Uncertainty Quantification in Dynamic Biological Models: From Foundational Principles to Clinical Applications

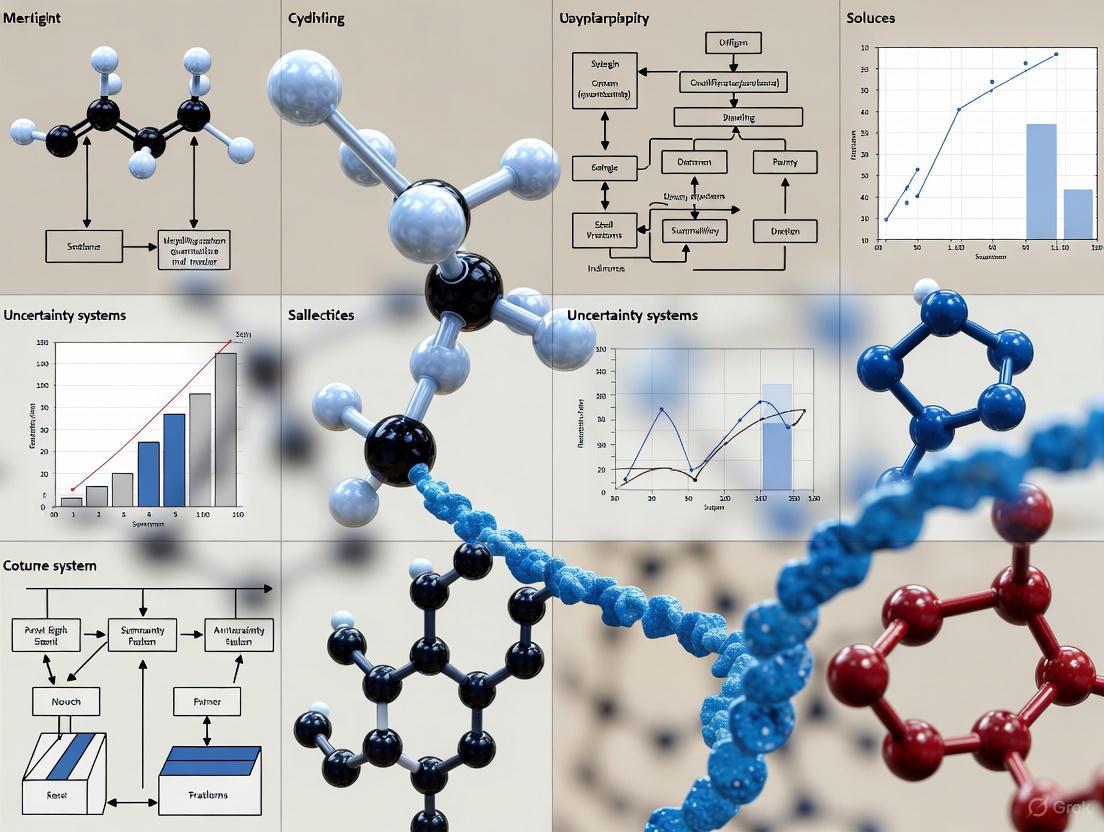

This article provides a comprehensive overview of Uncertainty Quantification (UQ) for dynamic biological models, a critical discipline for ensuring the reliability of computational predictions in systems biology and drug discovery.

Uncertainty Quantification in Dynamic Biological Models: From Foundational Principles to Clinical Applications

Abstract

This article provides a comprehensive overview of Uncertainty Quantification (UQ) for dynamic biological models, a critical discipline for ensuring the reliability of computational predictions in systems biology and drug discovery. We explore foundational concepts, including the distinction between aleatoric and epistemic uncertainty, and the unique challenges posed by biological complexity, such as parameter identifiability. The review systematically compares state-of-the-art UQ methodologies, from traditional Bayesian and ensemble approaches to emerging conformal prediction techniques. We further address practical troubleshooting for model optimization and establish a rigorous framework for the validation and comparative analysis of UQ methods. Designed for researchers and drug development professionals, this guide aims to bridge the gap between theoretical UQ advances and their practical application in creating trustworthy, clinically-relevant biological models.

Why Uncertainty is Inevitable: Foundational Concepts in Biological Systems Modeling

In the field of biological research, particularly in the development of dynamic models and predictive algorithms, the ability to quantify uncertainty is not merely a statistical exercise but a fundamental requirement for generating reliable, reproducible, and actionable scientific insights. The proliferation of high-dimensional data in genomics, transcriptomics, and medical imaging has heightened the need for robust uncertainty quantification (UQ) to guide clinical decision-making and drug development processes [1] [2]. Uncertainty in biological data and models primarily manifests in two distinct forms: aleatoric uncertainty, stemming from inherent noise and variability in biological systems, and epistemic uncertainty, arising from limitations in knowledge, models, or data [3] [4]. This distinction is crucial because each type requires different mitigation strategies; while aleatoric uncertainty is often irreducible, epistemic uncertainty can potentially be reduced by acquiring more data or improving models [5] [6].

The accurate decomposition of total predictive uncertainty into its aleatoric and epistemic components provides researchers with a diagnostic toolkit to identify whether model inaccuracies stem from fundamental data limitations (aleatoric) or model inadequacies (epistemic) [7] [5]. For instance, in medical applications such as cancer outcome prediction or geographic atrophy assessment, understanding the nature of uncertainty directly impacts clinical interpretation and trust in the predictions [8] [2]. This guide systematically compares methodologies for quantifying and distinguishing between aleatoric and epistemic uncertainty across diverse biological applications, providing researchers with structured frameworks, experimental protocols, and visualization tools to enhance their analytical workflows.

Theoretical Foundations: Disentangling Aleatoric and Epistemic Uncertainty

Conceptual Definitions and Distinctions

At its core, aleatoric uncertainty (also known as statistical or stochastic uncertainty) refers to the inherent randomness, variability, or noise present in the data generation process itself [5] [4]. This type of uncertainty emerges from intrinsic stochasticity in biological systems, such as stochastic gene expression, biochemical randomness, measurement errors from instrumentation, or biological variation between samples or patients [9]. Aleatoric uncertainty is characterized as being irreducible because it cannot be eliminated simply by collecting more data—it is a fundamental property of the system under study [7] [6]. In mathematical terms, for a regression model, aleatoric uncertainty can be represented as the variance of residual errors: (y=f(x)+\epsilon, \epsilon\sim\mathcal{N}(0,\sigma^{2})), where (\epsilon) represents the irreducible noise [4].

In contrast, epistemic uncertainty (also known as systematic or model uncertainty) originates from a lack of knowledge or incomplete information about the system being modeled [3] [8]. This form of uncertainty stems from limitations in model structure, insufficient training data that fails to adequately represent the true data distribution, simplifications in model architecture, or inadequate feature representation [5]. Unlike aleatoric uncertainty, epistemic uncertainty is theoretically reducible through obtaining more relevant data, refining model architectures, incorporating additional informative features, or integrating prior knowledge [3] [4]. In Bayesian frameworks, epistemic uncertainty is quantified through posterior distributions over model parameters: (p(\theta|D)=\frac{p(D|\theta)p(\theta)}{p(D)}), reflecting the uncertainty about model parameters (\theta) given the observed data (D) [4].

Comparative Analysis: Key Characteristics and Properties

Table 1: Fundamental Characteristics of Aleatoric and Epistemic Uncertainty

| Characteristic | Aleatoric Uncertainty | Epistemic Uncertainty |

|---|---|---|

| Origin | Inherent randomness in data | Limitations in knowledge or models |

| Reducibility | Irreducible | Reducible with more information |

| Data Dependency | Increases with more data in heteroscedastic cases | Decreases with more representative data |

| Mathematical Representation | Variance in predictive distribution | Distribution over model parameters |

| Primary Causes | Measurement noise, biological variability, stochastic processes | Sparse data, model misspecification, inadequate features |

| Dominant in | Large datasets with inherent variability | Small datasets or extrapolation regions |

The conceptual relationship between these uncertainty types and their position within the modeling workflow can be visualized through the following framework:

Quantitative Comparison: Methodologies and Experimental Data

Computational Techniques for Uncertainty Quantification

Multiple computational approaches have been developed to quantify and distinguish between aleatoric and epistemic uncertainty in biological data analysis. Deep ensemble methods have emerged as particularly effective techniques, training multiple models with different initializations and using their disagreement to estimate epistemic uncertainty while capturing aleatoric uncertainty through data-dependent variance estimation [5] [4]. Bayesian Neural Networks (BNNs) provide a natural framework for UQ, representing epistemic uncertainty through posterior distributions over weights and aleatoric uncertainty through the noise model [6]. Monte Carlo Dropout approximates Bayesian inference by enabling dropout at test time, generating multiple stochastic forward passes to estimate both uncertainty types [2]. For biochemical applications, methods like mean-variance estimation directly parameterize aleatoric uncertainty by making the variance a function of inputs [5].

Table 2: Performance Comparison of UQ Methods on Biological Tasks

| Method | Application Domain | Aleatoric UQ Accuracy | Epistemic UQ Accuracy | Computational Cost | Key Strengths |

|---|---|---|---|---|---|

| Deep Ensembles | Site-of-metabolism prediction [5] | High | High | High | State-of-the-art performance, reliable decomposition |

| Monte Carlo Dropout | Breast cancer outcome prediction [2] | Medium | Medium | Medium | Good balance of performance and efficiency |

| Bayesian Neural Networks | Molecular property prediction [5] | High | Medium | High | Strong theoretical foundations |

| Mean-Variance Estimation | Healthcare ML surveys [1] | High (heteroscedastic) | Low | Low | Effective for input-dependent noise |

| Stochastic Weight Averaging | Immunoreceptor signaling models [10] | Medium | Medium | Medium | Good for optimization uncertainty |

Experimental Evidence and Empirical Findings

Recent empirical studies across diverse biological domains provide compelling evidence for the importance of distinguishing uncertainty types. In site-of-metabolism (SOM) prediction for xenobiotic compounds, the aweSOM model utilizing deep ensembles demonstrated that epistemic uncertainty dominates for molecular structures distant from the training data, while aleatoric uncertainty increases for atoms with ambiguous metabolic labels even within the model's applicability domain [5]. Quantitative analysis revealed that predictions with high epistemic uncertainty (top quartile) showed a 45% decrease in accuracy compared to those with low epistemic uncertainty (bottom quartile), underscoring its value for identifying model limitations.

In healthcare machine learning applications, comprehensive benchmarking reveals that aleatoric uncertainty estimation is particularly unreliable in out-of-distribution settings for regression tasks, with models often outputting constant aleatoric variances regardless of input [1]. This finding challenges the conventional assumption that aleatoric uncertainty can be cleanly separated from epistemic uncertainty in practical biological applications. For geographic atrophy (GA) assessment in ophthalmology, epistemic uncertainties arising from measurement errors, subjective judgment in image annotation, and model structure uncertainties contribute significantly to variability in study results, with quantitative estimates suggesting these factors may account for 15-30% of the observed variance in progression rates [8].

In breast cancer outcome prediction using multimodal data, the UISNet framework demonstrated that integrating prior biological pathway knowledge substantially reduced epistemic uncertainty associated with feature relevance, improving the C-index from 0.650 in state-of-the-art methods to 0.691 on average across seven datasets [2]. The model's uncertainty-based interpretation method identified 20 genes associated with breast cancer outcomes, with 11 previously established in literature and 9 novel candidates, demonstrating how UQ can guide biological discovery.

Experimental Protocols and Methodological Workflows

General Framework for Uncertainty Quantification in Biological Modeling

Implementing robust uncertainty quantification in biological research follows a systematic workflow that encompasses data processing, model specification, training, and evaluation phases. The following diagram illustrates the comprehensive experimental protocol for uncertainty-aware biological modeling:

Specialized Protocol: Site-of-Metabolism Prediction with aweSOM

For biochemical applications such as site-of-metabolism prediction, the aweSOM framework provides a specialized protocol for uncertainty quantification [5]. The methodology begins with data representation, where molecules are transformed into graph structures with atoms as nodes and bonds as edges, incorporating atom-level and bond-level features. The model architecture employs a Graph Neural Network (GNN) with multiple independent instances initialized with different random seeds to form a deep ensemble. During training, each network in the ensemble is optimized using binary cross-entropy loss with Monte Carlo dropout for regularization.

For uncertainty decomposition, predictive uncertainty is quantified using the entropy of the predictive distribution: (H[\hat{y}|x] = -\sum{c=1}^C \hat{y}c \log(\hat{y}c)), where (\hat{y}) represents the mean prediction across ensemble members. The epistemic uncertainty is specifically calculated as the mutual information between the model parameters and the prediction: (I[y,\theta|x,D] = H[\hat{y}|x] - \mathbb{E}{p(\theta|D)}[H[y|x,\theta]]), which captures the disagreement between ensemble members. The aleatoric uncertainty is then derived as the remainder: (\mathbb{E}_{p(\theta|D)}[H[y|x,\theta]]).

This approach enables the identification of atoms with high epistemic uncertainty (indicating limited training data for similar molecular contexts) versus those with high aleatoric uncertainty (reflecting inherently ambiguous or noisy metabolic labels). Experimental validation demonstrates that predictions with low epistemic uncertainty show significantly higher accuracy (>80% for phase I metabolism), enabling reliable identification of model limitations and targeted data acquisition strategies.

Implementing effective uncertainty quantification in biological research requires both computational tools and domain-specific resources. The following table catalogues essential components of the uncertainty quantification toolkit for biological data analysis:

Table 3: Research Reagent Solutions for Uncertainty Quantification in Biological Studies

| Tool/Resource | Type | Primary Function | Example Applications |

|---|---|---|---|

| PyBioNetFit [10] | Software Tool | Parameter estimation and UQ for biological models | Immunoreceptor signaling models, metabolic pathways |

| COPASI [10] | Software Platform | Biochemical network simulation with UQ | Enzyme kinetics, metabolic flux analysis |

| Data2Dynamics [10] | Modeling Environment | Parameter estimation with profile likelihoods | Dynamic biological systems, ODE models |

| AMICI/PESTO [10] | Toolbox Combination | Gradient-based optimization with UQ | Large-scale biological models, sensitivity analysis |

| RegionFinder [8] | Specialized Software | Image analysis with epistemic uncertainty assessment | Geographic atrophy progression, medical imaging |

| Deep Ensemble Frameworks [5] [4] | Algorithmic Approach | Epistemic uncertainty via model disagreement | Site-of-metabolism prediction, healthcare ML |

| Monte Carlo Dropout [2] | Neural Network Technique | Approximation of Bayesian inference | Cancer outcome prediction, survival analysis |

| BNN Architectures [6] [4] | Modeling Framework | Native uncertainty representation | Molecular property prediction, clinical risk models |

| KEGG/Reactome [2] | Knowledge Base | Prior biological pathway information | Interpretable ML, feature selection validation |

The systematic comparison of aleatoric and epistemic uncertainty quantification methods across biological applications reveals a critical insight: the strategic decomposition of uncertainty provides not just statistical metrics, but actionable insights for guiding research investments and improving model reliability. While aleatoric uncertainty represents the fundamental limits of predictability inherent in biological systems, epistemic uncertainty serves as a diagnostic for model limitations and knowledge gaps [7] [5]. The experimental evidence consistently demonstrates that models incorporating both uncertainty types—such as aweSOM for metabolism prediction and UISNet for cancer outcomes—outperform approaches that ignore this distinction, achieving state-of-the-art predictive accuracy while providing interpretable confidence estimates [5] [2].

For researchers and drug development professionals, the practical implication is that uncertainty-aware models enable more efficient resource allocation by identifying whether predictive limitations stem from irreducible system noise (requiring acceptance of inherent variability) or reducible knowledge gaps (amenable to targeted data collection or model refinement) [9] [8]. As biological data continues to grow in volume and complexity, the integration of sophisticated uncertainty quantification frameworks will become increasingly essential for transforming data into reliable biological knowledge and clinically actionable insights. The methodologies, protocols, and tools outlined in this guide provide a foundation for researchers to implement these approaches across diverse biological domains, ultimately enhancing the robustness and reproducibility of computational biology in the era of high-dimensional data.

In the realm of systems biology, dynamic models—typically formulated as sets of ordinary differential equations (ODEs)—have become indispensable tools for deciphering the complex behaviors of biological processes. These mechanistic models provide a quantitative understanding that would be difficult to achieve through other means, enabling researchers to predict system behavior under different conditions, generate testable hypotheses, and identify knowledge gaps [11]. However, the predictive utility of these models hinges on addressing three interconnected fundamental properties: identifiability, observability, and parameter sensitivity. These properties form the foundational pillars supporting reliable model predictions and constitute the core challenge in uncertainty quantification for dynamic biological systems.

Structural identifiability addresses whether it is theoretically possible to uniquely determine a model's unknown parameters from experimental measurements, assuming perfect, noise-free data [12] [13]. A parameter is structurally globally identifiable if it can be uniquely determined from system output, and structurally locally identifiable if this uniqueness holds within a local neighborhood of parameter space [12]. Observability, a related concept from control theory, determines whether it is possible to reconstruct the internal state variables of a model from measurements of its outputs [12]. Finally, parameter sensitivity analysis examines how variations in parameters influence model outputs, which directly impacts practical identifiability—the ability to estimate parameters with acceptable precision from real-world, noisy data [14]. The interplay between these properties creates the fundamental framework for assessing model credibility and quantifying uncertainty in model predictions.

Core Concepts and Their Mathematical Foundations

Theoretical Frameworks and Their Interrelationships

The mathematical foundations of identifiability and observability analysis for nonlinear dynamic systems are deeply rooted in differential geometry. For a dynamic model described by ODEs in the general form:

[ \begin{align} \dot{x}(t) &= f[x(t),u(t),p], \ y(t) &= g[x(t),p], \ x_0 &= x(t_0,p) \end{align} ]

where (x(t)) represents the state variables, (u(t)) denotes inputs, (p) represents parameters, and (y(t)) signifies the measurable outputs, structural identifiability can be approached as a generalized version of observability [12]. By treating model parameters as state variables with zero dynamics, identifiability analysis can be recast as an observability analysis problem [12]. This unified approach allows researchers to assess both properties using Lie derivatives, which measure how output functions change along the vector fields defined by the system dynamics.

The relationship between these core properties is hierarchical and interdependent. Structural identifiability represents a necessary prerequisite for practical identifiability—if parameters cannot be identified even with perfect data, there is no hope of estimating them from real experimental measurements [13]. Similarly, observability ensures that the internal states governing system dynamics can be inferred from measured outputs. Both properties are influenced by parameter sensitivities, as parameters with low sensitivity have minimal effect on outputs and are consequently difficult to identify [14]. The diagram below illustrates these core concepts and their relationships:

Classification and Definitions

The terminology surrounding identifiability encompasses several nuanced classifications that are essential for proper analysis:

Structural Global Identifiability: A parameter (pi) is structurally globally identifiable if, for almost any parameter vector (p^*), the equality of model outputs (y(t,\hat{p}) = y(t,p^*)) for all (t) implies that (\hat{pi} = p_i^*) [12]. This represents the strongest form of identifiability.

Structural Local Identifiability: A parameter is structurally locally identifiable if the condition (\hat{pi} = pi^) holds only within a neighborhood of (p^), rather than across the entire parameter space [12] [13].

Structural Unidentifiability: A parameter is structurally unidentifiable if no such neighborhood exists, meaning infinitely many parameter values can produce identical model outputs [12].

Practical Identifiability: This refers to the ability to determine parameters with acceptable precision from finite, noisy, real-world data, taking into account experimental constraints and measurement errors [14].

The relationships between these different types of identifiability form a hierarchical structure that guides analysis workflows, as shown in the following conceptual classification:

Methodological Approaches: A Comparative Analysis

Computational Methods for Identifiability and Observability Analysis

Various computational methodologies have been developed to address the challenges of identifiability and observability analysis in dynamic biological systems. These approaches differ in their underlying mathematical foundations, applicability to different model types, and computational efficiency. The table below summarizes key methods and their characteristics:

Table 1: Computational Methods for Structural Identifiability and Observability Analysis

| Method | Mathematical Basis | Applicable Model Types | Computational Efficiency | Key Features |

|---|---|---|---|---|

| STRIKE-GOLDD [12] | Lie Derivatives & Differential Geometry | Nonlinear ODEs (analytic functions) | Moderate to High (with decomposition) | Treats identifiability as generalized observability; handles non-rational models |

| Differential Algebra [13] | Differential Algebra | Rational ODEs | Moderate (for medium-scale models) | Exact results; implemented in software like COMBOS |

| Taylor Series Expansion [13] | Power Series | Nonlinear ODEs | High (for small models) | Uses coefficients of Taylor expansion |

| Similarity Transformation [13] | Linear System Theory | Linear ODEs | High | Limited to linear systems |

| Symbolic Computation [15] | Symbolic Math | Nonlinear ODEs | Low to Moderate (model size-dependent) | Exact results; can handle complex expressions |

| Sensitivity-Based [14] | Sensitivity Analysis & Collinearity | Nonlinear ODEs | High (scales well) | Assesses practical identifiability; uses FIM |

Among these approaches, the STRIKE-GOLDD method represents a significant advancement due to its general applicability to analytic nonlinear systems, including those with non-rational terms such as Hill kinetics, which are common in biochemical models [12]. This method determines identifiability by calculating the rank of a generalized observability-identifiability matrix constructed using Lie derivatives. When this rank test reveals unidentifiability, the procedure can determine the subset of identifiable parameters and, in some cases, find identifiable combinations of the remaining parameters [12].

For large-scale models, methods based on sensitivity analysis and collinearity indices offer better scalability. These approaches, implemented in tools like VisId, use a collinearity index to quantify parameter correlations and integer optimization to find the largest groups of uncorrelated parameters [14]. The computational efficiency of these methods enables the practical identifiability analysis of dynamic models with dozens to hundreds of parameters.

Uncertainty Quantification Methods

Once identifiability has been established, uncertainty quantification (UQ) becomes essential for assessing the reliability of model predictions. UQ methods characterize how uncertainty in parameters and model structure propagates to uncertainty in predictions. The following table compares prominent UQ approaches:

Table 2: Uncertainty Quantification Methods for Dynamic Biological Systems

| Method | Theoretical Framework | Uncertainty Type Handled | Computational Demand | Statistical Guarantees |

|---|---|---|---|---|

| Bayesian Inference [11] [16] | Bayesian Statistics | Parameter, Structural | High (MCMC sampling) | Asymptotic with correct priors |

| Conformal Prediction [11] [17] | Frequentist | Predictive | Moderate | Non-asymptotic, distribution-free |

| Prediction Profile Likelihood [11] | Frequentist | Parameter, Predictive | High (multiple optimizations) | Asymptotic |

| Ensemble Modeling [11] | Frequentist/Bayesian | Structural, Predictive | Moderate | Heuristic |

| Fisher Information Matrix [11] | Frequentist | Parameter | Low | Local, asymptotic |

Traditional Bayesian methods, while powerful, often require specification of parameter distributions as priors and may impose parametric assumptions that don't always reflect biological reality [11]. Additionally, Bayesian approaches can be computationally expensive and susceptible to convergence failures when dealing with multimodal posterior distributions arising from identifiability issues [11].

Conformal prediction has emerged as a promising alternative that provides non-asymptotic guarantees for prediction intervals, ensuring well-calibrated coverage probabilities even with limited data and model misspecification [11] [17]. This approach is particularly valuable in systems biology applications where data are typically scarce and models are necessarily simplified representations of complex biological processes. Recent algorithms tailored for dynamic biological systems, such as those based on jackknife methodology and location-scale regression models, offer improved statistical efficiency while maintaining robustness and scalability [11].

Experimental Protocols and Assessment Workflows

Standardized Protocols for Identifiability Assessment

A systematic approach to identifiability analysis is crucial for ensuring model reliability. The following workflow outlines a comprehensive protocol for assessing and addressing identifiability issues in dynamic biological models:

The assessment begins with structural identifiability analysis using one of the computational methods described in Section 3.1. For models that prove to be structurally unidentifiable, the protocol proceeds to model reparameterization, which involves finding identifiable combinations of parameters or eliminating redundant parameters [12] [15]. This step is crucial because attempting to estimate unidentifiable parameters will lead to failed estimation algorithms, wasted resources, and potentially misleading model predictions [12].

Once structural identifiability is established, practical identifiability analysis assesses whether parameters can be estimated with sufficient precision from available data. This typically involves sensitivity analysis and examination of the Fisher Information Matrix (FIM) or profile likelihoods [14]. Based on these results, experimental design optimization may be employed to maximize the information content of future experiments, potentially by measuring additional outputs, increasing sampling frequency, or designing informative input stimuli [14].

Protocol for Uncertainty Quantification in Dynamic Systems

For uncertainty quantification, the following standardized protocol integrates both traditional and emerging approaches:

Model Calibration: Estimate parameters using global optimization methods such as evolutionary algorithms or scatter search (e.g., eSS) combined with efficient local search methods (e.g., NL2SOL) [14]. Regularization techniques may be incorporated to prevent overfitting.

Identifiability Assessment: Perform both structural and practical identifiability analysis as described in Section 4.1.

Uncertainty Quantification Method Selection: Choose appropriate UQ methods based on model characteristics and available computational resources. For models with potential identifiability issues, conformal prediction methods may be preferable due to their robustness to model misspecification [11].

Implementation: Apply selected UQ methods, such as:

- Bayesian inference with Markov Chain Monte Carlo (MCMC) sampling

- Conformal prediction algorithms tailored for dynamic systems

- Prediction profile likelihood calculations

- Ensemble modeling approaches

Validation: Assess the calibration and sharpness of uncertainty estimates using validation datasets not used in model training [11].

The integration of conformal prediction into this workflow is particularly valuable for providing distribution-free, non-asymptotic guarantees on prediction intervals, which remain reliable even with complex, nonlinear dynamic models [11].

Essential Computational Tools

The computational complexity of identifiability analysis and uncertainty quantification has spurred the development of specialized software tools. These resources significantly lower the barrier to performing rigorous model analysis. The following table catalogizes key software tools and their applications:

Table 3: Research Toolkit for Identifiability Analysis and Uncertainty Quantification

| Tool Name | Platform | Primary Function | Supported Model Types | Access |

|---|---|---|---|---|

| STRIKE-GOLDD [12] | MATLAB | Structural Identifiability & Observability | Nonlinear ODEs | Open Source |

| VisId [14] | MATLAB | Practical Identifiability & Visualization | Nonlinear ODEs | Open Source |

| StrucID [18] | MATLAB | Identifiability, Observability & Controllability | Nonlinear State-Space | Application |

| StructuralIdentifiability.jl [13] | Julia | Structural Identifiability Analysis | Nonlinear ODEs | Open Source |

| COMBOS [13] | Web Application | Structural Identifiability Analysis | Rational ODEs | Web Access |

| Fraunhofer Chalmers Tool [13] | Mathematica | Structural Identifiability Analysis | Nonlinear ODEs | Commercial |

These tools employ different mathematical approaches and vary in their scalability, usability, and model compatibility. The STRIKE-GOLDD toolbox, for instance, implements a differential geometry-based approach that can handle non-rational nonlinearities common in biological models, such as Hill kinetics [12]. In contrast, VisId focuses on practical identifiability assessment through sensitivity-based methods and provides visualization capabilities that help researchers identify parameter correlations and model deficiencies [14].

Selection Guidelines for Research Tools

Choosing appropriate software depends on several factors, including model characteristics, analysis goals, and computational resources:

For structural identifiability analysis of nonlinear models with potential non-rational terms, STRIKE-GOLDD offers broad applicability, though it may require decomposition techniques for large-scale models [12].

For large-scale model calibration and practical identifiability assessment, VisId provides scalable algorithms combining global optimization with regularization techniques, along with visualization capabilities that facilitate result interpretation [14].

For uncertainty quantification with limited data, conformal prediction algorithms implemented in MATLAB or Python offer robust prediction intervals with theoretical guarantees, serving as complements or alternatives to Bayesian methods [11].

For automatic model discovery with identifiability guarantees, methodologies integrating SINDy-PI with structural identifiability and observability (SIO) analysis ensure that discovered models are both accurate and identifiable [15].

Researchers should consider using multiple complementary tools to validate results, as different methods may provide unique insights into model structure and identifiability properties.

Identifiability, observability, and parameter sensitivity represent fundamental challenges that must be addressed to ensure the reliability of dynamic models in systems biology. These properties are deeply interconnected—structural identifiability is a necessary prerequisite for practical identifiability, which in turn depends on parameter sensitivities and is essential for meaningful uncertainty quantification. Ignoring these relationships can lead to misleading predictions, wasted resources, and erroneous biological insights.

The methodological landscape for tackling these challenges has evolved significantly, with tools like STRIKE-GOLDD addressing structural identifiability for complex nonlinear models [12], VisId enabling practical identifiability analysis for large-scale systems [14], and conformal prediction methods providing robust uncertainty quantification with non-asymptotic guarantees [11]. The integration of these approaches into standardized workflows, from initial model development through final uncertainty assessment, represents best practice in computational systems biology.

As biological models continue to increase in complexity and scope, particularly with emerging efforts in whole-cell modeling, the core challenge of ensuring identifiability, observability, and reliable uncertainty quantification will only grow in importance. Future methodological developments will likely focus on enhancing computational efficiency, improving scalability for ultra-large models, and developing more robust approaches that seamlessly integrate model discovery with identifiability assurance. By directly addressing these foundational issues, researchers can build dynamic models that not only fit existing data but also generate predictions with quantifiable uncertainty, ultimately enhancing their utility in biological discovery and therapeutic applications.

In the field of systems biology, computational models are indispensable tools for analyzing, predicting, and understanding the intricate behaviors of complex biological processes [11] [17]. These dynamic mechanistic models, typically composed of sets of deterministic nonlinear ordinary differential equations, provide quantitative understanding of dynamics that would be difficult to achieve through other means [11]. However, as model complexity increases with more species and unknown parameters, achieving full identifiability and observability becomes progressively more difficult [11]. This complexity directly damages model identifiability—the ability to uniquely determine unknown parameters from available data—and leads to more uncertain predictions [11].

Uncertainty Quantification (UQ) has consequently emerged as a fundamental process for systematically determining and characterizing the degree of confidence in computational model predictions [11] [17]. Robust UQ is particularly critical for dynamic biological systems due to nonlinearities and parameter sensitivities that significantly influence the behavior of complex biological systems [11]. Without proper UQ, models may become overconfident in their predictions, potentially leading to misleading results in critical applications such as cancer therapy optimization and treatment personalization [11].

The reliability of these models is fundamentally challenged by three primary sources of uncertainty: intrinsic randomness stemming from quantum-level phenomena, measurement error from observational imperfections, and model discrepancy arising from incomplete mathematical representations of biological reality. Understanding, quantifying, and managing these interrelated sources of noise is essential for building trustworthy models that can effectively guide scientific discovery and biomedical applications.

Theoretical Foundations: Classifying and Quantifying Noise

In computational modeling of biological systems, noise and uncertainty manifest in several distinct forms that require different methodological approaches for quantification and mitigation:

Intrinsic Randomness: This fundamental stochasticity arises from the inherent probabilistic nature of biological systems. Unlike other uncertainty forms, intrinsic randomness cannot be reduced through improved measurements or modeling, as it reflects genuine indeterminacy in the system [19] [20]. At the quantum level, this randomness stems from pure quantum phenomena such as vacuum noise, which represents fluctuating electromagnetic fields in their ground state [20] [21].

Measurement Error: This epistemic uncertainty results from imperfections in the observational process. Recent theoretical work has generalized the concept of measurement error to distinguish between intrinsic measurement error, which reflects some subjective quality of either the measurement tool or the measurand itself, and incidental measurement error, which represents the traditional form arising from a set of deterministic sample measurements [19].

Model Discrepancy: This systematic uncertainty emerges from simplifications, incorrect assumptions, or missing biological mechanisms in the mathematical structure of computational models. Evidence suggests that even state-of-the-art machine learning interatomic potentials (MLIPs) with carefully selected training datasets and small average errors may not fully reproduce atomic dynamics or related properties [22].

Theoretical Frameworks for Uncertainty Classification

The classification of measurement error into intrinsic and incidental varieties represents a significant advancement in uncertainty quantification theory. Intrinsic measurement error allows researchers to encode measurement uncertainty at the level of a single sample unit within a sample space, rather than only being unique to a subset defined by conditioning variables [19]. This formulation converts a traditional modeling problem into a data collection challenge, requiring additional information about the confidence or certainty that sample measurements assume particular values [19].

For Bernoulli target phenomena, the concept of intrinsic measurement error has been recognized in the cognitive psychology literature through confidence weighting, which enables the construction of estimation theories for phenomena subject to intrinsic measurement error [19]. The framework of random-variable-valued measurements (RVVMs) generalizes this approach to non-Bernoulli target phenomena, allowing for coherent integration into diverse model-building frameworks [19].

Table 1: Theoretical Frameworks for Classifying and Quantifying Uncertainty

| Framework | Uncertainty Type Addressed | Key Innovation | Biological Application Examples |

|---|---|---|---|

| Random-Variable-Valued Measurements (RVVMs) [19] | Intrinsic vs. Incidental Measurement Error | Allows measurement uncertainty at individual sample unit level | Cognitive psychology confidence weighting; survey response certainty |

| Conformal Prediction [11] [17] | Model discrepancy, Parameter uncertainty | Provides non-asymptotic guarantees for prediction regions without distributional assumptions | Dynamic biological system modeling; prediction intervals for protein concentrations |

| Matrix Factorization [23] | Multidimensional measurement error | Handles within-item multidimensionality using alternating least squares | Dyslexia risk assessment from complex cognitive test batteries |

| Quantum Randomness Quantification [20] | Intrinsic randomness | Quantifies guessing probability minimized over input states | Certification of random number generators; cryptographic systems |

Methodological Approaches: Quantifying Different Noise Types

Experimental Protocols for Noise Characterization

Protocol for Assessing Measurement and Sampling Noise in Cognitive Studies

Research investigating location probability learning highlights how neglecting psychometric properties can lead to flawed conclusions about unconscious processes [24]. The experimental protocol involves:

Participant Recruitment: 159 participants performing the additional singleton task to examine memory-guided visual search and awareness interactions [24].

Task Design: Implementation of the additional singleton task where participants learn to suppress salient but irrelevant distractors frequently presented in certain locations [24].

Awareness Measurement: Single-trial awareness tests that lack reliability assessment through standard methods due to their one-time administration [24].

Modeling Approach: Development of a noisy conscious model that incorporates realistic measurement noise in participants' search times and awareness responses, fitted to empirical data [24].

Simulation Procedure: Using estimated parameters to simulate new responses and participants, demonstrating how measurement and sampling noise can falsely support unconscious learning hypotheses [24].

This protocol reveals that under reasonable measurement noise and sample sizes, simulated evidence can paradoxically but falsely support arguments used to defend unconscious learning hypotheses [24].

Protocol for Evaluating Model Discrepancy in Machine Learning Interatomic Potentials

Research on machine learning interatomic potentials (MLIPs) demonstrates how conventional error metrics can mask significant model discrepancies [22]:

MLIP Construction: Retrieval of multiple MLIP models (GAP, GAPPRX, NNP, SNAP, MTP) from previous studies and training of Deep Potential (DeePMD) models using consistent training datasets encompassing diverse atomistic structures [22].

Testing Dataset Creation: Construction of specialized testing sets (e.g., interstitial-RE and vacancy-RE testing sets) consisting of snapshots of atomic configurations with single migrating vacancies or interstitials from ab initio molecular dynamics (AIMD) simulations [22].

Conventional Error Metrics: Calculation of standard root-mean-square errors (RMSEs) for energies and atomic forces across testing datasets [22].

Dynamic Property Comparison: Implementation of MLIP-driven molecular dynamics (MD) simulations with comparison to AIMD simulations for properties like diffusion coefficients, radial distribution functions, and defect migration barriers [22].

Rare Event Analysis: Development of evaluation metrics based on atomic forces of rare event migrating atoms, demonstrating that MLIPs with low average errors may still show significant discrepancies in simulating atomic dynamics [22].

This protocol revealed that MLIPs with force errors as low as 0.03 eV Å⁻¹ could still produce errors of 0.1 eV in vacancy diffusion activation energies compared to DFT values of 0.59 eV [22].

Quantitative Comparison of Uncertainty Quantification Methods

Table 2: Performance Comparison of Uncertainty Quantification Methods in Biological Systems

| UQ Method | Theoretical Basis | Computational Scalability | Statistical Guarantees | Key Limitations |

|---|---|---|---|---|

| Bayesian Inference [11] [16] | Bayesian probability | Challenging for large-scale models; convergence issues | Strong with correct priors and models | Requires specification of parameter priors; computationally expensive |

| Conformal Prediction [11] [17] | Frequentist; non-parametric | Excellent scalability | Non-asymptotic marginal coverage guarantees | Weaker theoretical justification for complex correlations |

| Prediction Profile Likelihood [11] | Frequentist; likelihood-based | Computationally demanding for multiple predictions | Asymptotic guarantees | Does not scale well with numerous predictions |

| Ensemble Modeling [11] | Empirical aggregation | Good performance for large-scale models | Weaker theoretical justification | Limited theoretical foundation for uncertainty intervals |

| Matrix Factorization [23] | Psychometric/Rasch measurement | Efficient alternating least squares algorithm | Objective measurement across instruments | Requires sufficient response data for stable estimates |

Research Reagent Solutions for Uncertainty Quantification

Table 3: Essential Computational Tools for Uncertainty Quantification in Biological Systems

| Tool/Technique | Function | Application Context |

|---|---|---|

| Conformal Prediction Algorithms [11] [17] | Provides prediction intervals with non-asymptotic guarantees | Dynamic biological system prediction; limited data settings |

| Random-Variable-Valued Measurements (RVVMs) [19] | Encodes measurement confidence directly in data structure | Surveys with confidence assessment; cognitive testing |

| Photonic Probabilistic Neurons (PPNs) [21] | Hardware-level random number generation using quantum vacuum noise | Ultra-fast stochastic sampling; probabilistic machine learning |

| Alternating Least Squares Matrix Factorization [23] | Extracts multidimensional signals while increasing Shannon entropy | Multidimensional clinical assessment; dyslexia risk measurement |

| Profile Likelihood Methods [16] | Identifies parameter identifiability issues in mechanistic models | Dynamic model parameter estimation; structural identifiability analysis |

Case Studies: Noise Management in Biological Research

Conformal Prediction for Dynamic Biological Systems

The application of conformal prediction methods to dynamic biological systems demonstrates a novel approach to managing model discrepancy and parameter uncertainty [11] [17]. Researchers proposed two conformal algorithms specifically designed for dynamic biological systems to optimize statistical efficiency under limited data constraints common in biological research [11].

The experimental workflow for this approach can be visualized as follows:

Conformal Prediction Workflow for Biological Systems

This methodology provides non-asymptotic guarantees that improve robustness and scalability across various applications, even when predictive models are misspecified [11]. Through illustrative scenarios, these conformal algorithms serve as powerful complements—or even alternatives—to conventional Bayesian methods, delivering effective uncertainty quantification for predictive tasks in systems biology [11] [17].

Quantum Vacuum Noise for Intrinsic Randomness

Research in photonic computing has leveraged intrinsic quantum randomness for probabilistic machine learning, demonstrating how fundamental physics can be harnessed for computational applications [21]. The experimental system consists of three modules:

Biased Optical Parametric Oscillator (OPO): A photonic probabilistic neuron (PPN) implemented as a biased degenerate OPO that leverages quantum vacuum noise to generate probability distributions encoded by a bias field [21].

Detection System: A homodyne detector that measures the optical phase of the steady state and maps it to corresponding bit values (0 rad → 0, π rad → 1) [21].

Processing Unit: Electronic processors (FPGA or GPU) that implement measurement-and-feedback loops for time-multiplexed PPNs to solve probabilistic machine learning tasks [21].

This system was applied to probabilistic inference and image generation of MNIST-handwritten digits, showcasing both discriminative and generative models [21]. In both implementations, quantum vacuum noise served as a random seed to encode classification uncertainty or probabilistic generation of samples [21].

The significant advantage of this approach lies in its energy efficiency and speed, with proposals for all-optical probabilistic computing platforms estimating a sampling rate of approximately 1 Gbps and energy consumption of about 5 fJ/MAC (femtojoules per multiply-accumulate operation) [21].

Matrix Factorization for Multidimensional Measurement Noise

The challenge of multidimensional measurement noise is particularly acute in clinical assessment, where conditions like dyslexia present an intrinsically multidimensional complex of cognitive loads [23]. Researchers addressed this problem using a special form of matrix factorization called Nous, based on the Alternating Least Squares algorithm [23].

Matrix Factorization for Clinical Assessment

This methodology increases the Shannon entropy of the dataset while simultaneously being constrained to meet the special objectivity requirements of the Rasch model [23]. The resulting Dyslexia Risk Scale (DRS) yields linear equal-interval measures comparable across different item subsets, with high reliability (0.95) and excellent receiver operating characteristics (AUC = 0.96) demonstrated in a calibration sample of 828 persons aged 7 to 82 [23].

The comprehensive examination of noise sources in biological modeling reveals that effective uncertainty quantification requires integrated approaches that address intrinsic randomness, measurement error, and model discrepancy simultaneously. Each noise type demands specialized methodological strategies: conformal prediction for model discrepancy with non-asymptotic guarantees, quantum random number generation for certified intrinsic randomness, and matrix factorization for multidimensional measurement error [11] [21] [23].

The field is moving toward hybrid frameworks that combine mechanistic models with machine learning to improve interpretability and predictive performance while explicitly accounting for uncertainty [16]. Future research directions include developing benchmarks to support reproducibility and method comparison, advancing inference methods and tools, and creating integrated uncertainty quantification pipelines that span from data collection to final prediction [16].

For researchers, scientists, and drug development professionals, the practical implication is that noise and uncertainty should not be treated as nuisances to be minimized, but as fundamental aspects of biological systems that provide critical information about model reliability and predictive limitations. By embracing comprehensive uncertainty quantification approaches that address all noise sources, the systems biology community can build more robust, interpretable, and trustworthy models that accelerate scientific discovery and improve biomedical applications.

In the field of systems biology, mechanistic dynamic models are indispensable tools for analyzing and predicting the behavior of complex biological processes, from cellular signaling to whole-organism physiology [11]. However, the predictive power of these models is constrained by their Applicability Domain (AD)—the range of data and conditions within which the model's predictions are reliable [25] [26]. For researchers and drug development professionals, understanding and defining the AD is not merely a technical exercise; it is a fundamental prerequisite for ensuring that predictions used in critical decision-making, such as optimizing cancer therapy schedules or forecasting treatment responses, are trustworthy [11] [26]. Within the broader challenge of uncertainty quantification (UQ) in dynamic biological models, accurately defining the AD provides a structured approach to managing epistemic uncertainty arising from incomplete data, measurement errors, or limited biological knowledge [16].

Defining a model's AD allows scientists to identify the chemical or biological space associated with reliable predictions, a principle mandated by the OECD for QSAR model validation [25]. Using a model outside its AD risks incorrect predictions, which in a pharmaceutical context could lead to inefficient resource allocation, supply chain disruptions, or unsafe drug candidates progressing through development pipelines [26]. The core challenge lies in the fact that models are developed on structurally and parametrically limited training sets; their generalization to a broader space is not guaranteed [25].

Defining the Applicability Domain: A Comparative Analysis of Methods

Several methodologies have been developed to characterize the interpolation space of a model and define its AD. They primarily differ in how they describe the region occupied by the training data in the model's descriptor space. The table below summarizes the main categories and their characteristics.

Table: Comparison of Key Applicability Domain (AD) Definition Methods

| Method Category | Core Principle | Examples | Key Advantages | Key Limitations |

|---|---|---|---|---|

| Range-Based [25] | Defines a hyper-rectangle based on the min/max values of each model descriptor. | Bounding Box | Simple to implement and understand. | Cannot identify empty regions or account for descriptor correlations. |

| Geometric [25] | Defines the smallest convex shape containing all training data. | Convex Hull | Provides a well-defined geometric boundary. | Computationally complex for high-dimensional data; cannot identify internal empty regions. |

| Distance-Based [25] [26] | Measures the distance of a query compound to a reference point (e.g., centroid) of the training data. | Euclidean, Mahalanobis, Leverage | Mahalanobis distance can handle correlated descriptors. | Performance highly dependent on the chosen threshold; may not reflect data density well. |

| Probability Density-Based [25] | Models the underlying probability distribution of the training data. | Parametric distributions | Can naturally identify dense and sparse regions. | Relies on assumptions about the data distribution. |

| Novelty Detection [26] | Assesses the similarity of new data to the training set based on proximity. | DA-Index (K-NN), Cosine Similarity | Intuitive; does not require model-specific information. | Relies solely on input data, ignoring the model's behavior. |

| Confidence Estimation [26] | Uses information from the predictive model itself to estimate uncertainty. | Standard Deviation from Ensemble models, Bayesian Neural Networks | Potentially more powerful as it incorporates model-specific uncertainty. | Can be computationally intensive; implementation is model-dependent. |

A novel approach proposed for defining the AD uses non-deterministic Bayesian neural networks. This method falls under confidence estimation and has demonstrated superior accuracy in defining the AD compared to previous methods by directly quantifying the model's uncertainty for a given prediction [26].

Experimental Protocols for AD Assessment

To benchmark the performance of different AD techniques, a robust validation framework is essential. The following protocol, adapted from contemporary research, outlines a general workflow for comparative evaluation.

Detailed Methodology for Benchmarking AD Methods

Dataset Selection and Model Training:

Application of AD Measures:

- Apply a suite of different AD measures to the trained models. This includes:

- Novelty Detection Measures: Such as the DA-Index, which uses k-Nearest Neighbors (K-NN with k=5 and Euclidean distance) to compute distances like

κ(distance to the k-th nearest neighbor),γ(mean distance to k-nearest neighbors), andδ(length of the mean vector to k-nearest neighbors) [26]. - Leverage: Calculate the Mahalanobis distance using the hat matrix ( hi = xi^T (X^TX)^{-1} xi ), where ( X ) is the training set matrix and ( xi ) is a query data point [25] [26].

- Confidence Estimation Measures: Such as the standard deviation of predictions from a bagging ensemble or the predictive uncertainty from a Bayesian neural network [26].

- Novelty Detection Measures: Such as the DA-Index, which uses k-Nearest Neighbors (K-NN with k=5 and Euclidean distance) to compute distances like

- Apply a suite of different AD measures to the trained models. This includes:

Performance Benchmarking:

- The key property of an AD measure is its discriminating ability—it should distinguish between predictions with high and low accuracy. An effective measure will show a monotonically increasing relationship between its value and the prediction error rate [26].

- Evaluate this by analyzing the correlation between the AD measure values and the actual prediction errors on test sets. Data points with higher AD measures should, on average, have larger prediction errors [26].

The logical relationship and workflow for this experimental protocol can be visualized as follows:

The Scientist's Toolkit: Essential Reagents for AD and UQ Research

For experimental and computational scientists working at the intersection of AD definition and uncertainty quantification, the following tools and "reagents" are fundamental.

Table: Essential Research Reagent Solutions for Uncertainty Quantification

| Tool/Reagent | Function / Application |

|---|---|

| Conformal Prediction Algorithms [11] | A non-Bayesian UQ method that provides non-asymptotic guarantees for prediction regions, improving robustness in dynamic biological systems. |

| Bayesian Inference Tools [16] | A foundational framework for UQ that treats parameters as random variables to derive posterior distributions, though it can be computationally demanding. |

| Profile Likelihood [11] [16] | A frequentist method for parameter estimation and uncertainty analysis, particularly useful for assessing parameter identifiability in dynamic models. |

| Ensemble Modeling [11] | Utilizes multiple models to capture uncertainty; the standard deviation of ensemble predictions can serve as a confidence-based AD measure. |

| MATLAB/Python/R [25] [26] | Core computational environments for implementing AD methods (e.g., k-NN, leverage) and UQ analysis. |

| Active Learning & Optimal Experimental Design [16] | Strategies to guide the collection of new data, effectively expanding the model's AD by targeting areas of high uncertainty. |

The integration of AD assessment into the broader model development and UQ workflow is critical. This process ensures that the limitations of mechanistic models—such as nonlinearities, parameter sensitivities, and structural identifiability issues—are properly characterized, preventing overconfidence in predictions [11]. The choice of AD method often involves a trade-off between computational scalability and statistical interpretability. For large-scale biological models, methods like ensemble modeling and the newly proposed conformal prediction algorithms offer a promising balance, providing effective UQ with strong theoretical guarantees [11].

The Impact of Uncertainty on Predictive Power and Decision-Making in Biomedicine

Uncertainty Quantification (UQ) has emerged as a pivotal discipline in biomedical research, fundamentally transforming how models are developed, validated, and utilized for critical decision-making. In fields ranging from drug discovery to clinical diagnostics, the systematic analysis and management of uncertainties determine both the reliability and applicability of predictive models [27]. The integration of UQ frameworks enables researchers and clinicians to distinguish between reliable and unreliable predictions, thereby enhancing trust in data-driven methodologies [28].

This review examines the transformative impact of UQ on predictive power and decision-making processes across biomedical domains. By analyzing recent advances in machine learning, digital twin technologies, and clinical applications, we demonstrate how UQ methodologies are reshaping biomedical research and accelerating the translation of computational models into practical tools that improve patient outcomes and optimize resource utilization.

Uncertainty Quantification Methodologies and Applications

Fundamental Approaches to Uncertainty Quantification

UQ methodologies systematically address both aleatoric (data-related) and epistemic (model-related) uncertainties that inherently affect biomedical predictions [27]. Aleatoric uncertainty stems from intrinsic variability in biological systems, including patient-specific physiological variations, measurement errors from diagnostic instruments, and incomplete medical data [27]. Epistemic uncertainty arises from limitations in model structure, mathematical approximations, and incomplete knowledge of biological mechanisms [29].

The integration of UQ with Verification and Validation (VVUQ) processes forms the foundation for establishing model credibility in clinical contexts [29]. Verification ensures computational implementations correctly solve mathematical models, while validation tests how accurately model predictions represent real-world biological behavior [27] [29]. UQ completes this framework by quantifying how uncertainties in model inputs propagate to affect outputs, enabling the prescription of confidence bounds for predictions [29].

UQ in Machine Learning for Drug Discovery

In pharmaceutical research, UQ has become indispensable for machine learning (ML) applications. Roche developed an innovative approach where calibrated ML models with robust UQ methods discriminate between reliable and unreliable predictions for molecular property assessment [28] [30]. By establishing optimal uncertainty thresholds through collaborative efforts between ML and experimental scientists, they demonstrated that up to 25% of compounds could be excluded from experimental assay submission without compromising decision quality [28]. This strategic application of UQ significantly reduces development costs and accelerates candidate selection.

Table 1: Impact of Uncertainty Quantification in Pharmaceutical Research

| Application Area | UQ Methodology | Quantitative Impact | Decision-Making Enhancement |

|---|---|---|---|

| Pharmacokinetic Assay Submission | ML-predicted confidence thresholds | Up to 25% reduction in assay submissions [28] | Exclusion of low-confidence predictions from experimental testing |

| Small Molecule Property Prediction | Calibrated ML models with uncertainty thresholds | Significant time and cost savings [30] | Improved compound selection efficiency |

| Secondary Pharmacology Analysis | Robust uncertainty quantification methods | Identification of truly reliable predictions [28] | Enhanced discrimination between viable and non-viable candidates |

Digital Twins and UQ in Clinical Decision-Making

Digital twins represent a paradigm shift in precision medicine, creating virtual representations of individual patients that simulate disease progression and treatment responses [29]. The credibility of these models fundamentally depends on robust VVUQ processes [29]. In cardiology, personalized cardiac electrophysiological models incorporating CT scans enable simulation of heart electrical behavior at the individual level, aiding in diagnosing arrhythmias like atrial fibrillation [29]. Similarly, in oncology, digital twins leverage longitudinal imaging data with mechanistic models to predict spatiotemporal tumor progression while accounting for patient-specific anatomy [31].

A critical advancement in this domain is the development of end-to-end data-to-decision methodologies that combine patient data with mechanistic models through statistical inverse problems [31]. These approaches enable rigorous quantification of uncertainty arising from sparse, noisy measurements, facilitating risk-informed decision making for personalized medicine [31]. The implementation of scalable Bayesian posterior approximations makes UQ computationally tractable for complex biological systems [31].

Table 2: Uncertainty Quantification in Digital Twin Applications

| Medical Domain | Digital Twin Function | UQ Methodology | Clinical Decision Support |

|---|---|---|---|

| Cardiology | Simulates electrical behavior of personalized heart models | Bayesian methods for anatomical uncertainties from clinical data [29] | Diagnosis of arrhythmias (e.g., atrial fibrillation) |

| Oncology | Predicts spatiotemporal tumor progression | Scalable Bayesian posterior approximation for imaging data [31] | Personalized treatment planning and intervention timing |

| Cardiovascular Medicine | Assesses cardiac anomalies via myocardial deformation | Quantifying impact of MRI artifacts on predictive capabilities [29] | Assessment of valve displacement and cardiac function |

Experimental Protocols and Methodologies

Machine Learning Model Calibration for Drug Discovery

The Roche protocol for leveraging ML in pharmacokinetic assay submission exemplifies a rigorous approach to UQ implementation [28]. The methodology begins with nonadditivity analysis to establish initial error acceptance thresholds, determining the maximum tolerable uncertainty for reliable predictions [28]. ML and experimental scientists collaboratively develop optimal uncertainty thresholds through iterative validation, ensuring alignment between computational predictions and experimental requirements [28].

The core UQ process involves coupling efficient ML models with robust uncertainty quantification methods to discriminate among predictions [30]. For each compound, the model generates both property predictions and confidence estimates. Compounds falling within the pre-established confidence threshold proceed to experimental assay, while those with uncertainties exceeding the threshold are excluded from submission [28]. This approach maintains decision quality while significantly reducing experimental burden, demonstrating how UQ enhances both efficiency and reliability in pharmaceutical development.

Digital Twin Validation Framework

For digital twins in precision medicine, a comprehensive VVUQ framework is essential for establishing clinical credibility [29]. Verification processes ensure computational implementations correctly solve the underlying mathematical models through software quality engineering practices and solution verification for discretized systems [29]. Validation assesses how accurately model predictions represent real-world physiology, employing patient-specific data to evaluate predictive capabilities [29].

UQ in digital twins addresses multiple uncertainty sources, including anatomical variations from medical imaging, parameter uncertainties from patient data, and model structural uncertainties [29] [31]. Bayesian methods are particularly valuable for quantifying how uncertainties in clinical measurements propagate through computational models to affect predictions [29]. For temporal validation, digital twins require ongoing reassessment as new patient data becomes available, ensuring model accuracy throughout their deployment lifecycle [29].

Digital Twin Workflow with Integrated UQ: This diagram illustrates the continuous feedback loop between physical patients and their virtual representations, highlighting how uncertainty quantification integrates with prediction and clinical decision-making.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Computational Tools for Uncertainty Quantification in Biomedicine

| Tool/Resource | Function | Application Context |

|---|---|---|

| Calibrated ML Models | Predict molecular properties with confidence estimates | Small molecule pharmaceutical research [28] |

| Bayesian Inference Frameworks | Quantify parameter and prediction uncertainties | Digital twin model calibration [29] [31] |

| Mechanistic Biological Models | Simulate physiological processes virtually | Cardiovascular and oncology digital twins [29] |

| High-Throughput Experimental Data | Train and validate predictive models | Automated biofoundries and parallel cultivation systems [32] |

| Statistical Inverse Problem Solvers | Estimate parameters from sparse patient data | Oncology digital twins with longitudinal imaging [31] |

| Cloud Computing Infrastructure | Enable large-scale simulations and data processing | Digital twin deployment and updating [29] |

Signaling Pathways and Uncertainty Propagation

Understanding how uncertainties propagate through biological signaling pathways is essential for reliable predictions in computational biomedicine. The complex, interconnected nature of these pathways means that small uncertainties in initial conditions or parameters can significantly impact model outputs.

Uncertainty Propagation in Biological Systems: This diagram visualizes how initial uncertainties in biological measurements amplify through signaling pathways, ultimately affecting clinical outcome predictions with quantified confidence bounds.

Comparative Analysis and Future Directions

The integration of UQ across biomedical domains demonstrates consistent benefits for predictive power and decision-making. In pharmaceutical applications, UQ enables more efficient resource allocation by identifying high-confidence predictions worth experimental validation [28]. In clinical settings, UQ provides crucial confidence bounds that help physicians balance risks and benefits when selecting treatment strategies [27] [29].

The emerging field of digital twins represents perhaps the most advanced application of UQ in biomedicine [29]. By creating virtual patient representations that dynamically update with new clinical data, digital twins offer unprecedented opportunities for personalized treatment planning [29] [31]. The integration of mechanistic models with UQ enables causal inference rather than mere correlation, fundamentally enhancing clinical decision-making [29].

Future advances in UQ methodologies will likely focus on real-time uncertainty estimation, integration of multi-scale uncertainties from molecular to organism levels, and development of standardized frameworks for UQ in regulatory contexts [32] [29]. As these methodologies mature, UQ will increasingly become an embedded component of biomedical research and clinical practice, transforming how uncertainties are managed across the healthcare continuum.

A Practical Guide to UQ Methods: From Bayesian Frameworks to Conformal Prediction

Bayesian inference is a powerful statistical framework that updates the probability of a hypothesis as new evidence is acquired. It is grounded in Bayes' theorem, which formally combines prior knowledge with observed data to produce a posterior distribution. This approach is inherently probabilistic, allowing researchers to quantify uncertainty in a principled manner. The core formula of Bayes' theorem is:

P(H∣E) = [P(E∣H) ⋅ P(H)] / P(E)

Where:

- P(H∣E) is the posterior probability of the hypothesis H given the evidence E.

- P(E∣H) is the likelihood, representing the probability of the evidence given the hypothesis.

- P(H) is the prior probability, encapsulating initial beliefs about the hypothesis before seeing the data.

- P(E) is the marginal likelihood or evidence, serving as a normalizing constant [33].

This theorem enables sequential learning, where today's posterior becomes tomorrow's prior, creating a natural framework for updating beliefs as new data emerges [34]. In medical diagnostics, for example, a clinician might start with a prior probability of a disease based on population prevalence, then update this probability using diagnostic test results to arrive at a posterior probability that informs treatment decisions [34].

Fundamental Principles and Comparison with Frequentist Methods

Core Philosophical Differences

The Bayesian and frequentist paradigms represent fundamentally different approaches to statistical inference, particularly in their interpretation of probability and how they handle uncertainty.

Table 1: Core Differences Between Bayesian and Frequentist Approaches

| Aspect | Frequentist Approach | Bayesian Approach |

|---|---|---|

| Probability Definition | Long-run frequency of events | Degree of belief or uncertainty |

| Parameters | Fixed, unknown quantities | Random variables with distributions |

| Uncertainty Quantification | Confidence intervals, p-values | Credible intervals, posterior distributions |

| Prior Information | Not formally incorporated | Explicitly incorporated via priors |

| Interpretation of Results | Probability of data given hypothesis | Probability of hypothesis given data |

Practical Implications for Scientific Research

The Bayesian framework offers several distinct advantages for research applications:

- Evidence Measurement: Bayesian methods provide the Bayes factor (BF₁₀), which quantifies evidence for one hypothesis relative to another. Unlike p-values that can only reject a null hypothesis, Bayes factors can state evidence for both null and alternative hypotheses [35]. The Bayes factor represents the predictive updating factor that measures change in relative beliefs:

BF₁₀ = [P(x|H₁) / P(x|H₀)] = [P(H₁|x) / P(H₀|x)] × [P(H₀) / P(H₁)] [35]

Intuitive Interpretation: Bayesian results often align more naturally with scientific reasoning. A 95% credible interval means there is a 95% probability the parameter lies within that interval, given the data and prior—a more direct interpretation than frequentist confidence intervals [34] [35].

Incorporation of Prior Knowledge: Bayesian methods allow integration of existing scientific knowledge through prior distributions, which is particularly valuable when data are limited or expensive to collect [36] [34].

Prior Distributions: Selection and Sensitivity

Types of Priors and Their Applications

The choice of prior distribution is a critical step in Bayesian analysis, influencing results especially with limited data. Different prior types serve different purposes in research contexts.

Table 2: Common Types of Prior Distributions and Their Applications

| Prior Type | Description | Common Use Cases | Examples |

|---|---|---|---|

| Uninformative/Weakly Informative | Minimal influence on posterior, lets data dominate | Default choice when prior knowledge is limited | Normal with large variance (e.g., N(0,1000)); Uniform over plausible range |

| Informative | Incorporates substantial pre-existing knowledge | When prior studies or expert knowledge exist | Normal centered at previous estimate; Beta distribution for probabilities |

| Conjugate Priors | Mathematical convenience; posterior in same family as prior | Analytical solutions; teaching foundations | Beta-Binomial; Gamma-Poisson; Normal-Normal |

| Hierarchical Priors | Parameters share common parent distribution | Multilevel data; partial pooling across groups | Centered vs. non-centered parameterizations [37] |

Prior Sensitivity and Robustness Analysis

responsible Bayesian practice requires testing how sensitive results are to different prior choices. For example, in clinical trials, a sensitivity analysis might compare results using skeptical priors (centered on no effect), enthusiastic priors (centered on beneficial effect), and reference priors (minimally informative) [34]. As noted in one clinical trial tutorial: "Bayesian methods require that careful attention is paid to the choice of prior distribution and a sensitivity analysis is recommended" [38].

Research shows that weakly informative priors that span a realistic range of parameter values generally perform better than completely flat priors, which can imply unreasonable beliefs by putting more probability mass on extreme values than moderate ones [35]. For effect sizes in biomedical research, common choices include normal, t-, and Cauchy distributions, with scales reflecting expectations of small to medium effects (0.2≤|d|<0.5) based on field-specific knowledge [35].

Computational Frameworks and Challenges

Key Computational Methods

The practical implementation of Bayesian inference often relies on sophisticated computational algorithms, particularly for complex models where analytical solutions are intractable.

Table 3: Computational Methods for Bayesian Inference

| Method | Description | Strengths | Limitations |

|---|---|---|---|

| Markov Chain Monte Carlo (MCMC) | Samples from posterior distribution through iterative simulation | Asymptotically exact; well-established theory | Computationally intensive; convergence diagnostics needed |

| Hamiltonian Monte Carlo (HMC) & NUTS | MCMC variant using gradient information for more efficient exploration | More efficient for high-dimensional, complex posteriors | Requires differentiable model; tuning parameters |

| Variational Inference (VI) | Approximates posterior with simpler distribution | Faster computation for large datasets | Approximation error; model-specific implementations |

| Sequential Monte Carlo (SMC) | Particle-based method for sequential inference | Handles complex dependencies; parallelizable | Weight degeneracy; parameter tuning |

| Bayesian Model Averaging (BMA) | Combines predictions from multiple models, accounting for model uncertainty | Robust predictions; acknowledges model uncertainty | Computationally demanding; requires defining model space |

Grand Challenges in Bayesian Computation

Despite significant advances, Bayesian computation faces several fundamental challenges that limit its application, particularly in biological domains with complex models and large datasets:

Understanding the Role of Parametrization: Model performance can depend significantly on how parameters are represented. As noted by computational researchers, "the community has been prioritising the development of new algorithms over understanding when existing algorithms work well, and how their performance can be improved through more careful consideration of how the statistical model is parametrised" [37]. For hierarchical models, the choice between centered and non-centered parameterizations can dramatically affect sampling efficiency [37].

Reliable Assessment of Posterior Approximations: There is a critical need for better diagnostic tools to evaluate whether posterior approximations are fit for purpose. This includes both theoretical guarantees for specific tasks like uncertainty quantification and practical diagnostics for assessing approximation quality [37]. Sampling methods like MCMC remain popular partly because their guarantees are "better understood, more trusted, and (asymptotically) stronger than those that exist for non-sampling methods" [37].

Scalability to Large Datasets and Complex Models: Applying Bayesian methods to modern large-scale datasets and complex models, such as those in systems biology and genomics, remains computationally challenging. As one researcher noted, "Genomes have an extremely complex dependence structure and nobody that I know of even tries to use the full structure of what is known" [39].

Community Benchmarks and Reproducibility: The field lacks systematic approaches for comparing different computational algorithms, with test problems often "cherry-picked to demonstrate the effectiveness of a proposed method" [37]. Developing community benchmarks with gold-standard ground truths is essential for rigorous methodological comparisons.