The Machine Learning Reproducibility Crisis in Biomarker Discovery: Diagnosing the Problem and Engineering Solutions

The application of machine learning (ML) in biomarker discovery is at a critical juncture.

The Machine Learning Reproducibility Crisis in Biomarker Discovery: Diagnosing the Problem and Engineering Solutions

Abstract

The application of machine learning (ML) in biomarker discovery is at a critical juncture. While promising to accelerate the translation of molecular insights into clinical diagnostics and therapeutics, the field is grappling with a pervasive reproducibility crisis. This article provides a comprehensive analysis for researchers and drug development professionals, exploring the foundational causes of this crisis—from small sample sizes and data leakage to overfitting and poor generalization. It delves into methodological pitfalls, advocates for rigorous validation frameworks and explainable AI, and presents a forward-looking perspective on how embedding reproducibility by design can rebuild trust, enhance clinical applicability, and finally deliver on the promise of precision medicine.

The Silent Stumbling Block: Understanding the Reproducibility Crisis in Biomedicine

In the high-stakes field of biomarker discovery, reproducibility is not merely a scientific ideal—it is a critical gatekeeper determining whether a potential biomarker transitions from a promising research finding to a clinically validated tool. The journey is fraught with challenges; for instance, across all diseases, only 0-2 new protein biomarkers achieve FDA approval each year, highlighting a significant translational gap often rooted in irreproducible results [1]. A massive survey in Brazil, involving over 50 research teams, recently found dismaying results when they attempted to double-check a swathe of biomedical studies, underscoring the pervasive nature of this crisis [2]. For researchers and drug development professionals, navigating the "reproducibility crisis" is essential for developing non-addictive pain therapeutics, refining cancer care, and advancing precision medicine [3] [4]. This guide provides actionable troubleshooting advice to overcome common barriers and achieve robust, cross-study validation for your biomarker candidates.

► FAQs: Understanding the Reproducibility Crisis

Q1: What does "reproducibility" mean in the context of biomarker research? Reproducibility is a multi-faceted concept. According to established guidelines, it can be broken down into three key types [5]:

- Methods Reproducibility: The ability to exactly repeat the experimental and computational procedures, using the same data and tools, to obtain the same results. This requires precise documentation of every step.

- Results Reproducibility: The ability to replicate the findings of a prior study by conducting a new, independent study following the same experimental procedures. This is also known as replicability.

- Inferential Reproducibility: The ability to draw similar conclusions from a reanalysis of the original data or from an independent study.

Q2: Why is there a "reproducibility crisis" in machine learning and biomarker discovery? The crisis stems from a combination of factors that plague modern computational and life-science research [5] [6]:

- Non-Deterministic Code: Machine learning algorithms rely on inherent randomness (e.g., random weight initialization in neural networks, data shuffling, dropout layers). If random seeds are not fixed, results will differ across runs [5] [7].

- Insufficient Data Sharing: One study found that only 6% of presenters at top AI conferences shared their code, and only a third shared their data, making independent verification nearly impossible [5].

- Poor Study Design and Small Sample Sizes: Many biomarker studies suffer from the "small n, large p" problem, where the number of potential features (e.g., genes, proteins) far exceeds the number of patients, increasing the risk of false discoveries [1] [8].

- Lack of Incentives: Researchers are often rewarded for novel findings over confirmatory or null results, leading to a publication bias that hides irreproducible studies [6].

- Environmental and Data Variability: Differences in software versions, computational environments, and even subtle changes in data files (e.g., from being opened in spreadsheet software) can break reproducibility [7].

Q3: What is the difference between a prognostic and a predictive biomarker, and why does it matter for validation? This distinction is a fundamental statistical consideration that directly impacts study design and validation [9].

- A prognostic biomarker provides information about the patient's overall disease outcome, regardless of therapy. It is identified through a test of association between the biomarker and the outcome in a cohort or single-arm trial.

- A predictive biomarker informs about the response to a specific treatment. It must be identified through an interaction test between the treatment and the biomarker in the context of a randomized clinical trial.

Mixing up these two can lead to invalid conclusions and failed validation studies.

► Troubleshooting Guide: Common Scenarios and Solutions

| Problem Scenario | Likely Cause(s) | Solution(s) |

|---|---|---|

| Different results each time you retrain your ML model on the same data. | Uncontrolled randomness in the code (e.g., unset random seeds for weight initialization, data shuffling, or dropout layers) [5] [7]. | Set all relevant random seeds in your code (e.g., in Python, set seeds for random, numpy, and TensorFlow/PyTorch). Ensure GPU-enabled operations are deterministic if possible [7]. |

| You cannot replicate the baseline performance of a published algorithm. | The original code, data, or critical hyperparameters were not shared or were inadequately documented [5]. | Use a reproducibility checklist. Contact the original authors for specifics. If unavailable, document all your assumptions and hyperparameters when re-implementing [5] [8]. |

| Your biomarker candidate fails to validate in an independent cohort. | The discovery cohort was too small or not representative of the target population. The biomarker may be overfitted to the initial dataset [1] [9]. | Pre-specify your analysis plan. Use large, diverse datasets for discovery and validation. Apply rigorous statistical corrections for multiple comparisons (e.g., False Discovery Rate) [9] [8]. |

| You get errors when trying to run a colleague's analysis code. | A mismatched computational environment (e.g., different package versions, language versions, or operating system) [7]. | Use containerization tools (e.g., Docker, Singularity) to create a portable, version-controlled environment. Share the entire container [7]. |

► Essential Experimental Protocols for Robust Validation

Protocol 1: Designing a Reproducible Biomarker Discovery Study

- Define Objective and Biomarker Type: Clearly pre-specify the biomarker's intended use (e.g., diagnostic, prognostic, predictive) and the target population [9] [3].

- Power and Sample Size Calculation: Perform an a priori power calculation to ensure the study has a sufficient number of samples and events to detect a meaningful effect, minimizing the risk of false positives [9] [8].

- Pre-register Study Plan: Publicly register your research ideas, hypotheses, and analysis plan before beginning the study. This establishes authorship, reduces bias, and increases the integrity of the results [6].

- Plan for Batch Effects: In high-throughput data generation, randomly assign cases and controls to testing plates or batches to control for technical variability. Use blocking designs where appropriate [9] [8].

Protocol 2: Distinguishing Prognostic from Predictive Biomarkers

- Prognostic Biomarker Identification:

- Setting: Can be conducted using a retrospectively collected cohort that represents the target population.

- Statistical Test: A main effect test (e.g., Cox regression, t-test) assessing the association between the biomarker and a clinical outcome (e.g., overall survival) [9].

- Predictive Biomarker Identification:

- Setting: Must be performed using data from a randomized clinical trial (RCT).

- Statistical Test: A test for a significant interaction between the treatment arm and the biomarker in a model predicting the outcome. For example, in the IPASS study, a significant interaction between EGFR mutation status and treatment (gefitinib vs. carboplatin-paclitaxel) was the key evidence for a predictive biomarker [9].

Protocol 3: Implementing a Machine Learning Pipeline for Biomarker Discovery

- Data Curation and Standardization: Apply data type-specific quality control metrics (e.g., fastQC for NGS data, arrayQualityMetrics for microarrays). Transform clinical data into standard formats (e.g., OMOP, CDISC) to ensure harmonization [8].

- Feature Selection with Regularization: To avoid overfitting in high-dimensional data (

p >> n), use variable selection methods that incorporate shrinkage (e.g., Lasso regression) during model estimation [9] [8]. - Use Explainable AI (XAI): Integrate XAI techniques from the start to ensure your digital biomarkers are not only predictive but also understandable and clinically actionable, which builds trust and facilitates regulatory acceptance [1].

- Validation on Hold-Out Sets: Always validate the final model on a completely held-out test set that was not used during the feature selection or model training process. The ultimate test is clinical validation at scale across large, diverse populations [1] [8].

► Key Quantitative Metrics for Biomarker Evaluation

Table: Essential Statistical Metrics for Biomarker Performance Assessment [9]

| Metric | Description | Interpretation |

|---|---|---|

| Sensitivity | Proportion of true cases that test positive. | A high value means the biomarker rarely misses patients with the disease. |

| Specificity | Proportion of true controls that test negative. | A high value means the biomarker rarely falsely flags healthy individuals. |

| Area Under the Curve (AUC) | Measures how well the biomarker distinguishes cases from controls across all possible thresholds. | Ranges from 0.5 (no discrimination) to 1.0 (perfect discrimination). |

| Positive Predictive Value (PPV) | Proportion of test-positive patients who truly have the disease. | Highly dependent on disease prevalence. |

| Negative Predictive Value (NPV) | Proportion of test-negative patients who truly do not have the disease. | Highly dependent on disease prevalence. |

► The Scientist's Toolkit: Research Reagent Solutions

Table: Essential Materials and Frameworks for Reproducible Research

| Item | Function in Biomarker Discovery & Validation |

|---|---|

| FAIR Data Principles | A set of guiding principles to make data Findable, Accessible, Interoperable, and Reusable. Prevents researchers from "reinventing the wheel" [1]. |

| Digital Biomarker Discovery Pipeline (DBDP) | An open-source project providing toolkits and community standards (e.g., DISCOVER-EEG) to overcome analytical variability and promote reproducible methods [1]. |

| Electronic Laboratory Notebooks (ELNs) | Digitizes lab entries to seamlessly sit alongside research data, making it easier to access, use, and share experimental details across teams [6]. |

| Containerization (e.g., Docker) | Bundles code, data, and the entire computational environment into a single, runnable unit, eliminating "it worked on my machine" problems [7]. |

| Consolidated Standards of Reporting Trials (CONSORT) | Standard reporting guidelines for clinical trials. Following such guidelines ensures comprehensive and clear documentation of the study design [8]. |

| Repository with DOI (e.g., Zenodo) | Allows for archiving and sharing of data, code, and other research outputs. A Digital Object Identifier (DOI) ensures the resource can be persistently found and cited [6]. |

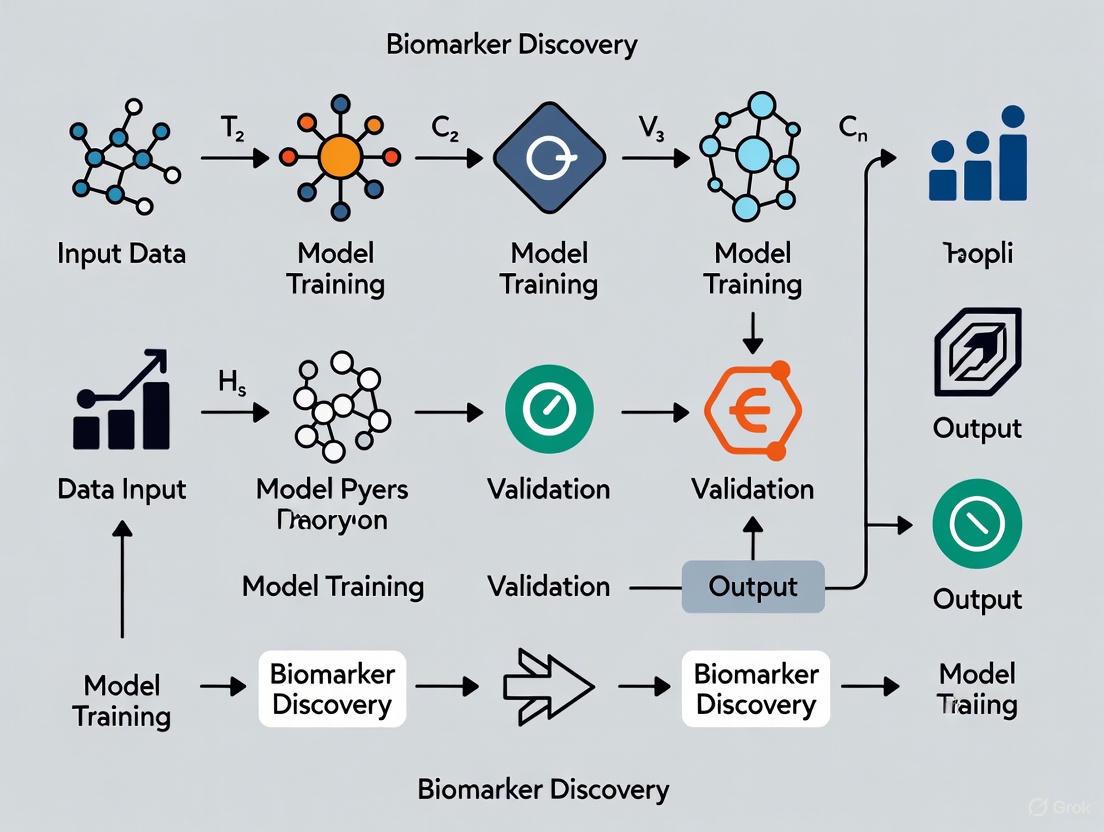

► Visualizing Workflows for Clarity and Reproducibility

Diagram 1: The Biomarker Validation Pathway

This diagram outlines the critical stages a candidate biomarker must pass through to achieve clinical utility, highlighting the iterative and rigorous nature of validation.

Diagram 2: Three Types of Reproducibility

This chart clarifies the distinct meanings of reproducibility, which are often conflated but represent different levels of scientific verification.

Reproducibility is a cornerstone of the scientific method. However, numerous fields are currently grappling with a "reproducibility crisis," where other researchers are unable to reproduce a high proportion of published findings [10]. This problem is particularly acute in machine learning (ML)-based science and biomarker discovery research, where initial promising results often fail to hold up in subsequent validation efforts [11] [12]. Landmark reports from industry have highlighted the alarming scale of this issue, revealing reproducibility rates as low as 11% to 25% in critical areas of preclinical research [10]. This technical support guide outlines the scope of the problem and provides actionable troubleshooting advice for researchers and drug development professionals working to improve the reliability of their work.

Landmark Studies on Reproducibility Rates

The following table summarizes key findings from major investigations that have quantified the reproducibility problem.

| Source / Field of Study | Reported Reproducibility Rate | Context and Findings |

|---|---|---|

| Amgen & Bayer (Biomedical) [10] | 11% – 25% | Scientists from these biotech companies reported that only 11% (Amgen) to 25% (Bayer) of landmark findings in preclinical cancer research could be replicated. |

| ML-based Science Survey [12] | Widespread errors affecting 648+ papers | A survey of 41 papers across 30 fields found data leakage and other pitfalls collectively affected 648 papers, in some cases leading to "wildly overoptimistic conclusions." |

| Clinical Metabolomics (Cancer Biomarkers) [13] | ~15% of metabolites consistently reported | A meta-analysis of 244 studies found that of 2,206 unique metabolites reported as significant, 85% were likely statistical noise. Only 3%–12% of metabolites for a specific cancer type were statistically significant. |

| Biomedical Researcher Survey [14] | ~70% perceive a crisis | A survey of over 1,600 biomedical researchers found nearly three-quarters believe there is a significant reproducibility crisis, with "pressure to publish" cited as the leading cause. |

FAQs and Troubleshooting Guides

What are the most common causes of irreproducibility in ML-based biomarker discovery?

Irreproducibility often stems from a combination of factors across the entire research pipeline. The most prevalent issues in ML-based science are related to data leakage, where information from the test dataset inadvertently influences the model training process [12]. In clinical metabolomics and other biomarker fields, inconsistencies arise from a lack of standardized protocols and low statistical power [11] [15] [13].

Troubleshooting Steps:

- Audit for Data Leakage: Systematically check your ML pipeline for these common leakage pitfalls [12]:

- Incorrect Train-Test Split: Failing to split data before any pre-processing or feature selection.

- Illegitimate Features: Using features that are proxies for the outcome variable.

- Temporal Leakage: Training on data from the future to predict the past.

- Non-Independent Data: Having duplicate or highly correlated samples across training and test sets.

- Standardize Pre-Analytical Protocols: Document and control all sample handling steps, including collection tubes, time to processing, centrifugation conditions, and storage temperature [11] [15].

- Increase Sample Size: Use power analysis to ensure your study is large enough to detect a true effect, as small samples often yield overoptimistic results [11].

How can I prevent data leakage in my machine learning workflow?

Data leakage is a pervasive cause of overoptimistic models and irreproducible findings in ML-based science [12]. Preventing it requires rigorous discipline throughout the modeling process.

Troubleshooting Steps:

- Implement Rigorous Data Splitting: Create a strict hold-out test set immediately after data collection. Do not use this set for any aspect of model development, including feature selection, parameter tuning, or normalization [12].

- Use Nested Cross-Validation: For robust internal validation, use nested cross-validation, where an outer loop handles data splitting and an inner loop is dedicated to model selection and hyperparameter tuning.

- Create a "Model Info Sheet": Adopt a checklist to document and justify key decisions, including the origin of all features, the handling of data dependencies, and the exact pre-processing steps applied to training and test data separately [12].

Our team struggles to reproduce our own ML models. How can we improve internal reproducibility?

The problem of irreproducibility often starts within the same lab or team. The ML development lifecycle is often complex and poorly documented, making it difficult to rebuild models from scratch [16].

Troubleshooting Steps:

- Implement Version Control for Code, Data, and Models: Use systems like Git for code. For data and model weights, use solutions like DVC (Data Version Control) or similar platforms to snapshot the exact state of your training data and resulting models for each experiment [16].

- Record All Hyperparameters and Environment Details: Log every configuration setting, random seed, and software library version for every training run. Tools like MLflow or Weights & Biases can automate this tracking.

- Containerize Your Environment: Use Docker or similar containerization technologies to capture the complete computational environment, including OS, libraries, and drivers, ensuring that the model can be run identically in the future [16].

Experimental Protocols for Assessing Reproducibility

Protocol: Meta-Analysis of Reported Biomarkers

This methodology, derived from a large-scale analysis of clinical metabolomics studies, provides a framework for quantifying reproducibility across a field [13].

- Literature Search & Screening:

- Conduct an exhaustive search of scientific literature using relevant databases (e.g., PubMed, Scopus) and keywords.

- Apply strict inclusion/exclusion criteria (e.g., only human studies, specific biofluid, specific analytical technique).

- Data Extraction:

- Systematically extract data from each included study: publication details, cohort characteristics, pre-analytical protocols, analytical methods, and lists of reported significant biomarkers (e.g., metabolites, proteins).

- Data Homogenization:

- Standardize biomarker nomenclature and group related disease types to enable cross-study comparison.

- Frequency and Consistency Analysis:

- Calculate how many studies report each unique biomarker.

- For biomarkers reported by multiple studies, analyze the consistency in the reported direction of change (e.g., up-regulated or down-regulated in disease).

- Statistical Modeling:

- Use statistical models to estimate the proportion of reported biomarkers that are likely to be true signals versus statistical noise, based on their frequency and consistency of reporting [13].

The Scientist's Toolkit: Key Research Reagent Solutions

The following table lists essential materials and tools for improving reproducibility in ML and biomarker research.

| Item / Tool | Function | Field |

|---|---|---|

| Certified Reference Materials [11] | Provides a "gold standard" sample to calibrate assays and control for lot-to-lot variability in analytical kits. | Biomarker Analysis |

| Quality Control (QC) Samples [13] | Pooled samples from case and control groups, analyzed repeatedly to monitor instrument stability and data quality over time. | Metabolomics, Proteomics |

| Version Control Systems (e.g., Git) [16] | Tracks changes to code, ensuring that every modification is documented and any previous version can be recovered. | ML & General Research |

| Containerization (e.g., Docker) [16] | Packages code, dependencies, and the operating system into a single, reproducible unit that can be run on any compatible machine. | ML & General Research |

| Data Version Control (e.g., DVC) | Extends version control to large data files and ML models, linking them to the code that generated them. | ML-based Science |

| Model Info Sheets [12] | A documentation template that forces researchers to justify the absence of data leakage, increasing transparency and rigor. | ML-based Science |

Understanding Reproducibility Failures: A Pathways Diagram

The diagram below visualizes the primary pathways that lead to irreproducible results in ML-based biomarker research, highlighting critical failure points.

Troubleshooting Guides & FAQs

FAQ 1: What are the most critical yet often overlooked factors ruining my biomarker discovery model's performance?

The most critical factors are often methodological pitfalls rather than algorithmic shortcomings. These include small sample sizes, batch effects, overfitting, and data leakage, which collectively compromise model generalization. A significant, frequently neglected issue is Quality Imbalance (QI) between patient sample groups, where systematic quality differences between disease and control groups can create false biomarkers. One study of 40 clinical RNA-seq datasets found that 35% (14 datasets) suffered from high quality imbalance. This imbalance artificially inflates the number of differentially expressed genes; the higher the imbalance, the more false positives appear, directly reducing the relevance of findings and reproducibility between studies [17]. Furthermore, the uncritical application of complex deep learning models often exacerbates these problems by increasing the risk of overfitting and reducing interpretability, offering negligible performance gains for typical clinical proteomics datasets [18].

FAQ 2: My model works perfectly on my data but fails on external datasets. Is this a algorithm problem?

This is typically a generalization problem, and while algorithm tuning can help, the root cause usually lies in the data and study design. The core issue is often that your model has learned non-biological, technically confounded signals—such as batch effects, sample quality artifacts, or contaminants from consumables—instead of genuine biological signals [18] [17]. For instance, batch effects can perfectly mimic biological variation if samples from different conditions are processed in separate batches. Similarly, studies have shown that contaminant peaks from sources like lens tissue or storage containers, or variations introduced by different instrument users, can be mistakenly identified as significant features by classification models, leading to highly misleading results [19]. The solution requires a focus on rigorous study design, appropriate validation strategies, and transparent modeling practices, rather than seeking algorithmic novelty [18].

FAQ 3: How can I proactively detect and correct for batch effects in my experimental data?

Proactive detection and correction requires a multifaceted approach:

- Standardized Protocols: Implement carefully standardized sample handling and measurement procedures to minimize batch effects at the source [19].

- Experimental Design: Whenever possible, distribute biological groups and conditions evenly across processing batches to avoid confounding.

- Batch Effect Detection: Actively investigate your data for systematic differences between batches using visualization tools like PCA plots, where samples may cluster by batch rather than biology.

- Batch Effect Correction: Evaluate and apply specialized computational methods designed for batch effect correction. However, use these with caution, as they can sometimes remove biological signal if improperly applied [19]. The key is to document and report all such procedures to ensure transparency and reproducibility.

FAQ 4: I have a 'small n, large p' problem (few samples, many features). How can I build a reliable model?

In the context of the "small n, large p" problem, prioritizing simplicity and interpretability over complexity is essential. Complex models like deep neural networks have a high capacity to overfit and memorize technical noise in small datasets [18]. Instead, consider the following strategies:

- Feature Selection: Aggressively reduce the number of features (p) through robust, domain-aware feature selection methods to mitigate overfitting.

- Simple Models: Start with simpler, more interpretable models (e.g., regularized linear models). These are less prone to overfitting and their results are easier to validate and interpret biologically.

- Rigorous Validation: Employ strict validation protocols such as nested cross-validation to obtain realistic performance estimates and avoid data leakage. Emphasize external validation on a completely independent dataset whenever possible to truly test generalizability [18].

The following tables consolidate key quantitative findings from recent studies investigating factors that drive the reproducibility crisis.

Table 1: Impact of Sample Quality Imbalance (QI) on Differential Gene Expression Analysis This table summarizes data from an analysis of 40 clinically relevant RNA-seq datasets, quantifying how quality imbalance confounds results [17].

| Metric | Finding | Impact on Analysis |

|---|---|---|

| Prevalence of High QI | 14 out of 40 datasets (35%) | Highlights that quality imbalance is a common, not rare, issue in published data. |

| QI vs. Differential Genes | A strong positive linear relationship (R² = 0.57, 0.43, 0.44 in 3 large datasets). | The higher the QI, the greater the number of reported differentially expressed genes, inflating false discoveries. |

| Effect of Dataset Size | In high-QI datasets, the number of differential genes increased 4x faster with dataset size (slope=114) vs. balanced datasets (slope=23.8). | Increasing sample size without addressing quality imbalance compounds, rather than solves, the problem. |

| Presence of Quality Markers | 7,708 low-quality marker genes were identified, recurring in up to 77% (10/13) of low-QI datasets studied. | These genes are often mistaken for true biological signals, directly reducing the relevance of findings. |

Table 2: Experimental Factors Influencing ASAP-MS Data Reproducibility This table outlines key experimental factors identified as major sources of variation in mass spectrometry-based metabolomics, which can be generalized to other omics technologies [19].

| Experimental Factor | Specific Source of Variation | Impact on Data & Model |

|---|---|---|

| Instrument & Calibration | Residual calibration mix in ion source; probe temperature. | Introduces contaminant peaks and changes ionization probability, creating non-biological spectral features. |

| Sample Handling | Probe cleaning procedures; consumables (lens tissue, storage tubes); different users. | Leads to sample degradation or introduction of contaminants, skewing classification models. |

| Batch Effects | Systematic differences when samples are processed in different batches or on different days. | Can mask or, worse, perfectly mimic biological variation, invalidating study conclusions [19]. |

Experimental Protocols for Mitigating Technical Drivers

Protocol 1: Standardized Sample Preparation and ASAP-MS Measurement for Biofluids

Adapted from a study optimizing clinical metabolomics data generation using Atmospheric Solids Analysis Probe Mass Spectrometry (ASAP-MS) [19].

1. Sample Preparation (e.g., Cerebrospinal Fluid - CSF):

- Thaw frozen CSF samples on ice.

- Centrifuge at 12,000g for 15 minutes at 4°C to pellet cellular components and particulate matter.

- Carefully pipette the supernatant into a new, clean polypropylene tube, ensuring the pellet is not disturbed.

2. Pre-Measurement Setup:

- Capillary Cleaning: Bake glass ASAP probe capillaries in an oven at 250°C for 30 minutes to remove surface contaminants.

- Background Measurement: Fit the probe with a clean capillary, insert it into the ion source, and record a background mass spectrum for 30 seconds.

- Probe Cooling: Remove the probe and allow the tip to cool before sample application.

3. Sample Measurement:

- Bring the clean, cooled probe tip into contact with the smallest possible amount of sample.

- Reinsert the probe into the ion source for a 25-second measurement.

- Critical: Monitor the Total Ion Chromatogram (TIC). A valid run shows an instantaneous signal rise followed by a rapid decay over 20-30 seconds. Signal saturation indicates too much sample and will produce unreliable data [19].

Protocol 2: A Rigorous Data Processing Workflow for ASAP-MS Spectral Data

This protocol ensures consistent and transparent data processing from raw spectra to analysis-ready features [19].

1. Data Export and Region Identification:

- Export all raw mass spectra from the proprietary software. The raw data consists of sequential scans taken every ~900 ms.

- Use a custom script (e.g., in Python) to automatically identify the "background" and "sample" regions based on the sharp rise in total ion count when the loaded probe is inserted.

2. Spectral Averaging and Background Consideration:

- For each sample, calculate the average mass spectrum from all scans recorded during the 25-second sample measurement interval.

- The standard deviation of these scans can also be calculated to assess signal stability during measurement.

3. Batch Effect Detection and Correction (if necessary):

- After feature quantification, use statistical and visualization methods (e.g., PCA) to check for systematic variation associated with processing batch, date, or user.

- If a batch effect is detected and is not perfectly confounded with the biological groups, evaluate and apply a suitable batch effect correction algorithm.

- Documentation: Meticulously record any correction steps applied for full transparency.

Visualizing the Workflow and Problem

Diagram 1: Standardized ASAP-MS Clinical Analysis Workflow

Diagram 2: How Technical Drivers Compromise Biomarker Discovery

The Scientist's Toolkit: Essential Research Reagents & Materials

Table 3: Key Materials for Robust Clinical Omics Studies This table details essential materials and their critical functions in ensuring data quality and reproducibility, based on optimized protocols [19].

| Item | Function & Rationale | Critical Quality Note |

|---|---|---|

| Oven-Baked Glass Capillaries | Serves as the probe tip for introducing sample into the ASAP-MS ion source. | Baking at 250°C for 30 mins is essential to remove surface contaminants that create spurious spectral peaks [19]. |

| LC-MS Grade Water | Used as a high-purity solvent for homogenizing tissue samples (e.g., brain sections). | Prevents introduction of chemical contaminants from lower-grade water that can interfere with the metabolite profile. |

| Polypropylene Sample Tubes | Used for storage and homogenization of clinical samples (e.g., brain tissue, CSF). | Material selection is critical; certain plastics can leach compounds that appear as contaminant peaks in mass spectra [19]. |

| Bead Homogeniser | Provides thorough and reproducible mechanical homogenization of tissue samples. | Standardized settings (speed, time) across all samples are vital to ensure consistent extraction and avoid technical variation. |

| Cryostat | Used to obtain thin (e.g., 10μm) sections from frozen post-mortem brain tissue samples. | Care must be taken to avoid contamination from the Optimal Cutting Temperature (OCT) compound used for mounting. |

The integration of machine learning (ML) into biomarker discovery represents one of the most promising frontiers in modern therapeutic development. It holds the potential to decipher complex biological patterns from high-dimensional data, enabling more precise target discovery, patient stratification, and clinical trial design [20]. However, this promise is currently overshadowed by a pervasive reproducibility crisis that undermines the entire research pipeline. This crisis is not merely a theoretical concern; it has tangible, costly consequences, wasting billions of R&D dollars and critically delaying the delivery of life-saving treatments to patients. This technical support center is designed to provide researchers, scientists, and drug development professionals with actionable troubleshooting guides and FAQs to directly address and mitigate these reproducibility failures in their daily experimental work.

The Scale of the Problem: Quantitative Evidence of Reproducibility Failures

The tables below summarize key quantitative evidence that illustrates the severe reproducibility challenges in clinical metabolomics and ML-based science.

Table 1: Reproducibility Crisis in Clinical Metabolomics for Cancer Biomarker Discovery A meta-analysis of 244 clinical metabolomics studies revealed significant inconsistencies in reported biomarkers [13].

| Metric | Value | Implication |

|---|---|---|

| Total Unique Metabolites Reported | 2,206 | Vast number of candidate biomarkers |

| Metabolites Reported by Only One Study | 1,582 (72%) | Extreme lack of consensus across studies |

| Metabolites Classified as Statistical Noise | 1,867 (85%) | Most reported findings are likely false positives |

| Statistically Significant Metabolites per Cancer Type | 3% to 12% | Very low true signal-to-noise ratio |

Table 2: Prevalence of Data Leakage in ML-Based Science A survey across 30 scientific fields found data leakage to be a pervasive cause of irreproducibility [12].

| Field | Number of Papers Reviewed | Number of Papers with Pitfalls | Common Pitfalls |

|---|---|---|---|

| Clinical Epidemiology | 71 | 48 | Feature selection on train and test set |

| Radiology | 62 | 16 | No train-test split; duplicates in datasets |

| Neuropsychiatry | 100 | 53 | No train-test split; improper pre-processing |

| Medicine | 65 | 27 | No train-test split |

| Law | 171 | 156 | Illegitimate features; temporal leakage |

Troubleshooting Guides: Addressing Specific Experimental Issues

Guide 1: Troubleshooting Data Leakage in ML Pipelines

Problem: Your model shows excellent performance during training and validation but fails dramatically when applied to a truly independent test set or new clinical data.

Investigation Checklist:

- Verify Data Splitting: Confirm that your training and test sets were split before any pre-processing, feature selection, or parameter tuning steps. The test set should be completely locked away until the final evaluation stage [12].

- Check for Illegitimate Features: Scrutinize your feature set for "proxy" variables that directly leak information about the target label. For example, a feature derived from the outcome variable itself is illegitimate [12].

- Audit Pre-processing: Ensure that operations like normalization, imputation, and scaling were fit only on the training data and then applied to the test data. Pre-processing the entire dataset before splitting is a common critical error [12].

- Assess Temporal Validity: If your data has a time component (e.g., patient records over years), validate that all data in your test set was collected after the data in your training set. Using future data to predict the past creates unrealistic performance [12].

- Search for Duplicates: Check for and remove any duplicate samples that appear in both your training and test sets, as this invalidates the independence of the test set [12].

Solution Protocol: Implementing a Rigorous ML Workflow The following diagram outlines a leakage-proof ML workflow for biomarker discovery. Adhering to this strict separation of data is the most effective defense against data leakage.

Guide 2: Resolving Biomarker Inconsistencies in Multi-Omic Studies

Problem: Your metabolomic or proteomic study identifies a promising panel of biomarkers, but subsequent validation efforts fail, or the direction of change (up/down-regulation) is inconsistent with other literature.

Investigation Checklist:

- Review Sample Preparation: Inconsistent sample collection, storage temperature, or extraction protocols (e.g., aqueous vs. methanol, Folch method) can dramatically alter the metabolite profile detected. Standardize and document every step [13].

- Confirm Analytical Method Suitability: Different analytical platforms (NMR vs. MS) and columns (HILIC vs. C18) have unique detection biases. NMR measures abundant metabolites, while MS detects metabolites that ionize well. Ensure your method is appropriate for your target analytes [13].

- Validate Statistical Modeling: Check for overfitting, especially when the number of features (p) far exceeds the number of samples (n). Use appropriate cross-validation and multiple testing corrections. A recent meta-analysis suggests 85% of reported metabolite biomarkers may be statistical noise [13].

- Assess Coverage and Identification: The metabolome is partially "dark," and identifications can be ambiguous. Confirm your findings against known standards and be transparent about the level of confidence in your metabolite identifications [13].

Solution Protocol: Standardized Workflow for Robust Biomarker Discovery Implement this standardized workflow to minimize technical variability and enhance the reproducibility of your biomarker studies.

Frequently Asked Questions (FAQs)

Q1: Our team achieved a high-performance ML model for patient stratification internally, but it failed completely upon external validation. What is the most likely cause? A1: The most probable cause is data leakage, where information from the test set inadvertently influenced the training process. This can occur through seemingly innocent actions like performing feature selection on the entire dataset before splitting, or using imputation methods that calculate values from both training and test data. Always perform a rigorous audit of your pipeline using the checklist in Troubleshooting Guide 1 [12].

Q2: Why do different clinical metabolomics studies on the same cancer type report completely different, and sometimes contradictory, metabolite biomarkers? A2: This inconsistency stems from a combination of factors, including:

- Methodological Variability: Differences in sample preparation, analytical platforms (NMR vs. MS), and data processing protocols [13].

- Statistical Noise: A recent meta-analysis of 244 studies found that 85% of reported metabolite biomarkers are likely statistical noise, with 72% being reported by only a single study [13].

- Overfitting: The use of high-dimensional data with small sample sizes without proper validation leads to models that do not generalize.

Q3: How can we make our ML-based biomarker research more reproducible, even when using complex models like deep learning? A3: Adopt the following best practices:

- Set Random Seeds: For models with inherent randomness (e.g., stochastic gradient descent), always set and report the random seed. One study found that changing the random seed alone could inflate performance estimates by 2-fold [21].

- Version Control Everything: Document the exact versions of all software libraries, as default parameters can change from version to version, leading to different results [21].

- Share Code and Data: Where possible, embrace open science by sharing code and data in a "walled-garden" environment if privacy is a concern, to allow for independent verification [21].

Q4: What are the most critical, yet often overlooked, steps in the biomarker development lifecycle that lead to failure? A4: Failures most often occur during the Discovery and Analytical Validation phases.

- In Discovery: Over-reliance on hypothesis-driven cherry-picking or, conversely, blind application of machine learning that leads to overfitted biosignatures that fail to generalize [22].

- In Analytical Validation: Promoting biomarker potential prematurely before comprehensive performance evaluation, leading to later failures in clinical validation [22]. A clear definition of the clinical need and intended use from the outset is paramount.

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 3: Key Tools and Platforms for Reproducible ML and Biomarker Research

| Tool / Resource Category | Specific Examples | Function & Importance for Reproducibility |

|---|---|---|

| Public Data Repositories | MIMIC-III, Phillips eICU, UK Biobank, CDC NHANES [21] | Provides standardized, high-quality datasets that foster reproducibility and allow independent validation of findings across different research teams. |

| Multi-Omics Technologies | Next-Generation Sequencing (NGS), High-Throughput Proteomics, NMR & Mass Spectrometry [23] | Enables comprehensive profiling of genes, transcripts, proteins, and metabolites. Integration via multi-omics approaches provides a more robust view of biology. |

| AI-Driven Bioinformatics | PathExplore Fibrosis, Histotype Px [20] [23] | AI tools can uncover hidden patterns in complex data (e.g., histology slides) that outperform established markers, improving diagnostic and prognostic accuracy. |

| Quality Control (QC) Materials | Isotopically Labeled Standards, Pooled QC Samples [13] | Critical for controlling for technical variability in 'omic' assays. Pooled QC samples help monitor instrument stability and correct for batch effects. |

| Reporting Guidelines | TRIPOD, CONSORT, SPRINT (adapted for AI/ML) [21] | Standardized reporting guidelines ensure transparency and provide the necessary details for other researchers to understand, evaluate, and replicate the study. |

Technical Support Center

Troubleshooting Guides

Guide 1: Diagnosing Performance Failure: Is Your Data the Problem?

- Problem: My deep learning model performs excellently on the training set but fails on the test set or external validation cohort.

- Diagnosis: This is a classic sign of overfitting, where the model has memorized noise and spurious correlations in your training data instead of learning generalizable biological patterns. This is a major contributor to the reproducibility crisis in biomarker discovery [24] [25].

- Solution:

- Conduct a Data Sufficiency Analysis: Before building a complex model, check if your dataset size is sufficient for the number of features. Deep learning models require exponentially more data to achieve stable performance [25].

- Simplify the Model: Start with a simpler machine learning model like Random Forest or LASSO regression. These models are less prone to overfitting on small-data, high-dimensional clinical datasets and can provide a performance benchmark [25] [26].

- Implement Robust Validation: Ensure you are using a strict validation protocol. A patient-wise or time-based split is more reliable than a random split for clinical data, as it prevents data leakage from the same patient appearing in both training and test sets [24] [26].

Guide 2: Addressing the "Black Box" Problem for Clinical Adoption

- Problem: My deep learning model has good predictive power, but clinicians and regulators reject it because the predictions are not interpretable.

- Diagnosis: A lack of explainability and mechanistic insight undermines trust and prevents clinical translation, a common pitfall in biomarker research [24] [27].

- Solution:

- Incorporate Explainable AI (XAI) Techniques: Use post-hoc methods like SHAP (SHapley Additive exPlanations) or LIME (Local Interpretable Model-agnostic Explanations) to explain individual predictions [24] [27].

- Validate with Biological Knowledge: The explanations provided by XAI should be treated as hypotheses. Correlate the model's important features with known biological pathways and mechanisms to see if they make sense. For example, if a model for rheumatoid arthritis highlights an immune-related pathway, this builds credibility [24].

- Prioritize Explainable Architectures: When possible, use models that offer inherent interpretability, such as decision trees or logistic regression with feature selection, for critical diagnostic or prognostic tasks [26].

Frequently Asked Questions (FAQs)

Q1: When should I genuinely consider using deep learning over simpler models for my clinical dataset? A: Deep learning becomes a compelling choice when you have a very large number of samples (e.g., >10,000) and your data has a complex, hierarchical structure that simpler models cannot easily capture. This includes tasks like analyzing raw medical images, genomic sequences, or time-series sensor data [28] [25] [29]. For most tabular clinical or omics datasets with a few hundred to a few thousand samples, simpler models often provide equal or better performance with greater efficiency and interpretability [25] [26].

Q2: What are the most critical steps to ensure my machine learning model is reproducible and trustworthy? A: Trustworthiness is built on technical robustness, ethical responsibility, and domain awareness [27]. Key steps include:

- Prevent Data Leakage: Ensure information from the test set never influences the training process. This includes performing feature selection and preprocessing within each cross-validation fold [26].

- Perform External Validation: The ultimate test of a biomarker model is its performance on a completely independent dataset from a different institution or cohort [25] [26].

- Report Comprehensively: Follow reporting guidelines that detail data sources, preprocessing steps, model parameters, and validation results to allow for replication [26].

Q3: How can I optimize my computational resources when working with high-dimensional clinical data? A: Computational complexity is a major drawback of deep learning [30]. To optimize resources:

- Start Simple: Begin with a simple model as a baseline. You may find it meets your needs without requiring extensive computational power [26].

- Use Dimensionality Reduction: Apply techniques like Principal Component Analysis (PCA) to reduce the feature space before training a model [24] [25].

- Leverage Specialized Cores: Many institutions have biomedical computing cores that offer consulting and access to high-performance computing resources, which can be used collaboratively or for Do-It-Yourself (DIY) projects [28].

The table below summarizes key concepts and evidence related to the limitations of complex models in clinical data contexts.

| Concept / Finding | Quantitative / Descriptive Evidence | Relevant Context |

|---|---|---|

| Data Scale Requirement | Deep Learning (DL) requires "very large number of samples"; simple models suffice for "few hundred to a few thousand samples" [25]. | Highlights the data volume threshold where complexity becomes necessary. |

| Overfitting Risk | DL models have a "tendency to overfit" and find "local answers" that do not generalize [24]. | Core reproducibility problem in biomarker discovery. |

| Performance Benchmark | A novel optimized SAE+HSAPSO framework achieved 95.52% accuracy on DrugBank/Swiss-Prot data [30]. | Example of high performance achievable with non-standard DL on large, clean datasets. |

| Validation Imperative | "External validation should also be performed whenever possible" [26]. | Critical action to ensure model generalizability beyond a single dataset. |

| Explainability Need | "Lack of interpretability" is a significant hurdle for clinical adoption [25]. | Key reason why complex "black box" models face resistance in clinical practice. |

Detailed Experimental Protocols

Protocol 1: Building a Reproducible Biomarker Discovery Pipeline

This protocol outlines a robust workflow for identifying biomarkers from transcriptomic data, using a Rheumatoid Arthritis (RA) case study as a reference [24].

- Data Acquisition and QC: Source a public dataset (e.g., from TCGA, GEO). Perform initial quality control using unsupervised learning methods.

- Exploratory Data Analysis: Use PCA to get a global view of data variation and identify potential batch effects or outliers. Use t-SNE or UMAP to explore local data structure and see if patient groups naturally cluster by disease status [24].

- Model Training and Validation:

- Split data into training (70%), validation (15%), and hold-out test (15%) sets, ensuring patient-wise separation.

- On the training set, train multiple models: a simple logistic regression/LASSO model, a Random Forest, and a DL model (e.g., a simple multilayer perceptron).

- Use the validation set for hyperparameter tuning and model selection.

- Model Interpretation:

- Apply SHAP analysis to the best-performing model to identify the top features (genes) driving the predictions.

- Perform pathway enrichment analysis (e.g., using GO, KEGG) on the top features to see if the model has identified biologically relevant mechanisms [24].

Protocol 2: Rigorous Model Validation for Clinical Trustworthiness

This protocol is essential for demonstrating that a model will perform reliably in real-world settings [27] [26].

- Internal Validation: Use k-fold cross-validation (e.g., k=5 or 10) on the training dataset to get a robust estimate of model performance and minimize overfitting.

- External Validation: Apply the final model, trained on the entire original dataset, to a completely independent cohort. This is the gold standard for assessing generalizability [26].

- Performance Metrics and Reporting: Report a comprehensive set of metrics on both internal and external validation sets. For classification, this must include sensitivity, specificity, AUC-ROC, and a calibration plot [26]. All data sources, preprocessing steps, and model parameters must be thoroughly documented.

Workflow and Relationship Diagrams

ML Model Validation Workflow

Pillars of a Trustworthy ML System

The Scientist's Toolkit: Research Reagent Solutions

The table below lists key computational and data resources essential for reproducible machine learning in biomarker discovery.

| Item | Function / Application |

|---|---|

| The Cancer Genome Atlas (TCGA) | A comprehensive public database containing genomic, epigenomic, transcriptomic, and proteomic data from over 11,000 patients across 33 cancer types. Serves as a primary source for biomarker discovery and model training [24]. |

| Python Notebooks (e.g., CTR_XAI) | Pre-built computational workflows, such as the example for Rheumatoid Arthritis transcriptome data, provide accessible starting points for applying ML and Explainable AI (XAI) with minimal coding expertise [24]. |

| SHAP (SHapley Additive exPlanations) | A game theory-based method for explaining the output of any machine learning model. It is critical for interpreting "black box" models and identifying which features contributed most to a prediction [24] [27]. |

| Stacked Autoencoder (SAE) | A type of deep learning network used for unsupervised feature learning and dimensionality reduction. It can extract robust, high-level features from complex input data, which can then be used for classification tasks [30]. |

| Particle Swarm Optimization (PSO) | An evolutionary computation technique used for hyperparameter optimization. It helps efficiently find the best model parameters without relying on gradient-based methods, improving model performance and stability [30]. |

Beyond the Hype: Building Methodologically Sound ML Pipelines for Biomarkers

In the field of biomarker discovery, the machine learning reproducibility crisis presents a significant barrier to scientific progress and clinical application. Studies reveal an alarming reality; across 17 different scientific fields where machine learning has been applied, at least 294 scientific papers were affected by data leakage, leading to overly optimistic performance reports [31]. In cancer biology, only 6 out of 53 published findings could be confirmed—a reproducibility rate approaching an alarmingly low 10% [32].

Data leakage occurs when information from outside the training dataset is used to create the model, creating a deceptive foresight that wouldn't be available during real-world prediction [31] [33]. This silent killer of predictive models creates an illusion of high competence during testing, but leads to catastrophic failures when models face real-world data [33] [34]. For biomarker researchers and drug development professionals, the consequences extend beyond poor model performance to include wasted resources, biased decision-making, and eroded trust in analytical processes [31].

This technical support center provides a comprehensive framework for identifying, troubleshooting, and preventing data leakage in biomarker discovery research, offering practical guidance to enhance the reliability and reproducibility of your machine learning workflows.

Troubleshooting Guide: Identifying and Resolving Data Leakage

Common Data Leakage Errors

| Error Type | Description | Impact on Biomarker Performance |

|---|---|---|

| Target Leakage | Inclusion of data that would not be available at prediction time [31]. | Model learns spurious correlations; fails clinical validation [33]. |

| Train-Test Contamination | Improper splitting or preprocessing that mixes training and validation data [31]. | Artificially inflated accuracy; poor generalization to new patient data [35]. |

| Temporal Leakage | Using future information to predict past events in time-series data [34]. | Inaccurate prognostic biomarkers; failed clinical deployment [34]. |

| Preprocessing Leakage | Applying scaling, imputation, or normalization before data splitting [31] [35]. | Optimistic performance estimates; irreproducible biomarker signatures [35]. |

| Feature Selection Leakage | Using entire dataset (including test set) for feature selection [31]. | Biased feature importance; non-generalizable biomarker panels [9]. |

Step-by-Step Diagnostic Protocol

Experiment 1: Temporal Integrity Validation

- Objective: Detect temporal leakage in longitudinal biomarker studies.

- Methodology: Implement time-aware cross-validation using rolling windows [34].

- Protocol:

- Split data chronologically (e.g., use first 70% of timeline for training, remaining 30% for testing)

- Compare model performance between random split and time-aware split

- Calculate performance gap: ΔAUC = AUC(random) - AUC(temporal)

- Interpretation: ΔAUC > 0.05 indicates significant temporal leakage [34]

Experiment 2: Residual Analysis for Leakage Detection

- Objective: Identify leakage through analysis of prediction errors.

- Methodology: Examine patterns in residuals (differences between predicted and actual values) [34].

- Protocol:

- Compute residuals on proper temporal hold-out set

- Generate autocorrelation function (ACF) plot of residuals

- Calculate Durbin-Watson statistic

- Interpretation: Significant autocorrelation (Durbin-Watson ≠ 2) suggests model leveraging leaked information [34]

Experiment 3: Data Provenance Audit

- Objective: Verify that all features would be available at time of prediction.

- Methodology: Systematic documentation of feature creation timelines [33].

- Protocol:

- Create feature inventory with creation dates

- Map feature availability to prediction timeline

- Flag features updated after target event

- Interpretation: Any post-prediction features must be removed to prevent target leakage [33]

Quantitative Impact Assessment

Table: Performance Degradation Due to Data Leakage

| Leakage Type | Apparent AUC | Real-World AUC | Performance Drop | Clinical Risk |

|---|---|---|---|---|

| Target Leakage | 0.95-0.98 | 0.50-0.65 | 35-48% | Critical - Misdiagnosis |

| Train-Test Contamination | 0.90-0.94 | 0.65-0.75 | 20-29% | High - False Assurance |

| Temporal Leakage | 0.88-0.92 | 0.60-0.70 | 22-32% | High - Prognostic Failure |

| Preprocessing Leakage | 0.87-0.91 | 0.70-0.78 | 12-21% | Moderate - Resource Waste |

Frequently Asked Questions (FAQs)

What are the most subtle signs of data leakage in biomarker research?

Subtle red flags include:

- Unusually high performance: Accuracy, precision, or recall significantly higher than expected, especially on validation data [31]

- Discrepancies between training and test performance: A large gap indicates potential overfitting due to leakage [31]

- Inconsistent cross-validation results: High variation across folds may signal improper splitting [31]

- Unexpected feature importance: Heavy reliance on features that don't make logical sense for the prediction task [31]

- Perfect correlation with future events: Features that should not be predictive yet show near-perfect correlation [34]

How can we fix data leakage after discovering it in our biomarker pipeline?

Data leakage requires a systematic remediation approach:

- Restart from raw data: Never attempt to fix a leaked dataset; return to the original source [35]

- Implement strict temporal segregation: For longitudinal studies, ensure all training data precedes test data chronologically [34]

- Use scikit-learn pipelines: Bundle preprocessing with model training to prevent test set contamination [35]

- Re-engineer problematic features: Remove or transform features that incorporate future information [33]

- Re-evaluate with proper validation: Use time-series cross-validation or chronological hold-out sets [34]

What proactive strategies prevent data leakage in multi-site biomarker studies?

Prevention requires both technical and procedural safeguards:

- Protocol standardization: Establish and document consistent sample collection, processing, and analysis protocols across all sites [36]

- Centralized preprocessing: Process all raw data through a unified pipeline fitted only on training data [35]

- Feature documentation: Maintain complete metadata including creation dates and availability timelines for all variables [33]

- Blinded analysis: Keep individuals who generate biomarker data from knowing clinical outcomes to prevent bias [9]

- Automated quality control: Implement systems like the Omni LH 96 automated homogenizer to reduce manual processing variability [36]

How much does data leakage actually impact biomarker reproducibility?

The impact is severe and quantifiable:

- Reduced reproducibility: One study found only a very small overlap between biomarkers proposed in pairs of related studies exploring the same phenotypes [32]

- Resource wastage: Fixing data leakage requires retraining models from scratch, which is computationally expensive and resource-intensive [31]

- Clinical trial failures: Biomarkers that appear promising during discovery often fail during validation due to leakage-induced optimism [32] [9]

- Economic costs: In clinical settings, specimen mislabeling alone carries an average additional cost of $712 USD per incident [36]

Experimental Workflows and Signaling Pathways

Proper Data Handling Workflow

Proper Data Handling Workflow

Data Leakage Detection Protocol

Data Leakage Detection Protocol

The Scientist's Toolkit: Essential Research Reagents and Solutions

Research Reagent Solutions for Leakage-Free Biomarker Research

| Tool/Solution | Function | Application in Preventing Data Leakage |

|---|---|---|

| Omni LH 96 Automated Homogenizer | Standardizes sample preparation across multiple sites | Reduces manual processing variability and batch effects that can introduce leakage [36] |

| scikit-learn Pipelines | Bundles preprocessing with model training | Ensures preprocessing steps are fitted only on training data [35] |

| Time-Series Cross-Validation | Chronological splitting for longitudinal data | Prevents future information leakage in prognostic biomarker studies [34] |

| Data Shapley Framework | Quantifies contribution of individual data points | Identifies influential training points that may indicate leakage [37] |

| Confident Learning (cleanlab) | Estimates uncertainty in dataset labels | Detects and handles label errors that can lead to misleading performance [37] |

| Standardized SOPs with Barcoding | Consistent sample tracking and processing | Reduces mislabeling and preprocessing inconsistencies [36] |

Frequently Asked Questions (FAQs) on Reproducibility

Q1: What is the core of the reproducibility crisis in machine learning-based biomarker discovery?

The crisis stems from the frequent failure of findings from one experimental study to be reproduced in subsequent studies. This is often due to the use of overly complex, black-box models that are highly sensitive to small changes in their initial conditions (random seeds), data splits, or hyperparameters. This sensitivity leads to significant variations in both the model's predictive performance and the features it identifies as important, making the results unreliable and not generalizable [38] [39].

Q2: How do 'Interpretable AI' and 'Explainable AI' (XAI) differ in addressing model transparency?

- Interpretable AI (e.g., Symbolic AI): Uses simpler, transparent models (like decision trees or rule-based systems) whose internal logic can be directly understood and audited by humans. There is no need for a separate explanation; the model itself is the explanation [40].

- Explainable AI (XAI): Attempts to explain the decisions of complex, opaque black-box models (like deep neural networks) after the fact using secondary methods. These explanations are approximations and can themselves be complex and difficult to trust [41] [40].

Q3: Why are black-box models particularly problematic for clinical biomarker research?

Trusting a black-box model means trusting not only its internal equations but also the entire database it was built from. In high-stakes fields like medicine and finance, this opacity creates unacceptable legal, regulatory, and safety risks. Furthermore, if a model cannot be understood, researchers cannot fully validate its reasoning or be sure it has learned biologically meaningful patterns rather than spurious correlations in the data [40].

Q4: What practical steps can I take to improve the reproducibility of my ML models?

- Stabilize Feature Importance: Use validation techniques that involve repeated trials with different random seeds to identify the most consistently important features.

- Prevent Data Leakage: Ensure no information from the test set leaks into the training process.

- Manage Data wisely: Thoroughly understand your data's limitations, use appropriate validation and test sets, and be cautious with data augmentation.

- Prioritize Simplicity: Consider whether a simpler, interpretable model can achieve comparable performance to a complex one.

- Publish Code and Data: Make research artifacts openly available to allow for exact reproduction of results [38].

Q5: What is the bias-variance tradeoff and how does it relate to model complexity?

The bias-variance tradeoff is a fundamental concept that describes the balance between a model's tendency to oversimplify a problem (high bias) and its sensitivity to random noise in the training data (high variance) [43]. This is directly related to model complexity and the reproducibility crisis:

| Model Type | Bias | Variance | Effect on Reproducibility |

|---|---|---|---|

| Overly Simple (High-Bias) | High | Low | Systematic Error: Consistently misses patterns, leading to poor but stable (repeatable) inaccurate results. |

| Overly Complex (High-Variance) | Low | High | Irreproducible Error: Fits to noise in the training set. Performance fluctuates wildly on new data or with different seeds, causing irreproducible findings [39]. |

| Balanced Model | Balanced | Balanced | Optimal Generalization: Captures true underlying patterns while ignoring noise, leading to reproducible and reliable results. |

Troubleshooting Guides for Common Experimental Pitfalls

Problem 1: Unstable Feature Importance

Symptoms: The top-ranked biomarkers or features change dramatically when the model is retrained with a different random seed or data split.

Solution: Implement a Repeated-Trial Validation Approach This methodology stabilizes feature importance and performance by aggregating results across many random initializations [39].

Experimental Protocol:

- For each subject in your dataset, run the model training and evaluation for a large number of trials (e.g., 400).

- Between each trial, re-initialize the model with a new random seed.

- In each trial, record the feature importance rankings.

- Aggregate the results: For each subject, identify the set of features that are consistently important across all trials. This is the subject-specific feature importance.

- Across all subjects, aggregate these subject-specific sets to determine the group-level feature importance.

This process filters out noise and reveals the features that are robustly linked to the outcome.

Visual Guide to Stabilizing Feature Importance:

Problem 2: Data Leakage

Symptoms: The model performs exceptionally well during training and validation but fails catastrophically when deployed on truly new data or in a clinical trial.

Solution: Rigorous Data Separation Ensure that information from the test set never influences any part of the training process [42].

Experimental Protocol:

- Split Data Early: Partition your data into training, validation, and test sets before any exploratory data analysis or preprocessing.

- Fit Preprocessing on Training Data Only: Calculate parameters for steps like feature scaling and imputation using only the training set. Then apply these same parameters to the validation and test sets.

- Avoid Peeking: Do not use the test set to make modeling decisions, such as selecting features or choosing a model architecture. The test set should only be used for the final, unbiased evaluation of a fully-developed model.

Problem 3: Poor Model Generalization

Symptoms: The model works on data from one cohort or imaging center but fails on data from another.

Solution: Challenge Your Model with Appropriate Tests

- Use Multiple Test Sets: If possible, test your model on data from different sources (e.g., different clinical sites, patient populations, or imaging protocols) to ensure robustness [42].

- Consult Domain Experts: Collaborate with biologists and clinicians to check that the model's predictions and identified features are biologically plausible. This can reveal when a model has learned irrelevant technical artifacts instead of true biomarkers [42].

The Scientist's Toolkit: Essential Research Reagents & Materials

The following table details key components for building reproducible and interpretable ML workflows in biomarker discovery.

| Item / Solution | Function / Explanation |

|---|---|

| Interpretable Model (e.g., Decision Tree, Rule-Based) | Provides direct transparency into decision-making, allowing researchers to audit the logic behind a prediction. Crucial for validating biological plausibility [40]. |

| Repeated-Trial Validation Framework | A script/protocol to run the model hundreds of times with varying random seeds. Used to stabilize performance metrics and feature importance rankings, directly combating irreproducibility [39]. |

| Stratified Data Splits | Pre-defined partitions of the dataset (training/validation/test) that preserve the distribution of the target variable (e.g., disease cases vs. controls). Prevents bias and data leakage [42]. |

| Domain Expert Validation Protocol | A checklist or procedure for a domain expert (e.g., a biologist) to qualitatively assess whether the model's top features and predictions make sense in the context of existing knowledge [42]. |

| SHAP (SHapley Additive exPlanations) | A popular XAI method used to explain the output of any black-box model. It attributes the prediction to each input feature. Use with caution, as it is a post-hoc approximation [41] [40]. |

Core Experimental Protocol: Stabilizing Biomarker Discovery

Title: A Repeated-Trial Validation Approach for Robust and Interpretable Biomarker Identification.

Hypothesis: Aggregating feature importance rankings across many model instances, initialized with different random seeds, will yield a stable, reproducible set of biomarkers superior to those from a single model run.

Workflow Diagram:

Methodology:

- Data Preparation: Obtain and clean your dataset. Perform stratified splitting to create a hold-out test set (e.g., 20% of data). Do not use this test set for any further steps until the final evaluation [42].

- Repeated-Trial Loop: On the remaining 80% (training set), initiate a loop for a large number of trials (e.g., 400). In each trial [39]: a. Seed: Initialize the ML algorithm (e.g., Random Forest) with a new random seed. b. Validate: Perform nested cross-validation to train the model and tune hyperparameters. c. Extract: Record the feature importance rankings from the trained model.

- Aggregation: After all trials, aggregate the feature importance rankings. Calculate the frequency or median importance of each feature across all trials. Select the top-K most stable features to form your robust biomarker signature.

- Final Evaluation: Train a final model on the entire training set using only the selected robust biomarkers. Evaluate this model's performance exactly once on the held-out test set to obtain an unbiased estimate of its generalizability [42].

Expected Outcome: This protocol produces a shortlist of biomarkers whose importance is consistent and reproducible across many model initializations, significantly increasing confidence in their biological and clinical relevance.

Embedding Explainable AI (XAI) as a Non-Negotiable Requirement for Clinical Trust

This technical support center addresses the critical intersection of Explainable AI (XAI), the machine learning reproducibility crisis, and biomarker discovery research. In high-stakes clinical and translational research, the inability to reproduce AI model findings or understand their decision-making process erodes trust and hinders adoption [38] [39]. XAI is not merely a convenience but a foundational requirement for establishing the credibility, reliability, and clinical trust necessary for AI-driven biomarkers to progress from research to patient impact [44] [25]. The following guides and FAQs are designed for researchers, scientists, and drug development professionals navigating these challenges.

Troubleshooting Guides & FAQs

Q1: Our AI biomarker model shows high performance internally but fails to generalize in an external validation cohort. Could explainability methods help diagnose the issue? A: Yes. This is a classic sign of the reproducibility/generalizability crisis, often caused by the model learning spurious correlations or dataset-specific artifacts rather than genuine biological signals [38] [39]. XAI techniques like saliency maps (Grad-CAM) or feature attribution (SHAP) can be used diagnostically.

- Actionable Protocol: Apply a post-hoc XAI method (e.g., SHAP) to your model's predictions on both the internal (training) and external (validation) datasets. Compare the top contributing features for each. If the explanations differ drastically—for instance, if the model relies on imaging background artifacts or a specific lab instrument's batch effect internally—you have identified a shortcut learning problem [45]. This insight directs you to improve data curation, apply stronger augmentation, or use domain adaptation techniques.

Q2: We provided model explanations to clinician partners, but their trust and performance did not improve uniformly. Some even performed worse. How should we troubleshoot this? A: This mirrors recent findings where the impact of explanations varied significantly across clinicians, with some showing reduced performance [46]. Variability is a key human factor, not necessarily a technical flaw.

- Diagnosis Checklist:

- Explanation Usefulness Mismatch: Collect post-hoc feedback. A strong negative correlation has been observed between a clinician's perceived helpfulness of explanations and their change in performance (Mean Absolute Error) [46]. Survey your users.

- Inappropriate Reliance: Define and measure "appropriate reliance" [46]. Is the clinician correctly agreeing with the model when it is right and disagreeing when it is wrong? Your troubleshooting should analyze cases of over-reliance (following an incorrect model) and under-reliance (rejecting a correct model).

- Explanation Fidelity & Format: Ensure your explanations faithfully represent the model's reasoning. Furthermore, prototype-based explanations (e.g., "this case looks like this known prototype") may be more intuitive for some tasks than heatmaps [46]. Consider A/B testing explanation formats.

Q3: Our team gets different important features each time we retrain the same ML model on the same biomarker data, harming reproducibility. How can we stabilize this? A: This is a direct consequence of stochastic processes in model training (e.g., random weight initialization, random seeds) [39]. A novel validation approach can stabilize feature importance.

- Stabilization Protocol [39]:

- For a given subject/dataset, run your training pipeline N times (e.g., 400 trials), each with a different random seed.

- For each trial, record the model's performance and its feature importance rankings (e.g., using Gini importance for Random Forest, SHAP values).

- Aggregate the feature importance rankings across all N trials. The features that consistently rank high are the stable, reproducible set for that subject (subject-specific) or for the entire cohort (group-specific).

- Use this aggregated feature set for your final biological interpretation. This method decouples robust signal from random noise introduced by training stochasticity.

Q4: We are preparing a regulatory submission for an AI-based diagnostic biomarker. What are the key XAI-related requirements we must address? A: Regulatory bodies (FDA, EMA) and frameworks like GDPR emphasize transparency and the "right to explanation" [44] [47]. Your technical documentation must go beyond accuracy metrics.

- Required Evidence Dossier:

- Validation of Explanation Fidelity: Provide evidence that your chosen XAI method (e.g., LIME, attention maps) accurately reflects the model's decision process, not just creates plausible-looking outputs [44] [45].

- Human Factors Evaluation: Include results from a reader study with target end-users (e.g., pathologists, radiologists) showing the impact of explanations on appropriate reliance and decision-making, similar to a 3-stage design [46].

- Failure Mode Analysis: Document how explanations help identify and mitigate model biases (e.g., spurious correlations with demographic or acquisition variables) [45].

- Standardized Reporting: Adhere to emerging guidelines for reproducible and interpretable ML research in healthcare [38].

Detailed Experimental Protocols

Protocol 1: Three-Stage Reader Study to Evaluate XAI Impact on Clinical Decision-Making Objective: To empirically measure the effect of AI predictions and subsequent explanations on clinician trust, reliance, and performance [46]. Design:

- Stage 1 (Baseline): Clinicians (e.g., sonographers) review a set of medical images (e.g., fetal ultrasound) and provide their estimates (e.g., gestational age) without any AI assistance. This establishes their native performance (Mean Absolute Error - MAE).

- Stage 2 (AI Prediction Only): Clinicians review a new, matched set of images. This time, the AI model's prediction (e.g., estimated gestational age in days) is displayed alongside the image. They provide their final estimate, which may or may not incorporate the AI suggestion.

- Stage 3 (AI Prediction + Explanation): Clinicians review a third set of images. The AI prediction is displayed along with an explanation (e.g., a saliency heatmap or a prototype similarity image). They again provide their final estimate. Key Metrics Calculated Per Stage:

- Performance: MAE compared to ground truth.

- Reliance: The shift in the clinician's estimate toward the AI's prediction.

- Appropriate Reliance: Categorization of each decision into: Appropriate (relying on a better AI or rejecting a worse AI), Over-reliance (relying on a worse AI), or Under-reliance (rejecting a better AI) [46].

- Trust & Usefulness: Via post-stage questionnaires using Likert scales.

Protocol 2: Repeated-Trials Validation for Reproducible Feature Importance Objective: To obtain stable, reproducible biomarker rankings from a stochastic ML model [39]. Workflow:

- Fix your dataset and its train/validation/test split.

- Define your ML model (e.g., Random Forest, Neural Network) and hyperparameter ranges.

- For

Ttrials (e.g., T=400):- Set a unique random seed for the trial.

- Train the model from scratch.

- Evaluate its performance on the held-out test set.

- Extract feature importance scores (e.g., using SHAP, permutation importance) for the test set.

- Aggregation:

- For group-level importance: Pool feature importance scores from all trials across all subjects. Rank features by their median or mean importance across the

Ttrials. - For subject-level importance: For each individual subject in the test set, aggregate the feature importance scores across all

Ttrials where that subject was predicted. Rank features by their median importance for that specific subject.