Technical Variance Correction in Multi-Source Data Integration: Strategies for Robust Biomedical Discovery

Integrating multi-source data is essential for powerful biomedical analyses, but it introduces technical variances and batch effects that can compromise data integrity and lead to misleading conclusions.

Technical Variance Correction in Multi-Source Data Integration: Strategies for Robust Biomedical Discovery

Abstract

Integrating multi-source data is essential for powerful biomedical analyses, but it introduces technical variances and batch effects that can compromise data integrity and lead to misleading conclusions. This article provides a comprehensive framework for researchers and drug development professionals to navigate the challenges of technical variance correction. We explore the foundational concepts and profound impact of batch effects, detail current methodologies and algorithms for their mitigation, and present advanced troubleshooting strategies for complex, real-world scenarios. The guide concludes with a comparative analysis of validation frameworks and performance metrics, offering practical insights for achieving reliable, reproducible data integration in omics studies and clinical research.

Understanding Batch Effects: The Hidden Challenge in Multi-Source Data

Defining Technical Variance and Batch Effects in Omics Data

Frequently Asked Questions (FAQs)

FAQ 1: What is the fundamental difference between technical variance and batch effects?

- Technical Variance: Refers to the noise or uncertainty inherent in the measurement process of individual biological samples. This is often assessed by measuring the same sample multiple times (technical replicates). High technical variance can obscure true biological signals [1].

- Batch Effects: Are larger-scale technical variations that are systematically introduced when samples are processed in different groups (batches). These batches can be defined by different times, laboratory personnel, reagent lots, sequencing machines, or analysis pipelines. Batch effects can confound biological factors of interest and lead to misleading conclusions if not properly addressed [2] [3] [4].

FAQ 2: What are the real-world consequences of uncorrected batch effects?

The impact of batch effects is profound and can extend beyond the laboratory:

- Incorrect Conclusions: Batch effects have been known to cause shifts in patient risk calculations, leading to incorrect treatment decisions. In one case, this resulted in 162 patients being misclassified, with 28 receiving incorrect or unnecessary chemotherapy [2] [3].

- Irreproducibility: Batch effects are a paramount factor contributing to the "reproducibility crisis" in science. They have led to the retraction of high-profile articles when key results could not be reproduced after a change in reagent batches [2] [3].

- Spurious Findings: In differential expression analysis, batch-correlated features can be erroneously identified as significant, especially when batch and biological outcomes are correlated [2] [3].

FAQ 3: Can I correct for batch effects if my study design is unbalanced or confounded?

This is one of the most challenging scenarios. In a balanced design, where biological groups are evenly represented across batches, many correction algorithms (e.g., ComBat, Harmony) can be effective [5] [6]. However, in a confounded design, where a biological group is completely processed in a single batch, it becomes nearly impossible for most algorithms to distinguish technical variation from true biological signal. In such cases, correction may remove the biological effect of interest [6] [4].

- Recommended Solution: The most effective strategy for confounded designs is a ratio-based approach. This involves concurrently profiling one or more common reference materials (e.g., standardized control samples) in every batch. Study sample values are then scaled relative to the reference, effectively canceling out batch-specific technical noise [6].

FAQ 4: How can I visualize complex omics data with multiple values per node on a network?

Traditional network visualization tools like Cytoscape typically allow only one data row per node. The Omics Visualizer app for Cytoscape was designed to overcome this limitation. It allows you to import data tables with multiple rows for the same gene or protein (e.g., different post-translational modification sites or conditions) and visualize them on networks using pie or donut charts directly on the nodes [7].

Troubleshooting Guides

Guide 1: Diagnosing and Mitigating Technical Variance

Problem: High technical variance across replicate measurements is obscuring biological signals in differential expression analysis.

Investigation & Solution:

- Step 1: Quantify Replicate Variance. Instead of simply averaging technical replicates, use methods that incorporate their variance into downstream statistics. The information on measurement uncertainty is often lost in averaging but can be highly informative [1].

- Step 2: Apply Variance-Exploiting Statistics. Use tools like RepExplore, which employs the Probability of Positive Log Ratio (PPLR) statistic. PPLR uses a variational Expectation-Maximization algorithm to model both point estimates and variation across replicates, providing a more robust ranking of differentially expressed biomolecules compared to standard methods like the empirical Bayes moderated t-statistic (eBayes) [1].

- Step 3: Visualize to Validate. Generate whisker plots for top-ranked biomolecules. A reliable differential expression signal should show minimal overlap in the value ranges of technical replicates across sample groups [1].

Guide 2: A Step-by-Step Protocol for Batch Effect Correction Using a Ratio-Based Approach

Objective: To effectively remove batch effects in a large-scale multi-omics study, even in confounded scenarios.

Experimental Workflow:

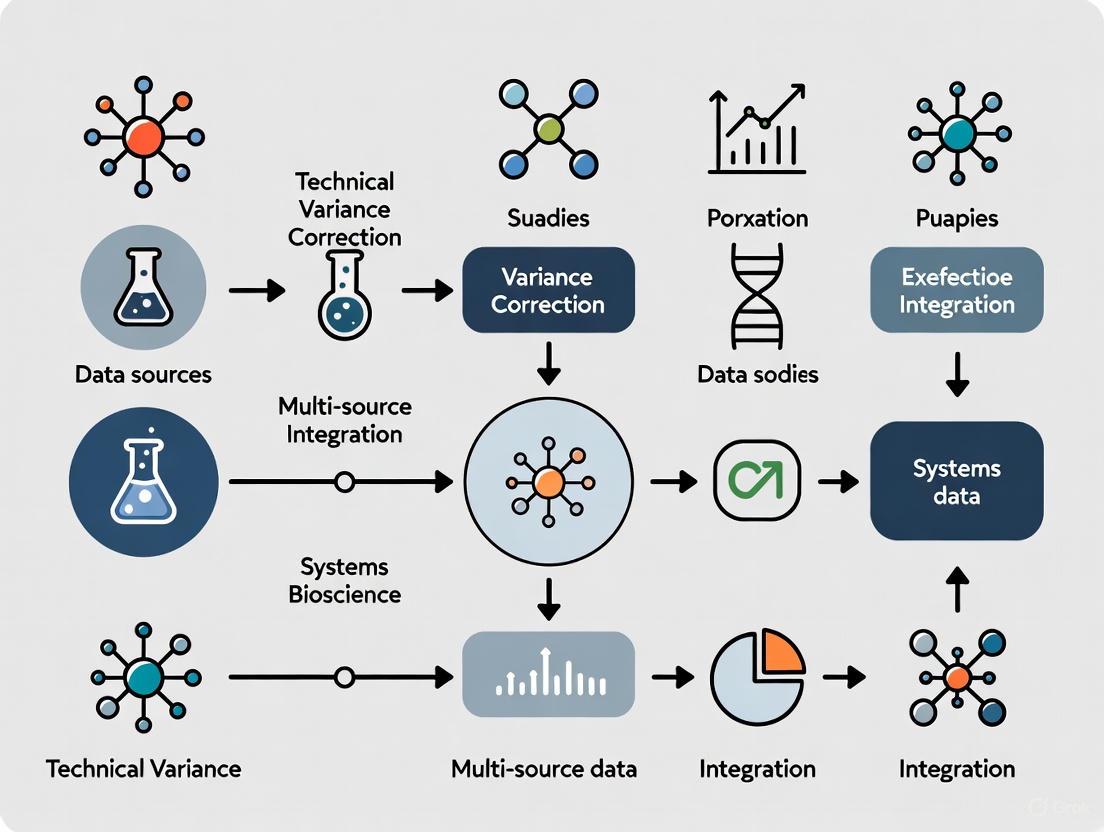

Diagram: Ratio-Based Batch Correction Workflow

Methodology:

- Select Reference Material: Choose well-characterized, stable reference materials. In the Quartet Project, suites of multiomics reference materials (DNA, RNA, protein, metabolite) are derived from the same B-lymphoblastoid cell lines, providing a gold standard for cross-batch comparability [6].

- Concurrent Profiling: In every batch of your experiment (whether defined by time, lab, or platform), profile your study samples alongside aliquots of the same reference material.

- Data Generation: Generate your omics data (transcriptomics, proteomics, metabolomics) for all samples and references as usual.

- Ratio Calculation: For each feature (e.g., gene, protein) in each study sample, transform the absolute intensity value (

I_study) into a ratio relative to the value of the reference material (I_reference). This can be simply:Ratio = I_study / I_reference. This scaling step effectively normalizes out batch-specific fluctuations [6]. - Downstream Analysis: Use the ratio-scaled data for all integrated analyses, such as identifying differentially expressed features, building predictive models, or sample clustering. This dataset is now corrected for batch effects.

Guide 3: Preparing Data for Batch Effect Analysis in Bioinformatics Platforms

Problem: Errors occur when uploading omics data into analysis platforms (e.g., Omics Playground) for batch correction.

Solution: Adhere to strict formatting rules:

- File Format: Provide data as comma-separated values (CSV) files [8].

- Expression Matrix (

counts.csv): - Sample Information Matrix (

samples.csv):- The first column contains sample names that exactly match the expression matrix.

- Subsequent columns define phenotypes and batch groups. Do not use purely numerical values for phenotypes (e.g., use "Age_Group" instead of "50"); the platform requires discrete ranges with at least one alphabetical character [8].

Research Reagent Solutions

Table: Essential Reagents and Resources for Managing Technical Variance and Batch Effects

| Item Name | Function/Description | Application Context |

|---|---|---|

| Quartet Reference Materials | Matched DNA, RNA, protein, and metabolite materials derived from four related cell lines. Serves as a multi-omics benchmark for cross-batch and cross-platform normalization [6]. | Large-scale multi-omics studies, quality control, and batch effect correction using the ratio-based method. |

| Common Reference Sample | A standardized control sample (can be commercial or lab-generated) included in every processing batch. Enables ratio-based scaling to correct for inter-batch variation [6]. | Any omics study design where samples are processed in multiple batches. Critical for confounded study designs. |

| RepExplore Web Service | A tool that uses technical replicate variance to compute more reliable differential expression statistics (PPLR), rather than discarding this information through averaging [1]. | Analyzing proteomics and metabolomics datasets with technical replicates to improve statistical robustness. |

| Omics Visualizer App | A Cytoscape app that allows visualization of complex omics data (e.g., multiple PTM sites per protein) on biological networks using pie or donut charts [7]. | Network biology and pathway analysis when data has multiple measurements per biological entity. |

Performance Metrics for Batch Effect Correction

Table: Quantitative Metrics for Evaluating Batch Effect Correction Algorithms (BECAs)

| Performance Metric | What It Measures | Interpretation |

|---|---|---|

| Signal-to-Noise Ratio (SNR) | The ability of the method to separate biological groups after correction [6]. | A higher SNR indicates better preservation of biological signal while removing technical noise. |

| Differentially Expressed Feature (DEF) Accuracy | The accuracy in identifying true positive and true negative DEFs between biological conditions [6]. | Assesses whether correction improves the reliability of downstream differential analysis. |

| Predictive Model Robustness | The performance and stability of predictive models (e.g., classifiers) built on the corrected data [6]. | Indicates the practical utility of the corrected data for building reproducible biomarkers. |

| Clustering Accuracy | The ability to accurately cluster cross-batch samples by their true biological origin (e.g., donor) rather than by batch [6]. | A direct measure of successful data integration and batch effect removal. |

Integrating data from different laboratories, experiments, or omics platforms is fundamental to modern biological research and drug development. However, this process is plagued by technical variance—unwanted systematic variations introduced by differing experimental conditions—which can lead to irreproducible findings and misleading scientific conclusions. This technical support article outlines the sources of this variance and provides tested methodologies for its correction, enabling more reliable data integration and meta-analysis.

FAQs on Technical Variance and Data Integration

1. What is the greatest source of technical variance in experimental data? Evidence from a multisite assessment study using identical protocols and reagents revealed that the most significant source of technical variability occurs between different laboratories. In high-content cell phenotyping experiments, lab-to-lab variability was a greater source of error than variability between persons, experiments, or technical replicates within the same lab [9].

2. Can't we just combine datasets from different labs directly? No, direct meta-analysis of primary data from different laboratories often provides low value due to strong batch effects [9]. However, this variability can be markedly improved through batch effect removal strategies, which make the data suitable for combined analysis [9].

3. What is a more reliable alternative to "absolute" feature quantification? Research from the Quartet Project for multi-omics integration has identified absolute feature quantification as a root cause of irreproducibility. They advocate for a paradigm shift to a ratio-based profiling approach, where the feature values of a study sample are scaled relative to those of a concurrently measured common reference sample. This method produces data that is more reproducible and comparable across batches, labs, and platforms [10].

4. What are the main strategies for integrating diverse data sources? The two primary architectural strategies are ETL (Extract, Transform, Load) and ELT (Extract, Load, Transform). ETL involves transforming data into a clean, structured format before loading it into a destination and is ideal for structured data and compliance-heavy workflows. ELT loads raw data directly into a powerful destination (like a cloud data warehouse) where transformation occurs later; this is better for large, messy datasets and offers more flexibility [11] [12].

Troubleshooting Guides

Problem 1: Inability to Reproduce Findings from Another Laboratory

Symptoms: Statistical significance is lost when analysis is repeated on data generated in your lab, or clustering of samples is inconsistent.

Solution: Implement a Common Reference & Ratio-Based Scaling

- Acquire Common Reference Materials: Use publicly available and well-characterized multi-omics reference materials, such as those from the Quartet Project, which provide DNA, RNA, protein, and metabolites from the same source with built-in biological truths [10].

- Concurrent Measurement: In every batch of experiments, measure the common reference sample alongside your study samples.

- Calculate Ratios: Derive your final quantitative values by scaling the absolute feature values of your study samples relative to those of the common reference sample on a feature-by-feature basis [10]. This corrects for inter-batch and inter-lab variation.

Problem 2: Poor Data Integration Leading to Incorrect Sample Grouping

Symptoms: Multi-omics data fails to cluster samples correctly according to known biological groups; principal component analysis (PCA) shows strong separation by batch instead of phenotype.

Solution: A Combined Wet-Lab and Computational Batch Correction Workflow

- Standardize Procedures: Before integration, minimize initial variance by using standardized protocols and key reagents across all data generation sites [9].

- Quantify Variance Sources: Use a Linear Mixed Effects (LME) model to quantify the variability at each hierarchical level (e.g., cell, replicate, experiment, person, lab). This helps identify the biggest sources of noise [9].

- Apply Batch Effect Removal: Apply computational batch-effect correction algorithms (e.g., ComBat, limma) to the data, using the batch identifier (e.g., Lab ID) as a covariate. This step is crucial for enabling reliable meta-analyses of image-based or omics datasets from different sources [9].

Key Experimental Protocols

Objective: To systematically quantify biological and technical variability in a nested experimental design.

Methodology:

- Nested Design: A minimum of three independent laboratories participate. At each lab, three different operators perform three independent experiments. Each experiment includes control and perturbation conditions (e.g., ROCK inhibitor), with three technical replicates per condition.

- Standardization: All labs receive an identical, detailed protocol, the same cell line (e.g., HT1080 fibrosarcoma), and all key reagents.

- Data Generation: Acquire time-lapse images (e.g., 5-min intervals for 6 hours) for all conditions.

- Centralized Processing: Transfer all raw images to a single location for uniform segmentation and feature extraction (e.g., using CellProfiler and custom Matlab scripts) to extract variables describing cell morphology and dynamics.

- Statistical Analysis: Apply a Linear Mixed Effects (LME) model with a hierarchical structure of nested random intercepts to partition the total variance for each measured variable into components from the different levels (temporal, cell, technical replicate, experiment, person, laboratory).

Objective: To generate reproducible and comparable multi-omics data suitable for integration across batches and platforms.

Methodology:

- Material Selection: Obtain suites of multi-omics reference materials (DNA, RNA, protein, metabolites) derived from the same source, such as the immortalized cell lines from the Quartet Project.

- Experimental Design: In each measurement batch (e.g., for LC-MS/MS proteomics or RNA-seq), include the designated common reference sample (e.g., sample D6 from the Quartet) alongside the study samples.

- Absolute Quantification: Perform standard absolute quantification of all features (e.g., protein or gene expression levels) for all samples, including the common reference.

- Ratio Calculation: For each feature in every study sample, calculate a ratio by dividing its absolute value by the corresponding absolute value in the common reference sample. This creates a new, normalized dataset.

- Quality Control: Use built-in truths (e.g., Mendelian relationships in the Quartet family) and metrics like Signal-to-Noise Ratio (SNR) to evaluate the proficiency of data generation and integration.

Table 1: Sources of Technical Variability in High-Content Imaging [9]

| Source of Variability | Relative Contribution | Impact on Meta-Analysis |

|---|---|---|

| Between Laboratories | Major Source | Prevents direct meta-analysis without correction |

| Between Persons | Lower than lab-to-lab | Contributes to overall technical noise |

| Between Experiments | Lower than person-to-person | Contributes to overall technical noise |

| Between Technical Replicates | Lowest | Contributes to overall technical noise |

Table 2: Quartet Project QC Metrics for Multi-Omics Integration [10]

| QC Metric | Application | Purpose |

|---|---|---|

| Mendelian Concordance Rate | Genomic Variant Calling | Proficiency testing for DNA sequencing |

| Signal-to-Noise Ratio (SNR) | Quantitative Omics Profiling | Evaluate measurement precision for RNA, protein, metabolites |

| Sample Classification Accuracy | Vertical Integration | Assess ability to correctly cluster samples based on all omics data |

| Central Dogma Validation | Vertical Integration | Assess ability to identify correct DNA->RNA->Protein relationships |

The Scientist's Toolkit: Key Research Reagent Solutions

Table 3: Essential Materials for Technical Variance Correction

| Item | Function | Example |

|---|---|---|

| Common Reference Materials | Provides a stable benchmark across experiments and labs to enable ratio-based profiling and cross-lab standardization. | Quartet Project multi-omics reference materials (DNA, RNA, protein, metabolites) [10]. |

| Standardized Cell Line | Minimizes biological variance at the source in cell-based assays, allowing technical variance to be isolated and measured. | HT1080 fibrosarcoma cells stably expressing fluorescent markers [9]. |

| Detailed Common Protocol | Reduces operator-induced variability by ensuring all participants follow the same precise steps for sample preparation and data acquisition. | A shared, detailed protocol distributed to all participating laboratories [9]. |

| Batch Effect Correction Algorithms | Computational tools that remove unwanted systematic variation associated with different batches or labs, making datasets combinable. | Tools like ComBat, limma, or other normalization methods. |

| Centralized Data Processing Pipeline | Eliminates variance introduced by different analysis methods; ensures all data is processed identically. | Uniform CellProfiler pipeline and Matlab scripts run by a single lab [9]. |

Batch effects are systematic technical variations introduced during the collection and processing of high-throughput data, which are unrelated to the biological objectives of a study. These unwanted variations can arise at virtually every stage of an experiment, from initial study design to sample preparation and data analysis [3] [2]. In the context of multi-source data integration research, identifying and mitigating batch effects is not merely a preprocessing step but a fundamental requirement for ensuring data reliability and reproducibility. The profound negative impact of batch effects includes diluted biological signals, reduced statistical power, and—in the worst cases—misleading or irreproducible findings that can invalidate research conclusions and even affect clinical decisions [3]. This guide details the common sources of batch effects and provides practical troubleshooting advice to help researchers manage these challenges effectively.

What Are Batch Effects and Why Do They Matter?

Batch effects are technical biases that confound data analysis, introduced by differences in machines, experimenters, reagents, processing times, or environmental conditions [13]. In multi-omics studies, these effects are particularly complex because they involve data types measured on different platforms with different distributions and scales [3].

The consequences of uncorrected batch effects are severe. They can:

- Lead to incorrect conclusions, such as falsely identifying genes, proteins, or metabolites as differentially expressed [3].

- Cause irreproducibility, a paramount concern in scientific research, resulting in retracted articles and economic losses [3] [2].

- Skew predictive models, leading to inaccurate drug target identification or incorrect patient diagnoses [13].

The table below summarizes the common sources of batch effects encountered during different phases of a high-throughput study.

Table 1: Common Sources of Batch Effects in Omics Studies

| Stage | Source | Description | Affected Omics Types |

|---|---|---|---|

| Study Design | Flawed or Confounded Design [3] [2] | Samples not randomized; batch variable correlated with biological variable of interest (e.g., all controls processed in one batch). | Common to all |

| Minor Treatment Effect Size [3] [2] | Small biological effect sizes are harder to distinguish from technical variations. | Common to all | |

| Sample Preparation & Storage | Protocol Procedure [3] [2] | Variations in centrifugal force, time, and temperature prior to centrifugation during plasma separation. | Common to all |

| Sample Storage Conditions [3] | Variations in storage temperature, duration, and number of freeze-thaw cycles. | Common to all | |

| Reagent Lot Variability [14] | Using different lots of chemicals, enzymes, or kits with varying purity and efficiency. | Common to all | |

| Data Generation | Sequencing Platform Differences [14] | Using different machines (e.g., Illumina HiSeq vs. NovaSeq) or different calibrations. | Transcriptomics |

| Library Preparation Artifacts [14] | Variations in reverse transcription efficiency, amplification cycles, or personnel. | Bulk & single-cell RNA-seq | |

| Instrument Drift [15] | Changes in instrument performance (e.g., mass spectrometer sensitivity) over time. | Proteomics, Metabolomics |

How to Detect and Diagnose Batch Effects

Before applying any correction, it is crucial to assess whether your data suffers from batch effects.

Visualization Techniques

- Principal Component Analysis (PCA): Plot your data using the top principal components. If samples cluster strongly by batch (e.g., processing date) rather than by biological source, batch effects are likely present [16] [13].

- t-SNE or UMAP: Overlay batch labels on these nonlinear dimensionality reduction plots. Clustering by batch instead of biological condition (e.g., cell type) indicates a batch effect [16].

Table 2: Quantitative Metrics for Batch Effect Assessment

| Metric | What It Measures | Interpretation |

|---|---|---|

| k-nearest neighbor Batch Effect Test (kBET) [17] | Local mixing of batches in the data. | A higher acceptance rate indicates better batch mixing. |

| Average Silhouette Width (ASW) [14] | How similar a sample is to its own batch vs. other batches. | Values closer to 0 indicate good integration; values closer to 1 or -1 indicate strong batch or biological separation. |

| Adjusted Rand Index (ARI) [14] | Similarity between two clusterings (e.g., before and after correction). | Higher values indicate that cell type/biological clusters are preserved post-correction. |

| Local Inverse Simpson's Index (LISI) [14] | Diversity of batches in a local neighborhood. | Higher LISI scores indicate better batch mixing. |

Methodologies for Batch Effect Correction

Choosing the right Batch Effect Correction Algorithm (BECA) is highly context-dependent. The following workflows are commonly used:

Batch Effect Correction Workflow

Selecting a Batch Effect Correction Algorithm

Table 3: Common Batch Effect Correction Algorithms (BECAs) and Their Applications

| Algorithm | Typical Use Case | Key Principle | Strengths | Limitations |

|---|---|---|---|---|

| ComBat [13] [14] | Bulk transcriptomics/proteomics with known batches. | Empirical Bayes framework to adjust for known batch variables. | Simple, widely used, effective for known, additive effects. | Requires known batch info; may not handle complex non-linear effects. |

| limma removeBatchEffect [13] [14] | Bulk transcriptomics with known batches. | Linear modeling to remove batch effects. | Efficient, integrates well with differential expression workflows. | Assumes known, additive batch effects; less flexible. |

| SVA [14] | Bulk transcriptomics with unknown batches. | Estimates hidden sources of variation (surrogate variables). | Useful when batch variables are unknown or partially observed. | Risk of removing biological signal if not carefully modeled. |

| Harmony [16] [18] | Single-cell RNA-seq, multi-omics data integration. | Iterative clustering and correction in a reduced-dimensional space. | Effective for complex datasets, preserves biological variation. | Less scalable for extremely large datasets. |

| scANVI [16] | Single-cell RNA-seq (complex batch effects). | Deep generative model using variational inference. | High performance on complex integrations. | Computationally intensive. |

| RUV [13] | Various omics data with unwanted variation. | Uses control genes/samples or replicate samples to remove unwanted variation. | Flexible, several variants available (e.g., RUV-III-C). | Requires negative controls or replicates. |

Critical Considerations to Avoid Over-Correction

A major risk in batch effect correction is the removal of true biological signal. Watch for these signs of over-correction [16]:

- Distinct cell types are clustered together on a UMAP or t-SNE plot after correction.

- A complete overlap of samples from very different biological conditions, suggesting that meaningful differences have been erased.

- Cluster-specific markers are comprised of genes with widespread high expression (e.g., ribosomal genes) rather than biologically meaningful markers.

Frequently Asked Questions (FAQs)

Q1: I'm integrating multiple single-cell RNA-seq datasets. Which batch correction method should I use first? A: Benchmarking studies suggest starting with Harmony due to its good balance of performance and runtime, or scANVI for top-tier performance if computational resources allow [16]. Always try multiple methods and validate them rigorously, as the best method can be dataset-specific.

Q2: How can I tell if I have over-corrected my data and removed biological signals? A: Check your corrected data for key indicators: distinct cell types that should be separate are now clustered together; samples from drastically different conditions (e.g., healthy vs. diseased) show complete overlap; and your differential expression analysis yields nonspecific marker genes [16]. Always compare pre- and post-correction visualizations and metrics.

Q3: At which level should I correct batch effects in my proteomics data: precursor, peptide, or protein? A: A recent 2025 benchmarking study indicates that protein-level correction is the most robust strategy for mass spectrometry-based proteomics. The process of aggregating precursor/peptide intensities into protein quantities can interact with early-stage correction, making later correction more reliable [15].

Q4: My study design is imbalanced (e.g., different numbers of cells per cell type across batches). How does this affect integration? A: Sample imbalance can substantially impact integration results and their biological interpretation [16]. Standard integration methods may perform poorly. Consult specialized guidelines for imbalanced settings, which may recommend specific tools or parameter adjustments to handle such data structures more effectively.

Q5: Is batch correction always necessary? A: No. First, assess your data using PCA, UMAP, and quantitative metrics. If data from identical biological conditions cluster perfectly together regardless of batch, correction might not be needed. However, if clear batch-driven clustering is observed, correction is essential [16] [14].

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 4: Key Research Reagent Solutions for Batch Effect Mitigation

| Item | Function in Batch Effect Management |

|---|---|

| Universal Reference Materials (e.g., Quartet) [15] | Profiled across all batches and labs to serve as a stable baseline for ratio-based batch correction (e.g., in proteomics). |

| Pooled Quality Control (QC) Samples [15] [14] | A pooled sample run repeatedly across batches to monitor technical variation and instrument drift. |

| Standardized Reagent Lots | Using the same lot number for all critical reagents (enzymes, kits, buffers) throughout a study to minimize a major source of batch variation [14]. |

| Internal Standards (for Metabolomics/Proteomics) | chemically identical, stable isotope-labeled compounds spiked into every sample for signal normalization across runs [14]. |

The Critical Need for Correction in Multi-Omics and Longitudinal Studies

Core Concepts: Understanding Variance and Integration

What are the primary sources of technical variance in multi-omics data? Technical variance in multi-omics data arises from multiple sources, including batch effects from different processing labs or dates, platform-specific noise from different measurement technologies (e.g., different sequencing platforms or mass spectrometers), and the inherent biological and statistical heterogeneity between different omics layers (e.g., genomics, transcriptomics, proteomics) [19] [20]. Each data type has unique noise structures, detection limits, and missing value patterns, which complicate integration [20].

Why is technical variance particularly problematic for longitudinal multi-omics studies? Longitudinal studies involve repeated measurements from the same subjects over time [21]. Technical variance can confound true biological changes over time, making it difficult to distinguish between actual molecular shifts and artifacts introduced by batch effects or platform variability [22] [23] [21]. This is compounded by challenges like participant attrition and non-random missing data, which can bias results if not handled properly [21].

What is the fundamental difference between horizontal and vertical data integration?

- Horizontal Integration (Within-omics): The integration of multiple datasets from a single omics type (e.g., combining RNA-seq data from different labs or batches). Its main goal is to correct for batch effects to enable a unified analysis [19].

- Vertical Integration (Cross-omics): The integration of diverse datasets from multiple omics types (e.g., genomics, proteomics, metabolomics) derived from the same set of samples. This aims to identify interconnected biological networks and multi-layered molecular signatures [19] [24].

Troubleshooting Guides & FAQs

FAQ: Data Generation & QC

Q: How can we assess data quality and integration performance in the absence of a known ground truth? A: The Quartet Project provides a powerful solution by offering multi-omics reference materials derived from a family quartet (parents and monozygotic twins) [19]. This design provides a "built-in truth" through known genetic relationships and the central dogma of biology. Using these materials, labs can employ QC metrics such as the Mendelian concordance rate for genomic variants and the Signal-to-Noise Ratio (SNR) for quantitative profiling to objectively evaluate their proficiency and the reliability of their integration methods [19].

Q: Our lab is new to multi-omics. What is a robust starting approach to minimize technical irreproducibility? A: Evidence suggests a paradigm shift from absolute quantification to ratio-based profiling [19]. This involves scaling the absolute feature values of your study samples relative to a concurrently measured common reference sample (like the Quartet reference materials) on a feature-by-feature basis. This approach has been shown to produce more reproducible and comparable data across batches, labs, and platforms [19].

FAQ: Data Analysis & Integration

Q: A wide array of integration tools exists (e.g., MOFA, DIABLO, SNF). How do I choose the right one? A: The choice depends heavily on your biological question and data structure. The table below summarizes key methods:

Table 1: Comparison of Multi-Omics Data Integration Methods

| Method | Integration Type | Key Characteristic | Best Use Case |

|---|---|---|---|

| MOFA [20] | Unsupervised | Probabilistic Bayesian framework; infers latent factors | Exploratory analysis to discover hidden sources of variation |

| DIABLO [20] | Supervised | Uses phenotype labels for integration and feature selection | Building predictive models for disease subtyping or biomarker discovery |

| SNF [20] | Network-based | Fuses sample-similarity networks from each omics layer | Identifying disease subtypes based on multiple data layers |

| MCIA [20] | Multivariate | Captures co-inertia (shared patterns) across multiple datasets | Simultaneous analysis of more than two datasets to find global patterns |

Q: In our longitudinal study, we have missing data points due to missed visits. How should we handle this? A: Missing data is common in longitudinal research [21]. First, investigate the pattern of missingness (e.g., is it random or related to the study outcome?). For random missingness, statistical techniques like multiple imputation (e.g., using k-nearest neighbors or matrix factorization) can be used to estimate missing values [25] [21]. It is critical to perform sensitivity analyses to understand how your results might change under different assumptions about the missing data [21].

Troubleshooting Guide: Common Integration Pitfalls

Problem: Poor integration results with weak biological signals.

- Potential Cause 1: Strong batch effects are obscuring true biological variation.

- Solution: Apply batch effect correction methods (e.g., ComBat) as a preprocessing step before integration. The use of ratio-based profiling with a common reference can also inherently mitigate this [19] [25].

- Potential Cause 2: The chosen integration method is mismatched to the study goal.

- Solution: Refer to Table 1. If you have a specific outcome to predict (e.g., disease state), use a supervised method like DIABLO. For unbiased exploration, an unsupervised method like MOFA is more appropriate [20].

Problem: Inability to reconcile findings from different omics layers.

- Potential Cause: The analysis is not accounting for the natural, time-lagged flow of biological information (DNA → RNA → Protein).

- Solution: When integrating data, consider the temporal hierarchy of biological regulation. Use the central dogma as a framework for interpreting correlations—for example, a genetic variant should ideally be correlated with RNA and then protein levels, not necessarily directly. The Quartet Project's QC metrics are designed to validate this flow [19].

Experimental Protocols for Variance Correction

Protocol 1: Implementing Ratio-Based Profiling for Multi-Omics QC

This protocol uses a common reference material to correct for technical variance across experiments [19].

I. Research Reagent Solutions Table 2: Essential Materials for Ratio-Based Profiling

| Item | Function |

|---|---|

| Certified Reference Materials (e.g., Quartet Project DNA, RNA, Protein) | Provides a stable, well-characterized ground truth for cross-batch and cross-platform normalization [19]. |

| Study Samples | The experimental samples of interest (e.g., patient cohorts, cell lines). |

| Omics Profiling Platforms | Platforms for sequencing (DNA, RNA), mass spectrometry (proteomics, metabolomics), etc. |

II. Methodology

- Experimental Design: For every batch of study samples processed, include a fixed amount of the common reference material (e.g., Quartet D6) as an internal control.

- Data Generation: Process all samples (study and reference) concurrently using the same reagents, equipment, and protocols.

- Absolute Quantification: Generate absolute feature measurements (e.g., gene counts, protein intensities) for all samples.

- Ratio Calculation: For each feature in every study sample, calculate a ratio relative to the same feature in the concurrently measured reference sample:

Ratio_Study = Absolute_Value_Study / Absolute_Value_Reference. - Data Integration: Use the derived ratios, rather than the absolute values, for all downstream horizontal and vertical integration analyses. This scales the data to a common standard, reducing non-biological variation [19].

The workflow for this protocol is illustrated below.

Protocol 2: A Longitudinal Multi-Omics Analysis Pipeline

This protocol outlines a workflow for a time-series study, such as investigating long-term patient sequelae, correcting for both multi-omics and longitudinal variances [23].

I. Research Reagent Solutions Table 3: Key Materials for Longitudinal Multi-Omics

| Item | Function |

|---|---|

| Longitudinal Patient Cohort | Provides biological samples (e.g., blood, tissue) at multiple pre-defined time points [23]. |

| Matched Control Samples | Healthy controls for baseline comparison and to help distinguish case-specific changes from general variability [23]. |

| Multi-omics Profiling Suites | Platforms for proteomics, metabolomics, etc., applied to all collected samples [23]. |

| Clinical Data Management System (e.g., RedCap) | For structured storage of clinical metadata, sample IDs, and timepoints [21]. |

II. Methodology

- Sample Collection & Storage: Collect samples from patients and controls at all scheduled time points (e.g., 6 months, 1 year, 2 years). Process and aliquot samples using standardized protocols and store them at -80°C to preserve integrity [23] [21].

- Batch-Aligned Profiling: Profile all samples for all omics types. Crucially, distribute samples from all time points and groups (e.g., patient/control) randomly across processing batches to avoid confounding time and batch.

- Preprocessing and Horizontal Integration: For each omics dataset individually, perform quality control, normalization, and batch effect correction. This is the horizontal integration step that creates clean, within-omics datasets [19] [20].

- Vertical Integration & Temporal Modeling: Integrate the cleaned multi-omics datasets using a method appropriate for the question (see Table 1). To model changes over time, employ statistical methods designed for repeated measures (e.g., Generalized Estimating Equations) or machine learning models like Recurrent Neural Networks (RNNs) that can capture temporal dependencies [22] [25].

- Validation: Use the built-in truth from known biological relationships or validate key findings with orthogonal experiments in a separate test cohort [23].

The following diagram maps the logical flow and decision points in this pipeline.

The Scientist's Toolkit

Table 4: Essential Research Reagents and Computational Tools

| Tool / Reagent | Category | Function / Application |

|---|---|---|

| Quartet Project Reference Materials [19] | Reference Material | Provides DNA, RNA, protein, and metabolite standards from immortalized cell lines for objective QC and proficiency testing. |

| MOFA+ [20] | Software Tool | An unsupervised Bayesian method for discovering the principal sources of variation across multiple omics data sets. |

| DIABLO [20] | Software Tool | A supervised integration method to identify multi-omics biomarker panels and predict categorical outcomes. |

| Similarity Network Fusion (SNF) [20] | Software Tool | A network-based method to fuse multiple omics data types into a single sample-similarity network for clustering. |

| ComBat [25] | Statistical Method | Empirically Bayesian framework for adjusting for batch effects in high-dimensional genomic data. |

| R/Bioconductor, Python | Programming Environment | Primary platforms for implementing most statistical and machine learning-based integration and correction methods. |

| RedCap, OpenClinica [21] | Data Management | Secure web-based applications for managing longitudinal clinical and omics metadata. |

A Practical Guide to Batch Effect Correction Algorithms and Their Implementation

In the field of high-throughput genomics and multi-source data integration, technical variance poses a significant challenge to biological discovery. Batch effects—systematic non-biological variations introduced during experimental processes—can obscure genuine biological signals, leading to false positives, reduced statistical power, and compromised reproducibility in downstream analyses. This technical support guide provides a comprehensive overview of four major algorithm families for batch effect correction: ComBat, limma, Harmony, and RUVseq. Designed for researchers, scientists, and drug development professionals, this resource offers practical troubleshooting guidance, experimental protocols, and comparative analyses to facilitate robust data harmonization within multi-study frameworks.

Algorithm Comparison Tables

Table 1: Key Characteristics of Major Batch Effect Correction Algorithms

| Algorithm | Statistical Approach | Primary Data Types | Key Features | Known Limitations |

|---|---|---|---|---|

| ComBat | Empirical Bayes framework [26] | Microarray gene expression, RNA-seq count data (ComBat-seq) [26], MRI-derived measurements [27] | Adjusts for additive and multiplicative batch effects; effective with small sample sizes; borrows information across features [26] | Assumes consistent covariate effects across sites; requires balanced population distributions; can over-correct with unbalanced designs [27] [28] |

| limma | Linear models with empirical Bayes moderation [29] | Microarray, RNA-seq data [29] | Incorporates batch as covariate in linear models; robust differential expression analysis; does not create "corrected" data matrix [28] | Limited to known batch effects; requires careful model specification [29] |

| Harmony | Iterative clustering with dataset correction factors [30] | Single-cell RNA-seq data [30] | Computes corrected dimensionality reduction without modifying expression values; integrates datasets while preserving biological variation [30] | Does not output corrected expression values; insufficient for differential expression in highly divergent samples [30] |

| RUVseq | Factor analysis using controls/replicates [31] [32] | Bulk RNA-seq, single-cell RNA-seq (RUV-III-NB) [32] | Uses negative control genes or pseudo-replicates to estimate unwanted variation; negative binomial GLM for count data [32] | Requires appropriate control genes; can inflate counts with poor parameter choices [31] |

Table 2: Input Requirements and Output Specifications

| Algorithm | Required Inputs | Batch Information | Control Genes/Cells | Output Type |

|---|---|---|---|---|

| ComBat | Normalized expression data [26] | Known batches required [26] | Not required | Batch-adjusted expression matrix [26] |

| limma | Expression data, design matrix [29] | Known batches as covariates [28] | Not required | Model coefficients for downstream analysis [28] |

| Harmony | Dimensionality reduction (PCA) [30] | Metadata columns for integration [30] | Not required | Corrected embeddings, not expression values [30] |

| RUVseq | Count matrix [32] | Can handle unknown batches [32] | Negative control genes or pseudo-replicate sets required [32] | Adjusted counts for downstream analysis [32] |

Frequently Asked Questions

ComBat produces seemingly perfect clustering results. Is this trustworthy?

Perfect clustering after ComBat adjustment may indicate overfitting, especially with unbalanced experimental designs. ComBat uses the biological variable of interest as a covariate in its model, which can potentially bias the data toward the expected outcome. One researcher reported that even with randomly permuted batches, ComBat still produced perfect biological grouping [28].

Troubleshooting Steps:

- Validate with balanced designs: Ensure your experimental design has similar proportions of biological groups across batches [28].

- Perform a permutation test: Randomly shuffle batch labels and re-run ComBat. If you still observe strong clustering by biological group, the correction may be overfitting [28].

- Consider alternative approaches: For unbalanced designs, include batch as a covariate in your final analysis model (e.g., using

limma) rather than pre-correcting the data [28].

How do I choose between negative control genes and pseudo-replicates for RUVseq?

The choice depends on your data type and experimental design. RUVseq uses these elements to estimate unwanted variation without requiring known batch information [32].

Selection Guidelines:

- Negative Control Genes: These are genes whose expression is unaffected by biological conditions of interest but affected by technical variation. Housekeeping genes are commonly used [32]. They are suitable when you have prior knowledge of invariant genes.

- Pseudo-Replicates: These are sets of cells with similar biological states, identified through clustering or known biological labels [32]. Use this approach when reliable control genes are unavailable, particularly in single-cell analyses.

Implementation for Single-Cell Data with RUV-III-NB:

- Single Batch: Cluster cells using graph-based methods (e.g., Louvain algorithm) on normalized counts. Cells in the same cluster form a pseudo-replicate set [32].

- Multiple Batches: Use tools like

scReplicatefrom thescMergepackage to identify mutual nearest clusters (MNCs) across batches [32].

Should I create a batch-corrected dataset or include batch in my analysis model?

This is a fundamental decision with significant implications. Creating a "batch-free" dataset using tools like ComBat replaces original batch effects with estimation errors, which can still confound results [28]. The safer approach, when possible, is to retain the original data and account for batch effects directly in your statistical model.

Recommendations:

- Use limma's covariate approach for differential expression analysis by including batch in the design matrix [28].

- Reserve ComBat-style correction for situations where you must use analysis tools that cannot handle batch effects themselves [28].

- Always document that batch-adjusted data sets are not truly "batch-free" and interpret results with appropriate caution [28].

Why does Harmony not output corrected expression values?

Harmony operates on dimensionality reductions (e.g., PCA) rather than raw expression data. It computes a new, integrated embedding where cells are aligned by biological state rather than technical batch [30]. This is sufficient for clustering and visualization but means you cannot use Harmony-corrected data for differential expression analysis that requires gene-level counts.

Workflow Solutions:

- For clustering and visualization: Use Harmony's embeddings (

harmony_umap) with your preferred methods [30]. - For differential expression: Perform DE testing within biologically matched clusters using the original (unintegrated) counts, while including batch information in your statistical model [30].

Experimental Protocols

ComBat Batch Adjustment Protocol

Materials Needed:

- Normalized gene expression matrix (features × samples)

- Batch covariate vector (categorical)

- Biological covariate matrix (optional, for preserving signals)

Methodology:

- Data Preparation: Ensure data is properly normalized using appropriate methods (e.g., quantile normalization for microarrays) [26].

- Model Specification: ComBat models batch effects using a location-scale framework:

Y_gij = X_i * β_g + γ_gj + δ_gj * ε_gijwhereγ_gjrepresents additive batch effect andδ_gjrepresents multiplicative batch effect for gene g in batch j [26]. - Parameter Estimation: The algorithm uses empirical Bayes to estimate batch effect parameters, borrowing information across genes to stabilize estimates, particularly beneficial with small sample sizes [26].

- Adjustment: Apply the estimated parameters to remove additive and multiplicative batch effects while preserving biological signals of interest [26].

- Validation: Generate PCA plots before and after correction, coloring points by batch and biological group to assess correction effectiveness and signal preservation [28].

RUVseq Normalization Protocol for Single-Cell Data (RUV-III-NB)

Research Reagent Solutions:

- Raw UMI Count Matrix: Input data representing molecular counts per cell.

- Negative Control Genes: Housekeeping genes or other invariant genes unaffected by biological variables.

- Pseudo-Replicate Sets: Groups of cells with similar biological states.

Methodology:

- Data Modeling: RUV-III-NB models counts

y_gcfor gene g and cell c as Negative Binomial (for UMI data):y_gc ~ NB(μ_gc, ψ_g)[32] - GLM Framework: Uses a generalized linear model with log link function:

log(μ_g) = X * α_g + W * β_g + ζ_gwhere W represents unobserved unwanted factors and X is the pseudo-replicate design matrix [32]. - Parameter Estimation: Implements a double-loop iteratively re-weighted least squares (IRLS) algorithm to estimate parameters, including unobserved unwanted factors [32].

- Adjustment: Returns Percentile-Adjusted Counts (PAC) suitable for downstream differential expression analysis [32].

- Validation: Assess integration quality using clustering metrics and differential expression concordance with independent datasets [32].

The Scientist's Toolkit

Table 3: Essential Research Reagents and Materials

| Item | Function | Example Applications |

|---|---|---|

| Negative Control Genes | Genes used to estimate technical variation unaffected by biology [32] | RUVseq normalization; identifying housekeeping genes for scRNA-seq [32] |

| Pseudo-Replicate Sets | Groups of cells with homogeneous biology across batches [32] | RUV-III-NB normalization; scRNA-seq data integration [32] |

| Batch Covariate Vector | Categorical variable indicating processing batch for each sample [26] | ComBat adjustment; limma model specification [26] [28] |

| Biological Covariate Matrix | Design matrix specifying biological variables of interest [26] | Preserving biological signals during ComBat correction [26] |

| Dimensionality Reduction | PCA or other embeddings representing high-dimensional data [30] | Harmony integration; clustering-based pseudo-replicate definition [30] |

Workflow Visualization

Algorithm Selection Workflow for Batch Effect Correction

ComBat Empirical Bayes Methodology Workflow

The Rising Promise of Ratio-Based Scaling and Reference Materials

In multi-source data integration research, technical variances introduced by different platforms, laboratories, or batches are a major obstacle to obtaining reliable, reproducible results. Ratio-based scaling, supported by well-characterized reference materials, has emerged as a powerful methodology to correct these batch effects and enable robust data integration. This approach involves scaling the absolute feature values of study samples relative to those of a concurrently measured common reference sample, transforming data into a comparable ratio scale that minimizes unwanted technical variation [10]. This technical support center provides practical guidance for implementing these methods in your research.

Troubleshooting FAQs

1. Why does my multi-omics data show strong batch effects despite using standard normalization?

Standard normalization methods often fail when batch effects are completely confounded with biological factors of interest. A primary cause is reliance on absolute feature quantification, which is highly susceptible to technical variation across labs and platforms [10]. Ratio-based profiling, which scales study sample values relative to a common reference material measured in every batch, has proven significantly more effective for removing these confounding technical variations [33].

2. What are the essential characteristics of effective reference materials?

Effective reference materials should have several key characteristics [10]:

- Built-in Ground Truth: Derived from sources with known biological relationships (e.g., family quartets, monozygotic twins) that provide a factual basis for validation.

- Multi-omics Compatibility: Matched sets of DNA, RNA, protein, and metabolites from the same source enable cross-omics integration.

- Scalable Production: Large quantities (1,000+ vials) allow for consistent use across large-scale, multi-site studies.

- Technology Breadth: Suitable for a wide range of platforms including sequencing, methylation arrays, and mass spectrometry.

3. How do I validate the success of ratio-based batch effect correction?

You can employ multiple validation strategies based on your reference materials [10]:

- Sample Classification Accuracy: Assess the ability to correctly cluster samples into their known biological groups (e.g., different individuals or genetically related clusters).

- Central Dogma Validation: Evaluate whether identified cross-omics feature relationships follow the expected information flow from DNA to RNA to protein.

- Predictive Model Robustness: Test the performance of predictive models built on the corrected data across different batches.

Key Experimental Protocols

Protocol 1: Implementing Ratio-Based Profiling with Reference Materials

This protocol outlines the core methodology for ratio-based scaling, which has been shown to be highly effective for batch effect correction in large-scale multi-omics studies [33].

Materials Needed

- Common reference materials (e.g., Quartet Project reference materials)

- Study samples

- Appropriate multi-omics profiling platforms

Procedure

- Experimental Design: Include the common reference material in every batch of experiments.

- Data Generation: Process both reference materials and study samples concurrently using identical protocols.

- Ratio Calculation: For each feature, calculate the ratio of the study sample value to the reference material value.

- Data Integration: Use the ratio-scaled data for all downstream integrative analyses.

Table 1: Comparison of Data Quantification Approaches

| Aspect | Absolute Quantification | Ratio-Based Scaling |

|---|---|---|

| Batch Effect Sensitivity | High | Low |

| Cross-Platform Reproducibility | Limited | High |

| Data Integration Capability | Challenging | Facilitated |

| Required Components | None | Common Reference Materials |

| Ground Truth Validation | Difficult | Built-in via reference design |

Protocol 2: Evaluating Multi-omics Data Integration Performance

This validation protocol utilizes the built-in truths provided by properly designed reference materials to assess integration quality [10].

Procedure

Horizontal Integration Assessment:

- Calculate Mendelian concordance rates for genomic data

- Compute signal-to-noise ratios for quantitative omics data

- Compare results across batches and platforms

Vertical Integration Assessment:

- Perform multi-omics clustering to verify sample classification accuracy

- Analyze cross-omics feature relationships against central dogma expectations

- Evaluate biological network reconstruction accuracy

Research Reagent Solutions

Table 2: Essential Reference Materials for Multi-omics Research

| Material Type | Key Function | Example Products |

|---|---|---|

| DNA Reference Materials | Genomic variant calling validation and standardization | Quartet DNA References (GBW 099000-099007) [10] |

| RNA Reference Materials | Transcriptomics data normalization and integration | Quartet RNA References [10] |

| Protein Reference Materials | Proteomics data standardization across platforms | Quartet Protein References from immortalized LCLs [10] |

| Metabolite Reference Materials | Metabolomics data batch effect correction | Quartet Metabolite References [10] |

| Multi-omics Reference Suites | Cross-omics integration validation | Quartet matched DNA, RNA, protein, metabolites [10] |

Data Visualization and Workflows

Ratio-Based Profiling Workflow

Multi-omics Integration Quality Control

Batch-Effect Reduction Trees (BERT) is a high-performance data integration method designed for large-scale analyses of incomplete omic profiles. It effectively combines data from multiple sources, which is often afflicted by technical biases (batch effects) and missing values, hindering quantitative comparison. BERT addresses the computational efficiency challenges and data incompleteness prevalent in contemporary large-scale data integration tasks [34].

Key Problem it Solves: Traditional batch-effect correction methods like ComBat and limma require that each batch has at least two numerical values per feature, a condition often violated in real-world, incomplete omic data. BERT relaxes this requirement, allowing for the robust integration of datasets with arbitrary missing value patterns [34].

Frequently Asked Questions (FAQs)

Q1: What types of data is BERT designed for? BERT is designed for high-dimensional omic data (e.g., from proteomics, transcriptomics, metabolomics) and other data types like clinical data. It is particularly effective for data integration tasks involving many datasets (up to 5000 in the research) that suffer from batch effects and a high ratio of missing values [34].

Q2: How does BERT handle missing data compared to other methods? Unlike many methods that require data imputation, BERT is an imputation-free framework. It uses a tree-based approach to propagate features with missing values, retaining significantly more numeric values than other methods like HarmonizR. In simulations with 50% missing values, BERT retained all numeric values, while HarmonizR exhibited up to 88% data loss depending on its blocking strategy [34].

Q3: Can BERT account for different experimental conditions or covariates? Yes, BERT allows users to specify categorical covariates (e.g., biological conditions like sex or tumor type). The algorithm passes these covariates to the underlying batch-effect correction methods (ComBat/limma) at each step, ensuring that batch effects are removed while biologically relevant covariate effects are preserved [34].

Q4: My data has unique samples not found in other batches. Can BERT handle this? Yes, BERT includes a feature for user-defined references. You can designate specific samples (e.g., a control group present in multiple batches) as references. BERT uses these to estimate the batch effect, which is then applied to correct all samples, including non-reference samples with unknown or unique covariate levels [34].

Q5: What are the computational performance advantages of BERT? BERT is engineered for high performance. It decomposes the integration task into independent sub-trees that can be processed in parallel, leveraging multi-core and distributed-memory systems. This architecture has demonstrated up to an 11x runtime improvement compared to HarmonizR [34].

Troubleshooting Common Experimental Issues

Problem: Poor Integration Quality After Running BERT

- Symptoms: Biological groups do not cluster correctly in downstream analysis; batch effects appear to remain.

- Possible Causes & Solutions:

- Cause 1: Incorrect covariate specification.

- Solution: Verify that the covariate levels (e.g., 'tumor', 'control') are accurately defined for every sample in your input data matrix.

- Cause 2: Severely imbalanced design, where certain biological conditions are unique to a single batch.

- Solution: If available, leverage the reference sample feature. Designate a subset of samples with known conditions that are shared across as many batches as possible to anchor the batch-effect correction [34].

- Cause 3: The data has a very high proportion of missing values, even for BERT's robust pre-processing.

- Solution: Consult the BERT quality control output, which reports metrics like the Average Silhouette Width (ASW) for both batch and biological labels. A low ASW label score after correction may indicate fundamental issues with the data structure for integration.

- Cause 1: Incorrect covariate specification.

Problem: BERT Execution is Slower Than Expected

- Symptoms: The data integration process takes a very long time on a multi-core machine.

- Possible Causes & Solutions:

- Cause 1: Suboptimal parallelization parameters.

- Solution: The BERT algorithm uses parameters (P, R, S) to control parallelization. These do not affect the output quality but can impact speed. Adjust the number of initial BERT processes (

P) and the reduction factor (R) based on your system's available cores [34].

- Solution: The BERT algorithm uses parameters (P, R, S) to control parallelization. These do not affect the output quality but can impact speed. Adjust the number of initial BERT processes (

- Cause 2: Using the ComBat backend when limma is sufficient.

- Solution: The limma backend is generally faster. In simulation studies, limma showed an average 13% runtime improvement over ComBat. Use ComBat only if your specific analysis requires it [34].

- Cause 1: Suboptimal parallelization parameters.

Problem: Error During Runtime or Job Failure

- Symptoms: BERT fails to run and returns an error message.

- Possible Causes & Solutions:

- Cause 1: Input data is not in an accepted format.

- Solution: BERT accepts

data.frameandSummarizedExperimentobjects. Ensure your data is loaded as one of these supported types [34].

- Solution: BERT accepts

- Cause 2: A feature has only a single numeric value in one batch after pre-processing.

- Solution: BERT's pre-processing removes singular numerical values from individual batches to meet the requirements of ComBat/limma. This typically affects a very small fraction (<1%) of values. Check the BERT log for details on removed values [34].

- Cause 1: Input data is not in an accepted format.

Performance and Data Retention Metrics

The following table summarizes quantitative benchmarks comparing BERT to HarmonizR, the only other method for incomplete omic data integration, from simulation studies involving 10 repetitions with 6000 features and 20 batches [34].

| Performance Metric | BERT | HarmonizR (Full Dissection) | HarmonizR (Blocking of 4 Batches) |

|---|---|---|---|

| Data Retention | Retained 100% of numeric values across all missing value ratios. | Up to 27% data loss with increasing missing values. | Up to 88% data loss with increasing missing values. |

| Runtime | Faster than HarmonizR for all tests. Execution time decreases with more missing values. | Slower than BERT. | Slower than BERT, even with blocking. |

| Backend Choice | limma: ~13% faster on average.ComBat: More computationally intensive. | N/A | N/A |

Experimental Protocols for Key Tasks

Protocol 1: Simulating a Data Integration Benchmark This protocol is based on the simulation studies used to characterize BERT [34].

- Data Generation: Generate a complete data matrix. The published study used datasets with 6000 features and 20 batches, with 10 samples per batch.

- Introduce Biological Conditions: Simulate two distinct biological conditions (e.g., Label A and Label B) across the samples.

- Introduce Missing Values: Randomly select a subset of features to be completely missing in each batch, following a Missing Completely at Random (MCAR) scheme. Vary the ratio of missing values (e.g., from 0% to 50%).

- Apply BERT: Run the BERT integration on the simulated, incomplete dataset.

- Validation: Calculate the Average Silhouette Width (ASW) using the formula below to assess the quality of integration, where a higher ASW label score and a lower ASW batch score indicate successful correction.

$$ASW={\sum }{i=1}^{N}\frac{{b}{i}-{a}{i}}{\max ({a}{i},{b}_{i})},\quad ASW\in [-1,1]$$

N denotes the total number of samples, and a_i, b_i indicate the mean intra-cluster and mean nearest-cluster distances of sample i with respect to its biological condition (ASW label) or batch of origin (ASW batch) [34].

Protocol 2: Integrating Experimental Data with Covariates and References This protocol outlines the steps for a real-world integration task using BERT's advanced features [34].

- Data Inventory: Compile all datasets (batches) to be integrated. Document the known covariate levels (e.g., tumor type, treatment) for each sample.

- Define References: Identify samples that will serve as references. These should be samples with known covariate levels that are present across multiple batches (e.g., two WNT-medulloblastoma samples in each batch).

- Input Preparation: Format the data into a

SummarizedExperimentobject or adata.frame, ensuring covariate and reference designations are included. - Parameter Setting: Choose the backend (limma for speed, ComBat if specifically needed) and set parallelization parameters (P, R, S) based on your computing resources.

- Execution and QC: Run BERT and review the output quality control metrics, including the ASW scores for the raw and integrated data.

BERT Algorithm Workflow and Data Flow

The diagrams below illustrate the core logic and execution flow of the BERT algorithm.

Diagram 1: BERT Core Algorithm Workflow. This diagram outlines the logical flow of the BERT algorithm, showing how it processes batches and features through a binary tree.

Diagram 2: BERT High-Performance Data Flow. This diagram shows the execution flow of BERT, highlighting the parallel processing stages controlled by user parameters P, R, and S.

The Scientist's Toolkit: Research Reagent Solutions

The following table lists key components and resources essential for working with the BERT framework.

| Item / Resource | Function / Description |

|---|---|

| R Statistical Environment | The programming language in which BERT is implemented. Required to run the algorithm [34]. |

| Bioconductor | The primary repository where the BERT library has been published and peer-reviewed [34]. |

| ComBat Algorithm | An established empirical Bayes method used by BERT at each tree node to remove batch effects for complete features [34]. |

| limma Algorithm | A linear models framework used by BERT as an alternative backend for batch-effect correction, offering faster runtime [34]. |

| SummarizedExperiment Object | A standard Bioconductor S4 class used for storing and organizing omic data, which BERT accepts as input [34]. |

| Average Silhouette Width (ASW) | A key metric reported by BERT for quality control, quantifying how well samples cluster by biology and separate from batch post-integration [34]. |

| User-Defined References | A set of samples with known covariates used by BERT to guide the correction in datasets with imbalanced or sparse conditions [34]. |

In modern drug development, Population Pharmacokinetic (popPK) modeling is a critical analytical tool that quantifies drug behavior by identifying and explaining variability in drug concentrations among individuals receiving therapeutic doses [35]. Traditional popPK analyses often rely on data from a single clinical study. However, integrated popPK models represent a significant methodological advancement by combining data from multiple, disparate sources—such as several clinical trials across different patient populations, geographic locations, or even phases of drug development [36].

This case study explores a successful implementation of an integrated popPK model, demonstrating how multi-source data integration enhances model robustness, improves covariate relationship understanding, and supports regulatory decision-making. The methodology and troubleshooting guidance presented herein are framed within the broader research context of technical variance correction in multi-source data, providing researchers with practical frameworks for addressing common computational and methodological challenges.

Featured Case Study: Integrated Rivaroxaban PopPK Analysis

A seminal example of successful integration is the development of a comprehensive popPK model for rivaroxaban, an oral anticoagulant. This model pooled data from 4,918 patients across 7 clinical trials spanning all approved indications for the drug, including venous thromboembolism prevention, atrial fibrillation, and acute coronary syndrome [36].

Primary Objectives:

- Develop a unified popPK model across all approved indications

- Harmonize covariate relationships consistently across populations

- Enable reliable exposure predictions for special patient subgroups

Data Integration Methodology

The analysis employed a one-compartment disposition model with first-order absorption, applied to pooled concentration-time data from the seven trials. The integration methodology included:

Data Harmonization: Standardized covariate definitions and units across all studies Modeling Approach: Nonlinear mixed-effects modeling (NONMEM) to handle sparse sampling designs Covariate Analysis: Systematic evaluation of demographic and clinical factors on PK parameters

Table 1: Integrated Rivaroxaban Dataset Composition

| Indication | Number of Studies | Number of Patients | Total PK Observations | Median Observations per Patient |

|---|---|---|---|---|

| VTE Prevention | 3 | 1,636 | 8,033 | 4-6 |

| VTE Treatment | 2 | 870 | 4,634 | 3-8 |

| Atrial Fibrillation | 1 | 161 | 800 | 5 |

| Acute Coronary Syndrome | 1 | 2,251 | 9,376 | 4 |

| Total | 7 | 4,918 | 22,843 | - |

Key Findings and Model Outputs

The integrated analysis identified consistent covariate effects across populations:

- Creatinine clearance and comedications significantly influenced apparent clearance (CL/F)

- Age, weight, and gender affected apparent volume of distribution (V/F)

- Dose-dependent bioavailability was confirmed

Table 2: Covariate Effects on Rivaroxaban Pharmacokinetics

| PK Parameter | Significant Covariates | Clinical Impact |

|---|---|---|

| Apparent Clearance (CL/F) | Creatinine Clearance | Modest influence on exposure |

| Apparent Clearance (CL/F) | Comedications | Modest influence on exposure |

| Apparent Clearance (CL/F) | Study Population | Accounted for inter-trial variability |

| Apparent Volume of Distribution (V/F) | Age, Weight, Gender | Minor influence on exposure |

| Relative Bioavailability (F) | Dose | Explained non-linear absorption |

The model successfully predicted exposure across diverse patient subgroups, demonstrating that renal function had greater impact on rivaroxaban exposure than age, body weight, or comedication use [36].

Technical Support Center: FAQs & Troubleshooting

Foundational Concepts

Q1: What distinguishes integrated popPK from standard popPK analysis? Integrated popPK simultaneously analyzes data pooled from multiple studies or data sources, whereas standard popPK typically uses data from a single clinical trial. This integrated approach increases statistical power, enhances covariate detection capability, and improves model generalizability across diverse populations [36]. The key advantage lies in quantifying covariate relationships consistently across different patient populations and clinical contexts.

Q2: When should researchers consider an integrated popPK approach? Consider integration when:

- You have multiple studies with sparse PK sampling

- You need to characterize covariate effects across diverse populations

- You aim to simulate drug exposure in under-represented subgroups

- You're preparing for regulatory submissions requiring comprehensive PK characterization [35] [36]

Data Management & Preprocessing

Q3: How should we handle batch effects and technical variance in multi-source data? Batch effects—technical biases from different experimental conditions—are common in integrated analyses. Recommended approaches include:

- Proactive Design: Implement standardized protocols across studies

- Statistical Correction: Apply established methods like ComBat or limma when standardization isn't feasible

- Algorithmic Solutions: For complex omics data integrated with PK, consider specialized tools like Batch-Effect Reduction Trees (BERT) [34]

- Reference Samples: Include common reference measurements across batches when possible

Q4: What strategies effectively manage incomplete data across sources? Multi-source data often exhibits missingness from technical and biological causes. Effective strategies include:

- Imputation-Free Methods: Use algorithms like BERT that employ matrix dissection to identify complete-data subsets [34]

- Clear Documentation: Systematically record missing data patterns and potential mechanisms

- Appropriate Handling: Select methods (MCAR, MAR, MNAR) based on missingness mechanism understanding

Modeling & Computational Challenges

Q5: How can we optimize model selection in complex integrated analyses? Traditional manual model selection is time-consuming and subjective. Automated approaches using machine learning can:

- Systematically explore model spaces containing thousands of potential structures

- Reduce development timelines from weeks to days [37]

- Improve reproducibility by encoding model selection criteria explicitly

- Utilize platforms like pyDarwin with predefined model spaces and penalty functions [37]

Q6: What are best practices for handling concentration data below quantification limits?

- Document the percentage of BQL values in each data source

- Consider mechanistic handling methods like the M3 method for likelihood-based estimation

- Assess potential bias introduced by BQL data, particularly when percentages differ across studies

Detailed Experimental Protocols

Protocol: Development of an Integrated PopPK Model

This protocol outlines the methodology successfully employed in the rivaroxaban case study [36].

Step 1: Data Collection and Curation

- Identify all potential data sources (clinical trials, observational studies)

- Extract raw concentration-time data with associated sampling times

- Compile comprehensive covariate datasets (demographics, laboratory values, comorbidities)

- Standardize variable definitions and units across all sources

Step 2: Data Quality Assessment

- Evaluate completeness of each data source

- Identify potential outliers or erroneous measurements

- Assess consistency of bioanalytical methods across studies

- Document missing data patterns

Step 3: Structural Model Development

- Begin with simple one-compartment model

- Progress to more complex structures if needed

- Evaluate appropriate absorption models

- Test between-subject variability on key parameters

Step 4: Covariate Model Building

- Implement stepwise covariate model building

- Test physiological plausibility of relationships

- Use likelihood ratio tests for nested models

- Apply information criteria (AIC, BIC) for non-nested comparisons

Step 5: Model Validation

- Conduct internal validation (bootstrap, visual predictive checks)

- Perform external validation if hold-out data available

- Evaluate precision of parameter estimates

- Assess predictive performance in key subgroups

Step 6: Model Application

- Simulate exposures across populations of interest

- Evaluate probability of target attainment

- Inform dosing recommendations

Protocol: Batch Effect Correction for Multi-Source Integration

This protocol adapts the BERT framework for popPK applications [34].

Step 1: Data Organization

- Arrange data in a features × samples matrix

- Annotate batch origin for each sample

- Identify categorical and continuous covariates

Step 2: Quality Control Metrics

- Calculate average silhouette width (ASW) for biological conditions

- Compute ASW for batch of origin

- Assess design imbalance across batches

Step 3: Batch Effect Correction

- Decompose integration task using binary tree structure

- Apply ComBat or limma to feature subsets with sufficient data

- Propagate features with insufficient data without modification

- Merge corrected data subsets

Step 4: Result Validation

- Compare pre- and post-integration ASW values

- Verify preservation of biological signal

- Confirm reduction of batch-associated variance

- Assess integration quality using positive and negative controls

Workflow Visualization