Taming Imbalance: A Machine Learning Guide to Robust Biomarker Identification

This article provides a comprehensive guide for researchers and drug development professionals on addressing the critical challenge of class imbalance in machine learning for biomarker discovery.

Taming Imbalance: A Machine Learning Guide to Robust Biomarker Identification

Abstract

This article provides a comprehensive guide for researchers and drug development professionals on addressing the critical challenge of class imbalance in machine learning for biomarker discovery. Imbalanced datasets, where critical biomarker-positive cases are rare, can severely bias models and lead to misleading conclusions. We explore the foundational reasons why this problem is pervasive in biomedical data, detail a suite of methodological solutions from data resampling to cost-sensitive algorithms, and offer strategies for troubleshooting and optimizing model performance. Finally, we present a rigorous framework for validation and comparison, ensuring that identified biomarkers are robust, reliable, and ready for clinical translation. By synthesizing modern machine learning techniques with domain-specific knowledge, this guide aims to enhance the accuracy and impact of predictive models in precision medicine.

Why Biomarker Data is Inherently Imbalanced: The Foundation for Effective ML

In biomedical research, class imbalance is not an exception but the rule. This occurs when one class of data (e.g., healthy patients) is significantly more common than another (e.g., diseased patients) [1] [2]. Most standard machine learning algorithms are designed with the assumption of balanced class distribution, causing them to become biased toward the majority class and perform poorly on the critical minority class [3] [4]. In practical terms, this means a model might achieve high overall accuracy by simply always predicting "healthy," thereby failing to identify the sick patients it was ultimately designed to find [2]. Effectively handling this imbalance is therefore not just a technical step, but a prerequisite for developing reliable diagnostic and drug discovery tools.

This technical support center provides targeted guides and FAQs to help you troubleshoot the specific challenges posed by imbalanced datasets in your biomarker identification research.

Frequently Asked Questions

Q1: My model has a 98% accuracy, but it fails to detect any rare disease cases. What is going wrong? This is a classic symptom of class imbalance. Your model is likely ignoring the minority class (the rare disease) because correctly classifying the majority class (healthy individuals) is enough to achieve high accuracy [2]. To properly evaluate your model, stop relying on accuracy alone and instead use metrics like precision, recall (sensitivity), and the Area Under the Receiver Operating Characteristic Curve (AUC) [4] [5]. These metrics give a clearer picture of how well your model is performing on the rare class of interest.

Q2: I have a very small dataset for my rare disease study. Can I still use machine learning? Yes, but you must use strategies specifically designed for such scenarios. A powerful approach is to combine synthetic oversampling with data-level adjustments. Techniques like SMOTE (Synthetic Minority Over-sampling Technique) can generate synthetic examples for your rare disease class to balance the dataset [3] [4]. Furthermore, algorithmic-level approaches like cost-sensitive learning can be applied, which instruct the model to assign a higher penalty for misclassifying a rare disease case than a common case, forcing it to pay more attention to the minority class [3] [6].

Q3: What is the difference between SMOTE and random oversampling, and which should I use?

- Random Oversampling balances the dataset by randomly duplicating existing minority class examples. A major risk is that this can lead to overfitting, as the model may learn from these specific, repeated examples and fail to generalize to new data [3].

- SMOTE creates new, synthetic minority class examples by interpolating between existing ones. This helps the model learn broader patterns and generally generalizes better than random duplication [4]. For these reasons, SMOTE is often the preferred method.

Q4: How do I choose the right technique for my imbalanced biomarker dataset? There is no one-size-fits-all solution. The best approach depends on your specific dataset, including the Imbalance Ratio (IR) and the nature of your features [2] [6]. The most reliable strategy is experimental: try multiple methods (e.g., SMOTE, Tomek Links, cost-sensitive learning) and evaluate their performance using robust metrics like AUC and recall on a held-out test set. The table below summarizes the performance of various techniques from published studies.

Table 1: Performance of ML Models with Imbalance Handling Techniques in Biomedical Research

| Research Context | Machine Learning Model(s) | Imbalance Handling Technique | Key Performance Metric(s) | Citation |

|---|---|---|---|---|

| Prostate Cancer Biomarker Discovery | XGBoost, RF, SVM, Decision Tree | SMOTE-Tomek link, Stratified k-fold | 96.85% Accuracy (XGBoost) | [7] |

| Large-Artery Atherosclerosis Biomarker Prediction | Logistic Regression, SVM, RF, XGBoost | Recursive Feature Elimination | AUC: 0.92 (with 62 features) | [5] |

| Drug Discovery (Graph Neural Networks) | GCN, GAT, Attentive FP | Oversampling, Weighted Loss Function | High Matthew's Correlation Coefficient (MCC) achieved with oversampling | [6] |

| Medical Incident Report Detection | Logistic Regression | SMOTE | Recall increased from 52.1% to 75.7% | [3] |

Troubleshooting Guides

Guide 1: Diagnosing Poor Minority Class Performance

Problem: Your model shows promising overall accuracy but fails to identify the crucial minority class (e.g., active drug compounds, rare disease patients).

Step-by-Step Troubleshooting:

Audit Your Evaluation Metrics

- Stop using accuracy as your primary metric.

- Calculate a confusion matrix to visualize true positives, false negatives, false positives, and true negatives.

- Switch to metrics that capture minority class performance: Precision, Recall (Sensitivity), F1-score, and AUC-ROC [4] [2]. For example, a high recall is critical when the cost of missing a positive case (a disease) is high.

Benchmark with a Dummy Classifier

- Train a simple classifier that always predicts the majority class. If your complex model's performance is not significantly better than this baseline, your model has learned nothing useful about the minority class and is likely suffering from the imbalance [2].

Implement a Resampling Strategy

- Apply a resampling technique to your training data only (to avoid data leakage).

- Start with SMOTE to generate synthetic minority samples [7] [4].

- Consider a hybrid approach like SMOTE-Tomek, which uses SMOTE to oversample the minority class and then uses Tomek Links to clean the overlapping areas between classes, potentially leading to better-defined class clusters [7] [8].

Explore Algorithmic-Level Solutions

- Use models that natively handle imbalance. Many algorithms allow you to set the

class_weightparameter to "balanced," which automatically adjusts weights inversely proportional to class frequencies [6]. - For neural networks, employ a weighted loss function that increases the cost of misclassifying a minority class example [6].

- Use models that natively handle imbalance. Many algorithms allow you to set the

Guide 2: Selecting a Handling Technique for High-Dimensional Biomarker Data

Problem: You are working with high-dimensional data (e.g., from genomics, metabolomics) and need a robust pipeline to identify reliable biomarkers.

Detailed Experimental Protocol:

This protocol is based on methodologies that have successfully identified biomarkers for diseases like prostate cancer and large-artery atherosclerosis [7] [5].

1. Data Preprocessing

- Missing Value Imputation: Use methods like mean imputation or k-Nearest Neighbors (k-NN) imputation to handle missing data points [5].

- Feature Scaling: Standardize or normalize your features. Models like SVMs and algorithms using gradient descent are sensitive to the scale of data.

2. Feature Selection

- Apply Recursive Feature Elimination with Cross-Validation (RFECV): This technique recursively removes the least important features and builds a model with the remaining ones, using cross-validation to find the optimal number of features. This helps reduce overfitting and improves model interpretability by selecting the most predictive biomarkers [5].

3. Model Training with Resampling (on Training Set only)

- Split your data into training and testing sets.

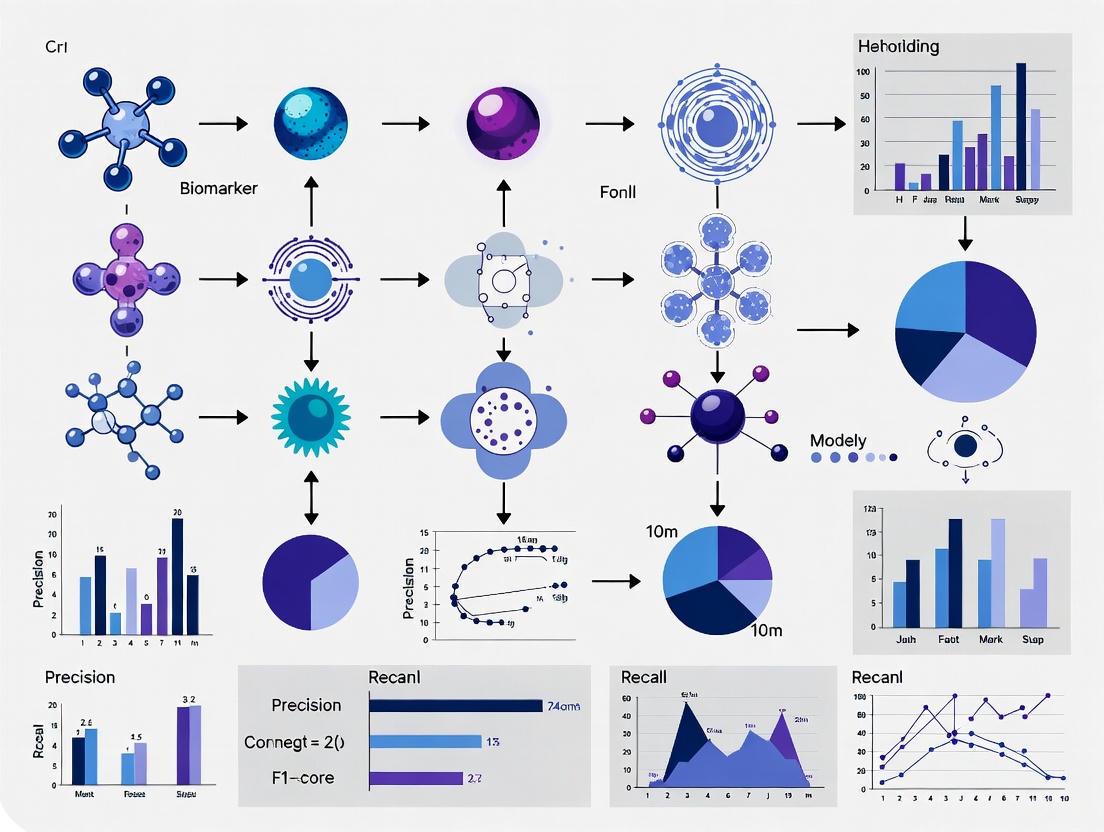

- On the training set, apply your chosen imbalance-handling technique. The following workflow diagram illustrates a robust integrated approach:

4. Model Evaluation

- Evaluate the final model on the untouched test set using the metrics discussed in Guide 1. This gives an unbiased estimate of its performance on new data.

The Scientist's Toolkit

Table 2: Essential Software and Analytical Tools

| Tool / Reagent | Function / Application | Key Consideration |

|---|---|---|

| imbalanced-learn (Python) | A scikit-learn-contrib library offering a wide range of oversampling (SMOTE, ADASYN) and undersampling (Tomek Links, NearMiss) methods [8]. | Integrates seamlessly with the scikit-learn pipeline, ensuring no data leakage during resampling. |

| XGBoost (Extreme Gradient Boosting) | A powerful ensemble learning algorithm that often achieves state-of-the-art results, even on imbalanced data [7] [5]. | Has built-in parameters for handling imbalance (e.g., scale_pos_weight) and can be effectively combined with resampling techniques [7]. |

| Recursive Feature Elimination (RFE) | A feature selection method that works by recursively removing the least important features and building a model on the remaining ones [5]. | Critical for high-dimensional biomarker data to prevent overfitting and identify a concise set of candidate biomarkers. |

| Stratified k-Fold Cross-Validation | A cross-validation technique that preserves the percentage of samples for each class in every fold [7]. | Ensures that each fold is a good representative of the whole, providing a more reliable estimate of model performance on imbalanced datasets. |

The following diagram outlines the logical decision process for selecting the most appropriate technique based on your dataset's characteristics.

FAQs on the Accuracy Metric Trap

Q1: Why is a model with 99% accuracy potentially useless in biomarker research?

A model that achieves 99% accuracy can be completely useless if the dataset is severely imbalanced. For example, if only 1% of patients in a study have the disease (the minority class), a model that simply predicts "no disease" for every single patient would still be 99% accurate. This model fails at its primary task—identifying the patients with the condition—and is therefore dangerously misleading [9]. High accuracy in such contexts often just reflects the model's ability to identify the majority class, masking its failure on the critical minority class.

Q2: What evaluation metrics should I use instead of accuracy for imbalanced biomarker data?

For imbalanced classification, you should use metrics that focus on the performance for the minority class. The table below summarizes key metrics and their applications [10].

| Metric | Formula | Focus & Best Use Case |

|---|---|---|

| Recall (Sensitivity) | TP / (TP + FN) | Avoiding false negatives. Critical when missing a positive case (e.g., a disease) is costly. |

| Precision | TP / (TP + FP) | Avoiding false positives. Important when falsely labeling a healthy person as sick has high consequences. |

| F1-Score | 2 * (Precision * Recall) / (Precision + Recall) | Harmonic mean of Precision and Recall. Provides a single balanced score when both FP and FN are important. |

| G-Mean | sqrt(Sensitivity * Specificity) | Geometric mean that balances performance on both the minority and majority classes. |

Q3: What are common pitfalls in biomarker study design that lead to non-reproducible results?

A major pitfall is improper handling of continuous biomarker data through dichotomania—the practice of artificially dichotomizing a continuous variable into "high" and "low" groups using an arbitrary cut-point [11]. This discards valuable biological information, reduces statistical power, and finds "thresholds" that do not exist in nature and thus fail to replicate in other datasets. Other pitfalls include using sample sizes that are too small to support the intended analysis and failing to pre-specify a rigorous statistical analysis plan [11].

Q4: What is the PRoBE design and how does it improve biomarker research?

The Prospective-specimen-collection, Retrospective-blinded-evaluation (PRoBE) design is a rigorous study framework for pivotal evaluation of a biomarker's classification accuracy. Its key components are [12]:

- Prospective Collection: Biological specimens are collected from a defined cohort before the clinical outcome (e.g., disease) is known.

- Random Selection: After outcomes are ascertained, case patients and control subjects are randomly selected from the cohort.

- Blinded Evaluation: The biomarker is assayed on the selected specimens in a fashion blinded to the case-control status. This design eliminates common biases that pervade the biomarker research literature and ensures that the study cohort is relevant to the clinical application [12].

Troubleshooting Guides

Problem: My biomarker model has high accuracy but fails to identify any true positive cases.

Solution: This is a classic sign of a severely imbalanced dataset. Follow this troubleshooting guide to correct your approach.

| Step | Action | Description & Rationale |

|---|---|---|

| 1 | Diagnose | Check the confusion matrix and calculate Recall. A Recall of 0 for the positive class confirms the model is ignoring it [9]. |

| 2 | Resample Data | Apply techniques like downsampling the majority class or oversampling the minority class (e.g., with SMOTE) to create a more balanced training set [1] [13]. |

| 3 | Use Appropriate Metrics | Stop tracking accuracy. Instead, optimize and evaluate your model using Recall, F1-score, or G-Mean to force focus on the minority class [10]. |

| 4 | Consider Algorithmic Costs | Use algorithms that allow you to assign a higher cost to misclassifying a minority class example (e.g., cost-sensitive learning) [1]. |

Problem: My biomarker candidate does not replicate in a new validation dataset.

Solution: This is often due to a flawed discovery process. Implement a robust ML pipeline designed for consistency.

Detailed Protocol for Robust Biomarker Identification

This protocol is based on a study that identified a 15-gene composite biomarker for pancreatic ductal adenocarcinoma metastasis, leveraging data from multiple public repositories [13].

Data Preparation and Integration

- Data Sourcing: Collect data from multiple public repositories (e.g., TCGA, GEO) to maximize statistical power.

- Inclusion Criteria: Define strict clinical criteria for sample inclusion (e.g., primary tumor tissues, specific metastasis status).

- Pre-processing: Normalize data (e.g., using TMM normalization) and perform batch effect correction (e.g., with ARSyN) to remove technical variance and integrate datasets [13].

Robust Feature Selection

- Data Splitting: Split the integrated data into a training set and a hold-out validation set.

- Consensus Selection: On the training set, perform a 10-fold cross-validation process. In each fold, run multiple models (e.g., 100) that combine several feature selection algorithms (e.g., LASSO logistic regression, Boruta, and a backward selection algorithm).

- Define Robust Candidates: Identify genes that are selected in a high percentage of models (e.g., 80%) across multiple folds. This ensures the selected features are consistent and not due to random noise [13].

Model Building and Validation

- Train Model: Build a classification model (e.g., a Random Forest) using only the robustly selected features on the entire training set.

- Validate: Test the final model on the held-out validation set that was not used in any part of the feature selection process.

- Evaluation: Use a comprehensive set of metrics suitable for imbalanced data, including Precision, Recall, and F1 score for each class [13].

Robust Biomarker Discovery Workflow

The Scientist's Toolkit

Research Reagent Solutions for ML-based Biomarker Discovery

| Item | Function in the Experiment |

|---|---|

| Targeted Metabolomics Kit (e.g., Absolute IDQ p180) | A commercial kit used to reliably quantify the concentrations of a predefined set of 194 plasma metabolites from various compound classes, ensuring consistent data generation across samples [5]. |

| RNA Sequencing Data | Provides the raw transcriptomic data from primary tumor tissues, which serves as the high-dimensional input for the machine learning pipeline to identify gene expression biomarkers [13]. |

| Batch Effect Correction Algorithm (e.g., ARSyN) | A crucial computational tool for removing non-biological technical variation between datasets from different sources or experiments, allowing for meaningful integration and analysis [13]. |

| Synthetic Minority Oversampling (e.g., ADASYN) | A technique used to handle class imbalance by generating synthetic examples of the minority class (e.g., metastatic samples), preventing the model from being biased toward the majority class [13]. |

Handling Class Imbalance in Data

Frequently Asked Questions

Q1: My model has a 98% accuracy, but it fails to detect any active drug compounds. What is happening? This is a classic sign of class imbalance. When one class (e.g., "inactive compounds") vastly outnumbers another (e.g., "active compounds"), standard accuracy becomes a misleading metric. A model can achieve high accuracy by simply always predicting the majority class, while completely failing on the minority class that is often of primary interest, such as promising drug candidates or diseased patients [14]. You should use metrics like Precision, Recall, and the F1-score to get a true picture of your model's performance on the minority class [15].

Q2: What is the difference between fixing imbalance with data versus with algorithms? Data-level methods physically change the composition of your training dataset, while algorithm-level methods adjust the model's learning process to pay more attention to the minority class.

- Data-Level: Includes random undersampling (removing majority class samples) and random oversampling (duplicating minority class samples) [8]. More advanced techniques like SMOTE create synthetic minority samples instead of just copying them [14].

- Algorithm-Level: Involves using cost-sensitive learning or adjusting class weights, which makes the model penalize misclassifications of the minority class more heavily [14]. Ensemble methods like Random Forest can also be inherently effective [15].

Q3: I've applied random undersampling, and my model now detects the minority class. However, its performance on a real-world, imbalanced test set is poor. Why? Undersampling creates an artificial, balanced world for the model to train in. If not corrected, the model learns that the classes are equally likely, which is not true in reality. To correct for this prediction bias, you must upweight the loss associated with the downsampled majority class. This means when the model makes a mistake on a majority class example, the error is treated as larger, ensuring the model learns the true data distribution [1].

Troubleshooting Guides

Problem: Model is biased towards the majority class (inactive compounds/healthy patients).

| Step | Action | Technical Details & Considerations |

|---|---|---|

| 1 | Diagnose the Issue | Calculate the Imbalance Ratio (IR). Check metrics beyond accuracy, especially Recall and F1-Score for the minority class [16] [14]. |

| 2 | Select a Strategy | For high IR (>1:50): Start with random undersampling to create a moderate imbalance (e.g., 1:10) [16]. For lower IR: Try class weight adjustment in your algorithm or synthetic oversampling with SMOTE [14]. |

| 3 | Validate Correctly | Use a strict train-test split where the test set retains the original, real-world imbalance to evaluate generalizability [8]. Employ cross-validation correctly by applying resampling techniques only to the training folds, not the validation folds [15]. |

| 4 | Mitigate Information Loss | If using undersampling, employ ensemble undersampling: create multiple balanced subsets by undersampling the majority class differently each time, train a model on each, and aggregate the predictions [16]. |

Problem: After resampling, the model is overfitting and does not generalize.

| Step | Action | Technical Details & Considerations |

|---|---|---|

| 1 | Review Resampling Method | Simple random oversampling by duplication can lead to overfitting. Switch to synthetic generation methods like SMOTE or ADASYN, which create new, interpolated samples [14]. |

| 2 | Apply Hybrid Techniques | Use SMOTE followed by Tomek Links. SMOTE generates synthetic samples, and Tomek Links cleans the space by removing overlapping samples from both classes, creating a clearer decision boundary [8]. |

| 3 | Tune Model Complexity | A model that is too complex will memorize the noise in the resampled data. Regularize your model (e.g., via L1/L2 regularization) and perform hyperparameter tuning to find a simpler, more generalizable solution [15]. |

| 4 | Validate with External Data | The ultimate test is validation on a completely external, imbalanced dataset from a different source to ensure your model has learned genuine patterns [16]. |

Quantitative Data on Imbalance and Performance

Table 1: Impact of Severe Class Imbalance in Drug Discovery Bioassays This table summarizes the performance challenges when predicting active compounds in highly imbalanced high-throughput screening (HTS) datasets [16].

| Infectious Disease Target | Original Imbalance Ratio (Active:Inactive) | Key Performance Challenge on Imbalanced Data |

|---|---|---|

| HIV | 1:90 | Very poor performance with Matthews Correlation Coefficient (MCC) values below zero, indicating no better than random prediction [16]. |

| COVID-19 | 1:104 | Most resampling methods failed to improve performance across multiple metrics, highlighting the difficulty of extreme imbalance [16]. |

| Malaria | 1:82 | Models showed deceptively high accuracy but poor ability to identify the active compounds (low recall) without resampling [16]. |

Table 2: Comparative Performance of Resampling Techniques This table compares the effect of different resampling strategies on model performance for a bioassay dataset. RUS often provides a strong balance of metrics [16].

| Resampling Technique | Effect on Recall (Minority Class) | Effect on Precision | Overall Recommendation |

|---|---|---|---|

| Random Undersampling (RUS) | Significantly increases | May decrease, but often leads to the best overall F1-score and MCC [16]. | Highly effective for creating a robust model [16]. |

| Random Oversampling (ROS) | Significantly increases | Often decreases significantly due to overfitting on duplicated samples [8]. | Can be useful but carries a high risk of overfitting [14]. |

| Synthetic (SMOTE/ADASYN) | Increases | Varies; can sometimes maintain higher precision than ROS [14]. | A good alternative to simple oversampling, but may introduce noise [16]. |

Experimental Protocols

Protocol 1: K-Ratio Random Undersampling for Robust Model Training

This protocol, derived from recent research, outlines a method to find an optimal imbalance ratio instead of forcing a perfect 1:1 balance [16].

- Data Preparation: Split your data into training and test sets, ensuring the test set retains the original, real-world imbalance.

- Determine Baseline IR: Calculate the original Imbalance Ratio (IR) in your training set (e.g., 1:100).

- Iterative Undersampling: Systematically reduce the majority class in the training data to create new training sets with less severe IRs, such as 1:50, 1:25, and 1:10. Do not modify the test set.

- Model Training and Validation: Train your chosen model (e.g., Random Forest, SVM) on each of the resampled training sets. Evaluate each model on the held-out, imbalanced test set.

- Optimal Ratio Selection: Identify the IR that yields the best performance on the test set, focusing on the F1-score or MCC. A moderate IR like 1:10 often provides an optimal balance between learning minority class patterns and retaining majority class information [16].

Protocol 2: Combined SMOTE and Tomek Links for Data Cleaning

This hybrid protocol aims to oversample the minority class while cleaning the data space for a clearer decision boundary [8].

- Apply SMOTE: First, use the Synthetic Minority Over-sampling Technique (SMOTE) on the training data. SMOTE works by selecting a minority class instance and its k-nearest neighbors, then creating new synthetic examples along the line segments joining them. This increases the number of minority class samples.

- Apply Tomek Links: Second, apply Tomek Links to the SMOTE-augmented dataset. A Tomek Link is a pair of instances from different classes that are each other's nearest neighbors. Removing the majority class instance from these pairs helps to reduce class overlap and noise.

- Train Model: Train your classifier on the resulting cleaned and balanced dataset.

- Validate: Evaluate the final model on the original, untouched test set.

Workflow and Strategy Diagrams

Imbalance Troubleshooting Workflow

Resampling Strategy Comparison

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Computational Tools for Handling Class Imbalance

| Tool / "Reagent" | Function / Purpose | Example Use-Case / Note |

|---|---|---|

imbalanced-learn (imblearn) Library |

A Python library providing a suite of resampling techniques. | The primary tool for implementing ROS, RUS, SMOTE, ADASYN, and Tomek Links [8]. |

| Cost-Sensitive Algorithms | Machine learning algorithms that can be configured with a class_weight parameter. |

Use class_weight='balanced' in Scikit-learn's RandomForest or LogisticRegression to automatically adjust weights [14]. |

| Tree-Based Ensemble Methods | Algorithms like Random Forest and XGBoost that are naturally robust to imbalance. | Effective for biomarker identification from high-dimensional genomic data without heavy pre-processing [15] [14]. |

| Matthews Correlation Coefficient (MCC) | A performance metric that is reliable even when classes are of very different sizes. | More informative than accuracy for initial diagnostics on imbalanced bioassay data [16]. |

| The Cancer Genome Atlas (TCGA) | A public database containing genomic and clinical data for various cancer types. | A common source of real-world, imbalanced datasets for biomarker discovery, such as the PANCAN RNA-seq dataset [15]. |

Biomarker Fundamentals & FAQs

What are the primary functional types of biomarkers in clinical research?

Biomarkers are objectively measurable indicators of biological processes, pathological states, or responses to therapeutic interventions. In clinical research and precision medicine, they are primarily categorized by their functional role [17] [18] [19].

- Diagnostic Biomarkers: Identify the presence or type of a disease. For example, protein biomarkers like CA 19-9 are used for detecting Pancreatic Ductal Adenocarcinoma (PDAC) [17].

- Prognostic Biomarkers: Forecast the likely course of a disease in an untreated individual, providing information on long-term outcomes. Analyses of immune signatures or microbiome compositions can serve this purpose by indicating disease aggressiveness [17].

- Predictive Biomarkers: Identify individuals who are more likely to respond to a specific treatment. The mutational status of genes like BRAF or BRCA helps predict efficacy of targeted therapies [20] [18].

The following table summarizes these key types and their applications.

Table 1: Key Biomarker Types and Their Clinical Applications

| Biomarker Type | Primary Function | Example | Clinical Application Context |

|---|---|---|---|

| Diagnostic | Detects or confirms the presence of a disease | CA 19-9, protein biomarkers [17] | Identifying patients with Pancreatic Ductal Adenocarcinoma (PDAC) |

| Prognostic | Predicts disease aggressiveness and future course | Immune signatures, microbiome biomarkers [17] | Estimating long-term outcomes in cancer patients |

| Predictive | Forecasts response to a specific therapy | BRCA mutations, BRAF mutations [20] [18] | Selecting patients for PARP inhibitor or EGFR inhibitor therapy |

What key characteristics define a reliable and effective biomarker?

For a biomarker to be clinically useful, it should possess several key characteristics [19]:

- High Sensitivity and Specificity: Sensitivity minimizes false negatives (correctly identifies diseased individuals), while specificity minimizes false positives (correctly rules out healthy individuals) [19].

- Reproducibility: The biomarker must provide consistent results across different tests, laboratories, and over time [19].

- Clinical Relevance: There should be a correlation between the biomarker's levels and the severity of the disease or damage [19].

- Dynamic Response: An ideal biomarker changes in response to treatment, allowing for real-time monitoring of therapeutic efficacy [19].

The Class Imbalance Challenge in Biomarker Discovery

How does class imbalance negatively impact biomarker discovery?

Class imbalance is a prevalent issue in biomedical datasets, where one class of samples (e.g., healthy controls) significantly outnumbers another (e.g., disease cases). This creates major challenges for machine learning (ML) models [13]:

- Model Bias: Standard ML classifiers tend to be biased toward the majority class, achieving high accuracy by simply always predicting the common outcome, while failing to identify the critical minority class (e.g., metastatic patients) [13].

- Non-Robust Feature Selection: Feature selection processes can become unstable and inconsistent, potentially missing biologically relevant markers that are characteristic of the rare class [13].

- Poor Generalizability: A model trained on imbalanced data will likely perform poorly in real-world clinical settings where it encounters a more balanced distribution of cases [13].

What methodological strategies can mitigate class imbalance?

Several data handling and modeling strategies can be employed to address class imbalance:

- Data Resampling Techniques: Methods like ADASYN (Adaptive Synthetic Sampling) generate synthetic examples of the minority class to balance the dataset, creating a more robust training environment for the model [13].

- Robust Variable Selection: Implementing a rigorous, multi-step feature selection process that involves repeated cross-validation and consensus modeling helps identify features that are consistently important, reducing noise and false positives [13].

- Ensemble Machine Learning Models: Algorithms like Random Forest are inherently more robust to class imbalance. They aggregate predictions from multiple decision trees, which improves classification accuracy for the minority class [15] [13].

The following diagram illustrates a robust experimental workflow designed to counteract class imbalance challenges.

What specific experimental protocols are used in robust biomarker discovery?

Protocol: A Robust ML Pipeline for Metastatic Biomarker Discovery [13]

This protocol uses Pancreatic Ductal Adenocarcinoma (PDAC) metastasis prediction as a case study.

Data Sourcing and Inclusion Criteria:

- Source primary tumor RNA-seq data from public repositories (e.g., TCGA, GEO, ICGC).

- Apply strict inclusion criteria: samples must be from unpaired PDAC patients with available clinical data on lymph node (N) and distant (M) metastasis status.

- Stratify samples into "non-metastasis" (stages IA-IIA, N0) and "metastasis" (stages IIB-IV) groups.

Data Pre-processing and Integration:

- Normalization: Apply TMM (Trimmed Mean of M-values) normalization using the

edgeRpackage to account for sequencing depth and composition. - Gene Filtering: Filter out genes with low expression levels (e.g., < 5% quantile and < 0.1 Absolute Fold Change).

- Batch Effect Correction: Use the ARSyN (ASCA removal of systematic noise) method to remove technical variance introduced by different experimental batches.

- Normalization: Apply TMM (Trimmed Mean of M-values) normalization using the

Consensus Biomarker Candidate Identification:

- Split the processed data into training and validation sets.

- On the training set, perform a 10-fold cross-validation process that runs 100 models per fold.

- For variable selection in each model, sequentially apply three algorithms:

- Define a robust biomarker candidate as a gene that appears in at least 80% of the models within a fold and across at least five folds.

Model Building and Evaluation:

- Build a final classifier (e.g., Random Forest) using the consensus genes on the balanced training data.

- Test the model's performance on the held-out validation set.

- Evaluate the model using a comprehensive set of metrics suitable for imbalanced data, including Precision, Recall, and F1-score for both the metastasis and non-metastasis classes.

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Research Reagents and Resources for Biomarker Discovery

| Item | Function / Description | Application Example |

|---|---|---|

| RNA-seq Data (Illumina HiSeq) | High-throughput quantification of transcript expression levels [15]. | Profiling gene expression across cancer types (e.g., BRCA, LUAD) for classification [15]. |

| Targeted Metabolomics Kit (Absolute IDQ p180) | Quantifies 194 endogenous metabolites from 5 compound classes (e.g., amino acids, lipids) [5]. | Identifying metabolic biomarkers for Large-Artery Atherosclerosis (LAA) [5]. |

| LASSO Regression | A linear model with L1 regularization that performs variable selection by driving some coefficients to zero [15] [13]. | Initial filtering of thousands of genes or metabolites to a smaller, relevant subset for model building [13] [5]. |

| Random Forest Algorithm | An ensemble learning method that constructs multiple decision trees and aggregates their results [15] [13]. | Building robust classifiers for disease status (e.g., cancer type, metastasis) that are less prone to overfitting [15] [13]. |

| ADASYN (Adaptive Synthetic Sampling) | An oversampling technique that generates synthetic data for the minority class based on its density distribution [13]. | Balancing datasets where the number of metastatic samples is much lower than non-metastatic samples [13]. |

Advanced Concepts & Integration Strategies

How can multi-modal data integration improve biomarker discovery?

Integrating diverse data types (multi-omics) provides a more comprehensive molecular profile, which is crucial for understanding complex diseases. Machine learning offers three primary strategies for this integration [21]:

- Early Integration: Data from different sources (e.g., genomics, clinical) are combined into a single feature set before being fed into a model. Methods like Canonical Correlation Analysis (CCA) can be used to extract common features [21].

- Intermediate Integration: Data sources are joined during the model building process. Examples include kernel fusion in Support Vector Machines or multimodal neural networks [21].

- Late Integration (Stacking): Separate models are first trained on each data modality. Their predictions are then combined by a "meta-model" to make the final prediction [21].

The choice of strategy depends on the data characteristics and the biological question. A critical step is to assess whether new omics data provides added value over traditional clinical markers [21].

What is the role of novel computational tools like MarkerPredict?

Novel, hypothesis-generating frameworks are emerging to guide biomarker discovery. MarkerPredict is one such tool designed specifically for predicting predictive biomarkers in oncology [20].

- Core Hypothesis: It is based on the premise that network-based properties of proteins (their position and connections in signaling networks) and structural features (like intrinsic protein disorder) influence their potential as biomarkers [20].

- Methodology:

- It analyzes proteins within signaling networks, focusing on their participation in network motifs (small, overrepresented subnetworks like three-node triangles).

- It integrates this topological information with protein disorder data from databases like DisProt, AlphaFold, and IUPred.

- It uses machine learning models (Random Forest, XGBoost) trained on known positive and negative biomarker examples to classify and rank new candidate biomarkers [20].

- Output: The tool generates a Biomarker Probability Score (BPS), which provides a systematic ranking of potential predictive biomarkers for further experimental validation [20].

From Theory to Practice: Data-Centric and Algorithmic Solutions for Biomarker Discovery

Frequently Asked Questions

1. What is the class imbalance problem and why is it critical in biomarker research? In machine learning for biomarker discovery, the class imbalance problem occurs when the classes of interest are not represented equally; for instance, when the number of patients with a specific disease or condition (the minority class) is much smaller than the number of healthy control subjects (the majority class) [22] [23]. This is a common challenge in medical research, where important but rare events, such as cancer metastasis or drug response, are underrepresented [13] [24]. Most standard classification algorithms are designed to maximize overall accuracy and become biased toward the majority class, failing to adequately learn the characteristics of the critical minority class. This leads to models with high false negative rates, which is unacceptable in healthcare, where failing to identify a disease or a metastatic event can have severe consequences [22] [4].

2. When should I use oversampling versus undersampling? The choice depends on your dataset size and the specific nature of your research problem.

- Oversampling (e.g., SMOTE) is generally preferred when you have a limited amount of data overall, as it does not discard any information. It works by generating new, synthetic examples for the minority class, thereby helping the model learn its structure better [4] [6]. For example, in a study predicting breast cancer recurrence from blood microbiome data with only 7 minority class samples, SMOTE was successfully applied to augment the data and build a reliable model [25].

- Undersampling (e.g., Tomek Links, NearMiss) is often more suitable for larger datasets where discarding some majority class samples will not lead to a significant loss of information. Its primary goal is to clean the data by removing ambiguous or noisy majority samples, which can improve the definition of the class boundary [4] [23].

3. Can resampling techniques be combined, and what are the advantages? Yes, hybrid approaches that combine over- and undersampling are often highly effective. A prominent example is SMOTE-Tomek Links [26] [27]. This method first applies SMOTE to generate synthetic minority samples, which can potentially create noisy or overlapping examples. It then applies Tomek Links to remove any pairs of very close instances from opposite classes (both the original majority samples and the newly generated minority samples), effectively "cleaning" the dataset and creating a clearer decision boundary [26]. Research on fault diagnosis in electrical machines found that this combined technique provided the best performance across several classifiers [27].

4. How should I evaluate model performance on resampled imbalanced data? Using accuracy as a sole metric is highly misleading for imbalanced datasets [23]. You should instead rely on a suite of metrics that are robust to class imbalance. Key metrics include:

- Recall (Sensitivity): The ability of the model to find all relevant minority class cases. This is often critical in medical applications.

- Precision: The accuracy of the model when it predicts the minority class.

- F1-Score: The harmonic mean of precision and recall, providing a single balanced metric.

- Area Under the Receiver Operating Characteristic Curve (AUROC): Measures the model's ability to distinguish between classes.

- Matthews Correlation Coefficient (MCC): A reliable metric that produces a high score only if the model performs well in all four confusion matrix categories [25] [13].

Troubleshooting Guides

Problem: My model's recall for the minority class is still very low after applying SMOTE. Solution: Investigate the quality of the synthetic samples and consider alternative techniques.

- Potential Cause 1: SMOTE is generating noisy samples. SMOTE can sometimes generate synthetic examples in the region of the majority class, introducing noise [4].

- Action: Apply a cleaning step after SMOTE. Use SMOTE-Tomek Links or SMOTE-ENN (Edited Nearest Neighbors) to remove such ambiguous instances. A study on cancer diagnosis found that the hybrid method SMOTEENN achieved the highest mean performance (98.19%) among many tested resampling techniques [22].

- Potential Cause 2: The internal structure of the minority class is not considered. Vanilla SMOTE treats all minority samples equally.

- Action: Use advanced variants of SMOTE that are more sensitive to the data distribution. Borderline-SMOTE only generates synthetic samples for minority instances that are near the class boundary, while ADASYN adaptively generates more samples for minority examples that are harder to learn [4] [13]. In a PDAC metastasis study, ADASYN was used during the training of a random forest model to identify robust biomarker candidates [13].

Problem: After applying Random Undersampling, my model has become unstable and performance has dropped. Solution: You may have lost critical information from the majority class.

- Potential Cause: Indiscriminate removal of majority class samples. Random Undersampling can remove important samples that are essential for defining the overall data structure [23].

- Action: Switch to informed undersampling methods. Instead of random removal, use Tomek Links to only remove majority class samples that are "too close" to minority samples, thereby clarifying the class boundary [28] [26]. Alternatively, use the NearMiss algorithm, which selects majority class samples based on their distance to minority class instances, preserving the most informative examples [4] [23].

Problem: I am unsure which resampling technique to choose for my biomarker dataset. Solution: Follow an empirical, data-driven evaluation protocol.

- Action: Implement a comparative framework.

- Define a robust evaluation metric (e.g., F1-Score or MCC) and a cross-validation strategy suitable for imbalanced data (e.g., Repeated Stratified K-Fold) [26].

- Test a baseline model without any resampling.

- Evaluate a suite of resampling techniques on the same model and dataset. A suggested minimal set includes: SMOTE, ADASYN, Tomek Links, NearMiss, and one hybrid method like SMOTE-Tomek.

- Compare the results using multiple metrics and select the technique that yields the best and most stable performance for your specific task. A benchmarking study in drug discovery with GNNs highlighted that while oversampling often performed well, the best technique can be dataset-dependent [6].

Comparison of Resampling Techniques

The table below summarizes the core characteristics, mechanisms, and ideal use cases for the resampling techniques discussed.

| Technique | Type | Core Mechanism | Best For | Key Advantages | Key Limitations |

|---|---|---|---|---|---|

| SMOTE [4] [26] | Oversampling | Generates synthetic minority samples by interpolating between existing ones. | Datasets with a small overall size; adding new information. | Mitigates overfitting compared to random duplication. | May generate noisy samples in overlapping regions. |

| ADASYN [4] [13] | Oversampling | Adaptively generates more synthetic data for "hard-to-learn" minority samples. | Complex datasets where the minority class is not homogeneous. | Focuses model attention on difficult minority class examples. | Can be sensitive to noisy data and outliers. |

| Tomek Links [28] [26] | Undersampling | Removes majority class samples that form a "Tomek Link" (closest opposite-class neighbor pair). | Cleaning datasets; clarifying class boundaries, often used in combination with SMOTE. | Effectively removes borderline majority samples, refining the decision boundary. | Does not change the number of minority samples; can be conservative. |

| NearMiss [4] [23] | Undersampling | Selects majority class samples based on their distance to minority class instances (e.g., choosing the closest). | Large datasets where informed data reduction is needed. | Preserves important structural information from the majority class. | Computational cost can be high with very large datasets. |

| SMOTE-Tomek [26] [27] | Hybrid | First applies SMOTE, then uses Tomek Links to clean the resulting dataset. | General-purpose use; achieving a balance between adding and cleaning data. | Combines the benefits of both generating and cleaning data. | Introduces complexity with two steps to implement and tune. |

Experimental Protocol: Benchmarking Resampling Techniques

This protocol provides a step-by-step methodology for comparing resampling techniques, as implemented in studies on cancer diagnosis and fault detection [22] [27].

- Data Preparation and Splitting:

- Begin with a pre-processed biomarker dataset (e.g., gene expression, protein levels) with a defined binary outcome (e.g., metastatic vs. non-metastatic).

- Split the data into training (80%) and a completely held-out test set (20%), ensuring the class imbalance is preserved in both splits [25].

- Define Resampling Candidates:

- Select a set of resampling techniques to evaluate. A recommended starting set is: No Resampling (Baseline), SMOTE, ADASYN, Tomek Links, and SMOTE-Tomek.

- Define Model and Evaluation Framework:

- Choose a classifier known to perform well on complex biological data, such as Random Forest [22] [13].

- Define a robust cross-validation strategy on the training set only. Use Repeated Stratified K-Fold Cross-Validation (e.g., 10 folds, 3 repeats) to ensure each fold maintains the class distribution [26].

- Specify evaluation metrics. Record Precision, Recall (Sensitivity), F1-Score, and MCC for the minority class.

- Model Training and Validation:

- For each resampling technique, apply it within the cross-validation loop on the training fold only. This prevents data leakage from the validation fold.

- Train the model on the resampled training fold and calculate the metrics on the untouched validation fold.

- Repeat this process for all folds and repeats, then aggregate the results (e.g., calculate the mean and standard deviation for each metric).

- Final Evaluation and Technique Selection:

- Select the resampling technique that achieved the best-aggregated performance (e.g., highest mean F1-Score or MCC) during cross-validation.

- Train a final model on the entire training set using this selected resampling technique.

- Evaluate this final model on the held-out test set to obtain an unbiased estimate of its performance on new data.

Workflow Diagram: Resampling in Biomarker Discovery

The following diagram illustrates the logical workflow for integrating resampling techniques into a biomarker discovery pipeline, as demonstrated in several research studies [22] [13].

The Scientist's Toolkit: Essential Research Reagents & Software

This table details key computational "reagents" and tools essential for implementing resampling techniques in a biomarker research pipeline.

| Tool/Reagent | Function in Experiment | Example/Note |

|---|---|---|

| imbalanced-learn (imblearn) | Python library providing the core implementation of resampling algorithms like SMOTE, Tomek Links, and NearMiss. | Essential software dependency. Provides a scikit-learn compatible API for easy integration into model pipelines [28] [26]. |

| scikit-learn | Provides machine learning models (Random Forest, SVM), data splitting utilities, and comprehensive evaluation metrics. | Used for building the classifier and calculating performance metrics like precision, recall, and F1-score [25] [26]. |

| Random Forest Classifier | A robust, ensemble ML algorithm frequently used as the base model for classification tasks on imbalanced biological data. | Demonstrated top performance in studies on cancer diagnosis and prognosis, making it a strong default choice [22] [13]. |

| Synthetic Minority Class | The output of oversampling techniques; a set of generated data points that represent the underrepresented condition. | In a breast cancer recurrence study, the minority class (7 patients) was augmented to 35 synthetic samples using SMOTE for effective model training [25]. |

| Stratified K-Fold Cross-Validation | A resampling procedure used for model evaluation that preserves the class distribution in each fold, critical for imbalanced data. | Prevents over-optimistic performance estimates. Should be applied when comparing different resampling strategies [13] [26]. |

Frequently Asked Questions (FAQs)

Q1: What is cost-sensitive learning and how does it help with class imbalance in biomarker identification? Cost-sensitive learning directly incorporates the cost of misclassification into a machine learning algorithm. In the context of biomarker discovery, where failing to identify a true positive (e.g., a metastatic cancer sample) is often far more costly than a false alarm, this approach assigns a higher penalty for misclassifying the minority class. This forces the model to pay more attention to learning the characteristics of the rare but critical cases, leading to more clinically relevant models [29].

Q2: For a Random Forest model, should I use 'class_weight' or resample my data?

Both are valid strategies. Using the class_weight parameter (e.g., setting it to 'balanced') is often more straightforward as it does not reduce your dataset size. The 'balanced' mode automatically adjusts weights inversely proportional to class frequencies, giving more weight to the minority class. Resampling techniques like SMOTE or random undersampling create a new, balanced dataset, which can also be effective. The optimal choice can be dataset-dependent, so empirical testing is recommended [30] [4] [31].

Q3: How do I set the scale_pos_weight parameter in XGBoost for a binary classification problem?

The scale_pos_weight parameter is used to balance the positive and negative classes in binary classification. A typical and effective value to start with is the ratio of the number of negative instances to the number of positive instances: sum(negative instances) / sum(positive instances) [32]. For multi-class problems, you would use the class_weight parameter instead.

Q4: Why is Accuracy a bad metric for my imbalanced biomarker dataset and what should I use instead? Accuracy is misleading for imbalanced data because a model that simply always predicts the majority class will achieve a high accuracy score, while completely failing to identify the minority class of interest (e.g., metastatic samples). For evaluation, you should use metrics that are robust to class imbalance. Balanced Accuracy (BAcc), which is the arithmetic mean of sensitivity and specificity, is highly recommended. The Area Under the Curve (AUC) of the Receiver Operating Characteristic (ROC) and the F1-score for the minority class are also reliable choices [33] [13].

Q5: My Random Forest model is still biased toward the majority class even after setting class_weight. What can I do? You can explore these advanced strategies:

- Combine Class Weighting with Undersampling: Use the

class_weightparameter in conjunction with random undersampling of the majority class for a more robust effect [34]. - Use

BalancedRandomForest: This variant, available in libraries likeimblearn, specifically combines Random Forest with built-in undersampling to further focus on the minority class [30]. - Verify Your Evaluation Metrics: Ensure you are using balanced metrics like Balanced Accuracy to track true performance improvements [33].

Troubleshooting Guides

Problem: Your model reports high overall accuracy (e.g., 92%), but a closer look reveals it fails to predict the minority class (e.g., metastatic cancer samples) correctly.

Diagnosis: This is a classic symptom of a model biased by class imbalance. The standard accuracy metric is dominated by the majority class performance [33].

Solution Steps:

- Switch Your Performance Metric: Immediately stop using accuracy. Instead, calculate the Balanced Accuracy (BAcc) and the F1-score specifically for the minority class. This will give you a true picture of model performance [33].

- Apply Cost-Sensitive Learning: Implement class weighting in your model.

- For Random Forest: Instantiate your model with

class_weight='balanced'. This automatically sets weights inversely proportional to class frequencies [30] [34]. - For XGBoost: For binary classification, set

scale_pos_weight = number_of_negative_samples / number_of_positive_samples. For multi-class, use theclass_weightparameter with a dictionary of weights [32].

- For Random Forest: Instantiate your model with

- Re-train and Re-evaluate: Train the new, cost-sensitive model and evaluate it using the balanced metrics from Step 1. You should now see a more realistic and useful assessment of its predictive power.

Issue 2: Implementing a Robust Biomarker Discovery Pipeline with Imbalanced Data

Problem: You need a reproducible and robust workflow for identifying biomarker candidates from high-dimensional, imbalanced transcriptomic data.

Diagnosis: Standard, off-the-shelf ML pipelines are prone to data leakage and irreproducibility, especially with imbalanced data and a low sample-to-feature ratio [13].

Solution Steps: This guide outlines a robust pipeline inspired by a published PDAC metastasis study [13].

- Data Integration and Pre-processing: Pool and normalize data from multiple sources (e.g., TCGA, GEO). Critically, correct for technical variance and batch effects using methods like ARSyN to isolate the true biological signal.

- Robust Feature Selection: Split data into training and validation sets. On the training set, perform a robust variable selection process using a 10-fold cross-validation loop that combines multiple algorithms (e.g., LASSO, Boruta, varSelRF). A gene is considered a robust candidate only if it is selected in a high percentage of models (e.g., 80%) across multiple folds.

- Model Training with Imbalance Handling: Build a Random Forest model using the selected robust features. To handle imbalance, employ the

class_weight='balanced'parameter or use an oversampling technique like ADASYN on the training data [4] [13]. - Rigorous Validation: Finally, test the final model on the held-out validation dataset that was not used in any part of the feature selection or training process, using a comprehensive set of balanced performance metrics.

The following workflow diagram illustrates this robust pipeline:

Experimental Protocols & Data Summaries

Protocol 1: Benchmarking Class Weighting in Random Forest

This protocol details a methodology to evaluate the effectiveness of different class weighting strategies in a Random Forest classifier for imbalanced data [30] [34].

1. Hypothesis: Using class weighting (balanced or balanced_subsample) will improve the Balanced Accuracy and F1-score of a Random Forest model on an imbalanced biomarker dataset, compared to a model with no weighting.

2. Experimental Setup:

- Dataset: Use a labeled dataset with a known class imbalance (e.g., the public Seer Breast Cancer Dataset [31]).

- Models: Train three Random Forest models with

n_estimators=100:- Model A:

class_weight=None - Model B:

class_weight='balanced' - Model C:

class_weight='balanced_subsample'

- Model A:

- Evaluation Method: Use a stratified 5-fold cross-validation. Report mean and standard deviation for all metrics.

3. Key Parameters and Variables:

n_estimators: 100cross-validation: Stratified 5-Foldrandom_state: 42 (for reproducibility)

4. Analysis and Interpretation: Compare the performance metrics across the three models. The model with the highest Balanced Accuracy and minority-class F1-score, while maintaining reasonable precision, is the most effective for the given task.

Performance Metrics for Imbalanced Classification

The table below summarizes key metrics to replace accuracy when evaluating models on imbalanced data [33] [13].

| Metric | Formula | Interpretation | Ideal Value |

|---|---|---|---|

| Balanced Accuracy (BAcc) | (Sensitivity + Specificity) / 2 | Average of recall obtained on each class. Robust to imbalance. | Closer to 1 |

| F1-Score (Minority Class) | 2 * (Precision * Recall) / (Precision + Recall) | Harmonic mean of precision and recall for the class of interest. | Closer to 1 |

| Area Under the ROC Curve (AUC-ROC) | Area under the ROC plot | Measures the model's ability to distinguish between classes across thresholds. | Closer to 1 |

| Precision (Minority Class) | True Positives / (True Positives + False Positives) | When the model predicts the minority class, how often is it correct? | Closer to 1 |

| Recall (Sensitivity) | True Positives / (True Positives + False Negatives) | What proportion of actual minority class samples were correctly identified? | Closer to 1 |

Protocol 2: Integrating SMOTE with Random Forest

This protocol is based on a successful application in materials design and catalyst discovery, where SMOTE was used to handle data imbalance [4].

1. Hypothesis: Integrating the Synthetic Minority Over-sampling Technique (SMOTE) with a Random Forest classifier will enhance the model's ability to identify the minority class in a high-dimensional biomolecular dataset.

2. Experimental Workflow:

- Data Splitting: Split the data into training and test sets. Important: Apply SMOTE only to the training set to avoid data leakage.

- Resampling: Apply the SMOTE algorithm to the training data. SMOTE generates synthetic samples for the minority class by interpolating between existing instances.

- Model Training: Train a standard Random Forest classifier (

class_weight=None) on the resampled (balanced) training data. - Evaluation: Evaluate the trained model on the original, untouched test set using Balanced Accuracy and other metrics from the table above.

The following diagram visualizes this workflow:

The Scientist's Toolkit: Research Reagent Solutions

The table below lists essential computational "reagents" for handling class imbalance in biomarker research.

| Item | Function / Purpose | Example / Note |

|---|---|---|

Random Forest (scikit-learn) |

An ensemble classifier that can be made cost-sensitive via the class_weight parameter. |

Use RandomForestClassifier(class_weight='balanced') [30] [34]. |

XGBoost (xgboost) |

A powerful gradient boosting framework with built-in cost-sensitivity. | Use scale_pos_weight for binary or class_weight for multi-class problems [32]. |

BalancedRandomForest (imblearn) |

A variant of Random Forest that combines undersampling with ensemble learning. | Specifically designed for imbalanced datasets [30] [31]. |

SMOTE (imblearn) |

An oversampling technique that generates synthetic minority class samples. | Helps prevent overfitting compared to simple duplication [4] [31]. |

ADASYN (imblearn) |

An adaptive oversampling method that focuses on generating samples for hard-to-learn minority class instances. | Can be more effective than SMOTE for complex distributions [4] [13]. |

Stratified K-Fold (scikit-learn) |

A cross-validation method that preserves the percentage of samples for each class in every fold. | Crucial for obtaining reliable performance estimates on imbalanced data [13]. |

Balanced Accuracy Metric (scikit-learn) |

A performance metric that is robust to imbalance, calculated as the average recall per class. | Should be the default metric for model selection [33]. |

Core Concept: What is Focal Loss and Why is it Needed?

In the context of biomarker identification, researchers often work with datasets where easily classifiable samples (e.g., healthy tissue or common biomarkers) vastly outnumber the "hard" examples that are difficult to classify but are critically important (e.g., rare cellular structures or novel biomarker patterns). This is a classic class imbalance problem.

The Problem with Standard Cross-Entropy Loss The traditional Cross-Entropy (CE) loss treats every sample equally. In an imbalanced dataset, the loss from the numerous "easy" negative samples (e.g., background tissue) can dominate the total loss and gradient signal during training. This causes the model to become biased toward the majority class and fail to learn the nuanced features required to identify the minority, "hard-to-classify" biomarker samples [35] [36]. You may achieve a high accuracy, but the model will perform poorly on the minority class of interest.

How Focal Loss Provides a Solution Focal Loss (FL) adapts Standard Cross-Entropy to address this issue. It introduces a dynamically scaled cross-entropy loss, where the scaling factor decays to zero as confidence in the correct class increases. This automatically down-weights the contribution of easy examples and forces the model to focus on hard, misclassified examples during training [35] [37] [36].

The Focal Loss function is defined as:

FL(pₜ) = -αₜ(1 - pₜ)ᵞ log(pₜ)

Where:

pₜis the model's estimated probability for the true class.(1 - pₜ)is the modulating factor. When a sample is misclassified andpₜis small, this factor is close to 1, and the loss is unaffected. When a sample is easy to classify andpₜis close to 1, this factor tends towards 0, down-weighting the loss for that sample.ᵞ(gamma) is the focusing parameter, typically set to 2. It controls the rate at which easy examples are down-weighted. A higherᵞincreases the focus on hard examples.αₜ(alpha) is a weighting factor, often used to balance class importance [35] [36].

The following diagram illustrates the logical relationship and workflow for implementing Focal Loss to tackle class imbalance in biomarker research.

Performance Evidence: Quantitative Results in Medical Research

The application of Focal Loss and similar advanced loss functions has demonstrated significant performance improvements in various medical AI tasks, from disease classification to image segmentation. The table below summarizes key quantitative findings from recent studies.

Table 1: Performance of Advanced Loss Functions in Medical Research Applications

| Research Context | Model / Technique | Key Performance Metrics | Compared Baseline |

|---|---|---|---|

| Liver Disease Classification [38] | AFLID-Liver (Integrates Focal Loss, Attention, LSTM) | Accuracy: 99.9%, Precision: 99.9%, F-score: 99.9% | Baseline GRU Model (Accuracy: 99.7%, F-score: 97.9%) |

| Medical Image Segmentation (5 public datasets) [37] | Unified Focal Loss (Generalizes Dice & CE losses) | Consistently outperformed 6 other loss functions (Dice, CE, etc.) in 2D binary, 3D binary, and 3D multiclass tasks. | Standard Dice Loss & Cross-Entropy Loss |

| TMB Biomarker Prediction from Pathology Images [39] | Saliency ROB Search (SRS) Framework | AUC: 0.833, Average Precision: 0.782 | Baseline without specialized modules (AUC: 0.691) |

Implementation Guide: Integrating Focal Loss in Your Biomarker Pipeline

Here is a detailed methodology for implementing and tuning Focal Loss in a biomarker classification experiment, using a CNN-based image classifier as an example.

Experimental Protocol: Focal Loss for Biomarker Detection

Problem Formulation & Data Preparation:

- Task: Binary classification of tissue patches as containing a specific biomarker pattern ("positive") or not ("negative").

- Data Split: Divide your Whole Slide Images (WSIs) or biomarker data into training, validation, and test sets at the patient level to prevent data leakage.

- Preprocessing: Apply standard normalization and augmentation techniques (e.g., random rotations, flips, color jitter) to the training patches. This increases robustness.

Model Configuration:

- Architecture: Select a backbone architecture like a pre-trained ResNet or a U-Net-style network, depending on your task (classification or segmentation).

- Final Layer: Ensure the final layer has a single output node with a sigmoid activation for binary classification.

Focal Loss Integration:

- Code Implementation: Replace your standard loss function with Focal Loss. Below is a sample implementation in Python using TensorFlow/Keras.

Hyperparameter Tuning:

- Gamma (γ): Start with a value of

2.0[35] [36]. To tune, try a range (e.g., 1, 2, 3, 4) and monitor performance on your validation set. A higherγincreases the focus on hard examples. - Alpha (α): This can be the inverse class frequency or a hyperparameter found via cross-validation. A common starting point is

0.25for the positive class [35]. It helps balance class importance. - Use a validation set to find the optimal (

α,γ) combination for your specific dataset.

- Gamma (γ): Start with a value of

Model Training & Evaluation:

- Training: Train the model using the Focal Loss. Monitor both loss and relevant performance metrics on the validation set.

- Evaluation: Do not rely on accuracy. Use metrics that are robust to class imbalance:

- Balanced Accuracy (BAcc): The arithmetic mean of sensitivity and specificity. Highly recommended for imbalanced data [33].

- Area Under the ROC Curve (AUC): Measures the model's ability to distinguish between classes.

- Precision-Recall Curve (PRC) and Average Precision (AP): Especially informative when the positive class is rare [39].

- F-score: The harmonic mean of precision and recall.

Troubleshooting FAQs

Q1: I've implemented Focal Loss, but my model's performance on the minority biomarker class is still poor. What should I check?

- A: First, verify your data pipeline. Ensure there are no label leaks or incorrect annotations in your "hard" samples. Second, revisit your hyperparameters. The default

γ=2andα=0.25are starting points; systematically grid search overγin the range [1, 5] andαaround the inverse class frequency. Finally, consider combining Focal Loss with data-level strategies like strategic oversampling of the minority class (e.g., SMOTE) [40] or using more sophisticated data sampling techniques.

Q2: How is Focal Loss different from simply weighting classes in standard Cross-Entropy?

- A: Class-weighted Cross-Entropy applies a static weight to all samples in a class. It addresses the imbalance between classes but does not distinguish between easy and hard samples within a class. Focal Loss adds a dynamic scaling factor

(1 - pₜ)ᵞthat is sample-specific. An easy sample from the minority class will still be down-weighted, while a hard sample from the majority class will be up-weighted, ensuring the model focuses its learning capacity on the most informative examples, regardless of their class [35] [36].

Q3: My model training has become unstable after switching to Focal Loss. Why?

- A: Instability often arises from an excessively high

γvalue, which can overly suppress the loss from a large number of easy examples, making the gradient signal noisy. Try reducing theγvalue (e.g., from 2.0 to 1.5 or 1.0). Also, check your model's output probabilities (pₜ). Adding a small epsilon (e.g.,1e-8) inside the logarithm in the loss function, as shown in the code sample, can prevent numerical instability fromlog(0).

Q4: For biomarker segmentation tasks, should I use Focal Loss or Dice Loss?

- A: Both are designed to handle class imbalance. Dice Loss is region-based and directly optimizes for the overlap between prediction and ground truth, making it a natural fit for segmentation. However, it can be unstable with very small lesions. Focal Loss is distribution-based and excels at refining boundaries and handling class imbalance by focusing on hard pixels. A modern best practice is to use a compound loss, such as the sum of Dice and Focal Loss (sometimes called Unified Focal Loss [37]), which leverages the benefits of both for robust biomarker segmentation.

The Scientist's Toolkit: Essential Research Reagents & Materials

Table 2: Key Resources for Biomarker Identification Experiments with Imbalanced Data

| Item / Reagent | Function / Explanation |

|---|---|

| Histopathology Whole-Slide Images (WSIs) [39] | The primary raw data input. H&E-stained WSIs provide the morphological context for identifying biomarker-relevant regions. |

| Focal Loss Function [35] [37] | The core algorithmic component used during model training to mitigate class imbalance by focusing learning on misclassified biomarker samples. |

| Imbalanced-Learn (imblearn) Python Library [40] | A software toolkit providing various resampling techniques (e.g., SMOTE, Tomek Links) that can be used in conjunction with Focal Loss for data-level balancing. |

| Balanced Accuracy (BAcc) Metric [33] | A critical evaluation metric that provides a reliable performance assessment on imbalanced datasets by averaging sensitivity and specificity. |

| Functional Principal Component Analysis (FPCA) [41] | A statistical technique for feature extraction from irregular and sparse longitudinal biomarker data, reducing dimensionality before classification. |

| Region of Biomarker Relevance (ROB) Framework [39] | A conceptual and computational framework to identify and focus on the specific morphological areas in an image most associated with a biomarker, reducing noise. |

In machine learning-based biomarker identification, particularly for drug discovery, a prevalent issue is severely imbalanced datasets. In such cases, the number of active compounds (the minority class) is vastly outnumbered by inactive compounds (the majority class). This imbalance can lead to biased models that exhibit high overall accuracy but fail to identify the rare, active compounds that are of primary interest [42] [4]. This case study explores how the integration of the Synthetic Minority Over-sampling Technique (SMOTE) with the Random Forest (RF) ensemble algorithm was successfully applied to overcome this challenge and identify novel Histone Deacetylase 8 (HDAC8) inhibitors, a promising target for cancer therapy [43].

Technical Support Center: FAQs & Troubleshooting Guides

Frequently Asked Questions

Q1: Why did our initial model for virtual screening fail to identify any active HDAC8 inhibitors, despite high overall accuracy?

A: This is a classic symptom of the imbalanced data problem. Your model was likely biased toward predicting the majority "inactive" class. A Japanese research team encountered this exact issue. Their initial model, trained on an imbalanced dataset from ChEMBL (218 active vs. 1805 inactive compounds, a ratio of 1:8.28), failed to identify any active compounds in the first screening round. The model's accuracy was misleading, as it simply learned to always predict "inactive" [43].

Q2: What is SMOTE, and how does it improve the prediction of active compounds?

A: The Synthetic Minority Over-sampling Technique (SMOTE) is an advanced oversampling method that generates synthetic samples for the minority class. Instead of simply duplicating existing minority samples, SMOTE creates new, synthetic examples by interpolating between existing minority instances that are close in feature space. This technique helps balance the class distribution and allows the machine learning model to learn a more robust decision boundary for the minority class, thereby improving its ability to identify active compounds [42] [4] [43].

Q3: Why is Random Forest often chosen in conjunction with SMOTE for this task?

A: Random Forest is an ensemble algorithm that builds multiple decision trees and aggregates their results. It is particularly effective for several reasons:

- Robustness to Noise: It is generally robust to the minor noise that might be introduced by SMOTE [42].

- High Performance: In the referenced HDAC8 study, the RF model, when combined with SMOTE, demonstrated the best predictive accuracy among several tested algorithms, with an Area Under the Curve (AUC) of 0.98 [43].

- Feature Importance: RF provides estimates of feature importance, offering some insight into which molecular descriptors contribute most to the prediction [43].

Q4: Our SMOTE-RF model has high cross-validation scores, but the top predicted compounds are inactive. How can we improve the model?

A: This indicates that the model's knowledge can be refined. A successful strategy is to perform iterative model refinement. After the first screening round, the experimentally confirmed inactive compounds should be added back into the training dataset as "inactive" examples. The model (including the SMOTE balancing step) is then retrained on this expanded and more representative dataset. This process was key to the ultimate success of the HDAC8 study, leading to the identification of a potent, selective inhibitor after the second screening round [43].

Troubleshooting Guides

Problem: Model is insensitive to the minority class after applying SMOTE.

- Potential Cause 1: SMOTE may be generating noisy samples in overlapping class regions.

- Potential Cause 2: The model's hyperparameters are not tuned for the balanced dataset.

- Solution: After applying SMOTE, perform a fresh round of hyperparameter tuning for the Random Forest model. Focus on metrics that are more appropriate for imbalanced data, such as the F1-score or Matthews Correlation Coefficient (MCC), rather than simple accuracy [43].

Problem: High computational cost and long training times.

- Potential Cause: The dataset has become very large after oversampling, and the model is complex.

- Solution: Consider using the Random Under-Sampling (RUS) method to reduce the majority class size before applying SMOTE, creating a more manageable dataset. Alternatively, evaluate if other, less computationally intensive ensemble methods like XGBoost are suitable for your specific data [4].

Experimental Protocol: The HDAC8 Inhibitor Discovery Workflow

The following workflow, derived from the successful study, provides a detailed methodology for replicating this approach.

Workflow Diagram: SMOTE-Enhanced HDAC8 Inhibitor Screening

Detailed Methodology

Step 1: Data Curation and Preparation

- Source: Extract compound structures and corresponding HDAC8 half-maximal inhibitory concentration (IC50) data from a public database like ChEMBL [43].

- Curation: Remove duplicates and compounds without reliable IC50 values.

- Labeling: Convert IC50 values to pIC50 (-log10(IC50)). Define active compounds (minority class) as those with pIC50 > 7, and the remaining as inactive compounds (majority class). Document the initial imbalance ratio [43].

Step 2: Addressing Class Imbalance with SMOTE

- Technique: Apply SMOTE exclusively to the training set after a train-test split to avoid data leakage.

- Goal: Adjust the ratio of active to inactive compounds from the original imbalance (e.g., 1:8) to a balanced state (1:1) by generating synthetic active compounds [43].

Step 3: Model Building and Selection

- Featureization: Encode the chemical structures using a fingerprint method such as PubChem fingerprint or ECFP [43].

- Algorithm Training: Train multiple machine learning models (e.g., Random Forest, Support Vector Machine, k-Nearest Neighbors) on the SMOTE-balanced training dataset.

- Model Evaluation: Use the held-out, original (unmodified) test set for evaluation. Select the best-performing model based on the Area Under the Receiver Operating Characteristic Curve (AUC-ROC). The HDAC8 study found the RF-SMOTE model to be superior [43].

Step 4: Virtual Screening and Experimental Validation

- Screening: Use the trained RF-SMOTE model to predict active compounds from a large, diverse chemical library (e.g., natural compound libraries) [44] [43].

- Filtering: Apply additional filters for drug-likeness (e.g., ADMET properties) and remove compounds highly similar to those in the training set to prioritize novel chemotypes [43].

- Validation: Subject the top-ranking virtual hits to experimental HDAC8 inhibition assays to confirm biological activity [43].

The Scientist's Toolkit: Research Reagent Solutions

The table below lists key computational and experimental reagents used in the featured HDAC8 study and related fields.

| Item Name | Function / Description | Application in HDAC8 Context |

|---|---|---|