Systems Biology in Biomarker Discovery: Integrating Multi-Omics, AI, and Network Analysis for Precision Medicine

This article explores the transformative role of systems biology in modern biomarker identification, moving beyond single-analyte approaches to a holistic, network-based paradigm.

Systems Biology in Biomarker Discovery: Integrating Multi-Omics, AI, and Network Analysis for Precision Medicine

Abstract

This article explores the transformative role of systems biology in modern biomarker identification, moving beyond single-analyte approaches to a holistic, network-based paradigm. Targeting researchers and drug development professionals, we examine how the integration of multi-omics data, artificial intelligence, and computational modeling is accelerating the discovery of diagnostic, prognostic, and predictive biomarkers. The content covers foundational principles, cutting-edge methodological applications, strategies for overcoming analytical and regulatory challenges, and frameworks for clinical validation. By synthesizing current technologies and future trends, this resource provides a comprehensive guide for leveraging systems biology to develop clinically actionable biomarkers that enhance drug development and personalized treatment strategies.

From Single Molecules to Network Biology: The Systems Approach to Biomarker Discovery

The recognition of complex diseases as manifestations of dysregulated biological networks, rather than consequences of isolated molecular defects, has fundamentally shifted the paradigm of biomarker discovery. This evolution moves beyond the single-target approach toward a systems-level framework that acknowledges the multifaceted nature of diseases such as cancer, neurodegenerative disorders, and metabolic conditions [1] [2]. The limitations of single-target biomarkers are particularly evident in their inability to capture disease heterogeneity, their frequent lack of robustness across diverse patient populations, and their insufficient blocking of disease progression pathways when used for therapeutic intervention [1]. In response, the field is increasingly adopting multi-target strategies that leverage computational network analysis and high-throughput omics technologies to identify biomarker signatures that more accurately reflect underlying disease biology [1] [3]. This Application Note details protocols and methodologies for systems biology-driven biomarker identification, providing researchers with practical frameworks for implementing these approaches in drug development pipelines.

Computational Methods for Multi-Target Biomarker Identification

Network-Based Target Identification Using Min-Cut Algorithm

The min-cut algorithm represents a powerful graph-theoretical approach for identifying critical intervention points in disease pathways by strategically disconnecting disease progression networks.

Protocol: Pathway Disruption Analysis

Purpose: To identify a minimum set of target genes capable of blocking all paths from disease onset genes to apoptotic genes in a disease pathway network.

Materials:

- Disease pathway data from KEGG or similar databases

- Directed protein-protein interaction (PPI) network data

- Network analysis software (Cytoscape with appropriate plugins)

- Min-cut algorithm implementation (e.g., via Python NetworkX library)

Procedure:

- Network Construction: Build an initial directed network from a disease-specific pathway (e.g., Alzheimer's disease pathway from KEGG) [1].

- Network Augmentation: Enhance the initial network by integrating directed PPI relationships to create a denser, more biologically complete network. In practice, this augmentation typically increases node and edge counts by approximately 207% and 454%, respectively [1].

- Source-Sink Definition: Manually curate onset (source) and apoptotic (sink) genes based on KEGG pathway descriptions and literature validation (Table 1).

- Min-Cut Application: Apply the min-cut algorithm to all source-sink pairs to identify the minimum set of edges whose removal disconnects the network.

- Target Gene Identification: Select genes corresponding to the endpoints of cutting edges as candidate multi-target biomarkers or therapeutic targets.

Table 1: Example Source and Sink Genes for Neurodegenerative Disease Pathways

| Disease Pathway | Source Genes (Onset) | Sink Genes (Apoptotic) | Source-Sink Pairs |

|---|---|---|---|

| Alzheimer's Disease | APP | CASP3 | 6 distinct combinations |

| Huntington's Disease | Htt | CASP3 | Multiple configurations |

| Type 2 Diabetes | Multiple insulin-related | CASP3 | Disease-specific pairs |

Validation: The resulting candidate genes should be validated through gene set enrichment analysis (GSEA), PubMed literature mining, and comparison to known drug targets in databases such as KEGG [1].

Systems Biology Approach for Cancer Biomarker Discovery

This protocol employs protein-protein interaction network analysis to identify hub genes with central roles in cancer progression, suitable for diagnostic or prognostic biomarker development.

Protocol: PPI Network Analysis for Hub Gene Identification

Purpose: To reconstruct and analyze protein-protein interaction networks from gene expression data to identify central hub genes as potential biomarkers for complex diseases like colorectal cancer.

Materials:

- Gene expression dataset (e.g., from GEO database)

- STRING database for PPI information

- Cystoscope and Gephi software for network visualization and analysis

- R/Bioconductor packages for differential expression analysis

Procedure:

- Differential Expression Analysis: Identify differentially expressed genes (DEGs) using R/Bioconductor packages with appropriate statistical thresholds (e.g., adjusted p-value < 0.05, log2 fold change > 1).

- PPI Network Reconstruction: Input DEGs into the STRING database to reconstruct the PPI network, then import into Cystoscope for further analysis [3].

- Centrality Analysis: Calculate network centrality measures (degree, betweenness, closeness) using Gephi software to identify hub genes based on their topological importance.

- Module Analysis: Perform cluster analysis using k-means algorithm or similar approaches to identify interactive modules within the PPI network.

- Survival Analysis: Validate prognostic significance of identified hub genes using survival analysis tools such as GEPIA to correlate gene expression with patient outcomes [3].

Expected Outcomes: In a colorectal cancer case study, this approach identified 99 hub genes from 848 DEGs, with central genes like CCNA2, CD44, and ACAN contributing to poor prognosis, and other genes (TUBA8, AMPD3, TRPC1, ARHGAP6, JPH3, DYRK1A, ACTA1) associated with decreased survival rates [3].

Experimental Validation Frameworks

Biomarker Validation Platforms and Technologies

Robust validation of computationally identified biomarkers requires careful selection of analytical platforms based on the molecular nature of the biomarkers and the required sensitivity, specificity, and throughput.

Table 2: Biomarker Validation Platforms and Their Applications

| Platform Category | Example Technologies | Advantages | Limitations | Automatability |

|---|---|---|---|---|

| DNA/RNA Analysis | Next-Generation Sequencing, qPCR, RNA-Seq | High throughput, comprehensive analysis, sensitive | Expensive, complex data analysis | High (automated sample prep and analysis) |

| Protein Analysis | ELISA, Meso Scale Discovery (MSD), Luminex | Quantitative, high specificity, multiplexing capabilities | Limited multiplexing for some platforms, expensive reagents | High (fully automated systems available) |

| Cellular Analysis | Traditional Flow Cytometry, Spectral Flow Cytometry, Single-Cell RNA Sequencing | High parameter multiplexing, single-cell resolution | Expensive, requires skilled operators, complex data analysis | High (fully automated systems available) |

| Spatial Biology | CodeX, Spatial Transcriptomics, Imaging Mass Cytometry | Spatially resolved analysis, tissue context preservation | Extensive sample preparation, expensive | High (automated imaging and analysis) |

Criteria for Biomarker Selection

When selecting potential biomarkers from computational predictions, researchers should prioritize molecules that meet the following criteria [4]:

- Easy to Access: Present in peripheral tissue or biological fluids (blood, urine, saliva) requiring minimally invasive collection.

- Easy to Detect: Highly expressed gene panels or abundant proteins suitable for clinical detection.

- Specific and Quantifiable: Specific to the disease or treatment response and easily measurable.

- Robust to Validation: Successfully validated in independent assays and highly replicable across populations.

Essential Research Reagent Solutions

The following reagents and platforms constitute critical components for implementing the protocols described in this Application Note.

Table 3: Essential Research Reagents and Platforms for Biomarker Discovery

| Reagent/Platform | Function | Application Context |

|---|---|---|

| STRING Database | Provides protein-protein interaction information | Network reconstruction in systems biology approaches |

| Cytoscape | Network visualization and analysis | Hub gene identification and pathway analysis |

| Omics Playground | Integrated data analysis and visualization | Machine learning-based biomarker discovery without coding |

| MSD & Luminex | Multiplex protein biomarker detection | Validation of protein biomarker signatures |

| NGS Platforms | Comprehensive DNA/RNA sequencing | Genomic and transcriptomic biomarker identification |

| Spectral Flow Cytometry | High-parameter single-cell analysis | Cellular biomarker validation in complex populations |

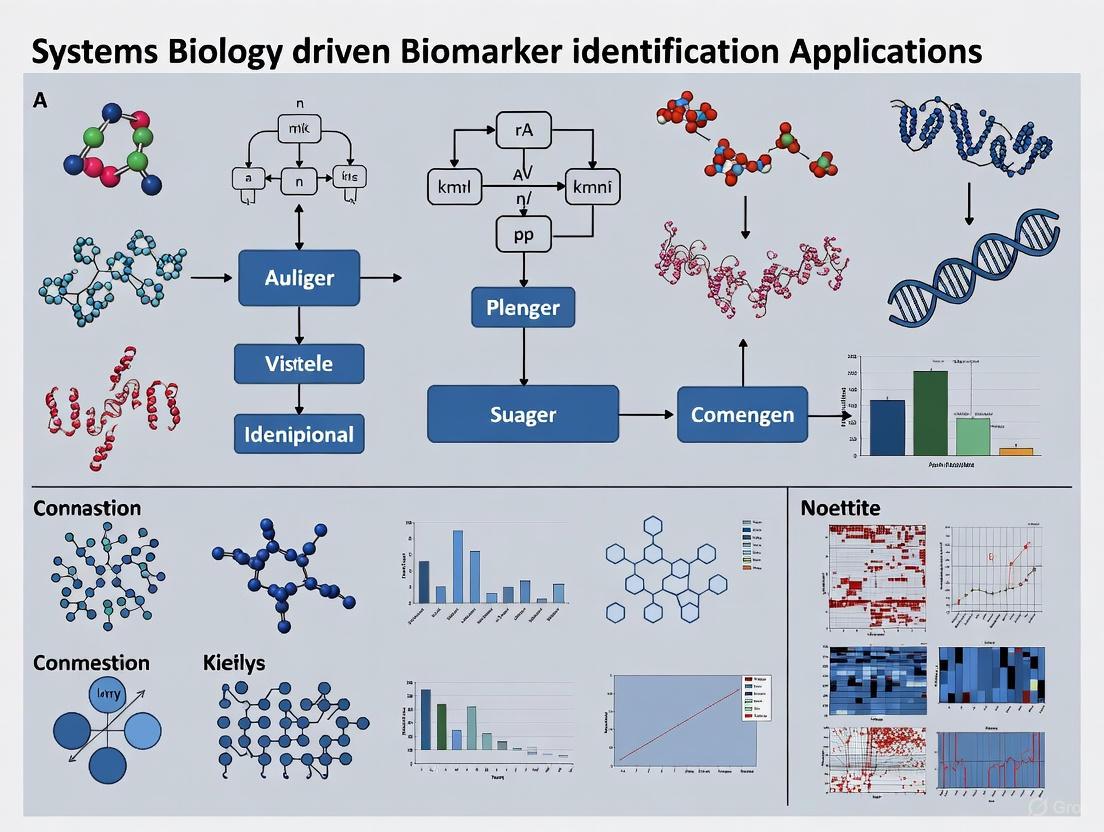

Visualization of Workflows and Pathways

Min-Cut Algorithm Application Workflow

Systems Biology Biomarker Discovery Pipeline

The evolution beyond single-target biomarkers represents a necessary adaptation to the biological complexity of human diseases. The protocols and methodologies detailed in this Application Note provide researchers with practical frameworks for implementing systems biology approaches in their biomarker discovery pipelines. By integrating computational network analysis with rigorous experimental validation and appropriate visualization techniques, researchers can identify robust multi-target biomarkers that more accurately capture disease complexity. These approaches ultimately enable the development of more effective diagnostic, prognostic, and therapeutic strategies for complex diseases, advancing the goals of precision medicine.

The complexity of human diseases, particularly rare genetic disorders and complex syndromes, presents a significant challenge for traditional, single-marker diagnostic approaches. The core principle of analyzing disease-perturbed molecular networks posits that pathogenic states are not merely the result of isolated gene defects but manifest through reproducible disruptions in interconnected molecular pathways and biological modules. By mapping these perturbations within comprehensive molecular networks, researchers can identify robust diagnostic signatures that capture the systemic nature of disease, offering superior specificity and sensitivity compared to conventional biomarkers. This network-based paradigm represents a fundamental advancement in systems biology driven biomarker identification, shifting the diagnostic focus from individual molecules to dysfunctional systems.

Protein-protein interaction networks (PINs) have emerged as particularly effective platforms for uncovering the molecular mechanisms of diseases and establishing diagnostic frameworks [5]. These networks represent physical interactions between gene products that accomplish specific cellular functions, providing a map of intracellular biochemical activities that traditional reductionist methods cannot capture. When disease perturbs these networks, the resulting alterations in network topology and function create identifiable signatures that can serve as diagnostic tools. The application of PINs has proven valuable across diverse conditions including Alzheimer's disease, multiple sclerosis, cancer metastasis, and various rare genetic disorders [5] [6].

Key Analytical Approaches and Quantitative Frameworks

Network Topological Analysis

The topological properties of molecular networks provide crucial insights into disease mechanisms and potential diagnostic signatures. Key topological metrics used in network analysis include several well-established measurements that reveal different aspects of network organization and function [5]:

- Degree Centrality: Quantifies the number of direct connections a node has, identifying highly connected "hub" proteins that often play critical functional roles. Disruption of hub proteins typically causes more severe network damage.

- Cluster Coefficient: Measures the tendency of nodes to form tightly interconnected groups, helping identify molecular complexes or functional modules.

- Network Modularity: Assesses the extent to which a network can be divided into separate modules, revealing functionally specialized subsystems that may be collectively perturbed in disease.

Analysis of rare genetic diseases using multiplex networks has revealed that disease-associated genes exhibit distinct patterns of connectivity across biological scales, with the protein-protein interaction (PPI) layer occupying a central position in network architecture [6]. The structural characteristics of network layers vary significantly, influencing their utility for diagnostic signature identification [6].

Table 1: Structural Characteristics of Molecular Network Layers in Rare Disease Analysis

| Biological Scale | Genome Coverage (Number of Genes) | Edge Density | Clustering Coefficient | Literature Bias (Spearman's ρ) |

|---|---|---|---|---|

| Proteome (PPI) | 17,944 | 2.36 × 10⁻³ | 0.22 | 0.59 |

| Transcriptome (Average per Tissue) | ~10,527 | 7.89 × 10⁻³ | 0.31 | Not Significant |

| Genetic Interactions | 8,823 | 1.13 × 10⁻² | 0.73 | Not Reported |

| Phenotypic Similarity (HPO) | 3,342 | 1.05 × 10⁻² | 0.68 | Not Reported |

Sub-Network Biomarker Identification

Beyond individual topological metrics, the identification of sub-network biomarkers represents a more comprehensive approach to diagnostic signature development. These sub-networks correspond to functionally related protein modules that become collectively perturbed in disease states [5]. Methodologically, sub-network identification often involves extracting densely connected regions of global networks that are enriched for disease-associated genes or proteins showing significant expression changes.

The PIN-based pathway analysis (PINBPA) method exemplifies this approach, having been successfully applied to identify multiple sclerosis-associated sub-networks containing genes from immunological and neural pathways [5]. This method demonstrated particular utility in prioritizing highly confident candidate genes for complex disease traits, including BCL10, CD48, REL, TRAF3, and TEC [5]. Similarly, node-weighted Steiner tree approaches have been employed to detect core interactions in cancer-related PINs, revealing important components in PI3K/Akt and MAPK signaling pathways with diagnostic and therapeutic implications [5].

Table 2: Sub-Network Biomarker Identification Methods and Applications

| Method | Key Principle | Disease Application | Identified Components/Pathways |

|---|---|---|---|

| PINBPA | Pathway enrichment and relationship analysis through distance calculations between pathway modules | Parkinson's Disease, Multiple Sclerosis | Apoptosis, focal adhesion, T cell receptor, HIF-1, MAPK, NF-kappa B signaling pathways |

| Node-Weighted Steiner Tree | Detection of minimum-weight trees connecting key nodes in large-scale networks | Cancer Signaling | Core interactions in PI3K/Akt and MAPK pathways; relationship between p53 and NF-κB |

| Two-Stage Yeast Two-Hybrid | Experimental construction of kinase sub-networks followed by scaffold identification | MAPK Signaling | FLNA, NHE1, RANBP9, KIF26A as MAPK scaffolds; novel interactions with RANBP9 |

Experimental Protocols for Network-Based Diagnostic Signature Identification

Protocol 1: Construction of Disease-Perturbed Molecular Networks

Objective: To construct a comprehensive molecular network representing interactions perturbed in a specific disease state.

Materials and Reagents:

- Biological Samples: Patient-derived tissues, blood, or primary cells appropriate to the disease context

- Protein-Protein Interaction Data: Curated from databases such as HIPPIE [6]

- Transcriptomic Data: RNA-seq or microarray data from disease and control samples

- Pathway Databases: REACTOME pathway definitions [6]

- Gene Ontology Annotations: Functional annotations from Gene Ontology database [6]

- Phenotypic Data: Human Phenotype Ontology (HPO) annotations for phenotypic similarity networks [6]

Methodology:

Data Acquisition and Integration

- Extract protein-protein interactions from curated literature sources and experimental databases [5]

- Generate or acquire transcriptomic co-expression networks using RNA-seq data across relevant tissues from sources like GTEx database [6]

- Compile genetic interaction data from CRISPR-based functional genomics screens [6]

- Annotate genes with functional information using Gene Ontology and pathway membership using REACTOME [6]

- Establish phenotypic similarity networks based on HPO annotations [6]

Network Construction and Filtering

- Apply bipartite mapping techniques to convert gene-pathway associations into gene-gene relationships [6]

- Utilize ontology-based semantic similarity metrics to quantify functional and phenotypic relationships [6]

- Implement correlation-based measures for co-expression relationships with appropriate statistical filtering [6]

- Employ network structural criteria to remove spurious connections and enhance biological relevance [6]

Multiplex Network Assembly

- Organize the 46 network layers spanning six biological scales: genome, transcriptome, proteome, pathway, biological processes, and phenotype [6]

- Ensure consistent gene identifiers across all network layers

- Preserve tissue-specific co-expression networks while extracting a core pan-tissue co-expression network [6]

Network Construction Workflow: From multi-omic data to integrated molecular network

Protocol 2: Identification and Validation of Sub-Network Biomarkers

Objective: To identify and validate disease-relevant sub-network modules with diagnostic potential.

Materials and Reagents:

- Computational Resources: High-performance computing cluster with sufficient memory for network analyses

- Bioinformatics Tools: Network analysis software (e.g., Cytoscape, NetworkX, Igraph)

- Statistical Software: R or Python with appropriate network and statistical packages

- Validation Assays: Multiplex immunohistochemistry, spatial transcriptomics, or proteomic platforms

Methodology:

Disease Module Identification

- Calculate network proximity between known disease-associated genes in the multiplex network [6]

- Apply community detection algorithms to identify densely connected network modules enriched for disease genes

- Use random walk-based methods to explore network neighborhoods of seed genes

- Quantify module specificity by comparing enrichment in disease cases versus controls

Topological Analysis of Candidate Modules

- Compute key topological metrics (degree centrality, betweenness, clustering coefficient) for all nodes within candidate modules [5]

- Identify hub proteins within modules that may represent critical regulatory points

- Classify hubs as "party" hubs (simultaneous interactions) or "date" hubs (temporally regulated interactions) [5]

- Assess module resilience to random versus targeted node removal

Cross-Scale Validation

- Validate identified modules across multiple biological scales in the multiplex network [6]

- Correlate module perturbation with clinical severity or disease progression metrics

- Assess tissue specificity of module expression patterns using tissue-specific co-expression networks [6]

- Integrate with external datasets to confirm associations with relevant biological processes

Experimental Validation

- Utilize spatial biology techniques (spatial transcriptomics, multiplex IHC) to confirm co-localization of module components [7]

- Perform functional validation using organoid models or humanized systems to test module necessity and sufficiency for disease phenotypes [7]

- Establish correlation between module activation state and clinical outcomes in independent patient cohorts

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Research Reagents and Platforms for Network-Based Biomarker Discovery

| Category | Specific Solutions | Key Functions | Application Context |

|---|---|---|---|

| Multi-omic Profiling Platforms | RNA-seq, ATAC-seq, Mass Spectrometry Proteomics, LC-MS Metabolomics | Comprehensive molecular profiling across biological scales | Generating layered data for multiplex network construction [7] |

| Spatial Biology Technologies | Multiplex Immunohistochemistry, Spatial Transcriptomics, CODEX | In situ analysis preserving tissue architecture and cellular relationships | Validating spatial co-localization of network components [7] |

| Advanced Biological Models | Organoids, Humanized Mouse Models, 3D Culture Systems | Recapitulating human tissue complexity and tumor-immune interactions | Functional validation of network perturbations [7] |

| Network Analysis Tools | HIPPIE, REACTOME, Gene Ontology, HPO | Providing curated molecular interactions and functional annotations | Constructing baseline networks and establishing ground truth [6] |

| AI and Analytics Platforms | Machine Learning Classifiers, Natural Language Processing, MOFA | Identifying subtle patterns in high-dimensional data | Extracting diagnostic signatures from complex network data [7] |

Visualization and Interpretation of Network Signatures

Effective visualization of disease-perturbed networks is essential for interpreting diagnostic signatures. The following diagram illustrates a generalized workflow for analyzing network perturbations and extracting diagnostic insights:

Network Analysis Workflow: From raw network to diagnostic signatures

The interpretation of network-based diagnostic signatures requires careful consideration of several key aspects:

- Hub Criticality: Hub proteins within identified modules often represent points of network vulnerability. Their perturbation frequently leads to more severe functional consequences, making them potential indicators of disease severity [5].

- Module Conservation: The preservation of network modules across multiple biological scales (genomic, transcriptomic, proteomic) increases confidence in their biological relevance and diagnostic utility [6].

- Dynamic Range: Diagnostic signatures should demonstrate significant differences in activation state or connectivity between disease and control states, with quantitative metrics establishing clear classification thresholds.

- Context Specificity: The diagnostic performance of network signatures may vary across tissue types, developmental stages, or patient subpopulations, necessitating appropriate contextual validation [6].

Concluding Remarks

The analysis of disease-perturbed molecular networks as diagnostic signatures represents a paradigm shift in biomarker development, moving beyond single-molecule indicators to systemic assessments of pathological states. By leveraging the organizational principles of biological systems and employing multiplex network approaches that span genomic, proteomic, and phenotypic scales, researchers can identify robust diagnostic signatures that capture the complexity of disease mechanisms. The integration of multi-omic data, advanced analytical methods, and sophisticated visualization techniques creates a powerful framework for developing next-generation diagnostics with enhanced specificity, sensitivity, and clinical utility. As network medicine continues to evolve, these approaches will play an increasingly important role in personalized healthcare, enabling earlier disease detection, more precise patient stratification, and ultimately, improved therapeutic outcomes.

The field of biology has witnessed a paradigm shift from a reductionist approach to a holistic, systems-level understanding, where biology is treated as an information science [8]. Systems biology studies biological systems as a whole and their interactions with the environment by measuring and quantifying various types of global biological information, integrating information at different levels, and studying dynamical changes of all biological systems [8]. Multi-omics data integration sits at the core of this approach, combining data from genomics, transcriptomics, proteomics, and metabolomics to reveal comprehensive insights into biological systems [9].

This integrated approach has particular power in the search for informative diagnostic biomarkers because it focuses on the fundamental causes and keys on the identification and understanding of disease-perturbed molecular networks [8] [10]. The central premise of systems medicine is that clinically detectable molecular fingerprints resulting from these perturbed networks can be used to detect and stratify various pathological conditions [8]. This revolution is transforming our understanding of complex diseases, enabling the identification of robust biomarker signatures, and advancing the development of personalized therapeutic strategies [9] [11].

Current Landscape and Emerging Trends

The multi-omics field has experienced significant growth and evolution over the past decade. A bibliometric analysis of publications from 2013-2023 revealed a noteworthy increase in multi-omics research, with China emerging as the leading contributor to publications and the USA securing the highest number of citations [12]. The most frequently occurring terms in this literature include "multi-omics," "data integration," and "metabolomics," while "Bioinformatics Briefings" was identified as both the most relevant source and the most cited journal [12].

Table 1: Key Trends in Multi-Omics Research (2023-2025)

| Trend Area | Specific Advancements | Research Impact |

|---|---|---|

| Single-Cell Resolution | Multi-omic measurements from same cells; Correlation of genomic, transcriptomic, and epigenomic changes [9] | Transforms understanding of tissue health and disease at cellular level; Reveals cell-type-specific mechanisms |

| Artificial Intelligence | Machine learning for data integration; Deep learning for survival prediction; Pattern detection in complex datasets [9] [11] [13] | Enables higher-level analysis of integrated data; Improves predictive accuracy for clinical outcomes |

| Clinical Translation | Liquid biopsies (cfDNA, RNA, proteins); Whole genome sequencing as first-line diagnostic [9] | Non-invasive disease monitoring; Early detection applications; Personalized treatment strategies |

| Network Medicine | Integration of multi-omics data onto shared biochemical networks; Mapping known molecular interactions [9] [8] | Improves mechanistic understanding of disease; Identifies key regulatory nodes as therapeutic targets |

| Data Integration Challenges | Need for purpose-built analysis tools; Standardization of methodologies; Federated computing solutions [9] | Addresses computational barriers; Enhances reproducibility across studies; Enables larger-scale analyses |

Methodological Framework for Multi-Omics Integration

Core Data Integration Strategies

Effective multi-omics integration requires sophisticated computational approaches that move beyond simple correlation of individual datasets. The optimal integrated multi-omics approach interweaves omics profiles into a single dataset for higher-level analysis [9]. This process begins with collecting multiple omics datasets on the same set of samples and integrating data signals from each prior to processing [9]. The integrated data improves statistical analyses where sample groups are separated based on a combination of multiple analyte levels [9].

A key piece to an integrated multi-omics approach is network integration, where multiple omics datasets are mapped onto shared biochemical networks to improve mechanistic understanding [9]. In this process, analytes are connected based on known interactions, such as a transcription factor mapped to the transcript it regulates or metabolic enzymes mapped to their associated metabolite substrates and products [9]. This network-based approach can capture changes in downstream effectors and in many cases is more useful for prediction compared to any individual molecule [11].

Experimental Workflow for Biomarker Discovery

The following diagram illustrates a comprehensive multi-omics integration workflow for biomarker discovery, adapted from recent studies in ulcerative colitis and colorectal cancer [11] [13]:

Workflow for Multi-Omics Biomarker Discovery

Protocol: Multi-Omics Data Integration for Biomarker Discovery

Objective: To identify robust biomarker signatures for disease stratification and prognostic prediction through integrated analysis of genomic, transcriptomic, proteomic, and metabolomic data.

Materials and Equipment:

- Biological samples (tissue, plasma, serum)

- High-throughput sequencing platform

- Proteomic analysis system (e.g., multiplexed aptamer-based binding assay)

- Metabolomic profiling platform

- Computing infrastructure with sufficient RAM and storage

- R or Python with specialized packages (e.g., "TwoSampleMR", "sva")

Procedure:

Sample Preparation and Data Generation

- Collect and process biological samples according to standardized protocols [11] [13]

- Isolate and quantify molecular species (DNA, RNA, proteins, metabolites)

- Perform genome sequencing, transcriptome profiling, proteomic analysis, and metabolomic profiling

- For plasma-derived biomarkers, assess samples for haemolysis and exclude compromised samples [11]

Data Preprocessing and Quality Control

- Conduct quality assessment of raw data

- Perform normalization to adjust for technical variability (e.g., quantile normalization)

- Filter out molecules with excessive missing values (>50% missingness)

- Impute missing data using appropriate methods (e.g., KNNimpute) [11]

Multi-Omics Data Integration

- Apply Mendelian Randomization (MR) approaches to establish causal relationships [13]

- Utilize the "TwoSampleMR" R package for proteome-wide MR analysis

- Employ multiple MR methods: MR Egger, weighted median, and inverse variance weighted (IVW) [13]

- Perform differential expression analysis across all omics layers

- Identify overlapping genes/proteins across different omics datasets

Biomarker Signature Identification

Network and Functional Analysis

- Map biomarker signatures onto biological networks

- Construct regulatory networks (mRNA-miRNA-lncRNA)

- Perform immune infiltration analysis (e.g., CIBERSORT)

- Conduct functional enrichment analysis (GSEA)

Experimental Validation

- Validate expression changes in experimental models (e.g., DSS-induced colitis mice) [13]

- Use RT-qPCR to confirm expression trends of identified biomarkers

- Perform functional assays to verify biological roles

Troubleshooting:

- Address batch effects using the "sva" package in R [13]

- For unbalanced class distributions, apply Synthetic Minority Oversampling Technique (SMOTE) [11]

- Use cis-pQTLs as instrumental variables in MR to minimize horizontal pleiotropy [13]

Computational Methods for Network Analysis and Visualization

Network-Based Biomarker Identification

Network analysis provides a powerful framework for identifying biologically meaningful biomarkers. This approach recognizes that molecular networks are sources for identifying powerful biomarkers that can capture changes in downstream effectors and in many cases are more useful for prediction compared to any individual gene [11]. The following diagram illustrates the network-based biomarker discovery process:

Network-Based Biomarker Discovery

Protocol: Biological Network Visualization for Multi-Omics Data

Objective: To create effective biological network figures that communicate multi-omics integration results clearly and accurately.

Principles of Effective Network Visualization [14]:

Determine Figure Purpose: Before creating an illustration, establish its purpose. Write down the explanation (caption) to be conveyed and note whether it relates to the whole network, a node subset, temporal aspects, or topology.

Consider Alternative Layouts:

- Node-link diagrams are most common but can produce clutter in dense networks

- Adjacency matrices work well for dense networks and can encode edge attributes

- Fixed layouts position nodes to encode additional data (e.g., maps, circular genomes)

Beware of Unintended Spatial Interpretations:

- Nodes drawn in proximity will be interpreted as conceptually related

- Centrality may metaphorically represent relevance

- Direction can represent information flow or developmental processes

Provide Readable Labels and Captions:

- Labels should use the same or larger font size as the caption font

- Ensure text is legible without detailed reference to the text

- Use annotations to highlight salient aspects

Tools and Software:

- Cytoscape for network visualization and analysis

- yEd for graph layout and editing

- R and Python for customized visualizations

- VOSviewer for bibliometric mapping [12]

Essential Research Reagents and Computational Tools

Table 2: Research Reagent Solutions for Multi-Omics Studies

| Reagent/Tool | Specific Function | Application Context |

|---|---|---|

| SOMAscan Platform | Multiplexed aptamer-based binding assay for protein quantification [13] | Large-scale proteomic analysis in genetic studies |

| OpenArray Platform | High-throughput miRNA profiling using quantitative RT-PCR [11] | Plasma miRNA biomarker discovery |

| MirVana PARIS Kit | RNA isolation from plasma samples [11] | Preparation of circulating miRNA for analysis |

| TwoSampleMR R Package | Mendelian randomization analysis to establish causal relationships [13] | Integration of pQTL and GWAS data for causal inference |

| CIBERSORT | Computational method for immune cell infiltration estimation [13] | Characterization of tumor microenvironment |

| SVM-RFE Algorithm | Machine learning feature selection for biomarker identification [13] | Identification of optimal molecular signatures |

| Single-Cell RNA Sequencing | High-resolution expression profiling at cellular level [13] | Cell-type-specific biomarker discovery |

| VOSviewer Software | Bibliometric mapping and visualization of scientific literature [12] | Research trend analysis and knowledge mapping |

Applications in Complex Disease Research

Case Study: Ulcerative Colitis Biomarker Discovery

A recent multi-omics study on ulcerative colitis demonstrates the power of integrated approaches [13]. Researchers integrated data from the Gene Expression Omnibus database and protein quantitative trait loci from genome-wide association studies to identify overlapping genes. Using three machine learning algorithms, they identified four core hub genes (EIF5A2, IDO1, CDH5, and MYL5) and constructed a diagnostic model that demonstrated strong predictive performance. Single-cell sequencing analysis revealed cell-type-specific expression patterns, with CDH5 primarily expressed in endothelial cells, EIF5A2 enriched in stem cells/T cells, IDO1 expressed in monocytes, and MYL5 found in epithelial and endothelial cells [13].

Case Study: Colorectal Cancer Prognostic Biomarkers

In colorectal cancer, a multi-objective optimization framework effectively integrated data-driven approaches with knowledge from miRNA-mediated regulatory networks to identify robust plasma miRNA signatures [11]. This approach identified a prognostic signature comprising 11 circulating miRNAs that predict patient survival outcome and target pathways underlying colorectal cancer progression. The generality of the method was demonstrated across three publicly available miRNA datasets associated with biomarker studies in other diseases, highlighting the utility of systems biology approaches for biomarker discovery [11].

The multi-omics revolution is fundamentally transforming biomedical research by enabling a comprehensive, systems-level understanding of biological processes and disease mechanisms. Through the integration of genomic, proteomic, and metabolomic data, researchers can now identify robust biomarker signatures that more accurately reflect the complex, multifactorial nature of human diseases. The methodological frameworks presented in this application note provide researchers with practical protocols for implementing multi-omics integration strategies, from initial data generation and processing to advanced network analysis and visualization. As computational methods continue to evolve and multi-omics technologies become more accessible, these approaches will play an increasingly critical role in advancing personalized medicine, enabling earlier disease detection, more accurate prognosis, and more targeted therapeutic interventions.

In the field of systems biology, biomarkers are defined as objectively measurable indicators of normal biological processes, pathogenic processes, or responses to an exposure or intervention [15] [16]. The discipline of systems biology, which views biology as an information science and studies biological systems as a whole, has particular power in the search for informative diagnostic biomarkers because it focuses on fundamental causes and identifies disease-perturbed molecular networks [8]. This approach has transformed biomarker discovery from traditional, pauci-parameter measurements to multiparameter analyses that capture the complexity of biological systems through the integration of global data from genomics, transcriptomics, proteomics, and metabolomics [8] [17].

The critical importance of clear biomarker definitions and applications was recognized by the U.S. Food and Drug Administration (FDA) and the National Institutes of Health (NIH), which jointly established the Biomarkers, EndpointS, and other Tools (BEST) resource to create a common framework [15]. This review focuses on four core functional types of biomarkers—diagnostic, prognostic, predictive, and pharmacodynamic—within the context of systems biology-driven identification and their applications in research and drug development.

Biomarker Definitions and Clinical Applications

The following table summarizes the key characteristics and applications of the four primary biomarker types discussed in this application note.

Table 1: Core Biomarker Types: Definitions and Applications

| Biomarker Type | Definition | Primary Application | Representative Examples |

|---|---|---|---|

| Diagnostic | Detects or confirms the presence of a disease or condition, or identifies a disease subtype [15] [18]. | Disease identification and classification [15]. | Prostate-Specific Antigen (PSA) for prostate cancer; C-Reactive Protein (CRP) for inflammation [18] [19]. |

| Prognostic | Predicts the likely course of a disease, including risk of recurrence or mortality, independent of treatment [18]. | Informing disease management strategies and patient stratification [18]. | Ki-67 (MKI67) for tumor proliferation in breast cancer; BRAF mutation status in melanoma [18]. |

| Predictive | Identifies individuals who are more or less likely to respond to a specific therapeutic intervention [15] [18]. | Guiding treatment selection for personalized medicine [18]. | HER2/neu status for trastuzumab response in breast cancer; EGFR mutation status for EGFR inhibitors in non-small cell lung cancer [18]. |

| Pharmacodynamic/ Response | Shows that a biological response has occurred in an individual exposed to a medical product or environmental agent [15] [18]. | Demonstrating biological activity and mechanism of action in clinical trials and treatment monitoring [18]. | Reduction in LDL cholesterol in response to statins; reduction in blood pressure in response to antihypertensive drugs [18]. |

Systems Biology Workflow for Biomarker Identification

Systems biology provides a powerful, holistic framework for discovering and validating biomarkers by analyzing complex molecular networks. The following workflow diagram illustrates a generalized protocol for systems biology-driven biomarker identification.

Figure 1. Systems Biology Biomarker Discovery Workflow. This workflow integrates multi-omics data acquisition with computational network analysis to identify and validate robust biomarker signatures.

Experimental Protocol: A Multi-Omic Biomarker Discovery Pipeline

Objective: To identify a panel of diagnostic and prognostic biomarkers for a complex disease (e.g., colorectal cancer or a neurodegenerative disorder) by integrating multi-omics data and network analysis.

Methodology:

Sample Collection and Preparation:

- Collect matched tissue (e.g., from biopsy or animal model) and biofluid (e.g., blood, plasma, CSF) samples from case and control cohorts [8] [17].

- Process samples using standardized protocols. Automated homogenization (e.g., Omni LH 96) is recommended for consistency and to reduce human error and processing bias [7].

- Aliquot samples for downstream multi-omics analyses and store at appropriate temperatures.

Multi-Omics Data Acquisition:

- Genomics/Transcriptomics: Isolate DNA and total RNA. Perform whole-genome sequencing, RNA sequencing (RNA-seq), or microarray analysis to identify differentially expressed genes (DEGs) [3] [20]. For spatial context, implement spatial transcriptomics on tissue sections [7].

- Proteomics: Perform protein extraction from tissue or biofluids. Analyze using high-throughput mass spectrometry or multiplex immunoassays to quantify protein expression and post-translational modifications [8] [19].

- Metabolomics: Analyze metabolite profiles from plasma or tissue using Liquid Chromatography–Tandem Mass Spectrometry (LC–MS/MS) or Gas Chromatography–Mass Spectrometry (GC–MS) to identify altered metabolic pathways [17] [20].

Data Integration and Network Analysis (Systems Biology Core):

- Bioinformatics Analysis: Perform differential expression analysis on each omics dataset (e.g., using R/Bioconductor packages) [3].

- Network Construction: Reconstruct Protein-Protein Interaction (PPI) networks using databases like STRING. Visualize and analyze networks using tools like Cytoscape and Gephi [3].

- Centrality and Cluster Analysis: Identify hub genes (highly connected nodes) within the PPI network through centrality analysis (e.g., degree, betweenness). Use clustering algorithms (e.g., k-means) to dissect the network into functional modules [3].

- Pathway Enrichment: Conduct Gene Ontology (GO) and KEGG pathway enrichment analyses on hub genes and network modules to identify biologically relevant processes perturbed by the disease [3].

Candidate Biomarker Validation:

- Select top candidate biomarkers from the hub genes and significantly altered molecules across the omics layers.

- Functional Validation: Use advanced models such as organoids and humanized mouse models to confirm the functional role of candidates in a context that mimics human biology and drug responses [7].

- Assay Development: Develop specific, quantitative assays (e.g., ELISA, qPCR, customized panels for mass spectrometry) for the candidate biomarkers.

- Analytical Validation: Assess the sensitivity, specificity, and reproducibility of the measurement assays following regulatory guidelines [15] [19].

The Scientist's Toolkit: Key Research Reagent Solutions

The following table details essential reagents and platforms for executing the systems biology biomarker discovery workflow.

Table 2: Essential Research Reagents and Platforms for Biomarker Discovery

| Reagent / Platform | Function / Application | Example Use Case |

|---|---|---|

| Automated Homogenizer | Standardized disruption of tissues and cells for reproducible biomolecule extraction. | Omni LH 96 for consistent preparation of tissue lysates prior to multi-omics analysis [7]. |

| Next-Generation Sequencing (NGS) Kits | Comprehensive analysis of genetic variations, gene expression, and epigenetic modifications. | RNA-seq library prep kits for transcriptomic profiling of disease vs. control tissues [21] [20]. |

| Multiplex Immunoassay Panels | Simultaneous quantification of multiple protein biomarkers from a single sample. | Luminex xMAP or Olink panels to validate protein expression changes identified by mass spectrometry [20]. |

| Mass Spectrometry Reagents | Preparation and analysis of proteomic and metabolomic samples. | LC–MS/MS grade solvents and iTRAQ/TMT tags for relative quantification of proteins across samples [20] [19]. |

| Spatial Biology Reagents | In-situ analysis of biomarker expression while preserving tissue architecture. | Multiplex immunohistochemistry (IHC) or RNAscope kits to visualize biomarker distribution within the tumor microenvironment [7]. |

| Organoid Culture Systems | 3D in vitro models for functional biomarker screening and target validation. | Cancer organoid co-cultures to test if biomarker expression predicts response to therapeutics [7]. |

The integration of systems biology approaches is revolutionizing biomarker science by moving beyond single-parameter measurements to multi-parameter, network-based signatures. Diagnostic, prognostic, predictive, and pharmacodynamic biomarkers each play distinct yet complementary roles in advancing personalized medicine. The application of multi-omics technologies, coupled with robust computational analysis and validation in advanced disease models, provides a powerful pipeline for discovering and translating novel biomarkers into clinical and drug development practice. This structured, evidence-based framework ensures that biomarker development keeps pace with scientific and clinical needs, ultimately enabling more precise diagnosis, prognostication, and treatment for patients.

Within the framework of systems biology, the identification of biomarkers is evolving from a reductionist focus on single molecules to a holistic analysis of complex, interconnected biological networks. This paradigm shift recognizes that the phenotypic signatures of complex diseases arise from dynamic perturbations across multiple molecular layers. Network biomarkers—comprising multiple interacting molecules—and dynamic network biomarkers that capture temporal fluctuations, offer superior potential for early diagnosis, patient stratification, and monitoring of disease progression compared to traditional, single-entity biomarkers [22]. This Application Note details pioneering studies and associated protocols that successfully leverage network-based approaches to discover and validate such biomarkers in neurodegenerative and metabolic diseases, providing a practical roadmap for researchers and drug development professionals.

Network Biomarker Successes in Neurodegeneration

The Global Neurodegeneration Proteomics Consortium (GNPC): A Large-Scale Proteomic Network Initiative

The Global Neurodegeneration Proteomics Consortium (GNPC) represents a landmark success in applying a systems-level approach to biomarker discovery. This public-private partnership established one of the world's largest harmonized proteomic datasets to address the diagnostic and prognostic challenges in heterogeneous conditions like Alzheimer's disease (AD), Parkinson's disease (PD), frontotemporal dementia (FTD), and amyotrophic lateral sclerosis (ALS) [23].

- Objective: To identify robust plasma proteomic signatures specific to common neurodegenerative diseases and transdiagnostic signatures of clinical severity by analyzing a massive, multi-cohort dataset.

- Systems Biology Context: The consortium operated on the core systems biology principle that large-scale, integrated datasets from diverse populations are necessary to capture the complex, multi-factorial nature of neurodegeneration and overcome the poor reproducibility of findings from smaller, single-site cohorts.

- Key Findings:

- Identification of disease-specific differential protein abundance patterns across AD, PD, FTD, and ALS.

- Discovery of transdiagnostic proteomic signatures correlating with clinical severity, indicating common pathways in neurodegeneration.

- Identification of a robust plasma proteomic signature of

APOE ε4carriership, a key genetic risk factor, reproducible across all four neurodegenerative diseases studied. - Revelation of distinct patterns of organ aging associated with different neurodegenerative conditions [23].

Table 1: Key Quantitative Findings from the GNPC Initiative

| Finding Category | Specific Result | Significance |

|---|---|---|

| Dataset Scale | ~250 million protein measurements from >35,000 biofluid samples | Unprecedented statistical power for biomarker discovery |

| Transdiagnostic Signature | Proteomic signature of clinical severity shared across AD, PD, FTD, and ALS | Suggests common final pathways; useful for tracking progression |

APOE ε4 Signature |

Robust plasma proteomic signature of APOE ε4 carriership |

Provides a molecular readout of a major genetic risk factor |

A Microglia-Focused Network Approach in Alzheimer's Disease

The discovery of microglial genes as key risk factors for neurodegenerative diseases (NDDs) has positioned these cells as central nodes in disease networks. Targeting microglial networks, particularly those centered on the Triggering Receptor Expressed on Myeloid cells 2 (TREM2), is a promising therapeutic and biomarker strategy [24].

- Objective: To target microglial dysfunction, a core driver of neurodegeneration, and develop companion biomarkers for tracking therapeutic response.

- Systems Biology Context: This approach moves beyond targeting individual pathological proteins (e.g., Aβ) to modulating the broader immune network of the brain. It acknowledges microglia as integrators of multiple pathological signals and regulators of neuronal health.

- Key Findings & Applications:

- TREM2 Agonists: Antibodies like AL002 (Alector) and VHB937 (Novartis) are designed to activate TREM2 signaling networks, enhancing microglial phagocytosis and promoting a neuroprotective phenotype.

- Soluble TREM2 (sTREM2) as a Dynamic Network Biomarker: Cerebrospinal fluid (CSF) levels of sTREM2, a cleavage product of the receptor, are considered a biomarker of microglial activation. In clinical trials for AL002, a dose-dependent reduction in CSF sTREM2 was observed, serving as a key pharmacodynamic biomarker indicating target engagement and receptor internalization [24].

- Therapeutic Efficacy: Preclinical models show that TREM2 activation reduces Aβ plaque burden and can improve cognitive performance, validating the microglial network as a viable therapeutic target.

Table 2: Microglia-Targeted Clinical Trials and Associated Network Biomarkers

| Therapeutic Agent | Target | Mechanism | Phase | Key Biomarker |

|---|---|---|---|---|

| AL002 (Alector) | TREM2 | Activating monoclonal antibody | Phase 2 (NCT04592874) | Reduction in CSF sTREM2 |

| VHB937 (Novartis) | TREM2 | Activating monoclonal antibody | Phase 2 in ALS (NCT06643481) | Downstream signaling (SYK phosphorylation) |

| VG-3927 (Vigil Neurosciences) | TREM2 | Brain-penetrant small molecule agonist | Phase 1 (NCT06343636) | Reduction in CSF sTREM2 |

Network Biomarker Successes in Metabolic Disease

A Network Metabolomics Approach to Major Depressive Disorder (MDD)

A seminal study successfully applied a network-based metabolomics strategy to identify a diagnostic biomarker signature for Major Depressive Disorder (MDD), a condition with high clinical heterogeneity and a lack of objective diagnostic tools [25].

- Objective: To investigate plasma metabolite signatures in MDD patients versus healthy controls and identify diagnostic biomarkers associated with core depressive features using a network-based approach.

- Systems Biology Context: The study used Weighted Gene Co-expression Network Analysis (WGCNA), a systems biology method, to move beyond univariate associations. WGCNA constructs metabolite co-expression networks to identify modules of tightly correlated metabolites that are collectively associated with clinical traits, thus capturing the functional organization of the metabolome.

- Key Findings:

- WGCNA identified key metabolite modules significantly correlated with depressive severity and specific symptoms like sadness/depressive mood.

- Seven hub metabolites were pinpointed as a diagnostic biomarker signature:

- Positively correlated with depression: SM (OH) C16:1 (a sphingomyelin), HexCer(d18:1/24:1) (a hexosylceramide), PC aa C40:6 (a phosphatidylcholine), CE(20:4) (a cholesteryl ester).

- Negatively correlated with depression: Methionine, Arginine, Tyrosine.

- Enriched pathways included biosynthesis of phenylalanine, tyrosine and tryptophan, glutathione metabolism, and arginine and proline metabolism.

- A deep neural network model incorporating these seven biomarkers achieved an area under the curve (AUC) of 0.803 for diagnosing MDD, demonstrating high clinical potential [25].

Table 3: Hub Metabolites Identified via WGCNA for MDD Diagnosis

| Hub Metabolite | Class | Correlation with Depressive Features |

|---|---|---|

| SM (OH) C16:1 | Sphingomyelin | Positive |

| HexCer(d18:1/24:1) | Hexosylceramide | Positive |

| PC aa C40:6 | Phosphatidylcholine | Positive |

| CE(20:4) | Cholesteryl Ester | Positive |

| Methionine | Amino Acid | Negative |

| Arginine | Amino Acid | Negative |

| Tyrosine | Amino Acid | Negative |

Detailed Experimental Protocols

Protocol 1: Network Metabolomics for Disease Biomarker Discovery

This protocol outlines the key steps for discovering network-based metabolite biomarkers, as applied in the MDD study [25].

1. Sample Preparation and Metabolite Detection:

- Sample Type: Collect plasma samples from well-phenotyped patient and matched control groups.

- Metabolite Profiling: Employ a targeted metabolomics platform (e.g., MxP Quant 500 kit) using UPLC-MS/MS. This allows for the absolute quantification of hundreds of metabolites across diverse biochemical classes.

- Quality Control: Include multiple replicates of a quality control (QC) pool on each analysis plate, created from a pool of all study samples, to monitor instrumental performance.

2. Data Preprocessing and Multivariate Analysis:

- Normalization: Normalize metabolite concentrations to correct for dilution and other technical variances.

- Differential Analysis: Perform Orthogonal Partial Least Squares-Discriminant Analysis (OPLS-DA) to discriminate metabolic profiles between groups. Identify significantly altered metabolites using Variable Importance in Projection (VIP) > 1.0 and p-value < 0.05.

3. Weighted Gene Co-expression Network Analysis (WGCNA):

- Network Construction: Construct a weighted metabolite co-expression network using the

WGCNApackage in R. Choose a soft-thresholding power (e.g., β=7) to achieve a scale-free topology. - Module Detection: Identify modules of highly correlated metabolites using hierarchical clustering and dynamic tree cutting.

- Module-Trait Association: Calculate correlations between module eigengenes (the first principal component of a module) and clinical traits (e.g., depression scores). Select significant modules (p < 0.05) for further analysis.

- Hub Metabolite Identification: Within significant modules, identify hub metabolites as those with high module membership (MM > 0.6) and high gene significance (GS > 0.2) for the trait of interest. The intersection of these with differentially expressed metabolites defines the final hub metabolite set.

4. Diagnostic Model Construction and Validation:

- Machine Learning: Use the hub metabolites as features to build diagnostic classifiers. Apply multiple algorithms (e.g., Ridge Regression, Support Vector Machine, Random Forest, Deep Neural Network) in a training set (e.g., 70% of data).

- Model Validation: Evaluate the performance of the optimal model on a held-out test set (e.g., 30% of data) using metrics like Area Under the Curve (AUC), sensitivity, and specificity.

- Model Interpretation: Apply explainable AI techniques like SHapley Additive exPlanations (SHAP) to interpret the contribution of each metabolite to the model's predictions.

Protocol 2: Large-Scale Consortia-Based Proteomic Biomarker Discovery

This protocol describes the workflow for large-scale, multi-site proteomic biomarker discovery, as exemplified by the GNPC [23].

1. Consortium Building and Data Harmonization:

- Partnership: Establish a public-private partnership with multiple academic, clinical, and industry contributors to aggregate a large number of biofluid samples (plasma, serum, CSF) with associated clinical data.

- Data Generation: Utilize high-dimensional proteomic platforms (e.g., SomaScan, Olink, Mass Spectrometry) across different sites to profile proteins.

- Harmonization: Implement rigorous computational and statistical methods to harmonize protein measurements from multiple platforms and cohorts, correcting for batch effects and technical variability.

2. Centralized Data Management and Access:

- Cloud-Based Platform: Host the harmonized dataset on a secure, cloud-based environment (e.g., Alzheimer’s Disease Data Initiative’s AD Workbench) to provide controlled access to consortium members and, eventually, the wider research community.

3. Integrated Statistical and Systems-Level Analysis:

- Differential Abundance Analysis: Perform meta-analyses across cohorts to identify proteins that are consistently dysregulated in a specific disease compared to controls.

- Transdiagnostic Analysis: Test for proteomic signatures that are shared across different neurodegenerative diseases (e.g., related to clinical severity) or specific to a single disease.

- Network and Pathway Analysis: Integrate proteomic data with genetic and clinical information to identify core networks and biological pathways driving disease. Use the scale of the data to identify robust signatures of genetic risk factors (e.g.,

APOE ε4).

Visualization of Workflows and Pathways

Network Metabolomics Workflow for Biomarker Discovery

Network Metabolomics Discovery Workflow

TREM2-Centric Microglial Network in Neurodegeneration

TREM2 Microglial Network and Biomarker

The Scientist's Toolkit: Key Research Reagent Solutions

Table 4: Essential Research Reagents and Platforms for Network Biomarker Discovery

| Item / Solution | Function / Application | Example Use Case |

|---|---|---|

| MxP Quant 500 Kit | Targeted metabolomics kit for absolute quantification of ~630 metabolites via UPLC-MS/MS. | Profiling plasma metabolites in MDD study [25]. |

| SomaScan & Olink Platforms | High-throughput, affinity-based proteomic platforms for measuring thousands of proteins from biofluids. | Large-scale plasma proteomics in the GNPC [23] [26]. |

| WGCNA R Package | Algorithm for constructing weighted co-expression networks and identifying functional modules. | Identifying metabolite modules associated with depressive features [25]. |

| Cloud Data Platforms (e.g., AD Workbench) | Secure, cloud-based environments for storing, harmonizing, and analyzing large-scale multi-omics data. | Hosting and analyzing the GNPC dataset [23]. |

| TREM2 Agonist Antibodies | Research-grade agonists to activate TREM2 signaling and study microglial function in disease models. | Preclinical validation of microglial-targeted therapies [24]. |

Advanced Technologies and Workflows: Multi-Omics, Spatial Biology, and AI-Driven Analytics

Multi-omics integration represents a transformative approach in systems biology that combines datasets from genomic, transcriptomic, and proteomic analyses to construct comprehensive biological signatures. This methodology enables researchers to move beyond single-layer analysis to gain a holistic understanding of complex biological systems, disease mechanisms, and therapeutic responses. The core principle involves horizontal and vertical integration strategies that allow simultaneous analysis across multiple molecular layers, revealing interactions and patterns that would remain hidden in single-omics approaches [27].

The power of multi-omics integration lies in its ability to bridge the gap between genotype and phenotype by capturing the flow of biological information from DNA to RNA to proteins. Recent technological advances have revolutionized this field, particularly through single-cell multi-omics and spatial multi-omics technologies that provide unprecedented resolution for understanding cellular heterogeneity and tissue microenvironment interactions [27]. These approaches are especially valuable in complex diseases like cancer, where tumor heterogeneity and dynamic microenvironment interactions drive disease progression and treatment resistance.

For biomarker discovery, multi-omics strategies have demonstrated superior performance compared to traditional single-omics approaches. By integrating complementary data types, researchers can identify biomarker panels at single-molecule, multi-molecule, and cross-omics levels that show enhanced diagnostic and prognostic accuracy for cancer diagnosis, prognosis, and therapeutic decision-making [27]. This comprehensive framework supports the development of personalized treatment strategies by providing a more complete picture of individual patient biology.

The foundation of robust multi-omics research relies on access to high-quality, well-annotated datasets from diverse biological sources. Several large-scale consortia have established comprehensive data repositories that serve as invaluable resources for the research community. These repositories provide standardized, multi-layered molecular data from thousands of samples, enabling researchers to validate findings across diverse populations and disease states.

Table 1: Major Public Multi-Omics Data Repositories

| Repository Name | Primary Focus | Data Types Available | Research Applications |

|---|---|---|---|

| The Cancer Genome Atlas (TCGA) | Cancer genomics | RNA-Seq, DNA-Seq, miRNA-Seq, SNV, CNV, DNA methylation, RPPA | Pan-cancer analysis, biomarker discovery, disease subtyping |

| Clinical Proteomic Tumor Analysis Consortium (CPTAC) | Cancer proteomics | Proteomics data corresponding to TCGA cohorts | Proteogenomic analysis, therapeutic target identification |

| International Cancer Genomics Consortium (ICGC) | International cancer genomics | Whole genome sequencing, somatic and germline mutation data | Cross-population cancer studies, driver mutation identification |

| Cancer Cell Line Encyclopedia (CCLE) | Cancer cell lines | Gene expression, copy number, sequencing data, drug response profiles | Drug screening, mechanistic studies, biomarker validation |

| METABRIC | Breast cancer | Clinical traits, gene expression, SNP, CNV data | Cancer subtyping, prognostic biomarker identification |

| TARGET | Pediatric cancers | Gene expression, miRNA expression, copy number, sequencing data | Pediatric cancer research, rare cancer studies |

| Omics Discovery Index (OmicsDI) | Consolidated multi-omics data | Genomics, transcriptomics, proteomics, metabolomics from 11 repositories | Cross-database queries, meta-analyses |

These repositories enable researchers to access and integrate diverse data types, with TCGA representing one of the most comprehensive resources with data from more than 33 different cancer types across 20,000 individual tumor samples [28]. The CPTAC portal complements TCGA by providing deep proteomic characterization of TCGA cohorts, enabling true proteogenomic analyses [28]. The integration of these rich data sources provides the statistical power necessary to identify meaningful patterns and validate biomarkers across patient populations with different backgrounds, exposures, and comorbidities, ultimately enhancing clinical translatability [29].

Experimental Design and Workflows

Sample Preparation and Data Generation

Implementing a successful multi-omics study requires meticulous experimental design beginning with sample preparation. The integrity of multi-omics data heavily depends on sample quality and processing consistency across different analytical platforms. Researchers must establish standardized protocols for sample collection, storage, and processing to minimize technical variability, especially when analyzing multiple omics layers from the same specimen [30].

For transcriptomic profiling, RNA sequencing (RNA-Seq) has emerged as the dominant technology due to its comprehensive coverage, accuracy in quantifying expression levels, and ability to reveal novel transcriptional insights [30]. While microarray technology remains reliable for certain applications, RNA-Seq provides superior sensitivity for detecting low-abundance transcripts and alternative splicing variants. For proteomic analysis, mass spectrometry-based approaches including liquid chromatography-tandem mass spectrometry (LC-MS/MS) and reverse-phase protein arrays enable high-throughput protein identification and quantification [30]. Emerging technologies like spatial transcriptomics and proteomics add dimensional context to molecular measurements, preserving critical information about tissue architecture and cellular localization [27].

A critical consideration in experimental design is understanding the dynamic range and detection limitations of each technology. Transcriptomic methods typically offer greater depth of coverage compared to proteomic approaches, potentially creating imbalances in downstream integration. Researchers should implement quality control measures specific to each platform, including checks for RNA integrity numbers (RIN) for transcriptomics and protein yield measurements for proteomics.

Integrated Transcriptomic-Proteomic Analysis

The integration of transcriptomic and proteomic data presents both unique challenges and opportunities. Contrary to the central dogma assumption of direct correspondence between mRNA transcripts and protein expression, studies consistently show only moderate correlation between these molecular layers due to post-transcriptional regulation, differing half-lives, and translational efficiency variations [30].

Several factors influence the relationship between mRNA and protein abundance, including:

- Translational efficiency affected by physical properties of transcripts such as Shine-Dalgarno sequences in prokaryotes and mRNA secondary structure

- Codon bias where preferred codons correlate with increased translation efficiency

- Ribosome density and occupancy time on mRNAs

- Post-translational modifications and protein degradation rates

Proteogenomic integration approaches have been developed to address these challenges. The integrated transcriptomic-proteomic (ITP) workflow uses RNA-Seq data to generate customized protein sequence databases that improve peptide identification in mass spectrometry analyses [31]. This approach has successfully identified novel proteoforms, including novel exons, translation of annotated untranslated regions, and alternative splice variants that refine genome annotation and reveal previously unrecognized protein diversity [31].

Figure 1: Proteogenomic workflow for integrated transcriptomic-proteomic analysis

Computational Methods and Data Integration Strategies

Multi-Omics Data Integration Approaches

Computational integration of multi-omics data requires sophisticated strategies to handle the inherent heterogeneity of datasets with varying scales, resolutions, and noise levels. Multiple mathematical frameworks have been developed to address these challenges, each with distinct advantages for specific research applications.

Horizontal integration combines the same type of omics data across different samples or conditions, enabling comparative analyses and population-level insights. This approach is particularly valuable for identifying consistent patterns across diverse cohorts. In contrast, vertical integration combines different types of omics data from the same samples, focusing on understanding the relationships between molecular layers within individual biological systems [27].

More specifically, integration methods can be categorized into:

- Concatenation-based approaches that merge multiple omics datasets into a single composite matrix for simultaneous analysis

- Model-based approaches that incorporate biological knowledge or statistical models to guide integration

- Network-based approaches that represent molecular entities as nodes and their relationships as edges in a comprehensive biological network

- Similarity-based approaches that identify shared patterns or structures across different omics layers

The choice of integration strategy depends on the specific research question, data characteristics, and desired outcomes. For biomarker discovery, network-based approaches have proven particularly valuable, as they can identify hub genes and proteins that play central roles in biological processes and may serve as more robust biomarkers than entities working in isolation [3].

Pathway Enrichment and Multivariate Analysis

Pathway enrichment analysis provides a powerful framework for interpreting multi-omics data in the context of biologically meaningful gene sets. Traditional methods face limitations when applied to multi-contrast or multi-omics datasets, leading to the development of specialized tools like mitch (multi-contrast pathway enrichment) [32].

Mitch employs a rank-MANOVA statistical approach to identify gene sets that exhibit joint enrichment across multiple contrasts or omics layers. This method offers several advantages:

- Simultaneous analysis of multiple dimensions without requiring arbitrary significance cutoffs

- Identification of pathways with consistent or discordant regulation across omics layers

- Visualization capabilities for interpreting complex enrichment patterns in high-dimensional data

The package uses a directional significance score (D) defined as: D = -log₁₀(nominal p-value) × sign(log₂FC)

This score captures both statistical significance and direction of change, providing a more nuanced view of regulation patterns than significance alone [32].

For network-based integration, protein-protein interaction (PPI) networks reconstructed from databases like STRING enable centrality analysis to identify hub genes with potential biomarker utility. Studies applying this approach to colorectal cancer have identified hub genes such as CCNA2, CD44, and ACAN that contribute to poor patient prognosis, demonstrating the power of network-based multi-omics integration for biomarker discovery [3].

Figure 2: Network-based multi-omics analysis workflow for biomarker discovery

Application Notes: Biomarker Discovery in Oncology

Case Study: Colorectal Cancer Biomarker Identification

A systems biology approach to colorectal cancer (CRC) demonstrates the practical application of multi-omics integration for biomarker discovery. Researchers analyzed gene expression data from GEO databases, identifying 848 differentially expressed genes between colorectal tumor and normal tissues [3]. Through protein-protein interaction network reconstruction and centrality analysis, they distilled this set to 99 hub genes with potential functional significance in CRC pathogenesis.

Clustering analysis of the PPI network revealed seven interactive modules with distinct biological functions. Survival analysis further refined the candidate biomarkers, identifying that high expression of CCNA2, CD44, and ACAN was associated with poor prognosis in CRC patients [3]. Additionally, seven genes (TUBA8, AMPD3, TRPC1, ARHGAP6, JPH3, DYRK1A, and ACTA1) showed significant association with decreased survival rates, suggesting their potential utility as prognostic biomarkers.

This multi-step filtering approach—progressing from differential expression to network centrality to survival association—demonstrates how multi-omics integration can prioritize the most clinically relevant biomarkers from initially large candidate pools. The identification of both established CRC-related genes and novel candidates with limited prior literature connection highlights the discovery power of integrated systems biology approaches.

Application in Traumatic Brain Injury Biomarkers

Beyond oncology, multi-omics integration shows promise for biomarker discovery in neurological conditions such as traumatic brain injury (TBI). Researchers have applied network and pathway analysis to a manually curated list of 32 protein biomarker candidates from the literature, recovering known TBI-related mechanisms while generating hypotheses about new candidate biomarkers [33].

This approach identified both established biomarkers like S100B, GFAP, and UCHL1 and novel candidates with potential diagnostic and prognostic utility. The integration of multi-omics data helps address key challenges in TBI biomarker development, including limited specificity of individual markers and the complex multifactorial nature of secondary cellular responses to brain injury [33].

Essential Research Reagents and Computational Tools

Successful implementation of multi-omics integration requires both wet-lab reagents and computational resources. The table below outlines key solutions and their applications in multi-omics research.

Table 2: Essential Research Reagents and Computational Tools for Multi-Omics Integration

| Category | Specific Tool/Reagent | Primary Function | Application in Multi-Omics |

|---|---|---|---|

| Transcriptomic Profiling | RNA-Seq kits (Illumina) | Comprehensive transcriptome sequencing | Gene expression quantification, alternative splicing detection |

| Proteomic Analysis | LC-MS/MS systems | High-throughput protein identification and quantification | Proteome profiling, post-translational modification detection |

| Spatial Omics | 10x Genomics Visium | Spatial transcriptomic profiling | Tissue context preservation, regional expression analysis |

| Multi-omics Integration | mitch R package | Multi-contrast pathway enrichment analysis | Identifying jointly enriched pathways across omics layers |

| Network Analysis | Cytoscape with STRING | PPI network visualization and analysis | Hub gene identification, module detection |

| Statistical Analysis | limma, DESeq2 | Differential expression analysis | Identifying significantly altered molecules |

| Data Repositories | TCGA, CPTAC, ICGC | Public multi-omics data sources | Data validation, meta-analyses, cohort expansion |

Validation and Clinical Translation

Analytical Validation

Rigorous validation is essential for translating multi-omics biomarkers from discovery to clinical application. Analytical validation ensures that biomarker measurements are accurate, reproducible, and fit for purpose. For multi-omics biomarkers, this process must address the unique challenges of integrating multiple assay types with different performance characteristics.

Key components of multi-omics biomarker validation include:

- Assay precision determination for each omics platform separately

- Cross-platform reproducibility assessment using different technologies to measure the same biomarkers

- Dynamic range evaluation across expected biological concentrations

- Sample stability studies under various collection and storage conditions

The validation process should adhere to established guidelines such as the FDA's Bioanalytical Method Validation guidance, adapting traditional approaches to address multi-omics-specific considerations. For integrated biomarker signatures, validation must confirm not only the performance of individual components but also the integrative algorithm itself.

Clinical Utility Assessment

Establishing clinical utility represents the final step in translating multi-omics biomarkers to practice. This process demonstrates that using the biomarker signature improves patient outcomes compared to standard approaches. For multi-omics biomarkers, clinical utility may derive from several advantages:

- Enhanced diagnostic accuracy through complementary information from multiple molecular layers

- Improved patient stratification by capturing biological heterogeneity that single-omics approaches miss

- Therapeutic response prediction by monitoring coordinated changes across molecular levels

- Resistance mechanism identification through understanding compensatory pathways