Systems Biology in Biomarker Discovery: Decoding Complex Pathology for Precision Medicine

This article explores the transformative role of systems biology in identifying and validating biomarkers for complex diseases.

Systems Biology in Biomarker Discovery: Decoding Complex Pathology for Precision Medicine

Abstract

This article explores the transformative role of systems biology in identifying and validating biomarkers for complex diseases. Moving beyond traditional reductionist approaches, we detail how integrative analysis of multi-omics data, AI-powered analytics, and network models is revolutionizing our understanding of pathological mechanisms. Aimed at researchers and drug development professionals, the content provides a comprehensive framework—from foundational concepts and cutting-edge methodologies to overcoming translational challenges and rigorous validation. The article synthesizes key insights to guide the development of robust, clinically actionable biomarkers, ultimately advancing personalized therapeutics and proactive health management.

From Reductionism to Networks: A Systems View of Disease Pathology

Systems biology is a transformative approach that applies fundamental principles of complexity science and systems medicine to characterize the dynamic states of health and disease within biological networks. This framework moves beyond traditional reductionist methods by integrating and analyzing complex structured data—including genomics, transcriptomics, proteomics, and metabolomics—to understand disease emergence from system-level perturbations [1]. The field has matured significantly through incorporating techniques based on statistical physics and machine learning, which have refined our understanding of intricate disease networks and their behaviors [1].

The core paradigm of systems biology treats diseases not as isolated consequences of single molecular defects but as pathological states that arise from dysregulated interactions within complex biological networks. This perspective enables researchers to identify emergent properties that cannot be detected by examining individual components in isolation, providing a more comprehensive foundation for understanding complex pathologies and developing effective therapeutic interventions [1].

Multi-Omics Integration Framework

Data Types and Structures in Biological Research

Systems biology relies on the systematic integration of diverse data types to construct comprehensive models of biological systems. The table below outlines the primary data categories and their characteristics used in this integrative approach:

Table 1: Data Types in Quantitative Cell Biology and Systems Research

| Data Category | Subtype | Description | Examples |

|---|---|---|---|

| Quantitative Data | Discrete | Countable, finite numerical values | Number of cells in an image, filopodia per cell |

| Continuous | Measured values within a range | Fluorescence intensity, cell size, protein concentration | |

| Qualitative Data | Categorical | Distinct groups or categories | Control vs. treated, wild type vs. mutant, viable vs. inviable phenotypes |

Understanding these distinctions is crucial for selecting appropriate data processing and visualization techniques in systems biology research [2]. The integration of both quantitative and qualitative data has proven particularly valuable in parameter identification for systems biology models, where qualitative observations can be formalized as inequality constraints on model outputs [3].

The Multi-Omics Approach in Biomarker Research

By 2025, multi-omics integration is expected to gain substantial momentum in biomarker research, with researchers increasingly leveraging combined data from genomics, proteomics, metabolomics, and transcriptomics to achieve a holistic understanding of disease mechanisms [4]. This approach enables the identification of comprehensive biomarker signatures that reflect the true complexity of diseases, facilitating improved diagnostic accuracy and treatment personalization.

The shift toward systems biology through multi-omics data promotes a deeper understanding of how different biological pathways interact in health and disease, which is crucial for identifying novel therapeutic targets and biomarkers [4]. This trend is further accelerated by collaborative efforts between disciplines such as bioinformatics, molecular biology, and clinical research, which drive the development of innovative multi-omics platforms for enhanced biomarker discovery and validation [4].

Computational Methodologies and Workflows

Data Exploration and Analysis Protocols

Robust data exploration serves as a fundamental bridge between raw biological data and meaningful scientific insights in systems biology. This process requires a flexible, hands-on approach that reveals trends, identifies outliers, and refines hypotheses throughout the research lifecycle [2]. The core principles for effective data exploration in quantitative cell biology include:

- Flexibility: Workflows must adapt as new data are added, beginning with the first biological repeat and continuing incrementally until the dataset is complete

- Visualization: Generating clear, informative plots enables quick interpretation of trends, identification of anomalies, and observation of patterns that might be missed in numerical tables

- Biological Variability Assessment: Consistently evaluating biological variability and reproducibility is crucial to avoid premature conclusions, using approaches like SuperPlots that display individual data points by biological repeat while capturing overall trends

- Metadata Tracking: Maintaining comprehensive metadata during analysis is essential for understanding variability and ensuring reproducibility [2]

For computational implementation, learning programming languages such as R or Python can significantly enhance data exploration capabilities by eliminating repetitive manual tasks and enabling the creation of automated analysis pipelines. Python's extensive imaging and machine learning libraries make it particularly valuable for image data, while R offers specialized packages for genomic analyses like single-cell RNA sequencing data [2].

Combining Qualitative and Quantitative Data in Parameter Identification

A powerful methodology in systems biology involves the formal integration of both qualitative and quantitative data for parameter identification in biological models. This approach addresses the common challenge where quantitative time-course data may be unavailable, limited, or corrupted by noise, while qualitative data (e.g., activating/repressing, oscillatory/non-oscillatory, viability/inviability) are often abundant but underutilized [3].

The technical protocol for this integration involves:

- Formalizing Qualitative Data: Convert qualitative biological observations into inequality constraints on model outputs (e.g., gi(x) < 0)

- Objective Function Construction: Create a single scalar objective function that accounts for both dataset types:

- ftot(x) = fquant(x) + fqual(x)

- Where fquant(x) = Σj (yj,model(x) - yj,data)² (standard sum of squares)

- And fqual(x) = Σi Ci · max(0, gi(x)) (static penalty function for constraint violations)

- Constrained Optimization: Minimize ftot(x) using optimization algorithms (e.g., differential evolution, scatter search) to identify optimal parameter values [3]

This methodology was successfully applied to parameterize a yeast cell cycle regulation model, incorporating both quantitative time courses (561 data points) and qualitative phenotypes of 119 mutant yeast strains (1647 inequalities) to identify 153 model parameters [3].

Applications in Complex Disease Biomarker Research

Network Medicine for Complex Disease Characterization

Network medicine represents a specialized application of systems biology that focuses on characterizing the dynamical states of health and disease within biological networks. This approach has significantly refined our understanding of disease networks by incorporating techniques based on statistical physics and machine learning [1]. By mapping complex diseases onto biological networks, researchers can identify disease modules, uncover network-based biomarkers, and discover potential therapeutic targets that might remain hidden through conventional approaches.

The next phase of network medicine must expand the current framework by incorporating more realistic assumptions about biological units and their interactions across multiple relevant scales. This expansion is crucial for advancing our understanding of complex diseases and improving strategies for their diagnosis, treatment, and prevention [1]. Current challenges that must be addressed include limitations in defining biological units and interactions, interpreting network models, and accounting for experimental uncertainties [1].

Advanced Biomarker Technologies and Trends

As we approach 2025, biomarker analysis is poised for transformative changes driven by advances in technology and data science. Several key trends are expected to significantly impact complex disease research:

Table 2: Key Trends in Biomarker Analysis for Complex Disease Research (2025 Outlook)

| Trend Area | Specific Advancements | Impact on Complex Disease Research |

|---|---|---|

| AI/ML Integration | Predictive analytics for disease progression, Automated data interpretation, Personalized treatment planning | Enhanced clinical decision-making, Reduced biomarker discovery time, Tailored therapeutic strategies |

| Liquid Biopsy Technologies | Enhanced sensitivity/specificity, Real-time monitoring capabilities, Expansion beyond oncology | Non-invasive early detection, Dynamic treatment response assessment, Broader application across disease types |

| Single-Cell Analysis | Deeper insights into tumor microenvironments, Identification of rare cell populations, Integration with multi-omics | Understanding tumor heterogeneity, Targeting therapy-resistant cells, Comprehensive cellular mechanism views |

These technological advancements, combined with evolving regulatory frameworks and an increased focus on patient-centric approaches, are expected to drive significant improvements in biomarker discovery and validation for complex diseases [4].

Experimental Framework and Research Toolkit

Essential Research Reagent Solutions

Systems biology research requires specialized reagents and computational tools to effectively investigate complex diseases. The table below details key resources essential for conducting comprehensive systems biology studies:

Table 3: Essential Research Reagents and Computational Tools for Systems Biology

| Category | Specific Tool/Reagent | Function in Research |

|---|---|---|

| Computational Tools | R/Python Programming Environments | Data processing automation, Statistical analysis, and Visualization |

| Network Analysis Software | Construction and analysis of biological networks and pathways | |

| Machine Learning Libraries | Pattern recognition in complex datasets and predictive modeling | |

| Experimental Reagents | Multi-Omics Profiling Kits | Simultaneous measurement of multiple molecular layers (genomics, proteomics, metabolomics) |

| Single-Cell Analysis Platforms | Examination of cellular heterogeneity within tissues and microenvironments | |

| Liquid Biopsy Assays | Non-invasive collection and analysis of biomarkers from blood samples |

The increasing availability of generative artificial intelligence and large language models is making coding and data workflow improvement more accessible than ever, further enhancing researchers' capabilities in systems biology [2].

Visualization Standards in Biological Research

Effective visual communication is essential in systems biology, particularly when representing complex networks and pathways. Research has identified significant challenges in how arrow symbols are used in biological figures, with studies finding little correlation between arrow style and meaning across hundreds of figures in introductory biology textbooks [5]. This inconsistency creates interpretation difficulties, particularly for students and non-specialists.

To address these challenges, researchers should:

- Ensure clarity and consistency when using arrow symbols in pathway diagrams and network representations

- Be cognizant of the level of clarity of representations used during instruction and publication

- Explicitly define symbolic representations in figure legends to minimize misinterpretation [5]

Additionally, all visual elements must meet minimum color contrast ratio thresholds to ensure accessibility, with WCAG 2.0 level AA requiring a contrast ratio of at least 4.5:1 for normal text and 3:1 for large text or graphical objects [6] [7]. The specified color palette for diagrams in this document (#4285F4, #EA4335, #FBBC05, #34A853, #FFFFFF, #F1F3F4, #202124, #5F6368) provides sufficient contrast when properly implemented.

The future of systems biology in understanding complex diseases will be shaped by several converging trends. The enhanced integration of artificial intelligence and machine learning is anticipated to play an increasingly significant role by enabling more sophisticated predictive models that can forecast disease progression and treatment responses based on comprehensive biomarker profiles [4]. Additionally, the continued evolution of regulatory frameworks toward streamlined approval processes and standardized validation protocols will facilitate the translation of systems biology discoveries into clinically useful applications [4].

Despite these promising developments, the field must overcome significant challenges to realize its full potential. Limitations in defining biological units and interactions, interpreting network models, and accounting for experimental uncertainties continue to hinder progress [1]. The next phase of network medicine must expand current frameworks by incorporating more realistic assumptions about biological units and their interactions across multiple relevant scales [1]. This expansion is crucial for advancing our understanding of complex diseases and improving strategies for their diagnosis, treatment, and prevention.

As systems biology continues to mature, its holistic framework will play an increasingly pivotal role in shaping the future of personalized medicine, ultimately leading to improved patient outcomes through more precise diagnostic capabilities and targeted therapeutic strategies. The integration of multi-scale data, advanced computational methodologies, and innovative experimental technologies positions systems biology as a cornerstone of 21st-century biomedical research for complex diseases.

The field of biomarker discovery is undergoing a fundamental transformation, moving from a reductionist approach focused on single molecules toward a holistic understanding of complex network signatures. This revolution is driven by the recognition that complex pathologies like cancer, autoimmune diseases, and neurological disorders cannot be adequately characterized by isolated biomarkers. The traditional "one mutation, one target, one test" model has provided important progress in companion diagnostics but has left significant blind spots in our understanding of disease biology [8]. In its place, a new paradigm has emerged that embraces the inherent complexity of biological systems through multi-analyte signatures, artificial intelligence (AI)-driven pattern recognition, and systems-level interpretations [9].

This shift has been catalyzed by two converging forces: the rise of high-dimensional, high-throughput platforms (such as single-cell technologies) and the integration of AI and advanced analytics into translational workflows [9]. Where traditional biomarker discovery often took years and relied on hypothesis-driven approaches that might miss complex molecular interactions, AI-powered methods can now systematically explore massive datasets to find patterns humans couldn't detect – often reducing discovery timelines from years to months or even days [10]. The result is a move toward composite biomarkers that combine multiple weak signals into robust, interpretable readouts that better reflect biological redundancy and complexity [9].

Framed within the broader context of systems biology, this revolution represents more than just technological advancement—it signifies a fundamental change in how we conceptualize disease mechanisms and therapeutic interventions. By analyzing biomarkers as interconnected networks rather than isolated entities, researchers can capture the emergent properties of biological systems, leading to more accurate diagnostics, better patient stratification, and more effective therapeutic interventions [11].

Technological Drivers of the Revolution

Multi-Omics Integration and Spatial Biology

The backbone of the network signature revolution lies in multi-omics integration, which layers genomics, transcriptomics, proteomics, and metabolomics to capture the full complexity of disease biology [8]. This approach has transformed biomarker science from examining single endpoints to viewing molecular interactions in parallel, resolving layers of complexity that once went unseen [8].

Spatial biology techniques have emerged as one of the most significant advances, revealing the spatial context of dozens or more markers within a single tissue, enabling full characterization of complex and heterogeneous microenvironments [12]. Unlike traditional approaches, spatial transcriptomics and multiplex immunohistochemistry allow researchers to study gene and protein expression in situ without altering the spatial relationships or interactions between cells [12]. This provides critical information about physical distance between cells, cell types present, and cellular organization—factors that often prove crucial for understanding biomarker function and therapeutic response.

The distribution of expression throughout a tumor is now recognized as an important factor when considering the utility of a predictive biomarker [12]. For instance, a biomarker may only indicate the presence of cancer when expressed in a specific region, and different microenvironments may express different biomarkers relevant to different aspects of disease progression or therapeutic response [12]. Studies suggest that the distribution of spatial interactions can significantly impact treatment response, highlighting why spatial context is indispensable for next-generation biomarker discovery [12].

Artificial Intelligence and Machine Learning

AI-powered biomarker discovery transforms traditional processes by systematically exploring massive datasets to uncover patterns that conventional methods miss [10]. Recent systematic reviews of 90 studies show that 72% used standard machine learning methods, 22% used deep learning, and 6% used both approaches [10]. This represents a fundamental paradigm shift from hypothesis-driven to data-driven biomarker identification.

The power of AI lies in its ability to integrate and analyze multiple data types simultaneously. Where traditional approaches might examine one biomarker at a time, AI can consider thousands of features across genomics, imaging, and clinical data to identify meta-biomarkers—composite signatures that capture disease complexity more completely [10]. Machine learning algorithms excel at different aspects of biomarker discovery, with random forests and support vector machines providing robust performance with interpretable feature importance rankings, deep neural networks capturing complex non-linear relationships in high-dimensional data, and convolutional neural networks extracting quantitative features from medical images and pathology slides [10].

AI is particularly valuable in immuno-oncology, where traditional biomarkers like PD-L1 expression provide limited predictive value [10]. The complexity of immune checkpoint inhibitors involves dynamic interplay between tumor cells, immune cells, and the surrounding microenvironment—complex relationships that AI approaches can decipher by integrating multiple data modalities [10].

Advanced Model Systems

Advanced model systems, including organoids and humanized systems, represent another advance in biomarker discovery as these platforms can better mimic human biology and drug responses compared to conventional 2D or animal models [12]. Organoids excel at recapitulating the complex architectures and functions of human tissues, making them well-suited for functional biomarker screening, target validation, and exploration of resistance mechanisms [12]. Meanwhile, humanized mouse models allow research teams to conduct studies in the context of human immune responses, proving particularly beneficial for investigating response and resistance to immunotherapies [12].

These models become even more valuable for biomarker discovery and validation when used in conjunction with multi-omic technologies [12]. By combining data from various models, research teams can enhance the robustness and predictive accuracy of their studies, paving the way for more personalized and effective treatments [12]. This integrated approach exemplifies the systems biology principle that complex biological phenomena are best understood through multiple complementary perspectives and experimental modalities.

Table 1: Emerging Technologies in Biomarker Discovery

| Technology | Key Application | Advantages | Limitations |

|---|---|---|---|

| Spatial Biology | Characterization of tumor microenvironment [12] | Preserves spatial context of biomarkers; reveals cellular interactions [12] | Technically challenging; higher costs; complex data analysis [12] |

| Single-Cell Multi-omics | Identification of rare cell populations; cellular heterogeneity [8] | Unprecedented resolution; reveals hidden subtypes [8] | Expensive; specialized expertise required; data integration challenges [8] |

| AI-Powered Analytics | Pattern recognition in high-dimensional data [10] [12] | Identifies complex, non-linear relationships; processes massive datasets [10] | "Black box" concerns; requires large, high-quality datasets [10] |

| Organoid Models | Functional biomarker validation [12] | Recapitulates human tissue architecture; personalized screening [12] | Limited microenvironment representation; standardization challenges [12] |

Methodological Framework: From Data to Network Signatures

Data Acquisition and Integration

The AI-powered biomarker discovery pipeline follows a systematic approach that ensures robust, clinically relevant results [10]. The process begins with data ingestion from collecting multi-modal datasets from diverse sources, including genomic sequencing data, medical imaging, electronic health records, and laboratory results [10]. The challenge is harmonizing data from different institutions and formats, requiring data lakes and cloud-based platforms as essential infrastructure for managing these massive, heterogeneous datasets [10].

Preprocessing involves quality control, normalization, and feature engineering [10]. Missing data imputation and outlier detection are critical steps that dramatically impact model performance [10]. Batch effects from different sequencing platforms or imaging equipment must be corrected, and feature engineering may involve creating derived variables, such as gene expression ratios or radiomic texture features, that capture biologically relevant patterns [10]. This stage is crucial for ensuring that downstream analyses produce reliable, reproducible results.

The integration of multimodal data creates a multidimensional health ecosystem across the human lifecycle that captures disease progression trajectories and elucidates mechanisms underlying individual drug response variations [13]. This integrated analysis of pharmacogenomics and proteomics creates a robust foundation for developing prognosis assessment and health risk predictive models [13].

Network Construction and Analysis

Network-based approaches provide the conceptual and analytical framework for moving from single molecules to system-level signatures. Biological networks can be constructed from various data types, including correlation-based networks from gene expression data, protein-protein interaction networks, and pathway-based networks [14]. Tools like BioLayout Express 3D enable the visualization and analysis of complex biological networks, providing powerful capabilities for identifying patterns and relationships that might otherwise remain hidden [14].

The visualization of these networks is not merely illustrative—it serves as an analytical tool that leverages human pattern recognition capabilities to complement computational analyses [14]. When data is visualized intuitively, it allows analysts to tackle certain problems whose size and complexity make them otherwise intractable [15]. BioLayout and similar tools couple advanced computational algorithms with visualization interfaces that make full use of human cognitive abilities, providing deeper understanding and better communication of data [15].

Network analysis techniques include identifying highly connected nodes (hubs) that may represent crucial regulatory elements, detecting community structures that correspond to functional modules, and analyzing network topology to understand system robustness and vulnerability [14]. These approaches align with systems biology principles by focusing on the relationships between components rather than just the components themselves.

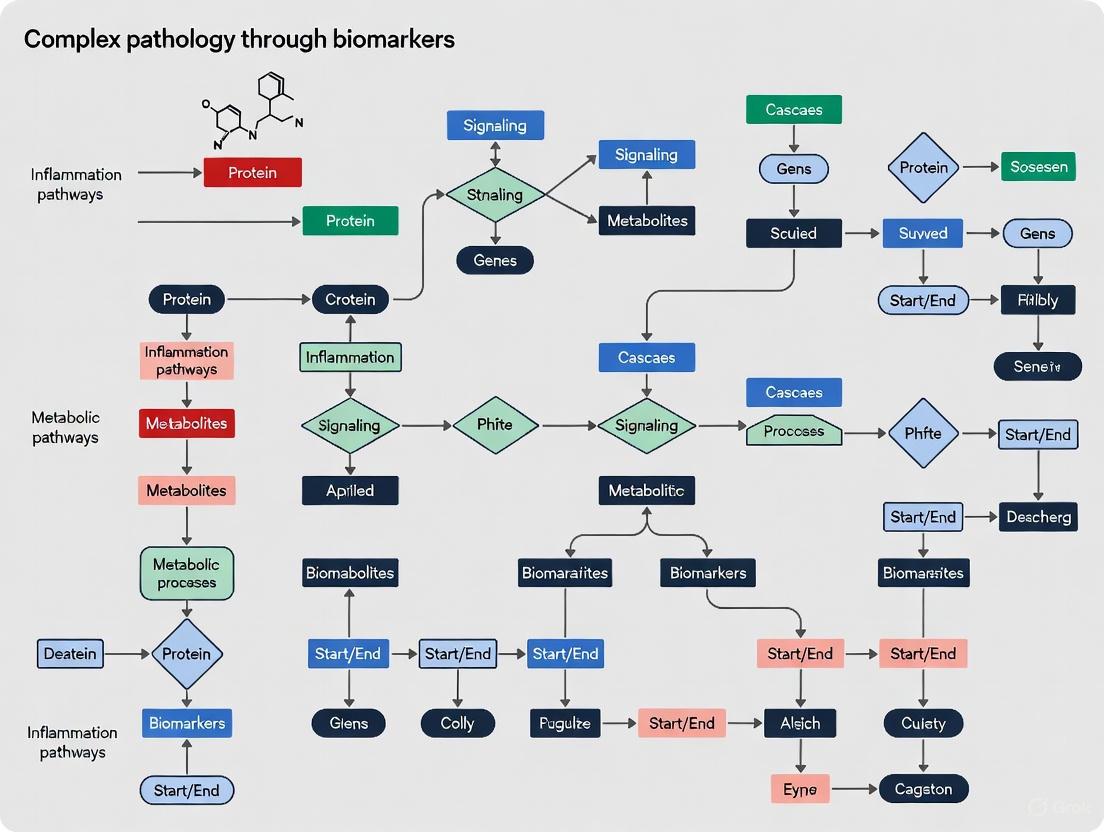

Diagram 1: Network Signature Discovery Workflow. This workflow illustrates the pipeline from multi-omics data collection through computational analysis to experimental validation of biomarker signatures.

Signature Validation and Clinical Translation

The transition from network discovery to clinically applicable signatures requires rigorous validation and attention to practical implementation. Validation requires independent cohorts and biological experiments, as computational predictions alone aren't sufficient [10]. Biomarkers must demonstrate clinical utility in real-world settings, including analytical validation (does the test work reliably?), clinical validation (does it predict the intended outcome?), and clinical utility assessment (does it improve patient care?) [10].

A critical challenge in validation is ensuring that signatures are interpretable, actionable, and portable [9]. Clinicians and regulators must understand the basis and implications of a signature, it should directly inform treatment decisions, and it must be feasible to implement under routine clinical trial conditions [9]. Many promising signatures fail not because the science is flawed, but because operational realities were overlooked [9].

Platform convergence—the principle that different technologies can resolve uncertainty, correct for each other's blind spots, and strengthen confidence in a biological signal—plays a crucial role in validation [9]. When multiple technologies corroborate a finding, confidence in the signature increases substantially [9]. This approach acknowledges that biology is redundant by nature, and therefore biomarker signatures should be as well [9].

Table 2: Classification and Applications of Biomarker Networks

| Biomarker Network Type | Components | Analytical Methods | Clinical Applications |

|---|---|---|---|

| Co-expression Networks | Genes, proteins, metabolites with correlated expression [14] | Correlation metrics (Pearson, Spearman), clustering [14] | Disease subtyping, identification of regulatory modules [14] |

| Protein-Protein Interaction Networks | Proteins and their physical interactions [14] | Topological analysis, hub identification, community detection [14] | Target identification, understanding mechanism of action [14] |

| Regulatory Networks | Transcription factors, genes, miRNAs | Bayesian networks, ODE modeling | Understanding disease pathogenesis |

| Spatial Interaction Networks | Cells and their spatial relationships [12] | Spatial statistics, neighborhood analysis [12] | Tumor microenvironment characterization, immunotherapy response prediction [12] |

| Multi-omics Integrative Networks | Multiple molecular layers (genomics, proteomics, etc.) [8] | Multivariate analysis, graph machine learning [8] | Comprehensive patient stratification, predictive biomarker discovery [8] |

The Scientist's Toolkit: Essential Research Reagents and Platforms

Table 3: Essential Research Reagents and Platforms for Network Biomarker Discovery

| Tool Category | Specific Technologies/Platforms | Key Function | Application Context |

|---|---|---|---|

| Single-Cell Analysis | 10x Genomics, Element Biosciences AVITI24 [8] | High-resolution cell profiling, RNA and protein measurement simultaneously [8] | Identification of rare cell populations, cellular heterogeneity studies [8] |

| Spatial Biology | Multiplex IHC/IF, spatial transcriptomics [12] | In situ analysis preserving tissue architecture [12] | Tumor microenvironment mapping, spatial biomarker discovery [12] |

| Network Visualization & Analysis | BioLayout Express 3D, Cytoscape [15] [14] | Network construction, visualization, and topological analysis [15] | Pattern identification in complex datasets, pathway analysis [14] |

| AI/ML Platforms | Random forests, SVMs, deep neural networks [10] | Pattern recognition in high-dimensional data [10] | Predictive model development, biomarker signature optimization [10] |

| Advanced Model Systems | Organoids, humanized mouse models [12] | Functional validation in physiologically relevant systems [12] | Therapeutic response testing, resistance mechanism studies [12] |

| Multi-omics Integration | Sapient Biosciences platforms [8] | Simultaneous measurement of thousands of molecules [8] | Comprehensive molecular profiling, systems-level insights [8] |

Analytical Workflow for Network Signature Identification

The process of identifying robust network signatures follows a structured analytical workflow that combines computational and experimental approaches. Model training uses various machine learning approaches depending on the data type and clinical question, with cross-validation and holdout test sets ensuring models generalize beyond the training data [10]. Ensemble methods that combine multiple algorithms often provide the most robust results [10].

A key consideration in this workflow is the principle of redundant design [9]. Biology is redundant by nature, with cytokine signaling, for instance, involving overlapping molecules and feedback loops [9]. Therefore, resilient signatures should mimic this biological architecture: layered, flexible, and capable of generating a signal across variable conditions [9]. This doesn't mean more noise; it means intentional overlap, where multiple markers or modalities speak to the same biological event from different angles [9].

The final stage involves signature refinement for clinical implementation [9]. This may involve distilling a high-dimensional, multi-platform signature discovered during early development down to a handful of proteins or transcripts that still reflect the original biology but are more practical for clinical use [9]. This process requires careful balancing of biological comprehensiveness with practical implementability.

Diagram 2: Example Signaling Network with Hub Node. This diagram illustrates a simplified signaling network where Kinase A acts as a critical hub node, representing the type of network structure often identified in biomarker signature discovery.

Validation and Implementation Framework

Analytical and Clinical Validation

The validation of network signatures requires a rigorous, multi-stage process to ensure reliability and clinical utility. Analytical validation establishes that the signature can be measured accurately and reliably across different conditions and platforms [10]. This includes assessments of precision, accuracy, sensitivity, specificity, and reproducibility under defined conditions [10]. The complexity increases significantly with network signatures compared to single biomarkers due to the multivariate nature of the signatures.

Clinical validation demonstrates that the signature is associated with the clinical phenotype, outcome, or treatment response of interest [10]. This requires testing the signature in well-characterized patient cohorts with appropriate clinical annotations [10]. For predictive signatures, this means showing differential treatment effects between signature-positive and signature-negative patients [10]. The statistical validation requirements differ significantly between prognostic and predictive markers, with predictive markers requiring specific clinical trial designs with biomarker stratification and interaction testing [10].

The evolving regulatory landscape, particularly Europe's IVDR (In Vitro Diagnostic Regulation), is reshaping biomarker and diagnostic development [8]. Implementation has proved complex, creating challenges for diagnostics companies and the broader life sciences sector [8]. Common pain points include uncertainty about requirements, inconsistencies between jurisdictions, lack of transparency compared to the US FDA system, and unpredictable timelines that complicate drug-diagnostic co-development [8].

Clinical Translation and Implementation

For biomarkers to influence clinical decision-making and improve patient outcomes, they must be embedded into clinical-grade infrastructure that ensures reliability, traceability, and compliance [8]. Without such infrastructure, even the most advanced technologies risk stalling before they reach the patient [8]. This requires purpose-built laboratories combined with quality frameworks that enable genomic and multi-omic assays to achieve regulatory and clinical standards [8].

Equally vital is the digital backbone underpinning these services, including Laboratory Information Management Systems (LIMS), electronic Quality Management Systems (eQMS), and clinician portals to streamline complex data flows from sample to report [8]. Digital pathology serves as a natural bridge between imaging and molecular biomarker workflows, with AI-driven image interpretation and fully digital reporting environments delivering greater consistency, scalability, and interoperability across sites [8].

Successful implementation also requires that signatures be interpretable, actionable, and portable [9]. Clinicians and regulators must understand the basis and implications of a signature, it should directly inform treatment decisions, and it must be feasible to implement under routine clinical trial conditions [9]. This is where the intersection of AI and domain expertise becomes powerful: human-guided feature selection combined with automated learning can yield simplified, robust signatures [9].

Future Perspectives and Challenges

Emerging Trends and Technologies

The field of network biomarker discovery continues to evolve rapidly, with several emerging trends likely to shape future research and clinical applications. Spatial multi-omics is advancing quickly, with new technologies enabling simultaneous measurement of multiple molecular layers while preserving spatial context [12]. This approach is particularly valuable for understanding the tumor microenvironment and cellular interactions that drive treatment response and resistance [12].

AI and machine learning methodologies are becoming increasingly sophisticated, with growing emphasis on explainable AI that provides transparent, interpretable results that clinicians can trust and act upon [10]. Federated learning approaches enable secure analysis across distributed datasets without moving sensitive patient data, addressing privacy concerns while leveraging diverse datasets [10].

The integration of real-world data from electronic health records, wearable devices, and patient-generated health data represents another expanding frontier [13]. These digital biomarkers can provide continuous, dynamic monitoring of disease states and treatment responses, complementing traditional molecular biomarkers [13].

Addressing Implementation Challenges

Despite the exciting potential of network biomarker signatures, significant challenges remain in their widespread clinical implementation. Data heterogeneity poses substantial obstacles, requiring sophisticated normalization and harmonization approaches [13]. Inconsistent standardization protocols across platforms and institutions further complicate large-scale implementation [13].

Limited generalizability across diverse populations remains a critical concern [13]. Models developed in specific populations may not perform adequately in others, potentially exacerbating health disparities [13]. This requires intentional inclusion of diverse populations in training datasets and rigorous testing across demographic groups.

High implementation costs and clinical translation barriers also present significant challenges [13]. The infrastructure required for complex biomarker signatures—both technological and human expertise—may be unavailable in resource-limited settings, potentially limiting equitable access to advanced diagnostics [13].

Moving forward, expanding these predictive models to rare diseases, incorporating dynamic health indicators, strengthening integrative multi-omics approaches, conducting longitudinal cohort studies, and leveraging edge computing solutions for low-resource settings emerge as critical areas requiring innovation and exploration [13]. By addressing these challenges systematically, the field can realize the full potential of network biomarker signatures to transform precision medicine.

Modern neurodegenerative disease research has undergone a paradigm shift from a reductionist focus on individual pathological proteins to a systems-level understanding of complex, perturbed molecular networks. This whitepaper synthesizes cutting-edge computational and experimental frameworks for deconstructing these disease-perturbed networks, drawing on recent advances in single-cell multi-omics, proteomics, and network biology. We detail specific methodological workflows for mapping transcriptional dysregulation, identifying key network vulnerabilities, and translating these findings into biomarker and therapeutic target discovery. Designed for researchers and drug development professionals, this guide provides both the conceptual foundation and practical protocols for applying systems pathology principles to unravel the complexity of neurodegenerative diseases and other complex pathologies.

Neurodegenerative diseases (NDs), including Alzheimer's disease (AD), Parkinson's disease (PD), and frontotemporal dementia (FTD), represent a large group of neurological disorders with heterogeneous clinical and pathological traits characterized by progressive nervous system dysfunction [16]. Traditional pathological examination has focused on hallmark protein aggregates—amyloid-β and tau in AD, α-synuclein in PD—yet these represent only the terminal endpoints of widespread network failures. Systems pathology integrates all levels of functional and morphological information into a coherent model that enables understanding of perturbed physiological systems and complex pathologies in their entirety [17].

The fundamental premise of network medicine is that complex diseases are rarely caused by mutation in a single gene but rather influenced by combinations of genetic, epigenetic, and environmental factors that disrupt biological networks [18]. A disease-perturbed network refers to the systematic alteration in the interactions and regulatory relationships between molecular components (genes, proteins, metabolites) that leads to pathological system behavior. In neurodegeneration, these perturbations often follow a predictable spatiotemporal pattern, beginning with synaptic dysfunction and progressing through neuroinflammatory cascades to eventual cell death [19] [18].

Table 1: Key Network Types in Neurodegenerative Disease Research

| Network Type | Nodes Represent | Edges Represent | Primary Application in ND Research |

|---|---|---|---|

| Protein-Protein Interaction (PPI) Networks | Proteins | Physical interactions between proteins | Identifying hub proteins and functional modules disrupted in disease [16] |

| Gene Co-expression Networks | Genes | Similarity in expression patterns across samples | Discovering disease-associated transcriptional modules and regulatory programs [18] |

| Single-Cell Regulatory Networks | Genes/chromatin regions | Co-accessibility of chromatin/gene expression | Mapping cell-type-specific transcriptional changes in disease [19] |

| Ligand-Receptor Communication Networks | Cell types | Predicted intercellular signaling | Understanding how disease alters cell-cell communication [19] |

Analytical Frameworks for Network Deconstruction

Single-Cell Multi-Omic Integration

Recent advances in single-cell technologies have enabled unprecedented resolution for mapping disease-perturbed networks at cellular resolution. A 2025 study of tau-driven Alzheimer's pathology exemplifies this approach, combining single-nuclei RNA sequencing (snRNA-seq) and single-nuclei Assay for Transposase-Accessible Chromatin using sequencing (snATAC-seq) from transgenic rat hippocampus to define regulatory events contributing to tau-induced neurodegeneration [19].

Experimental Protocol: Single-Cell Multiome Analysis of Disease-Perturbed Networks

Tissue Preparation and Nuclei Isolation

- Dissect fresh or frozen tissue samples (e.g., hippocampus from Tau transgenic and wild-type littermates)

- Homogenize tissue and isolate nuclei using gentle mechanical disruption and nuclear purification kits

- Quality control: Assess nuclei integrity and count using automated cell counters

Library Preparation and Sequencing

- Process nuclei using 10X Genomics Single Cell Multiome ATAC + Gene Expression kit

- For snRNA-seq: Capture RNA using poly-dT primers, reverse transcribe, and prepare sequencing libraries

- For snATAC-seq: Use transposase to fragment accessible chromatin, then amplify and prepare libraries

- Sequence on Illumina platforms (recommended: ≥20,000 read pairs per nucleus for snRNA-seq; ≥25,000 read pairs per nucleus for snATAC-seq)

Computational Data Integration

- Preprocessing: Align snRNA-seq reads to reference genome (STAR) and snATAC-seq reads (CellRanger-ATAC)

- Cluster cells using weighted nearest neighbor (WNN) integration of RNA and ATAC modalities

- Annotate cell types using marker genes from established brain cell atlases

- Identify differentially accessible regions (DARs) and differentially expressed genes (DEGs) between conditions

- Construct gene regulatory networks by linking transcription factor motif accessibility in ATAC-seq to target gene expression

Single-Cell Multi-Omic Workflow

Response Quantitative Trait Loci (reQTL) Mapping

Mapping context-dependent gene regulation requires specialized approaches that account for cellular heterogeneity in response to perturbations. A novel framework for identifying reQTLs—genetic variants whose effect on gene expression changes after environmental perturbation—leverages single-cell data to model per-cell perturbation states, significantly enhancing detection power compared to traditional bulk approaches [20].

Experimental Protocol: Continuous reQTL Mapping

Perturbation Induction and Single-Cell Profiling

- Collect peripheral blood mononuclear cells (PBMCs) from donors with known genotypes

- Apply disease-relevant perturbations (e.g., influenza A virus, Candida albicans, Pseudomonas aeruginosa)

- Process cells for single-cell RNA sequencing using 10X Genomics platform

- Include unstimulated controls from the same donors

Continuous Perturbation Scoring

- Perform logistic regression with corrected expression principal components as independent variables

- Predict log odds of being perturbed to generate continuous perturbation score for each cell

- Validate score by correlation with established marker genes (e.g., ISG15, IFI6 for interferon response)

reQTL Identification Using Poisson Mixed Effects Model

- Model gene expression = genotype + genotype × discrete perturbation + genotype × continuous perturbation score + covariates

- Include random effects for donor and batch

- Test significance using two degree-of-freedom likelihood ratio test

- Apply false discovery rate correction (Q value < 0.05)

Table 2: reQTL Mapping Performance Across Perturbations

| Perturbation | reQTLs Detected (2df-model) | Increase Over Discrete Model | Cell-Type-Specific Effects |

|---|---|---|---|

| Influenza A Virus (IAV) | 166 | 36.9% | MX1 eQTL in CD4+ T cells |

| Candida albicans (CA) | 770 | 38.2% | SAR1A eQTL in CD8+ T cells |

| Pseudomonas aeruginosa (PA) | 594 | 35.7% | Varies by cell type |

| Mycobacterium tuberculosis (MTB) | 646 | 37.1% | Varies by cell type |

Proteomic Network Analysis in Neurodegeneration

Large-scale consortia like the Global Neurodegeneration Proteomics Consortium (GNPC) have established harmonized proteomic datasets to identify disease-specific differential protein abundance and transdiagnostic signatures. The GNPC dataset comprises approximately 250 million unique protein measurements from over 35,000 biofluid samples (plasma, serum, and cerebrospinal fluid) across Alzheimer's disease, Parkinson's disease, frontotemporal dementia, and amyotrophic lateral sclerosis [21].

Experimental Protocol: Cross-Disease Proteomic Signature Identification

Sample Preparation and Proteomic Profiling

- Collect biofluid samples following standardized protocols to minimize pre-analytical variability

- Profile proteins using multiple platforms (SomaScan, Olink, mass spectrometry) for cross-validation

- Include samples from multiple neurodegenerative diseases and controls

Data Harmonization and Normalization

- Apply platform-specific normalization to account for technical variance

- Remove batch effects using ComBat or similar algorithms

- Impute missing values using k-nearest neighbors or similar approaches

Network-Based Differential Abundance Analysis

- Identify differentially abundant proteins between disease groups and controls

- Construct protein co-abundance networks using weighted correlation network analysis (WGCNA)

- Map differentially abundant proteins to existing protein-protein interaction networks (e.g., STRING, BioGRID)

- Identify conserved modules across neurodegenerative conditions

Key Findings in Neurodegenerative Network Pathology

Tau-Driven Network Perturbations

Single-cell multiome analysis of tauopathy models has revealed that synaptic dysfunction represents a critical early event in Alzheimer's continuum, with specific disruptions in axon guidance and synapse assembly pathways [19]. In dentate gyrus glutamatergic neurons, tau pathology causes decreased expression of adhesion molecules (Cdh10, Nectin1, Cntn4) critical for synaptic development, while upregulating semaphorin family genes (Sema3c, Sema3e) and Ephrin signaling components [19]. These findings reinforce the concept that initial synaptic failure precedes overt neurodegeneration in AD pathology.

Conserved Neuroinflammatory Networks

Cross-disease analyses have identified Toll-like receptor (TLR) signaling as a prominent pathway connecting multiple neurodegenerative conditions [16]. Network-based protein interaction studies reveal that connector proteins like TRAF6 serve as integration points for neuroinflammatory signaling across AD, PD, and FTD, suggesting potential therapeutic targets for modulating maladaptive immune responses common to multiple neurodegenerative diseases [16].

Transdiagnostic Proteomic Signatures

The GNPC analysis has identified robust plasma proteomic signatures that transcend traditional diagnostic boundaries, including an APOE ε4 carriership signature reproducible across AD, PD, FTD, and ALS [21]. These findings suggest shared molecular pathways underlying genetic risk mechanisms and highlight the power of network-based approaches to identify conserved pathological processes across clinically distinct conditions.

Network Propagation in Neurodegeneration

The Scientist's Toolkit: Essential Research Reagents and Platforms

Table 3: Research Reagent Solutions for Network Deconstruction Studies

| Reagent/Platform | Function | Application in Network Pathology |

|---|---|---|

| 10X Genomics Single Cell Multiome ATAC + Gene Expression | Simultaneous profiling of gene expression and chromatin accessibility | Mapping transcriptional regulatory networks in disease models [19] |

| SomaScan Proteomic Platform | High-throughput measurement of ~7,000 proteins | Identifying differential abundance signatures across neurodegenerative diseases [21] |

| Olink Proximity Extension Assay | Highly specific protein quantification with minimal sample volume | Validating proteomic biomarkers in biofluids [21] |

| PARTNER CPRM | Community Partner Relationship Management for network mapping | Visualizing and analyzing collaborative research networks [22] |

| Cytoscape with GeneMANIA | Open-source platform for network visualization and analysis | Integrating multi-omics data to identify hub genes and functional modules [18] |

| Poisson Mixed Effects Model | Statistical framework for single-cell eQTL mapping | Identifying context-dependent genetic regulation in perturbation responses [20] |

Visualization and Computational Tools

Effective visualization of quantitative data is essential for interpreting complex network relationships. Color accessibility must be prioritized in network visualizations, with sufficient contrast between foreground elements and background, and consideration for color vision deficiencies [23]. Professional color palettes (e.g., Dark2 for light backgrounds, Pastel1 for dark backgrounds) enhance readability and differentiation between nodes and edges [22].

For quantitative data visualization, selection of appropriate chart types is critical:

- Heatmaps effectively represent data density and intensity gradients, useful for gene expression patterns or geographic distribution of biomarkers [24] [25]

- Scatter plots analyze relationships and correlations between variables, such as gene expression in different conditions [24] [25]

- Line charts visualize trends over time, ideal for tracking disease progression or protein level changes [24] [25]

The deconstruction of disease-perturbed networks represents a transformative approach to understanding complex neurodegenerative pathologies. By integrating multi-omic data at single-cell resolution, researchers can now map the precise molecular cascades that propagate from initial protein misfolding to system-wide network failure. The methodologies outlined in this whitepaper—from single-cell multiome analysis to continuous reQTL mapping and cross-disease proteomics—provide a roadmap for applying systems pathology principles to biomarker discovery and therapeutic target identification.

Future advances will likely come from even deeper integration of spatial transcriptomics, live-cell imaging, and computational modeling to create dynamic, predictive network models that can simulate disease progression and treatment responses. As these technologies mature, network-based approaches will increasingly guide clinical trial design, patient stratification, and the development of combinatorial therapies that target multiple nodes within disease-perturbed networks simultaneously.

The investigation of complex diseases is undergoing a paradigm shift from reductionist approaches toward a systems-level understanding that acknowledges the dynamic, interactive, and emergent properties of biological systems. Traditional methods that focus on single biomarkers or linear pathways have proven inadequate for deciphering the pathophysiology of multifactorial diseases such as Alzheimer's disease (AD), cancer, and autoimmune disorders. Systems biology provides a framework for understanding how molecular components integrate into functional networks whose behavior cannot be predicted by studying individual elements in isolation [26]. This whitepaper articulates the core principles of dynamism, interactivity, and emergence within biological systems, with specific application to the discovery and validation of pathology biomarkers.

Emergent properties arise from non-linear interactions between system components, creating collective behaviors that are not evident from studying individual parts. For instance, research reveals that interacting AI agents and biological systems alike develop shared neural dynamics during social interactions, an emergent property not programmed into any single agent but arising from their interaction [27]. Similarly, in network medicine, disease phenotypes emerge from the perturbation of complex molecular networks rather than single gene defects [26]. Understanding these principles is critical for developing next-generation diagnostic tools and therapeutic interventions that address the systemic nature of disease.

Core Principle 1: Dynamism - The Temporal Dimension of Biological Systems

Theoretical Foundations of System Dynamism

Dynamism in biological systems refers to the continuous temporal evolution of molecular, cellular, and organismal states. This principle emphasizes that biological processes are not static but exist in constant flux, with system states evolving over time in response to internal programming and external stimuli. The dynamic nature of biological systems is mathematically captured through differential equation models that describe how system variables change continuously, enabling researchers to simulate and predict system behavior under various conditions and interventions [28].

In gene regulatory networks (GRNs), dynamism manifests through multi-stable states where the system can settle into distinct attractor states representing different functional phenotypes, including healthy, diseased, or apoptotic states. Research demonstrates that certain drugs can alter parameters within GRNs, prompting transitions from pathological to normal states [28]. This state transition capability underscores the therapeutic potential of manipulating dynamic network properties. The dynamic progression of pathological processes is particularly evident in neurodegenerative diseases, where biomarkers follow a predictable temporal sequence, with Aβ pathology preceding tau pathology, which in turn precedes neuronal loss and cognitive decline [29].

Quantitative Profiling of Dynamic Biomarkers

Table 1: Temporal Sequencing of Biomarkers in Alzheimer's Disease Pathology

| Disease Stage | Temporal Sequence | Key Biomarkers | Detection Methods | Dynamic Characteristics |

|---|---|---|---|---|

| Preclinical | 1-2 decades before symptoms | Aβ deposition | Aβ-PET, CSF Aβ42 | Initial exponential accumulation followed by plateau |

| Prodromal | 5-10 years before dementia | Tau pathology, synaptic dysfunction | Tau-PET, CSF p-tau | Linear increase correlated with cognitive decline |

| Mild Cognitive Impairment | Early symptomatic | Neurodegeneration, brain atrophy | sMRI, FDG-PET | Accelerated hippocampal and cortical thinning |

| Dementia | Fully symptomatic | Cognitive decline, functional impairment | Clinical assessment | Non-linear progression with compounding pathologies |

Experimental Protocol: Analyzing Gene Regulatory Network Dynamics

Objective: To quantify state transitions in a 3-node gene regulatory network and identify control parameters for inducing transitions from disease to healthy states.

Materials and Reagents:

- MATLAB with optimization toolbox

- Pre-parameterized ODE model of the GRN

- High-performance computing cluster for parallel processing

Methodology:

- Network Modeling: Implement the GRN as a system of nonlinear ODEs using Hill function kinetics to represent biochemical switches [28]: Parameters: n = 4, s = 0.5, k = 1.0, with specific激励 and inhibition strengths [28].

Attractor Identification: Numerically solve the ODE system from multiple initial conditions to identify all stable steady states (attractors) using Newton-Raphson and continuation methods.

Bifurcation Analysis: Systematically vary regulatory parameters (e.g., b1 from 0.1 to 5.0) to identify critical transition points where the system qualitatively changes behavior.

Control Strategy Optimization: Formulate and solve a dynamic optimization problem to identify parameter manipulation strategies that minimize transition time between pathological and healthy attractors while minimizing control energy [28].

Diagram Title: Dynamic Network Analysis Workflow

Core Principle 2: Interactivity - Multi-Scale Cross-Talk in Biological Networks

Molecular and Cellular Interactivity Networks

Interactivity encompasses the bidirectional communication between components across multiple biological scales, from molecular interactions to organism-level social behaviors. At the molecular level, network medicine leverages protein-protein interaction (PPI) networks and gene co-expression networks to map the complex web of relationships that underlie disease phenotypes [26]. These networks demonstrate that diseases rarely result from single gene defects but rather emerge from perturbations across interconnected modules. Studies show that disease modules often overlap, sharing common pathways that explain disease co-morbidity and heterogeneous clinical presentations [26].

At the cellular level, interactivity enables coordination between different cell populations and systems. Groundbreaking research on inter-brain neural dynamics reveals that socially interacting mammals show synchronized neural patterns between their brains, particularly in GABAergic neurons in the dorsomedial prefrontal cortex [27]. This neural synchrony represents a fundamental interactive property that extends beyond individual organisms to create coupled systems. Similarly, AI agents designed to interact develop shared neural dynamics analogous to biological systems, suggesting that interactivity and its consequences may represent a universal principle of intelligent systems [27].

Experimental Protocol: Measuring Inter-Brain Neural Synchrony

Objective: To quantify shared neural dynamics between interacting subjects using calcium imaging and analytical approaches applicable to both biological and artificial systems.

Materials and Reagents:

- Genetically encoded calcium indicators (e.g., GCaMP)

- Miniature microscopes for in vivo calcium imaging

- Customized social interaction arena

- Data acquisition system with synchronized recording

- Computational resources for PLSC analysis

Methodology:

- Surgical Preparation: Express calcium indicators in specific neuronal populations (e.g., GABAergic or glutamatergic neurons) in the dorsomedial prefrontal cortex (dmPFC) of experimental subjects.

Neural Recording: Simultaneously image calcium activity from both interacting subjects during structured social interactions using head-mounted microscopes.

Cell Type Identification: Classify recorded neurons based on molecular markers using post-hoc immunohistochemistry.

Shared Dynamics Analysis: Apply Partial Least Squares Correlation (PLSC) to identify shared high-dimensional neural subspaces between interacting subjects [27].

Dimensional Characterization: Separate neural activity into shared dimensions (capturing coordinated social behaviors) and unique dimensions (capturing individual behaviors).

Perturbation Experiments: Optogenetically inhibit specific neuronal populations during social interaction to test their causal role in generating shared neural dynamics.

Diagram Title: Inter-Subject Neural Synchronization

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Research Reagents for Studying Biological Interactivity

| Reagent/Category | Function | Application Examples |

|---|---|---|

| Calcium Indicators (GCaMP, R-GECO) | Monitor neural activity in real-time | In vivo imaging of dmPFC during social behavior [27] |

| Viral Vectors (AAV, Lentivirus) | Deliver genetic tools to specific cell types | Cell-type specific optogenetic manipulation in neural circuits |

| Optogenetic Actuators (Channelrhodopsin, Halorhodopsin) | Precisely control neuronal activity | Testing causal role of GABAergic neurons in social synchrony [27] |

| Multi-omics Reagents (scRNA-seq kits, ATAC-seq kits) | Profile molecular states at single-cell resolution | Building cell-type specific regulatory networks [26] |

| Molecular Probes (Aβ-PET, Tau-PET tracers) | Visualize protein pathology in living systems | Tracking Aβ and tau progression in Alzheimer's disease [29] |

| Cytokine Panels & Assays | Quantify inflammatory mediators | Monitoring immune activation in disease networks [26] |

Core Principle 3: Emergent Properties - System-Level Behaviors from Complex Interactions

Theoretical Framework for Emergence

Emergent properties represent system-level behaviors that arise from complex, non-linear interactions between system components but cannot be predicted or reduced to those individual components. In biological systems, emergence manifests in phenomena ranging from consciousness arising from neural networks to organism-level behaviors emerging from molecular networks. The 2025 Nature study demonstrating that AI agents develop shared neural dynamics during social interactions provides a compelling example of emergence—these dynamics were not programmed but spontaneously emerged from the interaction rules [27].

Network medicine provides a framework for understanding disease as an emergent property of perturbed molecular networks. Research shows that disease-associated genes tend to cluster in specific neighborhoods of biological networks, forming disease modules whose perturbation leads to emergent pathological states [26]. This network perspective explains why different mutations can produce similar disease phenotypes (as they perturb the same module) and why single genes can have pleiotropic effects (as they participate in multiple modules). The emergent nature of disease has profound implications for biomarker discovery, suggesting that effective biomarkers should capture network-level perturbations rather than just individual molecule concentrations.

Quantitative Framework for Emergent Network Properties

Table 3: Metrics for Quantifying Emergent Properties in Biological Networks

| Network Metric | Mathematical Definition | Biological Interpretation | Application in Pathology |

|---|---|---|---|

| Degree Centrality | Number of connections per node | Molecular hub significance in network | High-degree nodes are more likely essential; their mutation often causes disease [26] |

| Betweenness Centrality | Number of shortest paths passing through a node | Bottleneck or broker position in information flow | Identifies proteins critical for communication between disease modules |

| Modularity | Strength of division into modules (communities) | Functional specialization within networks | Quantifies separation between disease-specific and healthy network modules |

| Small-Worldness | Ratio of clustering to path length | Efficient information transfer balance | Altered in disease networks, affecting robustness and signal propagation |

| Synchronization Capacity | Ability of nodes to enter correlated dynamics | System coordination and integration | Measured as inter-brain correlation in social mammals [27] |

Experimental Protocol: Mapping Emergent Disease Modules via Network Medicine

Objective: To identify emergent disease modules through multi-omics network integration and validate their causal role in pathology.

Materials and Reagents:

- High-performance computing cluster

- Network analysis software (Cytoscape, NetworkX)

- Multi-omics datasets (genomics, transcriptomics, proteomics)

- Gene editing system (CRISPR-Cas9) for validation

Methodology:

- Interactome Construction: Compile a comprehensive protein-protein interaction (PPI) network integrating data from yeast two-hybrid screens, affinity purification, and literature curation.

Multi-omics Data Integration: Map genomic, transcriptomic, and proteomic data from patient cohorts onto the interactome to create patient-specific network models.

Disease Module Identification: Apply community detection algorithms (e.g., Louvain method) to identify densely connected network neighborhoods enriched for disease-associated molecules [26].

Network Perturbation Analysis: Systematically in silico perturb identified modules to predict their functional impact and relationship to disease phenotypes.

Experimental Validation: Use CRISPR-based gene editing to perturb key nodes within identified modules in model systems and quantify phenotypic consequences.

Diagram Title: Emergent Disease Module Mapping

Integrated Applications: Advancing Pathology Biomarker Research

Multi-Modal Biomarker Integration in Alzheimer's Disease

The principles of dynamism, interactivity, and emergence find powerful application in the evolving framework for Alzheimer's disease biomarkers. The 2024 Alzheimer's Association guidelines introduce the AT1T2NISV framework, which expands beyond the classical AT(N) system to include emergent pathological processes including neuroinflammation (I), synucleinopathy (S), and vascular injury (V) [29]. This expanded framework acknowledges that AD clinical presentation emerges from the complex interaction of multiple co-occurring pathological processes rather than a single linear pathway.

Advanced neuroimaging techniques now enable the quantification of dynamic and interactive aspects of AD pathology. Tau-PET imaging reveals distinct emergent spatial patterns of tau deposition—limbic-predominant, parietal-predominant, medial temporal lobe-sparing, and left-hemisphere asymmetric—each associated with different clinical phenotypes and progression rates [29]. These patterns represent emergent properties of network-level vulnerability rather than simple anatomical proximity. Similarly, the inflammatory biomarker component acknowledges the emergent role of neuroimmune interactions in modulating disease progression.

Quantitative Biomarker Profiles

Table 4: Multi-Modal Biomarker Profiles for Complex Disease Subtyping

| Biomarker Category | Measurement Technique | Dynamic Range | Emergent Properties Revealed | Clinical Utility |

|---|---|---|---|---|

| Aβ Pathology | Aβ-PET, CSF Aβ42 | Centiloid scale: 0-100 | Spatial expansion pattern from frontal to sensory cortex | Early detection, trial enrichment |

| Tau Pathology | Tau-PET, CSF p-tau | SUVR: 1.0-3.0+ | Spatial patterns defining AD subtypes (limbic vs. parietal) | Staging, progression forecasting |

| Neurodegeneration | sMRI, FDG-PET | Z-scores: -4 to +2 | Network-based atrophy patterns | Disease monitoring, treatment response |

| Network Synchronization | Inter-brain neural dynamics | Correlation: 0-1.0 | Shared neural subspaces in social mammals | Quantifying interaction impairment [27] |

| Network Perturbation | Node centrality in GRNs | Control energy: variable | Critical transitions between attractor states | Identifying therapeutic intervention points [28] |

Future Directions: Network-Pharmacology and Dynamic Interventions

The principles outlined in this whitepaper point toward a future of network pharmacology and dynamic therapeutic interventions that acknowledge the emergent properties of biological systems. Rather than the traditional "one drug, one target" approach, next-generation therapies will target network nodes with high betweenness centrality or specifically designed to perturb disease attractors back toward healthy states [26] [28]. The demonstration that shared neural dynamics can be manipulated through precise interventions provides a roadmap for developing therapies that target emergent properties rather than individual components [27].

Methodological advances in single-cell multi-omics, live imaging, and computational modeling will enable unprecedented resolution in mapping biological dynamism, interactivity, and emergence. As these tools mature, they will transform biomarker discovery from a static cataloging of molecular changes to a dynamic mapping of system-level perturbations, ultimately enabling earlier diagnosis, personalized prognostic stratification, and more effective therapeutic interventions for complex diseases.

Advanced Tools and Workflows: Building a Biomarker Discovery Pipeline

The comprehension of complex human pathologies has been fundamentally limited by traditional reductionist approaches that examine biological systems one molecule at a time. Complex diseases such as cancer, cardiovascular disease, and metabolic disorders involve intricate interactions across genetic predispositions, environmental influences, multiple tissues, and numerous molecular pathways operating under a polygenic or even omnigenic model [30]. In this model, perturbations of any interacting genes can propagate through molecular networks to cause disease manifestations, with central "hub" genes possessing more connections exerting greater influence on network stability [30]. This multidimensional complexity demands analytical strategies that embrace rather than simplify biological intricacy.

Multi-omics integration has emerged as the methodological paradigm capable of meeting this challenge through the combined analysis of diverse biological datasets across genomics, transcriptomics, proteomics, and metabolomics [31]. By offering a layered, cross-dimensional perspective, multi-omics enables researchers to uncover molecular interactions not apparent through single-omics approaches, distinguish causal mutations from inconsequential ones, and identify functionally relevant drug targets that might otherwise be overlooked [31]. The power of this approach is amplified when integrated with artificial intelligence and real-world data, shifting the research paradigm from static biological snapshots to dynamic, predictive models of disease that can inform drug development in near real-time [31]. This technical guide explores the methodologies, applications, and practical implementation of integrating three core omics layers—genomics, proteomics, and metabolomics—within the context of systems biology and biomarker research for complex pathologies.

Foundations of Multi-Omics Science

The Core Omics Layers: Functional Relationships and Technical Considerations

The strategic integration of genomics, proteomics, and metabolomics provides a comprehensive view of the biological information flow from genetic blueprint to functional phenotype. Each layer interrogates a distinct level of biological organization with specific technological requirements and analytical considerations.

Genomics provides the foundational blueprint, identifying DNA sequences, structural variations, and mutations that establish disease predisposition and potential therapeutic targets. Modern genomics primarily utilizes next-generation sequencing platforms that generate high-throughput data on genetic variants and associations [32].

Proteomics reveals the functional effectors, quantifying protein expression, post-translational modifications, and structural characteristics that directly mediate cellular processes. Liquid chromatography coupled with tandem mass spectrometry (LC-MS/MS) serves as the cornerstone technology, with Data-Independent Acquisition (DIA) offering high reproducibility and Tandem Mass Tags (TMT) enabling multiplexed quantification across samples [33]. A significant technical challenge remains the dynamic range problem, where highly abundant proteins can mask the detection of low-abundance yet biologically critical proteins [33].

Metabolomics captures the dynamic physiological state, profiling small-molecule metabolites that represent functional outputs of biochemical activity and environmental interactions. Analytical platforms include Gas Chromatography-Mass Spectrometry (GC-MS) for volatile compounds and LC-MS for broader metabolite coverage, with Nuclear Magnetic Resonance (NMR) spectroscopy providing highly reproducible quantification despite lower sensitivity [33]. Metabolomics offers a real-time snapshot of cellular state but often lacks explanatory power about upstream regulatory mechanisms when used in isolation [33].

The Systems Biology Rationale for Integration

In isolation, each omics layer provides only a partial and potentially misleading view of biological systems. For instance, a gene may show high transcription levels but low translation into protein, indicating regulatory checkpoints that could be targeted therapeutically [31]. Similarly, metabolite shifts may indicate pathway perturbations, but without knowledge of upstream proteins or enzymes, the underlying regulatory mechanisms remain unclear [33].

The true power of multi-omics integration lies in creating bidirectional insights where proteins are understood as drivers of biochemical pathways while metabolites reflect their functional outcomes [33]. This approach provides more accurate pathway analysis, as pathways supported by both protein abundance and metabolite concentration changes demonstrate higher biological relevance [33]. In biomarker discovery, protein-metabolite correlations enhance specificity compared to single-marker approaches, enabling combined signatures that better distinguish disease states [33]. This integrated perspective is particularly valuable for resolving contradictions that frequently arise in single-omics studies, such as when protein upregulation lacks corresponding metabolite changes, suggesting biologically insignificant regulation [33].

Computational Methods and Data Integration Strategies

Approaches for Multi-Omics Data Integration

The integration of heterogeneous omics datasets presents significant computational challenges due to varying scales, resolutions, noise levels, and data structures. Multiple computational frameworks have been developed to address these challenges, each with distinct strengths and applications in biomedical research.

Table 1: Computational Methods for Multi-Omics Data Integration

| Integration Approach | Key Features | Representative Tools | Best Use Cases |

|---|---|---|---|

| Pathway-Based Integration | Uses predefined biochemical pathways for enrichment analysis; relies on existing domain knowledge | IMPALA, iPEAP, MetaboAnalyst [34] | Hypothesis-driven research; validation of known biological mechanisms |

| Network-Based Integration | Constructs molecular interaction networks without predefined pathways; identifies altered graph neighborhoods | SAMNetWeb, pwOmics, Metscape, MetaMapR [34] | Discovery of novel interactions; hypothesis generation; systems-level analysis |

| Correlation-Based Integration | Identifies statistical relationships between omics layers; useful when biochemical knowledge is limited | MixOmics, WGCNA, DiffCorr [34] | Exploratory analysis; integration of clinical metadata; large-scale dataset screening |

| Factor Analysis-Based Integration | Discovers latent factors driving variation across multiple omics layers; dimensionality reduction | MOFA2 [33] | Identifying major sources of variation; patient stratification; data compression |

Network-based analyses represent a particularly powerful approach for multi-omics integration, as they can reveal complex connections among diverse cellular components without dependence on predefined biochemical pathways [34]. These networks can map multiple omics results to identify altered graph neighborhoods, highlighting hub genes and proteins that may serve as optimal intervention points in complex diseases [30]. The organization of these biological networks typically follows a "scale-free" pattern where a small number of nodes have many more connections than average, while the majority have few connections [30]. This topological structure suggests that targeted interventions on central hubs could disproportionately impact network stability and disease progression.

Key Computational Tools and Platforms

A robust ecosystem of computational tools supports the implementation of these integration strategies. The R package MixOmics provides multivariate statistical methods, including sparse Partial Least Squares (sPLS) and canonical correlation analysis, to uncover correlations across datasets [34] [33]. MetaboAnalyst offers a comprehensive web-based platform for metabolomics data analysis and pathway mapping, with specialized modules for integration with proteomic data [34]. xMWAS performs network-based integration, enabling visualization of protein-metabolite interaction networks [33]. For more advanced factor analysis, MOFA2 (Multi-Omics Factor Analysis) employs a machine learning framework to capture latent factors driving variation across multiple omics layers [33].

Data normalization and batch effect correction represent critical preprocessing steps that must be addressed before meaningful integration can occur. Proper normalization strategies—including log-transformation, quantile normalization, and variance stabilization—are essential to harmonize datasets with different scales and dynamic ranges [33]. Batch effect correction tools like ComBat effectively mitigate technical variation, ensuring biological signals dominate subsequent analyses [33].

Experimental Workflows and Protocols

Integrated Sample Preparation and Data Acquisition

Implementing a successful multi-omics study requires careful experimental design and execution, with particular attention to sample preparation protocols that preserve the integrity of multiple molecular classes. The following workflow outlines a standardized approach for generating integrated genomics, proteomics, and metabolomics data from biological specimens.