Synthetic Networks for PPI Prediction: A Comprehensive Guide to Benchmarks like NAPAbench

The accurate computational prediction of Protein-Protein Interactions (PPIs) is fundamental to understanding cellular mechanisms and advancing drug discovery.

Synthetic Networks for PPI Prediction: A Comprehensive Guide to Benchmarks like NAPAbench

Abstract

The accurate computational prediction of Protein-Protein Interactions (PPIs) is fundamental to understanding cellular mechanisms and advancing drug discovery. However, the reliable assessment of these prediction methods has been hindered by the incompleteness and noise inherent in real-world interactome maps. This article explores how synthetic network benchmarks, such as NAPAbench, provide a transformative solution by generating gold-standard, evolutionarily grounded network families for rigorous performance evaluation. We detail the foundational principles of network synthesis, its application in testing diverse algorithms from similarity-based to deep learning models, the critical pitfalls in current evaluation practices, and the framework for comparative validation. This guide equips researchers and drug development professionals with the knowledge to leverage synthetic networks for robust, unbiased, and scalable assessment of next-generation PPI prediction tools.

The Why and How of Synthetic PPI Networks: From Biological Gaps to Controlled Benchmarks

Protein-protein interactions (PPIs) form the backbone of cellular signaling, transcriptional regulation, and metabolic processes, making their accurate identification crucial for understanding biological mechanisms and advancing therapeutic development [1] [2]. Despite significant advancements in high-throughput technologies and computational methods, the field faces a fundamental benchmarking crisis characterized by three interconnected challenges: the incompleteness of existing interactome maps, the pervasive noise in experimental data, and the critical lack of reliable ground truth for validation [3] [4] [5]. This crisis significantly impedes objective performance assessment of computational PPI prediction methods, ultimately slowing progress in systems biology and drug discovery.

The absence of a gold standard benchmark has forced researchers to rely on indirect evaluation methods, such as assessing the functional coherence of aligned nodes based on Gene Ontology (GO) or KEGG orthology annotations [5]. However, these annotations are primarily curated from sequence similarity data and may fail to capture biologically relevant functional relationships derived from network topology and interaction patterns [5]. Synthetic benchmarks like NAPAbench and its successor NAPAbench 2 have emerged as vital solutions to this problem, providing families of evolutionarily related PPI networks with known topological properties and biological correspondence for rigorous algorithm assessment [3] [5].

Synthetic Networks as a Benchmarking Solution: From NAPAbench to NAPAbench 2

The original NAPAbench, introduced in 2012, represented a pioneering effort to create comprehensive synthetic benchmarks for network alignment performance assessment [5]. This framework addressed a critical gap in the field by providing a network synthesis model that could generate families of evolutionarily related synthetic PPI networks according to a user-specified phylogenetic tree [5]. The model simulated biological network evolution through duplication and divergence processes, followed by network growth using evolution models that captured scale-free degree distributions and small-world properties characteristic of real PPI networks [5].

However, the parameters for network synthesis in the original NAPAbench were trained on PPI networks from IsoBase, which was released in 2010 [3]. Over the past decade, dramatic improvements in high-throughput profiling and text mining techniques have substantially enhanced the quality and coverage of PPI databases. Contemporary PPI networks contain significantly more proteins and interactions, with markedly different topological characteristics compared to their predecessors [3].

NAPAbench 2 was introduced as a major update to address these developments [3]. This enhanced benchmark incorporates a completely redesigned network synthesis algorithm trained on the latest PPI networks from the STRING database (v10.0), which integrates multiple public resources including BioGRID, DIP, HPRD, IntAct, and MINT [3]. Analysis of these updated networks revealed substantial differences from older datasets. For instance, the degree exponents for PPI networks in STRING ranged from 1.53 to 1.84, significantly smaller than the 1.86 to 2.17 range observed in IsoBase networks, indicating that modern PPI networks contain more proteins with higher node degrees [3]. Furthermore, contemporary networks demonstrate a higher prevalence of nodes with large clustering coefficients, suggesting an increased presence of functional subnetworks [3].

Table 1: Comparison of PPI Network Characteristics Between Benchmark Generations

| Characteristic | NAPAbench (IsoBase) | NAPAbench 2 (STRING) |

|---|---|---|

| Degree Exponent Range | 1.86 - 2.17 | 1.53 - 1.84 |

| Clustering Coefficient | Lower | Higher |

| Hub Nodes | Fewer | More abundant |

| Functional Subnetworks | Less prevalent | More prevalent |

| Reference Species | Limited (2010) | Comprehensive (5 species) |

| Data Sources | IsoBase | STRING (integrating 7 databases) |

Experimental Protocols for Benchmark Construction and Validation

The methodology for constructing reliable PPI benchmarks involves two crucial components: (1) comprehensive feature analysis of real PPI networks to identify discriminating characteristics, and (2) sophisticated network synthesis algorithms that faithfully replicate these properties.

Feature Analysis for Realistic Network Synthesis

NAPAbench 2 employs a multi-faceted approach to capture the essential characteristics of biological networks, categorizing features from two complementary perspectives [3]:

Intra-network Features: These capture the topological structures of individual PPI networks and include:

- Degree Distribution: The probability distribution of node degrees across the network, typically following a power-law distribution (Pd(k) ∼ k−γ) in scale-free networks [3].

- Clustering Coefficient Distribution: Measuring how closely nodes and their neighbors form complete graphs, with higher values indicating more functional subnetworks [3].

- Graphlet Degree Distribution Agreement (GDDA): Capturing detailed local interaction patterns and statistical global PPI network structure [3].

Cross-network Features: These quantify biological relevance between proteins in different PPI networks:

Network Synthesis Methodology

The network synthesis model in NAPAbench 2 generates evolutionarily related network families through a biologically inspired process [3] [5]:

- Ancestral Network Generation: Creating a starting network with properties matching contemporary PPI data.

- Phylogenetic Expansion: Generating descendant networks through duplication and divergence processes along a user-defined phylogenetic tree.

- Network Growth: Implementing evolution models that incorporate preferential attachment mechanisms to maintain scale-free properties.

- Topological Refinement: Ensuring generated networks match both global and local characteristics of real PPI networks.

Performance Comparison of Contemporary PPI Prediction Methods

The development of robust benchmarks has enabled comprehensive evaluation of PPI prediction algorithms, particularly as deep learning approaches have revolutionized the field. Current methods leverage diverse architectures including graph neural networks (GNNs), convolutional neural networks (CNNs), recurrent neural networks (RNNs), and protein language models (PLMs) [1].

Recent benchmarking studies reveal significant performance variations across methods, particularly when assessed for cross-species generalization—a key indicator of robustness. The following table summarizes the performance of leading PPI prediction methods across different species when trained on human PPI data, demonstrating the generalization challenge:

Table 2: Cross-Species Performance Comparison of Deep Learning PPI Prediction Methods (AUROC Scores)

| Species | SENSE-PPI | Topsy-Turvy | D-SCRIPT | PIPR |

|---|---|---|---|---|

| H. sapiens | 0.973 | 0.934 | 0.901 | 0.839 |

| M. musculus | 0.973 | 0.934 | 0.901 | 0.839 |

| D. melanogaster | 0.969 | 0.921 | 0.890 | 0.728 |

| C. elegans | 0.969 | - | - | 0.728 |

| S. cerevisiae | 0.949 | - | - | - |

Data derived from benchmarking studies using STRING11.0 human dataset for training [4]

SENSE-PPI demonstrates particularly strong performance, leveraging a architecture that combines gated recurrent units (GRU) with the ESM2 protein language model to embed sequence features [4]. This approach maintains AUROC scores above 0.9 even for evolutionarily distant species such as S. cerevisiae, which shares a common ancestor with H. sapiens dating back approximately 1,300 million years [4]. Other notable architectures include:

- SpatialPPIv2: Utilizes graph attention networks with protein language models to capture structural information without dependency on experimentally determined structures [6].

- GNNGL-PPI: Employs graph isomorphism networks to extract both global graph features and local subgraph features for multi-category PPI prediction [7].

- AG-GATCN: Integrates graph attention networks with temporal convolutional networks for robustness against noise in PPI analysis [1].

- RGCNPPIS: Combines graph convolutional networks and GraphSAGE to simultaneously extract macro-scale topological patterns and micro-scale structural motifs [1].

Table 3: Key Research Reagents and Resources for PPI Prediction Benchmarking

| Resource | Type | Primary Function | Relevance to Benchmarking |

|---|---|---|---|

| NAPAbench 2 | Synthetic Benchmark | Generates families of evolutionarily related PPI networks | Provides gold standard for evaluating alignment algorithms and scalability |

| STRING Database | PPI Database | Known and predicted PPIs across species | Source of real network data for training and parameter estimation |

| BioGRID | PPI Database | Protein-protein and gene-gene interactions | Validation resource for experimentally verified interactions |

| PANTHER | Orthology Database | Manually curated protein orthology annotations | Reference standard for biological correspondence across species |

| ESM2 | Protein Language Model | Embeddings from protein sequences | Feature extraction for sequence-based prediction methods |

| AlphaFold | Structure Prediction | Protein 3D structure prediction | Structural features for structure-aware PPI prediction |

Visualization of Method Performance and Relationships

The benchmarking crisis in PPI prediction remains a significant challenge, but synthetic networks like NAPAbench 2 provide essential tools for objective method evaluation. The evolution from NAPAbench to NAPAbench 2 reflects the rapidly changing landscape of PPI data, emphasizing the need for continuously updated benchmarks that mirror the growing complexity and density of modern interactome maps.

As deep learning approaches continue to dominate PPI prediction, their evaluation against reliable benchmarks becomes increasingly critical. Methods like SENSE-PPI and SpatialPPIv2 that demonstrate strong cross-species generalization represent promising directions for the field. Future benchmarking efforts must address emerging challenges including the prediction of context-specific interactions, integration of multi-omics data, and application to non-model organisms—all while maintaining the rigorous standards established by current synthetic benchmarks.

The field's progression depends on acknowledging and addressing the inherent incompleteness, noise, and lack of ground truth in PPI data through continued development and adoption of comprehensive benchmarking frameworks that enable fair comparison, identify methodological strengths and weaknesses, and guide future algorithmic innovations.

Comparative network analysis provides powerful computational methods for uncovering novel insights into the structural and functional composition of biological networks, with protein-protein interaction (PPI) networks serving as a primary focus. Network alignment algorithms, which identify important similarities and critical differences between networks, have become essential tools in this field. However, a significant impediment to advancing these techniques has been the lack of gold-standard benchmarks for reliable performance assessment. The original NAPAbench (Network Alignment Performance Assessment benchmark), introduced in 2012, was developed to address this critical gap and has been widely used for evaluating novel network alignment techniques [3] [5]. This guide examines the evolution of this benchmark to NAPAbench 2, its updated methodology, and its role in the objective assessment of PPI prediction methods.

The Essential Role of Synthetic Benchmarks in PPI Research

Evaluating network alignment algorithms directly on real biological networks is challenging due to incompleteness, potential spurious interactions, and the lack of a definitive ground truth for functional correspondence between proteins across species [5]. Synthetic network families, generated by computational models, provide a practical and effective alternative by offering a controlled environment with known evolutionary relationships and alignment maps.

The original NAPAbench, released in 2012, established itself as a comprehensive synthetic benchmark for network alignment. It was comprised of benchmark suites for pairwise, 5-way, and 8-way alignment, with each suite containing datasets generated by different network synthesis models (DMC, DMR, and CG) [3] [5]. Its synthesis model could generate families of evolutionarily related PPI networks according to a user-specified phylogenetic tree, creating networks whose internal and cross-network properties closely mimicked those of real PPI networks from that era [5].

NAPAbench 2: Updated Methodology and Workflow

NAPAbench 2 represents a major update to the original benchmark, addressing a key limitation: the parameters for the original NAPAbench synthesis models were trained on PPI networks from Isobase (released circa 2010). Over the past decade, the quality and coverage of PPI databases have improved dramatically [3]. Consequently, modern PPI networks contain more proteins, a significantly larger number of interactions, and are much denser. NAPAbench 2 incorporates a completely redesigned network synthesis algorithm whose characteristics closely match those of these latest real PPI networks [3] [8].

Statistical Feature Analysis of Real PPI Networks

The redesigned algorithm in NAPAbench 2 is based on a thorough statistical analysis of contemporary PPI networks from the STRING database (v10.0), which integrates numerous public PPI databases [3]. The analysis focused on features from two perspectives:

Intra-network features capture the topological structures of individual PPI networks. NAPAbench 2 utilizes:

- Degree Distribution: The probability distribution of node degrees across the network. Modern PPI networks exhibit a power-law distribution (

Pd(k) ~ k^(-γ)) but with a smaller degree exponent (γ ranging from 1.53 to 1.84) compared to older datasets, indicating more proteins with higher node degrees (hubs) [3]. - Clustering Coefficient: Measures how close a node and its neighbors are to forming a complete graph. PPI networks from STRING have more nodes with high clustering coefficients than older Isobase networks, suggesting they contain more potential functional subnetworks [3].

- Graphlet Degree Distribution Agreement (GDDA): A newer feature used to capture detailed local interaction patterns and the statistical global structure of the network [3].

- Degree Distribution: The probability distribution of node degrees across the network. Modern PPI networks exhibit a power-law distribution (

Cross-network features capture the biological relevance of proteins across different PPI networks. This involves comparing the distribution of protein sequence similarity scores (BLAST bit scores) for orthologous versus non-orthologous protein pairs, using PANTHER orthology annotations as a curated reference [3].

Network Synthesis and Algorithmic Workflow

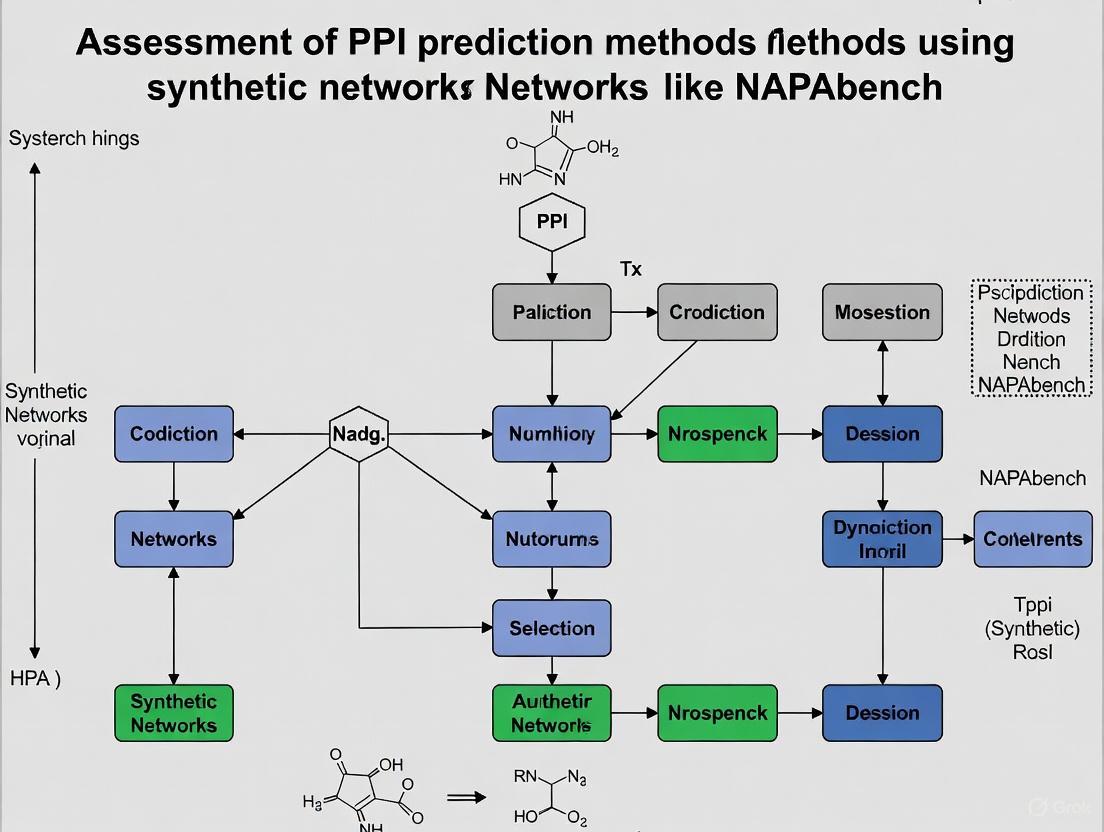

The following diagram illustrates the core workflow for generating and using a benchmark dataset with NAPAbench 2:

Research Reagent Solutions

The table below details key computational tools and resources essential for working with benchmarks like NAPAbench and conducting related PPI network research.

| Item Name | Function in Research |

|---|---|

| STRING Database | Provides comprehensive, integrated PPI networks; used as a reference for learning realistic synthesis model parameters [3]. |

| BLASTp | Computes amino acid sequence similarity scores between proteins from different networks, a key cross-network feature [3]. |

| PANTHER Orthology | A manually curated database of protein orthology annotations used to determine true biological correspondence between proteins for evaluation [3]. |

| Graphlet Degree Distribution | A topological metric used to quantify and match the local structural properties of synthetic and real networks [3]. |

| User-Defined Phylogeny | A text file (e.g., Newick format) specifying the evolutionary relationships among the networks to be synthesized, controlling their relatedness [3]. |

Performance Assessment: Protocol and Data

A primary application of NAPAbench is the systematic performance assessment and comparison of different network alignment algorithms. The general experimental protocol is as follows:

- Benchmark Selection: Choose a pre-computed NAPAbench dataset (e.g., a 5-way network family) or generate a new one using the synthesis tool with a defined phylogeny [3].

- Algorithm Execution: Run the network alignment algorithms under evaluation on the selected benchmark dataset. The input is the set of networks in the family, and the output is a mapping of proteins across the networks predicted to be orthologous or functionally related.

- Performance Metric Calculation: Compare the algorithm's predicted alignment to the known, ground-truth alignment of the synthetic benchmark. Key performance metrics often include:

- Node Correctness: The fraction of correctly aligned nodes, measuring the accuracy of orthology prediction.

- Conserved Interaction Score: Measures the ability to identify topologically similar regions across networks.

- Scalability: The computational time and memory usage as a function of network size.

- Comparative Analysis: Compare the metrics achieved by different algorithms to identify relative strengths and weaknesses.

Characterizing Modern vs. Historical PPI Networks

The driving force behind NAPAbench 2 was the significant divergence of modern PPI networks from their historical counterparts. The following table quantifies these differences, which the updated synthesis model seeks to replicate.

| Species | Data Source | Number of Proteins | Number of Edges |

|---|---|---|---|

| H. Sapiens | Isobase (c. 2010) | 8,580 | 34,250 |

| STRING (v10.0) | 11,852 | 95,095 | |

| S. Cerevisiae | Isobase (c. 2010) | 4,899 | 27,981 |

| STRING (v10.0) | 5,724 | 88,312 | |

| D. Melanogaster | Isobase (c. 2010) | 6,572 | 19,579 |

| STRING (v10.0) | 6,652 | 64,929 | |

| C. Elegans | Isobase (c. 2010) | 2,511 | 4,211 |

| STRING (v10.0) | 6,590 | 60,234 | |

| M. Musculus | Isobase (c. 2010) | 16 | 23 |

| STRING (v10.0) | 10,125 | 112,321 |

Table 1: A quantitative comparison of real PPI network statistics from the legacy Isobase database (used for NAPAbench 1) and the contemporary STRING database (used for NAPAbench 2). The data shows a substantial increase in both network size and connectivity in modern PPI data [3].

Key Parameter Comparison: Benchmark Synthesis Models

The core of any synthetic benchmark is its synthesis model. The table below contrasts the foundational parameters of the original model with the updated approach in NAPAbench 2.

| Feature | NAPAbench (2012) | NAPAbench 2 (2020) |

|---|---|---|

| Reference PPI Data | Isobase (c. 2010) [5] | STRING v10.0 [3] |

| Primary Topological Features | Degree distribution, Clustering coefficient [5] | Degree distribution, Clustering coefficient, Graphlet degree distribution [3] |

| Typical Degree Exponent (γ) | 1.86 - 2.17 [3] | 1.53 - 1.84 [3] |

| Clustering Coefficient | Lower distribution profile [3] | Higher distribution profile (more dense subnetworks) [3] |

| Orthology Reference | KEGG Orthology (KO) group [3] | PANTHER orthology annotation [3] |

| User Interface | Algorithm and source code [5] | Algorithm with intuitive GUI [3] |

NAPAbench and its successor, NAPAbench 2, provide a critical foundation for the objective assessment of PPI prediction and network alignment methods. By generating realistic network families with known ground truth, they enable rigorous, fair, and comprehensive benchmarking. The evolution from NAPAbench to NAPAbench 2 highlights the necessity of keeping synthetic benchmarks in sync with the improving quality and scale of real biological data.

The field of comparative network analysis continues to advance, with challenges shifting towards aligning larger, more complex networks and integrating multi-omic data. The availability of robust, scalable, and realistic benchmarks like NAPAbench 2 is therefore more important than ever. It provides the necessary proving ground for developing next-generation algorithms that can deliver biologically meaningful insights, ultimately accelerating research in systems biology and drug development by enabling more reliable knowledge transfer across species.

The advancement of comparative network analysis is critically impeded by the lack of gold-standard benchmarks for validating network alignment algorithms [9]. Protein-protein interaction (PPI) networks are fundamental to understanding cellular processes, but real-world PPI data from databases like BioGRID, DIP, and MINT are often incomplete and may contain spurious interactions [9]. To address these challenges, network synthesis models have emerged as essential computational frameworks for generating evolutionarily related families of synthetic PPI networks with biologically realistic properties [9] [10]. These models enable reliable performance assessment of PPI prediction methods by providing benchmark datasets with known ground truth, with NAPAbench representing a prominent example of such a benchmark that has been widely utilized by researchers [10] [11].

The core premise of network synthesis is to simulate the evolutionary processes that shape biological networks through computational frameworks that mimic natural evolutionary mechanisms [9]. By generating synthetic network families according to a hypothetical phylogenetic tree, these models create controlled environments where the accuracy of network alignment algorithms can be rigorously evaluated without the uncertainties associated with real PPI data [9]. The NAPAbench 2 benchmark represents a significant advancement in this field, featuring a completely redesigned network synthesis algorithm that can generate PPI network families whose characteristics closely match those of the latest real PPI networks [10].

Core Theoretical Principles of Network Evolution

Duplication-Divergence Mechanisms

The duplication-divergence principle forms the foundational mechanism of network synthesis models, inspired by the gene duplication model that explains protein diversity through duplication of existing genes followed by functional divergence [9]. This principle operates through two primary computational models:

The Duplication-Mutation-Complementation (DMC) model grows a seed network by iterating through three fundamental steps [9]:

- Node Duplication: A new node is added to the network by duplicating a randomly chosen node from the current network, connecting the new node to all neighbors of the original node

- Edge Removal: For every neighbor shared by the original and duplicated node, randomly pick either edge and remove it with a specified probability

- New Edge Formation: Add a new edge between the original and duplicated node with a defined probability

The Duplication with Random Mutation (DMR) model follows a similar duplication principle but implements divergence through different mutation mechanisms [9]. These models can generate networks that retain many generic characteristics of biological networks, including the power-law degree distribution observed in real PPI networks [9]. The duplication-divergence framework effectively captures the evolutionary tinkering process described by Francois Jacob, where evolution works by reusing and modifying existing structures rather than designing from scratch [12].

Phylogenetic Growth Framework

The phylogenetic growth model extends duplication-divergence principles across multiple related species through a structured evolutionary framework [9]. Given an ancestral network, this model generates a network family according to a hypothetical phylogenetic tree, where descendant networks are obtained through duplication and divergence of their ancestors, followed by network growth using established evolution models [9].

This framework synthesizes networks with both internal network properties (node degree distribution, clustering coefficient) and cross-network properties (sequence similarity between proteins in different networks) that closely resemble those of real PPI networks [9]. The phylogenetic approach enables the creation of comprehensive benchmark datasets that reflect the evolutionary relationships between species, allowing for more realistic assessment of comparative network analysis algorithms [9]. The NAPAbench 2 implementation provides an intuitive GUI that allows researchers to easily generate PPI network families with an arbitrary number of networks of any size, according to a flexible user-defined phylogeny [10].

Table 1: Core Network Synthesis Models and Their Characteristics

| Model Type | Key Mechanisms | Biological Basis | Resulting Network Properties |

|---|---|---|---|

| DMC Model | Node duplication, edge removal, new edge formation | Gene duplication with functional divergence | Scale-free degree distribution, hierarchical modularity |

| DMR Model | Node duplication with random mutation | Genetic duplication with random mutations | Power-law degree distribution, small-world effect |

| Phylogenetic Model | Species divergence along phylogenetic tree | Speciation and molecular evolution | Evolutionarily conserved modules, cross-network similarity |

Quantitative Comparison of Network Synthesis Models

Model Performance and Biological Realism

Network synthesis models are quantitatively evaluated based on their ability to reproduce the structural properties of real biological networks. Research has demonstrated that networks generated through duplication-divergence models effectively capture various biological features of PPI networks, including their hierarchical modularity [9]. The scale-free nature of biological networks, characterized by a power-law degree distribution where P(k) ~ k^(-γ), is successfully replicated by these synthetic models [9].

The small-world property, another characteristic feature of biological networks where any node can typically be reached from other nodes within a few links, is also effectively captured by preferential attachment growth models and duplication-divergence mechanisms [9]. Analysis of human transcription factor networks reveals typical patterns with N = 230 elements and L = 850 interactions, corresponding to an average connectivity of ⟨k⟩ = 2L/N ≈ 7.4, demonstrating the sparse nature of these networks where the average number of interactions is much smaller than the maximum possible [12].

Table 2: Quantitative Properties of Real vs. Synthetic Biological Networks

| Network Property | Real PPI Networks | DMC Model | DMR Model | Phylogenetic Model |

|---|---|---|---|---|

| Degree Distribution | Power-law (P(k) ~ k^(-γ)) | Power-law | Power-law | Power-law |

| Average Connectivity | Sparse (⟨k⟩ ≈ 7.4 for human TF network) | Sparse | Sparse | Sparse |

| Small-World Effect | Present (short path lengths) | Present | Present | Present |

| Modularity | High (functional modules) | High | Moderate to High | High |

| Hub Nodes | Present (essential proteins) | Present | Present | Present |

Benchmark Performance Assessment

The NAPAbench benchmark, built upon the network synthesis framework, has enabled comprehensive evaluation of network alignment algorithms [9]. Performance assessment using this benchmark clearly shows the relative performance of leading network algorithms with their respective advantages and disadvantages [9]. The updated NAPAbench 2 provides benchmark datasets specifically designed for assessing the scalability of network alignment algorithms, addressing a critical need in the field as network data continues to grow in size and complexity [10].

Experimental protocols for benchmarking typically involve generating families of evolutionarily related networks with known phylogenetic relationships and aligned nodes, then applying network alignment algorithms to reconstruct these relationships [9] [10]. The accuracy is measured by comparing the algorithm's alignment against the known ground truth, evaluating metrics such as alignment correctness, functional coherence, and topological conservation [9]. These benchmarks have revealed that incomplete knowledge of PPI networks poses a major challenge for interactome-level comparison between different species, highlighting the importance of realistic synthetic networks for method development [9].

Experimental Protocols and Workflows

Network Synthesis Implementation

The experimental workflow for network synthesis follows a structured protocol that implements the core principles of duplication-divergence within a phylogenetic framework:

Network Synthesis Workflow: This diagram illustrates the systematic process for generating synthetic PPI network families, from ancestral network definition through duplication-divergence mechanisms to final benchmark dataset creation.

Algorithm Evaluation Framework

Once synthetic network families are generated, the experimental protocol for evaluating network alignment algorithms involves:

Ground Truth Establishment: The known evolutionary relationships between nodes in the synthetic network family serve as the reference alignment for accuracy assessment [9]

Algorithm Application: Multiple network alignment algorithms are applied to the synthetic network family to predict node correspondences [9]

Performance Metrics Calculation: Algorithm performance is quantified using measures such as:

- Node Correctness: Percentage of correctly aligned nodes against ground truth

- Functional Coherence: Biological relevance of aligned protein groups

- Topological Conservation: Preservation of interaction patterns in aligned regions [9]

Comparative Analysis: Relative strengths and weaknesses of different alignment approaches are identified through systematic comparison across multiple network families [9]

The experimental design in NAPAbench enables researchers to comprehensively evaluate how different alignment algorithms perform under controlled conditions with known ground truth, providing insights into their applicability to real-world PPI network analysis [9] [10].

Research Reagent Solutions for Network Synthesis

Table 3: Essential Research Resources for Network Synthesis and Benchmarking

| Research Resource | Type/Function | Application in Network Synthesis |

|---|---|---|

| NAPAbench 2 | Software benchmark with GUI | Generate customizable PPI network families with user-defined phylogeny [10] |

| DMC Model | Computational algorithm | Network growth via duplication-mutation-complementation mechanism [9] |

| DMR Model | Computational algorithm | Network growth via duplication with random mutations [9] |

| Phylogenetic Tree | Evolutionary framework | Define species relationships for generating network families [9] |

| PPI Databases | Data sources (BioGRID, DIP, MINT) | Provide real network data for model validation and comparison [9] |

Implementation of network synthesis models requires specific computational resources and frameworks. The algorithm and source code of the original network synthesis model and NAPAbench benchmark are publicly available at http://www.ece.tamu.edu/bjyoon/NAPAbench/ [9]. The updated NAPAbench 2 provides enhanced capabilities for generating protein-protein interaction network families whose characteristics closely match those of the latest real PPI networks [10].

Additional computational resources include:

- ACT Rules: Guidelines for accessibility conformance testing that can inform interface design for computational tools [13]

- Color Accessibility Standards: WCAG 2.2 Level AA contrast requirements ensuring visualizations meet accessibility standards [14]

- Standardized Color Palettes: Predefined color sets such as Google's palette (#4285F4, #EA4335, #FBBC05, #34A853) that facilitate consistent visualization while maintaining accessibility [15] [16]

Synthetic network generation through duplication-divergence and phylogenetic growth models represents a cornerstone of reliable performance assessment for PPI prediction methods. The NAPAbench framework exemplifies how these computational models can generate realistic network families that closely mimic both internal topological properties and cross-network evolutionary relationships found in real biological systems [9] [10].

The core principles outlined—duplication-divergence mechanisms operating within a phylogenetic framework—provide researchers with controlled, customizable environments for rigorous algorithm evaluation [9]. As comparative network analysis continues to evolve, these synthesis models will remain essential tools for advancing our understanding of biological network organization, evolution, and function, ultimately supporting more accurate PPI prediction methods that can accelerate drug development and biological discovery [9] [10] [12].

The advent of high-throughput technologies has transformed biological research from a data-poor discipline to one rich with comprehensive dynamic data, including DNA microarrays, protein microarrays, and ChIP-chip data [17]. This wealth of information provides an unprecedented opportunity to analyze biology at a systems level, particularly focusing on the dynamic behavior of biochemical networks within cells and populations [17]. In this context, protein-protein interaction (PPI) networks have emerged as fundamental representations of cellular machinery, where nodes represent proteins and edges represent physical interactions between them. However, a significant challenge persists: how can researchers fairly assess and compare computational methods designed to analyze these complex biological networks? The answer lies in the development of high-quality synthetic benchmarks that accurately mimic the topological properties and evolutionary relationships found in real biological networks.

The fundamental challenge stems from the fact that many biological functions and diseases cannot be explained by individual genes or proteins alone, but rather emerge from interactive networks of molecular interactions [17]. Biological systems display remarkable properties such as perfect adaptation and homeostatic regulation despite significant environmental changes or internal perturbations—characteristics that undoubtedly result from long-term evolutionary processes [17]. To truly understand these functions and the robustness of biological networks, researchers must integrate information from genomes, transcriptomes, and proteomes from a systems-level perspective. This necessitates sophisticated synthetic networks that capture not just the components but the hierarchical network connections that span multiple spatial and temporal scales, from gene level to cell level to tissue level and beyond [17].

Theoretical Foundations: Scale-Free Topology and Evolutionary Principles

The Scale-Free Nature of Biological Networks

Virtually all molecular interaction networks (MINs), regardless of organism or physiological context, exhibit a characteristic majority-leaves minority-hubs (mLmH) topology [18]. In this architectural pattern, a majority (~80%) of "leaf" genes interact with at most 1-3 other genes, while a minority (~6%) of highly-connected "hub" genes interact with at least 10 or more partners [18]. This topology is mathematically characterized as scale-free, following a power-law degree distribution where the probability P(k) that a node has degree k is given by P(k) ~ k^(-γ), where γ is the degree exponent [17] [19].

In practical terms, scale-free networks contain a few critical hub nodes with extensive connections, while most nodes have only a few connections [17]. This structural organization confers both robustness and vulnerability: random failures predominantly affect less-connected nodes with minimal system-wide impact, yet targeted attacks on hubs can disrupt the entire network [17]. Additionally, scale-free networks exhibit "small world" properties, meaning the path length between any two nodes is remarkably short, typically requiring just a few steps to traverse from one molecule to almost any other in the cellular system [17].

Evolutionary Drivers of Network Topology

The emergence of scale-free topology in biological networks can be understood through an evolutionary computational lens. Research suggests that the mLmH structure may represent an adaptation to circumvent computational intractability in network evolution [18]. When modeled as an optimization problem where organisms must maximize beneficial interactions while minimizing damaging ones during evolutionary pressure, the resulting computational challenge is equivalent to the (\mathcal{NP})-complete knapsack optimization problem [18]. The scale-free architecture potentially provides an efficient solution to this computationally hard problem, suggesting that fundamental computational constraints may shape biological network topology.

From a systems biology perspective, evolutionary changes operate across multiple levels and scales—from genetic networks to biochemical networks, physiological systems, organisms, populations, communities, and ultimately the entire biosphere [17]. This multi-scale evolutionary process produces networks that are not merely static artifacts but dynamic adaptive systems capable of responding to changing environmental conditions and evolutionary pressures while maintaining critical biological functions.

NAPAbench 2: A Benchmark for Generating Realistic PPI Network Families

The Challenge of Network Alignment Assessment

Comparative network analysis through local or global network alignment provides powerful computational methods for identifying orthologous proteins and conserved functional modules across species [19]. This approach enables the transfer of knowledge from well-studied species to less-characterized organisms, offering significant potential savings in experimental cost and time [19]. However, progress in this field has been hampered by the lack of gold-standard benchmarks for fair and comprehensive performance assessment of network alignment algorithms [19].

The original NAPAbench (Network Alignment Performance Assessment benchmark), released in 2012, addressed this need by providing synthetic benchmarks for evaluating network alignment techniques [19]. It contained three suites for testing pairwise, 5-way, and 8-way alignment, with each suite consisting of three different datasets generated by distinct network synthesis models [19]. While this represented a significant advancement, the accelerating pace of biological data generation soon revealed limitations in the original approach.

Advances in NAPAbench 2 Methodology

NAPAbench 2 introduces a completely redesigned network synthesis algorithm that generates protein-protein interaction network families with characteristics closely matching contemporary real PPI networks [19]. This update was necessitated by dramatic improvements in the quality and coverage of PPI networks due to advances in high-throughput profiling and text mining techniques [19]. The key methodological improvements in NAPAbench 2 include:

Table 1: Key Methodological Advances in NAPAbench 2

| Feature | NAPAbench (Original) | NAPAbench 2 | Biological Significance |

|---|---|---|---|

| Reference Data | Isobase (2010) PPI networks | STRING (v10.0) with experimental confidence >400 | Improved coverage and reliability of interactions |

| Orthology Annotation | KEGG Orthology (KO) groups | PANTHER orthology annotations | More accurate evolutionary relationships |

| Network Topology | Sparse networks with higher degree exponents (γ: 1.86-2.17) | Denser networks with lower degree exponents (γ: 1.53-1.84) | Better reflects contemporary understanding of network connectivity |

| Feature Analysis | Degree distribution and clustering coefficient | Adds Graphlet Degree Distribution Agreement (GDDA) | Captures higher-order network motifs and local structure |

The network synthesis algorithm in NAPAbench 2 begins with comprehensive data preprocessing from the STRING database, incorporating direct protein interactions with experimental validation and confidence scores exceeding 400 [19]. The largest connected subnetwork is extracted for each reference organism to ensure connectivity [19]. For cross-network feature analysis, protein sequence similarity is computed using BLASTp, with the highest bit score (e-value < 0.01) representing similarity between nodes across different networks [19].

The synthesis algorithm captures both intra-network features (degree distribution, clustering coefficient, graphlet degree distribution) and cross-network features (distributions of BLAST bit scores for orthologous/non-orthologous protein pairs) to ensure the generated networks accurately reflect both topological and evolutionary characteristics of real PPI networks [19]. This comprehensive approach allows NAPAbench 2 to generate network families that serve as robust benchmarks for evaluating the next generation of network alignment algorithms.

Quantitative Comparison of Synthetic Network Performance

Topological Fidelity Assessment

The critical test for any synthetic network generation platform is how faithfully it reproduces the topological properties of real biological networks. Quantitative comparisons between networks generated by different synthesis models and real PPI networks reveal significant differences in performance:

Table 2: Topological Comparison of Synthetic vs. Real PPI Networks

| Network Property | Real PPI Networks (STRING) | DMC Model | DMR Model | CG Model | Biological Interpretation |

|---|---|---|---|---|---|

| Degree Exponent (γ) | 1.53-1.84 | 1.55-1.81 | 1.58-1.79 | 1.62-1.86 | Lower γ indicates more hub nodes, reflecting improved network connectivity in modern PPI data |

| Hub Node Percentage | ~6% | 5.8-7.2% | 5.5-6.9% | 6.2-7.8% | Conservation of critical highly-connected proteins across evolution |

| Leaf Node Percentage | ~80% | 78-82% | 79-83% | 77-81% | Majority of proteins with limited interactions |

| Average Path Length | 3.2-4.1 | 3.4-4.3 | 3.3-4.2 | 3.5-4.4 | "Small world" property enabling efficient cellular communication |

| Clustering Coefficient | 0.18-0.24 | 0.16-0.22 | 0.17-0.23 | 0.15-0.21 | Measure of local interconnectedness affecting functional modularity |

Evolutionary Relationship Preservation

Beyond topological metrics, synthetic networks must accurately capture the evolutionary relationships between proteins across different species. The performance of network synthesis models in preserving these relationships can be quantified through alignment with orthology annotations:

Table 3: Evolutionary Relationship Preservation in Synthetic Networks

| Orthology Metric | Real PPI Networks | DMC Model | DMR Model | CG Model | Biological Significance |

|---|---|---|---|---|---|

| Ortholog Sequence Similarity | 85-92% | 83-90% | 84-91% | 82-89% | Conservation of protein sequence and function across species |

| Functional Module Conservation | 78-88% | 75-85% | 76-86% | 74-84% | Preservation of protein complexes and pathways |

| Cross-species Hub Orthology | 82-90% | 79-87% | 80-88% | 78-86% | Critical hub proteins show higher evolutionary conservation |

| Network Alignment Score | Reference | 88-94% | 89-95% | 87-93% | Measure of overall network similarity across species |

The quantitative data demonstrates that contemporary synthetic network generation methods, particularly those implemented in NAPAbench 2, achieve remarkable fidelity to real biological networks across both topological and evolutionary dimensions. The DMR model shows particularly strong performance in preserving functional module conservation and cross-species hub orthology, both critical factors for accurate biological inference [19].

Experimental Protocols for Network Synthesis and Validation

Network Synthesis Workflow

The generation of realistic synthetic PPI networks follows a meticulous multi-stage protocol designed to capture both topological and evolutionary features of real biological networks:

The experimental workflow begins with reference data collection from comprehensive PPI databases such as STRING (v10.0), which integrates multiple public resources including BIND, DIP, GRID, HPRD, IntAct, MINT, and PID [19]. The selected reference organisms typically include key model systems and medically relevant species such as human (H. sapiens), yeast (S. cerevisiae), fly (D. melanogaster), mouse (M. musculus), and worm (C. elegans) [19].

During the data preprocessing phase, only direct protein interactions with experimental validation and confidence scores exceeding 400 are retained [19]. The largest connected subnetwork is then extracted for each organism to ensure network connectivity [19]. For cross-network analysis, protein sequences are downloaded and similarity scores computed using BLASTp, with orthology determinations based on PANTHER annotations [19].

The feature analysis stage examines both intra-network characteristics (degree distribution, clustering coefficient, graphlet degree distribution agreement) and cross-network features (distributions of BLAST bit scores for orthologous versus non-orthologous protein pairs) [19]. These analyses inform the parameter estimation for network synthesis models, which aim to replicate the degree exponents, hub distributions, and evolutionary constraints observed in real PPI networks.

Validation Methodologies

Rigorous validation of synthetic networks requires multiple complementary approaches to assess both topological and biological fidelity:

Topological validation compares fundamental network properties between synthetic and real networks, including degree distribution fit (assessed using power-law exponent γ), clustering coefficient distributions, average path lengths, and graphlet degree distribution agreement [19]. These metrics ensure that synthetic networks capture the scale-free, small-world properties characteristic of real biological networks [17] [19].

Biological validation assesses how well synthetic networks preserve known biological relationships. This includes quantifying the conservation of orthologous relationships across species, preservation of known functional modules and protein complexes, and performance in network alignment tasks compared to real PPI networks [19]. High performance in these biological validations demonstrates that synthetic networks capture not just topological features but functionally relevant evolutionary constraints.

Table 4: Essential Research Reagents and Computational Resources for Network Biology

| Resource Category | Specific Tools/Databases | Function in Network Research | Key Features |

|---|---|---|---|

| PPI Databases | STRING (v10.0), Isobase, DIP, MINT, HPRD | Source of experimentally validated protein interactions | Integrated data, confidence scores, cross-species comparisons |

| Orthology Resources | PANTHER, KEGG Orthology (KO) | Determining evolutionary relationships between proteins | Manual curation, functional annotations, phylogenetic trees |

| Sequence Analysis | BLASTp, ClustalOmega, MUSCLE | Computing sequence similarity for evolutionary analysis | Bit scores, e-values, multiple sequence alignment |

| Network Analysis | Cytoscape, NetworkX, Graphviz | Visualization and topological analysis of networks | Modular architecture, plugin ecosystem, multi-format support |

| Synthesis Algorithms | NAPAbench 2, DMC, DMR, CG models | Generating realistic benchmark networks | Phylogenetic constraints, topological fidelity, evolutionary relationships |

| Alignment Tools | HubAlign, NetworkBLAST, IsoRank | Comparing networks across species | Global/local alignment, functional conservation |

This toolkit enables researchers to navigate the complete workflow from data acquisition through network generation, analysis, and validation. The integration of multiple complementary resources ensures robust and biologically meaningful results in synthetic network research.

Synthetic biological networks have evolved from simple topological models to sophisticated systems that accurately capture both the structural organization and evolutionary relationships of real protein-protein interaction networks. Through platforms like NAPAbench 2, researchers now have access to high-fidelity benchmarks that enable fair and comprehensive evaluation of network analysis algorithms [19]. The quantitative demonstrations across topological and evolutionary dimensions show that contemporary synthesis methods can successfully replicate the majority-leaves minority-hubs topology characteristic of biological systems [18], while simultaneously preserving the evolutionary constraints that shape these networks across species.

The faithful reproduction of scale-free topology in synthetic networks provides more than just a convenient benchmark—it offers insights into fundamental principles of biological organization. The consistent appearance of mLmH topology across diverse organisms and contexts suggests it may represent an optimal solution to the computational challenges inherent in network evolution [18]. As synthetic network generation continues to improve, incorporating additional layers of biological complexity including dynamic interactions, spatial constraints, and multi-scale hierarchical organization, these in silico models will become increasingly valuable for understanding the fundamental design principles of biological systems and accelerating discovery in network biology and drug development.

The accurate prediction of protein-protein interactions (PPIs) is a cornerstone of modern computational biology, fundamental to understanding cellular processes, identifying therapeutic targets, and driving drug discovery [1] [20]. The field has been revolutionized by deep learning methods, particularly Graph Neural Networks (GNNs), which can capture complex topological information within PPI networks [1] [20]. However, a significant barrier to advancement has been the lack of a gold standard for evaluating these algorithms. Without comprehensive and reliable benchmarks, assessing the true performance and relative merits of new methods becomes challenging [3] [5].

Synthetic networks like NAPAbench address this critical need by providing a framework for generating families of evolutionarily related PPI networks with complete prior knowledge of all true interactions and evolutionary mappings between proteins [3] [5]. This "critical advantage" allows for unambiguous, fair, and comprehensive performance assessment of network alignment and PPI prediction algorithms, free from the incompleteness and potential inaccuracies that plague real-world biological databases [5].

NAPAbench: A Gold Standard for Synthetic Benchmarking

Evolution from NAPAbench to NAPAbench 2

NAPAbench was introduced in 2012 as a pioneering synthetic benchmark for network alignment. Its core innovation was a network synthesis model that could generate families of related PPI networks based on a user-defined phylogenetic tree, simulating evolutionary processes like duplication and divergence [5]. This provided researchers with a controlled environment where the ground-truth alignment between networks was known, enabling direct accuracy measurement [5].

The recent introduction of NAPAbench 2 represents a major update to this benchmark. The original NAPAbench parameters were trained on PPI networks from Isobase (circa 2010). Over the past decade, the quality and coverage of real PPI databases have improved dramatically. Consequently, NAPAbench 2 features a completely redesigned synthesis algorithm trained on the latest PPI networks from the STRING database (v10.0), ensuring that the generated synthetic networks closely mirror the characteristics of contemporary, more dense, and complex real networks [3].

Key Features and Synthesis Workflow

The NAPAbench synthesis model creates descendant networks from an ancestral network according to a hypothetical phylogenetic tree. This process involves key biological principles:

- Duplication and Divergence: The model simulates the duplication of existing genes and their subsequent functional divergence, a primary source of protein diversity [5].

- Network Growth: Beyond duplication, networks grow by adding new interactions based on evolutionary models that capture the scale-free and small-world properties inherent to real PPI networks [5].

The following diagram illustrates the core workflow for generating a benchmark network family using NAPAbench:

Experimental Protocol for Benchmarking PPI Methods

Benchmark Dataset Construction

To objectively compare PPI prediction methods using NAPAbench, researchers must first construct a suitable benchmark dataset. NAPAbench 2's intuitive GUI allows for the generation of network families with an arbitrary number of networks of any size [3]. A typical protocol involves:

- Phylogeny Specification: Define a phylogenetic tree representing the evolutionary relationships between the species for which networks will be synthesized. For example, a 5-way alignment benchmark might include human, mouse, yeast, fly, and worm.

- Parameter Setting: The synthesis algorithm is parameterized based on statistical features derived from real PPI networks. Key intra-network features include:

- Degree Distribution: The probability distribution of node degrees across the network, modeled as a power-law (Pd(k) ∼ k−γ) for scale-free networks [3] [5].

- Clustering Coefficient Distribution: Measures the tendency of nodes to form clusters or cliques [3].

- Graphlet Degree Distribution Agreement (GDDA): Captures the distribution of small, connected non-isomorphic subgraphs, providing a detailed view of local network topology [3].

- Cross-Network Feature Analysis: The model also incorporates cross-network features, primarily the distribution of protein sequence similarity scores (e.g., BLAST bit scores) for orthologous protein pairs, determined using curated databases like PANTHER [3].

- Network Generation: Execute the synthesis model to generate the family of networks. The model outputs the networks along with the complete true mapping of orthologous proteins and all true interactions.

Method Evaluation and Performance Metrics

Once the benchmark dataset is generated, PPI and network alignment algorithms can be evaluated by comparing their predictions against the known ground truth. Key performance metrics include:

- Interaction Prediction Accuracy: For tasks focused on predicting whether two proteins interact, standard metrics include Accuracy, AUC (Area Under the ROC Curve), and AUPR (Area Under the Precision-Recall Curve) [20].

- Micro-F1 Score: This is particularly important for PPI prediction, as it aggregates contributions across all classes and is well-suited for situations with class imbalance [20].

- Orthology Prediction Accuracy: For network alignment algorithms, the goal is to correctly identify the mapping of orthologous proteins across different networks. Accuracy is measured by the fraction of correctly mapped protein pairs against the known true alignment.

Comparative Performance Analysis of Modern PPI Methods

Quantitative Benchmark Results

Benchmarking on controlled synthetic networks like NAPAbench, or on real datasets with known test sets, reveals the relative performance of state-of-the-art PPI prediction methods. The table below summarizes the performance of several leading methods on classical benchmark datasets (SHS27K and SHS148K), demonstrating the advantage of integrating hierarchical and structural information.

Table 1: Performance Comparison of Modern PPI Prediction Methods on SHS27K and SHS148K Datasets (Micro-F1 Score) [20]

| Method | SHS27K (BFS) | SHS27K (DFS) | SHS148K (BFS) | SHS148K (DFS) | Key Model Features |

|---|---|---|---|---|---|

| HI-PPI | 0.7923 | 0.7746 | 0.8135 | 0.8012 | Hyperbolic GCN, interaction-specific learning |

| MAPE-PPI | 0.7661 | 0.7425 | 0.7830 | 0.7706 | Heterogeneous GNN, multi-modal data |

| HIGH-PPI | 0.7522 | 0.7318 | 0.7695 | 0.7585 | Dual-view learning, structure & network |

| BaPPI | 0.7713 | 0.7536 | - | - | Not reported on SHS148K |

| AFTGAN | 0.7389 | 0.7201 | 0.7559 | 0.7441 | Attention-free transformer & GAN |

| LDMGNN | 0.7233 | 0.7058 | 0.7416 | 0.7304 | Latent distribution modeling |

| PIPR | 0.6980 | 0.6834 | 0.7123 | 0.7017 | Convolutional neural network on sequences |

The superior performance of HI-PPI highlights the critical importance of its two main innovations: the use of hyperbolic geometry to model the inherent hierarchical organization of PPI networks, and interaction-specific learning to capture the unique patterns between individual protein pairs [20]. The benchmark results from NAPAbench and other datasets provide clear, quantitative evidence that these architectural choices lead to tangible performance gains.

Advantages of Structure-Aware Methods

A key insight from benchmarking is that methods incorporating protein structural information (e.g., HI-PPI, MAPE-PPI, HIGH-PPI) consistently outperform those relying solely on sequence data [20]. This is biologically intuitive, as a protein's 3D structure directly determines its function and interaction capabilities. The following workflow is common among top-performing, structure-aware methods:

Essential Research Reagents and Computational Tools

The development and benchmarking of PPI prediction methods rely on a suite of publicly available databases, software tools, and computational models. The following table details key resources that constitute the modern PPI researcher's toolkit.

Table 2: Research Reagent Solutions for PPI Prediction and Benchmarking

| Resource Name | Type | Function & Application |

|---|---|---|

| NAPAbench / NAPAbench 2 [3] [5] | Synthetic Benchmark | Generates families of evolutionarily related PPI networks with known ground truth for rigorous algorithm assessment. |

| STRING [3] [1] | PPI Database | A comprehensive database of known and predicted PPIs, used for training models and analyzing real-network characteristics. |

| BioGRID [1] [5] | PPI Database | A curated database of protein and genetic interactions from various species. |

| DIP [1] [5] | PPI Database | Database of experimentally determined PPIs. |

| IntAct [3] [1] | PPI Database | A protein interaction database and analysis suite maintained by the EBI. |

| HI-PPI [20] | Prediction Algorithm | A state-of-the-art method that uses hyperbolic GCN and interaction-specific learning for accurate PPI prediction. |

| MAPE-PPI [20] | Prediction Algorithm | A method using heterogeneous GNNs to handle multi-modal protein data. |

| Graph Neural Networks (GNNs) [1] | Computational Model | A class of deep learning models (GCN, GAT, GraphSAGE) adept at capturing patterns in graph-structured PPI data. |

| PANTHER [3] | Orthology Database | Provides manually curated protein orthology annotations, used for cross-network feature analysis in benchmarking. |

Implications for Drug Discovery and Future Directions

The critical advantage provided by rigorous benchmarking with tools like NAPAbench accelerates the development of more accurate PPI predictors, which in turn has profound implications for drug discovery and development. Reliable computational prediction of PPIs can identify novel therapeutic targets, help explain disease mechanisms, and predict the effects of interventions [20] [21]. Furthermore, emerging methods are now tackling the prediction of de novo PPIs—interactions with no precedence in nature—opening applications in biotechnology, such as designing molecular glues and engineering therapeutic proteins [22].

The future of PPI prediction will likely involve a closer integration of benchmarking efforts with these emerging applications. As the field moves towards predicting more complex and novel interactions, the role of synthetic benchmarks that can simulate these scenarios will become even more critical. The continued development of benchmarks that reflect the latest data and challenge algorithms with increasingly complex tasks will be essential for translating computational advances into real-world biological and clinical breakthroughs [3] [22] [21].

Putting Benchmarks to Work: Testing PPI Prediction Algorithms with Synthetic Networks

The accurate prediction of protein-protein interactions (PPIs) is a fundamental challenge in computational biology, with profound implications for understanding cellular functions, disease mechanisms, and drug discovery. The field has witnessed an evolution of methodologies, from early approaches leveraging semantic similarity and network topology to contemporary deep learning architectures that capture complex hierarchical relationships. Each methodological class offers distinct advantages and faces specific limitations in handling the inherent noise, sparseness, and highly skewed degree distribution of PPI networks. Assessing the performance of these diverse algorithms requires robust benchmarking frameworks. Synthetic networks, particularly those generated by platforms like NAPAbench 2, provide gold-standard benchmarks that enable fair and comprehensive performance assessment of PPI prediction methods by simulating realistic network properties and known ground-truth interactions. This guide systematically compares the performance of similarity-based, network topology, and deep learning approaches for PPI prediction, leveraging experimental data from benchmark studies to provide an objective resource for researchers, scientists, and drug development professionals.

Methodological Approaches and Comparative Performance

Similarity-Based and Local Topology Methods

Similarity-based and local topology algorithms represent some of the earliest computational approaches for PPI prediction and network reconstruction. These methods operate on the fundamental premise that proteins with similar characteristics or shared neighbors are more likely to interact.

Similarity Multiplied Similarity (SMS) Algorithm: A recently developed method, SMS, utilizes paths of length three (L3) in combination with protein similarities. It computes a mixed similarity measure that integrates topological structure and node attribute features, then calculates a prediction value by summing the product of all similarities along the L3 paths. A variant called maxSMS focuses on the maximum impact path. Evaluations on six datasets including S. cerevisiae and H. sapiens show that maxSMS improves the precision of the top 500 predictions, area under the precision-recall curve, and normalized discounted cumulative gain by an average of 26.99%, 53.67%, and 6.7%, respectively, compared to other optimal methods [23].

Common Neighbor-Based Approaches: Traditional common neighbor methods leverage the insight that two proteins sharing an unusually large number of neighbors are likely functionally associated. Enhancements to this approach have led to algorithms that measure and reduce the influence of hub proteins on detecting function-associated protein pairs. When applied to human PPI data, these improved common neighbor methods identified 4,233 significant functional associations among 1,754 proteins, enabling assignment of 466 KEGG pathway annotations to 274 proteins and 123 Gene Ontology annotations to 114 proteins with estimated false discovery rates below 21% for KEGG and 30% for GO [24].

Gene Ontology-Based Semantic Similarity: GO-based semantic similarity measurements provide a valuable approach for assessing the reliability of PPIs and refining networks by filtering low-confidence links. Studies have systematically compared five semantic similarity metrics (Jiang, Lin, Rel, Resnik, and Wang) across three GO annotation terms (Molecular Function, Biological Process, and Cellular Component). The Resnik metric with Biological Process annotation terms performed best among all combinations, significantly improving the performance of six topology-based centrality methods in identifying essential proteins when applied to refined PPI networks [25].

Table 1: Performance Comparison of Similarity-Based and Topology Methods

| Method | Key Mechanism | Reported Advantages | Limitations |

|---|---|---|---|

| SMS/maxSMS [23] | Similarity multiplied along paths of length three | 26.99% avg. precision improvement in top 500 predictions | Limited to local path structures |

| Common Neighbor Enhancement [24] | Reduced hub protein influence | 4,233 functional associations identified at <21% FDR | May miss interactions between distant nodes |

| GO-Based Refinement (Resnik-BP) [25] | Semantic similarity filtering | Superior essential protein identification | Dependent on completeness of GO annotations |

| Random Walk with Resistance [26] | Novel random walk procedure handling hubs | Higher biological relevance in reconstructed network | Computationally intensive for large networks |

Deep Learning Architectures

Deep learning has revolutionized PPI prediction by automatically learning relevant features from complex data and capturing intricate patterns that elude manual feature engineering. Several architectural paradigms have emerged as particularly effective for PPI prediction tasks.

Graph Neural Networks (GNNs): GNNs and their variants have become predominant in PPI prediction due to their natural alignment with network-structured data. These models operate through message-passing mechanisms that aggregate information from neighboring nodes to generate informative protein representations. Key GNN variants include Graph Convolutional Networks (GCNs), Graph Attention Networks (GATs), GraphSAGE, and Graph Autoencoders (GAEs) [1]. For instance, GCNs apply convolutional operations to aggregate neighbor information, while GATs introduce attention mechanisms to adaptively weight the importance of neighboring nodes. GraphSAGE employs sampling and aggregation strategies that make it suitable for large-scale graphs, and GAEs utilize encoder-decoder frameworks to learn compact network representations [1].

HI-PPI Framework: A recently introduced method called HI-PPI represents a significant advancement by integrating hyperbolic graph convolutional networks with interaction-specific learning. This approach explicitly models the hierarchical organization of PPI networks by embedding proteins in hyperbolic space, where the distance from the origin naturally reflects hierarchical levels. Additionally, HI-PPI employs a gated interaction network to extract unique patterns between specific protein pairs. Evaluations on SHS27K and SHS148K datasets demonstrate that HI-PPI improves Micro-F1 scores by 2.62%-7.09% over the second-best method and shows superior generalization across different PPI types and robustness against edge perturbations [20].

Specialized Deep Learning Architectures: Researchers have developed numerous specialized architectures to address specific challenges in PPI prediction. The AG-GATCN framework integrates graph attention networks with temporal convolutional networks to enhance robustness against noise [1]. RGCNPPIS combines GCN and GraphSAGE to simultaneously extract macro-scale topological patterns and micro-scale structural motifs [1]. The Deep Graph Auto-Encoder (DGAE) innovatively combines canonical auto-encoders with graph auto-encoding mechanisms for hierarchical representation learning [1].

Table 2: Performance of Deep Learning Models on Benchmark Datasets

| Method | SHS27K (Micro-F1) | SHS148K (Micro-F1) | Key Innovation | Data Utilization |

|---|---|---|---|---|

| HI-PPI [20] | 0.7746 (DFS) | Not specified | Hyperbolic geometry + interaction networks | Structure + sequence |

| MAPE-PPI [20] | Second best on SHS27K | Second best on SHS148K | Multi-modal heterogeneous GNN | Multiple data types |

| BaPPI [20] | Second best on SHS27K | Not specified | Not specified in results | Not specified |

| PIPR [27] | Poor performance | Poor performance | Sequence-based | Sequence only |

| AFTGAN [1] | Not specified | Not specified | AFT + GAN integration | Not specified |

Performance Trends and Insights

Several important trends emerge from comparative analyses of PPI prediction methods. Structure-based methods consistently outperform sequence-only approaches, as protein structure more directly determines function and provides spatial biological information relevant to interactions [20]. Methods that explicitly model network hierarchy demonstrate superior performance, highlighting the importance of capturing the natural hierarchical organization of PPI networks from molecular complexes to functional modules and cellular pathways [20]. Additionally, integration of multiple data sources generally enhances prediction accuracy, as evidenced by the success of heterogeneous network approaches that combine various biological data types [2].

Experimental Protocols and Benchmarking

Benchmark Datasets and Evaluation Metrics

Robust evaluation of PPI prediction methods requires standardized benchmarks and appropriate metrics. The NAPAbench 2 benchmark represents a significant advancement in this area, providing synthetic PPI networks with characteristics that closely match contemporary real PPI networks. This benchmark incorporates a completely redesigned network synthesis algorithm trained on the latest PPI networks from the STRING database (v10.0), which includes data from species including H. sapiens, S. cerevisiae, D. melanogaster, M. musculus, and C. elegans [3]. Key improvements in NAPAbench 2 include updated degree exponents (ranging from 1.53 to 1.84 for STRING networks compared to 1.86 to 2.17 for older Isobase networks), increased clustering coefficients, and more functional subnetworks, better reflecting the properties of modern PPI datasets [3].

Commonly used evaluation metrics for PPI prediction include:

- Precision at Top K: Measures the proportion of correct predictions among the top K ranked results

- Area Under Precision-Recall Curve (AUPR): Summarizes performance across all probability thresholds

- Micro-F1 Score: Harmonic mean of precision and recall, particularly important for class imbalance

- Area Under ROC Curve (AUC): Measures separability between interacting and non-interacting pairs

- Normalized Discounted Cumulative Gain (NDCG): Assesses ranking quality of prediction algorithms

Critical Experimental Considerations

When designing experiments to evaluate PPI prediction methods, researchers should address several critical considerations. Data splitting strategy significantly impacts performance assessment, with both Breadth-First Search (BFS) and Depth-First Search (DFS) approaches used to create training and test sets that evaluate different generalization capabilities [20]. Accounting for dataset shift between training and application contexts is essential, as PPI predictors may demonstrate sensitivity to small changes in training data [27]. Statistical significance testing, such as two-sample t-tests comparing multiple runs of different methods, should be conducted to ensure observed improvements are meaningful [20].

PPI Prediction Method Evaluation Workflow

Research Reagent Solutions

Table 3: Essential Research Resources for PPI Prediction Studies

| Resource Name | Type | Primary Function | Application Context |

|---|---|---|---|

| STRING [1] [3] | Database | Known and predicted PPIs across species | Data source for training and validation |

| BioGRID [26] [1] | Database | Protein-protein and gene-gene interactions | Experimental validation data |

| NAPAbench 2 [3] | Benchmark | Synthetic PPI network generation | Algorithm performance assessment |

| Gene Ontology [25] | Annotation | Functional protein characterization | Semantic similarity computation |

| PDB [1] | Database | 3D protein structures | Structure-based feature extraction |

| SHS27K/SHS148K [20] | Dataset | Homo sapiens PPI subsets from STRING | Standardized model evaluation |

The landscape of PPI prediction methods encompasses a diverse spectrum of approaches, each with distinctive strengths and applicability domains. Similarity-based methods offer interpretability and solid performance, particularly when integrating multiple similarity measures. Network topology approaches provide biologically meaningful reconstructions by leveraging local and global network properties. Deep learning methods, especially those incorporating hierarchical information and interaction-specific learning, currently achieve state-of-the-art performance by automatically learning relevant features from complex data. The continued advancement of benchmarking frameworks like NAPAbench 2 enables rigorous, standardized evaluation across these methodological paradigms. For researchers and drug development professionals, method selection should be guided by specific application requirements, data availability, and interpretability needs, with ensemble approaches potentially offering the most robust solutions for critical applications in target identification and therapeutic development.

The accurate prediction of protein-protein interactions (PPIs) is a cornerstone of modern biology, underpinning our understanding of cellular functions, disease mechanisms, and drug discovery. Computational methods, particularly those leveraging Graph Neural Networks (GNNs), have emerged as powerful tools to complement experimental techniques that are often time-consuming, costly, and prone to false positives/negatives [28] [29]. A critical challenge in this domain is the rigorous evaluation of these GNN models to determine their capacity to capture both the local topological properties and the complex hierarchical structures inherent in biological networks. This guide provides a comparative analysis of GNN architectures for PPI prediction, framing the evaluation within the context of synthetic benchmarks like NAPAbench, and provides detailed experimental protocols and resources for researchers.

Comparative Analysis of GNN Architectures for PPI Prediction

Performance Across PPI Datasets

Various GNN architectures have been developed and applied to PPI prediction. The table below summarizes the comparative performance of different models, highlighting their distinct approaches and efficacy.

Table 1: Comparison of GNN Architectures for PPI Prediction

| Model | Core Mechanism | Application Focus | Key Strengths | Reported Performance |

|---|---|---|---|---|

| GCN (Graph Convolutional Network) | Spectral graph convolution using layer-wise neighborhood aggregation [28]. | General PPI prediction from sequence and structural information [29]. | Simplicity, efficiency in capturing local topology. | Foundational performance; can be outperformed by more specialized architectures [28] [29]. |

| GAT (Graph Attention Network) | Incorporates attention mechanisms to assign varying importance to neighboring nodes [28] [29]. | Learning from protein graphs built from PDB files and sequence features [29]. | Dynamic weighting of neighbor influences, increased interpretability. | Outperforms sequence-only and some traditional ML methods [29]. |

| HGCN (Hyperbolic Graph Convolution) | Performs graph convolutions in hyperbolic space, which better captures hierarchical and tree-like structures [28]. | Multi-type PPI prediction on datasets with complex relational hierarchies. | Superior modeling of hierarchical data and power-law structures. | Tend to have better performance than other methods on protein-related datasets [28]. |

| HC-GNN (Hierarchical Community-aware GNN) | Generates a multi-level hierarchy of super-nodes for message-passing (bottom-up, within-level, top-down) [30]. | General graph tasks (node classification, link prediction) on complex networks. | Captures long-range interactions and multi-scale (meso- and macro-level) semantics. | Consistently outperforms flat GNNs; significant improvement in few-shot learning (up to 16.4%) [30]. |