Structural and Evolutionary Principles for PPI Prediction: From Deep Learning to Drug Discovery

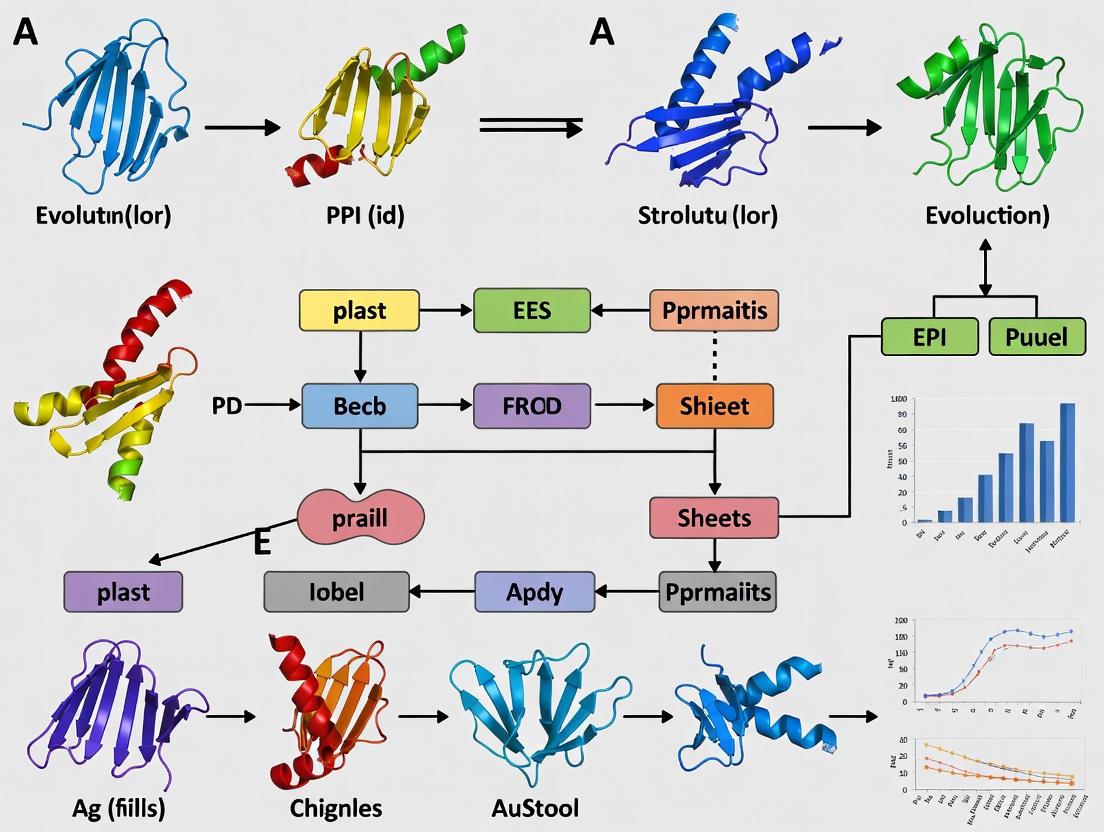

This article provides a comprehensive overview of computational methods for predicting protein-protein interactions (PPIs) by integrating structural biology and evolutionary principles.

Structural and Evolutionary Principles for PPI Prediction: From Deep Learning to Drug Discovery

Abstract

This article provides a comprehensive overview of computational methods for predicting protein-protein interactions (PPIs) by integrating structural biology and evolutionary principles. It explores foundational concepts, from structural matching and template-based docking to the latest deep learning models like graph neural networks and hyperbolic embeddings that capture hierarchical network properties. We detail methodological advances, including algorithms for de novo interaction prediction and the use of energetic profiles for evolutionary analysis, while addressing critical challenges such as data imbalance, benchmarking pitfalls, and the prediction of interactions with no natural precedence. Finally, we examine rigorous validation frameworks and the transformative applications of these methods in identifying drug targets and constructing disease-specific interactomes, offering a vital resource for researchers and drug development professionals navigating this rapidly evolving field.

The Structural and Evolutionary Bedrock of Protein Interactions

Core Principles of Structural Matching and Interface Architecture

Protein-protein interactions (PPIs) govern virtually all cellular processes, and understanding their architectural principles is fundamental to advancing biological science and therapeutic development [1]. The core challenge in PPI prediction lies in accurately modeling the structural matching between interacting proteins and the evolutionary principles that shape their interfaces. Structural matching refers to the physicochemical and geometric complementarity between protein surfaces that enables specific binding, while interface architecture encompasses the spatial organization of residues that form the functional binding site. These principles are not static; they are governed by evolutionary pressures that optimize binding affinity, specificity, and regulatory control [2].

Recent advances in artificial intelligence (AI) and deep learning have transformed computational methods for modeling protein complexes, enabling researchers to move from sequence-based predictions to accurate structure-based interface characterization [1]. This document provides detailed application notes and experimental protocols for applying these core principles in PPI research, framed within a broader thesis on structural and evolutionary biology. The content is specifically designed for researchers, scientists, and drug development professionals working at the intersection of computational biology and structural bioinformatics.

Core Principles and Theoretical Framework

The Structural Matching Paradigm

Structural matching in PPIs is a multi-dimensional optimization problem where interacting surfaces evolve toward complementary patterns. This complementarity occurs at multiple levels:

- Geometric Complementarity: Surface contours, protrusions, and depressions must physically fit together with minimal steric clashes, often described as a "lock-and-key" or "induced-fit" mechanism.

- Electrostatic Complementarity: Charge distributions across interacting surfaces create favorable electrostatic potentials that guide binding partners and stabilize complexes.

- Hydrophobic Complementarity: Hydrophobic patches tend to associate to minimize solvent exposure, while hydrophilic residues often remain at the interface to form specific hydrogen bonds.

The evolutionary conservation of these properties creates recognizable signatures in protein sequences and structures. Coevolutionary analysis can detect these signatures by identifying pairs of positions in interacting proteins that have undergone correlated mutations over evolutionary time, preserving functional interactions despite sequence changes [1].

Interface Architecture Hierarchies

Protein interfaces exhibit hierarchical structural organization that can be analyzed at increasing levels of complexity:

Table: Hierarchical Levels of Protein Interface Architecture

| Architectural Level | Key Characteristics | Experimental Approaches |

|---|---|---|

| Primary (Residue) | Amino acid composition, physicochemical properties, conservation patterns | Multiple sequence alignment, conservation analysis |

| Secondary (Motif) | Short linear motifs, β-sheet pairing, α-helical bundles | Motif discovery, structural fragment analysis |

| Tertiary (Domain) | Structured domains, fold complementarity, surface topography | Domain-domain interaction mapping, docking studies |

| Quaternary (Complex) | Stoichiometry, symmetry, allosteric regulation | Native mass spectrometry, cross-linking, cryo-EM |

This hierarchical organization implies that interface prediction requires integrated methods that can operate across these spatial scales, from residue-level contact predictions to complex assembly modeling [2].

Evolutionary Principles in Interface Architecture

Evolutionary constraints on interface regions differ significantly from other protein surfaces due to their functional importance:

- Purifying Selection: Core interface residues typically show evolutionary conservation as mutations disrupt critical interactions.

- Adaptive Evolution: Interface periphery may undergo positive selection in host-pathogen interactions or other evolutionary arms races.

- Structural Conservation: While sequences diverge, interface structures often remain more conserved, enabling homology-based inference of interactions.

These principles form the theoretical foundation for the computational protocols outlined in the following sections.

Quantitative Data and Performance Metrics

The field has established rigorous benchmarks for evaluating PPI prediction methods. The following tables summarize key quantitative data from recent methodological advances.

Table: Performance Comparison of PPI Prediction Methods on Standard Benchmarks [2] [3]

| Method Category | Approach | Average Precision | Recall | F1-Score | AUC-ROC |

|---|---|---|---|---|---|

| Deep Learning (CNN) | Sequence-to-structure prediction | 0.89 | 0.85 | 0.87 | 0.93 |

| Graph Convolutional Networks | Network-based inference | 0.85 | 0.88 | 0.86 | 0.92 |

| Evolutionary Algorithms | Multi-objective optimization | 0.82 | 0.91 | 0.86 | 0.90 |

| Traditional ML | Feature-based classification | 0.78 | 0.80 | 0.79 | 0.85 |

| Docking-Based | Template-based modeling | 0.75 | 0.72 | 0.73 | 0.81 |

Table: MIPS Complex Detection Performance Under Varying Noise Conditions [3]

| Noise Level (%) | MCODE Precision | MCODE Recall | DECAFF Precision | DECAFF Recall | MOEA-FS Precision | MOEA-FS Recall |

|---|---|---|---|---|---|---|

| 0% (Original) | 0.62 | 0.58 | 0.71 | 0.65 | 0.82 | 0.91 |

| 10% | 0.59 | 0.54 | 0.68 | 0.61 | 0.80 | 0.88 |

| 20% | 0.53 | 0.49 | 0.63 | 0.57 | 0.76 | 0.84 |

| 30% | 0.47 | 0.42 | 0.58 | 0.51 | 0.71 | 0.79 |

The multi-objective evolutionary algorithm (MOEA) with functional similarity-based perturbation shows notable robustness to noise, maintaining higher precision and recall across noise conditions compared to established methods like MCODE and DECAFF [3].

Application Notes: Computational Protocols

Multi-Objective Evolutionary Algorithm for Complex Detection

This protocol implements a novel multi-objective optimization model that integrates both topological and biological data for detecting protein complexes in PPI networks [3].

MOEA for Protein Complex Detection Workflow

Initialization and Representation

- Input Data Preparation: Obtain PPI network data from validated databases (e.g., MIPS, BioGRID, STRING). Acquire Gene Ontology (GO) annotations for all proteins in the network, focusing on biological process, molecular function, and cellular component ontologies [3].

- Solution Representation: Encode potential protein complexes as binary strings of length N (where N is the number of proteins in the network), with '1' indicating membership in the complex and '0' indicating exclusion.

- Population Initialization: Generate an initial population of 100-200 candidate solutions using a combination of random initialization and heuristic initialization based on network topology (e.g., starting from highly connected seed proteins).

Multi-Objective Optimization Model

The algorithm simultaneously optimizes three conflicting objectives that reflect the core principles of structural matching and interface architecture:

Topological Density Objective: Maximize the internal connectivity of the candidate complex using the subgraph density metric:

f₁(C) = (2 × |E(C)|) / (|C| × (|C| - 1))

where |E(C)| is the number of edges within candidate complex C, and |C| is the number of proteins in C.

Functional Coherence Objective: Maximize the functional similarity of proteins within the complex based on Gene Ontology annotations:

f₂(C) = (ΣᵢΣⱼ GO_sim(pᵢ, pⱼ)) / (|C| × (|C| - 1))

where GO_sim(pᵢ, pⱼ) is the semantic similarity between proteins pᵢ and pⱼ based on their GO annotations.

Interface Conservation Objective: Maximize the evolutionary conservation of interface residues based on co-evolutionary signals:

f₃(C) = Mean(Σᵢ EV_score(pᵢ))

where EV_score(pᵢ) is the evolutionary conservation score for protein pᵢ derived from multiple sequence alignments.

Functional Similarity-Based Protein Translocation Operator (FS-PTO)

This novel mutation operator enhances the integration of biological knowledge with topological data [3]:

- Functional Affinity Calculation: For each protein in a candidate complex, compute its functional affinity to the complex as the average GO semantic similarity to all other members.

- Translocation Decision: Identify proteins with low functional affinity (bottom 20%) as candidates for removal. Simultaneously, identify external proteins with high functional affinity to the complex (top 10%) as candidates for inclusion.

- Controlled Perturbation: With probability μ = 0.3, replace the lowest-affinity internal protein with the highest-affinity external protein. This preserves complex size while improving functional coherence.

Evaluation and Termination

- Fitness Assignment: Use non-dominated sorting and crowding distance computation to rank solutions across the multiple objectives.

- Selection and Variation: Apply binary tournament selection for reproduction. Use standard crossover (probability = 0.8) and mutation (probability = 0.2) operators alongside the FS-PTO operator.

- Termination Condition: Run the algorithm for a maximum of 500 generations or until the hypervolume of the Pareto front shows less than 1% improvement over 50 consecutive generations.

Deep Learning Framework for Structure-Based PPI Prediction

This protocol details a deep learning approach for predicting protein-protein interactions from sequence and structural features [2].

Deep Learning Framework for PPI Prediction

Multi-Modal Feature Extraction

Sequence Feature Extraction:

- Input: Protein sequences in FASTA format.

- Processing: Generate position-specific scoring matrix (PSSM) profiles using PSI-BLAST against a non-redundant database (e-value threshold: 0.001, 3 iterations).

- Architecture: Use 1D convolutional neural network (CNN) with 3 convolutional layers (256, 128, 64 filters) and kernel sizes of 9, 7, and 5 to capture sequence motifs at multiple scales.

Structural Feature Extraction:

- Input: Protein structures from PDB or predicted structures from AlphaFold2.

- Processing: Represent structures as graphs where nodes are residues and edges represent spatial proximity (≤8Å).

- Architecture: Use graph convolutional network (GCN) with 3 layers to propagate structural information and capture interface neighborhoods.

Evolutionary Feature Extraction:

- Input: Multiple sequence alignments for each protein.

- Processing: Compute co-evolutionary signals using direct coupling analysis (DCA) or similar methods.

- Architecture: Use bidirectional LSTM network to model evolutionary constraints across the sequence.

Feature Fusion and Prediction

- Cross-Attention Mechanism: Implement attention-based fusion to dynamically weight the importance of different feature types for each potential interaction.

- Interaction Prediction: Use fully connected layers with dimensions 512, 256, and 128 with ReLU activation, followed by a sigmoid output layer for binary classification (interaction vs. non-interaction).

- Regularization: Apply batch normalization, dropout (rate=0.5), and L2 regularization (λ=0.001) to prevent overfitting.

Training Protocol

- Data Partitioning: Use strict leave-one-species-out cross-validation or time-split validation to avoid homology bias.

- Loss Function: Optimize weighted binary cross-entropy to handle class imbalance.

- Optimization: Use Adam optimizer with initial learning rate of 0.001, reduced by factor of 0.5 when validation loss plateaus for 10 epochs.

The Scientist's Toolkit: Research Reagent Solutions

Table: Essential Research Resources for Structural Matching and Interface Architecture Studies

| Resource Type | Specific Examples | Function and Application |

|---|---|---|

| PPI Databases | MIPS, BioGRID, STRING, IntAct | Provide curated experimental PPI data for training and benchmarking prediction methods [3]. |

| Structure Databases | PDB, AlphaFold DB, ModelArchive | Source of protein structures for structural analysis and template-based modeling [1]. |

| Ontology Resources | Gene Ontology (GO), InterPro | Functional annotations for evaluating biological relevance of predicted complexes [3]. |

| Computational Frameworks | TensorFlow, PyTorch, scikit-learn | Deep learning and machine learning frameworks for implementing prediction algorithms [2]. |

| Specialized Software | COTH, PRISM, InterEvol | Tools specifically designed for protein interface prediction and evolutionary analysis. |

| Evaluation Suites | CAPRI criteria, AUC implementation | Standardized metrics and tools for method performance assessment [1]. |

Future Challenges and Research Directions

Despite significant advances, several challenges remain in structural matching and interface architecture prediction [1] [2]:

- Host-Pathogen Interactions: Modeling the specialized interfaces in host-pathogen interactions remains difficult due to rapid co-evolution and limited structural data.

- Intrinsically Disordered Regions: Predicting interactions involving intrinsically disordered regions requires new paradigms beyond static structural complementarity.

- Immune-Related Interactions: Modeling the extreme diversity and adaptive evolution of immune system interactions presents unique challenges.

- Dynamic Complexes: Current methods predominantly predict static structures, while biological function often emerges from dynamic conformational ensembles.

- Multi-Scale Integration: Effectively integrating atomic-level structural details with cellular-scale network context remains an open challenge.

Future research should focus on developing temporal models that can capture the dynamics of interface formation, graph neural networks that can operate across organizational scales, and few-shot learning approaches to address limited training data for specialized interaction types. The integration of physics-based models with deep learning approaches appears particularly promising for achieving more accurate and biophysically realistic predictions [1] [2].

Protein-protein interactions (PPIs) are fundamental to virtually all cellular processes, from signal transduction to defense against pathogens. Understanding the structural basis of these interactions is essential for deciphering molecular function and guiding therapeutic design [4] [5]. While experimental techniques like X-ray crystallography provide high-resolution complex structures, a significant gap exists between the number of known interactions and those with determined structures; for instance, only approximately 6% of known human interactome interactions have an associated experimental complex structure [4] [5]. Computational prediction methods, particularly template-based docking (TBD), have emerged as powerful tools to bridge this gap by leveraging the known structures of related complexes to model unknown targets [4] [6] [7].

Template-based docking operates on the principle that proteins with similar sequences or structures tend to form similar complexes [7]. This paradigm extends the concepts of homology modeling and threading from single-chain proteins to multi-chain complexes, allowing for the construction of interaction models from amino acid sequences alone, without pre-requiring the structures of monomer components [4]. Compared to free docking, which relies on shape and physicochemical complementarity, TBD is generally less sensitive to structural inaccuracies in protein models and conformational changes upon binding, making it particularly valuable for large-scale interactome mapping [6]. This application note details the methodologies, protocols, and practical resources for implementing template-based docking, framed within the structural and evolutionary principles of PPI research.

Core Principles and Methodological Frameworks

Comparative Analysis of Docking Methodologies

Template-based docking is one of two primary methods for computational modeling of protein-protein complexes. The distinction between these approaches is critical for selecting the appropriate tool for a given prediction problem.

Table 1: Comparison of Protein-Protein Complex Modeling Approaches

| Feature | Template-Based Docking (TBD) | Free Docking |

|---|---|---|

| Fundamental Principle | Leverages known complex structures (templates) through sequence or structure alignment [4] [7] | Exhaustive search based on shape and physicochemical complementarity [4] [6] |

| Requirement for Monomer Structures | Not pre-required; models can be built from sequence [4] | Essential starting point [4] |

| Sensitivity to Conformational Change | Low; uses bound templates [4] [6] | High; accuracy decreases with large conformational changes [4] |

| Best For | Targets with detectable homologous templates or interface similarity [7] | Complexes with obvious shape complementarity and large interfaces [4] |

| Reported Success Rate (Top 1) | ~26% (structure alignment-based) [7] | Varies significantly; typically lower than TBD when good templates exist [6] |

Evolutionary Underpinnings of Template-Based Prediction

The success of template-based docking is rooted in evolutionary principles. Proteins that share evolutionary ancestry often preserve not only their fold but also their interaction modes—a concept known as interologs [8]. Methods that transfer interaction information from well-understood proteins to lesser-known ones based on homology are therefore a cornerstone of TBD [8]. Beyond simple homology, co-evolutionary signals between interacting partners can provide insights into interface residues, further guiding template selection and complex model construction [8]. The integration of these evolutionary concepts with geometric network analysis has been shown to improve PPI prediction accuracy by up to 14.6% compared to baseline methods without evolutionary information [9].

Experimental Protocols and Workflows

General Pipeline for Template-Based Complex Modeling

A standard template-based modeling procedure, starting from the sequences of the complex components, mirrors the steps used in TBM of single-chain proteins [4].

Figure 1: The generalized workflow for template-based modeling of protein complexes, highlighting the sequential steps from sequence input to a refined structural model.

Protocol 1: Standard TBM for Protein Complexes

Template Identification: Search for known protein complex structures (templates) related to the target sequences. This can be achieved through:

- Homology-Based Detection: Matching query sequences against sequences of subunits in a complex template library using tools like BLAST or HHsearch [4] [6].

- Structure-Based Comparison: Given monomer structures, align them to a library of complex templates using structural alignment tools like TM-align [7]. This can be done via full-structure alignment or interface-only alignment.

- Remote Homology Detection: Using profile hidden Markov models (HMMs) via tools like HH-suite to find distantly related templates [6] [7].

Target-Template Alignment: Align the target sequences to the selected template structure(s). Methods range from simple sequence alignment to sophisticated profile-based alignment and threading [4].

Structural Framework Construction: Build an initial model for the target by copying the coordinates of the structurally aligned regions from the template(s). This creates a crude backbone model that may contain gaps [4].

Loop Modeling and Side-Chain Placement: Construct missing loop regions and termini using fragment libraries or ab initio methods. Add and optimize side-chain conformations using rotamer libraries with tools like SCWRL to match the target sequence [4] [6].

Model Refinement: Perform energy minimization and limited structural refinement to correct stereochemical errors and optimize the interface. This step is computationally intensive and not always implemented in large-scale pipelines [4].

Advanced Protocol: Template-Based Docking by Structure Alignment

This protocol is applicable when the structures of the interacting monomer components are known or can be reliably modeled [7].

Protocol 2: Docking via Structural Alignment

Template Library Curation: Compile a non-redundant set of protein-protein complex structures from resources like DOCKGROUND [7].

Structure Alignment:

- Perform independent structural alignment of the target receptor and ligand monomers against the template library. This can be done using two approaches:

- Full-Structure Alignment: Align the entire monomer structures to the full structures of the template complexes.

- Interface Alignment: Align the target monomers only to the interface parts of the template complexes. This is preferable when significant conformational change (e.g., domain rearrangement) is suspected [7].

- Use a structural alignment tool like TM-align for this step.

- Perform independent structural alignment of the target receptor and ligand monomers against the template library. This can be done using two approaches:

Template Selection and Complex Assembly:

- Identify templates where both the receptor and ligand show significant structural similarity to the target proteins.

- Exclude "self-hits" (templates with TM-score ≥ 0.98 and sequence identity ≥ 95% to the target).

- Superimpose the target monomer structures onto the selected template complex based on the alignments generated in step 2. The relative orientation of the target proteins is inherited from the template.

Model Scoring and Ranking: Rank the generated models using a combined scoring function that may include structural similarity measures, statistical potentials, or evolutionary information [7].

Performance Benchmarking

The performance of template-based docking methods has been systematically evaluated, providing guidance on expected outcomes.

Table 2: Benchmarking Docking Approaches on Protein Models [6]

| Docking Approach | Sensitivity to Model Inaccuracy | Key Strength | Typical Application Context |

|---|---|---|---|

| Template-Based Docking | Low | Robustness; higher rank of near-native poses [6] | Preferred when good templates are available |

| Free Docking | High | No template dependency; models interaction multiplicity [6] | Essential for novel interfaces and crowded cellular environments |

| Integrated Approach | Moderate | Combines strengths of both methods [6] [10] | Most practical strategy for robust performance |

Table 3: Success Rates of Structure Alignment-Based Docking [7]

| Alignment Method | Top-1 Success Rate (Bound Structures) | Top-1 Success Rate (Unbound Structures) | Notes |

|---|---|---|---|

| Full-Structure Alignment | 26% | Similar to bound | Performance is consistent between bound and unbound forms. |

| Interface Alignment | 26% | Similar to bound | Marginally better model quality than full-structure alignment. |

| Consensus (Both Methods Select Same Top Template) | ~Twofold increase | ~Twofold increase | Highlighting the value of consensus in template selection. |

A successful template-based docking experiment relies on a suite of computational tools, databases, and reagents.

Table 4: Key Research Reagent Solutions for Template-Based Docking

| Resource Name | Type | Function in Workflow | Access |

|---|---|---|---|

| DOCKGROUND [7] | Database | Provides comprehensive benchmark sets and template libraries for docking. | http://dockground.compbio.ku.edu |

| BioLiP [10] | Database | A curated library of biologically relevant protein-ligand interactions, useful for identifying binding pocket templates. | https://zhanggroup.org/BioLiP/ |

| HH-suite [6] [7] | Software Toolkit | Detects remote homologous templates by comparing profile HMMs. | https://toolkit.tuebingen.mpg.de/tools/hhpred |

| TM-align [7] | Algorithm | Performs structural alignment between target and template proteins, used for both full and interface-based alignment. | https://zhanggroup.org/TM-align/ |

| GNINA [10] | Scoring Function | A convolutional neural network (CNN)-based model for scoring and ranking docking poses. | https://github.com/gnina/gnina |

| PRISM [4] | Web Server | A TBD method that predicts protein interactions by structural matching of template interfaces. | http://prism.ccbb.ku.edu.tr/ |

| PrePPI [4] [8] | Web Server | Integrates structural modeling with non-structural features (e.g., co-expression, functional similarity) for PPI prediction. | http://bhapp.c2b2.columbia.edu/PrePPI/ |

| Phyre2 [6] | Web Server | Models monomeric protein structures via homology, which can serve as input for subsequent docking. | http://www.sbg.bio.ic.ac.uk/phyre2 |

Integrated and Hybrid Approaches

The most powerful modern applications of TBD integrate it with other data sources and methodologies. For instance, the PrePPI algorithm combines structural evidence from templates with non-structural features like gene co-expression, functional similarity, and protein essentiality, using a Bayesian approach to predict interacting partners with greater accuracy [4] [8]. Similarly, for ligand-binding prediction, tools like CoDock-Ligand hybridize template-based modeling with CNN-based scoring (GNINA), demonstrating that incorporating experimental template data significantly improves success rates over docking with scoring functions alone [10].

Figure 2: A conceptual diagram of integrated approaches that combine template-based docking with evolutionary, network, and other data types to enhance prediction accuracy.

Template-based docking has matured into an indispensable method for high-throughput structural characterization of protein-protein interactions. Its ability to generate plausible complex structures from sequence, coupled with robustness to imperfections in monomer models, makes it uniquely suited for constructing 3D interactomes. While challenges remain—particularly in refining models to high accuracy and identifying templates for distantly related targets—the integration of TBD with evolutionary principles, co-evolutionary analysis, and machine learning scoring functions points to a future where computational models will play an ever more central role in illuminating the structural basis of cellular life.

Leveraging Evolutionary Trace through Sequence and Structural Conservation

Evolutionary Trace (ET) is a computational method that identifies functionally important residues in proteins by analyzing patterns of sequence conservation and variation across a protein family. The core hypothesis is that residues critical for function, such as those involved in catalysis, binding, or allosteric regulation, will exhibit variation patterns that correlate with major evolutionary divergences [11] [12]. Unlike simple conservation metrics, ET ranks residues not merely by their invariance, but by whether their variations occur between, rather than within, major evolutionary branches. This provides a more nuanced view of functional importance, distinguishing residues conserved for structural stability from those directly involved in molecular functions like protein-protein interactions (PPIs) [12] [13]. The method is particularly valuable in structural biology and drug discovery, as it helps pinpoint specific residues that can be targeted for mutagenesis to probe function, for engineering novel specificities, or for therapeutic intervention [11] [12].

The integration of ET with structural data has proven powerful because top-ranked ET residues frequently form spatial clusters on the protein surface, demarcating potential functional interfaces [11] [12]. This makes ET a cornerstone technique for annotating protein function and understanding the structural basis of molecular recognition, especially within a broader research thesis focused on structural and evolutionary principles for PPI prediction.

Key Principles and Methodological Framework

Core Algorithm and Ranking

The ET method begins with a multiple sequence alignment (MSA) of homologous proteins and an associated phylogenetic tree. The fundamental ranking algorithm has evolved into two primary forms:

Integer-Value ET (ivET): The original method assigns an integer rank to each residue position

iusing the formula:ri = 1 + Σδnfromn=1toN-1, whereδnis 0 if the residue is invariant within the sequences of noden, and 1 otherwise. This approach is highly sensitive to perfect correlation patterns between residue variation and phylogenetic divergence [12].Real-Value ET (rvET): A refined, more robust version incorporates Shannon Entropy to measure invariance within phylogenetic branches. The rank

ρifor a residue is calculated as:ρi = 1 + Σ (1/n) Σ sifromn=1toN-1, wheresiis the Shannon entropy for the sub-alignment of groupg. This real-value approach is less sensitive to alignment errors and natural polymorphisms, making it suitable for automated, large-scale analysis [12].

The resulting ranks are converted into percentile ranks, with residues in the top 20-30% typically considered evolutionarily important [12].

Spatial Clustering and Statistical Significance

A critical validation step involves mapping top-ranked ET residues onto a three-dimensional protein structure. Functionally important residues are expected to cluster spatially rather than distribute randomly. The significance of this clustering is quantified using a clustering z-score [12].

The cluster weight w is calculated as: w = Σ Si Sj Aij (j-i) for i<j, where Si and Sj are 1 if residues meet the ET threshold, Aij is the adjacency matrix (1 if residues i and j are within 4Å), and (j-i) weights residues that are close in structure but distant in sequence. The z-score is then: z = (w - ⟨w⟩) / σ, where ⟨w⟩ and σ are the mean and standard deviation from an ensemble of random residue choices. A high z-score indicates a statistically significant cluster that likely corresponds to a functional site [12].

Workflow Visualization

The following diagram illustrates the core workflow of an Evolutionary Trace analysis, from data preparation to functional prediction.

Performance and Validation Data

Evolutionary Trace has been extensively validated through both case studies and large-scale benchmarks. Its predictions have been confirmed by site-directed mutagenesis, functional assays, and the successful design of peptide inhibitors.

Table 1: Key Validation Studies of Evolutionary Trace

| Study Focus/Protein | Key Finding | Experimental Validation |

|---|---|---|

| G-protein Signaling (Gα, RGS proteins) [12] | ET identified binding sites for Gβγ subunits, GPCRs, and PDE. | ~100 mutations confirmed predicted binding interfaces. |

| Function Transfer (RGS7 & RGS9) [12] | ET residues defined functional specificity. | Swapping a few ET residues successfully transferred function between homologs. |

| Large-Scale Function Annotation (ETA pipeline) [12] | ET-derived 3D-templates enable function prediction for proteins of unknown function. | Benchmarking showed accurate annotation of enzymatic and non-enzymatic functions. |

| Machine Learning Integration [13] | Combining ET-like conservation (ΔΔE) with stability (ΔΔG) improves functional residue identification. | Trained on multiplexed assay data (MAVEs); validated on independent datasets like GRB2 SH3 domain. |

Table 2: Quantitative Outcomes of ET-Based Predictions

| Validation Metric | Outcome | Context |

|---|---|---|

| Spatial Clustering [12] | Top-ranked ET residues show significant clustering (high z-score) on protein surfaces. | Found across numerous protein families; clusters overlap known functional sites. |

| Stable But Inactive (SBI) Prediction [13] | Machine learning model using conservation & stability correctly identified 116 of 127 functional residues. | Training on MAVE data from NUDT15, PTEN, CYP2C9; validation on GRB2 SH3. |

| Functional Specificity [11] | Accurately delineated functional epitopes and residues critical for binding specificity. | Tests on SH2, SH3 domains, and nuclear hormone receptor DNA-binding domains. |

Application Notes: Protocol for Predicting PPI Interfaces

This protocol details the steps for using Evolutionary Trace to identify and validate potential protein-protein interaction interfaces.

Stage 1: Sequence-Based Evolutionary Analysis

Goal: To generate a ranked list of evolutionarily important residues.

Step 1: Gather Homologous Sequences

- Use BLAST or PSI-BLAST to search the non-redundant (nr) protein database using the query protein sequence as input.

- Parameters: Expect threshold (E-value) of

1e-5, enable filtering for low-complexity regions. - Collect several hundred to a few thousand homologous sequences to ensure a robust evolutionary analysis. Avoid over-representation of specific clades.

Step 2: Construct Multiple Sequence Alignment (MSA)

- Use alignment tools like Clustal Omega, MAFFT, or MUSCLE with default parameters.

- Curate the MSA: Remove fragments, sequences with excessive gaps, and outliers to improve quality.

Step 3: Build Phylogenetic Tree

- Construct a phylogenetic tree from the curated MSA using methods like Maximum Likelihood (e.g., RAxML) or Neighbor-Joining.

- The tree topology is critical for the ET analysis as it defines the evolutionary branches.

Step 4: Run Evolutionary Trace

- Input the MSA and phylogenetic tree into an ET implementation (e.g., the public ET server at

http://mammoth.bcm.tmc.edu/). - Output: A list of all residues ranked by evolutionary importance (e.g., top 5%, 10%, 20%, etc.). Residues in the top quintile (20%) are typically considered for further analysis.

- Input the MSA and phylogenetic tree into an ET implementation (e.g., the public ET server at

Stage 2: Structure-Based Interface Prediction

Goal: To identify spatially clustered, top-ranked residues that form a putative interface.

Step 5: Map Residues to a 3D Structure

- Obtain a 3D structure of the query protein from the PDB or via a high-quality homology model.

- Using molecular visualization software (e.g., PyMOL, Chimera), map the top-ranked ET residues onto the structure, coloring them by their ET percentile rank.

Step 6: Identify Spatial Clusters

- Visually inspect the structure for clusters of top-ranked residues on the protein surface.

- Quantify Clustering: Calculate the clustering z-score to assess statistical significance. A z-score > 3 is typically considered significant.

- The largest and/or most significant cluster often corresponds to a primary functional interface, such as a PPI site.

Stage 3: Experimental Validation and Functional Perturbation

Goal: To confirm the predicted interface through targeted experiments.

Step 7: Design Mutants

- Design point mutations for key residues within the predicted cluster.

- Strategies:

- Alanine Scanning: Replace residues with alanine to remove side-chain functionality.

- Charge Reversal: Replace positively charged residues (e.g., Arg, Lys) with negatively charged ones (e.g., Asp, Glu) and vice versa to disrupt electrostatic interactions.

- Conservation Swap: Replace a residue with one commonly found at that position in a non-interacting ortholog.

Step 8: Experimental Assays

- Expression & Abundance Check: Use Western blotting or flow cytometry to ensure mutants express at wild-type levels, ruling out stability defects [13].

- Functional PPI Assay: Employ techniques like Yeast Two-Hybrid (Y2H), Surface Plasmon Resonance (SPR), or Co-Immunoprecipitation (Co-IP) to quantify interaction strength.

- Interpretation: Mutations that disrupt the PPI without affecting protein stability provide strong evidence for the residue's direct role in binding.

The following diagram summarizes this multi-stage experimental protocol.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Resources for Conducting Evolutionary Trace Analysis

| Resource Name | Type | Primary Function in ET/Validation | Access Link/Reference |

|---|---|---|---|

| NCBI BLAST | Database & Tool | Finding homologous sequences for MSA construction. | https://blast.ncbi.nlm.nih.gov/ |

| Clustal Omega / MAFFT | Software Tool | Performing multiple sequence alignment. | https://www.ebi.ac.uk/Tools/msa/clustalo/https://mafft.cbrc.jp/alignment/software/ |

| Evolutionary Trace Server | Web Server | Performing ET analysis using MSA and tree. | http://mammoth.bcm.tmc.edu/ [12] |

| Protein Data Bank (PDB) | Database | Source for high-resolution 3D protein structures. | https://www.rcsb.org/ |

| PyMOL / UCSF Chimera | Software Tool | Visualizing 3D structures and mapping ET residues. | https://pymol.org/https://www.cgl.ucsf.edu/chimera/ |

| Rosetta | Software Suite | Predicting changes in protein stability (ΔΔG) upon mutation. | https://www.rosettacommons.org/ [13] |

| Negatome Database | Database | Curated dataset of non-interacting protein pairs for negative training data in computational methods. | [14] |

| Yeast Two-Hybrid (Y2H) System | Experimental Assay | Detecting binary PPIs in vivo for experimental validation. | [14] [15] |

| Surface Plasmon Resonance (SPR) | Experimental Assay | Label-free, quantitative measurement of binding kinetics and affinity for PPI validation. | [14] |

Integration with Modern PPI Prediction Frameworks

Evolutionary Trace provides a foundational, evolutionarily-grounded perspective that complements modern computational methods for PPI prediction. While advanced deep learning models like HI-PPI [16] and MAPE-PPI [16] leverage graph neural networks and hyperbolic geometry to capture complex network topology and hierarchical relationships, they often rely on protein structure and sequence as primary inputs. The functional insights from ET can directly inform these models by highlighting specific, evolutionarily critical residues that should be prioritized in interaction interfaces.

Furthermore, ET principles are being integrated into machine learning models that deconvolute function from stability. For instance, combining ET-like evolutionary information (ΔΔE) with biophysical stability calculations (ΔΔG) has been shown to significantly improve the identification of functional residues that are "stable but inactive" [13]. This synergy between evolutionary analysis, structural biophysics, and modern deep learning creates a powerful, multi-faceted framework for advancing PPI prediction research, directly supporting the development of novel therapeutic strategies.

The Hierarchical Organization of PPI Networks in Biological Systems

Application Notes and Protocols for Structural and Evolutionary PPI Prediction Research

Protein-protein interaction (PPI) networks are not flat, random assortments of connections but are intrinsically organized into hierarchical layers that reflect biological function and evolutionary history [17] [18]. This hierarchy operates across multiple scales: from atomic-level residue contacts forming binding sites, to the assembly of proteins into stable complexes and pathways, and further to the organization of these pathways into functional modules within the global cellular interactome [17] [16] [18]. Understanding this nested organization is a core structural and evolutionary principle that significantly enhances the accuracy and interpretability of computational PPI prediction [17] [16]. For drug development professionals, targeting proteins or interactions at specific hierarchical levels—such as critical hub proteins in a top-level network or key residues in a binding interface—offers a strategic approach for therapeutic intervention [19].

Evidence and Quantitative Characterization of Hierarchical Organization

The hierarchical nature of PPI networks is supported by multiple lines of evidence from structural biology, network theory, and evolutionary analysis. Key quantitative features are summarized below.

Table 1: Quantitative Evidence for Hierarchical Organization in PPI Networks

| Hierarchical Level | Measurable Property | Typical Finding/Value | Implication for PPI Prediction | Source |

|---|---|---|---|---|

| Residue/Interface | Interface Planarity | Single-segmented interfaces are more planar than multi-segmented ones [19]. | Distinguishes interaction types; informs druggability of pockets. | [19] |

| Buried Surface Area (BSA) | Multi-segmented interfaces have ~1000 Ų larger average BSA [19]. | Correlates with interaction stability and affinity. | [19] | |

| Concavity Depth | Single-segmented interfaces often bind at "groove" depths (>5Å) suitable for small molecules [19]. | Identifies potentially druggable PPI targets. | [19] | |

| Protein/Node | Hyperbolic Embedding Radius (in HI-PPI) | Distance from origin in hyperbolic space indicates protein's hierarchical level [16]. | Automatically identifies hub vs. peripheral proteins. | [16] |

| Network/System | Fractal & Scaling Exponents | PPI networks exhibit multiplicative growth and fractal topology [20]. | Informs evolutionary models (Duplication-Divergence). | [20] |

| Modularity Density (D) | A quality function for module detection that overcomes resolution limits [21]. | Enables identification of biologically meaningful functional modules. | [21] |

Evolutionary Basis: The hierarchy is a product of evolutionary dynamics. The dominant Duplication-Divergence model drives multiplicative network growth, where gene duplication events create new nodes that initially share interactions, followed by selective pruning or rewiring [20]. This process naturally generates self-similar, fractal network topologies where functional modules are preserved and expanded across evolutionary time [20] [18].

Computational Protocols for Hierarchical PPI Analysis and Prediction

Leveraging hierarchy requires specialized computational models. Below are detailed protocols for two representative approaches.

Protocol 3.1: Hierarchical Graph Learning with HIGH-PPI

Objective: To predict PPIs by jointly modeling intra-protein (residue-level) and inter-protein (network-level) graphs.

Materials (The Scientist's Toolkit):

- PPI Network Data: From STRING [17], BioGRID, or DIP [22].

- Protein Structures: PDB files or AlphaFold2 predictions for proteins of interest [23].

- Software: HIGH-PPI implementation (https://github.com/zqgao22/HIGH-PPI) [17].

- Feature Sets: Residue-level physicochemical descriptors (e.g., charge, hydrophobicity) for node attributes [17].

Procedure:

- Bottom-View Graph Construction (Protein Graph):

- For each protein, represent residues as nodes.

- Connect nodes with edges if the Cα atoms of the residues are within a cutoff distance (e.g., 10Å) to create a contact map [17].

- Assign node features from precomputed residue-level physicochemical descriptors.

- Top-View Graph Construction (PPI Network Graph):

- Represent each protein graph from Step 1 as a single node in the top-level network.

- Connect nodes with edges if an experimental or predicted interaction exists between the corresponding proteins.

- Initialize the feature vector for each top-level node with the learned embedding from the bottom-view graph.

- Model Training & Prediction:

- Train the Bottom GNN (BGNN) and Top GNN (TGNN) end-to-end.

- BGNN (using Graph Convolutional Networks) learns a fixed-dimensional embedding for each protein graph [17].

- TGNN (using Graph Isomorphism Networks) propagates information through the PPI network to refine protein representations [17].

- For a candidate protein pair, concatenate their final embeddings and pass through a Multi-Layer Perceptron (MLP) classifier to predict interaction probability.

Workflow Diagram: Hierarchical Graph Learning for PPI Prediction

Protocol 3.2: Hyperbolic Embedding for Hierarchy-Aware Prediction with HI-PPI

Objective: To capture the latent hierarchical organization among proteins in a PPI network using hyperbolic geometry for improved prediction.

Materials:

- PPI Network & Features: As in Protocol 3.1. HI-PPI uses both sequence and structural features [16].

- Software: HI-PPI framework (method described in [16]).

- Mathematical Framework: Hyperbolic space (Poincaré ball model).

Procedure:

- Feature Extraction:

- Generate initial protein representations by concatenating sequence-based features (e.g., from ESM-2 language model) and structure-based features (e.g., from a pre-trained graph encoder on contact maps) [16].

- Hyperbolic Graph Convolution:

- Map the initial Euclidean protein features into hyperbolic space using an exponential map.

- Perform graph convolution operations (analogous to GCN) within the hyperbolic space. The hyperbolic distance from the origin of this space naturally corresponds to the node's centrality or hierarchical position—proteins near the origin are top-level hubs [16].

- Iteratively aggregate neighbor information to learn hierarchy-aware protein embeddings.

- Interaction-Specific Learning & Prediction:

- For a given protein pair (u, v), retrieve their hyperbolic embeddings.

- Apply a gated interaction network: compute the Hadamard product (element-wise multiplication) of the embeddings and pass it through a gating mechanism (e.g., a learnable sigmoid gate) to extract pair-specific interaction patterns [16].

- The gated representation is then used by a classifier to predict the interaction.

Experimental Validation Protocols for Hierarchical Predictions

Computational predictions of hierarchy and PPIs require orthogonal experimental validation.

Protocol 4.1: Validating Predicted Interfaces via Crosslinking Mass Spectrometry (XL-MS)

Objective: To confirm the structural accuracy of a predicted binary protein complex model, especially its interface.

Materials:

- Proteins: Purified, recombinant proteins for the predicted pair.

- Crosslinker: Membrane-permeable, amine-reactive crosslinker (e.g., DSSO).

- Equipment: LC-MS/MS system.

Procedure:

- In vitro Complex Formation: Incubate the two purified proteins under native conditions.

- Crosslinking: Add DSSO crosslinker to the mixture to covalently link spatially proximal lysine residues.

- Digestion and Enrichment: Quench the reaction, digest with trypsin, and optionally enrich for crosslinked peptides.

- LC-MS/MS Analysis: Run the sample and identify crosslinked peptide pairs.

- Validation: Map the identified lysine-lysine crosslinks onto the predicted 3D model of the complex. Successful validation occurs if a significant fraction of crosslinks are consistent with the distances (<~30Å) and orientations in the predicted interface [23]. This provides orthogonal evidence supporting the high-confidence models from AlphaFold2-based pipelines [23].

Workflow Diagram: Experimental Validation of Predicted Complexes

Protocol 4.2: Functional Validation of Hierarchical Modules via Gene Ontology Enrichment

Objective: To assess the biological relevance of a predicted protein complex or functional module identified by hierarchical clustering algorithms.

Materials:

- Gene List: The set of proteins constituting the predicted module.

- Software: GO enrichment analysis tools (e.g., clusterProfiler, DAVID).

- Database: Gene Ontology (GO) annotations.

Procedure:

- Module Detection: Use a hierarchical or multi-objective optimization algorithm (e.g., maximizing modularity density D [21] or using a GO-informed evolutionary algorithm [3]) to identify a candidate protein cluster from a PPI network.

- Enrichment Analysis:

- Input the protein list into the GO enrichment tool, specifying the appropriate organism background.

- Perform statistical testing (e.g., Fisher's exact test with Benjamini-Hochberg correction) for over-representation of GO Biological Process, Molecular Function, and Cellular Component terms.

- Interpretation: A statistically significant enrichment (adjusted p-value < 0.05) for coherent, specific GO terms (e.g., "mitochondrial electron transport") indicates that the computationally detected module corresponds to a bona fide functional unit in the cell, validating the hierarchical decomposition of the network [3] [21].

Application in Drug Discovery: Targeting Hierarchical Layers

The hierarchical view directly informs therapeutic strategy.

Table 2: Druggability Considerations Across Hierarchical Levels

| Target Level | Description | Druggability Consideration | Example Strategy |

|---|---|---|---|

| Residue/Interface | Specific binding/catalytic sites, "hotspots". | High if a concave pocket exists; challenging for flat, large interfaces [19]. | Design of small-molecule inhibitors that occupy interfacial pockets (e.g., at a "groove") [19]. |

| Protein (Hub) | Highly connected proteins in the top-level network. | Often essential; inhibition may have severe side effects. Could possess specific interfaces. | Allosteric inhibition or targeted degradation (PROTACs) to selectively modulate hub function. |

| Functional Module | A cluster of proteins performing a specific cellular process. | Allows for polypharmacology or network medicine. | Identify and target a critical, druggable protein within an oncogenic module while sparing other modules. |

Protocol Notes: When prioritizing PPI drug targets, first use hierarchical prediction models (like HIGH-PPI) to identify likely interactions. Then, analyze the predicted or modeled interface geometry using metrics from Table 1 (planarity, concavity) to assess the likelihood of successful small-molecule inhibition [19]. Finally, cross-reference with tissue-specific hierarchical networks from resources like TissueNet v.2 to evaluate potential on-target toxicity in healthy tissues [24].

Protein-protein interactions (PPIs) form the bedrock of nearly all cellular processes, from signal transduction to metabolic regulation. Understanding these interactions is crucial for a systems-level description of biological function and dysfunction, particularly in drug development where PPIs represent promising therapeutic targets [25]. The prediction and characterization of PPIs rely heavily on specialized biological databases that compile, curate, and disseminate interaction data. These resources provide the essential structural and evolutionary context needed to formulate and test hypotheses about protein function and interaction networks.

For researchers investigating the structural and evolutionary principles governing PPIs, four databases stand as foundational resources: the Protein Data Bank (PDB) for structural biology, STRING for functional associations, and BioGRID and IntAct for curated molecular interaction data. Each database offers complementary data types, curation philosophies, and analytical tools that together enable a multi-faceted approach to PPI prediction and validation. This article provides detailed application notes and experimental protocols for leveraging these resources within a comprehensive PPI prediction research framework, with particular emphasis on their integration for structural and evolutionary analysis.

Database Comparative Analysis

The major PPI databases differ significantly in scope, content, and underlying data models, making strategic selection essential for research efficacy. The table below provides a quantitative comparison of these key resources, highlighting their distinctive features and dataset sizes.

Table 1: Key Protein Interaction Databases: Comparative Analysis

| Database | Primary Focus | Interaction Count | Organism Coverage | Key Features | Data Types |

|---|---|---|---|---|---|

| PDB | Macromolecular structures | 245,778 released structures (as of 2025) [26] | Multiple | 3D structural data; Annual growth: ~16,000 structures [26] | X-ray crystallography, NMR, EM structures |

| STRING | Functional protein associations | ~210,914 interactions (E. coli example at medium confidence) [27] | >14,000 species | Directionality of regulation; Network clustering; Pathway enrichment [25] | Experimental, predicted, curated pathway data |

| BioGRID | Genetic & physical interactions | 2,901,447 raw interactions; 2,251,953 non-redundant [28] | 10+ major organisms [29] | Open Repository of CRISPR Screens (ORCS); Themed curation projects [28] | Physical, genetic, chemical associations, PTMs |

| IntAct | Molecular interaction data | 1,726,476 interactions; 150,010 interactors [30] | Multiple | PSI-MI standard compliance; Complex Portal [30] | Binary interactions, protein complexes |

These databases employ different data representation models that significantly impact how interactions can be analyzed. PPI datasets are typically visualized as graphs where proteins represent nodes and interactions represent connections between nodes [29]. However, representation differs based on experimental method – for affinity purification followed by mass spectrometry (AP-MS) data, the "spokes model" assumes interactions only between the tagged bait protein and each prey, while the "matrix model" assumes all proteins in a purified complex interact with each other [29]. Understanding these representation differences is crucial for accurate biological interpretation.

Table 2: Experimental Methodologies for Protein Interaction Detection

| Method | Principle | Key Databases | Advantages | Limitations |

|---|---|---|---|---|

| Yeast Two-Hybrid (Y2H) | Bait-prey interaction triggers reporter gene expression [29] | BioGRID, IntAct, MINT | Tests direct binary interactions; High-throughput capability | False positives from auto-activation; Membrane protein challenges |

| Affinity Purification + MS (AP-MS) | Tagged protein purification with co-purifying partners identified by MS [29] | BioGRID, IntAct | Identifies native complex components; Works in near-physiological conditions | Cannot distinguish direct from indirect interactions |

| CRISPR Screens | Gene knockout followed by phenotypic assessment | BioGRID ORCS [28] | Genome-wide functional assessment; Identifies genetic interactions | Indirect relationships; Off-target effects |

Database-Specific Application Notes

PDB (Protein Data Bank)

The Protein Data Bank serves as the single global repository for three-dimensional structural data of biological macromolecules, providing essential structural context for interpreting PPIs at atomic resolution. As of 2025, the PDB contains over 245,000 released structures, with approximately 16,000 new structures added annually [26]. This structural information is fundamental for understanding the physical principles governing protein interactions, including binding interfaces, conformational changes, and allosteric regulation mechanisms.

Application Protocol: Extracting Structural Information for PPI Prediction

Objective: Retrieve and analyze protein structures and complexes to inform PPI prediction models. Materials: PDB database (rcsb.org), molecular visualization software (e.g., PyMOL, UCSF Chimera) Procedure:

- Structure Retrieval: Navigate to the PDB website and search using protein identifiers, keywords, or sequence similarity using BLAST

- Complex Identification: Filter results to include only structures containing multiple protein chains or protein-ligand complexes

- Interface Analysis: Identify residues at protein-protein interfaces using built-in analysis tools measuring solvent accessibility and inter-atomic distances

- Conservation Mapping: Map evolutionary conservation scores from resources like ConSurf onto the structure to identify functionally important interface residues

- Data Export: Download structural coordinates in PDB format and interface information for further computational analysis

Research Reagent Solutions:

- PDB Structure Files: Atomic coordinates of macromolecular structures; provide physical basis for interactions

- PDB-101 Educational Resources: Tutorials and explanatory materials on structural biology concepts; enhance database usability [31]

- Ligand Explorer: Integrated visualization tool for analyzing protein-ligand interaction geometries

STRING Database

STRING integrates both physical and functional protein associations drawn from numerous sources, including experimental repositories, computational prediction methods, and curated pathway databases [25]. Its recently introduced "regulatory network" feature gathers evidence on the type and directionality of interactions using curated pathway databases and a fine-tuned language model that parses the scientific literature [25]. This makes STRING particularly valuable for constructing context-specific networks that reflect the dynamic nature of cellular signaling and regulatory processes.

Application Protocol: Constructing Functional Association Networks

Objective: Build comprehensive protein association networks incorporating multiple evidence channels to predict novel functional relationships. Materials: STRING database (string-db.org), protein identifier list Procedure:

- Query Input: Enter protein identifiers (UniProt, gene names, etc.) or protein sequences into the STRING search interface

- Evidence Channel Selection: Specify which evidence types to include (experimental, gene neighborhood, gene fusion, co-expression, textmining) via the "Data Settings" tab [27]

- Confidence Thresholding: Adjust the combined score threshold (0-1) to balance coverage and reliability; 0.7 represents high confidence [27]

- Network Analysis: Use built-in tools to identify significantly enriched functional terms and pathways within the network

- Data Export: Download the network in various formats (PSI-MI, TSV, FASTA) via the "Tables/Exports" feature for further analysis [27]

Research Reagent Solutions:

- Combined Scoring Algorithm: Integrates probabilities from multiple evidence channels while correcting for random observation; provides confidence metrics [27]

- Organism-Specific Datasets: Precomputed networks for >14,000 organisms; enable cross-species comparisons

- Programmatic Access (API): Enables automated querying and network retrieval for high-throughput analyses [27]

Figure 1: STRING Database Functional Association Workflow

BioGRID

BioGRID is one of the most comprehensive repositories for genetic and physical interaction data, with continuous monthly updates to its curated dataset [28]. As of November 2025, BioGRID contains interaction data from over 87,000 publications, encompassing nearly 2.9 million raw interactions and over 563,000 post-translational modification sites [28]. A key innovation is the BioGRID Open Repository of CRISPR Screens (ORCS), a publicly accessible database of CRISPR screens compiled through comprehensive curation of genome-wide CRISPR screen data from the biomedical literature [28].

Application Protocol: Genetic Interaction Screening Analysis

Objective: Identify and analyze genetic interactions using BioGRID's curated dataset to inform PPI prediction in disease contexts. Materials: BioGRID database (thebiogrid.org), gene list of interest, statistical analysis software Procedure:

- Dataset Access: Navigate to the BioGRID download page to retrieve the complete interaction dataset or use the web interface for targeted queries

- Interaction Filtering: Filter interactions by organism, experimental type (physical vs. genetic), and detection method using the available metadata annotations

- CRISPR Screen Integration: Access the ORCS database to incorporate functional genomic data from CRISPR screens, including cell line, phenotype, and significance metrics [28]

- Network Integration: Combine physical and genetic interaction data to construct comprehensive networks highlighting functional relationships

- Themed Curation Utilization: Leverage BioGRID's themed curation projects (e.g., autophagy, ubiquitin-proteasome system) for disease-relevant biological processes [28]

Research Reagent Solutions:

- BioGRID-ORCS: Curated CRISPR screening database; enables integration of functional genomic data

- GIX Browser Extension: Retrieves gene product information directly on webpages by double-clicking gene names; facilitates rapid data access [28]

- Themed Curation Projects: Expert-curated datasets focused on specific biological processes; provide high-quality subnetworks for disease research [28]

IntAct Molecular Interaction Database

IntAct provides an open-source database system and analysis tools for molecular interaction data, serving as a core member of the International Molecular Exchange (IMEx) consortium [29]. The database recently surpassed 1.5 million binary interaction evidences in its 247th release [32]. IntAct distinguishes itself through strict adherence to proteomics standards and provides the Complex Portal, a dedicated resource for protein complexes. For PPI prediction research, IntAct offers particularly high-quality data with detailed experimental annotation.

Application Protocol: Standard-Compliant Interaction Data Retrieval

Objective: Extract high-confidence binary interaction data compliant with proteomics standards for predictive model training. Materials: IntAct database (ebi.ac.uk/intact), PSI-MI compliant software tools Procedure:

- Data Access: Access IntAct through the main portal or programmatically via its web services API

- Binary Interaction Focus: Specify "binary interaction" search filters to obtain high-quality pairwise interaction data

- Experimental Detail Extraction: For each interaction, retrieve detailed experimental parameters including detection method, participant identification, and interaction parameters

- Complex Analysis: Use the integrated Complex Portal to analyze curated protein complexes and subunit interactions

- Standards-Compliant Export: Download data in PSI-MI format for interoperability with other bioinformatics tools and databases

Research Reagent Solutions:

- PSI-MI Data Format: Standardized representation for molecular interaction data; ensures interoperability [29]

- Complex Portal: Resource for manually curated protein complexes; provides authoritative complex information [30]

- IntAct Core Database: Curation platform supporting multiple molecular interaction databases; offers consistently annotated data [30]

Integrated Experimental Protocols

Integrated Protocol 1: Cross-Database PPI Network Construction for Novel Interaction Prediction

Objective: Integrate complementary data from multiple databases to construct a comprehensive PPI network and computationally predict novel interactions.

Materials:

- Computational environment (R/Python)

- Database access (PDB, STRING, BioGRID, IntAct)

- Network analysis tools (Cytoscape, NetworkX)

Procedure:

Step 1: Seed Generation from Structural Data

- Retrieve structures of interest from PDB with proteins in complex conformations

- Extract interface residues using distance cutoffs (<5Å between non-hydrogen atoms)

- Generate sequence-based position-specific scoring matrices for interface regions

Step 2: Experimental Evidence Integration

- Query BioGRID and IntAct for physical interactions involving seed proteins using their API interfaces

- Apply confidence filters: keep interactions with multiple publications or orthogonal detection methods

- Resolve identifier mapping issues using uniform conversion to UniProt identifiers throughout

Step 3: Functional Context Addition

- Use STRING to incorporate functional associations and co-expression data

- Apply medium confidence threshold (combined score ≥0.7) for balance between coverage and reliability

- Extract directionality information where available for regulatory networks [25]

Step 4: Evolutionary Conservation Analysis

- Retrieve orthologous sequences for proteins of interest using STRING's cross-species transfer capabilities

- Map conservation scores to structural models when available

- Identify evolutionarily conserved interface residues as potential functional "hotspots"

Step 5: Computational Prediction and Validation Prioritization

- Train machine learning classifier using features from integrated dataset

- Apply trained model to predict novel interactions from uncharacterized protein pairs

- Prioritize predictions based on evolutionary conservation, functional coherence, and structural plausibility

Figure 2: Integrated PPI Prediction Workflow

Integrated Protocol 2: Disease Mechanism Elucidation Through Multi-Scale Network Analysis

Objective: Elucidate disease mechanisms by integrating PPI data across structural, functional, and genetic levels.

Materials:

- Disease-associated gene list

- Multi-omics data (transcriptomics, proteomics)

- Pathway analysis tools (Enrichr, clusterProfiler)

Procedure:

Step 1: Disease Module Identification

- Extract physical interactions from BioGRID and IntAct for disease-associated proteins

- Apply network clustering algorithms to identify densely connected disease modules

- Animate modules with structural information from PDB where available

Step 2: Regulatory Layer Integration

- Use STRING's new regulatory network features to add directionality information [25]

- Integrate transcriptomic data to identify condition-specific interactions

- Map post-translational modification sites from BioGRID to identify regulatory switches

Step 3: Structural Modeling of Pathogenic Interactions

- Retrieve or homology-model structures for disease-relevant interactions

- Map disease-associated mutations to structural models

- Predict impact on binding affinity and interface stability

Step 4: CRISPR Functional Data Integration

- Incorporate genetic interaction data from BioGRID ORCS [28]

- Identify synthetic lethal relationships and genetic dependencies

- Cross-reference with drug-target information for therapeutic prioritization

Step 5: Therapeutic Hypothesis Generation

- Integrate multi-scale data to generate mechanistic disease hypotheses

- Identify key network nodes as potential therapeutic targets

- Design validation experiments using structural and evolutionary information

Research Reagent Solutions

Table 3: Essential Research Reagents and Computational Tools for PPI Research

| Category | Resource | Function | Source |

|---|---|---|---|

| Database Access | BioGRID GIX Browser Extension | Retrieves gene product information directly on webpages | [28] |

| Data Standards | PSI-MI Standards | Ensures interoperability between interaction databases | [29] |

| Computational Tools | STRING API | Enables programmatic access to functional association networks | [27] |

| Validation Resources | BioGRID ORCS | Provides curated CRISPR screen data for functional validation | [28] |

| Structural Analysis | PDB-101 | Educational resources for structural biology concepts | [31] |

The integrated use of PDB, STRING, BioGRID, and IntAct provides a powerful framework for advancing PPI prediction research grounded in structural and evolutionary principles. Each database brings unique strengths: PDB offers atomic-resolution structural insights; STRING provides functional context and directionality; BioGRID delivers comprehensive genetic and physical interaction data with specialized curation; and IntAct supplies standards-compliant molecular interaction data. The protocols outlined in this article demonstrate how these resources can be strategically combined to generate biologically meaningful predictions, from initial network construction through disease mechanism elucidation and therapeutic target identification. As these databases continue to evolve—with PDB expanding its structural coverage, STRING incorporating directionality of regulation, BioGRID enhancing its CRISPR screen curation, and IntAct progressing toward more standardized data representation—their collective utility for predicting and characterizing PPIs will only increase, opening new avenues for understanding cellular function and dysfunction.

Advanced Computational Methods: From Deep Learning to Energetic Profiling

Graph Neural Networks (GNNs) for Modeling PPI Network Topology

Protein-protein interactions (PPIs) are fundamental regulators of cellular functions, and their prediction is crucial for understanding biological systems and drug discovery [22]. Computational deep learning approaches represent an affordable and efficient solution to tackle PPI prediction, and among them, Graph Neural Networks (GNNs) have emerged as a powerful architecture [33]. GNNs adeptly capture local patterns and global relationships in protein structures by processing graph-structured data with minimal information loss, making them ideal for naturally representing the complex nature of protein macromolecules [22] [33]. This document details the application of GNNs for modeling PPI network topology, providing structured experimental data, detailed protocols, and essential resource information to facilitate research in this field.

Core GNN Architectures for PPI Prediction

The application of GNNs to PPI prediction can be broadly implemented through two conceptual frameworks: molecular structure-based and PPI network-based approaches [34]. In the molecular structure-based approach, the graph represents the three-dimensional structure of a single protein, where nodes are amino acid residues, and edges represent spatial or chemical relationships between them [35] [33]. In the PPI network-based approach, the entire interactome is modeled as a graph, where each node represents a whole protein, and edges represent known or predicted interactions between them [34]. Several core GNN architectures have been successfully adapted for PPI tasks, each with distinct strengths as summarized in the table below.

Table 1: Core Graph Neural Network Architectures for PPI Prediction

| GNN Architecture | Core Mechanism | Advantages for PPI Prediction | Representative Models |

|---|---|---|---|

| Graph Convolutional Network (GCN) [22] | Applies convolutional operations to aggregate features from a node's neighbors. | Simple, efficient, effective for learning from graph structure. | GCN-PPI [34], Base model in MGPPI [35] |

| Graph Attention Network (GAT) [22] | Introduces attention mechanisms to weight the importance of neighboring nodes. | Handles noisy connections; captures variable influence of residues. | GAT-PPI [34], AG-GATCN [22] |

| GraphSAGE [22] | Uses sampling and aggregation to generate node embeddings. | Scalable to large PPI networks; inductively learns from node features. | RGCNPPIS [22] |

| Graph Autoencoder (GAE) [22] | Encodes graph nodes into a latent space and decodes to reconstruct graph. | Suitable for link prediction in PPI networks; can handle unlabeled data. | Deep Graph Auto-Encoder (DGAE) [22] |

Quantitative Performance Benchmarking

Evaluating the performance of GNN models on standardized datasets is critical for assessing their predictive capability. The following table consolidates key performance metrics reported by several recent GNN-based methods on common PPI prediction tasks, providing a benchmark for comparison.

Table 2: Performance Benchmarks of GNN Models on PPI Prediction Tasks

| Model | Dataset | Accuracy | Precision | Recall | F-Score | AUC |

|---|---|---|---|---|---|---|

| GNN (Whole Dataset) [33] | PDBe PISA (Dimer Complexes) | 0.9467 | 0.8982 | 0.8108 | 0.8522 | 0.9794 |

| GNN (Interface Dataset) [33] | PDBe PISA (Interacting Chains) | 0.9610 | 0.8627 | 0.7927 | 0.8262 | 0.9793 |

| GNN (Chain Dataset) [33] | PDBe PISA (Single Chains) | 0.8335 | 0.5454 | 0.6731 | 0.6025 | 0.8679 |

| CurvePotGCN [36] | Human PPI | - | - | - | - | 0.98 |

| CurvePotGCN [36] | Yeast PPI | - | - | - | - | 0.89 |

| MGPPI [35] | Multi-species Dataset | Outperformed state-of-the-art methods | - | - | - | - |

Experimental Protocols

Protocol 1: Residue-Level PPI Prediction Using GCN/GAT

This protocol describes the procedure for predicting interactions between two proteins by representing each as an individual graph and using a GNN to learn features for a pair-wise classifier [34].

Input Data Preparation:

Model Architecture and Training:

- GNN Encoder: Build a GCN or GAT model to process each protein graph [34].

- The GNN performs message passing, updating each node's representation by aggregating features from its connected neighbors [35].

- Graph-Level Readout: After processing through the GNN layers, generate a single feature vector representing the entire protein graph. This is often done using a global mean pooling operation: ( yG = \frac{1}{|M|} \sum{i \in M} xi^T ), where ( M ) is the set of all nodes in the graph, and ( xi^T ) is the feature vector of node ( i ) at the final layer ( T ) [35].

- Classifier: For a protein pair (A, B), concatenate their graph-level feature vectors ( yG^A ) and ( yG^B ). Feed this concatenated vector into a classifier, typically a multi-layer perceptron (MLP) with two hidden layers and an output layer, to predict the probability of interaction [34].

- GNN Encoder: Build a GCN or GAT model to process each protein graph [34].

Validation: Validate the model on standardized datasets such as Pan's human dataset (HPRD) or the S. cerevisiae dataset from the Database of Interacting Proteins (DIP) [34].

Protocol 2: Interpretable PPI Site Prediction with Multiscale GNN

This protocol uses a multiscale GNN to predict PPI sites at the residue level and provides explanations for the predictions by identifying key binding residues [35].

Input Data Preparation:

- Graph Construction: Represent paired protein structures as amino acid-level graphs

G = (N, E), where nodes(N)are residues and edges(E)represent various physicochemical relationships [35]. - Node Features: Include a comprehensive set of biophysical and structural attributes as listed in Table 3 below [35].

- Edge Features: Encode the types of bonds or contacts between residues (e.g., covalent, hydrophobic, hydrogen bond, ionic bond) as edge attributes [35].

- Graph Construction: Represent paired protein structures as amino acid-level graphs

Model Architecture and Training:

- Multiscale GCN (MGCN): Implement a GCN with a layer-wise sampling approach.

- The model extracts node features after each graph convolutional layer, allowing it to capture both local structural information (from early layers with a small receptive field) and global protein features (from deeper layers with a larger receptive field) [35].

- The feature vectors from multiple layers are integrated to form the final protein representation.

- Readout and Classification: Use a readout function to get a graph-level representation for each protein and combine them for binary interaction prediction.

- Multiscale GCN (MGCN): Implement a GCN with a layer-wise sampling approach.

Interpretation with Grad-WAM:

- To identify key binding residues, use the Gradient Weighted interaction Activation Mapping (Grad-WAM) method [35].

- This technique utilizes the gradient magnitudes flowing back from the output to the final GCN layer, which indicate the importance of each node (residue) for the model's prediction.

- The contributions of each amino acid position are calculated and visualized, highlighting crucial residues involved in the interaction [35].

The Scientist's Toolkit

Table 3: Essential Research Reagents and Resources for GNN-based PPI Studies

| Resource Category | Specific Examples | Function and Application |

|---|---|---|

| PPI Databases | STRING, BioGRID, DIP, HPRD, MINT, IntAct [22] | Provide known and predicted PPIs for model training, validation, and benchmarking. |

| Structure Databases | Protein Data Bank (PDB) [22] | Source of 3D protein structures required for constructing molecular graphs. |

| Protein Language Models | SeqVec, ProtBert [34] | Generate informative, context-aware feature vectors for amino acid residues from sequence data, used as node features. |