Stochastic vs Deterministic Optimization: A Researcher's Guide for Biomedical Applications

This article provides a comprehensive analysis of deterministic and stochastic optimization methods, tailored for researchers and professionals in drug development and biomedical sciences.

Stochastic vs Deterministic Optimization: A Researcher's Guide for Biomedical Applications

Abstract

This article provides a comprehensive analysis of deterministic and stochastic optimization methods, tailored for researchers and professionals in drug development and biomedical sciences. It explores the foundational principles of both paradigms, contrasting their theoretical guarantees and operational mechanisms. The scope extends to methodological applications in areas like process optimization and epidemic modeling, addresses troubleshooting common challenges like high-dimensionality and noise, and offers a rigorous validation framework for method selection. By synthesizing these four intents, this guide aims to equip scientists with the knowledge to effectively choose and apply optimal optimization strategies in complex biomedical research scenarios.

Core Principles: Understanding Deterministic and Stochastic Optimization

In the scientific domain of drug discovery, the choice of an optimization strategy is pivotal. This guide delineates the fundamental divide between two overarching paradigms: deterministic optimization, which provides exact, reproducible solutions, and probabilistic (or stochastic) optimization, which employs guided search and randomness to explore complex solution spaces [1]. Deterministic methods, such as Sequential Quadratic Programming (SQP), are characterized by their rule-based logic; for any given input, they will invariably produce the same output, offering precision and auditability [2] [3]. In contrast, probabilistic methods—including genetic algorithms and simulated annealing—leverage statistical inference and randomness, allowing them to handle uncertainty, avoid local optima, and adapt to noisy or incomplete data [1] [3].

The distinction is not merely academic but has practical implications for predictive modeling and algorithm selection. Deterministic models provide precise point estimates, making them ideal for scenarios requiring high precision and explainability. Probabilistic models, however, output confidence scores and probability distributions, quantifying the uncertainty inherent in their predictions [4] [3]. This capability is crucial for risk assessment and robust decision-making in high-stakes environments. As we explore these paradigms within the context of pharmaceutical research, it becomes clear that the "best" choice is deeply contextual, depending on factors such as data quality, the problem's complexity, and the need for interpretability versus exploratory power.

The following tables synthesize experimental data and key characteristics comparing deterministic and probabilistic methodologies across various applications, from machine learning to direct optimization.

Table 1: Comparative Performance of Deterministic and Probabilistic Machine Learning Models in Additive Manufacturing

| Metric | Deterministic Model (SVR) | Probabilistic Model (GPR) | Probabilistic Model (BNN) |

|---|---|---|---|

| Predictive Accuracy | Accuracy close to process repeatability [4] | Strong predictive performance [4] | Varies by approach; one balances accuracy/uncertainty, another has lower dimensional accuracy [4] |

| Output Type | Precise point estimate [4] | Predictive distribution [4] | Captures both aleatoric and epistemic uncertainty [4] |

| Interpretability | N/A | High interpretability [4] | Lower interpretability; complex uncertainty decomposition [4] |

| Primary Strength | Precision [4] | Strong performance and interpretability [4] | Comprehensive uncertainty quantification for risk assessment [4] |

Table 2: High-Level Comparison of Deterministic vs. Probabilistic Optimization Models

| Factor | Deterministic Models | Probabilistic Models |

|---|---|---|

| Output Type | Binary, yes/no decision [3] | Probability score (e.g., match confidence) [3] |

| Data Requirements | Requires complete, clean data [3] | Tolerates incomplete or noisy data [3] |

| Flexibility & Adaptability | Rigid, requires manual updates [3] | Learns and adapts from new data [3] |

| Transparency & Explainability | Easy to audit and explain [2] [3] | "Black-box" nature; may need additional tools for explainability [3] |

| Best-Fit Use Cases | Compliance, exact matching, high-precision decisions [3] | Pattern recognition, exploratory problems, fragmented data [3] |

Experimental Protocols: Methodologies in Practice

Protocol 1: A Hybrid Optimization Framework for Process Design

A comparative study detailed a rigorous methodology for applying deterministic, stochastic, and hybrid optimization methods to integrated process design, considering dynamical non-linear models [1].

- Problem Formulation: The problem was stated as a multi-objective non-linear optimization problem with non-linear constraints. Key objectives included optimizing for controllability indexes such as disturbance sensitivity gains, the H∞ norm, and the Integral Square Error (ISE) to achieve optimum disturbance rejection.

- Algorithms Tested:

- Deterministic Method: Sequential Quadratic Programming (SQP).

- Stochastic Methods: Genetic Algorithms (GA) and Simulated Annealing (SA).

- Hybrid Method: A novel methodology combining both deterministic and stochastic approaches.

- Case Study & Validation: The proposed strategies were applied to an activated sludge process with Proportional-Integral (PI) control schemes. This real-world, non-linear system served to illustrate and validate the performance of the different optimization methods.

- Reported Outcome: The study found that the hybrid methodology, which leveraged the strengths of both deterministic and stochastic paradigms, showed an improvement in performance compared to using either type of method alone [1].

Protocol 2: AI-Guided Peptide Optimization in Drug Discovery

Gubra's streaMLine platform exemplifies a modern, probabilistic machine learning protocol for peptide drug discovery, integrating high-throughput data generation with predictive modeling [5].

- Platform: The

streaMLinedrug discovery platform. - Objective: To simultaneously optimize peptide candidates for multiple drug-like properties, including receptor potency, selectivity, and stability.

- Methodology: The platform combines high-throughput experimental data generation with advanced AI models. Machine learning models are trained on this data to guide the selection of the most promising drug candidates. This enables informed decision-making by predicting molecular properties and optimizing lead compounds.

- Application Example: In developing a novel GLP-1 receptor agonist, the AI-guided platform made specific, data-driven substitutions that enhanced GLP-1R affinity while reducing off-target effects. It also optimized stability by reducing peptide aggregation and achieved a pharmacokinetic profile compatible with once-weekly dosing, as confirmed in vivo in diet-induced obese mice [5].

Protocol 3: Deep Learning for Hit-to-Lead Progression

A landmark study published in Nature Communications (2025) demonstrated a protocol for expediting hit-to-lead progression using deep learning for reaction prediction and multi-dimensional optimization [6].

- Data Set Generation: Researchers employed high-throughput experimentation (HTE) to generate a comprehensive data set of 13,490 novel Minisci-type C-H alkylation reactions.

- Model Training: This large-scale HTE data served as the foundation for training deep graph neural networks to accurately predict reaction outcomes.

- Virtual Library Creation & Screening: Scaffold-based enumeration was used to generate a virtual library of 26,375 molecules from moderate inhibitors of monoacylglycerol lipase (MAGL). This library was then evaluated using a combination of reaction prediction, physicochemical property assessment, and structure-based scoring.

- Validation: From 212 identified candidates, 14 compounds were synthesized and tested. These exhibited subnanomolar activity, representing a potency improvement of up to 4500 times over the original hit compound. Co-crystallization of three computationally designed ligands with the MAGL protein provided structural validation of the predicted binding modes [6].

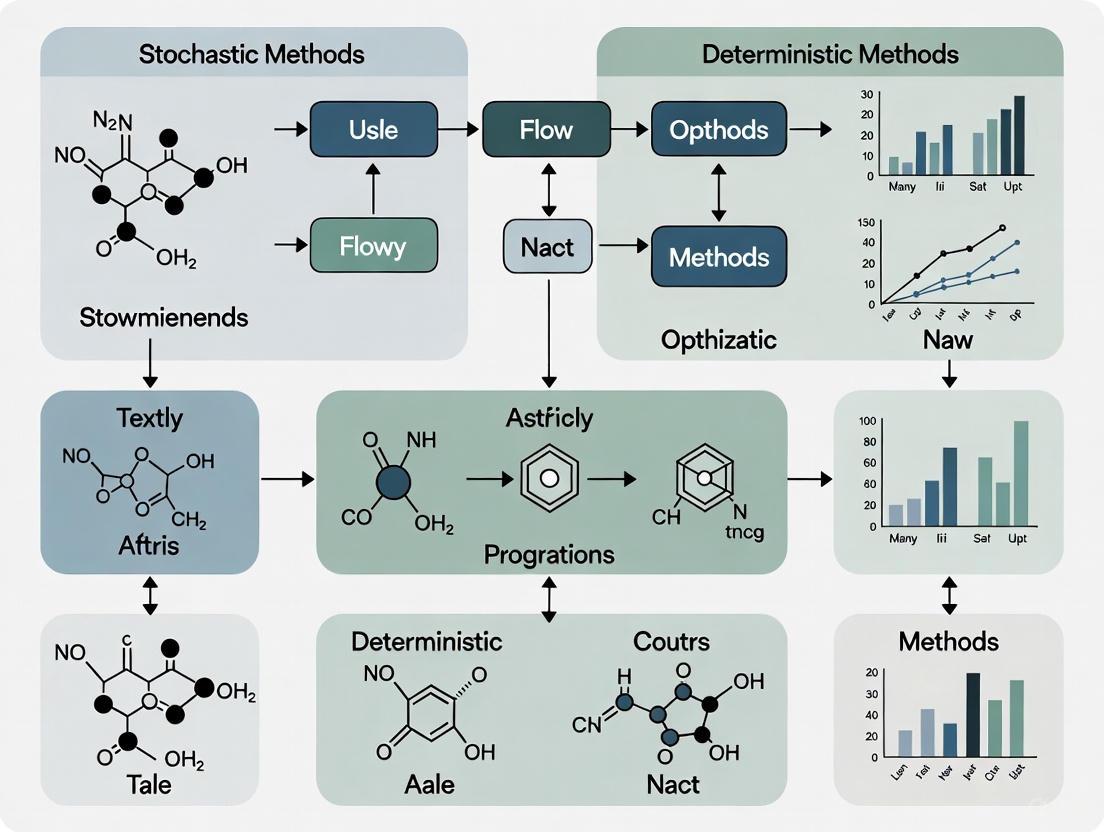

Workflow Visualization: Experimental Pathways

The following diagrams, generated with Graphviz DOT language, illustrate the logical workflows of the key experimental protocols described above.

Diagram 1: Hybrid Optimization Workflow

Diagram 2: AI-Driven Hit-to-Lead Workflow

The Scientist's Toolkit: Essential Research Reagents & Solutions

Table 3: Key Research Reagent Solutions for AI-Driven Drug Discovery

| Tool or Reagent | Function / Explanation |

|---|---|

| High-Throughput Experimentation (HTE) | A methodology for rapidly generating thousands of parallel chemical reactions to create large, high-quality datasets for training machine learning models [6]. |

| Deep Graph Neural Networks (GNNs) | A class of machine learning architectures that operate on graph-structured data, ideal for predicting molecular properties, reaction outcomes, and protein-ligand interactions [7] [6]. |

| Geometric Deep Learning Platform | A reference implementation (e.g., based on PyTorch Geometric) for building models that learn from the inherent 3D geometry and structure of molecules and proteins [6]. |

| Structure Prediction Tools (e.g., AlphaFold) | Software that predicts the 3D structure of proteins from amino acid sequences, providing critical structural context for target identification and de novo drug design [5]. |

| Generative Models (e.g., proteinMPNN) | AI systems that can propose novel amino acid or molecular sequences (de novo design) that are compatible with a desired 3D structure or function [5]. |

| StreaMLine Platform | An integrated platform that combines high-throughput data generation with machine learning to systematically guide the optimization of peptide candidates for multiple properties simultaneously [5]. |

In computational mathematics and operations research, the pursuit of an optimal solution is guided by two fundamentally different philosophies: deterministic optimization, which provides guaranteed global optima, and stochastic optimization, which offers controllable execution times with probabilistic performance [8]. This dichotomy represents a critical trade-off between solution quality and computational feasibility that every researcher must navigate when selecting an optimization method. Deterministic methods aim to find the global best result with theoretical guarantees, exploiting specific problem structures to provide completeness and rigor [8]. In contrast, stochastic optimization employs processes with random factors, sacrificing guaranteed optimality for practical computation times and the ability to handle complex, black-box problems where deterministic methods struggle [8] [9].

The selection between these approaches has profound implications across scientific domains, particularly in drug development where optimization problems range from molecular docking studies to clinical trial design. Understanding their theoretical foundations and performance characteristics is essential for building effective computational workflows. This guide provides a systematic comparison of these methodologies, supported by experimental data and practical implementation frameworks for researchers navigating this crucial decision.

Theoretical Foundations and Performance Guarantees

Core Principles of Deterministic Optimization

Deterministic optimization encompasses rigorous algorithmic classes that provide theoretical guarantees for finding global optima. These methods are classified as either complete (guaranteeing global optimality with indefinite execution time) or rigorous (finding global optima in finite time within predefined tolerances) [8]. This mathematical certainty comes from exploiting convenient problem features through methods such as branch-and-bound, cutting plane, outer approximation, and interval analysis [8] [9].

The effectiveness of deterministic methods depends heavily on problem structure. For convex optimization problems - where the objective function and feasible set form a convex region - any local minimum is automatically a global minimum [10]. This property makes deterministic methods particularly powerful for problems with clear exploitable features. A function is convex if it satisfies the inequality (f(αx2 + (1-α)x1) ≤ αf(x2) + (1-α)f(x1)) for (0≤α≤1) and any two points (x1), (x2) in a convex set [10]. For differentiable functions, convexity can be verified by checking whether the Hessian matrix is positive semidefinite at all points [10].

Deterministic approaches excel for problems with linear programming (LP), integer programming (IP), and convex nonlinear programming (NLP) formulations [8]. However, they face significant challenges with black-box problems, extremely complex search spaces, and intricate problem structures where exploitable features are not readily available [8].

Fundamental Aspects of Stochastic Optimization

Stochastic optimization employs randomized processes to explore solution spaces, offering fundamentally different theoretical guarantees compared to deterministic approaches. While these methods cannot guarantee global optima in finite time, the probability of finding the global optimum increases with execution time, approaching 100% only as time approaches infinity [8]. This probabilistic framework makes stochastic methods particularly valuable for real-world scenarios where good-enough solutions within feasible timeframes are more valuable than guaranteed optima after impractical computation periods.

The theoretical foundation of stochastic optimization enables controllable execution time, allowing users to balance solution quality against computational resources [8]. This capability is implemented through various metaheuristics including trajectory methods (e.g., tabu search) and population-based methods (e.g., genetic algorithms, particle swarm optimization, and ant colony optimization) [8] [9]. These algorithms are especially effective for problems where the search space is too large for exhaustive methods or where the objective function lacks nice mathematical properties like convexity or differentiability.

For drug development applications, stochastic methods can handle the complex, noisy, and multi-modal landscapes commonly encountered in molecular design and protein folding problems. Their ability to escape local optima through randomization makes them particularly suitable for these challenging domains where deterministic methods might become trapped in suboptimal regions.

Comparative Theoretical Framework

Table 1: Theoretical Guarantees of Optimization Methods

| Theoretical Aspect | Deterministic Optimization | Stochastic Optimization |

|---|---|---|

| Global Optima Guarantee | Guaranteed with theoretical proofs | Stochastic; approaches 100% probability only with infinite time |

| Execution Time | May be very long for medium/big problems; often unpredictable | Controllable based on user requirements and resource constraints |

| Problem Models | LP, IP, MLP, NMLP, MINLP with exploitable structures | Any model, including black-box and non-convex problems |

| Convergence Proofs | Based on mathematical programming theory | Rely on probability theory and convergence in expectation |

| Typical Algorithms | Branch-and-Bound, Cutting Plane, Outer Approximation, Interval Analysis | Genetic Algorithms, Particle Swarm Optimization, Simulated Annealing, Ant Colony |

| Required Problem Structure | Exploitable mathematical features (convexity, linearity) | No specific structure required; operates on evaluation only |

The relationship between execution time and solution quality represents the fundamental trade-off between these approaches. This relationship can be visualized through the following conceptual diagram:

Theoretical Trade-offs Between Deterministic and Stochastic Methods

Methodological Approaches and Experimental Protocols

Deterministic Method Implementation

Deterministic optimization methods follow systematic procedures that guarantee solution quality through mathematical rigor rather than random sampling. The branch and bound method, for instance, operates by recursively dividing the feasible region into smaller subproblems (branching) and calculating bounds on optimal solutions to prune suboptimal branches [9]. The algorithm maintains a global bound that progressively tightens as the search proceeds, eventually converging to the proven optimal solution.

Cutting plane methods employ a different strategy, iteratively refining the feasible set by adding linear inequalities that eliminate portions of the space while preserving optimal solutions [9]. These methods begin with a relaxation of the original problem, then sequentially add "cuts" that remove fractional solutions until an integer solution is obtained (for MILP problems) or until the optimal solution is identified.

Interval methods use interval arithmetic to maintain rigorous bounds on function values throughout the optimization process [9]. By representing numbers as intervals that guarantee to contain the true value, these methods can provide mathematically proven enclosures of global optima, making them particularly valuable for safety-critical applications where approximation errors are unacceptable.

For convex problems, deterministic methods can leverage the powerful property that any local optimum is necessarily global [10]. This enables highly efficient algorithms that terminate once any local optimum is found, dramatically reducing computation time for problems that satisfy convexity assumptions. The verification of convexity can be performed by checking if the Hessian matrix of the objective function is positive semidefinite throughout the feasible region [10].

Stochastic Algorithm Frameworks

Stochastic optimization methods employ randomized strategies to explore complex solution spaces. Genetic algorithms maintain a population of candidate solutions that undergo selection, crossover, and mutation operations inspired by biological evolution [8] [9]. These algorithms effectively explore multiple regions of the search space simultaneously, using fitness-based selection to progressively improve solution quality.

Simulated annealing mimics the physical process of annealing in metallurgy, where a material is heated and slowly cooled to reduce defects [9]. The algorithm employs a temperature parameter that controls the probability of accepting worse solutions, allowing escape from local optima in early stages while converging toward better solutions as the "temperature" decreases.

Particle swarm optimization coordinates a population of particles that move through the search space, with each particle adjusting its trajectory based on its own experience and the experiences of neighboring particles [8]. This social behavior enables efficient information sharing across the population, often leading to rapid discovery of promising regions in the search space.

The theoretical foundation for many stochastic methods lies in Markov chain theory, which ensures that under appropriate conditions (such as careful cooling schedules in simulated annealing), the probability distribution of solutions will converge to a distribution concentrated on global optima given sufficient time [9]. While this asymptotic guarantee doesn't ensure finite-time performance, it provides the mathematical foundation for the method's global optimization properties.

Experimental Comparison Methodology

Rigorous comparison between optimization approaches requires standardized evaluation protocols. For the activated sludge process optimization studied by [1], researchers implemented both deterministic (sequential quadratic programming) and stochastic (genetic algorithms, simulated annealing) methods on the same non-linear constrained problem. Performance was evaluated using multiple criteria: solution quality (objective function value), computational effort (function evaluations and CPU time), constraint satisfaction, and reliability across multiple runs.

In COVID-19 control optimization [11], deterministic and stochastic formulations were compared using compartmental models parameterized with real-world data from Algeria. The stochastic model incorporated white noise perturbations to account for uncertainties in disease transmission dynamics. Both approaches were evaluated based on their ability to minimize infection rates while considering control costs, with additional analysis of the stochastic method's performance across multiple realizations.

For protein structure prediction [9], a classic global optimization challenge, researchers have employed both deterministic (branch-and-bound with interval analysis) and stochastic (replica exchange molecular dynamics) approaches. Performance metrics included energy minimization, structural accuracy compared to experimental data, and computational requirements, revealing the complementary strengths of both methodologies for different molecular systems.

Table 2: Experimental Protocol for Method Comparison

| Evaluation Metric | Measurement Method | Application Context |

|---|---|---|

| Solution Quality | Deviation from known optimum or best-found solution | All benchmark problems |

| Computational Effort | CPU time, function evaluations, memory usage | Activated sludge process [1] |

| Reliability | Success rate across multiple runs or initial conditions | Non-linear constraint satisfaction |

| Constraint Handling | Degree of constraint violation or feasibility maintenance | Engineering design problems |

| Uncertainty Quantification | Sensitivity to parameter variations and noise | COVID-19 control [11] |

| Scalability | Performance degradation with problem size | Molecular docking studies |

Performance Analysis and Comparative Data

Quantitative Performance Comparison

Empirical studies reveal distinct performance patterns between deterministic and stochastic approaches. In the integrated design of processes using dynamical non-linear models, [1] conducted a systematic comparison showing that while deterministic methods (sequential quadratic programming) found higher-precision solutions for tractable problems, stochastic methods (genetic algorithms, simulated annealing) demonstrated superior performance on complex non-convex problems with multiple constraints. Most significantly, a hybrid methodology combining both approaches achieved the best overall performance, leveraging the precision of deterministic methods with the global exploration capability of stochastic approaches.

For epidemic control optimization, [11] demonstrated that stochastic formulations provided more robust policies under uncertainty compared to their deterministic counterparts. The deterministic optimal control solutions, while mathematically elegant, showed significant performance degradation when applied to realistic scenarios with noisy data and unpredictable transmission dynamics. In contrast, stochastic optimization produced solutions that maintained effectiveness across a wider range of possible scenarios, albeit at higher computational cost.

In molecular simulations, [9] notes that stochastic methods like parallel tempering have become the dominant approach for protein folding and structure prediction, despite the existence of deterministic alternatives. This preference stems from the ability of stochastic methods to navigate the extremely complex energy landscapes of biomolecules, where deterministic methods often become trapped in local minima corresponding to misfolded structures.

Time-Quality Trade-off Analysis

The fundamental trade-off between computation time and solution quality follows different patterns for deterministic and stochastic methods. Deterministic algorithms often exhibit asymptotic convergence - they may show limited progress initially followed by rapid convergence once the algorithm identifies the optimal region [8]. The computation time for these methods depends critically on problem structure rather than just size, with some problems solved efficiently while others require practically infinite time.

Stochastic methods typically demonstrate diminishing returns - rapid improvement in early iterations followed by progressively slower refinement [8]. This characteristic enables practical implementation where users can terminate the search once acceptable quality is achieved, rather than waiting for guaranteed convergence. The following diagram illustrates this fundamental difference in convergence behavior:

Comparative Convergence Patterns Between Method Classes

Application-Specific Performance

The performance characteristics of optimization methods vary significantly across application domains. In process engineering design problems studied by [1], deterministic methods excelled for problems with well-defined mathematical structure and convex properties, while stochastic methods proved more effective for highly constrained, non-convex problems with discontinuous design spaces.

For epidemiological control strategies [11], stochastic optimization demonstrated superior performance in handling the inherent uncertainties in disease transmission parameters and intervention effectiveness. The deterministic approaches produced solutions that were optimal in a theoretical sense but fragile when applied to real-world scenarios with noisy data and unpredictable human behavior.

In molecular modeling and drug design [9], stochastic global optimization methods have become the standard for protein folding and molecular docking problems due to their ability to navigate complex energy landscapes with numerous local minima. While deterministic methods provide guarantees for certain simplified molecular models, they typically cannot handle the full complexity of biomolecular systems.

Table 3: Application-Based Performance Comparison

| Application Domain | Deterministic Strength | Stochastic Strength | Hybrid Approach |

|---|---|---|---|

| Process Engineering | High precision for structured problems | Handles non-convex constraints | Sequential: stochastic exploration then deterministic refinement |

| Epidemiological Control | Mathematical elegance for simplified models | Robustness to uncertainty and noise | Stochastic with deterministic subproblems |

| Drug Discovery | Guarantees for simplified molecular models | Navigates complex energy landscapes | Parallel: both methods with solution exchange |

| Protein Folding | Limited to small or coarse-grained systems | Handles full atomic complexity | Multi-scale: stochastic at atomic, deterministic at residue level |

| Clinical Trial Design | Optimal for simplified patient models | Accommodates real-world variability | Stochastic optimization with deterministic constraints |

Implementation Framework and Research Toolkit

Selection Guidelines for Research Applications

Choosing between deterministic and stochastic optimization requires careful analysis of problem characteristics and research constraints. Researchers should consider these key factors:

Solution Quality Requirements: Applications demanding mathematically proven optima (safety-critical systems, regulatory submissions) favor deterministic approaches, while scenarios where good-enough solutions suffice (preliminary screening, exploratory research) can utilize stochastic methods [8] [10].

Problem Structure: Problems with convex properties, linear constraints, and exploitable mathematical structure are ideal for deterministic methods, while black-box problems, non-convex landscapes, and systems with numerous local optima warrant stochastic approaches [10] [9].

Computational Budget: Limited computational resources or strict time constraints often favor stochastic methods with their controllable execution times, while problems where computation time is secondary to solution quality may justify deterministic approaches [8].

Uncertainty Considerations: Problems with significant parameter uncertainty, noisy evaluations, or stochastic dynamics align with stochastic optimization frameworks, while deterministic problems with precise parameters suit deterministic methods [11] [12].

Implementation Complexity: Deterministic methods often require specialized mathematical expertise to formulate problems appropriately, while stochastic methods can be more straightforward to implement for complex, poorly understood systems [9].

Computational Research Toolkit

Implementing optimization strategies requires both theoretical understanding and practical tools. The following research toolkit provides essential components for developing optimization workflows:

Table 4: Research Reagent Solutions for Optimization Implementation

| Research Reagent | Function | Implementation Examples |

|---|---|---|

| Convexity Verification | Determines if local optima are global | Hessian matrix positive definiteness check [10] |

| Branch-and-Bound Framework | Provides deterministic global optimization | Integer programming, spatial branching for NLP |

| Interval Arithmetic Library | Enables rigorous bound computation | Verified constraint propagation, uncertainty quantification |

| Metaheuristic Template | Implements stochastic search strategies | Genetic algorithm, particle swarm, simulated annealing [9] |

| Hybrid Coordination Algorithm | Manages deterministic-stochastic interaction | Solution passing, search space decomposition, multi-start |

| Performance Profiling | Tracks time-quality tradeoffs | Convergence monitoring, solution quality assessment |

Workflow Integration Strategy

Successful integration of optimization methods requires systematic workflow design. The following diagram illustrates a decision framework for method selection and implementation:

Optimization Method Selection Decision Framework

The theoretical guarantees of deterministic and stochastic optimization methods present researchers with a fundamental trade-off between solution quality certainty and computational practicality. Deterministic methods provide unmatched guarantees of global optimality but often require impractical computation times for complex real-world problems. Stochastic methods offer controllable execution and robust performance across diverse problem structures but cannot provide mathematical certainty of global optimality [8].

This comparison reveals that method selection is highly application-dependent. For drug development applications, stochastic methods frequently excel in early-stage discovery where problem complexity is high and good-enough solutions enable rapid iteration, while deterministic approaches may prove valuable for later-stage optimization problems with well-characterized structure and validated models. The emerging trend toward hybrid methodologies [1] offers promising avenues for leveraging the strengths of both approaches, using stochastic methods for global exploration and deterministic techniques for local refinement.

As optimization challenges in pharmaceutical research continue to grow in scale and complexity, understanding these fundamental trade-offs becomes increasingly critical. Researchers must balance theoretical guarantees against practical constraints, selecting methods that align with their specific quality requirements, computational resources, and application contexts. By applying the systematic comparison and implementation frameworks presented here, scientists can make informed decisions that advance both methodological rigor and practical impact in drug discovery and development.

In the field of mathematical optimization, researchers and practitioners are frequently confronted with a fundamental choice: whether to employ deterministic models, which produce precisely reproducible results from a fixed set of inputs, or stochastic models, which explicitly incorporate randomness and uncertainty to generate a distribution of possible outcomes [13] [14]. This distinction forms a critical axis in the broader thesis of optimization methodology, with profound implications for applications ranging from pharmaceutical development to energy systems engineering [15] [16]. The selection between these approaches hinges on multiple factors, including problem structure, data availability, computational resources, and the inherent uncertainty present in the system being modeled [14].

Deterministic approaches, including Linear Programming (LP), Integer Programming (IP), and Nonlinear Programming (NLP), have historically dominated optimization practice due to their conceptual clarity and computational efficiency [16] [17]. These methods assume perfect knowledge of all system parameters and establish clear cause-and-effect relationships between inputs and outputs [14]. In contrast, stochastic models embrace the inherent randomness of real-world systems, making them particularly valuable for modeling biological processes, financial markets, and other domains where uncertainty cannot be ignored [15] [13]. As optimization problems grow increasingly complex and high-dimensional, the rigid dichotomy between deterministic and stochastic paradigms is giving way to sophisticated hybrid approaches that leverage the strengths of both methodologies [18] [19].

This guide systematically compares the suitability of major optimization model classes—LP, IP, NLP, and black-box methods—across diverse problem landscapes, with particular attention to their application in scientific domains such as drug development. Through explicit experimental data, detailed methodologies, and structured analysis, we provide researchers with a framework for selecting appropriate modeling approaches based on problem characteristics and performance requirements.

Conceptual Foundations: Deterministic vs. Stochastic Paradigms

Core Definitions and Characteristics

Deterministic models operate on the principle that system behavior is fully determined by parameter values and initial conditions, without incorporating random variation [15] [14]. In these models, identical inputs will always produce identical outputs, establishing a transparent cause-and-effect relationship that facilitates straightforward interpretation and implementation [14]. Mathematical representations typically take the form of ordinary differential equations (ODEs) or algebraic constraint systems, where the trajectory of model components is precisely fixed once initial conditions are specified [15].

Stochastic models intentionally incorporate randomness as an inherent feature of system dynamics [15] [13]. These approaches recognize that many real-world processes—particularly in biological and economic systems—are influenced by random events that can profoundly impact outcomes, especially when population sizes are small [15]. Unlike their deterministic counterparts, stochastic models with identical parameters and initial conditions can produce an ensemble of different outputs, requiring probabilistic rather than deterministic interpretation [13] [14].

Table 1: Fundamental Characteristics of Deterministic vs. Stochastic Models

| Characteristic | Deterministic Models | Stochastic Models |

|---|---|---|

| Output Nature | Unique, precisely determined result | Distribution of possible outcomes |

| Uncertainty Handling | Assumes perfect knowledge | Explicitly incorporates randomness |

| Computational Demand | Generally lower | Typically higher due to sampling needs |

| Data Requirements | Less data intensive | Requires extensive data for distribution estimation |

| Interpretability | Straightforward cause-effect relationships | Probabilistic, requires statistical literacy |

| Ideal Application Domain | Well-defined systems with minimal uncertainty | Complex systems with inherent randomness |

Mathematical Formulations

The mathematical representation of deterministic models for chemical process optimization often takes the form of NLP problems [16]:

Minimize: ( f(x) ) Subject to: ( g(x) \leq 0 ), ( h(x) = 0 ), ( x \in \mathbb{R}^n )

Where ( f(x) ) represents the objective function (e.g., economic performance), while ( g(x) ) and ( h(x) ) represent inequality and equality constraints governing system behavior.

In contrast, stochastic models frequently employ master equations to describe the time evolution of probability distributions [15]. For a simple birth-death process representing cell population dynamics, the master equation takes the form:

( \frac{dPn(t)}{dt} = \beta \cdot (n-1) \cdot P{n-1}(t) + \delta \cdot (n+1) \cdot P{n+1}(t) - (\beta + \delta) \cdot n \cdot Pn(t) \quad \text{for } n \geq 1 )

Where ( P_n(t) ) represents the probability of population size ( n ) at time ( t ), with ( \beta ) and ( \delta ) denoting birth and death rates, respectively [15].

Mathematical Programming Approaches: LP, IP, and NLP

Algorithmic Landscape and Solver Technologies

Deterministic optimization encompasses a hierarchy of mathematical programming approaches, with Linear Programming (LP), Integer Programming (IP), and Nonlinear Programming (NLP) representing progressively more complex model classes [17]. Modern solver technologies such as Artelys Knitro implement multiple algorithm classes for addressing these problem types, including interior-point methods, active-set methods, and sequential quadratic programming (SQP) [17].

Table 2: Performance Characteristics of NLP Algorithms in Knitro Solver

| Algorithm Type | Problem Scale | Derivative Requirements | Strengths | Weaknesses |

|---|---|---|---|---|

| Interior/Direct | Large-scale (sparse) | Explicit Hessian matrix | Handles ill-conditioned problems; works with degenerate constraints | Requires explicit Hessian storage |

| Interior/CG | Large-scale (sparse/dense) | Hessian-vector products | Avoids Hessian formation/factorization; suitable for large problems | May require excessive CG iterations |

| Active Set | Small-medium scale | Explicit Hessian matrix | Efficient warm-starting; rapid infeasibility detection | Less efficient for large-scale problems |

| SQP | Small scale with expensive evaluations | Explicit Hessian matrix | Fewest function evaluations; handles expensive simulations | High per-iteration cost |

| Augmented Lagrangian | Small-large scale with degenerate constraints | Various options | Handles constraint degeneracy; works when LICQ fails | May require solving multiple subproblems |

The interior-point methods implemented in Knitro replace the original constrained problem with a series of barrier subproblems controlled by a barrier parameter, solving each through direct linear algebra (Interior/Direct) or conjugate gradient approaches (Interior/CG) [17]. Active-set methods follow a fundamentally different strategy, solving a sequence of quadratic programming subproblems while progressively identifying active constraints [17]. The SQP method also solves a sequence of QP subproblems but is primarily designed for small to medium-scale problems with expensive function evaluations [17].

Experimental Comparison: NLP vs. MILP Formulations

A comprehensive evaluation comparing NLP and Mixed-Integer Linear Programming (MILP) formulations for Organic Rankine Cycle (ORC) systems provides insightful performance data [16]. The experimental protocol involved modeling four different ORC configurations using MATLAB R2017a with OPTI Toolbox v2.27 on a Windows 3.1 GHz Intel Core i5 laptop, with solvers selected based on academic availability and compatibility [16].

Table 3: Performance Comparison of NLP vs. MILP Formulations for ORC Systems

| Formulation Type | Number of Variables | Number of Constraints | Solution Time | Convergence Behavior |

|---|---|---|---|---|

| NLP Formulations | Fewer variables | Fewer constraints | Faster (all solvers <13s) | All solvers converged to feasible solutions |

| MILP Formulations | Significantly more variables | Significantly more constraints | Slower (~1s to ~2200s) | Mixed convergence results |

The results demonstrated that NLP formulations coupled with state-of-the-art solvers (IPOPT, SNOPT, KNITRO) significantly outperformed MILP approaches in computational efficiency, with all NLP solvers converging to feasible solutions in under 13 seconds while MILP solvers exhibited highly variable solution times ranging from approximately 1 second to 2200 seconds [16]. This performance advantage was attributed to the availability of exact derivatives—particularly second derivatives—in NLP formulations, allowing more efficient navigation of the solution space [16]. The experimental findings challenge the conventional wisdom that linearized formulations necessarily yield computational advantages, suggesting that with modern NLP solvers, certain problem classes are more efficiently solved directly as NLPs rather than through linearization and integer reformulation [16].

Black-Box Optimization and Complex Landscapes

Derivative-Free Optimization and Stochastic Global Search

Many real-world optimization problems present challenges that render derivative-based approaches ineffective, including non-convex landscapes, discontinuous functions, or computationally expensive evaluations where gradient information is unavailable [20] [21]. These "black-box" optimization scenarios necessitate specialized approaches that do not rely on derivative information, instead employing strategic sampling of the objective function to navigate complex solution spaces [20].

Black-box optimization algorithms fall into two broad categories: deterministic derivative-free methods and stochastic global search algorithms [20]. Deterministic approaches include pattern search, mesh adaptive direct search, and model-based methods that systematically explore the parameter space without randomness [20]. Stochastic approaches encompass evolutionary strategies, particle swarm optimization, ant colony optimization, and other population-based metaheuristics inspired by natural systems [22] [20].

A recent comprehensive benchmarking study evaluated 25 state-of-the-art algorithms from both classes on problems with up to 20 dimensions and large evaluation budgets (10⁵×n function evaluations) [20]. The findings revealed significant performance variation across problem classes, with no single algorithm dominating all others, highlighting the importance of algorithm selection based on specific problem characteristics [20].

Case Study: High-Dimensional Stochastic Order Allocation

Supply chain optimization under uncertainty presents particularly challenging black-box optimization problems characterized by high dimensionality and complex constraints [19]. A recent study addressed the stochastic order allocation problem, where orders must be assigned to parallel machines with varying efficiencies under conditions of uncertain demand, with the goal of maximizing expected profit while considering potential order cancellations [19].

The mathematical model for this high-dimensional stochastic optimization problem incorporates scenario-based reasoning, where each scenario ( s ) represents a possible realization of order demands [19]. The probability of scenario ( s ) is given by:

( \pi^s = \Pii (yi^s \cdot pi + (1 - yi^s)(1 - p_i)) )

Where ( yi^s ) indicates whether order ( i ) is demanded in scenario ( s ), and ( pi ) represents the marginal probability that order ( i ) will be selected for processing [19].

To address this challenging problem, researchers developed a Modified Adaptive Variable Neighborhood Search (MAVNS) algorithm combined with scenario generation (MAVNS-SG) [19]. The experimental protocol evaluated the algorithm on problems with varying numbers of orders and machines, comparing performance against traditional Monte Carlo Simulation approaches [19]. The MAVNS-SG algorithm demonstrated superior optimization performance and computational efficiency, effectively handling the high-dimensional stochastic variables that render exact methods intractable [19].

Emerging Approaches: Large Language Models in Optimization

Recent investigations have explored the potential of Large Language Models (LLMs) as black-box optimizers, with systematic evaluations assessing their capabilities across diverse optimization scenarios [21]. The experimental protocol employed a progressive evaluation framework testing LLMs on both discrete and continuous optimization problems, examining fundamental properties including numerical value understanding, multidimensional data handling, scalability, and exploration-exploitation balance [21].

Findings revealed that LLMs currently demonstrate limited effectiveness for pure numerical optimization tasks, struggling with floating-point precision, multidimensional vector manipulation, and maintaining appropriate exploration-exploitation balance [21]. However, researchers identified specific scenarios where LLMs offer distinct advantages, particularly in problems where they can leverage contextual information from prompts to generate effective heuristics without explicit programming [21]. This suggests a promising role for LLMs in optimization domains extending beyond traditional numerical problems, such as prompt engineering and code generation [21].

Hybrid Stochastic-Deterministic Methods

Algorithmic Frameworks and Integration Strategies

Recognition of the complementary strengths of stochastic and deterministic approaches has motivated development of hybrid algorithms that strategically combine both methodologies [18]. These hybrids typically employ stochastic methods for global exploration of the solution space, leveraging their ability to escape local optima, while applying deterministic approaches for local refinement, exploiting their rapid convergence properties [18].

A representative example from electrochemical impedance spectroscopy demonstrates the hybrid paradigm combining three stochastic algorithms—Genetic Algorithms (GA), Particle Swarm Optimization (PSO), and Simulated Annealing (SA)—with the deterministic Nelder-Mead (NM) algorithm [18]. In this implementation, the stochastic component performs broad global search to identify promising regions of the solution space, whose outputs then serve as initial values for deterministic local refinement [18].

The experimental protocol evaluated these hybrid methods (GA-NM, PS-NM, SA-NM) on mathematical test functions and Proton Exchange Membrane Fuel Cell (PEMFC) impedance data using equivalent electrical circuit models of varying complexity [18]. Performance metrics included stability, efficiency, solution quality, and computational resource requirements, with all hybrid methods demonstrating improved interpretation of experimental data compared to standalone stochastic or deterministic approaches [18].

Performance Analysis and Application Guidelines

The comparative analysis of hybrid algorithms yielded specific application guidelines based on problem characteristics [18]. For problems with unknown parameter orders of magnitude, the PS-NM (Particle Swarm-Nelder-Mead) and GA-NM (Genetic Algorithm-Nelder-Mead) hybrids demonstrated superior performance, effectively exploring the solution space before refinement [18]. For problems with approximately known parameter ranges, the SA-NM (Simulated Annealing-Nelder-Mead) approach proved most effective, efficiently leveraging prior knowledge for accelerated convergence [18].

All hybrid methods shared the common advantage of reduced sensitivity to initial conditions while accelerating convergence compared to purely stochastic approaches, achieving lower least-square residuals with physically meaningful solutions [18]. This robust performance across diverse problem instances highlights the value of hybrid frameworks for complex optimization challenges in scientific domains.

Table 4: Hybrid Algorithm Selection Guidelines Based on Problem Characteristics

| Problem Characteristics | Recommended Hybrid | Performance Advantages | Application Context |

|---|---|---|---|

| Unknown parameter orders of magnitude | PS-NM or GA-NM | Effective global exploration | Broad search domains with limited prior knowledge |

| Approximately known parameter ranges | SA-NM | Efficient convergence with prior information | Parameter estimation with approximate initial guesses |

| High-dimensional complex landscapes | GA-NM | Effective navigation of multimodal spaces | Molecular docking, protein folding |

| Computationally expensive evaluations | SA-NM | Fewer function evaluations to convergence | Complex simulation-based optimization |

Application in Drug Discovery and Development

Stochastic Approaches in Pharmacometrics

The model-informed drug discovery and development (MID3) paradigm has established itself as a cornerstone of modern pharmaceutical research, integrating diverse modeling strategies including population pharmacokinetics/pharmacodynamics (PK/PD) and systems biology [15]. While nonlinear mixed-effect modeling represents the current methodological standard for characterizing PK/PD data across individuals, stochastic approaches offer particular value when modeling small populations where random events can profoundly impact system behavior [15].

In oncological applications, stochastic models effectively capture critical phenomena including mutation acquisition leading to cancerous cells or drug resistance, patient withdrawal from clinical trials, and initial transmission dynamics of infectious diseases [15]. These random events significantly influence disease progression and treatment effects, particularly in small populations, and ignoring such stochasticity can bias parameter estimation and subsequent conclusions [15].

The mathematical framework for stochastic pharmacometric modeling typically employs master equations or stochastic simulation algorithms, with the Gillespie Stochastic Simulation Algorithm (SSA) representing a gold standard for exact simulation of possible trajectories [15]. While computationally demanding—particularly for large biological systems requiring numerous simulation replicates—these approaches provide unique insights into system variability that deterministic approximations may obscure [15].

Experimental Protocols and Research Reagent Solutions

Implementing optimization methodologies in pharmaceutical research requires specialized computational tools and analytical frameworks. The following research reagent solutions represent essential components for conducting optimization experiments in drug development contexts:

Table 5: Research Reagent Solutions for Optimization in Drug Development

| Research Reagent | Function | Application Context |

|---|---|---|

| Artelys Knitro | Nonlinear optimization solver | Mechanism-based PK/PD model parameter estimation |

| MATLAB with OPTI Toolbox | Modeling environment and solver interface | Organic Rankine Cycle optimization; prototype implementation |

| Stochastic Simulation Algorithm (SSA) | Exact stochastic trajectory simulation | Intracellular pathway dynamics with small molecule counts |

| Modified Adaptive VNS (MAVNS) | Stochastic local search with adaptive mechanisms | High-dimensional clinical trial design optimization |

| NLME Software (NONMEM, Monolix) | Nonlinear mixed-effects modeling | Population pharmacokinetics and dose optimization |

| TensorFlow/PyTorch | Deep learning frameworks with automatic differentiation | Molecular property prediction and generative chemistry |

Experimental protocols for optimization in pharmaceutical applications typically follow a structured workflow: (1) problem formulation and objective definition; (2) data collection and preprocessing; (3) model selection and implementation; (4) solver configuration and parameter tuning; (5) validation and sensitivity analysis [16] [15] [18]. For stochastic problems involving high-dimensional uncertainty, scenario generation techniques coupled with intelligent optimization algorithms have demonstrated particular effectiveness, significantly reducing computational burden compared to traditional Monte Carlo simulation while maintaining solution quality [19].

The comprehensive comparison of optimization approaches reveals a complex landscape without universal solutions, where appropriate method selection depends critically on problem characteristics, data availability, and computational constraints. LP formulations offer computational efficiency for properly linearizable problems but may oversimplify complex nonlinear systems. IP approaches provide essential modeling capability for discrete decisions but encounter combinatorial complexity in large-scale instances. NLP methods deliver accurate representations of continuous nonlinear systems but may converge to local optima for nonconvex problems.

Black-box optimization approaches expand the addressable problem domain to include functions without analytical expressions or derivative information, with stochastic global search algorithms particularly effective for multimodal landscapes, albeit at increased computational cost [20] [21]. Hybrid stochastic-deterministic frameworks increasingly represent the state-of-the-art for complex optimization challenges, strategically balancing global exploration and local refinement to achieve robust performance across diverse problem instances [18] [19].

For drug development professionals and researchers, method selection should be guided by systematic consideration of key factors: (1) problem structure and linearity; (2) discrete versus continuous variables; (3) availability of derivative information; (4) computational budget; (5) solution quality requirements; and (6) uncertainty characterization. Through thoughtful application of these guidelines and leveraging ongoing algorithmic advances, optimization methodologies continue to provide powerful approaches for addressing complex challenges across scientific domains.

Within the broader research on optimization methodologies, a fundamental dichotomy exists between deterministic and stochastic approaches. This guide provides a structured comparison between two prominent families: the deterministic Branch-and-Bound (BnB) and Cutting Plane methods, and the stochastic Genetic Algorithms (GA) and Simulated Annealing (SA). Framed within the context of optimization research for complex scientific problems, such as those encountered in drug development and materials discovery, this analysis aims to equip researchers with a clear understanding of each family's principles, performance, and optimal use cases [23] [24].

Algorithm Family Comparison: Philosophical Foundations and Core Mechanisms

Stochastic Meta-Heuristics: GA and SA

Genetic Algorithms and Simulated Annealing are high-level meta-heuristics designed for navigating complex, multi-modal solution spaces where traditional gradient-based methods falter [25]. Both are inherently stochastic, incorporating randomness to escape local optima.

- Simulated Annealing (SA): SA is a single-state method inspired by the metallurgical annealing process. It iteratively modifies a single candidate solution. Its defining feature is the probabilistic acceptance of worse solutions, controlled by a decreasing "temperature" parameter. This allows extensive exploration early on and gradual convergence to exploitation [25] [26]. One generation in SA is typically computationally inexpensive [25].

- Genetic Algorithms (GA): GA is a population-based method inspired by Darwinian evolution [23]. It maintains a pool of candidate solutions (individuals). New generations are created through selection of fit individuals, crossover (recombination of genetic material from two parents), and mutation (random alteration) [25] [23]. The crossover operation is a key differentiator, allowing the combination of beneficial traits from different solutions, which is a form of directed search not present in SA [25] [26].

Deterministic Combinatorial Optimizers: BnB and Cutting Planes

Branch-and-Bound and Cutting Plane methods are foundational techniques for solving combinatorial optimization problems, such as Integer Programming (IP) and Mixed-Integer Programming (MIP) [24]. Their logic is deterministic and rooted in mathematical programming.

- Branch-and-Bound (BnB): BnB is an enumerative algorithm that recursively partitions the feasible solution space into smaller subsets (branching). For each subset, it calculates bounds on the objective function. Subsets that cannot contain a better solution than the current best are "pruned," drastically reducing the search space [24].

- Cutting Plane Methods: These methods iteratively refine the feasible region of a linear programming relaxation of an integer problem by adding valid inequalities (cuts) that exclude fractional solutions but no integer-feasible points. This tightens the relaxation, providing better bounds [27].

- Branch-and-Cut: Modern solvers typically combine both in the Branch-and-Cut framework, using cutting planes to strengthen bounds within a BnB tree [27] [24]. Heuristics are often incorporated to find good feasible solutions early [24].

Performance and Application Scenarios: Experimental Insights

The choice between these families hinges on problem structure, solution requirements, and computational resources. The table below summarizes key comparative insights derived from literature and experimental studies.

Table 1: Algorithm Family Performance and Use Case Comparison

| Aspect | Genetic Algorithms (GA) & Simulated Annealing (SA) | Branch-and-Bound (BnB) & Cutting Planes |

|---|---|---|

| Problem Domain | General-purpose optimization, especially with black-box, non-convex, or noisy objective functions [25] [23]. | Combinatorial Optimization, Integer Linear Programming (ILP/MIP) [27] [24]. |

| Solution Guarantee | Heuristic. No guarantee of global optimality; seeks high-quality approximations [25] [23]. | Exact (with full execution). Can prove optimality or provide optimality gaps [24]. |

| Core Strength | Exploration of vast, unstructured spaces. GA's crossover can effectively combine partial solutions [25] [23]. | Exploitation of problem structure through mathematical bounds, enabling systematic search and proof. |

| Typical Performance | In practice, GAs often find better solutions than SA but at higher computational cost [25]. SA can be faster per iteration [25]. | Performance heavily depends on the strength of formulations, cuts, and heuristics. Default settings are usually best, but exceptions exist [24]. |

| Sample Application | Inverse design of molecules and materials [23], statistical image reconstruction [28]. | Scheduling, resource allocation, logistics (classic IP problems) [24]. |

| Parallelizability | GA is inherently parallel (population members evaluate independently) [25]. SA is sequential in its classic form. | BnB tree traversal can be parallelized, but load balancing is challenging. |

Quantitative data from a canonical study on statistical image reconstruction highlights the performance dynamics between SA and GA [28]. The study found that for this high-dimensional problem with many equally influential variables, standard GAs performed poorly compared to SA. However, a hybrid algorithm using SA for initial search followed by GA-style crossover to recombine solutions proved more effective and efficient than either method alone [28].

Table 2: Experimental Performance in Image Reconstruction (Adapted from [28])

| Algorithm | Relative Solution Quality | Key Finding |

|---|---|---|

| Standard Genetic Algorithm | Poor | Not adept at problems with many variables of roughly equal influence. |

| Simulated Annealing (SA) | Good | More effective than the tested GAs for this high-dimensional problem. |

| Hybrid (SA + Crossover) | Best | Combining SA's search with GA's crossover operation was most efficient. |

Detailed Experimental Protocol: Algorithm Comparison Study

The following protocol is synthesized from methodologies used in comparative studies, such as the image reconstruction experiment [28] and general optimization benchmarking.

1. Problem Formulation & Benchmark Suite:

- Select a set of benchmark instances from a target domain (e.g., a library of MIP problems from MIPLIB for deterministic solvers, or a set of molecular design objectives for stochastic methods).

- For stochastic vs. deterministic comparisons, choose problems that can be formulated both as a black-box optimization (for GA/SA) and as a structured IP/MIP (for BnB/Cut).

2. Algorithm Implementation & Parameter Tuning:

- GA: Define chromosome representation (e.g., bitstring, permutation, SMILES string for molecules [23]), fitness function, selection mechanism (e.g., roulette wheel, tournament), crossover and mutation operators, and population size [25] [23].

- SA: Define neighbor solution generation function, cooling schedule (initial temperature, cooling rate, final temperature), and acceptance probability function [25].

- BnB/Cut: Use a standard solver (e.g., CPLEX, Gurobi, SCIP). Configure two modes: (A) "pure" BnB with all cuts and heuristics disabled, and (B) full-featured Branch-and-Cut with default settings [24].

- Perform preliminary parameter tuning for GA and SA on a small subset of benchmarks to find robust settings.

3. Execution & Data Collection:

- For each algorithm and problem instance, run multiple trials (with different random seeds for stochastic methods) with a fixed computational budget (e.g., wall-clock time or number of function evaluations).

- Record: best objective value found, time to find the best solution, final optimality gap (for exact methods), and iteration/generation count.

4. Analysis:

- Compare mean and variance of solution quality across trials.

- For deterministic solvers, compare the solve time and tree size between "pure" and default configurations [24].

- Perform statistical significance tests on performance differences.

Visualization of Algorithm Relationships and Workflow

Algorithm Taxonomy: Stochastic vs. Deterministic Optimization Families

Comparative Workflow of Genetic Algorithms and Simulated Annealing

The Scientist's Toolkit: Key Components for Algorithm Implementation

Table 3: Essential "Research Reagent" Components for Algorithm Experimentation

| Component | Function in Stochastic GA/SA | Function in Deterministic BnB/Cut |

|---|---|---|

| Representation (Chromosome/Model) | Encodes a candidate solution (e.g., bitstring, SMILES string for molecules [23], vector of parameters). Defines the search space. | The mathematical model: Decision variables, objective function, and constraints (linear, integer). |

| Fitness Function / Objective | The "cost function" to be minimized/maximized. Often the computational bottleneck [25] [23]. Can be a physical property calculated via simulation. | The formal objective function of the IP/MIP. Evaluating the LP relaxation is a core step. |

| Variation Operators (Crossover/Mutation) | Crossover: Recombines two parents to exploit building blocks [25] [23]. Mutation: Introduces random exploration. | Cutting Planes: Generate valid inequalities to cut off fractional LP solutions, refining the model [27]. |

| Selection / Pruning Mechanism | Selection: Chooses parents for reproduction based on fitness (exploitation) [23]. | Pruning: In BnB, discards nodes (solution subsets) whose bound is worse than the current best solution [24]. |

| Control Parameters | GA: Population size, crossover/mutation rates. SA: Initial temperature, cooling schedule. | BnB/Cut: Node selection rule, cutting plane separation frequency and aggressiveness, heuristic intensity [24]. |

| Surrogate Model | A machine-learned model used as a fast approximation of the expensive true fitness function, accelerating evolution [23]. | Less common, but can be used to predict promising branching variables or the utility of specific cuts. |

This guide delineates the conceptual and practical territories of two pivotal optimization families. Stochastic meta-heuristics (GA/SA) offer flexibility and robustness for ill-defined or vast search spaces, with hybrids often yielding the best results [28]. Deterministic Branch-and-Cut methods provide precision and provable guarantees for structured combinatorial problems, though their performance is highly dependent on problem formulation and solver engineering [27] [24]. The choice for researchers in fields like drug development is not either/or; it is guided by the problem's nature—whether it is a de novo molecular design requiring exploration of a latent chemical space (suited for GA/SA) [23], or a resource-constrained scheduling problem with well-defined rules (suited for BnB/Cut). Understanding this landscape is crucial for selecting the right tool from the algorithmic toolkit.

From Theory to Practice: Methodologies and Real-World Biomedical Applications

Stochastic Optimization in Machine Learning for Drug Discovery

The integration of machine learning (ML) into drug discovery represents a paradigm shift from traditional, deterministic workflows to more adaptive, data-driven approaches. At the heart of this evolution lies a critical methodological choice: stochastic versus deterministic optimization. Deterministic methods, such as sequential quadratic programming (SQP), provide predictable, reproducible paths to an optimum based on gradient information but can struggle with complex, multi-modal landscapes common in biological systems [1]. In contrast, stochastic optimization methods—including genetic algorithms, simulated annealing, and stochastic gradient descent—leverage randomness to explore solution spaces more broadly, offering a higher probability of escaping local minima and discovering novel molecular entities [1]. This comparative guide objectively evaluates the performance of stochastic optimization techniques within ML pipelines for drug discovery, contextualized within the broader research thesis on their advantages and limitations versus deterministic counterparts.

Comparative Analysis of Optimization Method Performance

The efficacy of an optimization strategy is measured by its accuracy, computational efficiency, and ability to navigate the high-dimensional, noisy search space of drug design. The following tables synthesize quantitative data from key experiments comparing stochastic and deterministic-inspired ML approaches.

Table 1: Performance of Active Learning (Stochastic Batch Selection) on ADMET Property Prediction Active learning employs stochastic batch selection to optimize model training. Performance is measured by Root Mean Square Error (RMSE) against iteration count.

| Dataset (Property) | Method (Stochastic Approach) | Initial RMSE | RMSE at 300 Samples | Key Improvement Over Random Selection |

|---|---|---|---|---|

| Aqueous Solubility | COVDROP (MC Dropout) [29] | High | ~1.05 | Achieves target accuracy with 40% fewer experiments |

| Lipophilicity | COVLAP (Laplace Approx.) [29] | High | ~0.68 | Faster convergence; superior diversity sampling |

| Cell Permeability (Caco-2) | BAIT (Fisher Information) [29] | Moderate | ~0.52 | Effective but outperformed by COVDROP in later stages |

| Plasma Protein Binding | Random (Baseline) [29] | Very High | ~1.45 | Slowest convergence, high initial error |

Table 2: Computational Performance: Stochastic Simulation Algorithm (SSA) on Edge GPUs Stochastic simulations are computationally intensive. This table compares the efficiency of GPU platforms in executing the SSA, a core stochastic method [30].

| Hardware Platform | GPU Architecture | Power Envelope (W) | SSA Performance (Million Iter/sec) | Energy Efficiency (ms/W) |

|---|---|---|---|---|

| NVIDIA Jetson Orin NX | Ampere | 20 | 4.86 | 2102.7 |

| NVIDIA Jetson Orin Nano | Ampere | 15 | 3.12 | 1850.1 |

| NVIDIA Jetson Xavier NX | Volta | 15 | 2.01 | 1340.5 |

| Desktop RTX 3080 (Reference) | Ampere | 320 | 42.50 (Est.) | ~132.8 (Est.) |

Table 3: High-Level Comparison of Optimization Philosophies in Drug Discovery ML

| Aspect | Deterministic Optimization (e.g., SQP) | Stochastic Optimization (e.g., GA, SA, SGD) |

|---|---|---|

| Core Principle | Follows a defined, reproducible path using gradients/hessians [1]. | Incorporates randomness to explore solution space globally [1]. |

| Handling of Noise | Can be sensitive to data noise and irregularities. | Naturally robust to noise through probabilistic sampling. |

| Risk of Local Optima | High; convergence is to the nearest local minimum. | Lower; random jumps facilitate escape from local minima. |

| Parallelizability | Often sequential. | Highly parallelizable (e.g., population in GA, batches in SGD). |

| Best Suited For | Well-defined, convex problems, final-stage refinement. | Early-stage exploration, high-dimensional & multi-modal spaces (e.g., molecular generation [31]). |

Experimental Protocols for Key Cited Studies

1. Protocol for Batch Active Learning in ADMET Optimization [29] Objective: To minimize the number of experimental cycles needed to train an accurate predictive model for molecular properties. Workflow: 1. Pool & Oracle Setup: A large pool of unlabeled molecules is established. An "oracle" (e.g., historical data or a high-fidelity simulator) holds the true property labels. 2. Initialization: A small, randomly selected subset of molecules is labeled from the oracle to train an initial deep learning model (e.g., Graph Neural Network). 3. Stochastic Batch Selection: For each cycle: a. The trained model predicts properties and, crucially, estimates uncertainty for all molecules in the unlabeled pool. Methods include Monte Carlo Dropout (COVDROP) or Laplace Approximation (COVLAP) [29]. b. A covariance matrix representing prediction uncertainties and inter-sample correlations is computed. c. A batch of molecules (e.g., 30) is selected by finding the submatrix with the maximal log-determinant, maximizing joint entropy and diversity. 4. Iteration: The selected batch is "labeled" by the oracle, added to the training set, and the model is retrained. Steps 3-4 repeat until a performance threshold is met. Outcome Measurement: Model accuracy (RMSE, AUC-ROC) is plotted against the cumulative number of labeled samples.

2. Protocol for Stochastic Simulation Algorithm (SSA) Benchmarking [30]

Objective: To evaluate the performance and energy efficiency of edge GPU platforms for compute-intensive stochastic simulations.

Workflow:

1. Algorithm Implementation: The Gillespie SSA is implemented in CUDA C++. The system models a set of N molecular species interacting through M reaction channels with propensity functions a_j(x) [30].

2. Hardware Configuration: Jetson devices (Xavier NX, Orin Nano, Orin NX) are set to their maximum stable power mode (10W-20W). A desktop RTX 3080 serves as a reference.

3. Workload Definition: A benchmark biochemical reaction network (e.g., a gene regulatory network) is defined. The simulation scales by increasing the number of parallel stochastic trajectories.

4. Execution & Metrics: The SSA kernel is executed, measuring total execution time, iterations per second, and system power draw using integrated sensors.

5. Analysis: Energy efficiency (ms/W) and cost-performance (ms/USD) are calculated from the primary metrics [30].

Visualization of Key Workflows and Relationships

Title: Stochastic Batch Active Learning Cycle for Drug Property Prediction

Title: Optimization Method Selection Logic in Drug Discovery ML

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 4: Key Computational Tools and Datasets for Stochastic Optimization in Drug Discovery ML

| Item Name | Category | Primary Function in Stochastic Optimization |

|---|---|---|

| DeepChem Library [29] | Software Framework | Provides building blocks for deep learning on molecules, enabling the implementation of stochastic active learning pipelines and model training. |

| ChEMBL Datasets [29] | Data Resource | Large-scale, curated bioactivity data serving as the "oracle" or training pool for active learning tasks, particularly for affinity prediction. |

| ADMET Property Datasets (e.g., Solubility, Lipophilicity) [29] | Data Resource | Benchmark datasets used to validate and compare the performance of stochastic optimization methods in property prediction tasks. |

| NVIDIA Jetson Orin NX [30] | Hardware Platform | An energy-efficient edge GPU device for deploying and benchmarking compute-intensive stochastic simulations (e.g., SSA) in resource-constrained or real-time settings. |

| Ant Colony Optimization (ACO) Algorithm [32] | Optimization Algorithm | A stochastic, nature-inspired metaheuristic used for feature selection in hybrid ML models to improve drug-target interaction prediction. |

| Context-Aware Hybrid Model (CA-HACO-LF) [32] | ML Model | An example of a hybrid model combining stochastic optimization (ACO) for feature selection with a classifier, designed to improve prediction accuracy in drug discovery. |

| Generative Adversarial Networks (GANs) [31] | ML Model | A deep learning framework where a generator and discriminator are trained adversarially in a stochastic process, used for de novo molecular design. |

| Stochastic Simulation Algorithm (SSA) [30] | Simulation Algorithm | The core computational method for modeling biochemical systems with inherent randomness, crucial for understanding intracellular dynamics and variability. |

The design and operation of chemical reactors are fundamental to the chemical, pharmaceutical, and energy industries, where improvements in yield, purity, and energy efficiency directly translate to economic and environmental benefits. Optimization is central to achieving these improvements, yet reactor systems present unique challenges due to their complex, multivariable, and often non-linear nature. The choice of optimization strategy—whether stochastic methods that incorporate randomness to explore complex spaces or deterministic methods that follow precise mathematical rules—profoundly impacts the efficiency and outcome of the optimization process.

Stochastic optimization methods, such as Simulated Annealing (SA), Particle Swarm Optimization (PSO), and Genetic Algorithms (GA), are particularly well-suited for tackling the high-dimensional, non-convex problems common in reactor engineering. They excel at global exploration of the parameter space, reducing the risk of becoming trapped in local optima. In contrast, deterministic methods, like gradient-based algorithms or the Nelder-Mead simplex method, often provide fast and efficient local refinement but are highly dependent on initial conditions and may miss globally optimal solutions.

This case study examines the application of Simulated Annealing and Artificial Intelligence (AI) models to chemical reactor optimization. It objectively compares their performance against other stochastic and deterministic alternatives, presenting experimental data and detailed methodologies. The analysis is framed within the broader research context of understanding the respective roles and synergies of stochastic versus deterministic optimization paradigms.

Theoretical Foundations: Stochastic vs. Deterministic Optimization

Fundamental Differences and Mechanisms

Optimization algorithms can be broadly categorized based on their use of randomness and their approach to navigating the search space.

Stochastic Optimization: These algorithms incorporate probabilistic elements to explore the solution space. They do not guarantee the same result on every run but are highly effective for problems with multiple local optima. Their key strength is global exploration. Examples highly relevant to chemical engineering include:

- Simulated Annealing (SA): Models the physical annealing process of metals, where a material is heated and slowly cooled to reduce defects. It probabilistically accepts worse solutions early in the process to escape local minima, gradually focusing on convergence as the "temperature" cools [33] [34].

- Particle Swarm Optimization (PSO): A population-based method where candidate solutions ("particles") move through the search space based on their own experience and the experience of neighboring particles [35] [34].

- Genetic Algorithms (GA): Inspired by natural selection, these algorithms use operations such as selection, crossover, and mutation on a population of solutions to evolve toward better fitness over generations [18] [34].

- Ant Colony Optimization (ACO): Mimics the behavior of ants foraging for food, using pheromone trails to probabilistically build solutions and reinforce promising paths [35] [34].

Deterministic Optimization: These algorithms follow a fixed set of rules and, given the same starting point, will always produce the same result. They are often efficient at local refinement (exploitation) but can be sensitive to initial conditions.

- Nelder-Mead Simplex: A direct search method that uses a geometric simplex (e.g., a triangle in 2D space) to converge to a minimum without using gradient information [18].

- Gradient-Based Methods: Algorithms like gradient descent use the derivative of the objective function to determine the direction of steepest descent for finding local minima [36].

The Hybrid Approach: Combining Strengths

A powerful trend in modern optimization is the development of hybrid stochastic-deterministic algorithms. These methods leverage the global search capability of a stochastic algorithm to locate a promising region in the solution space, then hand off the solution to a fast local deterministic optimizer for fine-tuning. This combines the strengths of both paradigms, mitigating their individual weaknesses [18].