Robust Kinetic Model Calibration: Advanced Strategies to Prevent Overfitting in Biomedical Research

This article provides a comprehensive guide for researchers and drug development professionals on preventing overfitting during kinetic model calibration.

Robust Kinetic Model Calibration: Advanced Strategies to Prevent Overfitting in Biomedical Research

Abstract

This article provides a comprehensive guide for researchers and drug development professionals on preventing overfitting during kinetic model calibration. Covering foundational concepts to advanced methodologies, we explore why kinetic models of biological systems are particularly prone to overfitting, especially with high-dimensional parameters and limited experimental data. The content details robust parameter estimation techniques combining global optimization with regularization, practical troubleshooting strategies for ill-conditioned problems, and rigorous validation frameworks to ensure model generalizability. Through critical analysis of current tools and future directions, this resource equips scientists with the knowledge to build more reliable, predictive models for therapeutic development and clinical translation.

Understanding Overfitting: Why Kinetic Models in Biomedical Research Are Particularly Vulnerable

Frequently Asked Questions (FAQs)

1. What is overfitting in the context of calibrating kinetic models?

Overfitting occurs when a machine learning model, including a kinetic model, fits its training data too closely. It gives accurate predictions for the data it was trained on but fails to generalize and make accurate predictions for new, unseen data [1] [2]. In kinetic models, this means the model may perfectly describe the dataset used for parameter identification (like reaction rates or concentrations) but will perform poorly when predicting the outcome of a new experiment under different conditions [3]. An overfitted model essentially memorizes the noise and specific random fluctuations in its training data instead of learning the true underlying physical relationships [4].

2. How can I tell if my kinetic model is overfitted?

The primary method is to test the model on data it has never seen before [1]. The key indicators of an overfit model are:

- Low error on training data: The model has a high accuracy or low prediction error on the dataset used to train it.

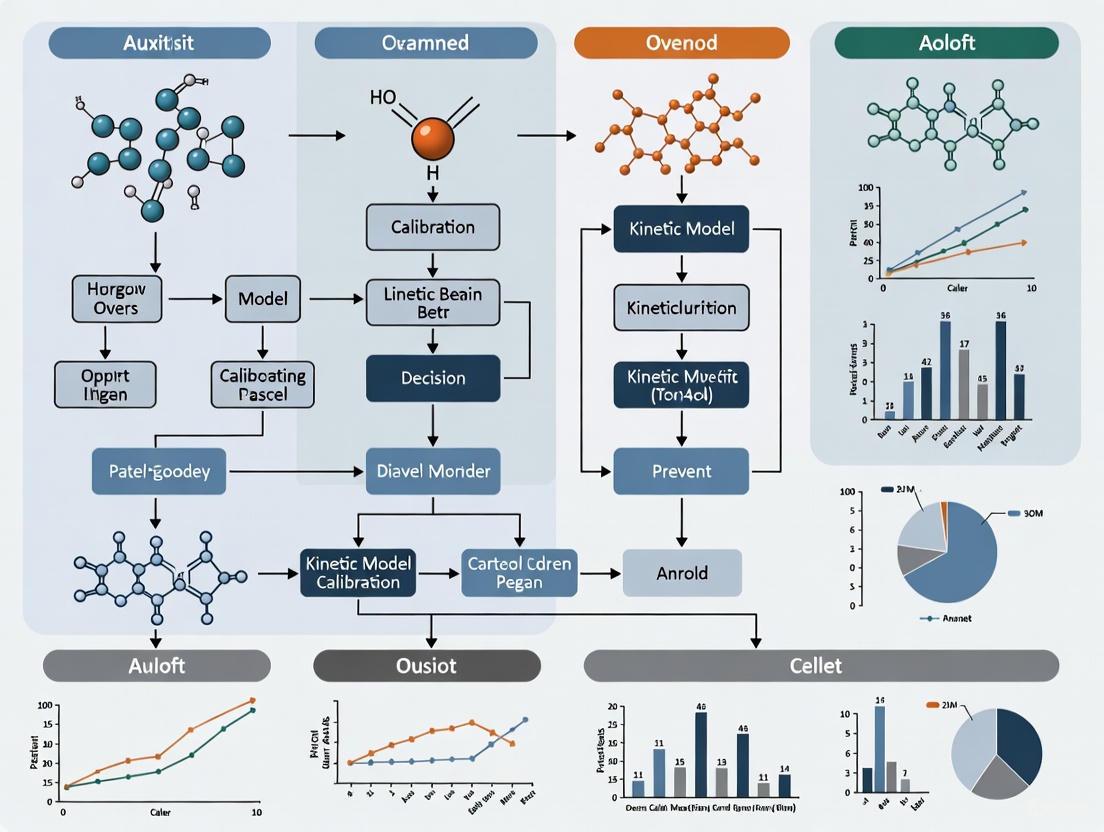

- High error on test/validation data: The model performs poorly on a separate, unseen test dataset or new experimental conditions [2] [5]. Common detection techniques include k-fold cross-validation, where your data is split into 'k' subsets. The model is trained on k-1 subsets and validated on the remaining one, a process repeated until each subset has been used for validation. A high average error rate on these validation folds indicates overfitting [1] [6]. The workflow for this diagnostic approach is outlined in the diagram below.

3. What are the main causes of overfitting in complex scientific models?

The primary causes of overfitting include [1] [4] [6]:

- Excessive Model Complexity: Using a model that is too complex or flexible relative to the amount of data available.

- Insufficient Training Data: Having a dataset that is too small to capture the true population trends.

- Noisy Data: Training on data that contains a significant amount of irrelevant information or measurement errors, which the model then learns.

- Inadequate Validation: Relying solely on the model's performance on the training data without proper evaluation on a hold-out test set.

4. What is the difference between overfitting and underfitting?

| Feature | Overfitting | Underfitting |

|---|---|---|

| Model Complexity | Too complex for the data [4] | Too simplistic for the data [4] |

| Performance on Training Data | High accuracy / Low error [1] | High error / Low accuracy [5] |

| Performance on New Data | Poor accuracy / High error [1] | Poor accuracy / High error [5] |

| Core Problem | High variance; model is sensitive to noise [1] [2] | High bias; model cannot capture underlying patterns [2] [6] |

| Analogy | Memorizing a textbook without understanding concepts | Failing to learn the key concepts in the textbook |

5. Are certain types of machine learning algorithms more prone to overfitting?

Yes, algorithms with high inherent flexibility and capacity are more prone to overfitting, especially when data is limited. These include [5]:

- Deep Neural Networks: Particularly those with many layers and neurons, which can learn overly complex functions.

- Decision Trees: Can become overfit if they grow very deep, creating a complex tree that captures every detail in the training data.

- Support Vector Machines (SVMs): With complex kernels (e.g., high-degree polynomial or RBF) on high-dimensional data.

However, techniques like pruning (for trees), dropout (for neural networks), and regularization (for many models) can be applied to mitigate this risk [1] [5].

Troubleshooting Guide: Preventing Overfitting in Kinetic Model Calibration

Problem: Suspected Overfitting in Kinetic Parameter Identification

You observe that your calibrated kinetic model achieves an excellent fit on your training dataset (e.g., a specific set of concentration and temperature conditions) but produces unreliable and inaccurate predictions when applied to a new validation dataset (e.g., different concentrations, flow rates, or mixer geometries) [3].

Investigation and Solution Protocol

Follow the systematic troubleshooting workflow below to diagnose the root cause and apply the correct remedy.

Step 1: Evaluate Training Data Quantity and Quality

- Action: Assess the size and scope of your experimental dataset.

- Why: A small dataset or one that lacks diversity (e.g., limited range of reactant concentrations) is a primary cause of overfitting. The model cannot discern general trends [1] [6].

- Solution:

Step 2: Evaluate Model Complexity

- Action: Compare the complexity of your model (number of parameters, layers, etc.) to the size of your dataset.

- Why: A model with high complexity can "memorize" the training data, including its noise, rather than learning the true kinetic relationships [4].

- Solution:

- Feature Selection/Pruning: Identify and use only the most important input parameters (features) for your prediction, eliminating redundant or irrelevant ones [1] [7].

- Reduce Model Complexity: Choose a simpler model architecture. For a neural network, this could mean reducing the number of layers or units per layer [7] [6].

Step 3: Review Validation Protocols for Bias

- Action: Scrutinize how you are evaluating your model's performance.

- Why: Using the same data for both feature selection, model training, and final evaluation leads to optimistically biased performance estimates (a common pitfall in high-dimensional data) [8].

- Solution:

- Use k-Fold Cross-Validation: This provides a more robust estimate of generalization error [1] [6].

- Apply Nested Cross-Validation: For a rigorous and unbiased evaluation, especially when also performing feature selection or hyperparameter tuning, use a nested protocol where an inner loop performs these tasks within the training fold, and an outer loop provides the final performance estimate [8].

Core Remediation Techniques Table

The following table summarizes key techniques you can implement to prevent overfitting, applicable across various model types.

| Technique | Brief Description | Application in Kinetic Modeling |

|---|---|---|

| Cross-Validation [1] [6] | Splits data into k folds; trains on k-1 and validates on the held-out fold, repeated k times. | Provides a realistic estimate of how your model will perform on new experimental conditions. |

| Regularization (L1/L2) [7] [2] | Adds a penalty to the model's loss function to discourage complex models. | Prevents kinetic parameters from taking extreme values, promoting a more robust and generalizable model. |

| Early Stopping [1] [2] | Halts the training process before the model starts to learn the noise in the data. | Monitor validation error during iterative training (e.g., of a neural network); stop when validation error begins to rise. |

| Ensemble Methods (e.g., Random Forest) [1] [6] | Combines predictions from multiple models to improve generalization. | Train multiple models on different data subsamples; the aggregate prediction is often more accurate and stable. |

| Dropout [7] [6] | Randomly "drops" a subset of neurons during training in a neural network. | Prevents complex co-adaptations between neurons, forcing the network to learn more robust features. |

| Data Augmentation [1] [5] | Artificially increases the size and diversity of the training set. | Apply small, realistic perturbations to your input data (e.g., adding minor noise to initial concentration values). |

The Scientist's Toolkit: Essential Reagents for Robust Modeling

| Research Reagent / Resource | Function in Preventing Overfitting |

|---|---|

| High-Quality, Diverse Experimental Datasets | Serves as the foundation for learning generalizable patterns, reducing the risk of the model latching onto spurious correlations [1] [2]. |

| Validation Dataset (Hold-Out Set) | Acts as the ultimate test for generalization performance, providing an unbiased evaluation of the model's predictive power on unseen data [1] [6]. |

| K-Fold Cross-Validation Script | A computational tool that systematically partitions data to provide a robust estimate of model generalization error, guarding against over-optimistic results [1] [9]. |

| Regularization Algorithms (Lasso, Ridge, Dropout) | Mathematical constraints applied during model training to penalize excessive complexity and promote simpler, more reliable models [1] [7] [2]. |

| Feature Selection Tools | Identifies and retains the most relevant input variables, simplifying the model and reducing the chance of learning from irrelevant noise [1] [7]. |

| Computational Framework for Nested Validation | A rigorous experimental protocol that isolates the test data from any model development step (like feature selection), ensuring a truly unbiased error estimate [8]. |

Technical Support Center: Troubleshooting Guides and FAQs for Robust Calibration

Framed within a thesis on preventing overfitting in kinetic model calibration research, this guide addresses the core challenges of ill-conditioning and nonconvexity, providing practical solutions for researchers, scientists, and drug development professionals.

Core Challenges & Manifestations

Calibrating kinetic models—described by nonlinear ordinary differential equations—is an inverse problem fraught with pathological issues [10]. Two primary challenges dominate:

- Nonconvexity: The parameter estimation problem often involves a cost function (e.g., sum of squared errors) with multiple local minima. This rugged landscape means local optimization methods can converge to suboptimal solutions, leading to incorrect conclusions about the model's validity [10] [11].

- Ill-conditioning: This arises from over-parameterization, scarce or noisy data, and high model flexibility. It leads to large uncertainties in parameter estimates, sensitivity to small data perturbations, and, crucially, overfitting. An overfit model captures noise instead of the underlying signal, resulting in excellent fit to calibration data but poor generalization to new data [10] [1].

The following table summarizes quantitative benchmarks from the literature, illustrating the scale and nature of typical calibration problems [11]:

Table 1: Benchmark Problems in Kinetic Model Calibration

| Problem ID | Description | Parameters | States | Data Points | Key Challenge |

|---|---|---|---|---|---|

| B2 | E. coli Metabolic Network | 116 | 18 | 110 | Nonconvexity, Real Noise |

| B3 | E. coli Metabolic & Transcription | 178 | 47 | 7567 | High-Dimensionality |

| B4 | Chinese Hamster Metabolic Network | 117 | 34 | 169 | Ill-conditioning |

| BM1 | Mouse Signaling Pathway | 383 | 104 | 120 | Large-scale, Nonconvex |

| TSP | Generic Metabolic Pathway | 36 | 8 | 2688 | Multi-modality |

Troubleshooting Guide: FAQs & Solutions

Q1: My optimization run converges, but the parameters change dramatically with different initial guesses. What's happening? A: This is a classic symptom of nonconvexity. Your solver is finding different local minima. Solution: Shift from local to global optimization strategies. Do not rely on a single local search. Implement a multi-start approach (launching many local searches from random points) or use a dedicated metaheuristic (e.g., scatter search, genetic algorithms) [10] [11]. For medium-to-large scale problems, a hybrid metaheuristic that combines a global search with a gradient-based local optimizer has been shown to be particularly effective [11].

Q2: My calibrated model fits my training data perfectly but fails to predict validation data. Why? A: This is the hallmark of overfitting due to ill-conditioning. The model has excessive freedom to fit the noise in your specific dataset [10] [1]. Solutions:

- Regularization: Introduce a penalty term to the cost function that discourages extreme parameter values. L2 regularization (Ridge) pushes parameters toward zero, while L1 regularization (Lasso) can drive them to exactly zero, performing automatic feature selection [7] [12].

- Cross-Validation: Use k-fold cross-validation during calibration to assess generalizability. If performance varies wildly across folds, you are likely overfitting [7] [1].

- Increase Data Quality/Quantity: If possible, gather more experimental data points or reduce measurement noise [10].

Q3: How do I choose between L1 and L2 regularization, and how do I set the penalty strength? A: L2 is generally preferred when you believe all parameters should contribute to the model but with constrained magnitude. L1 is useful for feature selection, to identify and exclude irrelevant mechanisms [7] [12]. Tuning the penalty strength (λ) is critical. A common method is the L-curve criterion: plot the model fit error against the regularization penalty for a range of λ values. The optimal λ is often near the "corner" of the resulting L-shaped curve, balancing fit and complexity [12]. Always validate the chosen λ on a hold-out dataset.

Q4: I have a large-scale model with hundreds of parameters. Which optimization method is most robust? A: Based on systematic benchmarking, for problems with tens to hundreds of parameters, a well-tuned hybrid metaheuristic is recommended. Specifically, a global scatter search metaheuristic combined with an interior-point local method using adjoint-based sensitivity analysis has demonstrated superior performance in terms of both robustness and efficiency [11]. A multi-start of gradient-based methods can also be successful if computational resources for sensitivity analysis are available [11].

Q5: How can I proactively design experiments to minimize calibration challenges? A: Employ Optimal Experimental Design (OED). OED uses the current model to identify which new experiments (e.g., time points, stimuli levels) would provide the most information to reduce parameter uncertainty and improve identifiability, thereby combatting ill-conditioning before data is collected [10].

Detailed Experimental & Computational Protocols

Protocol 1: Regularization Tuning via L-curve and Cross-Validation

Objective: To select the optimal regularization strength λ that prevents overfitting. Materials: Calibration dataset, validation dataset, modeling software with regularization capability. Method:

- Split your data into training (e.g., 70%) and hold-out validation (30%) sets [7].

- For a log-spaced range of λ values (e.g., 10^-6 to 10^2):

a. Calibrate the model on the training set using the regularized cost function:

J(θ) = SSE(θ) + λ * Penalty(θ). b. Record the SSE(θ) on the training set and the norm of the penalty term. c. Crucially, record the SSE(θ) on the hold-out validation set. - Plot two curves: (i) Validation SSE vs. λ, and (ii) L-curve (Penalty Norm vs. Training SSE).

- The optimal λ is the one that minimizes the validation SSE. The L-curve corner should correspond to a similar λ value [12].

Protocol 2: Hybrid Global-Local Optimization for Nonconvex Problems

Objective: To reliably find the global optimum (or a robust approximation) in a multimodal landscape. Materials: Global optimization toolbox (e.g., MEIGO, ARES), local gradient-based solver (e.g., IPOPT, fmincon), model with sensitivity equations or adjoint capabilities. Method (based on top performer from benchmarks) [11]:

- Global Phase (Scatter Search): Initialize a diverse population of parameter vectors within bounds. Iteratively generate new trial points by combining existing solutions and applying diversification mechanisms. Use the direct model simulation (no gradients) to evaluate cost.

- Refinement Phase: Periodically select the most promising points from the global search.

- Local Phase (Interior-Point with Adjoints): Launch a gradient-based local optimizer (e.g., interior-point) from each selected point. Use adjoint sensitivity analysis to compute gradients efficiently, even for large models.

- Iterate: Return refined solutions to the global pool. Continue until a convergence criterion (max iterations, lack of improvement) is met.

- Report the best solution found across all phases.

Protocol 3: k-Fold Cross-Validation for Overfitting Detection

Objective: To assess the generalizability of a calibrated model. Method:

- Randomly partition the entire dataset into

kequally sized folds (commonly k=5 or 10). - For

i = 1tok: a. Set foldiaside as the validation set. b. Use the remainingk-1folds as the training set. c. Calibrate the model on the training set. d. Calculate the error (e.g., RMSE) of the calibrated model on the validation set (E_val_i). - Calculate the average validation error:

Avg(E_val_i). A low average error indicates good generalization. - Compare the average validation error to the training error. If validation error is significantly higher, overfitting is present [7] [1].

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Computational Tools for Robust Kinetic Calibration

| Tool Category | Specific Solution/Software | Function in Calibration |

|---|---|---|

| Global Optimizers | MEIGO (Scatter Search, ESS), Genetic Algorithms, Particle Swarm Optimization | Navigate nonconvex cost landscapes to avoid local minima [13] [11]. |

| Local Optimizers with Gradients | IPOPT, NLopt, MATLAB's fmincon, SUNDIALS (IDA) |

Efficiently refine solutions using gradient information; essential for hybrid methods [11]. |

| Sensitivity Analysis | Adjoint Method (CVODES), Forward Sensitivity Equations | Compute parameter gradients efficiently, especially for large models (>50 params) [11]. |

| Regularization Solvers | Custom implementation in Python (SciPy), R, or using LASSO/Elastic Net packages | Implement L1/L2 penalty terms to constrain parameters and combat ill-conditioning [12]. |

| Model Simulation & ODE Solving | COPASI, AMIGO, PySB, Julia DifferentialEquations.jl, MATLAB ODE suites | Reliable numerical integration of the kinetic ODE system for cost evaluation [10]. |

| Cross-Validation & Diagnostics | Custom k-fold scripts, scikit-learn (for ML wrappers) | Assess model generalizability and detect overfitting [7] [1]. |

Troubleshooting Guide: Identifying and Resolving Overfitting

This guide helps you diagnose and fix common overfitting problems in computational biology research.

FAQ: Overfitting Fundamentals

Q1: What is overfitting and why is it a critical issue in biological model calibration? Overfitting occurs when a machine learning model learns not only the underlying patterns in the training data but also the noise and random fluctuations. This results in models that perform well on training data but generalize poorly to unseen data [7] [14]. In biological contexts like kinetic model calibration, this is particularly problematic because it can lead to misleading scientific conclusions, wasted resources, and reduced reproducibility of studies [15] [14].

Q2: How can I detect if my kinetic model is overfitted? The primary indicator is a significant performance gap between training and validation data. Monitor these key signs:

- Validation loss increases while training loss continues to decrease [7] [16]

- High performance metrics on training data but poor metrics on testing/validation data [17]

- Large discrepancy between training and validation AUROC curves [18] K-fold cross-validation is a robust technical method for detection, where the model is trained on multiple data subsets and tested on held-out folds [7] [19].

Q3: What are the most effective strategies to prevent overfitting in biochemical reaction systems? Implement multiple complementary approaches:

- Regularization: Apply L1 (Lasso) or L2 (Ridge) regularization to constrain model complexity [7] [18]

- Cross-validation: Use k-fold or repeated k-fold cross-validation for reliable performance estimation [15]

- Data augmentation: Artificially increase training data through synthetic generation or transformations [7] [19]

- Feature selection: Reduce dimensionality by selecting only the most important features [7] [14]

- Early stopping: Halt training when validation performance begins to degrade [7] [20]

- Dropout: Randomly disable network units during training to prevent co-adaptation [20]

Q4: How does thermodynamically consistent model calibration help prevent overfitting? Thermodynamically Consistent Model Calibration (TCMC) incorporates physical constraints from thermodynamics into parameter estimation, which naturally restricts the solution space to physically plausible values. This approach provides dimensionality reduction, better estimation performance, and lower computational complexity, all of which help alleviate overfitting [21].

Q5: What are the consequences of overfitting in biomarker discovery and drug development? Overfitting can have severe real-world impacts:

- Identification of spurious biomarkers that fail validation in independent datasets [14]

- Wasted resources on validating false-positive findings [14]

- Incorrect diagnoses or treatment recommendations in clinical applications [14]

- Reduced reproducibility of scientific studies [15] [14]

Detection Methods Comparison

Table: Quantitative Methods for Detecting Overfitting

| Method | Key Metrics | Implementation Complexity | Best Use Cases |

|---|---|---|---|

| Hold-out Validation [7] | Training vs. test accuracy/loss | Low | Large datasets, initial screening |

| K-fold Cross-validation [7] [15] | Average performance across folds | Medium | Small to medium datasets, reliable estimation |

| Training History Analysis [16] | Divergence between training/validation loss | Medium | Deep learning models, epoch optimization |

| Bias-Variance Analysis [19] [22] | Error decomposition | High | Model diagnosis, complexity tuning |

Research Reagent Solutions

Table: Essential Computational Tools for Preventing Overfitting

| Tool Category | Specific Examples | Primary Function | Application Context |

|---|---|---|---|

| Regularization Libraries | Scikit-learn L1/L2, PyTorch Regularization [14] [19] | Add penalty terms to loss function | All model types, especially high-dimensional data |

| Cross-validation Frameworks | Scikit-learn KFold, StratifiedKFold [15] [14] | Robust performance estimation | Small datasets, class imbalance |

| Feature Selection Tools | Scikit-learn SelectKBest, RFE [7] [14] | Dimensionality reduction | High feature-to-sample ratio scenarios |

| Neural Network Regularization | Dropout layers, Early stopping callbacks [20] [16] | Prevent complex co-adaptations | Deep learning applications |

| Thermodynamic Constraint Tools | TCMC method [21] | Ensure physical plausibility | Biochemical reaction systems, kinetic models |

Experimental Protocol: Nested Cross-Validation for Kinetic Models

Objective: Reliably evaluate model performance while minimizing overfitting risk during hyperparameter tuning.

Procedure:

- Outer Loop: Split data into k-folds (typically k=5 or k=10)

- Inner Loop: For each training fold, perform another k-fold cross-validation to tune hyperparameters

- Parameter Selection: Choose hyperparameters that maximize inner loop performance

- Final Evaluation: Train with selected parameters on outer training fold, test on outer test fold

- Repeat: Iterate until each outer fold serves as test set once

This approach prevents optimistic bias that occurs when using the same data for both parameter tuning and performance estimation [15].

Workflow Diagram: Model Development with Overfitting Safeguards

Model Development with Validation Checkpoints

Bias-Variance Relationship Diagram

Bias-Variance Tradeoff in Model Complexity

Troubleshooting Guides

Guide 1: Diagnosing and Addressing Overfitting in Drug-Target Interaction (DTI) Models

Problem: Model shows high training accuracy but fails to predict novel drug-target interactions or generalizes poorly to external validation sets.

Primary Symptoms:

- Discrepancy >15% between training and validation/test set performance metrics (e.g., AUC, accuracy) [23] [24].

- High-confidence predictions (probability >0.9) that prove incorrect during experimental validation [24].

- Performance degradation when predicting interactions for new target classes or drug scaffolds [24].

Diagnostic Steps:

- Perform Cold-Start Validation: Test model performance on target proteins or drug compounds completely absent from training data [24].

- Implement Randomization Test: Compare model performance on real data versus randomly shuffled labels to assess statistical significance of learned patterns [23].

- Analyze Uncertainty Calibration: Use evidential deep learning to quantify prediction uncertainty; high uncertainty often indicates overfitting to spurious correlations [24].

Solutions:

- Integrate Evidential Deep Learning (EDL): Incorporate an evidence layer that provides uncertainty estimates alongside predictions to flag low-confidence inferences [24].

- Apply Multidimensional Representations: Use both 2D topological graphs and 3D spatial structures for drugs, combined with protein sequence features, to force learning of robust, multimodal features rather than dataset-specific noise [24].

- Utilize Pre-trained Models: Leverage protein (e.g., ProtTrans) and molecule (e.g., MG-BERT) pre-trained models as feature encoders to benefit from transfer learning and reduce parameter overfitting on limited task-specific data [24].

Guide 2: Mitigating Overfitting in Metabolic Pathway and PBPK Models

Problem: Model perfectly fits training metabolic data but fails to predict drug-induced metabolic changes or pharmacokinetics in new biological contexts.

Primary Symptoms:

- Accurate reproduction of metabolic flux training data but poor prediction of perturbation responses [25].

- PBPK models that simulate known population data but generate unrealistic predictions for special populations (pediatric, hepatic impaired) [26] [27].

- Overly complex models with many parameters relative to available experimental observations [23] [27].

Diagnostic Steps:

- Apply Task Inference Analysis: Use TIDE (Tasks Inferred from Differential Expression) algorithm to test if model-predicted pathway activities align with transcriptomic data from validation conditions [25].

- Conduct Sensitivity Analysis: Identify parameters with implausibly narrow confidence intervals, indicating potential overfitting [27].

- Perform External Validation: Test model predictions against entirely independent datasets not used during calibration [23].

Solutions:

- Implement "Fit-for-Purpose" Modeling: Align model complexity with context of use (COU) and available data; avoid unnecessary mechanistic details for the decision being supported [27].

- Apply Regularization via Physiological Constraints: Incorporate known biological boundaries (e.g., tissue volumes, blood flows, thermodynamic constraints) as priors to restrict parameter space [26] [25].

- Use Cross-Validation with Early Stopping: Monitor validation error during training and halt when validation performance plateaus or deteriorates despite improving training metrics [23].

Frequently Asked Questions (FAQs)

Q1: How can I determine the optimal model complexity to avoid overfitting when building a DTI prediction model?

A: Use a statistical significance test for component selection rather than relying solely on cross-validation. The randomization test approach enables objective assessment of each component's significance, reducing reliance on "soft" decision rules that can lead to overfitting [23]. For neural network-based DTI models, integrate evidential deep learning to automatically calibrate model complexity based on prediction uncertainty [24].

Q2: What are the most effective strategies to prevent overfitting when working with limited metabolic flux data?

A: Implement constraint-based modeling with physiological boundaries to restrict solution space [25]. Apply task inference approaches (TIDE) that use differential expression data without requiring full metabolic flux measurements. Utilize regularization techniques that incorporate prior knowledge from genome-scale metabolic models, and consider a variant like TIDE-essential that focuses on essential genes without relying on flux assumptions [25].

Q3: How can I validate that my PBPK model isn't overfitted to a specific population and will generalize to special populations?

A: Use virtual population simulations that incorporate known physiological differences across populations (age, genetics, organ function) during model development [26] [27]. Validate against multiple independent datasets representing different populations. Apply sensitivity analysis to ensure parameters remain within physiologically plausible ranges when extrapolating [26].

Q4: What practical steps can I take to ensure my machine learning models for toxicity prediction don't become overconfident on novel chemical scaffolds?

A: Implement uncertainty quantification methods like evidential deep learning that provide well-calibrated confidence estimates [24]. Use multi-task learning that jointly predicts potency, hERG, CYP inhibition, and PK parameters to encourage learning of generalizable features rather than scaffold-specific artifacts [28]. Continuously validate with prospective compounds and update models with experimental results [28].

Data Presentation Tables

Table 1: Performance Comparison of DTI Models with Overfitting Controls

| Model Type | AUC on Training | AUC on Test | Cold-Start AUC | Uncertainty Calibration | Overfitting Risk |

|---|---|---|---|---|---|

| Traditional DL (No UQ) | 95.2% | 81.5% | 72.3% | Poor | High [24] |

| EviDTI (With EDL) | 92.8% | 86.7% | 79.96% | Well-calibrated | Moderate [24] |

| Random Forest | 98.5% | 82.1% | 75.4% | Moderate | High [24] |

| SVM | 94.3% | 80.8% | 70.2% | Poor | High [24] |

Table 2: Metabolic Pathway Analysis Performance with Different Validation Approaches

| Validation Method | RMSECV | RMSEP | Identified True Synergies | False Synergies | Overfitting Indicator |

|---|---|---|---|---|---|

| Conventional CV | 0.15 | 0.28 | 3 | 5 | High (RMSECV ≪ RMSEP) [23] |

| Randomization Test | 0.21 | 0.23 | 4 | 1 | Low (RMSECV ≈ RMSEP) [23] |

| External Test Set | 0.18 | 0.19 | 5 | 2 | Low [23] |

| TIDE Algorithm | N/A | N/A | 4 | 1 | Low (Model-constrained) [25] |

Table 3: Research Reagent Solutions for Overfitting Prevention

| Reagent/Resource | Function in Preventing Overfitting | Application Context |

|---|---|---|

| MTEApy Python Package | Implements TIDE framework for metabolic task inference without full GEM construction | Metabolic pathway analysis [25] |

| ProtTrans Pre-trained Model | Provides robust protein features transferable to new targets, reducing parameter fitting | DTI prediction [24] |

| MG-BERT Molecular Encoder | Generates molecular representations from pre-trained knowledge, limiting overfitting to small datasets | DTI prediction, compound screening [24] |

| Evidential Deep Learning Layer | Produces uncertainty estimates alongside predictions, flagging low-confidence inferences | All predictive models [24] |

| Virtual Population Simulators | Tests model generalizability across physiological variants before experimental validation | PBPK modeling [26] [27] |

Experimental Protocols

Protocol 1: Randomization Test for Model Significance Assessment

Purpose: To statistically validate that a model has learned meaningful patterns rather than fitting dataset-specific noise [23].

Materials: Dataset (features X, target Y), modeling algorithm, computational environment.

Procedure:

- Train Initial Model: Develop model M using standard procedures on dataset (X, Y).

- Generate Randomization Distribution:

- For i = 1 to N (N ≥ 100):

- Randomly permute target values Y to create Yshuffled

- Train model Mi on (X, Yshuffled)

- Record performance metric Pi of Mi on (X, Yshuffled)

- Compare Actual Performance: Calculate performance P of model M on (X, Y)

- Assess Significance: Compute p-value as (number of times P_i ≥ P + 1) / (N + 1)

- Interpretation: p < 0.05 indicates model has learned statistically significant patterns beyond chance [23].

Protocol 2: Evidential Deep Learning for Uncertainty Quantification in DTI Prediction

Purpose: To provide well-calibrated confidence estimates for DTI predictions, reducing overconfident errors on novel data [24].

Materials: Drug-target interaction dataset, protein sequences, drug structures (2D graphs and 3D coordinates), computational resources with GPU acceleration.

Procedure:

- Feature Encoding:

- Protein Features: Use ProtTrans pre-trained model to extract sequence embeddings, apply light attention mechanism to identify residue-level important features [24].

- Drug Features: Encode 2D topological information using MG-BERT pre-trained model, process with 1DCNN. Encode 3D spatial structure using geometric deep learning (GeoGNN) on atom-bond and bond-angle graphs [24].

- Evidence Layer Integration:

- Concatenate protein and drug representations

- Feed through fully connected layers to evidence layer

- Output parameters α of Dirichlet distribution

- Uncertainty Calculation:

- Prediction probability = α₀ / sum(α) where α₀ = sum(α)

- Uncertainty = K / α₀ where K is number of classes

- Model Training: Use Dirichlet loss function to jointly optimize accuracy and uncertainty calibration [24].

- Validation: Assess using calibration curves and cold-start scenarios where drugs/targets are absent from training data [24].

Pathway Diagrams and Workflows

Diagram 1: Randomization Test Workflow for Model Validation

Diagram 2: EviDTI Framework for Robust DTI Prediction

Diagram 3: Metabolic Pathway Analysis with TIDE Algorithm

Frequently Asked Questions

Q: My model performs well on training data but poorly on new, unseen data. Is this overfitting?

Q: Can a model be overfitted even if I use a separate validation set for calibration?

Q: How does model complexity relate to overfitting in calibration?

- A: Excessively complex models are more prone to overfitting because they have a high capacity to memorize training data idiosyncrasies. Overly complex models often produce overconfident predictions (probabilities that are too extreme) and are a key indicator of overfitting during the calibration process [8] [31].

Q: What is the most reliable visual tool to diagnose poor calibration and potential overfitting?

Q: For high-dimensional data common in my research, what is a critical step to avoid overfitted models?

Troubleshooting Guides

Problem 1: A Large Gap Between Training and Validation Performance

Description You observe high accuracy or low loss on your training (or calibration) data, but performance significantly degrades on the validation or test set [8] [29].

Diagnostic Steps

- Monitor Performance Metrics: Track key metrics (e.g., accuracy, log loss, Brier score) on both training and validation sets throughout the training and calibration process [29].

- Plot Learning Curves: Graph the model's performance on both sets over time (epochs) or with increasing model complexity. A diverging gap is a clear warning sign [8].

- Check Data Splits: Ensure your training, validation, and test sets are truly independent and that there is no data leakage between them [29].

Solutions

- Apply Regularization: Introduce L1 (Lasso) or L2 (Ridge) regularization to penalize overly complex models and prevent coefficients from taking extreme values [7] [34].

- Simplify the Model: Reduce model complexity by using fewer parameters, removing layers from a neural network, or decreasing the number of neurons [7].

- Implement Early Stopping: Halt the training process when validation performance stops improving and begins to degrade [7] [34].

- Expand Your Data: Use data augmentation techniques to artificially increase the size and diversity of your training set, or collect more data if possible [7].

Problem 2: The Model is Overconfident in Its Predictions

Description The model's predicted probabilities are not aligned with true likelihoods. For example, for samples predicted with 90% confidence, the actual correct rate may only be 70% [32] [31].

Diagnostic Steps

- Create a Calibration Plot: Use this primary diagnostic tool. Plot the model's mean predicted probabilities (binned) against the observed fraction of positives. If the curve lies below the diagonal, the model is overconfident; if above, it is underconfident [32] [31].

- Calculate Quantitative Metrics: Compute the Brier Score (mean squared error of predictions) or Log Loss. Lower scores indicate better calibration. A model can have good accuracy but a poor Brier Score if its probabilities are inaccurate [31].

Solutions

- Apply Calibration Methods: Post-process your model's outputs using calibration techniques.

- Use a Simpler, Well-Calibrated Model: Models like Logistic Regression typically output well-calibrated probabilities by nature. Use them as a baseline or for final deployment if performance is acceptable [31].

Problem 3: Performance Varies Wildly with Different Data Splits

Description Your model's reported performance is highly sensitive to the specific random split of the data into training and validation sets.

Diagnostic Steps

- Employ Cross-Validation: Instead of a single hold-out validation, use k-fold cross-validation. This involves splitting the data into 'k' folds, training the model k times (each time with a different fold as the validation set), and averaging the results. High variance in the performance across folds indicates instability and potential overfitting [29].

- Stratify Your Data Splits: Ensure that each fold in cross-validation has the same proportion of class labels or important features as the complete dataset, which provides a more reliable performance estimate [29].

Solutions

- Increase Data Size: A larger dataset generally leads to more stable performance estimates across different splits.

- Use Ensemble Methods: Methods like Random Forests or Gradient Boosting combine multiple models to average out the biases and variances of individual models, leading to more robust performance [34].

Diagnostic Metrics & Methods

The following table summarizes key metrics for identifying overfitting during calibration.

Table 1: Key Quantitative Metrics for Diagnosing Overfitting and Poor Calibration

| Metric | Description | Interpretation | How It Indicates Overfitting |

|---|---|---|---|

| Performance Gap | Difference between training and validation set performance (e.g., accuracy, loss) [8] [29]. | A small gap is desirable. | A large gap suggests the model has memorized the training data and does not generalize. |

| Brier Score | Mean squared difference between predicted probabilities and actual outcomes (0/1) [31]. | Lower is better. A perfect model has a score of 0. | A high Brier Score indicates poor calibration, often a result of overconfident predictions from an overfitted model. |

| Log Loss / Cross-Entropy | Measures the uncertainty of predictions based on how much they diver from the true labels [32] [31]. | Lower is better. | A high Log Loss penalizes overconfidence on incorrect predictions, which is common in overfitted models. |

| Expected Calibration Error (ECE) | Weighted average of the absolute difference between confidence and accuracy across bins [32]. | Lower is better. A score of 0 indicates perfect calibration. | A high ECE shows a miscalibration, which can be a symptom of an overfitted model. (Note: ECE can be sensitive to bin size) [32]. |

Table 2: Comparison of Common Calibration Methods

| Method | Principle | Best For | Advantages | Disadvantages |

|---|---|---|---|---|

| Platt Scaling | Applies a logistic regression to the model's outputs [32] [31]. | Models where miscalibration is sigmoid-shaped. | Simple, fast, less prone to overfitting with small datasets. | Limited flexibility; assumes a specific shape of miscalibration. |

| Isotonic Regression | Learns a non-decreasing piecewise constant function to map outputs to probabilities [32] [31]. | Models with any monotonic miscalibration. | Highly flexible, can correct any monotonic distortion. | Requires more data, can overfit on small datasets. |

The following workflow diagram illustrates the logical process for diagnosing and addressing overfitting during calibration.

Diagram 1: Diagnostic workflow for identifying overfitting during calibration.

The Scientist's Toolkit

Table 3: Essential Research Reagents & Computational Tools

| Item / Solution | Function / Explanation |

|---|---|

| Stratified K-Fold Cross-Validation | A resampling procedure that ensures each fold is a good representative of the whole dataset by preserving the percentage of samples for each class. Critical for obtaining unbiased performance estimates [29] [30]. |

| scikit-learn Library (Python) | A core machine learning library providing implementations for data splitting, cross-validation, various models (with L1/L2 regularization), calibration methods (Platt Scaling, Isotonic Regression), and all standard evaluation metrics [31]. |

| Regularization (L1/L2) | A mathematical technique that adds a penalty term to the model's loss function to discourage complexity. L1 can drive feature coefficients to zero (feature selection), while L2 shrinks them uniformly [7] [34]. |

| Calibration Curve (Reliability Diagram) | The primary visual diagnostic tool for assessing probability calibration. It directly shows the relationship between a model's predicted probabilities and the true observed frequency of events [32] [31]. |

| Synthetic Data | Artificially generated data that mimics the statistical properties of real data. Can be used for data augmentation to increase training set size and improve generalization, or for creating controlled test scenarios, though it must be validated rigorously [29]. |

| Early Stopping Callback | A programming function that monitors validation loss during training and automatically halts the process when performance plateaus or starts to degrade, preventing the model from over-optimizing on the training data [7]. |

Proven Methodologies: Robust Parameter Estimation Frameworks to Combat Overfitting

Frequently Asked Questions (FAQs)

FAQ 1: Why is escaping local minima particularly challenging when calibrating kinetic models for pharmaceutical applications?

The calibration of kinetic models, such as those used in drug metabolism studies, often involves high-dimensional, non-convex optimization problems. In these landscapes, the number of saddle points and local minima increases exponentially with dimensionality [35]. The primary challenge is not just local minima but also flat regions and saddle points where the gradient is zero, which can cause optimization algorithms to stagnate prematurely. This is especially problematic in kinetic models where small parameter changes can lead to significant differences in predicted drug concentration trajectories, directly impacting the model's predictive accuracy and leading to overfitting [35] [23].

FAQ 2: What is the fundamental difference between global and local optimization methods in this context?

Local optimization methods are designed to find the nearest local minimum from an initial starting point. They are efficient for refinement but are inherently limited in their ability to explore the complex Potential Energy Surface (PES) globally. In contrast, Global Optimization (GO) methods combine global exploration with local refinement to locate the most stable configuration, or global minimum. This is crucial for kinetic model calibration, as it increases the likelihood of finding a parameter set that generalizes well to new data, thereby helping to prevent overfitting [36].

FAQ 3: How can I determine if my optimization algorithm is stuck at a saddle point instead of a local minimum?

A key diagnostic tool is the analysis of the Hessian matrix (the matrix of second-order partial derivatives) at the suspected point. A local minimum will have a Hessian matrix with all positive eigenvalues. In contrast, a saddle point is characterized by a Hessian with both positive and negative eigenvalues, indicating directions of descent that the algorithm could potentially follow [35]. In high-dimensional problems, computing the full Hessian can be expensive, but stochastic perturbations in methods like Stochastic Gradient Descent (SGD) can help escape these regions without explicit Hessian calculation [35].

FAQ 4: What role do stochastic perturbations play in preventing overfitting during optimization?

Stochastic perturbations, such as the noise injected in Stochastic Gradient Descent (SGD) or Perturbed Gradient Descent, help the optimization process escape shallow local minima and saddle points. By adding controlled noise, the algorithm does not converge prematurely to a suboptimal solution that may fit the training data well but fails on validation data. This encourages exploration of the loss landscape, leading to parameter sets that are often more generalizable, thus mitigating overfitting [35].

Troubleshooting Guides

Problem 1: Algorithm Convergence to Poor Local Minima

Symptoms: The loss function stagnates at a high value, or the calibrated model performs well on training data but poorly on validation data (overfitting).

Solutions:

- Implement Stochastic Perturbations: Modify your gradient descent update rule to include noise. The update becomes

x_{k+1} = x_k - η ∇f(x_k) + η ζ_k, whereζ_kis Gaussian noise. This helps push the algorithm out of attractive but suboptimal regions [35]. - Use Global Optimization Algorithms: Switch from local to global solvers. The following table compares several methods suitable for kinetic model calibration.

| Method | Type | Key Mechanism | Suitability for Kinetic Models |

|---|---|---|---|

| Basin Hopping (BH) [36] | Stochastic | Transforms the energy landscape into a collection of local minima, accepting/rejecting jumps based on a Monte Carlo criterion. | Effective for complex, rugged landscapes common in molecular system models. |

| Particle Swarm Optimization (PSO) [36] | Stochastic | A population-based method where particles navigate the search space based on their own and the swarm's best-known positions. | Good for broad exploration of high-dimensional parameter spaces. |

| Simulated Annealing (SA) [36] | Stochastic | Introduces a probabilistic acceptance of worse solutions that decreases over time, allowing escape from local minima early on. | Useful for initial broad searches before fine-tuning with local methods. |

| Stochastic Gradient Descent (SGD) [35] | Stochastic | Uses a noisy estimate of the gradient, which inherently provides a perturbation mechanism. | Standard in high-dimensional machine learning; requires careful learning rate tuning. |

Problem 2: Prohibitively Slow Convergence in High Dimensions

Symptoms: Optimization takes an excessively long time, making it impractical to complete a full model calibration.

Solutions:

- Employ Subspace Optimization: Reduce the problem's dimensionality by restricting the optimization to a lower-dimensional random subspace

𝒮 ⊂ ℝ^n. This can dramatically decrease computational cost while maintaining the efficiency of global convergence [35]. - Utilize Hybrid Methods: Combine a fast global explorer (e.g., a genetic algorithm) with a efficient local refiner (e.g., a gradient-based method). This leverages the strengths of both approaches: broad exploration and fast local convergence [36].

Problem 3: Selecting the Appropriate Model Complexity to Avoid Overfitting

Symptoms: Adding more parameters (e.g., more reaction pathways or intermediates) continuously improves fit to training data but worsens validation performance.

Solutions:

- Apply a Randomization Test: To objectively determine the optimal number of model components (e.g., in Partial Least Squares regression), use a randomization test. This method assesses the statistical significance of each added component, providing a more objective stopping criterion than visual inspection of validation curves, which can be ambiguous [23].

- Follow a Disentangled Modeling Scheme: Separate the decision for data pre-treatment from the decision on model complexity. First, use expert knowledge to pre-treat data, then use a statistical test like the randomization test to select the final model dimensionality objectively [23]. This workflow is illustrated in the diagram below.

Problem 4: Flat Loss Landscapes and Gradient Vanishing

Symptoms: The optimization progress becomes extremely slow, and gradient values approach zero.

Solutions:

- Incorporate Adaptive Learning Rates: Use algorithms like Adam or RMSprop that adapt the learning rate for each parameter. This can help navigate flat regions and ravines more effectively than standard gradient descent [35].

- Perform Eigenvalue Analysis: Analyze the Hessian matrix's eigenvalues at the current point. If the smallest eigenvalue is negative or close to zero, it confirms the presence of a saddle point or flat region. This knowledge can justify the use of more aggressive perturbation strategies [35].

Experimental Protocols & Workflows

Protocol 1: Implementing Perturbed Gradient Descent

This protocol adds controlled noise to standard gradient descent to escape saddle points [35].

- Initialization: Choose an initial point

x_0, learning rateη, and noise standard deviationσ. - Iteration:

a. Compute Gradient: Calculate the gradient

∇f(x_k)at the current point. b. Apply Perturbation: Generate a noise vectorζ_k ~ 𝒩(0, σ^2 I_n)from a Gaussian distribution. c. Update Parameters: Apply the update rule:x_{k+1} = x_k - η ∇f(x_k) + η ζ_k. - Termination: Repeat until convergence criteria are met (e.g., a fixed number of iterations or minimal progress).

Protocol 2: Randomization Test for Model Component Selection

This protocol provides an objective method to select the number of components in a model (e.g., PLS factors) to prevent overfitting [23].

- Pre-treatment: Pre-process the data (

X,Y) based on expert knowledge (e.g., filtering, scaling). - Build Initial Model: Construct a PLS model with a sufficiently large number of components,

A_max. - Randomization:

a. Randomly permute the values in the

Yvector to break the true relationship withX. b. Build a new PLS model using the permutedYand the originalX. c. Record the Root Mean Squared Error (RMSE) for this model with the randomized data. - Significance Testing: Repeat step 3 many times (e.g., 100-1000) to create a distribution of RMSE values under the null hypothesis (no real relationship).

- Decision: The optimal number of components is the smallest

Afor which the real model's RMSE is statistically significantly lower than the RMSE from the randomized models.

Essential Visualizations

Workflow for Overfitting Prevention

This diagram outlines the alternative calibration workflow that uses a randomization test to objectively prevent overfitting by selecting a model complexity that generalizes well [23].

Topology of an Optimization Landscape

This diagram visualizes key topological features—like local minima, saddle points, and the global minimum—on a non-convex optimization landscape, which are critical concepts for understanding optimization challenges [35] [36].

The Scientist's Toolkit: Research Reagent Solutions

The following table details key computational and algorithmic "reagents" essential for conducting global optimization in kinetic model calibration.

| Item Name | Type | Function/Benefit |

|---|---|---|

| Stochastic Gradient Perturbation [35] | Algorithmic Technique | Injects noise into gradient updates to escape saddle points and shallow local minima, preventing premature convergence. |

| Hessian Eigenvalue Analysis [35] | Diagnostic Tool | Uses the spectrum of the Hessian matrix to diagnose the nature of a stationary point (minimum vs. saddle point). |

| Randomization Test [23] | Statistical Method | Provides an objective, statistical criterion for selecting model complexity to avoid overfitting, superior to visual inspection of validation curves. |

| Subspace Optimization [35] | Dimensionality Reduction | Restricts the search to a random lower-dimensional subspace, reducing computational cost in high-dimensional problems. |

| Basin Hopping [36] | Global Optimization Algorithm | Simplifies the energy landscape by working with local minima, using Monte Carlo to accept/reject jumps between them for effective exploration. |

This technical support center provides troubleshooting guides and FAQs for researchers applying regularization techniques to prevent overfitting in kinetic model calibration, particularly in pharmaceutical development.

### Understanding Overfitting and the Need for Regularization

What is overfitting in the context of kinetic model calibration? Overfitting occurs when a model learns the training data too well, including its noise and random fluctuations, rather than capturing the underlying patterns. This leads to poor performance when the model is applied to new, unseen data [37] [38]. In kinetic models, this might manifest as a model that perfectly fits your calibration data but fails to accurately predict drug concentration-time profiles or metabolic pathways outside the specific experimental conditions it was trained on.

How can I detect overfitting in my models? A key indicator of overfitting is a significant gap between performance on training data and performance on validation or test data [39] [38]. For example, your model may have a very low error (e.g., Mean Squared Error) on the training set but a high error on the test set. Techniques like k-fold cross-validation are essential for detecting overfitting [39] [38].

### Comparison of Regularization Techniques

The table below summarizes the core characteristics of L1, L2, and Elastic Net regularization to guide your selection.

| Feature | L1 (Lasso) | L2 (Ridge) | Elastic Net |

|---|---|---|---|

| Penalty Term | Absolute value of coefficients [37] [40] | Squared value of coefficients [37] [40] | Mix of L1 and L2 penalties [41] |

| Impact on Coefficients | Drives some coefficients to exactly zero [40] [42] | Shrinks coefficients towards zero, but not exactly zero [40] [43] | Can drive some coefficients to zero while shrinking others [41] |

| Feature Selection | Yes, inherent to the method [37] [42] | No, all features are retained [37] | Yes, but less aggressive than L1 alone [41] [44] |

| Handling Correlated Features | Tends to select one feature from a correlated group [44] | Distributes weight evenly among correlated features [37] [44] | Handles groups of correlated features well [41] [44] |

| Best Use Case | High-dimensional data where only a few features are expected to be important [41] [42] | When all features are expected to contribute to the outcome [41] | Datasets with many correlated features [41] [44] |

### Workflow for Implementing Regularization

The following diagram illustrates a general workflow for applying and tuning regularization techniques in your research.

Diagram 1: Regularization implementation workflow.

### Frequently Asked Questions (FAQs) & Troubleshooting

FAQ 1: Should I use L1 or L2 regularization for my kinetic model with hundreds of potential parameters? If you are working with a high-dimensional kinetic model (where the number of parameters or features is large relative to the number of observations) and you suspect only a subset is biologically relevant, L1 (Lasso) regularization is often a good starting point. Its ability to perform feature selection will simplify the model and enhance interpretability by identifying the most critical parameters [37] [42]. However, if your parameters are highly correlated, L1 may arbitrarily select only one from a group. In such cases, Elastic Net is a robust alternative as it can select groups of correlated features while still promoting sparsity [41] [44].

FAQ 2: Why does my regularized model have high error on both training and test data? This is a sign of underfitting [38]. The most common cause is that your regularization parameter (λ) is set too high, over-penalizing the model coefficients and making the model too simple to capture the underlying kinetics [37] [43]. To troubleshoot:

- Reduce the value of λ. This decreases the strength of the penalty, allowing the model more flexibility to learn from the data [38].

- Consider if the model itself is too simple for the complexity of the system being modeled.

FAQ 3: My model performs well on training data but poorly on validation data, even with regularization. What should I do? This indicates that overfitting is still occurring. Several strategies can help:

- Increase the regularization parameter (λ): A higher λ applies a stronger penalty, further discouraging model complexity [43].

- Try a different regularization type: If using L2, try switching to L1 or Elastic Net for a stronger sparsity-inducing effect [41].

- Collect more training data: Overfitting is often a result of too much model flexibility for the amount of available data [38].

- Simplify the model architecture: Reduce the number of features or model parameters independent of regularization [38].

FAQ 4: How do I choose the right value for the regularization parameter (λ)? The optimal value for λ is data-dependent and must be found empirically. The standard methodology is to use cross-validation [39] [42]:

- Define a grid of potential λ values.

- For each value, train your model using k-fold cross-validation on your training set.

- Calculate the average cross-validation performance (e.g., lowest Mean Squared Error).

- Select the λ value that gives the best average performance.

- Finally, assess this chosen model on your held-out test set for a final evaluation.

### Experimental Protocol: Tuning Regularization with Cross-Validation

Objective: To systematically identify the optimal regularization parameter (λ) for a Lasso (L1) regression model predicting a kinetic response variable.

Materials & Reagents (The Scientist's Toolkit):

| Item/Software | Function |

|---|---|

| Python with scikit-learn | Programming environment and library providing Lasso, LassoCV, and GridSearchCV classes for implementation [41] [42]. |

| R with glmnet package | Statistical computing environment specifically designed for this purpose, offering efficient cross-validation for λ selection [42]. |

| Training Dataset | The subset of data used to train the model and tune the hyperparameter λ. |

| Validation/Test Dataset | A held-out subset of data not used during training, reserved for final model evaluation. |

| Computational Resources | Adequate processing power, as k-fold cross-validation involves training multiple models. |

Methodology:

- Data Preparation: Split your data into training and final test sets (e.g., 75%/25%). Do not use the test set for parameter tuning [39].

- Preprocessing: Rescale continuous predictors (e.g., Z-score scaling) to ensure the regularization penalty is applied uniformly [42].

- Define Parameter Grid: Specify a list of λ values (e.g.,

[0.01, 0.1, 1.0, 10.0]) to test. - Configure Cross-Validation: Use a tool like

LassoCVin scikit-learn orcv.glmnetin R, specifying the number of folds (k, typically 5 or 10). - Execute Cross-Validation: The algorithm will, for each λ value, train the model on (k-1) folds and validate on the remaining fold, cycling through all folds.

- Identify Optimal λ: Calculate the average performance (e.g., Mean Squared Error) across all folds for each λ. The λ with the best average performance is chosen.

- Final Evaluation: Train a final model on the entire training set using the optimal λ, and report its performance on the held-out test set.

### Advanced Topics: Bias-Variance Tradeoff and Regularization

How does regularization relate to the bias-variance tradeoff? Regularization directly manages the bias-variance tradeoff, a fundamental concept in model building [42] [38].

By increasing the regularization parameter λ, you increase bias but decrease variance [42]. This results in a simpler model that may not fit the training data as closely but is more likely to generalize to new data. The goal of tuning λ is to find the sweet spot that balances these two sources of error, minimizing the total generalization error [38].

### Frequently Asked Questions (FAQs)

1. What is the core difference between Bayesian and Frequentist statistics in model calibration? The core difference lies in how they treat unknown parameters and use existing information. The Frequentist approach regards parameters as fixed, unknown values and relies solely on the current dataset for estimation, aiming to control long-run error rates [45] [46]. In contrast, the Bayesian approach treats parameters as random variables with distributions. It explicitly incorporates prior knowledge (as a prior distribution) with the current data to form a posterior distribution, which is an updated summary of belief about the parameters [45] [47] [46]. This makes Bayesian methods particularly suited for preventing overfitting when data is limited, as the prior acts as a natural constraint on the parameter space [48].

2. When should I consider using a Bayesian approach for my kinetic model? A Bayesian approach is especially valuable in several scenarios common in kinetic model calibration:

- When you have limited data, as is often the case with complex kinetic studies, and need to avoid unreliable parameter estimates [48].

- When high-quality, relevant prior information exists from previous experiments, literature, or expert knowledge that can inform parameter values [47] [49].

- When you want to make direct probability statements about your parameters (e.g., "There is a 95% probability that the rate constant lies between X and Y") [46].

- In areas like drug development for rare diseases or dose-finding trials in oncology, where ethical and practical concerns demand learning from every available piece of information [49].

3. What are the potential pitfalls of using informative priors? The primary pitfall is introducing bias. If the prior knowledge is unreliable or incorrectly specified, it can lead to misleading posterior results. As highlighted in a review, "Bayesian estimation is preferred if prior parameter knowledge is reliable, but provides misleading results when the modeler is overly confident about poor parameter guesses" [48]. It is crucial to perform sensitivity analyses—running the analysis with different prior specifications—to ensure your conclusions are robust and not unduly influenced by a single, potentially flawed, prior assumption.

4. How do I report Bayesian analysis to ensure clarity and reproducibility? Reporting should be comprehensive and include:

- Justification for the prior: Clearly state the source of your prior knowledge (e.g., pilot data, meta-analysis, expert elicitation).

- The prior distribution itself: Specify the type (e.g., Normal, Gamma) and its parameters (e.g., mean, variance).

- Results of the posterior distribution: Report key summaries like the posterior mean, median, and credible intervals for parameters.

- Sensitivity analysis: Document how the results change with different reasonable priors to demonstrate robustness [46].

### Troubleshooting Guides

Problem: Model is Overfitting despite Using Bayesian Methods

Symptoms:

- Parameter estimates are extreme or physically implausible.

- The model predicts your calibration data perfectly but fails on new validation data.

- Posterior distributions are unusually wide, indicating high uncertainty.

Possible Causes and Solutions:

Cause: The prior distribution is too weak (too vague).

- Solution: Incorporate more substantive prior information. If reliable historical data exists, use it to construct a more informative prior. Consult domain experts to refine your prior beliefs. A more informative prior exerts a stronger regularizing effect, pulling estimates toward reasonable values and reducing overfitting [48] [46].

Cause: The model is too complex for the available data.

- Solution: Simplify the model structure. Use subset-selection (estimability) analysis to identify and fix the values of parameters that the data cannot reliably estimate. This method ranks parameters from most- to least-estimable, allowing you to reduce the number of parameters being estimated and avoid overfitting [48].

Cause: Poor choice of likelihood function.

- Solution: Re-evaluate the likelihood to ensure it accurately represents the data-generating process. For instance, if your experimental data has known heteroscedasticity (changing variance), using a simple Gaussian likelihood may be inadequate. Specify a likelihood that better captures the noise structure of your measurements.

Problem: MCMC Sampling is Slow or Failing to Converge

Symptoms:

- Very high autocorrelation between samples.

- Diagnostic plots (e.g., trace plots) show the chain is not mixing well or "sticking."

- The Gelman-Rubin diagnostic (R-hat) is significantly greater than 1.

Possible Causes and Solutions:

Cause: Poorly scaled parameters.

- Solution: Reparameterize your model. If parameters are on vastly different scales (e.g., a rate constant of 0.001 and an equilibrium constant of 1000), it can slow down sampling. Standardize or center your parameters to a common scale to improve sampling efficiency.

Cause: Strong correlations between parameters in the posterior.

- Solution: This is common in kinetic models. Consider using a sampling algorithm like Hamiltonian Monte Carlo (HMC) or the No-U-Turn Sampler (NUTS), which are better at handling correlated parameters. Additionally, reparameterizing the model to reduce correlations, perhaps by using a Cholesky decomposition of the covariance matrix, can be beneficial.

Cause: Inefficient proposal distribution.

- Solution: Tune the sampler's parameters (e.g., the step size) during the warm-up phase. Modern software like Stan automatically performs this tuning, so ensuring you are using an up-to-date and adaptive sampler is key.

### Methodologies & Data Presentation

Table 1: Comparison of Statistical Approaches for Parameter Estimation

This table summarizes the core differences between Frequentist and Bayesian methods, which is fundamental to understanding how Bayesian approaches constrain parameter space.

| Feature | Frequentist Approach | Bayesian Approach |

|---|---|---|

| Nature of Parameters | Fixed, unknown constants [46] | Random variables with probability distributions [46] |

| Inference Basis | Frequency of data in hypothetical repeated samples (p-values) [47] [46] | Updated belief given the data (posterior probability) [47] [46] |

| Use of Prior Knowledge | Not directly incorporated [45] [49] | Explicitly incorporated via the prior distribution [45] [49] |

| Output | Point estimate and confidence interval | Entire posterior distribution and credible interval |

| Interpretation of Interval | Long-run frequency: proportion of intervals containing the true parameter over infinite repeats [46] | Direct probability: 95% probability the true parameter lies within the interval [46] |

| Handling Limited Data | Prone to overfitting; estimates can be unstable [48] | Prior regularizes estimates, reducing overfitting risk [48] |

Table 2: Bayesian Estimation Methods to Prevent Overfitting

This table compares two key methodological strategies for dealing with limited data.

| Method | Core Principle | Advantages | Disadvantages | Best Used When |

|---|---|---|---|---|

| Bayesian Estimation with Informative Priors | Summarizes prior knowledge as a probability distribution, which is updated with data [48] [46] | - Directly incorporates existing knowledge- Provides a natural regularization penalty- Yields a full distribution for parameters [48] | - Results are sensitive to poor prior choices- Requires careful justification and sensitivity analysis [48] | High-quality, reliable prior information is available [48] |

| Subset-Selection (Estimability) Analysis | Ranks parameters based on estimability from the data; only a subset is estimated, others are fixed [48] | - Less susceptible to bias from poor initial guesses- Identifies model simplifications- Reduces number of estimated parameters [48] | - Computationally expensive- Does not fully utilize prior knowledge in a probabilistic way [48] | Prior knowledge is limited or unreliable, and the model is potentially over-parameterized [48] |

### Experimental Workflow Visualization

The following diagram illustrates the logical workflow for applying a Bayesian approach to kinetic model calibration, emphasizing the steps that prevent overfitting.

Bayesian Model Calibration Workflow

### The Scientist's Toolkit: Research Reagent Solutions

The following table lists essential computational and statistical "reagents" for implementing Bayesian approaches in kinetic research.

| Item | Function in Bayesian Calibration |

|---|---|

| Probabilistic Programming Languages (e.g., Stan, PyMC3, WinBUGS) | Provides the core environment for specifying Bayesian statistical models and performing inference, often via efficient MCMC sampling [46]. |

| Informative Prior Distribution | The "regularizing reagent" that incorporates previous knowledge to constrain parameter estimates, preventing them from taking on implausible values due to noise in a limited dataset [48] [46]. |

| Sensitivity Analysis Plan | A methodological protocol to test the robustness of conclusions against different choices of prior distributions and model structures, ensuring results are not artifacts of a single subjective choice [48]. |

| MCMC Diagnostics (e.g., R-hat, trace plots) | Tools to assess the convergence and reliability of the sampling algorithm, verifying that the obtained posterior distribution is a genuine result and not a computational artifact. |

| Subset-Selection Algorithm | A computational tool used to identify which parameters in a complex model can be reliably estimated from the available data, helping to simplify the model and avoid overfitting [48]. |

Your Toolkit at a Glance

The following table summarizes the core characteristics of SKiMpy, Tellurium, and MASSpy to help you understand their different approaches to kinetic modeling.

| Toolkit | Primary Parameter Determination Method | Key Data Requirements | Key Advantages | Major Limitations / Overfitting Risks |

|---|---|---|---|---|

| SKiMpy | Sampling [50] | Steady-state fluxes & concentrations; thermodynamic information [50] | Uses stoichiometric network as a scaffold; efficient & parallelizable; ensures physiologically relevant time scales [50]. | Explicit time-resolved data fitting is not implemented, limiting calibration against dynamic datasets [50]. |

| Tellurium | Fitting [50] | Time-resolved metabolomics data [50] | Integrates many tools and standardized model structures; suitable for dynamic simulation and analysis [50]. | Limited built-in parameter estimation capabilities can push users toward custom, potentially unvalidated, scripts [50]. |

| MASSpy | Sampling [50] | Steady-state fluxes & concentrations [50] | Well-integrated with COBRApy for constraint-based modeling; computationally efficient & parallelizable [50]; uses mechanistic, mass-action kinetics [51]. | Implemented primarily with mass-action rate law, which can be complex for large networks without curated mechanisms [50]. |

Troubleshooting Common Modeling Issues

Problem: My model fits the training data perfectly but fails to predict new conditions.

Potential Cause and Solution: This is a classic sign of overfitting, where the model has too many degrees of freedom. Simplify your model.

- For MASSpy: Since it uses detailed mass-action kinetics by default, consider if your network has unnecessary intermediate species or reactions. The complexity can lead to over-parameterization [51].

- For SKiMpy: Leverage its ability to automatically assign rate law mechanisms from a built-in library, which can prevent the ad-hoc addition of complex, unjustified mechanisms [50].

- General Best Practice: A first-order kinetic model can be highly effective and more robust than complex models for many biologics, as it reduces the number of parameters to fit and minimizes overfitting [52].

Problem: Parameter estimation fails or yields unrealistic values.

Potential Cause and Solution: The parameter space is too large or poorly constrained.

- For Tellurium: Its limited built-in parameter estimation capabilities may require you to use external packages or scripts. Ensure you are providing tight, physiologically realistic bounds for all parameters during estimation [50].

- For SKiMpy & MASSpy: Both tools can sample parameter sets that are consistent with thermodynamic constraints and experimental data. Use this feature to generate ensembles of models that are all thermodynamically feasible, rather than searching for a single "perfect" parameter set [50]. This approach inherently quantifies uncertainty and prevents over-reliance on one potentially overfit solution.

Problem: The model is numerically unstable during simulation.

Potential Cause and Solution: This can arise from parameter sets that create "stiff" systems of equations.

- For MASSpy: It utilizes

libRoadRunneras its simulation engine, which is a high-performance SBML simulator designed to handle complex models [51]. Ensure you are using an updated version. - For All Tools: Unstable simulations can result from overfit parameters that are pushed to extreme, non-physiological values. The ensemble modeling approach supported by SKiMpy and MASSpy helps avoid this by filtering for parameters that produce physiologically relevant time scales [50].

Frequently Asked Questions (FAQs)

Q1: How can I prevent overfitting when I have limited experimental data? Prioritize model simplicity. Using a first-order kinetic model has been demonstrated to effectively predict long-term stability for various complex protein modalities because it reduces the number of fitted parameters, enhancing robustness and reliability [52]. Furthermore, leverage tool features like SKiMpy's and MASSpy's parameter sampling to generate an ensemble of models that are consistent with the available data and thermodynamic laws, rather than trying to find one exact fit [50]. This explicitly accounts for uncertainty.

Q2: Which toolkit is best for integrating with existing genome-scale metabolic reconstructions? MASSpy is specifically designed for this purpose. It expands the COBRApy framework, creating a unified environment for both constraint-based and kinetic modeling. This allows you to directly build upon established stoichiometric models [51] [50].

Q3: My model needs to capture specific enzyme mechanisms. Which tool is most flexible? Tellurium is a versatile tool that supports various standardized model formulations and is excellent for modeling specific, curated biochemical pathways with custom mechanisms [50]. SKiMpy also allows for user-defined kinetic mechanisms in addition to its built-in library [50].

Q4: What is a practical workflow to minimize overfitting risk from the start? A robust, preventative workflow is key. The diagram below outlines the process.

Q4 Diagram Title: Overfitting Prevention Workflow

Experimental Protocol: Ensemble Modeling to Quantify Uncertainty

This protocol uses MASSpy or SKiMpy to generate an ensemble of kinetic models, a best practice for avoiding overfitting and quantifying prediction uncertainty [50].

1. Objective: To generate a population of kinetic models that are all consistent with available steady-state and thermodynamic data, rather than a single overfit model.

2. Materials & Reagent Solutions:

- Software: MASSpy Python package [51] or SKiMpy [50].

- Input Data: