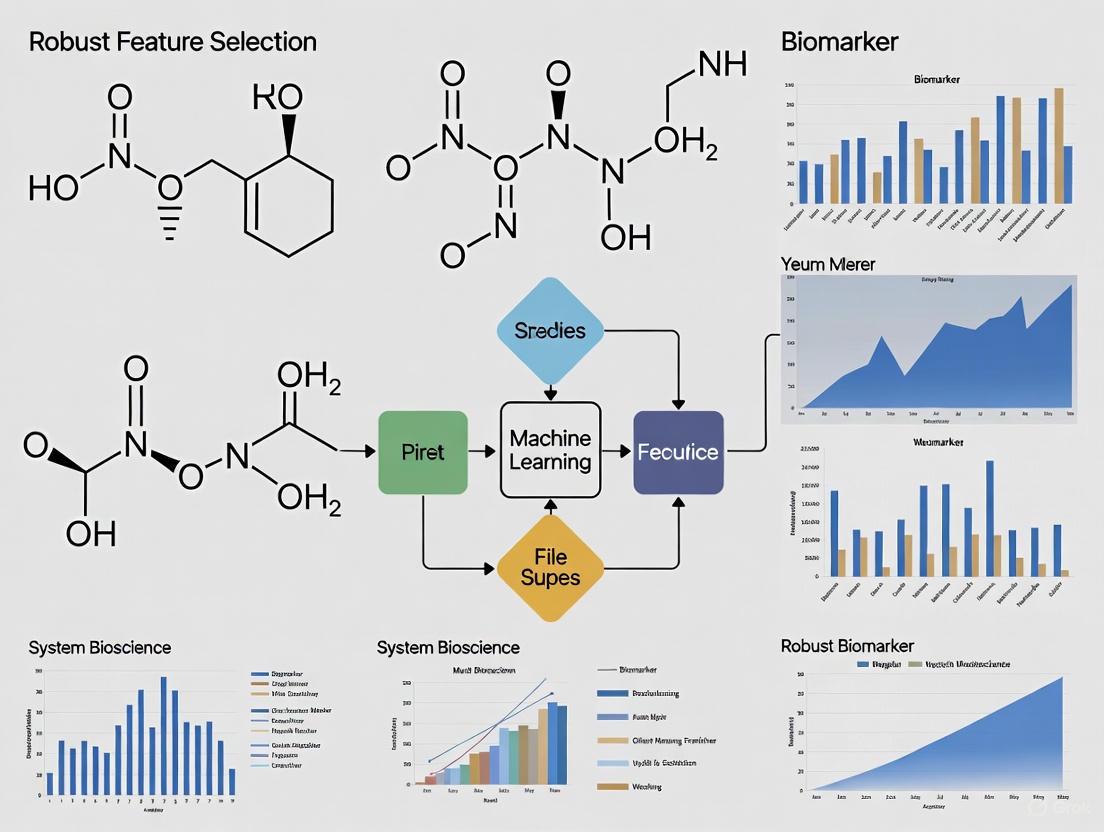

Robust Feature Selection for Biomarker Discovery in Machine Learning: Strategies for Stable and Clinically Actionable Models

This article provides a comprehensive guide for researchers and drug development professionals on implementing robust feature selection in machine learning for biomarker discovery.

Robust Feature Selection for Biomarker Discovery in Machine Learning: Strategies for Stable and Clinically Actionable Models

Abstract

This article provides a comprehensive guide for researchers and drug development professionals on implementing robust feature selection in machine learning for biomarker discovery. It covers the foundational importance of stability and reproducibility in high-dimensional omics data, explores advanced methodological frameworks including causal inference and multi-modal integration, and addresses critical challenges like data heterogeneity and overfitting. Through comparative analysis of validation strategies and interpretability techniques like SHAP, the article outlines a pathway for translating computational models into reliable tools for precision medicine, early diagnosis, and therapeutic development.

The Critical Role of Robust Feature Selection in Modern Biomarker Discovery

Troubleshooting Guides

Troubleshooting Guide 1: Unstable Feature Selection Results

Problem: Selected feature subsets change drastically between different runs or subsamples of your dataset, compromising result reliability.

Explanation: In high-dimensional settings where features vastly outnumber samples, feature selection algorithms become vulnerable to small data perturbations, leading to high instability [1] [2]. This undermines confidence in the identified biomarkers.

Solution:

- Implement Ensemble Feature Selection: Apply the same selection algorithm to multiple perturbed versions (e.g., bootstrap samples) of your training data. Aggregate results by selecting features based on high inclusion frequency across all runs [1].

- Use Stability-Enhanced Algorithms: Employ modern methods like

BCenetTuckerfor tensor data or other bootstrap-integrated approaches that explicitly model and mitigate instability during estimation [2]. - Apply Regularization: Integrate

Elastic Netregularization, which combines L1 (Lasso) and L2 (Ridge) penalties. This promotes sparsity while handling correlated features better than Lasso alone, improving stability [2].

Verification: After applying these methods, stability metrics like the Jaccard Index (measuring similarity between selected feature sets) should show significant improvement. For example, the BCenetTucker method achieved a Jaccard Index of 0.975, indicating high reproducibility [2].

Troubleshooting Guide 2: Poor Model Generalization Despite High Training Accuracy

Problem: Your model performs well on training data but fails on external validation sets or real-world deployment.

Explanation: This overfitting occurs when a model learns noise or spurious correlations specific to the training set, often due to high dimensionality and complex models with insufficient data [3].

Solution:

- Rigorously Partition Data: Strictly separate data into training, validation, and test sets. Do not use the test set for any aspect of model development or feature selection to prevent information leakage [3].

- Apply Dimensionality Reduction: Use feature selection (choosing a feature subset) or extraction (creating new features, e.g., PCA) to reduce the feature space, mitigating the "curse of dimensionality" [4].

- Implement Robust Validation: Use nested cross-validation to provide an unbiased performance estimate when tuning hyperparameters and selecting features simultaneously [5].

Verification: Model performance on the held-out test set should closely match performance on the training set. A significant drop indicates overfitting.

Troubleshooting Guide 3: Managing Sex and Other Biases in Biomarker Prediction

Problem: Your biomarker prediction model performs poorly for specific demographic subgroups, such as one biological sex.

Explanation: Machine learning models can perpetuate and even amplify existing biases in data. Significant sex-based differences in biomarker mean values and variances have been documented. Building a single model on combined data can obscure these differences, leading to suboptimal predictions for all groups [5].

Solution:

- Stratify Data by Sex: Build separate, sex-specific models. Research shows this can yield more accurate predictions for biomarkers like waist circumference, BMI, and blood glucose than a single model trained on combined data [5].

- Incorporate Sex as a Feature: If stratification is not feasible, explicitly include sex as an input feature to allow the model to learn sex-specific patterns [5].

- Conduct Pre-Modelling Analysis: Use statistical tests (e.g., Levene's test for variances, t-tests for means) and visualize density distributions to identify significant differences in feature distributions between subgroups [5].

Verification: Evaluate model performance metrics (e.g., error rates) separately for each subgroup. Sex-specific models should show lower prediction error for their respective subgroups compared to a general model [5].

Frequently Asked Questions (FAQs)

What is the practical difference between feature selection and feature extraction?

Answer: Both aim to reduce dimensionality, but their outputs differ.

- Feature Selection identifies a subset of the original features (e.g., selecting 10 specific proteins from 3440 analytes). This preserves interpretability, which is crucial for biomarker discovery [6].

- Feature Extraction creates new, transformed features from the originals (e.g., Principal Components). While effective, these new features can be difficult to interpret biologically [4].

- When to Use: Prioritize feature selection when interpretability and identifying specific biomarkers are key. Use feature extraction when prediction accuracy is the sole priority and interpretability is secondary [4].

How can I quantitatively measure the stability of my feature selection?

Answer: Stability is distinct from predictive accuracy and must be measured separately. Common metrics include [2]:

- Jaccard Index: Measures the similarity between two selected feature sets. Ranges from 0 (no overlap) to 1 (identical sets).

- Support-Size Deviation: The standard deviation in the number of features selected across different data perturbations. Lower values indicate higher stability.

- Inclusion Frequency: For each feature, the proportion of times it is selected across all runs. Stable features will have a frequency near 1.0.

Table: Stability Metrics for Bootstrap-Based Feature Selection Method (BCenetTucker) on a Gene Expression Dataset [2]

| Stability Metric | Value Achieved | Interpretation |

|---|---|---|

| Jaccard Index | 0.975 | Very high similarity between selected feature sets across runs. |

| Support-Size Deviation | 1.7 genes | Low variability in the number of features selected. |

| Proportion of Stable Support | High | A large majority of selected features were consistently chosen. |

My dataset is very small. How can I reliably perform feature selection?

Answer: Small sample size is a major challenge for stability.

- Leverage Resampling: Use bootstrap methods or repeated cross-validation to create multiple training sets. This simulates the process of drawing new samples from the population [2].

- Use Regularized Models: Algorithms with built-in feature selection like Lasso or Elastic Net are often more stable on small data than unregularized models [2] [6].

- Prioritize Simplicity: With limited data, avoid complex, data-hungry models. Start with simple models and heuristics, as they can provide a strong baseline and are less prone to overfitting [7].

What are the main types of feature selection methods?

Answer: Methods can be categorized by how they interact with the learning model [1] [4]:

- Filter Methods: Select features based on statistical scores (e.g., correlation with the target) independently of the classifier. They are computationally efficient but may ignore feature dependencies.

- Wrapper Methods: Use the performance of a specific classifier to evaluate feature subsets. They can find high-performing subsets but are computationally expensive and risk overfitting.

- Embedded Methods: Perform feature selection as part of the model training process (e.g., L1 Lasso regularization). They offer a good balance of efficiency and performance.

How do I know if I have enough data for a robust machine learning project?

Answer: There is no universal number, but these are key considerations [3]:

- Signal-to-Noise Ratio: Strong biological signals may require less data, while weak signals require more.

- Model Complexity: Complex models like deep neural networks need vast amounts of data. For small datasets, prefer simpler models (linear models, shallow trees) with strong regularization [3].

- Use Cross-Validation: It maximizes the utility of available data for both training and reliable evaluation [3].

- Data Augmentation: If possible, artificially expand your dataset using domain-specific techniques (e.g., adding slight noise to measurements) [3].

Experimental Protocols

Protocol 1: Implementing Homogeneous Ensemble Feature Selection

This protocol uses data perturbation and aggregation to improve the stability of any base feature selection algorithm [1].

Workflow Diagram: Homogeneous Ensemble Feature Selection

Methodology:

- Input: High-dimensional dataset

DwithNsamples andMfeatures. - Generate Bootstrap Samples: Create

B(e.g., 100) bootstrap samples{D_1, D_2, ..., D_B}by randomly sampling fromDwith replacement. - Apply Base Selector: Run your chosen feature selection algorithm (e.g., Lasso, mRMR) on each bootstrap sample

D_bto get a feature subsetS_b. - Aggregate Results: Calculate the inclusion frequency for each feature

f_jacross allBsubsets:F_j = (Number of times f_j is selected) / B. - Output Final Set: Select all features with an inclusion frequency

F_jabove a predefined threshold (e.g., 0.6) or select the top-Kfeatures ranked byF_j.

Protocol 2: Benchmarking Biomarker Selection and Classifier Pairs

This protocol provides a systematic framework for evaluating different combinations of feature selection and classification methods to identify the optimal pipeline for a specific diagnostic task [6].

Workflow Diagram: Biomarker Selection Benchmarking

Methodology (as applied in [6]):

- Dataset: A balanced gastric cancer dataset with 50 cases and 50 controls, each with 3440 molecular measurements (analytes).

- Feature Selection:

- Define the maximum number of biomarkers

K(e.g., 1, 3, 10, 30). - Apply multiple feature selection methods (e.g., Univariate using Chi-square, Causal-based metric) to select the top-

Kfeatures. - Optionally, binarize the analyte measurements based on a threshold

τ(e.g.,τ = 1.0).

- Define the maximum number of biomarkers

- Classification:

- Using only the selected

Kfeatures, train multiple classifiers (e.g., Logistic Regression, Gradient Boosted Trees, Neural Networks). - Evaluate using rigorous validation like Leave-One-Out Cross-Validation (LOOCV).

- Using only the selected

- Evaluation:

- Fix specificity at a clinically relevant level (e.g., 0.9) and compare the resulting sensitivity.

- The best-performing combination is the one that achieves the highest sensitivity for a given

Kand specificity.

Table: Example Benchmarking Results for K=10 Biomarkers at 0.9 Specificity (Adapted from [6])

| Feature Selection Method | Classifier | Sensitivity |

|---|---|---|

| Causal-Based | Gradient Boosted Trees | 0.520 |

| Univariate | Neural Network | 0.480 |

| Univariate | Logistic Regression | 0.040 |

The Scientist's Toolkit: Research Reagent Solutions

Table: Essential Computational and Methodological Tools for Robust Biomarker Research

| Item | Function / Purpose | Example / Notes |

|---|---|---|

| Elastic Net Regularization | A penalized regression method that performs feature selection (via L1 penalty) while handling correlated features better than Lasso (via L2 penalty), improving stability [2]. | Implemented in most ML libraries (e.g., scikit-learn). Key for creating sparse, stable models in high-dimensional data. |

| Bootstrap Resampling | A statistical technique that creates multiple new datasets by randomly sampling with replacement from the original data. Used to simulate the process of drawing new samples and assessing stability [2]. | Foundation for ensemble feature selection and methods like BCenetTucker and Bolasso [2]. |

| Stability Selection | A robust feature selection framework that combines bootstrap resampling with a base selector (e.g., Lasso) and selects features based on their inclusion frequency across all runs [2]. | Helps control false discoveries and improves reproducibility. |

| Recursive Feature Elimination with Cross-Validation (RFECV) | A wrapper method that recursively removes the least important features and uses cross-validation to find the optimal number of features [5]. | Useful for optimizing the feature set size while maintaining predictive performance. |

| Causal-Based Feature Selection Metrics | A method that evaluates a feature's importance based on its causal effect in the context of other correlated features, moving beyond simple univariate associations [6]. | Particularly performant when a very small number of biomarkers are permitted [6]. |

| Nested Cross-Validation | A validation scheme where an inner cross-validation loop (for hyperparameter tuning/feature selection) is inside an outer loop (for error estimation). Prevents optimistic bias in performance estimates [5]. | The gold standard for obtaining a reliable estimate of model performance when tuning and selection are required. |

Frequently Asked Questions (FAQs)

What exactly is the 'p >> n' problem in the context of my omics research?

The "p >> n" problem, or the "curse of dimensionality," occurs when the number of variables or features (p) in your dataset is vastly larger than the number of observations or samples (n). In omics studies, it is common to have a single -omic dataset containing tens of thousands of features (e.g., from RNAseq measuring over 20,000 genes) but only a few hundred samples at most [8]. This creates a high-dimensional data space where traditional statistical methods fail because they are designed for datasets with more samples than features [8].

Why is this problem particularly critical for biomarker discovery?

The p >> n problem is especially detrimental to biomarker discovery for several key reasons. It leads to model overfitting, where a model learns the noise in the training data rather than the true underlying biological signal, performing well on training data but poorly on new validation data [8] [9]. It also complicates feature selection, as it becomes statistically challenging to distinguish genuinely important biomarkers from thousands of irrelevant features. Furthermore, high-dimensional spaces exhibit counterintuitive geometry where data points become sparse and distances between them become less meaningful, distorting similarity measures essential for clustering and classification [10]. Finally, the computational cost of model training and hyperparameter optimization increases exponentially with dimensionality [9].

I have a limited budget for sample collection. Can I still perform robust analysis?

Yes, but it requires strategic methodology. While large sample sizes are ideal, researchers with limited budgets can leverage specialized machine learning techniques designed for high-dimensional data with few samples. The field heavily utilizes methods like dimensionality reduction and models that perform well with relatively few samples, such as support vector machines, random forests, and regularized regression [8]. A 2023 literature survey confirmed that these are popular and effective techniques for multi-omic data integration in low-sample settings [8].

Troubleshooting Guides

Problem: Poor Model Performance and Overfitting Despite High Training Accuracy

Description: Your machine learning model achieves near-perfect accuracy on your training dataset but fails to generalize to your test set or independent validation cohorts. This is a classic symptom of overfitting in a high-dimensional setting.

Diagnosis Flowchart:

Solution:

- Implement Dimensionality Reduction: Use techniques like Principal Component Analysis (PCA) or autoencoders to transform your high-dimensional data into a lower-dimensional space that retains most of the biological signal while discarding noise. A 2023 review found autoencoders to be particularly common in multi-omics research for this purpose [8].

- Apply Robust Feature Selection: Before model training, aggressively reduce the number of features. Do not rely on univariate filtering alone.

- Protocol: Ensemble Feature Selection for Biomarker Discovery

- Objective: To identify a minimal, robust subset of biomarkers resistant to random variations in the dataset.

- Procedure:

- Step 1: Apply at least three different feature selection algorithms (e.g., LASSO, Random Forest feature importance, mRMR) to your training data.

- Step 2: Aggregate the results, for example, by selecting features that appear in the top ranks of at least two or three of the algorithms. A 2025 study on miRNA biomarkers for Usher Syndrome used this strategy, selecting top miRNAs that appeared in at least three algorithms [11].

- Step 3: Train your final model (e.g., SVM, Random Forest) using only this consensus subset of features.

- Validation: Use nested cross-validation to avoid bias. The inner loop performs feature selection and hyperparameter tuning, while the outer loop provides an unbiased estimate of model performance [11].

- Protocol: Ensemble Feature Selection for Biomarker Discovery

Problem: Inconsistent Biomarker Panels Across Studies or Datasets

Description: A biomarker panel identified in one cohort or dataset fails to replicate in another, even for the same condition. This is a major challenge in translational research.

Diagnosis Flowchart:

Solution:

- Combat Technical Variability: Ensure rigorous pre-processing, normalization, and correction for batch effects. Technical artifacts from different sequencing platforms or preparation batches can overshadow true biological signals [12] [13].

- Employ Stable Feature Selection Algorithms: Use advanced machine learning methods designed to identify robust features in high-dimensional spaces.

- Protocol: SVM-Recursive Feature Elimination with Cross-Validation (SVM-RFECV)

- Objective: To find a compact, highly predictive, and stable protein biomarker panel for disease classification, as demonstrated in a 2025 Alzheimer's disease study [14].

- Procedure:

- Step 1: Pre-process and normalize your multi-omics data. Handle missing values (e.g., using K-Nearest Neighbor imputation) [14].

- Step 2: Train a Support Vector Machine (SVM) model on the full set of features.

- Step 3: Recursively eliminate the least important feature (based on the SVM's weight coefficients) in each iteration.

- Step 4: At each step of elimination, perform k-fold cross-validation (e.g., 5-fold or 10-fold) to evaluate the model's performance with the current feature set.

- Step 5: The optimal feature subset is the one that yields the best cross-validation score (e.g., highest AUC). This process was used to identify a robust 12-protein panel for Alzheimer's disease from CSF proteomic data [14].

- Validation: The final model must be validated on multiple, completely independent cohorts that were not used in the discovery phase to prove its generalizability [14].

- Protocol: SVM-Recursive Feature Elimination with Cross-Validation (SVM-RFECV)

The Scientist's Toolkit

Research Reagent Solutions

The following table lists key resources and their functions for managing high-dimensional omics data, as evidenced by recent research.

| Research Reagent / Resource | Function & Application in p >> n Context |

|---|---|

| The Cancer Genome Atlas (TCGA) | A highly accessible, community-standard dataset containing multi-omic data from thousands of patients. Its use in 73% of surveyed papers enables benchmarking and provides a critical resource for developing and testing methods when in-house sample size is limited [8]. |

| SVM-RFECV Pipeline | A computational "reagent" for stable feature selection. It is used to identify minimal, highly predictive biomarker panels (e.g., a 12-protein panel for Alzheimer's) that generalize well across different patient cohorts and measurement technologies [14]. |

| Ensemble Feature Selection | A methodological approach that combines multiple feature selection algorithms to achieve consensus. It increases the robustness and reliability of identified biomarkers, as demonstrated in miRNA discovery for Usher Syndrome [11]. |

| Dirichlet-Multinomial (DM-RPart) | A specialized regression model for complex outcome data like microbiome composition. It allows for supervised partitioning of samples based on covariates (e.g., cytokine levels) to identify associations in a high-dimensional, low-sample setting while remaining interpretable [10]. |

| Multivariate Data Analysis (MVDA) Software (e.g., SIMCA) | Software designed to handle the dimensionality problem by using projection methods like PCA and OPLS-DA. It simplifies complex omics data, models noise, and provides powerful visualization for spotting outliers and patterns [13]. |

Quantitative Landscape of the p >> n Problem in Omics

The table below summarizes key quantitative findings from the literature, highlighting the scale of the challenge.

| Metric | Value from Literature | Context & Implications |

|---|---|---|

| Median Number of Features | 33,415 [8] | Based on a survey of multi-omics ML literature, this shows the typical high dimensionality of datasets. |

| Median Number of Samples | 447 [8] | Highlights the stark imbalance between features (p) and samples (n) that is common in the field. |

| Use of TCGA Database | 73% of surveyed papers [8] | Underlines the field's reliance on a few key public resources to overcome the high cost of generating large multi-omics datasets. |

| Top ML Techniques | Autoencoders, Random Forests, Support Vector Machines [8] | These methods are specifically popular because they help address the challenges of datasets with few samples and many features. |

| Classification Performance (Ensemble Feature Selection) | 97.7% Accuracy, 97.5% AUC [11] | Demonstrates that robust methodology can achieve high performance in a p >> n context (e.g., for Usher Syndrome miRNA biomarkers). |

FAQ: Understanding the Core Problem

What are spurious correlations in the context of high-throughput biological data? A spurious correlation occurs when a machine learning model incorrectly associates a data feature (e.g., a specific protein or miRNA) with an outcome, not because of a true biological relationship, but because of a coincidental pattern or a hidden, confounding factor in the training data [15]. In biomarker discovery, this means a selected "biomarker" might perform well in initial tests but fails utterly when applied to new patient cohorts or different detection technologies, as it never captured the underlying disease biology [16].

Why are traditional statistical and machine learning methods particularly vulnerable to these pitfalls? Traditional methods, like standard Empirical Risk Minimization (ERM), are designed to minimize the average error across all training data [16]. High-throughput data is often characterized by high dimensionality (thousands of features) and sparsity (many missing or zero values) [17]. In such data, it is statistically likely that some features will, by pure chance, appear to be correlated with the outcome. Models exploiting these features will achieve high average accuracy but show poor worst-group accuracy, meaning they fail on minority subgroups of patients or data that do not exhibit the same spurious pattern [16].

Troubleshooting Guide: Identifying and Diagnosing Spurious Correlations

If your biomarker model performs well in training but generalizes poorly to independent validation sets, use the following table to diagnose potential causes.

| Observed Symptom | Potential Root Cause | Diagnostic & Solution Steps |

|---|---|---|

| High training accuracy, poor performance on new cohorts. [16] | The model relied on spurious features prevalent in the majority of your training samples but not universally present. | Diagnose: Analyze performance across data subgroups. Use techniques from [16] to infer majority/minority groups. Solution: Apply robust training methods like Group Distributionally Robust Optimization (GDRO) or use ensemble feature selection [11]. |

| Model fails on data from a different geographic region or processed with a different technology. [14] | Batch effects or technology-specific artifacts were learned as predictive features instead of true biology. | Diagnose: Conduct a PCA analysis to see if samples cluster more strongly by batch/technology than by biological outcome [14]. Solution: Build models on multi-cohort, multi-technology datasets and use cross-validation schemes that keep batches separate [14]. |

| Inconsistent biomarker panel identified from different study subsets. | High-dimensional sparsity leads to multiple, equally likely but spurious, feature combinations. | Diagnose: Perform stability analysis on your feature selection algorithm. If different runs yield different features, the result is unstable [17]. Solution: Employ ensemble feature selection that aggregates results from multiple algorithms to find a robust, consensus feature set [11]. |

| The final biomarker panel lacks biological plausibility or connection to known disease pathways. | The feature selection process was purely mathematical, with no constraint for biological relevance. | Diagnose: Conduct pathway enrichment analysis (e.g., GO, KEGG) on your candidate biomarkers [14]. A spurious set will not enrich for coherent biology. Solution: Integrate biological network data (e.g., from STRING) into the model selection process to prioritize features with known connections to the disease [14]. |

A Robust Methodological Shift: Strategies for Success

To overcome these pitfalls, move beyond traditional ERM. The following workflow, implemented in studies for Usher Syndrome and Alzheimer's Disease, provides a robust alternative.

Diagram: A Robust Biomarker Discovery Workflow

The key phases of this workflow are:

- Ensemble Feature Selection: Instead of relying on a single feature selection algorithm, run multiple ones (e.g., SVM-RFE, random forest importance, recursive feature elimination) on your training data. Select only those features that consistently appear across different algorithms. This was used to identify a minimal set of 10 miRNA biomarkers for Usher syndrome [11] and a robust 12-protein panel for Alzheimer's Disease [14].

- Nested Cross-Validation: Use an outer loop of cross-validation to estimate model performance, and an inner loop to tune hyperparameters. This prevents data leakage and provides a more realistic estimate of how the model will perform on unseen data, which is critical for reliable biomarker discovery [11].

- Multi-Cohort, Multi-Technology Validation: The ultimate test of a biomarker is its performance across diverse, independent datasets. As demonstrated in the Alzheimer's study, a robust biomarker panel should maintain high accuracy across cohorts from different countries and data generated using different detection technologies (e.g., label-free mass spectrometry, TMT, DIA, or ELISA) [14].

The Scientist's Toolkit: Essential Research Reagents & Materials

The following table details key reagents and computational tools referenced in the robust studies cited above.

| Item / Technology | Function / Relevance in Biomarker Research |

|---|---|

| Luna qPCR/RT-qPCR Reagents | Used in high-throughput qPCR workflows for validating gene expression biomarkers. The "dots in boxes" analysis method ensures data meets MIQE guidelines for robustness [18]. |

| SVM-RFECV (Computational Method) | A machine learning technique (Support Vector Machine with Recursive Feature Elimination and Cross-Validation) that identifies an optimal, minimal subset of predictive features, as used for the 12-protein AD panel [14]. |

| ELISA Kits | Used for orthogonal, non-mass spectrometry validation of candidate protein biomarkers (e.g., BASP1, SMOC1, FN1) in independent patient samples [14]. |

| Chromeleon CDS | Chromatography Data System software with built-in troubleshooting tools for HPLC/UHPLC, which is often used in metabolomics and proteomic sample preparation [19]. |

| STRINdb & Cytoscape | Used for Protein-Protein Interaction (PPI) network construction and analysis to assess the biological coherence and centrality of a candidate biomarker panel [14]. |

Experimental Protocol: Implementing a Robust Biomarker Workflow

This protocol outlines the key steps for a robust analysis, as applied in the Alzheimer's disease biomarker study [14].

Step 1: Data Curation and Preprocessing

- Gather multiple independent cohorts. For the AD study, this involved 7+ mass spectrometry datasets from different regions [14].

- Handle missing values. Remove proteins with >80% missing values, then impute remaining missing values using a method like K-Nearest Neighbors (KNN) [14].

- Normalize data. Apply appropriate standardization (e.g., Z-score) to make samples comparable.

Step 2: Candidate Biomarker Identification with SVM-RFECV

- Identify a loose set of Differentially Expressed Proteins (DEPs) using a two-sided t-test (p < 0.05) on your primary discovery cohort [14].

- Apply the SVM-RFECV algorithm to this candidate list. This process:

- Ranks features by their importance using an SVM model.

- Recursively removes the least important features.

- At each step, uses cross-validation to calculate the model's performance.

- Selects the feature subset that yields the best cross-validation score (e.g., highest AUC) [14].

Step 3: Model Training and Validation

- Train a final model (e.g., SVM or Random Forest) using the selected biomarker panel on the full discovery cohort.

- Employ Nested Cross-Validation to get unbiased performance estimates [11].

- Validate on external cohorts. Test the trained model on all available independent datasets without any retraining to assess generalizability [14].

Step 4: Biological Validation and Interpretation

- Perform pathway analysis. Use Gene Ontology (GO) and KEGG enrichment analysis to confirm the biomarker panel is involved in biological processes relevant to the disease [14].

- Correlate with known biomarkers. Check that your new panel shows a statistically significant correlation with established clinical biomarkers or scores (e.g., the 12-protein AD panel was correlated with amyloid-β, tau, and Montreal Cognitive Assessment scores) [14].

Troubleshooting Guides

FAQ 1: Why does my polygenic risk score (PRS) model show poor transferability from the research cohort to a clinical population?

Issue: A PRS model, developed from a large-scale GWAS, fails to generalize when applied to a new, independent cohort for individual patient risk prediction.

| Potential Cause | Diagnostic Checks | Corrective Actions |

|---|---|---|

| Population Stratification | - Perform Principal Component Analysis (PCA) to compare genetic ancestry between discovery and target cohorts.- Check for systematic differences in allele frequency distributions. | - Use ancestry-matched LD reference panels [20].- Develop or apply population-specific PRS models.- Include ancestry principal components as covariates in the model. |

| Overfitting in Feature Selection | - Evaluate performance drop between cross-validation and external validation sets.- Audit the feature selection process for data leakage. | - Employ nested cross-validation, where feature selection is performed within each training fold of the outer loop [21].- Use regularization techniques (e.g., LASSO) to penalize model complexity [22]. |

| Inadequate LD Pruning | - Check for high correlation (linkage disequilibrium) between selected SNPs in the model. | - Re-prune SNPs using an appropriate LD threshold (e.g., r² < 0.1-0.2) from a reference panel that matches the target population's ancestry [23]. |

FAQ 2: How can I improve the clinical actionability of a PRS that has high statistical significance but low individual discriminative power?

Issue: A PRS is significantly associated with a disease at the population level (low p-value) but fails to effectively stratify individuals into clinically meaningful risk categories.

| Potential Cause | Diagnostic Checks | Corrective Actions |

|---|---|---|

| Limited Phenotypic Variance Explained | - Calculate the proportion of variance explained (R²) by the PRS on the observed scale. | - Integrate Clinical Features: Combine the PRS with established clinical risk factors (e.g., BMI, smoking status) in a combined model [22].- Focus on Etiology: Use the genetic risk to explore modifiable risk factors via the Risk Score Ratio (RSR) for targeted prevention [24]. |

| Omnigenic Architecture | - Review the number of SNPs included in the PRS. A model with thousands of SNPs of minuscule effects may be inherently non-actionable. | - Prioritize Actionability over Heritability: Shift focus from maximizing heritability explained to identifying subsets of features with stronger biological plausibility or larger effect sizes [20].- Combine with Omics Data: Integrate proteomic or metabolomic biomarkers that may be more proximal to the disease phenotype and offer clearer therapeutic targets [14]. |

FAQ 3: My biomarker discovery pipeline identifies a large number of candidate features. How do I select a robust and minimal panel for clinical validation?

Issue: High-dimensional data (e.g., from proteomics or metabolomics) yields hundreds of differentially expressed features, making downstream validation costly and impractical.

| Potential Cause | Diagnostic Checks | Corrective Actions |

|---|---|---|

| Weak Feature Selection Strategy | - Check if feature importance is unstable across different data splits or bootstrap samples. | - Use Ensemble Feature Selection: Run multiple feature selection algorithms (e.g., SVM-RFE, Random Forest, LASSO) and prioritize features that consistently appear across methods [11] [14].- Apply SVM-RFECV: Combine Recursive Feature Elimination with Cross-Validation (SVM-RFECV) to identify the feature subset with the best cross-validation score [14]. |

| Technical Noise and Batch Effects | - Use PCA to visualize whether sample clustering is driven by batch or processing date rather than disease status. | - Rigorous Preprocessing: Apply data-type specific normalization and transformation (e.g., variance stabilizing transformation) [21].- Quality Control Metrics: Use established software (e.g., arrayQualityMetrics for microarrays, Normalyzer for proteomics) to filter out uninformative features and correct for batch effects [21]. |

Experimental Protocols for Robust Biomarker Research

Protocol 1: Nested Cross-Validation with Ensemble Feature Selection

This protocol is designed to identify a minimal, robust biomarker panel while controlling for overfitting, as demonstrated in studies on Usher syndrome and Alzheimer's disease [11] [14].

1. Data Preprocessing

- Missing Values: Remove features with >80% missing values. Impute remaining missing values using a method like K-Nearest Neighbors (KNN) [14].

- Normalization: Log-transform and standardize data (e.g., Z-score normalization) to make features comparable.

2. Outer Loop: Performance Estimation

- Split the entire dataset into (k) folds (e.g., (k=10)).

- For each fold (i): a. Hold out fold (i) as the validation set. b. Use the remaining (k-1) folds as the model development set.

3. Inner Loop: Feature Selection and Model Tuning (on the model development set)

- Split the model development set into (j) folds.

- For each fold (j): a. Hold out fold (j) as the test set. b. Use the remaining (j-1) folds as the training set. c. Ensemble Feature Selection: Run multiple feature selection algorithms (e.g., SVM-RFE, Random Forest, LASSO) on the training set. d. Aggregate results to create a consensus list of top-ranked features.

- Define the final feature subset for the outer fold (i) based on features that appear in at least three different selection algorithms [11].

4. Model Training and Validation

- Train a final model (e.g., Logistic Regression, SVM) on the entire model development set using only the selected feature subset.

- Evaluate the trained model on the held-out validation set from step 2.

5. Final Model

- Repeat steps 2-4 for all (k) outer folds.

- The average performance across all (k) validation sets provides an unbiased estimate of generalizability.

- To create a production model, rerun the ensemble feature selection and training on the entire dataset.

Protocol 2: Development and Validation of a Population-MeAN Polygenic Risk Score (PM-PRS)

This protocol extends individual PRS to population-level risk estimation, useful for public health planning and etiological exploration [24].

1. Input Data Preparation

- Genetic Associations: Obtain effect sizes (βi) and minor allele frequencies (MAFi) for (n) SNPs from a large-scale GWAS.

- Target Population: Obtain MAFi for the same (n) SNPs in your specific study population.

2. Calculate Expected Minor Allele Expression

- For each SNP (i), calculate the expected number of effect alleles in the population using the MAF: (E[Gi] = 0 \times (1 - MAFi)^2 + 1 \times 2 \times MAFi \times (1 - MAFi) + 2 \times MAFi^2 = 2 \times MAFi)

- This simplifies to the expectation that each individual in the population carries, on average, (2 \times MAF_i) effect alleles at locus (i) [24].

3. Compute the Population-MeAN Polygenic Risk Score (PM-PRS)

- Calculate the PM-PRS using the formula: ( PM\text{-}PRS = \sum{i=1}^{n} \betai \times E[Gi] = \sum{i=1}^{n} \betai \times (2 \times MAFi) )

- This score represents the average genetic burden for the disease in the study population [24].

4. Etiological Exploration via Risk Score Ratio (RSR)

- To investigate the role of a potential risk factor (e.g., an inflammatory biomarker), calculate its RSR.

- Formula: ( RSR = \frac{\text{Risk Score (Exposure)}}{\text{Risk Score (Disease)}} )

- The RSR uses genetic variants shared by the exposure and the disease to provide a causal inference estimate, less prone to confounding [24].

Research Reagent Solutions

Table: Essential Resources for Biomarker Discovery and Validation

| Category | Item / Resource | Function / Application | Key Considerations |

|---|---|---|---|

| Data & Software | PLINK [20] | Whole-genome association analysis toolset. Handles genotype/phenotype data, QC, and basic association analyses. | Still often uses GRCh37 reference; transitioning to pangenome references is recommended. |

| SVM-RFECV (in scikit-learn) [14] | Feature selection combined with cross-validation to identify optimal, minimal biomarker panels. | Prevents overfitting by integrating feature selection directly into the validation process. | |

| PheWeb [20] | Visualization and dissemination of GWAS results. | Useful for collaborative interpretation of association results. | |

| Analytical Kits & Reagents | Absolute IDQ p180 Kit (Biocrates) [22] | Targeted metabolomics kit quantifying 194 endogenous metabolites from plasma/serum. | Provides standardized quantification for biomarker discovery in cardiovascular and metabolic diseases. |

| ELISA Kits (e.g., for BASP1, SMOC1) [14] | Antibody-based validation of protein biomarker candidates identified via mass spectrometry. | Essential for orthogonal validation of proteomic discoveries in independent cohorts. | |

| Reference Data | LD Reference Panels (e.g., from 1000 Genomes, gnomAD) [23] | Population-specific linkage disequilibrium patterns for SNP pruning and heritability analysis. | Critical: Mismatch between study cohort and LD panel ancestry is a major source of error. |

| NHGRI-EBI GWAS Catalog [23] | Curated repository of all published GWAS results. | Primary source for SNP-trait associations and effect sizes for PRS construction. |

Frequently Asked Questions (FAQs)

FAQ 1: Why is my feature selection method producing biomarkers that lack biological plausibility? This common issue often arises from relying on a single feature selection technique, which can be biased towards specific data properties and may capture spurious correlations. A robust solution is to implement a hybrid sequential feature selection approach. This method combines multiple techniques, such as variance thresholding, recursive feature elimination (RFE), and LASSO regression, to leverage their complementary strengths [25]. For instance, one study successfully reduced 42,334 mRNA features to 58 robust biomarkers for Usher syndrome by integrating these methods within a nested cross-validation framework, ensuring the selected features were both statistically significant and biologically relevant [25]. Furthermore, always validate computationally selected biomarkers with experimental methods like droplet digital PCR (ddPCR) to confirm their expression patterns and biological relevance [25].

FAQ 2: How can I improve the interpretability of my machine learning model for clinical stakeholders? To enhance interpretability, employ explainable AI (XAI) techniques such as SHapley Additive exPlanations (SHAP). SHAP provides both global and local interpretability by quantifying the contribution of each feature to individual predictions [26]. For example, in an Alzheimer's disease diagnostic model, SHAP analysis clearly visualized how specific hub genes like RHOQ and MYH9 influenced model outputs, distinguishing risk factors from protective factors [26]. This allows clinicians to understand the model's decision-making process, building necessary trust for clinical adoption. Complement this with decision curve analysis (DCA) to demonstrate the clinical utility of the model across a range of threshold probabilities [26].

FAQ 3: My model performance drops significantly on an independent validation set. How can I ensure robustness? Performance drops often indicate overfitting or a failure to account for dataset heterogeneity. Implement a nested cross-validation scheme, where an inner loop is dedicated to feature selection and hyperparameter tuning, and an outer loop provides an unbiased performance estimate [25]. Additionally, ensure your feature selection process is integrated within the cross-validation folds themselves, not performed on the entire dataset before splitting, to avoid data leakage [6]. If your data contains known sources of heterogeneity (e.g., sex differences), stratify your data and build sex-specific models, as combined models can obscure important biological differences and generalize poorly [5].

FAQ 4: What is the most effective way to select a minimal set of biomarkers for a cost-effective diagnostic? The optimal strategy depends on the permitted number of biomarkers. When a very small number of biomarkers are required (e.g., 3-5), causal-based feature selection methods have been shown to be the most performant, as they prioritize features with a potential causal relationship to the outcome, reducing spurious correlations [6]. When a larger number of features are permissible (e.g., 10-15), univariate feature selection methods like chi-squared or ANOVA can be highly effective [6]. Recursive Feature Elimination with Cross-Validation (RFECV) is another powerful technique that systematically removes the least important features based on model performance, optimizing the number of features for a given task [5].

FAQ 5: How do I validate that my computationally identified biomarkers are functionally relevant? Computational identification is only the first step. A robust validation pipeline must include:

- Experimental Validation: Use laboratory techniques like droplet digital PCR (ddPCR) to confirm the expression levels of selected mRNA biomarkers in patient-derived cell lines [25]. This provides absolute quantification and validates the patterns observed in your transcriptomic data.

- Biological Pathway Analysis: Perform Gene Ontology (GO) and Kyoto Encyclopedia of Genes and Genomes (KEGG) enrichment analyses on the selected biomarker set to determine if they are enriched in pathways known to be associated with the disease [26].

- Single-Cell Analysis: For a deeper understanding, explore the biological significance of top candidate biomarkers at the single-cell transcriptome level to identify which cell types they are active in [26].

Troubleshooting Guides

Problem: Poor Model Generalization Across Different Patient Subgroups

Symptoms:

- High performance on the training cohort but significantly lower AUC (e.g., drop of >0.1) on external validation sets or specific patient subgroups [26] [5].

- The feature importance rankings differ vastly when models are trained on different subgroups (e.g., males vs. females).

Diagnosis: This is frequently caused by sex-based or other demographic bias in the data and model. The model may have learned patterns that are specific to the majority subgroup in the training data.

Solution:

- Stratified Analysis: Instead of building a single model on the entire dataset, stratify the data by the confounding variable (e.g., sex) and build separate models for each subgroup [5].

- Bias-Aware Feature Selection: Use Recursive Feature Elimination with Cross-Validation (RFECV) independently on each subgroup. This will identify the optimal feature set specific to each group's physiology [5].

- Validation: Validate each subgroup-specific model on a corresponding hold-out validation set. As shown in Table 1, this approach often yields higher accuracy than a combined model.

Table 1: Example of Sex-Specific Model Performance for Biomarker Prediction

| Biomarker Target | Data Subgroup | Number of Selected Features | Top Performance Metric (Error <10%) |

|---|---|---|---|

| Waist Circumference | Male | 5 | 92.1% |

| Female | 4 | 89.5% | |

| Combined | 6 | 85.3% | |

| Blood Glucose | Male | 5 | 88.7% |

| Female | 5 | 86.2% | |

| Combined | 7 | 82.9% |

Problem: Inconsistent Feature Selection Results

Symptoms:

- The list of top-K selected features varies wildly with different random seeds or small perturbations in the dataset.

- Features with known biological importance are consistently ranked low.

Diagnosis: The feature selection method may be unstable or overly sensitive to noise in high-dimensional data.

Solution:

- Adopt a Hybrid Sequential Approach: Implement a multi-stage filter. For example:

- Stage 1 (Variance Filter): Remove low-variance features.

- Stage 2 (Univariate Filter): Use a method like ANOVA or chi-squared to reduce the feature space further.

- Stage 3 (Wrapper/Method): Apply a more computationally intensive method like RFE or LASSO on the filtered set [25].

- Ensemble Feature Selection: Run multiple feature selection algorithms (e.g., MRMR, ReliefF, LASSO) and aggregate the results to create a more stable, consensus feature set [25].

- Leverage Domain Knowledge: After the computational selection, intentionally include features with established biological roles in the disease pathway in your final model, even if their statistical score is marginally lower, to improve biological insight and face validity.

Experimental Protocols

Protocol 1: Hybrid Sequential Feature Selection for mRNA Biomarker Discovery

This protocol is adapted from a study on Usher syndrome and provides a robust framework for narrowing down thousands of mRNA features to a handful of validated biomarkers [25].

1. Sample Preparation and RNA Sequencing:

- Source: Use immortalized B-lymphocytes from patients and healthy controls. Extract RNA in triplicate for patient lines and quadruplicate for controls.

- Library Prep: Perform next-generation sequencing (NGS) library preparation.

- Data Generation: Sequence the libraries to generate raw transcriptomic data.

2. Computational Feature Selection Workflow: The following diagram illustrates the multi-stage feature selection process:

- Step 1 - Variance Thresholding: Filter out mRNA features with negligible variance across samples, as they are unlikely to be informative.

- Step 2 - Recursive Feature Elimination (RFE): Use a machine learning model (e.g., Random Forest or SVM) to recursively remove the least important features.

- Step 3 - LASSO Regression: Apply L1 regularization to penalize the absolute size of coefficients, effectively shrinking some to zero and performing feature selection.

- Step 4 - Nested Cross-Validation: Embed the entire feature selection process (Steps 1-3) within the inner loop of a cross-validation to prevent overfitting and provide an unbiased performance estimate.

3. Biological Validation via ddPCR:

- Candidate Selection: Select top-ranked mRNA biomarkers from the computational output.

- Experimental Validation: Perform droplet digital PCR (ddPCR) on the original RNA samples to quantitatively validate the expression patterns of the selected biomarkers. Compare the ddPCR results with the computational predictions to confirm consistency [25].

Protocol 2: Interpretable Model Building with SHAP

This protocol details how to build an interpretable diagnostic model for a disease like Alzheimer's, as demonstrated in the search results [26].

1. Identify Hub Genes via Integrative Bioinformatics:

- Differential Expression: Identify Differentially Expressed Genes (DEGs) from transcriptomic data (e.g., from GEO database GSE109887).

- Weighted Co-expression Network Analysis (WGCNA): Construct co-expression modules and identify modules highly correlated with the disease trait.

- Protein-Protein Interaction (PPI) Network: Build a PPI network using the overlapping genes from DEGs and key modules. Use algorithms like MCC to identify the top 10 hub genes.

2. Build and Interpret the Diagnostic Model: The workflow below outlines the process from data integration to model interpretation:

- Model Construction: Train a machine learning model, such as Random Forest, using the expression profiles of the 10 hub genes along with clinical data like age and gender.

- Performance Assessment: Evaluate the model using 5-fold cross-validation and on an independent external validation set. Use AUC and other metrics.

- SHAP Analysis: Apply SHapley Additive exPlanations to the trained model. Generate summary plots to show the global importance of each hub gene and force plots to explain individual predictions [26].

The Scientist's Toolkit: Key Research Reagent Solutions

Table 2: Essential Materials for Biomarker Discovery and Validation

| Item | Function/Application | Example from Literature |

|---|---|---|

| Immortalized B-Lymphocytes | A readily available, minimally invasive cell source for studying mRNA expression profiles in genetic diseases. | Used as the source of mRNA for Usher syndrome biomarker discovery [25]. |

| Epstein-Barr Virus (EBV) | Used to immortalize human B-lymphocytes, creating a renewable cell resource for repeated studies [25]. | EBV (B95-8 strain) was used to immortalize lymphocytes from Usher patients and controls [25]. |

| Droplet Digital PCR (ddPCR) | Provides absolute quantification of nucleic acid molecules for validating computationally identified mRNA biomarkers with high precision. | Used to validate the expression of top 10 selected mRNAs from the Usher syndrome study [25]. |

| RNA Purification Kit | For extracting high-quality total RNA from cell lines for downstream sequencing or PCR. | GeneJET RNA Purification Kit was used for RNA extraction from B-lymphocytes [25]. |

| SHAP (SHapley Additive exPlanations) | A Python library for interpreting the output of any machine learning model, providing both global and local interpretability. | Used to explain the impact of hub genes (e.g., MYH9, RHOQ) in an Alzheimer's disease diagnostic model [26]. |

| Random Forest Classifier | A robust, ensemble machine learning algorithm available in Scikit-learn, often used for building high-performance diagnostic models. | Achieved the highest AUC (0.896) for an Alzheimer's disease diagnostic model using hub genes [26]. |

Advanced Frameworks and Techniques for Stable Biomarker Identification

In high-dimensional biomarker discovery research, robust feature selection is not merely a preprocessing step but a foundational component of building reliable, interpretable, and generalizable models. The "curse of dimensionality" is a significant challenge in biomarker development, where the number of features (e.g., genes, proteins) often vastly exceeds the number of available samples (a situation known as the p >> n problem) [21]. Unnecessary features increase model complexity, training time, and the risk of overfitting, leading to models that fail to validate on independent datasets [27] [21]. This guide provides a technical deep dive into the three primary families of feature selection methods—Filter, Wrapper, and Embedded—to help you navigate these challenges and implement rigorous, reproducible pipelines for your biomarker research.

Core Concepts: Defining the Feature Selection Triad

What are Filter Methods?

Filter methods select features based on their intrinsic, statistical properties, independently of any machine learning model. They are often univariate, assessing each feature in isolation [28].

- Key Principle: Use statistical measures (e.g., correlation, chi-squared) to score and select features prior to model training [28] [29].

- Model Relationship: Model-agnostic; the selection process is completely separate from the classifier or regression algorithm [28].

- Typical Use Case: Ideal as a fast, preliminary feature reduction step, especially for very high-dimensional data like gene expression or metabarcoding datasets [28] [30].

What are Wrapper Methods?

Wrapper methods evaluate feature subsets by using the performance of a specific predictive model as the selection criterion. They "wrap" a search algorithm around a model [27] [29].

- Key Principle: Train a model on a subset of features and use its performance (e.g., accuracy, R-squared) to guide the search for an optimal subset [27] [31].

- Model Relationship: Model-dependent; the choice of machine learning algorithm is central to the selection process [31].

- Typical Use Case: When you have a well-defined model and computational resources to spend on finding a high-performing, concise feature set, potentially capturing feature interactions [27] [29].

What are Embedded Methods?

Embedded methods integrate the feature selection process directly into the model training phase. The model itself learns which features are most important [31] [32].

- Key Principle: Feature selection is a built-in consequence of the model's training algorithm, such as regularization or impurity-based importance [31].

- Model Relationship: Model-inherent; selection is embedded within the training of specific algorithms [31].

- Typical Use Case: A balanced approach that is more efficient than wrappers and often more accurate than filters, suitable for many general-purpose modeling tasks [32].

Table 1: High-Level Comparison of Feature Selection Methods

| Characteristic | Filter Methods | Wrapper Methods | Embedded Methods |

|---|---|---|---|

| Model Involvement | None (Model-agnostic) | High (Model-dependent) | Integrated into Model Training |

| Computational Cost | Low (Fast) | High (Slow) | Moderate |

| Risk of Overfitting | Low | High (if not properly validated) | Moderate |

| Captures Feature Interactions | Typically No | Yes | Yes |

| Primary Selection Criteria | Statistical scores | Model Performance | Model-derived importance (e.g., coefficients) |

| Examples | Correlation, Chi-Squared, Variance Threshold | Forward Selection, Backward Elimination, Exhaustive Search | Lasso Regression, Random Forest Feature Importance |

Troubleshooting Guides & FAQs

FAQ 1: How do I choose the right method for my biomarker dataset?

Answer: The choice depends on your dataset's dimensionality, computational budget, and project goals. Consider the following workflow:

Decision Guide:

- For Ultra-High-Dimensional Data (p >> n): Start with a Filter Method like Variance Thresholding or Mutual Information to quickly reduce the feature space to a manageable size [21] [30].

- When Computational Efficiency is Critical: Filter Methods are the clear choice due to their speed and scalability [28].

- For a Robust, General-Purpose Pipeline: Embedded Methods like Lasso or Random Forests offer a powerful balance between performance and efficiency. Recent benchmarks suggest tree-based models like Random Forests are often robust even without additional feature selection [30].

- For Maximizing Predictive Performance with a Specific Model: If resources allow, Wrapper Methods like Sequential Feature Selection can be used on a pre-filtered dataset to find a high-performing subset [27] [29].

FAQ 2: Why does my feature selection perform well on training data but fail in validation?

Answer: This is a classic sign of overfitting in your feature selection process, a critical risk in biomarker development [21].

Troubleshooting Steps:

- Isolate Data Leaks: Ensure that your entire feature selection process (including calculation of statistical scores for filters) is performed only on the training set after a data split. Any use of the test set for selection contaminates the process [33].

- Apply Proper Cross-Validation (CV): For wrapper and embedded methods, use nested cross-validation. An outer CV loop estimates model performance, while an inner loop performs the feature selection and hyperparameter tuning on the training fold of the outer loop. This gives an unbiased performance estimate [33].

- Re-evaluate Method Choice: Wrapper methods are particularly prone to overfitting. If using a wrapper, ensure you have a sufficiently large sample size and use a stringent stopping criterion. Consider switching to a more robust embedded method [31] [30].

- Simplify Your Model: A model that is too complex for the amount of available data will overfit. Regularization (an embedded method) can help mitigate this [31].

FAQ 3: How can I handle highly correlated biomarkers in my feature set?

Answer: Correlated features (multicollinearity) can destabilize model coefficients and impair interpretability.

Solutions by Method Type:

- Filter Methods: Calculate the correlation matrix between features. If two features are highly correlated, you can use a filter method to remove one based on a lower correlation with the target variable [34].

- Wrapper Methods: Since wrappers evaluate feature subsets by model performance, they may naturally select one feature from a correlated pair if it provides sufficient predictive power. However, the selection can be arbitrary [27].

- Embedded Methods:

- Lasso (L1) Regression: Tends to select one feature from a group of correlated features arbitrarily, which can be useful for radical simplification [31].

- Ridge (L2) Regression: Shrinks coefficients of correlated features but does not set them to zero, keeping all features but making interpretation difficult [31].

- Elastic Net: Combines L1 and L2 penalties, often effective for datasets with many correlated features [32].

- Tree-Based Methods (Random Forest, XGBoost): Are generally robust to correlated features, though the importance may be spread across them [31].

FAQ 4: My dataset is a mix of clinical and omics data. How should I approach feature selection?

Answer: This is a common scenario in modern biomarker research. The key question is whether omics data provides added value over traditional clinical markers [21].

Integration Strategies:

- Early Integration: Combine all features (clinical and omics) into a single dataset and apply feature selection to the entire set. This allows the model to directly compare the importance of different data types [21].

- Intermediate Integration: Use algorithms like Multiple Kernel Learning or specific neural network architectures that can integrate different data modalities during the model building process [21].

- Late Integration: Build separate models for clinical and omics data, then combine their predictions (e.g., via stacking). This requires feature selection to be performed independently within each modality [21].

Recommended Protocol: A pragmatic approach is to use early integration followed by an embedded method like Random Forest, which can rank the importance of both clinical and omics features, allowing you to assess their relative contribution [21].

Detailed Experimental Protocols

Protocol 1: Implementing Forward Selection usingmlxtend

This protocol outlines the steps for a wrapper-based forward feature selection to identify a compact biomarker signature.

Principle: A greedy sequential search that starts with no features, adding the most significant feature one at a time based on model performance until a stopping criterion is met [27].

Workflow:

Step-by-Step Code (Python):

Key Parameters to Tune:

k_features: The number of features to select. Can be an integer or a tuple(min, max)to find the optimal number.scoring: The performance metric to optimize. For binary classification of biomarkers,'roc_auc'is often appropriate.cv: The number of cross-validation folds. Higher values reduce overfitting but increase computation time [27] [29].

Protocol 2: Applying Lasso (L1) Regularization for Embedded Selection

This protocol uses Lasso regression, an embedded method, for continuous outcome biomarkers. For classification, Lasso logistic regression can be used.

Principle: Adds a penalty (L1 norm) to the regression model's loss function, which shrinks the coefficients of less important features to exactly zero, effectively removing them from the model [31] [32].

Workflow:

Step-by-Step Code (Python):

Key Parameters to Tune:

alpha(λ): The regularization strength. This is the most important parameter. UseLassoCVto automatically find the optimal alpha via cross-validation.max_iter: The maximum number of iterations for the solver to converge [31].

Protocol 3: Executing Variance Thresholding as a Pre-Filter

A simple, effective filter method to remove low-variance features, which are unlikely to be informative biomarkers.

Principle: Removes all features whose variance does not meet a specified threshold. Variance is dataset-specific, so thresholding should be done relative to your data's distribution [28] [34].

Step-by-Step Code (Python):

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Software and Libraries for Feature Selection in Biomarker Research

| Tool / Reagent | Function / Purpose | Key Features / Notes |

|---|---|---|

Scikit-learn (sklearn) |

Comprehensive machine learning library in Python. | Provides VarianceThreshold, SelectKBest (for filters), SelectFromModel (for embedded), Lasso, Ridge, tree-based models. The workhorse for many ML tasks [28] [31]. |

| MLXtend | Extension library for data science and ML tasks. | Implements wrapper methods like SequentialFeatureSelector (SFS) and ExhaustiveFeatureSelector (EFS) with a simple API [27] [29]. |

| Pandas | Data manipulation and analysis library. | Essential for handling structured data, data frames, and seamless integration with Scikit-learn pipelines. |

| Random Forest / XGBoost | Tree-based ensemble algorithms. | Provide robust, embedded feature importance scores. Benchmarks show they perform well on high-dimensional biological data, often with minimal need for pre-selection [31] [30]. |

Cross-Validation (e.g., cross_val_score) |

Model and selection process evaluation. | Critical for obtaining unbiased performance estimates and avoiding overfitting, especially when using wrapper methods [33]. |

Pipeline Class (sklearn.pipeline) |

Chains preprocessing and modeling steps. | Ensures that all preprocessing (like feature selection) is correctly fitted on the training data and applied to the test data, preventing data leaks [33]. |

Recursive Feature Elimination Cross-Validation (RFE-CV) for Enhanced Stability

Troubleshooting Guides

FAQ 1: Why does RFE-CV sometimes select all features or get stuck at a high feature count?

Problem: During RFE-CV, the feature selection process sometimes fails to eliminate any features, remaining at the initial high feature count across multiple iterations.

Explanation: This occurs when the algorithm determines that retaining all features yields the best cross-validation performance. RFE-CV uses a performance metric (like accuracy or F1-score) to guide feature elimination. If removing any feature subset causes performance to drop below the current optimum, RFE-CV will retain all features [35]. This behavior can be particularly pronounced with small sample sizes or when many weakly relevant features collectively contribute to prediction.

Solutions:

- Adjust stopping criteria: Explicitly set

n_features_to_selectto force elimination rather than relying solely on performance optimization [36] [35]. - Modify step parameter: Increase the

stepparameter to remove more features between iterations, potentially bypassing local performance maxima [35]. - Fix random state: Use a fixed

random_statefor cross-validation splits to ensure reproducible feature selection behavior [35]. - Try different algorithms: Test alternative base estimators (e.g., Logistic Regression instead of Decision Trees) as their feature importance calculations may be less prone to this issue [37].

FAQ 2: How can I address inconsistent feature selection results across different RFE-CV runs?

Problem: RFE-CV selects different feature subsets when run multiple times on the same dataset, reducing methodological reliability for publication.

Explanation: Feature selection instability typically stems from two sources: (1) random splitting in cross-validation folds creates different training environments across runs, and (2) some algorithms (particularly tree-based methods) may have inherent variability in feature importance calculations [37].

Solutions:

- Stratified cross-validation: Use

StratifiedKFoldto maintain class distribution across folds, creating more consistent training conditions [36] [33]. - Increase cross-validation folds: Higher fold counts (e.g., 10 instead of 5) provide more stable performance estimates [38].

- Multiple random states: Run RFE-CV with several random states and select features that consistently appear across multiple runs [39].

- Alternative RFE variants: Consider "Enhanced RFE" or other modified approaches that demonstrate better selection stability according to benchmarking studies [37].

FAQ 3: Why does my RFE-CV model show excellent cross-validation performance but poor external validation results?

Problem: A model built with RFE-CV-selected features performs well during cross-validation but generalizes poorly to independent test sets.

Explanation: This typically indicates overfitting during the feature selection process itself, where features are selected based on their ability to capture dataset-specific noise rather than biologically meaningful signals [22]. This is particularly problematic in high-dimensional, low-sample-size scenarios common in biomarker research.

Solutions:

- Nested cross-validation: Implement a nested CV structure where RFE-CV occurs only within the training folds of an outer validation loop, completely separating feature selection from final performance assessment [22].

- Independent validation set: Hold back a completely independent test set before beginning any feature selection process [22] [33].

- Simplify the model: Reduce model complexity or increase regularization to improve generalizability of the selected features [40].

- Increase sample size: When possible, collect more samples to improve the stability of feature selection [37].

FAQ 4: How can I manage the computational demands of RFE-CV with large feature sets?

Problem: RFE-CV becomes computationally prohibitive when working with high-dimensional data (e.g., genomic or proteomic datasets with thousands of features).

Explanation: RFE-CV requires repeatedly training and evaluating models, which becomes computationally intensive with large feature sets, especially when using complex base estimators or many cross-validation folds [37].

Solutions:

- Pre-filtering: Apply fast filter methods (e.g., correlation, mutual information) to reduce feature space before applying RFE-CV [41] [37].

- Larger step sizes: Increase the

stepparameter to eliminate more features per iteration, reducing total iterations required [35] [38]. - Efficient algorithms: Use computationally efficient base estimators (e.g., Logistic Regression instead of Random Forest) for the feature selection phase [40] [37].

- Parallelization: Leverage the

n_jobs=-1parameter in scikit-learn to distribute computation across available CPU cores [35]. - Cloud computing: For extremely large datasets, consider distributed computing approaches [37].

Experimental Protocols & Data Presentation

Quantitative Performance Comparison of RFE-CV Variants in Biomarker Discovery

Table 1: Benchmarking RFE-CV variants across domains demonstrates trade-offs between accuracy, feature parsimony, and computational efficiency. Adapted from empirical evaluations [37].

| RFE-CV Variant | Base Estimator | Domain | Original Features | Selected Features | Predictive Accuracy | Relative Computational Cost |

|---|---|---|---|---|---|---|

| RFECV-DT | Decision Tree | Network Security | 42 | 15 | 95.30% | Low |

| RFECV-LR | Logistic Regression | Atherosclerosis | 67 | 27 | 92.00% | Low |

| RFECV-RF | Random Forest | Thermal Comfort | 19 | 7 | 91.20% | High |

| RFECV-XGB | XGBoost | Education | 388 | 31 | 89.50% | Very High |

| Enhanced RFE | Mixed | Healthcare | 255 | 18 | 88.90% | Medium |

Detailed Methodology: RFE-CV for Biomarker Discovery

Protocol Title: Cross-Validated Recursive Feature Elimination for Robust Biomarker Identification

Background: This protocol describes an RFE-CV workflow specifically optimized for biomarker discovery studies, where small sample sizes and high-dimensional data present particular challenges [40] [22].

Materials & Equipment:

- High-dimensional dataset (e.g., genomic, proteomic, or metabolomic data)

- Computing environment with Python and scikit-learn

- Sufficient computational resources (RAM, CPU cores)

Procedure:

- Data Preprocessing:

RFE-CV Configuration:

- Select base estimator appropriate for your data type (Logistic Regression for linear relationships, Random Forest for complex interactions) [37].

- Configure k-fold cross-validation (typically 5-10 folds based on sample size) [40] [33].

- Set step parameter to eliminate 1-10% of features per iteration [35].

- Define performance metric aligned with research goals (accuracy, F1-score, AUC-ROC) [33].

Feature Selection Execution:

- Execute RFE-CV on training data only.

- Record selected features and their importance scores.

- Repeat with multiple random seeds to assess selection stability [37].

Model Validation:

- Train final model using selected features on entire training set.

- Evaluate performance on held-out test set.

- Compare with negative controls (random features) and positive controls (full feature set) [22].

Troubleshooting:

- If feature selection is unstable, increase cross-validation folds or employ stratified sampling [36] [33].

- If computational time is excessive, pre-filter features or increase step parameter [38] [37].

- If performance is poor, try different base estimators or check for data leakage [22] [33].

Workflow Visualization

Table 2: Essential research reagents and computational solutions for RFE-CV in biomarker discovery.

| Category | Item | Specifications/Function | Example Applications |

|---|---|---|---|

| Computational Libraries | scikit-learn RFE/RFECV | Primary implementation of recursive feature elimination with cross-validation | General feature selection in biomarker studies [36] |

| XGBoost | Gradient boosting implementation usable as RFE base estimator | Handling complex feature interactions [37] | |

| Pandas/NumPy | Data manipulation and numerical computation | Data preprocessing and transformation [22] | |

| Data Resources | Targeted Metabolomics | AbsoluteIDQ p180 kit (Biocrates) quantifying 194 metabolites | Atherosclerosis biomarker discovery [22] |

| Genomic Data | Pan-genome presence/absence datasets | Antimicrobial resistance biomarker identification [39] | |

| Clinical Data | Body mass index, smoking status, medication history | Cardiovascular disease risk assessment [22] | |

| Validation Tools | Nested Cross-Validation | Prevents overfitting in performance estimation | Robust biomarker validation [22] |

| Independent Test Sets | Completely held-out data for final validation | Assessing generalizability [33] | |

| SHAP Values | Model-agnostic feature importance explanation | Interpreting selected biomarkers [40] |

Integrating Causal Inference with Graph Neural Networks for Biologically Plausible Biomarkers

What is the core premise of integrating Causal Inference with Graph Neural Networks (GNNs) for biomarker discovery? This integration addresses a fundamental limitation in traditional biomarker discovery methods. Conventional machine learning approaches often identify features based on spurious correlations rather than genuine causal relationships, leading to biomarkers that lack stability and biological interpretability across different datasets. The Causal-GNN framework is designed to overcome this by combining GNNs' capacity to model complex gene-gene regulatory networks with causal inference methods that distinguish true causal effects from mere correlations [42] [43]. This synergy enables the identification of stable, biologically plausible biomarkers by leveraging both the structural prior knowledge of biological networks and robust causal effect estimation.

Why does this integration produce more robust biomarkers for real-world applications? The integration yields more robust biomarkers because it explicitly addresses two critical challenges: (1) the instability of feature selection across different biological datasets, and (2) the conflation of correlation with causation. By utilizing GNNs to model the regulatory context of genes through propensity score estimation and then applying causal effect measurements like Average Causal Effect (ACE), the method prioritizes genes that maintain their predictive power regardless of dataset-specific variations [42] [44]. This results in biomarker signatures that are more likely to be reproducible and biologically interpretable, which is crucial for clinical translation in areas like cancer diagnostics and drug development [45].

FAQs: Core Conceptual Questions

What distinguishes Causal-GNN from traditional feature selection methods in biomarker discovery? Traditional feature selection methods, such as filter, wrapper, or embedded methods, typically rank genes based on their individual correlation with the outcome of interest (e.g., disease state). These approaches often ignore the complex interdependencies between genes and are highly susceptible to dataset-specific noise, resulting in unstable biomarker lists [44]. In contrast, Causal-GNN incorporates the topological structure of gene regulatory networks as prior knowledge, enabling it to account for biological context. Furthermore, it moves beyond correlation to estimate the causal effect of each gene on the outcome using propensity scores and Average Causal Effect (ACE), thereby identifying features with genuine biological relevance [42] [43].

How does the "propensity score" mechanism within the GNN framework function? In the Causal-GNN architecture, a Graph Convolutional Network (GCN) is employed to estimate a propensity score for each gene (mRNA). This score represents the probability of a gene's expression level conditional on the expression patterns of its co-regulated neighbors within the gene regulatory network. The GCN achieves this through a multi-layer message-passing mechanism where each gene (node) aggregates feature information from its regulatory neighbors [43]. Formally, the propagation rule for a single GCN layer is:

[ \mathbf{H}^{(l+1)} = \sigma\left(\hat{\mathbf{D}}^{-1/2}\hat{\mathbf{A}}\hat{\mathbf{D}}^{-1/2}\mathbf{H}^{(l)}\mathbf{W}^{(l)}\right) ]

where (\hat{\mathbf{A}} = \mathbf{A} + \mathbf{I}) is the adjacency matrix of the gene network with self-loops, (\hat{\mathbf{D}}) is its degree matrix, (\mathbf{H}^{(l)}) are the node representations at layer (l), and (\mathbf{W}^{(l)}) is a trainable weight matrix [43]. This allows the model to capture complex, cross-regulatory signals that inform the propensity score.