Regularization Parameter Tuning: A Practical Guide for Robust Biomedical Data Models

This article provides a comprehensive guide to regularization parameter tuning, tailored for researchers and professionals in drug development and biomedical science.

Regularization Parameter Tuning: A Practical Guide for Robust Biomedical Data Models

Abstract

This article provides a comprehensive guide to regularization parameter tuning, tailored for researchers and professionals in drug development and biomedical science. It covers the foundational theory of regularization for preventing overfitting, explores practical methodologies like L1/L2 penalization and advanced optimizers, details systematic troubleshooting and optimization strategies for high-dimensional biological data, and outlines rigorous validation and comparative analysis frameworks. The goal is to equip practitioners with the knowledge to build generalizable, interpretable, and reliable predictive models that accelerate therapeutic discovery.

Why Regularization is Non-Negotiable in Biomedical Data Science

This technical support center is framed within a broader research thesis aimed at establishing rigorous, evidence-based guidelines for regularization parameter tuning. For researchers, scientists, and drug development professionals, mastering these guidelines is not merely an academic exercise but a critical step in developing robust, generalizable predictive models. Such models are foundational for high-stakes applications, from identifying novel therapeutic targets to optimizing clinical trial design [1] [2]. Regularization serves as the primary methodological lever to control the bias-variance tradeoff, systematically preventing a model from memorizing noise (overfitting) while retaining its capacity to learn genuine signal [3] [4]. This guide provides targeted troubleshooting, protocols, and resources to navigate common pitfalls in implementing these essential techniques.

Troubleshooting Guide: Common Regularization Issues in Research Experiments

Issue 1: Model Shows Perfect Training Accuracy but Poor Validation Performance

- Problem Identification: This is the classic signature of overfitting. The model has become overly complex, learning idiosyncratic patterns and noise specific to the training dataset [1] [3].

- Diagnostic Steps:

- Plot learning curves showing training and validation loss over epochs/iterations. A diverging gap indicates overfitting [3].

- Evaluate model performance on a held-out test set that was not used during any phase of training or validation.

- Solution Pathway:

- Increase Regularization Strength: Systematically increase the λ (lambda) parameter for L1/L2 regularization or the dropout rate and observe validation performance [5] [6].

- Implement Early Stopping: Halt training when validation loss stops decreasing for a pre-defined number of epochs (patience) [7] [4].

- Apply Data Augmentation: Artificially expand your training dataset using label-preserving transformations (e.g., rotation, scaling for images; synonym replacement for text) [2] [4].

Issue 2: Unstable Model Performance Across Different Training Runs

- Problem Identification: High variance in outcomes suggests the model is sensitive to specific random initializations or data shuffling, often due to insufficient regularization in a high-capacity network [4].

- Diagnostic Steps: Train the model multiple times with different random seeds, recording final validation accuracy. High standard deviation confirms instability.

- Solution Pathway:

- Enforce L2 Regularization (Weight Decay): This technique shrinks weights uniformly, promoting stability and reducing sensitivity to small input variations [1] [8].

- Utilize Dropout: Randomly disabling neurons during training prevents complex co-adaptations, effectively training an ensemble of networks and improving robustness [5] [2].

- Increase Training Data Size: If possible, collect more diverse data. Regularization is most effective when combined with sufficient data [3].

Issue 3: Difficulty in Selecting and Tuning the Regularization Hyperparameter (λ/Alpha)

- Problem Identification: The strength of the regularization penalty is controlled by a hyperparameter. Choosing a value that is too low fails to prevent overfitting; a value that is too high causes underfitting, where the model fails to learn meaningful patterns [7] [3].

- Diagnostic Steps: Perform a hyperparameter sweep. Plot model performance (both training and validation error) against a logarithmic range of λ values. The optimal point minimizes validation error.

- Solution Pathway:

- Employ Systematic Hyperparameter Tuning: Use

GridSearchCVorRandomizedSearchCVwith k-fold cross-validation to objectively find the optimal λ [9]. - Leverage Bayesian Optimization: For computationally expensive models, use Bayesian optimization to intelligently search the hyperparameter space, modeling the performance landscape to find the optimum faster [9].

- Employ Systematic Hyperparameter Tuning: Use

Issue 4: L1 Regularization for Feature Selection Yields Inconsistent Results with Correlated Features

- Problem Identification: In datasets with highly correlated predictors (common in genomics or biomarker studies), L1 (Lasso) regularization may arbitrarily select one feature from a correlated group and discard the others, leading to unstable and non-reproducible feature sets [5] [7].

- Diagnostic Steps: Check the correlation matrix of your input features. High correlation coefficients (e.g., > |0.8|) indicate potential for this issue.

- Solution Pathway:

- Switch to L2 or Elastic Net Regularization: L2 (Ridge) shrinkage tends to distribute weight across correlated features more evenly. Elastic Net, which combines L1 and L2 penalties, can provide a balance between feature selection and handling correlation [5] [6].

- Apply Domain Knowledge: Pre-process features by grouping correlated variables based on biological or chemical pathways before model input.

Issue 5: Regularized Model Performance Plateaus or is Worse Than a Simpler Model

- Problem Identification: Excessive regularization leads to underfitting. The model is too constrained, resulting in high bias and an inability to capture the underlying data structure [3] [4].

- Diagnostic Steps: Learning curves where training and validation error are both high and close together are indicative of underfitting [3].

- Solution Pathway:

- Reduce Regularization Strength: Decrease the λ parameter or dropout rate.

- Increase Model Capacity: Add more layers or neurons to the network (while cautiously monitoring for the return of overfitting).

- Reduce Other Constraints: Review and potentially increase limits like tree depth in tree-based models or reduce kernel regularization penalties [3].

Frequently Asked Questions (FAQs)

Q1: What is regularization, and why is it non-negotiable in research ML models? A1: Regularization is a set of techniques that add a penalty for complexity to a model's loss function during training [10] [6]. Its primary goal is to prevent overfitting, ensuring the model generalizes well to new, unseen data—a critical requirement for any scientific finding or diagnostic tool intended for real-world application [1] [3].

Q2: What is the fundamental difference between L1 (Lasso) and L2 (Ridge) regularization? A2: The difference lies in the penalty term. L1 adds a penalty proportional to the absolute value of model weights (λ∑|w|), which can drive some weights to exactly zero, performing automatic feature selection [1] [6]. L2 adds a penalty proportional to the square of the weights (λ∑w²), which shrinks weights smoothly towards zero but rarely eliminates them completely, preserving all features while controlling their influence [1] [5].

Q3: How do I scientifically choose between L1, L2, or Elastic Net regularization? A3: The choice is hypothesis-driven:

- Use L1 (Lasso) when you have a high-dimensional dataset and the research goal includes identifying the most critical features or biomarkers [1] [7].

- Use L2 (Ridge) when you believe all measured features contribute to the outcome and you need a stable, robust model, especially in the presence of multicollinearity [5] [8].

- Use Elastic Net as a hybrid approach when your dataset has many correlated features, and you want to balance feature selection with coefficient stability [7] [6].

Q4: What are the most reliable methods for tuning regularization hyperparameters? A4: Reliable tuning requires a validation set and systematic search:

- Grid Search with Cross-Validation (

GridSearchCV): Exhaustively tries all combinations from a predefined set of hyperparameter values. It is thorough but computationally expensive [9]. - Randomized Search (

RandomizedSearchCV): Samples a fixed number of parameter settings from specified distributions. It is often more efficient than grid search for high-dimensional spaces [9]. - Bayesian Optimization: Builds a probabilistic model of the function mapping hyperparameters to validation score and uses it to select the most promising hyperparameters to evaluate next. It is highly efficient for very costly models [9].

Q5: How does regularization interact with other techniques like Dropout or Early Stopping? A5: These techniques are complementary and often used in concert, especially in deep learning:

- Dropout is a form of structural regularization that randomly drops units during training, preventing complex co-adaptations on training data [5] [2].

- Early Stopping is a form of procedural regularization that halts training when validation performance degrades, implicitly limiting the effective model complexity [7] [4].

- L1/L2 are parameter norm penalties applied directly to the weights. Using Dropout with L2 weight decay is a common best practice in neural networks [8].

Q6: My dataset is small, which is common in early-stage research. How can I regularize effectively? A6: Small datasets are highly prone to overfitting. A multi-pronged approach is essential:

- Prioritize Data Augmentation: Generate realistic, synthetic training examples to artificially increase dataset size and diversity [2] [4].

- Use Stronger Regularization: Employ higher λ values, dropout rates, or shallower model architectures.

- Leverage Transfer Learning: Use a model pre-trained on a large, related dataset and fine-tune it on your small dataset with careful regularization [2].

- Employ k-Fold Cross-Validation Rigorously: This maximizes the use of available data for both training and validation [9].

Table 1: Comparative Analysis of Core Regularization Techniques [1] [5] [2]

| Technique | Mathematical Penalty | Key Mechanism | Primary Effect | Optimal Use Case in Research |

|---|---|---|---|---|

| L1 (Lasso) | λ ∑ |w| | Absolute value penalty | Sparsity: Drives some weights to zero. | High-dimensional feature selection (e.g., genomic biomarker discovery). |

| L2 (Ridge) | λ ∑ w² | Squared magnitude penalty | Shrinkage: Smoothly reduces weight magnitudes. | Stable regression with correlated predictors; general neural network training. |

| Elastic Net | λ[(1-α)∑|w| + α∑w²] | Convex combination of L1 & L2 | Balanced: Selects features while grouping correlated ones. | Datasets with many correlated features where pure L1 is unstable. |

| Dropout | N/A | Random deactivation of units | Ensemble Effect: Trains a "committee" of thinned networks. | Regularizing large, fully-connected layers in deep neural networks. |

| Early Stopping | N/A | Halting training based on validation loss | Implicit Constraint: Limits effective training epochs. | Preventing overfitting in iterative learners; simple and efficient. |

| Data Augmentation | N/A | Artificial expansion of training set | Increased Diversity: Exposes model to more data variations. | Computer vision, NLP, and any domain with limited labeled data. |

Table 2: Impact of Regularization Strength (λ) on Model Metrics [3] [4]

| Regularization Strength (λ) | Training Error | Validation/Test Error | Model Complexity | Risk |

|---|---|---|---|---|

| λ → 0 (No Regularization) | Very Low | High | Very High | High Variance / Overfitting |

| λ Optimal | Moderately Low | Minimized | Balanced | Managed Bias-Variance Tradeoff |

| λ → High | High | High | Very Low | High Bias / Underfitting |

Detailed Experimental Protocols

Protocol 1: Establishing the Bias-Variance Tradeoff via Regularization Strength Sweep Objective: To empirically demonstrate how the regularization parameter λ controls the bias-variance tradeoff in a linear or logistic regression model. Materials: Dataset (e.g., gene expression matrix with clinical outcome), standard ML library (scikit-learn). Methodology:

- Split data into training (60%), validation (20%), and test (20%) sets.

- Define a logarithmic range for λ (e.g.,

np.logspace(-5, 3, 15)). - For each λ value: a. Train a Lasso or Ridge regression model on the training set. b. Calculate and record the Mean Squared Error (MSE) or log loss on both the training and validation sets.

- Plot two curves: Training Error vs. log(λ) and Validation Error vs. log(λ).

- Identify the λ value that minimizes validation error.

- Final Evaluation: Retrain the model with the optimal λ on the combined training+validation set and report final performance on the held-out test set. Expected Outcome: A plot showing validation error forming a "U-shaped" curve, with underfitting on the right (high λ), overfitting on the left (low λ), and an optimum in the middle [4].

Protocol 2: Hyperparameter Tuning for Regularization using Grid Search with Cross-Validation Objective: To systematically identify the optimal combination of regularization hyperparameters (e.g., λ for L2, dropout rate) for a neural network. Materials: Training dataset, validation dataset, deep learning framework (TensorFlow/PyTorch). Methodology:

- Define the hyperparameter grid. Example for a simple CNN:

- Implement a model-building function that creates a CNN with the given hyperparameters.

- Use

GridSearchCV(for scikit-learn compatible wrappers likeKerasClassifier) or a custom cross-validation loop: a. For each fold in k-fold cross-validation: i. Train the model on the training fold. ii. Evaluate on the validation fold. b. Average the performance metric (e.g., validation accuracy) across all folds for that hyperparameter set. - Select the hyperparameter set with the best average validation performance. Expected Outcome: A defined set of hyperparameters that provides robust, generalizable model performance, mitigating the risk of overfitting to a single validation split [9].

Protocol 3: Applying L1 & L2 Regularization in a Multilayer Perceptron (MLP) for Drug Response Prediction Objective: To build a predictive model for drug sensitivity using gene expression data, employing weight decay (L2) and dropout for regularization. Materials: Processed gene expression matrix (features), normalized drug response metric (e.g., IC50, target), PyTorch/TensorFlow. Methodology:

- Architecture: Design an MLP with 2-3 hidden layers. Use ReLU activation functions.

- Regularization Implementation:

a. L2 (Weight Decay): Set the

weight_decayparameter in the optimizer (e.g.,torch.optim.Adam(..., weight_decay=1e-4)). This applies L2 penalty to all weights. b. Dropout: AddDropout(p=0.5)layers after each hidden layer activation during training. - Training: Use a batch size of 32-64. Monitor training and validation loss.

- Early Stopping: Implement a callback to stop training if validation loss does not improve for 20 epochs, restoring the weights from the best epoch.

- Evaluation: Report the Root Mean Squared Error (RMSE) or Concordance Index on the test set. Expected Outcome: A model that predicts drug response with improved generalization compared to an unregularized baseline, as evidenced by a smaller gap between training and test error [8] [4].

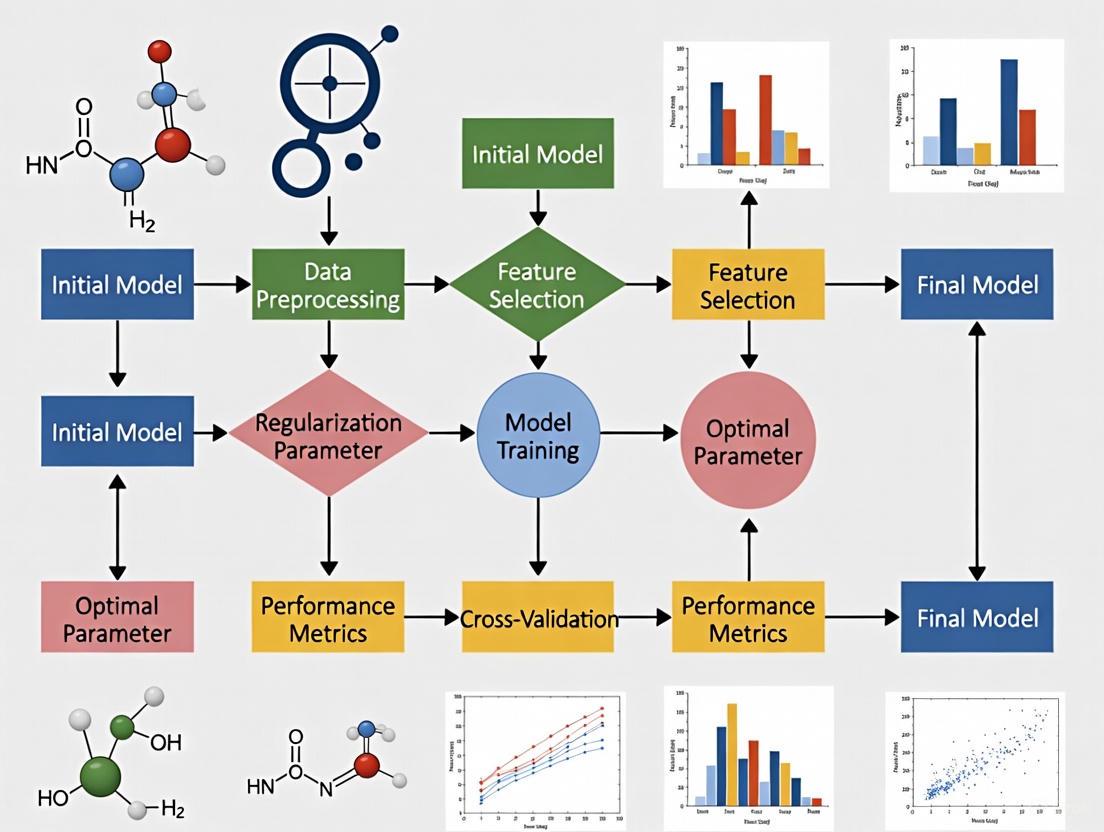

Visualizations of Regularization Concepts and Workflows

Diagram 1: The Bias-Variance Tradeoff Governed by Regularization

Diagram 2: Systematic Hyperparameter Tuning Workflow

Diagram 3: Regularization Technique Selection Decision Tree

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential "Reagents" for Regularization Experiments

| Research Reagent Solution | Function in the Regularization "Experiment" |

|---|---|

| Regularization Parameter (λ / Alpha) | The primary "knob" to control penalty strength. Determines the trade-off between fitting the data and model simplicity. Must be tuned empirically [6]. |

| Validation & Test Datasets | Critical controls. The validation set guides hyperparameter tuning, and the held-out test set provides an unbiased final estimate of generalization error [3]. |

| Cross-Validation Framework (e.g., k-Fold) | A methodological tool to maximize data usage and obtain a robust estimate of model performance for a given λ, reducing variance in the tuning process [9]. |

| Optimization Algorithm with Weight Decay | The "reaction chamber." Optimizers like SGD or Adam, when configured with a weight_decay argument, directly implement L2 regularization during the weight update step [8] [4]. |

| Dropout Layer / Early Stopping Callback | Structural and procedural modifiers. Dropout layers are inserted into network architectures; early stopping callbacks monitor validation loss to halt training automatically [5] [4]. |

| Data Augmentation Pipeline | A method to synthetically increase the diversity and effective size of the training dataset, acting as a powerful regularizer by presenting more varied examples [2] [4]. |

| Hyperparameter Optimization Library (e.g., scikit-learn's GridSearchCV) | An automation tool for systematically testing different "concentrations" (values) of regularization parameters and other hyperparameters [9]. |

| Visualization Tools (Learning Curves, Validation Curves) | Diagnostic instruments. Plots of loss/accuracy over time or against λ are essential for identifying overfitting/underfitting and selecting the optimal regularization point [3] [4]. |

The Critical Link Between Regularization and Generalization in Predictive Modeling

Troubleshooting Guide: Common Regularization Issues

Symptom: Model Performance is Poor on New Data (Overfitting)

- Problem: Your model performs well on training data but poorly on validation/test data.

- Diagnostic Check: Compare training vs. validation loss curves showing diverging patterns.

- Solution:

Symptom: Model is Overly Simplified (Underfitting)

- Problem: Model shows high bias and performs poorly on both training and test data.

- Diagnostic Check: Consistently high training error with simple decision boundaries.

- Solution:

Symptom: Model is Unstable with Small Data Changes

- Problem: Model predictions vary significantly with minor training data modifications.

- Diagnostic Check: High variance in cross-validation scores across different data splits.

- Solution:

Symptom: Feature Selection is Needed

- Problem: Too many features making interpretation difficult.

- Diagnostic Check: Many features with small, non-zero coefficients.

- Solution:

Frequently Asked Questions (FAQs)

How do I choose between L1 and L2 regularization?

Answer: The choice depends on your goals:

- Use L1 (Lasso) for feature selection and sparse models - it can drive coefficients to zero [6] [5].

- Use L2 (Ridge) for handling multicollinearity and when all features may be relevant - it shrinks coefficients evenly without eliminating them [6] [11].

- Use Elastic Net for a balanced approach, particularly with correlated features [6].

How do I determine the optimal regularization parameter?

Answer: Follow this methodology:

- Use cross-validation to test lambda values across a logarithmic scale (0.001, 0.01, 0.1, 1, 10, 100) [10].

- Select the lambda that minimizes validation error without significantly increasing training error.

- For MEG connectivity studies, consider using 1-2 orders of magnitude less regularization than typical source estimation parameters [13].

What regularization approach works best for small datasets in drug development?

Answer: For limited data scenarios:

- Prefer L2 regularization over L1 to retain all available features [11].

- Disable augmentation to prevent overfitting to noise in small datasets [11].

- Consider higher lambda values (heavier regularization) to prevent overfitting [11].

- Implement early stopping based on validation performance [12].

How do I know if my regularization is working effectively?

Answer: Monitor these indicators:

- Good: Small gap between training and validation/test performance [12].

- Good: Stable feature importance across different data samples [5].

- Concerning: Validation performance deteriorates with increased regularization (underfitting) [11].

- Concerning: Large performance difference between training and validation (overfitting persists) [12].

Regularization Techniques: Quantitative Comparison

Table 1: Comparison of Regularization Methods for Predictive Modeling

| Method | Mathematical Formulation | Key Strengths | Typical Use Cases | Parameter Range |

|---|---|---|---|---|

| L1 (Lasso) | Cost = MSE + λ∑|wᵢ| [6] | Feature selection, sparse models, interpretability [6] [5] | High-dimensional data, feature reduction, model simplification [6] | λ: 0.001 to 1.0 [6] |

| L2 (Ridge) | Cost = MSE + λ∑wᵢ² [6] | Handles multicollinearity, stable solutions, all features retained [6] [11] | Correlated features, ill-conditioned problems, default regularization [11] | λ: 0.1 to 10.0 (default 0.5) [11] |

| Elastic Net | Cost = MSE + λ[(1-α)∑|wᵢ| + α∑wᵢ²] [6] | Balanced L1/L2 benefits, grouped feature selection [6] | Highly correlated features, when both selection and stability needed [6] | λ: 0.001 to 1.0, α: 0.1 to 0.9 [6] |

| Dropout | Random node deactivation during training [12] [5] | Prevents co-adaptation, neural network specific, ensemble effect [12] [5] | Deep neural networks, complex architectures, overfitting prevention [5] | Dropout rate: 0.2 to 0.5 [5] |

Table 2: Regularization Performance Across Domains (Based on Published Results)

| Application Domain | Optimal Method | Performance Gain | Key Findings | Citation |

|---|---|---|---|---|

| MEG Connectivity | Minimum-norm with reduced regularization | Significant improvement in connectivity estimation | 1-2 orders magnitude less regularization than source estimation optimal [13] | [13] |

| Clinical Predictive Analytics | L2 Regularization | 15% improvement in customer segmentation accuracy | Reduced model complexity with faster training times [10] | [10] |

| Recommendation Systems | L2 Regularization | Improved generalization to unseen preferences | Prevented overfitting on user data while maintaining accuracy [10] | [10] |

| Bike Sharing Prediction | Linear vs Ridge Comparison | Weak dependence on small lambda values | Small datasets show minimal overfitting with proper regularization [11] | [11] |

Experimental Protocols for Regularization Parameter Tuning

Protocol 1: Cross-Validation for Lambda Selection

Purpose: Systematically determine optimal regularization strength [10].

Materials Needed:

- Training dataset with labels

- Validation dataset (holdout)

- Modeling framework (Scikit-learn, TensorFlow, etc.)

- Computational resources for multiple training runs

Methodology:

- Split data into training (60%), validation (20%), and test (20%) sets

- Define lambda values to test: [0.001, 0.01, 0.1, 0.5, 1.0, 10.0]

- For each lambda value:

- Train model on training set with specified regularization

- Evaluate performance on validation set

- Record training and validation accuracy

- Select lambda with best validation performance

- Final evaluation on test set with chosen lambda

Expected Outcomes:

- U-shaped validation curve showing optimal lambda

- 5-15% improvement in generalization performance vs. unregularized baseline

Protocol 2: Regularization Technique Comparison

Purpose: Identify most effective regularization method for specific dataset.

Materials Needed:

- Dataset with known ground truth

- Multiple regularization implementations (L1, L2, Elastic Net, Dropout)

- Performance metrics relevant to domain (AUC, accuracy, F1-score)

Methodology:

- Preprocess data and create standardized features

- Implement each regularization technique with parameter sweep

- Train models with identical architectures except regularization

- Evaluate on consistent validation set

- Compare:

- Final performance metrics

- Training/validation gap

- Model complexity (number of features, convergence time)

- Stability across multiple runs

Expected Outcomes:

- Ranking of regularization methods by effectiveness

- Understanding of trade-offs between sparsity and performance

- Guidelines for method selection based on data characteristics

Workflow Visualization

Regularization Strategy Workflow: This diagram outlines the decision process for selecting and tuning regularization techniques based on dataset characteristics and model performance.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Tools for Regularization Research

| Tool/Resource | Function | Application Context | Implementation Example |

|---|---|---|---|

| Scikit-learn Regularization | L1, L2, and Elastic Net implementations | Linear models, generalized linear models | Lasso(alpha=0.1), Ridge(alpha=1.0), ElasticNet(alpha=1.0, l1_ratio=0.5) [6] |

| PEFT Library | Parameter-Efficient Fine-Tuning | Large Language Models (LLMs) | LoRA (Low-Rank Adaptation) for efficient LLM fine-tuning [14] |

| Cross-Validation Framework | Hyperparameter tuning and validation | All regularization methods | GridSearchCV for systematic lambda testing [10] |

| Early Stopping Callbacks | Prevent overfitting in neural networks | Deep learning models | Stop training when validation loss plateaus [12] |

| Dropout Layers | Neural network regularization | Deep learning architectures | tf.keras.layers.Dropout(0.2) in hidden layers [12] [5] |

| Model Interpretability Tools | Feature importance analysis | Understanding regularization effects | SHAP, LIME for explaining regularized models [15] |

Troubleshooting Guide: Frequently Asked Questions

Q1: My model's performance drops significantly when I apply it to a new dataset. It seems to have memorized the training data. What regularization should I use?

A: This is a classic case of overfitting. The L2 penalty (Ridge Regression) is specifically designed to address this by shrinking coefficients to reduce model complexity and improve generalization [16]. It introduces a penalty term (the sum of the squares of the coefficients) to the model's loss function, which helps to prevent any single feature from having an excessively large weight [16]. The strength of the penalty is controlled by a hyperparameter, lambda (λ). As λ increases, model bias increases but variance decreases, which can help the model perform better on new, unseen test data [16].

Q2: I have a dataset with many genetic markers, but I suspect only a few are truly relevant for predicting disease. How can I identify them?

A: For this feature selection task, the L1 penalty (Lasso) is the appropriate tool. Unlike L2, the L1 penalty can shrink some coefficients to exactly zero, effectively removing those features from the model [17] [18]. This results in a sparse, interpretable model that highlights the most important predictors. This property makes Lasso particularly valuable in genomics and biomarker discovery, where the goal is to identify a small number of key drivers from a high-dimensional dataset [19] [20].

Q3: My predictors are highly correlated (e.g., different clinical measurements from the same patient). Which method is more stable?

A: In the presence of multicollinearity, L2 (Ridge) regression is generally more stable than L1 [16]. When predictors are highly correlated, Lasso tends to select one variable from the group arbitrarily and ignore the others, which can lead to unstable models when the data changes slightly [17]. Ridge regression, by contrast, shrinks the coefficients of correlated variables towards each other, distributing the effect among them and providing more reliable estimates [16]. For a middle-ground approach that offers both grouping and sparsity, consider the Elastic Net, which combines both L1 and L2 penalties [17].

Q4: I've used Lasso for feature selection and want to report confidence intervals for the selected biomarkers. Is standard statistical inference valid?

A: No, standard inference is not valid after using the same data for variable selection. Classical statistical methods assume a pre-specified set of covariates, which is violated when selection is data-driven [18]. You must use specialized selective inference methods to obtain valid confidence intervals and p-values. These methods account for the selection process and prevent over-optimistic results. Available approaches include sample splitting, conditional inference, and universally valid post-selection inference [18].

Performance Comparison of Penalty Functions

The table below summarizes the properties and recommended use cases for core penalty functions based on empirical studies.

| Penalty Method | Key Mechanism | Primary Use Case | Performance Notes |

|---|---|---|---|

| L1 (Lasso) | Shrinks coefficients to exactly zero [17] | Feature selection, creating sparse models [19] | Superior discriminative performance in healthcare predictions; may select correlated features arbitrarily [17]. |

| L2 (Ridge) | Shrinks coefficients towards zero but not to zero [16] | Handling multicollinearity, preventing overfitting [16] | Does not perform feature selection; improves generalization by reducing model variance [16]. |

| Elastic Net | Hybrid of L1 and L2 penalties [17] | Scenarios with grouped, correlated features [17] | Often matches L1's discrimination; typically produces larger models than Lasso [17]. |

| Adaptive Lasso | Applies weights to L1 penalty (e.g., based on initial coefficient estimates) [18] [19] | Addressing Lasso's bias, achieving consistent selection [18] | Can generate sparser, more stable models with fewer false positives [17] [18]. |

Detailed Experimental Protocols

Protocol 1: Biomarker Selection for Malnutrition Studies

This protocol is adapted from studies identifying biomarkers associated with Environmental Enteropathy (EE) and child growth [19].

- Problem Framing: Define a continuous outcome variable, such as the Height-for-Age Z-score (HAZ) at one year of age [19].

- Data Preparation: Collect a set of potential predictor variables (p). These may include:

- Model Application: Apply penalized regression methods (e.g., Lasso, Adaptive Lasso, SCAD) to the data. The objective is to minimize the loss function:

Loss(β) + λ * Penalty(β), wherePenalty(β)is the L1 norm for Lasso [19]. - Validation: Use bootstrapping to evaluate the consistency of the selected biomarkers across resampled datasets. This helps verify that the identified biomarkers are robust and not artifacts of a particular sample [19].

Protocol 2: Gene Selection for Cancer Classification

This protocol outlines the process for building a sparse logistic regression model for classifying cancer types based on high-dimensional genomic data [20].

- Data Setup: Begin with an ( n \times p ) gene expression matrix ( X ) and a corresponding binary outcome vector ( y ) (e.g., Class 1 vs. Class 2), where ( p ) (number of genes) is much larger than ( n ) (number of samples) [20].

- Model Specification: Use a sparse logistic regression model. The probability of a sample being in Class 2 is given by: ( P(yi = 1|Xi) = \frac{e^{(Xi^\prime \beta)}}{1 + e^{(Xi^\prime \beta)}} ) The coefficients ( \beta ) are estimated by minimizing a penalized log-likelihood function [20]: ( \arg \min{\beta} \left{ l(\beta) + \lambda \sum{j=1}^{p} P(\betaj) \right} ) where ( P(\betaj) ) is the L1 penalty term for Lasso [20].

- Algorithm Fitting: Implement a coordinate descent algorithm to solve the optimization problem. This algorithm updates one coefficient ( \beta_j ) at a time while keeping all others fixed, which is computationally efficient for high-dimensional problems [20].

- Evaluation: Assess the model's performance based on:

- Sparsity: The number of genes with non-zero coefficients.

- Classification Accuracy: The model's ability to correctly classify cancer types on a held-out test set [20].

Workflow Visualization

The following diagram illustrates a generalized workflow for applying penalized regression in a biomedical research context, from data preparation to model deployment.

The Scientist's Toolkit: Research Reagent Solutions

The table below lists key software tools and statistical packages essential for implementing penalized regression methods.

| Tool / Package Name | Function / Purpose | Key Application Context |

|---|---|---|

R package ipflasso |

Implements Integrative LASSO with Penalty Factors (IPF-LASSO) for multi-omics data [21]. | Assigning different penalties to different data modalities (e.g., gene expression, methylation) for improved prediction [21]. |

R package PatientLevelPrediction |

Provides a standardized pipeline for model development and external validation [17]. | Comparing regularization variants (L1, L2, ElasticNet) on observational health data mapped to the OMOP-CDM [17]. |

| Coordinate Descent Algorithm | An efficient "one-at-a-time" optimization algorithm for fitting penalized regression models [20]. | Solving high-dimensional logistic regression problems for biomarker selection and cancer classification [20]. |

| Selective Inference Methods | Provides valid confidence intervals and hypothesis tests after variable selection [18]. | Addressing over-optimism in statistical inference for biomarkers selected by Lasso [18]. |

Frequently Asked Questions (FAQs)

FAQ 1: What is the fundamental difference between LASSO, SCAD, and MCP in terms of bias? The core difference lies in how they penalize large coefficients. LASSO applies a constant penalty, which shrinks all coefficients equally and can significantly bias large coefficients toward zero. In contrast, SCAD and MCP are folded concave penalties that reduce the penalty rate for larger coefficients, mitigating this bias. SCAD relaxes the penalization rate smoothly, while MCP reduces it down to zero after a threshold, allowing large coefficients to be estimated with minimal shrinkage [22] [23] [24].

FAQ 2: My SCAD/MCP model fails to converge during training. What could be the cause? Non-convergence is a common challenge with non-convex penalties like SCAD and MCP. Unlike the convex optimization problem of LASSO, these methods can have multiple local minimizers, causing algorithms to get trapped [23]. To address this:

- Ensure Proper Initialization: Use the LASSO or OLS solution as a starting point for SCAD/MCP algorithms rather than a random initialization [22].

- Check Tuning Parameters: The

gammaparameter in MCP andain SCAD control the concavity. Ensure they are set to recommended starting values (e.g.,gamma=3for MCP,a=3.7for SCAD) and validate their selection via cross-validation [22] [23]. - Verify Data Preprocessing: High multicollinearity can exacerbate instability. Standardize all predictors and check for perfect collinearity before model fitting [25].

FAQ 3: When should I prefer SCAD or MCP over LASSO for my feature selection problem? You should strongly consider SCAD or MCP in the following scenarios, particularly within drug development where identifying true predictors is critical:

- When Unbiased Estimation is Critical: If you need accurate effect sizes for key variables, such as the impact of a specific excipient on drug release rates [26].

- In High-Dimensional Settings with Likely Strong Signals: When

p > nand you have prior reason to believe some predictors have large effects, as LASSO's bias can be detrimental [24]. - To Achieve the "Oracle Property": When your goal is to asymptotically perform as well as if the true underlying model were known in advance, a property possessed by SCAD and MCP but not by LASSO [22] [27].

FAQ 4: How do SCAD and MCP handle correlated independent variables compared to LASSO? LASSO tends to arbitrarily select one variable from a group of correlated predictors. SCAD and MCP can also be unstable with highly correlated features. For such situations, the Elastic Net penalty, which combines L1 and L2 penalties, is often recommended because it promotes a grouping effect where correlated variables are selected together [23]. If using SCAD or MCP, a two-stage approach that first applies a screening method like Sure Independence Screening (SIS) can help reduce dimension and manage correlation before applying the non-convex penalty [25].

FAQ 5: What are the primary computational considerations when using SCAD/MCP? SCAD and MCP are computationally more demanding than LASSO due to their non-convexity [22]. Efficient algorithms, such as local linear approximation (LLA) and coordinate descent, are used to fit these models. The LLA algorithm, for instance, can solve SCAD by iteratively solving a series of weighted LASSO problems [22] [27].

Troubleshooting Guides

Issue 1: Model Selection Instability and High False Positive Rates

Problem: Your SCAD or MCP model selects different variables across different samples or cross-validation folds, or includes too many irrelevant variables (false positives).

Diagnosis and Solution Pathway:

Step-by-Step Instructions:

- Analyze Predictor Correlation: Calculate the correlation matrix of your predictors. If you find groups of highly correlated variables, a pure L1 penalty (like in LASSO, SCAD, MCP) may be inherently unstable for selection [23] [24].

- Implement a Two-Stage Procedure: Use a pre-screening method like Point-Biserial SIS (PB-SIS) for binary responses or correlation-based SIS for linear models to reduce the feature space to a manageable size (e.g., from 10,000 to 100 features) before applying SCAD/MCP. This improves stability and reduces computational cost [25].

- Re-tune Regularization Parameters: Use

K-fold cross-validation(with K=5 or 10) more rigorously. Ensure you are not under-penalizing by selecting alambdavalue that is too small. Perform cross-validation on multiple data splits to check for consistency in thelambdapath. - Explore Alternative Methods: If false positive control is paramount, consider L0-penalized regression (e.g., via the

L0Learnorabesspackages), which directly penalizes the number of non-zero coefficients and has been shown to produce sparser models with fewer false positives than LASSO [24].

Issue 2: Poor Model Performance with Outliers or Heavy-Tailed Errors

Problem: The predictive performance of your SCAD/MCP model degrades because the data contains outliers or the error distribution is not normal.

Diagnosis and Solution Pathway:

Step-by-Step Instructions:

- Diagnose the Error Distribution: Plot the residuals from an initial model (e.g., OLS or LASSO). Look for heavy tails or influential points that deviate significantly from the pattern.

- Apply a Robust Variable Selection Framework: Integrate your SCAD penalty with a robust loss function. Instead of the standard least squares loss, use:

- Huber Loss: which is less sensitive to outliers.

- Least Absolute Deviation (LAD): which uses the L1-norm of the residuals.

- These combine a robust loss

Ψ(with a non-convex penalty:min[ Σ Ψ(yi - xiβ) + Σ pλ(|βj|) ][27].

- Utilize Flexible Error Distributions: For a data-adaptive approach, model the regression error using a Nonparametric Gaussian Scale Mixture (NGSM). This method automatically adapts to the shape of the error distribution (normal, heavy-tailed, etc.) within the penalized likelihood framework, providing robustness without requiring manual specification of the loss function [27]. This has been shown to maintain efficiency and improve selection accuracy in the presence of outliers.

Protocol: Benchmarking SCAD and MCP against LASSO

Objective: To empirically compare the feature selection performance and estimation bias of SCAD, MCP, and LASSO under controlled conditions.

Workflow:

Detailed Methodology:

- Data Generation:

- Set a sample size

n=100and number of predictorsp=500to simulate a high-dimensional setting. - Generate predictors

Xfrom a multivariate normal distribution with mean 0 and a covariance matrix Σ. Define Σ to have a block structure with high correlation (e.g., ρ=0.9) within blocks and no correlation between blocks. - Set the true coefficient vector

βto be sparse. For example, have 5 non-zero coefficients: two with large values (e.g., 2.5), two medium (e.g., 1.5), and one small (e.g., 0.5). The rest are zero. - Generate the response via:

Y = Xβ + ε, whereεcan be drawn from i) a standard normal distribution, and ii) a Student's t-distribution with 3 degrees of freedom to simulate heavy-tailed errors.

- Set a sample size

- Model Fitting:

- Fit LASSO, SCAD, and MCP models to the generated data.

- For SCAD, set

a=3.7; for MCP, setgamma=3[23]. - Use 10-fold cross-validation to select the optimal

lambdavalue for each method. - Repeat the entire process over 100 independent Monte Carlo simulations.

- Performance Evaluation: Calculate the following metrics averaged over the simulations:

- True Positive Rate (TPR): Proportion of non-zero true coefficients correctly identified.

- False Positive Rate (FPR): Proportion of zero true coefficients incorrectly selected.

- Estimation Bias: Average absolute difference between the estimated non-zero coefficients and their true values.

- Prediction Error: Mean Squared Error (MSE) on an independently generated large test set.

The table below summarizes key characteristics of the penalties, informed by theoretical properties and simulation studies [22] [23] [24].

Table 1: Comparison of Regularization Penalties for Feature Selection

| Feature | LASSO | SCAD | MCP | Elastic Net |

|---|---|---|---|---|

| Penalty Form | λ|β| | Complex, non-convex | Complex, non-convex | λ(α|β| + (1-α)|β|²/2) |

| Bias for Large Coefs | High | Low | Low | Medium (adjustable via α) |

| Oracle Property | No | Yes | Yes | No |

| Handling Correlated Features | Selects one randomly | Can be unstable | Can be unstable | Groups correlated features |

| Computational Complexity | Low | Medium-High | Medium-High | Low-Medium |

| Robustness to Outliers | Low | Low (but can be integrated with robust loss) | Low (but can be integrated with robust loss) | Low |

Table 2: Typical Simulation Results (Illustrative, n=100, p=500)

| Metric | LASSO | SCAD | MCP |

|---|---|---|---|

| True Positive Rate (TPR) | 0.85 | 0.94 | 0.95 |

| False Positive Rate (FPR) | 0.12 | 0.08 | 0.05 |

| Bias (Large Coefficients) | 0.45 | 0.10 | 0.08 |

| Prediction MSE | 2.1 | 1.5 | 1.4 |

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Computational Tools for Advanced Penalized Regression

| Item | Function | Example Packages / commands |

|---|---|---|

R ncvreg Package |

Primary tool for fitting SCAD and MCP models in high-dimensional GLMs. | fit <- ncvreg(X, y, penalty="SCAD") |

R glmnet Package |

Industry standard for fitting LASSO and Elastic Net models; useful for initialization. | fit <- glmnet(X, y, alpha=1) |

R L0Learn Package |

For fitting L0-penalized models, an alternative for ultra-sparse solutions. | fit <- L0Learn.fit(X, y, penalty="L0") |

Python scikit-learn |

Provides LASSO and Elastic Net; SCAD/MCP may require custom implementation or other libraries. | from sklearn.linear_model import Lasso |

| Cross-Validation Function | Critical for tuning the regularization parameter lambda. |

cv.ncvreg() in R, GridSearchCV in Python |

| Sure Independence Screening (SIS) | Pre-screening method to reduce dimensionality before applying SCAD/MCP. | SIS package in R |

Frequently Asked Questions (FAQs)

Foundational Concepts

1. What is the fundamental difference between a Bayesian prior and traditional regularization? Traditional regularization techniques, such as L1 (Lasso) and L2 (Ridge), add an explicit penalty term to a loss function to constrain model parameters [28]. In contrast, within the Bayesian framework, the prior distribution itself acts as an implicit regularization mechanism [29] [30]. A prior represents your beliefs about the parameters before observing the data. By choosing a prior that assigns higher probability to "simpler" parameter values (e.g., values near zero), you naturally guide the model away from overfitting, achieving the same goal as explicit regularization [31] [32].

2. How can a probabilistic prior prevent overfitting? Overfitting often occurs when model parameters become excessively large to fit noise in the training data. A Bayesian prior, such as a Gaussian distribution centered at zero, encodes the belief that large parameter values are unlikely. During inference, the posterior distribution combines this prior belief with the evidence from the data [33]. This process inherently penalizes complex models that would require extreme parameter values, thereby reducing overfitting and improving generalization [34].

3. My model is still overfitting despite using a prior. What might be wrong? This is often a result of a misspecified prior or an incorrectly tuned scale (hyperparameter) of the prior. A prior that is too "weak" (e.g., a Gaussian with a very large variance) will exert insufficient influence, allowing the model to fit the noise. Conversely, a prior that is too "strong" can lead to underfitting. The solution is to either:

- Use domain knowledge to select a more informative prior.

- Use hierarchical models to learn the prior's scale from the data.

- Employ methods like cross-validation to tune the hyperparameters [29].

Implementation and Tuning

4. Which prior should I use for my specific problem? The choice of prior depends on the type of sparsity or constraint you want to induce. The table below summarizes common priors and their equivalents in traditional regularization.

| Desired Constraint | Bayesian Prior | Frequentist Equivalent | Common Use Cases |

|---|---|---|---|

| Small coefficients, no sparsity | Gaussian (Normal) Prior [31] [32] | L2 / Ridge Regularization [31] [28] | General-purpose prevention of overfitting; robust regression. |

| Sparsity (feature selection) | Laplace Prior [31] [32] | L1 / Lasso Regularization [31] [28] | Models where interpretability is key; identifying key predictors. |

| Strong sparsity on a few signals | Horseshoe Prior [31] [35] | - | Very high-dimensional problems (e.g., genetics, neuroimaging) where only a few variables are relevant [35]. |

| Structured sparsity | Spike-and-Slab Prior [31] | - | Model selection; explicitly testing whether a parameter is zero or non-zero. |

5. How do I set the hyperparameters (e.g., λ, τ) for my priors? Tuning the scale of the prior is crucial. Several strategies exist:

- Empirical Bayes: Treat the hyperparameters as unknown constants and estimate them from the data by maximizing the marginal likelihood [29].

- Full Bayes: Place a prior (a hyperprior) on the hyperparameter itself. This is often done with the Horseshoe prior or using half-Cauchy/distributions on scale parameters, allowing the data to inform the appropriate level of shrinkage [35].

- Cross-Validation: Use held-out data to evaluate model performance across a range of hyperparameter values, similar to the frequentist approach [28].

Domain-Specific Applications

6. Can Bayesian regularization be applied beyond linear regression? Absolutely. The principle is general and has been successfully applied to a wide range of models, including:

- Structural Equation Modeling (SEM): Priors can be used to shrink cross-loadings, small coefficients, or specific paths, helping to achieve parsimonious models without stepwise modification [30].

- Drug Development and Clinical Trials: Bayesian methods incorporate prior information (e.g., from adult studies) into pediatric trials, potentially reducing the required number of participants and accelerating the process [33] [36]. They are also used in dose-finding trials to identify the maximum tolerated dose more efficiently [36].

- Generalized Linear Models (GLMs): Bayesian regularization with shrinkage priors like Laplace or Horseshoe can be applied to logistic regression for prediction modeling, especially with a low number of events per variable [35].

Troubleshooting Guides

Problem 1: Model is Underfitting (High Bias)

Symptoms:

- Poor performance on both training and test data.

- Model predictions are consistently inaccurate and lack complexity.

- Parameter estimates are shrunk too aggressively towards zero.

Diagnosis and Solutions:

| Possible Cause | Diagnostic Steps | Recommended Solution |

|---|---|---|

| Overly Informative (Strong) Prior | Examine the prior distribution. Is its variance (or scale) set too small? Check if the prior is dominating the likelihood. | Weaken the prior by increasing its variance. Consider using a more diffuse or weakly informative prior. |

| Incorrect Prior Centering | The prior mean is far from the true parameter value. | If domain knowledge exists, re-center the prior. Otherwise, a common default is to center at zero. |

| Excessive Regularization Hyperparameter (λ) | The value of λ is too large, giving the prior too much weight. | Use cross-validation or a Bayesian method (Empirical/Full Bayes) to select a smaller, more appropriate λ value [28]. |

Experimental Protocol: Diagnosing Prior Impact

- Run a Baseline: Fit your model with the current strong prior.

- Fit a Weak Prior Model: Fit the same model with a much weaker prior (e.g., a Gaussian with a variance 100x larger).

- Compare Posteriors: Plot the posterior distributions of key parameters from both models. If they differ significantly, your original prior is likely too strong.

- Compare Predictive Performance: Evaluate both models on a held-out test set using a metric like Mean Squared Error or log-predictive density. The weaker prior model should perform better if underfitting was the issue.

Problem 2: Model is Overfitting (High Variance)

Symptoms:

- Excellent performance on training data, but poor performance on test data.

- Parameter estimates are large and unstable, showing high sensitivity to small changes in the data.

Diagnosis and Solutions:

| Possible Cause | Diagnostic Steps | Recommended Solution |

|---|---|---|

| Non-informative (Weak) Prior | The prior variance is set too large, providing effectively no constraint on the parameters. | Introduce a regularizing prior. Start with a Gaussian prior for general shrinkage or a Laplace prior if you suspect sparsity [31] [32]. |

| Missing Regularization | The model is fit using Maximum Likelihood Estimation (MLE) with no prior. | Transition from MLE to Maximum a Posteriori (MAP) estimation by adding a prior. This is the direct Bayesian interpretation of regularization [32] [34]. |

| Insufficient Shrinkage | The hyperparameter λ is too small. | Systematically increase λ and observe performance on a validation set. Use Bayesian optimization for efficient tuning [28]. |

Experimental Protocol: Implementing Regularization with a Horseshoe Prior The Horseshoe prior is effective in high-dimensional settings for strong regularization of noise while preserving signals [35].

- Standardize Data: Center and scale all predictor variables to have mean 0 and standard deviation 1.

- Specify the Model: For a linear regression ( yi = xi^T\beta + \epsiloni ), with ( \epsiloni \sim N(0, \sigma^2) ), specify the hierarchical prior:

- ( \betaj \mid \lambdaj, \tau \sim N(0, \lambdaj^2 \tau^2) ) (Conditional prior for coefficients)

- ( \lambdaj \sim C^+(0, 1) ) (Local shrinkage parameter; half-Cauchy)

- ( \tau \sim C^+(0, 1) ) (Global shrinkage parameter; half-Cauchy)

- Posterior Inference: Use Markov Chain Monte Carlo (MCMC) sampling (e.g., with Stan, PyMC3, or JAGS) to approximate the posterior distribution of ( \beta, \lambda, \tau ).

- Evaluate: Check the posterior distributions for ( \beta_j ). True signals will have posteriors away from zero, while noise variables will be shrunk heavily toward zero.

Problem 3: Computational Issues and Slow Sampling

Symptoms:

- MCMC samplers take a very long time to converge.

- High autocorrelation between samples or low effective sample size.

Diagnosis and Solutions:

| Possible Cause | Diagnostic Steps | Recommended Solution |

|---|---|---|

| Poor Parameterization | Models with strong dependencies between parameters can slow down sampling. | Use non-centered parameterizations for hierarchical models to break dependencies. |

| Ill-conditioned Prior/Likelihood | The prior scale is mismatched with the scale of the data. | Ensure all variables are standardized. Reparameterize the model to improve geometry. |

| Complex Priors | Priors like the Laplace lack conjugate forms, leading to slower sampling. | Use modern HMC-based samplers (e.g., NUTS) which are efficient for non-conjugate models. Alternatively, use a Gaussian prior which is often computationally easier. |

The Scientist's Toolkit: Research Reagent Solutions

| Item / Concept | Function / Explanation | Example in Bayesian Regularization |

|---|---|---|

| Gaussian (Normal) Prior | A symmetric, bell-shaped distribution that encodes the belief that a parameter is likely to be near its mean value. | Used as the Bayesian equivalent of L2 (Ridge) regularization. It shrinks coefficients towards zero but does not set them exactly to zero [31] [32]. |

| Laplace Prior | A distribution with a sharp peak at zero and heavy tails. It promotes sparsity. | The Bayesian counterpart to L1 (Lasso) regularization. It can drive parameter estimates exactly to zero, performing automatic feature selection [31] [32]. |

| Horseshoe Prior | A continuous shrinkage prior with a very sharp peak at zero and heavy tails. It strongly shrinks noise while preserving large signals [35]. | Ideal for high-dimensional problems where most variables are irrelevant, but a few have large effects. Used in clinical prediction models [35]. |

| Spike-and-Slab Prior | A discrete mixture prior combining a "spike" (a point mass at zero) and a "slab" (a diffuse distribution, like a Gaussian). | Provides a direct method for variable selection by assigning a probability to a variable being included in the model [31]. |

| Markov Chain Monte Carlo (MCMC) | A class of algorithms for sampling from complex probability distributions, such as the posterior in Bayesian models. | Essential for performing inference with complex, non-conjugate models that use advanced shrinkage priors like the Horseshoe [35]. |

| Maximum a Posteriori (MAP) Estimation | A point estimate of the parameters that maximizes the posterior distribution. | Provides a direct link to traditional regularized estimates. The MAP estimate with a Gaussian prior is identical to the Ridge estimate, and with a Laplace prior to the Lasso estimate [32] [34]. |

| Stan / PyMC3 | Probabilistic programming languages that allow for flexible specification of Bayesian models, including those with custom priors. | The primary software tools for implementing Bayesian regularized models, as they provide powerful and efficient MCMC samplers. |

Workflow and Conceptual Diagrams

Bayesian Regularization Logic

Experimental Implementation Workflow

A Methodological Toolkit for Effective Regularization Tuning

FAQ: Hyperparameter Optimization Fundamentals

What is the core difference between a model parameter and a hyperparameter?

Model parameters are variables learned by the model from the training data during the training process itself, such as the weights and biases in a neural network. In contrast, hyperparameters are configuration variables that are set before the learning process begins. They control the very behavior of the learning algorithm, influencing how the model parameters are learned [37]. Examples include the learning rate, the number of layers in a neural network, or the regularization parameter C in a support vector machine [38].

Why is hyperparameter tuning critical in research, especially for regularization?

Hyperparameter tuning is essential for building models that are both accurate and generalizable. A well-tuned model can significantly outperform a poorly tuned one, even if they use the same algorithm [37]. Proper tuning helps prevent both overfitting (where the model learns the training data too well, including its noise) and underfitting (where the model fails to learn the underlying patterns) [9]. For regularization parameters specifically, which control a model's complexity, the choice is a direct trade-off between bias and variance. For instance, research in fields like neuroimaging has shown that the amount of regularization optimal for one task (e.g., source estimation) can be suboptimal for another (e.g., connectivity analysis), highlighting the need for careful, problem-specific tuning [13].

When should I choose Grid Search over more advanced methods?

Grid Search is most appropriate when you have a relatively small and well-understood hyperparameter space [39]. It is a logical starting point if the number of hyperparameters is limited and you have sufficient computational resources to exhaustively evaluate all combinations. It guarantees finding the best combination within the predefined grid. However, it becomes computationally prohibitive as the number of hyperparameters or the range of their values increases, a phenomenon known as the "curse of dimensionality" [38].

Troubleshooting Common Experimental Issues

Issue 1: My hyperparameter search is taking too long and is computationally expensive.

Solutions:

- Switch from Grid to Random Search: For a similar computational budget, Random Search can explore a wider and more complex hyperparameter space by randomly sampling a fixed number of parameter combinations, often outperforming Grid Search [38] [37].

- Adopt Bayesian Optimization: This method builds a probabilistic model of the objective function to intelligently select the most promising hyperparameters to evaluate next. It typically finds a good set of hyperparameters in far fewer iterations, making it ideal for complex models and large datasets where each model training is slow and expensive [40] [39].

- Use Early Stopping: Integrate early stopping into your training workflow. This technique halts the training of a model if the validation performance stops improving for a predefined number of epochs, saving significant time during the evaluation of each hyperparameter configuration [39].

Issue 2: I am unsure if my hyperparameter optimization is overfitting to my validation set.

Solutions:

- Implement Nested Cross-Validation: To get an unbiased estimate of your model's generalization performance after hyperparameter tuning, use nested cross-validation. This involves an outer loop for estimating generalization performance and an inner loop dedicated solely to hyperparameter optimization. This ensures that the test set in the outer loop never influences the hyperparameter selection process [38].

- Hold Out a Final Test Set: Always reserve a completely separate test set that is not used at any point during the hyperparameter search. This set is only used for the final evaluation of the model trained with the selected optimal hyperparameters [38].

Quantitative Comparison of Core Search Strategies

The table below summarizes the key characteristics of the three primary hyperparameter search strategies.

Table 1: Comparison of Hyperparameter Optimization Methods

| Feature | Grid Search | Random Search | Bayesian Optimization |

|---|---|---|---|

| Core Principle | Exhaustive search over a predefined grid [38] | Random sampling from specified distributions [38] | Probabilistic model guides search based on past results [37] |

| Search Strategy | Brute-force, non-adaptive [9] | Random, non-adaptive [9] | Adaptive and sequential [40] |

| Parallelization | Highly parallel (embarrassingly parallel) [38] | Highly parallel (embarrassingly parallel) [38] | Inherently sequential; difficult to parallelize [40] |

| Best For | Small, low-dimensional hyperparameter spaces [39] | Larger, higher-dimensional spaces [38] | Complex models with expensive-to-evaluate training [40] [39] |

| Key Advantage | Guaranteed to find best point in the grid | Explores wider space efficiently; good baseline [38] [37] | Finds good parameters in fewer evaluations; balances exploration/exploitation [38] [39] |

| Key Disadvantage | Computationally intractable for large spaces (curse of dimensionality) [38] | Can miss optimal regions; no learning from past trials [37] | Higher computational overhead per iteration; complex to implement [40] |

Experimental Protocols for Hyperparameter Tuning

Protocol 1: Implementing a Random Search with Scikit-Learn

This protocol provides a step-by-step methodology for using RandomizedSearchCV, a common tool for random hyperparameter search.

Output: Tuned Decision Tree Parameters: {'criterion': 'entropy', 'max_depth': None, 'max_features': 6, 'min_samples_leaf': 6}. Best score is 0.842 [9].

Workflow Logic: The following diagram illustrates the logical process of a random search, which can be generalized to other methods where an evaluation and selection step is involved.

Protocol 2: Bayesian Optimization with a Gaussian Process Surrogate

This protocol outlines the core steps of a Bayesian optimization loop, which is used by frameworks like Optuna and Scikit-Opt.

Objective: To find the hyperparameters x that minimize a loss function f(x) (e.g., validation error) with the fewest evaluations.

Methodology:

- Initialization: Start by evaluating the objective function

f(x)for a small number of randomly selected hyperparameter setsx_1, x_2, ..., x_n[37]. - Build Surrogate Model: Construct a probabilistic surrogate model, often a Gaussian Process (GP), that approximates the unknown function

f(x). The GP provides a mean prediction and an uncertainty (variance) for any point in the hyperparameter space [37]. - Maximize Acquisition Function: Use an acquisition function (e.g., Expected Improvement/EI), which balances exploration (high uncertainty) and exploitation (good mean prediction), to select the next most promising hyperparameter set

x_nextto evaluate [41] [37]. - Evaluate and Update: Evaluate the true objective function

f(x_next)by training the model withx_next. Then, update the surrogate model with this new data point(x_next, f(x_next))[37]. - Iterate: Repeat steps 3 and 4 until a stopping criterion is met (e.g., maximum iterations, no improvement, or time limit) [37].

Workflow Logic:

The Scientist's Toolkit: Essential Research Reagents & Solutions

Table 2: Key Software Tools and Libraries for Hyperparameter Optimization

| Tool / Library | Primary Function | Key Tuning Algorithms Supported | Reference |

|---|---|---|---|

| Scikit-Learn | Machine learning library for Python | GridSearchCV, RandomizedSearchCV | [9] [39] |

| Optuna | Dedicated hyperparameter optimization framework | Bayesian Optimization (TPE), Random Search, CMA-ES | [41] |

| Hyperopt | Distributed hyperparameter optimization library | Bayesian Optimization (TPE), Random Search, Annealing | [41] |

| Scikit-Opt | Optimization algorithms library | Bayesian Optimization (GP), among others | [41] |

| Ray Tune | Scalable model tuning library | Population-Based Training (PBT), ASHA, HyperBand, Bayesian Opt. | [38] |

Tuning L1 and L2 Regularization Strength (λ) in Linear and Logistic Regression Models

Core Concepts FAQ

What is the fundamental difference between L1 and L2 regularization?

L1 and L2 regularization both prevent overfitting by adding a penalty term to the model's loss function, but they do so through distinct mechanisms. L1 regularization (Lasso) adds a penalty proportional to the absolute value of the coefficients (L1-norm), which can drive some coefficients to exactly zero, effectively performing feature selection. In contrast, L2 regularization (Ridge) adds a penalty proportional to the square of the coefficients (L2-norm), which shrinks coefficients toward zero without eliminating them entirely, helping to manage multicollinearity and stabilize predictions [1] [42] [6].

How does the choice of λ affect my regression model?

The regularization parameter λ controls the trade-off between fitting the training data and model complexity.

- λ = 0: No regularization; the model corresponds to ordinary least squares (linear regression) or maximum likelihood estimation (logistic regression), risking overfitting.

- Small λ: A weak penalty; the model may still overfit if the original problem was high-variance.

- Large λ: A strong penalty; significantly constrains coefficients, which can lead to underfitting and high bias [1] [43].

When should I prefer L1 over L2 regularization in a biological or drug discovery context?

Choose L1 regularization when you are in an exploratory phase and need to identify key biomarkers, genes, or molecular descriptors from a high-dimensional dataset (where the number of features p is much larger than the number of samples n). Its feature selection capability yields sparse, interpretable models [1] [44] [45]. Prefer L2 regularization when you believe most features contribute some signal and you want to build a stable, generalizable predictive model without discarding any variables, which is common in image recognition or sensor data analysis [42]. For problems with highly correlated features and a need for both selection and stability, a hybrid like Elastic Net (combining L1 and L2) is often beneficial [46] [6].

Troubleshooting Guides

Issue 1: Model Performance is Poor on Validation Set

Problem: Your model shows high accuracy on training data but performs poorly on the validation or test set, indicating overfitting.

| Potential Cause | Diagnostic Check | Corrective Action |

|---|---|---|

| λ is too small | Compare training vs. validation loss; a large gap indicates overfitting [46]. | Systematically increase λ using a geometric grid (e.g., 0.001, 0.01, 0.1, 1). Re-tune. |

| Incorrect regularization type | You have many irrelevant features but used L2, which keeps all features. | Switch to L1 or Elastic Net to perform feature selection and reduce model complexity [1] [6]. |

| Inadequate validation | You tuned λ directly on the test set, leading to optimistic bias. | Ensure you use a separate validation set or cross-validation for tuning. Use the test set only for final evaluation [47]. |

Experimental Protocol for Diagnosis:

- Train your model with the current λ.

- Calculate the loss (e.g., Mean Squared Error, Log Loss) on both training and validation sets.

- Plot these losses against a range of λ values. The optimal λ is typically near the point where the validation loss is minimized, before the two curves start to diverge significantly.

Issue 2: Model is Underfitting

Problem: The model performs poorly on both training and validation data, showing high bias.

| Potential Cause | Diagnostic Check | Corrective Action |

|---|---|---|

| λ is too large | The coefficients are shrunk too aggressively toward zero. Training loss is almost as high as validation loss [43]. | Decrease the value of λ. Consider a grid search over smaller values (e.g., 1e-5 to 1e-2). |

| Excessive feature selection with L1 | L1 has set too many potentially relevant coefficients to zero. | Reduce the λ for L1. Alternatively, use L2 regularization or Elastic Net, which allows more features to remain in the model with small weights [46]. |

Issue 3: Unstable or Inconsistent Feature Selection with L1

Problem: When you run the model multiple times on different splits of the data, L1 regularization selects different sets of features.

| Potential Cause | Diagnostic Check | Corrective Action |

|---|---|---|

| Highly correlated features | L1 tends to arbitrarily select one feature from a correlated group [42]. | Use L2 regularization or Elastic Net, which distributes weight among correlated predictors and leads to more stable selection [48] [6]. |

| Small sample size | The feature selection is highly sensitive to the specific data sample. | Employ resampling methods like bootstrapping. Use the frequency with which a feature is selected across samples as a more robust measure of its importance. |

Experimental Protocols & Data Presentation

Protocol 1: Two-Step Regularization for High-Dimensional Biological Data

This protocol is highly effective for datasets with thousands of features (e.g., gene expression, molecular descriptors) and few samples, a common scenario in drug development [44].

Workflow Diagram:

Methodology:

- Stage 1: Feature Selection with L1 Regularization. Apply L1-penalized regression (Lasso) to the full high-dimensional dataset. Use cross-validation to find the optimal λ1 that minimizes the loss. This will create a sparse model, reducing the feature set to the most relevant 50-100 predictors [44].

- Stage 2: Model Refinement with L2 Regularization. Using only the features selected in Stage 1, train a new model with L2 regularization (Ridge). Perform a second cross-validation to find the optimal λ2. This "milder" regularization on the reduced feature set often yields a model with higher prediction accuracy and better generalization [44].

Performance Table: The following table summarizes results from a study applying this two-step method to biological regression tasks (CoEPrA contest) [44].

| Task | Initial Features | Features after L1 | Best λ1 (Stage 1) | Best λ2 (Stage 2) | Performance (q²) |

|---|---|---|---|---|---|

| Task I | ~6,000 | 50 | 0.05 | 0.1 | 0.691 |

| Task II | ~6,000 | 43 | 0.05 | 0.01 | 0.668 |

| Task III | ~6,000 | 56 | 0.08 | 0.3 | 0.131 |

| Task IV | ~6,000 | 41 | 0.1 | 0.2 | 0.586 (SRCC) |

Protocol 2: Tuning λ via Cross-Validation and Grid Search

This is the standard methodology for selecting the optimal regularization parameter.

Workflow Diagram:

Methodology:

- Define a Parameter Grid: Start with a coarse grid spanning several orders of magnitude, using a geometric progression (e.g.,

[0.0005, 0.005, 0.05, 0.5, 5]). Thinking in multiplicative steps is more effective than linear steps [47]. - K-Fold Cross-Validation: For each candidate λ value in the grid:

- Partition the training data into K folds (e.g., K=5 or 10).

- Train the model on K-1 folds and validate on the held-out fold.

- Repeat for all K folds and compute the average validation performance (e.g., validation loss or accuracy) [48].

- Select Optimal λ: Choose the λ value that gives the best average cross-validation performance.

- Refine Grid (Optional): If higher precision is needed, define a finer grid around the best-performing λ from the coarse search and repeat step 2 [47].

The Scientist's Toolkit: Research Reagent Solutions

| Reagent / Resource | Function in Regularization Tuning | Example / Notes |

|---|---|---|

glmnet (R package) |

Highly efficient package for fitting L1, L2, and Elastic Net regularized models. It includes built-in cross-validation for λ tuning. | The de facto standard for regularized regression in R; can handle both Gaussian (linear) and binomial (logistic) families [44] [43]. |

scikit-learn (Python) |

Provides modules Lasso, Ridge, and ElasticNet for linear models, and LogisticRegression with penalty argument for classification. |

Use GridSearchCV or RandomizedSearchCV for automated hyperparameter tuning [6]. |

| Coordinate Descent Algorithm | The optimization solver used by glmnet and scikit-learn to efficiently compute the regularization path for L1 and L2 models. |

Particularly effective for high-dimensional problems; solves by iteratively optimizing one parameter at a time [45]. |

| Validation Set / K-Fold CV | A mandatory methodological "reagent" for obtaining an unbiased estimate of model performance during tuning. | Prevents overfitting to the test set. K-fold CV is preferred for small datasets [47] [48]. |

| Bayesian Optimization | An advanced "reagent" for guided hyperparameter search, potentially more efficient than grid search for very complex tuning. | Can be implemented with libraries like scikit-optimize or BayesianOptimization in Python [47]. |

Troubleshooting Guides

Guide 1: Diagnosing Overfitting Despite Regularization

Problem: Your model shows a large gap between training and validation accuracy, even after adding L2 regularization or Dropout.

- Check Your Optimizer and Regularization Coupling: The interaction between your optimizer and regularization technique is crucial. If using Adam, ensure you are using AdamW, which correctly decouples weight decay from the gradient-based update. The standard Adam implementation in many libraries incorrectly ties L2 regularization to the adaptive learning rate, reducing its effectiveness [49].

- Verify Hyperparameters: Adaptive optimizers like Adam often require stronger regularization (e.g., a higher weight decay value) compared to SGD to achieve the same generalization effect [49]. Systematically tune your regularization strength when switching optimizers.

- Inspect the Training Curves: If the validation loss starts to increase while the training loss continues to decrease, it is a classic sign of overfitting. Consider applying early stopping at the point where the validation loss is at its minimum [50].

Guide 2: Addressing Unstable or Diverging Training

Problem: Training loss oscillates wildly or diverges, especially when using adaptive learning rate optimizers.

- Adjust Learning Rate and Decay Factors: For RMSprop, the decay factor (β, typically 0.9) controls the moving average of squared gradients. A value too close to 1.0 can make the learning rate adjustments too aggressive, leading to instability. Try a slightly lower value, such as 0.9 or 0.95 [51] [52].

- Apply Gradient Clipping: This is particularly useful for RNNs or very deep networks. It prevents exploding gradients by capping the gradient values before the parameter update [50].

- Use a Learning Rate Schedule: A static learning rate might be too high once the parameters are close to a minimum. Implement a schedule (e.g., exponential decay) to reduce the learning rate over time, helping the optimizer converge stably [53].

Frequently Asked Questions (FAQs)

Q1: I am using Adam with L2 regularization, but my model is still overfitting. What is wrong?

A1: The core issue is likely that you are using the standard Adam optimizer instead of AdamW. In Adam, the L2 regularization term is integrated into the gradient calculation and is then adjusted by the adaptive learning rate. This means the effectiveness of the weight decay becomes dependent on the learning rate, which varies for each parameter. AdamW decouples the weight decay from the gradient update, applying it directly to the weights afterward. This correct implementation has been shown to yield better generalization and is a more true form of weight decay [49]. Always use AdamW if your framework supports it.

Q2: When should I use SGD over adaptive optimizers like Adam or RMSprop?

A2: The choice can depend on your model and dataset. SGD with Momentum is often recommended when you can afford extensive hyperparameter tuning and have the computational budget to train for more epochs. It can sometimes reach a better final optimum, especially for convex problems or well-scaled data. Adaptive optimizers (Adam, RMSprop) are generally preferred for their faster convergence in the early stages, robustness to sparse gradients, and good performance on non-convex problems (like deep neural networks) with less tuning of the base learning rate [51] [52] [49]. For tasks like training RNNs, RMSprop and Adam are particularly useful [52].

Q3: How does Batch Normalization interact with weight regularization and optimizers?

A3: Batch Normalization (BatchNorm) helps to stabilize and accelerate training by normalizing the inputs to each layer, reducing internal covariate shift [54]. This has an indirect regularizing effect. Importantly, the scale and shift parameters in BatchNorm are affected by weight decay (L2 regularization). Applying too much weight decay to these parameters can counter their beneficial effect. Some practitioners choose to exclude BatchNorm parameters from weight decay. Regarding optimizers, BatchNorm's stabilization of activations allows for the use of higher learning rates, which can benefit all optimizer types [54].

Q4: What is the fundamental difference between L2 Regularization and Weight Decay?

A4: While mathematically equivalent for standard Stochastic Gradient Descent (SGD), they are not the same for optimizers with adaptive learning rates, like Adam [49].

- L2 Regularization: Adds a penalty term (wd * all_weights.pow(2).sum() / 2) directly to the loss function. The optimizer then calculates gradients from this combined loss.