Profile Likelihood for Uncertainty Quantification: A Practical Guide for Biomedical Research and Drug Discovery

This article provides a comprehensive guide to profile likelihood for uncertainty quantification, tailored for researchers and professionals in drug discovery and biomedical science.

Profile Likelihood for Uncertainty Quantification: A Practical Guide for Biomedical Research and Drug Discovery

Abstract

This article provides a comprehensive guide to profile likelihood for uncertainty quantification, tailored for researchers and professionals in drug discovery and biomedical science. It covers foundational concepts, from defining profile likelihood and its relation to maximum likelihood estimation, to its core mechanics for deriving confidence intervals and assessing parameter identifiability. The piece details methodological applications in computational modeling and pharmaceutical contexts, including handling censored data and ODE models. It also addresses troubleshooting for non-identifiability and optimization strategies, and validates the approach through comparisons with Bayesian and ensemble methods. The goal is to equip practitioners with the knowledge to reliably quantify uncertainty, thereby enhancing trust and decision-making in predictive models for clinical trials and molecular design.

What is Profile Likelihood? Core Concepts for Quantifying Uncertainty

In statistical inference, particularly in fields like systems biology and drug development, the likelihood function measures how well a statistical model explains observed data by calculating the probability of seeing that data under different parameter values [1]. For a model parameterized by θ = (δ,ξ), where δ represents parameters of interest and ξ represents nuisance parameters, the likelihood function for observed data y is denoted as L(θ;y) = L(δ,ξ;y) [2].

The profile likelihood provides a powerful method for dealing with nuisance parameters while making inferences about parameters of interest. It is defined as:

$$Lp(\delta) = \sup{\xi} L(\delta,\xi;y)$$

This represents the maximum likelihood value achievable for a fixed value of the parameter of interest δ when the nuisance parameters ξ are optimized over their domain [2]. In practice, analysts often work with a normalized version:

$$Rp(\delta) = \frac{\sup{\xi} L(\delta,\xi;y)}{\sup_{(\delta,\xi)} L(\delta,\xi;y)}$$

which is the profile likelihood ratio relative to the overall maximum likelihood [2].

Table: Key Components of Likelihood-Based Inference

| Component | Mathematical Representation | Interpretation |

|---|---|---|

| Likelihood Function | L(θ;y) = L(δ,ξ;y) | Probability of data y given parameters θ |

| Profile Likelihood | Lp(δ) = supξ L(δ,ξ;y) | Maximum likelihood for fixed δ |

| Profile Likelihood Ratio | Rp(δ) = supξ L(δ,ξ;y) / sup(δ,ξ) L(δ,ξ;y) | Normalized profile likelihood |

Theoretical Foundation and Mathematical Framework

The theoretical justification for profile likelihood lies in its relationship with the χ² distribution. For a parameter of interest δ, the deviance statistic:

$$D(\delta) = -2 \log R_p(\delta)$$

follows approximately a χ² distribution with degrees of freedom equal to the dimension of δ [3]. This property enables the construction of confidence intervals through:

$$\text{CR}θ = \left{ θ \mid \chi{PL}^2(θ) - \chi{PL}^2(\hat{θ}) < \deltaα \right}$$

where δα is the α quantile of the χ² distribution with appropriate degrees of freedom, and χ² ∝ -2 log L for normally distributed errors [3].

Profile likelihood effectively projects the full parameter space onto subspaces of interest, enabling tractable inference in high-dimensional problems. As noted by Royall [2000], the profile likelihood ratio performs satisfactorily despite being an "ad hoc" solution in the sense that true likelihoods are not being compared [3].

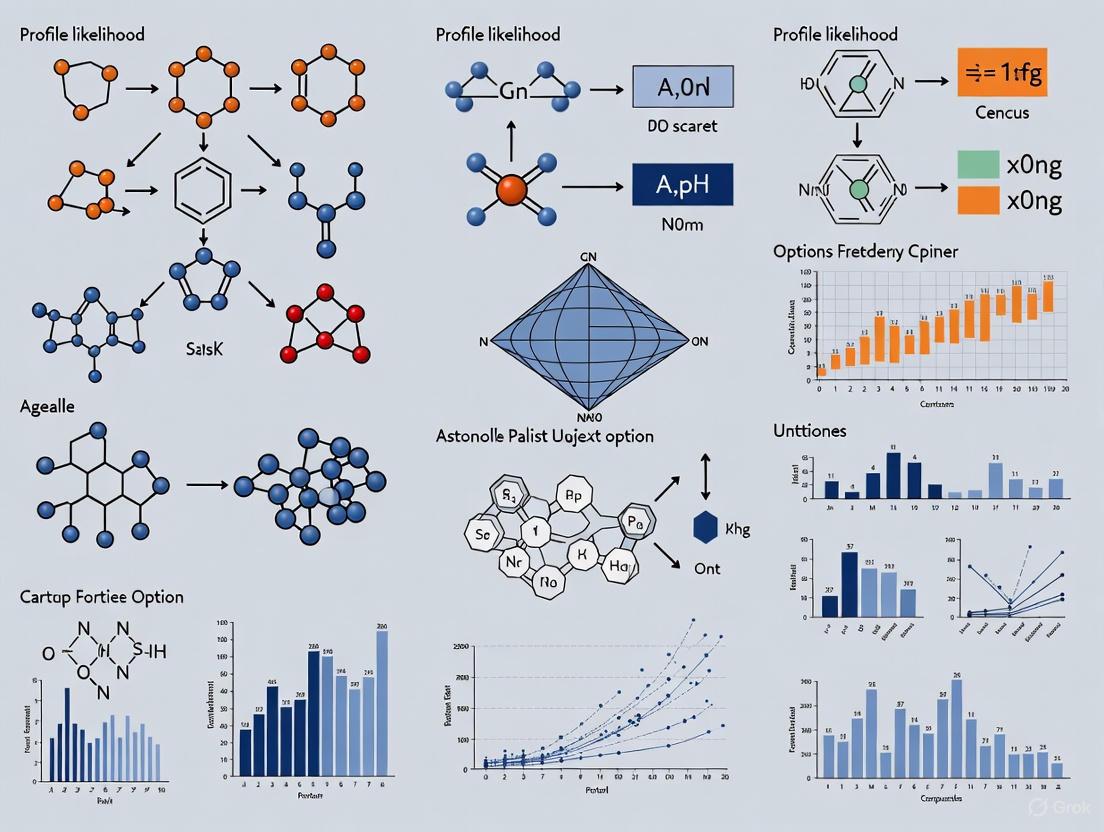

Figure 1: Profile Likelihood Workflow Logic - This diagram illustrates the conceptual process of deriving profile likelihood, where parameters of interest are fixed while nuisance parameters are optimized over.

Computational Methods and Implementation

Multiple computational approaches have been developed for calculating profile likelihoods, each with distinct strengths and applications.

Optimization-Based Profiling

The classical approach to profile likelihood calculation uses stepwise optimization, where the parameter of interest is fixed at various values across a defined range, and at each point, the likelihood is maximized with respect to all other parameters [4]. This method directly implements the mathematical definition of profile likelihood but can be computationally intensive for complex models.

Integration-Based Profiling

The integration-based approach computes likelihood profiles by solving a system of differential equations that describe how parameters evolve along the profile path [5] [4]. This method can be more efficient than stepwise optimization for certain model classes, particularly when implemented with adaptive ordinary differential equation (ODE) solvers.

Constrained Optimization Confidence Intervals

The CICOProfiler method estimates confidence interval endpoints directly through constrained optimization without restoring the full profile shape [5]. This approach is computationally efficient when only confidence bounds are needed rather than the complete profile curve.

Table: Comparison of Profile Likelihood Computation Methods

| Method | Key Principle | Advantages | Limitations |

|---|---|---|---|

| Optimization-Based | Stepwise re-optimization with fixed parameters | Direct implementation, general applicability | Computationally expensive |

| Integration-Based | Solving differential equation systems | Potentially faster for certain ODE models | Model-specific implementation |

| CICOProfiler | Constrained optimization for CI endpoints | Efficient for confidence interval estimation | Doesn't provide full profile shape |

Research Reagent Solutions for Profile Likelihood Analysis

Table: Essential Software Tools for Profile Likelihood Implementation

| Tool/Platform | Function | Key Features |

|---|---|---|

| LikelihoodProfiler.jl | Unified package for practical identifiability | Multiple profiling methods, SciML compatibility [5] |

| ProfileLikelihood.jl | Fixed-step optimization-based profiles | Bivariate profile likelihood support [5] |

| InformationalGeometry.jl | Differential geometry approaches | Various methods to study likelihood functions [5] |

| Optimization.jl | Core optimization interface | Multiple optimizer support, automatic differentiation [5] |

| OrdinaryDiffEq.jl | Differential equations solver | Integration-based profiling support [5] |

Performance Comparison and Experimental Data

Statistical Performance in Ordered Categorical Data

A comparative study of profile-likelihood-based confidence intervals for two-sample problems in ordered categorical data revealed important performance characteristics [6]. The researchers compared actual type I error rates (or 1 - coverage probability) of various rank-based methods, including profile likelihood, at the relative effect of 50%.

The study found that in large or medium samples, actual type I error rates of the profile-likelihood method and the Brunner-Munzel test were close to the nominal level even under unequal distributions [6]. In contrast, the Wilcoxon-Mann-Whitney test showed substantially different error rates from the nominal level under unequal distributions, particularly with unequal sample sizes.

In small samples, the profile likelihood method demonstrated more conservative performance, with actual type I error rates slightly larger than the nominal level, though still better than some alternatives [6].

Computational Efficiency in Biological Models

The computational efficiency of LikelihoodProfiler.jl has been tested on multiple benchmark models, demonstrating its applicability to complex systems biology and quantitative systems pharmacology (QS) models [4]. The package leverages the Julia SciML ecosystem, providing access to various optimizers, differential equation solvers, and automatic differentiation backends.

Figure 2: Uncertainty Quantification Workflow - This diagram shows the role of profile likelihood in the broader context of uncertainty quantification for mechanistic models.

Applications in Uncertainty Quantification and Systems Biology

Profile likelihood plays a crucial role in practical identifiability analysis for complex biological models. In mathematical biology, developing mechanistic insight by combining models with experimental data requires assessing whether model parameters can be reliably estimated from available data [7].

The profile likelihood approach is particularly valuable for quantifying uncertainty in parameters, model states, and predictions [4]. It provides several advantages over Fisher Information Matrix (FIM)-based approaches: profile likelihood-based confidence intervals can be asymmetric, are invariant under parameter transformations, and are more reliable for nonlinear models [3].

In optimal experimental design, profile likelihood helps identify the most informative targets and time points for new measurements by examining model trajectories along parameter profiles [3]. Parameters with flat profiles indicate practical non-identifiability, suggesting where additional data collection would be most beneficial.

The emerging Profile-Wise Analysis (PWA) framework uses profile likelihood to propagate uncertainty from parameters to predictions, creating profile-wise prediction intervals that isolate how different parameter combinations affect model predictions [7]. This approach provides fully "curvewise" predictive confidence sets that trap the entire model trajectory with the specified confidence level, offering stronger guarantees than pointwise intervals.

Profile likelihood represents a powerful statistical methodology that bridges theoretical likelihood principles with practical implementation needs in computational biology and drug development. Its ability to handle nuisance parameters while providing reliable confidence intervals makes it particularly valuable for complex mechanistic models where traditional methods fail.

The continuing development of computational frameworks like LikelihoodProfiler.jl and Profile-Wise Analysis demonstrates the evolving nature of profile likelihood methods, with increasing emphasis on computational efficiency, uncertainty propagation, and integration with biological modeling workflows. As mathematical models grow more complex and central to drug development decisions, profile likelihood will remain an essential tool for quantifying and managing uncertainty in parameter estimation and model predictions.

Maximum Likelihood Estimation (MLE) and Chi-Squared statistics form a cornerstone of modern statistical inference, with deep theoretical connections that underpin many advanced methodologies, including profile likelihood for uncertainty quantification. MLE is a method for estimating parameters of an assumed probability distribution, given some observed data, achieved by maximizing a likelihood function so that under the assumed statistical model, the observed data is most probable [8]. The fundamental goal is to find the parameter values that make the observed data most likely, providing a principled approach to parameter estimation that reveals connections between different statistical paradigms.

The integration of these methods is particularly relevant in uncertainty quantification research, where profile likelihood has emerged as a powerful frequentist approach for identifiability analysis, parameter estimation, and prediction confidence sets [7]. Profile likelihood methods enable the propagation of likelihood-based confidence sets for parameters to predictions, systematically isolating how different parameter combinations affect model outputs. This workflow provides a computationally efficient alternative to Bayesian methods while maintaining rigorous frequentist coverage properties, making it particularly valuable for researchers, scientists, and drug development professionals working with complex mechanistic models.

Mathematical Foundations and Theoretical Connections

Maximum Likelihood Estimation: Core Principles

For a random sample (X1, X2, \cdots, Xn) from a distribution with probability density (or mass) function (f(xi;\theta)), the likelihood function is defined as the joint probability of the observed data viewed as a function of the parameter (\theta):

[ L(\theta) = \prod{i=1}^n f(xi;\theta) ]

The maximum likelihood estimate (\hat{\theta}) is the value that maximizes this function [9]:

[ \hat{\theta} = \underset{\theta}{\operatorname{arg\,max}} \, L(\theta) ]

In practice, we often work with the log-likelihood (\ell(\theta) = \log L(\theta) = \sum{i=1}^n \log f(xi;\theta)), as the logarithm is a monotonic function that simplifies calculations by converting products into sums [8] [9]. The score function, defined as the derivative of the log-likelihood, provides the slope information used in optimization:

[ g'(\beta) = \frac{\partial \ell(\theta)}{\partial \theta} ]

The MLE is found by solving the score equation (g'(\beta) = 0), which represents the point where the slope of the log-likelihood function is zero in all directions [10].

Chi-Squared Statistics and Hypothesis Testing

Chi-squared tests are statistical hypothesis tests used primarily for analyzing contingency tables and assessing goodness-of-fit. Pearson's chi-squared test statistic is calculated as [11]:

[ X^2 = \sum{i=1}^k \frac{(Oi - Ei)^2}{Ei} ]

where (Oi) represents observed frequencies and (Ei) represents expected frequencies under the null hypothesis. This test determines whether there is a statistically significant difference between observed and expected frequencies, with the test statistic following a (\chi^2) distribution under the null hypothesis.

The Wilks' Theorem Connection

The profound connection between MLE and chi-squared statistics is formally established by Wilks' Theorem, which states that for nested models, the likelihood ratio test statistic follows a chi-squared distribution asymptotically [12]. Consider testing the null hypothesis (H0: \theta \in \Theta0) against the alternative (H_1: \theta \in \Theta). The likelihood ratio test statistic is defined as:

[ \lambda{\text{LR}} = -2 \ln \left[ \frac{\sup{\theta \in \Theta0} L(\theta)}{\sup{\theta \in \Theta} L(\theta)} \right] = -2[\ell(\theta_0) - \ell(\hat{\theta})] ]

Under the null hypothesis and regular conditions, Wilks' Theorem establishes that as the sample size approaches infinity:

[ \lambda{\text{LR}} \sim \chid^2 ]

where (d) is the difference in dimensionality between the full parameter space (\Theta) and the restricted space (\Theta_0) [12]. This result provides the crucial bridge between likelihood-based methods and the well-established chi-squared distribution, enabling rigorous hypothesis testing within the likelihood framework.

Table 1: Key Mathematical Relationships Between MLE and Chi-Squared Statistics

| Concept | Mathematical Expression | Role in Connecting MLE and χ² |

|---|---|---|

| Likelihood Function | (L(\theta) = \prod{i=1}^n f(xi;\theta)) | Foundation for both estimation and testing |

| Log-Likelihood Ratio | (\lambda{\text{LR}} = -2[\ell(\theta0) - \ell(\hat{\theta})]) | Test statistic with known asymptotic distribution |

| Score Function | (g'(\beta) = \frac{\partial \ell(\theta)}{\partial \theta}) | Determines MLE through solving score equations |

| Pearson Chi-Squared | (X^2 = \sum{i=1}^k \frac{(Oi - Ei)^2}{Ei}) | Measures discrepancy between observed and expected |

| Wilks' Theorem | (\lambda{\text{LR}} \xrightarrow{d} \chid^2) | Establishes asymptotic equivalence between LRT and χ² |

Figure 1: Theoretical relationships between MLE, likelihood ratio tests, chi-squared distributions, and profile likelihood methods for uncertainty quantification.

Profile Likelihood for Uncertainty Quantification

Foundations of Profile Likelihood

Profile likelihood provides a computationally efficient method for quantifying uncertainty in complex models, particularly those with multiple parameters. In this framework, we partition the parameter vector (\theta = (\psi, \lambda)) into interest parameters (\psi) and nuisance parameters (\lambda). The profile likelihood for (\psi) is defined as [7]:

[ Lp(\psi) = \max\lambda L(\psi, \lambda) ]

This construction eliminates nuisance parameters by maximizing over them for each fixed value of the interest parameters. The corresponding profile log-likelihood is:

[ \ellp(\psi) = \ln Lp(\psi) ]

The uncertainty in the interest parameters (\psi) can then be quantified using the likelihood ratio test and its connection to the chi-squared distribution. Specifically, an approximate (100(1-\alpha)\%) confidence set for (\psi) is given by [7]:

[ {\psi : 2[\ell(\hat{\psi}, \hat{\lambda}) - \ellp(\psi)] \leq \chi{1,1-\alpha}^2} ]

where (\chi_{1,1-\alpha}^2) is the (1-\alpha) quantile of the chi-squared distribution with 1 degree of freedom.

Uncertainty Decomposition in Profile Likelihood

In practical applications, understanding the decomposition of total uncertainty into statistical and systematic components is essential. In the covariance representation, the total uncertainty combines these components [13]:

[ \sigmai^2 = \sigma{\text{stat},i}^2 + \sigma_{\text{syst},i}^2 ]

When combining measurements using profile likelihood methods, the weights (\lambda_i) that minimize the variance in the combined result account for all uncertainty sources [13]:

[ m\text{cmb} = \sumi \lambdai mi, \quad \sigma\text{cmb}^2 = \sumi \lambdai^2 \sigmai^2 ]

with corresponding statistical and systematic contributions:

[ \sigma\text{stat,cmb}^2 = \sumi \lambdai^2 \sigma{\text{stat},i}^2, \quad \sigma\text{syst,cmb}^2 = \sumi \lambdai^2 \sigma{\text{syst},i}^2 ]

This decomposition enables researchers to identify dominant sources of uncertainty and prioritize efforts for reduction.

Table 2: Profile Likelihood Workflow for Uncertainty Quantification (Adapted from PWA [7])

| Workflow Step | Methodological Approach | Connection to MLE and χ² |

|---|---|---|

| Model Specification | Define mechanistic model with parameters θ and probability model p(y;θ) | Forms foundation for likelihood function |

| Parameter Estimation | Maximize likelihood function to obtain MLE (\hat{\theta}) | Direct application of MLE principles |

| Identifiability Analysis | Calculate profile likelihood for parameters | Uses LRT and χ² distribution for confidence intervals |

| Uncertainty Propagation | Construct profile-wise prediction intervals | Propagates parameter confidence sets to predictions |

| Result Combination | Decompose uncertainties using BLUE or nuisance parameters | Applies χ²-based weighting schemes |

Experimental Protocols and Applications

Experimental Framework in High-Energy Physics

The application of profile likelihood with MLE and chi-squared statistics is well-established in high-energy physics, particularly at facilities like the LHC. A typical analysis involves constructing a likelihood function that incorporates both statistical uncertainties from data and systematic uncertainties through nuisance parameters [13]:

[ -2\ln {\mathscr{L}} = \sum{i} \left(\frac{mi+\sumr (\alphar - ar) \Gamma{ir} - m\text{H}}{\sigma{\text{stat},i}}\right)^2 + \sumr (\alphar - a_r)^2 ]

Here, (mi) represents measurements, (m\text{H}) is the parameter of interest (e.g., Higgs boson mass), (\alphar) are nuisance parameters corresponding to systematic uncertainty sources, (ar) are constraint terms, and (\Gamma_{ir}) quantifies how systematic uncertainty (r) affects measurement (i).

Case Study: Higgs Boson Mass Measurement

Consider the first ATLAS Run 2 measurement of the Higgs boson mass in the (H\rightarrow \gamma \gamma) and (H\rightarrow 4\ell) final states [13]. The measurements were:

- (m_{\gamma \gamma} = 124.93 \pm 0.40 (\pm 0.21 \text{ (stat) } \pm 0.34 \text{ (syst)})) GeV

- (m_{4\ell} = 124.79 \pm 0.37 (\pm 0.36 \text{ (stat) } \pm 0.09 \text{ (syst)})) GeV

The combination using profile likelihood methods accounted for the different statistical and systematic uncertainty balances in each channel. The (\gamma \gamma) channel benefited from a large data sample but had significant systematic uncertainties from photon energy calibration, while the (4\ell) channel had a smaller data sample but excellent calibration systematic uncertainties. The profile likelihood approach properly weighted these contributions according to their uncertainties, demonstrating the practical application of the theoretical foundations.

Figure 2: Experimental workflow for profile likelihood analysis, showing the integration of MLE and chi-squared calibration for uncertainty quantification.

Research Reagent Solutions: Computational Tools

Table 3: Essential Computational Tools for Profile Likelihood Analysis

| Tool Category | Specific Examples | Function in MLE and χ² Analysis |

|---|---|---|

| Optimization Algorithms | Gradient-based methods (BFGS, Newton-Raphson), EM algorithm | Solve score equations to find MLE |

| Statistical Software | R, Python (SciPy, statsmodels), specialized HEP tools | Implement profile likelihood and calculate LRT |

| Uncertainty Propagation | Profile-wise analysis (PWA), bootstrap methods | Propagate parameter uncertainties to predictions |

| Visualization Tools | Likelihood surface plotters, confidence interval visualizers | Display profile likelihood functions and confidence sets |

| Model Validation | Goodness-of-fit tests, residual analysis, Q-Q plots | Assess model adequacy using χ² tests |

Comparative Performance Analysis

Methodological Comparisons

The performance of profile likelihood methods can be evaluated against alternative approaches for uncertainty quantification. Recent research has established that profile likelihood provides a computationally efficient middle ground between simplistic linearization methods and computationally expensive full Bayesian approaches [7].

Table 4: Performance Comparison of Uncertainty Quantification Methods

| Method | Computational Efficiency | Statistical Rigor | Implementation Complexity |

|---|---|---|---|

| Linearization (Fisher Information) | High | Low (for nonlinear models) | Low |

| Profile Likelihood | Medium | High | Medium |

| Full Bayesian (MCMC) | Low | High | High |

| Bootstrap Methods | Low to Medium | Medium | Medium |

Impact on Experimental Outcomes

In practical applications, the choice between MLE with chi-squared calibration and alternative methods significantly impacts experimental conclusions. In the Higgs boson mass combination example [13], the profile likelihood approach properly accounted for the different statistical and systematic uncertainty balances between channels, producing a combined result that appropriately weighted each measurement according to its precision. This approach outperformed simplistic combination methods that might overemphasize measurements with apparently small total uncertainty but large systematic components.

The profile-wise analysis (PWA) workflow has demonstrated particular value in mathematical biology and systems pharmacology, where it enables parameter identifiability analysis, estimation, and prediction within a unified framework [7]. By propagating profile-likelihood-based confidence sets for parameters to predictions, PWA explicitly isolates how different parameter combinations affect model predictions, providing insights that are obscured in other methods.

The deep mathematical connections between Maximum Likelihood Estimation and Chi-Squared statistics, formalized through Wilks' Theorem, provide a robust foundation for modern uncertainty quantification methods. Profile likelihood builds upon this foundation, offering a computationally efficient framework for quantifying uncertainty in complex models with multiple parameters. The theoretical equivalence between likelihood ratio tests and chi-squared statistics enables rigorous frequentist inference with well-calibrated error rates.

For researchers, scientists, and drug development professionals, these methods offer powerful tools for parameter estimation, hypothesis testing, and prediction interval construction. The continued development of profile likelihood methodologies, particularly in emerging areas like profile-wise analysis, ensures that these foundational statistical principles remain relevant for addressing contemporary challenges in scientific inference and uncertainty quantification.

In scientific research, particularly in fields like medical imaging and systems biology, accurately estimating key parameters of interest is often complicated by the presence of nuisance parameters—unwanted variables that influence the data but are not the primary focus of investigation. Nuisance parameters pose a significant challenge to the reliability and interpretability of computational models [14]. These can include unknown target range in radar systems, background interference in medical images, or unmeasured biological variables in drug development studies [15] [16]. Profiling provides a powerful statistical framework to address this challenge by systematically scanning parameter spaces to isolate parameters of interest while accounting for the uncertainty introduced by nuisance parameters.

The core mechanics of profiling involve exploring the likelihood function of a statistical model, where nuisance parameters are optimized out at each candidate value of the parameters of interest. This process, known as profile likelihood, creates a reduced dimensional space that enables focused inference on target parameters [15]. Royall (2000) recommends the profile likelihood ratio as a general solution for dealing with nuisance parameters, noting that while it represents an ad hoc solution where true likelihoods are not directly compared, its performance remains very satisfactory for practical applications [15]. This approach has proven particularly valuable in magnetic resonance imaging (MRI) relaxometry, biological system modeling, and signal processing, where it enables researchers to extract meaningful information from complex, noisy data environments.

Theoretical Foundations of Parameter Profiling

The Mathematical Framework of Profile Likelihood

The profile likelihood approach operates on a general statistical model where experimental data, denoted as ( y ), is described as a function ( f ) of interesting parameters ( x ), nuisance parameters ( ν ), and experimental design parameters ( p ), with added measurement noise ( ε ): ( y = f(x; ν, p) + ε ) [17]. The foundational work builds upon Fisher information theory and Cramér-Rao Bound (CRB) optimization to create a min-max framework that robustly enables precise parameter estimation even in the presence of nuisance variables [17].

The profile likelihood method effectively reduces the dimensionality of the parameter estimation problem by "profiling out" nuisance parameters. For a given parameter of interest ( θ ), the profile likelihood ( Lp(θ) ) is obtained by maximizing the full likelihood ( L(θ,ν) ) over the nuisance parameters ( ν ): ( Lp(θ) = \max_ν L(θ,ν) ). This transformation allows researchers to work with a function that depends only on the parameters of interest, while still accounting for the uncertainty in the nuisance parameters through the optimization process [15]. The resulting profile likelihood ratio, which compares the profile likelihood to the maximum achievable likelihood, serves as a test statistic for hypothesis testing and confidence interval construction for the parameters of interest.

Cramér-Rao Bound Optimization in Scan Design

In the context of MR scan design for parameter mapping, the Cramér-Rao Bound provides a theoretical lower bound on the variance of any unbiased estimator [17]. This statistical measure enables researchers to optimize scan parameters—such as flip angles and repetition times—for precise T1 and T2 estimation in the presence of nuisance parameters like radiofrequency field inhomogeneities [17]. The CRB-inspired min-max optimization finds scan parameter combinations that minimize the worst-case variance of parameter estimates across a defined range of biological conditions, ensuring robust performance in practical applications.

The Fisher information matrix ( I(x(r); ν(r),P) ) plays a central role in this framework, quantifying how much information the observed data carries about the parameters of interest [17]. When nuisance parameters are present, the matrix inversion needed to compute the CRB must appropriately account for their influence, typically through partitioning or marginalization strategies. The profile likelihood approach naturally handles this challenge by concentrating the nuisance parameters out of the estimation problem, creating a direct path to inference on the parameters of interest.

Comparative Analysis of Parameter Estimation Methods

Performance Comparison of Estimation Techniques

Table 1: Comparison of parameter estimation methods for handling nuisance parameters

| Method | Mechanism | Computational Demand | Accuracy with Nuisance Parameters | Primary Applications |

|---|---|---|---|---|

| Profile Likelihood | Scans parameters of interest while optimizing nuisance parameters | Moderate | High with sufficient data | Generalized linear models, MR relaxometry [17] [15] |

| Wiener Estimation | Linear operation minimizing ensemble mean-squared error [16] | Low | Limited for location estimation [16] | Signal processing, image analysis |

| Scanning-Linear Estimation | Seeks global maximum via linear metric optimization [16] | Moderate to High | High for location parameters | Target localization, noisy image environments [16] |

| Marginal/Conditional Likelihood | Integrates out nuisance parameters [15] | Variable | High when tractable | Specialized statistical models |

| Generalized Likelihood Ratio Test | Uses maximum likelihood estimates of nuisance parameters [15] | Moderate | Good with accurate estimation | Signal detection, radar systems |

Practical Performance Metrics

Table 2: Empirical performance characteristics across methodologies

| Method | Bias Control | Variance Handling | Robustness to Model Misspecification | Implementation Complexity |

|---|---|---|---|---|

| Profile Likelihood | Low bias with correct model | Efficient variance estimation | Moderate | Medium |

| Wiener Estimation | Low in linear Gaussian settings [16] | Optimal for Gaussian noise [16] | Low | Low |

| Scanning-Linear Estimation | Low for amplitude and shape [16] | Good with proper covariance [16] | Moderate with Gaussian assumption [16] | Medium to High |

| Posterior Mean (MCMC) | Theoretically optimal [16] | Full Bayesian accounting | High with flexible models | Very High |

The profile likelihood method demonstrates particular strength in maintaining calibration across diverse scenarios. As highlighted in recent uncertainty quantification research, properly calibrated predictions can be reliably interpreted as probabilities, with truthfulness in calibration measures ensuring that predictors are minimized when outputting true probabilities rather than being incentivized to appear more calibrated [18]. This property makes profiling particularly valuable in drug development contexts where accurate uncertainty quantification is essential for regulatory decision-making.

Experimental Protocols and Methodologies

Profile Likelihood Implementation Workflow

The experimental implementation of profile likelihood methods follows a systematic workflow designed to ensure robust parameter estimation. The process begins with model specification, where researchers define the full statistical model including both parameters of interest and nuisance parameters. This is followed by data collection using optimized experimental designs that maximize information content for the parameters of interest while controlling for nuisance factors [17]. The core profiling procedure then iterates through candidate values of the parameters of interest, at each point optimizing the likelihood over nuisance parameters to construct the profile function.

For MR relaxometry applications, this typically involves acquiring multiple scans with varied acquisition parameters (flip angles, repetition times) to enhance sensitivity to T1 and T2 values while accounting for nuisance parameters like RF inhomogeneity [17]. The profile likelihood is then computed by fixing candidate T1 and T2 values and optimizing over the nuisance parameters, creating a 2D profile surface that can be used for point estimation and uncertainty quantification. This approach has been shown to yield excellent agreement with reference measurements in phantom studies while providing practical advantages for in vivo applications [17].

Handling Method Failure in Comparative Studies

An essential consideration in experimental implementation is the proper handling of method failure, which occurs when an estimation method fails to produce output for some data sets [19]. In comparative studies of parameter estimation methods, researchers often encounter failures manifesting as error messages, system crashes, or excessive computation times. The prevalent approaches of discarding affected data sets or imputing values are generally inappropriate as they can introduce significant bias, particularly when failure is correlated with data characteristics [19].

Instead, recommended practice involves implementing fallback strategies that reflect how real-world users would proceed when a method fails [19]. This includes documenting failure rates as performance metrics themselves, as they provide valuable information about method robustness. For profile likelihood methods specifically, implementation should include safeguards against convergence failures in the optimization steps, potentially employing multiple starting points or alternative optimization algorithms when the primary method fails.

The Scientist's Toolkit: Research Reagent Solutions

Essential Computational and Analytical Tools

Table 3: Key research reagents and computational tools for profiling methods

| Tool Category | Specific Examples | Function in Profiling Workflow | Implementation Considerations |

|---|---|---|---|

| Optimization Algorithms | Gradient-based methods, EM algorithm | Maximize likelihood over nuisance parameters | Convergence diagnostics, multiple starting points |

| Uncertainty Quantification | Profile likelihood confidence intervals, bootstrap | Quantify estimation uncertainty | Calibration assessment, coverage verification [18] |

| Experimental Design | Cramér-Rao Bound analysis [17] | Optimize scan parameters for estimation efficiency | Computational cost of CRB calculation [17] |

| Statistical Software | R, Python with specialized packages | Implement profiling procedures | Custom programming often required |

| Visualization Tools | Profile plots, confidence curve displays | Communicate results and diagnose problems | Interactive exploration capabilities |

The Cramér-Rao Bound analysis serves as a particularly important tool in the experimental design phase for profile likelihood studies. By calculating the lower bound on estimator variance before data collection, researchers can optimize scan parameters to maximize information content for parameters of interest while effectively controlling for nuisance parameters [17]. In MR relaxometry, this approach has been successfully applied to optimize combinations of Spoiled Gradient-Recalled Echo (SPGR) and Dual-Echo Steady-State (DESS) sequences for rapid T1 and T2 mapping [17].

Applications in Scientific Research and Drug Development

Medical Imaging and Biomarker Quantification

Profile likelihood methods have demonstrated significant utility in medical imaging applications, particularly for quantitative biomarker estimation. In magnetic resonance relaxometry, profiling enables rapid, reliable quantification of T1 and T2 relaxation parameters, which serve as important biomarkers for monitoring neurological disorders, classifying lesions in multiple sclerosis, characterizing tumors, and predicting symptom onset in stroke [17]. The method's ability to efficiently handle nuisance parameters like radiofrequency field inhomogeneity makes it particularly valuable in clinical research settings where scan time is limited and robustness is essential.

The optimization of steady-state sequences such as SPGR and DESS through CRB-based experimental design has enabled scan times sufficiently fast for clinical practice while maintaining precision comparable to traditional methods [17]. This illustrates how profile likelihood methods can reveal new parameter mapping techniques from combinations of established pulse sequences, expanding the utility of existing imaging technologies without requiring hardware modifications.

Uncertainty Quantification in Systems Biology and Drug Development

In systems biology and drug development, profile likelihood provides a powerful framework for uncertainty quantification in complex biological models [14]. The approach helps manage epistemic uncertainty arising from incomplete data, measurement errors, or limited biological knowledge—common challenges in pharmacological research and development. By profiling out nuisance parameters related to cellular dynamics or environmental factors, researchers can obtain more reliable estimates of key pharmacological parameters such as drug-receptor binding affinities, metabolic rates, and signal transduction efficiencies.

Recent advances in distribution-free inference methods, including conformal prediction, have complemented traditional profile likelihood approaches by providing confidence sets with finite-sample coverage guarantees under minimal assumptions [18]. These methods are particularly valuable in drug development applications where model misspecification is a concern and reliable uncertainty quantification is essential for regulatory decision-making. The integration of profiling with these modern uncertainty quantification techniques represents an active area of methodological research with significant practical implications for pharmaceutical research.

Profile likelihood methods provide a powerful statistical framework for parameter estimation in the presence of nuisance parameters, with demonstrated applications across medical imaging, systems biology, and drug development. The core mechanics of profiling—scanning parameters of interest while optimizing over nuisance parameters—enable researchers to extract meaningful information from complex data environments where traditional estimation methods may fail. The method's strong theoretical foundations in Fisher information theory and the Cramér-Rao Bound facilitate optimal experimental design, while its practical implementation balances computational efficiency with statistical robustness.

Future methodological developments will likely focus on scaling profile likelihood approaches to high-dimensional problems, improving computational efficiency through advanced optimization techniques, and enhancing integration with modern machine learning methods. As noted in recent uncertainty quantification research, emerging frameworks that combine mechanistic models with machine learning show particular promise for improving both interpretability and predictive performance [14]. The continued development of robust profiling methods will further strengthen their role as essential tools in the scientist's toolkit for parameter estimation and uncertainty quantification across diverse research applications.

Deriving Asymmetric Confidence Intervals and Assessing Parameter Identifiability

In mechanistic mathematical modeling, particularly in systems biology and drug development, reliably connecting models to empirical data is fundamental for prediction and decision-making. Profile likelihood has emerged as a powerful frequentist approach for uncertainty quantification, addressing two critical challenges: deriving robust, potentially asymmetric confidence intervals for parameters and assessing practical parameter identifiability. Unlike traditional symmetric intervals that rely on local quadratic approximations, profile likelihood constructs intervals by exploring the likelihood surface directly, providing accurate uncertainty bounds even when models are nonlinear, parameters are near boundaries, or the likelihood is highly asymmetric [20]. This capability is essential for building models that deliver predictions with robust, quantifiable uncertainty, moving beyond merely achieving a good model fit to data [21].

The relationship between parameter identifiability and confidence interval estimation is intrinsic. A parameter is considered practically identifiable when its confidence interval is finite for a given confidence level and data set [3]. Profile likelihood analysis simultaneously diagnoses identifiability issues and provides a rigorous method for constructing confidence intervals, making it an indispensable tool for researchers aiming to tailor model complexity to the information content of their data.

Core Theoretical Foundations

The Mathematics of Profile Likelihood

Profile likelihood confidence intervals are constructed by inverting a likelihood ratio test for a scalar parameter of interest in the presence of nuisance parameters [20]. Let ( \theta \in \Theta \subset \mathbb{R}^p ) denote the full parameter vector of a statistical model, with scalar parameter of interest ( \psi = g(\theta) ), and let ( \lambda ) represent the nuisance parameters.

The profile log-likelihood for ( \psi ) is defined as: [ \ellp(\psi) = \max{\theta: g(\theta) = \psi} \ell(\theta), ] where ( \ell(\theta) ) is the full log-likelihood function [20]. This represents the best possible log-likelihood achievable when the parameter of interest ( \psi ) is fixed at a specific value.

The likelihood-ratio statistic for testing the hypothesis ( H0: \psi = \psi0 ) is: [ \lambda(\psi0) = -2 \left[ \ellp(\psi0) - \ell(\hat\theta) \right], ] where ( \hat\theta ) is the global maximum likelihood estimate (MLE). Under standard regularity conditions and in large samples, Wilks' theorem states that ( \lambda(\psi0) ) asymptotically follows a ( \chi^21 ) distribution under ( H0 ) [20].

The ( 100(1-\alpha)\% ) profile likelihood confidence interval is then: [ CI{1-\alpha} = \left{ \psi: \lambda(\psi) \leq \chi^2{1,1-\alpha} \right}, ] where ( \chi^2{1,1-\alpha} ) is the ( (1-\alpha) ) quantile of the ( \chi^21 ) distribution [20].

Structural vs. Practical Identifiability

Understanding parameter identifiability is a prerequisite for meaningful parameter estimation:

- Structural Identifiability: A model parameter is structurally identifiable if it can be uniquely determined from perfect, infinite theoretical data. It is a mathematical property of the model structure itself [21] [22].

- Practical Identifiability: A parameter is practically identifiable (or estimable) if it can be estimated with reasonable precision from the finite, noisy data actually available [21] [22]. Practical non-identifiability manifests as profile likelihoods that do not fall below the critical threshold over a wide parameter range, leading to infinite or very wide confidence intervals [3].

Profile likelihood analysis is particularly effective for diagnosing practical identifiability, as the shape of the likelihood profile directly reveals the information content of the data with respect to each parameter [3] [22].

Computational Workflow and Protocols

Implementing profile likelihood analysis involves a systematic computational workflow. The following diagram illustrates the core process for assessing practical identifiability and deriving confidence intervals.

Figure 1: The core computational workflow for profile likelihood analysis, illustrating the sequence from model initialization to the final construction of confidence intervals and identifiability assessment.

Step-by-Step Experimental Protocol

The standard algorithmic workflow for profile likelihood analysis consists of the following steps [20] [3]:

- Compute the Global MLE: Find the parameter vector ( \hat{\theta} ) that maximizes the full log-likelihood ( \ell(\theta) ). Set the reference log-likelihood value ( \ell^* = \ell(\hat{\theta}) ).

- Select Parameter and Define Grid: Choose a parameter of interest ( \thetai ) and define a sufficiently wide and dense grid of values ( {\thetai^{(k)}} ) around its MLE ( \hat{\theta}_i ).

- Profile the Likelihood: For each grid value ( \thetai^{(k)} ), solve the constrained optimization problem: [ \ellp(\thetai^{(k)}) = \max{\lambda} \ell(\thetai^{(k)}, \lambda), ] where ( \lambda ) represents all other parameters. This involves holding ( \thetai ) fixed and optimizing over all nuisance parameters.

- Compute Likelihood Ratio: For each profiled value, compute the likelihood ratio statistic: [ \lambda(\thetai^{(k)}) = -2 [\ellp(\theta_i^{(k)}) - \ell^*]. ]

- Determine Confidence Intervals: Find the values of ( \thetai ) where ( \lambda(\thetai) = \chi^2_{1, 1-\alpha} ). These are the endpoints of the ( (1-\alpha) ) confidence interval, often found via interpolation of the profiled values.

This process is repeated for each parameter in the model. The computational intensity depends on the cost of each optimization and the number of grid points, but modern optimization tools and adaptive gridding can improve efficiency [20].

Profile-Wise Analysis (PWA): An Integrated Workflow

A recent advancement is Profile-Wise Analysis (PWA), a unified workflow that integrates identifiability analysis, parameter estimation, and prediction uncertainty quantification [7]. PWA's key innovation is propagating profile-likelihood-based confidence sets for parameters to model predictions. This isolates how different parameter combinations affect predictions, providing a more efficient and interpretable method for constructing "curvewise" (simultaneous) prediction confidence bands compared to more expensive brute-force methods [7].

Comparative Performance Analysis

Profile Likelihood vs. Alternative Methods

The table below summarizes a quantitative comparison of profile likelihood against other common methods for confidence interval estimation and identifiability analysis.

Table 1: Comparative analysis of methods for confidence interval estimation and identifiability.

| Method | Core Principle | Key Advantages | Key Limitations | Best-Suited Applications |

|---|---|---|---|---|

| Profile Likelihood [20] [3] [22] | Inversion of likelihood ratio tests via constrained optimization. | - Handles asymmetry & non-linearity- Transformation invariant- Superior finite-sample coverage- Directly diagnoses identifiability | - Computationally intensive for many parameters- Requires careful optimization | Nonlinear ODE models, non-identifiable parameters, non-Gaussian models. |

| Wald / FIM-based [3] [22] | Local curvature of likelihood (Fisher Information Matrix). | - Computationally very cheap- Simple to implement. | - Assumes symmetric, quadratic likelihood- Poor coverage for nonlinear models- Not transformation invariant. | Initial screening, models with linear or near-linear parameter dependencies. |

| Bayesian MCMC [7] [23] [22] | Characterizes the full posterior parameter distribution. | - Provides full distributional information- Incorporates prior knowledge naturally. | - Computationally expensive (sampling)- Choice of prior can be influential. | Problems with informative priors, full posterior exploration is desired. |

| Bootstrap [7] [24] | Resampling data to empirically estimate parameter distribution. | - Conceptually simple- Makes few assumptions. | - Extremely computationally expensive- Can be ad-hoc; challenging to analyze accuracy. | Models where likelihood is intractable but simulation is fast. |

Empirical Performance Data

Case studies consistently demonstrate the practical superiority of profile likelihood. In a study comparing models of coral reef regrowth, profile likelihood analysis confirmed the practical identifiability of both simple and complex models, providing finite, asymmetric confidence intervals. The subsequent parameter-wise prediction interval analysis, built on the profiles, offered efficient and insightful uncertainty propagation to model predictions [22].

Furthermore, benchmarks comparing neural network-based (amortized) methods to traditional likelihood-based methods for model fitting found convergence in parameter estimation performance. However, for model comparison, machine learning classifiers significantly outperformed traditional likelihood-based metrics like AIC and BIC [23]. This highlights that while profile likelihood is powerful for inference and uncertainty quantification, other approaches may be superior for specific tasks like model selection.

Essential Research Reagents and Computational Tools

Successfully implementing profile likelihood analysis requires a suite of computational tools and conceptual "reagents." The table below details key components of the research toolkit.

Table 2: Key "Research Reagent Solutions" for implementing profile likelihood analysis.

| Tool / Concept | Function | Implementation Notes |

|---|---|---|

| Optimization Algorithm (e.g., SQP, Trust-Region) [20] | Solves the inner constrained optimization problem for each profile point. | Must be robust to handle potential non-convexities. Good initial guesses (from the MLE) are critical. |

| Critical Threshold (( \chi^2_{1, 0.95} \approx 3.84 )) [20] [3] | Defines the cutoff in the likelihood ratio for the confidence interval. | For a 95% CI, the drop in profile log-likelihood is ( \Delta = 3.84/2 \approx 1.92 ). |

| Prediction Profile Likelihood [3] | Propagates parameter uncertainty to model predictions. | Defined as ( PPL(z) = \min{p \in {p | g{pred}(p)=z}} \chi^2_{res}(p) ), allowing construction of CIs for predictions. |

| Mechanistic Model (e.g., ODE system) [21] [22] | Represents the biological, chemical, or physical process under study. | The forward model must be coupled with a probabilistic error model to define the likelihood function. |

| Global Optimizer | Finds the initial Maximum Likelihood Estimate (MLE). | Needed to ensure the starting point ( \hat{\theta} ) is the true global maximum before profiling. |

Advanced Concepts and Extensions

Modified Profile Likelihood

A known limitation of the standard profile likelihood is that it can underestimate uncertainty by treating the profiled nuisance parameters as known, ignoring the error in their estimation. Modified profile likelihood introduces higher-order corrections to address this. A common approach (Barndorff-Nielsen modification) adds a penalization term: [ \tilde{\ell}p(\psi) = \ellp(\psi) + \frac{1}{2} \log |I{\lambda\lambda}(\psi, \hat{\lambda}(\psi))| + \ldots, ] where ( I{\lambda\lambda} ) is the observed information for the nuisance parameters. This yields more accurate uncertainty quantification, especially for small samples and complex models [20].

Handling Non-Regularity and Model Uncertainty

Profile likelihood methods can be adapted for challenging scenarios:

- Near-Boundary Parameters: When parameters are near physical boundaries, the sampling distribution of the likelihood ratio may not follow the standard ( \chi^2 ) distribution. Empirical calibration of the cutoff via Monte Carlo simulation (Feldman-Cousins approach) can be necessary [20].

- Model Selection Uncertainty: Model-averaged profile likelihood intervals, which combine CIs from multiple candidate models, have been proposed. However, they can undercover when model dimension is high, suggesting caution in their application [20].

The following diagram illustrates the logical relationships between core and advanced concepts in the profile likelihood ecosystem, guiding users on when to apply specific techniques.

Figure 2: A decision tree illustrating the logical relationships between core profile likelihood concepts and the advanced techniques used to address specific challenges like non-identifiability, small sample bias, and model uncertainty.

Profile likelihood provides a statistically rigorous and computationally feasible framework for deriving asymmetric confidence intervals and diagnosing parameter identifiability in complex mechanistic models. Its ability to accurately characterize likelihood surfaces without relying on potentially misleading local approximations makes it a superior choice over Wald-type intervals for nonlinear models common in biology and drug development.

The integration of profile likelihood into unified workflows like Profile-Wise Analysis (PWA) represents the state of the art, enabling researchers to move seamlessly from identifiability analysis and parameter estimation to quantified predictive uncertainty. As computational power and accessible software for these methods continue to improve, their adoption will be crucial for building robust, predictive models that can reliably inform critical decisions in science and industry.

The Critical Role of Uncertainty Quantification (UQ) in Trustworthy Drug Discovery and Biomedical Models

Uncertainty quantification (UQ) is transforming from a technical nicety to a foundational requirement for trustworthy artificial intelligence (AI) and computational modeling in drug discovery and biomedical research. As machine learning (ML) and deep learning (DL) systems increasingly inform high-stakes decisions—from molecular subtype classification in oncology to de novo drug design—the inability to assess prediction reliability has become a critical barrier to clinical adoption [25] [26]. Traditional AI models consistently demonstrate exceptional predictive performance in controlled settings, yet often struggle to transition into clinical practice, largely due to insufficient accountability of prediction reliability [25]. This challenge is particularly acute in biological and healthcare applications, where models frequently lack the foundational conservation laws that govern physical systems and must contend with profound data heterogeneity [26]. The COVID-19 pandemic starkly highlighted these limitations, with many modeling efforts lacking confidence intervals, ultimately undermining public trust and policy implementation [26].

Within this context, profile likelihood emerges as a particularly valuable UQ methodology within the frequentist framework, especially for dynamic biological systems where it combines maximum projection of the likelihood by solving a sequence of optimization problems [27]. However, it represents just one approach in a rapidly diversifying UQ landscape. This guide provides a comprehensive comparison of UQ methodologies, their performance characteristics, and implementation protocols to empower researchers in selecting appropriate techniques for robust, reliable biomedical AI.

Comparative Analysis of UQ Method Performance

Understanding the relative strengths and weaknesses of different UQ approaches is essential for method selection in biomedical applications. The table below synthesizes empirical findings from multiple studies evaluating UQ methods across key performance dimensions.

Table 1: Performance Comparison of Uncertainty Quantification Methods in Biomedical Applications

| UQ Method | Calibration Quality | OOD Detection | Robustness to Adversarial Attacks | Computational Efficiency | Interpretability | Key Strengths |

|---|---|---|---|---|---|---|

| Single Deterministic | Low | Poor | Low | High | Medium | Baseline simplicity |

| Monte Carlo Dropout (MCD) | Medium | Medium | Medium | Medium | Medium | Good trade-off for compute-limited applications |

| Bayesian Neural Networks (BNN) | High | Medium | High | Low | Medium | Strong robustness, good calibration |

| Deep Ensemble (DE) | Medium | High | High | Low | High | Best overall performance, reliable uncertainty estimates |

| Bootstrap Ensemble (BG) | Medium | High | High | Low | High | Comparable to Deep Ensemble |

| Conformal Prediction | High (with exchangeability) | Medium | Varies | High | High | Distribution-free guarantees |

| PCS-UQ | High (subgroup-aware) | High | High | Medium (Low for DL) | High | Stable across subgroups, integrates model selection |

Key Performance Insights from Empirical Studies

Ensemble Methods (Deep Ensemble, Bootstrap Ensemble) consistently demonstrate superior performance in out-of-distribution (OOD) detection and provide more robust uncertainty estimates, making them particularly valuable for real-world deployment where distribution shifts are common [25]. Their main limitation is computational expense, as training multiple models increases resource requirements.

Bayesian Methods (BNN) excel in scenarios requiring robustness against adversarial attacks and well-calibrated predictions, with studies showing they "demonstrate strong robustness to adversarial attacks, an attribute that may enhance the generalization capacity of classifiers" [25]. This makes them particularly suitable for safety-critical applications.

Conformal Prediction offers a distribution-free approach with non-asymptotic guarantees for prediction intervals, functioning as a powerful complement or even alternative to conventional Bayesian methods, especially when parametric assumptions may not hold [27]. Its coverage guarantees rely on the exchangeability assumption, which may be challenging with temporal or structured biological data.

PCS-UQ represents an emerging framework that integrates model selection via predictability checks with stability assessment through bootstrapping. In comparative studies, PCS-UQ "reduces width over conformal approaches by ≈20%" while maintaining target coverage across subgroups where conventional methods often fail [28].

Experimental Protocols for UQ Evaluation in Biomedical Research

Implementing rigorous experimental protocols is essential for meaningful UQ evaluation. Below we detail standardized methodologies for assessing UQ method performance.

Protocol 1: Molecular Subtype Classification with UQ

Table 2: Experimental Protocol for Breast Cancer Molecular Subtype Classification with UQ

| Protocol Component | Specification | Purpose in UQ Assessment |

|---|---|---|

| Dataset | TCGA breast cancer gene expression data (∼25,000 genes) | High-dimensional molecular data with inherent biological variability |

| UQ Methods Evaluated | Single Deterministic, MCD, BNN, Deep Ensemble, Bootstrap Ensemble | Comparative assessment of architectural approaches |

| OOD Generation | GMGS (β-TCVAE-based synthetic data generation) | Tests robustness to distributional shifts and technical variations |

| Evaluation Metrics | Calibration curves, OOD detection AUC, adversarial robustness, accuracy with rejection | Multi-dimensional performance assessment beyond simple accuracy |

| Key Application | Uncertainty-guided sample rejection; refers uncertain cases for expert review | Demonstrates clinical utility of uncertainty estimates |

Methodological Details: The experimental process involves three main steps: (1) model training with different UQ methods, (2) comprehensive evaluation using both classical performance metrics (accuracy, F1-score) and advanced criteria (calibration, interpretability, robustness, OOD detection), and (3) implementation of uncertainty-guided rejection strategies [25]. For OOD detection assessment, researchers introduced GMGS, a β-TCVAE-based approach for generating synthetic OOD data, crucial for evaluating UQ method reliability when real-world OOD samples are limited [25].

Protocol 2: UQ-Enhanced Molecular Design Optimization

Table 3: Experimental Protocol for Molecular Design with UQ-Enhanced Graph Neural Networks

| Protocol Component | Specification | Purpose in UQ Assessment |

|---|---|---|

| Model Architecture | Directed Message Passing Neural Networks (D-MPNNs) | Captures molecular structure and connectivity relationships |

| Optimization Framework | Genetic Algorithm with UQ-guided acquisition functions | Enables exploration of vast chemical spaces |

| UQ Integration | Probabilistic Improvement Optimization (PIO) | Uses uncertainty to assess threshold exceedance probability |

| Benchmarks | Tartarus and GuacaMol platforms (16 tasks total) | Standardized evaluation across diverse molecular properties |

| Evaluation Focus | Optimization success rate, multi-objective balancing | Tests UQ utility in practical design scenarios |

Methodological Details: This protocol combines GNNs with genetic algorithms for molecular optimization, allowing direct exploration of chemical space without predefined libraries. The key innovation is integrating UQ through acquisition functions like Probabilistic Improvement Optimization (PIO), which "quantifies the likelihood that a candidate molecule will exceed predefined property thresholds, reducing the selection of molecules outside the model's reliable range" [29]. The D-MPNN implementation operates directly on molecular graphs, capturing detailed connectivity and spatial relationships between atoms, which is essential for accurate property prediction [29].

Emerging UQ Frameworks and Profile Likelihood Context

While profile likelihood remains important for parameter inference in dynamic biological systems, several emerging UQ frameworks offer complementary capabilities for different research contexts.

The PCS-UQ Framework

The Predictability-Computability-Stability (PCS) framework addresses uncertainty arising throughout the entire data science life cycle. PCS-UQ implements this through a structured process:

- Predictability Check: Multiple models are trained and evaluated on a validation set, with poorly performing algorithms screened out [28].

- Stability Assessment: The remaining algorithms are fitted on multiple bootstrapped training datasets to assess finite-sample variability and algorithmic instability [28].

- Calibration: A multiplicative calibration extends interval lengths to achieve desired coverage while maintaining subgroup adaptivity [28].

In comparative studies, PCS-UQ achieved desired coverage while reducing interval length by approximately 20% compared to conformal approaches, demonstrating particular strength in maintaining coverage across subgroups [28].

Conformal Prediction for Biological Systems

Conformal prediction offers distribution-free uncertainty quantification with non-asymptotic guarantees, making it particularly valuable for complex biological systems where parametric assumptions may not hold [27]. Recent adaptations have extended conformal prediction to dynamic biological systems through two novel algorithms that optimize statistical efficiency despite limited data availability [27]. As noted in research, "conformal prediction has also been extended to accommodate general statistical objects, such as graphs and functions that evolve over time, which can be very relevant in many biological problems" [27].

The Scientist's Toolkit: Essential Research Reagents for UQ Implementation

Table 4: Key Research Reagents and Computational Tools for UQ in Biomedical Research

| Tool/Reagent | Type | Function in UQ Research | Example Applications |

|---|---|---|---|

| TCGA Gene Expression Data | Biological Dataset | Provides high-dimensional molecular data for UQ method validation | Breast cancer molecular subtype classification [25] |

| β-TCVAE Framework | Computational Algorithm | Generates synthetic OOD data for UQ reliability assessment | Testing model robustness to distribution shifts [25] |

| Directed MPNNs (D-MPNNs) | Neural Architecture | Molecular graph representation with inherent structure-awareness | Property prediction in molecular design [29] |

| Tartarus & GuacaMol | Benchmarking Platform | Standardized evaluation of molecular design algorithms | Comparing UQ methods across 16 design tasks [29] |

| Profile Likelihood | Statistical Method | Parameter uncertainty quantification in dynamic systems | Identifiability analysis in ODE models [27] |

| Conformal Prediction | UQ Framework | Distribution-free prediction intervals with coverage guarantees | Uncertainty quantification in dynamic biological systems [27] |

| PCS-UQ Implementation | UQ Framework | Integrates model selection with stability assessment | Regression and classification with subgroup-aware uncertainty [28] |

As drug discovery and biomedical modeling increasingly rely on complex AI systems, uncertainty quantification has evolved from an optional enhancement to an essential component of trustworthy research. No single UQ method dominates all applications—ensemble methods excel in OOD detection, Bayesian approaches offer robustness, conformal prediction provides distribution-free guarantees, and emerging frameworks like PCS-UQ address model selection and stability. The optimal approach depends on specific research constraints, including data modality, computational resources, and deployment requirements. By integrating these UQ methodologies into standard research workflows and recognizing profile likelihood's role within this broader ecosystem, biomedical researchers can develop more reliable, interpretable, and clinically translatable AI systems that appropriately communicate their limitations while empowering scientific discovery.

Implementing Profile Likelihood: Methods and Real-World Applications in Biomedicine

Step-by-Step Workflow for Profile Likelihood Calculation in Computational Models

Profile likelihood is a powerful statistical technique for identifiability analysis, parameter estimation, and uncertainty quantification in computational models, particularly for biological systems and drug development research. This method provides a computationally efficient approach to understanding parameter uncertainties and their propagation to model predictions, which is crucial for reliable model-based decision-making in pharmaceutical development. Unlike Bayesian methods that can be computationally expensive and require specification of prior distributions, profile likelihood offers a frequentist alternative with well-defined statistical properties and often lower computational demands [30] [7]. The core principle involves profiling the likelihood function by systematically varying parameters of interest while optimizing over nuisance parameters, creating a projected representation of the full likelihood surface that reveals practical identifiability and confidence regions for parameters and predictions [3].

The growing importance of profile likelihood in computational biology is underscored by recent methodological developments. The Profile-Wise Analysis (PWA) workflow represents a systematic framework that unifies identifiability analysis, parameter estimation, and prediction, addressing key challenges in mechanistic model development [30] [7]. For drug development professionals, these methods provide crucial insights into parameter identifiability and prediction reliability, enabling more robust quantitative decisions in therapeutic optimization and clinical trial design.

Table 1: Comparison of Uncertainty Quantification Methods in Systems Biology

| Method | Computational Demand | Theoretical Justification | Scalability to Complex Models | Ease of Implementation |

|---|---|---|---|---|

| Profile Likelihood | Moderate | Strong (frequentist) | Good for ODE/PDE models | Moderate (requires optimization) |

| Bayesian Sampling | High | Strong (Bayesian) | Challenged by multimodality | Moderate to difficult |

| Fisher Information Matrix | Low | Weak for nonlinear models | Good but unreliable | Easy |

| Ensemble Methods | Moderate to High | Ad hoc but practical | Good for large-scale models | Easy to moderate |

| Conformal Prediction | Low to Moderate | Strong non-asymptotic guarantees | Good for various models | Moderate |

Theoretical Foundations of Profile Likelihood

Mathematical Formulation

Profile likelihood operates on the principle of converting a multi-dimensional likelihood function into a one-dimensional representation by focusing on parameters of interest while accounting for nuisance parameters. Formally, for a model with parameter vector θ = (θi, θj) where θi is the parameter of interest and θj represents nuisance parameters, the profile likelihood for θi is defined as [3]:

[ \chi{PL}^{2}(\thetai) = \min{\theta{j \neq i}} \chi^{2}(\theta) ]

where χ²(θ) represents the residual sum of squares or, more generally, -2 times the log-likelihood function. This formulation represents a function in θi of least increase in the residual sum of squares, achieved by adjusting the other parameters θj accordingly [3]. The minimization process ensures that for each fixed value of the parameter of interest, we obtain the best possible fit to the data by optimizing over the remaining parameters.

Profile likelihood-based confidence regions can be derived through the relationship [3]:

[ \text{CR}θ = \left{ θ \, \middle| \, \chi{PL}^{2}(θ) - \chi{PL}^{2}(\hat{θ}) < δα \right} ]

where δα represents the α quantile of the χ² distribution with appropriate degrees of freedom, and (\hat{θ}) denotes the maximum likelihood estimate. For nonlinear ordinary differential equation (ODE) models commonly used in pharmacological modeling, profile likelihood-based confidence intervals provide more accurate uncertainty quantification than traditional Fisher information matrix approaches, which assume local linearity and can produce misleading results [3].

Extensions to Prediction Uncertainty

A significant advancement in profile likelihood methodology is the prediction profile likelihood, which directly quantifies how parameter uncertainty propagates to model predictions. For a model prediction z, the prediction profile likelihood is defined as [3]:

[ PPLz = \min{p \in {p \, | \, g{pred}(p) = z}} \chi{res}^{2}(p) ]

This approach propagates uncertainty from experimental data to predictions by exploring the prediction space rather than the parameter space, providing a more direct and computationally efficient method for constructing predictive confidence intervals [3]. The Profile-Wise Analysis workflow further extends this concept through "profile-wise predictions" that explicitly isolate how different parameter combinations affect model predictions, enabling more nuanced uncertainty analysis [30] [7].

Comparative Analysis of Profile Likelihood Workflows

Profile-Wise Analysis (PWA) Workflow

The Profile-Wise Analysis framework represents a unified workflow that systematically addresses parameter identifiability, estimation, and prediction. This approach constructs profile-wise predictions that propagate profile-likelihood-based confidence sets for parameters to model predictions, explicitly isolating how different parameter combinations affect model outputs [30]. The key advantage of PWA is its ability to approximate the full likelihood-based prediction confidence set efficiently by combining profile-wise prediction confidence sets, providing a computationally tractable alternative to more expensive methods like full Bayesian sampling [7].

Recent applications demonstrate that PWA successfully maintains the statistical rigor of profile likelihood methods while improving computational efficiency. In case studies focusing on ODE-based mechanistic models with both Gaussian and non-Gaussian noise models, PWA generated prediction intervals that closely approximated those obtained through more computationally expensive full likelihood evaluations [30] [22]. The method naturally provides fully "curvewise" predictive confidence sets for model trajectories, offering a stronger guarantee than typical "pointwise" intervals that only trap trajectories at specific time points [7].

Traditional Profile Likelihood Implementation

Traditional profile likelihood implementation follows a more sequential process of identifiability analysis followed by prediction uncertainty quantification. This approach typically involves:

- Structural Identifiability Analysis: Determining whether parameters can theoretically be identified from perfect data using algebraic methods [22]

- Practical Identifiability Assessment: Evaluating parameter identifiability given finite, noisy experimental data [22]

- Profile Likelihood Calculation: Computing univariate or bivariate profiles for parameters of interest [3]

- Confidence Interval Construction: Deriving parameter confidence intervals from profile likelihoods [3]

- Prediction Uncertainty Quantification: Propagating parameter uncertainties to model predictions [3]

While this traditional approach is methodologically sound, it can become computationally demanding when a large number of predictions need to be assessed, particularly for complex biological models with many parameters and outputs [27].

Table 2: Workflow Comparison for Coral Reef Population Dynamics Case Study [22]

| Analysis Step | Single Species Model | Two Species Model | Computational Requirements |

|---|---|---|---|

| Parameter Profiling | 2 parameters | 4 parameters | 2x more evaluations for two-species model |

| Practical Identifiability | Both parameters identifiable | All parameters identifiable | Similar optimization effort per parameter |

| Confidence Interval Width | Narrow for both parameters | Wider intervals for growth parameters | Not applicable |

| Prediction Interval Construction | Efficient with parameter-wise approximation | Efficient with parameter-wise approximation | Similar computational cost |

| Model Selection Insight | Adequate for total population prediction | Necessary for species-specific dynamics | Dependent on research question |

Comparison with Alternative Uncertainty Quantification Methods

Profile likelihood occupies a middle ground in the spectrum of uncertainty quantification methods, balancing computational efficiency with statistical rigor. Recent comparative studies highlight its position relative to other approaches:

Bayesian methods typically require specification of prior distributions and involve sampling from posterior distributions using Markov Chain Monte Carlo techniques. While powerful, these methods can be computationally expensive and face convergence issues with multimodal distributions common in ODE models [27]. Profile likelihood methods often achieve similar conclusions with significantly less computational effort [22].

Conformal prediction methods represent a newer approach that provides non-asymptotic guarantees for prediction intervals without requiring strong distributional assumptions. These methods offer excellent reliability and scalability but are less established for dynamical systems in computational biology [27].

Ensemble methods and Fisher Information Matrix approaches provide alternatives with different trade-offs. Ensemble methods offer better scalability for large-scale models but have weaker theoretical justification, while FIM is computationally efficient but unreliable for nonlinear models [27].

Figure 1: Traditional Profile Likelihood Workflow

Step-by-Step Protocol for Profile Likelihood Calculation

Workflow Implementation

The implementation of profile likelihood analysis follows a systematic protocol that can be applied to various computational models:

Step 1: Model and Likelihood Specification Define the mechanistic model (typically ODE-based) and the corresponding likelihood function based on the assumed error model. For ODE models with Gaussian measurement error, the likelihood is often formulated as:

[ L(θ) = ∏{i=1}^{n} \frac{1}{\sqrt{2πσi^2}} \exp\left(-\frac{(yi - f(ti, θ))^2}{2σ_i^2}\right) ]

where (yi) are measurements, (f(ti, θ)) is the model prediction at time (ti) with parameters θ, and (σi^2) is the measurement variance [30]. For non-Gaussian error models, appropriate likelihood functions should be specified.

Step 2: Structural Identifiability Analysis Before profile computation, assess whether model parameters are structurally identifiable using algebraic methods such as differential algebra or Taylor series approaches [22]. This step determines whether unique parameter estimation is theoretically possible given perfect data.

Step 3: Maximum Likelihood Estimation Obtain the maximum likelihood estimate (MLE) (\hat{θ}) by solving:

[ \hat{θ} = \arg \min_θ [-2\log L(θ)] ]

This optimization typically requires specialized algorithms for ODE-constrained problems, such as gradient-based or derivative-free optimizers [22].

Step 4: Univariate Profile Likelihood Calculation For each parameter of interest (θ_i), compute the profile likelihood by solving a series of optimization problems:

[ PL(θi) = \min{θ_{j \neq i}} [-2\log L(θ)] ]

across a range of fixed values for (θ_i) [3]. This creates a projected representation of the likelihood surface.

Step 5: Confidence Interval Construction Determine confidence intervals for each parameter using the likelihood ratio test:

[ CI{θi} = \left{ θi \, \middle| \, PL(θi) - PL(\hat{θ}i) < χ^2{1,1-α} \right} ]

where (χ^2_{1,1-α}) is the critical value from the chi-squared distribution with 1 degree of freedom [3].

Step 6: Practical Identifiability Assessment Evaluate practical identifiability by examining the shape of profile likelihoods. Well-formed profiles with clear minima indicate identifiable parameters, while flat profiles suggest practical non-identifiability [22].

Step 7: Prediction Uncertainty Quantification Propagate parameter uncertainties to model predictions using profile-wise predictions or prediction profile likelihood methods [30] [3].

Protocol Variations for Specific Scenarios