Practical Non-Identifiability in Dynamic Models: Diagnosis, Solutions, and Best Practices for Biomedical Research

This article provides a comprehensive guide for researchers and drug development professionals on addressing the critical challenge of practical non-identifiability in dynamic models.

Practical Non-Identifiability in Dynamic Models: Diagnosis, Solutions, and Best Practices for Biomedical Research

Abstract

This article provides a comprehensive guide for researchers and drug development professionals on addressing the critical challenge of practical non-identifiability in dynamic models. Practical non-identifiability occurs when available data are insufficient to uniquely determine model parameters, leading to unreliable predictions and hampering model utility in decision-making. We explore the fundamental concepts distinguishing structural from practical identifiability, present a suite of diagnostic methods including profile likelihood and collinearity analysis, and detail strategies for overcoming identifiability issues through optimal experimental design, model reduction, and incorporation of multiple data features. The article also covers validation frameworks and compares methodological approaches, offering a holistic perspective for developing robust, predictive models in biomedical research and drug development.

Understanding Practical Non-Identifiability: Core Concepts and Consequences for Model Reliability

Welcome to the Model Diagnostics & Identifiability Support Center

This resource is designed for researchers, scientists, and drug development professionals working with dynamic models, particularly ordinary differential equations (ODEs) in systems biology and pharmacokinetics/pharmacodynamics (PK/PD). Framed within a broader thesis on addressing practical non-identifiability, this guide provides troubleshooting FAQs and protocols to diagnose and resolve common identifiability issues in your modeling workflow.

Core Concept FAQ

Q1: What is the fundamental difference between structural and practical non-identifiability?

- A: This is the most critical distinction for effective troubleshooting.

- Structural Non-Identifiability is a property of the model equations themselves. It means that even with perfect, continuous, and noise-free data, certain parameters (or combinations thereof) cannot be uniquely determined because different parameter values yield identical model outputs [1] [2]. It is an a priori issue related to model design.

- Practical Non-Identifiability arises from limitations in the real-world data. Even if a model is structurally identifiable, parameters may not be uniquely estimated due to noisy, sparse, or insufficiently informative data [1] [2] [3]. The model structure allows for identification, but the available data is inadequate to achieve it.

Q2: Why is distinguishing between them crucial for my research?

- A: The diagnosis dictates the cure.

- If the issue is structural, you must modify the model itself (e.g., reparameterize, simplify, or incorporate additional prior knowledge) before any amount of data collection will help [4] [3].

- If the issue is practical, the solution lies in improving the data (e.g., optimizing experimental design, collecting more or different data points, reducing measurement noise) or using more robust estimation methods that handle uncertainty [1] [5].

* * Poor convergence of optimization algorithms. * Extremely large or infinite confidence intervals for parameter estimates. * High correlations between parameter estimates. * Sensitivity of the optimal parameter set to initial guesses. * Good model fit to data achieved with wildly different parameter sets.

Troubleshooting Guides & Methodologies

Guide 1: Diagnosing Structural Non-Identifiability

Protocol: Taylor Series Expansion Method [3] This method tests whether parameters can be solved uniquely from the coefficients of a Taylor series expansion of the model output around a known point (e.g., t=0).

- Define your model: ODE system

dx/dt = f(x, p, u), outputy = g(x, p), with parametersp. - Compute derivatives: Calculate the first several time derivatives of the output

yanalytically (y', y'', y''', ...). The number needed is at most2n-1for a linear system withnstates, but may be higher for nonlinear systems [3]. - Form a system of equations: The derivatives are functions of the parameters

pand initial conditions. Set up equations:y(t0) = Y0,y'(t0) = Y1, etc., whereYiare considered known symbolic quantities. - Solve for parameters: Attempt to solve this algebraic system for the parameters

p. If you cannot obtain a unique solution for a parameter (e.g., find it can be any value, or is combined with another asp1*p2), that parameter is structurally unidentifiable.

Guide 2: Diagnosing Practical Non-Identifiability

Protocol: Profile Likelihood Analysis [1] This is a powerful global method recommended over traditional, and potentially misleading, Fisher Information Matrix approaches [1].

- Obtain the Maximum Likelihood Estimate (MLE): Fit your model to the data to find the best-fit parameter vector

p*and the corresponding maximum log-likelihoodL*. - Profile a parameter: Select a parameter of interest,

p_i. Fixp_iat a valueθaway from its MLE. Re-optimize the log-likelihood over all other free parameters. Record the optimized log-likelihood valueL(θ). - Construct the profile: Repeat step 2 across a wide range of values for

θ. PlotL(θ)againstθ. - Diagnose from the plot:

- A V-shaped, uniquely minimum profile indicates practical identifiability.

- A flat valley or extended plateau around the minimum indicates practical non-identifiability. The confidence interval for

p_ibased on a likelihood ratio threshold will be infinitely wide.

Guide 3: Addressing Non-Identifiability in Hierarchical (NLME) Models In Nonlinear Mixed Effects models, unidentifiability at the individual level may be resolved at the population level due to inter-individual variability [5] [6].

Protocol: Nonparametric Population Distribution Comparison [5] This method checks if different population-level parameter distributions can be distinguished given the data.

- Multi-start Estimation: Fit your NLME model to the population data multiple times from different initial guesses to find multiple potential "best-fit" solutions.

- Extract Individual Estimates: For each solution, obtain the set of empirical Bayes estimates (EBEs) for the parameters of each individual.

- Compare Distributions (Individual Level): For a target parameter, take the EBEs from two different solution fits. Use a Kolmogorov-Smirnov two-sample test to determine if the two samples come from significantly different population distributions.

- Compare Distributions (Population Level): Estimate the kernel density for the parameter distribution from each solution fit. Calculate the overlapping index (area under the minimum of the two density curves). An index near 1 suggests the distributions are indistinguishable, indicating practical non-identifiability.

Table 1: Comparison of Identifiability Analysis Methods

| Method | Applies to | Key Principle | Strengths | Weaknesses | Citation |

|---|---|---|---|---|---|

| Taylor Series | Structural | Solves parameters from output derivatives. | Conceptually simple, analytic. | Can be algebraically complex for large models. | [3] |

| Exact Arithmetic Rank (EAR) | Structural | Differential algebra-based. | Powerful, available in software (e.g., Mathematica). | Can be computationally heavy. | [3] |

| Fisher Information Matrix (FIM) | Practical | Local curvature of likelihood. | Fast, standard output of many estimators. | Misleading for non-identifiable models; local approximation only. | [1] |

| Profile Likelihood | Practical | Global exploration of likelihood. | Reliable for detecting both structural & practical issues; provides confidence intervals. | Computationally expensive (requires repeated optimization). | [1] |

| Nonparametric NLME Comparison | Practical (NLME) | Compares population distributions from different fits. | Accounts for hierarchical structure; uses statistical tests. | Requires significant computation (multi-start fits). | [5] |

Table 2: Impact of Available Derivatives on Structural Identifiability [7] This table summarizes findings from a study introducing κ-identifiability, which relaxes the unrealistic assumption of having infinite derivatives.

| Model | Identifiable Parameters (All Derivatives) | Identifiable Parameters (Max 3 Derivatives) | Notes |

|---|---|---|---|

| Example Drosophila Model | 17 | 1 | Demonstrates severe overestimation by traditional methods. |

| Example NF-κB Model | 21 | 6 | Highlights the critical dependency on high-order derivative information. |

The Scientist's Toolkit: Essential Research Reagents & Software

Table 3: Key Software Tools for Identifiability Analysis

| Tool Name | Language/Platform | Primary Function | Useful For | Citation/Source |

|---|---|---|---|---|

| PottersWheel | MATLAB | Profile likelihood for structural & practical identifiability. | Comprehensive modeling, fitting, and identifiability analysis. | [2] |

| STRIKE-GOLDD | MATLAB | Structural identifiability analysis. | Determining a priori identifiability of nonlinear models. | [2] |

| StructuralIdentifiability.jl | Julia | Assessing structural parameter identifiability. | Symbolic computation for ODE models in the Julia ecosystem. | [2] |

| LikelihoodProfiler.jl | Julia | Practical identifiability analysis via profiling. | Calculating likelihood profiles and confidence intervals. | [2] |

| Model Reduction Code | Julia (Jupyter) | Data-informed model reduction for non-identifiable models. | Finding identifiable reparameterizations from data. | [4] |

Diagnostic Visualization Workflows

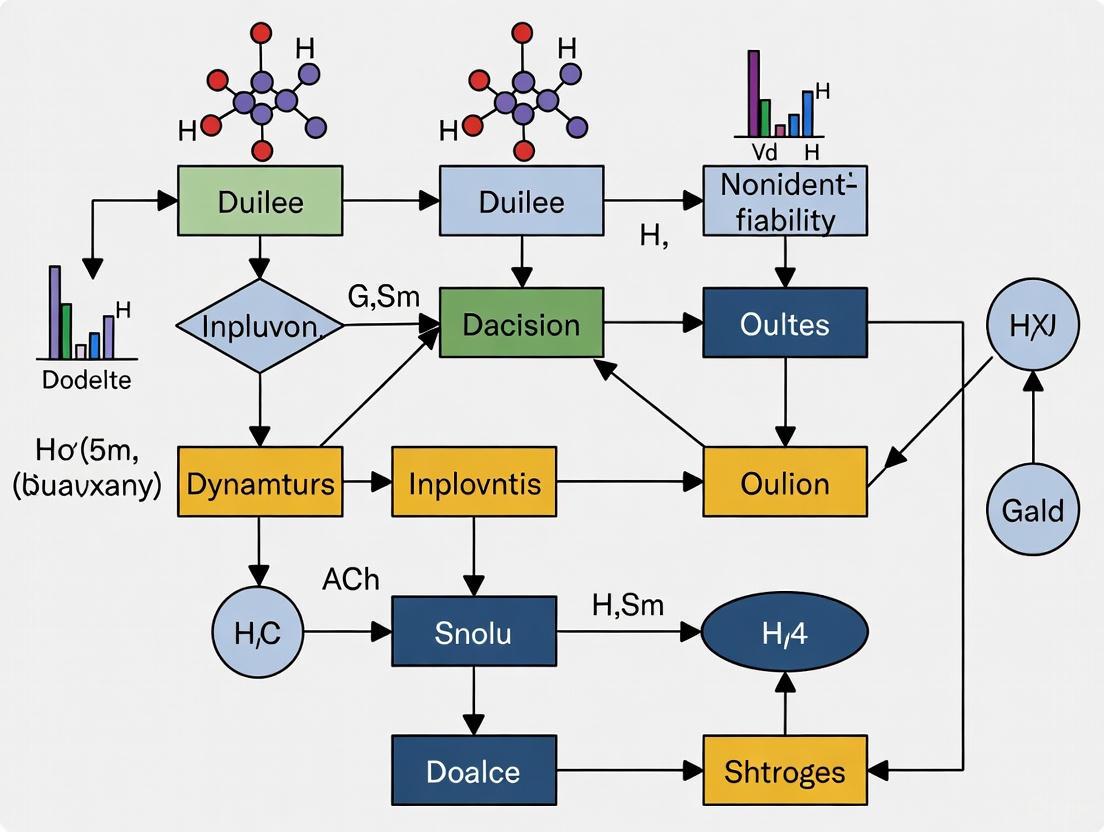

Diagnostic Decision Tree for Identifiability Issues

Synthesis of a Diagnostic Model for System Fault Detection

How Non-Identifiability Undermines Parameter Estimation and Model Predictions

Technical Support Center: Troubleshooting Non-Identifiable Dynamic Models

Welcome, Researcher. This technical support center is designed within the broader thesis that addressing practical non-identifiability is crucial for robust, predictive modeling in systems biology and pharmacometrics. Below, you will find targeted troubleshooting guides, FAQs, and resources to diagnose and resolve common issues arising from non-identifiable models during your experiments.

Troubleshooting Guide: Common Symptoms & Diagnosis

If your model fitting is unstable or predictions are unreliable, consult this flowchart to identify potential root causes related to non-identifiability.

Diagram 1: Diagnostic flowchart for non-identifiability.

Frequently Asked Questions (FAQs)

Q1: My Markov Chain Monte Carlo (MCMC) sampling shows strong correlations between parameters and poor convergence. What does this mean? A1: This is a classic symptom of practical non-identifiability, where the data cannot uniquely constrain individual parameters, only certain combinations of them [9] [10]. The sampler explores a "ridge" in the posterior where changes in one parameter can be compensated by changes in another without affecting the model fit to the data. This leads to wide marginal posterior distributions and high correlation in pairwise plots.

Q2: How can I distinguish between structural and practical non-identifiability? A2:

- Structural Non-Identifiability: A property of the model structure itself, present even with perfect, infinite data. It arises from redundant parameterizations (e.g., product of two parameters appearing as a single term). Tools like Lie derivatives or generating series methods can detect it [11].

- Practical Non-Identifiability: Arises from limited, noisy data. The model is structurally identifiable, but the available data lacks the information to precisely estimate parameters. It is diagnosed using methods like profile likelihood, which reveals flat profiles for non-identifiable parameters [11] [1].

Q3: Is it acceptable to proceed with predictions from a non-identifiable model? A3: Yes, but with crucial caveats. A model can have predictive power for specific outputs even if its parameters are not uniquely identified [9]. For example, training a signaling cascade model only on a downstream variable (e.g., K4) can yield accurate predictions for that variable's trajectory under new stimulation protocols, despite high uncertainty in all individual parameters [9]. However, predictions for unobserved variables or extrapolations far from training conditions will be unreliable. The key is to rigorously assess and report prediction uncertainties.

Q4: Why does the Fisher Information Matrix (FIM) approach sometimes fail to diagnose non-identifiability? A4: The FIM is a local, linear approximation (curvature) of the likelihood around the estimated parameters. For nonlinear models with flat or complex likelihood surfaces (common in non-identifiable problems), this approximation can be severely misleading, suggesting identifiability when none exists [1]. The profile likelihood is a more reliable, global method for assessing practical identifiability [11] [1].

Q5: Can a model be non-identifiable at the individual level but identifiable at the population level? A5: Yes. In hierarchical frameworks like Nonlinear Mixed Effects (NLME) models, inter-individual variability can provide additional information. A parameter that is non-identifiable from a single subject's data may become identifiable when data from a population is analyzed simultaneously, as the population distribution acts as a constraint [5]. This highlights the importance of choosing the right modeling framework for your data structure.

Table 1: Comparison of Identifiability Analysis Methods

| Method | Principle | Strengths | Weaknesses | Best For |

|---|---|---|---|---|

| Profile Likelihood [11] [1] | Explores likelihood by profiling over parameters. | Global, reliable for practical non-identifiability, provides confidence intervals. | Computationally intensive for high-dimensional parameters. | Practical identifiability analysis, confidence set construction. |

| Fisher Information Matrix (FIM) [1] | Local curvature of likelihood at optimum. | Fast, easy to compute. | Can be misleading for nonlinear/non-identifiable models; local approximation. | Initial screening, experimental design. |

| Markov Chain Monte Carlo (MCMC) [9] [10] | Samples from posterior parameter distribution. | Reveals correlations and full uncertainty; works with priors. | Computationally heavy; diagnostics required; may not converge if badly non-identifiable. | Bayesian inference, exploring parameter spaces. |

| Data-Informed Model Reduction [4] | Reparameterizes model based on likelihood. | Creates identifiable, predictive reduced models. | Requires computational implementation; reduces original parameter interpretation. | Obtaining a simplified, identifiable model for prediction. |

Table 2: Parameter Uncertainty in Sequential Training Experiment [9] (Based on a 4-step signaling cascade model trained on different variable combinations. "δ" represents multiplicative deviation.)

| Training Data | Effective Params (Dimensionality) | Largest δ (Variation) | Smallest δ (Variation) | Predictive Outcome |

|---|---|---|---|---|

| Prior Only | 9 | ~20-fold | ~20-fold | No predictions. |

| Variable K4 only | 8 | ~12-fold | <1.5-fold | Accurate prediction for K4 only. |

| Variables K2 & K4 | 7 | ~10-fold | <1.5-fold | Accurate prediction for K2 & K4. |

| All 4 Variables | 5 | ~12-fold | <1.5-fold | Accurate prediction for all variables. |

Detailed Experimental Protocols

Protocol 1: Sequential Training to Assess Predictive Power This protocol, derived from a study on a biochemical signaling cascade, illustrates how predictive power can be incrementally built despite parameter uncertainty [9].

- Model System: A four-step signaling cascade with negative feedback (e.g., RAS-RAF-MEK-ERK MAPK cascade).

- Data Generation:

- Simulate the model using a nominal parameter set and an "on-off" stimulation protocol

S(t). - Generate noisy time-course data for all four cascade variables (

K1, K2, K3, K4). Use at least 3 measurement replicates.

- Simulate the model using a nominal parameter set and an "on-off" stimulation protocol

- Sequential Bayesian Training:

- Step 1: Train the model using only

K4data. Use MCMC (Metropolis-Hastings) with broad log-normal priors to sample the posterior parameter distribution. - Step 2: Use the posterior samples to predict

K4under a novel stimulation protocol. The 80% prediction interval should accurately contain the true trajectory. - Step 3: Attempt to predict

K1, K2, K3. Observe that prediction bands are very broad. - Step 4: Expand training data to include

K2, then retrain. Predictions forK2andK4should now be accurate. - Step 5: Train using all variables. The model is now "well-trained" and can predict all variables.

- Step 1: Train the model using only

- Analysis: Perform Principal Component Analysis (PCA) on the logarithm of posterior parameter samples to quantify the reduction in plausible parameter space dimensionality after each training step (see Table 2).

Protocol 2: Profile Likelihood Analysis for Practical Identifiability This is a gold-standard method for detecting practically non-identifiable parameters and constructing reliable confidence intervals [11] [1].

- Maximum Likelihood Estimation (MLE): Fit your model to the data to obtain the best-fit parameter vector

θ*and the maximum log-likelihoodL*. - Profiling a Parameter:

- Select a parameter of interest,

θ_i. - Define a grid of fixed values for

θ_iaround its MLE. - For each fixed value of

θ_i, optimize the likelihood over all other parametersθ_j (j≠i). Record the optimized log-likelihood value.

- Select a parameter of interest,

- Calculate Profile Likelihood Ratio: For each grid point, compute

PLR = 2[L* - L(θ_i)]. - Diagnosis & Interval Construction:

- A profile that is flat (remains below the critical threshold over a wide range) indicates practical non-identifiability for

θ_i[1]. - A likelihood-based confidence interval for

θ_iis given by all values wherePLR < χ²(1-α, df=1)(e.g., < 3.84 for 95% confidence). For non-identifiable parameters, this interval may be one-sided or infinite [11].

- A profile that is flat (remains below the critical threshold over a wide range) indicates practical non-identifiability for

Visualizing Key Concepts

Signaling Cascade with Feedback Motif This diagram represents the core model used in the sequential training experiment [9].

Diagram 2: Signaling cascade with nominal (solid) and relaxed (dashed) feedback.

Profile-Wise Analysis (PWA) Workflow This diagram outlines the unified workflow for identifiability analysis, estimation, and prediction [11].

Diagram 3: Profile-Wise Analysis (PWA) workflow for non-identifiable models.

The Scientist's Toolkit: Essential Research Reagents & Solutions

Table 3: Key Computational Tools for Addressing Non-Identifiability

| Item | Function & Purpose | Example/Reference |

|---|---|---|

| Profile Likelihood Software | Implements the core algorithm for detecting practical non-identifiability and building parameter confidence sets. Essential for diagnosis. | dMod R package; PWA Julia code [11]. |

| MCMC Sampler | Explores the posterior parameter distribution, revealing correlations and uncertainties in non-identifiable settings. | Stan [10], PyMC, NONMEM's Bayesian tools. |

| Structural Identifiability Checker | Determines if the model structure is theoretically identifiable with perfect data. | DAISY, GenSSI2 [11]. |

| Model Reduction Algorithm | Automates the process of reparameterizing a non-identifiable model into an identifiable one based on the data. | Data-informed likelihood reparameterization [4]. |

| Sloppiness/Identifiability Analysis Suite | Provides multiple diagnostics (e.g., PCA on parameter samples, eigenvalue analysis of FIM). | MATLAB DRAM toolbox; custom analysis based on posterior samples [9]. |

| Hierarchical Modeling Framework | Enables population-level analysis where individual-level non-identifiability may be resolved. | NONMEM, Monolix, brms in R [5]. |

Frequently Asked Questions (FAQs) on Model Non-Identifiability

FAQ 1: What is the fundamental difference between structural and practical non-identifiability?

- Structural non-identifiability arises from the model formulation itself; even with perfect, error-free data, certain parameters cannot be uniquely determined because different parameter combinations yield identical model outputs [12]. An example is a simple cancer growth model

x' = βx - δx, where only the net growth ratem = β - δcan be estimated, not the specific birth (β) and death (δ) rates [12]. - Practical non-identifiability occurs when the available clinical or experimental data are insufficient in quantity, quality, or frequency to uniquely estimate parameters, despite the model being structurally identifiable [13] [12]. This is common in clinical settings where data points are sparse or measurements are noisy.

FAQ 2: How can I check if my model is practically non-identifiable?

You can use several diagnostic methods:

- Profile Likelihood Analysis: If the likelihood function remains flat (or near-flat) when a parameter is varied, it indicates practical non-identifiability [13] [12].

- Collinearity Analysis: A high collinearity index between parameters suggests their estimates are highly correlated and not independently identifiable [13].

- Markov Chain Monte Carlo (MCMC) Diagnostics: In Bayesian modeling, failure of MCMC chains to converge, high

R̂values (>1.01), or bimodal posterior distributions can signal identifiability issues [14].

FAQ 3: What are the direct consequences of using a non-identifiable model for clinical prediction?

Using a non-identifiable model can lead to significantly biased and unreliable clinical predictions. A study on prostate cancer demonstrated that five different, equally well-fitting parameter sets for the same model produced accurate fits to the initial patient data but resulted in vastly different forecasts of long-term treatment outcomes [12]. Relying on such a model for precision medicine could lead to incorrect treatment decisions.

Troubleshooting Guides

Guide 1: Addressing Practical Non-Identifiability in a Cancer Growth Model

Problem: A researcher is calibrating a logistic growth model to tumor volume data from a mouse xenograft study. The parameter estimates for the growth rate (r) and carrying capacity (K) are highly uncertain and correlated.

Diagnosis:

- Symptoms: Strong correlation between

randKin the posterior distribution; a flat profile likelihood for one or both parameters. - Root Cause: The data may lack information from the saturation phase of the growth curve, making it difficult to distinguish between a fast-growing tumor that reaches a small size and a slow-growing tumor that reaches a large size [15] [12].

Solutions:

- Augment Calibration Targets: Include additional types of data beyond overall tumor volume. If available, incorporate biomarker data that provides information on the underlying biological state [13]. For example, in a study of crizotinib, data on target kinase inhibition (ALK or MET phosphorylation) was used alongside tumor growth inhibition to strengthen the pharmacodynamic model [16].

- Improve Data Collection Frequency: Increase the frequency of measurements, particularly during the transition from exponential growth to the plateau phase. Simulation experiments show that data collection strategy is crucial for identifiability [12].

- Incorporate Prior Knowledge: Use Bayesian methods to incorporate prior distributions for parameters from literature or previous experiments. This can constrain the plausible parameter space and improve identifiability [14].

- Consider Model Reduction: If the goal is to estimate a specific quantity (e.g., low-density growth rate), simplifying the crowding term in the growth model might be necessary, but beware of introducing model misspecification [15].

Prevention:

- Perform an observing-system simulation experiment (OSSE) before finalizing the experimental design. Simulate the data you expect to collect from your model and check if you can recover the known parameters. This helps determine the optimal data type, frequency, and accuracy required for identifiability [12].

Guide 2: Troubleshooting Non-Identifiability in a Bayesian Pharmacodynamic Model

Problem: During the fitting of a hierarchical Bayesian model for drug response, MCMC chains fail to converge, and R̂ values are unacceptably high.

Diagnosis:

- Symptoms:

R̂> 1.01; divergent transitions reported by the sampler (e.g., in Stan); low Effective Sample Size (ESS); trace plots showing chains that do not mix well [14]. - Root Cause: The posterior geometry may be highly correlated or have sharp edges, making it difficult for the sampler to explore efficiently. This is common in cognitive models and can similarly affect complex pharmacological models with non-linear dynamics [14].

Solutions:

- Reparameterize the Model: Use a non-centered parameterization for hierarchical models. Instead of drawing individual patient parameters from a group distribution as

θ_i ~ Normal(μ, σ), express them asθ_i = μ + σ * ζ_i, whereζ_i ~ Normal(0, 1). This can reduce dependencies between parameters and improve sampling efficiency [14]. - Check and Specify Priors: Avoid using improperly vague priors (e.g.,

Uniform(0, 10000)). Use weakly informative priors that restrict parameters to biologically plausible ranges, which can regularize the estimation and help achieve identifiability [14]. - Increase Model Flexibility Cautiously: If non-identifiability stems from an incorrect model assumption (e.g., the functional form of the growth curve), consider a semi-parametric approach. For instance, represent an unknown crowding function with a Gaussian process, which allows the data to inform the shape of the function rather than assuming a specific parametric form [15].

Verification:

- After implementing a solution, always run a posterior predictive check. Simulate new data using the fitted model and compare it to the observed data. This checks the model's overall adequacy, not just its computational stability [14].

Data Requirements for Identifiable Cancer Models

The table below summarizes findings from simulation experiments on the identifiability of common cancer growth models, highlighting how data characteristics influence the ability to uniquely estimate parameters [12].

Table 1: Impact of Data Characteristics on Model Identifiability

| Model Type | Key Prognostic Parameters | Minimum Data for Identifiability | Impact of Low Data Accuracy | Impact of Sparse Sampling |

|---|---|---|---|---|

| Exponential Growth | Net growth rate (m) | Data from at least two time points | Moderate uncertainty in estimate | Can completely prevent identification if too few points |

| Logistic Growth | Intrinsic growth rate (r), Carrying capacity (K) | Data covering exponential and saturation phases | High uncertainty, strong parameter correlation | Inability to identify K without saturation data |

| Generalized Growth (e.g., Richards) | Growth rate (r), Carrying capacity (K), Shape parameter (β) | High-frequency data across all growth phases | Very high uncertainty, practical non-identifiability likely | Shape parameter (β) often becomes unidentifiable |

Experimental Protocols for Generating Identifiable Models

Protocol: Observing-System Simulation Experiment (OSSE) for Clinical Trial Design

Purpose: To determine the data requirements (type, frequency, accuracy) for achieving practical identifiability of a candidate mathematical model before initiating a costly clinical study [12].

Methodology:

- Model Selection: Choose one or more candidate mechanistic models (e.g., logistic growth, system of ODEs for disease progression).

- Generate Synthetic Data:

- Select a "true" parameter set

θ_truebelieved to be representative of a patient population. - Use the model to simulate error-free output

y(t)at a high temporal resolution. - Add realistic measurement noise to create synthetic datasets

y_obs(t). Vary the noise level and sampling frequency to create different experimental scenarios [12].

- Select a "true" parameter set

- Calibration and Recovery:

- For each synthetic dataset, attempt to recover

θ_trueusing your standard calibration method (e.g., maximum likelihood, Bayesian inference). - Repeat this for many different

θ_truesampled from a biologically plausible space (Monte Carlo approach) [12].

- For each synthetic dataset, attempt to recover

- Diagnosis:

- Analyze the results using profile likelihoods or posterior distributions. A model is deemed identifiable for a given data scenario if

θ_truecan be recovered with high accuracy and precision across most simulations [12].

- Analyze the results using profile likelihoods or posterior distributions. A model is deemed identifiable for a given data scenario if

Interpretation: This protocol helps answer critical design questions: "Is monthly imaging sufficient, or is weekly required?" or "Do we need to measure this biomarker in addition to tumor volume?" [12].

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Key Computational and Analytical Tools for Managing Non-Identifiability

| Tool / Reagent | Type | Primary Function in Troubleshooting | Application Context |

|---|---|---|---|

| Profile Likelihood | Statistical Method | Identifies practically non-identifiable parameters by finding parameter ranges that are consistent with the data [13]. | General model calibration |

| Stan / PyMC3 | Software Library | Implements advanced MCMC (HMC, NUTS) for Bayesian inference; provides diagnostics (e.g., R̂, ESS) to detect sampling problems [14]. |

Complex hierarchical models |

| DAISY Software | Software Tool | Tests for structural identifiability using differential algebra, before any data is collected [12]. | Dynamic system models (ODEs) |

| Semi-Parametric Gaussian Processes | Modeling Approach | Replaces a potentially misspecified model term with a flexible function; reduces bias from model misspecification, a common cause of non-identifiability [15]. | Models with uncertain functional forms (e.g., growth curves) |

| Collinearity Index | Diagnostic Metric | Quantifies the degree of correlation between parameter estimates; a high index indicates non-identifiability [13]. | Multi-parameter model calibration |

Workflow and Pathway Diagrams

Diagram 1: Diagnostic and Remediation Workflow for Non-Identifiable Models

Diagram 2: Data Integration Pathway for Robust Drug Effect Assessment

Understanding Parameter Identifiability

What is parameter identifiability in the context of dynamic models? Parameter identifiability is a fundamental property of a mathematical model that determines whether its parameters can be uniquely determined from the available data. A model is considered identifiable if each unique set of parameters produces a unique model output. Formally, for a model ( M ) that maps parameters ( \theta ) to outputs ( y ), identifiability requires that ( \theta1 \neq \theta2 ) implies ( M(\theta1) \neq M(\theta2) ) [17] [18]. If different parameters can produce identical outputs, it becomes impossible to identify the "true" parameters based on data alone [17].

What is the difference between structural and practical non-identifiability? Non-identifiability manifests in two primary forms:

- Structural Non-identifiability: This is an inherent flaw in the model structure itself. It occurs when a change in one parameter can be perfectly compensated for by changes in one or more other parameters, making them impossible to uniquely identify even with perfect, infinite data [19]. This often arises from over-parameterization or redundant parameter combinations.

- Practical Non-identifiability: This type arises from limitations in the experimental data. A model may be structurally identifiable, but its parameters can be practically non-identifiable if the data is too noisy, too sparse, or lacks the necessary stimulation to inform the parameters [20] [19]. The quantity and quality of the data are insufficient to constrain the parameters to a unique value within a reasonable confidence interval.

Why is my model a good fit, but the parameter estimates are unreliable? A good model fit does not guarantee reliable parameter estimates. In non-identifiable or "sloppy" models, the goodness-of-fit might remain almost unchanged across a wide range of parameter values [19]. This means the estimated parameters could vary drastically without significantly affecting the model's output, making them untrustworthy. This is a common pitfall where a good fit is achieved at the cost of meaningful parameter interpretation [19].

Diagnostic Methods & Tools

How can I diagnose a non-identifiable model? Several methods exist to diagnose identifiability issues. The choice of method often depends on whether you are assessing the model structure a priori or diagnosing issues a posteriori after fitting experimental data.

The table below summarizes key diagnostic methods [18]:

| Method | Type of Analysis | Identifiability Indicator | Key Feature |

|---|---|---|---|

| DAISY (Differential Algebra for Identifiability of SYstems) | Structural, Global | Categorical (Yes/No) | Provides a definitive, analytical answer for systems of rational ODEs [18] |

| Sensitivity Matrix Method (SMM) | Practical, Local | Continuous & Categorical | Analyzes the sensitivity of model outputs to parameter changes at specific timepoints [18] |

| Fisher Information Matrix Method (FIMM) | Practical, Local | Continuous & Categorical | Evaluates the curvature of the log-likelihood function; can handle random effects [18] |

| Profile Likelihood | Practical, Local | Continuous | Explores parameter uncertainties by profiling the likelihood function [19] |

What does a non-identifiable covariance matrix indicate? After parameter estimation, inspecting the covariance matrix of the parameter estimates is a common diagnostic. If two or more parameter estimates are perfectly (or highly) correlated, or if one parameter estimate is a linear combination of several others, your model is likely non-identifiable [17]. This correlation implies that the data cannot distinguish between the effects of these parameters. In such cases, the covariance matrix will be singular or nearly singular, indicating that it cannot be inverted, which is a clear sign of identifiability problems [17].

How can I visualize the workflow for identifiability analysis? The following diagram outlines a general workflow for conducting identifiability analysis in dynamic model research:

A Practical Protocol: Iterative Training of a Signaling Cascade Model

This protocol is adapted from research on a biochemical signaling cascade, demonstrating how to handle practical non-identifiability through iterative experimentation [20].

1. Model and Initial Training

- Model Structure: A four-step signaling cascade (e.g., K1 → K2 → K3 → K4) with a negative feedback loop, represented by a system of ordinary differential equations [20].

- Initial Data: The model is first trained using only the trajectory of the final cascade variable (K4), measured in response to an "on-off" stimulation protocol.

- Outcome: After training, the model can accurately predict the K4 trajectory under a different stimulation protocol. However, predictions for the intermediate variables (K1, K2, K3) remain poor with very broad confidence bands, indicating that most model parameters are still uncertain and the model is practically non-identifiable [20].

2. Iterative Experimentation and Training The key is to sequentially add measurements of more model variables to constrain the parameter space further [20].

- Step 2: Expand the training dataset to include the trajectory of an intermediate variable (e.g., K2). Re-train the model.

- Step 3: Finally, train the model using a complete dataset containing trajectories of all four variables (K1, K2, K3, K4).

Research Reagent Solutions The table below lists essential materials and their functions for such an experiment [20]:

| Item | Function in the Experiment |

|---|---|

| Computational Model | Represents the signaling cascade dynamics using ordinary differential equations (ODEs). |

| Stimulation Protocol | A defined time-dependent signal (e.g., S(t)) that activates the cascade; can be an "on-off" or other pattern. |

| Time-Series Data | Measured concentrations or activities of the cascade variables (K1, K2, K3, K4) at multiple time points. |

| Markov Chain Monte Carlo (MCMC) | A Bayesian sampling algorithm used to explore the "plausible parameter space" consistent with the data. |

| Principal Component Analysis (PCA) | Used to analyze the space of plausible parameters and quantify the reduction in dimensionality after each training step. |

3. Results and Interpretation This iterative process systematically reduces the dimensionality of the "plausible parameter space." Even when parameters are not uniquely identified, the model can still have predictive power for the measured variables [20]. The diagram below illustrates this sequential training and prediction process:

Frequently Asked Questions (FAQs)

Can a non-identifiable model still be useful for making predictions? Yes. A model can be non-identifiable (parameters are not unique) yet still possess significant predictive power. Research shows that by training a model on a specific variable, you can reduce the dimensionality of the parameter space enough to make accurate predictions for that variable's behavior under new conditions, even if all individual parameters remain unknown [20]. The model's predictive power depends on which outputs were used for training and which you wish to predict.

What are the most common causes of practical non-identifiability in drug development? In pharmacometrics, common causes include:

- Insufficient Data: Too few subjects, sparse sampling times, or a lack of data during critical dynamic phases.

- Poor Study Design: Dosing regimens or sampling schedules that do not sufficiently excite the system's dynamics to inform all parameters.

- High Correlation Between Parameters: For example, when the effects of a drug's clearance and volume of distribution are highly correlated based on the available plasma concentration data.

- High Measurement Noise: This obscures the underlying system dynamics, making it difficult to estimate parameters precisely.

My model is structurally identifiable but practically non-identifiable. What should I do? When facing practical non-identifiability, consider these steps:

- Refine Your Experimental Design: The most robust solution is to collect more informative data. This could involve increasing the number of data points, sampling at more informative time points, or measuring additional model variables, as demonstrated in the signaling cascade protocol [20].

- Use a Continuous Identifiability Indicator: Methods like the Fisher Information Matrix Method (FIMM) can provide a continuous scale of identifiability, helping you identify which parameters are "hard-to-identify" and guiding targeted improvements to your study design [18].

- Consider Model Reduction: Use methods like likelihood reparameterization to create a simplified, identifiable model that retains predictive capability, even if some composite parameters lose a direct mechanistic interpretation [4] [20].

How can I check if my model is sloppy? Sloppiness is characterized by a spectrum of parameter sensitivities. You can diagnose it by computing the eigenvalues of the Fisher Information Matrix (FIM) or the Hessian of the cost function. A sloppy model will have a few large eigenvalues (stiff directions, well-constrained by data) and many very small eigenvalues (sloppy directions, poorly constrained by data) [20] [18]. This indicates that while the model output is sensitive to a few parameter combinations, it is largely insensitive to many others.

Troubleshooting Guides

Guide 1: Diagnosing and Resolving Data-Related Non-Identifiability

This guide helps researchers systematically identify and address the most common data-related causes of practical non-identifiability in dynamic models.

Table 1: Data Deficiency Symptoms and Solutions

| Data Deficiency | Key Symptoms in Model Calibration | Recommended Corrective Actions |

|---|---|---|

| Insufficient Data Points | Profile likelihoods for parameters do not become finite [21]. | Implement Minimally Sufficient Experimental Design to identify critical time points for measurement [21]. |

| Excessively Noisy Data | Widely flat profile likelihoods, even with adequate data points [20]. | Increase experimental replicates; review data collection protocols; employ Bayesian methods with appropriate noise models [4] [20]. |

| Uninformative Data | Parameters are non-identifiable despite a good model fit; model fails to predict new experimental conditions [20]. | Design experiments to measure the variable most directly linked to the parameters of interest; use sensitivity analysis to guide experimental design [21]. |

Diagnostic Workflow

The following diagram outlines a systematic workflow for diagnosing the root cause of practical non-identifiability in a model.

Guide 2: Implementing a Minimally Sufficient Experimental Design

This protocol provides a methodology for determining the minimal experimental data required to achieve practical identifiability, thereby optimizing resource use.

Step-by-Step Protocol

- Model Development and Validation: Begin with a candidate mathematical model that embodies the hypothesized mechanisms. Perform initial calibration and validation using any available preliminary data [21].

- Select Parameters of Interest: Identify which model parameters are critical for your research question. Remove from consideration those that can be directly measured experimentally (e.g., PK parameters from drug concentration data). Use local sensitivity analysis to rank the remaining parameters by their influence on the model output [21].

- Generate "Complete" Simulated Data: Simulate an ideal, noise-free dataset for your variable of interest (e.g., percent target occupancy in the tumor microenvironment) over a dense time course. This "complete" data should, by design, render the parameters of interest practically identifiable [21].

- Iterative Data Reduction and Identifiability Check: Systematically reduce the simulated dataset by removing time points. After each reduction, perform a practical identifiability analysis (e.g., using profile likelihood) on the parameters of interest [21].

- Define the Minimal Set: The minimally sufficient experimental design is the smallest set of time points at which data must be collected to maintain the practical identifiability of all key parameters [21].

Experimental Design Workflow

The following diagram visualizes the iterative process of defining a minimally sufficient experimental design.

Frequently Asked Questions (FAQs)

1. What is the fundamental difference between structural and practical non-identifiability?

Answer: Structural non-identifiability is an inherent property of the model structure itself, where different parameter combinations yield identical model outputs, even with perfect, noise-free data. Practical non-identifiability, the focus of this guide, arises from issues with the data, such as insufficient data points, excessive noise, or data that does not inform the specific parameters of interest [22] [20]. It is a problem of quality, quantity, and relevance of the available experimental measurements.

2. My model has many parameters and collecting data for all of them is infeasible. What should I do?

Answer: A sequential, iterative approach is recommended. Start by training your model on the most easily measurable variable. Even if this leaves most parameters uncertain (sloppy), it may allow for accurate predictions of that specific variable under different conditions [20]. Then, successively include measurements of additional key variables. Each new variable reduces the dimensionality of the "plausible parameter space," progressively increasing the model's overall predictive power without requiring a full, immediate dataset [20].

3. Can I use Bayesian methods to manage non-identifiability instead of collecting more data?

Answer: Yes, Bayesian inference provides a powerful framework for handling practical non-identifiability. By incorporating informative prior distributions—derived from expert knowledge, previous studies, or external data sources—you can constrain the plausible parameter space [23]. This approach allows for probabilistic inference and can resolve non-identifiability, but it requires careful sensitivity analysis to ensure results are not overly dependent on the chosen priors [23].

4. How do general data quality issues directly contribute to practical non-identifiability?

Answer: Common data quality failures create the conditions for practical non-identifiability [24]:

- Incomplete Data: Missing essential variables or time points breaks the workflow of constraining model parameters [24].

- Inaccurate Data Entry & Veracity Issues: Typos, incorrect units, or data that is untrustworthy in context (low veracity) act as systematic noise, misleading the parameter estimation process [24].

- Lack of Data Governance: Unclear ownership and standards lead to inconsistent data across experiments, making it difficult to build a reliable, unified model [24].

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Computational Tools and Methods for Addressing Non-Identifiability

| Tool / Method | Function | Application Context |

|---|---|---|

| Profile Likelihood Analysis | A computational method to assess practical identifiability by analyzing the sensitivity of the likelihood function to individual parameters [21]. | Determining if parameters are identifiable with a given dataset; used in the minimally sufficient experimental design workflow [21]. |

| Markov Chain Monte Carlo (MCMC) | A Bayesian sampling algorithm (e.g., Metropolis-Hastings) used to explore the posterior distribution of parameters [23]. | Calibrating models and quantifying parameter uncertainty, especially when incorporating informative priors to handle non-identifiability [23] [20]. |

| Sensitivity Analysis | Evaluates how changes in model parameters affect the model output, ranking parameters by influence [21]. | Identifying the most critical parameters to target for estimation; guiding experimental design to collect the most informative data [21]. |

| Data Profiling and Cleansing Tools | Software that automatically analyzes datasets for structure, content, and quality issues like null values, duplicates, and outliers [24] [25]. | The essential first step in any modeling exercise: ensuring the foundational data is complete, consistent, and accurate before calibration [24]. |

Diagnostic Toolkit: Methods for Detecting and Analyzing Practical Non-Identifiability

Profile likelihood is a powerful statistical method for quantifying parameter uncertainty in complex models, particularly when dealing with nuisance parameters (other unknown parameters that are not the primary focus of interest) [26]. It is a cornerstone technique for addressing practical non-identifiability in dynamic models common in systems biology, pharmacology, and drug development [27] [1]. Unlike simpler methods like Wald intervals that rely on local curvature assumptions, profile likelihood constructs confidence intervals by inverting likelihood ratio tests, making it more reliable for nonlinear models, moderate sample sizes, and non-Gaussian settings [26].

This guide provides troubleshooting and FAQs to help researchers successfully implement profile likelihood analysis in their experiments.

Frequently Asked Questions (FAQs)

FAQ 1: When should I use profile likelihood over other confidence interval methods? You should prioritize profile likelihood in these scenarios [26]:

- Your model is nonlinear, and you suspect the likelihood surface is not quadratic.

- You have a moderate sample size where asymptotic approximations (like those from the Fisher Information Matrix) may be unreliable.

- Model parameters are near a boundary or you suspect non-identifiability.

- The Wald (Fisher Information-based) confidence intervals seem implausibly narrow or symmetric when you expect asymmetry.

FAQ 2: My profile likelihood is multi-modal or highly irregular. What does this indicate and how should I proceed? A multi-modal or irregular profile (with several "peaks" or "dips") is a strong indicator of practical non-identifiability [26] [1]. It suggests that for your specific dataset, multiple distinct parameter values explain the data almost equally well.

- Troubleshooting Steps:

- Verify Model and Data: Double-check the model equations and data for errors.

- Check Structural Identifiability: Ensure your model is theoretically identifiable with perfect, noise-free data.

- Consider Data Collection: The most robust solution is often to collect more informative data. Active learning algorithms like E-ALPIPE can help design optimal experiments to resolve non-identifiability [28].

- Use Modified Profile Likelihood: In some cases, applying a higher-order correction (e.g., Barndorff-Nielsen modification) can smooth the profile [26].

FAQ 3: How can I efficiently compute profile likelihood confidence intervals for computationally expensive models? For models where each likelihood evaluation is slow (e.g., ODE models):

- Start with a Coarse Grid: Initially, profile over a coarse grid of parameter values to locate the approximate region of the confidence interval boundaries [26].

- Use Adaptive Root-Finding: Once the approximate boundaries are known, apply root-finding algorithms (like bisection or secant methods) to precisely find where the profile likelihood crosses the critical threshold, minimizing the number of required evaluations [26] [28].

- Leverage Parallelization: The profiling process for each parameter value is independent and can be parallelized across multiple CPUs or GPUs [26].

FAQ 4: How do I propagate parameter uncertainty to model predictions using profile likelihood? The Profile-Wise Analysis (PWA) workflow provides an efficient method [27]:

- For your parameter of interest, define the profile likelihood confidence set.

- Propagate this set of parameter values through your model prediction function.

- The union of all resulting prediction curves forms a profile-wise prediction confidence set. This provides a confidence band for your model output that acknowledges parameter uncertainty. Combining these for different parameters can approximate the full likelihood-based prediction confidence set.

The Scientist's Toolkit: Essential Research Reagents

The table below outlines key computational "reagents" and their functions for a successful profile likelihood analysis.

| Research Reagent / Tool | Function / Purpose |

|---|---|

| Likelihood Function | The core probability model linking parameters to observed data; the foundation for all inference [27] [29]. |

| Constrained Optimizer | Algorithm (e.g., Sequential Quadratic Programming) to maximize likelihood subject to a fixed parameter of interest [26]. |

| Root-Finding Algorithm | Method (e.g., bisection) to find where the profile likelihood crosses the critical value, defining interval endpoints [26]. |

| Profile-Wise Prediction Framework | Methodology to propagate profile-based confidence sets for parameters to confidence sets for model predictions [27]. |

Experimental Protocols & Workflows

Protocol 1: Basic Workflow for Constructing a 1D Profile Likelihood CI

This is the standard methodology for profiling a single parameter of interest, ψ [26].

- Global Optimization: Find the unconstrained Maximum Likelihood Estimate (MLE) for the full parameter vector,

θ̂, and compute the maximum log-likelihood value,ℓ(θ̂). - Define Parameter Grid: Select a range of values for your parameter of interest,

{ψ₁, ψ₂, ..., ψₖ}, that covers a plausible interval around its MLE. - Constrained Optimization: For each value

ψᵢin the grid, solve the constrained optimization problem:θ*(ψᵢ) = argmax ℓ(θ)subject tog(θ) = ψᵢ. This maximizes the likelihood while keeping the parameter of interest fixed. Store the resulting profile log-likelihood valueℓ_p(ψᵢ) = ℓ(θ*(ψᵢ)). - Compute Likelihood Ratio: For each

ψᵢ, calculate the likelihood ratio statistic:λ(ψᵢ) = -2[ ℓ_p(ψᵢ) - ℓ(θ̂) ]. - Find Interval Endpoints: The approximate

100(1-α)%confidence interval forψincludes all values for whichλ(ψ) ≤ χ²₁,₁₋α, whereχ²₁,₁₋αis the(1-α)quantile of the chi-squared distribution with 1 degree of freedom (e.g., ~3.84 for a 95% CI). Use interpolation on your computedλ(ψᵢ)values to find the exact roots.

Protocol 2: Profile-Wise Analysis (PWA) for Prediction Uncertainty

This extended protocol, used for dynamic model predictions, builds upon the basic profiling workflow [27].

- Perform Identifiability Analysis: Use profile likelihood to assess which parameters are practically identifiable given your data.

- Construct Profile Likelihoods: Generate the profile likelihood for each parameter of interest, following Protocol 1.

- Generate Profile-Wise Predictions: For each parameter, take the set of parameter values within its profile likelihood confidence set (not just the MLE). Simulate your model (e.g., solve the ODE) for all these parameter values to create a family of prediction curves.

- Combine Confidence Sets: The overall prediction confidence set is formed by taking the union of the profile-wise prediction sets from step 3. This combines the uncertainties from the various parameters into a unified confidence band for your model's output.

The following workflow diagram illustrates the structured process of Profile-Wise Analysis (PWA) for integrating parameter identifiability, estimation, and prediction uncertainty.

Data Presentation: Critical Values for Profile Likelihood

The table below summarizes the key critical values from the chi-squared distribution used for constructing profile likelihood confidence intervals, based on Wilks' theorem [26].

| Confidence Level | Alpha (α) | Critical Value (χ²₁,₁₋α) | Log-Likelihood Drop Threshold (χ²₁,₁₋α / 2) |

|---|---|---|---|

| 90% | 0.10 | 2.71 | 1.36 |

| 95% | 0.05 | 3.84 | 1.92 |

| 99% | 0.01 | 6.63 | 3.32 |

The following diagram illustrates the logical relationship between the profile likelihood function and the resulting confidence interval, highlighting the role of the critical value.

# Frequently Asked Questions

Q1: What is the fundamental difference between multicollinearity and practical non-identifiability? While both concepts relate to challenges in parameter estimation, multicollinearity occurs when predictor variables in a regression model are highly correlated, making it difficult to isolate their individual effects on the dependent variable [30] [31]. Practical non-identifiability, in the context of dynamic models, arises when available data is insufficient to reliably estimate unique parameter values, even if the model structure is theoretically identifiable (structurally identifiable) [1] [32]. Essentially, multicollinearity is a specific data problem in regression analysis, whereas practical non-identifiability is a broader model-data mismatch challenge in computational modeling.

Q2: Why should I be concerned about multicollinearity in my predictive model if its overall accuracy remains high? Multicollinearity primarily affects the interpretability of your model, not necessarily its predictive power [30]. A model with severe multicollinearity can still provide accurate predictions but will have unreliable coefficient estimates, making it difficult to understand the individual influence of each predictor [30]. This becomes problematic in scientific and drug development contexts where you need to identify key biological drivers or therapeutic targets.

Q3: Can a model be practically non-identifiable even without severe multicollinearity? Yes. Practical non-identifiability can stem from various issues beyond multicollinearity, including model symmetries, over-parameterization, or simply a lack of informative data for certain parameters [33] [32]. For instance, in a complex systems biology model, multiple distinct parameter combinations might produce nearly identical output trajectories for the observed variables, rendering those parameters non-identifiable even if no strong pairwise correlations exist [20].

Q4: What is the most reliable method for detecting multicollinearity in my dataset? The Variance Inflation Factor (VIF) is widely considered the most robust diagnostic [30] [34] [31]. Unlike simple correlation matrices that only detect pairwise relationships, VIF can detect multicollinearity between three or more variables [34]. A VIF value of 1 indicates no correlation, values between 1 and 5 suggest moderate correlation, and values exceeding 5 indicate critical multicollinearity that may warrant corrective measures [30] [31].

Q5: How can I resolve severe multicollinearity without collecting new data? Several analytical approaches can mitigate multicollinearity:

- Remove redundant variables: Use domain knowledge to remove one variable from a highly correlated pair [31] [35].

- Center your variables: For models with interaction terms (e.g., X₁ × X₂) or polynomial terms (e.g., X²), centering the variables (subtracting the mean) can reduce structural multicollinearity without changing the interpretation of coefficients [30].

- Use dimensionality reduction: Techniques like Principal Component Analysis (PCA) or Partial Least Squares (PLS) regression transform the original correlated variables into a smaller set of uncorrelated components [31] [35].

# Troubleshooting Guides

# Guide 1: Diagnosing and Resolving Multicollinearity in Regression Models

Problem: Your regression model has high overall significance, but individual predictors are statistically insignificant, or coefficient signs are counter-intuitive. You suspect multicollinearity.

Investigation & Diagnosis:

- Calculate VIFs: For each predictor variable, compute the Variance Inflation Factor (VIF). This measures how much the variance of a coefficient is inflated due to multicollinearity [30] [31].

- Interpret VIF Values: Use the following table to interpret your results:

| VIF Value | Interpretation | Recommended Action |

|---|---|---|

| VIF = 1 | No correlation | No action needed. |

| 1 < VIF ≤ 5 | Moderate correlation | Generally acceptable; monitor. |

| 5 < VIF ≤ 10 | High correlation | Investigate and consider remediation. |

| VIF > 10 | Severe multicollinearity | Model coefficients are poorly estimated; remediation is required [30]. |

- Supplement with a Correlation Matrix: Create a heatmap of the correlation matrix between all predictors. Look for absolute correlation coefficients exceeding 0.7 to 0.8, which indicate strong pairwise relationships [34] [35].

Resolution Protocol:

- If one or two variables have high VIFs: Check if they are conceptually distinct. If not, consider dropping the less critical variable [35].

- If the model has interaction or polynomial terms: Apply mean-centering to the continuous variables involved. This reduces structural multicollinearity without altering the model's fundamental relationships [30].

- If multiple key variables are involved: Apply PCA to create a new set of uncorrelated variables. The downside is that these new components may be less interpretable [31].

# Guide 2: Addressing Practical Non-Identifiability in Dynamic Models

Problem: When calibrating a dynamic model (e.g., a system of ODEs for a signaling cascade), you find that many different parameter sets yield an equally good fit to your observed data. This is practical non-identifiability.

Investigation & Diagnosis:

- Profile Likelihood Analysis: For each parameter, fix it to a range of values and re-optimize all other parameters. A flat profile likelihood indicates that the parameter is non-identifiable, as changes in its value can be compensated for by other parameters without worsening the fit [1].

- Fisher Information Matrix (FIM) Analysis: Calculate the FIM at your parameter estimates. Perform an Eigenvalue Decomposition (EVD) of the FIM. Eigenvalues close to zero indicate sloppy directions in parameter space—combinations of parameters that the data cannot inform [32]. The corresponding eigenvectors reveal which parameters are involved in these non-identifiable combinations [33] [32].

Resolution Protocol:

- Increase Data Informativeness: Design new experiments to measure additional model variables or use more informative stimulation protocols (e.g., time-varying signals instead of steady-state measurements) [20]. The table below outlines a sequential training approach for a signaling cascade model.

| Training Step | Data Used (Measured Variables) | Resulting Predictive Power | Dimensionality Reduction |

|---|---|---|---|

| 1 | Last cascade variable (K4) | Accurate prediction of K4 only | 1 (of 9 possible) |

| 2 | Variables K2 and K4 | Accurate prediction of K2 and K4 | 2 (of 9 possible) |

| 3 | All four variables (K1, K2, K3, K4) | Accurate prediction of all variables | 4 (of 9 possible) [20] |

- Model Reduction: If certain parameters are consistently non-identifiable, consider fixing them to literature values or re-parameterizing the model to reduce its complexity [1].

- Bayesian Methods: Use Bayesian inference with informative priors. The prior distributions can help constrain the plausible parameter space, effectively compensating for a lack of identifiability in the data alone [33].

# The Scientist's Toolkit: Research Reagent Solutions

The following table lists key computational and statistical tools essential for conducting robust collinearity and identifiability analysis.

| Tool / Reagent | Type | Primary Function in Analysis |

|---|---|---|

| Variance Inflation Factor (VIF) | Statistical Diagnostic | Quantifies the severity of multicollinearity by measuring how much the variance of a coefficient is inflated due to correlations with other predictors [30] [31]. |

| Correlation Matrix & Heatmap | Visual Diagnostic | Provides a visual representation of pairwise linear relationships between predictor variables, allowing for quick identification of strongly correlated pairs [35]. |

| Profile Likelihood | Computational Method | Assesses practical identifiability by analyzing how the model's fit changes when a single parameter is varied while others are re-optimized. Flat profiles indicate non-identifiability [13] [1]. |

| Fisher Information Matrix (FIM) | Mathematical Framework | A matrix whose invertibility is linked to practical identifiability. Its eigenvalue decomposition reveals sloppy (non-identifiable) and stiff (identifiable) directions in parameter space [32]. |

| Markov Chain Monte Carlo (MCMC) | Computational Algorithm | A Bayesian method for sampling the posterior distribution of parameters. It is robust to identifiability issues and provides full uncertainty quantification for parameters and predictions [20] [33]. |

| Principal Component Analysis (PCA) | Dimensionality Reduction | Transforms a set of potentially correlated variables into a smaller number of uncorrelated variables called principal components, which can remedy multicollinearity [31] [35]. |

Fisher Information Matrix (FIM) and Its Limitations in Identifiability Assessment

Frequently Asked Questions (FAQs)

Q1: What is the fundamental principle behind using the FIM for identifiability analysis? The Fisher Information Matrix (FIM) quantifies the amount of information that observed data carries about the model's unknown parameters. For local practical identifiability, a non-singular FIM (i.e., having all eigenvalues significantly greater than zero) is a sufficient condition for many models. This indicates that the log-likelihood surface has sufficient curvature around the parameter estimates, allowing them to be uniquely identified from the available data [18] [33].

Q2: My model is structurally identifiable, but the FIM analysis indicates practical non-identifiability. What does this mean? This is a common scenario. Structural identifiability assumes idealized, noise-free data observed continuously. Practical non-identifiability, detected by a near-singular FIM, arises from real-world data limitations, such as insufficient sample size, inadequate sampling schedules, or high noise levels. Even though parameters are uniquely determinable in theory, your specific dataset lacks the information to estimate them with acceptable precision [18] [1].

Q3: Are there specific types of models where FIM is known to be a poor indicator? Yes, the FIM can be misleading for models that are highly nonlinear or when its calculation relies on crude approximations. For Nonlinear Mixed Effects Models (NLME), the choice of linearization method (like FO or FOCE) to compute the FIM can lead to different and sometimes incorrect conclusions about identifiability [36]. In such cases, profile likelihood or Bayesian methods are often more reliable [1].

Q4: What are the main alternatives to FIM for assessing identifiability? Several robust alternatives exist, including:

- Profile Likelihood: A powerful method to detect and resolve practical non-identifiability by analyzing the likelihood function when one parameter is fixed and all others are re-optimized [1].

- Sensitivity Matrix Method (SMM): Analyzes how model outputs change with parameter variations. Unidentifiable parameters are indicated by a sensitivity matrix with a non-trivial null space [37] [18].

- Bayesian Methods: These methods sample from the full posterior distribution of parameters. Even for non-identifiable models, Bayesian inference can be used to sample from the parameter space, making it more robust to these issues than maximum likelihood methods [33] [20].

Troubleshooting Guides

Problem: FIM is Singular or Nearly Singular

A singular or near-singular FIM is a primary indicator of local practical non-identifiability.

Diagnosis:

- Check the eigenvalues of the FIM. The presence of one or more eigenvalues close to zero suggests non-identifiability [33]. The eigenvector corresponding to a near-zero eigenvalue indicates the direction in parameter space that is poorly identifiable [37] [33].

- In population models, this can occur if the model is over-parameterized or if the study design (dosing, sampling times) is insufficient to inform all parameters [36].

Solutions:

- Modify Study Design: Use optimal design principles to adjust dosing levels or sampling times to maximize the information content for the unidentifiable parameters [36].

- Model Reduction: Identify and fix unidentifiable parameters to literature values or remove them entirely. Techniques like reparameterization can also help [4] [1].

- Add Prior Information: In a Bayesian framework, incorporate informative priors to constrain the plausible range of unidentifiable parameters [20].

Problem: Inconsistent Results Between FIM and Other Methods

You may find that the FIM suggests identifiability, but parameter estimation fails, or vice versa.

Diagnosis:

- FIM Approximation Error: The FIM is often computed using approximations (e.g., FO, FOCE). The FO approximation can be inaccurate for highly nonlinear models or models with large inter-individual variability, leading to incorrect identifiability conclusions [36].

- Data vs. Design: The FIM is typically computed for an experimental design and a set of initial parameter values, not the actual data. Discrepancies can arise if the initial parameter values are misspecified or if the actual data is particularly noisy [36] [1].

Solutions:

- Use a Better Approximation: If possible, switch from the First Order (FO) to the First Order Conditional Estimation (FOCE) approximation for a more accurate FIM calculation in NLME models [36].

- Validate with Another Method: Cross-validate the FIM result using a different technique, such as the profile likelihood method or a bootstrap analysis, which are more directly based on the fitted model and the actual data [1].

Problem: Handling Non-identifiable Models for Prediction

A model can be non-identifiable yet still have useful predictive power.

Diagnosis:

- Assess whether the non-identifiability affects the model predictions for your specific quantity of interest. Some parameter combinations may be unidentifiable, but the model output may be insensitive to these combinations [20].

Solutions:

- Exploit Stiff/Sloppy Directions: Identify the "stiff" parameter combinations (those that the data does inform) and use the model for predictions that depend primarily on these well-constrained combinations [20].

- Iterative Training and Prediction: Train the model on available data, which reduces the dimensionality of the plausible parameter space. Even with many unidentified parameters, the model might accurately predict the specific variables that were measured during training under different conditions [20].

- Bayesian Predictive Checks: Use the posterior parameter distribution from a Bayesian analysis to generate prediction intervals. This acknowledges parameter uncertainty while still allowing for model use [33] [20].

Experimental Protocols

Protocol for Local Identifiability Analysis using FIM

Purpose: To determine if a model is locally practically identifiable given a specific experimental design and initial parameter estimates.

Materials:

- A defined mathematical model (e.g., a system of ODEs).

- A proposed experimental design (dosing regimens, sampling time points).

- Initial parameter estimates (e.g., from literature or preliminary fits).

- Software capable of computing the FIM (e.g.,

OptimalDesignin Julia,PopED,PFIM).

Methodology:

- Define Model and Design: Formally specify your model structure, parameters to be estimated, and the complete experimental design.

- Compute the FIM: Calculate the FIM for the given design and initial parameter values. For NLME models, specify the linearization method (FO or FOCE) and implementation (full or block-diagonal FIM) [36].

- Eigenvalue Decomposition: Perform an eigenvalue decomposition of the FIM.

- Interpret Results:

- Categorical Assessment: If the smallest eigenvalue is zero (or numerically very close to zero), the model is locally unidentifiable. The number of near-zero eigenvalues indicates the number of unidentifiable parameter combinations [33].

- Continuous Indicators: Calculate the relative parameter changes or the skewing angle. These continuous metrics provide a more nuanced view of the identifiability level, showing how close the model is to being unidentifiable [37] [18].

- Identify Problematic Parameters: The eigenvectors corresponding to near-zero eigenvalues indicate the linear combinations of parameters that are not well-identified by the design [37] [33].

The workflow for this protocol is outlined below.

Protocol for Comparing FIM Approximations in NLME Models

Purpose: To evaluate the impact of different FIM calculation methods on identifiability conclusions and optimal design.

Materials: As in Protocol 3.1.

Methodology:

- Compute Multiple FIMs: Calculate the FIM for the same model and design using different combinations of approximation methods (FO vs. FOCE) and implementations (full FIM vs. block-diagonal FIM) [36].

- Compare Eigenvalues: For each FIM variant, compute and record the eigenvalues, particularly the smallest one.

- Compare Optimal Designs: Use each FIM variant to optimize the same design criterion (e.g., D-optimality). Compare the resulting optimal sampling schedules and the number of support points [36].

- Performance Evaluation: Evaluate the performance of each design generated from the different FIMs using a stochastic simulation and estimation (SSE) study. Compare the bias and empirical precision of the parameter estimates [36].

The table below summarizes the expected differences between FIM approximations based on published findings [36].

Table 1: Comparison of FIM Approximations and Implementations

| FIM Implementation | Model Linearization | Typical Outcome on Design | Performance under Misspecification |

|---|---|---|---|

| Full FIM | First Order (FO) | More support points, less clustering | Superior robustness to parameter misspecification |

| Block-Diagonal FIM | First Order (FO) | More clustering of sample points | Higher parameter bias |

| Full FIM | First Order Conditional Estimation (FOCE) | More support points, less clustering | Generally good performance |

| Block-Diagonal FIM | First Order Conditional Estimation (FOCE) | More support points than FO block-diagonal | Good performance, but full FIM may be preferred |

Research Reagent Solutions

Table 2: Key Software Tools for Identifiability and FIM Analysis

| Tool / "Reagent" | Function | Application Context |

|---|---|---|

| DAISY | Performs structural identifiability analysis using differential algebra. | Determines global/local identifiability for ODE models assuming perfect, continuous data [18]. |

| SMM & FIMM Software | Implements the Sensitivity Matrix and Fisher Information Matrix Methods. | Assesses practical, local identifiability for a given study design; provides continuous identifiability indicators [37] [18]. |

| Profile Likelihood | A computational method to assess practical identifiability by profiling the likelihood function. | Robustly identifies identifiable and non-identifiable parameters and their correlations in real datasets [1]. |

| Pumas/OptimalDesign | A pharmacometric framework with tools for FIM calculation and optimal design. | Computes FIM for NLME models and optimizes sampling designs for clinical trials [33]. |

| Bayesian MCMC Sampling | A method for sampling parameter posteriors using Markov Chain Monte Carlo. | Fits non-identifiable and poorly identifiable models and quantifies their predictive power despite parameter uncertainty [20]. |

Advanced Workflow: From Identifiability Analysis to Prediction

For complex dynamic models, a single identifiability check is often insufficient. The following diagram illustrates an iterative workflow that integrates identifiability assessment with model training and prediction, acknowledging that non-identifiable models can still be useful [20].

Frequently Asked Questions (FAQs)

FAQ 1: What are the primary causes of non-identifiability in NLME models, and how can I diagnose them? Non-identifiability occurs when different parameter combinations yield indistinguishable model solutions, making it impossible to pinpoint a unique set of parameters from the available data. This can be structural (inherent to the model equations) or practical (due to data quality or quantity) [4] [15]. Diagnosis involves profile likelihood analysis or examining the correlation matrix of parameter estimates for values near ±1. In practice, you may also observe extremely large standard errors or confidence intervals for parameter estimates, or failure of the optimization algorithm to converge [15].

FAQ 2: How does the hierarchical structure of NLME models help with practical non-identifiability? The NLME framework models all subjects' data simultaneously, allowing the model to "borrow strength" across the population. This shrinkage effect pulls individual parameter estimates toward the population mean, which stabilizes estimates and can mitigate practical non-identifiability that might be present when fitting models to each subject's data individually [38]. This is particularly valuable when working with sparse or noisy data, as the population structure provides additional information to constrain parameter values [38] [39].

FAQ 3: My model fails to converge. What are the first steps I should take? First, simplify the model by reducing the number of random effects or fixing certain parameters to literature values. Second, check your initial parameter values; starting values that are too far from the true optimum can prevent convergence. Third, consider re-parameterizing the model. For parameters that span several orders of magnitude, log-transformation can improve numerical stability and convergence [40]. Finally, ensure your data is scaled appropriately, as large differences in variable scales can cause numerical issues.

FAQ 4: When should I consider machine learning or automated approaches for NLME model development? Automated model search is beneficial when facing a vast space of potential model structures, such as in population pharmacokinetics (popPK) for extravascular drugs with complex absorption behavior [41]. These approaches can systematically explore model configurations that might be missed by manual, sequential searches, helping to avoid local minima and improve reproducibility. They are especially useful when time constraints limit manual exploration or when you want to standardize the model selection process [41].

Troubleshooting Common Experimental Issues

Table 1: Common NLME Modeling Problems and Solutions