Performance Analysis of LevMar SE vs. GLSDC: Optimization Algorithms for Systems Biology Parameter Estimation

This article provides a comprehensive performance analysis of two prominent optimization algorithms used for parameter estimation in dynamic biological system models: the gradient-based Levenberg-Marquardt with Sensitivity Equations (LevMar SE) and...

Performance Analysis of LevMar SE vs. GLSDC: Optimization Algorithms for Systems Biology Parameter Estimation

Abstract

This article provides a comprehensive performance analysis of two prominent optimization algorithms used for parameter estimation in dynamic biological system models: the gradient-based Levenberg-Marquardt with Sensitivity Equations (LevMar SE) and the hybrid stochastic-deterministic Genetic Local Search with Distance independent Diversity Control (GLSDC). Tailored for researchers, scientists, and drug development professionals, we explore their foundational principles, methodological applications, and relative performance in handling real-world challenges like non-identifiability and high-dimensional parameter spaces. The analysis synthesizes evidence on convergence speed, computational efficiency, and the critical impact of data normalization strategies—Data-driven Normalization of Simulations (DNS) versus Scaling Factors (SF)—on algorithm success. Practical guidance is offered for algorithm selection and optimization to enhance the development of predictive models in systems biology and drug discovery.

The Critical Role of Parameter Estimation in Systems Biology and Drug Development

Dynamic models, particularly those based on Ordinary Differential Equations (ODEs), are fundamental tools in systems and synthetic biology for formalizing hypotheses and predicting the quantitative, time-evolving behavior of cellular processes such as signal transduction and gene regulation [1] [2]. These models describe the rate of change of molecular species concentrations (e.g., dx/dt = f(x, θ)) as a function of the current state x and a set of kinetic parameters θ [1]. The process of parameter estimation, or model calibration, is the critical inverse problem of finding the unknown parameter values θ that best align model simulations with experimental data [3]. This is mathematically formulated as an optimization problem, where an objective function measuring the discrepancy between simulations and data is minimized [2].

The task is notoriously challenging due to the non-linearity of biological systems, the frequent existence of local minima in the objective function, and parameter non-identifiability, where multiple parameter sets fit the data equally well [1] [4]. The challenge is compounded by the nature of experimental data, which are often expressed in relative or arbitrary units (e.g., from Western blotting or RT-qPCR), unlike the well-defined units (e.g., nano-Molar concentrations) of model simulations [2]. This discrepancy necessitates a strategy to make simulations and data comparable, a choice that profoundly impacts the performance of optimization algorithms [1].

Scaling Factor vs. Data-Driven Normalisation: A Critical Choice

Two primary methods exist to align model simulations with normalized experimental data, and the choice between them significantly influences the complexity and success of parameter estimation [1] [2].

- Scaling Factor (SF) Approach: This common method introduces an unknown scaling factor parameter (

α) for each observable, which multiplies the simulation outputs to match the scale of the data:ỹᵢ ≈ αⱼ * yᵢ(θ). These scaling factors must be estimated alongside the dynamic parametersθ, thereby increasing the problem's dimensionality [1] [4]. - Data-Driven Normalisation of Simulations (DNS) Approach: This method applies the same normalisation procedure used on the experimental data directly to the model simulations. For instance, if data are normalized by dividing by a control data point (

ỹᵢ = ŷᵢ / ŷ_ref), the simulations are normalized identically:ỹᵢ ≈ yᵢ(θ) / y_ref(θ). The key advantage is that DNS does not introduce new parameters to be estimated [1] [2].

The following diagram illustrates the fundamental difference in how these two approaches integrate simulations and data within the objective function.

The Algorithms: LevMar SE vs. GLSDC

Optimization algorithms for parameter estimation can be broadly categorized into local and global/hybrid methods. This guide focuses on two prominent representatives: a local, gradient-based algorithm and a hybrid, stochastic-deterministic one [1] [4].

- Levenberg-Marquardt with Sensitivity Equations (LevMar SE): This is a local, gradient-based nonlinear least-squares algorithm [5]. It interpolates between the Gauss-Newton algorithm and gradient descent, using a damping factor to ensure robustness [5]. Its key feature is the use of Sensitivity Equations (SE) to compute the required gradients of the objective function with respect to parameters accurately and efficiently [1]. It is typically deployed with multiple restarts (e.g., via Latin hypercube sampling) to mitigate the risk of converging to local minima [1] [4].

- Genetic Local Search with Diversity Control (GLSDC): This is a hybrid, global optimization algorithm that combines a stochastic global search phase based on a genetic algorithm with a deterministic local search phase (e.g., using Powell's method) [1] [6]. The "Distance independent Diversity Control" mechanism helps maintain population diversity effectively, allowing it to escape local minima and explore the parameter space more broadly than purely local methods [1].

Performance Comparison: Experimental Data and Analysis

A systematic study compared LevMar SE, LevMar FD (which uses finite differences instead of sensitivity equations), and GLSDC on three test-bed problems of increasing complexity, using both SF and DNS approaches [1] [4]. The key performance metrics were convergence speed (computation time) and parameter identifiability.

Benchmark Problems and Protocols

The experimental comparison was based on three established test problems [1] [4]:

- STYX-1-10: A problem with 1 observable and 10 unknown parameters.

- EGF/HRG-8-10: A problem with 8 observables and 10 unknown parameters.

- EGF/HRG-8-74: A problem with 8 observables and 74 unknown parameters.

The core protocol for each parameter estimation run involved [1]:

- Model and Data Definition: Specifying the ODE model, parameters to estimate (with bounds), and normalized experimental data.

- Objective Function Setup: Configuring the objective function (Least Squares or Log-Likelihood) using either the SF or DNS method.

- Algorithm Execution: Running each optimization algorithm (LevMar SE, LevMar FD, GLSDC) from multiple starting points to ensure statistical robustness.

- Performance Measurement: Recording the computation time until convergence and analyzing the resulting parameter sets for practical non-identifiability.

Comparative Performance Results

The table below summarizes the key findings from the benchmark studies, highlighting how algorithm performance depends on problem size and the chosen normalisation method [1] [4].

Table 1: Performance Comparison of LevMar SE vs. GLSDC across different problems

| Test Problem | Number of Parameters | Normalisation Method | LevMar SE Performance | GLSDC Performance | Key Finding |

|---|---|---|---|---|---|

| STYX-1-10 | 10 | SF | Fastest | Good | For smaller problems, LevMar SE is highly efficient [1]. |

| EGF/HRG-8-10 | 10 | SF | Good | Markedly improved with DNS | DNS provides a significant advantage to GLSDC even with fewer parameters [1]. |

| EGF/HRG-8-74 | 74 | SF | Slower, high non-identifiability | Outperforms LevMar SE | For large parameter counts, GLSDC performs better [1]. |

| EGF/HRG-8-74 | 74 | DNS | Convergence speed improved | Best performance, fastest convergence | DNS greatly improves speed for all algorithms with large parameter numbers [1] [4]. |

A critical finding was that the Scaling Factor (SF) approach aggravates practical non-identifiability, increasing the number of parameter directions that are not uniquely determined by the data [1] [4]. In contrast, the DNS approach does not introduce this additional identifiability problem and, by reducing the number of effective parameters, leads to faster convergence [1] [2].

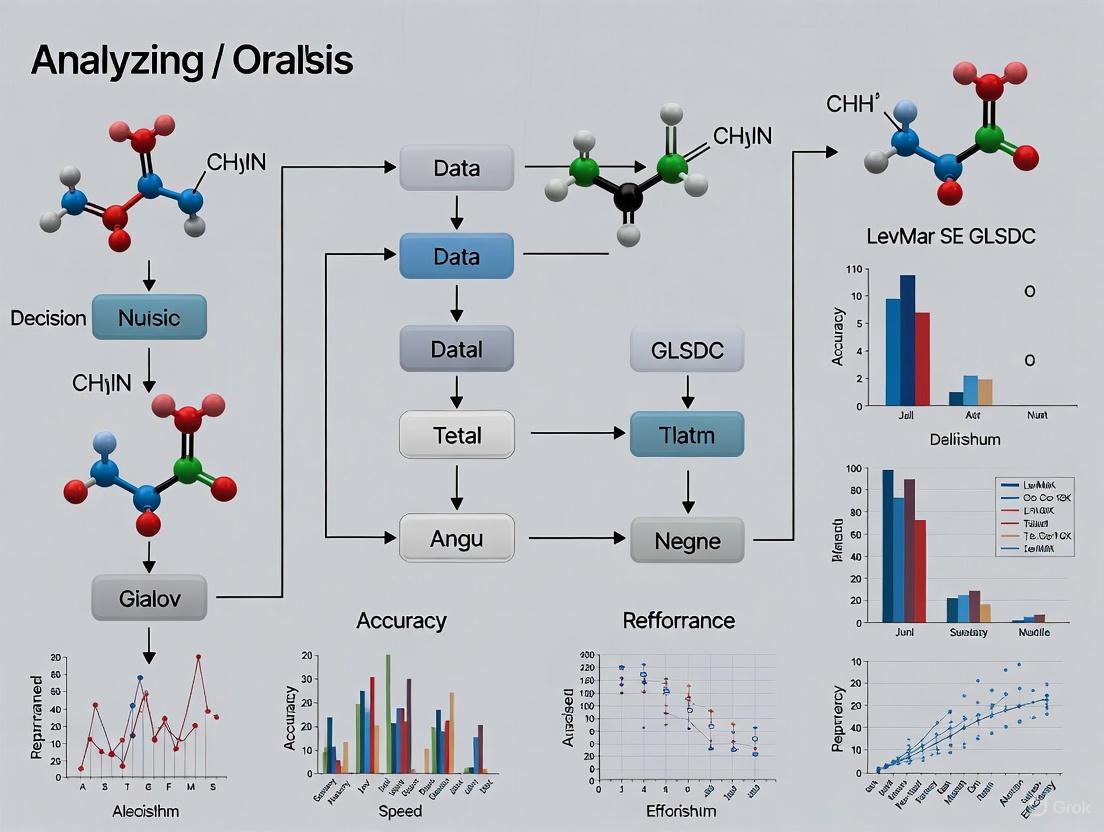

The following workflow diagram encapsulates the complete experimental procedure from problem setup to performance analysis.

The Scientist's Toolkit: Essential Research Reagents

Successful parameter estimation relies on a combination of software tools, benchmark models, and methodological standards. The following table details key resources for researchers in this field.

Table 2: Essential Research Reagents and Resources for Parameter Estimation

| Item Name | Type | Function & Purpose |

|---|---|---|

| PEPSSBI | Software Pipeline | The first software to fully support Data-driven Normalisation of Simulations (DNS), automating the construction of the objective function and enabling parallel parameter estimation runs [2]. |

| BioPreDyn-bench | Benchmark Suite | A collection of ready-to-run, medium to large-scale dynamic models (e.g., of E. coli, S. cerevisiae) used as standard reference problems to evaluate parameter estimation methods [3]. |

| Systems Biology Markup Language (SBML) | Standardized Format | A common XML-based format for representing computational models in systems biology, enabling model sharing and simulation across different software tools [2] [3]. |

| Multi-condition Experiments | Experimental Design | A set of experiments involving different perturbations (e.g., various ligands, doses) essential for constraining parameters and ensuring model identifiability [2]. |

| Sensitivity Equations (SE) | Computational Method | A technique for efficiently calculating gradients required by local optimizers like LevMar SE, often more accurate and faster than finite differences [1]. |

The performance analysis of LevMar SE versus GLSDC reveals that there is no universally superior algorithm; the optimal choice is highly dependent on the specific problem context. Based on the experimental evidence, we can derive the following conclusions and recommendations for researchers and drug development professionals [1] [4] [2]:

- For Models with a Limited Number of Parameters (e.g., ~10): LevMar SE with multiple restarts is an excellent choice, offering high speed and accuracy, particularly when using the DNS approach.

- For Large-Scale and Complex Models (e.g., Dozens to Hundreds of Parameters): The hybrid GLSDC algorithm combined with the DNS approach is the preferred strategy. It outperforms local methods by more effectively navigating the complex, multi-modal parameter space and avoiding local minima.

- Regarding Data-Simulation Alignment: The Data-driven Normalisation of Simulations (DNS) method should be strongly favored over Scaling Factors (SF). DNS reduces the dimensionality of the optimization problem, mitigates practical non-identifiability, and significantly accelerates convergence for all algorithms, especially as the number of observables and parameters grows.

In summary, while LevMar SE remains a powerful and efficient tool for smaller-scale parameter estimation problems, the combination of GLSDC and DNS emerges as the most robust and efficient framework for tackling the large-scale dynamic models increasingly common in modern systems biology and drug development.

Ordinary Differential Equations (ODEs) serve as a fundamental mathematical framework for modeling the dynamic behavior of cellular signaling pathways. These models capture the quantitative and temporal nature of how cells sense, process, and transmit information through molecular interactions. Mathematically, ODE models of signaling pathways are expressed as ( \frac{d}{dt}x = f(x,\theta) ), where ( x ) represents the state vector of molecular concentrations, ( \theta ) denotes kinetic parameters, and ( f(\cdot) ) describes the nonlinear function governing rate changes [7] [8]. This formulation allows researchers to simulate pathway behavior under different conditions, formalize biological understanding, identify inconsistencies, and generate testable hypotheses.

Signaling pathways comprise interconnected components that transduce extracellular signals into appropriate intracellular responses. Despite their functional diversity, many pathways share conserved building blocks including receptors, G proteins, kinase cascades (such as MAPK pathways), and small GTPases [9]. The interconnected nature of these pathways often leads to cross-talk, where components of one pathway influence another, creating complex networks that can exhibit emergent behaviors such as bistability, oscillations, and ultrasensitivity [10] [11]. ODE-based modeling helps unravel this complexity by providing a deterministic framework to simulate system dynamics over time.

Parameter estimation presents a significant challenge in developing accurate ODE models of signaling pathways. The complexity and nonlinearity of biological systems render this estimation mathematically difficult, with issues arising from both local minima in optimization landscapes and practical non-identifiability of parameters [7] [8]. This comparison guide examines two prominent optimization algorithms—LevMar SE and GLSDC—for addressing these challenges, evaluating their performance across different modeling scenarios and experimental conditions.

Optimization Algorithms for Parameter Estimation

Algorithm Specifications and Theoretical Foundations

LevMar SE (Levenberg-Marquardt with Sensitivity Equations) implements a gradient-based local optimization approach combined with Latin hypercube restarts [7] [8]. The algorithm computes gradients using sensitivity equations, which describe how solutions change with respect to parameters. This implementation represents LSQNONLIN SE, previously identified as a top-performing method in benchmarking studies [8]. The sensitivity equation approach provides computational efficiency for gradient calculation, particularly valuable for models with many parameters.

GLSDC (Genetic Local Search with Distance-independent Diversity Control) employs a hybrid stochastic-deterministic strategy that alternates between global search phases based on a genetic algorithm and local search phases utilizing Powell's derivative-free method [7] [8]. This combination enables effective exploration of complex parameter spaces while maintaining convergence properties. The algorithm does not require gradient computation, making it suitable for problems where sensitivity equations are difficult to derive or compute.

Table 1: Algorithm Characteristics and Theoretical Foundations

| Feature | LevMar SE | GLSDC |

|---|---|---|

| Optimization Strategy | Gradient-based local search with restarts | Hybrid stochastic-deterministic |

| Gradient Computation | Sensitivity Equations | Not required |

| Global Optimization | Limited (depends on restart strategy) | Excellent (genetic algorithm component) |

| Local Convergence | Fast (quadratic near minima) | Good (Powell's method) |

| Theoretical Basis | Damped Gauss-Newton method | Evolutionary algorithms + direct search |

Experimental Setup and Performance Metrics

Comprehensive algorithm evaluation employed three test problems with varying complexity [7] [8]:

- STYX-1-10: 1 observable, 10 unknown parameters

- EGF/HRG-8-10: 8 observables, 10 unknown parameters

- EGF/HRG-8-74: 8 observables, 74 unknown parameters

Experimental protocols assessed performance using two approaches for aligning simulated and experimental data [7] [8]:

- Scaling Factors (SF): Introduces unknown scaling parameters that multiply simulations to match experimental data scales

- Data-driven Normalization of Simulations (DNS): Normalizes simulations identically to experimental data processing

Performance metrics included convergence speed (computation time), success rates, parameter identifiability, and objective function minimization. Identifiability analysis quantified practical non-identifiability as the number of parameter space directions along which parameters could not be uniquely identified [7].

Figure 1: Parameter Estimation Workflow for ODE Models of Signaling Pathways

Performance Comparison: Experimental Data

Convergence Speed and Computational Efficiency

Table 2: Algorithm Performance Across Test Problems and Scaling Methods

| Test Problem | Algorithm | Scaling Method | Relative Computation Time | Convergence Success | Identifiability Impact |

|---|---|---|---|---|---|

| STYX-1-10 | LevMar SE | SF | 1.0 (reference) | High | Aggravated |

| STYX-1-10 | LevMar SE | DNS | 0.7 | High | Minimal |

| STYX-1-10 | GLSDC | SF | 1.8 | Medium | Aggravated |

| STYX-1-10 | GLSDC | DNS | 0.9 | High | Minimal |

| EGF/HRG-8-74 | LevMar SE | SF | 1.0 (reference) | Low | Severely Aggravated |

| EGF/HRG-8-74 | LevMar SE | DNS | 0.6 | Medium | Minimal |

| EGF/HRG-8-74 | GLSDC | SF | 1.5 | Low | Severely Aggravated |

| EGF/HRG-8-74 | GLSDC | DNS | 0.8 | High | Minimal |

Experimental results demonstrated that DNS markedly improved optimization speed for both algorithms, with particularly pronounced benefits for larger parameter estimation problems [7] [8]. For the most complex test case (EGF/HRG-8-74 with 74 parameters), DNS reduced computation time by 40% for LevMar SE and 20% for GLSDC compared to SF approaches. The advantage of DNS was especially notable for the non-gradient-based GLSDC algorithm, which showed performance improvements even for smaller parameter sets [7].

GLSDC outperformed LevMar SE on large-scale parameter estimation problems (74 parameters), achieving higher convergence success rates with reasonable computation times [7]. This performance advantage stems from GLSDC's hybrid strategy, which effectively combines global exploration of parameter space with efficient local refinement. For smaller problems (10 parameters), both algorithms achieved similar success rates, though LevMar SE maintained faster computation times when paired with DNS [7] [8].

Identifiability and Parameter Estimation Quality

A critical finding from comparative studies revealed that SF approaches significantly increased practical non-identifiability compared to DNS [7]. The scaling factor method introduced additional unknown parameters and created dependencies between scaling factors and kinetic parameters, resulting in multiple parameter combinations producing equally good fits to experimental data. This identifiability problem became progressively severe as model complexity increased.

DNS substantially alleviated non-identifiability issues by eliminating the need for additional scaling parameters and properly normalizing simulations to match experimental data processing [7] [8]. This approach allowed both algorithms to more reliably identify biologically meaningful parameter values, with GLSDC demonstrating particular robustness in handling non-identifiable parameter spaces through its global search capabilities.

Figure 2: Impact of Data Scaling Methods on Parameter Estimation

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Resources for ODE Modeling of Signaling Pathways

| Resource Category | Specific Tools | Function in Research |

|---|---|---|

| Modeling Software | PEPSSBI [7] | Supports data-driven normalization of simulations (DNS) |

| Optimization Algorithms | LevMar SE [7] [8] | Gradient-based parameter estimation with sensitivity equations |

| Optimization Algorithms | GLSDC [7] [8] | Hybrid stochastic-deterministic global optimization |

| Model Exchange | SBML [9] | Standardized format for model representation and sharing |

| Model Repositories | BioModels, JWS Online, DOQCS [9] | Curated collections of published models |

| Experimental Techniques | Western blotting, Multiplexed Elisa, Proteomics [8] | Generate quantitative data for parameter estimation |

Implementation Guidelines and Best Practices

Algorithm Selection Framework

Choosing between LevMar SE and GLSDC depends on specific modeling characteristics. LevMar SE excels for medium-scale problems (10-30 parameters) with good initial parameter estimates and models where sensitivity equations can be efficiently computed [7] [8]. The algorithm provides fast convergence when started near optimal parameter values and benefits significantly from DNS approaches.

GLSDC proves superior for large-scale problems (50+ parameters), poorly characterized systems with limited prior knowledge, and models with substantial non-identifiability issues [7]. Its hybrid nature provides robustness against local minima, making it particularly valuable for novel pathway modeling where parameter landscapes are poorly understood.

Data Normalization Protocol

Implement DNS by applying identical normalization procedures to both experimental data and model simulations [7] [8]:

- Identify reference points used in experimental data normalization (e.g., maximum values, control conditions, timepoint averages)

- Normalize experimental data using established biological protocols

- Apply identical normalization to simulated trajectories during objective function calculation

- Avoid introducing scaling factors unless absolutely necessary for unit conversion

This protocol eliminates unnecessary parameters, reduces non-identifiability, and accelerates convergence for both optimization algorithms [7].

Experimental Design Recommendations

Effective parameter estimation requires data that sufficiently constrains possible parameter values [7] [10]. Recommended practices include:

- Measure multiple observables simultaneously to increase parameter identifiability

- Implement multiple perturbations (genetic, environmental, pharmacological) to probe different aspects of pathway regulation

- Collect high-resolution time-courses to capture system dynamics

- Include experimental controls appropriate for data normalization procedures

- Design experiments to specifically probe known non-identifiable parameter combinations

Performance comparison between LevMar SE and GLSDC reveals a complex landscape where algorithm superiority depends on problem context. For models of moderate complexity with good initial parameter estimates, LevMar SE with DNS provides computationally efficient parameter estimation. As models increase in size and complexity, GLSDC with DNS emerges as the preferred approach, offering robust performance despite computational overhead.

The critical importance of data scaling methods transcends algorithm choice, with DNS consistently outperforming SF approaches across all test scenarios [7] [8]. Future methodological development should focus on hybrid approaches that combine the global search capabilities of GLSDC with the gradient computation efficiency of LevMar SE, along with continued refinement of identifiability analysis techniques.

ODE modeling of signaling pathways will continue to benefit from integration with emerging experimental techniques providing richer, more quantitative data. As single-cell measurements and live-cell imaging advance, parameter estimation methods must adapt to handle increasingly complex models while providing uncertainty quantification and identifiability assessment. The combination of appropriate optimization algorithms with careful data handling practices will remain essential for extracting biological insight from mathematical models of cellular signaling.

In the field of systems biology, the development of predictive mathematical models relies on the accurate estimation of unknown parameters from experimental data. This parameter estimation problem is an optimization process where an objective function, quantifying the discrepancy between model simulations and experimental data, is minimized [2]. The choice of optimization algorithm and the method for aligning model simulations with data are critical decisions that directly impact the success of this process. This guide provides a performance comparison between two such algorithms—LevMar SE (a gradient-based local algorithm) and GLSDC (a hybrid stochastic-deterministic global algorithm)—in the context of different objective function formulations, with a particular focus on the challenge of practical non-identifiability [1] [4].

Experimental Setup and Performance Metrics

To objectively compare the performance of LevMar SE and GLSDC, we draw on a systematic study that evaluated these algorithms using three test-bed models of increasing complexity from systems biology [1] [4]. The core of the comparison lies in how each algorithm handles two different approaches for matching model outputs to experimental data.

- Scaling Factor (SF) Approach: This common method introduces an additional unknown parameter (a scaling factor) for each model observable. The simulated data is multiplied by this factor to align it with the scale of the measured data [1] [2].

- Data-Driven Normalisation of Simulations (DNS) Approach: This method avoids extra parameters by normalizing the model simulations in the exact same way that the experimental data were normalized (e.g., by a control data point or the average response) [1] [2].

The algorithms were tested on problems with varying numbers of unknown parameters (10 and 74) [1]. Performance was measured primarily by the convergence time (computation time required to find an optimal solution) and the analysis of practical non-identifiability (the number of directions in parameter space for which parameters cannot be uniquely determined) [1].

Performance Comparison: Convergence and Identifiability

The following tables summarize the key quantitative findings from the comparative analysis.

Table 1: Algorithm Performance Across Problem Sizes and Normalisation Methods

| Problem Size (Parameters) | Algorithm | Normalisation Method | Convergence Speed | Practical Non-Identifiability |

|---|---|---|---|---|

| Relatively Small (10) | LevMar SE | SF | Fastest for small problems [1] | Higher [1] |

| LevMar SE | DNS | Fast | Lower [1] | |

| GLSDC | SF | Slow | Higher [1] | |

| GLSDC | DNS | Marked improvement over SF [1] | Lower [1] | |

| Relatively Large (74) | LevMar SE | SF | Slower | Higher [1] |

| LevMar SE | DNS | Improved speed vs. SF [1] | Lower [1] | |

| GLSDC | SF | Slow | Higher [1] | |

| GLSDC | DNS | Best performing; outperformed LevMar SE [1] | Lower [1] |

Table 2: Summary of Key Findings and Recommendations

| Aspect | Finding | Recommendation |

|---|---|---|

| Overall Best Performer | For large parameter numbers (74), GLSDC with DNS performed better than LevMar SE [1]. | Use GLSDC with DNS for complex problems with many parameters. |

| Impact of DNS | DNS improves convergence speed for all algorithms with large parameter numbers and reduces practical non-identifiability compared to SF [1] [2]. | Prefer DNS over SF, especially as model complexity grows. |

| Gradient Computation | Assessing convergence by counting function evaluations is inappropriate for algorithms using sensitivity equations (SE); computation time is a more accurate metric [1]. | Use wall-clock time for performance comparisons involving gradient-based methods. |

| Algorithm Strategy | Hybrid stochastic-deterministic methods (GLSDC) can outperform local gradient-based methods with restarts (LevMar SE) for complex problems [1]. | Consider hybrid algorithms for problems suspected to have multiple local minima. |

Detailed Experimental Protocols

Optimisation Algorithms and Implementation

- LevMar SE: This is an implementation of the Levenberg-Marquardt nonlinear least squares algorithm. It is a local gradient-based method that uses sensitivity equations (SE) to compute the gradient of the objective function efficiently [1]. The algorithm was used with multiple restarts from points chosen by Latin hypercube sampling to better explore the parameter space [1].

- GLSDC (Genetic Local Search with Distance independent Diversity Control): This is a hybrid stochastic-deterministic global algorithm. It alternates between a global search phase, which uses a genetic algorithm to explore the parameter space and escape local minima, and a local search phase, which uses Powell's derivative-free method to refine solutions [1] [6].

Test-Bed Models and Objective Functions

The study used three established models of intracellular signalling pathways [1]:

- STYX-1-10: A model with 1 observable and 10 unknown parameters.

- EGF/HRG-8-10: A model with 8 observables and 10 unknown parameters.

- EGF/HRG-8-74: A model with 8 observables and 74 unknown parameters. For each model, the parameter estimation was formulated as an optimization problem using both least-squares (LS) and log-likelihood (LL) objective functions. The core of the objective function involved calculating the sum of squared errors or the log-likelihood between the normalized experimental data and the correspondingly normalized model simulations [1].

Workflow for Parameter Estimation with DNS and SF

The following diagram illustrates the logical workflow for a single parameter estimation run, highlighting the critical difference between the SF and DNS approaches.

Assessing Practical Non-Identifiability

To evaluate whether the estimated parameters were practically identifiable (i.e., a unique optimal value could be found), the study employed an ensemble approach [2]. The parameter estimation was run hundreds of times from different starting points, generating an ensemble of optimal parameter sets. Parameters that showed large variations across these sets, forming flat ridges or valleys in the objective function landscape, were deemed practically non-identifiable. The study found that the SF approach increased the number of such non-identifiable directions compared to the DNS approach [1].

The Scientist's Toolkit: Essential Research Reagents and Software

Table 3: Key Software and Methodological Tools

| Item | Function in Performance Analysis |

|---|---|

| PEPSSBI (Parameter Estimation Pipeline for Systems and Synthetic Biology) | Software that provides full support for DNS, which is critical for the performance gains observed with complex models [1] [2]. |

| SBML (Systems Biology Markup Language) | A standard file format for sharing and exchanging computational models of biological processes, used by PEPSSBI and other tools [2]. |

| Multi-Condestion Experimental Data | High-resolution time-course data under multiple perturbations (e.g., different ligands, doses) essential for constraining complex models and testing algorithm performance under realistic conditions [1] [2]. |

| Sensitivity Equations (SE) | A method for efficiently computing the gradient of the objective function, used by LevMar SE. More efficient than finite differences (FD) but requires measuring performance via computation time, not just function evaluations [1]. |

| Least-Squares (LS) & Log-Likelihood (LL) | The two primary types of objective functions compared in the underlying study for formulating the error between model and data [1]. |

The comparative analysis demonstrates that there is no single "best" algorithm for all parameter estimation problems in systems biology. For models with a relatively small number of parameters, LevMar SE is an efficient and fast choice. However, as model complexity and the number of unknown parameters grow, the GLSDC algorithm, especially when combined with Data-Driven Normalisation of Simulations (DNS), emerges as the superior option. It not only achieves faster convergence for large problems but also, in conjunction with DNS, mitigates the issue of practical non-identifiability that plagues the more traditional Scaling Factor approach. This combination provides a robust and effective framework for building predictive models from relative biological data.

In the field of systems biology and drug development, mathematical modeling of biological processes relies heavily on parameter estimation to create accurate, predictive simulations of intracellular signaling pathways. This process involves determining unknown model parameters by minimizing the discrepancy between experimental data and model simulations, a fundamental optimization problem. Two distinct algorithmic philosophies dominate this space: gradient-based optimization and hybrid stochastic-deterministic approaches. The choice between these methodologies significantly impacts the efficiency, accuracy, and practical feasibility of constructing biological models, particularly as models increase in complexity and scale. Within this context, this guide provides a performance analysis focusing on two specific implementations: the gradient-based Levenberg-Marquardt with Sensitivity Equations (LevMar SE) and the hybrid Genetic Local Search with Distance Independent Diversity Control (GLSDC) [1] [4].

Gradient-based algorithms, such as LevMar SE, utilize calculus-based principles to iteratively move parameter estimates in the direction of steepest descent of the objective function. These methods rely on precise gradient information—often computed via sensitivity equations or finite differences—to efficiently locate local minima [12] [13]. In contrast, hybrid stochastic-deterministic algorithms like GLSDC combine global exploration and local refinement. They employ stochastic strategies (e.g., genetic algorithms) to broadly explore the parameter space and avoid local traps, coupled with deterministic local search methods (e.g., Powell's method) to fine-tune solutions once a promising region is identified [1] [14]. The performance and applicability of these core philosophies vary dramatically based on problem dimensionality, data normalization techniques, and biological context.

Core Algorithmic Mechanisms and Workflows

The Gradient-Based Approach: LevMar SE

Gradient-based optimization algorithms operate on the principle of iterative descent. At each iteration, the algorithm computes the gradient of the objective function with respect to the parameters, which points in the direction of the steepest ascent. The parameters are then updated in the opposite direction—the steepest descent—to reduce the objective function value [12] [13]. The Levenberg-Marquardt algorithm is a sophisticated variant that interpolates between the Gauss-Newton method and gradient descent, adapting its behavior based on the local landscape [1] [4].

When enhanced with Sensitivity Equations (LevMar SE), the gradient computation becomes highly efficient. Sensitivity equations are auxiliary differential equations that describe how the model outputs change with respect to parameters. Their use provides exact gradient information, which is more accurate and computationally efficient for large parameter sets than finite-difference approximations, especially when the number of parameters is large [1]. A typical workflow for parameter estimation in systems biology using LevMar SE involves several key stages, as shown in the diagram below.

The Hybrid Stochastic-Deterministic Approach: GLSDC

Hybrid stochastic-deterministic algorithms are designed to overcome the fundamental limitation of purely local gradient-based methods: their susceptibility to becoming trapped in local minima. This is particularly valuable in systems biology, where objective function landscapes are often non-convex and riddled with local minima due to model non-linearity and noisy experimental data [1] [14].

GLSDC, the specific hybrid algorithm examined here, operates through a two-phase cyclic process. The stochastic global phase uses a genetic algorithm to maintain and evolve a population of parameter sets. This exploration is guided by principles of selection, crossover, and mutation, which allows the algorithm to jump across different regions of the parameter space and avoid premature convergence. This phase is followed by a deterministic local phase, which employs a direct search method like Powell's conjugate gradient method to intensively exploit promising areas located by the global search [1]. This combination leverages the complementary strengths of both strategies, as visualized in the following workflow.

Performance Comparison: Experimental Data and Analysis

Direct experimental comparisons between LevMar SE and GLSDC reveal that their relative performance is not absolute but is highly dependent on the problem context, particularly the number of unknown parameters and the chosen data normalization method [1] [4]. Key studies have evaluated these algorithms using test-bed problems from systems biology, such as the "STYX-1-10" (10 parameters) and "EGF/HRG-8-74" (74 parameters) models [1].

Convergence Speed and Computational Efficiency

The convergence speed of an optimization algorithm is a critical metric, especially for complex biological models that can be computationally expensive to simulate. Performance varies significantly with problem size.

Table 1: Algorithm Convergence Performance vs. Problem Dimensionality [1] [4]

| Algorithm | Algorithm Type | Performance on Small Problem (10 params) | Performance on Large Problem (74 params) | Key Dependencies |

|---|---|---|---|---|

| LevMar SE | Gradient-based (Local) | Fastest convergence, high accuracy | Slower convergence; performance degrades with increasing parameters | Requires accurate gradients; sensitive to initial guesses |

| LevMar FD | Gradient-based (Local) | Slower than LevMar SE due to approximate gradients | Computationally expensive; less practical for high dimensions | Gradient accuracy affects speed and stability |

| GLSDC | Hybrid Stochastic-Deterministic | Good performance, but can be slower than LevMar SE | Superior convergence speed and reliability for large parameter spaces | Benefits greatly from DNS; robust to initial conditions |

A crucial finding from recent research is that for gradient-based algorithms using Sensitivity Equations, the traditional measure of "number of function evaluations" is an insufficient metric for assessing convergence speed. Because calculating gradients via SEs is computationally expensive per evaluation, the total computation time is a more realistic and meaningful measure of efficiency. In this light, the hybrid GLSDC can outperform LevMar SE on large problems because its reduction in total required iterations more than compensates for its potentially higher cost per iteration [1].

Robustness and Identifiability

A paramount challenge in parameter estimation is non-identifiability, where multiple distinct parameter sets fit the experimental data equally well, making it impossible to determine a unique solution. The choice of optimization strategy and data scaling approach significantly impacts this issue.

Table 2: Robustness and Identifiability Analysis [1] [4] [2]

| Algorithm | Resilience to Local Minima | Impact on Parameter Identifiability | Stability of Convergence |

|---|---|---|---|

| LevMar SE | Low; a local search method that can get trapped in local minima. Requires multiple restarts. | Aggravates practical non-identifiability when used with Scaling Factors (SF). | Stable, predictable convergence path when started near a minimum. |

| GLSDC | High; the stochastic global phase allows it to escape local minima effectively. | Lower degree of practical non-identifiability, especially when combined with DNS. | Less predictable path but higher probability of finding a global optimum. |

The connection between algorithm choice and identifiability is often mediated by the method used to scale model simulations to experimental data. The Scaling Factor (SF) approach introduces new unknown parameters (the scaling factors themselves), which increases the problem's dimensionality and can worsen non-identifiability [1] [2]. In contrast, the Data-driven Normalisation of Simulations (DNS) approach normalizes the model output in the same way the experimental data is normalized, avoiding extra parameters. Research shows that using DNS markedly improves the performance of all algorithms, but the improvement is particularly pronounced for GLSDC, making it the preferred combination for large-scale problems [1] [4] [2].

Experimental Protocols and Methodologies

To ensure the reproducibility of the comparative results between LevMar SE and GLSDC, it is essential to understand the standard experimental protocols used in such benchmarks.

Parameter Estimation Workflow for Systems Biology Models

A rigorous parameter estimation experiment typically follows this protocol [1] [4] [2]:

- Model Formulation: The biological system is formalized as a set of Ordinary Differential Equations (ODEs). The model structure, including states (e.g., protein concentrations) and parameters (e.g., kinetic rates), is defined using standards like SBML.

- Experimental Data Collection: Time-course data for model observables (e.g., phosphorylated protein levels) are collected via techniques like Western blotting or ELISA. These data are typically in relative, arbitrary units and are organized into multi-condition experiments (e.g., different ligand doses).

- Data Normalization: Raw data are normalized to make biological replicates comparable. Common methods include normalizing to a control time point or the average value of a replicate.

- Objective Function Definition: The cost function quantifying the mismatch between data and simulations is defined. Least-squares and log-likelihood functions are most common. At this stage, the scaling method (SF or DNS) is implemented within the objective function.

- Optimization Execution: The optimization algorithm (e.g., LevMar SE or GLSDC) is executed, often with multiple random starts or population initializations to probe the global optimality of the solution.

- Identifiability and Validation Analysis: The resulting parameter estimates are analyzed for practical identifiability (e.g., via profile likelihoods or parameter ensembles). The model's predictive power is then tested on validation data not used during estimation.

Key Reagents and Computational Tools

The following toolkit is essential for conducting research in this field.

Table 3: The Scientist's Toolkit for Parameter Estimation Research

| Tool/Reagent | Type | Primary Function | Relevance to Algorithm Comparison |

|---|---|---|---|

| PEPSSBI | Software Pipeline | Supports Data-driven Normalisation of Simulations (DNS) and parallel parameter estimation. | Key for implementing DNS, which is critical for GLSDC performance [2]. |

| SBML (Systems Biology Markup Language) | Data Standard | Represents mathematical models of biological systems in a standardized XML format. | Ensures models are portable and can be run consistently across different software tools [2]. |

| COPASI, Data2Dynamics, PottersWheel | Software Tools | Provide environments for model simulation, parameter estimation, and analysis. | Often used as benchmarks; they traditionally support SF but not DNS, highlighting a software gap filled by PEPSSBI [1] [2]. |

| Multi-condition Experimental Data | Biological Reagent | Data from perturbation experiments (e.g., ligand doses, inhibitors). | Essential for estimating global parameters and ensuring model reliability. Used to stress-test algorithm performance [1] [4]. |

| High-Performance Computing (HPC) Cluster | Computational Resource | Provides massive parallel processing capabilities. | Necessary for running the many parallel optimizations required for robust benchmarking and for handling large-scale models [2]. |

The comparative analysis between gradient-based and hybrid stochastic-deterministic algorithms demonstrates that there is no universally superior choice. Instead, the optimal selection depends on the specific characteristics of the parameter estimation problem at hand.

For problems with a relatively small number of unknown parameters (e.g., ~10) and a good initial parameter estimate, the gradient-based LevMar SE algorithm is likely the most efficient choice. Its use of sensitivity equations enables rapid and precise convergence to a local minimum [1]. However, for larger-scale problems (e.g., tens to hundreds of parameters) or problems where the objective function landscape is suspected to be complex with multiple local minima, the hybrid GLSDC algorithm is demonstrably superior. Its ability to globally explore the parameter space, combined with the efficiency of DNS, allows it to find optimal solutions in a reasonable time where local methods struggle [1] [4].

A critical, overarching recommendation is to adopt the Data-driven Normalisation of Simulations (DNS) approach whenever possible. Regardless of the chosen algorithm, DNS reduces problem dimensionality, mitigates practical non-identifiability, and significantly accelerates convergence, with performance gains being most dramatic for hybrid methods like GLSDC applied to large models [1] [2]. As systems biology models continue to grow in size and complexity, embracing robust hybrid algorithms coupled with efficient data normalization strategies will be paramount for generating accurate, predictive models in drug development and biological research.

Implementing LevMar SE and GLSDC: A Practical Guide for Biomedical Research

In the fields of computational science and engineering, efficiently solving non-linear inverse problems and parameter estimation tasks is paramount. Within a broader thesis on performance analysis, this guide objectively compares two prominent algorithms for these challenges: the Levenberg-Marquardt algorithm with Sensitivity Equations (LevMar SE) and the Gaussian Least Squares Differential Correction (GLSDC) algorithm. The Levenberg-Marquardt (LM) algorithm has long been a cornerstone for solving non-linear least squares problems, acting as a robust hybrid between the Gauss-Newton method and gradient descent [15]. Its enhancement with Sensitivity Equations (LevMar SE) represents a specialized advancement for handling complex, coupled systems. Conversely, the GLSDC algorithm offers a distinct batch-processing approach for parameter identification in noisy environments [6]. This guide provides a comparative analysis of their performance, supported by experimental data and detailed methodologies, to inform researchers and professionals in their selection process.

Algorithmic Fundamentals: A Theoretical Breakdown

Levenberg-Marquardt with Sensitivity Equations (LevMar SE)

The core Levenberg-Marquardt algorithm solves non-linear least squares problems by iteratively minimizing the sum of squared residuals. For a function ( F(x) = \frac{1}{2}\sumi \|fi(x)\|^2 ), it iterates by solving a damped linear approximation [15]: [ (J^T J + \mu I) \Delta x = -J^T F(x) ] where ( J ) is the Jacobian matrix, ( \mu ) is the damping parameter, and ( \Delta x ) is the step update.

The Sensitivity Equations enhancement provides an efficient method to compute the Jacobian ( J ) or the required sensitivity matrices, which describe how the solution changes with respect to parameters. This is achieved through complex variable derivative methods (CVDM) or by solving auxiliary differential equations [16]. The CVDM approach, for instance, allows for highly accurate calculation of sensitivity matrices independent of step size, overcoming a critical limitation of finite-difference methods [16]. This makes LevMar SE particularly effective for dynamic coupled thermoelasticity problems and other systems where governing equations are known but boundary conditions and physical properties must be identified inversely.

Gaussian Least Squares Differential Correction (GLSDC)

The GLSDC algorithm is a batch estimation technique designed for parameter identification where the underlying model is a correct representation of state dynamics, and outputs are measured in a noisy environment [6]. It operates by estimating unknown parameters that constitute the coefficients of non-linear state and input signal terms. The algorithm uses batch input and output signals to iteratively estimate the parameter set and recover filtered state trajectories. A key characteristic is its simultaneous estimation of initial state values alongside unknown coefficients, whose bounds are typically known in industrial applications [6]. This method is particularly valuable for Permanent Magnet Synchronous Motor (PMSM) modeling and similar applications where real-time operation must be guaranteed and system health must be monitored.

Performance Comparison: Quantitative Analysis

The table below summarizes key performance characteristics based on experimental results from the literature.

Table 1: Comparative Performance of LevMar SE and GLSDC Algorithms

| Performance Metric | LevMar SE with CVDM | GLSDC Algorithm |

|---|---|---|

| Primary Application Domain | Inverse dynamic coupled thermoelasticity problems [16] | Permanent Magnet Synchronous Motor (PMSM) parameter estimation [6] |

| Stability | Good stability and robustness, even with measurement errors [16] | Performance depends on initial estimate quality; methods suggested to shorten convergence [6] |

| Accuracy | High accuracy in identifying thermal-mechanical properties and loadings [16] | Accurate parameter estimation in noisy environments [6] |

| Sensitivity to Guess Values | Analyzed; method demonstrates robustness [16] | Requires correct initial state value; also estimates this value [6] |

| Sensitivity to Measurement Errors | Analyzed; method demonstrates robustness [16] | Designed for noisy measurements; uses batch processing to improve accuracy [6] |

Experimental Protocols: Methodologies for Algorithm Evaluation

Protocol for LevMar SE: Inverse Thermoelasticity Problem

This protocol is derived from research on identifying thermal-mechanical loading and material properties [16].

- Problem Setup: A plate with four circular holes is subjected to unknown thermal and mechanical loadings on its left side. The goal is to identify these loadings and material properties simultaneously.

- Sensor Placement: Displacement and temperature sensors are installed at specific points within the structure's interior.

- Direct Problem Solver: The Element Differential Method (EDM) is used to solve the forward dynamic coupled thermoelasticity problem, considering the full coupling between temperature and displacement fields.

- Sensitivity Calculation: The Complex Variable Derivative Method (CVDM) is employed to compute the sensitivity matrix with high accuracy, independent of step size.

- Inversion Process: The Levenberg-Marquardt algorithm iteratively minimizes the difference between sensor measurements and model predictions. The complex variable EDM element provides the required sensitivity information at each step.

- Validation: The identified thermal-mechanical loadings and properties are validated against the known exact values in the numerical experiment. The influence of guess values and measurement errors is analyzed.

Protocol for GLSDC: PMSM Parameter Estimation

This protocol outlines the method for identifying parameters of a Permanent Magnet Synchronous Motor model [6].

- Data Collection: Noisy input and output signals from the PMSM are collected in a batch.

- Model Assumption: A non-linear model is assumed to be the correct representation of the underlying state dynamics.

- Parameter Initialization: Initial guesses for the unknown parameters are defined, with their bounds assumed to be known from industrial specifications.

- State Trajectory Reconstruction: The algorithm uses the batch data to iteratively estimate the parameter set and simultaneously recover the filtered state trajectories.

- Convergence Check: The estimation process continues until the parameter estimates converge to stable values. Techniques may be applied to compute better initial estimates and shorten convergence time.

- Validation: The estimated parameters are used in the model, and the output is compared against the real system behavior to assess estimation accuracy.

Workflow Visualization: Algorithm Structures

The following diagrams illustrate the logical workflows and core structures of the two algorithms, highlighting their distinct approaches to parameter estimation.

The Scientist's Toolkit: Essential Research Reagents

This table details key computational components and their functions in implementing and analyzing the LevMar SE and GLSDC algorithms, serving as essential "research reagents" for experimental work in this field.

Table 2: Key Research Reagent Solutions for Algorithm Implementation

| Research Reagent | Function & Purpose | Algorithm Context |

|---|---|---|

| Complex Variable Derivative Method (CVDM) | Calculates sensitivity matrices with high accuracy, independent of numerical step size, avoiding finite-difference errors [16]. | LevMar SE |

| Element Differential Method (EDM) | Solves the direct problem of dynamic coupled thermoelasticity; provides the foundation for the inverse solution [16]. | LevMar SE |

| Variable Projection (VP) Algorithm | Separates linear and nonlinear parameters in separable least squares problems, reducing the problem's dimensionality [17]. | LevMar SE |

| Truncated SVD (TSVD) / Modified SVD (MSVD) | Regularizes ill-conditioned linear systems that arise in parameter estimation, improving solution stability and reducing mean square error [17]. | LevMar SE |

| Batch Signal Processor | Processes a set of input/output measurements simultaneously to improve parameter estimation accuracy in noisy conditions [6]. | GLSDC |

| State Initialization Estimator | Provides an initial estimate for the system's state vector, which is critical for the convergence of the GLSDC algorithm [6]. | GLSDC |

Discussion and Concluding Remarks

The experimental data and theoretical analysis demonstrate that both LevMar SE and GLSDC are powerful algorithms for parameter estimation, yet they are suited to different problem domains. The LevMar SE algorithm, particularly when enhanced with Complex Variable Derivative Methods, shows exceptional performance in coupled multi-physics problems like inverse thermoelasticity. Its primary strengths lie in its high accuracy, good stability, and robustness against measurement noise and suboptimal guess values [16]. Furthermore, recent research has developed accelerated versions of the LM method that provide theoretical guarantees like oracle complexity bounds and local quadratic convergence under certain conditions [18].

The GLSDC algorithm excels in dynamic system identification where a reliable model structure exists and operations must be maintained under continuous, noisy monitoring conditions, such as in electric motor control and fault detection [6]. Its batch-processing nature allows it to effectively filter noise and produce accurate parameter estimates.

The selection between LevMar SE and GLSDC should be guided by the specific problem context. For inverse problems involving coupled physical fields with known governing equations, LevMar SE with sensitivity equations is the more specialized and effective tool. For traditional dynamic system identification and parameter estimation in control systems, GLSDC presents a robust and systematic approach. Future work in this performance analysis thesis will involve direct numerical comparisons on a common benchmark problem to provide more definitive guidance for researchers and industrial practitioners.

Parameter estimation is a critical step in building quantitative, predictive models of complex biological systems, such as intracellular signalling pathways. This process involves calibrating mathematical models, often based on ordinary differential equations (ODEs), to experimental data by finding the set of unknown parameters that minimize the discrepancy between model simulations and observations [2]. The non-linear nature of these models makes parameter estimation a challenging optimization problem, prone to local minima and parameter non-identifiability [1] [4].

Within this field, the choice of optimization algorithm significantly impacts the success of model development. This guide provides a performance analysis of two prominent algorithms: LevMar SE (Levenberg-Marquardt with Sensitivity Equations) and GLSDC (Genetic Local Search algorithm with Distance-independent Diversity Control). LevMar SE represents a class of fast, gradient-based local optimization methods, while GLSDC is a hybrid stochastic-deterministic algorithm that combines a global genetic algorithm search with a local refinement using Powell's derivative-free method [1] [4] [19]. The core thesis of this research is that while LevMar SE is highly efficient for many problems, the hybrid architecture of GLSDC provides superior performance and reliability for large-scale, complex parameter estimation problems, especially when combined with a data-driven normalization strategy.

Algorithm Fundamentals and Architecture

Levenberg-Marquardt with Sensitivity Equations (LevMar SE)

The Levenberg-Marquardt (LM) algorithm is an iterative non-linear least-squares solver that interpolates between the Gauss-Newton algorithm and gradient descent [5] [20]. It is used to solve problems of the form ( \min{\beta} \sum{i=1}^{m} [yi - f(xi, \beta)]^2 ), where ( \beta ) is the parameter vector and ( f ) is the non-linear model.

- Core Mechanism: At each iteration, the parameter update step ( \delta ) is computed by solving the damped normal equations: ( (\mathbf{J}^T \mathbf{J} + \lambda \mathbf{I}) \delta = \mathbf{J}^T [\mathbf{y} - \mathbf{f}(\beta)] ), where ( \mathbf{J} ) is the Jacobian matrix and ( \lambda ) is the damping parameter [5] [20].

- Sensitivity Equations (SE): The LevMar SE variant computes the required Jacobian efficiently using sensitivity equations, which are differential equations that describe how the model solution changes with respect to its parameters [1] [4]. This is more accurate and computationally efficient than estimating the Jacobian via finite differences (FD), especially for ODE models.

- Strengths: Known for fast (quadratic) convergence near a minimum and high efficiency for well-behaved functions with reasonable initial guesses [1] [20].

Genetic Local Search with Diversity Control (GLSDC)

GLSDC is a hybrid algorithm designed to tackle complex, multi-modal optimization problems where the risk of converging to local minima is high.

- Global Phase - Genetic Algorithm (GA): This phase operates on a population of candidate parameter sets. It uses selection, crossover, and mutation operators to explore the parameter space broadly. Its stochastic nature helps escape local minima [19].

- Local Phase - Powell's Method: The genetic algorithm is hybridized with Powell's conjugate direction method, a local, derivative-free search algorithm [1] [21]. Powell's method minimizes the function by performing a series of bi-directional line searches along a set of conjugate directions, which is efficient and does not require gradient calculations [21].

- Hybrid Workflow: The algorithm alternates between a global search phase (GA) to explore the parameter space and a local search phase (Powell's method) to refine promising solutions [1]. This combines the global robustness of a stochastic algorithm with the fast local convergence of a deterministic one.

Diagram 1: High-level workflow of the GLSDC algorithm, showing the alternation between its global and local search phases.

Experimental Performance Comparison

A systematic study compared LevMar SE, LevMar FD (Finite Differences), and GLSDC on three test-bed problems from systems biology with different complexities: STYX-1-10, EGF/HRG-8-10, and EGF/HRG-8-74 (the number suffix indicates the number of unknown parameters) [1] [4]. A critical factor in this comparison was the method used to align model simulations (e.g., in nM concentrations) with experimental data (e.g., in arbitrary units).

- Scaling Factor (SF) Approach: Introduces an additional unknown parameter (a scaling factor) for each observable to multiply the simulations and match the data scale.

- Data-Driven Normalization of Simulations (DNS) Approach: Normalizes the model simulations in the same way the experimental data were normalized (e.g., by a control or maximum value), avoiding extra parameters [1] [2].

The following tables summarize the key experimental findings.

Table 1: Performance Comparison on Test Problems (10 Parameters)

| Algorithm | Normalization Method | Convergence Speed | Practical Non-Identifiability |

|---|---|---|---|

| LevMar SE | Scaling Factor (SF) | Fastest for this problem size [1] | Higher [1] |

| LevMar SE | Data-Driven (DNS) | Fast | Lower [1] |

| GLSDC | Scaling Factor (SF) | Slow | Higher [1] |

| GLSDC | Data-Driven (DNS) | Markedly Improved [1] | Lower [1] |

Note: For the smaller 10-parameter problem, LevMar SE generally showed the fastest convergence. However, using DNS already provided a significant performance boost for GLSDC, even at this scale [1].

Table 2: Performance Comparison on Large Test Problem (74 Parameters)

| Algorithm | Normalization Method | Convergence Speed | Practical Non-Identifiability |

|---|---|---|---|

| LevMar SE | Scaling Factor (SF) | Slower | High [1] |

| LevMar SE | Data-Driven (DNS) | Improved | Medium [1] |

| GLSDC | Scaling Factor (SF) | Slow | High [1] |

| GLSDC | Data-Driven (DNS) | Best Performance [1] | Lower [1] |

Note: For the large 74-parameter problem, GLSDC combined with DNS outperformed LevMar SE in terms of convergence speed. The DNS approach also consistently reduced practical non-identifiability for all algorithms [1] [4].

Detailed Experimental Protocols

To ensure reproducibility and provide a clear framework for benchmarking, this section outlines the key methodological components of the cited studies.

Optimization Algorithms and Objective Functions

The compared algorithms were implemented as follows [1] [4]:

- LevMar SE: A local gradient-based optimizer using the Levenberg-Marquardt algorithm. Gradients were computed via sensitivity equations, and the algorithm was restarted multiple times from randomly sampled initial parameters (Latin Hypercube sampling) to mitigate local minima.

- LevMar FD: Identical to LevMar SE, except gradients were approximated using finite differences, providing a baseline to judge the efficiency gain from sensitivity equations.

- GLSDC: A hybrid algorithm alternating a global search (Genetic Algorithm) with a local search (Powell's conjugate direction method). This combination aims to balance broad exploration and efficient local refinement.

The optimization minimized one of two objective functions:

- Least Squares (LS): ( S(\theta) = \sum [\tilde{y}i - yi(\theta)]^2 ), focusing on the raw sum of squared errors.

- Log-Likelihood (LL): A probabilistic objective function that incorporates assumptions about measurement noise.

Data Normalization and Scaling Procedures

A central aspect of the protocol was the treatment of relative data [1] [2].

Diagram 2: A comparison of the Scaling Factor (SF) and Data-Driven Normalization (DNS) approaches for aligning model simulations with experimental data.

Test Problems and Identifiability Analysis

The performance of the algorithms was evaluated on three established mathematical models of signalling pathways [1] [4]:

- STYX-1-10: A simpler model with 1 observable and 10 unknown parameters.

- EGF/HRG-8-10: A more complex model with 8 observables and 10 unknown parameters.

- EGF/HRG-8-74: A large-scale model with 8 observables and 74 unknown parameters.

Performance was measured by convergence speed (computation time and number of function evaluations) and the degree of practical non-identifiability. Non-identifiability was assessed by running the estimation multiple times to generate an ensemble of parameter sets and analyzing the directions in parameter space along which parameters could vary without significantly worsening the fit [1] [2].

This section catalogs key software, data resources, and methodological concepts essential for research in this field.

Table 3: Key Resources for Parameter Estimation and Drug Discovery Research

| Resource Name | Type | Function & Application |

|---|---|---|

| PEPSSBI [2] | Software Pipeline | First major parameter estimation software to fully support Data-Driven Normalization (DNS), streamlining its implementation and reducing errors. |

| Data2Dynamics [1] [2] | Software Toolbox | A modeling environment for MATLAB that supports parameter estimation in dynamic systems, including multi-condition experiments. |

| COPASI [1] [2] | Software Application | A widely used open-source software for simulating and analyzing biochemical networks and performing parameter estimation. |

| SBML [2] | Model Format | Systems Biology Markup Language; a standard, interoperable format for sharing and exchanging computational models of biological processes. |

| Relative Data [1] [2] | Data Type | Experimental data (e.g., from Western Blots) expressed in arbitrary units, necessitating normalization strategies like DNS or SF for modeling. |

| GLASS Database [22] | Bioactivity Database | A comprehensive, manually curated resource for experimentally validated GPCR-ligand associations, useful for drug discovery and screening. |

| GDSC / CTRP [23] | Pharmacogenomic DB | Databases linking genetic features of cancer cell lines to drug sensitivity, aiding in target discovery and drug prioritization. |

The experimental data lead to several conclusive insights for researchers and drug development professionals. For parameter estimation problems with a relatively small number of unknowns (e.g., 10 parameters), LevMar SE remains a strong and fast candidate, particularly when computational speed is critical. However, as model complexity and the number of unknown parameters increase, the hybrid GLSDC algorithm, especially when paired with Data-Driven Normalization (DNS), demonstrates superior performance in terms of convergence speed and reduced parameter non-identifiability [1] [4].

The choice of data scaling method is as crucial as the choice of algorithm. The DNS approach is highly recommended for complex problems, as it reduces the optimization problem's dimensionality and mitigates practical non-identifiability without the need to estimate additional scaling parameters [1] [2]. Future developments in parameter estimation software, such as the wider adoption of DNS in user-friendly tools like PEPSSBI, are poised to make these powerful techniques more accessible, ultimately accelerating the development of predictive models in systems biology and drug discovery.

In the field of systems biology, mathematical modelling serves as a powerful tool to formalize hypotheses and predict the behaviour of complex biological systems. Ordinary differential equation (ODE) models are widely used to represent intracellular signalling pathways, capturing the quantitative and dynamic nature of cellular processes [1] [2]. The development of quantitative and predictive mathematical models requires estimating unknown model parameters using experimental data, a task known as parameter estimation. This process is formulated as an optimization problem where an objective function quantifies the discrepancy between experimental data and model simulations [2]. The choice of objective function and optimization algorithm significantly impacts the efficiency, accuracy, and practical identifiability of the estimated parameters.

The parameter estimation problem is mathematically challenging due to the non-linearity of biological systems, the existence of local minima, and prevalent non-identifiability issues [1]. Non-identifiability occurs when multiple parameter sets fit the experimental data equally well, preventing the determination of a unique solution [1] [2]. This comparison guide focuses on two fundamental objective functions—Least Squares (LS) and Log-Likelihood (LL)—within the context of evaluating LevMar SE and GLSDC optimization algorithms, providing experimental data and methodological insights for researchers, scientists, and drug development professionals.

Theoretical Foundations of LS and LL

Least Squares (LS) Estimation

The Least Squares method estimates parameters by minimizing the sum of squared differences between observed and predicted values. For a model with predictions ( yi(\theta) ) and measurements ( \tilde{y}i ), the LS objective function is:

[ \min{\theta} \sum{i=1}^{N} \left( \tilde{y}i - yi(\theta) \right)^2 ]

LS estimation is one of the most common approaches for parameter estimation in dynamic systems [1]. Its widespread adoption stems from computational simplicity and intuitive interpretation—it seeks the parameter values that bring model simulations as close as possible to the experimental measurements in a geometric sense.

Log-Likelihood (LL) Estimation

Maximum Likelihood Estimation (MLE) determines parameter values that make the observed data most probable under an assumed statistical model [24]. The likelihood function ( L(\theta) ) for a parameter vector ( \theta ) given observations ( \mathbf{y} = (y1, y2, \ldots, y_n) ) is proportional to the joint probability density:

[ Ln(\theta) = Ln(\theta; \mathbf{y}) = f_n(\mathbf{y}; \theta) ]

For computational convenience, we typically work with the log-likelihood function:

[ \ell(\theta; \mathbf{y}) = \ln L_n(\theta; \mathbf{y}) ]

The maximum likelihood estimate ( \hat{\theta} ) is obtained by maximizing the log-likelihood function:

[ \hat{\theta} = \underset{\theta \in \Theta}{\operatorname{arg\,max}} \, \ell(\theta; \mathbf{y}) ]

For normally distributed errors with constant variance, MLE is equivalent to LS estimation [25] [26]. This equivalence arises because the normal distribution likelihood function contains the sum of squares term in its exponent. However, under non-normal error distributions, LS and LL estimators diverge, making the choice between them consequential for parameter estimation accuracy [26].

Relationship Between LS and LL

The relationship between LS and LL estimation depends critically on the distributional assumptions about the errors:

- Under Gaussian errors: LS and LL estimators are identical [25] [26]. The LS solution maximizes the likelihood when errors are independent and identically distributed following a normal distribution.

- Under non-Gaussian errors: LS and LL estimators differ substantially. For non-normal distributions (e.g., Gamma, Poisson, Binomial), the LL approach incorporates the correct probability structure, while LS does not [26].

This theoretical distinction has practical implications for computational systems biology, where experimental data often violate normality assumptions due to their discrete nature (e.g., count data) or inherent asymmetries.

Optimization Algorithms: LevMar SE vs. GLSDC

LevMar SE Algorithm

LevMar SE implements the Levenberg-Marquardt nonlinear least squares optimization algorithm with sensitivity equations (SEs) for gradient computation [1] [4]. This approach combines gradient-based local optimization with Latin hypercube restarts to enhance convergence probability. The sensitivity equations provide exact gradients by solving auxiliary differential equations that describe how state variables change with respect to parameters [1]. This exact gradient computation can be more efficient and accurate than finite-difference approximations, particularly for stiff systems where numerical differentiation proves challenging.

GLSDC Algorithm

GLSDC (Genetic Local Search algorithm with Distance independent Diversity Control) represents a hybrid stochastic-deterministic optimization approach [1] [4]. This algorithm alternates between a global search phase based on a genetic algorithm and a local search phase utilizing Powell's method [1]. Unlike LevMar SE, GLSDC does not require gradient computation, making it suitable for problems with discontinuous or noisy objective functions. The stochastic global search component helps escape local minima, while the local refinement efficiently converges to nearby optima [1].

Algorithmic Strengths and Limitations

Table 1: Comparative Analysis of Optimization Algorithms

| Feature | LevMar SE | GLSDC |

|---|---|---|

| Search Strategy | Local gradient-based with restarts | Hybrid stochastic-deterministic |

| Gradient Computation | Sensitivity equations (exact) | Not required |

| Global Convergence | Limited (depends on restarts) | Strong (genetic algorithm component) |

| Local Refinement | Excellent (Levenberg-Marquardt) | Good (Powell's method) |

| Computational Overhead | Higher per iteration (SE solutions) | Lower per function evaluation |

| Suitable Problem Types | Well-behaved differentiable systems | Complex, multi-modal problems |

Scaling Approaches: DNS vs. SF

The Scaling Challenge in Biological Data

A fundamental challenge in parameter estimation for systems biology arises from the relative nature of most experimental data. Techniques such as western blotting, multiplexed Elisa, proteomics, and RT-qPCR typically produce data in arbitrary units (au), while mathematical models simulate well-defined units such as molar concentrations [1] [2] [4]. This discrepancy necessitates scaling approaches to align simulations with measurements.

Scaling Factors (SF) Approach

The SF approach introduces additional parameters—scaling factors—that multiply simulations to convert them to the scale of the data [1] [2]. Mathematically, this is represented as:

[ \tilde{y}i \approx \alphaj y_i(\theta) ]

where ( \alphaj > 0 ) is the scaling factor for observable ( j ), ( \tilde{y}i ) denotes measured data-points, and ( y_i(\theta) ) represents simulated data-points [1]. These scaling factors are unknown and must be estimated alongside model parameters, thereby increasing the dimensionality of the optimization problem.

Data-driven Normalisation of Simulations (DNS) Approach

The DNS approach normalises simulations in the same way as the experimental data, making them directly comparable without additional parameters [1] [2] [4]. If experimental data are normalised as ( \tilde{y}i = \hat{y}i / \hat{y}{\text{ref}} ) (where ( \hat{y}i ) represents un-normalised data), then simulations are normalised as ( \tilde{y}i \approx yi / y{\text{ref}} ) [1]. The reference point ( y{\text{ref}} ) could be the maximum value, a control condition, or the average of measured values.

Figure 1: Comparison of SF and DNS scaling approaches. SF introduces additional parameters, while DNS applies identical normalization to simulations and data.

Comparative Performance of Scaling Approaches

Research demonstrates that the DNS approach offers significant advantages over SF, particularly for problems with large parameter sets [1] [2] [4]. DNS reduces optimization dimensionality by eliminating scaling parameters, accelerates convergence, and decreases practical non-identifiability—defined as the number of directions in parameter space along which parameters cannot be uniquely identified [1] [4].

Experimental Comparison Framework

Test Problems and Methodology

To systematically evaluate the performance of LS vs. LL objective functions and LevMar SE vs. GLSDC optimization algorithms, researchers have developed three test-bed parameter estimation problems with varying complexity [1] [4]:

- STYX-1-10: 1 observable, 10 unknown parameters

- EGF/HRG-8-10: 8 observables, 10 unknown parameters

- EGF/HRG-8-74: 8 observables, 74 unknown parameters

These test problems represent increasingly challenging parameter estimation scenarios, allowing comprehensive assessment of algorithm performance across different problem sizes and structures [1].

Quantitative Performance Metrics

Table 2: Performance Metrics for Algorithm Evaluation

| Metric | Description | Measurement Method |

|---|---|---|

| Convergence Speed | Time or function evaluations required to reach optimum | Computation time counting |

| Success Rate | Percentage of runs converging to acceptable solution | Multiple random restarts |

| Parameter Identifiability | Number of non-identifiable parameter directions | Analysis of parameter ensembles |

| Objective Function Value | Final achieved value of LS or LL objective function | Direct comparison at convergence |

Experimental Results: LS vs. LL Performance

Experimental comparisons reveal that the choice between LS and LL objective functions significantly impacts optimization performance, particularly when combined with different scaling approaches [1]. Under the SF approach, LL objective functions demonstrate slightly better performance for smaller parameter problems (10 parameters), while both LS and LL perform similarly for larger parameter sets (74 parameters) [1].

For the DNS approach, LS and LL show comparable performance across problem sizes, with minor advantages for LL in certain scenarios [1]. This suggests that DNS mitigates some of the disadvantages of LS estimation when dealing with relative biological data.

Experimental Results: LevMar SE vs. GLSDC

Table 3: Algorithm Performance Across Test Problems

| Test Problem | Algorithm | Convergence Speed | Success Rate | Notes |

|---|---|---|---|---|

| STYX-1-10 (10 params) | LevMar SE | Fastest | High | Excellent for small problems |

| LevMar FD | Moderate | Medium | FD gradient computation slower | |

| GLSDC | Slowest | High | Benefits from DNS approach | |

| EGF/HRG-8-74 (74 params) | LevMar SE | Moderate | Low | Struggles with large parameter space |

| GLSDC | Faster | Higher | Hybrid approach excels with DNS |