Network-Based Prediction of Protein Function: From AI-Driven Methods to Clinical Applications

Accurate protein function prediction is pivotal for understanding biological mechanisms and accelerating drug discovery, yet the vast majority of the over 200 million known proteins remain uncharacterized.

Network-Based Prediction of Protein Function: From AI-Driven Methods to Clinical Applications

Abstract

Accurate protein function prediction is pivotal for understanding biological mechanisms and accelerating drug discovery, yet the vast majority of the over 200 million known proteins remain uncharacterized. This article provides a comprehensive overview of the computational methods revolutionizing this field, focusing on network-based approaches that interpret protein function in the context of molecular interaction networks. We explore foundational principles, detail cutting-edge methodologies including graph neural networks and heterogeneous data integration, and address key challenges like data sparsity and functional ambiguity. By comparing state-of-the-art tools and their validation on standardized benchmarks, we offer researchers, scientists, and drug development professionals a clear roadmap for selecting and optimizing prediction strategies to bridge the widening sequence-function gap in biomedical research.

The Network Perspective: Core Principles for Inferring Protein Function from Cellular Interactions

The "Guilt-by-Association" (GBA) principle stands as a foundational concept in functional genomics, positing that genes or proteins which interact or share similar associations are more likely to perform related biological functions [1]. This principle has become increasingly important for annotating gene function, identifying disease genes, and understanding cellular pathways. The conceptual framework of GBA operates on the premise that molecular components operating within shared functional pathways exhibit measurable associations—whether through physical interaction, co-regulation, or co-expression—that can be captured as networks [2]. These networks, representing protein-protein interactions (PPIs), gene co-expression patterns, or genetic interactions, provide a scaffold for propagating functional information from characterized to uncharacterized elements [3].

The biological rationale underlying GBA stems from the fundamental organization of cellular processes. Proteins rarely operate in isolation but rather form complex macromolecular assemblies to execute biological functions [2]. This functional modularity implies that proteins participating in the same cellular process are more likely to interact with one another, creating dense neighborhoods within biological networks that correspond to functional modules [1]. From an evolutionary perspective, selective pressure conserves not only protein sequences but also their interaction patterns, further strengthening the relationship between network proximity and functional similarity. The GBA principle has demonstrated remarkable predictive power across diverse organisms, from yeast to human, making it an indispensable tool for functional annotation in the era of high-throughput biology [4].

Theoretical Foundations and Mechanisms

Statistical and Computational Basis

The computational implementation of GBA relies on quantifying associations between biological entities and establishing significance thresholds for these associations. In practice, each entity (gene or protein) is represented as a data profile comprising multiple characteristics—such as expression levels across different conditions, genetic variants, or interaction partners. Distance measures, including Euclidean distance or correlation coefficients, then quantify similarity between these profiles [5]. For a set of n entities, this process generates a distance matrix that encodes their pairwise relationships. Statistical frameworks like the Mantel test and RV coefficient can assess the congruence between different distance matrices, helping establish whether patterns of association in one data type (e.g., co-expression) correspond to associations in another (e.g., functional annotation) [5].

Network propagation algorithms form the computational engine for many GBA-based prediction methods. These algorithms simulate the flow of functional information across network edges, under the assumption that function propagates more readily to nearby nodes than to distant ones. The Markov random field framework represents one sophisticated approach that incorporates network topology to prioritize candidate genes, effectively weighting functional predictions based on both direct and indirect associations within the network [3]. Such methods demonstrate that network connectivity significantly influences prediction robustness, with highly connected nodes often presenting both opportunities and challenges for accurate functional inference [1] [4].

Molecular Mechanisms Underlying Network Associations

Several distinct but complementary molecular mechanisms create the associations that enable GBA predictions:

Physical Protein Interactions: Direct physical binding between proteins facilitates the formation of macromolecular complexes that execute coordinated functions, such as the ribosomal complex for protein synthesis or the proteasome for protein degradation [2]. These stable interactions create strong functional links that are readily detectable through methods like yeast two-hybrid (Y2H) or affinity purification mass spectrometry (AP-MS).

Co-Regulation and Co-Expression: Genes participating in the same biological process often share transcriptional regulatory programs, resulting in correlated expression patterns across diverse conditions [3]. Such co-expression networks can reveal functional relationships even between proteins that do not physically interact, identifying members of the same pathway or process.

Genetic Interactions: Synthetic lethality or other genetic interactions often occur between genes whose products function in compensatory pathways or the same protein complex, creating another layer of functional association [1].

Table 1: Molecular Mechanisms Creating Functional Associations

| Mechanism | Detection Methods | Typical Functional Relationships |

|---|---|---|

| Physical Interaction | Y2H, AP-MS, MYTH | Protein complex membership, transient signaling |

| Co-Expression | Microarray, RNA-seq | Pathway co-membership, shared regulation |

| Genetic Interaction | Synthetic lethality screens | Compensatory pathways, parallel processes |

Experimental Protocols for Network-Based Function Prediction

Protein-Protein Interaction Mapping

Protocol 1: Yeast Two-Hybrid (Y2H) Screening

Principle: The classic Y2H system relies on the reconstitution of a transcription factor through interaction between two proteins—one fused to a DNA-binding domain (BD) and the other to a transcriptional activation domain (AD). Interaction brings BD and AD together, activating reporter gene expression [2].

Workflow:

- Bait Construction: Clone the gene of interest into a BD vector

- Prey Library: Transform yeast with a cDNA library fused to AD

- Selection: Plate transformants on selective media lacking specific nutrients

- Confirmation: Isolate positive clones and sequence inserts

- Validation: Verify interactions through independent methods

Advantages and Limitations:

- Advantages: Simple, established, low-cost; scalable for large-scale screening; performed in vivo [2]

- Limitations: Requires nuclear localization; potential for false positives from overexpression; may miss interactions requiring post-translational modifications [2]

Protocol 2: Affinity Purification Mass Spectrometry (AP-MS)

Principle: AP-MS identifies protein complexes through immunoaffinity purification of a bait protein followed by mass spectrometric identification of co-purifying proteins [2].

Workflow:

- Tagging: Introduce an affinity tag (e.g., FLAG, HA) to the bait protein

- Cell Lysis: Prepare cell extract under non-denaturing conditions

- Affinity Purification: Incubate extract with tag-specific antibody beads

- Wash: Remove non-specifically bound proteins

- Elution and Analysis: Identify co-purifying proteins by LC-MS/MS

Advantages and Limitations:

- Advantages: Identifies multi-protein complexes; can be performed under near-physiological conditions

- Limitations: May capture non-specific interactions; requires careful controls; may miss transient interactions

Co-Expression Network Analysis

Protocol 3: Constructing Co-Expression Networks for Function Prediction

Principle: Genes with similar expression patterns across diverse conditions often participate in related biological processes. Co-expression networks capture these relationships as edges between genes, with edge weights representing correlation strength [3].

Workflow:

- Data Collection: Compile gene expression data across multiple conditions (e.g., tissues, treatments, time courses)

- Similarity Calculation: Compute pairwise correlation coefficients (e.g., Pearson, Spearman) for all gene pairs

- Network Construction: Create an adjacency matrix by applying a threshold to correlation values

- Module Detection: Identify densely connected clusters (modules) using algorithms like hierarchical clustering or weighted gene co-expression network analysis (WGCNA)

- Functional Enrichment: Annotate modules through enrichment analysis of Gene Ontology terms or pathways

Applications and Considerations:

- Particularly effective for identifying pathway members and condition-specific processes

- Network rewiring between conditions can reveal disease-relevant alterations [3]

- Requires large sample sizes for robust correlation estimates

Advanced Computational Methods and Recent Innovations

From Static to Dynamic Network Analysis

Traditional GBA approaches treat biological networks as static entities, but cellular networks are inherently dynamic, rewiring in response to different stimuli and conditions. The emerging "guilt by rewiring" principle focuses on network changes between states (e.g., healthy vs. disease) rather than static topology [3]. In Crohn's disease, for example, immune-related genes show significantly more rewiring in patient co-expression networks compared to controls, providing additional functional insights beyond static associations [3].

The GOHPro (GO Similarity-based Heterogeneous Network Propagation) method represents a recent innovation that integrates protein functional similarity with Gene Ontology (GO) semantic relationships [4]. This approach constructs a heterogeneous network with two layers—a protein functional similarity network and a GO semantic similarity network—then applies network propagation to prioritize functional annotations. When evaluated on yeast and human datasets, GOHPro achieved Fmax improvements of 6.8% to 47.5% over existing methods across Biological Process, Molecular Function, and Cellular Component ontologies [4].

Table 2: Comparison of Network-Based Function Prediction Methods

| Method | Network Type | Key Features | Performance |

|---|---|---|---|

| Classic GBA | Single network | Propagation from annotated neighbors | Varies by network quality and density |

| Guilt by Rewiring | Differential network | Focuses on network changes between conditions | Identifies condition-specific functions |

| GOHPro | Heterogeneous network | Integrates multiple data types with GO semantics | Fmax improvements of 6.8-47.5% over alternatives |

Addressing Methodological Challenges

Controlling for Multifunctionality Bias

A critical challenge in GBA analysis is the "multifunctionality bias"—where highly connected "hub" genes accumulate predictions across diverse functions, sometimes artifactually [1]. Surprisingly, knowledge of multifunctionality alone can produce strong function prediction performance, indicating that some predictions may reflect general promiscuity rather than specific functional links [1].

Solutions:

- Computational controls that account for node degree and multifunctionality

- Explicit modeling of the relationship between connectivity and functional diversity

- Differential weighting of interactions based on confidence or specificity

Handling Data Sparsity and Noise

Biological networks are typically sparse and contain both false positives and false negatives, complicating GBA applications [4].

Solutions:

- Data integration from multiple sources to create more robust networks

- Similarity-based network reconstruction that incorporates domain profiles and protein complex information to overcome limitations of direct interaction data [4]

- Benchmarking against gold-standard datasets to optimize parameters and thresholds

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Research Reagents for Network-Based Function Prediction

| Reagent/Tool | Function | Application Examples |

|---|---|---|

| Y2H Systems | Detect binary protein interactions | Full-length ORFeome libraries; split-ubiquitin systems for membrane proteins |

| Affinity Tags | Purify protein complexes | FLAG, HA, TAP tags for AP-MS; biotin ligase (BioID) for proximity labeling |

| Co-Expression Resources | Construct correlation networks | Gene expression compendia (GEO); tissue-specific transcriptome datasets |

| Protein Interaction Databases | Reference network data | BioGRID, STRING, Complex Portal for validation and integration |

| GO Annotations | Functional benchmarking | GO term annotations; semantic similarity measures |

| Network Analysis Software | Visualize and analyze networks | Cytoscape with plugins; NAViGaTOR for large networks; custom scripts for propagation algorithms |

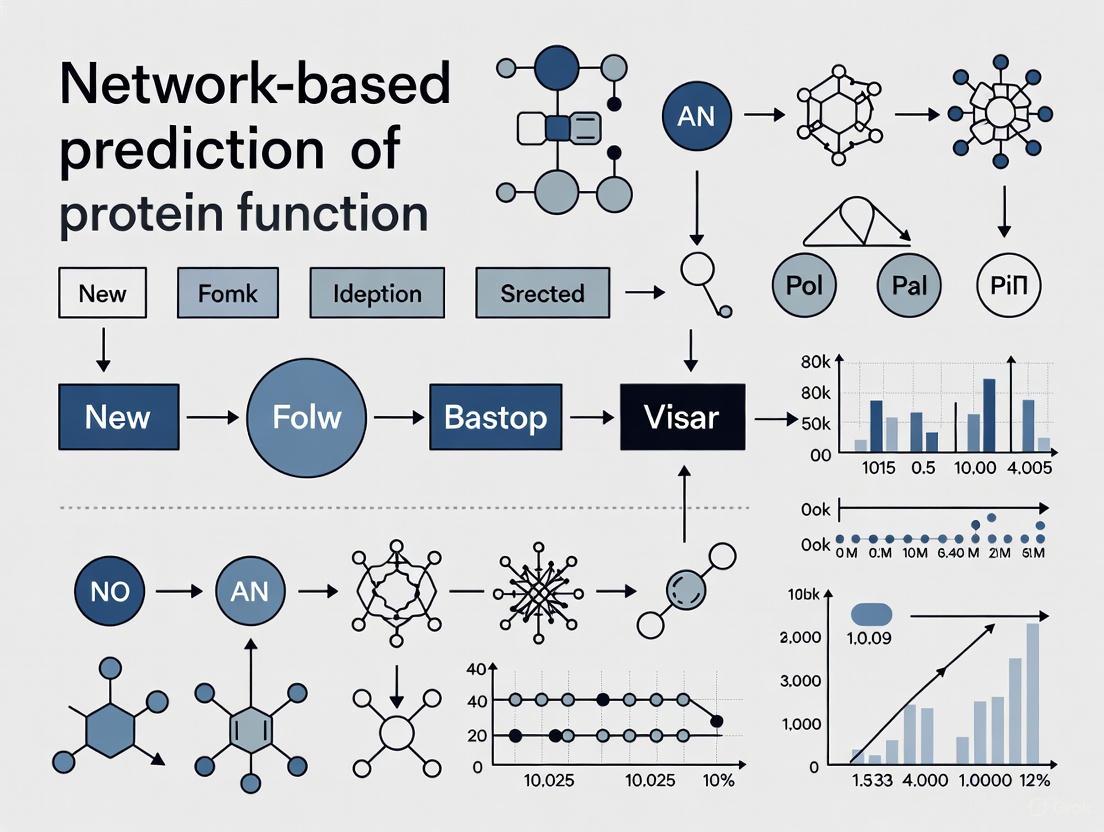

Experimental Workflows and Diagram

The following workflow diagram illustrates the integrated experimental and computational pipeline for network-based function prediction using the guilt-by-association principle:

Integrated Workflow for Guilt-by-Association Based Function Prediction

Troubleshooting and Technical Considerations

Common Experimental Challenges

Low Yield in Y2H Screens:

- Potential cause: Poor expression or improper folding of bait/prey proteins in yeast

- Solution: Codon-optimize genes for yeast expression; test autoactivation and toxicity controls; try multiple fusion orientations

High False Positives in AP-MS:

- Potential cause: Non-specific binders or contaminant proteins

- Solution: Implement stringent controls (empty tag, unrelated baits); use quantitative proteomics to distinguish specific interactions; apply statistical frameworks like SAINT

Weak Co-expression Signals:

- Potential cause: Insufficient sample size or limited condition diversity

- Solution: Increase sample number; integrate public datasets; focus on condition-specific correlations rather than global patterns

Computational Validation Strategies

Cross-Validation:

- Perform leave-one-out cross-validation where each annotated gene is sequentially treated as unannotated

- Use temporal validation where older annotations train predictions tested on newer annotations

Benchmarking:

- Compare against random networks with preserved topology

- Evaluate precision-recall curves against gold-standard functional annotations

- Assess biological relevance through pathway enrichment analysis

The Guilt-by-Association principle remains a powerful framework for functional genomics, continually evolving through methodological improvements. The integration of heterogeneous data sources, development of dynamic network analyses, and implementation of controls for multifunctionality bias represent significant advances that enhance prediction accuracy [4] [3]. Future directions will likely incorporate single-cell resolution data, spatial organization information, and deep learning approaches to further refine network-based function prediction. As these methods mature, they will increasingly bridge the annotation gap for uncharacterized proteomes, accelerating biological discovery and therapeutic development [4].

The comprehensive mapping of protein-protein interaction (PPI) networks, known as the interactome, provides a crucial framework for understanding cellular organization and function. These networks form the backbone of cellular processes, revealing how proteins work together in living organisms and providing fundamental insights into molecular mechanisms [6]. For researchers and drug development professionals, accurately constructing and analyzing these networks is a critical step in unraveling complex biological systems, predicting protein functions, and identifying novel therapeutic targets for various diseases.

The challenge lies in effectively integrating diverse, multi-source interaction data into a biologically meaningful network. As protein interactions can be stable (forming long-lasting complexes) or transient (temporary binding for cellular processes), utilizing appropriate data sources and analytical methods becomes paramount for generating reliable hypotheses in network-based prediction of protein function [6]. This protocol details the methodologies for achieving this integration, from data acquisition to functional validation.

Protein-protein interaction data are available from various sources, each with distinct advantages and characteristics. Understanding these sources is essential for building a high-confidence network.

Primary Databases and Metadatabases

Primary PPI databases extract interactions from experimental evidence reported in the scientific literature through manual curation processes. In contrast, metadatabases aggregate and unify information from multiple primary sources, and predictive databases use computational methods to infer interactions in unexplored areas of the interactome [7].

Table 1: Key Protein-Protein Interaction Data Resources

| Resource Name | Type | Key Characteristics | Use Case |

|---|---|---|---|

| IntAct [7] [6] | Primary Database | Manually curated molecular interaction data. | Accessing experimentally verified, literature-derived interactions. |

| BioGRID [6] [8] | Primary Database | Provides protein and genetic interactions from major model organisms. | Studying physical and genetic interaction networks. |

| DIP [9] [6] | Primary Database | Focuses on experimentally determined interactions. | Building high-quality, evidence-based core networks. |

| MINT [6] | Primary Database | Stores mammalian and viral protein interactions. | Pathogen-host interaction studies. |

| STRING [6] [8] | Integrated/Metadatabase | Combines experimental, predicted, and other evidence (e.g., co-expression, text mining). | Comprehensive network analysis including direct and indirect functional associations. |

| OmniPath [8] | Integrated Resource | Considered a high-quality data source; often integrated with others. | Constructing high-confidence interaction sets. |

Assessing Data Quality and Integration

A significant challenge in interactome mapping is the variable quality and coverage of different datasets. False positives (experimental artifacts or prediction errors) and false negatives (undetected real interactions) are common [6]. Furthermore, the dynamic nature of interactions, which change across cellular conditions and over time, adds another layer of complexity.

To address quality concerns, resources like STRING provide a probabilistic confidence score for each interaction [8]. When integrating multiple sources, a practical approach is to assign a confidence score to non-STRING data based on the distribution of scores for overlapping interactions. For instance, data from OmniPath and InWeb_IM are generally considered high-quality, as a large percentage of their interactions have high STRING physical scores (>0.9) [8]. Integrating data from multiple sources, as done by platforms like Metascape, can significantly increase coverage while allowing users to select conservative ("Physical (Core)") or comprehensive ("Combined (All)") datasets [8].

Application Note: A Protocol for Reconstructing Weighted PPI Networks

This protocol describes a methodology for integrating multiple PPI datasets into a single, functionally validated weighted network, optimized using functional module similarity. This approach is particularly valuable for predicting protein complexes and generating high-confidence hypotheses for experimental validation [9].

Materials and Reagents

Research Reagent Solutions

- PPI Datasets: Collect data from multiple sources (e.g., AP-MS experiments, DIP, BIND, IntAct, orthologous interactions from related organisms) [9]. Ensure consistent protein identifier mapping across datasets.

- Functional Module Sets: These serve as the optimization target.

- Software and Computational Tools:

- Harmony Search Algorithm: For global optimization of dataset weights [9].

- MCL (Markov Clustering) Algorithm: For detecting modules (clusters) within the weighted PPI network [9] [6].

- Cytoscape: For network visualization and analysis [9] [6].

- Normalized Mutual Information (NMI) Measure: To quantify similarity between detected PPI modules and reference functional modules [9].

Step-by-Step Procedure

Data Acquisition and Preprocessing:

- Download PPI datasets from selected primary and metadatabases.

- Map all protein identifiers to a consistent namespace (e.g., UniProt IDs) to ensure seamless integration.

- Compile your functional module reference sets (e.g., co-expression modules or GO-based modules).

Network Integration and Weight Assignment:

Integrate the

\(k\)PPI datasets into a single weighted network using the naïve Bayesian formula. The combined similarity between two proteins\(p_i\)and\(p_j\)is calculated as:\[ Similarity(p_i, p_j) = 1 - \prod_{p=1}^{k}(1 - S_p(p_i, p_j)) \]where

\(S_p(p_i, p_j)\)is the confidence score (weight) for the\(p^{th}\)dataset if it contains the interaction, and zero otherwise [9].- Initialize the confidence scores

\(S_p\)for each dataset with starting values.

Module Detection and Optimization:

- Use the MCL clustering algorithm on the current weighted network to detect protein modules.

- Calculate the Normalized Mutual Information (NMI) between the detected PPI modules and the reference functional modules.

- Employ the Harmony Search metaheuristic optimization algorithm to iteratively adjust the dataset weights

\(S_p\)to maximize the NMI value. The optimization runs for a sufficient number of iterations (e.g., 10,000) to reach a global optimum [9].

Validation and Analysis:

- Extract the final weighted PPI network using the optimized dataset weights.

- Identify central proteins (hubs) within modules as those with a node degree larger than twice the average node degree in the module [9].

- Validate the biological relevance of the predicted modules through literature mining and functional enrichment analysis using resources like EcoCyc or Gene Ontology [9].

The following workflow diagram illustrates the key steps of this protocol:

Application Note: A Protocol for Differential PPI Network Mapping with AP-MS

This protocol outlines an experimental-computational workflow for identifying changes in protein-protein interactions between two conditions (e.g., disease vs. normal, treated vs. untreated) using Affinity Purification-Mass Spectrometry (AP-MS), allowing for the study of network dynamics [10].

Materials and Reagents

Research Reagent Solutions

- Cell Culture: Appropriate mammalian cell lines for the biological question.

- Plasmids: For expressing affinity-tagged "bait" proteins (e.g., FLAG, HA tags).

- Affinity Resins: For purifying the tagged bait protein and its interactors (e.g., anti-FLAG M2 agarose).

- Mass Spectrometry System: High-resolution LC-MS/MS system for protein identification and quantification.

- Software Tools:

Step-by-Step Procedure

Experimental Design and Sample Preparation:

- Express the affinity-tagged bait protein in mammalian cells under pairwise conditions (e.g., control and stimulated).

- Perform affinity purification to isolate the bait protein and its co-purifying "prey" proteins for each condition. Include appropriate controls.

Protein Identification and Quantification:

- Digest the purified proteins and analyze them by mass spectrometry.

- Process the raw MS data using software like MaxQuant to identify proteins and quantify their abundance (e.g., using label-free quantification or isobaric tagging methods) [10].

Statistical Analysis and Differential Interaction Mapping:

- Use a statistical framework like MSstats in R to analyze the quantitative data. Identify prey proteins that show a statistically significant change in abundance with the bait between the two conditions [10].

- Construct separate PPI networks for each condition. The edges (interactions) can be weighted based on quantitative changes.

Network Visualization and Interpretation:

- Visualize the differential networks in Cytoscape. Use visual features like node color (e.g., red for upregulated, blue for downregulated interactions) or edge width to represent quantitative changes [10].

- Integrate the differential network with functional data (e.g., pathway databases) to infer biological mechanisms.

The experimental and computational workflow for this protocol is summarized below:

Assessing Functional Similarity

Once a PPI network is constructed, a critical next step is to interpret it functionally. Measuring the functional similarity between proteins provides a powerful tool for this task, aiding in the validation of interactions and the prediction of protein function.

Functional Similarity Databases and Measures

The FunSimMat database is a comprehensive resource that provides precomputed functional similarity values for proteins in UniProtKB and protein families in Pfam and SMART [11]. It leverages the structured, controlled vocabulary of Gene Ontology (GO) to compute several semantic similarity measures between GO terms, which are then used to derive functional similarity between proteins [11]. These measures help evaluate whether interacting proteins are functionally related, a key principle in interactome analysis.

The Scientist's Toolkit

Table 2: Essential Research Reagents and Resources for Interactome Mapping

| Item Name | Function/Application | Example/Note |

|---|---|---|

| Cytoscape [9] [6] | Open-source software for visualizing, analyzing, and modeling molecular interaction networks. | Essential for creating publication-quality network figures and performing network topology analysis. |

| Harmony Search Algorithm [9] | A metaheuristic global optimization algorithm. | Used to find the optimal weights for different PPI datasets to maximize functional relevance. |

| MCL Algorithm [9] [6] | A fast and scalable clustering algorithm for graphs. | Applied to detect protein complexes and functional modules within the larger PPI network. |

| Affinity Purification Resins | To isolate protein complexes from cell lysates. | e.g., anti-FLAG M2 agarose, used in AP-MS protocols [10]. |

| MaxQuant Software [10] | A quantitative proteomics software package for analyzing high-resolution MS data. | Used for identifying and quantifying proteins in AP-MS experiments. |

| FunSimMat Database [11] | Provides precomputed functional similarity measures based on Gene Ontology. | Used to validate interactions and infer protein function based on semantic similarity. |

The integration of diverse PPI data sources into a coherent and functionally validated interactome model is a cornerstone of modern systems biology. The protocols outlined here—one computational, focusing on optimal data integration, and the other experimental-computational, focusing on capturing interaction dynamics—provide robust frameworks for researchers. By systematically employing these methods and the associated toolkit, scientists can generate high-confidence, biologically interpretable networks. These networks, in turn, powerfully illuminate cellular function and dysfunction, directly supporting the discovery of novel therapeutic targets and advancing drug development efforts.

Proteins are the fundamental executors of biological processes, but they rarely act in isolation. The majority of cellular functions arise from precisely coordinated protein-protein interactions (PPIs) that form complexes and pathways. Understanding these collaborations is crucial for elucidating disease mechanisms and developing therapeutic strategies. The field of network biology has emerged as a powerful framework for predicting protein function by analyzing interaction patterns within the cellular interactome. This approach moves beyond studying individual proteins to investigating how functional modules – groups of proteins working together – drive cellular processes. Network-based prediction leverages the principle of "guilt by association," where uncharacterized proteins can be assigned functions based on their interacting partners within biological networks [12] [4].

Recent advances in computational methods, particularly artificial intelligence and deep learning, have revolutionized our ability to map and interpret these complex interaction networks. These technologies can integrate diverse data sources – from sequence information to structural data and experimental interaction evidence – to build comprehensive models of protein collaboration [13] [14]. As these models become more sophisticated, they offer increasingly accurate predictions about how proteins form functional complexes and pathways, providing critical insights for both basic biological research and drug development.

Computational Prediction of Protein Complexes and Interactions

Structure-Based Interaction Prediction with DeepSCFold

Accurately predicting the structures of protein complexes is fundamental to understanding their function. DeepSCFold represents a cutting-edge computational pipeline that significantly improves protein complex structure modeling by leveraging sequence-derived structure complementarity. This method addresses a key limitation of traditional approaches that rely primarily on sequence co-evolution signals, which are often absent in certain complexes like antibody-antigen pairs or host-pathogen interactions [15].

The DeepSCFold protocol employs two specialized deep learning models that work in concert:

- Protein-protein structural similarity prediction (pSS-score): Quantifies structural similarity between query sequences and their homologs

- Interaction probability estimation (pIA-score): Predicts interaction likelihood based solely on sequence features

These models enable the construction of deep paired multiple-sequence alignments (MSAs) that capture intrinsic protein-protein interaction patterns through structural awareness rather than just sequence conservation [15]. The workflow integrates multi-source biological information including species annotations, UniProt accession numbers, and experimentally determined complexes from the Protein Data Bank to enhance biological relevance.

Table 1: Performance Comparison of Protein Complex Structure Prediction Methods

| Method | TM-score Improvement | Key Innovation | Limitations Addressed |

|---|---|---|---|

| DeepSCFold | 11.6% over AlphaFold-Multimer; 10.3% over AlphaFold3 | Sequence-derived structure complementarity | Poor prediction for complexes lacking co-evolution signals |

| AlphaFold-Multimer | Baseline | Extension of AlphaFold2 for multimers | Lower accuracy than monomer predictions |

| Coev2Net | Superior to PRISM on SCOPPI dataset | Threading-based interface prediction | Limited structural data availability |

When benchmarked on CASP15 protein complex targets, DeepSCFold demonstrated remarkable performance, achieving an 11.6% improvement in TM-score compared to AlphaFold-Multimer and 10.3% improvement over AlphaFold3. For challenging antibody-antigen complexes from the SAbDab database, it enhanced prediction success rates for binding interfaces by 24.7% and 12.4% over AlphaFold-Multimer and AlphaFold3, respectively [15]. This performance highlights how incorporating structural complementarity information can overcome limitations of methods relying solely on sequence-level co-evolution.

Graph Neural Networks for Functional Prediction

Graph neural networks (GNNs) have emerged as powerful computational frameworks for predicting protein functions from network data. These approaches effectively model the cellular interactome as a graph where proteins represent nodes and interactions represent edges. GNNs can learn rich representations that capture both structural features and relational patterns within these protein graphs [16].

GNN-based methods operate at multiple levels of granularity:

- Atomic-level graphs: Model atomic interactions within proteins

- Residue-level graphs: Capture amino acid-level interactions

- Multi-scale graphs: Integrate different levels of biological organization

These approaches leverage the underlying structural knowledge of proteins to make predictions about Gene Ontology terms and protein-protein interactions [16]. By propagating information across the interaction network, GNNs can infer functions for uncharacterized proteins based on their position and connectivity within the graph, effectively implementing the "guilt by association" principle at a computational scale.

Figure 1: DeepSCFold Workflow for Protein Complex Structure Prediction. The pipeline integrates sequence-based structural similarity and interaction probability to construct paired multiple sequence alignments for accurate complex modeling.

Integrated Functional Prediction with GOHPro

The GOHPro framework represents a novel approach to protein function prediction that constructs a heterogeneous network integrating protein functional similarity with Gene Ontology semantic relationships. This method addresses key challenges in functional prediction, including data sparsity and functional ambiguity, by leveraging network propagation algorithms to prioritize annotations based on multi-omics context [4].

GOHPro constructs its predictive model through several sophisticated steps:

- Domain structural similarity network: Combines contextual similarity (domain-based similarity of level-1 neighbors) and compositional similarity (proteins' internal domain structure)

- Modular similarity network: Established using protein complex information from Complex Portal, a manually curated resource of macromolecular complexes

- GO semantic similarity network: Based on hierarchical relationships between GO terms

- Heterogeneous network integration: Combines protein functional similarity with GO semantic similarity

When evaluated on yeast and human datasets, GOHPro outperformed six state-of-the-art methods, achieving Fmax improvements ranging from 6.8% to 47.5% across Biological Process, Molecular Function, and Cellular Component ontologies [4]. The method demonstrated particular effectiveness in resolving functional ambiguity for proteins with shared domains, such as AAA + ATPases, by leveraging contextual interactions and modular complexes.

Experimental Validation of Protein Complexes

Quantitative Complexome Analysis by CN-PAGE

Experimental validation of computationally predicted complexes requires methods that can capture protein interactions under near-physiological conditions. The CN-PAGE (Clear-Native PAGE) workflow combined with mass spectrometry provides a robust approach for identifying protein complexes and establishing quantitative complexome profiles. This method enables researchers to study how protein complex abundance and composition change under different biological conditions [17].

The CN-PAGE protocol involves several key steps:

- Native protein extraction: Proteins and intact complexes are extracted in detergent-free buffer at 4°C to preserve native interactions

- Size-based fractionation: Complexes are separated by CN-PAGE based on molecular weight

- In-gel digestion: Fractionated complexes are processed using HiT-Gel, a high-throughput digestion method

- LC-MS/MS analysis: Peptides are identified and quantified using liquid chromatography tandem mass spectrometry

- Profile deconvolution: Computational analysis reconstructs protein migration profiles and identifies oligomeric states

This approach shows low technical variation, with Pearson correlation coefficients higher than 0.9 between biological replicates, demonstrating high reproducibility [17]. In a proof-of-concept study analyzing Arabidopsis thaliana at different diurnal time points, the method identified 2338 proteins at the end of day and 2469 at the end of night, with an 88.3% overlap between conditions. Importantly, fewer than 11% of detected proteins peaked in fractions corresponding to monomeric ranges, confirming that most cellular proteins exist in complexes.

Table 2: Key Research Reagents for Protein Complex Analysis

| Reagent/Resource | Function in Analysis | Application Context |

|---|---|---|

| Clear-Native PAGE | Size-based separation of native protein complexes | Preservation of protein interactions without denaturation |

| PINOT Web Tool | Integration of PPI data from multiple databases | Construction of protein interaction networks from curated literature |

| Orbitrap Mass Analyzer | High-resolution mass detection for peptide identification | Discovery proteomics with broad dynamic range |

| Triple Quadrupole MS | Targeted quantitation with high sensitivity | Absolute quantification of specific protein complexes |

| Isobaric Tags (TMT/iTRAQ) | Multiplexed relative quantitation of proteins | Comparison of complex abundance across multiple conditions |

| SILAC Labeling | Metabolic labeling for relative quantitation | In vivo tracking of protein complex dynamics |

Confidence Assessment with Coev2Net Framework

Validating predicted protein interactions requires rigorous confidence assessment. The Coev2Net framework provides a structure-based approach for computing confidence scores that address both false-positive and false-negative rates in high-throughput interaction data [18]. This method is particularly valuable for assessing interactions in poorly characterized regions of the interactome.

The Coev2Net framework operates through several computational stages:

- Interface prediction: Sequences are threaded onto the best-fit template complex

- Co-evolution likelihood calculation: A probabilistic graphical model assesses interface co-evolution with respect to artificial homologous sequences

- Classifier training: Scores are input into a classifier trained on high-confidence networks

- Confidence scoring: Outputs a score between 0-1 representing interaction confidence

When applied to human MAPK networks, Coev2Net successfully predicted interactions for approximately 1,500 pairs where clear homologous complexes didn't exist in the PDB, demonstrating its ability to extend beyond known structural templates [18]. The framework also predicted interfaces enriched for cancer-related or damaging SNPs, highlighting its biological relevance for understanding disease mechanisms.

Interaction Data Integration with PINOT

Collating protein-protein interaction data from multiple sources presents significant challenges due to inconsistencies in data formats and curation standards across databases. The PINOT (Protein Interaction Network Online Tool) web resource optimizes this process by providing live integration of PPI data from IMEx consortium databases and WormBase [12].

PINOT implements a sophisticated quality control pipeline:

- Data download: Direct querying of seven primary databases via PSICQUIC interface

- Data parsing and merging: Integration of interaction data from multiple sources

- Confidence scoring: Based on detection methods and publication records

- Filtering: Application of lenient or stringent quality filters

Each interaction is assigned a confidence score based on the number of distinct detection methods and supporting publications. Interactions with a final score of 2 (reported by one publication using one technique) should be interpreted with caution as they lack independent replication [12]. This transparent scoring system helps researchers prioritize interactions for experimental validation based on available evidence.

Integrated Computational-Experimental Workflows

Synergistic Approaches for Complex Identification

The most robust insights into protein complexes emerge from workflows that integrate computational prediction with experimental validation. These synergistic approaches leverage the scalability of computational methods with the empirical grounding of experimental techniques, creating a virtuous cycle of hypothesis generation and testing [17] [15].

An effective integrated workflow typically involves:

- Computational complex prediction using structure-based methods like DeepSCFold or network-based approaches like GNNs

- Experimental complex validation through native separation techniques like CN-PAGE followed by mass spectrometry

- Confidence assessment using frameworks like Coev2Net to evaluate interaction reliability

- Functional annotation through tools like GOHPro that leverage complex information for function prediction

This integrated approach is particularly powerful for studying condition-specific changes in complex composition and abundance, such as comparing protein complexes at different diurnal time points or in disease versus healthy states [17]. The quantitative nature of mass spectrometry-based complexome profiling enables researchers to track how complex formation and stoichiometry change in response to cellular signals or perturbations.

Figure 2: Integrated Workflow for Protein Complex Identification. The synergistic cycle combines computational prediction with experimental validation to build high-confidence models of protein complexes.

Quantitative Proteomics for Complex Dynamics

Understanding how protein complexes change in response to cellular conditions requires quantitative methodologies. Quantitative proteomics provides powerful approaches for both discovery and targeted analysis of global proteomic dynamics, enabling researchers to track changes in complex abundance and composition [19].

Two fundamental strategies dominate quantitative proteomics:

- Discovery proteomics: Optimizes protein identification through extensive fractionation and high-resolution mass spectrometry (e.g., Orbitrap instruments)

- Targeted proteomics: Quantifies specific proteins with high precision, sensitivity, and throughput (e.g., triple quadrupole MS)

For protein complex studies, quantitative strategies are further divided into:

- Relative quantitation: Compares peptide abundance between samples using metabolic labeling (SILAC) or isobaric tags (TMT, iTRAQ)

- Absolute quantitation: Spikes samples with known concentrations of isotopically-labeled synthetic peptides

These quantitative approaches reveal how protein complex formation, dissociation, and stoichiometry change in different biological states, providing critical insights into regulatory mechanisms [19]. When combined with native separation methods like CN-PAGE, quantitative proteomics enables comprehensive mapping of complexome dynamics across conditions.

Applications in Biomedical Research and Drug Discovery

The network-based understanding of protein complexes and pathways has profound implications for biomedical research and therapeutic development. By elucidating how proteins collaborate in functional modules, researchers can identify novel drug targets and understand disease mechanisms at a systems level [13].

Key applications include:

- Drug target identification: Mapping interactions between pathogenic and host proteins reveals potential intervention points

- Drug mechanism elucidation: Understanding how therapeutics disrupt or modulate protein complexes

- Polypharmacology: Designing drugs that target multiple proteins within a functional module

- Biomarker discovery: Identifying characteristic complex signatures in disease states

Structure-based PPI prediction methods like DeepSCFold are particularly valuable for drug discovery, as they provide atomic-level details of interaction interfaces that can be targeted with small molecules or biologics [15]. Similarly, network-based functional prediction methods like GOHPro help prioritize candidate proteins for therapeutic intervention by placing them in functional context [4].

As these computational and experimental methods continue to advance, they promise to accelerate the translation of basic biological knowledge into clinical applications, ultimately enabling more precise targeting of disease-relevant protein complexes and pathways.

Table 3: Performance Benchmarks of Protein Complex Analysis Methods

| Method | Key Metric | Performance | Application Scope |

|---|---|---|---|

| DeepSCFold | TM-score improvement | +11.6% vs. AlphaFold-Multimer; +10.3% vs. AlphaFold3 | Challenging complexes lacking co-evolution |

| GOHPro | Fmax improvement | 6.8-47.5% over state-of-the-art methods | Functional annotation across GO categories |

| CN-PAGE/MS | Technical variation | Pearson correlation >0.9 between replicates | Quantitative complexome across conditions |

| Coev2Net | Prediction coverage | ~1,500 interactions in human MAPK networks | Confidence assessment for interactome mapping |

| PINOT | Data integration | 7 primary databases via PSICQUIC | Unified access to curated PPI data |

The rapid advancement of sequencing technologies has generated an unprecedented volume of protein sequence data, creating a critical bottleneck in biological research: the functional annotation of these sequences. This application note quantifies the extensive gap between sequenced and annotated proteins, framed within the context of network-based prediction methodologies, which represent a promising frontier for closing this knowledge gap. The UniProt database now contains over 356 million protein sequences, yet the vast majority (~80%) lack any functional characterization [20]. More critically, only <0.1% of proteins in UniProt have been assigned experimental functional annotations, creating an immense sequence-function gap that hinders advances in biomedicine, drug discovery, and fundamental biology [21]. This document provides researchers with quantitative frameworks to assess this challenge and detailed protocols for implementing cutting-edge network-based and deep learning approaches to expand functional protein annotation.

Table 1: The Protein Sequence-Function Annotation Gap

| Metric | Value | Source/Reference |

|---|---|---|

| Total proteins in UniProt | >356 million | [20] |

| Proteins with experimental annotations | <0.1% | [21] |

| Uncharacterized proteins ("Dark Proteome") | ~80% | [20] |

| Animal proteomes unannotated by traditional homology | Up to 50% | [22] |

| CAFA evaluation benchmark (Fmax score progression) | 0.5 (CAFA1) to ~0.65-0.8 (CAFA5) | [23] |

Quantitative Landscape of the Annotation Gap

The UniProt knowledgebase is divided into two primary sections that highlight the annotation disparity: Swiss-Prot, containing over 570,000 proteins with high-quality, manually curated annotations derived from expert literature review, and TrEMBL, containing over 250 million proteins with automated annotations that often lack depth and accuracy [21]. This structural division institutionalizes the annotation gap, with TrEMBL accommodating the rapid growth of sequence data while sacrificing annotation quality due to scalability constraints. The challenge is particularly pronounced for non-model organisms, where traditional homology-based methods fail to annotate nearly half of all genes, especially in less-studied phyla [22]. For example, approximately 30% of proteins in the model organism Caenorhabditis elegans lack functional annotation in UniProt, while this problem affects 41% of tardigrade genes and 50% of sponge genes [22].

Performance Metrics for Prediction Algorithms

The Critical Assessment of Protein Function Annotation (CAFA) has established standardized evaluation metrics to quantify prediction accuracy. The primary metric, the Fmax score, represents the maximum harmonic mean of precision and recall on the precision-recall curve, ranging from 0-1 where 1 indicates perfect prediction [23]. From CAFA1 to CAFA5, the average Fmax scores across all Gene Ontology (GO) domains have improved from approximately 0.5 to nearly 0.65, with molecular function predictions reaching up to 0.8, demonstrating progress while highlighting significant room for improvement [23]. Performance varies substantially across the three GO domains, with molecular function typically achieving the highest scores, followed by biological process, while cellular component predictions have proven most challenging due to both ontological complexities and reduced research focus [23].

Table 2: Prediction Performance Across Gene Ontology Domains

| GO Domain | Representative Fmax Score | Primary Prediction Methods | Key Challenges |

|---|---|---|---|

| Molecular Function (MFO) | ~0.8 (CAFA5) | Remote homology detection, structure integration, embedding models | Limited for rapidly evolving functions |

| Biological Process (BPO) | ~0.65 (CAFA5) | Text mining, network propagation, multi-modal data | Evolutionary divergence between species |

| Cellular Component (CCO) | Lower than MFO/BPO | Sequence-based features | Complex ontology structure, less research focus |

Network-Based Prediction Frameworks: Experimental Protocols

Protein-Protein Interaction Network Construction and Analysis

Purpose: To infer protein function through guilt-by-association principles by analyzing interaction patterns within biological networks.

Workflow:

- Data Collection: Compile protein-protein interaction (PPI) data from experimental techniques (e.g., affinity purification-mass spectrometry, yeast two-hybrid systems) and curated databases [24].

- Network Construction: Represent proteins as nodes and interactions as edges in a graph structure. Utilize standardized formats such as GraphML or CSV for node and edge definitions.

- Topological Analysis: Calculate key network metrics using tools like NetworkX or Cytoscape:

- Node Degree: Number of interactions per protein; high-degree proteins are "hubs" often essential for network stability [24].

- Betweenness Centrality: Measures how often a node lies on shortest paths; high betweenness indicates "bottleneck" proteins critical for information flow [24].

- Closeness Centrality: Measures how quickly a node can reach other nodes; indicates potential for functional influence.

- Functional Module Identification: Apply clustering algorithms to detect densely connected regions that often correspond to functional units:

- Louvain Method: Optimizes modularity to identify community structure [24].

- Walktrap Algorithm: Uses random walks to detect communities; effective for drug target identification [24].

- Evolutionary Clustering Algorithm (ECTG): Combines topological features with gene expression data to reduce noise [24].

Statistics-Informed Graph Network (PhiGnet Protocol)

Purpose: To annotate protein functions and identify functional sites at residue-level resolution using evolutionary couplings and residue communities [20].

Workflow:

- Input Representation:

- Generate protein embeddings using pre-trained ESM-1b model [20].

- Compute Evolutionary Couplings (EVCs) from multiple sequence alignments to capture co-evolving residue pairs.

- Identify Residue Communities (RCs) through hierarchical clustering of co-evolving residues.

- Dual-Channel Graph Construction:

- Represent residues as graph nodes with ESM-1b embeddings as node features.

- Construct two graph edge types: EVC edges (weighted by coupling strength) and RC edges (based on community membership).

- Network Architecture:

- Process both edge types through separate stacked Graph Convolutional Network (GCN) channels.

- Integrate outputs from both channels using concatenation or attention mechanisms.

- Pass integrated representations through fully connected layers for GO term or EC number prediction.

- Functional Site Identification:

- Compute activation scores per residue using Gradient-weighted Class Activation Mapping (Grad-CAM) [20].

- Residues with scores ≥0.5 indicate high functional significance.

- Map significant residues to 3D structures (from PDB or AlphaFold predictions) for biological validation.

Multi-Channel Equivariant Graph Framework (ENGINE Protocol)

Purpose: To integrate protein 3D structural information with evolutionary sequence data for robust function prediction [25].

Workflow:

- Input Feature Generation:

- Structure Channel: Process 3D coordinates (from PDB or AlphaFold) using an Equivariant Graph Convolutional Network (EGCN) to capture geometric features.

- Sequence Channel: Encode evolutionary and sequence-derived information using ESM-C protein language model.

- 3D-Sequence Fusion: Create unified representation combining spatial and sequential signals.

- Network Architecture:

- Construct separate graph networks for structure and sequence representations.

- Implement attention mechanisms to weight important residues and structural motifs.

- Fuse multi-channel information through concatenation or cross-attention layers.

- Training Protocol:

- Use GO term annotations as training targets with multi-label classification objective.

- Implement class-balanced loss functions to address annotation bias.

- Apply gradient clipping and learning rate scheduling for stable training.

- Interpretation and Validation:

- Identify functionally critical residues and substructures through attention weights.

- Perform ablation studies to quantify contribution of different input modalities.

- Compare predictions with experimental annotations from BioLip database [20].

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Research Reagents and Computational Tools

| Resource/Tool | Type | Function in Protein Annotation | Access |

|---|---|---|---|

| FireProtDB 2.0 | Manually curated database | Provides standardized protein stability data (ΔΔG, ΔTm) for 2,762 proteins with 546K experiments; trains stability prediction models | Public database [26] |

| AlphaFold/ESMFold | Structure prediction tools | Generates reliable 3D protein structures from sequence; provides input for structure-based function prediction | Public servers/API |

| ESM-1b/ESM-2 | Protein Language Model | Converts protein sequences to embeddings; captures evolutionary constraints and functional signals | Downloadable models |

| PPI Networks (STRING) | Protein interaction database | Provides functional context via guilt-by-association; inputs for network propagation algorithms | Public database |

| FANTASIA | Annotation pipeline | Performs zero-shot function prediction using embedding similarity; covers proteins missed by homology | GitHub [22] |

| PhiGnet | Prediction framework | Annotates functions and identifies functional residues using evolutionary statistics | Available upon request [20] |

| ENGINE | Multi-modal framework | Integrates structure and sequence data for precise function prediction | GitHub [25] |

| GOAnnotator | Literature mining tool | Retrieves relevant literature and identifies GO terms without manual curation | GitHub [21] |

Visualization and Data Interpretation Framework

Network Propagation Diagram for Function Prediction

Performance Benchmarking Visualization

The quantitative gap between sequenced and annotated proteins remains substantial, with fewer than 1% of proteins having experimental functional characterization. Network-based prediction methods have demonstrated significant progress in bridging this gap, with Fmax scores improving from approximately 0.5 to over 0.7 on molecular function prediction in the past decade [23]. The most promising approaches integrate multiple data modalities—sequence, structure, evolutionary constraints, and interaction networks—to achieve robust performance across diverse protein families and organisms [25] [20]. Emerging strategies including zero-shot learning with protein language models [22] and residue-level function identification [20] offer particularly exciting avenues for illuminating the "dark proteome." For drug development professionals and researchers, adopting these network-based frameworks can significantly accelerate target identification and functional validation while providing crucial insights into molecular mechanisms underlying protein function. Continued development of standardized benchmarks like CAFA and curated resources like FireProtDB 2.0 will be essential for driving further innovation in this critical domain of bioinformatics [23] [26].

From Algorithms to Action: A Guide to Modern Network-Based Prediction Methods

Protein function prediction is a cornerstone of modern bioinformatics, critical for understanding biological processes, disease mechanisms, and accelerating drug discovery. Among computational approaches, direct annotation methods that leverage protein network data have emerged as powerful tools. These methods operate on the fundamental principle that proteins interacting within a network tend to perform related functions. Direct methods specifically predict the function of a protein based on the known functions of its direct neighbors in the network, distinguishing them from indirect methods that first identify functional modules before assigning functions [27] [28].

The reliance on network data addresses a key limitation of traditional sequence-similarity approaches, which often lack contextual information about the biological processes proteins participate in. As high-throughput technologies generate increasingly large protein-protein interaction (PPI) datasets, direct annotation methods provide a framework for inferring functional context at a systems biology level [27]. This document details the core methodologies, practical protocols, and recent advancements in three fundamental direct annotation approaches: neighborhood counting, graph theory applications, and Markov Random Fields.

Core Methodologies and Comparative Analysis

The table below summarizes the key characteristics, strengths, and limitations of the three primary direct annotation methods.

Table 1: Comparison of Direct Annotation Methods for Protein Function Prediction

| Method | Core Principle | Key Algorithmic Features | Strengths | Limitations |

|---|---|---|---|---|

| Neighborhood Counting | Simple aggregation of neighbors' functions | Majority voting; frequency-based scoring | Computational simplicity; intuitive logic; fast for large networks | Limited by immediate neighbors; ignores network topology |

| Graph Theory Applications | Leverages topological properties of the entire network | Random walks; network propagation; community detection | Captures global network structure; more robust to local noise | Higher computational complexity; parameter sensitivity |

| Markov Random Fields (MRF) | Probabilistic graphical model incorporating neighbor dependencies | Gibbs sampling; belief propagation; iterative probability updates | Models functional dependencies; probabilistic confidence scores | Complex parameter estimation; convergence issues in large networks |

Neighborhood Counting

This is the most straightforward direct method. It annotates an uncharacterized protein based on the frequency of functional labels among its direct interacting partners in the network. A common implementation is the majority vote, where the most frequent function among neighbors is assigned. The underlying assumption is that if a protein interacts with many proteins having a specific function, it is likely to share that function [27].

Graph Theory Applications

Methods in this category utilize algorithms from graph theory to propagate functional information across the network. For instance, random walk algorithms simulate a walker moving randomly from node to node, with the probability of a function being assigned to a node proportional to the time the walker spends on nodes known to have that function. This allows the influence of annotated proteins to spread beyond their immediate neighborhood, capturing more complex functional relationships embedded in the network's global structure [4].

Markov Random Fields (MRF)

MRF models provide a statistical framework for protein function prediction. In an MRF, the probability that a protein has a specific function depends on two factors: its own inherent propensity (a prior probability) and the functions of its direct neighbors in the network. This dependency is modeled via an energy function, and the goal is to find the most probable joint assignment of functions to all unannotated proteins in the network. The standard approach involves using Gibbs sampling to estimate these probabilities iteratively [27] [28].

Advanced Implementation and Benchmarking

The Bayesian Markov Random Field (BMRF) Enhancement

A significant advancement in MRF methodology is the Bayesian Markov Random Field (BMRF), which addresses a critical flaw in the standard MRF approach (MRF-Deng). The original method performs parameter estimation using only annotated proteins, ignoring interactions with unannotated proteins. This leads to biased parameters and reduced prediction performance, especially when many proteins lack annotations [27] [28].

BMRF amends this by performing simultaneous estimation of model parameters and prediction of protein functions using a Bayesian approach. It models the joint posterior distribution of the parameters and unknown functional states, sampling from this distribution via a Markov Chain Monte Carlo (MCMC) algorithm. This effectively "averages across" the uncertainty of the unannotated proteins, leading to more accurate parameter estimates and, consequently, superior prediction performance [28].

Table 2: Performance Benchmark of Protein Function Prediction Methods

| Method | Mean AUC (across 90 GO terms) | Key Differentiator |

|---|---|---|

| Kernel Logistic Regression (KLR) | 0.8195 | Uses a diffusion kernel to expand protein neighborhoods |

| Bayesian MRF (BMRF) | 0.8137 | Joint parameter estimation and prediction via MCMC |

| Letovsky & Kasif (LK) | 0.7867 | Belief propagation for prediction |

| MRF-Deng | 0.7578 | Standard MRF with Gibbs sampling; ignores unannotated nodes during parameter estimation |

Performance benchmarks on a high-quality S. cerevisiae network with 1622 proteins show that BMRF outperforms its foundational methods (MRF-Deng and LK) and is competitive with the more computationally expensive Kernel Logistic Regression (KLR) [28].

Integrated Modern Frameworks

Recent state-of-the-art methods often integrate direct network-based principles with other data types and deep learning. For example, the GOHPro framework constructs a heterogeneous network by integrating a protein functional similarity network (built from domain profiles and modular complexes) with a Gene Ontology (GO) semantic similarity network. It then uses a network propagation algorithm, a graph-theoretic technique, to prioritize functions for unannotated proteins, demonstrating superior performance over existing methods [4].

Similarly, DPFunc is a deep learning-based method that uses domain information to guide the identification of functionally important regions in protein structures. While not a pure network method, it exemplifies the trend of combining multiple data sources and sophisticated algorithms for enhanced accuracy and interpretability [29].

Protocol for Bayesian Markov Random Field Analysis

Experimental Workflow

The following diagram illustrates the logical workflow and key components for implementing a Bayesian MRF analysis for protein function prediction.

Step-by-Step Procedure

Step 1: Data Preparation and Input

- Input: A protein-protein interaction (PPI) network and a set of known functional annotations (e.g., Gene Ontology terms) for a subset of proteins.

- Software Requirement: Implement the BMRF algorithm in a computational environment like R or Python. Custom code is typically required, based on the original methodology [27] [28].

- Action: Format the network into an adjacency matrix where entries indicate the presence or strength of an interaction. Organize functional annotations into a binary matrix where rows are proteins and columns are GO terms.

Step 2: Define the Bayesian MRF Model

- Action: Specify the joint probabilistic model. For a given GO term, the probability (log-odds) that a protein i has the function is modeled as:

P(Y_i = 1 | Y_j, j in N(i)) = σ( α + β_1 * n_i^(1) + β_0 * n_i^(0) )

where:

Y_iis the functional state of protein i.σis the logistic function.αis the baseline log-odds (prior parameter).n_i^(1)andn_i^(0)are the number of neighbors of i with and without the function, respectively.β_1andβ_0are the interaction parameters quantifying the influence of neighbors.- Key Differentiator: Unlike standard MRF, the parameters

(α, β_1, β_0)and the unknown statesY_iare treated as random variables to be estimated jointly [28].

- Key Differentiator: Unlike standard MRF, the parameters

Step 3: Execute MCMC Sampling

- Action: Use an adaptive Markov Chain Monte Carlo (MCMC) algorithm to draw samples from the complex joint posterior distribution of all unknowns.

- Sub-step 3.1: Initialize all unknown functional states

Y_iand model parameters randomly or with heuristic values. - Sub-step 3.2: Iterate between the following two steps for a large number of cycles:

- a. Sample Parameters: Conditioned on the current guess of all functional states (both known and unknown), sample new values for the parameters

(α, β_1, β_0). - b. Sample Functional States: Conditioned on the current parameter values, sample new functional states for the unannotated proteins.

- a. Sample Parameters: Conditioned on the current guess of all functional states (both known and unknown), sample new values for the parameters

- Convergence Check: Monitor the MCMC chain for convergence using trace plots and diagnostic statistics like the Gelman-Rubin statistic [27] [28].

Step 4: Interpret Results and Output

- Action: After discarding an initial "burn-in" period and confirming convergence, use the remaining MCMC samples to make inferences.

- Output 1: The posterior mean probability of a protein having a specific GO term is calculated as the average of its sampled states across all post-burn-in iterations.

- Output 2: Proteins can be ranked by these posterior probabilities, and annotations are assigned above a chosen probability threshold (e.g., 0.5).

The Scientist's Toolkit

Table 3: Essential Research Reagents and Computational Solutions

| Item Name | Type | Function in Protocol | Example/Note |

|---|---|---|---|

| Protein-Protein Interaction (PPI) Data | Data | Provides the foundational network structure for all analyses. | From databases like STRING, BioGRID, or IntAct. |

| Gene Ontology (GO) Annotations | Data | Provides the functional labels to be propagated through the network. | Curated annotations from UniProt-GOA or model organism databases. |

| MCMC Sampling Algorithm | Software/Algorithm | The core computational engine for performing Bayesian inference in BMRF. | Custom implementations in R/Python using Gibbs or Metropolis-Hastings sampling. |

| GO Semantic Similarity Network | Data/Construct | Used in advanced frameworks like GOHPro to integrate functional hierarchies. | Calculated based on the overlap and relationships between GO terms [4]. |

| Protein Domain Profiles | Data/Feature | Used to construct functional similarity networks, augmenting physical PPI data. | Sourced from Pfam database; indicates functional modules [4]. |

| Validation Dataset (e.g., CAFA) | Data | Benchmark for objectively assessing prediction performance. | Critical Assessment of Functional Annotation (CAFA) provides standardized benchmarks [29] [30]. |

Within the framework of network-based protein function prediction, computational methods are broadly categorized into direct annotation schemes and module-assisted schemes [31]. Direct methods propagate functional information to unannotated proteins directly from their neighbors in the protein-protein interaction (PPI) network. In contrast, module-assisted schemes involve a two-stage process: first, identifying densely connected modules within the complex PPI network, and second, performing a collective functional annotation of all proteins within each discovered module [31]. This approach is grounded in the biological principle that molecular networks are organized into functional modules—groups of proteins that work together in a coordinated fashion to carry out specific cellular processes [32]. These modules can represent stable protein complexes or dynamic functional units, such as signaling cascades [32]. By leveraging this modular architecture, module-assisted schemes provide a powerful strategy for the collaborative annotation of protein function on a systems level.

Key Concepts and Biological Rationale

Defining Functional Modules in Networks

In the context of PPI networks, a functional module is typically defined as a set of proteins that exhibit a high density of interactions within the set and a lower density of interactions with the rest of the network [32]. This topological structure reflects their cooperative biological function. There are two primary types of cellular modules that can be discovered:

- Protein Complexes: Multimolecular machines where proteins interact simultaneously in the same location (e.g., the anaphase-promoting complex, RNA splicing machinery) [32].

- Dynamic Functional Units: Groups of proteins that participate in the same cellular process but may not interact all at once or in the same place (e.g., signaling pathways, cell-cycle regulation modules) [32].

The fundamental principle behind module-assisted annotation is that proteins within the same module are functionally related. Therefore, annotating an uncharacterized protein can be achieved by transferring functional information from its well-annotated module partners. This "guilt-by-association" principle within modules often leads to more robust and accurate predictions compared to considering only immediate network neighbors, as it incorporates information from a broader, yet functionally coherent, network context [31].

Quantitative Measures for Module Identification

The process of identifying modules relies on graph-theoretic measures to evaluate the connectivity and significance of candidate subnets. The table below summarizes the key metrics used.

Table 1: Key Quantitative Measures for Module Identification

| Measure | Formula | Interpretation |

|---|---|---|

| Interaction Density (Q) | ( Q = \frac{2m}{n(n-1)} ) | Measures the fraction of observed interactions (m) out of all possible interactions in a module of size n. Ranges from 0 to 1 (fully connected) [32]. |

| P-value | ( P(n, m) ) | Probability of finding a module with n proteins and m or more interactions in a comparable random network. Induces statistical significance [32]. |

| E-value | ( E = P \times \Omega_n ) | Expected number of modules with n proteins and m or more interactions, accounting for the huge number of possible subnets ((\Omega_n)) [32]. |

Application Notes: Experimental Protocols and Workflows

Protocol for Module-Assisted Function Prediction

The following workflow provides a detailed, step-by-step protocol for predicting protein function using a module-assisted scheme.

Step 1: Network Preprocessing and Data Integration

- Obtain a PPI network from a reliable database such as DIP (Database of Interacting Proteins) or STRING [33] [34].

- Integrate functional annotations from structured ontologies, primarily the Gene Ontology (GO), which provides standardized terms for Biological Process, Molecular Function, and Cellular Component [34].

- Clean the network by removing proteins that lack any interaction data and standardize protein identifiers to ensure consistency.

Step 2: Identification of Functional Modules

- Apply one or more clustering algorithms to the PPI network to identify candidate modules. The choice of algorithm depends on the research goal and network characteristics.

- Clique Enumeration: Identifies all fully connected subgraphs (cliques). Effective for finding stable cores of complexes [32].

- Superparamagnetic Clustering (SPC): A physics-inspired method that assigns a "spin" to each node. Correlated fluctuations of spins identify nodes belonging to a highly connected cluster [32].

- Monte Carlo (MC) Optimization: An optimization procedure that seeks to maximize the interaction density (Q) of a candidate module of a given size

n[32].

- Subject the resulting candidate modules to statistical significance testing. Compare the observed connectivity (m) against a distribution generated from 1000 randomized networks that preserve the original node degrees. Retain only modules with a P-value < 0.05 or a sufficiently low E-value [32].

Step 3: Collaborative Functional Annotation

- For each statistically significant module, compile the set of all known GO annotations associated with its member proteins [35].

- Perform an annotation enrichment analysis for each module. This typically involves a hypergeometric test (or similar statistical test) to determine which GO terms are significantly over-represented in the module compared to their frequency in the entire proteome [35].

- The functional annotation is a collaborative effort: the known functions of a subset of proteins within a module provide strong evidence for annotating all members, including uncharacterized ones. Assign the top significantly enriched functions to the entire module.

Step 4: Validation and Interpretation

- Biologically validate the predicted module functions by reviewing the scientific literature for supporting evidence.

- Technically validate predictions using cross-validation techniques; for instance, hide the annotations of a subset of proteins, run the prediction process, and then check the recovery rate of the held-out functions.

The following diagram illustrates the logical workflow of this protocol.

Workflow for module-assisted functional annotation.

Successful implementation of module-assisted annotation relies on a suite of computational tools and data resources.

Table 2: Research Reagent Solutions for Module-Assisted Annotation

| Tool / Resource | Type | Primary Function | Access |

|---|---|---|---|

| STRING | Database | Provides comprehensive PPI networks, including both experimental and predicted interactions, for a vast number of organisms [33]. | Web interface, API |

| DIP (Database of Interacting Proteins) | Database | A curated repository of experimentally determined PPIs, often used as a core dataset for method development [34]. | Downloadable files |

| Gene Ontology (GO) | Knowledge Base | Provides a controlled vocabulary of functional terms and their relationships, essential for annotation and enrichment analysis [34]. | Web interface, OBO files |

| Cytoscape | Software Platform | An open-source platform for visualizing molecular interaction networks and integrating with other data. Essential for visualizing discovered modules [35]. | Desktop application |

| BiNGO/ClueGO | Software Tool | Cytoscape apps specifically designed to perform statistical enrichment analysis of GO terms on a network or a list of genes/proteins [35]. | Cytoscape plugin |

Discussion

Module-assisted schemes offer a powerful paradigm for elucidating protein function by leveraging the inherent modularity of biological systems. The primary advantage of this approach is its ability to provide context-specific functional hypotheses. By considering a protein within its functional module, predictions move beyond generic functional transfer from immediate neighbors to a more systems-level understanding of the protein's role in a coordinated cellular process [32]. Furthermore, methods that rely on multibody interactions within modules have been shown to be robust to false-positive interactions that are common in high-throughput PPI screens, as random false interactions are unlikely to form coherent, densely connected subgraphs [32].

However, several challenges remain. The performance and biological relevance of the identified modules are highly dependent on the choice of clustering algorithm and its parameters [32]. Future directions in this field point towards the integration of heterogeneous data sources, such as gene expression profiles or genetic interaction data, to refine module detection and annotation [34]. Moreover, distinguishing between different types of modules, such as stable complexes and dynamic functional units, from network topology alone remains difficult and often requires additional biological context [32]. Despite these challenges, module-assisted schemes for collaborative annotation stand as a cornerstone in the computational toolbox for translating network biology into functional insight.