Network-Based Biomarkers: Unlocking Predictive Power in Precision Oncology

This article explores the transformative role of network-based biomarkers in predicting treatment response and patient outcomes in complex diseases like cancer.

Network-Based Biomarkers: Unlocking Predictive Power in Precision Oncology

Abstract

This article explores the transformative role of network-based biomarkers in predicting treatment response and patient outcomes in complex diseases like cancer. Moving beyond single-molecule markers, we examine how integrative approaches that leverage protein-protein interaction networks, signaling pathways, and multi-omics data provide superior predictive power. Covering foundational concepts, advanced methodologies like graph neural networks and machine learning frameworks, implementation challenges, and rigorous validation strategies, this resource offers researchers and drug development professionals a comprehensive guide to the current landscape and future potential of network-driven biomarker discovery for precision medicine.

The Paradigm Shift: From Single Molecules to Network Biology in Biomarker Discovery

The rise of precision medicine has underscored the limitation of single-molecule biomarkers for complex diseases, which are often caused by the malfunction of interconnected biological networks rather than individual genes or proteins. Network-based biomarkers represent a paradigm shift, defined as sets of biomolecules and their interactions that collectively serve as measurable indicators of biological processes, pathogenic states, or therapeutic responses [1] [2]. This approach moves beyond individual components to capture the dynamic interactions within regulatory networks, protein-protein interactions (PPIs), and signaling pathways that underlie disease heterogeneity and drug response variability [3] [2]. By leveraging systems-level properties, network biomarkers offer enhanced predictive power for patient stratification, prognosis, and treatment selection in oncology, autoimmune diseases, and other complex conditions [4] [3].

The core hypothesis is that the therapeutic effect of a drug propagates through a PPI network to reverse disease states. Therefore, proteins topologically close to drug targets or within dysregulated disease modules are strong candidates for predictive biomarkers [3]. This framework integrates three critical data types: (1) therapy-targeted proteins, (2) disease-specific molecular signatures, and (3) the underlying human interactome, enabling the discovery of biomarkers with mechanistic links to both the disease and the intervention [3].

Key Methodological Frameworks and Experimental Protocols

The MarkerPredict Framework for Predictive Biomarkers in Oncology

MarkerPredict is a computational framework that integrates network motifs and protein disorder to predict biomarkers for targeted cancer therapies [4].

- Experimental Protocol:

- Network Construction: Utilize three signed signaling networks with distinct topological characteristics (e.g., Human Cancer Signaling Network (CSN), SIGNOR, ReactomeFI) [4].

- Motif and Protein Disorder Analysis: Identify three-nodal network motifs (triangles) using the FANMOD tool. Annotate proteins using intrinsic disorder databases (DisProt) and prediction methods (AlphaFold, IUPred) [4].

- Training Set Curation: Establish positive and negative training sets from literature evidence. Positive controls (class 1) are protein pairs where one is an established predictive biomarker for a drug targeting its partner, annotated using the CIViCmine text-mining database [4].

- Machine Learning Classification: Train multiple Random Forest and XGBoost models on network-specific and combined data, incorporating topological features and protein disorder annotations. Optimize hyperparameters via competitive random halving [4].

- Biomarker Scoring: Calculate a Biomarker Probability Score (BPS) as a normalized summative rank across all models to classify and rank potential predictive biomarker-target pairs [4].

Table 1: Performance Metrics of MarkerPredict Machine Learning Models (LOOCV) [4]

| Signaling Network | Machine Learning Model | Accuracy Range | Key Predictive Features |

|---|---|---|---|

| Combined Networks | XGBoost | 0.89 - 0.96 | Network Motifs, Protein Disorder |

| SIGNOR | Random Forest | 0.75 - 0.89 | Triangle Participation, Link Sign |

| ReactomeFI | XGBoost | 0.81 - 0.93 | Network Centrality, Protein Disorder |

| CSN | Random Forest | 0.70 - 0.85 | Unbalanced Triangles, Interaction Type |

The PRoBeNet Framework for Predicting Treatment Response

PRoBeNet is a network medicine framework designed to discover biomarkers that predict patient response to therapy, particularly in complex autoimmune diseases [3].

- Experimental Protocol:

- Define Inputs:

- Therapeutic Target: The protein or proteins targeted by the drug of interest.

- Disease Signature: A set of genes or proteins differentially expressed in the disease state, typically derived from transcriptomic data of patient tissues.

- Interactome: A comprehensive human PPI network (e.g., from STRING, BioGRID) [3].

- Network Propagation: Model the theoretical therapeutic effect as a signal that propagates through the interactome from the drug target(s). Algorithms such as random walk with restart are used to identify network nodes most influenced by the target [3].

- Biomarker Prioritization: Integrate the propagation results with the disease signature. Proteins that are both topologically close to the drug target and part of the disease-associated module are prioritized as candidate biomarkers [3].

- Predictive Model Building: Use the expression levels of the prioritized biomarker genes in patient samples to train machine learning classifiers (e.g., logistic regression, support vector machines) to predict responder vs. non-responder status [3].

- Validation: Validate predictive power using retrospective gene-expression data from patient cohorts and, if possible, prospective validation in clinical samples [3].

- Define Inputs:

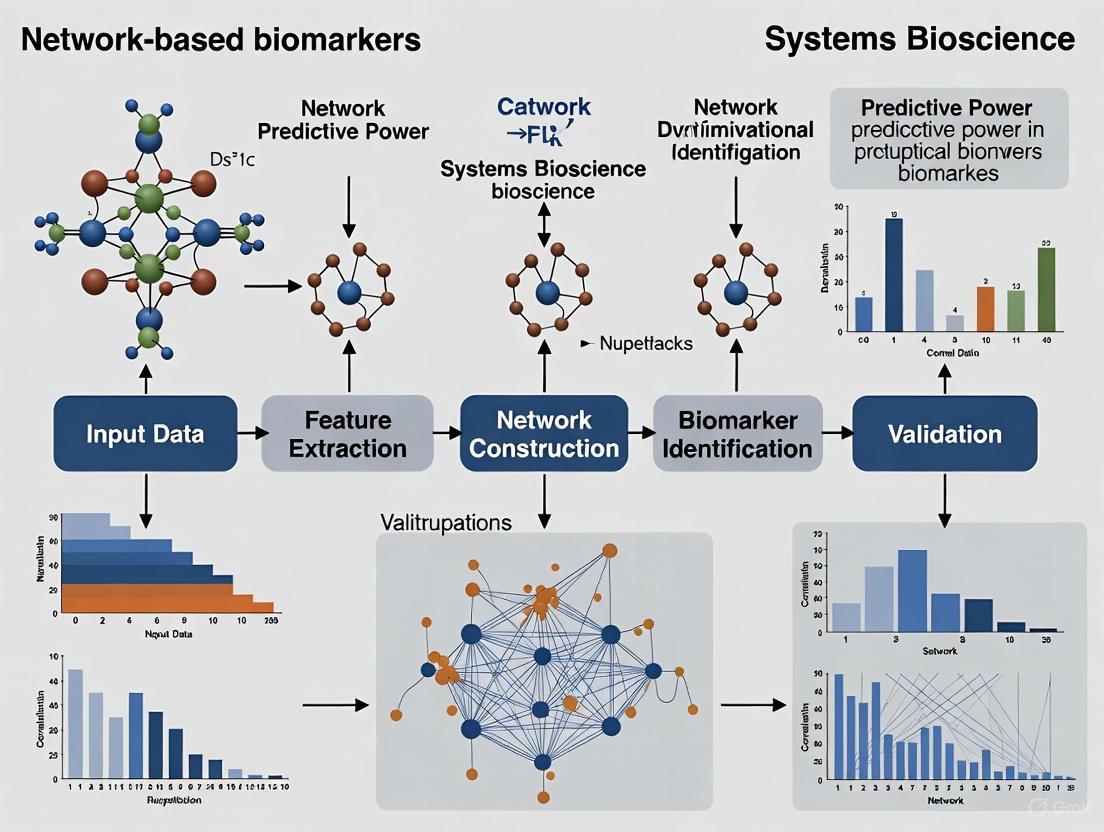

Figure 1: PRoBeNet Workflow for Treatment-Response Biomarker Discovery.

Experimental Validation and Quality Control

Biomarker Toolkit for Clinical Translation

A major challenge in biomarker development is the transition from discovery to clinical use. The Biomarker Toolkit is an evidence-based guideline designed to evaluate and promote the clinical potential of biomarkers [5]. It provides a checklist of attributes critical for success, grouped into four categories:

- Rationale: Justification of the unmet clinical need and pre-specified hypothesis [5].

- Analytical Validity: Ensures the accuracy, precision, reproducibility, and reliability of the biomarker assay. This includes detailed specifications on biospecimen matrix, collection, storage, and assay validation [5].

- Clinical Validity: Establishes the biomarker's ability to correctly identify a clinical state. This involves study design, patient eligibility, blinding, statistical modeling, and demonstration of sensitivity and specificity [5].

- Clinical Utility: Demonstrates the biomarker's usefulness in informing clinical decision-making, including cost-effectiveness, ethical considerations, feasibility of implementation, and approval by relevant authorities [5].

Applying this toolkit as a scoring system during research and development can help identify biomarkers with the highest promise for clinical adoption [5].

Table 2: Essential Research Reagent Solutions for Network Biomarker Studies

| Reagent / Resource | Function in Protocol | Example Sources / Databases |

|---|---|---|

| Protein-Pro Interaction Network | Provides the scaffold for network analysis and propagation models. | STRING, BioGRID, Human Cancer Signaling Network (CSN) [4] [3] |

| Signaling Network Database | Supplies signed, directed interactions for motif analysis in specific pathways. | SIGNOR, ReactomeFI [4] |

| Intrinsic Disorder Database | Annotates proteins with unstructured regions, a feature linked to biomarker potential. | DisProt, IUPred, AlphaFold DB [4] |

| Biomarker Annotation Database | Provides literature-curated evidence on known biomarkers for training sets. | CIViCmine [4] |

| Gene Expression Omnibus (GEO) | Source of patient-derived transcriptomic data for disease signature discovery and validation. | Public Repository |

| Machine Learning Library | Implementation of classification algorithms (XGBoost, Random Forest) for model building. | Scikit-learn, XGBoost (Python/R) [4] |

Advanced Concepts: Dynamic Network Biomarkers

While static network biomarkers are powerful, Dynamic Network Biomarkers (DNBs) represent a further refinement by capturing time-dependent alterations in biomarker interactions [2]. DNBs are particularly valuable for detecting the "pre-disease state," a critical transition period before the clinical onset of a complex disease [2].

The core principle of DNBs is that as a system approaches a critical transition, the molecular group (subnetwork) associated with the impending shift will exhibit three key dynamic properties:

- A sharp rise in the standard deviation of its constituent molecules.

- A sharp rise in the correlation between these molecules.

- A sharp decrease in the correlation between this molecule group and other molecules in the network [2].

Monitoring these statistical properties in longitudinal high-throughput data (e.g., repeated transcriptomic or proteomic measurements) can provide an early-warning signal for disease initiation, enabling preventative interventions.

Figure 2: DNB Concept for Early Disease Detection.

Network-based biomarkers represent a powerful systems-level approach that transcends the limitations of single-molecule markers. Frameworks like MarkerPredict and PRoBeNet, which integrate interactome data, disease signatures, and machine learning, demonstrate robust performance in identifying biomarkers predictive of therapy response in cancer and complex autoimmune diseases. The application of validation tools like the Biomarker Toolkit and the exploration of dynamic changes through DNBs are critical steps toward translating these discoveries into clinically actionable assays that can truly personalize patient care.

The transition from traditional, reductionist biomarker discovery to a network-based paradigm represents a fundamental shift in precision oncology. Traditional methods often evaluate biomarkers in isolation, overlooking the complex biological systems in which they operate [6]. This approach can miss critical interactions and fail to explain why many statistically significant biomarkers stall in clinical translation [5]. In contrast, network-based frameworks explicitly incorporate the topological properties of biological systems, recognizing that a protein's position and connectivity within molecular networks significantly influence its potential as a predictive biomarker [7]. This paradigm operates on the principle that disease phenotypes rarely arise from single gene defects but rather from perturbations within complex interaction networks [6] [3]. The structural and dynamic properties of these networks therefore provide a powerful lens for identifying biomarkers with greater biological relevance and clinical predictive power.

Network Topology Concepts in Biomarker Discovery

Key Topological Features

The predictive potential of a biomolecule is profoundly shaped by its structural role within biological networks. Several key topological features have emerged as critical determinants:

- Network Motifs: Small, recurring circuit patterns within larger networks serve as fundamental regulatory units. Specifically, three-nodal triangles—fully connected triplets—function as information processing hotspots. Proteins embedded within these motifs, particularly those involving drug targets, demonstrate significantly stronger co-regulation and are enriched for predictive biomarker potential [7].

- Hub and Bottleneck Positions: Proteins occupying highly connected central positions (hubs) or those connecting disparate network modules (bottlenecks) often control essential biological processes and information flow. Their strategic placement makes them sensitive indicators of network perturbation, though their essentiality can sometimes limit therapeutic utility [8].

- Dynamic Network Biomarkers (DNBs): Rather than static entities, DNBs capture temporal rewiring in gene regulatory networks across disease states. Genes exhibiting significant shifts in their regulatory interactions during critical transitions (e.g., from normal to pre-disease states) offer high predictive power for impending pathological shifts [8].

The Special Case of Intrinsically Disordered Proteins (IDPs)

Intrinsically disordered proteins (IDPs), which lack stable tertiary structures, exemplify the link between molecular characteristics and network topology. System-level analyses reveal that IDPs are significantly enriched in triangular network motifs with oncotherapeutic targets [7]. Their structural flexibility allows them to act as flexible connectors, facilitating new interactions and integrating signals across multiple pathways. This topological role, combined with their prevalence in cancer signaling, makes them compelling candidates for predictive biomarker development [7].

Quantitative Frameworks and Supporting Data

Performance Metrics of Network-Based Tools

Table 1: Comparative performance of network-based biomarker discovery tools.

| Tool Name | Underlying Methodology | Network Data Used | Reported Performance |

|---|---|---|---|

| MarkerPredict [7] | Random Forest, XGBoost on network motifs and protein disorder | Human Cancer Signaling Network (CSN), SIGNOR, ReactomeFI | LOOCV accuracy: 0.7–0.96; Identified 2084 potential predictive biomarkers |

| TransMarker [8] | Graph Attention Networks, Gromov-Wasserstein optimal transport | Prior interaction data integrated with state-specific single-cell expression | Outperforms existing multilayer network ranking in classification accuracy and robustness |

| PRoBeNet [3] | Network propagation on the human interactome | Protein-protein interaction network, disease molecular signatures | Machine learning models using its biomarkers significantly outperform models using all genes |

| NetRank [9] | Random surfer model (PageRank-inspired) | STRINGdb PPI network or WGCNA co-expression networks | AUC >90% for segregating 16/19 cancer types in TCGA; Breast cancer AUC: 93% |

Statistical Evidence for Network Topology Relevance

Table 2: Key quantitative findings establishing the biological rationale.

| Finding | Supporting Data | Biological Implication |

|---|---|---|

| IDP Enrichment in Motifs [7] | IDPs are significantly overrepresented in triangles with drug targets (p-value < 0.05) across CSN, SIGNOR, and ReactomeFI networks. | Close regulatory connection with targets enhances predictive value for therapy response. |

| IDPs as Cancer Biomarkers [7] | >86% of IDPs found in network triangles were annotated as prognostic biomarkers in the CIViCmine database. | Intrinsic structural properties are leveraged by networks for critical signaling roles. |

| Compact Biomarker Signatures [9] | Top 100 proteins selected by NetRank achieved 93% AUC in segregating breast cancer from other cancers. | Network-prioritized gene sets are highly interpretable and non-redundant. |

Experimental Protocols and Application Notes

Protocol 1: Identifying Biomarkers via Network Motifs and Protein Disorder

Purpose: To identify predictive biomarkers for targeted cancer therapeutics by analyzing network motifs and integrating intrinsic protein disorder.

Materials & Reagents:

- Signaling Networks: Human Cancer Signaling Network (CSN), SIGNOR, or ReactomeFI data.

- IDP Databases: DisProt, AlphaFold (pLLDT<50), IUPred (score>0.5).

- Biomarker Annotation: CIViCmine database for known clinical associations.

- Software: FANMOD for network motif detection; Custom scripts (e.g., MarkerPredict GitHub repository).

Procedure:

- Network Motif Identification: Use FANMOD to scan selected signaling networks for all three-node motifs. Extract triangles (fully connected three-node subgraphs) for subsequent analysis [7].

- Protein Annotation Overlay: Map known oncotherapeutic targets and proteins from IDP databases onto the network. Identify all "IDP-target pairs" — triangles containing both an IDP and a drug target [7].

- Training Set Construction:

- Positive Controls: Manually review literature (e.g., via CIViCmine) to identify proteins established as predictive biomarkers for a drug targeting their triangle neighbor.

- Negative Controls: Compile proteins not listed in biomarker databases or form random protein pairs not expected to have a predictive relationship [7].

- Machine Learning Classification: Train Random Forest or XGBoost classifiers using features derived from network topology and protein disorder. Validate models using Leave-One-Out-Cross-Validation (LOOCV) [7].

- Biomarker Prioritization: Calculate a Biomarker Probability Score (BPS) as a normalized summative rank across all models. Prioritize candidate biomarkers with high BPS for experimental validation [7].

Protocol 2: Discovering Dynamic Network Biomarkers with Cross-State Alignment

Purpose: To identify genes that undergo significant regulatory role transitions (Dynamic Network Biomarkers) during cancer progression using single-cell data.

Materials & Reagents:

- Single-Cell RNA-Seq Data: From multiple disease states (e.g., normal, pre-cancerous, tumor).

- Prior Interaction Knowledge: A reference protein-protein interaction or gene regulatory network (e.g., from STRINGdb).

- Software: TransMarker framework (available on GitHub).

Procedure:

- Multilayer Network Construction: Encode each disease state (e.g., normal, tumor) as a separate layer in a multilayer network. Intralayer edges represent state-specific interactions, while interlayer connections link the same gene across different states [8].

- Graph Embedding: Generate contextualized embeddings for each gene in each state using a Graph Attention Network (GAT), which integrates prior interaction data with state-specific expression profiles [8].

- Quantify Structural Shifts: Use Gromov-Wasserstein optimal transport to compute a pairwise distance matrix between the embeddings of each gene across two states. This quantifies the magnitude of each gene's structural and regulatory rewiring [8].

- Dynamic Network Index (DNI) Calculation: For each gene, compute the DNI based on its alignment distance and the distances of its neighbors in the union network. Rank all genes by their DNI values [8].

- Validation via Classification: Use the top-ranked genes as features in a deep neural network classifier to predict disease states. The classification performance on held-out data validates the biological relevance of the identified DNBs [8].

Table 3: Key resources for network-based biomarker discovery.

| Resource Name | Type | Function in Research |

|---|---|---|

| STRINGdb [9] | Protein-Protein Interaction Database | Provides a comprehensive source of known and predicted protein interactions for network construction. |

| CIViCmine [7] | Literature Mining Database | Annotates proteins with their known clinical roles (prognostic, predictive, diagnostic) for training and validation. |

| DisProt / IUPred [7] | Protein Disorder Database & Tool | Catalogs and predicts intrinsically disordered protein regions, a key feature for MarkerPredict-like analyses. |

| FANMOD [7] | Network Motif Detection Tool | Identifies statistically over-represented small subgraphs (like triangles) in large biological networks. |

| NetRank R Package [9] | Biomarker Ranking Algorithm | Integrates network connectivity with phenotypic association for robust feature selection from RNA-seq data. |

| TransMarker [8] | Computational Framework | Detects dynamic network biomarkers from single-cell data across disease states using graph alignment and optimal transport. |

Visualizing Workflows and Network Relationships

Workflow for Predictive Biomarker Identification

Workflow for Predictive Biomarker Identification. This diagram outlines the key steps in a network-based biomarker discovery pipeline, from data input and network construction through to the generation of ranked candidate biomarkers using machine learning.

Multilayer Network for Dynamic Biomarkers

Dynamic Network Rewiring Across States. This multilayer network visualization illustrates how gene-gene interactions can rewire between disease states (e.g., normal vs. tumor). Gene C shows a major shift in its regulatory role, making it a strong candidate Dynamic Network Biomarker.

The integration of network topology into biomarker discovery provides a powerful, biologically rational framework that transcends the limitations of reductionist approaches. By considering a biomolecule's position, connectivity, and dynamic behavior within interaction networks, researchers can prioritize candidates with a higher likelihood of clinical predictive power. This paradigm, supported by robust computational tools and validated by successful applications across cancer types, promises to accelerate the development of more effective companion diagnostics and improve patient stratification for targeted therapies.

The discovery of predictive biomarkers is being transformed by computational methods that analyze the intricate architecture of biological systems. Traditional, hypothesis-driven approaches often overlook the complex molecular interactions that dictate disease progression and treatment response. The integration of network science and machine learning provides a powerful, systems-level framework for identifying robust biomarkers. This paradigm shift leverages key network properties—network motifs, centrality measures, and the presence of intrinsically disordered proteins (IDPs)—to pinpoint molecules with critical roles in cellular information flow and signaling fidelity. These properties help elucidate why certain proteins are more likely to function as successful biomarkers, as they often occupy privileged, information-rich positions within the cellular interactome and possess unique structural characteristics that facilitate versatile interactions [4] [10] [11]. Framing biomarker discovery within this context moves the field beyond single-molecule associations towards an understanding of the disrupted system, ultimately enhancing the predictive power for patient-specific therapeutic outcomes.

Foundational Network Properties and Their Biological Significance

Network Motifs as Functional Hotspots

Network motifs are small, recurring circuit patterns within a larger network that appear more frequently than in random networks. They are considered the fundamental functional modules and building blocks of complex biological networks [10]. In transcriptional regulatory networks (TRNs), the Feed-Forward Loop (FFL), a three-node motif, is a statistically significant and well-characterized example [10]. Motifs like FFLs are not just structural artifacts; they confer specific dynamic properties to the network. They can act as filters for transient signals, generate pulse-like responses, accelerate response times, and provide robustness against network perturbations [4] [10]. From a biomarker perspective, proteins that co-participate in motifs with a drug target are enmeshed in a tight regulatory relationship, making their state a potential indicator of pathway activity and, consequently, drug response [4].

Centrality Measures for Identifying Key Players

Centrality analysis provides quantitative metrics to rank nodes (e.g., proteins or genes) based on their topological importance within a network. Identifying these "key players" is crucial for biomarker prioritization, as they are often essential for network stability and information flow [12] [13]. Different centrality measures capture distinct aspects of a node's importance:

- Degree Centrality: The number of direct connections a node has. It is a simple measure of local influence, where hubs (high-degree nodes) were initially thought to be universally essential [12] [13].

- Betweenness Centrality: Quantifies how often a node lies on the shortest path between other nodes. Nodes with high betweenness act as critical gatekeepers of information flow [12] [13].

- Closeness Centrality: Measures how quickly a node can reach all other nodes in the network via shortest paths. Nodes with high closeness can rapidly disseminate or receive information [12] [13].

- Eigenvector Centrality: A more sophisticated measure where a node's importance is determined by both its own connections and the importance of its neighbors [12].

No single centrality measure is perfect, and their performance can vary across different biological contexts. Studies have shown that combining multiple centrality measures often yields more reliable predictions of essential genes than any single measure alone [12].

Intrinsic Disorder and Network Regulation

Intrinsically Disordered Proteins (IDPs) or regions lack a stable tertiary structure under physiological conditions. This structural flexibility allows them to interact with multiple diverse partners and act as hubs in protein interaction networks [4]. IDPs are enriched in signaling and regulatory networks, where their plasticity is advantageous for facilitating new connections and integrating information from different pathways [4]. Their overrepresentation in three-nodal triangles with oncotherapeutic targets suggests a close regulatory connection, making them compelling candidates for predictive biomarkers. The presence of intrinsic disorder can be predicted computationally using tools like IUPred and AlphaFold (via per-residue confidence metrics, pLDDT), or curated from databases like DisProt [4].

Table 1: Key Network Properties and Their Biomarker Relevance

| Network Property | Biological Significance | Role in Biomarker Function |

|---|---|---|

| Network Motifs (e.g., FFLs) | Information-processing modules; provide robustness, signal filtering, and specific temporal dynamics [10]. | Indicate tight co-regulation; a neighbor's state in a motif can predict target pathway activity and drug response [4]. |

| Centrality Measures | Identify topologically essential nodes for network connectivity and information flow [12] [13]. | Prioritize proteins whose perturbation has widespread network consequences, correlating with essentiality and potential biomarker value [11]. |

| Intrinsic Disorder | Confers binding promiscuity and structural flexibility; enriched in regulatory hubs [4]. | IDPs are often critical network connectors; their state or expression can be a sensitive indicator of network rewiring in disease [4]. |

Quantitative Insights from Recent Studies

Recent research provides quantitative evidence supporting the integration of these network properties for biomarker discovery. The MarkerPredict framework, which integrates network motifs and protein disorder, classified 3,670 target-neighbor pairs using machine learning models (Random Forest and XGBoost), achieving a high leave-one-out cross-validation (LOOCV) accuracy range of 0.7 to 0.96 [4]. This study defined a Biomarker Probability Score (BPS) and identified 2,084 potential predictive biomarkers for targeted cancer therapeutics, 426 of which were classified as biomarkers by all four calculation methods [4].

Furthermore, the analysis of three signaling networks (CSN, SIGNOR, ReactomeFI) revealed that triangles containing both an IDP and a drug target member were significantly enriched, occurring with a much larger frequency than by random chance. Unbalanced triangles were particularly overrepresented among these IDP-target pairs [4]. Text-mining annotations from the CIViCmine database showed that in these networks, more than 86% of the IDPs were also annotated as prognostic biomarkers, underscoring their clinical relevance [4].

Table 2: Performance Metrics of a Network-Based Biomarker Discovery Framework (MarkerPredict) [4]

| Metric | Value / Range | Description / Context |

|---|---|---|

| LOOCV Accuracy | 0.7 - 0.96 | Performance of 32 different ML models across three signaling networks. |

| Target-Neighbor Pairs Classified | 3,670 | Total number of protein pairs evaluated. |

| Potential Biomarkers Identified | 2,084 | Biomarkers with a defined Biomarker Probability Score (BPS). |

| High-Confidence Biomarkers | 426 | Biomarkers classified positively by all 4 calculation methods. |

| IDPs as Prognostic Biomarkers | >86% | Percentage of Intrinsically Disordered Proteins annotated as prognostic biomarkers in CIViCmine. |

Experimental Protocols for Network-Based Biomarker Discovery

Protocol 1: Identifying and Analyzing Motif-Central Biomarkers

This protocol outlines the steps to discover biomarker candidates by analyzing proteins that participate in network motifs with known drug targets.

Workflow Overview:

Materials & Reagents:

- Signaling Network Data: Curated network files from sources like the Human Cancer Signaling Network (CSN), SIGNOR, or ReactomeFI [4].

- Motif Detection Software: FANMOD or an equivalent tool for enumerating network motifs [4] [10].

- Drug Target List: A curated list of proteins that are targets of known therapeutics.

- Biomarker Database: CIViCmine or similar for annotating known biomarker functions [4].

- IDP Prediction Tools: IUPred34 and/or AlphaFold33 (for pLDDT scores) to identify intrinsically disordered regions [4].

Procedure:

- Network Curation: Obtain and pre-process the chosen signaling network. Ensure the network is represented as a directed graph where possible, as link direction is crucial for motif definition.

- Motif Detection: Run the motif detection tool (e.g., FANMOD) to identify all instances of three-node motifs (specifically triangles) within the network. Statistical analysis (e.g., Z-score) should be performed to confirm the motifs are significantly over-represented compared to randomized networks [4] [10].

- Target-Motif Overlap: Cross-reference the list of drug targets with the proteins participating in the identified motifs. Create a set of "target-neighbor pairs," which are proteins that share a motif with a drug target [4].

- Biomarker Annotation: Annotate the target-neighbor pairs using a biomarker database like CIViCmine. This step establishes a ground-truth set of known predictive biomarkers (positive controls) and non-biomarkers (negative controls) for model training [4].

- Feature Engineering: For each target-neighbor pair, compute a set of features that will be used for machine learning. This should include:

- Motif-based features: The number and type of shared motifs.

- Topological features: Centrality measures (degree, betweenness) of the neighbor protein.

- Structural features: Intrinsic disorder scores from IUPred or AlphaFold for the neighbor protein [4].

- Model Training and Validation: Train a binary classifier (e.g., Random Forest or XGBoost) on the annotated dataset. Use rigorous validation methods like Leave-One-Out Cross-Validation (LOOCV) or k-fold cross-validation to assess model performance and avoid overfitting [4].

- Biomarker Prioritization: Apply the trained model to uncharacterized target-neighbor pairs. Use a composite score, like the Biomarker Probability Score (BPS), to rank the predictions and generate a final list of high-confidence biomarker candidates [4].

Protocol 2: A Multi-Layer Consensus Approach for Biomarker Prioritization

This protocol uses an ensemble of functional data to build a robust sub-network from which hub genes (potential biomarkers) are identified via centrality analysis.

Workflow Overview:

Materials & Reagents:

- Gene Expression Data: A disease-relevant transcriptomic dataset (e.g., from NCBI GEO).

- Module Detection Algorithms: A set of different algorithms (e.g., WGCNA, EXPANDER, CLICK) to identify co-expression modules [11].

- Ontological Data: Gene Ontology (GO) databases for functional annotation.

- Network Analysis Platform: Software like Cytoscape for visualization and analysis.

Procedure:

- Data Collection and Preprocessing: Obtain a disease-specific gene expression dataset (e.g., from NCBI GEO). Normalize and preprocess the data to remove technical artifacts and batch effects [11].

- Functional Module Detection: Apply multiple, independent module detection algorithms to the preprocessed expression data. This identifies groups of genes with highly correlated expression patterns, which often represent functional units [11].

- Build Consensus Networks:

- Consensus Co-expression Network: For the genes present in the top-ranked functional modules from step 2, generate a co-expression network. Use an ensemble of network inference methods to create a robust, consensus network that is less dependent on any single algorithm [11].

- Consensus Ontological Network: For the same set of genes, build a network where edges represent shared functional annotations from multiple Gene Ontology terms (e.g., Biological Process, Molecular Function, Cellular Component) [11].

- Create Final Confidence Network: Merge the consensus co-expression network and the consensus ontological network to form a single, functional confidence network. This network integrates both statistical correlation and prior biological knowledge [11].

- Centrality Analysis: Calculate multiple centrality measures (e.g., degree, betweenness, closeness) for all nodes in the confidence network.

- Hub Gene Identification: Rank the genes based on their centrality values. The top-ranked hub genes in this disease-specific, functionally-validated network are high-priority candidates for further validation as disease biomarkers or drug targets [11].

Table 3: Key Research Reagents and Computational Tools for Network-Based Biomarker Discovery

| Category / Item | Specific Examples | Function and Application |

|---|---|---|

| Signaling Network Databases | Human Cancer Signaling Network (CSN), SIGNOR, ReactomeFI [4] | Provide curated maps of protein-protein interactions and signaling pathways for network construction. |

| Motif Analysis Tools | FANMOD [4] [10] | Enumerates and identifies over-represented network motifs within a larger network structure. |

| Centrality Analysis Software | Cytoscape with plugins, NetworkX [11] [13] | Platforms for calculating a wide array of centrality measures and other topological parameters. |

| IDP Prediction Resources | IUPred34, AlphaFold33 (pLDDT), DisProt database [4] | Predict or catalog intrinsically disordered regions in protein sequences. |

| Biomarker Annotation Databases | CIViCmine [4] | Text-mined and curated database linking genes and variants to clinical evidence in cancer. |

| Module Detection Algorithms | WGCNA, EXPANDER, CLICK [11] | Identify functional modules (groups of co-expressed/co-regulated genes) from expression data. |

| Machine Learning Frameworks | Scikit-learn (Random Forest), XGBoost [4] | Provide libraries for building and validating classification models to rank biomarker candidates. |

Integrated Analysis and Future Directions

The convergence of motifs, centrality, and intrinsic disorder provides a multi-faceted lens through which to view and discover predictive biomarkers. Proteins that score highly across these dimensions—such as a hub protein with high betweenness centrality that is also an IDP and participates in multiple regulatory motifs with a drug target—are exceptionally strong candidates. Frameworks like MarkerPredict and PRoBeNet demonstrate the tangible power of this integrated approach, showing that machine learning models built on these network features significantly outperform models using randomly selected genes or all genes, especially when data is limited [4] [3].

Future research directions will likely involve a deeper integration of multi-omics data and more sophisticated temporal network analysis to capture the dynamic rewiring of biological systems in disease and treatment. Furthermore, the push for explainable AI in this field is critical for building clinical trust and generating biologically interpretable insights [14] [15]. As these methodologies mature, network-based biomarker discovery will become an indispensable component of precision medicine, enabling more accurate prediction of treatment response and improving patient outcomes in complex diseases.

Application Note: Leveraging Network-Based Predictive Biomarkers in Oncology

Therapeutic resistance and patient heterogeneity represent the most significant challenges in modern oncology. Tumor heterogeneity, both between patients (inter-tumor) and within a single tumor (intra-tumor), drives differential treatment responses and ultimately leads to therapy resistance [16] [17]. The diverse and heterogeneous nature of cancer is a fundamental characteristic responsible for therapy resistance, progression, and disease recurrence [16]. To address this clinical imperative, network-based frameworks that integrate multi-omics data, protein-protein interaction networks, and machine learning have emerged as powerful tools for discovering predictive biomarkers that can guide personalized treatment strategies.

Quantitative Landscape of Predictive Biomarkers in Oncology

Table 1: Performance Metrics of Network-Based Biomarker Discovery Platforms

| Platform Name | Algorithm(s) Used | Validation Performance (AUC) | Key Biomarkers Identified | Clinical Application |

|---|---|---|---|---|

| MarkerPredict [4] | Random Forest, XGBoost | 0.7-0.96 (LOOCV) | 426 high-confidence biomarkers across 3670 target-neighbor pairs | Predictive biomarker identification for targeted therapies |

| PRoBeNet [3] | Network propagation + ML | Significant outperformance vs. random genes | Biomarkers for infliximab response in ulcerative colitis | Autoimmune disease therapy selection |

| GTR-ITH Radiomics [17] | Multiple ML ensemble | 0.94 (training), 0.83 (test) | 17 global tumor region + 27 heterogeneity features | HCC response to TACE-ICI-MTT therapy |

Table 2: Biomarker Classification and Clinical Utility

| Biomarker Category | Definition | Key Examples | Clinical Utility |

|---|---|---|---|

| Predictive [15] | Determines likelihood of response to specific treatment | HER2 (breast cancer), EGFR mutations (lung cancer), PD-L1 | Guides targeted therapy and immunotherapy selection |

| Prognostic [15] | Indicates disease outcome independent of treatment | Ki67 (breast cancer), Oncotype DX Recurrence Score | Assesses disease aggressiveness and recurrence risk |

| Diagnostic [18] | Identifies presence and type of cancer | PSA (prostate cancer), CA-125 (ovarian cancer) | Facilitates early detection and cancer classification |

Network-Based Frameworks for Addressing Therapy Resistance

Network medicine approaches have demonstrated significant promise in unraveling the complexity of therapy resistance. The PRoBeNet framework operates on the hypothesis that the therapeutic effect of a drug propagates through a protein-protein interaction network to reverse disease states [3]. This approach prioritizes biomarkers by considering: (1) therapy-targeted proteins, (2) disease-specific molecular signatures, and (3) an underlying network of interactions among cellular components (the human interactome) [3]. Machine learning models using PRoBeNet biomarkers significantly outperformed models using either all genes or randomly selected genes, especially when data were limited.

Similarly, MarkerPredict employs network motifs and protein disorder to explore their contribution to predictive biomarker discovery [4]. The platform utilizes intrinsically disordered proteins (IDPs) enriched in network triangles as potential predictive biomarkers, as these structural features may shape their biomarker potential. MarkerPredict classified 3670 target-neighbor pairs with 32 different models achieving a 0.7-0.96 LOOCV accuracy and identified 2084 potential predictive biomarkers to targeted cancer therapeutics [4].

Imaging Biomarkers for Decoding Tumor Heterogeneity

Radiomic biomarkers have emerged as powerful non-invasive tools for quantifying intratumor heterogeneity (ITH). A recent multicenter cohort study developed a composite GTR-ITH score integrating both global tumor region and ITH-related features extracted from pre-treatment computed tomography scans [17]. This approach demonstrated high discriminative performance in predicting treatment response to transarterial chemoembolization combined with immune checkpoint inhibitor plus molecular targeted therapy (TACE-ICI-MTT) in hepatocellular carcinoma patients, with AUCs of 0.94 in the training set and 0.83 in independent testing [17]. The GTR-ITH low-risk group exhibited an immune-inflamed microenvironment characterized by enriched plasma cells and M1 macrophages, and reduced M2 macrophage infiltration, providing biological relevance to the imaging biomarkers.

Experimental Protocols

Protocol 1: Network-Based Biomarker Discovery Using MarkerPredict

Principle and Scope

This protocol describes the methodology for identifying predictive biomarkers using the MarkerPredict framework, which integrates network motifs, protein disorder, and machine learning to predict clinically relevant biomarkers for targeted cancer therapies [4]. The approach is based on the observation that intrinsically disordered proteins are enriched in network triangles and are likely to be cancer biomarkers.

Materials and Reagents

Table 3: Research Reagent Solutions for Network-Based Biomarker Discovery

| Item | Specification/Function | Example Sources/Platforms |

|---|---|---|

| Signaling Networks | Human Cancer Signaling Network (CSN), SIGNOR, ReactomeFI | [4] |

| IDP Databases | DisProt, AlphaFold (pLLDT<50), IUPred (score > 0.5) | [4] |

| Biomarker Annotation | CIViCmine text-mining database | [4] |

| Machine Learning Frameworks | Random Forest, XGBoost (Python implementations) | [4] |

| Motif Identification | FANMOD programme | [4] |

Procedure

Step 1: Network Motif Identification and Triangle Selection

- Obtain three signed subnetworks from Human Cancer Signaling Network (CSN), SIGNOR, and ReactomeFI

- Identify three-nodal motifs using FANMOD programme

- Select fully connected three-nodal motifs (triangles) for analysis, with special attention to unbalanced triangles (with odd number of negative links) and cycles

- Confirm enrichment of triangles containing both DisProt IDP and target members compared to random chance

Step 2: Training Dataset Construction

- Annotate biomarker properties using CIViCmine text-mining database

- Establish positive controls (class 1): cases where disordered protein was the predictive biomarker for its target triangle pair (332 cases of 4550 neighbor-target pairs)

- Establish negative control dataset from neighbor proteins not present in CIViCmine and random pairs

- Create final training set of 880 target-interacting protein pairs total

Step 3: Machine Learning Model Training and Optimization

- Implement both Random Forest and XGBoost binary classification methods

- Train on both network-specific and combined data of all 3 signaling networks

- Train on individual and combined data of all 3 IDP databases and prediction methods (32 total models)

- Set optimal hyperparameters with competitive random halving

- Perform validation using Leave-one-out-cross-validation (LOOCV), k-fold cross-validation, and 70:30 train-test splitting

Step 4: Biomarker Probability Score (BPS) Calculation and Classification

- Define Biomarker Probability Score as normalized summative rank of the models

- Classify 3670 target-neighbor pairs using the 32 different models

- Identify high-confidence biomarkers (426 classified as biomarker by all 4 calculations)

Protocol 2: Radiomic Biomarker Development for Tumor Heterogeneity

Principle and Scope

This protocol details the methodology for developing imaging biomarkers that capture intratumor heterogeneity (ITH) to predict treatment response in hepatocellular carcinoma (HCC) patients receiving combination therapy [17]. The approach integrates radiomic features representing both global tumor regions and ITH to create a composite biomarker score.

Materials and Reagents

- Pre-treatment computed tomography scans from multicenter cohort

- Radiomic feature extraction software (Python-based radiomics packages)

- Bulk RNA sequencing data from The Cancer Imaging Archive for biological validation

- Multiple machine learning algorithms for ensemble learning

- Principal component analysis tools for feature integration

Procedure

Step 1: Patient Cohort Selection and Image Acquisition

- Include patients with unresectable HCC receiving first-line TACE-ICI-MTT

- Ensure consistent CT imaging protocols across multiple centers

- Curate clinical data including treatment response and survival outcomes

Step 2: Radiomic Feature Extraction and Selection

- Extract features representing global tumor regions (GTR) and intratumor heterogeneity (ITH)

- Perform feature selection to retain most informative features (17 GTR-related and 27 ITH-related features)

- Generate composite GTR-ITH score using principal component analysis

Step 3: Machine Learning Model Development and Validation

- Employ ensemble learning with multiple machine learning algorithms

- Divide data into training, internal validation, and independent test sets

- Evaluate model performance by area under the receiver operating characteristic curve (AUC)

- Validate survival prediction using Kaplan-Meier analysis and log-rank test

Step 4: Biological Validation and Microenvironment Characterization

- Characterize immune infiltration patterns using bulk RNA sequencing data

- Correlate GTR-ITH score with immune microenvironment phenotypes

- Confirm immune-inflamed microenvironment in GTR-ITH low-risk group (enriched plasma cells and M1 macrophages, reduced M2 macrophage infiltration)

Protocol 3: PRoBeNet Framework for Predictive Biomarker Discovery

Principle and Scope

This protocol describes the PRoBeNet (Predictive Response Biomarkers using Network medicine) framework for discovering treatment-response-predicting biomarkers for complex diseases [3]. The method operates under the hypothesis that the therapeutic effect of a drug propagates through a protein-protein interaction network to reverse disease states.

Materials and Reagents

- Protein-protein interaction network (human interactome)

- Disease-specific molecular signatures

- Therapy-targeted proteins information

- Gene expression data from patient cohorts

- Machine learning frameworks for model building

Procedure

Step 1: Network Construction and Data Integration

- Compile comprehensive human interactome (protein-protein interaction network)

- Integrate disease-specific molecular signatures

- Incorporate therapy-targeted proteins information

Step 2: Biomarker Prioritization

- Prioritize biomarkers based on network proximity to therapy targets

- Consider network propagation of therapeutic effects

- Identify key nodes that reverse disease states when targeted

Step 3: Model Validation with Retrospective and Prospective Data

- Validate predictive power with retrospective gene-expression data from patients with ulcerative colitis and rheumatoid arthritis

- Perform prospective validation with tissues from patients with ulcerative colitis and Crohn disease

- Compare performance against models using all genes or randomly selected genes

Step 4: Clinical Translation

- Develop companion and complementary diagnostic assays

- Stratify suitable patient subgroups for clinical trials

- Implement biomarkers for improved patient outcomes

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 4: Key Research Reagent Solutions for Biomarker Discovery

| Category | Specific Items | Function/Application | Key References |

|---|---|---|---|

| Network Databases | Human Cancer Signaling Network (CSN), SIGNOR, ReactomeFI | Provide signed signaling networks for motif analysis and biomarker discovery | [4] |

| IDP Resources | DisProt, AlphaFold (pLLDT<50), IUPred (score > 0.5) | Identify intrinsically disordered proteins with biomarker potential | [4] |

| Biomarker Annotation | CIViCmine text-mining database | Annotate biomarker properties and establish training sets | [4] |

| Machine Learning Platforms | Random Forest, XGBoost, Ensemble Methods | Develop predictive models for biomarker classification | [4] [17] |

| Imaging Biomarker Tools | Radiomic feature extraction software, PCA tools | Quantify intra-tumor heterogeneity and global tumor characteristics | [17] |

| Validation Resources | Bulk RNA sequencing, Immune profiling assays | Biological validation of biomarker associations with microenvironment | [17] |

Methodologies in Action: Machine Learning and Network Algorithms for Biomarker Identification

The advancement of precision medicine relies heavily on the identification of robust predictive biomarkers to guide therapeutic decisions, particularly in complex diseases like cancer and autoimmune disorders. Traditional methods for biomarker discovery often face challenges of limited data availability and inadequate sample sizes when compared to the high dimensionality of molecular data. Computational frameworks that leverage network biology principles have emerged as powerful tools to address these limitations. By integrating protein-protein interaction networks, multi-omics data, and machine learning algorithms, these frameworks can systematically prioritize biomarker candidates with higher predictive potential. This article explores three prominent computational frameworks—PRoBeNet, MarkerPredict, and Comparative Network Stratification (CNS)—that utilize network-based approaches to discover biomarkers with predictive power for treatment response. These frameworks represent a paradigm shift from reductionist approaches to systems-level analyses that capture the complex interplay within biological systems, potentially offering more clinically relevant biomarkers for personalized treatment strategies.

The PRoBeNet Framework

Conceptual Foundation and Methodology

PRoBeNet (Predictive Response Biomarkers using Network medicine) is a novel framework developed to address the challenge of limited data availability in precision medicine for complex autoimmune diseases. This framework operates on the core hypothesis that the therapeutic effect of a drug propagates through a protein-protein interaction network to reverse disease states [3]. Unlike conventional approaches that focus solely on differential expression, PRoBeNet employs a more sophisticated strategy that prioritizes biomarkers by considering three critical elements: (1) therapy-targeted proteins, (2) disease-specific molecular signatures, and (3) an underlying network of interactions among cellular components (the human interactome) [3].

The methodology involves mapping both disease signatures and drug targets onto a comprehensive human interactome network. The framework then identifies proteins that occupy strategic positions in the network relative to both disease processes and drug mechanisms. These proteins are considered strong candidates for predictive biomarkers because they potentially mediate or reflect the propagation of therapeutic effects through biological networks. Validation studies have demonstrated that PRoBeNet successfully discovered biomarkers predicting patient responses to both established autoimmune therapies (infliximab) and investigational compounds (a mitogen-activated protein kinase 3/1 inhibitor) [3].

Experimental Protocol and Implementation

Protocol: PRoBeNet Biomarker Discovery

Step 1: Data Collection and Curation

- Compile a comprehensive human protein-protein interaction (PPI) network from reference databases (e.g., STRING, BioGRID).

- Obtain disease-specific molecular signatures from transcriptomic studies of patient tissues.

- Curate a list of therapy-targeted proteins based on drug mechanism of action studies.

Step 2: Network Integration and Analysis

- Map disease signatures and drug targets onto the PPI network.

- Implement network propagation algorithms to simulate the spread of therapeutic effects from drug targets.

- Identify proteins within the network that show significant proximity to both disease-associated regions and drug targets.

Step 3: Biomarker Prioritization

- Rank candidate biomarkers based on their network topological properties (e.g., centrality, proximity to targets).

- Apply machine learning models built specifically with PRoBeNet-identified features.

- Validate predictive power using retrospective gene-expression data from patient cohorts.

Step 4: Experimental Validation

- Verify top biomarker candidates in prospective clinical samples using appropriate molecular assays (e.g., RT-qPCR, RNA-seq).

- Assess the clinical utility of biomarkers for patient stratification in relevant therapeutic contexts.

The framework has shown particular strength in constructing robust machine-learning models when data are limited, significantly outperforming models using either all genes or randomly selected genes [3]. This makes PRoBeNet especially valuable for biomarker discovery in rare diseases or patient subgroups where large sample sizes are difficult to obtain.

PRoBeNet Workflow Visualization

The MarkerPredict Framework

Conceptual Foundation and Methodology

MarkerPredict is a specialized computational framework designed specifically for predicting clinically relevant predictive biomarkers for targeted cancer therapies [4]. This approach integrates two key biological concepts that shape biomarker potential: network-based properties of proteins and structural features such as intrinsic disorder [4]. The framework is founded on the observation that intrinsically disordered proteins (IDPs) are significantly enriched in three-nodal network motifs (triangles) with oncotherapeutic targets, suggesting a close regulatory relationship that may be exploitable for biomarker development [4].

The methodology employs machine learning to classify target-neighbour pairs based on their potential as predictive biomarkers. MarkerPredict was trained on literature evidence-based positive and negative training sets comprising 880 target-interacting protein pairs total using both Random Forest and XGBoost algorithms across three different signaling networks [4]. The framework achieved impressive performance metrics, with Leave-One-Out-Cross-Validation (LOOCV) accuracy ranging from 0.7 to 0.96 across 32 different models [4]. To facilitate biomarker prioritization, MarkerPredict introduces a Biomarker Probability Score (BPS), defined as a normalized summative rank of the models, which enables quantitative assessment and ranking of potential biomarkers [4].

Experimental Protocol and Implementation

Protocol: MarkerPredict Biomarker Prediction

Step 1: Data Collection and Feature Extraction

- Collect three signaling networks: Human Cancer Signaling Network (CSN), SIGNOR, and ReactomeFI.

- Identify three-nodal motifs (triangles) using the FANMOD programme.

- Calculate network topological properties for all proteins (centrality, participation in motifs).

- Annotate intrinsic disorder using multiple databases/methods: DisProt, AlphaFold (pLLDT<50), and IUPred (score > 0.5).

Step 2: Training Set Construction

- Create positive controls from established predictive biomarker-target pairs (332 pairs annotated from CIViCmine database).

- Compile negative controls from neighbor proteins not present in CIViCmine and random pairs.

- Manually review and curate the final training set of 880 protein pairs.

Step 3: Machine Learning Model Development

- Implement both Random Forest and XGBoost algorithms.

- Train models on both network-specific and combined data across all three signaling networks.

- Optimize hyperparameters using competitive random halving.

- Validate models using LOOCV, k-fold cross-validation, and 70:30 train-test splitting.

Step 4: Biomarker Classification and Scoring

- Apply trained models to classify 3,670 target-neighbour pairs.

- Calculate Biomarker Probability Score (BPS) as normalized average of ranked probability values.

- Prioritize candidates based on BPS for experimental validation.

Application of MarkerPredict identified 2,084 potential predictive biomarkers for targeted cancer therapeutics, with 426 classified as biomarkers by all four calculations [4]. The framework has been specifically used to detail the biomarker potential of LCK and ERK1 in cancer therapeutics, demonstrating its utility for generating clinically relevant hypotheses [4].

MarkerPredict Workflow Visualization

Comparative Network Stratification (CNS)

Conceptual Foundation

Comparative Network Stratification (CNS) is a computational framework designed for patient stratification through comparative analysis of molecular networks across patient subgroups. While detailed methodology for CNS was not available in the search results, the approach generally involves constructing disease-specific networks for different patient subgroups and identifying differential network regions that correspond to distinct disease mechanisms or treatment responses. This framework is particularly valuable for addressing disease heterogeneity, which often undermines the development of universally effective biomarkers and therapies.

CNS typically integrates multi-omics data to construct comprehensive molecular networks that capture the complex interactions within biological systems. By comparing these networks across patient populations with different clinical outcomes, the framework can identify network-based subtypes that may respond differently to treatments. This approach aligns with the broader trend in precision medicine toward moving beyond traditional biomarkers to network-based stratification that better reflects the complexity of disease mechanisms.

Comparative Analysis of Frameworks

Framework Comparison Table

The following table provides a detailed comparison of the key features, methodologies, and applications of PRoBeNet, MarkerPredict, and CNS:

| Feature | PRoBeNet | MarkerPredict | CNS |

|---|---|---|---|

| Primary Application | Predicting response biomarkers for complex autoimmune diseases [3] | Predicting predictive biomarkers for targeted cancer therapies [4] | Patient stratification through network comparison |

| Core Methodology | Network propagation from drug targets through interactome | Machine learning on network motifs and protein disorder features [4] | Comparative analysis of molecular networks across subgroups |

| Network Types | Protein-protein interaction networks | Signaling networks (CSN, SIGNOR, ReactomeFI) [4] | Disease-specific molecular networks |

| Key Biological Features | Drug targets, disease signatures, network proximity | Intrinsic disorder, network motif participation [4] | Multi-omics patterns, network topology |

| Machine Learning Approach | Models using PRoBeNet-selected features | Random Forest & XGBoost (32 models) [4] | Not specified in available sources |

| Validation Methods | Retrospective & prospective gene-expression data [3] | LOOCV, k-fold CV, train-test split (0.7-0.96 accuracy) [4] | Not specified in available sources |

| Key Output | Prioritized predictive biomarkers | Biomarker Probability Score (BPS) [4] | Patient subgroups, network subtypes |

| Unique Strength | Effective with limited data; reduces features for robust models [3] | Integrates structural disorder with network topology [4] | Addresses disease heterogeneity |

Performance Metrics Table

The following table summarizes the performance characteristics and validation results for the frameworks:

| Performance Aspect | PRoBeNet | MarkerPredict | CNS |

|---|---|---|---|

| Reported Accuracy | Significantly outperforms all-gene or random-gene models [3] | LOOCV accuracy: 0.7-0.96 across models [4] | Not available |

| Data Efficiency | Works well with limited data samples [3] | Requires sufficient training pairs (880 in original) [4] | Not available |

| Validation Evidence | Retrospective data from UC, RA; prospective from CD [3] | Classification of 3,670 pairs; 426 high-confidence biomarkers [4] | Not available |

| Clinical Translation | Potential for companion diagnostics [3] | Encourages clinical validation [4] | Not available |

| Scalability | Suitable for multi-omics integration | Can scale with network size and disorder data | Not available |

The Scientist's Toolkit

Successful implementation of network-based biomarker discovery frameworks requires specific computational tools and biological resources. The following table details essential components of the research toolkit for these approaches:

| Resource Type | Specific Tools/Databases | Function in Research |

|---|---|---|

| Signaling Networks | Human Cancer Signaling Network (CSN), SIGNOR, ReactomeFI [4] | Provide curated biological networks for analysis and feature extraction |

| IDP Databases | DisProt, IUPred, AlphaFold [4] | Annotate intrinsic protein disorder, a key feature in MarkerPredict |

| Biomarker Databases | CIViCmine [4] | Provide validated biomarker information for training and validation |

| Motif Detection | FANMOD programme [4] | Identify network motifs (triangles) for feature calculation |

| Machine Learning | Random Forest, XGBoost [4] | Implement classification algorithms for biomarker prediction |

| Implementation | PRoBeNet framework, MarkerPredict (GitHub) [4] [3] | Ready-to-use computational frameworks for biomarker discovery |

| Validation Data | Retrospective gene-expression data from patient cohorts [3] | Validate predictive power of discovered biomarkers |

Network-based computational frameworks represent a powerful paradigm shift in predictive biomarker discovery. PRoBeNet, MarkerPredict, and CNS each offer distinct approaches to addressing the fundamental challenge of connecting biological complexity to clinically actionable predictions. While PRoBeNet excels in contexts with limited data availability and MarkerPredict offers specialized capability for cancer therapeutics by integrating structural disorder, CNS focuses on addressing disease heterogeneity through comparative network analysis.

The future development of these frameworks will likely involve several key directions: First, increased integration of multi-omics data will provide more comprehensive biological context for predictions. Second, improvement in interpretability methods will enhance clinical translation by providing mechanistic insights alongside predictions. Third, incorporation of temporal dynamics through longitudinal data analysis may capture the evolving nature of treatment responses. Finally, standardization of validation protocols across frameworks will facilitate comparative assessment and clinical adoption.

As these frameworks mature, they hold significant promise for transforming precision medicine by enabling more accurate prediction of treatment responses, ultimately leading to improved patient outcomes and more efficient therapeutic development.

Within the paradigm of network medicine, diseases are rarely caused by single gene defects but rather arise from perturbations in complex cellular networks. Network propagation has emerged as a powerful computational technique that leverages protein-protein interaction (PPI) networks to identify biomarkers and therapeutic targets by simulating the flow of information from known disease-associated genes. This approach is grounded in the "guilt-by-association" principle, wherein proteins proximal to known targets in the network are likely involved in related biological processes and disease mechanisms. By framing biomarker discovery within the context of a broader thesis on network-based biomarkers' predictive power, this protocol details practical methodologies for implementing network propagation to uncover biomarkers proximal to drug targets, thereby accelerating therapeutic development.

The core hypothesis is that genes causing similar phenotypes tend to interact with one another, and that the functional influence of a gene or protein extends to its network neighbors. Network-based methods systematically contextualize individual molecular entities within the broader cellular system, moving beyond differential expression alone to identify biomarkers based on their topological significance. This is particularly valuable for understanding complex drug response mechanisms, as resistance is often mediated through alternative pathways that bypass the primary drug target [19].

Quantitative Foundations of Network Propagation

The application of network propagation relies on several key quantitative metrics and algorithms that determine how "influence" spreads through a network. The following table summarizes the core computational components.

Table 1: Core Algorithms and Metrics in Network Propagation

| Component | Algorithm/Metric | Function in Biomarker Identification | Key Formula/Parameters |

|---|---|---|---|

| Network Propagation | PageRank / Random Walk with Restart | Prioritizes genes based on their proximity and connectivity to known seed genes (e.g., drug targets) in the PPI network. | ( PR(gi; t) = \frac{1-d}{N} + d \sum{gj \in B(gi)} \frac{PR(gj; t-1)}{L(gj)} ) Where (d) is a damping factor, (B(gi)) are neighbors, and (L(gj)) is the out-degree [20]. |

| Path Analysis | k-Shortest Paths (PathLinker) | Identifies critical communication pathways between proteins, revealing potential bypass routes used in drug resistance. | Parameter k (e.g., 200) defines the number of shortest simple paths to compute between source and target nodes [19]. |

| Centrality Analysis | Betweenness, Closeness, Degree | Quantifies the topological importance of a node within the network, helping to identify bottleneck proteins or key connectors. | Integrated into frameworks like BEERE for iterative gene list ranking and expansion [21]. |

| Statistical Enrichment | Hypergeometric Test | Determines if a set of candidate genes is significantly over-represented in a specific biological pathway, adding functional context. | Used to map candidate genes to ICI-related pathways [20]. |

The PageRank algorithm, a cornerstone of many propagation methods, operates by iteratively distributing a "score" across the network. Seeds (e.g., known drug targets) are initialized with a high score, which is then propagated to their immediate neighbors. The damping factor d (typically ~0.85) ensures the process converges and models the probability that propagation restarts from a seed node. This process prioritizes nodes that are highly connected and close to multiple seeds, making them strong biomarker candidates [20].

The k-shortest paths approach complements this by not just looking at direct neighbors but at the ensemble of shortest paths that connect two proteins of interest, such as a drug target and a transcription factor. Analyzing these paths can reveal which intermediary proteins are most frequently used for communication within the cell. In cancer, these frequently used intermediaries can represent vulnerabilities whose targeting can block resistance mechanisms [19].

Application Note: Predictive Biomarker Discovery for Immune Checkpoint Inhibitor (ICI) Response

Background and Rationale

Predicting patient response to Immune Checkpoint Inhibitors (ICIs) remains a major challenge in oncology. While biomarkers like PD-L1 expression and tumor mutational burden are used, they lack consistent predictive power across cancer types. The PathNetDRP framework was developed to address this by identifying functionally relevant biomarkers using network propagation on PPI and pathway networks, moving beyond simple differential expression analysis [20].

Protocol: The PathNetDRP Workflow

This protocol outlines the steps for identifying and validating biomarkers for ICI response.

Table 2: PathNetDRP Protocol Workflow

| Step | Procedure | Input Data | Output |

|---|---|---|---|

| 1. Seed Initialization | Compile a list of known ICI target genes (e.g., PD-1, CTLA-4). | ICI target lists from databases like DrugBank or TTD. | Seed gene set ( S ). |

| 2. Network Propagation | Run the PageRank algorithm on a PPI network, initializing scores with seed genes ( S ). | High-confidence PPI network (e.g., from HIPPIE, STRING). | A ranked list of candidate genes influenced by the ICI targets. |

| 3. Pathway Mapping | Perform hypergeometric testing to identify biological pathways significantly enriched with the top-ranked candidate genes. | Pathway databases (e.g., KEGG, Reactome). | A set of ICI-response-related pathways ( P ). |

| 4. PathNetGene Scoring | For each pathway in ( P ), construct a pathway-specific subnetwork and re-run PageRank. Calculate the final PathNetGene Score as the mean PageRank score across all relevant pathways. | Pathway ( P ) and the PPI network. | Final prioritized list of biomarkers with PathNetGene scores. |

| 5. Validation | Use the top biomarkers as features in a machine learning classifier to predict ICI response (Responder vs. Non-Responder) on transcriptomic data from patient cohorts. | Gene expression data from ICI-treated patients (e.g., from TCGA). | A predictive model with performance metrics (AUC, accuracy). |

The following diagram illustrates the logical flow and data transformation throughout the PathNetDRP protocol.

PathNetDRP Biomarker Discovery Workflow

Outcome and Interpretation

In validation across multiple independent ICI-treated patient cohorts, PathNetDRP demonstrated a significant increase in predictive performance, with the area under the receiver operating characteristic (ROC) curve improving from 0.780 using conventional methods to 0.940 [20]. The framework not only provided a predictive gene list but also offered biological interpretability by highlighting key immune-related pathways. Researchers should prioritize biomarkers with high PathNetGene scores that also reside in pathways with known immune function (e.g., T cell signaling, cytokine-cytokine receptor interaction) for further experimental validation.

Protocol: Discovering Combinatorial Drug Targets to Overcome Resistance

Background and Rationale

A major limitation of monotherapy in oncology is the development of drug resistance, often through cancer cells activating alternative signaling pathways that bypass the inhibited target. This protocol describes a network-based strategy to discover optimal co-target combinations that preemptively block these bypass routes [19].

Detailed Experimental Methodology

Table 3: Protocol for Combinatorial Target Discovery

| Step | Activity | Specifications & Reagents |

|---|---|---|

| 1. Input Data Curation | Identify significant pairs of co-existing mutations from cancer genomics data. | Data: Somatic mutation profiles from TCGA, AACR GENIE. Tool: Fisher's Exact Test with multiple-testing correction. |

| 2. Shortest Path Calculation | For each significant mutation pair, compute the k-shortest paths in the PPI network. | Tool: PathLinker. Parameter: k = 200 (Jaccard index ~0.73 vs. k=300/400). Network: HIPPIE PPI network. |

| 3. Subnetwork Construction | Aggregate all nodes and edges from the computed shortest paths for all mutation pairs. | Output: A focused subnetwork representing key communication pathways. |

| 4. Topological Analysis | Identify key connector nodes within the subnetwork based on centrality measures. | Metrics: Betweenness centrality, degree. Focus: Proteins that serve as bridges between different mutation pairs. |

| 5. Target Prioritization & Validation | Select connector nodes from alternative pathways as co-targets. Test combinations in vitro and in vivo. | Validation: Patient-derived xenograft (PDX) models. Example: Alpelisib (PI3Ki) + LJM716 (HER3i) in breast cancer. |

The following pathway diagram visualizes the conceptual rationale behind targeting connector proteins to block resistance routes.

Network-Based Resistance and Combination Targeting

Outcome and Interpretation

Application of this protocol to patient-derived breast and colorectal cancer models successfully identified effective drug combinations. In breast cancer with ESR1/PIK3CA co-mutations, the combination of alpelisib (PI3K inhibitor) and LJM716 (HER3 inhibitor) diminished tumors. In colorectal cancer with BRAF/PIK3CA co-mutations, the triple combination of alpelisib, cetuximab (EGFR inhibitor), and encorafenib (BRAF inhibitor) showed context-dependent tumor growth inhibition [19]. The key to success is selecting co-targets that are topological connectors in the subnetwork, thereby disrupting the cancer cell's ability to re-route signals.

Successful implementation of network propagation requires a curated set of computational tools and biological datasets. The following table details essential resources.

Table 4: Key Research Reagents and Computational Resources

| Resource Name | Type | Function in Protocol | Access Link/Reference |

|---|---|---|---|

| HIPPIE PPI Database | Biological Database | Provides a high-confidence, continuously updated human protein-protein interaction network for network construction. | http://cbdm-01.zdv.uni-mainz.de/~mschaefer/hippie/ [19] |

| PathLinker | Algorithm/Software | Reconstructs signaling pathways by computing k-shortest paths between source and target proteins in a network. | https://github.com/Murali-group/PathLinker [19] |

| TCGA & AACR GENIE | Genomic Data Repository | Provides somatic mutation profiles and clinical data from thousands of cancer patients for identifying co-existing mutations. | https://www.cancer.gov/ccg/research/genome-sequencing/tcga [19] |

| Kyoto Encyclopedia of Genes and Genomes (KEGG) | Pathway Database | Curated repository of biological pathways used for functional enrichment analysis of candidate biomarkers. | https://www.genome.jp/kegg/ [20] |

| BEERE (Biological Entity Expansion and Ranking Engine) | Computational Tool | A network-based tool that uses centrality measures to iteratively rank and expand a list of candidate genes. | Described in GETgene-AI framework [21] |

This document provides detailed Application Notes and Protocols for the integrated use of Random Forest (RF), XGBoost, and Graph Neural Networks (GNNs) for classification tasks, with a specific focus on identifying predictive network-based biomarkers in oncology. This integrated approach leverages the complementary strengths of tree-based models and deep learning for enhanced feature analysis, robust classification, and improved interpretability of biological networks. The protocols outlined below have been validated in research for classifying clinically relevant biomarkers and predicting drug responses, demonstrating superior performance over single-model approaches [7] [22].

Performance Comparison of Integrated Models

The integration of these models has been systematically benchmarked across various biological and chemical datasets. The following table summarizes the typical performance metrics achieved by individual and integrated models in relevant tasks.

Table 1: Comparative Performance of ML Models in Biomedical Classification and Regression Tasks

| Model / Study | Task Description | Key Performance Metrics | Key Findings |

|---|---|---|---|

| MarkerPredict (RF & XGBoost) [7] | Classifying predictive oncological biomarkers (LOOCV) | LOOCV Accuracy: 0.7 - 0.96 | High accuracy in identifying predictive biomarker-protein pairs from signaling networks. |

| Biologically Informed NN (BINN) [22] | Stratifying septic AKI and COVID-19 subphenotypes | ROC-AUC: 0.99 ± 0.00 (AKI), 0.95 ± 0.01 (COVID-19) | Outperformed benchmarked models, including RF and XGBoost. |

| Stacking Ensemble [23] | Predicting Pharmacokinetic (PK) parameters | R²: 0.92, MAE: 0.062 | Stacking of multiple models, including GNNs and XGBoost, achieved highest accuracy. |

| GNNSeq (GNN+RF+XGBoost) [24] | Protein-ligand binding affinity prediction | Pearson CC: 0.784, R²: 0.595 | Hybrid model leveraging sequence-based features showed robust performance. |

| Descriptor-Based (SVM, XGB, RF) [25] | Molecular property prediction (11 public datasets) | Variable by dataset | On average, descriptor-based models (XGBoost, RF) outperformed graph-based models in accuracy and computational efficiency. |

Detailed Experimental Protocols

Protocol 1: Biomarker-Target Pair Classification Using Tree-Based Ensembles

This protocol is adapted from the MarkerPredict framework for identifying predictive biomarkers in cancer signaling networks using RF and XGBoost [7].

1. Objective: To classify protein-neighbor pairs in biological networks as potential predictive biomarkers for targeted cancer therapeutics.

2. Research Reagent Solutions & Materials:

- Biological Networks: Human Cancer Signaling Network (CSN), SIGNOR, or ReactomeFI networks [7].

- Biomarker Database: CIViCmine database for annotated, evidence-based biomarkers [7].

- Protein Disorder Data: DisProt, AlphaFold (pLLDT<50), or IUPred (score >0.5) databases [7].

- Software: Python with Scikit-learn and XGBoost libraries.

3. Procedure:

- Step 1: Data Compilation and Feature Engineering