Network Propagation Algorithms for Disease Gene Prioritization: A Comprehensive Guide for Biomedical Research

This article provides a comprehensive overview of network propagation algorithms for disease gene prioritization, a critical computational approach in the post-GWAS era.

Network Propagation Algorithms for Disease Gene Prioritization: A Comprehensive Guide for Biomedical Research

Abstract

This article provides a comprehensive overview of network propagation algorithms for disease gene prioritization, a critical computational approach in the post-GWAS era. Aimed at researchers, scientists, and drug development professionals, it covers foundational concepts, key methodological frameworks including Random Walk with Restart (RWR) and conditional random fields, and practical optimization strategies to handle noisy biological data. The content also includes rigorous validation techniques and comparative performance analysis of state-of-the-art tools, offering a holistic resource to enhance the efficiency and accuracy of identifying disease-associated genes for therapeutic development.

The Foundations of Network Medicine: From Biological Networks to Disease Gene Discovery

Network science provides a powerful framework for representing and analyzing complex biological systems. In this paradigm, biological entities are represented as nodes (or vertices), and their interactions are represented as edges (or links). This approach allows researchers to move beyond studying components in isolation to understanding the system-level properties that emerge from their interactions [1] [2]. The fundamental tenet of network medicine, a field within this domain, is that diseases can be viewed as localized perturbations within the cellular interactome—the comprehensive network of molecular interactions within a cell [1].

Biological networks exist at multiple scales, from molecular interactions within cells to ecological relationships between species. The analysis of these networks using graph theory has revealed that many biological networks share common architectural features, such as scale-free and small-world properties, which profoundly impact their robustness and function [2]. The application of network science in biology has accelerated discoveries in disease gene identification, drug target validation, and understanding of evolutionary processes.

Key Network Types in Biology

Biological networks can be categorized based on the entities they connect and the nature of their interactions. The table below summarizes the primary types of molecular biological networks used in disease research.

Table 1: Key Types of Biological Networks in Disease Research

| Network Type | Nodes Represent | Edges Represent | Primary Applications in Disease Research |

|---|---|---|---|

| Protein-Protein Interaction (PPI) Network | Proteins | Physical or functional interactions between proteins | Identifying disease modules and protein complexes; uncovering novel disease genes [1] [2] |

| Gene Regulatory Network (GRN) | Genes, Transcription Factors | Regulatory relationships (activation/inhibition) | Understanding transcriptional dysregulation; identifying key regulators in disease states [1] [2] |

| Gene Co-expression Network | Genes | Similarity in expression patterns across conditions | Identifying functionally related gene modules; discovering biomarkers [1] [2] |

| Metabolic Network | Metabolites, Enzymes | Biochemical reactions | Mapping metabolic alterations in disease; identifying therapeutic targets [2] |

| Signaling Network | Signaling Molecules | Signal transduction events | Elucidating signaling pathway rewiring in disease; predicting drug effects [2] |

| Competing Endogenous RNA (ceRNA) Network | RNAs (mRNAs, lncRNAs, miRNAs) | Competition for miRNA binding | Understanding post-transcriptional regulation; exploring RNA interference therapies [1] |

Network Propagation Algorithms for Disease Gene Prioritization

Network propagation algorithms leverage the interconnectivity within biological networks to infer gene-disease associations. These methods are based on the "guilt-by-association" principle, which posits that genes causing the same or similar diseases tend to interact with each other or reside in the same network neighborhood [1] [3].

Algorithmic Foundations

At their core, network propagation methods simulate the flow of information through a network. The most common approaches include:

- Random Walk with Restart (RWR): Models a random walker that traverses the network from a set of seed nodes (known disease genes) and has a probability of restarting from the seeds at each step. The steady-state probability of the walker landing on each node represents its functional relevance to the seed set [4] [5].

- Label Propagation: Iteratively propagates labels from known disease genes to unlabeled nodes through their connections, eventually converging to a stable assignment where each node receives a score reflecting its association with the disease [3].

- Network Diffusion: Applies a diffusion process, often modeled with heat equations, to smooth the initial signal (e.g., GWAS p-values) across the network, amplifying signals for genes connected to many other candidate genes [4].

Practical Implementation: uKIN Guided Network Propagation

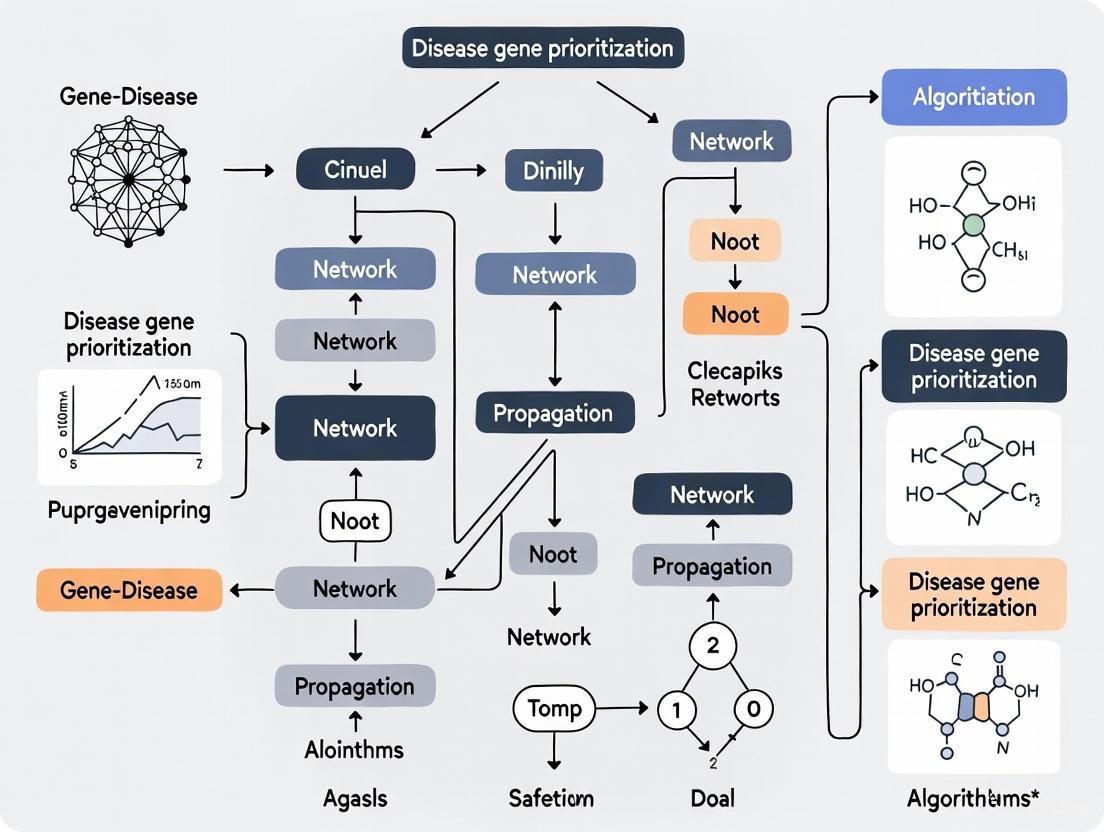

The uKIN algorithm represents an advanced implementation of guided network propagation that integrates both prior knowledge and new experimental data [5]. The workflow is depicted below:

uKIN Algorithm Workflow

uKIN uses known disease-associated genes to guide random walks initiated at newly identified candidate genes within a PPI network. This integration of prior and new information has been shown to outperform methods using either source alone, successfully identifying cancer driver genes across 24 cancer types and genes relevant to complex diseases from genome-wide association studies [5].

Implementation of the IDLP Framework

The Improved Dual Label Propagation (IDLP) framework addresses two key challenges in disease gene prioritization: limited known disease genes and false positive interactions in PPI networks. The method constructs a heterogeneous network connecting gene networks with phenotype similarity networks through known gene-phenotype associations [3].

Table 2: Key Mathematical Notations in IDLP Framework

| Variable | Description | Dimensions |

|---|---|---|

| W₁ | Binary adjacency matrix of PPI network | n × n (n = number of genes) |

| W₂ | Phenotype similarity network | m × m (m = number of phenotypes) |

| Ŷ | Binary matrix of known gene-phenotype associations | n × m |

| Y | Predicted gene-phenotype association matrix (to be learned) | n × m |

| S₁ | Weighted PPI network (to be learned) | n × n |

| S₂ | Weighted phenotype similarity network (to be learned) | m × m |

The overall objective function of IDLP is:

L(Y,S₁,S₂) = tr(Yᵀ(I - S₁)Y) + tr(Y(I - S₂)Yᵀ) + (μ + ζ)‖Y - Ŷ‖²F + ν‖S₁ - S̄₁‖²F + η‖S₂ - S̄₂‖²_F

where μ, ζ, ν, η are regularization parameters, and S̄₁, S̄₂ are normalized versions of the initial networks [3]. This formulation allows the algorithm to simultaneously learn the gene-phenotype associations while correcting for noise in both the PPI and phenotype similarity networks.

Application Notes: From GWAS to Disease Genes

Genome-wide association studies (GWAS) identify statistical associations between genetic variants and diseases, but translating these variant associations to causal genes remains challenging. Network propagation has emerged as a powerful approach to address this limitation [4].

Objective: Prioritize likely causal genes from GWAS summary statistics using network propagation.

Workflow:

GWAS to Gene Prioritization Workflow

Step-by-Step Procedure:

Variant-to-Gene Mapping: Associate SNPs with genes using one of three primary methods:

- Genomic Proximity: Assign SNPs to genes within a defined window (typically ±10-50 kb from gene boundaries) [4].

- Chromatin Interaction Mapping: Utilize chromatin conformation data (e.g., Hi-C) to associate SNPs with genes within the same topologically associated domain (TAD) or connected through chromatin loops [4].

- eQTL Mapping: Associate SNPs with genes whose expression they significantly influence, using tissue-relevant expression quantitative trait locus (eQTL) data [4].

Gene-Level Score Calculation: Aggregate SNP-level p-values to generate gene-level scores. Common approaches include:

Network Selection and Propagation:

- Select appropriate molecular network (PPI, co-expression, or functional network) based on disease context [4].

- Perform network propagation using algorithms like RWR or diffusion kernels to smooth gene scores across the network.

- Critical Parameter: The restart probability in RWR (typically 0.5-0.8) balances local exploration near seed genes versus global exploration of the network [4] [5].

Gene Prioritization: Rank genes based on their propagated scores for experimental validation.

Advanced Applications: Differential Causal Network Analysis

Differential Causal Network (DCN) analysis represents a cutting-edge approach for comparing biological networks across different states (e.g., disease vs. healthy, male vs. female) [6]. Unlike standard differential network analysis that focuses on correlation changes, DCNs specifically model changes in causal relationships.

Protocol: Constructing Differential Causal Networks

Objective: Identify differences in causal gene regulatory relationships between two biological conditions.

Procedure:

- Data Collection: Collect gene expression data for two conditions to compare (e.g., case vs. control, male vs. female).

- Causal Network Inference: For each condition, infer causal networks using:

- DCN Construction: Given two causal networks C₁ = {G₁,E₁} and C₂ = {G₂,E₂}, construct the differential causal network DCN₁₂ = {G₁,E₁₂}, where the adjacency matrix ADCN = AC₁ - A_C₂ [6].

- Biological Interpretation: Identify significantly rewired nodes and edges, and perform pathway enrichment analysis on rewired gene sets.

Table 3: Research Reagent Solutions for Network Biology

| Resource Type | Examples | Primary Function | Access |

|---|---|---|---|

| PPI Databases | BioGRID [3], MINT [2], IntAct [2], STRING | Catalog experimentally determined and predicted protein-protein interactions | Public web access, downloadable files |

| Gene-Phenotype Associations | OMIM database [3] | Curated database of known gene-disease associations | Public web access, licensed data |

| Network Analysis Software | DIAMOnD [1], SWIM [1], uKIN [5], IDLP [3] | Implement network propagation and disease module detection algorithms | Python, R, MATLAB packages |

| Gene Expression Data | GTEx [6], TCGA | Provide tissue-specific gene expression data for co-expression network construction | Public access with data use restrictions |

| Causal Network Tools | Differential Causal Networks tool [6] | Implement DCN construction and analysis | GitHub repository |

Application of DCN analysis to Type 2 Diabetes Mellitus (T2DM) revealed sex-specific causal gene networks across nine tissues, providing insights into differential disease mechanisms between males and females [6]. This approach demonstrates how network science can uncover nuanced biological differences that may inform personalized therapeutic strategies.

Visualization Guidelines for Biological Networks

Effective visualization is crucial for interpreting and communicating biological network analyses. The following guidelines ensure clarity and accessibility:

- Color Selection: Choose color palettes based on data type:

- Sequential data: Use a single hue with varying luminance/saturation (e.g., light to dark blue) [7].

- Divergent data: Use two contrasting hues (e.g., blue to red) with a neutral midpoint [7].

- Qualitative data: Use distinct hues for different categories, limiting to 7-8 colors for discriminability [7].

- Accessibility: Ensure sufficient color contrast and avoid red-green combinations that affect colorblind users (approximately 1 in 10 men) [7] [8].

- Node-Link Relationships: Use complementary-colored or neutral (gray) links to enhance discriminability of node colors, particularly in dense networks [9].

Network science approaches have fundamentally transformed biological research by providing system-level insights into disease mechanisms. The continued development of network propagation algorithms and causal inference methods promises to further accelerate the identification of disease genes and therapeutic targets.

Biological systems are fundamentally built upon complex networks of interactions rather than isolated actions of single molecules. The observable clustering of functionally related genes within these networks is not a random occurrence but a reflection of deep-seated biological principles essential for cellular operation, robustness, and evolutionary fitness. This clustering provides a critical organizational framework for interpreting the functional consequences of genetic perturbations in disease.

Research in network biology consistently demonstrates that genes implicated in similar diseases or biological processes exhibit significant functional connectivity and tend to reside in close network proximity [10] [11]. This principle forms the cornerstone of modern approaches for disease gene prioritization, where network propagation algorithms leverage these topological relationships to identify novel candidate genes associated with pathological conditions. Understanding why and how these clusters form is therefore paramount for advancing both basic biological knowledge and translational applications in drug development.

The Quantitative Evidence for Functional Clustering

Statistical Significance in Genomic Studies

Empirical evidence from large-scale genomic analyses provides compelling support for the non-random clustering of functionally related genes. A pivotal study examining copy number variants (CNVs) in patients with developmental disorders revealed that pathogenic CNVs frequently span multiple functionally related genes, a phenomenon significantly less common in CNVs from healthy controls [12].

Table 1: Functional Clustering in Pathogenic CNVs

| Cohort | CNVs with Functional Clusters | Average Cluster Size | Statistical Significance (P-value) |

|---|---|---|---|

| DECIPHER (626 CNVs) | 49.4% | 3.46 genes | 0.0217 |

| NIJMEGEN (426 CNVs) | 54.0% | 3.69 genes | 0.0005 |

This study employed a Phenotypic Linkage Network (PLN), an integrated functional network combining protein-protein interactions, gene co-expression data, and model organism phenotype information to assess functional relationships [12]. The finding that de novo CNVs in patients are more likely to affect these functional clusters—and affect them more extensively—than benign CNVs underscores the pathogenic contribution of disrupting coordinated genetic modules.

Network Topology and Disease Module Hypothesis

The conceptual framework of "disease modules" posits that genes associated with a specific disease are not scattered randomly across the cellular interactome but localize within specific neighborhoods of the network [11]. This hypothesis is substantiated by systematic analyses of protein-protein interaction (PPI) networks, which show that products of genes implicated in similar diseases exhibit significant topological proximity and tend to form highly interconnected subnetworks [10] [11].

This organizational principle enables powerful computational approaches. Genes within these clusters often share similar topological profiles, meaning their patterns of connectivity within the larger network are alike. This similarity can be exploited by algorithms like Vavien, which uses topological resemblance to known disease genes to prioritize new candidate genes from linkage intervals [10].

Underlying Biological Mechanisms Driving Clustering

The formation of functional gene clusters is driven by several convergent evolutionary and biological mechanisms that confer advantages to the organism.

Coordinated Regulation and Expression: Genes involved in the same biological process or pathway often require synchronized expression. Physical proximity in the genome can facilitate this through shared regulatory elements, such as bidirectional promoters or common enhancer regions, enabling efficient co-transcriptional control [12].

Protein Interaction Proximity: For genes whose products must physically interact to perform their function (e.g., subunits of a protein complex), genomic clustering can reduce the stochasticity of encounter, increasing the efficiency of complex assembly. This is reflected in the enrichment of physical PPIs among products of clustered genes [13] [12].

Epistatic Selection and Co-inheritance: Genes with functional epistasis—where the effect of one gene depends on the presence of another—may be kept in close genomic linkage to ensure they are co-inherited, thus preserving beneficial genetic combinations and phenotypic stability across generations [12].

Common Evolutionary Origin: Some clusters, like those arising from tandem gene duplications, contain paralogous genes with similar or related functions. While these represent a specific type of cluster, studies indicate they are distinct from the larger functional clusters that include non-paralogous genes [12].

The following diagram illustrates the relationship between genomic organization, functional networks, and phenotypic outcomes:

Diagram: Relationship between genomic organization, functional networks, and disease phenotypes. A copy number variant (CNV) disrupting clustered genes can propagate through the network to disrupt biological processes.

Experimental Protocols for Validating Functional Clusters

Protocol: Identifying Dysregulated Subnetworks from Transcriptomic Data

This protocol outlines the steps for discovering functionally related gene clusters that are dysregulated in a disease state by integrating gene expression data with protein-protein interaction networks [13].

1. Input Data Preparation

- Gene Expression Matrix: Obtain a case-control gene expression dataset (e.g., RNA-seq or microarray data) with appropriate sample size for statistical power.

- Protein-Protein Interaction (PPI) Network: Download a comprehensive human PPI network from databases such as STRING, BioGRID, or HumanNet. Filter for high-confidence interactions.

2. Differential Expression Scoring

- For each gene, compute a differential expression score between case and control groups using a statistical test (e.g., t-test or moderated t-test). Apply multiple testing correction (e.g., Benjamini-Hochberg FDR).

3. Subnetwork Scoring and Search

- Define candidate subnetworks as connected components in the PPI network.

- For each subnetwork, compute an aggregate score reflecting collective dysregulation. Common methods include:

- Mean-based aggregation: Average the differential expression scores of all genes in the subnetwork.

- Maximum-based aggregation: Use the maximum score within the subnetwork.

- Employ a search algorithm (e.g., greedy search, simulated annealing) to identify subnetworks with statistically significant aggregate scores, correcting for multiple hypotheses.

4. Functional Enrichment and Validation

- Perform functional enrichment analysis (e.g., Gene Ontology, KEGG pathways) on genes within significant subnetworks.

- Validate biologically using experimental approaches such as siRNA knockdown of central hub genes followed by phenotypic assays relevant to the disease.

Protocol: Network-Based Prioritization of Candidate Disease Genes

This protocol utilizes the topological properties of biological networks to prioritize candidate genes from genomic intervals identified in genome-wide association studies (GWAS) or linkage analyses [10].

1. Input Definition

- Seed Genes: Compile a set of known disease-associated genes from databases like OMIM or ClinVar.

- Candidate Genes: Compile genes located within linkage intervals or significant loci from GWAS.

- Integrated Functional Network: Obtain an integrated network (e.g., HumanNet, PLN) combining PPIs, co-expression, genetic interactions, and functional annotations.

2. Topological Similarity Calculation

- For the integrated network, compute the topological profile of each protein. This profile can be based on:

- Network centrality measures (e.g., betweenness, closeness, eigenvector centrality)

- Graphlet degrees or other topological descriptors

- Calculate the pairwise topological similarity between all seed proteins and candidate proteins using a similarity metric (e.g., cosine similarity, Pearson correlation).

3. Candidate Gene Ranking

- For each candidate gene, compute a prioritization score based on its topological similarity to seed genes. This can be the maximum similarity to any seed gene or the average similarity to all seed genes.

- Rank all candidate genes based on this score in descending order.

4. Experimental Validation Triaging

- Select top-ranking candidates for functional validation.

- Design experiments based on the shared biological functions of the seed genes and the predicted cluster (e.g., common pathway assays, model organism phenotypes).

Table 2: Key Research Reagents and Computational Tools for Network Biology

| Resource Type | Example Resources | Primary Function | Application in Validation |

|---|---|---|---|

| Integrated Networks | HumanNet [12], Phenotypic Linkage Network (PLN) [12], STRING | Provides functional gene-gene associations from multiple evidence sources | Serves as the reference network for cluster identification and gene prioritization |

| Protein Interaction Data | BioGRID, IntAct, AP-MS datasets [13] | Catalogs physical protein-protein interactions | Experimental validation of predicted physical interactions within a cluster |

| Gene Expression Data | GTEx [14], GEO datasets, RNA-seq from case-control studies | Provides transcriptomic profiles across tissues/conditions | Input for identifying co-expressed gene modules and dysregulated subnetworks |

| Phenotype Databases | OMIM [10], Mouse Genome Database (MGD) [12] | Curates gene-disease associations and model organism phenotypes | Validation of phenotypic concordance for genes within a predicted cluster |

| Analysis Platforms | GeneNetwork [14], Cytoscape, R/Bioconductor | Offers integrated toolkits for systems genetics and network visualization | Enables QTL mapping, co-expression analysis, and network visualization |

Application in Disease Gene Prioritization and Drug Development

The principle of functional clustering is directly leveraged in network propagation algorithms for disease gene prioritization. These algorithms simulate the flow of information through the interactome, starting from known disease genes, to identify novel candidate genes that reside within the same network neighborhood [10] [11]. The underlying assumption is that genes causing the same or similar diseases are proximate in the network, a phenomenon often referred to as "guilt by association" [10].

In translational research, identifying a cluster of functionally related genes disrupted in a disease provides a more robust set of targets than focusing on individual genes. This systems-level perspective helps explain disease mechanisms, as the clinical phenotype often arises from perturbations to the entire functional module rather than a single gene [12] [11]. Furthermore, drugs typically target multiple proteins within a network, and understanding cluster organization aids in predicting drug effects, identifying repurposing opportunities, and understanding resistance mechanisms [11].

The following workflow diagram illustrates how these concepts are applied in a practical research pipeline:

Diagram: A network propagation workflow for disease gene prioritization, moving from known disease genes to experimental validation of candidate clusters.

Application Note: Protein-Protein Interaction (PPI) Networks

Core Concept and Utility

Protein-protein interaction networks provide a physical map of cellular machinery, where nodes represent proteins and edges represent confirmed or predicted physical interactions. In disease gene prioritization, these networks operate on the principle that genes associated with similar disease phenotypes tend to have protein products that are closer within the PPI network topology than expected by chance [15]. This "guilt-by-association" approach enables the identification of novel disease genes based on their network proximity to known disease-associated genes, often referred to as "seed" genes [15] [16].

Complex genetic disorders involve products of multiple genes acting cooperatively, making PPI networks particularly valuable for understanding polygenic diseases [15]. Rather than having random connections throughout the network, proteins encoded by genes implicated in similar phenotypes tend to interact with partners from the same disease phenotypes [15]. This topological signature provides the foundation for network-based prioritization algorithms.

Quantitative Performance of PPI-Based Prioritization Methods

Table 1: Performance comparison of network-based prioritization methods across multiple datasets (AUC %)

| Method | OMIM Dataset | Goh Dataset | Chen Dataset |

|---|---|---|---|

| NetCombo | 72.09 | 67.08 | 78.41 |

| NetScore | 67.49 | 67.32 | 75.92 |

| Network Propagation | 65.97 | 54.74 | 69.07 |

| NetZcore | 62.99 | 61.45 | 72.80 |

| NetShort | 65.63 | 55.36 | 63.11 |

| Functional Flow | 58.55 | 54.78 | 63.56 |

| PageRank with Priors | 57.03 | 52.39 | 65.30 |

| Random Walk with Restart | 55.36 | 49.35 | 61.78 |

The table above demonstrates that network-based prioritization methods show significant variation in their ability to identify disease genes, with consensus methods like NetCombo generally outperforming individual algorithms [15]. Performance is also highly dependent on the quality and completeness of the underlying PPI data, with incompleteness (false negatives) and noise (false positives) representing significant challenges [15].

Experimental Protocol: PPI-Based Gene Prioritization Using GUILD

Purpose: To prioritize candidate disease genes using protein-protein interaction networks and known disease-associated seed genes.

Materials:

- Protein-protein interaction data (e.g., from STRING, InWeb, or OmniPath databases)

- Known disease-associated genes (seed genes)

- GUILD software framework (http://sbi.imim.es/GUILD.php)

- Network analysis environment (Python/R with network analysis libraries)

Procedure:

- Network Preparation: Compile a comprehensive PPI network using databases such as STRING, InWeb, or OmniPath [17]. Filter interactions based on confidence scores if available.

- Seed Gene Selection: Curate a set of high-confidence known disease-associated genes from databases such as OMIM or GWAS catalog.

- Algorithm Selection: Choose one or more network prioritization algorithms implemented in GUILD:

- NetScore: Measures the network relevance of paths connecting disease-associated nodes

- NetZcore: Computes z-scores based on network topology

- NetShort: Utilizes shortest path distances to seed genes

- NetCombo: Consensus method combining multiple algorithms [15]

- Score Calculation: Execute the selected algorithm(s) to compute prioritization scores for all genes in the network.

- Cross-Validation: Perform k-fold cross-validation (typically 5-fold) to assess method performance using area under ROC curve (AUC) and sensitivity at top 1% predictions [15].

- Candidate Gene Selection: Generate a prioritized list of candidate genes based on their network scores for experimental validation.

Troubleshooting:

- If results show bias toward highly connected genes, consider statistical adjustments using random networks [15].

- If performance is poor, integrate additional data types (e.g., gene expression, functional annotations) to create a more robust functional network [15].

Application Note: Gene Co-expression Networks

Core Concept and Utility

Gene co-expression networks represent functional relationships between genes based on similarity in their expression patterns across multiple conditions, treatments, or tissues [18]. Unlike PPI networks that represent physical interactions, co-expression networks capture coordinated transcriptional regulation and functional relatedness, operating on the principle that genes involved in the same biological process or pathway tend to show similar expression patterns [18] [19].

These networks are constructed from high-throughput transcriptomic data (microarray or RNA-seq) and have found widespread application in predicting gene function, identifying gene modules, and prioritizing disease genes [18] [19]. Co-expression analysis allows the simultaneous identification, clustering, and exploration of thousands of genes with similar expression patterns across multiple conditions [18].

Network Construction and Normalization Considerations

The accuracy of co-expression networks heavily depends on appropriate normalization and processing of gene expression data. For RNA-seq data, specific considerations include:

Between-sample normalization has the most significant impact on network quality, with counts adjusted by size factors (e.g., TMM, UQ) producing networks that most accurately recapitulate known functional relationships [19].

Within-sample normalization methods like TPM (transcripts per million) and CPM (counts per million) account for sequencing depth and gene length variations [19].

Network transformation techniques such as weighted topological overlap (WTO) and context likelihood of relatedness (CLR) can enhance biological signal by upweighting connections more likely to be real and downweighting spurious correlations [19].

Table 2: Key co-expression network resources and their applications

| Resource Type | Examples | Primary Application |

|---|---|---|

| Expression Databases | Gene Expression Omnibus (GEO), recount2 | Source of expression data for network construction |

| Network Construction Tools | WGCNA, ARACNE | Inference of co-expression networks from expression data |

| Functional Annotation | Gene Ontology, KEGG | Validation of co-expression modules |

Experimental Protocol: Constructing Co-expression Networks from RNA-seq Data

Purpose: To build a biologically meaningful gene co-expression network from RNA-seq data for disease gene prioritization.

Materials:

- RNA-seq count data from relevant tissues or conditions

- Computing environment with R/Bioconductor

- Normalization tools (edgeR, DESeq2)

- Network construction packages (WGCNA, COEN)

Procedure:

- Data Preprocessing:

- Filter genes with low expression (e.g., <10 counts in most samples)

- Apply within-sample normalization (CPM, TPM, or RPKM) to account for technical variability [19]

- Between-Sample Normalization:

- Similarity Calculation:

- Network Construction:

- Apply adjacency function to convert similarity matrix to connection strengths

- Use hard thresholds or soft thresholds (preferred for weighted networks) [18]

- Network Transformation:

- Apply topological overlap matrix (WTO) to account for shared neighbors

- Alternatively, use context likelihood of relatedness (CLR) to remove indirect interactions [19]

- Module Identification:

- Identify modules of highly interconnected genes using hierarchical clustering or community detection algorithms

- Annotate modules using functional enrichment analysis (GO, KEGG)

- Candidate Gene Prioritization:

- Apply guilt-by-association principle within modules to predict gene function

- Prioritize unknown genes that co-express with known disease genes

Troubleshooting:

- If networks are too dense, apply more stringent thresholds or use ARACNE algorithm to prune indirect interactions [18]

- If biological interpretation is challenging, integrate prior knowledge or use tissue-aware gold standards for validation [19]

Application Note: Integrated Disease Networks

Core Concept and Utility

Integrated disease networks combine multiple data types—including PPI, co-expression, genetic, and phenotypic data—into unified frameworks for enhanced disease gene prioritization [20] [17]. These networks address limitations of single-network approaches by leveraging complementary information from diverse molecular perspectives.

The fundamental premise is that genes associated with similar diseases tend to reside in the same neighborhood of integrated networks, share common protein interaction partners, exhibit correlated expression patterns, and display similar phenotypic profiles [20]. The DREAM Challenge assessment revealed that different network types capture complementary trait-associated modules, suggesting that integration can provide a more comprehensive view of disease mechanisms [17].

Performance of Integrated Network Approaches

Network propagation methods that integrate multiple data types have demonstrated superior performance in disease gene identification compared to single-network approaches. Methods like uKIN, which use known disease genes to guide random walks initiated from newly identified candidate genes, show better identification of disease genes than using single sources of information alone [5].

The RWRHN (Random Walk with Restart on Heterogeneous Networks) algorithm, which fuses PPI networks reconstructed by topological similarity, phenotype similarity networks, and known disease-gene associations, shows improved performance in inferring disease genes compared to single-network methods [20]. This approach successfully predicted novel causal genes for 16 diseases including breast cancer, diabetes mellitus type 2, and prostate cancer, with top predictions supported by literature evidence [20].

Experimental Protocol: Multi-Network Propagation for GWAS Analysis

Purpose: To integrate GWAS summary statistics with molecular networks for improved disease gene identification.

Materials:

- GWAS summary statistics for disease of interest

- Molecular networks (PPI, co-expression, signaling, etc.)

- Reference panels for LD information (1000 Genomes, HRC)

- Functional genomic annotations (eQTL data, chromatin interactions)

Procedure:

- Variant-to-Gene Mapping:

- Map SNPs to genes using genomic proximity (e.g., ±10kb from gene body)

- Alternatively, use chromatin interaction mapping (Hi-C) or eQTL data for more accurate functional mapping [4]

- Gene-Level Score Calculation:

- Aggregate SNP P-values to gene-level scores using methods like minSNP, VEGAS, fastBAT, or PEGASUS [4]

- Correct for gene length bias and linkage disequilibrium between SNPs

- Network Selection and Integration:

- Select appropriate molecular networks based on disease context:

- Protein-protein interaction networks for pathway context

- Co-expression networks for tissue-specific functional relationships

- Signaling networks for understanding pathway perturbations [17]

- Select appropriate molecular networks based on disease context:

- Network Propagation:

- Candidate Gene Prioritization:

- Rank genes based on their propagated scores

- Validate top candidates using independent datasets or functional enrichment

Troubleshooting:

- If propagation results are noisy, pre-sparsify networks by removing weak edges [17]

- If tissue-specificity is important, use tissue-aware networks and validation standards [19]

The Scientist's Toolkit

Table 3: Essential research reagents and computational tools for network-based disease gene prioritization

| Resource Category | Specific Tools/Databases | Function and Application |

|---|---|---|

| Protein Interaction Databases | STRING, InWeb, OmniPath | Source of curated physical protein interactions for PPI network construction |

| Gene Expression Resources | GEO, recount2, GTEx | Source of transcriptomic data for co-expression network inference |

| Disease Gene Associations | OMIM, GWAS Catalog | Curated known disease-gene associations for seed genes and validation |

| Network Analysis Software | GUILD, uKIN, WGCNA | Implementations of network propagation and module identification algorithms |

| Functional Annotation | Gene Ontology, KEGG | Functional enrichment analysis of network modules and prioritized genes |

| Benchmarking Resources | DREAM Challenge modules | Gold standards for method validation and performance assessment |

Visualizing Network Propagation Workflows

Integrated Network Analysis Methodology

Network Propagation Workflow: This diagram illustrates the integrated methodology for disease gene prioritization, combining genomic data, molecular networks, and prior knowledge through network propagation algorithms to generate candidate genes for experimental validation.

Multi-Network Gene Prioritization Framework

Multi-Network Framework: This visualization shows how diverse molecular networks are integrated and analyzed using multiple algorithms to identify trait-associated modules and prioritize disease genes.

Genome-wide association studies (GWAS) have successfully identified thousands of genetic variants associated with complex human diseases and traits. The NHGRI-EBI GWAS Catalog currently contains thousands of publications and top associations with full summary statistics [21]. However, a critical bottleneck has emerged: over 90% of disease-associated variants map to non-coding regions of the genome, making their functional interpretation and target gene identification profoundly challenging [22]. This translation gap between statistical association and biological mechanism represents a fundamental challenge in human genetics.

The scale of this challenge is substantial. A comprehensive systematic review of experimental validation studies identified only 309 experimentally validated non-coding GWAS variants regulating 252 genes across 130 human disease traits from an initial set of 36,676 articles [22]. This represents a tiny fraction of the reported associations, highlighting the pressing need for efficient prioritization strategies. Network propagation algorithms have emerged as powerful computational frameworks that integrate GWAS findings with biological networks to address this prioritization challenge, enabling researchers to bridge statistical associations with biological mechanisms for experimental validation.

Network Propagation: A Computational Framework for Gene Prioritization

Network propagation represents a class of algorithms that leverage molecular interaction networks to contextualize GWAS findings. These methods are based on the "guilt-by-association" principle, which posits that genes causing similar diseases tend to interact with each other or reside in the same functional modules within biological networks.

Core Algorithmic Principles

The uKIN algorithm exemplifies the modern network propagation approach, using known disease genes to guide random walks initiated at newly implicated candidate genes within protein-protein interaction networks [5]. This guided network propagation framework allows for the integration of prior biological knowledge with new GWAS data, effectively amplifying weak signals and identifying disease-relevant genes with higher accuracy than using either source of information alone [5].

The mathematical foundation of these methods involves simulating random walks on biological networks, where the propagation process diffuses association signals from initial seed genes to their network neighbors. This approach effectively smooths noisy GWAS data and prioritizes genes that are both genetically associated and network-proximal to other known disease genes.

Performance Advantages

In large-scale testing across 24 cancer types, guided network propagation approaches have demonstrated superior performance in identifying cancer driver genes compared to methods using either prior knowledge or new GWAS data alone [5]. These methods also readily outperform other state-of-the-art network-based approaches, establishing network propagation as a leading strategy for gene prioritization.

Table 1: Key Network Propagation Algorithms and Applications

| Algorithm | Methodology | Key Features | Applications |

|---|---|---|---|

| uKIN [5] | Guided network propagation using known disease genes | Integrates prior knowledge with new data via guided random walks | Cancer driver gene identification, complex disease gene discovery |

| Standard Network Propagation [4] | Random walks or information diffusion on molecular networks | Uses gene-level scores from GWAS P-values; signal amplification | Polygenic disease gene prioritization |

| Ensemble Methods [4] | Combination of multiple networks and algorithms | Improves robustness by integrating diverse network sources | Enhanced prediction across diverse disease domains |

Integrated Protocol for GWAS Functional Validation

This protocol provides a comprehensive framework for progressing from GWAS summary statistics to experimentally validated disease genes, integrating both computational prioritization and experimental validation approaches.

Stage 1: Computational Gene Prioritization

Data Acquisition and Preprocessing

Begin by obtaining GWAS summary statistics from public databases such as the GWAS Catalog [21] or the Atlas of GWAS Summary Statistics, which contains thousands of GWAS from unique studies across diverse traits and domains [23]. For the prioritization algorithm, gene-level scores must be computed from SNP-level P-values. The minSNP approach (assigning the lowest P-value within gene boundaries) represents the simplest method, though it exhibits bias toward longer genes [4]. Superior alternatives include:

- PEGASUS: Computes gene scores analytically from a null chi-square distribution that captures linkage disequilibrium (LD) between SNPs [4]

- FastCGP: Employs circular genomic permutation to correct for gene length bias while considering LD between SNPs [4]

- MAGMA: Uses regression-based models that can include covariates for population stratification [4]

Table 2: SNP-to-Gene Mapping Strategies

| Mapping Approach | Methodology | Advantages | Limitations |

|---|---|---|---|

| Gene Body + Buffer | Associates SNPs within extended gene boundaries | Simple to implement; accounts for proximal regulatory elements | Misses distal regulatory connections |

| Chromatin Interaction Mapping | Uses 3D chromatin contact maps (e.g., Hi-C) | Captures long-range regulatory interactions | Tissue-specific; data not always available |

| eQTL Mapping | Correlates variants with gene expression | Provides functional evidence of regulatory effect | Tissue-specificity; may miss causal genes |

Network Selection and Propagation

Select appropriate biological networks based on the disease context. Protein-protein interaction networks (e.g., from STRING, BioGRID) often serve as the foundation [5]. Consider network size and density, as these factors significantly impact propagation performance [4]. Implement the propagation algorithm:

- Construct the network graph G = (V,E) where nodes represent genes and edges represent interactions

- Initialize the prior information vector p with gene-level scores from GWAS

- Apply the propagation equation: F = α(I - (1-α)W)^{-1}p, where W is the normalized adjacency matrix and α is the restart probability

- For guided methods like uKIN, incorporate known disease genes as additional priors [5]

- Rank genes based on their propagated scores for experimental follow-up

Ensemble Approaches

Recent evidence suggests that combining multiple networks may improve prioritization performance [4]. Implement ensemble methods by running propagation separately on different network types (protein-protein, genetic interaction, co-expression) and aggregating results, or by constructing integrated networks before propagation.

Stage 2: Experimental Validation of Prioritized Genes

Once genes are prioritized through computational methods, experimental validation is essential to confirm their functional role in disease pathogenesis. The systematic review by Unlu et al. revealed that multiple complementary approaches are typically employed for validation [22].

Functional Characterization of Non-coding Variants

For non-coding variants, which represent the majority of GWAS findings, employ a multi-step approach:

Fine-mapping and Annotation: Identify causal variants through statistical fine-mapping and overlap with functional genomic annotations (e.g., chromatin accessibility, transcription factor binding sites) [24]. Utilize regulatory target analysis to connect non-coding variants with their target genes through eQTL analysis or chromatin interaction data [24].

Protein Binding assays: Determine molecular functions using:

- ChIP-Seq/qPCR: Compare allelic ratios in heterozygous samples to identify transcription factor binding differences [24]

- Electrophoretic Mobility Shift Assays (EMSAs): Incubate DNA probes surrounding candidate variants with nuclear extracts to assess binding affinity differences [24]

- DNA-Affinity Pulldown with Mass Spectrometry: Identify proteins specifically binding to risk versus protective alleles [24]

Genome Editing Approaches: Implement CRISPR-based genome editing to modify candidate causal variants in disease-relevant cell models [24]. Assess functional consequences on:

- Gene expression (qPCR, RNA-seq)

- Chromatin state (ATAC-seq, histone modification ChIP-seq)

- Cellular phenotypes relevant to disease

High-Throughput Validation Strategies

For scalable validation of multiple candidates:

- Massively Parallel Reporter Assays (MPRAs): Simultaneously test thousands of variants for regulatory activity

- CRISPR Screens: Implement pooled or arrayed CRISPR screens to assess functional impact of multiple candidate genes/variants

- High-Throughput Protein Binding Assays: Utilize methods like SNP-seq to identify functional SNPs that allelically modulate regulatory protein binding [24]

The Scientist's Toolkit: Essential Research Reagents

Table 3: Key Research Reagents for GWAS Validation Studies

| Reagent/Resource | Function | Application Examples |

|---|---|---|

| GWAS Catalog [21] | Public repository of GWAS associations | Source of initial variant-disease associations for prioritization |

| uKIN Algorithm [5] | Guided network propagation tool | Prioritizing disease genes from GWAS data using biological networks |

| ATLAS of GWAS [23] | Database of GWAS summary statistics | Access to processed summary statistics for gene-level analysis |

| CRISPR/Cas9 Systems [24] | Precision genome editing | Functional validation of causal variants in cellular models |

| eQTL Databases | Repository of expression quantitative trait loci | Linking non-coding variants to potential target genes |

| ChIP-grade Antibodies | Protein-DNA interaction mapping | Assessing transcription factor binding to candidate variants |

| Mass Spectrometry Platforms | Protein identification and quantification | Identifying proteins that differentially bind to risk alleles |

Visualization of Workflows

GWAS Validation Pathway

Network Propagation Algorithm

Experimental Validation Framework

Network propagation approaches represent a powerful methodology for bridging the gap between GWAS discoveries and biological mechanisms. By integrating statistical genetics with systems biology, these methods effectively prioritize genes for labor-intensive experimental validation, significantly accelerating the functional interpretation of GWAS findings.

The future of disease gene prioritization lies in the continued refinement of multi-modal integration strategies, combining GWAS data with diverse biological networks, single-cell omics profiles, and clinical data. As these approaches mature, they will increasingly enable the translation of genetic discoveries into novel therapeutic strategies, ultimately fulfilling the promise of genomic medicine for complex human diseases.

Network propagation has emerged as a powerful computational paradigm for analyzing high-throughput biological data within the context of molecular interaction networks. This approach leverages the global topology of networks to smooth vertex scores using random walk or diffusion processes, enabling researchers to infer functional relationships and identify biologically significant patterns [25]. In disease gene prioritization, network propagation methods address the critical challenge of identifying potential disease-causing genes from hundreds of candidates generated by high-throughput studies such as Genome-Wide Association Studies (GWAS) and linkage analyses [26]. The fundamental hypothesis underpinning these methods is that genes associated with similar phenotypes tend to interact with each other or reside in the same neighborhood of biological networks, a concept often described as "guilt by association" [27].

The algorithmic assumption central to network propagation is that random walk or diffusion processes on biological networks can effectively capture the functional relationships between genes or proteins, thereby allowing the prioritization of candidate genes based on their proximity to known disease-associated genes in the network [25]. This approach has become the dominant framework for network ranking problems in computational biology, with demonstrated asymptotic optimality for certain random graph models [25]. As belief networks model increasingly complex biological situations, propagation algorithms that make minimal assumptions about the underlying data distributions become increasingly valuable for robust inference [28] [29].

Core Principles and Algorithmic Assumptions

Foundational Hypotheses

Network propagation methods operate on several foundational hypotheses that guide their application in disease gene prioritization. The network smoothness hypothesis proposes that functionally related genes exhibit similar phenotypes and tend to cluster together in biological networks, implying that information can be propagated smoothly across the network [25] [27]. The local connectivity hypothesis assumes that genes involved in the same disease often participate in the same functional modules or pathways, forming connected subnetworks within larger interaction networks [25]. The diffusion state hypothesis suggests that the steady-state distribution of a random walk on a biological network captures meaningful functional relationships between genes, with closely connected genes having similar diffusion profiles [25].

These hypotheses translate into specific algorithmic assumptions during implementation. The homogeneity assumption presumes that the propagation rules remain consistent across different regions of the network, though recent approaches like IDLP challenge this by modeling network-specific biases [27]. The topological primacy assumption treats the network structure as correct and complete, though in reality biological networks contain false positives and incomplete data [27]. The linearity assumption underlies many propagation models, which use linear diffusion processes despite the potential need for nonlinear models to capture complex biological relationships [25].

Mathematical Framework

Network propagation algorithms typically employ either a random walk with restart (RWR) framework or a heat kernel diffusion approach. For a network with n vertices, let ( G = (V, E) ) represent the graph with vertices ( V ) and edges ( E ). The adjacency matrix ( A ) encodes edge weights, while the degree matrix ( D ) is a diagonal matrix containing vertex degrees. The transition matrix ( P ) is defined as ( P = D^{-1}A ).

In RWR, the propagation process follows: [ \mathbf{p}{t+1} = (1 - r)P\mathbf{p}t + r\mathbf{q} ] where ( \mathbf{p}_t ) represents the probability distribution at time step ( t ), ( r ) is the restart probability, and ( \mathbf{q} ) is the initial probability distribution based on prior knowledge [26]. The heat kernel diffusion employs: [ \mathbf{p} = \exp(-\alpha(I - P))\mathbf{q} ] where ( \alpha ) is the diffusion parameter and ( I ) is the identity matrix [25]. These mathematical formulations share the common assumption that propagating initial information through the network structure will reveal biologically meaningful relationships that are not apparent from the initial data alone.

Experimental Protocols and Benchmarking

Standardized Benchmarking Methodology

Robust evaluation of network propagation methods requires carefully designed benchmarks that minimize knowledge cross-contamination and provide statistically meaningful performance measures. The Gene Ontology (GO)-based benchmark framework utilizes the intrinsic clustering property of GO terms, where gene products annotated with the same term are associated with similar biological processes, cellular components, or molecular functions [26]. This approach employs three-fold cross-validation: genes annotated with a certain GO term are randomly divided into three equally sized parts, with two parts used as query and the third as holdout for validation [26].

The benchmark implementation follows these steps:

- GO Term Selection: Select GO terms from Cellular Component (CC), Molecular Function (MF), and Biological Process (BP) ontologies within specific size ranges ({10-30}, {31-100}, {101-300}) to ensure meaningful clustering [26].

- Network Preparation: Utilize comprehensive functional association networks such as FunCoup that do not include GO data to avoid knowledge cross-contamination [26].

- Cross-Validation: For each GO term, perform three-fold cross-validation, using two-thirds of genes as input and evaluating the method's ability to recover the held-out genes [26].

- Performance Calculation: Compute performance measures for each term and visualize their distributions across all evaluated terms [26].

Performance Metrics for Evaluation

Multiple performance metrics are necessary to comprehensively evaluate gene prioritization methods, each capturing different aspects of performance:

Table 1: Performance Metrics for Network Propagation Algorithms

| Metric | Formula | Interpretation | Application Context |

|---|---|---|---|

| Partial AUC (pAUC) | ( \int_{0}^{0.02} TPR(FPR)dFPR ) | Probability of ranking true positives high in the list; focuses on top candidates | Primary performance measure for practical applications where only top candidates are validated |

| Median Rank Ratio (MedRR) | ( \text{median}(rank_{TP}) / N ) | Normalized median rank of true positives; lower values indicate better performance | Measures skewness of true positive ranks while normalizing for candidate list length |

| Normalized Discounted Cumulative Gain (NDCG) | ( \frac{DCGp}{IDCGp} ) where ( DCGp = \sum{i=1}^p \frac{2^{reli} - 1}{\log2(i+1)} ) | Emphasizes early retrieval of true positives; penalizes late true positives | Information retrieval perspective; important when ranking quality is critical |

| Top Percentage Recovery | ( \frac{\text{TP in top 1% or 10%}}{\text{total TP}} ) | Direct measure of performance in practically relevant range | Assesses utility for guiding experimental design with limited resources |

The results of these performance measures typically follow non-normal distributions, necessitating the use of non-parametric statistical tests such as the Mann-Whitney U test for pairwise comparisons, with correction for multiple hypothesis testing using the Benjamini-Hochberg procedure [26].

Implementation Protocols

Network Propagation Workflow

The following Graphviz diagram illustrates the complete network propagation workflow for disease gene prioritization:

Figure 1: Network Propagation Workflow for Gene Prioritization

Advanced Implementation: IDLP Framework

The Improved Dual Label Propagation (IDLP) framework addresses limitations of standard network propagation by explicitly modeling noise in protein-protein interaction networks and phenotype similarity matrices [27]. The following protocol details its implementation:

Protocol 1: IDLP Implementation for Disease Gene Prioritization

Network Preparation

- Obtain protein-protein interaction network from BioGRID or similar database [27]

- Construct phenotype similarity network using semantic similarity measures

- Build heterogeneous network by connecting gene and phenotype networks

Matrix Learning Phase

- Treat PPI network matrix and phenotype similarity matrix as matrices to be learned

- Amend noises in training matrices through iterative optimization

- Model bias caused by false positive protein interactions

Dual Propagation Process

- Propagate labels throughout both PPI and phenotype similarity networks

- Implement restart mechanism to handle cases with few known disease genes

- Balance influence from both networks using weighting parameters

Candidate Prioritization

- Compute final association scores for all candidate genes

- Rank genes based on propagated scores

- Apply statistical significance testing

The IDLP framework demonstrates particular effectiveness for querying phenotypes without known associated genes, making it valuable for studying novel or less-characterized diseases [27].

Research Reagent Solutions

Table 2: Essential Research Resources for Network Propagation Studies

| Resource Category | Specific Examples | Function and Application | Key Features |

|---|---|---|---|

| Interaction Networks | FunCoup [26], BioGRID [27] | Provides functional association data between genes/proteins; serves as propagation substrate | Comprehensive coverage, multiple evidence types, regular updates |

| Benchmark Databases | Gene Ontology [26], OMIM [27] | Supplies ground truth data for algorithm training and validation | Structured vocabulary, manual curation, disease associations |

| Prioritization Tools | NetMix2 [25], IDLP [27], NetRank [26] | Implements propagation algorithms for candidate gene ranking | Specialized functions, parameter tuning, visualization capabilities |

| Evaluation Frameworks | GO-based benchmark [26], Cross-validation suite | Provides standardized performance assessment | Statistical robustness, multiple metrics, bias minimization |

Comparative Performance Analysis

Table 3: Quantitative Performance Comparison of Propagation Algorithms

| Algorithm | pAUC (Mean ± SD) | MedRR (Mean ± SD) | NDCG (Mean ± SD) | Top 1% Recovery | Key Assumptions |

|---|---|---|---|---|---|

| NetMix2 [25] | 0.891 ± 0.042 | 0.032 ± 0.008 | 0.872 ± 0.035 | 38.7% | Explicit subnetwork family definition combined with propagation |

| IDLP [27] | 0.885 ± 0.045 | 0.035 ± 0.009 | 0.865 ± 0.038 | 36.9% | Models false positive PPIs and learns network matrices |

| NetRank [26] | 0.862 ± 0.048 | 0.041 ± 0.011 | 0.851 ± 0.041 | 33.5% | Standard random walk with restart framework |

| Random Walk with Restart [26] | 0.854 ± 0.051 | 0.045 ± 0.012 | 0.843 ± 0.043 | 31.2% | Classical propagation with fixed restart probability |

| MaxLink [26] | 0.831 ± 0.055 | 0.052 ± 0.015 | 0.826 ± 0.047 | 28.7% | Utilizes direct network neighborhood without propagation |

Performance data represents aggregate results across multiple GO term sizes ({10-30}, {31-100}, {101-300}) and ontologies (BP, MF, CC) based on three-fold cross-validation [26]. NetMix2 demonstrates superior performance by unifying subnetwork family and network propagation approaches, while IDLP shows particular strength in handling noisy network data [25] [27].

Technical Considerations and Algorithmic Specifications

NetMix2 Algorithmic Details

NetMix2 represents a significant advancement in network propagation by deriving the propagation family, a subnetwork family that approximates the sets of vertices ranked highly by network propagation approaches [25]. This unification enables the algorithm to combine the advantages of both subnetwork family and network propagation approaches. The key innovation lies in its flexibility to accept a wide range of subnetwork families, including not only connected subgraphs but also subnetworks defined by linear or quadratic constraints such as high edge density or small cut-size [25].

The algorithm operates through the following computational steps:

- Input Processing: Takes interaction network ( G = (V, E) ) and vertex scores ( s(v) ) for all ( v \in V )

- Family Specification: Defines propagation family ( \mathcal{F} ) that approximates network propagation rankings

- Optimization: Identifies altered subnetworks by maximizing a scoring function over ( \mathcal{F} )

- Significance Assessment: Evaluates statistical significance of identified subnetworks

NetMix2 has demonstrated superior performance on simulated data, pan-cancer somatic mutation data, and genome-wide association data from multiple human diseases compared to existing methods [25].

Visualization of Algorithmic Relationships

The following diagram illustrates the conceptual relationships between different network propagation approaches and their underlying assumptions:

Figure 2: Network Propagation Approaches and Assumptions

This conceptual framework highlights how unified approaches like NetMix2 integrate the global topology utilization of propagation methods with the explicit statistical foundation of subnetwork family approaches, addressing limitations of both methodologies while preserving their respective strengths [25].

Algorithmic Deep Dive: Key Network Propagation Methods and Their Applications

The identification of genes associated with hereditary disorders and complex diseases represents a fundamental challenge in biomedical research. Network-based gene prioritization approaches have emerged as powerful computational methods that leverage the "guilt-by-association" principle, which posits that genes causing similar diseases tend to lie close to one another in biological networks [30] [31]. Among these methods, Random Walk with Restart (RWR) has established itself as a leading algorithm for prioritizing candidate disease genes based on their proximity to known disease-associated genes in biological networks [30] [32] [31]. The RWR algorithm simulates a random walker that traverses a biological network, starting from known disease genes (seed nodes), and at each step either moves to a neighboring node or restarts from one of the seed nodes. This process produces a steady-state probability distribution that quantifies the functional proximity of all genes in the network to the seed genes, thereby enabling the prioritization of candidate genes for experimental validation [30] [32].

Theoretical Foundations of Random Walk with Restart

Mathematical Formulation

The RWR algorithm operates on a graph structure G = {V, E}, where V = {gene_i} represents the set of genes or proteins, and E = {(i→j)} represents the set of edges between them, typically with degree-normalized edge weights so that each gene's outgoing edges sum to 1 [33]. The fundamental RWR equation is defined as:

pₜ₊₁ = (1 - r)Wpₜ + rp₀

Where:

- pₜ is a vector in which the i-th element holds the probability of being at node i at time step t

- W is the column-normalized adjacency matrix of the graph

- r is the restart probability, controlling the likelihood that the walker returns to the seed nodes at each step

- p₀ is the initial probability vector, constructed such that equal probabilities are assigned to nodes representing known disease genes, with the sum of probabilities equal to 1 [30]

The algorithm iterates until convergence, typically when the change between pₜ and pₜ₊₁ falls below a predetermined threshold (e.g., 10⁻⁶), yielding a steady-state probability vector p∞ [30]. Candidate genes are then ranked according to their values in p∞, with higher values indicating greater potential association with the query disease.

Biological Rationale

The effectiveness of RWR for disease gene prioritization stems from the observation that genes associated with similar diseases often reside in specific neighborhoods within protein-protein interaction networks [30]. This organization reflects the modular nature of biological systems, where functionally related genes participate in common pathways or complexes. RWR represents a global network similarity measure that captures relationships between disease proteins more effectively than algorithms based solely on direct interactions or shortest paths [30]. By considering all possible paths through the network and their weights, RWR integrates both local and global topological information, enabling the identification of genes that may not directly interact with known disease genes but share broader network connectivity patterns.

Table 1: Key Parameters in the RWR Algorithm

| Parameter | Mathematical Symbol | Biological Interpretation | Typical Values |

|---|---|---|---|

| Restart Probability | r | Controls the preference for returning to known disease genes versus exploring the network | 0.1-0.9 [30] [32] |

| Convergence Threshold | ε | Determines when the iterative process stops | 10⁻⁶ [30] |

| Initial Probability Vector | p₀ | Represents the starting point based on known disease genes | Uniform distribution across seed genes [30] |

| Normalized Adjacency Matrix | W | Encodes the transition probabilities between connected nodes | Column-normalized edge weights [33] |

RWR Extensions and Implementations

Heterogeneous Network Formulations

The basic RWR approach has been extended to operate on heterogeneous networks that incorporate multiple biological entities. The RWR on Heterogeneous Network (RWRH) algorithm integrates both gene/protein networks and phenotypic disease similarity networks, enabling simultaneous prioritization of candidate genes and diseases [34] [35]. This approach connects a gene network and a disease similarity network through known gene-disease associations, creating a unified framework that leverages both molecular and phenotypic information [36] [35]. The HGPEC Cytoscape app implements this heterogeneous network approach, allowing researchers to predict novel disease-gene and disease-disease associations through a user-friendly interface [34] [35].

Further extending this concept, MultiXrank enables RWR on generic multilayer networks comprising any number and combination of multiplex and monoplex networks connected by bipartite interaction networks [32]. This framework can incorporate diverse data types including protein-protein interactions, drug-target associations, regulatory networks, and metabolic pathways, providing a comprehensive representation of biological knowledge that enhances prioritization accuracy [32].

Comparison with Alternative Network Algorithms

RWR belongs to the category of network diffusion algorithms that propagate information throughout the entire network, in contrast to direct neighborhood methods like naïve Bayes (NB) that only consider immediate network neighbors [37]. Benchmarking studies have demonstrated that the effectiveness of these algorithmic approaches depends on the connectivity patterns of disease-associated genes in the network. Specifically, network diffusion methods generally outperform direct neighborhood approaches for diseases whose associated genes form well-connected network modules [37]. However, for "early retrieval" of top candidate genes (e.g., the top 200 candidates), direct neighborhood methods may sometimes provide better performance, particularly when the connectivity among pathway genes is limited [37].

Table 2: Comparison of Network-Based Gene Prioritization Algorithms

| Algorithm | Mechanism | Network Type | Advantages | Limitations |

|---|---|---|---|---|

| RWR [30] | Network propagation throughout entire network | Gene/protein network | Global network proximity measure; Robust to noisy data | Performance depends on seed gene connectivity |

| RWRH [34] [35] | Random walk on heterogeneous network | Gene-disease heterogeneous network | Integrates phenotypic information; Predicts both genes and diseases | Increased computational complexity |

| Direct Neighborhood [37] | Propagation only to direct neighbors | Gene/protein network | Better for top candidates in some diseases; Computationally efficient | Limited to local information |

| GenePanda [31] | Seed association based on heuristic rules | Gene/protein network | Effective for diseases with strong network modules | May miss functionally related but distant genes |

| Node2Vec [31] | Graph embedding followed by machine learning | Gene/protein network | Captures complex topological features; Transferable embeddings | Requires substantial training data |

Experimental Protocol for RWR-Based Gene Prioritization

The following diagram illustrates the comprehensive workflow for disease gene prioritization using the RWR algorithm:

Protocol Steps

Step 1: Data Collection and Network Construction

Input Data Sources:

- Protein-Protein Interactions (PPI): Curate high-quality interactions from databases such as BioGRID [36], String (experimentally confirmed with score ≥350) [31], Human Reference Interactome (HuRI) [31], and Gene Transcription Regulation Database (GTRD) [31].

- Gene-Disease Associations: Obtain known associations from DisGeNET (filter with GDA score ≥0.3) [31] and OMIM database [30] [36].

- Disease Similarity Networks: Calculate phenotypic similarity using text-mining approaches like MimMiner (similarity score >0.6) [31] or ontology-based methods.

Network Integration: For heterogeneous network approaches, construct an integrated network comprising:

- Gene-protein interaction network (S₁)

- Phenotypic disease similarity network (S₂)

- Gene-disease association bipartite network connecting the two [36]

Table 3: Essential Research Reagents and Data Resources

| Resource Type | Specific Examples | Purpose | Access Information |

|---|---|---|---|

| Protein Interaction Databases | BioGRID [36], String [31], HuRI [31] | Provides physical and functional interactions between proteins | Publicly available |

| Disease Association Databases | OMIM [30] [36], DisGeNET [31] | Source of known gene-disease relationships | Publicly available |

| Disease Similarity Resources | MimMiner [31] | Enables construction of phenotypic disease network | Publicly available |

| Implementation Tools | HGPEC Cytoscape App [34], MultiXrank [32] | Software for executing RWR algorithms | Open source |

Step 2: Seed Selection and Parameter Configuration

Seed Gene Selection:

- Compile known disease-associated genes from authoritative sources (e.g., OMIM, DisGeNET)

- For diseases with few known genes, consider incorporating genes associated with phenotypically similar diseases

- Construct initial probability vector p₀ with uniform probability distribution across seed genes

Parameter Configuration:

- Set restart probability r typically between 0.1 and 0.9 [30] [32]

- Define convergence threshold ε (e.g., 10⁻⁶) [30]

- Configure network normalization parameters (e.g., column-normalize adjacency matrix)

Step 3: Algorithm Execution and Convergence

Execute the iterative RWR process using the equation:

pₜ₊₁ = (1 - r)Wpₜ + rp₀

Continue iterations until the L1 norm between pₜ and pₜ₊₁ falls below the convergence threshold ε [30]. For large networks, employ efficient computational strategies such as sparse matrix operations to reduce memory requirements and computation time.

Step 4: Results Analysis and Candidate Ranking

- Generate candidate gene rankings based on steady-state probabilities in p∞

- Apply thresholding or top-k selection to identify strongest candidates

- Perform functional enrichment analysis to validate biological coherence of predictions

- Compare results with existing knowledge and independent datasets for validation

Validation and Benchmarking Strategies

Performance Evaluation Metrics

Comprehensive validation of RWR predictions requires multiple assessment approaches:

Leave-One-Out Cross-Validation (LOOCV): Systematically remove each known disease gene from the seed set and measure its recovery rank when used as a candidate [31]. Performance is typically reported as:

- Median rediscovery rank (e.g., 185.5 out of 19,463 genes for RWRH in cSVD study) [31]

- Area under the ROC curve (AUC) for entire rank list

- AUC for top N candidates (e.g., AUCTop200) to assess early retrieval performance [37]

External Validation with GWAS Data: Compare prioritized candidates with genes identified through genome-wide association studies (GWAS) [31]. Measure the enrichment of GWAS-significant genes in top-ranked predictions.

Literature Validation: Conduct systematic surveys of biomedical literature to confirm predicted gene-disease associations that were not in the original training data [36].

Comparative Performance Benchmarking

Benchmarking studies have demonstrated that RWRH generally shows superior LOOCV performance compared to other network-based algorithms [31]. In a comprehensive assessment for cerebral small vessel disease (cSVD), RWRH achieved the best LOOCV performance with a median rediscovery rank of 185.5 out of 19,463 genes, outperforming methods like Node2Vec, DIAMOnD, and GenePanda [31].

The following diagram illustrates the benchmarking workflow for evaluating RWR performance:

Applications and Case Studies

Disease-Specific Gene Prioritization

RWR algorithms have been successfully applied to prioritize candidate genes for numerous diseases. In a study on leukemia, MultiXrank was used with HRAS and Tipifarnib as seed nodes, successfully prioritizing known leukemia-associated genes such as CYP3A4 (involved in drug resistance) and FNTB (target of Tipifarnib) [32]. The top-ranked drug, Astemizole, was validated as having anti-leukemic properties in human leukemic cells [32].

For inborn errors of metabolism (IEMs), the metPropagate method implements a label propagation algorithm on a network combining protein interactions and metabolomic data, successfully prioritizing causative genes in the top 20th percentile of candidates for 92% of patients with known IEMs [38].

Drug Repurposing and Target Discovery

The application of RWR extends beyond gene discovery to drug prioritization and repurposing. By exploring multilayer networks containing drug-target interactions, RWR can identify novel therapeutic applications for existing drugs [32]. For example, in the leukemia case study, RWR prioritization identified Zoledronic acid as a top candidate for leukemia treatment, which was supported by existing literature evidence [32].

Troubleshooting and Technical Considerations

Common Implementation Challenges

Network Quality and Coverage:

- Challenge: Incomplete or biased network data may limit prediction accuracy

- Solution: Integrate multiple complementary data sources to improve coverage

- Consideration: Balance between comprehensiveness and data quality by applying stringent confidence filters

Parameter Sensitivity:

- Challenge: RWR performance can be sensitive to restart probability (r)

- Solution: Perform parameter sweep to identify optimal values for specific applications

- Guideline: Typical r values range between 0.1-0.9, with lower values favoring network exploration and higher values emphasizing seed proximity [30] [32]

Computational Complexity:

- Challenge: Large biological networks require efficient computational implementations

- Solution: Utilize sparse matrix representations and optimized linear algebra libraries

- Implementation: For networks with thousands of nodes, convergence typically occurs within 100-200 iterations [30]

Interpretation Considerations

When interpreting RWR results, researchers should consider:

- Topological Bias: Highly connected genes (hubs) may be prioritized due to network structure rather than biological relevance

- Seed Dependence: Predictions are influenced by the selection and quality of seed genes

- Functional Coherence: Validate predictions through enrichment analysis of biological pathways and processes

- Independent Validation: Always seek corroborating evidence from orthogonal data sources and experimental follow-up

RWR algorithms represent a powerful and versatile approach for disease gene prioritization that continues to evolve with improvements in network data quality and computational methods. When properly implemented and validated, these methods can significantly accelerate the identification of novel disease genes and potential therapeutic targets.