Navigating the Data Maze: A Comprehensive Guide to Batch Effect Correction in Biomarker Studies

The integration of large-scale omics data is paramount for modern biomarker discovery but is persistently challenged by technical variations known as batch effects.

Navigating the Data Maze: A Comprehensive Guide to Batch Effect Correction in Biomarker Studies

Abstract

The integration of large-scale omics data is paramount for modern biomarker discovery but is persistently challenged by technical variations known as batch effects. These non-biological artifacts can skew analytical results, increase false discovery rates, and jeopardize the clinical translation of promising biomarkers. This article provides a systematic framework for researchers and drug development professionals, addressing the foundational concepts of batch effects, exploring advanced methodological corrections, outlining troubleshooting strategies for complex datasets, and establishing robust validation protocols. By synthesizing current evidence and emerging solutions, this guide aims to enhance the reliability, reproducibility, and clinical utility of integrated biomarker data.

Understanding the Invisible Adversary: What Are Batch Effects and Why Do They Threaten Biomarker Discovery?

In molecular biology and high-throughput research, a batch effect refers to systematic, non-biological variations in data caused by technical differences when samples are processed and measured in different batches [1]. These effects are unrelated to the biological variation under study but can be strong enough to confound analysis, leading to inaccurate conclusions and, ultimately, contributing to the broader reproducibility crisis in life science research [2] [3]. This article defines batch effects, explores their direct link to irreproducible results, and provides a practical technical support guide for researchers navigating these challenges within data integration and biomarker studies.

What Are Batch Effects? A Technical Definition

Batch effects are sub-groups of measurements that exhibit qualitatively different behavior across experimental conditions due to technical, not biological, variables [2]. They occur because measurements are affected by a complex interplay of laboratory conditions, reagent lots, personnel differences, and instrumentation [1]. In high-throughput experiments—such as microarrays, mass spectrometry, and single-cell RNA sequencing—these effects are pervasive and can be a dominant source of variation, often exceeding true biological signal [2].

The Mechanisms: How Batch Effects Lead to Irreproducible Results

The path from a technical artifact to an irreproducible finding is often systematic. Batch effects introduce a systematic bias that can be correlated with an outcome of interest. For example, if all control samples are processed on Monday and all disease samples on Tuesday, the day-of-week effect can be mistaken for a disease signature [2]. This confounding severely undermines analytic replication (re-analysis of the original data) and direct replication (repeating the experiment under the same conditions) [4].

Quantifying the problem, a Nature survey revealed that over 70% of researchers in biology were unable to reproduce the findings of other scientists, and approximately 60% could not reproduce their own findings [4]. Another source states that up to 65% of researchers have tried and failed to reproduce their own research [3]. Preclinical research is particularly affected, with one attempt to confirm landmark studies succeeding in only 6 out of 53 cases [5]. The financial toll is staggering, with an estimated $28 billion per year wasted on non-reproducible preclinical research in the US alone [4] [3].

The diagram below illustrates how uncontrolled technical variables introduce batch effects, which then mask biological truth and lead to irreproducible conclusions in downstream analysis.

Diagram 1: How Batch Effects Arise and Cause Irreproducible Results.

The Scientist's Toolkit: Essential Reagents and Materials for Batch Management

Effective management of batch effects begins with strategic experimental design and the use of key materials. The following table details essential "Research Reagent Solutions."

| Item | Function in Batch Effect Management |

|---|---|

| Authenticated, Low-Passage Cell Lines/ Bioreagents | Ensures biological starting material is consistent and traceable, reducing variability introduced by misidentified or contaminated samples [4]. |

| Standardized Reagent Kits from Single Lot | Minimizes variation from differing reagent compositions or performance between manufacturing lots [1] [6]. |

| Internal Standard (IS) Spikes | Isotopically labeled compounds added to each sample to correct for variations in sample preparation and instrument response for target analytes [6]. |

| Pooled Quality Control (QC) Sample | A homogeneous sample made by pooling aliquots from all study samples. Run repeatedly throughout the batch to monitor and correct for instrumental drift [6]. |

| Reference RNA/DNA or Protein Material | Provides a universal benchmark across batches and laboratories to calibrate measurements and assess technical performance [7]. |

Experimental Protocols for Batch Effect Management and Correction

Protocol 1: Designing an Experiment with Batch Effect Mitigation

Goal: To minimize the introduction of batch effects at the source.

- Single-Batch Processing: Process all samples in a single batch whenever feasible [6].

- Randomization: If multiple batches are unavoidable, fully randomize the assignment of samples from different biological groups (e.g., case/control) across batches and within batch run order [6].

- Replication: Include technical replicates of the same biological sample within and across batches to assess technical variability [6].

- QC Sample Integration: Prepare a pooled QC sample. Inject/analyze the QC after every 4-10 experimental samples throughout the run sequence to track drift [6].

- Metadata Logging: Meticulously record all potential batch variables: personnel, reagent lot numbers, instrument IDs, date/time, and environmental conditions [1].

Protocol 2: Applying a QC-Based Batch Correction Using Robust Spline Correction (RSC)

Goal: To computationally remove time-dependent signal drift using pooled QC samples.

Materials: Processed data file (e.g., peak areas), metadata file with run order and QC labels.

Software: R with metaX or statTarget package.

Methodology:

- Data Preparation: Format your data matrix (samples as rows, features as columns) and a sample info file tagging QC samples.

- Trend Modeling: For each metabolite/feature, the RSC algorithm fits a robust spline regression model between the feature's intensity and the injection order, using only the data from the QC samples.

- Drift Estimation: The fitted spline model represents the systematic technical drift over time.

- Correction: For each experimental sample, the predicted drift value based on its run order is subtracted from (or used to divide) the measured intensity for that feature.

- Validation: Assess correction effectiveness by checking if QC samples cluster tightly in PCA and if the correlation between technical replicates improves [6].

Technical Support Center: Troubleshooting Guides and FAQs

FAQ 1: How can I detect if my dataset has significant batch effects?

A: Visualization and statistical tests are key. First, perform Principal Component Analysis (PCA) or UMAP and color the data points by batch (e.g., processing date). If samples cluster strongly by batch rather than by biological group, a batch effect is likely present [6] [2]. Quantitatively, you can use tests like the k-nearest neighbor batch effect test (kBET), which measures whether the local neighborhood of each cell is well-mixed with respect to batch labels [8] [9].

FAQ 2: What is the best method to correct for batch effects in my single-cell RNA-seq data?

A: There is no universal "best" method; the choice depends on your data scale and structure. However, comprehensive benchmarks have provided strong guidance. A 2020 benchmark of 14 methods on single-cell data, evaluating computational runtime and the ability to preserve biological variation, recommended Harmony, LIGER, and Seurat 3 (Integration) as top performers [8]. Harmony is often suggested as a first try due to its fast runtime and good efficacy [8]. It's critical to never apply a correction blindly. Always validate by checking that batch mixing improves while biological cluster separation (e.g., by cell type) is maintained.

FAQ 3: Can batch correction methods remove real biological signal?

A: Yes, this is a major risk. If a biological factor of interest (e.g., disease status) is completely confounded with batch (e.g., all controls in batch A, all cases in batch B), computational methods cannot distinguish the technical effect from the biological signal. Attempting to "correct" this will remove the biological signal [7]. This underscores why proper experimental design (randomization) is always superior to post-hoc computational correction. Always assess the impact of correction on known biological variables.

FAQ 4: We didn't include QC samples. Can we still correct for batch effects?

A: Yes, but options are more limited and come with greater assumptions. Sample-based correction methods like Empirical Bayes (ComBat) or mean-centering can be applied. ComBat is widely used in genomics; it pools information across genes to estimate and adjust for batch-specific location and scale parameters [1] [7]. However, these methods assume the overall biological signal is consistent across batches and are less effective at correcting complex, non-linear drift over time compared to QC-based methods [6] [7].

FAQ 5: How do I validate that my batch correction worked?

A: Use a combination of metrics:

- Visual Inspection: Use PCA/UMAP plots colored by batch (should be mixed) and by cell type or phenotype (should remain separated) [8].

- Quantitative Metrics:

- Replicate Correlation: Calculate the correlation between technical replicates before and after correction; it should increase or remain high [6].

- kBET/LISI Scores: The kBET rejection rate should decrease, and the Local Inverse Simpson's Index (LISI) for batch should increase, indicating better mixing [8] [9].

- Average Silhouette Width (ASW): Evaluate if the silhouette width for biological labels remains high while for batch labels decreases [8].

- Biological Consistency: Key known differentially expressed genes or biomarkers should remain significant post-correction.

Comparative Analysis of Batch Effect Correction Methods

The table below summarizes key characteristics of commonly used correction strategies, synthesized from benchmarking studies and reviews [6] [7] [8].

| Method Category | Example Tools | Key Principle | Best For | Major Caveat |

|---|---|---|---|---|

| Sample-Based (Statistical) | ComBat, limma | Empirical Bayes or linear modeling to adjust location/scale per batch. | Bulk genomics (microarray, RNA-seq), when batch info is known, no QC samples. | Assumes most features are not differential across batches; risk of over-correction if biology is confounded. |

| QC-Based | RSC (metaX), SVR, QC-RFSC | Models signal drift over run order using pooled QC samples, then subtracts trend. | Metabolomics, proteomics, any LC/GC-MS data with time-dependent drift. | Requires dense, regular QC injections. Poor QC quality ruins correction. |

| Matching-Based (scRNA-seq) | MNN Correct, Seurat 3, Scanorama | Identifies mutual nearest neighbors (MNNs) or "anchors" across batches to align datasets. | Integrating single-cell data from different technologies or labs. | Computationally intensive for huge datasets; assumes shared cell states exist. |

| Clustering-Based (scRNA-seq) | Harmony | Iteratively clusters cells while diversifying batch membership per cluster to remove batch effects. | Fast, effective integration of multiple single-cell batches. | Like others, may struggle with highly unique batches. |

| Deep Learning | scGen, BERMUDA | Uses variational autoencoders (VAEs) to learn a latent representation that factors out batch. | Complex, non-linear batch effects; potential for cross-modality prediction. | Requires substantial data for training; "black box" nature can complicate interpretation. |

Integrated Workflow for Batch-Effect-Aware Biomarker Research

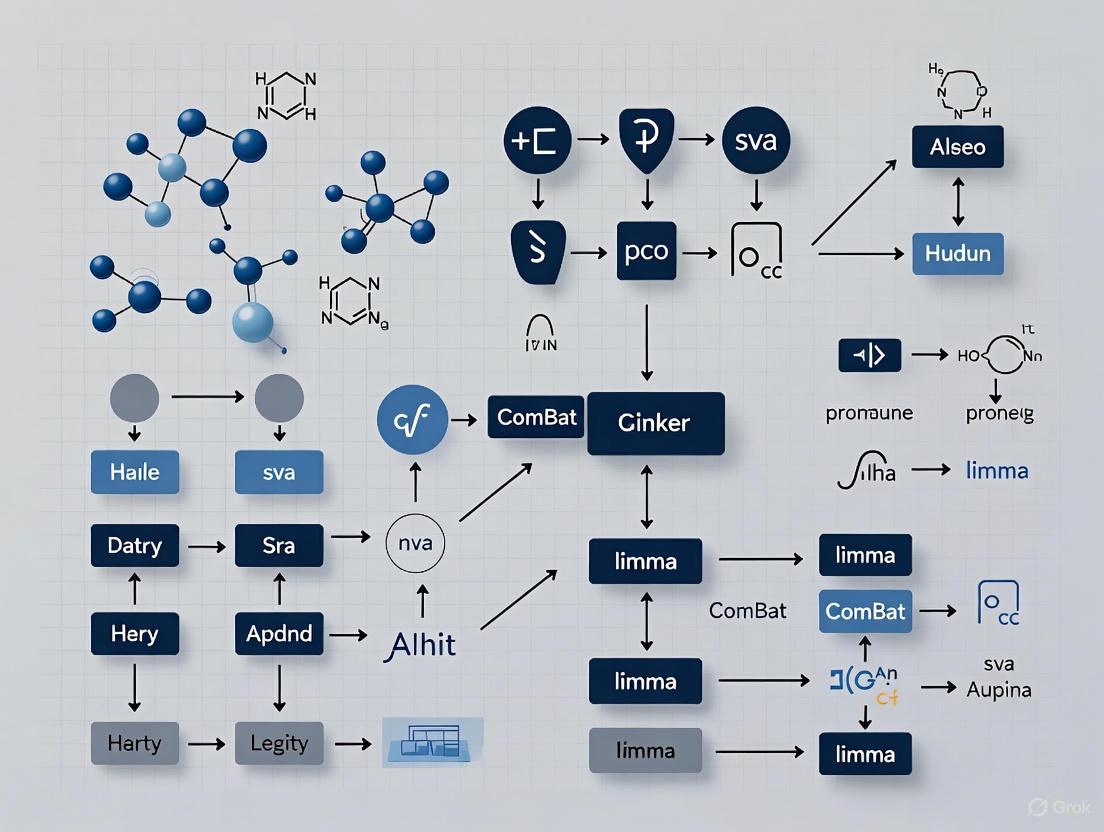

The following diagram outlines a robust workflow for biomarker discovery research that proactively addresses batch effects at every stage, from design to validation.

Diagram 2: Batch-Effect-Aware Biomarker Discovery Workflow.

Batch effects are not merely a nuisance but a fundamental threat to the integrity of high-throughput biology and translational research. They serve as a direct mechanistic link between uncontrolled technical variability and the pervasive crisis of irreproducible results [2] [3]. Success in data integration and biomarker studies hinges on a two-pronged approach: rigorous experimental design to minimize batch effects at their source, followed by prudent application and validation of computational correction tools when necessary. By treating batch effect management as a non-negotiable component of the research lifecycle—as outlined in the protocols, toolkit, and workflow above—researchers can safeguard the biological truth in their data and produce findings that are robust, reliable, and reproducible.

Troubleshooting Guides

Guide 1: My Biomarker Model Performs Poorly on New Data. Could Batch Effects Be the Cause?

Problem: A predictive model, developed from biomarker data (e.g., gene expression, proteomics), shows high accuracy during internal validation but fails to generalize to new datasets from different clinics or sequencing batches.

Diagnosis and Solution: This is a classic symptom of batch effects confounding model training. Technical variations in the original data can be inadvertently learned by the model as a predictive signal. When applied to new data with different technical characteristics, this false signal disappears, and the model's performance drops [10] [11].

Investigation Protocol:

- Visualize Batch Influence: Generate a PCA plot using the top variable features from your combined training and validation datasets. Color the data points by the dataset or batch of origin. A clear separation of batches, rather than by the biological outcome of interest, strongly indicates pervasive batch effects [12] [13].

- Conduct Differential Analysis: Perform a statistical test (e.g., t-test) to identify genes or features that are significantly different between batches. If you find a large number of differentially expressed features between technical batches, it confirms that batch effects are a major source of variation that can mask or mimic true biological signals [14].

- Audit the Cross-Validation: If your internal validation used a simple random split of the data, the model may have been evaluated on samples from the same batch it was trained on, giving an over-optimistic performance estimate. Re-audit your model using a batch-out cross-validation scheme, where entire batches are left out as the validation set. A significant drop in performance under this scheme indicates that the model is learning batch-specific artifacts [10].

Guide 2: After Data Integration, My Cell Types Have Merged. Did I Overcorrect?

Problem: After applying a batch-effect correction method to single-cell RNA-seq data from multiple patients, distinct cell types (e.g., T-cells and B-cells) are no longer separable in the visualization.

Diagnosis and Solution: This is a sign of overcorrection, where the batch-effect algorithm has removed not only technical variation but also the biological heterogeneity you aimed to study [12] [13].

Investigation Protocol:

- Check for Biological Signal Loss: Examine the cluster-specific markers after correction. A key sign of overcorrection is when these marker lists are dominated by genes with widespread high expression (e.g., ribosomal genes) rather than canonical, well-established cell-type-specific markers [12].

- Benchmark Against Ground Truth: If the expected cell types are known, use a metric like the Adjusted Rand Index (ARI) to compare cluster identities before and after correction. A significant decrease in ARI after correction suggests that biologically meaningful separations have been lost [8].

- Re-run with Milder Parameters: Most batch-correction methods have parameters that control the strength of integration. Try reducing the strength of correction or the number of covariates being corrected. The goal is to achieve batch mixing without destroying the biological population structure [14] [13].

- Try a Different Algorithm: Algorithms have different philosophies; some are more aggressive than others. If overcorrection persists, switch to a method known for better biological conservation. Benchmarking studies have consistently highlighted Harmony as a method that effectively balances batch removal with biological preservation [15] [8].

Guide 3: I Have an Unbalanced Study Design. How Can I Safely Correct for Batch Effects?

Problem: Your experimental groups are confounded with batch. For example, most of the control samples were processed in Batch 1, while most of the disease samples were processed in Batch 2.

Diagnosis and Solution: This unbalanced batch-group design is a high-risk scenario. Standard batch-effect correction methods can create false signals or remove genuine biological effects because they cannot reliably distinguish the technical effect from the biological effect [10] [11].

Investigation Protocol:

- Acknowledge the Limitation: The first step is to recognize that no computational method can fully resolve a confounded design. The solution is primarily rooted in improved experimental design, such as randomizing samples across batches [10].

- Use a Causal Framework: Emerging causal approaches to batch effects are better equipped to handle this. They explicitly model the relationships between covariates, batches, and outcomes. In cases of severe confounding and low covariate overlap, these methods may correctly return "no answer" rather than provide a potentially misleading corrected dataset [16].

- Leverage Robust Feature Selection: Instead of correcting the entire dataset, use feature-selection methods that are more resistant to batch effects. Network-based approaches or other methods that focus on coherent biological pathways rather than individual features can be more robust in these situations [10].

Frequently Asked Questions (FAQs)

Q1: What is the fundamental difference between data normalization and batch effect correction? A: These processes address different technical issues. Normalization corrects for variations between individual cells or samples, such as differences in sequencing depth, library size, or gene length. It operates on the raw count matrix and is a prerequisite for most analyses. In contrast, batch effect correction addresses systematic technical differences between groups of samples (batches) caused by different sequencing platforms, reagent lots, handling personnel, or processing times [12] [14].

Q2: How can I quantitatively measure the success of my batch effect correction? A: Beyond visual inspection with t-SNE or UMAP plots, several quantitative metrics can be used to evaluate batch mixing and biological conservation. These should be calculated before and after correction for comparison [12] [8].

Table: Key Quantitative Metrics for Evaluating Batch Effect Correction

| Metric Name | What It Measures | Interpretation |

|---|---|---|

| k-nearest neighbor Batch Effect Test (kBET) | Whether local neighborhoods of cells are well-mixed with respect to batch labels [8]. | A lower rejection rate indicates better batch mixing. |

| Local Inverse Simpson's Index (LISI) | The diversity of batches within a local neighborhood of cells [8]. | A higher LISI score indicates better batch mixing. |

| Adjusted Rand Index (ARI) | The similarity between two clusterings (e.g., how well cell type clusters are preserved after correction) [8]. | A value closer to 1 indicates better preservation of biological clusters. |

| Average Silhouette Width (ASW) | How well individual cells match their assigned cluster (cell type) versus other clusters [8]. | A higher value indicates clearer separation of biological groups. |

Q3: What are the most recommended tools for batch effect correction in single-cell RNA-seq data? A: Independent benchmark studies that evaluate methods on their ability to remove technical artifacts while preserving biological variation consistently recommend a subset of tools. A 2020 benchmark in Genome Biology and a 2024 review both point to the same top performers [15] [8] [13].

Table: Benchmark-Recommended Batch Effect Correction Methods

| Method | Brief Description | Key Strengths |

|---|---|---|

| Harmony | Iteratively clusters cells in PCA space and corrects for batch effects within clusters [8]. | Fast runtime, well-calibrated, balances integration and biological preservation effectively [15] [8]. |

| Seurat 3 | Uses Canonical Correlation Analysis (CCA) and mutual nearest neighbors (MNNs) as "anchors" to integrate datasets [17] [8]. | High performance in many scenarios, widely used and integrated into a comprehensive toolkit [8] [13]. |

| LIGER | Uses integrative non-negative matrix factorization (iNMF) to factorize datasets and align shared factors [8]. | Does not assume all inter-dataset differences are technical, can preserve biologically relevant variation [8]. |

Q4: Can batch effects really lead to tangible harm in a clinical setting? A: Yes, the consequences can be severe. In one documented case, a change in the RNA-extraction solution used to generate gene expression profiles introduced a batch effect. This shift led to an incorrect gene-based risk calculation for 162 patients, resulting in 28 patients receiving incorrect or unnecessary chemotherapy regimens [11]. Such instances underscore that batch effects are not just a theoretical statistical problem but a critical issue impacting patient care and translational research.

Table: Key Research Reagent Solutions and Their Functions in Mitigating Batch Effects

| Item | Function in Batch Effect Mitigation |

|---|---|

| Common Laboratory Reagents | |

| Single, standardized reagent lots | Using the same lot of kits, enzymes, and chemicals for all samples in a study minimizes a major source of technical variation [17]. |

| Multiplexed sample barcoding | Allowing multiple samples to be pooled and processed in a single sequencing run technically eliminates batch effects between those samples [13]. |

| Computational & Data Resources | |

| Reference datasets | Publicly available, well-annotated datasets (e.g., from consortia like HuBMAP) can serve as a stable anchor for aligning and assessing new data [11]. |

| Benchmarking frameworks | Standardized workflows and metrics (like kBET, LISI) allow for objective evaluation of batch effect correction methods on your specific data [8]. |

| Causal batch effect algorithms | Newer methods that model batch effects as causal, rather than associational, problems can prevent erroneous conclusions when biological and technical variables are confounded [16]. |

Visualizing Workflows and Relationships

Batch Effect Diagnostic Workflow

The Confounding Problem of Batch Effects

This diagram illustrates how batch effects can create spurious associations or obscure real ones, leading to false discoveries.

Batch effects are technical variations introduced during the processing and measurement of samples that are unrelated to the biological factors under study. These non-biological variations can arise at virtually every step of a high-throughput experiment, from initial sample collection to final data generation [11] [1]. In omics studies, including genomics, transcriptomics, proteomics, and metabolomics, batch effects can introduce noise that dilutes biological signals, reduces statistical power, and may even lead to misleading or irreproducible conclusions [11]. The profound negative impact of batch effects includes their role as a paramount factor contributing to the reproducibility crisis in scientific research, sometimes resulting in retracted articles and invalidated findings [11].

Understanding and tracing the sources of batch effects is particularly crucial in biomarker studies and drug development, where accurate data interpretation directly impacts diagnostic, prognostic, and therapeutic decisions. One documented example from a clinical trial showed that batch effects introduced by a change in RNA-extraction solution resulted in incorrect classification outcomes for 162 patients, 28 of whom received incorrect or unnecessary chemotherapy regimens [11]. This guide provides researchers with practical information to identify, troubleshoot, and mitigate batch effects throughout their experimental workflows.

Batch effects originate from diverse technical sources throughout the experimental workflow. The table below categorizes these sources by experimental phase with corresponding mitigation strategies.

Table 1: Common Batch Effect Sources and Mitigation Strategies in Sample Preparation

| Experimental Phase | Specific Source | Impact Description | Prevention Strategy |

|---|---|---|---|

| Study Design | Flawed or confounded design | Samples not randomized or selected based on specific characteristics | Randomize sample processing order; balance biological groups across batches [11] |

| Study Design | Minor treatment effect size | Small biological effects harder to distinguish from technical variation | Increase sample size; optimize assay sensitivity [11] |

| Sample Preparation & Storage | Protocol procedures | Different centrifugal forces, time/temperature before centrifugation | Standardize protocols across all samples; use identical equipment [11] |

| Sample Preparation & Storage | Sample storage conditions | Variations in temperature, duration, freeze-thaw cycles | Use consistent storage conditions; minimize freeze-thaw cycles [11] |

| Reagents & Materials | Reagent lot variability | Different batches of reagents (e.g., fetal bovine serum) | Use single lot for entire study; test new lots before implementation [11] [1] |

| Laboratory Conditions | Personnel differences | Different technicians with varying techniques | Cross-train personnel; rotate staff systematically [1] |

| Laboratory Conditions | Time of day/day of week | Variations in environmental conditions, equipment performance | Randomize processing time across experimental groups [1] |

| Data Generation | Instrument variation | Different machines or same machine over time | Calibrate regularly; use same instrument for entire study when possible [1] |

| Data Generation | Analysis pipelines | Different bioinformatics tools or parameters | Standardize computational methods; process batches together [11] |

How can I detect batch effects in my dataset before beginning formal analysis?

Several visual and statistical methods can help identify batch effects prior to formal analysis:

- Principal Component Analysis (PCA): Plot the first two principal components colored by batch. Clustering of samples by batch rather than biological group suggests strong batch effects [18].

- Quality Metric Analysis: Examine quality control metrics (e.g., sequencing depth, mapping rates) across batches. Significant differences may indicate batch effects [18].

- Intraclass Correlation Coefficient (ICC): Quantify the proportion of total variance explained by between-batch differences. One study found batch effects explained 1-48% of variance across different protein biomarkers [19].

- Machine Learning Quality Prediction: Tools like seqQscorer use machine learning to predict sample quality (Plow scores) that may correlate with batch membership [18].

Why do my negative control samples show different values across batches?

Systematic technical variations affect all samples in a batch, including controls. Several specific batch effect types can impact control samples:

Table 2: Batch Effect Types Affecting Control Samples

| Batch Effect Type | Description | Impact on Controls |

|---|---|---|

| Protein-Specific | Certain proteins deviate systematically between batches | Controls show different baseline values for specific proteins [20] |

| Sample-Specific | All values for a particular sample offset between measurements | Control samples show consistent upward/downward shift [20] |

| Plate-Wide | Overall deviation affecting all proteins and samples equally | Controls show global value shifts across entire plate [20] |

The visual presence of these effects can be detected by plotting measurements from one batch against another. Without batch effects, points should fall along the line of identity (x=y). Deviations from this line indicate batch effects [20].

Experimental Protocols for Batch Effect Assessment

Protocol: Assessing Batch Effects Using Bridging Controls

Bridging controls (BCs) are identical samples included across multiple batches to directly measure batch effects.

Materials:

- Bridging control samples (identical aliquots from same source)

- 8-12 recommended replicates per batch for optimal correction [20]

- Standard laboratory equipment for your assay

Procedure:

- Include identical BCs on every processing batch (plate, sequencing run, etc.)

- Process all samples according to standard protocol

- For each biomarker/analyte, calculate the batch effect magnitude for each BC using the formula: [ BEj = \sum{i=1}^{N{BC}} NPX{i,1}^j - NPX{i,2}^j ] where (NPX{i,1}^j) and (NPX_{i,2}^j) are measurements of the same BC (j) on batches 1 and 2 [20].

- Use the Interquartile Range (IQR) to identify outlier BCs with excessive batch effects

- Apply statistical correction methods (e.g., BAMBOO, ComBat, median centering) using the BC measurements

Validation: After correction, BCs should show minimal systematic differences between batches. The optimal number of BCs is typically 10-12 for robust correction [20].

Protocol: Machine Learning-Based Batch Effect Detection

This protocol uses quality metrics to detect batches without prior knowledge of batch membership.

Materials:

- FASTQ files from RNA-seq experiment

- seqQscorer software [18]

- Computing resources for analysis

Procedure:

- Download a maximum of 10 million reads per FASTQ file to standardize analysis

- Derive quality features from full files and subsets (e.g., 1 million reads)

- Compute Plow scores (probability of being low quality) for each sample using seqQscorer

- Compare Plow scores between suspected batches using Kruskal-Wallis test

- Perform PCA using abundance data, colored by predicted quality groups

- Evaluate clustering metrics (Gamma, Dunn1, WbRatio) before and after quality-based correction

Interpretation: Significant differences in Plow scores between batches (p < 0.05) indicate quality-related batch effects. Improved clustering after quality-based correction confirms the presence of batch effects [18].

Figure 1: Batch effects can originate at multiple experimental stages, requiring comprehensive detection and correction strategies.

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 3: Essential Materials for Batch Effect Management

| Reagent/Material | Function in Batch Effect Control | Implementation Guidelines |

|---|---|---|

| Bridging Controls (BCs) | Identical samples across batches to quantify technical variation | Use 10-12 BCs per batch; select representative samples [20] |

| Single Lot Reagents | Prevent reagent batch variability | Purchase entire study supply from single manufacturing lot [11] |

| Calibration Standards | Instrument performance monitoring | Run with each batch to detect instrument drift [1] |

| Reference Materials | Process standardization across time/locations | Use well-characterized reference samples (e.g., NIST standards) [18] |

| Quality Control Kits | Assessment of sample quality pre-processing | Implement pre-batch quality screening [18] |

FAQ on Batch Effect Management

How can I distinguish true biological signals from batch effects?

True biological signals typically correlate with biological variables (e.g., disease status, treatment group), while batch effects correlate with technical variables (processing date, reagent lot, instrument). Several approaches help distinguish them:

- Experimental Design: Process samples from different biological groups together in each batch

- Statistical Analysis: Include both biological and technical variables in models

- Validation: Replicate findings in independently processed samples

- Control Samples: Use samples with known biological characteristics as references

In one study, what appeared to be cross-species differences between human and mouse were actually batch effects caused by different subject designs and data generation timepoints separated by 3 years. After batch correction, data clustered by tissue rather than species [11].

What is the minimum number of samples per batch needed for effective batch effect correction?

The minimum sample size depends on the correction method, but generally:

- ComBat and limma: Require at least 2 samples per batch for correction [21]

- Bridging control approaches: Require 8-12 BCs for optimal performance [20]

- Multi-batch studies: Should have sufficient samples to represent biological variation within each batch

For rare sample types, consider pooling samples or using specialized methods like BERT (Batch-Effect Reduction Trees) designed for incomplete data [21].

Which batch effect correction method should I choose for my multi-omics study?

Method selection depends on your data type and study design:

Table 4: Batch Effect Correction Method Selection Guide

| Method | Best For | Considerations |

|---|---|---|

| ComBat | Bulk omics data (microarray, RNA-seq) | Uses empirical Bayes; handles known batches well [21] [1] |

| HarmonizR | Multi-omics with missing values | Imputation-free; uses matrix dissection [21] |

| BERT | Large-scale integration of incomplete profiles | Tree-based; retains more numeric values [21] |

| BAMBOO | Proteomics (PEA data) with bridging controls | Corrects protein-, sample-, and plate-wide effects [20] |

| DeepBID | Single-cell RNA-seq data | Deep learning approach; integrates clustering [22] |

| Harmony | Multiple batches of single-cell data | Uses PCA and soft k-means clustering [22] |

Can proper experimental design eliminate the need for statistical batch effect correction?

While proper experimental design significantly reduces batch effects, it rarely eliminates them completely. Key design elements include:

- Randomization: Process samples in random order relative to biological groups

- Balancing: Ensure each batch contains similar numbers from each biological group

- Blocking: Group similar samples together within batches

- Replication: Include technical replicates across batches

However, even with optimal design, unknown technical factors can introduce batch effects. Therefore, both good design and statistical correction are recommended [11] [23].

Figure 2: Follow this decision pathway to select appropriate batch effect correction methods based on your data characteristics.

Tracing batch effects from sample preparation through data generation is essential for producing reliable, reproducible research outcomes, particularly in biomarker studies and drug development. By implementing rigorous experimental designs, utilizing appropriate control materials, and applying validated correction methods, researchers can significantly reduce the impact of technical variation on their results. The tools and strategies outlined in this guide provide a comprehensive approach to managing batch effects throughout the research workflow, ultimately leading to more accurate data interpretation and more robust scientific conclusions.

Understanding Batch Effects

What are batch effects and why are they a problem in omics studies?

Batch effects are systematic technical variations introduced into high-throughput data due to differences in experimental conditions, such as reagent lots, personnel, sequencing machines, or measurement dates [24]. These non-biological variations can skew data analysis, leading to increased false positives in differential expression analysis, masking genuine biological signals, and ultimately threatening the reproducibility and reliability of research findings [24] [25]. In severe cases, batch effects have been linked to incorrect clinical classifications and retracted scientific publications [24].

How prevalent are batch effects in real-world studies?

Batch effects are notoriously common in omics data [24]. In proteomics, a benchmarking study leveraging the Quartet Project reference materials found that batch effects are a major challenge for data integration [26]. Similarly, in genomics, the analysis of DNA methylation array data is significantly challenged by batch effects, which can influence biological interpretations and clinical decision-making [27].

Quantitative Evidence from Omics Studies

The tables below summarize empirical evidence on batch effect prevalence and the performance of various correction methods from recent proteomics and genomics studies.

- Proteomics: Evidence from Mass Spectrometry and Proximity Extension Assays

| Study Description | Key Quantitative Findings | Correction Methods Benchmarked |

|---|---|---|

| Multi-batch MS-based proteomics using Quartet reference materials (balanced and confounded designs) [26]. | Protein-level correction was the most robust strategy. The MaxLFQ-Ratio combination showed superior prediction performance in a cohort of 1,431 plasma samples from type 2 diabetes patients [26]. | Combat, Median Centering, Ratio, RUV-III-C, Harmony, WaveICA2.0, NormAE [26]. |

| PEA (Olink) proteomics study characterizing three distinct batch effects [20]. | Identified three batch effect types: protein-specific, sample-specific, and plate-wide. Simulation showed optimal correction achieved with 10–12 bridging controls (BCs). With large plate-wide effects, BAMBOO accuracy remained >90%, outperforming other methods [20]. | BAMBOO, Median of the Difference (MOD), ComBat, Median Centering [20]. |

- Genomics: Evidence from DNA Methylation Studies

| Study Description | Key Quantitative Findings | Correction Methods Benchmarked |

|---|---|---|

| Incremental batch effect correction for DNA methylation array data in longitudinal studies [27]. | Proposed iComBat to correct newly added data without re-processing old data. Demonstrated efficiency in simulation studies and real-world data applications, proving useful for clinical trials and epigenetic clock analyses [27]. | iComBat (based on ComBat), Quantile Normalization, SVA, RUV-2 [27]. |

Experimental Protocols for Batch Effect Assessment

Protocol 1: Benchmarking Batch Effect Correction in Proteomics

This protocol is adapted from a large-scale study that utilized reference materials to evaluate correction strategies [26].

- Dataset Preparation: Acquire multi-batch datasets, such as those from the Quartet protein reference materials, which include triplicate MS runs of four distinct reference samples. Design both balanced (Quartet-B) and confounded (Quartet-C) scenarios to test robustness.

- Data Quantification: Process raw MS data using multiple quantification methods (e.g., MaxLFQ, TopPep, iBAQ) to generate protein abundance matrices.

- Batch Effect Correction: Apply a suite of batch-effect correction algorithms (BECAs) such as Combat, Ratio, and Harmony at different data levels (precursor, peptide, or protein).

- Performance Evaluation:

- Feature-based metrics: Calculate the coefficient of variation (CV) within technical replicates across batches.

- Sample-based metrics: Compute the signal-to-noise ratio (SNR) from PCA plots to assess group separation. Use Principal Variance Component Analysis (PVCA) to quantify the variance contributed by batch versus biological factors.

- Validation: Test the best-performing workflow on a large-scale independent cohort (e.g., clinical plasma samples) to validate prediction performance.

The following workflow diagram illustrates the key steps of this benchmarking protocol:

Protocol 2: Characterizing and Correcting Batch Effects in PEA Proteomics

This protocol outlines the process for identifying specific batch effect types and applying a robust correction method like BAMBOO [20].

- Experimental Design: Measure a set of at least 24 samples across two different plates. Include a minimum of 8-12 bridging controls (BCs)—identical samples placed on every plate.

- Effect Characterization: Plot the normalized protein expression (NPX) values from plate 2 against plate 1. Color-code data points by protein and by sample to visually identify:

- Protein-specific effects: Grouped deviations of a specific protein from the diagonal.

- Sample-specific effects: Offsets affecting all proteins in a specific sample.

- Plate-wide effects: A global shift from the diagonal, assessed via robust linear regression.

- Quality Control & Filtering: Calculate the total batch effect for each BC. Remove outlier BCs using the interquartile range (IQR) method. Flag proteins with many measurements below the limit of detection.

- Batch Correction with BAMBOO:

- Use robust linear regression on the BC data to estimate plate-wide adjustment factors (intercept and slope).

- Calculate a protein-specific adjustment factor (AF) as the median of the adjusted differences for each protein across BCs.

- Apply both the plate-wide and protein-specific adjustments to all samples on the test plate.

The logical flow of the BAMBOO method is shown below:

The Scientist's Toolkit

This table lists essential reagents and materials used in the featured experiments for reliable batch effect assessment and correction.

| Research Reagent / Material | Function in Batch Effect Studies |

|---|---|

| Quartet Reference Materials [26] | Commercially available protein reference materials from four cell lines (D5, D6, F7, M8) used as a gold standard for benchmarking batch effects and correction algorithms in proteomics. |

| Bridging Controls (BCs) [20] | Identical biological samples (e.g., from a single pool) included on every processing plate/run in a study. They serve as technical anchors to quantify and correct for plate-to-plate variation. |

| Universal Reference Samples [26] | A common reference sample profiled concurrently with study samples across all batches, enabling ratio-based normalization methods (e.g., MaxLFQ-Ratio) for cross-batch integration. |

| Protease Inhibitor Cocktails (EDTA-free) | Added during protein extraction and sample preparation to prevent protein degradation, which is a potential source of pre-analytical variation and batch effects [28]. |

| DNA Methylation Array Kits | Commercial kits (e.g., from Illumina) used for epigenome-wide association studies (EWAS). Different kit batches can be a source of batch effects, requiring statistical correction [27]. |

Frequently Asked Questions

What is the fundamental difference between normalization and batch effect correction?

Normalization addresses technical variations within a single batch or run, such as differences in sequencing depth, library size, or overall signal intensity. In contrast, batch effect correction addresses systematic variations between different batches of samples, such as those processed on different days, by different personnel, or using different reagent lots [12].

How can I visually detect the presence of batch effects in my dataset?

The most common method is to use dimensionality reduction techniques like Principal Component Analysis (PCA) for bulk data, or t-SNE/UMAP plots for single-cell data. If your samples cluster strongly by technical factors like processing date or sequencing batch, rather than by biological condition or cell type, this is a clear indicator of batch effects [12] [25].

What are the key signs that my batch effect correction may have been too aggressive (overcorrection)?

Overcorrection can remove genuine biological signal. Key signs include [12]:

- The loss of known, expected cluster-specific markers (e.g., canonical T-cell markers no longer appear in a T-cell cluster).

- A significant overlap in the markers identified for different cell types or conditions.

- The emergence of widespread, non-informative genes (e.g., ribosomal genes) as top markers.

Is batch effect correction the same for all omics technologies (e.g., proteomics vs. genomics)?

While the core purpose is the same—to remove technical variation—the specific algorithms and strategies can differ. For example, metabolomics often uses quality control (QC) samples and internal standards for correction, while transcriptomics and proteomics rely more heavily on statistical modeling. Furthermore, methods designed for the high sparsity and scale of single-cell RNA-seq data may not be suitable for bulk genomic data, and vice versa [12] [25].

The Correction Toolbox: From Established Algorithms to Next-Generation Solutions

Frequently Asked Questions

Q1: Do I always need to correct for batch effects? If principal component analysis (PCA) or other clustering methods (like UMAP) show that your samples group by a technical variable (e.g., processing date) rather than by your biological condition of interest, then batch correction is highly recommended [25].

Q2: Can batch correction remove true biological signal? Yes. A primary risk is over-correction, which can remove real biological variation if the batch effects are confounded with—meaning they overlap significantly with—the experimental conditions you want to study. Always validate results after correction [29] [25].

Q3: What is the core difference between using ComBat and including batch in a model? Programs like ComBat directly modify your data to subtract the batch effect. In contrast, including 'batch' as a covariate in a statistical model (e.g., in DESeq2 or limma) estimates and accounts for the effect size of the batch during hypothesis testing without altering the original data matrix [29].

Q4: How do I choose a method for raw count data? For bulk RNA-seq raw count data, ComBat-Seq is specifically designed as it uses a negative binomial model suitable for counts [30] [29]. The original ComBat was designed for normalized, microarray-style data and applying it to raw counts is not recommended [29].

Q5: What if I don't know what my batches are? Methods like Surrogate Variable Analysis (SVA) can be used to estimate and adjust for hidden sources of variation, or unobserved batch effects, when batch labels are unknown or partially missing [25] [31].

Troubleshooting Guides

Problem: Poor performance after batch correction.

- Potential Cause 1: Violation of distributional assumptions.

- Solution: Check the distribution of your data. Combat assumes an approximate Gaussian distribution and is best for normalized, log-transformed data. For raw counts that may follow a negative binomial or gamma distribution, use ComBat-Seq or methods that allow batch to be included as a covariate in a generalized linear model (e.g., in

DESeq2oredgeR) [30] [29].

- Solution: Check the distribution of your data. Combat assumes an approximate Gaussian distribution and is best for normalized, log-transformed data. For raw counts that may follow a negative binomial or gamma distribution, use ComBat-Seq or methods that allow batch to be included as a covariate in a generalized linear model (e.g., in

- Potential Cause 2: Confounded study design.

- Solution: If your biological groups are perfectly aligned with batches (e.g., all controls in one batch and all treatments in another), it is statistically impossible to disentangle biological from technical effects. The best solution is a better experimental design. If this is not possible, interpret results with extreme caution, as any correction method may remove biological signal or introduce artifacts [32].

Problem: Over-correction removing biological signal.

- Potential Cause: The correction method is too aggressive, often because the batch is weakly correlated with the condition of interest.

- Solution:

- Use a less aggressive method: Consider using

limma::removeBatchEffector including batch as a covariate in your model instead of ComBat [29]. - Validate rigorously: After correction, check that known biological differences between groups (e.g., a validated biomarker) are still detectable. Use positive controls if available [33].

- Use a less aggressive method: Consider using

- Solution:

Problem: Which method to choose for single-cell or complex data integration?

- Context: Single-cell RNA-seq or multi-omics data integration often involves more complex, non-linear batch effects.

Method Comparison & Selection

The table below summarizes the characteristics of major batch correction method families to guide your selection.

| Method Family | Example Algorithms | Primary Data Type | Strengths | Key Limitations |

|---|---|---|---|---|

| Linear Models | limma::removeBatchEffect [29] |

Normalized, continuous data (e.g., microarray, log-CPM) | Simple, fast, integrates well with linear model-based DE analysis; does not alter the original data structure drastically [25]. | Assumes batch effects are additive and known; less flexible for complex, non-linear effects [25]. |

| Empirical Bayes | ComBat [34], ComBat-Seq [30] | ComBat: Normalized data; ComBat-Seq: Raw counts | Powerful, adjusts for known batches using a robust Bayesian framework; can handle small sample sizes better than some linear methods [25]. | Requires known batch information; standard ComBat may not handle non-linear effects; can be prone to over-correction [29] [25]. |

| Nearest Neighbor-Based | Harmony [34], fastMNN [34], Seurat (RPCA/CCA) [34] | Single-cell RNA-seq, high-dimensional profiles | Effective for complex, non-linear batch effects; does not require all cell types to be present in all batches; top-performing in benchmarks [34]. | Can be computationally intensive for very large datasets; may require re-computation when new data is added [34]. |

| Hidden Factor Analysis | SVA (Surrogate Variable Analysis) [25] | Bulk or single-cell RNA-seq | Does not require known batch labels; useful for discovering and adjusting for unknown sources of technical variation [25]. | High risk of removing biological signal if not modeled carefully; requires careful interpretation of surrogate variables [25]. |

Experimental Protocol: A Standard Bulk RNA-seq Batch Correction Workflow

This protocol outlines a standard workflow for batch correction in a bulk RNA-seq analysis, using R and popular Bioconductor packages [31] [35].

1. Input Data Preparation Begin with a raw count matrix. For this example, we use a publicly available Arabidopsis thaliana dataset [31].

2. Normalization

Normalization corrects for differences in library size and composition between samples. The Trimmed Mean of M-values (TMM) method in edgeR is a common choice.

3. Batch Effect Detection with PCA Visualize the normalized data to check for batch effects.

- Interpretation: If the PCA plot shows clear clustering by batch (e.g., all squares from batch 1 are separate from batch 2 circles), you have a batch effect that needs correction [35].

4. Batch Effect Correction Apply a correction method. Here are two common approaches.

Using

limma::removeBatchEffect(for known batches):Using

ComBat-Seq(for raw counts and known batches):

5. Validation

Repeat the PCA on the corrected data (e.g., corrected_log_cpm). A successful correction will show samples clustering primarily by biological condition, not by batch [25] [35].

The Scientist's Toolkit

| Category | Item | Function in Batch Management |

|---|---|---|

| Core R/Bioconductor Packages | sva (contains ComBat/ComBat-Seq, SVA) [31] |

Provides multiple algorithms for batch effect correction and surrogate variable analysis. |

limma (contains removeBatchEffect) [29] |

Provides linear model-based batch correction for normalized expression data. | |

edgeR / DESeq2 [29] |

Enable batch to be included as a covariate during differential expression analysis. | |

| Quality Control Metrics | PCA (Principal Component Analysis) [35] | A visual, qualitative method to detect the presence of batch effects. |

| kBET, ARI, LISI [25] | Quantitative metrics to assess the success of batch correction in mixing batches while preserving biology. | |

| Experimental Design Aids | Balanced Block Design | The most crucial "tool"—ensuring biological conditions are balanced across processing batches to minimize confounding [32]. |

Method Selection and Application Workflow

The diagram below outlines a logical workflow for selecting and applying a batch correction method.

Frequently Asked Questions (FAQ)

Q: What is BERT, and what specific problem does it solve? A: BERT (Batch-Effect Reduction Trees) is a high-performance data integration method designed for large-scale analyses of incomplete omic profiles (e.g., from proteomics, transcriptomics, or metabolomics). It specifically addresses the dual challenge of batch effects (technical biases between datasets) and missing values, which are common when combining independently acquired datasets [21].

Q: My data is very incomplete, with many missing values. Can BERT handle it? A: Yes, a key advantage of BERT is its ability to handle arbitrarily incomplete data. Unlike some methods that remove features with missing values, BERT uses a tree-based approach to retain a significantly higher number of numeric values, minimizing data loss during integration [21].

Q: How does BERT's performance compare to other methods like HarmonizR? A: BERT offers substantial improvements over existing methods like HarmonizR [21]:

- Data Retention: Retains up to five orders of magnitude more numeric values.

- Speed: Leverages parallel computing for up to 11x runtime improvement.

- Correction Quality: Improves data integration quality, as measured by the Average Silhouette Width (ASW).

Q: Can I use BERT with different types of omic data? A: Yes, BERT's scope is broad. It has been characterized on various omic types, including proteomics, transcriptomics, and metabolomics, as well as other data types like clinical data [21].

Q: Why is batch effect correction so important in biomarker studies? A: Batch effects are technical variations that can obscure true biological signals and lead to incorrect conclusions [24]. In tumor biomarker studies, for example, more than 10% of a biomarker's variance can sometimes be attributable to batch effects rather than biology. Correcting them is essential for identifying reliable biomarkers and ensuring the validity of your research [19].

Q: What are common sources of batch effects I should be aware of in my experiments? A: Batch effects can arise at nearly any stage of a high-throughput study [24]:

- Study Design: Non-random assignment of samples to batches.

- Sample Preparation: Differences in collection, storage, or processing.

- Instrumentation: Variations between machines, reagents, or operators.

- Data Generation: Changes over time, such as different sequencing runs or LC/MS batches [36].

Troubleshooting Guides

Issue 1: Poor Data Integration After Batch Correction

Problem: After running a batch effect correction, your biological groups still do not separate well, or the correction seems to have removed the biological signal.

| Investigation Step | Action to Take |

|---|---|

| Check Design Balance | Ensure biological conditions are not perfectly confounded with batches. BERT allows specification of covariates to account for imbalanced designs [21]. |

| Inspect Pre-processing | For LC/MS data, ensure proper peak alignment and RT correction across batches in the pre-processing stage, as errors here cannot be fixed later [36]. |

| Validate with Metrics | Use quality metrics like Average Silhouette Width (ASW) reported by BERT to assess integration quality for both batch removal (ASW Batch) and biological signal retention (ASW Label) [21]. |

| Review Correction Method | BERT uses established algorithms (ComBat, limma). If over-correction is suspected, check the parameters used for these underlying models [21]. |

Issue 2: Slow Performance with Large Datasets

Problem: The data integration process is taking an excessively long time.

| Investigation Step | Action to Take |

|---|---|

| Leverage Parallelization | BERT is designed for high-performance computing. Utilize its multi-core and distributed-memory capabilities by adjusting the user-defined parameters for processes (P), reduction factor (R), and sequential batch number (S) [21]. |

| Benchmark Performance | Compare BERT's runtime against HarmonizR. BERT has demonstrated up to an 11x runtime improvement in benchmark studies [21]. |

Issue 3: Handling Unique Covariate Levels and Reference Samples

Problem: Your dataset contains covariate levels that only appear in one batch, or you have a set of reference samples you want to use to guide the correction.

| Investigation Step | Action to Take |

|---|---|

| Use the Reference Feature | BERT allows users to indicate specific samples as references. The algorithm uses these to estimate the batch effect, which is then applied to correct all samples in the batch pair, including those with unknown covariates [21]. |

| Specify Covariates | Provide all known categorical covariates (e.g., sex, disease status) for every sample. BERT will pass these to the underlying correction models (ComBat/limma) to preserve these biological conditions while removing the batch effect [21]. |

Experimental Protocols & Data

BERT Performance Comparison

The following table summarizes a simulation study comparing BERT against HarmonizR, highlighting its advantages in handling missing data and computational speed [21].

| Method | Data Retention with 50% Missingness | Runtime (Relative) | Handles Covariates & References |

|---|---|---|---|

| BERT | Retains all numeric values | Up to 11x faster | Yes |

| HarmonizR (Full Dissection) | Up to 27% data loss | Baseline | No |

| HarmonizR (Blocking of 4) | Up to 88% data loss | Slower than BERT | No |

Key Research Reagent Solutions

The following table lists key computational tools and resources relevant for batch effect correction in omics studies.

| Item | Function in Research |

|---|---|

| BERT R Package | The primary tool for high-performance data integration of incomplete omic profiles, implementing the BERT algorithm [21]. |

| apLCMS Platform | A preprocessing tool for LC/MS metabolomics data; includes methods to address batch effects during peak alignment and quantification [36]. |

| batchtma R Package | A tool developed for mitigating batch effects in tissue microarray (TMA)-based protein biomarker studies [19]. |

| Pluto Bio Platform | A commercial platform designed to harmonize multi-omics data (e.g., RNA-seq, scRNA-seq) and correct batch effects through a web interface without coding [33]. |

| ComBat & limma | Established statistical methods for batch effect correction that form the core correction engines used within the BERT framework [21]. |

Workflow Diagrams

BERT Data Integration Workflow

BERT Pairwise Correction Logic

Batch effects, defined as unwanted technical variations caused by differences in labs, reagents, instrumentation, or processing times, are notoriously common in proteomic studies and can skew statistical analyses, increasing the risk of false discoveries [20] [37]. In proximity extension assay (PEA) proteomics, which enables large-scale investigation of numerous proteins and samples, these technical variations present a significant challenge for data integration and reliability [20]. The BAMBOO (Batch Adjustments using Bridging cOntrOls) method was developed specifically to address three distinct types of batch effects identified in PEA data: protein-specific effects (where values for specific proteins are offset across plates), sample-specific effects (where all values for a particular sample are shifted), and plate-wide effects (an overall deviation affecting all proteins and samples on a plate) [20]. This robust regression-based approach utilizes bridging controls (BCs)—identical samples included on every plate—to correct these technical variations and enhance the reliability of large-scale proteomic analyses [20].

Experimental Protocols & Workflows

The BAMBOO Batch Effect Correction Protocol

The BAMBOO method implements a structured, four-step correction procedure:

Step 1: Quality Filtering of Bridging Controls Calculate the batch effect for each BC using the formula:

BE_j = ∑(NPX_i,1^j - NPX_i,2^j)where NPX represents normalized protein expression values [20]. Identify and remove BC outliers using the Interquartile Range (IQR) method (values belowQ1 - 1.5*(Q3-Q1)or aboveQ3 + 1.5*(Q3-Q1)). Remove values below the limit of detection (LOD) unless this results in fewer than 6 BC measurements for a protein, in which case retain the values but flag the protein for cautious interpretation [20].Step 2: Plate-Wide Effect Correction Estimate plate-wide batch effects using a robust linear regression model on the bridging control data:

NPX_i,1^j = b_0 + b_1*NPX_i,2^j, whereb_0andb_1serve as adjustment factors for plate-wide effects [20].Step 3: Protein-Specific Effect Calculation Compute the adjustment factor for protein-specific batch effects:

AF_i = median(NPXj_i,1^j - (b_0 + b_1*NPX_i,2^j))[20].Step 4: Sample Adjustment Adjust non-bridging control samples to the reference plate using the formula:

adj.NPX_i,2^j = (b_0 + b_1*NPX_i,2^j) + AF_i[20].

Experimental Design for Optimal BAMBOO Performance

For researchers planning experiments utilizing the BAMBOO method, several key design considerations are essential:

Bridging Control Implementation: Include at least 8-12 bridging controls on every measurement plate, with 10-12 BCs recommended for optimal batch correction [20]. These should be identical samples with identical freeze-thaw cycles replicated across every plate [20].

Sample Randomization: Randomize samples across batches in a balanced manner to prevent confounding of biological factors with technical batches [38]. When possible, incorporate a sample mix per batch for additional quality control [38].

Data Recording: Meticulously record all technical factors, including both planned variables (reagent lots, instrumentation) and unexpected variations that occur during experimentation [38].

Reference Materials: For multi-site or longitudinal studies, consider implementing standardized reference materials across all batches and sites to facilitate ratio-based correction approaches [37] [39].

Figure 1: BAMBOO Method Workflow - The four-step correction procedure for robust batch effect adjustment in proteomic studies.

Performance Comparison of Batch Effect Correction Methods

Quantitative Method Comparison

Table 1: Performance comparison of batch effect correction methods under different conditions

| Method | Accuracy (No Plate-Wide Effect) | Accuracy (Large Plate-Wide Effect) | Robustness to BC Outliers | Optimal BC Requirements |

|---|---|---|---|---|

| BAMBOO | >95% [20] | Maintains high accuracy (>90%) [20] | Highly robust [20] | 10-12 [20] |

| MOD | >95% [20] | Lower accuracy in plate-wide scenarios [20] | Highly robust [20] | 10-12 [20] |

| ComBat | >95% (slightly higher than BAMBOO) [20] | Lower than BAMBOO for moderate/large effects [20] | Significantly impacted by outliers [20] | Not specified |

| Median Centering | 96.8-97.2% (lower than others) [20] | Lowest accuracy among methods [20] | Significantly impacted by outliers [20] | Not specified |

| Ratio-Based | Not specified for PEA | Effective in confounded scenarios [37] | Not specified | Reference materials [39] |

Advanced Correction Methods for Specific Scenarios

While BAMBOO is particularly effective for PEA proteomics, other correction methods have demonstrated utility in specific contexts and technologies:

Ratio-Based Methods: Particularly effective when biological and batch factors are completely confounded, as they scale feature values relative to concurrently profiled reference materials [37] [39]. This approach has shown superior performance in large-scale multi-omics studies [37].

Protein-Level Correction: Evidence suggests that performing batch-effect correction at the protein level (after quantification) rather than at the precursor or peptide level provides the most robust strategy for MS-based proteomics [40] [41].

BERT Algorithm: For large-scale data integration tasks with incomplete omic profiles, the Batch-Effect Reduction Trees (BERT) method efficiently handles missing values while correcting batch effects, retaining significantly more numeric values than alternative approaches [21].

The Scientist's Toolkit: Essential Research Reagents & Materials

Table 2: Key research reagents and materials for implementing BAMBOO in proteomic studies

| Reagent/Material | Specification | Function in Experiment |

|---|---|---|

| Bridging Controls | Identical samples with identical freeze-thaw cycles [20] | Technical replicates across plates for batch effect quantification |

| Reference Materials | Standardized samples (e.g., Quartet project materials) [37] [39] | Cross-batch normalization and quality assessment |

| PEA Assay Plates | Olink Target panels or similar [20] | Multiplexed protein measurement from minimal sample volumes |

| Quality Control Samples | Pooled samples or commercial standards [38] | Monitoring technical variation and signal drift |

| Normalization Buffers | Platform-specific dilution and assay buffers | Maintaining consistent matrix effects across batches |

Troubleshooting Guides & FAQs

Common Implementation Challenges

Q: What should I do if my dataset has insufficient bridging controls (fewer than 8)? A: With limited BCs, BAMBOO's robustness may be compromised. Consider these approaches: (1) Flag the analysis as preliminary and interpret results with caution; (2) Implement additional quality control measures, such as examining correlation structures between existing BCs; (3) If using MS-based proteomics, explore ratio-based correction using any available reference samples [37]. For future experiments, always plan for 10-12 BCs per plate as recommended [20].

Q: How can I distinguish true biological signals from residual batch effects after correction? A: Employ multiple validation strategies: (1) Check if significant findings are driven by samples from a single batch; (2) Validate key results using orthogonal methods or techniques; (3) Examine positive controls and known biological relationships to ensure they are preserved; (4) For MS-based data, ensure batch-effect correction is performed at the protein level for maximum robustness [40] [41].

Q: What is the best approach when batch effects are completely confounded with biological groups of interest? A: In completely confounded scenarios (where all samples from one biological group are in a single batch), most standard correction methods fail. The ratio-based method using reference materials has demonstrated particular effectiveness in these challenging situations [37] [39]. When possible, redesign experiments to avoid completely confounded designs through staggered sample processing.

Q: How do I handle outliers in my bridging controls?

A: BAMBOO includes specific quality filtering steps for BC outliers using the IQR method. BCs with batch effect values below Q1 - 1.5*(Q3-Q1) or above Q3 + 1.5*(Q3-Q1) should be removed [20]. If excessive outliers are detected, investigate potential technical issues with sample preparation, storage, or measurement.

Data Quality & Validation

Q: What metrics should I use to validate successful batch effect correction? A: Multiple assessment approaches are recommended: (1) Examine PCA plots before and after correction—batches should cluster together rather than separating by technical factors; (2) Calculate the Average Silhouette Width (ASW) to quantify batch mixing [21]; (3) Assess correlation structures within and between batches; (4) Monitor known biological signals to ensure they are preserved through correction [38].

Q: How should I handle missing values in relation to batch effect correction? A: Missing values present special challenges. For BAMBOO implementation, values below the limit of detection (LOD) should be removed unless this results in fewer than 6 BC measurements for a protein [20]. For extensive missing data, consider BERT algorithm, which specifically addresses incomplete omic profiles while correcting batch effects [21]. Avoid imputation before batch correction as it may introduce artifacts.

Figure 2: Batch Effect Diagnostic Guide - Decision pathway for identifying and addressing different types of batch effects in proteomic data.

The BAMBOO method represents a significant advancement for addressing batch effects in PEA proteomic studies, providing robust correction specifically designed for the three distinct types of batch effects encountered in this technology. Through the strategic implementation of bridging controls and a structured four-step correction process, researchers can significantly enhance the reliability of their large-scale proteomic analyses. The method's particular strength lies in its robustness to outliers in bridging controls and its effective handling of plate-wide effects, outperforming established methods like ComBat and median centering in these challenging scenarios. When implementing BAMBOO, careful experimental design incorporating sufficient bridging controls (10-12 recommended) and comprehensive quality control measures remain essential for optimal performance and biologically meaningful results in biomarker discovery and proteomic research.

Frequently Asked Questions (FAQs)

Q1: What is the core problem that iComBat solves for longitudinal biomarker studies? iComBat addresses the challenge of batch effects in datasets that are expanded over time with new measurement batches [42]. Traditional batch-effect correction methods require re-processing the entire dataset when new samples are added, which can alter previously corrected data and disrupt ongoing longitudinal analysis. iComBat uses an incremental framework based on ComBat and empirical Bayes estimation to correct newly added data without affecting previously processed data, making it ideal for studies with repeated measurements [42].

Q2: In which types of studies is an incremental framework like iComBat most critical? This framework is particularly crucial for clinical trials and research involving repeated measurements over time, such as:

- Studies of DNA methylation in relation to diseases and aging [42].

- Clinical trials of anti-aging interventions [42].

- Longitudinal oncology research monitoring treatment response via biomarkers like circulating tumor DNA (ctDNA) [43].

- Any long-term study where data collection occurs in sequential batches over weeks, months, or years.

Q3: What are the main advantages of using iComBat over standard ComBat? The primary advantages are:

- Data Consistency: Previously corrected data remains unchanged, ensuring stable and comparable results throughout the study timeline [42].

- Computational Efficiency: Eliminates the need to re-process the entire historical dataset every time new samples are added [42].

- Practicality for Long-Term Studies: Provides a feasible workflow for studies where data is incrementally measured and included [42].

Q4: What underlying methodology does iComBat use for correction? iComBat is based on the ComBat method, which is a location and scale adjustment approach that uses a Bayesian hierarchical model and empirical Bayes estimation to remove batch effects [42].

Troubleshooting Guide

Issue 1: Corrected Data Becomes Inconsistent After Adding New Batches

Problem Statement After adding and processing a new batch of longitudinal samples, previously corrected data shifts or becomes inconsistent, making it impossible to track true biological changes over time.

Symptoms & Error Indicators

- Significant drift in the values of established control samples between batches.

- Previously stable biomarker measurements show unexpected jumps or drops after a new batch is integrated.

- Statistical models for temporal trends break after new data is incorporated.

Possible Causes

- Using a traditional batch-effect correction method that reprocesses the entire dataset, thereby altering the baseline of previously corrected data [42].

- The model parameters (e.g., mean, variance) for batch effect adjustment are not fixed for prior batches.

Step-by-Step Resolution Process

- Diagnose the Method: Confirm that you are not using a standard batch-effect correction tool in "re-analysis" mode on the full, growing dataset.

- Implement an Incremental Framework: Switch to a method specifically designed for incremental data, such as iComBat [42].

- Parameter Isolation: Ensure that the correction model applies estimated parameters from the original, baseline data to new batches without re-estimating parameters for old data. iComBat achieves this by correcting new data based on the existing model [42].

- Validation: After correction with the incremental method, validate that the values of control samples or baseline patient measurements remain consistent across the entire study timeline.

Escalation Path or Next Steps If inconsistency persists, verify the integrity of the baseline dataset and the implementation of the incremental algorithm. Consult with a biostatistician specializing in longitudinal data analysis or batch-effect correction.

Issue 2: Integrating Heterogeneous Data from Multiple Longitudinal Time Points

Problem Statement Difficulty in combining and analyzing biomarker data (e.g., from DNA methylation arrays, plasma ctDNA) collected from the same subjects at multiple time points, especially when assays are run in different batches.

Symptoms & Error Indicators

- Strong clustering of data points by "batch date" or "processing run" instead of by subject or biological time point in a PCA plot.

- Apparent biomarker changes over time are correlated with batch processing dates rather than clinical events.

Possible Causes

- Technical variation (e.g., different reagent lots, machine calibrations, lab personnel) introduced during each sample processing batch is obscuring true biological signals [42].

- Failure to properly account for the study's longitudinal design and the batch-effect structure during the data integration phase.

Step-by-Step Resolution Process

- Characterize Batches: Clearly document the batch identifier (e.g., processing date, plate ID) for every sample at every time point.

- Visualize Batch Effects: Use PCA or other clustering methods to confirm that batch effects are present and to understand their magnitude.

- Apply Incremental Correction: Use the iComBat framework. For the initial baseline data, perform a standard batch-effect correction if multiple batches exist. For each new longitudinal time point processed as a new batch, apply iComBat to correct it incrementally against the established baseline [42].

- Verify Biological Signal: After correction, confirm that PCA plots show data clustering by subject or biological group rather than by batch.

Validation or Confirmation Step Check that known biological correlations (e.g., between established biomarkers) are strengthened and that technical artifacts are minimized in the corrected dataset. The trajectory of individual subjects' biomarkers over time should appear biologically plausible.

Experimental Protocols & Data Presentation

Key Experimental Protocol: Incremental Batch Effect Correction with iComBat

The following workflow is adapted from the iComBat framework for DNA methylation data, applicable to other biomarker datasets from evolving longitudinal studies [42].

- Initial Baseline Correction: For the first set of samples (Batch 1), run the standard ComBat algorithm to estimate and remove batch effects. This creates a stabilized baseline dataset.

- Model Parameter Storage: Save the estimated parameters (location and scale adjustments, empirical Bayes estimates) from the baseline ComBat model.

- New Data Introduction: As new data from a subsequent time point (Batch N) is collected, preprocess it similarly to the baseline data.

- Incremental Adjustment: Apply the iComBat algorithm. This step uses the saved parameters from the baseline model to correct the new Batch N data, without re-processing Batches 1 through N-1.

- Data Integration: The newly corrected Batch N data is now compatible with the original baseline data and can be integrated for longitudinal analysis.

- Validation: Use statistical measures and visualization (e.g., PCA plots before and after correction) to confirm the removal of batch effects and preservation of biological variance.