Navigating Multimodal Parameter Landscapes in Drug Discovery: Strategies, Applications, and AI-Driven Solutions

This article provides a comprehensive guide for researchers and drug development professionals on navigating multimodal parameter landscapes, where problems feature multiple optimal solutions.

Navigating Multimodal Parameter Landscapes in Drug Discovery: Strategies, Applications, and AI-Driven Solutions

Abstract

This article provides a comprehensive guide for researchers and drug development professionals on navigating multimodal parameter landscapes, where problems feature multiple optimal solutions. It explores the foundational concepts of multimodality and its critical importance in biomedical research, from offering flexible therapeutic candidates to enhancing robustness against uncertainty. The content details cutting-edge methodological frameworks, including Evolutionary Algorithms and AI-driven fusion techniques like transformers and graph neural networks, which are revolutionizing target identification and compound design. It also addresses pervasive challenges such as data heterogeneity and model interpretability, offering practical troubleshooting and optimization strategies. Finally, the article covers rigorous validation approaches and provides a forward-looking perspective on the integration of these methods into the next generation of personalized and efficient drug development pipelines.

Understanding Multimodality: Why Multiple Solutions Matter in Drug Development

Defining Multimodal Parameter Landscapes in a Biomedical Context

In biomedical research, a multimodal parameter landscape refers to the complex, high-dimensional space defined by the numerous and diverse parameters from different data types that influence a biological outcome or therapeutic objective [1]. In the context of drug discovery and personalized medicine, navigating this landscape is akin to a delicate balancing act, where optimizing one parameter (e.g., drug potency) often leads to detrimental changes in others (e.g., toxicity or metabolic stability) [2]. The integration of various data modalities—such as genomic, imaging, clinical, and time-series data—creates a more holistic but also more intricate landscape that researchers must map and optimize [3]. Successfully traversing this landscape requires sophisticated computational frameworks that can handle multiple, often conflicting, objectives and identify the sets of parameters (the "hills" and "valleys" in the landscape) that lead to a successful outcome, such as a safe and effective personalized drug target [1].

Troubleshooting Guide: FAQs on Multimodal Experiments

Q1: Our multimodal model is not outperforming unimodal baselines. What could be the issue?

This is often a problem of ineffective fusion or data misalignment [4]. The representation spaces of different modalities (e.g., text and images) may not be properly aligned, preventing the model from learning meaningful cross-modal interactions. Furthermore, if the datasets from different departments (e.g., genomics and radiology) are not correctly synchronized or normalized, the model will learn from noisy or misrepresented data [5].

- Solution: Re-examine your data pre-processing and fusion strategy.

- Technical Protocol: Implement a structured fusion framework like the Holistic AI in Medicine (HAIM) pipeline [3]. This involves:

- Modality-Specific Embedding: Use pre-trained models (e.g., BERT for text, ResNet for images) to extract features from each raw data modality into a unified embedding space [4] [3].

- Systematic Fusion Testing: Experiment with different fusion techniques. Start with simple early or late fusion, and progress to more complex joint fusion using cross-attention mechanisms or transformer architectures that allow the model to learn the importance of each modality dynamically [4] [6].

- Validation Step: Calculate Shapley values for each data modality to quantify its contribution to the final prediction. This can reveal if a particular modality is being underutilized or is introducing noise [3].

- Technical Protocol: Implement a structured fusion framework like the Holistic AI in Medicine (HAIM) pipeline [3]. This involves:

Q2: How can we handle missing or incomplete data across modalities?

Data heterogeneity and incompleteness are fundamental challenges in multimodal biomedical research [5]. A single missing data point in one modality can render an entire patient's multimodal sample unusable if not handled properly.

- Solution: Employ strategies that allow for flexible input configurations.

- Technical Protocol: Adapt your framework to be robust to missingness.

- Imputation with Uncertainty: Use advanced imputation methods (e.g., multivariate imputation by chained equations) but couple them with uncertainty quantification. This allows the model to weigh the confidence of the imputed value [2].

- Flexible Architecture: Design or use models that can dynamically adjust to available modalities. The HAIM framework, for instance, is designed to work with various combinations of tabular, time-series, text, and image data without requiring every sample to have all four [3].

- Technical Protocol: Adapt your framework to be robust to missingness.

Q3: How do we optimize for multiple, conflicting objectives in drug target identification?

Traditional methods often focus on a single objective, like minimizing the number of driver nodes in a network, but ignore other crucial factors like prior knowledge of drug targets or functional differences between target sets [1]. This can lead to suboptimal or clinically non-viable candidates.

- Solution: Formulate the problem as a Multimodal Multiobjective Optimization (MMO) problem [1].

- Technical Protocol: Implement a framework like MMONCP (Multimodal Multiobjective optimization with Network Control Principles).

- Problem Formulation: Define your objectives (e.g., minimize toxicity, maximize efficacy) and your decision variables (e.g., candidate drug targets) [1].

- Algorithm Selection: Use an evolutionary algorithm designed for MMO, such as CMMOEA-GLS-WSCD, which combines a global and local search strategy with a weighting-based special crowding distance. This algorithm is specifically designed to find multiple, functionally different sets of personalized drug targets (Pareto-optimal solutions) that are equivalent in objective space but differ in their biological configuration [1].

- Technical Protocol: Implement a framework like MMONCP (Multimodal Multiobjective optimization with Network Control Principles).

Q4: Our model performs well on internal data but fails to generalize. How can we improve robustness?

This is typically caused by overfitting to noise or spurious correlations in the training data and a lack of robustness to adversarial variations [4].

- Solution: Incorporate robustness checks and leverage data-efficient learning.

- Technical Protocol:

- Adversarial Training: During model training, intentionally introduce small, realistic perturbations to your input data and train the model to be invariant to them [4].

- Zero- and Few-Shot Learning: Utilize Vision-Language Models (VLMs) like CLIP. These models, pre-trained on vast internet-scale datasets, can be adapted to new tasks with very few examples (few-shot learning) or even without any task-specific training (zero-shot learning), which can improve generalization and reduce overfitting [7].

- Cross-Domain Validation: Always validate your model on external datasets from different institutions or patient populations to test its generalizability before clinical deployment [5].

- Technical Protocol:

Key Experimental Protocols

Protocol 1: Building a Multimodal Predictive Pipeline

This protocol is based on the HAIM framework, which has been demonstrated to consistently improve predictive performance by integrating tabular, time-series, text, and image data [3].

Data Curation and Pre-processing:

- Gather multimodal data from Electronic Health Records (EHRs), ensuring patient-level alignment across modalities.

- Tabular Data: Normalize continuous variables and one-hot encode categorical variables.

- Time-Series Data: (e.g., vital signs) Interpolate to handle missing timestamps and extract summary statistics (mean, variance).

- Text Data: (e.g., clinical notes) Clean and tokenize text.

- Image Data: (e.g., X-rays) Normalize pixel values and resize images to a standard dimension.

Feature Extraction:

Fusion and Model Training:

- Concatenate the feature embeddings from all modalities into a unified representation vector.

- Feed this unified vector into a downstream predictor (e.g., a classifier or regressor) for the specific task (e.g., disease diagnosis, mortality prediction).

- Train the entire model end-to-end, allowing gradients to flow back to the feature extractors.

Validation and Interpretation:

- Evaluate model performance on a held-out test set using area under the receiver operating characteristic curve (AUROC).

- Use Shapley value analysis to determine the contribution of each data modality to the final prediction [3].

Protocol 2: Multimodal Multiobjective Optimization for Drug Targets (MMONCP)

This protocol is designed to identify multiple, equivalent sets of personalized drug targets (PDTs) by integrating network control principles with multiobjective optimization [1].

Construct a Personalized Gene Interaction Network (PGIN): Use tools like LIONESS or SSN to create a sample-specific molecular network for an individual patient from their genomic data [1].

Define the Multimodal Multiobjective Problem:

- Objective 1: Minimize the number of driver nodes (a measure of intervention cost).

- Objective 2: Maximize the information from prior-known drug targets (embedding existing knowledge).

- Constraints: Apply constraints based on the network control method used (e.g., MDS, NCUA).

Execute the Optimization Algorithm:

- Apply the CMMOEA-GLS-WSCD algorithm [1].

- This algorithm performs a global and local search to find a set of Pareto-optimal solutions. These solutions represent different sets of PDTs that are equivalent in their trade-off between the two objectives but may differ in their biological functions (i.e., they are multimodal).

Validate the MDTs: Experimentally or computationally validate the predicted Multimodal Drug Targets (MDTs) for their efficacy and functional differences.

Performance Data and Landscape Analysis

Table 1: Quantitative Performance of Multimodal AI in Healthcare Tasks

This table summarizes the performance gains achieved by multimodal AI models over their unimodal counterparts, as demonstrated in large-scale studies [5] [3].

| Application Domain | Metric | Unimodal Performance (Avg. AUC) | Multimodal Performance (Avg. AUC) | Performance Improvement | Key Modalities Integrated |

|---|---|---|---|---|---|

| General Medical Applications | AUC | Baseline | +6.2 percentage points | +6.2 pp | Imaging, Clinical, Genomic [5] |

| Chest Pathology Diagnosis | AUC | Baseline | +6% to +22% | +9% (Avg.) | Chest X-ray (Image), Clinical Text, Time-Series [3] |

| Hospital Length-of-Stay Prediction | AUC | Baseline | +8% to +20% | +14% (Avg.) | Clinical Tabular, Time-Series, Text [3] |

| 48-Hour Mortality Prediction | AUC | Baseline | +11% to +33% | +22% (Avg.) | Clinical Tabular, Time-Series, Text [3] |

Table 2: Research Reagent Solutions for Multimodal Experiments

This table lists essential computational tools and data resources for constructing and analyzing multimodal parameter landscapes.

| Reagent / Resource | Type | Primary Function in Research | Example Use Case |

|---|---|---|---|

| HAIM Framework [3] | Software Pipeline | Provides a unified pipeline for processing, fusing, and modeling diverse EHR data modalities (tabular, time-series, text, images). | Building a holistic patient model for outcome prediction. |

| MMONCP Framework [1] | Optimization Algorithm | Solves constrained multimodal multiobjective problems to identify multiple sets of personalized drug targets. | Finding equivalent but functionally different drug target combinations. |

| Pre-trained Models (BERT, ResNet) [4] | Feature Extractors | Converts raw text and image data into meaningful, lower-dimensional feature vectors for downstream fusion. | Creating aligned embeddings from clinical notes and medical images. |

| CLIP (Contrastive Language-Image Pre-training) [7] | Vision-Language Model | Enables zero-shot and few-shot learning by understanding the relationship between images and text descriptions. | Assessing landscape scenicness from images and text prompts; adaptable to medical image and report analysis. |

| Shapley Values [3] | Interpretation Metric | Quantifies the marginal contribution of each data modality (or source) to the final model's prediction. | Explaining a model's decision and identifying the most informative data types. |

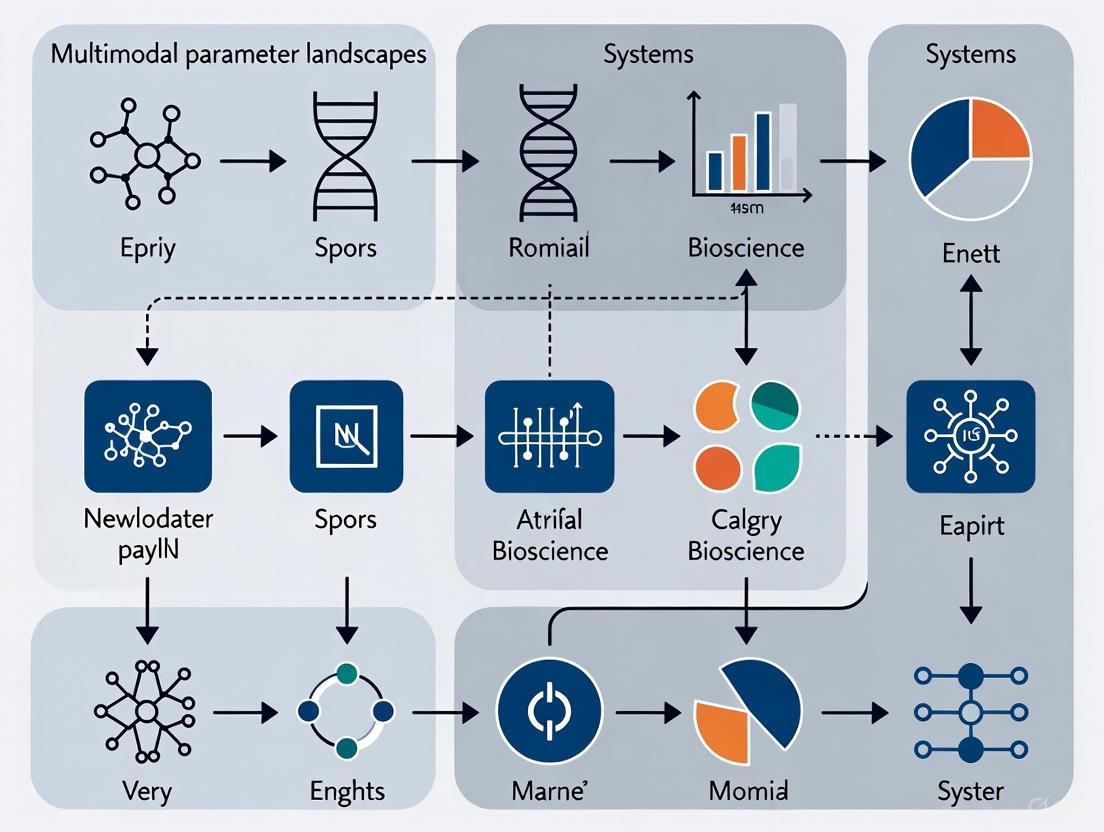

Visualizing Workflows and Landscapes

Multimodal AI Pipeline for Healthcare

Multimodal Multiobjective Optimization

Technical Troubleshooting Guides

Guide: Handling Premature Convergence in Multi-Objective Molecular Optimization

Problem: Optimization algorithm converges to a single dominant solution, missing the diverse Pareto front of potential drug candidates.

Symptoms:

- Generated molecular candidates exhibit minimal structural diversity despite varying property targets.

- Algorithm repeatedly returns similar solutions with nearly identical physicochemical properties.

- Failure to discover candidates balancing conflicting objectives (e.g., potency vs. solubility).

Solution Steps:

Step 1: Verify Landscape Multimodality

- Procedure: Conduct preliminary landscape analysis using visualization techniques [8]. Sample your search space broadly and plot fitness landscapes to identify presence of multiple local optima.

- Expected Outcome: Confirmation that your problem contains multiple promising regions in the search space, not just a single global optimum.

Step 2: Implement Diversity-Preserving Algorithms

- Procedure: Incorporate niching or crowding mechanisms into your optimization workflow. For Bayesian optimization, use q-NParEGO or q-ES algorithms as described in Pareto optimization studies [9].

- Configuration: Set population size to at least 100 individuals and maintain an archive of non-dominated solutions throughout the optimization process.

Step 3: Adjust Objective Sensitivity

- Procedure: Recalibrate property weighting in multi-objective functions. For molecular optimization, ensure no single property (e.g., binding affinity) dominates the fitness function to prevent premature convergence [10].

- Validation: Test with known diverse solutions to verify the algorithm can maintain them in the population.

Guide: Resolving Conflicting Objectives in Clinical Dose Optimization

Problem: Unable to determine the optimal dosage balancing efficacy and toxicity in oncology drug development.

Symptoms:

- High rates of dose reduction or treatment discontinuation in clinical trials.

- Significant efficacy-toxicity trade-offs with no clear optimal dosage.

- Regulatory feedback requesting better dose justification prior to pivotal trials.

Solution Steps:

Step 1: Implement Randomized Dose-Ranging Studies

- Procedure: Follow Project Optimus guidelines by evaluating multiple doses early in development [11] [12]. Design trials to explicitly compare at least 3-5 different dose levels with sufficient patient allocation per arm.

- Data Collection: Incorporate patient-reported outcomes (PROs) and pharmacokinetic/pharmacodynamic (PK/PD) data to capture both efficacy and tolerability metrics.

Step 2: Apply Multi-Objective Decision Framework

- Procedure: Formalize the trade-off using Pareto optimization principles [13] [9]. Structure the problem with clear constraints (e.g., minimum efficacy threshold) and competing objectives (maximize efficacy, minimize toxicity).

- Implementation: Use mathematical optimization to identify the Pareto front of non-dominated dose solutions, then apply clinical judgment for final selection.

Step 3: Engage Regulatory Early

- Procedure: Schedule Type B meetings with FDA Oncology Center of Excellence during Phase I development to discuss dose-optimization strategy [11].

- Documentation: Prepare comprehensive data packages including dose-response models, exposure-response analyses, and justification for proposed dosing regimen.

Frequently Asked Questions (FAQs)

Q1: What practical value do multiple optimal solutions provide in drug discovery?

Multiple optima provide crucial flexibility in drug development by offering:

- Backup Candidates: When lead compounds fail in later stages due to unforeseen toxicity or pharmacokinetic issues [10].

- Portfolio Strategy: Multiple structurally distinct scaffolds with similar potency allow parallel development paths [14].

- Development Flexibility: Candidates optimized for different specific properties can be matched to particular patient populations or formulation strategies [9].

Q2: How can we efficiently identify multiple optima in high-dimensional molecular search spaces?

Recent approaches include:

- Collaborative LLM Systems: MultiMol architecture uses specialized agents to explore chemical space more comprehensively [10].

- Pareto Optimization: Superior to scalarization for maintaining diverse solution fronts [9].

- Visualization Techniques: Landscape visualization helps identify promising regions containing multiple local optima [8].

Q3: What are the regulatory implications of presenting multiple optimal dosing regimens?

FDA's Project Optimus encourages:

- Comprehensive Data: Submission of data across multiple doses rather than single MTD [11] [12].

- Risk-Benefit Profiles: Clear documentation of efficacy-safety trade-offs for different dosing options.

- Patient-Centric Justification: Rationale for selected dose based on quality of life and tolerability considerations, not just efficacy.

Q4: How do we handle decision-making when faced with multiple non-dominated solutions?

Effective approaches include:

- Scenario Analysis: Testing solutions under different assumptions and constraints [13].

- Stakeholder Prioritization: Engaging clinicians, patients, and regulators to weight competing objectives.

- Sequential Decision Making: Implementing priority-based optimization where critical objectives are satisfied first [13].

Table 1: Performance Comparison of Multi-Objective Optimization Approaches in Molecular Design

| Method | Success Rate (%) | Diversity Metric | Computational Cost | Key Applications |

|---|---|---|---|---|

| MultiMol (LLM System) [10] | 82.30 | High | Medium-High | Lead optimization, selectivity enhancement |

| Traditional AI Methods [10] | 27.50 | Low | Medium | Single-property optimization |

| Pareto Optimization (Virtual Screening) [9] | 100% Pareto front coverage | High | Low (8% library exploration) | High-throughput screening |

| Bayesian Optimization [9] | Varies by scalarization | Medium | Medium | Property prediction |

Table 2: Project Optimus Dose Optimization Framework Components [11] [12]

| Component | Traditional Paradigm | Optimus Paradigm | Key Benefits |

|---|---|---|---|

| Dose Finding | Maximum Tolerated Dose (MTD) | Multiple dose levels | Reduced toxicity, better tolerability |

| Trial Design | 3+3 design | Randomized dose-ranging | Comprehensive efficacy-toxicity characterization |

| Data Collection | Focus on efficacy and severe toxicity | Includes PROs, PK/PD, quality of life | Patient-centric dosing |

| Timing | Late-phase adjustment | Early development (Phase I/II) | Reduced post-market modifications |

Experimental Protocols

Protocol: Multi-Objective Molecular Optimization Using Collaborative LLM Systems

Purpose: To optimize multiple molecular properties simultaneously while maintaining structural integrity and scaffold consistency.

Materials:

- Software: MultiMol framework or similar collaborative LLM system [10]

- Data: Molecular dataset in SMILES format with property annotations

- Hardware: GPU-enabled workstation for LLM inference

Procedure:

Input Preparation:

- Extract molecular scaffold SMILES using RDKit [10]

- Define target property values based on optimization goals (e.g., LogP, QED, selectivity)

- Set optimization strength parameter Δ to control property adjustment magnitude

Worker Agent Execution:

- Input scaffold SMILES and adjusted property targets to fine-tuned LLM

- Generate candidate molecules satisfying new specifications

- Maintain scaffold consistency through masked-and-recover training approach

Research Agent Filtering:

- Perform literature review via web search for target properties

- Identify key molecular characteristics associated with desired properties

- Filter candidates based on literature-derived insights

Validation:

- Assess generated molecules for chemical validity

- Verify scaffold preservation and property improvements

- Select top-performing candidates for experimental validation

Protocol: Dose Optimization Following Project Optimus Guidelines

Purpose: To identify optimal therapeutic dose balancing efficacy, safety, and tolerability in oncology drug development.

Materials:

- Regulatory Guidance: FDA Project Optimus documents [11]

- Trial Design Resources: Adaptive trial protocol templates

- Data Collection Tools: PRO instruments, PK/PD assay protocols

Procedure:

Early Phase Planning:

- Engage FDA Oncology Review Division before registration trials [11]

- Design Phase I trials to evaluate multiple dose levels (minimum 3-5 doses)

- Incorporate biomarker assessments and PK/PD modeling

Randomized Dose Evaluation:

- Implement randomized dose-ranging studies with sufficient power

- Include patient-reported outcomes and quality of life metrics

- Assess multiple efficacy endpoints and toxicity profiles across doses

Data Integration and Analysis:

Regulatory Submission:

- Document comprehensive dose justification

- Present multiple candidate doses with risk-benefit profiles

- Prepare for discussions on recommended Phase II and Phase III doses

Visualization Diagrams

Multi-Objective Optimization Workflow

Multimodal Fitness Landscape

Dose Optimization Decision Framework

Research Reagent Solutions

Table 3: Essential Tools for Multi-Objective Optimization Research

| Tool/Reagent | Function | Application Context | Key Features |

|---|---|---|---|

| MultiMol Framework [10] | Collaborative LLM for molecular optimization | Multi-property lead optimization | Dual-agent system, literature integration |

| Pareto Optimization Software [9] | Multi-objective Bayesian optimization | Virtual screening campaigns | Efficient library exploration, Pareto front identification |

| RDKit [10] | Cheminformatics toolkit | Molecular manipulation and analysis | Scaffold extraction, property calculation |

| Landscape Visualization Tools [8] | Multimodality analysis | Algorithm development and debugging | Fitness landscape mapping, optimum identification |

| Project Optimus Toolkit [11] | Regulatory guidance framework | Oncology dose optimization | Dose-ranging methodologies, FDA-aligned approaches |

Peaks, Valleys, and Basins of Attraction in Fitness Landscapes

Frequently Asked Questions (FAQs)

1. What defines a 'peak' or 'optimum' in a fitness landscape? In evolutionary biology, a fitness landscape is a mapping of genotypes to fitness. A peak, or fitness optimum, is a high-fitness genotype whose single-step mutational neighbors all have lower fitness. In optimization terms, it is a solution where no small change in the decision variables can lead to an improvement in the objective function [15]. In multimodal optimization, multiple such peaks can exist, representing multiple satisfactory solutions to a given problem [16].

2. What is a 'basin of attraction' and why is it important? A basin of attraction is the region in the search space surrounding a peak. Within this region, local search algorithms will converge to that particular peak [15]. Identifying these basins is crucial for multimodal optimization, as it allows algorithms to find multiple distinct optima instead of having multiple solutions converge to the same peak [16].

3. What is the practical significance of finding multiple peaks in drug development? In drug development, particularly in studying antibiotic resistance, fitness landscapes reveal that the number of adaptive mutational paths is often limited. Identifying these paths and the corresponding fitness peaks helps understand how resistance evolves. This knowledge can inform the use of alternating antibiotics to restore susceptibility after resistance has evolved [17].

4. How can I distinguish between a true peak and a local, non-optimal solution in my data? A two-phase multimodal optimization model can be employed. The first phase uses a population-based search algorithm to locate potential optima. The second phase uses a peak identification (PI) procedure, such as the hill–valley method, to filter out non-optimal solutions. This method checks whether two individuals are in the same region of attraction without requiring prior knowledge of niche radii [16].

5. What is 'sign epistasis' and how does it create valleys in the fitness landscape? Sign epistasis occurs when a mutation that is beneficial in one genetic background becomes deleterious in another. Reciprocal sign epistasis, where two individual mutations are each deleterious but become beneficial when combined, can create a local fitness valley—a low-fitness genotype surrounded by neighbors of higher fitness. This phenomenon ruggedens the landscape and constrains evolutionary paths [17].

Troubleshooting Guides

Problem 1: Algorithm Convergence to a Single Peak

Symptoms: Your optimization algorithm consistently returns the same solution, even when started from different initial points, despite suspicion of multiple optima.

Solution:

- Implement Niching: Integrate a niching technique into your evolutionary algorithm to preserve population diversity. Prominent methods include:

- Crowding: Replace individuals with genetically similar parents.

- Fitness Sharing: Penalize the fitness of individuals based on how crowded their neighborhood is.

- Species Conserving: Explicitly form and maintain subpopulations (species) within different basins of attraction [16].

- Apply a Two-Phase Model: Separate the search and identification processes.

- Phase 1 - Search: Run a population-based algorithm (e.g., an EA with niching) to broadly explore the search space.

- Phase 2 - Peak Identification: Use a procedure like the Hill–Valley (HVPI) algorithm on the final population. This algorithm tests points on the line between two candidate solutions; if a point with lower fitness is found, they belong to different peaks [16].

Problem 2: Difficulty in Precisely Identifying Distinct Peaks

Symptoms: Your algorithm finds many candidate solutions, but it is unclear how many represent truly distinct optima versus redundant solutions clustered around the same peak.

Solution:

- Use the Hill–Valley Peak Identification (HVPI) Algorithm: This method distinguishes between optima without needing a pre-defined niche radius.

- Sort all candidate solutions by their fitness in descending order.

- Initialize an empty solution list

S. - For each candidate

pin the sorted list, check if it is sufficiently close to any existing member ofSby sampling points on the path between them. If a point with significantly lower fitness is found,pis in a new basin and should be added toS[16].

- Reduce Computational Cost with Clustering: To minimize the number of fitness evaluations in HVPI, first group the candidate solutions using a bisecting K-means clustering algorithm. The hill–valley check can then be performed between cluster centers rather than every individual, resulting in the more efficient HVPIC algorithm [16].

Problem 3: Navigating Rugged Landscapes with Sign Epistasis

Symptoms: Evolutionary pathways are highly constrained, and populations get stuck on sub-optimal peaks because all immediate mutational steps lead to a decrease in fitness (a fitness valley).

Solution:

- Identify Permissive Pathways: Construct a combinatorially complete set of mutants to map all possible evolutionary trajectories. This reveals if any paths exist where each step increases fitness [17].

- Explore Adaptive Reversions: Be aware that some pathways may involve an adaptive reversion, where a favorable mutation incorporated early is later reverted to allow access to a higher fitness peak [17].

- Utilize Compensatory Mutations: Look for compensatory mutations that mitigate unfavorable fitness interactions introduced at earlier stages, effectively creating a bridge across a fitness valley [17].

Experimental Protocols & Data Presentation

Protocol 1: Mapping an Adaptive Landscape for a Protein

Objective: To empirically determine the fitness landscape for a set of n mutant sites in a gene, revealing all peaks, valleys, and possible evolutionary paths.

Methodology:

- Genotype Construction: Create all possible combinations (

2^n) of thenmutant sites of interest. - Phenotypic Assay: Measure a proxy for fitness (e.g., enzyme activity, antibiotic resistance, growth rate) for each genotype under controlled, reproducible conditions.

- Data Analysis:

- Calculate the epistasis (interaction) between mutations.

- Identify all local and global fitness peaks.

- Map all permissible evolutionary trajectories where each single mutational step increases fitness [17].

Key Reagents and Solutions:

- Template DNA: The wild-type gene or genome.

- Site-Directed Mutagenesis Kit: For constructing all mutant combinations.

- Selection Medium: To apply selective pressure (e.g., containing an antibiotic).

- Spectrophotometer/Microplate Reader: For high-throughput growth rate measurements.

Quantitative Measures of Landscape Ruggedness

The following table summarizes key metrics for analyzing the structure of a fitness landscape [15].

| Metric | Formula/Description | Interpretation |

|---|---|---|

| Autocorrelation (Ruggedness) | ρ(s) ≈ (1/(σΦ²(m-s))) * Σ[(Φ(u_t) - Φ̄)(Φ(u_{t+s}) - Φ̄)]Calculated from a random walk of length m through the landscape. |

A low autocorrelation indicates a rugged landscape, making it harder for local search algorithms to navigate. |

| Fitness Distance Correlation (FDC) | ρ(Φ, d) = cov(Φ, d) / (σΦ σ_d)Measures correlation between fitness (Φ) and distance (d) to the nearest global optimum. |

A value of -1 (for maximization) indicates an easy problem. A value of 1 indicates a difficult one. |

| Number of Local Optima | The count of genotypes that are fitter than all their single-mutant neighbors. | A higher number indicates a more rugged landscape with many potential traps for optimization algorithms. |

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in Fitness Landscape Research |

|---|---|

| Combinatorially Complete Library | A set of mutants containing all possible combinations of the genetic changes of interest. It is the fundamental requirement for empirically mapping an adaptive landscape [17]. |

| High-Throughput Phenotyping Assay | A reproducible and scalable method to measure fitness or a proxy (e.g., growth rate, fluorescence, drug resistance) for a large number of genotypes in parallel [17]. |

| Niching Algorithm | A computational method (e.g., crowding, fitness sharing) that maintains population diversity in evolutionary algorithms, enabling the simultaneous location of multiple fitness peaks [16]. |

| Hill–Valley Peak Identification (HVPI) | A post-processing algorithm that filters a set of candidate solutions to identify distinct optima by checking if solutions reside in the same basin of attraction [16]. |

Landscape Analysis and Evolutionary Workflows

Fitness Landscape Analysis Workflow

Reciprocal Sign Epistasis

FAQ: Troubleshooting Guide for Natural Product Drug Discovery

FAQ 1: Our high-throughput screening of a natural product library is yielding an unmanageably high number of hits with similar activity. How can we prioritize compounds for further investigation?

Answer: This is a common challenge when working with complex natural extracts. We recommend implementing an Integrated Dereplication Strategy to quickly identify known compounds and prioritize novel chemistries.

- Recommended Action: Employ a combination of analytical techniques before committing to full isolation.

- Detailed Methodology:

- Liquid Chromatography-High Resolution Mass Spectrometry (LC-HRMS): Use this technique to obtain precise molecular weights and formula predictions for active constituents [18].

- Tandem MS/MS Analysis: Fragment the ions and compare the resulting spectra against natural product databases like Global Natural Products Social Molecular Networking (GNPS) to identify known compounds [18].

- Hyphenated Techniques: For critical samples, utilize HPLC-HRMS-SPE-NMR. This system automates the separation, trapping, and nuclear magnetic resonance analysis of individual compounds, providing structural information without the need for large-scale isolation [18].

FAQ 2: Our lead natural product has excellent efficacy but poor solubility and pharmacokinetic properties. What strategies can we explore to develop a viable drug candidate?

Answer: Optimizing the properties of a natural product lead is a central challenge in drug development. The solution often lies in Chemical Modification and Analogue Development.

- Recommended Action: Systematically generate and test synthetic analogues of your lead compound.

- Detailed Methodology:

- Structure-Activity Relationship (SAR) Analysis: Synthesize a library of analogues with modifications to different regions of the parent molecule. Test these analogues for both activity and improved properties [19].

- Property-Based Design: Focus modifications on improving key parameters defined by the Rule of Five (molecular weight < 500, cLogP < 5, hydrogen bond donors < 5, acceptors < 10) to enhance the likelihood of oral bioavailability [20] [19].

- Semi-Synthesis: Use the natural product as a starting material for chemical synthesis to create novel derivatives that are inaccessible through fermentation or extraction alone [18].

FAQ 3: We are encountering significant variability in the biological activity of different batches of a plant extract. How can we ensure consistency and identify the true active component?

Answer: Batch variability often stems from differences in plant genetics, growing conditions, or extraction methods. A Metabolomics-Driven Quality Control approach can resolve this.

- Recommended Action: Move from a single-compound assay to a comprehensive metabolite profiling workflow.

- Detailed Methodology:

- Metabolomic Profiling: Use UHPLC-HRMS to generate detailed chemical fingerprints of all active and inactive batches [18].

- Multivariate Data Analysis: Apply statistical methods like Principal Component Analysis (PCA) to correlate the presence and abundance of specific metabolites in the extracts with the observed biological activity [18].

- Bioactivity-Guided Fractionation: Couple the chromatographic separation to a real-time, high-throughput biological assay (e.g., an online biochemical assay) to directly trace the activity to specific fractions, dramatically accelerating the identification of the true active [18].

FAQ 4: How can we efficiently navigate the complex parameter landscape of ion channel modulation to identify optimal compounds?

Answer: Navigating multimodal parameter spaces, where multiple parameter sets can yield similar functional outputs, requires specialized computational approaches.

- Recommended Action: Utilize Bayesian inference methods to map the parameter landscape rather than seeking a single "best" solution.

- Detailed Methodology:

- Markov Chain Monte Carlo (MCMC) Sampling: Employ MCMC algorithms to explore the high-dimensional parameter space (e.g., ion channel conductance densities) [21].

- Posterior Distribution Analysis: The output is not a single value but a probability distribution (a "landscape") of all parameter sets that are consistent with your experimental data. This allows you to visualize the relationships between parameters and identify regions of high model robustness [21].

- Solution Map Visualization: Analyze the resulting multimodal posteriors to select optimal parameter sets and understand parameter sensitivities for designing future experiments [21].

Experimental Protocols for Key Techniques

Protocol 1: LC-HRMS-SPE-NMR for Targeted Compound Identification

Objective: To isolate and structurally elucidate a bioactive compound from a complex natural extract without large-scale purification.

Materials:

- Natural extract dissolved in suitable solvent (e.g., methanol)

- Ultra-High-Performance Liquid Chromatography (UHPLC) system

- High-Resolution Mass Spectrometer (HRMS)

- Solid-Phase Extraction (SPE) cartridges or microfluidic traps

- Nuclear Magnetic Resonance (NMR) spectrometer (with cryoprobe)

Workflow:

- Separation: The extract is separated using a UHPLC system with a reversed-phase column and a water-acetonitrile gradient.

- Detection & Triggering: The eluent passes through a UV/Vis detector and is then split, with a minor flow directed to the HRMS for exact mass determination. Based on the UV trace or MS signal, the system triggers the collection of the peak of interest.

- Trapping: The major flow is diverted to capture the target compound onto an SPE cartridge or a loop, effectively concentrating it and removing the chromatographic solvent.

- Elution to NMR: The trapped compound is eluted from the SPE cartridge directly into an NMR tube using a deuterated solvent (e.g., deuterated methanol or DMSO).

- Structural Elucidation: 1D and 2D NMR experiments (e.g., 1H, 13C, COSY, HSQC, HMBC) are performed to determine the complete structure [18].

Protocol 2: Bayesian Inference for Parameter Estimation in Neuron Models

Objective: To infer the parameters (e.g., ion channel densities) of a complex neuron model from electrophysiological data, accounting for multimodality.

Materials:

- Experimental voltage trace data from the neuron of interest.

- A defined Hodgkin-Huxley-type neuron model.

- Computational environment (e.g., Python with PyMC3 or Stan).

Workflow:

- Model Definition: Formulate the problem in a Bayesian framework. Define the prior distributions for the parameters to be inferred, based on physiological constraints.

- Likelihood Specification: Establish a likelihood function that quantifies the probability of observing the experimental data given a specific set of model parameters.

- MCMC Sampling: Run an MCMC algorithm (e.g., Hamiltonian Monte Carlo) to sample from the posterior distribution. This distribution represents the probability of different parameter combinations given the observed data.

- Analysis & Visualization:

- Check convergence of the MCMC chains.

- Visualize the posterior distributions for individual parameters (marginal distributions).

- Create 2D scatter plots of parameter pairs to visualize correlations and identify the multimodal landscape of viable solutions [21].

Table 1: Clinically Significant Plant-Derived Therapeutic Compounds and Their Targets

| Therapeutic Compound | Natural Source | Primary Indication | Mechanism of Action | Key Molecular Target |

|---|---|---|---|---|

| Paclitaxel [22] | Taxus brevifolia (Pacific Yew) | Ovarian, Breast Cancer | Promotes microtubule assembly, inhibits depolymerization | Tubulin |

| Artemisinin [20] [22] | Artemisia annua (Sweet Wormwood) | Malaria | Generates reactive oxygen species upon activation | Heme/Parasite Biomolecules |

| Quinine [20] [22] | Cinchona spp. (Cinchona Bark) | Malaria | Inhibits hemozoin formation in malaria parasite | Heme Polymerase |

| Morphine [22] | Papaver somniferum (Opium Poppy) | Severe Pain | Agonist of opioid receptors in CNS | μ-opioid receptor |

| Digitoxin [22] | Digitalis purpurea (Foxglove) | Heart Failure | Inhibits Na+/K+ ATPase, increasing cardiac contractility | Na+/K+ ATPase pump |

Table 2: Key Analytical Technologies for Natural Product Discovery and Their Performance Metrics

| Technology | Primary Application in Discovery | Key Performance Strengths | Common Throughput |

|---|---|---|---|

| LC-HRMS/MS [18] | Dereplication, Metabolite Profiling | High mass accuracy, sensitivity, enables formula prediction | High |

| UHPLC-UV [18] | Crude extract profiling, Purity analysis | Excellent separation efficiency, robust, quantitative | High |

| HPLC-SPE-NMR [18] | Structural Elucidation | Direct structural information, minimal purification needed | Medium |

| High-Throughput Screening [20] [19] | Lead Identification | Rapid testing of 100,000+ compounds | Very High |

Visualizing Workflows and Landscapes

Diagram 1: Natural Product Drug Discovery Workflow

Diagram 2: Multimodal Parameter Landscape

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Research Reagents and Materials for Featured Experiments

| Item | Function/Application | Brief Explanation of Role |

|---|---|---|

| Natural Product Libraries [18] | Lead Identification | Pre-fractionated extracts or pure compounds from diverse biological sources for HTS campaigns. |

| Deuterated Solvents (e.g., DMSO-d6, CD3OD) [18] | NMR Spectroscopy | Provides the magnetic field environment required for NMR analysis without interfering proton signals. |

| UHPLC Columns (C18 phase) [18] | Analytical Separation | Provides high-resolution separation of complex mixtures prior to MS or NMR analysis. |

| Stable Cell Lines [19] | Target-Based Screening | Engineered cells consistently expressing a specific molecular target for reproducible compound testing. |

| MCMC Software (e.g., PyMC, Stan) [21] | Parameter Estimation | Computational tools for implementing Bayesian inference and exploring complex, multimodal parameter landscapes. |

AI and Algorithmic Frameworks for Mapping and Exploiting Multimodal Landscapes

Evolutionary Multimodal Optimization (EMO) involves the use of evolutionary algorithms (EAs) to locate and maintain multiple optimal solutions—both global and local—in problems with multiple optima. Unlike traditional optimization that converges on a single solution, EMO provides a comprehensive view of the problem's landscape. This is particularly valuable in fields like drug discovery and engineering design, where identifying multiple viable solutions offers flexibility based on secondary criteria such as cost, material, or side effects [23].

The core challenge in EMO is preventing the population of candidate solutions from prematurely converging to a single optimum. This is addressed through specialized diversity-preserving mechanisms, which maintain a diverse set of solutions throughout the evolutionary process, enabling the algorithm to explore and exploit multiple peaks in the fitness landscape simultaneously [23].

Core Principles of EMO

The workflow of an EMO algorithm is built upon standard evolutionary algorithms but integrates diversity preservation at its core. The key steps are as follows [23]:

- Initialization: A population of candidate solutions is generated randomly.

- Evaluation: Each individual's fitness is assessed using the objective function.

- Diversity Preservation: Mechanisms like fitness sharing or niching are applied to maintain population diversity.

- Selection: Individuals are selected for breeding, often based on a fitness that has been adjusted to promote diversity.

- Variation: Genetic operations (crossover and mutation) are applied to generate offspring.

- Replacement: The population is updated by integrating the new offspring.

- Termination: The algorithm stops when a condition (e.g., a maximum number of generations) is met.

Troubleshooting Guide: Common EMO Experimental Issues

FAQ 1: How can I prevent my algorithm from converging to a single peak?

Problem: The population loses diversity and converges to a single optimum, missing other viable solutions.

Solutions:

- Implement a Niching Technique: Introduce a fitness sharing mechanism. This reduces the fitness of individuals based on how crowded their neighborhood is, discouraging convergence to a single region [23]. The sharing function is typically defined as:

sh(d_ij) = { 1 - (d_ij/σ)^α, if d_ij ≤ σ; 0, otherwise }whered_ijis the distance between individualsiandj,σis the niche radius, andαis a scaling constant [23]. - Use Crowding Methods: Employ deterministic crowding during the replacement phase. This replaces parents with their most similar offspring, preserving the genetic diversity within the population from one generation to the next [23].

- Adopt an Island Model: Split your population into several sub-populations (islands) that evolve independently. Periodically allow migration between islands to introduce new genetic material and maintain global diversity [23].

- Try an Adaptive Algorithm: Use modern algorithms like the Diversity-based Adaptive Differential Evolution (DADE), which automatically divides the population into niches of appropriate sizes at different search stages and adaptively chooses mutation strategies to balance exploration and exploitation [24].

FAQ 2: My algorithm is not locating all the known global optima. What could be wrong?

Problem: The search is stuck or is unable to find some of the known optimal solutions.

Solutions:

- Adjust Niche Radius (

σ): The performance of many niching methods is highly sensitive to the niche radius parameter. Ifσis set too large, niches may merge; if too small, the population may fracture unnecessarily. Perform parameter tuning or use an adaptive method like DADE that is less sensitive to this parameter [23] [24]. - Check Population Size: The population may be too small to maintain stable subpopulations around all optima. Increase the population size to ensure sufficient individuals are available to cover all peaks.

- Implement a Local Optima Escape Strategy: Algorithms like DADE include a local optima processing strategy. When a subpopulation's diversity falls below a threshold (indicating stagnation), its individuals are reinitialized, helping them escape local optima and continue the search [24].

- Balance Exploration and Exploitation: Ensure your algorithm has a mechanism to balance global search (exploration) with local refinement (exploitation). Monitoring population diversity can serve as a metric for this balance [24].

FAQ 3: The computational cost of my EMO experiment is too high. How can I improve efficiency?

Problem: The algorithm takes too long to run, often due to expensive fitness evaluations or complex diversity calculations.

Solutions:

- Optimize Diversity Calculations: The computational complexity of calculating pairwise distances for fitness sharing grows with the square of the population size. Consider using clustering or other efficient methods to approximate niche distributions.

- Use a Co-evolutionary Approach: For constrained multi-objective problems, algorithms like DESCA use a two-population approach. An auxiliary population explores the unconstrained Pareto front, while a main population converges to the constrained front. This分工 can lead to faster and more robust convergence [25].

- Leverage Adaptive Operators: Allow the algorithm to dynamically choose genetic operators based on the current state of the population. This avoids wasting computational effort on ineffective operations [25].

Key Diversity-Preserving Mechanisms

The following table summarizes the primary mechanisms used in EMO to maintain diversity.

Table 1: Diversity-Preserving Mechanisms in EMO

| Mechanism | Core Principle | Key Parameters | Common Issues |

|---|---|---|---|

| Fitness Sharing [23] | Reduces the fitness of an individual based on the number of other, similar individuals in its neighborhood. | Niche radius (σ), sharing exponent (α). |

High computational cost; sensitive to σ setting. |

| Crowding & Deterministic Crowding [23] | Replaces a parent with its most similar offspring, preserving the distribution of solutions. | Distance metric. | Can be less effective in high-dimensional spaces. |

| Niching & Speciation [23] | Divides the population into subgroups (species) that focus on different regions of the search space. | Species radius (σ_s). |

Sensitive to the species radius parameter. |

| Island Models [23] | Splits the population into isolated sub-populations that evolve independently, with occasional migration. | Number of islands, migration rate, migration frequency. | Configuration of migration policy can be complex. |

| Diversity-based Adaptive Niching [24] | Uses population diversity to adaptively divide the population into niches without fixed parameters. | Diversity threshold. | Requires a method to accurately measure diversity. |

Experimental Protocol: Applying EMO to a Benchmark Problem

This protocol outlines the steps to implement and test a basic EMO algorithm on the Rastrigin function, a common multimodal benchmark.

Materials and Reagents

Table 2: Research Reagent Solutions for EMO Experiments

| Item | Function in the Experiment |

|---|---|

| Benchmark Function (e.g., Rastrigin) | Provides a standardized, multimodal fitness landscape with known optima to validate algorithm performance [23]. |

| Computational Environment (e.g., Python/MATLAB) | The platform for implementing the evolutionary algorithm, fitness evaluation, and diversity mechanisms. |

| Population Initialization Routine | Generates the initial set of candidate solutions, typically uniformly random within the defined variable bounds. |

| Diversity-Preserving Algorithm (e.g., NSGA-II, DADE) | The core EMO logic that performs selection, variation, and critically, maintains diversity. Public-domain codes are often available [24]. |

| Performance Metrics (e.g., Peak Ratio) | Measures used to quantify success, such as the ratio of known optima successfully located by the algorithm. |

Methodology

Problem Definition:

- Define the Rastrigin function for

n=1variable:f(x) = 10 + x² - 10*cos(2πx)withxin[-5.12, 5.12][23]. - The goal is to find all global minima located at

x=0and other local minima.

- Define the Rastrigin function for

Algorithm Initialization:

- Set the population size (e.g.,

P = 10). - Initialize the population by randomly generating individuals within the domain

[-5.12, 5.12][23]. - Configure algorithm parameters (e.g., for fitness sharing, set niche radius

σand sharing exponentα).

- Set the population size (e.g.,

Execution:

- Evaluate Fitness: Calculate the raw fitness for each individual using the Rastrigin function.

- Apply Diversity Mechanism: For example, apply fitness sharing:

a. Calculate pairwise distances

d_ijbetween all individuals. b. Compute the sharing functionsh(d_ij)for each pair. c. Calculate the niche count for each individual:niche_count_i = Σ sh(d_ij). d. Derive the shared fitness:f'_i = f_i / niche_count_i[23]. - Selection: Select parents for reproduction using a method like roulette wheel selection based on the shared fitness

f'. - Variation: Create offspring through crossover and mutation operators.

- Replacement: Form the new population for the next generation.

Termination and Analysis:

- Run the algorithm for a fixed number of generations or until a performance threshold is met.

- Count the number of distinct optima found by the final population.

- Calculate performance metrics like the peak ratio to evaluate effectiveness.

The following workflow diagram visualizes this experimental process and the key diversity mechanisms.

EMO Experimental Workflow

The Scientist's Toolkit: Essential Materials for EMO Research

Table 3: Key Research Reagent Solutions for EMO

| Category | Item | Purpose |

|---|---|---|

| Algorithms & Software | NSGA-II, SPEA2, DADE | Foundational and state-of-the-art algorithms for multimodal and multi-objective optimization. Public-domain code is often available [24] [26]. |

| Benchmark Problems | CEC2013 MMOP Test Suite, Rastrigin Function | Standardized test functions with known properties for validating and comparing algorithm performance [23] [24]. |

| Performance Metrics | Peak Ratio, Maximum Peak Ratio | Quantify the proportion of known optima that an algorithm successfully locates. |

| Theoretical Foundations | Self-Adaptation, Co-Evolution | Advanced strategies like the DESCA algorithm use co-evolution between main and auxiliary populations to handle complex constraints and enhance diversity [25]. |

Troubleshooting Guide: Common Experimental Challenges and Solutions

Data Integration and Preprocessing

Problem: How do I handle misalignment between different data modalities (e.g., molecular graphs and protein sequences)?

Misalignment between heterogeneous data structures is a fundamental challenge in multimodal parameter landscapes. Effective solutions involve creating unified embedding spaces.

Solution: Implement joint embedding spaces that map different modalities into a shared latent representation. For graph and sequence data, use specialized encoding techniques:

- For molecular graphs: Utilize Graph Neural Networks with message-passing layers to generate graph embeddings [27] [28]

- For protein sequences: Employ Transformer-based encoders with self-attention mechanisms [29]

- Fusion strategy: Apply cross-attention mechanisms between graph and sequence embeddings, allowing each modality to attend to relevant parts of the other [30]

Experimental Protocol:

- Input Preparation: Represent molecules as 2D/3D graphs (atoms as nodes, bonds as edges) and proteins as amino acid sequences [28]

- Modality-Specific Encoding:

- Cross-Modal Alignment: Implement attention mechanisms between GNN-generated graph embeddings and Transformer-generated sequence embeddings

- Joint Training: Optimize with a combined loss function including task-specific loss and modality alignment regularization

Problem: What strategies exist for managing incomplete multimodal datasets?

Real-world experimental data often has missing modalities, which poses significant challenges for model training.

Solution: Deploy flexible fusion architectures that can function robustly even when certain data types are unavailable [5].

Experimental Protocol:

- Architecture Selection: Implement hybrid fusion approaches rather than strictly early or late fusion

- Modality Dropout: During training, randomly omit entire modalities with probability p=0.2-0.5 to enhance robustness [5]

- Imputation Techniques: For partially missing features, use graph-based similarity measures (for molecular data) or attention-weighted aggregates (for sequential data) to infer missing values

- Validation Strategy: Use k-fold cross-validation specifically designed to test performance under different missing modality conditions

Model Architecture and Training

Problem: How can I address the computational complexity of Transformer attention on large molecular graphs?

The quadratic complexity of self-attention with respect to sequence length becomes prohibitive for large graphs.

Solution: Integrate efficient attention mechanisms and hybrid architectures that combine the strengths of GNNs and Transformers [30] [31].

Experimental Protocol:

- Graph Coarsening: Apply graph pooling layers (e.g., TopK pooling, self-attention pooling) to reduce node count before Transformer application

- Efficient Attention: Implement linear-complexity attention variants such as Performer or Linformer architectures for long sequences [31]

- Hierarchical Processing: Use GNNs for local feature extraction and reserve Transformers for capturing global dependencies [27]

- Complexity Monitoring: Track memory usage and computation time during training to identify bottlenecks

Problem: My hybrid model suffers from over-smoothing and over-squashing when capturing long-range dependencies in graph structures.

GNNs inherently struggle with propagating information across distant nodes, a limitation known as over-smoothing (node representations becoming indistinguishable) and over-squashing (information bottleneck in nodes with high connectivity) [31].

Solution: Leverage Graph Transformers that can directly model relationships between distant nodes through global attention mechanisms [31].

Experimental Protocol:

- Structural Encoding: Incorporate graph structural information into Transformers using:

- Attention Modification: Implement structure-aware attention where attention scores are modulated by graph distance or edge features

- Hybrid Layers: Alternate between GNN layers (for local structure) and Transformer layers (for global context) in deep architectures [27]

- Evaluation Metrics: Monitor both task performance and representational quality (e.g., using metrics like PageRank to assess information flow)

Performance Optimization and Evaluation

Problem: How do I properly evaluate hybrid models against unimodal baselines in drug discovery applications?

Comprehensive evaluation requires both standard metrics and modality-specific assessments.

Solution: Implement a multi-dimensional evaluation framework that assesses performance gains, data efficiency, and robustness across diverse scenarios [5] [27].

Experimental Protocol:

- Baseline Establishment: Train and evaluate unimodal models (GNN-only, Transformer-only) using identical data splits

- Performance Metrics:

- For classification tasks: AUC-ROC, F1-score, precision-recall curves

- For regression tasks: Mean squared error, R² coefficient

- Ablation Studies: Systematically remove modality components to quantify their individual contributions

- Data Efficiency Analysis: Train models on subsets of training data (10%, 30%, 50%, 100%) to assess learning efficiency [27]

Table: Typical Performance Improvements with Hybrid Architectures in Drug Discovery

| Application Domain | Unimodal Baseline (AUC) | Hybrid Model (AUC) | Performance Gain | Key Fusion Strategy |

|---|---|---|---|---|

| Nuclear Receptor Binding Prediction [27] | 0.79 (GNN only) | 0.87 | +8.0% | GNN-Transformer with meta-learning |

| Molecular Property Prediction [28] | 0.82 (Transformer only) | 0.89 | +7.0% | Graph Transformer with 3D encodings |

| General Medical Applications [5] | Varies by modality | Consolidated improvement | +6.2% (average) | Multimodal fusion |

Frequently Asked Questions (FAQs)

What are the key advantages of combining GNNs and Transformers over using either architecture alone?

The hybrid approach creates synergistic benefits: GNNs excel at capturing local graph structure and neighborhood relationships through message passing, while Transformers specialize in modeling global dependencies and long-range interactions via self-attention [32] [30] [31]. This combination is particularly valuable in drug discovery applications, where molecular activity depends on both local chemical groups (better captured by GNNs) and overall molecular configuration (better captured by Transformers) [27] [28]. Empirical studies demonstrate that hybrid models consistently outperform unimodal approaches, with an average AUC improvement of 6.2 percentage points across medical applications [5].

When should I choose early fusion versus late fusion strategies for multimodal data?

The optimal fusion strategy depends on your data characteristics and computational constraints [33] [34]:

- Early Fusion: Combine raw data or low-level features at the input level; ideal when modalities are tightly synchronized and have strong inter-dependencies

- Late Fusion: Process each modality independently and combine predictions or high-level features; preferable when modalities are weakly correlated or have different sampling rates

- Hybrid Fusion: Leverage both approaches, providing flexibility for handling diverse data relationships; generally provides the most robust performance but increases architectural complexity

How can I address the data scarcity problem for specific biological targets with limited labeled examples?

Few-shot learning approaches, particularly meta-learning frameworks, effectively address data scarcity in drug discovery [27]. The Meta-GTNRP framework demonstrates how to optimize model parameters across multiple nuclear receptor tasks, enabling knowledge transfer from data-rich targets to data-poor targets [27]. Technique involves:

- Meta-training: Learning across diverse but related tasks (e.g., different NR targets)

- Task Adaptation: Rapid fine-tuning with limited target-specific examples

- Architecture Design: Combining GNNs for local molecular structure with Transformers for preserving global semantic information

What are the most effective positional encoding strategies for graph-structured data in Transformers?

Graph Transformers employ several positional encoding strategies to incorporate structural information [31]:

- Laplacian Eigenvectors: Capture spectral graph properties and overall connectivity

- Random Walk Probabilities: Encode node proximity and diffusion patterns

- Spatial Encodings: For 3D molecular graphs, include distance-based relationships

- Centrality Encodings: Incorporate node importance measures (degree, betweenness) The optimal choice depends on your specific graph characteristics and task requirements.

Experimental Workflows and Methodologies

Standardized Protocol for Molecular Property Prediction

Table: Essential Research Reagents for Hybrid AI Experiments in Drug Discovery

| Reagent/Resource | Function/Purpose | Example Sources/Tools |

|---|---|---|

| Molecular Graph Datasets | Provides structured representations of compounds for GNN processing | NURA Database [27], ChEMBL [27], BindingDB [27] |

| Protein Sequence Databases | Supplies sequential data for Transformer-based protein modeling | Protein Data Bank, UniProt |

| Benchmarking Platforms | Enables standardized model evaluation and comparison | MoleculeNet [28], OGB (Open Graph Benchmark) |

| Nuclear Receptor Activity Data | Specialized datasets for few-shot learning applications | NURA Database (11 NR targets) [27] |

| 3D Molecular Conformation Data | Enhances spatial relationship modeling in geometric graphs | Public crystal structure databases, conformation generation tools |

Graph Title: Hybrid Architecture for Molecular Analysis

Meta-Learning Protocol for Few-Shot Drug Discovery

Graph Title: Few-Shot Learning Workflow

Advanced Implementation Considerations

For researchers implementing these architectures, several advanced considerations impact real-world performance:

Scalability Optimization: For large-scale graphs, implement graph sampling techniques (e.g., neighborhood sampling, graph partitioning) to manage memory requirements while maintaining model performance [31].

Explanability Integration: Incorporate attention visualization tools to interpret which molecular substructures and sequence regions most influence predictions, crucial for building trust in model outputs for drug development decisions [34].

Geometric Graph Handling: For 3D molecular data, extend standard Graph Transformers with rotational and translational invariance properties to properly handle molecular conformations and spatial relationships [28].

Troubleshooting Guides and FAQs

This technical support center addresses common challenges researchers face when working with multimodal data fusion, a core component of navigating complex multimodal parameter landscapes. The guides below provide solutions for specific experimental issues.

Data Integration and Preprocessing

FAQ: How do we handle the pervasive heterogeneity and misalignment between genomic, imaging, and clinical data streams?

- Challenge: Data from different sources (e.g., MRI machines, RNA sequencers, EHR systems) have different formats, scales, dimensions, and temporal resolutions, making direct integration impossible.

- Solution: Implement a modular preprocessing pipeline where each modality is processed independently before fusion.

- Imaging Data: Use Convolutional Neural Networks (CNNs) like VGGNet or ResNet, or Vision Transformers (ViTs), to extract high-level features from medical images (MRI, CT, histopathology slides). These features, known as Imaging-Derived Phenotypes (IDPs), provide quantitative, structured descriptors of anatomy and function [35] [36].

- Genomic Data: Process genetic variations, such as Single Nucleotide Polymorphisms (SNPs), from sequencing data. Dimensionality reduction techniques or dedicated deep neural networks can be used to extract meaningful features from high-dimensional genomic data [37] [35].

- Clinical Data: Structure and normalize data from Electronic Health Records (EHRs), including patient history, lab results, and medications. Models like BERT can be effective for processing clinical notes [37] [38].

- Troubleshooting Tip: If model performance is poor, check the alignment of your patient samples across modalities. Incomplete datasets or mislabeled samples are a common source of error. Employ rigorous data management and version control.

FAQ: What is the best strategy to fuse these preprocessed features from different modalities?

- Challenge: Choosing a fusion strategy that effectively leverages the complementary information in each data type without introducing excessive complexity or overfitting.

- Solution: The choice of fusion strategy depends on the research question, data availability, and desired level of interaction between modalities. The performance of different strategies is summarized in Table 1.

| Fusion Strategy | Description | Advantages | Disadvantages | Best-Suited Application |

|---|---|---|---|---|

| Early Fusion | Concatenating raw or low-level features from all modalities into a single input vector [36]. | Allows the model to learn complex, cross-modal interactions from the start. | Highly susceptible to overfitting due to the curse of dimensionality; requires modalities to be well-aligned [36]. | Exploring novel, low-level correlations between e.g., pixel intensity and specific genetic markers. |

| Late Fusion | Training separate models on each modality and combining their final predictions (e.g., by averaging or voting) [36]. | Robust to missing data; allows use of modality-specific model architectures. | Cannot capture intricate, intermediate cross-modal relationships. | Clinical settings where modularity and interpretability are valued, or when data streams are asynchronous. |

| Intermediate Fusion | Integrating modalities at intermediate layers of a deep learning model, often using attention mechanisms or transformers [38] [36]. | Offers a balance, enabling rich cross-modal representation learning while being more robust than early fusion. | Model architecture and training become more complex. | Most modern applications seeking to maximize predictive performance, such as tumor subtype classification [37]. |

| Hybrid Fusion | Combining elements of early, late, and intermediate fusion within a single framework [36]. | Highly flexible, can capture interactions at multiple levels. | Highest complexity; can be difficult to design and train. | Cutting-edge research aiming to extract the maximum possible information from all available data. |

The following diagram illustrates the workflow and decision process for selecting a fusion technique.

Model Performance and Optimization

FAQ: Our multimodal model is performing well on training data but generalizing poorly to the validation set. What could be the cause?

- Challenge: Overfitting in multimodal models is common due to high parameter landscapes and relatively small sample sizes, especially in biomedical domains.

- Solution:

- Data Augmentation: Systematically augment your training data. For images, use rotations, flips, and contrast adjustments. For genomic data, consider adding noise or using generative models to create synthetic samples.

- Regularization: Implement strong regularization techniques such as L1/L2 regularization, Dropout, and Early Stopping.

- Cross-Validation: Use nested cross-validation to more reliably estimate the model's performance on unseen data and tune hyperparameters without optimism bias.

- Simplify the Model: Reduce model complexity or switch to a simpler fusion strategy (e.g., from hybrid to intermediate fusion) to lower the risk of overfitting.

- Troubleshooting Tip: Perform an ablation study. Systematically remove one modality at a time to understand its contribution. This helps identify if poor generalization is due to one noisy modality or the fusion process itself [5].

FAQ: We achieved a performance improvement with multimodal fusion, but clinicians do not trust the "black box" model. How can we improve interpretability?

- Challenge: The complexity of multimodal AI, particularly with deep learning and intermediate fusion, can obscure the reasoning behind predictions, hindering clinical adoption.

- Solution: Integrate Explainable AI (XAI) techniques into your workflow.

- Attention Mechanisms: Use models that generate attention maps, highlighting which regions of an image or which genomic loci were most influential in making a prediction. For instance, in oncology, this can show a link between a specific tumor microenvironment feature on a histology slide and a genetic mutation [37] [36].

- SHAP/Saliency Maps: Apply post-hoc methods like SHapley Additive exPlanations (SHAP) to quantify the contribution of each input feature to the final prediction.

- Generate Biological Hypotheses: Frame model interpretations in the context of known biological pathways. For example, if your model associates a specific imaging phenotype with a set of genes, investigate if those genes are part of a known signaling pathway relevant to the disease [35].

- Troubleshooting Tip: Start with simpler, more interpretable models like logistic regression with late fusion to establish a baseline of trust and understanding before moving to more complex architectures.

Experimental Protocols and Workflows

FAQ: Can you provide a detailed protocol for a foundational experiment in multimodal fusion, such as linking imaging phenotypes to genomics?

- Experiment: A Data-Driven Imaging Genomics Workflow for Tumor Subtype Classification [35] [36].

- Objective: To identify associations between imaging-derived phenotypes (IDPs) and genetic variations (e.g., SNPs) to build a classifier for cancer subtypes.

- Detailed Methodology:

- Cohort Selection: Identify a patient cohort with matched multi-modal imaging (e.g., MRI), genomic sequencing data, and confirmed clinical diagnoses (e.g., from TCGA/TCIA).

- IDP Extraction:

- Segment the region of interest (e.g., tumor) from the medical images.

- Extract quantitative features (e.g., texture, shape, intensity) using a pre-defined radiomics software package or a pre-trained CNN to generate a deep feature vector.

- Genomic Feature Processing:

- Perform quality control on the genomic data.

- Conduct a Genome-Wide Association Study (GWAS) to filter SNPs associated with the disease of interest, reducing dimensionality.

- Data Integration and Fusion:

- Correlation Analysis: Perform canonical correlation analysis (CCA) to find linear relationships between the IDP matrix and the SNP matrix.

- Machine Learning Model:

- Split the data into training, validation, and test sets.

- For a classification task, use an intermediate fusion model. For example, create separate branches for IDPs and SNPs, process them with fully connected layers, and fuse the outputs using a concatenation layer or an attention-based fusion module.

- Train the model using the cross-entropy loss function and an Adam optimizer.

- Validation:

- Evaluate the model on the held-out test set using Area Under the Curve (AUC), accuracy, and F1-score.

- Perform statistical validation (e.g., permutation testing) to confirm the significance of identified imaging-genomic associations.

The workflow for this foundational experiment is outlined below.

The Scientist's Toolkit: Research Reagent Solutions

This table lists essential computational "reagents" and tools for constructing and analyzing multimodal fusion models.

| Item Name | Function / Purpose | Example Use Case in Multimodal Research |

|---|---|---|

| Convolutional Neural Networks (CNNs) | Automated feature extraction from medical images. | Generating Imaging-Derived Phenotypes (IDPs) from MRI or histopathology slides for integration with genomic data [38] [36]. |

| Vision Transformers (ViTs) | Image feature extraction using self-attention mechanisms, capturing global context. | An alternative to CNNs for creating more contextual image representations in multimodal Large Language Models (MLLMs) [38]. |

| BERT & Large Language Models (LLMs) | Processing and understanding complex textual data, such as clinical notes and reports. | Structuring unstructured EHR data to create a clinical modality for fusion with imaging and genomics [38] [39]. |

| Canonical Correlation Analysis (CCA) | Identifying linear relationships between two sets of variables from different modalities. | A foundational statistical method for discovering correlations between imaging features and genetic markers [35]. |

| Attention Mechanisms / Transformers | Enabling dynamic, weighted integration of features from different modalities (Intermediate Fusion). | Allowing a model to focus on the most relevant image regions and genomic signals when making a prediction, improving performance and interpretability [38] [36]. |

| Multimodal Large Language Models (MLLMs) | General-purpose models pre-trained to understand and reason over multiple data types (image, text). | Serving as a foundational backbone for building integrated diagnostic systems that can process patient data from multiple sources [40]. |

| Vector Databases (e.g., Milvus) | Efficient storage, indexing, and retrieval of high-dimensional vector embeddings. | Managing the embeddings generated from multimodal data to power efficient similarity search and retrieval-augmented generation (RAG) systems [41]. |

Accessing New Biological Targets and Designing Novel Compounds

Troubleshooting Guides

FAQ 1: Why is my multimodal AI model for Drug-Target Interaction (DTI) prediction failing to generalize to novel compound classes?

| Potential Cause | Symptoms | Diagnostic Steps | Solution |

|---|---|---|---|

| Data Imbalance & Bias | High accuracy on known drugs/targets, poor performance on new candidates. | Analyze dataset composition for over-represented target families. Perform ablation studies by systematically removing data subsets [42]. | Apply curriculum learning strategies (e.g., ACMO) to prioritize reliable data first. Use data augmentation techniques for under-represented classes [42]. |

| Ineffective Multimodal Fusion | Model performance is no better than using a single data modality. | Conduct ablation studies to test model performance with individual modalities (e.g., structure vs. gene expression alone) [42]. | Implement hierarchical attention-based fusion to dynamically weight the importance of different data types (e.g., genomic, proteomic, structural) [42]. |

| Inadequate Representation Learning | Model fails to capture key biochemical features for binding affinity. | Evaluate pretrained embeddings (e.g., ChemBERTa, ProtBERT) on benchmark tasks. Check for alignment between different modality spaces [42]. | Employ cross-modal contrastive learning to align representations from different data types into a unified semantic space [42]. |

FAQ 2: How can I troubleshoot a target-focused compound library that yields low hit rates in phenotypic screening?

| Potential Cause | Symptoms | Diagnostic Steps | Solution |

|---|---|---|---|

| Non-optimal Library Design Strategy | Low hit rates despite high predicted binding affinity in silico. | Review design basis: target-structure vs. chemogenomic vs. ligand-based. Cross-validate with a known active reference compound [43]. | For kinases, use a panel of kinase structures (active/inactive conformations) for docking. For novel targets, shift to a ligand-based scaffold hopping approach [43]. |

| Poor Scaffold Selection | No initial hits, or hits with no tractable structure-activity relationship (SAR). | Analyze if the scaffold's core structure can make key interactions (e.g., hydrogen bonds with a kinase's hinge region) [43]. | Select scaffolds with proven "privileged" structures for the target family and ensure synthetic feasibility for rapid analog synthesis [43]. |