Multi-Omics Integration for Biomarker Discovery: A Comprehensive Guide for Researchers and Drug Developers

This article provides a comprehensive exploration of multi-omics integration for biomarker discovery, tailored for researchers, scientists, and drug development professionals.

Multi-Omics Integration for Biomarker Discovery: A Comprehensive Guide for Researchers and Drug Developers

Abstract

This article provides a comprehensive exploration of multi-omics integration for biomarker discovery, tailored for researchers, scientists, and drug development professionals. It covers the foundational principles of major omics layers—genomics, transcriptomics, proteomics, and metabolomics—and their synergistic power in revealing complex disease mechanisms. The content delves into advanced computational methodologies for data integration, including machine learning and network-based approaches, while offering practical solutions for overcoming common challenges like data heterogeneity and batch effects. Furthermore, it examines the critical pathway for validating multi-omics biomarkers and their transformative applications in precision oncology, patient stratification, and accelerating therapeutic development, synthesizing current trends and future directions in the field.

The Foundation of Multi-Omics: From Single Layers to a Holistic Biological View

The advent of high-throughput technologies has revolutionized biomedical research, enabling the comprehensive study of biological systems at multiple molecular levels. The term "omics" refers to fields of study in biology that end with the suffix -omics, such as genomics, transcriptomics, proteomics, and metabolomics, with the related "-ome" addressing the collective objects of study (e.g., genome, transcriptome) [1]. These technologies provide global insights into biological processes and hold great promise in elucidating the myriad molecular interactions associated with human diseases [2]. In the context of biomarker discovery, multi-omics integration provides a powerful framework for identifying robust, clinically actionable biomarkers by offering a multidimensional perspective that captures the complex interplay between different molecular layers [3] [4]. This integrated approach is particularly valuable for addressing multifactorial diseases such as cancer, cardiovascular disorders, and neurodegenerative conditions, where single-omics approaches often provide incomplete pathological pictures [2] [5].

The fundamental premise behind multi-omics biomarker discovery is that each molecular layer provides complementary information: genomics reveals disease predisposition and potential therapeutic targets, transcriptomics captures dynamic gene regulation, proteomics reflects functional effector molecules and drug targets, while metabolomics provides the most proximal readout of physiological activity and pharmacological responses [3] [1]. The integration of these diverse data types enables researchers to move beyond correlative associations toward causal biological mechanisms, thereby increasing the probability of identifying biomarkers with high diagnostic, prognostic, and predictive value [4] [6]. Furthermore, technological advancements and declining costs of high-throughput data generation have made multi-omics approaches increasingly accessible, transforming them from specialized methodologies to central tools in precision medicine initiatives [2] [7].

Core Omics Technologies: Definitions and Methodologies

Genomics

Genomics is the systematic study of an organism's complete set of DNA, including genes, non-coding regions, and structural elements [1]. The primary goal of genomics in biomarker research is to identify genetic variations associated with disease susceptibility, progression, and treatment response [1]. Single nucleotide polymorphisms (SNPs) represent the most commonly used genetic markers, with array-based genotyping technologies enabling simultaneous assessment of up to 1 million SNPs per assay in genome-wide association studies (GWAS) [1]. Advanced sequencing technologies, including whole exome sequencing (WES) and whole genome sequencing (WGS), allow for comprehensive identification of copy number variations (CNVs), genetic mutations, and structural variants [3]. From a clinical perspective, genomics has yielded significant biomarkers such as tumor mutational burden (TMB), which has been approved by the FDA as a predictive biomarker for immunotherapy response in solid tumors [3].

Transcriptomics

Transcriptomics involves the global analysis of RNA expression patterns within a biological sample, providing insights into the dynamically expressed genes under specific physiological or pathological conditions [1]. Unlike the static genome, the transcriptome is highly variable over time, between cell types, and in response to environmental changes, making it particularly valuable for understanding disease mechanisms [1]. Methodologically, transcriptomics relies primarily on microarray technology and RNA sequencing (RNA-Seq), with the latter offering superior sensitivity, dynamic range, and ability to detect novel transcripts [3]. These technologies enable the comprehensive profiling of diverse RNA species, including messenger RNAs (mRNAs), long noncoding RNAs (lncRNAs), microRNAs (miRNAs), and small noncoding RNAs (snRNAs) [3]. Clinically, transcriptomics has yielded successful biomarker panels such as the Oncotype DX (21-gene) and MammaPrint (70-gene) assays that guide adjuvant chemotherapy decisions in breast cancer patients [3].

Proteomics

Proteomics encompasses the large-scale study of proteins, including their expression levels, post-translational modifications, interactions, and localization [1]. The proteome is highly dynamic and reflects the functional state of a cell or tissue, providing critical information that cannot be inferred from genomic or transcriptomic data alone due to post-translational regulation and protein turnover [1]. Mass spectrometry (MS) represents the cornerstone technology in modern proteomics, with liquid chromatography-mass spectrometry (LC-MS) enabling high-throughput protein identification and quantification [3] [5]. Reverse-phase protein arrays and antibody-based methods also contribute to proteomic analyses, particularly for validation studies [3]. Proteomics has identified clinically relevant biomarkers such as phosphorylated signaling proteins that reflect pathway activation and protein cleavage products indicative of specific disease processes [3] [6]. The Clinical Proteomic Tumor Analysis Consortium (CPTAC) has demonstrated that proteomics can identify functional subtypes and druggable vulnerabilities missed by genomics alone [3].

Metabolomics

Metabolomics focuses on the comprehensive analysis of small-molecule metabolites (typically <1 kDa) within a biological system, providing the most proximal readout of physiological activity [1]. The metabolome includes metabolic intermediates, hormones, signaling molecules, and secondary metabolites that reflect the functional outcome of genomic, transcriptomic, and proteomic regulation [1]. Analytical platforms for metabolomics primarily include mass spectrometry (MS), liquid chromatography-mass spectrometry (LC-MS), gas chromatography-mass spectrometry (GC-MS), and nuclear magnetic resonance (NMR) spectroscopy [3] [4]. A classic example of a metabolomics-derived biomarker is 2-hydroxyglutarate (2-HG), an oncometabolite that accumulates in IDH1/2-mutant gliomas and serves as both a diagnostic and mechanistic biomarker [3]. More recently, multi-metabolite panels have demonstrated superior diagnostic accuracy compared to conventional biomarkers in various cancers [3].

Table 1: Comparative Analysis of Major Omics Technologies

| Omics Field | Analytical Target | Primary Technologies | Key Biomarker Applications | Technical Considerations |

|---|---|---|---|---|

| Genomics | DNA sequence and variation | WGS, WES, Microarrays, Genotyping | Disease susceptibility, Tumor mutational burden, Pharmacogenomics | Static information, Variant interpretation challenges |

| Transcriptomics | RNA expression and splicing | RNA-Seq, Microarrays | Gene expression signatures, Pathway activation, Alternative splicing | RNA stability, Temporal dynamics, Post-transcriptional regulation |

| Proteomics | Protein expression and modification | LC-MS/MS, RPPA, Antibody arrays | Signaling pathway activity, Protein cleavage products, Drug targets | PTM complexity, Dynamic range limitations, Antibody specificity |

| Metabolomics | Small molecule metabolites | LC-MS, GC-MS, NMR | Metabolic pathway disturbances, Drug response, Diagnostic panels | Metabolic flux, Sample stability, Comprehensive coverage challenging |

Experimental Workflows and Methodological Details

Genomic and Transcriptomic Workflows

Genomic analysis typically begins with DNA extraction from tissues, cells, or bodily fluids, followed by quality control assessment. For sequencing-based approaches, libraries are prepared through fragmentation, adapter ligation, and amplification steps [3]. Whole genome sequencing provides comprehensive coverage of the entire genome, while whole exome sequencing focuses specifically on protein-coding regions, offering a cost-effective alternative for variant discovery [3]. For transcriptomic analysis, RNA extraction represents the critical first step, requiring careful handling to preserve RNA integrity [1]. Following extraction, reverse transcription converts RNA to complementary DNA (cDNA), which is then used for library preparation and sequencing [1]. The resulting sequences are aligned to reference genomes, and quantitative expression values are generated through counting algorithms. Single-cell RNA sequencing represents a major technological advancement, enabling transcriptome profiling at individual cell resolution and revealing cellular heterogeneity within tissues [3].

Proteomic and Metabolomic Workflows

Proteomic workflows typically begin with protein extraction and digestion into peptides, followed by separation using liquid chromatography [1] [5]. The eluted peptides are then ionized and analyzed by mass spectrometry, generating spectra that are matched to theoretical spectra from protein databases for identification [1]. Quantitative proteomics employs either label-based (e.g., TMT, SILAC) or label-free methods to compare protein abundance across samples [5]. Metabolomic studies require careful sample collection and preparation to preserve metabolic profiles, often involving immediate freezing or chemical stabilization [1]. Following extraction, metabolites are separated by gas or liquid chromatography and detected by mass spectrometry [4]. NMR spectroscopy provides an alternative method that requires less sample preparation and enables structural elucidation of unknown metabolites [4]. Both proteomic and metabolomic data analysis involve sophisticated computational pipelines for peak detection, alignment, normalization, and compound identification [5].

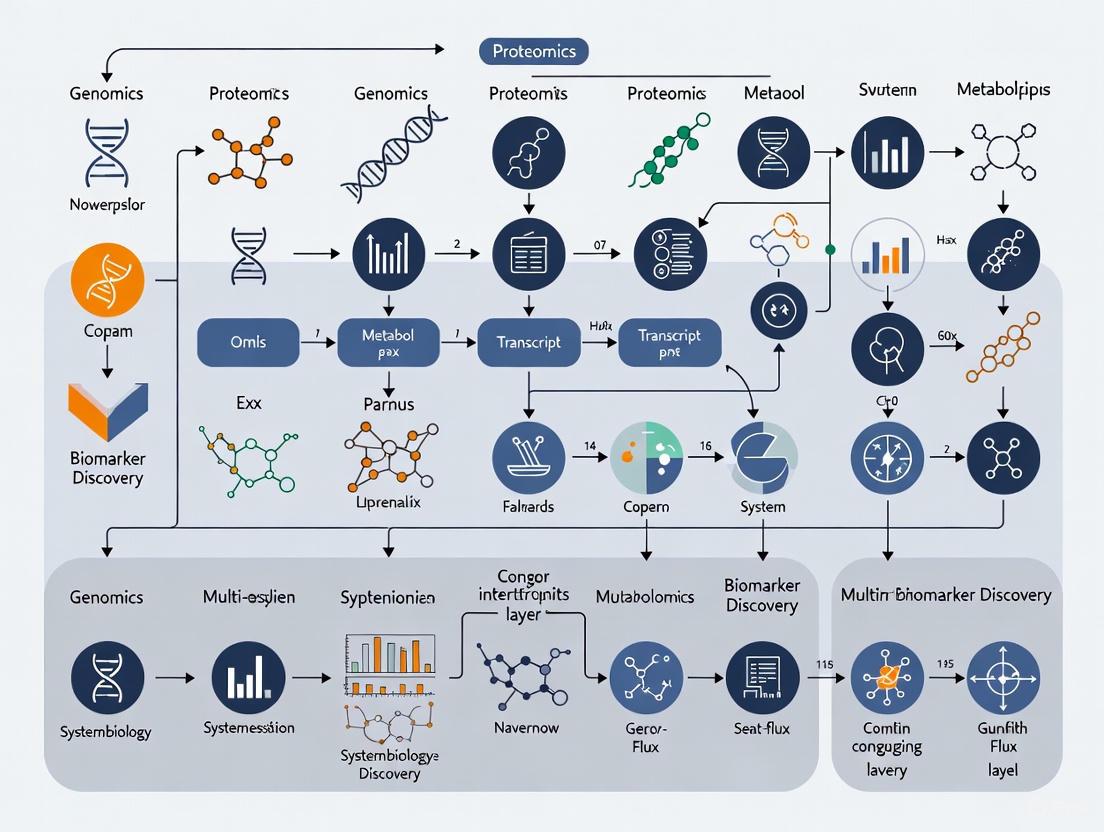

Diagram Title: Multi-Omics Experimental Workflow

Multi-Omics Integration Strategies and Computational Approaches

Data Integration Frameworks

The integration of multi-omics data presents significant computational challenges due to the high dimensionality, heterogeneity, and noise inherent in each data type [2]. Integration strategies can be broadly categorized into horizontal and vertical approaches [3]. Horizontal integration combines the same type of omics data from multiple studies or cohorts to increase statistical power, while vertical integration combines different types of omics data from the same samples to obtain a comprehensive view of biological systems [3]. Network-based approaches have gained prominence as they provide a holistic view of relationships among biological components in health and disease, revealing key molecular interactions and biomarkers that might be missed in single-omics analyses [2]. Tools such as InCroMAP facilitate integrated enrichment analysis and pathway-centered visualization of multi-omics data, enabling researchers to identify coordinated changes across molecular layers [8].

Advanced Computational Methods

Recent advances in computational methods have dramatically improved our ability to integrate and interpret multi-omics data. Machine learning and deep learning approaches are increasingly employed for multi-omics data interpretation, with algorithms capable of identifying complex, non-linear patterns across omics layers [3]. The SynOmics framework represents a cutting-edge approach that uses graph convolutional networks to model both within- and cross-omics dependencies by constructing omics networks in the feature space [9]. Unlike traditional early or late integration strategies, SynOmics adopts a parallel learning strategy to process feature-level interactions at each layer of the model, consistently outperforming state-of-the-art multi-omics integration methods across various biomedical classification tasks [9]. These computational advances are particularly valuable for biomarker discovery, as they can identify multi-omics biomarker panels that provide superior diagnostic and prognostic value compared to single-omics biomarkers [3] [5].

Table 2: Multi-Omics Integration Methods and Applications

| Integration Approach | Methodology | Key Tools/Platforms | Advantages | Biomarker Applications |

|---|---|---|---|---|

| Network-Based Integration | Constructs molecular interaction networks | InCroMAP, NetworkAnalyst | Identifies emergent properties, Captures system-level dynamics | Pathway-centric biomarkers, Network modules as biomarkers |

| Graph Neural Networks | Models intra- and inter-omics relationships | SynOmics, Graph Convolutional Networks | Preserves topological structure, Handles sparse data | Cancer subtype classification, Patient stratification |

| Similarity-Based Fusion | Integrates multiple omics similarity networks | SNF, Similarity Network Fusion | Robust to noise, Preserves data type-specific patterns | Integrative cancer subtypes, Cross-omics patient similarity |

| Matrix Factorization | Joint dimensionality reduction | JIVE, MOFA | Simultaneous analysis of shared and specific variation | Multi-omics disease endotypes, Composite biomarker panels |

The Scientist's Toolkit: Essential Research Reagents and Materials

Successful multi-omics research requires a comprehensive set of specialized reagents and materials tailored to each omics technology. The following table details essential research reagent solutions for multi-omics biomarker discovery:

Table 3: Essential Research Reagents for Multi-Omics Biomarker Discovery

| Reagent/Material | Omics Application | Function | Technical Considerations |

|---|---|---|---|

| Next-Generation Sequencing Kits | Genomics, Transcriptomics | Library preparation, Target enrichment, Sequencing | Read length, Error rates, Compatibility with sequencing platform |

| Mass Spectrometry Grade Solvents | Proteomics, Metabolomics | Sample preparation, Chromatographic separation | Purity, Ion suppression effects, LC-MS compatibility |

| Protein Digestion Enzymes | Proteomics | Protein cleavage into peptides for MS analysis | Specificity, Efficiency, Compatibility with denaturants |

| Stable Isotope Labels | Proteomics, Metabolomics | Quantitative analysis through internal standards | Labeling efficiency, Metabolic incorporation, Cost |

| Nucleic Acid Stabilization Reagents | Genomics, Transcriptomics | Preserve nucleic acids during sample collection | Stabilization time, Compatibility with downstream assays |

| Chromatography Columns | Proteomics, Metabolomics | Separation of complex mixtures prior to detection | Resolution, Reproducibility, Pressure tolerance |

| Quality Control Reference Materials | All omics fields | Method validation, Batch effect correction | Commutability, Stability, Matrix matching |

| Antibody Panels | Proteomics, Single-cell omics | Protein detection and quantification | Specificity, Cross-reactivity, Epitope accessibility |

Signaling Pathways and Biological Networks in Multi-Omics Context

Multi-omics approaches enable unprecedented insights into complex biological pathways by simultaneously measuring multiple molecular layers within the same biological system. The integrated analysis of genomic variants, transcript expression, protein abundance, and metabolic fluxes provides a comprehensive view of pathway activities and regulatory mechanisms [3]. For instance, in cancer research, multi-omics analyses have revealed how genomic alterations in oncogenes and tumor suppressor genes propagate through transcriptomic and proteomic layers to ultimately affect metabolic pathways, a phenomenon known as metabolic reprogramming [3] [6]. Similarly, in prediabetes research, integrated multi-omics approaches have elucidated how insulin resistance manifests differently across molecular layers, with proteomic and metabolomic changes often preceding clinical symptoms [5].

Diagram Title: Multi-Omics Pathway Integration

The visualization above illustrates how multi-omics integration provides a comprehensive understanding of biological pathways by connecting alterations across molecular layers. This integrated view is particularly valuable for identifying master regulatory nodes that coordinate responses across multiple biological processes, as these often represent high-value biomarker candidates and therapeutic targets [2] [3]. For example, in tissue repair and regeneration research, multi-omics approaches have identified key signaling pathways such as TGF-β signaling that coordinate transcriptional, proteomic, and metabolic responses during wound healing [4]. The integration of epigenomic data further enhances our understanding by revealing how DNA methylation and histone modifications establish persistent changes in gene regulatory programs that influence disease progression and treatment responses [3] [5]. These insights are driving the development of multi-modal biomarker panels that capture the complexity of biological systems more effectively than single-analyte biomarkers [6] [7].

The field of biomarker discovery is undergoing a fundamental transformation, moving from isolated single-omics investigations to comprehensive multi-omics approaches that capture the complex interplay within biological systems. Traditional single-omics studies—focusing solely on genomics, transcriptomics, proteomics, or metabolomics—have provided valuable but limited insights into disease mechanisms, often failing to capture the full complexity of diseases like cancer [3] [10]. Multi-omics integration represents a paradigm shift that simultaneously analyzes multiple molecular layers, enabling researchers to construct more complete models of disease biology and discover more robust, clinically actionable biomarkers [3] [11].

This revolution is driven by technological advances in high-throughput sequencing, mass spectrometry, and computational biology, which now make it feasible to generate and integrate massive multidimensional datasets from the same set of biological samples [3] [12]. The power of multi-omics lies in its ability to connect genetic predispositions with functional molecular phenotypes, bridging the critical gap between genotype and clinical phenotype [3] [10]. For biomarker discovery, this means moving beyond single molecules to complex signatures that reflect the dynamic interactions within biological systems, ultimately leading to more precise diagnostic, prognostic, and predictive biomarkers in oncology and other disease areas [3] [11] [10].

The Multi-Omics Landscape: From Single Layers to Integrated Networks

Complementary Omics Technologies

Multi-omics strategies integrate various molecular profiling technologies, each providing a unique perspective on biological systems. The table below summarizes the key omics technologies and their contributions to biomarker discovery.

Table 1: Omics Technologies and Their Applications in Biomarker Discovery

| Omics Layer | Key Technologies | Biomarker Applications | Clinical Examples |

|---|---|---|---|

| Genomics | Whole Genome Sequencing (WGS), Whole Exome Sequencing (WES) | Identification of driver mutations, copy number variations | Tumor Mutational Burden (TMB) for immunotherapy response [3] |

| Transcriptomics | RNA-seq, single-cell RNA-seq (scRNA-seq) | Gene expression signatures, alternative splicing patterns | Oncotype DX (21-gene) and MammaPrint (70-gene) for breast cancer prognosis [3] |

| Proteomics | Mass spectrometry (LC-MS/MS), reverse-phase protein arrays | Protein abundance, post-translational modifications, signaling networks | CPTAC studies revealing functional cancer subtypes [3] |

| Metabolomics | LC-MS, GC-MS, mass spectrometry imaging | Metabolic pathway activities, small molecule biomarkers | 2-hydroxyglutarate (2-HG) in IDH1/2-mutant gliomas [3] |

| Epigenomics | Whole Genome Bisulfite Sequencing (WGBS), ChIP-seq | DNA methylation patterns, histone modifications | MGMT promoter methylation predicting temozolomide response in glioblastoma [3] |

| Spatial Omics | Spatial transcriptomics, multiplex IHC | Tissue architecture, cellular neighborhoods, spatial gradients | TIM-3+ cell spatial distribution affecting T-cell function in lung cancer [10] |

The Integration Framework: Horizontal and Vertical Strategies

Multi-omics integration strategies can be broadly categorized into two complementary approaches: horizontal and vertical integration. Horizontal integration combines data from the same omics layer across different studies, cohorts, or laboratories, addressing biological and technical heterogeneity while increasing statistical power [13]. For example, combining single-cell RNA sequencing with spatial transcriptomics enables researchers to resolve cellular heterogeneity while maintaining crucial spatial context, as demonstrated by the discovery of KRT8+ alveolar intermediate cells (KACs) in early-stage lung adenocarcinoma [10].

Vertical integration connects different biological layers (e.g., genomics to transcriptomics to proteomics) from the same set of samples, enabling the construction of comprehensive models from genetic variation to functional phenotype [3] [13]. This approach can reveal how genomic alterations manifest as transcriptional dysregulation, which subsequently influences proteomic and metabolic states, ultimately driving disease phenotypes [10]. Vertical integration is particularly powerful for mapping complete signaling pathways and understanding mechanistic relationships in cancer biology [3].

Figure 1: Multi-omics integration strategies. Vertical integration connects different biological layers, while horizontal integration combines data from the same omics layer across multiple studies.

Technical Implementation: Methodologies for Multi-Omics Integration

Data Generation and Quality Control

Robust multi-omics integration begins with rigorous experimental design and quality control across all molecular layers. The following protocol outlines critical steps for ensuring data quality:

- Sample Collection and Preparation: Maintain consistent experimental conditions and sample collection methods across all omics layers to minimize batch effects. Use the same biological samples for all omics profiling where possible [12].

- Technology-Specific Quality Metrics: Implement appropriate quality checks for each omics modality: sequencing depth and mapping quality for genomics; transcript quantification metrics for transcriptomics; peak intensity and false discovery rates for proteomics and metabolomics [12].

- Missing Value Handling: Address missing values using statistical or machine learning methods like Least-Squares Adaptive (LSA) imputation. Exclude variables with high percentages (>25-30%) of missing values across samples [12].

- Standardization and Normalization: Apply appropriate transformations (logarithmic, centering, scaling) to ensure consistent feature scaling and prevent dominance by high-abundance molecules [12].

Computational Integration Methods

Multi-omics data integration employs diverse computational approaches, each with distinct strengths for specific research questions. The table below summarizes major integration methodologies and their applications.

Table 2: Multi-Omics Data Integration Methods and Applications

| Integration Method | Category | Key Features | Best Use Cases |

|---|---|---|---|

| Early Integration (Concatenation) | Low-level | Simple concatenation of omics datasets into single matrix | Identifying coordinated changes across omics layers [12] |

| MOFA (Multi-Omics Factor Analysis) | Intermediate | Unsupervised Bayesian factorization; identifies latent factors | Exploratory analysis of shared variation across omics [14] |

| DIABLO (Data Integration Analysis for Biomarker discovery using Latent Components) | Intermediate | Supervised integration with feature selection; uses phenotype labels | Biomarker discovery for disease classification [14] |

| SNF (Similarity Network Fusion) | Intermediate | Fuses sample-similarity networks from each omics dataset | Identifying patient subgroups across molecular layers [14] |

| Late Integration | High-level | Separate analysis per omics with result combination | When different omics layers provide complementary predictions [12] |

| Deep Learning (VAEs, GANs) | Intermediate | Neural network-based feature extraction and integration | Handling non-linear relationships, missing data [15] [16] |

Figure 2: Multi-omics data analysis workflow. The process involves sequential steps from raw data preprocessing to integration and biological interpretation.

Research Reagent Solutions and Computational Tools

Successful multi-omics biomarker discovery requires both wet-lab reagents and dry-lab computational tools. The following toolkit outlines essential resources for implementing multi-omics approaches.

Table 3: Essential Research Toolkit for Multi-Omics Biomarker Discovery

| Category | Tool/Reagent | Specific Function | Application Context |

|---|---|---|---|

| Wet-Lab Technologies | Single-cell RNA-seq kits | High-resolution transcriptome profiling at cellular level | Cellular heterogeneity analysis in tumor ecosystems [10] |

| Spatial transcriptomics platforms | Gene expression with tissue spatial context | Tumor microenvironment mapping [10] [17] | |

| LC-MS/MS systems | Protein and metabolite identification and quantification | Proteomic and metabolomic profiling [3] | |

| Multiplex immunohistochemistry | Simultaneous detection of multiple protein markers | Immune cell infiltration analysis in tumor tissues [17] | |

| Computational Tools | MOFA+ | Unsupervised multi-omics factor analysis | Exploratory analysis of shared variation patterns [14] |

| DIABLO | Supervised integration for biomarker discovery | Multi-omics biomarker panel identification [14] | |

| Seurat v5 | Single-cell and spatial omics integration | Cellular mapping with spatial context [10] | |

| Omics Playground | No-code multi-omics analysis platform | Accessible integration for non-bioinformaticians [14] |

Clinical Applications and Impact: Case Studies in Oncology

Enhancing Cancer Diagnosis and Prognosis

Multi-omics approaches have demonstrated remarkable success in improving cancer diagnosis and prognosis across multiple cancer types. In lung cancer, integrating genomics, transcriptomics, and spatial omics has revealed previously unrecognized cellular states and interactions within the tumor microenvironment [10]. For example, the combination of single-cell RNA sequencing with spatial transcriptomics identified KRT8+ alveolar intermediate cells (KACs) as transitional cells during the transformation of alveolar type II cells into tumor cells in early-stage lung adenocarcinoma [10]. This finding provides potential novel biomarkers for early detection and intervention.

In breast cancer, multi-omics analyses through projects like the Clinical Proteomic Tumor Analysis Consortium (CPTAC) have revealed functional subtypes and therapeutic vulnerabilities that were missed by genomics alone [3]. The integration of proteomic data with genomic information demonstrated that proteomics can identify distinct cancer subtypes with different clinical outcomes, enabling more precise prognostic stratification [3].

Predicting Therapeutic Response and Resistance

Multi-omics biomarkers have shown exceptional utility in predicting response to therapies, particularly in the context of immunotherapy and targeted treatments. The tumor mutational burden (TMB), a genomic biomarker validated in the KEYNOTE-158 trial, has received FDA approval as a predictive biomarker for pembrolizumab treatment across solid tumors [3]. However, subsequent multi-omics studies have revealed that integrating TMB with transcriptomic and proteomic signatures provides more accurate prediction of immunotherapy response than TMB alone [3] [10].

Similarly, in glioblastoma, MGMT promoter methylation status has long been used as a predictive biomarker for temozolomide response [3]. Recent multi-omics studies have enhanced this prediction by integrating MGMT methylation with proteomic profiles of DNA repair machinery and metabolic adaptations, creating more comprehensive predictive models of therapeutic efficacy [3].

Future Directions and Challenges

Emerging Technologies and Approaches

The field of multi-omics biomarker discovery continues to evolve rapidly with several emerging technologies poised to enhance integration capabilities. Single-cell multi-omics technologies now enable simultaneous measurement of multiple molecular layers (e.g., genome, epigenome, transcriptome, proteome) from the same single cell, providing unprecedented resolution for deciphering cellular heterogeneity in complex tissues [3]. Spatial multi-omics represents another frontier, combining spatial context with multidimensional molecular profiling to map cellular interactions and microenvironments in intact tissues [3] [10] [17].

Artificial intelligence and deep learning are revolutionizing multi-omics integration through approaches such as variational autoencoders (VAEs), generative adversarial networks (GANs), and transformer models [15] [11] [16]. These methods excel at handling non-linear relationships, missing data, and high-dimensional spaces that challenge traditional statistical approaches [15] [16]. Furthermore, foundation models pre-trained on large-scale multi-omics datasets show promise for transfer learning, potentially enabling robust biomarker discovery with smaller sample sizes [15].

Addressing Current Challenges

Despite significant progress, multi-omics biomarker discovery faces several persistent challenges that require methodological advances:

- Data Heterogeneity and Batch Effects: Technical variability across platforms and batches remains a major obstacle, necessitating improved normalization and batch correction methods [14] [13].

- High Dimensionality and Sample Size: The "high dimension, low sample size" (HDLSS) problem leads to overfitting and reduced generalizability, requiring sophisticated regularization and validation approaches [16] [13].

- Interpretability and Biological Validation: Complex multi-omics models often function as "black boxes," highlighting the need for explainable AI and rigorous experimental validation [11] [16].

- Data Integration Complexity: The absence of universal standards for multi-omics data integration creates reproducibility challenges and barriers to clinical translation [14] [13].

Future efforts should focus on developing standardized workflows, improving computational efficiency, enhancing model interpretability, and establishing rigorous validation frameworks to translate multi-omics biomarkers into clinical practice [3] [11] [16].

Multi-omics integration represents a transformative approach to biomarker discovery that fundamentally expands our ability to decipher complex biological systems and disease processes. By simultaneously interrogating multiple molecular layers and their dynamic interactions, researchers can identify more robust, clinically relevant biomarkers that reflect the true complexity of diseases like cancer. While significant technical and computational challenges remain, continued advances in measurement technologies, integration algorithms, and analytical frameworks are rapidly enhancing our capacity to extract meaningful biological insights from multi-dimensional datasets. As these approaches mature and become more accessible, multi-omics integration is poised to revolutionize precision medicine by enabling earlier disease detection, more accurate prognosis, and more personalized therapeutic strategies tailored to individual patients' molecular profiles.

Tumor heterogeneity describes the observation that different tumor cells can show distinct morphological and phenotypic profiles, including cellular morphology, gene expression, metabolism, motility, proliferation, and metastatic potential [18]. This phenomenon, a fundamental characteristic of cancer, occurs both between tumors (inter-tumour heterogeneity) and within individual tumors (intra-tumour heterogeneity) [18]. The heterogeneity of cancer cells introduces significant challenges in designing effective treatment strategies, primarily through the expansion of treatment-resistant subclones that lead to disease relapse [18].

In the era of personalized oncology, multi-omics strategies have revolutionized our approach to dissecting this complexity. By integrating genomics, transcriptomics, proteomics, and metabolomics, researchers can now obtain a systematic and comprehensive understanding of the biology of tumor development and progression [19] [4]. This integration allows for the identification and validation of robust biomarkers and therapeutic strategies aimed at improving outcomes for cancer patients [19] [4]. This technical guide synthesizes key biological insights into tumor heterogeneity, framing them within the context of multi-omics integration for advanced biomarker discovery.

Models and Mechanisms of Tumor Heterogeneity

Theoretical Frameworks

Two primary models explain the heterogeneity of tumor cells, which are not mutually exclusive and likely both contribute to heterogeneity across different tumor types [18]:

The Cancer Stem Cell (CSC) Model: This model asserts that within a population of tumor cells, only a small subset of cells—termed cancer stem cells (CSCs)—are tumorigenic (able to form tumors). These cells are marked by the abilities to both self-renew and differentiate into non-tumorigenic progeny. The heterogeneity observed between tumor cells is, therefore, the result of differences in the stem cells from which they originated [18]. Evidence for this model has been demonstrated in leukemias, glioblastoma, breast cancer, and prostate cancer [18].

The Clonal Evolution Model: First proposed by Peter Nowell in 1976, this model posits that tumors arise from a single mutated cell that accumulates additional mutations as it progresses [18]. These changes give rise to additional subpopulations (subclones), each with the potential to divide and mutate further. This model explains heterogeneity through two expansion mechanisms:

- Linear Expansion: Sequentially ordered mutations accumulate in driver genes, tumor suppressor genes, and DNA repair enzymes, resulting in clonal expansion [18].

- Branched Expansion: This method, more associated with heterogeneity than linear expansion, involves splitting into multiple subclonal populations. The acquisition of mutations is random due to genomic instability, and certain mutations may provide a selective advantage during tumor progression [18].

Molecular Drivers

Heterogeneity stems from both genetic and non-genetic variability [18]:

Genetic Heterogeneity: Arises from sources like exogenous mutagens (e.g., UV radiation, tobacco) or, more commonly, from genomic instability. This instability can result from impaired DNA repair mechanisms (leading to replication errors) or defects in the mitosis machinery (causing large-scale chromosomal gains/losses) [18]. Some cancer therapies can further increase this genetic variability [18].

Non-Genetic Heterogeneity: Tumor cells can show heterogeneous expression profiles, often caused by underlying epigenetic changes such as mutations affecting histone modifiers (e.g., SETD2, KDM5C) [18]. The tumor microenvironment also plays a crucial role, as regional differences (e.g., oxygen availability) impose different selective pressures on tumor cells, leading to spatial variation in dominant subclones [18].

Multi-Omics Technologies for Deconvoluting Heterogeneity

Advanced multi-omics technologies are essential for dissecting the layers of tumor heterogeneity. The following table summarizes the core omics approaches and their applications in this field.

Table 1: Multi-Omics Technologies for Analyzing Tumor Heterogeneity

| Omics Approach | Key Technologies | Primary Application in Tumor Heterogeneity | Representative Biomarkers/Targets |

|---|---|---|---|

| Genomics/Exomics | Whole-Exome Sequencing, Next-Generation Sequencing | Identifying mutational profiles, copy number variations (CNV), and subclonal architecture [20]. | CTNNB1 mutations, RAS/MAPK pathway mutations (KRAS, NRAS, BRAF) [21] [20]. |

| Transcriptomics | Single-Cell RNA Sequencing (scRNA-seq), Bulk RNA-seq | Defining gene expression heterogeneity, identifying cell subtypes, and tracing transcriptional trajectories [21]. | CREB3L2, VEGF, FGF, SPP1 [21] [4]. |

| Proteomics | Mass Spectrometry | Profiling protein expression, post-translational modifications, and signaling pathway activity [4]. | MMP-9, ADAM12, Phospho-S6, TGF-β [20] [4]. |

| Metabolomics | NMR Spectroscopy, Mass Spectrometry | Tracking metabolic reprogramming and oxidative stress across heterogeneous cell populations [4]. | Glycolytic intermediates, TCA cycle metabolites [4]. |

| Epigenomics | Methylation Arrays, ChIP-seq | Mapping epigenetic alterations that drive phenotypic plasticity and drug-tolerant states [21]. | KDM5 family demethylases, DNA methylation patterns [21]. |

Integrated Experimental Workflow

A standard integrated workflow for profiling tumor heterogeneity using multi-omics technologies can be visualized as follows:

Key Experimental Protocols and Biomarker Discovery

Single-Cell RNA Sequencing (scRNA-seq) Analysis

Protocol Overview: This methodology is critical for resolving cellular heterogeneity within tumors [21].

- Sample Preparation & Single-Cell Isolation: Tumor tissues are dissociated into single-cell suspensions. For hematopoietic malignancies like Multiple Myeloma (MM), bone marrow aspirates may be used directly [21].

- Library Preparation & Sequencing: Single-cell libraries are prepared using platforms like 10x Genomics. The barcoded cDNA is then sequenced on a high-throughput sequencer [21].

- Bioinformatic Data Processing:

- Quality Control: Cells are filtered based on thresholds for unique molecular identifiers (UMI) count (e.g., 200-20,000), gene number (e.g., 200-5,000), and mitochondrial gene content (e.g., < 20%) to remove low-quality cells, ambient RNA, and multiplets [21].

- Normalization & Integration: Data is normalized (e.g., using

NormalizeDatain Seurat) and integrated using algorithms like Harmony to remove batch effects [21]. - Clustering & Annotation: Dimensionality reduction (PCA, UMAP) is performed, followed by clustering (Louvain algorithm). Cell types are annotated based on canonical markers (e.g., plasma cells: SDC1, MZB1; T cells: CD3D, CD3E) [21].

- Subpopulation Analysis: Tumor cells are subsetted and re-analyzed to identify transcriptomically distinct subpopulations. Differential expression analysis (

FindAllMarkers; thresholds: P < 0.05, log2 FC > 0.25) identifies subgroup-specific markers [21].

Functional Validation of Genetic Alterations

Protocol Overview: This protocol validates the functional impact of mutations identified in omics studies, using the example of a CTNNB1 mutation in liver cancer [20].

- Gene Editing: CRISPR/Cas9 is used to generate isogenic HCC cell lines harboring specific mutations (e.g., CTNNB1 c.890T>C) [20].

- In Vitro Functional Assays:

- Proliferation: Measured using assays like CCK-8 or colony formation.

- Migration/Invasion: Assessed via Transwell assays with or without Matrigel.

- Angiogenesis: Evaluated by co-culturing mutant HCC cells with Human Umbilical Vein Endothelial Cells (HUVECs) and measuring tube formation.

- Signaling Pathway Analysis: Western blotting or immunofluorescence to assess pathway activity (e.g., PI3K/AKT, EMT markers) [20].

- In Vivo Validation:

Key Signaling Pathways in Heterogeneity and Progression

The integration of multi-omics data often reveals dysregulated signaling pathways that drive tumor heterogeneity, progression, and therapy resistance. The pathway below, constructed from recent findings, illustrates a key mechanism in TACE-resistant liver cancer.

Case Studies in Multi-Omics Integration

Multiple Myeloma (MM) Prognosis and Relapse

A 2025 study integrated transcriptomic and scRNA-seq data from MM patients to investigate how tumor cell heterogeneity and angiogenesis-related genes impact prognosis [21].

Key Findings:

- Significant genomic copy number variations were identified in MM tumor cells [21].

- Different tumor subgroups exhibited differences in angiogenic activity and gene expression [21].

- High expression of the transcription factor CREB3L2 in one subgroup (C1) was associated with the inhibition of angiogenesis and tumor cell proliferation/migration, suggesting a tumor-suppressive role [21].

- scRNA-seq analysis of patient samples at diagnosis revealed that high-risk subclones can be present at very low frequencies, evading detection by standard genetic assessments but later expanding to cause relapse [18]. This underscores that the presence of these subclones, even at low levels, confers a poor prognosis [18].

Clinical Implication: The study constructed a prognostic model based on angiogenesis and transcription factors, providing new theoretical insights for the precise diagnosis and personalized treatment of MM [21]. Furthermore, it highlights the need for highly sensitive detection methods at diagnosis to eradicate low-frequency, high-risk subclones [18].

TACE-Resistant Liver Cancer

A 2025 study on hepatocellular carcinoma (HCC) resistant to transarterial chemoembolization (TACE) employed single-cell and whole-exome sequencing to unravel the mechanisms of therapy resistance [20].

Key Findings:

- A CTNNB1 (c.890T>C) mutation was identified in TACE-resistant patients [20].

- Functional experiments confirmed that this mutation enhanced proliferation, migration, and EMT in HCC cells [20].

- The pro-angiogenic effect of CTNNB1 mutant cells was mediated via the ITGB1/PI3K/AKT signaling pathway [20].

- Animal models confirmed the tumorigenic properties of the CTNNB1 mutant cells [20].

Clinical Implication: The study suggests novel therapeutic targets for a subset of HCC patients with TACE resistance driven by CTNNB1 mutations and provides a mechanistic understanding of the associated aggressive phenotype [20].

Table 2: Quantitative Summary of Key Findings from Case Studies

| Case Study | Key Genetic Alteration | Affected Pathway/Process | Functional Outcome | Clinical/Prognostic Impact |

|---|---|---|---|---|

| Multiple Myeloma [21] | CREB3L2 (High Expression) | Angiogenesis, Cell Proliferation/Migration | Inhibition of tumor-promoting processes | Favorable factor; used in prognostic model |

| Multiple Myeloma [18] | Presence of low-frequency high-risk subclones (e.g., specific mutations, deletions) | Various | Expansion upon therapeutic pressure | Poor prognosis, early relapse |

| TACE-Resistant HCC [20] | CTNNB1 (c.890T>C) mutation | ITGB1/PI3K/AKT → EMT | Enhanced proliferation, migration, angiogenesis | TACE resistance, aggressive disease |

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 3: Essential Research Reagents and Materials for Tumor Heterogeneity Studies

| Reagent/Material | Function/Application | Specific Examples/Notes |

|---|---|---|

| Single-Cell Isolation Kits | Dissociation of solid tumor tissues into viable single-cell suspensions. | Enzyme-based kits (e.g., collagenase, dispase); critical for preserving RNA integrity. |

| scRNA-seq Library Prep Kits | Preparation of barcoded sequencing libraries from single cells. | Commercial platforms like 10x Genomics Chromium [21]. |

| CRISPR/Cas9 System | Gene editing to introduce or correct specific mutations in cell lines for functional validation. | Used to generate isogenic lines with mutations like CTNNB1 c.890T>C [20]. |

| Cell Culture Media & Supplements | For in vitro cultivation of primary and engineered tumor cell lines. | Includes specific media for different cell types (e.g., HUVECs for angiogenesis assays [20]). |

| Antibodies for Flow Cytometry/IHC | Cell surface and intracellular marker identification, protein localization, and quantification. | Used for cell type annotation (e.g., anti-CD3 for T cells [21]) and signaling analysis (e.g., anti-phospho-S6 [20]). |

| Functional Assay Kits | Quantitative measurement of cellular processes. | Proliferation (CCK-8), migration (Transwell), angiogenesis (Tube formation on Matrigel) [20]. |

| Animal Model Reagents | Establishment of in vivo models for tumorigenesis and therapy response. | Diethylnitrosamine for inducing HCC; Immunodeficient mice for xenografts [20]. |

| Bioinformatic Software Tools | Data processing, analysis, and visualization. | Seurat (v4.0.6) for scRNA-seq [21]; Cytoscape for network visualization [22]; R/Bioconductor packages. |

The unraveling of tumor heterogeneity is intrinsically linked to the advancement of multi-omics technologies. The integration of genomics, transcriptomics, proteomics, and other omics layers provides an unprecedented, multidimensional view of the complex cellular and molecular ecosystems within tumors. As demonstrated in the case studies of Multiple Myeloma and TACE-resistant liver cancer, this approach is indispensable for discovering novel biomarkers, understanding the mechanistic basis of therapy resistance, and identifying new therapeutic targets. The future of personalized oncology relies on continued innovation in these technologies and, crucially, on the development of sophisticated analytical frameworks to integrate the data they produce, ultimately guiding the creation of refined treatment strategies that overcome the challenge of tumor heterogeneity.

Large-scale research initiatives have revolutionized cancer research by generating comprehensive, publicly available multi-omics datasets that serve as foundational resources for biomarker discovery. These programs have systematically characterized molecular profiles across thousands of patient samples, enabling researchers to move beyond single-omics approaches to integrated analyses that capture the complex interplay between genomic, transcriptomic, proteomic, and epigenomic layers in cancer biology. The Cancer Genome Atlas (TCGA), Clinical Proteomic Tumor Analysis Consortium (CPTAC), and large-scale biobanks like the UK Biobank represent pioneering efforts that have established new paradigms for generating and utilizing large-scale molecular data [3] [23]. These initiatives have not only produced vast data resources but have also developed standardized analytical frameworks and computational tools that continue to shape contemporary multi-omics research strategies in oncology.

The evolution of these initiatives reflects the rapid technological advances in high-throughput sequencing, mass spectrometry, and computational biology. Starting with TCGA's focus on genomic characterization, the field has progressively expanded to include proteogenomic integration through CPTAC and diverse population studies through biobanks [3] [24]. This progression has enabled increasingly sophisticated biomarker discovery approaches that leverage machine learning and artificial intelligence to integrate heterogeneous data types. The resulting resources have become indispensable for identifying diagnostic, prognostic, and predictive biomarkers, ultimately advancing the goal of personalized oncology by linking molecular profiles to clinical outcomes and therapeutic responses [3] [25].

The Cancer Genome Atlas (TCGA)

TCGA represents one of the most comprehensive efforts to systematically characterize the molecular basis of cancer. Launched in 2006, this collaborative project between the National Cancer Institute (NCI) and National Human Genome Research Institute (NHGRI) generated multi-dimensional maps of key genomic changes in 33 cancer types, including over 20,000 primary cancer and matched normal samples from 11,000 patients [3] [26]. The program initially focused on genomic and transcriptomic profiling but expanded to include epigenomic and other molecular data types, creating an unprecedented resource for cancer genomics research. TCGA demonstrated that multi-omics integration could reveal novel cancer subtypes, driver pathways, and molecular signatures that transcend traditional histopathological classifications [3].

The Pan-Cancer Atlas, one of TCGA's culminating projects, integrated diverse molecular data across 33 cancer types to identify commonalities and differences, providing insights into tumorigenesis across tissue types and lineages. This effort highlighted the power of cross-cancer analyses for identifying fundamental mechanisms of cancer development and progression [3]. TCGA's data generation followed rigorous standardized protocols, ensuring consistency and quality across samples and cancer types. The initiative established robust pipelines for DNA sequencing (whole exome and whole genome), RNA sequencing, DNA methylation profiling, and microRNA analysis, creating a legacy of methodological standards that continue to influence cancer genomics [3] [27].

Clinical Proteomic Tumor Analysis Consortium (CPTAC)

CPTAC was established to complement genomic initiatives like TCGA by adding deep proteomic and phosphoproteomic characterization to genomic foundations. Recognizing that genomic alterations alone cannot fully capture the functional state of tumors, CPTAC employs advanced mass spectrometry-based proteomics to quantify protein abundance, post-translational modifications, and signaling pathway activities [3] [24]. This proteogenomic integration provides critical insights into how genomic alterations manifest at the functional protein level, enabling the identification of therapeutic targets and biomarkers that might be missed by genomic approaches alone [24].

CPTAC's study designs increasingly emphasize clinical translation, analyzing treatment-naive tumors alongside matched normal adjacent tissues to identify tumor-specific alterations. The consortium has developed standardized analytical workflows for proteogenomic data generation and integration, including liquid chromatography-mass spectrometry (LC-MS/MS) for global proteome and phosphoproteome profiling, and whole genome sequencing for genomic characterization [24]. Recent CPTAC investigations have demonstrated the clinical utility of this approach; for instance, a 2025 proteogenomic study of lung adenocarcinoma identified IGF2BP3 as a robust proteomic biomarker for genomic fragmentation and predictor of immune checkpoint inhibitor response [24].

Large-Scale Biobanks

Large-scale biobanks represent a complementary approach to disease-specific initiatives like TCGA and CPTAC, focusing on population-level data collection with longitudinal clinical follow-up. The UK Biobank stands as a prominent example, containing genetic, lifestyle, and health information from approximately 500,000 participants aged 40-69 at recruitment [23]. Unlike disease-specific cohorts, biobanks capture pre-diagnostic molecular measurements, enabling truly prospective analyses of disease development and the identification of early biomarkers [23].

These resources have enabled the development of sophisticated predictive models like MILTON (Machine Learning with Phenotype Associations), which integrates clinical biomarkers, plasma protein levels, and other quantitative traits to predict disease risk across 3,213 phenotypes [23]. Such approaches demonstrate how biobank data can augment traditional case-control genetic studies by identifying "cryptic cases" - individuals who may develop disease but are not yet clinically diagnosed. The population-based design of biobanks also facilitates the study of how environmental exposures, lifestyle factors, and genetic predispositions interact to influence disease risk and progression [23].

Table 1: Comparison of Major Multi-Omics Initiatives

| Initiative | Primary Focus | Key Omics Layers | Sample Scale | Notable Outputs |

|---|---|---|---|---|

| TCGA | Comprehensive molecular characterization of cancer | Genomics, transcriptomics, epigenomics | ~20,000 samples across 33 cancer types | Pan-Cancer Atlas, molecular subtypes, driver mutations |

| CPTAC | Proteogenomic integration for functional insights | Proteomics, phosphoproteomics, genomics | Thousands of tumors with matched normal | Therapeutic targets, predictive biomarkers, signaling networks |

| UK Biobank | Population-level longitudinal studies | Genomics, proteomics, metabolomics, clinical biomarkers | ~500,000 participants | Disease risk prediction models, pre-diagnostic biomarkers |

Experimental Methodologies and Workflows

TCGA Molecular Profiling Workflows

TCGA established standardized experimental protocols across sequencing centers to ensure data consistency and quality. Genomic characterization included whole exome sequencing (WES) to identify somatic mutations, single nucleotide polymorphisms (SNPs), and small insertions/deletions, while a subset of samples underwent whole genome sequencing (WGS) for comprehensive variant discovery [3]. Copy number variations (CNVs) were profiled using single nucleotide polymorphism (SNP) arrays, providing information on chromosomal gains and losses that drive oncogene activation and tumor suppressor inactivation [3].

Transcriptomic profiling primarily utilized RNA sequencing (RNA-Seq) to quantify gene expression levels, alternative splicing, and gene fusions. For microRNA analysis, both sequencing and array-based platforms were employed to capture post-transcriptional regulation networks [3]. Epigenomic characterization focused primarily on DNA methylation profiling using Illumina Infinium BeadChip arrays, enabling identification of promoter hypermethylation events that silence tumor suppressor genes [3]. All TCGA data generation followed rigorous quality control metrics, with centralized data processing pipelines ensuring consistency across different processing centers and technology platforms.

CPTAC Proteogenomic Integration Pipeline

CPTAC's integrated proteogenomic workflow begins with tumor tissue procurement, typically fresh-frozen specimens with matched normal adjacent tissue collected under standardized protocols. Nucleic acid extraction precedes genomic characterization via WGS or WES, while proteins are digested and prepared for mass spectrometry analysis [24]. For global proteome profiling, samples undergo liquid chromatography-tandem mass spectrometry (LC-MS/MS) with tandem mass tag (TMT) multiplexing to enable quantitative comparisons across samples [24].

A critical component of CPTAC's approach is phosphoproteomic analysis, which employs enrichment techniques such as immobilized metal affinity chromatography (IMAC) or titanium dioxide (TiO2) to capture phosphorylated peptides before LC-MS/MS analysis. This enables comprehensive mapping of signaling network alterations in cancer [24]. Bioinformatics pipelines then integrate genomic and proteomic data to identify proteogenomic relationships, including: (1) correlation of mutation and copy number alterations with protein abundance; (2) identification of novel peptide sequences from genomic variants; and (3) mapping of pathway activities through phosphoproteomic profiling [24].

Data Processing and Normalization Approaches

Multi-omics data integration requires sophisticated preprocessing and normalization to address technical variability across platforms. For transcriptomic data, TCGA and similar initiatives typically employ reads per kilobase per million (RPKM) or transcripts per million (TPM) normalization to enable cross-sample comparison [26]. Proteomic data from CPTAC undergoes median centering and variance stabilization to correct for batch effects, while DNA methylation data is processed using background correction and normalization algorithms specific to array technology [26].

Missing value imputation represents a particular challenge in proteomic data, where absence of measurement may reflect true biological absence or technical limitations. CPTAC employs multiple imputation strategies including k-nearest neighbors (KNN) and maximum likelihood approaches to address this issue [26]. For cross-omics integration, additional normalization such as z-score transformation is often applied to make features comparable across fundamentally different data types [27].

Diagram 1: Multi-omics integration workflow showing the parallel processing of different molecular layers and their convergence through bioinformatics analysis.

Multi-Omics Databases and Repositories

The exponential growth of multi-omics data has driven the development of specialized databases that curate and integrate molecular data from large-scale initiatives. MLOmics represents a recent innovation specifically designed to serve machine learning applications, containing 8,314 patient samples across 32 cancer types with four omics types (mRNA expression, microRNA expression, DNA methylation, and copy number variations) [26]. Unlike raw data repositories, MLOmics provides "off-the-shelf" datasets with three feature versions (Original, Aligned, and Top) to support different analytical needs, along with extensive baselines from highly cited methods to enable fair model comparison [26].

Disease-specific databases have also emerged to support focused research communities. GliomaDB integrates 21,086 glioblastoma multiforme samples from 4,303 patients across TCGA, GEO, Chinese Glioma Genome Atlas (CGGA), and MSK-IMPACT, enabling meta-analyses across diverse patient populations [3]. Similarly, HCCDBv2 provides a comprehensive liver cancer multi-omics database incorporating clinical phenotype data, bulk transcriptomics, single-cell transcriptomics, and spatial transcriptomics [3]. These specialized resources demonstrate how large-scale initiative data can be enhanced through integration with complementary datasets to address specific biological questions.

Machine Learning and Integration Algorithms

Multi-omics integration employs diverse computational strategies ranging from unsupervised clustering to supervised machine learning and deep learning approaches. Unsupervised methods include matrix factorization techniques like non-negative matrix factorization (NMF) and similarity network fusion (SNF), which identify coherent molecular patterns across omics layers without prior biological knowledge [3] [27]. Supervised approaches leverage algorithms like XGBoost, random forests, and support vector machines (SVM) to build predictive models that integrate multiple data types for classification or regression tasks [26].

Recent advances have incorporated deep learning architectures specifically designed for multi-omics integration. Methods like XOmiVAE, CustOmics, and Subtype-GAN employ variational autoencoders, attention mechanisms, and generative adversarial networks to learn latent representations that capture shared and complementary information across omics modalities [26]. These approaches have demonstrated superior performance in cancer subtyping, prognosis prediction, and biomarker identification compared to traditional methods. Benchmark studies have shown that feature selection is particularly critical for model performance, with appropriate filtering improving clustering performance by up to 34% [27].

Table 2: Essential Research Reagents and Computational Tools

| Category | Resource/Tool | Specific Function | Application in Multi-Omics |

|---|---|---|---|

| Experimental Platforms | Illumina sequencing platforms | DNA/RNA sequencing | Genomic and transcriptomic profiling |

| Liquid chromatography-mass spectrometry (LC-MS/MS) | Protein and metabolite quantification | Proteomic and metabolomic analysis | |

| Illumina Infinium BeadChips | DNA methylation profiling | Epigenomic characterization | |

| Computational Tools | MLOmics database | Preprocessed multi-omics datasets | Machine learning model development |

| DriverDBv4 | Multi-omics driver identification | Cancer gene discovery | |

| MILTON framework | Disease prediction from biomarkers | Risk stratification and genetic discovery |

Biomarker Discovery Applications and Case Studies

Diagnostic and Prognostic Biomarkers

Multi-omics initiatives have yielded numerous clinically relevant biomarkers across cancer types. TCGA identified tumor mutational burden (TMB) as a pan-cancer biomarker, which was subsequently validated in the KEYNOTE-158 trial as a predictive biomarker for pembrolizumab treatment across solid tumors [3]. Transcriptomic signatures such as Oncotype DX (21-gene) and MammaPrint (70-gene) have demonstrated utility in tailoring adjuvant chemotherapy decisions in breast cancer patients, as validated in the TAILORx and MINDACT trials respectively [3].

CPTAC's proteogenomic approaches have identified functional protein biomarkers that complement genomic findings. In ovarian and breast cancers, CPTAC studies revealed proteomic subtypes that identified potential druggable vulnerabilities missed by genomics alone [3]. A recent 2025 CPTAC study of lung adenocarcinoma developed a novel metric called Breakage Intensity Clustering (BIC) that classifies tumors by analyzing DNA breakpoint clustering and successfully stratified patients into three groups with significantly different survival outcomes [24]. This study also identified the protein IGF2BP3 as both a robust proteomic biomarker for genomic fragmentation and a predictor of immune checkpoint inhibitor response [24].

Predictive Biomarkers and Therapeutic Applications

Multi-omics data has been instrumental in identifying biomarkers that predict response to targeted therapies. The integration of genomic and proteomic data has revealed how genomic alterations translate to functional signaling pathway activities that influence therapeutic susceptibility. For example, proteogenomic analyses have identified phosphorylation events that activate oncogenic signaling pathways independent of mutational status, explaining heterogeneous responses to targeted agents [24] [25].

CPTAC's 2025 lung adenocarcinoma study exemplifies how multi-omics data can guide therapeutic strategy by identifying drug targets and nominating potential drugs for different molecular subtypes [24]. The study employed a systematic approach to prioritize drug targets if the corresponding protein, activating phosphorylation site, or other post-translational modification site was overexpressed in a particular subtype and knocking down the gene was essential for survival of corresponding cell lines. This approach identified numerous dependencies, including the splicing factor SF3B, the kinase MET, and the protein transporter XPO1, classifying targets into five tiers based on their actionability from approved drugs to novel therapy candidates [24].

Diagram 2: Proteogenomic biomarker discovery pipeline showing how genomic alterations propagate through molecular layers to influence clinical applications.

Best Practices and Guidelines for Multi-Omics Study Design

Experimental Design Considerations

Robust multi-omics study design requires careful consideration of both computational and biological factors. Benchmark analyses across TCGA datasets have identified nine critical factors that fundamentally influence multi-omics integration outcomes [27]. Computational factors include sample size, feature selection, preprocessing strategy, noise characterization, class balance, and number of classes, while biological factors encompass cancer subtype combinations, omics combinations, and clinical feature correlation [27].

Evidence-based recommendations indicate that studies should aim for at least 26 samples per class to ensure robust statistical power for subtype discrimination [27]. Feature selection is particularly critical, with selecting less than 10% of omics features recommended to reduce dimensionality while preserving biological signal. Maintaining sample balance under a 3:1 ratio between classes and controlling noise levels below 30% further enhance analytical robustness [27]. These guidelines provide a framework for designing multi-omics studies that yield reproducible and biologically meaningful results.

Data Integration Strategies and Challenges

Multi-omics integration approaches can be categorized into horizontal and vertical strategies. Horizontal integration combines the same type of omics data across different samples or conditions to increase statistical power and identify consistent patterns. Vertical integration combines different types of omics data from the same samples to build a comprehensive view of biological systems [3]. Each approach requires specialized computational methods and addresses distinct biological questions.

The field continues to face several methodological challenges, including data heterogeneity, missing data, batch effects, and computational scalability [27]. Different omics data types exhibit varying distributions and sources of noise - for instance, transcript expression typically follows a negative binomial distribution while DNA methylation displays a bimodal distribution [27]. These technical variations must be addressed through appropriate normalization and batch correction approaches before meaningful biological integration can occur. Additionally, missing data is particularly prevalent in proteomic and metabolomic datasets, requiring careful imputation strategies to avoid introducing biases [27].

Future Directions and Emerging Technologies

Single-Cell and Spatial Multi-Omics

The advent of single-cell and spatial multi-omics technologies represents a paradigm shift in resolving tumor heterogeneity. Single-cell approaches enable the characterization of cellular states and activities at unprecedented resolution, moving beyond bulk tissue averages to capture the true diversity of tumor cell populations and their microenvironment [3] [28]. Recent technological advances now allow simultaneous measurement of multiple molecular layers from the same single cells, providing matched genomic, epigenomic, transcriptomic, and proteomic profiles from individual cells within complex tissues [3].

Spatial transcriptomics and spatial proteomics provide complementary information by preserving the architectural context of tissues, enabling researchers to map molecular profiles within their native tissue morphology [3]. These approaches are particularly valuable for understanding tumor-immune interactions, cellular communication networks, and the spatial organization of heterogeneous subclones within tumors. As these technologies mature and become more widely accessible, they are expected to generate increasingly rich datasets that will further enhance our understanding of cancer biology and therapeutic resistance mechanisms [28].

AI-Driven Integration and Clinical Translation

Artificial intelligence and machine learning are playing an increasingly prominent role in multi-omics data analysis, enabling the identification of complex patterns that may not be apparent through traditional statistical approaches. Deep learning architectures such as convolutional neural networks (CNNs), transformers, and graph neural networks are being employed to model complex relationships between different data modalities [29]. These approaches are particularly powerful for integrating imaging and omics data, where early, late, and hybrid fusion strategies each offer distinct advantages depending on the specific clinical question and data characteristics [29].

The convergence of medical imaging and multi-omics data represents a particularly promising direction for clinical translation. Radiogenomic studies have demonstrated correlations between imaging characteristics and gene expression profiles, suggesting that noninvasive imaging can serve as a proxy for molecular characterization [29]. Integrated frameworks that combine histopathological images with genomic profiles have shown improved performance in predicting patient outcomes and identifying molecular subtypes compared to unimodal approaches [29]. As these multimodal AI approaches continue to evolve, they hold immense promise for advancing precision medicine by leveraging routinely collected clinical data to infer molecular characteristics and guide treatment decisions.

Table 3: Key Biomarkers Discovered Through Multi-Omics Initiatives

| Biomarker | Cancer Type | Omics Layer | Clinical Application | Initiative Source |

|---|---|---|---|---|

| Tumor Mutational Burden (TMB) | Multiple solid tumors | Genomics | Predicts response to immune checkpoint inhibitors | TCGA [3] |

| Oncotype DX (21-gene) | Breast cancer | Transcriptomics | Guides adjuvant chemotherapy decisions | TCGA [3] |

| IGF2BP3 | Lung adenocarcinoma | Proteomics | Predicts genomic fragmentation and immunotherapy response | CPTAC [24] |

| Breakage Intensity Clustering (BIC) | Lung adenocarcinoma | Genomics | Stratifies patients by survival outcomes | CPTAC [24] |

| HER2 amplification | Breast cancer | Genomics | Guides HER2-targeted therapies | TCGA [25] |

Strategies and Tools: A Practical Guide to Multi-Omics Data Integration

The staggering molecular heterogeneity of complex diseases like cancer demands analytical approaches that look beyond single molecular layers. Multi-omics integration has emerged as a transformative framework that combines data from genomics, transcriptomics, proteomics, metabolomics, and epigenomics to provide a system-level understanding of biological processes and disease mechanisms [3] [30]. The primary goal of these integration strategies is to elucidate comprehensive molecular signatures that drive tumor initiation, progression, and therapeutic resistance, thereby accelerating biomarker discovery for precision oncology [3] [31]. The technological evolution from early Sanger sequencing to modern high-throughput next-generation sequencing (NGS) platforms and mass spectrometry has enabled this paradigm shift, allowing researchers to capture the intricate cross-talk between different regulatory layers within cells [3] [32].

Multi-omics data fusion techniques are broadly categorized into two distinct paradigms: horizontal and vertical integration. These approaches differ fundamentally in their experimental design, data structure, analytical objectives, and computational requirements [3]. Horizontal integration, also referred to as intra-omics integration, involves combining the same type of omics data across multiple different samples or cohorts. This approach is particularly valuable for increasing statistical power in biomarker discovery by enlarging sample sizes and for identifying consistent molecular patterns across diverse populations [3]. In contrast, vertical integration, known as inter-omics integration, focuses on analyzing multiple types of omics data measured on the same set of biological samples. This strategy aims to reconstruct the functional flow of information from genetic blueprint to cellular phenotype, enabling researchers to connect genomic variations with their functional consequences across transcriptional, proteomic, and metabolic layers [3] [2].

The selection between horizontal and vertical integration strategies is dictated by specific research objectives, available data resources, and computational constraints. Horizontal integration primarily addresses challenges of data harmonization and batch effects when combining datasets from different sources, while vertical integration tackles the complexity of modeling nonlinear relationships across biologically interconnected but technologically disparate data modalities [3] [32]. Both paradigms are increasingly powered by sophisticated artificial intelligence (AI) and machine learning (ML) approaches that can handle the high dimensionality, heterogeneity, and scale of modern multi-omics datasets [30] [32]. As the field progresses toward clinical applications, understanding the methodological nuances, requirements, and limitations of these two fundamental integration strategies becomes crucial for researchers and clinicians aiming to implement multi-omics biomarkers in personalized cancer care [3].

Horizontal Data Fusion: Concepts and Applications

Core Principles and Experimental Design

Horizontal data fusion, also termed intra-omics integration, refers to the aggregation and combined analysis of the same type of omics data across multiple samples, experimental batches, or patient cohorts [3]. This integration strategy operates on the fundamental principle that combining similar data types from disparate sources enhances statistical power and improves the robustness of biological findings. The primary objective of horizontal integration is to identify consistent molecular patterns that persist across different studies, technologies, or populations, thereby increasing confidence in discovered biomarkers and enabling the detection of subtle but reproducible signals that might be overlooked in individual studies due to limited sample sizes or cohort-specific biases [3].

The experimental design for horizontal integration requires meticulous planning of metadata collection and standardization. Researchers must obtain the same omics data type (e.g., whole genome sequencing, RNA-seq, or LC-MS proteomics) from multiple sample collections, often generated at different institutions, using various technological platforms, or at different time points [3] [31]. A critical consideration in this design is the anticipation of technical variations, or batch effects, that inevitably arise when combining datasets from different sources. These technical artifacts can create spurious associations and obscure genuine biological signals if not properly accounted for in the analytical workflow [3]. Therefore, the experimental design should incorporate comprehensive sample tracking, detailed documentation of laboratory protocols, and standardized clinical annotation to facilitate effective batch effect correction during computational analysis.

Horizontal integration finds particular utility in biomarker discovery when individual studies lack sufficient statistical power to detect molecular signatures with small effect sizes or when validating candidate biomarkers across diverse populations to ensure generalizability [3]. For example, in oncology research, horizontal integration of genomic data from multiple cancer cohorts has been instrumental in distinguishing driver mutations from passenger alterations, while similar integration of transcriptomic datasets has revealed conserved gene expression programs across different tumor types [3]. The growing availability of large-scale multi-omics databases and biorepositories has significantly accelerated the application of horizontal integration approaches, though this has simultaneously intensified challenges related to data harmonization and computational scalability [3].

Methodological Workflow and Technical Considerations

The methodological workflow for horizontal data fusion follows a structured sequence of data retrieval, quality control, normalization, batch effect correction, and integrated analysis. The initial phase involves gathering datasets from multiple sources, which may include public repositories such as The Cancer Genome Atlas (TCGA), Gene Expression Omnibus (GEO), or institution-specific databases [3]. Each dataset must undergo rigorous quality assessment using modality-specific metrics—for genomic data, this includes evaluating sequencing depth and coverage uniformity; for transcriptomics, examining library complexity and ribosomal RNA contamination; and for proteomics, assessing peptide spectrum match quality and protein inference confidence [3].

Following quality control, the crucial step of data harmonization addresses technical variability through normalization procedures. These procedures adjust for systematic differences in data distribution across batches, platforms, or experimental conditions. For RNA-seq data, methods like DESeq2 or TPM normalization are commonly employed, while proteomics data often utilizes quantile normalization or variance-stabilizing transformation [3] [30]. The subsequent batch effect correction phase employs advanced computational algorithms such as ComBat, limma, or Harmony to remove unwanted technical variance while preserving biological signals [3] [30]. These methods model batch effects as covariates and statistically adjust the data to minimize their influence, though their application requires careful parameter tuning to avoid overcorrection that might eliminate genuine biological variation.