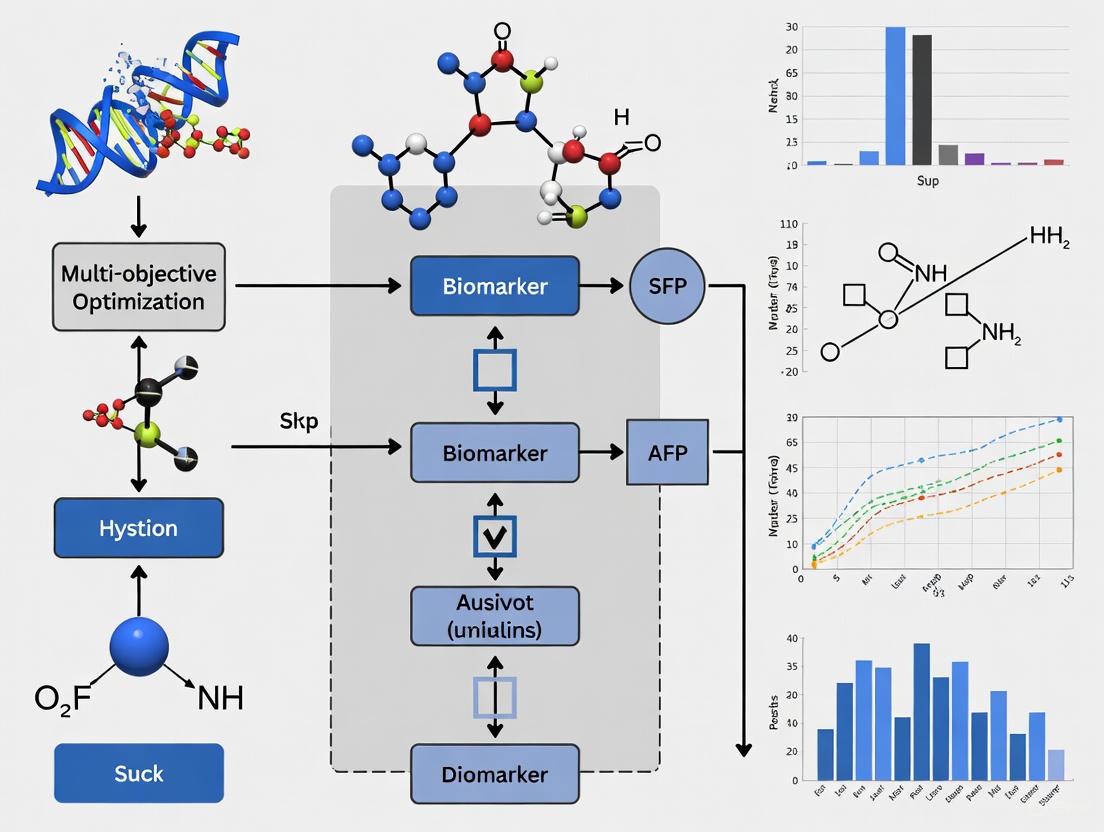

Multi-Objective Optimization for Biomarker Discovery: Integrating Evolutionary Algorithms and Multi-Omics in Precision Medicine

This article explores the transformative role of multi-objective optimization (MOO) in modern biomarker discovery.

Multi-Objective Optimization for Biomarker Discovery: Integrating Evolutionary Algorithms and Multi-Omics in Precision Medicine

Abstract

This article explores the transformative role of multi-objective optimization (MOO) in modern biomarker discovery. As biomarker research shifts from single-molecule to multi-omics and network-based approaches, MOO provides a powerful computational framework for balancing competing objectives like accuracy, cost, and biological relevance. We examine foundational concepts, key methodologies including evolutionary algorithms like NSGA-II and their applications in patient selection and drug molecule optimization, address troubleshooting and optimization challenges, and review validation strategies. This guide equips researchers and drug development professionals with the knowledge to leverage MOO for more efficient and clinically impactful biomarker identification in the era of personalized medicine.

From Single Molecules to Dynamical Networks: The Foundation of Modern Biomarker Discovery

Biomarker science is undergoing a fundamental transformation, moving beyond the traditional single-molecule approach to embrace the complexity of biological systems. This paradigm shift is driven by the recognition that valuable diagnostic and prognostic information resides not only in the differential expression of individual molecules but also in their associations, interactions, and dynamic fluctuations over time [1]. The limitations of single-target biomarkers have become increasingly apparent, particularly their inability to capture the network effects, unforeseen feedback loops, and dynamic adaptations that characterize complex diseases such as cancer and neurodegenerative disorders [2].

The evolving biomarker taxonomy now includes molecular biomarkers (based on differential expression/concentration of single molecules), network biomarkers (based on differential associations/correlations of molecule pairs), and dynamic network biomarkers (DNBs) (based on differential fluctuations/correlations of molecular groups) [1]. This progression represents a fundamental shift from static to dynamic, from reductionist to systems-level analysis. The DNBs are particularly revolutionary as they can identify pre-disease states or critical transition points, enabling predictive and preventative medicine rather than merely diagnosing established disease [1].

Table 1: Evolution of Biomarker Paradigms

| Biomarker Type | Fundamental Basis | Primary Application | Key Advantage |

|---|---|---|---|

| Molecular Biomarker | Differential expression/concentration of single molecules [1] | Disease state diagnosis and characterization [1] | Simple measurement and interpretation |

| Network Biomarker | Differential associations/correlations between molecule pairs [1] | Disease state diagnosis with improved stability [1] | Captures biological interactions and network stability |

| Dynamic Network Biomarker (DNB) | Differential fluctuations/correlations within molecular groups [1] | Pre-disease state recognition and prediction [1] | Identifies critical transitions before disease manifestation |

This shift is technologically enabled by breakthroughs in multi-omics technologies, artificial intelligence, and sophisticated computational modeling [3] [4]. The integration of genomics, transcriptomics, proteomics, metabolomics, and epigenomics provides the multidimensional data necessary to construct these network and dynamic biomarkers [3]. Furthermore, the emergence of single-cell and spatial multi-omics technologies offers unprecedented resolution for characterizing cellular heterogeneity and microenvironment interactions that were previously obscured in bulk analyses [3].

The Multi-omics Engine: Data Generation for Complex Biomarkers

Multi-omics Technologies and Data Integration

The foundation of network and dynamic biomarker discovery lies in multi-omics strategies that integrate diverse molecular data layers. Each omics layer provides unique insights into biological systems, and their integration reveals emergent properties not apparent from any single layer [3]. Genomics investigates DNA-level alterations including mutations, copy number variations, and single nucleotide polymorphisms through techniques like whole exome sequencing and whole genome sequencing [3]. Transcriptomics explores RNA expression patterns using microarray and RNA sequencing technologies, while proteomics investigates protein abundance, modifications, and interactions through mass spectrometry-based approaches [3]. Metabolomics examines cellular metabolites including lipids, carbohydrates, and nucleosides, and epigenomics focuses on DNA and histone modifications that regulate gene expression [3].

The integration of these diverse data types occurs through both horizontal (intra-omics) and vertical (inter-omics) integration strategies [3]. Horizontal integration combines data from the same omics type across different studies or platforms to increase statistical power, while vertical integration combines different omics types from the same samples to build comprehensive molecular profiles [3]. Successful multi-omics integration requires sophisticated computational approaches including machine learning and deep learning algorithms that can identify complex, non-linear relationships across omics layers [3].

Experimental Protocol: Multi-omics Data Generation for Network Biomarker Discovery

Purpose: To generate comprehensive multi-omics data from patient samples for the construction of network and dynamic biomarkers.

Materials:

- Fresh or properly preserved tissue/blood samples

- DNA/RNA extraction kits (e.g., Qiagen AllPrep)

- Next-generation sequencing platform (e.g., Illumina, Element Biosciences AVITI24)

- Mass spectrometry system for proteomics and metabolomics

- Single-cell RNA sequencing platform (e.g., 10x Genomics)

- Spatial transcriptomics platform (e.g., 10x Genomics Visium)

- High-performance computing infrastructure

Procedure:

- Sample Preparation: Extract DNA, RNA, proteins, and metabolites from matched samples using standardized protocols. Preserve spatial relationships for spatial omics applications.

- Library Preparation and Sequencing: Prepare sequencing libraries for whole genome, exome, and transcriptome analyses following manufacturer protocols. For single-cell analysis, prepare single-cell suspensions and load onto appropriate platforms.

- Proteomic Profiling: Process samples using liquid chromatography-mass spectrometry (LC-MS) for protein identification and quantification.

- Metabolomic Profiling: Extract metabolites and analyze using gas or liquid chromatography coupled to mass spectrometry.

- Spatial Analysis: For spatial transcriptomics, fix tissue sections on specialized slides and perform spatial barcoding and sequencing.

- Data Processing: Convert raw data to analyzable formats (FASTQ to BAM to count matrices) using standardized pipelines.

- Quality Control: Remove low-quality cells/sequences, normalize for technical variation, and batch correct across samples.

Validation: Technical replication across platforms, cross-validation with orthogonal methods (e.g., IHC for protein validation), and computational imputation to assess data completeness.

Network Biomarkers: From Molecules to Interactions

Theoretical Foundation and Computational Approaches

Network biomarkers represent a significant advancement over single-molecule approaches by capturing the interactions and associations between molecular components. Traditional molecular biomarkers focus on differential expression or concentration of individual molecules, potentially missing vital information about system-level biological processes [1]. In contrast, network biomarkers are based on differential associations or correlations between pairs of molecules, providing a more stable and reliable approach to disease state diagnosis [1].

The construction of network biomarkers begins with correlation networks, where nodes represent molecules and edges represent significant associations between them. Differential network analysis identifies changes in these association patterns between disease states and healthy controls. The statistical foundation typically involves calculating pairwise correlation coefficients (e.g., Pearson, Spearman) or mutual information metrics between all molecular pairs in different biological states. Network inference algorithms then reconstruct the underlying biological networks from the observed correlation structure.

Machine learning approaches are particularly valuable for network biomarker discovery. Algorithms can identify discriminative sub-networks or modules that differ significantly between disease states. These network features often provide more robust classification than individual molecules and offer biological interpretability by highlighting dysregulated pathways and processes.

Experimental Protocol: Constructing Correlation Networks for Biomarker Discovery

Purpose: To identify differential correlation networks that serve as stable biomarkers for disease states.

Materials:

- Normalized multi-omics data matrix

- High-performance computing environment

- R or Python with necessary packages (e.g., WGCNA, igraph, numpy)

- Visualization software (e.g., Cytoscape)

Procedure:

- Data Preprocessing: Normalize omics data to remove technical artifacts and transform if necessary. For RNA-seq data, use VST or TPM normalization.

- Correlation Matrix Calculation: Compute pairwise correlations between all molecular features (genes, proteins, metabolites) within each biological condition using appropriate correlation measures.

- Network Construction: Convert correlation matrices to adjacency matrices using soft thresholding to preserve scale-free topology properties.

- Module Detection: Identify modules of highly interconnected molecules using hierarchical clustering and dynamic tree cutting.

- Differential Network Analysis: Compare network topologies between conditions using appropriate statistical tests (e.g., Mantel test) or by comparing intramodular connectivity measures.

- Functional Annotation: Annotate significant modules with functional enrichment analysis (GO, KEGG) to interpret biological meaning.

- Validation: Validate network structures in independent cohorts using bootstrapping or cross-validation approaches.

Analysis: Identify hub molecules within significant modules as potential key regulators. Calculate module preservation statistics between datasets. Construct consensus networks across multiple studies to identify robust network biomarkers.

Dynamic Network Biomarkers: Capturing Critical Transitions

Theoretical Foundation of DNBs

Dynamic Network Biomarkers (DNBs) represent the cutting edge of biomarker science, focusing on detecting critical transitions in complex biological systems before those transitions become apparent at the phenotypic level [1]. While traditional biomarkers diagnose established disease states, and network biomarkers offer more stable diagnosis of those states, DNBs specifically aim to recognize pre-disease states—the critical tipping points where a system is poised to transition from health to disease [1].

The mathematical foundation of DNBs relies on detecting specific patterns of fluctuations in molecular groups as a system approaches a critical transition. As a biological system nears such a transition, certain telltale statistical patterns emerge in high-dimensional omics data: dramatically increased fluctuations in molecule concentrations within a specific group, strongly strengthened correlations among these molecules, and simultaneously weakened correlations between this group and the rest of the network [1]. This combination of patterns signals the loss of system resilience and impending state transition.

DNBs have particular relevance for rare diseases and conditions where early intervention is critical [1]. By identifying these pre-disease states, DNBs enable truly predictive and preventative medicine rather than reactive treatment after disease establishment. The ability to detect critical transitions makes DNBs invaluable for understanding the dynamic characteristics of disease initiation and progression [1].

Experimental Protocol: Identifying Dynamic Network Biomarkers for Pre-Disease States

Purpose: To detect Dynamic Network Biomarkers (DNBs) that signal critical transitions from health to disease.

Materials:

- Longitudinal multi-omics data collected at multiple timepoints

- High-performance computing cluster for intensive calculations

- Programming environment (R, Python, MATLAB) with necessary libraries

- Data visualization tools

Procedure:

- Longitudinal Sampling Design: Collect serial samples from subjects at high risk for disease transition with sufficiently frequent sampling to capture dynamics.

- Data Acquisition: Generate multi-omics data (transcriptomics, proteomics, metabolomics) for each timepoint using standardized protocols.

- Sliding Window Analysis: Divide time series into overlapping windows and analyze each window separately.

- DNB Score Calculation: For each window, identify candidate DNB modules by calculating:

- Fluctuation Increase: Standard deviation of molecules within candidate module

- Correlation Strengthening: Pearson correlation coefficient between molecules within module

- Correlation Weakening: Pearson correlation coefficient between module molecules and outside molecules

- Critical Transition Detection: Flag timepoints where DNB scores exceed empirically determined thresholds, indicating imminent state transition.

- Validation: Validate predicted transitions in independent longitudinal cohorts or experimental models.

Analysis: Apply dimensionality reduction techniques (t-SNE, UMAP) to visualize trajectory through state space. Use hidden Markov models or dynamical systems modeling to quantify transition probabilities. Perform sensitivity analysis to optimize DNB detection parameters.

Visualization and Interpretation of Complex Biomarkers

Advanced Visualization Tools and Techniques

The complexity of network and dynamic biomarkers necessitates sophisticated visualization approaches to make them interpretable to researchers and clinicians. Interactive visualization tools like SiViT (Signaling Visualization Toolkit) have been developed specifically to convert systems biology models into interactive simulations that can be used without specialist computational expertise [2]. These tools allow domain experts to introduce perturbations such as loss-of-function mutations or specific inhibitors and immediately visualize the effects on pathway dynamics, enabling more effective biomarker discovery and assessment [2].

Effective visualization of network biomarkers requires representing multiple dimensions of information simultaneously: network topology, quantitative changes in node properties, dynamic changes over time, and differences between experimental conditions [2]. SiViT addresses these challenges through intuitive color-coding schemes—using white to represent no difference between conditions, red for increased values in experimental versus control, and blue for decreased values, with intensity proportional to the magnitude of difference [2]. This approach allows researchers to quickly identify the most significantly altered network components.

For dynamic biomarkers, visualization must capture temporal patterns and state transitions. This often involves representing trajectories through multidimensional state space, with particular attention to regions corresponding to critical transitions. Animation techniques can effectively illustrate how network properties evolve over time, helping researchers identify patterns that might be missed in static representations.

Experimental Protocol: Visualizing Network Biomarkers with SiViT

Purpose: To utilize the Signaling Visualization Toolkit (SiViT) for interactive exploration of network biomarker dynamics and drug effects.

Materials:

- SiViT software platform (requires MATLAB 2011b or later)

- Systems Biology Markup Language (SBML) format model of signaling pathways

- Experimental data for model parameterization

- Computer workstation with sufficient graphics capabilities

Procedure:

- Model Import: Import SBML-format model of relevant signaling pathways into SiViT environment.

- Network Layout: Allow SiViT to automatically arrange the network using force-directed graph algorithms that optimize node placement and visibility.

- Baseline Simulation: Run baseline simulation to establish normal dynamics of the system. Observe concentration changes (represented by node sphere radius) and reaction velocities (represented by edge thickness) over time.

- Intervention Setup: Introduce specific interventions through the intervention panel—including drug additions, concentration changes, or genetic modifications—at specified timepoints.

- Comparative Analysis: Set up comparative simulations between control and experimental conditions. Designate one regimen as "Control" and the other as "Experiment."

- Dynamic Visualization: Animate the network response over time, observing color-coded differences (white=no difference, red=experiment>control, blue=experiment

- Biomarker Identification: Identify stable network patterns and dynamic signatures that robustly distinguish disease states or treatment responses.

Analysis: Use the comparative visualization to identify key nodes and edges that show consistent, significant differences between conditions. These network features represent candidate network biomarkers. Validate these candidates through iterative experimentation and model refinement.

The Scientist's Toolkit: Essential Research Reagents and Platforms

Table 2: Essential Research Reagents and Platforms for Network Biomarker Discovery

| Category | Specific Tools/Platforms | Function in Biomarker Discovery |

|---|---|---|

| Multi-omics Platforms | AVITI24 System (Element Biosciences) [5], 10x Genomics [5], LC-MS/MS [3] | Simultaneous measurement of DNA, RNA, protein, and metabolite profiles from single samples |

| Single-Cell & Spatial Technologies | 10x Genomics Single-Cell [3] [5], Spatial Transcriptomics [3] [4], Multiplex IHC [4] | Resolution of cellular heterogeneity and spatial relationships in tumor microenvironment |

| Computational & AI Tools | SiViT Visualization Toolkit [2], DriverDBv4 [3], AI/ML Algorithms [4] [6] | Network analysis, dynamic simulation, and pattern recognition in high-dimensional data |

| Advanced Model Systems | Organoids [4], Humanized Mouse Models [4] | Functional validation of biomarker candidates in context of human biology and immune responses |

| Data Resources | TCGA [3], CPTAC [3], HCCDBv2 [3] | Reference datasets for multi-omics integration and validation studies |

Multi-objective Optimization in Biomarker Discovery

Framework for Optimized Biomarker Selection

The identification of optimal biomarker panels represents a classic multi-objective optimization problem, requiring balance between competing criteria such as diagnostic accuracy, clinical feasibility, cost efficiency, and biological interpretability. Multi-objective optimization frameworks like the Non-dominated Sorting Genetic Algorithm III (NSGA-III) have been successfully applied to optimize patient selection criteria across multiple objectives including patient identification accuracy (F1 score), recruitment balance, and economic efficiency [7].

In the context of Alzheimer's disease trials, such optimization approaches have identified Pareto-optimal solutions spanning F1 scores from 0.979 to 0.995 while maintaining viable patient pool sizes from 108 to 327 participants [7]. This demonstrates the power of computational optimization to systematically evaluate trade-offs that are typically addressed through expert consensus alone. The optimization process typically involves defining decision variables (e.g., age boundaries, cognitive thresholds, biomarker criteria), objective functions (diagnostic accuracy, cost, feasibility), and constraints (biological plausibility, clinical relevance).

SHAP (SHapley Additive exPlanations) interpretability analysis reveals that biomarker requirements often function as the dominant cost driver in optimized solutions [7]. This insight helps researchers balance the informational value of complex biomarker panels against their economic impact on clinical development programs.

Experimental Protocol: Multi-objective Optimization for Biomarker Panel Selection

Purpose: To identify optimal biomarker panels that balance multiple competing objectives using multi-objective optimization algorithms.

Materials:

- Multi-omics dataset with clinical annotations

- High-performance computing environment

- Optimization software (e.g., Python with pymoo, R with mco)

- Validation cohort data

Procedure:

- Objective Definition: Define three primary optimization objectives:

- Diagnostic Accuracy: Maximize F1 score for disease classification

- Clinical Feasibility: Maximize potential patient recruitment pool

- Economic Efficiency: Minimize total biomarker assessment cost

- Decision Variables: Identify tunable parameters including:

- Threshold values for continuous biomarkers

- Inclusion/exclusion of specific molecular features

- Weighting factors for combined biomarker scores

- Algorithm Implementation: Implement NSGA-III or similar multi-objective optimization algorithm to identify Pareto-optimal solutions.

- Constraint Specification: Define biological and clinical constraints (e.g., minimum specificity, maximum cost).

- Solution Evaluation: Evaluate Pareto front solutions using Monte Carlo simulation with 10,000 iterations to assess robustness.

- Validation: Apply optimized biomarker panels to independent validation cohorts.

- Interpretability Analysis: Perform SHAP analysis to identify dominant features driving objective performance.

Analysis: Identify knee-point solutions on the Pareto front that offer balanced performance across objectives. Calculate cost-benefit ratios for incremental improvements in diagnostic accuracy. Assess clinical implementation feasibility of optimal solutions.

Table 3: Multi-objective Optimization Outcomes for Biomarker Selection

| Optimization Objective | Performance Range | Key Influencing Factors | Clinical Implications |

|---|---|---|---|

| Diagnostic Accuracy (F1 Score) | 0.979 - 0.995 [7] | Biomarker specificity, disease prevalence | Higher accuracy reduces misdiagnosis but may limit applicable population |

| Recruitment Feasibility | 108 - 327 patients [7] | Inclusion criteria stringency, biomarker availability | Broader criteria increase recruitment but may dilute treatment effects |

| Economic Efficiency | Mean savings $1,048 per patient (95% CI: -$1,251 to $3,492) [7] | Biomarker test costs, screening efficiency | Cost savings enable larger trials or resource reallocation to other areas |

Future Perspectives and Concluding Remarks

The paradigm shift from single molecular biomarkers to network and dynamical biomarkers represents a fundamental transformation in how we understand, diagnose, and treat complex diseases. This evolution is being driven by technological advances in multi-omics profiling, computational power, and analytical approaches that can capture biological complexity rather than reducing it to isolated components [1] [3]. The future of biomarker science will increasingly focus on dynamic processes, network interactions, and system-level properties rather than static measurements of individual molecules.

Several emerging trends are poised to further accelerate this paradigm shift. Artificial intelligence and machine learning are becoming indispensable for identifying subtle patterns in high-dimensional multi-omics and imaging datasets that conventional methods miss [4] [6]. The integration of digital biomarkers from wearables and connected devices provides continuous, real-world data streams that capture disease dynamics in ways impossible through periodic clinic visits [8]. Spatial biology technologies are revealing how cellular organization and microenvironment interactions influence disease progression and treatment response [4] [5].

The clinical implementation of network and dynamic biomarkers will require addressing several significant challenges. Regulatory science must evolve to establish validation frameworks for these complex biomarker types [5]. Standardization of analytical protocols and computational pipelines will be essential for reproducibility across institutions [6]. Perhaps most importantly, the successful translation of these advanced biomarkers will depend on collaborative efforts across disciplines—integrating expertise from biology, clinical medicine, computational science, and engineering to build a new generation of diagnostic and prognostic tools that truly capture the complexity of human disease.

As we look toward 2025 and beyond, the convergence of multi-omics technologies, advanced computational analytics, and patient-centered approaches will continue to drive the evolution of biomarker science [6]. This progression from single molecules to networks to dynamic systems promises to transform precision medicine from its current focus on static stratification to truly predictive, preventive, and personalized healthcare.

Biological systems are continually shaped by evolutionary pressures to perform multiple, often competing, tasks. Multi-objective optimization provides a mathematical framework for understanding how these systems resolve trade-offs when no single solution can simultaneously optimize all objectives [9]. In the context of biomarker identification, researchers face similar trade-offs, such as balancing a biomarker's sensitivity with its specificity, or its predictive power with the cost of its assay [7] [10]. The solution to such problems is not a single "best" answer, but a set of optimal compromises, known as the Pareto front [11]. Understanding these core principles is essential for leveraging computational optimization in biological research and drug development.

Core Theoretical Principles

Definition of Multi-Objective Optimization

Multi-objective optimization involves optimizing a problem with multiple, conflicting objective functions simultaneously. In a biological context, a phenotype (v) can be represented as a vector of trait values in a morphospace. Its performance at (k) different tasks is given by functions (p1(v), p2(v), ..., pk(v)) [9]. The goal is to find the set of phenotypes that best balance these competing performances.

Formally, a multi-objective optimization problem can be expressed as finding a vector of decision variables that satisfies constraints and optimizes a vector function whose elements represent the objective functions [11]. The problem is defined as: [ \min{x \in X} (f1(x), f2(x), \ldots, fk(x)) ] where the integer ( k \geq 2 ) is the number of objectives and ( X ) is the feasible set of decision variables [11].

The Pareto Front and Pareto Optimality

A solution is considered Pareto optimal or non-dominated if none of the objective functions can be improved in value without degrading some of the other objective values [11].

- Performance Space and Domination: The concept is usually defined in performance space. If a phenotype (v) has higher performance at all tasks than another phenotype (v'), then (v') can be eliminated. The set of phenotypes that remain after all such eliminations constitute the Pareto front [9].

- The Front as a Set of Compromises: Moving along the Pareto front entails improving performance in one objective at the expense of others. This front represents the set of best possible compromises [9]. In biological terms, natural selection tends to select phenotypes on or near the Pareto front, as these represent the most fit compromises for a given ecological niche [9].

The ideal objective vector and the nadir objective vector bound the Pareto front. The ideal vector contains the best possible values for each objective independently, while the nadir vector contains the worst values achieved by any Pareto optimal solution for each objective [11].

The Geometry of the Pareto Front in Biological Phenotype Space

The shape of the Pareto front in trait space (morphospace) provides deep insight into the evolutionary trade-offs at play.

- Archetypes and Polytopes: Under the assumption that performance for each task decays monotonically with distance from a single optimal phenotype (the archetype), the Pareto front is the convex hull of the archetypes [9]. This leads to low-dimensional shapes in the morphospace:

- Two tasks: A line segment connecting two archetypes.

- Three tasks: A triangle.

- Four tasks: A tetrahedron [9].

- Generalized Shapes: When the assumptions of monotonic decay are relaxed, the edges of these polytopes can become curved. For instance, with two tasks and different inner-product norms for performance decay, the Pareto front can take the shape of a hyperbola [9]. Despite this, the front generally remains a low-dimensional structure, explaining why complex biological data often collapses onto simple, interpretable shapes.

Table 1: Key Properties of Multi-Objective Optimization in a Biological Context

| Property | Mathematical/Biological Description | Implication for Biomarker Research |

|---|---|---|

| Pareto Optimality | A solution where no objective can be improved without worsening another [11]. | Identifies biomarker panels that offer the best compromise between competing metrics (e.g., cost vs. accuracy). |

| Archetype | The phenotype that is optimal for a single, specific task [9]. | Represents an ideal, but likely impractical, biomarker (e.g., 100% sensitive but prohibitively expensive). |

| Performance Space | The space defined by the values of all objective functions [9] [11]. | Allows for visualization of the trade-offs between different biomarker performance metrics. |

| Trade-off | The compromise between tasks; improving one necessitates declining another [9]. | The fundamental challenge in designing a biomarker panel, e.g., increasing sensitivity may reduce specificity. |

| Nadir Point | The vector of the worst objective values found on the Pareto front [11]. | Defines the lower bounds of performance for any optimal biomarker solution. |

Application to Biomarker Identification and Validation

The framework of multi-objective optimization is directly applicable to the challenges of identifying and validating disease biomarkers (DBs), particularly with the integration of high-dimensional multiomics data [12].

Multi-Objective Problems in Biomarker Discovery

The journey from biomarker discovery to clinical use is long and arduous, fraught with inherent trade-offs that are naturally modeled as multi-objective optimization problems [10] [12]. Key conflicts include:

- Accuracy vs. Cost: Maximizing identification accuracy (e.g., F1 score) while minimizing economic cost [7].

- Performance vs. Feasibility: Balancing statistical power with recruitment feasibility and safety in clinical trial design [7].

- Sensitivity vs. Specificity: A classic diagnostic trade-off that can be explored on a Pareto front.

- Interpretability vs. Complexity: In models using multiomics data (genomics, transcriptomics, proteomics), there is a trade-off between using a complex panel of many biomarkers for maximum predictive power and a simpler, more interpretable panel for clinical utility [10] [12].

Algorithmic Approaches for Biomarker Optimization

Evolutionary Computation (EC) methods are particularly well-suited for tackling the non-convex, high-dimensional, multi-objective discrete optimization problems presented by biomarker identification [12]. These include:

- Evolutionary Algorithms (EAs) and Swarm Intelligence: These are used to identify individual critical biomarker molecules from multiomics data by efficiently searching a vast solution space [12].

- Non-dominated Sorting Genetic Algorithm (NSGA) variants: For example, NSGA-III has been successfully implemented to optimize patient selection criteria in Alzheimer's disease clinical trials across objectives like F1 score, recruitment balance, and economic efficiency [7].

Experimental Protocols and Data Analysis

Protocol: A Multi-Objective Workflow for Biomarker Panel Identification from Transcriptomic Data

This protocol outlines a step-by-step approach for identifying a biomarker panel optimized for multiple objectives, adapted from methodologies used in immunotherapy and Alzheimer's disease research [7] [13].

Objective: To identify a gene expression signature that optimally balances sensitivity, specificity, and economic cost for predicting response to immunotherapy in human cancers.

Materials and Reagents:

- Input Data: Public transcriptomic datasets (e.g., from NCBI GEO, EBI ArrayExpress) containing RNA-seq data and annotated response to immune checkpoint inhibitors [12] [13].

- Software Tools: Computational environment for executing evolutionary algorithms (e.g., Julia, Python with DEAP or Pymoo libraries) [7].

- Validation Cohorts: Independent in-house or public cohorts for validating the identified biomarker panel [13].

Procedure:

Candidate Biomarker Selection:

- Obtain normalized gene expression matrices and clinical response data from chosen transcriptomic datasets [13].

- Perform initial differential expression analysis to filter for genes significantly associated with response (e.g., p < 0.05, after multiple comparison correction) to reduce the search space dimensionality [10].

Define Optimization Objectives:

- Formulate the problem as a multi-objective optimization with at least three target objectives:

- Maximize Sensitivity: True Positive Rate (TPR).

- Maximize Specificity: True Negative Rate (TNR).

- Minimize Cost: A proxy cost function based on the number of genes in the panel (e.g., Cost = number of genes * fixedunitcost) [7].

- Formulate the problem as a multi-objective optimization with at least three target objectives:

Configure and Execute Multi-Objective Algorithm:

- Implement an algorithm like NSGA-III. The solution representation is a binary vector where each bit indicates the presence or absence of a candidate gene.

- The fitness function evaluates each potential gene panel by training a simple classifier (e.g., logistic regression) and calculating the three objective values on a hold-out validation set [7] [12].

- Run the algorithm until convergence, typically determined by a stable Pareto front over successive generations.

Analyze the Pareto Front and Select Panel:

- The output is a set of non-dominated gene panels lying on the Pareto front.

- Analyze the trade-offs: A panel with 5 genes may have (Sens: 0.85, Spec: 0.80, Cost: 5), while a 10-gene panel may have (Sens: 0.90, Spec: 0.85, Cost: 10).

- The final selection is made by the researcher based on the clinical context and resource constraints [7] [11].

Validation:

Table 2: Example Results from a Multi-Objective Optimization of a Biomarker Panel for Alzheimer's Disease Trial Recruitment [7]

| Pareto Solution | Identification Accuracy (F1 Score) | Estimated Eligible Patient Pool | Mean Cost Saving per Patient (USD) |

|---|---|---|---|

| Solution A | 0.979 | 327 | $1,048 (95% CI: -$1,251 to $3,492) |

| Solution B | 0.987 | 254 | Data not specified |

| Solution C | 0.995 | 108 | Data not specified |

| Standard Criteria | (Baseline) | 101 | (Baseline) |

Table 3: Essential Research Reagent Solutions for Multi-Omics Biomarker Identification

| Reagent / Resource | Function / Description | Application in Protocol |

|---|---|---|

| National Alzheimer's Coordinating Center (NACC) Data | A database comprising participant data with comprehensive clinical assessments and biomarker measurements [7]. | Provides the real-world dataset for optimizing patient selection criteria, as used in [7]. |

| NCBI Gene Expression Omnibus (GEO) | A public repository for high-throughput gene expression and other functional genomics datasets [12]. | Primary source for transcriptomic data used in biomarker discovery [13]. |

| Single-cell RNA Sequencing (scRNA-Seq) Data | Enables analysis of gene expression at the level of individual cells, revealing cellular heterogeneity [12]. | Used to discover cell-type-specific biomarker signatures, e.g., in the tumor microenvironment [12]. |

| JuliQAOA | A Julia-based simulator for the Quantum Approximate Optimization Algorithm [14]. | Used for optimizing QAOA parameters; an example of a tool for advanced optimization algorithms. |

| Gurobi Optimizer | A state-of-the-art mathematical programming solver for mixed-integer programming problems [14]. | Can be used to solve ε-constraint problems in classical multi-objective optimization. |

Multi-objective optimization and the concept of the Pareto front provide a powerful, biologically-grounded framework for addressing complex problems in biomarker research. By formally acknowledging and quantifying the inherent trade-offs between objectives like accuracy, cost, and feasibility, researchers can move beyond suboptimal single-objective designs. The convergence of computational approaches, such as evolutionary algorithms, with rich multiomics data holds the promise of identifying biomarker panels and trial designs that are not only statistically sound but also clinically practical and economically viable, thereby accelerating the path to precision medicine.

The complexity of biological systems and disease pathologies necessitates a holistic approach to biomarker discovery. Multi-omics strategies, which integrate data from genomics, transcriptomics, proteomics, and metabolomics, have revolutionized our capacity to identify robust, clinically actionable biomarkers. This integrated approach provides a comprehensive understanding of the intricate molecular networks governing cellular life, enabling researchers to capture the flow of biological information from genetic blueprint to functional phenotype [3]. The transition from single-omics analyses to multi-omics integration represents a paradigm shift in biomarker research, offering unprecedented opportunities to elucidate disease mechanisms, discover novel biomarkers, and develop precision therapeutic strategies [3] [15].

Multi-omics integration is particularly crucial for addressing the challenges of complex diseases like cancer, where molecular heterogeneity and adaptive resistance mechanisms often limit the utility of single-analyte biomarkers. By simultaneously analyzing multiple molecular layers, researchers can identify composite biomarker signatures that more accurately reflect disease status, predict therapeutic responses, and capture tumor heterogeneity [3] [6]. The emergence of high-throughput technologies, including next-generation sequencing, advanced mass spectrometry, and microarray platforms, has enabled the generation of massive multi-omics datasets from large patient cohorts, providing the foundational data for integrative biomarker discovery [3] [16].

Table 1: Omics Technologies and Their Contributions to Biomarker Discovery

| Omics Layer | Key Technologies | Biomarker Examples | Clinical Utility |

|---|---|---|---|

| Genomics | Whole exome sequencing (WES), Whole genome sequencing (WGS) | Tumor Mutational Burden (TMB), IDH1/2 mutations | Predictive biomarker for immunotherapy response (pembrolizumab); Diagnostic biomarker in gliomas |

| Transcriptomics | RNA sequencing, Microarrays | Oncotype DX (21-gene), MammaPrint (70-gene) | Prognostic biomarkers for adjuvant chemotherapy decisions in breast cancer |

| Proteomics | Mass spectrometry, Reverse-phase protein arrays | Phosphorylation patterns, Protein abundance signatures | Functional biomarkers revealing druggable vulnerabilities missed by genomics |

| Metabolomics | LC-MS, GC-MS, NMR | 2-hydroxyglutarate (2-HG), 10-metabolite plasma signature | Diagnostic biomarker for gliomas; Superior diagnostic accuracy in gastric cancer |

| Epigenomics | Whole genome bisulfite sequencing, ChIP-seq | MGMT promoter methylation | Predictive biomarker for temozolomide benefit in glioblastoma |

Multi-Omics Integration Strategies and Methodologies

Conceptual Frameworks for Data Integration

The integration of multi-omics data can be approached through several computational frameworks, each with distinct advantages for biomarker discovery. Horizontal integration combines the same type of omics data from different studies or cohorts to increase statistical power, while vertical integration simultaneously analyzes different omics layers from the same biological samples to reconstruct complete molecular pathways [3]. The three primary methodological approaches include combined omics integration, correlation-based strategies, and machine learning integrative approaches [17]. Combined omics integration explains phenomena within each data type independently before synthesis, while correlation-based methods apply statistical correlations between different omics datasets to uncover relationships. Machine learning strategies utilize one or more omics types to comprehensively understand biological responses at classification and regression levels [17].

Similarity Network Fusion (SNF) represents a powerful framework for integrating diverse omics data types by constructing and fusing patient similarity networks. Each omics data type is used to create a separate network where patients are nodes and similarities between their molecular profiles define edges. These individual networks are then iteratively fused into a single network that captures shared information across all omics layers [18]. This approach effectively handles data heterogeneity and high dimensionality while identifying patient subgroups with distinct molecular characteristics - a crucial step for stratified biomarker discovery [18].

Correlation-Based Integration Methods

Correlation-based strategies apply statistical correlations between different omics datasets to identify coordinated changes across molecular layers. Gene co-expression analysis integrated with metabolomics data identifies modules of co-expressed genes and links them to metabolite abundance patterns, revealing metabolic pathways co-regulated with specific transcriptional programs [17]. Weighted Correlation Network Analysis (WGCNA) is particularly valuable for identifying clusters of highly correlated genes and metabolites that may represent functional biomarker modules [17].

Gene-metabolite interaction networks provide visual representations of relationships between transcriptional and metabolic changes. These networks are constructed by calculating correlation coefficients (e.g., Pearson correlation coefficient) between gene expression and metabolite abundance data, with nodes representing genes and metabolites and edges representing significant correlations [17]. Visualization tools like Cytoscape enable researchers to explore these networks and identify hub nodes that may serve as master regulators or key biomarkers in pathological processes [17] [18].

Table 2: Computational Tools and Platforms for Multi-Omics Integration

| Tool/Platform | Integration Approach | Compatible Data Types | Key Features |

|---|---|---|---|

| Similarity Network Fusion (SNF) | Network-based fusion | mRNA-seq, miRNA-seq, methylation, proteomics | Handles data heterogeneity; Identifies patient subgroups |

| Weighted Correlation Network Analysis (WGCNA) | Correlation-based | Transcriptomics, metabolomics | Identifies co-expression modules; Links genes to metabolites |

| Cytoscape | Network visualization and analysis | All omics types | Visualizes interaction networks; Plugin architecture for extended functionality |

| Metware Cloud Platform | Pathway-based integration | Transcriptomics, metabolomics | KEGG pathway analysis; Joint enrichment visualization |

| Ranked SNF (rSNF) | Feature ranking from fused networks | mRNA-seq, miRNA-seq, methylation | Ranks features by importance in fused similarity matrix |

Experimental Protocols for Multi-Omics Biomarker Discovery

Protocol 1: Network-Based Biomarker Identification Using Similarity Network Fusion

Application: Identification of diagnostic and prognostic biomarkers in neuroblastoma through integration of mRNA-seq, miRNA-seq, and methylation data [18].

Experimental Workflow:

Data Acquisition and Preprocessing

- Obtain mRNA-seq, miRNA-seq, and methylation array data from 99 patients

- Normalize data using appropriate methods (e.g., TPM for RNA-seq, beta values for methylation)

- Perform quality control to remove technical artifacts and low-quality samples

Similarity Matrix Construction

- For each data type, construct a patient similarity matrix using Euclidean distance or other appropriate metrics

- Convert distance matrices to similarity matrices using heat kernel weighting

Network Fusion and Parameter Tuning

- Apply SNF to integrate the three similarity matrices into a single fused network

- Optimize hyperparameters through iterative testing (T=15, K=20, α=0.5 established for neuroblastoma data)

- Validate convergence by monitoring relative changes between fused graphs in consecutive iterations

Feature Selection Using Ranked SNF

- Apply ranked SNF to assign importance scores to all features (genes, miRNAs, CpG sites)

- Select top 10% of high-ranking features from each data type for further analysis

- Identify essential genes by intersecting high-rank genes from methylation and mRNA-seq data

Regulatory Network Construction

- Retrieve TF-miRNA interactions from TransmiR 2.0 database

- Obtain miRNA-target interactions from TarBase v8 database

- Integrate interactions to construct a comprehensive regulatory network using Cytoscape

Hub Node Identification

- Apply Maximal Clique Centrality algorithm to identify top hub nodes

- Validate candidate biomarkers through survival analysis using Kaplan-Meier curves

- Confirm findings in independent validation cohort (e.g., GSE62564 for neuroblastoma)

Protocol 2: Pathway-Centric Integration of Transcriptomics and Metabolomics

Application: Uncovering mechanistic insights in septic myocardial dysfunction through integrated analysis of transcriptomic, proteomic, and metabolomic data [19].

Experimental Workflow:

Experimental Design and Sample Preparation

- Establish disease model (LPS-treated H9C2 cardiomyocytes) and intervention (rPvt1 knockdown)

- Collect samples for multi-omics analysis under standardized conditions

- Include appropriate controls (negative control shRNA) and biological replicates

Multi-Omics Data Generation

- Transcriptomics: RNA extraction, library preparation (Hieff NGS mRNA Library Prep Kit), sequencing (Illumina HiSeq)

- Proteomics: Protein extraction, tryptic digestion, LC-MS/MS analysis (timsTOF Pro mass spectrometer)

- Metabolomics: Metabolite extraction, LC-MS analysis in multiple ionization modes

Differential Analysis

- Identify differentially expressed genes (DEGs) using DESeq2 (q<0.05, log2FC>1)

- Detect differentially abundant proteins using MaxQuant (FDR<0.05)

- Determine differentially expressed metabolites (DEMs) using statistical testing (p<0.05, VIP>1)

Pathway-Based Integration

- Annotate all differentially expressed/abundant molecules to KEGG pathways

- Create pathway correlation diagrams integrating genes, proteins, and metabolites

- Generate joint enrichment plots visualizing significantly enriched pathways across omics layers

- Construct KGML interaction networks from KEGG database to explore gene-metabolite interactions

Functional Interpretation

- Perform Gene Ontology enrichment analysis on integrated molecule sets

- Identify key biological processes and pathways disrupted in the disease model

- Formulate testable hypotheses about regulatory mechanisms for experimental validation

Table 3: Essential Research Reagents and Computational Resources for Multi-Omics Biomarker Discovery

| Resource Category | Specific Tools/Reagents | Application in Multi-Omics | Key Features |

|---|---|---|---|

| Sequencing Reagents | Hieff NGS mRNA Library Prep Kit | Transcriptomics library preparation | Poly-A selection for mRNA enrichment; Compatible with Illumina platforms |

| Mass Spectrometry Resources | timsTOF Pro Mass Spectrometer | Proteomics and metabolomics analysis | Parallel Accumulation-Serial Fragmentation (PASEF); High sensitivity and throughput |

| Cell Culture Models | H9C2 Cardiomyocytes | Disease modeling for multi-omics studies | Rat myocardial cell line; Responsive to LPS-induced injury |

| Gene Manipulation Tools | Lentiviral shRNA vectors | Functional validation of candidate biomarkers | Stable gene knockdown; Fluorescent markers for transduction efficiency |

| Public Data Repositories | TCGA, CPTAC, ICGC, CCLE | Source of validation cohorts and reference data | Curated multi-omics data with clinical annotations; Large sample sizes |

| Pathway Databases | KEGG, GO, Reactome | Functional annotation and pathway analysis | Curated biological pathways; Multi-omics compatibility |

| Interaction Databases | TransmiR, TarBase | Regulatory network construction | Experimentally validated TF-miRNA and miRNA-target interactions |

| Bioinformatics Platforms | Cytoscape, Metware Cloud | Data integration and visualization | User-friendly interfaces; Extensive plugin ecosystems |

Multi-omics integration represents the cornerstone of next-generation biomarker discovery, enabling a systems-level understanding of disease mechanisms that cannot be captured through single-omics approaches. The protocols and methodologies outlined herein provide a framework for researchers to design and implement robust multi-omics studies that yield clinically actionable biomarkers. As the field advances, several emerging trends are poised to further transform biomarker discovery, including the increased incorporation of artificial intelligence and machine learning for pattern recognition in high-dimensional data [6], the maturation of single-cell and spatial multi-omics technologies to resolve cellular heterogeneity [3], and the development of more sophisticated computational methods for data integration and interpretation.

The successful translation of multi-omics biomarkers to clinical practice will require close attention to analytical validation standards, as emphasized in the 2025 FDA Biomarker Guidance, which maintains that while biomarker assays should address the same validation parameters as drug assays (accuracy, precision, sensitivity, etc.), the technical approaches must be adapted to demonstrate suitability for measuring endogenous analytes [20]. Furthermore, the adoption of FAIR (Findable, Accessible, Interoperable, Reusable) data principles and open-source initiatives like the Digital Biomarker Discovery Pipeline will be crucial for enhancing reproducibility and accelerating the validation of candidate biomarkers across diverse populations [21]. Through the continued refinement and application of integrated multi-omics strategies, researchers are well-positioned to deliver on the promise of precision medicine by developing biomarkers that enable earlier disease detection, more accurate prognosis, and personalized therapeutic interventions.

The progression of complex diseases, including many cancers and chronic conditions, is often characterized not by a smooth decline, but by sudden, catastrophic shifts from a relatively healthy state to a clear disease state. These abrupt deteriorations occur at a critical transition point, or "tipping point" [22]. Identifying the pre-disease state immediately before this transition is crucial for early intervention and preventive medicine, as this stage is often reversible with appropriate treatment, whereas the disease state is typically stable and irreversible [23] [24]. Dynamical Network Biomarkers (DNBs) represent a powerful theoretical and computational framework designed to detect these early-warning signals by analyzing high-dimensional omics data [25] [26].

Unlike traditional biomarkers, which are static molecules used to distinguish a disease state from a normal state, DNBs are dynamic, groups of molecules that form a strongly correlated network whose statistical properties change dramatically as the system approaches the critical transition [22] [24]. The DNB theory leverages the concept of "critical slowing down" from dynamical systems theory, which occurs near a bifurcation point where the system becomes increasingly slow to recover from small perturbations [22]. This framework is particularly suited for integration with multi-objective optimization in biomarker discovery, as it provides a quantifiable objective—the detection of a network's critical transition—that can be balanced against other goals such as clinical feasibility, cost, and prognostic power [7].

Theoretical Foundations and Quantitative Criteria

The DNB methodology conceptualizes disease progression as a nonlinear dynamical system traversing three distinct stages: the normal state, the pre-disease state (critical state), and the disease state [23] [27]. The pre-disease state is the limit of the normal state and is characterized by a significant loss of resilience, making the system highly susceptible to a phase transition into the disease state. The core innovation of the DNB approach is its model-free identification of a dominant group or module of molecules that exhibits specific statistical behaviors as the system enters this pre-disease state [22].

A group of molecules is identified as a DNB when it simultaneously satisfies the following three quantitative criteria in the pre-disease state [22] [27] [24]:

- Criterion I: The average Pearson's correlation coefficients (PCCs) between any pair of molecules within the DNB group drastically increases in absolute value.

- Criterion II: The average PCCs between molecules inside the DNB group and those outside it drastically decreases in absolute value.

- Criterion III: The average standard deviations (SDs) of the expression levels of molecules within the DNB group drastically increase.

These criteria can be combined into a single composite index ( I ) for robust pre-disease state detection [22]: [ I = \frac{\text{SD}d \times \text{PCC}d}{\text{PCC}o} ] where ( \text{SD}d ) is the average standard deviation of the dominant group, ( \text{PCC}d ) is the average PCC within the dominant group, and ( \text{PCC}o ) is the average PCC between the dominant group and others. This composite index is expected to spike sharply as the system approaches the critical transition, serving as a clear early-warning signal.

The logical relationship between the system state and the emergence of a DNB is summarized in the diagram below.

Diagram 1: The relationship between disease progression stages and Dynamical Network Biomarker (DNB) emergence. The pre-disease state triggers the emergence of a DNB module, which serves as an early-warning signal before the irreversible transition to the disease state.

Protocols for DNB Identification

This section provides detailed experimental and computational workflows for applying the DNB method, covering both traditional bulk analysis and advanced single-sample approaches.

Standard DNB Protocol with Time-Series Bulk Data

The following table outlines the key reagents and data sources required for a standard DNB analysis.

Table 1: Key Research Reagent Solutions for DNB Analysis

| Item | Function in DNB Analysis | Specific Examples |

|---|---|---|

| Transcriptomics Data | Provides genome-wide RNA expression levels for calculating correlations and standard deviations. | Bulk RNA-Seq [23], Microarray data [22] [24], single-cell RNA-Seq [23] |

| Protein-Protein Interaction (PPI) Network | Serves as a prior-knowledge template to constrain or guide the search for correlated modules. | STRING database (confidence score > 0.80) [27] |

| Public Multi-omics Databases | Source of validated omics data for analysis and as reference populations for single-sample methods. | The Cancer Genome Atlas (TCGA) [3] [27], Gene Expression Omnibus (GEO) [27], DriverDBv4 [3] |

| Computational Tools | Platforms and algorithms for data processing, network construction, and statistical calculation. | Horizontal & vertical multi-omics integration tools [3], Machine Learning/Deep Learning platforms [3] |

The standard protocol for identifying a DNB from time-series bulk omics data (e.g., gene expression from microarrays or RNA-Seq) involves the following steps [22] [24]:

- Data Collection & Preprocessing: Collect high-throughput omics data (e.g., transcriptomics, proteomics) from multiple samples across a time course that spans the normal state to the disease state. Normalize and log-transform the data as appropriate.

- Candidate Module Selection: For each time point, use a clustering method (e.g., hierarchical clustering based on PCC distance) to group molecules into potential modules. An empirical randomization process can be used to set a statistically significant PCC threshold for cluster formation [24].

- DNB Scoring: For each candidate module at each time point, calculate the three DNB criteria:

- Calculate ( \text{PCC}d ), the average PCC in absolute value between all pairs within the module.

- Calculate ( \text{PCC}o ), the average PCC in absolute value between molecules in the module and all molecules outside it.

- Calculate ( \text{SD}_d ), the average standard deviation of the molecules within the module.

- Composite Index Calculation & Critical Point Identification: Compute the composite index ( I ) for each candidate module at each time point. The time point at which a specific module's ( I ) value shows a significant peak is identified as the pre-disease state, and that module is designated the DNB.

Advanced Protocol: Single-Sample DNB and Network Entropy Methods

A significant limitation of the standard DNB method is its requirement for multiple samples at each time point. To overcome this for clinical application, single-sample methods have been developed. The Single-Sample Network (SSN) approach constructs a network for an individual sample by comparing it to a large reference group (e.g., healthy controls) [23]. The difference network for the individual relative to the reference group is then analyzed for DNB properties.

Another powerful model-free method is the Local Network Entropy (LNE) algorithm, which can identify the critical state from a single sample [27]. The workflow is as follows:

- Form a Global Network: Map all genes to a background network, typically a high-confidence PPI network from databases like STRING.

- Map Expression Data: For a single test sample and a set of reference samples (e.g., from healthy individuals), map the gene expression data to the global network.

- Extract Local Networks: For each gene ( g^k ), extract its local network, which includes the gene and its first-order neighbors ( {g^k1, ..., g^kM} ) in the global PPI network.

- Calculate Local Entropy: For each local network, calculate the network entropy ( E^n(k,t) ) for the test sample against the reference set using the formula: [ E^n(k,t) = - \frac{1}{M}\sum{i=1}^{M} pi^n(t)\log pi^n(t) ] where ( pi^n(t) ) is the absolute value of the PCC between neighbor ( g^k_i ) and the center gene ( g^k ), calculated based on the reference samples and the single test sample [27].

- Identify Critical Transition: A significant rise in the LNE score for a sample indicates that it is in the critical pre-disease state.

Diagram 2: Workflow for the Local Network Entropy (LNE) method, a single-sample approach for identifying critical transitions.

Experimental Validation and Application Notes

The DNB methodology has been successfully validated across numerous disease models, providing concrete case studies for researchers.

Table 2: Experimental Validation of DNB in Disease Models

| Disease / Condition | Key DNB Findings | Validation & Functional Significance |

|---|---|---|

| Liver Cancer & Lymphoma | Successfully identified pre-disease state and specific DNBs from microarray data [22]. | Pathway enrichment and bootstrap analysis confirmed relevance. The composite index ( I ) spiked prior to phenotypic deterioration [22]. |

| Type 1 Diabetes (NOD Mouse) | Identified two separate DNBs signaling peri-insulitis and hyperglycemia onset from pancreatic lymph node expression data [24]. | DNBs were enriched in pathways causally related to T1D (e.g., T cell receptor, NF-kappa B, and Insulin signaling pathways), consistent with independent experimental literature [24]. |

| Ten Cancers (e.g., KIRC, LUAD) | LNE method detected pre-disease states (e.g., KIRC in Stage III; LIHC in Stage II) prior to lymph node metastasis [27]. | Identified "dark genes" with non-differential expression but differential LNE values. Defined optimistic (O-LNE) and pessimistic (P-LNE) prognostic biomarkers [27]. |

When applying DNB protocols, consider the following notes:

- Data Requirements: The standard DNB method requires longitudinal, high-dimensional data (e.g., transcriptomics, proteomics) with multiple samples per time point. For single-sample methods, a well-defined reference population is critical.

- Multi-Omics Integration: DNB discovery can be enhanced by multi-omics strategies that integrate genomics, transcriptomics, proteomics, and metabolomics data, providing a more holistic view of the system's dynamics [3].

- Role of AI/ML: The integration of machine learning and deep learning is becoming increasingly important for automating the analysis of complex DNB-associated datasets and for building predictive models of disease progression [3] [6].

- Biological Interpretation: A identified DNB is not merely a statistical signal; it often represents the leading biomolecular network that drives the system toward the disease state. Functional analysis (e.g., pathway enrichment) is essential for validating its biological relevance and identifying potential therapeutic targets [24].

Integration with Multi-Objective Optimization in Biomarker Research

The pursuit of biomarkers, including DNBs, for clinical application inherently involves balancing multiple, often competing, objectives. Framing DNB discovery within a multi-objective optimization paradigm can significantly enhance its translational potential.

A primary challenge is balancing the sensitivity of detecting the pre-disease state with the specificity required to avoid false alarms. A highly sensitive DNB may have a low F1 score if it misclassifies normal-state samples. Furthermore, clinical implementation requires optimizing for recruitment feasibility, economic efficiency, and patient safety [7]. For instance, in complex diseases like Alzheimer's, multi-objective optimization algorithms (e.g., NSGA-III) can be used to fine-tune eligibility criteria, balancing statistical power (F1 score) with the size of the eligible patient pool and cost per patient [7].

Similarly, a DNB-based clinical trial would need to optimize:

- Accuracy: Maximizing the F1 score of the DNB for predicting the critical transition.

- Generalizability: Ensuring the DNB performs well across diverse patient populations.

- Cost-Efficiency: Minimizing the economic burden of the omics measurements required for DNB calculation.

- Clinical Utility: Ensuring the DNB leads to actionable interventions that improve patient outcomes.

Computational frameworks that simultaneously optimize these objectives can help transition DNBs from a powerful theoretical concept to a practical tool in personalized and preventive medicine [7] [6].

Algorithmic Approaches and Real-World Applications: Implementing MOO in Biomarker Research

Multi-objective optimization presents a significant challenge in biomarker identification research, where conflicting objectives such as diagnostic accuracy, biological relevance, and technical feasibility must be simultaneously balanced. Evolutionary algorithms (EAs) have emerged as powerful tools for addressing these complex problems by identifying a set of optimal trade-off solutions known as the Pareto front [28]. Within this domain, the Non-dominated Sorting Genetic Algorithm II (NSGA-II) has established itself as a benchmark approach, while its successor NSGA-III extends capabilities to many-objective problems, and specialized variants like MoGA-TA demonstrate domain-specific enhancements for drug discovery applications [29] [30] [31].

This article provides a comprehensive technical overview of these three prominent algorithms, with specific emphasis on their application to biomarker identification and validation. We present structured comparative analyses, detailed experimental protocols, and practical implementation guidelines to equip researchers with the necessary framework for applying these advanced optimization techniques to complex biological datasets. The integration of these computational methods offers transformative potential for accelerating biomarker discovery by efficiently navigating high-dimensional solution spaces and identifying biologically-relevant candidate panels with optimal performance characteristics.

Algorithmic Foundations and Comparative Analysis

NSGA-II: Core Architecture and Mechanisms

NSGA-II employs a sophisticated multi-objective optimization architecture that combines elitism with explicit diversity preservation. The algorithm begins with population initialization, where candidate solutions are generated, often through random sampling or domain-specific heuristics [28] [32]. Each solution is evaluated against multiple objective functions, which in biomarker research might include sensitivity, specificity, cost-effectiveness, and clinical practicality.

The algorithm's distinctive non-dominated sorting approach classifies the population into hierarchical Pareto fronts [28]. Solutions in the first front are not dominated by any other solutions, meaning no other solution is better in all objectives simultaneously. The second front contains solutions dominated only by those in the first front, and this sorting process continues until all solutions are classified. This ranking mechanism ensures selection pressure toward the true Pareto-optimal region.

NSGA-II's crowding distance calculation maintains solution diversity along the Pareto front by measuring the density of solutions surrounding a particular solution in objective space [28] [32]. Solutions in less crowded regions receive preferential selection, preventing premature convergence and ensuring a well-distributed approximation of the entire Pareto front. The algorithm uses binary tournament selection for reproduction, where solutions are compared first by front rank and then by crowding distance when ranks are equal.

NSGA-III: Reference Point-Based Extension

NSGA-III extends NSGA-II's capabilities for many-objective optimization problems (typically those with four or more objectives) through a reference point-based niching mechanism [33] [30] [31]. While it retains the fundamental non-dominated sorting procedure from NSGA-II, NSGA-III replaces the crowding distance operator with a systematic reference line approach that connects reference points defined along the hyperplane to the ideal point in the objective space.

The algorithm requires a set of reference points that define the regions of interest in the objective space, typically generated using systematic methods such as the Das-Dennis method for uniform distribution of points [30]. During selection, NSGA-III associates each population member with a reference point based on perpendicular distance and aims to preserve population members associated with underrepresented reference points, ensuring diversity across all objectives in high-dimensional spaces.

This reference direction approach makes NSGA-III particularly suitable for biomarker discovery problems involving numerous competing objectives, such as when simultaneously optimizing for multiple disease subtypes, demographic considerations, and analytical performance metrics across different technology platforms.

MoGA-TA: Domain-Specific Enhancement

The MoGA-TA algorithm represents a specialized adaptation for molecular optimization that incorporates Tanimoto similarity-based crowding distance and a dynamic acceptance probability population update strategy [29]. This approach integrates the multi-objective optimization capabilities of NSGA-II with structural similarity measures particularly relevant to chemical space exploration.

The Tanimoto coefficient, calculated based on molecular fingerprints, measures structural similarity between compounds by quantifying the ratio of common molecular features to total unique features [29]. By incorporating this domain-specific metric into the crowding distance calculation, MoGA-TA more accurately captures structural differences between molecules, preserving diverse molecular scaffolds and guiding population evolution toward structurally novel candidates with desirable properties.

The dynamic acceptance probability strategy enables broader exploration of chemical space during early generations while progressively favoring exploitation of high-quality regions in later stages, effectively balancing exploration-exploitation tradeoffs throughout the optimization process [29]. This approach has demonstrated particular efficacy in multi-objective drug molecule optimization tasks where structural diversity alongside specific pharmacological properties is essential.

Table 1: Comparative Analysis of Multi-Objective Evolutionary Algorithms

| Feature | NSGA-II | NSGA-III | MoGA-TA |

|---|---|---|---|

| Primary Selection Mechanism | Non-dominated sorting + crowding distance [28] | Non-dominated sorting + reference direction [30] | Non-dominated sorting + Tanimoto crowding [29] |

| Optimal Objective Scope | 2-3 objectives [29] | 4+ objectives (many-objective) [30] | 2-3 objectives (domain-optimized) [29] |

| Diversity Preservation | Crowding distance (objective space) [28] | Reference point association [33] | Tanimoto similarity (structural space) [29] |

| Computational Complexity | O(MN²) for non-dominated sort [34] | Similar to NSGA-II with additional reference point overhead [30] | Similar to NSGA-II with similarity calculation overhead [29] |

| Specialized Strengths | Well-distributed Pareto fronts for few objectives [32] | Uniform distribution in high-dimensional spaces [30] | Structural diversity in molecular optimization [29] |

| Biomarker Research Application | Initial candidate screening, 2-3 objective problems | Multi-omics integration, patient stratification | Molecular biomarker optimization |

Table 2: Performance Metrics in Molecular Optimization Tasks (Adapted from [29])

| Algorithm | Success Rate (%) | Hypervolume | Geometric Mean | Internal Similarity |

|---|---|---|---|---|

| MoGA-TA | 78.3 | 0.892 | 0.781 | 0.456 |

| NSGA-II | 65.7 | 0.835 | 0.692 | 0.512 |

| GB-EPI | 54.2 | 0.761 | 0.603 | 0.498 |

Experimental Protocols and Workflows

NSGA-II Implementation for Biomarker Panel Selection

Objective: Identify optimal biomarker panels balancing sensitivity, specificity, and analytical complexity.

Materials and Reagents:

- Clinical dataset with candidate biomarker measurements and outcome labels

- Python 3.8+ with NSGA-II implementation (pymoo library recommended) [32]

- Computing resources (minimum 8GB RAM, multi-core processor recommended)

Procedure:

Problem Formulation:

- Define decision variables as binary indicators for biomarker inclusion

- Formulate objective 1: Maximize sensitivity (1 - FNR)

- Formulate objective 2: Maximize specificity (1 - FPR)

- Formulate objective 3: Minimize panel size/number of biomarkers

- Define constraints based on clinical implementation feasibility

Algorithm Configuration:

Execution and Monitoring:

- Initialize random population of binary vectors

- For each generation:

- Evaluate objectives for all population members

- Perform non-dominated sorting [28]

- Calculate crowding distance for each front [28]

- Select parents via binary tournament selection

- Apply crossover and mutation operators

- Combine parent and offspring populations

- Select new population based on non-domination rank and crowding distance

- Track hypervolume indicator to monitor convergence

Result Analysis:

- Extract non-dominated solutions from final population

- Validate selected biomarker panels on independent test set

- Perform clinical relevance assessment of top candidates

NSGA-III Protocol for Multi-Omics Biomarker Integration

Objective: Integrate genomic, proteomic, and metabolomic biomarkers for comprehensive disease subtyping.

Materials and Reagents:

- Multi-omics datasets (RNA-seq, LC-MS proteomics, NMR metabolomics)

- Reference point generation utility (pymoo.util.ref_dirs)

- High-performance computing resources (16GB+ RAM recommended)

Procedure:

Reference Point Specification:

- Determine number of objectives (typically 4-6 for multi-omics)

- Generate reference directions using Das-Dennis method [30]

- Scale reference points based on objective ranges

- Set population size as multiple of reference points

Many-Objective Problem Formulation:

- Objective 1: Maximize genomic biomarker accuracy

- Objective 2: Maximize proteomic biomarker accuracy

- Objective 3: Maximize metabolomic biomarker accuracy

- Objective 4: Minimize analytical cost/complexity

- Objective 5: Maximize cross-platform reproducibility

Algorithm Configuration:

Execution and Monitoring:

- For each generation:

- Evaluate population members on all objectives

- Perform non-dominated sorting

- Normalize objectives based on extreme points

- Associate solutions with reference lines

- Niche preservation operation

- Environmental selection based on reference line diversity

- Track convergence using generational distance metric

- For each generation:

Result Interpretation:

- Analyze solution distribution across reference directions

- Identify omics trade-offs in optimal solutions

- Validate integrated biomarker panels in clinical cohorts

MoGA-TA Protocol for Molecular Biomarker Optimization

Objective: Optimize molecular structures for diagnostic biomarker candidates balancing multiple physicochemical properties.

Materials and Reagents:

- Chemical database (ChEMBL, PubChem) or proprietary compound library

- RDKit software package (version 2022.09+) [29]

- Molecular fingerprinting capabilities (ECFP, FCFP, AP fingerprints)

- Tanimoto similarity calculation utilities

Procedure:

Molecular Representation:

- Encode molecules as SMILES strings or molecular graphs

- Generate molecular fingerprints (ECFP4, FCFP4, or AP fingerprints) [29]

- Define chemical space boundaries based on lead compounds

Multi-Objective Formulation:

- Objective 1: Maximize Tanimoto similarity to target structure (Thresholded: 0.7-0.8) [29]

- Objective 2: Optimize physicochemical property (e.g., logP using MinGaussian) [29]

- Objective 3: Optimize structural property (e.g., TPSA using MaxGaussian) [29]

- Apply appropriate modifier functions (Gaussian, Thresholded) to normalize scores [29]

Algorithm Configuration:

- Implement Tanimoto-based crowding distance calculation [29]

- Configure dynamic acceptance probability strategy (decreases over generations)

- Set decoupled crossover and mutation rates

- Initialize with lead compound structures

Execution and Monitoring:

- For each generation:

- Evaluate molecular properties using RDKit

- Calculate Tanimoto similarities to reference compounds

- Perform non-dominated sorting

- Compute Tanimoto crowding distances

- Apply dynamic acceptance for population update

- Execute molecular crossover and mutation operations

- Track structural diversity and property improvements

- For each generation:

Result Validation:

- Assess chemical feasibility of proposed structures

- Synthesize and test top candidate molecules

- Evaluate diagnostic performance in target applications

Table 3: Research Reagent Solutions for Molecular Optimization

| Reagent/Resource | Function | Example Source/Implementation |

|---|---|---|

| RDKit Software Package | Calculates molecular descriptors and fingerprints [29] | Open-source cheminformatics toolkit |

| ECFP/FCFP Fingerprints | Encodes molecular structure for similarity computation [29] | Extended Connectivity Fingerprints |

| Tanimoto Coefficient | Measures molecular similarity based on fingerprint overlap [29] | Implementation in RDKit or custom code |

| ChEMBL Database | Provides reference compounds and bioactivity data [29] | Public domain chemical database |

| SMILES Representation | String-based encoding of molecular structure [29] | Simplified Molecular-Input Line-Entry System |

| Gaussian/Thresholded Modifiers | Normalizes objective scores to [0,1] interval [29] | Custom implementation based on task requirements |