Gradient-Based vs. Metaheuristic Optimization in Drug Discovery: A Comprehensive Guide for Researchers

This article provides a systematic comparison of gradient-based and metaheuristic optimization algorithms, with a focused application for researchers and professionals in drug development.

Gradient-Based vs. Metaheuristic Optimization in Drug Discovery: A Comprehensive Guide for Researchers

Abstract

This article provides a systematic comparison of gradient-based and metaheuristic optimization algorithms, with a focused application for researchers and professionals in drug development. We explore the foundational principles of both methodological families, from classic gradient descent to modern nature-inspired algorithms like Particle Swarm Optimization and the Gradient-Based Optimizer. The scope includes their practical application in solving complex pharmacometric problems, such as parameter estimation in nonlinear mixed-effects models and ligand-based virtual screening. We also address critical troubleshooting and optimization strategies to enhance algorithm performance and avoid common pitfalls like local optima. Finally, we present a rigorous validation and comparative framework, equipping scientists with the knowledge to select and apply the most effective optimization technique for their specific research challenges in biomedical and clinical research.

Core Principles: Demystifying Gradient-Based and Metaheuristic Algorithms

The Landscape of Optimization in Computational Drug Discovery

The process of drug discovery is characterized by its immense complexity, high costs, and prolonged timelines, often exceeding 12 years from target identification to market approval [1]. Within this pipeline, computational drug discovery has emerged as a transformative approach, leveraging optimization algorithms to navigate vast chemical spaces and predict molecular behavior with increasing accuracy. Optimization methods form the computational engine that powers virtual screening, binding affinity prediction, and molecular property optimization. These methods can be broadly categorized into gradient-based optimization techniques, which use derivative information to find local minima, and metaheuristic algorithms, which are population-based methods inspired by natural processes that excel at global exploration of complex search spaces [2].

The fundamental challenge in computational drug discovery lies in the enormous dimensionality of the problem. Researchers must evaluate billions of potential drug candidates against multiple criteria including binding affinity, solubility, toxicity, and metabolic stability. This multi-objective optimization problem demands algorithms that can efficiently balance exploration of diverse chemical spaces with exploitation of promising molecular scaffolds. Gradient-based methods, rooted in mathematical optimization theory, offer precision and convergence speed for well-defined problems with smooth parameter spaces. In contrast, metaheuristic approaches like Particle Swarm Optimization (PSO) and Ant Colony Optimization (ACO) provide robust mechanisms for handling non-convex, discontinuous search landscapes common in molecular design problems [2] [3]. This review systematically compares these approaches through experimental data, methodological analysis, and practical implementation guidelines to inform researchers' selection of appropriate optimization strategies for specific drug discovery challenges.

Comparative Analysis of Optimization Methods

Methodological Foundations

Gradient-Based Optimization methods utilize derivative information to navigate parameter spaces efficiently. The Gradient-Based Optimizer (GBO) exemplifies this approach by combining gradient search rules (GSR) for exploration with local escaping operators (LEO) for exploitation [2]. This dual mechanism enables effective navigation of complex fitness landscapes, with the gradient search rule enhancing exploration capability and convergence rate while avoiding local optima. The mathematical foundation lies in approximating the Newton method, where the search direction is determined by both the gradient and Hessian matrix information, providing theoretically sound convergence properties for suitable problem domains [2].

Metaheuristic Optimization encompasses a diverse family of nature-inspired algorithms. Particle Swarm Optimization (PSO) simulates social behavior patterns of bird flocking, while Ant Colony Optimization (ACO) mimics pheromone-based foraging behavior of ants [2] [3]. Genetic Algorithms (GA) employ evolutionary principles of selection, crossover, and mutation [4]. These methods share a population-based approach where multiple candidate solutions evolve through iterative improvement, offering distinct advantages for problems with rugged fitness landscapes or discontinuous parameter spaces where gradient information is unavailable or misleading.

Performance Comparison in Drug Discovery Applications

Experimental evaluations across multiple drug discovery domains reveal distinct performance patterns for different optimization classes. The table below summarizes quantitative comparisons from published studies:

Table 1: Performance Comparison of Optimization Algorithms in Virtual Screening

| Algorithm | Dataset | Accuracy (%) | Computational Efficiency | Key Advantage |

|---|---|---|---|---|

| GBO-kNN [5] | MAO (1665 features) | 98.8 | Moderate | High-dimensional data handling |

| HHO-SVM [5] | MAO | 96.2 | High | Feature reduction |

| GWO-kNN [5] | MAO | 95.7 | Moderate | Balanced performance |

| HSAPSO-SAE [6] | DrugBank/Swiss-Prot | 95.5 | High (0.010s/sample) | Hyperparameter optimization |

| ACO-RF [3] | Clobetasol Solubility | R²: 0.94 | Variable | Process parameter optimization |

| ACO-GBDT [3] | Clobetasol Solubility | R²: 0.987 | Variable | Non-linear relationship modeling |

Table 2: Optimization Methods by Drug Discovery Phase

| Drug Discovery Phase | Recommended Algorithm | Rationale | Limitations |

|---|---|---|---|

| Target Identification | HSAPSO [6] | High accuracy (95.5%) for druggable target classification | Dependent on training data quality |

| Virtual Screening (High-Dimensional) | GBO-kNN [5] | Superior performance (98.8% accuracy) with 1665 features | Moderate computational efficiency |

| Virtual Screening (Low-Dimensional) | HHO-SVM [5] | Efficient feature reduction capabilities | Lower accuracy on complex datasets |

| Solubility Optimization | ACO-GBDT [3] | Excellent non-linear fitting (R²: 0.987) | Parameter tuning sensitivity |

| Molecular Dynamics | Gradient-Based Newton [7] | Physical validity and geometric accuracy | Limited conformational sampling |

The experimental data demonstrates that metaheuristic methods generally excel in feature selection and high-dimensional virtual screening tasks. The GBO-kNN framework achieved remarkable 98.8% accuracy on the Monoamine Oxidase (MAO) dataset comprising 1665 molecular features [5]. This represents a significant improvement over other metaheuristic approaches including Hybrid Harris Hawks Optimization (96.2%), Grey Wolf Optimization (95.7%), and Butterfly Optimization Algorithm (94.1%) on the same dataset. The success of GBO-kNN stems from its effective balance between exploration and exploitation phases, with the GSR component enhancing population diversity while LEO facilitates escaping local optima [5].

For molecular property prediction tasks, hybrid approaches combining metaheuristics with machine learning demonstrate particular strength. In modeling Clobetasol Propionate solubility in supercritical CO₂, Ant Colony Optimization-tuned ensemble methods achieved exceptional performance, with Gradient Boosting Decision Trees (GBDT) reaching R² = 0.987, followed by Random Forest (R² = 0.94) and Extremely Randomized Trees (R² = 0.91) [3]. The ACO algorithm effectively optimized hyperparameters including tree depth, learning rate, and feature subsampling ratios, demonstrating the value of metaheuristics for complex parameter tuning problems where gradient information is unavailable.

Experimental Protocols and Methodologies

Virtual Screening with GBO-kNN

The GBO-kNN framework for ligand-based virtual screening employs a structured workflow combining feature selection with classification. The methodology proceeds through several well-defined phases:

Data Preprocessing: Molecular datasets undergo comprehensive preprocessing including normalization, tokenization, and descriptor calculation. For the MAO dataset, this involved processing 1665 molecular descriptors representing structural and physicochemical properties [5].

Feature Selection: The GBO algorithm optimizes feature subsets using a wrapper approach, evaluating feature combinations based on classification performance. The algorithm maintains a population of candidate solutions (feature subsets), with each solution represented as a vector in D-dimensional space: Xn = [Xn,1, Xn,2, ..., Xn,D], where D represents the total feature count [2].

Fitness Evaluation: The k-NN classifier assesses each feature subset's quality using classification accuracy as the primary fitness function. This creates a computationally efficient evaluation pipeline crucial for handling large chemical databases [5].

Iterative Refinement: The GBO's Gradient Search Rule (GSR) and Local Escaping Operator (LEO) collaboratively refine solutions over generations. The GSR employs parameter ρ₁ to control exploration: ρ₁ = 2 × rand × α - α, where α = β × sin(3π/2) + sin(β × 3π/2), with β adaptive over iterations [2] [5].

This protocol was validated through comparison with seven established metaheuristics, with statistical significance assessed via multiple runs, convergence curves, and boxplot analyses [5].

HSAPSO for Druggable Target Identification

The Hierarchically Self-Adaptive Particle Swarm Optimization (HSAPSO) protocol implements a sophisticated adaptation mechanism for deep learning optimization:

Network Architecture: A Stacked Autoencoder (SAE) framework processes molecular descriptors and protein features, creating hierarchical representations [6].

Hierarchical Adaptation: HSAPSO implements a dual-layer adaptation strategy where the first layer adjusts particle velocity and position using standard PSO equations, while the second layer dynamically modifies algorithmic parameters including inertia weight, acceleration coefficients, and velocity constraints [6].

Fitness Evaluation: The validation accuracy of the SAE classifier serves as the objective function, with careful regularization to prevent overfitting on pharmaceutical datasets.

Convergence Monitoring: The algorithm incorporates early stopping based on validation performance plateaus, optimizing computational efficiency [6].

Experimental validation using DrugBank and Swiss-Prot datasets demonstrated the framework's robustness, achieving 95.52% accuracy with minimal computational overhead (0.010 seconds per sample) and exceptional stability (±0.003) [6].

Table 3: Key Computational Resources for Optimization in Drug Discovery

| Resource Category | Specific Tools/Algorithms | Application Context | Performance Considerations |

|---|---|---|---|

| Metaheuristic Algorithms | GBO, HSAPSO, ACO | Virtual screening, target identification, hyperparameter optimization | GBO excels in high-dimensional feature selection; HSAPSO offers adaptive parameter control [5] [6] |

| Gradient-Based Optimizers | Newton-type methods, GBO | Structure-based drug design, binding pose prediction | Enhanced physical validity and geometric accuracy for receptor-ligand complexes [7] |

| Benchmark Datasets | QSAR Biodegradation, MAO, DrugBank | Method validation and comparative studies | MAO dataset (1665 features) tests high-dimensional capability [5] |

| Feature Selection Methods | Wrapper, Filter, Embedded approaches | Descriptor optimization, dimensionality reduction | GBO-kNN uses wrapper approach for optimal feature subset identification [5] |

| Solubility Prediction Models | GBDT, RF, ET with ACO tuning | Pharmaceutical processing optimization | GBDT with ACO achieves R² = 0.987 for supercritical solvent systems [3] |

| Validation Frameworks | Statistical comparison, convergence analysis | Method performance assessment | Cross-validation, boxplots, and convergence curves essential for robust evaluation [5] |

Integrated Workflows and Decision Framework

Hybrid Optimization Strategies

The most effective computational drug discovery pipelines increasingly employ hybrid strategies that leverage the complementary strengths of both gradient-based and metaheuristic approaches. Integrated workflows typically deploy metaheuristic algorithms for global exploration of chemical space during early discovery phases, followed by gradient-based refinement for lead optimization [7] [5]. For example, the GBO algorithm demonstrates this hybrid principle internally through its combination of gradient search rules (exploration) and local escaping operators (exploitation) [2].

The optSAE + HSAPSO framework exemplifies successful integration, where the metaheuristic component (HSAPSO) optimizes the architecture and hyperparameters of a deep learning model that itself employs gradient-based learning [6]. This hierarchical approach achieves state-of-the-art performance in drug classification tasks while maintaining computational efficiency. Similarly, in structure-based drug discovery, AlphaFold2 generates initial protein structures using deep learning (trained via gradient descent), while molecular docking often employs metaheuristics for conformational sampling of ligand binding poses [7].

Selection Guidelines for Practitioners

Choosing between gradient-based and metaheuristic optimization approaches depends on multiple factors specific to the drug discovery problem:

Problem Dimensionality: For high-dimensional feature spaces (e.g., molecular descriptor sets with >1000 features), metaheuristics like GBO and HSAPSO demonstrate superior performance [5] [6]. For lower-dimensional parameter optimization (e.g., solubility modeling with temperature/pressure inputs), gradient-enhanced methods may suffice [3].

Data Availability: With extensive training data, gradient-based deep learning models excel through comprehensive feature learning. For limited data scenarios, metaheuristic-optimized models like HSAPSO-SAE provide better generalization [6].

Computational Constraints: When computational efficiency is paramount, particularly for virtual screening of ultra-large libraries, highly optimized metaheuristics like GBO-kNN offer favorable performance profiles [5].

Accuracy Requirements: For critical applications requiring maximum predictive accuracy, hybrid approaches consistently outperform individual methods, as demonstrated by the 95.5% classification accuracy achieved by HSAPSO-SAE [6].

The landscape of optimization in computational drug discovery reveals a complex ecosystem where both gradient-based and metaheuristic methods play vital, complementary roles. Experimental evidence demonstrates that metaheuristic algorithms currently hold advantages for high-dimensional virtual screening and feature selection tasks, with GBO-kNN achieving exceptional 98.8% accuracy on challenging molecular datasets [5]. Meanwhile, gradient-based approaches provide mathematically rigorous solutions for well-defined problems with smooth parameter spaces and adequate training data.

The most promising future direction lies in sophisticated hybrid approaches that combine the global exploration capabilities of metaheuristics with the local refinement power of gradient-based methods. Frameworks like HSAPSO-SAE exemplify this trend, achieving state-of-the-art performance in drug classification while maintaining computational efficiency [6]. As drug discovery continues to grapple with increasingly complex problems—from polypharmacology to multi-target therapeutics—optimization methods will remain essential computational tools. Future research should focus on developing more adaptive optimization frameworks that can automatically select and combine algorithms based on problem characteristics, further accelerating the transformation of computational predictions into clinical therapeutics.

Optimization algorithms are the cornerstone of computational science, enabling advancements from traditional numerical analysis to modern artificial intelligence. These algorithms can be broadly categorized into gradient-based methods, which use derivative information to navigate the loss landscape, and metaheuristic approaches, which employ stochastic, population-based strategies for global exploration. While gradient-based methods dominate in deep learning and differentiable problems, metaheuristics prove invaluable for complex, non-convex, or non-differentiable objective functions commonly encountered in engineering design and drug discovery [8] [9].

This guide provides a comprehensive comparison of gradient-based optimization techniques, tracing their evolution from fundamental Newton's method to contemporary deep learning optimizers. We present experimental data across diverse applications—including image classification, text processing, and energy management—to objectively evaluate performance, convergence properties, and computational efficiency, providing researchers with evidence-based insights for algorithm selection.

Foundations: Newton's Method and Its Progeny

Historical Development and Core Mechanism

Newton's method, originally developed in the 17th century for finding roots of equations, was later adapted for optimization by targeting the roots of a function's derivative (i.e., its critical points) [10] [11]. For twice-differentiable functions, the method leverages both first and second-order derivative information to achieve rapid convergence near optima.

The iterative update rule for Newton's method in optimization is derived from the second-order Taylor approximation:

[ x{k+1} = xk - \gamma [f''(xk)]^{-1} f'(xk) ]

Where (f'(xk)) is the gradient, (f''(xk)) is the Hessian matrix of second derivatives, and (\gamma) is a step size parameter [10]. This update simultaneously determines both the direction and step size of each iteration, theoretically providing quadratic convergence under favorable conditions.

Practical Considerations and Modern Variants

Despite its theoretical advantages, Newton's method faces practical challenges in high-dimensional spaces. The computational cost of calculating, storing, and inverting the full Hessian matrix scales poorly with problem dimension [10]. Additionally, the method may converge to saddle points rather than minima and can diverge when initialized far from solutions [10] [12].

To address these limitations, researchers have developed several modifications:

- Damped Newton methods incorporate step size control via line search or trust regions to ensure stability [10]

- Quasi-Newton methods (e.g., BFGS) approximate the Hessian using gradient information, avoiding expensive matrix inversions [12]

- Gauss-Newton and Levenberg-Marquardt specialize in nonlinear least squares problems [10]

- Stochastic Newton methods extend the approach to large-scale machine learning problems [12]

These Newton-inspired approaches maintain a balance between convergence speed and computational practicality, influencing the development of modern adaptive gradient methods.

Evolution of Gradient-Based Optimizers in Deep Learning

From SGD to Adaptive Learning Rates

The limitations of Newton's method in high dimensions led to the dominance of first-order methods in deep learning, beginning with Stochastic Gradient Descent (SGD) and evolving into sophisticated adaptive optimizers [9].

Stochastic Gradient Descent (SGD) introduced minibatch-based parameter updates, injecting noise that helps escape local minima but often requiring careful learning rate tuning [9]. SGD with momentum improved upon this by accumulating velocity in directions of persistent gradient descent, dampening oscillations in narrow valleys of the loss landscape [9].

The breakthrough came with adaptive learning rate methods, which automatically adjust step sizes for each parameter based on historical gradient information:

- Adam (Adaptive Moment Estimation) combines momentum with per-parameter learning rate adaptations, making it robust to gradient scale variations [13] [9]

- AMSGrad addresses convergence issues in Adam by using a long-term memory of past squared gradients [13]

- AdamW decouples weight decay from gradient updates, improving generalization performance [13]

- QHAdam and Demon Adam incorporate advanced learning rate and momentum scheduling techniques [13]

Emerging Approaches: Probabilistic and Hybrid Methods

Recent research has explored probabilistic interpretations of optimization, treating gradients as random variables to better account for uncertainty. Variational Stochastic Gradient Descent (VSGD) exemplifies this trend, combining traditional gradient descent with probabilistic modeling for improved gradient estimation and noise handling [14].

In 2025 studies, VSGD demonstrated competitive or superior performance compared to Adam and SGD across various image classification benchmarks and network architectures, achieving higher accuracy on CIFAR100 and TinyImagenet-200 datasets while maintaining stable convergence without extensive hyperparameter tuning [14].

Comparative Performance Analysis

Experimental Protocols for Algorithm Evaluation

To ensure fair and meaningful comparisons, researchers typically employ standardized evaluation protocols across multiple domains:

Image Classification Experiments:

- Datasets: CIFAR10, CIFAR100, TinyImagenet-200

- Architectures: LeNet, ResNet, VGG, ResNeXt, ConvMixer

- Metrics: Validation accuracy, F1 score, test accuracy, time complexity (seconds/epoch)

- Training regime: Fixed number of epochs with standardized data augmentation [13] [14]

Text Classification Experiments:

- Datasets: IMDB for sentiment analysis

- Architectures: LSTM, BERT

- Metrics: Validation/test accuracy and F1 score [13]

Image Generation Experiments:

- Datasets: MNIST

- Architectures: Variational Autoencoders (VAE)

- Metrics: Fréchet Inception Distance (FID), Inception Score (IS), time complexity (minutes) [13]

Quantitative Performance Comparison

Table 1: Image Classification Performance on CIFAR10 Dataset [13]

| Optimizer | LeNet Test Accuracy (%) | ResNet Test Accuracy (%) | Time Complexity (s/epoch) |

|---|---|---|---|

| SGDM | 65.260 | 73.110 | 6.992/15.205 |

| Adam | 64.160 | 75.070 | 7.135/16.079 |

| QHM | 65.860 | 73.140 | 7.111/16.006 |

| AMSGrad | 64.700 | 73.660 | 7.313/15.965 |

| QHAdam | 64.600 | 75.130 | 7.277/16.959 |

| Demon Adam | 65.270 | 74.200 | 8.533/16.660 |

| AdamW | 63.110 | 75.030 | 8.294/16.723 |

Table 2: Text Classification Performance on IMDB Dataset [13]

| Optimizer | LSTM Test Accuracy (%) | BERT Test Accuracy (%) | LSTM Test F1 | BERT Test F1 |

|---|---|---|---|---|

| SGDM | 79.790 | 80.900 | 79.783 | 80.898 |

| Adam | 81.470 | 82.090 | 81.439 | 82.022 |

| AggMo | 80.620 | 81.170 | 80.618 | 81.151 |

| DemonSGD | 79.460 | 82.510 | 79.460 | 82.508 |

| QHAdam | 82.070 | 82.790 | 82.047 | 82.762 |

| AdamW | 81.410 | 82.010 | 81.410 | 81.949 |

Table 3: Image Generation Performance on MNIST Dataset [13]

| Optimizer | FID Score | Inception Score (IS) | Time Complexity (min) |

|---|---|---|---|

| SGDM | 93.535 | 2.090 | 4.403 |

| Adam | 74.447 | 2.151 | 4.565 |

| DemonAdam | 74.398 | 2.256 | 4.646 |

| QHAdam | 71.718 | 2.208 | 4.815 |

| AdamW | 74.028 | 2.262 | 4.548 |

Key Observations and Trends

The experimental data reveals several important patterns:

- Adam-based optimizers (QHAdam, AdamW, Demon Adam) generally achieve superior performance on vision tasks with complex architectures like ResNet [13]

- QHM-based algorithms demonstrate effective and stable performance across all scenarios, making them reliable default choices [13]

- SGDM remains the fastest optimizer due to minimal computational overhead, advantageous for large-scale deployments [13]

- QHAdam excels in image generation tasks, achieving the best FID score (71.718) while maintaining competitive IS scores [13]

- AdamW shows strong performance in both image classification (75.03% test accuracy on ResNet) and image generation (best IS score of 2.262) [13]

Gradient-Based vs. Metaheuristic Approaches

Complementary Strengths and Applications

While gradient-based methods dominate differentiable optimization problems, metaheuristic algorithms provide distinct advantages for specific problem classes:

Table 4: Comparison of Optimization Paradigms

| Characteristic | Gradient-Based Methods | Metaheuristic Approaches |

|---|---|---|

| Domain | Differentiable loss landscapes | Non-convex, non-differentiable, or discontinuous problems |

| Convergence Speed | Fast local convergence | Slower, more exploratory |

| Memory Requirements | Moderate to high (Hessian storage) | Low to moderate (population size) |

| Theoretical Guarantees | Strong local convergence theory | Limited theoretical guarantees |

| Primary Applications | Deep learning, numerical optimization | Engineering design, scheduling, drug discovery |

Hybrid Approaches in Real-World Applications

Recent research demonstrates the effectiveness of combining gradient-based and metaheuristic approaches:

In energy management systems, hybrid algorithms like Gradient-Assisted PSO (GD-PSO) and WOA-PSO consistently achieve the lowest operational costs with strong stability, outperforming classical metaheuristics such as Ant Colony Optimization (ACO) and Ivy Algorithm (IVY) [15]. These hybrids leverage gradient information to guide population-based search, achieving under 2% power load tracking error compared to 8-16% errors from standalone algorithms [8] [15].

In drug discovery, AI platforms like Insilico Medicine's Pharma.AI combine reinforcement learning (metaheuristic) with gradient-based policy optimization to balance multiple objectives including potency, toxicity, and novelty in small molecule design [16] [17]. Similarly, Iambic Therapeutics integrates specialized AI systems—Magnet for generative molecular design, NeuralPLexer for structure prediction, and Enchant for clinical property inference—creating an iterative, model-driven workflow where candidates are designed and evaluated entirely in silico before synthesis [17].

The Scientist's Toolkit: Essential Research Reagents

Table 5: Key Experimental Resources for Optimization Research

| Resource | Function | Example Implementations |

|---|---|---|

| CIFAR10/100 Datasets | Benchmarking image classification optimizers | Standardized vision datasets with 10/100 classes [13] |

| IMDB Review Dataset | Evaluating text classification performance | 50,000 movie reviews for sentiment analysis [13] |

| MNIST Dataset | Image generation task benchmarking | Handwritten digit generation using VAEs [13] |

| LeNet Architecture | Small-scale vision model for efficiency testing | CNN with ~60,000 parameters [13] |

| ResNet Architecture | Large-scale vision model for accuracy assessment | Deep residual networks with ~1-50M parameters [13] |

| LSTM/BERT Models | Text processing optimizer evaluation | Sequential and transformer-based architectures [13] |

| Variational Autoencoders | Image generation capability assessment | Generative models for output quality evaluation [13] |

The evolution of gradient-based methods from Newton's foundational work to modern deep learning optimizers demonstrates a continuous refinement balancing computational efficiency with convergence guarantees. Experimental evidence indicates that Adam-based optimizers currently provide the best overall performance for most deep learning applications, while Newton-inspired methods remain relevant for problems with favorable structure where second-order information is computationally tractable.

The emerging trend toward probabilistic interpretations (exemplified by VSGD) and hybrid gradient-metaheuristic approaches points to a future where optimizers become more adaptive, robust, and problem-aware. For researchers and practitioners, algorithm selection should be guided by problem structure, computational constraints, and desired convergence properties, leveraging the comprehensive experimental data provided in this guide to make evidence-based decisions.

In the pursuit of solving complex real-world problems, researchers and engineers increasingly rely on sophisticated optimization algorithms. These algorithms generally fall into two broad categories: gradient-based methods and metaheuristic approaches. Gradient-based methods, such as the Gradient-Based Optimizer (GBO), use calculus-based principles and gradient information to find optimal solutions efficiently, particularly in continuous, well-defined search spaces [2]. In contrast, metaheuristic algorithms are nature-inspired optimization techniques that excel at tackling problems where traditional methods struggle—when dealing with non-differentiable functions, discontinuous domains, multiple objectives, or complex constraints that make gradient information unavailable or impractical [8] [2].

The fundamental distinction between these approaches lies in their operation principles. While gradient-based methods follow mathematical gradients toward local optima, metaheuristics employ population-based search strategies inspired by natural phenomena such as biological evolution, swarm intelligence, and physical processes [2]. Genetic Algorithms (GA) mimic Darwinian evolution through selection, crossover, and mutation operations, while Particle Swarm Optimization (PSO) emulates the social behavior of bird flocking or fish schooling [18]. These nature-inspired problem solvers have demonstrated remarkable success across diverse fields including drug discovery, energy management, engineering design, and artificial intelligence model optimization [8] [19] [6].

This guide provides a comprehensive comparison of prominent metaheuristic algorithms, with particular focus on their performance characteristics, implementation methodologies, and application-specific strengths to assist researchers in selecting appropriate optimization techniques for their domains.

Algorithm Fundamentals and Comparative Mechanics

Key Metaheuristic Algorithms

Genetic Algorithms (GA): Inspired by natural selection, GA operates on a population of candidate solutions through selection, crossover, and mutation operators. It explores the search space by combining elements of different solutions (crossover) while maintaining diversity through random changes (mutation). GA is particularly effective for discrete and combinatorial optimization problems [8] [18].

Particle Swarm Optimization (PSO): Simulating social behavior, PSO maintains a population of particles that navigate the search space. Each particle adjusts its position based on its own experience and the knowledge of neighboring particles. PSO typically demonstrates faster convergence compared to GA for continuous optimization problems [8] [18].

Gradient-Based Optimizer (GBO): A hybrid approach combining population-based methods with gradient-based Newton's method principles. GBO employs two main operations: Gradient Search Rule (GSR) for enhancing exploration, and Local Escaping Operator (LEO) for improving exploitation. This combination allows it to efficiently handle various research problems in health, environment, and public safety [2].

Hierarchically Self-Adaptive PSO (HSAPSO): An enhanced PSO variant that dynamically adapts parameters during the optimization process. It features a hierarchical self-adaptation mechanism that optimizes the trade-off between exploration and exploitation, demonstrating superior performance in complex optimization tasks such as pharmaceutical data classification [6].

Algorithm Workflows

The following diagram illustrates the core operational workflow of a typical metaheuristic optimization process, shared across many population-based algorithms:

Metaheuristic Algorithm Workflow

Performance Comparison: Quantitative Analysis

Control Application in DC Microgrid

Experimental studies comparing metaheuristic algorithms for Model Predictive Control (MPC) weight optimization in a DC microgrid provide insightful performance data. The experimental setup involved optimizing MPC parameters to balance control effort and tracking accuracy in a system comprising photovoltaic panels, battery, supercapacitor, grid, and load [8].

Table 1: Performance Comparison in MPC Tuning for DC Microgrid [8]

| Algorithm | Power Load Tracking Error | Convergence Speed | Response to Sudden Changes | Key Characteristics |

|---|---|---|---|---|

| Particle Swarm Optimization (PSO) | <2% | Fast | Excellent | Superior accuracy even without parameter interdependency |

| Genetic Algorithm (GA) | 8% (improved from 16%) | Moderate | Good | Performance improves with parameter interdependency consideration |

| Pareto Search | Moderate | Slow | Limited | Effective trade-off support but less responsive |

| Pattern Search | Moderate | Slow | Limited | Supports trade-offs, globally convergent |

Drug Classification Application

In pharmaceutical informatics, researchers evaluated algorithm performance for drug classification and target identification using datasets from DrugBank and Swiss-Prot. The experimental protocol involved preprocessing drug-related data, followed by classification using a Stacked Autoencoder (SAE) optimized with different metaheuristics [6].

Table 2: Performance in Drug Classification Tasks [6]

| Algorithm | Accuracy | Computational Time (per sample) | Stability | Notable Features |

|---|---|---|---|---|

| HSAPSO-Optimized SAE | 95.52% | 0.010s | ±0.003 | Excellent generalization, reduced overfitting |

| XGB-DrugPred | 94.86% | Not specified | Not specified | Optimized DrugBank features |

| SVM with Feature Selection | 93.78% | Not specified | Not specified | Bagging ensemble with genetic algorithm |

| DrugMiner (SVM/NN) | 89.98% | Not specified | Not specified | 443 protein features |

Experimental Protocols and Methodologies

MPC Weight Optimization Protocol

The experimental methodology for comparing metaheuristic algorithms in control system applications followed this structured protocol [8]:

System Modeling: Develop a mathematical model of the DC microgrid incorporating photovoltaic panels, battery storage, supercapacitor, grid connection, and variable load.

Objective Function Definition: Formulate the cost function to balance control effort and tracking accuracy, with constraints on system variables.

Algorithm Implementation: Configure each metaheuristic algorithm (PSO, GA, Pareto Search, Pattern Search) with appropriate parameter settings:

- PSO: Swarm size 30-50, cognitive and social parameters tuned for exploration-exploitation balance

- GA: Population size 50-100, tournament selection, simulated binary crossover, polynomial mutation

- Pareto Search: Population size adapted for multi-objective optimization

- Pattern Search: Mesh size adaptively adjusted based on success of previous iterations

Performance Metrics: Define evaluation criteria including tracking error (%), convergence speed (iterations), computational time, and response to disturbance.

Validation: Execute multiple independent runs with randomized initial conditions to ensure statistical significance of results.

Drug Classification Framework

The experimental protocol for pharmaceutical classification employed the following methodology [6]:

Data Collection and Preprocessing: Curate datasets from DrugBank and Swiss-Prot, including protein sequences, molecular descriptors, and known drug-target interactions.

Feature Engineering: Apply dimensionality reduction and feature selection to handle high-dimensional biological data.

Model Architecture: Implement Stacked Autoencoder (SAE) with multiple encoding and decoding layers for robust feature extraction.

Optimization Integration: Employ HSAPSO for hyperparameter tuning, including:

- Network architecture optimization (layer sizes, activation functions)

- Learning rate adaptation

- Regularization parameter tuning

- Batch size optimization

Training Procedure: Execute hierarchical self-adaptation mechanism where HSAPSO dynamically adjusts PSO parameters during training to balance exploration and exploitation.

Evaluation: Validate performance using k-fold cross-validation, measuring accuracy, precision, recall, F1-score, and computational efficiency.

The Scientist's Toolkit: Essential Research Reagents

Table 3: Key Research Reagents and Computational Tools

| Tool/Resource | Function | Application Context |

|---|---|---|

| Optuna | Hyperparameter optimization framework | Automated tuning of AI models and optimization algorithms [19] |

| DrugBank Database | Pharmaceutical knowledge base | Source for drug-target interaction data and biomolecular information [6] |

| XGBoost | Gradient boosting framework | Baseline comparisons and feature importance analysis [19] [6] |

| OpenVINO Toolkit | Model optimization | Deployment optimization for Intel hardware platforms [19] |

| TensorRT | Deep learning inference optimizer | Acceleration of neural network deployment [19] |

| ONNX Runtime | Model interoperability framework | Cross-platform optimization and deployment [19] |

Application-Specific Implementation Guidelines

Algorithm Selection Framework

The following diagram illustrates the decision process for selecting an appropriate optimization algorithm based on problem characteristics:

Algorithm Selection Guide

Emerging Trends and Hybrid Approaches

Recent research demonstrates increasing interest in hybrid optimization approaches that combine strengths of multiple algorithms. Studies have established "algorithmic linking" between PSO and GA, demonstrating that PSO can benefit from incorporating key algorithmic features of effective GA implementations [18]. Similarly, reinforcement learning-enhanced parameter adaptation methods are emerging as promising approaches for dynamic parameter control in metaheuristics [20].

In industrial applications, multi-objective optimization capabilities are becoming essential. Pareto-based methods effectively balance competing objectives such as energy consumption and tracking accuracy in control systems, or fatigue loads and power generation in wind turbine optimization [8]. The integration of machine learning with metaheuristics continues to advance, with frameworks like HSAPSO demonstrating how adaptive optimization can significantly enhance deep learning model performance in critical domains like drug discovery [6].

This comparison guide demonstrates that algorithm performance significantly depends on application context. PSO emerges as a robust choice for control applications requiring high accuracy and rapid convergence, while HSAPSO-optimized deep learning models achieve state-of-the-art performance in pharmaceutical classification tasks. GA maintains relevance for problems with discrete search spaces or when parameter interdependencies can be effectively leveraged. Gradient-based hybrid approaches like GBO offer competitive alternatives for continuous optimization landscapes. Researchers should consider problem dimensionality, computational constraints, accuracy requirements, and solution landscape characteristics when selecting appropriate metaheuristic algorithms for their specific domains.

This guide provides an objective comparison of how gradient-based and metaheuristic optimization methods manage the fundamental exploration-exploitation trade-off, with a specific focus on applications in computational drug discovery.

Table of Contents

- Introduction to the Dilemma in Optimization

- Comparative Analysis of Optimization Methods

- Detailed Methodologies and Experimental Protocols

- Visualizing Algorithmic Pathways

- The Scientist's Toolkit: Research Reagent Solutions

- Performance and Application Analysis

In computational optimization, the exploration-exploitation dilemma describes the challenge of balancing two competing goals: exploring the search space to discover promising new regions, and exploiting known good regions to refine solutions and converge to an optimum. This trade-off is a central concern in fields ranging from machine learning to drug design, where the landscape of possible solutions is often vast, complex, and expensive to navigate. Exploration involves taking risks by testing new, unknown configurations, while exploitation involves leveraging current knowledge to improve existing solutions. An over-emphasis on exploration can lead to slow convergence and excessive resource consumption, whereas excessive exploitation can cause the algorithm to become trapped in a local optimum, missing a potentially superior global solution. The way different algorithms manage this balance fundamentally influences their performance, efficiency, and applicability to real-world scientific problems.

Comparative Analysis of Optimization Methods

The following table summarizes the core characteristics of gradient-based and metaheuristic approaches, highlighting their distinct strategies for navigating the exploration-exploitation dilemma.

Table 1: Core Characteristics of Optimization Paradigms

| Feature | Gradient-Based Methods | Metaheuristic Methods |

|---|---|---|

| Core Principle | Uses gradient information (e.g., from Newton's method) to navigate the search space [21]. | Mimics natural phenomena (e.g., swarms, evolution) to guide the search [21] [15]. |

| Exploration Mechanism | Guided by the slope of the objective function; can be enhanced with specific operators [21]. | Relies on stochasticity and population diversity to explore wide areas [21]. |

| Exploitation Mechanism | Naturally exploits gradient information to descend rapidly toward a local minimum [21]. | Uses selection pressure and local search behaviors to refine the best solutions [21]. |

| Balance Strategy | Often requires manual tuning of learning rates; can use specialized operators (e.g., LEO) to escape local optima [21]. | Typically employs intrinsic parameters (e.g., inertia) or hybrid designs to dynamically balance the trade-off [21] [15]. |

| Key Advantage | Fast convergence in smooth, convex landscapes. | Ability to handle non-convex, discontinuous problems without derivative information. |

| Primary Limitation | Prone to becoming trapped in local optima and requires differentiable objective functions. | Can require many function evaluations and offers no guarantee of optimality. |

Detailed Methodologies and Experimental Protocols

Gradient-Based Optimizer (GBO) with Local Escaping

The GBO algorithm is a prime example of a modern gradient-based method that explicitly addresses the exploration-exploitation trade-off. Its experimental protocol involves two key operators [21]:

- Gradient Search Rule (GSR): This operator leverages the gradient-based Newton's method to guide the population toward promising regions, enhancing the exploitation of local gradient information. It uses a set of vectors to explore the search space and accelerates convergence.

- Local Escaping Operator (LEO): This operator is specifically designed to help the algorithm explore new regions by escaping local optima. It activates when a solution is suspected of being trapped, allowing the algorithm to jump to a new area of the search space and continue the search.

This combination allows the GBO to dynamically adjust its strategy, exploiting gradient information where beneficial while retaining a mechanism to explore more broadly when progress stalls.

Hybrid Metaheuristic: Gradient-Assisted Particle Swarm Optimization (GD-PSO)

Hybrid algorithms combine the strengths of different paradigms to achieve a superior balance. The protocol for GD-PSO, as applied in energy cost minimization for microgrids, demonstrates this principle [15]:

- Base Metaheuristic (PSO): The standard PSO algorithm maintains a population of candidate solutions (particles). Each particle updates its position based on its own experience and the knowledge of the swarm, creating a balance between personal (exploitation) and social (exploration) learning.

- Gradient Assistance: The GD-PSO hybrid incorporates gradient information to assist the PSO update rules. This enhances the exploitation phase by providing a more direct and efficient path toward local improvement, guided by the slope of the objective function. Experimental results have shown that this hybridization leads to lower average costs and stronger stability compared to classical metaheuristics like Ant Colony Optimization (ACO) or the Ivy Algorithm (IVY) [15].

Metaheuristic Framework for Drug Classification (optSAE + HSAPSO)

In drug discovery, a novel framework integrating a Stacked Autoencoder (optSAE) with a Hierarchically Self-Adaptive PSO (HSAPSO) algorithm has been developed for drug classification and target identification [6].

- Feature Extraction: The Stacked Autoencoder first performs unsupervised learning to extract robust and latent features from high-dimensional pharmaceutical data (e.g., from DrugBank and Swiss-Prot).

- Hyperparameter Optimization: The Hierarchically Self-Adaptive PSO is then used to optimize the hyperparameters of the SAE. The "self-adaptive" component is key to managing the exploration-exploitation dilemma: it dynamically adjusts the algorithm's parameters during training, optimizing the trade-off without manual intervention. This methodology achieved a high classification accuracy of 95.52%, demonstrating effective navigation of the complex optimization landscape associated with pharmaceutical data [6].

Visualizing Algorithmic Pathways

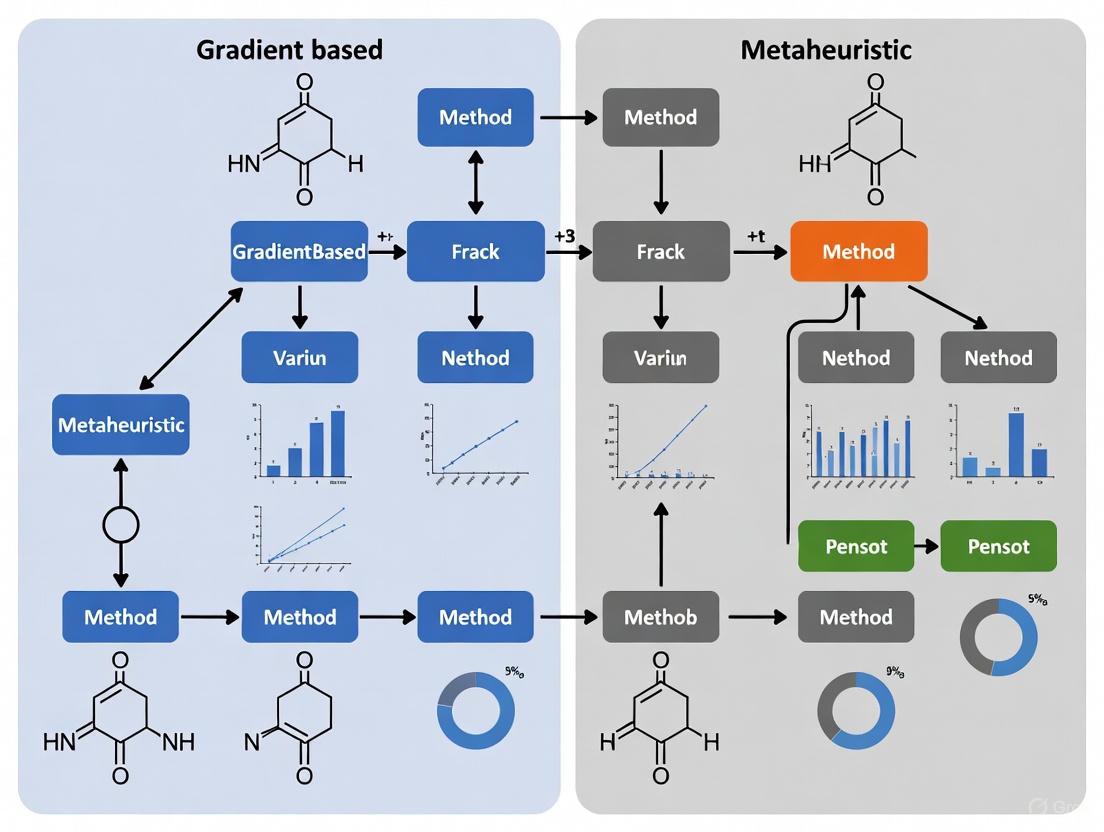

The diagram below illustrates the typical workflows for gradient-based and metaheuristic algorithms, highlighting the points where the exploration-exploitation dilemma is actively managed.

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Computational Tools for Optimization Research

| Tool / Solution | Function in Research |

|---|---|

| High-Quality Curated Datasets (e.g., DrugBank, Swiss-Prot) | Provide the biological and chemical data that forms the objective function for optimization tasks in drug discovery; data quality is paramount for meaningful results [6]. |

| Stacked Autoencoder (SAE) | A deep learning architecture used for unsupervised feature extraction, which reduces data dimensionality and reveals latent patterns that are more tractable for optimization algorithms [6]. |

| Gradient-Based Optimizer (GBO) | A standalone metaheuristic inspired by gradient-based methods, useful for solving complex engineering and design problems where traditional gradients are unavailable [21]. |

| Hierarchically Self-Adaptive PSO (HSAPSO) | An advanced swarm intelligence algorithm that dynamically tunes its own parameters during execution, effectively managing the exploration-exploitation trade-off without manual intervention [6]. |

| Local Escaping Operator (LEO) | A specific algorithmic component, as seen in GBO, that can be integrated into other methods to actively escape local optima and promote exploration [21]. |

Performance and Application Analysis

Table 3: Quantitative Performance Comparison Across Domains

| Application Domain | Algorithm | Key Performance Metric | Result | Implied Trade-Off Balance |

|---|---|---|---|---|

| Drug Classification & Target ID [6] | optSAE + HSAPSO | Classification Accuracy | 95.52% | Excellent balance: High exploitation of features via SAE with adaptive exploration via HSAPSO. |

| Mathematical Test Functions [21] | GBO vs. 5 other algorithms | Convergence & Avoidance of Local Optima | High Competitiveness | Effective balance: Strong exploitation via GSR combined with targeted exploration via LEO. |

| Microgrid Energy Cost Minimization [15] | GD-PSO (Hybrid) | Average Cost & Stability | Lowest Cost, High Stability | Superior balance: Exploration from PSO enhanced by gradient-assisted exploitation. |

| Microgrid Energy Cost Minimization [15] | ACO, IVY (Classical) | Average Cost & Stability | Higher Cost and Variability | Poorer balance: Likely insufficient exploration or inefficient exploitation. |

| Truss Structure Optimization [22] | Stochastic Paint Optimizer (SPO) | Accuracy & Convergence Rate | Outperformed 7 other algorithms | Effective balance: Unique stochastic strategy for navigating complex constraints. |

The experimental data consistently shows that algorithms which explicitly and dynamically manage the exploration-exploitation dilemma achieve superior performance. In drug discovery, the optSAE + HSAPSO framework demonstrates that adaptive metaheuristics can yield high accuracy on complex biological data [6]. In engineering and energy systems, hybrids like GD-PSO and specialized metaheuristics like GBO and SPO prove more robust and efficient than static algorithms [21] [15] [22]. The common thread is that a rigid approach to the trade-off is suboptimal; the most effective solutions incorporate mechanisms to dynamically shift strategy between exploration and exploitation based on the problem landscape and search progress.

The pursuit of optimal solutions lies at the heart of computational science, driving advancements across fields from structural engineering to drug discovery. For decades, optimization methodologies have been broadly divided into two paradigms: gradient-based methods rooted in mathematical rigor, and metaheuristic algorithms leveraging stochastic search. Gradient-based techniques, such as Stochastic Gradient Descent (SGD) and its adaptive variants, use calculated derivatives to efficiently navigate parameter spaces [23]. In contrast, metaheuristic approaches like Genetic Algorithms and Particle Swarm Optimization mimic natural processes to explore complex landscapes without gradient information [24]. While each approach has distinct strengths and limitations, a new paradigm is emerging through hybrid methodologies that integrate mathematical precision with stochastic exploration. This guide examines the performance and experimental foundations of these hybrid approaches, providing researchers and drug development professionals with objective comparisons for method selection.

Theoretical Foundations: Bridging Two Paradigms

Gradient-Based Methods: Mathematical Precision

Gradient-based optimization algorithms utilize derivative information to guide the search for minima in loss functions. The fundamental principle involves iteratively adjusting parameters in the direction opposite to the gradient of the objective function. Stochastic Gradient Descent (SGD) computes gradients using subsets of data, enabling application to large-scale problems [23]. Enhancements like momentum and Nesterov acceleration improve convergence by incorporating historical gradient information, while adaptive learning rate methods like Adam, Adagrad, and RMSprop automatically adjust step sizes based on gradient histories [23].

These methods excel in exploitation, efficiently refining solutions in smooth, convex landscapes. However, they face limitations in non-convex problems with numerous local minima, where gradient information can lead to premature convergence at suboptimal solutions [24]. The sensitivity to learning rate parameters and difficulty escaping saddle points further constrain their effectiveness in complex optimization landscapes [23].

Metaheuristic Algorithms: Stochastic Exploration

Metaheuristic algorithms employ stochastic strategies inspired by natural phenomena to explore solution spaces. Examples include Genetic Algorithms (GA) simulating natural selection, Particle Swarm Optimization (PSO) mimicking collective animal behavior, and Ant Colony Optimization (ACO) based on ant foraging principles [24]. These methods are population-based, maintaining multiple candidate solutions simultaneously, and typically operate without gradient information [22].

The strength of metaheuristics lies in global exploration, effectively navigating complex, high-dimensional search spaces with multiple local optima. They demonstrate particular efficacy in non-convex, non-differentiable, and noisy environments where gradient-based methods struggle [25]. However, they often require extensive function evaluations, exhibit slower convergence rates, and may lack mathematical convergence guarantees compared to their gradient-based counterparts [24].

Hybridization Strategy: Synergistic Integration

Hybrid approaches strategically combine mathematical rigor with stochastic search to leverage their complementary strengths. The integration typically follows one of three patterns:

- Mathematical-guided metaheuristics: Incorporating gradient information or mathematical properties into metaheuristic frameworks to improve local refinement [25].

- Multi-stage optimization: Employing metaheuristics for global exploration followed by gradient-based methods for precise local exploitation [26].

- Unified frameworks: Designing new algorithms that intrinsically balance exploration and exploitation through mathematical principles [23].

These hybrid methodologies aim to overcome the limitations of either approach used independently, particularly for complex real-world optimization problems in fields like drug discovery and structural design [26] [22].

Experimental Comparison of Hybrid Approaches

Performance Metrics and Evaluation Framework

Objective evaluation of optimization algorithms requires multiple performance metrics capturing different aspects of algorithm behavior. For comparative analysis, we consider: convergence rate (iterations to reach threshold), computational efficiency (CPU time/resources), solution quality (objective function value), and success rate (consistency across problem instances). Experimental protocols should include diverse benchmark functions and real-world problems with varying characteristics (convex/non-convex, smooth/non-smooth, low/high-dimensional) [22] [25].

Comparative Performance Analysis

Table 1: Performance Comparison of Optimization Algorithms on Benchmark Problems

| Algorithm | Convergence Rate | Solution Quality | Local Optima Avoidance | Computational Cost | Implementation Complexity |

|---|---|---|---|---|---|

| SGD with Momentum [23] | Moderate | Good in convex problems | Poor | Low | Low |

| Adam [23] | Fast | Good in smooth landscapes | Moderate | Low | Low |

| Genetic Algorithm [24] | Slow | Excellent global | Excellent | High | Moderate |

| Particle Swarm Optimization [24] | Moderate | Very Good | Very Good | Moderate | Moderate |

| Stochastic Paint Optimizer (SPO) [22] | Fast | Excellent | Excellent | Moderate | Moderate |

| Adam Gradient Descent Optimizer (AGDO) [25] | Very Fast | Excellent | Good | Moderate | High |

| Dual Enhanced SGD (DESGD) [23] | Very Fast | Excellent | Good | Moderate | High |

| Context-Aware HACO-LF [26] | Moderate | Superior in specific domains | Excellent | High | High |

Table 2: Quantitative Performance Results on Standard Benchmarks

| Algorithm | Rosenbrock Function (Iterations) | Sum Square Function (Iterations) | MNIST Accuracy (%) | Truss Weight Reduction (%) |

|---|---|---|---|---|

| SGD with Momentum [23] | 12,500 | 8,900 | 97.8 | N/A |

| Adam [23] | 7,200 | 5,400 | 98.2 | N/A |

| DESGD [23] | 2,400 | 1,800 | 98.5 | N/A |

| Stochastic Paint Optimizer [22] | N/A | N/A | N/A | 22.5 |

| AGDO [25] | 3,100 | 2,200 | 98.4 | N/A |

Domain-Specific Performance

Engineering Design Optimization

In structural engineering applications, hybrid approaches have demonstrated superior performance. A comprehensive comparison of eight metaheuristic algorithms for truss structure optimization with static constraints showed the Stochastic Paint Optimizer (SPO) achieved the best performance in terms of both accuracy and convergence rate, significantly reducing structural weight while satisfying displacement and stress constraints [22]. The study utilized three truss structure benchmarks (25-bar, 75-bar, and 120-member dome trusses) with aluminum materials, with SPO consistently outperforming other algorithms including African Vultures Optimization Algorithm, Flow Direction Algorithm, and Arithmetic Optimization Algorithm [22].

Drug Discovery Applications

In pharmaceutical applications, hybrid approaches are revolutionizing target identification and compound optimization. The Context-Aware Hybrid Ant Colony Optimized Logistic Forest (CA-HACO-LF) model combines ant colony optimization for feature selection with logistic forest classification, significantly improving drug-target interaction prediction [26]. When applied to a dataset of over 11,000 drug details, the model achieved an accuracy of 98.6% across multiple metrics including precision, recall, F1 Score, and AUC-ROC [26].

The Adam Gradient Descent Optimizer (AGDO) represents another hybrid approach, inspired by the Adam optimizer but incorporating three mathematical rules: progressive gradient momentum integration, dynamic gradient interaction system, and system optimization operator [25]. When evaluated on CEC2017 benchmarks across multiple dimensions (10, 30, 50, and 100), AGDO demonstrated strong performance compared to 19 other algorithms, achieving the highest Wilcoxon rank-sum test scores in three of four dimensions [25].

Machine Learning Optimization

For training machine learning models, the Dual Enhanced SGD (DESGD) algorithm dynamically adapts both momentum and step size using the same update rules as SGDM but with enhanced capabilities for challenging optimization landscapes [23]. In tests on the Rosenbrock and Sum Square functions, DESGD achieved comparable errors with 81-95% fewer iterations and 66-91% less CPU time than SGDM, and 67-78% fewer iterations with 62-70% quicker runtimes than Adam [23]. On the MNIST dataset, DESGD achieved the highest accuracies and lowest test losses across most batch sizes, consistently improving accuracy by 1-2% compared to SGDM [23].

Experimental Protocols and Methodologies

Standardized Testing Frameworks

Benchmark Functions and Evaluation Metrics

Performance evaluation of hybrid optimization approaches requires standardized testing protocols. For mathematical benchmarking, well-established test functions including Rosenbrock, Sum Square, Rastrigin, and Ackley functions provide landscapes with diverse characteristics [23]. Experiments should measure both iterations to convergence and computational time, reporting mean and standard deviation across multiple independent runs to account for stochastic variability [22].

For real-world applications, domain-specific benchmarks are essential. In drug discovery, metrics include predictive accuracy, precision-recall curves, hit rates in virtual screening, and experimental validation rates [26]. In engineering design, standard measures include weight reduction, constraint satisfaction, and structural integrity under load conditions [22].

Table 3: Key Research Reagents and Computational Tools

| Resource Type | Specific Tools/Platforms | Function/Purpose | Application Context |

|---|---|---|---|

| Chemical Databases | ZINC, ChEMBL, DrugBank | Provide annotated compound libraries for virtual screening | Drug discovery [27] |

| Protein Structure Resources | Protein Data Bank (PDB), UniProt | Offer target structures for molecular docking | Structure-based drug design [27] |

| Optimization Frameworks | DeepChem, OpenEye, Schrödinger Platform | Enable implementation and testing of optimization algorithms | Computational chemistry [27] |

| Benchmark Datasets | CEC2017, MNIST, Kaggle Medicine Details | Standardized performance evaluation across algorithms | General optimization [25] [26] |

| AI-Driven Discovery Platforms | Exscientia, Insilico Medicine, BenevolentAI | Integrate AI and optimization for end-to-end drug discovery | Pharmaceutical development [28] |

Detailed Methodological Protocols

Protocol 1: Drug-Target Interaction Prediction Using CA-HACO-LF

The CA-HACO-LF methodology follows a structured workflow [26]:

- Data Acquisition and Preprocessing: Obtain drug datasets (e.g., Kaggle's 11,000 Medicine Details). Apply text normalization including lowercasing, punctuation removal, and elimination of numbers and spaces. Perform stop word removal and tokenization. Apply lemmatization to refine word representations.

- Feature Extraction: Utilize N-grams for sequential pattern recognition. Compute Cosine Similarity to assess semantic proximity of drug descriptions.

- Optimization and Classification: Implement customized Ant Colony Optimization for feature selection. Integrate optimized features with Logistic Regression and Random Forest classifiers. Train the hybrid model on processed features and similarity metrics.

- Validation: Evaluate using k-fold cross-validation. Measure performance metrics including accuracy, precision, recall, F1 Score, RMSE, AUC-ROC, MSE, MAE, F2 Score, and Cohen's Kappa.

Protocol 2: Structural Optimization with Metaheuristic Hybrids

For engineering design applications such as truss optimization [22]:

- Problem Formulation: Define design variables (member cross-sectional areas, nodal coordinates). Specify constraints (stress, displacement, frequency). Establish objective function (weight minimization).

- Algorithm Implementation: Select appropriate hybrid metaheuristic (e.g., SPO, AGDO). Configure population size and termination criteria. Implement constraint handling techniques.

- Evaluation: Execute multiple independent runs to account for stochasticity. Compare final solutions, convergence history, and computational effort. Perform statistical significance testing (e.g., Wilcoxon rank-sum test).

Protocol 3: Training Deep Networks with Enhanced SGD Variants

For machine learning optimization tasks [23]:

- Experimental Setup: Select benchmark dataset (e.g., MNIST, CIFAR-10). Define network architecture. Set mini-batch size and initialization scheme.

- Algorithm Configuration: Implement DESGD with dynamic momentum and step size adaptation. Compare against baseline optimizers (SGD, Adam, RMSprop). Use consistent initialization across experiments.

- Evaluation Metrics: Track training loss convergence. Measure final test accuracy. Compute computational efficiency (time per epoch, total training time).

Visualization of Hybrid Method Workflows

Hybrid Optimization Workflow

Figure 1: This workflow illustrates the iterative process of hybrid optimization approaches, combining stochastic global exploration with mathematical local refinement.

Drug Discovery Optimization Process

Figure 2: Specific workflow for drug-target interaction prediction using the CA-HACO-LF model, demonstrating the integration of stochastic optimization with classification.

Hybrid optimization approaches that combine mathematical rigor with stochastic search represent a significant advancement beyond standalone gradient-based or metaheuristic methods. The experimental evidence demonstrates that algorithms such as DESGD, AGDO, SPO, and CA-HACO-LF consistently outperform conventional approaches across diverse applications including structural design, drug discovery, and machine learning model training.

The key advantage of hybrid methods lies in their balanced approach to exploration and exploitation, enabling effective navigation of complex, high-dimensional search spaces while efficiently converging to high-quality solutions. For researchers and drug development professionals, these approaches offer tangible benefits in terms of accelerated discovery timelines, improved solution quality, and enhanced robustness across problem domains.

As optimization challenges grow increasingly complex, the continued development and refinement of hybrid methodologies will play a crucial role in addressing the next generation of scientific and engineering problems. The experimental protocols and performance comparisons provided in this guide offer a foundation for informed method selection and implementation.

Solving Drug Discovery Challenges: Practical Applications and Methodologies

Parameter Estimation in Nonlinear Mixed-Effects Models (NLMEMs)

Parameter estimation in Nonlinear Mixed-Effects Models (NLMEMs) represents a cornerstone of computational pharmacology and drug development, enabling researchers to quantify both population-level trends and individual-specific variations in drug response. The estimation landscape is broadly divided into two methodological families: gradient-based optimization and metaheuristic approaches. Gradient-based methods, including first-order conditional estimation (FOCE) and Laplacian approximation, utilize derivative information to efficiently navigate parameter spaces toward locally optimal solutions. In contrast, metaheuristic methods employ stochastic search strategies inspired by natural processes to explore complex parameter landscapes, potentially avoiding local minima at the cost of increased computational demand.

The fundamental challenge in NLMEM estimation lies in balancing computational efficiency with statistical robustness, particularly when dealing with complex biological systems, sparse clinical data, or models with numerous parameters. As drug development increasingly targets rare diseases and complex biological systems, the choice of estimation algorithm can significantly impact trial design, power calculations, and ultimately, regulatory decisions. This guide provides a comprehensive comparison of contemporary parameter estimation methodologies, supported by experimental data and practical implementation considerations for pharmacometric applications.

Theoretical Foundations and Computational Frameworks

Gradient-Based Optimization Methods

Gradient-based optimization algorithms form the backbone of most modern NLMEM software platforms. These methods leverage calculus to determine the direction of steepest descent or ascent in the objective function landscape. The first-order conditional estimation extended least squares (FOCE ELS) algorithm approximates the likelihood function by linearizing the model around the conditional estimates of the random effects. Similarly, the Laplacian method employs a higher-order approximation, potentially improving accuracy for highly nonlinear models but at increased computational cost.

A significant advancement in this domain is the integration of automatic differentiation (AD), which accurately and efficiently computes derivatives without the numerical instability associated with traditional finite-difference approaches. The recently introduced automatic-differentiation-assisted parametric optimization (ADPO) implementation in Phoenix NLME 8.6 demonstrates the practical benefits of this approach, substantially reducing computation time for both ordinary differential equation (ODE) and non-ODE models [29].

Metaheuristic Optimization Approaches

Metaheuristic algorithms provide a derivative-free alternative for parameter estimation, particularly valuable for problems with discontinuous, noisy, or highly multimodal objective functions. These methods include genetic algorithms, particle swarm optimization, differential evolution, and artificial bee colony algorithms. Rather than following deterministic gradient information, metaheuristics maintain a population of candidate solutions that evolve according to rules balancing exploration of new regions and exploitation of promising areas.

Recent research has focused on enhancing metaheuristics through opposition-based learning (OBL) techniques, which simultaneously evaluate candidate solutions and their "opposites" to accelerate convergence. A 2025 systematic comparison identified quasi-reflection opposition-based learning as particularly effective, consistently outperforming other OBL variants across benchmark optimization problems [30]. This approach generates candidate solutions by reflecting them toward the center of the search space, maintaining diversity while promoting convergence.

Comparative Performance Analysis

Computational Efficiency Benchmarks

Table 1: Computational Performance Comparison of Estimation Methods

| Method | Implementation | Speed Advantage | Accuracy Metrics | Optimal Use Cases |

|---|---|---|---|---|

| ADPO FOCE ELS | Phoenix NLME 8.6 | 20-50% reduction vs traditional FOCE ELS; up to 95% with auto-detect ODE solver [29] | Equivalent accuracy to finite difference gradients [29] | Large PK/PD models; ODE-based systems |

| Traditional FOCE ELS | Phoenix NLME (pre-8.6) | Baseline | Reasonable accuracy and robustness [29] | Standard PK models; non-stiff systems |

| Gradient-based with semi-analytical gradients | pyPESTO | >10x speedup vs gradient-free methods [31] | Improved objective function values in some examples [31] | ODE models with qualitative data |

| Quasi-reflection OBL | Enhanced metaheuristics | Superior convergence speed vs other OBL variants [30] | Better solution quality across most benchmark functions [30] | Multimodal problems; global optimization |

Statistical Performance in Clinical Trial Applications

Table 2: Performance in Rare Disease Trial Settings Based on Simulation Studies

| Design & Method | Power (Slow Progression) | Power (Fast Progression) | Type I Error Control | Required Trial Duration |

|---|---|---|---|---|

| NLMEM with population-based LRT | 88% (parallel design) [32] | >80% with 2-year duration [32] | Controlled [32] | 5 years (slow), 2 years (fast) [32] |

| Linear mixed effect model (rich) | 75% [32] | Not reported | Not reported | Longer than NLMEM required |

| Linear mixed effect model (sparse) | 49% [32] | Not reported | Not reported | Longer than NLMEM required |

| Standard statistical analysis | 36% [32] | Not reported | Not reported | Longer than NLMEM required |

| Pharmacometrics-informed CSE framework | High (specific values not provided) [33] | Not reported | Valid, robust [33] | Optimized via simulation |

The superior performance of NLMEM approaches is particularly evident in rare disease settings, where a pharmacometrics-informed clinical scenario evaluation (CSE-PMx) framework demonstrated advantages over conventional methods for designing trials in conditions like Autosomal-Recessive Spastic Ataxia Charlevoix Saguenay (ARSACS) [33]. The nonlinear mixed-effects model with a population-based likelihood ratio test analysis showed improved validity, robustness, and statistical power compared to two-sample t-tests, analysis of covariance, or mixed models with repeated measurements [33].

Experimental Protocols and Methodologies

Clinical Trial Simulation Framework

The evaluation of estimation methods in rare neurological disorders followed a rigorous simulation protocol:

Disease Progression Modeling: Researchers developed a four-parameter logistic model to describe the evolution of the Scale for Assessment and Rating of Ataxia (SARA) scores over time since symptom onset [32]:

- Model parameters: δ (lower asymptote), γ (amplitude), α (disease progression rate), β (location parameter)

- Incorporation of treatment effect: α_trt = α(1 - DE × TRT), where DE represents drug effect and TRT is treatment indicator

Trial Design Simulation: Three designs were implemented in silico:

- Parallel design: Patients randomized to control or treatment groups

- Crossover design: Patients switch groups mid-trial

- Delayed-start design: Control patients receive treatment mid-trial

Performance Evaluation: Each design was tested under multiple scenarios varying trial duration (2/5 years), disease progression rate, residual error magnitude (σ=0.5/2), and sample size (40/100 patients) [32]

Analysis Comparison: NLMEM approaches were compared against linear mixed effects models and standard statistical analyses using type I error and power as primary metrics [32]

Gradient-Based Optimization with Qualitative Data

The integration of gradient-based optimization with qualitative data followed an optimal scaling approach:

Surrogate Data Optimization: Qualitative observations were transformed into quantitative surrogate data through a constrained optimization process that preserves category ordering [31]

Gradient Calculation: Semi-analytical gradient computation was implemented for the hierarchical optimization problem, enabling efficient parameter estimation [31]

Model Fitting: Parameters were estimated by minimizing the discrepancy between model simulations and surrogate data using gradient-based optimization in the pyPESTO toolbox [31]

This approach demonstrated particular value for parameterizing models from imaging data, FRET data, and phenotypic observations where quantitative measurements are unavailable [31].

Metaheuristic Enhancement Protocol

The evaluation of opposition-based learning techniques followed a standardized benchmarking approach:

Algorithm Selection: Five metaheuristics (differential evolution, genetic algorithm, particle swarm optimization, artificial bee colony, harmony search) were hybridized with five OBL variants [30]

Integration Testing: Each OBL variant was tested across different integration phases (initialization, generation jumps, both phases) [30]

Performance Metrics: Algorithms were evaluated using 12 benchmark functions from CEC2022 suite, with analysis of maximum, minimum, mean, standard deviation, and convergence curves [30]

Statistical Validation: Friedman tests provided statistical validation of performance differences between variants [30]

Visualization of Method workflows and Relationships

Gradient-Based Estimation Workflow

Gradient-Based NLMEM Estimation Workflow - This diagram illustrates the iterative process of parameter estimation using gradient-based methods, highlighting the critical decision point in selecting gradient computation approaches.

Metaheuristic Enhancement with OBL

Metaheuristic Enhancement with OBL - This visualization shows how opposition-based learning variants are integrated into metaheuristic algorithms to improve convergence and solution quality.

Essential Research Reagent Solutions

Table 3: Key Software Tools for NLMEM Parameter Estimation

| Tool/Platform | Primary Method | Key Features | Representative Applications |

|---|---|---|---|

| Phoenix NLME | Gradient-based (FOCE ELS, Laplacian) | ADPO implementation; Fast Optimization option [29] | PK/PD modeling; clinical trial simulation [33] [29] |

| pyPESTO | Gradient-based and metaheuristic | Parameter EStimation TOolbox; optimal scaling for qualitative data [31] | ODE models; qualitative data integration [31] |

| Pumas | Gradient-based (FOCE) | NLME-QSP model parameter estimation [34] | QSP-PK/PD model integration [34] |

| MATLAB NLMEM | Gradient-based | nlmefitsa function; random starting values [35] | Medical dosimetry; STP calculation [35] |

| Custom OBL-enhanced algorithms | Metaheuristic | Quasi-reflection OBL implementation [30] | Global optimization; multimodal problems [30] |

Discussion and Implementation Recommendations

The comparative analysis reveals a nuanced landscape for NLMEM parameter estimation where method selection should be guided by specific research requirements. Gradient-based methods, particularly those enhanced with automatic differentiation, demonstrate clear advantages in computational efficiency for large-scale pharmacometric applications. The reported 20-50% reduction in runtime with ADPO implementation [29] translates to substantial practical benefits in drug development timelines. Furthermore, gradient-based approaches have proven statistically superior in rare disease trial settings, where NLMEM with population-based likelihood ratio tests achieved 88% power compared to 36% for standard methods [32].

Metaheuristic approaches enhanced with opposition-based learning offer complementary strengths, particularly for problems characterized by multimodal objective functions or parameter identifiability challenges. The consistent outperformance of quasi-reflection OBL across benchmark functions [30] suggests its value as a default enhancement strategy for metaheuristic algorithms. However, the computational overhead of these population-based methods may be prohibitive for large NLMEM problems with numerous random effects.

For contemporary drug development, particularly in rare diseases with limited patient populations, we recommend a hierarchical approach to parameter estimation: beginning with gradient-based methods for initial estimation and leveraging metaheuristics for refinement in cases of convergence failure or suspected local minima. The pharmacometrics-informed clinical scenario evaluation framework [33] provides a structured methodology for comparing design and analysis strategies within specific resource constraints, representing a best practice for trial optimization in rare neurological disorders.

Future methodological development will likely focus on hybrid approaches that combine the efficiency of gradient-based optimization with the global search capabilities of metaheuristics, potentially through adaptive switching mechanisms or embedded opposition-based learning within gradient estimation routines.