Gene Regulatory Networks: From Foundational Principles to Clinical Applications in Biomedical Research

This article provides a comprehensive overview of gene regulatory networks (GRNs), the complex systems that control gene expression in living organisms.

Gene Regulatory Networks: From Foundational Principles to Clinical Applications in Biomedical Research

Abstract

This article provides a comprehensive overview of gene regulatory networks (GRNs), the complex systems that control gene expression in living organisms. Tailored for researchers, scientists, and drug development professionals, it explores the fundamental components of GRNs, details the evolution of computational inference methods from bulk to single-cell multi-omic data, and addresses key challenges in network analysis. It further outlines rigorous validation frameworks and comparative analytical techniques. By synthesizing foundational knowledge with current methodological advances and troubleshooting insights, this resource aims to bridge the gap between theoretical network biology and its practical applications in deciphering disease mechanisms and identifying novel therapeutic targets.

Deconstructing the Blueprint: Core Components and Principles of Gene Regulatory Networks

A Gene Regulatory Network (GRN) is a graph-level representation that describes the complex regulatory interactions between transcription factors (TFs) and target genes within cells [1]. At its core, a GRN consists of nodes representing genes and edges representing regulatory relationships between them [1]. These networks form the fundamental control systems that orchestrate cellular identity, function, and response to environmental stimuli throughout biological development [2] [1]. The structure and dynamics of GRNs enable cells to execute complex developmental programs, maintain homeostasis, and respond to perturbations—functions that emerge from the intricate patterns of connection between regulatory elements.

The study of GRNs represents a paradigm shift from the linear conception of genetic information flow described by the Central Dogma of molecular biology toward a complex systems perspective. Where the Central Dogma outlines the unidirectional flow of genetic information from DNA to RNA to protein, GRNs reveal a reality of multidirectional feedback and interconnected control logic [2]. This network perspective has become increasingly essential for understanding how relatively static genetic blueprints give rise to dynamic, adaptive biological systems. For researchers and drug development professionals, mapping these networks provides critical insights into disease mechanisms, potential therapeutic targets, and cellular behavior under various conditions [3] [1].

Core Architectural Principles of GRNs

Gene regulatory networks exhibit several defining structural properties that shape their functional capabilities and distinguish them from random networks. Understanding these architectural principles is essential for accurate inference, modeling, and interpretation of GRN behavior in biological contexts.

Key Structural Properties

Sparsity: Despite the potential for extensive interconnection, biological GRNs are remarkably sparse. Most genes are directly regulated by only a small number of transcription factors, and the number of regulators for any single gene is much smaller than the total number of regulators in the network. Experimental evidence from perturbation studies indicates that only approximately 41% of perturbations targeting a primary transcript have significant effects on the expression of any other gene [4].

Directed Edges and Feedback Loops: Regulatory relationships in GRNs are inherently directional, with "A regulates B" representing a fundamentally different relationship than "B regulates A." Simultaneously, feedback loops are pervasive in GRN architecture, creating complex control circuits. Empirical data reveals that 3.1% of ordered gene pairs exhibit at least a one-directional perturbation effect, with a subset demonstrating bidirectional regulation [4].

Hierarchical Organization and Modularity: GRNs typically exhibit hierarchical structures with transcription factors occupying different tiers of regulatory control. This hierarchy is complemented by modular organization, where densely interconnected groups of genes perform specialized functions. These modules often correspond to specific biological processes or response programs [4].

Scale-Free Topology and Small-World Properties: Many biological GRNs approximate scale-free networks characterized by power-law distributions of node connectivity. This structure yields a small number of highly connected "hub" genes and many poorly connected genes. The resulting "small-world" property means most genes are connected by short paths, facilitating efficient information transmission [4].

Computational Representations of GRN Architecture

The following diagram illustrates the core structural elements and hierarchical organization typical of gene regulatory networks:

Graphical representation of GRN hierarchical structure with three tiers of regulation and feedback loops (dashed lines)

Methodological Approaches for GRN Modeling and Inference

The reconstruction and analysis of GRNs employs diverse computational approaches, each with distinct strengths, limitations, and appropriate application domains. These methodologies can be broadly categorized based on their underlying mathematical frameworks and data requirements.

Classification of GRN Models

| Model Type | Core Principle | Data Requirements | Strengths | Limitations |

|---|---|---|---|---|

| Topological Models [2] | Represent GRNs as graphs showing connections between elements | Protein-protein interaction data, coexpression networks | Intuitive representation of network structure; Scalable to genome-wide applications | Does not capture regulatory logic or dynamics |

| Control Logic Models [2] | Describe dependencies between genes and their regulatory significance | Known regulatory interactions; Perturbation data | Reveals regulatory logic; Useful when knowledge is limited | May oversimplify complex regulatory relationships |

| Dynamic Models [2] | Describe and simulate dynamic fluctuations in system states | Time-series expression data; Kinetic parameters | Captures temporal behavior; Predicts network response to perturbations | Computationally intensive; Requires detailed parameter estimation |

| Machine Learning Models [3] [2] | Use algorithms to predict regulatory relationships from data | Large-scale expression data; Known regulatory relationships for training | Handles complex patterns; Adapts to new data; Reduces need for explicit modeling | Requires extensive training data; Limited interpretability in some cases |

Experimental Data Types for GRN Reconstruction

Different data modalities provide complementary insights into GRN structure and function, with optimal inference approaches often combining multiple data types:

Microarray Data: Historically important, widely available for various organisms and tissues, but largely superseded by more quantitative approaches [2].

RNA-seq Data: Provides more accurate quantification of gene expression levels across the transcriptome, enabling comprehensive mapping of expression relationships [2].

Single-cell RNA-seq (scRNA-seq): Reveals cell-type-specific gene expression patterns and heterogeneity, particularly valuable for understanding GRN dynamics in development and disease [2] [1].

Time-series Expression Data: Captures changes in gene expression over time, enabling inference of dynamic GRNs and causal relationships based on temporal patterns [2].

Perturbation Experiments: Utilize gene knockouts, knockdowns, or other interventions (e.g., CRISPR-based approaches) to establish causal relationships and identify direct regulatory effects [2] [4].

Multi-omics Datasets: Integrate information from multiple molecular levels (genomics, epigenomics, transcriptomics, proteomics) to construct more complete models of gene regulation [2].

Contemporary GRN Inference Technologies and Tools

Recent advances in computational methods have dramatically improved our ability to infer GRN structure from experimental data. The table below summarizes key contemporary tools and their applications:

Comparison of Modern GRN Inference Tools

| Tool/Platform | Core Methodology | Key Features | Application Context | Reference |

|---|---|---|---|---|

| RegNetwork 2025 [5] | Integrative database with scoring system | Curates TF, miRNA, gene interactions; Includes lncRNAs/circRNAs; Confidence scoring | Reference repository for human and mouse regulatory data | [5] |

| GRN_modeler [6] | Phenomenological modeling with GUI | User-friendly interface; Dynamical behavior and spatial pattern analysis | Synthetic biology; Biosensor design; Oscillator development | [6] |

| Meta-TGLink [3] | Structure-enhanced graph meta-learning | Few-shot learning capability; Transferable regulatory patterns; Handles limited labeled data | GRN inference with sparse prior knowledge; Cross-domain applications | [3] |

| GRLGRN [1] | Graph representation learning with transformer networks | Extracts implicit links; Attention mechanisms; Graph contrastive learning | Single-cell RNA-seq data; Prior network refinement | [1] |

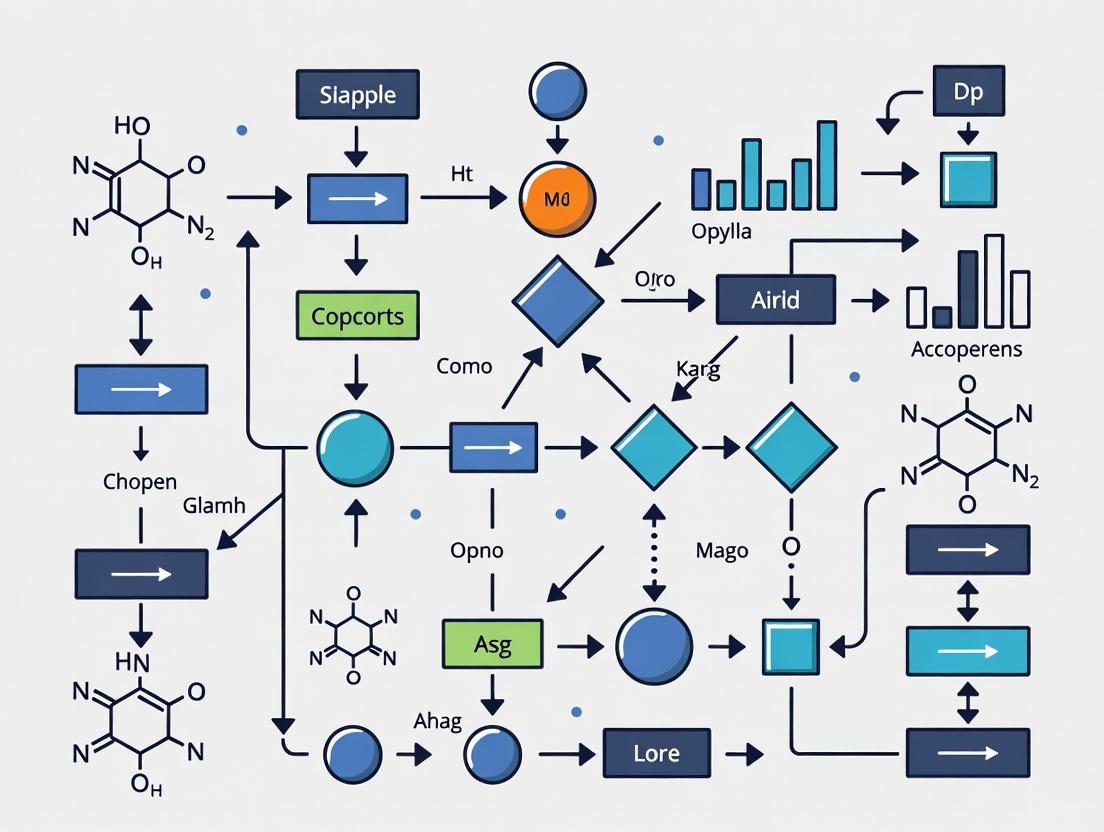

Workflow for Modern GRN Inference

The following diagram illustrates a typical computational workflow for GRN inference using contemporary machine learning approaches:

Computational workflow for GRN inference integrating experimental data and prior knowledge

Experimental Design and Methodological Protocols

Protocol: GRN Inference from Single-Cell RNA-seq Data

This protocol outlines the key steps for inferring gene regulatory networks from single-cell RNA sequencing data using contemporary computational approaches:

Data Acquisition and Preprocessing:

- Obtain scRNA-seq count matrix from relevant cell lines or tissues (e.g., human embryonic stem cells, mouse dendritic cells) [1].

- Perform quality control, normalization, and batch effect correction using standard bioinformatics pipelines.

- Select highly variable genes (e.g., top 500-1000 genes with largest expression variance) for focused analysis [1].

Integration of Prior Knowledge:

- Compile prior regulatory network from established databases (e.g., STRING, cell type-specific ChIP-seq, non-specific ChIP-seq) [1].

- Represent prior knowledge as directed graph structure with transcription factors and potential target genes.

- Formulate multiple graph perspectives: TF→target, target→TF, TF-TF regulatory relationships, and self-connections [1].

Model Architecture Configuration:

Model Training and Optimization:

Validation and Interpretation:

| Resource Category | Specific Examples | Function/Application | Key Features |

|---|---|---|---|

| Data Resources [5] [1] | RegNetwork 2025; BEELINE database | Provides curated regulatory interactions; Benchmark scRNA-seq datasets | Comprehensive TF-miRNA-gene interactions; Standardized evaluation frameworks |

| Computational Tools [6] [3] | GRN_modeler; Meta-TGLink | User-friendly GRN simulation; Few-shot inference with limited labeled data | Graphical interface; Adaptability to new tasks with minimal data |

| Experimental Platforms [4] | Perturb-seq (CRISPR-based screening) | High-throughput functional validation of regulatory interactions | Single-cell resolution; Genome-scale perturbation capability |

| Validation Databases [3] | ChIP-Atlas; STRING | Experimental validation of predicted regulatory relationships | Integration of multiple evidence types; Cross-species comparisons |

Advanced Applications and Future Directions

Synthetic Biology Applications

GRN modeling has enabled significant advances in synthetic biology, particularly through the design of programmable genetic circuits. The GRN_modeler tool demonstrates how computational approaches can guide the engineering of novel biological functions [6]. Key applications include:

Oscillator Design: Development of novel oscillator families capable of robust oscillation with even numbers of nodes, complementing the classical repressilator topology that requires odd-numbered nodes [6].

Biosensor Engineering: Creation of light-detecting biosensors in Escherichia coli that track light intensity over extended periods and record information through ring patterns in bacterial colonies [6].

Pattern Formation Systems: Programming of spatiotemporal pattern formation in concentration gradients using synthetic toggle switches and other regulatory motifs [6].

Emerging Computational Paradigms

Future advances in GRN research will be increasingly driven by sophisticated computational approaches that address current limitations:

Few-Shot and Zero-Shot Learning: Methods like Meta-TGLink that enable accurate GRN inference with minimal labeled data by transferring knowledge from well-characterized systems to less-studied cell types or species [3].

Integration of Multi-Modal Data: Combined analysis of single-cell epigenomic, transcriptomic, and proteomic data to construct more comprehensive and accurate regulatory models.

Causal Inference Frameworks: Approaches that move beyond correlation to establish causal regulatory relationships, particularly through the integration of perturbation data with observational studies [4].

Interpretable AI Models: Development of methods that not only predict regulatory interactions but also provide biological insights into regulatory mechanisms and patterns.

The continued evolution of GRN research promises to deepen our understanding of biological regulation and enhance our ability to intervene therapeutically in disease processes characterized by regulatory dysfunction. For drug development professionals, these advances offer new avenues for target identification, mechanism elucidation, and therapeutic strategy development across a wide range of complex diseases.

A gene regulatory network (GRN) is a collection of molecular regulators that interact with each other and with other substances in the cell to govern gene expression levels of mRNA and proteins, which in turn determine cellular function [7]. These networks form the fundamental control system that dictates how genetic information is translated into diverse phenotypes, coordinating complex processes such as development, environmental responses, and maintenance of cell identity [8] [7]. GRNs consist of directed interactions where transcription factors (TFs) bind to specific cis-regulatory elements (CREs) to activate or repress target genes, creating intricate circuits that can exhibit complex dynamic behaviors including feedback and feedforward loops [7] [9].

The structure and function of GRNs are profoundly influenced by the interplay between three key molecular players: transcription factors, cis-regulatory elements, and chromatin states. These components work in concert to ensure precise spatial and temporal control of gene expression, with disruptions in this regulatory apparatus often leading to disease states [10]. Recent advances in genomic technologies have enabled researchers to map these interactions at unprecedented scale and resolution, revealing that the combinatorial action of these elements forms a predictive regulatory code that can be deciphered through computational and experimental approaches [8] [11].

Transcription Factors: The Master Regulators

Functional Properties and Dynamics

Transcription factors (TFs) are proteins that recognize and bind to specific DNA sequences to regulate transcriptional initiation. They serve as the primary actuators of regulatory decisions within GRNs, integrating internal and external signals to modulate gene expression programs [12]. TFs can function as activators that enhance transcription or repressors that diminish it, with some factors capable of both functions depending on cellular context [7]. A special class of TFs known as pioneer factors possesses the unique ability to interact with closed chromatin structures and initiate chromatin remodeling, thereby creating accessible regions for other TFs to bind [10].

The in vivo dynamics of TF-chromatin interactions have revealed unexpected complexity. Rather than maintaining static binding, many TFs exhibit rapid exchange on genomic elements with short residence times, a finding that contrasts with earlier views of long-term residency [10]. This dynamic behavior enables flexible responses to changing cellular conditions and may facilitate the sampling of multiple genomic sites to establish appropriate expression patterns. The binding specificity of TFs is determined by their affinity for particular DNA sequences or motifs, though this specificity is modulated by chromatin structure and cooperative interactions with other nuclear proteins [10].

Quantitative Sensitivity to TF Dosage

Recent research has revealed that transcriptional responses are highly sensitive to TF dosage, with sequence-level features determining this sensitivity. Transfer learning approaches have predicted how concentrations of dosage-sensitive TFs like TWIST1 and SOX9 affect chromatin accessibility in progenitor cells with near-experimental accuracy [13]. Key sequence determinants have been identified:

- Buffering motifs: High-affinity motifs that allow for heterotypic TF co-binding, concentrated at RE centers, buffer against quantitative changes in TF dosage

- Sensitizing motifs: Low-affinity or homotypic binding motifs distributed throughout REs drive sensitive responses to TF dosage changes

Biophysical modeling indicates that TF-nucleosome competition explains why low-affinity motifs sensitize REs to TF dosage, as these sites require higher TF concentrations for stable binding [13]. Both buffering and sensitizing features display signatures of purifying selection, underscoring their functional importance in fine-tuning gene regulatory responses to varying TF concentrations.

Cis-Regulatory Elements: The Genomic Control Code

Definition and Functional Significance

Cis-regulatory elements (CREs) are non-coding DNA sequences that regulate the expression of genes located on the same chromosome [14]. These elements, which include enhancers, promoters, silencers, and insulators, contain binding sites (motifs) for transcription factors and function as critical information processing units that integrate regulatory signals [8] [14]. Enhancers are of particular importance as they can act over long distances to control cell-type-specific gene expression, often through looping interactions that bring them into proximity with their target promoters [8].

CREs typically range from 100 to 2000 base pairs in length and contain multiple binding sites for various TFs arranged in specific configurations that define their regulatory logic [8]. The combinatorial nature of TF binding to CREs allows a finite number of TFs to generate enormous regulatory complexity, enabling the precise spatial and temporal control of gene expression that underlies cellular differentiation and organismal development [8] [12].

Identification and Characterization Methods

Multiple experimental and computational approaches have been developed to identify and characterize CREs at genome-wide scale. The table below summarizes major CRE profiling methods and their key characteristics:

Table 1: Methods for Genome-wide Identification of Cis-Regulatory Elements

| Method | Principle | Resolution | Advantages | Limitations |

|---|---|---|---|---|

| ChIP-seq [14] | Immunoprecipitation of TF-bound DNA | ~500 bp | Direct in vivo binding measurement; TF-specific | Low throughput; requires specific antibodies |

| ATAC-seq [8] | Transposase insertion into accessible DNA | ~100-500 bp | Simple protocol; works on small cell numbers | Indirect measure of TF binding |

| MOA-seq [11] | MNase digestion of unprotected DNA | <100 bp | High-resolution footprinting; identifies bound sites | Complex data analysis; lower signal |

| DNA methylation [14] | Bisulfite sequencing of methylated regions | Single-base | Stable epigenetic signal across conditions | Indirect correlation with activity |

| CNS detection [14] | Evolutionary sequence conservation | Varies | Evolutionarily informed; context-independent | May miss lineage-specific elements |

Integrated approaches that combine multiple methods have proven most effective. A recent maize study demonstrated that integrating five computational conservation methods with epigenetic profiles (ACRs and UMRs) generated a comprehensive map of integrated CREs (iCREs) with improved completeness and precision for identifying functional TF binding sites [14]. This integrated approach successfully identified drought-specific regulatory networks and highlighted the contribution of transposable elements to the regulatory landscape.

Chromatin States: The Epigenetic Dimension

Definition and Functional Role

Chromatin states refer to the combinatorial patterns of histone modifications, DNA methylation, nucleosome positioning, and associated protein factors that define the functional status of genomic regions [10] [9]. These epigenetic modifications create a dynamic chromatin landscape that regulates DNA accessibility and determines the functional output of genomic sequences. Chromatin states can be classified into broad categories based on their associated histone marks and functional consequences:

- Strongly active: Characterized by H3K4me3 (promoters) or H3K4me1/H3K27ac (enhancers); associated with high gene expression [9]

- Weakly active: Moderate levels of active marks; associated with intermediate expression levels [9]

- Poised: Simultaneous presence of both activating (H3K4me3) and repressing (H3K27me3) marks; prepares genes for rapid activation [9]

- Repressed: Enriched for H3K27me3 or H3K9me3; associated with transcriptional silencing [9]

These chromatin states are not merely reflective of transcriptional activity but play causal roles in gene regulation by influencing chromatin accessibility and three-dimensional architecture [10] [9]. The dynamic interplay between chromatin states and TF binding creates a self-reinforcing cycle where TFs can recruit chromatin-modifying enzymes that in turn facilitate additional TF binding.

Impact on Regulatory Network Topology

Chromatin states significantly influence the topological organization and functional output of GRNs. Studies integrating chromatin state maps with regulatory networks have revealed that specific chromatin state compositions are significantly associated with network motifs, particularly feedforward loops (FFLs) [9]. These chromatin state-modified FFLs are highly cell-type-specific and determine cell-selective functions to a large extent.

For instance, embryonic stem cells exhibit a characteristic bivalent chromatin state (poised promoters marked by both H3K4me3 and H3K27me3) in developmentally important genes, which maintains them in a transcriptionally poised state ready for lineage-specific activation upon differentiation [9]. The presence of these bivalent domains in FFLs creates a regulatory circuit architecture that supports the pluripotent state while priming genes for future lineage commitment.

Table 2: Chromatin State Categories and Their Functional Associations

| Chromatin State | Representative Marks | Genomic Location | Functional Association |

|---|---|---|---|

| Active Promoter | H3K4me3, H3K27ac, low DNA methylation | Transcription start sites | High transcriptional activity |

| Strong Enhancer | H3K4me1, H3K27ac, low DNA methylation | Distal intergenic regions | Enhanced transcriptional activation |

| Poised Promoter | H3K4me3, H3K27me3 | Developmental gene promoters | Transcriptional poising for rapid activation |

| Weak Enhancer | Moderate H3K4me1, variable H3K27ac | Distal regulatory regions | Context-dependent enhancer activity |

| Repressed State | H3K27me3, H3K9me3, high DNA methylation | Silent genomic regions | Transcriptional silencing |

Comparative analyses of chromatin state-modified networks between cancerous, stem, and primary cell lines have revealed specific types of chromatin state alterations that cooperate with motif structural changes to generate cell-to-cell functional differences [9]. These findings highlight the importance of incorporating chromatin state information when analyzing GRN properties and dynamics across different biological contexts.

Integrated Experimental Approaches

The Bag-of-Motifs (BOM) Framework

The Bag-of-Motifs (BOM) framework is a computational approach that represents distal cis-regulatory elements as unordered counts of transcription factor binding motifs, combined with gradient-boosted trees for prediction of cell-type-specific regulatory activity [8]. This minimalist representation has demonstrated remarkable predictive accuracy, outperforming more complex deep learning models while offering direct interpretability through identification of the most predictive motifs.

The experimental workflow for BOM involves:

- Identification of candidate CREs as distal (>1 kb from TSS), non-exonic ATAC-seq peaks trimmed to 500 bp windows

- Motif annotation using databases like GimmeMotifs to reduce redundancy in TF binding motifs

- Vector encoding of each sequence as an unordered count of motif occurrences ("bag")

- Model training using XGBoost gradient-boosting algorithm on 60% of data

- Model validation on held-out test sets (20% of data) and experimental verification through synthetic enhancer construction

This approach has successfully predicted cell-type-specific enhancers across mouse, human, zebrafish, and Arabidopsis datasets, with models achieving 93% accuracy in assigning CREs to their cell type of origin in mouse embryonic data [8]. The method remains predictive even with limited training data, maintaining Matthews correlation coefficients above 0.7 with as few as 330 positive examples.

Diagram 1: BOM framework for predicting CRE activity from sequence.

MOA-Seq for Pan-Cistrome Mapping

MNase-defined cistrome occupancy analysis (MOA-seq) identifies putative transcription factor binding sites globally in a single experiment with high resolution (<100 bp), producing footprint regions that accurately reflect TF occupancy [11]. This approach has proven particularly valuable for mapping functional genetic variation affecting TF binding, enabling the identification of binding quantitative trait loci (bQTL) that explain the majority of heritable trait variation.

The MOA-seq protocol for pan-cistrome mapping involves:

- Nuclei isolation from target tissues (e.g., maize leaf blades under well-watered and drought conditions)

- MNase digestion to cleave unprotected DNA, leaving protein-bound regions intact

- Library preparation and sequencing of small DNA fragments

- Haplotype-specific alignment to concatenated hybrid genomes to avoid reference bias

- Footprint calling to identify regions protected from MNase digestion (FDR 5%)

- Allele-specific analysis to identify binding QTLs with significant allelic bias

In maize, this approach identified approximately 100,000 TF-occupied loci, covering about 70% of sequences identified in more than 100 ChIP-seq experiments [11]. When applied to a diverse population of 25 F1 hybrids, MOA-seq revealed that ~20% of motif polymorphisms showed significant allelic bias, closely matching allele-specific TF binding sites detected by gold-standard ChIP-seq.

Diagram 2: MOA-seq workflow for identifying TF footprints and bQTLs.

The Scientist's Toolkit: Essential Research Reagents

Table 3: Essential Research Reagents for Regulatory Genomics

| Reagent/Resource | Function | Application Examples |

|---|---|---|

| GimmeMotifs [8] | Motif discovery and analysis | Annotation of TF binding motifs in CREs for BOM models |

| XGBoost [8] | Gradient-boosted tree algorithm | Training predictive models of CRE activity from motif counts |

| MOA-seq reagents [11] | MNase enzyme and buffers | Genome-wide mapping of TF footprints and binding sites |

| Chromatin state maps [9] | Reference epigenomes | Annotation of functional chromatin states across cell types |

| SHAP values [8] | Model interpretability framework | Quantifying contribution of individual motifs to predictions |

| Pan-genome assemblies [11] | Multiple reference genomes | Haplotype-specific analysis of regulatory variation |

| Synthetic enhancer constructs [8] | Engineered DNA sequences | Experimental validation of computational predictions |

Quantitative Performance Benchmarks

Predictive Modeling Performance

The performance of different computational approaches for predicting regulatory activity from DNA sequence has been systematically benchmarked across diverse datasets. The table below summarizes the performance of prominent methods in classifying cell-type-specific cis-regulatory elements:

Table 4: Performance Comparison of Sequence-Based Classification Methods for CREs

| Method | Principle | auPR | MCC | Key Advantages |

|---|---|---|---|---|

| BOM (XGBoost) [8] | Bag-of-motifs with gradient boosting | 0.99 | 0.93 | High interpretability; cross-species applicability |

| LS-GKM [8] | Gapped k-mer support vector machine | 0.84 | 0.52 | Discovery of novel sequence patterns |

| DNABERT [8] | Transformer language model | 0.64 | 0.30 | Captures long-range dependencies |

| Enformer [8] | Hybrid convolutional-transformer | 0.90 | 0.70 | Models long-range interactions (up to 196 kb) |

| Simple CNN [8] | Convolutional neural network | 0.10-0.50 (recall) | N/R | Standard deep learning approach |

The BOM framework consistently outperformed more complex models, achieving 93% accuracy in assigning CREs to their cell type of origin in mouse embryonic data, with area under the ROC curve of 0.98 and area under the precision-recall curve of 0.98 [8]. The method maintained strong performance when trained on one developmental time point (E8.25) and tested on another (E8.5), demonstrating generalization across closely related biological contexts.

Functional Validation of Predictions

Critical validation of computational predictions involves experimental testing of synthetic regulatory elements. The BOM approach validated its predictions by constructing synthetic enhancers from the most predictive motifs, demonstrating that these motif sets could indeed drive cell-type-specific expression patterns [8]. This validation strategy provides direct causal evidence for the regulatory potential of identified motif combinations rather than relying solely on correlative evidence from genomic observations.

Similarly, MOA-seq predictions were validated through comparison with allele-specific ChIP-seq data for ZmBZR1, showing that more than 70% of allele-specific MOA polymorphisms overlapping with ZmBZR1 binding sites showed allelic bias in the same direction in both assays [11]. The high concordance between these independent methods confirms the accuracy of MOA-seq in identifying functional TF binding sites and their genetic variants.

Future Directions and Clinical Applications

The integration of transcription factor binding information, cis-regulatory maps, and chromatin state data is progressively enhancing our ability to interpret non-coding genetic variation and its impact on disease risk. As GRN inference methods improve, they hold increasing promise for identifying master regulator TFs that could serve as therapeutic targets, particularly in cancer and developmental disorders [15] [9]. The ability to predict how sequence variation influences TF binding and chromatin states will be crucial for advancing personalized medicine approaches that account for individual regulatory differences.

Emerging single-cell technologies are poised to revolutionize our understanding of GRNs by revealing cell-to-cell heterogeneity in regulatory states that is masked in bulk measurements [10]. Combining single-cell multi-omics with advanced computational modeling will enable the reconstruction of context-specific GRNs across different cell types, developmental stages, and disease states, providing unprecedented resolution of regulatory dynamics.

As these technologies mature, the clinical implementation of GRN-based diagnostics and therapeutics will require standardized frameworks for network validation and assessment [15]. Developing such standards represents a critical next step for translating basic research on transcriptional regulatory networks into clinically actionable insights for drug development and therapeutic intervention.

Gene regulatory networks (GRNs) are complex systems comprising molecular regulators that interact to govern gene expression levels, ultimately determining cellular function and identity [7]. The architecture of these networks—the specific pattern of connections between genes and their regulators—profoundly influences their dynamic behavior, robustness, and functional capabilities. Within systems biology, certain topological patterns recur across diverse biological systems. This technical guide examines three fundamental architectural themes observed in GRNs: scale-free, small-world, and hierarchical topologies. Each structure confers distinct advantages: scale-free networks enhance robustness against random failures, small-world networks enable efficient information propagation, and hierarchical organization facilitates modular control of complex processes. Understanding these blueprints is essential for researchers and drug development professionals aiming to decipher the organizational principles of cellular regulation and identify potential therapeutic intervention points.

Scale-Free Networks

Definition and Properties

Scale-free networks are a class of graphs characterized by a power-law degree distribution, meaning the probability ( P(k) ) that a node has degree ( k ) is proportional to ( k^{-\alpha} ), where ( \alpha ) is the scaling exponent [16]. This distribution reveals a fundamental asymmetry: while most nodes possess few connections, a few critical nodes, known as hubs, maintain a very high number of links. The term "scale-free" arises from the fact that the power-law distribution lacks a characteristic peak or scale, appearing similar at all levels of magnification. This topology is often generated through mechanisms like preferential attachment, where new nodes entering the network preferentially connect to already well-connected nodes [17].

Evidence in Biological Systems

The scale-free nature of biological networks, including GRNs, has been widely studied. Evidence suggests that GRNs are generally thought to be made up of a few highly connected nodes (hubs) and many poorly connected nodes nested within a hierarchical regulatory regime, approximating a scale-free topology [7]. This structure is believed to evolve due to the preferential attachment of duplicated genes to more highly connected genes [7]. Transcription factor networks derived from genome-wide identification of DNA binding sites also show early evidence of scale-free behavior [18]. However, a severe test of nearly 1,000 real-world networks across domains found that strongly scale-free structure is empirically rare, with log-normal distributions often providing a comparable or better fit than power laws [16]. Specifically, social (including some biological) networks were found to be at best weakly scale-free, while a handful of technological and biological networks appeared strongly scale-free [16].

Functional Implications

The scale-free architecture of GRNs carries significant functional consequences. Robustness against random failures is a key characteristic; random removal of nodes (e.g., due to mutation) rarely causes network-wide disruption because low-degree nodes are numerically dominant [17] [7]. However, this creates a corresponding vulnerability to targeted attacks on hubs, as disruption of these highly connected nodes can fragment the network and cause systemic failure [17]. Hubs in GRNs often represent master transcription factors or critical signaling proteins whose dysfunction can lead to severe pathological states, including cancer [7] [19]. Furthermore, the presence of hubs shortens the average path length between nodes and can act as key sources of trans-acting genetic variance, shaping the genetic architecture of gene expression and complex traits [19].

Table 1: Key Characteristics of Scale-Free Networks

| Feature | Description | Biological Example |

|---|---|---|

| Degree Distribution | Power-law distribution ( P(k) \sim k^{-\alpha} ) | Connectivity of transcription factors in a GRN [7] [18] |

| Hubs | Few nodes with exceptionally high connectivity | Master regulator transcription factors (e.g., MYOD, p53) [7] |

| Robustness | Resilient to random node removal | Genetic redundancy; viability despite random mutations [17] [7] |

| Vulnerability | Sensitive to targeted hub disruption | Disease states arising from mutation of key regulatory genes [17] [7] |

| Evolution | Grows via preferential attachment | Gene duplication and divergence events [7] |

Small-World Networks

Definition and Properties

Small-world networks represent a topological class that combines high local clustering with short global path lengths [20]. Formally, a network exhibits the small-world property if the typical distance ( L ) between two randomly chosen nodes grows proportionally to the logarithm of the number of nodes ( N ) in the network (( L \propto \log N )), while simultaneously maintaining a high global clustering coefficient that is not small [20]. The clustering coefficient quantifies the probability that two neighbors of a given node are also connected to each other, indicating the presence of dense local neighborhoods. This structure stands in contrast to both regular lattices (which have high clustering but long path lengths) and purely random networks (which have short path lengths but low clustering) [20].

Evidence in Biological Systems

Small-world properties are prominently observed in gene regulatory networks. Research indicates that the global properties of inferred GRNs show not only scale-free distributions but also exhibit small-world graph properties [18]. This architecture emerges naturally from the three-dimensional organization of the human genome, where spatial correlations lead to complex small-world regulatory networks in which the transcriptional activity of each genomic region subtly affects almost all others [21]. A key feature of small-world GRNs is efficient information transfer across the network, enabled by the presence of both local, densely connected clusters and long-range connections that serve as shortcuts, drastically reducing the average number of steps between any two nodes [17] [21].

Functional Implications

The small-world topology of GRNs enables several critical functional capabilities. Rapid signal propagation allows cellular responses to environmental changes or developmental cues to occur quickly, as regulatory signals need to traverse only a few steps to affect distant parts of the network [20]. The high clustering supports functional modularity, where groups of genes involved in related processes (e.g., a metabolic pathway or a developmental program) are tightly interconnected, facilitating coordinated expression [17] [21]. This combination of local specialization and global efficiency makes small-world networks particularly well-suited for balancing the competing demands of modular function and integrated system-wide responses in complex biological systems [4] [21].

Diagram 1: Small-World Network Topology showing high local clustering with long-range shortcuts enabling short path lengths.

Hierarchical and Modular Networks

Definition and Properties

Hierarchical networks exhibit a tree-like structure with clear levels of organization, where top-level controllers influence broader functional modules [17]. This organization often incorporates modularity, where the network is composed of densely connected subgroups (modules) with sparser connections between them [17]. In GRNs, hierarchical organization enables top-down control and functional specialization, while modular organization provides functional independence and robustness, as perturbations within one module are less likely to propagate to others [17]. Many biological networks exhibit a combination of both hierarchical and modular features, creating a sophisticated control architecture that balances centralized regulation with distributed processing [17] [4].

Evidence in Biological Systems

Hierarchical organization is a well-replicated feature of gene regulatory networks. GRNs are generally thought to be structured with a hierarchical regulatory regime [7]. This architecture is particularly evident during development, where master regulators control cell type-specific gene expression programs, and temporal changes in network structure drive developmental transitions [17] [19]. For example, core developmental GRNs are evolutionarily conserved across species, with hierarchical structures guiding embryonic development and cell fate decisions [17]. The Hippo signaling pathway in Drosophila provides a specific illustration of a conserved regulatory module that can be used for multiple functions depending on context, demonstrating how changing network topology within a hierarchical framework can alter the final network output [7].

Functional Implications

The hierarchical and modular structure of GRNs provides several evolutionary and functional advantages. Evolutionary adaptability is enhanced because modules can be rewired, duplicated, or repurposed without disrupting the entire network [7]. This facilitates the isolation of functional domains, allowing specific processes like cell cycle control or stress response to operate semi-autonomously [17] [4]. From a regulatory perspective, hierarchy enables coordinated expression of gene batteries, where a single transcription factor can activate multiple genes involved in a common function [19]. This organization also supports robustness to perturbations, as damage or mutations typically affect only localized modules rather than causing system-wide failure [17] [4].

Table 2: Comparative Analysis of Network Topologies in GRNs

| Topology | Structural Signature | Functional Advantage | Experimental Detection |

|---|---|---|---|

| Scale-Free | Power-law degree distribution; presence of hubs | Robustness to random failure; efficient routing | Degree distribution analysis; hub identification [16] [7] |

| Small-World | High clustering & short path lengths | Rapid signal propagation; coordinated activity | Clustering coefficient & average path length calculation [18] [20] |

| Hierarchical | Tree-like organization; nested modules | Functional specialization; evolvability | Community detection algorithms; motif analysis [17] [4] |

| Hybrid | Combination of above features | Balances multiple functional constraints | Integrated topological analysis [17] [7] |

Experimental and Computational Methodologies

Network Inference from Expression Data

Inferring gene regulatory networks from high-throughput data requires sophisticated computational approaches. A common foundation involves linear models of gene expression on directed graphs [4]. The differential form uses coupled equations where the expression level ( ai(t) ) of gene ( i ) at time ( t ) depends on the influence of other genes: ( \frac{dai}{dt} = \sum{j=1}^m W{ij}aj(t) + bi(t) + \xii(t) ), where ( W{ij} ) represents the influence of gene ( j ) on gene ( i ), ( bi(t) ) is an external forcing function, and ( \xii(t) ) is a noise term [18]. Alternatively, a finite difference Markovian model can be employed: ( ai(t+1) = \sum{j=1}^m \lambda{ij}aj(t) ), where transition coefficients ( \lambda_{ij} ) represent the influence of gene ( j ) on gene ( i ) [18]. These models are typically solved using singular value decomposition (SVD) to handle the underdetermined nature of the problem (where measurements are fewer than genes) [18].

Perturbation-Based Inference

Systematic perturbation strategies provide powerful approaches for GRN inference. Modular Response Analysis (MRA) infers network connections by analyzing experimental data from various perturbations [22]. The local response matrix ( r{ij} ) quantifies the direct regulation from node ( j ) to node ( i ) and is defined as ( r{ij} = \frac{\bar{x}j}{\bar{x}i} \cdot \frac{\partial xi}{\partial xj} ), where ( \bar{x} ) represents steady-state concentrations [22]. Recent advances involve calculating confidence intervals (CIs) of local response matrices under multiple perturbations to identify network sparsity and eliminate the impact of perturbation degrees [22]. For CRISPR-based perturbation approaches like Perturb-seq, network structure is inferred by measuring how the knockout of specific genes affects the expression of other genes across thousands of cells [4].

Diagram 2: Experimental Workflow for GRN Inference and Topological Analysis

Topological Analysis Techniques

Once inferred, GRNs are analyzed using graph theory metrics to identify their architectural features. The degree distribution is analyzed to detect scale-free properties by testing whether ( P(k) ) follows a power law [16]. The clustering coefficient measures the tendency of nodes to form clusters, calculated as the fraction of a node's neighbors that are connected to each other [20]. The average shortest path length determines the typical number of steps between any two nodes, with small-world networks exhibiting short average paths [20]. Motif analysis identifies recurring, statistically significant subgraphs (e.g., feed-forward loops) that may perform specific information-processing functions [7]. Community detection algorithms uncover modular organization by partitioning the network into densely connected subgroups [17] [4].

Table 3: Essential Research Reagents and Computational Tools for GRN Analysis

| Reagent/Tool | Type | Primary Function | Application Context |

|---|---|---|---|

| CRISPR-Cas9 | Molecular Tool | Precise gene knockout/editing | Generating perturbations for network inference [17] [4] |

| ChIP-seq | Experimental Assay | Genome-wide TF binding site identification | Mapping direct regulatory interactions [17] [7] |

| scRNA-seq | Profiling Technology | Single-cell resolution gene expression | Revealing cell-to-cell variability in GRNs [17] [4] |

| Cytoscape | Software Platform | Network visualization & analysis | Integrating and visualizing inferred GRNs [17] |

| GENIE3 | Algorithm | GRN inference from expression data | Predicting regulatory interactions using tree-based methods [15] |

| ARACNe | Algorithm | Mutual information-based inference | Reconstructing regulatory networks from data [15] |

| PIDC | Algorithm | Information-theoretic inference | Detecting regulatory relationships without directionality [15] |

Gene regulatory networks embody sophisticated architectural designs that balance multiple functional constraints. The scale-free, small-world, and hierarchical topologies discussed are not mutually exclusive; rather, they represent complementary perspectives on the organization of biological systems. Real-world GRNs typically incorporate features of all these architectures, creating robust, efficient, and adaptable systems. Scale-free organization provides robustness through hubs, small-world structure enables rapid information processing, and hierarchical modularity facilitates evolutionary adaptability and functional specialization. For researchers and drug development professionals, understanding these architectural principles provides a framework for interpreting high-throughput data, predicting system behavior, and identifying critical control points for therapeutic intervention. As network biology continues to mature, integrating these topological insights with dynamical models and multi-omics data will be essential for unraveling the complexity of gene regulation in health and disease.

Gene regulatory networks (GRNs) achieve sophisticated control over cellular functions through recurring patterns of interactions known as network motifs [23]. These motifs represent fundamental computational units that perform core regulatory functions across diverse biological systems. The complex molecular networks within cells execute precise regulatory functions not as arbitrary connections but through evolutionarily conserved designs that represent optimal solutions to common regulatory challenges [23]. This guide examines three fundamental motifs—autoregulation, feedback loops, and feed-forward loops—that serve as the foundational building blocks for complex biological behaviors, providing researchers with a framework for understanding cellular information processing, disease mechanisms, and therapeutic targeting strategies.

The principles underlying these network architectures reflect convergent evolution toward designs that effectively harness biochemical components to solve specific functional needs under physical constraints [23]. Just as chairs across cultures share abstract structural commonalities dictated by the physics of supporting a seated human, molecular regulatory networks display common organizational patterns dictated by the constraints of biochemical implementation [23]. For researchers investigating cellular processes and drug development professionals targeting disease pathways, understanding these core design principles provides critical insight into how regulatory networks control cellular decision-making and physiological functions.

Core Control Mechanisms: Architecture and Function

Autoregulatory Circuits

Autoregulation represents the simplest network motif, where a gene product regulates its own expression [24]. This self-regulation can occur through direct mechanisms, such as a transcription factor binding to its own promoter, or through indirect pathways with intervening molecular steps [23].

Positive Autoregulation: In this configuration, a gene product activates its own expression, creating a self-reinforcing loop. This architecture typically generates bistable switch-like behavior and provides cellular memory [23] [24]. Once activated, positive autoregulation can maintain the activated state even after the initial stimulus diminishes, making it crucial for developmental processes and cell fate decisions where transient signals need to be converted into stable cellular states.

Negative Autoregulation: Here, a gene product represses its own expression. This design provides robustness against perturbations and noise in the system [23]. It also accelerates the system's response time, allowing it to reach steady state more rapidly than equivalent systems without self-repression [23]. The rapid equilibration makes negative autoregulation particularly valuable in stress response systems where precise homeostasis is required.

Table 1: Functional Properties of Autoregulatory Circuits

| Autoregulation Type | Key Functions | Dynamic Behavior | Biological Examples |

|---|---|---|---|

| Positive | Cellular memory, bistability | Switch-like transitions | Cell fate determination, commitment processes |

| Negative | Noise suppression, response acceleration | Rapid stabilization, homeostasis | Stress response systems, precision control |

Feedback Loops

Feedback loops involve mutual regulation between two or more genes in a circular topology [24]. These motifs represent fundamental units that enable sophisticated control behaviors essential for complex cellular functions.

Positive Feedback Loops: In these interconnected systems, each element activates the others, creating a cooperative, self-reinforcing circuit. Positive feedback amplifies signals and creates bistable systems that can toggle between distinct ON and OFF states [23] [24]. This architecture is frequently observed in systems requiring irreversible decisions, such as cell cycle commitment, developmental patterning, and cellular differentiation. The bistability ensures clear phenotypic distinctions rather than graded intermediate states.

Negative Feedback Loops: These involve opposing interactions where elements ultimately inhibit each other's activity. Negative feedback provides stability and homeostasis, resisting deviations from set points [23] [24]. This design is ubiquitous in systems requiring precise regulation, such as circadian rhythms, metabolic pathways, and maintenance of intracellular conditions. The continuous correction mechanism enables robustness against internal and external perturbations.

Table 2: Comparative Analysis of Feedback Loop Architectures

| Feedback Type | Network Structure | Functional Role | Therapeutic Implications |

|---|---|---|---|

| Positive Feedback | Mutual activation | Signal amplification, bistability, decision-making | Targeting pathological state stabilization (e.g., cancer cell states) |

| Negative Feedback | Mutual inhibition | Homeostasis, stability, robustness | Addressing system instability, oscillation control |

Feedforward Loops (FFLs)

Feedforward loops represent a three-node architecture where an upstream regulator controls both a target gene and an intermediate regulator, which also controls the target [23] [24]. This creates parallel regulatory pathways that converge on the output node. FFLs are among the most highly enriched motifs in transcriptional networks across organisms, from bacteria to humans [23].

Coherent FFLs: In these motifs, the direct and indirect pathways have the same net sign of action on the target gene. A common subtype (coherent type 1) functions as a persistence detector, only activating the output when the input signal persists beyond a specific duration [23]. This design filters against transient, spurious signals while responding reliably to sustained inputs, preventing unnecessary cellular responses to noise.

Incoherent FFLs (IFFLs): These architectures feature opposing effects through the two pathways, with one branch activating and the other repressing the target. Incoherent FFLs can generate pulse-like dynamics, accelerate response times, enable fold-change detection, and achieve perfect adaptation (return to baseline after response) [23] [25]. These are particularly valuable in systems requiring transient responses to persistent signals or sensitivity to relative rather than absolute signal changes.

Table 3: Feedforward Loop Classification and Functional Properties

| FFL Type | Sign Pattern | Key Functions | Dynamic Response |

|---|---|---|---|

| Coherent Type 1 | X→Z +, X→Y→Z + | Persistence detection, signal filtering | Step response after delay |

| Incoherent Type 1 | X→Z +, X→Y→Z - | Pulse generation, fold-change detection, perfect adaptation | Transient pulse, adaptation |

Experimental and Computational Methodologies

Mathematical Modeling of Network Motifs

Quantitative analysis of network motifs requires mathematical formalisms that capture the dynamics of regulatory interactions. Ordinary differential equations (ODEs) provide a powerful framework for modeling these systems. For an incoherent feedforward loop (I1-FFL) capable of perfect adaptation, the dynamics can be modeled using the following scaled equations [25]:

$$ \tauy\frac{dy}{dt} = fy\left(\frac{x(t-\thetay)}{K1}\right) - y $$

$$ \tauz\frac{dz}{dt} = fz\left(\frac{x(t-\thetaz)}{K1}, \frac{y(t-\thetaz)}{K2}\right) - z $$

where (x), (y), and (z) represent the concentrations of the input, intermediate, and output species, respectively; (τy) and (τz) are their degradation time constants; (K1) and (K2) are dissociation constants; (θy) and (θz) represent time delays; and the regulatory functions are defined as [25]:

$$ fy(a) = \frac{a}{1+a}, \quad fz(a,b) = \frac{a}{1+a+b+ab/C} $$

The condition for perfect adaptation (return to exact pre-stimulus baseline) can be derived by equating the steady-state output before and after the stimulus, yielding a specific parameter relationship that must be satisfied [25]. Engineering principles demonstrate that combining IFFLs with negative feedback increases the robustness of perfect adaptation and improves dynamical characteristics [25].

Computational GRN Inference Methods

Reconstructing gene regulatory networks from experimental data employs diverse computational approaches, each with distinct strengths and limitations [26]:

Correlation-based approaches operate on "guilt-by-association," identifying co-expressed genes using measures like Pearson's correlation (linear relationships) or Spearman's correlation (nonlinear relationships). While computationally efficient, these methods struggle with distinguishing direct versus indirect regulation.

Regression models treat gene expression as a response variable predicted by potential regulators. Regularization techniques like LASSO prevent overfitting in high-dimensional data. These methods provide interpretable parameters but can be misled by correlated predictors.

Probabilistic models use graphical models to represent dependencies between variables, estimating the most probable regulatory relationships that explain observed data.

Dynamical systems approaches model the time evolution of gene expression, capturing complex behaviors but requiring temporal data and substantial computational resources.

Deep learning models use neural networks to learn complex regulatory relationships from large datasets, offering flexibility but requiring substantial data and computational resources while suffering from interpretability challenges [26].

Table 4: Methodological Approaches for GRN Inference

| Method Category | Key Principles | Advantages | Limitations |

|---|---|---|---|

| Correlation-based | Co-expression analysis | Computational efficiency, simplicity | Cannot distinguish direct/indirect regulation |

| Regression models | Predictor-response relationships | Interpretable parameters, handles multiple regulators | Sensitive to correlated predictors |

| Probabilistic models | Graphical models, dependence | Uncertainty quantification | Distributional assumptions may not hold |

| Dynamical systems | Differential equations, time evolution | Captures complex temporal behaviors | Requires temporal data, computationally intensive |

| Deep learning | Neural networks, pattern recognition | Models complex nonlinear relationships | Low interpretability, large data requirements |

Research Reagent Solutions for GRN Analysis

Contemporary research into gene regulatory networks utilizes sophisticated experimental tools that enable precise perturbation and measurement of regulatory interactions:

Table 5: Essential Research Reagents and Platforms for GRN Analysis

| Reagent/Platform | Function | Application in GRN Studies |

|---|---|---|

| Single-cell RNA-seq (scRNA-seq) | Measures transcriptomes of individual cells | Resolves cellular heterogeneity in regulatory programs [26] |

| Single-cell ATAC-seq (scATAC-seq) | Profiles chromatin accessibility at single-cell resolution | Identifies accessible regulatory elements across cell types [26] |

| SHARE-seq/10x Multiome | Simultaneously profiles RNA and chromatin accessibility in same cell | Enables direct correlation of regulatory elements with gene expression [26] |

| ChIP-seq | Maps genome-wide protein-DNA interactions | Identifies transcription factor binding sites [26] |

| CRISPR-based perturbations | Enables targeted manipulation of regulatory elements | Tests causal relationships in regulatory networks |

Visualization of Network Motifs

The following diagrams, generated using Graphviz DOT language, illustrate the core architectures of the regulatory motifs discussed in this guide.

Research Applications and Future Directions

Understanding dynamic control mechanisms in gene regulatory networks has profound implications for both basic research and therapeutic development. For researchers investigating developmental processes, these motifs explain how complex patterns emerge from simple regulatory logic. For drug development professionals, targeting pathological network architectures offers promising therapeutic strategies.

Network motifs frequently become dysregulated in disease states. Positive feedback loops can lock cells into pathological stable states, as seen in cancer cell phenotypes and chronic inflammatory conditions. Breakdowns in negative feedback mechanisms can lead to uncontrolled proliferation or autoimmunity. Incoherent feedforward loops that normally provide precise temporal control may become disrupted, leading to improper cellular responses. Therapeutic interventions that specifically target these dysregulated motifs represent an emerging frontier in precision medicine.

Future research directions include developing more sophisticated multi-omic integration methods to reconstruct comprehensive regulatory networks, engineering synthetic regulatory circuits for therapeutic applications, and creating computational tools that can predict network behavior across physiological and pathological contexts. As single-cell technologies continue to advance, researchers will increasingly able to map these regulatory motifs across cell types and states, providing unprecedented resolution of the control mechanisms governing cellular identity and function.

Gene regulatory networks (GRNs) represent the complex interplay of molecular regulators that control cellular identity and function [12]. Composed of transcription factors, cis-regulatory elements, and various signaling components, GRNs integrate multiple signals to produce robust cellular responses to developmental cues and environmental stimuli [17]. The temporal dynamics of these networks—including oscillatory behaviors, feedback loops, and stimulus-specific activation patterns—serve as critical determinants of cell fate decisions in processes ranging from embryonic development to immune responses [27]. This technical review examines the fundamental principles connecting GRN architecture and dynamics to phenotypic outcomes, surveys current methodologies for network inference and analysis, and discusses applications in disease research and therapeutic development. By synthesizing recent advances in single-cell technologies and computational modeling, we provide researchers with a comprehensive framework for investigating how network dynamics govern cellular identity transitions.

A gene regulatory network is a collection of molecular regulators that interact with each other and with other substances in the cell to govern gene expression rates [12]. These networks form the architectural basis of complex biological processes, integrating various regulatory elements to control spatial and temporal gene expression patterns that define cellular states [17]. The fundamental components of GRNs include cis-regulatory elements (DNA sequences regulating nearby genes, such as promoters, enhancers, and silencers), trans-regulatory factors (proteins and non-coding RNAs that interact with cis-regulatory elements), and feedback loops that create recurring patterns of interactions [17].

GRNs exhibit specific topological structures that influence their functional capabilities and dynamic behaviors. Scale-free networks characterized by hub nodes with many connections provide robustness against random failures, while small-world networks with short path lengths enable efficient information transfer [17]. Many biological networks display hierarchical organization with top-down control structures and modular organization with densely connected subgroups that operate with functional independence [17]. These architectural principles enable GRNs to process complex environmental information and generate appropriate phenotypic responses through precise spatiotemporal control of gene expression.

GRN Dynamics and Signaling Systems in Cell Fate Decisions

Temporal Dynamics of Signaling Systems

Single-cell technologies have revealed that signaling systems display complex temporal behaviors beyond simple on/off switching [27]. Key signaling pathways exhibit diverse dynamic patterns including oscillations, pulses, and sustained activation in response to different stimuli. The NF-κB system, a crucial regulator of immune and inflammatory responses, displays oscillatory nuclear localization with a period of approximately 1.5 hours [27]. These oscillations are not mere noise but functionally significant behaviors that enable selective activation of target genes based on their kinetic parameters [27]. Similarly, the p53 network shows dynamic pulses in response to DNA damage, with specific patterns influencing cell fate decisions toward repair versus apoptosis [27].

Network Motifs and Their Functional Roles

GRNs contain recurrent regulatory patterns or "motifs" that perform specific information-processing functions [17]. The table below summarizes key network motifs and their functional significance in cell fate decisions:

Table 1: Characteristic Network Motifs in GRNs and Their Functional Roles

| Motif Type | Structural Features | Dynamic Behavior | Functional Role in Cell Fate |

|---|---|---|---|

| Positive Feedback Loop | Mutual activation or self-activation | Bistability, hysteresis | Locking in cell fate decisions; creating discrete stable states |

| Negative Feedback Loop | Self-repression or mutual inhibition | Homeostasis, oscillations | Stabilizing gene expression; enabling pulsatile dynamics |

| Feed-Forward Loop | Three-node patterns with specific connectivity | Sign-sensitive delay; pulse generation | Decision-making under specific signal durations; noise filtering |

| Autoregulation | Gene regulating its own expression | Acceleration or delay of response | Fine-tuning temporal dynamics of state transitions |

These motifs generate specific dynamic behaviors that enable cells to decode complex environmental information. For example, positive feedback loops can create bistable systems that exhibit hysteresis, allowing cells to maintain their identity even after initial differentiation signals subside [17]. Negative feedback loops enable homeostasis and adaptive responses, while feed-forward loops can process temporal information to distinguish transient from persistent signals [17].

Dynamics-to-Fate Mapping in Biological Contexts

The connection between signaling dynamics and cell fate has been experimentally demonstrated across multiple biological contexts. In immune signaling, NF-κB dynamics control gene expression programs that determine immune cell activation and inflammatory responses [27]. Genes belonging to different functional classes respond to distinct NF-κB dynamic patterns by accumulating at different rates, effectively decoding temporal information into specific transcriptional programs [27]. In developmental systems, signaling dynamics guide embryonic patterning and cell type specification through precise temporal control of gene expression [27]. The Hes1 oscillator, for instance, controls neurogenesis through its dynamic expression pattern, with different oscillation frequencies leading to distinct differentiation outcomes [27].

Methodologies for GRN Inference and Analysis

Computational Approaches for Network Inference

Advances in computational methods have enabled increasingly sophisticated reconstruction of GRNs from experimental data. The table below compares major approaches for GRN inference:

Table 2: Computational Methods for Gene Regulatory Network Inference

| Method Category | Key Principles | Strengths | Limitations |

|---|---|---|---|

| Correlation-based | Pearson/Spearman correlation, mutual information | Simple implementation; captures linear/non-linear relationships | Cannot determine directionality; confounded by indirect effects |

| Regression Models | LASSO, ridge regression, ordinary least squares | Identifies directionality; handles multiple predictors | Unstable with correlated predictors; prone to overfitting |

| Information Theory | Mutual information, transfer entropy, maximum entropy | Detects non-linear dependencies; minimal assumptions | Computationally intensive; requires large sample sizes |

| Bayesian Networks | Probabilistic graphical models, directed acyclic graphs | Incorporates uncertainty; models causal interactions | Computationally challenging for large networks |

| Dynamical Systems | Ordinary differential equations, stochastic models | Captures temporal dynamics; mechanistic interpretation | Parameter intensive; requires time-series data |

| Machine Learning | Random forests, support vector machines, deep learning | Handles complex patterns; integrates multi-omics data | "Black box" nature; requires extensive training data |

Recent methods have leveraged graph representation learning and transformer networks to infer regulatory relationships from single-cell RNA sequencing data, demonstrating improved performance by incorporating prior network information and handling data sparsity [1]. For example, GRLGRN uses a graph transformer network to extract implicit links from prior GRN knowledge and combines this with gene expression profiles to predict regulatory dependencies [1]. Such approaches have achieved average improvements of 7.3% in AUROC and 30.7% in AUPRC compared to conventional methods [1].

Experimental Methods for Network Validation

Computational GRN inferences require experimental validation to establish biological relevance. Key experimental approaches include:

Chromatin Immunoprecipitation (ChIP) Techniques: ChIP-seq identifies genome-wide binding sites of transcription factors, providing direct evidence of physical interactions between regulators and target genes [17]. Advanced variants like CUT&RUN offer improved resolution with reduced background signal [17].

Perturbation Experiments: Systematic perturbations through knockout, knockdown (RNAi), or CRISPR-Cas9 editing reveal causal relationships by observing network responses to targeted interventions [22] [17]. A general computational approach based on systematic perturbation calculates local response matrices to infer network topologies and identify differences during cell fate decisions [22].

Gene Expression Profiling: Single-cell RNA sequencing (scRNA-seq) captures transcriptional heterogeneity and reveals cell-to-cell variability in gene expression, enabling reconstruction of context-specific GRNs [17] [1]. Time-series experiments further capture dynamic changes in gene expression across state transitions [17].

Experimental Framework for Analyzing GRN Dynamics

Systematic Perturbation Strategy

A robust methodology for inferring GRN topology involves systematic perturbation analysis followed by statistical and differential examination of expression data [22]. The fundamental principle involves measuring network responses to targeted perturbations of sensitive parameters, typically degradation rates or production rates of specific network components.

The workflow consists of:

- System Preparation: Allow the GRN system to reach a stable steady state under basal conditions

- Parameter Perturbation: Apply slight perturbations to sensitive parameters associated with each node

- Response Measurement: Quantify the steady-state expression changes for all network components

- Matrix Construction: Calculate the local response matrix (r_ij) representing the direct regulatory influence of node j on node i

- Statistical Validation: Use confidence intervals from multiple perturbations to determine significant interactions

- Differential Analysis: Compute relative local response matrices to identify critical regulations specific to each cell state

This approach successfully inferred network topologies during epithelial-to-mesenchymal transition (EMT), quantitatively identifying differences in network structures between epithelial, mesenchymal, and hybrid states [22].

Visualization and Modeling Tools

Specialized software tools facilitate GRN modeling, visualization, and analysis. BioTapestry is an open-source platform specifically designed for GRN modeling, providing hierarchical representations that track network states across different cell types and developmental times [28]. It employs genome-oriented representations with emphasis on cis-regulatory DNA, using automated layout templates to highlight regulatory relationships [28]. The platform supports three hierarchical views: View from the Genome (summary of all regulatory inputs), View from All Nuclei (interactions across regions), and View from the Nucleus (network state at specific time and location) [28].

Diagram: Signaling Dynamics in Cell Fate Decisions

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Research Reagents for GRN Dynamics Studies

| Reagent/Category | Specific Examples | Primary Function | Application Context |

|---|---|---|---|

| Live-Cell Imaging Reporters | Fluorescently tagged RelA (NF-κB), p53-GFP, ERK-KTR | Real-time visualization of signaling dynamics | Tracking transcription factor localization and activity in live cells |

| Perturbation Tools | CRISPR-Cas9 libraries, siRNA collections, small molecule inhibitors | Targeted disruption of network components | Establishing causal relationships in GRN topology |

| Single-Cell Sequencing Platforms | 10x Genomics, SHARE-Seq, scATAC-seq | High-resolution mapping of transcriptional states and chromatin accessibility | Characterizing cellular heterogeneity and inferring GRN architecture |

| Chromatin Profiling Reagents | ChIP-seq antibodies, CUT&RUN kits, ATAC-seq reagents | Mapping transcription factor binding and chromatin states | Experimental validation of regulatory interactions |

| Bioinformatics Software | BioTapestry, Cytoscape, GENIE3, GRLGRN | Network visualization, inference, and analysis | Computational reconstruction and modeling of GRNs |

Applications in Disease and Therapeutics

GRN analysis provides critical insights into disease mechanisms and therapeutic opportunities. In cancer biology, network dysregulation represents a fundamental driver of malignancy, with mutations in regulatory elements or trans-factors disrupting normal cellular homeostasis [17]. Network-based approaches enable identification of disease-associated modules and hub genes that may serve as potential drug targets [17] [1]. The visualization of GRN alterations in pathological states can reveal novel therapeutic strategies that target network stability rather than individual components.

Personalized medicine applications leverage patient-specific network alterations to predict disease progression and treatment responses [17]. For example, GRN analysis of tumor biopsies can identify master regulators driving oncogenic states, potentially guiding combination therapies that target multiple nodes within dysregulated networks [17]. In synthetic biology, GRN principles guide the design of artificial genetic circuits for therapeutic applications, including engineered immune cells and gene therapies [17].

The fundamental connection between GRN dynamics and cellular phenotype represents a paradigm shift in our understanding of biological regulation. Moving beyond static network maps to dynamic, context-specific representations will be essential for unraveling the complexity of cell fate decisions. Future research directions include the development of multi-omics integration frameworks that combine genomic, epigenomic, transcriptomic, and proteomic data into unified network models [29] [17]. Advancing single-cell technologies will enable the reconstruction of GRNs with unprecedented resolution across temporal and spatial dimensions [1]. Additionally, machine learning approaches that incorporate prior biological knowledge while handling the inherent noise and sparsity of single-cell data will continue to improve the accuracy of network inferences [1]. As these methodologies mature, our capacity to predict and manipulate cell fate decisions through targeted network interventions will transform both basic research and therapeutic development.

From Data to Networks: Evolutionary and Cutting-Edge Inference Methods

For researchers charting the complex territory of gene regulatory networks (GRNs), the Knowledge Discovery in Databases (KDD) process provides a systematic framework for extracting meaningful biological insights from vast genomic datasets. KDD refers to the complete, iterative process of uncovering valuable knowledge from data, emphasizing the high-level application of particular data-minded methods [30] [31]. In the context of GRN inference—which aims to reveal the genomic mechanisms governing how cells respond to environmental and developmental cues—this structured approach is particularly valuable [32]. Where single experiments might provide snapshots of gene expression, the KDD process enables researchers to build comprehensive models of regulatory interactions, identify key transcription factors, and ultimately understand the mechanistic principles underlying phenotypic transitions in health and disease [33] [34].

The KDD workflow is especially relevant for research aimed at drug development, where understanding network rewiring—significant changes in network connectivity between different biological conditions—can provide crucial insights into disease mechanisms and potential therapeutic targets [33]. This technical guide will explore each stage of the KDD process through the lens of GRN research, providing methodologies, visualizations, and resources to equip researchers with a robust framework for inference workflow design.

The KDD Process: A Step-by-Step Technical Guide

The KDD process is iterative and interactive, consisting of multiple stages that may require moving back to previous steps as new insights emerge [30]. The process spans from understanding the application domain to implementing the discovered knowledge, with each step building upon the previous one.

Step 1: Developing an Understanding of the Application Domain