From Data to Networks: A Comprehensive Overview of Modern GRN Inference Methodologies

This article provides a comprehensive overview of the rapidly evolving field of Gene Regulatory Network (GRN) inference, tailored for researchers, scientists, and drug development professionals.

From Data to Networks: A Comprehensive Overview of Modern GRN Inference Methodologies

Abstract

This article provides a comprehensive overview of the rapidly evolving field of Gene Regulatory Network (GRN) inference, tailored for researchers, scientists, and drug development professionals. It begins by exploring the fundamental principles and challenges of reconstructing regulatory networks from high-throughput data. The review then delves into the latest methodological advances, categorizing and explaining cutting-edge computational approaches from machine learning and multi-omics integration. A dedicated section addresses common troubleshooting and optimization strategies to overcome persistent issues like data sparsity and integration tuning. Finally, the article synthesizes the current landscape of validation benchmarks and performance comparisons, offering a clear-eyed assessment of the state-of-the-art. The goal is to equip practitioners with the knowledge to select, apply, and critically evaluate GRN inference methods in their own research, ultimately bridging the gap between network models and clinical insights.

The GRN Inference Challenge: Foundations, Obstacles, and the Quest for Accuracy

Defining Gene Regulatory Networks (GRNs) and Their Biological Significance

A Gene Regulatory Network (GRN) is a collection of molecular regulators that interact with each other and with other substances in the cell to govern the gene expression levels of mRNA and proteins, which in turn determine cellular function [1]. These networks represent the complex causal relationships by which gene expression is controlled, forming the molecular basis of a regulatory code that dictates cellular identity, behavior, and response to stimuli [2] [3]. GRNs play a central role in morphogenesis (the creation of body structures) and are fundamental to evolutionary developmental biology (evo-devo) [1]. In essence, GRNs provide a graph-level representation of the regulatory relationships between transcription factors (TFs) and their target genes, where nodes represent genes and edges represent regulatory interactions between them [4]. The intricate architecture of these networks enables cells to process information, respond to environmental cues, and execute the precise developmental programs that build and maintain complex organisms.

Architectural Principles of GRNs

Biological systems exhibit remarkable complexity, yet GRNs are built upon several key organizational principles that define their structure and function.

Core Structural Properties

Extensive research has identified consistent architectural features across biological GRNs. These properties provide both challenges and opportunities for network inference and analysis [2].

Table 1: Key Structural Properties of Gene Regulatory Networks

| Property | Description | Biological Significance |

|---|---|---|

| Sparsity | Most genes are directly regulated by only a small number of regulators [2] | Enables modularity and reduces pleiotropic effects; only ~41% of gene perturbations significantly affect other genes [2] |

| Directed Edges & Feedback | Regulatory relationships are directional ("A regulates B" ≠ "B regulates A") and often form feedback loops [2] | Permits dynamic control, cellular memory, and stable state maintenance; 2.4% of regulatory pairs show bidirectional effects [2] |

| Hierarchical Scale-Free Topology | Few highly connected nodes (hubs) with many poorly connected nodes [1] [5] | Creates robust yet adaptable systems; follows power-law degree distribution with preferential attachment [1] |

| Modularity | Genes group by function in hierarchical organization [2] | Allows coordinated response to perturbations; functional units can be reused in different contexts [1] |

| Network Motifs | Recurring, statistically over-represented sub-networks [1] | Performs specific regulatory functions (e.g., feed-forward loops for pulse-generation/noise-filtering) [1] |

Recurring Network Motifs

GRNs contain characteristic local patterns called network motifs that perform specific information-processing functions. The feed-forward loop is among the most abundant motifs, consisting of three nodes where a master regulator controls a target gene both directly and through an intermediate regulator [1]. This configuration can create different input-output behaviors, such as accelerating metabolic transitions in the E. coli galactose system or delaying activation in the arabinose utilization system to prevent unnecessary metabolic changes due to transient fluctuations [1]. In the Xenopus Wnt signaling pathway, feed-forward loops act as fold-change detectors that respond to relative rather than absolute changes in β-catenin levels, increasing resistance to biochemical noise [1].

Diagram 1: Feed-forward loop motif

Methodologies for GRN Inference

Reconstructing GRNs from experimental data remains a central challenge in systems biology. Multiple approaches have been developed, each with distinct strengths and limitations.

Experimental Approaches for Network Mapping

Table 2: Key Experimental Methods for GRN Inference

| Method | Principle | Output | Applications |

|---|---|---|---|

| CAP-SELEX [3] | High-throughput mapping of TF-TF interactions and composite DNA binding motifs | Identifies spacing/orientation preferences (1,329 pairs) and novel composite motifs (1,131 pairs) [3] | Mapping cooperative binding landscape; solving "hox specificity paradox" [3] |

| Perturb-seq [2] | CRISPR-based perturbations combined with single-cell RNA sequencing | Measures effects of 11,258 perturbations on 5,530 transcripts across 1.9M cells [2] | Discovering local network structure, trait-relevant genes, novel gene functions [2] |

| ChIP-seq [5] | Chromatin immunoprecipitation with sequencing | Genome-wide identification of TF binding sites | Direct mapping of physical TF-DNA interactions [5] |

| Time-Series Expression [5] | Measuring expression profiles over time after perturbation | Dynamic response patterns for inferring causal relationships | Revealing regulatory hierarchies and causal relationships [5] |

Computational Inference Frameworks

Computational methods leverage the data generated from experimental techniques to infer network structures. These can be broadly categorized into several frameworks.

Table 3: Computational Approaches for GRN Inference

| Method Category | Representative Algorithms | Key Principles | Best Applications |

|---|---|---|---|

| Linear Models [5] | SVD-based solutions, Robust regression | Coupled differential equations: ȧi(t) = ΣWij·aj(t) + bi(t) + ξi(t) [5] | Time-series expression data; initial network mapping [5] |

| Machine Learning [6] [4] | GENIE3, GRNBoost2, GRLGRN | Tree-based methods, graph neural networks, transformer architectures | Integrating prior knowledge with expression data [4] |

| Deep Learning [7] [4] | DeepSEM, DAZZLE, CNNGRN | Autoencoders, graph convolutional networks, attention mechanisms | Handling zero-inflated single-cell data; large-scale networks [7] [4] |

| Differential Equations [2] | SCODE, SINGE | Ordinary differential equations, Granger causality | Modeling dynamics from pseudotime trajectories [2] |

The DAZZLE model exemplifies recent advances, addressing the zero-inflation problem (57-92% zeros in single-cell data) through Dropout Augmentation rather than imputation [7]. This approach regularizes models by adding synthetic dropout noise during training, improving robustness against this characteristic of single-cell RNA-seq data [7].

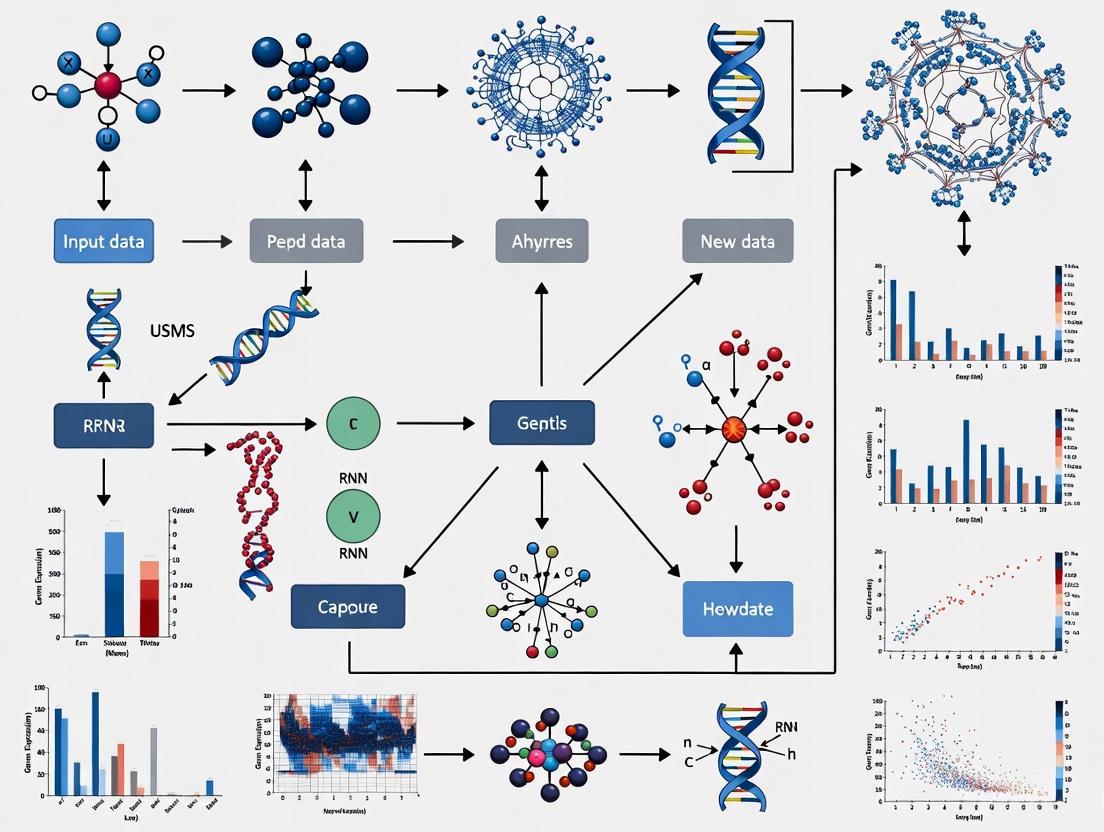

Diagram 2: GRN inference methodology integration

Advanced Experimental Techniques: CAP-SELEX Case Study

The CAP-SELEX (Consecutive-Affinity-Purification Systematic Evolution of Ligands by Exponential Enrichment) method represents a cutting-edge approach for mapping the biochemical interactions between DNA-bound transcription factors [3]. This technique was adapted to a 384-well microplate format to enable high-throughput screening of over 58,000 TF-TF pairs, leading to the identification of 2,198 specifically interacting TF pairs [3].

The experimental workflow comprises several key stages:

- Protein Preparation: Expression of human TFs enriched in conserved mammalian proteins in E. coli

- TF Pair Combination: Systematic combination into 58,754 TF-TF pairs in microplate format

- CAP-SELEX Cycles: Three consecutive cycles of selection and amplification

- Sequencing: Massively parallel sequencing of selected DNA ligands

- Computational Analysis: Novel algorithms for identifying spacing/orientation preferences and composite motifs

Key Reagents and Research Solutions

Table 4: Essential Research Reagents for CAP-SELEX

| Reagent/Solution | Function | Application Note |

|---|---|---|

| Recombinant TFs | DNA-binding proteins for interaction screening | Focus on conserved mammalian TFs; underrepresentation of KRAB zinc fingers [3] |

| DNA Library | Randomized oligonucleotides for binding selection | Contains diverse sequence space for TF binding |

| Affinity Matrices | Purification of TF-DNA complexes | Sequential purification for specific isolation |

| HT-SELEX Controls | Reference binding specificities for individual TFs | Enables comparison with cooperative binding [3] |

| Microplate Platform | High-throughput screening format | Enables testing of 58,000+ TF-TF pairs [3] |

Data Analysis Algorithms

The massive dataset generated by CAP-SELEX required novel computational approaches:

Mutual Information Algorithm: Identifies TF-TF pairs with preferential binding to specific spacings and orientations (1,329 pairs) [3]

K-mer Enrichment Analysis: Detects novel composite motifs by comparing enrichment in CAP-SELEX versus HT-SELEX (1,131 composite motifs) [3]

Global analysis revealed that short binding distances (<5bp) are generally preferred, with few longer-range interactions observed. TF-TF interactions commonly crossed family boundaries, with TEA family TFs being particularly promiscuous while C2H2 zinc fingers showed fewer interactions [3].

Diagram 3: DNA-guided TF interactions in CAP-SELEX

Biological Significance and Applications

Developmental Biology and Disease

GRNs form the fundamental control systems that guide embryonic development and maintain tissue homeostasis. The hierarchical organization of GRNs enables the precise spatial and temporal patterns of gene expression required for morphogenesis [1]. Through morphogen gradients, cells receive positional information that dictates their fate by activating specific regulatory programs [1]. When these networks malfunction, the consequences can be severe - loss of feedback regulation in GRNs can lead to uncontrolled cell proliferation in cancer [1].

The "hox specificity paradox" illustrates how GRN context determines biological function: anterior homeodomain proteins (HOX1-HOX8) bind to identical TAATTA motifs yet have distinct developmental functions [3]. This paradox is resolved through TF-TF interactions that expand the regulatory lexicon, allowing TFs with similar binding specificities to achieve distinct outcomes through cooperative binding with different partners [3].

Drug Discovery and Therapeutic Targeting

Understanding GRNs provides powerful insights for pharmaceutical development. By mapping the regulatory networks underlying disease states, researchers can identify key nodes whose perturbation might therapeutic benefits. Transcription factors that define embryonic axes commonly interact with different TFs and bind to distinct motifs, explaining how similar specificities can define distinct cell types [3]. This knowledge enables more targeted therapeutic approaches that aim to reprogram pathological network states rather than simply inhibiting individual components.

Hub genes with high connectivity in GRNs represent particularly attractive therapeutic targets, as their perturbation can potentially redirect entire regulatory programs [4]. Advanced inference methods like GRLGRN, which uses graph transformer networks to extract implicit links from prior GRNs, can help identify these critical regulators and their associated pathways [4].

Future Directions and Challenges

Despite significant advances, GRN inference remains challenging due to cellular heterogeneity, measurement noise, and data sparsity [7] [4]. Single-cell sequencing technologies have revealed substantial diversity in cell types and states, complicating network inference [2]. The prevalence of "dropout" events in single-cell RNA-seq data (where transcripts are erroneously not captured) creates zero-inflated datasets that require specialized computational approaches [7].

Future methodologies will likely increasingly integrate multiple data types - including protein-protein interactions, epigenetic modifications, and spatial information - to construct more comprehensive regulatory models [6]. Techniques that combine perturbation data with observational studies show particular promise, as perturbation data are critical for discovering specific regulatory interactions, while data from unperturbed cells may be sufficient to reveal broader regulatory programs [2].

As our understanding of GRNs deepens, we move closer to fundamental insights about the organizational principles of biological systems and their implications for medicine and biotechnology. The continuing development of both experimental and computational methods for mapping and analyzing GRNs promises to unlock further secrets of the regulatory code that governs life.

The advent of single-cell technologies represents a paradigm shift in molecular biology, enabling the interrogation of cellular machinery at an unprecedented resolution. This revolution is particularly transformative for the inference of Gene Regulatory Networks (GRNs), which are graph-level representations depicting the regulatory interactions between transcription factors (TFs) and their target genes [8] [4]. Understanding GRN architecture is fundamental for unraveling the mechanisms controlling cellular identity, differentiation, and response to stimuli, with profound implications for drug discovery and understanding disease etiology [9] [4]. Traditional bulk sequencing methods averaged signals across millions of cells, obscuring the critical heterogeneity inherent in biological systems. Single-cell RNA sequencing (scRNA-seq) and related modalities overcome this limitation, revealing the diverse transcriptional states that constitute complex tissues. However, this new depth of information introduces significant computational and experimental challenges. This whitepaper examines how the field is leveraging single-cell data to reconstruct GRNs, detailing the novel opportunities created, the inherent obstacles encountered, and the sophisticated methodologies developed to navigate this complex landscape.

New Opportunities in GRN Inference

The single-cell revolution has unlocked several novel avenues for accurately inferring gene regulatory networks.

Leveraging Cellular Heterogeneity and CRISPR Perturbations

Rather than treating cellular variation as noise, modern GRN methods treat each cell as a distinct observation of network activity. This allows for the inference of regulatory relationships from the natural covariance of gene expression across a population of cells [4]. Furthermore, the integration of scRNA-seq with CRISPR-based perturbations (scCRISPR) provides a powerful causal framework. By measuring the transcriptional consequences of knocking out individual genes in single cells, researchers can move beyond correlation to establish causality [10] [9].

A key advancement is the use of time-series scCRISPR data. Methods like RENGE (REgulatory Network inference using GEne perturbation data) model the propagation of knockout effects over time, distinguishing between direct regulatory targets and indirect downstream effects, a task difficult with snapshot data [10]. RENGE's model conceptualizes that the observed gene expression at time t after knocking out gene g is a function of the cumulative, weighted effects of regulatory paths of different orders from the perturbed gene.

Integration of Multi-Omic Data at Single-Cell Resolution

The emergence of multi-omic technologies allows for the simultaneous measurement of different molecular layers in individual cells. Integrating scRNA-seq with single-cell ATAC-seq (scATAC-seq), which profiles chromatin accessibility, provides a more direct readout of regulatory potential. Algorithms like ScReNI (Single-cell Regulatory Network Inference) exemplify this approach, using a modified random forest model to establish nonlinear regulatory relationships between gene expression and chromatin accessibility from paired or unpaired datasets [11]. This integration helps prioritize regulatory interactions that are not only correlated at the expression level but are also supported by an open chromatin state, significantly improving inference accuracy.

Advanced Deep Learning and Graph-Based Models

The complexity and high-dimensionality of single-cell data have spurred the development of advanced deep learning models. These methods can capture non-linear relationships and incorporate prior biological knowledge. GRLGRN is one such model that uses a graph transformer network to learn implicit links in a prior GRN, generating rich gene embeddings that are combined with gene expression data [4]. It further employs attention mechanisms and graph contrastive learning to refine features and prevent over-smoothing, leading to superior performance in predicting gene-gene interactions as demonstrated on benchmark datasets from the BEELINE framework [4].

Inherent Challenges and Methodological Hurdles

Despite these opportunities, the path to accurate GRN inference is fraught with challenges intrinsic to single-cell data and biological complexity.

Data Sparsity, Noise, and Technological Artifacts

scRNA-seq data is notoriously sparse, characterized by a high number of zero counts ("dropout" events) due to technical limitations where lowly expressed mRNAs are not captured [4]. This sparsity, coupled with significant technical noise and batch effects, complicates the reliable estimation of gene-gene relationships. Distinguishing true biological regulation from technical artifacts remains a primary hurdle for all inference methods.

The Scalability and Evaluation Bottleneck

As the scale of perturbation experiments grows to encompass thousands of genes, the scalability of inference methods becomes critical. Traditional causal inference algorithms often struggle with this scale [9]. Moreover, evaluating the performance of GRN methods is challenging due to the lack of comprehensive, known ground-truth networks for real biological systems. While simulators like dyngen can generate in-silico benchmarks, performance on synthetic data does not always translate to real-world scenarios [10] [9]. Initiatives like CausalBench have been established to address this, providing a benchmark suite with large-scale real-world scCRISPR data and biologically-motivated metrics to objectively compare methods [9].

Disentangling Direct and Indirect Regulation

A fundamental problem in network inference is distinguishing direct regulation from indirect effects that are mediated through other genes. A knockout can influence multi-layered downstream genes over time, creating a cascade of expression changes that are difficult to deconvolute from a single snapshot [10]. While time-series data and sophisticated models like RENGE help address this, the problem is not fully solved, especially for large, densely connected networks.

Quantitative Benchmarking of GRN Inference Methods

Systematic benchmarking is essential for tracking progress in the field. The table below summarizes the performance of various state-of-the-art methods as evaluated by the CausalBench suite on large-scale perturbation datasets from two cell lines (K562 and RPE1) [9].

Table 1: Performance Comparison of GRN Inference Methods on CausalBench

| Method | Type | Key Strength | Notable Limitation |

|---|---|---|---|

| Mean Difference [9] | Interventional | Top performance on statistical metrics (e.g., Mean Wasserstein distance) | - |

| Guanlab [9] | Interventional | Top performance on biologically-motivated evaluation (e.g., F1 score) | - |

| GRNBoost2 [9] | Observational | High recall (discovers many true interactions) | Low precision (many false positives) |

| NOTEARS / DCDI variants [9] | Observational / Interventional | Theoretical grounding in causal discovery | Extracts limited information from large-scale data in practice |

| PC / GES [9] | Observational | Classic constraint-based and score-based causal methods | Poor scalability to very large datasets |

| Betterboost / SparseRC [9] | Interventional | Good statistical evaluation performance | Lower performance on biological evaluation |

Table 2: Common Benchmarking Frameworks for GRN Inference

| Framework | Primary Data Type | Key Feature | Reported Limitation |

|---|---|---|---|

| BEELINE [4] [12] | scRNA-seq | Standardized evaluation on multiple cell lines with curated ground-truth networks (e.g., STRING, ChIP-seq) | - |

| CausalBench [9] | scCRISPR (Perturbation) | Uses real-world large-scale interventional data; biology-driven and statistical metrics | Focuses on perturbation data |

| NetBenchmark / GRNbenchmark [12] | Bulk & scRNA-seq | Compares multiple methods using synthetic and real data | Under-representation of bulk RNA-seq data in some tools |

| GReNaDIne [12] | scRNA-seq | - | Usability challenges for non-technical users |

Experimental Workflow for Time-Series scCRISPR

A cutting-edge experimental protocol for inferring GRNs with causal information involves time-series single-cell CRISPR perturbation. The workflow below details the key steps, from experimental design to network inference.

Detailed Experimental Protocol

Experimental Design: Select a set of genes (e.g., transcription factors) for perturbation. Design and synthesize CRISPR guide RNAs (gRNAs) targeting each gene, along with non-targeting control gRNAs. Plan multiple time points for harvesting cells post-transduction, capturing early, intermediate, and late regulatory responses [10] [13].

CRISPR Perturbation & Time-Course Sampling: Transduce the population of cells (e.g., human induced pluripotent stem cells or primary T cells) with the pooled CRISPR library. At each predetermined time point, harvest a sample of cells. In the case of primary CD4+ T cells, activate the cells and perform CRISPR-knockout prior to scRNA-seq library preparation [13].

Single-Cell RNA Sequencing: For each harvested sample, prepare a scRNA-seq library using a platform such as 10x Genomics. Sequence the libraries to obtain gene expression counts for each individual cell, while also capturing the gRNA identity present in each cell to link perturbation to transcriptome [10] [13].

Computational Data Processing:

- Preprocessing: Perform standard scRNA-seq quality control (QC) - filter cells by mitochondrial read percentage, number of detected genes, and count depth. Filter out doublets. Normalize the gene expression data.

- Perturbation Assignment: Use tools like

CellRangerorCITE-Seq-Countto map gRNA sequences and assign each cell to its respective perturbation group (e.g., KO of gene X) or control group. - Expression Matrix Generation: Create a normalized expression matrix for each perturbation condition and time point.

Network Inference with RENGE: Input the processed time-series perturbation data into the RENGE algorithm [10]. The model regresses the expression of all genes at each time point t against the decrease in expression of the knocked-out gene g. It solves for the adjacency matrix A (representing the GRN) by fitting the data to its model equation: ( \mathbf{E}{g,t} = \sum{k=1}^{K} w(t,k,g) \mathbf{A}^{k} \mathbf{X}{g} + \mathbf{b}{t} ) where ( \mathbf{E}{g,t} ) is the expression vector after KO of *g* at time *t*, ( \mathbf{X}{g} ) is the vector of KO-driven expression decrease, ( w(t,k,g) ) is a weight for the k-th order regulation at time t, and ( \mathbf{b}_{t} ) is the wild-type expression vector. Bootstrap methods are used to calculate p-values for each inferred regulatory edge in A [10].

Table 3: Key Research Reagent Solutions for scCRISPR GRN Inference

| Reagent / Resource | Function | Application in Workflow |

|---|---|---|

| CRISPR gRNA Library | Designed to knock out specific target genes. | Introduces targeted perturbations to disrupt the network. |

| Viral Transduction System | Delivers gRNAs into cells. | Enables efficient and stable knockout in a pool of cells. |

| Single-Cell Kit | Prepares barcoded scRNA-seq libraries. | Captures transcriptome of thousands of single cells. |

| Validated Antibodies | Confirms protein-level knockdown. | Experimental validation of perturbation efficiency. |

| BEELINE / CausalBench Software | Benchmarks GRN inference methods. | Provides standardized evaluation of computational predictions. |

The single-cell revolution has fundamentally altered our approach to deciphering gene regulatory networks, moving the field from static, correlative maps to dynamic, causal models. The convergence of single-cell multi-omics, large-scale CRISPR perturbations, and sophisticated deep-learning algorithms represents a powerful toolkit for this endeavor. However, significant challenges persist, including data sparsity, the need for scalable and biologically interpretable models, and the development of robust benchmarks grounded in real-world data. Future progress will likely hinge on the continued development of multi-omic perturbation technologies, the creation of more comprehensive benchmark resources like CausalBench, and the adoption of models that can not only predict network topology but also simulate network dynamics in response to novel perturbations. Overcoming these hurdles will be crucial for realizing the full potential of single-cell technologies in drug discovery and our fundamental understanding of cellular biology.

Gene Regulatory Network (GRN) inference, the process of deciphering the complex web of interactions where genes and other molecules control cellular functions, is fundamental to advancing systems biology, drug discovery, and personalized medicine [14] [15]. Despite the advent of high-throughput sequencing technologies and sophisticated machine learning models, the accuracy of inferred GRNs has often been disappointingly low, frequently only marginally surpassing random predictions [16]. This persistent challenge stems from a confluence of intrinsic data limitations and profound methodological hurdles. This review delineates these fundamental limits, supported by quantitative data and experimental insights, to provide researchers with a clear understanding of the current landscape and the trajectory of ongoing innovations.

The Data Challenge: Sparsity, Noise, and Limited Independent Observations

The very nature of the biological data used for GRN inference presents the first and most significant barrier to high accuracy.

The Pervasiveness of Dropout in Single-Cell Data

Single-cell RNA sequencing (scRNA-seq) provides unparalleled resolution but is plagued by "dropout," a phenomenon where transcripts are erroneously not captured, leading to zero-inflated data [17] [7]. These dropouts are not random; they preferentially affect lowly or moderately expressed genes, distorting the true biological signal and complicating the detection of genuine co-expression and regulatory relationships.

Table 1: Impact of Dropout in Single-Cell Data Analysis

| Aspect of Dropout | Quantitative Impact / Characteristic | Consequence for GRN Inference |

|---|---|---|

| Prevalence of Zeros | 57% to 92% of observed counts are zeros across datasets [7] | High false-negative rate; obscures true gene-gene interactions |

| Nature of Error | Erroneous non-capture of expressed transcripts [17] | Introduces bias, as dropout is more likely for low/moderate expression genes |

| Modeling Approach | Dropout Augmentation (DA) simulates additional dropout during training [17] | Regularizes models like DAZZLE to improve robustness against zero-inflation |

The "Limited Data" Paradox of Single-Cell Experiments

While a single-cell experiment may profile thousands of cells, these cells are often not independent biological replicates. They represent snapshots from a continuum of states within a population, limiting the independent data points available for learning complex regulatory mechanisms [16]. This creates a paradox where datasets are large in volume but limited in independent informational value, making it difficult for models to generalize.

Methodological Hurdles: From Correlation to Causation

A second class of challenges arises from the computational and statistical methods themselves.

The Insufficiency of Correlation-Based Methods

Many classical GRN inference methods, such as those based on Pearson correlation or mutual information, identify gene-gene interactions based on co-expression patterns [15] [18]. A fundamental limitation is that correlation does not imply causation. Not all predicted correlations represent direct causal regulatory relationships, leading to a high rate of false positives [15]. Methods like GENIE3 and GRNBoost2, while powerful, operate on this principle and can be misled by indirect regulation and co-regulation.

The Black-Box Problem in Deep Learning

Deep learning models, including graph neural networks (GNNs) and transformers, have shown promise in capturing complex, non-linear regulatory relationships that linear models miss [14] [19]. However, their "black-box" nature limits mechanistic interpretability, restricting utility for generating testable biological hypotheses [19]. Without understanding why a model predicts an interaction, it is difficult for biologists to trust and validate its outputs.

The Challenge of Integrating Prior Knowledge

Integrating existing biological knowledge from databases like TRRUST, KEGG, or motif information can enhance inference and reduce false positives [15] [16]. However, this integration is technically challenging, especially in non-linear models. Furthermore, prior networks are often incomplete and not cell-type-specific, risking the propagation of errors or the failure to capture novel, context-specific interactions [15].

Experimental Protocols for Benchmarking GRN Inference

To objectively assess and compare the accuracy of GRN inference methods, the field relies on standardized benchmarking frameworks. The BEELINE framework is a prominent example [15].

1. Objective: To provide a systematic and unbiased evaluation of the accuracy, robustness, and efficiency of various GRN inference techniques on scRNA-seq benchmark datasets.

2. Input Data: The framework utilizes multiple scRNA-seq datasets from human and mouse cell lines (e.g., hESC, mESC). The input is a gene expression matrix where rows represent individual cells and columns represent genes.

3. Ground Truth Construction: A critical component is the use of experimentally derived ground-truth networks for validation. These can include: - Cell type-specific ChIP-seq networks: Direct measurements of transcription factor binding. - Non-specific ChIP-seq networks: Broader binding data. - Functional interaction networks: From databases like STRING. - Loss-of-function/Gain-of-function (LOF/GOF) networks: Derived from perturbation experiments.

4. Method Evaluation: Participating algorithms infer GRNs from the input data. Their predictions are compared against the ground truth networks.

5. Performance Metrics: - Early Precision Ratio (EPR): The fraction of true positives among the top-k predicted edges, where k is the number of edges in the ground truth network [15]. - Area Under the Precision-Recall Curve (AUPR): Measures the trade-off between precision and recall across all prediction thresholds. - Area Under the Receiver Operating Characteristic Curve (AUC): Measures the trade-off between the true positive rate and false positive rate.

This protocol allows for a direct and fair comparison of different methods, revealing that many historically achieve only modest performance, often just above random predictors [15] [12].

Visualization of Core GRN Inference Workflows

The following diagrams illustrate two predominant computational frameworks for GRN inference, highlighting their approaches to overcoming historical limitations.

Table 2: Essential Resources for GRN Inference Research

| Resource Name | Type | Primary Function in GRN Inference |

|---|---|---|

| BEELINE Framework [15] [12] | Software Benchmark | Provides standardized scRNA-seq datasets and ground truths to evaluate and compare inference methods. |

| TRRUST, KEGG, RegNetwork [15] | Prior Knowledge DB | Databases of known gene interactions used to guide inference algorithms and reduce false positives. |

| GENIE3 / GRNBoost2 [17] [15] | Inference Algorithm | Tree-based methods that use Random Forests to predict target genes from regulator expression. |

| DAZZLE [17] [7] | Inference Algorithm | A stabilized autoencoder model using Dropout Augmentation to improve robustness to zero-inflated data. |

| LINGER [16] | Inference Algorithm | A lifelong learning method that integrates large-scale external bulk data to enhance inference from single-cell multiome data. |

| GTAT-GRN [18] | Inference Algorithm | A graph neural network that uses topology-aware attention and multi-source feature fusion. |

| BIO-INSIGHT [20] | Consensus Optimizer | An evolutionary algorithm that optimizes consensus among multiple inference methods using biological objectives. |

Emerging Solutions and Future Directions

The field is actively developing innovative strategies to overcome these historical limits, showing promising improvements in accuracy.

1. Enhanced Robustness to Noise: New methods like DAZZLE directly address the dropout problem not through imputation, but via Dropout Augmentation (DA), a regularization technique that augments training data with synthetic zeros to make models more resilient to this noise [17] [7]. This leads to more stable and robust network inferences.

2. Leveraging External Data at Scale: Methods like LINGER employ lifelong learning to incorporate knowledge from atlas-scale external bulk data across diverse cellular contexts [16]. This mitigates the "limited data" paradox by pre-training models on a vast corpus of regulatory information before fine-tuning them on specific single-cell data, achieving a fourfold to sevenfold relative increase in accuracy.

3. Integrating Knowledge in a Guided Framework: Frameworks like KEGNI integrate scRNA-seq data with prior biological knowledge in a more sophisticated way, using a graph autoencoder coupled with a knowledge graph embedding model [15]. This multi-task learning approach ensures predictions are both data-driven and biologically plausible, consistently outperforming methods that use expression data alone.

4. Pursuing Interpretability and Combinatorial Logic: LogicSR moves beyond black-box predictions by framing GRN inference as a symbolic regression task [19]. It discovers interpretable Boolean logic rules (e.g., "Gene A is ON if (TF1 AND TF2) OR (NOT TF3)"), explicitly modeling the combinatorial cooperation of transcription factors and providing testable mechanistic hypotheses.

In conclusion, while the fundamental limits of GRN inference are deeply rooted in data quality and methodological complexity, the field is making significant strides. The integration of robust noise-handling techniques, large-scale external data, structured prior knowledge, and interpretable models represents a powerful, multi-faceted approach that is steadily pushing the boundaries of inference accuracy.

Gene Regulatory Networks (GRNs) are intricate biological systems that control gene expression and regulation in response to environmental and developmental cues [21]. The inference of accurate, cell type-specific GRNs is a fundamental step in investigating complex regulatory mechanisms underlying both normal physiology and disease [15]. Single-cell technologies have revolutionized this field by enabling researchers to dissect complex tissues and identify distinct cell populations with unique functional states, moving beyond the limitations of bulk sequencing approaches [22].

The emergence of various single-cell omics technologies—particularly single-cell RNA sequencing (scRNA-seq) and single-cell ATAC sequencing (scATAC-seq)—has provided unprecedented resolution for studying cellular heterogeneity. More recently, multiome profiling, which simultaneously measures multiple molecular modalities from the same cell, has paved the way for more powerful and integrative approaches to GRN inference [23]. These technological advances, coupled with sophisticated computational methods including machine learning and artificial intelligence, are significantly improving the accuracy of GRN reconstruction [21] [6].

This technical guide provides an in-depth examination of these key data sources, their experimental protocols, computational integration strategies, and their transformative applications in drug discovery and development.

Core Single-Cell Technologies and Their Characteristics

The foundation of modern GRN inference lies in several key single-cell technologies, each providing a unique perspective on cellular state and function.

Single-cell RNA sequencing (scRNA-seq) captures the transcriptome of individual cells, revealing gene expression profiles and enabling the identification of cell types and states based on expressed genes [24]. Since its demonstration in 2009, scRNA-seq has advanced substantially, with workflows typically involving single-cell isolation, mRNA capture, barcoding, cDNA library generation, and high-throughput sequencing [25].

Single-cell ATAC sequencing (scATAC-seq) identifies accessible chromatin regions across the genome using Tn5 transposase [26]. The organization of accessible chromatin reflects a cell's network of possible physical interactions through which enhancers, promoters, insulators, and transcription factors regulate gene expression [27].

Multiome profiling represents a significant technological leap by simultaneously measuring chromatin accessibility and gene expression from the same individual cells [28] [23]. Commercial platforms like the 10x Genomics Multiome kit use microfluidics to isolate cells into separate droplets where each cell is tagged with unique barcodes before lysis; RNA undergoes reverse transcription into barcoded cDNA, while accessible DNA is tagged for ATAC sequencing [26].

Table 1: Comparison of Key Single-Cell Technologies for GRN Inference

| Technology | Molecular Target | Key Outputs | Primary Strengths | Limitations |

|---|---|---|---|---|

| scRNA-seq | mRNA transcripts | Gene expression counts per cell | Identifies cell types and states; reveals expressed genes | Does not directly capture regulatory mechanisms |

| scATAC-seq | Accessible chromatin | Chromatin accessibility peaks | Maps regulatory elements; identifies potential enhancers and promoters | Indirect measure of gene regulation; highly sparse data |

| Multiome | Both mRNA and chromatin accessibility | Paired gene expression and accessibility per cell | Directly links regulators to target genes; identifies "primed" cell states | Higher cost; more complex data analysis; mandatory nuclei isolation |

Multiome Profiling: Experimental Protocols and Workflows

The implementation of multiome sequencing requires specific experimental protocols and considerations. A prominent example is the ScISOr-ATAC (Single-cell Isoform RNA sequencing and ATAC) method, which was developed to interrogate the correlation between splicing and chromatin accessibility modalities in single cells from frozen cortical tissue samples [28].

Detailed ScISOr-ATAC Protocol

The ScISOr-ATAC protocol involves several critical steps:

- Sample Preparation: Collecting tissue samples (e.g., human and rhesus macaque frozen cortical tissues) and performing nuclei isolation—a mandatory step for scATAC-seq due to its tagmentation requirement [28] [27].

- Multiome Library Preparation: Using the 10x Genomics Multiome kit to prepare single-nucleus RNA and ATAC libraries from the same cells.

- Sequencing: High-throughput sequencing generates millions of paired-end reads for both RNA and ATAC modalities. For example, applications of ScISOr-ATAC achieved 293 million–385 million paired-end reads for RNA and 350 million–381 million reads for ATAC [28].

- Targeted Enrichment (Optional): Custom-designed enrichment arrays (e.g., Agilent) can be applied to cover annotated splice junctions in genes of interest before Oxford Nanopore Technologies (ONT) long-read sequencing, significantly improving on-target capture rates (79-83% with enrichment versus ~2% without) [28].

- Data Processing: Reads are mapped to the reference genome using tools like minimap2, and barcodes are matched to assign reads to individual cells. Spliced reads are filtered, and unique molecular identifiers (UMIs) are used to distinguish distinct transcripts [28].

Key Research Reagent Solutions

Table 2: Essential Research Reagents and Tools for Multiome Experiments

| Item | Function | Example Product/Method |

|---|---|---|

| Nuclei Isolation Kit | Extracts intact nuclei from tissue or cells | Mandatory for ATAC tagmentation [27] |

| Multiome Kit | Enables co-assay of RNA and ATAC from same cell | 10x Genomics Multiome Kit [28] [26] |

| Chromium Controller | Partitions single cells into nanoliter droplets | 10x Genomics Chip [24] |

| Enrichment Panel | Targets specific genes of interest for sequencing | Custom Agilent enrichment array [28] |

| Sequencing Platform | Generates high-throughput sequence data | Illumina Novaseq 6000; Oxford Nanopore Technologies [28] [26] |

| Cell Ranger | Processes sequencing data and performs initial alignment | 10x Genomics Pipeline [24] |

Diagram 1: Multiome experimental workflow showing parallel RNA and ATAC processing.

Computational Integration and GRN Inference Methods

The complex, multi-modal data generated by single-cell technologies requires sophisticated computational approaches for GRN inference. These methods can be broadly categorized by the data types they utilize and their underlying algorithms.

Multiome Data Integration Methods

SCARlink (Single-cell ATAC + RNA linking) is a gene-level regulatory model that predicts single-cell gene expression and links enhancers to target genes using multi-ome sequencing data [23]. Unlike pairwise correlation approaches, SCARlink uses regularized Poisson regression on tile-level accessibility data (500 bp tiles spanning ±250 kb of the gene body) to jointly model all regulatory effects at a gene locus. This approach avoids limitations of peak calling and outperforms existing gene scoring methods, particularly in high-coverage datasets [23].

FigR, SCENIC+, and LINGER are additional frameworks that integrate scRNA-seq and scATAC-seq data to deduce regulatory interactions, leveraging the paired nature of multiome measurements to connect transcription factors to their target genes [15].

Knowledge-Enhanced GRN Inference

KEGNI (Knowledge graph-Enhanced Gene regulatory Network Inference) represents a cutting-edge framework that employs a graph autoencoder to capture gene regulatory relationships and incorporates a knowledge graph to infer GRNs based on scRNA-seq data [15]. KEGNI uses a self-supervised learning strategy where it randomly masks a subset of gene expression features and learns to reconstruct them, effectively capturing the underlying gene-gene interactions. The integration of prior biological knowledge from databases like KEGG PATHWAY further enhances its performance, with demonstrated superiority over multiple methods using either scRNA-seq data alone or paired scRNA-seq and scATAC-seq data [15].

Diagram 2: KEGNI framework integrating scRNA-seq data and prior knowledge.

Machine Learning and Deep Learning Approaches

Modern GRN inference increasingly leverages artificial intelligence, particularly machine learning techniques including supervised, unsupervised, semi-supervised, and contrastive learning to analyze large-scale omics data [21]. Deep learning-based computational strategies have demonstrated strong capabilities in capturing complex and nonlinear dependencies from gene expression data [15]. For instance:

- scGeneRAI employs an interpretable framework based on layer-wise relevance propagation

- AttentionGRN utilizes graph neural network architectures to integrate topological and contextual information

- CNNC and DeepIMAGER transform gene pairs into image-like representations and apply convolutional neural networks

These methods overcome limitations of traditional co-expression-based approaches that often increase false positives, as not all predicted correlations represent causal relationships [15].

Table 3: Computational Methods for GRN Inference from Single-Cell Data

| Method | Data Input | Core Algorithm | Key Advantage |

|---|---|---|---|

| SCARlink | Multiome (RNA+ATAC) | Regularized Poisson Regression | Models joint regulatory effects; avoids peak calling |

| KEGNI | scRNA-seq + Knowledge Graph | Graph Autoencoder + Contrastive Learning | Integrates prior knowledge; self-supervised learning |

| GENIE3 | scRNA-seq | Random Forest | Established benchmark for co-expression networks |

| SCENIC+ | Multiome (RNA+ATAC) | Random Forest + RcisTarget | Combines co-expression with motif analysis |

| LINGER | Multiome (RNA+ATAC) | Statistical Inference | Links regulatory elements to target genes |

Applications in Drug Discovery and Development

Single-cell technologies, particularly multiome profiling, are transforming drug discovery by providing unprecedented insights into disease mechanisms, cellular heterogeneity, and therapeutic responses [24] [25]. These approaches are being applied across the entire drug development pipeline:

Target Identification and Validation

Single-cell technologies help identify and validate disease-related targets by providing precise cell-specific data [25]. In Alzheimer's disease research, application of ScISOr-ATAC revealed that oligodendrocytes show high dysregulation in both chromatin and splicing, highlighting this cell type as a potential therapeutic target [28]. Similarly, in cancer research, single-cell analyses have identified molecular pathways that allow prediction of survival, response to therapy, likelihood of resistance, and candidacy for alternative intervention [24].

Preclinical Model Evaluation and Candidate Selection

ScRNA-seq has enabled identification of novel cell types and subtypes, refinement of cell differentiation trajectories, and dissection of heterogeneously manifested human traits [24]. The availability of single-cell sequencing data for animal model systems is improving our understanding of translatability to humans, helping researchers select the most relevant preclinical models for testing candidate therapies [24].

Clinical Development and Biomarker Identification

In clinical development, single-cell technologies can inform decision-making via improved biomarker identification for patient stratification and more precise monitoring of drug response and disease progression [24]. For instance, 10x Multiome has been applied to identify mechanisms of resistance in cancer patients who underwent monoclonal antibody therapy, implicating both genetic inactivation and epigenetic silencing of regulatory elements in treatment resistance [27].

Comparative Analysis and Best Practices

Technology Selection Considerations

When deciding between single-modality and multiome approaches, researchers should consider several factors:

- Data Quality: Compared to standalone scATAC-seq, 10x Multiome is currently outperformed in terms of sensitivity and library complexity, producing approximately half the unique fragment peaks as the most advanced 10x Single Cell ATAC protocol [27].

- Cost Efficiency: For designs where scATAC-seq is the primary focus, standalone 10x Single Cell ATAC may be preferred due to additional costs with Multiome [27].

- Biological Context: The utility of ATAC data for cell type annotation varies by tissue type. For example, studies demonstrate improvement in supervised annotation of immune cells when combining RNA and ATAC data, but no such improvement was observed when annotating neuronal cells [26].

Emerging Trends and Future Directions

The field of GRN inference is rapidly evolving, with several emerging trends shaping its future:

- Integration of Large-Scale Knowledge Bases: Methods like KEGNI that incorporate structured biological knowledge from multiple databases represent a promising direction for reducing false positives in network inference [15].

- Multi-omics at Scale: As technologies advance, the integration of additional modalities beyond RNA and ATAC—including proteomics, metabolomics, and spatial information—will provide more comprehensive views of cellular regulation [22] [25].

- AI-Driven Discovery: Deep learning techniques are increasingly being applied to predict drug perturbations at the single-cell level, using frameworks such as variational autoencoders (VAE) and transformers to simulate cellular responses to various therapeutic interventions [25].

The evolution from standalone scRNA-seq and scATAC-seq to integrated multiome profiling represents a paradigm shift in GRN inference methodologies. These advanced data sources, coupled with sophisticated computational frameworks, are enabling researchers to construct more accurate, cell type-specific regulatory networks that reflect the complex interplay between chromatin accessibility, gene expression, and regulatory element activity.

As these technologies continue to mature and computational methods become increasingly refined, we can expect further transformations in our understanding of gene regulation in health and disease. The integration of single-cell multi-omics data with machine learning approaches will likely play a pivotal role in accelerating drug discovery, enabling more precise target identification, improved preclinical model selection, and enhanced patient stratification in clinical development.

The inference of Gene Regulatory Networks (GRNs) is fundamentally compromised by the challenge of distinguishing true biological regulation from technical artifacts and biological noise. In single-cell RNA sequencing (scRNA-seq) data, which provides unprecedented resolution for cell-type-specific GRN analysis, a predominant source of technical noise is dropout—a phenomenon where transcripts with low or moderate expression are not detected, resulting in an excess of false zeros [7] [29]. This zero-inflation can obscure true gene-gene interactions and lead to incorrect network inferences. Simultaneously, biological variations stemming from the stochastic nature of gene expression, environmental niches, and cell-cycle effects create additional layers of complexity that confound accurate network reconstruction [29]. The performance of many existing GRN inference methods remains disappointingly low, often marginally exceeding random predictions, highlighting the critical importance of addressing these noise sources [16].

Computational Strategies for Noise Mitigation

Foundational Models and Data Integration

Table 1: Computational Methods for Addressing Noise in GRN Inference

| Method | Underlying Approach | Noise Mitigation Strategy | Key Innovation |

|---|---|---|---|

| scPRINT [30] | Bidirectional Transformer | Multi-task pretraining (denoising, bottleneck learning, label prediction) | Uses protein embeddings (ESM2) and genomic location as priors; pre-trained on 50 million cells. |

| LINGER [16] | Lifelong Neural Network | Integration of atlas-scale external bulk data | Uses elastic weight consolidation to transfer knowledge from bulk to single-cell data. |

| DAZZLE [7] | Autoencoder-based SEM | Dropout Augmentation (DA) | Augments data with synthetic zeros to improve model robustness to dropout noise. |

| IDEMAX [31] | Z-score Outlier Detection | Infers effective perturbation design from noisy data | Reduces regression dilution bias by reconstructing the perturbation matrix from expression. |

Advanced computational methods have been developed to specifically counter the challenges posed by noise. The scPRINT model introduces an innovative pre-training regimen designed to learn meaningful gene connections despite noisy data [30]. Its three core tasks—denoising, bottleneck learning, and label prediction—are optimized jointly to force the model to robustly represent gene networks. The model further incorporates biological priors by using protein sequence embeddings and genomic location data, which provide a structural and evolutionary foundation that helps differentiate signal from noise [30].

The LINGER framework addresses the problem of limited independent data points in single-cell experiments by leveraging lifelong learning. It pre-trains a neural network on vast external bulk data from resources like ENCODE, which encompasses hundreds of samples across diverse cellular contexts. When refining the model on single-cell multiome data, it applies elastic weight consolidation (EWC) loss, which uses the bulk data parameters as a prior. This regularization prevents the model from overfitting to the noisy single-cell data and guides it toward more biologically plausible parameters, resulting in a fourfold to sevenfold increase in accuracy over existing methods [16].

Direct Modeling of Technical Noise

Counter-intuitively, the DAZZLE model improves robustness to dropout noise by augmenting the input data with additional, synthetically generated zeros—a technique termed Dropout Augmentation (DA) [7]. During each training iteration, a small proportion of expression values are randomly set to zero, exposing the model to multiple noisy versions of the same data. This process regularizes the model, making it less likely to overfit to any specific instance of dropout noise. DAZZLE also incorporates a noise classifier that identifies which zeros are likely to be technical artifacts, allowing the model's decoder to downweight their influence during reconstruction [7].

For perturbation-based studies, where the intended experimental design can be obfuscated by off-target effects and noise, the IDEMAX algorithm improves GRN inference by reconstructing the effective perturbation matrix directly from the gene expression data itself [31]. It uses a Z-score approach to identify experiments where a gene's expression is most different from its overall distribution, assigning these as the inferred perturbations. This method mitigates regression dilution bias, a common issue where noise causes fitted values to incorrectly shrink toward zero, thereby recovering regulatory edges that would otherwise be lost [31].

Experimental Design and Validation

Robust Experimental Protocols

Protocol 1: GRN Inference with Integrated Noise Handling using scPRINT

- Input Data Preparation: Collect a gene expression matrix (cells x genes) from scRNA-seq experiments. The model is designed to handle a context of 2,200 randomly selected expressed genes per cell profile.

- Gene Representation Embedding:

- Generate gene ID embeddings using the ESM2 protein language model to create an amino-acid embedding for each gene's most common protein product.

- Encode gene expression values by processing log-normalized counts through a multi-layer perceptron (MLP).

- Create genomic positional encodings based on each gene's location in the genome.

- Model Input Construction: Sum the three embeddings (ID, expression, location) for each gene. Concatenate these across all expressed genes in a cell, along with placeholder cell embeddings, to form the input matrix for the transformer.

- Multi-Task Pre-training/Fine-tuning: Train the model by optimizing a combined loss function from three simultaneous tasks:

- Denoising Task: The model learns to upsample transcript counts, effectively imputing missing expressions.

- Bottleneck Learning Task: The model generates a cell embedding and then attempts to reconstruct the true expression profile from this embedding alone, forcing a compressed, meaningful representation.

- Label Prediction Task: A hierarchical classifier predicts cell metadata (e.g., cell type, disease) from the embeddings, disentangling facets of the cell state.

- GRN Extraction: After training, compute the gene-gene interaction strengths. This can be done by analyzing the attention mechanisms within the transformer or via dedicated output layers designed to reflect regulatory influence.

Protocol 2: Dropout Augmentation for Robust GRN Inference (DAZZLE Workflow)

- Data Preprocessing: Transform the raw count matrix ( x ) using ( \log(x+1) ) to reduce variance and avoid undefined values.

- Dropout Augmentation (DA): During each training iteration, randomly select a small proportion ( p ) (a hyperparameter, e.g., 5-10%) of the non-zero values in the input matrix and set them to zero. This simulates additional dropout noise.

- Model Training:

- The autoencoder-based model (e.g., DAZZLE) takes the augmented expression matrix as input.

- The model is trained to reconstruct the original (non-augmented) input.

- A noise classifier component operates in parallel, learning to distinguish real zeros from augmented ones.

- Sparsity Control: Introduce a sparsity loss term for the adjacency matrix after a customisable number of training epochs to prevent premature convergence to a dense network.

- Network Inference: Upon convergence, the weights of the trained adjacency matrix ( A ) are extracted, representing the inferred GRN. The model's stability is assessed by monitoring the consistency of the inferred network across training epochs.

Benchmarking and Validation

Rigorous validation is paramount, given the absence of a complete ground-truth network in most real-world scenarios. Benchmarking platforms like the BEELINE framework provide synthetic and curated real networks with known relationships to quantitatively evaluate inference accuracy [29]. Performance is typically measured using the Area Under the Receiver Operating Characteristic Curve (AUC) and the Area Under the Precision-Recall Curve (AUPR) [16]. For experimental data, where a full ground-truth is unavailable, reference networks built from literature-curated knowledge or orthogonal data sources like ChIP-seq and eQTL studies are used for validation [29] [16].

Diagram 1: A workflow outlining the major sources of noise in GRN inference and the strategies to mitigate them, leading to a validated network.

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Reagents and Resources for Robust GRN Inference

| Resource / Reagent | Function in GRN Inference | Utility in Noise Mitigation |

|---|---|---|

| cellxgene Database [30] | A curated atlas of single-cell data. | Provides massive-scale datasets (e.g., 50M+ cells) for pre-training foundation models like scPRINT, teaching models to distinguish consistent patterns from noise. |

| ENCODE Bulk Data [16] | A comprehensive collection of functional genomic data from bulk assays. | Serves as a prior knowledge base in lifelong learning (LINGER) to constrain and guide inference from noisier single-cell data. |

| BEELINE Benchmark [7] [29] | A software suite and dataset for benchmarking GRN algorithms. | Provides standardized synthetic and curated real networks with known ground truth to quantitatively evaluate a method's robustness to noise. |

| ChIP-seq & eQTL Data [16] | Orthogonal experimental data defining TF-binding and variant-gene links. | Used as high-confidence ground truth for validating inferred regulatory interactions, separating true positives from artifacts. |

| Multiome Kit (e.g., 10x Multiome) [16] | Allows simultaneous measurement of gene expression and chromatin accessibility in the same single cell. | Reduces confounding by enabling direct linking of accessible cis-regulatory elements to their target genes, strengthening causal inference. |

Distinguishing biological signal from technical and biological noise is not merely a preprocessing step but a central consideration that dictates the success of GRN inference. The advent of sophisticated computational strategies—including multi-task foundation models, lifelong learning with external data, and targeted regularization techniques like dropout augmentation—represents a significant leap forward. When these methods are coupled with rigorous experimental designs, such as single-cell multiome protocols, and validated against orthogonal biological data, researchers can achieve a level of accuracy and reliability that was previously unattainable. As these tools continue to evolve, they pave the way for more precise identification of disease-associated regulatory pathways and potential therapeutic targets.

The Modern GRN Toolkit: Machine Learning, Multi-omics, and Advanced Computational Strategies

Gene Regulatory Networks (GRNs) represent the complex web of interactions where transcription factors (TFs) regulate the expression of target genes, controlling fundamental biological processes [21]. The inference of these networks from high-throughput genomic data remains a central challenge in computational biology and systems biology. Regression-based models form a cornerstone of GRN inference methodologies, offering a powerful framework for deciphering these regulatory relationships from gene expression data.

These approaches fundamentally operate on the principle that the expression of a target gene can be predicted as a function of the expression levels of its potential regulators. The field has evolved along two primary branches: tree-based ensemble methods like GENIE3, which capture complex, non-linear relationships, and regularized linear models, which introduce sparsity constraints to handle high-dimensional data. This technical guide provides a comprehensive overview of these regression-based frameworks, detailing their theoretical foundations, methodological implementations, performance characteristics, and practical applications within the broader context of GRN inference research.

Theoretical Foundations of Regression-Based GRN Inference

The core problem of GRN inference is to reconstruct a network of directed regulatory interactions between genes from expression data, typically represented as a directed graph where edges indicate regulation [32]. Regression-based approaches frame this problem as a series of supervised learning tasks.

The foundational assumption is that the expression level of each gene ( j ) at condition or time ( k ), denoted ( x_j(k) ), can be modeled as a function of the expression levels of its potential regulators plus some noise:

[ xj(k) = fj(\mathbf{x}{-j}(k)) + \varepsilonk ]

Here, ( \mathbf{x}{-j}(k) ) represents the vector of expression levels of all other genes (or a predefined set of candidate regulators) at condition ( k ), and ( fj ) is a function that encodes the regulatory program for gene ( j ) [32] [33]. The function ( f_j ) is learned from the data, and the importance of each input gene ( i ) in predicting the target gene ( j ) is quantified as a confidence score for the regulatory link ( i \rightarrow j ).

This framework offers several advantages. It naturally handles the directionality of regulatory interactions, can accommodate both linear and non-linear relationships, and provides a principled way to rank putative interactions based on their predictive importance. The choice of the function class for ( f_j ) and the method for quantifying feature importance differentiate the various regression-based approaches.

Tree-Based Ensemble Methods: The GENIE3 Framework

Core Algorithm and Methodology

GENIE3 (GEne Network Inference with Ensemble of trees) is a leading GRN inference method that won the DREAM4 In Silico Multifactorial challenge and has demonstrated state-of-the-art performance in multiple independent benchmarks [32] [34]. Its success stems from its use of powerful tree-based ensemble methods.

The algorithm decomposes the network inference problem into ( p ) separate regression problems, where ( p ) is the number of genes [32]. For each gene ( j ):

Learning Sample Generation: A learning sample is created where the expression profile of gene ( j ) serves as the target output, and the expression profiles of all other genes (or a predefined set of candidate regulators) serve as input features: ( LS^j = {(\mathbf{x}{-j}(k), xj(k)), k = 1, \dots, M} ).

Model Training: A regression model ( f_j ) is learned from ( LS^j ) using either Random Forests or Extra-Trees ensemble methods. These methods aggregate predictions from multiple decision trees, each trained on bootstrapped samples of the data and random subsets of input features.

Importance Calculation: The importance of each input gene ( i ) in predicting target gene ( j ) is computed as the total decrease in variance (Mean Decrease in Impurity) across all trees in the forest when gene ( i ) is used for splitting. This importance score ( w_{i,j} ) represents the confidence for the regulatory link ( i \rightarrow j ).

The final network is reconstructed by aggregating the weights ( w_{i,j} ) across all genes, producing a ranked list of potential regulatory interactions [32].

Experimental Protocol and Implementation

Implementing GENIE3 requires careful consideration of several parameters and data preparation steps:

Input Data Preparation: GENIE3 was originally designed for static steady-state expression data from multifactorial perturbations, where ( M ) represents different experimental conditions [32]. The data should be properly normalized before analysis.

Candidate Regulators: If prior knowledge is available (e.g., a list of known transcription factors), the input genes for each regression problem can be restricted to these candidate regulators, improving efficiency and biological relevance.

Key Parameters: The main parameters include the choice of tree-based method (Random Forests or Extra-Trees), the number of trees in the ensemble, and the number of input features randomly selected at each split (typically the square root of the total number of features for classification problems).

Validation: Since the method provides a ranked list of interactions, practical network reconstruction requires setting a threshold on the weights. This can be done based on a desired number of top interactions or through stability analysis.

The following Graphviz diagram illustrates the comprehensive GENIE3 workflow, from data input to network reconstruction:

dynGENIE3: Extension to Time Series Data

The original GENIE3 method was adapted to handle time series expression data through dynGENIE3 (dynamical GENIE3) [33]. This extension uses a semi-parametric approach where the temporal evolution of each gene's expression is described by an ordinary differential equation (ODE):

[ \frac{dxj}{dt} = gj(\mathbf{x}(t)) - \alphaj xj(t) ]

Here, ( gj ) represents the transcription function of gene ( j ), modeled non-parametrically using Random Forests, and ( \alphaj ) is the degradation rate [33]. The regulators of each target gene are identified from the variable importance scores derived from the corresponding Random forest model. dynGENIE3 can also jointly analyze both time series and steady-state data, making it highly flexible for different experimental designs.

Regularized Linear Regression Approaches

Mathematical Formulations and Regularization Strategies

While GENIE3 captures non-linear relationships, regularized linear approaches provide an alternative framework that explicitly models linear regulatory relationships while addressing the high-dimensionality of GRN inference (where the number of genes ( p ) often exceeds the number of samples ( n )).

The basic multivariate linear regression model for GRN inference is formulated as:

[ \mathbf{Y} = \mathbf{XB} + \mathbf{E} ]

where ( \mathbf{Y}{n \times s} ) contains expression values of ( s ) target genes across ( n ) samples, ( \mathbf{X}{n \times p} ) contains expression values of ( p ) transcription factors, ( \mathbf{B}{p \times s} ) represents the regression coefficients (indicating regulatory relationships), and ( \mathbf{E}{n \times s} ) is the error matrix [35] [36].

To handle the ( p > n ) problem and induce sparsity (reflecting the biological fact that each gene is regulated by only a few TFs), various regularization techniques are employed:

L1 Regularization (Lasso): Promotes sparsity by penalizing the sum of absolute values of coefficients: ( \|\mathbf{B}\|_1 ). This forces weak regulators to have exactly zero coefficients.

L2 Regularization (Ridge): Penalizes the sum of squares of coefficients: ( \|\mathbf{B}\|_2^2 ), shrinking coefficients toward zero but not exactly to zero.

L2,1-Norm Regularization: Used in multivariate regression settings, this norm penalizes the sum of L2-norms of rows of ( \mathbf{B} ): ( \|\mathbf{B}\|{2,1} = \sum{i=1}^p \|\mathbf{b}i\|2 ). This encourages row-sparsity, meaning entire rows of ( \mathbf{B} ) become zero, effectively selecting a common set of regulators for all target genes [35] [36].

Mixed-Norms Regularized Multivariate Models

Recent advancements have proposed blended approaches that combine regularized multivariate regression with graphical models. These methods simultaneously model multiple target genes while accounting for the correlations among them, addressing a limitation of approaches that model each target gene independently [35] [36].

The objective function for these mixed-norms approaches takes the form:

[ \mathcal{L}(\mathbf{B}, \Omega) = \text{Tr}[\frac{1}{n}(\mathbf{Y} - \mathbf{XB})^T(\mathbf{Y} - \mathbf{XB})\Omega] - \log|\Omega| + \lambda1\|\mathbf{B}\|{2,1} + \lambda2\|\Omega\|1 ]

Here, ( \Omega ) is the precision matrix (inverse covariance matrix) of the errors, which captures conditional dependencies among target genes after accounting for the effects of regulators [36]. The L2,1-norm on ( \mathbf{B} ) selects a common set of regulators across targets, while the L1-norm on ( \Omega ) encourages sparsity in the residual dependency structure.

These models are estimated through an iterative algorithm that alternates between updating ( \mathbf{B} ) and ( \Omega ) until convergence, leveraging the fact that the optimization problem is biconvex [36].

The following Graphviz diagram illustrates the conceptual framework of mixed-norms regularized multivariate models:

Experimental Protocol for Regularized Linear Models

Implementing regularized linear models for GRN inference involves:

Data Preprocessing: Standard normalization of expression data is essential. For time series data, proper interpolation or smoothing might be required.

Candidate Regulator Specification: As with GENIE3, providing a list of known transcription factors as candidate regulators improves biological relevance and computational efficiency.

Parameter Tuning: The regularization parameters (( \lambda1 ), ( \lambda2 )) control the sparsity of the solution and must be carefully tuned, typically through cross-validation or information criteria.

Model Fitting: Efficient optimization algorithms, such as coordinate descent or alternating direction method of multipliers (ADMM), are used to solve the convex optimization problems.

Network Construction: The non-zero entries in the estimated coefficient matrix ( \mathbf{B} ) define the inferred regulatory network, with the magnitude of coefficients indicating interaction strengths.

Performance Comparison and Benchmarking

Quantitative Performance Metrics

Evaluating GRN inference methods requires carefully designed benchmarks and appropriate metrics. Common evaluation frameworks include:

Area Under the Precision-Recall Curve (AUPR): Particularly informative for network inference where positive interactions are rare compared to the vast number of possible interactions.

Area Under the Receiver Operating Characteristic Curve (AUC): Measures the overall ability to distinguish true regulatory interactions from non-interactions.

Early Precision: Precision at the top k predictions, reflecting practical usage where researchers typically consider only the highest-confidence predictions.

Stability: Measured by the Jaccard index between networks inferred from different subsamples of the data, indicating robustness to variations in the input data.

The BEELINE framework provides a comprehensive evaluation platform specifically designed for benchmarking GRN inference algorithms on single-cell data, incorporating synthetic networks with predictable trajectories, literature-curated Boolean models, and diverse transcriptional regulatory networks [34].

Comparative Performance Analysis

Extensive benchmarking studies reveal distinct performance characteristics across different regression-based methods:

Table 1: Performance Comparison of Regression-Based GRN Inference Methods

| Method | Model Type | Key Strengths | Performance Notes | Computational Scalability |

|---|---|---|---|---|

| GENIE3 | Tree-based ensemble | Captures non-linear interactions; No assumptions about regulation nature; Won DREAM4 challenge [32] | Good performance on synthetic networks (AUPRC ratio >5 for Linear Long Network); Robust to number of cells [34] | Fast and scalable to thousands of genes [32] [33] |

| dynGENIE3 | Semi-parametric ODE + Tree ensembles | Handles time series data; Joint analysis of steady-state and time series data [33] | Competitive performance on DREAM4 benchmarks; Outperforms GENIE3 on artificial data [33] | Highly scalable compared to Bayesian alternatives [33] |

| Mixed-Norms Models | Regularized multivariate linear | Joint modeling of multiple targets; Identifies master regulators; Considers target gene correlations [35] [36] | Consistently good performance across diverse datasets; Effective for master regulator identification [35] | Efficient iterative algorithms for high-dimensional data |

| LINGER | Neural network with external data | Incorporates atlas-scale external data; Uses motif information as regularization [16] | 4-7x relative increase in accuracy over existing methods; Superior cis-regulatory inference [16] | Requires significant computational resources for training |

Table 2: BEELINE Benchmark Results on Synthetic Networks (Adapted from [34])

| Network Type | Best Performing Methods | Median AUPRC Ratio | Notable Observations |

|---|---|---|---|

| Linear | 10/12 methods | >2.0 | Easiest network to infer |

| Linear Long | 7 methods | >5.0 | GENIE3 among top performers |

| Cycle | SINCERITIES | <2.0 | Moderately difficult |

| Bifurcating | SINCERITIES | <2.0 | Challenging for most methods |

| Trifurcating | PIDC | <2.0 | Most challenging network |

Benchmarking results indicate that no single method consistently outperforms all others across every network type and dataset. GENIE3 and its variants show robust performance across multiple challenges and maintain good scalability [34]. Regularized linear methods, particularly the more recent multivariate approaches, demonstrate consistently good performance and offer advantages in identifying master regulators—transcription factors that regulate a sizeable proportion of target genes [35].

Performance is influenced by multiple factors including network topology, data type (steady-state vs. time series), number of cells/samples, and noise levels. Methods that do not require pseudotime-ordered cells generally show better accuracy in benchmark studies [34].

Successful implementation of regression-based GRN inference methods requires both computational tools and biological data resources. The following table details key components of the research toolkit:

Table 3: Essential Research Reagent Solutions for GRN Inference

| Resource Type | Specific Examples | Function in GRN Inference | Implementation Notes |

|---|---|---|---|

| Expression Data | Static steady-state data (multifactorial perturbations); Time series expression profiles; Single-cell RNA-seq data [32] [33] [34] | Primary input for inferring regulatory relationships; Captures system response to perturbations | Normalization (e.g., TMM for RNA-seq) is critical; Quality control essential for single-cell data |

| Candidate Regulator Lists | Known transcription factors; Chromatin regulators; Signaling molecules | Constrains search space to biologically plausible regulators; Improves efficiency and relevance | Databases like PlantTFDB, AnimalTFDB provide comprehensive lists |

| Benchmark Networks | DREAM challenges; Literature-curated Boolean models; Synthetic networks [34] | Gold standards for method validation and comparison | BEELINE framework provides standardized evaluation [34] |

| Validation Data | ChIP-seq; eQTL studies; Perturbation studies [16] | Experimental validation of predicted interactions | Independent from inference data; Provides ground truth assessment |

| Software Libraries | R/Python implementations; Docker containers [34] | Accessible implementation of algorithms | BEELINE provides uniform interface to multiple methods [34] |

| External Bulk Data | ENCODE project; GTEx; eQTLGen [16] | Provides additional regulatory context; Enables transfer learning | LINGER uses this for lifelong learning [16] |