From Data to Discovery: A Machine Learning Framework for Identifying Robust Biomarker Candidates

This article provides a comprehensive overview for researchers and drug development professionals on the application of machine learning (ML) for robust biomarker discovery.

From Data to Discovery: A Machine Learning Framework for Identifying Robust Biomarker Candidates

Abstract

This article provides a comprehensive overview for researchers and drug development professionals on the application of machine learning (ML) for robust biomarker discovery. It covers the foundational principles of biomarkers and the necessity of ML for analyzing complex, high-dimensional omics data. The piece explores a suite of ML methodologies, from established algorithms to novel, biologically-informed techniques, and addresses critical challenges such as model overfitting and data integration. Through comparative analysis and validation strategies, it outlines a path for translating computational findings into clinically actionable biomarkers, synthesizing key takeaways and future directions for the field.

The New Frontier: Why Machine Learning is Revolutionizing Biomarker Discovery

A biomarker is defined as "a characteristic that is objectively measured and evaluated as an indicator of normal biological processes, pathogenic processes, or pharmacologic responses to a therapeutic intervention" [1] [2]. This broad definition encompasses molecular, histologic, radiographic, or physiologic characteristics that provide objective, quantifiable measures of biological processes [1] [2]. It is crucial to distinguish biomarkers from Clinical Outcome Assessments (COAs), which are direct measures of how a patient feels, functions, or survives [1]. Biomarkers serve as surrogate endpoints in research, but they only become clinically meaningful when they consistently predict or correlate with these patient-centric outcomes [1] [2].

The U.S. Food and Drug Administration (FDA) and National Institutes of Health (NIH) have established precise definitions for biomarker categories through their Biomarkers, EndpointS, and other Tools (BEST) resource, clarifying their distinct applications in patient care, clinical research, and therapeutic development [1]. A biomarker's journey from initial discovery to clinical application hinges on establishing a clear chain of evidence that progresses from technical measurability to clinical impact, requiring rigorous validation at each stage to ensure robustness and reliability [3].

Table 1: Biomarker Categories and Definitions

| Category | Definition | Example |

|---|---|---|

| Diagnostic | Detects or confirms presence of a disease or condition [1] | Troponin for acute myocardial infarction [1] |

| Monitoring | Measured serially to assess disease status or exposure effects [1] | HbA1c for diabetes management [1] |

| Predictive | Identifies likelihood of response to a specific therapeutic intervention [1] [3] | HER2 for trastuzumab response in breast cancer [3] |

| Prognostic | Provides information about disease course and future outcomes [4] | Cancer staging for survival probability [4] |

| Safety | Measures exposure to or effects of a medical product/environmental agent [3] | Troponin for cardiotoxicity [3] |

| Pharmacodynamic/Response | Shows a biological response has occurred in an individual who has received a medical product [1] | Cholesterol reduction after statin treatment [1] |

Machine Learning Approaches for Robust Biomarker Discovery

Traditional biomarker discovery approaches that focus on single molecular features face significant challenges, including limited reproducibility, high false-positive rates, and inadequate predictive accuracy when dealing with biologically heterogeneous diseases [4]. Machine learning (ML) and deep learning (DL) methods address these limitations by analyzing large, complex multi-omics datasets to identify reliable multivariate biomarker signatures that capture intricate biological networks [4].

Supervised and Unsupervised Learning Applications

Supervised learning approaches train predictive models on labeled datasets to classify disease status or predict clinical outcomes. Commonly used techniques include Support Vector Machines (SVM), Random Forests, and gradient boosting algorithms (e.g., XGBoost, LightGBM) [5] [4]. These methods are particularly effective for developing diagnostic and prognostic biomarkers from high-dimensional omics data. For example, a study on Alzheimer's disease utilized an SVM-based approach to identify a robust 12-protein panel from cerebrospinal fluid proteomic datasets that demonstrated high diagnostic accuracy across ten different cohorts [6].

Unsupervised learning methods explore unlabeled datasets to discover inherent structures or novel subgroupings without predefined outcomes. These techniques include clustering methods (k-means, hierarchical clustering) and dimensionality reduction approaches (principal component analysis) [4]. These are invaluable for disease endotyping—classifying subtypes based on underlying biological mechanisms rather than purely clinical symptoms [4].

Robust Feature Selection and Model Training

A critical challenge in ML-based biomarker discovery is the "p >> n problem," where the number of potential features (genes, proteins, metabolites) far exceeds the number of available samples [7]. This necessitates robust feature selection methods to identify the most informative biomarkers. A promising approach involves combining multiple algorithms in a consensus framework. For pancreatic ductal adenocarcinoma (PDAC) metastasis biomarker discovery, researchers implemented a pipeline that applied three algorithms (LASSO logistic regression, Boruta, and varSelRF) across 100 models per fold in a 10-fold cross-validation process [8]. Genes consistently found in at least 80% of models and five folds were considered robust candidates for building a consensus multivariate model [8].

Table 2: Machine Learning Techniques for Different Data Types

| Omics Data Type | ML Techniques | Typical Applications |

|---|---|---|

| Transcriptomics | Feature selection (e.g., LASSO); SVM; Random Forest [4] | Gene expression signatures for disease classification [4] |

| Proteomics | SVM-RFECV; Random Forest [6] | Protein panels for diagnosis and stratification [6] |

| Metabolomics | Logistic Regression; Random Forest [5] | Metabolic pathway analysis for disease prediction [5] |

| Multi-omics Integration | Multimodal neural networks; kernel fusion [7] | Comprehensive biomarker signatures from multiple data layers [4] [7] |

Validation Protocols: From Analytical to Clinical Utility

The validation process represents the most significant bottleneck in biomarker development, with approximately 95% of biomarker candidates failing to progress to clinical use [3]. Successful validation requires demonstrating three distinct types of validity: analytical, clinical, and utility [3].

Analytical Validation

Analytical validation establishes that an assay accurately and reliably measures the biomarker of interest. This requires demonstrating precision, accuracy, sensitivity, specificity, and reproducibility under specified conditions [3] [7]. Key requirements include a coefficient of variation under 15% for repeat measurements, recovery rates between 80-120%, and correlation coefficients above 0.95 when comparing to reference standards [3]. This phase must also address technical noise, batch effects, and platform-specific variability through rigorous quality control measures and standardized preprocessing pipelines [8] [7].

Clinical Validation

Clinical validation provides evidence that the biomarker consistently and accurately predicts clinical outcomes of interest across the intended use population [3]. This requires large-scale studies with appropriate statistical power—typically hundreds to thousands of patient samples—to demonstrate meaningful associations with clinical endpoints [3] [9]. The 2025 FDA Biomarker Guidance emphasizes that diagnostic biomarkers typically require ≥80% sensitivity and specificity, though exact requirements depend on the specific indication and context of use [3]. Validation must also assess generalizability across diverse genetic backgrounds, environmental factors, and disease subtypes [3].

Establishing Clinical Utility

Clinical utility represents the highest level of validation, demonstrating that using the biomarker actually improves patient outcomes, changes treatment decisions, or provides other beneficial impacts on human health [3]. This requires evidence that biomarker-informed decision-making leads to better clinical results compared to standard care, often through prospective clinical trials or well-designed observational studies [3]. The FDA's biomarker qualification program under the 21st Century Cures Act provides a structured pathway for regulatory approval, but qualification requires extensive evidence of clinical utility [3].

Experimental Protocols and Research Toolkit

Protocol: Machine Learning Pipeline for Biomarker Discovery

This protocol outlines the robust ML-based biomarker discovery approach applied to pancreatic ductal adenocarcinoma metastasis, which can be adapted for other disease contexts [8].

Data Preparation and Preprocessing

- Data Acquisition: Collect primary tumor RNAseq data from public repositories (TCGA, GEO, ICGC, CPTAC) with clinical annotation for metastasis status [8].

- Inclusion Criteria: Apply strict filters for sample quality: primary tumor tissues only, availability of metastasis status (N0 vs. N1/M1), and RNA sequencing data [8].

- Normalization and Batch Correction: Apply Trimmed Mean of M-values (TMM) normalization using edgeR package to account for sequencing depth differences. Correct for batch effects using ARSyN (ASCA removal of systematic noise) or similar methods to remove technical variance while preserving biological signals [8].

- Data Splitting: Split datasets into training (TCGA-PAAD, PACA-AU, PACA-CA) and validation (CPTAC-PDAC, GSE79668) cohorts, maintaining class balance for metastasis status [8].

Feature Selection and Model Training

- Cross-Validation Setup: Implement 10-fold cross-validation on training data with 100 models per fold to ensure robustness [8].

- Multi-Algorithm Feature Selection: Apply three feature selection methods in sequence: (1) LASSO logistic regression (glmnet package) for initial variable selection; (2) Boruta algorithm for all-relevant feature selection; and (3) Backwards selection (varSelRF package) for final refinement [8].

- Consensus Biomarker Identification: Select genes appearing in ≥80% of models across ≥5 folds as robust biomarker candidates [8].

- Model Construction: Build random forest models (ranger method in caret package) using selected features, with 5-fold cross-validation and ADASYN oversampling to address class imbalance [8].

Validation and Biological Contextualization

- Model Evaluation: Test final model on held-out validation datasets using comprehensive metrics: Precision, Recall, F1 score for both metastasis and non-metastasis classes [8].

- Biological Interpretation: Conduct pathway analysis (QIAGEN Ingenuity Pathway Analysis, GeneMANIA) to explore biological relevance of identified biomarkers and their connections to disease mechanisms [8].

- Independent Validation: Where possible, recalibrate models using only validation data to assess generalizability and performance stability [8].

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Research Reagents and Platforms for Biomarker Development

| Reagent/Platform | Function | Application Example |

|---|---|---|

| Absolute IDQ p180 Kit (Biocrates) | Targeted metabolomics analysis quantifying 194 endogenous metabolites from 5 compound classes [5] | Identification of metabolite biomarkers in large-artery atherosclerosis [5] |

| QIAGEN Ingenuity Pathway Analysis | Bioinformatics tool for pathway analysis, biological interpretation of omics data [8] | Contextualizing biomarker candidates within known biological networks and disease mechanisms [8] |

| MultiBaC Package (R) | Batch effect correction for multi-omics data integration across different platforms [8] | Removing technical variance when combining datasets from different sources [8] |

| edgeR Package (R) | Differential expression analysis of RNAseq data with TMM normalization [8] | Normalizing transcriptomics data to account for sequencing depth and composition [8] |

| Caret Package (R) | Unified interface for training and evaluating multiple machine learning models [8] | Implementing random forest models with cross-validation for biomarker discovery [8] |

The journey from biomarker discovery to clinical application requires navigating a complex pathway with significant attrition rates. Only approximately 5% of initially promising biomarker candidates ultimately achieve clinical use, primarily due to failures in analytical validation, clinical validation, or demonstration of clinical utility [3]. Modern approaches that integrate machine learning with multi-omics data offer promising strategies to improve this success rate by identifying more robust, multivariate biomarker signatures from the outset [4].

Successful biomarker development requires meticulous attention to three distinct validity types: analytical validity (accurate measurement), clinical validity (prediction accuracy), and clinical utility (patient benefit) [3]. By implementing robust machine learning pipelines with rigorous validation protocols and maintaining focus on clinically meaningful endpoints, researchers can enhance the translational potential of biomarker discoveries. The evolving regulatory landscape, particularly the FDA's 2025 Biomarker Guidance and provisions under the 21st Century Cures Act, provides clearer pathways for biomarker qualification, emphasizing the importance of early regulatory alignment in biomarker development strategies [3].

The advent of high-throughput technologies has revolutionized biomedical research, enabling the large-scale collection of multiple molecular datasets collectively known as "multi-omics." These technologies measure various biological layers, including the genomic (DNA sequences and variations), transcriptomic (gene expression patterns), epigenomic (DNA methylation, histone modifications), proteomic (protein expression and post-translational modifications), and metabolomic (small-molecule metabolite profiles) levels of biological systems [10]. The primary challenge in multi-omics analyses stems from the inherent characteristics of these datasets: they exhibit extremely high dimensionality, where the number of measured features (e.g., genes, proteins) vastly exceeds the number of samples, creating a statistical "curse of dimensionality" [11] [10].

This high-dimensional nature is compounded by significant data heterogeneity, as each omics layer possesses its own unique data structure, statistical distribution, noise profile, and measurement scale [12]. For instance, genomics data typically consists of discrete mutations, while proteomics and metabolomics data are continuous intensity values. Furthermore, technical variability from different analytical platforms and batch effects introduces unwanted noise that can obscure true biological signals [12] [10]. These characteristics collectively make multi-omics data integration and analysis a substantial computational challenge that requires specialized methodologies to extract biologically meaningful insights while avoiding spurious findings.

Computational Challenges in Multi-Omics Integration

Data Heterogeneity and Technical Variability

The integration of multi-omics data presents formidable bioinformatics challenges that can stall discovery efforts, particularly for researchers without computational expertise [12]. A critical issue is the absence of standardized preprocessing protocols across different omics modalities [12]. Each data type has unique data structures, distribution properties, measurement errors, and batch effects that challenge harmonization. Tailored preprocessing pipelines for each data type can introduce additional variability across datasets, further complicating integration.

The sheer volume and variety of multi-omics data creates additional obstacles. Modern oncology studies, for example, generate petabyte-scale data streams from technologies including next-generation sequencing (genomic variants at terabase resolution), mass spectrometry (quantifying thousands of proteins and metabolites), and radiomics (extracting quantitative features from medical images) [10]. The "four Vs" of big data—volume, velocity, variety, and veracity—pose formidable analytical challenges as dimensionality (e.g., >20,000 genes, >500,000 CpG sites) often dwarfs sample sizes in most cohorts [10].

The Problem of "Dark Matter" in Omics

A particularly vexing challenge across all omics disciplines is the presence of significant "dark matter"—molecular species that are detected but not confidently identified or annotated [11]. In metabolomics, for example, structural diversity results in only 1.8% of untargeted metabolomics spectra being annotated using mass spectrometry [11]. Similarly, routine proteomic workflows neglect an estimated 50% of the "dark proteome," while genomic and transcriptomic analyses have historically focused on protein-coding regions, leaving non-coding regions with established biological implications less characterized [11]. These gaps in coverage fundamentally limit the biological context that can be annotated within a given system and consequently impair multi-omics data interpretation.

Table 1: Key Computational Challenges in Multi-Omics Data Integration

| Challenge Category | Specific Issues | Impact on Analysis |

|---|---|---|

| Data Heterogeneity | Different statistical distributions, noise profiles, measurement scales [12] | Difficulties in data harmonization and comparison |

| Technical Variability | Batch effects, platform-specific artifacts, different detection limits [12] | Obscured biological signals, misleading conclusions |

| Dimensionality | Features >> Samples (e.g., >20,000 genes vs. hundreds of samples) [10] | Statistical "curse of dimensionality," overfitting risk |

| Data Complexity | Missing data, unknown signals ("dark matter"), incomplete annotations [11] | Limited biological context, incomplete interpretations |

| Integration Methods | Multiple algorithms with different approaches and parameters [12] | Confusion in method selection, irreproducible results |

Machine Learning Approaches for Robust Biomarker Discovery

A Framework for Robust Biomarker Identification

Machine learning (ML) plays a crucial role in addressing the challenges of high-dimensional omics data, yet conventional ML approaches face limitations in data integration and irreproducibility [8]. To address these challenges, robust computational frameworks that incorporate rigorous validation are essential. One such approach involves a consensus feature selection process that combines multiple algorithms to identify robust biomarker candidates [8]. This methodology employs a 10-fold cross-validation process that applies three algorithms (LASSO logistic regression, Boruta, and variable selection using random forests) across 100 models per fold [8]. Genes consistently found in at least 80% of models across multiple folds are considered robust candidates for building consensus multivariate models.

The application of paired differential analysis represents another robust approach for biological feature selection in machine learning models [13]. This method compares primary tumor tissue with the same patient's healthy tissue, which improves gene selection by eliminating individual-specific artifacts and accounting for patient variability [13]. When applied to carcinoma, this approach identified 27 pivotal genes capable of distinguishing between healthy and carcinoma tissue, even in unseen carcinoma types, demonstrating the method's robustness for biomarker discovery [13].

Integration Methods for Multi-Omics Data

Several computational methods have been developed specifically for multi-omics data integration, each with distinct approaches and strengths:

- MOFA (Multi-Omics Factor Analysis): An unsupervised factorization method that uses a Bayesian probabilistic framework to infer latent factors capturing principal sources of variation across data types [12]. Some factors may be shared across all data types, while others may be specific to a single modality.

- DIABLO (Data Integration Analysis for Biomarker discovery using Latent Components): A supervised integration method that uses known phenotype labels to achieve integration and feature selection [12]. It identifies latent components as linear combinations of original features and employs penalization techniques to select the most informative features.

- SNF (Similarity Network Fusion): A network-based method that fuses multiple data types by constructing sample-similarity networks for each omics dataset and then fusing them via non-linear processes [12].

- MCIA (Multiple Co-Inertia Analysis): A multivariate statistical method that extends co-inertia analysis to simultaneously handle more datasets and capture relationships and shared patterns of variation [12].

Table 2: Comparison of Multi-Omics Data Integration Methods

| Method | Type | Key Approach | Best Suited For |

|---|---|---|---|

| MOFA [12] | Unsupervised | Bayesian factor analysis | Exploring hidden sources of variation without predefined outcomes |

| DIABLO [12] | Supervised | Multiblock sPLS-DA with feature selection | Biomarker discovery when sample categories are known |

| SNF [12] | Unsupervised | Similarity network fusion | Identifying sample clusters and subgroups across omics layers |

| MCIA [12] | Unsupervised | Covariance optimization across datasets | Simultaneous analysis of multiple omics datasets |

Biomarker Discovery Workflow

Experimental Protocols for Biomarker Discovery

Protocol: A Robust Machine Learning Pipeline for PDAC Metastasis Biomarkers

Background: Pancreatic ductal adenocarcinoma (PDAC) is a highly aggressive cancer with a high potential for metastasis, making treatment particularly challenging [8]. The 5-year survival rate for PDAC patients with metastatic disease is only 5-10% [8]. This protocol outlines a robust machine learning pipeline for identifying composite biomarker candidates for PDAC metastasis using RNA sequencing data.

Step 1: Data Collection and Inclusion Criteria

- Collect primary tumor RNAseq data from public repositories (TCGA, GEO, ICGC, CPTAC) [8]

- Apply inclusion criteria: samples from primary tumor tissues of unpaired PDAC patients only, datasets containing clinical data for metastasis status, and data from RNA sequencing platforms [8]

- Stratify samples into "non-metastasis group" (stage IA to IIA, no regional lymph node metastasis) and "metastasis group" (stage IIB to IV) based on AJCC cancer staging [8]

Step 2: Data Pre-processing and Integration

- Normalize data using Trimmed Mean of M-values (TMM) normalization to account for sequencing depth and composition differences [8]

- Filter out genes with low expression levels (<5% quantile & <0.1 Absolute Fold Change) [8]

- Address batch effects using ARSyN (ASCA removal of systematic noise) mode 1 from the MultiBaC package [8]

- Filter out genes that do not show consistent expression patterns across technical batches

Step 3: Biomarker Candidate Identification

- Split processed data into train and validation datasets [8]

- Perform 10-fold cross-validation on train data using three algorithms:

- Build 100 models per fold and identify genes found in at least 80% of models across five folds [8]

- Consider these consensus genes as robust biomarker candidates

Step 4: Model Building and Validation

- Construct random forest models using the ranger method in the caret package [8]

- Use 5-fold cross-validation and address class imbalance with ADASYN oversampling [8]

- Build 100 models using the train set and assess each model on the test set [8]

- Evaluate models using twelve metrics, including Precision, Recall, and F1 score for both metastasis and non-metastasis classes [8]

- Test the final model on independent validation datasets

Step 5: Biological Contextualization

- Perform enrichment and pathway analyses using QIAGEN Ingenuity Pathway Analysis and GeneMANIA [8]

- Explore biological functions and relevance of identified biomarker candidates

- Validate potential relevance through links to cancer progression and metastasis mechanisms

Protocol: Explainable ML with Paired Differential Gene Expression

Background: This protocol describes an approach for robust biomarker identification using paired differential gene expression analysis, which enhances robustness and interpretability while accounting for patient variability [13].

Step 1: Sample Collection and Preparation

- Collect matched tissue pairs (primary tumor and healthy tissue) from the same patients [13]

- Ensure appropriate sample preservation for RNA extraction and sequencing

Step 2: RNA Sequencing and Data Generation

- Perform RNA sequencing on all samples using consistent platforms and protocols

- Generate gene expression profiles for both tumor and normal tissues

Step 3: Paired Differential Expression Analysis

- Conduct differential expression analysis comparing tumor vs. normal tissue for each patient

- Account for patient-specific effects by using paired statistical tests

- Identify consistently differentially expressed genes across multiple patients

Step 4: Machine Learning Model Development

- Use the paired differential expression patterns as features in machine learning models

- Implement explainable ML approaches to maintain interpretability [13]

- Validate models on independent datasets, including unseen carcinoma types [13]

Step 5: Biomarker Panel Refinement

- Identify pivotal genes that robustly distinguish between healthy and carcinoma tissue [13]

- Assess the panel's ability to identify tissue-of-origin in carcinoma types [13]

- Test the panel on metastatic samples to evaluate primary tissue origin identification [13]

Table 3: Research Reagent Solutions for Multi-Omics Biomarker Discovery

| Resource Category | Specific Tools | Function and Application |

|---|---|---|

| Public Data Repositories | TCGA, GEO, ICGC, CPTAC [8] | Sources of primary multi-omics data for analysis and validation |

| Bioinformatics Platforms | Omics Playground, Galaxy, DNAnexus [12] [10] | Integrated solutions for multi-omics analysis, often with code-free interfaces |

| Normalization Tools | edgeR (TMM normalization) [8] | Account for sequencing depth and composition differences between samples |

| Batch Effect Correction | ARSyN, ComBat, MultiBaC [8] [10] | Remove unwanted technical variability between experiments |

| Machine Learning Frameworks | caret, glmnet, ranger [8] | Implement ML algorithms for feature selection and predictive modeling |

| Pathway Analysis Tools | QIAGEN IPA, GeneMANIA [8] | Biological contextualization of identified biomarkers |

| Multi-Omics Integration | MOFA, DIABLO, SNF [12] | Specialized algorithms for integrating disparate omics data types |

Multi-Omics Data Integration Pipeline

The integration of multi-omics data represents a powerful approach for uncovering disease mechanisms and identifying robust biomarkers, but it requires carefully designed computational strategies to navigate the challenges of high-dimensional datasets. Artificial intelligence, particularly machine learning and deep learning, has emerged as an essential scaffold bridging multi-omics data to clinically actionable insights [10]. Unlike traditional statistics, AI excels at identifying non-linear patterns across high-dimensional spaces, making it uniquely suited for multi-omics integration [10].

Future developments in AI-driven multi-omics integration will likely focus on several key areas. Explainable AI (XAI) techniques like SHapley Additive exPlanations (SHAP) are becoming increasingly important for interpreting "black box" models and clarifying how different molecular features contribute to predictions [10]. Generative AI shows promise for synthesizing in silico "digital twins"—patient-specific avatars simulating treatment response [10]. Federated learning approaches enable privacy-preserving collaboration across institutions, while spatial and single-cell omics technologies provide unprecedented resolution for microenvironment decoding [10]. As these technologies mature, they promise to transform precision oncology from reactive population-based approaches to proactive, individualized cancer management [10].

Despite these advances, operationalizing AI-driven multi-omics integration requires confronting persistent challenges in algorithm transparency, batch effect robustness, ethical equity in data representation, and regulatory alignment [10]. By addressing these challenges while leveraging the robust computational frameworks outlined in this article, researchers can harness the full potential of multi-omics data to advance biomarker discovery and ultimately improve patient outcomes in complex diseases like cancer.

Traditional statistical methods, including t-tests and ANOVA, have long been the cornerstone of biomarker discovery. However, these methods face significant challenges when applied to modern, high-dimensional biological data. They often assume specific data distributions (e.g., normality) that are frequently violated by complex omics data, and they struggle with the problem of multiple testing and nonlinear relationships inherent in datasets containing millions of features [14]. Furthermore, conventional approaches typically focus on single molecular features, which proves inadequate for capturing the multifaceted biological networks underlying complex and heterogeneous diseases such as cancer [4]. These limitations reduce reproducibility, increase false-positive rates, and ultimately hinder the development of clinically useful biomarkers.

Machine learning (ML) overcomes these constraints by handling large, complex datasets without stringent distributional assumptions. ML algorithms excel at identifying intricate patterns and interactions among various molecular features that traditional methods miss, providing a powerful framework for robust biomarker identification [14] [4].

Comparative Analysis: Traditional Statistics vs. Machine Learning

Table 1: Comparative Performance of Traditional Statistics vs. Machine Learning in Biomarker Research

| Aspect | Traditional Statistics | Machine Learning | Practical Implication |

|---|---|---|---|

| Data Distribution | Assumes normality, often violated [14] | Non-parametric; makes no strict distributional assumptions [14] | ML can be applied to a wider range of data types without transformation |

| High-Dimensionality | Struggles with millions of features; multiple testing problem [14] [4] | Designed for scale; employs regularization and embedded feature selection [4] | ML is suited for modern omics data (genomics, proteomics) |

| Non-Linear Relationships | Limited capacity to model complex interactions [14] | Excels at identifying non-linear and interaction effects [14] | ML can uncover complex, non-intuitive biological pathways |

| Model Output | Often a single biomarker or a limited linear model | Multi-feature panels and complex, predictive models [4] | ML enables multi-biomarker signatures for better stratification |

| Clinical Validation | Well-established but often with limited predictive accuracy [4] | Can achieve high accuracy (e.g., AUC >0.90) but requires rigorous validation [5] [15] | ML models offer high potential but need careful external validation |

Table 2: Quantitative Performance of ML Models in Biomarker Applications

| Disease Area | Machine Learning Model | Key Biomarkers | Performance | Source |

|---|---|---|---|---|

| Large-Artery Atherosclerosis (LAA) | Logistic Regression (LR) | Clinical factors (BMI, smoking) + Metabolites (aminoacyl-tRNA biosynthesis) | AUC: 0.92 (External Validation) [5] | Scientific Reports (2023) |

| Ovarian Cancer (OC) Diagnosis | Ensemble Methods (RF, XGBoost) | CA-125, HE4, CRP, NLR | AUC > 0.90, Accuracy up to 99.82% [15] | PMC Review (2025) |

| Wastewater CRP Monitoring | Cubic Support Vector Machine (CSVM) | C-Reactive Protein (CRP) via absorption spectroscopy | Accuracy: ~65.5% (5-class classification) [16] | Scientific Reports (2025) |

| Rheumatoid Arthritis (RA) | Supervised ML on Transcriptomics | Blood transcriptome profiles | Clear patient-control separation in t-SNE/PCA [14] | Cell and Tissue Research (2023) |

Machine Learning Methodologies for Biomarker Discovery

Key Machine Learning Approaches

The application of ML in biomarker research primarily utilizes supervised and unsupervised learning techniques. Supervised learning trains models on labeled datasets to classify disease status or predict clinical outcomes. Common and effective algorithms include Support Vector Machines (SVM), which are effective for small-sample, high-dimensional omics data; Random Forests (RF), ensemble models robust against noise and overfitting; and Gradient Boosting algorithms (e.g., XGBoost), which iteratively correct errors for high accuracy [4]. Unsupervised learning explores unlabeled data to discover inherent structures or novel patient subgroups, invaluable for disease endotyping—classifying diseases based on shared molecular mechanisms rather than just clinical symptoms [14]. Techniques include clustering (k-means) and dimensionality reduction (PCA, t-SNE) [4].

Critical Step: Feature Selection Techniques

Feature selection is a critical step to improve model accuracy, reduce overfitting, and enhance interpretability [17]. The three main types of feature selection methods are:

- Filter Methods: These assess feature relevance based on statistical measures (e.g., correlation, chi-square) independent of the ML model. They are fast and model-agnostic but may miss feature interactions [17]. Example:

SelectKBestwith ANOVA F-test [18]. - Wrapper Methods: These use a specific ML algorithm to evaluate feature subsets based on model performance. Examples include Recursive Feature Elimination (RFE). They can find high-performing subsets but are computationally expensive [17]. Example:

RFEwith Logistic Regression [18]. - Embedded Methods: These integrate feature selection into the model training process itself. Examples include LASSO regression, which adds a penalty to shrink coefficients, and tree-based algorithms that provide feature importance scores [18] [17]. They offer a good balance of efficiency and performance [4].

Experimental Protocols for ML-Driven Biomarker Discovery

Protocol 1: Biomarker Panel Discovery for Disease Classification

This protocol outlines the process for identifying a biomarker panel to classify diseased versus healthy patients, as applied in studies of Large-Artery Atherosclerosis (LAA) and Ovarian Cancer [5] [15].

- Sample Collection & Data Generation: Collect patient samples (e.g., plasma, serum) under approved ethical guidelines. Generate high-dimensional data using targeted metabolomics kits (e.g., Biocrates Absolute IDQ p180) or measure established biomarkers (e.g., CA-125, HE4, CRP) [5] [15].

- Data Preprocessing: Handle missing values using imputation (e.g., mean imputation). Split the dataset into a training/validation set (e.g., 80%) and a hold-out test set (e.g., 20%) for external validation [5].

- Feature Selection: Apply recursive feature elimination with cross-validation (RFECV) or embedded methods (e.g., LASSO) on the training set to identify the most predictive features from the clinical and molecular data [5] [18].

- Model Training & Validation: Train multiple classifiers (e.g., Logistic Regression, SVM, Random Forest) using the selected features on the training set. Optimize hyperparameters via cross-validation. Evaluate the final model on the held-out test set, reporting metrics such as AUC, accuracy, precision, and recall [5] [15].

Protocol 2: Multi-Class Biomarker Level Estimation

This protocol is designed for classifying samples into multiple concentration levels, such as monitoring biomarker dynamics in wastewater or for patient stratification [16].

- Spectral Data Acquisition: For a target biomarker (e.g., C-Reactive Protein), acquire UV-Vis absorption spectroscopy spectra from prepared samples with known concentration classes (e.g., from (10^{-4}) to (10^{-1} \,\upmu)g/ml) [16].

- Spectral Data Preprocessing: Optionally restrict the spectral range (e.g., 400 nm to 700 nm) to simulate cost-effective sensor designs. Normalize the spectral data and extract features from the full or restricted spectrum [16].

- Model Training & Evaluation: Train a Cubic Support Vector Machine (CSVM) or other ML models to perform multi-class classification. Evaluate performance using metrics like accuracy and F1-score, and visualize results with confusion matrices and ROC curves to interpret classification performance across different concentration levels [16].

The Scientist's Toolkit: Essential Research Reagents & Materials

Table 3: Essential Research Reagents and Solutions for ML-Driven Biomarker Studies

| Item Name | Function/Application | Example Use Case |

|---|---|---|

| Absolute IDQ p180 Kit | Targeted metabolomics kit quantifying 194 endogenous metabolites from 5 compound classes [5]. | Biomarker discovery for Large-Artery Atherosclerosis; input for ML models [5]. |

| CA-125 & HE4 ELISA Kits | Immunoassays for measuring established protein biomarkers in serum/plasma [15]. | Building input features for ovarian cancer diagnostic and prognostic ML models [15]. |

| Sodium Citrate Blood Collection Tubes | Anticoagulant for plasma preparation in metabolomic and proteomic studies [5]. | Standardized collection of patient blood samples for subsequent high-throughput analysis. |

| UV-Vis Spectrophotometer | Instrument for measuring absorption spectra of samples in a label-free manner [16]. | Rapid, cost-effective data acquisition for monitoring biomarker levels (e.g., CRP) in complex matrices. |

| Scikit-learn Python Library | Open-source ML library providing feature selection tools, classifiers, and model evaluation metrics [18]. | Implementing the entire ML pipeline from data preprocessing to model validation. |

The identification of robust biomarkers is a cornerstone of precision medicine, enabling improved disease diagnosis, prognosis, and treatment selection. Traditional biomarker discovery approaches, often focused on single molecular features, face significant challenges including limited reproducibility, high false-positive rates, and inadequate predictive accuracy [4]. Machine learning (ML) has emerged as a transformative technology for biomarker discovery, capable of analyzing large-scale, complex datasets to identify subtle patterns and multi-parameter signatures that escape conventional statistical methods [19]. This document outlines key applications and detailed protocols for ML-driven identification of predictive, prognostic, and diagnostic biomarkers, providing researchers with practical frameworks for implementation.

Biomarker Classification and Clinical Utility

Biomarkers serve distinct roles in clinical practice and clinical research, each with specific applications and implications for patient management as shown in the table below.

Table 1: Biomarker Types, Definitions, and Clinical Applications

| Biomarker Type | Definition | Clinical/Research Application | Exemplary Biomarkers |

|---|---|---|---|

| Diagnostic | Identifies the presence or absence of a disease [4]. | Early disease detection and classification; distinguishing malignant from benign tumors [15] [20]. | CA-125 and HE4 in ovarian cancer [15]; CDKN3, TRIP13 in HCC [20]. |

| Prognostic | Forecasts disease progression or recurrence risk independent of therapeutic intervention [4]. | Patient risk stratification and treatment planning; predicting overall survival [21] [20]. | 8-gene signature (e.g., BCAT1, CDKN2B) for HCC overall survival [20]. |

| Predictive | Estimates treatment efficacy and likelihood of response to a specific therapeutic [22] [4]. | Therapy selection for targeted treatments and immunotherapy; predicting drug response and resistance [22] [23]. | BRAF mutations for EGFR inhibitor resistance in colon cancer; biomarkers for IO therapy response [22] [23]. |

Machine Learning Approaches for Biomarker Discovery

ML algorithms can be applied across various data modalities to identify different types of biomarkers. The choice of algorithm depends on the data structure, sample size, and the specific biomarker discovery goal.

Table 2: Machine Learning Techniques in Biomarker Discovery

| ML Algorithm | Best-Suited Data Types | Primary Biomarker Application | Reported Performance |

|---|---|---|---|

| Random Forest (RF) / RF with Recursive Feature Elimination (RF-RFE) | Transcriptomics, Proteomics, Clinical Data [20] [24]. | Diagnostic, Prognostic | 79.59% accuracy for predicting MASLD [24]. |

| XGBoost | Multi-omics, Clinical & Biomarker Data [22] [15]. | Diagnostic, Predictive | Achieved AUC >0.90 in ovarian cancer diagnosis [15]. |

| Support Vector Machine with RFE (SVM-RFE) | Transcriptomics [20]. | Diagnostic | AUC = 1.0 (TCGA) and 0.95 (validation) for HCC gene identification [20]. |

| LASSO Cox Regression | Transcriptomics, Survival Data [20]. | Prognostic | Identifies prognostic gene signatures for overall survival prediction [20]. |

| Contrastive Learning (PBMF) | Clinicogenomic, Real-world & Trial Data [23]. | Predictive | Uncovered biomarkers yielding 15% improvement in survival risk in a Phase 3 IO trial [23]. |

| Causal-Based Feature Selection | High-dimensional analyte data (e.g., proteomics) [25]. | Diagnostic | Outperformed logistic regression in sensitivity for gastric cancer diagnosis [25]. |

Workflow for ML-Driven Biomarker Discovery

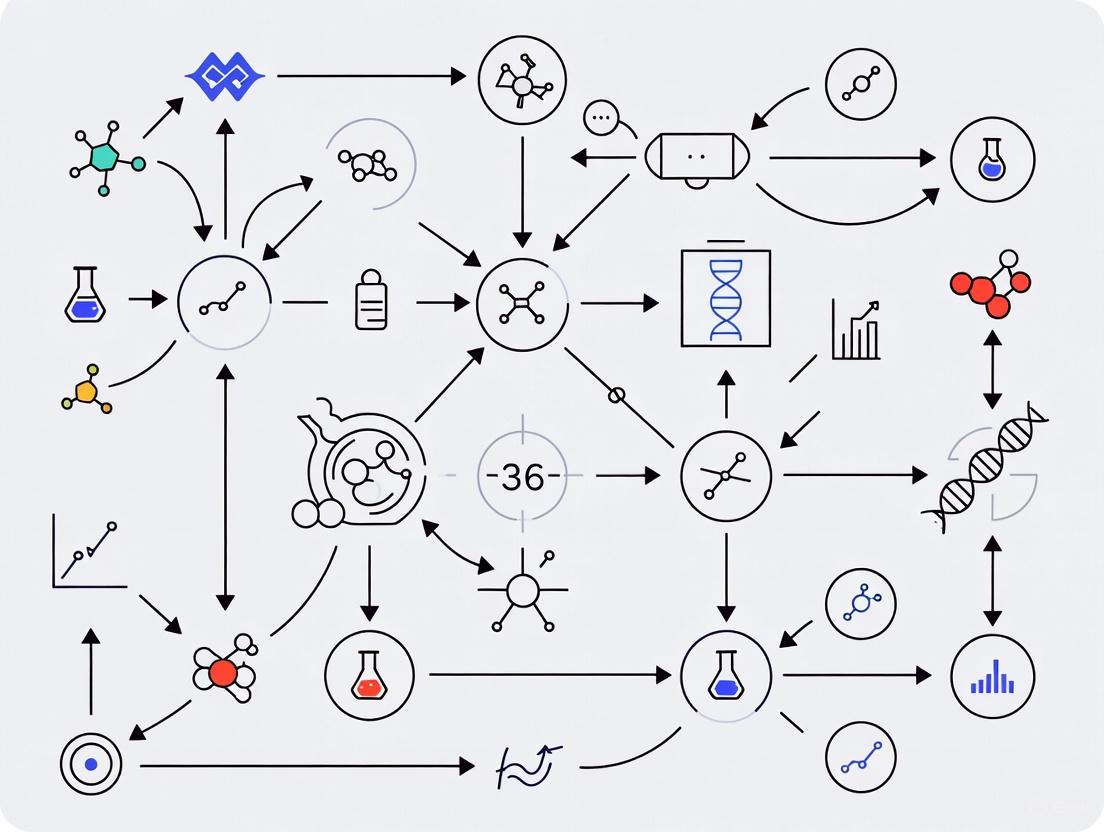

The following diagram illustrates a generalized computational workflow for identifying and validating biomarkers using machine learning.

Detailed Experimental Protocols

Protocol 1: Diagnostic Biomarker Identification Using SVM-RFE/RF-RFE

This protocol is adapted from a study identifying diagnostic mitotic cell cycle genes in hepatocellular carcinoma (HCC) [20].

I. Research Reagent Solutions

| Item | Function | Exemplary Sources/Tools |

|---|---|---|

| RNA-seq Data | Source of transcriptomic features for analysis. | TCGA LIHC, GEO (GSE77509, GSE144269) [20]. |

| Gene Set | Defines the biological context for biomarker candidacy. | MSigDB (e.g., GOBPMITOTICCELL_CYCLE) [20]. |

| R/Bioconductor Packages | Software environment for data analysis and model building. | TCGAbiolinks, edgeR, limma, e1071, caret, pROC, randomForest [20]. |

II. Step-by-Step Methodology

Data Acquisition and Preprocessing:

- Obtain RNA-seq count data for tumor and normal samples from public repositories (e.g., TCGA, GEO).

- Perform data normalization (e.g., TMM normalization using

edgeR/limma) and filter genes with low counts [20].

Differential Expression Analysis:

- Identify differentially expressed genes (DEGs) between tumor and normal groups. A standard threshold is adjusted p-value < 0.05 and |log fold change| > 1 [20].

- Intersect the DEGs with a pre-defined, biologically relevant gene set (e.g., mitotic cell cycle genes) to obtain candidate genes for ML analysis.

Feature Selection with Recursive Feature Elimination:

- SVM-RFE/RF-RFE Setup: Implement the RFE algorithm wrapped around a Support Vector Machine with a linear kernel or a Random Forest classifier.

- Robust Feature Selection: To enhance reliability, perform RFE with 10-fold cross-validation for 50 iterations. Retain only genes selected in at least 90% of the iterations.

- Final Gene Selection: Subject the robust genes to a final round of RFE with 10-fold repeated cross-validation (e.g., 5 repeats) to obtain the most relevant diagnostic biomarkers [20].

Model Training and Validation:

- Train final SVM or RF models using the selected features.

- Evaluate model performance using a separate validation cohort, not used in the training or feature selection process.

- Performance Metrics: Calculate Area Under the Curve (AUC), sensitivity, specificity, and accuracy.

- Statistical Robustness: Perform permutation testing (e.g., n=100) to confirm that the model's performance is significantly better than chance [20].

Protocol 2: Prognostic Biomarker Signature Construction Using LASSO Cox Regression

This protocol outlines the development of a gene signature for predicting patient survival, as applied in HCC research [20].

I. Research Reagent Solutions

| Item | Function | Exemplary Sources/Tools |

|---|---|---|

| Survival Data | Links gene expression to clinical outcomes (overall/progression-free survival). | TCGA clinical data; validated independent cohorts (e.g., GSE14520) [20]. |

| R/Bioconductor Packages | Software for survival and regression analysis. | survival, glmnet |

II. Step-by-Step Methodology

Data Preparation and Integration:

- Obtain normalized gene expression data and corresponding clinical data (survival time and vital status).

- Filter patients to include only those with adequate follow-up (e.g., ≥ 30 days) and complete clinical information [20].

Univariate Cox Regression Analysis:

- Perform univariate Cox regression for each candidate gene to test its individual association with overall survival.

- Test the proportional hazards (PH) assumption using Schoenfeld residuals and exclude genes that violate this assumption (p ≤ 0.05).

- Apply multiple testing correction (e.g., Benjamini-Hochberg FDR) and retain genes with FDR < 0.05 [20].

Multivariate Signature Construction with LASSO Cox Regression:

- Input the significant genes from the univariate analysis into a LASSO Cox regression model.

- LASSO penalizes the coefficients of non-informative genes to zero, effectively performing variable selection and yielding a parsimonious model.

- Use 10-fold cross-validation to identify the optimal penalty parameter (lambda) that minimizes the partial likelihood deviance [20].

Risk Score Calculation and Validation:

- Calculate a prognostic risk score for each patient using the formula: Risk Score = (ExprGene1 × CoefGene1) + (ExprGene2 × CoefGene2) + ... + (ExprGeneN × CoefGeneN).

- Stratify patients into high-risk and low-risk groups based on the median risk score.

- Validate the prognostic power of the signature using Kaplan-Meier survival analysis and log-rank tests in an independent validation cohort [20].

Protocol 3: Predictive Biomarker Discovery with Contrastive Learning

This protocol is based on an AI-driven framework designed to discover predictive, rather than prognostic, biomarkers from complex clinicogenomic data [23].

I. Research Reagent Solutions

| Item | Function | Exemplary Sources/Tools |

|---|---|---|

| Clinicogenomic Datasets | High-dimensional data from clinical trials or real-world sources. | Immuno-oncology (IO) trial data; real-world clinicogenomic data [23]. |

| Contrastive Learning Framework | AI model to distinguish patients who benefit from a specific therapy. | Predictive Biomarker Modeling Framework (PBMF) [23]. |

II. Step-by-Step Methodology

Problem Formulation and Data Curation:

- Define the clinical question: Identify biomarkers that predict response to a specific therapy (e.g., immunotherapy) compared to a control therapy.

- Assemble a dataset containing molecular profiles (e.g., genomic, transcriptomic) and clinical outcomes for patients from both treatment arms [23].

Application of Contrastive Learning Framework:

- Utilize the Predictive Biomarker Modeling Framework (PBMF), which employs contrastive learning.

- The model is trained to explore potential predictive biomarkers in an automated, systematic manner by learning representations that distinguish patients who survive longer on the investigational therapy versus those who do better on the control therapy [23].

Biomarker Interpretation and Clinical Actionability:

- The framework generates interpretable biomarkers to facilitate clinical decision-making.

- Analyze the model output to understand the specific features (e.g., gene expressions, mutations) that the algorithm identifies as predictive [23].

Retrospective and Prospective Validation:

- Perform retrospective validation on held-out trial data or independent datasets.

- The key metric is whether the biomarker-positive group, identified by the model, shows a significant improvement in survival risk or other clinical endpoints when treated with the specific therapy [23].

- The ultimate goal is to prospectively inform patient selection for future clinical trials.

Application Examples Across Diseases

The following table summarizes specific implementations of ML for biomarker discovery in various oncological and metabolic diseases.

Table 3: Exemplary Applications of ML in Biomarker Discovery

| Disease Context | Biomarker Type | ML Approach | Key Findings |

|---|---|---|---|

| Ovarian Cancer [15] | Diagnostic | Ensemble Methods (RF, XGBoost) | ML models integrating CA-125, HE4, and additional markers (e.g., CRP, NLR) achieved AUC >0.90, outperforming single markers. |

| Lung Cancer [21] | Predictive & Prognostic | Deep Learning, ML | AI models for predicting EGFR, PD-L1, and ALK status showed pooled sensitivity of 0.77 and specificity of 0.79, enabling non-invasive assessment. |

| Hepatocellular Carcinoma (HCC) [20] | Diagnostic & Prognostic | SVM-RFE, LASSO | Identified a 9-gene diagnostic panel and an 8-gene prognostic signature for overall survival, validated on independent cohorts. |

| Metabolic Dysfunction–Associated Steatotic Liver Disease (MASLD) [24] | Diagnostic | Random Forest | A model incorporating demographic, metabolic, and biochemical biomarkers predicted MASLD with 79.59% accuracy. |

| Gastric Cancer [25] | Diagnostic | Causal-Based Feature Selection | Outperformed traditional logistic regression, achieving higher sensitivity (0.240 vs. 0.000) with only 3 biomarkers. |

Machine learning has fundamentally enhanced the landscape of biomarker discovery by enabling the integration of complex, high-dimensional data to identify robust diagnostic, prognostic, and predictive signatures. The protocols outlined herein provide a framework for researchers to implement these advanced computational approaches. Future directions will focus on strengthening multi-omics integration, improving model interpretability through explainable AI, and conducting rigorous validation in large-scale, prospective clinical studies to ensure translation into clinical practice [26] [4] [23].

The ML Toolbox: Algorithms and Techniques for Biomarker Identification

The identification of robust biomarker candidates is a cornerstone of modern precision medicine, enabling advances in early disease detection, prognosis, and therapeutic selection. This article provides a comparative analysis of four core machine learning (ML) algorithms—Logistic Regression, Support Vector Machines (SVM), Random Forest, and eXtreme Gradient Boosting (XGBoost)—within the context of biomarker discovery research. We evaluate these algorithms based on key performance characteristics, including their handling of imbalanced datasets, interpretability, and computational efficiency, providing a structured framework for their application. The discussion is substantiated with recent case studies from oncology, demonstrating how ensemble methods like Random Forest and XGBoost are employed to identify predictive biomarkers from high-dimensional biological data. The article further presents detailed experimental protocols and resources, offering researchers a practical toolkit for implementing these algorithms in biomarker research.

Biomarkers, defined as objectively measurable indicators of biological processes, are invaluable for disease diagnosis, prognosis, and predicting response to treatment [26]. The discovery of reliable biomarkers, however, is fraught with challenges, including high-dimensional data, class imbalance, and the need for models that generalize well to new populations [26] [8]. Machine learning offers powerful tools to navigate this complex landscape, with different algorithms presenting distinct advantages and limitations.

This article focuses on four foundational ML algorithms widely used in classification tasks relevant to biomarker discovery: the linear model Logistic Regression; the kernel-based method Support Vector Machine (SVM); and the ensemble tree-based methods Random Forest and XGBoost. Selecting the appropriate algorithm is not a one-size-fits-all endeavor; it requires a careful balance of predictive performance, interpretability, and computational resources [27]. By providing a structured comparison and practical protocols, this work aims to guide researchers and drug development professionals in building robust, reliable predictive models for biomarker identification.

Core Algorithm Comparative Analysis

The following section provides a detailed comparison of the four core algorithms, summarizing their key characteristics, strengths, and weaknesses, with a particular focus on their applicability to biomarker research.

Table 1: Algorithm Characteristics and Suitability for Biomarker Research

| Criteria | Logistic Regression | Support Vector Machine (SVM) | Random Forest | XGBoost |

|---|---|---|---|---|

| Interpretability | High; provides clear feature coefficients [28] | Medium; "black-box" nature makes interpretation difficult [29] | Medium; provides feature importance scores [27] | Low; complex ensemble is less interpretable [27] |

| Computational Cost | Very Low [27] | High on large datasets [28] | Moderate [27] | High [27] |

| Nonlinear Capability | Poor; requires feature engineering [27] | Good; can handle non-linearity with kernels [28] | Good [27] | Excellent [27] |

| Handling Imbalance | Via class_weight parameter [27] |

Via class weights [30] | Via class weights or resampling [27] | Via scale_pos_weight + resampling [27] |

| Typical Performance (Imbalanced Data) | Low–Moderate recall [27] | Can achieve high sensitivity [30] | Moderate–High recall [27] | High recall and accuracy [27] [30] |

| Ideal Biomarker Use Case | Baseline model, when interpretability is paramount [27] | When data has clear margin of separation, high-dimensional space [28] | General-purpose, sturdy model with mixed data types [27] | Large, complex datasets where predictive power is key [27] |

Table 2: Key Hyperparameters for Tuning in Biomarker Models

| Algorithm | Critical Hyperparameters | Recommended Starting Values |

|---|---|---|

| Logistic Regression | C (inverse regularization strength), penalty (regularization type), solver [31] |

C: [100, 10, 1.0, 0.1, 0.01], penalty: ['l2', 'l1'], solver: ['liblinear', 'lbfgs'] [31] |

| Support Vector Machine | C (regularization), kernel (e.g., 'rbf', 'linear'), gamma (kernel coefficient) [31] |

C: [0.1, 1, 10, 100], kernel: ['rbf', 'linear'] |

| Random Forest | n_estimators (number of trees), max_depth, max_features, min_samples_leaf [29] |

n_estimators: [100, 200, 500], max_depth: [None, 10, 30] |

| XGBoost | n_estimators, max_depth, learning_rate, scale_pos_weight (for imbalance), subsample [27] [29] |

n_estimators: [100, 200], max_depth: [3, 6, 9], learning_rate: [0.01, 0.1, 0.2], scale_pos_weight: [nnegative / npositive [27]] |

Key Strengths and Weaknesses in Context

- Logistic Regression is highly valued for its simplicity and interpretability. The coefficients of the model can be directly interpreted as the influence of each feature on the log-odds of the outcome, which is crucial for understanding the biological relevance of a potential biomarker [28]. Its primary weakness is its inability to capture complex, non-linear relationships without extensive feature engineering [27].

- Support Vector Machine (SVM) excels in high-dimensional spaces, making it suitable for genomic or proteomic data with many features [28]. It is also robust to outliers. However, it can be computationally intensive on large datasets, and its performance is highly sensitive to the choice of kernel and hyperparameters [28]. The model's "black-box" nature also limits its interpretability [29].

- Random Forest is a versatile and robust algorithm that handles non-linear relationships and mixed data types well. It reduces the risk of overfitting through its ensemble of decorrelated trees and provides useful measures of feature importance [27] [29]. A key weakness is that its probability estimates can be poorly calibrated, and it may require significant memory for large forests [27].

- XGBoost is often the top performer in predictive accuracy on structured data. It builds trees sequentially, correcting the errors of previous trees, and includes built-in mechanisms for handling imbalanced data (e.g.,

scale_pos_weight), a common challenge in biomarker discovery [27] [22]. Its main drawbacks are a higher propensity for overfitting if not carefully tuned and greater computational demands [27].

Application Notes and Protocols from Recent Research

Case Study 1: Predictive Biomarkers in Oncology with MarkerPredict

A 2025 study by MarkerPredict developed a framework to identify predictive biomarkers for targeted cancer therapies by integrating network biology and protein disorder features [22].

Objective: To classify whether a protein pair (a target and its neighbour) represents a predictive biomarker relationship.

Experimental Protocol: 1. Data Curation: Positive and negative training sets were established using literature evidence from the CIViCmine database, resulting in 880 target-interacting protein pairs [22]. 2. Feature Engineering: Topological information from three signalling networks and protein disorder annotations were used as features [22]. 3. Model Training and Validation: Both Random Forest and XGBoost models were trained. The study utilized Leave-One-Out-Cross-Validation (LOOCV) and k-fold cross-validation to ensure robustness [22]. 4. Performance: Thirty-two different models were built, achieving a LOOCV accuracy range of 0.7–0.96. XGBoost marginally outperformed Random Forest. A Biomarker Probability Score (BPS) was defined to rank the predictions [22].

Case Study 2: A Robust Pipeline for PDAC Metastasis Biomarker Discovery

A 2024 study on Pancreatic Ductal Adenocarcinoma (PDAC) metastasis exemplifies a rigorous ML workflow for identifying robust composite biomarker candidates from transcriptomic data [8].

Objective: To identify a robust gene signature (composite biomarker) capable of predicting metastatic PDAC from primary tumour RNAseq data.

Experimental Protocol: 1. Data Integration: Data from five public repositories (TCGA, GEO, etc.) were pooled. Technical variance and batch effects were corrected using the ARSyN method [8]. 2. Robust Feature Selection: A 10-fold cross-validation process was employed, combining three variable selection algorithms (LASSO logistic regression, Boruta, and varSelRF) across 100 models per fold. Genes appearing in at least 80% of models and five folds were considered robust [8]. 3. Model Building and Validation: A Random Forest model was constructed using the selected genes. The dataset was split into training and validation sets, and the model was evaluated on the held-out validation data using metrics suited for imbalanced data (Precision, Recall, F1-score) [8].

Table 3: The Scientist's Toolkit for ML-Based Biomarker Discovery

| Research Reagent / Resource | Function in Workflow |

|---|---|

| Public Data Repositories (TCGA, GEO, ICGC, CPTAC) | Provides primary tumour RNAseq and clinical data for analysis [8]. |

| Batch Effect Correction Tools (e.g., ARSyN, ComBat) | Removes unwanted technical variance from integrated datasets to reveal true biological signal [8]. |

| Synthetic Oversampling (e.g., SMOTE, ADASYN) | Addresses class imbalance by generating synthetic samples of the minority class (e.g., metastatic cases) [30] [8]. |

| Feature Selection Algorithms (e.g., LASSO, Boruta) | Identifies the most relevant biomarkers from a high-dimensional feature space, reducing noise and overfitting [8]. |

| Tree-Based Models (Random Forest, XGBoost) | Serves as high-performance, robust classifiers that can handle complex interactions in biological data [27] [22] [8]. |

Visualizing the Biomarker Discovery Workflow

The following diagram illustrates the robust machine learning pipeline for biomarker candidate identification, as demonstrated in the PDAC case study [8].

ML Biomarker Discovery Pipeline

The comparative analysis presented herein underscores that there is no single "best" algorithm for all biomarker discovery tasks. The choice between Logistic Regression, SVM, Random Forest, and XGBoost must be guided by the specific research context. For highly interpretable baseline models, Logistic Regression is ideal. For complex, high-dimensional datasets where predictive power is paramount, XGBoost and Random Forest consistently demonstrate superior performance, as evidenced by their successful application in recent oncology studies.

The path to robust biomarker identification, however, relies on more than just algorithm selection. It requires a rigorous and reproducible workflow that integrates high-quality data, thoughtful handling of technical variance and class imbalance, robust feature selection, and thorough validation. By adopting the structured protocols and insights outlined in this article, researchers can enhance the reliability and translational potential of their machine learning-driven biomarker research.

Ensemble learning is a machine learning technique that combines multiple learning algorithms to obtain better predictive performance than could be obtained from any of the constituent learning algorithms alone [32]. In the context of robust biomarker identification, ensemble methods function like consulting multiple scientific experts before making a critical diagnostic decision—instead of relying on a single model that may be affected by noise, bias, or variance, ensemble techniques blend the outputs of different models to create more accurate and reliable predictions [33] [34]. The fundamental premise involves training a diverse set of weak models on the same task, where individually they would produce unsatisfactory predictive results, but when combined or averaged, they form a single, high-performing, accurate, and low-variance model ideal for the rigorous demands of biomarker discovery and validation [32].

These techniques are particularly valuable in precision oncology and biomarker research, where selecting targeted cancer therapies relies heavily on identifying predictive biomarkers with high confidence [22]. Ensemble methods strengthen normal behavior modeling and enhance diagnostic accuracy by reducing the risk of misdiagnosis, offering healthcare professionals a more reliable tool for clinical decision-making [34]. By leveraging the collective intelligence of multiple models, ensemble learning provides resilience against data uncertainties and variability common in biological datasets, making it an indispensable approach for identifying robust biomarker candidates in complex biomedical data.

Core Ensemble Techniques

Theoretical Foundations

Bagging (Bootstrap Aggregating)

Bootstrap Aggregating, or Bagging, is a supervised learning technique designed primarily to decrease a model's variance and overcome overfitting issues [34]. The method creates multiple subsets from the original dataset through bootstrapping—random sampling with replacement—then builds a base model on each of these subsets [32] [34]. The final prediction is made by combining predictions from all these models, typically through weighted averaging for regression or voting for classification tasks [34]. This approach is particularly effective with unstable models like deep decision trees, as the aggregation increases diversity in the ensemble [34].

Boosting

Boosting follows an iterative, sequential process where each new model in the ensemble focuses on correcting the errors of the previous ones [32]. Unlike bagging, boosting gives more weight to observations that were incorrectly predicted, forcing subsequent models to focus more on difficult cases [34]. The goal is to decrease the model's bias by turning multiple weak learners into a strong one through this sequential optimization process [34]. Classical boosting's subset creation is not random; each model's performance is directly influenced by the previous ones, creating an additive model that progressively reduces overall errors [32] [34].

Key Technical Differences

Table 1: Comparison of Bagging and Boosting Techniques

| Aspect | Bagging | Boosting |

|---|---|---|

| Primary Goal | Reduce variance | Reduce bias |

| Training Approach | Parallel training of independent models | Sequential training with error correction |

| Data Sampling | Random subsets with equal probability | Weighted sampling based on previous errors |

| Model Weighting | Generally equal weighting | Performance-based weighting |

| Overfitting | Reduces overfitting | Can be prone to overfitting |

| Optimal Use Cases | Unstable models, overfit datasets | Poorly performing models, complex patterns |

Algorithmic Workflows

The following diagram illustrates the core structural and procedural differences between bagging and boosting ensemble methods:

Advanced Implementations and Applications in Biomarker Research

Random Forests for Biomarker Discovery

Random Forests extend the bagging concept by combining multiple decision trees to make final predictions [34]. Instead of using the entire dataset and all features to grow trees, this method relies on random subsets of both features and data [34]. The implementation involves: starting with training data containing N observations and M features, randomly selecting a sample with replacement, choosing a subset of M features and using the best feature to split the node, then repeating this process to grow multiple trees [34]. For biomarker discovery, this approach enables researchers to select and rank potential biomarkers according to their respective discriminatory power while optimizing their combinations [35].

In practical applications for meat authentication—a proxy for biological sample classification—Random Forests distinguished carcasses of lambs pasture-finished from stall-fed lambs with an accuracy of up to 95.1-95.7% [35]. The models identified that perirenal fat skatole and perirenal fat carotenoid pigment content (out of 19 variables) played a prominent role in classification, demonstrating how ensemble methods can pinpoint the most biologically relevant biomarkers from numerous candidates [35]. The random forest designed for use at the point of sale, based on dorsal fat spectrocolorimetric characteristics and muscle color coordinates, still achieved 84.3-85.4% accuracy, showing robustness even with simplified biomarker panels [35].

Gradient Boosting Machines for Predictive Biomarker Identification

Advanced boosting implementations like XGBoost (Extreme Gradient Boosting) have demonstrated remarkable effectiveness in biomarker discovery applications [34] [22]. These methods build upon the fundamental boosting principle but incorporate additional enhancements like tree pruning, regularization, and parallel processing to improve performance and prevent overfitting [34]. In precision oncology, the MarkerPredict framework successfully employed XGBoost to classify target-neighbor pairs as potential predictive biomarkers, achieving leave-one-out-cross-validation (LOOCV) accuracies ranging from 0.7-0.96 across 32 different models [22].

The implementation typically involves training ensemble members sequentially, with each new model focusing on the mistakes of the previous ones [34]. For biomarker classification, this approach progressively refines the model's ability to distinguish true biomarkers from non-biomarkers based on features such as network topology properties, protein disorder characteristics, and motif participation in signaling networks [22]. The iterative nature of boosting makes it particularly effective for complex biomarker discovery tasks where subtle patterns in the data must be detected and amplified through successive modeling iterations.

Application Protocol: Biomarker Candidate Identification

Table 2: Experimental Protocol for Biomarker Discovery Using Ensemble Methods

| Step | Procedure | Parameters & Considerations |

|---|---|---|

| 1. Data Preparation | Collect and preprocess biomarker candidate data including protein expression, mutation status, network topology metrics, and structural features. | Handle missing values, normalize numerical features, encode categorical variables. |

| 2. Feature Selection | Perform initial feature importance analysis using Random Forest or XGBoost built-in capabilities. | Focus on biomarkers measurable in clinical settings; prioritize interpretable features. |

| 3. Model Training | Implement multiple ensemble methods: Random Forest (bagging) and XGBoost (boosting) with appropriate hyperparameter tuning. | Use cross-validation; set nestimators=100-500, maxdepth=3-10, learning_rate=0.01-0.3 for boosting. |

| 4. Validation | Evaluate using LOOCV, k-fold cross-validation, and train-test splits (70:30). | Assess AUC, accuracy, F1-score; calculate Biomarker Probability Score for ranking. |

| 5. Interpretation | Extract feature importance rankings and identify top biomarker candidates. | Validate biological plausibility; consider clinical applicability of identified biomarkers. |

Case Study: MarkerPredict Framework for Predictive Oncology Biomarkers

Implementation Workflow

The MarkerPredict framework provides a comprehensive example of advanced ensemble methods applied to predictive biomarker identification in oncology [22]. The system integrates network-based properties of proteins with structural features such as intrinsic disorder to explore their contribution to predictive biomarker discovery [22]. The following diagram illustrates the complete experimental workflow:

Research Reagent Solutions

Table 3: Essential Research Resources for Ensemble-Based Biomarker Discovery

| Resource Category | Specific Tools & Databases | Application in Biomarker Research |

|---|---|---|

| Signaling Networks | Human Cancer Signaling Network (CSN), SIGNOR, ReactomeFI | Provide network topology features and motif analysis context for biomarker identification [22]. |

| Protein Disorder Databases | DisProt, AlphaFold (pLLDT<50), IUPred (score>0.5) | Supply intrinsic disorder predictions as features for biomarker classification [22]. |

| Biomarker Annotation | CIViCmine text-mining database | Offers evidence-based positive and negative training sets for supervised learning [22]. |

| Machine Learning Libraries | Scikit-learn (Random Forest), XGBoost, AdaBoost | Provide implemented ensemble algorithms for model development and validation [33] [34]. |

| Validation Frameworks | LOOCV, k-fold Cross-Validation, Train-Test Splits | Enable rigorous assessment of model performance and biomarker reliability [22]. |

Performance Metrics and Outcomes

The MarkerPredict implementation demonstrated the powerful synergy between ensemble methods and biomarker discovery. By leveraging both Random Forest and XGBoost algorithms on three different signaling networks with multiple intrinsic disorder protein databases, the framework classified 3,670 target-neighbor pairs with high accuracy [22]. The ensemble approach allowed the definition of a Biomarker Probability Score (BPS) as a normalized summative rank of the models, which successfully identified 2,084 potential predictive biomarkers for targeted cancer therapeutics, with 426 classified as biomarkers by all four calculations [22].

The study further detailed the biomarker potential of specific proteins like LCK and ERK1, demonstrating how ensemble methods can prioritize candidates for further validation [22]. The high-performing machine learning models achieved excellent metrics with properly tuned hyperparameters, with the XGBoost algorithm marginally outperforming Random Forest in most configurations [22]. This case study illustrates how advanced ensemble methods can significantly impact clinical decision-making in oncology by providing a robust, systematic approach for predictive biomarker identification.

Advanced ensemble methods represent a paradigm shift in biomarker discovery and validation. By combining the strengths of multiple models through bagging and boosting techniques, researchers can achieve enhanced predictive power that exceeds the capabilities of individual algorithms. The application of Random Forests and Gradient Boosting machines in biomedical research has demonstrated remarkable success in identifying robust biomarker candidates with clinical relevance, particularly in complex domains like precision oncology. As these techniques continue to evolve and integrate with emerging biological insights, they offer promising avenues for accelerating the development of reliable diagnostic and predictive tools that can inform therapeutic decisions and improve patient outcomes. The structured protocols and implementation frameworks presented in this article provide researchers with practical guidance for leveraging these powerful methods in their biomarker discovery pipelines.

The pursuit of robust biomarker candidates is a fundamental objective in precision medicine, essential for advancing disease diagnosis, prognosis, and therapeutic development. Traditional biomarker discovery methods, which often focus on single molecular layers, face significant challenges including limited reproducibility, high false-positive rates, and an inability to capture the complex, multifactorial nature of human disease [4]. The integration of multi-genomic, clinical, and demographic data presents a promising path forward but demands sophisticated computational strategies to unravel the intricate biological patterns within these high-dimensional datasets [36] [37].

Artificial intelligence (AI) and machine learning (ML) have emerged as transformative technologies for this task, capable of identifying non-linear structures and interactions that typically elude conventional statistical techniques [36] [4]. This application note focuses on IntelliGenes, a novel ML pipeline specifically designed for biomarker discovery and predictive analysis using multi-genomic profiles. Developed by researchers at The State University of New Jersey, IntelliGenes strategically combines classical statistical methods with cutting-edge ML algorithms to discover biomarkers significant in disease prediction with high accuracy [36] [38] [39]. We will detail its operational protocols, present quantitative performance data, and frame its utility within the broader context of robust, ML-driven biomarker research for scientists and drug development professionals.

Core Architecture and Workflow

IntelliGenes is engineered to be a user-friendly, portable, and cross-platform application compatible with major operating systems, including Microsoft Windows, macOS, and UNIX [36] [40]. Its architecture is modular, allowing researchers to apply default combinations of algorithms or customize and create new AI/ML pipelines tailored to specific research needs [38]. The pipeline operates on AI/ML-ready data formatted in the Clinically Integrated Genomics and Transcriptomics (CIGT) format, which integrates patient age, gender, racial and ethnic background, diagnoses, and RNA-seq-driven gene expression data [36].

The analytical workflow of IntelliGenes is logically segmented into three main sections:

- Data Manager: Supports the user in loading and customizing the input data and any existing lists of biomarkers.

- AI/ML Analysis: Allows the application of statistical techniques and ML classifiers for biomarker discovery and disease prediction.

- Visualization: Provides options to interpret results through performance metrics, disease predictions, and various charts, enhancing the interpretability of the findings [38].

The Intelligent Gene (I-Gene) Score

A cornerstone innovation of the IntelliGenes platform is the Intelligent Gene (I-Gene) score, a novel metric designed to measure the importance of individual biomarkers for the prediction of complex traits [36] [40]. The calculation of the I-Gene score is a multi-step process that integrates two key components:

- SHAP (SHapley Additive exPlanations) Values: This approach from cooperative game theory is used to assign importance to each feature (biomarker) based on its contribution to the model's predictions [36].

- Herfindahl-Hirschman Index (HHI): This index measures the concentration of a classifier's reliance on individual biomarkers. Classifiers where predictions are dependent on a few high-impact biomarkers receive a greater weight, thereby prioritizing biomarkers that are decisively influential in specific models [36].