Evaluating Composite Biomarker Performance: Key Metrics, Methodologies, and Clinical Translation for 2025

This article provides a comprehensive framework for evaluating composite biomarker performance, essential for researchers and drug development professionals advancing precision medicine.

Evaluating Composite Biomarker Performance: Key Metrics, Methodologies, and Clinical Translation for 2025

Abstract

This article provides a comprehensive framework for evaluating composite biomarker performance, essential for researchers and drug development professionals advancing precision medicine. It covers foundational concepts of composite biomarkers and their superiority over single-analyte approaches. The piece explores cutting-edge methodological applications, including AI-driven predictive models and multi-omics integration, alongside practical troubleshooting for data and regulatory challenges. Finally, it details rigorous validation frameworks and comparative analyses against established clinical tools, synthesizing key metrics and future directions to bridge biomarker discovery with robust clinical application.

The Foundation of Composite Biomarkers: From Basic Concepts to Clinical Necessity

Composite biomarkers, which integrate multiple biological signals into a single diagnostic measure, represent a paradigm shift in precision medicine. This guide objectively evaluates the performance of composite biomarkers against traditional single-analyte approaches through a detailed analysis of recent clinical research. Using non-small cell lung cancer (NSCLC) immunotherapy response prediction as a case study, we demonstrate that while certain composite biomarkers fail to outperform superior single biomarkers, their integrated approach provides a more comprehensive framework for understanding complex disease biology. Supporting experimental data reveal that PD-1T TILs alone achieved 74% specificity for identifying patients with no long-term benefit to PD-1 blockade, outperforming the tested composite combinations [1].

Traditional biomarker strategies relying on single-analyte measurements face significant limitations in predicting treatment response for complex diseases like cancer. The tumor microenvironment exhibits multifaceted biology that cannot be adequately captured by measuring individual analytes such as PD-L1 expression alone, which fails to predict response in 60-70% of PD-L1 positive NSCLC patients [1]. Composite biomarkers address this limitation by integrating multiple complementary signals—including immune cell infiltration, spatial organization, and molecular signatures—to create a more holistic representation of disease state and therapeutic potential.

The conceptual framework for composite biomarkers aligns with the growing recognition that diseases involve complex, interconnected biological networks rather than isolated molecular events. As biomarker research evolves from univariate to multivariate approaches, composite biomarkers enable more granular patient stratification and personalized treatment strategies [2]. This guide systematically evaluates the performance of composite versus single-analyte biomarkers through objective comparison of experimental data, methodological protocols, and clinical validation studies.

Performance Comparison: Composite vs. Single Biomarkers

A 2024 study directly compared the predictive performance of composite biomarkers against individual biomarkers in 135 NSCLC patients treated with nivolumab. The research assessed multiple biomarkers including CD8 tumor-infiltrating lymphocytes (TILs), PD-1T TILs, CD3 TILs, CD20 B-cells, tertiary lymphoid structures (TLS), PD-L1 tumor proportion score (TPS), and Tumor Inflammation Score (TIS) [1].

Table 1: Predictive Performance for Disease Control at 6 Months (Validation Cohort)

| Biomarker Type | Specific Biomarker | Sensitivity (%) | Specificity (%) | NPV (%) |

|---|---|---|---|---|

| Composite | CD8+IT-CD8 | 64 | 64 | 76 |

| Composite | CD3+IT-CD8 | 83 | 50 | 85 |

| Single | PD-1T TILs | 72 | 64 | 86 |

| Single | TIS | 83 | 50 | 84 |

Table 2: Predictive Performance for Disease Control at 12 Months (Validation Cohort)

| Biomarker Type | Specific Biomarker | Sensitivity (%) | Specificity (%) | NPV (%) |

|---|---|---|---|---|

| Composite | CD8+IT-CD8 | 71 | 63 | 85 |

| Composite | CD8+TIS | 86 | 53 | 92 |

| Single | PD-1T TILs | 86 | 74 | 95 |

| Single | TIS | 100 | 39 | 100 |

The data reveal a critical finding: the tested composite biomarkers did not show improved predictive performance compared to superior individual biomarkers like PD-1T TILs and TIS for both 6- and 12-month endpoints [1]. Specifically, PD-1T TILs demonstrated substantially higher specificity (74% vs. 39-63%) for identifying patients with no long-term benefit at 12 months, suggesting better discrimination capability than composite approaches or TIS alone.

Experimental Protocols and Methodologies

Patient Cohort and Study Design

The referenced NSCLC study employed rigorous methodological standards [1]:

- Patient Population: 135 patients with pathologically confirmed stage IV NSCLC receiving second-line or later nivolumab monotherapy (3mg/kg every two weeks)

- Study Design: Patients were randomly allocated to training (n=55) and validation cohorts (n=80), stratified by treatment outcome at 6 and 12 months

- Endpoints: Primary endpoint was Disease Control at 6 months (DC 6m), defined as complete response, partial response, or stable disease per RECIST 1.1 criteria; secondary endpoint was Disease Control at 12 months (DC 12m)

- Exclusion Criteria: Tumors with EGFR mutations or ALK translocations; samples with less than 10,000 cells, endobronchial lesions, or fixation/staining artifacts

Biomarker Assessment Protocols

Tissue Processing and Staining [1]:

- Pretreatment FFPE tumor tissues were sectioned at 3μm thickness

- CD8 immunostaining used BenchMark Ultra autostainer with C8/144B clone (1:200 dilution)

- Heat-induced antigen retrieval performed with Cell Conditioning 1 for 32 minutes

- Detection employed OptiView DAB Detection Kit with hematoxylin counterstaining

- PD-1 staining used clone NAT105; PD-L1 used clone 22C3

Biomarker Evaluation Criteria:

- PD-1T TILs: Excluded samples with abundant normal lymphoid tissue to prevent false positives

- PD-L1: Assessed via Tumor Proportion Score (TPS)

- Tertiary Lymphoid Structures (TLS) and CD20+ B-cells: Scored according to established morphological criteria

- Tumor Inflammation Score (TIS): Standardized RNA expression signature characterizing immune activity

Data Integration and Visualization

Advanced computational methods enable the integration of multiple biomarker data streams. The "Composite Biomarker Image" (CBI) approach aligns immunohistochemistry biomarker images with H&E slides using a unified coordinate system, then filters and combines positive or negative regions into a single image using a fuzzy inference system [3]. This facilitates more efficient clinical assessment of biomarker co-expression patterns that might be missed when examining separate slides.

For complex biomarker data visualization, heatmaps with hierarchical clustering effectively display temporal patterns and source transitions during dynamic processes [4]. The methodology involves:

- Organizing data as a matrix (n samples × i biomarkers)

- Scaling biomarker concentrations to z-scores to avoid weighting artifacts

- Applying hierarchical clustering to reorder biomarkers based on similarity

- Visualizing using color gradients to identify co-variation patterns

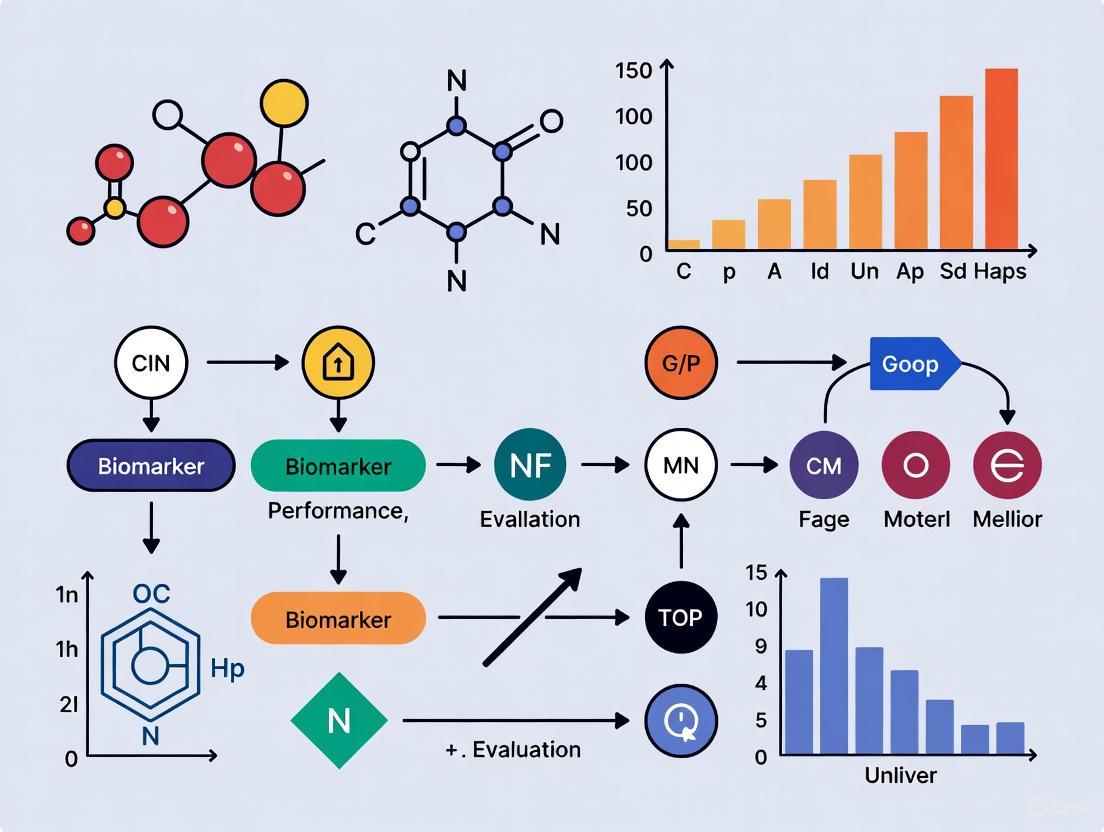

Experimental Workflow for Composite Biomarker Validation

Technological Framework for Composite Biomarker Development

Multi-Omics Integration Platforms

Contemporary composite biomarker development leverages multi-omics approaches that integrate genomic, transcriptomic, proteomic, and metabolomic data [5]. Advanced platforms like Element Biosciences' AVITI24 system combine sequencing with cell profiling to capture RNA, protein, and morphological data simultaneously, while 10x Genomics enables million-cell analyses that reveal clinically actionable subgroups missed by traditional bulk assays [5].

Digital Biomarkers and Continuous Monitoring

Digital biomarkers derived from wearables, smartphones, and connected devices provide continuous, real-world data streams that complement molecular biomarkers [6]. In oncology trials, these tools monitor heart rate variability, sleep quality, and activity levels, capturing daily symptom fluctuations that offer a more dynamic understanding of treatment tolerance and functional status than periodic clinic assessments.

Artificial Intelligence and Data Integration

AI technologies, particularly deep learning algorithms, systematically identify complex biomarker-disease associations that traditional statistical methods overlook [2]. Random Forest algorithms effectively quantify variable importance in multidimensional biomarker data, while digital twin platforms simulate disease trajectories to optimize biomarker validation strategies [7] [8].

Research Reagent Solutions for Composite Biomarker Studies

Table 3: Essential Research Reagents for Composite Biomarker Development

| Reagent/Category | Specific Examples | Research Function | Application Context |

|---|---|---|---|

| IHC Antibodies | CD8 clone C8/144B, PD-1 clone NAT105, PD-L1 clone 22C3 | Immune cell profiling and checkpoint marker localization | Tumor microenvironment characterization in immunotherapy studies [1] |

| Detection Systems | OptiView DAB Detection Kit, Ventana BenchMark Ultra | Signal amplification and visualization in tissue sections | Automated IHC staining for standardized biomarker assessment [1] |

| Spatial Biology Platforms | 10x Genomics, Element Biosciences AVITI24 | Simultaneous RNA, protein, and morphological analysis | Multi-omics integration for comprehensive biomarker discovery [5] |

| Digital Pathology Tools | AIRA Matrix, Pathomation, ComplexHeatmap R package | Image analysis, data integration, and visualization | Composite Biomarker Image creation and heatmap visualization [3] [4] |

| RNA Expression Panels | Tumor Inflammation Signature (TIS) | Characterization of immune-active tumor microenvironment | Predictive biomarker for immunotherapy response [1] |

The empirical comparison presented in this guide demonstrates that while composite biomarkers represent a theoretically superior approach to capturing disease complexity, their practical implementation does not invariably outperform optimized single biomarkers. In the NSCLC case study, PD-1T TILs alone more accurately identified non-responders than the tested composite biomarkers, highlighting the continued value of focused single-analyte approaches in specific clinical contexts [1].

Future composite biomarker development should prioritize several strategic directions:

- Advanced Multi-Omics Integration: Deeper integration of proteomic, metabolomic, and epigenomic data to capture fuller biological complexity [5]

- Dynamic Monitoring: Incorporation of digital biomarkers for continuous, real-world assessment of treatment response [6]

- Standardized Validation Frameworks: Implementation of rigorous analytical and clinical validation standards across diverse populations [2]

- Computational Advancements: Leverage AI and machine learning to identify optimal biomarker combinations from high-dimensional datasets [7]

As biomarker science evolves from static, single-analyte measurements to dynamic, multi-dimensional composites, researchers must balance the theoretical appeal of comprehensive assessment with demonstrated predictive performance. The optimal approach will likely be context-dependent, with composite biomarkers providing greatest value in heterogeneous disease states where multiple biological pathways drive clinical outcomes.

In the evolving landscape of precision medicine, composite biomarkers have emerged as powerful tools that integrate multiple biological signals to provide a more holistic view of patient health than single biomarkers alone. By simultaneously capturing activity across interconnected biological pathways such as inflammation, myocardial injury, and oxidative stress, these composites offer enhanced prognostic and diagnostic capabilities for complex conditions like cardiovascular disease [9]. This guide provides a comparative analysis of contemporary composite biomarker research, detailing experimental protocols, key biological pathways, and essential research tools for scientists and drug development professionals engaged in biomarker performance evaluation.

Comparative Analysis of Composite Biomarker Approaches

The table below summarizes four distinct approaches to composite biomarker development, highlighting their components, applications, and performance characteristics.

Table 1: Comparative Analysis of Composite Biomarker Strategies

| Composite Name/Strategy | Biological Pathways Captured | Components | Application Context | Performance Data |

|---|---|---|---|---|

| ln[ALP × sCr] Index [9] | • Vascular calcification/inflammation• Renal function• Cardiac-renal-metabolic axis | • Alkaline Phosphatase (ALP)• Serum Creatinine (sCr) | Mortality risk stratification in Type 2 Diabetes | Q4 vs Q1: All-cause mortality HR=1.47 (1.18-1.82); CVD mortality HR=1.44 (1.01-2.04) [9] |

| AI-Derived Protein Panel [10] | • Immune & inflammatory response• Apoptosis & cell death• Metabolic reprogramming | • CAMP, CLTC, CTNNB1• FUBP3, IQGAP1, MANBA• ORM1, PSME1, SPP1 | Diagnosis and risk stratification of Acute Myocardial Infarction (AMI) | ML model identified 9 key proteins from 437 DEPs; validated across bulk, single-cell, and spatial datasets [10] |

| Oxidative Stress Pathway Integration [11] [12] | • Mitochondrial ROS production• Calcium overload• Inflammatory cell activation | • Multiple ROS sources (mitochondria, NOX, XO)• Inflammatory mediators (IL-1β, IL-6, TNF-α) | Assessment of Myocardial Ischemia-Reperfusion Injury (MIRI) | Preclinical promise but clinical translation challenges; requires precision timing and patient stratification [12] |

| Multi-Omics Biomarker Discovery [5] [13] | • Complex disease biology across genomic, proteomic, and metabolomic layers | • Genomics, transcriptomics, proteomics, metabolomics data | Precision oncology; expanding to cardiovascular research | AI analysis can reduce biomarker discovery timelines from years to months or days [13] |

Experimental Protocols for Composite Biomarker Development

Deep Learning-Driven Composite Identification

A 2025 study established a protocol for developing the ln[ALP × sCr] composite index, leveraging deep learning for feature selection [9]:

- Cohort Design: 82,091 U.S. adults from NHANES (1999-2014), with 4,839 T2DM patients included in final analysis.

- Feature Selection: A feedforward neural network analyzed demographic, clinical, and biochemical variables (age, sex, BMI, diabetes parameters, lipid profile, inflammatory markers, liver enzymes, renal markers).

- Model Optimization: Hyperparameters (layer size, dropout rate, activation function) were optimized via grid search. SHAP (Shapley Additive Explanations) values quantified feature contributions.

- Composite Formulation: Top-ranked biomarkers (ALP, sCr, vitamin D) were used to derive the composite index ln[ALP × sCr], reflecting integrated cardiac-renal dysfunction.

- Validation: Restricted cubic spline analysis defined risk thresholds. Cox proportional hazards models assessed mortality risk over median 11.4-year follow-up.

Proteomic and Machine Learning Integration

A multi-omics study employed an integrated proteomic and machine learning workflow for Acute Myocardial Infarction (AMI) biomarker discovery [10]:

- Sample Preparation: Plasma from 48 AMI patients and 50 healthy controls processed using TCEP buffer, digested with trypsin, and fractionated via C18 column.

- Proteomic Analysis: Nano-LC-MS/MS using Q Exactive HF-X Hybrid Quadrupole-Orbitrap Mass Spectrometer. Protein identification against human NCBI RefSeq database with Mascot 2.4, FDR < 1%.

- Data Processing: Label-free quantification via intensity-based absolute quantification (iBAQ). Missing value imputation for proteins detected in >30% of samples.

- Machine Learning Feature Selection: Enhanced particle swarm optimization (ISPSO) algorithm integrated sub-feature grouping and probability-based search operators to identify hub proteins from 437 differentially expressed proteins.

- Validation Framework: Cross-dataset validation across bulk, single-cell, and spatial transcriptomic datasets for atherosclerosis and MI.

Biological Pathways Captured by Effective Composites

Inflammation Pathways in Cardiovascular Disease

Inflammation serves as a central pathway in cardiovascular pathology, with effective composites capturing multiple aspects of the immune response:

- Acute Phase Response: C-reactive protein (CRP) remains a cornerstone inflammatory biomarker, with recent research extending its utility to wastewater-based epidemiology for population-level monitoring [14]. CRP responds to a broad spectrum of inflammatory stimuli including infections, environmental pollutants, and psychosocial stress [14] [15].

- Cytokine Signaling: Interleukin-6 (IL-6) demonstrates strong association with major adverse cardiovascular events (MACE) in preclinical hypertension, with a doubling of IL-6 associated with a 62% higher MACE risk [15].

- Inflammasome Activation: The NLRP3 inflammasome, activated by oxidative stress during myocardial ischemia-reperfusion injury, triggers processing and release of pro-inflammatory cytokines IL-1β and IL-18 [12].

- Vascular Inflammation: Lipoprotein-associated phospholipase A2 (Lp-PLA2) mass and activity increase across blood pressure categories and associate with MACE in stage 1 hypertension [15].

Myocardial Injury Mechanisms

Myocardial injury involves complex molecular events that composites can capture through multiple angles:

- Direct Cardiomyocyte Damage: Cardiac troponin I/T (cTnI/cTnT) remain gold standards for detecting myocardial cell death [11] [16].

- Stress Response: N-terminal pro-B-type natriuretic peptide (NT-proBNP) reflects ventricular wall stress and hemodynamic load [16].

- Proteomic Landscape: Machine learning analysis of AMI plasma proteomics identified 437 differentially expressed proteins, with 291 up-regulated and 146 down-regulated, highlighting pathways in inflammation, immunity, metabolism, and cellular stress responses [10].

Oxidative Stress Dynamics

Oxidative stress represents a key pathological mechanism in myocardial ischemia-reperfusion injury, characterized by dynamic changes throughout ischemia and reperfusion:

- Ischemic Phase: Moderate ROS production occurs due to impaired mitochondrial electron transport chain function with limited oxygen availability [12].

- Reperfusion Burst: Sudden reintroduction of oxygen triggers massive ROS generation via mitochondrial reverse electron transport, NADPH oxidase activation, and xanthine oxidase activity [11] [12].

- Oxidative Damage Markers: Urinary isoprostanes, validated biomarkers of lipid peroxidation, increase across blood pressure categories and associate with MACE in preclinical hypertension (39% increased risk with doubling of concentration) [15].

- Antioxidant Defense Failure: Downregulation of mitochondrial histidine triad nucleotide-binding protein 2 (HINT2) and fibroblast growth factor 7 (FGF7) compromises endogenous antioxidant systems during AMI [11].

Diagram 1: Oxidative Stress Pathway in MIRI

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 2: Key Research Reagent Solutions for Composite Biomarker Studies

| Reagent/Category | Specific Examples | Research Function | Application Context |

|---|---|---|---|

| Proteomics Sample Prep | TCEP buffer, Trypsin (Promega #V5280), Formic Acid, NH4HCO3 [10] | Protein denaturation, reduction, digestion, and peptide fractionation | Plasma proteomics workflow for biomarker discovery |

| Chromatography Separation | C18 columns (trap and analytical), ReproSil-Pur C18-AQ beads [10] | Peptide separation prior to mass spectrometry analysis | Nano-liquid chromatography (Nano-LC) |

| Mass Spectrometry | Q Exactive HF-X Hybrid Quadrupole-Orbitrap Mass Spectrometer, EASY nLC 1200 system [10] | High-resolution peptide identification and quantification | Proteomic sequencing and biomarker identification |

| Bioinformatics Platforms | "Firmiana" proteomic cloud platform, Mascot 2.4 [10] | Protein database searching, false discovery rate control | Proteomic data analysis and protein identification |

| AI/ML Analysis Tools | Feedforward Neural Networks, SHAP analysis, Particle Swarm Optimization (PSO) [10] [9] | Feature selection, biomarker prioritization, model interpretability | Identification of key proteins and composite biomarkers |

| Immunoassay Reagents | ELISA kits, Electrochemiluminescence immunosensors [14] | Targeted protein quantification and validation | Validation of candidate biomarkers in specific pathways |

The development of effective composite biomarkers represents a paradigm shift in cardiovascular diagnostics and risk stratification. By capturing complementary biological information from inflammation, myocardial injury, and oxidative stress pathways, these composites provide a more comprehensive physiological picture than single biomarkers. The integration of advanced proteomics, multi-omics technologies, and machine learning has accelerated the discovery and validation of these sophisticated tools. Future success in this field will depend on continued refinement of experimental protocols, deeper understanding of pathway interactions, and thoughtful application of AI-driven analytics to develop clinically impactful composites that improve patient outcomes in cardiovascular disease and beyond.

The 'Health Space' model represents a paradigm shift in nutritional science and preventive medicine, moving from a traditional disease-focused approach to a dynamic assessment of an individual's health. It conceptualizes health not merely as the absence of disease, but as the ability to adapt and maintain homeostasis in response to environmental challenges, a concept termed "phenotypic flexibility" or "resilience" [17] [18]. This model leverages advanced computational techniques and challenge tests to quantify and visualize health status within a multidimensional space, providing researchers with a powerful tool for assessing subtle intervention effects that are often undetectable through conventional fasting biomarkers.

The fundamental premise of health space modeling is that a system's robustness is best measured when it is perturbed. In line with this, the PhenFlex Challenge Test (PFT) has been developed as a standardized high-caloric liquid meal test containing lipids, carbohydrates, and proteins to quantitatively assess phenotypic flexibility in both health and metabolic diseases [18]. By measuring biomarker responses before and after this controlled challenge, researchers can construct a health space where an individual's position reflects their metabolic and inflammatory resilience. This approach has proven particularly valuable for evaluating nutritional interventions and herbal extracts, where changes in phenotype are often subtle and difficult to measure with traditional methods [17].

Core Principles and Methodological Framework

Theoretical Foundations

The health space model is built upon several interconnected physiological concepts. Phenotypic flexibility refers to the body's capacity to adjust its physiological processes dynamically in response to metabolic challenges such as food intake [18]. This adaptability is essential for maintaining overall balance and promoting a healthy life. Health is thus operationally defined within this model as "the capacity to keep a consistent state of homeostasis in diverse and altering environmental conditions" [17].

The model also incorporates the concept of allostatic load, which represents the cumulative physiological burden imposed on the body through adaptions to repeated or chronic stress. By measuring an individual's biomarker trajectories in response to a standardized challenge, the health space model quantifies this adaptive capacity, providing insights into their underlying physiological robustness that would remain hidden in static, fasting measurements. This approach has revealed that the quantification of challenge-response proves to be more sensitive than fasting markers for detecting subtle health improvements or deteriorations [17].

Experimental Protocol: The PhenFlex Challenge Test

The standardized PhenFlex Challenge Test (PFT) serves as the cornerstone perturbation for health space modeling. The detailed experimental protocol is as follows:

- Participant Preparation: Participants fast for at least 12 hours overnight before the challenge test to establish baseline measurements [18].

- Challenge Meal Administration: Within a 5-minute period, participants consume a high-calorie liquid meal containing 75g of glucose, 60g of fat, and 18g of protein [18].

- Blood Sample Collection: Plasma samples are collected at multiple predetermined time points: typically at t=0 (fasting baseline), 30, 60, 120, and 240 minutes after consuming the challenge drink [18].

- Biomarker Analysis: Samples are analyzed for a broad panel of metabolic and inflammatory biomarkers, which may include glucose, insulin, triglycerides, free fatty acids, interleukin (IL)-6, IL-8, IL-10, IL-12p70, IL-13, interferon (IFN)-γ, tumour necrosis factor (TNF)-α, and other proteomic markers depending on the research focus [17] [18].

- Data Processing: Response curves for each biomarker are analyzed, and features are extracted for model input.

This rigorous standardized protocol ensures that interventional effects can be distinguished from normal biological variability, a critical consideration when studying healthy populations where intervention effects are often subtle [17].

Computational Architecture and Model Construction

The transformation of raw biomarker data into a meaningful health space involves sophisticated computational methods. The process typically employs Generalized Linear Models (GLMs) with 10-fold cross-validation to distinguish between reference groups representing different health states [17]. The computational workflow proceeds through several stages:

- Feature Selection: The algorithm identifies the most discriminative biomarkers from the postprandial response data. The number of selected features varies by experiment, with one study selecting 13 features for metabolic scores and 13 for inflammation scores in Experiment 1, and 11 features for metabolic scores and 35 for inflammatory resilience in Experiment 2 [17].

- Model Training: Reference groups representing phenotypic extremes (e.g., placebo vs. high-dose intervention, or young lean vs. older obese individuals) are used to train the classification algorithm [17] [18].

- Health Estimation Scores: The model generates quantitative scores for different biological processes (e.g., metabolic health, inflammatory resilience), which serve as coordinates in the health space [17].

- Validation: Model performance is evaluated using Receiver Operating Characteristic (ROC) curves comparing training and test set performance to ensure robust classification [17].

The following diagram illustrates the complete experimental and computational workflow for health space modeling:

Figure 1: Health Space Modeling Workflow

Comparative Analysis of Composite Biomarker Configurations

Inflammatory Resilience Biomarkers

Composite biomarkers of inflammatory resilience vary in their constituent markers and sensitivity to intervention effects. The table below compares different configurations evaluated in energy restriction studies:

Table 1: Performance Comparison of Composite Inflammatory Biomarkers

| Biomarker Configuration | Composition | Sensitivity to Energy Restriction | Correlation with Body Composition |

|---|---|---|---|

| Minimal Composite | IL-6, IL-8, IL-10, TNF-α | Unable to detect postprandial intervention effects in both Bellyfat and Nutritech studies [18] | Not significant [18] |

| Extended Composite | Multiple inflammatory markers beyond cytokines (unspecified) | Significant response to energy restriction in Nutritech study (P < 0.005) [18] | Reduction in score correlated with reduced BMI and body fat percentage [18] |

| Endothelial Composite | Inflammatory markers with endothelial focus (unspecified) | Significant response to energy restriction in Nutritech study (P < 0.005) [18] | Reduction in score correlated with reduced BMI and body fat percentage [18] |

| Optimized Composite | Statistically optimized inflammatory panel | Significant response to energy restriction in Nutritech study (P < 0.005) [18] | Reduction in score correlated with reduced BMI and body fat percentage [18] |

The performance disparities highlight the importance of biomarker selection in composite indicator development. While the minimal composite comprising only cytokines lacked sensitivity, more comprehensive panels successfully detected intervention effects and correlated with clinical improvements, underscoring the multidimensional nature of inflammatory resilience [18].

Metabolic Health Assessments

Different computational approaches exist for quantifying metabolic health from challenge test data, each with distinct strengths and biomarker requirements:

Table 2: Comparison of Metabolic Health Assessment Models

| Model Name | Key Biomarkers | Physiological Processes Quantified | Validation Approach |

|---|---|---|---|

| Health Space Model [17] | Postprandial metabolic and inflammatory proteins (13-35 features selected via machine learning) | Phenotypic flexibility, Metabolic resilience, Inflammatory resilience | ROC curves (AUC), separation of reference groups in 2-D space [17] |

| Mixed Meal Model [19] | Triglycerides, Free Fatty Acids, Glucose, Insulin | Insulin resistance, β-cell functionality, Liver fat | Comparison to gold-standard measures (e.g., MRI for liver fat) [19] |

| Deep Learning Composite [9] | Alkaline Phosphatase (ALP), Serum Creatinine (sCr), Vitamin D | Cardiovascular-renal-metabolic dysfunction, Mortality risk | NHANES cohort with mortality follow-up (median 11.4 years) [9] |

The health space model distinguishes itself by integrating multiple biological processes into a unified visualization framework, while other models focus more specifically on particular physiological subsystems or long-term risk prediction.

Applications in Nutritional Intervention Studies

Herbal Extract Efficacy Assessment

The health space model has been successfully applied to quantify the effects of herbal extracts on healthy individuals. In two randomized, double-blind, placebo-controlled crossover trials, intervention with Angelica keiskei (AK) and Capsosiphon fulvescens (CF) extracts resulted in higher health scores in the health space compared to placebo [17]. Participants receiving high-dose herbal extracts displayed distinct positions in the health space compared to untreated individuals, demonstrating improved phenotypic flexibility [17].

This application is particularly significant because it demonstrates the model's sensitivity to detect subtle changes in healthy populations, where intervention effects are typically minimal and difficult to quantify with traditional approaches. The visualization aspect allows researchers to immediately comprehend both the magnitude and direction of intervention effects relative to reference populations.

Energy Restriction Interventions

In studies examining the effects of energy restriction, the health space approach has proven valuable for detecting changes in inflammatory resilience. In the Nutritech study, which involved a 12-week 20% energy restriction intervention in overweight and obese individuals (age 50-65, BMI 25-35 kg/m²), multiple composite biomarker configurations detected significant improvements in inflammatory resilience [18].

Notably, these improvements correlated with reductions in BMI and body fat percentage, connecting the physiological resilience measured by the model with conventional clinical endpoints [18]. However, the same composite biomarkers failed to detect effects in the Bellyfat study, which might reflect differences in study populations or intervention designs, highlighting the context-dependent performance of specific biomarker configurations.

Interventional Biomarker Dynamics

The following diagram illustrates the biological systems and biomarker responses measured in health space studies following a PhenFlex challenge:

Figure 2: Biomarker Systems in Health Space Assessment

The Researcher's Toolkit

Essential Research Reagents and Materials

Successful implementation of health space modeling requires specific reagents and methodological components. The following table details the essential research toolkit:

Table 3: Essential Research Reagents and Materials for Health Space Studies

| Item Category | Specific Examples | Function/Application |

|---|---|---|

| Challenge Test Formulations | PhenFlex Challenge Drink (75g glucose, 60g fat, 18g protein) [18] | Standardized metabolic perturbation to assess phenotypic flexibility |

| Biomarker Analysis Kits | Multiplex Immunoassays (e.g., Meso Scale Discovery Panels for cytokines) [18] | Simultaneous measurement of multiple inflammatory markers from small plasma volumes |

| Metabolic Assays | Enzymatic assays for glucose, triglycerides, free fatty acids [19] | Quantification of metabolic responses to challenge test |

| Proteomic Analysis | Plasma proteomics platforms [17] | Measurement of protein biomarkers for integrated health assessment |

| Computational Tools | Machine learning algorithms (Generalized Linear Models), R/Python with specialized packages [17] | Development of health estimation scores and health space visualization |

| Reference Materials | Samples from reference populations (young lean vs. older obese individuals) [18] | Calibration of health space model using phenotypic extremes |

Methodological Considerations and Limitations

While health space modeling offers significant advantages, researchers must consider several methodological aspects. The selection of reference populations is critical, as they define the extremes of the health spectrum against which intervention effects are calibrated [18]. Additionally, feature selection requires careful attention, as the number and type of biomarkers included can significantly impact model sensitivity [17] [18].

Current limitations include insufficient exploration of sex-specific differences in phenotypic flexibility and the relatively narrow age ranges studied to date [17]. Furthermore, the massive amounts of continuous data generated pose challenges for data management, integration, and analysis, necessitating sophisticated computational infrastructure and analytical approaches [20].

The health space model represents a transformative approach to quantifying health as a dynamic, multidimensional state rather than merely the absence of disease. By integrating standardized challenge tests with advanced computational modeling, it provides researchers with a sensitive tool for detecting subtle intervention effects and quantifying phenotypic flexibility. The comparative analysis presented in this guide demonstrates that specific composite biomarker configurations vary significantly in their sensitivity and applicability across different intervention types and population characteristics.

As nutritional science and preventive medicine continue to evolve toward more personalized approaches, the health space model offers a robust framework for translating complex physiological responses into actionable insights. Its ability to visualize health status in an intuitive, two-dimensional space while maintaining mathematical rigor positions it as an increasingly valuable tool for researchers developing targeted interventions to enhance metabolic and inflammatory resilience.

Major Adverse Cardiovascular Events (MACE) represent a primary endpoint in cardiovascular outcome trials, encompassing composite endpoints such as cardiovascular death, myocardial infarction, and stroke. The establishment of clinical utility for novel biomarkers, particularly composite biomarkers, necessitates rigorous evaluation against these hard endpoints to demonstrate value in risk stratification, patient management, and drug development. Within the broader context of composite biomarker performance evaluation metrics research, this guide objectively compares the experimental performance of various biomarker approaches—from single molecules to multi-parameter panels and algorithmically derived composites—in predicting MACE across diverse patient populations. For researchers and drug development professionals, understanding the methodological frameworks and evidentiary standards required for biomarker validation is paramount to translating promising candidates from discovery to clinical application.

Comparative Performance of Biomarker Panels in Predicting MACE

Multi-Biomarker Panels in Atrial Fibrillation

A comprehensive study of 3,817 patients with atrial fibrillation (AF) evaluated a panel of 12 circulating biomarkers representing diverse pathophysiological pathways for their association with adverse cardiovascular outcomes [21]. The research identified a core set of biomarkers that independently predicted a composite endpoint of cardiovascular death, stroke, myocardial infarction, and systemic embolism.

Table 1: Performance of Individual Biomarkers for Predicting Composite Cardiovascular Events in AF Patients

| Biomarker | Physiological Pathway Represented | Association with Composite CV Outcome | Key Findings |

|---|---|---|---|

| High-Sensitivity Troponin T (hsTropT) | Myocardial injury | Independent predictor | Among most significant variables in model [21] |

| N-terminal pro-B-type Natriuretic Peptide (NT-proBNP) | Cardiac dysfunction | Independent predictor | Among most significant variables in model [21] |

| Growth Differentiation Factor-15 (GDF-15) | Oxidative stress, fibrosis | Independent predictor | Among most significant variables in model; also predicted major bleeding [21] |

| Interleukin-6 (IL-6) | Inflammation | Independent predictor | Significant association; also linked to myocardial infarction [21] |

| D-dimer | Coagulation | Independent predictor | Significant association with composite outcome [21] |

The integration of these five biomarkers significantly enhanced predictive accuracy for the composite outcome compared to clinical variables alone, with the area under the curve (AUC) increasing from 0.74 to 0.77 in traditional Cox models [21]. Machine learning models demonstrated even greater improvement, with XGBoost algorithm performance increasing from AUC 0.95 to 0.97 with biomarker inclusion [21].

Inflammatory Biomarkers in Heart Failure

Evidence increasingly supports the role of inflammation in heart failure (HF) pathogenesis and progression. Specific inflammatory biomarkers show particular promise for risk stratification [22].

Table 2: Inflammatory Biomarkers in Heart Failure Pathophysiology and Prognosis

| Biomarker | Pathophysiological Role | Association with HF | Clinical Utility |

|---|---|---|---|

| Interleukin-6 (IL-6) | Pro-inflammatory cytokine; central to inflammatory cascade | Causal role supported by Mendelian randomization; associated with HF development and adverse outcomes [22] | Potential therapeutic target; prognostic marker |

| High-sensitivity C-Reactive Protein (hsCRP) | Downstream acute-phase protein | Marker of residual inflammatory risk; associated with incident HF and adverse outcomes [22] | Prognostic marker; no causal involvement |

| Soluble Suppression of Tumorigenicity-2 (sST2) | Interleukin-33 receptor; fibrosis and stress marker | Released in response to vascular congestion, inflammation, and pro-fibrotic stimuli [23] | Predicts poor outcomes in heart failure, independent of natriuretic peptides |

Elevated levels of IL-6 and hsCRP are associated with increased risk of incident HF and adverse outcomes in established disease, highlighting their potential for improving individual risk assessment and guiding anti-inflammatory interventions [22].

Biomarker Performance in High-Risk Populations (End-Stage Kidney Disease)

Patients with end-stage kidney disease (ESKD) face exponentially increased cardiovascular risk, creating a challenging environment for biomarker interpretation due to altered clearance and concomitant cardiac remodeling [23].

A systematic review of 14 studies (4,965 participants) examined traditional and novel biomarkers for predicting MACE in ESKD populations [23]. N-terminal pro-Brain-Natriuretic Peptide (NT-proBNP) was the most frequently studied biomarker (7 studies), demonstrating consistent prognostic value despite renal clearance limitations [23]. Novel biomarkers including Galectin-3 (a marker of inflammation and fibrosis) and soluble suppression of tumorigenicity-2 (sST2) showed promise as predictors of cardiac morbidity, though their role in ESKD requires further investigation due to kidney function influence on circulating levels [23].

Experimental Protocols and Methodologies for Biomarker Validation

Large-Scale Cohort Study Design (Atrial Fibrillation Example)

The foundational evidence for the AF biomarker panel was generated through a prospective cohort study design [21]:

- Population: 3,817 well-phenotyped AF patients with mean age 71±10 years, 28% female.

- Biomarker Measurement: A predefined panel of 12 biomarkers measured from circulating blood using standardized, quality-controlled assays.

- Outcomes Ascertainment: Prospective follow-up for predefined endpoints including composite cardiovascular events (cardiovascular death, nonfatal ischemic stroke, nonfatal systemic embolism, nonfatal myocardial infarction), heart failure hospitalization, and major bleeding.

- Statistical Analysis:

- Age- and sex-adjusted, and multivariable-adjusted Cox regression analyses for each biomarker and outcome.

- Comparison of model performance with and without biomarkers using area under the curve (AUC).

- Machine learning approaches (random forest, XGBoost) to assess non-linear relationships and interactions.

AI-Driven Composite Biomarker Development (Type 2 Diabetes Example)

A novel approach combining deep learning with traditional epidemiological methods was used to develop a composite biomarker for mortality risk in diabetes [9]:

- Data Source: 82,091 U.S. adults from NHANES (1999-2014) with mortality follow-up through 2019.

- Feature Selection: A deep learning model analyzed comprehensive clinical, biochemical, and demographic features to identify top mortality-related biomarkers.

- Composite Derivation: Alkaline phosphatase (ALP) and serum creatinine (sCr) were identified as key predictors and combined into a novel composite index: ln[ALP × sCr].

- Validation: The composite biomarker was tested in 4,839 T2DM patients over median 11.4 years follow-up, showing significantly elevated risks for all-cause (HR 1.47), cardiovascular (HR 1.44), and diabetes-related mortality (HR 2.50) in the highest versus lowest quartile.

Machine Learning for Multimodal Composite Biomarkers (Neurological Disease Example)

While not cardiovascular, the methodological approach from Friedreich ataxia research demonstrates the cutting edge in composite biomarker development [24]:

- Data Integration: Combined multimodal neuroimaging (structural MRI, diffusion MRI, quantitative susceptibility mapping) with background clinical and genetic data.

- Model Training: Used elasticnet predictive machine learning regression to derive a weighted combination of measures predicting clinical scores.

- Performance Validation: Achieved high accuracy (R² = 0.79) and strong sensitivity to disease progression over two years (Cohen's d = 1.12), outperforming conventional clinical scales.

Signaling Pathways and Biological Context of Key Biomarkers

The clinical utility of cardiovascular biomarkers is grounded in their representation of fundamental pathophysiological processes driving MACE. The following diagram illustrates key pathways and their interactions:

This interconnected network demonstrates how biomarkers reflect complementary biological processes: hsTropT indicates myocardial injury; NT-proBNP reflects ventricular wall stress and cardiac dysfunction; IL-6 represents systemic inflammation that promotes atherosclerosis and plaque instability; GDF-15 indicates oxidative stress and tissue response to injury; and D-dimer reflects coagulation activation and thrombotic risk [21] [22]. The multimodal nature of this pathophysiology explains why composite approaches outperform individual biomarkers.

Research Reagent Solutions for Biomarker Investigation

Table 3: Essential Research Tools for Cardiovascular Biomarker Development

| Research Tool | Function & Application | Examples & Specifications |

|---|---|---|

| High-Sensitivity Immunoassays | Quantification of low-abundance circulating biomarkers (e.g., troponins, IL-6) | Electrochemiluminescence (ECLIA), Single molecule array (Simoa) technologies; Require validation to accepted standards (e.g., FDA-approved platforms) [21] [22] |

| Multi-Omics Platforms | Comprehensive biomarker discovery across biological layers | Genomic, transcriptomic, proteomic, and metabolomic profiling; Spatial biology and single-cell analysis technologies [5] [13] |

| Automated Clinical Platforms | High-throughput clinical-grade measurement for validation studies | FDA-approved platforms like Lumipulse G for pTau217/β-Amyloid ratio; Similar principles apply to cardiovascular biomarker validation [25] |

| Machine Learning Algorithms | Development of weighted composite biomarkers from high-dimensional data | Random forest, XGBoost, elasticnet regression; Implemented in R, Python with specialized packages [21] [24] [9] |

| Biobanked Cohort Samples | Validation across diverse populations with longitudinal outcomes | Large-scale epidemiological cohorts (e.g., NHANES); Disease-specific registries with adjudicated endpoints [9] [21] |

The establishment of clinical utility for biomarkers predicting MACE requires robust evidence generated through methodologically rigorous studies. The comparative data presented in this guide demonstrates that multi-marker approaches—whether predefined panels or algorithmically derived composites—consistently outperform individual biomarkers across diverse patient populations. Key findings indicate:

- Panel-based approaches focusing on complementary pathophysiological domains (myocardial injury, cardiac dysfunction, inflammation, coagulation) improve risk stratification beyond clinical factors alone [21].

- Inflammatory biomarkers, particularly IL-6, provide both prognostic information and potential therapeutic targets, with causal roles supported by genetic evidence [22].

- Novel composite biomarkers, especially those derived through machine learning integration of multimodal data, represent the cutting edge of biomarker science with demonstrated sensitivity to disease progression [24] [9].

- Context-specific validation remains essential, as biomarker performance varies across clinical settings (e.g., atrial fibrillation, heart failure, end-stage kidney disease) [21] [22] [23].

For drug development professionals and researchers, these findings underscore the importance of incorporating biomarker strategies early in clinical trial design, using appropriate methodological frameworks for validation, and considering composite approaches that better reflect the multidimensional nature of cardiovascular disease pathogenesis.

Advanced Methodologies for Composite Biomarker Development and Application

The integration of genomics, proteomics, and metabolomics represents a paradigm shift in biomarker discovery, moving beyond single-omics approaches to create comprehensive signatures that more accurately reflect complex disease states. This comparative analysis evaluates the performance of individual and integrated omics approaches, demonstrating that multi-omics signatures consistently outperform single-omics biomarkers in predictive accuracy, clinical utility, and biological insight. Through examination of experimental data from recent studies, this guide provides researchers with validated methodologies and performance metrics for implementing multi-omics integration in biomarker research and therapeutic development.

Performance Comparison of Omics Approaches

Table 1: Predictive Performance of Single vs. Multi-Omics Biomarkers

| Omics Approach | Median AUC (Incident Disease) | Median AUC (Prevalent Disease) | Optimal Feature Count | Key Strengths | Primary Limitations |

|---|---|---|---|---|---|

| Proteomics | 0.79 [26] | 0.84 [26] | ~5 proteins [26] | High predictive power with minimal features; directly reflects functional state | Does not capture genetic determinants or metabolic dynamics |

| Metabolomics | 0.70 [26] | 0.86 (max) [26] | Varies by disease | Close proximity to phenotype; sensitive to environmental influences | Limited by biochemical domain knowledge [27] |

| Genomics | 0.57 [26] | 0.60 [26] | Polygenic risk scores | Strong causal inference; stable throughout life | Lower predictive power for complex diseases [26] |

| Multi-Omics Integration | 0.61-0.99 [28] | Superior to single-omics [29] [30] | Combination of features [29] | Comprehensive biological view; captures interactions [29] [30] | Computational complexity; data heterogeneity [29] [31] |

Table 2: Experimental Validation of Multi-Omics Biomarkers in Gastric Cancer

| Biomarker | Omics Type | Association with GC (AUC) | Validation Method | Clinical Potential |

|---|---|---|---|---|

| IQGAP1 | Genomic/Proteomic | 0.99 [28] | scRNA-seq, MR, knockout models [28] | Therapeutic target and diagnostic |

| KRTCAP2 | Genomic | 0.61-0.99 range [28] | Colocalization (PPH4=0.97) [28] | Diagnostic biomarker |

| PARP1 | Genomic | 0.61-0.99 range [28] | Colocalization (PPH4=0.93) [28] | Diagnostic biomarker |

| ECM1 | Proteomic | 0.61-0.99 range [28] | MR, drug prediction [28] | Immunotherapy target |

Core Methodologies for Multi-Omics Integration

Network-Based Integration

Network approaches map multiple omics datasets onto shared biochemical networks to improve mechanistic understanding [30] [31]. Analytes (genes, transcripts, proteins, metabolites) are connected based on known interactions, such as transcription factors mapped to the transcripts they regulate or metabolic enzymes mapped to their associated metabolite substrates and products [31].

Experimental Protocol: Protein-Metabolite Association Study

- Sample Preparation: Collect 3,626 plasma samples from three independent human cohorts [32].

- Multi-Omics Profiling: Conduct proteomic analysis (1,265 proteins) and metabolomic analysis (365 metabolites) using high-throughput mass spectrometry [32].

- Correlation Analysis: Calculate pairwise Pearson correlations between all protein-metabolite pairs (171,800 significant associations detected) [32].

- Causal Inference: Perform Mendelian Randomization (MR) analyses using genomic data to identify putative causal protein-to-metabolite associations [32].

- Experimental Validation: Validate top MR findings through plasma metabolomics studies in murine knockout strains of key protein regulators [32].

Protein-Metabolite Association Workflow

Mendelian Randomization for Causal Inference

Mendelian Randomization serves as a natural counterpart to randomized controlled trials by leveraging genetic variations randomly allocated at conception [28]. This approach is particularly valuable for establishing whether circulating proteins and metabolites have causal effects on disease outcomes.

Experimental Protocol: Biomarker Discovery for Gastric Cancer

- Sample Collection: Perform single-cell RNA sequencing of PBMCs from gastric cancer patients and healthy controls (57,064 cells after quality control) [28].

- Cell Type Identification: Use unsupervised clustering to identify 10 cell types based on canonical marker expression (CD8+ T cells, monocytes, B cells, etc.) [28].

- Differential Expression Analysis: Identify 1,343 differentially expressed genes between GC patients and healthy controls [28].

- Molecular QTL Mapping: Identify cis-eQTLs (for genes) and cis-pQTLs (for proteins) from the eQTLGen Consortium (31,684 individuals) [28].

- Two-Sample MR Analysis: Integrate GC GWAS data from UK Biobank and FinnGen with eQTL/pQTL data to uncover causal genes/proteins [28].

- Sensitivity Analysis: Conduct Bayesian colocalization, Steiger filtering, and phenotypic heterogeneity assessments to validate findings [28].

Machine Learning Integration Pipelines

Advanced machine learning pipelines enable the integration of disparate omics data types into predictive models for disease classification and biomarker prioritization.

Experimental Protocol: Multi-Omics Biomarker Prioritization

- Data Cleaning: Process genotypes for 90 million variants, 1,453 proteins, and 325 metabolites from 500,000 UK Biobank participants [26].

- Feature Selection: Implement supervised feature selection methods to identify optimal biomarker combinations [26].

- Model Training: Train classification models using tenfold cross-validation on training datasets [26].

- Performance Validation: Compare results on holdout test sets and calculate AUC values for incident and prevalent disease [26].

- Biomarker Optimization: Determine the minimal number of biomarkers needed for clinical significance (AUC ≥0.8) [26].

Signaling Pathways in Multi-Omics Biomarkers

Multi-Omics Network in Complex Diseases

The Scientist's Toolkit: Essential Research Reagents

Table 3: Key Research Reagent Solutions for Multi-Omics Integration

| Reagent Category | Specific Tools/Frameworks | Primary Function | Application Context |

|---|---|---|---|

| Biobank Resources | UK Biobank, FinnGen [26] [28] | Large-scale cohort data with multi-omics measurements | Biomarker discovery and validation across diverse populations |

| Computational Environments | R packages (pwOmics, MixOmics, WGCNA) [27] | Statistical analysis and integration of multi-omics data | Horizontal and vertical data integration [29] |

| Network Analysis Platforms | Cytoscape with Metscape [27] | Visualization of gene-metabolite networks | Pathway analysis and network medicine [30] |

| Single-Cell Technologies | 10x Genomics, scRNA-seq platforms [28] | Resolution of cellular heterogeneity in tumors | Tumor microenvironment characterization [29] |

| Database Integration | DriverDBv4, HCCDBv2 [29] | Multi-omics data management and analysis | Cancer biomarker discovery and computational oncology |

| Mass Spectrometry Platforms | LC-MS, GC-MS [29] [32] | High-throughput proteomic and metabolomic profiling | Quantitative measurement of proteins and metabolites |

The integration of genomics, proteomics, and metabolomics represents the new frontier in biomarker science, enabling a systems-level understanding of disease mechanisms that cannot be captured by any single omics approach. As computational methods advance and multi-omics datasets become more accessible, the field is moving toward clinical applications that leverage these holistic signatures for early detection, patient stratification, and personalized treatment selection. The experimental data presented in this guide demonstrates that strategically integrated multi-omics biomarkers consistently outperform single-omics approaches, providing a robust foundation for the next generation of precision medicine applications in oncology and complex disease management.

Predictive analytics has become a cornerstone of modern scientific research, particularly in precision medicine. Among the plethora of machine learning algorithms, Random Forest and XGBoost have emerged as preeminent ensemble methods for tackling complex classification and regression tasks. This guide provides an objective comparison of their performance, with a special focus on applications in composite biomarker research, to help researchers and drug development professionals select the optimal tool for their predictive models.

Algorithmic Foundations: Bagging vs. Boosting

The core distinction between Random Forest and XGBoost lies in their ensemble learning techniques: bagging for Random Forest and boosting for XGBoost.

Random Forest (Bagging): This algorithm operates by constructing a multitude of decision trees at training time. Each tree is trained on a random subset of the data (bootstrap sample) and uses a random subset of features for splitting at each node. This randomness, injected in parallel, decorrelates the individual trees, reducing variance and mitigating overfitting. The final prediction is determined by majority voting (classification) or averaging (regression) across all trees in the forest [33] [34] [35].

XGBoost (Boosting): XGBoost, short for eXtreme Gradient Boosting, builds models sequentially. Each new tree is trained to correct the errors made by the ensemble of all previous trees. It uses a gradient descent framework to minimize a defined loss function, and each tree's contribution is scaled by a learning rate. XGBoost incorporates advanced regularization (L1 and L2) to further control complexity and prevent overfitting [33] [34].

Table 1: Core Algorithmic Differences between Random Forest and XGBoost

| Feature | Random Forest | XGBoost |

|---|---|---|

| Ensemble Method | Bagging (Bootstrap Aggregating) | Gradient Boosting |

| Model Building | Parallel construction of independent trees | Sequential construction, with each tree correcting its predecessor |

| Core Optimization | Averaging predictions from multiple trees | Gradient descent to minimize a loss function |

| Key Strength | Robust to noise and overfitting | High predictive accuracy, handles complex relationships |

Performance and Experimental Data in Biomarker Research

Empirical studies across various biomedical domains consistently demonstrate the superior predictive performance of XGBoost, though Random Forest remains a robust and reliable alternative.

Biomarker Discovery for Targeted Cancer Therapies

The MarkerPredict framework was designed to identify clinically relevant predictive biomarkers for targeted cancer therapies. The study integrated network-based properties of proteins and structural features like intrinsic disorder.

- Experimental Protocol: Researchers built a dataset of 880 target-interacting protein pairs from three signaling networks (CSN, SIGNOR, ReactomeFI). They then trained and evaluated 32 different models using both Random Forest and XGBoost.

- Model Validation: Model performance was rigorously assessed using Leave-One-Out-Cross-Validation (LOOCV), k-fold cross-validation, and a 70:30 train-test split.

- Results: Both algorithms produced high-performing models with LOOCV accuracy ranging from 0.7 to 0.96. However, the study noted that the "Random Forest algorithm marginally underperformed compared to XGBoost" [36]. The ensemble of these models successfully classified 3670 target-neighbour pairs to identify potential predictive biomarkers.

Ovarian Cancer Detection and Classification

A 2025 review analyzed 17 investigations integrating multi-modal data, including tumor markers (e.g., CA-125, HE4), inflammatory, metabolic, and hematologic parameters, for ovarian cancer management [37].

- Findings: The review concluded that ensemble methods, including both Random Forest and XGBoost, "excel in classification accuracy (up to 99.82%), survival prediction (AUC up to 0.866), and treatment response forecasting" [37]. These models significantly outperformed traditional statistical methods, with biomarker-driven ML models achieving AUC values exceeding 0.90 in diagnosing ovarian cancer.

Colorectal Cancer Subtype Classification

A study aimed at developing AI-driven classification models for colorectal cancer (CRC) utilized exome datasets. After initial models like SVM and DNN showed low accuracy, the researchers focused on tree-based ensembles [38].

- Experimental Protocol: The study employed a custom-built automated NGS pipeline for public CRC exome datasets. Feature engineering was performed to select relevant genomic variants before training and validating the ML models.

- Results: Both Random Forest and XGBoost demonstrated strong performance. The Random Forest model achieved an overall F1-score of 0.93, while the XGBoost model followed closely with an F1-score of 0.92 in classifying CRC subtypes. Confusion matrices indicated minimal misclassifications [38].

Table 2: Summary of Experimental Performance in Biomedical Studies

| Application / Study | Random Forest Performance | XGBoost Performance | Key Metric |

|---|---|---|---|

| MarkerPredict (Oncology) | Marginal underperformance vs. XGBoost | Marginally superior performance | LOOCV Accuracy (0.7 - 0.96) |

| Ovarian Cancer Review | Excel in classification & prediction | Excel in classification & prediction | Accuracy (up to 99.82%), AUC (up to 0.866) |

| Colorectal Cancer Subtyping | F1-Score: 0.93 | F1-Score: 0.92 | F1-Score |

| Air Quality Classification | Accuracy: 97.08% (with feature selection) | Accuracy: 98.91% (with feature selection) | Accuracy |

Key Considerations for Model Selection

Beyond raw accuracy, several practical factors influence the choice between Random Forest and XGBoost.

Handling of Unbalanced Data: XGBoost is often more effective for imbalanced datasets. The algorithm iteratively learns from mistakes, giving more weight to misclassified samples in subsequent rounds. This is crucial in biomarker research where case samples can be rare. Random Forest lacks a built-in mechanism for this, though it can be mitigated via sampling techniques [33] [34].

Overfitting and Generalization: Random Forest reduces overfitting by averaging multiple deep, unpruned trees. XGBoost combats overfitting with built-in L1 and L2 regularization and a tree-pruning method that stops building a branch once the similarity gain (or loss reduction) is deemed minimal. This often allows XGBoost to generalize better to unseen test data [33] [34].

Computational Efficiency and Hyperparameter Tuning: XGBoost is engineered for speed and efficiency, leveraging parallel processing and distributed computing. However, it has more hyperparameters than Random Forest, making its tuning process more complex. Random Forest is simpler to tune (primarily the number of trees and their depth) and can be less computationally demanding when not extensively tuned [33] [34].

The Scientist's Toolkit: Research Reagent Solutions

Building effective predictive models in biomarker research requires a suite of computational and data resources. The following table details key materials and their functions based on the cited experimental protocols.

Table 3: Essential Research Reagents and Resources for Biomarker ML Models

| Item / Resource | Function in the Research Context | Example from Literature |

|---|---|---|

| Signaling Network Databases | Provide structured protein-protein interaction data for feature engineering. | Human Cancer Signaling Network (CSN), SIGNOR, ReactomeFI [36]. |

| Biomarker Annotation Databases | Serve as ground truth for model training and validation of biomarker-disease links. | CIViCmine text-mining database [36]. |

| Intrinsic Disorder Predictors | Generate features related to protein structure, hypothesized to influence biomarker potential. | DisProt, IUPred, AlphaFold (pLLDT score) [36]. |

| Automated NGS Pipelines | Process raw exome or genomic sequencing data into analyzable variant calls. | Custom-built pipelines for CRC exome data [38]. |

| SHAP (SHapley Additive exPlanations) | Provides post-hoc interpretability for complex models by quantifying feature importance for individual predictions [39]. | Used to explain RF and XGBoost predictions by clustering instances based on SHAP values [39]. |

Implementation and Interpretation in Biomarker Studies

Practical Implementation Notes

- XGBoost for Random Forest: The XGBoost library can also be configured to train a standalone Random Forest by setting specific parameters:

booster='gbtree',subsampleandcolsample_bynodeto less than 1,num_parallel_treeto the forest size,num_boost_round=1, andlearning_rate=1[40]. - Feature Selection: The performance of both algorithms can be enhanced by appropriate feature selection. For instance, using Pearson Correlation to remove weakly related features has been shown to improve accuracy and interpretability in tree-based models [41].

Model Interpretability

While both models are less interpretable than a single decision tree, they offer avenues for explanation. Random Forest provides feature importance scores based on mean decrease in impurity [35]. For both RF and XGBoost, advanced XAI techniques like SHAP can be employed to create surrogate models (e.g., shallow decision trees) that explain predictions for groups of instances with high fidelity and comprehensibility [39].

The choice between Random Forest and XGBoost in predictive analytics for biomarker research is not absolute. Random Forest is an excellent choice for its robustness, simplicity, and strong baseline performance, making it suitable for initial prototyping and when computational resources or tuning expertise are limited. XGBoost, while more complex, often delivers marginally superior accuracy and is particularly adept at handling imbalanced datasets and complex, non-linear relationships, making it a favorite in performance-critical applications like high-stakes biomarker discovery.

The experimental data from oncology research consistently shows that both models are top performers, with the optimal choice often depending on the specific dataset and research goals. As the field advances, the integration of these powerful models with explainable AI (XAI) techniques will be crucial for building not only predictive but also interpretable and trustworthy tools for clinical decision-making.

Liquid biopsy represents a transformative approach in molecular diagnostics, enabling the non-invasive detection and analysis of tumor-derived components through bodily fluids such as blood. Unlike traditional tissue biopsies that provide a static snapshot from a single location, liquid biopsy offers dynamic insights into tumor heterogeneity and evolution over time, facilitating real-time monitoring of disease progression and treatment response [42] [43]. This paradigm shift is particularly valuable for assessing composite biomarkers—multianalyte signatures that integrate information from circulating tumor DNA (ctDNA), circulating tumor cells (CTCs), and extracellular vesicles (EVs) to provide a more comprehensive diagnostic picture than any single marker alone [2].

The clinical utility of liquid biopsy spans the entire cancer care continuum, from early detection and prognostic stratification to therapy selection and minimal residual disease monitoring [44] [45]. Technological advancements in next-generation sequencing (NGS), digital PCR, and microfluidic platforms have significantly enhanced the sensitivity and specificity of liquid biopsy assays, allowing detection of rare genetic alterations even at low variant allele frequencies [42] [46]. Within composite biomarker research, liquid biopsy enables the longitudinal tracking of multiple biomarker classes, providing critical insights into their collective performance as predictive and prognostic indicators [2].

Comparative Analysis of Liquid Biopsy Technologies

Core Biomarker Platforms and Performance Characteristics

Liquid biopsy technologies vary significantly in their target analytes, detection methodologies, and performance characteristics. The table below provides a comparative analysis of the major technology platforms based on key performance metrics relevant to composite biomarker evaluation.

Table 1: Performance Comparison of Major Liquid Biopsy Technology Platforms

| Technology Platform | Target Biomarkers | Sensitivity | Specificity | Variant Allele Frequency Range | Multiplexing Capacity | Turnaround Time | Key Applications |

|---|---|---|---|---|---|---|---|

| Next-Generation Sequencing (NGS) | ctDNA, cfDNA, CNVs | ~0.1% | >99% | 0.1%-95% | High (数十至数百个基因) | 7-14 days | Comprehensive genomic profiling, mutation detection, treatment selection [47] [48] |

| Digital PCR (dPCR) | Specific gene mutations (e.g., EGFR, KRAS) | ~0.01%-0.1% | >99% | 0.01%-100% | Low to moderate (通常<10个靶点) | 1-2 days | High-sensitivity mutation detection, treatment response monitoring [45] |

| Microfluidic CTC Capture | CTCs, CTC clusters | ~1 CTC/mL blood | >90% | N/A | Moderate (基于表型标记) | 3-6 hours | Metastasis research, prognostic assessment, drug resistance mechanisms [44] [46] |

| Extracellular Vesicle Analysis | EVs, exosomes, microRNAs | Varies by platform | Varies by platform | N/A | High (多组学分析) | 2-5 days | Early detection, disease monitoring, tumor microenvironment analysis [42] [43] |

Analytical Sensitivity and Limit of Detection Across Platforms

The limit of detection (LOD) represents a critical performance metric for evaluating liquid biopsy technologies, particularly in minimal residual disease monitoring where biomarker concentrations are exceedingly low. The following table compares the analytical sensitivity of different platforms for detecting tumor-derived content in blood samples.

Table 2: Analytical Sensitivity and Limit of Detection Comparison

| Technology | Sample Input | Limit of Detection (LOD) | Detection Dynamic Range | Input Material Requirements | Best Suited Clinical Contexts |

|---|---|---|---|---|---|

| Tumor-Informed NGS (e.g., Signatera) | 10-20 mL blood | 0.01% variant allele frequency | 0.01%-100% | Custom patient-specific assay requiring tumor tissue | MRD monitoring, recurrence detection [46] |

| Tumor-Agnostic NGS Panels | 10-20 mL blood | 0.1%-0.5% variant allele frequency | 0.1%-100% | No tumor tissue required | Treatment selection, comprehensive genomic profiling [48] |

| Droplet Digital PCR | 2-5 mL plasma | 0.02%-0.05% variant allele frequency | 0.02%-100% | Requires pre-specified mutations | Known mutation tracking, therapy response monitoring [45] |

| CTCs Enumeration (CellSearch) | 7.5 mL blood | 1-2 CTCs/7.5 mL | 1-数千CTCs | Blood collection in preservative tubes | Prognostic assessment in metastatic breast, prostate, colorectal cancers [44] |

| EV RNA Analysis | 1-4 mL plasma | ~100 EVs/mL | 102-106 particles/mL | Plasma processing within 4 hours of collection | Early detection, cancer subtyping [42] |

Experimental Methodologies for Composite Biomarker Evaluation

Integrated Workflow for Multi-Analyte Liquid Biopsy Analysis

Robust evaluation of composite biomarker performance requires standardized methodologies that ensure reproducibility and analytical validity. The following experimental protocols represent current best practices for multi-analyte liquid biopsy analysis.

Table 3: Essential Research Reagent Solutions for Liquid Biopsy Workflows

| Research Reagent Category | Specific Product Examples | Primary Function | Key Considerations for Composite Biomarker Studies |

|---|---|---|---|

| Blood Collection Tubes | CellSave Preservative Tubes, Streck Cell-Free DNA BCT, EDTA tubes | Stabilize blood cells and nucleases to prevent biomarker degradation | Choice affects ctDNA yield, CTC viability, and extracellular vesicle integrity; must match downstream applications [44] [43] |

| Nucleic Acid Extraction Kits | QIAamp Circulating Nucleic Acid Kit, Maxwell RSC ccfDNA Plasma Kit, MagMAX Cell-Free DNA Isolation Kit | Isolate high-quality ctDNA/cfDNA from plasma | Extraction efficiency significantly impacts sensitivity; must be optimized for fragment size selection (<200 bp) [42] |

| CTC Enrichment Systems | CellSearch CTC Kit, Parsortix System, ClearCell FX System | Isify and enumerate circulating tumor cells | Platform choice depends on enrichment strategy (EpCAM-based vs. size-based); affects downstream molecular characterization [44] [45] |

| Library Preparation Kits | AVENIO ctDNA Targeted Kits, Ion AmpliSeq HD Technology, QIAseq Targeted DNA Panels | Prepare sequencing libraries from low-input ctDNA | Unique molecular identifiers (UMIs) are essential for error correction and accurate variant calling in NGS workflows [42] [48] |

| EV Isolation Reagents | ExoQuick precipitation solution, qEV size exclusion columns, MagCapture EV isolation kit | Concentrate and purify extracellular vesicles | Method selection balances yield, purity, and functional preservation; influences downstream RNA and protein analyses [42] [43] |

Protocol for Parallel Analysis of ctDNA and CTCs from Single Blood Draw

Objective: Simultaneously isolate and analyze ctDNA and CTCs from a single blood sample to generate complementary molecular profiles for composite biomarker evaluation.

Sample Collection and Processing:

- Blood Collection: Draw 20-30 mL peripheral blood into appropriate collection tubes (e.g., CellSave for CTCs, Streck BCT for ctDNA).

- Plasma Separation: Within 4 hours of collection, centrifuge blood at 800-1600 × g for 10 minutes at room temperature to separate plasma from cellular components.

- CTC Preservation: Transfer cellular fraction to appropriate storage conditions for subsequent CTC isolation.

- Plasma Processing: Perform a second centrifugation of plasma at 16,000 × g for 10 minutes to remove residual cells and debris. Aliquot and store at -80°C until nucleic acid extraction.

ctDNA Isolation and Analysis:

- Extraction: Isolate ctDNA from 4-8 mL plasma using silica membrane or magnetic bead-based methods specifically validated for cell-free DNA.

- Quality Control: Assess DNA concentration using fluorometric methods and fragment size distribution using Bioanalyzer or TapeStation.

- Library Preparation: Utilize kits incorporating unique molecular identifiers to enable error correction during sequencing.

- Sequencing: Perform targeted NGS using panels covering relevant cancer genes with minimum coverage of 10,000×.

CTC Isolation and Molecular Characterization:

- Enrichment: Use immunomagnetic (e.g., CellSearch) or microfluidic (e.g., Parsortix) platforms to isolate CTCs from the cellular fraction.

- Enumeration: Identify CTCs using immunofluorescence staining for epithelial markers (EpCAM, cytokeratins) and nuclear staining, with negative selection for CD45.

- Molecular Analysis: For genomic analysis, pool multiple CTCs for whole genome amplification followed by NGS. For transcriptomic analysis, perform single-cell or pooled RNA sequencing.

Data Integration:

- Compare mutation profiles between ctDNA and CTCs to assess heterogeneity.

- Correlate quantitative measures (ctDNA variant allele frequency, CTC count) with clinical parameters.

- Integrate findings into composite biomarker scores for clinical outcome prediction [42] [44] [43].

Visualization of Liquid Biopsy Workflows and Composite Biomarker Integration

Liquid Biopsy Experimental Workflow

The following diagram illustrates the integrated workflow for processing liquid biopsy samples and analyzing multiple biomarker classes from a single blood draw.

Composite Biomarker Integration Pathway

This diagram outlines the conceptual framework for integrating multiple liquid biopsy biomarkers into a unified clinical decision support tool.

Liquid biopsy technologies have revolutionized our approach to cancer detection and monitoring by providing non-invasive access to tumor-derived molecular information. The comparative analysis presented in this guide demonstrates that each technological platform offers distinct advantages depending on the clinical context and biomarker of interest. As the field advances, the integration of multiple analyte classes into composite biomarker signatures represents the most promising path toward enhanced diagnostic accuracy and clinical utility [2].

Future developments will likely focus on standardizing analytical protocols across platforms, improving sensitivity for early-stage detection, and validating composite biomarkers in large prospective clinical trials. The integration of artificial intelligence and multi-omics approaches will further refine our ability to extract meaningful biological insights from liquid biopsy samples, ultimately advancing personalized cancer care and strengthening the foundation of precision oncology [46] [5].

Single-cell RNA sequencing (scRNA-seq) has revolutionized oncology research by enabling the detailed dissection of tumor ecosystems at unprecedented resolution. This guide objectively compares the performance of leading commercial scRNA-seq technologies and computational tools, providing researchers with data-driven insights for selecting optimal methods to evaluate composite biomarker performance in studying tumor heterogeneity and rare cell populations.

Table of Contents

- Experimental Protocols in Tumor Heterogeneity Research

- Performance Comparison of scRNA-seq Technologies

- Computational Tools for Marker Gene Identification

- Essential Research Reagents and Materials

Experimental Protocols in Tumor Heterogeneity Research