Escaping Local Minima: Advanced Strategies for Robust Parameter Estimation in Biomedical Research

This article provides a comprehensive guide for researchers and drug development professionals on navigating the complex challenge of local minima in parameter estimation.

Escaping Local Minima: Advanced Strategies for Robust Parameter Estimation in Biomedical Research

Abstract

This article provides a comprehensive guide for researchers and drug development professionals on navigating the complex challenge of local minima in parameter estimation. It explores the foundational concepts of optimization landscapes, details a wide array of escape methodologies from basic stochastic approaches to advanced algorithms, offers practical troubleshooting techniques for real-world application, and presents rigorous validation frameworks for comparing solution quality. By integrating theoretical insights with practical case studies from pharmacometrics and systems biology, this resource aims to equip scientists with the multidisciplinary knowledge needed to achieve more reliable and physiologically plausible model parameterizations in drug development.

Understanding the Optimization Landscape: Why Algorithms Get Stuck in Local Minima

Frequently Asked Questions (FAQs)

Q1: In high-dimensional parameter spaces, like those in drug design, is the "hiking analogy" still a valid mental model?

Yes, the core concept holds, but the "landscape" becomes far more complex. In a mountainous region, you can see valleys and peaks. In high-dimensional spaces, the loss landscape is visualized as a complex, multi-valleyed surface where each dimension represents a parameter. The goal remains to find the deepest valley (global minimum), but the number of smaller valleys (local minima) increases dramatically [1]. This is a central challenge in modern small molecule drug discovery, where one must optimize for multiple parameters simultaneously [2].

Q2: What are the practical consequences of my optimization getting stuck in a local minimum?

In practical terms, a local minimum represents a suboptimal solution. For example:

- In drug discovery, it could mean a candidate molecule with good binding affinity but poor solubility or high toxicity, ultimately failing as a viable drug [2].

- In machine learning, it results in a model with lower-than-possible accuracy or performance [1]. Essentially, your process converges on a "good enough" solution but misses the truly best possible outcome.

Q3: My model evaluation is computationally expensive (e.g., takes hours/days). How can I possibly explore the parameter space widely enough to avoid local minima?

This is a key challenge in fields like material science and drug design. The strategy involves using efficient, data-driven optimization methods. Instead of evaluating the expensive model at every point, you build a fast surrogate model (e.g., a deep neural network or Gaussian process) that approximates your system [3] [4]. Advanced algorithms like Bayesian Optimization or meta-learning frameworks then guide the search for the global optimum by intelligently selecting which few points to evaluate with the expensive true model, dramatically reducing the number of required evaluations [3] [4].

Q4: Are there specific techniques to make an optimization algorithm more "adventurous" and help it escape local minima?

Absolutely. Several techniques introduce controlled "instability" or "noise" to help the algorithm jump out of small valleys:

- Stochastic Gradient Descent (SGD): Uses small, random batches of data to compute gradients, introducing noise that can bounce the algorithm out of shallow local minima [1].

- Momentum: Helps the algorithm build "inertia," allowing it to power through small bumps and flat regions [1].

- Advanced Optimizers: Algorithms like Adam adapt the learning rate and incorporate momentum, making them more robust [1].

- Simulated Annealing: Occasionally allows the algorithm to take "uphill" steps to escape local minima, with the probability of such steps decreasing over time [1].

Troubleshooting Guides

Problem: Optimization Converges Too Quickly to a Apparently Suboptimal Solution

Description: The parameter estimation process stabilizes, but the resulting model or compound has a performance profile (e.g., prediction accuracy, binding score) that is lower than expected or required.

Diagnosis: This is a classic symptom of being trapped in a local minimum. The algorithm has found a point where the gradient is zero (a flat region) but it is not the best possible solution in the broader parameter space.

Solution Steps:

- Verify with Multiple Initializations: Restart the optimization process from several different, randomly chosen starting points (parameters). If you consistently converge to the same suboptimal result, it might be a fundamental limitation. If you find different results, you are likely finding local minima [1].

- Introduce Stochasticity: Switch from a full-batch gradient descent to a Stochastic Gradient Descent (SGD) approach. The inherent noise from mini-batches can prevent immediate trapping [1].

- Increase "Exploration" Parameters:

- Temporarily increase the learning rate: A larger step size can help "jump" over narrow valleys.

- Use or increase momentum: This helps overcome small bumps.

- Employ Advanced Algorithms: Implement optimizers like Adam or RMSprop that are dynamically adaptive [1].

- Leverage Ensemble Methods: Train multiple models with different initializations and randomness, then combine their results. This reduces reliance on any single model that may be stuck [1].

Problem: Inability to Efficiently Explore High-Dimensional Parameter Space

Description: With a large number of parameters (e.g., 50+), it becomes computationally infeasible to explore the entire space, and the optimization process fails to find satisfactory solutions within a reasonable budget.

Diagnosis: You are experiencing the "curse of dimensionality," where the complexity of the problem grows exponentially with the number of parameters [5].

Solution Steps:

- Perform Parameter Space Reduction: Before optimization, use techniques like Active Subspaces (AS) to identify lower-dimensional structures within the high-dimensional space. This reduces the number of effective parameters you need to optimize [5] [6].

- Implement a Surrogate-Assisted Framework: Adopt a meta-learning or active optimization pipeline.

- Use a surrogate model (e.g., a deep neural network) to approximate the expensive objective function [3] [4].

- Use an efficient search strategy (e.g., a guided tree search) to find promising candidates using the surrogate [4].

- Evaluate only the most promising candidates with the true, expensive model and add them back to the training data to improve the surrogate.

- Adopt a Holistic Drug Design (HDD) Approach: In drug discovery, strategically combine multiple computational approaches (e.g., structure-based design, AI/ML models, ligand-based design) for Multi-Parameter Optimization (MPO). Use probabilistic approaches and general principles in early stages, and rational, data-driven approaches when more data is available [2].

Experimental Protocols

Protocol: Parameter Sensitivity Analysis for PBPK Model Optimization

This protocol, adapted from a pharmacokinetic study, provides a structured workflow for optimizing complex models and avoiding local minima [7].

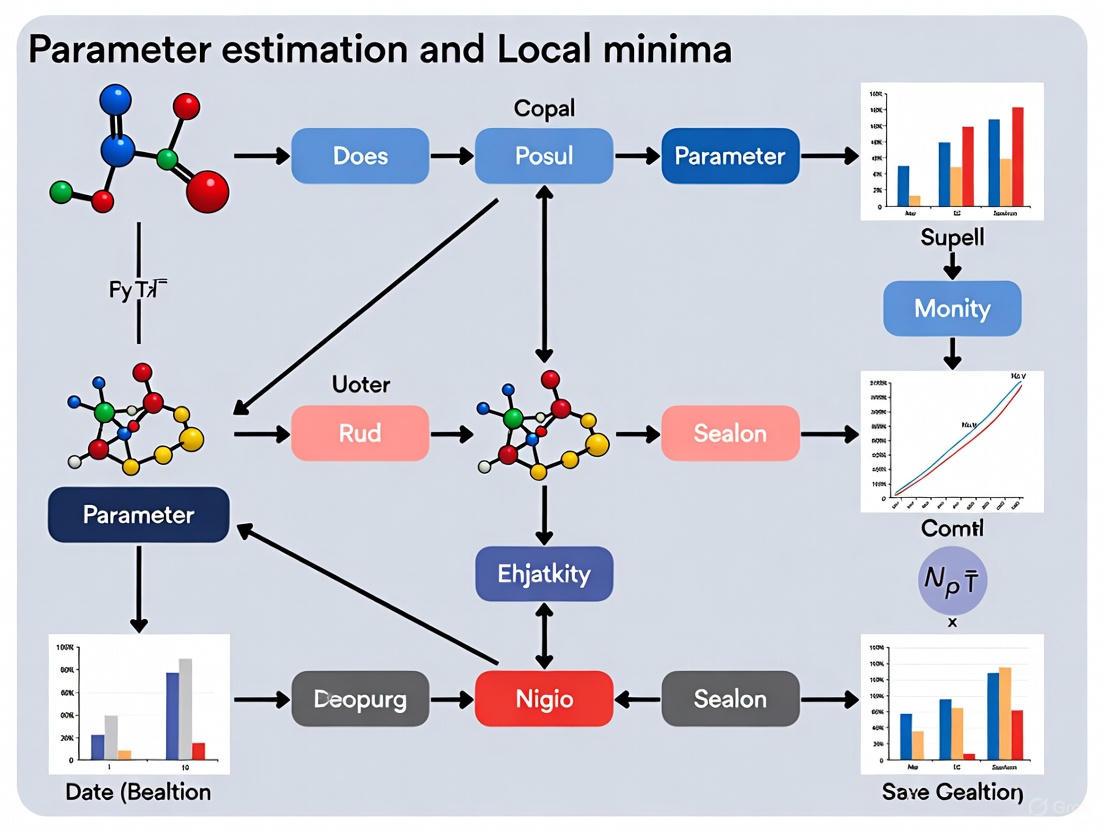

Workflow Diagram: PBPK Model Optimization

1. Simulation:

- Build an initial Physiologically Based Pharmacokinetic (PBPK) model using in silico, in vivo, and in vitro derived parameters for the compound of interest [7].

2. Verification:

- Compare the simulated pharmacokinetic (PK) profile (e.g., concentration-time curve) with observed clinical data.

- Calculate the fold-error between predicted and observed key PK parameters (C~max~, AUC~last~). A fold-error ≤ 2 is typically considered acceptable [7].

3. Parameter Sensitivity Analysis (PSA):

- If the verification fails, conduct a PSA to understand the root cause of the discrepancy.

- Systematically vary key input parameters (e.g., solubility, permeability, clearance) within a physiological range and observe their impact on the output PK profile.

- Identify the parameters to which the model output is most sensitive; these are the primary candidates for optimization [7].

4. Optimization:

- Optimize the sensitive parameters identified in the PSA to minimize the difference between the simulated and observed PK data.

5. Final Evaluation:

- Re-evaluate the optimized model against a new, separate set of observed PK data to confirm its predictive performance before final deployment [7].

Protocol: Meta-learning for Expensive Dynamic Optimization Problems

This protocol is designed for scenarios where the objective function is both expensive to evaluate and changes over time, requiring efficient tracking of the shifting optimum [3].

Workflow Diagram: Meta-learning Optimization

1. Meta-Training Phase:

- Objective: Learn effective initial parameters for the surrogate model from experience gained across different environmental states over time.

- Process: A gradient-based meta-learning approach is used to train the surrogate model such that it can be rapidly adapted to new environments. This learns the underlying patterns of the dynamic optimization problem [3].

2. Meta-Test (Adaptation) Phase:

- Trigger: An environmental change is detected.

- Process: The learned experience (model parameters from the meta-training phase) is used as the initial parameters for the surrogate model. This model is then quickly fine-tuned (adapted) using a very limited number of samples (few-shot learning) from the new environment [3].

3. Optimization Initiation:

- The adapted surrogate model, which now has a good representation of the new environment, is used to quickly initiate the search for the new optimal solution, all within a strictly restricted computational budget [3].

Research Reagent Solutions

The following table details key computational tools and methodologies referenced in the search results for tackling local minima and complex parameter optimization.

| Tool/Method Name | Function/Brief Explanation | Relevant Context |

|---|---|---|

| Active Subspaces (AS) [5] [6] | A linear dimensionality reduction technique for input parameter space; identifies directions of greatest sensitivity to make high-D problems more tractable. | Parameter space reduction for industrial optimization (e.g., ship hull design). |

| ATHENA [6] | An open-source Python package that implements Advanced Techniques for High dimensional parameter spaces, including Active Subspaces. | General-purpose parameter space reduction for enhancing numerical analysis pipelines. |

| STELLA [8] | A metaheuristics-based generative molecular design framework combining an evolutionary algorithm with clustering-based conformational space annealing for MPO. | De novo drug design and extensive exploration of fragment-level chemical space. |

| DANTE [4] | (Deep Active Optimization with Neural-Surrogate-Guided Tree Exploration) An AI pipeline using a deep neural surrogate and a modified tree search to find optima with limited data. | Optimizing complex, high-dimensional systems (e.g., alloy design, peptide binders). |

| Meta-learning Framework [3] | A "learning to learn" approach that uses knowledge from previous tasks (past environments) to enable fast adaptation to new tasks with few samples. | Solving expensive optimization problems in dynamic environments. |

| Holistic Drug Design (HDD) [2] | A strategic mindset for Multi-Parameter Optimization that leverages multiple, orthogonal drug design approaches tailored to the program's stage and data availability. | Modern small molecule drug discovery from hit-to-lead to candidate optimization. |

Technical Support Center

Frequently Asked Questions (FAQs)

FAQ 1: Why does my biological model yield different results on every run, even with the same code and dataset?

This is a common issue stemming from the inherent non-determinism of many AI and computational models, especially in deep learning. Key sources of this variability include [9]:

- Randomness in Training: Processes like random weight initialization, data shuffling, and the use of mini-batches in Stochastic Gradient Descent (SGD) inherently introduce variation.

- Model Architecture Choices: Techniques like dropout regularization deliberately deactivate neurons randomly during training.

- Hardware-Level Variations: Parallel computing on GPUs/TPUs can produce non-deterministic results due to floating-point precision limitations and the order of operations.

- Troubleshooting Guide:

- Action 1: Set Random Seeds. Use a fixed seed for random number generators in your code (e.g., in Python with NumPy, TensorFlow, or PyTorch) and note the specific software library versions used.

- Action 2: Audit Preprocessing. Ensure data preprocessing steps (e.g., normalization, feature selection) are performed after splitting data into training and test sets to prevent data leakage.

- Action 3: Check for Non-Deterministic Functions. Identify and configure your deep learning framework to use deterministic algorithms where possible, though this may come with a performance cost.

- Expected Outcome: Increased consistency across runs, though identical results are not always guaranteed due to the factors above.

FAQ 2: My model performs well during training but fails on new data. What is the cause?

This typically indicates a problem with generalizability, often caused by overfitting or data leakage [9].

- Data Leakage: Information from the test set inadvertently influences the training process. This artificially inflates performance metrics during validation.

- Overfitting: The model has learned patterns specific to the training data that do not generalize to the broader data distribution.

- Unrepresentative Data: The training dataset may lack representation from diverse demographic groups or experimental conditions, causing the model to perform poorly on underrepresented populations [9].

- Troubleshooting Guide:

- Action 1: Review Data Splitting. Rigorously check that no preprocessing that learns from data (like normalization) is applied before the train-test split. Perform such operations on the training set and then apply the transformation to the test set.

- Action 2: Analyze Data Composition. Evaluate your training data for biases and imbalances across relevant biological or demographic variables.

- Action 3: Implement Robust Validation. Use techniques like k-fold cross-validation and hold-out validation sets that were completely isolated during model development.

- Expected Outcome: A model whose performance on the validation set is a reliable indicator of its performance on new, unseen data.

FAQ 3: What optimization algorithms should I use for parameter estimation in problems with many local minima?

For high-dimensional, non-convex optimization landscapes, traditional gradient-based methods can fail. The following global optimization strategies are recommended [10]:

- Table: Optimization Algorithms for Problems with Local Minima

| Algorithm | Key Principle | Best for Scenarios with... | Key Considerations |

|---|---|---|---|

| Simulated Annealing | Probabilistically accepts worse moves to escape local minima, with an "temperature" parameter that decreases over time [10]. | A moderate number of parameters; can tolerate a slow, guided search. | Highly sensitive to its own parameters (e.g., cooling schedule). |

| Particle Swarm Optimization (PSO) | A "swarm" of particles explores the space, moving based on their own best found position and the swarm's global best [10]. | Continuous parameters and parallelizable function evaluations. | Performance depends on swarm size and topology. |

| Metropolis-Hastings (MCMC) | Uses multiple "walkers" to sample the parameter space, providing a probabilistic view of good regions [10]. | Quantifying uncertainty in parameter estimates. | Computationally intensive; requires many evaluations. |

FAQ 4: The computational cost for verifying my model is prohibitively high. How can I address this?

High computational cost is a significant barrier to reproducibility and verification, as seen with models like AlphaFold [9].

- Strategies:

- Code and Model Sharing: Provide not only your code but also pre-trained model weights. This allows others to bypass the expensive training phase and proceed directly to validation and inference.

- Utilize Cloud/HPC Resources: Leverage high-performance computing (HPC) clusters or cloud computing platforms to parallelize and speed up computations.

- Model Simplification: Explore if a simplified or more computationally efficient version of your model can be used for verification purposes without sacrificing critical predictive power.

The Scientist's Toolkit: Essential Research Reagents & Materials

Table: Key Computational Tools for Biological Modeling

| Item | Function & Application |

|---|---|

| BioNetGen Language (BNGL) | A rule-based modeling language well-suited for capturing the site-specific details of molecular interactions (e.g., in cell signaling systems) and helping to manage combinatorial complexity [11]. |

| Method of Regularized Stokeslets (MRS) | A computational method for modeling fluid-structure interactions at low Reynolds numbers, crucial for understanding biological processes like cellular motility [12]. |

| Immersed Boundary (IB) Method | A numerical framework for simulating elastic structures immersed in a viscous fluid, with wide applications in biological fluid dynamics [12]. |

| UCSC Genome Browser / Ensembl | Interactive platforms for visualizing genomic sequences, gene annotations, and genetic variations [13]. |

| PyMOL / ChimeraX | Molecular visualization software for rendering and analyzing protein structures and interactions in 3D space [13]. |

| Extended Contact Map | A visualization convention for illustrating the scope of a rule-based model, showing molecules, interactions, and modifications to make complex models understandable [11]. |

Experimental Protocols & Workflows

Detailed Methodology: Multi-Scale Cardiac Electrophysiology Modeling [14]

This protocol outlines the creation of a multi-scale model to simulate cardiac electrical activity, from ion channels to tissue-level excitation waves.

- Objective: To understand how ion channel dynamics propagate to create a cardiac action potential and how this excitation spreads through tissue, particularly in disease states.

Procedure:

- Ion Channel Modeling (Single Channel Level):

- Model the kinetics of individual ion channels (e.g., sodium, potassium, calcium) using ordinary differential equations (ODEs).

- Parameters include ion concentrations and transmembrane voltage (Vm).

- Incorporate effects of genetic mutations or drug blockades by modifying rate constants in the ODEs.

- Cellular Electrophysiology (Myocyte Level):

- Integrate the various ion current models into a system of coupled ODEs representing a cardiac cell.

- Simulate to generate an action potential.

- Adjust model parameters to reflect different cell types (atrial, ventricular) or disease-induced remodeling (e.g., heart failure).

- Tissue-Level Excitation (Tissue Level):

- Couple the cellular models using a reaction-diffusion system based on the monodomain or bidomain equations, represented by partial differential equations (PDEs).

- Simulate the spatio-temporal propagation of the electrical wave (excitation) through cardiac tissue.

- Introduce heterogeneities (e.g., scar tissue) by varying model parameters spatially.

- Ion Channel Modeling (Single Channel Level):

Visualization of Workflow:

Detailed Methodology: Parameter Optimization in a Rugged Landscape [10]

This protocol describes a direct search optimization strategy designed to navigate high-dimensional, non-convex parameter spaces with expensive function evaluations.

- Objective: To find a robust set of parameters that minimizes (or maximizes) an objective function characterized by many local minima, where each function evaluation is computationally costly (e.g., taking minutes).

Procedure:

- Initialization:

- Define the continuous parameter bounds for your 5-10 parameters.

- Set an initial step size (jump distance).

- Exploratory Move:

- From a starting point, pick a random direction in the parameter space.

- Evaluate the objective function.

- Continue moving in this direction as long as the result improves, increasing the step size with each successful move.

- Pattern Move & Refinement:

- When improvement stops, step back to the previous best point.

- Generate a set of orthogonal directions (e.g., using a random orthogonal matrix).

- Attempt to move along each orthogonal direction by the current step size.

- If any orthogonal move improves the result, continue the search in that new direction.

- Local Minimum Detection and Restart:

- If no moves (original or orthogonal) yield improvement, halve the step size.

- If the step size falls below a defined threshold, conclude a local minimum has been found. Record this minimum.

- Restart the entire process from a new, random point in the parameter space to continue exploring the landscape.

- Initialization:

Visualization of Workflow:

Table: Summary of Computational Resource Requirements

| Model / Process | Estimated Resource Demand | Primary Bottlenecks |

|---|---|---|

| Training Deep Learning Models (e.g., AlphaFold) | Extreme (e.g., 264 hours on specialized TPUs) [9]. | Memory, Floating-point operations, Parallel scaling. |

| Third-party Model Verification | High to Extreme [9]. | Access to equivalent hardware, Energy costs, Time. |

| Parameter Optimization (per evaluation) | Moderate to High (e.g., 1 minute per parameter set on a multi-core machine) [10]. | Single-thread performance, Total number of evaluations required. |

| Multi-scale Tissue Simulations | High [14]. | Solving coupled PDEs, Spatial resolution, Simulation duration. |

Frequently Asked Questions

FAQ 1: My parameter estimation algorithm consistently converges to different solutions with similar loss values. Is this a sign of local minima, and how can I determine which solution to trust?

This is a classic sign of a model with multiple local minima or an identifiability issue. When different parameter sets yield similar error values, it indicates a complex loss landscape. This is common in models with symmetries or over-parameterization, such as Gaussian Mixture Models (GMMs) and deep neural networks [15] [16].

- Actionable Steps:

- Statistical Comparison: Use criteria like AIC (Akaike Information Criterion) or BIC (Bayesian Information Criterion) to compare models, not just the loss value. Solutions with similar loss but different complexities should be penalized.

- Check Physical Plausibility: Evaluate if the different parameter sets are physiologically or physically plausible within the context of your research (e.g., drug clearance rates must be within a biologically possible range) [17].

- Ensemble Methods: If several solutions are statistically and physically equivalent, consider using an ensemble approach, combining predictions from multiple good models to improve robustness [18] [1].

- Regularization: Introduce regularization terms (e.g., L1 or L2 penalty) to the loss function to penalize overly complex parameter sets and guide the optimization toward simpler, more reliable solutions [18] [1].

FAQ 2: During hyperparameter tuning for my machine learning model, the performance landscape appears extremely rugged with many dips. What is the risk, and how can I find a robust solution?

A highly rugged performance landscape suggests that your model's performance is very sensitive to small changes in hyperparameters. The primary risk is that a standard grid search may accidentally land on a fragile local minimum that does not generalize well to new data [19].

- Actionable Steps:

- Increase Granularity: Use a finer-granularity search grid in the promising regions to better map the landscape and uncover hidden, more stable minima [19].

- Use Robust Optimizers: Switch from basic gradient descent to optimizers that incorporate momentum (e.g., Adam, RMSProp). Momentum helps the algorithm power through small bumps and escape shallow local minima [18] [1].

- Stochasticity: Employ Stochastic Gradient Descent (SGD) or mini-batch training. The inherent noise from batching helps the algorithm escape local minima [18] [20].

- Multiple Runs: Always perform multiple tuning runs with different random initializations to increase the chance of finding a globally robust solution [21].

FAQ 3: I am fitting a complex differential equation model to my pharmacological data. The optimizer gets stuck in a solution that fails to capture the later phases of the time-series data. What strategies can help?

This occurs when the optimizer finds a local minimum that fits the initial part of the data well but cannot adjust parameters to fit the entire trajectory without temporarily increasing the overall error [20].

- Actionable Steps:

- Iterative Growing: Do not fit the entire dataset at once. First, fit only the early portion of the time series (e.g., (0, 1.5)). Once a good fit is found for this segment, use the resulting parameters as the initial guess for a fit on a longer span (e.g., (0, 3.0)). Iteratively grow the time span until the full dataset is fitted [20].

- Fit Initial Conditions: In the initial stages of optimization, allow the initial conditions of the differential equation to be trained along with the parameters. This provides more degrees of freedom to find a good trajectory. Later, you can fix the initial conditions to their known values and refine only the parameters [20].

- Weighted Loss Functions: Modify your loss function to assign higher weights to the later portions of the time series, forcing the optimizer to pay more attention to fitting that data [20].

Troubleshooting Guides

Problem: Proliferation of Local Minima in Complex Model Structures

Root Cause: Certain model architectures are inherently prone to local minima. For example, Gaussian Mixture Models (GMMs) can have multiple local minima where different components fit the same true cluster or a single component splits across multiple true clusters [15]. Deep neural networks also have a vast number of (often equivalent) local minima due to non-identifiability, such as from weight symmetries [16].

Experimental Protocol for Diagnosis and Mitigation:

- Diagnosis: Perform multiple runs of your optimization algorithm from vastly different random starting points. If the algorithm consistently converges to parameter sets with significantly different structures but similar loss values, your model likely has a complex local minima structure [15] [21].

- Protocol:

- Run the optimization 50-100 times with random initializations [21].

- Cluster the resulting parameter estimates.

- Analyze the loss values and model predictions for each cluster.

- Mitigation Strategy: Use a hybrid global-local optimization algorithm. A proposed method combines Simulated Annealing (SA) to broadly explore the parameter space, a descent method to quickly find the local minimum in a region, and Tabu Search (TS) to avoid revisiting already discovered minima [22]. This can systematically identify multiple global and good local minima.

Table 1: Hybrid Algorithm for Global and Local Minima Identification [22]

| Stage | Algorithm Component | Purpose |

|---|---|---|

| 1 | Simulated Annealing (SA) | Global exploration to find promising regions in the parameter space. |

| 2 | Descent Method | Rapid local convergence to the nearest minimum from the SA-proposed point. |

| 3 | Tabu Search (TS) | Prevents the algorithm from cycling back to previously found minima, forcing further exploration. |

Problem: Data Limitations Leading to Optimization Instability

Root Cause: The objective function itself can be a source of local minima. The standard "single-shooting" method, where a model is simulated from the start for the entire dataset, can create a complex loss landscape. Small parameter changes can lead to large simulation errors, creating many local minima [23].

Experimental Protocol for Diagnosis and Mitigation:

- Diagnosis: Observe if your optimization progress is highly erratic and sensitive to the learning rate or initial parameters. If reducing the dataset size simplifies the optimization, the data structure or objective function is likely a key issue.

- Protocol: Implement a piecewise evaluation method like Multiple Shooting for Stochastic Systems (MSS). This method breaks the time-series data into intervals [23].

- The model is simulated separately for each interval, often with estimated initial states.

- The loss is computed as the sum of errors across all intervals, plus a term that penalizes discrepancies between the end state of one interval and the start state of the next.

- Mitigation Strategy: Adopting the MSS objective function has been shown to "smooth" the fitness landscape, reducing the number and depth of local minima and making global optimization more tractable for systems biology models (e.g., Lotka-Volterra, FitzHugh-Nagumo) [23].

The diagram below illustrates the workflow for diagnosing and mitigating local minima stemming from model structure and data limitations.

Workflow for Diagnosing and Mitigating Local Minima

Problem: Algorithmic Constraints and the Saddle Point Trap

Root Cause: In high-dimensional spaces, saddle points—flat regions where the gradient is zero but the point is not a minimum—are a more common issue than local minima. Basic gradient descent can become extremely slow in these regions [16].

Experimental Protocol for Diagnosis and Mitigation:

- Diagnosis: Monitor the norm of the gradient and the loss value during training. If the loss stagnates for a long time while the gradient norm remains very small (but not zero), the algorithm is likely traversing a saddle point or a flat region.

- Protocol:

- Use optimizers with adaptive learning rates and momentum, such as Adam or Nesterov momentum [18] [16].

- Momentum helps the algorithm build up velocity to move through flat regions and shallow local minima.

- Adaptive learning rates prevent the step size from becoming infinitesimally small on flat slopes.

- Mitigation Strategy: Avoid pure second-order optimization methods (like Newton's method) for very high-dimensional problems, as they can be critically hampered by saddle points. First-order methods with momentum (like Adam) are generally more effective in these contexts [16].

Table 2: Key Algorithmic Solutions and Their Applications

| Algorithmic Solution | Mechanism | Best For |

|---|---|---|

| Stochastic Gradient Descent (SGD) [18] [1] | Introduces noise via data sampling, helping to escape local minima. | Large-scale datasets and deep learning. |

| Momentum & Nesterov Momentum [18] | Accumulates a velocity vector from past gradients to power through flat spots and minor minima. | Loss landscapes with high curvature or saddle points. |

| Adaptive Optimizers (Adam, RMSprop) [1] [16] | Uses per-parameter learning rates and incorporates momentum for robust traversal of complex landscapes. | Default choice for many deep learning and non-convex problems. |

| Simulated Annealing [18] [22] | Occasionally accepts worse solutions to explore more of the space, with a decreasing probability over time. | Global search in the initial phases of optimization. |

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Computational Tools for Handling Local Minima

| Tool / Reagent | Function in Experimentation |

|---|---|

| COPASI Software Package [23] | A widely accessible software platform for simulating and parameter estimation of biological systems models, which includes implementations of advanced objective functions like Multiple Shooting (MSS). |

| Hybrid Global-Local Algorithms [22] | A combination of Simulated Annealing, Tabu Search, and a descent method, used as a "reagent" to systematically identify multiple global and good local minima, rather than just one. |

| Multiple Shooting (MSS) Objective Function [23] | A specific formulation of the loss function that treats intervals between data points separately, serving as a "reagent" to smooth the fitness landscape and reduce local minima. |

| Random Initialization Protocol [21] | A standard methodological "reagent" involving 50-100 optimization runs from random starting points to probe the loss landscape and avoid poor local minima, especially for models with 3+ parameters. |

Frequently Asked Questions (FAQs)

Q1: What is a local minimum in the context of parameter estimation, and why is it a problem for my biomedical models?

A local minimum is a point in the parameter space where the value of your objective function (e.g., a loss function) is lower than all surrounding points, but it is not the lowest possible value in the entire space (the global minimum). Optimization algorithms can "get stuck" in these local minima during parameter estimation [24]. This is a significant problem because the resulting model parameters are not the best possible fit for your data. Consequently, the model's predictive accuracy is compromised, which can lead to incorrect biological inferences and reduce the clinical translatability of your findings [25] [26].

Q2: My complex Physiologically-Based Pharmacokinetic (PBPK) model failed to converge. Could local minima be the cause?

Yes. Complex models like PBPK and Quantitative Systems Pharmacology (QSP) models with many parameters are particularly susceptible to issues during parameter estimation. The choice of algorithm and its initial settings can significantly influence the results, often due to the presence of local minima. It is advisable to conduct multiple rounds of parameter estimation using different algorithms and initial values to mitigate this risk and identify the most credible parameter set [26].

Q3: How can I improve the chances of my model finding the global minimum instead of a local minimum?

Several strategies can help your optimization algorithm escape local minima:

- Stochastic Initialization: Start multiple optimization runs from different, randomly chosen initial parameter values. This increases the probability that at least one run will converge to the global minimum [27].

- Advanced Algorithms: Utilize global optimization methods like genetic algorithms, simulated annealing, or particle swarm optimization. These methods are specifically designed to explore the parameter space more broadly and are less likely to get trapped in local minima compared to traditional gradient-based methods [27] [26].

- Ensemble Learning: Train multiple models with different initializations and aggregate their predictions. Techniques like boosting or bagging can diminish the impact of any single model getting stuck in a poor local minimum [27].

Q4: What is parameter identifiability, and how does it relate to local minima?

Parameter identifiability concerns whether it is possible to uniquely determine the values of a model's parameters given a specific set of data [25]. If a model is not structurally identifiable, or if the available data are insufficient (a condition known as practical non-identifiability), the optimization problem may have multiple solutions or flat regions in the parameter space. This can exacerbate the local minima problem, as many different parameter sets can appear to fit the data equally well, making it difficult for an algorithm to find a single best solution [25].

Troubleshooting Guides

Issue: Optimization Algorithm Converges to Different Parameter Values on Different Runs

This is a classic symptom of an optimization landscape with multiple local minima.

| Observation | Possible Cause | Solution Steps | Verification Method |

|---|---|---|---|

| Parameter estimates vary widely between runs. | Algorithm is getting stuck in different local minima. | 1. Use a global optimization algorithm (e.g., Genetic Algorithm, Particle Swarm Optimization) [27] [26].2. Implement multi-start optimization: run a local optimizer (e.g., quasi-Newton) from many starting points [26]. | Compare the final objective function value (e.g., loss) across runs. The run with the lowest value likely found the best minimum. |

| Small changes in initial guesses lead to different results. | The objective function is highly non-convex. | 1. Use Bayesian Optimization to guide the search more efficiently [27].2. Apply regularization to the objective function to smooth the landscape and reduce complexity [27]. | Check the consistency of model predictions on a held-out validation dataset. |

| Parameters are highly correlated. | Practical non-identifiability; the data cannot support estimating all parameters [25]. | 1. Perform sensitivity analysis to determine which parameters are most influential [25].2. Conduct subset selection: fix non-essential or correlated parameters to literature values and estimate only the most sensitive subset [25]. | Calculate profile likelihoods or confidence intervals for parameters to check if they are well-defined. |

Experimental Protocol: Multi-Start Optimization with a Global Algorithm

- Algorithm Selection: Choose a global optimizer such as a Genetic Algorithm (GA) or Particle Swarm Optimization (PSO) from your software package [26].

- Define Bounds: Set physiologically plausible lower and upper bounds for all parameters to be estimated.

- First-Pass Optimization: Run the global optimizer to find a promising region of the parameter space.

- Refine with Local Search: Use the result from the global optimizer as the initial guess for a more efficient local optimizer (e.g., the Nelder-Mead method or a quasi-Newton method) to refine the solution [26].

- Repeat: Execute steps 3-4 multiple times with different random seeds for the global optimizer to ensure consistency.

Issue: Model Fits Training Data Well But Fails to Generalize to New Data

This indicates overfitting, which can be related to finding a minimum that is too specific to the training data.

| Observation | Possible Cause | Solution Steps | Verification Method |

|---|---|---|---|

| Low training error, high validation error. | Overfitting to noise in the training data; the found minimum may not be the physiologically meaningful global minimum. | 1. Introduce regularization (e.g., L1/L2) to penalize model complexity [27].2. Simplify the model by reducing the number of estimated parameters if possible [25].3. Use Bayesian estimation methods, which incorporate prior knowledge and can be more robust [28]. | Use cross-validation to tune hyperparameters (like regularization strength) and assess generalizability. |

| Model predictions are biologically implausible. | The algorithm converged to a local minimum that is mathematically sound but physiologically invalid. | 1. Incorporate Bayesian priors to constrain parameters to biologically realistic ranges during estimation [28].2. Add constraints to the optimization problem based on domain knowledge. | Validate model mechanisms and output against established biological literature, not just data fit. |

Experimental Protocol: Regularized Maximum Likelihood Estimation

- Objective Function: Modify the standard least-squares or likelihood objective function to include a penalty term. For example, for L2 regularization:

Objective = (Data - Model)² + λ * ||Parameters||², whereλis the regularization parameter. - Cross-Validation: Split your data into training and validation sets. Estimate parameters on the training set for a range of

λvalues. - Parameter Tuning: Choose the

λvalue that results in the best model performance on the validation set. - Final Evaluation: Train the model with the chosen

λon the entire dataset and evaluate its performance on a completely separate test set.

Workflow Diagrams for Overcoming Local Minima

Global Optimization Strategy

Parameter Identification and Subset Selection

Research Reagent Solutions: Computational Tools

This table details key computational "reagents" — algorithms and methods — essential for tackling local minima in biomedical parameter estimation.

| Research Reagent | Function | Key Considerations |

|---|---|---|

| Genetic Algorithm (GA) | A global optimization technique inspired by natural selection that maintains a population of candidate solutions, making it robust to local minima [27] [26]. | Computationally intensive; well-suited for complex models with many parameters. Requires tuning of hyperparameters (e.g., mutation rate). |

| Particle Swarm Optimization (PSO) | A global optimizer where a "swarm" of particles explores the parameter space, sharing information to find the global minimum [26]. | Effective for a wide array of problems; often easier to implement than GAs. |

| Simulated Annealing | A probabilistic technique that allows acceptance of worse solutions early on (at high "temperature") to escape local minima, then focuses on convergence as it "cools" [27]. | Good for problems with a rough fitness landscape; cooling schedule needs careful design. |

| Bayesian Estimation | A method that treats parameters as probability distributions. It incorporates prior knowledge (e.g., physiological parameter ranges), which can guide the estimation away from implausible local minima [28]. | Particularly useful when data are sparse or noisy. Provides uncertainty estimates for parameters. |

| Multi-Start Local Optimization | A simple yet effective strategy that runs a fast local optimizer from numerous random starting points, increasing the chance of finding the global minimum [26]. | Can be parallelized for speed. The robustness of the final solution depends on the number of starts. |

FAQ: Understanding and Identifying Local Minima

Q1: What are local minima in the context of TMDD model parameter estimation? A local minimum is a set of parameter values where the estimation algorithm (e.g., SAEM) converges, but the resulting model fit is not the best possible. The objective function value (e.g., -2LL) is low in the immediate vicinity of these parameters but is not the global lowest value achievable. In TMDD models, this often manifests as a model that fits the data poorly for certain dose levels or time periods, and small changes to parameters do not improve the fit, even though the solution is suboptimal [29].

Q2: Why are TMDD models particularly prone to convergence issues like local minima?

TMDD models are highly complex, nonlinear systems characterized by a large number of parameters (e.g., kon, koff, kint, kdeg) that can be highly correlated [29]. For instance, the parameters kdeg (receptor degradation rate) and KD (equilibrium dissociation constant) often have a similar influence on the shape of the concentration-time curve. This correlation makes the model "over-parameterized" when faced with limited data, meaning the data cannot uniquely identify all parameters, leading to unstable estimates and convergence to local solutions [29].

Q3: What are the key diagnostic signs of local minima or over-parameterization? Monitor these key indicators during estimation [29]:

- Non-convergence of SAEM: Unstable fixed effect estimates or random effects (

omega) that converge to very high values. - High Condition Number: A condition number above 100 (calculated from the correlation matrix eigenvalue ratio) strongly hints at over-parameterization and high parameter correlations. Values between 10-100 are questionable.

- High Relative Standard Errors (r.s.e.): Large uncertainties (r.s.e.) on parameter estimates indicate the data is insufficient to identify them reliably.

- Trends in Residual Plots: Systematic patterns in population weighted residuals (PWRES) vs. time or predictions suggest a model mis-specification that can be related to a local solution.

- Parameter-Covariate Trends: A clear trend in the distribution of individual parameters (e.g.,

Vm) when stratified by dose groups indicates the model is not adequately capturing the dose-dependent behavior.

Q4: Our full TMDD model failed to converge. What is the recommended strategy? A bottom-up approach is highly recommended over a top-down approach [29]. Start with simpler, more robust approximations of the TMDD model and progressively complexify the model if diagnostic plots show mis-specifications. This is more reliable than trying to fit the full model first, which may never converge.

Q5: How does the available data guide the choice of an initial TMDD model to avoid estimation issues? The type of data you have can restrict model choice and thus help avoid unidentifiable parameters [29]:

- If you have only measured the free ligand concentration (L), all TMDD models are applicable.

- If you have measured total ligand (Ltot), free/total receptor (R, Rtot), complex (P), or target occupancy (TO), you can use all models except the Michaelis-Menten (MM) model.

Troubleshooting Guide: Protocols for Resolving Estimation Problems

Protocol 1: Model Simplification Based on Prior Knowledge and Data Inspection

Objective: To select an appropriate, simpler TMDD model that reduces the number of parameters to be estimated, thereby mitigating the risk of local minima and non-convergence.

Methodology:

- Step 1: Assess Binding Kinetics. Review prior in vitro evidence (e.g., from Biacore experiments) [29].

- Evidence of fast binding (high

konandkoff) -> Use Quasi-Equilibrium (QE), Wagner, or MM approximations. - Evidence of irreversible binding (very low

KD) -> Use Irreversible Binding (IB), "Constant Rtot + IB", or MM models.

- Evidence of fast binding (high

- Step 2: Assess Receptor Turnover. Evaluate if the elimination rates of the free receptor and drug-receptor complex are similar [29].

- Evidence that

kdeg≈kint-> Use Constant Rtot, Wagner, "Constant Rtot + IB", or MM models.

- Evidence that

- Step 3: Inspect Concentration-Time Curve Shape. Analyze the measured PK profiles [29].

- Phase 1 (initial rapid drop) is not observable -> Binding is too fast for data to capture. Use QE, Wagner, or MM models.

- Phase 4 (terminal phase) is below the limit of quantification (LOQ) -> Binding is very strong/irreversible. Use IB, "Constant Rtot + IB", or MM models.

Expected Outcome: A robust initial model with fewer parameters, leading to more stable convergence and identifiable parameters.

Protocol 2: Parameter Fixation and Bottom-Up Model Building

Objective: To stabilize estimation by fixing non-identifiable parameters to literature or in vitro values, then testing their estimability.

Methodology:

- Step 1: Fit a Simplified Model. Begin with a Michaelis-Menten model or a QE approximation to obtain initial estimates for systemic parameters (e.g.,

CL,V). - Step 2: Identify Problematic Parameters. Analyze the correlation matrix and RSE values from the simplified model fit to identify highly correlated or uncertain parameters.

- Step 3: Fix Parameters. Introduce parameters from a more complex model one by one, fixing their values to prior knowledge (e.g., fix

konandkofffrom Biacore data, orR0from proteomic studies [30] [29]). - Step 4: Re-estimate and Assess. Fit the model with fixed parameters and check for improvements in convergence and diagnostics.

- Step 5: Release Parameters Iteratively. If the model with fixed parameters fits well, try to re-estimate one fixed parameter at a time to see if the data contains enough information to inform it.

Expected Outcome: A step-wise progression to a more complex model without encountering convergence issues, resulting in a final model with reliable and interpretable parameter estimates.

The following workflow summarizes the diagnostic and resolution process for addressing local minima:

Diagnostic Reference Table

The table below summarizes key diagnostic checks and their interpretation for identifying local minima and over-parameterization [29].

| Diagnostic Check | Tool/Metric | Problematic Indicator | Probable Cause |

|---|---|---|---|

| Algorithm Convergence | SAEM Estimation History | Unstable parameter values; High random effects (omega) |

Over-parameterization; Model too complex for data |

| Parameter Identifiability | Correlation Matrix & Condition Number | Condition number > 100; | High correlation between parameters (e.g., kdeg & KD) |

| Parameter Uncertainty | Relative Standard Error (RSE) | RSE > 50% for key parameters | Insufficient data to reliably estimate the parameter |

| Model Fit Adequacy | Residual Plots (PWRES vs. TAD) | Systematic trends, not random around zero | Model mis-specification; Key process not captured |

TMDD Model Selection Guide

This table outlines common TMDD model approximations and the scenarios for their application to prevent estimation issues [29].

| TMDD Model | Key Assumption | When to Use | Parameters Reduced |

|---|---|---|---|

| Quasi-Equilibrium (QE) | Binding is rapid and at equilibrium | Fast binding; Phase 1 not observed in data | kon & koff replaced by KD |

| Quasi-Steady-State (QSS) | Binding is at steady-state | General purpose approximation for mAbs [31] | kon & koff replaced by KSS |

| Irreversible Binding (IB) | Drug-Target complex does not dissociate | Very high affinity; Phase 4 below LOQ | koff is set to zero |

| Constant Rtot | Total target concentration is constant | Receptor synthesis rate ksyn equals complex loss kint |

ODE for Rtot is removed |

| Michaelis-Menten (MM) | Linear and saturable elimination | Low affinity & slow systemic clearance [31]; Limited dose range | All target-mediated parameters replaced by Vm & Km |

The Scientist's Toolkit: Research Reagent Solutions

The following table lists key materials and computational tools essential for developing and troubleshooting TMDD models.

| Item / Reagent | Function / Application | Technical Notes |

|---|---|---|

| Biacore / SPR System | Measures binding kinetics (kon, koff) in vitro. |

Provides critical prior knowledge to fix parameters or guide model selection [29]. |

| LC-MS/MS System | Quantifies free ligand, total ligand, and sometimes target or complex concentrations. | Essential for generating rich PK data for model fitting [30]. |

| MonolixSuite | Pharmacometric software for nonlinear mixed-effects modeling (SAEM algorithm). | Used for TMDD model parameter estimation and diagnostics [29]. |

| Mlxplore | Simulation tool (part of MonolixSuite). | Used for prior simulation of TMDD models to assess parameter identifiability [29]. |

| WebAIM Color Contrast Checker | Online tool to check color contrast ratios. | Ensures accessibility of generated graphs and presentations [32]. |

| R / Python with ggplot2/Matplotlib | Programming languages and libraries for data visualization and analysis. | Used for creating custom diagnostic plots (e.g., residuals, parameter correlations). |

The relationships between different TMDD models, based on their simplifying assumptions, are visualized below. This map aids in selecting an appropriate simplification path.

Escape Methodologies: From Stochastic Optimization to Experimental Design

Technical Support Center: Troubleshooting Guides and FAQs

This technical support resource is designed for researchers and scientists working on parameter estimation, particularly in fields like pharmacometrics and drug development. A central challenge in this work is the optimization algorithm becoming trapped in a local minimum, leading to biased parameter estimates and unreliable models. The following guides address common issues encountered when using stochastic optimization algorithms to overcome this problem.

Frequently Asked Questions (FAQs)

Q1: My parameter estimation consistently converges to different, suboptimal values. How can I escape these local minima?

A: This is a classic symptom of an optimization process getting trapped in local minima. We recommend the following actions:

- Action 1: Switch to a Global Optimization Algorithm. Local search methods like the quasi-Newton method can be sensitive to initial values [26]. Algorithms like Simulated Annealing and Genetic Algorithms are specifically designed to explore the entire parameter space and are less likely to get stuck [33].

- Action 2: Leverage a Hybrid Approach. Run a global algorithm (e.g., Genetic Algorithm) to broadly explore the parameter space, then use its output as the initial estimates for a more precise local method (e.g., Stochastic Gradient Descent). This combines the strengths of both global exploration and local refinement [26].

- Action 3: Conduct Multiple Estimation Rounds. Run your parameter estimation multiple times with different, randomly perturbed initial values. Consistent convergence to the same parameter set increases confidence in the result, while divergence indicates instability or the presence of multiple minima [26] [34].

Q2: My Stochastic Gradient Descent (SGD) optimization is noisy and unstable. What can I do to improve convergence?

A: The inherent noise in SGD can be managed with a few established techniques:

- Action 1: Implement a Learning Rate Schedule. Instead of a fixed learning rate, use a schedule that gradually decreases the rate over time. This allows for large steps initially to escape shallow minima and smaller steps later for fine-tuning.

- Action 2: Use a Momentum Term. Incorporate a momentum term into your SGD updates. Momentum helps accelerate convergence in relevant directions and dampens oscillations by adding a fraction of the previous update vector to the current one, allowing the algorithm to navigate through noisy gradients more effectively [33].

- Action 3: Validate with Mini-Batch SGD. If you are using pure SGD (one sample at a time), try switching to mini-batch SGD. This variant computes the gradient on a small, random subset of the data, offering a compromise between the computational efficiency of SGD and the stability of batch gradient descent [33].

Q3: How do I handle Below the Limit of Quantification (BLQ) data in my pharmacokinetic model to avoid biased parameter estimates?

A: The handling of censored BLQ data is critical for accurate parameter estimation.

- Action 1: Understand the Gold Standard (M3 Method). The M3 method is a likelihood-based approach that is considered the gold standard as it introduces the least bias. It maximizes the likelihood for all data, where the likelihood for BLQ data is the probability that the observation is indeed BLQ [34].

- Action 2: Be Aware of M3's Instability. The M3 method can suffer from numerical issues and fail to converge consistently, leading to instability in the objective function value across different runs [34].

- Action 3: Consider a Stable Alternative (M7+). For greater stability during model development, consider the M7+ method. This involves replacing all BLQ data with zero and inflating the additive residual error for these imputed values (e.g., by 100% of the LLOQ). This accounts for the additional uncertainty in the imputed data and performs comparably to M3 with superior stability [34].

Algorithm Comparison and Selection Guide

The table below summarizes the key characteristics of the three stochastic optimization algorithms to aid in selection.

Table 1: Comparison of Stochastic Optimization Algorithms for Parameter Estimation

| Algorithm | Primary Strength | Key Mechanism | Best for Problem Type | Stability & Bias Notes |

|---|---|---|---|---|

| Stochastic Gradient Descent (SGD) | Efficiency on large datasets [33] | Uses random data subsets to calculate gradient [33] | High-dimensional, convex landscapes | Can be noisy; prone to local minima [33] |

| Simulated Annealing | Global optimum search [33] | Probabilistically accepts worse solutions to escape local minima [33] | Complex landscapes with multiple local optima | Less efficient but more robust [33] |

| Genetic Algorithms | Global search, no gradient needed [33] | Evolves population via selection, crossover, mutation [33] | Discontinuous, non-differentiable, complex problems | Computationally intensive; good for avoiding local traps [33] |

Experimental Protocol: Benchmarking Optimization Algorithms

Objective: To systematically evaluate and compare the performance of SGD, Simulated Annealing, and Genetic Algorithms on a specific parameter estimation problem, assessing their ability to find the global minimum and avoid local traps.

Materials & Methods:

- Model & Data: Use a known model (e.g., a two-compartment pharmacokinetic model) and a corresponding dataset. The dataset should ideally present a known challenge, such as a high proportion of BLQ data, to test algorithm robustness [34].

- Algorithm Implementation:

- SGD: Implement with a momentum term and a decaying learning rate schedule [33].

- Simulated Annealing: Define a cooling schedule (initial temperature, cooling rate) and a neighbor solution generation function.

- Genetic Algorithm: Set population size, fitness function, and parameters for crossover and mutation rates [33].

- Initialization: Run each algorithm from the same set of multiple (e.g., 50) different starting points to test sensitivity to initial values [26].

- Evaluation Metrics: For each run, record:

- Final Objective Function Value (OFV)

- Estimated Parameter Values

- Number of Iterations/Function Evaluations to Convergence

- Computation Time

The workflow for this experiment is outlined below.

The Scientist's Toolkit: Essential Research Reagents

Table 2: Key Computational Tools and Methods for Optimization Research

| Tool/Reagent | Function in Experiment | Technical Specification / Example |

|---|---|---|

| Optimization Software (e.g., NONMEM) | Platform for implementing models and estimation algorithms [34] | Supports multiple estimation methods; used with FOCE-I/Laplace for PK/PD modeling [34]. |

| BLQ Data Handling Method (M7+) | Accounts for uncertainty in censored observations to reduce bias [34] | Impute BLQ as 0; inflate additive error: θAdd + LLOQ [34]. |

| Global Optimization Algorithm (e.g., GA) | Finds global minimum in complex, multi-modal parameter spaces [33] [26] | Uses population-based search with crossover/mutation [33]. |

| Parameter Perturbation Script | Tests stability of solution by re-running with varied initial values [26] | Automates multiple runs with slightly different initial estimates. |

| Performance Metrics Logger | Records OFV, parameters, and runtime for comparative analysis. | Custom script to capture metrics from each algorithm run. |

Core Algorithm Workflows

The following diagrams illustrate the fundamental operational logic of each algorithm, highlighting their approach to navigating the optimization landscape and avoiding local minima.

Stochastic Gradient Descent with Momentum

Simulated Annealing Search Process

Genetic Algorithm Evolution Cycle

Frequently Asked Questions (FAQs)

FAQ 1: What is the fundamental difference between Classical Momentum and Nesterov's Accelerated Gradient?

Classical Momentum (CM) and Nesterov's Accelerated Gradient (NAG) are both optimization techniques that use a velocity vector to accumulate past gradients. The core difference lies in the order of operations. CM first calculates the velocity update and then takes a step based on this velocity and the current gradient. In contrast, NAG first makes a "look-ahead" step in the direction of the accumulated velocity, calculates the gradient at this future position, and then corrects the step using this gradient [35] [36]. This look-ahead property makes NAG more responsive to the changing loss landscape, often leading to faster convergence and reduced oscillation [37].

FAQ 2: When should I use NAG over Classical Momentum in my experiments?

NAG is generally preferred when you are training deep neural networks or optimizing complex, non-convex functions commonly encountered in parameter estimation research. Empirical studies, such as those on MNIST, have shown that with careful hyperparameter tuning, Nesterov momentum often converges faster and achieves better precision than Classical Momentum [37]. It is particularly beneficial when the optimization path is prone to sharp curvatures or when the algorithm needs to make more cautious updates to avoid overshooting minima [35].

FAQ 3: Why does my model's loss oscillate heavily when using momentum-based methods?

Oscillations are a common challenge when using momentum, primarily caused by three factors [38]:

- Inherent Stochasticity: Using random mini-batches of data introduces noise into the gradient estimates.

- Large Learning Rate: A step size that is too high can cause the optimizer to consistently overshoot the minimum.

- Imperfect Gradient Estimates: The stochastic nature of the gradients means they do not always point in the true direction of steepest descent. Momentum can amplify these oscillations, especially when navigating steep, narrow valleys in the loss landscape. To mitigate this, you can try reducing the learning rate, increasing the batch size to get more stable gradient estimates, or using a learning rate schedule that decays over time [38].

FAQ 4: How can momentum methods help in escaping local minima in parameter estimation?

Momentum helps overcome local minima by incorporating information from past gradients. In Classical Momentum, the velocity term acts like a "ball" rolling through the loss landscape, allowing it to pass through shallow local minima due to its inertia [39] [40]. NAG's look-ahead mechanism further enhances this ability. By evaluating the gradient after a momentum step, it can detect an upcoming slope (e.g., leading out of a local minimum) earlier and adjust its update accordingly, making it more effective at navigating away from suboptimal regions [35] [37]. This is particularly valuable in drug development research where objective functions can be highly complex and riddled with local minima.

Troubleshooting Guides

Problem: Training Becomes Unstable or Diverges After Introducing Momentum

Description: The loss value increases dramatically (diverges) or exhibits large, unstable swings instead of steadily decreasing.

Solution: This is frequently a sign that the effective step size is too large.

- Reduce the Learning Rate: The momentum-accelerated velocity vector can lead to larger overall steps. Start by reducing your learning rate by a factor of 10 and monitor the stability. The relationship between learning rate (

η) and momentum (μ) is critical; a high momentum value often necessitates a lower learning rate [38]. - Check Your Momentum Value: Ensure your momentum coefficient (

β) is set to a sensible value, typically between 0.5 and 0.99. A value too close to 1 (e.g., 0.999) without a correspondingly small learning rate can cause instability. - Gradient Clipping: Implement gradient clipping to cap the norm of the gradients. This prevents excessively large parameter updates, which is especially useful when training recurrent neural networks (RNNs) or models with exploding gradients [41].

Problem: NAG is Performing Worse than Classical Momentum

Description: Contrary to expectations, the model with NAG converges slower or to a worse minimum than the one with Classical Momentum.

Solution:

- Verify Your Implementation: A common issue is the incorrect implementation of the NAG update rule. Double-check that you are calculating the gradient at the "look-ahead" point (

θ + μ*v) and not at the current parameters [36]. - Re-tune Hyperparameters: The optimal learning rate and momentum values for CM may not be directly transferable to NAG. Conduct a new hyperparameter search specifically for NAG. NAG can sometimes benefit from a slightly different configuration than CM [37].

- Inspect the Loss Landscape: In some very noisy or specific non-convex landscapes, the look-ahead correction of NAG might be too aggressive initially. Consider using a learning rate warm-up period to allow the optimization to stabilize before the full effect of NAG is applied.

Experimental Data and Protocols

Quantitative Comparison of Optimization Algorithms

The following table summarizes typical performance characteristics of various optimizers, including CM and NAG, as observed in controlled experiments like those on the MNIST dataset [37].

Table 1: Optimizer Performance Comparison on Benchmark Tasks

| Optimizer | Convergence Speed | Stability | Ease of Tuning | Typical Use Case |

|---|---|---|---|---|

| SGD | Slow | High (low oscillation) | Moderate | Simple convex problems, baseline |

| Classical Momentum | Medium-Fast | Medium | Moderate | General non-convex optimization |

| Nesterov Momentum | Fast | Medium-High | Moderate-Difficult | Deep learning, complex loss landscapes |

| Adagrad | Medium (early) | High | Easy | Sparse data, natural language processing |

| Adam | Fast (early) | Medium | Easy | Default for many deep learning tasks |

Detailed Experimental Methodology

To reproduce comparative experiments between CM and NAG, follow this protocol:

- Baseline Establishment: First, train your model using plain SGD to establish a baseline convergence time and final performance.

- Implement Momentum Updates:

- Classical Momentum: The update rule is implemented as follows [35]:

- Velocity:

v_{t+1} = μ * v_t - η * ∇f(θ_t) - Update:

θ_{t+1} = θ_t + v_{t+1}

- Velocity:

- Nesterov Accelerated Gradient: The update rule can be implemented in its "look-ahead" form [36] [37]:

- "Look-ahead" position:

θ_lookahead = θ_t + μ * v_t - Gradient calculation: Compute

∇f(θ_lookahead) - Velocity update:

v_{t+1} = μ * v_t - η * ∇f(θ_lookahead) - Parameter update:

θ_{t+1} = θ_t + v_{t+1}

- "Look-ahead" position:

- Classical Momentum: The update rule is implemented as follows [35]:

- Hyperparameter Search: Perform a grid search for each optimizer. A standard starting point is:

- Learning Rate (

η): [0.1, 0.01, 0.001, 0.0001] - Momentum (

μ): [0.5, 0.9, 0.95, 0.99]

- Learning Rate (

- Evaluation: Monitor the training loss and a validation metric (e.g., accuracy, C-index for survival models [41]) over epochs. The optimizer that achieves the lowest loss or highest metric in the fewest epochs is considered superior for that task.

Workflow and Conceptual Diagrams

Momentum Optimization Workflow

Diagram Title: Momentum Algorithms Workflow Comparison

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Computational Tools for Momentum Optimization Research

| Item | Function | Example Use Case |

|---|---|---|

| Automatic Differentiation Library | Automatically computes gradients of complex functions, which is essential for backpropagation in neural networks. | PyTorch, TensorFlow, JAX. |

| Hyperparameter Tuning Framework | Automates the search for optimal learning rates and momentum coefficients. | Weights & Biases, Optuna, Ray Tune. |

| Numerical Computation Environment | Provides a high-level language and ecosystem for implementing and testing optimization algorithms. | Python with NumPy/SciPy, MATLAB, R. |

| Visualization Toolkit | Plots loss curves, parameter trajectories, and loss landscapes to diagnose optimizer behavior. | Matplotlib, Seaborn, Plotly. |

| Stochastic Gradient Descent (SGD) Optimizer | The foundational optimizer class upon which momentum methods are built. | torch.optim.SGD (with momentum and nesterov parameters). |

| Learning Rate Scheduler | Dynamically adjusts the learning rate during training to improve convergence and escape local minima. | Step decay, cosine annealing, torch.optim.lr_scheduler. |

Technical Support & Troubleshooting Center

This guide addresses common challenges researchers face when implementing hybrid PSO-CGNM methods for parameter estimation, particularly in avoiding local minima. The content is framed within the thesis context of developing robust strategies to handle non-unique solutions and premature convergence in complex models.

Frequently Asked Questions (FAQs)

Q1: My parameter estimation consistently converges to different local minima depending on the initial guess. How can I obtain a more complete picture of the solution space? A: This is a classic symptom of a multimodal optimization problem. Instead of relying on a single run, employ a multi-start method with a systematic exploration strategy. The Cluster Gauss-Newton Method (CGNM) is specifically designed for this purpose [42] [43] [44]. CGNM starts from multiple initial iterates within a user-specified range and uses a collective global linear approximation to efficiently find multiple approximate minimizers of the nonlinear least squares problem simultaneously, revealing parameter identifiability issues [44].

Q2: My standard Particle Swarm Optimization (PSO) algorithm is "stuck" in a local optimum and shows premature convergence. What enhancements can I implement? A: Standard PSO is prone to this issue [45] [46]. Consider hybridizing PSO with strategies from other algorithms:

- Incorporate a local optimal jump-out strategy: Reset a portion (e.g., 40%) of the particle population's position when premature convergence is detected to reintroduce diversity [45].

- Use dynamic and adaptive parameters: Implement dynamic inertial weight to improve global search early on and adaptive acceleration coefficients to balance exploration and exploitation [45] [46].

- Hybridize with other metaheuristics: Integrate mutation strategies from Differential Evolution (DE) or spiral search mechanisms from the Whale Optimization Algorithm (WOA) in later iterations to refine solutions and escape local basins [45] [47].

Q3: Function evaluations for my physiological model are computationally expensive (e.g., ~1 minute per set). Which method is more efficient for broad parameter space exploration? A: When evaluations are costly, efficiency is critical. While multi-start methods are ideal for broad exploration, naive repetition is prohibitive [10]. The CGNM provides a significant computational advantage in this scenario. It reuses intermediate computation results across all iterates to build a collective Jacobian-like approximation, drastically reducing the number of unique model evaluations needed compared to running independent optimizations from each starting point [42] [43] [44].

Q4: How can I statistically validate the confidence intervals of parameters estimated using these hybrid methods, especially when some parameters are not uniquely identifiable? A: The profile likelihood method is used to determine parameter identifiability and confidence intervals [42]. However, drawing a profile likelihood is computationally intensive as it requires repeated optimizations. A key advantage of CGNM is that the vast number of parameter combinations evaluated during its run can be reused to quickly approximate the profile likelihood for all parameters without additional model evaluations, providing an upper bound of the true profile likelihood [42].

Q5: For my photovoltaic cell parameter estimation problem, the error landscape is highly multimodal. Are there specific hybrid PSO strategies recommended? A: Yes. Research on photovoltaic model parameter estimation, a highly multimodal problem, suggests effective strategies include:

- Using multiple initial populations: An Optimizer Leveraging Multiple Initial Populations (OLMIP) uses separate evolution strategies from distinct starts to explore multiple regions before building an elite population, effectively avoiding local minima [48].

- PSO combined with local search: Hybrids like Quadratic PSO with Local Search (QPSO) integrate local search strategies around the best agent to refine solutions and escape local optima [48].

- PSO-DE hybrids: Integrating Differential Evolution (DE) operations with PSO increases swarm diversity and reduces the probability of converging to local optima [48].

The following tables summarize experimental results from cited studies on hybrid PSO and CGNM performance.

Table 1: Performance of NDWPSO (A Hybrid PSO Algorithm) on Benchmark Functions [45]

| Comparison Group | Number of Benchmark Functions / Datasets | Performance Result (NDWPSO vs. Group) | Context / Dimension |

|---|---|---|---|

| Other PSO Variants | 49 sets of data | Obtained better results for all 49 sets | Aggregate result across tests |

| 5 Other Intelligent Algorithms (e.g., GA, WOA) | 13 functions (f₁–f₁₃), Dim=30,50,100 | Achieved 69.2%, 84.6%, 84.6% of the best results | Unimodal & multimodal functions |

| 5 Other Intelligent Algorithms | 10 fixed-multimodal functions | Achieved 80% of the best optimal solutions | Fixed-dimensional multimodal |

| - | 3 practical engineering design problems | Obtained the best design solutions for all 3 problems | Welded beam, pressure vessel, etc. |

Table 2: Performance of CGNM on Pharmacokinetic (PBPK) Model Problems [44]

| Metric | CGNM Performance | Comparative Method |

|---|---|---|

| Computational Efficiency | More computationally efficient in finding multiple solutions | Standard Levenberg-Marquardt (multi-start) |

| Robustness to Local Minima | More robust against local minima | Standard Levenberg-Marquardt & state-of-the-art derivative-free methods |

| Primary Application | Efficiently finds multiple approximate minimizers for overparameterized models | Traditional methods focused on finding a single minimizer |

Detailed Experimental Protocols

Protocol 1: Implementing the NDWPSO Hybrid Algorithm for Benchmark Testing [45]

- Initialization: Use Elite Opposition-Based Learning to generate the initial particle position matrix, improving initial solution quality.

- Parameter Setup: Define a dynamic inertia weight (ω) that decreases nonlinearly from 0.9 to 0.4 over iterations. Set acceleration coefficients c₁ and c₂.

- Iterative Search: In each iteration: a. Update particle velocities and positions using the standard PSO equations [45] [46]. b. Monitor population diversity. If the global best solution stagnates for a predefined number of iterations, trigger the local optimal jump-out strategy: randomly reset 40% of the particles' positions within bounds.

- Late-Stage Refinement: After a certain iteration threshold, apply: a. The spiral shrinkage search strategy from WOA to exploit the region around the current best solution. b. The DE/best/2 mutation strategy to the particle positions to enhance diversity and precision.

- Termination & Evaluation: Run for a fixed number of iterations (e.g., 1000). Evaluate the final global best solution on the benchmark function (e.g., Sphere, Rosenbrock, Ackley) and record the error.

Protocol 2: Applying CGNM for Parameter Estimation in PBPK Models [42] [44]

- Problem Formulation: Define the PBPK model as a set of ODEs. Formulate the parameter estimation task as a nonlinear least squares problem: minimize SSR(θ) = ||ymodel(θ) - yobserved||².

- Initialization: Specify a physiologically plausible range for each parameter. Randomly generate N initial parameter vectors (iterates) within this range (e.g., N=100).

- CGNM Iteration Loop: a. Evaluate Model: Simulate the PBPK model for all N parameter vectors to obtain model outputs. b. Compute Collective Approximation: Construct a global linear approximation (a pseudo-Jacobian matrix) using the input-output pairs from all current iterates, instead of computing separate Jacobians for each. c. Update Iterates: Using this collective approximation, perform a Gauss-Newton-type update step for all N parameter vectors simultaneously to reduce their SSR. d. Check Convergence: Repeat steps a-c until the reduction in SSR for the cluster falls below a threshold or a maximum iteration count is reached.

- Analysis of Results: The output is a cluster of N parameter vectors with low SSR. Analyze the distribution: a. Identifiability: Parameters with narrow distributions are likely identifiable; wide distributions indicate non-identifiability [44]. b. Profile Likelihood Approximation: Bin the results by the value of a parameter of interest and take the minimum SSR in each bin to quickly plot an approximate profile likelihood [42].

- Validation: Use the cluster of parameters for ensemble simulation to predict drug concentration under new dosing scenarios, capturing uncertainty from non-identifiable parameters.

Workflow and Algorithm Structure Diagrams

Diagram 1: Hybrid PSO-CGNM Framework for Robust Parameter Estimation

Diagram 2: Core Iterative Loop of the Cluster Gauss-Newton Method (CGNM)

The Scientist's Toolkit: Key Research Reagent Solutions

This table lists essential algorithmic components ("reagents") for constructing robust hybrid parameter estimation methods.

| Research Reagent (Algorithm Component) | Primary Function in the "Experiment" | Key Reference / Role |

|---|---|---|

| Elite Opposition-Based Learning | Generates a high-quality, diverse initial population for PSO, improving starting point and convergence speed. | [45] |

| Dynamic Inertia Weight (ω) | Balances exploration and exploitation: higher ω early promotes global search, lower ω later fine-tunes solutions. | [45] [46] |