Ensuring Machine Learning Biomarker Consistency: From Multi-Omics Integration to Clinical Translation

The application of machine learning (ML) for biomarker discovery holds transformative potential for precision medicine, yet the consistency of these biomarkers across diverse datasets remains a significant challenge.

Ensuring Machine Learning Biomarker Consistency: From Multi-Omics Integration to Clinical Translation

Abstract

The application of machine learning (ML) for biomarker discovery holds transformative potential for precision medicine, yet the consistency of these biomarkers across diverse datasets remains a significant challenge. This article provides a comprehensive analysis for researchers and drug development professionals, exploring the foundational principles of ML-driven biomarker discovery. It delves into advanced methodologies for multi-omics data integration, identifies key obstacles such as data heterogeneity and model overfitting, and outlines rigorous validation frameworks essential for ensuring generalizability and clinical adoption. By synthesizing insights from recent studies and emerging best practices, this review offers a strategic roadmap for developing robust, reliable, and clinically actionable ML-derived biomarkers.

The Foundation of ML-Driven Biomarkers: Core Concepts and Data Landscape

Biomarkers, defined as measurable indicators of biological processes or responses to therapeutic interventions, serve as critical molecular signposts that illuminate the intricate pathways of health and disease [1]. These indicators can take the form of molecules, genes, proteins, cells, hormones, enzymes, or physiological traits, providing essential insights that bridge the gap between benchside discovery and bedside application [2]. In the era of precision medicine, biomarkers have evolved from simple diagnostic tools to sophisticated instruments that guide therapeutic decisions, predict treatment outcomes, and enable personalized treatment strategies tailored to individual patient characteristics.

The fundamental importance of biomarkers lies in their ability to objectively measure and evaluate normal biological processes, pathogenic processes, or pharmacological responses to therapeutic interventions [1]. This capability has revolutionized drug development and clinical practice, moving medicine from a population-based "one-size-fits-all" approach to a more nuanced strategy that considers individual variability in genes, environment, and lifestyle. As the field advances, the precise classification and application of biomarkers have become increasingly important for ensuring their appropriate use in both clinical research and patient care, with distinct categories serving specific roles in the continuum of disease management from risk assessment to treatment selection.

Biomarker Classification and Definitions

Core Biomarker Types in Precision Medicine

Biomarkers are broadly categorized based on their clinical applications, with diagnostic, prognostic, and predictive biomarkers representing three fundamental types that serve distinct yet sometimes overlapping roles in patient care [1]. Understanding the precise definitions and appropriate contexts of use for each biomarker category is essential for their correct application in both research and clinical settings. These categories are not necessarily mutually exclusive—a single biomarker may fulfill multiple roles depending on the context—but each serves a specific primary purpose in the clinical decision-making pathway.

Diagnostic Biomarkers: These biomarkers detect or confirm the presence of a disease or condition of interest, or identify individuals with a specific disease subtype [1]. They are used to establish the presence or absence of disease, often enabling earlier detection than would be possible based on clinical symptoms alone. In precision medicine, diagnostic biomarkers are increasingly used not only to identify people with a disease but to redefine disease classification itself, moving from organ-based to molecular-based classification schemes. For example, cancer diagnosis is rapidly evolving toward molecular and imaging-based classification rather than traditional histopathological approaches alone.

Prognostic Biomarkers: Prognostic biomarkers provide information about the likely course of a disease in untreated individuals, identifying the likelihood of a clinical event, disease recurrence, or progression in patients who already have the disease or medical condition of interest [3] [1]. These biomarkers reflect the intrinsic characteristics of the patient or disease and help stratify patients based on their likely disease outcomes, independent of specific treatments. Prognostic biomarkers are often identified from observational data and are regularly used to identify patients more likely to have a particular outcome, enabling clinicians to tailor monitoring intensity or consider more aggressive initial therapies for those at highest risk of poor outcomes.

Predictive Biomarkers: Predictive biomarkers identify individuals who are more likely than similar individuals without the biomarker to experience a favorable or unfavorable effect from exposure to a specific medical product or environmental agent [3] [1]. These biomarkers help determine whether a particular treatment will be effective or whether specific side effects might occur in a given patient, enabling therapy selection based on the biological characteristics of both the patient and their disease. The identification of predictive biomarkers generally requires a comparison of treatment to a control in patients with and without the biomarker, though compelling preclinical and early clinical data may sometimes support enrichment strategies in definitive clinical trials.

Comparative Analysis of Biomarker Types

Table 1: Comparative Characteristics of Major Biomarker Types

| Biomarker Type | Primary Function | Clinical Question Addressed | Measurement Context | Examples |

|---|---|---|---|---|

| Diagnostic | Detects or confirms disease presence | "Does the patient have the disease?" | Single measurement often sufficient | CA-125 for ovarian cancer, troponin for myocardial infarction [4] [1] |

| Prognostic | Predicts disease course and outcomes | "What is the likely disease outcome regardless of treatment?" | Measured at diagnosis or before treatment | BRCA mutations in ovarian cancer for overall survival likelihood [3] [4] |

| Predictive | Forecasts treatment response | "Will this specific treatment work for this patient?" | Measured before treatment selection | BRAF V600E mutation for vemurafenib response in melanoma [3] |

Table 2: Methodological Requirements for Biomarker Validation

| Biomarker Type | Study Design Requirements | Statistical Considerations | Common Validation Challenges |

|---|---|---|---|

| Diagnostic | Comparison to reference standard | Sensitivity, specificity, ROC curves, positive/negative predictive values | Establishing appropriate thresholds, context of use, false positives in low-prevalence settings [1] |

| Prognostic | Observational studies of natural history | Survival analysis, Cox proportional hazards, Kaplan-Meier curves | Controlling for confounding factors, distinguishing from predictive effects [3] |

| Predictive | Randomized controlled trials with biomarker stratification | Treatment-by-biomarker interaction tests, qualitative vs quantitative interactions | Requirement for control groups, adequate sample size for biomarker subgroups [3] |

Distinguishing Prognostic and Predictive Biomarkers

Conceptual and Methodological Differences

While both prognostic and predictive biomarkers provide information about future outcomes, they differ fundamentally in their clinical applications and the methodological approaches required for their validation [3]. Prognostic biomarkers provide information about the natural history of the disease regardless of specific treatments, while predictive biomarkers provide information about the differential benefit (or harm) of a specific treatment between biomarker-defined subgroups. This distinction has profound implications for clinical practice, as prognostic biomarkers help answer questions about disease monitoring and management intensity, while predictive biomarkers directly inform treatment selection.

The differentiation between prognostic and predictive biomarkers presents methodological challenges, as they cannot generally be distinguished when only patients who have received a particular therapy are studied [3]. In the absence of appropriate control groups, what appears to be a predictive effect may actually reflect underlying prognostic factors. For example, a biomarker might appear to predict better response to an experimental therapy simply because patients with that biomarker have better outcomes regardless of treatment. Proper discrimination requires comparison of treatment effects between biomarker-defined subgroups in studies that include appropriate control arms, ideally through randomized controlled trials designed to assess treatment-by-biomarker interactions.

Visualizing Biomarker Distinctions Through Clinical Outcomes

Diagram 1: Prognostic vs. Predictive Biomarker Pathways. This diagram illustrates the distinct clinical pathways for prognostic biomarkers (which indicate disease outcomes regardless of treatment) and predictive biomarkers (which indicate likelihood of response to specific treatments).

Statistical interaction forms the basis for distinguishing predictive from prognostic biomarkers [3]. Qualitative interactions, where a treatment is beneficial in one biomarker subgroup but harmful in another, provide the strongest evidence for predictive biomarkers. Quantitative interactions, where the magnitude of benefit differs between subgroups but the direction remains the same, may be less useful for treatment selection unless toxicity or other costs overshadow the differential benefits. The ideal predictive biomarker demonstrates a qualitative interaction where one subgroup clearly benefits from the experimental treatment while another does not, or may even experience harm, enabling precise targeting of therapies to those most likely to benefit.

Machine Learning Approaches for Biomarker Discovery and Validation

Enhanced Biomarker Selection Through Machine Learning

Recent advances in machine learning (ML) have transformed biomarker discovery by enabling analysis of complex, high-dimensional datasets that traditional statistical methods struggle to process effectively [4] [5]. ML algorithms can identify subtle patterns and interactions in large-scale molecular data, leading to more robust biomarker signatures. Studies have demonstrated that ML models such as random forests (RF), support vector machines (SVM), and gradient boosting machines (XGBoost) can outperform traditional statistical methods in various cancer prediction tasks, including ovarian cancer detection and classification [4].

Comparative research evaluating multiple ML approaches for biomarker selection has revealed important insights into their relative strengths [5]. When specificity was fixed at 0.9, ML approaches significantly outperformed standard logistic regression, producing sensitivities of 0.240 (with 3 biomarkers) and 0.520 (with 10 biomarkers), compared to logistic regression sensitivities of 0.000 and 0.040 for the same biomarker numbers. The study also found that causal-based methods for biomarker selection proved most effective when fewer biomarkers were permitted, while univariate feature selection performed best when a greater number of biomarkers were allowed. This suggests that the optimal ML approach depends on the specific constraints and goals of the biomarker development program.

Biomarker-Driven ML Models in Ovarian Cancer

Ovarian cancer management provides a compelling case study for the application of biomarker-driven ML models [4]. Biomarker-integrated ML approaches have significantly outperformed traditional statistical methods, achieving AUC values exceeding 0.90 in diagnosing ovarian cancer and distinguishing malignant from benign tumors. Ensemble methods such as Random Forest and XGBoost, along with deep learning approaches including recurrent neural networks (RNNs), have demonstrated exceptional performance with classification accuracy up to 99.82%, survival prediction AUC up to 0.866, and improved treatment response forecasting.

The integration of multiple biomarkers in ML models has proven particularly powerful [4]. Combining established biomarkers like CA-125 and HE4 with additional inflammatory markers such as C-reactive protein (CRP) and neutrophil-to-lymphocyte ratio (NLR) enhances both specificity and sensitivity in ovarian cancer detection. This multi-biomarker approach, enabled by ML's capacity to model complex interactions, represents a significant advancement over single-biomarker strategies. Furthermore, ML models integrating clinical, biomarker, and molecular data have shown promise in predicting chemotherapy response and survival outcomes, enabling more personalized treatment planning.

Table 3: Performance of Machine Learning Models in Biomarker Applications

| Application Domain | ML Algorithm | Performance Metrics | Biomarkers Used | Reference |

|---|---|---|---|---|

| Ovarian Cancer Diagnosis | Random Forest, XGBoost | AUC > 0.90, Accuracy up to 99.82% | CA-125, HE4, CRP, NLR [4] | |

| Gastric Cancer Biomarker Selection | Causal ML Methods | Sensitivity: 0.240 (3 biomarkers), 0.520 (10 biomarkers) | 3440 analytes down to selected biomarkers [5] | |

| Wastewater CRP Classification | Cubic SVM | Accuracy: 64.88%-65.48% | CRP absorption spectra [6] | |

| Survival Prediction | RNNs | AUC up to 0.866 | Multi-modal data integration [4] |

Experimental Protocols and Methodologies

Biomarker Validation Framework

The validation of biomarkers for clinical use requires a rigorous, multi-stage process that progresses from analytical validation to clinical qualification [1]. Analytical validation ensures that the biomarker test accurately and reliably measures the intended analyte across the specified range of biological samples. This includes establishing analytical sensitivity, specificity, precision, reproducibility, and limits of detection and quantification. The requirements for analytical validation depend on the context of use, with more stringent requirements for biomarkers that will guide critical treatment decisions compared to those used for preliminary hypothesis generation.

Clinical qualification establishes the evidence that a biomarker is fit for its specific purpose in a defined context of use [1]. This process requires demonstrating that the biomarker reliably predicts the biological process, pathological state, or response to intervention that it claims to measure. The level of evidence required depends on the intended application, with risk biomarkers requiring different evidence than diagnostic or predictive biomarkers. For regulatory acceptance, the evidence must demonstrate that the biomarker measurement is accurate, reproducible, and clinically meaningful for its intended use.

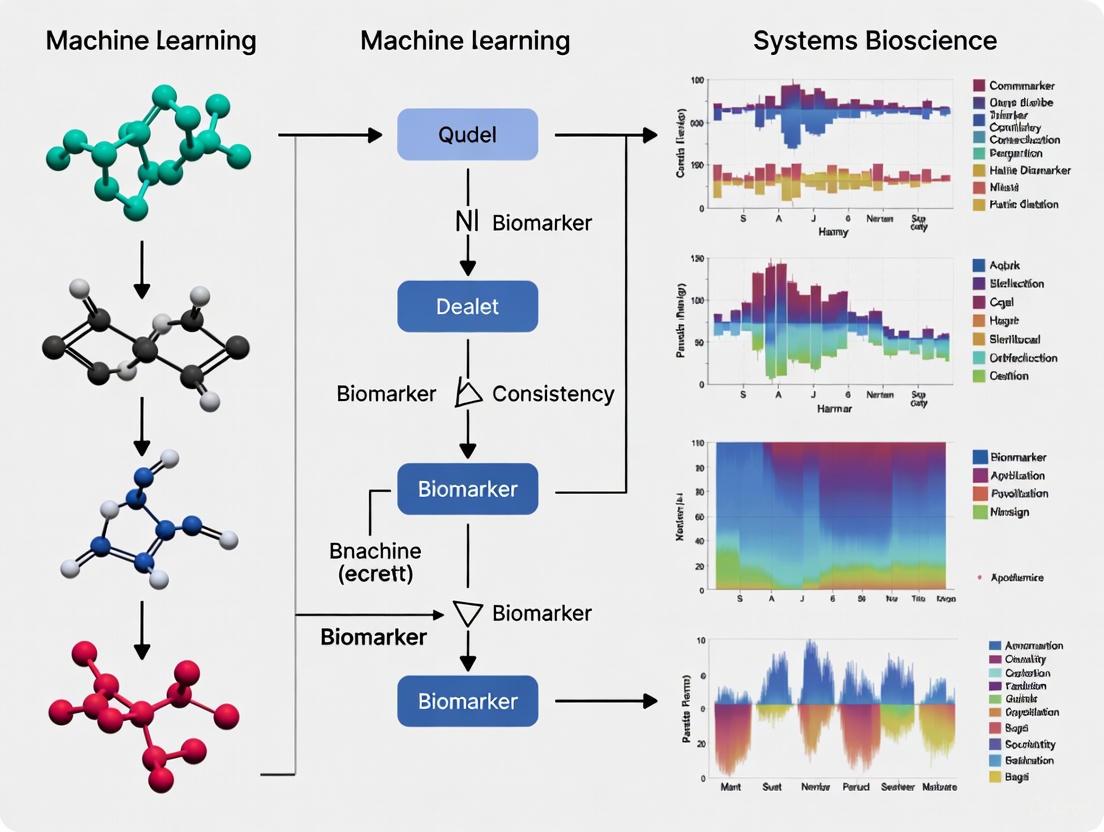

Machine Learning Workflow for Biomarker Discovery

Diagram 2: ML Workflow for Biomarker Discovery. This diagram outlines the standard machine learning workflow for biomarker discovery, from high-dimensional data collection through feature selection, model training, cross-validation, and performance assessment.

A typical ML-driven biomarker discovery pipeline involves several methodical steps [5]. The process begins with data collection from high-throughput technologies that can measure thousands of analytes simultaneously. Feature selection methods then identify the most promising biomarker candidates from these analytes, using approaches ranging from univariate selection based on statistical tests to more sophisticated causal inference methods that account for complex biological relationships. The selected biomarkers then serve as inputs to ML classifiers, which are trained and validated using rigorous cross-validation approaches such as leave-one-out cross-validation (LOOCV) or k-fold cross-validation to ensure generalizability.

For biomarker selection, causal-based methods have shown particular promise when the number of permitted biomarkers is severely restricted [5]. These methods examine the effect of a single analyte in the context of other analytes that may have co-occurring measurements, adapting causal metrics to identify biomarkers with potentially more direct biological relevance. The resulting biomarker panels are then evaluated based on their performance characteristics, including sensitivity, specificity, area under the curve (AUC), and clinical utility metrics that assess their potential impact on patient management and outcomes.

Essential Research Reagent Solutions

Table 4: Key Research Reagents and Platforms for Biomarker Studies

| Reagent/Platform Type | Specific Examples | Primary Function in Biomarker Research | Application Context |

|---|---|---|---|

| Protein Array Technologies | Nucleic Acid Programmable Protein Array (NAPPA) | High-throughput assessment of humoral responses to thousands of proteins | Biomarker discovery in gastric cancer [5] |

| Liquid Chromatography Systems | Reversed-phase LC-MS (rLC-MS) | Untargeted profiling of thousands of small molecule biomarkers per sample | Small molecule biomarker discovery [7] |

| Absorption Spectroscopy | UV-Vis Spectrophotometry | Measuring absorption spectra for biomarker classification | Wastewater CRP monitoring [6] |

| Immunoassay Platforms | ELISA, Electrochemiluminescence Immunosensor | Quantitative detection of specific protein biomarkers | Spike S1 protein detection in wastewater [6] |

The precise classification of biomarkers into diagnostic, prognostic, and predictive categories provides an essential framework for their appropriate application in precision medicine. Each category serves distinct yet complementary roles in the continuum of patient care, from initial diagnosis through treatment selection and monitoring. The distinction between prognostic and predictive biomarkers deserves particular emphasis, as confounding these categories can lead to inappropriate clinical decisions and squandered therapeutic opportunities. Proper validation of biomarkers for their intended context of use remains paramount, requiring rigorous analytical and clinical evaluation standards.

Machine learning approaches are revolutionizing biomarker discovery and validation, enabling analysis of complex, high-dimensional data and identification of robust biomarker signatures that outperform those derived from traditional statistical methods. The integration of multi-omics data, including genomics, proteomics, and metabolomics, with clinical variables through ML models promises to further enhance the precision and personalization of medical care. As these technologies advance, the development of explainable AI and standardized validation frameworks will be critical for translating biomarker research into clinically actionable tools that improve patient outcomes across diverse disease areas.

The discovery of biomarkers—measurable indicators of biological processes, pathological states, or responses to therapeutic interventions—is fundamental to advancing precision medicine [8]. Traditional biomarker discovery methods, which often focus on single molecular features, face significant challenges including limited reproducibility, high false-positive rates, and inadequate predictive accuracy when confronting complex diseases [8]. Machine learning (ML) paradigms have emerged as powerful tools that address these limitations by analyzing large, complex multi-omics datasets to identify more reliable and clinically useful biomarkers [8] [9].

ML enhances biomarker discovery by integrating diverse and high-volume data types, including genomics, transcriptomics, proteomics, metabolomics, imaging, and clinical records [8]. This review systematically compares the three key machine learning paradigms—supervised learning, unsupervised learning, and deep learning—in the context of biomarker discovery, evaluating their methodological approaches, performance characteristics, and applications across different disease domains to guide researchers and drug development professionals in selecting appropriate computational strategies.

Comparative Analysis of Machine Learning Paradigms

The table below provides a systematic comparison of the three primary machine learning paradigms in biomarker discovery, highlighting their core functions, key algorithms, and typical applications.

Table 1: Comparison of Machine Learning Paradigms in Biomarker Discovery

| Paradigm | Core Function | Key Algorithms | Data Requirements | Typical Biomarker Applications |

|---|---|---|---|---|

| Supervised Learning | Predictive modeling using labeled datasets | Random Forest, SVM, XGBoost, Logistic Regression [8] [10] | Labeled data with known outcomes [9] | Disease classification, treatment response prediction [8] [10] |

| Unsupervised Learning | Pattern discovery in unlabeled data | k-means, Hierarchical Clustering, PCA, t-SNE [8] [11] | Unlabeled data without predefined outcomes [11] | Patient stratification, disease endotyping [8] [11] |

| Deep Learning | Complex pattern recognition in high-dimensional data | CNN, RNN, Transformers, LLMs [8] [12] | Large-scale datasets (imaging, omics, sequences) [8] | Image-based biomarkers, multi-omics integration [8] [12] |

Supervised Learning in Biomarker Discovery

Methodological Approach and Experimental Protocols

Supervised learning trains predictive models on labeled datasets to classify disease status or predict clinical outcomes [9]. The fundamental approach involves using known input-output pairs to learn a mapping function that can then be applied to new, unseen data. In biomarker discovery, feature selection is typically incorporated prior to or during classification to remove noise and identify the most informative molecular features [9].

The standard experimental protocol for supervised biomarker discovery includes: (1) data collection and preprocessing (quality control, normalization, handling missing values); (2) feature selection using filter, wrapper, or embedded methods; (3) model training with cross-validation; (4) performance evaluation on holdout test sets; and (5) external validation using independent cohorts [9] [10]. For example, in a study predicting large-artery atherosclerosis (LAA), researchers used recursive feature elimination with cross-validation to identify optimal biomarker combinations, subsequently testing six different classifiers including logistic regression, support vector machines, random forests, and gradient boosting methods [10].

Performance Metrics and Comparative Data

Supervised learning models are typically evaluated using metrics derived from confusion matrices, including recall, precision, and area under the receiver operating characteristics curve (AUC-ROC) [9]. The table below summarizes performance data from recent biomarker discovery studies employing supervised learning approaches.

Table 2: Performance of Supervised Learning Models in Biomarker Discovery Applications

| Disease Area | ML Algorithm | Biomarker Type | Performance | Reference |

|---|---|---|---|---|

| Large-artery Atherosclerosis | Logistic Regression | Metabolites + Clinical Factors | AUC: 0.92 (external validation) [10] | [10] |

| Large-artery Atherosclerosis | Multiple Models | 27 Shared Features | AUC: 0.93 [10] | [10] |

| Cancer Signaling Networks | XGBoost | Predictive Biomarkers | LOOCV Accuracy: 0.7-0.96 [13] | [13] |

| Embryonic Development | F-score + SVM | Gene Expression | Superior to limma, edgeR, DESeq [9] | [9] |

Strengths and Limitations

Supervised learning excels in predictive accuracy when sufficient high-quality labeled data is available [10]. These methods provide robust performance for classification tasks and can handle high-dimensional data effectively. However, they require substantial labeled datasets, which can be costly and time-consuming to generate [9]. There is also a risk of overfitting, particularly with complex models applied to small sample sizes, necessitating careful feature selection and regularization [8] [9].

Unsupervised Learning in Biomarker Discovery

Methodological Approach and Experimental Protocols

Unsupervised learning explores unlabeled datasets to discover inherent structures or novel subgroupings without predefined outcomes [8] [11]. These methods are particularly valuable for identifying disease endotypes—subtypes based on shared molecular mechanisms rather than clinical symptoms alone [8]. Common techniques include clustering algorithms (k-means, hierarchical clustering) and dimensionality reduction approaches (principal component analysis, t-SNE) [8] [11].

A representative experimental protocol for unsupervised biomarker discovery is illustrated in Alzheimer's disease research, where investigators applied t-SNE followed by k-means clustering to the ADNIMERGE dataset, which included patient sociodemographics, brain imaging, biomarkers, cognitive tests, and medication usage [11]. This approach identified four distinct clinical sub-populations with characteristic patterns of disease severity and progression [11]. Association rule mining was subsequently applied to identify frequently occurring pharmacologic substances within each sub-population, revealing potential protective factors and treatment patterns [11].

Unsupervised Learning Workflow for Biomarker Discovery

Applications and Performance in Disease Subtyping

In the Alzheimer's disease study, unsupervised learning successfully identified four distinct clinical sub-populations with characteristic patterns: cluster-1 represented least severe disease (+17.3% cognitive performance, +13.3% brain volume); cluster-0 and cluster-3 represented mid-severity sub-populations with different profiles; and cluster-2 represented most severe disease (-18.4% cognitive performance, -8.4% brain volume) [11]. Association rule mining further revealed distinct medication patterns, with anti-hyperlipidemia drugs associated with one mid-severity cluster, antioxidants vitamin C and E with another, and antidepressants associated with the most severe disease cluster [11].

Deep Learning in Biomarker Discovery

Architectures and Methodological Innovations

Deep learning architectures, particularly convolutional neural networks (CNNs) and recurrent neural networks (RNNs), have demonstrated remarkable capabilities in analyzing complex biomedical data [8]. CNNs utilize convolutional layers to identify spatial patterns, making them highly effective for imaging data such as histopathology slides, while RNNs process sequential information through recurrent connections, making them suitable for time-series data or molecular sequences [8]. More recently, transformers and large language models have found application in omics data analysis, enabling more sophisticated pattern recognition [8].

The Predictive Biomarker Modeling Framework (PBMF) represents a cutting-edge deep learning approach that uses contrastive learning to systematically explore predictive biomarkers in clinical trial data [14]. This framework processes tens of thousands of clinicogenomic measurements per individual to identify biomarkers that specifically predict treatment response rather than general prognosis [14]. When applied retrospectively to immuno-oncology trials, this approach uncovered interpretable biomarkers that showed a 15% improvement in survival risk compared to the original trial selection criteria [14].

Performance Comparison Across Data Modalities

Deep learning approaches have demonstrated particular strength in analyzing complex data modalities, including digital pathology, radiomics, and multi-omics integration. A systematic review of artificial intelligence for predictive biomarker discovery in immuno-oncology analyzed 90 studies and found that deep learning methods were used in 22% of studies, with standard machine learning in 72%, and both approaches in 6% [12]. The review highlighted that non-small-cell lung cancer was the most frequently studied cancer type (36%), followed by melanoma (16%), with 25% of studies taking a pan-cancer approach [12].

Table 3: Deep Learning Applications Across Data Modalities in Oncology

| Data Modality | Deep Learning Architecture | Application | Key Findings |

|---|---|---|---|

| Digital Pathology (Pathomics) | Convolutional Neural Networks (CNNs) [8] | Tumor micro-environment analysis | Extracted prognostic and predictive signals from standard histology slides [15] |

| Radiomics | 3D Convolutional Networks [12] | Treatment response prediction | Identified novel imaging biomarkers for immunotherapy response [12] |

| Genomics/Transcriptomics | Recurrent Neural Networks (RNNs) [8] | Molecular biomarker discovery | Analyzed sequential patterns in gene expression data [8] |

| Multi-omics Integration | Transformers, Autoencoders [8] [16] | Meta-biomarker discovery | Integrated multimodal data to identify composite signatures [16] [12] |

Research Reagent Solutions for Biomarker Discovery

The table below outlines essential research reagents and computational tools used in machine learning-driven biomarker discovery, as identified from the experimental methodologies in the cited literature.

Table 4: Essential Research Reagents and Tools for ML-Driven Biomarker Discovery

| Reagent/Tool | Function | Example Applications |

|---|---|---|

| Absolute IDQ p180 Kit [10] | Targeted metabolomics quantifying 194 endogenous metabolites | Identification of plasma metabolites for large-artery atherosclerosis prediction [10] |

| ADNIMERGE Dataset [11] | Standardized Alzheimer's disease data incorporating multi-modal patient information | Unsupervised clustering of AD sub-populations using clinical, imaging, and biomarker data [11] |

| CIViCmine Database [13] | Text-mined cancer biomarker knowledge base | Training and validation of predictive biomarkers in cancer signaling networks [13] |

| DisProt, IUPred, AlphaFold [13] | Intrinsically disordered protein prediction databases | Analysis of protein disorder contribution to biomarker function [13] |

| FANMOD Software [13] | Network motif detection tool | Identification of three-nodal motifs in cancer signaling networks [13] |

| Biocrates MetIDQ Software [10] | Metabolomics data processing and quality control | Processing of targeted metabolomics data for machine learning analysis [10] |

Integration of Machine Learning Paradigms and Future Directions

The future of biomarker discovery lies in the strategic integration of multiple machine learning paradigms to leverage their complementary strengths. Combining unsupervised learning for patient stratification with supervised learning for outcome prediction represents a powerful approach for handling disease heterogeneity [11]. Similarly, deep learning feature extraction combined with traditional machine learning classifiers can enhance interpretability while maintaining high accuracy [12].

Key emerging trends include the development of explainable AI (XAI) methods to address the "black box" nature of complex models, facilitating clinical adoption by providing transparent reasoning for predictions [8] [17]. Federated learning approaches enable collaborative model training across institutions without sharing sensitive patient data, addressing critical privacy concerns while expanding dataset diversity and size [16]. The systematic review of AI in immuno-oncology emphasized that while most studies show promise, prospective trial designs incorporating AI from the outset are needed to provide high-level evidence for clinical practice changes [12].

As these technologies mature, the integration of multi-modal data—combining genomics, radiomics, pathomics, and clinical records—will enable the discovery of meta-biomarkers that more comprehensively capture disease complexity and therapeutic opportunities [16] [12]. This integrated approach, leveraging the distinct strengths of supervised, unsupervised, and deep learning paradigms, promises to accelerate the development of robust, clinically actionable biomarkers that advance precision medicine across diverse disease areas.

Multi-omics strategies integrate various molecular data layers to provide a comprehensive understanding of biological systems, particularly in complex diseases like cancer [18]. This approach has revolutionized biomarker discovery and enabled novel applications in personalized oncology by capturing the intricate interactions between genes, transcripts, proteins, and metabolites [18]. The fundamental premise of multi-omics rests on overcoming the limitations of single-omics approaches, which cannot account for the complex, multi-layer regulation governing cellular functions [19]. For instance, measuring gene expression levels alone does not quantify translated proteins, nor does protein presence confirm metabolic activity, creating critical knowledge gaps that multi-omics aims to bridge [19].

The field has evolved significantly from early genomic studies to now encompass transcriptomics, proteomics, metabolomics, and epigenomics, with recent advances introducing single-cell and spatially resolved multi-omics methods [19] [18]. These technological developments have been instrumental in characterizing the complex molecular networks that drive disease initiation, progression, and therapeutic resistance [18]. For researchers focused on machine learning biomarker consistency across datasets, multi-omics provides orthogonal data perspectives that enhance biomarker robustness and predictive power across diverse patient populations.

Comparative Analysis of Omics Technologies

Table 1: Comparative analysis of major omics technologies and their applications in biomarker research

| Omics Type | Primary Analytical Methods | Key Biomarkers Detected | Clinical/Research Applications | Technical Considerations |

|---|---|---|---|---|

| Genomics | Whole Genome Sequencing (WGS), Whole Exome Sequencing (WES) [18] | Single nucleotide polymorphisms (SNPs), Copy number variations (CNVs), Mutations [18] | Tumor mutational burden (TMB) for immunotherapy response [18], MSK-IMPACT (37% tumors harbor actionable alterations) [18] | Read length varies by platform: Sanger (800-1000bp), Illumina (100-300bp), PacBio (10,000-25,000bp) [19] |

| Transcriptomics | RNA sequencing, Microarrays [18] | mRNA, long noncoding RNAs (lncRNAs), miRNAs, small noncoding RNAs (snRNAs) [18] | Oncotype DX (21-gene), MammaPrint (70-gene) for breast cancer chemotherapy decisions [18] | High sensitivity and cost-effectiveness; dominant in multi-omics research [18] |

| Proteomics | Liquid chromatography-mass spectrometry (LC-MS), Reverse-phase protein arrays [18] | Protein abundance, Post-translational modifications (phosphorylation, acetylation, ubiquitination) [18] | CPTAC studies identify functional subtypes and druggable vulnerabilities in ovarian/breast cancers [18] | Captures functional effectors; reveals therapeutic targets missed by genomics [18] |

| Metabolomics | Mass spectrometry (MS), LC-MS, Gas chromatography-mass spectrometry [18] | Small molecules, carbohydrates, peptides, lipids, nucleosides [18] | IDH1/2-mutant gliomas (oncometabolite 2-HG); 10-metabolite plasma signature for gastric cancer diagnosis [18] | Close representation of phenotypic state; useful for diagnostic accuracy and treatment outcome prediction [18] |

| Epigenomics | Whole genome bisulfite sequencing (WGBS), ChIP-seq [18] | DNA methylation, Histone modifications (acetylation) [18] | MGMT promoter methylation in glioblastoma; multi-cancer early detection assays (Galleri test) [18] | Regulatory layer; DNMT and HDAC inhibitors already FDA-approved [18] |

Sequencing Platform Comparison

Table 2: Technical specifications of sequencing generations and platforms

| Generation | First-Generation | Second-Generation | Third-Generation | |

|---|---|---|---|---|

| Platform | Sanger [19] | Illumina [19] | PacBio [19] | Oxford Nanopore [19] |

| Year Introduced | 1987 [19] | 2006 [19] | 2009 [19] | 2015 [19] |

| Sequencing Technology | Chain termination method [19] | Sequencing by synthesis [19] | Circular consensus sequencing [19] | Electrical detection [19] |

| Current Read Length | 800-1000 bp [19] | 100-300 bp [19] | 10,000-25,000 bp [19] | 10,000-30,000 bp [19] |

| Throughput | Low [19] | High [19] | High [19] | Moderate [19] |

| Read Accuracy | High [19] | High [19] | Moderate [19] | Low [19] |

| Difficulty of Analysis | Low [19] | Moderate [19] | High [19] | High [19] |

| Computing Requirements | Low [19] | High [19] | High [19] | High [19] |

Experimental Protocols for Multi-Omics Integration

Data Generation and Processing Workflows

Figure 1: Comprehensive multi-omics workflow from sample collection to biomarker validation

Single-Cell Multi-Omics Protocol

The emerging single-cell multi-omics approach requires specialized experimental and computational methods [18]. A representative protocol based on recent literature involves:

Cell Preparation and Sequencing: Single-cell suspensions are prepared from tissue samples (e.g., 93 lung samples encompassing normal, COPD, IPF, and LUAD tissues) [20]. After quality control and doublet exclusion, cells are processed using platforms like 10x Genomics for single-cell RNA sequencing [20]. Harmony analysis is employed to mitigate batch effects, followed by dimensionality reduction using PCA and UMAP for clustering [20].

Cell Type Identification and Subpopulation Analysis: Unsupervised clustering identifies distinct cell clusters (e.g., 24 clusters in lung tissue analysis) [20]. Cell types are annotated based on canonical marker genes: T cells (CD3D), NK cells (NCAM1), macrophages (CD68), epithelial cells (EPCAM), proliferating cells (MKI67), and fibroblasts (COL1A1) [20]. Proliferating cells can be further subdivided into subpopulations (e.g., six distinct proliferating subpopulations with unique markers like KRT8, MMP9, FABP4) [20].

Phenotype Association Analysis: The Scissor algorithm identifies cell subgroups associated with clinical phenotypes within single-cell data [20]. This method pinpoints Scissor+ proliferating cell genes (e.g., 663 genes identified in LUAD) with prognostic implications [20]. Functional enrichment analysis (e.g., G2M Checkpoint, Epithelial-Mesenchymal Transition pathways) reveals potential roles in disease pathogenesis [20].

Integration with Spatial Multi-Omics: Spatial transcriptomics validation confirms colocalization of specific subpopulations (e.g., C1FABP4, C2MMP9, C3_KRT8) at spatial resolution, supporting potential synergistic roles in disease progression [20]. Cellular communication analysis using tools like CellChat identifies key signaling pathways (e.g., MIF-CD74+CD44) mediating interactions among subpopulations [20].

Computational Integration Strategies

Machine Learning Approaches for Multi-Omics Data

Figure 2: Computational workflow for multi-omics data integration and machine learning analysis

Advanced computational strategies are essential for meaningful integration of multi-omics datasets [18]. The Scissor+ proliferating cell risk score (SPRS) development exemplifies a sophisticated machine learning approach, employing 111 algorithm combinations to construct a robust predictive model [20]. This methodology demonstrates superior performance in predicting prognosis and clinical outcomes compared to 30 previously published models [20].

Horizontal integration addresses intra-omics harmonization, combining datasets of the same type from different sources, while vertical integration combines different omics types from the same samples [18]. Successful implementation requires specialized tools such as DriverDBv4, which encompasses data from over 70 cancer cohorts and employs eight multi-omics integration algorithms to elucidate driver characteristics [18]. Disease-specific databases like GliomaDB (integrating 21,086 glioblastoma samples) and HCCDBv2 for liver cancer provide specialized resources for biomarker discovery [18].

Case Study: Multi-Omics Biomarker Discovery in Lung Adenocarcinoma

A comprehensive multi-omics study on lung adenocarcinoma (LUAD) exemplifies the power of integrated approaches for biomarker discovery [20]. This research utilized single-cell RNA sequencing data from 93 samples (368,904 cells after quality control) encompassing normal lung tissue, COPD, IPF, and LUAD [20]. The analysis revealed significant enrichment of proliferating cells in IPF and LUAD tissues compared to COPD and normal tissues [20].

The study identified 22 Scissor+ proliferating cell genes with significant prognostic implications for LUAD patients [20]. Through integrative machine learning (111 algorithm combinations), researchers developed the Scissor+ proliferating cell risk score (SPRS), which demonstrated superior performance in predicting prognosis compared to 30 existing models [20]. The SPRS model effectively stratified patients into high- and low-risk groups with distinct biological functions, immune cell infiltration patterns, and therapeutic responses [20].

High SPRS patients showed resistance to immunotherapy but increased sensitivity to chemotherapeutic and targeted therapeutic agents [20]. Multifactorial analysis confirmed SPRS as an independent prognostic factor affecting LUAD patient survival [20]. This case study illustrates how multi-omics approaches can generate clinically actionable biomarkers that inform personalized therapeutic strategies.

Research Reagent Solutions for Multi-Omics Studies

Table 3: Essential research reagents and computational tools for multi-omics experiments

| Category | Specific Tools/Reagents | Primary Function | Application Context |

|---|---|---|---|

| Sequencing Kits | Illumina Sequencing Kits, PacBio SMRTbell Kits, Oxford Nanopore Ligation Kits | Library preparation for genomic and transcriptomic analysis | Whole genome sequencing, whole exome sequencing, RNA sequencing [19] [18] |

| Mass Spectrometry Reagents | LC-MS grade solvents, Trypsin/Lys-C digestion enzymes, TMT/Isobaric tags | Protein and metabolite identification and quantification | Proteomic profiling, post-translational modification analysis, metabolomic studies [18] |

| Single-Cell Platforms | 10x Genomics Chromium, BD Rhapsody, Parse Biosciences reagents | Single-cell partitioning and barcoding | Single-cell RNA sequencing, cellular heterogeneity studies, tumor microenvironment analysis [18] [20] |

| Spatial Omics Reagents | Visium Spatial Gene Expression Slide & Reagent Kit, CODEX antibodies | Spatially resolved molecular profiling | Spatial transcriptomics, spatial proteomics, tumor-immune interactions [18] [20] |

| Computational Tools | FastQC, Trimmomatic, Cell Ranger, Seurat, Scanpy | Quality control, data processing, and initial analysis | Raw data processing, single-cell analysis, quality assessment [19] [20] |

| Integration Algorithms | Scissor, MOFA+, iCluster, mixOmics, MOGONET | Multi-omics data integration | Horizontal and vertical integration, biomarker discovery, subtype identification [18] [21] [20] |

| Machine Learning Frameworks | Random Forest, SVM, Neural Networks, Autoencoders | Predictive modeling and pattern recognition | Biomarker validation, risk score development, treatment response prediction [18] [20] |

Biomarker Validation and Clinical Translation

The transition from multi-omics biomarker discovery to clinical application requires rigorous validation frameworks [18]. Successful examples include the tumor mutational burden (TMB), validated in the KEYNOTE-158 trial and approved by the FDA as a predictive biomarker for pembrolizumab treatment across solid tumors [18]. Similarly, transcriptomic biomarkers like Oncotype DX (21-gene) and MammaPrint (70-gene) have demonstrated utility in tailoring adjuvant chemotherapy decisions in breast cancer patients through prospective clinical trials (TAILORx and MINDACT trials) [18].

For machine learning-derived biomarkers like the SPRS in LUAD, validation encompasses multiple dimensions: prognostic accuracy, biological interpretability, and therapeutic predictive power [20]. The integration of single-cell and spatial multi-omics data provides mechanistic insights that support biomarker validity, such as spatial colocalization of relevant cell subpopulations and identification of key signaling pathways (e.g., MIF-CD74+CD44) driving the observed clinical associations [20].

Critical challenges in biomarker validation include data heterogeneity, reproducibility across platforms, and generalizability across diverse patient populations [18]. Multi-omics approaches inherently address some challenges through data triangulation, where consistent patterns across multiple molecular layers strengthen biomarker credibility. Furthermore, public data resources like The Cancer Genome Atlas (TCGA) Pan-Cancer Atlas and the Clinical Proteomic Tumor Analysis Consortium (CPTAC) provide essential validation cohorts for assessing biomarker consistency across datasets [18].

The Critical Importance of Biomarker Consistency for Clinical Reliability and Generalizability

In the pursuit of precision medicine, biomarkers have become indispensable tools for disease detection, diagnosis, prognosis, and predicting treatment response [22]. However, the transition of biomarker signatures from research settings to clinically applicable tools faces a significant hurdle: biomarker consistency. This concept refers to the robustness and reliability of a biomarker or biomarker panel to perform accurately across different datasets, patient populations, and measurement conditions. The emergence of machine learning (ML) techniques for analyzing high-dimensional biological data has further amplified both the promise and challenges of biomarker development [4] [23].

Biomarker consistency is the cornerstone of clinical reliability and generalizability—without it, even the most biologically plausible biomarkers fail in real-world applications. Inconsistencies can arise from multiple sources, including biological variability across populations, differences in sample collection and processing, analytical measurement variability, and statistical artifacts from overfitting in model development [23] [24]. As research increasingly focuses on complex multi-biomarker panels rather than single biomarkers, and as ML models become more sophisticated, ensuring consistency becomes both more critical and more challenging. This comparison guide examines the factors affecting biomarker consistency, evaluates methodological approaches to enhance it, and provides practical guidance for researchers developing ML-based biomarker models.

Methodological Approaches for Ensuring Biomarker Consistency

Experimental Design and Statistical Considerations

Robust biomarker discovery begins with rigorous experimental design that anticipates and controls for sources of variability. Key considerations include pre-specifying analytical plans before data collection to avoid data-driven artifacts, implementing randomization and blinding procedures during specimen analysis to prevent bias, and ensuring adequate sample sizes with appropriate power calculations [22]. Statistical methods must control for multiple comparisons, particularly when dealing with high-dimensional omics data, with false discovery rate (FDR) correction being particularly useful in genomic studies [22].

The intended use of a biomarker must be defined early in development, as this determines the validation pathway. Prognostic biomarkers (providing information about overall disease outcomes) can be identified through properly conducted retrospective studies, while predictive biomarkers (informing treatment response) require data from randomized clinical trials and testing for treatment-by-biomarker interaction effects [22]. Distinct analytical metrics apply to different biomarker applications, with sensitivity, specificity, positive and negative predictive values, and ROC-AUC being fundamental for diagnostic biomarkers [22] [25].

Biomarker Selection Techniques and Their Impact on Consistency

Different feature selection methods can yield substantially different biomarker sets, raising important questions about biological significance and consistency [23]. Research has systematically compared biomarker selection techniques along two critical dimensions: similarity of selected gene sets and implications for predictive performance and stability.

Table 1: Comparison of Biomarker Selection Techniques

| Selection Method | Approach | Strengths | Consistency Performance |

|---|---|---|---|

| Univariate Methods | Evaluates each feature independently | Computational efficiency, simplicity | Moderate stability, depends on data structure |

| Multivariate Methods | Considers feature interdependencies | Captures biological complexity | Variable stability, can be context-dependent |

| Causal-Based Selection | Adapts causal metrics for biomarker discovery | Reduces spurious correlations | Highest performance with limited biomarkers [5] |

| Ensemble Methods | Combines multiple selection approaches | Mitigates individual method limitations | Improved robustness across datasets |

Univariate approaches typically evaluate the relevance of each biomarker independently using statistical tests such as chi-square, while multivariate approaches account for interdependencies between biomarkers [23]. Recent research introduces causal-based feature selection adapted specifically for biomarker discovery, which examines the effect of a single analyte considering other analytes that may have co-occurring measurements [5]. This approach has demonstrated particular strength when the number of permitted biomarkers is severely restricted, outperforming traditional methods in scenarios where only 3-10 biomarkers can be used [5].

Data Normalization Strategies for Cross-Dataset Consistency

Data-driven normalization methods are essential for mitigating technical variability and biological variance across cohorts, which is crucial for long-term validation of developed models [24]. Different normalization approaches can significantly impact both the identified biomarker signatures and subsequent model performance.

Table 2: Comparison of Data Normalization Methods in Biomarker Research

| Normalization Method | Principle | Best Application Context | Performance Evidence |

|---|---|---|---|

| Probabilistic Quotient Normalization (PQN) | Uses median relative signal intensity ratio | Metabolomics data with consistent reference | High diagnostic quality in metabolomics [24] |

| Median Ratio Normalization (MRN) | Employs geometric averages of sample concentrations | Large-scale cross-study investigations | High diagnostic quality in metabolomics [24] |

| Variance Stabilizing Normalization (VSN) | Determines optimal glog transformation parameters | Large-scale and cross-study investigations | Superior sensitivity (86%) and specificity (77%) [24] |

| Quantile Normalization | Rearranges distributions to match across samples | Omics data with similar distribution shapes | Common in metabolomics but outperformed by VSN [24] |

Comparative studies have demonstrated that VSN uniquely highlighted pathways related to the oxidation of brain fatty acids and purine metabolism in metabolomics research, suggesting it may capture biological insights missed by other methods [24]. The choice of normalization method should be guided by both the data structure and the biological context, with empirical testing of multiple approaches recommended for optimal results.

Comparative Performance of ML Approaches Across Data Modalities

Context-Dependent Performance of Biomarker-Driven ML Models

Machine learning models show highly variable performance depending on data modalities and clinical contexts, highlighting the importance of context-aware model selection [26]. Research systematically evaluating ML models across diverse clinical data scenarios reveals that prediction accuracy is highly dependent on data type and clinical context.

Table 3: ML Model Performance Across Different Data Modalities and Clinical Contexts

| Data Modality | Clinical Context | Best Performing Model | AUC Performance | Key Findings |

|---|---|---|---|---|

| Laboratory Biomarkers | Diabetes and cardiovascular disease | Multiple traditional models | 0.62 | Modest accuracy from lab data alone [26] |

| Patient History & Lifestyle | Diabetes risk stratification | Ensemble methods | 0.84 | Strong performance with comprehensive history [26] |

| Symptoms & ECG Data | Heart disease detection | Neural networks and ensemble methods | 0.87 | Highest accuracy with structured clinical data [26] |

| Syndromic Surveillance | Communicable disease prediction | Multiple models tested | 0.49 | Poor performance due to symptom overlap [26] |

| Ovarian Cancer Biomarkers | Ovarian cancer detection | Random Forest, XGBoost | >0.90 | Biomarker-driven ML outperforms traditional methods [4] |

The integration of established biomarkers like CA-125 and HE4 with additional parameters such as C-Reactive Protein (CRP) and neutrophil-to-lymphocyte ratio (NLR) through ensemble ML methods like Random Forest and XGBoost has demonstrated exceptional performance in ovarian cancer detection, achieving AUC values exceeding 0.90 and classification accuracy up to 99.82% in some studies [4]. These findings underscore the potential of combining traditional biomarkers with ML approaches for complex diagnostic challenges.

Experimental Protocols for Assessing Biomarker Consistency

Robust assessment of biomarker consistency requires systematic experimental protocols. The following methodology has been demonstrated effective for evaluating the stability and generalizability of biomarker signatures:

Dataset Formation and Partitioning: Create multiple reduced datasets (e.g., 80% of samples) through random sampling from the original dataset while preserving class distributions [23].

Biomarker Selection on Subsets: Apply biomarker selection algorithms to each reduced dataset to identify candidate biomarkers [23].

Consistency Measurement: Calculate overlap between biomarker sets selected from different subsets using similarity indices such as the Kuncheva index, which accounts for chance agreement [23].

Performance Validation: Assess predictive performance of selected biomarker panels using cross-validation or external validation sets, evaluating metrics such as AUC, sensitivity, and specificity [23].

Functional Consistency Analysis: Evaluate whether different biomarker sets capture similar biological pathways using gene ontology or pathway enrichment analysis, even when specific biomarkers differ [23].

This protocol allows researchers to simultaneously evaluate both the stability of biomarker selection and the predictive performance of selected panels, providing a comprehensive assessment of biomarker consistency [23].

Signaling Pathways and Experimental Workflows

The following diagram illustrates the experimental workflow for assessing biomarker consistency across datasets and technical variations:

The Scientist's Toolkit: Essential Research Reagent Solutions

Implementing robust biomarker consistency research requires specific methodological tools and analytical solutions. The following table details key resources and their applications in biomarker development workflows:

Table 4: Essential Research Reagent Solutions for Biomarker Consistency Studies

| Reagent/Resource | Function | Application Context | Implementation Notes |

|---|---|---|---|

| Gene Ontology Database | Functional similarity analysis | Genomic/proteomic biomarker studies | Enables comparison of biological functions captured by different biomarker sets [23] |

| VSN Normalization Package | Variance stabilization normalization | Metabolomics and cross-study investigations | Superior for large-scale studies; implemented in vsn2 package [24] |

| Probabilistic Quotient Normalization | Normalization based on reference spectra | Metabolomics data | Implemented using Rcpm package [24] |

| Causal Metric Algorithms | Causal-based biomarker selection | High-dimensional biomarker discovery | Adapted from Kleinberg causal measures; particularly effective with limited biomarkers [5] |

| Gradient Boosting Machines | Predictive modeling with biomarker panels | Multi-biomarker panel validation | XGBoost implementation shows strong performance across contexts [4] [26] |

| Electronic Health Record Data | Real-world validation of biomarker generalizability | Assessment of clinical utility | Enables evaluation of prognostic heterogeneity across patient phenotypes [27] |

Biomarker consistency is not merely a statistical concern but a fundamental requirement for clinical applicability. The evidence presented demonstrates that methodological choices—from experimental design and normalization techniques to feature selection algorithms and model validation—profoundly impact the consistency and subsequent generalizability of biomarker signatures. Causal-based feature selection methods outperform traditional approaches when biomarker panels must be minimized, while variance-stabilizing normalization techniques provide superior performance in cross-cohort applications.

The path forward requires a disciplined, context-aware approach to biomarker development that prioritizes consistency from the earliest discovery phases. Researchers should embrace multi-center validation studies, assess functional consistency beyond simple biomarker overlap, and select ML approaches appropriate to their specific data modalities and clinical contexts. As biomarker research increasingly integrates multi-omics data and artificial intelligence, maintaining focus on the fundamental principles of consistency and generalizability will ensure that promising discoveries translate into clinically valuable tools that benefit diverse patient populations.

Methodologies for Robust Biomarker Discovery: Techniques and Real-World Applications

The identification of robust biomarkers is crucial for advancing precision medicine, enabling early disease detection, accurate prognosis, and personalized treatment strategies. Machine learning (ML) algorithms, particularly Random Forest (RF), XGBoost, and Neural Networks (NNs), have transformed the biomarker discovery landscape by analyzing complex, high-dimensional biological data. This guide provides an objective comparison of these three algorithmic approaches, detailing their performance characteristics, optimal application contexts, and implementation protocols. Within a broader thesis on machine learning biomarker consistency across datasets, we evaluate these algorithms based on empirical evidence from recent studies, highlighting their capacities to manage data heterogeneity, ensure model generalizability, and support clinical translation.

Biomarkers, defined as objectively measurable indicators of biological processes, are fundamental to precision medicine, supporting disease diagnosis, prognosis, and therapeutic decision-making [28]. Traditional biomarker discovery methods, often focused on single molecular features, face challenges with reproducibility, predictive accuracy, and integrating multifaceted biological data [8]. Machine learning paradigms have surmounted these limitations by identifying complex, multivariate biomarker signatures from large-scale omics datasets.

Among diverse ML algorithms, Random Forest, an ensemble of decision trees, is prized for its robustness and interpretability. XGBoost (eXtreme Gradient Boosting), another ensemble method, sequentially builds trees to correct previous errors, often achieving state-of-the-art predictive performance. In contrast, Neural Networks, especially deep learning architectures, excel at identifying intricate, non-linear patterns in highly complex data types like genomic sequences and medical images [29] [8]. The selection between these algorithms significantly impacts the reliability, consistency, and clinical applicability of discovered biomarkers, a critical consideration within research focused on biomarker consistency across diverse genomic and clinical datasets.

Performance Comparison and Experimental Data

Direct comparative studies and application-specific implementations demonstrate the distinct performance profiles of RF, XGBoost, and Neural Networks.

Table 1: Comparative Performance Metrics Across Cancer Types

| Cancer Type | Algorithm | Reported Accuracy | Reported AUC | Key Biomarkers Identified |

|---|---|---|---|---|

| Ovarian Cancer [4] | Random Forest / XGBoost | Up to 99.82% | Exceeding 0.90 | CA-125, HE4, CRP, NLR |

| Ovarian Cancer [4] | RNN (Deep Learning) | N/A | Up to 0.866 | CA-125, HE4, CRP, NLR |

| Gastric, Lung, Breast [30] [31] | XGBoost (XGB-BIF) | > 90% | Up to 0.93 | CBX2, CLDN1 (Gastric); CAVIN2, ADAMTS5 (Breast); CLDN18, MYBL2 (Lung) |

| Metaplastic Breast [29] | Deep Reinforcement Learning | 96.20% | N/A | MALAT1, HOTAIR, NEAT1, GAS5 (ncRNAs) |

| Colorectal Cancer [32] | Random Forest | 0.93 F1-Score | N/A | Genomic variants from exome data |

| Colorectal Cancer [32] | XGBoost | 0.92 F1-Score | N/A | Genomic variants from exome data |

Table 2: Algorithm Strengths and Clinical Applicability

| Feature | Random Forest (RF) | XGBoost | Neural Networks (NNs) |

|---|---|---|---|

| Best Use Case | Initial biomarker screening, Robust tabular data analysis | Winning predictive accuracy on structured data | Complex data: images, sequences, multi-omics integration |

| Interpretability | High (Feature Importance) | High (Gain, SHAP, LIME) [30] | Low ("Black box"); requires SHAP/LIME [8] |

| Data Efficiency | Performs well on small-to-medium datasets | Requires sufficient data for boosting | Requires very large datasets to avoid overfitting |

| Handling Non-linearity | Good | Excellent | Superior, captures highly complex interactions |

| Implementation Speed | Fast training, parallelizable | Fast, efficient resource use [30] | Slower training, high computational cost |

| Clinical Validation | High; widely adopted in biomarker studies [4] | High; increasing use in genomics [30] [31] | Emerging; requires rigorous external validation [29] |

Detailed Experimental Protocols

To ensure reproducibility and rigorous benchmarking, the following sections detail the experimental methodologies commonly employed in biomarker discovery studies utilizing these algorithms.

The XGB-BIF Framework for Genomic Data

The XGBoost-Driven Biomarker Identification Framework (XGB-BIF) has been successfully applied to identify biomarkers for gastric, lung, and breast cancers using human genomic data [30] [31]. The protocol involves a multi-stage process:

- Data Preprocessing and Feature Ranking: The genomic dataset (e.g., from RNA sequencing) is first normalized. XGBoost is then trained on the full dataset, after which features (genes) are ranked based on the "Gain" metric, which measures their relative contribution to the model's predictive accuracy.

- Classification with Meta-Learners: The top-ranked features are used to train and validate downstream classifiers, including Support Vector Machines (SVM), Logistic Regression (LR), and Random Forest. This ensemble approach mitigates the risk of model-specific biases.

- Interpretability and Validation: Model interpretability is ensured using SHapley Additive exPlanations (SHAP) and Local Interpretable Model-agnostic Explanations (LIME) to identify high-impact biomarkers. The biological significance of these biomarkers is further validated through pathway enrichment analysis and survival analysis (Kaplan-Meier curves, Cox regression) to strengthen translational value [30].

- External Validation: The final model is tested on an independent external dataset (e.g., the METABRIC dataset for breast cancer) to assess its generalizability and robustness [31].

The MarkerPredict Framework for Predictive Biomarkers

The MarkerPredict framework was designed to identify predictive biomarkers in oncology, defined as biomarkers that forecast response to targeted cancer therapies [13]. Its workflow integrates network biology and machine learning:

- Network Motif Analysis: Three signaling networks (Human Cancer Signaling Network, SIGNOR, ReactomeFI) are analyzed to identify three-node network motifs (triangles). Proteins within these motifs, particularly Intrinsically Disordered Proteins (IDPs) and known drug targets, are hypothesized to have close regulatory relationships.

- Training Set Construction: A positive training set is built from literature-curated, known predictive biomarker-target pairs (e.g., BRAF mutations predicting response to EGFR inhibitors). A negative set is derived from protein pairs not annotated as predictive in databases like CIViCmine.

- Model Training and Classification: Multiple Random Forest and XGBoost models are trained on this data, using features derived from network topology and protein disorder. A Leave-One-Out-Cross-Validation (LOOCV) strategy is employed for robust internal validation.

- Biomarker Scoring and Ranking: A Biomarker Probability Score (BPS) is calculated as a normalized summative rank across all models, providing a single metric to prioritize potential predictive biomarkers for further experimental validation [13].

Deep Learning for ncRNA Biomarker Discovery

A Deep Reinforcement Learning (DRL) framework for predicting non-coding RNA (ncRNA) associations with Metaplastic Breast Cancer (MBC) exemplifies the deep learning approach [29]:

- Multi-dimensional Feature Engineering: A comprehensive feature set is constructed for each ncRNA, integrating 550 sequence-based features and 1,150 target gene descriptors (based on miRDB scores ≥ 90). This creates a high-dimensional input vector.

- Feature Selection and Dimensionality Reduction: To enhance computational efficiency and avoid overfitting, feature selection and optimization techniques are applied, significantly reducing the feature space (e.g., by 42.5% from 4,430 to 2,545 features) while maintaining predictive performance.

- Model Training and Interpretation: The DRL model is trained on the processed feature set. SHAP analysis is subsequently used to interpret the model's predictions, identifying key sequence motifs (e.g., "UUG") and structural features (e.g., free energy ΔG = -12.3 kcal/mol) as critical drivers, thereby providing biological insights.

- Prognostic Validation: The clinical relevance of identified ncRNA biomarkers (e.g., MALAT1, HOTAIR) is confirmed by conducting survival analysis on independent cohorts like TCGA, linking high biomarker expression to poor patient survival (Hazard Ratios of 1.76–2.71) [29].

Visualization of Workflows

The following diagrams illustrate the core logical workflows for the key algorithms discussed in this guide.

Random Forest/XGBoost Biomarker Discovery Workflow

Neural Network Biomarker Discovery Workflow

Successful implementation of ML-driven biomarker discovery relies on a suite of computational tools and data resources.

Table 3: Key Resources for ML-Based Biomarker Identification

| Resource Name | Type | Primary Function in Research | Relevant Context |

|---|---|---|---|

| CIViCmine [13] | Database | Literature-curated evidence for cancer biomarkers; used for training set construction. | Predictive biomarker annotation for model training. |

| SHAP / LIME [30] [31] | Software Library | Provides post-hoc interpretability for ML models, explaining feature contributions to predictions. | Critical for translating model output to biologically intelligible biomarkers. |

| DisProt / IUPred [13] | Database / Tool | Provides data on Intrinsically Disordered Proteins (IDPs) and prediction of protein disorder. | Feature generation for network-based biomarker prediction. |

| ReactomeFI, SIGNOR [13] | Database | Curated human signaling networks containing protein-protein interactions. | Used for network motif analysis and identifying biomarker-target pairs. |

| TCGA [29] | Database | The Cancer Genome Atlas provides large-scale genomic, transcriptomic, and clinical data. | Primary data source for training and external validation of models. |

| METABRIC [31] | Dataset | Molecular taxonomy of breast cancer genomic dataset. | Used for external validation of biomarker models to test generalizability. |

The rapid advancement in healthcare technology has generated an explosion of data from diverse sources, including clinical examinations, medical imaging, and molecular analysis [33]. No single data modality can provide a complete picture of a patient's health status or disease pathology [34]. Multi-modal data fusion has emerged as a transformative approach that systematically integrates these complementary data sources to create a unified representation for analysis [35]. This integrated approach significantly enhances diagnostic accuracy, enables personalized treatment planning, and improves predictive modeling for various medical conditions [35] [36].

Within the specific context of machine learning biomarker consistency research, multi-modal fusion addresses a critical challenge: the validation of biomarkers across diverse datasets and populations [28]. By combining information from multiple sources, researchers can develop more robust and generalizable models, distinguishing true biological signals from dataset-specific noise [15]. This review comprehensively compares the primary strategies for multi-modal data fusion, evaluates their performance across clinical applications, and details experimental protocols that enable rigorous assessment of biomarker consistency.

Multi-Modal Data Fusion Levels and Strategies

Multi-modal data fusion occurs at three principal levels, each with distinct methodologies and applications. The table below summarizes the core characteristics of these fusion levels.

Table 1: Comparison of Data Fusion Levels

| Fusion Level | Description | Common Algorithms | Advantages | Limitations |

|---|---|---|---|---|

| Early Fusion (Data-Level) | Integration of raw data from multiple sources before feature extraction [36]. | Wavelet Transform, PCA | Preserves complete information from original data [34]. | Highly susceptible to noise and requires perfect data alignment [34]. |

| Intermediate Fusion (Feature-Level) | Salient features are extracted from each modality independently and then combined [33]. | CNN-based feature extractors, Multi-Channel Neural Networks [33] [34] | Handles heterogeneous data effectively; robust to missing data [35]. | Risk of information loss during feature selection [36]. |

| Late Fusion (Decision-Level) | Separate models process each modality, and their decisions are combined [36]. | Random Forest, SVM, Ensemble Methods [4] [37] | High flexibility; allows for different model architectures per modality [38]. | Fails to capture cross-modal interactions at the data level [33]. |

Deep learning architectures, particularly Convolutional Neural Networks (CNNs), have significantly advanced intermediate fusion techniques. CNNs automatically learn hierarchical features from imaging data and can be adapted to integrate features from non-imaging sources [34]. Generative Adversarial Networks (GANs) and transformer-based architectures represent the cutting edge, showing remarkable capability in generating high-quality fused images and capturing complex relationships across modalities [36].

Diagram 1: Multi-modal data fusion strategies at different levels

Performance Comparison Across Clinical Applications

The effectiveness of multi-modal fusion strategies is demonstrated through their application across diverse medical specialties. The following table compares the performance of uni-modal versus multi-modal approaches in specific clinical scenarios.

Table 2: Performance Comparison of Uni-Modal vs. Multi-Modal Approaches

| Clinical Application | Data Modalities | Fusion Strategy | Performance Metrics | Key Findings |

|---|---|---|---|---|

| Osteoporosis Screening [38] | Chest X-ray, Clinical data (age, sex, BMI, lab values) | Probability Fusion (Late Fusion) | Multi-modal: AUC: 0.975, Accuracy: 92.36%, Sensitivity: 91.23%, Specificity: 93.92%X-ray only: AUC: 0.951, Accuracy: 89.32% | Multi-modal fusion significantly outperformed single-modality models across all metrics (P=.004 for AUC) |

| Ovarian Cancer Detection [4] | Serum biomarkers (CA-125, HE4, CRP), Clinical variables | Ensemble Methods (Late Fusion) | Multi-modal: AUC > 0.90, Accuracy up to 99.82%CA-125 alone: Limited specificity | Combining multiple biomarkers with ML significantly outperformed traditional single-biomarker approaches |

| Cardiovascular Risk Stratification [37] | Clinical data (age, blood pressure, cholesterol, etc.) | Random Forest with SHAP explanations | Accuracy: 81.3% | Integration of explainable AI provided transparent risk assessments for clinical decision support |

| Wastewater Biomarker Monitoring [6] | Absorption spectroscopy, CRP concentrations | Cubic Support Vector Machine | Accuracy: 65.48% (5-class classification) | Demonstrated feasibility of ML for classifying biomarker levels in complex environmental samples |

| Oncology Therapy Response [35] | Radiology, Pathology, Genomics, Clinical data | Multi-layer Neural Networks | AUC: 0.91 for predicting anti-HER2 therapy response | Integration of diverse data sources enabled highly accurate personalized treatment predictions |

The consistent theme across these studies is that multi-modal approaches demonstrably outperform single-modality analyses. In oncology, the integration of radiology, pathology, and genomic data has enabled more precise tumor characterization and personalized treatment planning [35]. For example, a multi-modal model combining radiology, pathology, and clinical information achieved an area under the curve (AUC) of 0.91 for predicting response to anti-human epidermal growth factor receptor 2 therapy, significantly surpassing single-modality predictors [35].

In ophthalmology, the combination of genetic and imaging data has facilitated early diagnosis of retinal diseases such as glaucoma and age-related macular degeneration [35]. These advancements highlight how multi-modal fusion can leverage complementary information from different biological scales to create a more comprehensive understanding of disease processes.

Experimental Protocols for Biomarker Consistency Research

Protocol 1: Multi-Modal Fusion for Osteoporosis Screening

A recent study demonstrated a robust experimental protocol for fusing chest X-ray images and clinical data for osteoporosis screening [38].

Dataset and Population: The study retrospectively collected multimodal data from 1,780 patients, with 990 in the osteoporosis group and 790 in the non-osteoporosis group. Inclusion criteria were age ≥50 years and availability of both chest X-ray and DXA (gold standard) performed on the same day [38].

Preprocessing:

- Imaging: DICOM format X-ray images were converted to PNG, intensity-normalized, squared using zero padding, and downsampled to 224×224 pixels. Data augmentation included random flipping, rotation, translation, zooming, and contrast adjustment [38].

- Clinical Data: Missing values were handled using mean imputation for normally distributed features (e.g., white blood cell count) and median imputation for skewed features (e.g., platelet count) [38].

Model Architecture and Fusion:

- Image Model: Utilized a pre-trained ResNet50 as the backbone network for feature extraction, augmented with wavelet transform for multi-scale analysis and a soft attention mechanism to focus on key regions [38].

- Clinical Model: Processed structured clinical data including age, sex, BMI, and laboratory values.

- Fusion Strategy: Implemented probability fusion (late fusion) to combine predictions from both models, significantly outperforming single-modality approaches [38].

Diagram 2: Osteoporosis screening multi-modal fusion protocol

Protocol 2: Biomarker-Driven Machine Learning for Ovarian Cancer

This protocol focuses on integrating multiple biomarkers with machine learning for improved ovarian cancer detection [4].

Biomarker Panel: The study analyzed a comprehensive panel of biomarkers including CA-125, HE4, kallikreins, prostasin, transthyretin, and inflammatory markers like CRP and NLR [4].

Experimental Design:

- Sample Collection: Serum samples from patients with confirmed ovarian cancer and benign controls.

- Biomarker Measurement: Standardized laboratory techniques (ELISA, chemiluminescence) for quantitative biomarker assessment.

- Model Development: Multiple machine learning algorithms including Random Forest, XGBoost, and Neural Networks were trained and validated using cross-validation techniques [4].

Fusion Approach: Intermediate fusion at the feature level, where normalized biomarker values were combined with clinical features to create a unified feature vector for model training [4].

Validation: Rigorous internal validation using k-fold cross-validation and external validation when possible to assess model generalizability across diverse populations [4].

The Scientist's Toolkit: Essential Research Reagents and Solutions

Table 3: Essential Research Reagents and Computational Tools for Multi-Modal Fusion

| Category | Item | Specifications/Features | Research Application |

|---|---|---|---|

| Imaging Modalities | MRI Scanner | High soft tissue contrast, functional imaging capabilities [36] | Provides detailed anatomical and functional information for fusion with molecular data |

| CT Scanner | Excellent bone detail, rapid acquisition [36] | Structural imaging complementary to MRI's soft tissue focus | |