Decoding Disease-Perturbed Networks: A Systems Biology Approach to Drug Discovery

This article explores the paradigm of network medicine, which utilizes systems biology to analyze molecular interactions within disease-perturbed networks.

Decoding Disease-Perturbed Networks: A Systems Biology Approach to Drug Discovery

Abstract

This article explores the paradigm of network medicine, which utilizes systems biology to analyze molecular interactions within disease-perturbed networks. We cover the foundational shift from single-target to network-based disease models and detail key methodological approaches, including causal network inference and controllability theory for identifying therapeutic targets. The content also addresses central challenges in network analysis, such as data integration and model contextualization, and reviews frameworks for the analytical and experimental validation of network-based predictions. Aimed at researchers and drug development professionals, this review synthesizes how network-based strategies are refining our understanding of complex diseases and accelerating the development of combination therapies.

From Single Genes to Interactomes: Foundations of Network Medicine

For decades, the classification of diseases, particularly in psychiatry and complex chronic illnesses, has relied predominantly on symptom-based categorization systems. These frameworks group diseases based on clinical presentation rather than underlying molecular mechanisms. The current taxonomies for psychotropic agents exemplify this problem, where drugs are classified as "antidepressants" or "antipsychotics" despite their demonstrated efficacy across multiple diagnostic categories. This approach fails to account for the dimensional nature of both psychopathology and the biology of psychiatric illnesses, creating a fundamental mismatch between our classification systems and biological reality [1].

The limitations of this symptom-centric paradigm are increasingly evident in drug development, where high failure rates and unpredictable efficacy across patient populations highlight our incomplete understanding of disease mechanisms. Traditional treatment designs based on physical parameters or simple ligand-protein interactions have proven insufficient for meeting clinical drug safety criteria or accounting for inter-individual variability [2]. This has created an urgent need for a paradigm shift toward molecular taxonomies that reflect the complex, network-based nature of disease pathogenesis.

Theoretical Foundation: Network Medicine and Systems Biology

Network medicine represents a transformative approach that applies fundamental principles of complexity science and systems biology to characterize the dynamical states of health and disease within biological networks. This framework integrates and analyzes complex structured data, including genomics, transcriptomics, proteomics, and metabolomics to map the intricate web of molecular interactions that underlie disease phenotypes [3].

Core Principles of Biological Networks

Biological systems function through complex networks of molecular interactions rather than through linear pathways. The network perspective reveals that:

- Diseases with overlapping network modules show significant co-expression patterns, symptom similarity, and comorbidity [2]

- Diseases residing in separated network neighborhoods are phenotypically distinct [2]

- Network topology provides critical insights into disease mechanisms and potential therapeutic targets [4]

Molecular interaction networks form the foundation for studying how biological functions are controlled by the complex interplay of genes and proteins. Investigating perturbed processes using these networks has been instrumental in uncovering mechanisms that underlie complex disease phenotypes [4].

From Single-Omics to Multi-Layer Integration

Traditional single-omics approaches provide limited views of disease mechanisms by focusing on isolated molecular layers. The integration of multi-omics data (genomic, proteomic, transcriptional, and metabolic layers) enables a comprehensive mapping of metabolism and molecular regulation [2]. This integrative approach reveals that genes work as part of complex networks rather than acting alone to perform cellular processes [2].

Table 1: Multi-Omics Data Types in Systems Biology

| Data Type | Biological Level | Key Analytical Methods |

|---|---|---|

| Genomics | DNA sequence variations | GWAS, sequence analysis |

| Transcriptomics | RNA expression | RNA-seq, microarray analysis |

| Proteomics | Protein abundance and modification | Mass spectrometry, protein arrays |

| Metabolomics | Metabolic products | Mass spectrometry, NMR |

Computational Methodologies for Molecular Taxonomy

The development of molecular taxonomies requires sophisticated computational approaches that can integrate diverse data types and extract biologically meaningful patterns. Several key methodologies have emerged as critical tools in this endeavor.

Network-Based Modeling Approaches

Network-based modeling visualizes a wide range of components such as genes or proteins and their interconnections. A basic network consists of nodes (genes, proteins, drugs) and edges (functional interactions between nodes) [2]. Key network types include:

- Protein-Protein Interaction (PPI) Networks: Encode information of proteins and their interactions, helping predict potential disease-related proteins based on the assumption that shared components in disease-related PPI networks may cause similar disease phenotypes [2]

- Gene Co-expression Networks: Identify functional gene clusters based on correlation of gene expression patterns under the assumption that proteins work together to perform metabolic functions [2]

- Heterogeneous Networks: Include different types of nodes and edges, enabling integration of multi-layer connections across biological scales [2]

Static Network Analysis

Static network models capture functional interactions from omics data at a specific point in time, providing topological properties from the presented interactions. The purpose of constructing a static network is to predict potential interactions among drug molecules and target proteins through shared components that can serve as intermediaries to convey information across different network layers [2].

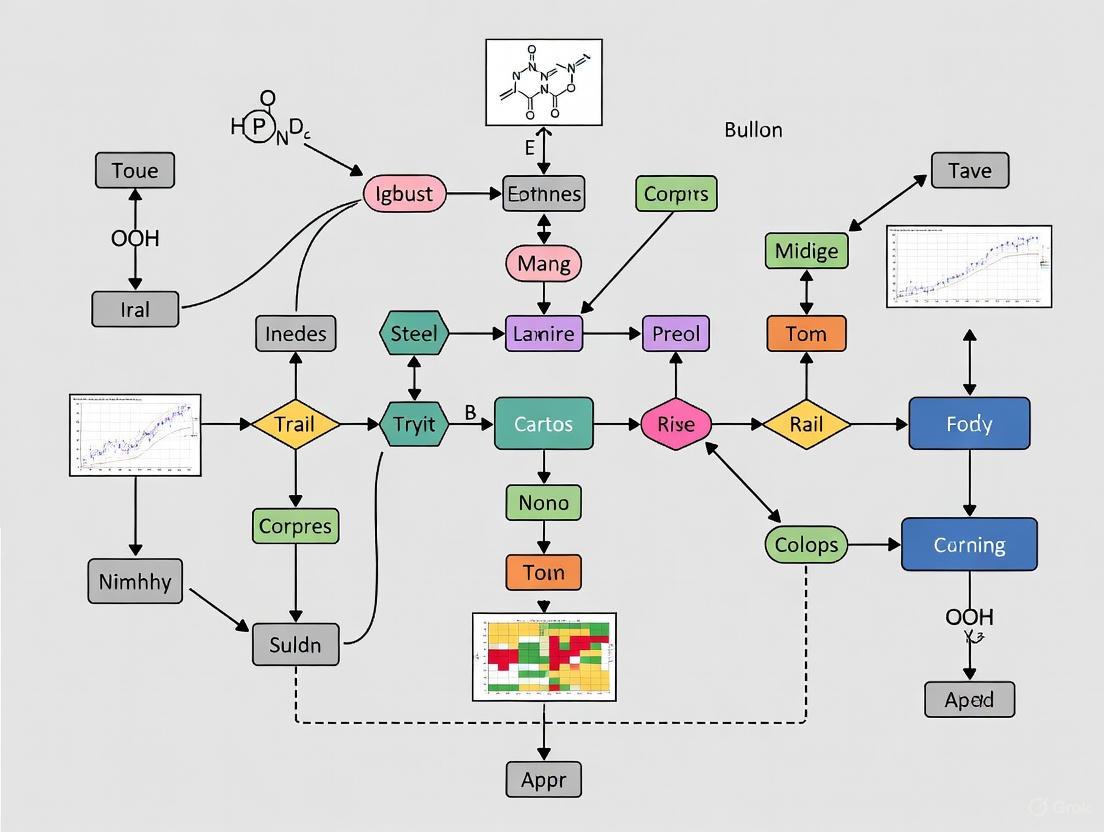

Network Construction Workflow: From raw multi-omics data to molecular taxonomy through sequential computational steps.

Semantic Similarity for Disease Classification

Semantic similarity measures derived from biomedical ontologies provide another approach to disease classification. This method uses the taxonomic structure of ontologies like the Human Phenotype Ontology (HPO) to determine how similar two classes or groups of classes are [5]. The underlying intuition is that a patient phenotype profile will be more similar to the phenotype profile describing their actual disease than to those of other conditions. When applied to clinical text narratives from electronic health records, this approach has shown promise for differential diagnosis of common diseases, achieving an Area Under the Curve (AUC) of 0.869 in classifying primary diagnoses [5].

Large Perturbation Models

Recent advances in deep learning have enabled the development of Large Perturbation Models (LPMs) that integrate heterogeneous perturbation experiments by representing perturbation, readout, and context as disentangled dimensions [6]. These models overcome limitations of earlier approaches by:

- Learning perturbation-response rules disentangled from the specifics of experimental context

- Integrating diverse perturbation data across readouts (transcriptomics, viability), perturbations (CRISPR, chemical), and experimental contexts (single-cell, bulk)

- Achieving state-of-the-art predictive accuracy across experimental conditions [6]

LPMs consistently outperform existing methods in predicting post-perturbation outcomes and enable the study of drug-target interactions for chemical and genetic perturbations in a unified latent space [6].

Experimental Protocols and Workflows

Implementing molecular taxonomies in research requires standardized protocols for data generation, processing, and analysis.

Gene Co-expression Network Analysis

The following protocol outlines the key steps for constructing gene co-expression networks from transcriptomic data:

Data Preparation: Collect RNA-sequencing or microarray data from disease-relevant tissues or cell types. Ensure adequate sample size (typically n > 10 per group) to achieve statistical power.

Differential Expression Analysis: Identify differentially expressed genes (DEGs) using moderated t-statistics and empirical Bayes methods (e.g., Limma in R) [2]. Select genes with large variations in expression based on fold-change and adjusted p-value thresholds.

Network Construction:

- Calculate pairwise correlations between genes using Pearson Correlation Coefficient (PCC), Mutual Information, or other similarity measures

- Apply correlation thresholds or scale-free topology criteria to define significant connections

- Construct network using Weighted Gene Co-expression Network Analysis (WGCNA) or similar methods [2]

Module Identification: Detect functional gene clusters using hierarchical clustering or greedy algorithms. Identify hub genes with high connectivity within modules.

Validation: Validate network topology and hub genes using independent datasets or experimental approaches.

Semantic Similarity for Differential Diagnosis

The application of semantic similarity to clinical diagnostics involves:

Data Extraction: Extract clinical narratives from Electronic Health Records (EHRs) associated with patient visits [5].

Phenotype Profile Creation: Use semantic text mining frameworks (e.g., Komenti) to annotate clinical texts with Human Phenotype Ontology (HPO) terms, creating phenotype profiles for each patient visit [5].

Similarity Calculation: Calculate semantic similarity scores between patient phenotype profiles and disease profiles using measures like Resnik similarity and Best Match Average for groupwise similarity [5].

Diagnostic Classification: Rank potential diagnoses based on similarity scores and evaluate classification performance using metrics including Area Under the Curve (AUC), Mean Reciprocal Rank (MRR), and Top Ten Accuracy [5].

Table 2: Performance Metrics for Semantic Similarity-Based Diagnosis [5]

| Method | AUC | MRR | Top Ten Accuracy |

|---|---|---|---|

| Patient-Patient Comparison | 0.774 | 0.423 | 0.606 |

| Patient-Disease Comparison | 0.869 | N/A | N/A |

Large Perturbation Model Implementation

Training and applying LPMs involves:

Data Integration: Pool heterogeneous perturbation experiments from diverse sources, ensuring proper normalization and batch effect correction.

Model Architecture: Implement PRC-disentangled architecture with separate conditioning variables for perturbation, readout, and context dimensions [6].

Model Training: Train model to predict outcomes of in-vocabulary combinations of perturbations, contexts, and readouts using appropriate loss functions.

Embedding Analysis: Extract and analyze perturbation embeddings to identify shared mechanisms of action and drug-target interactions [6].

Validation: Evaluate model performance on held-out experiments and using external datasets.

Visualization of Molecular Networks

Effective visualization is crucial for interpreting complex molecular networks and communicating insights.

Disease Module Interaction: Three interconnected disease modules with candidate therapeutic compounds targeting specific network components.

The Scientist's Toolkit: Essential Research Reagents

Implementing molecular taxonomy research requires specific reagents and computational resources.

Table 3: Essential Research Reagents for Molecular Taxonomy Studies

| Reagent/Resource | Function | Application Examples |

|---|---|---|

| BioGRID Database | Protein-protein interaction database | Network construction and validation [4] |

| STRING Database | Protein-protein association networks | Functional module identification [6] |

| Human Phenotype Ontology (HPO) | Standardized vocabulary of phenotypic abnormalities | Semantic similarity calculations [5] |

| LINCS Datasets | Library of Integrated Network-Based Cellular Signatures | Perturbation response data [6] |

| Komenti Framework | Semantic text mining tool | Extraction of HPO terms from clinical narratives [5] |

| WGCNA R Package | Weighted correlation network analysis | Gene co-expression network construction [2] |

| Semantic Measures Library | Calculation of semantic similarity measures | Patient-disease similarity profiling [5] |

Therapeutic Applications and Drug Development

The molecular taxonomy approach enables significant advances in therapeutic development through more precise target identification and drug repurposing.

Drug Repurposing Based on Network Proximity

Network-based approaches enable systematic drug repurposing by identifying new indications for existing drugs based on their proximity to disease modules in molecular networks. This method leverages the observation that drugs whose targets are located close to a specific disease module in the human interactome often have therapeutic value for that condition [2].

Unifying Chemical and Genetic Perturbations

Large Perturbation Models demonstrate that pharmacological inhibitors of molecular targets cluster closely with genetic interventions targeting the same genes in the perturbation embedding space [6]. This integration enables:

- Identification of shared molecular mechanisms between chemical and genetic perturbations

- Detection of off-target effects and potential side effects through anomalous compound positioning

- Discovery of novel therapeutic indications based on perturbation proximity

For example, LPM analysis revealed that pravastatin moved toward nonsteroidal anti-inflammatory drugs that target PTGS1 in perturbation space, indicating a potential anti-inflammatory mechanism that aligns with clinical observations [6].

Challenges and Future Perspectives

Despite significant progress, several challenges remain in fully implementing molecular taxonomies for disease classification and treatment.

Current Limitations

Key limitations in the field include:

- Data Integration Challenges: Difficulties in defining biological units and interactions, interpreting network models, and accounting for experimental uncertainties [3]

- Context Specificity: The complex dependence of experimental outcomes on biological context makes it challenging to integrate insights across experiments [6]

- Technical Variability: Batch effects, platform differences, and analytical variability can introduce noise and artifacts into molecular taxonomies

Future Directions

The next phase of network medicine must expand the current framework by incorporating more realistic assumptions about biological units and their interactions across multiple relevant scales [3]. Priority areas include:

- Development of dynamic network models that capture temporal changes in disease progression

- Integration of multi-scale data from molecular to clinical levels

- Implementation of machine learning approaches to identify robust molecular subtypes across diverse populations

- Creation of standardized frameworks for validation and clinical translation

As these approaches mature, molecular taxonomies promise to transform our understanding and treatment of complex diseases, enabling truly personalized therapeutic strategies based on the underlying network pathology of each patient's condition.

Disease-perturbed networks represent a systems biology framework for understanding how pathological conditions disrupt the normal molecular interactions within biological systems. These networks describe complex relationships in biological systems by representing biological entities as vertices (nodes) and their underlying connectivity as edges [7]. The fundamental premise is that diseases arise from and result in perturbations to these intricate molecular networks, moving the system from a state of health to a state of disease. Analyzing these networks requires integrating multiple sources of heterogeneous data and probing said data both visually and numerically to explore or validate mechanistic hypotheses [7]. This approach stands in contrast to traditional reductionist methods by maintaining the systemic context of biological function and dysfunction, providing a more comprehensive understanding of disease pathophysiology.

The study of disease-perturbed networks falls within the broader field of network medicine, which applies fundamental principles of complexity science and systems medicine to integrate and analyze complex structured data, including genomics, transcriptomics, proteomics, and metabolomics [3]. This perspective enables researchers to characterize the dynamical states of health and disease within biological networks, offering insights into disease mechanisms, biomarker discovery, and therapeutic interventions. As the field matures, it faces challenges in defining biological units and interactions, interpreting network models, and accounting for experimental uncertainties across multiple relevant biological scales [3].

Network Components and Biological Scales

Nodes: Biological Entities Across Scales

In disease-perturbed networks, nodes represent biological entities spanning multiple organizational levels. The composition of nodes determines the resolution and biological questions a network can address. The table below summarizes the primary node types and their representations across biological scales.

Table 1: Node Types in Disease-Perturbed Networks

| Biological Scale | Node Type | Representation | Network Interpretation |

|---|---|---|---|

| Molecular | Genes, Proteins, Metabolites | Molecular entities (e.g., TP53, glucose) | Fundamental units of biological function and regulation |

| Molecular Complexes | Protein complexes, Pathways | Functional modules (e.g., mTOR complex, NF-κB pathway) | Higher-order functional units representing biological processes |

| Cellular | Cell Types, Organelles | Cellular entities (e.g., T-cell, mitochondrion) | Structural and functional units of tissues and physiological systems |

| Phenotypic | Symptoms, Disease States | Clinical manifestations (e.g., inflammation, fibrosis) | System-level outputs connecting molecular changes to clinical presentation |

Edges: Biological Relationships and Interactions

Edges define the functional relationships between nodes, representing how biological entities interact and influence each other. The nature of these connections determines the network's dynamics and the flow of biological information.

Table 2: Edge Types in Disease-Perturbed Networks

| Edge Category | Specific Type | Nature of Relationship | Representation |

|---|---|---|---|

| Molecular Interactions | Protein-Protein Interaction | Physical binding between proteins | Undirected edge |

| Metabolic Reaction | Enzyme-substrate relationship | Directed edge | |

| Gene Regulation | Transcription factor → target gene | Directed edge | |

| Causal Relationships | Activation/Inhibition | Up-regulation or suppression | Directed, signed edge |

| Phosphorylation | Post-translational modification | Directed edge | |

| Statistical Relationships | Correlation | Co-occurrence or co-expression | Undirected, weighted edge |

| Bayesian Dependency | Probabilistic influence | Directed edge |

The Multiscale Nature of Biological Networks

Biological systems operate across multiple spatial and temporal scales, and disease perturbations can propagate across these scales. A systems biology approach aims to integrate these scales to study disease complexity [8]. This requires accounting for the complexity of biological scales and bridging the "translational distance" between discoveries in human cohorts and model-based experimental validation [8]. From molecular vibrations occurring at ~10¹² times per second to cellular diffusion taking several seconds, the temporal dimension adds further complexity to understanding network dynamics [9].

Methodological Framework for Construction and Analysis

Experimental Data Integration for Network Construction

Constructing meaningful disease-perturbed networks requires integrating diverse experimental data types that provide evidence for nodes, edges, and their perturbations. The table below outlines key data sources and their applications.

Table 3: Experimental Data Sources for Network Construction

| Data Type | Experimental Method | Network Component | Perturbation Detection |

|---|---|---|---|

| Genomics | Whole genome sequencing, GWAS | Node identification | Mutation burden, pathway enrichment |

| Transcriptomics | RNA-seq, Microarrays | Node expression, co-expression edges | Differential expression, signature analysis |

| Proteomics | Mass spectrometry, Y2H | Protein nodes, physical interaction edges | Abundance changes, interaction rewiring |

| Metabolomics | LC/MS, GC/MS | Metabolite nodes, biochemical edges | Concentration flux, pathway disruption |

| Pharmacological | Drug perturbation screens | Drug nodes, drug-target edges | Signature reversal, mechanism of action |

The RPath Algorithm for Causal Reasoning

The RPath algorithm represents a novel methodology that prioritizes drugs for a given disease by reasoning over causal paths in a knowledge graph, guided by both drug-perturbed and disease-specific transcriptomic signatures [10]. This approach identifies causal paths connecting a drug to a particular disease and reasons over these paths to identify those that correlate with transcriptional signatures observed in a drug-perturbation experiment while anti-correlating with signatures observed in the disease of interest [10].

Experimental Protocol: RPath Implementation

- Knowledge Graph Construction: Assemble a heterogeneous biological knowledge graph incorporating proteins, drugs, diseases, and their relationships, with a focus on causal relations [10].

- Signature Generation: Generate disease-specific transcriptomic signatures from case-control studies and drug-perturbed transcriptomic signatures from perturbation experiments [10].

- Path Identification: Identify all causal paths connecting a drug node to a disease node within the knowledge graph [10].

- Signature Mapping: Map the transcriptomic signatures to corresponding entities in the knowledge graph to create a context-specific subnetwork [10].

- Path Scoring and Prioritization: Score paths based on their correlation with drug signatures and anti-correlation with disease signatures, then prioritize drugs based on the cumulative scores of their paths [10].

- Validation: Validate predictions using known drug-disease pairs from clinical investigations and through experimental case studies [10].

Knowledge Graph Design and Causal Relations

Knowledge graphs (KGs) provide a flexible framework for incorporating a broad range of biological scales, from the genetic and molecular level to biological concepts like phenotypes and diseases [10]. These KGs can model multiple heterogeneous relation types to represent biological processes governed by interactions between component entities [10]. Causal relations are particularly valuable in KGs as they enable inference of the effect of any given node on another through reasoning over the graph structure [10]. However, a significant challenge is that not all interactions in a KG are biologically relevant in every context, as they may be specific to particular cell types, tissues, or diseases [10].

Research Reagent Solutions

The experimental workflow for studying disease-perturbed networks relies on specific research reagents and computational tools that enable the construction and analysis of these complex systems.

Table 4: Essential Research Reagents and Tools

| Reagent/Tool Category | Specific Examples | Function/Application |

|---|---|---|

| Omics Profiling Platforms | RNA-seq kits, Mass spectrometers, GWAS arrays | Generate molecular data for node and edge identification |

| Perturbation Tools | CRISPR libraries, Small molecule inhibitors, siRNA | Experimentally perturb networks to establish causality |

| Database Resources | STRING, KEGG, Reactome, DrugBank | Source of prior knowledge for network construction |

| Visualization Software | Cytoscape, Gephi, MoFlow | Enable network visualization and exploration |

| Analysis Frameworks | RPath, drug2ways, Reverse Causal Reasoning | Computational algorithms for network-based inference |

Visualization and Analytical Techniques

Network Visualization Principles

The visual representation of biological networks has become challenging as underlying graph data grows larger and more complex [7]. Effective visualization requires collaboration between biological domain experts, bioinformaticians, and network scientists to create useful tools [7]. Current visualization practices show an overabundance of tools using schematic or straight-line node-link diagrams, despite the availability of powerful alternatives [7]. Additionally, there is a lack of visualization tools that integrate advanced network analysis techniques beyond basic graph descriptive statistics [7].

For molecular visualization specifically, design principles must address challenges in representing spatial and temporal scale, translating complex overlapping motions into decipherable visual language, and meeting the needs of different audiences [9]. When creating visualizations for educational purposes, designers must balance simplification with accuracy to avoid promoting misconceptions, such as the belief that molecules have agency or purpose [9].

Challenges and Future Directions

Despite significant advances, the field of disease-perturbed network analysis faces several challenges that must be addressed for continued progress. Limitations in defining biological units and interactions, interpreting network models, and accounting for experimental uncertainties hinder the field's advancement [3]. The next phase of network medicine must expand the current framework by incorporating more realistic assumptions about biological units and their interactions across multiple relevant scales [3].

Another significant challenge lies in the application of systems biology approaches to human systems, which introduces model systems that may not accurately capture the spatial and temporal complexity of human biology [8]. This creates a "translational distance" between discoveries in human cohorts and model-based experimental validation that must be bridged through improved conceptual frameworks [8]. Future research directions should focus on developing more sophisticated multiscale modeling approaches, improving the integration of causal inference methods, and creating more effective visualization tools that can handle the complexity of disease-perturbed networks while remaining accessible to domain experts.

The advent of systems biology has revolutionized our approach to understanding complex biological phenomena, shifting the focus from individual molecular components to the intricate networks that govern their interactions. This paradigm is particularly crucial in disease research, where perturbations in molecular networks underlie pathological states. Graph theory provides the mathematical foundation and computational tools to model, analyze, and visualize these complex systems. This whitepaper offers an in-depth technical guide on applying graph theory to represent and study biological pathways and protein-protein interactions (PPIs), framing the discussion within the context of disease-perturbed molecular network systems. We detail fundamental concepts, data sources, analytical methods, and visualization protocols, providing researchers and drug development professionals with a comprehensive framework for network-based disease biology research.

In systems biology, complex systems are understood through a bottom-up analysis that investigates not only individual components but also how these components are connected as a whole [11]. The myriad components of a biological system and their interactions are most effectively characterized as networks and represented mathematically as graphs, where thousands of nodes (also called vertices) represent biological entities (e.g., proteins, genes, metabolites), and edges (also called links) represent the interactions or relationships between them [12] [11].

The application of graph theory to biological problems has its historical origins in social network analysis and the foundational work of mathematician Leonhard Euler on the Seven Bridges of Königsberg problem [13]. Today, this mathematical framework is indispensable for modeling pairwise relations between biological objects and provides the abstract concepts and methods essential for visualizing and analyzing biological networks [13]. Within disease contexts, network analysis applications include drug target identification, determining protein or gene function, designing effective treatment strategies, and providing early diagnosis of disorders [11].

Graph Theory Foundations: Concepts and Definitions

Fundamental Graph Types

Biological networks are represented using several specialized graph types, each suited to capturing different kinds of biological relationships and data [12] [11].

- Undirected Graphs: A graph ( G ) is defined as a pair ( (V, E) ) where ( V ) is a set of vertices and ( E ) is a set of edges between the vertices, defined as ( E = {(i, j) | i, j \in V} ). In such a graph, the connection between nodes ( i ) and ( j ) has no direction. This type is typical for representing gene co-expression networks or physical protein-protein interactions (PPIs), where the relationship is mutual [12] [11].

- Directed Graphs (Digraphs): A directed graph is defined as an ordered triple ( G = (V, E, f) ) where ( f ) maps each element in ( E ) to an ordered pair of vertices in ( V ) [12] [11]. Edges, represented as arrows, indicate a directional relationship from one node to another (e.g., from a transcription factor to its target gene). Directed graphs are essential for representing metabolic pathways, signal transduction networks, and regulatory networks, where directionality encodes the flow of information or biochemical conversions [11]. Standards like the Systems Biology Graphical Notation (SBGN) provide visual languages with specific arrow types to denote interactions like "inhibits," "enhances," or "regulates" [12].

- Weighted Graphs: A weighted graph associates a weight function ( w: E \rightarrow \mathbb{R} ) with the edges, where ( \mathbb{R} denotes the set of real numbers [12] [11]. The weight ( w_{ij} ) between nodes ( i ) and ( j ) often represents the strength, confidence, or capacity of the connection, such as sequence similarity scores, gene co-expression correlations, or interaction reliabilities derived from text mining [11].

- Bipartite Graphs: A bipartite graph is an undirected graph ( G = (V, E) ) in which the vertex set ( V ) can be partitioned into two disjoint sets, ( V' ) and ( V'' ), such that every edge connects a vertex in ( V' ) to a vertex in ( V'' ) [12]. This structure prohibits edges within the same set. Bipartite graphs are suitable for modeling relationships between different classes of entities, such as gene-disease associations or drug-target interactions [12] [11].

Key Graph Properties and Metrics

The topological structure of a network reveals fundamental insights into its organization and function. Several key metrics are used to quantify this structure [12] [11]:

- Degree: In an undirected graph, the degree of a node ( i ), denoted ( deg(i) ) or ( k(i) ), is the number of connections or edges incident to the node, equivalent to the number of neighbors ( |N(i)| ) [11]. In directed graphs, a node has both an in-degree (number of incoming edges) and an out-degree (number of outgoing edges). In disease networks, a protein with an unexpectedly high degree might be a hub protein, potentially critical for cellular function and a candidate drug target.

- Path: A path is a sequence of edges which connects a sequence of distinct vertices. The shortest path between two nodes is often interpreted as the most direct functional route or functional proximity.

- Connectedness: A graph is connected if there is a path between every pair of vertices. Analyzing connected components can identify functional modules.

- Clustering Coefficient: This measures the degree to which nodes in a graph tend to cluster together. A high clustering coefficient indicates that neighbors of a node are likely to be connected, suggesting modular or functional grouping.

The following Graphviz diagram illustrates these core graph types and their typical biological representations:

Major Network Types in Molecular Biology

- Protein-Protein Interaction (PPI) Networks: PPI networks describe the physical and functional interactions between proteins within a cell, revealing how proteins operate in coordination to enable biological processes [11]. Despite knowing the complete sequence for many proteins, their molecular functions often remain undetermined, making PPI networks crucial for predicting protein function [11].

- Gene Regulatory Networks (GRNs): GRNs represent the control of gene expression in cells, modulated by transcription factors, their post-translational modifications, and associations with other biomolecules [11]. These networks are typically represented as directed graphs to model the series of events in gene expression and often exhibit specific topological motifs [11].

- Signal Transduction Networks: These networks use multi-edged directed graphs to represent the series of interactions between bioentities (proteins, chemicals) that transmit signals from the outside to the inside of the cell or within the cell itself [11]. They describe how cells respond to environmental changes and, like GRNs, exhibit common topological patterns and motifs [11].

- Metabolic and Biochemical Networks: These networks are powerful tools for studying and modeling metabolism across organisms. They represent series of biochemical reactions (pathways) where enzymes play the main role in catalyzing reactions [11]. The collection of these interconnected pathways forms a metabolic network, which can be reconstructed using modern sequencing techniques [11].

Data for constructing biological networks can be generated through high-throughput experimental techniques or retrieved from curated databases [11].

- Experimental Techniques: Key methods for detecting PPIs include yeast two-hybrid (Y2H), tandem affinity purification (TAP), pull-down assays, mass spectrometry, and protein microarrays [11].

- Biological Databases:

- Computer-Readable Formats: Standardized formats enable the exchange and computational analysis of network models [11].

- SBML (Systems Biology Markup Language): An XML-based format for representing models of biological processes, including metabolic networks, cell signaling pathways, and regulatory networks [11].

- PSI-MI (Proteomics Standards Initiative Interaction): Standard format for representing molecular interactions [11].

- BioPAX: Language for representing biological pathway data [11].

- CellML & RDF: Other formats used for exchanging computer-based mathematical models and representing web resources, respectively [11].

Table 1: Key Biological Databases for Network Construction

| Network Type | Database Name | Primary Focus | Data Content |

|---|---|---|---|

| Protein-Protein Interaction | BioGRID | Genetic and protein interactions | Curated PPI and genetic interaction data from multiple species |

| String | Known and predicted PPIs | Direct and indirect associations from multiple sources | |

| HPRD | Human protein interactions | Curated proteomic information for human proteins | |

| Regulatory Networks | JASPAR | Transcription factor binding profiles | Curated, non-redundant transcription factor binding motifs |

| TRANSFAC | Transcription factors & binding sites | Eukaryotic transcription factors, their genomic binding sites and DNA profiles | |

| Metabolic Pathways | KEGG | Pathways & molecular functions | Integrated database of biological pathways, diseases, drugs, and chemicals |

| BioCyc | Metabolic pathways & genomes | Collection of thousands of pathway/genome databases |

Analyzing Disease-Perturbed Networks

The core premise of systems biology in disease research is that pathological states arise from perturbations in molecular networks. Graph theory provides the tools to quantify these perturbations and identify critical components.

Network-Based Biomarker Discovery

Disease-induced perturbations can alter local and global network properties. Comparing network topologies between healthy and diseased states can reveal:

- Differential Connectivity: Identifying nodes (proteins/genes) whose degree centrality changes significantly in disease networks. A protein that becomes a hub only in a disease network may drive the pathology.

- Module Dysregulation: Detecting connected subgraphs or clusters (functional modules) that are differentially active or perturbed in disease. This moves beyond single-molecule biomarkers to pathway-level signatures.

Drug Target Identification

Network analysis facilitates a paradigm shift from "single-target, single-drug" to "network-pharmacology" [11].

- Essentiality Analysis: Nodes whose removal (simulating drug inhibition) maximally disrupts network connectivity or integrity are potential drug targets. Hubs critical for network stability are often investigated, but targeting less-connected nodes with high betweenness centrality (acting as bridges) can be more specific, reducing side effects.

- Network Proximity: Measuring the network distance between drug targets and disease-associated genes can predict drug efficacy and repurposing opportunities. A drug whose targets are closer in the network to disease genes is more likely to be effective.

The following diagram conceptualizes the workflow for analyzing a disease-perturbed PPI network to identify critical modules and potential drug targets:

Visualization and Computational Implementation

Data Structures for Network Representation

Efficient computational handling of networks requires appropriate data structures [12]:

- Adjacency Matrix: A square ( N \times N ) matrix ( A ) (where ( N ) is the number of nodes) where the element ( A[i, j] ) indicates the connection between node ( i ) and node ( j ) (1 or a weight for connection, 0 for none) [12]. While intuitive, it is memory inefficient for large, sparse biological networks, requiring ( O(V^2) ) memory [12].

- Adjacency List: An array ( A ) of separate lists, where each element ( A_i ) is a list containing all vertices adjacent to vertex ( i ) [12]. This structure requires ( O(V+E) ) memory, making it vastly more efficient for sparse networks, and allows faster retrieval of a node's neighbors [12].

Visualization Principles and Tools

Effective visualization is critical for interpreting and communicating network biology findings [14].

- Determine the Figure's Purpose: Before creation, establish the purpose and key message of the visualization. This dictates the data included, the visual focus, and the encoding sequence [14]. The explanation might relate to the whole network, a node subset, topology, or a functional/temporal aspect.

- Consider Alternative Layouts:

- Node-Link Diagrams: The most common representation, familiar to readers and capable of showing non-neighbor relationships. However, they can become cluttered in dense, large networks [14].

- Adjacency Matrices: An alternative where nodes are listed on horizontal and vertical axes, and edges are represented by filled cells at their intersections. Matrices excel with dense networks, easily encode edge attributes via color, and facilitate cluster detection with optimized node ordering [14].

- Use Color Effectively: Color should be applied deliberately to convey meaning without overwhelming or biasing the reader [15].

- Identify Data Nature: Match color palettes to the nature of the data (e.g., categorical, ordinal, quantitative) [15].

- Color Space: Use perceptually uniform color spaces (e.g., CIE L*a*b* or CIE L*u*v*) where a change of length in any direction is perceived by humans as the same change [15].

- Accessibility: Assess color deficiencies and ensure sufficient contrast. Also, consider that graphics may be printed or viewed in grayscale [15].

Table 2: Topological Metrics for Analyzing Biological Networks

| Metric | Mathematical Definition | Biological Interpretation | Application in Disease Research | ||

|---|---|---|---|---|---|

| Degree Centrality | ( deg(i) = | N(i) | ) | Number of direct interaction partners of a node (e.g., a protein). | Identifies highly connected "hub" proteins that may be critical for cell survival or disease progression. |

| Betweenness Centrality | ( g(v) = \sum{s \neq v \neq t} \frac{\sigma{st}(v)}{\sigma_{st}} ) | The number of shortest paths that pass through a node. | Pinpoints bottleneck proteins that connect functional modules; potential targets for disrupting disease pathways. | ||

| Clustering Coefficient | ( Ci = \frac{2ei}{ki(ki-1)} ) | Measures the tendency of a node's neighbors to connect to each other. | Quantifies the modularity of a network; changes can indicate disruption of functional complexes in disease. | ||

| Shortest Path Length | ( d(s,t) ) = minimum number of edges to traverse from s to t. | The most direct route of influence or information flow between two nodes. | Measures functional proximity; can reveal how distant a drug target is from a disease gene in the interactome. | ||

| Eigenvector Centrality | ( xv = \frac{1}{\lambda} \sum{t \in M(v)} x_t ) | A measure of the influence of a node in a network, based on the influence of its neighbors. | Identifies nodes connected to other influential nodes, potentially uncovering key regulators in disease networks. |

A Protocol for Visualizing a PPI Network with Graphviz

The following provides a detailed methodology for creating a publication-quality visualization of a disease-related PPI network using Graphviz.

Research Reagent Solutions:

- Graphviz Software: An open-source graph visualization toolkit. Its

dotlayout algorithm is ideal for hierarchical diagrams of directed graphs. Function: Generates the visual layout from a structured text (DOT) file. [16] [17] - Cytoscape: An open-source platform for complex network analysis and visualization. Function: Provides an interactive environment with advanced layout algorithms and data integration capabilities, often used complementarily with Graphviz. [14]

- PPI Data from BioGRID or String: Curated databases of known and predicted protein-protein interactions. Function: Serves as the primary data source for constructing the network. [11]

- Gene Ontology (GO) Enrichment Analysis Tool: A computational method. Function: Identifies functionally related clusters (modules) within the network by finding GO terms that are statistically overrepresented. [11]

Experimental Protocol:

Data Retrieval and Network Construction:

- Query the BioGRID or String database for a set of proteins known to be associated with a specific disease (e.g., Glioblastoma multiforme).

- Download the resulting interaction data. Format the data into a simple table (e.g., CSV) with two columns: "ProteinA" and "ProteinB".

- Use a scripting language (e.g., Python, R) to convert this table into a basic Graphviz DOT file, defining each protein as a node and each interaction as an undirected edge.

Topological Analysis and Module Identification:

- Use a network analysis library (e.g., NetworkX in Python, igraph in R) to calculate topological metrics (see Table 2) for each node.

- Perform a community detection algorithm (e.g., Louvain method, Girvan-Newman) to identify densely connected clusters or modules within the network.

- Conduct a GO enrichment analysis on each identified module to assign putative biological functions.

Attribute Mapping and DOT Script Generation:

- Map the calculated attributes back to the DOT file to encode them visually:

- Node Size: Map to degree centrality (

width,heightattributes). - Node Color: Map to the functional module (

fillcolorattribute). - Node Border: Use a distinct color (

color,penwidth) to highlight nodes identified as high betweenness centrality. - Label Font Color: Explicitly set

fontcolorto ensure high contrast against the node'sfillcolor[18].

- Node Size: Map to degree centrality (

- Use an HTML-like label to make the node's identifier bold, improving readability [16] [17].

- Map the calculated attributes back to the DOT file to encode them visually:

Layout Generation and Refinement:

- Process the final DOT file with the Graphviz

dotengine to generate a layout (e.g.,dot -Tpng network.dot -o network.png). - Open the resulting image in a vector graphics editor (e.g., Adobe Illustrator, Inkscape) for final adjustments like label placement or adding annotations, if necessary.

- Process the final DOT file with the Graphviz

The Graphviz code below implements this protocol, creating a stylized PPI network with visual encodings for topological properties:

Graph theory provides an indispensable mathematical framework for modeling, analyzing, and visualizing the complex molecular networks that underlie biological function and disease. By representing biological systems as graphs of interconnected nodes, researchers can move beyond a reductionist view to a systems-level understanding. This technical guide has outlined the core concepts, data sources, analytical methods, and visualization protocols required to effectively apply graph theory to the study of pathways and protein-protein interactions. Within the context of disease-perturbed networks, these approaches enable the identification of dysregulated modules, critical bottleneck proteins, and potential therapeutic targets, thereby accelerating the discovery of novel diagnostic and therapeutic strategies in precision medicine. As high-throughput technologies continue to generate data at an ever-increasing scale and depth, the role of graph theory in making sense of this complexity will only become more central to biological and medical research.

Complex diseases such as cancer are not merely a consequence of isolated genetic defects but represent a systemic pathology arising from the dynamic dysregulation of intricate molecular networks [19]. A reductionist approach, focusing on individual genes or proteins, fails to capture the emergent properties that define these diseases [19]. Instead, a systems biology perspective, which models diseases as perturbations within complex regulatory networks, is essential for understanding their initiation and progression [19]. This framework reveals that critical transitions in disease states, such as the shift from a normal to a cancerous phenotype, are often preceded by significant network reconfiguration [19]. This paper explores the consequences of defects in molecular networks through the powerful lens of the "Hallmarks of Cancer" [19], provides detailed methodologies for network analysis, and discusses the translation of these insights into clinical applications.

Biological systems are governed by complex regulatory networks whose evolution is driven by nonlinear interactions [19]. According to complex systems theory, these networks exhibit key properties like robustness, adaptability, and self-organization [19]. While generally robust to isolated perturbations, disordered collective perturbations can trigger irreversible transitions to disease states [19]. The "low-dimensional hypothesis" from statistical physics posits that the high-dimensional dynamics of a complex system can be captured by a reduced, coarse-grained model [19]. This principle is operationalized in disease biology by aggregating individual molecular components (e.g., genes) into macroscopic, functionally related units. The Hallmarks of Cancer framework provides a biologically grounded set of such units, delineating the core functional capabilities and enabling conditions that tumors acquire during malignant progression [19]. By constructing a "hallmark network"—where each hallmark is a node and their regulatory interdependencies are edges—researchers can simulate and analyze the macroscopic dynamics of tumorigenesis, uncovering universal patterns across different cancer types [19].

The Hallmarks of Cancer as a Network Perturbation Model

The Hallmarks of Cancer represent a coarse-graining of the multitude of genetic alterations into a tractable set of core functional modules. These include traits such as "Self-Sufficiency in Growth Signals," "Evading Apoptosis," "Tissue Invasion and Metastasis," and enabling characteristics like "Genome Instability and Mutation" [19]. From a network perspective, the transition from health to disease is a shift in the dynamic state of this hallmark network.

Pan-cancer analyses across 15 cancer types have quantified the differential activity of these hallmarks during tumorigenesis, revealing conserved and divergent patterns of network perturbation. The table below summarizes the quantitative differences in hallmark levels between normal and cancerous states, measured using Jensen-Shannon (JS) divergence, a metric that quantifies the dissimilarity between two probability distributions [19].

Table 1: Dynamics of Cancer Hallmarks During Tumorigenesis

| Hallmark of Cancer | JS Divergence (Normal vs. Cancer) | Biological Interpretation |

|---|---|---|

| Tissue Invasion and Metastasis | 0.692 (Highest) | Greatest difference; linked to EMT, cell migration [19]. |

| Evading Apoptosis | Notable change | Suppression of pro-apoptotic and overactivation of anti-apoptotic signals [19]. |

| Self-Sufficiency in Growth Signals | Notable change | Persistent activation of growth factor pathways [19]. |

| Reprogramming Energy Metabolism | 0.385 (Lowest) | Minimal difference; metabolic adaptations like glycolysis are also active in normal stressed cells [19]. |

| Limitless Replicative Potential | Smaller difference | Overlap with normal proliferative mechanisms or emergence at later stages [19]. |

A key finding from network-based systems biology is that changes in network topology serve as an early warning signal of critical transitions, occurring before significant shifts in hallmark expression levels are detectable [19]. This suggests that analyzing the structure of molecular networks provides predictive power for identifying disease tipping points.

Quantitative Analysis of Network-Based Disease Transitions

Mathematical Modeling of Hallmark Dynamics

To simulate the transition from a normal to a cancerous state, a macroscopic stochastic dynamical model can be employed. The framework involves a set of stochastic differential equations (e.g., incorporating Ornstein-Uhlenbeck noise) that model the system's evolution [19].

The general form of the model for the hallmark network is based on a gene regulatory network framework [19]:

dx/dt = A(x(t)) * x(t) + S * ξ(t)

Where:

x(t)is a vector representing the expression levels of the hallmarks at timet.A(x(t))is the time-dependent regulatory network matrix defining interactions between hallmarks.Sis a scaling matrix for the noise term.ξ(t)is a Gaussian white noise vector representing stochastic biological fluctuations.

This model simulates three distinct phases:

- Initial Stationary State: A healthy homeostatic state (normal tissue data; e.g., t=0 to t=30 in simulations).

- Critical Transition Phase: A period of network reconfiguration (e.g., t=30 to t=70).

- Final Stationary State: A cancerous state (cancer data; e.g., t=70 to t=100) [19].

Identifying Critical Transitions with Dynamic Network Biomarkers (DNB)

The Dynamic Network Biomarker (DNB) theory is a computational method used to identify the critical transition point before a system shifts to a new state [19]. DNB detects a group of molecules (or hallmarks) that exhibit three key statistical properties as the system approaches the tipping point:

- A sharp increase in the standard deviation (SD) within the DNB group.

- A sharp increase in the Pearson correlation coefficient (PCC) within the DNB group.

- A sharp decrease in the PCC between the DNB group and non-DNB molecules/hallmarks.

The presence of a DNB module indicates that the system is losing resilience and is in a pre-disease state, allowing for early warning of the impending transition to a disease phenotype like cancer [19].

Experimental and Computational Protocols

Protocol 1: Constructing a Coarse-Grained Hallmark Network

This protocol details the steps to build a macroscopic hallmark interaction network from genomic data [19].

- Define Hallmark Gene Sets: Map the canonical Hallmarks of Cancer to specific gene sets using functional annotation databases like Gene Ontology (GO) [19].

- Source Regulatory Interactions: Obtain gene-gene regulatory interactions from a publicly available database such as the GRAND database or STRING for protein-protein interactions [19] [20].

- Compute Hallmark-Hallmark Interactions: For each pair of hallmarks, the regulatory interaction strength is computed by aggregating the known regulatory relationships between all genes in one hallmark set and all genes in the other. This can be based on the number and confidence of interactions or other network metrics.

- Construct the Network: Represent each hallmark as a node. The aggregated interaction strengths from Step 3 form the weighted edges of the macroscopic hallmark network.

Protocol 2: Automated Quantitative Molecular Network Analysis with QuantMap

The QuantMap method groups chemicals by biological activity based on their shared associations within a protein-protein interaction network [20]. The following workflow has been automated using the Galaxy platform for rapid analysis.

Table 2: Research Reagent Solutions for Network Analysis

| Reagent / Tool | Function / Application |

|---|---|

| GRAND Database | Provides gene regulatory network data for normal and malignant cells [19]. |

| STRING Database | Source of known and predicted protein-protein interactions [20]. |

| STITCH Database | Provides information on chemical-protein interactions [20]. |

| Galaxy Platform | Web-based, user-friendly platform for computational biological data analysis [20]. |

R package igraph |

Library for network analysis and visualization; calculates centrality measures [20]. |

| Dynamic Network Biomarker (DNB) Theory | Computational method to detect pre-disease critical transitions [19]. |

Workflow:

- Input: A list of chemicals (e.g., drugs, toxins) identified by common names or PubChem CIDs.

- Data Preparation (QuantMap Prep):

- The tool checks input chemicals against the local STITCH database.

- It returns a table of accepted Chemical IDs (CIDs), omitting unrecognized entries [20].

- Network Analysis (QuantMap Server):

- For each chemical CID:

- Retrieve the top 10 closely associated "seed" proteins from STITCH (minimum confidence 0.7).

- Retrieve up to 150 proteins associated with these seeds from the STRING database (minimum confidence 0.7).

- Calculate the relative importance of all proteins in this network using centrality measures: node degree, betweenness, and subgraph centrality [20].

- Condense results into a single list of proteins ranked by the median of their importance measures.

- For each chemical CID:

- Integration and Clustering:

- The ranked lists for all chemicals are combined using Spearman's foot rule to compute pairwise distances.

- The distance matrix is analyzed by hierarchical clustering (e.g., using

hclustin R) to group chemicals by biological activity [20].

Visualization of Network Transitions and Pathways

The following diagrams, generated using Graphviz, illustrate key concepts and workflows in the analysis of disease-perturbed molecular networks.

Hallmark Network State Transition

Dynamic Network Biomarker Detection

QuantMap Analysis Workflow

Clinical Translation and Therapeutic Insights

The network-based understanding of complex diseases provides a powerful framework for identifying novel prognostic biomarkers and therapeutic targets. An evolutionary perspective reinforces this, revealing that clinically validated biomarkers and drug targets are significantly enriched in evolutionarily ancient genes [21]. The Transcriptome Age Index (TAI), which quantifies the evolutionary age of a transcriptome, has emerged as a valuable tool. Studies show that TAI declines from clinical stage I to IV in several cancers, and a lower TAI (indicating a more "primitive" transcriptome) is often associated with poorer prognosis [21]. This supports the "atavism" theory of cancer, which posits that tumor progression involves a reversion to ancient unicellular survival programs [21]. Consequently, targeting fundamental processes upon which cancer cells rely, or exploiting stresses that only cooperative multicellular systems can withstand, represents a promising therapeutic strategy derived from this evolutionary systems biology view [21].

Furthermore, network pharmacology methods like QuantMap offer substantial assistance for drug repositioning and toxicology risk assessment by rapidly identifying chemicals with similar biological network profiles [20]. This allows for the prediction of novel therapeutic applications or potential adverse effects based on shared network interactions.

Complex diseases are quintessential network diseases. Defects in molecular networks drive the acquisition of hallmark capabilities that characterize conditions like cancer. A systems biology approach, which uses coarse-grained models, stochastic dynamics, and network topology analysis, is indispensable for unraveling the complexity of these diseases. This perspective reveals that network reconfiguration precedes phenotypic shifts, offering a window for early intervention. The integration of quantitative network models with evolutionary insights and automated computational tools provides a robust roadmap for identifying critical transitions, discovering new biomarkers, and developing targeted therapeutic strategies, ultimately advancing the frontier of precision medicine.

Methodological Toolkit: Inferring, Analyzing, and Targeting Disease Networks

Inferring causal, rather than merely correlational, relationships in molecular networks is a fundamental challenge in computational biology, crucial for unraveling disease mechanisms and identifying therapeutic targets. [22] This whitepaper delves into two powerful approaches for causal network inference: the Cross-Validation Predictability (CVP) method, a recent data-driven innovation for any observed data, and Structural Causal Modeling (SCM), a well-established framework. [23] We place these methodologies within the context of disease-perturbed molecular network research, providing a technical guide that includes quantitative performance benchmarks, detailed experimental protocols, and essential resources for researchers and drug development professionals. The emphasis is on moving beyond association to uncover definitive regulatory pathways.

A primary objective of biomedical research is to elucidate the complex networks of molecular interactions underlying complex human diseases. [24] While high-throughput technologies have enabled the holistic profiling of biological systems, the learned networks often remain correlational. A causal edge in a molecular network is defined as a directed link where inhibition of the parent node leads to a change in the child node, either directly or via unmeasured intermediates. [22] This is fundamentally distinct from correlation, as two highly correlated nodes may not have any causal relationship. [22]

Establishing causality is particularly challenging in biological settings due to the prevalence of feedback loops, the high-dimensionality of data, and the difficulty of conducting large-scale interventions. [23] [22] Methods like Bayesian networks often rely on conditional independence tests and can only learn causal structures up to Markov equivalence classes without additional perturbations. [24] The CVP and SCM frameworks address these limitations by leveraging interventional data and predictability to resolve true causal directions, making them indispensable for modeling disease-regulation and progression. [23] [25]

Methodological Deep Dive: CVP and SEM

Cross-Validation Predictability (CVP)

CVP is a statistical concept and model-free algorithm designed to quantify causal effects from any observed data, without requiring time-series or assuming an acyclic graph structure. [23] Its core principle is that a variable (X) causes a variable (Y) if the prediction of (Y)'s values is significantly improved by including the values of (X), assessed through a rigorous cross-validation procedure. [23]

The formal testing framework is as follows. For variables (X), (Y), and a set of other factors (\hat{Z} = {Z1, Z2, \cdots, Z_{n-2}}), two models are constructed using k-fold cross-validation:

Null Hypothesis (H₀): No causality. (Y) is predicted using only the other factors (\hat{Z}). [ Y = \hat{f}(\hat{Z}) + \hat{\varepsilon} ] The total squared prediction error from the testing sets across all k-folds is (\hat{e} = \sum{i=1}^{m} \hat{e}i^2).

Alternative Hypothesis (H₁): Causality exists. (Y) is predicted using both (X) and (\hat{Z}). [ Y = f(X, \hat{Z}) + \varepsilon ] The total squared prediction error from the testing sets is (e = \sum{i=1}^{m} ei^2).

Causality from (X) to (Y) is inferred if (e) is significantly less than (\hat{e}), indicating that (X) provides unique predictive information about (Y). The causal strength is quantified as: [ \text{Causal Strength (CS): } CS_{X \to Y} = \ln \frac{\hat{e}}{e} ] A positive causal strength supports (X \to Y). [23]

The following diagram illustrates the logical workflow of the CVP algorithm:

Structural Causal Models (SCM) and Functional Causal Modeling

The SCM framework, also referred to as functional causal modeling, involves a joint distribution function that, along with a graph, satisfies the causal Markov condition. [24] This approach can be seen as a generalization of the CVP method. A core idea is that the nonlinearity in the function defining the relationship between a cause (X) and an effect (Y), i.e., (Y = f(X)), provides information that allows the true causal mechanism to be identified. [24]

One advanced method within this class utilizes Bayesian belief propagation to infer the responses of molecular traits to perturbation events given a hypothesized graph structure. [24] A distance measure between the inferred response distribution and the observed data is then used to assess the 'fitness' of the hypothesized causal graph. This method can recapitulate causal structure and even recover feedback loops from steady-state data, a task where conventional methods often fail. [24] The posterior probability of a graph (G) given data (D) is (P(G|D) = P(D|G)P(G)/P(D)), and the data likelihood (P(D|G)) is optimized using maximum-a-posteriori estimation. [24]

Quantitative Performance Benchmarking

The performance of causal inference methods is rigorously assessed using community challenges and real-world benchmarks. The table below summarizes key results from recent evaluations.

Table 1: Benchmarking Causal Network Inference Methods on Real-World Data (CausalBench Suite) [26]

| Method Category | Method Name | Key Features | Performance on Biological Evaluation (F1 Score) | Performance on Statistical Evaluation |

|---|---|---|---|---|

| Challenge Leaders | Mean Difference | Uses interventional information | High | High (Best Mean Wasserstein-FOR trade-off) |

| Guanlab | Uses interventional information | High (Slightly better than Mean Difference) | High | |

| Observational | GRNBoost | Tree-based, high recall | Low Precision | Low FOR on K562 |

| NOTEARS | Continuous optimization, acyclicity constraint | Varying Precision | Similar recall, varying precision | |

| PC, GES | Constraint/score-based | Varying Precision | Similar recall, varying precision | |

| Interventional | GIES, DCDI | Extension of GES; continuous optimization | Varying Precision | Similar recall, varying precision |

Table 2: Performance of CVP on Diverse Benchmarking Datasets [23]

| Dataset | Dataset Type | Key Finding | CVP Performance |

|---|---|---|---|

| DREAM3/4 | In silico gene networks | Gold-standard benchmark for GRN inference | High accuracy in recapitulating known networks |

| IRMA | Biosynthesis network (Yeast) | Ground-truth network from synthetic biology | Validated network structure |

| SOS DNA Repair | Real network (E. coli) | Response to DNA damage | Identified known causal pathways |

| TCGA | Human cancer data | Liver cancer (HCC) data | Identified driver genes SNRNP200 and RALGAPB; validated by knockdown experiments |

A critical insight from recent large-scale benchmarks is that contrary to theoretical expectations, many existing interventional methods do not consistently outperform observational methods. [26] This highlights the challenges of scalability and effectively leveraging perturbation data in complex real-world biological systems. Furthermore, methods that perform well on synthetic benchmarks do not always generalize to real-data environments. [26]

Experimental Protocols for Validation

The HPN-DREAM Network Inference Challenge Protocol

This community challenge established a rigorous protocol for empirically assessing causal networks using held-out interventional data. [22]

- Training Data Generation: Collect phosphoprotein time-course data from cancer cell lines under various ligand stimuli and kinase inhibitors (e.g., using Reverse-Phase Protein Lysate Arrays - RPPAs). [22]

- Network Inference: Participants use training data to infer context-specific, directed, and weighted causal networks. [22]

- Causal Validation with Test Data:

- A test intervention (e.g., with an mTOR inhibitor) not used in training is applied.

- From the test data, a "gold-standard" set of descendant nodes (D{\text{true}}) is identified as those showing salient changes under the test inhibitor. [22]

- For a submitted network, the set of predicted descendants (D{\text{pred}}) is computed from the inferred graph.

- Causal accuracy is scored by comparing (D{\text{pred}}) and (D{\text{true}}) using the Area Under the Receiver Operating Characteristic Curve (AUROC). [22]

Experimental Validation of CVP-Inferred Targets

A protocol for functionally validating causal predictions involves CRISPR-based knockdown and phenotypic assays: [23]

- Network Inference & Target Identification: Apply the CVP algorithm to transcriptomic data (e.g., from TCGA) to infer a causal gene network and identify key driver genes.

- Genetic Perturbation: Perform CRISPR-Cas9 knockdown of the predicted causal driver genes (e.g., SNRNP200 and RALGAPB) in relevant cell lines (e.g., liver cancer). [23]

- Phenotypic Assay: Measure the impact of knockdown on disease-relevant phenotypes, such as:

- Cell proliferation and growth.

- Colony formation ability. [23]

- Validation: A successful prediction is confirmed if knockdown of the CVP-identified gene significantly inhibits cancer cell growth and colony formation, demonstrating its causal role in the disease phenotype. [23]

The following diagram outlines the key steps in this causal inference and validation pipeline:

The Scientist's Toolkit: Essential Research Reagents and Platforms

Table 3: Key Research Reagent Solutions for Causal Network Inference Studies

| Reagent / Platform | Function in Causal Inference | Application Context |

|---|---|---|

| CRISPRi Knockdown Pools | Provides targeted genetic perturbations to specific genes, generating interventional data essential for causal testing. | Large-scale single-cell perturbation screens. [26] |

| Single-Cell RNA Sequencing (scRNA-seq) | Measures gene expression at single-cell resolution under both control and perturbed states, providing high-quality observational and interventional data. | Profiling transcriptional responses in cell lines like RPE1 and K562. [26] |

| Reverse-Phase Protein Lysate Array (RPPA) | Quantifies protein abundance and post-translational modifications (e.g., phosphorylation) across many samples, enabling signaling network inference. | HPN-DREAM challenge for causal phosphoprotein signaling networks. [22] |

| CausalBench Benchmark Suite | An open-source benchmarking suite providing curated large-scale perturbation datasets and biologically-motivated metrics to evaluate network inference methods. | Objective comparison of causal inference algorithms on real-world data. [26] |

| Synapse Platform | A collaborative, open-data platform used to host community challenges, allowing for sharing of data, submissions, and code. | HPN-DREAM challenge infrastructure. [22] |

The advancement from correlational to causal network inference represents a paradigm shift in systems biology. Methods like CVP and SCM, validated through rigorous community benchmarks and experimental protocols, provide the tools necessary to uncover the definitive regulatory logic of disease-perturbed networks. The integration of large-scale perturbation data, robust computational algorithms, and functional validation is key to generating actionable insights for drug discovery and the development of targeted therapies. As these methods continue to evolve, they promise to deepen our understanding of disease mechanisms and accelerate progress in precision medicine.

Complex diseases like cancer arise from the deregulation of multiple interconnected pathways within molecular networks. Monotheracies often fail due to system redundancies and emerging drug resistance. Combination therapies targeting multiple pathogenic pathways simultaneously offer a promising alternative, but the astronomical number of potential target combinations presents a formidable challenge [27].

Network control theory has emerged as a powerful computational framework to address this challenge. By modeling gene regulatory networks as control systems, this approach identifies minimal sets of driver nodes capable of steering the network from a diseased state to a healthy state. The Optimal Control Node (OptiCon) algorithm represents a significant advancement in this field, enabling de novo identification of synergistic regulators that exert maximal control over disease-perturbed genes while minimizing influence on unperturbed genes [27]. This technical guide examines OptiCon's methodology, validation, and application within disease-perturbed molecular network research.

Theoretical Foundations of Network Controllability

Core Concepts in Structural Network Controllability

Network controllability theory applies principles from traditional control theory to complex biological networks. The fundamental objective is identifying a minimal set of driver nodes that can guide the system's dynamics from any initial state (diseased) to any desired final state (healthy) [27] [28].

In structural controllability frameworks, a Structural Control Configuration (SCC) defines the topological skeleton for controlling network dynamics. For a gene regulatory network represented as graph G, its SCC is identified by finding a maximum matching in the corresponding bipartite graph [27]. The unmatched nodes within this configuration comprise the minimal set of driver nodes. However, applying this basic framework to sparse, degree-heterogeneous molecular networks typically identifies a large proportion of nodes as drivers, making practical application prohibitive [27].

Algorithmic Approaches to Control Node Identification

Multiple algorithmic frameworks exist for identifying control nodes, each with distinct advantages:

- Maximum Matching (MM): Based on creating a bipartite graph representation and finding a maximum set of edges without common vertices. Driver nodes are unmatched nodes [27].

- Minimum Dominating Set (MDS): Identifies a minimal node subset where every node is either in the set or adjacent to a node in the set [28].

- Feedback Vertex Set (FVS): Focuses on identifying nodes whose removal eliminates all cycles from the network [29].

Advanced implementations like the Directed Critical Probabilistic MDS (DCPMDS) algorithm address the probabilistic nature of biological interactions and directionality, providing more biologically realistic control node identification [28].

The OptiCon Algorithm: Methodology and Implementation

OptiCon addresses limitations in basic network controllability by incorporating gene expression constraints to identify Optimal Control Nodes (OCNs) that specifically target the disease-perturbed components of a network [27]. The algorithm follows a structured workflow:

Defining Control Regions and Identifying OCNs

For each gene in the network, OptiCon defines its control region, comprising both directly and indirectly controlled genes. Based on structural controllability theory, a gene can fully control downstream genes located within its SCC. OptiCon extends this by identifying indirect control regions using expression correlation and shortest-path algorithms [27].

The identification of OCNs is formulated as a combinatorial optimization problem with the objective function: o = d - u, where:

- d = desired influence (fraction of deregulation controlled by OCNs)

- u = undesired influence (fraction of controllable non-deregulated genes)

The algorithm employs greedy search to identify OCN sets that maximize this objective function, with statistical significance determined through false discovery rate (FDR) cutoffs (typically 0.05) [27].

Synergy Scoring for Combination Therapy Targets

A critical innovation in OptiCon is its explicit identification of synergistic OCN pairs through a composite synergy score incorporating:

- Mutation Score: Measures enrichment of recurrently mutated cancer genes in each OCN's control region

- Crosstalk Score: Quantifies density of functional interactions between genes in the control regions of two OCNs [27]

This synergy scoring enables prioritization of regulator pairs as candidates for combination therapy, with statistical validation against null distributions.

Experimental Validation and Performance Metrics

Benchmarking Against Known Combinatorial Therapies

OptiCon performance has been rigorously validated across multiple cancer types, demonstrating its ability to recapitulate known therapeutic synergies and identify novel combinations. The algorithm shows superior performance in predicting clinically efficacious combinatorial drugs compared to other state-of-the-art methods [29].

Table 1: Performance Comparison of Network Control Methods in Identifying Clinical Combinatorial Drugs

| Method | Network Framework | Breast Cancer Precision (%) | Lung Cancer Precision (%) | Personalization Capability |

|---|---|---|---|---|

| OptiCon | De novo OCN identification | 68% known cancer targets | Comparable performance | High - disease-specific networks |

| CPGD | FVS-based controllability | Superior to comparator methods | Superior to comparator methods | High - individual patient networks |

| RACS | Existing drug synergy | Limited to known drug targets | Limited to known drug targets | Low - cohort-based |

| DrugComboRanker | Existing drug synergy | Limited to known drug targets | Limited to known drug targets | Low - cohort-based |

Biological Validation of Predicted Regulators

Experimental validation demonstrates OptiCon's biological relevance, with 68% of predicted regulators corresponding to either known drug targets or proteins with critical roles in cancer development [27]. Predicted regulators are significantly depleted for proteins associated with side effects, suggesting favorable therapeutic windows. Additional validation comes from:

- Support by disease-specific synthetic lethal interactions

- Experimental confirmation of predicted synergies

- Enrichment in genes contributing to therapy resistance through dense inter-subnetwork interactions [27]

Practical Implementation Guide

Computational Requirements and Reagents

Successful implementation of OptiCon requires specific computational resources and biological data, detailed in the table below.

Table 2: Essential Research Reagents and Computational Tools for OptiCon Implementation

| Resource Type | Specific Resource | Function/Purpose | Implementation Notes |

|---|---|---|---|

| Network Data | Gene Regulatory Network (e.g., 5959 genes, 108,281 regulatory links) | Backbone network for controllability analysis | Customizable based on disease context [27] |

| Expression Data | Disease vs. normal transcriptomes | Defining deregulated genes and control regions | RNA-seq or microarray data from matched samples [27] |

| Mutation Data | Cancer type-specific SNV datasets | Edge scoring in personalized networks | TCGA or comparable datasets [29] |

| Drug-Target Data | Combinatorial drug-gene interaction network | Mapping OCNs to therapeutic candidates | Integrates DCDB, DGIdb, DrugBank, TTD [29] |