Decoding Cellular Control: A Guide to GRN Topology, Dynamics, and Clinical Translation

This article provides a comprehensive overview of the methods and challenges in inferring and analyzing Gene Regulatory Network (GRN) topology and dynamics.

Decoding Cellular Control: A Guide to GRN Topology, Dynamics, and Clinical Translation

Abstract

This article provides a comprehensive overview of the methods and challenges in inferring and analyzing Gene Regulatory Network (GRN) topology and dynamics. Aimed at researchers and drug development professionals, it covers foundational concepts of GRNs and their role in disease and development. The content explores cutting-edge computational methods, from machine learning to multi-omics integration, for reconstructing networks. It also addresses common pitfalls in GRN inference and strategies for optimization, and concludes with a review of validation techniques and performance benchmarks for state-of-the-art tools. The goal is to bridge the gap between theoretical network models and their practical application in biomedicine.

The Blueprint of Life: What GRN Topology and Dynamics Reveal About Cellular Function

Gene Regulatory Networks (GRNs) are intricate systems that control gene expression within the cell, serving as the fundamental architects of cellular identity and function. By mapping gene-gene interactions, GRNs expose the dynamic control of gene expression across environmental conditions and developmental stages, clarifying basic principles of life and underpinning studies of disease mechanisms and drug target discovery [1]. In cancer research, for example, GRN analysis reveals transcription factors such as p53 and MYC that drive tumorigenesis, along with their downstream networks, providing insights that inform the design of personalized therapies [1]. A GRN is fundamentally represented as a directed graph where nodes correspond to genes and edges represent causal regulatory relationships, typically from transcription factors (TFs) to their target genes [2]. The precise inference of GRN architecture—characterized by properties such as hierarchical structure, modular organization, and sparsity—remains a central challenge and opportunity in systems biology [3].

Structural and Functional Properties of GRNs

Key Topological Characteristics

The topology of GRNs is not random; it exhibits specific structural properties that are crucial for their stability and function. Biological networks are thought to be well-described by directed graphs with a degree distribution that follows an approximate power-law, often referred to as a scale-free topology [3]. Key topological features include degree centrality (number of direct regulatory links), betweenness centrality (control over information flow), clustering coefficient (cohesiveness of local neighborhood), and k-core index (membership within dense network cores) [1]. These properties emerge from the generating principles of GRNs and confer robustness and specific dynamic behaviors. Notably, most nodes in these graphs are connected by short paths, a hallmark of the "small-world" property of networks, which facilitates efficient information transfer [3].

Quantitative Structural Properties

Table 1: Key Quantitative Properties of Biological GRNs

| Property | Description | Biological Significance | Typical Value/Pattern |

|---|---|---|---|

| Sparsity | The typical gene is directly affected by a small number of regulators. | Limits cascading effects of perturbations; enhances stability. | Only 41% of gene perturbations have significant effects on other genes [3]. |

| Scale-Free Topology | Node in- and out-degrees follow a power-law distribution. | Network resilience; presence of highly influential "hub" genes. | A few genes (hubs) have many connections, while most have few [3]. |

| Feedback Loops | Presence of directed cycles (e.g., A→B→A). | Enables dynamic memory, oscillations, and bistability. | Bidirectional regulation observed in 2.4% of interacting gene pairs [3]. |

| Modularity | Organization into densely connected, functionally related groups. | Supports coordinated expression of functional programs. | Evident from co-expression analysis and functional enrichment [3]. |

Methodologies for GRN Inference and Analysis

Experimental Data Generation and Protocols

Modern GRN research relies on high-throughput multiomic profiling to simultaneously capture transcriptional and epigenetic states from the same cell population.

Protocol 1: Paired Single-Cell RNA-seq and ATAC-seq for Enhancer-Driven Regulon Analysis This protocol is used to map enhancer-driven regulatory networks, as demonstrated in studies of T cell differentiation [4] [5].

- Cell Preparation: Isolate TCR-matched CD8+ T cells from models of infection (e.g., LCMV Armstrong for acute infection, LCMV CL13 for chronic infection) and cancer (e.g., syngeneic tumor cell lines engineered to express the GP33–41 epitope).

- Library Preparation & Sequencing: Perform paired scRNA-seq and scATAC-seq on the isolated cells using a platform like the 10x Genomics Chromium. scATAC-seq libraries reveal chromatin accessibility, pinpointing potential enhancer regions.

- Data Processing:

- scRNA-seq: Align reads to a reference genome (e.g., GRCh38) using a tool like STAR. Generate a count matrix for gene expression and perform clustering to identify cell states (e.g., naive, effector, memory, exhausted).

- scATAC-seq: Align reads and call peaks using software like MACS2. Create a cell-by-peak matrix of accessibility.

- Regulon Assembly: Link transcription factors to putative target genes by correlating TF gene expression (from scRNA-seq) with the accessibility of distal enhancer peaks near target genes (from scATAC-seq). This constructs enhancer-driven regulons.

- Network Analysis: Compare regulons across cell states (e.g., Trm-like TIL vs. exhausted T cells) to identify key differentiating transcription factors like KLF2 and BATF [4] [5].

Computational Inference Methods

Computational methods for GRN inference from single-cell data can be broadly categorized into unsupervised and supervised approaches.

Protocol 2: Supervised Deep Learning for GRN Inference using GAEDGRN GAEDGRN is a framework that infers directed GRNs from scRNA-seq data [2].

- Input Data: A prior GRN (can be incomplete) and a scRNA-seq gene expression matrix ((X \in R^{N \times G})), where (N) is the number of cells and (G) is the number of genes.

- Weighted Feature Fusion:

- Calculate gene importance scores using an improved PageRank* algorithm, which emphasizes a gene's out-degree (number of genes it regulates) rather than in-degree.

- Fuse these importance scores with the gene expression features to make the model focus on key regulators.

- Gravity-Inspired Graph Autoencoder (GIGAE):

- The encoder uses a graph convolutional network to learn latent node embeddings ((Z)) that capture directed network topology.

- A "gravity-inspired" decoder reconstructs the graph by calculating a potential energy score for each possible edge ((i,j)): ( \text{energy} = \frac{{(Zi \cdot Zj) \cdot \text{Importance}j}}{{\|Zi - Z_j\|^2}} ). This physics-inspired formula helps model the asymmetric, directed nature of regulatory relationships.

- Random Walk Regularization:

- Perform random walks on the graph to capture local node neighborhoods.

- Use the Skip-Gram model to ensure that nodes with similar network contexts have similar embeddings, regularizing the latent space learned by the GIGAE.

- Output: A reconstructed, directed GRN with predicted regulatory edges ranked by their inferred strength.

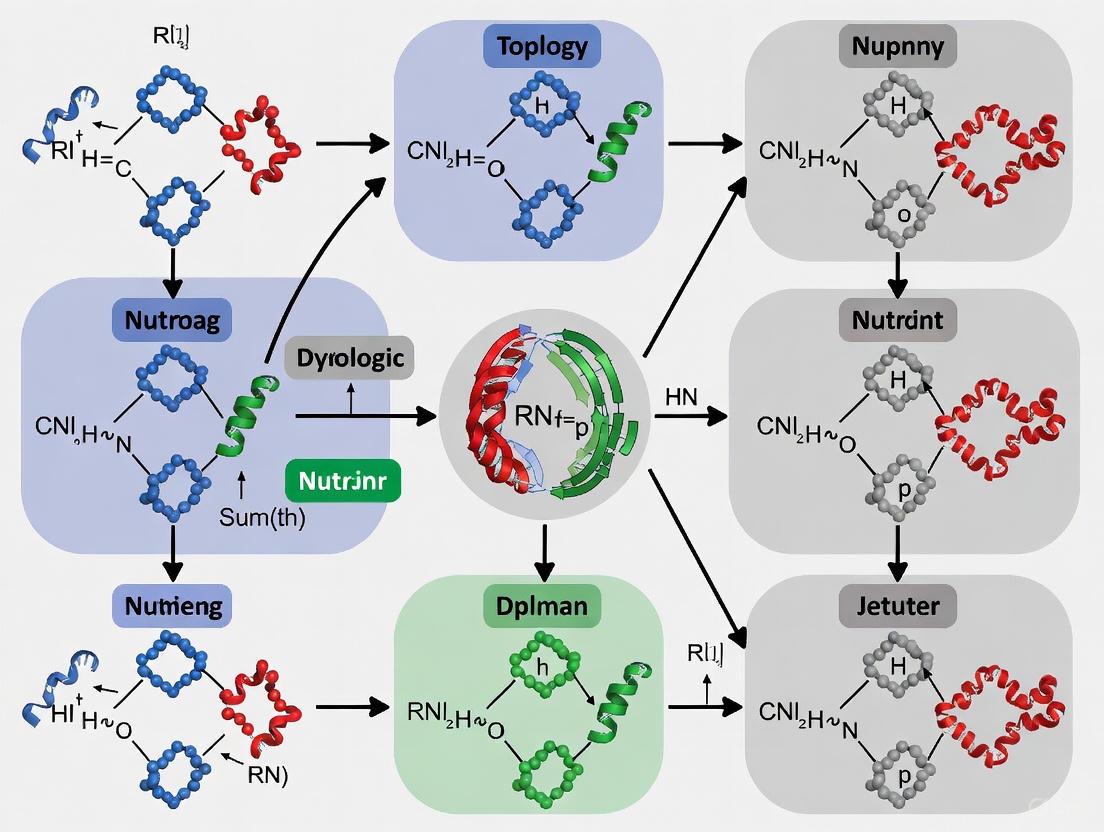

Diagram 1: The GAEDGRN computational workflow for directed GRN inference.

Advanced Computational Models: GTAT-GRN Framework

The GTAT-GRN model represents a state-of-the-art approach that integrates multi-source biological features with a topology-aware attention mechanism to enhance GRN inference [1].

Model Architecture and Protocol

Protocol 3: GRN Inference with GTAT-GRN

- Multi-Source Feature Fusion:

- Temporal Features: Extract mean, standard deviation, skewness, kurtosis, and time-series trend from gene expression time-series data after Z-score normalization: ( \hat{X}t^{i,:} = (Xt^{i,:} - \mui) / \sigmai ) [1].

- Expression-Profile Features: Compute baseline expression level, stability, specificity, and correlation from wild-type (control) expression data.

- Topological Features: Calculate node-level metrics (e.g., degree centrality, betweenness, PageRank) from the network structure.

- These heterogeneous features are fused into a comprehensive node representation for each gene.

- Graph Topology-Aware Attention (GTAT):

- The fused features are passed to a Graph Topology-Aware Attention Network. Unlike standard graph attention, GTAT dynamically captures high-order dependencies and asymmetric topological relationships among genes.

- It combines graph structure information with multi-head attention to weight the influence of neighboring genes, effectively learning potential regulatory dependencies.

- Feedforward Network & Output:

- The output of the GTAT layer is processed through a feedforward network with residual connections to enable deeper model training.

- The final layer produces a probability score for each potential regulatory edge, constituting the inferred GRN.

Table 2: Feature Types and Their Biological Functions in GTAT-GRN

| Feature Type | Source Data | Extracted Metrics | Biological Function Inferred |

|---|---|---|---|

| Temporal | Gene expression time-series | Mean, Std Dev, Skewness, Kurtosis, Trend | Dynamic expression patterns; response to stimuli [1]. |

| Expression-Profile | Baseline/wild-type expression data | Baseline level, Stability, Specificity, Correlation | Expression context; functional pathways; regulatory role [1]. |

| Topological | Structural properties of the GRN graph | Degree, Betweenness, Clustering Coefficient, PageRank | Gene's position, importance, and role in information flow [1]. |

Diagram 2: The GTAT-GRN architecture fusing multi-source features for enhanced inference.

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 3: Key Research Reagent Solutions for GRN Studies

| Reagent / Material | Function / Application | Example Use Case |

|---|---|---|

| scRNA-seq Kits (10x Genomics) | Profiling transcriptional heterogeneity at single-cell resolution. | Characterizing CD8+ T cell states (naive, effector, memory, exhausted) in infection and cancer [4] [5]. |

| scATAC-seq Kits (10x Genomics) | Mapping genome-wide chromatin accessibility at single-cell resolution. | Identifying active enhancers and promoters to build enhancer-driven regulons [4] [5]. |

| CRISPR-based Perturb-seq | Enabling large-scale functional screening by coupling genetic perturbation with single-cell RNA sequencing. | Determining causal gene functions and local GRN structure around a focal gene or pathway [3]. |

| Fluorophore-conjugated Antibodies (e.g., anti-CD45, anti-CD69) | Cell sorting and isolation of specific cell populations via FACS. | Isolation of TCR-matched CD8+ T cell subsets for multiomic profiling [5]. |

| Engineered Cell Lines | Modeling specific genetic alterations or disease contexts. | Syngeneic tumor cell lines engineered to express the LCMV GP33–41 epitope for studying tumor-specific T cell responses [5]. |

The inference of Gene Regulatory Networks has evolved from simplistic correlation-based models to sophisticated frameworks that integrate multi-source features, respect directional topology, and leverage deep learning. Models like GTAT-GRN and GAEDGRN exemplify the next generation of tools that capture the complex, asymmetric, and hierarchical nature of gene regulation [1] [2]. Furthermore, the application of enhancer-driven network analysis in immunology highlights how these approaches can reveal master transcriptional regulators, such as KLF2 and BATF, governing critical cell fate decisions in the tumor microenvironment [4] [5]. As these methodologies mature, they provide an increasingly powerful framework for mapping the causal architecture of complex traits and diseases, ultimately accelerating the discovery of novel therapeutic targets.

The topology of a Gene Regulatory Network (GRN)—the specific pattern of interconnections between its components—is not merely a structural artifact but a fundamental determinant of cellular function, stability, and response. Understanding GRN topology and dynamics is essential for deciphering how genetic programs execute phenotypic outcomes, respond to environmental cues, and malfunction in disease states. The arrangement of network nodes (genes, transcription factors) and edges (regulatory interactions) creates information flow pathways that process signals and govern cellular decisions. Topological analysis moves beyond cataloging individual interactions to reveal the higher-order organizational principles and regulatory motifs that confer specific dynamical properties on the network. These motifs—recurring, significant subgraphs—act as functional circuit elements, performing operations like signal processing, noise filtering, and pulse generation. This architectural perspective provides a powerful framework for interpreting complex biological data, predicting system behavior, and identifying critical control points for therapeutic intervention. For researchers and drug development professionals, mastering these principles is becoming increasingly critical for understanding disease mechanisms and developing targeted strategies that exploit network vulnerabilities.

Fundamental Concepts of Network Topology

Basic Topological Features and Metrics

The structure of a GRN can be quantified using specific metrics that describe the importance of its components and their overall connectivity.

- Nodes and Edges: In any network, nodes represent individual entities (e.g., genes, transcription factors, proteins), while edges represent the relationships or interactions between them (e.g., activation, repression) [6]. In GRNs, these typically represent genes and their regulatory interactions.

- Centrality Metrics: These metrics identify the most influential or important nodes within a network [6] [7].

- Degree Centrality: The number of direct connections a node has. Highly connected "hub" genes are often critical for network stability and function [6].

- Betweenness Centrality: Measures how often a node acts as a bridge along the shortest path between two other nodes. Nodes with high betweenness are potential gatekeepers of information flow [6].

- Closeness Centrality: Reflects how quickly a node can reach all other nodes in the network, indicating potential efficiency in influencing the entire network [6].

- Global Topological Properties: These describe the overall structure of the network.

- Density: The proportion of potential connections that are actually realized. Dense networks are often more robust but less adaptable [6].

- Diameter: The longest shortest path between any two nodes, indicating how "spread out" the network is [6].

- Clustering Coefficient: The degree to which nodes tend to cluster together, revealing the potential for localized, modular function [6].

The Barabási-Albert Model and Scale-Free Networks

Many real-world GRNs exhibit a scale-free topology, as described by the Barabási-Albert model [6]. This model posits that networks grow through preferential attachment, where new nodes are more likely to connect to already well-connected nodes. The result is a network where a few nodes (hubs) have a very high number of connections, while the majority of nodes have few. This structure has profound implications: hub genes often stabilize the entire network, and their dysregulation can be disproportionately disruptive, making them potential high-value therapeutic targets.

Key Regulatory Motifs and Their Functional Consequences

Regulatory motifs are small, recurring circuit patterns that perform defined information-processing functions. Their identification is key to moving from a static map to a dynamic understanding of network behavior.

Table 1: Key Network Motifs and Their Functions

| Motif Type | Topological Description | Dynamic Function | Biological Example |

|---|---|---|---|

| Feed-Forward Loop (FFL) | A regulator (X) controls a second regulator (Y), and both jointly regulate a target (Z). | Filters out transient signals; creates temporal programs (e.g., pulse generation, delay). | Found in nutrient utilization networks; can accelerate or delay target gene expression. |

| Positive Feedback Loop | A node activates itself, often through a chain of intermediaries. | Enables bistability (toggle switch) and cellular differentiation. Lock-in of a cellular state (e.g., fate decision). | In the Arabidopsis root epidermis, a WER/MYB23 positive feedback loop helps stabilize non-hair cell fate [8]. |

| Negative Feedback Loop | A node represses itself, either directly or indirectly. | Promotes homeostasis, ensures robustness, and can generate oscillatory behavior. | Circadian clocks, where repressors periodically inhibit their own expression. |

| Lateral Inhibition | A cell-to-cell communication pattern where a cell adopting a fate inhibits its neighbors from doing the same. | Creates spatial patterns of alternating cell fates from a field of equivalent cells. | Driven by diffusion of inhibitors like CPC in the Arabidopsis root epidermis, forming alternating hair and non-hair cells [8]. |

Analytical Methods and Experimental Protocols for GRN Inference

A Multi-Method Workflow for Network Inference

Accurately reconstructing GRN topology from data is a foundational challenge. A robust approach integrates multiple computational and experimental techniques.

Table 2: Key Research Reagents and Solutions for GRN Analysis

| Research Reagent / Tool | Primary Function | Application Context |

|---|---|---|

| GENIE3 | A machine learning algorithm that infers regulatory relationships from gene expression data. | A top-performing non P-based method for GRN inference from transcriptomic data (e.g., RNA-seq) [9]. |

| Z-score | A statistical method that uses the perturbation design matrix to infer causal regulatory links. | A high-performing P-based method for GRN inference from knockdown/knockout data [9]. |

| shRNA/siRNA Libraries | Enables targeted gene knockdown for functional screening. | Used in perturbation experiments to test the necessity of predicted hub genes (e.g., in FLT3-ITD AML) [10]. |

| CHiC (Promoter Capture HiC) | Maps physical, long-range interactions between promoters and distal regulatory elements. | Integrates topological data with GRN models to assign enhancers to target genes [10]. |

| DNaseI-seq / ATAC-seq | Identifies regions of open, accessible chromatin genome-wide. | Used to locate potential regulatory elements for integration into GRN models [10]. |

Protocol 1: Integrative GRN Construction from Multi-Omic Data This protocol, adapted from studies in FLT3-ITD AML, constructs a high-confidence GRN by combining multiple data types [10].

- Data Acquisition: Collect transcriptomic (RNA-seq) data from both diseased and relevant healthy control cells. Acquire epigenomic data, including DNaseI-seq (for open chromatin) and promoter-capture HiC (for chromatin interactions).

- Identify Regulatory Regions: Using DNaseI-seq data, identify open chromatin regions (DHSs) specific to the condition of interest (e.g., with a >3-fold change compared to normal cells).

- Footprint Analysis: Perform digital footprinting on the condition-specific DHSs to identify transcription factor binding motifs that are actively occupied.

- Assign Targets: Link the regulated enhancers to their target gene promoters using the CHiC interaction data. As a fallback, assign to the nearest gene within a 200 kb window.

- Network Assembly: Integrate the data: a TF is connected to a target gene if its bound motif is located in a regulatory region that interacts with that gene's promoter. The network can be filtered to focus on interactions involving specifically upregulated genes.

Figure 1: A workflow for the integrative construction of a Gene Regulatory Network from multi-omic data.

An Informed Functional Screen for Hub Validation

Once a GRN is inferred, its predictions about key regulators must be functionally validated.

Protocol 2: Informed shRNA Screen for Hub Gene Validation This protocol details a targeted approach to validate the functional importance of highly connected nodes predicted by a GRN, as demonstrated in FLT3-ITD AML [10].

- Target Selection: From the constructed GRN, select ~150-200 genes representing highly connected nodes (hubs) and key members of predicted regulatory modules.

- Screen Design: Clone shRNAs targeting the selected genes into a pooled lentiviral vector library.

- In Vitro Screening: Transduce the shRNA library into relevant cell line models (e.g., MV4-11, MOLM-14 for AML). Culture cells for a set duration and harvest genomic DNA to track shRNA abundance by sequencing. A depletion of a specific shRNA indicates that its target gene is essential for cell growth/survival.

- In Vivo Validation (Optional): Transplant transduced cells into immunodeficient mice (e.g., NSG). After tumor formation or a set time, harvest tumors and analyze shRNA abundance to identify genes essential for in vivo growth.

- Hit Prioritization: Integrate the screening results with the original GRN topology. Genes whose knockdown causes a drop-out effect and that occupy central network positions are high-confidence master regulators.

Figure 2: An experimental workflow for validating the functional importance of hub genes predicted by a GRN using an informed shRNA screen.

Case Studies: Topology-Driven Insights in Biological Systems

Targeting Hub Genes in Acute Myeloid Leukemia (AML)

In FLT3-ITD mutant AML, a subtype with poor prognosis, researchers constructed a patient-specific GRN by integrating transcriptomic, epigenomic, and chromatin interaction data [10]. Topological analysis of this network revealed highly connected nodes corresponding to specific transcription factor families (e.g., RUNX, AP-1). The hypothesis that these hubs are crucial for AML maintenance was tested using an informed shRNA screen targeting the network's central nodes. The study demonstrated that disrupting these key topological elements, such as the RUNX1 module, led to a collapse of the GRN and subsequent cell death, validating hub genes as vulnerable therapeutic targets in this cancer [10].

Spatial Patterning in Arabidopsis Root Epidermis

The root epidermis of Arabidopsis thaliana provides a classic example of how GRN topology, coupled with cell-to-cell communication, generates precise spatial patterns. A meta-GRN model incorporating positive and negative feedback loops was developed to explain the formation of alternating hair and non-hair cell files [8]. The key topological feature is a lateral inhibition motif, implemented by the diffusion of proteins like CPC and GL3/EGL3 between adjacent cells. In this motif, a cell adopting the non-hair fate produces a mobile inhibitor (CPC) that prevents its neighbors from adopting the same fate. The feedback loops within each cell's GRN create bistability, while the diffusive coupling between cells creates the spatial pattern. This model successfully recapitulated the wild-type pattern and 28 mutant phenotypes, highlighting how a specific network motif, when coupled with a transport process, directly dictates macroscopic tissue organization [8].

The Critical Role of Perturbation Design in Accurate GRN Inference

The accuracy of an inferred GRN is profoundly influenced by the experimental design used to generate the input data. A key distinction lies between methods that use only observed gene expression changes and those that also incorporate knowledge of the perturbation design matrix (P-based methods), which specifies which genes were intentionally targeted in knockdown/knockout experiments [9].

Table 3: Benchmarking P-based vs. Non P-based GRN Inference Methods

| Method Category | Uses Perturbation Design? | Typical AUPR on High-Noise Data | Key Characteristics |

|---|---|---|---|

| P-based (e.g., Z-score) | Yes | High (~0.6 on GeneSPIDER data) [9] | Infers causality; near-perfect accuracy with correct design; performance drops to random with incorrect design. |

| Non P-based (e.g., GENIE3) | No | Low to Moderate (<0.3 on GeneSPIDER data) [9] | Infers association; limited accuracy even at low noise levels; does not require perturbation knowledge. |

Benchmarking studies show that P-based methods consistently and significantly outperform non P-based methods across various noise levels [9]. The performance advantage is because P-based methods can distinguish between direct and indirect effects by leveraging the causal information embedded in the perturbation design. Consequently, targeted gene perturbations combined with P-based inference methods are indispensable for achieving high-confidence GRN maps.

Figure 3: The critical role of the perturbation design matrix in inferring causal GRNs versus associative networks.

Gene Regulatory Networks (GRNs) are complex systems of molecular interactions that control core developmental and biological processes, including cell fate decisions such as differentiation, reprogramming, and transdifferentiation [11] [3]. The architecture of a GRN—its topology (structure) and dynamics (behavior)—directly determines the stable cell states (attractors) a system can adopt and how it transitions between them during processes like the Epithelial to Mesenchymal Transition (EMT) [11]. Inferring the precise structure of these networks, including the direction and intensity of regulations between genes, remains one of the most significant challenges in systems biology, despite advances in computational approaches and high-throughput biological technologies [11] [12]. Research in this field is increasingly focused on understanding key structural properties of GRNs—such as sparsity, hierarchical organization, modularity, and the presence of feedback loops—and how these properties govern the distribution and dampening of perturbation effects to ensure robust cell fate control [3].

Foundational Principles of GRN Dynamics

Key Structural Properties of GRNs

The function of a GRN is profoundly shaped by its underlying structure. Analysis of large-scale perturbation data, such as from Perturb-seq studies, has revealed several defining architectural principles [3]:

- Sparsity: GRNs are sparse, meaning each gene is directly regulated by only a small number of other genes. In a major Perturb-seq study in K562 cells, only 41% of perturbations targeting a primary transcript had significant effects on the expression of any other gene, indicating that most genes are not highly connected regulators [3].

- Directed Edges and Feedback Loops: Regulatory relationships are directional (gene A regulating gene B is distinct from gene B regulating gene A), yet feedback loops are pervasive. In perturbation data, 2.4% of gene pairs with a one-directional effect also showed evidence of bidirectional regulation, confirming the presence of feedback [3].

- Hierarchical Organization and Modularity: GRNs often exhibit a hierarchical structure with modular sub-netroups (groups of genes that work together to perform specific functions). This modular organization, combined with a "small-world" property where most nodes are connected by short paths, helps to localize the effects of perturbations and facilitate coordinated control of biological processes [3].

- Scale-Free Topology: The in- and out-degree (number of regulators and targets) of nodes in GRNs often follows a power-law distribution. This means a few "hub" genes have many connections while most genes have few, which has important implications for the network's robustness and susceptibility to perturbations [3].

Mathematical Frameworks for Modeling GRN Dynamics

The dynamic behavior of GRNs is frequently modeled using systems of Ordinary Differential Equations (ODEs) that describe the rate of change in concentration for each molecular species in the network [11]. A general form for such a model is: dx/dt = f(x; p) where x is a vector representing the concentrations of n molecules, and p is a parameter vector encompassing biochemical rate constants [11]. These models can capture complex nonlinear dynamics, including multi-stability (the existence of multiple stable steady states, corresponding to different cell fates) and state transitions in response to signals or perturbations.

A critical quantitative measure for understanding direct regulatory influence within a GRN is the local response coefficient, rij. This coefficient quantifies the relative change in the steady-state level of gene i with respect to a small change in the level of gene j, and is defined as [11]: rij = ∂ln xi / ∂ln xj = (xj / xi) * (∂xi / ∂xj) The sign and magnitude of rij reveal the direction and intensity of the regulatory interaction from node j to node i. A negative value typically indicates repression, while a positive value suggests activation. The derivation of these coefficients from perturbation data forms the basis of powerful network inference methods like Modular Response Analysis (MRA) [11].

Advanced Methodologies for GRN Inference

Computational Inference from Perturbation Data

Systematic perturbation, combined with statistical and differential analysis, provides a robust framework for inferring GRN topology and identifying network differences across cell fates [11]. The following workflow outlines the core steps of this approach, which can be applied to various data types, including single-cell RNA sequencing (scRNA-seq) data and simulated expression data.

The process begins with a biological system at a stable steady state, representative of a specific cell fate. Systematic perturbations are applied to sensitive parameters (e.g., degradation rates, signal strengths) associated with each node, and the new steady-state expression levels of all molecules are measured [11]. From this data, the local response matrix is calculated, whose elements rij represent the direct regulatory influence of node j on node i [11].

To enhance accuracy and account for variability, statistical analysis is performed. Confidence Intervals (CIs) for the local response matrices under multiple perturbations are calculated and used to define a sparse network topology that eliminates spurious connections and reduces the impact of perturbation degrees [11]. This results in a redefined local response matrix that reflects the consensus network structure.

Finally, differential analysis introduces the concept of a relative local response matrix. This enables the identification of critical regulations specific to each cell fate and helps determine the dominant cell state associated with particular regulatory interactions [11]. The output is a set of inferred, cell fate-specific GRN models that quantitatively capture network differences.

Machine Learning and Hybrid Approaches

Machine Learning (ML), Deep Learning (DL), and hybrid approaches have emerged as powerful alternatives for large-scale GRN construction. These methods can capture nonlinear, hierarchical, and context-dependent regulatory relationships that are difficult to model with traditional statistical methods [12].

Table 1: Comparison of GRN Inference Methodologies

| Method Category | Examples | Key Principles | Strengths | Limitations |

|---|---|---|---|---|

| Perturbation-Based & Differential Analysis | Modular Response Analysis (MRA), Statistical & Differential Analysis [11] | Infers direct regulations and intensities from system's response to targeted perturbations. | Quantifies direction and strength of regulation; Model-independent; Identifies state-specific differences. | Requires systematic perturbation data which can be costly to generate. |

| Machine Learning (ML) | GENIE3 [12], Support Vector Machine (SVM) [12] | Uses algorithms to learn regulatory relationships from expression data patterns. | Scalable; Can integrate diverse data types. | May struggle with high-dimensional data; Can fail to capture complex nonlinearities. |

| Deep Learning (DL) | DeepBind [12], CNN-based Models [12] | Uses multiple neural network layers to learn hierarchical features and complex patterns. | Excels at learning high-order dependencies; Powerful for sequence-based features. | Requires very large datasets; Can be prone to overfitting; "Black box" interpretability challenges. |

| Hybrid Models | CNN + ML Ensembles [12] | Combines deep feature extraction with ML classifiers for prediction. | Consistently outperforms traditional ML/DL alone; Improved accuracy and interpretability. | Implementation complexity; Computational resource demands. |

A significant innovation in this domain is the use of transfer learning. This strategy addresses the challenge of limited experimentally validated regulatory pairs in non-model species by leveraging knowledge from a data-rich source species (e.g., Arabidopsis thaliana) to improve GRN inference in a target species with limited data (e.g., poplar or maize) [12]. Hybrid models that combine Convolutional Neural Networks (CNNs) with machine learning have demonstrated superior performance, achieving over 95% accuracy on holdout test datasets and more effectively ranking key master regulators like MYB46 and MYB83 in lignin biosynthesis pathways [12].

Experimental Protocols and Research Tools

Detailed Protocol for Perturbation-Based GRN Inference

The following protocol details the steps for inferring GRN topology using perturbation data, statistical analysis, and differential analysis, as applied in recent studies [11].

System Preparation and Basal State Measurement

- Allow the biological system (e.g., a cell population) to reach a stable steady state under controlled conditions, representative of a specific cell fate (e.g., Epithelial state).

- Measure the basal, unperturbed steady-state expression levels, (\bar{x} = (\bar{x}1, \bar{x}2, ..., \bar{x}n)), for all n molecules (genes/proteins) of interest in the network. The sensitive parameter set is denoted as (pb = (p{b,1}, ..., p{b,n})) [11].

Execution of Systematic Perturbations

- For each node (k) (where (k = 1) to (n)), slightly perturb its associated sensitive parameter (pk) from its basal value (p{b,k}) to a perturbed value (p_{s,k}). The perturbation should be mild enough to allow the system to reach a new, nearby steady state of the same type [11].

- For each perturbation (k), measure the new stable steady-state expression levels of all molecules, denoted as (\bar{x}k = (\bar{x}{k,1}, ..., \bar{x}_{k,n})).

- Repeat this process to generate technical and biological replicates for robust statistical analysis.

Calculation of the Local Response Matrix

- For each ordered pair of distinct genes ((i, j)), compute the local response coefficient (r{ij}) using the formula [11]: (r{ij} = \frac{\bar{x}j}{\bar{x}i} \cdot \frac{\Delta xi}{\Delta xj}) where (\Delta xi = \bar{x}{i,k} - \bar{x}i) and (\Delta xj = \bar{x}{j,k} - \bar{x}j) for a perturbation applied to node (k), and the partial derivative is approximated by the observed change. Self-response coefficients (r_{ii}) are defined as -1 [11].

- Construct the (n \times n) local response matrix (R), where each element (R[i,j] = r_{ij}).

Statistical Analysis and Network Sparsification

- Using the replicate data, calculate Confidence Intervals (CIs) for each element of the local response matrix (R).

- Apply a sparsity constraint by setting to zero any element (r_{ij}) whose confidence interval includes zero or is below a statistically defined threshold. This eliminates non-significant regulations [11].

- Construct the redefined local response matrix, (R'), which contains only the statistically significant regulatory interactions. The accuracy of the inferred topology can be validated by calculating prediction errors against held-out perturbation data.

Differential Analysis Across Cell Fates

- Repeat steps 1-4 for each distinct cell fate of interest (e.g., Epithelial, Hybrid, and Mesenchymal states during EMT).

- To identify regulations that are critical to a specific cell fate, compute the relative local response matrix.

- Compare the matrices (R'A) and (R'B) from two cell fates A and B. Regulations with the largest absolute differences in their (r_{ij}) values are the most state-critical. This quantifies how the network topology is rewired during cell fate decisions [11].

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 2: Key Research Reagent Solutions for GRN Inference Experiments

| Reagent / Material | Function in GRN Research | Example Application |

|---|---|---|

| CRISPR-based Perturbation Libraries | Enables high-throughput, precise knockout or knockdown of target genes to systematically probe network function. | Genome-scale Perturb-seq studies in K562 cells to observe downstream effects of knocking out ~9,866 genes [3]. |

| Single-Cell RNA Sequencing (scRNA-seq) | Profiles the transcriptome of individual cells, capturing heterogeneity and revealing expression changes in response to perturbations. | Identifying distinct cell states (E, H, M) in Epithelial to Mesenchymal Transition (EMT) and their response to perturbations [11]. |

| Chromatin Immunoprecipitation Sequencing (ChIP-seq) | Identifies genome-wide binding sites for transcription factors and histone modifications, providing evidence for direct regulatory interactions. | Experimental validation of transcription factor binding to promoter regions of putative target genes [12]. |

| DNA Affinity Purification Sequencing (DAP-seq) | An in vitro method for identifying protein-DNA interactions, useful for mapping potential regulatory networks for transcription factors. | High-throughput screening of TF-target relationships, especially in plant species [12]. |

| Validated TF-Target Interaction Databases | Serve as a gold-standard training set for supervised machine learning models and for benchmarking inferred networks. | Curated sets of known interactions from Arabidopsis used to train models for transfer learning to poplar and maize [12]. |

| Specialized Software/Packages (e.g., SCODE, GENIE3, TGPred) | Implement various computational algorithms for inferring GRNs from expression data, each with different underlying models and assumptions. | GENIE3 was used to infer the existence of regulations from static transcriptomic data [12]. |

Key Regulatory Motifs and Network-Level Control

The overall behavior and robustness of a GRN emerge from the interplay of smaller, recurring circuit patterns known as network motifs. These motifs perform specific information-processing functions.

The Feed-Forward Loop (FFL) is a three-node pattern where a master regulator (TF A) controls a target gene (Gene C) both directly and through an intermediate regulator (TF B). This motif can act as a sign-sensitive filter, introducing delays in the target gene's response and ensuring it is only activated by persistent input signals [3].

Feedback Loops are crucial for dynamic control. Positive Feedback can lock a system into a stable state, making cell fate decisions irreversible and robust to minor fluctuations. This is fundamental to bistable switches that govern transitions between distinct fates, such as E and M states in EMT. Negative Feedback, in contrast, promotes homeostasis and dampens noise, allowing a system to return to a set point after a disturbance [3].

A classic motif underlying cell fate bifurcations is Mutual Inhibition, where two key transcription factors reciprocally repress each other. This architecture creates a toggle switch, enabling two mutually exclusive, stable states. The system can be flipped from one state to the other by a transient signal that temporarily overwhelms one factor's repression of the other. This motif is often coupled with positive feedback to solidify the chosen fate [11].

The dynamics of cellular decision-making are an emergent property of the complex topology and nonlinear interactions within Gene Regulatory Networks. The integration of systematic perturbation experiments, sophisticated computational inference methods like statistical and differential analysis of local response matrices, and the power of machine learning and hybrid models, is providing an increasingly precise and quantitative picture of these networks [11] [12]. Understanding the core principles of GRN architecture—including sparsity, hierarchy, modularity, and the functional roles of specific motifs—is not merely an academic exercise. It is fundamental to deciphering the logic of development, disease, and cellular reprogramming. As these research tools and protocols continue to advance, they pave the way for rationally intervening in cell fate decisions for therapeutic purposes, such as in regenerative medicine and cancer treatment, by targeting the critical regulatory nodes that control network-level state transitions.

Gene Regulatory Networks (GRNs) are sophisticated computational models that represent the complex web of interactions among genes, proteins, and other molecules that control cellular processes [13]. At the heart of these networks are transcription factors, specialized proteins that bind to specific DNA regions to activate or repress gene expression, thereby governing the production of proteins essential for cellular function [13]. GRNs are not merely collections of individual genes; they exhibit emergent properties through feedback loops and combinatorial control where genes mutually inhibit or activate one another, enabling cells to fine-tune responses to internal signals and external stimuli [13]. This complex interplay allows cells to differentiate into diverse cell types, execute specialized functions, and maintain homeostasis—processes that become dysregulated in disease states [13].

Understanding GRNs requires examining both their topology (structural arrangement of interactions) and dynamics (temporal changes in regulatory activities) [14]. The structure of a GRN is typically represented as a graph where nodes symbolize genes and edges represent regulatory relationships between them [13]. Technological advances in high-throughput data generation have created unprecedented opportunities for reconstructing GRNs, moving the field beyond single-gene studies toward a holistic systems biology approach that captures the complexity of biological systems [15] [13]. This paradigm shift has been particularly transformative for understanding complex diseases, where GRN modeling helps identify crucial genetic elements that contribute to disease susceptibility and progression [13].

Methodologies for GRN Reconstruction and Analysis

Data Types for GRN Inference

GRN reconstruction relies on diverse data types that provide complementary insights into regulatory relationships. The accuracy and reliability of GRN inference heavily depend on the quality and appropriateness of the underlying data, necessitating careful assessment and addressing of potential noise and technical variation sources [13].

Table 1: Data Types for GRN Reconstruction

| Data Type | Key Characteristics | Applications in GRN | Considerations |

|---|---|---|---|

| Microarray | Widely available for various organisms and tissues; measures gene expression levels | Initial GRN mapping; large-scale association studies | Lower dynamic range than sequencing; platform-specific biases |

| RNA-seq | More accurate quantification of gene expression; captures novel transcripts | Comprehensive GRN inference; isoform-specific regulation | Requires substantial computational resources; batch effects |

| Single-cell RNA-seq | Reveals cell-type-specific gene expression patterns; captures cellular heterogeneity | Cell-type-specific GRNs; developmental trajectories | Sparse data; technical noise; high cost per cell |

| Time-series expression | Enables studying changes in gene expression over time | Inference of dynamic GRNs; identification of causal relationships | Requires careful design of time intervals; computational complexity |

| Perturbation experiments (e.g., gene knockouts) | Provides causal information through intervention | Establishing directionality in regulation; validation of predicted interactions | Off-target effects; compensatory mechanisms |

Time-series expression data are particularly valuable for inferring dynamic GRNs and identifying regulatory relationships based on temporal patterns, while perturbation experiments (e.g., gene knockouts, drug treatments) provide crucial causal information about gene-gene interactions [13]. Emerging approaches increasingly leverage multi-omics datasets that integrate genomic, epigenomic, transcriptomic, and proteomic information to establish a more complete picture of gene regulation [13].

Computational Approaches and Model Architectures

The selection of computational approaches for GRN reconstruction depends on the nature of available data, biological questions, and computational constraints [13] [14]. Model architectures can be broadly categorized into several classes:

Topological models represent GRNs as graphs depicting connections between elements and have been applied to various biological datasets, including protein-protein interaction and co-expression networks [13]. These models focus on the network structure but do not capture the dynamic behavior or regulatory logic. Logical models provide a straightforward approach that incorporates control logic, representing regulatory relationships using Boolean logic or more complex rule-based systems [13]. These are particularly useful when knowledge is limited, as they can effectively pinpoint specific regulatory interactions.

Dynamic models represent the conventional approach for modeling GRNs and aim to describe and replicate fluctuations in system states over time [13]. These models can predict network responses to environmental changes and stimuli, making them invaluable for understanding system behavior under different conditions. Dynamic models include ordinary differential equations (ODEs), stochastic models, and neural network approaches that simulate the kinetic behavior of regulatory systems [14].

Machine learning approaches have gained prominence for GRN inference, with algorithms such as random forests, neural networks, and mutual information-based methods being employed to predict regulatory relationships from expression data [13]. The ARACNE algorithm, for instance, uses mutual information to reconstruct GRNs, effectively eliminating indirect interactions by applying the Data Processing Inequality [14].

Experimental Workflow for GRN Reconstruction

The standard workflow for GRN reconstruction involves multiple stages, from experimental design to network validation:

Experimental Design and Data Generation

Effective GRN reconstruction begins with careful experimental design that matches the research question with appropriate assays and conditions. For dynamic GRN inference, time-series experiments should capture critical transition points with sufficient temporal resolution [14]. Perturbation experiments, including gene knockouts, RNAi-mediated knockdown, or drug treatments, provide valuable causal information by disrupting specific network components [13] [14]. Single-cell RNA-seq experiments require consideration of cell number, capture efficiency, and appropriate controls to account for technical variation [13].

Data Preprocessing and Quality Control

Raw sequencing data requires extensive preprocessing before GRN inference. For RNA-seq data, this typically includes quality control (FastQC), adapter trimming (Trimmomatic), read alignment (STAR, HISAT2), and quantification (featureCounts, HTSeq) [14]. Single-cell RNA-seq data necessitates additional steps for batch effect correction, normalization (SCTransform), and imputation to address sparsity [13]. For microarray data, background correction, normalization, and probe summarization are essential preprocessing steps [14].

Network Inference and Model Selection

Network inference involves applying computational algorithms to reconstruct regulatory relationships from processed expression data. The choice of inference method should align with the data characteristics and biological questions [14]. For large-scale networks with limited prior knowledge, correlation-based methods or mutual information approaches provide a starting point. When temporal data are available, dynamic models like ODEs or Boolean networks can capture regulatory dynamics [13]. For systems with extensive prior knowledge, Bayesian networks incorporate existing information while learning new relationships from data [14].

Model Optimization and Validation

GRN models require optimization to improve their biological accuracy and predictive power. Parameter tuning involves adjusting model-specific parameters to maximize agreement with experimental data [14]. Cross-validation techniques assess model generalizability, while resampling methods (bootstrapping, jackknifing) evaluate network stability [14]. Biological validation remains challenging but essential; predicted interactions should be tested through experimental validation such as chromatin immunoprecipitation (ChIP), luciferase reporter assays, or additional perturbation experiments [14].

GRNs in Cancer Biology

Cancer Cell Plasticity and GRN Dynamics

Cancer cell plasticity—the ability of cancer cells to transition between different phenotypic states—represents a major mechanism underlying tumor progression, therapeutic resistance, and relapse [16]. This plasticity is governed by dynamic rearrangements in GRNs that enable cells to evade treatment and adapt to changing microenvironments. The concept of Waddington's epigenetic landscape provides a powerful metaphor for understanding how cancer cells shift between phenotypes [16]. In this analogy, cells occupy different valleys representing stable cell states, but cancer cells exhibit increased ability to transition between these states due to alterations in their underlying GRNs.

Quantifying cancer cell plasticity requires examining the attractor states and basins of attraction within the GRN landscape [16]. Attractor states represent stable phenotypic states toward which cells naturally evolve, while basins of attraction define the region of state space from which cells will converge to a particular attractor [16]. Cancer cells often exhibit shallow basins that facilitate transitions between states, enhancing their plasticity. Two key approaches for quantifying plasticity include: (1) quasi-potential analysis based on GRN dynamics, which measures the stability of cell states; and (2) inference of cell potency from single-cell trajectory analysis or lineage tracing [16].

GRN Dysregulation in Cancer Progression

Dysregulation of GRNs contributes to cancer progression through multiple mechanisms. Oncogenic transcription factors can become rewired to activate pro-survival and proliferation programs, while tumor suppressor networks may be disrupted [16]. In many cancers, GRNs that normally control developmental processes are re-activated, leading to stem-like properties and enhanced plasticity [16]. Single-cell RNA-sequencing studies have revealed remarkable heterogeneity in cancer cell states within tumors, with distinct GRN configurations corresponding to different phenotypic states [16].

The layers of heterogeneity in cancer include genetic heterogeneity (selection of mutants with different treatment responses), epigenetic heterogeneity (variable chromatin accessibility, DNA methylation, and transcription factor binding), and stochastic heterogeneity (probabilistic biochemical reactions within cells) [16]. These layers collectively define phenotypic variability and create drug-tolerant persister cells that contribute to treatment resistance [16].

Analytical Framework for Cancer GRNs

GRNs in Developmental Disorders

Neurodevelopmental Processes and GRNs

Gene regulatory networks play fundamental roles in brain development, where they orchestrate neurogenesis, neuronal survival, axon and dendrite growth, synaptic plasticity, and myelination [17]. The functional genomics of human brain development involves complex spatiotemporal regulation of gene expression across different brain regions and cell types [18]. Disruptions in these carefully coordinated GRNs can lead to various neurodevelopmental disorders, including autism spectrum disorders, intellectual disability, and schizophrenia.

Neurotrophic factors represent crucial components of developmental GRNs, influencing essentially all aspects of nervous system development [17]. These factors include BDNF (Brain-Derived Neurotrophic Factor), NGF (Nerve Growth Factor), and NT-3/4 (Neurotrophin-3/4), which signal through specific receptor tyrosine kinases (Trk receptors) and the p75 neurotrophin receptor [17]. The 2025 Gordon Research Conference on Neurotrophic Mechanisms will highlight how these factors shape neural circuit connectivity, synaptic plasticity, and behavior through their integration into broader GRNs [17].

Analytical Approaches for Developmental GRNs

Studying GRNs in development presents unique challenges and opportunities. Time-series analysis during critical developmental windows can reveal dynamic rewiring of regulatory relationships [13]. Single-cell RNA-sequencing of developing tissues enables reconstruction of cell-type-specific GRNs and lineage relationships [13]. Spatial transcriptomics approaches capture the spatial organization of gene expression patterns, essential for understanding tissue patterning during development.

Integration of epigenomic data (ATAC-seq, ChIP-seq, DNA methylation) with transcriptomic data provides insights into the regulatory logic underlying developmental GRNs [13]. Chromatin accessibility patterns can reveal potential regulatory elements, while transcription factor binding profiles identify direct regulatory targets. Machine learning approaches that integrate multiple data types are particularly powerful for reconstructing accurate developmental GRNs [13].

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Research Reagents for GRN Studies

| Reagent/Category | Specific Examples | Function in GRN Research |

|---|---|---|

| Gene Expression Datasets | Microarray data; RNA-seq data; Single-cell RNA-seq data; Time-series expression data; Perturbation experiment data | Primary data for network inference; enables studying changes in gene expression over time and causal relationships [13] |

| Computational Tools | STRING; ARACNE; GeneMANIA; FunCoup; HumanNet | Network inference, analysis, and visualization; integration of multiple evidence types [19] [14] |

| Experimental Validation Reagents | CRISPR/Cas9 systems; siRNA/shRNA libraries; ChIP-seq kits; Luciferase reporter constructs | Functional validation of predicted regulatory interactions; perturbation studies [13] [14] |

| Database Resources | STRING; BioGRID; IntAct; MINT; KEGG; Reactome | Source of curated protein-protein associations; pathway information; prior knowledge for network inference [19] |

| Specialized Analysis Tools | DREAM Challenges datasets; Pathway enrichment tools; Network clustering algorithms | Benchmarking GRN inference methods; functional interpretation of networks; identifying modular organization [13] [19] |

The STRING database deserves special emphasis as a comprehensive resource that compiles, scores, and integrates protein-protein association information from experimental assays, computational predictions, and prior knowledge [19]. The latest version, STRING 12.5, introduces a regulatory network mode that captures the type and directionality of interactions using curated pathway databases and a fine-tuned language model that parses the scientific literature [19]. STRING provides three distinct network types—functional, physical, and regulatory—each applicable to different research needs, along with tools for network clustering and pathway enrichment analysis [19].

Future Directions and Therapeutic Applications

Emerging Technologies and Approaches

The field of GRN research is rapidly evolving, driven by technological advances and conceptual innovations. Single-cell multi-omics technologies that simultaneously measure transcriptome, epigenome, and proteome in the same cell promise to revolutionize GRN reconstruction by providing matched measurements across molecular layers [13]. Spatial transcriptomics and proteomics enable GRN mapping within tissue context, essential for understanding development and disease pathology [13]. Machine learning and artificial intelligence approaches are becoming increasingly sophisticated for GRN inference, with graph neural networks and transformer models showing particular promise for integrating diverse data types [13] [14].

The integration of network physiology concepts into GRN research represents another emerging direction, focusing on how regulatory networks operate across different scales—from molecular interactions to cellular responses to tissue-level phenotypes [16]. This approach is particularly relevant for cancer systems biology, where the built-in plasticity of heterogeneous cell states creates profound challenges for network inference [16].

Therapeutic Implications

Understanding GRNs in health and disease has profound therapeutic implications. In cancer, targeting plastic GRNs rather than individual genes may provide strategies to prevent or overcome therapy resistance [16]. Approaches include stabilizing specific attractor states corresponding to treatment-sensitive phenotypes or reducing overall network plasticity to prevent adaptation [16]. For developmental disorders, GRN-based approaches may identify key regulatory nodes whose modulation could restore normal developmental trajectories [17] [18].

Neurotrophic factors represent promising therapeutic targets for various neurological and psychiatric disorders, with treatments exploiting neurotrophin biology now in clinical trials for conditions ranging from chronic pain to autism and dementia [17]. The 2025 Gordon Research Conference on Neurotrophic Mechanisms will highlight translating knowledge of neurotrophin biology into therapies, bringing together researchers focusing on the intersection of neurotrophin biology with neuronal cell biology, circuit formation, plasticity, chronic pain, neurodegeneration/regeneration, and cancer [17].

As GRN research continues to advance, it will increasingly enable precision medicine approaches that account for the complex network dynamics underlying disease states, moving beyond single-gene or single-pathway models toward truly systems-level therapeutic strategies.

Gene Regulatory Networks (GRNs) represent the complex orchestration of molecular interactions where transcription factors (TFs) regulate target genes, controlling fundamental cellular processes, development, and responses to environmental cues [12] [1]. The central challenge in systems biology lies in reconstructing accurate network models from experimental data that is inherently noisy, high-dimensional, and sparse [12] [1]. Conventional GRN inference methods face significant hurdles due to the astronomical number of potential gene-gene interactions from limited samples, technical artifacts in omics measurements, and the fundamental biological complexity of regulatory mechanisms [1].

The reconstruction of GRNs is essential for elucidating the molecular mechanisms underlying plant physiology, stress responses [12], and disease mechanisms in biomedical research, including cancer driven by transcription factors such as p53 and MYC [1]. While experimental techniques like ChIP-seq and yeast one-hybrid assays provide accurate validation of regulatory interactions, they remain labor-intensive and low-throughput, limiting their application to small gene sets [12]. This bottleneck has accelerated the development of computational approaches that can leverage large-scale transcriptomic data to infer regulatory relationships at genome scale [12].

Table 1: Key Challenges in GRN Inference from High-Dimensional Data

| Challenge | Impact on GRN Inference | Traditional Approaches |

|---|---|---|

| High Computational Complexity | Poor scaling with large genomic datasets; slow performance on large inputs [1] | Mutual information [1], regression-based methods [1] |

| Data Sparsity | Many gene-gene links remain unconfirmed; incomplete networks [1] | Pearson correlation [1], linear regression [1] |

| Nonlinear Regulatory Relationships | Failure to capture complex biological dependencies [1] | Linear dependency assumptions [1] |

| Limited Training Data | Particularly problematic in non-model species [12] | Species-specific model training [12] |

Advanced Computational Methodologies

Hybrid Machine Learning and Deep Learning Frameworks

Hybrid approaches that combine convolutional neural networks (CNNs) with traditional machine learning have demonstrated remarkable performance, achieving over 95% accuracy on holdout test datasets for GRN inference [12]. These models successfully identified a greater number of known transcription factors regulating the lignin biosynthesis pathway and demonstrated higher precision in ranking key master regulators such as MYB46 and MYB83, along with upstream regulators including members of the VND, NST, and SND families [12].

The GTAT-GRN model exemplifies innovation through its graph topology-aware attention mechanism that fuses multi-source features [1] [20]. This approach integrates temporal expression patterns, baseline expression levels, and structural topological attributes to enrich node representations with multidimensional expressiveness [1]. The model dynamically captures high-order dependencies and asymmetric topological relationships among genes during graph learning, effectively uncovering latent regulatory patterns that conventional methods miss [1].

Multi-Source Feature Fusion Framework

Effective GRN inference requires integrating heterogeneous biological data types to overcome the limitations of individual data modalities [1]. The multi-source feature fusion framework jointly models three critical information streams, each capturing distinct aspects of regulatory relationships [1].

Table 2: Multi-Source Feature Fusion for Enhanced GRN Inference

| Feature Type | Data Source | Key Metrics | Biological Significance |

|---|---|---|---|

| Temporal Features [1] | Gene expression time-series data | Mean, standard deviation, maximum/minimum values, skewness, kurtosis, time-series trend [1] | Reflects dynamic changes in gene expression; reveals expression levels and trends at different time points [1] |

| Expression-Profile Features [1] | Wild-type or multiple condition expression data | Baseline expression level, expression stability, expression specificity, expression pattern, expression correlation [1] | Describes expression characteristics under different conditions; provides background for inferring regulatory roles [1] |

| Topological Features [1] | Structural properties of GRN graph | Degree centrality, in-degree, out-degree, clustering coefficient, betweenness centrality, PageRank score [1] | Reveals structural role of genes in network; captures regulatory relationships and interactions [1] |

Experimental Protocol for Hybrid GRN Inference

Data Collection and Preprocessing

- Data Retrieval: Raw datasets in FASTQ format are retrieved from the Sequence Read Archive (SRA) database at NCBI using SRA-Toolkit [12].

- Quality Control: Adaptor sequences and low-quality bases are removed using Trimmomatic (version 0.38); quality assessment is performed with FastQC [12].

- Alignment and Quantification: Trimmed reads are aligned to the reference genome using STAR (2.7.3a), and gene-level raw read counts are obtained using CoverageBed [12].

- Normalization: Read counts are normalized using the weighted trimmed mean of M-values (TMM) method from edgeR to minimize technical variations [12].

Feature Extraction and Model Training

- Temporal Feature Extraction: For gene expression time-series data ( Xt \in \mathbb{R}^{N \times T} ) (where ( N ) represents genes and ( T ) time points), Z-score normalization is applied: ( \hat{X}{t{i,:}} = \frac{X{t{i,:}} - \mui}{\sigma_i} ) to ensure each gene has zero mean and unit variance across time points [1].

- Multi-Feature Integration: Temporal, expression-profile, and topological features are concatenated into a unified representation [1].

- Cross-Species Transfer Learning: For species with limited training data, models trained on well-characterized species (e.g., Arabidopsis thaliana) are transferred using orthologous gene mappings [12].

Visualization of Methodologies

Hybrid GRN Inference Workflow

Graph Topology-Aware Attention Mechanism

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Computational Tools and Data Resources for GRN Inference

| Resource | Type | Function in GRN Research |

|---|---|---|

| SRA-Toolkit [12] | Data Retrieval | Retrieves raw sequencing data in FASTQ format from the NCBI Sequence Read Archive [12] |

| Trimmomatic [12] | Quality Control | Removes adaptor sequences and low-quality bases from raw reads [12] |

| STAR Aligner [12] | Sequence Alignment | Aligns trimmed reads to reference genomes with high accuracy [12] |

| edgeR [12] | Normalization | Normalizes gene-level read counts using TMM method to minimize technical variations [12] |

| GTAT-GRN [1] [20] | Inference Model | Graph topology-aware attention method with multi-source feature fusion [1] |

| Transfer Learning Framework [12] | Cross-Species Analysis | Enables knowledge transfer from data-rich species (Arabidopsis) to data-scarce species [12] |

| DREAM4/DREAM5 [1] | Benchmark Datasets | Standardized datasets for systematic evaluation of GRN inference methods [1] |

Performance Benchmarks and Validation

Experimental results demonstrate that hybrid models consistently outperform traditional GRN inference methods across multiple benchmarks. On standardized DREAM4 and DREAM5 datasets, topology-aware approaches like GTAT-GRN achieve superior performance in overall metrics including AUC and AUPR, along with high-confidence predictive performance on Top-k metrics (Precision@k, Recall@k, F1@k) [1]. The integration of multi-source features provides a 15-20% improvement in identifying key regulatory relationships compared to single-modality approaches [1].

Cross-species transfer learning has proven particularly valuable for non-model species with limited experimentally validated regulatory pairs. By leveraging training data from well-characterized species like Arabidopsis thaliana, models can successfully predict regulatory relationships in poplar and maize with significantly enhanced performance [12]. This strategy demonstrates the feasibility of knowledge transfer across species and provides a scalable framework for elucidating regulatory mechanisms in data-scarce plant systems [12].

The journey from noisy, high-dimensional biological data to accurate network models represents one of the most significant challenges in contemporary systems biology. The integration of hybrid machine learning approaches, multi-source feature fusion, and cross-species transfer learning has dramatically advanced our capacity to reconstruct reliable GRNs from complex transcriptomic data. These computational innovations not only enhance inference accuracy but also provide scalable frameworks for elucidating regulatory mechanisms across both model and non-model organisms. As these methodologies continue to evolve, they promise to unlock deeper insights into the topological organization and dynamic behavior of gene regulatory networks, ultimately advancing both basic biological understanding and applications in therapeutic development and precision medicine.

From Data to Networks: Methodologies for Inferring GRN Topology and Dynamics

Gene Regulatory Networks (GRNs) are intricate systems that represent the causal interactions between genes, controlling cellular processes and functional states [20]. Understanding their topology (structure) and dynamics (behavior over time) is a fundamental challenge in systems biology, with profound implications for deciphering disease mechanisms and accelerating drug discovery [21] [20]. The inference and analysis of GRNs are complicated by the noisy nature of genomic data, the high dimensionality of the problem, and the complex, often non-linear, nature of regulatory relationships [1] [3].

In recent years, machine learning (ML) has emerged as a transformative force in this domain. ML methods provide the computational framework needed to infer network topology from experimental data and model network dynamics. Supervised learning leverages known regulatory interactions to train predictive models. Unsupervised learning uncovers hidden patterns and structures without prior labeling. Deep learning, particularly Graph Neural Networks (GNNs), offers powerful tools for learning directly from graph-structured data, naturally aligning with the representation of GRNs [1] [22]. This technical guide explores the core ML paradigms revolutionizing the study of GRN topology and dynamics, providing researchers with a framework for selecting and implementing these advanced computational techniques.

Supervised Learning: Leveraging Known Regulatory Knowledge

Supervised learning approaches for GRN inference require a set of known gene regulatory relationships to train a model that can then predict new interactions. This formulation typically treats the problem as a link prediction task on a graph [22].

Core Methodology and Experimental Protocol

A standard protocol for supervised GRN inference involves the following steps [22]:

- Network Representation: Formally represent the GRN as a graph ( G = (V, E) ), where ( V ) is the set of genes (nodes) and ( E ) is the set of known regulatory interactions (edges).

- Feature Engineering: For each gene or gene pair, engineer a feature vector. This can include:

- Model Training: Train a classifier (e.g., a Graph Neural Network) on labeled gene pairs to distinguish between existing (positive) and non-existing (negative) regulatory links.

- Evaluation: Validate the model on held-out test data using standard metrics such as Area Under the Receiver Operating Characteristic Curve (AUROC) and Area Under the Precision-Recall Curve (AUPRC) [22].

Performance Comparison of Supervised Methods

Table 1: Performance metrics of selected supervised GRN inference methods on human cell line benchmarks. Metrics shown are AUROC (Area Under the ROC Curve) and AUPRC (Area Under the Precision-Recall Curve).

| Method | Model Type | A375 (AUROC/AUPRC) | A549 (AUROC/AUPRC) | HEK293T (AUROC/AUPRC) | PC3 (AUROC/AUPRC) |

|---|---|---|---|---|---|

| Meta-TGLink | Graph Meta-Learning | Highest Performance | Highest Performance | Highest Performance | Highest Performance |

| GNNLink | Graph Neural Network | Lower | Lower | Lower | Lower |

| GENELink | Graph Neural Network | Lower | Lower | Lower | Lower |

| CNNC | Convolutional Neural Network | Lower | Lower | Lower | Lower |

| GNE | Multi-Layer Perceptron | Lower | Lower | Lower | Lower |

As illustrated in Table 1, methods like Meta-TGLink, which employ sophisticated graph meta-learning, demonstrate superior performance across multiple cell lines. This highlights the advantage of architectures specifically designed for graph-structured data and few-shot learning scenarios, where known regulatory information is limited [22].

Unsupervised and Semi-Supervised Learning: Inference from Data Patterns

Unsupervised learning methods infer GRNs without relying on pre-existing knowledge of regulatory interactions. They primarily leverage statistical measures and machine learning techniques to identify gene associations directly from data [22].

Key Approaches and Workflows

- Correlation and Information-Theoretic Methods: These include calculating Pearson correlation coefficients or mutual information between gene expression profiles to infer associations. While simple, they often assume linear dependencies and can yield high false-positive rates [22] [3].

- Tree-Based Methods: Algorithms like GENIE3 use ensemble trees (e.g., Random Forests) to infer networks. Each gene is modeled as a function of all other genes, and the importance of a gene as a predictor for another is interpreted as evidence of a regulatory link [22].

- Generative Models: Methods like DeepSEM use a beta-variational autoencoder combined with a structural equation model to infer interactions. Another approach, MetaSEM, extends this with bi-level optimization and meta-learning to generate pseudo-labels for unsupervised inference [22].

The following workflow diagram illustrates a modern unsupervised learning pipeline for GRN inference, integrating feature extraction and model inference.

Deep Learning and Graph Neural Networks: A Paradigm Shift

Deep learning models, particularly GNNs, have shown considerable potential for GRN inference due to their innate capacity to learn from graph structures and model complex, non-linear regulatory relationships [1] [22].

Advanced Architectures for GRN Inference

GTAT-GRN (Graph Topology-aware Attention GRN) is a state-of-the-art deep learning model that integrates multi-source feature fusion with a topology-aware attention mechanism [1] [20]. Its architecture consists of four key modules:

- A. Multi-Source Feature Fusion: Jointly models temporal expression patterns, baseline expression levels, and structural topological attributes to create enriched node (gene) representations.

- B. Graph Topology-Aware Attention Network (GTAT): Dynamically captures high-order dependencies and asymmetric relationships between genes by combining graph structure with multi-head attention.

- C. Feedforward Network & Residual Connections: Processes the refined representations and helps maintain stable gradient flow during training.

- D. GRN Prediction Output Layer: Produces the final predictions for regulatory links [20].

Meta-TGLink is another advanced GNN model designed for few-shot learning, where known regulatory interactions are scarce. It is based on a model-agnostic meta-learning (MAML) framework, which enables it to learn transferable regulatory patterns from related tasks and adapt quickly to new genes or cell types with minimal labeled data [22].

Performance of Deep Learning vs. Other Methods

Table 2: Comparative performance of GTAT-GRN against other state-of-the-art methods on the DREAM4 and DREAM5 benchmark datasets. Performance is measured by Area Under the Curve (AUC) and Area Under the Precision-Recall Curve (AUPR).

| Inference Method | DREAM4 (AUC) | DREAM4 (AUPR) | DREAM5 (AUC) | DREAM5 (AUPR) |

|---|---|---|---|---|

| GTAT-GRN | Highest | Highest | Highest | Highest |

| GENIE3 | Lower | Lower | Lower | Lower |

| GreyNet | Lower | Lower | Lower | Lower |

| Other STOA Methods | Lower | Lower | Lower | Lower |

Experimental results, as summarized in Table 2, demonstrate that GTAT-GRN consistently achieves higher inference accuracy and improved robustness across different benchmark datasets, confirming the effectiveness of integrating topological attention with multi-source features [20].

Building and analyzing GRNs requires a combination of software tools, datasets, and computational resources. The table below catalogs key components of the modern computational biologist's toolkit.

Table 3: Key research reagents, datasets, and tools for ML-based GRN inference.

| Item Name | Type | Function and Application |

|---|---|---|

| DREAM4 & DREAM5 | Benchmark Datasets | Standardized, gold-standard datasets for evaluating and benchmarking the performance of GRN inference algorithms [1] [20]. |

| GRiNS (Gene Regulatory Interaction Network Simulator) | Software Library | A Python library for parameter-agnostic simulation of GRN dynamics, integrating RACIPE and Boolean Ising formalisms with GPU acceleration for scalability [23]. |

| RACIPE | Modeling Framework | A method for generating a system of ODEs from a network topology and simulating it over random parameters to uncover possible steady states and dynamic behaviors [23]. |

| ChIP-Atlas | Validation Database | A data repository of ChIP-seq experiments used for the biological validation of computationally predicted gene regulatory interactions, such as TF-target links [22]. |

| GTAT-GRN / Meta-TGLink Model Code | Software Tool | Reference implementations of advanced GNN models for high-accuracy and few-shot GRN inference, typically available from research publications [1] [22] [20]. |

Integrated Experimental Protocol for ML-Based GRN Analysis

This section provides a consolidated, step-by-step protocol for researchers aiming to infer GRN topology using a modern deep learning approach, based on methodologies from the cited works.

Protocol: GRN Inference using a Graph Neural Network with Feature Fusion

Data Acquisition and Preprocessing:

- Obtain gene expression data (e.g., RNA-seq, single-cell RNA-seq). This can be time-series data or data from multiple baseline conditions.

- Perform standard normalization. For temporal data, apply Z-score normalization per gene across time points: ( \hat{X}{t{i},:} = \frac{X{t{i},:} - \mui}{\sigmai} ), where ( \mui ) and ( \sigmai ) are the mean and standard deviation of gene ( i )'s expression [20].

Feature Extraction:

- Temporal Features: For each gene, calculate statistical measures from its expression trajectory: mean, standard deviation, maximum, minimum, skewness, kurtosis, and time-series trend [20].