Data-Driven Biomarker Discovery: Integrating AI, Multi-Omics, and Clinical Translation for Precision Medicine

This article provides a comprehensive overview of the current landscape and future directions of data-driven, knowledge-based biomarker discovery.

Data-Driven Biomarker Discovery: Integrating AI, Multi-Omics, and Clinical Translation for Precision Medicine

Abstract

This article provides a comprehensive overview of the current landscape and future directions of data-driven, knowledge-based biomarker discovery. Aimed at researchers, scientists, and drug development professionals, it explores the foundational shift from hypothesis-driven to AI-powered discovery, detailing cutting-edge methodologies from multi-omics integration and single-cell analysis to spatial biology. It addresses critical challenges in data standardization, model generalizability, and clinical translation, while providing a framework for rigorous validation and comparative analysis of biomarker signatures. The content synthesizes insights from recent technological breakthroughs, regulatory trends, and real-world case studies to offer a actionable guide for advancing personalized therapeutics and diagnostic strategies.

The New Paradigm: From Hypothesis-Driven to Data-Driven Biomarker Discovery

In modern oncology, a biomarker is defined as an objectively measurable indicator of normal biological processes, pathogenic processes, or pharmacological responses to therapeutic interventions [1]. These molecular signposts, detectable in blood, tissue, or other biological samples, have become indispensable tools across the cancer care continuum—from early detection and diagnosis to treatment selection and monitoring [2] [3]. The evolution of biomarker science represents a paradigm shift from traditional histopathological classification toward molecular stratification, fundamentally enabling precision oncology by tailoring therapeutic strategies to individual patient profiles [4].

The clinical utility of biomarkers is defined through their distinct roles: diagnostic biomarkers confirm the presence of a specific disease or subtype; prognostic biomarkers provide information about the likely course of the disease regardless of treatment; and predictive biomarkers identify patients who are more likely to respond to a specific therapeutic intervention [2]. This tripartite classification provides the foundational framework for understanding how biomarkers guide clinical decision-making in contemporary oncology practice, with the ultimate goal of matching the right patient with the right treatment at the right time.

Table 1: Classification of Biomarkers by Clinical Application in Oncology

| Biomarker Type | Primary Function | Representative Examples | Clinical Utility |

|---|---|---|---|

| Diagnostic | Confirms disease presence or subtype | HER2 amplification in breast cancer; BRAF V600E mutation in melanoma | Guides initial disease characterization and classification |

| Prognostic | Provides information on disease outcome independent of treatment | High PD-L1 expression in NSCLC; Circulating Tumor DNA (ctDNA) levels | Informs about natural disease history and overall aggressiveness |

| Predictive | Identifies patients likely to respond to specific therapy | EGFR mutations for EGFR inhibitors; NTRK fusions for TRK inhibitors | Enables therapy selection and predicts treatment efficacy |

| Monitoring | Tracks disease status or treatment response | PSA levels in prostate cancer; ctDNA dynamics during therapy | Assesses treatment effectiveness and detects recurrence |

The Molecular Taxonomy of Modern Biomarkers

The technological revolution in molecular profiling has dramatically expanded the classes of biomarkers available for clinical and research applications. Contemporary biomarker development extends beyond traditional single-analyte approaches to incorporate multi-omics integration, simultaneously examining DNA, RNA, proteins, and metabolites to provide a more holistic understanding of cancer biology [5].

Genomic biomarkers, including specific mutations, gene fusions, and copy number variations, were among the first to be incorporated into routine clinical practice. These include established markers such as EGFR mutations in non-small cell lung cancer (NSCLC) and KRAS mutations in colorectal cancer [3]. Epigenetic biomarkers, particularly DNA methylation patterns, have emerged as powerful tools for early cancer detection and monitoring, with technologies such as methylation-specific PCR and sequencing approaches enabling their clinical application [1].

Proteomic and metabolomic biomarkers provide insights into the functional state of tumor cells, reflecting the complex interplay between genomic alterations and the tumor microenvironment. The emergence of liquid biopsy technologies has further transformed the biomarker landscape by enabling non-invasive detection and monitoring of circulating tumor DNA (ctDNA), circulating tumor cells (CTCs), and extracellular vesicles (EVs) [3]. These circulating biomarkers offer a dynamic window into tumor evolution and treatment response, overcoming the limitations of traditional tissue biopsies.

Table 2: Technical Characteristics of Major Biomarker Classes in Oncology

| Biomarker Class | Molecular Characteristics | Primary Detection Technologies | Key Clinical Applications |

|---|---|---|---|

| Genetic | DNA sequence variants, gene expression changes | NGS, PCR, SNP arrays | Genetic risk assessment, tumor subtyping, target identification |

| Epigenetic | DNA methylation, histone modifications | Methylation arrays, ChIP-seq, ATAC-seq | Early cancer diagnosis, environmental exposure assessment |

| Transcriptomic | mRNA expression, non-coding RNAs | RNA-seq, microarrays, qPCR | Molecular subtyping, treatment response prediction |

| Proteomic | Protein expression, post-translational modifications | Mass spectrometry, ELISA, protein arrays | Disease diagnosis, therapeutic monitoring, prognosis evaluation |

| Metabolomic | Metabolite concentration profiles | LC-MS/MS, GC-MS, NMR | Metabolic pathway activity assessment, treatment toxicity evaluation |

| Imaging | Anatomical structures, functional activities | MRI, PET-CT, radiomics | Disease staging, treatment response assessment |

| Digital | Behavioral characteristics, physiological fluctuations | Wearable devices, mobile applications, IoT sensors | Remote monitoring, early warning systems |

Biomarker Applications Across the Cancer Care Continuum

Early Detection and Screening Biomarkers

The application of biomarkers in cancer screening aims to detect disease at its earliest stages when treatment is most likely to succeed. Traditional protein biomarkers such as prostate-specific antigen (PSA) for prostate cancer and cancer antigen 125 (CA-125) for ovarian cancer have been widely used but often disappoint due to limitations in sensitivity and specificity, resulting in overdiagnosis and overtreatment [3].

Recent advances have focused on multi-analyte approaches that combine multiple biomarker classes to improve detection accuracy. Circulating tumor DNA (ctDNA) analysis has emerged as a particularly promising non-invasive biomarker that detects fragments of DNA shed by cancer cells into the bloodstream [3]. The development of multi-cancer early detection (MCED) tests such as the Galleri test represents a transformative approach, capable of detecting over 50 cancer types simultaneously through ctDNA analysis [3]. These technologies, combined with artificial intelligence-driven pattern recognition, are laying the groundwork for population-level screening tools that could significantly reduce cancer mortality through earlier intervention.

Diagnostic and Prognostic Biomarker Applications

Biomarkers are vital for confirming cancer diagnoses, classifying molecular subtypes, and predicting disease course. In breast cancer, the evaluation of HER2 overexpression and hormone receptor (ER/PR) status has become standard practice, providing critical prognostic information and guiding therapeutic selection [3]. Similarly, in colorectal cancer, KRAS mutation status predicts resistance to EGFR-targeted therapies and is associated with worse patient outcomes [3].

The rise of immunotherapy has introduced new biomarker challenges and opportunities. PD-L1 expression levels have demonstrated utility in identifying patients with melanoma and NSCLC who are more likely to benefit from immune checkpoint inhibitors [3]. However, PD-L1 alone represents an incomplete predictor of treatment outcomes, particularly for patients with immune-impaired, inflammatory profiles, highlighting the need for more sophisticated biomarker panels that incorporate multiple immune parameters [3].

Predictive Biomarkers for Therapy Selection

Predictive biomarkers represent the cornerstone of precision oncology, enabling therapy selection based on the molecular profile of an individual patient's tumor. The efficacy of tropomyosin receptor kinase (TRK) inhibitors in neurotrophic receptor tyrosine kinase (NTRK) fusion-positive tumors exemplifies the power of targeted therapy guided by predictive biomarkers, demonstrating impressive efficacy across multiple tumor types in a truly tumor-agnostic fashion [4].

The development of companion diagnostics has created an essential link between biomarker testing and therapeutic application. For example, the addition of encorafenib and cetuximab to the FOLFOX regimen in first-line treatment of metastatic colorectal cancer is guided by BRAF mutation status [4]. These biomarker-therapy pairs exemplify the practical implementation of precision oncology, though it is important to note that currently only a minority of patients benefit from genomics-guided precision cancer medicine, as many tumors lack actionable mutations or develop treatment resistance [4].

Experimental Protocols for Biomarker Discovery and Validation

High-Throughput Biomarker Screening Protocol

Objective: To identify novel biomarker candidates from patient-derived samples using high-throughput multi-omics technologies. Materials: Patient tissue or blood samples, Abcam SimpleStep ELISA kits, automation-ready microplate readers (e.g., SpectraMax series), automated liquid handling systems, high-throughput sequencing platforms, multi-mode microplate washers (e.g., AquaMax 4000) [6].

Procedure:

- Sample Preparation: Collect and process biological samples (tissue, plasma, serum) using standardized protocols. For liquid biopsies, isolate ctDNA from plasma using commercially available extraction kits. For tissue samples, perform macro-dissection or micro-dissection to enrich for tumor content [3] [6].

- Multi-Omics Profiling:

- Genomic Analysis: Perform next-generation sequencing using comprehensive gene panels or whole-exome sequencing to identify mutations, copy number alterations, and structural variants [3].

- Transcriptomic Analysis: Conduct RNA sequencing to characterize gene expression patterns, alternative splicing, and fusion transcripts [1].

- Proteomic Analysis: Utilize mass spectrometry-based approaches or multiplexed immunoassays to quantify protein expression and post-translational modifications [1].

- Automated Immunoassay Testing: Implement automated, high-throughput ELISA protocols using validated kits in 384-well format. Configure microplate washers and readers for walkaway operation to minimize manual handling and variability [6].

- Data Generation and Quality Control: Process raw data through standardized pipelines. Implement rigorous quality control metrics including sample-level metrics (e.g., DNA/RNA quality, library complexity) and assay-specific metrics (e.g., sequencing depth, coverage uniformity) [6].

AI-Driven Biomarker Discovery Protocol

Objective: To identify predictive biomarker signatures from high-dimensional clinicogenomic data using artificial intelligence approaches. Materials: Annotated clinicogenomic datasets, high-performance computing infrastructure, Python/R programming environments with machine learning libraries (e.g., PyTorch, TensorFlow), contrastive learning frameworks [7].

Procedure:

- Data Curation and Preprocessing: Aggregate multi-modal data including genomic, transcriptomic, proteomic, and clinical data. Implement standardized normalization procedures to correct for batch effects and platform-specific biases [1] [7].

- Feature Engineering: Perform dimensionality reduction using principal component analysis (PCA) or autoencoders. Create derived features that capture interactions between different molecular data types [7].

- Model Training: Implement contrastive learning frameworks such as the Predictive Biomarker Modeling Framework (PBMF) that systematically explore potential predictive biomarkers in an automated, unbiased manner. Train models to distinguish patients who respond to specific therapies from those who do not [7].

- Biomarker Validation: Apply trained models to independent validation cohorts. Assess biomarker performance using metrics including sensitivity, specificity, positive predictive value, and hazard ratios for survival outcomes [7].

- Clinical Interpretation: Generate interpretable biomarker signatures that can be translated into clinically actionable assays. Develop scoring algorithms and establish clinically relevant cutpoints [7].

Dynamic Biomarker Monitoring Protocol

Objective: To monitor biomarker dynamics during treatment and assess their relationship with clinical outcomes. Materials: Serial patient samples, liquid biopsy collection tubes, digital PCR systems, NGS platforms, statistical software for longitudinal data analysis [8].

Procedure:

- Sample Collection Strategy: Establish a standardized schedule for longitudinal sample collection (e.g., pre-treatment, every 2-3 treatment cycles, at progression). For liquid biopsy monitoring, collect blood in cell-stabilizing tubes to prevent degradation [8].

- Molecular Analysis: Process samples using consistent methodologies across all timepoints. For ctDNA analysis, employ tumor-informed assays when possible to enhance sensitivity. Quantify biomarker levels using appropriate techniques (e.g., variant allele frequency for mutations, concentration for proteins) [8].

- Data Integration: Combine longitudinal biomarker measurements with clinical data including radiographic assessments, symptom reports, and treatment details. Align all data on a common timeline [8].

- Dynamic Modeling: Apply statistical models such as joint models, landmark analysis, or multi-state models to characterize biomarker trajectories and their relationship with survival outcomes. Account for informative missing data mechanisms when appropriate [8].

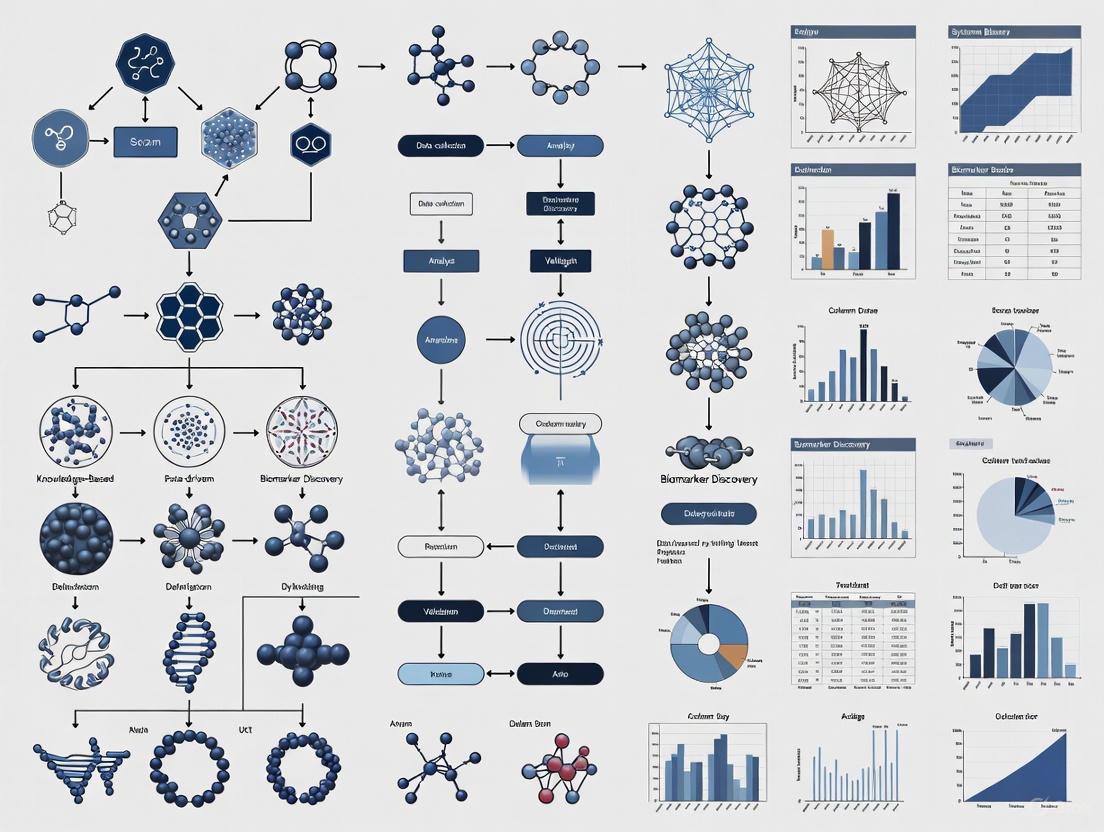

Diagram 1: Biomarker discovery workflow from sample to clinical application.

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 3: Essential Research Reagents and Platforms for Biomarker Research

| Category | Specific Product/Platform | Primary Function | Key Applications |

|---|---|---|---|

| Sample Preparation | Omni LH 96 Homogenizer | Tissue disruption and homogenization | Standardized nucleic acid and protein extraction from tissue samples |

| Automation Systems | AquaMax 4000 Microplate Washer | Automated plate washing | High-throughput immunoassays in 96- or 384-well formats |

| Detection Instruments | SpectraMax ABS Plus Microplate Reader | Absorbance, fluorescence, and luminescence detection | Quantitative biomarker measurement in ELISA and other plate-based assays |

| Validated Assay Kits | Abcam SimpleStep ELISA Kits | Pre-optimized immunoassays with single-wash protocol | Rapid, reproducible quantification of specific protein biomarkers |

| Data Analysis Software | SoftMax Pro GxP Software | Curve fitting and data analysis | Compliance-ready data processing and reporting for biomarker assays |

| Next-Generation Sequencing | Comprehensive genomic profiling panels | Targeted sequencing of cancer-related genes | Mutation detection, fusion identification, and biomarker discovery |

| Liquid Biopsy Platforms | ctDNA extraction and analysis kits | Isolation and analysis of circulating tumor DNA | Non-invasive biomarker detection and monitoring |

Data-Driven Knowledge-Based Biomarker Discovery

The paradigm of biomarker discovery is shifting from traditional hypothesis-driven approaches toward hypothesis-free, data-driven strategies that leverage large-scale OMICS technologies and advanced computational analytics [5]. This approach systematically analyzes high-dimensional molecular data without preconceived notions of biological relevance, enabling the identification of novel biomarkers and associations that might be overlooked in targeted investigations.

Artificial intelligence is playing an increasingly transformative role in biomarker discovery. Machine learning algorithms, particularly deep learning networks, can identify complex patterns in multi-modal data that elude conventional statistical methods [9] [1]. The Predictive Biomarker Modeling Framework (PBMF) exemplifies this approach, using contrastive learning to systematically explore potential predictive biomarkers in an automated, unbiased manner [7]. When applied retrospectively to immuno-oncology trial data, this AI-driven framework has demonstrated the ability to identify biomarkers that could have improved patient selection for phase 3 trials, with identified patients showing a 15% improvement in survival risk compared to the original trial population [7].

The integration of dynamic prediction models (DPMs) represents another frontier in data-driven biomarker research. These models incorporate longitudinal biomarker measurements and time-dependent clinical events to continuously update prognostic predictions as new data becomes available during patient follow-up [8]. Joint models, which simultaneously analyze longitudinal biomarker data and time-to-event outcomes, are particularly valuable for capturing the evolving nature of cancer and its response to therapy [8].

Diagram 2: Data-driven knowledge-based biomarker discovery framework integrating multi-modal data and AI.

The field of oncology biomarkers is evolving toward increasingly sophisticated approaches that integrate multiple data modalities and leverage advanced computational methods. The future will see greater emphasis on multi-omics integration, combining genomic, transcriptomic, proteomic, epigenomic, and metabolomic data to develop comprehensive biomarker signatures that more accurately reflect the complexity of cancer biology [1] [5].

Artificial intelligence will play an expanding role in biomarker discovery and validation, with algorithms capable of identifying subtle patterns in high-dimensional data that escape human detection [9] [7]. The application of contrastive learning and other self-supervised approaches will enable more efficient identification of predictive biomarkers from complex clinicogenomic datasets [7]. Furthermore, the development of dynamic prediction models that incorporate longitudinal biomarker data will provide continuously updated prognostic assessments that better reflect the evolving nature of cancer and its response to therapy [8].

As biomarker science advances, addressing challenges related to data standardization, model generalizability across diverse populations, clinical implementation pathways, and regulatory alignment will be critical for translating these innovations into improved patient outcomes [1]. Through continued innovation in both molecular technologies and analytical approaches, biomarkers will increasingly fulfill their potential as essential tools for guiding personalized cancer care across the entire disease continuum.

The field of biomarker discovery is undergoing a profound transformation, moving from traditional hypothesis-driven approaches to data-driven strategies powered by artificial intelligence (AI). Traditional biomarker discovery methods, which often focus on single molecular features, face significant challenges including limited reproducibility, high false-positive rates, and inadequate predictive accuracy due to the inherent complexity and biological heterogeneity of diseases [10]. Machine learning (ML) and deep learning (DL) address these limitations by analyzing large, complex multi-omics datasets to identify more reliable and clinically useful biomarkers that capture the multifaceted biological networks underlying disease mechanisms [10]. This revolution enables the identification of patterns and relationships in high-dimensional data that far exceed human observational capacity and analytical capabilities [9] [11].

The integration of AI into biomarker research represents a fundamental shift toward proactive health management, transitioning from traditional disease diagnosis and treatment models to approaches based on prediction and prevention [1]. This transformation is grounded in the integration of diverse data types—including genomics, transcriptomics, proteomics, metabolomics, imaging data, and clinical records—providing comprehensive molecular profiles that facilitate the identification of highly predictive biomarkers across various disease areas [10] [1]. AI-driven biomarker discovery now spans oncology, infectious diseases, neurodegenerative disorders, and chronic inflammatory diseases, illustrating the versatility of these methodologies [10].

Table 1: Core AI Technologies in Biomarker Discovery

| AI Technology | Primary Applications in Biomarker Discovery | Key Advantages |

|---|---|---|

| Convolutional Neural Networks (CNNs) | Analysis of histopathology images, radiology scans, and spatial biology data [10] [9] | Identifies spatial patterns and features invisible to human observation |

| Recurrent Neural Networks (RNNs) | Processing sequential data, temporal biomarker patterns, and longitudinal monitoring data [10] | Captures time-dependent patterns in disease progression |

| Transformers & Large Language Models | Multi-omics data integration, literature mining, and clinical note analysis [10] [12] | Identifies complex non-linear associations across disparate data types |

| Random Forests & XGBoost | Feature selection, biomarker classification, and handling high-dimensional omics data [10] [13] | Robust against noise and overfitting; provides feature importance metrics |

| Generative Adversarial Networks | Molecular generation techniques, creating novel drug molecules [14] [15] | Generates novel molecular structures with desired biological properties |

AI Applications Across Data Modalities

Multi-Omics Data Integration

AI approaches have demonstrated remarkable capabilities in analyzing large-scale multi-omics datasets, enabling the identification of intricate patterns and interactions among various molecular features that were previously unrecognized [10]. By integrating genomic, epigenomic, transcriptomic, and proteomic data, ML models can develop comprehensive molecular disease maps that identify complex marker combinations traditional methods might overlook [1]. For instance, AI-driven analysis of multi-omics data has improved early Alzheimer's disease diagnosis specificity by 32%, providing a crucial intervention window [1]. The integration of multi-omics data reveals novel insights into the molecular basis of diseases and drug responses, identifying new biomarkers and therapeutic targets that predict and optimize individualized treatments [11].

Digital Biomarkers and Real-World Data

Digital biomarkers derived from wearables, smartphones, and connected medical devices are becoming invaluable tools that offer continuous, objective insights into a patient's health in real-world settings [16]. Unlike traditional clinical outcome assessments that rely on intermittent and sometimes subjective clinic-based measurements, digital biomarkers enable a richer, more dynamic understanding of disease progression and treatment response [16]. In oncology, wearable devices monitoring heart rate variability, sleep quality, and activity levels have reshaped how clinicians assess treatment tolerance and functional status [16]. When combined with electronic patient-reported outcome tools, these approaches capture daily symptom fluctuations—moving beyond static clinic visits and providing a real-world perspective of each patient's experience [16].

Spatial Biology and Imaging Data

Spatial biology techniques represent one of the most significant advances in biomarker discovery, revealing the spatial context of dozens of markers within a single tissue specimen [11]. Unlike traditional approaches, spatial transcriptomics and multiplex immunohistochemistry allow researchers to study gene and protein expression in situ without altering the spatial relationships or interactions between cells [11]. This provides critical information about physical distance between cells, cellular organization, and tissue architecture that is essential for understanding the complex tumor microenvironment [11]. Deep learning models, particularly CNNs, can extract hidden prognostic and predictive information directly from routine histological images, significantly enhancing traditional pathology workflows [10] [9].

Diagram 1: AI-Powered Spatial Biomarker Workflow (76 chars)

Experimental Protocols and Methodologies

Protocol 1: Plasma Proteomics-Based Biomarker Panel Discovery

Objective: To identify and validate a plasma protein signature for disease diagnosis and prognosis using machine learning approaches, as demonstrated in amyotrophic lateral sclerosis (ALS) research [17].

Materials and Reagents:

- Olink Explore 3072 platform or similar high-throughput proteomics system

- Plasma samples from patients and matched controls

- EDTA or heparin blood collection tubes

- Quality control materials for proteomic assays

- ELISA validation kits for candidate biomarkers

Methodology:

- Sample Preparation and Quality Control: Collect plasma samples following standardized protocols. Implement rigorous quality control measures, noting that the Olink platform typically demonstrates median intra-assay and inter-assay coefficients of variation of 9.9% and 22.3%, respectively [17].

Proteomic Profiling: Utilize the Olink Explore 3072 platform or equivalent to measure 2,886+ plasma proteins. Ensure adequate sample sizes (e.g., 183 ALS cases vs. 309 controls in discovery cohort) for statistical power [17].

Differential Abundance Analysis: Conduct proteome-wide association testing using generalized linear regression adjusted for age, sex, collection tube type, and population stratification factors. Apply false discovery rate (FDR) correction (e.g., FDR P < 0.05) to identify significantly differentially abundant proteins [17].

Machine Learning Model Development:

- Feature Selection: Include significantly differentially abundant proteins (e.g., 33 in ALS study) along with clinical parameters (age, sex) as initial features [17].

- Data Partitioning: Randomly designate 80% of samples as Discovery/Training Set and 20% as Replication/Test Set [17].

- Model Training: Employ supervised machine learning algorithms (e.g., random forests, XGBoost) to create binary classification models distinguishing cases from controls [17].

- Validation: Assess model performance using the independent replication cohort, with high-accuracy models achieving area under the curve (AUC) values up to 98.3% [17].

Pathway Analysis: Conduct enrichment analysis of significantly altered proteins to identify associated biological processes and pathways (e.g., skeletal muscle development, energy metabolism, neuronal function) [17].

Implementation Considerations: For studies aiming to predict disease onset in pre-symptomatic individuals, analyze plasma samples collected before symptom emergence to estimate the age of clinical onset and understand prodromal phase biology [17].

Protocol 2: Predictive Biomarker Discovery Using Network-Based Approaches

Objective: To identify predictive biomarkers for targeted cancer therapies by integrating network motifs and protein disorder information using the MarkerPredict framework [13].

Materials and Resources:

- Network data from Human Cancer Signaling Network (CSN), SIGNOR, and ReactomeFI databases

- Protein disorder data from DisProt, AlphaFold (pLLDT<50), and IUPred (score > 0.5) databases

- Biomarker annotations from CIViCmine text-mining database

- Machine learning environment with Random Forest and XGBoost implementations

Methodology:

- Network Motif Identification:

- Extract three-nodal motifs (triangles) from cancer signaling networks using FANMOD or similar tools

- Focus on triangles containing both intrinsically disordered proteins (IDPs) and known drug targets

- Note the significant overrepresentation of IDP-target triangles compared to random chance [13]

Training Dataset Construction:

- Positive Controls: Compile neighbor-target pairs where the neighbor is an established predictive biomarker for drugs targeting its pair partner (e.g., 332 of 4550 pairs in original study) [13]

- Negative Controls: Curate neighbor-target pairs where neighbors are not predictive biomarkers in CIViCmine database, supplemented with random pairs [13]

Machine Learning Framework:

- Feature Engineering: Incorporate topological network properties, protein disorder metrics, and functional annotations

- Model Training: Develop 32 different models using Random Forest and XGBoost algorithms on network-specific and combined data across three IDP databases [13]

- Hyperparameter Tuning: Utilize competitive random halving for parameter optimization [13]

Validation and Scoring:

- Employ leave-one-out-cross-validation (LOOCV), k-fold cross-validation, and train-test splits (70:30)

- Expect performance metrics in the range of 0.7-0.96 LOOCV accuracy for well-trained models [13]

- Calculate Biomarker Probability Score (BPS) as a normalized summative rank of model predictions to harmonize probability values [13]

Implementation Considerations: This approach identified 2084 potential predictive biomarkers for targeted cancer therapeutics, with 426 classified as biomarkers by all four calculations in the original study [13].

Table 2: Research Reagent Solutions for AI-Driven Biomarker Discovery

| Research Reagent | Function in Biomarker Discovery | Application Context |

|---|---|---|

| Olink Explore 3072 Platform | High-throughput proteomic profiling of 3,072 proteins from minimal sample volumes [17] | Plasma proteomic biomarker discovery for neurological and other diseases |

| Spatial Transcriptomics Platforms | Gene expression analysis with tissue spatial context preservation [11] | Tumor microenvironment characterization, spatial biomarker identification |

| Human Cancer Signaling Network Database | Provides curated cancer signaling pathways for network-based biomarker discovery [13] | Predictive biomarker identification for targeted therapies |

| DisProt Database | Centralized resource of experimentally characterized intrinsically disordered proteins [13] | Identification of disordered proteins as potential biomarkers |

| Organoid & Humanized Models | Physiologically relevant systems for functional biomarker validation [11] | Biomarker screening, target validation, resistance mechanism exploration |

Implementation Considerations and Challenges

Data Quality and Standardization

The performance of AI models in biomarker discovery heavily depends on data quality and standardization. Challenges include limited sample sizes, noise, batch effects, and biological heterogeneity that can severely impact model performance, leading to issues such as overfitting and reduced generalizability [10] [1]. Differences in sensor calibration, environmental factors, and user behavior can introduce variability or measurement errors in digital biomarker data [16]. Successful implementation requires robust data governance frameworks, including encryption, anonymization, and adherence to regulatory standards such as GDPR and HIPAA to protect patient confidentiality and build trust [16].

Model Interpretability and Validation

The interpretability of ML models remains a significant hurdle, as many advanced algorithms function as "black boxes," making it difficult to elucidate how specific predictions are derived [10]. This lack of interpretability poses practical barriers to clinical adoption, where transparency and trust in predictive models are essential [10]. Explainable AI techniques such as SHAP analysis can help demonstrate feature impact explanations, increasing transparency for biomarker-driven modeling [12]. Furthermore, biomarkers identified through computational methods must undergo stringent validation using independent cohorts and experimental methods to ensure reproducibility and clinical reliability [10] [17].

Regulatory and Ethical Considerations

The deployment of ML-derived biomarkers into clinical practice requires compliance with rigorous standards set by regulatory bodies such as the FDA and EMA [10] [9]. The dynamic nature of ML-driven biomarker discovery, where models continuously evolve with new data, presents particular challenges for regulatory oversight and demands adaptive yet strict validation and approval frameworks [10]. Algorithmic bias and generalizability also pose potential risks, as many digital biomarker algorithms are trained on limited demographic groups, potentially reducing accuracy in underrepresented populations [16]. Including diverse participants during algorithm development is essential to mitigate these biases [16].

Diagram 2: Biomarker Validation Pipeline (76 chars)

The future of AI-driven biomarker discovery lies in several promising directions. Expanding predictive models to rare diseases, incorporating dynamic health indicators, strengthening integrative multi-omics approaches, conducting longitudinal cohort studies, and leveraging edge computing solutions for low-resource settings emerge as critical areas requiring innovation and exploration [1]. Future research should focus on directly linking genomic data to functional outcomes, particularly with biosynthetic gene clusters and non-coding RNAs [10]. The recognition of the microbiome's impact on health has also spurred the application of ML to identify microbial pathways involved in metabolite production, revealing potential therapeutic targets and expanding the biomarker landscape beyond the human genome to include the broader holobiont [10].

The integration of AI biomarker analysis into early research and development will not happen in isolation—it requires collaboration across the entire ecosystem [9]. Pharmaceutical and biotech companies must invest in data infrastructure and AI partnerships, academic researchers should provide translational insights that bridge preclinical and clinical biology, and regulators need to evolve frameworks that foster innovation while ensuring patient safety [9]. As these partnerships mature and technologies advance, AI-driven biomarker discovery will continue to enhance our ability to develop personalized treatment strategies that improve patient outcomes, ultimately realizing the promise of precision medicine through patterns uncovered beyond human capability.

Multi-omics integration has emerged as a transformative approach in biomedical research, moving beyond the limitations of single-omics analyses to provide a comprehensive understanding of complex biological systems. By combining data from genomics, transcriptomics, proteomics, and metabolomics, researchers can now capture the full spectrum of molecular interactions that underlie health and disease states. This integrated framework is particularly powerful for biomarker discovery, offering unprecedented opportunities to identify robust diagnostic, prognostic, and predictive signatures across various disease areas, including oncology, tissue repair, and beyond. The implementation of multi-omics strategies requires sophisticated computational tools for data integration and visualization, coupled with rigorous experimental protocols. As the field advances, emerging technologies such as single-cell multi-omics, spatial omics, and artificial intelligence are further enhancing our ability to decipher disease mechanisms and develop personalized therapeutic strategies, ultimately driving the evolution of precision medicine.

Multi-omics represents a paradigm shift in biological research, integrating data from multiple molecular levels to construct comprehensive models of biological systems. This approach recognizes that biological functions emerge from complex interactions between various molecular layers—from genetic blueprint to metabolic activity. Where single-omics studies (examining only genomics, transcriptomics, proteomics, or metabolomics in isolation) provide limited insights, multi-omics integration reveals how alterations at one molecular level propagate through the system to influence phenotype and function [18]. This holistic perspective is particularly valuable for understanding complex diseases like cancer, where heterogeneity and dynamic changes across molecular layers drive pathogenesis and treatment response [19] [20].

The fundamental premise of multi-omics is that each molecular layer provides complementary information: genomics reveals inherited and acquired genetic variants; transcriptomics captures gene expression patterns; proteomics identifies protein abundance and modifications; and metabolomics profiles the end-products of cellular processes that most closely reflect phenotypic states [18] [20]. By integrating these layers, researchers can bridge the gap between genotype and phenotype, uncovering causal relationships and regulatory mechanisms that would remain invisible in single-omics approaches.

In the context of biomarker discovery, multi-omics strategies have demonstrated particular promise for identifying molecular signatures with greater specificity and clinical utility than single-omics biomarkers. The integration of multiple data types helps distinguish driver alterations from passenger events, identifies compensatory pathways that may mediate treatment resistance, and reveals biomarkers that accurately stratify patient populations for targeted therapies [19] [21] [20]. Furthermore, as pharmaceutical research increasingly focuses on personalized medicine, multi-omics approaches provide the comprehensive molecular profiling necessary to match patients with optimal treatments based on their unique molecular profiles [18] [22].

Methodological Framework for Multi-Omics Integration

Data Integration Strategies

Successful multi-omics integration requires methodological frameworks that can handle the complexity, high dimensionality, and heterogeneity of omics data. Several computational strategies have been developed, each with distinct strengths and applications in biomarker discovery research.

Table 1: Multi-Omics Data Integration Approaches

| Integration Method | Key Characteristics | Applications in Biomarker Discovery | Example Tools/Pipelines |

|---|---|---|---|

| Conceptual Integration | Links omics data through shared biological concepts or entities | Hypothesis generation; exploring associations between omics sets; functional annotation | STATegra, OmicsON, Gene Ontology, pathway databases |

| Statistical Integration | Uses quantitative measures to combine or compare datasets | Identifying co-expressed molecules across omics layers; clustering patients based on molecular profiles | Correlation analysis, regression models, clustering algorithms |

| Model-Based Integration | Employs mathematical models to simulate system behavior | Understanding dynamics and regulation of biological systems; predicting drug responses | Network models, PK/PD models, systems pharmacology |

| Network and Pathway Integration | Uses biological pathways to represent system structure and function | Visualizing interactions between molecules; identifying dysregulated pathways in disease | Protein-protein interaction networks, metabolic pathways |

Conceptual integration leverages existing biological knowledge from curated databases to link different omics datasets through shared entities such as genes, proteins, or pathways [18]. This approach is particularly valuable in the early stages of biomarker discovery, as it allows researchers to contextualize molecular findings within established biological processes. For example, identifying that differentially expressed genes, proteins, and metabolites from a multi-omics dataset all map to the same metabolic pathway significantly strengthens the case for that pathway's involvement in the disease process.

Statistical integration employs quantitative methods to identify patterns across omics datasets without requiring extensive prior biological knowledge [18]. These methods include correlation analysis to identify co-expressed genes or proteins across different molecular layers, and clustering techniques to group patients based on integrated molecular profiles. Such approaches can reveal novel biomarker combinations that would not be identified when analyzing each omics layer separately. For instance, statistical integration might identify that a specific genetic variant only leads to protein-level changes when accompanied by particular metabolic conditions—a finding with important implications for patient stratification.

Model-based integration uses mathematical and computational models to simulate biological system behavior, creating predictive frameworks that can inform biomarker discovery [18]. Network models can represent interactions between biomolecules across different omics layers, while pharmacokinetic/pharmacodynamic (PK/PD) models can describe how drugs are processed in the body and how they affect multiple molecular systems. These models are particularly valuable for predicting how interventions might affect biomarker levels and how biomarker combinations might predict treatment response.

Network and pathway-based integration provides a biological context for multi-omics data by mapping molecular measurements onto established pathways or interaction networks [18]. This approach helps researchers interpret complex multi-omics datasets in terms of disrupted biological processes, which is essential for understanding the functional implications of potential biomarkers. For example, mapping genomic, transcriptomic, and proteomic data onto protein-protein interaction networks can identify key hub proteins that might serve as biomarkers or therapeutic targets.

Workflow for Multi-Omics Biomarker Discovery

The typical workflow for multi-omics biomarker discovery involves several standardized steps:

Experimental Design: Careful planning of sample collection, storage, and processing to minimize technical variation across different omics platforms. This includes determining appropriate sample sizes, considering batch effects, and ensuring ethical compliance.

Data Generation: Simultaneous or sequential generation of data from multiple omics platforms, potentially including whole-genome sequencing, RNA sequencing, mass spectrometry-based proteomics, and NMR or LC-MS-based metabolomics.

Quality Control and Preprocessing: Rigorous quality assessment for each dataset, followed by normalization, missing value imputation, and data transformation to make datasets comparable.

Data Integration: Application of integration methods (as described in Table 1) to combine information from multiple omics layers.

Biomarker Identification: Use of statistical and machine learning approaches to identify molecular patterns associated with diseases, outcomes, or treatment responses.

Validation: Experimental validation of candidate biomarkers using independent samples and different analytical techniques.

A critical consideration throughout this workflow is the handling of data heterogeneity. Multi-omics data varies in scale, distribution, and noise characteristics, requiring specialized normalization and transformation approaches before integration [18]. Additionally, the high dimensionality of multi-omics data (with far more features than samples) necessitates appropriate statistical corrections to avoid false discoveries.

Experimental Protocols and Applications

Protocol 1: Multi-Omics Biomarker Discovery for Cancer Diagnostics

Objective: To identify integrated molecular signatures for cancer diagnosis, prognosis, and treatment selection using horizontally and vertically integrated multi-omics approaches.

Background: In oncology, multi-omics approaches have proven particularly valuable for addressing tumor heterogeneity and understanding the complex interplay between cancer cells and their microenvironment [19] [20]. Horizontal integration combines data from the same omics layer (e.g., multiple transcriptomic datasets), while vertical integration connects different biological layers (e.g., genomics to transcriptomics to proteomics) [20].

Table 2: Experimental Steps for Cancer Multi-Omics Biomarker Discovery

| Step | Procedure | Key Parameters | Quality Controls |

|---|---|---|---|

| Sample Collection | Collect tumor and matched normal tissues; record clinical metadata | Snap-freeze in liquid N₂; maintain cold chain | Assess tissue viability; document ischemia time |

| Nucleic Acid Extraction | Isolate DNA and RNA using commercial kits | Quantity and quality assessment | Bioanalyzer RNA Integrity Number (RIN) > 7.0 |

| Genomics (WES/WGS) | Library preparation and sequencing | Minimum 80x coverage for WGS; 100x for WES | Check coverage uniformity; mapping rates >90% |

| Transcriptomics | RNA-seq library prep (poly-A selection) | Minimum 30 million reads per sample | Check rRNA contamination; alignment rates |

| Proteomics | Protein extraction, digestion, LC-MS/MS | TMT or label-free quantification | Include QC reference samples; monitor retention time |

| Data Integration | Apply computational integration methods | Choice of horizontal vs. vertical integration | Assess batch effects; implement correction |

Detailed Procedures:

Sample Preparation and Quality Control:

- Obtain fresh-frozen tissue sections (5-10 mg) and allocate portions for each omics analysis.

- For nucleic acid extraction, use validated commercial kits with DNase/RNase-free conditions.

- Assess DNA/RNA quality using Agilent Bioanalyzer or TapeStation systems (RNA Integrity Number > 7.0 required).

- For proteomics, homogenize tissue in lysis buffer (e.g., 8M urea, 2M thiourea) with protease and phosphatase inhibitors.

Genomics Workflow:

- Perform library preparation using Illumina TruSeq DNA PCR-Free Library Prep Kit.

- Sequence on Illumina NovaSeq platform (150bp paired-end reads).

- Process data through bioinformatics pipeline: quality trimming (FastQC), alignment to reference genome (BWA-MEM), variant calling (GATK), and annotation (ANNOVAR).

Transcriptomics Workflow:

- Isolate mRNA using poly-A selection (Illumina Stranded mRNA Prep).

- Sequence on Illumina platform (minimum 30 million reads per sample).

- Process data: quality control (FastQC), alignment (STAR), quantitation (featureCounts), and differential expression analysis (DESeq2).

Proteomics Workflow:

- Digest proteins with trypsin (filter-aided sample preparation protocol).

- Desalt peptides using C18 solid-phase extraction.

- Analyze by LC-MS/MS on Orbitrap Eclipse Tribrid mass spectrometer.

- Process data: peak detection (MaxQuant), database searching (UniProt), and quantitation (LFQ or TMT-based).

Data Integration and Analysis:

- For horizontal integration: Combine scRNA-seq with spatial transcriptomics using Seurat v5 or Cell2location to map cell types to tissue locations [20].

- For vertical integration: Link genomic variants to transcriptomic and proteomic changes using iCluster or Multi-Omics Factor Analysis [20].

- Identify candidate biomarkers showing consistent alterations across multiple omics layers.

Applications: This protocol has been successfully applied in lung cancer research, revealing biomarkers such as KRT8+ alveolar intermediate cells that represent transitional states during early tumorigenesis [20]. The integrated approach has also identified TIM-3+ immune cells with impaired antigen presentation capacity, providing both diagnostic and therapeutic insights [20].

Protocol 2: Multi-Omics Data Visualization for Pathway Analysis

Objective: To simultaneously visualize multiple omics datasets on biological pathway diagrams to identify dysregulated pathways and network interactions.

Background: Visual integration of multi-omics data enables researchers to interpret complex molecular relationships in the context of biological pathways. Several tools are available for this purpose, each with unique capabilities for visualizing different omics data types simultaneously [23] [24] [25].

Detailed Procedures:

Data Preparation for PathVisio:

- Create a single data file containing all omics measurements with appropriate database identifiers.

- Format columns to include: Identifier, System Code (e.g., "L" for Entrez Gene, "S" for UniProt, "Ce" for ChEBI), quantitative values (e.g., log2FC), p-values, and Type (e.g., "Transcriptomics," "Proteomics") [23].

- Example data format:

PathVisio Visualization Workflow:

- Open PathVisio and load the pathway of interest.

- Ensure appropriate identifier mapping databases are loaded (species-specific gene and metabolite mapping).

- Import expression data through Data > Import Expression Data.

- In the import wizard, select the appropriate system code column for identifier mapping.

- Create advanced visualization through Data > Visualization Options:

- Select "Text label" and "Expression as color" > "Advanced."

- For log2FC values, create a color gradient from blue (down-regulated) to red (up-regulated).

- For data types, add rule-based coloring with distinct colors for each omics type [23].

OmicCircos Circular Visualization:

- Install OmicCircos in R:

BiocManager::install("OmicCircos") - Prepare genomic data in appropriate formats for different tracks (e.g., chromosome ideogram, gene expression, copy number variations).

- Use core functions:

sim.circos()to create simulated input data for practice.segAnglePo()to transform linear data into angular coordinates.circos()to create circular plots with multiple track types [24].

- Customize plots by adding scatterplots, lines, heatmaps, and curves to represent different omics data types and their relationships.

- Install OmicCircos in R:

PTools Cellular Overview Multi-Omics Visualization:

- Load up to four omics datasets simultaneously, assigning each to a different visual channel:

- Reaction edge color (e.g., for transcriptomics data)

- Reaction edge thickness (e.g., for proteomics data)

- Metabolite node color (e.g., for metabolomics data)

- Metabolite node thickness (e.g., for additional metabolic data) [25].

- Use interactive controls to adjust color and thickness mappings for optimal data representation.

- Utilize semantic zooming to reveal additional details at higher magnification levels.

- For time-series data, employ animation features to visualize dynamic changes across molecular layers.

- Load up to four omics datasets simultaneously, assigning each to a different visual channel:

Applications: These visualization approaches have been used to map relationships between human papillomavirus (HPV) genome and human genes in cervical cancer, and to display multi-omics profiles of breast cancer subtypes from The Cancer Genome Atlas data [24]. The simultaneous visualization of multiple data types helps identify coordinated changes across molecular layers that might be missed when examining individual datasets separately.

Visualization Approaches for Multi-Omics Data

Effective visualization is crucial for interpreting complex multi-omics datasets. Several specialized tools have been developed to represent multiple molecular layers simultaneously in biologically meaningful contexts.

Multi-Omics Visualization Tool Ecosystem

Table 3: Comparison of Multi-Omics Visualization Tools

| Tool | Diagram Type | Simultaneous Omics Types | Key Features | Best Applications |

|---|---|---|---|---|

| PTools Cellular Overview | Automated pathway-specific layout | 4 | Semantic zooming, animation, organism-specific diagrams | Metabolic pathway analysis, time-series multi-omics |

| PathVisio | Manually curated pathways | 3 | Rule-based visualization, multiple identifier systems | Pathway-centric biomarker validation |

| OmicCircos | Circular genomic plots | Multiple tracks | Genomic coordinate mapping, multiple track types | Genome-wide association studies, copy number variation |

| KEGG Mapper | Manual uber pathways | 2 | Standardized pathway diagrams, wide pathway coverage | Cross-species comparison, communication |

| PaintOmics | Manually drawn pathways | 3 | Web-based interface, no installation required | Collaborative projects, quick visualization |

The PTools Cellular Overview represents one of the most advanced multi-omics visualization systems, supporting the simultaneous display of four different omics datasets through distinct visual channels [25]. This tool uses automated layout algorithms to generate organism-specific metabolic network diagrams, ensuring that visualizations accurately reflect the specific metabolic capabilities of the organism being studied. A key advantage is the support for semantic zooming, which reveals different levels of detail as users zoom in and out of the diagram. Additionally, the animation capabilities enable researchers to visualize dynamic processes and time-series multi-omics data, revealing how molecular relationships evolve over time or in response to perturbations.

PathVisio offers powerful capabilities for creating intuitive visualizations of multiple omics data types on pathway diagrams [23]. The software supports rule-based visualization, allowing researchers to define custom display rules based on statistical thresholds, fold-changes, or data types. This flexibility is particularly valuable in biomarker discovery, where visualizing the same dataset with different thresholds can reveal meaningful patterns. PathVisio's support for multiple database identifier systems facilitates the integration of diverse omics data types that may use different naming conventions (e.g., Entrez Gene IDs for transcriptomics, UniProt IDs for proteomics, and ChEBI IDs for metabolomics).

OmicCircos specializes in circular plots for genomic data, enabling researchers to visualize multiple types of genomic information in coordinated tracks [24]. This approach is particularly useful for displaying relationships between genomic position and various molecular measurements, such as showing gene expression, copy number variations, and mutation status simultaneously across all chromosomes. The circular format facilitates identification of chromosomal patterns and hotspots that might be associated with disease processes or treatment responses.

Successful implementation of multi-omics approaches requires both wet-lab reagents for data generation and computational tools for data integration and analysis. This section outlines essential resources for a comprehensive multi-omics biomarker discovery pipeline.

Table 4: Essential Research Reagent Solutions for Multi-Omics Studies

| Category | Specific Products/Technologies | Key Applications | Considerations |

|---|---|---|---|

| Nucleic Acid Extraction | Qiagen AllPrep, TRIzol, magnetic bead-based systems | Simultaneous DNA/RNA extraction | Maintain RNA integrity, avoid cross-contamination |

| Sequencing Library Prep | Illumina TruSeq, NEBNext, SMARTer kits | WGS, WES, RNA-seq | Compatibility with downstream analysis |

| Proteomics Sample Prep | Filter-aided sample preparation (FASP), S-Trap kits | Protein digestion, cleanup | Efficiency, reproducibility, compatibility with MS |

| Mass Spectrometry | Orbitrap (Thermo), TIMS-TOF (Bruker) systems | Proteomics, metabolomics | Resolution, sensitivity, quantitative accuracy |

| Metabolomics | Biocrates kits, IROA technologies | Targeted metabolomics | Coverage, standardization, quantification |

| Single-Cell Technologies | 10x Genomics, BD Rhapsody | scRNA-seq, single-cell multi-omics | Cell viability, capture efficiency, cost |

Computational Resources and Tools:

Data Integration Platforms:

- STATegra: Provides a comprehensive framework for multi-omics data integration, with particular strength in time-series analyses [18].

- iCluster: Bayesian method for integrative clustering of multiple omics data types, useful for patient stratification [20].

- Multi-Omics Factor Analysis (MOFA): Discovers principal sources of variation across multiple omics layers [20].

Visualization Tools:

- PathVisio: Creates pathway-based visualizations with support for multiple omics data types and custom visualization rules [23].

- OmicCircos: Generates circular plots for genomic data, with capabilities for displaying multiple data tracks simultaneously [24].

- PTools Cellular Overview: Provides organism-specific metabolic network diagrams with multi-omics visualization capabilities [25].

Specialized Databases:

- Gene Ontology: Provides standardized vocabulary for gene function annotation, essential for conceptual integration [18].

- Pathway Databases: KEGG, Reactome, and WikiPathways offer curated pathway information for biological context [18] [25].

- Protein-Protein Interaction Networks: STRING and BioGRID provide interaction data for network-based integration [18].

The selection of appropriate reagents and computational tools should be guided by the specific research question, sample types, and available infrastructure. As multi-omics technologies continue to evolve, staying current with emerging platforms and methodologies is essential for maintaining cutting-edge biomarker discovery capabilities.

The field of multi-omics research is rapidly evolving, driven by technological advancements and increasingly sophisticated computational methods. Several emerging trends are poised to further transform biomarker discovery and precision medicine.

Emerging Technologies: Single-cell multi-omics technologies are revealing unprecedented insights into cellular heterogeneity within tissues and tumors [19] [20]. These approaches allow researchers to measure multiple molecular layers simultaneously from the same cell, providing direct evidence of how genomic variation influences transcriptomic and epigenomic states at the single-cell level. Spatial omics technologies add another dimension by preserving the architectural context of cells within tissues, enabling researchers to map molecular relationships within the tissue microenvironment [19]. These technologies are particularly valuable for understanding cell-cell interactions and spatial organization patterns that drive disease processes.

Artificial Intelligence Integration: Machine learning and deep learning approaches are becoming increasingly integral to multi-omics data analysis [19] [26]. These methods can identify complex, non-linear patterns across omics layers that might escape conventional statistical approaches. AI-based integration methods are particularly promising for predictive biomarker discovery, where they can integrate diverse molecular data to forecast disease progression, treatment response, or adverse events [19] [26]. As these methods mature, they are expected to enhance the precision and personalization of clinical interventions.

Clinical Translation Challenges: Despite the exciting potential of multi-omics approaches, several challenges remain for widespread clinical implementation. Standardization of protocols across laboratories, establishment of quality control metrics, and development of regulatory frameworks for multi-omics-based diagnostics are active areas of development [19] [18]. Additionally, the cost and computational complexity of multi-omics analyses present barriers to routine clinical application. However, as technologies continue to advance and costs decrease, multi-omics approaches are likely to become increasingly accessible for clinical biomarker discovery and implementation.

Conclusion: Multi-omics integration represents a powerful framework for biomarker discovery, providing a more comprehensive understanding of biological systems and disease processes than single-omics approaches. By simultaneously considering multiple molecular layers, researchers can identify robust biomarker signatures with greater predictive power and clinical utility. The successful implementation of multi-omics strategies requires careful experimental design, appropriate computational integration methods, and effective visualization approaches. As technologies continue to advance and computational methods become more sophisticated, multi-omics approaches are poised to drive significant advances in precision medicine, enabling more accurate diagnosis, prognosis, and treatment selection across a wide range of diseases.

The paradigm of biomarker discovery is shifting from traditional, hypothesis-driven approaches to data-driven, knowledge-based research. This transition is fueled by the convergence of high-throughput biotechnology, which can generate millions of clinicogenomic measurements per individual [7], and advanced artificial intelligence (AI). However, two significant challenges impede progress: the data accessibility problem, where sensitive clinical and genomic data cannot be centralized for analysis due to privacy and regulatory concerns, and the trust deficit, where the "black-box" nature of complex AI models hinders their adoption in clinical practice [27] [28].

In response, two key technological drivers have emerged as foundational to modern biomarker research: Federated Learning (FL) for secure, collaborative data analysis and Explainable AI (XAI) for building clinical trust. FL enables the training of machine learning models across multiple decentralized data sources without moving or sharing the underlying raw data [29]. Simultaneously, XAI provides transparent, interpretable results that allow clinicians and researchers to understand the reasoning behind an AI's output, fostering trust and facilitating clinical actionability [27] [28]. This Application Note details the protocols and methodologies for integrating these technologies into a robust framework for biomarker discovery.

Federated Learning for Secure, Collaborative Biomarker Discovery

Federated Learning is a distributed machine learning approach that is revolutionizing how researchers leverage real-world data from multiple institutions. It is particularly vital for biomarker discovery in oncology and rare diseases, where sample sizes from single centers are often insufficient for robust model development.

Core Protocol: Federated Model Training for a Predictive Biomarker Signature

The following protocol outlines the key steps for training a predictive biomarker model using a federated approach across multiple hospital sites.

Objective: To collaboratively train a predictive biomarker model for immunotherapy response in non-small cell lung cancer (NSCLC) using distributed clinicogenomic datasets without centralizing patient data. Data Type: Multi-modal data including genomic sequencing (e.g., from Comprehensive Genome Profiling panels), transcriptomics, and structured clinical data from Electronic Health Records (EHRs) [30] [29].

| Step | Procedure | Key Considerations & Parameters |

|---|---|---|

| 1. Initialization | A central server initializes a global machine learning model (e.g., a neural network or gradient boosting model). | Model architecture must be agreed upon by all participating sites. The PBMF framework is an example of a neural network suitable for this task [7]. |

| 2. Client Selection | The server selects a subset of available client sites (e.g., hospital servers) to participate in a training round. | Selection can be random or based on specific criteria like dataset size or computational availability. |

| 3. Client Download | Selected clients download the current global model weights from the central server. | Communication must be secured via encryption (e.g., HTTPS/TLS). |

| 4. Local Training | Each client trains the model on its local data. Training is performed for a predetermined number of local epochs. | Critical: Local data never leaves the hospital firewall. Algorithms like Stochastic Gradient Descent (SGD) are typically used. |

| 5. Model Export | Each client generates updated model parameters (e.g., weight updates, gradients) from the locally trained model. | Only the model parameters, not the training data, are exported. Differential privacy techniques can be added to further obscure the contribution of any single data point. |

| 6. Secure Aggregation | The clients send their model updates to the central server. The server aggregates these updates to improve the global model. | Aggregation algorithms like Federated Averaging (FedAvg) are standard. The formula for FedAvg is: ( w{t+1} \leftarrow \sum{k=1}^{K} \frac{nk}{n} w{t+1}^k ), where ( w ) are the weights, ( K ) is the number of clients, and ( n_k/n ) is the fraction of data on client ( k ). |

| 7. Model Update | The server updates the global model with the aggregated parameters. | The process repeats from Step 2 for a set number of rounds or until model performance converges. |

| 8. Validation | The final global model is evaluated on a held-out test set from each client or a centralized public dataset to assess its performance and generalizability. | Performance metrics (e.g., AUC, C-index) are reported for each site to check for consistency and identify potential biases [29]. |

Workflow Visualization

The diagram below illustrates the iterative cycle of federated model training.

Explainable AI for Clinical Trust and Actionable Insights

The development of a high-performance biomarker model is insufficient for clinical translation. Clinicians must understand the model's reasoning to trust and appropriately use its predictions. XAI techniques are essential for transforming a black-box prediction into an interpretable and clinically actionable insight [27] [31].

Core Protocol: Implementing XAI in a Biomarker-Driven Clinical Decision Support System (CDSS)

This protocol describes how to integrate XAI into a CDSS that provides biomarker-based treatment recommendations.

Objective: To explain an AI model's prediction of positive response to immunotherapy in a specific NSCLC patient, highlighting the key genomic and clinical features driving the decision. Model: A trained machine learning model (e.g., Random Forest, XGBoost, or Neural Network) for predicting treatment response. XAI Techniques: SHAP (SHapley Additive exPlanations) and LIME (Local Interpretable Model-agnostic Explanations) [27] [28].

| Step | Procedure | Key Considerations & Parameters |

|---|---|---|

| 1. Model & Data Setup | Deploy the trained predictive model and prepare the individual patient's data for explanation. | Data must be preprocessed identically to the training data (e.g., normalized, encoded). |

| 2. Global Explainability (SHAP) | Calculate SHAP values for the entire validation dataset to understand the model's overall behavior. | SHAP values quantify the marginal contribution of each feature to the model's prediction. Use shap.Explainer() and shap.summary_plot() to visualize global feature importance. |

| 3. Local Explainability (SHAP/LIME) | Generate an explanation for a single patient's prediction. | For SHAP: Use shap.force_plot() or shap.waterfall_plot() to show how each feature pushed the model's output from the base value to the final prediction. For LIME: Create a local surrogate model (e.g., linear model) that approximates the black-box model's behavior for that specific instance. Use lime_tabular.LimeTabularExplainer(). |

| 4. Explanation Presentation | Integrate the explanation into the CDSS user interface in a clear, concise manner for the clinician. | Present the top 5-10 features driving the prediction. Use natural language (e.g., "This patient is predicted to respond due to high PD-L1 expression and low tumor mutational burden"). Visual aids like bar charts or waterfall plots are highly effective [31]. |

| 5. Clinical Validation & Feedback | The clinician uses the explanation to contextualize the AI's recommendation against their own expertise. | This human-in-the-loop step is critical. Discrepancies between the explanation and clinical knowledge can reveal model biases or data quality issues, creating a feedback loop for model improvement [32] [31]. |

Workflow Visualization

The diagram below illustrates the pathway from a black-box model to a clinically trusted decision.

The Scientist's Toolkit: Essential Research Reagents & Computational Solutions

The following table details key resources and tools required for implementing the federated and explainable biomarker discovery workflows described in this note.

| Category | Item / Solution | Function & Application Note |

|---|---|---|

| Data & Standards | Real-World Clinicogenomic Data | Multi-modal data from EHRs, NGS, and transcriptomics. Must be harmonized using standards like OMOP CDM for federated analysis [30] [1]. |

| Biomarker Definitions | BEST (Biomarkers, EndpointS, and other Tools) Resource guidelines for defining and validating biomarker types (prognostic vs. predictive) [30]. | |

| Computational Frameworks | Federated Learning Platform | Software like Lifebit, NVIDIA FLARE, or Flower that orchestrates the federated training cycle across distributed data nodes [29]. |

| Predictive Biomarker Modeling Framework (PBMF) | A specific AI framework based on contrastive learning, designed to systematically discover predictive (not just prognostic) biomarkers from clinicogenomic data [7]. | |

| XAI Libraries | SHAP (SHapley Additive exPlanations) | A unified game theory-based library for explaining the output of any machine learning model. Provides both global and local interpretability [27] [28]. |

| LIME (Local Interpretable Model-agnostic Explanations) | Creates local, surrogate models to explain individual predictions. Useful for validating SHAP findings or for models where SHAP is computationally expensive [28]. | |

| Validation & Evaluation | Independent Clinical Cohorts | Held-out datasets from different geographical or demographic populations are essential for assessing model generalizability and preventing overfitting [1]. |

| Performance Metrics | Standard metrics (AUC, Accuracy) and clinical utility metrics (e.g., Net Reclassification Index) to evaluate the biomarker's impact on decision-making [29] [7]. |

The integration of Federated Learning and Explainable AI represents a foundational shift in data-driven biomarker discovery. FL directly addresses the critical constraints of data privacy and accessibility, enabling the pooling of statistical power from globally distributed datasets. Concurrently, XAI addresses the "trust gap" by making model outputs interpretable, which is a non-negotiable requirement for clinical adoption [31] [28]. The protocols outlined herein provide a actionable roadmap for researchers to build more robust, generalizable, and clinically trustworthy biomarker models. By adopting this integrated framework, the field can accelerate the translation of complex data into meaningful knowledge, ultimately advancing the goals of personalized medicine.

Building the Pipeline: Technologies and Workflows for Next-Generation Biomarker Identification

The discovery of robust, clinically relevant biomarkers is a cornerstone of modern precision medicine, yet the process remains notoriously challenging, expensive, and time-consuming. The integration of Artificial Intelligence (AI) and machine learning (ML) creates a powerful, data-driven paradigm shift, moving from a hypothesis-limited approach to a holistic, systems-level understanding of disease biology [33]. This document details the application notes and protocols for implementing an AI-powered discovery pipeline, designed to accelerate biomarker identification and validation within knowledge-based research frameworks. By systematically orchestrating the flow of data from heterogeneous sources through to model deployment, this pipeline ensures scalable, reproducible, and actionable insights that can fundamentally enhance drug development.

Pipeline Architecture and Quantitative Impact

An AI data pipeline automates the end-to-end flow of data, from raw collection to model training and deployment. It is distinguished from traditional data pipelines by its incorporation of ML-specific processes such as feature engineering, model training, continuous learning, and real-time data processing capabilities [34]. For biomarker discovery, this translates to a structured framework that transforms multi-modal, high-volume data into validated, predictive models.

Table 1: Quantitative Impact of AI Pipelines on Discovery Timelines and Success Rates

| Stage | Traditional Timeline | Traditional Success Rate (Phase Transition) | AI-Accelerated Timeline (Estimate) | AI-Improved Success Rate (Hypothesis) | Key AI Interventions |

|---|---|---|---|---|---|

| Target ID & Validation | 2-3 years | N/A (Impacts downstream success) | < 1 year | N/A (Improves downstream success) | Genomic data mining, multi-omics analysis, literature NLP, pathway modeling [33] |

| Hit-to-Lead & Preclinical | 3-6 years | ~69% (Preclinical) | 1-3 years | >75% | AI-powered virtual screening, predictive ADMET & toxicology [33] |

| Phase II Clinical Trials | ~2 years | ~29% | 1-1.5 years | >50% (with stratification) | Biomarker discovery, precision patient stratification, digital twins [33] |

The strategic value is clear: AI pipelines can condense discovery timelines, in some cases reducing a 12-month target identification phase to under 5 months, while simultaneously improving the likelihood of clinical success through better target selection and patient stratification [33].

Core Components and Experimental Protocols

Data Ingestion and Preprocessing

The initial stage involves aggregating and preparing diverse data types foundational to biomarker research.

Protocol 3.1.1: Multi-Omics Data Ingestion and Harmonization

- Objective: To systematically collect and harmonize structured and unstructured data from disparate biological sources for downstream feature engineering.

- Materials:

- Data Sources: Genomic sequencers, proteomic and metabolomic platforms, electronic Health Records (EHRs), published biomedical literature [33].

- Tools: Data integration platforms with pre-built connectors (e.g., Airbyte) or custom APIs [35]. Cloud storage solutions (e.g., AWS, Google Cloud, Azure) [34].

- Method:

- Configuration: Establish connections to source systems using secure credentials and APIs. For unstructured text data (e.g., scientific literature), implement a document processing pipeline [36].

- Synchronization: Set replication frequency (batch or real-time) and sync modes (e.g., Incremental - Append + Deduped) to ensure data currency without overloading systems [35].

- Validation & Checksumming: Implement automated data quality checks upon ingestion. Compute checksums (e.g., SHA-256) for all files to prevent and detect duplicate processing, a critical step for reproducibility [36].

- Metadata Capture: Record critical metadata including source, timestamp, and file checksum in a dedicated metadata table [36].

Protocol 3.1.2: Automated Data Preprocessing and Feature Engineering

- Objective: To clean, normalize, and transform raw data into informative features (biomarker candidates) suitable for ML models.

- Materials: Python environment with libraries like Pandas, Scikit-learn, and specialized AI tools (e.g.,

teradatagenaifor entity recognition in text) [36]. - Method:

- Cleaning: Handle missing values (e.g., imputation, removal), correct errors, and remove outliers.

- Normalization: Standardize numerical data (e.g., Z-score normalization) to ensure features are on a comparable scale.

- Feature Engineering: Create derived features that may have higher predictive power. This can include generating interaction terms between omics data points or calculating specific ratios of analyte concentrations.

- Dimensionality Reduction: Apply techniques like Principal Component Analysis (PCA) or autoencoders to reduce the feature space, mitigating the "curse of dimensionality" common in omics data.

Model Training and Validation

This phase involves selecting, training, and rigorously evaluating models to identify a candidate biomarker signature.

Protocol 3.2.1: Building and Training a Predictive Model for Biomarker Stratification

- Objective: To develop a model that identifies a biomarker pattern predictive of a specific clinical outcome or treatment response.

- Materials: Curated and preprocessed feature set from Protocol 3.1.2. ML frameworks like TensorFlow, PyTorch, or Scikit-learn. Access to GPU-accelerated computing (e.g., NVIDIA DGX Cloud) for deep learning models [37].

- Method:

- Data Partitioning: Split the dataset into training, validation, and hold-out test sets (e.g., 70/15/15). Ensure splits preserve the distribution of the target variable.

- Model Selection: Experiment with various algorithms, from simpler models (Logistic Regression, Random Forests) to more complex architectures (Graph Neural Networks for molecular data, Transformers for sequence data) [38].

- Hyperparameter Tuning: Use automated tools like Optuna or Ray Tune to systematically search the hyperparameter space for optimal model performance [39].