Clinical Proteomics for Biomarker Discovery: Techniques, Workflows, and Future Directions in Precision Medicine

This article provides a comprehensive overview of the current landscape of clinical proteomics for biomarker identification, tailored for researchers and drug development professionals.

Clinical Proteomics for Biomarker Discovery: Techniques, Workflows, and Future Directions in Precision Medicine

Abstract

This article provides a comprehensive overview of the current landscape of clinical proteomics for biomarker identification, tailored for researchers and drug development professionals. It explores the foundational principles of proteomics and its critical role in bridging genomic information with biological function. The piece details advanced methodological workflows, from sample preparation to data acquisition using mass spectrometry and protein microarrays. It further addresses key challenges in the field, including experimental design and statistical power, and outlines rigorous biomarker validation and verification processes. By synthesizing insights from foundational concepts to clinical application, this review serves as a strategic guide for navigating the complexities of biomarker discovery and translation into clinically useful tools.

The Proteomics Landscape: From Genome to Clinical Biomarker

In the pursuit of precision medicine, biomarkers have become indispensable tools for early disease detection, accurate prognosis, and monitoring treatment efficacy. Among the various molecular tiers, the proteome—the entire set of proteins expressed by a genome—represents a uniquely valuable source of biomarkers. Proteins are the primary functional actors in biological systems, directly regulating metabolic pathways, cellular signaling, and structural integrity. Their dynamic expression, post-translational modifications, and secretion into readily accessible biofluids make them ideal sentinels of health and disease states. This application note details the experimental protocols and analytical frameworks for identifying and validating protein biomarkers, contextualized within clinical proteomics research for drug development.

Quantitative Foundations: The Case for Protein Biomarkers

The following table summarizes the key advantages that position proteins as superior biomarkers compared to other molecular classes.

Table 1: Key Advantages of Proteins as Clinical Biomarkers

| Characteristic | Significance for Biomarker Utility |

|---|---|

| Proximal to Phenotype | Proteins are the main effectors of cellular function; their expression levels, locations, and modifications directly reflect the current physiological or pathological state [1]. |

| Dynamic Nature | Protein expression and activity can change rapidly in response to environmental cues, disease progression, or therapeutic intervention, providing a real-time snapshot of biological status [1]. |

| Druggable Targets | Most therapeutic agents, including small molecules and biologics, are designed to target proteins, making their quantification directly relevant to drug development and efficacy monitoring [2]. |

| Accessible in Biofluids | Proteins and protein fragments are readily detectable in minimally or non-invasive liquid biopsies (e.g., plasma, serum, urine, CSF), enabling serial monitoring [3] [1]. |

| Post-Translational Modifications (PTMs) | PTMs (e.g., phosphorylation, glycosylation) offer a rich layer of functional regulation that can serve as sensitive biomarkers for disease-specific pathways [4]. |

Experimental Protocols for Biomarker Discovery and Verification

A robust proteomic pipeline is essential for translating a candidate protein into a validated biomarker. The workflow is typically segmented into discovery and targeted verification phases.

Discovery Phase Proteomics

Objective: To identify a broad panel of candidate biomarker proteins that are differentially expressed between comparative groups (e.g., disease vs. control).

Detailed Methodology:

Sample Preparation: Rigorous standard operating procedures are critical.

- Source: Begin with clinically relevant biofluids (plasma, serum, CSF) or tissues (including FFPE blocks) [1].

- Depletion: For plasma/serum, use immunoaffinity columns to remove high-abundance proteins (e.g., albumin, IgG) to compress the dynamic range and reveal lower-abundance potential biomarkers [3].

- Digestion: Digest proteins into peptides using a sequence-specific enzyme, most commonly trypsin [4] [1].

- Clean-up: Desalt peptides using C18 solid-phase extraction cartridges.

Data Acquisition via LC-MS/MS:

- Separation: Peptides are separated by reversed-phase liquid chromatography (LC) based on hydrophobicity [1].

- Ionization: Eluting peptides are ionized via electrospray ionization (ESI).

- Mass Analysis: Two primary data acquisition modes are used:

- Data-Dependent Acquisition (DDA): The mass spectrometer automatically selects the most abundant precursor ions for fragmentation. While comprehensive, it can suffer from stochastic sampling and missing low-abundance ions [1].

- Data-Independent Acquisition (DIA): Also known as SWATH-MS, this method fragments all ions within sequential, predefined mass windows. It generates a complete digital proteome map, enabling retrospective data mining without re-running samples [1].

Data Analysis:

- Identification: MS/MS spectra are searched against protein sequence databases using software tools (e.g., MaxQuant, Spectronaut) to identify peptides and infer proteins [4].

- Quantification: Label-free or isotope-labeling methods are used to quantify relative protein abundance changes across sample groups.

Targeted Verification Phase Proteomics

Objective: To precisely and reliably quantify a shortlist of candidate biomarkers in a larger cohort of samples.

Detailed Methodology: This phase typically employs Multiple Reaction Monitoring (MRM) or its parallel version, Parallel Reaction Monitoring (PRM), on a triple-quadrupole or high-resolution mass spectrometer [3] [1].

Assay Development:

- For each candidate protein, select 3-5 unique "proteotypic" peptides that are specific and efficiently ionized.

- For each peptide, select multiple specific fragment ions (transitions).

- Synthesize stable isotope-labeled (SIL) versions of these peptides to serve as internal standards for precise quantification.

Sample Analysis:

- The MS is programmed to only monitor the predefined precursor ion → fragment ion transitions for the target peptides.

- Q1 selects the specific precursor ion (peptide) mass.

- Q2 (collision cell) fragments the selected ion.

- Q3 filters for the specific fragment ion masses.

- The intensity of these transition signals over the chromatographic elution time is integrated and compared to the internal standard to determine the absolute or relative concentration of the peptide, and thus the protein, in the sample [3] [1].

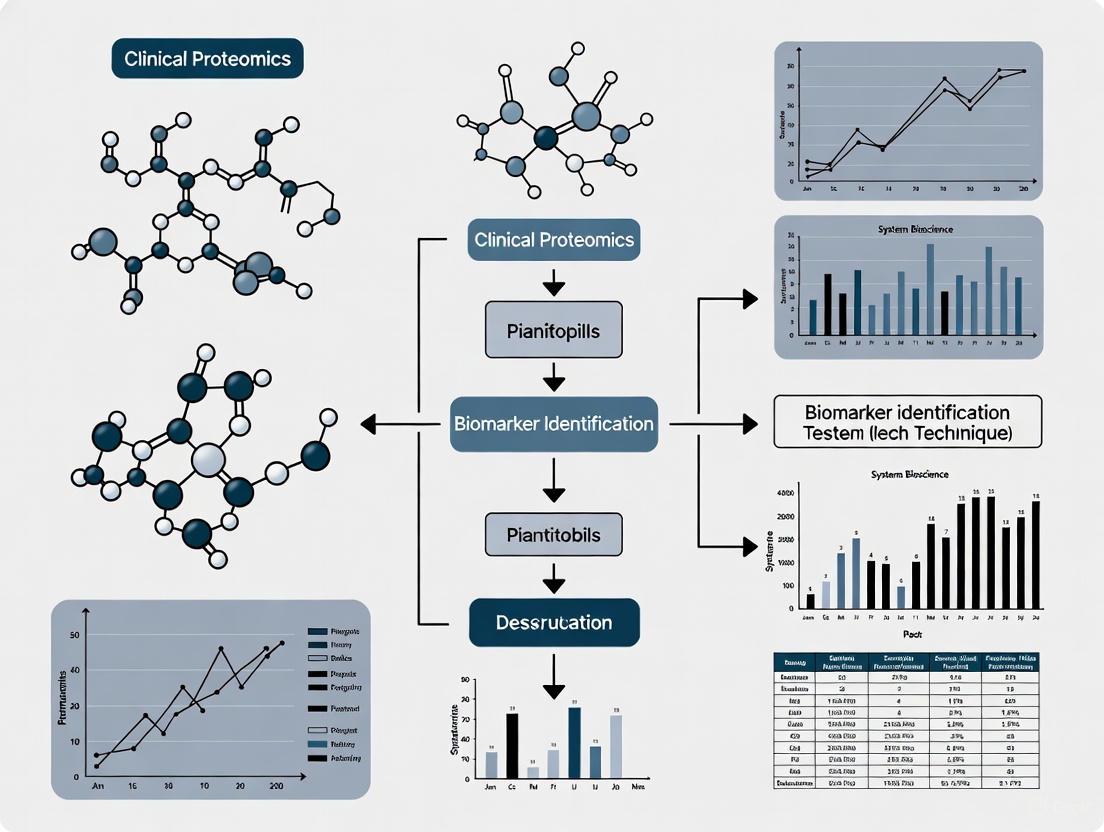

The following diagram illustrates the logical workflow and decision points in the biomarker development pipeline.

The Scientist's Toolkit: Research Reagent Solutions

Successful execution of a proteomics workflow relies on a suite of essential reagents and materials. The following table catalogs key solutions for biomarker discovery and validation studies.

Table 2: Essential Research Reagents for Clinical Proteomics

| Reagent / Material | Function in Workflow | Specific Examples / Notes |

|---|---|---|

| Trypsin (Sequencing Grade) | Enzyme for specific proteolytic digestion of proteins into peptides for MS analysis. | Ensures complete and reproducible cleavage at lysine and arginine residues. |

| Stable Isotope-Labeled (SIL) Peptides | Internal standards for absolute quantification in targeted proteomics (MRM). | Spiked into samples to correct for sample prep losses and ionization variability [3]. |

| Immunoaffinity Depletion Columns | Remove top 1-20 abundant proteins from serum/plasma to enhance detection of lower-abundance biomarkers. | Columns target proteins like albumin, IgG, transferrin [3]. |

| Liquid Chromatography Columns | Separate peptides based on hydrophobicity prior to MS injection. | Reversed-phase C18 columns, typically with nano-flow configurations for sensitivity. |

| Quality Control (QC) Samples | Monitor instrument performance and reproducibility across batches. | A pooled sample from all or a representative set of study samples. |

| Validated Antibodies | Used for immuno-enrichment of low-abundance target proteins or peptides prior to MS analysis. | Critical for quantifying proteins at pg/mL levels in blood [1]. |

A Framework for Clinical Translation: The CUSP Protocol

Transitioning a candidate biomarker from a research finding to a clinically useful tool requires careful evaluation beyond statistical significance. The Clinically Useful Selection of Proteins (CUSP) protocol provides a rational framework for this process [5].

This protocol combines statistical and non-statistical criteria to score and rank candidate proteins:

- Statistical Component: Proteins are initially ranked based on their ability to differentiate participant groups using logistic regression models.

- Non-Statistical Component: Proteins are then evaluated against five practical criteria, weighted by importance:

- Commercial Assay Availability (40 points): Is there an existing, commercially available clinical-grade assay (e.g., ELISA) for this protein? This is the single most important factor for clinical translation.

- Established Biological Role (25 points): Is the protein's function and link to the disease pathology already known? This de-risks validation.

- Stability in Relevant Biofluid (15 points): Is the protein known to be stable in the biofluid of interest (e.g., plasma)?

- Secreted Protein (10 points): Is the protein naturally secreted, making it more likely to be a robust biomarker in biofluids?

- Known Druggable Target (10 points): Does the protein have known ligands or drugs that target it, increasing its translational value?

The total CUSP score (statistical + non-statistical rankings) provides a transparent metric to select the most promising candidates for costly and time-consuming validation studies [5].

Proteins stand as ideal biomarkers by providing a dynamic, functional, and directly targetable readout of human health and disease. The structured proteomic workflows outlined here—from unbiased discovery using DIA/MS to rigorous verification via targeted MRM—provide a powerful roadmap for biomarker development. The integration of strategic frameworks like the CUSP protocol further ensures that discovered biomarkers have a viable path to clinical application, ultimately accelerating drug development and enabling more personalized patient care.

The development of robust biomarkers is a critical pathway in advancing precision medicine, yet the journey from discovery to clinical application remains fraught with challenges. Current estimates indicate that approximately 95% of biomarker candidates fail to progress from discovery to clinical use, creating a significant "validation valley of death" that frustrates researchers and delays patient benefits [6]. This high attrition rate persists despite advances in 'omics technologies that generate hundreds to thousands of candidate biomarkers [6]. The biomarker pipeline systematically transforms raw biological data into clinically validated indicators through three fundamental phases: discovery, verification, and validation. Within clinical proteomics—the large-scale study of proteins for clinical applications—this pipeline demands exceptional rigor in analytical methods and statistical assessment [7] [8]. The stakes are immense, with the global biomarker market projected to reach $104 billion by 2030, yet traditional validation approaches require 5-10 years and cost millions per candidate [6]. This application note details current protocols and best practices for navigating this complex pipeline, with special emphasis on proteomic approaches for autoimmune diseases and other complex conditions where biomarker development faces particular challenges [8].

The biomarker development pipeline constitutes a rigorous, multi-stage process designed to identify, verify, and validate measurable biological indicators that can predict, diagnose, or monitor disease. Successful navigation requires understanding both the technical requirements and the strategic framework necessary to overcome the high failure rates observed in biomarker development.

The Three-Legged Stool of Biomarker Assessment

Biomarker validity is not a single concept but rather three interconnected challenges that must all be successfully addressed, with weakness in any single area jeopardizing the entire program [6]:

Analytical Validity: The ability to accurately and reliably measure the biomarker across different laboratories, equipment, and technicians. This requires demonstrating measurement accuracy, precision across varying conditions, appropriate sensitivity and specificity, and consistent performance over time [6].

Clinical Validity: The ability of the biomarker to accurately predict or correlate with the clinical outcome or status of interest. This requires demonstrating meaningful associations with clinical outcomes, showing predictive capability for future events, and proving diagnostic accuracy across diverse patient populations [6].

Clinical Utility: The demonstration that using the biomarker actually improves patient outcomes and clinical decision-making. This requires evidence that clinical decisions change when doctors have biomarker information, and that these changes lead to better results [6].

Distinguishing Validation from Qualification

A critical distinction that can save development programs years of work is understanding that validation and qualification represent different processes with different endpoints [6]:

Validation: The scientific process of generating evidence, publishing papers, and building scientific consensus around a biomarker. This typically takes 3-7 years and results in peer-reviewed publications that convince the research community [6].

Qualification: The regulatory process where agencies like the FDA formally recognize a biomarker for specific uses in drug development. This is a 1-3 year regulatory process that results in official qualification letters [6].

The payoff for regulatory qualification is substantial, with qualified biomarkers reducing clinical trial costs by approximately 60% through better patient selection [6].

Table 1: Biomarker Pipeline Phase Overview

| Pipeline Phase | Primary Objective | Typical Duration | Key Outputs | Success Rate |

|---|---|---|---|---|

| Discovery | Identify candidate biomarkers | 6-12 months | List of candidate biomarkers with statistical associations | 100% (starting point) |

| Verification | Confirm analytical performance | 12-24 months | Optimized assay protocols with precision data | ~40% (60% fail inter-lab validation) |

| Validation | Establish clinical utility | 24-48 months | Evidence of improved patient outcomes | ~5% (95% overall failure rate) |

| Regulatory Qualification | Achieve regulatory endorsement | 12-36 months | FDA/EMA qualification for specific context | Limited to top performers |

Phase 1: Discovery

The discovery phase represents the initial identification of potential biomarker candidates through unbiased screening approaches. In clinical proteomics, this phase leverages high-throughput technologies to profile proteins across disease and control populations.

Experimental Protocols for Proteomic Discovery

Sample Collection and Preparation Protocol

Principle: Consistent pre-analytical sample handling is critical for reliable proteomic profiling [7].

Reagents and Materials:

- EDTA or heparin blood collection tubes

- Protease inhibitor cocktails

- Protein extraction buffers (RIPA, urea/thiourea)

- Bicinchoninic acid (BCA) or Bradford protein assay kits

- Centrifugal filters (3-100 kDa MWCO)

Procedure:

- Collect blood samples following standardized venipuncture protocols with appropriate anticoagulants

- Process samples within 2 hours of collection to prevent protein degradation

- Separate plasma/serum by centrifugation at 2,000-3,000 × g for 15 minutes

- Aliquot samples and store at -80°C in low-protein-binding tubes

- Document complete sample metadata including processing times and storage conditions

- For tissue samples, use laser capture microdissection to isolate specific cell populations

- Extract proteins using appropriate buffers supplemented with protease and phosphatase inhibitors

- Quantify protein concentration using colorimetric assays with bovine serum albumin standards

- Remove high-abundance proteins if necessary using immunoaffinity depletion columns

Mass Spectrometry-Based Protein Profiling Protocol

Principle: Liquid chromatography-tandem mass spectrometry (LC-MS/MS) enables comprehensive protein identification and quantification [8].

Reagents and Materials:

- Trypsin (sequencing grade modified)

- C18 solid-phase extraction cartridges

- Formic acid, acetonitrile (LC-MS grade)

- Water (LC-MS grade)

- iTRAQ or TMT labeling reagents (for multiplexed quantification)

Procedure:

- Digest proteins with trypsin at 1:50 enzyme-to-substrate ratio overnight at 37°C

- Desalt peptides using C18 solid-phase extraction

- For label-free quantification, analyze individual samples using 120-minute LC gradients

- For multiplexed quantification, label peptides with isobaric tags (iTRAQ/TMT) following manufacturer protocols

- Pool labeled samples and fractionate using high-pH reverse-phase chromatography

- Analyze fractions by LC-MS/MS using data-dependent acquisition

- Use Orbitrap mass analyzers for high mass accuracy (<5 ppm) measurements

- Perform database searching against human protein databases (SwissProt) using search engines such as MaxQuant or Proteome Discoverer

- Apply false discovery rate (FDR) threshold of <1% at protein and peptide levels

Biomarker Discovery Workflow

The following diagram illustrates the complete proteomic biomarker discovery workflow:

Phase 2: Verification

The verification phase assesses the analytical performance of candidate biomarkers in larger sample sets, transitioning from discovery platforms to robust, quantitative assays.

Experimental Protocols for Biomarker Verification

Multiplex Immunoassay Verification Protocol

Principle: Electrochemiluminescence-based multiplex assays (e.g., Meso Scale Discovery) enable verification of multiple candidates simultaneously with improved sensitivity over traditional ELISA [9].

Reagents and Materials:

- Meso Scale Discovery (MSD) multi-spot plates

- MSD read buffer

- Biotinylated detection antibodies

- Ruthenium-labeled streptavidin

- MSD plate washer

- MSD MESO QuickPlex SQ 120 imager

Procedure:

- Coat MSD plates with capture antibodies overnight at 4°C with shaking

- Block plates with MSD blocker A solution for 1 hour at room temperature

- Add samples and standards in duplicate and incubate for 2 hours with shaking

- Wash plates 3 times with PBS-Tween using an automated plate washer

- Add biotinylated detection antibodies and incubate for 2 hours

- Wash plates as in step 4

- Add ruthenium-labeled streptavidin and incubate for 30 minutes

- Wash plates and add MSD read buffer

- Measure electrochemiluminescence signal using MSD imager

- Generate standard curves using 4-parameter logistic regression

- Assess precision with coefficient of variation <15% across replicates

LC-MS/MS-Based Verification Protocol

Principle: Targeted mass spectrometry (multiple reaction monitoring) provides highly specific verification without requirement for specific antibodies [9].

Reagents and Materials:

- Synthetic stable isotope-labeled peptide standards

- C18 reverse-phase columns (1.0 mm × 150 mm)

- Triple quadrupole mass spectrometer

- Nanoflow or capillary flow LC system

Procedure:

- Spike samples with stable isotope-labeled internal standard peptides

- Digest proteins as described in discovery protocol (section 3.1.2)

- Desalt peptides using C18 solid-phase extraction

- Separate peptides using 60-minute nanoLC gradients at 300 nL/min flow rate

- Monitor specific precursor-product ion transitions for target peptides

- Use scheduled MRM to monitor each transition at optimal retention time

- Integrate peak areas for native and heavy isotope-labeled peptides

- Calculate ratio of native to heavy peptide for quantification

- Assess linearity across 3 orders of magnitude with R² > 0.99

- Determine lower limits of quantification with CV <20%

Analytical Validation Requirements

Table 2: Analytical Performance Criteria for Biomarker Verification

| Performance Characteristic | Acceptance Criterion | Experimental Approach | Regulatory Reference |

|---|---|---|---|

| Precision | Coefficient of variation <15% | Repeated measurements of QC samples | CLSI EP05-A3 [6] |

| Accuracy | Recovery rates 80-120% | Spike-recovery experiments with known standards | CLSI EP05-A3 [6] |

| Linearity | R² > 0.95 across measuring range | Dilution series of pooled patient samples | CLSI EP05-A3 [6] |

| Sensitivity (LLOQ) | CV <20% at lower limit | Serial dilution of lowest measurable concentration | FDA Guidance (2007) [6] |

| Specificity | No interference from related analytes | Spike samples with structurally similar compounds | FDA Guidance (2007) [6] |

| Stability | <15% change after storage | Multiple freeze-thaw cycles, benchtop stability | CLSI EP05-A3 [6] |

Biomarker Verification Workflow

The following diagram illustrates the biomarker verification process:

Phase 3: Validation

The validation phase represents the most resource-intensive stage, requiring large-scale clinical studies to demonstrate that biomarker measurement improves patient outcomes.

Clinical Validation Protocol for Predictive Biomarkers

Principle: Prospective-validation studies establish whether a biomarker can reliably predict treatment response or disease progression in relevant clinical populations [6] [10].

Study Design Considerations:

- Use stratified designs to account for biomarker misclassification [6]

- Implement blinding to treatment and biomarker status during outcome assessment

- Pre-specify statistical analysis plan including sample size justification

- Define clinical endpoints appropriate for intended use (diagnostic, prognostic, predictive)

Procedures:

- Sample Size Calculation: Power analysis based on expected effect size, typically requiring hundreds to thousands of patients [6]

- Patient Recruitment: Enroll representative population with predefined inclusion/exclusion criteria

- Sample Collection: Standardized collection across multiple clinical sites

- Biomarker Testing: Centralized testing with blinded personnel

- Clinical Follow-up: Assess predefined endpoints at scheduled intervals

- Statistical Analysis:

Advanced Validation Technologies

While ELISA has traditionally been the gold standard for biomarker validation, advanced technologies now offer superior performance:

Table 3: Comparison of Biomarker Validation Technologies

| Technology | Sensitivity | Multiplexing Capacity | Cost per Sample | Key Advantages |

|---|---|---|---|---|

| Traditional ELISA | Moderate | Single-plex | ~$15-20 per analyte | Established workflow, widely available |

| Meso Scale Discovery (MSD) | 10-100x greater than ELISA | 10-100 plex | ~$19 for 4-plex panel | Broad dynamic range, low sample volume |

| LC-MS/MS | High | 10-100+ peptides | Variable based on plex | Absolute quantification, no antibodies needed |

| Multiplex Immunoassays | Moderate to high | 5-50 plex | $25-50 per multi-plex panel | Comprehensive profiling, pathway analysis |

Clinical Validation and Implementation Workflow

The following diagram illustrates the clinical validation and implementation pathway:

The Scientist's Toolkit: Essential Research Reagents and Platforms

Table 4: Essential Research Reagents and Platforms for Biomarker Development

| Tool Category | Specific Products/Platforms | Primary Function | Key Considerations |

|---|---|---|---|

| Sample Preparation | Protease inhibitor cocktails, RIPA buffer, BCA assay kits | Protein stabilization and quantification | Maintain sample integrity, ensure accurate quantification |

| Discovery Platforms | Orbitrap mass spectrometers, SWATH/DIA acquisition, iTRAQ/TMT labeling | Unbiased protein identification and quantification | Coverage, reproducibility, quantification accuracy |

| Verification Assays | Meso Scale Discovery U-PLEX, LC-MRM/MS, Luminex xMAP | Targeted candidate verification | Sensitivity, multiplexing capacity, dynamic range |

| Validation Technologies | Validated ELISA kits, LC-MS/MS assays, clinical grade IHC | Clinical grade biomarker measurement | Regulatory compliance, reproducibility across sites |

| Data Analysis Tools | MaxQuant, Skyline, R/Bioconductor, Python scikit-learn | Statistical analysis and biomarker modeling | False discovery control, model performance assessment |

| Biospecimen Resources | Biobanking systems, LN2 storage, sample tracking software | Sample management and quality control | Sample provenance, quality metrics, ethical compliance |

The biomarker pipeline remains a challenging but essential pathway for advancing precision medicine. The integration of AI and machine learning approaches is beginning to transform this landscape, with recent studies showing that machine learning improves validation success rates by 60% [6]. These approaches can analyze over 50 million scientific papers to identify hidden connections between diseases and biomarkers, predicting which candidates are most likely to succeed in validation [6].

Modern proteomic approaches are particularly promising for complex conditions like autoimmune diseases, where they offer the potential to identify unique biomarkers for more precise diagnosis, classification, and treatment decisions [8]. The emergence of standardized assessment tools like the Biomarker Toolkit—which provides an evidence-based checklist of 129 attributes associated with successful biomarker implementation—further supports more systematic development approaches [10].

As the field advances, researchers must maintain focus on the fundamental principles of analytical validity, clinical validity, and clinical utility while embracing new technologies that offer enhanced sensitivity, multiplexing capability, and efficiency. By applying the detailed protocols and frameworks presented in this application note, researchers can navigate the complex biomarker development pipeline more effectively, increasing the likelihood that promising discoveries will ultimately benefit patients through improved diagnosis, monitoring, and treatment selection.

In the field of clinical proteomics and precision medicine, biomarkers are objectively measured characteristics that provide critical insights into biological processes, pathogenic states, or pharmacological responses to therapeutic interventions [11]. The ideal clinical biomarker serves as a cornerstone for disease detection, diagnosis, prognosis, and monitoring treatment efficacy, ultimately enabling personalized treatment strategies [12]. As modern medicine increasingly shifts toward precision-based approaches, the demand for refined biomarkers has intensified, particularly with advancements in proteomic technologies such as mass spectrometry and protein microarrays that enhance diagnostic precision [13].

The defining characteristics of an ideal biomarker include high sensitivity and specificity, which ensure accurate disease detection and classification, alongside non-invasiveness, which facilitates repeated sampling and real-time monitoring [14]. These attributes are especially vital in oncology, where early detection of recurrence significantly impacts patient outcomes [14]. This application note delineates the essential properties of clinical biomarkers, structured protocols for their validation, and advanced methodological workflows, with a specific focus on proteomic applications for researchers and drug development professionals.

Core Characteristics of an Ideal Biomarker

The utility of a clinical biomarker is governed by a set of interdependent characteristics that determine its performance and applicability in real-world settings. These properties ensure that the biomarker reliably informs clinical decision-making from diagnosis through treatment monitoring.

- High Analytical Sensitivity and Specificity: A biomarker must demonstrate high sensitivity—the ability to correctly identify individuals with the disease (true positive rate)—and high specificity—the ability to correctly identify those without the disease (true negative rate) [11]. These metrics are foundational to a biomarker's analytical validity, ensuring the test itself is accurate and reproducible [12].

- Non-Invasiveness: Biomarkers detectable in easily accessible biofluids (e.g., blood, urine) via liquid biopsies offer a profound advantage. They enable repeated sampling for dynamic disease monitoring, patient compliance, and early detection of conditions like breast cancer recurrence, often before clinical symptoms or radiological evidence appear [14]. Platforms analyzing circulating tumor DNA (ctDNA), circulating tumor cells (CTCs), and exosomes are at the forefront of this non-invasive revolution [14] [15].

- Clinical Validity and Utility: Beyond technical performance, a biomarker must prove clinically valid—effectively identifying or predicting a specific disease or condition in the target patient population [12]. Its clinical utility is demonstrated by providing information that improves patient outcomes, guides treatment selection, and is cost-effective to implement in healthcare systems [12].

- Robustness and Standardization: An ideal biomarker must generate consistent results across different laboratories, operators, and over time. This requires standardized protocols for sample collection, processing, and analysis to minimize pre-analytical and analytical biases, which are common pitfalls in biomarker development [16].

Table 1: Key Quantitative Metrics for Biomarker Evaluation

| Metric | Definition | Interpretation in a Clinical Context |

|---|---|---|

| Sensitivity | Proportion of actual positive cases that are correctly identified. | A high sensitivity is crucial for ruling out disease (high negative predictive value) and is required for screening biomarkers. |

| Specificity | Proportion of actual negative cases that are correctly identified. | A high specificity minimizes false positives, reducing unnecessary follow-up tests and patient anxiety. |

| Positive Predictive Value (PPV) | Proportion of positive test results that are true positives. | Highly dependent on disease prevalence; indicates the probability that a positive test result is correct. |

| Negative Predictive Value (NPV) | Proportion of negative test results that are true negatives. | Also depends on disease prevalence; indicates the probability that a negative test result is correct. |

| Area Under the Curve (AUC) | Measures the overall ability of a biomarker to discriminate between cases and controls. | An AUC of 1.0 represents perfect discrimination, while 0.5 represents no discriminative ability (like a coin toss). |

Essential Validation Criteria

The journey from biomarker discovery to clinical application is long and arduous, requiring rigorous validation to ensure real-world reliability [16]. This process is structured around three pillars of validation.

- Analytical Validity refers to the performance of the assay itself—its ability to accurately and reliably measure the biomarker. This involves rigorous assessment of the test's sensitivity, specificity, precision, and accuracy under defined conditions [12]. For a test to be clinically viable, its analytical measurements must be reproducible, with low coefficients of variation (e.g., <20-30%) [16].

- Clinical Validity establishes that the biomarker is indeed associated with the clinical endpoint of interest (e.g., disease presence, prognosis, or response to therapy). It confirms that the biomarker measurements correlate meaningfully with the patient's clinical status [12]. This is typically evaluated using the same metrics described in Table 1, within a well-defined clinical cohort.

- Clinical Utility is the ultimate test, demonstrating that using the biomarker in practice leads to improved patient outcomes, better decision-making, or more efficient use of resources compared to standard care [12]. A biomarker may be analytically and clinically valid but fail if it does not change clinical management in a beneficial and cost-effective way.

Table 2: The Three Pillars of Biomarker Validation

| Validation Type | Core Question | Key Parameters Assessed |

|---|---|---|

| Analytical Validity | Does the test work reliably in the lab? | Sensitivity, Specificity, Precision, Accuracy, Reproducibility, Coefficient of Variation (CV) [12] [16]. |

| Clinical Validity | Does the test result correlate with the patient's condition? | Clinical Sensitivity, Clinical Specificity, Positive/Negative Predictive Value, Odds Ratios, Hazard Ratios [11] [12]. |

| Clinical Utility | Does using the test improve patient care? | Impact on treatment decisions, patient outcomes (survival, quality of life), cost-effectiveness, and feasibility of implementation [12]. |

Experimental Protocols for Biomarker Assessment

Protocol: Validation of a Protein Biomarker via Mass Spectrometry

This protocol outlines a targeted proteomic workflow for verifying a candidate protein biomarker in serum samples.

1. Sample Preparation

- Materials: Pre-analytical blood collection tubes, protease inhibitor cocktails, ultracentrifuge, protein quantification assay.

- Procedure:

- Collect blood samples in serum separator tubes and allow to clot. Centrifuge at 2,000 x g for 10 minutes to isolate serum.

- Aliquot serum and store immediately at -80°C to preserve protein integrity.

- Deplete high-abundance proteins using an immunoaffinity column.

- Digest the protein sample into peptides using a standardized trypsinization protocol.

- Desalt and concentrate peptides using C18 solid-phase extraction tips.

2. Liquid Chromatography-Tandem Mass Spectrometry (LC-MS/MS) Analysis

- Materials: Nano-flow LC system, hybrid quadrupole-orbitrap mass spectrometer, C18 reversed-phase analytical column.

- Procedure:

- Separate peptides using a nano-LC system with a linear gradient of acetonitrile.

- Analyze eluting peptides using data-dependent acquisition (DDA) or scheduled parallel reaction monitoring (PRM) for higher sensitivity and reproducibility.

- Use heavy isotope-labeled synthetic peptides as internal standards for absolute quantification.

3. Data Processing and Statistical Analysis

- Materials: Proteomics software suite, statistical computing environment.

- Procedure:

- Identify and quantify peptides by searching MS/MS spectra against a curated protein sequence database.

- Normalize protein abundances across runs using internal standards.

- Perform statistical analysis (e.g., t-tests, ROC analysis) to assess the differential expression of the candidate biomarker between case and control groups.

Protocol: Evaluating a Multi-Biomarker Panel for Prognosis

This protocol describes the process for developing and validating a panel of biomarkers to improve prognostic accuracy.

1. Panel Construction and Assay Development

- Materials: Multiplex immunoassay platform, clinical data from a well-annotated patient cohort.

- Procedure:

- Select candidate biomarkers based on prior discovery-phase experiments.

- Develop a multiplex assay to measure all candidates simultaneously from a single sample.

- Use continuous biomarker values to retain maximal information; avoid early dichotomization [11].

2. Model Building and Validation

- Materials: Statistical software capable of machine learning algorithms.

- Procedure:

- Using a training cohort, employ variable selection methods to identify the most informative biomarkers for the panel.

- Build a prognostic model using logistic regression or a machine learning classifier.

- Validate the model's performance on a separate, independent validation cohort.

- Assess the panel's added value by comparing the AUC of the multi-marker model to that of any single biomarker or standard clinical factor.

Visualization of Workflows and Relationships

Diagram 1: Biomarker development pipeline.

Diagram 2: Multi-omics data integration.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Reagents and Platforms for Clinical Proteomics

| Research Tool | Function in Biomarker Workflow |

|---|---|

| Mass Spectrometer | High-sensitivity instrument for identifying and quantifying proteins and peptides in complex biological samples [13]. |

| Protein Microarrays | Platform for high-throughput screening of protein expression and interactions, facilitating biomarker discovery [13]. |

| Next-Generation Sequencing | Enables comprehensive genomic and transcriptomic profiling, used for discovering mutation-based biomarkers and analyzing ctDNA [14]. |

| Liquid Biopsy Kits | Reagents for the isolation and analysis of circulating biomarkers like ctDNA, CTCs, and exosomes from blood samples [14] [15]. |

| Immunoassay Kits | Antibody-based kits for validating and measuring specific protein biomarkers; the gold standard for clinical assays [16]. |

| Bioinformatics Software | Computational tools for analyzing large-scale omics data, performing statistical analysis, and building predictive models [13]. |

The success of clinical proteomics in biomarker discovery is fundamentally linked to the selection and proper handling of biological samples. Blood, tissue, urine, and proximal fluids each offer unique windows into physiological and pathological processes, with varying protein compositions, dynamic ranges, and clinical accessibility. Blood plasma and serum remain the most frequently used sources due to their rich protein content and minimal invasiveness, providing a systemic overview of an individual's health status. Tissue samples offer direct insight into disease mechanisms at the site of pathology but require invasive collection procedures. Urine provides a non-invasive alternative with relatively stable protein composition, while proximal fluids contain proteins shed or secreted from specific tissue microenvironments, potentially enriching for disease-relevant biomarkers. Understanding the technical considerations, advantages, and limitations of each sample type is crucial for designing robust proteomic studies that yield clinically actionable biomarkers.

Table 1: Comparative characteristics of major sample sources in clinical proteomics

| Sample Source | Key Advantages | Technical Challenges | Approximate Protein Complexity | Primary Clinical Applications |

|---|---|---|---|---|

| Blood (Plasma/Serum) | Minimally invasive; Rich protein content; Systemic health reflection [17] | Extreme dynamic range (>10 orders of magnitude); High-abundance protein masking [17] [18] | ~10,000 core proteins [19] | Cancer biomarker discovery [20]; Autoimmune disease profiling [8]; Therapeutic monitoring |

| Tissue Biopsy | Direct analysis of disease site; Pathological context preserved [21] | Invasive procedure; Sample heterogeneity; Limited material [21] | >10,000 proteins (tissue-dependent) | Cancer subtyping [21]; Molecular pathology; Drug target identification |

| Urine | Completely non-invasive; Large volumes obtainable; Stable protein composition [19] [22] | Low protein concentration; Variable composition (diet, time of day) [19] | ~2,000 proteins [19] | Renal diseases [19]; Urological cancers [20]; Systemic disease detection |

| Proximal Fluids | Enriched with tissue-specific proteins; Lower dynamic range than plasma [23] | Limited availability; Access requires specialized procedures [23] | Varies by fluid type | Organ-specific biomarker discovery; Local microenvironment assessment [23] |

Table 2: Quantitative performance of proteomic technologies across sample types

| Technology Platform | Typical Proteome Coverage | Quantitative Precision (CV) | Sample Throughput | Best Suited Sample Types |

|---|---|---|---|---|

| DIA-MS (e.g., SWATH) | ~2,000 proteins from tissue [21]; ~1,000+ from plasma [18] | 3.3-9.8% (protein level) [18] | Medium-High | Plasma, Tissue, Urine |

| DDA-MS | Fewer identifications than DIA in complex samples [18] | Higher variability than DIA [18] | Medium | All sample types |

| Aptamer-based (SomaScan) | Up to 11,000 proteins [17] | <5% (platform-dependent) | High | Plasma, Serum |

| Proximity Extension Assay (Olink) | ~3,000 proteins [17] | <10% (platform-dependent) | High | Plasma, Serum, Urine |

| Antibody Arrays | Up to hundreds of proteins | Varies by target | High | All sample types |

Blood (Plasma/Serum) Proteomics

Technical Considerations and Protocols

Blood-derived samples present significant analytical challenges due to the extreme dynamic range of protein concentrations, which spans over 10 orders of magnitude [17]. The 22 most abundant plasma proteins constitute approximately 99% of the total protein mass, necessitating specialized strategies to detect lower-abundance protein biomarkers. Recent advancements in depletion methods, acquisition techniques, and instrumentation have substantially improved the depth and quantitative accuracy of plasma proteome analysis.

High-Abundance Protein Depletion Protocol:

- Sample Preparation: Collect venous blood in EDTA, heparin, or citrate tubes. Centrifuge at 2,000-3,000 × g for 10-15 minutes to separate plasma from cellular components. Aliquot and store at -80°C until analysis.

- Immunoaffinity Depletion: Use commercial columns (e.g., MARS-14, Seer Proteograph) with immobilized antibodies against high-abundance proteins. Dilute plasma 1:5 with binding buffer and load onto column according to manufacturer's instructions [17].

- Alternative Depletion Methods: For nanoparticle-based enrichment (e.g., Seer Proteograph), mix 200μL plasma with magnetic nanoparticles. Incubate with rotation for 30 minutes at room temperature. Capture nanoparticles magnetically and discard supernatant [17].

- Protein Digestion: Add 8M urea/100mM ammonium bicarbonate to depleted samples. Reduce with 5mM tris(2-carboxyethyl)phosphine (60°C, 30 minutes), alkylate with 10mM iodoacetamide (room temperature, 30 minutes in dark). Dilute to 1.6M urea, digest with trypsin/Lys-C (1:50 enzyme:protein) overnight at 37°C [17] [18].

- Peptide Clean-up: Desalt peptides using C18 solid-phase extraction columns. Elute with 50-80% acetonitrile/0.1% formic acid. Dry in vacuum concentrator and reconstitute in 0.1% formic acid for MS analysis.

Liquid Chromatography-Mass Spectrometry Analysis: For DIA (SWATH-MS) on TripleTOF or Orbitrap platforms: Inject 1-2μg peptides onto nanoflow LC system (C18 column, 75μm × 250mm). Separate with 90-180 minute gradient from 2-30% acetonitrile/0.1% formic acid. For DIA acquisition, set variable windows covering 400-1000 m/z range. Use 25ms accumulation time for MS1 (350-1500 m/z) and 20ms for MS2 (100-1500 m/z) [21] [18].

Plasma Proteomics Workflow

Microsampling Approaches

Blood microsampling (<100μL) using fingerstick or microblade devices offers advantages for pediatric populations, frequent monitoring, and remote sampling. Dried blood spots (DBS) and novel microsampling devices enable room temperature storage and transport, reducing cold-chain logistics [24]. A 2024 scoping review confirmed that microsamples are amenable to high-throughput proteomics, though quantification normalization remains challenging due to hematocrit effects and variable sample volumes [24].

Tissue Proteomics

Technical Considerations and Protocols

Tissue biopsies provide direct access to disease sites but present challenges including limited material, cellular heterogeneity, and efficient protein extraction. The PCT-SWATH method enables reproducible proteomic analysis from biopsy-level tissues (1-3mg), converting small tissue samples into permanent digital proteome maps [21].

PCT-SWATH Tissue Processing Protocol:

- Tissue Lysis and Protein Extraction: Place 1-3mg wet tissue in pressure-resistant MicroTubes. Add 100μL extraction buffer (8M urea, 100mM ammonium bicarbonate, protease inhibitors). Subject to pressure cycling: 50 seconds at 45,000 p.s.i. followed by 10 seconds at ambient pressure for 60 cycles (60 minutes total) [21].

- Protein Reduction and Alkylation: Add tris(2-carboxyethyl)phosphine to 5mM and iodoacetamide to 10mM directly to lysate. Incubate at room temperature in the dark for 30 minutes with pressure cycling (20,000 p.s.i., 50 seconds on/10 seconds off) [21].

- Protein Digestion: Add Lys-C (1:50 enzyme:protein) in 6M urea. Pressure cycle at 33°C for 45 cycles (50 seconds at 20,000 p.s.i./10 seconds ambient). Dilute to 1.6M urea with ammonium bicarbonate. Add trypsin (1:50 enzyme:protein) and pressure cycle for 90 cycles [21].

- Peptide Recovery: Acidify with 1% formic acid. Centrifuge at 14,000 × g for 10 minutes. Transfer supernatant to clean tubes. Desalt using C18 stage tips. The entire process from tissue to MS-ready peptides takes 6-8 hours [21].

- SWATH-MS Acquisition: Load 1-2μg peptides onto LC-MS system. Use 120-minute gradient. For SWATH-MS, acquire MS1 scan (350-1500 m/z, 250ms accumulation), followed by 64 sequential MS2 scans (100-1500 m/z, 20ms each) of variable precursor isolation windows [21].

Tissue Proteomics Workflow

Urine Proteomics

Technical Considerations and Protocols

Urine has become an attractive biofluid for clinical proteomics due to non-invasive collection, relatively stable composition, and relevance to both urogenital and systemic diseases. Normal urine contains approximately 2,000 proteins, with composition influenced by factors including time of day, exercise, and diet [19]. Morning urine collection is preferred due to higher protein content.

Urinary Protein Preparation Protocol:

- Sample Collection and Preparation: Collect mid-stream morning urine (50-100mL). Centrifuge at 2,000 × g for 10 minutes to remove cells and debris. Aliquot supernatant and store at -80°C. Avoid multiple freeze-thaw cycles [19].

- Protein Concentration: Choose one method:

- Acetone Precipitation: Mix urine with 4 volumes cold acetone. Incubate at -20°C overnight. Centrifuge at 14,000 × g for 15 minutes. Discard supernatant, air-dry pellet.

- Ultracentrifugation: Centrifuge at 100,000 × g for 60 minutes at 4°C. Collect supernatant.

- Lyophilization: Freeze urine at -80°C, then lyophilize to dryness. Reconstitute in 1/10 original volume with PBS [19].

- Protein Depletion and Enrichment: For abundant protein depletion, use multiple affinity removal system (MARS) columns specific for urine proteins. For low-abundance protein enrichment, employ strong cation exchange chromatography or combinatorial peptide ligand libraries [19].

- Protein Digestion and Clean-up: Dissolve proteins in 8M urea/100mM ammonium bicarbonate. Reduce, alkylate, and digest following similar protocol to plasma proteomics. Desalt peptides using C18 columns [19].

Proximal Fluids Proteomics

Technical Considerations and Protocols

Proximal fluids, derived from the extracellular milieu of specific tissues, contain proteins shed or secreted from tissue microenvironments. These fluids potentially enrich for disease-relevant biomarkers that may be diluted in systemic circulation. Examples include cerebrospinal fluid, synovial fluid, ascites, and pleural effusion [23]. The protein composition of proximal fluids typically has a less extreme dynamic range than plasma, facilitating detection of tissue-derived proteins.

Proximal Fluid Processing Protocol:

- Sample Collection: Collect fluid using clinical procedures specific to the fluid type (e.g., lumbar puncture for CSF, arthrocentesis for synovial fluid). Centrifuge at 2,000 × g for 10 minutes to remove cells and debris.

- Protein Concentration: Concentrate using centrifugal filtration devices (3-10kDa molecular weight cutoff) or precipitation methods. The choice depends on initial protein concentration and sample volume.

- Protein Digestion: Follow standard reduction, alkylation, and digestion protocols as described for plasma, adjusting buffer volumes according to sample size.

- LC-MS Analysis: Utilize DIA or DDA methods optimized for the specific complexity of the proximal fluid. CSF typically requires less extensive fractionation than plasma due to lower complexity.

The Scientist's Toolkit

Table 3: Essential research reagents and platforms for clinical proteomics

| Reagent/Platform | Function | Application Notes |

|---|---|---|

| Pressure Cycling Technology (PCT) | Integrated tissue lysis, protein extraction, and digestion [21] | Essential for small tissue biopsies; improves yield and reproducibility |

| Magnetic Nanoparticles (Seer Proteograph) | Dynamic range compression in plasma [17] | Enables detection of >3,000 plasma proteins; requires high initial investment |

| Immunoaffinity Depletion Columns (MARS-14) | Removal of high-abundance plasma proteins [17] | Standard approach for plasma proteome depth improvement |

| SWATH-MS | Data-independent acquisition for comprehensive proteome mapping [21] | Creates permanent digital proteome maps; enables retrospective analysis |

| Olink PEA | High-sensitivity multiplexed protein detection [17] [20] | Ideal for cytokine and low-abundance protein quantification |

| SomaScan | Aptamer-based proteomic platform [17] [20] | Highest multiplex capacity (>11,000 proteins); useful for biomarker discovery |

| ENRICHplus Beads (PreOmics) | Magnetic bead-based plasma protein enrichment [17] | Identifies >5,500 protein groups from 50μL plasma |

| Strong Cation Exchange (SCX) Chromatography | Fractionation and enrichment of basic peptides [19] | Particularly useful for phosphoproteome enrichment |

The selection of appropriate sample sources and optimized processing protocols is fundamental to successful clinical proteomics. Blood plasma and serum remain central to biomarker discovery despite analytical challenges posed by their extreme dynamic range. Tissue biopsies provide invaluable disease site information, with emerging technologies like PCT-SWATH enabling comprehensive analysis of minimal samples. Urine offers a completely non-invasive alternative with particular utility for renal and urological conditions, while proximal fluids enrich for tissue-specific biomarkers. Microsampling approaches are gaining traction for applications requiring frequent monitoring or remote collection. As proteomic technologies continue to advance with improved sensitivity, reproducibility, and multiplexing capabilities, the integration of multiple sample types will provide complementary insights, accelerating the translation of proteomic discoveries to clinical applications across diverse disease areas including cancer, autoimmune disorders, and renal diseases.

Advanced Proteomic Workflows: From Sample to Data

Within clinical proteomics, the success of biomarker identification and validation hinges almost entirely on the initial quality of the sample. Inconsistent or suboptimal sample preparation introduces variability that can obscure true biological signals and compromise the reliability of downstream mass spectrometry analyses. This document provides detailed application notes and protocols for the preparation of three fundamental sample types in biomedical research: plasma, serum, and formalin-fixed paraffin-embedded (FFPE) tissues. With vast archives of FFPE tissues representing a largely untapped resource for retrospective biomarker discovery, and blood plasma/serum remaining the most accessible biofluids for longitudinal studies, standardizing their preparation is a critical step toward advancing precision medicine.

Blood Plasma and Serum Preparation

Fundamental Definitions and Collection Materials

The preparation of plasma and serum begins with the collection of whole blood, but the subsequent processing determines the final analyte composition.

- Serum is the liquid fraction of whole blood collected after allowing the blood to clot spontaneously. This process removes fibrinogen and other clotting factors, yielding a sample that is often less complex than plasma. [25]

- Plasma is produced when whole blood is collected in tubes containing an anticoagulant. The blood does not clot, and centrifugation removes the cellular components. Plasma thus retains the full complement of circulating proteins, including clotting factors. [25]

The choice of collection tube is critical and depends on the intended downstream analysis. The table below outlines the common tube types and their applications. [25]

Table 1: Blood Collection Tubes for Serum and Plasma Preparation

| Tube Color | Additive | Designated Sample Type | Notes on Use |

|---|---|---|---|

| Red | None | Serum | Allows blood to clot. |

| Red with black | Clot-activating gel | Serum | Gel forms a barrier between serum and clot during centrifugation. |

| Lavender | EDTA | Plasma | Chelates calcium to prevent clotting; common for proteomics. |

| Green | Heparin | Plasma | Can be contaminated with endotoxin, which may stimulate cytokine release. [25] |

| Blue | Citrate | Plasma | Binds calcium; often used in coagulation studies. |

| Grey/Yellow | Potassium Oxalate/Sodium Fluoride | Plasma | Fluoride inhibits glycolytic enzymes, often used for glucose assays. |

Step-by-Step Experimental Protocols

Serum Preparation Protocol

Materials: Whole blood collected in red-top or red/black-top serum tubes. [25]

- Collection: Collect whole blood into a serum tube.

- Clotting: Allow the blood to clot by leaving the tube undisturbed at room temperature for 15–30 minutes.

- Centrifugation: Centrifuge the tube at 1,000–2,000 x g for 10 minutes in a refrigerated centrifuge (2–8°C). The resulting supernatant is serum.

- Transfer: Using a clean Pasteur pipette, immediately transfer the clarified serum into a clean polypropylene tube. Maintain samples at 2–8°C during handling.

- Aliquoting and Storage: If the serum is not analyzed immediately, aliquot it into 0.5 mL portions to avoid repeated freeze-thaw cycles. Store and transport samples at –20°C or lower. [25]

Plasma Preparation Protocol

Materials: Whole blood collected in anticoagulant-treated tubes (e.g., lavender-top EDTA tubes). [25]

- Collection: Collect whole blood into a pre-treated tube (e.g., EDTA, citrate, or heparin) and invert gently to mix with the anticoagulant.

- Centrifugation: Centrifuge the tube at 1,000–2,000 x g for 10 minutes in a refrigerated centrifuge. For platelet-poor plasma, centrifuge at 2,000 x g for 15 minutes.

- Transfer: Carefully extract the supernatant (plasma) using a Pasteur pipette, taking care not to disturb the cell pellet. Maintain samples at 2–8°C.

- Aliquoting and Storage: Aliquot plasma into 0.5 mL portions and store at –20°C or lower. Avoid freeze-thaw cycles. [25]

Critical Note for Both Plasma and Serum: Samples that are hemolyzed (red blood cell rupture), icteric (high bilirubin), or lipemic (high lipids) can invalidate certain tests and should be noted. [25]

The following workflow diagram summarizes the parallel paths for preparing serum and plasma from whole blood:

FFPE Tissue Preparation for Proteomics

Unlocking the Archive: The Value of FFPE Tissues

FFPE tissue archives represent the most extensive repository of preserved human biological specimens worldwide, encompassing billions of samples with decades of linked clinical data. [26] Their value for proteomics is immense, enabling retrospective biomarker validation across diverse patient populations and rare diseases. Recent advances have demonstrated that robust, high-resolution quantitative proteomics is possible from FFPE cardiac tissue, quantifying approximately 4,000-5,000 proteins per sample with minimal variation introduced by the fixation process itself (median variance ~1.1%). [27] This establishes FFPE tissue as a viable and powerful resource for clinical proteomics.

Critical Steps in FFPE Tissue Proteomics Workflow

The key challenge in FFPE proteomics is reversing the formalin-induced protein crosslinks that preserve the tissue, while efficiently removing paraffin to allow for effective protein extraction and digestion.

- Deparaffinization: This initial step involves removing the paraffin embedding matrix. Modern, scalable protocols are often xylene-free, using heat-based methods to melt and remove paraffin in a higher-throughput, safer manner. [28]

- Decrosslinking and Protein Extraction: A critical optimization step, this process uses specialized buffers and heat to break the methylene bridges formed by formalin fixation, thereby solubilizing proteins for extraction. The efficiency of this step directly impacts proteome depth, with optimized workflows successfully retrieving even proteins from specialized compartments like the plasma membrane. [27]

- Protein Digestion and Cleanup: The extracted proteins are digested into peptides (typically with trypsin) and desalted before mass spectrometry analysis. For comprehensive coverage, fractionation at high pH can be performed to reduce sample complexity. [27]

The following diagram illustrates the core workflow for preparing FFPE tissues for proteomic analysis:

Mass Spectrometry Acquisition Strategies for FFPE Proteomics

The choice of mass spectrometry acquisition method is determined by the goals of the study, balancing depth of coverage, quantitative accuracy, and throughput.

Table 2: Comparison of Mass Spectrometry Acquisition Methods for FFPE Proteomics

| Acquisition Method | Typical Proteins Quantified | Key Strengths | Ideal Use Case |

|---|---|---|---|

| TMT Multiplexing with DDA [27] | ~5,900 proteins (with fractionation) | High proteome depth; allows multiplexing of several samples. | In-depth discovery studies with a limited number of samples. |

| Label-Free DIA (diaPASEF) [27] | ~4,000 proteins (single-shot) | Minimal missing values; excellent reproducibility; highly scalable for large cohorts. | Large-scale retrospective studies and clinical cohort profiling. |

The Scientist's Toolkit: Essential Research Reagents and Materials

Successful sample preparation relies on the use of specific, high-quality reagents and materials. The following table details essential items for the protocols described in this document.

Table 3: Essential Research Reagent Solutions for Sample Preparation

| Item | Function/Application | Key Considerations |

|---|---|---|

| EDTA Blood Collection Tubes (Lavender Top) [25] | Collects plasma by chelating calcium to prevent coagulation. | Preferred for many proteomic applications due to minimal interference. |

| Serum Tubes (Red Top) [25] | Collects serum by allowing blood to clot. | The clot-activating gel in red/black-top tubes can aid separation. |

| Pasteur Pipettes [25] | Transfer of supernatant (serum/plasma) after centrifugation. | Critical for avoiding disturbance of the cell pellet during transfer. |

| Optimized FFPE Lysis Buffer [27] | Decrosslinks formalin-induced bonds and extracts proteins from FFPE scrolls. | Composition is key for efficient protein retrieval, especially membrane proteins. |

| Tandem Mass Tags (TMT) [27] | Multiplexes peptide samples for relative quantification in MS. | Increases throughput and reduces instrument time for discovery studies. |

| High-pH Reversed-Phase Chromatography Kit [27] | Fractionates complex peptide mixtures to reduce sample complexity. | Significantly increases proteome coverage and depth in discovery modes. |

| Data-Independent Acquisition (DIA) Kits | Enables comprehensive, reproducible quantification in MS. | Ideal for large cohort studies; creates permanent digital proteome maps. [26] |

Standardized and meticulously executed sample preparation is the non-negotiable foundation of robust clinical proteomics. The protocols detailed here for plasma, serum, and FFPE tissues provide a roadmap to generate high-quality data from these invaluable sample types. By leveraging the vast archives of FFPE tissues with modern, optimized workflows, researchers can now unlock decades of clinical data for proteomics-driven disease characterization and patient stratification. As the field moves forward, the integration of artificial intelligence and multi-omics approaches with these solid foundational practices will further accelerate the discovery of novel biomarkers and the advancement of precision medicine. [27] [29]

In clinical proteomics, the identification of robust biomarkers for diseases such as acute myocardial infarction, lung adenocarcinoma, and various autoimmune conditions depends critically on the effective separation and analysis of proteins from complex biological samples [30] [31] [8]. The dynamic nature of the proteome, with its vast concentration range and numerous post-translational modifications (PTMs), presents a significant analytical challenge [32]. Two-dimensional gel electrophoresis (2D-GE) and liquid chromatography (LC), often coupled with mass spectrometry (MS), are two foundational techniques employed for this purpose. This article details the application, protocols, and key considerations of these techniques within a clinical proteomics workflow for biomarker discovery.

Technique 1: Two-Dimensional Gel Electrophoresis (2D-GE)

Principles and Clinical Applications

Two-dimensional gel electrophoresis separates complex protein mixtures based on two independent physicochemical properties: isoelectric point (pI) in the first dimension and molecular weight (MW) in the second dimension [33]. This orthogonality allows for the resolution of thousands of proteins, including different proteoforms resulting from PTMs like phosphorylation and glycosylation, which can cause observable shifts in protein migration [34] [33]. In clinical proteomics, 2D-GE is particularly valuable for visually detecting alterations in protein expression levels and PTM patterns between healthy and diseased states, making it applicable in cancer research, studies of cell differentiation, and the discovery of disease biomarkers [34] [33]. Its primary strength lies in its ability to separate and visualize complete, denatured proteins, providing a proxy to the real biological objects of interest [34].

Detailed Experimental Protocol for 2D-GE

Sample Preparation:

- Protein Extraction: Solubilize proteins from tissues (e.g., brain or tumor biopsies) or biofluids (e.g., serum) using a lysis buffer containing 8 M urea, 2 M thiourea, 4% CHAPS, and 30 mM Tris-HCl to denature proteins and maintain solubility [34] [35].

- Reduction and Alkylation: Reduce disulfide bonds with 1 mM DTT at 37°C for 1 hour. Subsequently, alkylate free thiol groups with 5 mM iodoacetamide in the dark for 1 hour to prevent reformation.

- Clean-up: Precipitate proteins using cold acetone or a commercial kit to remove contaminants like salts, nucleic acids, and lipids that can interfere with isoelectric focusing (IEF) [35]. Re-dissolve the cleaned protein pellet in a rehydration buffer compatible with IEF.

First Dimension - Isoelectric Focusing (IEF):

- Rehydration: Apply the protein sample (typically 50-100 µg for analytical gels, up to 1 mg for preparative gels) to immobilized pH gradient (IPG) strips (e.g., pH 3-11 nonlinear) via active or passive rehydration for 12-16 hours [33] [35].

- Focusing: Perform IEF using a programmed voltage gradient on an IEF device. A typical protocol for an 11 cm strip might include a step-and-hold regimen: 500 V for 1 hour, 1000 V for 1 hour, and 8000 V until 35,000 V-hr is reached, all at 20°C [35].

Second Dimension - SDS-PAGE:

- Strip Equilibration: After IEF, equilibrate the IPG strips in two steps. First, incubate in equilibration buffer (6 M Urea, 75 mM Tris-HCl pH 8.8, 30% glycerol, 2% SDS) containing 1% DTT for 15 minutes to reduce proteins. Second, incubate in the same buffer containing 2.5% iodoacetamide for 15 minutes to alkylate them.

- Gel Casting/Loading: Use pre-cast or hand-cast polyacrylamide gels (e.g., 8-16% gradient gels) for the second dimension. Place the equilibrated IPG strip onto the top of the SDS-PAGE gel.

- Electrophoresis: Run the gel in an electrophoresis chamber filled with running buffer (25 mM Tris, 192 mM glycine, 0.1% SDS). Apply a constant voltage (e.g., 100 V) until the dye front migrates to the bottom of the gel [36].

Protein Visualization and Analysis:

- Staining: Visualize separated proteins using sensitive stains like silver nitrate, Coomassie Brilliant Blue, or fluorescent dyes (e.g., SYPRO Ruby) [34] [36].

- Image Acquisition and Analysis: Scan the gel using a digital imaging system. Use specialized software to detect protein spots, match spots across different gels, and quantify differential expression [36].

- Spot Excision and Identification: Excise protein spots of interest robotically or manually. Digest the proteins within the gel plugs with trypsin, extract the resulting peptides, and identify them by mass spectrometry [33].

2D-GE Workflow Visualization

Diagram 1: The sequential workflow for two-dimensional gel electrophoresis (2D-GE), from sample preparation to protein identification.

Technique 2: Liquid Chromatography (LC) in Proteomics

Principles and Clinical Applications

Liquid chromatography, particularly when coupled with tandem mass spectrometry (LC-MS/MS), is the cornerstone of modern bottom-up proteomics [32]. In this approach, complex protein mixtures are digested into peptides, which are then separated by LC based on properties like hydrophobicity (in reverse-phase LC) or charge (in ion-exchange LC) before being introduced into the mass spectrometer [32]. Multidimensional LC (MDLC) platforms, such as the combination of strong cation exchange (SCX) and reverse-phase liquid chromatography (RPLC), significantly increase peak capacity and resolution, enabling the deep profiling of complex proteomes like human plasma or tissue lysates [32] [37]. LC-MS/MS is highly suited for high-throughput biomarker verification in clinical proteomics due to its superior throughput, sensitivity, and ability to be fully automated [31] [38]. It is the method of choice for targeted, absolute quantification of specific protein biomarkers, as demonstrated for cardiac troponin I (cTnI), and for large-scale, untargeted discovery studies [30] [31].

Detailed Experimental Protocol for MDLC-MS/MS

Sample Preparation for Bottom-Up Proteomics:

- Protein Digestion: Denature and reduce proteins from serum or tissue lysates. Alkylate cysteine residues. Digest the protein mixture into peptides using trypsin (typically at a 1:50 enzyme-to-protein ratio) overnight at 37°C [30].

- Peptide Clean-up: Desalt and concentrate the peptide mixture using C18 solid-phase extraction (SPE) cartridges. Elute peptides in a solvent compatible with the first LC dimension, then dry down and reconstitute in the appropriate loading buffer.

First Dimension - Fractionation (Off-line or On-line):

- Off-line SCX Fractionation: Load the peptide mixture onto a strong cation exchange column. Elute peptides using a linear salt gradient (e.g., 0-500 mM KCl or NaCl) over 60-90 minutes. Collect fractions at regular time intervals (e.g., 1-2 minutes/fraction) [37].

- Desalting of Fractions: Desalt each SCX fraction individually using C18 StageTips or a 96-well SPE plate to remove salts before the second dimension.

Second Dimension - Reverse-Phase LC-MS/MS:

- LC Separation: Reconstitute each fraction in a low-organic solvent (e.g., 2% acetonitrile, 0.1% formic acid). Inject onto a C18 reverse-phase nanoLC or capillary LC column. Separate peptides using a gradient from 2% to 35% acetonitrile over 30-120 minutes, depending on the desired throughput and depth of analysis [37] [38].

- MS Analysis: The eluting peptides are ionized by electrospray ionization and introduced into a tandem mass spectrometer. Operate the MS in data-dependent acquisition (DDA) or data-independent acquisition (DIA/SWATH) mode. In DDA, the instrument continuously acquires MS1 survey scans and selects the most intense precursor ions for fragmentation and MS2 analysis [38].

Data Analysis:

- Protein Identification and Quantification: Search the resulting MS/MS spectra against a protein sequence database using algorithms like SEQUEST, Mascot, or MaxQuant. For quantitative studies, use label-free or isobaric label-based (e.g., TMT, iTRAQ) quantification methods integrated into the software [34] [32].

LC-MS/MS Workflow Visualization

Diagram 2: The workflow for multidimensional liquid chromatography coupled with tandem mass spectrometry (MDLC-MS/MS) in a bottom-up proteomics approach.

Comparative Analysis of 2D-GE and LC

The choice between 2D-GE and LC-MS hinges on the specific goals of the clinical proteomics study. Table 1 summarizes the key characteristics of both techniques to guide researchers in selecting the most appropriate method.

Table 1: Comparative analysis of 2D-GE and LC-MS for clinical proteomics applications.

| Feature | 2D-Gel Electrophoresis (2D-GE) | Liquid Chromatography-Mass Spectrometry (LC-MS) |

|---|---|---|

| Analytical Principle | Separation of intact proteins by charge (pI) and molecular weight (SDS-PAGE). | Separation of digested peptides by hydrophobicity/charge (LC) followed by mass-to-charge ratio (MS). |

| Throughput | Lower throughput; process is labor-intensive and difficult to automate fully [32]. | High throughput; fully automatable, especially in online MDLC setups [38]. |

| Dynamic Range | Limited (~3-4 orders of magnitude); abundant proteins can obscure low-abundance ones [32]. | Superior (~4-6 orders of magnitude); enhanced by fractionation and advanced MS [32]. |

| Sensitivity | Low µg range for detection with standard stains [36]. | High (amol-zmol range); capable of detecting low-abundance biomarkers [31] [38]. |

| Ability to Resolve PTMs/Proteoforms | Excellent. Directly visualizes protein shifts due to PTMs (e.g., phosphorylation, glycosylation) [34] [33]. | Indirect. Inferred from peptide mass shifts or specific MS fragmentation; requires specialized enrichment for comprehensive analysis. |

| Protein Hydrophobicity Handling | Poor for very hydrophobic proteins (e.g., membrane proteins) [32]. | Good, especially with optimized solvents and chromatography [32]. |

| Quantification | Relative quantification based on spot staining intensity (e.g., DIGE) [34]. | Highly accurate relative and absolute quantification using label-free or isotope-labeling methods [30] [38]. |

| Ideal Clinical Application | Discovery of proteoforms and PTM-based biomarkers; analysis of protein isoforms [34] [35]. | High-throughput biomarker discovery and verification; targeted, absolute quantification of specific biomarkers [30] [31] [8]. |

The Scientist's Toolkit: Essential Research Reagents and Materials

Successful implementation of 2D-GE and LC-MS protocols relies on a suite of essential reagents and instruments. Table 2 lists key solutions and their functions in the clinical proteomics workflow.

Table 2: Key research reagent solutions and materials for protein separation techniques.

| Reagent / Material | Function in Protocol |

|---|---|

| Immobilized pH Gradient (IPG) Strips | Used in the first dimension of 2D-GE to separate proteins based on their isoelectric point across a defined pH range [33]. |

| Urea, Thiourea, CHAPS Detergent | Key components of lysis and rehydration buffers for 2D-GE; denature proteins and maintain solubility during IEF [35]. |

| Dithiothreitol (DTT) & Iodoacetamide | Reducing and alkylating agents, respectively. DTT breaks disulfide bonds; iodoacetamide alkylates cysteine thiols to prevent reformation [30] [35]. |

| Trypsin (Protease) | Enzyme used in bottom-up proteomics to digest proteins into peptides for LC-MS/MS analysis [30] [32]. |

| Stable Isotope-Labeled (SIL) Peptides/Proteins | Internal standards added to samples for precise absolute quantification in targeted LC-MS/MS assays (e.g., for cardiac troponin I) [30]. |

| C18 Reverse-Phase LC Columns | The most common stationary phase for peptide separation in the second dimension of LC-MS, separating peptides based on hydrophobicity [32] [38]. |

| Strong Cation Exchange (SCX) Resin | Stationary phase for the first dimension in MDLC; separates peptides based on their net positive charge [32] [37]. |

| Mass Spectrometer (e.g., Q-TOF, Orbitrap) | The detection system for LC-MS; identifies and quantifies peptides based on their mass-to-charge ratio and fragmentation patterns [31] [38]. |

Both 2D-GE and LC-MS are powerful, yet complementary, techniques in the clinical proteomics pipeline. 2D-GE remains invaluable for the direct visualization and analysis of intact proteoforms and PTMs, while LC-MS offers superior sensitivity, dynamic range, and throughput for large-scale biomarker discovery and validation. The choice between them should be guided by the specific clinical question, the sample type, and the resources available. As proteomics continues to advance towards precision medicine, the integration of data from these and other emerging platforms will be crucial for developing robust diagnostic assays and understanding disease mechanisms at the molecular level.

In the field of clinical proteomics, the identification of protein biomarkers for diseases such as multisystem inflammatory syndrome in children (MIS-C) or idiopathic pulmonary fibrosis (IPF) relies heavily on advanced mass spectrometry (MS) techniques [39] [40]. Two soft ionization methods—Matrix-Assisted Laser Desorption/Ionization (MALDI) and Electrospray Ionization (ESI)—have become cornerstone technologies for profiling complex biological samples, enabling the precise characterization of proteins, peptides, and other biomolecules [41]. These techniques allow for the ionization of fragile, high molecular weight molecules with minimal fragmentation, making them particularly suitable for clinical proteomics applications where sample integrity is paramount [41] [42]. The continual refinement of these ionization sources, coupled with increasingly sophisticated mass analyzers and machine learning algorithms for data analysis, has significantly advanced the precision and scope of biomarker discovery, paving the way for improved diagnostic and prognostic tools in medical practice [41] [43] [39].

Technology Fundamentals: MALDI and ESI

Principle of Matrix-Assisted Laser Desorption/Ionization (MALDI)

MALDI is a soft ionization technique that uses a laser energy-absorbing matrix to facilitate the desorption and ionization of analyte molecules from a solid sample preparation [42]. The process involves three critical steps: first, the sample is mixed with a suitable matrix material (e.g., trans-2-[3-(4-tert-butylphenyl)-2-methyl-2-propenylidene]malononitrile) and applied to a metal plate, forming crystals upon drying; second, a pulsed laser beam (typically at 337 nm, 349 nm, or 355 nm) impinges on the sample, causing desorption of the sample and matrix material; and finally, the analyte molecules are ionized via protonation or deprotonation in the hot plume of ablated gases [42]. A key characteristic of MALDI is that it typically produces ions with a net single charge, which simplifies mass spectrum interpretation and enables straightforward determination of molecular mass for most compounds [41] [42]. This technique has found extensive application in the analysis of biomolecules including proteins, peptides, DNA, polysaccharides, and synthetic polymers [42].

Principle of Electrospray Ionization (ESI)

Electrospray Ionization (ESI) is a soft ionization technique based on electrospray technology that operates with liquid samples [41]. In ESI, a solution containing the analyte is introduced through a needle to which a high voltage is applied, creating a fine aerosol of charged droplets [41]. As these charged droplets undergo evaporation in a high-pressure electric field, Coulombic repulsion forces overcome droplet surface tension, leading to the formation of gas-phase ions [41]. A distinctive feature of ESI is its ability to yield multiply charged ions, particularly beneficial for the detection and analysis of high molecular weight substances such as proteins and protein complexes [41]. This multiple charging phenomenon expands the effective mass range detectable by mass analyzers, making ESI particularly suitable for coupling with liquid chromatography (LC) systems for complex mixture analysis [41].

Comparative Analysis of MALDI and ESI Technologies

The selection between MALDI and ESI for clinical proteomics applications requires careful consideration of their respective technical characteristics, advantages, and limitations, as summarized in Table 1.

Table 1: Comprehensive Comparison of MALDI and ESI Technologies for Clinical Proteomics

| Parameter | MALDI | ESI |

|---|---|---|

| Charge State | Primarily single-charged ions [41] | Multiply charged ions [41] |