Biomarker Validation with Triple Quadrupole Mass Spectrometry: A Complete Guide from Discovery to Clinical Application

This article provides a comprehensive resource for researchers and drug development professionals on the application of triple quadrupole (QqQ) mass spectrometry in biomarker validation.

Biomarker Validation with Triple Quadrupole Mass Spectrometry: A Complete Guide from Discovery to Clinical Application

Abstract

This article provides a comprehensive resource for researchers and drug development professionals on the application of triple quadrupole (QqQ) mass spectrometry in biomarker validation. It covers foundational principles of QqQ instrumentation and Selected Reaction Monitoring (SRM), explores methodological workflows for developing robust quantitative assays, addresses critical troubleshooting and optimization strategies to enhance sensitivity and specificity, and examines validation frameworks and comparative analyses with alternative platforms. The content synthesizes current trends, including the growing adoption of QqQ in clinical applications and its role in translating biomarker candidates into validated clinical tests.

The Triple Quadrupole Foundation: Core Principles and Its Central Role in the Biomarker Pipeline

Core Components and Operating Principle

The triple quadrupole mass spectrometer (TQMS), often denoted as the QqQ configuration, is a tandem mass spectrometer consisting of two mass-resolving quadrupoles (Q1 and Q3) separated by a non-mass-resolving radio frequency (RF)–only quadrupole that acts as a collision cell (q2 or Q2) [1] [2]. This instrumental setup operates on the principle of tandem-in-space mass spectrometry, where different mass-selective processes occur sequentially in separate physical regions of the instrument [1].

The fundamental operation involves ionization of the sample, primary mass selection in Q1, collision-induced dissociation (CID) in Q2, mass analysis of the resulting fragments in Q3, and finally, detection [1]. The system's robustness, sensitivity, and quantitative accuracy have cemented its role as a cornerstone technology in modern analytical chemistry, particularly in biomedical research and clinical applications where its use has increased 2–3 times this decade [3] [4].

Function of Individual Quadrupoles

Q1: The First Mass Filter The first quadrupole (Q1) serves as the primary mass-to-charge (m/z) selector. It consists of four parallel, cylindrical metal rods controlled by a superposition of direct current (DC) and radio-frequency (RF) voltages [1] [2]. By precisely varying these RF and DC potentials, Q1 can be tuned to allow only ions of a single, specific m/z value to pass through to the next stage while rejecting all others [2] [5]. This selective transmission provides the first stage of mass filtration, isolating the precursor ion of interest for subsequent fragmentation and analysis.

Q2: The Collision Cell The second quadrupole (Q2 or q) functions as a collision cell and is typically operated with only an RF voltage, meaning it does not perform mass resolution [1] [2]. Its primary role is to induce fragmentation of the precursor ions selected by Q1. This is achieved through collision-induced dissociation (CID), where the precursor ions collide with an inert gas such as argon, nitrogen, or helium [1] [5]. These collisions convert kinetic energy into internal energy, causing the precursor ions to break apart into characteristic fragment or product ions [2]. In some modern instruments, the normal quadrupole collision cell has been replaced by hexapole or octopole cells to improve ion transmission efficiency [1].

Q3: The Second Mass Filter The third quadrupole (Q3) is the second mass-resolving analyzer, structurally identical to Q1 and also controlled by DC and RF potentials [1] [2]. It receives the product ions generated in Q2 and performs the final stage of mass analysis. Depending on the analytical mode, Q3 can be set to scan the entire m/z range of the fragments, monitor for a specific product ion, or correlate its scanning with Q1 [2]. This second stage of mass filtration is key to the exceptional selectivity of the triple quadrupole.

Table: Core Functions of the Triple Quadrupole Components

| Component | Common Designation | Primary Function | Key Operational Characteristic |

|---|---|---|---|

| First Quadrupole | Q1 | Primary mass selection; filters precursor ions | DC and RF voltages filter by m/z [1] [2] |

| Second Quadrupole | Q2 or q | Collision cell; fragments precursor ions | RF-only field; contains collision gas (e.g., N₂, Ar) [1] [5] |

| Third Quadrupole | Q3 | Secondary mass analysis; filters product ions | DC and RF voltages filter fragment ions by m/z [1] [2] |

Operational Scan Modes

The power and versatility of the triple quadrupole mass spectrometer are realized through its diverse scan modes. By independently controlling the mass-selective functions of Q1 and Q3, the instrument can be configured for various experiments tailored to qualitative identification or highly sensitive quantification [1] [2]. The most common scan modes include Product Ion Scan, Precursor Ion Scan, Neutral Loss Scan, and Selected/Multiple Reaction Monitoring.

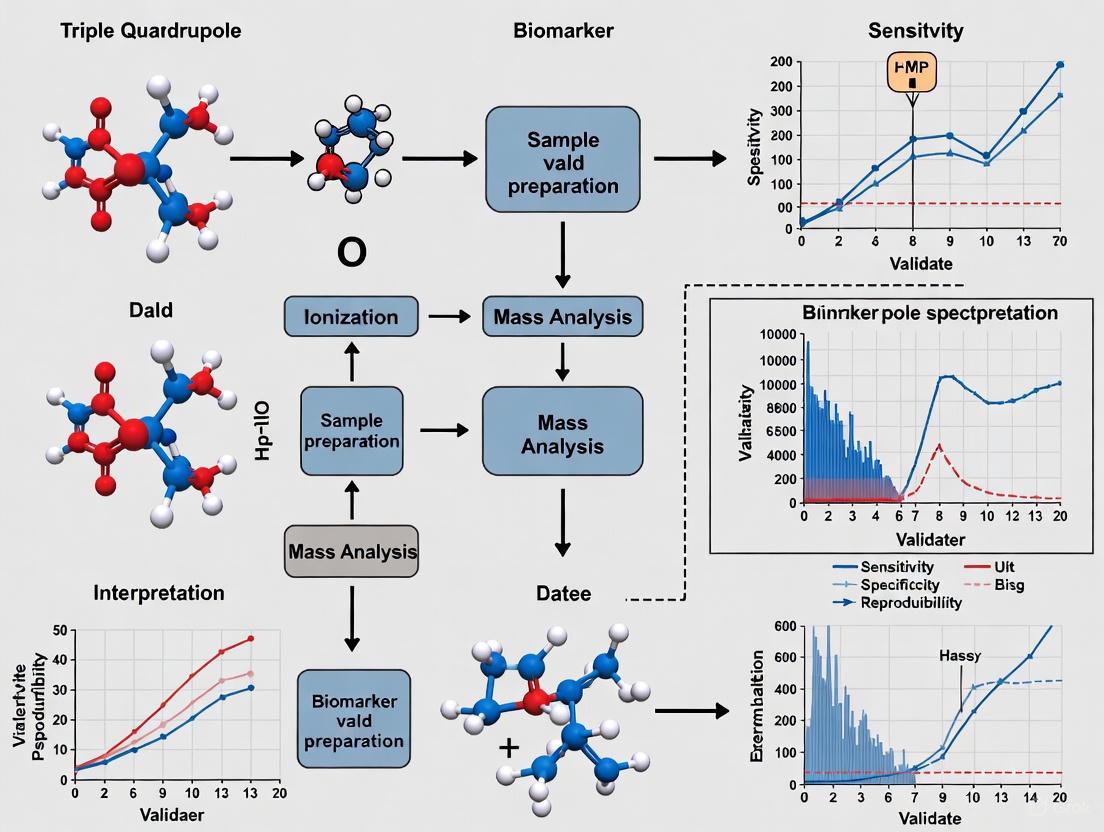

Triple Quadrupole Operational Flow

Key Analytical Modes

Product Ion Scan In this mode, Q1 is set to select a specific precursor ion of a known mass, which is then fragmented in Q2. Q3 is set to scan across a range of m/z values to record all the resulting product ions. This scan provides a characteristic fragmentation pattern, which is invaluable for structural elucidation of the original ion and is commonly used to identify optimal ion transitions for quantitative methods [1] [5].

Precursor Ion Scan A precursor ion scan involves setting Q3 to monitor for a specific product ion that is characteristic of a particular functional group or molecular moiety. Q1 then scans a wide m/z range. Any precursor ion that fragments to produce the characteristic product ion monitored in Q3 is identified. This mode is highly selective for recognizing ions sharing a common structural feature in a complex mixture [1].

Neutral Loss Scan In a neutral loss scan, both Q1 and Q3 are scanned simultaneously but with a constant mass offset between them. This configuration selectively detects all ions that lose a specific neutral fragment (e.g., H₂O, NH₃, CO₂) during fragmentation in Q2. Like the precursor ion scan, this mode is useful for selectively identifying closely related compounds within a sample [1] [5].

Selected Reaction Monitoring (SRM) / Multiple Reaction Monitoring (MRM) This is the most widely used mode for high-sensitivity quantification. In SRM/MRM, both Q1 and Q3 are fixed at specific m/z values to monitor a single, defined transition from a specific precursor ion to a specific product ion [1] [2]. This dual mass-filtering process provides exceptional selectivity and dramatically reduces chemical noise, leading to significantly lower limits of detection and quantification. When multiple such transitions are monitored in a single run, it is termed Multiple Reaction Monitoring (MRM), enabling high-throughput, multiplexed quantification of dozens of analytes simultaneously [1] [2]. The specificity is often enhanced by monitoring two mass transitions for a single analyte: a quantifier ion for concentration measurement and a qualifier ion to confirm the analyte's identity [2].

Table: Summary of Triple Quadrupole Scan Modes

| Scan Mode | Q1 Function | Q3 Function | Primary Application |

|---|---|---|---|

| Product Ion Scan | Selects single m/z | Scans a mass range | Structural elucidation, method development [1] [5] |

| Precursor Ion Scan | Scans a mass range | Selects single m/z | Selective detection of ions with a common fragment [1] |

| Neutral Loss Scan | Scans | Scans with fixed offset | Selective detection of ions that lose a common neutral group [1] |

| SRM/MRM | Selects single m/z | Selects single m/z | Highly sensitive and selective target quantification [1] [2] |

Experimental Protocol: MRM-Based Biomarker Quantification

This protocol details the application of a triple quadrupole mass spectrometer coupled with liquid chromatography (LC-MS/MS) for the absolute quantification of a protein biomarker candidate in human plasma using the Multiple Reaction Monitoring (MRM) approach. This workflow is central to biomarker validation studies in drug development [2].

Sample Preparation

- Internal Standard Addition: Add a known quantity of a stable isotope-labeled (SIL) analog of the target protein (e.g., 15N-labeled) to a measured volume of plasma (typically 50-100 µL) [2] [6]. The SIL protein serves as an internal standard to correct for variability in sample processing and ionization.

- Protein Digestion:

- Denature the plasma proteins using a buffer such as ammonium bicarbonate.

- Reduce disulfide bonds with dithiothreitol (DTT) and alkylate with iodoacetamide.

- Digest the proteins into peptides using a sequence-grade protease, most commonly trypsin, overnight at 37°C [2].

- Solid-Phase Extraction (SPE): Desalt and concentrate the resulting peptide mixture using a C18 solid-phase extraction cartridge to remove interfering salts and buffers, eluting peptides with an organic solvent like acetonitrile.

- Reconstitution: Evaporate the eluent to dryness under a gentle stream of nitrogen and reconstitute the peptide pellet in a mobile phase compatible with the LC system (e.g, 0.1% formic acid in water).

LC-MS/MS Analysis

- Liquid Chromatography (LC):

- Column: Use a reverse-phase C18 analytical column (e.g., 2.1 mm x 100 mm, 1.8 µm) for peptide separation [2].

- Mobile Phase: A) 0.1% Formic acid in water; B) 0.1% Formic acid in acetonitrile.

- Gradient: Employ a linear gradient from 2% B to 35% B over 10-20 minutes to elute the peptides based on hydrophobicity [2].

- Mass Spectrometry (MS) - MRM Acquisition:

- Ionization: Utilize Electrospray Ionization (ESI) in positive ion mode [2].

- Source Conditions: Optimize parameters like nebulizing gas flow, drying gas flow, and interface temperature for robust ion generation.

- MRM Transitions: For each target peptide (proteotypic for the biomarker and its SIL analog), program the instrument to monitor at least two specific precursor ion → product ion transitions.

- Quantifier Transition: The most intense transition for calculating concentration.

- Qualifier Transition: A secondary transition to confirm peptide identity based on the intensity ratio relative to the quantifier [2].

- Collision Energies: Optimize the collision energy in Q2 for each specific transition to achieve efficient and reproducible fragmentation.

Data Analysis and Quantification

- Peak Integration: Manually or automatically integrate the chromatographic peaks for the quantifier and qualifier transitions for both the endogenous peptide and the SIL internal standard peptide.

- Analyte Verification: Confirm the identity of the target peptide by ensuring the retention time of the endogenous peptide matches that of the SIL internal standard and that the ratio of qualifier-to-quantifier peak areas is consistent with that of the standard.

- Quantification: Calculate the peak area ratio (Areaendogenous / AreaSIL). Plot this ratio against the known concentration of the internal standard to create a calibration curve. The concentration of the target biomarker in the original plasma sample is determined by interpolating from this curve [2].

The Scientist's Toolkit

Table: Essential Reagents and Materials for LC-MS/MS Biomarker Assay Development

| Item | Function / Explanation |

|---|---|

| Stable Isotope-Labeled (SIL) Protein/Peptide | Serves as an internal standard; corrects for sample preparation losses and ion suppression, enabling absolute quantification [2]. |

| Sequence-Grade Trypsin | High-purity protease for reproducible and complete protein digestion into measurable peptides [2]. |

| Solid-Phase Extraction (SPE) Cartridges (C18) | For sample clean-up, desalting, and concentration of peptides prior to LC-MS/MS analysis, reducing matrix effects [2]. |

| Reverse-Phase LC Columns (C18) | Provides high-resolution separation of complex peptide mixtures, reducing ion suppression and isobaric interferences [2]. |

| Ammonium Bicarbonate Buffer | A volatile buffer suitable for protein digestion and compatible with mass spectrometry, as it can be easily removed during evaporation. |

| Mass Spectrometry Calibrant Solution | A standard solution containing compounds of known mass for regular mass accuracy calibration of the instrument. |

Understanding Selected Reaction Monitoring (SRM) and Multiple Reaction Monitoring (MRM)

Selected Reaction Monitoring (SRM) and Multiple Reaction Monitoring (MRM) are targeted mass spectrometry techniques primarily utilized for the precise quantification of specific analytes within complex mixtures [7]. These methods involve isolating and monitoring predefined precursor-product ion transitions, enabling high selectivity and sensitivity even in challenging biological matrices like serum, plasma, and urine [7] [8]. The fundamental difference between SRM and MRM lies in their monitoring scope: SRM typically involves monitoring a single predefined precursor-to-product ion transition, whereas MRM extends this capability to simultaneously monitor multiple precursor-to-product ion transitions within a single analysis, enhancing throughput and efficiency in quantification experiments [7].

The development of SRM/MRM is rooted in the advancements of tandem mass spectrometry, particularly with the introduction of triple quadrupole mass spectrometers in the late 1970s [7]. These instruments provided the foundational capability to perform targeted quantification by selecting precursor ions in the first quadrupole, fragmenting them in a collision cell, and then selecting specific product ions in the third quadrupole for detection [7] [9]. This technical evolution addressed the growing need for more targeted and quantitative analysis across various fields including pharmaceuticals, clinical diagnostics, and environmental monitoring [7]. In recent decades, SRM/MRM has become indispensable in scientific disciplines such as proteomics, metabolomics, and biomarker validation, with technological advancements further expanding their utility and versatility [7] [10].

Technical Principles and Instrumentation

Fundamental Operating Principles

At its core, SRM/MRM methodology enhances standard mass spectrometry through tandem mass spectrometry (MS/MS) configurations [7]. The process begins with precursor ion selection, where specific precursor ions corresponding to target analytes are chosen based on their unique mass and fragmentation patterns in the first quadrupole (Q1) [7]. These selected ions then undergo controlled fragmentation through collision-induced dissociation (CID) in a collision cell (q2), producing characteristic product ions [7]. The final critical step involves product ion selection, where in SRM, a single product ion corresponding to the expected fragmentation is monitored, while MRM allows simultaneous monitoring of multiple product ions, enhancing throughput and versatility [7]. The specific pair of m/z values associated with the precursor and fragment ions is referred to as a "transition," and the combination of several transitions with the retention time of the targeted peptide can constitute a definitive assay [11].

The instrumentation central to SRM/MRM experiments is the triple quadrupole mass spectrometer, which consists of three quadrupole analyzers arranged in series [7] [9]. The first (Q1) and third (Q3) quadrupoles act as mass filters, while the second quadrupole (q2) serves as a collision cell [9]. This specific configuration enables the non-scanning, static monitoring of predefined transitions, resulting in a significantly increased duty cycle compared to full-scan techniques [11]. The two stages of mass selection with narrow mass windows provide exceptional selectivity, effectively filtering out co-eluting background ions [11]. This technical approach provides a linear response over a wide dynamic range of up to five orders of magnitude and enables the detection of low-abundance proteins in highly complex mixtures, which is crucial for systematic quantitative studies in systems biology and biomarker validation [11].

Comparative Mass Spectrometry Techniques

SRM/MRM offers distinct advantages compared to other mass spectrometry techniques. It provides enhanced selectivity by specifically monitoring predefined precursor-to-product ion transitions, which minimizes interference from background noise and co-eluting compounds [7]. The technique also delivers increased sensitivity by selectively amplifying the signal of target analytes while suppressing background noise, enabling detection even at low concentrations [7]. Furthermore, the ability to precisely control precursor and product ion transitions, coupled with robust calibration strategies, ensures high quantitative accuracy and reproducibility in SRM/MRM analyses [7].

Table: Comparison of Targeted Mass Spectrometry Approaches

| Feature | MRM (SRM) | Parallel Reaction Monitoring (PRM) |

|---|---|---|

| Instrumentation | Triple Quadrupole | Orbitrap, Q-TOF |

| Resolution | Unit resolution | High (HRAM) |

| Fragment Ion Monitoring | Predefined transitions | All fragments (full MS/MS spectrum) |

| Selectivity | Moderate | High (less interference) |

| Sensitivity | Very High | High, depending on resolution |

| Throughput | High | Moderate |

| Method Development | Requires transition tuning | Quick, minimal optimization |

| Data Reusability | No | Yes (retrospective) |

| Best Applications | High-throughput screening, routine quantification | Low-abundance targets, PTMs, validation |

Parallel Reaction Monitoring (PRM) has emerged as a complementary targeted technique performed on high-resolution, accurate-mass (HRAM) instruments like Orbitrap or Q-TOF systems [12]. While MRM monitors only predefined transitions on a triple quadrupole instrument, PRM captures the entire MS/MS spectrum of all resulting fragments using a high-resolution detector [12]. This allows for retrospective data analysis and increased confidence in analyte identification, making PRM especially useful for cases involving low-abundance targets, post-translational modifications, and samples with high background complexity [12]. However, MRM remains superior for high-throughput applications due to its faster cycle times and well-established standardized workflows [12].

Applications in Biomarker Validation

The Biomarker Development Pipeline

The biomarker development pipeline consists of several preclinical phases—discovery, verification, and validation—before final clinical evaluation [10] [13]. Biomarker discovery is typically performed using non-targeted "shotgun" proteomics approaches that provide relative quantitation of thousands of proteins in a small number of samples, yielding output as "up-or-down regulation" or "fold-increases" [10]. Following discovery, potential biomarker proteins undergo verification on sets of 10-50 patient samples to confirm their association with the disease state [10]. The final validation phase involves quantifying a small number of confirmed biomarkers across hundreds to thousands of samples to establish clinical utility [10] [13].

SRM/MRM plays a critical role in bridging the gap between biomarker discovery and validation [10] [13]. The technology's unique potential for reliable quantification of low-abundance analytes in complex mixtures makes it ideally suited for the verification phase [11]. Unlike discovery proteomics, which identifies potentially relevant proteins, SRM/MRM enables researchers to precisely measure predefined sets of candidate biomarkers across multiple samples with the consistency required for clinical application [11]. This targeted approach addresses a major limitation of conventional shotgun proteomics: the poor reproducibility of target selection, which often results in only partially overlapping sets of proteins being identified from similar samples [11].

Specific Applications in Clinical Proteomics

In clinical diagnostics and biomarker discovery, SRM/MRM techniques are increasingly adopted for the precise quantification of disease-associated biomarkers in biological fluids [7]. The accurate measurement of biomarker concentrations in clinical samples enables early disease detection, disease monitoring, and treatment optimization [7]. A key application is the absolute quantification of disease protein biomarkers in body fluids such as urine, which represents a critical step in the biomarker development pipeline [8]. The great advantage of targeted mass spectrometry-based methodologies like SRM/MRM is their capacity for accurate and specific simultaneous quantification of several biomarkers (multiplexing) using peptides as protein surrogates measured on triple quadrupole instruments [8].

For proteomics and protein quantification, SRM/MRM enables precise measurement of proteins across complex biological samples, providing insights into cellular processes, disease mechanisms, and therapeutic targets [7]. Researchers leverage these techniques to quantify protein expression levels, post-translational modifications, and protein-protein interactions with exceptional accuracy and sensitivity [7]. This application facilitates biomarker discovery, drug development, and personalized medicine by elucidating the dynamic proteome landscape [7]. Similarly, in metabolomics and small molecule analysis, the targeted quantification capabilities of SRM/MRM allow researchers to measure metabolite concentrations with high specificity and sensitivity, enabling comprehensive analysis of metabolic pathways, disease biomarkers, and drug metabolism [7].

Table: Performance Characteristics of SRM/MRM in Biomarker Validation

| Performance Measure | Typical Range | Context |

|---|---|---|

| Linear Range | 10^3 – 10^4 | For standard serum proteins [14] |

| Limit of Detection | 0.2 – 2 fmol | Comparable to commercial ELISA kits [14] |

| Reproducibility (R²) | 0.723 – 0.931 | Varies by protein/peptide [14] |

| Correlation with ELISA | R² = 0.565 – 0.928 | Depends on specific protein target [14] |

| Target Multiplexing | Up to 1000 transitions | Using scheduled SRM [11] |

Experimental Protocols for SRM/MRM Assay Development

Establishing a Targeted SRM/MRM Experiment

The development of a robust SRM/MRM assay involves a systematic approach that can be divided into distinct phases. The pre-mass spectrometry acquisition phase includes (1) generation of a target protein list, (2) selection of proteotypic peptides, and (3) experimental design [15]. The post-acquisition phase encompasses (1) peak detection, integration and quantification, (2) data quality assessment, (3) data visualization and exploratory analysis, and (4) fold change/statistical significance analysis [15].

The first critical step is the selection of a target protein set based on previous experiments, scientific literature, or various bioinformatics resources such as gene expression data, protein-protein interaction networks, or functionally related protein groups from gene ontology or KEGG databases [11]. For biomarker validation studies, this set typically derives from prior discovery experiments. In addition to the proteins of interest, several "housekeeping" proteins should be selected as an invariant reference set to correct for experimental variability such as uneven total protein amount per sample [11].

The next crucial step involves peptide selection for each targeted protein. Following tryptic digestion, proteins yield tens to hundreds of peptides, but typically only a few representative "proteotypic peptides" (PTPs) are targeted to infer the presence and quantity of a protein [11]. These peptides should exhibit good MS responses and uniquely identify the targeted protein or a specific isoform thereof [11]. Previous information from repositories like PeptideAtlas, Human Proteinpedia, GPM Proteomics Database, or PRIDE can help identify frequently observed peptides for proteins of interest [11]. Additional empirical rules for peptide selection include avoiding peptides with missed cleavages, methionine or cysteine residues (unless alkylated), and N-terminal glutamine or glutamate (which can form pyroglutamate), while favoring peptides between 7-20 amino acids in length [11].

Transition Optimization and Assay Validation

For each proteotypic peptide, the optimization of transitions is essential for developing a sensitive and specific SRM assay [11]. This process involves identifying fragment ions that provide optimal signal intensity and discriminate the targeted peptide from other species in the sample [11]. Transition development typically involves importing protein sequences into specialized software that generates preferred Q1 and Q3 m/z values based on parameters such as enzyme specificity, allowed missed cleavages, fixed modifications, and fragment types [14]. Filters are applied to narrow down the MRM transitions list, including Q1 m/z > 400, Q3 m/z < 1200, and peptide length between 6-30 amino acids [14].

The final transition list is refined by examining peak areas of candidate transitions after analyzing digested purified protein standards [14]. Typically, the top 3-4 peptides with the highest SRM/MRM response are selected as signature peptides, with 2-3 transitions monitored for each peptide to ensure specificity [14]. This approach creates easy-to-distinguish overlapping peaks with the same elution time in the chromatogram, confirming assay specificity [14].

Diagram: SRM/MRM Workflow on Triple Quadrupole Mass Spectrometer

Assay validation involves establishing key performance parameters including limit of detection (LOD), lower limit of quantification (LLOQ), linearity, carry-over, and specificity [15]. For "Response Curve" experiments, assays are typically performed in triplicates over multiple dilution ranges (e.g., seven points) to identify LLOQ, LOD, and linear range [15]. For "Mini-validation of Repeatability," experiments are performed in triplicates at three concentration levels ("High," "Medium," and "Low") repeated on five different days to approximate the variability of the assay in real-world practice [15]. Tools like MRMPlus can compute these analytical measures as recommended by the Clinical Proteomics Tumor Analysis Consortium (CPTAC) Assay Development Working Group for "Tier 2" assays—non-clinical assays sufficient to measure changes due to both biological and experimental perturbations [15].

Practical Implementation and Quality Control

The Scientist's Toolkit: Essential Research Reagents and Materials

Table: Essential Research Reagent Solutions for SRM/MRM Assay Development

| Reagent/Material | Function/Application | Examples/Specifications |

|---|---|---|

| Triple Quadrupole Mass Spectrometer | Targeted quantification of predefined transitions | Instrumentation with three quadrupole analyzers [7] |

| Liquid Chromatography System | Analyte separation prior to MS analysis | Nano-HPLC systems for improved sensitivity [14] |

| Trypsin (Sequencing Grade) | Protein digestion to generate peptides | Enzyme to protein ratio of 1:50 (w/w) [14] |

| Isotope-Labeled Peptide Standards | Absolute quantification internal standards | Heavy-labeled (D, 13C, 15N) peptides [9] [11] |

| Solid-Phase Extraction Columns | Sample cleanup and desalting | C18-based columns for peptide purification [16] |

| LC Separation Columns | Peptide separation prior to MS injection | ZORBAX 300SB-C18, 3.5μm, 150mm×0.075mm [14] |

| Quality Control Pools | Monitoring assay performance over time | Representative sample aliquots for longitudinal QC [10] |

Analytical Considerations and Quality Assurance

Successful implementation of SRM/MRM requires careful attention to several analytical factors. Instrument performance optimization involves parameters such as collision energy, cone voltage, and dwell times to maximize sensitivity and selectivity [7]. Chromatographic conditions, including mobile phase composition, flow rate, and column temperature, must be meticulously optimized to achieve efficient analyte separation and peak resolution [7]. Prior to sample analysis, instrument calibration and performance verification procedures are essential to ensure accurate quantification and data quality [7].

Scheduled SRM represents an important advancement for quantifying multiple proteins in a single experiment [11]. When targeting many proteins requiring numerous transitions, the dwell time for individual transitions is reduced, potentially compromising sensitivity [11]. Practical dwell-time settings range between 10 ms for good sensitivity and 100 ms for excellent sensitivity [11]. To ensure precise LC-MS quantification, at least eight data points should be acquired across the chromatographic elution profile of a peptide [11]. Assuming a peak width of 20 s at 10% peak height, a cycle time of 2 s is required, translating to approximately 200 transitions with 10 ms dwell time [11]. Scheduled SRM addresses this limitation by acquiring transitions for particular peptides only in time windows around their expected retention times, significantly increasing the number of peptides/proteins that can be detected and quantified in a single LC-MS experiment—potentially exceeding 1000 transitions with high sensitivity and reproducibility [11].

Diagram: Biomarker Pipeline with SRM/MRM Verification

Quality control and assessment are critical components of robust SRM/MRM assay development [15]. The complexities of biological matrices result in somewhat unpredictable analytical behavior of peptides and transitions, making experimental and analytical validation essential to establish that peptides and associated transitions serve as stable, quantifiable, and reproducible representatives of proteins of interest [15]. Open-source tools like MRMPlus streamline the assay development analytical workflow and minimize error predisposition by computing performance measures as recommended by the CPTAC Assay Development Working Group [15]. These tools accept inputs from preprocessing software like Skyline and generate performance-associated visualizations that provide global perspectives of assay performance across all assayed peptide transitions [15].

SRM and MRM mass spectrometry techniques represent powerful tools for targeted quantification in biomarker validation research. Their exceptional selectivity, sensitivity, and quantitative accuracy make them particularly valuable for verifying candidate biomarkers in complex biological matrices during the critical transition from discovery to clinical validation [7] [11]. The robust nature of these techniques, combined with their multiplexing capabilities, positions them as essential methodologies in the proteomics workflow, especially when traditional immunoaffinity-based techniques are limited by antibody availability [14].

As mass spectrometry technology continues to advance, SRM/MRM approaches remain foundational for precise protein quantification in systems biology and clinical applications [11]. The ability to reliably quantify predefined sets of proteins across multiple samples addresses a major limitation of discovery proteomics and supports the development of mathematical models that simulate biological systems and predict their behavior under different conditions [11]. Furthermore, the continuous development of standardized guidelines, open-source analytical tools, and quality control frameworks ensures that SRM/MRM assays will maintain their critical role in generating high-quality quantitative data for biomarker validation and drug development pipelines [15].

The journey of a biomarker from initial discovery to clinical validation is a complex, multi-stage process often described as a pipeline. This pathway is designed to systematically reduce a large number of candidate biomarkers identified through discovery efforts down to a select few that demonstrate robust clinical utility [17] [10]. The development of clinically useful biomarkers represents a critical bridge between basic research and patient care, enabling advancements in disease diagnosis, prognosis, therapeutic monitoring, and personalized medicine [18]. Despite the application of advanced "omics" technologies that generate hundreds to thousands of biomarker candidates, a discouragingly small number successfully navigate the entire pipeline to achieve regulatory approval and clinical implementation [17] [10]. This high attrition rate stems from numerous challenges, including the incredible mismatch between the volume of biomarker candidates and the scarcity of reliable assays and methods for proper validation [17]. The verification stage, in particular, represents a significant bottleneck—described as the "tar pit" of the pipeline—where many promising candidates fail due to inadequate analytical validation or insufficient clinical performance [17]. Understanding each phase of this pipeline, along with the appropriate technologies and methodologies required at each step, is essential for improving the success rate of biomarker development, particularly when utilizing powerful analytical platforms such as triple quadrupole mass spectrometry for targeted validation studies [4] [10].

Phases of the Biomarker Pipeline

Biomarker Discovery

The biomarker pipeline begins with the discovery phase, where potential biomarker candidates are identified through unbiased screening approaches [10] [18]. During this stage, researchers utilize various "omics" technologies—including genomics, proteomics, metabolomics, and lipidomics—to comprehensively profile biological samples from distinct clinical groups (e.g., diseased versus healthy controls) [18]. In proteomics-based discovery, mass spectrometry techniques are typically employed in a non-targeted "shotgun" approach to identify proteins that exhibit differential expression between comparative groups [10] [13]. These methods rely on relative quantitation, with results typically expressed as "up-or-down regulation" or "fold-increases" rather than absolute concentration measurements [10]. Common techniques include isobaric tagging (e.g., iTRAQ, TMT), label-free quantitation, and spectral counting, which enable the simultaneous comparison of thousands of proteins across multiple samples [10] [19]. The output of this phase is a extensive list of candidate biomarkers—often numbering in the hundreds or thousands—that show statistically significant associations with the disease or condition of interest [17] [10]. However, it is crucial to recognize that discovery efforts produce candidates (hypotheses), not validated biomarkers, and these efforts are inherently error-prone due to technological limitations, biological variability, and study design constraints [17].

Biomarker Prioritization and Verification

Following discovery, an essential prioritization step occurs to select the most promising candidates for further verification [17]. This stage involves filtering the extensive candidate list based on various biological and practical criteria, such as the magnitude of observed change, known biological relevance to the disease mechanism, likelihood of detection in accessible biofluids (e.g., plasma), and feasibility of developing robust assays for quantification [17] [10]. Proteins discovered in diseased tissues that are predicted to be secreted or located on the cell surface are often prioritized based on the assumption they might have greater access to circulation [17]. The subsequent verification phase represents a critical bottleneck in the biomarker pipeline, where prioritized candidates are assessed using targeted, quantitative methods in larger sample sets (typically 10-50 patients) [10] [20]. This stage aims to confirm the differential expression of candidates before committing resources to large-scale validation studies [17]. Targeted mass spectrometry approaches, particularly Multiple Reaction Monitoring (MRM) using triple quadrupole mass spectrometers, have emerged as powerful tools for biomarker verification due to their high sensitivity, specificity, and multiplexing capabilities [17] [4] [10]. Unlike discovery-phase approaches, verification requires absolute or precise relative quantitation to determine actual protein concentrations, moving beyond simple fold-change comparisons [10].

Clinical Validation and Qualification

The final preclinical phase involves rigorous clinical validation of verified biomarkers in well-designed, large-scale studies involving hundreds to thousands of patient samples [17] [10]. This stage assesses the clinical performance of the biomarker for its intended use, establishing diagnostic sensitivity and specificity, prognostic value, or predictive utility for treatment response [18]. The bar for clinical validation is exceptionally high, as biomarkers must demonstrate not only statistical significance but also clinical relevance and cost-effectiveness for their proposed application [17]. For example, a biomarker intended for population screening must meet extraordinarily high specificity standards to avoid excessive false positives, while a biomarker for diagnosing symptomatic patients may have different performance requirements [17]. The validation process must also evaluate whether the biomarker provides added value beyond existing standards of care [21]. Successful clinical validation leads to regulatory qualification, wherein biomarkers undergo review by regulatory agencies (e.g., FDA, EMA) against stringent criteria for analytical validity, clinical validity, and clinical utility [22] [18]. This process requires extensive documentation, including assay validation data, clinical trial evidence, and proof of clinical significance [18].

Table 1: Key Stages in the Biomarker Development Pipeline

| Pipeline Stage | Primary Objective | Sample Size | Key Technologies | Output |

|---|---|---|---|---|

| Discovery | Identify candidate biomarkers | Small (n < 50) | Shotgun proteomics, LC-MS/MS, Isobaric tagging | List of 100s-1000s of candidate proteins with fold changes |

| Prioritization | Filter candidates based on biological/practical criteria | N/A | Literature mining, bioinformatics, preliminary testing | Prioritized list of 10s of candidates |

| Verification | Confirm differential expression | Moderate (n = 10-50) | Targeted MS (MRM), immunoassays | Verified shortlist of candidates with quantitative data |

| Validation | Assess clinical performance | Large (n = 100s-1000s) | Validated assays (MS or immunoassays), clinical trials | Clinically validated biomarker with performance characteristics |

| Qualification | Regulatory approval | Very large (n = 1000s+) | GLP-compliant assays, multi-center trials | Approved biomarker for specific clinical context |

Mass Spectrometry in Biomarker Verification and Validation

Triple Quadrupole Mass Spectrometry and Multiple Reaction Monitoring (MRM)

Triple quadrupole (QqQ) mass spectrometers have become the cornerstone technology for biomarker verification and validation due to their exceptional sensitivity, specificity, and quantitative capabilities [4] [10]. These instruments operate through a tandem mass spectrometry approach where the first quadrupole (Q1) selects specific precursor ions, the second quadrupole (Q2) fragments these ions through collision-induced dissociation, and the third quadrupole (Q3) filters for specific product ions [4]. This configuration enables Multiple Reaction Monitoring (MRM)—a highly specific targeted analysis method that monitors predetermined precursor-product ion transitions corresponding to peptides of interest [10]. The exceptional selectivity of MRM allows for precise quantification of target analytes even in complex biological matrices like plasma or serum, making it ideally suited for biomarker verification studies [17] [10]. The strength of MRM lies in its ability to multiplex, simultaneously monitoring dozens to hundreds of candidate biomarkers in a single analysis, thus significantly accelerating the verification process [17]. Furthermore, the incorporation of stable isotope-labeled standard (SIS) peptides enables absolute quantification, providing precise concentration measurements essential for clinical assay development [10]. The robust nature, relatively low cost, and wide dynamic range of triple quadrupole instruments have established them as the preferred platform for targeted biomarker quantification in both research and clinical settings [4].

Advanced MS Techniques: TMT-MRM and Isobaric Tagging

Recent technological advancements have combined the multiplexing advantages of isobaric tagging with the sensitivity and specificity of MRM, creating powerful integrated approaches for biomarker verification [19]. Isobaric tagging methods, such as Tandem Mass Tags (TMT) and Isobaric Tags for Relative and Absolute Quantitation (iTRAQ), enable simultaneous analysis of multiple samples (2-11 plex) by labeling them with chemical tags that have identical overall mass but yield distinct reporter ions upon fragmentation [19]. When coupled with MRM, these techniques significantly enhance throughput and quantitative precision while reducing analytical variation [19]. For example, TMT labeling applied to phospholipid analysis allowed comprehensive screening of 196 human plasma samples from Alzheimer's disease cohorts with only 40 MRM measurements, dramatically improving efficiency without compromising data quality [19]. This integrated approach is particularly valuable for large-scale verification studies where analyzing hundreds of samples for dozens of candidates would be prohibitively time-consuming and expensive using conventional methods [19]. The method demonstrates high reproducibility in human plasma and enables direct comparison of biomarker levels across multiple patient cohorts, facilitating robust biomarker candidate assessment [19].

Table 2: Comparison of Mass Spectrometry Approaches in Biomarker Development

| Parameter | Discovery (Shotgun Proteomics) | Verification (MRM/QqQ) | Validation (Targeted MS) |

|---|---|---|---|

| Primary Goal | Comprehensive protein identification | Targeted candidate verification | Precise quantification for clinical use |

| Quantitation Type | Relative (fold-changes) | Absolute or precise relative | Absolute concentration |

| Multiplexing Capacity | 1000s of proteins | 10s-100s of targets | Typically < 10 targets |

| Sample Throughput | Low to moderate | High with multiplexing | High for targeted assays |

| Key Strengths | Unbiased, comprehensive | Specific, sensitive, quantitative | Robust, reproducible, validated |

| Limitations | Semi-quantitative, limited dynamic range | Requires a priori knowledge | Limited scope, extensive validation required |

Experimental Protocols

Protocol: MRM-Based Biomarker Verification Using Triple Quadrupole MS

Objective: To verify candidate protein biomarkers in plasma/serum samples using targeted MRM mass spectrometry.

Materials and Reagents:

- Triple quadrupole mass spectrometer with nanoflow or conventional LC system

- Stable Isotope-Labeled Standard (SIS) peptides for each target protein

- Trypsin (sequencing grade) for protein digestion

- Solid-phase extraction cartridges (C18) for sample cleanup

- Mobile phase solvents: water and acetonitrile with 0.1% formic acid

Sample Preparation Procedure:

- Protein Extraction and Digestion:

- Deplete high-abundance proteins from plasma/serum using immunoaffinity columns [23].

- Reduce proteins with 5 mM dithiothreitol (56°C, 30 min) and alkylate with 15 mM iodoacetamide (room temperature, 30 min in dark).

- Digest proteins with trypsin (1:20-1:50 enzyme-to-protein ratio) at 37°C for 12-16 hours.

- Add known quantities of SIS peptides before digestion (if quantifying pre-digestion analytes) or after digestion (if quantifying peptides).

- Peptide Cleanup:

- Desalt digested peptides using C18 solid-phase extraction cartridges.

- Dry samples under vacuum and reconstitute in 0.1% formic acid for LC-MS analysis.

LC-MRM/MS Analysis:

- Chromatographic Separation:

- Use reversed-phase C18 column (e.g., 15-25 cm length, 75 μm inner diameter).

- Apply linear gradient from 2% to 35% acetonitrile over 30-60 minutes at 200-300 nL/min flow rate.

MRM Method Development:

- Select 2-3 proteotypic peptides per protein (typically 7-20 amino acids long).

- For each peptide, identify 3-5 optimal fragment ions for monitoring.

- Optimize collision energies for each transition.

- Schedule MRM transitions within specific retention time windows to maximize monitoring capacity.

Data Acquisition:

- Set dwell times to achieve 8-12 data points across each chromatographic peak.

- Use unit resolution in both Q1 and Q3.

- Include heavy isotope-labeled internal standards for each target peptide.

Data Analysis:

- Peak Integration and Review:

- Integrate chromatographic peaks for all transitions using Skyline or similar software.

- Apply quality controls: co-elution of light and heavy peptides, consistent retention times, and matching relative intensities of fragment ions.

- Quantification:

- Calculate peak area ratios of light (natural) to heavy (SIS) peptides.

- Generate calibration curves using stable isotope-labeled standards for absolute quantification.

- Determine protein concentrations based on peptide standards.

This protocol enables specific, sensitive, and reproducible quantification of candidate protein biomarkers, providing crucial data for decision-making before proceeding to large-scale validation studies [17] [10] [20].

Protocol: TMT-MRM Multiplexed Biomarker Verification

Objective: To simultaneously verify multiple candidate biomarkers across several sample groups using TMT labeling combined with MRM.

Materials and Reagents:

- Tandem Mass Tag (TMT) 6-plex or 11-plex reagents

- Aminoxy TMT reagents for lipid analyses [19]

- Triethylamine solution (10% in THF)

- Ethanolamine solution (10% in THF) for quenching

- Acetic acid (10% in THF) for neutralization

Procedure:

- Sample Preparation and Labeling:

- Extract lipids or digest proteins from patient samples as described in Section 4.1.

- Dissolve extracted samples in 50 μL tetrahydrofuran (THF).

- Add 9 μL of TMT reagent (pre-dissolved in acetonitrile) to each sample.

- Add 5 μL of triethylamine solution (10% in THF) to catalyze the reaction.

- Incubate at room temperature for 20 hours.

Reaction Quenching and Pooling:

- Add 5 μL of ethanolamine solution (10% in THF) to quench the reaction.

- Incubate for 10 minutes.

- Add 5 μL of acetic acid (10% in THF) to neutralize samples.

- Combine TMT-labeled samples in desired multiplexing scheme (e.g., 6-plex).

MRM Analysis:

- Develop MRM methods targeting TMT-labeled analytes of interest.

- Monitor specific transitions that include TMT reporter ions (e.g., m/z 126-131 for 6-plex).

- Use pooled quality control samples labeled with a distinct TMT channel for normalization.

This multiplexed approach significantly enhances throughput while maintaining quantitative accuracy, making it particularly valuable for large-scale verification studies [19].

Workflow Visualization

Biomarker Pipeline with MS Approaches

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 3: Essential Research Reagent Solutions for Biomarker MS Workflows

| Reagent/Material | Function | Application Examples |

|---|---|---|

| TMT/iTRAQ Reagents | Isobaric mass tags for multiplexed relative quantification | Simultaneous analysis of 2-11 samples; biomarker verification studies [19] |

| Stable Isotope-Labeled Standards (SIS) | Internal standards for absolute quantification | Precise measurement of protein/peptide concentrations in MRM assays [10] |

| Immunoaffinity Depletion Columns | Remove high-abundance proteins | Reduce dynamic range in plasma/serum samples to enhance detection of low-abundance biomarkers [23] |

| Trypsin (Sequencing Grade) | Proteolytic enzyme for protein digestion | Generates peptides for bottom-up proteomics; essential for MRM assay development [10] |

| Phospholipid Removal Plates | Extract lipids from biological samples | Sample preparation for lipidomic biomarker discovery and verification [19] |

| C18 Solid-Phase Extraction Plates | Desalt and concentrate peptide samples | Sample cleanup before LC-MS analysis; improves signal-to-noise ratio [20] |

TMT-MRM Experimental Workflow

Quality Assurance and Control in Biomarker Validation

Ensuring the reliability and reproducibility of biomarker assays requires rigorous quality assurance and control measures throughout the validation process [20]. Fit-for-purpose (FFP) biomarker assay validation has emerged as a guiding principle, where validation requirements are aligned with the proposed context of use (COU) for any given biomarker [22]. This approach recognizes that the level of validation needed for early research phases differs substantially from that required for clinical decision-making or regulatory submission [22]. Key analytical performance characteristics that must be established include precision, accuracy, sensitivity, specificity, and reproducibility [22] [20]. For mass spectrometry-based biomarker assays, specific challenges include the presence of endogenous analytes in control matrices and difficulties in procuring appropriate reference standards that accurately represent the endogenous molecules [22]. Implementing quality control samples at multiple levels—including process controls, internal standards, and pooled quality control samples—is essential for monitoring assay performance and ensuring data reliability [20] [19]. Additionally, proper study design elements such as sample blinding, randomization, and batch effect control are critical for minimizing bias and ensuring the validity of study conclusions [21] [20]. As biomarkers progress toward clinical implementation, adherence to regulatory guidelines and standards becomes increasingly important, with detailed documentation of assay validation data and clinical evidence required for regulatory qualification [22] [18].

The biomarker development pipeline represents a rigorous, multi-stage process designed to translate promising research findings into clinically useful tools for precision medicine. Despite the challenges and high attrition rates, systematic approaches that leverage appropriate technologies at each stage—particularly triple quadrupole mass spectrometry for verification—can significantly improve the efficiency and success of biomarker development [17] [4]. The integration of advanced methodologies such as TMT-MRM further enhances throughput and quantitative precision, helping to address the critical bottleneck in biomarker verification [19]. As mass spectrometry technologies continue to evolve and standardization improves, these platforms are poised to play an increasingly important role in bridging the gap between biomarker discovery and clinical validation, ultimately accelerating the delivery of novel biomarkers to improve patient care [17] [4] [23].

Why QqQ is the Gold Standard for Targeted Quantification

The triple quadrupole mass spectrometer (QqQ) maintains its position as the gold standard for targeted quantitative analysis in biomedical research due to its exceptional sensitivity, specificity, and robustness. Within the critical context of biomarker validation, QqQ systems, particularly when operating in Selected Reaction Monitoring (SRM) or Multiple Reaction Monitoring (MRM) modes, provide the precise and reproducible data required to translate potential biomarkers from discovery into clinically applicable tools [24] [4]. This application note details the operational principles, experimental protocols, and specific applications that solidify the QqQ's status for targeted quantification in biomarker research and drug development.

The triple quadrupole mass spectrometer, first developed in the late 1970s by Enke and Yost, consists of three sequentially arranged quadrupole mass analyzers [1] [25]. The first (Q1) and third (Q3) quadrupoles act as mass filters, capable of selecting ions based on their mass-to-charge ratio (m/z). The second quadrupole (q2) is a radio-frequency (RF)–only collision cell that fragments the precursor ions selected by Q1 through collision-induced dissociation (CID) with an inert gas [1]. This linear arrangement of components, often abbreviated QqQ, enables a tandem-in-space configuration that is ideal for targeted analyses [1].

The Pillars of QqQ Quantification

The supremacy of QqQ in targeted quantification rests on three fundamental pillars, which are particularly crucial for the biomarker validation pipeline where reliability is paramount.

Unmatched Sensitivity and Specificity

The core strength of the QqQ lies in its two-stage mass filtering process. Q1 selects a specific precursor ion, excluding the vast majority of chemical background. After fragmentation in q2, Q3 monitors a specific product ion. This double mass selection creates a highly specific ion transition, dramatically reducing background noise and leading to superior signal-to-noise ratios [24] [25]. This configuration increases sensitivity by one to two orders of magnitude compared to full-scan methods, enabling the detection of low-abundance biomarkers in complex matrices like plasma or urine [24].

Robust and Reliable Quantification

QqQ systems provide a wide linear dynamic range and excellent analytical precision [26]. The predictable and efficient fragmentation in the RF-only collision cell, coupled with the unit mass resolution of the quadrupole mass filters, yields highly reproducible results essential for absolute quantitation [10]. This robustness makes QqQ the preferred platform for high-throughput clinical applications, including newborn screening programs where it is used to detect metabolic biomarkers for congenital diseases [4].

Operational and Economic Efficiency

Despite the emergence of high-resolution mass spectrometers (HRMS), QqQ systems remain more affordable and cost-effective for dedicated quantitative analyses [27] [4]. They are relatively easy to operate and maintain, making them accessible to a broad range of clinical and research laboratories. The number of biomedical studies utilizing QqQ has increased 2–3 times this decade, demonstrating its growing adoption and utility [4].

Table 1: QqQ Performance in Key Application Areas

| Application Area | Key Metric | Performance/Role |

|---|---|---|

| Newborn Screening [4] | Utilization Rate in Studies | 84% (924 out of 1098 studies) |

| Endocrine Testing [4] | Trend | Increasing adoption as reference method, displacing immunoassays |

| Biomarker Validation [24] [10] | Primary Mode | Selected Reaction Monitoring (SRM) / Multiple Reaction Monitoring (MRM) |

| General Quantitative Analysis [26] | Benefits | Increased selectivity, improved S/N, lower limits of quantitation, wider linear range |

The Biomarker Validation Pipeline and QqQ

The journey of a protein biomarker from discovery to clinical use is a multi-stage process with distinct analytical requirements at each phase. QqQ mass spectrometry plays an indispensable role in the verification and validation stages.

The Biomarker Pipeline

The pipeline begins with discovery, typically using non-targeted "shotgun" proteomics on high-resolution instruments to identify hundreds of potential protein candidates from a small number of samples [10]. This is followed by verification, where QqQ-based SRM assays are deployed to screen tens to hundreds of these candidate proteins across a larger set of samples (e.g., 10-50 patients) [10]. The final preclinical stage is validation, where a small number of the most promising biomarkers are quantified across hundreds of samples using highly optimized SRM assays on QqQ platforms [10]. The final clinical validation involves analyzing these biomarkers across 500–1000+ samples [10].

Diagram 1: The Biomarker Validation Pipeline. QqQ-MS is critical for the verification and validation phases.

QqQ Scan Modes for Biomarker Analysis

The flexibility of the QqQ instrument is demonstrated through its various scan modes, each serving a distinct purpose in quantitative and qualitative analysis [1].

Table 2: Key Operational Modes of a QqQ Mass Spectrometer

| Scan Mode | Q1 Function | Q3 Function | Primary Application in Biomarker Research |

|---|---|---|---|

| Selected/Multiple Reaction Monitoring (SRM/MRM) [1] | Selects specific precursor ion | Selects specific product ion | Targeted quantification of known biomarkers with high sensitivity and specificity. |

| Product Ion Scan [1] | Selects specific precursor ion | Scans all product ions | Obtaining fragmentation patterns for structural elucidation and transition selection. |

| Precursor Ion Scan [1] [28] | Scans all precursor ions | Selects specific product ion | Selective detection of all precursors that fragment to yield a common product ion (e.g., a characteristic functional group). |

| Neutral Loss Scan [1] [28] | Scans all precursor ions | Scans with a constant mass offset | Detection of all precursors that undergo a common neutral loss (e.g., H₂O, NH₃). |

Diagram 2: QqQ Operational Modes. Different scanning configurations support various analytical needs.

Detailed Protocol: Biomarker Verification via SRM on a QqQ Platform

The following protocol outlines a standardized workflow for verifying candidate protein biomarkers in human plasma using LC-SRM on a QqQ mass spectrometer.

Experimental Workflow

Diagram 3: SRM Experimental Workflow. Key steps for targeted biomarker verification.

Step-by-Step Methodology

Step 1: Sample Preparation

- Protein Extraction: Extract proteins from human plasma or serum samples. Utilize techniques like Solid-Phase Extraction (SPE) for removing abundant proteins and lipids to reduce sample complexity [27].

- Protein Digestion: Digest the protein extract into peptides using a sequence-specific protease, most commonly trypsin. Use appropriate buffers (e.g., ammonium bicarbonate) and control digestion conditions (time, temperature, enzyme-to-substrate ratio) to ensure complete and reproducible digestion [24] [10].

Step 2: Selection of Proteotypic Peptides and Transitions

- Proteotypic Peptide (PTP) Selection: For each candidate biomarker protein, select 1-3 peptides that uniquely identify the protein (proteotypic). These peptides should exhibit strong ionization efficiency and not contain unstable or modifiable amino acids [24]. Computational tools and databases like PeptideAtlas can aid in this selection [24].

- Transition Selection: For each PTP, select 2-4 optimal fragment ions to monitor. Singly charged y-type ions are typically the most abundant and stable fragments generated by CID in a QqQ [24]. Select transitions where the fragment ion has a larger m/z than the precursor ion to minimize chemical background [24].

Step 3: Liquid Chromatography (LC) Separation

- Separate the digested peptide mixture using reversed-phase liquid chromatography (e.g., C18 column) or hydrophilic interaction liquid chromatography (HILIC) [27].

- Use a binary gradient with mobile phase A (e.g., water/0.1% formic acid) and B (e.g., acetonitrile/0.1% formic acid) to achieve optimal peptide separation and co-elution of the target peptide with its stable isotope-labeled internal standard.

Step 4: SRM/MRM Assay on QqQ

- Instrument Parameters:

- Ion Source: Electrospray Ionization (ESI) is most common.

- Q1/Q3 Resolution: Set to unit resolution (0.7 Da FWHM).

- Dwell Time: 10-50 ms per transition, adjusted to ensure sufficient data points across the chromatographic peak.

- Collision Energy: Optimized for each transition; can be predicted from precursor charge and m/z [27].

- Data Acquisition: Monitor the predefined precursor-product ion transitions for each PTP across their expected retention time windows.

Step 5: Data Analysis and Quantification

- Integrate the chromatographic peaks for each transition.

- Use a stable isotope-labeled internal standard (SIL) for each target peptide for absolute quantification. The SIL peptide is identical in sequence but contains heavy isotopes (e.g., 13C, 15N), ensuring identical chemical behavior [10].

- Calculate the ratio of the peak area of the native peptide to the peak area of the SIL peptide. Use a calibration curve, prepared by spiking known amounts of the SIL peptide into the sample matrix, to determine the absolute concentration of the target protein [10].

The Scientist's Toolkit: Essential Reagents and Materials

Table 3: Essential Research Reagents and Materials for QqQ-based Biomarker Assays

| Reagent/Material | Function/Application | Example Specifications |

|---|---|---|

| Trypsin, Sequencing Grade [10] | Proteolytic enzyme for digesting proteins into peptides for bottom-up proteomics. | High purity to minimize autolysis; modified to reduce self-digestion. |

| Stable Isotope-Labeled (SIL) Peptides [10] | Internal standards for absolute quantification; correct for sample prep and ionization variability. | Synthesized with >97% purity and heavy amino acids (e.g., [13C6, 15N2]-Lysine). |

| LC-MS Grade Solvents [27] | Mobile phases for liquid chromatography; high purity is critical to reduce background noise. | Acetonitrile, Methanol, Water with low volatile impurities. |

| Abundant Protein Depletion Kit [10] | Immunoaffinity columns to remove high-abundance proteins (e.g., albumin) from plasma/serum. | Spin columns or cartridges designed for specific sample volumes. |

| Solid-Phase Extraction (SPE) Plates [27] | For sample clean-up and removal of phospholipids and other interferents post-digestion. | 96-well format for high-throughput; chemistries like C18 or EMR-Lipid. |

Application Example: Protocol for Analysis of New Psychoactive Substances

This protocol, adapted from Rodrigues de Morais et al. (2020), demonstrates the use of QqQ scan modes for qualitative screening and quantitative analysis of NBOMe and NBOH compounds in blotter papers [28].

Sample Preparation:

- Extraction: Cut a segment of the blotter paper and extract with 1 mL of methanol in an ultrasonic bath for 15 minutes.

- Preparation: Dilute the extract 1:100 with mobile phase for LC-MS/MS analysis.

LC-MS/MS Analysis:

- Chromatography: Use a C18 column with a gradient of water and acetonitrile, both with 0.1% formic acid.

- Initial Screening (Precursor Ion Scan):

- Set Q3 to monitor a characteristic product ion (e.g., m/z 121 for NBOMes).

- Scan Q1 over a mass range (e.g., m/z 300-500) to identify all precursors that generate this fragment.

- Confirmation (Product Ion Scan):

- For each potential precursor found, set Q1 to its m/z.

- Scan Q3 to obtain a full product ion spectrum for library matching and structural confirmation.

- Quantification (SRM/MRM):

- For confirmed analytes, develop a quantitative SRM method.

- Use two transitions per compound for confident identification (primary for quantification, secondary for qualification).

- Use deuterated internal standards if available.

Key Instrument Parameters:

- Ion Source: ESI, positive mode.

- Gas Temperature: 300°C

- Nebulizer Gas: 7 L/min

- Collision Gas: Nitrogen or Argon

This multi-mode approach showcases the power of QqQ for comprehensive analysis, from unknown screening to precise quantification.

The triple quadrupole mass spectrometer remains the undisputed gold standard for targeted quantification in biomarker validation and drug development. Its unparalleled sensitivity, derived from the dual mass filtering of SRM/MRM, combined with its robustness, quantitative linear dynamic range, and operational efficiency, makes it an indispensable tool for generating high-quality, reproducible data. As biomarker research continues to drive advances in precision medicine, the QqQ platform will remain a cornerstone technology for verifying and validating the next generation of diagnostic and prognostic biomarkers.

The adoption of triple quadrupole (QqQ) mass spectrometry in biomedical research represents a paradigm shift towards highly specific, quantitative analysis in biomarker discovery and validation. This technology, particularly through multiple reaction monitoring (MRM) and selected reaction monitoring (SRM), has become the cornerstone of modern proteomic pipelines seeking to translate candidate biomarkers into clinically applicable diagnostic tools [10]. The inherent complexity of biological systems and the critical need for precision in drug development have accelerated the integration of QqQ mass spectrometry, moving beyond qualitative identification to robust, reproducible quantification [20]. This transition is vital, as the biomarker development pipeline demands progressively higher levels of analytical stringency—from initial discovery in small cohorts to final clinical validation in populations of hundreds or thousands [10]. The rising adoption of QqQ methodologies is fundamentally linked to their ability to bridge this gap, offering the specificity, sensitivity, and multiplexing capacity required to verify and validate protein biomarkers with the rigor demanded by regulatory agencies [20] [29]. This protocol outlines the application of QqQ mass spectrometry within this context, providing a detailed framework for its use in targeted proteomic studies aimed at biomarker validation.

Current Landscape and Quantitative Data

The use of mass spectrometry in biomedical research, especially for protein biomarker analysis, has seen substantial growth and formal recognition. Key quantitative data and trends are summarized in the tables below.

Table 1: Adoption Metrics for Analytical Techniques in Biomarker Research

| Metric | Value / Trend | Context & Notes |

|---|---|---|

| Primary MS Technique for Verification | Multiple Reaction Monitoring (MRM) | Bridges gap between discovery and validation; considered the gold standard for verification [10]. |

| Number of FDA-Approved Protein Biomarkers (as of 2014) | ~24 for cancer | Highlights the challenge of translation; many are based on immunoassays, a target for MS-based replacement [10]. |

| Dynamic Range of Newer Immunoassays (e.g., MSD) | Up to 5 orders of magnitude | Shows performance of alternative techniques; QqQ MS offers comparable or superior specificity [29]. |

| Sample Throughput in Discovery vs. Validation | Discovery: 10s of samplesVerification: 10-50 samplesValidation: 100-500+ samples | Illustrates the inverse relationship between sample number and proteins quantified; QqQ MRM is optimized for the high-sample-number phases [10]. |

Table 2: Key Performance Characteristics of QqQ MRM versus Immunoassays

| Characteristic | QqQ MRM/MS | Immunoassay (e.g., ELISA) |

|---|---|---|

| Specificity | High (based on precursor ion mass + fragment ion mass) | High (dependent on antibody affinity and specificity) [29]. |

| Multiplexing Capability | High (can monitor 100s of proteins in a single run) | Low to Moderate (multiplexing requires multiple, compatible antibodies) [29]. |

| Assay Development Time | Relatively short (weeks) | Long (months to years for antibody production and validation) [29]. |

| Critical Reagents | Synthetic stable isotope-labeled peptides | Target protein standard and matched antibody pairs [29]. |

| Susceptibility to Matrix Effects | Can be mitigated with internal standards | Can be affected by cross-reactivity with homologous or endogenous proteins [29]. |

Experimental Protocol: QqQ MRM for Biomarker Verification

This protocol details the steps for verifying a panel of candidate protein biomarkers in human plasma or serum using liquid chromatography-coupled QqQ mass spectrometry (LC-MRM/MS).

Sample Preparation

Objective: To reproducibly process complex biofluids (e.g., plasma, serum) to generate peptides for LC-MRM/MS analysis.

Materials:

- Plasma/Serum Samples: From well-characterized cohorts (e.g., case-control). Aliquot and store at -80°C.

- Digestion Buffer: 50 mM Tris-HCl, pH 8.0-8.5.

- Reducing Agent: 10 mM Dithiothreitol (DTT).

- Alkylating Agent: 25 mM Iodoacetamide (IAA).

- Protease: Sequencing-grade modified trypsin.

- Solid-Phase Extraction (SPE) Plates: e.g., C18 resin for desalting and peptide cleanup.

- Internal Standards: Heavy isotope-labeled synthetic peptides (e.g., ( ^{13}C/^{15}N)-labeled) for each target protein (AQUA peptides).

Procedure:

- High-Abundance Protein Depletion: Deplete major proteins (e.g., albumin, IgG) from plasma/serum using immunoaffinity columns per manufacturer's instructions. This step is optional but highly recommended for deeper analysis.

- Protein Denaturation, Reduction, and Alkylation:

- Dilute 10-20 µL of plasma/serum (or depleted equivalent) with 100 µL of digestion buffer.

- Add DTT to a final concentration of 10 mM. Incubate at 60°C for 30 minutes.

- Cool to room temperature. Add IAA to a final concentration of 25 mM. Incubate in the dark for 30 minutes.

- Proteolytic Digestion:

- Add trypsin at a 1:20 to 1:50 (w/w) enzyme-to-protein ratio.

- Incubate at 37°C for 12-16 hours.

- Quench the reaction by acidifying with 1% formic acid (FA).

- Peptide Cleanup:

- Desalt the digested peptide mixture using C18 SPE plates. Elute peptides with 50% acetonitrile (ACN)/0.1% FA.

- Dry the eluents completely in a vacuum concentrator.

- Spike-in of Internal Standards:

- Reconstitute the dried peptide pellet in an appropriate volume of 0.1% FA containing a known concentration of the heavy isotope-labeled peptide internal standards.

- Centrifuge at high speed to remove any insoluble material before LC-MS analysis.

LC-MRM/MS Method Development and Data Acquisition

Objective: To establish a highly specific and sensitive MRM assay for target peptides and acquire quantitative data.

Materials:

- Nano-flow or High-flow LC System: Configured with a C18 reverse-phase column.

- QqQ Mass Spectrometer: e.g., Agilent 6495, Sciex 7500, or Thermo Scientific TSQ Altis.

- Mobile Phase A: 0.1% Formic acid in water.

- Mobile Phase B: 0.1% Formic acid in acetonitrile.

Procedure:

- Peptide Selection: From your candidate protein list, select 2-3 proteotypic peptides per protein that are unique, 7-20 amino acids long, and avoid post-translational modification sites or ragged ends.

- Transition Selection:

- For each target peptide, use Skyline or similar software to predict precursor ion (m/z) and 3-5 most intense fragment ions (y- or b-series).

- Synthesize the light and heavy peptides to empirically confirm and optimize transitions.

- Define the final MRM method, including retention time, precursor ion > fragment ion transitions, and optimal collision energies for each transition.

- Liquid Chromatography:

- Inject a fixed volume of the prepared sample.

- Separate peptides using a binary gradient, e.g., from 3% to 35% Mobile Phase B over 30-60 minutes.

- Mass Spectrometry Data Acquisition:

- Operate the QqQ mass spectrometer in MRM mode.

- Set Q1 and Q3 to unit resolution.

- Schedule MRMs based on the known retention time of each peptide to maximize the number of data points acquired across each peak.

Data Analysis and Quantification

Objective: To extract and analyze MRM data to determine the relative or absolute concentration of target proteins.

Materials:

- Software: Skyline, MRM Peaktyper, or MultiQuant.

Procedure:

- Peak Integration: Import raw data into analysis software. Manually review and integrate peaks for all light (endogenous) and heavy (internal standard) transitions.

- Quality Control:

- Confirm the co-elution of light and heavy peptide peaks.

- Ensure the relative intensities of fragment ions for the light peptide match those of the heavy internal standard (within ~20%).

- Quantitative Calculation:

- For absolute quantification, calculate the ratio of the peak area of the light peptide to the peak area of the heavy internal standard for each peptide.

- Use a calibration curve (from serial dilutions of heavy peptide) to convert this ratio into a molar concentration.

- For relative quantification (e.g., diseased vs. control), calculate the light-to-heavy ratio and perform statistical comparison between sample groups.

Workflow Visualization

Biomarker Pipeline with QqQ

The Scientist's Toolkit: Essential Research Reagents

Table 3: Key Research Reagent Solutions for QqQ MRM Biomarker Studies

| Reagent / Material | Function / Role | Critical Notes |

|---|---|---|

| Stable Isotope-Labeled Standard (SIS) Peptides | Internal standards for precise quantification; corrects for sample prep losses and MS variability. | Essential for absolute quantification. Must be identical in sequence and behavior to the target endogenous peptide [10]. |

| Sequencing-Grade Modified Trypsin | Proteolytic enzyme for digesting proteins into measurable peptides. | High purity and specificity are critical for reproducible and complete digestion [20]. |

| Immunoaffinity Depletion Columns | Remove high-abundance proteins from plasma/serum to enhance detection of lower-abundance biomarkers. | Increases dynamic range and reduces signal suppression [20]. |

| LC Columns (C18, nano-flow) | Separate complex peptide mixtures prior to MS analysis. | Column performance directly impacts sensitivity and resolution [20]. |

| Quality Control (QC) Sample | Monitors instrument performance and reproducibility across the entire batch of samples. | Typically a pooled sample from all study aliquots or a commercial standard [20]. |

From Theory to Practice: Developing Robust QqQ Assays for Biomarker Verification

In the pipeline of biomarker validation, targeted mass spectrometry, particularly using triple quadrupole instruments, has emerged as the method of choice for verifying and quantifying candidate proteins with high specificity and sensitivity [30]. The core of this targeted approach lies in the precise selection of proteotypic peptides (PTPs)—peptides that uniquely represent a target protein or protein isoform and can be consistently detected by mass spectrometry [31] [32]. Subsequently, the development of optimal ion transitions for Selected Reaction Monitoring (SRM) or Multiple Reaction Monitoring (MRM) is crucial for achieving the high sensitivity and quantitative accuracy required to detect low-abundance biomarkers in complex matrices [33] [30]. This protocol details a systematic workflow for building a robust targeted assay, from in silico peptide selection to experimental optimization of MS parameters, specifically framed within the context of biomarker validation research.

The Role of Proteotypic Peptides in Biomarker Validation

Proteotypic peptides are the cornerstone of targeted proteomics for biomarker verification. Their fundamental property is to act as a surrogate for the parent protein, enabling its unambiguous identification and quantification [31]. In the context of a complex biological sample, such as plasma or urine, a well-chosen PTP must be unique to the protein of interest, thereby avoiding false positives from other proteins in the background proteome [34]. The use of PTPs also confers significant practical advantages. Searching MS/MS spectra against a database containing only proteotypic peptides, rather than the entire proteome, can reduce data analysis time by 20-fold due to the drastic reduction in database size [32]. Furthermore, concentrating on this most-observable subset of peptides can implicitly reduce the likelihood of false identifications [35].

Workflow for Developing a Targeted SRM/MRM Assay

The process of developing a targeted assay is multi-staged, involving both computational and experimental components. The following diagram illustrates the complete workflow from initial candidate selection to a finalized, quantitative assay.

Figure 1: A complete workflow for developing a targeted SRM/MRM assay, from protein candidate list to a validated quantitative method.

Selecting Proteotypic Peptides (PTPs)

Core Criteria for Selection

The initial selection of peptides is a computational process aimed at identifying the most suitable peptides to represent the target protein.

- Uniqueness to the Protein: The peptide sequence must be unique to the target protein or a specific isoform within the background proteome of the sample organism (e.g., human) to ensure the assay's specificity [31] [34].

- Consistent Observability: PTPs should be peptides that are consistently observed in mass spectrometry experiments, indicating favorable ionization and detection properties [35] [32].

- Ideal Physicochemical Properties: Peptides should typically be between 7 and 25 amino acids long. Shorter peptides may lack specificity, while longer ones may exhibit poor ionization and fragmentation [34].

- Avoidance of Problematic Sequences:

- Methionine and Cysteine: These residues are prone to oxidation, which can lead to multiple forms and complicate quantification [34].

- N-terminal Glutamine: This can cyclize to form pyro-glutamic acid, leading to non-quantitative conversion [34].

- Consecutive Prolines: Can cause peak broadening in chromatography, reducing sensitivity [34].

- Missed Tryptic Cleavages: Peptides with predictable and complete tryptic cleavage are preferred for reproducible generation [34].

Practical Selection Methodology