Biomarker Clinical Endpoint Validation: Criteria, Challenges, and Regulatory Pathways for Drug Development

This article provides a comprehensive guide to biomarker clinical endpoint validation for researchers and drug development professionals.

Biomarker Clinical Endpoint Validation: Criteria, Challenges, and Regulatory Pathways for Drug Development

Abstract

This article provides a comprehensive guide to biomarker clinical endpoint validation for researchers and drug development professionals. It covers foundational concepts from biomarker definitions and BEST categories to the detailed regulatory criteria set by the FDA and EMA. The content explores methodological frameworks including fit-for-purpose validation, analytical and clinical validation processes, and the specific challenges in transitioning biomarkers from discovery to qualified clinical tools. Practical insights are offered on troubleshooting common pitfalls, optimizing validation strategies with advanced technologies, and navigating the complex regulatory qualification landscape to successfully integrate biomarkers into drug development and precision medicine.

Laying the Groundwork: Core Principles and Definitions of Biomarker Endpoints

In modern drug development, biomarkers are defined as a "characteristic that is objectively measured and evaluated as an indicator of normal biological processes, pathogenic processes, or pharmacological responses to a therapeutic intervention" [1] [2]. This standardized definition, established by the Biomarkers, EndpointS, and other Tools (BEST) Resource, provides a critical foundation for clear communication among researchers, regulators, and drug developers [3] [4]. The BEST Resource, created by an FDA-NIH joint working group, offers a comprehensive glossary that categorizes biomarkers based on their specific applications in research and clinical practice, moving beyond vague terminology to precise functional classifications [3] [2].

The appropriate validation and application of biomarkers, especially their use as surrogate endpoints, represents a core challenge in accelerating therapeutic development without compromising scientific rigor. Within the context of biomarker clinical endpoint validation criteria research, understanding these categories is not merely an academic exercise but a practical necessity for designing efficient trials and generating reliable evidence. This guide systematically compares these biomarker categories, providing researchers with a framework for selecting and validating biomarkers for specific contexts of use in drug development programs [3].

Biomarker Categories: Definitions and Comparisons

The BEST Biomarker Classification System

The BEST Resource establishes seven primary biomarker categories, each defined by a specific context of use (COU) in drug development [3] [2]. A biomarker's COU is a "concise description of the biomarker's specified use in drug development," which includes its BEST category and intended application [3]. The table below provides a detailed comparison of these categories, their definitions, and representative examples.

Table 1: BEST Biomarker Categories and Applications

| Biomarker Category | Definition and Purpose | Typical Use in Drug Development | Real-World Example |

|---|---|---|---|

| Susceptibility/Risk | Indicates the potential for developing a disease or condition [3] [2]. | Identifying high-risk populations for preventive therapy or trial enrollment [3]. | BRCA1/BRCA2 genetic mutations for breast and ovarian cancer risk [3]. |

| Diagnostic | Confirms the presence of a disease or condition [3] [2]. | Accurately identifying patients with the target disease for trial enrollment [3]. | Hemoglobin A1c for diagnosing diabetes mellitus [3]. |

| Prognostic | Identifies the likelihood of a clinical event, disease recurrence, or progression [3] [2]. | Defining higher-risk disease populations to enhance trial efficiency or understand natural history [3] [5]. | Total kidney volume for predicting progression in autosomal dominant polycystic kidney disease [3]. |

| Predictive | Identifies individuals more likely than others to respond to a specific medical product, either positively or negatively [3] [2]. | Selecting patient populations most likely to respond to an investigational therapy [3] [6]. | EGFR mutation status for predicting response to EGFR inhibitors in non-small cell lung cancer [3] [6]. |

| Pharmacodynamic/Response | Shows a biological response has occurred in an individual who has been exposed to a medical product or environmental agent [3] [2]. | Providing evidence of biological activity, aiding in dose selection, and demonstrating target engagement [3]. | Reduction in HIV RNA viral load after initiating antiretroviral therapy [3]. |

| Safety | Used to measure the presence or likelihood of toxicity as an adverse effect of exposure to medical products [3] [2]. | Monitoring for potential adverse events during a clinical trial [3]. | Serum creatinine for monitoring acute kidney injury [3]. |

| Monitoring | Used to measure the status of a disease or medical condition for the purpose of assessing it over time [3] [2]. | Measuring the effects of a treatment and the body's response to it repeatedly to track progress [3] [2]. | HCV RNA viral load for monitoring response to antiviral therapy in Hepatitis C [3]. |

Biomarker Types by Measured Component

Beyond their functional application, biomarkers can also be classified by the biological component they measure, which directly influences the choice of discovery platform and analytical technique.

Table 2: Comparison of Biomarker Types by Biological Component

| Biomarker Type | Description | Key Technologies for Discovery/Measurement | Advantages and Considerations |

|---|---|---|---|

| Genomic | Measurable characteristics of DNA (e.g., SNPs, mutations) that indicate biological processes or disease risk [7] [6]. | Genome-wide association studies (GWAS), sequencing [7]. | Provides information on heritable disease risk and drug response; stable over time but does not capture environmental influences [7]. |

| Proteomic | Proteins or peptides that reflect cellular activities and functional pathways [7]. | Mass spectrometry, immunoassays [7]. | Directly reflects functional cellular state; however, proteins often remain in tissue beds and may not be easily accessible in circulation [7]. |

| Small Molecule | Low molecular weight compounds (e.g., metabolites, lipids) that provide a functional readout of biological processes [7]. | Liquid chromatography-mass spectrometry (LC-MS) [7]. | Captures interactions between genes, proteins, and environment; can be non-invasively captured in blood to provide real-time insight into tissue-level processes [7]. |

| Digital | Sensor-based data from wearables or devices providing continuous, objective physiological/behavioral insights [8]. | Wearables, smartphones, connected medical devices [8]. | Enables high-resolution, real-world data collection; reduces participant burden; challenges include data standardization and privacy [8]. |

Surrogate Endpoints and Validation Criteria

Defining Surrogate Endpoints

A surrogate endpoint is a specific type of biomarker used in clinical trials as a substitute for a direct measure of how a patient feels, functions, or survives [4]. It does not measure the clinical benefit of primary interest itself but is expected to predict that clinical benefit based on epidemiologic, therapeutic, pathophysiologic, or other scientific evidence [4]. Surrogate endpoints are particularly valuable when clinical outcome trials would be impractical, too long, or unethical [1] [4].

Regulatory agencies characterize surrogate endpoints by their level of validation [4]:

- Candidate Surrogate Endpoint: Still under evaluation for its ability to predict clinical benefit.

- Reasonably Likely Surrogate Endpoint: Supported by strong mechanistic and/or epidemiologic rationale but insufficient clinical data for full validation; can support Accelerated Approval [4].

- Validated Surrogate Endpoint: Supported by a clear mechanistic rationale and clinical data providing strong evidence that an effect on the surrogate predicts a specific clinical benefit [4].

Methodologies for Validating Surrogate Endpoints

The validation of a surrogate endpoint is a rigorous process requiring both statistical evidence and biological plausibility. Simple correlation between the biomarker and the clinical outcome is insufficient, as it can lead to misleading conclusions [1] [5].

The Principal Surrogate Endpoint Framework

A robust framework for evaluation is the principal surrogate endpoint criteria, based on causal associations between treatment effects on the biomarker and on the clinical endpoint [9]. This framework uses causal inference and principal stratification to avoid misleading results due to unmeasured confounding variables [9]. The two key criteria are:

- Average Causal Necessity: If the treatment (

Z) has no effect on the surrogate (S), then it has no average effect on the clinical endpoint (Y). In other words,risk(1)(s,s) = risk(0)(s,s)for alls, indicating no dissociative effects [9]. - Average Causal Sufficiency: If the treatment has a large enough effect on the surrogate, then it has an effect on the clinical endpoint. Formally, there exists a

C > 0such that ifS(1) - S(0) >= C, thenrisk(1)(S(1), S(0)) != risk(0)(S(1), S(0)), indicating associative effects [9].

The following diagram illustrates the causal pathways in the principal surrogate evaluation, highlighting how a valid surrogate should mediate the treatment's effect on the clinical outcome.

Diagram 1: Causal pathways for surrogate endpoint validation. A valid surrogate (S) should mediate the treatment's effect on the clinical outcome (Y), minimizing the direct effect (red arrow). Unmeasured confounders (U) can complicate this relationship.

Meta-Analytic and Statistical Evaluation

For a quantitative evaluation using data from multiple historical trials, a simple approach involves five key criteria [5]:

- An acceptable sample size multiplier: The sample size needed for a trial using the predicted treatment effect based on the surrogate endpoint should not be prohibitively larger than the sample size needed for a trial measuring the true endpoint directly.

- A prediction separation score > 1: This indicates that the effect of treatment on the surrogate endpoint is strongly informative for the effect on the true endpoint across the range of effects observed in historical trials.

- Similarity of biological mechanism: The biological mechanism of the treatment in the new trial must be similar to those in the historical trials used for surrogate validation.

- Similarity of secondary treatments: Any treatments administered after observing the surrogate endpoint should be similar between the new trial and the historical trials.

- Low risk of late harmful effects: The risk of harmful side effects occurring after the observation of the surrogate endpoint must be low.

The discovery and validation of biomarkers require a suite of specialized reagents, analytical tools, and data resources. The table below details key solutions essential for researchers in this field.

Table 3: Key Research Reagent Solutions for Biomarker Research

| Resource/Reagent | Function and Application | Example Uses |

|---|---|---|

| Mass Spectrometry Systems | High-throughput, untargeted profiling of small molecule biomarkers (e.g., metabolites, lipids) from biological samples [7]. | Sapient's rLC-MS systems can profile thousands of samples daily, measuring over 11,000 small molecule biomarkers for functional phenotyping [7]. |

| Genomic Assays | Tools for detecting genetic variants (e.g., SNPs, insertions/deletions) that serve as susceptibility, diagnostic, or predictive biomarkers [7] [6]. | Genome-wide association studies (GWAS); profiling EGFR mutations to predict response to tyrosine kinase inhibitors in oncology [7] [6]. |

| Proteomic Platforms | Technologies for identifying and quantifying protein biomarkers, including their post-translational modifications and interactions [7]. | Analyzing hundreds to thousands of protein biomarkers to elucidate cellular activities and functional pathways [7]. |

| FDA Table of Pharmacogenomic Biomarkers | A regulatory resource listing biomarkers in drug labeling that inform on exposure, response variability, and adverse event risk [6]. | Referencing HLA-B*57:01 status before prescribing abacavir to avoid severe hypersensitivity reactions [6]. |

| FDA Surrogate Endpoint Table | A curated list of surrogate endpoints that have been used as primary efficacy endpoints in approved drug applications, providing clarity for trial design [4]. | Justifying the use of blood pressure reduction as a surrogate for reduced stroke risk in a cardiovascular drug development program [4]. |

| Digital Biomarker Tools | Wearables, smartphones, and connected devices for passive, continuous collection of physiological and behavioral data [8]. | Using accelerometers in wearables to monitor activity levels and sleep quality in oncology or neurology trials [8]. |

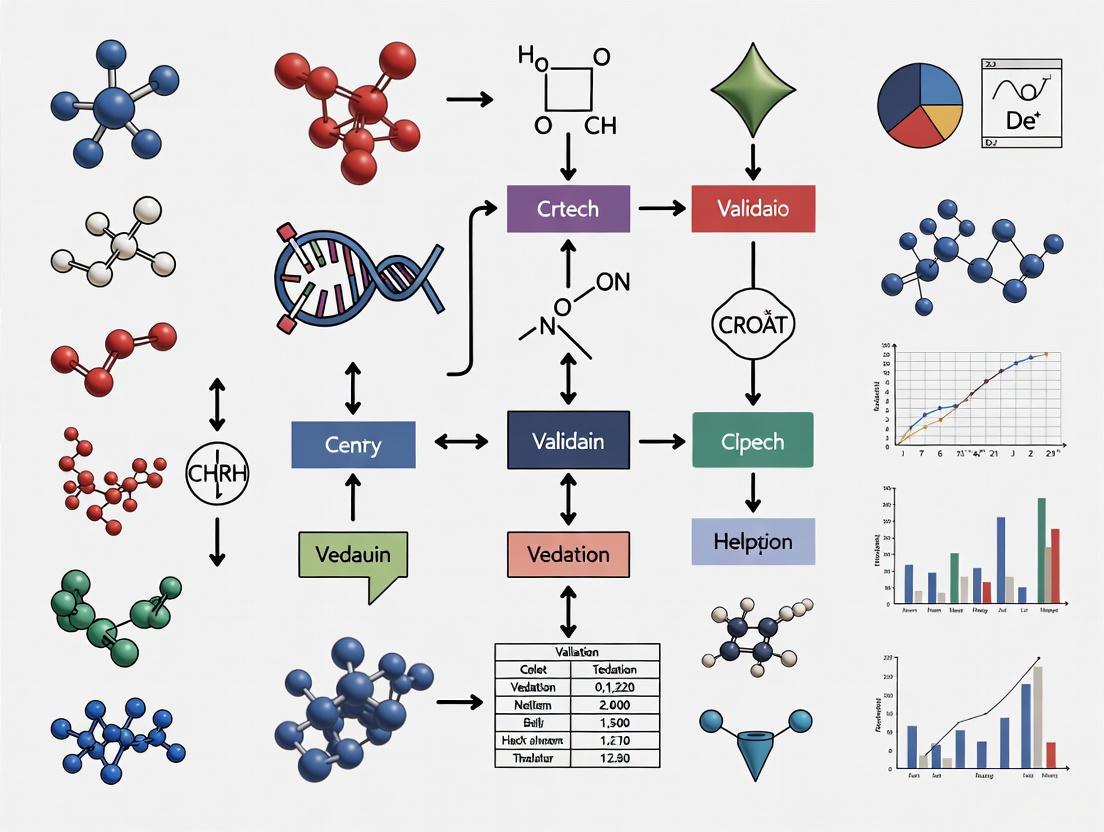

The following workflow diagram maps the key stages and decision points in the biomarker development and validation process, from initial discovery to regulatory acceptance.

Diagram 2: Biomarker development and validation workflow.

The BEST resource provides an indispensable framework for categorizing biomarkers, moving from broad characteristics like susceptibility/risk markers to the highly specific and rigorous category of validated surrogate endpoints. For researchers engaged in clinical endpoint validation, understanding these categories is fundamental to designing robust studies. The choice of biomarker and its intended context of use directly dictates the required validation pathway, which must be supported by strong biological rationale and robust empirical evidence [3] [1]. The increasing integration of novel biomarker types, from small molecules to digital biomarkers, promises to further refine drug development, enabling more precise, efficient, and patient-centric clinical trials [7] [8].

The Critical Role of Context of Use (COU) in Defining Validation Strategy

In the realm of drug development, a biomarker's Context of Use (COU) is formally defined as a concise description of the biomarker's specified application, encompassing both its BEST biomarker category and its intended drug development purpose [10]. This precise definition forms the critical foundation for determining the appropriate level and type of validation required, establishing a "fit-for-purpose" approach that aligns validation strategies with the biomarker's decision-making impact [3]. The COU framework ensures that validation efforts are both scientifically rigorous and practically efficient, avoiding both insufficient validation for critical applications and unnecessarily stringent requirements for exploratory biomarkers.

The structural formula for a COU follows: [BEST biomarker category] to [drug development use] [10]. Real-world examples include a predictive biomarker to enrich for enrollment of asthma patients more likely to respond to a novel therapeutic in Phase 2/3 trials, or a safety biomarker for detecting acute drug-induced renal tubule alterations in male rats [10]. Understanding this framework is essential for researchers designing validation strategies, as the same biomarker may require vastly different validation approaches depending on its intended context.

How COU Dictates Validation Strategy

The Fit-for-Purpose Validation Paradigm

The "fit-for-purpose" approach to biomarker validation recognizes that different contexts of use demand distinct levels of evidentiary support [3]. This principle underscores that validation is not a one-size-fits-all process but rather a sliding scale of rigor dependent on the consequences of potential misinterpretation. For example, a biomarker used for early pharmacodynamic response may require less extensive validation than one serving as a surrogate endpoint supporting regulatory approval [3]. The validation process must demonstrate that the biomarker accurately identifies or predicts the clinical outcome of interest for the specified context, with the stringency of validation reflecting the biomarker's decision-making criticality.

Analytical validation assesses the performance characteristics of the measurement assay itself, including accuracy, precision, analytical sensitivity, analytical specificity, reportable range, and reference range [3]. Meanwhile, clinical validation establishes that the biomarker reliably identifies or predicts the relevant clinical outcome or biological process [3]. The degree of evidence required for both analytical and clinical validation is directly determined by the COU, creating a risk-based approach to biomarker qualification that efficiently allocates resources while ensuring scientific validity.

Comparative Case Study: Same Biomarker, Different COUs

A compelling illustration of COU-driven validation strategies comes from two Phase I trials utilizing the same complement factor protein biomarker for entirely different purposes [11].

Table: Validation Requirements for Different Contexts of Use

| Validation Parameter | COU A: Pharmacodynamic Response | COU B: Patient Stratification |

|---|---|---|

| Primary Decision | Measure biological effect of drug | Determine patient eligibility |

| Critical Performance Attribute | Accuracy at baseline | Precision around clinical cut-point |

| Acceptable Variability | Higher (large fold-change expected) | Very low (small differences matter) |

| Key Risk | Mischaracterization of effect size | False inclusion/exclusion of patients |

| Validation Focus | Baseline reproducibility | Precision across decision threshold |

In Case Study A, the complement factor served as a pharmacodynamic biomarker to demonstrate target engagement, where researchers expected a large (approximately 1000-fold) decrease post-dosing [11]. The validation emphasis was on baseline measurement accuracy, as results would be expressed as percent change from pre-dose values. Post-dose precision was less critical due to the substantial effect size.

In Case Study B, the identical biomarker was used for patient stratification based on pre-treatment levels [11]. This COU demanded high precision around clinical decision points, as small measurement variations could incorrectly include or exclude patients. The validation requirements were consequently more stringent, focusing on reproducibility and accuracy across the concentration range used for enrollment decisions.

Experimental Protocols for Biomarker Validation

Method Comparison Experiment Protocol

The comparison of methods experiment represents a fundamental approach for assessing systematic error or inaccuracy in biomarker assays [12]. This protocol is particularly relevant for biomarkers used in diagnostic, monitoring, or safety contexts where accurate quantification is critical.

Purpose and Design: The experiment estimates systematic error by analyzing patient samples using both the new method (test method) and a comparative method [12]. A minimum of 40 patient specimens is recommended, carefully selected to cover the entire working range of the method and representing the spectrum of diseases expected in routine application [12]. The specimens should be analyzed within a short timeframe (generally within two hours) unless specific stability data support longer intervals [12].

Reference Method Selection: When possible, a reference method with documented correctness should serve as the comparative method [12]. If only a routine method is available, discrepant results may require additional experiments (recovery, interference) to identify which method is inaccurate [12].

Data Analysis Approach: The data should be graphed immediately during collection to identify discrepant results requiring reanalysis [12]. For wide analytical ranges, linear regression statistics (slope, y-intercept, standard deviation about the regression line) allow estimation of systematic error at medically important decision concentrations [12]. For narrow analytical ranges, calculating the average difference (bias) between methods is more appropriate [12].

Method Comparison Experimental Workflow

Model Validation Protocol for Computational Biomarkers

For data-driven biomarker models, robust validation follows specific rules to ensure reliable performance generalization [13].

Rule 1: Independent Data for Model Building and Evaluation: Data-driven models require strict separation between data used for model building (training/validation sets) and evaluation (test set) [13]. This prevents overfitting and ensures accurate assessment of generalization capability. The independence requirement extends to all aspects of model building, including preprocessing operations and variable selection.

Rule 2: Consistency with Real-World Application: The test set must represent the population of interest, and the validation strategy should mimic real-life application conditions [13]. This includes considering factors like patient demographics, measurement protocols, and biological variability that the model will encounter in practice.

Implementation Approach: Use nested cross-validation routines that perform all model building operations (including preprocessing and variable selection) within the inner loop without using the test data [13]. This prevents data leakage and ensures the perceived generalization performance reflects real-world applicability.

Comparative Analysis of Biomarker Qualification Pathways

Regulatory Pathways and Timelines

The search for regulatory acceptance of biomarkers reveals two primary pathways with significantly different timelines and evidence requirements [14].

Table: Biomarker Qualification Program Timeline Analysis

| Qualification Stage | FDA Target Timeline | Actual Median Timeline | Extension Beyond Target |

|---|---|---|---|

| Letter of Intent (LOI) Review | 3 months | 6 months (all projects) 13.4 months (post-guidance) | 100% (all projects) 347% (post-guidance) |

| Qualification Plan (QP) Review | 7 months | 14 months (all projects) 11.9 months (post-guidance) | 100% (all projects) 70% (post-guidance) |

| QP Development | Not specified | 32 months (all projects) 47 months (surrogate endpoints) | N/A |

| Full Qualification | Not specified | Only 8 biomarkers qualified (2018) | N/A |

Data extracted from the Biomarker Qualification Program (BQP) reveals that safety biomarkers constitute the most frequently qualified category (50% of qualified biomarkers), while surrogate endpoints face the longest development timelines (median 47 months for QP development) and have not yet achieved qualification through the BQP [14]. These timelines highlight the critical importance of early and precise COU definition, as biomarker qualification represents a substantial investment with uncertain outcomes.

Pathway Selection Strategy

The Biomarker Qualification Program (BQP) provides a pathway for broader regulatory acceptance across multiple drug development programs but requires more extensive evidence and longer timelines [3]. In contrast, qualification through the IND application process offers a more streamlined approach for biomarkers specific to a particular drug development program [3].

The choice between pathways should consider the scope of intended use, available resources, and development timeline constraints. The BQP may be preferable for biomarkers with applicability across multiple development programs, despite longer timelines, while the IND pathway offers efficiency for program-specific biomarkers [3].

Essential Research Reagents and Materials

Table: Key Research Reagent Solutions for Biomarker Validation

| Reagent/Material | Function in Validation | Critical Considerations |

|---|---|---|

| Reference Standards | Calibrate assays and establish traceability | Purity, stability, commutability with patient samples |

| Quality Control Materials | Monitor assay performance over time | Matrix matching, concentration near medical decision points |

| Characterized Patient Samples | Assess analytical performance across measuring range | Coverage of pathological conditions, stability documentation |

| Interference Substances | Evaluate assay specificity | Common interferents (hemoglobin, bilirubin, lipids), drug metabolites |

| Matrix Components | Dilution linearity and recovery studies | Appropriate blank matrix, preservation of biomarker integrity |

The selection and characterization of research reagents must align with the biomarker's COU [11]. For example, patient stratification biomarkers require well-characterized samples spanning the clinical decision threshold, while pharmacodynamic biomarkers need samples representing the expected dynamic range of response [11]. The fit-for-purpose principle applies equally to reagent qualification, ensuring resources focus on the most critical performance characteristics for the intended use.

The context of use serves as the foundational blueprint for biomarker validation strategy, determining the scope, stringency, and methodology of validation activities. The case studies and comparative data presented demonstrate that precisely defining the COU enables resource-efficient validation that addresses the most critical performance characteristics without imposing unnecessary burdens. As biomarker technologies evolve and regulatory pathways mature, the disciplined application of COU-driven validation will continue to be essential for developing reliable, impactful biomarkers that accelerate drug development and improve patient care.

In the era of precision medicine, biomarkers have become indispensable tools for disease detection, diagnosis, prognosis, and predicting response to therapeutic interventions [15]. These biological characteristics, which are objectively measured and evaluated, provide critical insights into normal biological processes, pathogenic processes, or pharmacologic responses to an intervention [16]. The journey from biomarker discovery to clinical implementation requires rigorous evaluation through a structured validation process. This process ensures that biomarkers generate reliable, reproducible, and actionable data for informed decision-making in research and clinical settings [17]. Without proper validation, there is potential for misinterpretation of data leading to misleading clinical trials and possibly patient harm [18]. The validation spectrum encompasses three fundamental components: analytical validation, clinical validation, and clinical utility, each addressing distinct aspects of biomarker performance and application.

Defining the Three Pillars of Validation

Analytical Validation

Analytical validation focuses on the technical performance of the assay itself, assessing how accurately and reliably it measures the biomarker of interest [19] [16]. This process verifies that the test consistently produces results that correctly identify or quantify the analyte under defined conditions [20]. The key parameters established during analytical validation include:

- Accuracy: The closeness of agreement between a measured value and the true value

- Precision: The closeness of agreement between independent measurements obtained under stipulated conditions

- Sensitivity: The lowest amount of analyte that can be accurately detected

- Specificity: The ability to unequivocally assess the analyte in the presence of interfering components

- Reproducibility: The precision under different locations, operators, and instruments

For molecular genetic tests, analytical validation also considers factors such as selectivity (distinguishing target signals from background), interference (substances that may affect detection), and potential for carryover contamination [20]. This technical validation forms the foundational evidence demonstrating that the biomarker assay performs as intended from an analytical perspective before advancing to clinical studies.

Clinical Validation

Clinical validation establishes how accurately and reliably the biomarker predicts or correlates with the clinical status or outcome of interest [19]. While analytical validation confirms the test measures the biomarker correctly, clinical validation confirms that the biomarker measurement is clinically meaningful [18] [19]. This process evaluates:

- Clinical sensitivity: The proportion of positive test results among patients with the disease or condition

- Clinical specificity: The proportion of negative test results among patients without the disease or condition

- Predictive values: The probability of the disease or condition given a positive or negative test result

- Likelihood ratios: How much a given test result will raise or lower the probability of the target disorder

Clinical validation authenticates the biomarker's correlation with clinical outcomes, confirming its relevance as a prognostic or predictive factor [21]. For example, validating Nectin-4 as a serum biomarker in ovarian cancer required demonstrating its elevated expression in cancer tissues and serum compared to normal controls, and its ability to help discriminate benign gynecologic diseases from ovarian cancer [22].

Clinical Utility

Clinical utility represents the highest level of validation, assessing whether using the biomarker test in clinical practice leads to improved patient outcomes and provides value to clinical decision-making [19] [23]. The National Cancer Institute defines clinical utility as "the likelihood that a test will, by prompting an intervention, result in an improved health outcome" [19]. Key considerations for clinical utility include:

- Impact on clinical decision-making for diagnosis, monitoring, prognostication, or treatment selection

- Improvement in patient outcomes (morbidity, mortality, quality of life)

- Streamlined clinical workflow and more efficient use of healthcare resources

- Emotional, social, cognitive, and behavioral benefits for patients

- Cost-effectiveness and appropriate resource utilization

Clinical utility is highly context-dependent, varying based on the intended use, patient population, and existing standard of care [19]. A test must demonstrate practical value in real-world clinical settings beyond mere statistical associations to establish genuine clinical utility.

Comparative Analysis of Validation Types

Table 1: Comprehensive Comparison of Validation Types

| Aspect | Analytical Validation | Clinical Validation | Clinical Utility |

|---|---|---|---|

| Primary Purpose | Verify test measures analyte correctly [19] | Confirm biomarker correlates with clinical status [19] | Determine test improves patient outcomes [19] |

| Key Question | Does the test work technically? | Does the biomarker mean something clinically? | Does using the test help patients? |

| Focus | Technical performance of assay [16] | Clinical meaningfulness of biomarker [18] | Patient outcomes and healthcare value [23] |

| Key Metrics | Accuracy, precision, sensitivity, specificity, reproducibility [20] | Clinical sensitivity, clinical specificity, predictive values [19] | Impact on diagnosis, treatment decisions, patient outcomes, cost-effectiveness [19] |

| Context Dependence | Largely independent of clinical context | Dependent on clinical context and population | Highly dependent on clinical context, population, and healthcare setting |

| Regulatory Emphasis | FDA requirements for IVDs; CLIA for LDTs [19] | FDA requirements for IVDs [19] | CMS and payer requirements for coverage [19] |

| Evidence Generation | Laboratory studies with reference materials | Clinical studies comparing to reference standard | Clinical trials, outcomes research, cost-effectiveness analyses [23] |

| Stakeholders | Laboratory professionals, assay developers | Clinicians, researchers, regulators | Patients, clinicians, payers, health systems [19] |

Experimental Approaches and Methodologies

Methodologies for Analytical Validation

Robust analytical validation requires carefully designed experiments to establish performance characteristics under controlled conditions. The principles of "fit-for-purpose" validation guide this process, tailoring the extent of validation to the intended application [16]. Key methodological considerations include:

- Precision Studies: Testing repeatability (within-run), intermediate precision (within-lab), and reproducibility (between-lab) using multiple replicates across different days, operators, and instruments [20]

- Accuracy Assessment: Comparing results to reference methods or certified reference materials when available

- Linearity and Range: Establishing the interval of analyte concentrations where results are directly proportional

- Limit of Detection/Quantification: Determining the lowest amount of analyte that can be reliably detected or quantified

- Interference Testing: Identifying substances that might affect measurement accuracy

- Carryover Evaluation: Assessing potential contamination between samples

Automation platforms can significantly enhance analytical validation by improving consistency, reliability, and reproducibility while increasing throughput and standardization [17]. For protein biomarkers, technologies like ELISA, Meso Scale Discovery (MSD), and Luminex offer varying degrees of multiplexing capability and sensitivity, while genomic biomarkers may utilize platforms such as qPCR, next-generation sequencing, or nanopore sequencing depending on the application requirements [17].

Approaches for Clinical Validation

Clinical validation requires distinct methodological approaches focused on establishing clinically meaningful associations:

- Case-Control Studies: Comparing biomarker levels between well-characterized patient groups and healthy controls

- Longitudinal Studies: Tracking biomarker levels over time in relation to disease progression or treatment response

- Method Comparison Studies: Evaluating the biomarker against an accepted reference standard or "gold standard"

- Correlation Analyses: Assessing relationships between biomarker levels and clinical parameters

Statistical considerations are paramount in clinical validation to avoid false discoveries. Key issues include addressing within-subject correlation when multiple observations are collected from the same subject, correcting for multiple comparisons to control false discovery rates, and minimizing selection bias in retrospective studies [21]. Mixed-effects linear models that account for dependent variance-covariance structures within subjects can produce more realistic p-values and confidence intervals [21].

Assessing Clinical Utility

Determining clinical utility requires evaluating the real-world impact of biomarker testing on patient management and outcomes [23]. Methodological approaches include:

- Randomized Controlled Trials (RCTs): The highest level of evidence, comparing outcomes between patients managed with versus without the biomarker test

- Systematic Reviews: Summarizing available evidence on test performance and impact on clinical decisions

- Post-Market Surveillance: Monitoring test performance and impact in routine clinical practice

- Decision Analysis: Constructing mathematical models to evaluate the impact on clinical decision-making

- Cost-Effectiveness Analysis: Evaluating value in terms of cost and improvement in clinical outcomes [23]

These approaches help determine whether biomarker testing leads to more targeted therapies, improved clinical diagnosis, better prognostic stratification, or more efficient resource utilization [21] [23].

Table 2: Common Technology Platforms for Biomarker Analysis

| Biomarker Type | Technology Platforms | Key Advantages | Common Applications |

|---|---|---|---|

| DNA/RNA | qPCR, RT-PCR, Next-Generation Sequencing, Nanopore Sequencing [17] | High sensitivity, quantitative results, comprehensive analysis | Mutation detection, gene expression, SNP genotyping |

| Protein | ELISA, Western Blot, Meso Scale Discovery (MSD), Luminex, GyroLab [17] | High specificity, multiplexing capabilities, quantitative | Protein expression, post-translational modifications, signaling pathways |

| Cellular | Flow Cytometry, Cell Sorting (FACS), Single-Cell RNA Sequencing [17] | Single-cell resolution, multiparameter analysis, live cell isolation | Immune monitoring, cell phenotype, functional assays |

| Spatial | CODEX, Spatial Transcriptomics, Imaging Mass Cytometry [17] | Spatial context, high-plex tissue imaging, tissue architecture | Tumor microenvironment, tissue heterogeneity, cellular interactions |

The Validation Workflow and Interrelationships

The validation process typically follows a sequential pathway where each stage builds upon evidence generated in the previous stage. The relationship between analytical validation, clinical validation, and clinical utility can be visualized as a progressive evidentiary framework.

This sequential relationship highlights the dependency between validation stages. A test that fails analytical validation (inaccurate measurements) will inevitably show suboptimal clinical validity, potentially reporting false positive or negative outcomes that impact diagnosis and treatment decisions, thereby compromising clinical utility [19]. The principle of "fit-for-purpose" should guide the validation process, with the extent of validation tailored to the specific context of use and the required level of certainty [16].

Essential Research Reagents and Materials

Table 3: Essential Research Reagent Solutions for Biomarker Validation

| Reagent Category | Specific Examples | Primary Function | Validation Stage |

|---|---|---|---|

| Reference Standards | Certified reference materials, synthetic biomarkers, reference controls [20] | Establish accuracy and calibrate assays | Analytical Validation |

| Assay-Specific Reagents | Primers, probes, antibodies, enzymes, buffers [20] | Enable specific detection and quantification of target analyte | Analytical Validation |

| Biological Samples | Characterized patient samples, control specimens, biobank materials [22] | Assess clinical performance across relevant populations | Clinical Validation |

| Interference Substances | Hemolyzed blood, lipids, common medications, homologous substances | Evaluate assay specificity and potential interfering substances | Analytical Validation |

| Control Materials | Positive controls, negative controls, no-template controls, calibrators [20] | Monitor assay performance and detect contamination | Analytical & Clinical Validation |

| Automation Reagents | Compatible buffers, enzymes, and consumables for automated platforms [17] | Enable standardized, high-throughput validation | All Stages |

The validation spectrum for biomarkers encompasses a rigorous, multi-stage process that progresses from technical performance (analytical validation) to clinical meaningfulness (clinical validation) and ultimately to practical healthcare value (clinical utility). Each stage addresses distinct questions and requires specialized methodologies and expertise. Understanding these distinctions is crucial for researchers, developers, and clinicians working to translate promising biomarkers from discovery to clinical implementation. As digital biomarkers and novel technologies continue to emerge, adherence to this structured validation framework will ensure that new tests provide genuine value to patients and healthcare systems while maintaining scientific rigor and regulatory compliance.

In the highly regulated landscape of drug development, "qualification" and "validation" represent distinct but interconnected processes critical for ensuring product quality, efficacy, and patient safety. Within the U.S. Food and Drug Administration (FDA) framework, these terms carry specific meanings and applications. Qualification primarily refers to the documented process of ensuring that equipment, systems, or tools work correctly and are properly installed [24] [25]. In the specific context of biomarkers, the FDA's Biomarker Qualification Program (BQP) provides a structured pathway for evaluating a biomarker for a specific Context of Use (COU) across multiple drug development programs [3]. Conversely, Validation constitutes a broader concept, defined as "establishing documented evidence that provides a high degree of assurance that a specific process will consistently produce a product meeting its predetermined specifications and quality attributes" [26].

Understanding this distinction is paramount for researchers, scientists, and drug development professionals. While equipment and instruments are qualified, processes, procedures, and methods are validated [25]. A process, such as manufacturing or cleaning, must be validated using equipment that has already been qualified [24] [25]. This foundational understanding frames the rigorous criteria required for biomarker clinical endpoint validation, ensuring that tools and methodologies meet the evidential standards demanded by regulators for decision-making in drug development.

Core Definitions and Comparative Analysis

What is Qualification?

Qualification is a step-by-step documented process that proves a piece of equipment, system, or utility is correctly installed, operates according to design specifications, and performs as expected under load [24] [25]. It is the essential foundation upon which validated processes are built. The FDA's perspective on qualification, particularly for computerized systems, often follows a '4Q lifecycle model', which includes Design, Installation, Operational, and Performance Qualification [27].

The typical sequence for equipment qualification involves three critical stages, often referred to as IQ, OQ, and PQ [24] [28]:

- Installation Qualification (IQ): Verifies that the equipment has been delivered, installed, and configured correctly according to manufacturer specifications and design drawings [24].

- Operational Qualification (OQ): Demonstrates that the installed equipment will function as intended throughout its anticipated operating ranges. This tests controls, alarms, and other functions under expected conditions [24].

- Performance Qualification (PQ): Provides documented evidence that the equipment can consistently perform its intended function in its regular operating environment, producing repeatable results that meet predetermined acceptance criteria [24] [28].

In the context of biomarkers, the FDA has established a formal Biomarker Qualification Program (BQP). This program evaluates a biomarker for a specific Context of Use (COU), which is a concise description of the biomarker's specified application in drug development [3]. Once a biomarker is qualified through this program, it can be used by any drug developer for that specific COU without needing re-evaluation, promoting consistency and efficiency across the industry [3].

What is Validation?

Validation is a comprehensive, documented approach that provides a high degree of assurance that a specific process, procedure, or method will consistently yield a result meeting predetermined acceptance criteria [25] [26]. The FDA defines process validation as "the collection of data from the process design stage throughout production, which establishes scientific evidence that a process is capable of consistently delivering quality products" [26].

Several key types of validation are employed in pharmaceutical development and manufacturing:

- Process Validation: Ensures the manufacturing process is robust, reproducible, and capable of consistently producing a product that meets its quality attributes. This occurs in three stages: Process Design, Process Qualification, and Continued Process Verification [26].

- Cleaning Validation: Scientifically demonstrates that cleaning methods consistently remove residues from contact surfaces, equipment, and packaging below established acceptance criteria to prevent contamination [26].

- Analytical Method Validation: Establishes documented evidence that a testing procedure is fit for its intended purpose in terms of quality, reliability, and consistency of analytical results [26].

- Computer System Validation (CSV): Confirms that software or computerized systems used in regulated processes consistently perform according to their intended use, ensuring data integrity and reliability [27].

For a biomarker to be accepted as a surrogate endpoint in drug development, it must undergo a rigorous validation process. This includes analytical validation (assessing the performance characteristics of the assay, such as accuracy and precision) and clinical validation (demonstrating that the biomarker accurately identifies or predicts the clinical outcome of interest) [3] [1].

Side-by-Side Comparison

The table below summarizes the key differences between qualification and validation within the FDA regulatory framework.

Table 1: Key Differences Between Qualification and Validation

| Aspect | Qualification | Validation |

|---|---|---|

| Primary Focus | Equipment, systems, utilities, and biomarkers for a specific Context of Use (COU) [24] [25] [3] | Processes, procedures, methods, and computer systems [25] [26] |

| Objective | Prove that an item is correctly installed, operates correctly, and performs as expected [24] [25] | Prove that a process leads to a consistent and reproducible result meeting quality standards [25] [26] |

| Documentation | Qualification protocols (e.g., IQ, OQ, PQ) and reports [24] | Validation protocols, master plans, and extensive performance data reports [24] [26] |

| Timing & Sequence | Conducted before validation; provides the foundation for it [24] [25] | Follows qualification; a process is validated using qualified equipment [24] [25] |

| Regulatory Emphasis | FDA's 4Q model for equipment [27]; Biomarker Qualification Program (BQP) for biomarkers [3] | Process Validation lifecycle (Design, Qualification, Continued Verification) [26]; Fit-for-purpose biomarker validation [3] |

| Common Examples | Qualifying a mixing tank, HVAC system, or analytical balance [24] [25] | Validating a manufacturing process, cleaning method, or analytical test procedure [25] [26] |

Biomarker Clinical Endpoint Validation: A Workflow

The process of establishing a biomarker as a valid clinical endpoint is complex and follows a fit-for-purpose approach, where the level of evidence required depends on the specific Context of Use (COU) [3]. The following workflow diagrams the key stages from biomarker identification through to regulatory acceptance.

Diagram 1: Biomarker Validation and Qualification Workflow. This chart outlines the key stages from initial biomarker identification through to regulatory acceptance, highlighting the iterative process of analytical and clinical validation. BQP: Biomarker Qualification Program; COU: Context of Use.

Biomarker Categorization and Context of Use (COU)

The initial critical step is defining the biomarker's Context of Use (COU), which is a concise description of its specific application in drug development [3]. The COU determines the type and amount of evidence needed for validation. Concurrently, the biomarker is categorized. The FDA-NIH BEST (Biomarkers, EndpointS, and other Tools) resource defines several categories [3] [29]:

Table 2: Biomarker Categories with Examples

| Biomarker Category | Intended Use | Real-World Example |

|---|---|---|

| Diagnostic | To accurately identify individuals with a disease or condition. | Hemoglobin A1c for diagnosing diabetes mellitus [3]. |

| Prognostic | To identify the likelihood of a clinical event, disease recurrence, or progression in patients with a disease. | Total kidney volume for assessing progression risk in autosomal dominant polycystic kidney disease [3]. |

| Predictive | To identify individuals who are more likely to experience a favorable or unfavorable effect from a specific medical product. | EGFR mutation status for predicting response to EGFR inhibitors in non-small cell lung cancer [3]. |

| Pharmacodynamic/Response | To show that a biological response has occurred in an individual who has received a therapeutic intervention. | HIV RNA viral load to monitor response to antiretroviral therapy [1]. |

| Safety | To indicate the potential for, or occurrence of, toxicity or an adverse effect. | Serum creatinine for monitoring renal function and potential nephrotoxicity [3]. |

| Susceptibility/Risk | To identify individuals with an increased susceptibility or risk of developing a disease or condition. | BRCA1 and BRCA2 genetic mutations for breast and ovarian cancer risk [3]. |

Analytical and Clinical Validation Protocols

For a biomarker to be considered for use as a surrogate endpoint, it must undergo rigorous analytical and clinical validation.

Analytical Validation

Objective: To assess the performance characteristics of the biomarker assay, ensuring it reliably measures the analyte of interest [3]. Methodology: The specific parameters evaluated depend on the assay technology and analyte but typically include [3]:

- Accuracy: The closeness of agreement between a measured value and its true value.

- Precision: The closeness of agreement between a series of measurements from multiple sampling. This includes repeatability (within-run) and intermediate precision (between-run, between-days, between-analysts).

- Analytical Sensitivity: The lowest amount of analyte that can be reliably detected.

- Analytical Specificity: The ability to unequivocally assess the analyte in the presence of other components, such as interferents or cross-reactants.

- Reportable Range: The span of results that can be reliably reported by the assay, from the lower to the upper limit of quantitation.

- Reference Range: The range of test values expected in a healthy population.

Clinical Validation

Objective: To demonstrate that the biomarker accurately identifies or predicts the clinical outcome, status, or endpoint of interest [3] [1]. Methodology: This involves epidemiological and clinical studies to establish a link between the biomarker and the clinical outcome. Key assessments include [3]:

- Sensitivity and Specificity: Determining the biomarker's ability to correctly identify patients with and without the condition or outcome.

- Positive and Negative Predictive Values: Evaluating the probability that a positive or negative biomarker result correctly predicts the presence or absence of the clinical outcome.

- Performance in the Intended Population: Validating the biomarker's predictive value in the specific patient population and clinical setting for which it is intended (the COU).

Pathways to Regulatory Acceptance

There are several pathways for achieving regulatory acceptance of a biomarker for use in drug development [3]:

- Early Engagement: Developers can engage with the FDA early via mechanisms like Critical Path Innovation Meetings (CPIM) or pre-IND meetings to discuss biomarker validation plans.

- IND Application Process: Biomarkers can be proposed and reviewed within the context of a specific Investigational New Drug (IND) application. This pathway is efficient for biomarkers intended for a specific drug development program.

- Biomarker Qualification Program (BQP): This is a formal, drug-development-program-independent pathway for qualifying biomarkers for a specific COU. While it requires more extensive data, once qualified, the biomarker can be referenced by any sponsor for that COU, streamlining multiple development programs [3].

The Scientist's Toolkit: Essential Reagents and Materials

The experimental validation of biomarkers relies on a suite of critical reagents and tools to ensure the generation of reliable, reproducible data.

Table 3: Key Research Reagent Solutions for Biomarker Validation

| Reagent / Material | Function in Validation |

|---|---|

| Validated Assay Kits | Pre-optimized kits (e.g., ELISA, PCR, NGS) for specific analyte detection that have undergone performance characterization, providing a foundation for analytical validation [3]. |

| Certified Reference Standards | Calibrators and controls with known analyte concentrations traceable to international standards, essential for establishing assay accuracy, precision, and reportable range [3]. |

| High-Quality Biological Matrices | Well-characterized patient-derived samples (serum, plasma, tissue, DNA) representing the target population, crucial for clinical validation and establishing reference ranges [3]. |

| Cell Lines and Tissue Sections | Model systems for developing and optimizing biomarker assays, particularly for immunohistochemistry or in situ hybridization, and for testing specificity [3]. |

| Data Analysis Software | Regulatory-compliant software for statistical analysis of validation data, including determination of sensitivity, specificity, and predictive values, ensuring data integrity [27]. |

The distinction between qualification and validation within the FDA framework is fundamental to robust drug development. Qualification establishes the fitness of tools—be it equipment or a biomarker for a specific COU—while Validation provides the documented evidence that processes, including the use of a biomarker as a clinical endpoint, are consistently reliable. The validation of biomarkers as surrogate endpoints is a rigorous, fit-for-purpose endeavor requiring robust analytical and clinical validation based on a well-defined Context of Use. By adhering to these structured regulatory definitions and pathways, researchers and drug developers can generate the high-quality evidence necessary to advance new therapies, ensuring they are both effective and safe for patients.

The Validation Pathway: Methodologies and Regulatory Application

Implementing a Fit-for-Purpose (FFP) Validation Approach

The Fit-for-Purpose (FFP) validation approach represents a paradigm shift in biomarker method validation, emphasizing that the rigor and extent of validation should be appropriate for the biomarker's specific Context of Use (COU) in drug development [30]. This strategy acknowledges that biomarker assays support varied COUs—from early discovery and understanding mechanisms of action to patient selection and supporting efficacy claims in late-stage trials [31]. The U.S. Food and Drug Administration (FDA) has formally recognized this approach in its 2025 Bioanalytical Method Validation for Biomarkers (BMVB) guidance, which states that "a fit-for-purpose approach should be used when determining the appropriate extent of method validation" [31]. Unlike pharmacokinetic (PK) assays that measure drug concentrations in a singular context, biomarker assays must be validated with consideration of their unique scientific and technical challenges, particularly the measurement of endogenous analytes often without identical reference standards [31] [32].

The fundamental principle of FFP validation is that the assay's performance characteristics should be sufficiently demonstrated to ensure it generates reliable data for its intended decision-making purpose [33]. This framework provides a flexible yet rigorous pathway for biomarker method validation, ensuring quality while recognizing that different contexts require different levels of evidence [30]. This guide objectively compares FFP validation against traditional approaches and provides the experimental frameworks necessary for successful implementation.

Regulatory Evolution and Current Framework

Historical Development of FFP Guidance

The concept of fit-for-purpose biomarker validation first emerged in a 2006 publication from the AAPS Ligand Binding Analytical Focus Group [30]. This approach gained regulatory recognition in the FDA's 2018 Bioanalytical Method Validation guidance, which acknowledged that while drug assay validation approaches should be the starting point, different considerations might be needed for biomarkers [32]. The regulatory landscape evolved significantly with the January 2025 release of the FDA's dedicated Bioanalytical Method Validation for Biomarkers (BMVB) guidance [31].

The 2025 BMVB guidance replaced the 2018 FDA BMV guidance and specifically recognizes the substantial differences between biomarker and PK assays which impact method validation strategies [31]. A key development in this evolution is the guidance's reference to ICH M10 as a starting point while simultaneously acknowledging that its technical approaches cannot be directly applied to biomarker platforms [31]. This reflects the agency's understanding that biomarker assays require fundamentally different validation approaches from PK assays, primarily because they measure endogenous analytes often without fully characterized reference standards [31] [32].

The Critical Role of Context of Use

Establishing a clear Context of Use (COU) is the foundational step in FFP validation [30]. The FDA defines COU as "a concise description of a biomarker's specified use in drug development" comprised of two components: the biomarker category and its proposed use [31]. The COU dictates every aspect of validation, including assay platform selection, required performance parameters, and acceptance criteria [30].

Table 1: Context of Use Determinations for Biomarker Applications

| Development Stage | Typical COU Examples | Required Validation Rigor | Common Technologies |

|---|---|---|---|

| Early Discovery | Mechanism of Action, Target Engagement | Moderate (Exploratory) | Ligand Binding, Flow Cytometry |

| Preclinical | Pharmacodynamic Effect, Safety Assessment | Moderate to High | MS-based assays, LBAs |

| Clinical Proof-of-Concept | Patient Stratification, Dose Selection | High | PCR, Immunoassays, NGS |

| Regulatory Submission | Efficacy Endpoint, Diagnostic Claims | Highest (Definitive) | Validated LBAs, PCR, IHC |

Without a clearly defined COU, it is impossible to determine what constitutes adequate validation, as broad terms like "exploratory endpoint" do not provide sufficient specificity for establishing validation criteria [30]. As emphasized by regulatory experts, "no context, no validated assay" [30].

Comparative Analysis of Validation Approaches

Biomarker vs. Pharmacokinetic Assay Validation

Understanding the fundamental differences between biomarker and PK assay validation is essential for proper FFP implementation. The distinct analytical challenges of biomarker assays necessitate different technical approaches, even when evaluating similar performance parameters [31].

Table 2: Key Differences Between Biomarker and PK Assay Validation

| Validation Aspect | PK Assays (ICH M10) | Biomarker Assays (FFP) | Rationale for Difference |

|---|---|---|---|

| Reference Standards | Fully characterized drug substance identical to analyte [31] | Recombinant proteins or synthetic calibrators often different from endogenous analyte [31] | Endogenous biomarkers may be poorly characterized or unavailable |

| Accuracy Assessment | Spike-recovery of reference standard [31] | Relative accuracy; parallelism to demonstrate similarity [31] [33] | Cannot spike endogenous analyte; must show calibrator behaves like endogenous biomarker |

| Critical Sample Types | Calibrators and QCs from spiked reference material [31] | Endogenous quality controls and study samples [31] | Performance with endogenous analyte is most relevant |

| Primary Validation Focus | Performance with reference standard [31] | Performance with endogenous analyte [31] | Reference standard may not fully represent endogenous biomarker |

| Regulatory Framework | ICH M10 requirements [31] | Fit-for-purpose based on COU [31] | Diverse biomarker applications require flexible approach |

The most significant technical difference lies in accuracy assessment. For PK assays, spike-recovery experiments using the reference standard (the drug itself) directly demonstrate accuracy [31]. For biomarker assays, where the endogenous analyte cannot be spiked, parallelism assessment becomes critical to demonstrate that the calibrator (often recombinant) and the endogenous biomarker behave similarly in the assay [31] [33]. This fundamental distinction means that applying ICH M10 technical approaches directly to biomarker validation would be inappropriate and misleading [32].

Biomarker Assay Classification and Validation Requirements

The American Association of Pharmaceutical Scientists (AAPS) has established five general classes of biomarker assays, each with distinct validation requirements [33]. This classification system provides a structured framework for implementing FFP validation based on analytical capability rather than intended use.

Table 3: Validation Requirements by Biomarker Assay Category

| Performance Characteristic | Definitive Quantitative | Relative Quantitative | Quasi-quantitative | Qualitative |

|---|---|---|---|---|

| Accuracy | Required [33] | Not applicable | Not applicable | Not applicable |

| Trueness (Bias) | Not applicable | Required [33] | Not applicable | Not applicable |

| Precision | Required [33] | Required [33] | Required [33] | Not applicable |

| Reproducibility | Required [33] | Not applicable | Not applicable | Not applicable |

| Sensitivity | LLOQ [33] | LLOQ [33] | Not applicable | Required [33] |

| Specificity | Required [33] | Required [33] | Required [33] | Required [33] |

| Dilution Linearity | Required [33] | Required [33] | Not applicable | Not applicable |

| Parallelism | Required [33] | Required [33] | Not applicable | Not applicable |

| Assay Range | LLOQ-ULOQ [33] | LLOQ-ULOQ [33] | Not applicable | Not applicable |

Definitive quantitative assays use fully characterized reference standards representative of the biomarker and can report absolute quantitative values [33]. Relative quantitative assays use reference standards that are not fully representative of the biomarker [33]. Quasi-quantitative assays lack a calibration standard but produce continuous data expressed in terms of sample characteristics [33]. Qualitative assays provide categorical data, either ordinal (discrete scores) or nominal (yes/no) [33].

Experimental Protocols for FFP Validation

Five-Stage Validation Process

Implementing FFP validation follows a structured five-stage process that emphasizes continuous improvement and iterative refinement [33]. Each stage has distinct objectives and deliverables that collectively ensure the assay is appropriate for its COU.

Stage 1: Definition of Purpose and Assay Selection The most critical phase involves precisely defining the COU, which informs all subsequent validation decisions [33]. This includes determining whether the biomarker will be used for internal decision-making or regulatory submission, which directly impacts validation stringency [31]. During this stage, researchers should also select appropriate technology platforms based on required sensitivity, specificity, and practical considerations like sample volume requirements [30].

Stage 2: Method Validation Planning This stage involves assembling all necessary reagents and components, writing the detailed method validation plan, and finalizing the assay classification [33]. The validation plan should explicitly link each performance parameter to the COU and predefine acceptance criteria based on the biological variability of the biomarker and the consequences of decision errors [30].

Stage 3: Experimental Performance Verification The experimental phase involves systematically evaluating predefined performance parameters [33]. For definitive quantitative assays, this includes accuracy, precision, sensitivity, specificity, dilution linearity, parallelism, and stability [33]. For relative quantitative assays, trueness (bias) replaces accuracy assessment [33]. The evaluation culminates in the formal determination of fitness-for-purpose against predefined criteria [33].

Stage 4: In-Study Validation This stage assesses assay performance in the actual clinical context using patient samples [33]. It enables identification of practical sampling issues, including collection, processing, storage, and stability under real-world conditions [33]. This phase also allows for detecting matrix effects or interferences specific to the study population [30].

Stage 5: Routine Use and Continuous Monitoring During routine implementation, quality control monitoring, proficiency testing, and batch-to-batch quality assurance are essential [33]. This stage employs statistical quality control rules to monitor assay performance over time and identify drift or deterioration [30]. The process driver is continuous improvement, with feedback mechanisms that may necessitate returning to earlier stages for refinement [33].

Critical Experimental Parameters and Methodologies

Parallelism Assessment Parallelism experiments evaluate whether the dilution curve of a sample containing the endogenous biomarker is parallel to the standard curve prepared with the reference material [31] [33]. This critical validation parameter demonstrates that the reference material and endogenous biomarker behave similarly in the assay, supporting the validity of using the reference material for quantification [31]. The experimental protocol involves:

- Preparing serial dilutions of endogenous samples with high biomarker concentrations

- Comparing the resulting dilution response curve to the calibration standard curve

- Statistical assessment of curve parallelism using appropriate models

- Establishing acceptance criteria based on the COU (typically <25% variance for quantitative assays)

Precision and Accuracy Profile The accuracy profile approach incorporates total error (bias + intermediate precision) and pre-set acceptance limits to determine the validity of future measurements [33]. The experimental protocol recommends:

- Analyzing 3-5 different concentrations of calibration standards and 3 different concentrations of validation samples (high, medium, low) in triplicate on 3 separate days [33]

- Calculating β-expectation tolerance intervals (e.g., 95%) to display confidence intervals for future measurements [33]

- Visually determining what percentage of future values will fall within predefined acceptance limits [33]

- Deriving sensitivity, dynamic range, LLOQ, and ULOQ from the accuracy profile [33]

For biomarker assays, greater flexibility is typically allowed compared to PK assays, with 25% being the default value for precision and accuracy (30% at LLOQ) during pre-study validation [33].

Stability Assessment Biomarker stability experiments evaluate pre-analytical variables that can significantly impact measurement results [30]. The protocol should assess:

- Short-term stability: Bench-top stability at processing temperatures

- Long-term stability: Storage stability at designated temperatures

- Freeze-thaw stability: Multiple freeze-thaw cycles simulating handling conditions

- Processed sample stability: Stability in the analytical matrix post-processing

Unlike PK assays that use spiked quality controls, biomarker stability should be assessed using endogenous quality controls whenever possible, as recombinant proteins may demonstrate different stability profiles from endogenous biomarkers [30].

Statistical Considerations for Biomarker Validation

Managing Analytical and Biological Variability

Statistical considerations are paramount in biomarker validation to ensure reliable and reproducible results [21]. A key principle is that the acceptable level of analytical variability depends on the magnitude of biological variability and the intended use of the biomarker [30]. For example, if biological variability is high, greater analytical imprecision may be acceptable, whereas for biomarkers with low biological variability, tighter analytical precision is necessary to detect meaningful changes [30].

Within-subject correlation presents another critical statistical consideration, particularly when multiple observations are collected from the same subject [21]. Ignoring this correlation can inflate type I error rates and produce spurious findings [21]. Mixed-effects linear models that account for dependent variance-covariance structures within subjects provide more realistic p-values and confidence intervals [21].

Addressing Multiplicity and False Discovery

Biomarker validation studies are particularly susceptible to false positives due to the typically large number of potential markers investigated [21]. Multiplicity concerns arise from multiple candidate biomarkers, multiple endpoints, or multiple patient subsets [21]. While controlling false discovery is essential, researchers must balance this against the risk of false negatives that could discard potentially valuable biomarkers [21].

Statistical approaches for addressing multiplicity include:

- Family-wise error rate control: Methods like Bonferroni, Tukey, or Scheffe adjustments [21]

- False discovery rate control: Benjamini-Hochberg procedure for large-scale biomarker screening

- Prioritization of outcomes: Pre-specification of primary endpoints to minimize multiple testing

- Composite endpoints: Combining multiple related measures into a single endpoint

Table 4: Statistical Considerations in Biomarker Validation

| Statistical Issue | Impact on Validation | Recommended Approaches | Considerations |

|---|---|---|---|

| Within-Subject Correlation | Inflated type I error rate if ignored [21] | Mixed-effects models, Generalized Estimating Equations [21] | Particularly relevant for multiple tumors or longitudinal sampling |

| Multiplicity | Increased false discovery rate [21] | Family-wise error control, False discovery rate procedures [21] | Balance between false positives and false negatives |

| Selection Bias | Compromised generalizability [21] | Prospective designs, Stratified sampling | Common in retrospective studies |

| Multiple Endpoints | Interpretation challenges [21] | Pre-specified primary endpoints, Composite endpoints | Requires multiple testing corrections |

Essential Research Reagents and Materials

Successful FFP validation requires careful selection and characterization of research reagents tailored to biomarker-specific challenges. The table below details essential materials and their functions in biomarker assay development and validation.

Table 5: Research Reagent Solutions for Biomarker Validation

| Reagent Category | Specific Examples | Function in Validation | Special Considerations |

|---|---|---|---|

| Reference Standards | Recombinant proteins, Synthetic peptides, Certified reference materials | Calibrator for quantitative assays; enables assignment of numerical values [31] | May differ from endogenous analyte in structure, folding, glycosylation [31] |

| Quality Control Materials | Endogenous QCs, Pooled patient samples, Surrogate matrix samples | Monitor assay performance; validate sample analysis batches [30] | Endogenous QCs preferred over spiked recombinant materials [30] |

| Capture and Detection Reagents | Monoclonal antibodies, Polyclonal antibodies, Binding proteins | Determine assay specificity, sensitivity, and dynamic range [33] | Critical for ligand binding assays; require thorough characterization [33] |

| Assay Diluents and Matrices | Charcoal-stripped matrix, Artificial matrix, Diluent buffers | Define assay background; optimize signal-to-noise ratio [33] | Should mimic native matrix as closely as possible [33] |

| Stability Additives | Protease inhibitors, Stabilizer cocktails, Antimicrobial agents | Maintain analyte integrity during sample processing and storage [30] | Must be validated for compatibility with the assay [30] |

The Fit-for-Purpose validation approach represents a scientifically rigorous framework that aligns biomarker assay validation with specific contexts of use in drug development. By recognizing the fundamental differences between biomarker and PK assays—particularly the challenges of measuring endogenous analytes without identical reference standards—FFP validation provides a flexible yet standardized pathway for generating reliable biomarker data [31] [32]. The 2025 FDA BMVB guidance formalizes this approach while maintaining continuity with previous recommendations [31] [32].

Successful implementation requires careful attention to assay classification, appropriate statistical methods to address variability and multiplicity concerns, and thorough characterization of critical reagents [33] [21]. By adopting this tailored validation strategy, researchers can ensure that biomarker methods generate sufficiently reliable data for their intended decision-making purposes throughout the drug development continuum, from early discovery to regulatory submission.

Analytical validation is a foundational step in the biomarker development pipeline, serving as the critical gatekeeper between promising discovery and clinical application. For a biomarker to be considered fit-for-purpose, its measurement assay must undergo rigorous testing to prove it is reliable, reproducible, and accurate. [3] [34] [35] This process establishes that the test itself performs correctly from a technical standpoint, forming the essential evidence base that regulatory bodies like the U.S. Food and Drug Administration (FDA) require before a biomarker can be used in drug development or clinical trials. [3] [34]

The core components of analytical validation work together to build a complete picture of an assay's performance. The following table summarizes these key parameters and their roles in demonstrating reliability.

| Validation Parameter | Core Question | Role in Establishing Assay Reliability |

|---|---|---|

| Accuracy | Does the test measure the true value? | Quantifies closeness of agreement between the measured value and a known reference standard. [34] |

| Precision | How reproducible are the results? | Evaluates the closeness of agreement between a series of measurements from multiple samplings. Includes repeatability (same conditions) and reproducibility (different labs, operators, time). [34] |

| Specificity | Does the test only measure the target? | Ability to assess the target analyte unequivocally in the presence of other components, such as interfering substances or cross-reactive analogs. [3] [34] |

| Analytical Sensitivity | What is the lowest detectable concentration? | The lowest amount of the analyte that can be reliably distinguished from zero (Limit of Detection, LOD). [3] |

| Reportable Range | Over what range are results valid? | The span of analyte concentrations that can be directly measured without dilution, establishing the limits of quantification (LOQ). [3] |

Experimental Protocols for Validation

The validation process is not theoretical; it requires concrete experiments to generate evidence for each performance parameter. The following methodologies, drawn from established guidelines and real-world research, provide a template for this essential work.

1. Protocol for Assessing Accuracy and Precision This experiment often runs concurrently using the same data set. [34]

- Methodology: Prepare a minimum of three concentration levels of quality control (QC) samples (low, medium, high) spanning the assay's reportable range. For precision, analyze each QC level repeatedly (e.g., n=5) within a single run (intra-assay precision) and across multiple different runs, days, and operators (inter-assay precision). Calculate the coefficient of variation (CV%), with a common benchmark for success being CV <15%. [34] For accuracy, compare the mean measured concentration of the QC samples to their known, theoretical concentrations. Calculate the percentage recovery, with recovery rates typically required to be between 80-120%. [34]

2. Protocol for Determining Specificity and Selectivity

- Methodology: Spike the sample matrix (e.g., plasma, serum) with potentially interfering substances (e.g., hemolyzed blood, lipids, bilirubin, or structurally similar molecules) at physiologically relevant high concentrations. Also, test samples from individuals with related but different disease states or healthy controls. The assay's measured result for the target analyte should not show significant deviation (e.g., <20% change) from baseline upon addition of interferents, confirming specificity. [3]

3. Protocol for Establishing Analytical Sensitivity and Reportable Range

- Methodology: Serially dilute a sample with a known high concentration of the analyte using the appropriate blank matrix. Analyze multiple replicates of each dilution. The Limit of Detection (LOD) is often determined as the concentration where the signal-to-noise ratio exceeds 3:1. The Lower Limit of Quantification (LLOQ) is the lowest concentration that can be measured with acceptable precision (e.g., CV <20%) and accuracy (e.g., 80-120% recovery). The Upper Limit of Quantification (ULOQ) is the highest concentration measurable with acceptable precision and accuracy. The range from LLOQ to ULOQ defines the reportable range. [3]

Visualizing the Analytical Validation Workflow

The journey of a biomarker assay from development to being deemed analytically valid follows a structured, multi-stage pathway. The diagram below maps this critical workflow.

The Scientist's Toolkit: Research Reagent Solutions

Executing the validation protocols above requires a suite of reliable reagents and tools. The following table details essential materials used in a real-world biomarker validation study for a radiation biodosimetry blood test, illustrating the practical application of these components. [36]

| Research Reagent / Tool | Function in Validation |

|---|---|