Beyond the Hubs: Correcting High-Degree Node Bias for Accurate Network Prediction in Biomedical Research

This article addresses the critical challenge of high-degree hub bias, a pervasive issue that skews network prediction tasks in biology and medicine.

Beyond the Hubs: Correcting High-Degree Node Bias for Accurate Network Prediction in Biomedical Research

Abstract

This article addresses the critical challenge of high-degree hub bias, a pervasive issue that skews network prediction tasks in biology and medicine. We explore how an over-reliance on node degree can lead to misleading results in link prediction, node importance identification, and graph neural network performance. Drawing on the latest research, we provide a foundational understanding of hub bias origins, present methodological corrections from degree-aware benchmarks to targeted edge dropout, and offer troubleshooting guidance for real-world biomedical networks like protein-protein interactions. Finally, we establish a validation framework for comparing debiasing techniques, empowering researchers in drug discovery and clinical biomarker identification to build more robust and reliable network models.

The Hidden Influence of Hubs: Understanding the Sources and Impact of Degree Bias in Biological Networks

Your Questions Answered

1. What is high-degree hub bias in the context of network analysis?

High-degree hub bias is a form of sampling bias that occurs when the methods used to construct or evaluate a network systematically over-represent connections to and from nodes that already have a high number of connections (high-degree nodes) [1] [2]. In essence, it creates a distorted view of the network where "rich get richer," making hubs appear more central and influential than they truly are in the complete, unbiased network.

This bias can stem from various factors [2]:

- Experimental Limitations: In biological networks like Protein-Protein Interaction Networks (PINs), research focus is often on a small set of well-known proteins, leaving others under-examined.

- Evaluation Benchmarks: In graph machine learning, common link prediction benchmarks are intrinsically biased toward high-degree nodes, leading to misleading performance for methods that overfit to node degree [1].

2. Why does this bias matter for my research in drug discovery or network biology?

Hub bias matters because it can skew your results and lead to incorrect conclusions about which nodes are most important in your network [2]. This has direct implications:

- Misidentification of Key Targets: You might incorrectly prioritize a high-degree hub protein as a crucial drug target, while overlooking a truly critical but less-connected protein that acts as a key bottleneck in a signaling pathway [3] [4].

- Over-optimistic Model Performance: A link prediction model might appear highly accurate simply because it learned to exploit this degree-based bias, not because it captured any meaningful biological relationship. A "null" method based solely on node degree can yield nearly optimal performance in a biased benchmark, which is misleading [1].

- Reduced Generalizability: Findings and models based on biased networks may not hold up when applied to more complete data or real-world scenarios.

3. How can I detect if my network is affected by high-degree hub bias?

You can detect potential hub bias by analyzing the correlation between node degree and other centrality measures, or by using a degree-corrected null model. The following workflow outlines a practical diagnostic process:

Diagnostic Workflow for Hub Bias

4. What are the practical methods to correct for this bias?

Correcting for hub bias involves using analysis techniques and evaluation benchmarks that are not solely dependent on node degree.

- Use Multiple Centrality Measures: Relying only on degree centrality is not sufficient [4] [5]. Integrate other measures like betweenness centrality (which identifies bottleneck nodes) and closeness centrality (which identifies efficient broadcasters) to get a more nuanced view of node importance [6] [5].

- Employ Robust Benchmarks: For link prediction tasks, use a degree-corrected benchmark instead of a standard one. This provides a more reasonable assessment of your model's performance and helps reduce overfitting to node degrees [1].

- Apply Statistical Corrections: Utilize null models that explicitly account for the network's degree distribution when testing hypotheses about network structure.

Experimental Protocol: Assessing Robustness to Sampling Bias

This protocol helps you quantify how sampling bias might be affecting the centrality measures in your network.

1. Define Your 'Ground Truth' Network: Start with the most complete network dataset you have available [2]. 2. Simulate Biased Sampling: Systematically remove edges from your ground truth network using different methods [2]: * Random Edge Removal (RER): Removes edges randomly. * Highly Connected Edge Removal (HCER): Preferentially removes edges connected to high-degree nodes. * Lowly Connected Edge Removal (LCER): Preferentially removes edges connected to low-degree nodes. 3. Recalculate Centrality Measures: On each of the sparser, down-sampled networks, recalculate the centrality measures of interest (e.g., degree, betweenness). 4. Analyze Robustness: Compare the centrality values and rankings from the down-sampled networks to those from the ground truth network. Measures that show little change are considered more robust to that particular type of bias.

Impact of Sampling Bias on Centrality Measures

Research on biological networks shows that the robustness of centrality measures varies under different sampling biases [2]. The table below summarizes how stable different measures are when edges are removed.

| Centrality Measure | Classification | Robustness to Sampling Bias | Remarks |

|---|---|---|---|

| Degree Centrality | Local | High | Generally robust, especially in scale-free networks [2]. |

| Betweenness Centrality | Global | Low | Highly sensitive to edge removal; less reliable in incomplete networks [2]. |

| Closeness Centrality | Global | Low | Values are heterogeneous and can be significantly distorted [2]. |

| Eigenvector Centrality | Global | Low | Particularly vulnerable compared to PageRank [2]. |

| Subgraph Centrality | Intermediate | Medium | More robust than global measures, less than local ones [2]. |

The Scientist's Toolkit: Research Reagent Solutions

| Item / Reagent | Function in Research |

|---|---|

| Network Analysis Library (e.g., NetworkX) | A Python library for the creation, manipulation, and study of the structure, dynamics, and functions of complex networks. Essential for calculating centrality measures [5]. |

| Protein-Protein Interaction (PIN) Database (e.g., STRING, BioGRID) | Provides a curated "ground truth" network of known protein interactions against which to test for biases and validate predictions [3] [2]. |

| Degree-Corrected Benchmark | A corrected evaluation benchmark for link prediction that reduces overfitting to node degree and offers a more reasonable assessment of model performance [1]. |

| Biased Edge Removal Script | A custom script (e.g., in Python) to simulate the six stochastic edge removal methods (RER, HCER, LCER, etc.) for robustness testing [2]. |

Hub bias represents a significant methodological challenge in network science, particularly affecting the evaluation of link prediction algorithms. This bias occurs when the performance of these algorithms is disproportionately evaluated on, or influenced by, a small subset of highly connected nodes (hubs) within a network. In real-world networks—from biological protein interactions to academic co-authorships—the connection structure is naturally uneven. Certain nodes amass a substantially higher number of connections, marking them as network hubs that exert undue influence on network function and algorithm performance [4]. When link prediction algorithms are benchmarked without accounting for this inherent structural bias, it can lead to overoptimistic performance claims and reduced generalizability to real-world applications where accurate prediction across all node types is crucial.

The problem is particularly acute because many standard evaluation metrics are sensitive to network structure. A link prediction method might appear superior simply because it performs exceptionally well on hub nodes, while failing to accurately predict connections for the majority of less-connected nodes. This compromises the practical utility of these algorithms in critical domains such as drug development, where predicting protein-protein interactions or gene-disease associations requires accurate modeling across the entire network topology, not just its most connected components [4]. This technical support document provides researchers with the methodological tools to identify, quantify, and correct for hub bias in their link prediction experiments.

Troubleshooting Guides & FAQs

Frequently Asked Questions

Q1: What are the primary indicators that my link prediction results might be affected by hub bias? A1: Several warning signs suggest potential hub bias contamination: (1) Performance inconsistency where algorithm superiority disappears when hubs are removed from evaluation; (2) Structural divergence where LLM-generated networks show different modularity and clustering coefficients than baseline networks [7]; (3) Demographic disparities where accuracy varies significantly across demographic groups in social networks [7].

Q2: How does hub bias specifically affect different types of network analysis? A2: Hub bias manifests differently across domains. In biological networks, it can lead to overestimation of protein significance. In LLM-generated co-authorship networks, it results in demographic biases where models produce more accurate co-authorship links for researchers with Asian or White names, particularly those with lower visibility or limited academic impact [7]. In directed networks, algorithms ignoring link direction show significantly degraded performance [8].

Q3: Which evaluation metrics are most susceptible to hub bias, and which are more robust? A3: Metrics like AUC (Area Under the ROC Curve) and H-measure demonstrate the strongest discriminability across network types and are less susceptible to structural biases [9]. Simple accuracy measures can be highly misleading when hub nodes dominate the evaluation set. Recent research recommends AUC followed by NDCG (Normalized Discounted Cumulative Gain) for balanced assessment [9].

Q4: What practical steps can I take to control for hub bias during experimental design? A4: Implement stratified evaluation by node degree, calculate Conditional Demographic Parity (CDP) and Conditional Predictive Equality (CPE) for sensitive attributes [7], employ multiple centrality measures beyond simple degree (betweenness, closeness, eigenvector) [4], and always report performance separately for hub and non-hub nodes using the protocols in Section 3.

Troubleshooting Common Experimental Problems

Problem: Inconsistent algorithm performance across different networks Solution: This often indicates hub bias. Implement the stratified cross-validation protocol outlined in Section 3.2. Calculate performance metrics separately for high-degree nodes (hubs), medium-degree nodes, and low-degree nodes. This reveals whether your method's performance is dependent on network structure rather than predictive capability.

Problem: LLM-generated networks showing demographic disparities Solution: Audit your models using the bias measurement framework from [7]. Calculate Demographic Parity (DP) and Predictive Equality (PE) for gender and ethnicity attributes. The study found that while gender disparities were minimal, significant ethnicity biases existed, with LLMs producing more accurate co-authorship links for authors with Asian or White names [7].

Problem: Poor performance in directed network link prediction Solution: Utilize directed network-specific algorithms like HBCF (Hits Centrality and Bias random walk via Collaborative Filtering) and HBSCF (Hits Centrality and Bias random walk via Self-included Collaborative Filtering) that preserve node importance through HITS centrality while capturing higher-order path information via biased random walks [8].

Experimental Protocols for Hub Bias Identification and Correction

Protocol: Hub Identification and Characterization

Purpose: Standardized methodology for identifying hub nodes and characterizing the degree distribution of your network.

Materials Needed: Network dataset, computational environment (Python/R), basic network analysis libraries (NetworkX, igraph).

Procedure:

- Calculate centrality metrics: Compute multiple centrality measures for all nodes:

Identify hub nodes: Use consensus scoring across multiple centrality measures. Select nodes in the top 10-20th percentile across at least two different centrality measures as hub nodes [4].

Characterize degree distribution: Test for right-tailed distribution using:

- Variance-to-mean ratio (dispersion index)

- Power-law fitting (using maximum likelihood estimation)

- Visualization through rank-degree plots

Document hub proportion: Calculate the percentage of nodes classified as hubs and their coverage of total connections.

Table: Hub Classification Criteria Based on Network Properties

| Network Type | Centrality Measures Recommended | Hub Threshold (Percentile) | Expected Hub Proportion |

|---|---|---|---|

| Social Networks | Degree, Betweenness, Eigenvector | 15th | 10-15% |

| Biological Networks | Degree, Betweenness | 20th | 15-20% |

| Co-authorship Networks | Degree, Closeness | 10th | 5-10% |

| Directed Networks | In-degree, HITS Authority | 15th | 10-15% |

Protocol: Stratified Evaluation for Hub Bias Assessment

Purpose: To evaluate link prediction performance across different node types while controlling for hub bias.

Materials Needed: Pre-identified hub nodes, link prediction algorithm, evaluation framework.

Procedure:

- Stratify network nodes: Divide nodes into three categories:

- Hub nodes (top 10-20% by centrality)

- Medium-connectivity nodes (middle 60-70%)

- Peripheral nodes (bottom 10-20%)

Generate test sets: For each stratum, randomly select node pairs for testing, ensuring proportional representation of each connection type.

Evaluate performance separately: Calculate precision, recall, AUC, and F1-score separately for each stratum.

Calculate bias metrics:

- Performance disparity ratio: (Hub performance)/(Non-hub performance)

- Stratified AUC difference: AUCₕᵤᵦ - AUCₙₒₙ₋ₕᵤᵦ

- Demographic parity metrics for attributed networks [7]

Statistical testing: Use paired t-tests or Mann-Whitney U tests to determine if performance differences between strata are statistically significant.

Table: Example Bias Assessment Results (Adapted from [7])

| Node Category | Precision | Recall | AUC | F1-Score | Demographic Parity |

|---|---|---|---|---|---|

| Hub Nodes | 0.85 | 0.79 | 0.92 | 0.82 | 0.88 |

| Medium Nodes | 0.72 | 0.68 | 0.81 | 0.70 | 0.85 |

| Peripheral Nodes | 0.61 | 0.55 | 0.73 | 0.58 | 0.79 |

| Disparity Ratio | 1.39 | 1.44 | 1.26 | 1.41 | 1.11 |

Protocol: Bias-Aware Algorithm Training and Validation

Purpose: To train link prediction algorithms that maintain robust performance across all node types.

Materials Needed: Training network data, computational resources for cross-validation.

Procedure:

- Stratified sampling: During training/validation split, ensure proportional representation of hub and non-hub nodes in all splits.

Loss function modification: Incorporate fairness constraints or weighted loss functions that penalize performance disparities across node types.

Bias mitigation techniques:

- Pre-processing: Resample training data to balance connection types

- In-processing: Add regularization terms to minimize performance variance across strata

- Post-processing: Calibrate prediction thresholds separately for different node types

Validation: Use nested cross-validation with stratification to obtain unbiased performance estimates.

Benchmarking: Compare against baseline methods using the stratified evaluation protocol from Section 3.2.

Research Reagent Solutions: Essential Tools for Robust Link Prediction

Table: Key Research Reagents for Hub Bias-Aware Network Analysis

| Reagent/Tool | Type | Function | Usage Notes |

|---|---|---|---|

| HBCF Framework | Algorithm | Directed link prediction using HITS centrality and biased random walks | Parameter-free; preserves node significance and higher-order structures [8] |

| HBSCF Framework | Algorithm | Self-included variant of HBCF for enhanced structure capture | Fuses with directed local/global similarities; 12 index variants available [8] |

| Demographic Parity Metrics | Evaluation Metric | Measures fairness across sensitive attributes | Critical for auditing LLM-generated networks [7] |

| AUC & H-measure | Evaluation Metric | High-discriminability performance assessment | Recommended over accuracy due to better robustness to network structure [9] |

| Multi-Centrality Consensus | Methodology | Robust hub identification using multiple centrality measures | Mitigates limitations of single-metric approaches [4] |

| Stratified Cross-Validation | Methodology | Bias-controlled performance evaluation | Essential for realistic performance estimation |

| DCN, DAA, DRA | Algorithms | Directed extensions of common neighbor-based methods | Adapted for directed networks (Directed Common Neighbors, etc.) [8] |

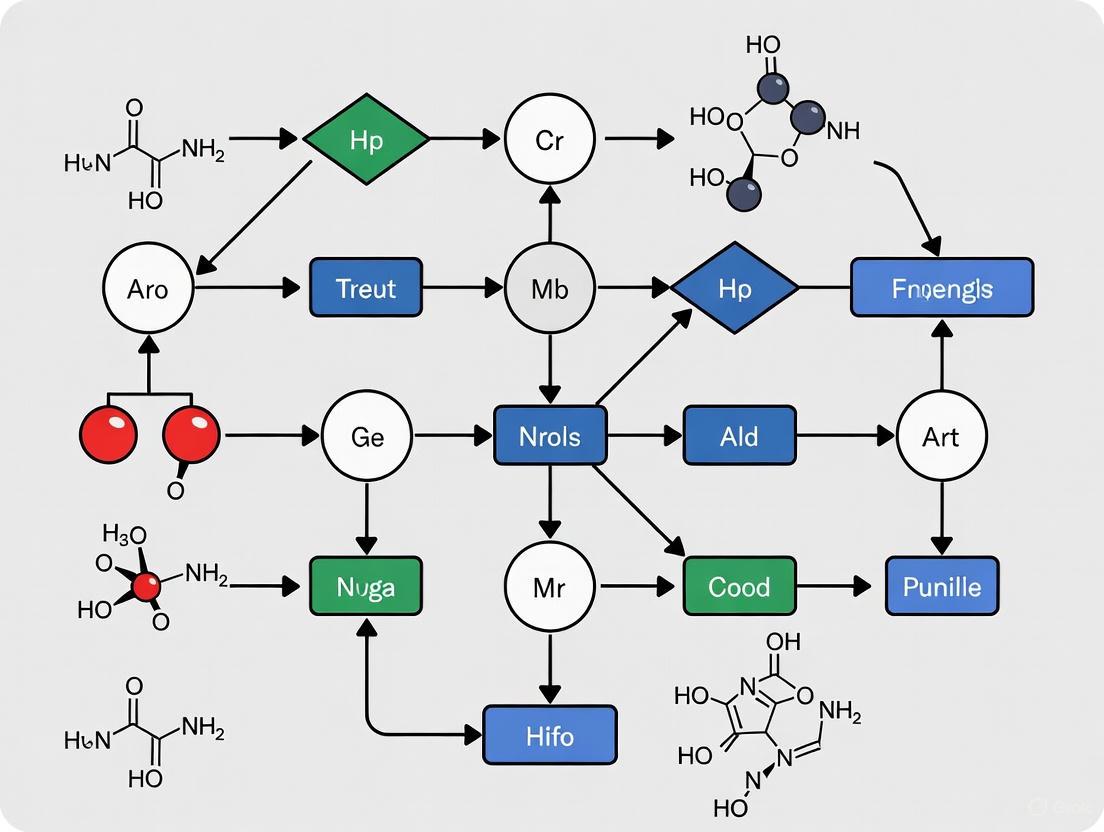

Workflow Visualization: Hub Bias Identification and Mitigation

Hub Bias Assessment Workflow: This diagram illustrates the comprehensive workflow for identifying, evaluating, and mitigating hub bias in link prediction benchmarks, ensuring robust and fair algorithm assessment.

Stratified Evaluation Architecture: This diagram shows the comprehensive architecture for stratified performance evaluation that controls for hub bias by assessing algorithm performance separately across different node connectivity strata.

Frequently Asked Questions

Q1: What is "implicit degree bias" in network analysis? Implicit degree bias is a systematic error in common network evaluation tasks, such as link prediction, where the standard sampling of edges for testing disproportionately focuses on connections to high-degree nodes (hubs). This creates a skewed benchmark that unfairly favors methods that simply overfit to node degree, making them appear more accurate than they truly are. In fact, a null model based solely on node degree can achieve nearly optimal performance on these biased benchmarks, misleading researchers about the actual capability of their models to learn relevant network structures [1] [11].

Q2: How does this bias affect real-world applications, like drug development? In contexts like identifying drug-target interactions within biological networks, a bias towards hubs can lead to a high rate of false positives. Models may repeatedly identify well-known, highly-connected proteins (hubs) as potential targets, while overlooking potentially novel but less-connected targets. This can stifle innovation and misdirect valuable research resources. Correcting for this bias is therefore essential for generating novel, reliable insights from biological network data [1].

Q3: My model performs well on standard link prediction benchmarks. Why should I be concerned? Strong performance on a biased benchmark is not a guarantee that your model has learned meaningful structures beyond the simple correlation with node degree. The benchmark itself may be the problem. Before trusting your results, it is critical to validate your model's performance on a degree-corrected benchmark to ensure it is capturing signals beyond just hub connectivity [1] [11].

Q4: What is the relationship between a node's degree and its closeness centrality? Research has revealed an explicit non-linear relationship: the inverse of closeness centrality is linearly dependent on the logarithm of degree. This means that in many networks, measuring closeness centrality is largely redundant unless this dependence on degree is first removed from the closeness calculation. This relationship further underscores how topological measures can be intrinsically influenced by node degree [12].

Troubleshooting Guides

Issue: Model is Overfitting to Node Degree

Symptoms:

- Excellent performance on standard link prediction tasks.

- Poor performance when predicting links for low-degree nodes.

- Model predictions are strongly correlated with node degree, and it struggles to identify important nodes that are not hubs.

Diagnosis and Solution: Implement a degree-corrected sampling procedure for your link prediction benchmark to ensure a more balanced evaluation.

Experimental Protocol: Degree-Corrected Link Prediction Benchmark

- Objective: To create a network train-test split that reduces the implicit bias toward high-degree nodes.

- Background: The standard link prediction task involves hiding a subset of edges and asking the model to distinguish these true edges from randomly sampled non-edges (node pairs without a connection). The bias arises because this random sampling is often not corrected for node degree [1] [11].

- Methodology:

- Step 1 - Graph Partitioning: Use a graph partitioning algorithm to divide the network into multiple communities or blocks.

- Step 2 - Stratified Edge Removal: When removing edges to create the test set, ensure that the sampling is stratified across these communities. This prevents the test set from being dominated solely by edges connected to high-degree hubs within a single large community.

- Step 3 - Degree-Aware Negative Sampling: When generating non-edges for the test set, sample node pairs in a way that accounts for their degree. This ensures that low-degree nodes are adequately represented in the negative sample set, rather than it being overwhelmingly populated by unconnected pairs of low-degree nodes.

- Validation: A successful implementation will show that a simple degree-based null model no longer achieves top performance, allowing more sophisticated models that learn genuine network patterns to be properly ranked [11].

Issue: Validating Centrality Measures Beyond Hubs

Symptoms: Your analysis identifies the same set of high-degree nodes as "central" or "important" across multiple different centrality measures.

Diagnosis and Solution: The measured importance may be an artifact of the hub bias. To extract unique information from a centrality measure like closeness, you must first remove the dependence on degree.

Experimental Protocol: Isolating Unique Closeness Centrality

- Objective: To identify nodes that have high closeness centrality after accounting for their degree.

- Background: The inverse of closeness (the average distance) has been shown to have a linear relationship with the logarithm of degree (log k) in many networks [12].

- Methodology:

- Calculate the closeness centrality, ( cv ), and degree, ( kv ), for every node ( v ).

- Perform a linear regression of ( \frac{1}{cv} ) (the average distance) against ( \log(kv) ).

- Compute the residuals from this regression for each node. A node with a large positive residual has a much shorter average distance to other nodes than would be expected given its degree. These nodes are uniquely central beyond being simple hubs.

- Interpretation: Nodes with high residual values are your truly central nodes, whose importance is not just a function of their high degree and may be due to their strategic position in the network [12].

Experimental Protocols & Workflows

Detailed Methodology for Degree-Corrected Benchmarking

The following workflow details the steps for creating a robust, degree-corrected link prediction benchmark.

Diagram: Workflow for a degree-corrected benchmark.

Protocol Steps:

- Input Network: Begin with your graph ( \mathcal{G} ).

- Partition Network: Use a community detection algorithm (e.g., Louvain, Infomap) to partition the network into ( K ) communities. This step ensures topological diversity in your test set.

- Stratified Edge Removal: Randomly remove a fixed percentage of edges (e.g., 10-20%) to form the positive test set. Crucially, ensure that the removed edges are proportionally sampled from within and between the identified communities, rather than purely at random from the entire graph.

- Degree-Aware Negative Sampling: Generate non-edges (node pairs without connections) for the test set. Instead of purely random sampling, use a stratified approach:

- Sample non-edges from within communities.

- Sample non-edges between communities.

- Ensure the degree distribution of nodes in the negative sample mirrors that of the positive test set.

- Model Training & Evaluation: Train your link prediction model on the training graph (the original graph minus the removed test edges). Evaluate its performance on the corrected test set. The model's ability to rank the true test edges higher than the sampled non-edges is a more robust indicator of its true learning capability [1] [11].

Research Reagent Solutions

Table: Essential "Reagents" for Network Bias Correction Research

| Item Name/Concept | Function / Explanation | Example / Note |

|---|---|---|

| Degree-Corrected Benchmark | A testing framework that removes degree bias from evaluation, providing a truer measure of model performance. | The core corrective methodology proposed to address the hub inflation problem [1]. |

| Stochastic Block Model (SBM) | A generative model for random graphs that can define network structure based on node blocks/communities. | Useful for creating synthetic benchmarks and for graph barycenter computation in spectral analysis [11]. |

| Topological Dirac Operator | An operator from topological deep learning that processes signals on nodes and edges jointly. | Used in advanced signal processing to overcome limitations of methods that assume smoothness or harmonic signals [11]. |

| Residual Closeness Centrality | The residual from regressing inverse closeness on log(degree). Isolates unique closeness information not redundant with degree. | Helps identify nodes that are centrally located for reasons other than just being a hub [12]. |

| Graph Barycenter | The Fréchet mean of a set of networks; a central graph in a dataset. | Key for machine learning tasks on networks. Can be computed efficiently using SBM approximations in the spectral domain [11]. |

| Control Profile | A summary of a directed network's structural controllability in terms of source nodes, external dilations, and internal dilations. | Reveals control principles; many models erroneously produce source-dominated profiles, unlike real networks [13]. |

Methodologies for Key Cited Experiments

Experiment: Demonstrating Implicit Degree Bias

Diagram: Logic of the bias demonstration.

- Objective: To prove that standard link prediction benchmarks are biased and that a model using only node degree can perform deceptively well.

- Procedure:

- Select standard benchmark networks and perform a standard random train-test split for link prediction.

- Create a simple "null" model whose prediction score for a node pair (u, v) is a function of their degrees, e.g., ( \text{score}(u,v) = ku \times kv ) or ( \text{score}(u,v) = ku + kv ).

- Evaluate this null model on the standard benchmark using standard metrics (AUC, Average Precision).

- Outcome: The null model will achieve competitively high scores, demonstrating that the benchmark itself can be gamed without learning complex patterns. This validates the need for a corrected benchmark [1].

Experiment: Implementing Degree Correction for Better Training

- Objective: To show that training models on a degree-corrected benchmark reduces overfitting and facilitates learning of relevant network structures.

- Procedure:

- Take a state-of-the-art graph machine learning model (e.g., a Graph Neural Network).

- Train one instance of the model on a standard benchmark and another on the degree-corrected version.

- Evaluate both models on a held-out test set constructed with the degree-corrected method. Also, analyze what the models have learned by examining their embeddings or attention weights.

- Outcome: The model trained on the corrected benchmark will show improved generalization on the robust test set. Analysis will reveal that this model's embeddings capture structural features beyond node degree, while the model trained on the standard benchmark shows a stronger correlation between its output and node degree [1] [11].

Table: WCAG Color Contrast Requirements for Visualizations

This table provides the minimum contrast ratios for text and UI elements as defined by WCAG guidelines, which should be adhered to in all diagrams and visual outputs for clarity and accessibility [14] [15].

| Content Type | Minimum Ratio (Level AA) | Enhanced Ratio (Level AAA) |

|---|---|---|

| Body Text (Small) | 4.5 : 1 | 7 : 1 |

| Large-Scale Text (≥ 18pt or ≥ 14pt bold) | 3 : 1 | 4.5 : 1 |

| User Interface Components (icons, graphs) | 3 : 1 | Not Defined |

Frequently Asked Questions (FAQs)

Q1: What is the fundamental functional difference between a provincial hub and a connector hub? A1: Provincial hubs are highly connected nodes that primarily integrate information within their own brain network or module. In contrast, connector hubs are highly connected nodes that primarily distribute their connections and facilitate communication across different, distinct modules of the network [16]. Connector hubs are therefore crucial for the global integration of information throughout the modular brain [16].

Q2: How can hub misclassification due to high-degree hub bias impact my research findings? A2: Misclassification can lead to a fundamental misunderstanding of network dynamics. For example, in disease modeling on networks, sampling bias that over-represents high-degree nodes (size bias) can cause severe overestimation of epidemic metrics, such as the number of infected individuals and secondary infections [17]. In a clinical context, confusing a connector hub for a provincial hub could lead to targeting the wrong neural substrate for intervention, as their roles in information processing are distinct [18].

Q3: What is the standard metric for identifying and distinguishing these hubs in a functional network? A3: The normalized participation coefficient (PCnorm) is a standard metric used to identify connector hubs [16]. It quantifies how uniformly a node's connections are distributed across different modules. A higher PCnorm indicates a node is a connector hub, while a lower value suggests a provincial hub, which has most of its connections within its native module [16].

Q4: My analysis shows a hub with altered connectivity after an intervention (e.g., sleep deprivation). How do I determine if its core role has changed? A4: You should analyze changes in its participation coefficient and its pattern of allegiances. A systematic investigation involves:

- Recalculating PCnorm: A significant increase in PCnorm post-intervention indicates the hub is behaving more like a connector [16].

- Tracking Network Allegiance: Determine if the hub shows weaker connections to its native network and starts to follow the functional trajectories of other networks, a key sign of a shift toward connector function [18].

Q5: Are there specific brain networks where connector hubs are more prevalent? A5: Yes, research indicates that following sleep deprivation, the significantly affected connector hubs were primarily observed in both the Control Network and the Salience Network [16]. Furthermore, hub aberrancies in bipolar disorder have been linked to hubs in the somatomotor network forming weaker allegiances with their own network and instead following the trajectories of the limbic, salience, dorsal attention, and frontoparietal networks [18].

Troubleshooting Common Experimental Issues

| Problem | Possible Cause | Solution |

|---|---|---|

| All hubs are identified as provincial hubs; no connector hubs are found. | The network may have poor modularity or the threshold for defining a connector hub may be set too high. | Recalculate the modularity (Q-value) of your network. Validate your threshold for the participation coefficient against established literature for your specific type of network (e.g., fMRI, diffusion MRI) [16]. |

| Hub classification is unstable across multiple scanning sessions or datasets. | High within-subject variability or low signal-to-noise ratio in the data. | Implement a rigorous quality control pipeline for your neuroimaging data (e.g., using toolkits like fmriprep or mriqc) [16]. Use a longitudinal processing stream and aggregate connectivity matrices across sessions to improve reliability. |

| Sampling method over-represents high-degree nodes, creating size bias. | Use of a simple Random Walk (RW) sampling algorithm on a heterogeneous network. | Switch to the Metropolis-Hastings Random Walk (MHRW) algorithm, which corrects for size bias by adjusting node selection probability based on its connections, yielding more representative samples [17]. |

| Observed connector hub enhancement is correlated with reduced modularity. | This is an expected trade-off. Enhanced connector hub function increases inter-modular communication at the expense of intra-modular segregation. | This is a valid finding, not necessarily an error. As shown in sleep deprivation studies, increased connector hub diversity is associated with reduced modularity and small-worldness but also with enhanced global efficiency, potentially indicating a compensatory mechanism [16]. |

| A hub is classified as a connector but its allegiances are primarily with its own network. | This may indicate an error in the module assignment or participation coefficient calculation. | Re-check your module assignment algorithm (e.g., Louvain method). Ensure that the hub's connections are correctly assigned to their respective modules before recalculating the participation coefficient. |

Table 1: Key Properties of Provincial and Connector Hubs

| Property | Provincial Hub | Connector Hub |

|---|---|---|

| Primary Function | Intra-modular integration [16] | Inter-modular integration [16] |

| Connectivity Pattern | Diverse connections within its own module [16] | Connections distributed across many different modules [16] |

| Key Metric | Low Normalized Participation Coefficient (PCnorm) | High Normalized Participation Coefficient (PCnorm) [16] |

| Impact on Network | Supports specialized, segregated processing | Supports global integration and communication [16] |

| Network Cost | Lower | Higher; associated with increased network cost [16] |

| Example Network Location | Somatomotor network in healthy controls [18] | Hubs in Control and Salience networks after sleep deprivation [16] |

Table 2: Impact of Sampling Algorithms on Disease Metric Estimation (from Network Modelling)

| Sampling Algorithm | Estimated Infected Individuals (in ER/SW networks) | Estimated Secondary Infections | Representative for SF Networks? | Key Characteristic |

|---|---|---|---|---|

| Random Walk (RW) | Overestimates by ~25% [17] | Overestimates by ~25% [17] | No (significant variability) [17] | High size bias; computationally cheap [17] |

| Metropolis-Hastings RW (MHRW) | More accurate (aligns within ~1% in real data) [17] | More accurate (aligns within ~1% in real data) [17] | No (significant variability) [17] | Reduces size bias; 1.5-2x more computationally expensive [17] |

Detailed Experimental Protocols

Protocol 1: Identifying Connector Hubs in Functional MRI Data

This protocol is based on methodologies used in recent studies on sleep deprivation and bipolar disorder [16] [18].

- Data Preprocessing: Process resting-state fMRI data using a standardized pipeline (e.g.,

fmriprep) to perform motion correction, normalization, and denoising [16]. - Network Construction: Parcellate the brain into regions of interest (ROIs). Calculate the functional connectivity (e.g., using Pearson correlation) between the time series of every pair of ROIs to create a subject-specific connectivity matrix.

- Module Detection: Apply a community detection algorithm (e.g., the Louvain method) to the group-average connectivity matrix to partition the network into non-overlapping modules.

- Calculate Participation Coefficient: For each node (ROI) and each subject, calculate the participation coefficient (PC). This measures how a node's connections are distributed across all modules. The formula is: ( PCi = 1 - \sum{s=1}^{NM} ( \frac{k{is}}{ki} )^2 ) where ( k{is} ) is the number of connections from node i to nodes in module s, and ( k_i ) is the total degree of node i.

- Normalize PC: Normalize the PC (PCnorm) of each node by comparing it to the mean PC of a set of random networks with the same degree distribution to control for underlying network structure [16].

- Hub Classification: Identify hubs as nodes with a high degree (e.g., above the network median). Then, classify these hubs as connector hubs if their PCnorm is above a defined threshold (e.g., the network average), and as provincial hubs otherwise.

Protocol 2: Metropolis-Hastings Random Walk for Reducing Size Bias

This protocol outlines the use of MHRW to sample networks for epidemiological modeling without over-representing high-degree nodes [17].

- Seed Selection: Start from a randomly chosen seed node in the network.

- Proposal: From the current node u, randomly select a neighbor node v.

- Acceptance/Rejection: Accept the proposed move to node v with a probability A calculated as:

( A = min(1, \frac{ku}{kv}) )

where ( ku ) and ( kv ) are the degrees of nodes u and v, respectively.

- If the move is accepted, the chain moves to v.

- If the move is rejected, the chain remains at u, and u is sampled again (duplicates are retained).

- Iteration: Repeat steps 2 and 3 for a large number of steps (e.g., until the sample size is sufficient and the chain has converged).

- Validation: Compare the degree distribution of the sampled nodes with the degree distribution of the underlying network to confirm that size bias has been reduced.

Visualizations

Connector Hub Identification Workflow

Sampling Algorithms & Size Bias

Research Reagent Solutions

Table 3: Essential Toolkits and Datasets for Hub Analysis

| Item Name | Function/Brief Explanation | Source/Reference |

|---|---|---|

| fmriprep | A robust functional MRI preprocessing pipeline for standardized and automated data cleaning and normalization. | Docker Hub: nipreps/fmriprep [16] |

| BCT (Brain Connectivity Toolbox) | A comprehensive MATLAB/Octave toolbox for complex brain network analysis, including modularity and participation coefficient calculation. | GitHub: BCT [16] |

| Normalized Participation Coefficient Code | Custom code for calculating the normalized participation coefficient (PCnorm), critical for identifying connector hubs. | GitHub: Code from Pedersen et al. (2020), as used in [16] |

| OpenNeuro Dataset ds000201 | A publicly available replication dataset containing fMRI data, suitable for validating hub analysis methods. | https://openneuro.org/datasets/ds000201/ [16] |

| mriqc | An MRI quality control tool for assessing the quality of structural and functional MRI data before analysis. | Docker Hub: nipreps/mriqc [16] |

Frequently Asked Questions (FAQs)

Q1: What is hub bias in the context of Protein-Protein Interaction (PPI) networks? Hub bias refers to the phenomenon where highly connected proteins, known as "hubs," are disproportionately identified and reported in PPI studies. This occurs due to a combination of study bias, where certain proteins like those associated with cancer are tested more frequently, and technical biases inherent to high-throughput experimental methods [19]. This can skew the observed network topology, making it appear as if the network has a power-law degree distribution even if the true biological interactome does not [19].

Q2: How does the choice of experimental method contribute to hub bias? Different high-throughput technologies detect interactions in distinct ways, each with unique biases. For example, affinity purification/mass spectrometry (AP/MS) and protein-fragment complementation assay (PCA) data sets can over- or under-represent proteins from specific functional categories. In contrast, yeast two-hybrid (Y2H) methods have been found to be the least biased toward any particular functional characterization [20]. The biases affect the recovery of interactions, especially for proteins in large complexes versus those involved in transient interactions [20].

Q3: Why is correcting for hub bias critical for drug discovery research? Hub proteins are often investigated as potential drug targets because of their central role in networks. However, if a protein appears as a hub largely due to study bias rather than its true biological role, targeting it may not yield the expected therapeutic results and could lead to unpredicted side effects. Accurate, bias-corrected networks are essential for identifying genuine, therapeutically relevant targets [21] [22].

Q4: What are some network properties used to define hub proteins? Hub proteins are typically defined by network properties such as:

- Degree Centrality: The number of interactions a protein has [22].

- Betweenness Centrality: The frequency with which a protein appears on the shortest path between other proteins [22].

- Eigenvector Centrality: A measure of a protein's influence based on the influence of its connections [22]. Note that there is no universal consensus on the specific degree threshold (e.g., 5, 10, or 20 interactions) for classifying a hub protein [22].

Q5: Can algorithmic methods help correct for hub bias? Yes, computational approaches are vital for bias correction. For instance, the Interaction Detection Based on Shuffling (IDBOS) procedure is a numerical approach that computes co-occurrence significance scores to identify high-confidence interactions from AP/MS data, reducing reliance on previous knowledge and its associated biases [20]. Furthermore, supervised learning methods like ClusterEPs use contrast patterns to distinguish true complexes from random subgraphs, improving prediction accuracy beyond methods that rely solely on network density [23].

Troubleshooting Guides

Issue: Low Overlap Between PPI Data Sets from Different Experiments

Problem: Biological insights derived from different PPI data sets (e.g., AP/MS vs. Y2H) do not agree or even contradict each other.

| Potential Cause | Explanation | Solution |

|---|---|---|

| Methodological Bias | Different technologies (AP/MS, PCA, Y2H) have inherent strengths and weaknesses, leading them to recover distinct sets of interactions [20]. | Interpret data in experimental context. Do not combine data from different methods without accounting for their biases. Treat data from each method as a complementary view of the interactome [20]. |

| High False Positive/Negative Rates | AP/MS may infer indirect interactions (false positives), while Y2H can have high false-negative rates [20]. | Apply high-confidence filters. Use scoring methods like IDBOS for AP/MS data or consolidate multiple Y2H data sets to create a more reliable interaction set [20]. |

Issue: Distinguishing Genuine Hubs from Artifactual Ones

Problem: It is challenging to determine if a highly connected protein is a true biological hub or an artifact of biased sampling.

| Potential Cause | Explanation | Solution |

|---|---|---|

| Study Bias / Preferential Sampling | Well-known proteins (e.g., cancer-associated proteins like p53) are tested as "bait" more frequently, artificially inflating their number of recorded interactions [19]. | Account for bait usage distribution. Analyze the provenance of interactions. Be skeptical of hubs whose high degree relies heavily on data from a single experimental method or a small number of over-studied baits [19]. |

| Aggregation of Multiple Studies | Combining results from thousands of individual studies can create a power-law degree distribution in the aggregated network, even if the underlying true interactome has a different topology [19]. | Analyze study-specific networks. Examine the degree distribution in networks derived from individual, controlled experiments rather than only relying on large, aggregated databases [19]. |

Experimental Protocol: Assessing Methodological Bias in PPI Data

This protocol helps characterize the functional biases in a given high-confidence PPI data set [20].

- Data Set Selection: Obtain high-confidence PPI data sets from different methodologies (e.g., AP/MS, PCA, Y2H).

- Functional Annotation: Annotate all proteins in each data set using a structured database like the Munich Information Center for Protein Sequences (MIPS) for categories such as "Function," "Location," and "Complex" [20].

- Calculate Intra-annotation Coherence: For each data set and category, classify every interaction as "intra-annotation" (both proteins share at least one common annotation) or "inter-annotation" (no common annotation) [20].

- Compare Across Methods: The percentage of intra-annotation interactions reveals methodological bias. For example, AP/MS data may show very high intra-annotation for "Location" (88%) but lower for "Complex" (31%), whereas PCA may show lower intra-annotation for "Function" (52%), indicating different functional recoveries [20].

Experimental Protocol: Supervised Complex Prediction with ClusterEPs

This protocol uses the ClusterEPs method to predict protein complexes while mitigating biases from assuming all complexes are densely connected [23].

- Network Preprocessing: Start with a PPI network (e.g., from DIP database). Preprocess to remove low-confidence interactions, for example, by using a functional similarity score (like TCSS) and removing edges with a Biological Process score below 0.5 [23].

- Create Training Classes:

- Positive Class: Subgraphs from known true complexes (e.g., from MIPS or TAP06 catalogues). Remove single proteins, pairs, and duplicates.

- Negative Class: Random subgraphs from the PPI network that are not known complexes [23].

- Feature Vector Construction: Calculate key topological properties for each subgraph in both classes. Features can include average clustering coefficient, degree correlation variance, and other network statistics [23].

- Discover Emerging Patterns (EPs): Mine patterns that contrast sharply between the positive (true complexes) and negative (random subgraphs) classes. An example EP is

{meanClusteringCoeff ≤ 0.3, 1.0 < varDegreeCorrelation ≤ 2.80}, which is highly indicative of non-complexes [23]. - Score and Predict New Complexes: Define an EP-based clustering score. New complexes are grown from seed proteins by iteratively updating this score to identify groups of proteins that are likely to form a biological complex [23].

Key Experimental Methods for Characterizing PPIs

The table below summarizes prevalent biophysical methods for detecting PPIs, which are a common source of technical bias [21].

| Method | Advantages | Disadvantages | Affinity Range |

|---|---|---|---|

| Fluorescence Polarization (FP) | Automated high-throughput; simple mix-and-read format [21]. | Requires a large change in size upon binding; susceptible to fluorescence interference [21]. | nM to mM [21] |

| Surface Plasmon Resonance (SPR) | Label-free; provides real-time kinetic data [21]. | Immobilization of bait can interfere with binding [21]. | sub-nM to low mM [21] |

| Nuclear Magnetic Resonance (NMR) | Provides high-resolution structural information [21]. | Requires high sample consumption; can be time-consuming to analyze [21]. | µM to mM [21] |

| Isothermal Titration Calorimetry (ITC) | Label-free; directly measures thermodynamic parameters [21]. | Low throughput; requires significant preparation time [21]. | nM to sub-µM [21] |

Research Reagent Solutions

| Reagent / Resource | Function in PPI Research | Key Consideration |

|---|---|---|

| Tagged Bait Proteins (e.g., TAP, GFP) | Used in AP/MS to purify protein complexes from a native cellular environment [20]. | Tag placement and size can sterically hinder interactions, introducing false negatives. |

| Yeast Two-Hybrid System | Detects binary interactions by reconstituting a transcription factor via bait-prey interaction [20] [21]. | Interactions are forced to occur in the nucleus, which may not reflect native conditions. |

| Fluorescent Dyes (e.g., Fluorescein, Cy5) | Used to label proteins in Fluorescence Polarization (FP) and Microscale Thermophoresis (MST) assays [21]. | The fluorescent label can potentially alter the binding properties of the protein. |

| Sensor Chips (e.g., Gold Film) | The core of SPR systems; the bait protein is immobilized on this surface [21]. | The immobilization chemistry must maintain the bait protein in a functional state. |

Visualizations

PPI Method Bias in Network Topology

Hub Bias Creation via Aggregation

Correcting Bias with Controlled Analysis

Corrective Strategies in Practice: From Degree-Aware Algorithms to Biomedical Applications

Implementing Degree-Corrected Benchmarks for Unbiased Link Prediction

Frequently Asked Questions (FAQs)

Q1: What is the core problem that degree-corrected benchmarks solve? Traditional link prediction benchmarks have an implicit degree bias, where the common evaluation method of distinguishing hidden edges from random node pairs creates a systematic bias toward high-degree nodes [1]. This skews evaluation, allowing a simple "null" method based solely on node degree to achieve nearly optimal performance, which misleadingly favors models that overfit to node degree rather than learning relevant network structures [1].

Q2: How does degree bias manifest in real-world networks like biological systems? In networks built from correlation data (e.g., functional brain networks), a node's degree is often substantially explained by the size of the network community (or system) it belongs to, rather than its unique importance [24]. This means degree-based approaches might simply identify parts of large systems instead of true critical hubs, confounding the analysis [24].

Q3: My graph is disconnected, containing multiple isolated components. Should I connect them into a single graph for link prediction? No. You should not create a "super graph" by connecting disconnected components if predicting links between them does not make sense for your problem [25]. The link prediction task should be performed individually on each connected component to avoid evaluating nonsensical connections between nodes that belong to inherently separate networks [25].

Q4: What are the main advantages of switching to a degree-corrected benchmark? The degree-corrected benchmark provides a more reasonable assessment that better aligns with performance on real-world tasks like recommendation systems [1]. It helps prevent overfitting to node degrees during model training and facilitates the learning of meaningful network structures [1].

Troubleshooting Guides

Issue 1: Model Performance Remains Highly Dependent on Node Degree

Problem: After implementing a degree-corrected benchmark, your model's predictions are still overly correlated with node degree.

Solution:

- Verify Your Sampling Procedure: Ensure the negative edge sampling (selection of non-existent edges for evaluation) does not implicitly favor high-degree nodes. The proposed degree-corrected benchmark specifically addresses this by modifying the sampling procedure [1].

- Inspect Community Structure: Analyze if your network has strong community structure. In such cases, consider integrating community-aware metrics. Research indicates that in correlation networks, degree can be a confounded measure because it is driven by community size [24].

Issue 2: Unstable Centrality Rankings After Edge Removal

Problem: When simulating observational errors or data incompleteness through edge removal, the rankings of node importance (centrality) change drastically.

Solution:

- Diagnose Robustness: This is a known issue when networks are incomplete. Studies on sampling bias show that the robustness of centrality measures varies [2].

- Choose Robust Centralities: Prefer local centrality measures (like degree centrality), which generally show greater robustness under sampling bias compared to global measures (like betweenness or closeness) [2].

- Understand Network Type: Be aware that protein interaction networks (PINs) have been found to be particularly robust to edge removal, while other biological networks like reaction networks are less so [2].

Issue 3: Poor Performance on Real-World Tasks Despite Good Benchmark Scores

Problem: Your model performs well on a standard link prediction benchmark but fails when deployed on a practical task like a recommendation system.

Solution:

- Re-evaluate Your Benchmark: This is a key sign of benchmark bias. The standard link prediction benchmark's validity has been questioned because it can be gamed by degree-dependent models [1].

- Adopt a Degree-Corrected Benchmark: Use the proposed degree-corrected benchmark, which has been shown to offer a more reasonable assessment and better align with performance on recommendation tasks [1].

Experimental Protocols & Data Presentation

Protocol 1: Reproducing the Core Degree-Bias Finding

This experiment demonstrates that node degree alone can achieve high performance on traditional link prediction benchmarks.

Methodology:

- Select Dataset: Use a standard link prediction benchmark network (e.g., a protein-protein interaction network from STRING or BioGRID) [2].

- Baseline Method: Implement a "null" model where the likelihood of a link between two nodes is proportional to the product of their degrees.

- Evaluation: Follow the common evaluation procedure of removing a subset of edges and trying to distinguish them from randomly sampled non-edges.

- Comparison: Compare the performance (e.g., AUC score) of this simple degree-based method against more complex graph machine learning models.

Expected Outcome: The degree-based null model will yield nearly optimal performance, highlighting the bias inherent in the standard evaluation task [1].

Protocol 2: Implementing a Degree-Corrected Benchmark

This protocol outlines the steps to create a more unbiased evaluation benchmark.

Methodology:

- Negative Sampling: Modify the negative sampling strategy to correct for the implicit bias toward high-degree nodes. The specific correction method is detailed in the originating research [1].

- Model Training: Train graph machine learning models using this new benchmark.

- Performance Assessment: Evaluate models on the degree-corrected benchmark and compare their performance on downstream tasks (e.g., recommendation systems) to validate the improved alignment [1].

Key Quantitative Findings from Literature:

Table 1: Impact of Sampling Bias on Centrality Measure Robustness (BioGRID PIN) [2]

| Edge Removal Method | Degree Centrality Robustness | Betweenness Centrality Robustness | Eigenvector Centrality Robustness |

|---|---|---|---|

| Random Edge Removal (RER) | High | Medium | Low |

| Highly Connected Edge Removal (HCER) | Medium | Low | Low |

| Lowly Connected Edge Removal (LCER) | High | Medium | Medium |

Table 2: Community Size Explains Node Strength in Correlation Networks [24]

| Network Type | Analysis Scale | Variance in Strength Explained by Community Size |

|---|---|---|

| Functional Brain Network | Areal (264 areas) | ~11% (±4%) |

| Functional Brain Network | Voxelwise | ~34% (at common thresholds) |

Workflow Visualization

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Resources for Unbiased Link Prediction Research

| Item / Resource | Function / Purpose | Example / Note |

|---|---|---|

| Network Datasets | Provides ground-truth data for training and evaluation. | Protein Interaction Networks (e.g., BioGRID, STRING) [2]; Synthetic Networks (e.g., Scale-free, Watts-Strogatz) [2]. |

| Graph Analysis Libraries | Provides algorithms for computation of centrality measures, community detection, and link prediction. | Neo4j Graph Data Science (GDS) Library [26]; NetworkX (for Python) [2]. |

| Degree-Corrected Blockmodels | Statistical model for network data that accounts for degree heterogeneity and community structure. | A nonparametric approach using Dirichlet processes can automatically determine the number of communities [27]. |

| Robustness Testing Framework | Simulates sampling biases (e.g., edge removal) to test the stability of network metrics. | Implement methods like Random Edge Removal (RER) and Highly Connected Edge Removal (HCER) [2]. |

| Accessibility & Color Contrast Tools | Ensures visualizations and diagrams are readable by all, including those with color vision deficiencies. | Use tools like WebAIM's Contrast Checker [28] or axe DevTools [29] to verify color contrast in figures. |

Frequently Asked Questions (FAQs)

Fundamental Concepts

Q1: What is the key difference between a provincial hub and a connector hub? A1: Provincial hubs are high-degree nodes that primarily connect to other nodes within the same network module or community. In contrast, connector hubs are also high-degree nodes, but they are distinguished by their diverse connectivity profile, linking several different modules within the network. The alteration or removal of a connector hub results in more widespread disruption throughout the entire network compared to a provincial hub [30] [31] [32].

Q2: Why are current univariate hub identification methods insufficient? A2: Univariate methods, such as simple sorting-based approaches that rank nodes by degree or betweenness centrality, select hub nodes sequentially (one after another). This process ignores the interdependencies between hub nodes and the complex topology of the entire network. Consequently, these methods are biased toward identifying provincial hubs and often fail to capture the synergistic role of connector hubs, leading to redundant and less critical hub selections [30] [31].

Q3: How does the multivariate method define and identify a connector hub? A3: The multivariate method identifies connector hubs jointly as a set. The core principle is to find the critical nodes whose collective removal would break down the network into the largest number of disconnected components. This approach leverages the global network organization, moving beyond local node-level metrics to assess the multivariate topological dependency within the network [30] [31].

Experimental Design & Data

Q4: What data types are required to apply multivariate hub identification? A4: This method is designed for brain networks derived from neuroimaging data. It requires a connectivity matrix (e.g., a correlation matrix) representing the structural or functional connections between parcellated brain regions. The method can be applied to a single subject's network or extended to perform population-wise analysis across a group of networks [30] [31] [32].

Q5: Can this method be applied to multilayer networks? A5: Yes, advanced versions of the method have been extended to multilayer networks. These approaches use graph representation learning to infer a low-dimensional graph embedding that accounts for both intra-layer and inter-layer connectivity. This allows for the identification of hubs that are critical to the integrated topology of the multilayer network [32].

Interpretation and Validation

Q6: How can I validate the identified hub set in my experiment? A6: Validation can be performed through several means:

- Simulated Networks: Test the method on simulated networks with a known ground-truth hub structure to assess accuracy [30] [32].

- Replicability: Check the consistency of identified hubs across different sub-samples of your population or similar datasets [30].

- Clinical/Biological Relevance: Evaluate whether the identified hubs align with known disease foci or biological markers. For example, in Alzheimer's disease, check if hubs colocalize with regions known to have high amyloid-β deposition [30] [31].

- Robustness Analysis: Perturb the network data slightly and re-run the analysis to see if the hub set remains stable.

Q7: What is the relationship between network modules and connector hubs? A7: Network modules (or communities) are densely connected groups of nodes. Connector hubs are the nodes that facilitate communication between these modules. While module-based methods (functional cartography) can distinguish hub types, they still often rely on univariate sorting after module detection. The multivariate method identifies connector hubs based on their critical role in network integration without necessarily requiring a pre-defined module partition [30].

Troubleshooting Guides

Problem: Algorithm Identifies Too Many Redundant Hubs

Symptoms: The identified hub set contains multiple nodes that are densely connected to each other and appear to belong to the same network module, with no nodes that clearly link disparate parts of the network.

Possible Causes and Solutions:

- Cause 1: Use of a univariate sorting method.

- Cause 2: Over-reliance on a single centrality metric like degree.

- Solution: If you must use a univariate method, combine multiple nodal centrality metrics (e.g., betweenness, participation coefficient). However, be aware that this still uses sequential selection and may not fully resolve the redundancy issue [30].

Experimental Protocol for Verification:

- Run your preferred univariate method (e.g., degree ranking) on your network data and note the top 5 hubs.

- Run a multivariate method on the same data.

- Visualize the network, color-coding nodes based on the two different results. The multivariate result should show nodes that sit at the junctions between visually distinct modules.

Problem: Inability to Distinguish Connector Hubs from Provincial Hubs

Symptoms: The hub identification process selects high-degree nodes but cannot differentiate those with diverse inter-module connections from those with dense intra-module connections.

Possible Causes and Solutions:

- Cause: The method lacks a mechanism to incorporate the global network topology and module structure.

- Solution: Implement a method that uses graph spectrum theory. The multivariate method evaluates the criticality of each node in the Eigenspace of the graph Laplacian. A node's removal that significantly changes the graph spectrum indicates it is a critical connector hub. For multilayer networks, ensure the method uses an embedding learned from both intra- and inter-layer connections [30] [32].

Methodology for Multivariate Hub Identification:

- Input: A graph G with N nodes.

- Objective: Find a set S of k nodes whose removal maximizes the number of connected components in the graph.

- Process: The method infers a low-dimensional latent representation of the network's organization. It then identifies the set of nodes that act as critical bridges between network communities by solving an optimization problem based on the graph's spectral properties [30] [32].

- Output: A joint set S of k connector hub nodes.

Problem: Low Statistical Power in Population-Based Studies

Symptoms: When comparing hub properties between a patient group and a control group, no significant differences are found, even when other network measures show alterations.

Possible Causes and Solutions:

- Cause 1: Using an unstable hub identification method that yields different results across individuals in the same group.

- Cause 2: Focusing on the wrong type of hub (provincial vs. connector).

Validation Experiment Protocol:

- Data: Acquire functional MRI data from a publicly available dataset (e.g., Alzheimer's Disease Neuroimaging Initiative - ADNI).

- Groups: Define two groups: patients and healthy controls.

- Analysis:

- For each subject, create a functional connectivity matrix.

- Use the population-wise multivariate method to identify a common set of connector hubs for the entire cohort.

- Compare the connectivity strength or other properties of these common hubs between the two groups using a standard statistical test (e.g., t-test).

- Expected Outcome: Studies have shown that this method exhibits enhanced statistical power in identifying network alterations related to neurological disorders compared to conventional methods [30] [31].

Table 1: Comparison of Hub Identification Methods

| Method Category | Key Principle | Pros | Cons | Best for Identifying |

|---|---|---|---|---|

| Univariate Sorting-Based [30] [31] | Ranks nodes one-by-one based on a centrality metric (e.g., degree, betweenness). | Computationally simple and efficient. | Ignores hub interdependencies; biased toward provincial hubs; results in redundant hub sets. | High-degree provincial hubs |

| Functional Cartography [30] [31] | Identifies hubs based on network modularity and the participation coefficient. | Can distinguish between provincial and connector hubs. | Relies on the quality of module detection; final hub selection is still univariate and sequential. | Provincial and connector hubs (with module info) |

| Multivariate Graph Inference [30] [31] | jointly finds a set of nodes whose removal maximizes network fragmentation. | Utilizes global topology; reduces redundancy; more accurate and replicable; identifies critical connector hubs. | More computationally complex than univariate methods. | Connector hubs |

| Multilayer Graph Representation Learning [32] | Learns a low-dimensional graph embedding from intra- and inter-layer connections to identify hubs. | Captures complex topology of multilayer networks; identifies hubs critical to cross-layer integration. | High computational complexity; requires multilayer data. | Connector hubs in multilayer networks |

Table 2: Key Network Metrics for Hub Characterization

| Metric | Formula/Definition | Interpretation in Hub Context |

|---|---|---|

| Degree Centrality | ( ki = \sum{j \neq i} A_{ij} ) where (A) is the adjacency matrix. | Number of connections a node has. A basic measure, but high degree does not necessarily mean a node is a connector hub [30]. |

| Betweenness Centrality | ( bi = \sum{s \neq t \neq i} \frac{\sigma{st}(i)}{\sigma{st}} ) where (\sigma_{st}) is the number of shortest paths between (s) and (t). | Measures how often a node lies on the shortest path between other nodes. High betweenness can indicate a bridge role [30] [31]. |

| Participation Coefficient [32] | ( PCi = 1 - \sum{m=1}^{M} \left( \frac{ki(m)}{ki} \right)^2 ) where (k_i(m)) is the degree of node (i) to module (m). | Measures the diversity of a node's connections across different modules. Key metric for connector hubs: values near 1 indicate a uniform distribution of links across all modules [32]. |

| Modularity [30] | ( Q = \sum{m} (e{mm} - am^2 ) ) where (e{mm}) is the fraction of links within module (m), and (a_m) is the fraction of links connected to nodes in (m). | Measures the strength of division of a network into modules. A prerequisite for calculating the participation coefficient [30]. |

Experimental Workflow and Visualization

Conceptual Workflow for Multivariate Hub Identification

The following diagram illustrates the core logic of differentiating traditional univariate methods from the advanced multivariate approach for identifying connector hubs.

Protocol: Implementing Multivariate Hub Identification

Aim: To identify a set of critical connector hubs from a single-subject brain functional network.

Step-by-Step Methodology:

- Data Preprocessing:

- Obtain preprocessed fMRI time series for N brain regions.

- Compute the N x N functional connectivity matrix, typically using Pearson correlation between time series.

Network Construction:

- Threshold the correlation matrix to create an adjacency matrix (A) for an undirected graph. This can be a binary or weighted matrix.

Apply Multivariate Hub Identification Algorithm:

- The core algorithm is designed to find a set S of k nodes that solves the optimization problem: argmaxₛ |C(G \ S)|, where |C(G \ S)| is the number of connected components after removing the node set S from graph G.

- Implementation Note: The method uses spectral graph theory. It involves computing the graph Laplacian (L = D - A), where D is the diagonal degree matrix, and analyzing its eigenvalues and eigenvectors. The algorithm seeks the set of nodes whose removal causes the largest increase in the graph's nullity (the dimension of the zero-eigenspace), which directly relates to the number of disconnected components [30].

Output and Interpretation:

- The algorithm returns a set S of node indices identified as critical connector hubs.

- Map these node indices back to their anatomical brain regions for biological interpretation.

Troubleshooting Tip: If the algorithm fails to converge or runs slowly on a dense network, consider applying a more stringent threshold during network construction to create a sparser graph.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Resources for Hub Identification Research

| Item | Function/Description | Example/Tool |

|---|---|---|

| Neuroimaging Data | Provides raw data to construct structural or functional brain networks. | fMRI, dMRI, or MEG data from databases like ADNI, HCP, or UK Biobank. |

| Parcellation Atlas | Defines the nodes (brain regions) of the network. | Automated Anatomical Labeling (AAL), Harvard-Oxford Atlas, Brainnetome Atlas. |

| Network Construction Tool | Converts raw time series or tractography data into a connectivity matrix. | MATLAB Toolboxes (e.g., CONN, Brain Connectivity Toolbox), Python (MNE, nilearn). |

| Hub Identification Software | Implements algorithms for identifying provincial and connector hubs. | Custom code based on multivariate graph inference [30] [31]; Brain Connectivity Toolbox (for participation coefficient). |

| Graph Visualization Platform | Enables visualization of networks and identified hubs for interpretation. | BrainNet Viewer, Gephi, Cytoscape. |

| Multilayer Network Analysis Tool | For advanced studies analyzing networks across multiple frequencies or conditions. | Multilayer extension of multivariate method [32]; multinet library in R. |

Frequently Asked Questions

Q1: What is "over-smoothing" in Graph Neural Networks, and why is it a problem? Over-smoothing is a phenomenon where, after too many graph convolution layers, the node representations (embeddings) become increasingly similar and eventually converge to constant vectors. This leads to a loss of discriminative information and a significant degradation in model performance, such as poor node classification accuracy [33].

Q2: How does random edge dropout (e.g., DropEdge) differ from targeted edge dropout? Random edge dropout removes connections uniformly from the graph during training. In contrast, targeted edge dropout strategically removes edges based on specific graph properties. The method discussed here, SDrop, specifically targets connections between highly connected nodes (hubs), which are often the first to suffer from over-smoothing, and combines this with a siamese network architecture to improve robustness [33].

Q3: My model performance drops when I use edge dropout. What could be wrong? A common issue is the inconsistency between the subgraphs used during training and the full graph used during inference. This can create an out-of-distribution (OOD) problem. To address this, consider using methods like SDrop that incorporate a siamese network to enforce prediction consistency between differently dropped versions of the graph, thereby stabilizing training [33]. Furthermore, be aware that some edge-dropping methods can reduce sensitivity to distant nodes, which might be detrimental to tasks requiring long-range dependency modeling [34].

Q4: Why should I focus on dropping hub-hub connections specifically? Empirical and theoretical studies show that regions of the graph with connected hub nodes (high-degree nodes) are the first to exhibit over-smoothing. Their features converge to a stationary state much faster than other node types. By selectively dropping these connections, you can directly delay the onset of this early over-smoothing, which in turn helps to relieve global over-smoothing in the entire graph [33].

Q5: Are there scenarios where edge dropout might be harmful? Yes. Recent research indicates that while edge dropout helps with over-smoothing, it can exacerbate the problem of "over-squashing," where information from too many nodes is compressed into a fixed-sized vector. This is particularly damaging for tasks that require modeling long-range interactions on the graph. For such tasks, sensitivity-aware methods like DropSens might be more appropriate [34].

Troubleshooting Guides

Problem: Rapid Performance Degradation with Deep GNNs

- Symptoms: Performance (e.g., accuracy) increases up to a certain number of GNN layers but then sharply decreases as more layers are added.

- Diagnosis: This is a classic sign of over-smoothing. The model loses its ability to distinguish between nodes from different classes.

- Solutions:

- Implement Targeted Dropout: Instead of random dropout, use a method like SDrop that focuses on hub-hub connections. This directly addresses the regions where over-smoothing begins [33].

- Add Residual Connections: Incorporate skip connections that bypass one or more GNN layers. This helps to propagate features from earlier layers forward, preserving node-specific information [33].

- Use Initial Residuals: Combine the output of a convolution layer with the initial node features (or a transformed version of them) to help the network retain a "memory" of the original input data [33].

Problem: High Variance and Unstable Training with DropEdge

- Symptoms: Model performance fluctuates significantly between training epochs, and results are not reproducible.

- Diagnosis: The random removal of edges creates a significant disparity between the data distribution seen during training (subgraphs) and inference (full graph). This is the inconsistency problem [33].

- Solutions:

- Adopt a Siamese Framework: Implement the SDrop architecture. It uses two "views" (channels) of the graph with different targeted edge drops. A consistency regularization loss is applied to the predictions from these two channels, forcing the model to become robust to the dropout variations [33].

- Message-Dropout Alternatives: Consider methods like DropMessage, which randomly masks features during the message-passing step rather than removing entire edges. This can sometimes lead to more stable training [35].

Problem: Poor Performance on Long-Range Tasks

- Symptoms: The model performs well on tasks requiring only local information but fails when predictions depend on nodes that are far apart in the graph.

- Diagnosis: The model may be suffering from over-squashing, where information from a large receptive field is compressed into a fixed-sized vector, causing a bottleneck. Standard edge dropout can worsen this by reducing the number of available paths [34].

- Solutions:

- Use Sensitivity-Aware Dropout: Replace standard DropEdge with DropSens. This method controls the proportion of information loss by considering the sensitivity of nodes, helping to preserve important long-range interactions even as edges are dropped [34].

- Combine with Graph Rewiring: Consider using graph rewiring techniques (not covered in detail here) that add or re-connect edges to create shorter paths between distant nodes, explicitly mitigating the over-squashing bottleneck.

Experimental Protocols & Data

Protocol 1: Reproducing the Hub-Hub Connection Analysis

This experiment demonstrates that hub-hub connections are indeed the first to over-smooth.

- Objective: To validate that connected hub nodes experience early over-smoothing.

- Methodology:

- Node Categorization: For a given graph, categorize local regions into three types: Hub-Hub, Hub-Non-hub, and Non-hub-Non-hub connections based on node degrees.

- Model Training: Train a vanilla GCN or SGC model with a sufficient number of layers (e.g., 8-16) on a benchmark dataset (e.g., Cora, Citeseer).

- Metric Tracking: At each layer, calculate the average Euclidean distance between the embeddings of connected nodes for each of the three region types.

- Expected Outcome: The embedding distance for Hub-Hub connections will be significantly smaller and converge to its minimum value faster than the other two types, visually confirming early over-smoatching [33].

Protocol 2: Evaluating the SDrop Method

This protocol outlines how to implement and test the SDrop method for semi-supervised node classification.

- Objective: Compare the performance of SDrop against baseline methods like DropEdge.

- Methodology:

- Backbone Model: Select a GNN model (e.g., GCN, GAT) as the backbone.

- SDrop Implementation:

- Create two copies of the input graph.