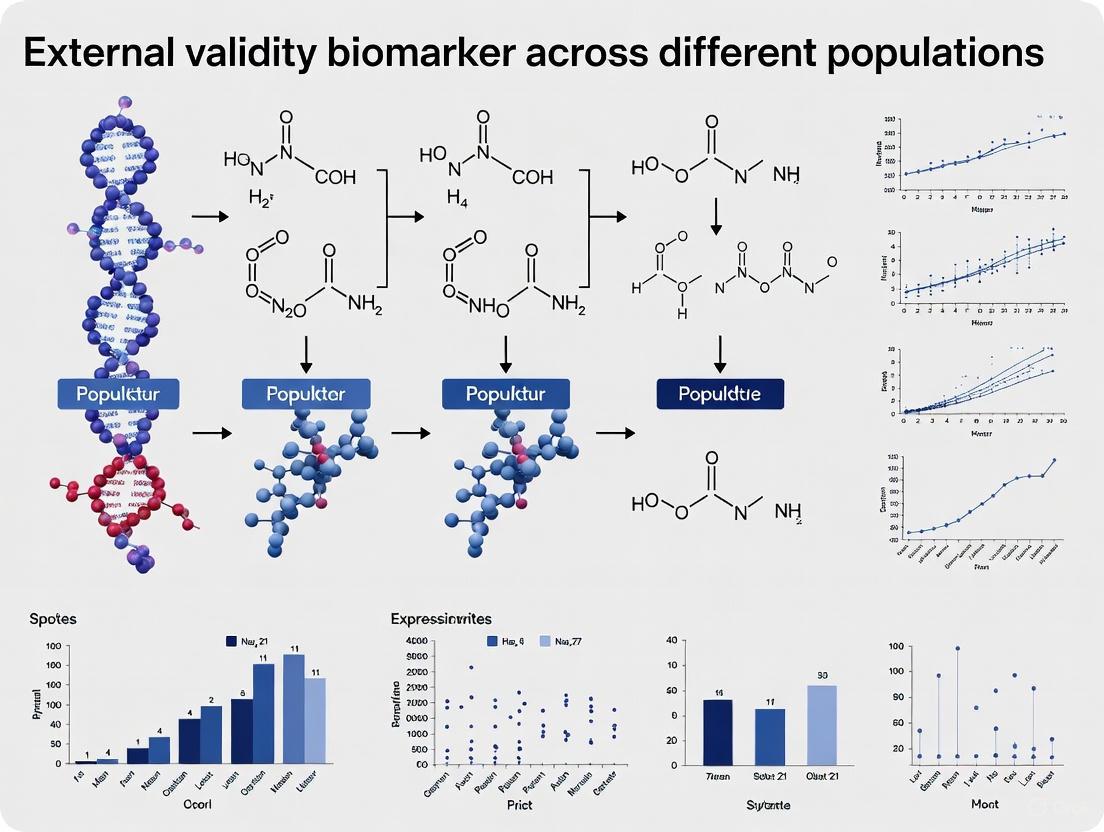

Beyond the Discovery Cohort: A Strategic Framework for Externally Validating Biomarkers Across Diverse Populations

This article provides a comprehensive guide for researchers and drug development professionals on establishing the external validity of biomarkers.

Beyond the Discovery Cohort: A Strategic Framework for Externally Validating Biomarkers Across Diverse Populations

Abstract

This article provides a comprehensive guide for researchers and drug development professionals on establishing the external validity of biomarkers. It systematically addresses the journey from foundational concepts and methodological rigor to troubleshooting common pitfalls and executing robust validation studies. By synthesizing current evidence and regulatory perspectives, the content offers a strategic framework to enhance the generalizability, clinical applicability, and regulatory acceptance of biomarkers, ultimately ensuring they deliver on their promise in real-world, heterogeneous patient populations.

Why External Validity is the Lynchpin of Clinically Useful Biomarkers

In the field of biomarker research, the journey from discovery to clinical application is fraught with challenges, chief among them being the demonstration of external validity. This guide objectively examines the critical role of external validity—the extent to which research findings can be generalized beyond the specific context of a study to other populations, settings, and times. Framed within the context of biomarker research across diverse populations, we compare validation methodologies, present experimental data from recent studies, and provide a practical toolkit for researchers and drug development professionals to enhance the generalizability and real-world impact of their work.

External validity refers to the extent to which the results of a study can be generalized beyond the specific context of the study to other populations, settings, times, and variables [1]. In biomarker research, this concept transcends traditional internal validation and cross-validation techniques to address a more fundamental question: will this biomarker perform reliably across diverse patient populations, clinical settings, and real-world conditions?

The ultimate goal of biomarker research is to produce knowledge that can be applied to real-world situations [1]. Without strong external validity, even the most promising biomarkers may fail during clinical implementation, unable to deliver on their potential for improving patient care. This is particularly critical in drug development, where decisions based on biomarker performance can significantly impact clinical trial design, patient stratification, and therapeutic efficacy assessments.

The relationship between internal and external validity often involves a delicate balance [2]. Studies with rigorous control over variables may achieve high internal validity but can be less applicable to real-world settings due to their artificial conditions. Conversely, studies conducted in naturalistic settings may have higher ecological validity but face challenges in controlling for confounding variables [2]. This guide explores methodologies and frameworks for enhancing both dimensions of validity, with particular emphasis on their application in biomarker research across diverse populations.

Theoretical Framework: Understanding External Validity

Core Components of External Validity

External validity encompasses two primary dimensions that determine the generalizability of research findings:

Population Validity: This aspect addresses how well the findings of a study can be extended to other populations or groups beyond the specific sample studied [1]. Key factors influencing population validity include sampling methods, sample size, and the characteristics of the sample (age, gender, ethnicity, socioeconomic status, and cultural background) [1]. In biomarker research, population validity is crucial for ensuring that a biomarker discovered in one demographic group performs reliably in others.

Ecological Validity: This concerns the generalizability of findings to real-world settings or environments [1]. It addresses whether results obtained in controlled research contexts can be meaningfully applied to natural environments where the phenomenon of interest occurs. For biomarkers, this translates to performance in routine clinical practice versus highly controlled laboratory conditions.

Conceptual Relationship Between Validity Types

The following diagram illustrates the relationship between internal and external validity and their subcomponents in the context of research generalization:

Threats to External Validity in Biomarker Studies

Several factors can compromise the external validity of biomarker research, creating significant barriers to clinical translation:

Sampling and Selection Bias: Using samples that are not representative of the target population severely limits generalizability [1]. In biomarker research, this often manifests as studies conducted primarily in homogeneous populations that don't reflect the diversity of real-world patient populations.

Artificiality of Research Settings: Highly controlled laboratory environments may not reflect the complexities of clinical practice where multiple confounding factors interact [1]. This is particularly relevant for biomarkers whose performance might be affected by variations in sample collection, processing, or analysis across different clinical sites.

Interaction Effects of Selection: The effects observed in a study might be specific to the particular experimental population and not hold true for other groups [1]. For example, a biomarker validated in a research-intensive academic medical center might not perform similarly in community hospital settings.

Temporal Factors: Changes in technology, healthcare practices, or disease patterns over time can affect the generalizability of biomarker research conducted at a specific point in time [1].

Methodologies for Establishing External Validity

Validation Frameworks for Biomarker Research

Establishing external validity requires systematic approaches that go beyond traditional internal validation methods. The following workflow outlines key methodological stages for demonstrating external validity in biomarker research:

Experimental Protocols for External Validation

Robust external validation of biomarkers requires carefully designed experimental protocols that test generalizability across multiple dimensions:

Multi-Cohort Validation Studies: These studies involve testing the biomarker in independent patient cohorts from different geographic locations, healthcare systems, and demographic compositions. The protocol typically includes standardized operating procedures for sample collection, processing, and analysis to minimize technical variability while maximizing population diversity.

Prospective-Blinded Evaluation: In this design, the biomarker is applied to new patient populations in a blinded manner where researchers and clinicians are unaware of the biomarker results until after clinical outcomes are documented. This approach reduces assessment bias and provides more realistic estimates of real-world performance.

Real-World Evidence Studies: These studies evaluate biomarker performance in routine clinical practice settings rather than controlled clinical trial environments. They typically involve larger, more diverse patient populations and assess how the biomarker performs amid the complexities and variations of actual healthcare delivery.

Comparative Analysis: Biomarker Performance Across Populations

Large-Scale Validation Study of Cancer Prediction Algorithms

A recent large-scale study published in Nature Communications provides compelling data on the external validation of cancer prediction algorithms that incorporate biomarker data [3]. The study developed and validated two diagnostic prediction algorithms for 15 cancer types using a population of 7.46 million adults in England, with external validation in separate cohorts totaling over 5 million patients from across the U.K. [3].

Table 1: Performance Metrics of Cancer Prediction Algorithms with Biomarker Data Across Validation Cohorts

| Cancer Type | Model with Clinical Factors Only (AUROC) | Model with Clinical Factors + Blood Biomarkers (AUROC) | Improvement with Biomarkers | Generalizability Across UK Regions |

|---|---|---|---|---|

| Any Cancer (Men) | 0.869 (95% CI 0.867-0.871) | 0.876 (95% CI 0.874-0.878) | +0.007 | Consistent across England, Scotland, Wales, N. Ireland |

| Any Cancer (Women) | 0.839 (95% CI 0.837-0.842) | 0.844 (95% CI 0.842-0.847) | +0.005 | Consistent across England, Scotland, Wales, N. Ireland |

| Colorectal Cancer | 0.854 (95% CI 0.848-0.860) | 0.872 (95% CI 0.866-0.878) | +0.018 | Maintained performance across regions |

| Lung Cancer | 0.882 (95% CI 0.878-0.886) | 0.892 (95% CI 0.888-0.896) | +0.010 | Slight variability by geographic area |

| Blood Cancer | 0.823 (95% CI 0.815-0.831) | 0.856 (95% CI 0.849-0.863) | +0.033 | Consistent performance across regions |

The incorporation of commonly used blood tests (full blood count and liver function tests) as affordable digital biomarkers improved discrimination between patients with and without cancer, with the algorithms demonstrating superior prediction estimates compared to existing scores [3]. Importantly, these models maintained performance across diverse populations from different UK nations, supporting their external validity.

Performance of Decipher Prostate Biomarker Across Populations

The Decipher Prostate Genomic Classifier, a 22-gene test developed using RNA whole-transcriptome analysis and machine learning, demonstrates how biomarkers can achieve external validity across diverse populations [4]. With performance and clinical utility demonstrated in over 90 studies involving more than 200,000 patients, it is the only gene expression test to achieve "Level I" evidence status and inclusion in the risk-stratification table in the most recent NCCN Guidelines for prostate cancer [4].

Table 2: External Validation Metrics for Decipher Prostate Biomarker Across Diverse Cohorts

| Validation Cohort | Sample Size | Primary Endpoint | Performance Metric | Generalizability Assessment |

|---|---|---|---|---|

| Multi-institutional cohort | 2,135 patients | Prostate cancer metastasis | Concordance index: 0.79 | Consistent across treatment modalities |

| Prospective BALANCE trial | Stratified randomized | Benefit from hormone therapy | Hazard ratio: 0.41 (p<0.05) | Validated in recurrent prostate cancer setting |

| Diverse practice settings | >200,000 samples | Clinical utility | Level I evidence | Generalized across community and academic practices |

| Multiple ethnic groups | Population-based | Cancer-specific mortality | C-index: 0.77-0.80 | Maintained performance across ethnicities |

The Decipher GRID database, which includes more than 200,000 whole-transcriptome profiles from patients with urologic cancers, has been instrumental in establishing the external validity of this biomarker across diverse populations [4]. The presentation of first prospective validation data for the biomarker predicting hormone therapy benefit at ASTRO 2025 further strengthens its demonstrated external validity [4].

Enhancing External Validity: Strategies and Solutions

Methodological Approaches to Improve Generalizability

Researchers can employ several strategies to improve the external validity of biomarker studies:

Use Representative Sampling: Recruit participants who are similar to the population of interest in terms of relevant characteristics [1]. Probability sampling techniques, such as random sampling or stratified random sampling, can help ensure that the sample is representative of the target population.

Larger and More Diverse Samples: Larger samples with a wide range of characteristics are more likely to represent the target population and reduce sampling error [1]. In biomarker research, this specifically means intentional inclusion of diverse ethnic, geographic, and socioeconomic groups.

Multi-Center Study Designs: Conducting studies across multiple institutions with different healthcare systems, patient populations, and practice patterns provides built-in assessment of generalizability and enhances external validity.

Replication Studies: Repeating the study with different participants and in different settings can provide evidence for the generalizability of the findings [1]. For biomarkers, this means validation in independent cohorts with differing characteristics.

Real-World Evidence Generation: Supplementing traditional clinical trial data with real-world evidence from routine clinical practice provides critical insights into how biomarkers perform under less controlled but more representative conditions.

Technological Innovations Supporting External Validity

Emerging technologies are creating new opportunities to enhance the external validity of biomarker research:

Artificial Intelligence and Machine Learning: AI-driven algorithms can process complex datasets from diverse populations to identify robust biomarker signatures that maintain performance across subgroups [5]. These technologies can also help identify potential sources of heterogeneity in biomarker performance.

Multi-Omics Integration: Combining data from genomics, proteomics, metabolomics, and transcriptomics enables the development of comprehensive biomarker signatures that may be more robust across diverse populations [6] [5]. This systems biology approach captures the complexity of diseases more completely.

Liquid Biopsy Technologies: These minimally invasive approaches facilitate repeated sampling and real-time monitoring of biomarker dynamics across diverse populations [5]. Their non-invasive nature also reduces barriers to participation in validation studies.

Single-Cell Analysis Technologies: By examining individual cells within tissues, researchers can uncover insights into heterogeneity that might affect biomarker performance across different population subgroups [5].

Table 3: Essential Research Reagent Solutions for External Validation Studies

| Resource Category | Specific Tools/Platforms | Function in External Validation | Key Considerations |

|---|---|---|---|

| Biobanking Platforms | Decipher GRID [4], UK Biobank | Provide diverse sample collections for validation across populations | Sample quality, demographic diversity, clinical annotation |

| Analytical Technologies | Next-generation sequencing, Mass spectrometry, Liquid biopsy platforms [5] | Enable reproducible biomarker measurement across sites | Standardization, sensitivity, specificity, reproducibility |

| Computational Tools | AI/ML algorithms [7], Multi-omics integration platforms [6] | Identify robust biomarker signatures and assess generalizability | Algorithm transparency, validation across datasets |

| Statistical Methods | Random sampling, Stratified sampling, Bayesian methods | Ensure representative sampling and appropriate generalization | Sampling frame, stratification variables, prior distributions |

| Validation Frameworks | PROBAST, TRIPOD, STARD | Provide structured approaches to assess risk of bias and generalizability | Domain-specific considerations, reporting completeness |

External validity represents a critical bridge between biomarker discovery and clinical implementation. While internal validation and cross-validation provide essential foundational evidence, they are insufficient alone to ensure that biomarkers will perform reliably across diverse real-world populations and settings. The comparative data presented in this guide demonstrates that biomarkers can achieve strong external validity when validated in large, diverse populations using rigorous methodologies.

Future directions in biomarker validation will likely involve greater incorporation of real-world evidence, more intentional diversity in validation cohorts, and innovative use of artificial intelligence to identify robust biomarker signatures that maintain performance across population subgroups. By prioritizing external validity throughout the biomarker development pipeline, researchers and drug development professionals can accelerate the translation of promising biomarkers into clinically useful tools that improve patient outcomes across diverse populations.

In the realm of precision oncology, the accurate identification and validation of biomarkers are fundamental to tailoring therapeutic strategies. A critical conceptual and practical distinction lies between prognostic and predictive biomarkers. Understanding this difference is essential for robust clinical trial design, appropriate patient management, and the advancement of personalized medicine. A prognostic biomarker provides information about the patient's likely disease course, such as the risk of recurrence or overall survival, independent of a specific treatment. In contrast, a predictive biomarker identifies patients who are more or less likely to benefit from a particular therapy [8] [9] [10].

This distinction is not merely academic; it directly impacts clinical decision-making. The same biomarker can, in some cases, serve both roles, but its validation and application differ significantly. For instance, the rat sarcoma-2 virus (RAS) gene status in metastatic colorectal cancer (mCRC) is a well-established negative predictive biomarker for response to anti-epidermal growth factor receptor (EGFR) therapies like cetuximab and panitumumab. Simultaneously, RAS mutations also carry a prognostic value, being associated with a generally poorer overall survival compared to patients with wild-type RAS tumors [9]. This guide will objectively compare these biomarker types, focusing on their validation in diverse populations, supported by experimental data and analytical methodologies.

Comparative Analysis: Characteristics and Clinical Impact

The following table summarizes the core characteristics, clinical implications, and examples of prognostic and predictive biomarkers.

Table 1: Comparative Analysis of Prognostic and Predictive Biomarkers

| Feature | Prognostic Biomarker | Predictive Biomarker |

|---|---|---|

| Core Definition | Informs about the natural history of the disease or likely outcome regardless of therapy [8] [9]. | Predicts the efficacy or lack of efficacy of a specific therapeutic intervention [8] [9]. |

| Clinical Question | What is the patient's likely disease outcome? | Will this specific treatment work for this patient? |

| Impact on Treatment | Indirect. Informs about disease aggressiveness and the need for/type of therapy (e.g., watchful waiting vs. intensive treatment) [10]. | Direct. Determines whether a specific drug should be used or avoided [9]. |

| Study Design for Validation | Analyzed within a single treatment arm or in patients receiving standard care. Correlates biomarker status with outcome. | Requires a randomized controlled trial. A statistical interaction between the biomarker and treatment effect must be tested [10]. |

| Example in Colorectal Cancer | KRAS mutation is associated with poorer overall survival, irrespective of the chemotherapy regimen used (e.g., FOLFOX vs. FOLFIRI) [9]. | RAS mutations predict a lack of response to anti-EGFR monoclonal antibodies (cetuximab, panitumumab) [9]. |

| Example in Brain Tumors | IDH1/2 mutations in gliomas are associated with a more favorable prognosis [11]. | BRAF p.V600E mutation in pediatric low-grade gliomas predicts response to BRAF inhibitors (dabrafenib, vemurafenib) [11]. |

| Statistical Model Representation | Main effect of the biomarker on the clinical endpoint (e.g., Overall Survival). | Interaction effect between the biomarker status and the treatment assignment on the clinical endpoint [10]. |

The visual below illustrates the distinct ways these biomarkers influence patient survival outcomes in a randomized clinical trial setting.

Methodologies for Biomarker Discovery and Validation

Statistical and Computational Frameworks

Validating biomarkers, particularly in high-dimensional genomic data, presents significant challenges due to correlation between biomarkers and the need to model both prognostic and predictive effects. The PPLasso (Prognostic Predictive Lasso) method is a novel computational approach designed to address this. It integrates both effects into a single statistical model based on an ANCOVA-type framework and is particularly adept at handling correlated biomarkers, a common issue in genomic data [10].

The core statistical model for PPLasso can be represented as a regression problem where the continuous response (e.g., tumor size) is modeled by the biomarker measurements from two treatment groups. The model simultaneously estimates:

- Prognostic effects (β): The main effect of a biomarker on the outcome.

- Predictive effects (γ): The interaction effect between the biomarker and the treatment, indicating a predictive value [10].

PPLasso employs a penalized regression approach (Lasso) that performs variable selection and coefficient estimation simultaneously, effectively identifying the most relevant prognostic and predictive biomarkers from a large pool of candidates. Performance evaluations show that PPLasso outperforms traditional Lasso and other extensions in accurately identifying both types of biomarkers in various scenarios [10].

Artificial Intelligence and Machine Learning Approaches

Artificial intelligence (AI) models are increasingly demonstrating high efficacy in identifying and predicting biomarker status from complex data, offering a non-invasive alternative to conventional assays. A recent systematic review and meta-analysis focusing on lung cancer found that AI models, particularly deep learning and machine learning algorithms, achieved a pooled sensitivity of 0.77 and a pooled specificity of 0.79 for predicting the status of key biomarkers like EGFR, PD-L1, and ALK [12].

These models leverage diverse data sources, including gene expression profiles, imaging features (radiomics), and clinical variables, to generate robust predictions. Internal and external validation techniques have confirmed the generalizability of these AI-driven predictions across heterogeneous patient cohorts [12]. However, a major challenge for clinical adoption is the lack of robust external validation. A scoping review of AI models in lung cancer pathology found that only about 10% of developed models undergo external validation using independent datasets, which is crucial for assessing real-world performance [13].

Clinical Application and Validation Data

The table below summarizes validation data and clinical implications for key biomarkers across different cancer types, highlighting their prognostic and predictive roles.

Table 2: Clinical Validation and Performance of Key Biomarkers in Oncology

| Cancer Type | Biomarker | Role | Clinical/Therapeutic Implication | Validation Data / Performance |

|---|---|---|---|---|

| Colorectal Cancer (CRC) | RAS (KRAS/NRAS) | Predictive (Negative) [9] | Predicts lack of response to anti-EGFR therapy (cetuximab, panitumumab). | In KRAS wild-type mCRC, cetuximab improved OS (9.5 vs. 4.8 mos; HR 0.55) and PFS (3.7 vs. 1.9 mos; HR 0.40). No benefit in mutant KRAS [9]. |

| Non-Small Cell Lung Cancer (NSCLC) | PD-L1, EGFR, ALK | Predictive [12] | Guides use of immunotherapy and targeted therapies. | AI models for predicting status showed pooled sensitivity 0.77 (95% CI: 0.72–0.82) and specificity 0.79 (95% CI: 0.78–0.84) [12]. |

| Pediatric Low-Grade Glioma | BRAF p.V600E | Predictive [11] | Predicts response to BRAF inhibitors (dabrafenib, vemurafenib). | Alteration found in 20-25% of pLGG. BRAF/MEK inhibitors have received regulatory approval for this biomarker-defined population [11]. |

| Various Cancers | NTRK fusions | Predictive [11] | Tumor-agnostic biomarker for TRK inhibitors (larotrectinib, entrectinib). | In NTRK-fusion CNS tumors, pediatric patients showed a marked survival benefit (median OS 185.5 mos) vs. adults (24.8 mos) [11]. |

| Glioma | IDH1/2 mutation | Prognostic [11] | Associated with a more favorable prognosis. | A defining molecular feature used for diagnosis and risk stratification [11]. |

The Scientist's Toolkit: Essential Research Reagents and Materials

The following table details key reagents and tools essential for conducting biomarker discovery and validation research.

Table 3: Key Research Reagent Solutions for Biomarker Validation

| Reagent / Tool | Function and Application in Biomarker Research |

|---|---|

| PPLasso R/Python Package [10] | A software tool implementing the PPLasso algorithm for the simultaneous selection of prognostic and predictive biomarkers from high-dimensional genomic data (e.g., transcriptomic, proteomic). |

| Digital Whole Slide Images (WSIs) [13] | Digitized histopathology slides used as the primary input data for developing and validating AI-based diagnostic and biomarker prediction models. |

| CIViCmine Database [14] | A text-mining database that annotates the biomarker properties (prognostic, predictive, diagnostic) of thousands of proteins, used for training and validating computational models like MarkerPredict. |

| BEAMing Technology [9] | A highly sensitive digital polymerase chain reaction (PCR)-based technique used for non-invasive analysis of biomarker status (e.g., RAS) from circulating tumor DNA (ctDNA) in liquid biopsies. |

| Human Cancer Signaling Network (CSN) [14] | A curated network of cancer signaling pathways used in systems biology approaches (e.g., MarkerPredict) to explore network properties of proteins for predictive biomarker discovery. |

| IUPred & AlphaFold [14] | Computational tools used to predict Intrinsically Disordered Proteins (IDPs), which have been shown to be enriched in network motifs and are likely candidates for cancer biomarkers. |

Analytical Workflow for Biomarker Identification

The process of identifying and validating prognostic and predictive biomarkers from a randomized clinical trial involves a structured analytical workflow, as illustrated below.

The critical distinction between prognostic and predictive biomarkers is the cornerstone of their valid clinical application. While prognostic markers help stratify patients based on their inherent disease risk, predictive markers are indispensable for matching patients with effective therapies, thereby realizing the promise of precision oncology. Robust validation, through advanced statistical methods like PPLasso and rigorous external validation of AI models, is paramount. As biomarker research evolves, integrating multi-omics data and leveraging sophisticated computational tools will be key to discovering novel biomarkers and ensuring they perform reliably across diverse patient populations, ultimately improving therapeutic outcomes and optimizing healthcare resources.

The translation of clinical trial results into effective real-world patient care is a fundamental challenge in medical research. Generalizability, or external validity, refers to the extent to which the results of a study can be reliably applied to broader patient populations and routine clinical practice settings beyond the controlled conditions of the trial. A significant generalizability gap exists when therapies demonstrating efficacy in randomized controlled trials (RCTs) fail to deliver comparable benefits in diverse patient populations, potentially compromising treatment decisions and patient outcomes.

This gap is particularly pronounced in oncology, where real-world survival associated with anti-cancer therapies is often significantly lower than that reported in RCTs, with some studies showing a median reduction of six months in median overall survival [15]. For novel agents such as checkpoint inhibitors, observational studies suggest that real-world patients experience both decreased overall survival and reduced survival benefits relative to standard of care [15]. This discrepancy represents a critical problem for researchers, clinicians, and drug development professionals who rely on trial evidence to make informed decisions about treatment strategies and resource allocation.

The emergence of sophisticated biomarker technologies and analytical frameworks offers promising pathways to bridge this gap. This guide examines the factors contributing to limited generalizability, compares emerging methodologies to enhance external validity, and provides actionable experimental protocols for evaluating and improving the applicability of clinical research across diverse populations.

Factors Limiting Trial Generalizability

Restrictive Eligibility Criteria and Selection Bias

Randomized controlled trials typically employ strict eligibility criteria that create homogeneous study populations poorly representative of actual clinical practice. Restrictive enrollment criteria systematically exclude patients with comorbidities, older age, compromised performance status, or concomitant medications—characteristics common in real-world settings [15]. Approximately one in five real-world oncology patients are ineligible for a phase 3 trial based on standard eligibility requirements [15].

This selection process introduces prognostic risk bias, as physicians often selectively recruit patients with better prognoses irrespective of formal eligibility criteria. Research reveals that real-world patients have more heterogeneous prognoses than RCT participants, with preferential recruitment based on factors such as race or socioeconomic status—both linked to prognosis—further contributing to this issue [15].

Methodological Limitations in Externally Controlled Trials

Externally controlled trials (ECTs), which compare a treatment group to patients external to the study, are increasingly used when RCTs are unfeasible, particularly for rare diseases or targeted therapies. However, a 2025 cross-sectional analysis of 180 ECTs published between 2010 and 2023 revealed critical methodological shortcomings that limit their reliability [16]:

| Methodological Issue | Prevalence in ECTs (%) | Impact on Generalizability |

|---|---|---|

| Provided rationale for using external controls | 35.6% | Lack of transparency in design rationale |

| Prespecified use of external controls in protocol | 16.1% | Potential for post-hoc manipulation |

| Conducted feasibility assessments | 7.8% | Uncertain adequacy of data sources |

| Used statistical methods to adjust for covariates | 33.3% | Unaddressed confounding bias |

| Performed sensitivity analyses for primary outcomes | 17.8% | Limited assessment of result robustness |

| Used quantitative bias analyses | 1.1% | Almost no evaluation of systematic error |

Without randomization, ECTs are vulnerable to confounding, selection bias, and survivor-lead-time bias, risking study validity and potentially leading to incorrect clinical decisions [16]. The almost complete absence of quantitative bias analyses in current ECT practices further limits confidence in their results [16].

Emerging Solutions for Enhancing Generalizability

Machine Learning Frameworks for Generalizability Assessment

Novel computational approaches are addressing generalizability challenges by systematically evaluating how trial results translate across diverse patient subgroups. The TrialTranslator framework uses machine learning to risk-stratify real-world oncology patients and emulate phase 3 trials across these prognostic phenotypes [15].

This approach involves a two-step process:

Step I - Prognostic Model Development: Cancer-specific prognostic models predict patient mortality risk from time of metastatic diagnosis. A gradient boosting survival model has demonstrated superior discriminatory performance across multiple cancer types, with 1-year survival AUC of 0.783 for aNSCLC compared to 0.689 for traditional Cox models [15].

Step II - Trial Emulation: Real-world patients meeting key eligibility criteria are stratified into low-risk, medium-risk, and high-risk phenotypes using mortality risk scores. Survival analysis then assesses treatment effects within each phenotype [15].

Application across 11 landmark oncology trials revealed that patients in low-risk and medium-risk phenotypes exhibit survival times and treatment-associated benefits similar to those observed in RCTs. In contrast, high-risk phenotypes show significantly lower survival times and diminished treatment benefits compared to RCT findings [15]. This demonstrates how prognostic heterogeneity substantially contributes to the generalizability gap.

Figure 1. Machine learning workflow for trial generalizability assessment. This framework uses real-world electronic health record (EHR) data to develop prognostic models, stratify patients by risk, and emulate trials across phenotypes to evaluate external validity [15].

Advanced Biomarker Technologies and Multi-Omics Approaches

Biomarker innovation is critical for improving patient stratification and understanding treatment effects across diverse populations. Multi-omics approaches that integrate genomics, proteomics, metabolomics, and transcriptomics are reshaping biomarker development, enabling a more comprehensive view of disease complexity beyond single endpoints [17].

| Technology | Application | Impact on Generalizability |

|---|---|---|

| Liquid Biopsies | Non-invasive circulating tumor DNA (ctDNA) analysis | Enables real-time monitoring across diverse populations; expanding beyond oncology to infectious and autoimmune diseases [5] |

| Multi-Omics Platforms | Simultaneous analysis of DNA, RNA, proteins, metabolites | Reveals clinically actionable subgroups traditional assays miss; identifies dynamic biomarkers across populations [17] |

| Single-Cell Analysis | Examination of individual cells within tumor microenvironments | Identifies rare cell populations driving disease progression; reveals heterogeneity across patients [5] |

| AI-Powered Biomarkers | Digital histopathology feature detection | Uncovers prognostic signals in standard histology slides; outperforms established molecular markers [18] |

These technologies help address biological heterogeneity across populations, a key factor limiting generalizability. For example, protein profiling through multi-omics approaches has revealed tumor regions expressing poor-prognosis biomarkers that standard RNA analysis missed, demonstrating how multidimensional perspectives yield biomarkers more translatable across diverse patient groups [17].

Statistical Methods for Enhancing External Validity

Appropriate statistical methodologies are essential for addressing confounding and selection bias in non-randomized comparisons. The propensity score method is the most common approach (used in 58.3% of ECTs that employ statistical adjustment), helping balance baseline characteristics between treatment and external control arms [16].

More advanced techniques include:

Inverse Probability of Treatment Weighting (IPTW): Applied in the TrialTranslator framework to balance demographic information, area-level socioeconomic status, insurance status, cancer characteristics, and clinical features between treatment and control arms within risk phenotypes [15].

Quantitative Bias Analysis: Systematically evaluates how potential systematic errors might affect study results, though currently used in only 1.1% of ECTs [16].

Sensitivity Analyses: Assess the robustness of results to different assumptions or methodological choices, performed in only 17.8% of ECTs for primary outcomes [16].

Figure 2. Statistical workflow for enhancing external validity in clinical research. This methodology emphasizes feasibility assessment, transparent covariate selection, appropriate statistical adjustment, and bias analysis to improve the reliability of externally controlled trials [16].

Experimental Protocols for Generalizability Assessment

Machine Learning-Based Trial Emulation Protocol

Objective: To evaluate the generalizability of phase 3 oncology trial results across different prognostic phenotypes in real-world patient populations.

Materials and Methods:

- Data Source: Nationwide EHR-derived database (e.g., Flatiron Health) containing structured and unstructured data from approximately 280 cancer clinics [15].

- Patient Cohort: Patients diagnosed with advanced or metastatic disease (aNSCLC, mBC, mPC, mCRC) between 2011-2022 [15].

- Prognostic Model Development:

- Develop cancer-specific gradient boosting survival models to predict mortality risk from metastatic diagnosis

- Optimize predictive performance at timepoints aligned with mOS for each cancer (1 year for aNSCLC, 2 years for others)

- Validate model discrimination using time-dependent AUC on test sets [15]

- Trial Emulation Procedure:

- Identify real-world patients receiving treatment or control regimens who meet key eligibility criteria from landmark RCTs

- Calculate mortality risk scores for each patient using the trained prognostic model

- Stratify patients into low-risk (bottom tertile), medium-risk (middle tertile), and high-risk (top tertile) phenotypes

- Apply IPTW to balance features between treatment arms within each phenotype

- Perform survival analysis to assess treatment effect for each phenotype using RMST and mOS [15]

Output Analysis: Compare survival times and treatment-associated benefits across phenotypes and against original RCT results. The protocol typically validates that low and medium-risk phenotypes show similar outcomes to RCTs, while high-risk phenotypes demonstrate significantly lower survival times and diminished treatment benefits [15].

Externally Controlled Trial Quality Assessment Protocol

Objective: To evaluate the methodological rigor of ECTs and identify potential threats to generalizability.

Materials and Methods:

- Data Extraction: Systematic assessment of ECT publications using standardized data extraction form [16]

- Quality Domains Evaluated:

- Rationale and prespecification: Assess whether studies provide justification for using external controls and prespecify this approach in protocols

- Data source adequacy: Evaluate whether feasibility assessments were conducted to determine if available data sources can adequately serve as external controls

- Covariate handling: Document procedures for covariate selection and methods to address missing data in external control datasets

- Statistical adjustment: Record use of propensity score methods, multivariable regression, or other techniques to adjust for confounding

- Sensitivity analyses: Identify whether studies performed sensitivity analyses for primary outcomes or quantitative bias analyses [16]

Quality Metrics: Calculate percentages of studies addressing each methodological domain. Based on current evidence, benchmark against expected standards: >75% for rationale and prespecification, >50% for feasibility assessment, >80% for covariate selection procedures, >75% for statistical adjustment, and >50% for sensitivity analyses [16].

Essential Research Reagent Solutions

The following reagents and platforms are critical for implementing generalizability assessment protocols:

| Research Solution | Function | Application in Generalizability Research |

|---|---|---|

| Electronic Health Record Databases (Flatiron Health) | Provide real-world patient data for emulation studies | Source for prognostic model development and trial emulation across diverse populations [15] |

| Gradient Boosting Survival Models | Predict patient mortality risk from clinical and biomarker data | Risk stratification of real-world patients into prognostic phenotypes for comparative effectiveness research [15] |

| Liquid Biopsy Platforms | Analyze ctDNA, CTCs, and exosomes from blood samples | Non-invasive biomarker monitoring across diverse patient populations without requirement for tissue biopsies [19] |

| Multi-Omics Integration Systems (Sapient Biosciences, Element Biosciences) | Simultaneously profile thousands of molecules from single samples | Comprehensive biomarker discovery capturing disease complexity across populations [17] |

| Propensity Score Software (R, Python packages) | Statistical adjustment for confounding in non-randomized studies | Balance baseline characteristics between treatment and external control arms in ECTs [16] |

The generalizability of clinical trial results remains a critical challenge with significant implications for drug development and patient care. The high stakes involve not only the substantial financial investments in clinical research but, more importantly, the effective translation of scientific advances into improved outcomes for diverse patient populations.

Evidence suggests that prognostic heterogeneity among real-world patients plays a substantial role in the limited generalizability of RCT results, with high-risk phenotypes deriving significantly less benefit from treatments than reported in trial populations [15]. Addressing this challenge requires methodological rigor in externally controlled trials, including better feasibility assessment, transparent covariate selection, appropriate statistical adjustment, and comprehensive sensitivity analyses [16].

Emerging approaches leveraging machine learning, biomarker innovation, and real-world data offer promising pathways to bridge the generalizability gap. By systematically evaluating treatment effects across diverse prognostic phenotypes and developing more dynamic, predictive biomarkers, researchers can enhance the external validity of clinical evidence and ensure that trial success translates into meaningful patient benefit across the broader population.

The pursuit of reliable predictive models for Acute Respiratory Distress Syndrome (ARDS) mortality represents a crucial frontier in critical care medicine. Despite decades of research, ARDS continues to affect approximately 10.4% of intensive care unit (ICU) admissions with persistently high mortality rates ranging from 35% to 46% [20] [21]. This clinical challenge has spurred the development of numerous prediction models incorporating clinical parameters, biomarkers, and advanced machine learning algorithms. However, the true test of any predictive model lies not in its performance on the data from which it was derived, but in its external validation – its ability to generalize to new, independent patient populations across different healthcare settings and geographic locations.

External validation serves as a critical reality check for predictive models, revealing limitations that internal validation cannot detect. The process tests model performance across different patient demographics, clinical practices, and disease etiologies, providing essential insights into real-world applicability. This case study examines the lessons learned from the external validation of an ARDS mortality prediction model, with particular focus on the challenges of biological heterogeneity, data standardization, and model scalability across diverse clinical environments. Through this analysis, we aim to provide researchers and clinicians with evidence-based guidance for developing more robust, generalizable prediction tools that can ultimately improve patient outcomes through early risk stratification and personalized intervention.

Experimental Protocols and Methodologies

Original Model Development and Validation Framework

The foundational ARDS mortality prediction model under examination was developed using a retrospective cohort of 110 COVID ARDS (C-ARDS) patients [22]. The model employed a binary logistic regression framework incorporating four key predictor variables: PaO₂/FiO₂ ratio (P/F) at day one and day three of invasive mechanical ventilation, chest x-ray features extracted using convolutional neural networks (CNN), and patient age. The initial model demonstrated promising performance during internal testing on a holdout set of 23 patients from the original cohort, achieving an area under the receiver operating characteristic curve (AUC) of 0.862 (95% CI: 0.654-0.969) [22].

The experimental protocol for model development followed a structured approach. Chest radiographs were processed using a deep neural network to extract quantitative imaging features that complemented traditional clinical parameters. The combination of physiological measurements (P/F ratio), demographic data (age), and computationally-derived imaging biomarkers created a multimodal predictive framework. Internal validation employed standard machine learning practices with data partitioning to avoid overfitting, while performance metrics focused on discrimination capability as measured by the AUC.

External Validation Design and Patient Cohorts

The external validation protocol was designed to rigorously test model generalizability across distinct patient populations [22]. Two independent validation cohorts were assembled from a separate healthcare system:

- COVID ARDS Cohort: 66 patients with ARDS secondary to COVID-19 infection

- Non-COVID ARDS Cohort: 76 patients with primary ARDS from other etiologies

This deliberate separation of ARDS subtypes enabled researchers to test the critical hypothesis regarding whether COVID-ARDS represents a distinct pathological entity from other forms of primary ARDS. The validation methodology maintained consistency in predictor variable measurement across sites, with particular attention to standardized calculation of P/F ratios and chest imaging protocols. Model performance was assessed using the same discrimination metrics (AUC with 95% confidence intervals) employed during initial development, allowing direct comparison of validation results with original performance benchmarks.

Comparative Performance Analysis

Quantitative Performance Across Validation Cohorts

The external validation process revealed substantial variation in model performance across different patient populations, highlighting the critical importance of population-specific validation. The table below summarizes the key performance metrics observed during internal and external validation:

Table 1: Performance Comparison of ARDS Mortality Prediction Model Across Different Validation Cohorts

| Validation Cohort | Patient Population | Sample Size | AUC (95% CI) | Performance Interpretation |

|---|---|---|---|---|

| Internal Validation | COVID ARDS (holdout) | 23 | 0.862 (0.654-0.969) | Excellent discrimination |

| External Validation 1 | COVID ARDS | 66 | 0.741 (0.619-0.841) | Acceptable discrimination |

| External Validation 2 | Non-COVID ARDS | 76 | 0.611 (0.493-0.721) | Poor discrimination |

| Retrained Model | Combined training + COVID ARDS test | 176 | 0.855 (0.747-0.930) | Excellent discrimination |

The performance degradation from internal to external validation illustrates the "volatility" of predictive models when applied to new populations [22]. Most notably, the dramatic performance drop when applying the COVID-ARDS-derived model to non-COVID ARDS patients (AUC 0.611) suggests fundamental differences in how clinical and radiologic predictors relate to mortality across these populations. This finding has profound implications for the development of generalized ARDS prediction tools.

Benchmarking Against Traditional Scoring Systems

To contextualize these results, it is valuable to compare the model's performance against established ICU scoring systems. Recent systematic reviews and meta-analyses provide benchmark data for conventional approaches:

Table 2: Performance Comparison with Conventional ICU Scoring Systems for ARDS Mortality Prediction

| Prediction Model | Pooled AUC (95% CI) | Clinical Utility Assessment |

|---|---|---|

| SOFA Score | 0.802 (0.719-0.885) | Moderate discrimination [23] |

| APACHE II | 0.667 (0.613-0.721) | Limited discrimination [23] |

| SAPS-II | 0.70 (0.66-0.74) | Limited discrimination [21] |

| Machine Learning Models (Pooled) | 0.81 (0.78-0.84) | Good discrimination [21] |

| Novel ML Model (XGBoost) | 0.887 (0.863-0.909) | Excellent discrimination [24] |

The superior performance of machine learning approaches, particularly the novel XGBoost model achieving an AUC of 0.887, demonstrates the potential advantage of sophisticated computational methods [24]. However, these models still face the same external validation challenges observed in the case study model, with performance often decreasing when applied to independent datasets.

Key Findings and Implications for Biomarker Research

The Fundamental Limitation: ARDS Heterogeneity

The most significant finding from this external validation study was the dramatic performance difference between COVID-19 and non-COVID ARDS populations [22]. The model maintained reasonable discrimination when validated on COVID ARDS patients (AUC 0.741) but performed only marginally better than chance when applied to non-COVID ARDS patients (AUC 0.611). This divergence strongly suggests that the biological mechanisms, clinical progression, and imaging manifestations of COVID-19 ARDS differ substantially from other forms of primary ARDS.

This finding aligns with emerging understanding of ARDS as a heterogeneous syndrome comprising multiple distinct endotypes and molecular phenotypes rather than a single uniform disease entity [25]. Omics technologies have revealed distinct biomarker profiles associated with ARDS pathogenesis, including dysregulated inflammatory signaling, epithelial and endothelial barrier dysfunction, and compromised immune responses [25]. Genetic studies have further identified polymorphisms in genes encoding angiotensin-converting enzyme, surfactant proteins, toll-like receptor 4, and interleukin-6 that influence ARDS susceptibility and progression [25].

Diagram 1: ARDS Heterogeneity Impact on Model Validation

The Scalability Paradox: Model Adaptation Potential

Despite the population-specific limitations, the case study revealed an important positive finding: the underlying model architecture demonstrated scalability when retrained on expanded datasets [22]. Researchers developed a new model using the complete set of 110 patients from the original cohort and validated it on the external COVID-ARDS cohort, achieving an AUC of 0.855 (95% CI: 0.747-0.930) – effectively matching the original internal validation performance.

This scalability demonstrates that while predictor variables may have population-specific relationships with outcomes, the fundamental model structure can remain valid across settings with appropriate retraining. This finding supports a "framework-based" approach to predictive model development, where the core analytical architecture is designed for adaptation rather than assuming universal predictor-outcome relationships.

Advanced Modeling Techniques in ARDS Prediction

Machine Learning and Feature Selection Methodologies

Recent advances in ARDS prediction have increasingly leveraged machine learning approaches with sophisticated feature selection techniques. The optimal performance of random forest models for predicting ARDS after liver transplantation (AUROC 0.766-0.844) demonstrates the value of ensemble methods that can capture complex nonlinear relationships [26]. These models employed recursive feature elimination (RFE) with cross-validation to identify the most predictive variables from initially 72 potential predictors, ultimately selecting nine key features including recipient age, BMI, MELD score, total bilirubin, prothrombin time, operation time, standard urine volume, total intake volume, and red blood cell infusion volume [26].

Similar feature selection methodologies were applied in developing the interpretable machine learning model for ARDS mortality risk, which used recursive feature elimination with cross-validation to screen features and Bayesian optimization for hyperparameter tuning [24]. The resulting XGBoost model achieved outstanding performance (AUC 0.887) by effectively identifying the most prognostically significant variables from numerous candidate predictors.

Diagram 2: Advanced Model Development Workflow

Interpretable AI and Model Explainability

The development of interpretable machine learning models represents a significant advancement in bridging the gap between predictive accuracy and clinical utility [24]. The application of SHapley Additive exPlanations (SHAP) methodology allows researchers and clinicians to understand which variables most strongly influence individual predictions, addressing the "black box" concern that often limits clinical adoption of complex machine learning models.

This explainable AI approach not only builds trust in predictive models but also provides valuable pathophysiological insights by identifying the relative importance of clinical and laboratory variables in mortality risk stratification. The interpretable model developed by Li et al. demonstrated that traditional severity scores combined with specific laboratory values and clinical parameters offered the most accurate prognostic assessment [24].

The Scientist's Toolkit: Essential Research Reagents and Solutions

Successful development and validation of ARDS prediction models requires specialized methodological approaches and analytical tools. The table below summarizes key "research reagents" – essential methodologies, data resources, and analytical techniques that form the foundation of robust predictive modeling in ARDS research.

Table 3: Essential Research Reagent Solutions for ARDS Prediction Modeling

| Research Reagent | Category | Function & Application | Exemplary Use Cases |

|---|---|---|---|

| Multimodal Data Integration | Data Framework | Combines clinical, imaging, and omics data for comprehensive modeling | CNN-extracted chest X-ray features with clinical parameters [22] |

| Recursive Feature Elimination (RFE) | Feature Selection | Identifies most predictive variables from high-dimensional data | Selecting 9 key predictors from 72 potential variables [26] |

| SHAP (SHapley Additive exPlanations) | Model Interpretation | Explains individual predictions and overall variable importance | Interpreting XGBoost model predictions for clinical transparency [24] |

| MIMIC-IV & eICU-CRD | Data Resources | Large, publicly available ICU databases for model development & validation | Training and validating mortality models on diverse patient populations [24] |

| Regularized Logistic Regression | Modeling Algorithm | Prevents overfitting while handling correlated predictors | Retrospective ARDS identification from EHR data [27] |

| Bayesian Hyperparameter Optimization | Model Tuning | Efficiently searches optimal model parameters | Optimizing XGBoost parameters for mortality prediction [24] |

| Cross-Validation | Validation Technique | Assesses model performance while mitigating overfitting | 5-fold cross-validation for feature selection [26] |

| Decision Curve Analysis (DCA) | Clinical Utility | Evaluates clinical value of models across decision thresholds | Assessing net benefit of prediction models [20] |

This case study on the external validation of an ARDS mortality prediction model yields several crucial lessons for researchers and clinicians. First, the substantial performance degradation observed when applying a COVID-ARDS-derived model to non-COVID ARDS populations underscores the fundamental biological heterogeneity within the ARDS syndrome. Second, the scalability of successful model architectures across institutions when appropriately retrained suggests a path forward through adaptable framework-based approaches rather than seeking universally applicable fixed models.

The implications for drug development and clinical trial design are significant. Predictive models used for patient stratification in clinical trials must be validated on populations representative of the intended study cohort, and researchers should account for potential etiological differences in treatment response. The emerging paradigm favors the development of modular prediction systems that can incorporate population-specific weighting of predictor variables while maintaining consistent analytical frameworks.

Future research should prioritize the development of transfer learning methodologies that allow models to be efficiently adapted to new populations with minimal retraining data. Additionally, increased integration of omics technologies may enable biologically-informed stratification that transcends traditional etiology-based classifications [25]. As ARDS prediction models evolve toward greater accuracy and generalizability, they hold the promise of enabling truly personalized management approaches for this complex and challenging syndrome.

The Context of Use (COU) is a foundational concept in regulatory science and biomarker development, providing a precise framework for how a biomarker should be applied in drug development and regulatory decision-making. According to the FDA's Biomarker Qualification Program, a COU is "a concise description of the biomarker’s specified use in drug development" [28]. It consists of two core components: the BEST (Biomarker, EndpointS, and other Tools) biomarker category and the biomarker’s intended use in drug development [28]. This precise specification is critical because it determines the level of evidence needed for qualification and ensures that the biomarker is applied appropriately and consistently across development programs [29].

The COU framework enables a common understanding among researchers, pharmaceutical companies, and regulators about the exact circumstances under which a biomarker is considered valid. Once a biomarker is qualified for a specific COU, this information becomes publicly available through FDA guidance, allowing multiple drug developers to utilize the biomarker for that specified purpose without needing to re-establish its validity in each new development program [29]. This standardization accelerates drug development while maintaining scientific rigor and regulatory standards.

The Regulatory Significance of Precisely Defining COU

COU as a Driver of Biomarker Qualification

The Context of Use is not merely a descriptive statement but a critical determinant of the qualification process itself. As Dr. Shashi Amur of FDA's CDER explains, "the context of use drives the level of evidence needed, which in turn drives the qualification process" [29]. The COU statement comprehensively describes the conditions under which the biomarker is qualified, including the identity of the biomarker, what aspect is measured, the species and subject characteristics, the purpose in drug development, and the interpretation and action based on the biomarker results [29].

This precise specification is particularly important because a single biomarker category can support multiple contexts of use. For example, a prognostic biomarker might be used to stratify patients or for enrichment in clinical trials, with each distinct use requiring separate validation and qualification [29]. The FDA encourages a pragmatic approach where biomarkers may initially be qualified for a limited context of use, with the understanding that additional evidence can accumulate over time to support an expanded context of use [29].

COU in the Broader Framework of Measurement Tools

The COU concept extends beyond biomarkers to other clinical measurement tools. For Clinical Outcome Assessments (COAs), the Context of Use is similarly defined as "a statement that fully and clearly describes the way the medical product development tool is to be used and the medical product development-related purpose of the use" [30]. The development process for these tools begins with defining both the Concept of Interest (COI) - what is being measured - and the Context of Use - the specific situation in which the measurement will be applied [30].

Table: Key Components of Context of Use Definition Across Regulatory Frameworks

| Framework | Concept of Interest | Context of Use | Primary Regulatory Purpose |

|---|---|---|---|

| Biomarker Qualification | The biological process or parameter the biomarker measures | How the biomarker will be used in drug development [28] | Qualification for specific drug development applications [29] |

| Clinical Outcome Assessment | Aspect of patient's clinical status or experience being assessed [30] | Situation where the COA will be applied [30] | Ensure appropriate use of patient-reported outcomes in trials |

Defining and Implementing COU for Biomarkers

Structural Framework of a COU Statement

According to FDA guidance, a properly constructed Context of Use consists of two main parts: the Use Statement and the Conditions for Qualified Use [29]. The Use Statement should be concise and include the name and identity of the biomarker along with its purpose in drug development. The Conditions for Qualified Use provides a comprehensive description of the circumstances under which the biomarker can be appropriately applied in the qualified setting [29].

The general structure for a biomarker COU follows this pattern: [BEST biomarker category] to [drug development use] [28]. The drug development use may include descriptive information such as the patient population, disease or disease stage, model system, stage of drug development, and/or mechanism of action of the therapeutic intervention [28].

Table: Examples of Biomarker Context of Use Statements from FDA Guidance

| BEST Category | Intended Drug Development Use | Example COU Statement |

|---|---|---|

| Predictive Biomarker | Defining inclusion/exclusion criteria [28] | "Predictive biomarker to enrich for enrollment of a sub group of asthma patients who are more likely to respond to a novel therapeutic in Phase 2/3 clinical trials." [28] |

| Prognostic Biomarker | Enriching clinical trial for an event or population of interest [28] | "Prognostic biomarker to enrich the likelihood of hospitalizations during the timeframe of a clinical trial in phase 3 asthma clinical trials." [28] |

| Safety Biomarker | Supporting clinical dose selection [28] | "Safety biomarker for the detection of acute drug-induced renal tubule alterations in male rats." [28] |

| Prognostic Enrichment Biomarker | Selecting patients for clinical trials | "Total kidney volume as a prognostic enrichment biomarker in clinical studies for treatment of autosomal dominant polycystic kidney disease." [29] |

Practical Implementation Considerations

When developing a context of use, researchers should consider multiple factors relevant to the specific biomarker, including [29]:

- The identity of the biomarker

- The aspect of the biomarker that is measured and the form in which it is used for biological interpretation

- The species and characteristics of the animal or subjects studied

- The purpose of use in drug development

- The drug development circumstances for applying the biomarker

- The interpretation and decision or action based on the biomarker

Not all factors are equally relevant for every biomarker, but each should be evaluated for relevance to the biomarker being developed [29].

Experimental Validation and Statistical Considerations for COU

Methodological Rigor in Biomarker Validation

The validation of biomarkers for a specific Context of Use requires rigorous statistical approaches and study designs. Biomarker development follows a phased process from discovery to validation, with the intended use and target population defined early in development [31]. The patients and specimens used in validation studies should directly reflect the target population and intended use [31].

Key considerations for conducting biomarker discovery studies using archived specimens include [31]:

- The patient population represented by the specimen archive

- Power of the study (through the number of samples and number of events)

- Prevalence of the disease

- The analytical validity of the biomarker test

- The pre-planned analysis plan

Bias represents one of the greatest causes of failure in biomarker validation studies [31]. Bias can enter a study during patient selection, specimen collection, specimen analysis, and patient evaluation. Randomization and blinding are two of the most important tools for avoiding bias, with randomization controlling for non-biological experimental effects and blinding preventing bias induced by unequal assessment of biomarker results [31].

Statistical Metrics for Biomarker Evaluation

Different statistical metrics are appropriate for evaluating biomarkers depending on the study goals and should be determined by a multidisciplinary team including clinicians, scientists, statisticians, and epidemiologists [31].

Table: Key Statistical Metrics for Biomarker Validation and Evaluation

| Metric | Description | Application in COU Definition |

|---|---|---|

| Sensitivity | The proportion of cases that test positive [31] | Critical for diagnostic biomarkers; impacts threshold setting for specific COU |

| Specificity | The proportion of controls that test negative [31] | Important for screening biomarkers; influences population selection criteria |

| Positive Predictive Value | Proportion of test positive patients who actually have the disease [31] | Function of disease prevalence; informs utility for patient stratification |

| Negative Predictive Value | Proportion of test negative patients who truly do not have the disease [31] | Function of disease prevalence; relevant for exclusion criteria |

| ROC Curve AUC | Measure of how well marker distinguishes cases from controls [31] | Primary metric for diagnostic performance; determines suitability for intended use |

| Calibration | How well a marker estimates the risk of disease or event of interest [31] | Important for risk stratification biomarkers; affects clinical utility |

For biomarkers intended to inform treatment decisions, the statistical approach must align with the specific biomarker type. Prognostic biomarkers can be identified through properly conducted retrospective studies that use biospecimens from a cohort representing the target population, while predictive biomarkers must be identified in secondary analyses using data from randomized clinical trials, specifically through an interaction test between the treatment and the biomarker in a statistical model [31].

The Researcher's Toolkit: Essential Reagents and Methodologies

Core Research Reagent Solutions for Biomarker Development

The development and validation of biomarkers for a specific Context of Use requires specialized reagents and methodologies tailored to the biomarker type and intended application.

Table: Essential Research Reagent Solutions for Biomarker Development and Validation

| Reagent/Methodology | Function in Biomarker Development | Application Examples |

|---|---|---|

| Flow Cytometry Reagents | Immunophenotyping of cell surface and intracellular markers [32] | Analysis of T-cell subsets for immune-related adverse event biomarkers [32] |

| Next-Generation Sequencing (NGS) | Detection of genetic mutations, deletions, rearrangements, and copy number variations [31] | Identification of EGFR mutations in NSCLC as predictive biomarkers [31] |

| Liquid Biopsy Platforms | Isolation and analysis of circulating tumor DNA (ctDNA) [31] | Non-invasive disease monitoring and treatment response assessment [31] |

| DURAClone IM Phenotyping Tubes | Standardized multicolor flow cytometry panels for immune cell profiling [32] | Validation of biomarkers for immune-related adverse events [32] |

| Plasma Biomarker Assays | Quantitative measurement of analyte concentrations in blood samples [33] | Implementation of plasma measures as drug development tools for Alzheimer's disease [33] |

Analytical Methodologies for Different Biomarker Categories

The analytical methods should be chosen to address study-specific goals and hypotheses, with the analytical plan written and agreed upon by all research team members prior to receiving data to avoid the data influencing the analysis [31]. This includes defining outcomes of interest, hypotheses to be tested, and criteria for success.

For prognostic biomarker identification, researchers employ main effect tests of association between the biomarker and outcome in statistical models [31]. For predictive biomarkers, the key statistical test is the interaction between treatment and biomarker in a model assessing treatment outcomes [31].

When information from a panel of multiple biomarkers is required to achieve better performance than a single biomarker, researchers should use each biomarker in its continuous state instead of dichotomized versions to retain maximal information for model development [31]. The optimal analytical strategy for combining multiple biomarkers depends on both sample size and clinical context, with incorporation of variable selection techniques to minimize overfitting [31].

Case Studies and Applications in Therapeutic Areas

Biomarker Applications in Alzheimer's Disease Development

The Alzheimer's disease drug development pipeline demonstrates the critical importance of biomarkers with well-defined Contexts of Use. Currently, 138 drugs are being assessed in 182 clinical trials, with biomarkers serving as primary outcomes in 27% of active trials [33]. Biomarkers play essential roles in determining trial eligibility and as outcome measures, particularly for biological disease-targeted therapies [33].

In Alzheimer's development, biomarkers were key to the development and approval of monoclonal antibodies directed against amyloid-beta protein. The approval of these therapies was dependent on biomarkers to establish the presence of the treatment target and to demonstrate its removal by the intervention [33]. Simultaneously, fluid biomarkers, including plasma measures, have been implemented as drug development tools useful in diagnosis, monitoring, and assessment of pharmacodynamic response in clinical trials [33].

Pain Therapeutics and the HEAL Initiative

The NIH Helping to End Addiction Long-term (HEAL) Initiative highlights the pressing need for biomarkers in areas where subjective measures dominate clinical assessment. In pain therapeutics, the lack of reliable biomarkers to demonstrate therapeutic target engagement, stratify patients, and predict therapeutic response has contributed to numerous clinical trial failures [34].

The HEAL Initiative supports biomarker discovery and rigorous validation to accelerate high-quality clinical research into neurotherapeutics and pain [34]. Different categories of biomarkers are being sought for pain applications, including susceptibility/risk biomarkers, diagnostic biomarkers, prognostic biomarkers, pharmacodynamic/response biomarkers, predictive biomarkers, monitoring biomarkers, and safety biomarkers [34].

Oncology and Immune-Related Adverse Event Prediction

In oncology, significant effort has been directed toward developing biomarkers to predict immune-related adverse events (irAEs) following immune checkpoint inhibitor therapy. However, external validation studies have demonstrated the challenges in biomarker generalizability across populations. One study attempting to validate 59 previously reported markers of irAE risk found poor discriminatory value when tested in a new cohort of 110 patients receiving Nivolumab and Ipilimumab therapy [32].

This research highlights the critical importance of external validation for biomarkers and their specified Contexts of Use. Even unsupervised clustering of flow cytometry data that identified four T-cell subsets with higher discriminatory capacity for colitis than previously reported populations could not be considered reliable classifiers in the validation cohort [32]. Such findings underscore that mechanisms predisposing patients to particular irAEs may be captured inadequately by pre-therapy flow cytometry and clinical data alone, emphasizing the need for continued refinement of COU definitions and validation approaches.

The Context of Use framework represents an essential regulatory and scientific paradigm for ensuring biomarkers are developed, validated, and applied appropriately in drug development. By precisely specifying the circumstances under which a biomarker is qualified, the COU creates a common language between researchers and regulators, facilitates biomarker qualification, and ultimately accelerates therapeutic development. As biomarker technologies continue to evolve across therapeutic areas from Alzheimer's disease to oncology and pain therapeutics, the disciplined application of COU principles will remain fundamental to translating promising biomarkers into validated tools that enhance drug development efficiency and patient care.

Building a Robust Framework for External Validation: From Assays to Analytics

In the field of biomarker research, the transition from promising discovery to clinically useful tool requires rigorous validation. While internal validation demonstrates a model's performance on data from the same source, external validation assesses its generalizability to new, independent datasets collected from different populations or settings. This process is crucial for verifying that a biomarker or predictive model performs reliably across diverse patient demographics, healthcare systems, and technical variations [6]. Without robust external validation, biomarkers risk exhibiting degraded performance in real-world clinical practice, potentially leading to inaccurate diagnoses, suboptimal treatment selections, and ultimately, compromised patient care [35].

The challenge of validation is particularly acute for artificial intelligence (AI)-based biomarkers, especially in complex fields like oncology. As these tools are derived from routine clinical data such as medical imaging and electronic health records, they promise to enhance the accessibility of personalized medicine [7]. However, their successful integration into clinical practice depends critically on large-scale validation and prospective clinical trials to demonstrate trustworthiness and cost-effectiveness [7]. This guide provides a structured comparison of validation methodologies, detailed experimental protocols, and essential resources to help researchers achieve the gold standard in external validation for biomarker models.

Comparative Performance: Internal vs. External Validation

Robust external validation requires testing on datasets that are fully independent from the training data, often from different institutions, geographic locations, or patient populations. The performance metrics from such validation provide a realistic estimate of how a model will perform in broad clinical practice. The table below summarizes quantitative evidence from published studies, highlighting the performance gap that can emerge between internal and external validation settings.

Table 1: Performance Comparison of Models in Internal vs. External Validation Settings

| Model / Study Focus | Internal Validation Performance (Metric) | External Validation Performance (Metric) | Performance Gap & Key Insight |

|---|---|---|---|

| Overactive Bladder Treatment Prediction [36] | Not Explicitly Stated | AUC: 0.66 (Objective); 0.64 (Patient-Reported) | Outperformed human experts (AUC: 0.47-0.53) and other ML algorithms in an external cohort, demonstrating value in complex prediction tasks. |

| Lung Cancer Subtyping AI Models [35] | High (Often >90% accuracy in development) | Average AUC ranged from 0.746 to 0.999 | High variability in external performance; common use of restricted, non-representative datasets limits real-world generalizability. |

| CRP Classification in Wastewater [37] | Not Applicable | Accuracy: ~65% (Best Model, CSVM) | Demonstrates application in a novel, complex matrix; moderate performance underscores challenge of noisy, real-world data. |

Experimental Protocols for Rigorous External Validation

Implementing a methodologically sound external validation study is fundamental to assessing a biomarker's true clinical utility. The following protocols detail the critical steps, from dataset selection to performance analysis.

Protocol 1: Independent Cohort Validation for Clinical Biomarkers

This protocol is designed for validating clinical biomarker models, such as those predicting treatment response or diagnostic status, using a completely independent cohort from a different institution or study.

- External Cohort Acquisition: Secure a dataset that was not used in any part of the model development or training process. This dataset should ideally come from a different clinical site, use different equipment, or represent a different demographic population [35]. Key variables should be defined a priori.

- Data Preprocessing and Harmonization: Apply the same preprocessing steps (e.g., normalization, handling of missing data, feature scaling) that were defined during the model development phase to the external dataset. It is critical not to re-tune these steps on the new data.

- Blinded Model Prediction: Apply the finalized, frozen model to the preprocessed external dataset to generate predictions. This process should be automated to prevent any manual intervention or adjustment.

- Outcome Assessment and Comparison: For the external cohort, obtain the ground truth labels (e.g., clinical responder status, confirmed diagnosis) through established clinical methods, independent of the model's predictions.

- Statistical Performance Analysis: Calculate performance metrics (e.g., AUC, accuracy, precision, recall, F1-score) by comparing the model's predictions against the independent ground truth. Compare these metrics to the model's internal validation performance and to the performance of existing standards, such as human expert judgment [36].

Protocol 2: Validation of AI-Digital Pathology Tools