Beyond Single-Method Validation: A Strategic Guide to Orthogonal Methods for Robust Interaction Confirmation

This article provides researchers, scientists, and drug development professionals with a comprehensive framework for employing orthogonal experimental methods to validate predicted molecular interactions.

Beyond Single-Method Validation: A Strategic Guide to Orthogonal Methods for Robust Interaction Confirmation

Abstract

This article provides researchers, scientists, and drug development professionals with a comprehensive framework for employing orthogonal experimental methods to validate predicted molecular interactions. As high-throughput and in silico techniques generate vast biological predictions, confirming these findings with independent, non-redundant methods has become critical for scientific rigor. We explore the foundational principles of orthogonality, detail methodological applications across diverse fields like kinase-substrate mapping and pharmaceutical development, address common troubleshooting scenarios, and provide a comparative analysis of validation strategies. This guide synthesizes current best practices to help scientists design robust validation workflows that enhance confidence in their findings and accelerate translational research.

The 'Why' Behind Orthogonal Validation: From Conceptual Frameworks to Practical Necessity

In scientific research, particularly in drug development, the reproducibility of experimental results remains a significant challenge. Orthogonal validation has emerged as a powerful framework that moves beyond simple replication to provide independent corroboration of findings through fundamentally different experimental methods. The core principle of orthogonality in experimental science involves using multiple, methodologically independent approaches to verify results, thereby controlling for technique-specific artifacts and biases. This approach is similar in principle to using a reference standard to verify a measurement; just as you need a different, calibrated weight to check if a scale is working correctly, you need antibody-independent data to cross-reference and verify experimental results [1].

The statistical foundation of orthogonality describes systems where variables are statistically independent or unrelated, enabling researchers to disentangle complex biological interactions [1] [2]. In the context of biological research and drug development, orthogonal methods provide complementary evidence that strengthens confidence in experimental conclusions, especially when evaluating research reagents or validating therapeutic targets. This approach forms a critical component of rigorous scientific methodology, helping to address the reproducibility crisis that has affected biomedical research by ensuring that findings reflect biological reality rather than methodological artifacts.

Theoretical Framework and Statistical Foundations

The Mathematical Principle of Orthogonality

The concept of orthogonality in experimental design originates from mathematical principles where factors are balanced such that their effects can be estimated independently. In statistical terms, orthogonal contrasts allow researchers to test specific hypotheses about treatment effects while maintaining statistical efficiency. These contrasts are considered orthogonal when the sum of the products of corresponding coefficients equals zero, ensuring that the comparisons being made are statistically independent [3].

This mathematical foundation enables the design of efficient experiments that can test multiple factors simultaneously without requiring full factorial designs, which would be prohibitively large and resource-intensive. Orthogonal arrays, a key tool in this framework, allow researchers to arrange experimental factors in a balanced way so that their individual effects can be distinguished without confounding [4] [5]. The property of orthogonality ensures that the factors being studied are uncorrelated, meaning that the effect of one factor can be assessed without interference from the others.

Orthogonal Arrays in Experimental Design

Orthogonal arrays provide a structured approach to designing experiments that can efficiently explore multiple factors simultaneously. These arrays are carefully constructed mathematical matrices that allow researchers to test a carefully selected subset of all possible factor combinations while still obtaining meaningful, statistically valid results [5].

The efficiency gains from orthogonal arrays can be dramatic. For instance, testing 7 factors with 3 levels each would require 2,187 experiments in a full factorial design, but can be accomplished with just 18 experiments using an orthogonal array [5]. This efficiency makes comprehensive experimental designs feasible in contexts where full factorial designs would be prohibitively expensive or time-consuming. The Taguchi method, widely used in quality engineering and industrial optimization, relies heavily on orthogonal arrays to identify factor settings that produce robust, consistent results even in the presence of noise and variability [4].

Orthogonal Methodologies in Practice

Orthogonal Validation for Research Reagents

Antibody validation represents a prime application of orthogonal strategies in biological research. The International Working Group on Antibody Validation recommends orthogonal approaches as one of five conceptual pillars for confirming antibody specificity [1]. This approach involves cross-referencing antibody-based results with data obtained using non-antibody-based methods, thus verifying specificity through independent mechanisms.

Case Example: Nectin-2/CD112 Antibody Validation Cell Signaling Technology scientists provided a clear example of orthogonal validation when validating their recombinant monoclonal antibody targeting Nectin-2/CD112. They first consulted RNA expression data from the Human Protein Atlas to identify cell lines with predicted high (RT4 and MCF7) and low (HDLM-2 and MOLT-4) expression of the target protein. They then performed western blot analysis using the antibody, with results confirming that protein expression levels aligned with the orthogonal RNA data—strong signals in RT4 and MCF7 lines and minimal to no detection in HDLM-2 and MOLT-4 lines [1]. This combination of orthogonal data source (RNA expression) with a binary experimental model (high/low expression systems) provided compelling evidence of antibody specificity.

A second case involved validation of a DLL3 antibody for immunohistochemistry applications, where researchers used liquid chromatography-mass spectrometry (LC-MS) data to identify tissues with high, medium, and low levels of DLL3 peptides. Subsequent IHC analysis with the antibody demonstrated a strong correlation between antibody-based protein detection and mass spectrometry peptide counts across the three tissue types [1]. This orthogonal approach provided additional confidence in the antibody's performance for IHC applications.

Orthogonal Approaches in Genetic Perturbation Studies

Orthogonal validation strengthens genetic perturbation studies by combining different gene modulation technologies to verify results. RNA interference (RNAi), CRISPR knockout (CRISPRko), and CRISPR interference (CRISPRi) each have distinct mechanisms of action, delivery methods, and potential off-target effects, making them ideal for orthogonal approaches [6].

Table 1: Comparison of Genetic Perturbation Technologies for Orthogonal Validation

| Feature | RNAi | CRISPRko | CRISPRi |

|---|---|---|---|

| Mechanism of Action | Degrades target mRNA in cytoplasm using endogenous RNAi machinery | Creates permanent DNA double-strand breaks repaired with indels | Blocks transcription using catalytically dead Cas9 fused to repressors |

| Effect Duration | Temporary (2-7 days with siRNA) | Permanent, heritable | Temporary to long-term depending on system |

| Efficiency | ~75-95% knockdown | Variable editing (10-95% per allele) | ~60-90% knockdown |

| Primary Off-Target Concerns | miRNA-like off-target effects | Off-target genomic edits | Off-target transcriptional repression |

| Best Use Cases | Acute knockdown studies | Permanent gene disruption | Reversible transcription inhibition |

When these technologies produce concordant results despite their different mechanisms and potential artifacts, confidence in the observed phenotypic effects increases substantially. For example, a gene that shows consistent phenotypic effects when targeted by both RNAi (which operates at the mRNA level) and CRISPRko (which creates permanent DNA mutations) provides stronger evidence for the gene's function than results from either method alone [6].

Factor Analysis for Confirmatory Studies

Confirmatory Factor Analysis (CFA) represents another application of orthogonal principles in experimental biology. CFA uses a pre-defined hypothesis about the latent structure among observed variables to identify biologically relevant factors. In microarray studies, for example, researchers can design experiments with orthogonal contrasts that enable identification of gene expression patterns associated with specific biological states or experimental conditions [2].

In one documented application, researchers used CFA to analyze gene expression data from ovarian cancer cell lines with differing degrees of cisplatin resistance. The orthogonal design allowed them to identify two latent factors representing differences in cisplatin resistance, from which they selected 315 genes associated with the resistance phenotype [2]. The orthogonal nature of the design ensured that these factors could be distinguished statistically, providing clearer biological interpretation than would be possible with unplanned comparisons.

Experimental Design and Workflows

Implementing Orthogonal Validation Strategies

Implementing effective orthogonal validation requires careful experimental planning and execution. The general workflow involves identifying independent methods that can address the same biological question, executing these methods in parallel or sequential fashion, and integrating the results to form a coherent conclusion.

Table 2: Key Research Reagent Solutions for Orthogonal Validation

| Reagent/Technology | Primary Function | Application in Orthogonal Validation |

|---|---|---|

| siRNAs/shRNAs | Gene knockdown via mRNA degradation | Comparing with CRISPR-based methods to control for off-target effects |

| CRISPRko/i/a systems | Gene editing or transcriptional control | Providing independent confirmation of RNAi results |

| Mass Spectrometry | Protein identification and quantification | Verifying antibody specificity and protein expression |

| qPCR Assays | mRNA expression quantification | Correlating protein and transcript levels |

| Omics Databases | Publicly available gene/protein expression data | Providing independent evidence for expected expression patterns |

The workflow typically begins with identifying appropriate orthogonal methods that address the same biological question through different mechanisms. For antibody validation, this might involve comparing antibody-based detection with mass spectrometry, RNA expression data, or genetic knockout models [1]. For genetic studies, combining RNAi with CRISPR technologies provides orthogonal evidence [6]. Careful experimental design ensures that the methods being compared are truly independent and not subject to the same potential artifacts or confounding factors.

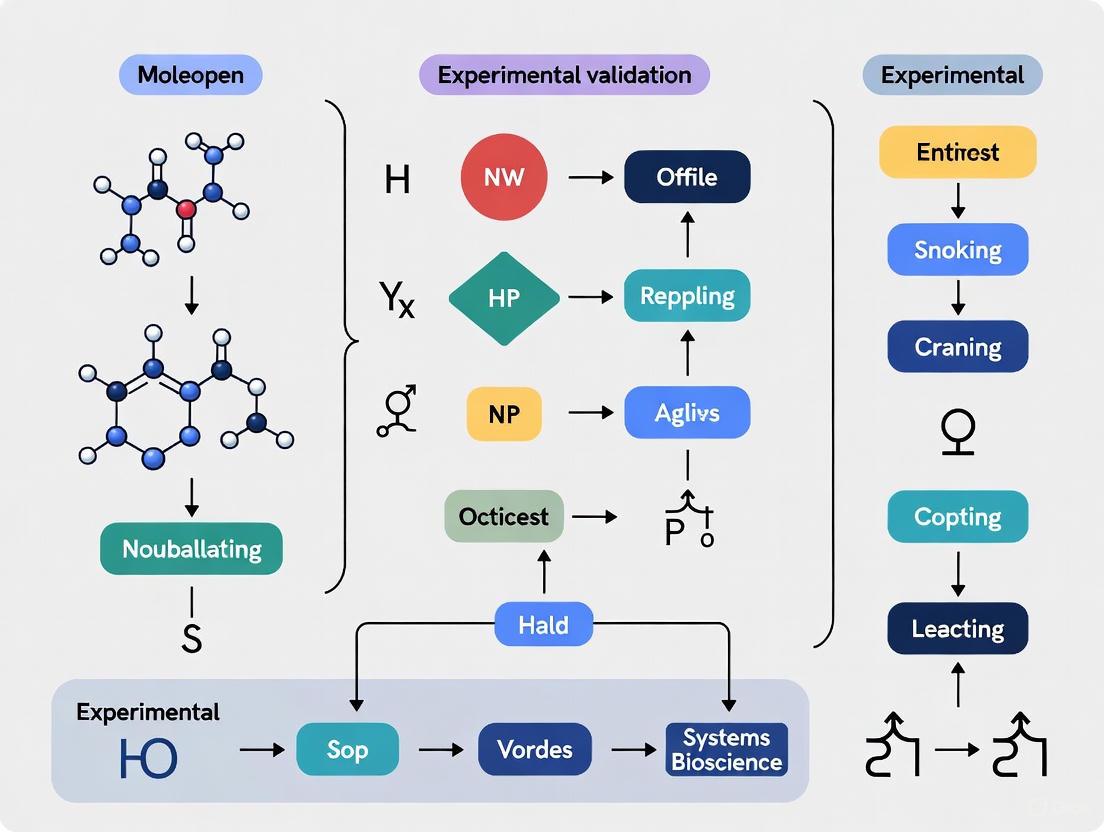

Visualization of Orthogonal Validation Workflow

The following diagram illustrates a generalized workflow for implementing orthogonal validation in experimental research:

Comparative Analysis of Orthogonal Approaches

Performance Metrics Across Validation Methods

Different orthogonal approaches offer varying strengths, limitations, and performance characteristics. Understanding these differences helps researchers select the most appropriate combination of methods for their specific validation needs.

Table 3: Performance Comparison of Orthogonal Validation Methods

| Validation Method | Key Advantages | Limitations | Typical Applications | Evidence Strength |

|---|---|---|---|---|

| Genetic Knockout/Knockdown | Direct causal evidence; targets gene of interest specifically | Potential compensatory mechanisms; viability issues | Functional validation; pathway analysis | Strong |

| Mass Spectrometry | Direct protein detection; no antibody required | Limited sensitivity; complex sample preparation | Protein identification; antibody verification | Strong |

| Transcriptomics | Comprehensive expression profiling; public data available | May not correlate perfectly with protein levels | Target expression validation; biomarker discovery | Moderate to Strong |

| In Situ Hybridization | Spatial context preservation; direct nucleic acid detection | Technical complexity; RNA stability concerns | Localization studies; RNA vs. protein correlation | Moderate |

The evidence strength provided by different orthogonal methods varies based on their directness, specificity, and technical reliability. Genetic methods provide strong evidence because they directly manipulate the gene of interest, while mass spectrometry offers strong orthogonal evidence for protein studies because it detects proteins through physical properties rather than affinity reagents. Transcriptomic methods provide moderate to strong evidence depending on the correlation between mRNA and protein levels for the specific target.

Orthogonal validation represents a fundamental shift from simple replication to independent corroboration through methodologically distinct approaches. By combining techniques such as antibody-based detection with mass spectrometry, RNAi with CRISPR technologies, or different computational approaches with experimental validation, researchers can build compelling evidence for their biological conclusions. This approach significantly reduces the likelihood that observed effects result from method-specific artifacts rather than true biological phenomena.

For researchers in drug development and biomedical science, implementing orthogonal strategies requires additional effort in experimental design and execution, but provides substantial returns in research reliability and credibility. As the examples in this guide demonstrate, orthogonal validation strengthens experimental conclusions across multiple domains, from reagent validation to functional studies. The rigorous application of these principles contributes to more reproducible, robust scientific research that can better withstand the challenges of translation to therapeutic applications.

The Critical Shift from 'Validation' to 'Corroboration' in the Big Data Era

The advent of big data and artificial intelligence has fundamentally transformed the landscape of drug discovery, necessitating an equally fundamental shift in how researchers evaluate predictive models. Traditional validation—a binary concept of establishing something as "true" or "correct"—becomes increasingly inadequate when dealing with the probabilistic predictions and complex relationships unearthed by AI systems. In its place, a more nuanced framework of corroboration is emerging, where evidence accumulates from multiple, independent angles to build confidence in predictions. This paradigm shift is particularly critical in the study of drug-target interactions (DTI) and drug-drug interactions (DDI), where AI models can screen thousands of potential relationships but require orthogonal experimental verification to establish biological relevance [7] [8]. This article examines this critical transition, comparing traditional and modern approaches to establishing scientific credibility in the age of big data.

The limitations of single-method validation are particularly pronounced in fields like drug discovery because AI models typically generate probabilistic predictions based on patterns in training data. Without multi-angle verification, researchers risk conflating statistical correlation with biological causation. The corroboration framework addresses this by treating evidence as a cumulative continuum rather than a binary state, recognizing that different experimental methods provide complementary strengths that collectively build a more complete evidentiary picture [8]. This approach is especially valuable for addressing the "black box" nature of many advanced AI models, where understanding why a prediction was made is as important as the prediction itself.

Comparative Analysis: Validation vs. Corroboration Frameworks

Table 1: Fundamental differences between validation and corroboration paradigms

| Aspect | Traditional Validation | Modern Corroboration |

|---|---|---|

| Primary Goal | Establish correctness against a single gold standard | Build convergent evidence across multiple methods |

| Evidence Structure | Binary (pass/fail) | Cumulative and weighted |

| Methodology | Single experimental standard | Orthogonal techniques |

| Data Foundation | Controlled, standardized datasets | Heterogeneous, multi-modal data |

| Uncertainty Handling | Seeks to eliminate | Explicitly characterizes and quantifies |

| Model Interpretation | Focuses on predictive accuracy | Emphasizes mechanistic understanding |

| Regulatory Alignment | Fixed checklist compliance | Risk-adaptive, evidence-based |

This paradigm shift is driven by several factors inherent to modern drug discovery challenges. First, the complexity of biological systems means that any single experimental method captures only one aspect of a multifaceted reality. Second, the scale of big data enables the detection of subtle patterns that may be statistically valid but biologically irrelevant without contextual evidence. Third, the probabilistic nature of AI predictions requires a correspondingly probabilistic approach to evaluation. Industry reports indicate that organizations are increasingly adopting these principles, with 46% expecting more agile and adaptable validation processes that can accommodate this richer evidentiary framework [9].

Orthogonal Methodologies for Corroborating AI Predictions

Experimental Design for Multi-Angle Verification

Orthogonal experimental design provides a systematic approach for corroborating computational predictions by testing them through independent methodological pathways. The core principle is that independent lines of evidence that converge on the same conclusion provide substantially greater confidence than any single method alone. In practice, this involves selecting techniques that probe different aspects of the predicted interaction—such as structural, functional, and phenotypic readouts—to build a comprehensive evidentiary case [7].

Orthogonal experimentation has emerged as a particularly powerful framework for this purpose, allowing researchers to efficiently explore multiple factors and their interactions through carefully designed experimental arrays. This methodology selects a subset of representative points from a full factorial design that maintain the property of being "uniformly dispersed" and "comparable," making it highly efficient for investigating multi-attribute and multi-level experimental spaces [10]. The resulting data provides independent verification points that can corroborate or challenge computational predictions across different dimensions of evidence.

Implementation in Drug-Target Interaction Research

In DTI prediction, a comprehensive corroboration strategy might integrate multiple experimental modalities:

- Biophysical assays (e.g., surface plasmon resonance) to quantify binding affinity and kinetics

- Functional cellular assays to measure downstream signaling effects

- Structural biology approaches (e.g., cryo-EM, X-ray crystallography) to visualize binding modes

- Phenotypic screening to assess overall cellular responses

This multi-modal approach is particularly valuable because it addresses the fundamental challenge that drug-target interactions can be context-dependent—a compound may bind its target but fail to produce the expected functional outcome due to cellular background, compensatory mechanisms, or off-target effects. By combining techniques that probe different aspects of the interaction, researchers can distinguish between truly functional interactions and biologically irrelevant contacts [7].

Table 2: Orthogonal methods for corroborating predicted drug-target interactions

| Method Category | Specific Techniques | What It Measures | Strengths | Limitations |

|---|---|---|---|---|

| Binding Assays | SPR, ITC, NMR | Direct physical interaction | Quantifies affinity and kinetics | May not reflect functional consequences |

| Cellular Activity | Reporter assays, second messenger measurements | Functional effects in living systems | Provides physiological context | Complex signal interpretation |

| Structural Methods | X-ray crystallography, Cryo-EM | Atomic-level interaction details | Reveals binding mode and mechanism | Static picture of dynamic process |

| Omics Approaches | Transcriptomics, proteomics | System-wide responses | Captures network-level effects | Challenging to attribute causality |

Comparative Performance of AI Models in Interaction Prediction

The shift from validation to corroboration is particularly evident when comparing the performance of different AI approaches for predicting drug-target and drug-drug interactions. Different model architectures exhibit distinct strengths and limitations that become apparent only when evaluated across multiple orthogonal metrics rather than a single performance measure.

Model Architectures and Their Corroboration Profiles

Graph Neural Networks (GNNs) have emerged as particularly powerful for DTI and DDI prediction because they naturally represent the network-like structure of biological systems. GNNs can integrate multiple data types—including chemical structures, protein sequences, and known interaction networks—to predict novel interactions. However, their performance must be corroborated through multiple angles: not just overall accuracy, but also performance on different interaction classes, generalization to novel chemical space, and robustness to data incompleteness [8].

Transformer-based models, which have revolutionized natural language processing, are increasingly applied to biological sequences for DTI prediction. These models can capture complex patterns in protein sequences and drug structures when pre-trained on large-scale databases then fine-tuned for specific prediction tasks. Their predictions gain credibility when corroborated through both computational benchmarks (e.g., performance on held-out test sets) and experimental verification of novel predictions [7].

Knowledge graph-based approaches integrate diverse biological data—including genes, diseases, drug structures, and clinical manifestations—into structured networks that can be mined for novel interactions. These models explicitly represent the evidence pathways supporting their predictions, naturally supporting a corroboration framework by showing how different data sources converge on a predicted relationship [8].

Table 3: Performance comparison of AI architectures for interaction prediction

| Model Architecture | Reported AUC | Key Strengths | Limitations | Corroboration Needs |

|---|---|---|---|---|

| Graph Neural Networks | 0.89-0.94 [8] | Captures network structure | Requires substantial data | Experimental verification of novel predictions |

| Transformer Models | 0.87-0.92 [7] | Handles sequence context | Computationally intensive | Specificity testing across target families |

| Knowledge Graph Embeddings | 0.83-0.90 [8] | Integrates diverse evidence | Complex implementation | Clinical relevance assessment |

| Traditional Machine Learning | 0.79-0.86 [7] | Computationally efficient | Limited to handcrafted features | Generalization beyond training data |

The Critical Role of Data Quality and Diversity

A fundamental principle in the corroboration framework is that model performance is intrinsically linked to data quality and diversity. The adage "garbage in, garbage out" takes on new dimensions in big data analytics, where biases and gaps in training data can propagate through complex models to produce confidently wrong predictions. The 2025 State of Validation Report highlights that data integrity remains a top challenge, ranked as the #3 concern by professionals in the field [11].

Different AI architectures show varying sensitivities to data quality issues. GNNs generally handle missing data more gracefully than sequence-based models but may propagate errors through the network structure. Transformer models require massive datasets for pre-training but can sometimes learn robust representations that transfer well to new prediction tasks. The process of corroboration must therefore include careful assessment of training data characteristics and their alignment with the intended application domain [7] [12].

Experimental Protocols for Orthogonal Corroboration

Orthogonal Experimental Design for Material Optimization

The orthogonal experimental design provides a systematic framework for efficiently exploring complex parameter spaces to corroborate computational predictions. This method is particularly valuable in contexts like similar material development for experimental models, where multiple factors interact to determine overall properties [13] [10].

Protocol: L9(3^4) Orthogonal Array Design for Material Optimization

Factor Selection: Identify critical factors influencing the system (e.g., for similar materials: cement content, coal powder ratio, aggregate composition, moisture content) [10]

Level Assignment: Define three levels for each factor representing low, medium, and high values based on preliminary experiments or literature data

Array Selection: Choose an appropriate orthogonal array (e.g., L9 for 4 factors at 3 levels each) that enables testing only 9 combinations rather than all 81 (3^4) possible combinations

Experimental Execution: Prepare specimens according to the designated combinations and measure key output parameters (e.g., compressive strength, elastic modulus, density)

Data Analysis:

- Calculate range values (R) to determine factor influence magnitude

- Perform analysis of variance (ANOVA) to identify statistically significant factors

- Build predictive models relating factors to outputs

Validation: Confirm model predictions with additional test points not in the original array

This approach was successfully applied in developing similar materials for simulated coal seam sampling, where cement content was identified as the main controlling factor for mechanical properties, while moisture content exhibited a complex three-stage relationship with strength parameters [10].

Machine Learning Enhancement of Experimental Optimization

Orthogonal experimental design can be further enhanced through integration with machine learning approaches:

Protocol: PSO-BP Neural Network for Experimental Optimization

Data Collection: Conduct orthogonal experiments to generate a comprehensive dataset covering the factor space [13]

Network Architecture: Design a backpropagation (BP) neural network with:

- Input layer: Experimental factors (e.g., material ratios)

- Hidden layers: 1-2 layers with sigmoid activation functions

- Output layer: Target properties (e.g., compressive strength, elastic modulus)

Particle Swarm Optimization:

- Initialize particle positions representing neural network weights and thresholds

- Evaluate fitness using prediction error on experimental data

- Iteratively update particle velocities and positions to minimize error

- Continue until convergence or maximum iterations reached

Model Validation: Compare PSO-BP neural network performance against traditional BP networks using metrics like R² correlation coefficient, RMSE (Root Mean Square Error), and MAE (Mean Absolute Error)

Prediction and Optimization: Use the trained model to predict optimal factor combinations beyond the experimentally tested points

This hybrid approach demonstrated superior performance in similar material proportioning, with the PSO-BP model achieving higher prediction correlation coefficients (R²) and lower error metrics compared to traditional BP neural networks [13].

Visualization of Workflows and Relationships

Corroboration Workflow for AI Predictions

Orthogonal Experimental Design Process

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 4: Essential research reagents and materials for orthogonal corroboration studies

| Category | Specific Items | Function/Application | Key Considerations |

|---|---|---|---|

| Material Components | Quartz sand, barite powder, cement, gypsum, glycerol [13] | Similar material development for physical models | Particle size distribution, purity, consistency |

| Binding Assay Reagents | Sensor chips, labeling kits, buffer components | Biophysical interaction studies | Compatibility with instrumentation, lot-to-lot variability |

| Cell-Based Assay Systems | Reporter constructs, signaling pathway inhibitors, detection reagents | Functional validation in biological systems | Cell line authentication, passage number control |

| Structural Biology Tools | Crystallization screens, cryo-protectants, grid materials | 3D structure determination | Sample purity, stability requirements |

| Data Analysis Resources | Public databases (BindingDB, UniProt, PubChem) [7] | Computational validation and benchmarking | Data currency, completeness, annotation quality |

The selection of appropriate research materials is critical for generating reliable, reproducible evidence in corroboration studies. For experimental models in mining and geotechnical engineering, carefully controlled similar materials with specific mechanical properties enable realistic simulation of field conditions. These materials typically include aggregates like quartz sand, binding agents like cement and gypsum, density modifiers like barite powder, and regulators like glycerol to control mechanical properties [13] [10]. The proportional combinations of these components significantly influence key parameters including uniaxial compressive strength, elastic modulus, and density, making systematic optimization through orthogonal experimental design particularly valuable.

In biological contexts, the quality and appropriateness of reagents directly impact the evidentiary value of experimental results. Cell-based assay systems require careful authentication and contamination screening to ensure biological relevance. Public databases like BindingDB, UniProt, and PubChem provide essential reference data for benchmarking computational predictions and designing experimental corroboration strategies [7]. The integration of these resources into a coherent corroboration workflow enables researchers to efficiently transition from computational prediction to experimental verification.

The shift from validation to corroboration represents more than just a semantic change—it reflects a fundamental evolution in how we establish scientific credibility in the big data era. This paradigm acknowledges the complexity of biological systems and the probabilistic nature of AI predictions, emphasizing cumulative evidence over binary determinations. As AI systems become increasingly integral to drug discovery, adopting this multifaceted approach to evidence generation will be essential for translating computational predictions into clinically meaningful interventions.

The future of drug discovery will likely see further formalization of corroboration frameworks, with standardized metrics for evidence quality and weighting. Industry trends already point in this direction, with increasing adoption of digital validation systems (58% of organizations in 2025) and growing recognition of the need for more adaptable, evidence-based approaches to establishing confidence in research findings [9] [11]. By embracing corroboration as a guiding principle, researchers can navigate the complexities of big data while maintaining the rigorous standards that underpin scientific progress.

The reliability of scientific discovery, particularly in drug development, hinges on the accurate validation of predicted interactions. A significant challenge in this process is literature bias, where well-studied phenomena are over-represented in training data for computational models, creating a "dark space" of understudied interactions that remain unvalidated. This bias is particularly problematic in mental health research, where citation fabrication rates in large language model (LLM) outputs can reach 29% for less-studied disorders like body dysmorphic disorder compared to only 6% for major depressive disorder, demonstrating how limited literature coverage directly impacts reliability [14]. Orthogonal methods—defined as techniques that use different physical or chemical principles to measure the same property—provide a powerful framework for addressing this validation gap [15]. This guide compares analytical approaches for confirming predicted interactions when limited published data exists, providing researchers with methodologies to illuminate this scientific dark space.

Understanding Orthogonal and Complementary Measurements

Definitions and Distinctions

Within pharmaceutical development and validation, the terms "orthogonal" and "complementary" have specific meanings that guide effective method selection:

Orthogonal Measurements: Techniques that apply different physical principles to measure the same specific property or attribute of a sample, thereby minimizing method-specific biases and interferences. The primary aim is to provide confidence in the measurement of a single critical quality attribute (CQA) by addressing unknown bias or interference through fundamentally different measurement physics [15].

Complementary Measurements: A broader set of methods that corroborate each other to support the same decision or conclusion, often by measuring different properties that collectively build evidence for a hypothesis. These measurements reinforce each other to support a common decision rather than targeting the same specific attribute [15].

Theoretical Framework for Method Selection

The relationship between these approaches in validating understudied interactions follows a logical progression:

Comparative Analysis of Orthogonal Method Performance

Chromatographic Orthogonal Screening for Impurity Detection

Chromatographic orthogonal methods provide a robust approach for detecting impurities and degradation products that might be missed by a single method. The systematic approach developed by Johnson & Johnson Pharmaceutical Research & Development involves screening samples under 36 different conditions across six columns with different bonded phases and various mobile phase modifiers [16].

Table 1: Orthogonal HPLC Screening Conditions for Comprehensive Impurity Profiling

| Parameter | Primary Method Conditions | Orthogonal Method Conditions |

|---|---|---|

| Columns | Zorbax XDB-C8 (150mm × 4.6mm, 5μm) | Phenomenex Curosil PFP (150mm × 4.6mm, 3μm) |

| Mobile Phase | Acetonitrile and water with 0.1% formic acid | Acetonitrile, methanol, and water with 0.1% trifluoroacetic acid |

| Temperature | 25°C | 25°C |

| Gradient | 25 minutes | 30 minutes |

| Detection Capability | Baseline separation of main components | Reveals co-eluted impurities and highly retained compounds |

In application, this orthogonal approach demonstrated significant value in multiple case studies. For Compound A, the primary method showed no new impurities in a new API batch, while the orthogonal method detected co-elution of impurities (A1 and A2) and highly retained compounds (dimer 1 and dimer 2) [16]. Similarly, for Compound B, the orthogonal method revealed that a 0.40% impurity detected by the primary method was actually the result of co-eluted compounds (Impurity A and Impurity B), plus a previously unknown API isomer [16].

Orthogonal Methods in Nanopharmaceutical Characterization

Characterizing complex nano-enabled drug products requires multiple orthogonal techniques to accurately measure critical quality attributes (CQAs) where literature may be limited.

Table 2: Orthogonal Methods for Nanoparticle Characterization

| Property | Primary Method | Orthogonal Methods | Key Advantages |

|---|---|---|---|

| Particle Size Distribution | Dynamic Light Scattering (DLS) | Nanoparticle Tracking Analysis (NTA), Analytical Ultracentrifugation (AUC) | NTA provides concentration data; AUC handles polydisperse samples |

| Hydrodynamic Radius | Dynamic Light Scattering | Asymmetric Flow Field Flow Fractionation (AF4) | AF4 separates by size prior to measurement |

| Geometric Radius | Transmission Electron Microscopy | Multiangle Light Scattering | TEM provides direct visualization; MALS gives solution-state data |

| Elemental Composition | ICP-OES | sp-ICP-MS | sp-ICP-MS provides single particle data |

The combination of these techniques is particularly valuable for products like liposomes, polymeric nanoparticles, lipid-based nanoparticles, and virus-like particles, where multiple complex CQAs must be monitored simultaneously [15]. For instance, measuring the particle size distribution of lipid-based nanoparticles using both DLS and NTA provides different but reinforcing information about the same attribute, with DLS measuring hydrodynamic radius based on diffusion and NTA providing direct particle-by-particle sizing and concentration [15].

Experimental Protocols for Orthogonal Validation

Comprehensive HPLC Orthogonal Screening Protocol

Objective: To develop orthogonal HPLC methods that comprehensively detect synthetic impurities and degradation products during pharmaceutical development.

Materials:

- All available batches of drug substances and drug products

- Multiple HPLC columns with different bonded phases (C8, C18, PFP, etc.)

- Various mobile phase modifiers (formic acid, trifluoroacetic acid, ammonium acetate)

- Forced degradation study materials (acid, base, oxidant, heat, light)

Methodology:

- Sample Preparation: Generate potential degradation products via forced decomposition studies under various stress conditions (acid, base, oxidative, thermal, photolytic). Select samples degraded between 5-15% to avoid secondary degradation products [16].

- Primary Screening: Screen all samples using a single chromatographic method (either from discovery phase or a generic broad gradient) to identify samples for further method development.

- Orthogonal Screening: Screen samples of interest using six broad gradients on each of six different columns (36 total conditions per sample). Maintain constant gradient while varying pH modifiers [16].

- Method Selection: Identify conditions that separate all components of interest. Select one primary method and one orthogonal method with significantly different selectivity.

- Validation: Analyze degradation samples containing degradation products and most stressed samples under both primary and orthogonal conditions to verify no peaks were missed.

Expected Outcomes: The orthogonal method should detect co-eluting impurities and highly retained compounds not visible in the primary method, as demonstrated in the case studies where orthogonal methods revealed additional impurities in multiple API batches [16].

Orthogonal Assay Development for Therapeutics Discovery

Objective: To confirm biological activity identified during primary screening and eliminate false positives through orthogonal assay approaches.

Materials:

- Primary assay system (e.g., AlphaLISA FcRn binding assay)

- Orthogonal detection technology (e.g., Surface Plasmon Resonance)

- Relevant biological reagents (therapeutic antibodies, receptors)

- Data integration platform (e.g., Revvity Signals One)

Methodology:

- Primary Screening: Conduct initial high-throughput screening using a robust primary method such as AlphaLISA FcRn binding assay for measuring relative affinities of therapeutic antibodies to FcRn [17].

- Orthogonal Confirmation: Employ a fundamentally different detection technology such as High-Throughput Surface Plasmon Resonance (HT-SPR) to reinforce primary findings using different physical principles [17].

- Data Integration: Combine results from both techniques using unified data management systems that support cross-study analytics and integration of in vitro and in vivo data.

- Decision Point: Proceed with lead optimization only when orthogonal methods yield results in agreement with the same conclusion, ensuring data trustworthiness for subsequent decisions [17].

Applications: This approach is particularly valuable in lead identification, where orthogonal assay approaches eliminate false positives or confirm activity identified during primary assays, as demonstrated in FcRn binding studies for predicting therapeutic antibody half-life in vivo [17].

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 3: Key Research Reagents for Orthogonal Method Development

| Reagent/Technology | Primary Function | Application Context |

|---|---|---|

| Multiple HPLC Columns (C8, C18, PFP, etc.) | Provide different selectivity for separation | Chromatographic method development |

| Various Mobile Phase Modifiers (Formic acid, TFA, ammonium acetate) | Adjust pH and interaction with analytes | Optimizing separation conditions |

| Forced Degradation Materials | Generate potential degradation products | Stress testing of drug substances |

| Surface Plasmon Resonance | Measure biomolecular interactions in real-time | Orthogonal confirmation of binding assays |

| Dynamic Light Scattering | Measure hydrodynamic size of nanoparticles | Primary particle size distribution |

| Nanoparticle Tracking Analysis | Visualize and size individual particles | Orthogonal particle size and concentration |

| AlphaLISA Assays | High-throughput binding assays | Primary screening for therapeutic antibodies |

| Data Integration Platforms | Combine and analyze results from multiple techniques | Cross-assay analytics and decision support |

Orthogonal methods provide an essential framework for addressing literature bias in scientific research, particularly when validating predicted interactions in understudied areas. The systematic application of fundamentally different measurement techniques—whether in chromatographic method development, nanoparticle characterization, or biological assay confirmation—significantly reduces the risk of measurement bias and decision uncertainty [15]. As demonstrated in the pharmaceutical case studies, orthogonal screening revealed critical impurities and co-elutions that single methods missed, preventing potentially serious oversight in drug development [16]. For researchers navigating the "dark space" of understudied interactions, implementing the comparative approaches and detailed protocols outlined in this guide provides a pathway to more robust validation, ultimately contributing to more reliable scientific discovery and therapeutic development.

In the rigorous world of scientific research and drug development, the pursuit of data integrity is paramount. False positives and technical artifacts pose significant threats, potentially leading to erroneous conclusions, wasted resources, and failed clinical trials. Orthogonal methods provide a robust defense against these risks. An orthogonal method is defined as an analytical approach that uses different physical or chemical principles to measure the same attribute of a sample, thereby minimizing the risk of method-specific biases and interferences [15]. This strategy is distinct from complementary methods, which are used to measure different attributes that, together, support a broader decision about product quality [15].

Regulatory agencies strongly recommend the use of orthogonal techniques to ensure the reliability of analytical results, particularly for complex biologics and pharmaceuticals [18]. The core principle is that while one method might be susceptible to a specific interference or artifact, an independent method based on a different mechanism is unlikely to share the same vulnerability. When these independent methods concur, the confidence in the result is substantially increased. This guide explores the application of orthogonal methods across pharmaceutical and clinical diagnostics, providing a comparative analysis of their implementation and efficacy in mitigating false positives.

Orthogonal Methodologies in Practice

Orthogonal Chromatography in Pharmaceutical Development

In pharmaceutical development, High Performance Liquid Chromatography (HPLC) is a cornerstone for analyzing drug substances and products. However, relying on a single chromatographic method carries the risk of missing critical impurities or degradation products that co-elute with the main active ingredient.

Experimental Protocol: A systematic approach to orthogonal HPLC method development involves several key stages [16]:

- Sample Generation: Generate a comprehensive set of samples containing all potential impurities and degradation products. This includes multiple batches of the drug substance and forced degradation studies (stressing the drug under acidic, basic, oxidative, and thermal conditions) [16].

- Orthogonal Screening: The samples of interest are screened using a matrix of 36 different chromatographic conditions. This matrix is built from six different broad gradient methods on each of six distinct column chemistries (e.g., C8, C18, PFP, Gemini C18) with varied mobile phase modifiers (e.g., formic acid, trifluoroacetic acid, ammonium acetate) at different pH levels [16].

- Method Selection and Optimization: From the screening results, a primary method is selected and validated for routine release and stability testing. Crucially, a second, orthogonal method that provides starkly different selectivity is also identified. Software tools like DryLab are often used to optimize both methods further [16].

- Ongoing Monitoring: The orthogonal method is then used to screen samples from new synthetic routes and pivotal stability batches. This ensures that the primary method remains specific and has not been compromised by new, previously unseen impurities [16].

Table 1: Orthogonal Screening Conditions for HPLC Method Development [16]

| Factor | Typical Options Used in Orthogonal Screening |

|---|---|

| Columns (Stationary Phase) | Zorbax XDB-C8, Phenomenex Curosil PFP, YMC-Pack Pro C18, Phenomenex Gemini C18, and others with different bonded phases. |

| Mobile Phase Modifiers | 0.1% Formic Acid, 0.1% Trifluoroacetic Acid, 5 mM Ammonium Acetate, at various pH levels. |

| Organic Solvents | Acetonitrile, Methanol, or Acetonitrile-Methanol mixtures. |

| Gradient | Broad, linear gradients (e.g., 25-35 minutes) to minimize non-elution or elution at the solvent front. |

Orthogonal Next-Generation Sequencing in Clinical Diagnostics

In clinical genetics, Next-Generation Sequencing (NGS) has revolutionized the diagnosis of genetic disorders. However, NGS is inherently error-prone, and false positive variant calls can lead to misdiagnosis. The American College of Medical Genetics (ACMG) guidelines recommend orthogonal confirmation for variant calls [19].

Experimental Protocol: Orthogonal NGS for Exome Sequencing

- Platform Selection: Utilize two NGS platforms that are orthogonal in both their DNA selection and sequencing chemistry. A common approach combines:

- Platform A: DNA selection by bait-based hybridization (e.g., Agilent SureSelect) followed by sequencing by synthesis with reversible terminators (e.g., Illumina NextSeq).

- Platform B: DNA selection by amplification (e.g., Life Technologies AmpliSeq) followed by semiconductor sequencing (e.g., Ion Proton) [19].

- Library Preparation and Sequencing: Prepare libraries for the same sample independently using the two different platforms and sequence them to a high mean coverage (e.g., >100x) [19].

- Variant Calling and Integration: Call variants independently for each platform's data. A custom algorithm (e.g., "Combinator") is then used to integrate the variant calls from both platforms. Variants are categorized based on whether they were called by one or both platforms, and their zygosity [19].

- Validation and Bypass: Variants identified by both orthogonal platforms are classified as high-confidence and typically do not require further Sanger sequencing confirmation. This significantly reduces turnaround time and cost [19].

Table 2: Performance Comparison of Single vs. Orthogonal NGS Platforms for SNV Detection [19]

| Sequencing Strategy | DNA Selection Method | Sequencing Chemistry | Sensitivity (%) | Positive Predictive Value (PPV) |

|---|---|---|---|---|

| Illumina NextSeq Only | Hybridization Capture (Agilent CRE) | Reversible Terminator | 99.6% | >99.5% |

| Ion Proton Only | Amplification (AmpliSeq) | Semiconductor | 96.9% | >99.5% |

| Orthogonal NGS (Combined) | Hybridization & Amplification | Terminator & Semiconductor | ~99.9% | ~99.9% |

Machine Learning as an Orthogonal Tool in NGS

A more recent advancement involves using machine learning (ML) as an orthogonal filter for NGS data, reducing the need for wet-lab confirmatory tests.

Experimental Protocol: ML for Sanger Confirmation Bypass

- Training Data Curation: Use whole exome sequencing data from well-characterized reference samples (e.g., Genome in a Bottle (GIAB) cell lines) where the true positive and false positive variants are known [20].

- Feature Extraction: For each variant call, extract numerous quality metrics such as allele frequency, read depth, mapping quality, read position probability, and sequence context (e.g., homopolymer presence) [20].

- Model Training: Train multiple supervised machine learning models (e.g., Logistic Regression, Random Forest, Gradient Boosting) to classify variants as high-confidence (true positive) or low-confidence (false positive) based on the extracted features [20].

- Pipeline Integration: The best-performing model is integrated into a two-tiered confirmation bypass pipeline. Variants classified as high-confidence by the ML model bypass Sanger confirmation, while low-confidence variants are flagged for orthogonal wet-lab testing [20].

Comparative Analysis of Orthogonal Strategies

The following table summarizes the core characteristics, advantages, and limitations of the different orthogonal strategies discussed.

Table 3: Comparison of Orthogonal Method Strategies

| Strategy | Core Principle | Key Advantage | Primary Limitation | Ideal Use Case |

|---|---|---|---|---|

| Orthogonal Chromatography [16] | Different column chemistries and mobile phases. | Directly resolves co-eluting impurities missed by a single method. | Method development is resource-intensive. | Impurity and degradation product profiling for drug substances and products. |

| Orthogonal NGS Platforms [19] | Different DNA selection and sequencing chemistries. | Genomic-scale confirmation; improves both sensitivity and specificity. | Higher initial cost and data processing complexity. | Clinical exome sequencing where maximum accuracy is required. |

| Machine Learning Filter [20] | Computational analysis of variant quality metrics. | Dramatically reduces Sanger sequencing costs and turnaround time. | Model requires training on a validated truth set and may be pipeline-specific. | High-volume sequencing labs aiming to optimize efficiency without sacrificing quality. |

The Scientist's Toolkit: Essential Reagents and Materials

Successful implementation of orthogonal methods relies on a set of key research reagents and tools.

Table 4: Key Research Reagent Solutions for Orthogonal Methods

| Item | Function in Orthogonal Methods |

|---|---|

| Forced Degradation Reagents [16] | Acids, bases, oxidants, etc., used to stress a drug substance and generate a wide range of potential degradation products for orthogonal method development. |

| Diverse HPLC Columns [16] | Columns with different stationary phases (C8, C18, PFP, etc.) are the foundation of orthogonal chromatography, providing the selectivity differences needed to resolve impurities. |

| Orthogonal NGS Kits [19] | Different exome capture kits (e.g., hybridization-based vs. amplification-based) ensure comprehensive coverage of the target region and mitigate biases inherent to any single platform. |

| Genome in a Bottle (GIAB) Reference Materials [20] | Highly characterized genomic DNA from cell lines like NA12878, providing a gold-standard "truth set" for benchmarking NGS pipelines and training machine learning models. |

| Mass Spectrometry [18] | Serves as a powerful orthogonal technique to immunoassays (like ELISA) for impurity profiling (e.g., Host Cell Proteins), offering superior specificity and the ability to identify individual contaminants. |

Workflow and Data Analysis Diagrams

Orthogonal HPLC Method Development Workflow

The following diagram illustrates the systematic workflow for developing and applying orthogonal HPLC methods in pharmaceutical analysis.

Orthogonal NGS with ML Filter Pipeline

This diagram outlines the integrated pipeline for using orthogonal NGS platforms and machine learning to achieve high-confidence variant calls with minimal Sanger confirmation.

The integration of orthogonal methods is a non-negotiable component of modern scientific research, particularly in regulated industries like drug development and clinical diagnostics. As demonstrated, whether through dual chromatographic systems, multiple NGS platforms, or sophisticated machine learning algorithms, the core principle remains the same: leveraging independent lines of evidence to mitigate the risk of false positives and technical artifacts. The comparative data clearly shows that orthogonal strategies enhance both the sensitivity and positive predictive value of analytical results far beyond what is achievable with any single method. As therapeutic modalities and analytical technologies continue to grow in complexity, the deliberate and informed application of orthogonality will remain a cornerstone of robust, reliable, and trustworthy science.

In pharmaceutical development, accurate impurity profiling is non-negotiable for ensuring drug safety and efficacy. Reversed-phase high-performance liquid chromatography (RP-HPLC) serves as the primary workhorse for these analyses but faces a significant limitation: it may fail to separate chemically similar impurities that co-elute with the target compound, creating a hidden risk of inaccurate purity assessment [21]. This challenge has propelled orthogonal chromatography—the use of two distinct separation mechanisms—from a specialized technique to an essential component of robust analytical control strategies.

Orthogonal separations are defined as "two separations of quite different selectivity, with marked changes in relative retention so that two peaks which are unresolved in one chromatogram will likely be separated in the second chromatogram" [22]. This approach is particularly valuable for synthetic peptides and complex APIs where traditional RP-HPLC may overlook critical impurities due to their similar hydrophobic properties [21] [23]. The following case study demonstrates how implementing an orthogonal method revealed co-eluted impurities that remained undetected by a primary RP-HPLC method, fundamentally changing the purity assessment and control strategy for a challenging peptide API.

Case Study: Challenging Impurity Profile of Histone H3 (1-20) Peptide

The Analytical Challenge

The purification of the hydrophilic peptide Histone H3 (1-20) (sequence: H-ARTKQTARKS TGGKAPRKQL-OH), synthesized via solid-phase peptide synthesis, presented a formidable analytical challenge [21]. Initial RP-HPLC analysis suggested a relatively clean chromatogram, potentially misleading scientists into concluding the crude peptide required minimal purification. However, this initial assessment proved dangerously incomplete.

Orthogonal Method Reveals Hidden Truth

When researchers applied an orthogonal purification approach using PurePep EasyClean (PEC) technology followed by RP-HPLC, a different reality emerged [21]. The PEC technology employs a chemo-selective separation principle, fundamentally different from the hydrophobic interaction mechanism of RP-HPLC. Through capping during synthesis, only the full-length peptide becomes accessible for modification with a traceless cleavable purification linker, enabling selective isolation from a complex mixture via catch-and-release principles [21].

Mass spectral analysis of the seemingly clean primary RP-HPLC peak revealed several co-eluting impurities that the primary method failed to resolve [21]. These included significant Ala (A)-, Arg (R)-, and Thr (T) deletion sequences that remained hidden within the main peak [21]. The initial "clean" appearance of the chromatogram was deceiving—the crude peptide actually had a purity of only 29%, a fact only revealed through orthogonal analysis [21].

Quantitative Comparison of Purification Efficacy

The dramatic improvement achieved through orthogonal purification is quantified in the table below, which compares the performance of different purification approaches for the Histone H3 peptide:

Table 1: Comparison of Purification Performance for Histone H3 (1-20) Peptide [21]

| 1st Dimension Purification | 2nd Dimension Purification | Final Purity | ACN Used | Total Waste |

|---|---|---|---|---|

| PEC | - | 86% | 50 mL | 200 mL |

| PEC | RP-HPLC | 96% | 1050 mL | 3200 mL |

| Flash (HFBA-enhanced) | - | 66% | 500 mL | 1500 mL |

| Flash (HFBA-enhanced) | RP-HPLC | 85% | 1500 mL | 4500 mL |

The data demonstrates that a single orthogonal PEC purification achieved higher purity (86%) than the first-dimension flash purification (66%), while also providing dramatic reductions in solvent consumption and waste production [21]. When combined with subsequent RP-HPLC as a second dimension, the orthogonal approach achieved exceptional 96% purity, significantly outperforming the traditional two-step chromatographic approach [21].

Additional Case Studies: Orthogonal HPLC in Small Molecule API Development

Systematic Screening Approach for Small Molecules

Beyond peptide applications, orthogonal method development has proven equally valuable for small molecule pharmaceuticals. One systematic approach employs six different broad gradient methods across six different columns—totaling 36 screening conditions—to develop a comprehensive understanding of impurity profiles [16]. This extensive screening uses columns with different bonded phases (C18, C8, PFP, phenyl, and polar-embedded) combined with mobile phases modified with different pH regulators (formic acid, trifluoroacetic acid, ammonium acetate, ammonium formate, phosphate buffer) to maximize selectivity differences [16].

Case Study 1: Compound A - Revealing Co-eluted Impurities and Dimers

For Compound A, a primary HPLC method showed no new impurities in a new API batch, suggesting consistent quality [16]. However, orthogonal method analysis revealed a different profile—previously undetected impurities (A1 and A2) were co-eluting in the primary method, and highly retained dimeric compounds (dimer 1 and dimer 2) were also present but missed by the primary method [16]. This discovery fundamentally changed the understanding of the impurity profile and necessitated method enhancement.

Case Study 2: Compound B - Identifying Co-elution and Isomers

In another instance, analysis of a new drug substance lot of Compound B with the primary method showed a 0.40% impurity [16]. The orthogonal method demonstrated this single peak actually represented two co-eluted compounds (Impurity A and Impurity B) [16]. Additionally, a previously unknown isomer of the API was detected only by the orthogonal method, highlighting a critical gap in the primary method's selectivity [16].

Case Study 3: Compound C - API Co-elution with Impurity

For Compound C, both primary and orthogonal methods detected two impurities in a new drug substance batch [16]. However, the orthogonal method exclusively revealed a third component (Impurity 3) at 0.10% that was co-eluting with the API in the primary method—a particularly concerning finding given that API-impurity co-elution represents one of the most challenging scenarios for accurate quantification [16].

Experimental Protocols for Orthogonal Method Development

Systematic Screening Protocol

A robust orthogonal screening protocol involves these critical steps [16]:

Sample Preparation: Obtain all available batches of drug substances and drug products. Generate potential degradation products via forced decomposition studies under stressed conditions (acid, base, oxidation, thermal, photolytic), typically degrading samples 5-15% to avoid secondary degradation products [16].

Primary Screening: Analyze generated samples using a single chromatographic method (either a method established during discovery or a generic broad gradient) to identify samples with unique impurity profiles for further method development [16].

Orthogonal Screening: Screen selected samples using multiple broad gradients on different columns. A standardized approach uses six different gradients on each of six columns (36 conditions per sample) [16]. Mobile phases should include different pH modifiers prepared at 20× the required concentration and added at constant 5% (v/v). Typical modifiers include [16]:

- 0.1% formic acid (pH ~2.7)

- 0.1% trifluoroacetic acid (pH ~2.0)

- 10 mM ammonium acetate (pH ~6.8)

- 10 mM ammonium formate (pH ~3.8)

- 10 mM phosphate buffer (pH ~2.7, 4.5, 7.0)

Column Selection: Utilize columns with different selectivity mechanisms. A potential column set includes [16]:

- Zorbax Eclipse XDB-C18

- Zorbax Eclipse XDB-C8

- YMC-Pack Pro C18

- Phenomenex Curosil-PFP

- Phenomenex Synergi Polar-RP

- Waters XBridge Shield RP18

Method Selection and Optimization: Based on screening results, select a primary method that separates all known components and an orthogonal method that provides significantly different selectivity. Software tools like DryLab can assist in optimizing both methods [16].

HILIC-RP-HPLC Orthogonal System for Cyclic Peptides

Recent research has established HILIC-RP-HPLC as a particularly effective orthogonal system for synthetic cyclic peptides [23]. The experimental protocol involves:

- Column Selection: Three polymer-based HILIC columns with different functionalities: acidic, basic, and zwitterionic [23].

- Mobile Phase Optimization: Seven different mobile phases are screened to investigate effects of additives and pH. Ammonium acetate has been identified as an effective additive [23].

- Parameter Optimization: Derringer's desirability function is employed based on five criteria (purity, impurity factor, peak symmetry factor, theoretical plate number, and retention time) to identify optimal screening conditions [23].

- Orthogonality Confirmation: RP-HPLC and HILIC are confirmed to be mutually orthogonal, with most impurities exhibiting small RP-HPLC retention times but large HILIC retention times, and vice versa [23].

Visualization of Orthogonal Method Concepts

Diagram 1: Orthogonal HPLC Workflow

Diagram 2: Orthogonal Separation Techniques

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 2: Key Research Reagents and Materials for Orthogonal HPLC Method Development

| Reagent/Material | Function in Orthogonal Method Development | Application Notes |

|---|---|---|

| C18 Columns | Primary reversed-phase separation mechanism based on hydrophobic interactions | Standard first-line approach; multiple brands show different selectivity [16] |

| PFP Columns | Complementary reversed-phase separation with π-π interactions and shape selectivity | Effective for separating structural isomers and compounds with aromatic rings [16] |

| HILIC Columns (acidic, basic, zwitterionic) | Orthogonal hydrophilic interaction mechanism | Particularly effective for polar compounds and peptides; provides different selectivity vs. RP-HPLC [22] [23] |

| Ammonium Acetate | MS-compatible buffer for mobile phase modification | Effective additive for both RP-HPLC and HILIC; compatible with mass spectrometry detection [16] [23] |

| Trifluoroacetic Acid (TFA) | Ion-pairing reagent for improved peak shape in RP-HPLC | Enhances separation of basic compounds; may suppress MS ionization [16] |

| Formic Acid | MS-compatible acidic mobile phase modifier | Suitable for positive ionization MS detection; typically used at 0.1% concentration [16] |

| Phosphate Buffers | UV-transparent buffers for high-sensitivity detection | Provides precise pH control without MS compatibility concerns [16] |

| Fused-Core Columns | High-efficiency columns for challenging separations | Enable minute-scale runs of complex samples like oligonucleotides with high resolution [24] |

The case studies presented demonstrate unequivocally that orthogonal HPLC methods are not merely optional advanced techniques but essential components of robust pharmaceutical development. The ability to detect co-eluted impurities that escape primary methods directly impacts product quality, safety profiles, and regulatory compliance.

The strategic implementation of orthogonal methods begins early in development, employing systematic screening approaches that leverage different separation mechanisms (RP-HPLC, HILIC, SFC, CE) with varied stationary and mobile phases [22] [16]. This comprehensive approach ensures "hidden" impurities are identified before they can impact clinical development or commercial production.

As pharmaceutical compounds grow more complex—from synthetic cyclic peptides to oligonucleotides—the role of orthogonal methods will only expand [23] [24]. Building orthogonality into analytical control strategies represents a critical investment in product quality, ultimately ensuring that purity assessments reflect the true impurity profile rather than the limitations of a single analytical method.

Building Your Orthogonal Toolkit: Method Selection and Implementation Across Domains

In pharmaceutical development, chromatographic orthogonality refers to the use of separation methods that operate by distinct and independent mechanisms to maximize the probability of resolving all components in a complex sample. The fundamental principle is that two analytes co-eluting under one set of conditions will likely be separated under another, orthogonal set due to differences in their physicochemical interactions with the chromatographic system [25]. This approach is particularly critical for impurity profiling and method validation, where a primary stability-indicating method must be challenged by an orthogonal method to demonstrate specificity and ensure no critical peaks are missed [16]. Orthogonality is quantitatively assessed using various orthogonality metrics (OMs) that measure how effectively the two-dimensional separation space is utilized, with ideal orthogonal systems exhibiting minimal correlation between retention times in different dimensions [26] [27].

Systematic screening with multiple columns and mobile phases enables researchers to identify optimal orthogonal systems prior to method development, providing a strategic advantage for characterizing impurities in drug substances with unknown impurity profiles [28]. This approach has demonstrated particular value when drug substance synthetic routes and drug product dosage forms are being selected during early phase development, where iterative processes require HPLC methods to separate potentially different sets of impurities and degradation products as development advances [16]. The systematic nature of this screening ensures that methods developed for release and stability testing of clinical supplies can unequivocally monitor all impurities and degradation products to assure products are safe and effective in vivo while meeting regulatory guidelines for reporting, identification, and toxicological qualification [16].

Theoretical Framework and Orthogonality Metrics

Defining and Quantifying Orthogonality

Chromatographic orthogonality can be defined as the condition where "two separations of quite different selectivity with marked changes in relative retention so that two peaks which are unresolved in one chromatogram will likely be separated in the second chromatogram" [25]. Operationally, orthogonal separations occur when the separation space is "uniformly covered" with zones without particular bias in their location [25]. From a practical perspective, orthogonality is achieved when the retention mechanisms in each dimension are independent, providing complementary selectivities that spread sample components across a broad range of retention factors [29].

Multiple mathematical approaches have been developed to quantify orthogonality, each with distinct advantages and limitations. These orthogonality metrics (OMs) generally measure either the correlation between retention times in different dimensions or the effective utilization of the available separation space [26] [25]. Effective OMs must possess certain essential properties: they should be scaled between defined limits (typically 0 to 1 or 0% to 100%), preserve data symmetry (giving the same result regardless of the order of dimensions), and accurately reflect the practical separation effectiveness [26]. The selection of an appropriate orthogonality metric depends on the specific application, with different metrics sometimes favoring certain chromatographic patterns [25].

Key Orthogonality Metrics

Table 1: Comparison of Major Orthogonality Metrics

| Metric Category | Specific Metrics | Basis of Calculation | Advantages | Limitations |

|---|---|---|---|---|

| Correlation Coefficients | Pearson, Kendall | Statistical correlation between retention factors | Simple to calculate, requires no data processing | Limited to linear relationships; insensitive to space utilization [27] |

| Bin-Counting Approaches | %O, %BIN | Division of 2D space into bins; count occupied bins | Intuitive; measures space utilization | Dependent on number of bins selected [26] [30] |

| Geometric Approaches | Convex Hull | Area enclosing all data points in 2D space | Measures overall zone occupancy | Overly sensitive to outliers [30] [25] |

| Distance-Based Methods | Nearest Neighbor Distances (NND) | Distances between closest peaks | Emphasizes critical shortest distances | Correlates poorly with expert assessment in some studies [25] |

| Polynomial Fitting | %FIT | Fitting polynomials through xy and yx data | High correlation with expert scores; requires no settings | Newer method with limited validation [30] |

Research comparing 20 different orthogonality metrics found that no single metric stands out as clearly superior, and products of specific OMs (particularly a global metric like convex hull paired with a local metric like box-counting fractal dimension) often correlate better with expert assessments of chromatographic quality than individual metrics [25]. This suggests that a comprehensive approach utilizing multiple complementary metrics may provide the most reliable assessment of orthogonality for method selection and optimization.

Experimental Design for Systematic Screening

Screening Methodology

A robust systematic approach to orthogonal screening involves multiple phases designed to comprehensively characterize the separation landscape for a given drug substance and its potential impurities. One well-established methodology consists of five key steps [16]:

First, all available batches of drug substances and drug products are obtained to assure all synthetic impurities are assessed, while potential degradation products are generated via forced decomposition studies [16]. These samples are then screened by a single chromatographic method (either a method established during drug discovery or a generic broad gradient method) to identify samples for further method development, selecting each drug substance lot with a unique impurity profile and samples of interest from forced degradation studies (typically degraded 5-15% to avoid secondary degradation products) [16].

The core screening phase involves analyzing the selected samples using six broad gradients on each of six different columns (totaling 36 conditions per sample) with mobile phases chosen as broad gradients to minimize elution at the solvent front or non-elution of components [16]. The modifiers are typically prepared at 20× the required concentration and added to the mobile phase at a constant 5% (v/v), with commonly used modifiers including formic acid, trifluoroacetic acid, ammonium acetate, ammonium hydroxide, ammonium bicarbonate, and ammonium carbonate, providing a pH range from approximately 2.7 to 9.5 [16]. Columns are selected based on anticipated selectivity differences, with a representative set potentially including Zorbax Eclipse XDB-C8, Zorbax Bonus-RP, Zorbax StableBond CN, Zorbax Extend-C18, Zorbax SB-Phenyl, and Zorbax SB-C18, though this set should be periodically revised as new columns with novel selectivity become available [16].

Based on the screening results, conditions that separate all components of interest are identified, with particular attention to finding both a primary method and an orthogonal method that provides very different selectivity [16]. Finally, to verify the selected methods, the previously identified samples containing degradation products, along with the most stressed samples from other stress conditions, are analyzed under both sets of conditions to assure no peaks were missed by the initial generic gradient [16].

Research Reagent Solutions

Table 2: Essential Materials for Orthogonal Screening

| Category | Specific Items | Function/Purpose |

|---|---|---|

| Stationary Phases | Zirconia-based (PBD-coated), silica-based (base-deactivated, polar-embedded, monolithic), C8, C18, CN, Phenyl, HILIC | Provide different selectivity mechanisms for orthogonal separations [28] [16] |

| Mobile Phase Modifiers | Formic acid, trifluoroacetic acid, ammonium acetate, ammonium hydroxide, ammonium bicarbonate, ammonium carbonate | Control pH and ion-pair interactions; different modifiers alter selectivity [16] |

| Organic Solvents | Acetonitrile, methanol, mixtures thereof | Varying solvent strength and selectivity through different organic modifiers [16] [27] |

| Buffers and Additives | Tributylammonium acetate (IP-RP-TBuAA), sodium perchlorate (SAX-NaClO4) | Enable specific separation modes (e.g., ion-pair RP, strong anion exchange) [31] |

| Instrumentation | Multi-port switching valves, trapping columns, different column ovens | Enable automated screening and method coupling; temperature provides additional selectivity dimension [32] [27] |

Workflow for Orthogonal Method Selection

The process of selecting orthogonal chromatographic systems follows a logical sequence that progresses from system characterization through data analysis to final method implementation. This workflow can be visualized as follows:

Comparative Performance of Orthogonal Systems

Quantitative Assessment of System Orthogonality

The effectiveness of different chromatographic systems combinations can be quantitatively compared using orthogonality metrics, which provides an objective basis for system selection. In one systematic study, the most orthogonal system identified was a zirconia-based stationary phase coated with a polybutadiene (PBD) polymer with methanol at pH 2.5, which showed high orthogonality toward several silica-based systems, particularly a base-deactivated C16-amide silica with methanol at pH 2.5 [28]. This orthogonality was validated using cross-validation and additional validation sets including non-ionizable solutes and mixtures of drugs and their impurities [28].

Recent advances in orthogonality metrics have introduced new methods such as %BIN and %FIT, which show high correlation with experts' orthogonality scores (r-squared values of 0.94-0.95) and offer improved discriminative power compared to earlier metrics like the Asterisks equations [30]. These metrics are particularly valuable because they require no specific settings for calculation and are easy to obtain, making them practical for routine method development [30]. Studies comparing orthogonality metrics have found that products of OMs (particularly a global metric measuring separation space utilization paired with a local metric measuring peak spacing) often show better correlation with expert assessments than single metrics, suggesting that optimization should target maximizing such OM products [25].

Practical Comparison of System Combinations

Table 3: Performance Comparison of Different Orthogonal Systems

| System Combination | Application Focus | Orthogonality Assessment | Practical Peak Capacity | Key Advantages |

|---|---|---|---|---|

| RPLC × RPLC | Charged compounds, pharmaceuticals, peptides | Moderate to high orthogonality with proper condition selection [27] | High due to efficiencies in both dimensions [27] | High separation power; mobile phase compatibility |

| RPLC × HILIC | Complex mixtures, natural products | Very high theoretical orthogonality [27] [29] | Limited by HILIC performance, especially for peptides [27] | Different separation mechanisms; good for polar compounds |

| IP-RP × SAX | Oligonucleotides, charged molecules | High orthogonality for charged compounds [31] | Significantly increased vs. 1D methods [31] | Complementary mechanisms for size and sequence variants |