Beyond Sequence: A Comparative Analysis of Topological and Sequence Similarity for Advanced Biological Alignment

This article provides a comprehensive comparative analysis of sequence-based and topology-based alignment methodologies, crucial for researchers, scientists, and drug development professionals.

Beyond Sequence: A Comparative Analysis of Topological and Sequence Similarity for Advanced Biological Alignment

Abstract

This article provides a comprehensive comparative analysis of sequence-based and topology-based alignment methodologies, crucial for researchers, scientists, and drug development professionals. We first explore the foundational principles of both approaches, establishing the conceptual shift from discrete, hierarchical to continuous, network-based models of biological space. The review then details cutting-edge methodological frameworks, including Enrichment of Network Topological Similarity (ENTS), data-driven network alignment (TARA/TARA++), and alignment-free comparators. A dedicated troubleshooting section addresses persistent challenges like statistical validation, data noise, and algorithmic complexity, offering optimization strategies such as meta-alignment and integration of physicochemical properties. Finally, we present a rigorous validation of these methods through benchmark studies and real-world applications in protein fold recognition and function prediction, synthesizing key performance differentiators. This analysis aims to guide the selection and development of next-generation alignment tools for enhanced biomedical discovery.

From Sequences to Networks: Foundational Concepts in Biological Similarity Search

Sequence alignment represents one of the most fundamental methodologies in bioinformatics, providing the foundation for comparing biological sequences to identify similarities, infer evolutionary relationships, and predict molecular functions. For decades, alignment-based approaches have served as the cornerstone of computational biology, enabling researchers to extract meaningful patterns from DNA, RNA, and protein sequences. These methods operate on the principle that related sequences share common ancestry, which is reflected in their residue patterns and structural conservation.

Despite their widespread adoption and utility, traditional sequence alignment techniques face significant challenges when operating in scenarios of low sequence similarity or when processing the massive datasets generated by modern sequencing technologies. This comprehensive analysis examines the core principles governing sequence alignment algorithms, their operational classifications, and the intrinsic limitations that emerge particularly in the "twilight zone" of remote homology detection, where sequence similarity falls below 20-35% [1]. Furthermore, we contextualize these established methods within the emerging paradigm of topological data analysis, which offers complementary approaches for capturing structural relationships that may elude sequence-based comparison.

Algorithmic Foundations and Classification

Sequence alignment methods can be broadly categorized into three distinct classes based on their operational principles and application domains: global, local, and hybrid approaches. Each category employs specific algorithmic strategies to optimize the comparison between biological sequences.

Global Alignment Algorithms

Global alignment methods enforce end-to-end comparison of sequences, assuming similarity across their entire length. The Needleman-Wunsch algorithm stands as the pioneering dynamic programming approach in this category, systematically comparing each residue of one sequence against all residues of another through the construction of a scoring matrix [2]. This algorithm guarantees finding the optimal alignment by maximizing a similarity score based on matches, mismatches, and gap penalties. While mathematically rigorous, global alignment exhibits polynomial time and memory complexity (O(n²)), rendering it computationally prohibitive for large-scale databases or lengthy genomic sequences [2].

Local Alignment Algorithms

For sequences with dissimilar lengths or those sharing only isolated regions of similarity, local alignment methods provide a more suitable alternative. The Smith-Waterman algorithm, inspired by Needleman-Wunsch, identifies local regions of high similarity without enforcing end-to-end alignment [2]. By permitting negative scores to be reset to zero during matrix traversal, the algorithm effectively demarcates local regions of significance. However, this method shares the same computational complexity constraints as global alignment, limiting its practical application to large datasets.

Heuristic and Hybrid Methods

To address the computational limitations of exact algorithms, heuristic approaches sacrifice guaranteed optimality for practical efficiency. The Basic Local Alignment Search Tool (BLAST) represents the most widely adopted heuristic, employing a word-based strategy that identifies short matches ("words") as seeds for potential alignment extension [2] [3]. This approach significantly reduces search space, enabling rapid database queries with linear time and memory complexity (O(n)) [2]. Hybrid methods like FASTA combine aspects of both heuristic filtering and dynamic programming, dividing query sequences into smaller segments (kmers/words) and aligning them using concepts from both BLAST and Needleman-Wunsch [2].

Table 1: Classification of Sequence Alignment Algorithms

| Algorithm Type | Representative Methods | Key Principle | Computational Complexity | Primary Use Cases |

|---|---|---|---|---|

| Global Alignment | Needleman-Wunsch | End-to-end sequence comparison | O(n²) time and memory | Sequences of similar length and domain structure |

| Local Alignment | Smith-Waterman, BLAST | Identify regions of local similarity | O(n²) for exact; O(n) for heuristic | Divergent sequences with isolated similar regions |

| Hybrid Approaches | FASTA, NASA | Combine heuristic filtering with alignment | O(n) to O(n²) depending on implementation | Balancing sensitivity with computational efficiency |

Performance Benchmarking and Quantitative Comparison

Empirical evaluations demonstrate the performance characteristics of various alignment tools across different operational contexts. The introduction of novel algorithms like NASA (Novel Algorithm for Sequence Alignment) and LexicMap has expanded the landscape of alignment methodologies, particularly for large-scale database searches [2] [3].

NASA employs a unique two-phase approach consisting of preprocessing and alignment steps. During preprocessing, it determines residue positions within sequences, focusing subsequent comparisons only on informative regions. The alignment phase then calculates sequence similarity scores based on a constant number of comparisons, achieving linear time and memory complexity while maintaining competitive accuracy [2]. Performance benchmarks indicate that NASA outperforms basic algorithms in elapsed time, memory requirements, system resource utilization, and alignment score precision [2].

LexicMap addresses the challenge of aligning sequences against massive genomic databases containing millions of prokaryotic genomes. By selecting a small set of probe k-mers (20,000 31-mers) that efficiently sample the entire database, LexicMap ensures that every 250-bp window of each database genome contains multiple seed k-mers [3]. This strategic seeding approach, combined with a hierarchical indexing system, enables rapid alignment with comparable accuracy to state-of-the-art methods but with greater speed and lower memory consumption [3].

Table 2: Performance Comparison of Modern Alignment Tools

| Tool | Algorithm Type | Time Complexity | Memory Complexity | Key Innovation | Optimal Use Case |

|---|---|---|---|---|---|

| Needleman-Wunsch | Global, exact | O(n²) | O(n²) | Dynamic programming for optimal global alignment | Protein sequences with similar length |

| Smith-Waterman | Local, exact | O(n²) | O(n²) | Dynamic programming for optimal local alignment | Identifying local domains of similarity |

| BLAST | Local, heuristic | O(n) | O(n) | Word-based seeding and extension | Rapid database searches with moderate sensitivity |

| NASA | Hybrid, heuristic | O(n) | O(n) | Preprocessing to identify informative regions | Large datasets with balanced accuracy/speed |

| LexicMap | Heuristic | Not specified | Low memory use | Probe k-mers with hierarchical indexing | Querying genes against millions of prokaryotic genomes |

Critical Limitations in Remote Homology Detection

Despite algorithmic advancements, sequence alignment methods face fundamental limitations in detecting remote homologies, particularly in the "twilight zone" of 20-35% sequence similarity [1]. In this region, traditional alignment-based approaches experience rapid decline in accuracy, struggling to distinguish true evolutionary relationships from random sequence similarity.

The core challenge stems from the differential conservation rates between protein sequence and structure. While protein sequences diverge rapidly through evolutionary time, their three-dimensional structures demonstrate significantly higher conservation [1]. Consequently, proteins sharing less than 20-35% sequence identity may maintain nearly identical folds and functions, creating a detection gap for sequence-based methods.

This limitation carries profound implications for drug discovery and protein function prediction, where identifying distant evolutionary relationships can reveal novel therapeutic targets and functional mechanisms. Structure-based alignment tools such as TM-align, DALI, and FAST can accurately detect remote homologs by superimposing protein three-dimensional structures, but they require experimentally determined or predicted structures that remain unavailable for most proteins [1]. Even with advances in protein structure prediction like AlphaFold2, the exponential growth of available protein sequences—particularly from metagenomic studies encompassing billions of unique sequences—far outpaces structural characterization efforts [1].

Emerging Paradigm: Topological Data Analysis as a Complementary Approach

Topological Data Analysis (TDA) has emerged as a powerful framework for extracting robust, multiscale, and interpretable features from complex molecular data [4]. By applying algebraic topology to analyze the "shape" of data, TDA captures topological invariants—such as connectivity, loops, and voids—that persist across multiple scales of observation. These persistent homology descriptors provide explainable representations that cannot be obtained through traditional sequence-based methods [4] [5].

The integration of TDA with machine learning, known as Topological Deep Learning (TDL), has demonstrated remarkable success in challenging bioinformatics applications. In the D3R Grand Challenges for computer-aided drug design, TDL models achieved competitive performance by capturing topological features critical for molecular interactions [4]. Similarly, TDL approaches have revealed SARS-CoV-2 evolutionary mechanisms and accurately predicted emerging dominant variants approximately two months in advance [4].

For drug-target interaction (DTI) prediction, frameworks like Top-DTI integrate persistent homology extracted from protein contact maps and drug molecular images with embeddings from protein language models (pLMs) and drug SMILES strings [6]. This hybrid approach significantly outperforms state-of-the-art methods across multiple evaluation metrics (AUROC, AUPRC, sensitivity, specificity), particularly in challenging cold-split scenarios where test sets contain drugs or targets absent from training data [6].

Experimental Protocols and Methodologies

Embedding-Based Alignment with Clustering Refinement

Recent advances in protein language models (pLMs) have enabled novel alignment approaches that leverage residue-level embeddings. The following protocol describes a method that refines embedding similarity matrices using K-means clustering and double dynamic programming (DDP) for improved remote homology detection [1]:

Embedding Generation: Convert protein sequences into residue-level embeddings using pre-trained pLMs (ProtT5, ProstT5, or ESM-1b) that capture sequence context and physicochemical properties.

Similarity Matrix Construction: Compute a residue-residue similarity matrix SMu×v where each entry represents the similarity between residue pairs using Euclidean distance between embedding vectors: SMa,b = exp(-δ(pa, qb)).

Z-score Normalization: Reduce noise in the similarity matrix through row-wise and column-wise Z-score normalization, averaging the results to create a refined similarity matrix.

K-means Clustering: Apply K-means clustering to group similar residues, creating clusters that capture local structural contexts.

Double Dynamic Programming: Implement DDP to identify optimal alignments by first performing local alignments within clusters followed by global optimization across cluster boundaries.

This approach consistently improves performance in detecting remote homology compared to both traditional sequence-based methods and state-of-the-art embedding-based approaches, demonstrating the value of combining embedding representations with clustering-based refinement [1].

Topological Feature Extraction Protocol

For topological approaches, the following workflow enables extraction of persistent homology features from molecular data [6] [5]:

Molecular Representation: Convert molecular structures into appropriate topological representations:

- For proteins: Generate contact maps from 3D structures or predicted distance matrices.

- For drugs: Create 2D molecular images or graph representations from SMILES strings.

Filtration Construction: Construct a filtration of simplicial complexes across multiple scales by varying a proximity parameter ε, which controls the connectivity threshold between molecular nodes.

Persistence Diagram Computation: Apply persistent homology to track the birth and death of topological features (connected components, loops, voids) across the filtration, encoding this information in persistence diagrams.

Feature Vectorization: Convert persistence diagrams into machine-learning-ready feature vectors using methods such as persistence images, landscapes, or silhouettes.

Integration with Sequence Features: Combine topological features with sequence-based embeddings (from pLMs or traditional alignment scores) using feature fusion modules that dynamically weight their relative importance during model training.

This protocol has demonstrated superior performance in predicting drug-target interactions, particularly for cold-split scenarios where traditional sequence-based methods struggle with generalization [6].

Visualization of Method Workflows

Sequence Alignment Workflow

Figure 1: Generalized Sequence Alignment Workflow

Topological Data Analysis Workflow

Figure 2: Topological Data Analysis Workflow

Research Reagent Solutions

Table 3: Essential Research Tools for Sequence and Topological Analysis

| Tool/Category | Specific Examples | Primary Function | Application Context |

|---|---|---|---|

| Traditional Alignment Tools | BLAST, FASTA, Clustal | Sequence comparison and database search | Homology detection, functional annotation |

| Modern Alignment Algorithms | NASA, LexicMap | Efficient large-scale alignment | Processing massive genomic datasets |

| Structure-Based Alignment | TM-align, DALI | 3D structure comparison | Remote homology detection when structures available |

| Protein Language Models | ProtT5, ESM-1b, ProstT5 | Generate residue-level embeddings | Embedding-based alignment and feature extraction |

| Topological Data Analysis | Persistence homology tools, TDA packages | Extract topological invariants from data | Multiscale structural analysis and feature engineering |

| Topological Deep Learning | Top-DTI, TCoCPIn | Integrate topological features with deep learning | Drug-target interaction prediction, molecular property prediction |

| Benchmarking Platforms | AFproject | Comprehensive evaluation of alignment methods | Objective comparison of tool performance |

The established paradigm of sequence alignment continues to serve as an indispensable methodology in bioinformatics, with ongoing algorithmic innovations addressing computational efficiency challenges for large-scale datasets. However, fundamental limitations persist in detecting remote homologies where sequence similarity falls below the twilight zone threshold. The emerging framework of topological data analysis offers complementary approaches that capture structural relationships and conserved features that may elude sequence-based methods. Integrating these paradigms—leveraging the strengths of sequence alignment for high-similarity comparisons while employing topological approaches for remote homology detection and structural analysis—represents a promising direction for advancing computational biology and drug discovery. As both fields continue to evolve, this integrative approach will enhance our ability to extract meaningful biological insights from the increasingly complex and voluminous data generated by modern experimental techniques.

For decades, the field of bioinformatics has been dominated by sequence-based approaches for protein analysis, with dynamic programming algorithms like Needleman-Wunsch and Smith-Waterman serving as fundamental tools for alignment tasks [2]. These methods operate on a simple premise: proteins with similar sequences likely share similar structures and functions. However, this paradigm faces significant limitations when dealing with proteins that share structural or functional similarities despite having divergent sequences—a common occurrence in the continuous landscape of protein space known as the "protein universe." This theoretical recognition has catalyzed a fundamental shift toward topological methods that capture the intricate structural and relational properties of proteins beyond their primary sequences.

The limitations of traditional methods become particularly evident when analyzing proteins with circular permutations, domain shuffling, or those sharing structural motifs without significant sequence identity [7]. In such cases, the true biological relationship is not captured by a sequential path but by a more complex, global mapping of residues that considers the overall topological arrangement. This shift in perspective represents a fundamental reimagining of protein alignment from a path-finding problem to a global matching challenge, enabling researchers to detect non-sequential similarities that were previously overlooked.

Topological approaches are gaining traction across multiple domains of biological research, from drug discovery and therapeutic peptide design to protein function prediction and structural alignment [8] [9] [10]. By leveraging advanced mathematical frameworks including persistent homology, optimal transport theory, and graph-based representations, these methods provide a more nuanced understanding of protein relationships within the continuous protein universe. This comparative guide examines the performance of emerging topological methods against established sequence-based alternatives, providing researchers with experimental data and implementation frameworks to inform their methodological choices.

Theoretical Foundations: From Sequence Alignment to Topological Matching

The Limitation of Traditional Sequence-Based Approaches

Traditional sequence alignment methods are fundamentally constrained by their reliance on sequential residue matching. Dynamic programming algorithms, while guaranteed to find optimal alignments under their scoring schemes, operate with time and memory complexities of O(n²), making them computationally prohibitive for large-scale database searches [2]. Heuristic methods like BLAST address these computational constraints but struggle with divergent sequences and fail to detect non-sequential similarities [7] [2].

The core theoretical limitation lies in the inherent assumption that biological relationships manifest as continuous paths of residue matches. This framework breaks down when analyzing proteins with:

- Circular permutations: Where protein domains appear in different orders despite sharing similar folds

- Domain shuffling: Where evolutionary events have rearranged functional domains

- Distant homologs: Proteins sharing structural and functional attributes with sequence identity below the "twilight zone" (<25%)

These limitations have driven the development of alternative paradigms that can capture the complex, multi-scale nature of protein relationships.

The Rise of Topological Frameworks

Topological methods reconceptualize protein comparison as a global matching problem rather than a path-finding exercise. The UniOTalign framework exemplifies this shift by replacing dynamic programming with optimal transport theory, representing proteins as distributions of residues in a high-dimensional feature space and computing an optimal transport plan between them [7]. This approach leverages Fused Unbalanced Gromov-Wasserstein (FUGW) distance, which simultaneously minimizes feature dissimilarity while preserving the internal geometric structure of sequences.

Similarly, TopoDockQ applies topological data analysis through persistent combinatorial Laplacian (PCL) features to evaluate peptide-protein interface quality, capturing substantial topological changes and shape evolution at binding interfaces [8]. This method demonstrates how topological invariants—mathematical properties that remain unchanged under continuous deformation—can characterize biological interactions with greater accuracy than sequence-based metrics.

The TAFS (Topology-Aware Functional Similarity) framework extends beyond direct neighbors in protein-protein interaction networks by incorporating a distance-dependent functional attenuation factor (γ) that dynamically adjusts the weights of distant nodes [11]. This multi-scale topological modeling captures both local neighborhood characteristics and global network topology, addressing limitations of conventional methods that focus exclusively on second-order neighbors.

Table 1: Theoretical Comparison of Alignment Paradigms

| Aspect | Sequence-Based Methods | Topological Methods |

|---|---|---|

| Fundamental Approach | Path-finding via dynamic programming | Global matching via optimal transport or topological invariants |

| Primary Data Source | Amino acid sequences | Protein structures, interaction networks, residue embeddings |

| Handling of Non-sequential Similarities | Limited | Excellent for circular permutations, domain shuffling |

| Computational Complexity | O(n²) for exact algorithms | Ranges from O(n) to O(n²) depending on method |

| Theoretical Foundation | Information theory, evolutionary models | Algebraic topology, optimal transport, graph theory |

Methodological Comparison: Frameworks and Workflows

Topological Deep Learning for Complex Prediction

TopoDockQ represents a cutting-edge application of topological deep learning for evaluating peptide-protein complexes. The methodology employs persistent combinatorial Laplacians to extract topological features from peptide-protein interfaces, which are then used to predict DockQ scores (p-DockQ) for assessing interface quality [8]. The experimental workflow involves:

- Feature Extraction: Computing persistent combinatorial Laplacian features from the 3D structure of peptide-protein complexes

- Model Training: Training a deep learning model to predict DockQ scores based on topological features

- Model Selection: Using p-DockQ scores to select high-quality complex models from AlphaFold2 predictions

- False Positive Reduction: Applying TopoDockQ to mitigate high false positive rates in AF2's built-in confidence score

In comparative evaluations across five datasets filtered to ≤70% peptide-protein sequence identity, TopoDockQ reduced false positives by at least 42% and increased precision by 6.7% compared to AlphaFold2's confidence score, while maintaining high recall and F1 scores [8]. This demonstrates the practical advantage of topological features over conventional confidence metrics.

Unified Optimal Transport for Protein Alignment

UniOTalign implements a fundamentally different approach to protein comparison by reformulating alignment as an optimal transport problem. The methodological workflow consists of [7]:

- Protein Representation: Generating residue embeddings using ESM-2 protein language model

- Cost Matrix Construction: Creating feature dissimilarity matrices and intra-protein distance matrices

- FUGW Optimization: Solving the Fused Unbalanced Gromov-Wasserstein problem to obtain a transport plan

- Alignment Extraction: Converting the dense transport plan into a discrete 1-to-1 alignment

The FUGW objective function balances feature similarity with geometric consistency through a weighting parameter α, while an unbalanced term controlled by ρ acts as a mathematical equivalent to gap penalties in traditional alignment [7]. This approach naturally handles sequences of different lengths and detects non-sequential similarities that challenge dynamic programming methods.

Topology-Aware Functional Similarity

The TAFS framework addresses limitations in conventional network-based functional similarity measures by integrating both local neighborhood information and global topological features. The methodology [11]:

- Computes co-functional probabilities between protein pairs based on their topological relationships

- Incorporates a distance-dependent attenuation factor (γ) that weights the influence of distant nodes

- Constructs a bidirectional joint co-function probability model that eliminates directional bias

- Calculates functional scores for candidate functions using the similarity-weighted sum method

In experimental evaluations, TAFS outperformed traditional FSWeight across both single-species and cross-species assessments, demonstrating improved prediction accuracy and interpretability through refined topological modeling [11].

Experimental Performance and Benchmarking

Quantitative Comparison of Alignment Accuracy

Rigorous benchmarking across multiple protein comparison tasks reveals distinct performance patterns between topological and sequence-based methods. In information retrieval experiments using SCOP family-level homologs, structural alignment methods consistently outperformed sequence-based approaches across all recall levels [12].

Table 2: Accuracy Comparison Across Protein Alignment Methods

| Method | Type | Average Precision | Key Strength | Limitation |

|---|---|---|---|---|

| SARST2 | Structural/Topological | 96.3% | Integrates primary, secondary, tertiary features | Requires structural data |

| Foldseek | Structural | 95.9% | Fast structural alignment | Lower precision than SARST2 |

| FAST | Structural | 95.3% | Accurate pairwise alignment | Computationally intensive |

| TM-align | Structural | 94.1% | Effective fold comparison | Limited to structural data |

| iSARST | Structural/Topological | 94.4% | Filter-and-refine strategy | Outperformed by SARST2 |

| BLAST | Sequence-based | <94.0% | Fast and widely available | Lowest accuracy in benchmarks |

SARST2 demonstrated superior accuracy (96.3%) in structural homolog retrieval, outperforming both traditional sequence alignment (BLAST) and structural alignment methods (FAST, TM-align, Foldseek) [12]. This performance advantage stems from its integration of multiple structural features—amino acid types, secondary structure elements, weighted contact numbers—with evolutionary information in a machine learning-enhanced framework.

Performance in Drug Discovery Applications

Topological methods show particular promise in drug discovery applications where accurately modeling molecular interactions is crucial. The Top-DTI framework, which integrates topological data analysis with large language models, demonstrated superior performance in predicting drug-target interactions [9].

In experiments on BioSNAP and Human benchmark datasets, Top-DTI outperformed state-of-the-art approaches across multiple metrics including AUROC, AUPRC, sensitivity, and specificity [9]. Notably, it maintained strong performance in challenging cold-split scenarios where test drugs or targets were absent from training data—a critical capability for real-world drug discovery where novel compounds are frequently encountered.

Similarly, PS3N leveraged protein sequence-structure similarity for drug-drug interaction prediction, achieving precision of 91%-98%, recall of 90%-96%, F1 scores of 86%-95%, and AUC values of 88%-99% across different datasets [10]. By directly integrating both protein sequence and 3D-structure representations, PS3N captured functional and structural subtleties of drug targets that are often missed by methods relying solely on chemical structures or interaction networks.

Computational Efficiency and Scalability

As protein databases expand exponentially with initiatives like the AlphaFold Database releasing 214 million predicted structures, computational efficiency becomes increasingly critical [12]. Topological methods demonstrate variable computational profiles:

SARST2 employs a filter-and-refine strategy enhanced by machine learning to complete AlphaFold Database searches in just 3.4 minutes using 9.4 GiB memory with 32 Intel i9 processors—significantly faster than Foldseek (18.6 minutes) and BLAST (52.5 minutes) while using less memory [12]. This efficiency enables researchers to search massive structural databases using ordinary personal computers.

The NASA pairwise alignment algorithm achieves linear time and memory complexity (O(n)) through an innovative preprocessing phase that identifies informative regions for comparison [2]. This represents a significant improvement over traditional dynamic programming approaches while maintaining higher accuracy than heuristic methods like BLAST.

Table 3: Computational Efficiency Comparison

| Method | Time Complexity | Memory Complexity | AlphaFold DB Search Time | Memory Usage |

|---|---|---|---|---|

| SARST2 | Not specified | Not specified | 3.4 minutes | 9.4 GiB |

| Foldseek | Not specified | Not specified | 18.6 minutes | 19.6 GiB |

| BLAST | O(n) | O(n) | 52.5 minutes | 77.3 GiB |

| NASA | O(n) | O(n) | Not tested | Not tested |

| Dynamic Programming | O(n²) | O(n²) | Impractical | Impractical |

Research Reagent Solutions: Computational Tools for Topological Analysis

Implementing topological methods requires specialized computational tools and resources. The following table summarizes key "research reagents"—software tools, databases, and libraries—that enable researchers to apply topological approaches to protein analysis.

Table 4: Essential Research Reagents for Topological Protein Analysis

| Tool/Resource | Type | Function | Application Context |

|---|---|---|---|

| TopoDockQ | Software Model | Predicts peptide-protein interface quality using topological features | Therapeutic peptide design and optimization |

| UniOTalign | Algorithm/Framework | Protein alignment via optimal transport | Detecting non-sequential similarities, circular permutations |

| SARST2 | Structural Alignment Tool | Rapid protein structural alignment against massive databases | Large-scale structural homolog identification |

| TAFS | Computational Framework | Topology-aware functional similarity calculation | Protein function prediction from PPI networks |

| Top-DTI | Prediction Framework | Drug-target interaction prediction using TDA and LLMs | Drug discovery and repurposing |

| PS3N | Neural Network Framework | Drug-drug interaction prediction using sequence-structure similarity | Drug safety profiling and adverse event prediction |

| ESM-2 | Protein Language Model | Generates contextual residue embeddings | Feature generation for topological methods |

| AlphaFold DB | Structure Database | 214 million predicted protein structures | Source of structural data for topology-based analysis |

The shift from sequence-based to topological methods represents a fundamental transformation in how researchers conceptualize and analyze relationships within the protein universe. Traditional sequence alignment algorithms, while foundational to bioinformatics, face inherent limitations in detecting complex biological relationships that manifest beyond linear sequence similarity. Topological approaches—including persistent homology, optimal transport, graph-based analysis, and topological deep learning—provide a more nuanced framework for capturing the continuous nature of protein space.

Experimental evidence demonstrates that topological methods consistently outperform sequence-based approaches in accuracy while maintaining computational efficiency. In structural alignment, SARST2 achieves higher precision (96.3%) than both sequence-based BLAST and other structural alignment tools [12]. In therapeutic peptide design, TopoDockQ reduces false positive rates by at least 42% compared to AlphaFold2's built-in confidence metrics [8]. In drug discovery applications, Top-DTI and PS3N deliver superior prediction performance by integrating topological features with complementary data modalities [9] [10].

The theoretical foundation of topological methods—representing proteins as multi-scale topological objects rather than linear sequences—aligns more closely with the biological reality of protein function and evolution. As the field advances, the integration of topological approaches with emerging technologies like protein language models and geometric deep learning promises to further enhance our ability to navigate the continuous protein universe, accelerating discoveries in basic biology and applied drug development.

For researchers selecting protein analysis methods, the choice between sequence-based and topological approaches should be guided by the specific biological question, data availability, and computational resources. While sequence methods remain valuable for rapid screening and high-similarity detection, topological approaches offer superior capabilities for detecting distant relationships, modeling complex interactions, and predicting function from structure—making them indispensable tools for exploring the expanding universe of protein diversity.

In bioinformatics and computational biology, homology and topology represent two foundational but distinct paradigms for comparing biological entities, each with its own theoretical underpinnings and methodological approaches. Homology, rooted in evolutionary biology, infers common ancestry from sequence similarity and provides the primary framework for characterizing genes and proteins. Topology, derived from mathematical sciences, analyzes the shape, connectivity, and persistent features of biological structures, offering complementary insights that often transcend sequence-level information. Understanding the core definitions, methodological applications, and statistical frameworks of these concepts is crucial for researchers leveraging comparative analyses in fields ranging from functional genomics to drug discovery. This guide provides a comprehensive comparison of these approaches, supported by experimental data and benchmark studies, to inform methodological selection in research and development.

Core Conceptual Frameworks

Homology: Inference from Evolutionary Descent

Homology is a concept signifying that two or more biological sequences share a common evolutionary ancestor. The inference of homology is fundamentally based on detecting statistically significant sequence similarity that exceeds what would be expected by random chance [13]. This excess similarity provides the simplest explanation for common ancestry. The key operational principle is that homologous sequences, due to their shared origin, often share similar structures and may share similar functions [13]. It is critical to note that "homology" is a binary state—sequences are either homologous or not—and should not be used quantitatively (e.g., "sequences share 50% homology") [14].

- Sequence Identity vs. Sequence Similarity: These are distinct quantitative measures used to infer homology. Sequence identity is the percentage of characters (nucleotides or amino acids) that match exactly between two aligned sequences, ignoring gaps [14]. Sequence similarity is a broader measure, often incorporating biochemical properties or substitution costs (e.g., using scoring matrices like BLOSUM or PAM) to evaluate the quality of an alignment, typically calculated as the solution to an optimal matching problem that considers edits like substitutions, insertions, and deletions [14].

Topology: Analysis of Shape and Connectivity

Topology, in the context of computational biology, concerns the study of structural properties and spatial relationships that remain invariant under continuous deformation, such as stretching or bending. Where homology focuses on linear sequence descent, topology focuses on shape, connectivity, and higher-order structural features.

A powerful modern application is Topological Data Analysis (TDA), which provides a mathematical framework for quantifying the shape of data. A key tool within TDA is Persistent Homology (note: this is a mathematical term distinct from biological homology), which characterizes topological features—such as connected components, loops (1D holes), and voids (2D holes)—across multiple scales [15] [16]. These features are summarized in a persistence diagram, which plots the "birth" and "death" scales of each topological feature, with long-persisting features considered more significant signals rather than noise [15].

Methodologies and Experimental Protocols

Homology-Based Sequence Alignment Methods

Sequence alignment is the primary methodological approach for identifying homology. The protocols can be broadly categorized as follows [17]:

Table 1: Classification of Sequence Alignment Methods

| Method Category | Description | Common Algorithms | Typical Use Cases |

|---|---|---|---|

| Pairwise Sequence Alignment (PSA) | Aligns two sequences (DNA, RNA, or protein) at a time. | BLAST [13], FASTA [13], SSEARCH [13], Smith-Waterman [17], Needleman-Wunsch [17] | Database searching, functional annotation of query sequences. |

| Multiple Sequence Alignment (MSA) | Aligns three or more sequences simultaneously to identify conserved regions. | CLUSTAL Omega [18], MUSCLE [18], MAFFT [18], T-Coffee [18] | Identifying conserved domains, building phylogenetic trees, inferring evolutionary relationships. |

The basic workflow for homology inference via sequence alignment involves:

- Sequence Comparison: Using an algorithm (e.g., BLAST) to compare a query sequence against a database.

- Alignment Scoring: Generating an alignment score based on matches, mismatches, and gaps.

- Statistical Evaluation: Calculating an Expectation value (E-value), which estimates the number of times a given alignment score would occur by chance in the searched database [13]. A low E-value (e.g., < 0.001) indicates statistically significant similarity, allowing a confident inference of homology [13].

- Functional Inference: Transferring functional annotation from a well-characterized homolog to the query sequence, with the caveat that functional similarity is not guaranteed even when homology is established [13].

Topology-Based Comparison Methods

Topological methods analyze biological data as geometric objects. A standard protocol for Persistent Homology analysis, as applied to structures like proteins or RNA-protein complexes, includes [16]:

- Data Representation: Representing the 3D structure as a set of points in space (e.g., atomic coordinates or alpha-carbon positions).

- Filtration: Constructing a sequence of simplicial complexes (e.g., Vietoris-Rips complexes) across a growing distance scale (e.g., from 0Å to 10Å).

- Feature Tracking: As the scale increases, topological features are born and die. This information is recorded.

- Persistence Diagram Creation: Each feature is plotted on a diagram with its birth and death scales. Features far from the diagonal (long lifetime) are considered robust topological signals.

- Feature Vectorization: The persistence diagram is converted into a numerical vector (e.g., a persistence image) for use in machine learning models [16].

Diagram 1: Topological Data Analysis Workflow

Performance Benchmarking and Comparative Analysis

Benchmarking Alignment Methods for Homology Detection

A benchmark study comparing PSA and MSA methods for protein clustering provides quantitative performance data. The study used cluster validity scores, which measure how well the sequence distances from an alignment method recapitulate the true biological classification of proteins into families [18].

Table 2: Benchmarking Protein Sequence Alignment Methods Using BAliBASE Datasets [18]

| Alignment Method Category | Representative Algorithms | Reported Cluster Validity Performance |

|---|---|---|

| Pairwise Sequence Alignment (PSA) | EMBOSS, BLAST, CD-HIT, UCLUST | Superior performance on most BAliBASE benchmark datasets. |

| Multiple Sequence Alignment (MSA) | MUSCLE, MAFFT, CLUSTAL Omega, T-Coffee | Generally inferior performance compared to PSA methods in this clustering task. |

The study concluded that PSA methods outperformed MSA methods on most benchmark datasets, validating that drawbacks of MSA methods observed with nucleotide sequences also exist at the protein level [18]. This highlights the importance of selecting the correct alignment strategy for the biological question.

Performance of Integrated Topology-Based Models

Topology-based methods, particularly when integrated with other data types, have demonstrated high predictive power in complex biological prediction tasks. For instance, a model predicting RNA-protein interactions integrated TDA-derived features with conventional sequence and structure descriptors [16].

Table 3: Performance of a TDA-Informed Model for RNA-Protein Interaction Prediction [16]

| Model Version | Predictive Accuracy | AUC-ROC | Precision | Recall |

|---|---|---|---|---|

| Baseline (Conventional features only) | 78% | 0.83 | 0.80 | 0.77 |

| TDA-Informed Model (Integrated features) | 88% | 0.91 | 0.87 | 0.89 |

Ablation studies confirmed the unique contribution of topological features, as removing them caused a 10% drop in accuracy. The first-order persistence features (loops) were among the most discriminative in the model [16].

Application in Drug Discovery and Development

The synergy between homology and topology is powerfully illustrated in modern drug discovery, as seen in the PS3N framework for predicting Drug-Drug Interactions (DDIs). This method leverages both protein sequence similarity (a homology-derived measure) and 3D protein structure similarity (which encompasses topological aspects) to compute comprehensive drug-drug similarity networks [10].

This integrated approach outperformed state-of-the-art methods, achieving high predictive performance (Precision: 91%–98%, Recall: 90%–96%, F1 Score: 86%–95%) [10]. This success demonstrates that moving beyond proxy features to directly use the functional and structural information encoded in sequences and structures can provide a more granular understanding of molecular interactions, enhancing both predictive accuracy and biological explainability.

Diagram 2: Drug-Drug Interaction Prediction via Similarity

The Scientist's Toolkit: Essential Research Reagents and Solutions

Table 4: Key Computational Tools and Resources

| Tool / Resource | Category | Primary Function | Application Context |

|---|---|---|---|

| BLAST [13] | Homology / Alignment | Fast sequence database search and homology inference. | Initial characterization of novel genes/proteins. |

| FASTA [13] | Homology / Alignment | Sequence database search and comparison. | An alternative to BLAST for homology search. |

| HMMER [13] | Homology / Alignment | Profile-based sequence search using Hidden Markov Models. | Detecting very distant homologs. |

| CLUSTAL Omega [18] [17] | Homology / Alignment | Multiple sequence alignment. | Identifying conserved regions across a protein family. |

| MAFFT [18] [17] | Homology / Alignment | Multiple sequence alignment. | Aligning large numbers of sequences or those with long gaps. |

| Ripser [16] | Topology / TDA | Computing persistent homology. | Generating persistence diagrams from point cloud data. |

| USR/USR-VS [19] | Topology / Shape | Ultra-fast 3D molecular shape similarity calculation. | Virtual screening for drug discovery; scaffold hopping. |

| HOOMD-blue [15] | Simulation | Particle-based dynamics simulation. | Generating configurational data for quasi-particle systems (e.g., skyrmions). |

| Persistence Images [16] | Topology / TDA | Vectorizing persistence diagrams. | Preparing topological features for machine learning. |

| Gene Ontology (GO) [20] | Functional Database | Standardized functional annotation. | Ground truth for evaluating functional predictions. |

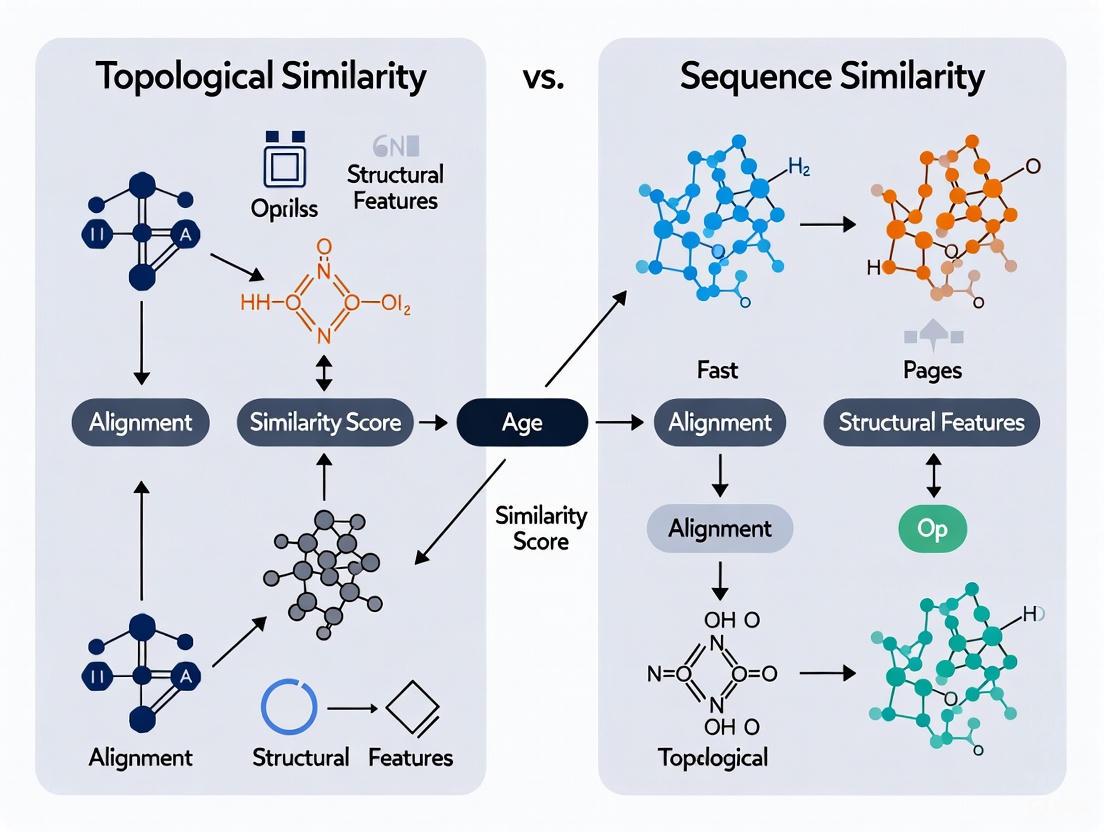

Network alignment (NA) is a foundational computational methodology for comparing biological systems across different species or conditions [21]. By identifying conserved structures, functions, and interactions within graphs representing entities like proteins or genes, NA provides invaluable insights into shared biological processes and evolutionary relationships [21]. This comparative analysis primarily navigates two methodological pathways: one leveraging the topological similarity of the networks themselves, and the other utilizing sequence similarity of the constituent nodes. The choice between these approaches fundamentally shapes the analysis, influencing everything from the initial data representation to the final biological interpretation. This guide provides a objective comparison of contemporary frameworks and tools grounded in these paradigms, evaluating their performance, experimental protocols, and applicability for research and drug development.

Methodological Frameworks: Topological vs. Sequence-Centric Approaches

The computational strategies for aligning biological networks can be broadly categorized based on their core alignment rationale. The table below summarizes the primary frameworks discussed in this guide.

Table 1: Core Methodological Frameworks for Biological Network Analysis

| Framework Name | Core Alignment Methodology | Representation Type | Primary Application |

|---|---|---|---|

| Probabilistic Alignment [22] | Infers a latent "blueprint" network; aligns multiple observed networks via posterior distribution over mappings. | Topological (Graph) | Multiple network alignment, connectome comparison |

| Topotein [23] | Topological Deep Learning using combinatorial complexes for hierarchical message passing. | Topological (Protein Combinatorial Complex) | Protein representation learning, fold classification |

| ENGINE [24] | Multi-channel deep learning integrating equivariant GNNs (structure) and protein language models (sequence). | Hybrid (Graph & Sequence) | Protein function prediction |

| StructSeq2GO [25] | Graph representation learning on AlphaFold structures combined with ProteinBERT sequence embeddings. | Hybrid (Graph & Sequence) | Protein function prediction |

Topology-Centric Alignment

Topology-centric methods prioritize the structure and connectivity of the network.

Probabilistic Network Alignment: This framework posits the existence of a latent, underlying blueprint network. Observed networks are modeled as noisy copies of this blueprint, and the alignment problem is recast as finding the most plausible mapping of nodes in each observed network to nodes in the unknown blueprint [22]. A key advantage is its transparency, as all model assumptions are explicit. Unlike heuristic approaches that yield a single "best" alignment, this probabilistic method provides the entire posterior distribution over alignments, which has been shown to correctly match nodes even when the single most probable alignment fails [22]. This approach is particularly powerful for aligning multiple networks simultaneously, such as comparing functional connectomes across several species [22].

Topological Deep Learning for Proteins (Topotein): Topotein addresses a key limitation of standard graph representations of proteins, where message-passing can be inefficient within secondary structures. It introduces a Protein Combinatorial Complex (PCC), a hierarchical data structure that represents proteins at multiple levels—residues, secondary structures, and the complete protein—while preserving geometric information [23]. Its Topology-Complete Perceptron Network (TCPNet) performs SE(3)-equivariant message passing across this hierarchy, effectively capturing multi-scale structural patterns. This approach is inherently topological and demonstrates particular strength in tasks like fold classification that require understanding secondary structure arrangements [23].

Sequence- and Hybrid-Based Alignment

These methods integrate the amino acid sequence information of proteins, often through modern protein language models, with or without structural data.

ENGINE: A Multi-Channel Deep Learning Framework: ENGINE integrates three complementary channels for protein function prediction. Its Structural Channel transforms 3D protein structures into graphs and processes them with Equivariant Graph Neural Networks (EGNNs) to capture geometric features. The Sequence Channel uses the ESM-C protein language model to encode evolutionary and contextual information. The 3Di Sequence Channel incorporates a discrete structural representation from Foldseek, encoding tertiary interactions into a sequence format [24]. Information from these channels is fused to predict Gene Ontology (GO) terms, effectively leveraging both sequence and structure.

StructSeq2GO: A Unified Graph-Based Approach: This hybrid model combines structural data from AlphaFold with sequence features from ProteinBERT. It converts AlphaFold-predicted structures into residue-level graphs and uses graph representation learning to extract spatial features [25]. These structural embeddings are then integrated with sequence embeddings for multi-label GO term classification, highlighting the critical importance of 3D context not captured by sequence alone.

Performance Comparison: Experimental Data and Benchmarks

Performance across key protein function prediction benchmarks reveals the strengths of integrated models.

Table 2: Benchmark Performance on Protein Function Prediction (Gene Ontology)

| Model | Molecular Function (AUC) | Biological Process (AUC) | Cellular Component (AUC) | Key Advantage |

|---|---|---|---|---|

| ENGINE [24] | 0.9253 | 0.8708 | 0.9206 | Superior AUC; integrates 3D structure, sequence, and 3Di tokens |

| StructSeq2GO [25] | 0.764 | 0.939 | 0.891 | High performance in Biological Process; uses AlphaFold structures & ProteinBERT |

| DeepGOZero [24] | 0.6144 (AUPR) | - | - | Strong performance on Molecular Function (Fmax) |

| PFresGO [24] | - | - | Top CC Performance | Leading performance in Cellular Component ontology |

The experimental data shows that ENGINE consistently achieves top-tier AUC scores, outperforming state-of-the-art baselines across all three GO domains [24]. This demonstrates the overall superiority of its multi-channel integration framework. StructSeq2GO also achieves state-of-the-art performance, particularly in the Biological Process domain, with reported Fmax scores of 0.485 (BPO), 0.681 (CCO), and 0.663 (MFO) [25].

Ablation studies for ENGINE provide crucial insight: removing any single channel (structural, sequence, or 3Di) leads to a significant drop in predictive performance [24]. This underscores the complementary nature of the different data types and confirms that neither topological nor sequence information alone is sufficient for optimal performance.

Experimental Protocols and Workflows

Workflow for Probabilistic Multiple Network Alignment

The probabilistic alignment method involves a well-defined inference procedure, illustrated below.

Diagram 1: Probabilistic Network Alignment Workflow

Detailed Methodology [22]:

- Input: K observed networks with adjacency matrices {A₁, A₂, ..., Aₖ}.

- Model Definition: The model assumes an unknown latent blueprint network L with binary edges. Each observed network Aₖ is considered a noisy copy of L, subject to a node permutation πₖ.

- Likelihood: The probability of observing an edge in Aₖ given the blueprint is defined by two error parameters: the probability p of a non-edge in L becoming an edge (false positive), and the probability q of an edge in L becoming a non-edge (false negative) [22]. The likelihood of the observed data given the blueprint and permutations is the product of these independent probabilities across all node pairs and networks.

- Inference: The goal is to compute the joint posterior distribution of the blueprint L and the permutations πₖ given the observed networks. This is typically done using Markov Chain Monte Carlo (MCMC) sampling methods to explore the space of possible blueprints and alignments.

- Output Analysis: Instead of a single alignment, the result is an ensemble of plausible alignments. This allows for calculating consensus mappings and confidence estimates, providing a more robust solution than methods that output only a single point estimate.

Workflow for Hybrid Protein Function Prediction

The workflow for hybrid models like ENGINE and StructSeq2GO involves feature extraction from multiple data channels.

Diagram 2: Hybrid Protein Function Prediction Workflow

Detailed Methodology for ENGINE [24]:

- Structural Channel: The 3D protein structure (experimentally resolved or predicted) is transformed into a graph where nodes represent amino acid residues. A K-Nearest Neighbors (KNN) graph is constructed based on spatial proximity of Cα atoms. This graph is processed by an Equivariant Graph Neural Network (EGNN) to capture invariant geometric features.

- 3Di Sequence Channel: The protein structure is also processed using Foldseek to generate a sequence of 3Di tokens. This alphabet represents the local structural context of each residue, creating a structure-aware sequential representation that is encoded into an embedding vector.

- Sequence Channel: The primary amino acid sequence is fed into a pre-trained protein language model, ESM-C, to generate contextualized residue-level embeddings. These are pooled to form a protein-level sequence embedding.

- Feature Fusion and Classification: The embeddings from all three channels are concatenated. The combined representation is fed into a Multilayer Perceptron (MLP) to generate confidence scores for thousands of Gene Ontology terms, resulting in a multi-label classification.

Successful implementation of network-based bioinformatics research requires a suite of computational "reagents" and data resources.

Table 3: Essential Research Reagents and Resources

| Resource / Tool | Type | Primary Function | Application in Research |

|---|---|---|---|

| AlphaFold DB [23] [25] | Database | Provides high-accuracy predicted protein structures for millions of proteins. | Source of 3D structural data for structure-based channels when experimental structures are unavailable. |

| ESM / ProteinBERT [24] [25] | Protein Language Model | Generates contextual, evolutionary-aware embeddings from amino acid sequences. | Encodes sequence-based information for function prediction and provides complementary signals to structural data. |

| Foldseek [24] | Algorithm & Database | Rapidly compares protein structures and generates 3Di token sequences from 3D coordinates. | Creates compact, structure-aware sequence representations for efficient comparison and feature extraction. |

| UniProt / Gene Ontology [24] [25] | Database / Ontology | Provides standardized protein sequences, annotations, and functional vocabularies (GO terms). | Source of ground-truth data for model training, benchmarking, and evaluation. |

| EGNN / GNN Libraries [24] | Software Library | Implements equivariant and standard graph neural networks for deep learning on graph-structured data. | Core computational engine for learning from graph-based representations of protein structures and networks. |

| HUGO Gene Nomenclature [21] | Standardization Resource | Provides approved, standardized gene symbols to ensure node identity consistency across networks. | Critical preprocessing step for data harmonization in cross-species or multi-source network alignment. |

The comparative analysis presented herein demonstrates a clear trajectory in the field: while powerful specialized methods exist for pure topological alignment (e.g., probabilistic methods) or pure sequence-based prediction, the leading edge of performance is occupied by hybrid models that integrate multiple data types. Frameworks like ENGINE and StructSeq2GO show that combining topological information from 3D structures with sequential and evolutionary information from protein language models yields a synergistic effect, leading to more accurate and generalizable predictions [24] [25].

The choice between a topological, sequence-based, or hybrid approach should be guided by the specific research question and data availability. For aligning multiple networks, such as brain connectomes, where node identities are unknown and sequence data is irrelevant, probabilistic topological methods are paramount [22]. For annotating protein function, where the relationship between structure, sequence, and function is complex, hybrid models are demonstrably superior. As the volume of structural data grows with resources like the AlphaFold Database, the effective integration of topological and sequence-based information will become increasingly critical for advancing our understanding of biological systems and accelerating drug discovery.

Methodologies in Action: Frameworks for Topological and Sequence Analysis

This guide provides a comparative analysis of advanced sequence analysis methods, framing their performance within research that contrasts traditional sequence similarity with emerging concepts of topological and structural relatedness for detecting remote homologies.

Table 1: Core Characteristics and Performance of Homology Detection Methods

| Method | Core Principle | Key Advantages | Reported Limitations | Typical Sensitivity (SCOPe) |

|---|---|---|---|---|

| PSI-BLAST [26] [27] | Iterative search using a evolving Position-Specific Scoring Matrix (PSSM). | High speed; well-established statistics; widely used. | Sensitive to narrow blocks in MSA for PSSM construction; can miss very remote homologs. [27] | Baseline (Varies with query and database) |

| HMMER [28] | Uses profile hidden Markov models for sequence comparison. | Implicitly learns complex position-specific rules; sensitive. | Speed can be a limitation; highly dependent on quality of input MSA. [28] | Not explicitly quantified in results, but generally high. |

| HHsenser [29] | Exhaustive transitive profile search using HMM-HMM comparison. | High sensitivity; produces diverse MSAs with few false positives. | Very long computation times for large superfamilies (e.g., ~5 hours for 1000 homologs). [29] | High (Exhaustive search) |

| DHR (Deep Learning) [28] | Alignment-free retrieval using embeddings from a protein language model. | Ultrafast (>22x PSI-BLAST); high sensitivity for remote homologs (>10% increase); incorporates structural information. | Requires GPU for optimal speed; performance optimal only when comparing sequences of similar lengths for some tasks. [28] | >10% increase over traditional methods [28] |

Table 2: Computational Requirements and Scalability

| Method | Search Speed (Relative) | Scalability to Large Databases | Key Resource Constraints |

|---|---|---|---|

| PSI-BLAST | 1x (Baseline) | Good [28] | CPU, Memory |

| HMMER | Up to 28,700x slower than DHR [28] | Moderate | CPU, MSA Quality |

| HHsenser | Slow (Exhaustive search) [29] | Poor for large superfamilies | CPU, Time |

| DHR | Up to 22x faster than PSI-BLAST [28] | Excellent (searches ~70 million entries in seconds on a GPU) [28] | GPU Availability |

Experimental Protocols and Workflows

To ensure reproducibility and objective comparison, this section details the standard experimental protocols and workflows for benchmarking homology detection methods.

Benchmarking Protocol for Sensitivity and Speed

The following workflow outlines the standard procedure for evaluating the performance of homology detection tools, as applied in studies such as those for DHR. [28]

Detailed Steps:

- Dataset Selection: A standardized, curated database with known structural and evolutionary relationships is used. The SCOPe (Structural Classification of Proteins) database is a common choice. [28] It provides a hierarchical classification (Family, Superfamily, Fold) where proteins within a superfamily are inferred to share a common ancestor, offering a ground truth for homology.

- Query Set Definition: A set of query protein sequences or domains is selected from the SCOPe database.

- Tool Execution: Each method (PSI-BLAST, HMMER, DHR, etc.) is run against a target database (often a non-redundant sequence database like UniRef) using the same set of queries. Critical parameters (e.g., E-value threshold, number of iterations) must be documented.

- Hit Parsing: For each query, the list of significant hits (e.g., with E-values below a threshold) returned by each tool is recorded.

- Sensitivity Evaluation: Sensitivity is typically measured as the ability to detect known homologous relationships. A standard metric is the True Positive Rate (TPR) at a given E-value threshold, calculated by checking if the returned hits belong to the same SCOP superfamily as the query. [28]

- Time Measurement: The computational time for each search is recorded, often normalized by the number of queries or database size.

- Performance Comparison: Results are compiled to compare the sensitivity-speed trade-offs, often presented as plots of TPR against E-value or in summary tables.

Protocol for Evaluating MSA Quality in Structure Prediction

The quality of an MSA is paramount for modern structure prediction tools like AlphaFold. The following protocol evaluates how different MSA construction methods impact prediction accuracy. [28] [30]

Detailed Steps:

- Target Selection: Protein targets with known experimental structures but which were not used in the training of the prediction model (e.g., from CASP blind experiments) are selected. [28]

- MSA Generation: Multiple MSAs are constructed for each target using different methods or pipelines (e.g., JackHMMER, DHR, DHR-meta which combines DHR and default MSAs). [28]

- Structure Prediction: AlphaFold2 is run using each distinct MSA as input, keeping all other parameters constant.

- Accuracy Measurement: The predicted structure is compared to the experimental native structure using metrics like Root-Mean-Square Deviation (RMSD) or Template Modeling Score (TM-score). [28] [30] Lower RMSD and higher TM-score indicate better accuracy.

- MSA Diversity Measurement: The diversity of each MSA is quantified using the effective number of sequences (Meff), which accounts for sequence redundancy. [28]

- Correlation Analysis: The structural prediction accuracy (e.g., RMSD) is correlated with the MSA generation method and its diversity (Meff) to determine which pipeline produces the most useful alignments for structure prediction.

Table 3: Essential Databases and Software for Homology Detection Research

| Resource Name | Type | Primary Function in Research | Relevance to Method Development |

|---|---|---|---|

| SCOPe Database [28] | Curated Protein Structure Database | Provides a gold-standard benchmark for remote homology detection, with proteins classified by evolutionary and structural relationships. | Essential for training and evaluating data-driven methods like DHR and TARA, and for benchmarking all homology detection tools. [28] |

| UniProt/UniRef [27] | Comprehensive Protein Sequence Database | Serves as the primary search space for finding homologous sequences during MSA construction and iterative searches. | The primary database for PSI-BLAST, HMMER, and DHR searches. Filtered versions (e.g., clustered at 90% identity) are often used to reduce redundancy. [29] [27] |

| Protein Data Bank (PDB) [31] [30] | Repository for 3D Structural Data | Provides experimental structures for validation, structure-based alignment, and for training models that incorporate structural information. | Critical for creating structure-based MSAs and for validating predictions from methods like AlphaFold. Used to verify homology predictions. [31] |

| ESM (Evolutionary Scale Modeling) [28] | Protein Language Model | A transformer-based model pre-trained on millions of protein sequences to learn evolutionary and structural patterns. | Provides the foundational embeddings for DHR, enabling its sensitivity and speed by encapsulating complex biological information without explicit alignment. [28] |

| Gene Ontology (GO) [20] | Functional Annotation Database | Provides standardized functional annotations for proteins. | Used as ground truth to evaluate the functional prediction accuracy of network alignment methods like TARA++, bridging sequence and function. [20] |

Integration with Broader Research Context

The evolution of these tools reflects a shift in the field from a pure sequence-similarity paradigm to one that incorporates topological relatedness and structural constraints.

- Beyond Sequence Similarity: Research has shown that pure topological similarity (isomorphism) in protein-protein interaction networks is a poor predictor of functional relatedness. [20] This finding challenges traditional network alignment assumptions and underscores the value of data-driven methods like TARA, which learn what "topological relatedness" corresponds to function, a concept that aligns with the structural constraints used in advanced HMMs and language models. [20]

- Structural Validation is Key: The highest-quality MSAs and homology inferences are often derived from or validated against known 3D structures. [31] For example, parsimonious, structure-based MSAs of human kinase domains achieve very high accuracy (>97%), resolving previous misclassifications and providing a reliable foundation for functional inference. [31] This demonstrates that structural data provides a crucial ground truth that can rectify errors introduced by sequence analysis alone.

- The Rise of Integrated, Data-Driven Frameworks: The limitations of individual methods are being overcome by hybrid and integrated approaches. PSI-BLAST can be significantly improved by initializing its search with profiles derived from structural HMMs. [26] Furthermore, deep learning models like AlphaFold's Evoformer and retrieval systems like DHR represent the ultimate integration, jointly processing MSAs and pairwise features to directly reason about evolutionary history and 3D structure in an end-to-end manner. [28] [30] This trend moves beyond using these methods in isolation toward sophisticated pipelines that leverage their combined strengths.

The prevailing paradigm for studying protein sequence, structure, function, and evolution has long been established on the assumption that the protein universe is discrete and hierarchical. However, cumulative evidence now suggests that the protein universe is fundamentally continuous. This continuity renders conventional sequence homology search methods, such as PSI-BLAST and hidden Markov models (HMMs), insufficient for detecting novel structural, functional, and evolutionary relationships between proteins from weak and noisy sequence signals [32]. These methods, built upon discrete and hierarchical assumptions, often miss relationships between very divergent sequences.

To overcome these limitations, the Enrichment of Network Topological Similarity (ENTS) framework was proposed. ENTS represents a paradigm shift from local, pairwise similarity comparisons to a global, network-based approach that integrates entire database structures into the search process. By representing the protein space as a graph and exploiting global network topology, ENTS can uncover remote homologies that conventional methods overlook. This guide provides a comparative analysis of ENTS against state-of-the-art alternatives, focusing on its application to the challenging problem of protein fold recognition, with supporting experimental data and methodologies relevant to researchers and drug development professionals [32] [33].

Methodological Framework: How ENTS Integrates Global Structure

Core Algorithmic Components of ENTS

The ENTS framework synthesizes several innovative concepts to address the challenges of similarity search in a continuous protein space. Its algorithmic workflow can be decomposed into four fundamental components:

- Weighted Graph Representation: ENTS initializes by constructing a structural similarity graph of protein domains. Each node represents a structural domain, and edges connect nodes only if their pairwise structural similarity (measured by tools like TM-align with a typical threshold of 0.4) exceeds a defined threshold. This graph can incorporate any similarity metric, including Euclidean distance, Jaccard index, or kernel-based similarities [32].

- Domain Classification/Clustering: Structural domains in the database are labeled using classification systems like SCOP or clustered using unsupervised techniques such as k-means, mean-shift, or affinity propagation. These clusters form the basis for subsequent enrichment analysis [32].

- Network Topological Similarity Calculation: Given a query domain sequence, ENTS links it to all nodes in the structural similarity graph. The weights of these new edges are based on sequence profile-profile similarity derived from HHSearch. Random Walk with Restart (RWR) then performs a probabilistic traversal of the graph across all paths leading from the query, outputting a ranked list of all instances based on the probability of being reached from the query node. This global ranking captures relationships missed by pairwise similarity [32] [33].

- Statistical Significance Assessment: Unlike conventional network searches that only provide rankings, ENTS assesses the statistical significance of similarities using an efficient random-set method. It compares the mean topological similarity score of a cluster against the null distribution of randomly drawn clusters of the same size, generating a normalized Z-score that quantifies reliability and helps distinguish true positives from false positives [32].

Experimental Workflow for Protein Fold Recognition

The following diagram illustrates the integrated workflow of the ENTS method when applied to protein fold recognition, synthesizing its core components into a cohesive process:

Comparative Performance Analysis: ENTS vs. State-of-the-Art Methods

Experimental Design and Benchmarking Protocol

The performance evaluation of ENTS for protein fold recognition followed rigorous benchmarking standards. Researchers constructed a structural similarity graph using 36,003 non-redundant protein domains from the PDB. The query benchmark set consisted of 885 SCOP domains, randomly selected from folds spanning at least two superfamilies. A critical aspect of the experimental design was the removal of all domains from the structural graph that belonged to the same superfamily as the query, ensuring the evaluation tested the method's ability to recognize fold-level similarities beyond closer homologous relationships [33].

The benchmark was designed to evaluate the method's performance specifically on fold recognition, where the goal is to identify proteins with the same overall fold but different functions and evolutionary origins. This represents a more challenging and biologically significant problem than detecting close homologs. The experimental protocol assessed the methods based on their precision in identifying the correct fold from a large database of possibilities, with particular attention to the trade-off between sensitivity (finding true relationships) and specificity (avoiding false positives) [32] [33].

Quantitative Performance Comparison

The following table summarizes the comparative performance of ENTS against other state-of-the-art methods for protein fold recognition, based on the benchmark studies cited in the literature:

Table 1: Performance Comparison of Protein Fold Recognition Methods

| Method | Approach Type | Key Features | Performance Highlights | Limitations |

|---|---|---|---|---|

| ENTS | Network Topological | Integrates global network structure; Uses RWR and statistical enrichment; Combines sequence and structural information | "Considerably outperforms state-of-the-art methods" in fold recognition [32]; Higher accuracy than CNFPred and HHSearch [33] | False positive rate remains high; Computationally intensive for very large graphs [33] |

| HHSearch | Profile-based | Profile-profile comparison; Hidden Markov Models | Established high performance for remote homology detection | Limited by pairwise comparison scope; Cannot leverage global database structure [32] |

| CNFPred | Network-based | Contact potential scoring; Neural networks | Competitive performance for fold recognition | Does not fully utilize network topological information [33] |

| TARA/TARA++ | Data-driven Network Alignment | Learns topological relatedness from functional data; Uses graphlet-based features | Outperforms traditional NA methods in protein function prediction [20] | Designed primarily for function prediction, not structure |

Analysis of Performance Advantages

ENTS's superior performance in protein fold recognition stems from its unique ability to integrate both sequence and structural information within a global network context. While profile-based methods like HHSearch are limited to comparing the query against single database entries one at a time, ENTS leverages the interconnectedness of the entire protein structure universe. The random walk with restart algorithm effectively propagates similarity signals through the network, allowing it to discover relationships that are not apparent from direct pairwise comparisons [32].

The enrichment analysis step with statistical significance testing provides another crucial advantage over other methods. By evaluating the clustering of related domains in the ranking rather than just individual hits, ENTS can distinguish more reliable matches from random background noise. This is particularly valuable for detecting very distant relationships where sequence and structural signals are weak. However, authors note that the false positive rate, while improved, remains substantial, suggesting potential for integration with energy-based scoring functions for further refinement [33].

Successful implementation of the ENTS framework or comparative analysis of topological similarity methods requires familiarity with several key resources and computational tools. The following table catalogs essential "research reagents" for this domain:

Table 2: Essential Research Reagents and Resources for Network Topological Similarity Research

| Resource Category | Specific Tools/Databases | Function and Application |

|---|---|---|

| Protein Structure Databases | Protein Data Bank (PDB), SCOP, CATH | Source of known protein structures and authoritative classifications for benchmark construction and graph building [32] |

| Structure Comparison Tools | TM-align | Calculates structural similarity scores for edge weighting in structural similarity graphs (threshold typically 0.4) [32] |

| Sequence Analysis Tools | HHSearch, PSI-BLAST | Generates sequence profiles and profile-profile similarities for query integration and edge weighting [32] |

| Network Analysis Libraries | Boost Graph Library (BGL) | Provides efficient implementations of graph algorithms like Random Walk with Restart for large-scale networks [32] |

| Data-Driven NA Frameworks | TARA, TARA++ | Implements alternative, learning-based approaches to network alignment using topological relatedness for functional prediction [20] |

The ENTS framework represents a significant methodological advancement in the comparative analysis of topological versus sequence similarity for biological sequence analysis. By shifting from a local, pairwise comparison paradigm to a global, network-based approach, ENTS demonstrates substantially improved performance for challenging problems like protein fold recognition. This has direct implications for drug development and functional genomics, where accurate annotation of protein structure and function is crucial for target identification and understanding disease mechanisms.