Beyond Accuracy: A Strategic Framework for Evaluating Objective Functions in Biological Models

This article provides a comprehensive framework for researchers and drug development professionals to evaluate objective functions in biological models, a critical step in ensuring model utility and preventing costly errors.

Beyond Accuracy: A Strategic Framework for Evaluating Objective Functions in Biological Models

Abstract

This article provides a comprehensive framework for researchers and drug development professionals to evaluate objective functions in biological models, a critical step in ensuring model utility and preventing costly errors. Moving beyond simple accuracy metrics, we explore the foundational principles of model evaluation, detail cutting-edge methodological applications from Bayesian optimization to AI-driven approaches, and address common pitfalls and optimization strategies. The guide further covers rigorous validation techniques and comparative analysis of different model types, emphasizing the shift from predictive performance to decision-oriented evaluation. By synthesizing these intents, this resource aims to enhance the reliability, efficiency, and clinical relevance of computational models in biomedical research.

The 'Why' Behind the Model: Defining Success Metrics for Biological Systems

The Critical Role of Objective Functions in Model-Informed Drug Development (MIDD)

Model-Informed Drug Development (MIDD) is an essential framework that uses quantitative models to facilitate drug discovery, development, and regulatory evaluation. By integrating knowledge of physiology, disease, and pharmacology, MIDD approaches inform objective decisions, streamline clinical programs, and improve the efficiency of bringing new therapies to patients [1] [2]. The application of MIDD has demonstrated significant value, with recent studies reporting annualized average savings of approximately 10 months of cycle time and $5 million per program through systematic implementation [1].

At the core of every MIDD approach lies the objective function—a mathematical criterion that quantifies how well a model's simulations match observed experimental data. The choice of objective function fundamentally influences parameter estimation, model predictability, and ultimately, the quality of decisions guided by these models. In biological systems, where parameters are often poorly specified and data is noisy, selecting an appropriate objective function becomes crucial for generating reliable, actionable insights [3]. This review systematically evaluates objective functions used in MIDD, providing comparative analysis of their performance across different drug development contexts to guide researchers in selecting optimal methodologies for their specific applications.

Objective Functions in MIDD: Theory and Application

Fundamental Concepts and Definitions

Objective functions, also called goodness-of-fit functions, mathematically define the error between experimentally measured values and corresponding model simulations [4]. In MIDD, they provide the numerical basis for parameter estimation, model validation, and uncertainty quantification. The most common objective functions include least-squares (LS), chi-square, and log-likelihood (LL) formulations [4].

In practice, two primary approaches exist for aligning simulated outputs with experimental data: the scaling factor (SF) approach, which introduces unknown multiplicative parameters to scale simulations to measured data, and data-driven normalization of simulations (DNS), which applies the same normalization method to both simulations and experimental data [4]. Research indicates that DNS does not aggravate non-identifiability problems and improves optimization speed compared to SF, especially when the number of unknown parameters is large [4].

Methodological Framework for Objective Function Evaluation

Evaluating objective functions requires standardized methodologies to ensure fair comparison. Key considerations include:

- Data Types: Experimental data in biology often constitute relative measurements (e.g., Western blotting, multiplexed Elisa) in arbitrary units, while models simulate well-defined units such as molar concentrations [4]

- Optimization Algorithms: Common algorithms include LevMar SE (Levenberg-Marquardt with sensitivity equations), LevMar FD (finite differences), and GLSDC (Genetic Local Search with Distance Control) [4]

- Performance Metrics: Evaluation should consider computational speed, parameter identifiability, convergence reliability, and predictive accuracy [4]

The "fit-for-purpose" principle guides objective function selection, emphasizing alignment with the Question of Interest (QOI), Context of Use (COU), and appropriate model evaluation within the specific drug development stage [2].

Comparative Performance of Objective Functions

Optimization Algorithm Efficiency

Computational studies have systematically evaluated how objective functions perform across different optimization methods. One comprehensive analysis compared three algorithms with least-squares and log-likelihood objective functions [4]:

Table 1: Performance of Optimization Algorithms with Different Objective Functions

| Algorithm | Gradient Method | Best Use Case | Convergence Speed | Identifiability |

|---|---|---|---|---|

| LevMar SE | Sensitivity Equations | Medium-scale deterministic models | Fast with DNS | High with DNS |

| LevMar FD | Finite Differences | Models where sensitivities are costly to compute | Moderate | Medium |

| GLSDC | Gradient-free | Complex problems with local minima | Best for large parameter sets | High with DNS |

The results demonstrated that forward mode automatic differentiation achieved the quickest computational time, while the complex perturbation method was simplest to implement and most generalizable [3]. For large parameter numbers (e.g., 74 parameters), GLSDC performed better than LevMar SE [4].

Predictive Accuracy Across Biological Contexts

A systematic evaluation of 11 objective functions combined with eight constraints tested their capacity to predict ¹³C-determined in vivo fluxes in Escherichia coli under six environmental conditions [5]. The study revealed that:

Table 2: Optimal Objective Functions for Different Metabolic States

| Environmental Condition | Optimal Objective Function | Predictive Accuracy | Key Constraints Required |

|---|---|---|---|

| Nutrient abundance (batch cultures) | Nonlinear maximization of ATP yield per flux unit | High (R² > 0.85) | Thermodynamic constraints |

| Nutrient scarcity (continuous cultures) | Linear maximization of overall ATP yield | High (R² > 0.82) | Capacity constraints |

| Nutrient scarcity (continuous cultures) | Linear maximization of biomass yield | High (R² > 0.81) | Capacity constraints |

The study concluded that no single objective function described flux states under all conditions, but identified condition-specific principles. Nonlinear objectives excelled in nutrient-rich environments, while linear objectives proved superior under nutrient scarcity [5].

Impact of Normalization Methods on Parameter Identifiability

The choice between scaling factors (SF) and data-driven normalization of simulations (DNS) significantly impacts parameter identifiability:

Table 3: Scaling Factor vs. Data-Driven Normalization Approaches

| Characteristic | Scaling Factor (SF) Approach | Data-Driven Normalization (DNS) |

|---|---|---|

| Additional parameters | Introduces scaling factors (αj) | No additional parameters |

| Practical non-identifiability | Increases non-identifiability | Does not aggravate non-identifiability |

| Convergence speed | Slower, especially for large parameter sets | Markedly improved speed |

| Implementation complexity | Lower - supported by most software | Higher - requires custom implementation |

Research demonstrates that DNS greatly improves convergence speed for all tested algorithms when the overall number of unknown parameters is relatively large (e.g., 74 parameters). DNS also markedly improves performance of non-gradient-based algorithms even with relatively small parameter sets (10 parameters) [4].

Experimental Protocols for Objective Function Evaluation

Parameter Estimation Protocol for Dynamic Models

A standardized protocol for parameter estimation in dynamic biological models involves [4]:

- Model Formulation: Define ordinary differential equations describing the system:

dx/dt = f(x,θ), where x represents state variables and θ represents parameters - Data Normalization: Apply either SF (

ŷ<sub>i</sub> ≈ α<sub>j</sub>y<sub>i</sub>(θ)) or DNS (ŷ<sub>i</sub> ≈ y<sub>i</sub>/y<sub>ref</sub>) approaches - Objective Function Selection: Choose based on data characteristics (e.g., least-squares for continuous data, log-likelihood for count data)

- Optimization Execution: Implement chosen algorithm (e.g., LevMar SE, GLSDC) with appropriate restarts to avoid local minima

- Identifiability Analysis: Check practical identifiability by examining the Hessian matrix or parameter confidence intervals

Flux Balance Analysis Protocol for Metabolic Networks

For evaluating objective functions in metabolic networks, the following protocol applies [5]:

- Network Reconstruction: Build a stoichiometric model representing major carbon flows (typically 98 reactions, 60 metabolites)

- Split Ratio Calculation: Express systemic degrees of freedom as split ratios at pivotal branch points (e.g., R1 = Pgi flux/∑(Zwf + Glk + Pts fluxes))

- Objective Function Implementation: Test 11 linear and nonlinear objective functions (e.g., maximize biomass yield, maximize ATP yield, minimize total flux)

- Alternate Optima Analysis: Quantify variance of in silico fluxes by determining absolute ranges for individual split ratios

- Experimental Validation: Compare predictions against ¹³C-determined in vivo fluxes using goodness-of-fit metrics

Sensitivity Analysis Protocol

Differential sensitivity analysis protocols help assess how model predictions depend on parameter values [3]:

- Method Selection: Choose from adjoint sensitivity analysis, complex perturbation sensitivity analysis, or forward mode sensitivity analysis based on model characteristics

- Gradient Computation: Calculate gradients of model outputs with respect to parameters using selected method

- Second-Order Sensitivity: Compute Hessian matrices where needed for refined predictions

- Uncertainty Propagation: Quantify how parameter uncertainties affect prediction uncertainties

Essential Research Reagents and Computational Tools

Successful implementation of objective functions in MIDD requires specific computational tools and methodologies:

Table 4: Research Reagent Solutions for Objective Function Evaluation

| Tool Category | Specific Tools | Function | Application Context |

|---|---|---|---|

| Optimization Algorithms | LSQNONLIN SE, GLSDC, LevMar FD | Parameter estimation for dynamic models | ODE models of signaling pathways [4] |

| Sensitivity Analysis | DifferentialEquations.jl, PESTO, CVODES/SUNDIALS | Gradient calculation for parameter uncertainty | Local sensitivity analysis [3] |

| Flux Analysis | Constraint-based modeling tools | Prediction of metabolic flux states | Metabolic network analysis [5] |

| Model Normalization | PEPSSBI | Data-driven normalization implementation | Parameter estimation with relative data [4] |

| Visualization | Neo4j Bloom, CluePoints, SAS JMP Clinical | Knowledge graph exploration and KPI tracking | Clinical trial data visualization [6] [7] |

Objective functions play a critical role in determining the success of Model-Informed Drug Development approaches. The comparative analysis presented demonstrates that objective function performance is highly context-dependent, with different functions excelling in specific biological scenarios and model configurations. Key findings indicate that data-driven normalization approaches outperform scaling factor methods for large parameter sets, and condition-specific objective functions yield more accurate predictions than one-size-fits-all approaches.

The systematic evaluation of objective functions across optimization algorithms, biological contexts, and normalization methods provides researchers with evidence-based guidance for selecting appropriate methodologies. As MIDD continues to evolve with increased integration of artificial intelligence and machine learning, objective function selection will remain fundamental to generating reliable, actionable insights throughout the drug development pipeline. The demonstrated savings of approximately 10 months and $5 million per program underscore the substantial value of optimizing these foundational components of quantitative drug development.

In the pursuit of precision medicine and AI-driven drug discovery, the primary metric for model success has historically been predictive accuracy [8] [9]. However, for a biological or clinical prediction model to be truly valuable, it must demonstrate utility, mitigate risk, and prove clinical relevance [10]. This guide compares traditional and novel evaluation paradigms, providing a framework for researchers to select objective functions that align with real-world application goals, moving beyond abstract accuracy metrics.

Comparative Analysis of Model Evaluation Metrics

The table below summarizes key performance measures, detailing their calculation, interpretation, and primary use case to facilitate direct comparison.

Table 1: Comparison of Model Performance Evaluation Metrics

| Metric Category | Specific Metric | Definition & Formula | Interpretation | Primary Use Case | ||||

|---|---|---|---|---|---|---|---|---|

| Overall Performance | Brier Score | Mean squared difference between predicted probability (p) and actual outcome (Y): (Y - p)² [10]. |

Ranges from 0 (perfect) to 0.25 for a non-informative model at 50% incidence. Lower is better. Measures overall calibration and discrimination [10]. | Assessing the average closeness of predictions to true outcomes. | ||||

| Discrimination | Concordance (C) Statistic / AUC-ROC | Probability that a randomly selected subject with the outcome has a higher predicted risk than one without [10]. | Ranges from 0.5 (no discrimination) to 1.0 (perfect discrimination). A rank-order statistic. | Evaluating a model's ability to separate populations (e.g., diseased vs. healthy). | ||||

| Discrimination | Discrimination Slope | Difference in the mean of predictions between those with and without the outcome [10]. | A larger positive slope indicates better separation between groups. | Simple visualization of predictive separation. | ||||

| Calibration | Calibration-in-the-large | Comparison of the mean observed outcome rate with the mean predicted risk [10]. | A difference of zero indicates perfect calibration at the aggregate level. | Checking for systematic over- or under-prediction. | ||||

| Calibration | Calibration Slope | Slope of the linear predictor in a logistic regression of the outcome on the model's linear predictor [10]. | Ideal slope is 1.0. A slope <1 suggests overfitting; >1 suggests underfitting. | Internal and external validation to assess need for coefficient shrinkage. | ||||

| Reclassification | Net Reclassification Improvement (NRI) | Quantifies the correct movement of risk categories after adding a new marker: `(P(up | event) - P(down | event)) - (P(up | nonevent) - P(down | nonevent))` [10]. | Positive NRI indicates improved reclassification with the new model. | Evaluating the incremental value of a new predictor to an existing model. |

| Reclassification | Integrated Discrimination Improvement (IDI) | Difference in discrimination slopes between new and old models. Integrates NRI over all possible thresholds [10]. | Positive IDI indicates improved discrimination. | Alternative to NRI that does not depend on predefined risk categories. | ||||

| Clinical Usefulness | Net Benefit (Decision Curve Analysis) | Net Benefit = (True Positives / N) - (False Positives / N) * (pt / (1-pt)), where pt is the threshold probability [10]. |

Plotted across threshold probabilities to show the range where using the model for decisions provides a net benefit over default strategies. | Assessing whether a model should be used to guide clinical decisions at specific risk thresholds. |

Detailed Experimental Protocol for Model Evaluation

The following protocol, based on established methodology, outlines the steps for a comprehensive evaluation of a clinical prediction model [10].

1. Study Design and Data Preparation:

- Objective: Develop and validate a model to predict residual tumor vs. benign tissue in post-chemotherapy testicular cancer patients.

- Cohorts: Split data into a model development cohort (n=544) and a fully independent, external validation cohort (n=273) [10].

- Predictors: Include readily available clinical variables (e.g., tumor markers, pathology). A novel biomarker (e.g., a genomic signature) is the candidate for incremental value assessment.

- Outcome: Binary histopathological confirmation of residual tumor.

2. Model Development and Initial Validation:

- Develop a baseline logistic regression model using established clinical predictors.

- Develop an extended model incorporating the novel biomarker.

- Perform internal validation on the development cohort using bootstrapping to estimate optimism and correct performance metrics (e.g., calibrate the slope).

3. Performance Assessment on External Validation Cohort:

- Overall Performance: Calculate the Brier Score for both baseline and extended models.

- Discrimination: Calculate the C-statistic (AUC-ROC) for both models. Compare the Discrimination Slopes via box plots of predictions by outcome group.

- Calibration: Assess Calibration-in-the-large and generate a calibration plot. Calculate the Calibration Slope for each model in the validation set.

- Reclassification: For clinically relevant risk thresholds (e.g., 20%, 50%), construct reclassification tables. Calculate the NRI and IDI to quantify the improvement offered by the extended model.

- Clinical Usefulness: Perform Decision Curve Analysis (DCA). Plot the Net Benefit of the baseline model, the extended model, and the default strategies of "treat all" and "treat none" across a range of threshold probabilities (e.g., 10% to 90%).

4. Interpretation and Reporting:

- A model is considered clinically relevant if it demonstrates not only improved discrimination (C-statistic, IDI) but also better calibration and a positive Net Benefit for threshold probabilities relevant to clinical decision-making (e.g., where biopsy or further treatment is considered) [10].

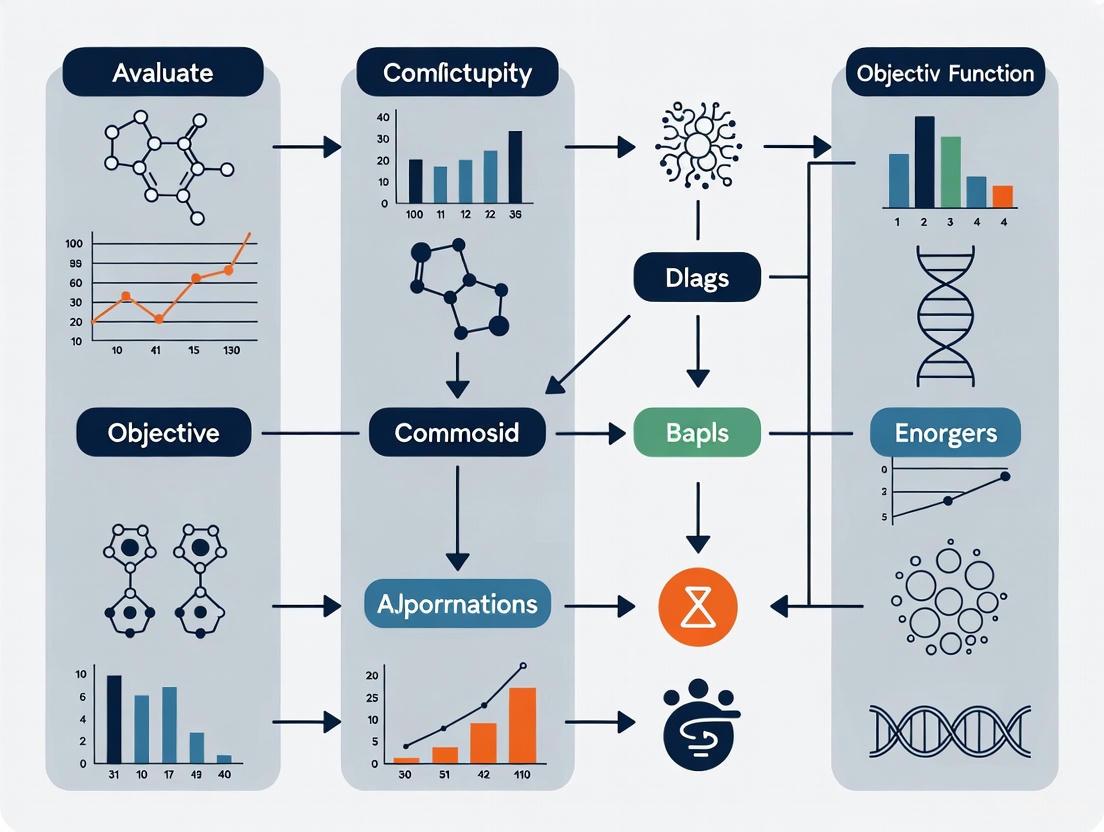

Visualizing the Evaluation Framework and a Novel Targeting Strategy

Framework for Model Performance Evaluation

High-Benefit vs. High-Risk Clinical Targeting

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 2: Key Resources for Advanced Model Evaluation Research

| Tool / Resource | Category | Function in Evaluation | Example / Note |

|---|---|---|---|

| Curated Clinical & Omics Databases | Data Source | Provides structured, often annotated datasets for model training and, crucially, for external validation, which is the gold standard for assessing generalizability [10]. | Genomics England's Generation Study data [8]; headache disorder datasets [11]. |

| Statistical Software with ML Libraries | Software | Enables implementation of traditional metrics (Brier, AUC) and novel algorithms for estimating heterogeneous treatment effects (CATE), such as causal forests [12]. | R (riskRegression, grf packages), Python (scikit-learn, EconML). |

| Federated Data Analytics Platforms | Infrastructure | Enables model training and validation on distributed, privacy-sensitive datasets without moving the data, addressing key ethical and data integrity concerns [8]. | Essential for multi-center studies using genomic or real-world health data. |

| AI Observability & Model Monitoring Suites | Monitoring | Provides continuous evaluation of model accuracy and performance in production, detecting data drift, performance shifts, and bias [13]. | Critical for maintaining model utility and managing risk post-deployment. |

| Decision Curve Analysis (DCA) Tools | Analysis | Calculates and visualizes the Net Benefit of a model to determine its clinical usefulness across different risk thresholds, directly informing utility [10]. | Available in R (dcurves package) or as standalone scripts. |

| Regulatory & Safety Benchmarking Frameworks | Guideline | Provides standardized tests to evaluate model capabilities and potential dual-use risks, especially relevant for biological AI models [14] [15]. | RAND's biological knowledge benchmarks [14]; EU AI Act guidance [15]. |

This comparison guide evaluates key computational and experimental strategies for navigating the complex, constrained, and noisy optimization landscapes inherent to biological systems. Framed within the broader thesis of evaluating objective functions for biological models, we objectively compare the performance of innovative approaches against traditional methods, supported by experimental data and detailed protocols.

Core Challenges in Biological Optimization

Biological optimization problems are fundamentally difficult due to expensive-to-evaluate objective functions, inherent experimental noise (often heteroscedastic), and high-dimensional design spaces where traditional exhaustive screening is prohibitive [16]. The "landscape" is frequently rugged, discontinuous, and stochastic due to complex molecular interactions, making gradient-based methods inapplicable [16]. Furthermore, biological systems are governed by trade-offs, where optimizing for one objective (e.g., rapid growth) can reduce performance in another (e.g., survival or adaptability) [17]. Noise is not merely a nuisance; according to the Constrained Disorder Principle (CDP), an optimal range of noise is essential for proper system functionality, adaptation, and resilience, with malfunctions arising when noise levels are disrupted [18].

Performance Comparison of Optimization Strategies

The table below summarizes the performance of advanced strategies compared to conventional methods, based on data from key validation studies.

Table 1: Performance Comparison of Biological Optimization Strategies

| Optimization Strategy | Key Innovation | Compared To | Performance Outcome | Key Experimental Context |

|---|---|---|---|---|

| Bayesian Optimization (BioKernel) [16] | No-code framework with heteroscedastic noise modeling & modular kernels. | Traditional Grid Search / OFAT | Converged to optimum (10% normalized Euclidean distance) in ~18-22 points (22% of unique points). | vs. 83 points for grid search in optimizing a 4D limonene production landscape in E. coli [16]. |

| CDP-based AI Therapy [18] | Introducing regulated noise in drug timing/dosage within approved ranges. | Standard Fixed Regimens | Improved clinical/lab functions in heart failure, stabilized multiple sclerosis, improved response in drug-resistant cancer and Gaucher disease [18]. | Managed diuretic resistance, reduced hospital admissions. |

| Steered Generation for Protein Optimization (SGPO) [19] | Steering generative priors (e.g., diffusion models) with small labeled fitness data. | Supervised MLDE & Zero-Shot Generation | Effectively identifies high-fitness variants with few (10^2-10^3) labels; offers steerability and scales to large design spaces [19]. |

Tested on TrpB and CreiLOV protein fitness datasets. |

| Functional Redundancy in Ecosystems [20] | Ill-conditioning from species redundancy maps to hard optimization problems. | Well-Conditioned Systems | Manifests as transient chaos, arbitrarily delaying equilibration and causing sensitive dependence on initial conditions/assembly sequence [20]. | Generalized Lotka-Volterra models with rank-deficient interaction matrices. |

Detailed Experimental Protocols

1. Protocol: Validating Bayesian Optimization on a Biological Dataset [16]

- Objective: Compare the sample efficiency of Bayesian Optimization (BO) against the exhaustive search used in a published study.

- Dataset: Limonene production data from a study applying four-dimensional transcriptional control in E. coli (83 unique parameter combinations with six technical replicates each) [16].

- Surface Reconstruction: A Gaussian Process with a scaled Radial Basis Function (RBF) kernel and additional white noise kernel was fitted to the experimental means to approximate the true optimization landscape. A separate mixed model of Random Forest (RF) and K-Nearest Neighbours (KNN) was trained on the standard deviations to create a heteroscedastic noise meshgrid [16].

- BO Policy: The reconstructed surface served as the test function for the BioKernel package. Optimization was run using a Matern kernel with a gamma noise prior.

- Metric: Convergence was defined as reaching a point within 10% of the total possible normalized Euclidean distance to the global optimum. The number of unique points evaluated by BO to reach this threshold was compared to the total points evaluated in the original grid search (83).

2. Protocol: Simulating Optimization Hardness in Ecological Networks [20]

- Model: Generalized Lotka-Volterra equations:

dn_i/dt = n_i * (r_i + Σ_j A_ij * n_j). - Interaction Matrix Design: To introduce functional redundancy, the matrix A was sampled as

A = P * P^T + ε * J, where P is a low-rank assignment matrix mapping N species to M functional groups (creating redundancy), J is a perturbation matrix with small amplitudeε, and^Tdenotes transpose [20]. - Simulation: The dynamical system was integrated from varied initial conditions. The condition number of the matrix A was used as a measure of "ill-conditioning" or optimization hardness.

- Analysis: Transient duration and sensitivity to initial conditions were measured. The relationship between steady-state diversity, the degree of redundancy (affecting condition number), and the onset of transient chaos was quantified.

3. Protocol: Applying CDP-based AI for Drug Regimen Optimization [18]

- Intervention: Drug administration times and dosages were diversified using random-based algorithms, but strictly within the clinically approved pharmacokinetic and safety ranges for the drug.

- Control: Standard-of-care fixed scheduling and dosing.

- Population: Patients with conditions demonstrating treatment tolerance or resistance (e.g., heart failure with diuretic resistance, multiple sclerosis, drug-resistant cancer).

- Outcomes: Measured improvements in specific clinical scores, laboratory values (e.g., biomarkers), radiological response rates, and reduction in adverse events or hospital admissions [18].

Visualizing Concepts and Workflows

Diagram 1: Bayesian Optimization Loop for Biological Experiments

Diagram 2: Functional Redundancy Leads to Optimization Hardness & Transient Chaos

Diagram 3: Biological Noise: From Disruption to Essential Function

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Key Research Reagents and Tools for Biological Optimization Studies

| Tool/Reagent | Category | Primary Function in Optimization Context | Example Use Case |

|---|---|---|---|

| Marionette-wild E. coli Strains [16] | Engineered Chassis | Provides a library of orthogonal, sensitive inducible promoters for creating high-dimensional, tunable optimization landscapes (e.g., 12-dimensional). | Optimizing multi-step heterologous pathways like astaxanthin production [16]. |

| Small Molecule Inducers (e.g., Naringenin) [16] | Chemical Modulators | Precisely control gene expression levels from inducible systems (like Marionette array) to explore transcriptional space. | Used in initial screening campaigns to find optimal expression levels for pathway enzymes [16]. |

| Spectrophotometry / Fluorescence Assays [16] [19] | Analytical Measurement | Enable rapid, quantitative evaluation of the objective function (e.g., pigment concentration, fluorescent protein intensity) for high-throughput feedback. | Quantifying astaxanthin production [16] or fluorescent protein fitness in directed evolution [19]. |

| Genome-Scale Metabolic Models (GEMs) [21] [17] | In Silico Model | Provide a mechanistic network to simulate metabolism, test objective functions (e.g., biomass vs. other goals), and identify engineering targets. | Inferring cellular objectives from omics data and exploring trade-offs [17]. |

| Heteroscedastic Gaussian Process Software (e.g., BioKernel) [16] | Computational Framework | Models non-constant experimental noise, selects kernels/acquisition functions, and proposes sequential experiments to efficiently find optima. | Guiding media or induction condition optimization with limited experimental budget [16]. |

| Protein Generative Models (Discrete Diffusion/PLMs) [19] | AI/ML Model | Provides a strong prior over functional protein sequence space, which can be steered with limited fitness data to propose improved variants. | Steered Generation for Protein Optimization (SGPO) with low-throughput assay data [19]. |

| CDP-based Algorithmic Randomizer [18] | Clinical Decision Support | Introduces regulated, personalized variability into treatment parameters (timing/dose) to overcome tolerance and improve therapeutic outcomes. | Managing diuretic resistance in heart failure or drug resistance in cancer [18]. |

The integration of predictive models, particularly artificial intelligence (AI) and large language models (LLMs), into clinical decision-making heralds a new era of precision medicine [22]. However, this integration carries the latent risk of creating self-fulfilling prophecies, where a model's prediction directly influences clinical actions that subsequently validate the prediction, irrespective of its initial accuracy [22] [23]. This comparison guide objectively evaluates the performance of AI-driven clinical models against traditional clinical judgment, framed within the critical thesis of selecting appropriate objective functions for biological and clinical models [5] [24] [25]. We present quantitative experimental data, detailed methodologies, and essential research toolkits to inform researchers and drug development professionals about these pivotal risks and considerations.

A self-fulfilling prophecy is a prediction that causes itself to become true through the feedback loop of belief and subsequent action [23] [26]. In clinical modeling, this manifests when an AI's risk prediction prompts a therapeutic intervention (e.g., early intubation), and the outcome of that intervention (intubation) is then recorded as data that reinforces the model's future predictions [22]. This cycle can amplify existing biases and lead to suboptimal patient care, effectively "overfitting" clinical practice to the model's assumptions.

This risk is intrinsically linked to the foundational concept of an objective function in model design. An objective function is a mathematical expression that quantifies the goal of a model, such as maximizing diagnostic accuracy or predicting a specific clinical outcome [24]. In systems biology, the choice of objective function (e.g., maximizing biomass yield vs. ATP yield) critically determines the predictive accuracy and biological relevance of metabolic models under different conditions [5] [25]. Similarly, in clinical AI, the objective function (e.g., minimizing prediction error on historical data) may not account for the model's own influence on future data generation, creating a dangerous feedback loop [22]. Evaluating models requires scrutinizing not just their statistical performance but their integration into a dynamic clinical environment where prediction influences reality.

Case Study: AI Prediction of Intubation Risk in Respiratory Failure

2.1 Experimental Protocol & Methodology A pivotal study evaluated the performance of GPT-4.0 in predicting the need for endotracheal intubation within 48 hours for patients with respiratory failure on high-flow nasal cannula (HFNC) oxygen therapy [22].

- Patient Cohort: 71 patients receiving HFNC therapy.

- Model & Comparator: The predictive performance of GPT-4.0 was compared against specialist physicians and non-specialist physicians.

- Input Data: Clinical data from the patient cases were used as input for the LLM.

- Outcome Measure: The primary metric was the Area Under the Receiver Operating Characteristic Curve (AUROC), which measures the model's ability to discriminate between patients who would and would not require intubation.

- Statistical Analysis: Performance comparisons were made using p-values to determine statistical significance.

2.2 Quantitative Performance Comparison The study yielded the following comparative results [22]:

Table 1: Performance Comparison in Predicting Intubation Risk (AUROC)

| Predictor | AUROC | Comparison vs. GPT-4.0 (p-value) |

|---|---|---|

| GPT-4.0 (AI Model) | 0.821 | (Reference) |

| Specialist Physicians | 0.782 | p = 0.475 (Not Significant) |

| Non-Specialist Physicians | 0.662 | p = 0.011 (Significant) |

Interpretation: GPT-4.0 demonstrated comparable accuracy to specialist physicians and superior accuracy to non-specialists in this specific predictive task [22].

2.3 Illustration of the Self-Fulfilling Prophecy Feedback Loop The hypothetical scenario described in the study perfectly encapsulates the peril [22]. An AI predicts a 70% risk of intubation for a patient. Driven by this high-risk prediction and the desire to avoid mortality from delayed procedure, the physician opts for immediate intubation. This action makes the "intubation" outcome a reality. This new outcome data is then fed back into the AI's training cycle, reinforcing the association between the patient's initial presentation and intubation, thereby increasing predicted risks for similar future cases. This creates a positive feedback loop that biases the model and clinical practice.

Diagram 1: Self-Fulfilling Prophecy Loop in Clinical AI

Comparative Analysis: Objective Functions in Biological vs. Clinical Models

The performance and pitfalls of clinical models can be better understood by analogy to the rigorous evaluation of objective functions in systems biology.

3.1 Lessons from Metabolic Network Analysis A systematic evaluation of 11 different objective functions for predicting metabolic fluxes in E. coli under various environmental conditions found that no single objective function was optimal under all conditions [5] [25]. For instance, maximizing ATP yield per flux unit best described unlimited growth in batch cultures, while maximizing overall biomass or ATP yield was more accurate under nutrient-scarce continuous cultures [5]. This underscores that the choice of objective function must be context-dependent.

Table 2: Comparison of Objective Functions in Metabolic Models

| Modeling Context | Optimal Objective Function | Key Insight | Source |

|---|---|---|---|

| E. coli, Unlimited Growth | Nonlinear maximization of ATP yield per flux unit | Different biological objectives drive network behavior under different conditions. | [5] [25] |

| E. coli, Nutrient Scarcity | Linear maximization of overall ATP or biomass yield | The "goal" of the system changes with environmental constraints. | [5] [25] |

| Clinical AI, Static Validation | Minimizing prediction error on historical dataset | May not account for the model's future influence on data generation (self-fulfilling prophecy). | [22] |

| Clinical AI, Dynamic Integration | Needs to incorporate post-decision review and feedback correction | The objective must evolve to ensure model actions improve real-world outcomes, not just self-validate. | [22] |

3.2 Parameter Estimation and the Risk of Bias In dynamic biological models, parameter estimation is fraught with challenges like non-identifiability, where many parameter sets fit the data equally well [4]. Using a scaling factor (SF) approach to align model simulations with experimental data introduces extra parameters and can aggravate non-identifiability [4]. In contrast, data-driven normalization of simulations (DNS) avoids this pitfall and improves optimization performance [4]. This mirrors the clinical AI dilemma: introducing an AI's recommendation (an external "scale") into the clinical decision process adds a complex, poorly understood parameter that can distort the system's natural state and create biased feedback data.

Diagram 2: Objective Function Evaluation & Feedback Workflow

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 3: Key Resources for Clinical & Biological Outcome Modeling

| Item / Solution | Category | Primary Function in Research |

|---|---|---|

| Large Language Models (e.g., GPT-4) | AI/Software | Serve as the predictive engine for clinical risk assessment or answering medical queries; require rigorous clinical validation [22]. |

| Curated Clinical Datasets | Data | High-quality, annotated patient data is the substrate for training and validating predictive models. Post-decision reviewed datasets are crucial to break self-fulfilling cycles [22]. |

| Flux Balance Analysis (FBA) Software | Modeling Tool | Enables constraint-based modeling of metabolic networks to test different biological objective functions and predict phenotypes [5] [25]. |

| Parameter Estimation Algorithms (e.g., LSQNONLIN, GLSDC) | Software/Algorithm | Used to fit dynamic model parameters to experimental data. The choice between SF and DNS approaches significantly impacts identifiability [4]. |

| Post-Decision Review Framework | Protocol/Process | A mandatory clinical workflow where AI-influenced decisions are archived and reviewed by multi-specialist panels to generate corrected, high-quality data for model refinement [22]. |

| Stability Constraint Formalism (e.g., TEAPS) | Modeling Framework | Algorithms like TEAPS (Thorough Exploration of Allowable Parameter Space) incorporate biological stability and resilience as constraints to find plausible parameter sets for dynamic models without overfitting [27]. |

The comparative analysis reveals a critical parallel: just as biological models require context-specific objective functions [5] [25], clinical AI models require objective functions and evaluation frameworks that account for their dynamic interaction with the clinical environment. The peril of the self-fulfilling prophecy is a direct result of using a static objective function (predictive accuracy on past data) in a dynamic, interactive system.

To mitigate this, the clinical modeling field must adopt strategies from advanced systems biology:

- Implement Mandatory Feedback Loops with Curation: Establish institutional protocols for post-decision review by specialist panels to audit AI-influenced outcomes and create refined datasets [22].

- Adopt Dynamic Objective Functions: Develop model evaluation metrics that penalize predictions leading to unnecessary interventions, effectively making the avoidance of self-fulfilling outcomes part of the model's objective.

- Employ Stability and Resilience Constraints: Similar to incorporating Biological Stable and Resilient (BSR) constraints in dynamic models [27], clinical models should be designed with constraints that promote system (clinical pathway) stability and prevent runaway feedback loops.

The ultimate objective function for clinical AI must evolve from simply "being right on a test set" to "enabling better, unbiased patient outcomes in a continuously learning healthcare system."

Framing the Context of Use (COU) and Fit-for-Purpose Model Selection

In computational biology and drug development, the Context of Use defines the specific purpose and conditions under which a model is expected to function, while Fit-for-Purpose Model Selection is the practice of choosing and validating models based on their performance for that specific context. This approach moves beyond generic model accuracy metrics, recognizing that a model perfect for one scientific aim may be inadequate for another. A Fit-for-Purpose paradigm is crucial because, as research shows, model performance and the relevance of different evaluation criteria depend heavily on the specific application. For instance, in species distribution modeling, the best-performing algorithm varies significantly depending on whether the goal is to understand a species' overall distribution, predict its occurrence at specific locations, or define its ecological niche limits [28].

This guide provides a structured framework for comparing model performance within a defined Context of Use, focusing on evaluating objective functions for biological models. We synthesize experimental data and methodologies to help researchers and drug development professionals make informed, evidence-based decisions in their model selection process.

Conceptual Framework: Objectives, Trade-offs, and Model Fit

The Foundation of Cellular and Biological Objectives

At the heart of biological modeling lies the need to accurately represent cellular objectives. Cells manage limited resources to achieve specific biological goals, which extend beyond simple biomass production, especially in mammalian systems. Neurons may prioritize electrical activity and neurotransmitter synthesis, muscle cells manage energy for contraction, and stem cells focus on developmental regulation. A model's objective function must reflect these priorities to be biologically accurate [17]. The Fit-for-Purpose model conceptualizes chronic nonspecific low back pain as an information problem, where patients hold strong internal models of a fragile back. The corresponding treatment framework aims to shift these internal models toward viewing the back as healthy and adaptable [29].

The Inevitability of Trade-offs

A core principle in systems biology is the trade-off. Cells, and by extension the models that represent them, cannot simultaneously optimize all objectives. This leads to a Pareto front, where improving performance in one objective necessitates a decline in another. For example, a trade-off exists between growth rate and survival in Escherichia coli, and similarly, cancer cell populations navigate trade-offs between proliferation and survival phenotypes [17]. Understanding which trade-offs are material to your Context of Use is critical for selecting a model whose inherent biases align with your primary research question.

Table 1: Common Trade-offs in Biological Model Selection

| Objective A | Objective B | Biological Context | Model Implication |

|---|---|---|---|

| Growth Rate | Survival & Stress Resistance | Microbial populations, Cancer cells | Models optimized for fast growth may fail under stress conditions. |

| Predictive Accuracy | Model Interpretability | Drug discovery, Diagnostic tools | Complex "black box" models may be accurate but hard to validate scientifically. |

| Overall Distribution Fit | Local Occurrence Prediction | Species Distribution Modeling | Algorithms like Maxent and GAM excel locally, while consensus models fit overall distribution better [28]. |

| Sensitivity (Recall) | Precision | Early disease detection, Screening | Balancing false positives against false negatives depends on the clinical cost of each error type [30]. |

Experimental Protocols for Model Comparison

A rigorous, standardized protocol is essential for an objective Fit-for-Purpose model evaluation. The following methodology, drawing from best practices in machine learning and species distribution modeling, provides a template for comparative studies [31] [28].

Model Evaluation Workflow

The following diagram illustrates the core experimental workflow for comparing model performance against a defined Context of Use.

Detailed Methodological Steps

- Define the Context of Use (COU): Formally specify the model's intended purpose, the specific questions it must answer, and the required output format (e.g., a continuous prediction, a binary classification, a ranked list of features). This step is the foundation for all subsequent evaluation.

- Select Candidate Models: Choose a diverse set of models representing different algorithmic families and objective functions. For a biological context, this might include:

- Regression Models: Generalized Linear Models (GLM), Generalized Additive Models (GAM).

- Machine Learning Models: Random Forests (RF), Gradient Boosted Machines (GBM), Artificial Neural Networks (ANN), and Maximum Entropy modeling (Maxent) [28].

- Configure Model Objectives and Hyperparameters: Set the objective functions (e.g., maximize likelihood, minimize error) and tune hyperparameters for each model. This may involve techniques like grid search or Bayesian optimization, using a separate validation set to prevent overfitting.

- Implement Robust Data Partitioning: Split the dataset into training, validation, and test sets. Use techniques like holdout validation for large datasets or k-fold cross-validation for smaller datasets to ensure a robust estimate of model performance on unseen data [31]. The key is to ensure the test set is held out until the final evaluation phase.

- Execute Model Training and Evaluation: Train each model on the training set and make predictions on the test set. It is critical that the test data is completely unseen during the training and tuning phases to guarantee an unbiased performance assessment.

- Calculate Performance Metrics: Compute a suite of metrics relevant to the COU. The table below summarizes key metrics. For example, in species distribution modeling, the consistency of environmental variable selection is as important as raw predictive accuracy [28].

Table 2: Key Performance Metrics for Model Evaluation [31] [30]

| Metric | Formula | Model Type | Interpretation | Context of Use |

|---|---|---|---|---|

| Mean Absolute Error (MAE) | (\frac{1}{n}\sum{i=1}^{n} |yi-\hat{y}_i|) | Regression | Average magnitude of error, robust to outliers. | When all prediction errors are equally important. |

| Root Mean Squared Error (RMSE) | (\sqrt{\frac{1}{n}\sum{i=1}^{n}(yi-\hat{y}_i)^2}) | Regression | Average error, penalizes larger errors more. | When large errors are particularly undesirable. |

| R-squared (R²) | (1 - \frac{\sum{i}(yi-\hat{y}i)^2}{\sum{i}(y_i-\bar{y})^2}) | Regression | Proportion of variance explained by the model. | To understand how well the model captures data variance. |

| Accuracy | (\frac{TP+TN}{TP+TN+FP+FN}) | Classification | Overall correctness of the model. | When classes are balanced and cost of errors is similar. |

| F1-Score | (2\times\frac{Precision\times Recall}{Precision+Recall}) | Classification | Harmonic mean of precision and recall. | When a balance between false positives and false negatives is needed. |

| Area Under ROC Curve (AUC-ROC) | Area under the ROC plot | Classification | Model's ability to distinguish between classes. | For overall performance across all classification thresholds. |

Case Study: Fit-for-Purpose Model in Chronic Pain Research

The "Fit-for-Purpose" model for chronic nonspecific low back pain (CLBP) provides a powerful case study of a model built from the ground up for a specific biological context, rather than being repurposed from other conditions [32]. This model frames CLBP as a state where the patient's brain holds a strong, intransient internal model of the back as damaged and fragile. The therapeutic goal is to shift this model toward viewing the back as healthy, strong, and "fit for purpose" [29] [33].

The following diagram outlines the four-stage rehabilitation framework of this model, demonstrating how a clear theoretical framework translates into a structured experimental or therapeutic protocol.

Experimental Evidence and Protocol: The model proposes specific, testable interventions at each stage. For the "Refine" stage, this includes graded retraining of sensory precision (e.g., tactile localisation and discrimination), motor imagery (e.g., left/right judgement tasks), and motor control with low-load, precision-focused movements [33]. This framework is currently being tested in clinical trials against other treatment comparators, with outcomes focused on functional recovery and changes in self-perception of back health, demonstrating a direct link between a defined COU and a tailored experimental design [32].

The Scientist's Toolkit: Essential Reagents and Materials

The following table details key computational and analytical "research reagents" essential for conducting rigorous model comparison and evaluation studies.

Table 3: Essential Reagents and Tools for Model Evaluation Research

| Item/Tool | Function in Research | Application Context |

|---|---|---|

| BIOMOD2 Platform | An R package that provides a unified framework for running multiple species distribution model algorithms [28]. | Ecological niche modeling, species distribution forecasting. |

| Neptune.ai | A platform for experiment tracking and visualization, used for monitoring and comparing machine learning model performance metrics [30]. | Managing multiple model training runs, hyperparameter tuning, result comparison. |

| Scikit-learn | A Python library providing simple and efficient tools for predictive data analysis, including model training and metrics calculation [31]. | Implementing holdout validation, cross-validation, and calculating accuracy, precision, F1-score, etc. |

| DataRobot | An automated machine learning platform that includes features for model comparison, lineage tracking, and accuracy metric visualization [34]. | Automated model selection, blueprint comparison, and model compliance reporting. |

| Confusion Matrix | A specific table layout that allows visualization of the performance of a classification algorithm [30]. | Evaluating Type-I (False Positive) and Type-II (False Negative) errors in diagnostic or classification models. |

| ROC Curves | A graphical plot that illustrates the diagnostic ability of a binary classifier system across varying discrimination thresholds [30]. | Selecting an optimal classification threshold and comparing the performance of different classifiers. |

| WorldClim Bioclimatic Data | A dataset of high-resolution global climate surfaces for bioclimatic modeling [28]. | Building species distribution models based on climatic variables. |

| Pareto Front Analysis | A mathematical technique for identifying a set of optimal trade-offs between competing objectives [17]. | Analyzing the trade-off between model accuracy and interpretability, or between growth and survival in cellular models. |

From Theory to Bench: A Toolkit of Objective Functions for Biological Research

Bayesian Optimization for High-Dimensional, Resource-Constrained Experiments

Optimizing complex biological systems represents a fundamental challenge in life sciences research and biotechnology. Whether engineering metabolic pathways in microorganisms or developing cell culture media for mammalian bioprocessing, researchers face the daunting task of navigating high-dimensional parameter spaces with severely constrained experimental resources. Biological optimization problems are inherently difficult: they involve expensive-to-evaluate objective functions, significant experimental noise (often heteroscedastic), and complex interactions between factors that create rugged, discontinuous response landscapes [16]. Traditional optimization approaches like exhaustive screening or one-factor-at-a-time (OFAT) experimentation become prohibitively resource-intensive as dimensionality increases, suffering from the well-known "curse of dimensionality" where required experiments grow exponentially with parameter count [16] [35].

Bayesian optimization (BO) has emerged as a powerful machine learning approach that transforms how researchers navigate these complex experimental spaces. As a sample-efficient, sequential strategy for global optimization of black-box functions, BO enables identification of optimal input parameters while making minimal assumptions about the objective function [16]. This review provides a comprehensive comparison of Bayesian optimization against traditional experimental design methods, with specific focus on performance in high-dimensional, resource-constrained biological experiments. Through analysis of quantitative benchmarks and experimental case studies, we demonstrate how BO's unique combination of probabilistic modeling and intelligent decision-making accelerates scientific discovery while substantially reducing experimental burdens.

Performance Benchmarking: BO Versus Traditional Methods

Quantitative Comparison Across Experimental Domains

Extensive benchmarking studies across diverse experimental domains reveal consistent advantages of Bayesian optimization over traditional methods. The performance gains are particularly pronounced in high-dimensional biological spaces where experimental resources are severely constrained.

Table 1: Performance Comparison of Optimization Methods Across Biological Applications

| Application Domain | Traditional Method | BO Method | Experimental Reduction | Performance Improvement | Citation |

|---|---|---|---|---|---|

| Metabolic Engineering (Limonene Production) | Adapted Grid Search (83 points) | Bayesian Optimization (18 points) | 78% fewer experiments | Equivalent optimal yield | [16] |

| Cell Culture Media Development | Design of Experiments | Bayesian Optimization | 3-30x fewer experiments | Improved cell viability & protein production | [36] |

| PBMC Culture Optimization | Design of Experiments | Bayesian Optimization | 3x fewer experiments | Maintained viability & distribution | [36] |

| K. phaffii Protein Production | Design of Experiments | Bayesian Optimization | 3x fewer experiments | Higher recombinant protein titers | [36] |

| CHO Cell Bioprocessing | Design of Experiments | Thermodynamics-Constrained BO | Not specified | Higher product titers | [37] |

| Vaccine Formulation Development | Traditional Excipient Screening | Bayesian Optimization | Significant reduction in screening | Improved stability attributes | [38] |

The acceleration factors demonstrated in these studies stem from BO's sample efficiency. In the limonene production optimization, BO achieved convergence to within 10% of the optimal normalized Euclidean distance using just 22% of the experimental points required by grid search [16]. This efficiency becomes increasingly valuable as dimensionality grows, with studies reporting 10- to 30-fold experimental reductions when optimizing media compositions with 9+ design factors including categorical variables [36].

Benchmarking Surrogate Models and Acquisition Functions

The performance of Bayesian optimization depends critically on appropriate selection of surrogate models and acquisition functions. Comprehensive benchmarking across multiple experimental materials science domains provides valuable insights for biological applications.

Table 2: Surrogate Model Performance Comparison for Bayesian Optimization

| Surrogate Model | Key Characteristics | Performance Advantages | Computational Considerations | Citation |

|---|---|---|---|---|

| Gaussian Process (Isotropic) | Single length scale parameter | Baseline performance | Lower computational cost | [39] |

| Gaussian Process (Anisotropic/ARD) | Individual length scales per dimension | Most robust performance across domains | Moderate computational cost | [39] |

| Random Forest | Non-parametric, tree-based | Comparable to GP-ARD, no distribution assumptions | Lower time complexity, less hyperparameter tuning | [39] |

| Bayesian Neural Networks | Flexible function approximation | Suitable for very complex landscapes | Higher computational cost | [40] |

Benchmarking studies demonstrate that GP with anisotropic kernels (automatic relevance detection) and Random Forest surrogates deliver comparable performance, both substantially outperforming GP with isotropic kernels [39]. The anisotropic GP's individual length scales for each dimension enable it to handle parameters with varying sensitivities effectively, making it particularly suitable for biological optimization where different media components or genetic parts may exert dramatically different influences on the objective function.

Acquisition function selection similarly influences optimization performance, with different strategies excelling in specific experimental contexts. Expected Improvement (EI) generally provides robust performance across diverse biological applications, effectively balancing exploration and exploitation [16] [40]. Probability of Improvement (PI) tends toward more exploitative behavior, while Upper Confidence Bound (UCB) can be tuned toward exploration with higher parameter values [16]. For high-dimensional spaces, recent approaches promote local search behavior through trust regions or targeted perturbations, which has proven more effective than global modeling [35].

Experimental Protocols and Methodologies

Standard Bayesian Optimization Workflow

The Bayesian optimization framework follows a consistent iterative workflow that integrates machine learning with experimental execution. The standard protocol comprises four key phases that cycle until convergence or resource exhaustion.

Figure 1: Standard Bayesian Optimization Workflow for Experimental Design

Phase 1: Initial Experimental Design The optimization begins with an initial sampling of the parameter space to build a preliminary surrogate model. While random sampling or Latin hypercube sampling are common, studies demonstrate that domain-informed initialization can significantly accelerate convergence. In drug discovery applications, docking-based initialization outperformed diversity-based approaches, requiring 24% fewer experiments on average to identify optimal compounds [41]. The initial batch size typically ranges from 5-20 points, scaled appropriately for the dimensionality and experimental constraints.

Phase 2: Surrogate Model Training The core of BO involves training a probabilistic surrogate model—typically a Gaussian Process (GP)—on all accumulated experimental data. The GP is defined by a mean function m(x) and covariance kernel k(x,x'), creating a full probability distribution over functions that could explain the observed data [40]. For biological applications, the Matérn kernel (particularly with ν=5/2) is often preferred as it accommodates realistic smoothness without being overly restrictive [40]. Critical implementation details include:

- Heteroscedastic Noise Modeling: Biological measurements often exhibit non-constant variance, which can be addressed through specialized noise models [16]

- Anisotropic Kernels: Automatic Relevance Detection (ARD) kernels with individual length scales for each parameter significantly improve performance in high-dimensional spaces [39]

- Hyperparameter Optimization: Length scales and noise parameters are typically optimized via maximum likelihood estimation (MLE) or maximum a posteriori (MAP) estimation [35]

Phase 3: Acquisition Function Optimization The trained surrogate model informs the selection of subsequent experiments through an acquisition function that balances exploration (sampling uncertain regions) and exploitation (refining promising regions). The standard acquisition functions include:

- Expected Improvement (EI): Measures expected improvement over the current best observation [42] [39]

- Upper Confidence Bound (UCB): Uses mean prediction plus weighted uncertainty [16] [39]

- Probability of Improvement (PI): Estimates probability that a point will improve upon current best [16] [39]

For high-dimensional spaces, studies demonstrate that local optimization strategies and trust region methods outperform global acquisition function optimization [35].

Phase 4: Iteration and Convergence The cycle of experimentation, model updating, and candidate selection continues until convergence criteria are met: typically minimal improvement over multiple iterations, exhaustion of experimental resources, or achievement of target performance. In practice, most biological optimizations converge within 20-100 experimental iterations, even for high-dimensional problems [16] [36].

Specialized Methodologies for Biological Applications

Biological optimization introduces unique challenges that require methodological adaptations, several of which have demonstrated significant performance improvements.

Multi-Objective Optimization Many biological applications involve competing objectives, such as maximizing product titer while minimizing byproduct formation. Multi-objective Bayesian optimization (MOBO) approaches like TSEMO (Thompson Sampling Efficient Multi-Objective) extend the BO framework to identify Pareto-optimal solutions [42]. These methods simultaneously model multiple responses and use specialized acquisition functions that balance improvements across all objectives.

Categorical and Constrained Parameter Handling Biological optimization frequently involves categorical variables (e.g., solvent types, nitrogen sources) and constraints (e.g., media component summation to 100%). Successful implementations use specialized kernels for categorical variables and incorporate constraints directly into the acquisition function optimization [36] [37]. For media optimization with composition constraints, methods that ensure suggested formulations remain feasible have demonstrated particular effectiveness [36].

Transfer Learning and Multi-Fidelity Approaches When prior knowledge exists from related systems or cheaper screening assays, transfer learning and multi-fidelity modeling can dramatically reduce experimental burden. These approaches incorporate data from related tasks or lower-fidelity experiments to initialize or constrain the surrogate model, improving sample efficiency [36] [42].

Research Reagent Solutions and Experimental Toolkits

Successful implementation of Bayesian optimization for biological experiments requires both computational tools and experimental resources. The following toolkit encompasses essential reagents, software, and methodologies employed in the cited studies.

Table 3: Essential Research Toolkit for Bayesian Optimization in Biological Applications

| Toolkit Component | Specific Examples | Function/Purpose | Application Examples |

|---|---|---|---|

| Surrogate Modeling Software | GPyTorch, Scikit-learn, SUMO | Probabilistic modeling of biological response surfaces | All cited applications |

| BO Frameworks | BoTorch, Ax, Summit | End-to-end Bayesian optimization implementation | Chemical reaction optimization [42] |

| Experimental Platforms | Automated bioreactors, HTS liquid handlers | Automated execution of suggested experiments | Bioprocess optimization [37] [40] |

| Biological Assays | Spectrophotometry, HPLC, flow cytometry | Quantitative measurement of objective functions | Astaxanthin quantification [16], cell viability [36] |

| Specialized Kernels | Matérn, ARD, composite kernels | Capture domain-specific response characteristics | High-dimensional optimization [35] [39] |

| Constraint Handling | Lagrangian methods, penalty approaches | Ensure experimental feasibility | Media formulation [36] [37] |

The integration of automated experimental systems with BO frameworks has been particularly transformative, enabling fully autonomous optimization cycles. Platforms like the "Summit" framework for chemical reaction optimization exemplify this integration, combining BO algorithms with robotic execution systems to substantially accelerate discovery [42]. Similarly, automated bioreactor systems (e.g., AMBR systems) interfaced with BO have demonstrated improved bioprocess optimization outcomes compared to traditional DOE approaches [37].

Advanced Applications and Future Directions

Emerging Applications in Biological Research

Bayesian optimization continues to expand into new biological domains, addressing increasingly complex experimental challenges. Recent advances include:

Drug Discovery and Molecular Optimization BO has demonstrated remarkable efficiency in navigating chemical space for drug discovery. The integration of structure-based virtual screening with BO, using docking scores as features or initialization strategies, has reduced the number of experiments needed to identify highly active compounds by up to 77% in some cases [41]. This hybrid approach combines the generality of structure-based methods with the inference power of machine learning, creating a more data-efficient optimization strategy.

Bioprocess Intensification and Scale-Up Upstream bioprocess optimization represents a natural application for BO due to the high dimensionality and resource intensity of experimentation. Recent studies have integrated BO with physical constraints based on solution thermodynamics to design feasible, high-performance cell culture media [37]. This integration of machine learning with domain knowledge ensures that suggested media formulations remain physically realizable while achieving improved product titers compared to traditional DOE methods.

Vaccine Formulation Development BO has proven valuable in optimizing complex biological formulations, as demonstrated in vaccine development studies. By modeling the relationship between excipient compositions and critical quality attributes (e.g., viral titer retention, glass transition temperature), BO has identified stabilized formulations with significantly reduced experimental burden compared to conventional excipient screening approaches [38].

Methodological Advances for High-Dimensional Challenges

The "curse of dimensionality" presents particular challenges for biological optimization, where parameter spaces frequently exceed 20 dimensions. Recent research has identified several key strategies for high-dimensional Bayesian optimization (HDBO):

Length Scale Initialization and Vanishing Gradients Studies have revealed that vanishing gradients during Gaussian process fitting significantly impact HDBO performance. Carefully scaled length scale initialization, such as the dimensionality-scaled log-normal hyperpriors or uniform priors, has demonstrated state-of-the-art performance by counteracting the increasing distances between points in high-dimensional spaces [35].

Local Search and Trust Region Methods Contrary to earlier assumptions that global modeling was essential, recent work shows that promoting local search behavior is highly effective in high-dimensional spaces. Methods that generate candidates through local perturbations of high-performing points, or that use trust regions to focus search, have shown superior performance on high-dimensional real-world benchmarks [35].

Additive and Embedded Structure Exploitation When prior knowledge suggests additive structure or the existence of low-dimensional embeddings, specialized BO approaches can leverage these patterns for improved efficiency. Additive Gaussian processes decompose high-dimensional functions into lower-dimensional components, while methods that identify active subspaces reduce effective dimensionality [35].

Figure 2: Strategic Approaches for High-Dimensional Bayesian Optimization

Bayesian optimization represents a paradigm shift in how researchers approach complex biological optimization problems. The extensive benchmarking and case studies reviewed demonstrate that BO consistently outperforms traditional experimental design methods—particularly in high-dimensional, resource-constrained scenarios common in biological research. The performance advantages are substantial: 3-30x reductions in experimental requirements, successful navigation of 20+ dimensional parameter spaces, and consistent identification of superior solutions compared to OFAT, DOE, and grid search approaches.

The key to successful implementation lies in appropriate method selection—matching surrogate models (GP with anisotropic kernels or Random Forest) and acquisition functions to problem characteristics—and careful handling of biological specifics such as heteroscedastic noise, categorical variables, and multi-objective optimization. As the field advances, integration with automated experimental systems and domain-informed constraints will further expand BO's applicability across biological domains.

For researchers facing complex optimization challenges with limited experimental resources, Bayesian optimization offers a rigorously demonstrated, computationally efficient framework for accelerating discovery while substantially reducing costs. The method's growing track record across diverse biological applications—from metabolic engineering and bioprocess development to drug discovery and vaccine formulation—establishes it as an essential tool in the modern biological researcher's toolkit.

Leveraging Large Language Models (LLMs) for Target Identification and Molecule Design

The integration of Large Language Models (LLMs) into the drug discovery pipeline represents a significant paradigm shift, offering novel methodologies for understanding disease mechanisms and accelerating therapeutic development [43]. Traditionally, the process of discovering new medicines is notoriously cumbersome and expensive, consuming vast computational resources and months of human labor to narrow down the enormous space of potential candidates [44]. LLMs, initially designed to understand and generate human language, are now being adapted to "understand" scientific data, including the complex language of DNA, proteins, and chemical structures, thereby transforming how researchers pinpoint biological targets and design novel drug molecules [43]. This review provides a comprehensive comparison of emerging LLM-powered approaches, evaluating their performance against traditional computational methods and examining their applicability within the broader context of evaluating objective functions for biological models research.

Comparative Analysis of LLM-Powered Target Identification Methods

Target identification is a critical first step in the drug discovery process, with in silico methods offering enhanced efficiency over traditional experimental approaches [45]. Recent systematic comparisons have evaluated seven target prediction methods, including both stand-alone codes and web servers (MolTarPred, PPB2, RF-QSAR, TargetNet, ChEMBL, CMTNN, and SuperPred), using a shared benchmark dataset of FDA-approved drugs to ensure reliability and consistency [45]. These methods generally fall into two categories: target-centric approaches that build predictive models for each target using Quantitative Structure-Activity Relationship (QSAR) models with various machine learning algorithms, and ligand-centric approaches that focus on similarity between query molecules and known ligands annotated with their targets [45].

Table 1: Performance Comparison of Target Prediction Methods

| Method | Type | Algorithm/Approach | Database Source | Key Performance Findings |

|---|---|---|---|---|

| MolTarPred | Ligand-centric | 2D similarity | ChEMBL 20 | Most effective method; Morgan fingerprints with Tanimoto scores outperform MACCS [45] |

| RF-QSAR | Target-centric | Random Forest | ChEMBL 20&21 | Uses ECFP4 fingerprints; performance varies with top similar ligand parameters [45] |

| TargetNet | Target-centric | Naïve Bayes | BindingDB | Utilizes multiple fingerprints (FP2, MACCS, E-state, ECFP2/4/6) [45] |

| ChEMBL | Target-centric | Random Forest | ChEMBL 24 | Uses Morgan fingerprints [45] |

| CMTNN | Target-centric | ONNX runtime | ChEMBL 34 | Employs Morgan fingerprints [45] |

| PPB2 | Ligand-centric | Nearest neighbor/Naïve Bayes/deep neural network | ChEMBL 22 | Uses MQN, Xfp, and ECFP4 fingerprints; considers top 2000 similar ligands [45] |

| SuperPred | Ligand-centric | 2D/fragment/3D similarity | ChEMBL and BindingDB | Utilizes ECFP4 fingerprints [45] |

The evaluation revealed that MolTarPred emerged as the most effective method, particularly when using Morgan fingerprints with Tanimoto scores, which outperformed MACCS fingerprints with Dice scores [45]. Model optimization strategies such as high-confidence filtering (using interactions with a minimum confidence score of 7 from ChEMBL) can enhance prediction quality, though this comes at the cost of reduced recall, making it less ideal for comprehensive drug repurposing efforts where broader target identification is valuable [45]. For practical applications, researchers have developed programmatic pipelines for target prediction and mechanism of action hypothesis generation, exemplified by a case study on fenofibric acid that demonstrated its potential for repurposing as a THRB modulator for thyroid cancer treatment [45].

Comparative Analysis of LLM-Powered Molecule Design Methods

Where target identification focuses on finding biological targets, molecule design involves creating compounds that can effectively and safely interact with these targets. LLMs are demonstrating remarkable capabilities in this domain through various architectural approaches.

Multimodal Architectures for Molecular Design

A groundbreaking approach from MIT and the MIT-IBM Watson AI Lab addresses the fundamental challenge of enabling LLMs to understand and reason about the atoms and bonds that form a molecule by augmenting an LLM with graph-based models specifically designed for generating and predicting molecular structures [44]. Their method, called Llamole (large language model for molecular discovery), employs a base LLM as a gatekeeper to interpret natural language queries specifying desired molecular properties, then automatically switches between the base LLM and graph-based AI modules to design the molecule, explain the rationale, and generate a step-by-step plan to synthesize it [44]. This interleaving of text, graph, and synthesis step generation combines words, graphs, and reactions into a common vocabulary for the LLM to consume.

Table 2: Performance Comparison of Molecule Design Methods

| Method | Architecture | Key Capabilities | Performance Advantages | Limitations |

|---|---|---|---|---|

| Llamole | Multimodal LLM + graph-based models | Natural language query interpretation, molecular structure generation, synthesis planning | Improved success ratio from 5% to 35% for retrosynthetic planning; outperforms LLMs 10x its size [44] | Trained on only 10 molecular properties; requires generalization [44] |

| MolT5-based Models | Text-based LLM | Translation between drug molecules and indications (drug-to-indication and indication-to-drug tasks) | Larger models outperform smaller ones across configurations; custom tokenizer improves DrugBank performance [46] | Fine-tuning can have negative impact on performance; overall performance still not satisfying [46] |

| ChatChemTS | LLM-powered chatbot + ChemTSv2 generator | Automated reward function construction, configuration generation, molecule generation analysis | Enables single and multi-objective molecule optimization without AI expertise [47] | Requires preparation of physicochemical property data or target protein information [47] |

In comparative evaluations, Llamole significantly outperformed existing approaches, generating molecules that better matched user specifications and were more likely to have a valid synthesis plan, improving the retrosynthetic planning success rate from 5% to 35% [44]. It also outperformed LLMs that are more than 10 times its size that design molecules and synthesis routes only with text-based representations, suggesting multimodality is key to its success [44].

Translation Between Molecules and Indications

Another promising approach involves using LLMs for the translation between drug molecules and their therapeutic indications. Researchers have proposed this as a new task and evaluated T5-based LLMs on two public datasets obtained from ChEMBL and DrugBank [46]. In the drug-to-indication task, models take Simplified Molecular-Input Line-Entry System (SMILES) strings of existing drugs as input and generate matching indications, while the indication-to-drug task takes therapeutic indications as input and seeks to generate corresponding SMILES strings for drugs that treat those conditions [46]. Experiments showed that larger MolT5 models outperformed smaller ones across all configurations, though fine-tuning sometimes negatively impacted performance, and creating molecules from indications remains challenging with current models [46].

Accessible Molecule Design Through Chatbot Interfaces

To address the specialized knowledge barrier required for effective use of AI-based molecule generators, researchers have developed ChatChemTS, an LLM-powered chatbot that assists users in utilizing ChemTSv2—an AI-based molecule generator—solely through interactive chats [47]. This approach eliminates the need for users to possess deep expertise in machine learning or reward function design, as ChatChemTS automatically prepares appropriate reward functions, configures desired conditions, and executes the molecule generator based on natural language requests [47]. The system employs a ReAct framework with predefined tools including a reward generator, prediction model builder, configuration generator, ChemTSv2 API, molecule generation analyzer, and file writing tool [47].

Experimental Protocols and Methodologies

Benchmarking Target Prediction Methods