Benchmarking Optimization Algorithms for Systems Biology: A Practical Guide for Method Selection and Validation

The effective application of optimization algorithms is crucial for tackling core problems in computational systems biology, including model tuning, parameter estimation, and biomarker identification.

Benchmarking Optimization Algorithms for Systems Biology: A Practical Guide for Method Selection and Validation

Abstract

The effective application of optimization algorithms is crucial for tackling core problems in computational systems biology, including model tuning, parameter estimation, and biomarker identification. This article provides a comprehensive framework for benchmarking these algorithms, addressing the critical need for standardized and neutral evaluation in the field. We explore foundational concepts, from the role of optimization in model fitting and network reconstruction to the principles of rigorous benchmarking. A detailed analysis of key algorithm classes—including multi-start least squares, Markov Chain Monte Carlo, and nature-inspired heuristics—is presented alongside their specific biological applications. Furthermore, the guide delves into troubleshooting common challenges like overfitting and non-convexity, and culminates with a review of modern validation strategies, such as challenge-based assessments and continuous benchmarking ecosystems. This resource is designed to empower researchers and drug development professionals in selecting, optimizing, and validating the most appropriate optimization methods for their specific systems biology tasks.

The Role of Optimization and Benchmarking in Systems Biology

In computational systems biology, researchers face the constant challenge of extracting meaningful knowledge from vast, complex biological datasets. Optimization provides the essential mathematical foundation for this endeavor, serving as the critical link between raw data and biological insight. From tuning parameters in dynamical models of cellular processes to identifying minimal biomarker sets for disease diagnosis, optimization algorithms determine the success and accuracy of computational methods. The field relies on optimization to navigate problems characterized by high dimensionality, multiple local solutions, and complex constraints that defy manual solution [1]. This guide examines the fundamental role of optimization across key applications in systems biology, comparing algorithmic performance through experimental data and providing standardized protocols for implementation.

The necessity of optimization in systems biology stems from the nature of biological systems themselves. Whether estimating rate constants in differential equation models of tumor-immune interactions or selecting informative genes from thousands of candidates in transcriptomic data, researchers must solve complex optimization problems to achieve reliable results [1] [2]. These problems often involve non-linear, non-convex objective functions with multiple possible solutions, requiring sophisticated global optimization approaches rather than simple analytical solutions [1]. As high-throughput technologies generate increasingly large datasets, the strategic selection and application of optimization methods becomes ever more critical for biological discovery.

Optimization Algorithms for Systems Biology: A Comparative Framework

Algorithm Categories and Characteristics

Optimization methods applied in systems biology generally fall into three main categories: deterministic, stochastic, and heuristic approaches. Each category offers distinct advantages for particular problem types and data characteristics [1]. The table below summarizes the core properties of representative algorithms from each category.

Table 1: Comparison of Optimization Algorithm Classes in Systems Biology

| Algorithm Class | Representative Methods | Convergence Properties | Parameter Types Supported | Key Applications in Systems Biology |

|---|---|---|---|---|

| Deterministic | Multi-start non-linear Least Squares (ms-nlLSQ) | Proven convergence under specific hypotheses [1] | Continuous parameters only [1] | Model tuning for systems of ODEs [1] |

| Stochastic | Random Walk Markov Chain Monte Carlo (rw-MCMC) | Proven convergence to global minimum under specific hypotheses [1] | Continuous parameters and non-continuous objective functions [1] | Fitting models with stochastic equations or simulations [1] |

| Heuristic | Simple Genetic Algorithm (sGA) | Convergence to global solution for discrete parameter problems [1] | Continuous and discrete parameters [1] | Biomarker identification, feature selection [3] |

Experimental Performance Comparison

Recent benchmarking studies provide empirical data on the performance of various optimization algorithms for biological applications. One comprehensive comparison evaluated nine hyperparameter optimization (HPO) methods for tuning extreme gradient boosting models to predict high-need high-cost healthcare users [4]. The study found that while default hyperparameters yielded reasonable discrimination (AUC=0.82), all HPO methods improved model performance (AUC=0.84) and significantly enhanced calibration. Notably, in datasets with large sample sizes, limited features, and strong signal-to-noise ratios, all HPO algorithms produced similar performance gains, suggesting that problem characteristics should guide algorithm selection [4].

Table 2: Hyperparameter Optimization Method Comparison for Predictive Modeling

| Optimization Method Category | Specific Methods Tested | Performance Improvement over Default | Computational Efficiency | Optimal Use Cases |

|---|---|---|---|---|

| Probabilistic Processes | Random Search, Simulated Annealing, Quasi-Monte Carlo | Similar AUC improvements across methods [4] | Variable | Large sample sizes, strong signal-to-noise [4] |

| Bayesian Optimization | Tree-Parzen Estimation, Gaussian Processes, Random Forests | Improved calibration and discrimination [4] | Generally efficient | Limited features, complex parameter spaces [4] |

| Evolutionary Strategies | Covariance Matrix Adaptation Evolutionary Strategy | Comparable performance gains [4] | Can be computationally intensive | Mixed parameter types, complex landscapes [4] |

Optimization for Biological Model Tuning

Parameter Estimation in Dynamic Models

In computational systems biology, models frequently take the form of ordinary or stochastic differential equations that describe biological phenomena such as cellular growth, metabolic pathways, or tumor-immune interactions [1]. The Lotka-Volterra model, for instance, describes population dynamics between predators and prey using four parameters: growth rate of prey (α), death rate of predators (b), rate at which predators consume prey (a), and rate at which predator population grows from eating prey (β) [1]. Estimating these parameters from experimental data constitutes a fundamental optimization problem where the objective is to minimize the difference between model simulations and observed data.

The mathematical formulation of this optimization problem can be expressed as:

Here, θ represents the vector of parameters, c(θ) is the cost function that quantifies the difference between model output and experimental data, while constraints (g, h, lb, ub) represent biological knowledge or physical limitations on parameter values [1]. This formulation highlights how optimization integrates both data fitting and biological constraints.

Protocol: Model Tuning with Multi-Start Least Squares

Problem Formulation: Define the objective function c(θ) as the sum of squared differences between experimental data and model simulations [1]

Parameter Initialization: Establish bounds for parameters based on biological constraints and initialize multiple starting points within the parameter space [1]

Local Optimization: At each starting point, apply a local optimization algorithm (e.g., Gauss-Newton) to minimize c(θ) [1]

Solution Comparison: Compare locally optimal solutions from different starting points to identify the global minimum [1]

Validation: Test the optimized parameter set on withheld experimental data to ensure generalizability [1]

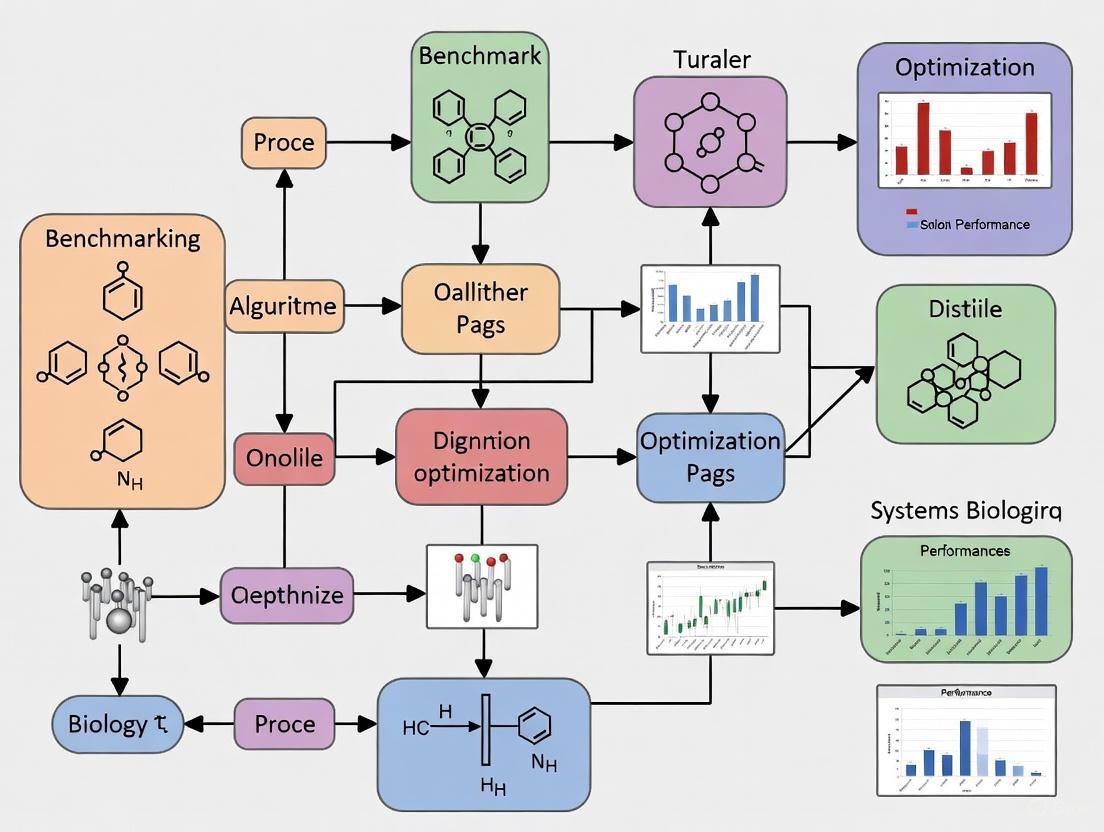

The following workflow diagram illustrates the model tuning process using multi-start optimization:

Optimization for Biomarker Identification

Feature Selection as an Optimization Problem

Biomarker identification represents a critical optimization challenge in computational biology, particularly for disease diagnosis and treatment selection. The goal is to identify a minimal set of molecular features (e.g., genes, proteins) that reliably discriminate between biological states from high-dimensional omics data [3] [5]. This problem is characterized by the "large p, small n" paradigm, where the number of features (p) vastly exceeds the number of samples (n), creating a high-risk of overfitting without appropriate optimization techniques [3].

One advanced approach, the Multi-Objective Evolution Algorithm for Identifying Biomarkers (MOESIB), formulates biomarker identification as a multi-objective optimization problem with two competing goals: maximizing classification accuracy while minimizing the number of selected features [6]. This method incorporates sample similarity networks to capture relationships between biological samples, overcoming limitations of methods that focus solely on gene or molecular networks [6]. Experimental results on five gene expression datasets demonstrate that MOESIB outperforms several state-of-the-art biomarker identification methods by simultaneously optimizing both objectives through specialized evolutionary strategies [6].

Hybrid Optimization Approaches for Biomarker Discovery

Hybrid optimization methods that combine multiple algorithmic approaches have shown particular success in biomarker identification. One study implemented a hybrid of Particle Swarm Optimization (PSO) and Genetic Algorithm (GA) for gene selection, using Artificial Neural Networks (ANN) as the classifier [3]. This approach simultaneously identifies informative gene subsets and optimizes classifier parameters, addressing both feature selection and model tuning within a unified optimization framework [3].

The experimental protocol evaluated the hybrid method on three benchmark gene expression datasets: Blood (leukemia), colon, and breast cancer [3]. Using 10-fold cross-validation, the method successfully reduced data dimensionality while improving classification accuracy, identifying compact biomarker sets with enhanced discriminatory power [3]. The hybrid nature of the algorithm allowed it to leverage the advantages of both PSO (fast convergence) and GA (effective information sharing between solutions), demonstrating how strategic algorithm combination can address complex biological optimization problems [3].

Protocol: Biomarker Identification with Multi-Objective Evolutionary Optimization

Data Preparation: Obtain gene expression dataset with sample class labels (e.g., healthy vs. diseased) [6]

Network Construction: Build sample similarity networks to capture relationships between biological samples [6]

Algorithm Initialization: Initialize population with random feature subsets and set multi-objective optimization parameters [6]

Evolutionary Optimization: Iteratively apply selection, crossover, and mutation operators to improve solution population [6]

Elite Guidance: Implement elite guidance strategy to preserve high-quality solutions across generations [6]

Fusion Selection: Apply fusion selection strategy to identify optimal biomarker set balancing size and accuracy [6]

Validation: Evaluate final biomarker set on independent test data using classification metrics [6]

The following diagram illustrates the multi-objective evolutionary optimization workflow for biomarker identification:

Benchmarking Optimization Algorithms: Towards Standardized Evaluation

The Need for Benchmarking Ecosystems

As the number of optimization methods applied in systems biology grows, standardized benchmarking becomes essential for objective comparison and method selection. A robust benchmarking ecosystem requires well-defined tasks, appropriate datasets, ground truth definitions, and standardized performance metrics [7]. Such ecosystems benefit multiple stakeholders: data analysts selecting methods for specific tasks, method developers comparing new algorithms to existing approaches, and journals/funding agencies ensuring rigorous evaluation of published tools [7].

The PROPER package represents one approach to standardized evaluation, providing visualization tools for comparing ranking classifiers in MATLAB [8]. This system enables researchers to generate two- and three-dimensional performance curves incorporating multiple metrics simultaneously, facilitating comprehensive algorithm comparison and optimization [8]. By implementing over 13 different performance measures including sensitivity, specificity, precision, recall, F-score, and Matthews correlation coefficient, PROPER addresses the multi-faceted nature of optimization algorithm evaluation in biological contexts [8].

Continuous Benchmarking Infrastructure

Advanced benchmarking approaches now advocate for continuous benchmarking ecosystems that automatically evaluate new methods as they emerge [7]. Such systems formalize benchmark definitions through configuration files that specify components, software environments, parameters, and snapshot procedures for reproducible comparisons [7]. This infrastructure addresses the challenge of rapidly evolving methodologies while maintaining standards of reproducibility and fairness in algorithm evaluation.

Continuous benchmarking systems incorporate multiple layers including hardware infrastructure, data management, software implementation, community standards, and knowledge generation [7]. Each layer presents specific challenges for optimization algorithm benchmarking, from computational resource management to long-term maintainability and community trust building [7]. By addressing these layers systematically, the systems biology community can develop robust evaluation frameworks that accelerate methodological progress through objective comparison.

Essential Research Tools for Optimization in Systems Biology

Implementing optimization methods in systems biology requires specialized software tools and computational resources. The table below summarizes key resources cited in this review.

Table 3: Essential Research Tools for Optimization in Systems Biology

| Tool/Resource | Application Domain | Key Features | Reference |

|---|---|---|---|

| PROPER (MATLAB Package) | Classifier evaluation and comparison | 20+ performance curves, multiple metrics | [8] |

| Hyperopt | Hyperparameter optimization | Random sampling, simulated annealing, Bayesian optimization | [4] |

| XGBoost | Gradient boosting models | Extreme gradient boosting with tunable hyperparameters | [4] |

| NetworkAnalyst | Gene expression analysis | Differential expression identification, network analysis | [9] |

| MOESIB | Biomarker identification | Multi-objective evolutionary optimization | [6] |

| SLEP | Sparse learning | Efficient projections for feature selection | [8] |

| Weka | General machine learning | Comprehensive algorithm collection | [8] |

Optimization serves as the computational backbone of modern systems biology, enabling researchers to extract meaningful patterns from complex biological data. As this review demonstrates, specialized optimization approaches deliver significant performance improvements across diverse applications including dynamic model tuning, biomarker identification, and predictive model development. The continuing evolution of optimization algorithms—particularly hybrid and multi-objective methods—promises to enhance our ability to understand biological systems and improve human health. By adopting standardized benchmarking practices and selecting algorithms matched to specific problem characteristics, researchers can maximize the insights gained from precious experimental data.

In the data-rich field of systems biology, where researchers model complex cellular networks and predict drug interactions, the selection of optimization algorithms can dramatically influence research outcomes and the pace of discovery. Algorithm benchmarking provides the methodological foundation for making these critical choices objectively. It is the science of systematically measuring and comparing the performance of different algorithms in terms of specific metrics such as time complexity, space complexity, and practical efficiency [10]. For scientists developing models of signaling pathways or drug target identification networks, rigorous benchmarking moves algorithm selection from a matter of preference to an evidence-based decision, ensuring that the computational tools used can handle the vast and intricate datasets characteristic of modern biological research.

This guide establishes a framework for the rigorous evaluation of optimization algorithms, with a specific focus on applications in systems biology. We will objectively compare algorithmic performance through structured experimental data, provide detailed methodologies for replication, and visualize the key relationships and workflows that underpin a robust benchmarking process. The goal is to equip researchers and drug development professionals with the protocols and criteria needed to critically assess the tools that drive their computational research.

Theoretical Foundations of Algorithm Analysis

A foundational element of algorithm benchmarking is understanding how an algorithm's resource consumption grows as the input size increases, which is formally expressed using Big O notation. This mathematical concept describes the upper bound of an algorithm's time or space complexity, providing a high-level understanding of its scalability [11]. This is particularly relevant in systems biology, where datasets, such as those from genomic sequencing or metabolic network modeling, can be extraordinarily large.

Common Time Complexities

The table below summarizes the most common time complexities, which are critical for predicting how an algorithm will perform as a biological dataset grows in size [11].

| Big O Notation | Complexity Type | Example Algorithm | Implication for Large Biological Datasets |

|---|---|---|---|

| O(1) | Constant Time | Accessing an array element | Ideal; performance is unaffected by data size. |

| O(log n) | Logarithmic Time | Binary Search | Excellent; performance degrades very slowly. |

| O(n) | Linear Time | Linear Search | Good; processing time doubles if data size doubles. |

| O(n log n) | Linearithmic Time | Merge Sort, Heap Sort | Acceptable; common for efficient sorting algorithms. |

| O(n²) | Quadratic Time | Bubble Sort, Naive Network Analysis | Poor; becomes slow with moderately sized datasets. |

| O(2ⁿ) | Exponential Time | Solving the traveling salesman problem via brute-force | Critical; becomes infeasible even for small datasets. |

| O(n!) | Factorial Time | Generating all permutations of a set | Intractable; to be avoided for any practical problem. |

For systems biology applications, algorithms with linear (O(n)), linearithmic (O(n log n)), or polynomial (O(n^c)) time complexities are generally sought, as they can scale to analyze large-scale molecular interaction networks. In contrast, algorithms with exponential (O(2^n)) or factorial (O(n!)) complexities are typically impractical for all but the smallest problems [11].

A Framework for Benchmarking Optimization Algorithms

Effective benchmarking transcends simply measuring execution time. It involves a structured approach to ensure results are accurate, reproducible, and meaningful [10] [12].

Key Performance Metrics

When benchmarking algorithms, multiple metrics must be considered to gain a complete picture of performance [10].

| Metric Category | Specific Metric | Description | Relevance to Systems Biology |

|---|---|---|---|

| Time Complexity | Big O Notation | Theoretical upper bound on growth of runtime with input size. | Predicts scalability for growing biological datasets (e.g., from single-cell to multi-omics). |

| Practical Timing | Execution Time | Measured runtime on specific hardware with a given input. | Determines feasibility for time-sensitive tasks like drug screening simulations. |

| Space Complexity | Memory Usage | Amount of memory consumed during algorithm execution. | Critical for large in-memory models, such as whole-cell or organ-scale models. |

| Solution Quality | Objective Function Value | The quality of the solution found (e.g., model fit error). | Ensures the algorithm finds biologically plausible solutions, not just fast ones. |

Experimental Best Practices

To ensure benchmarking results are reliable, the following best practices should be adhered to [10] [12]:

- Use Realistic Inputs: Test algorithms with data that closely resembles real-world biological data, including varying sizes and edge cases. This could include simulated datasets of different network topologies or experimental data of varying quality and completeness.

- Control the Environment: Run benchmarks on a consistent hardware and software configuration to minimize interference from other processes. A dedicated testing environment that mirrors the production system is ideal.

- Repeat Measurements: Run benchmarks multiple times to account for system performance variations and to obtain statistically significant results.

- Compare Equivalents: Ensure all algorithms are solving the same problem and producing equivalent outputs. Be transparent about any trade-offs between time complexity, space complexity, and solution quality.

Experimental Protocol for Algorithm Benchmarking

This section provides a detailed, step-by-step methodology for conducting a rigorous benchmarking study, adaptable for evaluating optimization algorithms used in pathway inference or parameter estimation.

Benchmarking Workflow

The following diagram illustrates the logical workflow of a robust benchmarking process, from planning to analysis.

Detailed Methodology

Planning and Definition (Pre-Experimental)

- Define Objectives: Clearly state the goal (e.g., "Identify the fastest optimization algorithm for parameter fitting in ordinary differential equation models of cell cycle regulation").

- Select Algorithms: Choose a set of candidate algorithms for comparison (e.g., Particle Swarm Optimization, Simulated Annealing, Genetic Algorithms).

- Identify Metrics: Determine the key performance indicators (KPIs) to be collected, based on the table in Section 3.1 [10] [12].

Test Environment Setup

- Hardware/Software: Document all specifications, including CPU, RAM, operating system, and programming language versions. Use a dedicated machine or container (e.g., Docker) to ensure consistency.

- Datasets: Prepare a set of standardized, biologically relevant input datasets. These should cover a range of sizes and complexities, from small toy models to large-scale network models.

Test Execution and Data Collection

- Instrumentation: Implement code using profiling tools (e.g.,

cProfilein Python) or benchmarking frameworks (e.g.,pytest-benchmark) to automatically collect metrics [10]. - Multiple Trials: Execute each algorithm on each dataset multiple times (e.g., 10-30 repetitions) to account for random variation, especially in stochastic algorithms.

- Data Recording: Log all relevant data for each run, including execution time, memory consumption, final objective function value, and other solution quality metrics.

- Instrumentation: Implement code using profiling tools (e.g.,

Comparative Performance Analysis

To illustrate the benchmarking process, we present a comparative analysis of two sorting algorithms, Bubble Sort and Merge Sort. While sorting is a foundational computer science task, the principles of comparison directly apply to evaluating optimization algorithms used in biology, such as those for sorting candidate drug compounds by binding affinity.

Experimental Data

The following table summarizes quantitative performance data collected from executing the two algorithms on random integer arrays of increasing size. The experiment was conducted in a controlled Python environment using the timeit module for precise timing [10].

| Algorithm | Theoretical Time Complexity | Execution Time (n=100) | Execution Time (n=1,000) | Execution Time (n=10,000) |

|---|---|---|---|---|

| Bubble Sort | O(n²) | 0.000302 seconds | 0.031245 seconds | 3.152687 seconds |

| Merge Sort | O(n log n) | 0.000201 seconds | 0.002514 seconds | 0.030125 seconds |

Key Finding: The performance disparity between the algorithms grows exponentially with input size. For n=10,000, Merge Sort was over 100 times faster than Bubble Sort [10]. This powerfully demonstrates how an algorithm's theoretical complexity translates into dramatic real-world performance differences, a critical consideration when selecting methods for large-scale biological data processing.

The Scientist's Toolkit: Essential Research Reagents

A rigorous benchmarking study relies on both computational tools and structured methodologies. The following table details key components of the benchmarking "toolkit" and their functions in the context of systems biology research.

| Tool/Reagent | Category | Function in Benchmarking | Example Tools / protocols |

|---|---|---|---|

| Performance Profiling Tool | Software Library | Measures detailed execution statistics (time per function, memory allocation) to identify code bottlenecks. | Python: cProfile, memory_profiler; Java: JProfiler [10]. |

| Benchmarking Framework | Software Library | Provides standardized, repeatable methods for running and timing code, often with statistical analysis. | Python: pytest-benchmark; Java: JMH; JavaScript: Benchmark.js [10]. |

| Controlled Test Environment | Protocol | Isolates the experiment from external variables, ensuring consistent and reproducible results. | Docker container, dedicated compute instance [10] [12]. |

| Standardized Biological Dataset | Data | Serves as a consistent, biologically relevant input for comparing algorithm performance and solution quality. | Public repositories like BioModels, SBO, or custom-generated synthetic datasets. |

| Statistical Analysis Method | Protocol | Validates that observed performance differences are statistically significant and not due to random noise. | Student's t-test, confidence interval analysis. |

Visualization and Reporting of Benchmarking Data

Effectively communicating benchmarking results is as important as generating them. Clear visualizations allow researchers to grasp complex performance trade-offs quickly.

Principles of Effective Data Visualization

Adhering to design best practices ensures that charts and graphs are interpretable and accurate [13].

- Know Your Audience and Message: Tailor the complexity and message of the visualization for fellow scientists and researchers.

- Use Color Effectively: Employ color palettes that are accessible to all readers, including those with color vision deficiencies. Use sequential color palettes for numeric data and qualitative palettes for categorical data [13].

- Ensure Sufficient Color Contrast: All text and key graphical elements must have a high contrast against their background. For body text, the Web Content Accessibility Guidelines (WCAG) recommend a minimum contrast ratio of 4.5:1 [14] [15] [16].

- Avoid Chartjunk: Eliminate any non-essential elements that do not convey information, such as excessive gridlines, 3D effects, or decorative images. Keep the design simple and clean [13].

Performance Scalability Diagram

The following diagram visualizes the core relationship between algorithm complexity and practical performance, which is the fundamental insight gained from benchmarking.

The "quest for rigorous algorithm evaluation" is a cornerstone of robust, reproducible computational systems biology. By defining the benchmarking problem through a structured framework of theoretical analysis, controlled experimentation, and clear visualization, researchers can move beyond anecdotal evidence when selecting optimization algorithms. This guide provides a pathway to generating objective, data-driven comparisons, empowering scientists to choose the most efficient and scalable algorithms for modeling complex biological systems and accelerating the drug development process. As biological datasets continue to grow in size and complexity, a disciplined approach to benchmarking will become increasingly critical to scientific progress.

In the field of systems biology, optimizing complex biological models is a fundamental task, essential for endeavors ranging from predicting cellular organization to engineering metabolic pathways [17] [18]. The algorithms powering this optimization must navigate challenging landscapes riddled with non-convexity, scale to high-dimensional parameter spaces, and converge reliably to meaningful solutions. This guide provides an objective comparison of contemporary optimization algorithms, benchmarking their performance on key properties critical to systems biology research. We focus on convergence behavior, scalability to large problems, and efficacy in handling non-convex functions, providing structured experimental data and protocols to aid researchers and drug development professionals in selecting the appropriate algorithmic tools.

Optimization algorithms can be broadly categorized by their underlying strategies for navigating complex solution spaces. The following table summarizes the core algorithms considered in this guide.

Table 1: Overview of Featured Optimization Algorithms

| Algorithm Class | Key Mechanism | Representative Algorithms |

|---|---|---|

| Gradient-Based Methods | Iteratively updates parameters using gradient of the loss function [19]. | Stochastic Gradient Descent (SGD), Momentum, Nesterov Accelerated Gradient (NAG) [19]. |

| Adaptive Moment Estimation | Combines momentum with parameter-specific adaptive learning rates [20] [19]. | Adam, RMSProp, AdaGrad [20] [19]. |

| Bayesian Optimization | Uses a probabilistic surrogate model and acquisition function to guide experimentation [18]. | BioKernel (No-code framework) [18]. |

| Manifold Optimization | "Convexifies" the objective by adding a multiple of the squared retraction distance for problems on manifolds [21]. | Accelerated manifold algorithms [21]. |

| Matrix Factorization Methods | Solves low-rank factorization without storing full Hessian matrices [22]. | BB scaling Conjugate Gradient method [22]. |

To facilitate a structured comparison, we define the three key properties under evaluation:

- Convergence: The guarantee and rate at which an algorithm approaches a stationary point (a local/global minimum or a saddle point). This is crucial for ensuring reliable and reproducible results in biological simulations [20] [19] [21].

- Scalability: The algorithm's performance as the number of parameters (dimensionality) and the volume of data increase. Systems biology models can involve optimizing dozens of parameters, such as inducer concentrations in a metabolic pathway [18].

- Handling of Non-Convexity: The algorithm's ability to navigate loss landscapes populated with multiple local minima and saddle points to find a sufficiently good solution, as the loss functions for complex models like deep neural networks are inherently non-convex [19] [23].

Comparative Performance Analysis

This section synthesizes quantitative and qualitative findings from the literature to compare the algorithms based on convergence, scalability, and handling of non-convexity.

Table 2: Comparative Analysis of Key Algorithmic Properties

| Algorithm | Convergence Behavior | Scalability | Handling of Non-Convexity |

|---|---|---|---|

| Stochastic Gradient Descent (SGD) | Can exhibit oscillations; slower convergence on ill-conditioned problems; convergence to a stationary point is proven [19]. | Highly scalable to large datasets due to mini-batch processing [19]. | Noisy updates help escape shallow local minima; can get stuck in saddle points [19]. |

| Adam | Can diverge in some convex settings; converges with proper tuning of learning rate and moment estimates [20]. | Excellent for large-scale, high-dimensional problems; default choice for deep neural networks [20] [19]. | Effective at escaping saddle points due to adaptive learning rates and momentum [19]. |

| Bayesian Optimization (BioKernel) | Sample-efficient; finds optimum with fewer evaluations; converged to a near-optimum in ~22% of the evaluations needed by a grid search in one case study [18]. | Handles up to ~20 dimensions effectively; performance can degrade in very high-dimensional spaces [18]. | Excellent for "black-box" functions; does not require gradients; naturally designed for rugged, non-convex landscapes [18]. |

| Manifold Optimization | Provable convergence to the optimum for convex functions on manifolds; converges to a stationary point for non-convex functions [21]. | Applied to tasks like low-rank matrix factorization on Netflix dataset; performance depends on the manifold's structure [21]. | Adapts to the objective's complexity without prior knowledge of its convexity [21]. |

| BB Scaling CG (for NMF) | Established convergence results; improved CPU time and fewer function evaluations in experiments [22]. | Designed for large-scale low-rank matrix factorization; avoids storing Hessian matrices, saving memory [22]. | Applied directly to the non-convex NMF problem; uses a nonmonotone strategy to navigate the landscape [22]. |

Supporting Experimental Data

The following table summarizes key quantitative results from experiments detailed in the cited literature, providing a direct comparison of algorithmic efficiency.

Table 3: Summary of Experimental Performance Data

| Experiment Context | Algorithm | Performance Metric | Result | Source |

|---|---|---|---|---|

| Optimizing 4D transcriptional control for limonene production [18] | Grid Search | Number of unique points evaluated | 83 | [18] |

| Bayesian Optimization (BioKernel) | Number of unique points evaluated to reach ~10% of optimum | 18 (22% of grid search) | [18] | |

| Low-rank Matrix Factorization [22] | Proposed BB Scaling CG | CPU Time, Efficiency | Significantly improved vs. existing methods | [22] |

| Non-Negative Matrix Factorization (NMF) [22] | Proposed BB Scaling CG | Number of Function Evaluations | Reduced vs. existing methods | [22] |

Detailed Experimental Protocols

To ensure reproducibility and provide context for the data in Table 3, this section outlines the methodologies of key experiments.

Protocol 1: Bayesian Optimization for Metabolic Pathway Tuning

This protocol is derived from the iGEM Imperial 2025 study that validated the BioKernel framework [18].

- Objective: To demonstrate that Bayesian Optimization (BO) can identify optimal pathway induction conditions with dramatically fewer experiments than a combinatorial grid search.

- Biological System: The Marionette-wild E. coli strain with a genomically integrated array of twelve orthogonal, inducible transcription factors, creating a high-dimensional optimization landscape [18].

- Target Output: Maximize the production of a target compound (e.g., astaxanthin or limonene).

- Validation Method:

- Retrospective Validation: A published dataset from a 4-dimensional limonene production optimization was used. A Gaussian process with a scaled RBF kernel was fitted to the existing data to approximate the true optimization landscape.

- Noise Modeling: The standard deviation from technical replicates was used to create a heteroscedastic noise model, making the validation more biologically realistic.

- BO Policy Execution: The BioKernel BO policy was run on this simulated landscape, using a Matern kernel. Its performance was measured by the number of unique parameter combinations (experiments) it needed to evaluate to get within 10% of the known global optimum.

- Key Outcome: The BO policy achieved convergence in an average of 18 points, compared to the 83 points required by the grid search used in the original study, representing a 78% reduction in experimental effort [18].

Protocol 2: Computational Analysis of Cellular Self-Organization

This protocol is based on research from Harvard SEAS on extracting rules for cellular morphogenesis [17].

- Objective: To develop a computational framework that can uncover the genetic and physical rules cells use to self-organize into complex structures.

- Computational System: A physics-based systems biology model that simulates cell growth, division, and interaction.

- Target Output: A set of inferred genetic network rules that, when followed by individual cells, lead to a target collective behavior or tissue shape.

- Methodology:

- Formulation as Optimization: The process of finding the correct cellular rules is framed as an optimization problem. The goal is to minimize the difference between the model's final structure and a desired target structure.

- Application of Automatic Differentiation: The backbone of the framework is automatic differentiation (AD), a technique central to training deep neural networks. AD is used to efficiently compute how small changes in the underlying gene network parameters affect the final, collective tissue-level outcome.

- Inversion for Design: Once the model is trained and predictive, it can be inverted. Researchers can specify a desired tissue shape or function, and the model can predict the cellular programming required to achieve it [17].

- Key Outcome: This framework serves as a proof-of-concept for predicting how to program cells to achieve specific organizational outcomes, a foundational step toward computational organ design [17].

Visual Workflows and Pathways

The following diagrams illustrate the core logical workflow of a key algorithm and a general experimental pipeline for biological optimization.

Bayesian Optimization Workflow

Biological Optimization Experimental Pipeline

The Scientist's Toolkit: Research Reagent Solutions

This table details key computational and biological reagents essential for implementing the optimization experiments discussed in this guide.

Table 4: Essential Research Reagents and Materials

| Reagent/Material | Function in Optimization | Context of Use |

|---|---|---|

| Marionette-wild E. coli Strain | Provides a genetically engineered chassis with multiple orthogonal inducible promoters, creating a high-dimensional optimization landscape for metabolic pathways [18]. | Tuning expression levels in multi-step biosynthetic pathways (e.g., for astaxanthin or limonene production) [18]. |

| No-Code Bayesian Optimization Framework (BioKernel) | Makes advanced BO accessible to experimental biologists without programming expertise for optimizing laboratory experiments [18]. | General-purpose optimization of biological experiments with modular kernels and support for heteroscedastic noise [18]. |

| Automatic Differentiation (AD) Libraries | Enables efficient computation of gradients in complex computational models, which is essential for training and optimizing deep neural networks and physics-based models [17]. | Computational research, such as inferring cellular interaction rules that lead to target tissue morphologies [17]. |

| Synthetic Biological Parts (Promoters, RBS, etc.) | The modular components that are assembled and whose expression levels are optimized to achieve a desired system-level function [18]. | Constructing and tuning genetic circuits and metabolic pathways in synthetic biology projects [18]. |

| Heteroscedastic Noise Model | A statistical model that accounts for non-constant measurement uncertainty, which is common in biological data, leading to more robust optimization [18]. | Integrated into Bayesian Optimization frameworks to improve their performance and reliability with real experimental data [18]. |

Parameter estimation and network reconstruction represent two foundational challenges in systems biology. Accurate parameter estimation is crucial for developing predictive dynamic models of cellular processes, while reliable network inference is essential for mapping the complex interactions that underlie biological function. This guide provides a comparative analysis of contemporary methodologies addressing these core problems, framed within a broader thesis on benchmarking optimization algorithms for systems biology research. We objectively evaluate performance using empirical data from recent studies, detailing experimental protocols to ensure reproducibility and providing structured comparisons to guide method selection.

Comparative Analysis of Parameter Estimation Methods

Parameter estimation transforms conceptual mathematical models into quantitatively predictive tools. This process involves determining parameter values that minimize the discrepancy between model simulations and experimental data.

Methodologies and Performance Metrics

Table 1: Performance Comparison of Parameter Estimation Methods

| Estimation Method | Computational Demand | Best-Suited Model Complexity | Key Advantage | Key Limitation | Reported RMSE (Sample) |

|---|---|---|---|---|---|

| Profiled Estimation (PEP) [24] | Moderate | ODE-based models (e.g., 3-12 parameters) | Avoids repeated ODE solution; stable convergence | Performance varies with parameter influence | 0.54 (Lettuce Model) |

| Frequentist (Differential Evolution) [24] | High | Models with fewer than 10 parameters | Conceptual simplicity; global search capability | High error with many parameters; computationally intensive | 0.89 (Lettuce Model) |

| Bayesian (MCMC) [24] | Very High | Models where uncertainty quantification is critical | Provides uncertainty estimates; incorporates prior knowledge | Extremely high computational cost | Not Specified |

| Maximum Likelihood (MLE) [25] | Low to Moderate | Various, including Gompertz distribution | Well-established theoretical properties | Can be sensitive to initial guesses | Varies by application |

| Maximum Product of Spacing (MPSE) [25] | Low to Moderate | Various, including Gompertz distribution | Robust to outliers | Less common than MLE | Varies by application |

Experimental Protocols for Parameter Estimation

Protocol 1: Profiled Estimation Procedure (PEP) for Dynamic Crop Models [24] This protocol outlines the application of PEP to models defined by ordinary differential equations (ODEs).

- Model Definition: Formulate the dynamic model as a set of ODEs. For example, a lettuce growth model with three state variables (e.g., biomass, nutrient status) and twelve parameters.

- Data Collection: Gather time-series measurements of the state variables (e.g., biomass at different growth stages).

- Spline Approximation: Approximate each state variable using a B-spline basis function expansion, rather than numerically solving the ODEs at each step.

- Three-Level Optimization: Solve the nested optimization problem:

- Inner Level: Optimize the coefficients of the B-spline to fit the data.

- Middle Level: Optimize the model parameters given the spline coefficients.

- Outer Level: Optimize the smoothing parameters to balance fit and smoothness.

- Validation: Compare model predictions against a held-out validation dataset using metrics like Root Mean Squared Error (RMSE) and Modeling Efficiency.

Protocol 2: Model Comparison under Accelerated Lifetime Tests [25] This protocol compares different life-stress relationship models (e.g., linear vs. inverse power-law) for reliability data.

- Data Generation: Conduct constant-stress accelerated lifetime tests or generate data from a flexible distribution like the Sine-Modified Power Gompertz (SMPoG) to test robustness.

- Parameter Estimation: Apply multiple estimation methods (Maximum Likelihood, Least Squares, Maximum Product Spacing, Cramér-von Mises) to the test data.

- Performance Evaluation: Calculate the Mean Squared Error (MSE) for each model-method combination across different sample sizes (small, medium, large).

- Model Selection: Use information criteria (e.g., Akaike Information Criterion - AIC) and cross-validation (e.g., Leave-One-Out Cross-Validation - LOOCV) to determine the best-fitting and most generalizable model.

Figure 1: Profiled Estimation Workflow. The diagram illustrates the three-level optimization structure of the Profiled Estimation Procedure (PEP) for calibrating dynamic models. [24]

Comparative Analysis of Network Reconstruction Methods

Network inference aims to reconstruct the wiring diagrams of biological systems, such as functional brain networks or gene regulatory networks, from observational and interventional data.

Benchmarking with CausalBench

The CausalBench suite provides a standardized framework for evaluating network inference methods on large-scale, real-world single-cell perturbation data, moving beyond synthetic benchmarks. [26]

Table 2: Performance of Network Inference Methods on CausalBench [26]

| Method | Type | Key Principle | Mean F1 Score (K562) | Mean F1 Score (RPE1) | Scalability to Large Networks |

|---|---|---|---|---|---|

| Mean Difference [26] | Interventional | Calculates mean expression differences post-perturbation | 0.197 | 0.205 | High |

| Guanlab [26] | Interventional | Custom algorithm from CausalBench challenge | 0.192 | 0.203 | High |

| GRNBoost [26] | Observational | Tree-based ensemble learning | 0.075 | 0.085 | Moderate |

| NOTEARS [26] | Observational | Continuous optimization with acyclicity constraint | 0.044 | 0.042 | Low |

| PC [26] | Observational | Constraint-based causal discovery | 0.031 | 0.029 | Low |

| GIES [26] | Interventional | Score-based search using interventional data | 0.038 | 0.035 | Low |

Experimental Protocols for Network Reconstruction

Protocol 3: Evaluating Network Inference with CausalBench [26] This protocol uses the CausalBench framework to benchmark network inference methods on single-cell CRISPRi perturbation data.

- Data Curation: Obtain two large-scale perturbational single-cell RNA-seq datasets (e.g., from RPE1 and K562 cell lines), containing measurements from both control (observational) and genetically perturbed (interventional) cells.

- Method Implementation: Apply a suite of network inference methods, including observational (NOTEARS, PC, GRNBoost) and interventional methods (GIES, DCDI, Mean Difference, Guanlab).

- Statistical Evaluation: Compute causal effect metrics.

- Mean Wasserstein Distance: Measures the strength of causal effects for predicted interactions.

- False Omission Rate (FOR): Measures the rate at which true causal interactions are missed.

- Biological Evaluation: Compare predicted networks to biologically-motivated, approximate ground truths to calculate precision, recall, and F1 scores.

- Analysis: Evaluate the trade-off between precision and recall, and rank methods based on their aggregate performance across statistical and biological metrics.

Protocol 4: Benchmarking Functional Connectivity (FC) Mapping [27] This protocol evaluates how different pairwise statistics influence the properties of inferred functional brain networks.

- Data Acquisition: Use resting-state functional MRI (fMRI) time series from a large cohort (e.g., N=326 from the Human Connectome Project).

- FC Matrix Calculation: Generate 239 different FC matrices for each subject using a comprehensive library of pairwise interaction statistics (e.g., covariance, precision, spectral, information-theoretic measures).

- Feature Extraction: For each FC matrix, compute canonical network features:

- Hub identification via weighted degree.

- Structure-function coupling (correlation with diffusion MRI-based structural connectivity).

- Relationship between FC and Euclidean distance.

- Individual fingerprinting (uniqueness of an individual's network).

- Benchmarking: Compare how each pairwise statistic performs on these features to identify methods optimized for specific research questions (e.g., precision-based statistics excelled in structure-function coupling and individual fingerprinting). [27]

Figure 2: CausalBench Evaluation Pipeline. The workflow for benchmarking network inference methods using real-world single-cell perturbation data within the CausalBench suite. [26]

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Research Reagents and Resources

| Item | Function/Application | Specific Example/Note |

|---|---|---|

| CausalBench Suite [26] | Open-source benchmark for network inference on real-world perturbation data. | Provides curated datasets, baseline method implementations, and biologically-motivated metrics. |

| PySPI Package [27] | Python library for computing a vast library of pairwise interaction statistics. | Enables benchmarking of 239 different functional connectivity metrics from fMRI data. |

| Large-Scale Perturbation Datasets [26] | Provides interventional and observational data for causal inference. | e.g., single-cell RNA-seq datasets from RPE1 and K562 cell lines with CRISPRi knockouts. |

| Human Connectome Project (HCP) Data [27] | Source of high-quality, multi-modal neuroimaging data. | Used for benchmarking functional connectivity mapping methods. |

| Catphan Phantom [28] | Physical phantom for quantitative evaluation of CT image quality parameters. | Used to assess noise, contrast-to-noise ratio, and resolution of reconstruction algorithms. |

This comparison guide demonstrates that the choice of methodology for parameter estimation and network reconstruction is highly context-dependent. For parameter estimation in dynamic models, the emerging Profiled Estimation Procedure offers a robust and efficient alternative to traditional frequentist and Bayesian methods, particularly for ODE-based models of moderate complexity. [24] In network reconstruction, methods that effectively leverage interventional data, such as Mean Difference and Guanlab, consistently outperform those relying solely on observational data in real-world benchmarks like CausalBench. [26] Furthermore, the choice of association metric (e.g., precision vs. correlation) fundamentally alters the properties of inferred functional connectivity networks, highlighting the need for tailored method selection. [27] A successful strategy involves aligning the methodological choice with the specific biological question, data modality, and computational constraints, while leveraging standardized benchmarking suites to ensure rigorous and reproducible evaluation.

A Practical Guide to Key Optimization Algorithms and Their Biological Use Cases

In systems biology and drug development, the calibration of mathematical models to experimental data is a fundamental step for generating predictive and mechanistic insights. This process, known as parameter estimation, is most often formulated as a nonlinear least squares (NLS) optimization problem, where the goal is to find the parameter set that minimizes the sum of squared differences between experimental observations and model predictions [29]. A significant challenge inherent to this process is the presence of multiple local minima in the objective function landscape. These local minima can trap standard optimization algorithms, resulting in parameter estimates that are suboptimal and poorly reflective of the underlying biology [30] [1].

The multi-start nonlinear least squares (ms-nlLSQ) approach is a deterministic strategy designed to overcome this limitation. Instead of relying on a single, potentially poor, initial guess, the method executes a local NLS optimizer from a population of different starting points distributed throughout the parameter space [31] [1]. The core premise is that by initiating searches from diverse locations, the algorithm has a higher probability of locating the global optimum—the best possible set of parameters—rather than becoming stuck in a local minimum [30]. This strategy reduces the initial guess dependence that can introduce user bias and lead to inconsistent modeling results, a critical consideration for robust and reproducible research [30] [29]. In the context of benchmarking, evaluating multi-start NLS involves comparing its effectiveness in finding the global minimum against other classes of optimization algorithms, such as purely stochastic or heuristic methods.

Theoretical Foundation of Multi-Start NLS

The Nonlinear Least Squares Problem

The parameter estimation problem for a deterministic model, such as a system of Ordinary Differential Equations (ODEs), is formally defined as follows. For a parameter vector θ and experimental data points (t_i, y_i), the objective is to minimize the cost function c(θ), which is typically the residual sum of squares:

c(θ) = Σ_i [y_i - f(t_i, θ)]²

where f(t_i, θ) is the model prediction at time t_i [1] [29]. The optimization is often subject to constraints, which can include lower and upper bounds on parameters (lb ≤ θ ≤ ub) or other nonlinear functional relationships (g(θ) ≤ 0, h(θ) = 0) [1]. The Karush-Kuhn-Tucker (KKT) conditions are the first-order necessary conditions for a solution to be optimal in a constrained setting [32].

The Critical Role of Initial Values

Deterministic local optimizers, such as the Levenberg-Marquardt or trust-region algorithms, work by iteratively improving an initial parameter guess θ_0 until a minimum is reached [30] [1]. As illustrated below, if the error landscape is complex, the local minimum found is entirely dependent on the starting point.

Figure 1: Dependence of local optimizers on initial values. The final solution found by a single local optimization run is highly sensitive to its starting point in the parameter space.

Multi-Start as a Global Search Strategy

The multi-start procedure automates and systematizes the process of testing multiple initial guesses. A high-level workflow involves:

Figure 2: A generic workflow for multi-start nonlinear least squares optimization.

This strategy improves the odds of landing in the basin of attraction of the global minimum. Advanced implementations, like the one in the gslnls R package, use modified algorithms to explore the parameter space efficiently, potentially updating undefined parameter ranges dynamically during the search to avoid a naive, exhaustive grid search [31].

Performance Benchmarking and Comparison

Established Benchmarking Protocols

Informed benchmarking of optimization algorithms requires carefully designed experiments. Key guidelines for benchmarking in systems biology include [29]:

- Using Realistic Test Problems: Benchmarks should be based on models and data that reflect the challenges of real-world systems biology, including non-identifiable parameters, limited data coverage, and non-trivial error structures.

- Comparing Against Established Solvers: Performance should be compared against robust, well-regarded solvers. Studies have shown that multi-start gradient-based optimization is a strong performer, as evidenced by its success in DREAM challenges [29].

- Reporting Comprehensive Metrics: Evaluation must go beyond a single metric. Key performance indicators include success rate (finding the global optimum), convergence speed, computational time, and parameter identifiability.

A typical benchmarking protocol involves applying multiple optimization algorithms to a suite of test models, including canonical problems like the NIST StRD Gauss1 problem and biologically relevant models like the Lubricant dataset from Bates & Watts [31].

Comparative Performance Data

The table below summarizes a hypothetical performance comparison based on aggregated findings from the literature for fitting medium-complexity ODE models (5-20 parameters) [31] [1] [29].

Table 1: Comparative performance of optimization approaches for model fitting

| Algorithm Class | Example | Success Rate | Computational Cost | Ease of Automation | Handling of Constraints | Best for Problem Type |

|---|---|---|---|---|---|---|

| Multi-start NLS | gsl_nls (R), lsqnonlin (MATLAB) |

High | Medium | High | Good | Models with several local minima, good initial range knowledge |

| Genetic Algorithm | Simple GA | Medium | High | High | Good | Very complex landscapes, discrete/continuous mixed parameters |

| Markov Chain Monte Carlo | rw-MCMC | Medium (Exploration) | High | Medium | Fair | Probabilistic fitting, uncertainty quantification |

| Single-start NLS | nls (R) |

Low (guess-dependent) | Low | Low | Good | Well-behaved, convex problems |

Analysis of Comparative Results

- Multi-start NLS vs. Stochastic/Heuristic Methods: Multi-start NLS often achieves a higher success rate than single-start NLS and can be more computationally efficient than purely stochastic methods like Genetic Algorithms (GAs) or particle swarm optimization, especially when hybridized with an effective local search [1] [29]. While GAs are less prone to getting trapped, they can be slower to converge to a precise optimum and their stochastic nature makes them less deterministic [30] [1].

- Impact of Implementation: The performance of multi-start NLS is heavily influenced by the underlying local optimizer and the strategy for generating initial points. For example, using a trust-region algorithm for the local search has been found to be particularly effective [29]. The ability to specify parameter ranges instead of fixed points, as in

gsl_nls, also enhances robustness [31].

Application in Systems Biology: A Case Study

Case Study: Fitting a Gene Regulatory Network Model

Consider a model of autoregulatory gene expression, a common motif in cellular networks. The model might involve the concentration of a protein that represses its own transcription, described by a nonlinear ODE. The goal is to estimate kinetic parameters (e.g., production and degradation rates) from time-course measurements of protein levels [33].

Experimental Protocol:

- Model Definition: Formulate the ODEs representing the biochemical reactions.

- Data Preparation: Use experimental data (e.g., from Western blots or fluorescence microscopy) measuring the system's response over time.

- Optimization Setup: Define the parameter ranges based on biological knowledge (e.g., degradation rates must be positive). The

gslnlspackage can be employed with a start list where some parameters are given fixed ranges and others are left to be determined dynamically [31]. - Benchmarking Run: Execute the multi-start NLS algorithm alongside a genetic algorithm and an MCMC method on the same dataset.

- Validation: Compare the fitted models based on their goodness-of-fit (e.g., residual sum of squares), predictive capability on a validation dataset, and biological plausibility of the estimated parameters.

Essential Research Reagents and Tools

Table 2: Key research reagents and computational tools for optimization

| Item Name | Function/Benefit | Example/Note |

|---|---|---|

| Trust-Region Algorithm | Robust local optimizer for NLS problems; often the core of performant multi-start suites. | Found in Data2Dynamics [29] |

| Parameter Ranges | Defines the search space for initial values; crucial for guiding the multi-start search. | Can be fixed or dynamically updated [31] |

| Sensitivity Analysis | Post-fitting analysis to determine parameter identifiability and estimate confidence intervals. | Evaluates reliability of fitted parameters [29] |

| Log-scale Optimization | Performing optimization on a log-transformed parameter space to handle parameters spanning orders of magnitude. | Recommended practice for biological parameters [29] |

Based on the synthesized benchmarking data, multi-start nonlinear least squares stands as a highly effective deterministic approach for data fitting in systems biology. Its primary strength lies in its high probability of locating the global optimum while maintaining a deterministic and reproducible workflow.

Recommendations for practitioners:

- Use Multi-start NLS as a first-choice method for fitting ODE models, especially when some prior knowledge of plausible parameter ranges is available.

- Benchmark critically by always comparing the results of a multi-start fit against those from a few runs of a stochastic algorithm (like a GA) to ensure the solution is not a local minimum.

- Invest in good initial ranges; the efficiency and success of the method are enhanced by biologically informed constraints on the parameter space.

For the systems biology and drug development community, the adoption of rigorous and well-benchmarked optimization strategies like multi-start NLS is not merely a technical detail but a fundamental requirement for building reliable, predictive models that can accelerate scientific discovery.

Markov Chain Monte Carlo (MCMC) for Stochastic Models

Markov Chain Monte Carlo (MCMC) algorithms are indispensable tools for sampling complex posterior distributions in stochastic models, particularly in systems biology and drug development. This guide objectively compares the performance of established and emerging MCMC methods based on recent benchmarking studies, providing researchers with data-driven insights for algorithm selection. The comparison focuses on sampling efficiency, convergence robustness, and applicability to challenging biological inference problems.

Table 1: Overview of MCMC Algorithms and Primary Applications

| Algorithm | Class | Key Mechanism | Best-Suited Problems |

|---|---|---|---|

| Metropolis-Hastings (MH) [34] [35] | Single-Chain | Random walk with accept/reject step | Baseline comparisons, simple posteriors |

| Adaptive Metropolis (AM) [36] [35] | Single-Chain | Proposal covariance adapted using sampling history | Posteriors with high parameter correlations |

| DRAM [36] [35] | Single-Chain | AM combined with delayed rejection | Problems where initial proposals are frequently rejected |

| MALA [36] [35] | Single-Chain | Uses gradient and Fisher Information for proposals | Models where gradient information is available |

| CMA-MCMC [37] | Multi-Chain | Integrates CMA-ES optimization with Metropolis | High-dimensional, non-Gaussian posteriors |

| DREAM/Zs [37] | Multi-Chain | Genetic algorithm-inspired inter-chain interactions | Multimodal and high-dimensional parameter spaces |

| NUTS [34] | Single-Chain | Hamiltonian dynamics with automatic path length tuning | Models with differentiable log-posteriors |

| Affine Invariant Stretch Move [34] | Multi-Chain | Proposal based on positions of other chains | Distributions with unknown scale or correlation |

Performance Benchmarking and Quantitative Comparison

Rigorous benchmarking reveals significant performance variations across MCMC algorithms, influenced by target distribution properties and computational resources.

Benchmarking Results on Analytical and Biological Problems

Large-scale evaluations demonstrate that multi-chain methods generally outperform single-chain algorithms, particularly for challenging distributions with multimodality or complex correlation structures [36] [35].

Table 2: Performance Metrics Across MCMC Algorithms

| Algorithm | Target Distribution Type | Performance Metric | Result | Reference |

|---|---|---|---|---|

| CMAM | High-dimensional hydrogeological inverse problems | Inversion accuracy vs. state-of-the-art | Comparable to DREAMZS [37] | [37] |

| NUTS | Newtonian squeeze flow calibration | Kullback-Leibler divergence to true posterior | Lowest divergence [34] | [34] |

| Affine Invariant Stretch Move | Newtonian squeeze flow calibration | Kullback-Leibler divergence to true posterior | Intermediate divergence [34] | [34] |

| Metropolis-Hastings | Newtonian squeeze flow calibration | Kullback-Leibler divergence to true posterior | Highest divergence [34] | [34] |

| Multi-chain Algorithms | Dynamical systems with bistability, oscillations | Overall sampling performance | Superior to single-chain [36] [35] | [36] [35] |

| Normalizing Flow-Enhanced MCMC | Multimodal synthetic & real-world Bayesian posteriors | Sampling acceleration vs. classic MCMC | Superior for suitable NF architectures [38] | [38] |

Emerging Methods: Normalizing Flow-Enhanced MCMC

Recent advances integrate normalizing flows (NFs) with MCMC to precondition target distributions or facilitate distant jumps. Empirical evaluations show:

- NF-MCMC outperforms classic MCMC when target density gradients are available and with appropriate NF architecture choices [38].

- Contractive residual flows emerge as the best general-purpose models with relatively low sensitivity to hyperparameters [38].

- Key applications include lattice field theory, molecular dynamics, and gravitational wave analyses where they accelerate sampling of complex geometries [38].

Experimental Protocols and Methodologies

Benchmarking Framework for Dynamical Systems

Comprehensive benchmarking studies employ rigorous methodologies to ensure fair algorithm comparison [36] [35]:

1. Problem Selection: Benchmarks include dynamical systems with bifurcations, periodic orbits, multistability, and chaotic regimes, generating posterior distributions with realistic challenges like multimodality and heavy tails [36] [35].

2. Algorithm Implementation:

- Both single-chain (AM, DRAM, MALA) and multi-chain (Parallel Tempering, Parallel Hierarchical Sampling) methods are implemented [36] [35].

- Multiple initialization and adaptation schemes are tested [36] [35].

- Knowledge of the true posterior is not used for method selection or tuning to maintain realism [36] [35].

3. Evaluation Metrics:

- Effective Sample Size (ESS) per function evaluation or per unit time [39].

- Gelman-Rubin convergence diagnostic (R̂) for multiple chains [40].

- Kullback-Leibler divergence from sampled to true posterior where available [34].

4. Computational Scale: One benchmarking effort required approximately 300,000 CPU hours to evaluate >16,000 MCMC runs across methods and benchmarks [36] [35].

CMAM Algorithm Workflow

The Covariance Matrix Adaptation Metropolis (CMAM) algorithm integrates population-based CMA-ES optimization with Metropolis sampling [37]:

Bayesian Inference Workflow for Biological Systems

The application of MCMC to systems biology follows a structured process from model formulation to uncertainty quantification [36] [35]:

The Scientist's Toolkit: Essential Research Reagents

Table 3: Key Computational Tools for MCMC in Systems Biology

| Tool/Resource | Function | Application Context |

|---|---|---|

| DRAM Toolbox [36] [35] | MATLAB implementation of single-chain MCMC methods | Parameter estimation for ODE models in systems biology |

| CMA-ES Library [37] | Optimization module for covariance matrix adaptation | Integration with CMAM for proposal distribution tuning |

| Gelman-Rubin Diagnostic [40] | Convergence assessment for multiple chains | Verifying MCMC convergence before posterior analysis |

| Effective Sample Size (ESS) [39] | Metric for sampling efficiency per function evaluation | Comparing algorithm performance across different targets |

| Normalizing Flow Architectures [38] | Deep learning models for density estimation | Preconditioning complex distributions for MCMC sampling |

| Bayesian Regression Framework [41] | Statistical inference for network topology | Multi-omic network inference from time-series data |

Benchmarking studies consistently demonstrate that multi-chain MCMC algorithms generally outperform single-chain methods for complex inference problems in systems biology [36] [35]. Method selection should be guided by posterior distribution characteristics: CMA-MCMC and DREAMZS excel for high-dimensional and multimodal problems [37], while gradient-based methods like MALA and NUTS are preferable when derivatives are available [36] [35].

Future methodological development should address the critical need for comprehensive, large-scale benchmarking analogous to those established in the optimization literature [39]. Emerging approaches integrating normalizing flows [38] and specialized frameworks for multi-omic network inference [41] show particular promise for advancing biological discovery through enhanced sampling of complex stochastic models.

In the intricate field of systems biology, researchers increasingly turn to heuristic and nature-inspired optimization algorithms to solve complex, multi-dimensional problems. These problems range from elucidating gene regulatory networks and predicting protein structures to identifying drug targets and analyzing high-throughput genomic data. Unlike classical optimization techniques that struggle with rough, discontinuous, and multimodal surfaces, methods like Genetic Algorithms (GA), Particle Swarm Optimization (PSO), and Differential Evolution (DE) can navigate these challenging landscapes effectively because they do not require gradient information and make few assumptions about the underlying problem [42].

Selecting the most appropriate algorithm is crucial for research efficiency and outcomes. This guide provides an objective, data-driven comparison of these prevalent algorithms, framing their performance within the context of preparing for rigorous benchmarking in systems biology research. We summarize quantitative performance data from controlled studies, detail standard experimental protocols for evaluation, and visualize key concepts to inform the choices of researchers and drug development professionals.

Algorithm Performance at a Glance

The following tables summarize the core characteristics and experimental performance of key algorithms, providing a foundation for initial comparison.

Table 1: Fundamental Characteristics of Heuristic Algorithms

| Algorithm | Core Inspiration | Key Operators | Primary Strength | Primary Weakness |

|---|---|---|---|---|

| Genetic Algorithm (GA) | Natural evolution / Survival of the fittest [43] | Selection, Crossover, Mutation [43] | Strong exploration; good at escaping local optima [43] | Slow convergence rate [43] |

| Particle Swarm Optimization (PSO) | Social behavior of bird flocking/fish schooling [43] [44] | Velocity & Position update based on personal and swarm best [45] | Rapid convergence speed [43] [44] | Prone to premature convergence to local optima [43] [44] |

| Differential Evolution (DE) | Darwinian evolution [44] | Mutation, Recombination, Selection [44] | Often superior overall performance and robustness [46] | Performance can be dictated by variant and parameter settings [46] [47] |

| Bio Particle Swarm Optimization (BPSO) | PSO with enhanced searchability | Velocity update using randomly generated angles [48] | Avoids premature convergence; great performance on unimodal problems [48] | Limited adaptability for moving obstacles in dynamic scenarios [48] |

Table 2: Experimental Performance Comparison on Benchmark Problems

| Algorithm | Convergence Speed | Solution Quality (Average Performance) | Notes on Experimental Findings |

|---|---|---|---|

| Genetic Algorithm (GA) | Slow convergence rate [43] | Good, but may not be the most accurate [43] | Favors exploration over exploitation [43]. |

| Particle Swarm Optimization (PSO) | Fast convergence [43] [44] | Lower average performance compared to DE [46] | Excels at low computational budgets but is outperformed by DE on most problems with adequate budget [46]. |

| Differential Evolution (DE) | Varies by variant, generally competitive | Clearly outperforms PSO on average [46] | Shows marked success in algorithm competitions [46]. Advantage is significant across numerous single-objective benchmarks and real-world problems [46]. |

| Hybrid (GA-PSO) | Better balance, faster than GA alone [43] | Consistently accurate results [43] | Achieves a better balance between exploration and exploitation than parent algorithms [43]. |

Experimental Protocols for Benchmarking

To ensure fair and reproducible comparisons between algorithms, researchers should adhere to standardized experimental protocols. The following methodologies are commonly employed in the field.

Standard Benchmarking Workflow

A typical benchmarking process involves several key stages, from problem selection to performance evaluation, as visualized below.

Key Methodological Details

Benchmark Problems: Algorithms are tested on diverse sets of problems, including classical mathematical functions (e.g., Rastrigin, Rosenbrock [49]) and real-world problems. Using 22 real-world problems is common practice to ensure robustness of conclusions [46]. For systems biology, specific problem sets like causal reasoning for gene expression analysis [50] or pooled CRISPR screen analysis [51] are highly relevant.

Performance Measurement: Two primary approaches are used, often in parallel:

- Fixed-Budget Approach: The number of objective function evaluations is fixed, and algorithms are compared based on the quality of the solution found [46]. This is practical for computationally expensive problems.

- Fixed-Target Approach: A pre-assumed value of the objective function is set, and algorithms are compared based on the number of function calls needed to reach that value [46].

Algorithm Configuration: Each algorithm has control parameters that must be set. For example, PSO requires an inertia weight ((w)) and cognitive/social coefficients ((\phip), (\phig)) [45], while DE has mutation and recombination parameters [47]. Benchmarking studies often perform parameter tuning to identify optimal values for a given problem class, or use adaptive mechanisms to control parameters during runtime [47] [45].

Population Topology: The structure defining how individuals in a population interact (the communication graph) significantly impacts performance. Common topologies include global (fully connected), ring, von Neumann (grid), and random structures. Using structured populations instead of a panmictic (fully mixed) one can help maintain diversity and prevent premature convergence [44].

Visualizing Algorithm Mechanisms

Understanding the fundamental workflows of these algorithms is key to appreciating their differences in performance.

Core Workflow of a Genetic Algorithm

The GA process begins with a randomly generated population of candidate solutions (chromosomes). Each individual's fitness is evaluated against the objective function. The algorithm then selects fitter individuals to be parents, applying crossover (combining two parents to create offspring) and mutation (introducing random small changes) to generate a new population. This generational process repeats, driven by the principle of "survival of the fittest," until a stopping condition is met [43].

Core Workflow of Particle Swarm Optimization

PSO initializes a swarm of particles with random positions and velocities. Each particle moves through the search space, remembering its own best position ((p)) and knowing the swarm's global best position ((g)). The velocity update (Eq. 4 in the literature [43]) is a key component, combining three elements:

- Inertial Component: A fraction of the previous velocity.

- Cognitive Component: A pull toward the particle's own best position.

- Social Component: A pull toward the swarm's best-known position. The particle's position is updated by adding its new velocity. This process guides the swarm toward promising regions of the search space [43] [45].

The Scientist's Toolkit: Key Reagents for Computational Benchmarking

Table 3: Essential "Research Reagents" for Optimization Benchmarking

| Item | Function in Computational Experiments |

|---|---|

| Benchmark Function Suite | A standardized set of mathematical problems (e.g., Rastrigin, Rosenbrock) with known optima, used to evaluate and compare algorithm performance on different landscape properties [46] [49]. |

| Real-World Problem Set | A collection of practical optimization problems from specific domains (e.g., 22 real-world problems in evolutionary computation) to validate algorithm performance beyond synthetic benchmarks [46]. |

| Prior Knowledge Network | A biological network (e.g., Omnipath, MetaBase) used in causal reasoning algorithms to infer dysregulated signalling proteins from transcriptomics data, crucial for MoA analysis [50]. |

| Normalized Log Fold Change | A summary statistic computed in CRISPR screens by comparing guide RNA abundance in treated vs. control populations; the primary input for many analysis algorithms [51]. |

| Causal Reasoning Algorithm | A computational method (e.g., SigNet, CARNIVAL) that uses transcriptomics data and prior knowledge networks to infer upstream causes of gene expression changes, such as a compound's mechanism of action [50]. |

Empirical evidence from large-scale comparisons indicates that while PSO is more popular in the literature, DE variants often demonstrate superior performance on a wider range of problems, particularly single-objective numerical benchmarks and real-world problems [46]. However, the "no free lunch" theorem reminds us that no single algorithm is best for all problems. The optimal choice can depend on the specific problem structure, computational budget, and desired balance between exploration and exploitation.