Analytical Validation and Biomarker Qualification: A Comprehensive Guide for Drug Development

This article provides a comprehensive guide to the analytical validation and regulatory qualification of biomarkers for researchers, scientists, and drug development professionals.

Analytical Validation and Biomarker Qualification: A Comprehensive Guide for Drug Development

Abstract

This article provides a comprehensive guide to the analytical validation and regulatory qualification of biomarkers for researchers, scientists, and drug development professionals. It covers foundational definitions, the stepwise qualification process as defined by the FDA, best practices for assay validation, and strategies for navigating common challenges. By integrating methodological frameworks with practical troubleshooting advice, this resource aims to equip teams with the knowledge to efficiently develop robust, qualified biomarkers that can accelerate drug development and enhance regulatory decision-making.

Biomarker Fundamentals: Definitions, Importance, and the Regulatory Pathway

The Biomarkers, EndpointS, and other Tools (BEST) resource, established by a joint FDA-NIH working group, provides standardized definitions and a common framework for biomarker applications in medical product development [1] [2]. According to BEST, a biomarker is formally defined as "a defined characteristic that is measured as an indicator of normal biological processes, pathogenic processes, or biological responses to an exposure or intervention, including therapeutic interventions" [1]. This comprehensive definition encompasses molecular, histologic, radiographic, or physiologic characteristics that can be objectively measured and evaluated [3].

The development of the BEST resource addressed significant confusion and inconsistency in biomarker terminology that had been impeding progress in drug development and regulatory science [2]. By establishing a standardized lexicon, BEST enables more precise communication among researchers, regulators, and drug developers, facilitating more efficient development of diagnostic and therapeutic technologies [2]. The resource categorizes biomarkers into specific types based on their application, with each category serving distinct purposes in drug development and clinical practice [1] [4].

Biomarker Categories in the BEST Glossary

The BEST resource classifies biomarkers into seven primary categories based on their specific applications in drug development and clinical practice. Each category serves a distinct purpose, from disease identification to predicting treatment outcomes. The table below summarizes these categories with their definitions and representative examples.

Table 1: BEST Biomarker Categories and Applications

| Category | Definition | Example |

|---|---|---|

| Susceptibility/Risk | Identifies potential for developing a disease/condition [4] | BRCA1/2 mutations for breast/ovarian cancer risk [4] |

| Diagnostic | Detects or confirms presence of a disease/condition [1] [4] | Hemoglobin A1c for diabetes diagnosis [4] |

| Monitoring | Serially assesses disease/condition status or exposure/response effects [1] [4] | HCV RNA viral load for Hepatitis C treatment monitoring [4] |

| Prognostic | Identifies likelihood of a clinical event, disease recurrence, or progression [4] | Total kidney volume for predicting autosomal dominant polycystic kidney disease progression [4] |

| Predictive | Identifies individuals more likely to experience a favorable/unfavorable effect from a specific medical product [4] | EGFR mutation status for predicting response to tyrosine kinase inhibitors in NSCLC [4] |

| Pharmacodynamic/Response | Shows a biological response has occurred in an individual who has received a medical product [4] | HIV RNA viral load as a surrogate endpoint in HIV drug trials [4] |

| Safety | Indicates potential for, presence of, or extent of toxicity related to a medical product [1] [4] | Serum creatinine for detecting drug-induced kidney injury [4] |

A single biomarker can often function in multiple categories depending on its specific application. For instance, Hemoglobin A1c serves as both a diagnostic biomarker for identifying diabetes and a monitoring biomarker for tracking long-term glycemic control in diagnosed individuals [4]. This multifunctionality highlights the importance of precisely defining a biomarker's context of use rather than considering its classification in isolation.

Table 2: Methodological Approaches for Different Biomarker Categories

| Biomarker Category | Primary Validation Focus | Common Methodologies |

|---|---|---|

| Susceptibility/Risk | Epidemiological evidence, biological plausibility, causality [4] | Genomic sequencing, family history analysis [5] |

| Diagnostic | Sensitivity, specificity, accurate disease identification [4] | ELISA, molecular diagnostics, imaging [5] [6] |

| Monitoring | Ability to reflect disease status changes over time [4] | Serial laboratory testing, repeated imaging [2] |

| Prognostic | Consistent correlation with disease outcomes [4] | Multivariate models, long-term clinical tracking [4] |

| Predictive | Sensitivity, specificity, mechanistic link to treatment [4] | Companion diagnostics, targeted sequencing [4] [5] |

| Pharmacodynamic/Response | Direct relationship between drug action and biomarker changes [4] | LC-MS/MS, multiplex immunoassays [6] |

| Safety | Consistent indication of adverse effects across populations [4] | Clinical chemistry panels, histopathology [5] |

Context of Use: The Critical Framework for Biomarker Application

The Context of Use (COU) is defined as "a concise description of the biomarker's specified use in drug development" [7]. This structured statement precisely defines how and when a biomarker should be deployed within medical product development and regulatory review [1] [7]. The COU consists of two essential components: the BEST biomarker category and the biomarker's specific intended use in drug development [7].

A well-constructed COU statement typically follows this structure: "[BEST biomarker category] to [drug development use]" [7]. For example, a complete COU might be "Predictive biomarker to enrich for enrollment of a sub group of asthma patients who are more likely to respond to a novel therapeutic in Phase 2/3 clinical trials" [7]. This precise framing eliminates ambiguity about the biomarker's purpose and sets clear boundaries for its appropriate application.

The drug development use component of a COU may include additional descriptive elements such as the specific patient population, disease stage, model system, stage of drug development, or mechanism of action of the therapeutic intervention [7]. This specificity ensures that the biomarker is applied consistently and appropriately across different development programs. Establishing a clear COU is particularly important for biomarkers used in regulatory decision-making, as it forms the basis for evaluating the adequacy of validation evidence [4].

Table 3: Examples of Context of Use Statements in Drug Development

| BEST Category | Drug Development Use | Complete COU Statement |

|---|---|---|

| Predictive | Enrich enrollment of patients likely to respond in Phase 2/3 trials [7] | Predictive biomarker to enrich for enrollment of a sub group of asthma patients who are more likely to respond to a novel therapeutic in Phase 2/3 clinical trials [7] |

| Prognostic | Enrich likelihood of events during clinical trial timeframe [7] | Prognostic biomarker to enrich the likelihood of hospitalizations during the timeframe of a clinical trial in phase 3 asthma clinical trials [7] |

| Safety | Detect acute drug-induced organ injury in preclinical models [7] | Safety biomarker for the detection of acute drug-induced renal tubule alterations in male rats [7] |

| Diagnostic | Identify patients with specific molecular subtype for targeted therapy | Diagnostic biomarker to identify non-small cell lung cancer patients with ALK mutations for targeted therapy in Phase 3 trials |

The Biomarker Validation Process: From Analytical to Clinical

Biomarker validation follows a rigorous, multi-stage process to ensure reliability and relevance for the specified COU. This process encompasses three distinct but interconnected components: analytical validation, clinical validation, and qualification [2].

Analytical Validation

Analytical validation establishes that the performance characteristics of a test, tool, or instrument are acceptable in terms of its sensitivity, specificity, accuracy, precision, and other relevant performance characteristics using a specified technical protocol [1]. This process validates the technical performance of the measurement assay but does not establish the biomarker's usefulness for its intended purpose [1]. Key parameters evaluated during analytical validation include:

- Accuracy: The closeness of agreement between a measured value and the true value [8]

- Precision: The closeness of agreement between a series of measurements obtained from multiple sampling of the same homogeneous sample [8]

- Sensitivity: The lowest amount of an analyte that can be accurately measured [8]

- Specificity: The ability to unequivocally assess the analyte in the presence of other components [8]

- Range: The interval between the upper and lower concentrations of analyte for which the method has suitable precision, accuracy, and linearity [8]

Advanced technologies such as liquid chromatography tandem mass spectrometry (LC-MS/MS) and Meso Scale Discovery (MSD) platforms are increasingly supplementing or replacing traditional ELISA methods due to their superior precision, sensitivity, and efficiency [6]. For example, MSD provides up to 100 times greater sensitivity than traditional ELISA and enables multiplexing of multiple biomarkers simultaneously, significantly reducing costs per data point [6].

Clinical Validation

Clinical validation demonstrates that the biomarker acceptably identifies, measures, or predicts the concept of interest relevant to the COU [1] [4]. This process establishes that the biomarker accurately identifies or predicts the clinical outcome or biological process it is intended to measure [4]. Clinical validation includes:

- Assessing clinical sensitivity and specificity

- Determining positive and negative predictive values

- Evaluating the biomarker's performance in the intended use population

- Establishing reference ranges or clinical cutpoints

The level of evidence required for clinical validation varies significantly depending on the COU. A biomarker used for patient stratification in early-phase trials requires different evidence than one used as a surrogate endpoint for regulatory approval [4].

Biomarker Qualification

Biomarker qualification is the formal regulatory process through which a biomarker receives regulatory endorsement for a specific COU [3]. The FDA's Biomarker Qualification Program (BQP) provides a structured, collaborative framework for this process, involving three distinct stages [3] [9]:

- Stage 1: Letter of Intent (LOI) - Initial submission describing the biomarker proposal, drug development need, and proposed COU

- Stage 2: Qualification Plan (QP) - Detailed proposal describing the development plan to generate necessary evidence

- Stage 3: Full Qualification Package (FQP) - Comprehensive compilation of supporting evidence for FDA's qualification decision

Recent analyses of the BQP reveal that as of July 2025, only eight biomarkers had been fully qualified through the program, with seven of these qualified before the 21st Century Cures Act was enacted in 2016 [9]. The program has accepted 61 projects, with safety biomarkers (30%), diagnostic biomarkers (21%), and pharmacodynamic/response biomarkers (20%) being the most common categories [9]. The qualification process involves substantial timelines, with median qualification plan development taking 32 months and reviews frequently exceeding FDA target timeframes [9].

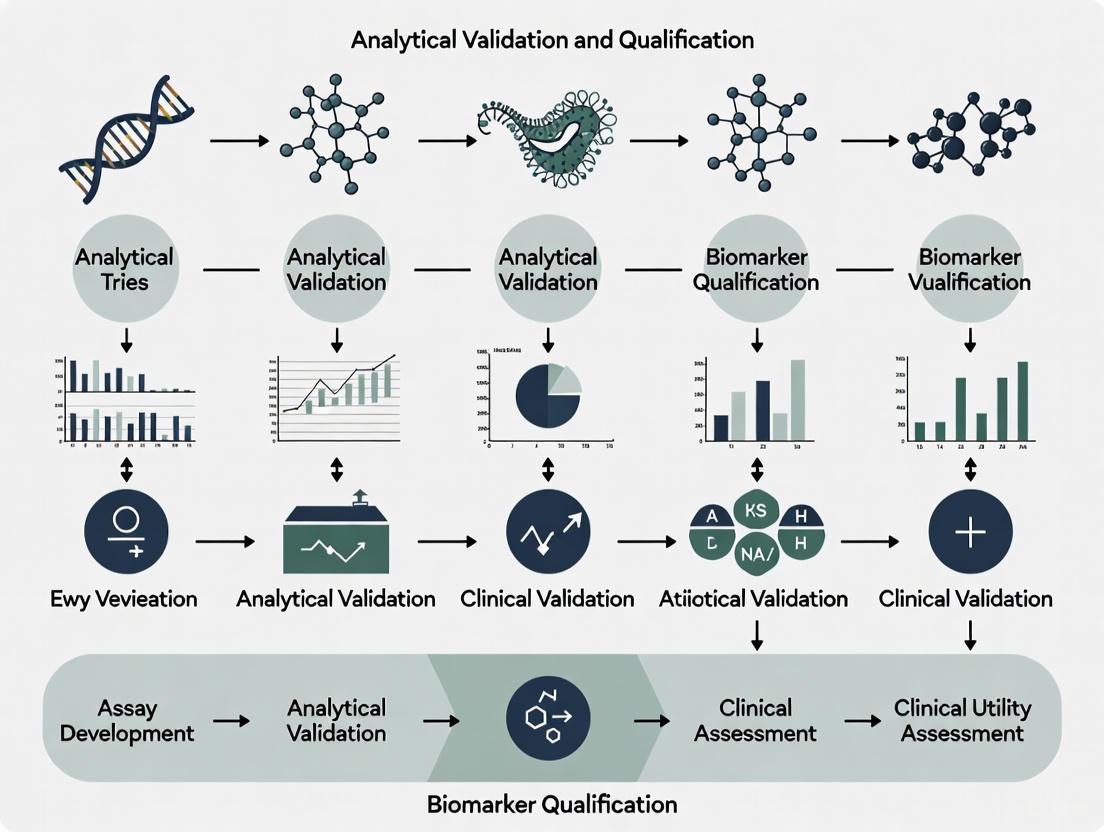

Figure 1: Biomarker Qualification Pathway. This diagram illustrates the sequential process from defining Context of Use through validation stages to regulatory qualification.

Biomarker Qualification and Regulatory Pathways

Regulatory Acceptance Pathways

Several pathways exist for achieving regulatory acceptance of biomarkers, each with distinct advantages and considerations [4]:

- Early Engagement: Through mechanisms like Critical Path Innovation Meetings (CPIM), drug developers can discuss biomarker validation plans and receive non-binding advice from regulatory agencies [3]

- IND Process: Biomarkers can be developed and validated within specific drug development programs via the Investigational New Drug application process, with regulatory review occurring during product approval [4]

- Biomarker Qualification Program (BQP): This standalone pathway provides broader acceptance of biomarkers across multiple drug development programs once qualified, though it requires more extensive evidence generation [4] [3]

The choice between these pathways involves strategic considerations. The IND pathway may be more efficient for biomarkers with established evidence used within a specific drug program, while the BQP offers value for biomarkers with applicability across multiple development programs, despite longer timelines and greater evidence requirements [4].

Fit-for-Purpose Validation Principle

A fundamental principle in biomarker validation is the fit-for-purpose approach, which recognizes that the level and type of validation needed should be appropriate for the specific COU [1] [4]. This principle acknowledges that validation requirements differ substantially between biomarker categories and applications [4]. For example:

- A safety biomarker used for monitoring organ toxicity in preclinical studies requires different validation than a predictive biomarker used for patient selection in registrational trials

- A pharmacodynamic biomarker used for dose selection may require less extensive validation than a surrogate endpoint used for accelerated approval

- An exploratory biomarker used for internal decision-making requires less rigorous validation than one used for regulatory decision-making

The fit-for-purpose approach ensures efficient resource allocation while maintaining scientific rigor appropriate to the biomarker's application and associated decision consequences [4].

Experimental Approaches and Research Reagent Solutions

Methodological Comparisons for Biomarker Analysis

Selecting appropriate analytical methods is crucial for generating reliable biomarker data. The table below compares commonly used technologies for biomarker analysis, highlighting their respective advantages and limitations.

Table 4: Comparison of Biomarker Analytical Platforms

| Technology | Sensitivity | Multiplexing Capacity | Throughput | Key Applications | Limitations |

|---|---|---|---|---|---|

| ELISA | Moderate (ng-pg/mL) [6] | Low (single-plex) [6] | Moderate | Validated single-analyte tests [6] | Narrow dynamic range, antibody-dependent [6] |

| Meso Scale Discovery (MSD) | High (100x ELISA) [6] | Medium (up to 10-plex) [6] | High | Cytokine profiling, signaling pathways [6] | Platform-specific reagents, specialized instrumentation [6] |

| LC-MS/MS | High (fg-pg/mL) [6] | High (100s-1000s) [6] | Low to medium | Metabolomics, proteomics, drug monitoring [6] | Complex sample preparation, technical expertise [6] |

| Genomic Sequencing | Variable | High (whole genome) | Variable | Mutational analysis, expression profiling [5] | Data interpretation challenges, bioinformatics requirements [5] |

Essential Research Reagent Solutions

Successful biomarker development and validation require carefully selected reagents and materials. The following table outlines key research reagent solutions and their functions in biomarker workflows.

Table 5: Essential Research Reagents for Biomarker Development

| Reagent Category | Specific Examples | Function in Biomarker Workflows |

|---|---|---|

| Capture & Detection Antibodies | Monoclonal, polyclonal, recombinant antibodies [6] | Specific binding to target analytes in immunoassays [6] |

| Calibrators & Quality Controls | Recombinant proteins, synthetic peptides, reference materials [8] | Establish standard curves, monitor assay performance [8] |

| Assay Diluents & Matrices | Buffer systems, matrix-matched diluents [8] | Minimize matrix effects, maintain analyte stability [8] |

| Signal Detection Reagents | Chemiluminescent, electrochemical, fluorescent substrates [6] | Generate measurable signals proportional to analyte concentration [6] |

| Sample Collection & Storage | Collection tubes, preservatives, protease inhibitors | Maintain sample integrity from collection to analysis |

| Multiplex Assay Kits | U-PLEX, cytokine panels, pathway arrays [6] | Simultaneous measurement of multiple analytes in limited samples [6] |

Figure 2: Logical Flow from Biomarker Category to Regulatory Application. This workflow depicts the logical progression from identifying drug development needs through biomarker application and regulatory decisions.

The standardized definitions provided by the BEST resource and the precise framework of Context of Use have fundamentally transformed biomarker development and application in medical product development. This systematic approach enables clearer communication between researchers and regulators, more efficient drug development, and ultimately, better targeted therapies for patients. The rigorous validation and qualification processes ensure that biomarkers can be relied upon for specific interpretations and applications within drug development and regulatory review.

As biomarker science continues to evolve with emerging technologies such as complex composite biomarkers, digital biomarkers from sensors and mobile technologies, and advanced analytical platforms, the foundational principles established by BEST and the COU framework provide the necessary structure for validating and implementing these novel tools [2] [6]. The ongoing development of biomarker qualification pathways and fit-for-purpose validation approaches will continue to support the translation of innovative biomarkers from discovery to regulatory acceptance and clinical application, ultimately enhancing the efficiency and success of drug development programs.

In the rigorous landscape of drug development, biomarkers have emerged as indispensable tools for diagnosing diseases, identifying therapeutic targets, monitoring patients, and stratifying patient populations [4] [10]. Their weight as decision-drivers in the development process has grown substantially, making the evidence supporting their use critical [10]. However, the terminology surrounding biomarker assessment often creates confusion, particularly between the processes of analytical validation and clinical qualification. These are two distinct but interconnected pillars in the biomarker lifecycle.

Analytical validation and clinical qualification represent sequential yet fundamentally different phases of evaluation. Analytical validation asks, "Does the assay measure the biomarker accurately and reliably?" whereas clinical qualification asks, "Does the biomarker measurement meaningfully predict or reflect the biological or clinical outcome of interest?" [11] [12]. Clarity on this distinction is not merely semantic; it is fundamental to efficient drug development and regulatory success. This guide provides a structured comparison of these two critical processes, equipping researchers and drug development professionals with the knowledge to navigate this complex terrain.

Conceptual Frameworks and Definitions

What is Analytical Validation?

Analytical validation is the process of establishing that the performance characteristics of an assay are suitable for its intended purpose [11] [10]. It focuses on the technical performance of the method used to measure the biomarker, confirming that the assay produces reliable, accurate, and reproducible results across a defined range [13] [12]. The goal is to demonstrate that the tool itself works correctly, independent of its biological or clinical interpretation.

What is Clinical Qualification?

Clinical qualification, often referred to in regulatory contexts as biomarker qualification, is the evidentiary process of linking a biomarker with biological processes and clinical endpoints [11]. It provides evidence that the biomarker accurately and reliably identifies a clinically or biologically defined disorder or state, and that it is capable of discriminating between groups with different clinical or biological characteristics [12]. The U.S. Food and Drug Administration (FDA) defines biomarker qualification as the conclusion that "within the stated context of use, the results of assessment with a drug development tool can be relied upon to have a specific interpretation and application in drug development and regulatory review" [14].

The Interrelationship in the Biomarker Development Workflow

The following diagram illustrates the sequential yet interconnected relationship between analytical validation, clinical validation, and clinical qualification in the overall biomarker development workflow.

Comparative Analysis: Core Dimensions

The distinction between analytical validation and clinical qualification extends across multiple dimensions, from their fundamental questions to their regulatory implications. The table below provides a structured, point-by-point comparison.

| Dimension | Analytical Validation | Clinical Qualification |

|---|---|---|

| Core Question | Does the assay measure the biomarker accurately and reliably? [12] | Does the biomarker predict or reflect a meaningful biological/clinical outcome? [11] |

| Primary Focus | Assay performance and technical characteristics [13] [12] | Clinical/biological significance of the biomarker result [11] |

| Key Parameters | Accuracy, precision, sensitivity, specificity, reproducibility, stability, reportable range [13] [10] [12] | Sensitivity, specificity, positive/negative predictive value, clinical utility, association with outcomes [4] [12] |

| Context Dependence | Dependent on the assay's intended measurement range and sample type [10] | Dependent on the specific Context of Use (COU) in drug development [14] [4] |

| Regulatory Goal | Ensure consistent and reliable biomarker measurement [13] | Qualify the biomarker for a specific COU across multiple drug development programs [14] |

| Stage in Pipeline | Earlier stage; prerequisite for clinical qualification [12] | Later stage; requires analytically validated assay [11] |

Experimental Protocols and Methodologies

Protocol for Core Analytical Validation Experiments

A robust analytical validation protocol must assess key performance parameters to ensure the assay is fit-for-purpose. The following table outlines the core experiments, their methodologies, and typical acceptance criteria.

| Parameter | Experimental Methodology | Key Acceptance Criteria |

|---|---|---|

| Accuracy | Measure agreement between measured value and true value (or reference standard) [12]. | Bias within pre-specified limits (e.g., ±20% of nominal value) [10]. |

| Precision | Perform repeated measurements of Quality Control (QC) samples within and between runs [13] [12]. | %CV for repeatability (within-run) and intermediate precision (between-run) below a threshold (e.g., 20-25%) [10]. |

| Sensitivity | Determine the Lowest Limit of Quantification (LLOQ) as the lowest concentration measured with acceptable accuracy and precision [13]. | LLOQ samples meet accuracy and precision criteria. |

| Specificity/Selectivity | Assess interference from matrix components (e.g., hemolyzed or lipemic samples) or similar analytes [10]. | Measured concentration of analyte in the presence of interferents is within ±20% of baseline. |

| Stability | Evaluate analyte stability under various conditions (freeze-thaw, benchtop, long-term storage) [10] [12]. | Stability samples maintain concentration within ±20% of baseline fresh sample. |

| Assay Measurement Range (AMR) | Establish the range from LLOQ to Upper Limit of Quantification (ULOQ) where assay is linear, precise, and accurate [10]. | Linearity with R² > 0.95, and QCs across range meet accuracy/precision criteria. |

Protocol for Core Clinical Qualification Experiments

Clinical qualification experiments aim to establish the relationship between the biomarker and the clinical endpoint of interest. The methodology is highly dependent on the Context of Use (COU).

| Experiment Objective | Methodology & Study Design | Data Analysis & Endpoints |

|---|---|---|

| Establish Clinical Sensitivity & Specificity | Case-control or cross-sectional study comparing biomarker measurements in subjects with and without the condition/disease [12]. | ROC curve analysis to determine AUC, optimal cut-off, sensitivity, and specificity [12]. |

| Prove Prognostic Value | Longitudinal cohort study measuring biomarker at baseline and following subjects for clinical outcomes over time. | Hazard Ratios (HR) or Relative Risk (RR) from Cox proportional hazards or regression models, correlating biomarker level with outcome. |

| Demonstrate Predictive Value | Randomized controlled trial (RCT) analyzing biomarker's interaction with treatment effect (e.g., biomarker-positive vs. biomarker-negative subgroups) [4]. | Test for interaction between treatment arm and biomarker status on the primary clinical endpoint (e.g., p-value for interaction). |

| Validate as a Surrogate Endpoint | Meta-analysis of multiple RCTs to establish if the biomarker effect reliably predicts the overall clinical treatment effect [4]. | Correlation analysis between the treatment effect on the biomarker and the treatment effect on the final clinical endpoint. |

The Central Role of Context of Use (COU) and Fit-for-Purpose Validation

The rigor required for both analytical and clinical studies is governed by the Context of Use (COU)—a concise description of the biomarker's specified use in drug development [4] [10]. The COU defines the fit-for-purpose validation approach, meaning the level of evidence must be sufficient to support the specific decision the biomarker will inform [4] [15].

For example, an exploratory biomarker used for internal decision-making in early research may only require a minimally validated assay and limited clinical data [15]. In contrast, a biomarker intended as a surrogate endpoint to support regulatory approval requires the highest level of evidence: a fully analytically validated assay and extensive clinical qualification, often through meta-analysis of multiple clinical trials [4]. This principle ensures that resources are allocated efficiently while meeting the necessary regulatory standards for the intended application.

Regulatory Pathways and Considerations

The regulatory acceptance of biomarkers acknowledges the distinct roles of analytical validation and clinical qualification. There are two primary pathways for achieving this acceptance, each with different implications.

The Drug approval pathway

Biomarkers are reviewed within a specific drug's marketing application (e.g., NDA, BLA). The biomarker's analytical validation and clinical qualification are evaluated for that specific drug development program and patient population [14].

The Biomarker Qualification Program (BQP)

This is a collaborative, evidence-based process where the FDA's Center for Drug Evaluation and Research (CDER) qualifies a biomarker for a specific COU independent of a single drug approval [14]. Once qualified, the biomarker can be used in multiple drug development programs without the need for CDER to reconfirm its suitability for that qualified COU [14]. This pathway is intended for biomarkers with broad applicability.

A recent analysis of the BQP revealed that as of 2025, only eight biomarkers have been fully qualified through the program, with safety biomarkers being the most common type achieving qualification [9]. The process is rigorous and time-consuming, with median development times for a Qualification Plan exceeding 2.5 years [9]. This underscores the significant investment required for the formal clinical qualification of a biomarker for widespread use.

The Scientist's Toolkit: Essential Research Reagent Solutions

The successful validation and qualification of a biomarker rely on a foundation of critical reagents and materials. The following table details key components of the research toolkit.

| Tool/Reagent | Critical Function | Considerations for Use |

|---|---|---|

| Reference Standard | Serves as the benchmark for quantifying the biomarker and assessing assay accuracy [10]. | Purity, stability, and commutability (behaving like the endogenous biomarker in the sample matrix) are paramount. |

| Quality Control (QC) Samples | Used to monitor assay precision, accuracy, and stability over time during validation and routine use [10]. | Should be matrix-matched and span the assay's dynamic range (low, mid, high). |

| Characterized Sample Panels | Essential for clinical validation to establish reference intervals and for assessing clinical sensitivity/specificity [10] [12]. | Must be well-annotated and representative of the intended use population (e.g., healthy vs. diseased). |

| Specific Binding Reagents | For immunoassays: antibodies (capture/detection). For LC-MS: internal standard (SIS). | Specificity and affinity for the target biomarker are critical. Cross-reactivity must be assessed during analytical validation [10]. |

| Matrix-Blank Samples | Used to demonstrate assay specificity and confirm the absence of significant matrix interference [10]. | The ideal matrix is the same as the study sample (e.g., human plasma, serum, CSF) but without the analyte. |

The journey from a promising biomarker discovery to a regulatory-qualified tool is complex and demands a clear understanding of the critical distinction between analytical validation and clinical qualification. Analytical validation ensures you have a reliable ruler—a precise and accurate method for measurement. Clinical qualification confirms that the measurements taken with that ruler have a meaningful and interpretable relationship with clinical or biological outcomes.

For researchers and drug development professionals, adhering to this distinction is not an academic exercise but a practical necessity. A flawless assay is useless if the biomarker lacks clinical relevance, and a clinically relevant biomarker cannot be reliably employed without a rigorously validated assay. By adopting a fit-for-purpose mindset that is driven by the Context of Use, teams can design efficient yet robust development strategies. This approach ensures that biomarkers fulfill their potential as powerful tools to accelerate the development of safe and effective therapies.

The FDA's Biomarker Qualification Program (BQP) provides a formal framework for qualifying biomarkers for specific uses in drug development, enabling their broader application across multiple drug development programs without needing re-evaluation in each new context [16]. Established to address the significant resource challenges often associated with biomarker development, the BQP operates on the principle that qualified biomarkers serve as publicly available tools that can accelerate therapeutic development and regulatory decision-making [17] [18]. The program was formally structured under Section 507 of the 21st Century Cures Act of 2016, which institutionalized a transparent, three-stage submission pathway for biomarker qualification [17] [19].

Qualification is distinct from biomarker acceptance within a specific drug application. When a biomarker is qualified through the BQP, it means that within a specifically defined Context of Use (COU), the biomarker has been demonstrated to reliably support a specified manner of interpretation and can be used in any drug development program for that qualified purpose [16]. This process is particularly valuable for addressing drug development challenges that extend beyond a single sponsor's program, creating efficiencies through collaborative evidence generation and regulatory review [17]. The qualification does not apply to a specific test or assay but to the biomarker itself, meaning any analytically validated method can be used to measure the qualified biomarker in IND, NDA, or BLA submissions [16].

The Three-Stage Qualification Roadmap

The biomarker qualification process follows a structured, sequential pathway consisting of three formal stages that increase in evidentiary requirements. This progressive approach allows for early feedback and course correction while building the comprehensive evidence base needed for qualification.

Figure 1: The FDA's Three-Stage Biomarker Qualification Pathway

Stage 1: Letter of Intent (LOI)

The qualification process begins with the submission of a Letter of Intent (LOI), which serves as an initial proposal outlining the biomarker's potential value and feasibility [3] [20]. The LOI provides FDA with essential information to conduct a preliminary assessment, including the drug development need the biomarker is intended to address, detailed biomarker information, the proposed Context of Use, and information on how the biomarker will be measured [3]. FDA reviews the LOI to assess whether the biomarker addresses an unmet drug development need and whether the proposal is scientifically feasible based on current understanding [3]. If the FDA accepts the LOI, the requestor receives permission to proceed to the next stage and submit a Qualification Plan [3] [21]. The agency aims to complete its review of LOIs within three months, though real-world median review times have historically exceeded this target [18].

Stage 2: Qualification Plan (QP)

The Qualification Plan (QP) represents a detailed, comprehensive proposal describing the complete biomarker development strategy [3] [20]. This stage requires requestors to provide a thorough summary of existing information supporting the proposed Context of Use, identify significant knowledge gaps, and propose specific studies or analyses to address these gaps [3]. The QP must include detailed information about analytical methods and performance characteristics of the biomarker measurement [3]. A successfully accepted QP essentially constitutes FDA agreement with the overall development approach and provides the requestor with specific instructions for preparing the Full Qualification Package [3] [21]. The FDA's target timeline for QP review is six months, though this timeframe has also proven challenging to maintain consistently [18].

Stage 3: Full Qualification Package (FQP)

The Full Qualification Package (FQP) constitutes the complete evidentiary submission for biomarker qualification [3]. This comprehensive compilation contains all accumulated information supporting qualification of the biomarker for the specified Context of Use, organized by topic area and representing the culmination of the development activities outlined in the Qualification Plan [20]. The FQP serves as the basis for FDA's final qualification decision, with the agency reviewing the complete evidence base to determine whether the biomarker can be relied upon to have a specific interpretation and application within the stated Context of Use [3] [16]. The FDA aims to complete its review of FQPs within ten months [18]. Upon successful qualification, the biomarker becomes publicly available for use in any drug development program for the qualified Context of Use [16].

Table 1: Key Components of Each Qualification Stage

| Stage | Primary Purpose | Key Submission Components | FDA Review Focus | Target Timeline |

|---|---|---|---|---|

| Letter of Intent (LOI) | Initial proposal and feasibility assessment | Drug development need, Biomarker information, Proposed Context of Use, Measurement approach [3] | Value in addressing unmet need, Scientific feasibility [3] | 3 months [18] |

| Qualification Plan (QP) | Detailed development strategy | Evidence summary, Knowledge gaps, Studies to address gaps, Analytical validation plan [3] | Soundness of development approach, Adequacy of proposed evidence [3] | 6 months [18] |

| Full Qualification Package (FQP) | Comprehensive evidence submission | All accumulated data supporting qualification, Organized by topic area [3] [20] | Sufficiency of evidence for qualified use [3] | 10 months [18] |

Program Performance and Quantitative Metrics

As of June 2025, the Biomarker Qualification Program has qualified only 8 biomarkers since its inception, with most qualifications occurring prior to the enactment of the 21st Century Cures Act in December 2016 [22] [18]. The most recent qualification occurred in 2018, indicating a significant slowdown in the program's output in recent years [18]. Currently, 59 biomarker projects are in various stages of development within the program, with 49 at the LOI stage and 10 at the QP stage [22]. These metrics suggest that while interest in biomarker qualification remains substantial, the transition from initial interest to full qualification presents significant challenges.

Analysis of program performance reveals persistent timing challenges throughout the qualification pathway. FDA review timelines for LOIs and QPs have regularly exceeded the agency's targets, with median review times more than double the respective three- and six-month goals [18]. Sponsor-side development also contributes to timeline extensions, with qualification plan development taking a median of over two-and-a-half years among programs with analyzable data [18]. The development complexity is particularly pronounced for biomarkers intended as surrogate endpoints, which have a median development time of nearly four years – 16 months longer than for other biomarker types [18]. Despite these challenges, the program has demonstrated particular effectiveness for safety biomarkers, which constitute approximately one-third of accepted programs and half of the successfully qualified biomarkers [18].

Table 2: Biomarker Qualification Program Metrics (as of June 2025) [22]

| Program Metric | Biomarker Qualification Program | All DDT Programs Combined |

|---|---|---|

| Total Projects in Development | 59 | 141 |

| LOIs Accepted | 49 | 121 |

| QPs Accepted | 10 | 20 |

| Newly Qualified (past 12 months) | 0 | 1 |

| Total Qualified to Date | 8 | 17 |

Analytical and Evidentiary Standards

The biomarker qualification process requires rigorous analytical and clinical validation tailored to the specific Context of Use. The evaluation framework encompasses three critical components: analytical validation, qualification (evidentiary assessment), and utilization analysis [23]. This structured approach brings consistency and transparency to what was previously a non-uniform evaluation process [23].

Analytical Validation Requirements

Analytical validation involves comprehensive assessment of the assay's measurement performance characteristics, determining the range of conditions under which the assay produces reproducible and accurate data [23]. This process includes establishing accuracy, precision, analytical sensitivity, analytical specificity, reportable range, and reference range appropriate for the measurement method and analyte of interest [4]. The level of analytical validation required follows a "fit-for-purpose" principle, meaning the extent of validation should match the degree of certainty needed for the proposed use [4]. For example, a biomarker used for early drug discovery decisions may require less extensive validation than one used as a primary endpoint in a pivotal trial [4].

Evidentiary Qualification Framework

The qualification component focuses on assessing available evidence on associations between the biomarker and disease states, including data showing effects of interventions on both the biomarker and clinical outcomes [23]. The evidence requirements vary significantly by biomarker category, with each type demanding tailored validation approaches focusing on specific evidence characteristics [4]. Susceptibility/risk biomarkers require epidemiological evidence and biological plausibility, while diagnostic biomarkers prioritize sensitivity and specificity across diverse populations [4]. Predictive biomarkers need robust clinical data showing consistent correlation with treatment response, often requiring demonstration of a mechanistic link [4].

Figure 2: Three-Component Framework for Biomarker Evaluation [23]

Context of Use and Utilization Analysis

The final component, utilization analysis, involves contextual assessment based on the specific proposed use and applicability of available evidence to this use [23]. This includes determining whether the validation and qualification conducted provide sufficient support for the proposed Context of Use [23]. The utilization analysis also encompasses benefit/risk assessment, considering the consequences of false positive or false negative results, availability of alternative tools, and impact on the target patient population [4]. This contextual analysis is critical as the same biomarker may have substantially different evidence requirements depending on whether it is used for early dose selection versus as a surrogate endpoint supporting regulatory approval [4].

Alternative Regulatory Pathways and Strategic Considerations

While the BQP offers a pathway for broad biomarker qualification, it is not the only mechanism for gaining regulatory acceptance of biomarkers in drug development. Understanding the alternative pathways enables strategic selection of the most efficient approach based on specific development objectives.

Drug-Specific Biomarker Acceptance

Biomarkers do not require formal qualification through the BQP to be used in drug development [16]. Through the traditional IND/NDA/BLA process, biomarkers can be reviewed and accepted within the context of a specific drug application [16] [4]. This pathway is often more efficient for biomarkers with established use in a particular therapeutic area or when the biomarker is being developed primarily for a specific drug program [4]. Importantly, biomarkers that have not been formally qualified by the BQP may still be acceptable for use in drug development and can support labeling claims through the standard drug approval process [16].

Early Engagement Mechanisms

FDA provides several mechanisms for early engagement on biomarker development outside the formal qualification process. The Critical Path Innovation Meeting (CPIM) offers a non-regulatory forum for discussing proposed biomarkers and receiving non-binding advice from FDA on their potential value in drug development [3]. For developers who identify promising but not yet fully validated biomarkers, FDA may issue a Letter of Support (LOS) briefly describing the agency's thoughts on the biomarker's potential value and encouraging further evaluation [3]. These alternative engagement strategies provide valuable feedback without committing to the more resource-intensive formal qualification pathway.

Table 3: Comparison of Biomarker Regulatory Pathways

| Pathway Characteristic | BQP Qualification | Drug-Specific Acceptance | Early Engagement (CPIM/LOS) |

|---|---|---|---|

| Regulatory Scope | Qualified for use across multiple drug development programs [16] | Accepted within a specific drug application [16] | Non-binding, preliminary feedback [3] |

| Evidence Requirements | Comprehensive evidence for broad applicability [3] | Sufficient for specific drug context [4] | Variable based on development stage [3] |

| Resource Investment | High (often requiring consortia) [17] [3] | Moderate (focused on specific drug) [4] | Low (exploratory discussions) [3] |

| Timeline | Multi-year process [18] | Aligned with drug development timeline [4] | Short-term engagement [3] |

| Strategic Value | Creates public tool for broader community [17] | Efficient for sponsor-specific needs [4] | Early de-risking of biomarker strategy [3] |

Essential Research Reagents and Methodologies

Successful biomarker qualification requires carefully selected research reagents and methodological approaches that ensure reliability and reproducibility across the development process.

Table 4: Essential Research Reagent Solutions for Biomarker Qualification

| Research Reagent Category | Specific Examples | Function in Qualification Process |

|---|---|---|

| Analytical Standards & Controls | Reference materials, Calibrators, Quality control samples [4] | Establish assay accuracy, precision, and reproducibility for analytical validation [4] |

| Assay Platforms & Kits | Immunoassays, PCR assays, Sequencing kits, Mass spectrometry platforms [16] [4] | Provide validated methods for biomarker measurement with established performance characteristics [16] |

| Biological Sample Collections | Biobanked specimens, Prospective cohort samples, Disease-specific panels [4] | Enable clinical validation across diverse populations and disease states [4] |

| Data Analysis Tools | Statistical software, Bioinformatics pipelines, Algorithm development platforms [4] | Support evidence generation through rigorous data analysis and pattern recognition [4] |

| Documentation Systems | Electronic lab notebooks, Data management platforms, Quality management systems [20] | Maintain comprehensive records required for regulatory submissions and audit trails [20] |

The FDA's three-stage Biomarker Qualification Program represents a structurally sound pathway for establishing biomarkers as qualified drug development tools with clearly defined Contexts of Use. The structured process of Letter of Intent, Qualification Plan, and Full Qualification Package provides a systematic framework for building the comprehensive evidence base required for regulatory qualification [3] [20]. However, program metrics indicate significant challenges in both timeline efficiency and throughput, with only eight biomarkers qualified to date and review timelines regularly exceeding FDA targets [22] [18].

The future utility of the BQP will likely depend on addressing current limitations through increased resources, potentially linked to user fee programs, and enhanced interaction opportunities between FDA and biomarker developers [18]. Despite these challenges, the program maintains distinct value for biomarkers addressing cross-cutting drug development needs that benefit multiple therapeutic programs, particularly in the safety biomarker domain where it has demonstrated relative success [18]. For targeted development needs, alternative pathways including drug-specific acceptance and early engagement mechanisms provide potentially more efficient routes to regulatory acceptance [16] [4]. Strategic selection among these pathways, based on specific development objectives and available resources, remains essential for optimizing biomarker integration into drug development programs.

In the realm of modern drug development, biomarkers are indispensable tools that provide objective indicators of biological processes, pathogenic processes, or pharmacological responses to therapeutic interventions [11]. The U.S. Food and Drug Administration (FDA) emphasizes that appropriately validated biomarkers are critical for benefiting both drug development and regulatory assessments, playing pivotal roles in patient population selection, dose selection, and safety and efficacy evaluations [4]. The context of use (COU)—a concise description of a biomarker's specified application in drug development—is fundamental to determining the necessary validation strategy [4]. Biomarker development involves identifying a drug development need, establishing the COU, analytically validating assays, clinically validating the biomarker for the COU, and determining if the biomarker provides advantages over existing methods [4].

The BEST (Biomarkers, EndpointS, and other Tools) Resource, an online glossary created by an FDA-NIH joint working group, provides standardized definitions and categories for biomarkers [4]. This classification system is integral to the framework of biomarker qualification, which requires a fit-for-purpose approach where the level of evidence needed depends on the specific COU [4]. As biomarkers become increasingly integrated into drug development and clinical trials, quality assurance—particularly rigorous analytical method validation—becomes essential for establishing standardized guidelines for biomarker measurements [11]. This guide explores the major biomarker categories, their distinct applications in research and therapy, and the experimental protocols essential for their validation.

Biomarker Categories: Definitions, Applications, and Examples

Biomarkers are categorized based on their specific applications in medical research and clinical practice. The FDA's BEST Resource defines several key types, including susceptibility/risk, diagnostic, monitoring, prognostic, predictive, pharmacodynamic/response, and safety biomarkers [4]. Understanding these categories is crucial for their proper application in drug development and personalized medicine. The table below provides a detailed comparison of these major biomarker categories, their definitions, primary uses, and specific examples.

Table 1: Classification and Comparison of Major Biomarker Categories

| Biomarker Category | Definition and Primary Use | Typical Applications in Drug Development | Real-World Examples |

|---|---|---|---|

| Susceptibility/Risk | Identifies individuals with an increased likelihood of developing a disease [4]. | Patient screening for preventive trials or identifying at-risk populations [4]. | BRCA1 and BRCA2 genetic mutations for assessing increased risk of breast and ovarian cancer [4]. |

| Diagnostic | Used to detect or confirm the presence of a disease or specific disease subtype [4]. | Patient stratification and accurate enrollment in clinical trials based on disease status [4]. | Hemoglobin A1c for diagnosing diabetes and pre-diabetes in adults [4]. |

| Prognostic | Defines the likely course of a disease, independent of treatment [4]. | Identifying patients with higher-risk disease to enhance clinical trial efficiency [4]. | Total kidney volume for assessing the likely progression of autosomal dominant polycystic kidney disease [4]. |

| Predictive | Predicts the likelihood of response to a specific therapeutic intervention [4]. | Selecting patients for targeted therapies; identifying intrinsic or acquired therapy resistance [24]. | EGFR mutation status for predicting response to EGFR tyrosine kinase inhibitors in non-small cell lung cancer (NSCLC) [4]. |

| Pharmacodynamic/ Response | Indicates a biological response to a therapeutic intervention, often used for dose selection [4]. | Demonstrating biological activity, aiding in dose selection and schedule optimization [4] [11]. | HIV RNA viral load used as a surrogate for clinical benefit in HIV drug trials [4]. |

| Safety | Monitors for potential adverse events or drug-induced toxicity during treatment [4]. | Detecting organ injury earlier than traditional clinical signs, potentially before irreversible damage occurs [4]. | Serum creatinine for monitoring renal function and potential nephrotoxicity during drug treatment [4]. |

| Monitoring | Tracks the status of a disease or measures exposure to a drug over time [4]. | Monitoring disease progression or repeated assessment of response to antiviral therapy [4]. | HCV RNA viral load for monitoring response to antiviral therapy in patients with chronic Hepatitis C [4]. |

It is important to note that a single biomarker can fall into multiple categories depending on its use. For instance, Hemoglobin A1c is used both to diagnose diabetes (diagnostic biomarker) and to monitor long-term glycemic control (monitoring biomarker) in individuals with the condition [4].

Analytical Validation and Regulatory Qualification of Biomarkers

The Fit-for-Purpose Validation Framework

The validation of biomarkers is a complex process where the required level of evidence is determined by the biomarker's category and its specific Context of Use (COU) [4]. This principle, known as fit-for-purpose validation, ensures that the validation approach is tailored to the specific role a biomarker will play in drug development or clinical decision-making [4] [11]. The process involves two critical components: analytical validation and clinical validation.

- Analytical Validation: This assesses the performance characteristics of the biomarker measurement assay itself. It involves establishing metrics such as accuracy, precision, analytical sensitivity, analytical specificity, reportable range, and reference range to ensure the assay reliably measures the biomarker [4].

- Clinical Validation: This demonstrates that the biomarker accurately identifies or predicts the clinical outcome of interest. This may involve assessing sensitivity, specificity, and positive and negative predictive values in the intended patient population [4].

The FDA also considers the potential benefits and risks of using a biomarker, including the consequences of false positive or false negative results and the availability of alternative tools [4].

The Regulatory Qualification Pathway

For regulatory acceptance, the FDA provides several pathways, with the Biomarker Qualification Program (BQP) being a key structured framework [4] [25]. The mission of the BQP is to work with external stakeholders to develop biomarkers as drug development tools, with the goal of qualifying biomarkers for specific contexts of use that address specified drug development needs [25]. The qualification process promotes consistency across the industry, reduces duplication of efforts, and helps streamline the development of safe and effective therapies [4].

The pathway from biomarker discovery to regulatory acceptance involves multiple stages of development and validation, as illustrated below.

Diagram: Biomarker Development and Regulatory Pathways

The BQP involves a structured, multi-stage submission and review process [26]. Drug developers can also engage with the FDA early in the development process via the pre-Investigational New Drug (IND) process or Critical Path Innovation Meetings (CPIM) to discuss biomarker validation plans [4]. The ideal pathway depends on factors such as whether the biomarker is for a specific drug program or for broader use across multiple programs [4].

Experimental Protocols for Biomarker Validation

Multi-Omics Workflow for Biomarker Discovery

Modern biomarker discovery heavily relies on multi-omics strategies, which integrate large-scale, high-throughput analyses of different molecular layers, including genomics, transcriptomics, proteomics, metabolomics, and epigenomics [27]. This integrated approach provides a comprehensive understanding of cellular dynamics and facilitates the identification of robust biomarker signatures.

The following diagram outlines a generalized workflow for a multi-omics based biomarker discovery and validation study.

Diagram: Multi-Omics Biomarker Discovery Workflow

Detailed Protocol:

- Sample Collection and Cohort Definition: Collect biological samples (e.g., tissue, blood) from well-characterized patient cohorts. The cohort should be designed to address the specific Context of Use (COU), for instance, comparing responders vs. non-responders to a therapy for a predictive biomarker [27].

- Multi-Omic Data Generation:

- Genomics: Use Whole Exome Sequencing (WES) or Whole Genome Sequencing (WGS) to identify genetic variations like single nucleotide polymorphisms (SNPs) and copy number variations (CNVs) [27].

- Transcriptomics: Apply RNA sequencing (RNA-seq) to profile gene expression levels of mRNA and non-coding RNAs [27].

- Proteomics: Utilize mass spectrometry-based methods like Liquid Chromatography-Mass Spectrometry (LC-MS) or Reverse-Phase Protein Arrays (RPPA) to quantify protein abundance and post-translational modifications [27].

- Epigenomics: Employ techniques such as Whole Genome Bisulfite Sequencing (WGBS) or ChIP-seq to analyze DNA methylation and histone modifications [27].

- Metabolomics: Use LC-MS or Gas Chromatography-Mass Spectrometry (GC-MS) to measure the levels of cellular metabolites [27].

- Data Processing and Quality Control: Process raw data through specialized pipelines for each omics layer. This includes read alignment for sequencing data, peak detection for mass spectrometry, and rigorous quality control to remove technical artifacts and batch effects [27].

- Data Integration and Biomarker Identification:

- Horizontal Integration: Combines data of the same type from different sources or cohorts to increase statistical power [27].

- Vertical Integration: Combines different types of omics data from the same subjects to build a comprehensive molecular network. Computational tools and machine learning models (e.g., the MarkerPredict framework using Random Forest and XGBoost) are used to identify biomarker panels with high diagnostic, prognostic, or predictive value [27] [24].

- Analytical Validation: Develop a robust, fit-for-purpose assay (e.g., an immunoassay or a targeted mass spectrometry assay) for the candidate biomarkers. Establish assay performance characteristics including accuracy, precision, sensitivity, specificity, and dynamic range according to regulatory guidelines [4] [11].

- Clinical Validation and Qualification: Test the validated assay in independent, larger clinical cohorts to establish the biomarker's clinical utility for its intended COU. This step generates the evidence needed for regulatory submission and qualification [4] [11].

Research Reagent Solutions and Essential Materials

The following table details key reagents, technologies, and platforms essential for executing the experimental protocols in modern biomarker research.

Table 2: Research Reagent Solutions for Biomarker Discovery and Validation

| Tool Category | Specific Technology/Reagent | Primary Function in Biomarker Workflow |

|---|---|---|

| Multi-Omics Profiling | Next-Generation Sequencing (NGS) Panels | Targeted sequencing for genomic (e.g., EGFR, BRCA) and transcriptomic biomarker discovery and validation [27]. |

| Multi-Omics Profiling | Mass Spectrometry (LC-MS, GC-MS) | High-throughput identification and quantification of proteins and metabolites in complex biological samples [27]. |

| Satial Biology | Multiplex Immunohistochemistry (IHC)/Immunofluorescence (IF) | Simultaneous detection of multiple protein biomarkers in situ, preserving spatial context within the tumor microenvironment [28]. |

| Spatial Biology | Spatial Transcriptomics | Genome-wide expression analysis with direct retention of spatial location information, revealing tissue architecture [27] [28]. |

| Advanced Model Systems | Organoids | 3D cell cultures that recapitulate human tissue architecture and function, used for functional biomarker screening and validation [28]. |

| Advanced Model Systems | Humanized Mouse Models | Mouse models with functional human immune systems, essential for validating predictive biomarkers for immunotherapies [28]. |

| Computational & Data Analysis | AI/ML Platforms (e.g., MarkerPredict) | Machine learning tools that integrate network topology and protein features to identify and rank potential predictive biomarkers [24]. |

| Computational & Data Analysis | Electronic Lab Notebook (ELN) & LIMS | Centralized systems for managing experimental data, protocols, and inventory, ensuring data integrity, traceability, and regulatory compliance [29] [30]. |

The systematic categorization of biomarkers into susceptibility, diagnostic, prognostic, predictive, and other types provides a critical framework for their application in precision medicine and rational drug development [4] [11]. The successful translation of a biomarker from discovery to clinical use is contingent upon a rigorous, fit-for-purpose validation strategy that encompasses both analytical and clinical components, all tailored to its specific Context of Use [4]. Emerging technologies like multi-omics integration, spatial biology, and artificial intelligence are significantly advancing the resolution and predictive power of biomarker discovery [27] [28]. Furthermore, structured regulatory pathways, such as the FDA's Biomarker Qualification Program, are essential for establishing biomarker reliability and encouraging their broader adoption across drug development programs, ultimately accelerating the delivery of targeted and effective therapies to patients [4] [25].

The Method Validation Blueprint: From Assay Development to Regulatory Submission

Core Principles of Fit-for-Purpose Assay Validation

Fit-for-purpose assay validation is a strategic framework in bioanalysis that tailors the rigor and extent of method validation to the specific intended use of the data generated [31]. This approach recognizes that different stages of drug development and different decision-making contexts require varying levels of analytical assurance [32]. The core principle is that the validation process should provide "confirmation by examination and the provision of objective evidence that the particular requirements for a specific intended use are fulfilled" [32] [33].

This paradigm is particularly essential for biomarker assays, which present unique challenges compared to traditional pharmacokinetic assays, including frequent absence of fully characterized reference standards and the need to measure endogenous analytes across diverse biological matrices [34]. The fit-for-purpose approach fosters flexibility while maintaining scientific rigor, allowing researchers to efficiently generate reliable data without undertaking unnecessarily extensive validation procedures during early discovery phases [35] [36].

Table 1: Key Differences Between Fit-for-Purpose and Fully Validated Assays

| Characteristic | Fit-for-Purpose Assay | Fully Validated Assay |

|---|---|---|

| Purpose | Early-stage research, feasibility testing, exploratory studies [35] | Regulatory-compliant clinical data for submissions [35] |

| Validation Level | Partial, optimized for specific study needs [35] | Fully validated per FDA/EMA/ICH guidelines [35] |

| Flexibility | High – can be adjusted as needed [35] | Low – must follow strict SOPs [35] |

| Regulatory Requirements | Not required for early research [35] | Required for clinical trials and approvals [35] |

| Application Examples | Biomarker analysis, PK screening, proof-of-concept research [35] | GLP studies, clinical bioanalysis, IND/CTA submissions [35] |

The Context of Use Framework

Defining Context of Use

The cornerstone of fit-for-purpose validation is establishing a clear Context of Use (COU) – a concise description of the biomarker's specified application in drug development [4] [36]. The COU encompasses both the biomarker category and its proposed function within the development pipeline [34]. This definition drives all subsequent validation decisions, as "no context [means] no validated assay" [36].

The COU determines the specific purpose in "fit-for-purpose," guiding selection of assay platform, format, and key development drivers [36]. For example, a biomarker used for internal decision-making on mechanism of action requires less extensive validation than one supporting pivotal safety determinations or efficacy claims in registrational trials [4].

Biomarker Categories and Applications

Biomarkers serve diverse functions throughout drug development, with each category demanding distinct validation considerations [4]:

- Diagnostic biomarkers identify disease or patient subsets, prioritizing sensitivity/specificity across populations

- Monitoring biomarkers track disease status changes over time, requiring validation of longitudinal performance

- Predictive biomarkers forecast treatment response, emphasizing specificity and mechanistic links

- Pharmacodynamic/response biomarkers indicate biological responses to therapeutic intervention, needing evidence of direct relationship to drug action

- Safety biomarkers detect potential adverse effects, requiring demonstration of consistent performance across populations [4]

Staged Implementation Approach

The Validation Lifecycle

Fit-for-purpose validation proceeds through discrete, iterative stages that enable continuous refinement as a biomarker progresses toward qualification [32] [33]. This lifecycle approach ensures appropriate resource allocation while maintaining scientific rigor throughout development.

Stage 1: Definition of Purpose and Assay Selection – The most critical phase involves establishing the COU and selecting appropriate candidate assays based on technological feasibility and analytical requirements [32] [36]. This stage requires cross-functional collaboration to align on the specific decisions the biomarker will support and the acceptable uncertainty around those decisions.

Stage 2: Method Validation Planning – Researchers assemble necessary reagents and components, write the method validation plan, and finalize assay classification [32]. This includes determining whether the assay will be definitive quantitative, relative quantitative, quasi-quantitative, or qualitative [32] [33].

Stage 3: Experimental Performance Verification – This phase involves laboratory studies to characterize assay performance against predefined acceptance criteria [32]. The critical evaluation of fitness-for-purpose occurs here, culminating in standardized operating procedures for the validated method.

Stage 4: In-Study Validation – Further assessment occurs within the clinical context, identifying patient sampling issues, stability concerns under real-world conditions, and assay robustness across the study population [32] [33].

Stage 5: Routine Use and Continuous Monitoring – Once deployed, quality control monitoring, proficiency testing, and batch-to-batch quality assurance provide ongoing performance verification [32]. The driver is continual improvement, with iterations potentially returning to earlier stages as needed [32].

Application Across Development Phases

The stringency of fit-for-purpose validation escalates appropriately throughout drug development [35] [32]. Early discovery employs more flexible approaches to enable rapid candidate screening, while later stages demand increasing rigor to support regulatory decision-making.

Table 2: Validation Requirements by Development Phase

| Development Phase | Typical Applications | Recommended Validation Approach | Key Parameters |

|---|---|---|---|

| Early Discovery | Target validation, lead optimization, preliminary mechanism of action [35] | Flexible fit-for-purpose with minimal validation | Precision, sensitivity, specificity [35] [36] |

| Preclinical Development | Pharmacodynamic studies, biomarker candidate qualification, toxicology support [35] | Intermediate fit-for-purpose with core parameters | Accuracy, precision, selectivity, stability, dilutional linearity [32] |

| Early Clinical | Proof-of-concept, patient stratification, dose selection [35] [4] | More rigorous fit-for-purpose approaching full validation | All quantitative parameters with tighter acceptance criteria (e.g., 25% for precision/accuracy) [32] |

| Late Clinical & Regulatory Submissions | Pivotal trial endpoints, companion diagnostics, surrogate endpoints [4] | Full validation per regulatory standards | Complete validation per ICH/FDA guidelines with strict acceptance criteria (e.g., 15% for precision/accuracy) [35] [34] |

Experimental Design and Protocols

Method Comparison Experiments

The comparison of methods experiment is fundamental for assessing systematic error when transitioning between platforms or establishing new methods [37]. Proper experimental design is crucial for generating meaningful data.

Sample Requirements: A minimum of 40 different patient specimens should be tested by both methods, carefully selected to cover the entire working range and represent the spectrum of diseases expected in routine application [37]. Specimen quality and concentration distribution are more important than sheer numbers, though 100-200 specimens may be needed to adequately assess specificity differences between methods [37].

Experimental Execution: Specimens should be analyzed within two hours by both test and comparative methods to ensure stability, unless specific analytes require shorter intervals [37]. The study should span multiple days (minimum of 5 recommended) to minimize systematic errors from single-run artifacts, with 2-5 patient specimens analyzed daily [37]. Duplicate measurements are advantageous for identifying sample mix-ups, transposition errors, and confirming discrepant results [37].

Data Analysis Approaches: Visual data inspection through difference plots (test minus comparative result versus comparative result) or comparison plots (test versus comparative) should be performed during data collection to identify discrepant results while specimens remain available [37]. For data spanning a wide analytical range, linear regression statistics are preferred, allowing estimation of systematic error at medical decision concentrations and providing information about constant or proportional error nature [37].

Validation Parameters by Assay Category

The American Association of Pharmaceutical Scientists (AAPS) has identified five general classes of biomarker assays, each requiring distinct validation approaches [32] [33]. Understanding these categories is essential for appropriate parameter selection.

Definitive Quantitative Assays utilize fully characterized calibrators representative of the endogenous biomarker [32]. Examples include mass spectrometric methods with stable isotope-labeled internal standards [32]. These assays require the most comprehensive validation, assessing accuracy, precision, sensitivity, specificity, dilution linearity, and stability [32].

Relative Quantitative Assays employ response-concentration calibration with reference standards that are not fully representative of the biomarker [32]. Ligand binding assays for endogenous proteins typically fall into this category [32] [33]. Parallelism assessment becomes critical to demonstrate similarity between endogenous analytes and calibrators [34].

Quasi-Quantitative Assays lack calibration standards but produce continuous response data expressed in terms of sample characteristics [32]. These require precision, sensitivity, and specificity assessments but not accuracy or trueness [32].

Qualitative Assays provide categorical data, either ordinal (discrete scoring scales) or nominal (yes/no determinations) [32]. These primarily require demonstration of sensitivity and specificity appropriate to their classification purpose [32].

Table 3: Recommended Validation Parameters by Assay Category

| Performance Characteristic | Definitive Quantitative | Relative Quantitative | Quasi-Quantitative | Qualitative |

|---|---|---|---|---|

| Accuracy | + | |||

| Trueness (Bias) | + | + | ||

| Precision | + | + | + | |

| Reproducibility | + | |||

| Sensitivity | + (LLOQ) | + (LLOQ) | + | + |

| Specificity | + | + | + | + |

| Dilution Linearity | + | + | ||

| Parallelism | + | |||

| Assay Range | + (LLOQ-ULOQ) | + (LLOQ-ULOQ) | + |

Adapted from Cummings et al. [32]

Precision and Accuracy Assessment

Precision and accuracy form the foundation of assay performance characterization, with methodologies tailored to the validation stage and COU [38].

Precision Measurements: Precision is evaluated at three levels: repeatability (intra-assay precision), intermediate precision (within-laboratory variations), and reproducibility (between-laboratory consistency) [38]. For biomarker assays, precision is typically documented through replicate analyses (minimum of nine determinations across three concentration levels), reported as percentage coefficient of variation (%CV) [38]. While small molecule bioanalysis often uses 15% CV acceptance criteria (20% at LLOQ), biomarker validation may allow 25-30% CV during early development, tightening as the assay advances toward regulatory submission [32].

Accuracy Assessment: Accuracy represents the closeness of agreement between measured and accepted reference values [38]. For definitive quantitative assays, accuracy is established across the method range using spiked samples, with data from minimum nine determinations across three concentration levels [38]. For relative quantitative biomarker assays without authentic reference standards, "relative accuracy" may be demonstrated through parallelism experiments comparing diluted patient samples to the calibration curve [34].

Sensitivity and Specificity Determination

Sensitivity Parameters: Assay sensitivity is defined through the limit of detection (LOD) and limit of quantitation (LOQ) [38]. The LOD represents the lowest concentration distinguishable from zero with 95% confidence, typically calculated as mean blank + 3.29 × standard deviation [39]. The LOQ is the lowest concentration meeting predefined precision and accuracy criteria, often defined as the concentration where %CV <20% [39]. These limits are typically established using signal-to-noise ratios (3:1 for LOD, 10:1 for LOQ) or based on the standard deviation of response and slope of the calibration curve [38].

Specificity and Selectivity: Specificity represents the ability to measure the analyte accurately despite potential interferents [38]. For chromatographic assays, specificity is demonstrated through resolution, plate count, and tailing factor measurements, complemented by peak purity assessment using photodiode array or mass spectrometry detection [38]. For biomarker assays, parallelism assessment demonstrates that endogenous analyte in patient samples behaves similarly to the calibration standard upon dilution [34].

Essential Research Reagent Solutions

Successful fit-for-purpose validation requires appropriate reagents and materials tailored to biomarker-specific challenges. Unlike pharmacokinetic assays that use the drug substance as reference standard, biomarker assays frequently lack fully characterized reference materials [34].

Table 4: Key Research Reagent Solutions for Biomarker Assay Validation

| Reagent/Material | Function | Special Considerations |

|---|---|---|

| Reference Standard | Calibration and accuracy assessment | For biomarkers, often recombinant proteins that may differ from endogenous analyte in structure, folding, or post-translational modifications [34] |

| Quality Control Materials | Monitoring assay performance | Endogenous QCs preferred over recombinant materials for stability testing and performance monitoring [36] |

| Matrix | Diluent for standards and sample background | Analyte-free matrix often unavailable for biomarkers; alternatives may include surrogate matrices or background subtraction [32] |

| Binding Reagents | Detection and capture (for LBAs) | Specificity and selectivity critical; may require extensive screening and characterization [36] |

| Stability Samples | Establishing pre-analytical conditions | Should represent endogenous analyte in appropriate matrix [32] |

Regulatory and Practical Considerations

Regulatory Framework

The regulatory landscape for biomarker assay validation continues to evolve, with the FDA issuing specific guidance on Bioanalytical Method Validation for Biomarkers (BMVB) in 2025 [34]. This guidance formally recognizes that biomarker assays require different validation approaches than pharmacokinetic assays and endorses the fit-for-purpose principle [34].

Regulatory acceptance pathways include early engagement through Critical Path Innovation Meetings (CPIM) or pre-IND consultations, the IND application process for biomarker use within specific drug development programs, and the Biomarker Qualification Program (BQP) for broader acceptance across multiple development programs [4]. The BQP provides a structured framework with three stages: Letter of Intent, Qualification Plan, and Full Qualification Package [4].

Practical Implementation Guidance

Successful implementation of fit-for-purpose validation requires careful consideration of several practical aspects:

Pre-analytical Variables: Controllable factors (matrix selection, specimen collection, processing, transport) and uncontrollable factors (patient characteristics, comorbidities) must be identified and addressed [36]. Standardized procedures are essential, particularly for multisite trials where pre-analytical variations can significantly impact results [36].

Biological Variability: Unlike pharmacokinetic assays measuring administered compounds, biomarker levels exhibit inherent biological variation that must be considered when setting precision requirements and interpreting data [36]. The acceptable level of analytical imprecision depends on the magnitude of biological variability and the COU [36].

Continuous Quality Monitoring: Implementing quality control procedures, including regular analysis of endogenous quality control samples, enables ongoing performance verification and detection of assay drift [39]. Quality control monitoring is particularly important for biomarkers used in long-term studies.