AI-Driven Network Analysis: Uncovering Novel Protein Functions for Advanced Drug Discovery

This article explores the transformative role of computational network analysis in discovering novel protein functions, a critical frontier in systems biology and drug development.

AI-Driven Network Analysis: Uncovering Novel Protein Functions for Advanced Drug Discovery

Abstract

This article explores the transformative role of computational network analysis in discovering novel protein functions, a critical frontier in systems biology and drug development. It provides researchers and drug development professionals with a comprehensive framework covering the foundational principles of protein-protein interaction (PPI) networks, advanced methodological applications of graph neural networks and multi-omics integration, strategies for overcoming data sparsity and analytical challenges, and rigorous validation techniques. By synthesizing the latest advances in deep learning and heterogeneous biological data fusion, this resource demonstrates how network-based approaches are accelerating the deconvolution of protein functional ambiguity, identifying new therapeutic targets, and reshaping the landscape of precision medicine.

The Architecture of Life: Understanding Protein Networks and Their Functional Significance

Protein-protein interactions (PPIs) represent a fundamental biological mechanism through which proteins combine to form complex structures and execute the vast majority of cellular processes. These interactions constitute the primary framework for cellular organization, governing everything from signal transduction and cell cycle regulation to transcriptional control and metabolic pathways [1] [2]. A systems-level understanding of the dynamic PPI network, or interactome, is crucial for deciphering normal cellular physiology and the molecular origins of disease, thereby enabling the discovery of novel protein functions and therapeutic targets [3] [4].

The Biological Significance of Protein-Protein Interactions

PPIs are indispensable for maintaining cellular structure and function. They regulate the interaction of transcription factors with their target genes, modulate intracellular signaling pathways in response to external stimuli, ensure cytoskeletal stability, and play a vital role in protein folding and quality control [1] [5]. The diverse nature of these interactions can be categorized based on their characteristics:

- Direct vs. Indirect: Direct interactions involve physical contact between proteins, whereas indirect interactions occur through functional associations within a pathway [2].

- Stable vs. Transient: Stable interactions form long-lasting complexes, while transient interactions are temporary and often signal-specific [1] [2].

- Homomeric vs. Heteromeric: Homomeric interactions occur between identical proteins, and heteromeric interactions occur between different proteins [2].

Disruptions in PPIs are a primary cause of cellular dysfunction, leading to various diseases, which makes them attractive targets for drug development. The launch of PPI modulators such as venetoclax and sotorasib for clinical use underscores their therapeutic relevance [4].

Core Deep Learning Architectures for PPI Analysis

The prediction and analysis of PPIs have been revolutionized by artificial intelligence, particularly deep learning. Unlike earlier computational methods that relied on manually engineered features, deep learning models automatically extract meaningful patterns from complex, high-dimensional biological data [1] [6]. The following table summarizes the core deep learning architectures employed in modern PPI research.

Table 1: Core Deep Learning Models for PPI Prediction and Analysis

| Model Architecture | Key Functionality | Representative Examples | Primary Application in PPI |

|---|---|---|---|

| Graph Neural Networks (GNNs) | Models proteins as nodes in a graph to capture topological relationships and spatial dependencies [1]. | GCN, GAT, GraphSAGE, DGAE, AG-GATCN [1] | Network-level prediction, identifying complex membership, modeling structural interfaces [1] [2]. |

| Convolutional Neural Networks (CNNs) | Processes spatial data through convolutional filters to detect local patterns and features [1] [2]. | Standard CNN architectures with pooling and fully connected layers [1] | Predicting interaction probability from sequence and structural motifs, interaction site identification [2]. |

| Recurrent Neural Networks (RNNs) | Handles sequential data by maintaining an internal state, ideal for time-series or ordered data [2]. | Long Short-Term Memory (LSTM) networks [2] | Modeling dynamic interaction patterns and conformational changes over time [1]. |

| Transformers & Attention Mechanisms | Uses self-attention to weigh the importance of different input elements, such as amino acid residues [2]. | Pre-trained models like ESM, AlphaFold2 [1] | Processing protein sequences for structure prediction and identifying critical binding residues [2] [6]. |

| Multi-task & Multi-modal Learning | Simultaneously learns multiple related tasks or integrates diverse data types to improve generalizability [2]. | Frameworks integrating sequence, structure, and expression data [2] | Enhancing prediction accuracy and robustness by leveraging complementary information [1]. |

Among these, GNNs are exceptionally powerful for PPI analysis because they natively operate on graph-structured data, naturally representing proteins as nodes and their interactions as edges [1]. Variants like Graph Attention Networks (GAT) enhance this by adaptively weighting the importance of neighboring nodes, which is crucial for identifying key interaction partners within a crowded cellular environment [1].

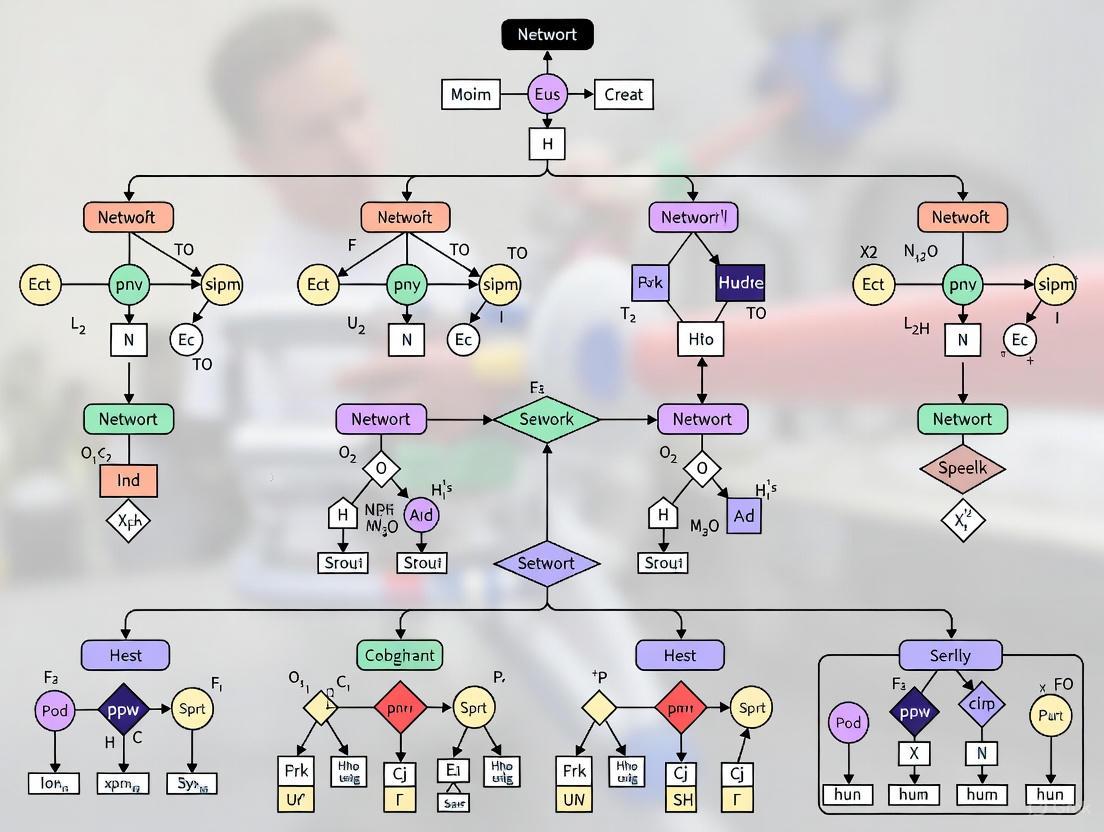

Diagram: General Workflow for Deep Learning-Based PPI Prediction

Key Databases and Research Reagents for PPI Research

The advancement of PPI research is underpinned by publicly available databases that compile interaction data from experimental assays, computational predictions, and prior knowledge [5]. Furthermore, specific experimental reagents and methodologies are essential for validating these computational predictions.

Table 2: Essential Databases and Research Reagents for PPI Discovery

| Resource Name | Type / Category | Primary Function and Utility |

|---|---|---|

| STRING | Database [7] [5] | Compiles known and predicted protein-protein associations, including physical and functional interactions, across numerous species [7]. |

| BioGRID | Database [7] [5] | A curated database of protein and genetic interactions from high-throughput studies and manual curation [7]. |

| CORUM | Database [3] | A specialized resource for experimentally verified mammalian protein complexes, often used as ground-truth for training ML models [3]. |

| AlphaFold2/3 | Computational Tool / Reagent [4] [6] | An AI system that predicts 3D protein structures with high accuracy, enabling structure-based analysis of PPIs [6]. |

| Mass Spectrometry | Experimental Assay [3] | Used to identify and quantify proteins in complex mixtures; key for co-fractionation and AP-MS workflows to discover novel interactions [3]. |

| Co-fractionation | Experimental Protocol [3] | Separates protein complexes based on physical properties under native conditions, inferring associations through co-elution [3]. |

| Yeast Two-Hybrid (Y2H) | Experimental Assay [1] [4] | A high-throughput method for detecting binary physical interactions between proteins [1]. |

| Antibodies for AP/Co-IP | Research Reagent [3] | Specific antibodies are essential for affinity purification (AP) or co-immunoprecipitation (Co-IP) to isolate specific protein complexes [3]. |

Methodologies: From Computational Prediction to Tissue-Specific Validation

AI-Driven Structure Prediction and Docking

Computational methods for modeling PPIs have been transformed by deep learning. End-to-end frameworks like AlphaFold-Multimer and AlphaFold3 have shown remarkable success in predicting the 3D structure of protein complexes directly from their amino acid sequences [6]. These methods leverage a diffusion model and are trained on diverse biomolecular interactions, significantly enhancing accuracy over traditional template-free docking, which struggles with protein flexibility and vast conformational space [6].

Constructing a Tissue-Specific Protein Association Atlas

Understanding that the interactome is not static but highly context-dependent is a frontier in PPI research. A recent landmark methodology involved compiling protein abundance data from 7,811 human proteomic samples across 11 tissues to create a tissue-specific atlas of protein associations [3].

The core methodology uses protein co-abundance, measured by the Pearson correlation of protein abundance profiles across many samples, to infer functional associations. The underlying principle is that subunits of protein complexes are co-regulated and maintain defined stoichiometries, leading to strong co-abundance signals [3]. This workflow is summarized below.

Diagram: Workflow for Building a Tissue-Specific PPI Atlas

Key Experimental Validation Protocols: The protein associations derived from the co-abundance atlas were rigorously validated for the brain tissue using orthogonal methods [3]:

- Cofractionation Experiments in Synaptosomes: Biochemical fractionation of synaptic terminals was performed, followed by MS analysis. Proteins that consistently co-eluted through the fractionation process were considered validated interacting partners.

- Curation of Brain-Derived Pulldown Data: Existing experimental data from affinity purification mass spectrometry (AP-MS) studies conducted on brain tissue was curated and compared to the atlas predictions.

- AlphaFold2 Modeling: The AF2 system was used to predict the 3D structure of protein pairs with high association scores. The formation of physically plausible, high-confidence complex structures provided computational validation of the potential for direct physical interaction.

This integrated approach demonstrated that protein co-abundance (AUC = 0.80 ± 0.01) outperformed both mRNA coexpression (AUC = 0.70 ± 0.01) and protein cofractionation (AUC = 0.69 ± 0.01) in recovering known protein complex members from the CORUM database [3]. The final atlas scored 116 million protein pairs across 11 tissues, with over 25% of associations being tissue-specific, providing an unprecedented resource for prioritizing candidate disease genes in a tissue-relevant context [3].

Protein-protein interactions form the essential framework of cellular function. The convergence of large-scale biological databases, sophisticated deep learning models, and innovative methodologies for assessing tissue specificity has fundamentally advanced our ability to map and understand the interactome. This integrated, network-based perspective is pivotal for discovering new protein functions, elucidating disease mechanisms, and ultimately accelerating the development of novel therapeutics.

Protein-protein interactions (PPIs) are fundamental regulators of nearly all cellular processes, including signal transduction, gene regulation, and metabolic pathways [8]. Within the intricate network of cellular signaling—the interactome—proteins communicate through specific, physical interactions that can be classified based on their stability, duration, and functional requirements [4]. The accurate classification of these interactions into categories such as obligate, non-obligate, stable, and transient provides a critical framework for discovering new protein functions through network analysis research [9] [10]. For researchers and drug development professionals, understanding these classifications is not merely an academic exercise; it enables the functional annotation of newly discovered protein complexes, aids in predicting novel interaction partners, and identifies potential therapeutic targets within dysregulated pathways [9] [4]. This technical guide provides a comprehensive overview of the defining characteristics, experimental methodologies, and computational tools essential for classifying PPI types within the broader context of biological network analysis.

Defining Protein-Protein Interaction Types

Core Classification Frameworks

Protein-protein interactions are primarily classified along two intersecting spectra: obligate versus non-obligate and stable versus transient. These classifications are defined by the thermodynamic stability, lifetime, and functional dependence of the interacting partners [8] [11].

- Obligate Interactions: In these permanent complexes, the protomers (individual protein units) are not individually structurally stable in vivo. They depend on the complex formation to maintain their native fold and function. The complex is stable and exists predominantly in its bound form [9] [10]. Examples include the p22 Arc repressor dimer (a homodimer) and human cathepsin D (a heterodimer) [8].

- Non-Obligate Interactions: In these interactions, the protomers are independently stable and can exist in a functional, folded state without being complexed. They can form either transient or permanent complexes [9] [10]. The association between thrombin and rodniin inhibitor is an example of a non-obligate permanent heterodimer [8].

- Stable (Permanent) Interactions: These are strong, long-lasting complexes that remain intact over time. They are often, but not exclusively, obligate in nature. Stable interactions are crucial for structural integrity and core cellular machinery, such as the RNA polymerase complex [8] [11].

- Transient Interactions: These are weak, short-lived interactions that occur for brief periods before dissociating. They are typically non-obligate and are essential for dynamic processes like signaling cascades and biochemical pathways. An example is the transient interaction of Rsc8 with the NuA3 histone acetyltransferase in Saccharomyces cerevisiae [8].

Table 1: Core Definitions of Protein-Protein Interaction Types

| Interaction Type | Structural Stability of Protomers | Complex Lifetime | Functional Dependence | Example |

|---|---|---|---|---|

| Obligate | Unstable in isolation; require complex formation for stability [9] [8] | Permanent [8] | Function is dependent on permanent complex formation [8] | Arc repressor dimer (Homodimer) [8] |

| Non-Obligate | Independently stable [9] [8] | Transient or Permanent [9] [8] | Proteins function independently; interaction modulates activity [8] | Thrombin-Rodniin inhibitor complex [8] |

| Stable/Permanent | Varies (can be obligate or non-obligate) | Long-lasting, strong affinity [8] [11] | Essential for core structural or functional complexes [11] | RNA polymerase multi-subunit complex [11] |

| Transient | Independently stable (inherently non-obligate) [9] | Short-lived, weak affinity [8] | Regulatory roles; often triggered by specific stimuli [8] [11] | Rsc8 interaction with NuA3 [8] |

It is critical to note that "obligate" and "permanent" are sometimes used interchangeably in literature, as most obligate interactions are indeed permanent. Similarly, most non-obligate interactions are transient, though permanent non-obligate complexes also exist [11].

Structural and Biophysical Characteristics

The classification of PPIs is underpinned by distinct structural and biophysical properties of their interaction interfaces, which can be quantitatively measured.

- Interface Size and Composition: Obligatory interfaces tend to be larger and more hydrophobic, resembling the protein's core, while non-obligatory interfaces are smaller and more polar [12].

- Atomic Contacts and Secondary Structure: Research indicates that obligatory chains have a higher number of contacts per interface (20 ± 14) compared to non-obligatory chains (13 ± 6). The involvement of main chain atoms is also higher in obligatory chains (16.9%) compared to non-obligatory ones (11.2%). Furthermore, β-sheet formation across subunits is observed almost exclusively among obligatory protein complexes [12].

- Energetic "Hot Spots": A key concept in PPI interfaces is the "hot spot," defined as a residue whose substitution leads to a significant decrease in the binding free energy (ΔΔG ≥ 1.5 to 2.0 kcal/mol). These hot spots are not uniformly distributed; symmetric PPIs (e.g., homodimers) have been found to exhibit significantly higher densities of hot spots per 100 Ų of buried surface area compared to non-symmetric interactions [8] [4].

Table 2: Quantitative Interface Properties Across PPI Types

| Property | Obligate/Obligatory Interfaces | Non-Obligate/Non-Obligatory Interfaces | Citation |

|---|---|---|---|

| Contacts per Interface | 20 ± 14 | 13 ± 6 | [12] |

| Main Chain Atom Involvement | 16.9% | 11.2% | [12] |

| β-sheet Formation Across Subunits | Observed | Rarely or not observed | [12] |

| Hydrophobicity | Higher, more core-like | Lower, more polar | [12] |

| Hot Spot Density (per 100 Ų BSA) | Higher in symmetric interfaces | Lower, especially in peptide interfaces | [8] |

The following diagram summarizes the logical relationship between protein stability, complex lifetime, and the resulting PPI classification.

Experimental Methodologies for PPI Classification

Accurantly classifying a PPI requires a multi-faceted approach that combines biochemical, biophysical, and genetic techniques. The choice of method depends on the nature of the interaction, the required information (simple detection vs. kinetic parameters), and the sample context [8] [11].

Biochemical and Genetic Methods

These methods are foundational for detecting and confirming physical interactions.

- Co-immunoprecipitation (Co-IP): This technique uses an antibody specific to one protein ("bait") to immunoprecipitate it from a cell lysate. Any tightly bound interacting partners ("prey") are co-precipitated and can be identified via Western blot or mass spectrometry. It is excellent for confirming suspected interactions from native cellular environments but provides limited kinetic data [8] [11].

- Pull-Down Assays: Similar to Co-IP but performed in vitro. One protein is tagged (e.g., GST, His-tag) and immobilized on a bead. A solution containing potential binding partners is incubated with the beads. After washing, specifically bound proteins are eluted and analyzed. This is ideal for confirming direct binary interactions [8].

- Yeast Two-Hybrid (Y2H) Screening: A genetic method performed in yeast. A "bait" protein is fused to a DNA-binding domain, and a "prey" protein (or library) is fused to a transcription activation domain. If the bait and prey interact, they reconstitute a functional transcription factor that drives the expression of a reporter gene. Y2H is powerful for high-throughput screening of novel interaction partners [11].

- Crosslinking Techniques: Chemical crosslinkers are used to covalently bind proteins in close proximity. This stabilizes transient or weak interactions, allowing for their isolation and identification. Crosslinking is often coupled with mass spectrometry to identify interaction partners and provide insights into protein complex topology [8].

Biophysical and Label-Free Methods

These techniques provide detailed quantitative data on binding affinity, kinetics, and thermodynamics, which are crucial for distinguishing stable from transient interactions.

- Surface Plasmon Resonance (SPR): A label-free technique where one interactor is immobilized on a sensor chip. The other interactor (analyte) is flowed over the surface in solution. Binding causes a change in the refractive index at the sensor surface, measured in real-time. SPR directly provides association (kon) and dissociation (koff) rate constants, from which the equilibrium binding affinity (KD) is calculated. It is the gold standard for kinetic characterization [11].

- Biolayer Interferometry (BLI): Another label-free, real-time technology. A biosensor tip-bound protein is dipped into a solution containing its partner. Binding changes the interference pattern of reflected light, allowing measurement of kinetics and affinity. BLI's fluidics-free design simplifies experiments and allows for analysis of crude samples [11].

- Isothermal Titration Calorimetry (ITC): This method measures the heat released or absorbed during a binding event. By titrating one protein into another, ITC directly provides the stoichiometry (n), affinity (KD), and thermodynamic parameters (enthalpy ΔH, entropy ΔS) of the interaction, offering a complete thermodynamic profile [11].

The following workflow diagram illustrates how these techniques can be integrated in a sequential experimental strategy for PPI discovery and characterization.

Computational Prediction and Network Analysis

Computational approaches are indispensable for predicting PPIs at scale and integrating them into functional networks, aligning directly with the thesis of discovering new protein functions through network analysis.

Traditional Machine Learning and Association Rules

Early computational methods relied on manually engineered features derived from sequence, structure, and evolutionary information.

- Association Rule-Based Classification (ARBC): One study used the APRIORI algorithm on 14 interface properties (e.g., solvent-accessible surface area, hydrophobicity, secondary structure content) from domain-interaction sites (dom-faces) to generate interpretable rules for classifying four PPI types: Enzyme-inhibitor (ENZ), non-Enzyme-inhibitor (nonENZ), hetero-obligate (HET), and homo-obligate (HOM). This method's key advantage is the high interpretability of the discovered rules, which can provide biological insights [9] [10].

- Support Vector Machines (SVMs) and Random Forests (RFs): These are common algorithms used for PPI prediction and classification. They are trained on datasets of known interacting and non-interacting protein pairs, using features like amino acid composition, evolutionary conservation, and structural properties to build a predictive model [4].

Advanced Deep Learning Architectures

Deep learning has revolutionized PPI prediction by automatically learning relevant features from complex data.

- Graph Neural Networks (GNNs): GNNs are exceptionally suited for modeling PPIs as they can represent proteins as nodes in a graph, with edges representing interactions or similarities. Variants like Graph Convolutional Networks (GCNs) and Graph Attention Networks (GATs) can aggregate information from a protein's neighbors in the network, capturing both local and global relational patterns [5].

- Multi-Modal and Transformer-Based Approaches: Modern frameworks integrate diverse data types (sequence, structure, gene expression) using architectures like Transformers, which leverage attention mechanisms to weigh the importance of different input features. Pre-trained protein language models (e.g., ESM, ProtBERT) extract semantic information from millions of protein sequences, providing powerful representations for downstream PPI prediction tasks [5].

Table 3: Computational Approaches for PPI Prediction and Classification

| Method Category | Key Examples | Principle | Advantages | Limitations |

|---|---|---|---|---|

| Association Rules | APRIORI Algorithm [9] | Discovers frequent "if-then" patterns in interface property data [9] | High interpretability of rules; biological insights [9] | Limited to predefined features; lower predictive power vs. DL |

| Traditional Machine Learning | Support Vector Machines (SVMs), Random Forests (RFs) [4] | Learns a classifier from manually engineered features [4] | Effective with good feature sets; less computationally intensive | Performance capped by quality of manual feature engineering |

| Graph Neural Networks (GNNs) | GCN, GAT, GraphSAGE [5] | Models PPI networks as graphs; learns from node/edge structure [5] | Captures topological network properties; powerful for network analysis | Requires substantial data; can be computationally complex |

| Deep Learning (Sequence/Structure) | Transformers, Protein Language Models (ESM) [5] | Uses attention and transfer learning on sequences/structures [5] | Automatic feature extraction; state-of-the-art accuracy | "Black-box" nature; low interpretability; high data demand |

Successful experimental analysis of PPIs relies on a suite of trusted reagents, tools, and databases.

Table 4: Research Reagent Solutions for PPI Analysis

| Tool / Reagent | Function / Application | Example / Source |

|---|---|---|

| Co-Immunoprecipitation Kits | Provides optimized buffers, beads (e.g., Protein A/G), and protocols for efficient IP/Co-IP. | ab206996 (Abcam) [8] |

| Label-Free Analysis Systems | Performs real-time, label-free kinetic and affinity analysis (BLI). | Octet Systems (Sartorius) [11] |

| Surface Plasmon Resonance Systems | Provides high-quality kinetic and affinity data (SPR). | Octet SF3 SPR [11] |

| Crosslinking Reagents | Chemically stabilizes protein complexes for isolation and MS analysis. | Various commercial suppliers (e.g., Thermo Fisher) [8] |

| PPI Databases | Provides reference data for known and predicted interactions. | STRING, BioGRID, DIP, IntAct [5] |

| Structural Databases | Source of 3D protein complex structures for interface analysis. | Protein Data Bank (PDB) [5] |

| Functional Annotation Databases | Provides Gene Ontology (GO) and pathway data for functional inference. | Gene Ontology, KEGG [5] |

Therapeutic Targeting of PPI Types

The classification of PPIs has direct implications for drug discovery, as different interaction types present unique challenges and opportunities for therapeutic modulation.

- Challenges and Strategies: PPI interfaces are often large, flat, and lack deep pockets, making them historically considered "undruggable." Strategies to overcome this include: 1) targeting key "hot spot" residues, 2) using fragment-based drug discovery (FBDD) to find small molecules that bind to discrete sub-pockets, and 3) designing peptidomimetics that replicate key secondary structures involved in the interaction [4].

- Allosteric Modulation (Type II PPI): Instead of targeting the interface directly, allosteric modulators bind to a distant site on the protein, inducing a conformational change that either disrupts or stabilizes the PPI. This approach can offer greater specificity. A prime example is Maraviroc, an HIV drug that allosterically binds to the CCR5 receptor, altering its conformation and preventing interaction with the viral gp120 protein [13].

- Stabilizers vs. Inhibitors: While most efforts focus on PPI inhibitors, there is growing interest in stabilizers that enhance beneficial interactions. However, stabilizer development is more challenging, as it requires a deep understanding of PPI thermodynamics and often involves allosteric mechanisms [4].

- Clinical Examples: Several FDA-approved drugs target PPIs. These include Venetoclax (Bcl-2 inhibitor for cancer), Sotorasib (KRAS inhibitor for cancer), and monoclonal antibodies that block the PD-1/PD-L1 immune checkpoint interaction in immunotherapy [4].

The precise classification of protein-protein interactions into obligate, non-obligate, stable, and transient types is a cornerstone of modern network analysis research. This classification, grounded in measurable structural and biophysical properties, enables researchers to infer protein function, map signaling pathways, and identify critical nodes within cellular networks. The integration of robust experimental methodologies—from Y2H and Co-IP to SPR and ITC—with powerful and interpretable computational models provides a comprehensive framework for the discovery and characterization of PPIs. As the field advances, the ability to therapeutically target specific PPI types continues to grow, moving previously "undruggable" targets into the realm of clinical possibility. For scientists engaged in deconvoluting complex biological systems, a deep understanding of PPI classification is not merely beneficial—it is essential for driving innovation in functional genomics and targeted drug development.

Protein-protein interaction (PPI) networks provide a powerful framework for understanding cellular physiology in both normal and disease states. As mathematical representations of the physical contacts between proteins in a cell, these networks are essential for deciphering the molecular etiology of disease and discovering putative therapeutic targets [14]. This technical review examines how PPI networks serve as biological blueprints, enabling researchers to move from analyzing local complexes to understanding global cellular regulation. By integrating network analysis with functional annotation and machine learning approaches, scientists can uncover novel protein functions and identify functional sites critical for cellular processes. The application of these approaches holds particular promise for elucidating pathogenic mechanisms in complex multi-genic diseases and developing effective diagnostic and therapeutic strategies [15].

Protein-protein interactions are physical contacts of high specificity established between two or more protein molecules as a result of biochemical events steered by electrostatic forces, hydrogen bonding, and hydrophobic effects [16]. These interactions can be transient, as seen in signal transduction processes, or stable, leading to the formation of permanent complexes that function as molecular machines [14] [16]. PPIs determine molecular and cellular mechanisms that control both healthy and diseased states in organisms, making their systematic study fundamental to understanding cellular function [15].

The totality of PPIs occurring in a cell or organism constitutes the interactome [14]. Current knowledge of the interactome remains both incomplete and noisy, with PPI detection methods producing false positives and negatives despite advances in high-throughput screening techniques [14]. Nevertheless, the development of large-scale PPI screening technologies has caused an explosion in available interaction data, enabling construction of increasingly complex and complete interactomes that serve as foundational resources for biological discovery [14].

Structural and Topological Organization of PPI Networks

Network Architecture Principles

PPI networks exhibit distinctive architectural properties that reflect their biological organization and evolutionary constraints. These networks have been shown to be scale-free, meaning their degree distribution follows a power-law rule where most nodes have few connections, while a small number of highly connected nodes, known as hubs, possess a disproportionate number of interactions [15]. This topological organization has profound implications for network robustness and function, as the removal of random nodes typically has minimal effect on network connectivity, whereas targeted hub removal can disrupt the entire network [15].

The structure of PPI networks also demonstrates small-world properties characterized by shorter than expected path lengths and high clustering coefficients [15]. This organization facilitates efficient information transfer and functional integration across the network while maintaining specialized local domains. Another crucial structural aspect is the presence of modules—groups of subnetworks with high internal connectivity and relatively sparse connections between modules [15]. These modules often correspond to functional units such as protein complexes or pathways.

Key Topological Parameters

Systematic analysis of PPI network topology relies on several quantitative parameters that characterize network structure and organization:

Table 1: Key Topological Parameters for PPI Network Analysis

| Parameter | Definition | Biological Interpretation |

|---|---|---|

| Degree (k) | Number of connections a node possesses | Proteins with high degree (hubs) may have essential cellular functions |

| Average Degree ( |

Mean of all degree values in a network | Overall network connectivity |

| Clustering Coefficient ( |

Measure of how connected a node's neighbors are to each other | Tendency of proteins to form functional modules or complexes |

| Shortest Path Length | Minimum number of edges required to connect two nodes | Efficiency of communication or influence between proteins |

| Betweenness Centrality | How often a node appears on shortest paths between other nodes | Proteins that connect different functional modules (bottlenecks) |

| Heterogeneity | Coefficient of variation of the degree distribution | Inequality of connection distribution among proteins |

These topological parameters provide critical insights into cellular evolution, molecular function, network stability, and dynamic responses to perturbation [15]. The quantitative analysis of these properties enables researchers to identify biologically significant nodes and modules within complex networks.

Methodological Approaches for PPI Network Construction and Analysis

Experimental Methods for PPI Detection

Experimental technologies for identifying PPIs can be broadly categorized into biophysical methods and high-throughput approaches:

Biophysical Methods provide the most detailed information about protein interactions and include techniques such as X-ray crystallography, NMR spectroscopy, fluorescence, and atomic force microscopy [15]. These approaches not only identify interacting partners but also yield detailed information about biochemical features of the interactions, including binding mechanisms and allosteric changes [15]. While offering high-resolution structural data, these methods are typically expensive, labor-intensive, and limited to studying a few complexes at a time [15].

High-Throughput Methods enable systematic mapping of interactomes and include:

Yeast Two-Hybrid (Y2H) Systems: These examine binary protein interactions by fusing proteins to transcription factor domains and detecting interaction through reporter gene activation [15] [17]. Y2H is particularly effective for mapping all possible interactions within an organism's proteome.

Affinity Purification Coupled with Mass Spectrometry: This approach identifies proteins present in complexes under near-physiological conditions, making it suitable for detecting stable interactions [17].

Indirect Methods: These include gene co-expression analysis (based on the assumption that interacting proteins must be co-expressed) and synthetic lethality (where mutations in two separate genes are viable alone but lethal when combined) [15].

Computational Prediction and Analysis Methods

Computational approaches complement experimental methods by predicting interactions and extracting biological insights from network data:

PPI Prediction Algorithms utilize various genomic features and evolutionary information to identify potential interactions, significantly expanding genome coverage beyond experimentally determined interactions [17]. These methods are particularly valuable for organisms with limited experimental data.

Frequent Pattern Identification techniques like PPISpan adapt frequent subgraph identification methods specifically for PPI networks to identify recurring functional interaction patterns [17]. This approach maps functional annotations onto PPI networks to discover overrepresented patterns of interaction in the functional space, revealing higher-level functional templates that recur in different contexts within the network [17].

Machine Learning Approaches combine statistical models for protein sequences with biophysical models of stability to predict functional sites [18]. These methods integrate multiple data types, including evolutionary sequence information, predicted changes in thermodynamic stability, hydrophobicity, and weighted contact number to identify residues conserved due to functional rather than structural constraints [18].

Table 2: Comparison of Major PPI Detection Methods

| Method | Principle | Advantages | Limitations |

|---|---|---|---|

| Yeast Two-Hybrid | Protein interaction reconstitutes transcription factor | Tests binary interactions directly; High-throughput | False positives from spurious activation; Limited to nuclear proteins |

| Affinity Purification + MS | Purification of protein complexes under native conditions | Identifies physiological complexes; Works with post-translational modifications | Cannot distinguish direct from indirect interactions |

| Gene Co-expression | Correlated expression of genes encoding interacting proteins | Can leverage existing transcriptomic data; Context-specific networks | Indirect evidence; Correlation does not prove physical interaction |

| Computational Prediction | Genomic features, evolutionary conservation | High coverage; Cost-effective; Applicable to poorly studied organisms | Requires validation; Dependent on training data quality |

Analytical Framework for Discovering Novel Protein Functions

Functional Annotation and Pattern Discovery

Mapping known functional annotations onto PPI networks enables the identification of frequently occurring interaction patterns in functional space [17]. Using the Molecular Function hierarchy of Gene Ontology (GO) annotations, particularly the GO Slim subset that provides broad functional categories, researchers can project functional annotation space onto the physical interaction network [17]. This approach reveals recurring functional interaction patterns that represent abstract functional templates reused in different biological contexts.

The PPISpan algorithm, adapted from frequent subgraph identification methods, enables discovery of these functional patterns by searching for arbitrary topological motifs rather than being restricted to specific cluster types or linear pathways [17]. This flexibility is particularly important for capturing the diverse topological arrangements found in molecular complexes, which recent studies show favor a small number of topological arrangements in the space of all possible configurations [17].

Identification of Functionally Important Sites

Machine learning approaches represent a powerful strategy for identifying functionally important sites in proteins by combining evolutionary information with biophysical principles. These methods address the challenge of distinguishing residues conserved for functional roles from those conserved primarily for structural stability [18].

The methodology involves training gradient boosting classifiers on multiplexed experimental data on variant effects, incorporating features such as:

- Predicted changes in thermodynamic stability (ΔΔG)

- Evolutionary sequence information scores (ΔΔE)

- Hydrophobicity of amino acids

- Weighted contact number [18]

This approach successfully identifies stable but inactive (SBI) variants—substitutions that affect function without perturbing structural stability—which often mark residues with direct roles in function such as catalytic sites, substrate interaction regions, and protein interfaces [18]. Across several proteins, approximately one in ten positions appear to be functionally relevant and conserved for reasons different than structural stability [18].

Integration of Dynamic Information

Static PPI networks provide only one dimension of the biochemical machinery controlling cellular behavior. Several research groups have integrated gene expression dynamics with protein interaction networks to understand how these networks change across different biological states [15]. Studies of the yeast cell cycle revealed a "just in time" model where dynamic protein complexes are activated by expressing key elements at specific periods, while most complex components remain co-expressed throughout the cycle [15].

This dynamic modular structure has also been observed in human protein interaction networks, suggesting it represents a fundamental organizational principle rather than a species-specific artifact [15]. The integration of temporal and contextual information with static interaction maps significantly enhances our ability to predict protein function and understand regulatory mechanisms.

Applications in Disease Research and Drug Development

Elucidating Disease Mechanisms

The structure and dynamics of PPI networks are frequently disturbed in complex diseases such as cancer, autoimmune disorders, and neurodegenerative conditions [15]. Network-based analyses facilitate understanding of pathogenic mechanisms that trigger disease onset and progression, which can subsequently be translated into effective diagnostic and therapeutic strategies [15].

Aberrant PPIs form the basis of multiple aggregation-related diseases, including Creutzfeldt-Jakob and Alzheimer's diseases [16]. Similarly, in Parkinson's disease and cancer, signal propagation inside cells depends on PPIs between various signaling molecules, and disruption of these interactions can lead to disease [16]. The application of PPI network analysis enables researchers to move beyond a univariate approach that studies individual gene expression to a systems-level understanding that can explicate the underlying mechanisms of complex diseases arising from the interplay of multiple genetic and environmental factors [15].

Network-Based Therapeutic Strategies

The comprehensive view of cellular systems provided by PPI networks supports the development of novel therapeutic paradigms. Rather than targeting individual molecules in isolation, PPI networks can themselves become the target of therapy for treating complex multi-genic diseases [15]. This approach is particularly valuable for identifying putative protein targets of therapeutic interest and understanding the molecular mechanisms by which disease-associated variants disrupt function [18] [16].

Prospective prediction and experimental validation of functional consequences of missense variants, as demonstrated with HPRT1 variants causing Lesch-Nyhan syndrome, illustrates how computational models can pinpoint molecular disease mechanisms [18]. Such approaches provide powerful tools for personalized therapeutic development by identifying specific residues that directly contribute to protein function and pathogenicity.

Research Reagent Solutions

Table 3: Essential Research Reagents and Resources for PPI Network Studies

| Resource Category | Specific Tools/Databases | Primary Function | Application Context |

|---|---|---|---|

| Experimental Databases | DIP [17], IntAct [14] | Repository of experimentally determined protein interactions | Network construction; Validation of predictions |

| Predicted Interaction Databases | STRING [17], WI-PHI [17] | Source of confidence-weighted predicted interactions | Expanding network coverage; Integrating multiple evidence types |

| Functional Annotation Resources | Gene Ontology (GO) Slim [17] | Broad functional categories for protein annotation | Functional pattern discovery; Annotation of uncharacterized proteins |

| Computational Tools | PPISpan [17], gSpan [17] | Identification of frequent functional interaction patterns | Discovery of recurring network motifs; Functional template identification |

| Structure Analysis | Rosetta [18], GEMME [18] | Prediction of stability changes and evolutionary constraints | Identification of functional residues; SBI variant prediction |

| Visualization Platforms | Gephi [19] | Network visualization and exploration | Data interpretation; Presentation of network topology |

Protein-protein interaction networks serve as fundamental biological blueprints that enable researchers to bridge the gap between local molecular complexes and global cellular regulation. The continuing development of high-throughput experimental methods, sophisticated computational prediction algorithms, and advanced analytical frameworks is progressively transforming our understanding of cellular systems biology. As these technologies mature, the integration of multidimensional data—including structural information, dynamic expression patterns, and functional annotations—will further enhance the predictive power and biological relevance of PPI network analyses.

The application of these approaches to disease research, particularly for complex multi-genic disorders, holds exceptional promise for identifying novel therapeutic targets and understanding pathogenic mechanisms at a systems level. The emerging paradigm of targeting network properties rather than individual molecules represents a significant shift in therapeutic development that may ultimately yield more effective treatments for challenging diseases. Future advances will likely focus on enhancing network completeness and accuracy, improving dynamic modeling capabilities, and developing more sophisticated computational tools for extracting biological insights from increasingly complex interactome data.

The Central Dogma of molecular biology, as originally proposed by Francis Crick, established a fundamental principle: genetic information flows unidirectionally from DNA to RNA to protein [20]. This framework posited that DNA sequences encode RNA, which in turn codes for proteins—the primary functional actors within biological systems. While this foundational theorem correctly identified the sequence-structure-function relationship, our contemporary understanding has significantly expanded beyond this initial one-way street to incorporate environmental influences and complex informational networks [20].

In the modern post-genomic era, we recognize that the primary sequence of a protein contains all essential information required to fold into a specific three-dimensional structure, which ultimately determines its cellular function [21]. This sequence-function relationship represents the functional manifestation of the Central Dogma at the protein level. However, the mechanistic path from sequence to function is far more complex than originally envisioned, involving evolutionary constraints, environmental signals, and intricate biomolecular networks.

The expansion of this paradigm is particularly relevant for drug discovery and biomedical research, where understanding protein function is pivotal for comprehending health, disease, and therapeutic development [21] [22]. With more than 200 million proteins remaining uncharacterized in databases like UniProt, and the vast majority (~80%) lacking functional annotations, computational approaches have become indispensable for bridging this sequence-function gap [21]. This whitepaper examines how network analysis and modern computational methods are revolutionizing our ability to discover new protein functions within this expanded Central Dogma framework.

The Computational Framework: Predicting Function from Sequence

The Fundamental Challenge

The core challenge in protein function prediction lies in deciphering the complex relationship between amino acid sequence and biological activity. Proteins perform nearly all essential biological activities by binding to other molecules, and understanding these interactions is crucial for comprehending molecular mechanisms underlying health and disease [21]. Traditional experimental methods for determining protein function, while highly accurate, are time-consuming and costly, unable to keep pace with the exponentially growing number of sequenced proteins [23].

The statistical reality underscores this challenge: even in well-studied model organisms like Saccharomyces cerevisiae, approximately 20% of genes have no functional annotations below the root of the Gene Ontology (GO) biological process hierarchy, and about 60% of annotated genes have only a single GO term annotation, suggesting substantial incomplete annotation [24]. This annotation sparsity becomes even more pronounced in higher eukaryotes including humans, creating an urgent need for robust computational methods that can generalize from limited known examples [24].

Network-Based Approaches

Network-based methods have emerged as powerful tools for protein function prediction by leveraging the "guilt-by-association" principle—the concept that proteins interacting with or resembling known functional proteins likely perform similar functions [25]. These approaches represent biological data as graphs where nodes correspond to proteins and edges represent various relationships including:

- Protein-protein interactions (PPIs) [25]

- Structural similarities [23]

- Evolutionary relationships [21]

- Genetic associations [22]

Table 1: Network-Based Protein Function Prediction Methods

| Method Type | Key Principle | Representative Algorithms | Applications |

|---|---|---|---|

| Neighborhood Counting | Assigns function based on frequencies among interacting partners | χ²-like scoring [25] | Initial functional annotation, homology extension |

| Graph Theoretic | Partitions network to maximize functional consistency | Minimum multiway cut, simulated annealing, network flow [25] | Protein complex identification, functional module discovery |

| Markov Random Fields | Probabilistic models where function depends on neighbors' functions | Gibbs sampling, quasi-likelihood methods [25] | Integrating heterogeneous data sources, confidence estimation |

| Deep Learning | Learns complex sequence-structure-function relationships | PhiGnet, DPFunc [21] [23] | Residue-level function prediction, novel function discovery |

The fundamental observation underpinning these methods is that proteins lying closer to one another in biological networks are more likely to share functional annotations [25]. This correlation between network proximity and functional similarity enables predictions even for previously uncharacterized proteins.

Advanced Methodologies: Statistics-Informed and Structure-Based Prediction

Statistics-Informed Graph Networks (PhiGnet)

PhiGnet represents a significant advancement in protein function prediction by leveraging evolutionary information directly from sequence data [21]. This method utilizes a dual-channel architecture with stacked graph convolutional networks (GCNs) to assimilate knowledge from evolutionary couplings (EVCs) and residue communities (RCs). The approach specializes in assigning functional annotations including Enzyme Commission (EC) numbers and Gene Ontology (GO) terms across biological process (BP), cellular component (CC), and molecular function (MF) categories [21].

The PhiGnet workflow processes protein sequences through several stages:

- Sequence Embedding: Derives initial protein representations using pre-trained ESM-1b model

- Graph Construction: Incorporates EVCs and RCs as graph edges

- Feature Processing: Utilizes six graph convolutional layers in dual stacked GCNs

- Function Assignment: Generates probability tensors for functional annotations

- Residue Scoring: Applies gradient-weighted class activation maps (Grad-CAMs) to quantify functional significance of individual residues [21]

A key innovation of PhiGnet is its ability to identify functional sites at residue level through activation scores, enabling quantitative assessment of each amino acid's contribution to specific functions. For example, in the mutual gliding-motility (MgIA) protein, PhiGnet identified residues with high activation scores (≥0.5) that formed a pocket binding guanosine di-nucleotide (GDP), corresponding closely with experimentally verified functional sites [21].

Domain-Guided Structure Information (DPFunc)

DPFunc addresses limitations in existing structure-based methods by incorporating domain information to guide functional annotation [23]. This approach recognizes that proteins consist of specific domains that are closely related to both their structures and functions. Traditional structure-based methods often average all amino acid features into protein-level representations, potentially overlooking functionally critical domains [23].

The DPFunc architecture comprises three integrated modules:

- Residue-level feature learning based on pre-trained protein language models and graph neural networks

- Protein-level feature learning that transforms residue-level insights into comprehensive representations guided by domain information

- Protein function prediction that annotates functions through fully connected layers with post-processing for GO term consistency [23]

DPFunc employs InterProScan to detect domains in protein sequences, converting them into dense representations through embedding layers. An attention mechanism then interweaves protein-level domain features with residue-level features to assess the importance of different residues, enabling the model to detect key motifs or residues strongly correlated with specific functions [23].

Table 2: Performance Comparison of Protein Function Prediction Methods (Fmax Scores)

| Method | Molecular Function (MF) | Cellular Component (CC) | Biological Process (BP) |

|---|---|---|---|

| Naive | 0.380 | 0.420 | 0.320 |

| Blast | 0.450 | 0.510 | 0.410 |

| DeepGO | 0.520 | 0.580 | 0.490 |

| DeepFRI | 0.570 | 0.620 | 0.540 |

| GAT-GO | 0.590 | 0.640 | 0.560 |

| DPFunc (without post-processing) | 0.637 | 0.672 | 0.605 |

| DPFunc (with post-processing) | 0.685 | 0.815 | 0.690 |

Performance metrics demonstrate DPFunc's significant advantages over existing state-of-the-art methods, with particularly notable improvements in cellular component and biological process prediction after implementing post-processing procedures [23].

Experimental Protocols and Validation

Benchmark Validation Strategies

Rigorous validation of computational function predictions remains challenging due to incomplete gold standards in biological databases [24]. To address this, researchers have developed experimental benchmarks through comprehensive validation of predictions for specific biological processes. For example, one benchmark focused on mitochondrion organization and biogenesis (MOB) in S. cerevisiae, validating 241 unique genes through laboratory experiments [24].

The experimental validation pipeline typically involves:

- Computational Prediction Generation: Multiple methods generate ranked lists of genes assigned to specific functional terms

- Initial Evaluation: Assessment against existing database annotations (e.g., Gene Ontology)

- Experimental Validation: Medium-throughput laboratory assays (e.g., petite frequency assays for mitochondrial function)

- Gold Standard Augmentation: Incorporation of verified functions into reference datasets

- Method Re-evaluation: Performance assessment using expanded gold standards [24]

This approach revealed that computational methods actually perform significantly better than estimated using incomplete database annotations—with an average of 68% higher precision at 10% recall than initially measured [24]. However, comparative evaluation between methods remains challenging even with the same training data, as incomplete knowledge causes individual methods' performances to be differentially underestimated [24].

Residue-Level Functional Site Identification

Advanced methods like PhiGnet enable quantitative examination of individual amino acid contributions to protein function through activation scores [21]. Validation experiments typically involve:

Protocol: Residue-Level Function Validation

- Activation Score Calculation: Compute per-residue activation scores for proteins with known functional sites

- Threshold Application: Identify residues with scores ≥0.5 as predicted functional residues

- Comparative Analysis: Compare predictions against experimentally determined or semi-manually curated binding sites (e.g., BioLip database)

- Structure Mapping: Map high-scoring residues onto three-dimensional protein structures

- Conservation Analysis: Assess evolutionary conservation of predicted functional residues [21]

This protocol has demonstrated promising accuracy (≥75%) in predicting significant sites at residue level across diverse proteins including cPLA2α, Tyrosine-protein kinase BTK, Ribokinase, and others with varying sizes, folds, and functions [21].

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Resources for Protein Function Prediction Research

| Resource Category | Specific Tools/Databases | Primary Function | Application Context |

|---|---|---|---|

| Protein Databases | UniProt, PDB, BioLip | Provide sequence, structure, and functional annotation data | Reference data for training, validation, and comparative analysis |

| Function Ontologies | Gene Ontology (GO), Enzyme Commission (EC) | Standardized vocabulary for functional annotation | Consistent evaluation and cross-study comparison |

| Domain Detection | InterProScan | Identifies functional domains in protein sequences | Domain-guided prediction (e.g., DPFunc) |

| Structure Prediction | AlphaFold2, ESMFold | Generates protein 3D structures from sequences | Structure-based function prediction |

| Language Models | ESM-1b | Creates residue-level feature representations | Sequence embedding for deep learning approaches |

| Interaction Networks | STRING, BioGRID | Protein-protein interaction data | Network-based function inference |

| Evaluation Frameworks | CAFA Challenge | Standardized assessment protocols | Method performance benchmarking |

Applications in Drug Discovery and Biomedical Research

The integration of protein function prediction with network analysis has profound implications for drug discovery and development. Network-based approaches can model complex relationships between drugs, targets, diseases, and side effects, significantly accelerating the identification of new therapeutic applications [22].

Drug-Target Interaction Prediction: Network link prediction methods can identify potential interactions between drugs and target proteins, facilitating drug repurposing and novel therapeutic development [22]. These approaches convert the drug discovery problem into a missing link prediction challenge within heterogeneous networks containing drugs, proteins, diseases, and genes [22].

Side Effect Prediction: By analyzing drug-drug interaction networks, computational methods can predict adverse side effects of drug combinations, addressing a critical challenge in polypharmacy [22]. Traditional experimental testing of all possible drug combinations is infeasible—for n drugs, there are n×(n-1)/2 pairwise combinations—making computational approaches essential [22].

Mechanistic Insights: Residue-level function prediction provides atomic-level insights into protein mechanisms, enabling more targeted drug design and understanding of disease mutations [21]. For example, identifying specific residues involved in binding pockets guides structure-based drug development and optimization.

The application of these methods has demonstrated practical success, with approximately 30% of drugs introduced in 2013 representing repurposed existing medications [22]. This highlights the real-world impact of computational function prediction in pharmaceutical development.

The field of protein function prediction continues to evolve rapidly, with several promising research directions emerging. Integration of multi-omics data—including genomics, transcriptomics, and proteomics—provides additional layers of functional context [22]. Explainable artificial intelligence approaches are increasing the interpretability of predictions, enabling researchers to understand the rationale behind functional assignments [21] [23]. Meanwhile, transfer learning techniques allow models trained on well-characterized model organisms to be adapted for less-studied species, addressing annotation sparsity in non-model organisms [23].

The Central Dogma's sequence-structure-function relationship remains a foundational principle in molecular biology, but our understanding of this relationship has grown considerably more sophisticated. Modern computational methods now leverage evolutionary information, structural features, domain architecture, and biological network context to predict protein function with increasing accuracy and resolution down to individual residues.

These advances are particularly valuable for drug discovery and biomedical research, where understanding protein function is essential for deciphering disease mechanisms and developing new therapeutics [21] [22]. As these computational methods continue to improve, they will play an increasingly central role in bridging the gap between the exponentially growing number of protein sequences and their biological functions, ultimately enhancing our ability to discover new protein functions and their applications in human health and disease.

Protein-protein interaction (PPI) data serves as the foundational framework for discovering novel protein functions through network analysis. By mapping the intricate relationship networks within cells, researchers can infer unknown protein functions based on interaction patterns with well-characterized partners. This whitepaper provides an in-depth technical examination of four pivotal biological databases—STRING, BioGRID, DIP, and MINT—that enable systematic PPI network analysis for research and therapeutic development. We present comprehensive quantitative comparisons, experimental protocols for utilizing these resources, visualization of analytical workflows, and essential research reagent solutions to equip scientists with practical tools for functional proteomics discovery.

Resource Descriptions and Specializations

STRING is a comprehensive database that compiles, scores, and integrates both physical and functional protein associations from experimental assays, computational predictions, and prior knowledge sources. The latest version, STRING 12.5, introduces regulatory networks with directionality of interactions using curated pathway databases and a fine-tuned language model for literature parsing [7]. It provides three distinct network types—functional, physical, and regulatory—to address diverse research needs [7].

BioGRID is a curated biological database of protein, genetic, and chemical interactions. Its core data encompasses interactions, chemical associations, and post-translational modifications (PTMs) from over 87,000 publications [26]. BioGRID also maintains the Open Repository of CRISPR Screens (ORCS), a curated database of CRISPR screens compiled from biomedical literature [26].

DIP (Database of Interacting Proteins) catalogs experimentally determined interactions between proteins, combining information from various sources to create a consistent set of protein-protein interactions [27]. The data within DIP are curated both manually by expert curators and automatically using computational approaches [27].

MINT (Multimeric INteraction Transformer) represents a novel approach as a Protein Language Model (PLM) specifically designed for contextual and scalable modeling of interacting protein sequences [28] [29]. Unlike traditional databases, MINT is trained on a large, curated set of 96 million protein-protein interactions from STRING using machine learning methodologies [28].

Quantitative Database Comparison

Table 1: Key quantitative metrics for PPI databases

| Database | Interaction Count | Coverage | Data Types | Key Features |

|---|---|---|---|---|

| STRING | >20 billion interactions [30] | 59.3 million proteins across 12,535 organisms [30] | Functional, physical, and regulatory associations [7] | Directionality of regulation; network embeddings; pathway enrichment [7] |

| BioGRID | 2.25 million non-redundant interactions from 87,393 publications [26] | Multiple organisms with themed projects (COVID-19, Alzheimer's, etc.) [26] | Protein-protein, genetic interactions; chemical associations; PTMs [26] | CRISPR screen curation (ORCS); expert manual curation; monthly updates [26] |

| DIP | Not specified in results | Not specified in results | Experimentally verified binary interactions [27] | Combined manual and computational curation; focused on core reliable data [27] |

| MINT | Trained on 96 million PPIs from STRING [28] | 16.4 million unique protein sequences [29] | Machine learning-generated interaction predictions | Cross-chain attention mechanism; state-of-the-art performance across PPI tasks [29] |

Methodologies for Network Analysis in Functional Discovery

Experimental Protocol: Multi-Database PPI Network Construction

Objective: To construct a comprehensive PPI network for identifying novel protein functions through guilt-by-association principles.

Workflow Steps:

Gene/Protein List Compilation: Assemble target proteins using standardized identifiers (UniProt, Ensembl) to ensure cross-database compatibility.

Multi-Source Data Retrieval:

- Query STRING via API using the "multiple proteins" endpoint with a minimum required interaction score of 0.7 (high confidence) [30].

- Extract experimentally validated interactions from BioGRID using download packages or direct SQL queries, applying filters for evidence type (e.g., biochemical, genetic) [26].

- Retrieve core interaction data from DIP to supplement with high-quality binary interactions [27].

Data Integration and Network Merging:

- Consolidate interactions from all sources using protein identifier mapping services.

- Resolve conflicting evidence through confidence scoring systems weighted by evidence type.

- Apply statistical frameworks to identify significantly enriched interaction modules using hypergeometric testing.

Functional Annotation Transfer:

- Implement cross-species functional transfer by leveraging orthologous relationships for poorly characterized proteins.

- Apply Gene Ontology enrichment analysis to interaction modules using STRING's pathway enrichment functionality with improved false discovery rate corrections [7].

- Integrate regulatory directionality information from STRING's new regulatory networks to hypothesize signaling hierarchies [7].

Protocol for MINT-Based Interaction Prediction and Functional Inference

Objective: To employ deep learning methodologies for predicting novel interactions and mutational effects on protein function.

Workflow Steps:

Environment Setup:

- Create a Conda environment from the provided environment.yml file:

conda env create --name mint --file=environment.yml - Activate the environment:

conda activate mint - Install the package from source:

pip install -e .[28]

- Create a Conda environment from the provided environment.yml file:

Embedding Generation:

- Prepare input data as a CSV file with separate columns for interacting protein sequences ("ProteinSequence1", "ProteinSequence2").

- Implement the MINTWrapper class with appropriate configuration and checkpoint paths.

- Generate embeddings using the sep_chains=True argument for maximum performance on downstream tasks, producing concatenated embeddings of shape (2, 2560) for protein pairs [28].

Interaction Prediction:

Functional Impact Assessment:

- Utilize MINT's mutational effect estimation capabilities by introducing point mutations into input sequences.

- Quantify binding affinity changes using the SKEMPI dataset benchmarking framework, where MINT has demonstrated 29% improvement in predicting binding affinity changes upon mutation [29].

- Identify potential functional disruptions through significant changes in interaction probabilities.

Visualization of PPI Network Analysis Workflow

PPI Network Analysis Pathway

Cross-Species Functional Transfer Logic

Research Reagent Solutions for PPI Studies

Table 2: Essential research reagents and computational tools for PPI network analysis

| Resource/Tool | Type | Function in PPI Research |

|---|---|---|

| STRING API | Computational Tool | Programmatic access to protein association networks for integration into analytical pipelines [30] |

| BioGRID ORCS | Data Resource | Curated CRISPR screening data for functional validation of PPIs through genetic perturbation [26] |

| MINT Model Checkpoint | Computational Tool | Pre-trained weights for the Multimeric INteraction Transformer model for interaction prediction [28] |

| ESM-2 Base Model | Computational Tool | Foundational protein language model serving as the architectural basis for MINT [29] |

| Gene Ontology Resources | Annotation Database | Standardized functional terminology for enrichment analysis of PPI networks [5] |

| PDB-Bind Database | Experimental Data | Binding affinity data for protein-protein complexes used in benchmarking predictive models [29] |

| SKEMPI Database | Experimental Data | Mutational effects on binding affinity for training and validating mutational impact predictors [29] |

Discussion and Future Perspectives

The integration of traditional curated databases with machine learning approaches represents a paradigm shift in protein function discovery through PPI network analysis. While established resources like STRING, BioGRID, and DIP provide comprehensive experimentally-derived interaction maps, emerging technologies like MINT leverage these data to develop predictive models that transcend the limitations of direct experimental evidence.

The directional regulatory information newly incorporated in STRING 12.5 enables more accurate hypothesis generation regarding signaling pathways and hierarchical relationships [7]. Meanwhile, MINT's demonstrated proficiency in predicting mutational effects on oncogenic PPIs—matching 23 of 24 experimentally validated effects—showcases the potential for computational methods to accelerate functional characterization [29].

Future developments in this field will likely focus on the integration of multi-omics data layers with PPI networks, enhanced directionality predictions, and single-cell resolution interaction mapping. As deep learning approaches continue to evolve, their synergy with curated biological databases will progressively transform our ability to discover novel protein functions and their roles in disease mechanisms, ultimately advancing drug discovery and therapeutic development.

From Data to Discovery: Computational Tools and AI Methods for Functional Prediction

The analysis of Protein-Protein Interactions (PPIs) is fundamental to understanding cellular functions, biological processes, and the molecular mechanisms underlying diseases. PPIs regulate everything from signal transduction and cell cycle progression to transcriptional regulation and cytoskeletal dynamics [5]. Traditionally, PPI prediction relied on experimental methods like yeast two-hybrid screening and co-immunoprecipitation, which, while effective, are often time-consuming, resource-intensive, and difficult to scale [5]. The advent of deep learning has transformed this field, enabling the development of computational models that can predict interactions with unprecedented accuracy and efficiency. These models are now crucial for discovering new protein functions and advancing drug discovery, particularly for hard-to-treat diseases [31]. This whitepaper provides an in-depth technical guide to the three core deep learning architectures—Graph Neural Networks (GNNs), Convolutional Neural Networks (CNNs), and Transformers—that are driving innovation in PPI analysis within the broader context of network-based protein function discovery.

Core Architectural Frameworks

Graph Neural Networks (GNNs) for PPI

GNNs have emerged as a powerful architecture for PPI prediction because they naturally represent proteins as graph structures, where nodes correspond to amino acid residues and edges represent spatial or functional relationships [5] [32]. This representation allows GNNs to capture both local patterns and global topological information within protein structures [5].

Key Variants and Applications:

- Graph Convolutional Networks (GCNs): Apply convolutional operations to aggregate information from a node's neighbors, making them effective for node classification and graph embedding tasks. A limitation is their uniform treatment of neighboring nodes, which may not capture heterogeneous relationships in complex graphs [5].

- Graph Attention Networks (GAT): Incorporate an attention mechanism that adaptively weights the importance of neighboring nodes, enhancing flexibility in modeling diverse interaction patterns [5] [33]. The DSSGNN-PPI model, for instance, uses a GAT module combined with a gate augmentation mechanism to process PPI networks, extracting complex topological patterns for multi-type interaction prediction [33].

- GraphSAGE: Designed for large-scale graph processing, it uses neighbor sampling and feature aggregation to reduce computational complexity, making it suitable for massive PPI networks [5].

- Graph Autoencoders (GAE): Employ an encoder-decoder framework to learn compact, low-dimensional node embeddings, which can be used for graph reconstruction or predictive tasks [5]. The Deep Graph Auto-Encoder (DGAE) innovatively combines canonical auto-encoders with graph auto-encoding for hierarchical representation learning [5].

Advanced frameworks like RGCNPPIS integrate GCN and GraphSAGE to simultaneously extract macro-scale topological patterns and micro-scale structural motifs [5]. Furthermore, AG-GATCN integrates GAT with Temporal Convolutional Networks (TCNs) to provide robust solutions against noise interference in PPI analysis [5].

Convolutional Neural Networks (CNNs) for PPI

CNNs are renowned for their ability to capture spatial hierarchies and local patterns, making them well-suited for tasks involving image-like representations of biological data [34] [35]. In PPI analysis, CNNs are applied to both sequence and structural data.

Key Methodologies:

- Image Representation of Sequences: The ProtConv approach converts amino acid sequences into two-dimensional, single-channel images after generating feature vectors via protein embedding techniques like TAPE. These images are then processed by a CNN architecture inspired by LeNet-5 to predict protein function, demonstrating state-of-the-art performance on tasks such as identifying proinflammatory cytokines and anticancer peptides [34].

- Contact Map Prediction: DeepCov utilizes a Fully Convolutional Network (FCN) architecture that operates directly on pairwise covariance data calculated from raw sequence alignments. This model demonstrates that simple alignment statistics contain sufficient information to achieve state-of-the-art precision in residue-residue contact prediction, outperforming methods like CCMpred and MetaPSICOV2, especially on shallow sequence alignments with fewer than 160 effective sequences [35]. FCNs are advantageous because they can process inputs of arbitrary size and produce correspondingly-sized outputs, making them ideal for proteins of different lengths [35].

Transformer Models for PPI

Transformers, with their self-attention mechanisms, excel at capturing long-range dependencies and complex patterns in sequential data. Their application in biology has grown rapidly, particularly for PPI analysis and network medicine [36].

Key Mechanisms and Applications:

- Self-Attention and Embeddings: Transformers dynamically weigh the significance of different elements in the input data. In models like Geneformer, which is pre-trained on 30 million single-cell transcriptomes, genes are treated as tokens. The cosine similarity of gene embeddings and the attention weights between gene pairs have been shown to implicitly capture experimentally validated PPIs [36]. Genes with physical interactions exhibit higher cosine similarity and attention weights compared to non-interacting pairs [36].

- Integration with Network Medicine: When PPI networks are weighted with Geneformer-derived cosine similarities and attention weights, they show improved performance in disease module detection and drug repurposing predictions. For example, in a case study on dilated cardiomyopathy, this approach successfully highlighted the specific network neighborhood of disease-associated genes [36].

- Multi-Modal Integration: The DPFunc model incorporates an attention mechanism inspired by the transformer architecture to integrate protein-level domain features with residue-level features. This guides the model to detect significant, function-related residues within protein structures, enhancing the accuracy of function prediction [23].

Quantitative Performance Comparison

The following table summarizes the performance of various deep learning models on key PPI and function prediction tasks, highlighting their specific applications and achieved metrics.

Table 1: Performance Comparison of Deep Learning Models in PPI Analysis

| Model Name | Core Architecture | Primary Application | Key Performance Metrics | Reference |

|---|---|---|---|---|

| DeepFRI | Graph Convolutional Network (GCN) | Protein Function Prediction | Outperforms sequence-based CNNs; scalable to large sequence repositories [37]. | [37] |

| DPFunc | GNN + Attention Mechanism | Protein Function Prediction | Significant improvement over state-of-the-art structure-based methods (e.g., 16-27% increase in Fmax over GAT-GO) [23]. | [23] |

| DSSGNN-PPI | Double GNN (GAT + Gated GNN) | Multi-type PPI Prediction | Remarkable effectiveness validated on STRING datasets; excels in capturing local/global features [33]. | [33] |

| PPI-GNN (GCN) | Graph Convolutional Network (GCN) | PPI Prediction (Binary) | Achieved 94.69% Accuracy, 95.25% Precision, 94.01% Recall, 94.63% F1-score on Pan's Human dataset [32]. | [32] |

| PPI-GNN (GAT) | Graph Attention Network (GAT) | PPI Prediction (Binary) | Achieved 95.71% Accuracy, 96.23% Precision, 95.16% Recall, 95.69% F1-score on Pan's Human dataset [32]. | [32] |

| Geneformer | Transformer | PPI & Disease Module Detection | Enhanced disease gene discovery and drug repurposing accuracy for dilated cardiomyopathy [36]. | [36] |