Advanced Strategies for Ill-Conditioned Optimization Problems in Pharmaceutical Research and Drug Development

Ill-conditioned optimization problems present significant challenges in pharmaceutical research, leading to unstable solutions, slow convergence, and unreliable results in critical applications from formulation design to pharmacokinetic modeling.

Advanced Strategies for Ill-Conditioned Optimization Problems in Pharmaceutical Research and Drug Development

Abstract

Ill-conditioned optimization problems present significant challenges in pharmaceutical research, leading to unstable solutions, slow convergence, and unreliable results in critical applications from formulation design to pharmacokinetic modeling. This article provides a comprehensive framework for understanding and addressing ill-conditioning, exploring its fundamental characteristics across numerical analysis, nonlinear regression, and AI-driven modeling. We examine proven methodological approaches including regularization techniques, model reparameterization, and preconditioning strategies, with specific applications in drug release optimization and catalyst design. The content further investigates advanced troubleshooting protocols and systematic validation frameworks to enhance solution robustness, offering researchers and drug development professionals practical tools for navigating complex, ill-posed problems in biomedical applications.

Understanding Ill-Conditioning: Fundamental Challenges in Pharmaceutical Optimization

Ill-conditioning is a property of a mathematical problem where small changes or errors in the input data cause large changes in the output solution. This high sensitivity makes it difficult to obtain reliable, accurate results, even with sophisticated numerical algorithms [1] [2].

The condition number is a crucial metric that quantifies the degree of this sensitivity. A low condition number indicates a well-conditioned problem, where errors in the input have minimal effect on the output. A high condition number indicates an ill-conditioned problem, where small input errors are unacceptably amplified [1] [3].

Core Concepts and Mathematical Definitions

What is a Condition Number?

In numerical analysis, the condition number measures how much the output value of a function can change for a small change in the input argument. It provides a bound on the worst-case relative change in output for a relative change in input [1].

For a general differentiable function ( f ), the relative condition number at a point ( x ) is defined as [1]: [ \left| \frac{x f'(x)}{f(x)} \right| ]

Condition Number of a Matrix

For the linear system ( A\mathbf{x} = \mathbf{b} ), the condition number of matrix ( A ) is defined as [1] [3]: [ \kappa(A) = \|A\| \|A^{-1}\| ] where ( \|\cdot\| ) denotes a consistent matrix norm.

Using the L²-norm, the condition number can be computed from the singular values of ( A ) [1] [3]: [ \kappa(A) = \frac{\sigma{\text{max}}(A)}{\sigma{\text{min}}(A)} ] where ( \sigma{\text{max}} ) and ( \sigma{\text{min}} ) are the largest and smallest singular values of ( A ), respectively.

Table 1: Interpretation of Condition Number Values

| Condition Number | Problem Classification | Implication for Solution Stability |

|---|---|---|

| ( \kappa \approx 1 ) | Well-conditioned | Input errors do not significantly amplify |

| ( \kappa \gg 1 ) | Ill-conditioned | Small input errors cause large output errors |

| ( \kappa = \infty ) | Singular (non-invertible) | No unique solution exists |

As a rule of thumb, if ( \kappa(A) = 10^k ), you may lose up to ( k ) digits of accuracy in your solution [1].

FAQ: Diagnosing and Troubleshooting Ill-Conditioning

How can I quickly check if my matrix is ill-conditioned?

Compute the condition number using the ratio of largest to smallest singular value. A high condition number indicates ill-conditioning. In practice, you can also check the relative residual [4]: [ \frac{\| \mathbf{b} - A\mathbf{\hat{x}} \|}{\|A\| \|\mathbf{\hat{x}}\|} ] where ( \mathbf{\hat{x}} ) is your computed solution. If the relative residual is small but the error in ( \mathbf{\hat{x}} ) is large, your problem is likely ill-conditioned [4].

Why is an ill-conditioned model difficult to solve?

Ill-conditioned models are difficult to solve because [1] [2]:

- High Sensitivity: Tiny perturbations in input data (e.g., from measurement error or finite-precision rounding) cause large changes in the solution.

- Numerical Instability: Algorithms may converge slowly, fail to converge, or exhibit catastrophic cancellation.

- Loss of Precision: The effective number of accurate digits in your solution can be dramatically reduced.

- Near-singularity: The matrix is close to being non-invertible [2].

- Poor scaling: Variables or equations have vastly different magnitudes [2] [5].

- Overparameterization: Redundant or highly correlated variables in optimization problems [2].

- Functional redundancy: In physical models, multiple components serve overlapping functions, leading to a system that is difficult to equilibrate [6].

- Specific matrix structures: Hilbert matrices, Vandermonde matrices with closely spaced points, and matrices from certain discretizations are often ill-conditioned [2].

My linear system has a high condition number. What can I do?

Several techniques can help manage ill-conditioned systems:

- Preconditioning: Transform the system ( A\mathbf{x} = \mathbf{b} ) into an equivalent system with a lower condition number using a preconditioner matrix ( P ) [2].

- Regularization: Reformulate the problem to make it more stable. A common method is Tikhonov regularization, which adds a penalty term to suppress large, unstable solutions [2].

- Variable Scaling: Ensure all variables and equations are scaled to have similar magnitudes [2].

- Use Specialized Algorithms: For optimization, consider using second-order methods (e.g., quasi-Newton methods) that are more robust to ill-conditioning than first-order methods like gradient descent [7].

Experimental Protocol: Diagnosing Ill-Conditioning in a Linear System

This protocol provides a step-by-step methodology to diagnose ill-conditioning when solving a linear system of equations ( A\mathbf{x} = \mathbf{b} ).

Objective

To determine if a given matrix ( A ) is ill-conditioned and to assess the reliability of the numerical solution ( \mathbf{\hat{x}} ).

Materials and Computational Tools

Table 2: Research Reagent Solutions for Numerical Analysis

| Item Name | Function / Purpose |

|---|---|

Linear Algebra Library (e.g., numpy.linalg, scipy.linalg, LinearAlgebra in Julia) |

Provides core routines for SVD, norm calculation, and linear system solving. |

| Condition Number Calculator | Computes ( \kappa(A) ) via the ratio of singular values. |

| Norm Function | Calculates vector and matrix norms (e.g., L2-norm, Frobenius norm) for error analysis. |

Visualization Tool (e.g., CairoMakie, Matplotlib) |

Plots error distributions and condition numbers for analysis [3]. |

Step-by-Step Procedure

Compute the Condition Number:

- Perform a Singular Value Decomposition (SVD) on matrix ( A ) to obtain its singular values ( \sigma_i ).

- Calculate ( \kappa(A) = \sigma{\text{max}} / \sigma{\text{min}} ).

- Interpret the result using Table 1. A high value (e.g., ( > 10^{10} ) for double precision) indicates severe ill-conditioning.

Solve the System and Calculate the Residual:

- Compute the numerical solution ( \mathbf{\hat{x}} ) using a standard solver (e.g.,

A \ b). - Calculate the relative residual: [ \text{Relative Residual} = \frac{\| \mathbf{b} - A\mathbf{\hat{x}} \|}{\|A\| \|\mathbf{\hat{x}}\|} ]

- A small relative residual (e.g., near machine precision) indicates that ( \mathbf{\hat{x}} ) solves a nearby system exactly, which is a sign of a backward stable algorithm [4].

- Compute the numerical solution ( \mathbf{\hat{x}} ) using a standard solver (e.g.,

Perform a Perturbation Analysis:

- Introduce a small, random perturbation ( \delta \mathbf{b} ) to the right-hand side vector ( \mathbf{b} ).

- Solve the perturbed system ( A\mathbf{y} = \mathbf{b} + \delta \mathbf{b} ).

- Calculate the relative error in the solution: [ \text{Relative Error} = \frac{\| \mathbf{y} - \mathbf{\hat{x}} \|}{\|\mathbf{\hat{x}}\|} ]

- Compare this to the bound predicted by the condition number: [ \text{Relative Error} \lessapprox \kappa(A) \cdot \frac{\| \delta \mathbf{b} \|}{\|\mathbf{b}\|} ]

- If the observed error is close to this upper bound, the system is highly sensitive to input perturbations, confirming ill-conditioning.

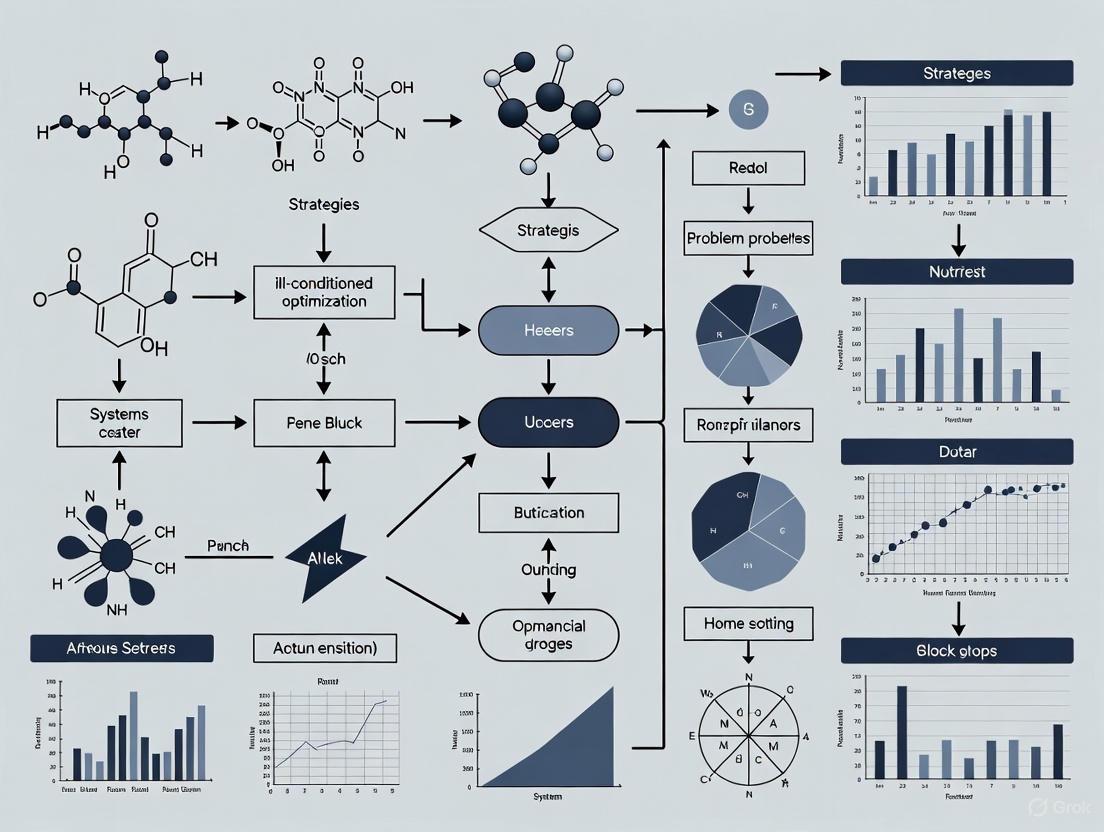

Workflow Visualization

Advanced Context: Ill-Conditioning in Optimization Problems

Within a broader thesis on strategies for ill-conditioned optimization problems, it is critical to understand that ill-conditioning manifests in the Hessian matrix of the objective function.

For an optimization problem ( \min f(\mathbf{x}) ), the Hessian ( \nabla^2 f(\mathbf{x}) ) at the solution ( \mathbf{x}^* ) is ill-conditioned if its eigenvalues vary widely. The condition number is again given by the ratio of largest to smallest eigenvalue [1] [5]: [ \kappa(\nabla^2 f(\mathbf{x}^*)) = \frac{|\lambda{\text{max}}|}{|\lambda{\text{min}}|} ]

A poorly scaled function, such as ( f(x, y) = 10^9 x^2 + y^2 ), will have a Hessian with a very high condition number, causing first-order methods (like gradient descent) to converge slowly. Second-order methods, like Newton's method, can suffer from numerical instability unless the ill-conditioning is addressed via preconditioning or regularization techniques [2] [5] [7].

Frequently Asked Questions

What is the fundamental difference between an ill-conditioned problem and an unstable algorithm? An ill-conditioned problem is inherently sensitive to small changes in its input; this is a property of the problem itself. In contrast, numerical instability is a property of a specific algorithm, where the method used to solve the problem amplifies small errors (like rounding errors) during computation [8]. A stable algorithm will not cure an ill-conditioned problem, but it will ensure that the computed solution is as accurate as the problem's conditioning allows.

Why does my optimization solver fail to converge or produce erratic results for my large-scale model? This is a classic symptom of numerical instability in optimization. Common causes include [9] [10]:

- Ill-conditioning: The geometry of your problem leads to a very high condition number, making the solution highly sensitive.

- Poor scaling: Decision variables or constraints have coefficients that span too many orders of magnitude (e.g., from 1e-10 to 1e+10).

- Rounding of input data: Manually rounding coefficients when building the model introduces artificial inaccuracies.

- Limits of floating-point arithmetic: Finite precision calculations can cause catastrophic cancellation when subtracting nearly equal numbers [11].

How can I check if my model is ill-conditioned?

- Analyze coefficient range: Calculate the ratio between the largest and smallest absolute values of nonzero coefficients in your model. A ratio exceeding 10^6 indicates potential danger [10].

- Inspect solver logs: Advanced solvers like Gurobi can provide a "numerical instability" warning or report an "attention level" that estimates the likelihood of numerical errors [9] [10].

- Compute the condition number: For linear systems (Ax = b), the condition number (\kappa(A) = \|A\| \|A^{-1}\|) quantifies sensitivity. A large condition number indicates ill-conditioning [3] [2].

What is catastrophic cancellation and how can I avoid it? Catastrophic cancellation occurs when subtracting two nearly equal floating-point numbers, leading to a massive loss of significant digits and a sharp increase in relative error [11] [2]. To avoid it, reformulate your calculations. For example, instead of directly computing (p - \sqrt{p^2 + q}), use the algebraically equivalent but numerically stable formula (-q/(p + \sqrt{p^2 + q})) [3].

Troubleshooting Guide: Diagnosing and Resolving Numerical Instability

Follow this workflow to systematically identify and address numerical issues in your optimization problems.

Step 1: Check Input Data and Model Scaling

The first step is to eliminate issues introduced during model building.

- Action: Audit your model generation code to ensure no unnecessary rounding of input parameters occurs [9].

- Action: Scale your model. Ensure all decision variables and constraints are scaled so that their coefficients are within a manageable range, ideally within a few orders of magnitude. A good rule of thumb is to aim for a ratio of maximum-to-minimum nonzero coefficients of less than 10^6 [10].

Step 2: Diagnose Problem Conditioning

Determine if the instability stems from the problem itself (ill-conditioning).

- Action: For linear systems, compute the condition number, (\kappa(A) = \sigma{\max}(A) / \sigma{\min}(A)), where (\sigma) are the singular values of (A). A large condition number indicates an ill-conditioned system [3] [2].

- Action: Use solver-specific diagnostics. For instance, Gurobi provides parameters and tools to analyze numerical instability [9].

Step 3: Apply Problem Reformulation and Stabilization Techniques

If the problem is ill-conditioned, use techniques to mitigate the issue.

- Action: Preconditioning transforms the problem into an equivalent, better-conditioned one. For linear systems, this involves finding a matrix (P) such that (\kappa(PA) \ll \kappa(A)) [2].

- Action: Use Stable Algorithms. Replace unstable numerical methods with robust alternatives [11].

| Unstable Method | Stable Alternative | Rationale |

|---|---|---|

| Gaussian elimination without pivoting | Gaussian elimination with partial/complete pivoting | Avoids division by small numbers [11] |

| Explicit Euler method for stiff ODEs | Implicit methods (e.g., Backward Euler) | Larger stability region [8] [11] |

| High-degree polynomial interpolation | Piecewise polynomials (Splines) | Avoids Runge's phenomenon [11] |

| Normal equations for least squares | QR decomposition or SVD | Avoids condition number squaring [3] |

- Action: For Physics-Informed Neural Networks (PINNs) and other machine learning models, ill-conditioning of the Jacobian matrix of the underlying PDE system can cause unstable training. Mitigation strategies include using time-stepping schemes or constructing controlled systems to improve the condition number [12].

Step 4: Verify the Solution

After applying fixes, verify the stability and reliability of your solution.

- Action: Perform a sensitivity analysis to quantify how your solution changes with small perturbations in the input data. Global sensitivity analysis methods (e.g., variance-based) are preferred for nonlinear models [13] [14].

- Action: Check the solver's final output, including the status, iteration count, and any remaining warnings about numerical issues [9].

The Scientist's Toolkit: Research Reagent Solutions

This table details key computational tools and techniques essential for diagnosing and managing numerical instability.

| Research Reagent | Function & Purpose |

|---|---|

| Condition Number Estimator | Quantifies the inherent sensitivity of a problem (e.g., a linear system). A high value signals ill-conditioning and potential for large output errors [3] [2]. |

| Preconditioner | A transformation applied to a problem to improve its condition number, thereby accelerating solver convergence and improving numerical stability [2]. |

| Stable Linear Solver (QR/SVD) | Algorithms that use orthogonal transformations (QR decomposition) or singular value decomposition (SVD) to solve least-squares and linear systems reliably, avoiding the numerical pitfalls of methods like normal equations [11] [3]. |

| Global Sensitivity Analysis | A suite of statistical techniques (e.g., Sobol' indices) used to apportion output uncertainty to input factors, helping identify which model parameters require precise estimation [13] [14]. |

| Implicit Integration Scheme | A class of methods for differential equations (e.g., Backward Euler) that remain stable for much larger step sizes than explicit methods, making them essential for solving stiff systems [8] [11]. |

Experimental Protocol: Assessing Model Stability via Sensitivity Analysis

This protocol provides a detailed methodology for performing a global sensitivity analysis to understand input-output relationships and identify factors contributing to output instability.

- Objective: To identify which uncertain input parameters ( (X1, X2, ..., X_p) ) have the greatest influence on the variability of a critical model output (Y) and to assess the model's robustness [13] [14].

- Background: Unlike local "one-at-a-time" (OAT) methods, which can be misleading for nonlinear models, global sensitivity analysis varies all inputs simultaneously over their entire feasible space. This approach captures interaction effects between parameters and provides a more reliable ranking of factor importance [14].

Procedure:

- Define Input Uncertainty: For each of the (p) input parameters considered uncertain, define a plausible range and probability distribution based on expert opinion, literature, or physical constraints [14].

- Generate Input Sample: Using a space-filling design like Latin Hypercube Sampling or a Sobol' sequence, generate a large number (N) of input vectors. This ensures efficient exploration of the multi-dimensional input space [13].

- Execute Model: Run the numerical model (e.g., your optimization or simulation) for each of the (N) input vectors and record the corresponding output (Y) for each run.

- Compute Sensitivity Indices: Calculate variance-based sensitivity indices, specifically the first-order and total-order Sobol' indices.

Interpretation:

- Factor Prioritization (Ranking): Inputs with high first-order indices (S_i) are the most influential and should be prioritized for further measurement to reduce output uncertainty.

- Factor Fixing (Screening): Inputs with very low total-order indices (S_{Ti}) have a negligible effect and can be fixed to nominal values in future studies without significantly affecting the output, thus simplifying the model [14].

Troubleshooting Guides

Guide: Resolving Matrix Near-Singularity in Drug Response Prediction

Problem Description: Matrix near-singularity, or ill-conditioning, occurs when a matrix has a very high condition number, making its inverse numerically unstable. This is a common issue in drug sensitivity analysis where data matrices are often low-rank and contain missing values, leading to unreliable computations and failed experiments [15] [16].

Primary Symptoms:

- Algorithm fails to converge or converges very slowly

- Large numerical errors in model predictions

- High sensitivity to small changes in input data

- Unstable parameter estimates in dose-response models

Diagnostic Steps:

- Calculate the condition number of your data matrix. A high condition number (e.g., >>1000) indicates ill-conditioning [17].

- Check for multicollinearity among model parameters, which can be a source of parametric collinearity in nonlinear regression [16].

- Analyze the eigenspectrum of the matrix. A few dominant eigenvalues suggest a low-rank, near-singular structure [15].

Resolution Procedures:

- Apply Singular Value Thresholding (SVT): Use SVT for matrix completion on input drug response data. This algorithm exploits the inherent low-rank structure of biological data to reconstruct a stable, complete matrix [15].

- Implement Model Reparameterization: Replace the original set of parameters with a new set possessing increased orthogonality properties. This systematic strategy decreases ill-conditioning in nonlinear regression [16].

- Use K-Optimal Experimental Designs: Construct a family of surface curves using the support points of locally K-optimal designs. This provides a more stable foundation for model fitting and can be solved using Semidefinite Programming [16].

Guide: Correcting Poor Scaling in Pre-Clinical Assay Data

Problem Description: Poor scaling arises when features or variables in a dataset have vastly different magnitudes (e.g., IC50 values vs. gene expression counts). This creates an ill-conditioned optimization landscape, slowing down training and reducing the effectiveness of gradient-based optimization [17].

Primary Symptoms:

- Inefficient or unstable convergence during model training

- Model predictions are biased towards features with larger scales

- "Loss of plasticity" where learning stalls prematurely in deep reinforcement learning models [17]

Diagnostic Steps:

- Examine the effective learning rate (ELR): A highly variable ELR indicates issues with gradient norms, often stemming from poor scaling [17].

- Plot the distribution of feature values to identify those with disproportionately large ranges.

- Analyze the Hessian of the loss function; a high condition number confirms an ill-conditioned landscape [17].

Resolution Procedures:

- Integrate Normalization Layers: Use batch normalization (BN) and weight normalization (WN) in neural network architectures. BN consistently produces better-conditioned local loss landscapes than alternatives like layer normalization, leading to condition numbers that are orders of magnitude smaller [17].

- Adopt a Distributional Loss Function: For critic networks, use a categorical cross-entropy (CE) loss instead of a mean squared error (MSE) loss. The CE loss induces a remarkably well-conditioned optimization landscape compared to MSE [17].

- Apply Standard Preprocessing: Use standard scaling (z-score normalization) or min-max scaling on all input features to ensure they reside on a similar scale before model training.

Guide: Managing Overparameterization in AI-based Drug Discovery

Problem Description: Overparameterization refers to designing machine learning models with more parameters than the amount of training data. While this can improve performance on complex tasks, it risks overfitting, where the model memorizes training data noise instead of learning generalizable patterns [18].

Primary Symptoms:

- Excellent performance on training data but poor performance on validation/test data

- Model exhibits high variance in predictions with small data changes

- Training becomes computationally expensive and resource-intensive [18]

Diagnostic Steps:

- Monitor the gap between training and validation loss. A growing gap indicates overfitting.

- Perform ablation studies to see if a smaller model can achieve comparable performance.

- Use visualization tools like learning curves to diagnose overfitting.

Resolution Procedures:

- Employ Regularization Techniques: Apply weight decay, dropout, and batch normalization to constrain the model during training and discourage memorization of noise [18] [17].

- Utilize Large and Diverse Datasets: Feed the model vast amounts of varied data to provide the raw material it needs to learn meaningful patterns rather than superficial correlations [18].

- Implement Early Stopping: Monitor validation performance during training and halt the process before the model begins to overfit [18].

- Apply Model Pruning and Compression: After training, trim away unnecessary parameters to reduce model complexity and improve deployment efficiency without sacrificing performance [18].

Frequently Asked Questions (FAQs)

Q1: What is the practical impact of a high condition number in my drug sensitivity matrix? A high condition number means your matrix is near-singular, leading to highly sensitive and unstable solutions. In practice, this can cause significant errors in predicting drug responses, potentially misguiding subsequent research efforts and leading to wasted resources. It directly challenges the reliability of your computational findings [15] [19].

Q2: Are overparameterized models always a problem in drug discovery? Not necessarily. When trained correctly, overparameterized models can offer greater representational power and flexibility, capturing intricate patterns in biological data. They can also be easier to optimize and can generalize well if techniques like regularization and large, diverse datasets are used. The key is balancing scale with methods that prevent overfitting [18].

Q3: My optimization algorithm for a nonlinear regression model is converging very slowly. Could poor scaling be the cause? Yes, poor scaling is a common cause of slow convergence. When features have vastly different scales, the loss landscape can become ill-conditioned, with curvature varying greatly across dimensions. This makes it difficult for gradient-based optimizers to navigate efficiently, drastically slowing down the training process [16] [17].

Q4: How can I prevent my model from overfitting when working with limited pre-clinical data? With limited data, it's crucial to leverage regularization techniques such as weight decay and dropout. Additionally, employing early stopping based on a validation set is highly effective. If possible, using a simpler model with fewer parameters or exploring data augmentation strategies to artificially expand your training dataset can also help mitigate overfitting [18].

Table 1: Impact of Architectural Choices on Optimization Landscape Conditioning

| Architectural Component | Condition Number | Impact on Optimization | Primary Use Case |

|---|---|---|---|

| Batch Normalization (BN) [17] | Orders of magnitude smaller than Layer Norm | Creates smoother loss landscapes, easier optimization | Deep learning models for drug response |

| Weight Normalization (WN) [17] | Reduces effective condition number | Stabilizes Effective Learning Rate (ELR) | Models with non-stationary targets |

| Cross-Entropy Loss (Critic) [17] | Remarkably well-conditioned vs. MSE | Superior convergence properties | Distributional reinforcement learning |

| Singular Value Thresholding (SVT) [15] | Improves matrix stability | Enables accurate low-rank matrix completion | Drug sensitivity data with missing values |

Table 2: Common Bottlenecks in Pre-Clinical Drug Discovery and Computational Solutions [19]

| Bottleneck | Impact | Computational Strategy |

|---|---|---|

| Target Identification & Validation | Poor validation leads to failed drug development | High-throughput screening, genomic/proteomic analysis |

| Assay Development & Optimization | Inaccurate results, false positives/negatives | Robust assay development, automation, standardized protocols |

| Compound Screening & Optimization | Missed opportunities, suboptimal drug candidates | Computational chemistry, AI/ML for prediction & prioritization |

| Pre-Clinical Safety & Toxicology | Costly late-stage failures, patient risk | Advanced in silico models, organ-on-a-chip technologies |

Experimental Protocols

Protocol: Two-Stage Matrix Completion for Drug Sensitivity Data

This protocol uses a Two-stage Matrix Completion for Drug Sensitivity Discovery (TSMC-DSD) to address missing data and ill-conditioned matrices in anticancer drug sensitivity testing [15].

Workflow Diagram:

Methodology:

- First Stage - Initial Matrix Completion:

- Input: The original drug response matrix (e.g., rows as cell lines, columns as drugs) with missing values.

- Procedure: Apply the Singular Value Thresholding (SVT) algorithm to perform an initial matrix completion. This results in a primary filled matrix that serves as a foundation for the next stage [15].

- Matrix Analysis and Block Segmentation:

- Hierarchical Clustering: Perform hierarchical clustering on the primary filled matrix based on row similarity under correlation coefficients.

- Segmentation: Group together rows with higher similarities to produce distinct matrix blocks [15].

- Second Stage - Refined Completion:

- Block Selection: Identify the largest block from the segmented matrix, which has been proven to possess the largest entropy.

- SVT Application: Apply the SVT algorithm once more to reconstruct this largest block.

- Data Restoration: Embed the reconstructed largest block back into the primary filled matrix to obtain the final, completed matrix [15].

Protocol: Achieving Well-Conditioned Optimization in Deep RL for Molecular Design

This protocol outlines steps to create a well-conditioned optimization landscape for deep reinforcement learning (RL) models, which can be applied to tasks like molecular optimization [17].

Workflow Diagram:

Methodology:

- Architecture Design:

- Normalization: Incorporate Batch Normalization (BN) layers into the critic network. BN consistently produces better-conditioned local loss landscapes than other normalization schemes [17].

- Weight Scaling: Apply Weight Normalization (WN) by periodically projecting the network's weights to the unit sphere. This technique improves the effective learning rate (ELR) and works synergistically with BN [17].

- Loss Function Selection:

- Choose a distributional critic using a categorical cross-entropy (CE) loss instead of a standard critic with a mean squared error (MSE) loss. The CE loss induces a remarkably well-conditioned optimization landscape, which is easier to optimize [17].

- Validation and Analysis:

- Hessian Analysis: Systematically investigate the impact of the design decisions by analyzing the eigenspectrum and condition number of the critic's Hessian. A low condition number confirms a well-conditioned landscape [17].

- ELR Monitoring: Monitor the effective learning rate during training to ensure it remains stable, which is critical for maintaining plasticity and stable training under non-stationary targets [17].

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Key Computational Tools and Data Resources

| Tool/Resource | Function | Application Context |

|---|---|---|

| Singular Value Thresholding (SVT) Algorithm [15] | Performs low-rank matrix completion by thresholding singular values. | Recovering missing data in drug sensitivity matrices; general matrix completion problems. |

| K-Optimal Designs (via Semidefinite Programming) [16] | Identifies optimal experimental support points to reduce model collinearity. | Designing experiments for nonlinear regression models to mitigate ill-conditioning. |

| Batch Normalization (BN) [17] | Normalizes layer inputs for smoother loss landscapes and better conditioning. | A key component in deep learning architectures (e.g., for drug response prediction) to accelerate and stabilize training. |

| Weight Normalization (WN) [17] | Decouples weight direction from magnitude to stabilize the effective learning rate. | Used in conjunction with BN in neural networks to further improve optimization dynamics. |

| Cross-Entropy Loss (for Distributional Critics) [17] | Reformulates regression as a classification problem for a better-conditioned loss. | Replacing MSE loss in deep RL critics and other models to improve convergence. |

| KEGG Database / DrugBank [20] [21] | Provides canonical data on drug structures, protein sequences, and known drug-target associations. | Source for constructing drug-drug and target-target similarity matrices for predictive models. |

| NCI-60 Screening Data [20] | Provides drug response (GI50) and gene expression data across 60 cancer cell lines. | A benchmark dataset for building and validating drug sensitivity prediction models. |

Optimizing drug release from multilaminated devices involves determining design parameters—such as initial drug concentration distribution across layers—to achieve a desired release profile. This mathematical inversion of the diffusion process is a classic ill-posed problem, where small errors in desired release specifications lead to large, often unphysical, oscillations in the calculated optimal parameters [22].

This case study, framed within broader research on strategies for ill-conditioned optimization problems, explores the manifestation of ill-conditioning in this context and presents a robust inverse problem solution scheme to achieve stable, physically meaningful solutions.

Technical FAQ: Understanding the Core Problem

Q1: What does "ill-conditioning" mean in the context of optimizing a multilaminated drug delivery device?

It means that the mathematical problem of calculating the optimal initial drug concentration (v(x)) to produce a specific release flux (j(t)) is highly sensitive. Minuscule errors or "noise" in the specification of the desired j(t), or in numerical computations, can cause enormous, non-physical swings in the calculated v(x). This makes direct numerical solutions unstable and impractical without specialized techniques [22].

Q2: Why is the optimization of a multilaminated device formulated as an "inverse problem"?

A forward problem predicts the drug release profile (the effect) from a known initial drug concentration (the cause). Optimization requires the inverse: finding the cause (initial concentration) that produces a desired effect (release profile). This inversion is the core of the inverse problem [22].

Q3: What is the fundamental mathematical reason for this ill-conditioning?

The problem can be reduced to solving a Fredholm integral equation of the first kind [22] [23]. The solution of this type of equation requires solving for an unknown function (v(x)) that appears inside an integral. This process inherently amplifies high-frequency components, including any measurement or numerical noise, making the solution process unstable and ill-posed.

Troubleshooting Guide: Common Numerical Issues and Solutions

| Symptom | Likely Cause | Recommended Solution |

|---|---|---|

| Computed initial concentration shows large, rapid oscillations between positive and negative values. | Severe ill-posedness of the Fredholm integral equation; solution is overly sensitive to numerical noise. | Implement a regularization method (e.g., Tikhonov, Modified Regularization) to stabilize the solution [22]. |

| Solution changes drastically with a tiny change in the desired release profile. | Ill-conditioning of the system matrix; high condition number. | Use Truncated Singular Value Decomposition (TSVD) to filter out the small, noise-amplifying singular values [22]. |

| Difficulty in choosing the right regularization parameter. | Subjective trade-off between solution fidelity (accuracy) and stability (smoothness). | Employ the L-curve method to visually select a parameter that balances these two properties [22]. |

| Inability to achieve a near-constant (zero-order) release profile. | Suboptimal initial configuration of the multilayer device. | Consider a universal design with three layers. For example, a design with scaled thicknesses of [0.5, 0.5, 0.14] and scaled concentrations of [1.6, 0.4, 0] can provide a robust starting point for optimization [24]. |

Experimental Protocol: Implementing the Inverse Problem Solution

The following methodology outlines the key steps for determining the optimal initial drug concentration profile.

Step 1: Mathematical Model Formulation

The drug release from a one-dimensional multilaminated device is modeled using Fick's second law of diffusion. The dimensionless formulation is used for generality [22].

- Governing Equation:

∂c/∂t = ∂²c/∂x² - Boundary Conditions:

- Zero flux at the sealed end (x=0):

∂c/∂x |_(x=0) = 0 - Perfect sink at the release surface (x=1):

c(t,1) = 0

- Zero flux at the sealed end (x=0):

- Initial Condition (Unknown):

c(0,x) = v(x) - Additional Measurement (Desired Release Rate):

j(t) = - ∂c/∂x |_(x=1)

Step 2: Transformation to an Integral Equation

Using the method of separation of variables, the solution to the forward model is found. The release flux j(t) is then expressed in terms of the unknown v(x), resulting in a Fredholm integral equation of the first kind [22]:

j(t) = ∫_0^1 K(x, t) v(x) dx

where K(x, t) is the kernel function derived from the diffusion model.

Step 3: Application of a Modified Regularization Method

To solve the ill-posed integral equation, a modified regularization method is employed. This method combines the strengths of Tikhonov regularization and the Truncated Singular Value Decomposition (TSVD) [22].

- Discretize the Problem: Convert the integral equation into a system of linear equations,

A * v = j, whereAis the system matrix. - Apply TSVD: Perform SVD on

Aand discard singular values below a chosen threshold to prevent noise amplification. - Tikhonov Regularization: Introduce a stabilizing term (regularizer) to the least-squares problem, minimizing the objective function

||A * v - j||² + λ²||L * v||², whereλis the regularization parameter andLis often the identity matrix. - Parameter Selection: Use the L-curve method to select an optimal

λ, which provides a trade-off between solution residual and smoothness.

Step 4: Numerical Validation with Noise

Test the robustness of the obtained solution v(x) by:

- Adding small, random noise to the desired release profile

j(t). - Recalculating

v(x)using the same regularization parameters. - Verifying that the solution does not exhibit large, unphysical oscillations and remains close to the noise-free solution.

Diagram 1: Inverse Problem Solution Workflow. This flowchart outlines the process from defining a target drug release profile to obtaining a stable, optimized initial drug concentration for a multilaminated device.

Research Reagent Solutions: Key Computational Tools

This table details the essential "reagents" or tools required to implement the optimization scheme.

| Tool / Component | Function in the Experiment | Key Specification / Note |

|---|---|---|

| Diffusion Model | The physics-based core that predicts drug release from a given initial state [22]. | Based on Fick's second law; requires dimensionless processing for generality. |

| Fredholm Solver | A numerical solver to address the core integral equation of the inverse problem. | Native solvers are unstable; must be paired with regularization. |

| Tikhonov Regularization | Adds a constraint to the solution to enforce smoothness and stability [22]. | Penalizes large oscillations in v(x). |

| Truncated SVD (TSVD) | Filters out the components of the solution that are most sensitive to noise [22]. | Acts as a numerical stabilizer by removing small singular values. |

| L-Curve Criterion | A heuristic for choosing the optimal regularization parameter (λ) [22]. | Balances solution fidelity (fit to data) and stability (smoothness). |

Advanced Analysis: Deeper into the Mathematics

The forward solution for the concentration c(t,x) is given by:

c(t,x) = ∑_(k=0)^∞ 2e^(-(k+1/2)²π²t) cos((k+1/2)πx) ∫_0^1 v(ξ) cos((k+1/2)πξ) dξ [22]

Differentiating at x=1 gives the expression for the release flux, j(t):

j(t) = -∂c/∂x|_(x=1) = ∑_(k=0)^∞ [2e^(-(k+1/2)²π²t) (k+1/2)π ∫_0^1 v(ξ) cos((k+1/2)πξ) dξ] [22]

This equation is of the form j(t) = ∫ K(ξ, t) v(ξ) dξ, confirming it is a Fredholm integral equation of the first kind. The ill-posedness is evident from the decaying exponential term, which mutes the contribution of higher-frequency components in v(ξ), making their reconstruction from j(t) unstable.

Diagram 2: Problem Diagnosis and Resolution Path. This diagram illustrates the root cause of ill-conditioning in the drug release optimization problem and the two primary regularization strategies used to resolve it.

The optimization of initial drug concentration in multilaminated devices is a computationally challenging ill-posed problem. By reframing it as an inverse problem and employing a modified regularization strategy that hybridizes Tikhonov regularization and TSVD, researchers can overcome the inherent instability. This provides a robust framework for designing sophisticated drug delivery systems with precise, pre-specified release profiles, contributing valuable strategies to the broader field of ill-conditioned optimization.

Technical Support Center: Troubleshooting Ill-Conditioned Optimization in Computational Research

Welcome, Researchers. This center addresses common challenges encountered when formulating and solving optimization problems in scientific computing, with a focus on mitigating ill-conditioning. The guidance below is framed within ongoing research into strategies for ill-conditioned optimization problems.

Frequently Asked Questions & Troubleshooting Guides

Q1: My Physics-Informed Neural Network (PINN) training is unstable and converges poorly. What could be the root cause, and how can I diagnose it? A: Unstable PINN training is frequently attributed to the ill-conditioning of the underlying partial differential equation (PDE) system, manifested in the Jacobian matrix of the discretized residuals [12]. A high condition number of this Jacobian can severely slow convergence. To diagnose:

- Construct a Controlled System: Following recent research, create a modified version of your PDE system where the Jacobian's condition number can be artificially adjusted while preserving the true solution. Observing faster convergence and higher accuracy with a lower condition number confirms ill-conditioning as the bottleneck [12].

- Monitor Loss Landscape: Consider that PINN training is fundamentally an optimization problem. Ill-conditioning here relates to the curvature of the loss landscape, where a high condition number of the Hessian matrix indicates vastly different curvatures along different parameter directions, making gradient-based updates inefficient [17].

Q2: In deep reinforcement learning, my critic network learns slowly despite tuning the learning rate. Are there architectural changes that can improve optimization stability? A: Yes. Slow learning can stem from an ill-conditioned critic loss landscape. Focus on architectural components that improve the conditioning of the optimization problem:

- Adopt Batch Normalization (BN): Empirical Hessian analysis shows BN leads to significantly better-conditioned landscapes compared to Layer Normalization in this context, yielding condition numbers orders of magnitude smaller [17].

- Utilize Weight Normalization (WN) with a Distributional Critic: Combine WN with a categorical cross-entropy (CE) loss for a distributional critic. The CE loss inherently creates a well-conditioned landscape, and WN helps stabilize the effective learning rate. This synergy dramatically improves conditioning and sample efficiency [17].

Q3: How does the mathematical formulation of a structural design problem affect its suitability for novel solvers like Quantum Annealing (QA)? A: The formulation is critical. QA requires problems to be expressed as a Quadratic Unconstrained Binary Optimization (QUBO) model. A traditional finite element analysis (FEA) coupled with an optimizer is not directly compatible. A reformulation that integrates the governing physics (e.g., via the principle of minimum complementary energy) directly into a single minimization objective allows mapping to a QUBO. This unified formulation avoids iterative analysis-optimization loops and exploits QA's strengths, though problem scale is currently limited by hardware [25].

Q4: What is a practical first step to mitigate ill-conditioning in a general optimization problem? A: Prior to algorithmic changes, reformulate the problem. The model structure directly impacts conditioning properties. For dynamic systems, this might involve variable scaling or preconditioning inspired by traditional numerical methods [12]. In machine learning, this translates to choosing loss functions (e.g., CE over MSE) and network architectures (e.g., using normalization layers) that are known to produce better-conditioned Hessian matrices [17]. A well-formulated problem often renders advanced solvers more effective.

Q5: Are there optimization methods that maintain efficiency for ill-conditioned problems in high-dimensional, streaming data contexts? A: First-order methods (e.g., SGD) struggle with ill-conditioning in streaming data. Recent advances propose adaptive stochastic quasi-Newton methods that are inversion-free. These methods approximate second-order information to improve conditioning, achieve a computational complexity of O(dN) (matching first-order methods), and demonstrate effectiveness under complex covariance structures, making them suitable for streaming applications [7].

The following table summarizes experimental findings on how model structure and formulation affect conditioning and performance.

Table 1: Impact of Formulation and Architecture on Problem Conditioning

| Study / Method | Key Structural Intervention | Measured Effect on Conditioning/Performance | Context |

|---|---|---|---|

| PINNs with Controlled System [12] | Reformulating PDE system to adjust Jacobian condition number (κ). | As κ(Jacobian) decreases, PINN convergence accelerates and accuracy increases. Direct correlation established. | Physics-Informed Neural Networks |

| XQC Algorithm [17] | Combination of Batch Norm (BN), Weight Norm (WN), and Categorical Cross-Entropy (CE) loss. | Critic Hessian condition number reduced by orders of magnitude. Achieved state-of-the-art sample efficiency on 70+ control tasks. | Deep Reinforcement Learning |

| Adaptive Stochastic Quasi-Newton [7] | Inversion-free second-order adaptation for streaming data. | Effectively addresses ill-conditioning with O(dN) complexity, outperforming first-order methods in poorly conditioned settings. | Streaming Data Optimization |

Detailed Experimental Protocols

Protocol 1: Diagnosing Ill-Conditioning in PINNs via a Controlled System Objective: To empirically verify the link between the Jacobian matrix's condition number and PINN training difficulty. Methodology:

- System Definition: Start with the target PDE system:

dq/dt = f(q), wherefencompasses PDE and boundary condition operators. - Construct Controlled System: Define a modified dynamics:

dq/dt = J(q_s) * (q - q_s) + f(q), whereJ(q_s)is the Jacobian offevaluated at the (unknown) steady solutionq_s. Introduce a control parameterαto scaleJ(q_s), creating a family of systems with adjustable condition numbers but identical steady-state solutionq_s[12]. - PINN Training & Evaluation: Train identical PINNs on systems with varying

α. Monitor and record:- The loss convergence trajectory over iterations.

- The final solution accuracy (e.g., L2 error vs. reference).

- Analysis: Correlate the convergence speed and final accuracy with the condition number of

α * J(q_s)(or its approximation). Faster convergence with lower condition number confirms the hypothesis.

Protocol 2: Analyzing Hessian Conditioning in Deep RL Critics Objective: To systematically evaluate how normalization layers and loss functions affect the critic network's loss landscape. Methodology:

- Architectural Variants: Train multiple Soft Actor-Critic (SAC) critics with different combinations: (LN vs. BN), (MSE vs. Categorical CE loss), (with/without WN).

- Hessian Eigenvalue Computation: At regular training intervals, compute an approximation of the Hessian matrix for the critic's loss with respect to its parameters. Use efficient iterative methods (e.g., Lanczos algorithm) to estimate the largest (

λ_max) and smallest (λ_min) eigenvalues. - Condition Number Calculation: Compute the condition number

κ = |λ_max| / |λ_min|(or a similar spectral measure). Log this value over training time for each architectural variant [17]. - Performance Correlation: Compare the sample efficiency (average return vs. environment steps) of the full RL algorithm using each critic variant against the recorded condition number trends.

Visualization of Key Concepts and Workflows

Diagram 1: Problem Formulation Optimization Workflow

Diagram 2: XQC Critic Architecture for Improved Conditioning

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Components for Mitigating Ill-Conditioning

| Item | Primary Function in Optimization Context |

|---|---|

| Batch Normalization (BN) | Normalizes layer activations, reducing internal covariate shift. Proven to yield a better-conditioned Hessian matrix in RL critics compared to alternatives [17]. |

| Weight Normalization (WN) | Periodically projects network weights onto the unit sphere. Works synergistically with normalization layers to stabilize the Effective Learning Rate (ELR), crucial for non-stationary RL targets [17]. |

| Cross-Entropy (CE) Loss | When used in distributional value learning, induces a more favorable (lower condition number) optimization landscape compared to Mean Squared Error (MSE) loss, easing gradient-based training [17]. |

| Controlled System Formulation | A diagnostic and interventional framework for PDE-based optimization (e.g., PINNs). Allows direct manipulation of the Jacobian condition number to isolate and address ill-conditioning [12]. |

| QUBO Formulation | A specific problem formulation (Quadratic Unconstrained Binary Optimization) required for Quantum Annealing. Enables the solution of integrated analysis-and-design problems in a single optimization step [25]. |

Methodological Approaches for Ill-Conditioned Systems in Drug Development

In the research of ill-conditioned optimization problems, a frequently encountered challenge is the numerical instability of solutions when the underlying mathematical problem is ill-posed or the system matrix is ill-conditioned. These conditions are characterized by a large condition number, where small perturbations in the input data (e.g., due to experimental noise) lead to large, often unbounded, oscillations in the solution. Within this context, regularization describes the process of replacing an ill-posed problem with a nearby well-posed one to obtain a stable, meaningful solution. Two pivotal techniques for stabilizing such systems are Tikhonov Regularization (also known as ridge regression) and Truncated Singular Value Decomposition (TSVD). Tikhonov regularization achieves stability by introducing a penalty term to the solution norm, while TSVD operates by discarding the contributions from the smallest singular values responsible for the system's instability. The strategic selection between these methods forms a cornerstone of reliable computational research in fields ranging from inverse problem resolution to drug development modeling [26] [27] [28].

The fundamental problem is often formulated as solving the linear system ( A\mathbf{x} = \mathbf{b} ), where ( A ) is an ( m \times n ) matrix that is ill-conditioned. The inherent instability can be understood through the Singular Value Decomposition (SVD). For a matrix ( A ), its SVD is given by ( A = U\Sigma V^T ), where ( U ) and ( V ) are orthogonal matrices, and ( \Sigma ) is a diagonal matrix containing the singular values ( \sigmai ) in non-increasing order: ( \sigma1 \geq \sigma2 \geq \cdots \geq \sigman \geq 0 ). The condition number is ( \text{cond}(A) = \sigma1 / \sigman ). For ill-conditioned problems, ( \sigman ) is very small (often close to zero), leading to a large condition number. The naive solution ( \mathbf{x} = \sum{i=1}^{N} \frac{\mathbf{u}i^T \mathbf{b}}{\sigmai} \mathbf{v}_i ) is dominated by the terms corresponding to the smallest singular values, which amplify noise exponentially [27] [29].

Technical Deep Dive: Methodologies and Comparative Analysis

Tikhonov Regularization: Methodology and Implementation

Tikhonov regularization addresses the ill-posedness by solving a modified minimization problem. Instead of minimizing only the residual norm ( \|A\mathbf{x} - \mathbf{b}\|^2 ), it introduces a constraint on the solution norm. The core problem is transformed into finding [ \mathbf{x}\alpha = \text{argmin}\mathbf{x} \left{ \|A\mathbf{x} - \mathbf{b}\|2^2 + \alpha^2 \|\Gamma \mathbf{x}\|2^2 \right}, ] where ( \alpha > 0 ) is the regularization parameter and ( \Gamma ) is a matrix defining the regularization properties, often chosen as the identity matrix ( I ) [27] [28]. The solution can be expressed in closed form using the normal equations: [ \mathbf{x}\alpha = (A^TA + \alpha^2 \Gamma^T\Gamma)^{-1} A^T \mathbf{b}. ] When analyzed through the lens of the SVD with ( \Gamma = I ), the solution takes on a revealing spectral filtering form: [ \mathbf{x}\alpha = \sum{i=1}^{N} \frac{\sigmai^2}{\sigmai^2 + \alpha^2} \frac{\mathbf{u}i^T \mathbf{b}}{\sigmai} \mathbf{v}i. ] Here, the factors ( \phii(\alpha) = \frac{\sigmai^2}{\sigmai^2 + \alpha^2} ) are the filter factors. These factors dictate the contribution of each SVD component: for ( \sigmai \gg \alpha ), ( \phii \approx 1 ), and the component is largely preserved; for ( \sigmai \ll \alpha ), ( \phii \approx 0 ), and the component is effectively filtered out. This provides a smooth, continuous damping of the solution components most susceptible to noise amplification [27] [29]. An advanced variant known as distributed Tikhonov regularization allows for finer control by using a vector of parameters, minimizing ( \|A\mathbf{x} - \mathbf{b}\|^2 + \sum{\ell=1}^p \frac{\|L\ell \mathbf{x}\|^2}{\theta\ell} ). This is particularly beneficial when the data exhibit significantly different sensitivity to various components of the unknown parameter vector ( \mathbf{x} ) [28].

Truncated SVD (TSVD): Methodology and Implementation

The Truncated SVD (TSVD) method is a more direct spectral filtering approach. It regularizes the problem by constructing a rank-( k ) approximation ( Ak ) of the original matrix ( A ), defined by: [ Ak = U \Sigmak V^T = \sum{i=1}^k \sigmai \mathbf{u}i \mathbf{v}i^T, ] where ( \Sigmak ) is a diagonal matrix containing only the ( k ) largest singular values, and all others are set to zero. The TSVD solution is then computed using the pseudoinverse of this truncated matrix: [ \mathbf{x}k = Ak^+ \mathbf{b} = \sum{i=1}^{k} \frac{\mathbf{u}i^T \mathbf{b}}{\sigmai} \mathbf{v}i. ] In the spectral filtering framework, TSVD employs a sharp, step-function filter: ( \phii = 1 ) for ( i \leq k ) and ( \phii = 0 ) for ( i > k ). This means components corresponding to the ( N-k ) smallest singular values are completely discarded. The crucial choice in TSVD is the truncation parameter ( k ), which controls the trade-off between stability (lower ( k )) and fidelity to the data (higher ( k )) [30] [29]. The optimality property of TSVD, as defined by the Eckart–Young theorem, states that ( A_k ) is the closest rank-( k ) matrix to ( A ) in both the Frobenius and spectral norms. This makes TSVD not just a regularization method, but also an optimal tool for model reduction and overcoming the curse of dimensionality in large-scale problems [30].

Quantitative Comparison and Decision Framework

The table below provides a systematic comparison of Tikhonov regularization and TSVD to guide researchers in selecting the appropriate technique.

Table 1: Comparative Analysis of Tikhonov Regularization vs. Truncated SVD

| Feature | Tikhonov Regularization | Truncated SVD (TSVD) |

|---|---|---|

| Mathematical Form | (\text{argmin}_x |Ax-b|^2 + \alpha^2 |x|^2) | (xk = \sum{i=1}^k \frac{ui^T b}{\sigmai} v_i) |

| Filter Factors | (\phii = \frac{\sigmai^2}{\sigma_i^2 + \alpha^2}) (smooth decay) | (\phi_i = 1) for (i \leq k), (0) otherwise (sharp cutoff) |

| Primary Control Parameter | Regularization parameter ( \alpha ) | Truncation index ( k ) |

| Solution Norm | Controlled continuously by ( \alpha ) | Non-increasing with decreasing ( k ) |

| Stability | Very stable, continuous solution | Stable, but can be sensitive to choice of ( k ) |

| Computational Cost | Requires solving linear system (e.g., via CG) | Requires full or partial SVD computation |

| Ideal Use Case | Problems requiring smooth solutions, generalized regularization via ( \Gamma ) | Problems with a clear spectral gap, sparse or low-rank solutions |

The choice between these methods often hinges on the nature of the singular value spectrum. Tikhonov regularization is generally preferred when the singular values decay gradually without a clear cutoff, as its smooth filter provides more nuanced control. In contrast, TSVD can be more effective when there is a distinct spectral gap—a noticeable drop in the magnitude of singular values—as it allows for a clear separation between signal and noise-dominated components [29]. In practice, hybrid approaches are increasingly common. A Tikhonov-TSVD united algorithm has been demonstrated in a muon positioning system, where it successfully reduced the vertical mean error to 0.922 m and the RMS error in the Z-direction from 4.254 m to 1.026 m, effectively mitigating oscillations in the localization results [26]. Another study on separable nonlinear least squares problems confirmed that an improved Tikhonov method, which neither discards small singular values nor treats all corrections equally, was more effective at reducing the mean square error than standalone TSVD or standard Tikhonov approaches [31].

Frequently Asked Questions (FAQs) and Troubleshooting

FAQ 1: How do I choose the regularization parameter α for Tikhonov or the truncation parameter k for TSVD in a real experiment?

Parameter selection is critical. If an estimate of the noise norm ( \delta ) in your data ( \mathbf{b} ) is available, the Morozov discrepancy principle is a standard choice. It selects ( \alpha ) (or ( k )) such that the residual norm is approximately equal to the noise norm: ( \|A\mathbf{x}_{\alpha} - \mathbf{b}\| \approx \delta ) [28]. Other established methods include:

- L-curve Criterion: Plots the solution norm ( \|\mathbf{x}{\alpha}\| ) against the residual norm ( \|A\mathbf{x}{\alpha} - \mathbf{b}\| ) on a log-log scale. The optimal parameter is often located near the "corner" of the resulting L-shaped curve [32] [27].

- Generalized Cross-Validation (GCV): Chooses the parameter that minimizes the prediction error [28].

- Sensitivity Index: A newer method that selects the parameter ( \lambda ) (or ( \alpha )) by minimizing a sensitivity index, which indicates the severity of ill-conditioning via solution accuracy. This can also be applied to find the optimal parameter for L-curves [32].

FAQ 2: My regularized solution is still highly inaccurate. What could be going wrong?

This is a common issue in experimental research. Consider these troubleshooting steps:

- Check Problem Formulation: Verify that the underlying physical model (matrix ( A )) is correct. No regularization can fix a fundamentally flawed model.

- Re-evaluate Regularization Parameter: An poorly chosen parameter is the most likely culprit. Use one of the systematic methods listed in FAQ 1 instead of an ad-hoc selection. A too-small ( \alpha ) leads to under-regularization (solution is still noisy), while a too-large ( \alpha ) causes over-regularization (solution is oversmoothed and lacks detail) [28].

- Consider Distributed Regularization: If your data have significantly different sensitivity to different components of ( \mathbf{x} ), a single regularization parameter ( \alpha ) might be insufficient. Explore distributed Tikhonov regularization, which uses a vector of parameters ( \theta_\ell ) to allow different regularization for different components or groups of components [28].

- Examine the Singular Value Spectrum: Plot the singular values of your matrix. If there is no clear decay, the problem might be too ill-posed for standard regularization to be effective without incorporating additional prior knowledge.

FAQ 3: When should I use a generalized Tikhonov regularization with a matrix L ≠ I?

Use a regularization matrix ( L ) other than the identity when you have prior knowledge about the desired solution. Common choices include:

- Discrete Gradient Operators: If you expect the solution to be smooth, using the first or second derivative operator as ( L ) will penalize roughness, forcing a smoother solution [27].

- Sparsifying Transforms: To promote sparsity in a transformed domain (e.g., for signal processing or compressed sensing), you can set ( L ) to be a wavelet or Fourier transform matrix. This is linked to sparsity-promoting ( \ell^1 )-type penalties like LASSO [28].

FAQ 4: Are Tikhonov regularization and ridge regression the same thing?

Yes, Tikhonov regularization and ridge regression are essentially the same technique, developed independently in different fields (integral equations and statistics, respectively). Both solve the same core problem of adding a quadratic penalty term to stabilize an ill-conditioned system [33].

The Scientist's Toolkit: Essential Research Reagents and Computational Materials

Table 2: Key Computational "Reagents" for Regularization Experiments

| Item / Concept | Function / Purpose | Example/Notes |

|---|---|---|

| SVD Solver | Computes the singular value decomposition ( A = U\Sigma V^T ). | Essential for spectral analysis and implementing TSVD. Use scipy.linalg.svd. |

| Conjugate Gradient Solver | Iteratively solves large, sparse linear systems. | Efficient for solving Tikhonov system ( (A^TA + \alpha^2 I)x = A^Tb ) without explicit inversion. |

| L-curve Plotting Tool | Visualizes the trade-off between solution and residual norms. | Critical for empirical parameter selection. |

| Condition Number Calculator | Quantifies the ill-posedness of matrix ( A ). | High condition number (( > 10^6 )) indicates strong need for regularization. |

| Distributed Regularization Framework | Allows component-wise control of regularization. | For problems with uneven data sensitivity. Implement via Bayesian hierarchical models [28]. |

| Sparsity-Promoting Package | Solves ( \ell^1 )-regularized problems (e.g., LASSO). | Used when the goal is a solution with few non-zero components (e.g., scikit-learn). |

## Frequently Asked Questions (FAQs)

Q1: Why does my nonlinear regression model converge slowly or fail to converge, and how is this related to model parameterization?

Slow convergence or failure to converge in nonlinear regression is often a symptom of ill-conditioning, frequently caused by parametric collinearity. This occurs when model parameters are highly correlated, leading to an ill-conditioned Hessian matrix and making the least-squares optimization process computationally inefficient and unstable. The core issue is that the optimization landscape becomes difficult to navigate, with multiple optima and flat regions that hinder progress. Ill-conditioning is mathematically tractable and can be addressed by reparameterizing the model to improve the orthogonality of its parameters [16] [34].

Q2: What are the primary strategies for diagnosing ill-conditioning in a nonlinear model?

You can diagnose ill-conditioning using several quantitative metrics, summarized in the table below. These metrics help assess the degree of correlation between parameters and the stability of the optimization problem.

Table 1: Diagnostic Metrics for Ill-Conditioning in Nonlinear Regression

| Metric | Description | Interpretation |

|---|---|---|

| Condition Number | Ratio of the largest to smallest singular value of the Jacobian or Hessian matrix. | A high number (e.g., >1e3) indicates ill-conditioning [12]. |

| Variance Inflation Factor (VIF) | Measures how much the variance of a parameter estimate is inflated due to collinearity. | A VIF > 5-10 suggests significant collinearity [16]. |

| Parameter Correlation Matrix | Examines pairwise correlations between parameter estimates. | High off-diagonal absolute values (e.g., >0.9) indicate strong dependencies [35]. |

| Eccentricity of Confidence Region | Shape of the parametric confidence region. | A highly elongated, narrow shape indicates ill-conditioning [16]. |

Q3: How does reparameterization improve orthogonality and mitigate ill-conditioning?

Reparameterization introduces a new set of parameters with better orthogonality properties, transforming the model so that the parameters have reduced correlation in the likelihood. This directly addresses the ill-conditioning of the Jacobian matrix associated with the underlying PDE system. As the condition number of this matrix decreases, the optimization process exhibits faster convergence and higher accuracy. The core idea is to find a parameterization where the model's sensitivity to each parameter is as independent as possible [12] [16] [35].

Q4: Can you provide a simple example of a basic reparameterization technique?

A common and powerful technique is the QR Reparameterization for linear predictor components in generalized linear and nonlinear models. In this approach, the design matrix X is decomposed into an orthogonal matrix Q and an upper-triangular matrix R (X = QR). The model is then fit using the orthogonal Q instead of the original X. This transformation reduces correlations between the predictors, leading to more stable and efficient estimation. This method is recommended in standard statistical software like Stan [36].

Q5: Are there reparameterization strategies for problems with hard constraints?

Yes, novel neural network architectures have been developed for hard constraints. Π-nets (Pi-nets) incorporate an output layer that uses operator splitting for rapid and reliable orthogonal projections during the forward pass. This ensures the network's output always satisfies specified convex constraints, making the model feasible-by-design. The backpropagation step is handled via the implicit function theorem. This is particularly useful for creating optimization proxies for parametric constrained problems [37].

Q6: How do I handle ill-conditioning caused by nuisance parameters that are correlated with parameters of interest?

For problems involving nuisance parameters, a GAM-based (Generalized Additive Model) reparameterization can be highly effective. This method uses an initial set of posterior samples to model the relationship between the parameters of interest ((C)) and the nuisance parameters ((N)). The goal is to find a transformation ( N' = N - f(C) ) such that (N') is statistically independent of (C) in the likelihood. Once this orthogonalization is achieved, the prior sensitivity for the parameters of interest is dramatically reduced, leading to more robust inference [35].

Q7: What advanced experimental design techniques can aid in creating better parameterizations?

K-optimal design of experiments can systematically guide model reparameterization. The support points from a locally K-optimal design are used to construct a response surface. This surface then informs a transformation of the original parameters into a new set with improved orthogonality properties. This approach can be implemented using Semidefinite Programming to find the optimal design points, providing a data-driven strategy for building a well-conditioned parameter space [16].

## Experimental Protocols

Protocol 1: Systematic Model Reparameterization using K-Optimality

This protocol outlines a method for finding a parameterization with improved orthogonality, based on the principles of K-optimal design [16].

- Problem Formulation: Define the original nonlinear model (y = f(x, \theta)), where (\theta) is the vector of original parameters.

- Local K-Optimal Design: Compute a locally K-optimal experimental design for the model. This involves using Semidefinite Programming to find a set of support points in the design space that are optimal for estimating the model's parameters.

- Response Surface Generation: Use the support points from Step 2 to generate a response surface. This creates a family of curves that capture the model's behavior across the parameter space.

- Transformation Construction: Analyze the generated response surface to construct a mathematical transformation (T) such that (\phi = T(\theta)), where (\phi) is the new set of parameters.

- Model Refitting: Refit the model using the reparameterized form (y = f(x, T^{-1}(\phi))).

- Validation: Compare the condition number and VIF of the new parameterization (\phi) against the original (\theta) to validate the improvement.

The following workflow visualizes this experimental protocol:

Protocol 2: Orthogonalization of Nuisance Parameters using GAMs

This protocol details a method to decorrelate parameters of interest from nuisance parameters, reducing prior sensitivity in Bayesian inference [35].

- Initial Sampling: Fit the original model (y = f(C, N)) with independent priors for the parameters of interest (C) and nuisance parameters (N). Obtain a sample of (n) posterior draws, creating matrices ( \tilde{C} ) and ( \tilde{N} ).

- Relationship Modeling: Fit a Generalized Additive Model (GAM) to model the relationship between (N) and (C) observed in the samples: (N \sim g(C) + \epsilon), where (g) is a smooth non-linear function.

- Define New Parameters: Define the orthogonalized nuisance parameters as (N' = N - \hat{g}(C)), where (\hat{g}(C)) is the predicted value from the fitted GAM.

- Reparameterize the Model: Rewrite the original model in terms of (C) and (N').

- Refit with New Priors: Fit the reparameterized model. Due to the achieved separability, the marginal posterior for (C) will now be robust to the choice of independent priors for (N').

The logical flow for orthogonalizing nuisance parameters is shown below:

## The Scientist's Toolkit

Table 2: Essential Research Reagents and Computational Tools for Reparameterization

| Tool/Reagent | Function in Reparameterization |

|---|---|

| QR Decomposition | A foundational linear algebra technique used to orthogonalize the design matrix, directly reducing collinearity in linear predictor components [36]. |

| K-Optimal Experimental Design | A strategy using Semidefinite Programming to select support points that guide the construction of a parameterization with improved orthogonality properties [16]. |

| Generalized Additive Models (GAMs) | Used to model and remove complex, non-linear dependencies between parameters of interest and nuisance parameters, enabling effective orthogonalization [35]. |

| Jacobian Matrix Analysis | The condition number of the PDE system's Jacobian matrix is a key diagnostic. Reparameterization aims to reduce this condition number to improve PINN convergence [12]. |

| Orthogonal Constraints (Π-net) | A specialized neural network layer that uses operator splitting and the implicit function theorem to enforce hard constraints via orthogonal projections, acting as a built-in reparameterization [37]. |

| Stochastic Quasi-Newton Methods | Adaptive optimization methods (e.g., inversion-free quasi-Newton) that can handle ill-conditioned problems in streaming data contexts with O(dN) complexity [38]. |

| Parameter Expansion | A reparameterization technique used in MCMC to improve the mixing of Markov chains by introducing auxiliary parameters to break correlations [39]. |

Preconditioning and Scaling Methods for Improved Convergence

Technical Support Center: Troubleshooting Convergence in Ill-Conditioned Problems

This support center is designed within the context of doctoral research on novel strategies for mitigating ill-conditioning in scientific optimization, common in computational chemistry and drug development. Below are common issues and their solutions.

Frequently Asked Questions (FAQs)

Q1: My gradient-based optimizer (e.g., SGD, L-BFGS) is extremely slow or fails to converge when training my neural network potential for molecular energy prediction. What is the most likely cause and first step? A1: The issue is highly likely an ill-conditioned problem landscape, where the curvature of the loss function varies drastically across parameters. This is common in systems with multi-scale features. The first step is to implement gradient scaling. Ensure all input features (e.g., atomic coordinates, charges) and target outputs (energy) are normalized to zero mean and unit variance. For the network parameters, consider adding a diagonal preconditioner that adapts the learning rate per parameter.

Q2: After applying standard mean-variance scaling, my conjugate gradient solver for a large linear system (from a finite-element model of protein-ligand binding) still converges poorly. What should I try next? A2: Basic scaling is insufficient for severely ill-conditioned systems. You must investigate preconditioning. A robust starting point is the Incomplete LU (ILU) factorization preconditioner. For the system Ax = b, compute an approximate LU factorization (M ≈ LU) and solve M⁻¹Ax = M⁻¹b. This effectively clusters the eigenvalues of the preconditioned system, dramatically improving convergence.

Q3: I am using a diagonal preconditioner for a quasi-Newton method, but it seems to destabilize the optimization in later iterations. How can I diagnose this? A3: This indicates that the local curvature is changing, and your fixed diagonal preconditioner is outdated. Transition to an adaptive preconditioning strategy. For example, implement a variant of AdaGrad or RMSProp, which accumulate squared gradient information to update a diagonal preconditioner iteratively: Dₖ = diag(δ + √(Gₖ))⁻¹, where Gₖ is the sum of squared gradients. This automatically adjusts to the problem's geometry.

Q4: When solving a large-scale PDE-constrained optimization problem for clinical trial model fitting, how do I choose between Jacobi and SSOR preconditioning? A4: The choice depends on the matrix structure and available resources. Use the decision guide below.

| Preconditioner | Operation (for M) | Cost & Storage | Best For | Not Recommended For |

|---|---|---|---|---|

| Jacobi (Diagonal) | M = diag(A) | Very Low / Low | Strongly diagonal-dominant matrices. | Matrices with significant off-diagonal coupling. |

| Symmetric Successive Over-Relaxation (SSOR) | M = (D/ω + L)D⁻¹(D/ω + U) | Moderate / Moderate | General symmetric positive-definite matrices. Improves with tuning ω. | Non-symmetric systems without modification. |

Q5: My second-order optimization using a Hessian-based preconditioner is too computationally expensive. Are there efficient approximations suitable for high-dimensional parameter spaces? A5: Yes. Avoid computing the full Hessian. Use limited-memory Hessian approximations.

- L-BFGS: Maintains a history of m past updates (sₖ, yₖ) to implicitly build an inverse Hessian approximation. Precondition the gradient as pₖ = -Hₖ ∇fₖ.

- Diagonal BFGS (DBFGS): Enforces the Hessian approximation to be diagonal, drastically reducing memory and computation to O(n) while still capturing per-parameter scaling.

Experimental Protocol: Benchmarking Preconditioner Efficacy

Objective: Quantify the impact of different preconditioners on solver convergence for a sparse linear system derived from a reaction-diffusion model of tumor growth.

Materials: See "Research Reagent Solutions" below.

Methodology:

- System Generation: Use a finite difference discretization on a 3D grid (100x100x100) of the PDE ∂u/∂t = ∇·(D(x)∇u) + ρu(1 - u), with spatially varying diffusion coefficient D(x). Apply implicit Euler timestepping to generate a large, sparse, ill-conditioned linear system Ax = b at each time step.

- Preconditioner Setup:

- P₁: Jacobi (Diagonal): M = diag(A).

- P₂: Incomplete Cholesky (IC(0)): Zero-fill-in factorization for the symmetric part.

- P₃: Algebraic Multigrid (AMG): A hierarchical method using coarse-grid correction.

- Solver Execution: Use the Preconditioned Conjugate Gradient (PCG) method. Set convergence tolerance to 1e-10 and maximum iterations to 2000. For each preconditioner Pᵢ, solve the same system Pᵢ⁻¹Ax = Pᵢ⁻¹b.

- Data Collection: For each run, record:

- Number of iterations to convergence.

- Wall-clock time to solution.

- Final relative residual norm (||b - Ax||₂ / ||b||₂).

Quantitative Benchmark Results:

| Preconditioner | Avg. Iterations | Time to Solve (s) | Final Residual | Convergence Factor (ρ) |

|---|---|---|---|---|

| None (Vanilla CG) | 1487 | 45.2 | 8.7e-11 | 0.998 |

| Jacobi | 632 | 21.3 | 9.2e-11 | 0.995 |

| Incomplete Cholesky (IC(0)) | 89 | 9.1 | 7.4e-11 | 0.960 |

| Algebraic Multigrid (AMG) | 14 | 5.8 | 6.1e-11 | 0.350 |

Analysis: AMG demonstrates superior performance, reducing iteration count by two orders of magnitude. While its setup time is higher, its fast convergence makes it the most efficient for this class of problem. Jacobi offers a simple but meaningful improvement over no preconditioning.

The Scientist's Toolkit: Research Reagent Solutions

| Item / Solution | Function in Preconditioning & Scaling Experiments |

|---|---|

| SuiteSparse Library | A suite of sparse matrix software (e.g., KLU, CHOLMOD) providing high-performance factorizations for preconditioner construction. |

| PETSc/TAO Framework | Portable, scalable toolkit for numerical PDEs and optimization. Essential for implementing and comparing a wide array of preconditioners (e.g., ILU, ICC, AMG) with solvers. |

| Hypre Library | Focuses on parallel multigrid and other scalable preconditioning methods, particularly effective for large-scale linear systems from PDEs. |

| Automatic Differentiation Tool (e.g., JAX, PyTorch) | Enables exact and efficient computation of gradients and Hessian-vector products, crucial for constructing and testing adaptive preconditioners in optimization. |

| L-BFGS Implementation (e.g., SciPy, libLBFGS) | Provides a robust, memory-efficient quasi-Newton optimizer that implicitly applies a variable preconditioner, serving as a benchmark. |

Visualization of Key Concepts

Leveraging Generative AI and Diffusion Models as Powerful Priors for Inverse Problems