A Researcher's Guide to PPI Data: Mastering IntAct, BioGRID, and Beyond for Robust Network Analysis

This guide provides researchers, scientists, and drug development professionals with a comprehensive framework for leveraging public Protein-Protein Interaction (PPI) databases.

A Researcher's Guide to PPI Data: Mastering IntAct, BioGRID, and Beyond for Robust Network Analysis

Abstract

This guide provides researchers, scientists, and drug development professionals with a comprehensive framework for leveraging public Protein-Protein Interaction (PPI) databases. It covers foundational knowledge of major resources like IntAct and BioGRID, strategic methodologies for data integration and tissue-specific application, solutions for common challenges including data heterogeneity and validation, and finally, best practices for comparative analysis and quality assessment. The article synthesizes current practices and emerging trends to empower the construction of biologically relevant PPI networks for advanced biomedical discovery.

Navigating the PPI Landscape: A Deep Dive into Core Databases and Their Specialties

Protein-protein interactions (PPIs) are fundamental to virtually every cellular process, from signal transduction and metabolic regulation to DNA replication and immune response. The systematic mapping of these interactions has become a cornerstone of modern biology, enabling researchers to model complex cellular networks and identify novel therapeutic targets. Public PPI databases have emerged as critical infrastructure for the life sciences, providing centralized, curated repositories of interaction data. These resources transform scattered experimental findings from the scientific literature into structured, computationally accessible knowledge. The field is characterized by a collaborative yet complementary ecosystem of databases, each with distinct strengths in curation focus, data types, and analytical tools. This guide provides an in-depth technical examination of six core resources—IntAct, BioGRID, HPRD, MINT, DIP, and REACTOME—framed within the context of biomedical research and drug discovery.

Database Comparison and Technical Specifications

The following table summarizes the key technical specifications and content focus of each major PPI database, enabling researchers to quickly identify the most appropriate resource for their specific needs.

Table 1: Core Features of Major Public PPI Databases

| Database | Primary Focus | Data Coverage | Curation Approach | Key Features |

|---|---|---|---|---|

| BioGRID | Protein & genetic interactions [1] [2] | ~1.93M interactions (2020); Human (670K), Yeast (755K) [2] | Manual curation from high & low-throughput studies [1] [2] | Includes PTMs, chemical interactions, CRISPR screens (ORCS) [2] |

| HPRD | Human proteome annotation [3] [4] | 20,000+ proteins; 30,000+ PPIs (2009) [3] | Manual literature extraction by biologists [3] [4] | PhosphoMotif Finder, disease associations, linked to NetPath [3] [4] |

| MINT | Experimentally verified PPIs [5] | Focused on curated physical interactions | Expert manual curation, PSI-MI standards [5] | IMEx consortium member; data integrated via IntAct [5] |

| DIP | Experimentally determined PPIs [6] | 1,089 proteins; 1,269 interactions (1999) [6] | Manual entry with expert review [6] | Details domains, amino acid ranges, dissociation constants [6] |

| IntAct | Molecular interaction data [7] | Provides molecular interaction data | Open source database; PSICQUIC service [7] | Confidence scores (MI score ≥ 0.45); framework for other resources [7] |

| REACTOME | Pathways & reactions [8] [9] | 2,825 human pathways; 16,002 reactions [8] | Manually curated, peer-reviewed pathways [9] | SBGN visualization; orthology-based predictions for 20 species [9] |

Table 2: Data Accessibility and Integration Features

| Database | Download Formats | Programmatic Access | Integration/Partnerships |

|---|---|---|---|

| BioGRID | Multiple formats including PSI MI XML [1] | REST API, Cytoscape plugin [1] | IMEx; data feeds to SGD, TAIR, FlyBase [1] |

| HPRD | Not specified | Human Proteinpedia submission portal [3] [4] | Linked to NetPath signaling pathways [3] [4] |

| MINT | PSI-MI standards [5] | PSICQUIC webservice [5] | IMEx consortium; data in IntAct [5] |

| DIP | Relational SQL database [6] | Web editing interface [6] | Links to sequence databases and pathway resources [6] |

| IntAct | Standardized downloads | PSICQUIC service [7] | Hosts MINT data; PSICQUIC aggregator [5] [7] |

| REACTOME | Various formats including SBGN [9] | Analysis tools API [8] | Overlays data from IntAct, BioGRID, MINT, etc. [9] |

Experimental Methodologies and Curation Standards

Curation Pipelines and Evidence Codes

PPI databases employ rigorous curation methodologies to ensure data quality and reliability. BioGRID maintains particularly detailed curation standards, with all interactions exclusively derived from manual curation of experimental data in peer-reviewed publications [2]. Each interaction is assigned structured evidence codes, including 17 different protein interaction evidence types (e.g., affinity capture-mass spectrometry, co-crystal structure, FRET, two-hybrid) and 11 genetic interaction evidence codes (e.g., synthetic lethality, synthetic rescue, dosage growth defect) [2]. This granular approach allows researchers to assess experimental context and reliability. High-throughput datasets are typically extracted from supplementary files and converted into consistent formats, while computationally predicted interactions are explicitly excluded to maintain high-confidence data standards [2].

Orthology-Based Pathway Predictions

REACTOME employs a sophisticated orthology inference system to extend human pathway knowledge to model organisms. The platform uses Ensembl Compara to identify orthologs of curated human proteins across 20 different species, enabling electronic inference of conserved reactions and pathways [9]. This approach significantly expands the utility of REACTOME for comparative biology and studies using model organisms. The Species Comparison tool allows direct comparison of predicted pathways between human and selected species, facilitating evolutionary analyses and translational research [9].

Data Integration Frameworks

The International Molecular Exchange (IMEx) consortium represents a critical collaborative framework in the PPI database ecosystem, with MINT and BioGRID as participating members [5] [2]. IMEx establishes common curation standards and enables resource sharing to minimize redundancy. The PSICQUIC (Proteomics Standard Initiative Common QUery InterfaCe) web service provides unified programmatic access to multiple interaction databases, including IntAct, BioGRID, MINT, and others [5] [9]. This interoperability allows researchers to query multiple resources simultaneously and facilitates more comprehensive network analyses.

Pathway Visualization and Data Integration

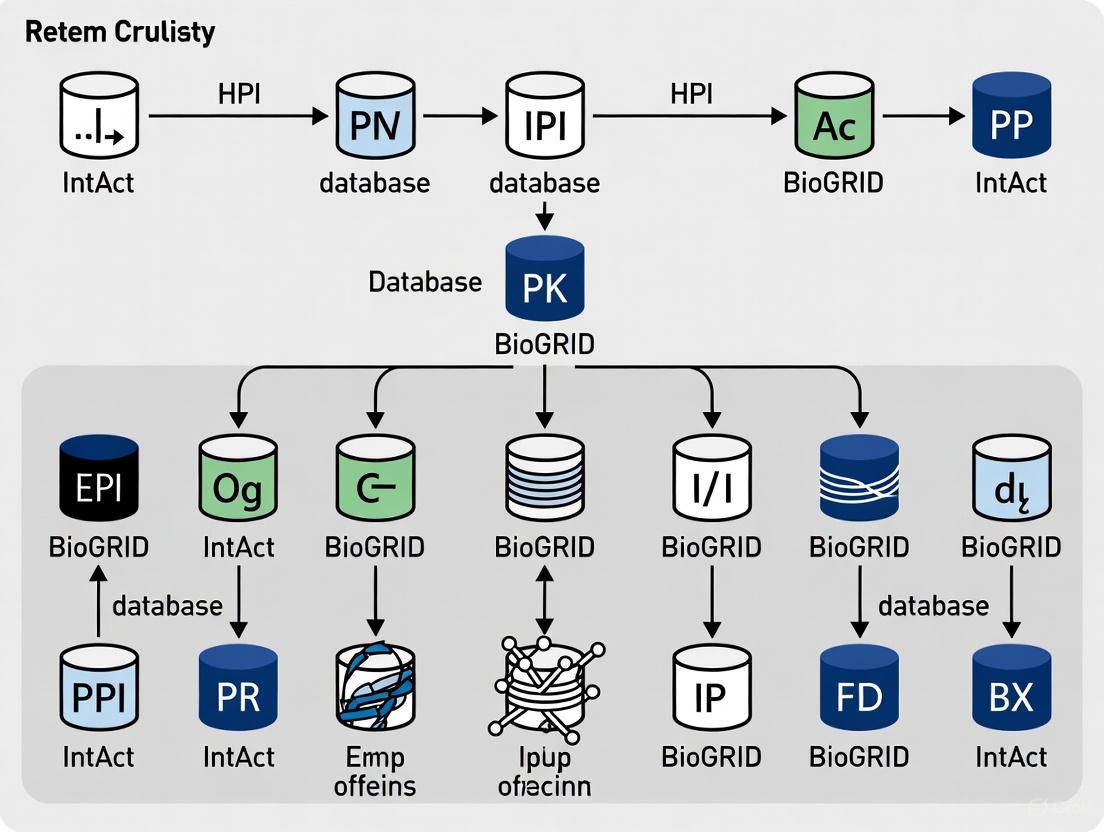

The following diagram illustrates the relationships and data integration between the major PPI databases and analytical tools:

Diagram 1: PPI Database Ecosystem and Data Flow (64 characters)

REACTOME's Pathway Browser implements Systems Biology Graphical Notation (SBGN) for standardized pathway visualization [9]. This enables consistent representation of biological entities and processes across different pathway diagrams. The browser supports zooming, scrolling, and event highlighting, with context-sensitive menus providing additional information about pathway components. A key innovation is the ability to overlay curated pathways with molecular interaction data from external databases, including IntAct, BioGRID, MINT, and others via PSICQUIC web services [9]. This integration creates a powerful environment for contextualizing interaction networks within established pathway frameworks.

Table 3: Key Research Reagent Solutions for PPI Studies

| Reagent/Resource | Function in PPI Research | Example Applications |

|---|---|---|

| CRISPR/Cas9 Systems | Gene knockout for genetic interaction screens | BioGRID-ORCS: 1,042+ CRISPR screens in human, mouse, fly [2] |

| Affinity Capture Reagents | Antibodies for immunoprecipitation | BioGRID evidence code: affinity capture-MS [2] |

| Two-Hybrid Systems | Binary interaction detection | Yeast two-hybrid; documented in DIP, BioGRID [6] [2] |

| Mass Spectrometry | Identification of co-purified proteins | Large-scale interaction datasets; PTM detection [2] [4] |

| PSICQUIC Tools | Unified querying of multiple databases | Programmatic access to IntAct, BioGRID, MINT [5] [9] |

The experimental workflow for generating and analyzing PPI data involves multiple complementary techniques, as shown in the following diagram:

Diagram 2: PPI Experimental and Analysis Workflow (52 characters)

Research Applications and Future Directions

Themed Curation for Disease-Focused Research

BioGRID has implemented themed curation projects to build depth in critical areas of human biology and disease [2]. These focused efforts include the ubiquitin-proteasome system (UPS), chromatin modification, autophagy, glioblastoma, Fanconi anemia, and most recently, SARS-CoV-2 coronavirus interactions [2]. Domain experts develop curated gene/protein lists to guide literature curation strategies, enabling comprehensive coverage of these specialized areas. This approach demonstrates how PPI databases can evolve beyond general repositories to become targeted discovery tools for specific research communities and disease areas.

Expanding Data Types and Modalities

The PPI database landscape continues to evolve beyond simple binary interactions. BioGRID now captures over 515,000 unique protein post-translational modifications and more than 28,000 interactions between drugs/chemicals and their protein targets [2]. The development of BioGRID-ORCS (Open Repository of CRISPR Screens) extends this further by capturing single mutant phenotypes and genetic interactions from genome-wide CRISPR/Cas9 screens [2]. This expansion reflects the growing integration of multi-modal data in network biology, providing richer context for interpreting interaction networks.

Challenges and Considerations

Researchers must recognize several considerations when using these resources. Data currency varies significantly between databases; for example, HPRD has not been updated since 2009, while BioGRID and REACTOME maintain regular updates [3] [8] [2]. Species coverage differs substantially, with some resources focusing exclusively on human data while others encompass multiple model organisms. Evidence quality should be critically evaluated through experimental method annotations and confidence scores. The complementary nature of these resources often necessitates querying multiple databases to obtain comprehensive interaction networks for a protein of interest.

Protein-protein interaction (PPI) data is fundamental to systems biology, providing critical insights into cellular signaling, regulatory pathways, and the molecular mechanisms underlying disease. For researchers, scientists, and drug development professionals, selecting the appropriate database is crucial for experimental design and data interpretation. This technical guide provides a comprehensive comparison of major PPI resources, focusing on their distinct curation methodologies, coverage, and specialized strengths to inform their use within biomedical research pipelines.

Key PPI Databases at a Glance

Table 1: Core Features of Major Protein-Protein Interaction Databases

| Database | Primary Focus | Curation Policy | Interaction Types | Notable Strengths |

|---|---|---|---|---|

| BioGRID [10] [11] | Protein, genetic, and chemical interactions for major model organisms and humans | Manual curation from literature; no unpublished data or reviews [12] | Physical, genetic, chemical, post-translational modifications | Extensive genetic interaction data; CRISPR screen data via ORCS [11] [13] |

| IntAct [14] | Molecular interaction data from literature curation and direct submissions | Open-source, open data; IMEx-level annotation and MIMIx-compatible entries [14] | Protein-protein, protein-small molecule, protein-nucleic acid | Detailed experimental condition description; compliant with IMEx consortium standards [14] |

| APID [15] | Unified "interactomes" by integrating data from primary sources | Data integration and unification from primary databases (e.g., BioGRID, IntAct, HPRD, MINT, DIP) [15] | Protein-protein (with "binary" vs "indirect" classification) | Provides unified, non-redundant interactomes; distinguishes binary physical interactions [15] |

| STRING [16] | Experimental and predicted interactions | Integration of curated data and predictions from genomic context, text-mining, etc. [17] | Experimental and predicted | High coverage; combined results with UniHI cover ~84% of experimentally verified PPIs [16] |

Quantitative Coverage and Performance

A systematic comparison of 16 PPI databases provides critical metrics for database selection based on coverage. The study found that combined results from STRING and UniHI covered approximately 84% of 'experimentally verified' PPIs for a test set of genes. For 'total' interactions (including predicted), about 94% of available PPIs were retrieved by the combined use of hPRINT, STRING, and IID. Among exclusively found experimentally verified PPIs, STRING contributed around 71% of the unique hits. Analysis with a gold-standard set of curated interactions revealed that GPS-Prot, STRING, APID, and HIPPIE each covered approximately 70% of these high-quality interactions [16].

Table 2: Database Coverage Metrics from a User's Perspective Study [16]

| Metric | Finding | Key Databases |

|---|---|---|

| Experimentally Verified PPIs | ~84% coverage | Combined use of STRING & UniHI |

| Total PPIs (Experimental & Predicted) | ~94% coverage | Combined use of hPRINT, STRING, & IID |

| Exclusively Found PPIs | ~71% of unique hits | STRING |

| Gold-Standard Curated PPIs | ~70% coverage each | GPS-Prot, STRING, APID, HIPPIE |

Specialized databases have also been developed for specific biological contexts. For instance, InterMitoBase, a database for human mitochondrial PPIs, contains 5,883 non-redundant interactions from 2,813 proteins integrated from PubMed, KEGG, BioGRID, HPRD, DIP, and IntAct. Of these, 1,640 are novel interactions not covered by the four major PPI databases [18].

Detailed Curation Methods and Workflows

BioGRID Curation Methodology

BioGRID employs a rigorous manual curation process where all interactions are captured as gene identifier pairs from the primary literature. The curation workflow involves:

- Evidence Capture: Curators record interacting partners, experimental evidence codes, and PubMed IDs. They capture all interactions in a paper, including those not the main focus and interactions found in supplementary files [10].

- Experimental Classification: Interactions are categorized using controlled vocabularies mapping to the PSI-MI 2.5 standard. Key distinctions include:

- Quality Assurance: Interactions from retracted publications are systematically removed, though data conflicts between non-retracted publications are maintained as a reflection of the literature [10].

- CRISPR Screen Curation: Through its ORCS (Open Repository for CRISPR Screens) platform, BioGRID curates genome-wide screens, capturing gene-phenotype and gene-gene relationships, including cell line metadata, experimental conditions, and gRNA library information [11] [13].

The following workflow diagram illustrates BioGRID's comprehensive curation process:

IntAct Curation and Quality Standards

IntAct employs a dual-level curation system with stringent quality control measures:

- Curation Standards: Supports both IMEx-level annotation (comprehensive details of experimental conditions, constructs, and participant methodologies) and MIMIx-compatible entries (less comprehensive but capturing essential data for confidence assessment) [14].

- Participant Detail: Protein interactions can be described to the isoform level or post-translationally cleaved mature peptide level using appropriate UniProtKB identifiers. Participant status is checked with every UniProtKB release, with remapping performed when sequences are updated or withdrawn [14].

- Quality Control: Each entry undergoes peer review by a senior curator before release. Additional rule-based checks are run at the database level, and original authors are contacted to verify data representation after release [14].

- Interaction Scoring: IntAct employs a quantitative scoring system for exporting interactions to UniProtKB/GOA, weighting interaction detection methods (e.g., Biochemical=3, Biophysical=3) and interaction types (e.g., Direct interaction=5, Association=1) [14].

APID Data Integration and Redefinition

APID functions as a meta-database that redefines and unifies PPI data from primary sources through a systematic pipeline:

- Data Unification: Integrates PPIs from BioGRID, DIP, HPRD, IntAct, and MINT, plus human data sources and 3D structures from PDB, removing duplicate and incomplete records [15].

- Method Re-evaluation: APID re-evaluated PSI-MI ontological vocabulary to distinguish proper experimental methods, creating a new "Method_Type" category with 11 terms labeled "NotAssigned" as they don't demonstrate experimental detection [15].

- Binary vs. Indirect Classification: APID classifies interaction detection methods as "binary" (direct physical detection between specific protein pairs) or "indirect" (detecting interactions within protein groups without direct pairwise distinction) [15].

- Binary Interactomes: This classification enables construction of binary interactomes, with the human binary interactome containing 83,949 PPIs (~21% of all reported human interactions) but covering over 90% of the reference proteome [15].

The following diagram illustrates APID's data integration and refinement pipeline:

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Research Reagents and Resources for PPI Research

| Resource/Reagent | Function in PPI Research | Application Context |

|---|---|---|

| CRISPR/Cas9 gRNA Libraries | Genome-wide screening for gene-phenotype and gene-gene relationships [11] | Identification of novel genetic interactions and functional gene modules |

| Affinity Tags (TAP, GST, etc.) | Protein purification and interaction capture for mass spectrometry or western analysis [10] | In vivo and in vitro interaction validation (Affinity Capture-MS/Western) |

| PSI-MI Controlled Vocabularies | Standardized annotation of experiments for consistent data interchange [15] | Database curation, data sharing, and meta-analysis across resources |

| Antibodies for Immunoblotting | Detection of specific proteins in co-immunoprecipitation experiments [10] | Validation of physical interactions and complex formation |

| Recombinant Protein Expression Systems | Production of purified proteins for in vitro interaction studies [10] | Reconstituted complex experiments and direct binding assays |

The landscape of PPI databases offers diverse resources with complementary strengths. BioGRID excels in genetic interactions and manual curation from literature, IntAct provides exceptionally detailed experimental annotations adhering to IMEx consortium standards, APID offers unified, non-redundant interactomes distinguishing binary interactions, and STRING delivers broad coverage by integrating experimental and predicted data. Research indicating that database usage frequencies do not always correlate with their respective advantages underscores the importance of informed selection [16]. For researchers in drug development and biomedical science, strategic use of multiple databases—particularly those with complementary coverage—provides the most comprehensive foundation for network analysis and therapeutic discovery.

The Biological General Repository for Interaction Datasets (BioGRID) is a primary database for the collection and standardization of protein-protein and genetic interactions. Its mission is to provide a comprehensive repository of molecular interactions that are manually curated from the primary biomedical literature, enabling systems-level biological approaches and facilitating the understanding of human disease and physiology. Unlike computationally predicted interactions, BioGRID provides experimentally evidenced data, making it an essential resource for researchers validating disease targets, understanding signaling pathways, and building network models of cellular processes. The core principle of BioGRID's curation philosophy is the systematic capture of binary molecular relationships directly supported by experimental evidence, providing researchers with a reliable foundation for network analysis and hypothesis generation [12] [19]. This technical guide details the principles, workflow, and methodologies underlying BioGRID's publication-driven curation process, providing researchers with the contextual knowledge needed to effectively utilize this critical bioinformatics resource.

Core Curation Principles and Data Scope

BioGRID operates on several foundational principles that govern what data is curated and how it is represented. Understanding these principles is essential for properly interpreting the interaction data provided by the resource.

- Publication-Driven Evidence: BioGRID curators exclusively capture interactions from primary research articles that provide direct experimental evidence. The database does not include interactions reported only in reviews or as unpublished data, ensuring that all curated interactions are traceable to a verifiable, peer-reviewed source [12].

- Binary Relationship Model: All interactions are recorded as binary relationships between two genes or proteins, accompanied by an evidence code that describes the experimental system used to detect the interaction and a reference to the supporting publication. This standardized format ensures computational tractability and consistent data representation [20].

- Comprehensive Organism Coverage: While BioGRID aims to curate all interactions for major model organisms, it also selectively curates topic-driven human datasets relevant to specific diseases or biological processes. The database encompasses interactions from numerous organisms, with substantial data for Homo sapiens, Saccharomyces cerevisiae, Drosophila melanogaster, and Arabidopsis thaliana, among others [21].

- Evidence over Interpretation: BioGRID curates interaction evidence as presented in each publication independently, without making judgments on the absolute biological truth of an interaction. This approach can lead to data conflicts in the database, which simply reflect contradictions in the published literature itself [10].

Table 1: BioGRID Data Statistics (Latest Build 4.4.241 - January 2025)

| Organism | Physical Interactions (Non-Redundant) | Genetic Interactions (Non-Redundant) | Unique Publications |

|---|---|---|---|

| Homo sapiens | 1,009,107 | 18,689 | 39,579 |

| Saccharomyces cerevisiae | 268,815 | 424,370 | 9,811 |

| Drosophila melanogaster | 68,703 | 10,764 | 8,053 |

| Arabidopsis thaliana | 74,009 | 299 | 2,450 |

| Caenorhabditis elegans | 41,075 | 2,295 | 1,560 |

The Curation Workflow: From Publication to Database

The BioGRID curation process follows a systematic workflow designed to ensure consistency and accuracy across all curated data. The workflow can be visualized as a multi-stage process where curators extract specific information from scientific publications and record it in a standardized format.

Diagram 1: BioGRID Curation Workflow

Literature Screening and Selection

The curation process begins with the identification of relevant scientific publications that contain reportable interaction data. BioGRID employs multiple strategies for literature identification, including automated PubMed searches, direct author submissions, and monitoring of high-impact journals. Curators prioritize articles that report novel interactions while also capturing additional evidence for previously reported interactions from new publications. The database focuses on comprehensive curation of all interactions within a paper, even those not central to the main findings or previously curated, to build a complete evidence trail for each interaction [10].

Interaction Identification and Data Extraction

Once a publication is selected for curation, expert curators perform a detailed reading of the full text to identify all reportable interactions. During this phase, curators:

- Identify specific figures, tables, and results sections that provide experimental evidence for molecular interactions

- Distinguish between physical interactions (direct binding or co-complex membership) and genetic interactions (functional relationships between genes)

- Extract the specific genes/proteins involved in each interaction, using standardized gene identifiers

- Determine experimental context, including the organism, cell type, and specific experimental conditions [19]

For complex data sets, particularly those from high-throughput studies presented in supplementary tables, curators may employ specialized loading scripts to efficiently process large numbers of interactions while maintaining data quality [10].

Experimental Evidence Annotation

A critical component of BioGRID curation is the annotation of the experimental evidence supporting each interaction. The database employs a detailed evidence code system that precisely describes the experimental methodology used to detect each interaction. This system allows users to assess the nature and quality of evidence supporting any given interaction in the database [20].

For each experiment supporting an interaction, curators record:

- The specific experimental system from the standardized evidence code ontology

- The publication reference (PubMed ID)

- The interacting partners as a pair of gene identifiers

- For physical interactions, directionality (bait and prey) when clearly defined in the experiment

- Any relevant qualifications or experimental details in free-text form [12]

Data Standardization and Quality Control

Before integration into the public database, all curated interactions undergo standardization and quality control checks. This process includes:

- Identifier standardization: Ensuring all interactors use correct and consistent gene identifiers across the database

- Evidence code validation: Verifying that the assigned evidence codes accurately reflect the described experimental methodology

- Directionality checks: Applying consistent rules for bait-prey relationships in pull-down experiments and other directional assays

- Duplicate detection: Identifying and appropriately handling interactions that may have been reported in multiple publications

BioGRID employs a spoke model for representing interactions, where a bait protein is connected to all identified prey proteins. This avoids artificial inflation of interaction counts that can occur when reciprocally validating interactions [10].

Database Integration and Release

The final stage of the curation workflow involves integrating the curated data into the BioGRID database and making it publicly available through regular quarterly releases. The database provides multiple access methods, including:

- A web-based search interface for interactive querying

- Bulk download files in standard formats for computational analysis

- A web service (API) for programmatic access to the data

- Specialized themed projects focused on specific biological processes or diseases [22]

Experimental Evidence Codes: A Detailed Taxonomy

BioGRID employs a comprehensive classification system for experimental evidence that enables precise annotation of the methods used to detect each interaction. This detailed taxonomy allows users to filter interactions based on experimental approach and assess the nature of supporting evidence.

Physical Interaction Evidence Codes

Physical interaction evidence codes describe experimental systems that detect direct or indirect physical associations between molecules. The specific methodologies are categorized as follows:

Table 2: Physical Interaction Evidence Codes in BioGRID

| Evidence Code | Experimental Principle | Key Methodological Features |

|---|---|---|

| Affinity Capture-MS | Protein complex isolation followed by mass spectrometry | Bait protein affinity-captured from cell extracts; associated partners identified by MS [20] |

| Affinity Capture-Western | Protein complex isolation followed by immunoblotting | Bait affinity-captured; interaction partners identified by Western blot with specific antibodies [20] |

| Co-crystal Structure | Direct atomic-level demonstration of interaction | X-ray crystallography, NMR, or EM structures showing physical interaction at atomic resolution [20] |

| Two-hybrid | Protein interaction detection via reporter gene activation | Bait expressed as DBD fusion, prey as TAD fusion; interaction measured by reporter activation [20] |

| FRET | Detection of molecular proximity by energy transfer | Fluorescence resonance energy transfer between fluorophore-labeled molecules in live cells [20] |

| Reconstituted Complex | In vitro demonstration of interaction between purified components | Includes GST pull-downs, surface plasmon resonance, bio-layer interferometry with recombinant proteins [20] [10] |

| Proximity Label-MS | Enzymatic labeling of vicinal proteins followed by MS | BioID and similar systems; bait-enzyme fusion labels nearby proteins for capture and identification [20] |

Genetic Interaction Evidence Codes

Genetic interactions describe functional relationships between genes, typically revealed through combinatorial genetic perturbations. Key genetic evidence codes include:

- Synthetic Lethality: Double mutant combination results in lethality, while single mutants are viable [10]

- Dosage Lethality: Overexpression of one gene causes lethality in a mutant background of another gene [20]

- Dosage Growth Defect: Overexpression causes a growth defect in a mutant background [20]

- Phenotypic Suppression: Mutation or overexpression of one gene reverses the phenotypic effect of another mutation

- Synthetic Rescue: Mutation in one gene reverses the phenotypic effect of another mutation [10]

BioGRID curators capture genetic interactions only when single mutants and double/multiple mutants are directly compared within the same publication or clearly referenced, ensuring the reliability of the genetic interaction evidence [10].

Distinguishing Between Similar Experimental Systems

Curatorial judgment is particularly important for distinguishing between experimentally similar but conceptually distinct evidence codes. Key differentiations include:

- Affinity Capture vs. Reconstituted Complex: The critical distinction is whether the relevant proteins are co-expressed in the cell (Affinity Capture) or the interaction is demonstrated in vitro with purified components (Reconstituted Complex) [10]

- Affinity Capture-Western/MS vs. Co-purification: Co-purification involves at least one extra purification step beyond standard affinity capture methods to remove potential contaminating proteins [20]

- Protein-RNA vs. Affinity Capture-RNA: Protein-RNA covers in vitro interactions, while Affinity Capture-RNA involves protein and RNA co-expressed in vivo [20]

Specialized Curation Projects and Methodologies

Beyond its core curation activities, BioGRID has developed specialized curation projects and methodologies to address specific biological questions and data types.

Themed Curation Projects

BioGRID's themed curation projects focus on specific biological processes with disease relevance. These projects involve:

- Assembling core genes/proteins central to a biological process with expert input

- Curating relevant publications for biological interactions with enhanced annotation

- Providing focused datasets for diseases including Alzheimer's Disease, COVID-19 Coronavirus, Autism Spectrum Disorder, and Glioblastoma [22]

These themed projects are updated monthly and provide researchers with pre-compiled interaction networks for specific pathological contexts.

BioGRID ORCS: CRISPR Screen Curation

The BioGRID Open Repository of CRISPR Screens (ORCS) is a specialized database for CRISPR screen data compiled through comprehensive curation of genome-wide CRISPR screens reported in the literature. ORCS provides:

- Structured metadata annotation capturing salient CRISPR experimental details

- Data from hundreds of publications encompassing thousands of individual screens

- Search functionality by gene/protein, phenotype, cell line, and other attributes [22]

Handling Complex Curation Scenarios

BioGRID curators follow specific guidelines for handling complex or edge-case scenarios:

- Self-interactions: Recorded when clear evidence exists for homodimerization or multimerization, most commonly through tagging a single protein with two different tags and demonstrating interaction [10]

- Cross-species interactions: Curated between proteins from different species, excluding cross-species complementation experiments that test functional orthology rather than genetic interaction [10]

- "Data not shown" interactions: Generally not curated, but curator judgment may be applied when the interactions are clearly supported by the experimental context [10]

- Conflicting data: All interactions are curated as presented in each publication, reflecting the current state of the literature, with systematic removal only for retracted publications [10]

Research Reagent Solutions for Interaction Studies

The experimental methods captured by BioGRID evidence codes rely on specific research reagents and tools. The table below details key reagents and their applications in interaction studies.

Table 3: Essential Research Reagents for Interaction Studies

| Research Reagent | Primary Function | Application in Interaction Studies |

|---|---|---|

| Epitope Tags (TAP, HA, FLAG) | Protein labeling and detection | Enable affinity capture of bait proteins and their interaction partners [20] |

| Polyclonal/Monoclonal Antibodies | Target-specific protein recognition | Used for Western blot detection and immunoprecipitation in affinity capture experiments [20] |

| Luciferase Reporters | Bioluminescence detection | Serve as detectable markers in protein-fragment complementation assays [20] |

| Fluorescent Proteins (CFP, YFP) | Fluorescence emission | Act as donor-acceptor pairs in FRET-based interaction detection [20] |

| Cross-linking Reagents | Covalent protein linkage | Stabilize transient interactions for Cross-Linking-MS studies [20] |

| GST Fusion Systems | Affinity purification | Facilitate pull-down assays for Reconstituted Complex experiments [20] [10] |

| CRISPR Libraries | Gene knockout screening | Enable genome-wide functional genetic interaction studies [22] |

Data Access and Utilization

Access Methods and Formats

BioGRID provides multiple access pathways to accommodate diverse research needs:

- Web Interface: Interactive searching with filters for organisms, interaction types, and evidence codes

- Bulk Downloads: Complete datasets available in standard formats including MITAB, PSI-MI, and BioPLEX

- Web Services: RESTful API access for programmatic querying and integration into analytical pipelines

- Browser Extensions: GIX extension retrieves gene product information directly on any webpage by double-clicking gene names [22]

Data Statistics and Growth

As of the latest 2025 statistics, BioGRID has curated interactions, chemical associations, and post-translational modifications from over 87,000 publications. The database contains:

- More than 2.25 million non-redundant molecular interactions

- Over 14,000 non-redundant chemical associations

- Nearly 564,000 non-redundant post-translational modification sites [22]

The database undergoes monthly curation updates, with new data added on a continuous basis to maintain current coverage of the scientific literature.

BioGRID data interoperates with numerous complementary resources through data sharing and standardization initiatives:

- STRING Database: BioGRID physical and genetic interactions contribute to the evidence network in STRING, which integrates additional prediction algorithms and pathway databases [23]

- Pathway Databases: Interactions from BioGRID feed into Reactome, KEGG, and other pathway resources

- Model Organism Databases: Collaboration with organism-specific databases ensures consistent gene annotation and identifier mapping

BioGRID's publication-driven curation model provides an essential foundation for systems biology and network-based approaches to understanding cellular function and disease mechanisms. By manually extracting experimentally supported interactions from the literature and representing them in a standardized, computationally accessible format, BioGRID enables researchers to move beyond individual interactions to system-level analyses. The detailed annotation of experimental evidence allows users to assess the nature and quality of support for each interaction, while the comprehensive coverage across model organisms and human datasets facilitates comparative network biology. As the volume of interaction data continues to grow, BioGRID's rigorous curation standards and specialized projects will remain critical for distilling high-quality molecular interaction networks from the expanding biomedical literature.

The Critical Role of Manual Curation and Expert Review in Databases like DIP and HPRD

In the complex landscape of systems biology, protein-protein interaction (PPI) networks serve as fundamental maps for understanding cellular processes and disease mechanisms. The accuracy and reliability of these networks depend critically on the curation processes behind the databases that house them. Manual curation and expert review represent the gold standard in this field, transforming raw experimental data into biologically meaningful information. Databases such as the Human Protein Reference Database (HPRD) and the Database of Interacting Proteins (DIP) have established themselves as authoritative resources precisely because of their rigorous curation methodologies. These curated databases form the foundation for diverse biomedical applications, from identifying novel drug targets to understanding the molecular basis of genetic diseases. Within the broader ecosystem of PPI resources that includes repositories like IntAct and BioGRID, the distinctive value of manually curated databases lies in their ability to provide context, resolve contradictions, and maintain consistently high-quality annotations across the entire proteome.

The essential challenge in PPI database management stems from the tremendous heterogeneity in experimental data quality and methodology. As Cusick et al. noted, different experimental techniques—from yeast two-hybrid (Y2H) systems to affinity purification followed by mass spectrometry (AP-MS)—produce fundamentally different types of interaction data [24]. Without expert interpretation, these data remain isolated facts rather than connected biological knowledge. Manual curation addresses this limitation by applying consistent standards and biological expertise to create structured, searchable, and interconnected data resources. This whitepaper examines the critical curation methodologies, quantitative impacts, and practical applications of manual curation in PPI databases, providing researchers with a comprehensive framework for leveraging these essential resources.

Manual Curation Methodologies: Protocols and Workflows

Core Curation Workflow

The manual curation process in databases like HPRD and DIP follows a systematic protocol to ensure consistency and accuracy. The workflow begins with comprehensive literature surveillance, where curators identify relevant publications containing experimental protein interaction data. This initial screening process typically employs sophisticated text-mining algorithms to identify candidate papers, which are then subjected to expert biological review. Trained curators, often holding advanced degrees in molecular biology or related fields, carefully examine the experimental details, methodology, and results reported in each publication.

The critical evaluation phase involves assessing the experimental evidence according to predefined quality metrics. Curators extract essential information including the specific experimental method used (e.g., Y2H, co-immunoprecipitation, TAP-MS), experimental conditions, interaction domains identified, and any quantitative measurements of binding affinity. This information is then structured according to standardized ontologies, particularly the Proteomics Standards Initiative - Molecular Interaction (PSI-MI) format, which enables data exchange and integration across resources [24]. Throughout this process, curators make critical judgments about which interactions meet quality thresholds for inclusion, resolving ambiguities in the primary literature that automated methods might overlook.

Figure 1: The sequential workflow for manual curation of protein-protein interaction data, highlighting the stages from literature identification to public release.

Specialized Curation Protocols for Different Experimental Methods

Manual curation requires distinct approaches for different experimental methodologies. For yeast two-hybrid experiments, curators focus on validating the binary nature of interactions, examining bait-prey pairs, and assessing false-positive rates based on control experiments. For affinity purification-mass spectrometry approaches, curators face the additional complexity of distinguishing direct physical interactions from co-purifying components of protein complexes. In this context, the curation protocol must address the representation model—whether to use the "matrix" model (assuming all components interact with each other) or the "spokes" model (connecting the bait protein to each prey) [24].

HPRD has developed particularly sophisticated curation protocols for post-translational modifications (PTMs), with phosphorylation events constituting 63% of all PTM data in the database [25]. For these annotations, curators not only record the modification itself but also contextual information including the modifying enzyme, specific modified residues, and functional consequences of the modification. This granular level of detail enables researchers to construct regulatory networks that extend beyond simple physical interactions to include functional relationships. The PhosphoMotif Finder tool within HPRD further exemplifies specialized curation, containing known kinase/phosphatase substrate and binding motifs curated exclusively from published literature [25].

Quantitative Impact of Manual Curation on Data Quality and Coverage

Coverage Statistics of Major PPI Databases

The rigorous manual curation methodologies employed by databases like HPRD and DIP directly translate into superior data quality and unique coverage advantages. The table below summarizes the documented coverage of major PPI databases, highlighting the distinctive position of manually curated resources:

Table 1: Protein-Protein Interaction Database Coverage Comparisons

| Database | Primary Curation Method | Reported Interactions | Publication Sources | Organism Focus | Key Strengths |

|---|---|---|---|---|---|

| HPRD | Manual expert curation | 38,000+ PPIs (2009) [26] | 18,777+ publications [24] | Human-specific | Integrated PTM data, disease associations, tissue expression |

| DIP | Manual curation with binary interaction focus | 53,431 interactions (2008) [24] | 3,193 publications [24] | Multiple organisms (134 species) | High-quality binary interactions, IMEx consortium member |

| BioGRID | Mixed curation approaches | 42,800 human PPIs (2009) [26] | 16,369 publications (2008) [24] | Multiple organisms (10 species) | Extensive genetic interaction data, themed curation projects |

| IntAct | Mixed curation approaches | 129,559 interactions (2008) [24] | 3,166 publications [24] | Multiple organisms (131 species) | IMEx consortium partner, comprehensive species coverage |

| MINT | Mixed curation approaches | 80,039 interactions (2008) [24] | 3,047 publications [24] | Multiple organisms (144 species) | Confidence scoring, protein-promoter/mRNA interactions |

The quantitative evidence demonstrates that HPRD's manual curation approach enables coverage of substantially more scientific publications than other databases—over 18,000 publications compared to approximately 3,000 for several other resources [24]. This extensive literature mining translates into more comprehensive annotation of biologically relevant interactions, particularly those reported in smaller-scale studies that might be missed by approaches focusing primarily on high-throughput datasets.

Comparative Analysis of Interaction Overlap and Unique Contributions

Systematic comparisons reveal limited overlap between different PPI databases, with each resource contributing unique interactions. A study analyzing 14,899 publications shared across multiple databases found that 39% were reported with different numbers of interactions in different databases [24]. These discrepancies arise from varying curation standards, identifier mapping challenges, and different interpretations of experimental results. In one notable example, the same publication reporting human PPIs was documented with 2,371 interactions in HPRD, 2,671 in IntAct, and 2,463 in MINT, while BioGRID reported 6,295 interactions from the same study, indicating fundamental differences in curation methodology [24].

Manual curation particularly excels in capturing interactions from small-scale, hypothesis-driven studies that provide crucial biological context. Analysis has shown that combined use of STRING and UniHI covers approximately 84% of experimentally verified PPIs, while nearly 94% of total PPIs (experimental and predicted) require combined data from hPRINT, STRING, and IID [16]. However, these metrics of breadth must be balanced against quality assessments, with studies revealing that GPS-Prot, STRING, APID, and HIPPIE each cover approximately 70% of curated interactions from a gold-standard PPI set [16].

Key Research Reagent Solutions for PPI Investigation

Table 2: Essential Research Reagents and Resources for Protein-Protein Interaction Studies

| Resource/Reagent | Function/Application | Database Implementation |

|---|---|---|

| Yeast Two-Hybrid (Y2H) Systems | Detection of binary protein interactions | HPRD, DIP, BioGRID categorize Y2H-derived interactions with specific evidence tags |

| Tandem Affinity Purification (TAP) Tags | Protein complex purification for mass spectrometry | Curators distinguish bait-prey relationships in AP-MS data |

| Co-immunoprecipitation (Co-IP) Antibodies | Validation of physical interactions in native cellular environments | HPRD documents specific antibodies used in validated interactions |

| CRISPR Screening Libraries | Genome-wide functional interaction studies | BioGRID ORCS database compiles CRISPR screen data [22] |

| Phospho-Specific Antibodies | Detection of post-translational modifications | HPRD curates phosphorylation sites with modifying enzyme data |

| Proteomic Standards Initiative MI (PSI-MI) | Data standardization and exchange format | IMEx consortium databases (DIP, IntAct, MINT) use PSI-MI for data sharing [24] |

The specialized reagents and resources listed in Table 2 represent critical tools for generating experimentally validated PPI data. Manual curation databases document the specific experimental methods and reagents used to identify each interaction, enabling researchers to assess the reliability of specific data points. This granular documentation is particularly valuable when designing follow-up experiments, as it provides insight into validated experimental approaches.

Complementary Roles in the PPI Data Ecosystem

Manually curated databases like HPRD and DIP do not exist in isolation but function as crucial components within a broader ecosystem of PPI resources. Meta-databases such as STRING, UniHI, and APID aggregate data from multiple sources, including manually curated databases, to provide more comprehensive coverage [26] [27]. The distinct value of manually curated databases in this ecosystem lies in their role as authoritative sources for high-quality, context-rich interaction data. The integration relationships between these resources can be visualized as follows:

Figure 2: Integration framework showing how manually curated databases contribute to meta-databases and directly support research applications.

The critical importance of manual curation becomes evident when examining how these integrated resources are employed in practice. For example, STRING incorporates PPI information from HPRD, BioGRID, MINT, BIND, and DIP, and supplements these data with text-mining results and predicted interactions [26]. Similarly, UniHI integrates PPIs from both high-throughput yeast two-hybrid screens and curated databases including HPRD, DIP, BIND, and Reactome [26]. In these contexts, the manually curated data from HPRD and DIP serve as benchmark datasets for validating computational predictions and text-mining results.

Applications in Disease Research and Drug Development

From Network Biology to Therapeutic Insights

The rigorous manual curation practices employed by databases like HPRD directly enable important applications in disease research and drug development. The annotation of disease-associated proteins and their interconnection within PPI networks provides a systems-level framework for understanding pathogenesis. For example, HPRD explicitly links proteins involved in human diseases to the Online Mendelian Inheritance in Man (OMIM) database, creating a critical bridge between genetics and proteomics [25].

A compelling example of how manually curated PPI data advance disease research comes from a study of inherited neurodegenerative disorders characterized by ataxia. Lim et al. constructed a protein interaction network for 54 proteins involved in 23 ataxias by combining yeast two-hybrid data with literature-curated interactions from BIND, HPRD, DIP, and MINT [26]. This integrated network revealed unexpected connections between ataxia proteins, suggesting shared pathways and disease mechanisms that had not been apparent from studying individual proteins in isolation. The manually curated interactions were essential for establishing the biological relevance of the network, with 68% of literature-curated interactions and 63% of interlog interactions annotated to similar Gene Ontology compartments [26].

Manual curation also plays a crucial role in drug target identification and validation. By mapping disease-associated proteins within the broader context of interaction networks, researchers can identify critical hubs or bottlenecks that represent attractive therapeutic targets. The annotation of enzyme-substrate relationships in HPRD further supports drug discovery by identifying potential modulators of pathway activity [25]. For drug development professionals, these curated networks provide insight into potential mechanism-based toxicities and off-target effects by revealing unanticipated connections between pathways.

Future Directions and Implementation Recommendations

Advancing Curation Practices in the Era of Big Data Biology

As the volume and complexity of proteomic data continue to grow, manual curation methodologies must evolve to maintain their critical role in ensuring data quality. Future developments will likely involve more sophisticated human-computer partnership approaches, where expert curators train machine learning algorithms to handle routine annotation tasks while focusing their expertise on particularly complex or contradictory findings. The continued development and adoption of community standards through initiatives like IMEx and PSI-MI will be essential for enabling data integration while preserving the nuanced contextual information that manual curation provides [24].

For researchers and drug development professionals leveraging PPI data, we recommend a stratified approach to database selection and use. For initial exploratory network analysis, meta-databases like STRING and UniHI provide valuable comprehensive overviews. However, for hypothesis-driven research and validation studies, direct consultation of manually curated databases like HPRD and DIP is essential. When designing follow-up experiments, researchers should pay particular attention to the experimental methods documented in these curated resources, as they provide validated approaches for confirming specific interaction types. The continued support and utilization of manually curated databases will be essential for ensuring that our maps of the human interactome remain both comprehensive and biologically accurate.

Protein-protein interaction (PPI) data is fundamental to understanding cellular functions, with direct implications for drug discovery and the understanding of disease mechanisms. Resources like BioGRID and IntAct provide critical repositories of curated interaction data, making them indispensable for researchers in biomedical science [28]. However, the practical utility of these resources depends significantly on a researcher's ability to effectively access and utilize their data through various download formats and web interfaces. This guide provides a comprehensive technical overview of these access modalities, framed within the context of a broader thesis on PPI data resources. For researchers, scientists, and drug development professionals, selecting the appropriate data format and understanding access methodologies is not merely a preliminary step but a critical determinant of research efficiency and analytical success. The following sections detail the specific technical characteristics of major PPI databases, present structured comparisons, and provide actionable protocols for data retrieval and application.

The Biological General Repository for Interaction Datasets (BioGRID) is a comprehensive curated database of protein, genetic, and chemical interactions. As of late 2025, BioGRID release 5.0.251 contains curated data from over 87,393 publications, encompassing approximately 2.25 million non-redundant interactions and over 563,000 post-translational modification sites [29] [22]. This extensive repository is 100% freely available to both academic and commercial users under the MIT License, supporting open science initiatives without warranty restrictions [29] [30]. BioGRID's data is compiled through rigorous manual curation from the scientific literature, with updates released on a monthly basis to ensure researchers have access to the most current interaction information [22].

The IntAct Molecular Interaction Database is an open-source, open data resource maintained by the European Bioinformatics Institute (EBI). As a core member of the International Molecular Exchange (IMEx) consortium, IntAct provides fine-grained molecular interaction data curated from both scientific literature and direct data depositions [31]. The database employs a deep annotation model that captures extensive experimental details essential for the accurate interpretation of molecular interaction data. This granular approach to data curation ensures that researchers have access to the contextual experimental information necessary for robust biological conclusions. The IntAct platform also serves as a shared curation and dissemination platform for multiple global partners within the IMEx consortium, enhancing data standardization and accessibility [31].

Table 1: Core PPI Database Profiles

| Database | Primary Focus | Data Volume | Update Frequency | Licensing |

|---|---|---|---|---|

| BioGRID | Protein, genetic and chemical interactions | 2.25M+ non-redundant interactions from 87K+ publications [22] | Monthly [22] | MIT License [29] |

| IntAct | Molecular interactions with fine-grained annotation | 1M+ binary interactions (as of 2021) [31] | Regularly updated | Open source, open data [31] |

BioGRID Data Access Formats

Recommended File Formats

BioGRID provides data in multiple file formats, each designed for specific use cases and analytical workflows. For new projects, the following formats are recommended:

- PSI-MI XML 2.5 (PSI25): This standardized format follows the Proteomics Standards Initiative guidelines and is particularly suitable for data exchange and integration with other bioinformatics tools. The files contain extensive metadata about interactions and experimental conditions [32].

- BioGRID TAB 3.0 (TAB3): A tab-delimited format that offers a balance between comprehensive data content and ease of use. This format is particularly accessible for researchers using scripting languages like Python or R for data analysis, and it includes all core interaction information in a structured columnar format [32].

- Osprey Custom Network 1.3.1 (OSPREY): Specifically designed for network visualization and analysis in the Osprey Network Visualization System. This format optimizes data for graphical representation and topological analysis of interaction networks [32].

- PSI MITAB Version 2.5: A simplified tabular variant of the PSI-MI standard that facilitates easy parsing and processing while maintaining standardized interaction data representation [32].

Specialized Data Files

Beyond general interaction data, BioGRID offers several specialized datasets:

- Multi-Validated (MV) Physical Datasets: These files contain interactions that have been experimentally validated through multiple independent methods or publications, providing a high-confidence subset of physical interactions for rigorous analysis [29] [32].

- Chemical Interaction Data (CHEMTAB): This format captures bioactive chemical-protein relationships, including chemical perturbations and interactions, which is particularly valuable for drug discovery and chemical biology research [29] [32].

- Post-Translational Modification Data (PTM): These files provide comprehensive information on post-translational modification sites, a critical regulatory layer in cellular signaling pathways [29] [22].

- Themed Project Datasets: Focused datasets on specific disease areas or biological processes, including Alzheimer's disease, COVID-19 coronavirus, autism spectrum disorder, and glioblastoma [29] [22].

Table 2: BioGRID Download Formats and Specifications

| Format Type | File Extension | Typical Size Range | Primary Use Case |

|---|---|---|---|

| PSI-MI XML 2.5 | .psi25.zip | 181-200 MB | Data exchange, computational analysis [29] [32] |

| BioGRID Tab 3.0 | .tab3.zip | 167-172 MB | Script-based analysis, custom pipelines [29] [32] |

| PSI MITAB 2.5 | .mitab.zip | 169-176 MB | Standardized tabular analysis [29] [32] |

| Organism-Specific | Varies | 61-188 MB | Species-focused research [29] |

| Chemical Data | .chemtab.zip | ~1.3 MB | Chemical biology, drug discovery [29] |

| Post-Translational Modifications | .ptm.zip | ~56 MB | Signaling pathway analysis [29] |

Legacy Format Considerations

BioGRID maintains several legacy formats including BioGRID TAB 2.0, TAB 1.0, and PSI-MI XML 1.0 to ensure backward compatibility with existing research pipelines [32]. However, for new projects, the use of current recommended formats is strongly advised as they contain the most up-to-date data structure improvements and comprehensive interaction records. The legacy formats are primarily recommended only for maintaining compatibility with existing legacy projects [32].

IntAct Data Access Framework

Data Model and Access Capabilities

IntAct employs a sophisticated data model that supports two levels of curation detail: full IMEx-level annotation and MIMIx-compatible entries [31]. This flexible framework allows researchers to access data at different levels of granularity based on their specific requirements. The database provides both web-based query interfaces and programmatic access options, enabling interactive exploration and large-scale computational analysis. IntAct's website has been specifically redesigned to enhance user experience, featuring improved search processes and more detailed graphical displays of interaction results [31].

Data Export and Integration

IntAct supports multiple data export formats that facilitate various analytical approaches. The resource provides specialized data visualization tools that allow researchers to generate interaction network diagrams directly from query results. Additionally, IntAct data is available in formats compatible with the Semantic Web, enhancing computational accessibility and integration with other linked data resources [31]. This commitment to standardized data representation ensures that IntAct datasets can be seamlessly incorporated into broader bioinformatics workflows and analytical pipelines.

Experimental and Computational Methodologies

Practical Workflow for PPI Data Retrieval

The following workflow diagram illustrates a standardized protocol for accessing PPI data from major databases:

Diagram 1: PPI Data Retrieval Workflow

Protocol 1: Targeted Gene Query via Web Interface

Purpose: To extract interaction data for specific candidate genes through graphical web interfaces.

Materials:

- Computer with internet access

- Supported web browser (Chrome, Firefox, or Edge)

- List of target gene identifiers

Procedure:

- Navigate to the BioGRID or IntAct website using a supported web browser [29] [31].

- Locate the search interface, typically prominently displayed on the homepage.

- Input official gene symbols or identifiers for your target genes.

- Apply relevant filters to restrict results by:

- Organism (e.g., Homo sapiens)

- Experimental system (e.g., physical vs. genetic interactions)

- Interaction detection method

- Review the returned interaction network visually.

- Select appropriate download format based on intended use (refer to Table 2).

- Export the data file to your local analysis environment.

Technical Notes: For BioGRID, the "Multi-Validated" dataset filter can be applied to obtain high-confidence physical interactions [32]. For IntAct, leverage the fine-grained annotation to filter interactions by specific experimental evidence.

Protocol 2: Bulk Data Download for Network Analysis

Purpose: To download complete datasets for comprehensive network analysis or integration with internal data.

Materials:

- Stable internet connection with sufficient bandwidth

- Adequate local storage capacity (250MB+ recommended)

- Data extraction tool (scripting environment or archive utility)

Procedure:

- Access the BioGRID download repository at https://downloads.thebiogrid.org/ [29] [30].

- Identify the most current release directory (e.g., Release 5.0.251) [29].

- Select the appropriate file format based on analytical requirements:

- Use PSI25 for computational integration

- Use TAB3 for custom script-based analysis

- Use MITAB for standardized tabular processing [32]

- Download the compressed data file.

- Extract the archive using appropriate decompression tools.

- Validate data integrity through record counts or checksum verification.

Technical Notes: For large-scale analyses, consider using BioGRID's REST service with JSON formatting for efficient programmatic access [32]. Always use the most recent release for new projects to ensure data comprehensiveness [29].

Protocol 3: Cross-Database Integration Methodology

Purpose: To integrate complementary PPI data from multiple databases for comprehensive coverage.

Materials:

- Data from at least two PPI databases (e.g., BioGRID and STRING)

- Data integration pipeline (custom scripts or workflow tools)

- Identifier mapping resources

Procedure:

- Download datasets from selected databases using appropriate formats.

- Standardize protein identifiers across datasets using mapping resources.

- Apply confidence filters specific to each database's metrics.

- Merge interaction records while preserving source attribution.

- Resolve conflicting interactions through evidence weighting.

- Generate a unified interaction network for analysis.

Technical Notes: Systematic comparisons indicate that combined use of STRING and UniHI covers approximately 84% of experimentally verified PPIs, while adding IID and hPRINT extends coverage to 94% of total available interactions [16]. BioGRID contributes significantly to experimentally verified interactions, with STRING providing approximately 71% of exclusive experimentally verified hits [16].

Table 3: Essential Research Reagents and Computational Resources for PPI Research

| Resource Type | Specific Tool/Reagent | Function/Application |

|---|---|---|

| Core Databases | BioGRID [29] [22] | Comprehensive curated protein, genetic and chemical interactions |

| IntAct [31] | Fine-grained molecular interaction data with deep annotation | |

| STRING [28] [16] | Known and predicted protein-protein interactions with confidence metrics | |

| Specialized Resources | BioGRID-ORCS [22] | CRISPR screening data and results |

| BioGRID Themed Projects [29] [22] | Disease-focused interaction sets (Alzheimer's, COVID-19, etc.) | |

| Analytical Formats | PSI-MI XML 2.5 [32] | Standardized format for data exchange and computational analysis |

| BioGRID TAB 3.0 [32] | Tab-delimited format for custom analytical pipelines | |

| Software & Libraries | Osprey Network Visualization [32] | Network visualization and analysis of interaction data |

| Graph Neural Networks [28] | Deep learning approaches for PPI prediction and analysis |

Advanced Data Integration and Analysis Techniques

Computational Framework for PPI Network Analysis

The following diagram illustrates a sophisticated computational pipeline for integrated PPI data analysis:

Diagram 2: Computational Analysis Pipeline

Deep Learning Architectures for PPI Analysis

Modern PPI research increasingly incorporates deep learning frameworks to extract meaningful patterns from complex interaction data. Several architectural approaches have demonstrated particular utility:

Graph Neural Networks (GNNs): These networks directly operate on graph-structured data, making them ideally suited for PPI networks. Variants such as Graph Convolutional Networks (GCNs) aggregate information from neighboring nodes to capture local patterns, while Graph Attention Networks (GATs) introduce attention mechanisms that adaptively weight the importance of different interactions [28].

Multi-Modal Frameworks: Advanced systems like the AG-GATCN framework integrate multiple architectural components (GAT and Temporal Convolutional Networks) to enhance robustness against biological noise in PPI data [28].

Representation Learning Methods: Architectures such as the Deep Graph Auto-Encoder (DGAE) combine canonical auto-encoders with graph auto-encoding mechanisms to enable hierarchical representation learning for PPI characterization [28].

These computational approaches are particularly valuable for addressing inherent challenges in PPI data analysis, including data imbalances, biological variations, and high-dimensional feature sparsity [28].

Effective access to PPI data through appropriate download formats and web interfaces is a critical competency for modern biological research. BioGRID and IntAct provide complementary resources with distinct strengths—BioGRID offers extensive curation volume and specialized datasets, while IntAct provides granular experimental annotation. The selection of specific data formats should be guided by analytical objectives, with PSI-MI XML 2.5 and BioGRID TAB 3.0 representing optimal choices for most new research initiatives. As the field advances, integration of multiple data sources and application of sophisticated computational methods like graph neural networks will increasingly drive discoveries in systems biology and drug development. Researchers are encouraged to leverage the standardized protocols and resource comparisons presented in this guide to optimize their PPI data access strategies, ensuring robust and reproducible research outcomes in the evolving landscape of interaction bioinformatics.

From Data to Biological Insight: Strategies for Integration and Specialized Network Construction

Protein-protein interaction (PPI) networks are fundamental to systems biology, providing a framework for understanding cellular machinery, signal transduction, and disease mechanisms [33]. The set of all interactions within an organism forms a protein interaction network (PIN), which serves as a critical tool for studying cellular behavior [34]. While public databases such as IntAct, BioGRID, and STRING provide vast repositories of interaction data, simply taking the union of data from these sources constitutes a naive approach that fails to address critical challenges including identifier inconsistencies, varying evidence types, and confidence scoring disparities [35] [22]. A robust integrated network requires sophisticated methodologies that move beyond simple data aggregation to create biologically coherent and analytically reliable networks suitable for hypothesis generation and validation in biomedical research.

The process of building these networks must address multiple dimensions of complexity. First, PPI data originates from diverse experimental techniques (e.g., yeast two-hybrid, mass spectrometry) and computational predictions, each with different reliability metrics and systematic biases [33] [35]. Second, the heterogeneity of nodes (proteins) and edges (interactions) requires semantic integration of biological annotations from ontologies like Gene Ontology (GO) and pathway databases such as KEGG and Reactome [33] [35]. Finally, effective visualization and analysis demand specialized software platforms that can handle the scale and complexity of integrated networks while providing analytical capabilities for biological discovery [36] [34]. This guide provides a comprehensive technical framework for constructing robust integrated PPI networks, with specific protocols and resources for research scientists and drug development professionals.

Core PPI Databases and Their Characteristics

A strategic integration approach begins with understanding the specialized strengths and limitations of available databases. The table below summarizes major PPI resources and their distinctive properties.

Table 1: Key Protein-Protein Interaction Databases and Resources

| Database Name | Primary Focus | Evidence Types | Update Frequency | Key Features |

|---|---|---|---|---|

| BioGRID [22] | Physical & genetic interactions | Curated from literature, high- & low-throughput experiments | Monthly | Extensive curation with >2.2 million non-redundant interactions; themed curation projects for specific diseases |

| STRING [35] | Functional & physical associations | Experimental, predictive, co-expression, text mining | Regularly updated | Comprehensive confidence scoring; cross-species transfer via interologs; regulatory networks |

| IntAct [35] | Molecular interaction data | Curated experiments from literature | Regular updates | IMEx consortium member; standardized data formats |

| MINT [35] | Experimentally verified PPIs | Focus on high-throughput experiments | Regular updates | Specialized in molecular interactions |

| HPRD [28] | Human protein reference | Manual curation from literature | Not specified | Human-specific data with enzymatic and localization data |

| DIP [28] | Experimentally verified PPIs | Curated experiments | Not specified | Database of Interacting Proteins |

| Reactome [35] | Pathway-centered interactions | Expert-curated pathways | Regular updates | Hierarchically nested pathway modules; pathway enrichment analysis |

Understanding the scale and composition of PPI data is essential for designing integration strategies. The following table provides comparative metrics for major resources (based on latest available data).

Table 2: Comparative Quantitative Metrics of PPI Resources

| Database | Publications | Interactions | Organisms | Confidence Scoring | Specialized Networks |

|---|---|---|---|---|---|

| BioGRID [22] | 87,393+ | >2.25M non-redundant | Multiple | Based on experimental evidence type | Themed projects (Autism, Alzheimer's, COVID-19) |

| STRING [35] | Not specified | Comprehensive coverage | 1000s of organisms | Probability score (0-1) for each association | Physical, regulatory, and functional networks |

| CORUM [28] | Not specified | Focus on complexes | Human | Experimental validation | Protein complexes specifically |

Methodological Framework: From Simple Union to Robust Integration

Core Challenges in PPI Network Integration

Building a robust integrated PPI network requires addressing several fundamental challenges that extend beyond simple data aggregation. The high number of nodes and connections in real PINs demands significant computational resources and can complicate graphical rendering and analysis [34]. Furthermore, the heterogeneity of nodes (proteins) and edges (interactions) creates integration complexity, particularly when combining data from multiple sources with different identifier systems and annotation standards [33]. The ability to annotate proteins and interactions with biological information extracted from ontologies (e.g., Gene Ontology) enriches PINs with semantic information but substantially complicates their visualization and analysis [33] [34]. Additionally, the availability of numerous data formats for representing PPI and PINs data creates interoperability challenges that must be addressed through standardized conversion pipelines [34].

Strategic Integration Workflow

The following diagram illustrates a comprehensive workflow for robust PPI network integration, moving systematically from data acquisition to functional validation:

Diagram 1: PPI Network Integration Workflow (width=760px)

Advanced Integration Protocols

Protocol 1: Identifier Mapping and Standardization

Effective integration requires resolving identifier inconsistencies across databases. This protocol ensures uniform protein identification:

- Source Data Acquisition: Download PPI data from multiple sources (BioGRID, STRING, IntAct) in standard formats (PSI-MI, TSV, or XML).

- Identifier Extraction: Extract all protein identifiers, noting the database of origin and identifier type (UniProt, Ensembl, Entrez Gene, RefSeq).

- Mapping Service Utilization: Use robust mapping services (UniProt ID Mapping, BioMart, g:Profiler) to convert all identifiers to a standardized namespace (recommended: UniProt KB accession numbers).

- Ambiguity Resolution: Manually resolve identifier ambiguities through sequence-based matching when automatic methods fail.

- Identifier Consolidation: Create a master mapping table that preserves all original identifiers while maintaining the standardized identifier as the primary key.

Protocol 2: Evidence-Weighted Confidence Scoring

Simple union approaches treat all interactions equally, regardless of evidence quality. This advanced protocol implements evidence-weighted confidence assessment:

Evidence Channel Classification: Categorize interaction evidence into distinct channels:

- Experimental (high-throughput vs. low-throughput)

- Computational predictions (genomic context, sequence-based)

- Database annotations (curated pathways)

- Text mining co-occurrence

Channel-Specific Scoring: Calculate confidence scores for each evidence channel using platform-specific metrics (e.g., STRING's neighborhood, fusion, and co-occurrence scores) [35].

Probabilistic Integration: Combine channel-specific scores using probabilistic integration, assuming evidence independence across channels. The combined confidence score is computed as: P(combined) = 1 - Π(1 - P_i) for i evidence channels

Threshold Application: Apply organism- and context-specific confidence thresholds (typically 0.7-0.9 for high-confidence networks).

Directionality Annotation: For regulatory networks, incorporate directionality information using natural language processing of literature and curated pathway databases [35].

Protocol 3: Semantic Integration of Functional Annotations

Moving beyond structural networks to functionally annotated networks enables deeper biological insights:

Ontology Resource Identification: Identify relevant ontologies (Gene Ontology, KEGG pathways, Reactome pathways) for functional annotation.

Annotation Mapping: Map standardized protein identifiers to functional annotations using services provided by EBI QuickGO, KEGG API, or custom mapping pipelines.

Enrichment Analysis Preparation: Precompute background gene sets appropriate for your organism and research context.

Semantic Similarity Calculation: Implement semantic similarity measures (Resnik, Lin, or Wang methods) to quantify functional relationships between proteins beyond direct interactions.

Annotation Integration: Integrate functional annotations as node attributes in the network for subsequent visualization and analysis.

Computational Implementation and Visualization Solutions

Software Platforms for PPI Network Analysis