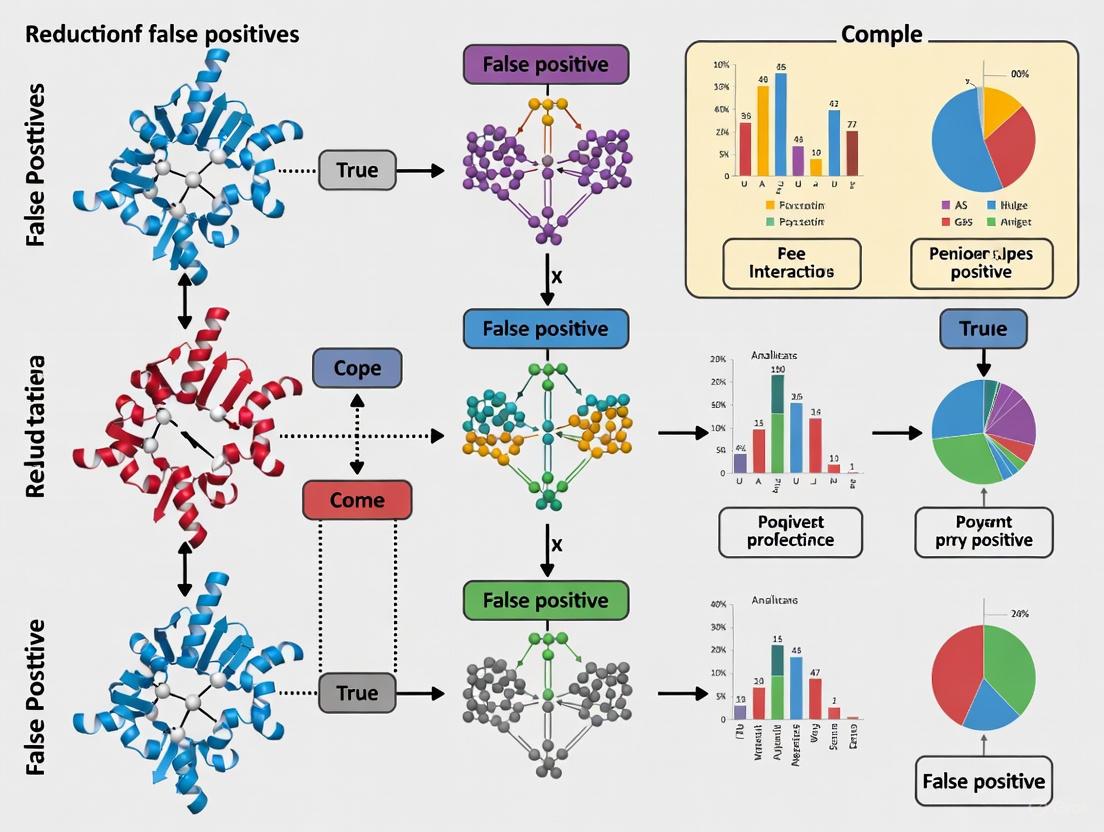

Strategies for Reducing False Positives in Co-Complex Interaction Data: From Computational Filters to AI-Driven Validation

This article provides a comprehensive guide for researchers and drug development professionals tackling the pervasive challenge of false positives in co-complex interaction data.

Strategies for Reducing False Positives in Co-Complex Interaction Data: From Computational Filters to AI-Driven Validation

Abstract

This article provides a comprehensive guide for researchers and drug development professionals tackling the pervasive challenge of false positives in co-complex interaction data. It explores the fundamental sources of error in experimental and computational protein interaction datasets and details rigorous methodological approaches for filtering and refinement. The content covers practical troubleshooting strategies for optimizing prediction algorithms, alongside current frameworks for the statistical validation and comparative analysis of interaction data. By synthesizing insights from foundational concepts to advanced AI applications, this resource aims to equip scientists with the knowledge to enhance data reliability, thereby accelerating robust drug discovery and therapeutic target identification.

Understanding False Positives: The Fundamental Challenge in Protein Interaction Data

Defining False Positives in Experimental and Computational Co-Complex Data

Frequently Asked Questions

What are the common sources of false positives in affinity purification-mass spectrometry (AP-MS) experiments?

One specific source is the creation of artificial binding motifs due to cloning artifacts. For example, using a commercially available ORF library that appends a C-terminal valine (a "cloning scar") to bait proteins can, in combination with the bait's native C-terminal sequence, create a peptide motif that is recognized by endogenous cellular proteins containing PDZ domains. This results in the aberrant co-purification of prey proteins that do not interact with the native bait protein in cells [1].

How can I reduce false positives in computationally predicted protein-protein interaction datasets?

A proven method is to use Gene Ontology (GO) annotations to establish knowledge-based filtering rules. One approach deduces rules based on top-ranking keywords from GO molecular function annotations and the co-localization of interacting proteins. Applying these rules can significantly increase the true positive fraction of a dataset. The improvement, measured by the signal-to-noise ratio, can vary between two and ten-fold compared to randomly removing protein pairs [2].

What strategies can help minimize false positives when accounting for receptor flexibility in virtual screening?

A strategy based on the binding energy landscape theory posits that a true ligand can bind favorably to different conformations of a flexible binding site. When screening a molecule library against multiple receptor conformations (MRCs), you can select the intersection of top-ranked ligands from all conformations. This approach helps exclude false positives that appear high-ranked in only one or a few specific receptor conformations [3].

Troubleshooting Guides

Problem: Suspicious interactions with PDZ domain-containing proteins in AP-MS. Diagnosis: This is likely caused by a C-terminal cloning scar on your bait protein, which can create an artificial PDZ-binding motif [1]. Solution:

- Verify Construct: Check the amino acid sequence of your expressed bait protein for any additional residues added by the cloning system.

- Redesign Construct: Re-clone your bait protein to remove the C-terminal cloning scar, ensuring the native sequence is restored.

- Use Controls: Include control baits with and without the scar to identify interactions that are dependent on the artificial sequence.

Problem: Low overlap between computational PPI predictions and experimental results. Diagnosis: The computational dataset likely contains a high number of false positive predictions [2]. Solution:

- Apply GO Filtering: Use Gene Ontology annotations to filter the predicted pairs.

- Implement Rules: Establish and apply knowledge-based rules. For example, require that interacting proteins share relevant functional keywords and are co-localized in the same cellular component.

- Measure Improvement: Assess the improvement in your dataset's quality by calculating the strength or signal-to-noise ratio before and after filtering [2].

Problem: An overwhelming number of potential hits in structure-based virtual screening with multiple receptor conformations. Diagnosis: Each distinct receptor conformation can introduce its own set of false positives, making it difficult to identify true binders [3]. Solution:

- Generate MRCs: Use methods like molecular dynamics simulations to generate multiple distinct conformations of your target receptor.

- Dock Separately: Perform docking exercises separately against each receptor conformation.

- Select Intersection: Identify and select the ligands that are consistently top-ranked across all or most of the receptor conformations. This selects for true binders and filters out conformation-specific false positives [3].

Experimental Protocols

Protocol: Using GO Annotations to Filter Computational PPI Predictions [2]

- Prepare Training Data: Compile a set of high-confidence, experimentally determined protein-protein interactions for your organism of interest.

- Extract GO Keywords: From the Molecular Function annotations of the interacting proteins in the training set, extract and cluster GO terms into general keywords.

- Identify Top Keywords: Rank the keywords by their frequency of appearance in the training set. Select the eight top-ranking keywords for the filtering rules.

- Deduce and Apply Rules: Establish knowledge rules based on the extracted keywords and the co-localization of proteins. A sample rule is that a predicted interacting pair must share at least one of the top keywords and be annotated to the same cellular component.

- Filter Dataset: Apply the rules to your computationally predicted PPI dataset. Pairs that do not satisfy the rules are considered false positives and are removed.

- Validate Improvement: Calculate the true positive fraction and signal-to-noise ratio (strength) of your dataset before and after filtering to quantify the improvement.

Protocol: Identifying False Positives from Cloning Scars in AP-MS [1]

- Observe Suspicious Preys: Identify prey proteins that consistently co-purify with your bait but lack prior biological evidence for an interaction. PDZ domain-containing proteins are a red flag.

- Map the Interaction Region: Create truncated versions of your bait protein to localize the region required for the interaction with the suspicious prey.

- Check Tag Position: If the interaction is lost when the affinity tag is moved from the N-terminus to the C-terminus of the bait, it suggests the C-terminal sequence is critical.

- Sequence Analysis: Examine the exact C-terminal amino acid sequence of the bait construct, paying attention to any non-native residues added by the cloning system (e.g., a valine from a PmeI restriction site).

- Construct a Scarless Bait: Create a new version of your bait construct that removes the C-terminal cloning scar.

- Comparative AP-MS: Repeat the affinity purification with both the original and the scarless bait. The disappearance of the suspicious prey in purifications with the scarless bait confirms it was a false positive.

Data Presentation

Table 1: Performance of GO-Based Filtering in Reducing False Positives [2]

| Organism | Sensitivity in Experimental Dataset | Average Specificity in Predicted Datasets | Improvement in Signal-to-Noise Ratio |

|---|---|---|---|

| S. cerevisiae (Yeast) | 64.21% | 48.32% | 2 to 10-fold |

| C. elegans (Worm) | 80.83% | 46.49% | 2 to 10-fold |

Table 2: Selection of True Ligands by Intersection of Multiple Receptor Conformations [3]

| Level of Comparison (T-Loop Pocket) | Molecules Selected | Level of Comparison (RNA Binding Site) | Molecules Selected |

|---|---|---|---|

| Top-ranked 50 | A | Top-ranked 10 | - |

| Top-ranked 100 | HAC and B | Top-ranked 20 | HAC1 |

| Top-ranked 150 | C-E | Top-ranked 30 | HAC2 |

| Top-ranked 200 | F-M | Top-ranked 50 | HAC3, 2-4 |

| Total Selected | 14 | Total Selected | 7 |

Table 3: Research Reagent Solutions

| Reagent / Material | Function in Experimental Context |

|---|---|

| Flexi-format ORFeome Collection | A cloned open reading frame (ORF) library used for systematic expression of proteins [1]. |

| Halo Tag | An affinity tag for purifying bait proteins and their interacting partners (preys) in AP-MS [1]. |

| Gene Ontology (GO) Annotations | A structured, controlled vocabulary used to annotate gene products for functional analysis and filtering [2]. |

| Multiple Receptor Conformations (MRCs) | A set of distinct 3D structures of a target protein used in docking to account for flexibility [3]. |

| GOLD Software | A program for flexibly docking ligands into protein binding sites, used in virtual screening [3]. |

Experimental Workflow Visualization

Welcome to the Technical Support Center

This resource is designed to help researchers, scientists, and drug development professionals navigate common challenges in generating and analyzing co-complex interaction data. The following troubleshooting guides and FAQs provide practical solutions for reducing false positives, a critical focus for improving the reliability of research in this field.

Frequently Asked Questions (FAQs)

FAQ 1: My virtual screening pipeline returns a high rate of false positive hits. How can I make my machine learning classifier more effective?

A high false-positive rate in virtual screening is often due to insufficiently challenging training data. Models trained on decoys that are trivially distinguishable from active compounds will fail in real-world applications.

Solution: Implement a training strategy that uses highly compelling, individually matched decoy complexes. This approach aims to generate decoy complexes that closely mimic the types of complexes encountered during actual virtual screens, forcing the model to learn more nuanced distinctions. For example, the D-COID dataset strategy has been used to train classifiers like vScreenML, which significantly improved prospective screening outcomes, with nearly all candidate inhibitors for acetylcholinesterase showing detectable activity and one hit reaching 280 nM IC50 [4].

Actionable Protocol:

- Compile Active Complexes: Start with experimentally determined structures from the PDB. Filter ligands to adhere to the same physicochemical properties required for your actual screening library to ensure relevance [4].

- Generate Compelling Decoys: Create decoy complexes that are matched to your active complexes and do not contain obvious flaws like steric clashes or systematic under-packing. The goal is to eliminate trivial differences the model could exploit [4].

- Train a Binary Classifier: Use a framework like XGBoost to train a model to distinguish between your active and compelling decoy complexes, rather than training a regression model on binding affinities alone [4].

FAQ 2: How can I distinguish direct physical interactions from indirect co-complex associations in my AP-MS data?

AP-MS techniques naturally identify co-complex memberships, which include both direct physical interactions and indirect associations. Most standard scoring methods do not differentiate between these, leading to an overly connected and potentially misleading interaction network.

Solution: Apply computational network topology methods designed specifically to infer direct binary interactions from co-complex data. The Binary Interaction Network Model (BINM) is one such method that uses mathematical frameworks to reassign confidence scores to observed interactions based on their propensity to be direct [5].

Actionable Protocol:

- Construct a Co-complex Network: Use a scoring method like Purification Enrichment (PE) or Bootstrap on your combined AP-MS data to build a high-confidence co-complex interaction network [5].

- Apply a Binary Interaction Model: Run a model like BINM on your co-complex network. This model assumes that observed interactions are the sum of direct and indirect links, with indirect links mediated by common neighbors.

- Filter and Validate: Use the confidence scores generated by BINM to predict direct physical interactions. These high-confidence binary interactions can then be validated against reference sets from Y2H assays, protein-fragment complementation assays (PCA), or structural information [5].

FAQ 3: The drug-target interaction (DTI) datasets I use for training are highly imbalanced. How can I prevent my model from being biased towards the majority class?

Imbalanced datasets, where non-interacting pairs far outnumber interacting ones, are a fundamental challenge in DTI prediction. This leads to models with high specificity but poor sensitivity, meaning they miss true positives (high false negative rate).

Solution: Integrate advanced data balancing techniques into your model training pipeline. One effective method is to use Generative Adversarial Networks (GANs) to create synthetic data for the underrepresented minority class (positive interactions) [6].

Actionable Protocol:

- Feature Engineering: Represent drugs using molecular fingerprints (e.g., MACCS keys) and targets using amino acid composition. Unify them into a single feature representation [6].

- Data Balancing: Train a GAN on the feature vectors of your known positive DTI pairs. Use the generator to create realistic synthetic positive interaction samples [6].

- Model Training and Validation: Combine the synthetic positive samples with your original data to create a balanced dataset. Train your classifier (e.g., a Random Forest model) and validate its performance on held-out test sets. This approach has been shown to achieve high sensitivity and specificity, with ROC-AUC scores exceeding 0.99 on some benchmark datasets [6].

FAQ 4: How can I correct for statistical biases in public drug-target interaction databases to reduce false positive predictions?

Public DTI databases often contain biases, such as over-representation of well-studied drugs and proteins. A key issue is the lack of confirmed negative examples (pairs known not to interact), which are essential for training a robust binary classifier.

Solution: Carefully construct your training set by sampling negative examples in a way that mitigates inherent database biases. A balanced sampling method, where negative examples are chosen so that each protein and each drug appears an equal number of times in both positive and negative interactions, has been shown to improve model performance and reduce false positives [7].

Actionable Protocol:

- Define Positive Interactions: Curate positive DTIs from a high-quality source like DrugBank [7].

- Generate Balanced Negative Examples: Instead of random sampling, use a balanced sampling approach. Randomly select negative examples from the pool of unlabeled pairs such that the count of positive and negative interactions is balanced for each individual drug and each individual protein in the dataset [7].

- Train and Evaluate: Train your model on this balanced dataset. This method has been shown to recover true targets more effectively and decrease the number of false positives among the top-ranked predictions for drugs with few known targets [7].

Experimental Protocols & Workflows

Protocol 1: Distinguishing Direct from Indirect Interactions in AP-MS Data

Objective: To computationally identify direct binary protein-protein interactions from a network of co-complex associations derived from AP-MS data.

Methodology:

Data Acquisition and Preprocessing:

- Obtain a combined set of protein purifications from AP-MS experiments [5].

- Apply a scoring scheme (e.g., Purification Enrichment (PE) with a threshold, or Bootstrap scores) to identify high-confidence co-complex interactions [5].

- Construct an undirected network where nodes are proteins and edges represent high-confidence co-complex associations.

Application of the Binary Interaction Network Model (BINM):

- The BINM model is based on two assumptions:

- The observed co-complex network (

O) is the sum of a latent direct interaction network (D) and an indirect interaction network. - An indirect interaction between two proteins is mediated by their common direct neighbors [5].

- The observed co-complex network (

- The model estimates a parameter for each observed interaction that represents its likelihood of being a direct link.

- The model equation can be conceptualized as

O = D + D^2(or similar), representing the sum of direct and indirect (direct neighbor of a direct neighbor) interactions. - Solve for the latent direct interaction network

Dusing the estimators of the model parameters.

- The BINM model is based on two assumptions:

Output and Validation:

- The output is a list of observed interactions reassigned with a new confidence score indicating the propensity of each to be a direct physical interaction [5].

- Predict interactions with scores above a chosen threshold as direct binary interactions.

- Benchmark the resulting set of direct interactions against independent reference sets, such as:

BINM Workflow for Direct Interaction Identification

Protocol 2: Building a Robust Classifier for Virtual Screening

Objective: To train a machine learning classifier that effectively reduces false positives in structure-based virtual screening.

Methodology:

Curate a High-Quality Set of Active Complexes:

- Source 3D structures of protein-ligand complexes from the Protein Data Bank (PDB).

- Apply filters to ensure ligands meet the physicochemical property criteria (e.g., molecular weight, logP) of your target screening library. This ensures the model remains "in-distribution" during deployment [4].

- Subject these crystal structures to energy minimization to better resemble computational docking poses and prevent the model from simply learning crystal packing artifacts.

Generate a Matched Set of Compelling Decoys (D-COID Strategy):

- For each active complex, generate decoy complexes that are highly challenging to distinguish.

- Ensure decoys are "compelling" by having realistic binding poses without steric clashes, appropriate packing, and the potential to form intermolecular hydrogen bonds. This prevents the classifier from using trivial chemical or structural differences [4].

Feature Extraction and Model Training:

- Extract features from both active and decoy complexes. These can include physics-based energy terms, knowledge-based potentials, and geometric descriptors.

- Train a binary classifier (e.g., using the XGBoost framework) to distinguish between the active and decoy complexes. The use of a classifier, rather than a affinity-predicting regressor, is key for this task [4].

- Validate model performance rigorously using retrospective benchmarks before prospective application.

Workflow for Training a Virtual Screening Classifier

Data Presentation: Scoring Method Comparison

The table below summarizes key scoring methods and their performance in identifying high-quality interactions, which is crucial for reducing false positives.

Table 1: Comparison of Methods for Protein-Protein Interaction Analysis

| Method Name | Primary Application | Key Strength | Reported Performance / Benchmark |

|---|---|---|---|

| BINM (Binary Interaction Network Model) [5] | Identifying direct physical interactions from AP-MS co-complex data. | Uses network topology to discriminate direct from indirect links. | Comprehensive benchmarking showed competitive performance against state-of-the-art methods using HINT, Y2H, PCA, and structural reference sets [5]. |

| HINT Database [8] | Providing a gold-standard set of high-quality interactions for validation. | Systematically and manually filtered to remove low-quality/erroneous interactions from multiple databases. | Serves as a high-quality reference. Used to benchmark methods like BINM. Classifies interactions by type (binary vs. co-complex) and source (HT vs. LC) [8] [5]. |

| PE (Purification Enrichment) [5] [9] | Scoring co-complex interactions from AP-MS data. | A standard scoring scheme for identifying high-confidence co-complex associations from purification data. | Used on a combined yeast dataset, applying a threshold (3.19) to identify 9,070 high-confidence interactions among 1,622 proteins [5]. |

| Bootstrap Scoring [5] [5] | Scoring co-complex interactions from AP-MS data. | Uses bootstrap technique to determine confidence scores for interactions. | On a combined yeast dataset, 10,096 interactions between 2,684 proteins had confidence scores ≥0.1 [5]. |

Table 2: Performance of Data Balancing with GANs on DTI Prediction

| Dataset | Model | Accuracy | Precision | Sensitivity (Recall) | Specificity | ROC-AUC |

|---|---|---|---|---|---|---|

| BindingDB-Kd | GAN + Random Forest | 97.46% | 97.49% | 97.46% | 98.82% | 99.42% |

| BindingDB-Ki | GAN + Random Forest | 91.69% | 91.74% | 91.69% | 93.40% | 97.32% |

| BindingDB-IC50 | GAN + Random Forest | 95.40% | 95.41% | 95.40% | 96.42% | 98.97% |

Performance metrics demonstrating the effectiveness of using Generative Adversarial Networks (GANs) to address data imbalance in Drug-Target Interaction (DTI) prediction, significantly improving sensitivity and reducing false negatives [6].

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Resources for High-Quality Interactome Research

| Item | Function in Research | Explanation / Application Note |

|---|---|---|

| HINT Database [8] | A gold-standard reference set of high-quality protein-protein interactions. | Provides filtered, reliable binary and co-complex interactions for human, yeast, and other organisms. Essential for benchmarking new predictions and training models. |

| BioGRID Database [5] | A public repository of protein and genetic interactions. | A primary source for interaction data. Used to compile reference sets of interactions from Y2H and PCA assays for validation [5]. |

| D-COID Dataset Strategy [4] | A method for building training datasets for virtual screening classifiers. | Provides a framework for generating "compelling decoys" matched to active complexes, which is critical for training ML models that generalize well to prospective screens. |

| DrugBank Database [7] | A bioinformatics and chemoinformatics resource containing drug and target information. | A high-quality source for building positive Drug-Target Interaction (DTI) datasets for training machine learning models. |

| Generative Adversarial Network (GAN) [6] | A deep learning framework for generating synthetic data. | Used to create synthetic minority-class samples (positive DTIs) to correct for severe class imbalance in training datasets, thereby improving model sensitivity. |

| XGBoost Framework [4] | A machine learning library implementing optimized gradient boosting. | An effective framework for training binary classifiers in virtual screening tasks, as demonstrated by the vScreenML model. |

The Impact of Data Quality on Drug Discovery Pipelines and Target Identification

In drug discovery, the integrity of data directly dictates the success and cost of bringing a new therapeutic to market. Poor data quality, particularly the prevalence of false positives, can misdirect research, consume vast resources, and ultimately lead to late-stage failures. This technical support center is designed within the context of a broader thesis on reducing false positives in co-complex interaction data. It provides targeted troubleshooting guides and FAQs to help researchers, scientists, and drug development professionals enhance the reliability of their experimental data, ensuring that drug discovery pipelines and target identification processes are built on a foundation of high-quality, trustworthy information.

FAQs: Data Quality and False Positives

What are the most common sources of false positives in early drug discovery? In high-throughput screening (HTS), over 95% of positive results can be attributed to false positives arising from various interference mechanisms. The most common sources are [10]:

- Colloidal Aggregators: Compounds that form aggregates which non-specifically inhibit proteins.

- Assay Interference Compounds: These include autofluorescent compounds and firefly luciferase (FLuc) inhibitors that disrupt spectrographic detection methods.

- Chemically Reactive Compounds: Promiscuous compounds that react with protein targets non-specifically.

- Instrumental and Data Processing Errors: Issues such as data transformation errors, pipeline incidents, and incorrect data freshness can also lead to false interpretations [11] [12].

How can computational tools help mitigate false positives before costly wet-lab experiments? Computational pre-screening is an effective strategy to triage compound libraries virtually. Tools like ChemFH use a directed message-passing neural network (DMPNN) to predict frequent hitters (FHs) with high accuracy (average AUC of 0.91) [10]. These platforms leverage large datasets (>800,000 compounds) and defined substructure rules to flag potential false positives, allowing researchers to prioritize compounds with a higher probability of being true positives before moving to experimental validation.

Why can my Co-IP results be misleading, and how do I confirm a true protein-protein interaction? Co-immunoprecipitation (Co-IP) is prone to false positives from non-specific binding or antibody cross-reactivity. A true interaction should be confirmed with carefully designed controls and orthogonal methods [13] [14]. Critical steps include:

- Using a negative control with non-treated affinity support (minus bait protein) to identify non-specific binding to the beads.

- Ensuring the antibody against the target does not itself recognize the pulled-down protein.

- Performing additional studies, such as surface plasmon resonance (SPR), to confirm interactions and obtain quantitative affinity data [14].

Troubleshooting Guides

Guide 1: Troubleshooting False Positives in High-Throughput Screening

Symptoms: An unusually high hit rate in HTS; hit compounds exhibit non-dose-dependent activity or inconsistent results in follow-up assays.

Root Causes & Solutions:

| Root Cause | Diagnostic Checks | Corrective Actions |

|---|---|---|

| Colloidal Aggregation | - Test sensitivity to non-ionic detergents (e.g., Triton X-100).- Perform dynamic light scattering (DLS) to detect aggregates. | - Add detergents (e.g., 0.01% Triton X-100) to assay buffer.- Use computational tools (e.g., ChemFH, Aggregator Advisor) for pre-screening [10]. |

| Spectroscopic Interference | - Check for compound autofluorescence in the assay's wavelength range.- Run a counter-screen against the assay enzyme (e.g., FLuc). | - Use red-shifted fluorophores.- Pre-screen compounds with computational models like ChemFLuc or ChemFluo [10]. |

| Chemical Reactivity | - Inspect for reactive functional groups (e.g., aldehydes, Michael acceptors).- Check for time-dependent inhibition. | - Use covalent binding assays to confirm mechanism.- Apply substructure filters (e.g., PAINS, Lilly Medchem rules) [10]. |

| Data Quality Issues | - Monitor data pipeline incidents and freshness [12].- Check for a high number of empty values in screening data [11]. | - Implement data quality monitoring for data downtime (Number of Incidents × (Time-to-Detection + Time-to-Resolution)) [12]. |

Guide 2: Troubleshooting False Positives in Co-Immunoprecipitation (Co-IP)

Symptoms: Bait protein is successfully pulled down, but suspected interaction partners appear in negative controls or cannot be validated.

Root Causes & Solutions:

| Root Cause | Diagnostic Checks | Corrective Actions |

|---|---|---|

| Antibody Specificity | - Run a pre-adsorption control: pre-treat antibody with a sample devoid of the bait protein [13].- Use a monoclonal antibody or independently derived antibodies against different epitopes [13]. | - Use antibodies validated for Co-IP and native protein binding [14].- Consider covalently linking the antibody to beads to prevent leakage [14]. |

| Non-Specific Binding | - Include a rigorous negative control with beads but no antibody, and another with an irrelevant antibody [13] [14].- Save wash buffers to check if your protein of interest is being depleted appropriately [14]. | - Increase the stringency of wash buffers (e.g., increase salt concentration, add mild detergents).- Use a more specific lysis buffer; optimize lysis conditions to preserve specific interactions while removing non-specific ones [14]. |

| Transient or Weak Interactions | - The interaction may not survive the lysis and wash steps. | - Use crosslinkers (e.g., DSS, BS3) to "freeze" interactions before lysis [13].- Ensure the crosslinker is membrane-permeable for intracellular targets and that the buffer is free of interfering substances like Tris or azide [13]. |

| Detection Issues | - The co-precipitated protein is masked by the antibody heavy (~50 kDa) and light (~25 kDa) chains in western blot analysis [14]. | - Use beads with covalently bound antibody.- Ensure the secondary antibody in western blotting recognizes a different species than your Co-IP antibody [14]. |

Essential Data Quality Metrics for Drug Discovery

Monitoring data quality metrics is crucial for maintaining the integrity of the drug discovery pipeline. The table below summarizes key metrics tailored for discovery research, expanding on the concept of Data Downtime—the total time data is incorrect or missing [12].

| Metric | Definition | Target in Discovery | Why It Matters |

|---|---|---|---|

| Data Completeness | Percentage of required data fields that are not empty [11]. | >99% for critical fields (e.g., compound ID, target). | Incomplete data on compound structure or assay results leads to flawed SAR analysis. |

| Data Freshness | Time elapsed between data generation and its availability for analysis [11] [12]. | As per assay SLA (e.g., HTS results within 24h). | Delayed data slows decision-making cycles in iterative compound optimization. |

| Number of Data Incidents (N) | The count of errors (e.g., pipeline failures, missing data) across all data pipelines [12]. | Trend should decrease over time as processes mature. | A high number indicates unstable data generation processes, risking all downstream research. |

| Time-to-Detection (TTD) | The median time from when a data incident occurs until it is detected [12]. | Minimize to <1 hour for critical assay data streams. | Slow detection allows false leads to propagate, wasting resources on invalid hypotheses. |

| Time-to-Resolution (TTR) | The median time from incident detection to its resolution [12]. | Minimize to <4 hours for critical incidents. | Long resolution times extend data downtime, halting research progress. |

| Data Validity | The degree to which data conforms to predefined syntax and format rules [11]. | 100% for all new data entries. | Invalid data formats (e.g., incorrect units) can cause catastrophic calculation errors in dose-response. |

Research Reagent Solutions

The following table details key reagents and their critical functions in experiments designed to generate high-quality, reliable data.

| Reagent / Material | Function in Experiment | Key Quality Considerations |

|---|---|---|

| Protein A/G Beads | Capture and purify antibody-protein complexes from a lysate [14]. | Choose based on antibody species (Protein G for rabbit, Protein A for mouse). Magnetic beads are gentler for large complexes; agarose offers higher yield [14]. |

| Protease Inhibitors | Prevent degradation of the protein-of-interest and its complexes during and after cell lysis [14]. | Use a broad-spectrum cocktail. Must be added fresh to the lysis buffer for every experiment. |

| Non-ionic Detergents (e.g., NP-40, Triton X-100) | Solubilize membrane proteins and disrupt weak, non-specific interactions in Co-IP [14]. | Concentration is critical; too little fails to solubilize, too much can disrupt genuine protein-protein interactions. Must be optimized empirically. |

| Crosslinkers (e.g., DSS, BS3) | Covalently "freeze" transient protein-protein interactions before lysis, preventing dissociation during Co-IP [13]. | Membrane permeability is key: use DSS for intracellular targets. Ensure buffer is amine-free (avoid Tris) to prevent reaction quenching [13]. |

| High-Resolution Accurate Mass Spectrometry (HRAMS) | Provides definitive identification and confirmation of chemical structures, crucial for distinguishing true positives from false signals in nitrosamine analysis and metabolomics [15]. | Provides high selectivity and specificity, essential for confirmatory analysis and reducing false positives [15]. |

Experimental Workflows for Robust Data Generation

Workflow 1: Computational Pre-screening of Compound Libraries

This workflow outlines the use of computational tools to filter out compounds likely to cause false positives before they are tested in wet-lab assays.

Workflow 2: Co-Immunoprecipitation with Integrated Controls

This detailed Co-IP protocol emphasizes controls and steps to minimize false positives and verify specific interactions.

Workflow 3: Data Quality Monitoring Pipeline

This diagram illustrates a continuous process for monitoring and ensuring the quality of data throughout the drug discovery pipeline.

FAQs: Navigating Protein Interaction Databases

FAQ 1: What are the primary publicly available databases for curated protein-protein interactions (PPIs), and how current is their data?

Several databases provide curated PPI data. A leading resource is the Biological General Repository for Interaction Datasets (BioGRID) [16]. This open-access repository is continuously updated, with its most recent curation update noted from November 2025. As of that update, BioGRID contains data from over 87,000 publications, encompassing more than 2.2 million non-redundant protein and genetic interactions [16]. Another key resource is the Human Protein Atlas Interaction resource, which integrates data from four different external interaction databases, covering 15,216 genes and featuring predicted 3D structures for interactions [17]. For dynamic network data, DPPIN is a biological repository that provides data on dynamic protein-protein interaction networks [18].

FAQ 2: What specific resources exist for CRISPR-based genetic interaction screening data?

The BioGRID Open Repository of CRISPR Screens (ORCS) is a dedicated, searchable database for CRISPR screen data [16]. It is compiled through the curation of genome-wide CRISPR screens from the biomedical literature. As of October 2025, ORCS contained data from 418 publications, representing 2,217 curated CRISPR screens. These screens encompass over 94,000 genes, 825 different cell lines, and 145 cell types across multiple organisms, including Humans, Mice, and Fruit Flies [16]. This database is updated quarterly.

FAQ 3: My analysis of AP-MS data yields many potential interactions. How can I computationally prioritize direct co-complex pairs and reduce false positives?

A proven computational method is to use a Support Vector Machine (SVM) classifier with a diffusion kernel [19]. This machine learning approach integrates heterogeneous data sources to predict co-complexed protein pairs (CCPPs). The method uses a gold standard dataset of known complexes (e.g., from MIPS) for training. It combines multiple data types, including protein sequences, protein interaction networks (from yeast two-hybrid, AP-MS, and genetic interactions), gene expression, and Gene Ontology annotations [19]. One study achieved a coverage of 89.3% at an estimated false discovery rate of 10% using this integrated approach, successfully enriching for true positives validated across independent AP-MS datasets [19].

FAQ 4: Are there specialized databases for protein interactions related to specific diseases?

Yes, BioGRID runs "themed curation projects" that focus on specific biological processes with disease relevance [16]. These projects involve the expert-guided curation of publications related to core genes and proteins for those diseases. Current themed projects listed include Autism spectrum disorder, Alzheimer's Disease, COVID-19 Coronavirus, Fanconi Anemia, and Glioblastoma [16]. These projects are updated monthly, providing a refined set of interactions pertinent to those disease contexts.

Troubleshooting Common Experimental & Computational Issues

Problem 1: High false positive rates in co-complex interaction data from AP-MS experiments.

Solution: This is a common challenge, and several scoring approaches have been developed to address it.

- Apply a Co-occurrence Significance (CS) Score: This computational scoring method uses a shuffling-based randomization technique to calculate the statistical propensity for two proteins to co-purify [20]. It compares the experimentally observed co-purification frequency against a random background, identifying specific, high-scoring associations and filtering out prevalent non-specific ones. This method requires no pre-defined training set and can be applied to AP/MS data for any species [20].

- Use an Integrated Computational Classifier: As referenced in FAQ 3, employ an SVM-based framework that doesn't rely solely on AP-MS data. By integrating AP-MS data with other evidence like genetic interactions and sequence information, the classifier can more accurately distinguish true direct co-complex pairs from non-specific associations captured in the purification process [19].

Problem 2: My protein interaction network appears random and lacks the expected modular structure.

Solution: This often indicates a high level of false positives or a specific bias in the detection method.

- Validate with a High-Confidence PIN: Generate a high-confidence Protein Interaction Network (PIN) using stringent scoring methods like the CS score or the integrated SVM classifier. Research has shown that such high-confidence networks derived from AP/MS data are highly modular, containing localized, densely-connected regions that represent functional units [20]. The lack of observed modularity in your network may stem from an overabundance of lower-confidence, non-specific interactions obscuring the true biological structure.

- Cross-validate with Binary Interaction Data: Compare your co-complex network with data from techniques that detect direct binary interactions (e.g., Yeast Two-Hybrid). Be aware that Y2H datasets may themselves underrepresent modularity due to false negatives, so discrepancies require careful interpretation [20].

Table 1: Key Databases for Protein Interaction Data

| Database Name | Primary Focus / Data Type | Key Features & Metrics | Update Frequency |

|---|---|---|---|

| BioGRID [16] | Curated protein, genetic, and chemical interactions | >2.2M non-redundant interactions from >87k publications; includes themed disease projects and CRISPR data (ORCS). | Monthly |

| BioGRID ORCS [16] | CRISPR Screen Data | 2,217 curated screens; 94k+ genes; 825 cell lines. | Quarterly |

| Human Protein Atlas Interaction [17] | Protein-protein interaction networks and 3D structures | Integrates four external databases; covers 15k+ genes; features predicted 3D structures via AlphaFold. | Not Specified |

| DPPIN [18] | Dynamic Protein-Protein Interaction Networks | A repository providing data on the dynamics of interaction networks. | Not Specified |

Table 2: Computational Methods for Reducing False Positives

| Method / Algorithm | Underlying Principle | Application Context | Key Outcome |

|---|---|---|---|

| SVM with Diffusion Kernels [19] | Machine learning that integrates heterogeneous data types (e.g., networks, sequence) to generalize from known complexes. | Predicting Co-Complexed Protein Pairs (CCPPs) from noisy high-throughput data. | 89.3% coverage at 10% FDR; effectively identifies true CCPPs validated across datasets. |

| Co-occurrence Significance (CS) Scoring [20] | Statistical comparison of observed co-purification frequency against randomized profiles. | Analyzing AP/MS data to assess interaction specificity and abundance bias. | Reveals underlying high-specificity associations; produces a highly modular, abundance-effect-free PIN. |

Experimental & Computational Workflows

The following diagram illustrates a robust computational workflow for refining raw protein interaction data into a high-confidence network, integrating the methods discussed above.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Research Reagents and Computational Tools

| Reagent / Tool | Function / Description | Application in Interaction Research |

|---|---|---|

| CRISPR Screening Libraries | Pooled guides for genome-wide knockout. | Used in genetic interaction studies to identify genes affecting specific phenotypes, with data often housed in BioGRID ORCS [16]. |

| Affinity Purification Tags | Tags for purifying protein complexes. | Crucial for AP-MS experiments to isolate native complexes and identify co-purifying prey proteins [20]. |

| Diffusion Kernel | A computational kernel for network analysis. | Used in SVM classifiers to predict interactions by considering the full topology of interaction networks, not just direct neighbors [19]. |

| Gold Standard Complex Sets | Manually curated sets of known protein complexes. | Serve as a training set and benchmark for computational methods. MIPS complex catalogue is a commonly used example [19]. |

Computational Filters and AI-Driven Methods for Data Refinement

Leveraging Gene Ontology Annotations as a Heuristic Filtering Tool

The False Positive Challenge in Co-complex Interaction Data

Protein-protein interaction (PPI) data, particularly from high-throughput co-complex studies like co-elution, frequently contain false positives that compromise downstream analyses. Computational PPI prediction methods consider "functionally interacting proteins" that cooperate on tasks without physical contact, while experimental techniques like yeast two-hybrid and affinity purification with mass spectrometry aim to detect direct physical interactions. This fundamental difference contributes to limited overlap between datasets [2]. Co-elution methods, which separate protein complexes into fractions and analyze similar elution profiles, provide valuable global interactome mapping but remain susceptible to false associations [21].

Gene Ontology as a Biological Filter

The Gene Ontology (GO) provides a structured, controlled vocabulary to describe gene products across three aspects: Molecular Function (elemental activities like catalysis), Biological Process (operations achieved by multiple molecular activities), and Cellular Component (locations where gene products act) [22]. GO annotations offer a biological knowledge framework to distinguish legitimate protein interactions from spurious ones by requiring functional coherence, as proteins interacting within the same complex typically share aspects of their GO profiles [2].

Key Experiments and Validation

Foundational Validation of GO Filtering

A seminal study quantitatively demonstrated GO annotation effectiveness for false positive reduction. Using experimental PPI pairs as training data, researchers extracted significant keywords from GO Molecular Function annotations and established knowledge rules incorporating both function and co-localization [2].

Table 1: Performance of GO-Based Filtering in Model Organisms

| Metric | S. cerevisiae (Yeast) | C. elegans (Worm) |

|---|---|---|

| Sensitivity in Experimental Dataset | 64.21% | 80.83% |

| Average Specificity in Predicted Datasets | 48.32% | 46.49% |

| Strength Improvement (Signal-to-Noise) | 2 to 10-fold | 2 to 10-fold |

The eight top-ranking keywords provided the core filtering criteria. The strength metric, measuring signal-to-noise ratio improvement, confirmed that rule-based filtering significantly outperformed random pair removal [2].

Advanced Integration in Multi-Objective Optimization

Recent research has incorporated GO more deeply into computational frameworks. One study developed a novel mutation operator, the Functional Similarity-Based Protein Translocation Operator (FSPTO), within a multi-objective evolutionary algorithm. This operator uses GO-based functional similarity to guide the search for protein complexes, directly integrating biological knowledge into the optimization process [23]. This approach demonstrated superior performance in identifying protein complexes, particularly in noisy PPI networks, highlighting the advantage of moving beyond simple filtering to integrated heuristic strategies [23].

Multi-Property Approaches

The MP-AHSA method further exemplifies advanced GO integration. It constructs a weighted PPI network using functional annotation similarities and employs a fitness function that combines multiple topological and biological properties to detect co-localized, co-expressed protein complexes with significant functional enrichment [24]. This method's success confirms that combining GO with other data types provides a robust framework for improving complex detection accuracy.

Implementation Workflows

Core GO Filtering Protocol

The following workflow outlines the primary steps for implementing a basic GO-based heuristic filter to refine co-complex interaction datasets.

Step-by-Step Methodology:

- Data Preparation and Mapping: Begin with your computationally predicted or experimentally derived co-complex PPI dataset. Obtain current GO annotations (Molecular Function-MF, Biological Process-BP, Cellular Component-CC) for all proteins in the dataset from the Gene Ontology Consortium or organism-specific databases [22].

- Similarity Calculation: For each protein pair in the dataset, calculate a functional similarity score. Common methods include semantic similarity measures based on the information content of shared GO terms, focusing primarily on Molecular Function and Biological Process ontologies.

- Co-localization Check: A critical step is to verify that both proteins in a pair are annotated to the same or related cellular compartments (e.g., both "cytosol," or "nucleus"). Pairs in disparate locations (e.g., one "extracellular" and one "nuclear") are strong candidates for filtering out [2].

- Rule Application and Thresholding: Apply predefined knowledge rules. A basic rule is:

IF (Functional_Similarity > Threshold_X AND Co_localization == TRUE) THEN RETAIN PAIR ELSE FILTER OUT. The optimal similarity threshold (Threshold_X) can be determined empirically from training data or literature [2]. - Output Filtered Set: The resulting dataset contains PPIs that are functionally coherent and spatially plausible, constituting a refined, high-confidence interactome.

Integrated Complex Detection Workflow

For a more comprehensive analysis aimed at detecting protein complexes, GO can be embedded into a larger workflow, as demonstrated by modern algorithms like MP-AHSA [24].

This integrated approach uses GO not just for post-hoc filtering but throughout the process: weighting the initial network, guiding complex formation, and finally, filtering the predicted complexes based on statistical functional enrichment to ensure biological relevance [24].

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Resources for GO-Based Analysis

| Resource / Reagent | Type | Primary Function in Analysis | Key Features |

|---|---|---|---|

| Gene Ontology Consortium Database | Database | Provides structured vocabularies (terms) and gene product annotations. | Standardized GO terms for MF, BP, CC; manual and electronic annotations [22]. |

| Semantic Similarity Measures (e.g., Resnik, Lin) | Algorithm | Quantifies functional relatedness between proteins based on their GO annotations. | Enables calculation of a numerical similarity score for heuristic filtering [2]. |

| Cytoscape with GO Plugins | Software | Network visualization and analysis; integrates GO data for functional module identification. | Allows visual overlay of GO term enrichment results on PPI networks. |

| BioConductor Packages (e.g., topGO, GOSemSim) | R Software Package | Perform statistical GO enrichment analysis and calculate semantic similarities. | Provides robust, scriptable environment for high-throughput analysis. |

| Experimental PPI Gold Standards (e.g., MIPS) | Dataset | Serves as a positive control training set to derive and validate keyword rules. | Curated set of known complexes for benchmarking and threshold setting [23]. |

Troubleshooting Guide and FAQs

FAQ 1: Why is there a poor overlap between my filtered dataset and experimental validation results?

- Potential Cause: Overly stringent filtering thresholds or reliance on incomplete GO annotations.

- Solution: Systematically optimize your similarity score threshold using a training set of known positives. Be aware that GO annotation is an ongoing process; proteins with incomplete or missing annotations (especially in non-model organisms) will lead to unnecessary filtering. Consider using electronic annotations in addition to manual ones to improve coverage [22].

FAQ 2: How do we handle proteins with multiple, diverse GO annotations during the similarity calculation?

- Potential Cause: Many proteins are multifunctional, participating in different complexes or processes.

- Solution: Implement a "best-match" average approach for semantic similarity calculation, which finds the maximum similarity between any two terms from the two proteins' annotation sets. This ensures that if two proteins share at least one specific functional aspect, it is captured [2].

FAQ 3: Our co-complex data suggests an interaction, but the proteins are in different cellular components. Should we always filter this pair?

- Potential Cause: True biological exceptions (e.g., transient interactions during transport) or inaccurate/subcellular localization data.

- Solution: This is a key heuristic decision point. While co-localization is a powerful rule [2], initial filtering should flag these pairs for manual inspection rather than automatic removal. Review recent literature on the specific proteins to check for validated transient or cross-compartment interactions.

FAQ 4: What is the most common mistake when implementing a GO-based filtering pipeline?

- Answer: Using outdated GO annotations. The GO is continuously updated. Using static, old annotations will miss new functional information and reduce filtering efficacy. Always download the most current annotations from the GO Consortium before beginning your analysis [22].

FAQ 5: Can GO filtering remove true positives?

- Answer: Yes, this is a risk inherent to any filtering method. Proteins within a complex can have distinct molecular functions (e.g., a kinase and its substrate) while being part of the same biological process. Over-reliance on Molecular Function similarity alone might remove such valid pairs. To mitigate this, ensure your filtering strategy incorporates Biological Process similarity and considers the core-attachment structure of complexes, where core proteins share high function similarity, but attachments may not [24].

Incorporating Cellular Localization Data to Prioritize Plausible Interactions

Core Concepts: The Role of Localization in Validating Interactions

Why is subcellular localization critical for reducing false positives in co-complex data?

Cellular localization provides essential contextual information for evaluating protein-protein interactions (PPIs). Proteins must reside in the same cellular compartment to physically interact under normal physiological conditions. When co-complex data from methods like affinity purification mass spectrometry (AP-MS) indicates an interaction between proteins with conflicting localization, this raises a red flag about potential false positives. Incorporating localization data allows researchers to prioritize interactions where proteins share compatible subcellular locations, significantly increasing confidence in biological relevance [25] [26].

Experimental studies have demonstrated that protein interaction networks naturally organize according to subcellular architecture. The BioPlex network analysis found that interaction communities strongly correlate with cellular compartments and biological processes, with proteins within complexes showing highly correlated localization patterns [25]. This principle enables functional characterization of thousands of proteins and provides a framework for filtering implausible interactions from large-scale datasets.

Key Methodologies and Experimental Protocols

How do researchers integrate localization data with interaction studies?

Computational Prediction Tools: Protein subcellular localization prediction represents an active research area in bioinformatics, with numerous computational tools developed to predict localization from protein sequence data. These tools use machine learning and deep learning algorithms to provide fast, reliable localization predictions that complement experimental methods. For proteins without experimentally determined localization, these predictors fill critical information gaps and enable preliminary localization-based filtering of interaction data [26].

Experimental Verification Workflows: Advanced mass spectrometry-based techniques now enable comprehensive interactome mapping while accounting for localization context. The following workflow illustrates the integration of localization data in interaction validation:

Detailed Methodology from Large-Scale Studies: The BioPlex project employed systematic AP-MS using C-terminally FLAG-HA-tagged baits expressed in HEK293T cells, identifying 23,744 interactions among 7,668 proteins. Their CompPASS-Plus analysis framework integrated multiple evidence layers, including localization context, to distinguish true interactions from background [25]. Similarly, a nearly saturated yeast interactome study demonstrated how network architecture reflects cellular localization, with membrane complexes and organellar complexes forming distinct interaction communities [27].

Troubleshooting Guides and FAQs

Frequently Asked Questions: Localization-Interaction Conflicts

Q: My AP-MS data suggests interactions between proteins with different annotated localizations. What should I do?

A: First, verify the localization annotations using recent databases or conduct localization experiments. Consider these possibilities:

- True biological process involving translocation

- Shared components between complexes in different compartments

- Contamination during purification

- Incorrect localization annotation

Experimental approaches:

- Perform fractionation studies followed by western blotting

- Use immunofluorescence co-localization

- Apply proximity-dependent labeling methods

- Conduct cross-linking MS to confirm direct interactions [28]

Q: How can I prevent localization-based false positives in my co-IP experiments?

A: Implement these controls:

- Include compartment-specific markers in your experiments

- Perform subcellular fractionation before co-IP

- Use cross-linking to stabilize transient interactions that might be lost during fractionation

- Verify bait localization in your experimental system, as localization can be cell-type specific [29] [30]

Q: What computational resources are available for localization-based interaction filtering?

A: Multiple databases and tools exist:

| Resource Type | Examples | Key Features |

|---|---|---|

| Localization Prediction Tools | Recent eukaryotic predictors | Machine learning-based localization prediction from sequence [26] |

| Protein Interaction Databases | BioPlex, BioGRID | Include localization context for interactions [25] |

| Integrated Analysis Frameworks | LIANA+ | Combines multiple evidence types including spatial context [31] |

Common Experimental Issues and Solutions

Problem: Inconsistent localization data between databases

- Solution: Use multiple independent databases and prioritize experimentally verified localization data. Consider species-specific and cell-type-specific variations.

Problem: Weak or transient interactions lost during fractionation

- Solution: Use gentle lysis conditions, cross-linking, or proximity labeling techniques that preserve transient interactions. Perform all steps at 4°C with protease inhibitors [29].

Problem: Endogenous protein expression too low for detection

- Solution: Consider mild overexpression of tagged proteins, but verify this doesn't alter natural localization. Use highly sensitive detection methods like targeted mass spectrometry [28].

Research Reagent Solutions

Essential Materials for Localization-Integrated Interaction Studies

| Reagent/Material | Function | Application Notes |

|---|---|---|

| Compartment-Specific Marker Antibodies | Verification of subcellular fractions | Use validated antibodies for organelles of interest |

| Cross-linkers (e.g., formaldehyde, DSS) | Stabilize transient interactions | Concentration and time must be optimized for each system |

| Subcellular Fractionation Kits | Isolate cellular compartments | Maintain cold temperatures throughout procedure |

| Tandem Affinity Purification Tags | High-stringency purification | Reduce false positives in AP-MS workflows |

| Protease/Phosphatase Inhibitors | Preserve protein complexes | Add fresh to all buffers immediately before use |

| Localization Prediction Software | Computational localization assessment | Complement with experimental verification |

Advanced Applications and Future Directions

Emerging Technologies for Enhanced Specificity

Recent advances in mass spectrometry-based interactome studies now integrate experimental approaches with cutting-edge computational tools. These include affinity purification, proximity labeling, cross-linking, and co-fractionation MS, combined with sophisticated bioinformatic analysis [28]. For cell-cell interaction studies, frameworks like LIANA+ provide all-in-one solutions that leverage rich knowledge bases to decode coordinated intercellular signalling events, incorporating spatial context directly into interaction assessment [31].

Spatial transcriptomics and proteomics technologies now enable unprecedented mapping of cellular interactions within tissue contexts. These approaches can validate whether interacting proteins actually co-localize in intact tissues, providing ultimate confirmation of interaction plausibility [32] [33]. As these technologies mature, they will become standard tools for reducing false positives in interaction networks.

The integration of these multidimensional data types—protein interactions, cellular localization, and spatial context—represents the future of high-confidence interactome mapping, moving the field closer to comprehensive understanding of cellular organization and function.

Machine Learning and Deep Learning Architectures for CPI Prediction

Frequently Asked Questions (FAQs)

Q1: What is the most significant challenge when applying deep learning to Co-Complexed Protein Pair (CCPP) prediction, and how can it be mitigated?

A1: The most significant challenge is the high false positive rate (FPR) often associated with predicted interactions. This can be mitigated by employing advanced topological scoring methods instead of relying solely on a model's built-in confidence score. For instance, replacing AlphaFold's built-in af_confidence score with a dedicated topological deep learning model like TopoDockQ has been shown to reduce false positives by at least 42% and increase precision by 6.7% across diverse evaluation datasets [34].

Q2: My dataset of known protein complexes is limited. How can I generate high-quality training data for my model?

A2: You can leverage heterogeneous data integration. Construct kernels or feature sets from various complementary data sources, such as:

- Protein interaction networks: Yeast two-hybrid (physical interactions) and affinity purification mass spectrometry (AP-MS) data [5] [19].

- Genetic interaction networks [19].

- Sequence information using sequence kernels [19].

- Auxiliary data: Gene expression, co-regulation, and sub-cellular localization data [19]. Combining these sources into a single classifier has been shown to achieve a high ROC50 score of 0.937 [19].

Q3: How can I distinguish direct physical interactions from indirect co-complex associations in my AP-MS data?

A3: You can use network topology-based models. The Binary Interaction Network Model (BINM) is designed specifically for this task. It uses the topology of a co-complex interaction network to reassign confidence scores to each observed interaction, indicating its propensity to be a direct physical interaction. This method relies on the mathematical relationship between direct interactions and observed co-complex interactions through common neighbors, and has demonstrated competitive performance against state-of-the-art methods [5].

Q4: What is an effective machine learning architecture for handling the sequential and spatial dependencies in biological interaction data?

A4: A hybrid CNN-LSTM architecture is particularly effective. In this setup, the Convolutional Neural Network (CNN) layers are responsible for extracting local spatial features and patterns from the input data (e.g., from protein sequences or structural representations). The Long Short-Term Memory (LSTM) layers then model the long-range temporal or sequential dependencies within these extracted features. This combination has proven successful in related domains for capturing complex patterns in multivariate time-series data [35].

Troubleshooting Guides

Issue: Model Performance is Hampered by Severe Data Imbalance

Problem: The number of non-interacting protein pairs in my dataset far outweighs the number of interacting pairs, leading to a model with poor sensitivity and a high false negative rate.

Solution: Employ synthetic data generation techniques to balance the dataset.

- Recommended Technique: Use Generative Adversarial Networks (GANs) to create synthetic data for the minority class (interacting pairs) [6].

- Expected Outcome: This approach directly addresses class imbalance, helping the model learn the characteristics of the minority class more effectively. In drug-target interaction studies, a GAN-based approach has achieved high sensitivity scores of over 97%, dramatically reducing false negatives [6].

Procedure:

- Preprocess Data: Encode your confirmed positive CCPPs (the minority class) into a suitable feature representation.

- Train GAN: Train a GAN model on the feature vectors of the positive pairs. The generator learns to produce new, synthetic feature vectors that resemble the real positive pairs.

- Generate Data: Use the trained generator to create a sufficient number of synthetic positive samples.

- Combine Datasets: Merge the synthetic positive samples with the original positive and negative samples to create a balanced training set.

- Retrain Model: Retrain your primary predictive model (e.g., a Random Forest classifier) on this new balanced dataset [6].

Issue: Inaccurate Model Selection from Prediction Pool

Problem: When using structure prediction tools like AlphaFold-Multimer, the built-in confidence score selects models with a high rate of false positives, reducing the reliability of downstream analyses.

Solution: Implement a post-processing scoring function specifically designed to evaluate interface quality.

- Recommended Tool: Use TopoDockQ, a topological deep learning model [34].

- How it Works: Instead of relying on global confidence scores, TopoDockQ uses Persistent Combinatorial Laplacian (PCL) features to capture substantial topological changes and shape evolution at the peptide-protein interface. It predicts a DockQ score (p-DockQ), which is a specialized metric for evaluating the quality of a model's interface [34].

Procedure:

- Generate Complex Models: Run your protein and peptide sequences through a structure prediction tool (e.g., AlphaFold-Multimer) to generate multiple candidate complex models.

- Extract Topological Features: For each predicted model, calculate the PCL-based features from the interaction interface.

- Predict DockQ Score: Feed the topological features into the pre-trained TopoDockQ model to obtain a p-DockQ score for each candidate.

- Select Best Model: Rank all candidate models based on their p-DockQ score and select the one with the highest value. This model is most likely to have a correct interface geometry, thereby reducing false positives [34].

The following table summarizes key experimental setups from the literature for predicting protein interactions and reducing false positives.

Table 1: Summary of Experimental Protocols for Interaction Prediction

| Method / Model | Core Objective | Input Data / Features | Key Preprocessing / Balancing | Validation / Benchmarking |

|---|---|---|---|---|

| SVM with Heterogeneous Kernels [19] | Predict Co-Complexed Protein Pairs (CCPPs) | Diffusion kernels on interaction networks; sequence kernels; auxiliary data (expression, GO terms). | Gold standard from MIPS complex catalogue; random selection of negatives. | Cross-validation; validation against independent AP-MS datasets. |

| Binary Interaction Network Model (BINM) [5] | Identify direct physical interactions from AP-MS co-complex data. | Co-complex interaction network topology. | Uses high-confidence co-complex networks from scoring methods (e.g., PE score). | Benchmarking against reference sets (HINT, Y2H, PCA, structural data). |

| Topological Deep Learning (TopoDockQ) [34] | Accurately select high-quality peptide-protein complex models to reduce FPR. | Persistent Combinatorial Laplacian (PCL) features from the interface. | Datasets filtered for ≤70% sequence identity to training set to prevent data leakage. | Performance compared to AlphaFold's built-in confidence score (af_confidence). |

| GAN + Random Forest Classifier [6] | Predict Drug-Target Interactions with balanced data. | Drug features (MACCS keys); target features (amino acid/dipeptide composition). | GANs used to generate synthetic data for the minority (interacting) class. | BindingDB benchmarks; metrics: Accuracy, Precision, Sensitivity, Specificity, AUC. |

Research Reagent Solutions

Table 2: Essential Research Reagents, Tools, and Datasets

| Item Name | Type | Function / Application | Example Source / Reference |

|---|---|---|---|

| MIPS Complex Catalogue | Gold Standard Dataset | Provides a curated set of known protein complexes for training and benchmarking CCPP predictors. | [19] |

| HINT Database | Gold Standard Dataset | A high-quality, filtered database of binary protein-protein interactions for validation. | [5] |

| BindingDB | Benchmark Dataset | A public database of measured binding affinities for drug-target interactions, used for validation in DTI/DTA studies. | [6] |

| Persistent Combinatorial Laplacian (PCL) | Computational Feature | A mathematical tool for extracting robust topological descriptors from the 3D structure of protein-protein interfaces. | [34] |

| Diffusion Kernel | Algorithm | A kernel function for SVMs that measures similarity between nodes in a network by considering paths of all lengths, superior to simple clustering coefficients. | [19] |

| MACCS Keys | Molecular Feature | A set of 166 structural fragments used to create a binary fingerprint representation of drug molecules. | [6] |

| Amino Acid/Dipeptide Composition | Protein Feature | Simple, effective representations of protein sequences that capture compositional information for machine learning models. | [6] |

Workflow Diagrams

TopoDockQ Model Selection Workflow

GAN-Based Data Balancing for Prediction

Structure-Based Validation Using Co-Crystal Data and AlphaFold Models

Frequently Asked Questions (FAQs)

1. How reliable are AlphaFold models for predicting protein-ligand complexes? While AlphaFold3 (AF3) and RoseTTAFold All-Atom (RFAA) have shown high initial accuracy in benchmarks, recent investigations raise concerns about their understanding of fundamental physics. Through adversarial testing, these models demonstrated notable discrepancies when subjected to biologically plausible perturbations, such as binding site mutagenesis. They often maintained incorrect ligand placements despite mutations that should displace the ligand, indicating potential overfitting and limited generalization [36].

2. What are the key confidence metrics for evaluating an AlphaFold2 model, and how should I interpret them? AlphaFold2 provides two primary confidence metrics:

- pLDDT (predicted Local Distance Difference Test): A per-residue score (0-100) indicating the model's confidence in the local atomic structure. Scores below 70 suggest low confidence in that region, which may be unstructured or poorly modeled.

- PAE (Predicted Aligned Error): A matrix estimating the confidence in the relative position and orientation of different parts of the model. High PAE values (>5 Å) between domains indicate low confidence in their relative placement, even if the domains themselves are well-folded [37]. It is critical to note that a high pLDDT does not guarantee the model matches biologically relevant conformations, especially for dynamic systems [37].

3. My AF2 model has high pLDDT but conflicts with my experimental data. What could be wrong? High pLDDT scores indicate local structural confidence but do not promise the conformation is biologically correct. AF2 can be inaccurate in several scenarios, even with high confidence scores:

- Incorrect structure in high-confidence regions.

- Correct backbone but incorrect side-chain rotamer placements.

- Correct individual domains but inaccurate relative domain placement (as revealed by high PAE) [37]. AF2 models are static snapshots and may not represent alternate biologically relevant states or conformational ensembles [37].

4. Can I use AlphaFold models for molecular phasing in crystallography? Yes, AlphaFold-predicted models can be successfully used for molecular replacement to solve crystal structures. This approach has been demonstrated for proteins like human trans-3-hydroxy-l-proline dehydratase, where the AF2 model facilitated straightforward phasing and structure solution. However, be aware that the AF2 model might lack functionally relevant structural elements present in the crystal structure, such as flexible loops involved in catalysis or oligomerization interfaces [38].

5. What is the advantage of integrating computational prediction with experimental validation for cocrystal discovery? A combined workflow saves significant time and resources. Computational screening prioritizes the most promising coformers from vast chemical libraries based on interaction energy, molecular complementarity, and stability. This allows experimentalists to focus validation efforts (e.g., via XRPD, SCXRD) on a smaller, higher-probability set of candidates, dramatically increasing the efficiency of discovering stable cocrystals with improved pharmaceutical properties [39].

Troubleshooting Guides

Issue 1: AlphaFold Model Shows Poor Agreement with Co-Crystal Ligand Pose

Problem: The ligand pose predicted by a co-folding model (like AF3 or RFAA) does not match the pose observed in your experimental co-crystal structure.

Investigation and Solution:

| Investigation Step | Action | Interpretation & Next Step |

|---|---|---|

| Check Model Confidence | Examine the pLDDT scores around the binding pocket and PAE between the ligand and protein. | Low confidence suggests inherent model uncertainty. High confidence with a wrong pose indicates a potential physical understanding failure [36] [37]. |

| Validate Physics | Manually inspect for unphysical interactions: steric clashes, unrealistic bond lengths/angles, or lack of expected hydrogen bonds. | The presence of steric clashes or other artifacts suggests the model struggles with atomic-level physical constraints [36]. |

| Test Robustness | Perform an in silico mutagenesis. Mutate key binding residues to alanine or glycine and re-predict. | If the model still places the ligand in the mutated, non-interactive pocket, it is likely overfit and memorizing training data rather than learning physics [36]. |

| Use Specialized Docking | For small molecules, consider using AF2 for the apo-protein structure and then employing physics-based (AutoDock Vina) or machine-learning docking tools (DiffDock) for ligand placement. | These tools may better handle specific protein-ligand physics and are benchmarked for this specific task, potentially offering a more accurate pose [36]. |

Issue 2: High False Positive Rate in Computationally Predicted Protein Complexes

Problem: Many of the protein-protein or protein-ligand complexes predicted by computational tools fail to validate experimentally.

Investigation and Solution:

| Investigation Step | Action | Interpretation & Next Step |

|---|---|---|

| Apply Gene Ontology (GO) Filters | Check the Gene Ontology (GO) annotations of the putative interacting partners. Filter out pairs that are not co-localized in the same cellular component or that lack related molecular functions/biological processes. | This uses biological priors to remove implausible interactions. One study showed this method could increase the true positive fraction of a dataset by improving the signal-to-noise ratio [2]. |

| Review Confidence Metrics | Scrutinize PAE plots for the predicted complex. High error between protein subunits suggests low confidence in the quaternary structure assembly. | Low-confidence interfaces from prediction should be prioritized lower for experimental validation. AF2 is less reliable for certain complexes, especially those involving large conformational changes [37]. |

| Consider System Properties | Be cautious with specific protein classes: membrane proteins, proteins with large intrinsically disordered regions, and proteins requiring co-factors not included in the prediction. | These systems are inherently challenging for current deep learning models and have higher reported false-discovery rates [37] [40]. |

| Integrate Orthogonal Data | Correlate predictions with co-elution or co-fractionation data from mass spectrometry. | Proteins that co-elute across a separation gradient and are predicted to interact have a much higher probability of forming a true complex, as this provides independent experimental support [21]. |

Experimental Protocols for Validation

Protocol 1: Computational Screening for Cocrystal Prediction

This protocol outlines the key steps for using computational methods to rationally design cocrystals, helping to reduce false positives before lab work begins [39].

Workflow Diagram: Cocrystal Prediction and Validation Workflow

Methodology:

- API Selection: Define the Active Pharmaceutical Ingredient (API) and the target properties for improvement (e.g., solubility, stability) [39].

- Computational Screening:

- Database Search: Use databases like the Cambridge Structural Database (CSD), PubChem, and ZINC to identify potential coformers [39].

- Interaction Analysis: Employ computational methods to predict interaction strength. Common approaches include:

- Quantum Mechanical (QM) Methods: Use software like Gaussian or CASTEP for Density Functional Theory (DFT) calculations to evaluate interaction energies and analyze Molecular Electrostatic Potential (MEP) surfaces for interaction site complementarity [39].

- Machine Learning (ML) & Network-Based Prediction: Apply algorithms trained on known cocrystals to predict new likely coformers [41].

- Lattice Energy Minimization: Predict the stable crystal structure of the API-coformer pair.

- Ranking and Analysis: Rank the potential cocrystals based on calculated interaction energies, binding affinity, and predicted stability [39].

- Experimental Design: Prioritize the top-ranked cocrystal candidates for experimental synthesis, considering synthetic feasibility and scalability [39].

Protocol 2: Experimental Validation of Predicted Cocrystals

This protocol details common experimental methods used to validate computationally predicted cocrystals [41].

Methodology:

- Cocrystal Synthesis:

- Liquid-Assisted Grinding (LAG): Grind the API and coformer together with a small, catalytic amount of solvent using a ball mill or mortar and pestle. This is often the quickest and most successful method for initial screening [41].

- Solvent Evaporation (SE): Dissolve the API and coformer in a suitable volatile solvent and allow the solvent to evaporate slowly, promoting cocrystal formation [41].

- Characterization and Validation:

- X-ray Powder Diffraction (XRPD): The primary tool for initial validation. Compare the XRPD pattern of the experimental product to the patterns of the pure starting materials. The appearance of new, distinct peaks indicates the formation of a new solid phase (i.e., a cocrystal) [41].