Overcoming Parameter Estimation Challenges in Biochemical Pathways: From Data Scarcity to Predictive Models

Parameter estimation is a fundamental yet formidable challenge in building quantitative models of biochemical pathways, essential for metabolic engineering and drug discovery.

Overcoming Parameter Estimation Challenges in Biochemical Pathways: From Data Scarcity to Predictive Models

Abstract

Parameter estimation is a fundamental yet formidable challenge in building quantitative models of biochemical pathways, essential for metabolic engineering and drug discovery. This article explores the core obstacles—including problem ill-conditioning, data limitations, and algorithmic multimodality—that render traditional local optimization methods ineffective. It systematically reviews and compares a modern arsenal of solutions, from robust global optimization strategies like Evolution Strategies and hybrid algorithms to innovative, data-efficient approaches such as fuzzy-inferred Kalman filtering and Bayesian optimal experimental design. Aimed at researchers and drug development professionals, this review provides a structured framework for selecting appropriate estimation methodologies, implementing validation protocols, and strategically designing experiments to build more reliable, predictive models of cellular processes.

The Core Hurdles: Why Parameter Estimation in Biochemical Pathways is Inherently Challenging

What is the Inverse Problem in Biochemical Pathway Modeling?

In the context of biochemical pathways, the "inverse problem" refers to the challenge of determining the unknown parameters of a dynamic model—such as kinetic rate constants—from a set of experimental observations. Solving this problem is fundamental for building predictive models that can reproduce experimental results and promote a functional understanding of biological systems at a whole-system level [1].

The problem is mathematically formulated as a Nonlinear Programming (NLP) problem with differential-algebraic constraints. The core objective is to find the set of parameters that minimizes the difference between the model's predictions and the experimental data, subject to the constraints defined by the system's dynamics [1].

Mathematical Formulation of the Inverse Problem

The parameter estimation problem for a nonlinear dynamic system is formally defined as follows [1]:

Find the vector of parameters p to minimize the cost function: $$ J = \sum (y{msd} - y(p, t))^T W(t) (y{msd} - y(p, t)) $$

Subject to the following constraints:

- The system dynamics:

f(dx/dt, x, p, v, t) = 0 - Other possible equality constraints:

h(x, p, v, t) = 0 - Other possible inequality constraints:

g(x, p, v, t) ≥ 0 - Upper and lower bounds on parameters:

p^L ≤ p ≤ p^U

Where:

Jis the cost function that measures the goodness of the fit.pis the vector of parameters to be estimated (e.g., kinetic rate constants).y_msdis the measured experimental data.y(p, t)is the model prediction for the output variables.W(t)is a weighting (or scaling) matrix.xis the vector of differential state variables (e.g., metabolite concentrations).vis a vector of other time-invariant parameters that are not being estimated.fis the set of differential and algebraic equality constraints describing the nonlinear system dynamics.handgrepresent possible additional equality and inequality constraints on the system.

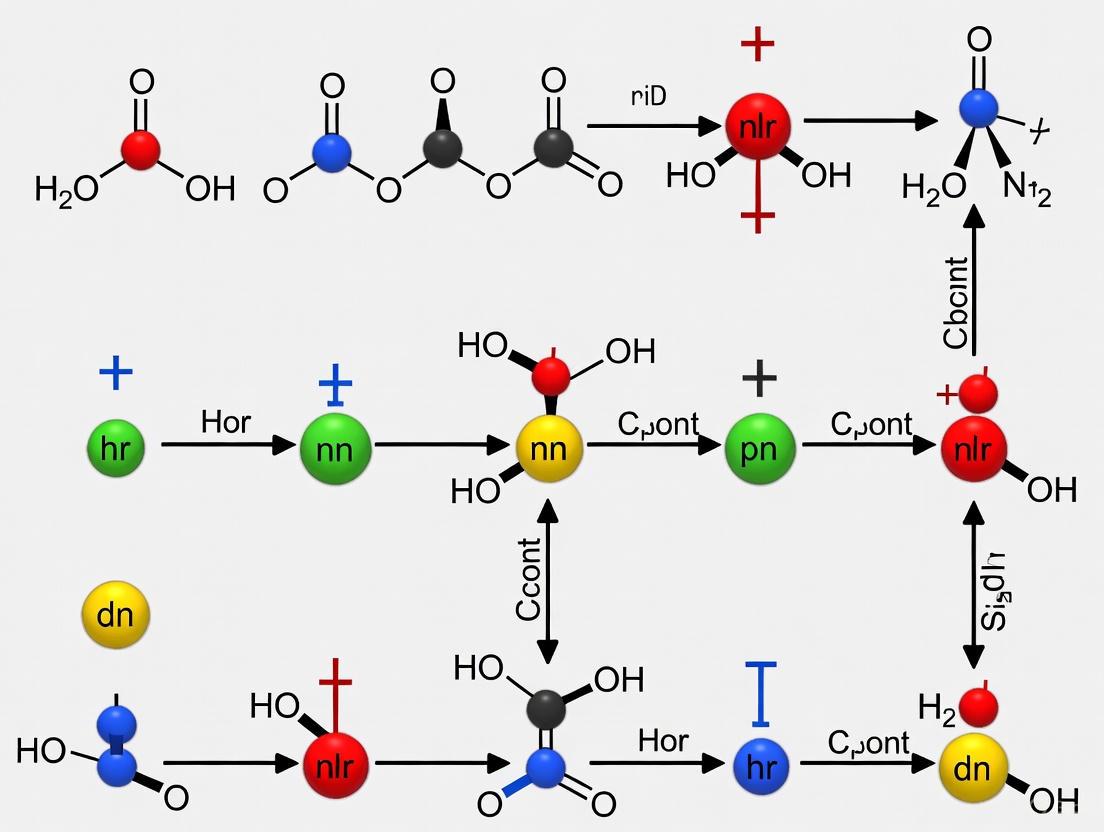

The following diagram illustrates the logical structure and components of this inverse problem.

Practical Implementation: Methodologies and Protocols

What are the Standard Methodologies for Solving the Inverse Problem?

Because the inverse problem is frequently ill-conditioned and multimodal, traditional local optimization methods (e.g., Levenberg-Marquardt) often fail by converging to unsatisfactory local solutions [1]. Therefore, global optimization (GO) methods are essential. The table below summarizes key methodological categories and examples cited in the literature.

| Method Category | Description | Specific Examples |

|---|---|---|

| Stochastic Global Optimization | Population-based methods that use probabilistic operators to explore the parameter space widely, helping to escape local optima [1] [2]. | Evolution Strategies (ES) [1] [3], Evolutionary Programming (EP) [1], Genetic Algorithms (GA) [4] [2], Particle Swarm Optimization (PSO) [2], Random Drift PSO (RDPSO) [2]. |

| Hybrid & Machine Learning Methods | Combines mechanistic models with data-driven approximators like neural networks to handle partially known systems or reduce computational cost [5]. | Hybrid Neural ODEs (HNODE) [5], Constrained Regularized Fuzzy Inferred Extended Kalman Filter (CRFIEKF) [6] [7]. |

| Deterministic Global Optimization | Methods with theoretical guarantees of convergence to a global optimum for certain problem types, but computational effort can be prohibitive for large problems [1]. | Branch-and-bound [1]. |

Detailed Protocol: Parameter Estimation Using Global Optimization

This protocol outlines the general steps for estimating parameters in a biochemical pathway model using a stochastic global optimization method, as derived from benchmark studies [1] [2].

Problem Formulation:

- Define the Dynamic Model: Formulate the system of ordinary differential equations (ODEs) representing your biochemical pathway (e.g., using Michaelis-Menten kinetics or power-law approximations).

- Define the NLP: Specify the cost function

J(e.g., sum of squared errors) and identify all parameterspto be estimated, including their plausible upper and lower bounds (p^L,p^U).

Data Preparation:

- Gather experimental time-course data (

y_msd) for a subset of the model's state variables (e.g., metabolite concentrations). - Pre-process the data (e.g., normalization, handling missing points) and define an appropriate weighting matrix

W(t)if necessary.

- Gather experimental time-course data (

Optimizer Configuration and Execution:

- Algorithm Selection: Choose a suitable global optimizer from the categories above (e.g., an Evolution Strategy or a PSO variant).

- Parameter Tuning: Set the algorithm-specific parameters (e.g., population size, mutation rates, convergence criteria). This often requires preliminary tests.

- Run Optimization: Execute the optimization process. The algorithm will repeatedly simulate the model with different parameter sets

pto minimizeJ.

Validation and Analysis:

- Solution Assessment: Validate the final parameter set

p*by simulating the model and visually and statistically comparing the output to the experimental data. - Identifiability Analysis: Perform a practical identifiability analysis to determine if the parameters can be uniquely estimated from the available data [5].

- Solution Assessment: Validate the final parameter set

The workflow for implementing a hybrid methodology that combines mechanistic knowledge with machine learning is shown below.

Troubleshooting Common Issues

The Optimization Algorithm Converges to a Poor Fit. What Should I Do?

This is a common symptom of the inverse problem's multimodality.

- Potential Solution 1: Switch to a Robust Global Optimizer. Do not rely on a single local optimization method. Use a state-of-the-art stochastic global algorithm. Studies have shown that for a 36-parameter pathway model, Evolution Strategies (ES) were the only type of algorithm able to successfully find a good solution [1] [3]. More recently, variants of Particle Swarm Optimization (PSO), such as Random Drift PSO (RDPSO), have also demonstrated excellent performance on complex benchmark models [2].

- Potential Solution 2: Use a Multi-Start Strategy with Clustering. If computational resources are limited, a multi-start approach of a local optimizer can be attempted. However, to avoid re-finding the same local minimum, implement it with a clustering method that identifies the vicinity of local optima to improve search efficiency [1].

- Potential Solution 3: Check Parameter Bounds and Scaling. Ensure the upper and lower bounds for parameters

pare biologically plausible and do not exclude the true solution. Poorly scaled parameters can also hinder optimizer performance.

My Model is Complex and Optimization is Computationally Prohibitive. Are There Efficient Alternatives?

Yes, several strategies can improve efficiency.

- Potential Solution 1: Incorporate Problem Decomposition. Use a method that embeds training functions, such as a multiple shooting approach or a decomposition strategy, within the optimization framework. This can help handle noisy data and improve computational efficiency [4].

- Potential Solution 2: Leverage Hybrid Machine Learning Models. For cases where the system structure is only partially known, a Hybrid Neural ODE (HNODE) framework can be effective. Here, a neural network approximates the unknown dynamics, which can make the overall problem more tractable [5].

- Potential Solution 3: Employ Regularization for Ill-Posed Problems. If the problem is ill-posed (e.g., due to limited data), use techniques like Tikhonov regularization to penalize unrealistic parameter values and stabilize the solution [6] [7].

How Can I Assess the Reliability of My Estimated Parameters?

It is crucial to determine if your parameters are identifiable.

- Potential Solution: Perform a Practical Identifiability Analysis. After parameter estimation, conduct an a posteriori identifiability analysis. This evaluates how uncertainties in the experimental measurements propagate to uncertainties in the estimated parameters. This can be done by profiling the cost function or using a sensitivity-based approach to compute confidence intervals for the parameters [5].

The Scientist's Toolkit: Key Research Reagent Solutions

The following table lists essential computational "reagents" and their functions for tackling the inverse problem in biochemical pathways.

| Tool / Resource | Function in the Inverse Problem |

|---|---|

| Global Optimization Algorithm | The core computational engine for searching the parameter space to minimize the cost function J (e.g., ES, PSO, GA). |

| Differential Equation Solver | A numerical integrator (e.g., Runge-Kutta methods) used to simulate the dynamic model f(dx/dt, x, p, v, t) = 0 for a given parameter set p. |

| S-System Canonical Model | A specific power-law formalism for representing biochemical networks, often used in inverse problem studies for its structured representation [4]. |

| Hybrid Neural ODE (HNODE) Framework | A modeling framework that combines a mechanistic ODE model with a neural network component to represent unknown dynamics, facilitating parameter estimation for partially known systems [5]. |

| Fuzzy Inference System (FIS) | Used in some advanced methods (e.g., CRFIEKF) to encapsulate imprecise, qualitative knowledge about relationships among molecules in a network when precise data is scarce [6] [7]. |

FAQ: Understanding the Core Problem

What is the fundamental reason local optimization methods fail for parameter estimation in biochemical pathways? Local optimization methods fail primarily because the parameter estimation problem for nonlinear dynamic biochemical pathways is frequently ill-conditioned and multimodal [8] [1]. These methods, such as the Levenberg-Marquardt or Gauss-Newton algorithms, converge to the nearest local minimum in the cost function [9]. In multimodal landscapes, this means the solution found is often a suboptimal local fit, not the true global best fit to the experimental data, unless the algorithm is initialized with a parameter vector that is already very close to the global solution [9].

How does the structure of a biochemical model contribute to this challenge? The problem is formulated as a nonlinear programming problem subject to nonlinear differential-algebraic constraints [8] [1]. The nonlinear and constrained nature of the system dynamics directly leads to a cost function landscape that is non-convex, meaning it contains multiple peaks and valleys (optima) [9]. This structure makes it difficult for local, gradient-based methods to navigate out of a local valley to find a deeper, more optimal one.

Are some biochemical pathway topologies more prone to this issue than others? Yes, while all nonlinear dynamic models can be challenging, the complexity is amplified in certain motifs. For example, the problem has been notably documented in models of a three-step pathway with 36 parameters [8] [2], branched pathways like the violacein biosynthetic pathway [10], and merging metabolic pathways [11]. These topologies introduce complex interactions that exacerbate the multimodality of the optimization landscape.

FAQ: Identifying Symptoms and Consequences

What are the practical symptoms of a failed optimization in my experiment? You may observe several key symptoms:

- Poor Fit: The model simulations do not adequately match the experimental time-course data, even after repeated optimization attempts from different starting points [9].

- Parameter Sensitivity: The estimated parameter values change drastically with slightly different initial guesses, indicating a lack of robustness [9].

- Inability to Validate: A model that converges to a local solution may fail validation against a new, independent set of experimental data [9].

What is the impact of an inadequate measurement on the optimization process? Inadequate or imprecise measurements compound the difficulties of parameter estimation. Noisy or insufficient data can make the objective function even more irregular and difficult to navigate. Some advanced methods attempt to address this by incorporating imprecise relationships among molecules, but the fundamental challenge remains [12].

Troubleshooting Guide: Diagnostic Steps

How can I diagnose if my optimization has converged to a local minimum? A reliable diagnostic is the multistart strategy: run a local optimization algorithm from a large number of different, randomly selected starting parameter vectors [1]. If the algorithm consistently converges to many different parameter sets with similarly poor objective function values, your problem is likely multimodal, and local methods are failing. If it always converges to the same parameter values, you can have more confidence in the solution.

What preliminary analyses can I perform before starting optimization?

- Identifiability Analysis: Determine whether it is theoretically possible to uniquely determine your model's parameter values from the available data [9]. This can reveal if the problem is ill-posed before optimization even begins.

- Evaluate Data Quality: Ensure your experimental data, such as time-course observations, has sufficient quality and quantity to constrain the model parameters [12].

Troubleshooting Guide: Solutions and Best Practices

What are the recommended alternatives to local optimization methods? The most robust alternatives are Stochastic Global Optimization (GO) methods [8] [1] [9]. These methods are specifically designed to explore the entire parameter space and have a higher probability of locating the global optimum. The following table summarizes the key classes of algorithms that have been successfully applied.

Table 1: Global Optimization Methods for Biochemical Pathway Models

| Method Class | Key Examples | Principles and Advantages | Considerations |

|---|---|---|---|

| Evolution Strategies (ES) | Evolution Strategies (ES), Stochastic Ranking ES (SRES) | Inspired by biological evolution; strong performance in benchmarks, good robustness, and self-tuning properties [8] [9] [2]. | Computational cost can be high, though more efficient than many alternatives [8] [9]. |

| Other Evolutionary Algorithms | Differential Evolution (DE), Genetic Algorithms (GAs) | Population-based search; can handle arbitrary constraints [9]. | GAs can have slower convergence speed [2]. |

| Swarm Intelligence | Particle Swarm Optimization (PSO), Random Drift PSO (RDPSO) | Simulates social behavior; fast convergence and lower computational need [2]. | Performance can be sensitive to swarm topology and parameters [2]. |

| Metaheuristics | Scatter Search (SS) | A population-based method that systematically combines solutions [9]. | A novel SS metaheuristic has been shown to significantly outperform previous methods for some benchmarks [9]. |

| Hybrid Methods | Hybrid Stochastic-Deterministic | Combines a global method for broad exploration with a local method for fast refinement [9]. | Can reduce computation time by an order of magnitude while maintaining robustness [9]. |

Are there strategies to make the optimization process more efficient? Yes, employing advanced computational strategies is crucial:

- Hybrid Approaches: Combine stochastic global methods with local methods. The global method locates the basin of attraction of the global optimum, and the local method quickly refines the solution [9].

- Modeling and Regression: For pathway expression optimization, use regression modeling on a sparsely sampled combinatorial library to predict high-performing genotypes without exhaustive testing [10].

- Specialized Algorithms: Consider newer methods like CRFIEKF, which can work with imprecise relationships and address ill-posed problems through Tikhonov regularization, reducing reliance on perfect time-course data [12].

Experimental Protocols for Robust Parameter Estimation

Protocol: Global Parameter Estimation using a Stochastic Method

- Problem Formulation: Define your parameter estimation task as a Nonlinear Programming problem with Differential-Algebraic Constraints (NLP-DAEs), including the objective function (e.g., sum of squared errors) and parameter bounds [1] [9].

- Algorithm Selection: Choose a suitable global optimizer from Table 1 (e.g., Evolution Strategies, RDPSO, or Scatter Search).

- Implementation: Link the optimizer to your dynamic model simulation module (e.g., in MATLAB, Python, or C++). The model is often treated as a "black-box" by the optimizer [1].

- Parallelization (Recommended): Leverage the inherent parallel nature of many stochastic methods by distributing objective function evaluations across multiple cores or a computing cluster [8] [9].

- Multi-Run Execution: Execute the global optimizer multiple times with different random seeds to build confidence that the best-found solution is near the global optimum.

- Solution Refinement: (Optional) Use the solution from the stochastic method as the initial guess for a fast local method to polish the result to high precision [9].

- Validation: Finally, validate the calibrated model with a previously unused dataset to assess its predictive power [9].

Diagram: Workflow for Robust Parameter Estimation

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Key Reagents and Computational Tools for Pathway Optimization

| Item / Resource | Function / Description | Example Use Case |

|---|---|---|

| Constitutive Promoter Library | A set of promoters providing a wide, predictable range of gene expression levels in a host (e.g., S. cerevisiae) [10]. | Combinatorial optimization of enzyme expression levels in a heterologous pathway to balance flux [10]. |

| Standardized Assembly Strategy | A DNA assembly method (e.g., isothermal assembly) allowing for efficient, combinatorial construction of multi-gene pathways [10]. | Rapid generation of large variant libraries to explore the expression landscape. |

| Biochemical Simulation Package | Software like Gepasi for simulating and analyzing biochemical systems [8]. | Pre-optimization testing and in silico validation of pathway models. |

| Global Optimization Toolbox | Software libraries implementing algorithms like ES, PSO, or Scatter Search (e.g., in MATLAB, Python SciPy) [8] [9]. | Solving the inverse problem for parameter estimation in nonlinear dynamic models. |

| Cluster/Grid Computing | High-performance computing technologies (e.g., Beowulf cluster, Globus) [8]. | Providing the computational power required for stochastic global optimization runs. |

Diagram: Local vs. Global Method Challenges

Frequently Asked Questions

What are the main types of noise affecting biochemical time-course data? Biological noise is categorized as either intrinsic or extrinsic. Intrinsic noise stems from random, inherent cellular processes like biochemical reactions involving low-copy-number molecules (e.g., mRNAs, transcription factors). Extrinsic noise arises from cell-to-cell variations in the cellular environment, such as fluctuating concentrations of ribosomes or polymerases, which simultaneously affect multiple genes or pathways [13] [14].

How can I estimate model parameters when experimental time-course data is unavailable or severely limited? Novel techniques like the Constrained Regularized Fuzzy Inferred Extended Kalman Filter (CRFIEKF) have been developed for this specific scenario. This approach integrates a Fuzzy Inference System (FIS) to leverage existing, imprecise knowledge about molecular relationships within a network, bypassing the need for traditional experimental measurements. Tikhonov regularization is then used to fine-tune the estimated parameters, preventing overfitting and stabilizing the solution [6].

What practical computational methods can handle highly noisy data from sensor measurements? A robust strategy combines sparse identification with subsampling and co-teaching. Subsampling randomly uses fractions of the dataset for model identification, while co-teaching mixes a small amount of available noise-free data (e.g., from first-principles simulations) with the noisy experimental measurements. This hybrid approach creates a mixed, less-corrupted dataset for more effective model training [15].

How can I assess the reliability of my parameter estimates? Uncertainty Quantification (UQ) is essential. Key methods include:

- Profile likelihood: Determines parameter identifiability and confidence intervals.

- Bootstrapping: Assesses parameter variability by repeatedly fitting models to resampled data.

- Bayesian inference: Incorporates prior knowledge to provide posterior distributions for parameters, fully characterizing uncertainty [16].

What should I do if my mathematical model is only partially known? Hybrid Neural Ordinary Differential Equations (HNODEs) offer a powerful solution. This framework embeds the known mechanistic parts of your model (expressed as ODEs) with neural networks that learn the unknown or overly complex components directly from data. This allows for parameter estimation even with incomplete system knowledge [5].

Troubleshooting Guides

Problem: Model Fitting Fails with Sparse or Noisy Data

| Observation | Possible Cause | Solution |

|---|---|---|

| Poor parameter convergence and high uncertainty | Non-identifiability: Parameters cannot be uniquely determined from the available data [16]. | Perform a practical identifiability analysis. Use profile likelihood to check which parameters are identifiable. For non-identifiable parameters, consider simplifying the model or collecting more informative data [16] [5]. |

| Model overfits to noise in the training data | High noise levels and lack of regularization [15]. | Apply regularization methods (e.g., Tikhonov regularization [6], Lasso, or Ridge regression [17]) during optimization to penalize overly complex solutions and reduce overfitting. |

| Optimization algorithm gets stuck in local minima | The objective function landscape is complex and multi-modal [16]. | Use global optimization or metaheuristic algorithms (e.g., genetic algorithms, particle swarm optimization). Perform multistart optimization by running the estimation from many different initial parameter values [16] [17]. |

Problem: Handling Low Copy Number Molecular Noise

| Observation | Possible Cause | Solution |

|---|---|---|

| High cell-to-cell variability in pathway activity | Intrinsic noise due to low abundance of key signaling molecules (e.g., transcription factors, mRNAs) [13] [14]. | Formulate a stochastic model instead of deterministic ODEs. Use the Chemical Master Equation framework and simulate trajectories with the Gillespie Stochastic Simulation Algorithm (SSA) to capture the discrete, random nature of reactions [13]. |

| Bimodal or broad distributions in single-cell measurements | A combination of intrinsic and extrinsic noise [14]. | Employ dual-reporter gene systems (e.g., expressing CFP and YFP from identical promoters) in the same cell. The difference between the two reporters quantifies intrinsic noise, while the total variation measures the combined effect [14]. |

Experimental Protocols

Protocol: Parameter Estimation Using Gradient-Based Optimization

This protocol outlines steps to estimate parameters for a system of Ordinary Differential Equations (ODEs) using gradient-based optimization, which is efficient for high-dimensional parameter spaces [16].

Model and Data Formulation:

- Model Specification: Cast your biochemical pathway model into a system of ODEs. Standardize the format using Systems Biology Markup Language (SBML) or BioNetGen Language (BNGL) for compatibility with software tools [16].

- Data Preparation: Gather normalized experimental time-course data (e.g., concentration dynamics). Ensure data is related to variables in your model.

- Objective Function: Define a model misfit function, typically a weighted residual sum of squares: (\sumi \omegai(yi - \hat{y}i)^2), where (yi) is the experimental data, (\hat{y}i) is the model prediction, and (\omegai) is a weighting constant (often (1/\sigmai^2), the inverse variance) [16].

Gradient Calculation (Choose One Method):

- Finite Difference Approximation: A simple but inefficient method where each parameter is perturbed slightly. Not recommended for models with many parameters [16].

- Forward Sensitivity Analysis: The preferred method for ODE systems. Augments the original ODEs with additional equations that compute the derivative of each species with respect to each parameter. This provides exact gradients [16].

- Adjoint Sensitivity Analysis: A more complex but highly efficient method for models with many parameters. It involves a backward integration of an adjoint system and is superior for large-scale problems [16].

Parameter Optimization:

- Algorithm Selection: Use a second-order method like L-BFGS-B or Levenberg-Marquardt (for least-squares problems) to minimize the objective function [16].

- Multistart Optimization: To avoid local minima, run the optimization from many different, randomly chosen starting points in the parameter space [16] [17].

Validation:

- Use cross-validation (e.g., k-fold) on held-out data to ensure the model has not overfitted and generalizes well [17].

Protocol: Mitigating Noise via Sparse Identification and Subsampling

This protocol is designed for building models from exceptionally noisy time-series data [15].

Data Preprocessing:

- Acquire noisy time-course measurement data from sensor readings.

Subsampling and Model Identification:

- Sparse Identification: Use a algorithm (e.g., SINDy) to identify a parsimonious model from the data.

- Random Subsampling: Randomly select a fraction of the full dataset.

- Model Construction: Perform sparse model identification on the selected subset.

Co-teaching Integration:

- Generate Clean Data: Use first-principles simulations of your system to generate a small amount of noise-free training data.

- Data Mixing: Create a mixed training dataset by combining the clean, simulated data with the subsampled noisy experimental data.

Iterative Training and Benchmarking:

- Train the model on the mixed dataset.

- Benchmark the model's prediction accuracy against models trained without subsampling or without co-teaching to validate performance improvement [15].

Research Reagent Solutions

| Reagent / Tool | Function in Research |

|---|---|

| Dual-Reporter Gene System (CFP/YFP) | A critical experimental tool for disentangling intrinsic and extrinsic noise in gene expression. Two identical promoters drive expression of different fluorescent proteins in the same cell, allowing precise noise quantification [14]. |

| High-Fidelity Polymerase (e.g., Q5, Phusion) | Reduces errors in PCR amplification during cloning and other preparatory steps, minimizing sequence errors that could introduce unintended variability in experiments [18]. |

| Software: PyBioNetFit | A general-purpose software tool for parameter estimation and uncertainty quantification in biological models, supporting both optimization and profile likelihood analysis [16]. |

| Software: AMICI/PESTO | A toolchain for model simulation (AMICI) and parameter estimation (PESTO), offering advanced features like adjoint sensitivity analysis for efficient gradient computation [16]. |

| Hybrid Neural ODE (HNODE) Framework | A computational framework that combines mechanistic ODEs with neural networks. It acts as a universal approximator for unknown system components, enabling parameter estimation with incomplete models [5]. |

Workflow and Pathway Diagrams

Parameter Estimation with Scarce/Noisy Data

Intrinsic vs. Extrinsic Noise

Frequently Asked Questions (FAQs)

FAQ 1: What do "ill-posedness" and "sloppiness" mean in the context of my model?

- Answer: Ill-posedness refers to a problem where the solution (i.e., the set of estimated parameters) is not unique; many different parameter sets can fit your experimental data equally well [19]. Sloppiness describes a common property in systems biology models where the model's predictions are exquisitely sensitive to a few "stiff" parameter combinations but remarkably insensitive to many other "sloppy" directions in parameter space. This results in a spectrum of parameter sensitivities where eigenvalues of the sensitivity Hessian matrix are roughly evenly distributed over many decades [20].

FAQ 2: How can I identify if my parameter estimation problem is ill-posed?

- Answer: Key indicators include:

- Non-unique solutions: The optimization algorithm converges to vastly different parameter values when started from different initial points [1].

- Extremely high parameter uncertainty: After fitting, the confidence intervals for parameters span orders of magnitude [20].

- Ill-conditioned Hessian matrix: The eigenvalues of the Hessian matrix (or an approximation thereof) calculated at the solution span a very wide range, for example, over 10^6 [20].

- Answer: Key indicators include:

FAQ 3: My model is sloppy. Does this mean my model predictions are unreliable?

- Answer: Not necessarily. A sloppy model can still make accurate and well-constrained predictions for specific system behaviors, even when individual parameters are poorly constrained [20]. The key is to focus on the reliability of the prediction you care about, rather than the uncertainty of individual parameters. Sloppiness implies that model predictions can be fragile to uncertainty in a single parameter, so it is crucial to assess prediction uncertainty using methods like profile likelihood or ensemble modeling [20].

FAQ 4: What practical steps can I take to overcome these challenges?

- Answer: A multi-faceted approach is recommended:

- Use Global Optimization: Employ stochastic global optimization methods (e.g., Evolution Strategies) to navigate the complex, multi-modal objective function and avoid local minima [1].

- Regularization: Incorporate techniques like Tikhonov regularization to penalize unrealistic parameter values and stabilize the solution of ill-posed problems [6] [12].

- Parameter Selection: Use frameworks like the "parameter Houlihan" to intelligently select a subset of parameters to estimate from a larger model, minimizing identifiability issues while retaining model flexibility [19].

- Focus on Predictions: Design experiments to directly test and constrain the model's predictions of interest, rather than aiming to measure all parameters precisely [20].

- Answer: A multi-faceted approach is recommended:

Troubleshooting Guides

Problem 1: Optimization Converges to Different Parameters on Each Run

This is a classic symptom of an ill-posed, multi-modal problem where the objective function has many local minima [1].

- Step 1: Diagnose the Problem. Run your estimation algorithm multiple times (≥ 50) from randomly sampled starting points within parameter bounds. Plot the resulting parameter values and the final objective function value. A large scatter in parameters with similar objective values confirms non-uniqueness.

- Step 2: Switch to a Global Optimizer. Replace local optimization methods (e.g., Levenberg-Marquardt) with global stochastic methods. The following table compares some options:

| Method | Principle | Best For | Key Reference |

|---|---|---|---|

| Evolution Strategies (ES) | Population-based stochastic search inspired by biological evolution. | Medium to large-scale problems; shown to successfully estimate 36 parameters in a pathway model [1]. | [1] |

| Simulated Annealing (SA) | Mimics the annealing process in metallurgy. | Smaller problems; can be computationally demanding [1]. | [1] |

| Adaptive Chaos Synchronization | Uses chaos theory to avoid local minima. | Noisy, chaotic systems like hormonal oscillators [21]. | [21] |

- Step 3: Implement Regularization. Augment your cost function with a regularization term. For example, Tikhonov regularization minimizes

J(p) + λ||Lp||², whereJ(p)is the original cost function,λis a regularization parameter, andLis a matrix (often the identity) that penalizes large parameter values [6] [12]. - Step 4: Refine the Parameter Set. If the model has many parameters, use a method like the parameter Houlihan [19] to select the most influential subset for estimation, fixing the others to nominal values to reduce the problem's dimensionality and improve identifiability.

Problem 2: Parameter Confidence Intervals are Unrealistically Large

This indicates sloppiness and that your available data is insufficient to constrain all parameters [20].

- Step 1: Compute the Sensitivity Spectrum. Calculate the Hessian matrix of your cost function with respect to the log-parameters at your best-fit solution. Compute the eigenvalues of this Hessian. A sloppy model will exhibit eigenvalues evenly distributed over many orders of magnitude [20].

- Step 2: Construct a Parameter Ensemble. Instead of relying on a single parameter set, generate an ensemble of thousands of parameter vectors that all fit the data adequately (e.g., with a cost function value below a certain threshold). This represents the plausible parameter space given the data [20].

- Step 3: Analyze Prediction Uncertainty. Use the generated ensemble to simulate your model's key predictions. The distribution of the prediction outcomes quantifies the uncertainty in your forecasts, which is often much smaller than the uncertainty in the individual parameters [20].

- Step 4: Design New Experiments. Identify which hypothetical new measurement would most effectively reduce the uncertainty in your critical prediction. This approach of optimal experimental design is more efficient than trying to measure all parameters directly [20].

Experimental Protocols & Methodologies

Protocol 1: Constrained Regularized Fuzzy Inferred Extended Kalman Filter (CRFIEKF)

This method is designed for when experimental time-course data is inaccessible or of poor quality, relying instead on imprecise molecular relationships [6] [12].

- Define the State-Space Model: Formulate your biochemical pathway as a state-space model, where differential equations describe the system dynamics.

- Construct the Fuzzy Inference System (FIS): Capture the approximate, qualitative relationships between molecules in the network using a FIS with Gaussian membership functions [6] [12].

- Implement the Extended Kalman Filter (EKF): Use the EKF to estimate the states and parameters of the nonlinear system.

- Apply Tikhonov Regularization: Integrate Tikhonov regularization within the EKF framework to constrain the parameter estimates and handle the ill-posed nature of the problem [6].

- Solve the Optimization: The regularized problem is solved as a convex quadratic programming problem to obtain the final parameter values [12].

Logical Workflow of the CRFIEKF Method:

Protocol 2: Global Optimization with Evolution Strategies (ES)

Use this protocol for calibrating nonlinear dynamic models where gradient-based methods fail [1].

- Problem Formulation: Define the parameter estimation task as a Nonlinear Programming (NLP) problem with differential-algebraic constraints, aiming to minimize the difference between model predictions and experimental data [1].

- Initialize Population: Generate an initial population of candidate parameter vectors (μ parents).

- Create Offspring: Generate λ offspring by applying mutation (e.g., adding a normal random vector) to the parents. Recombination may also be used.

- Selection and Evaluation: Simulate the model for each offspring parameter set and compute the cost function (fitness). Select the μ best offspring to become the parents of the next generation.

- Termination Check: Repeat steps 3-4 until a termination criterion is met (e.g., a maximum number of generations or no improvement in fitness).

High-Level Workflow for Global Parameter Estimation:

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in Parameter Estimation |

|---|---|

| Global Optimization Software (e.g., SloppyCell) | Provides implementations of algorithms like Evolution Strategies and tools for analyzing parameter sloppiness and uncertainties [20]. |

| Constraint Regularization Framework | A computational module to implement Tikhonov regularization, essential for stabilizing ill-posed problems [6] [12]. |

| Fuzzy Inference System (FIS) Library | A software library (e.g., in MATLAB or Python) to construct a FIS that encodes qualitative network relationships when quantitative data is scarce [6] [12]. |

| Model Ensembles | A collection of parameter sets that are all consistent with experimental data, used for robust uncertainty quantification and prediction [20]. |

| Sensitivity & Identifiability Analysis Tools | Software routines to compute the Hessian matrix and its eigenvalues, diagnosing sloppiness and structural non-identifiability [20]. |

| The "Parameter Houlihan" Framework | A machine-learning-guided method to select the optimal subset of parameters to estimate, balancing forecast error and identifiability [19]. |

High-Dimensionality and Computational Cost in Large-Scale Pathway Models

Frequently Asked Questions

1. Why are traditional local optimization methods often inadequate for parameter estimation in pathway models? Parameter estimation problems for nonlinear dynamic systems are frequently ill-conditioned and multimodal (i.e., they contain multiple local optima). Traditional gradient-based local optimization methods can easily get trapped in these local solutions, failing to find the globally optimal set of parameters that best fits the experimental data [1].

2. What are the main classes of global optimization methods, and which is best for my problem? Global optimization methods can be roughly classified as deterministic and stochastic [1].

- Deterministic methods (e.g., branch and bound) can, in theory, guarantee global optimality for certain problems, but their computational effort often increases exponentially with the problem size, making them unsuitable for high-dimensionality.

- Stochastic methods (e.g., Evolution Strategies, Simulated Annealing) use probabilistic approaches to search the parameter space. They cannot guarantee global optimality but are often more efficient at locating the vicinity of excellent solutions for complex, high-dimensional problems. For biochemical pathway estimation, Evolution Strategies (ES) and Evolutionary Programming (EP) have been shown to be particularly robust, though they can require significant computational effort [1].

3. My model is a large system of differential equations. Are there ways to simplify the estimation process? Yes, for models in the S-system formalism, a method called Alternating Regression (AR) combined with decoupling can drastically reduce computational cost [22]. This technique dissects the nonlinear inverse problem into iterative steps of linear regression by temporarily holding one part of the equation constant while solving for the other. This method has been reported to be three to five orders of magnitude faster than directly estimating coupled nonlinear differential equations [22].

4. Are there methods that can work without extensive experimental time-course data? Emerging methods are addressing the challenge of limited experimental data. One innovative approach is the Constrained Regularized Fuzzy Inferred Extended Kalman Filter (CRFIEKF), which eliminates the need for experimental time-course measurements. Instead, it leverages existing imprecise relationships among molecules within the network, using a Fuzzy Inference System (FIS) and Tikhonov regularization to fine-tune parameter values [6].

5. How can foundation models assist in the analysis of large-scale biological data? Foundation models, pre-trained on massive datasets, provide a powerful starting point for various downstream tasks. For example, CellFM is a single-cell foundation model pre-trained on the transcriptomics of 100 million human cells. It can be fine-tuned for specific applications like gene function prediction and perturbation prediction, helping to overcome data noise, sparsity, and batch effects that complicate parameter estimation and model building from raw data [23].

Troubleshooting Guides

Problem 1: Algorithm Convergence Failure

Symptoms: The optimization algorithm fails to converge, oscillates between poor solutions, or consistently settles on a parameter set that does not reproduce the experimental data.

| Possible Cause | Diagnostic Steps | Solution |

|---|---|---|

| Ill-posed or multimodal problem | Run the optimization from multiple, widely dispersed starting points. If results vary significantly, the problem is likely multimodal [1]. | Switch to a robust stochastic global optimization method like Evolution Strategies (ES) [1]. |

| Poorly scaled parameters | Check the magnitude of parameters and state variables. Differences of several orders of magnitude can cause numerical instability. | Normalize state variables and parameters to a common scale (e.g., [0, 1]) before estimation [6]. |

| Insufficient or noisy data | Examine the quality of time-series data and the derived slopes. Noisy data magnifies errors in slope calculations [22]. | Apply data smoothing (e.g., splines, Whittaker filter) before estimation [22]. Consider methods like CRFIEKF that are designed for imprecise data [6]. |

Problem 2: Prohibitive Computational Time

Symptoms: A single parameter estimation run takes days or weeks, hindering research progress.

| Possible Cause | Diagnostic Steps | Solution |

|---|---|---|

| High-dimensional parameter space | Count the number of parameters to be estimated. Traditional methods scale poorly with dimensionality [1]. | For S-system models, use the Alternating Regression (AR) method to decouple the system and reduce computation time by several orders of magnitude [22]. |

| Inefficient optimization algorithm | Determine if a local (e.g., Levenberg-Marquardt) or a naive stochastic search is being used. | Implement more efficient stochastic algorithms like Evolution Strategies or hybrid methods [1]. |

| Complex model evaluation | Profile your code to see where computation time is spent. Often, solving the ODEs is the bottleneck. | Where possible, use simplifications like the decoupling technique for parameter estimation, which works with algebraic equations instead of repeatedly solving differential equations [22]. |

Detailed Experimental Protocols

Protocol 1: Parameter Estimation Using Alternating Regression for S-Systems

This protocol outlines the use of the Alternating Regression (AR) method for estimating parameters in S-system models, a highly efficient alternative to conventional methods [22].

Research Reagent Solutions:

| Item | Function in the Protocol |

|---|---|

| Time-series concentration data | The experimental measurements of metabolite concentrations at discrete time points. |

| Estimated slopes of concentrations | The derived rates of change (dX_i/dt) for each metabolite at each time point. |

| S-system model structure | The defined network topology, indicating which metabolites influence the production and degradation of others. |

Methodology:

- System Decoupling: For each metabolite ( Xi ) in the S-system model, use the estimated slopes ( Si(tk) ) to reformulate the differential equation into an algebraic equation at each time point ( tk ): ( Si(tk) = \alphai \prod{j=1}^n Xj(tk)^{g{ij}} - \betai \prod{j=1}^n Xj(tk)^{h{ij}} ). This converts a system of ( n ) coupled differential equations into ( n \times N ) uncoupled algebraic equations [22].

Initialization: For the differential equation of metabolite ( Xi ), select initial guesses for the parameters of the degradation term (( \betai ) and ( h_{ij} )). Incorporate any prior knowledge by constraining certain kinetic orders (e.g., setting them to zero if an influence is known not to exist) [22].

Phase 1 - Regression for Production Term:

- Compute the transformed observation vector for the degradation term: ( \mathbf{yd} = \log(\betai \prod{j=1}^n Xj^{h{ij}} + |Si|) ).

- Using the matrix of log-transformed regressors ( \mathbf{Lp} ), estimate the production term parameters (( \log(\alphai) ) and ( g{ij} )) via multiple linear regression: ( \mathbf{bp} = (\mathbf{Lp}^T \mathbf{Lp})^{-1} \mathbf{Lp}^T \mathbf{yd} ) [22].

Phase 2 - Regression for Degradation Term:

- Using the new estimates from Phase 1, compute the transformed observation vector for the production term: ( \mathbf{yp} = \log(\alphai \prod{j=1}^n Xj^{g_{ij}}) ).

- Estimate the degradation term parameters (( \log(\betai) ) and ( h{ij} )) via regression: ( \mathbf{bd} = (\mathbf{Ld}^T \mathbf{Ld})^{-1} \mathbf{Ld}^T \mathbf{y_p} ) [22].

Iteration: Iterate steps 3 and 4, using the updated parameter estimates from each phase in the next, until the solution converges (i.e., the change in parameter values falls below a set tolerance) [22].

Protocol 2: Global Optimization with Evolution Strategies

This protocol describes using a stochastic global optimizer, Evolution Strategies (ES), for parameter estimation in complex, nonlinear pathway models [1].

Methodology:

- Problem Formulation: Define the parameter estimation as a Nonlinear Programming (NLP) problem. The objective is to find the parameter vector ( \mathbf{p} ) that minimizes the cost function ( J ), which is often a weighted sum of squared errors between model predictions ( \mathbf{y}(p, t) ) and experimental data ( \mathbf{y}_{msd} ), subject to the system dynamics ( f ) (e.g., the ODE model) [1].

Algorithm Selection: Choose an Evolution Strategies (ES) algorithm. ES are population-based stochastic search methods inspired by biological evolution, which are particularly effective for multimodal and non-convex problems [1].

Initialization: Initialize a population of candidate parameter vectors (μ parents).

Generational Loop: For each generation:

- Recombination and Mutation: Generate λ offspring by recombining and mutating the parent population. Mutation is typically performed by adding a normally distributed random vector.

- Evaluation: Simulate the model for each offspring parameter vector and compute the cost function ( J ).

- Selection: Select the μ best offspring to form the next parent generation.

- Termination Check: Repeat the loop until a maximum number of generations is reached or the improvement in the best solution falls below a threshold [1].

The Modern Toolkit: Methodologies for Robust Parameter Estimation

Frequently Asked Questions (FAQs)

FAQ 1: Why do traditional local optimization methods (like gradient-based methods) often fail for parameter estimation in biochemical pathway models?

Traditional gradient-based local optimizers frequently fail because parameter estimation problems for nonlinear dynamic biochemical pathways are often ill-conditioned and multimodal (nonconvex) [1]. This means the cost function has many local optima, causing local methods to get trapped in suboptimal solutions. Stochastic global optimizers like ES, DE, and PSO are better equipped to explore the entire search space and locate the vicinity of the global solution, though they cannot guarantee global optimality with certainty [1].

FAQ 2: My optimization with Particle Swarm Optimization (PSO) is converging prematurely to a local optimum. What strategies can I use to improve its performance?

Premature convergence in PSO is a common issue, often due to a loss of population diversity and an imbalance between exploration and exploitation [24] [25]. You can employ several strategies:

- Dynamic Parameter Control: Use an adaptive inertia weight (

ω) that starts with a higher value (e.g., 0.9) to promote global exploration and gradually decreases to a lower value (e.g., 0.4) to refine the search. Adaptive acceleration coefficients can also help [25] [24]. - Hybridization: Integrate the mutation and crossover operators from Differential Evolution (DE) into PSO. This can enhance population diversity and help the swarm escape local optima [24].

- Alternative Topologies: Instead of the global best (gbest) topology, use a local best (lbest) topology, such as a ring structure, where particles only share information with immediate neighbors. This slows convergence and can prevent the entire swarm from clustering prematurely around a suboptimal point [26].

FAQ 3: For a problem with over 30 parameters to estimate, which of these optimizers has been shown to be effective?

In a benchmark case study involving the estimation of 36 parameters for a nonlinear biochemical dynamic model, a specific type of stochastic algorithm, Evolution Strategies (ES), was able to solve the problem successfully [1] [8]. While other stochastic methods like Evolutionary Programming (EP) were also tested, they often required excessive computation times. This demonstrates the robustness of ES for high-dimensional inverse problems in systems biology [1].

FAQ 4: Is there a significantly faster alternative method for parameter estimation in S-system models?

Yes, a method called Alternating Regression (AR) combined with system decoupling has been developed for S-system models within Biochemical Systems Theory [22]. This method dissects the nonlinear inverse problem into iterative steps of linear regression. A key advantage is its speed; it has been reported to be three to five orders of magnitude faster than methods that directly estimate systems of nonlinear differential equations, making it highly efficient for structure identification and parameter estimation from time series data [22].

Troubleshooting Guides

Issue 1: Algorithm Fails to Find a Biologically Plausible Solution

Potential Causes:

- The solution found violates known biological constraints (e.g., a parameter for a reaction rate is negative).

- The algorithm is converging to a local optimum that fits the data poorly or is not interpretable.

Solutions:

- Implement Parameter Constraints: Enforce upper and lower bounds on all parameters based on prior biological knowledge. If a kinetic order is known to represent an inhibitory effect, constrain its value to be negative; for an activating effect, constrain it to be positive [22].

- Utilize Multi-Start Strategies: Run the optimization multiple times from different random initial points. If several runs converge to a similar solution, it increases confidence that it is a robust optimum [1].

- Refine Cost Function: Ensure the cost function (which measures the fit to experimental data) appropriately weights different datasets or state variables. Poorly scaled objectives can lead the optimizer to ignore key data features.

Issue 2: Unacceptably Long Computation Times

Potential Causes:

- The problem is high-dimensional (many parameters) and complex.

- The algorithm settings are inefficient for the specific problem.

- The function evaluations (simulating the biochemical model) are themselves computationally expensive.

Solutions:

- Algorithm Selection and Tuning: Consider using faster algorithms like Alternating Regression (AR) for S-system models if applicable [22]. For population-based methods (ES, DE, PSO), avoid an excessively large population size. For PSO, strategies like dynamic inertia weight can improve convergence speed [25] [24].

- Exploit Parallel Computing: Stochastic optimizers like ES, DE, and PSO are inherently parallelizable. The evaluation of candidate solutions in each generation can be distributed across multiple processors or cores, significantly reducing wall-clock time [1].

- Problem Decomposition: If possible, use methods to decouple the system of differential equations. This allows you to estimate parameters for one equation at a time, transforming a large, coupled problem into several smaller, more manageable ones [22].

Issue 3: Handling Noisy or Limited Experimental Data

Potential Causes:

- Experimental measurements of metabolite concentrations are inherently noisy.

- The available time-series data is sparse, making it difficult to accurately calculate slopes (derivatives) needed for the optimization.

Solutions:

- Data Smoothing and Slope Estimation: Before parameter estimation, smooth the experimental time-series data using methods like B-splines or the Whittaker filter. These can then be used to robustly estimate the slopes of the concentration changes, which are critical for the fitting process [22].

- Validate on Noise-Free Test Cases: First, test your optimization pipeline on a known, in-silico (computer-generated) model where you know the true parameter values. Add artificial noise to the simulated data to assess the robustness of your approach and tune algorithm parameters accordingly.

Quantitative Comparison of Optimizers

The table below summarizes the core characteristics of the three stochastic global optimizers in the context of biochemical pathway parameter estimation.

Table 1: Comparison of Stochastic Global Optimization Algorithms for Biochemical Pathways

| Feature | Evolution Strategies (ES) | Differential Evolution (DE) | Particle Swarm Optimization (PSO) |

|---|---|---|---|

| Core Inspiration | Biological evolution (mutation, selection) [1] | Darwinian evolution, vector differences [27] | Social behavior of bird flocking/fish schooling [28] [26] |

| Key Mechanism | Mutation of object parameters and strategy parameters [1] | Mutation based on weighted vector differences & crossover [27] | Velocity update guided by personal & swarm bests [28] |

| Typical Parameter Count | ~36 parameters (benchmark success) [1] [8] | Extensive use in engineering & optimization [27] | Widely applied; performance can be topology-dependent [26] |

| Handling Multimodality | Robust; successful on multimodal benchmark problems [1] | Good; mutation strategy helps explore multiple peaks [27] | Can suffer from premature convergence; requires mitigation [24] [25] |

| Major Strength | Proven robustness on difficult, high-dimensional inverse problems [1] | Simple structure, effective mutation strategy, good convergence [27] | Simple implementation, fast initial convergence, few parameters to tune [28] [24] |

| Common Challenge | Can be computationally expensive [1] | Parameter tuning (e.g., crossover rate) influences performance [27] | Premature convergence to local optima; sensitive to parameter settings [24] [25] |

Detailed Experimental Protocol: Parameter Estimation Using a PSO-DE Hybrid

This protocol outlines the methodology for implementing a hybrid MDE-DPSO algorithm, which combines the strengths of PSO and DE to address PSO's tendency for premature convergence [24].

1. Problem Formulation: Define the parameter estimation task as a Nonlinear Programming problem with Differential-Algebraic Constraints (NLP-DAEs) [1].

- Objective: Find the parameter vector

pthat minimizes the cost functionJ, which is typically the weighted sum of squared errors between model predictionsy(p,t)and experimental datay_msd[1]. - Subject to: The system dynamics

f(x'(t), x(t), p, v) = 0(the biochemical model) and parameter boundsp_L ≤ p ≤ p_U[1].

2. Algorithm Initialization:

- Swarm/Population: Initialize a population of

Sparticles, each representing a candidate parameter vectorX_i. Positions are randomly initialized within the specified parameter bounds [26]. - Velocity: Initialize each particle's velocity

V_ito zero or a small random value [26]. - Personal & Global Best: Set each particle's personal best

Pbest_ito its initial position. Identify the swarm's global bestGbest[26]. - Algorithm Parameters: Set initial values for:

3. Iterative Optimization Loop: Repeat until a termination criterion is met (e.g., maximum iterations or sufficient cost reduction).

- Dynamic Parameter Update: At each iteration, update the inertia weight

w(e.g., using a linear decrease from 0.9 to 0.4) and acceleration coefficients adaptively [24] [25]. - Velocity and Position Update (PSO Core):

- Update velocity:

V_i(t+1) = w * V_i(t) + c1 * r1 * (Pbest_i - X_i(t)) + c2 * r2 * (Gbest - X_i(t))[28]. - For MDE-DPSO, incorporate a dynamic strategy: include the "center nearest particle" and a perturbation term in the velocity update to enhance information sharing and randomness [24].

- Update position:

X_i(t+1) = X_i(t) + V_i(t+1)[28].

- Update velocity:

- Hybrid DE Mutation and Crossover:

- Selection and Evaluation:

- Evaluate the cost function

Jfor both the new PSO position and the DE trial vector. - For each particle, accept the new position (PSO or DE) that yields the lower cost.

- Evaluate the cost function

- Update Best Positions:

- Update each particle's

Pbest_iif a better position is found. - Update the swarm's

Gbestif any particle'sPbest_iis better than the currentGbest[26].

- Update each particle's

4. Solution Validation:

- Once converged, validate the final parameter set

p*by simulating the biochemical model and comparing the output to experimental data not used in the calibration. - Perform practical identifiability analysis (e.g., via bootstrapping) to assess the uncertainty of the estimated parameters.

Workflow and Pathway Diagrams

Diagram 1: Parameter Estimation Workflow.

Diagram 2: Generic Signaling Pathway with Feedback.

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Computational Tools for Optimization in Biochemical Research

| Tool/Reagent | Function/Purpose |

|---|---|

| S-system Formulation | A canonical modeling framework within Biochemical Systems Theory (BST) where each differential equation is a power-law function, simplifying structure identification and parameter estimation [22]. |

| Alternating Regression (AR) | A fast estimation method that dissects the nonlinear inverse problem into iterative steps of linear regression, drastically reducing computation time for S-system models [22]. |

| Slope Estimation Tools (Splines, Filters) | Methods like B-splines or Whittaker filters are used to smooth noisy experimental time-series data and robustly estimate the derivatives (slopes) required for parameter fitting [22]. |

| Common Benchmark Suites (CEC) | Standardized sets of test functions (e.g., CEC2013, CEC2017) used to rigorously evaluate and compare the performance of different optimization algorithms before applying them to biological models [24]. |

| Dynamic Inertia Weight | A parameter control strategy in PSO where the inertia starts high for global exploration and decreases over time to facilitate local exploitation, helping to balance the search [25] [24]. |

| Hybrid PSO-DE Algorithm | An optimizer that combines the social guiding mechanism of PSO with the mutation/crossover operations of DE to improve population diversity and avoid premature convergence [24]. |

FAQs

General Concepts

1. What is the primary challenge of parameter estimation in dynamical models of biochemical pathways? The primary challenge is that the parameter estimation problem is often formulated as a non-linear optimization problem which frequently results in a multi-modal (non-convex) cost function. Most local deterministic optimization methods can converge to suboptimal local minima if multiple optima are present, leading to misleading simulation results [29].

2. What is a hybrid optimization strategy, and why is it beneficial? A hybrid optimization strategy combines a global search method with a local search method. It leverages the global optimizer's ability to rapidly find the vicinity of the global solution and the local optimizer's capacity for fast convergence to a precise solution from a good starting point. This combination offers a reliable and efficient alternative for solving large-scale parameter estimation problems, saving significant computational effort [29].

3. How does the presented hybrid method improve upon previous hybrid approaches? This refined hybrid strategy offers two main advantages:

- It employs a multiple-shooting method as the local search procedure, which reduces the multi-modality of the problem and enhances the robustness of the hybrid approach by avoiding spurious solutions.

- It features a systematic switching strategy to determine the transition from the global to the local search during the estimation process, eliminating the need for computationally expensive preliminary tests [29].

Methodology & Implementation

4. What is the limitation of using a pure local method like single-shooting? The single-shooting (or initial value) approach, which optimizes the cost function directly with respect to initial values and parameters, has a limited domain of convergence to the global minimum in search space. Due to the presence of multiple minima, it often gets trapped in local solutions unless the initial guess is already very close to the global optimum [29].

5. How does the multiple-shooting method enhance the local search? Multiple-shooting avoids possible spurious solutions in the vicinity of the global optimum that can trap single-shooting methods. By doing so, it possesses a larger domain of convergence to the global optimum, which significantly increases the stability and success rate of the subsequent local search within the hybrid strategy [29].

6. What are examples of global and local methods used in hybrid strategies?

- Global Stochastic Methods: Evolutionary strategies, Genetic Algorithms, Simulated Annealing. Their advantage is rapidly arriving near the global solution [29].

- Local Deterministic Methods: Levenberg-Marquardt algorithm, Sequential Quadratic Programming. Their advantage is fast local convergence from a good starting point [29].

Troubleshooting Guides

Issue 1: Optimization consistently converges to poor data fits (likely a local minimum)

- Problem Identification: The optimized model simulations show a consistently and significantly poor fit to the experimental time-course data, regardless of initial parameter guesses.

- List Possible Explanations:

- The optimization is trapped in a local minimum of the non-convex cost function.

- The chosen local optimizer is not robust against spurious solutions.

- The switching from global to local search happened too early.

- Collect Data & Eliminate Explanations:

- Check with Experimentation & Identify Cause:

- Solution: Implement a hybrid strategy. Use a global stochastic method (e.g., Evolutionary Algorithm) to broadly explore the parameter space, then switch to a robust local method (e.g., multiple-shooting) to refine the solution [29].

- Ensure the hybrid method uses a systematic switching strategy to avoid premature local search [29].

Issue 2: Parameter estimation fails due to poor quality or sparse experimental data

- Problem Identification: The model cannot be adequately fitted to the data, or parameters have unphysically large confidence intervals.

- List Possible Explanations:

- The available experimental data is too sparse or noisy.

- The model is structurally unidentifiable with the current data set.

- The experiment lacks necessary positive and negative controls.

- Collect Data & Eliminate Explanations:

- Review your experimental data. Check if time points are too few or if measurement errors are high.

- Check your controls. For example, in an immunohistochemistry experiment, a dim signal could mean a protocol problem, or it could correctly indicate low protein expression. A positive control (a known highly-expressed protein) can distinguish between these [30].

- Check with Experimentation & Identify Cause:

- Solution: Consider innovative estimation techniques that can handle data limitations, such as methods incorporating fuzzy inference systems to leverage imprecise prior knowledge about molecular relationships [6].

- Redesign experiments to include more informative time points and essential controls to validate the protocol itself [30].

Issue 3: Biochemical solution for assay is not dissolving correctly

- Problem Identification: A powdered biochemical product will not fully dissolve in the intended solvent, potentially affecting concentration and assay results.

- List Possible Explanations:

- Incorrect solvent or buffer used.

- The solution temperature is too low, causing precipitation.

- The solubilization procedure is insufficient.

- Collect Data & Eliminate Explanations:

- Check with Experimentation & Identify Cause:

- Solution: To solubilize the product, try:

- Rapidly stirring or vortexing.

- Warming gently in a water bath.

- Sonicating.

- For difficult amino acids, using NaOH equivalents to help dissolution may be appropriate [31].

- Solution: To solubilize the product, try:

Experimental Protocols

Protocol 1: Parameter Estimation using a Hybrid Global-Local Strategy

1. Objective To reliably estimate the unknown parameters of a non-linear ODE model representing a biochemical pathway by minimizing a cost function that quantifies the difference between model predictions and experimental measurements.

2. Background Parameter estimation is formulated as a non-linear optimization problem. The cost function is often multi-modal, necessitating a hybrid approach for robustness and efficiency [29].

3. Materials

- Computational Environment: MATLAB, Python (with SciPy), or similar scientific computing platform.

- Model: A defined ODE model of the biochemical pathway (

x˙(t)=f(x(t),t,p)). - Data: Experimental time-course data (

Y_ij). - Observation Function: A function (

g_j(x(t_i), p)) mapping state variables to measurable outputs.

4. Methodology

- Step 1: Define the Cost Function. Formulate the maximum-likelihood cost function,

ℒ(x0,p), which sums the squared differences between model predictions and experimental data across all time points and observables [29]. - Step 2: Configure the Hybrid Optimizer.

- Global Phase: Select a global stochastic optimizer (e.g., an Evolutionary Algorithm).

- Local Phase: Select a local deterministic optimizer that supports the multiple-shooting method.

- Switching Strategy: Implement a rule to switch from global to local search (e.g., after a fixed number of iterations, or when improvement falls below a threshold).

- Step 3: Execute the Hybrid Optimization.

- Run the global optimizer to explore the parameter space.

- Once the switching criterion is met, pass the current best parameter set as the initial guess to the local multiple-shooting optimizer.

- Run the local optimizer to refine the solution and converge to a precise minimum.

- Step 4: Validate the Solution. Check the quality of the fit. Perform identifiability analysis or cross-validation with withheld data to ensure the estimated parameters are meaningful.

Protocol 2: Troubleshooting a Failed Biochemical Assay (e.g., Immunohistochemistry)

1. Objective To systematically identify the cause of a failed experiment (e.g., unexpectedly dim fluorescent signal in immunohistochemistry) and rectify it.

2. Background Troubleshooting is a critical skill that involves a structured process of problem identification, data collection, and hypothesis testing [32].

3. Materials

- Lab notebook and original protocol.

- Required reagents and equipment.

- Control samples.

- Step 1: Identify the Problem. Clearly define the issue without assuming the cause (e.g., "dim fluorescent signal").

- Step 2: List All Possible Explanations. Brainstorm potential causes for each step of the protocol (e.g., fixation time, antibody concentrations, reagent degradation, equipment failure).

- Step 3: Collect Data. Review your procedure and controls.

- Check if positive and negative controls yielded expected results.

- Verify equipment is functioning (e.g., microscope settings).

- Check reagent storage conditions and expiration dates.

- Step 4: Eliminate Explanations. Rule out causes based on the collected data (e.g., if the positive control worked, the core reagents are likely fine).

- Step 5: Check with Experimentation. Design experiments to test remaining hypotheses. Change only one variable at a time (e.g., test a range of secondary antibody concentrations in parallel).

- Step 6: Identify the Cause. Based on the experimental results, pinpoint the root cause (e.g., "the secondary antibody concentration was too low").

- Step 7: Document Everything. Record all steps, observations, and conclusions in your lab notebook.

Data Presentation

Table 1: Comparison of Optimization Methods for Parameter Estimation

| Method Type | Examples | Advantages | Disadvantages | Best Use Case |

|---|---|---|---|---|

| Local Deterministic | Levenberg-Marquardt, Sequential Quadratic Programming | Fast local convergence from a good starting point [29] | High probability of converging to local (not global) minima in multi-modal problems [29] | Problems where parameters are well-known and the cost function is suspected to be convex |

| Global Stochastic | Evolutionary Algorithms, Genetic Algorithms, Simulated Annealing | Rapidly arrives in the vicinity of the global solution; large convergence domain [29] | Computationally expensive to refine the solution; no guarantee of finding the global optimum [29] | Large, complex problems with many parameters and no good initial guess |

| Hybrid (Global-Local) | Evolutionary Strategy + Multiple-Shooting | Combines robustness of global search with speed of local convergence; systematic switching enhances efficiency [29] | Implementation is more complex than standalone methods | Recommended: Large-scale parameter estimation problems in systems biology where robustness and efficiency are critical [29] |

| Deterministic Global | Branch-and-Bound, Interval Analysis | Guaranteed convergence to the global optimum [29] | Computational cost increases drastically with the number of parameters; only feasible for small systems [29] | Small-scale models with a handful of unknown parameters |

Table 2: Research Reagent Solutions for Key Experiments

| Item | Function / Explanation | Example Application in Protocol |

|---|---|---|

| Primary Antibody | Binds specifically to the protein of interest for detection [30] | Immunohistochemistry, Western Blot [30] [33] |

| Secondary Antibody | Conjugated to a fluorophore or enzyme; binds to the primary antibody to allow visualization [30] | Immunohistochemistry, Western Blot [30] [33] |

| Taq DNA Polymerase | Enzyme that synthesizes new DNA strands during PCR by extending primers [32] | Polymerase Chain Reaction (PCR) [33] |

| dNTPs | Deoxynucleoside triphosphates (dATP, dCTP, dGTP, dTTP); the building blocks for DNA synthesis [32] | Polymerase Chain Reaction (PCR) [32] |

| Magnetic Beads | Used to bind and purify specific molecules, like mRNA, from a complex mixture [33] | RNA Extraction and Purification [33] |

| Restriction Enzymes | Enzymes that cut DNA at specific recognition sequences | Molecular Cloning [32] |

| Competent Cells | Bacterial cells (e.g., E. coli) treated to be capable of uptaking foreign plasmid DNA [32] | Transformation in Molecular Cloning [32] |

Pathway and Workflow Visualizations

Diagram Title: Hybrid Optimization Strategy Workflow

Diagram Title: Systematic Troubleshooting Process

Frequently Asked Questions (FAQs)

Q1: Why does my Joint Extended Kalman Filter (JEKF) fail to estimate parameters in my biological pathway model?

This is a known failure case for JEKF when using Unstructured Mechanistic Models (UMMs), which are common in biomanufacturing and biochemical pathway modeling. The failure occurs under specific conditions: when the UMM contains unshared parameters (parameters that affect only a single state variable), the initial state error covariance matrix P(t=0) and process noise covariance Q are initialized with uncorrelated elements, and you have only one measured state variable. In this scenario, the Kalman gain for the unshared parameter remains zero, preventing the parameter estimate from updating [34].

Solution: The SANTO approach can side-step this failure. It involves adding a small, non-zero quantity to the state error covariance between the measured state variable and the unshared parameter in the initial P(t=0) matrix. This prevents the Kalman gain from being zero and allows for simultaneous state and parameter estimation [34].

Q2: How do I choose between the Joint EKF (JEKF), Dual EKF (DEKF), and Ensemble KF (EnKF) for parameter estimation? The choice depends on your specific priorities regarding computational cost, accuracy, and implementation complexity.

- JEKF is often the best choice when you need a numerically economical and robust algorithm for moderate-size systems. It avoids the computational overhead of running two separate filters and can be simpler to implement and tune [34].

- DEKF uses two consecutive EKFs to separate state and parameter estimation. This separation can be advantageous in some specific scenarios, though it comes with higher computational cost [34].

- EnKF is particularly powerful for high-dimensional systems and for estimating the complete background error covariance matrix, which is essential for techniques like Strongly Coupled Data Assimilation (SCDA). It uses an ensemble of model states to compute the error statistics [35] [36].

Q3: My parameter estimates for a nonlinear biochemical pathway are unstable or inaccurate. What advanced strategies can I use? Standard Kalman filters can struggle with the high nonlinearities and parameter correlations (sloppiness) common in biological models. Consider these advanced hybrid approaches:

- Incorporate Regularization: Techniques like Tikhonov regularization can be integrated with the Kalman filter to handle ill-posed problems and prevent overfitting, especially when parameters are correlated [6] [37].

- Use Sensitivity Analysis: Perform local sensitivity analysis to identify which parameters are practically identifiable. You can then use methods like orthogonalization and D-optimal design to select a subset of parameters for estimation, reducing variance and improving numerical stability [37].

- Apply a Fuzzy Inference System (FIS): For systems where precise relationships are unknown, a Constrained Regularized Fuzzy Inferred EKF (CRFIEKF) can use imprecise, existing knowledge about molecular relationships within the network to estimate parameters, even without experimental time-course data [6].

- Integrate Deep Learning: A Deep Neural Network with Ensemble Adjustment Kalman Filter (DNN-EAKF) can learn nonlinear relationships between model variables. This is especially useful for cross-component updates in coupled models, as it improves the estimation of error covariances when ensemble sizes are limited [35].

Q4: How can I handle time-varying parameters in my epidemiological or biochemical dynamic model? Time-varying parameters can be estimated using an augmented state-space approach with the Ensemble Kalman Filter. The model parameters are treated as additional state variables with dynamics. To mitigate issues with strong nonlinearity in the augmented system, a damping factor can be applied to the parameter evolution, which slows down the adaptation rate and improves stability [36].

Troubleshooting Guides

Problem: Filter Divergence or Numerical Instability

- Symptoms: Estimates become unrealistically large, or the covariance matrix loses its positive definiteness.

- Potential Causes and Solutions:

- Cause 1: Excessive linearization error in highly nonlinear systems (a problem for EKF).

- Solution: Switch to a filter better suited for nonlinear systems, such as the Unscented Kalman Filter (UKF) or Cubature Kalman Filter (CKF). These sigma-point filters more accurately capture the mean and covariance of states through nonlinear transformations [38] [37].

- Cause 2: Poorly chosen initial covariance matrices or process noise.