Network-Guided Biomarker Discovery: Integrating AI, Graphs, and Multi-Omics for Precision Oncology

The complexity of cancer and other complex diseases demands a paradigm shift beyond single-molecule biomarkers.

Network-Guided Biomarker Discovery: Integrating AI, Graphs, and Multi-Omics for Precision Oncology

Abstract

The complexity of cancer and other complex diseases demands a paradigm shift beyond single-molecule biomarkers. This article explores the transformative field of network-guided biomarker discovery, a approach that leverages biological networks and artificial intelligence to uncover robust, clinically actionable molecular signatures. We cover the foundational principles of moving from single-entity to systems-level thinking, detail cutting-edge methodological frameworks like Graph Neural Networks (GNNs) and multi-omics integration, and address key challenges in model interpretability and data heterogeneity. Through comparative analysis and validation strategies, we demonstrate how these approaches are yielding superior biomarkers for patient stratification, treatment response prediction, and drug development, ultimately advancing the goals of precision medicine.

From Single Molecules to Systems: The Foundation of Network-Based Biomarkers

The pursuit of molecular biomarkers has long been dominated by reductionist approaches focusing on single molecules, yet this paradigm has yielded disappointingly few clinically validated biomarkers. This application note delineates the fundamental limitations of single-gene and single-molecule approaches in capturing the multifactorial nature of complex diseases. We present evidence that network-based biomarker discovery strategies, which integrate multi-omics data with biological context, overcome these limitations by providing more robust, interpretable, and clinically actionable signatures. Supported by quantitative comparisons and detailed protocols, this note provides researchers with practical frameworks for implementing network-guided approaches in oncological and complex disease research.

Despite decades of intensive research and significant investment, the translation of biomarker discoveries into clinical practice remains remarkably poor. The U.S. Food and Drug Administration (FDA) has approved fewer than 30 protein biomarkers for cancer, with only two biomarker panels approved for breast cancer prognosis (OncoType Dx and MammaPrint) and one for ovarian cancer (Ova1) [1]. This translation gap underscores fundamental limitations in traditional biomarker discovery paradigms.

Biomarkers are defined as objectively measurable indicators of specific biological conditions, particularly those related to disease, while biosignatures represent collections of features that together define a biomarker [1]. The traditional approach has oscillated between two poles: hypothesis-based discovery, which builds on mechanistic understanding of disease processes, and discovery-based approaches, which identify statistically significant molecular associations with disease states [1]. With the advent of high-throughput technologies, the discovery-based approach has predominated, yet its success has been constrained by analytical limitations and biological complexity.

Table 1: Clinically Utilized Biomarker Types and Examples

| Biomarker Type | Clinical Function | Examples |

|---|---|---|

| Diagnostic | Detect early disease state; classify disease subtypes | PSA (prostate cancer), OVA1 (ovarian cancer) |

| Prognostic | Predict disease progression and recurrence | Oncotype DX (breast cancer recurrence), Decipher (prostate cancer aggressiveness) |

| Predictive | Identify patients likely to respond to specific treatments | HER2/neu (trastuzumab response), EGFR mutations (tyrosine kinase inhibitor response) |

| Risk | Identify patients likely to develop disease | BRCA1/2 mutations (breast/ovarian cancer risk) |

Fundamental Limitations of Single-Gene Approaches

Inability to Capture Disease Complexity

Complex diseases such as cancer, neurodegenerative disorders, and metabolic syndromes arise from dysregulated molecular networks rather than isolated molecular defects. Single-gene approaches fundamentally cannot capture this multifactorial nature of complex diseases [2]. These diseases typically involve subtle alterations across multiple biological pathways, with no single molecule bearing sufficient discriminatory power. The traditional single-biomarker-to-single-disease approach fails to reflect the biological reality that complex diseases have diverse origins and manifestations [2].

Statistical and Analytical Challenges

High-dimensional omics data presents significant statistical challenges that single-marker approaches struggle to address appropriately. With thousands of metabolites or genes measured simultaneously, univariate statistical methods (e.g., t-tests with Bonferroni correction) exhibit critical limitations:

- High false discovery rates emerge due to intercorrelation between molecular features [3]

- Limited sensitivity for detecting coordinated subtle changes across multiple molecules [3]

- Biological pathway bias toward identifying metabolites or genes from singular biological pathways while missing orthogonal pathways [3]

As the number of assayed metabolites increases in nontargeted versus targeted approaches, multivariate methods demonstrate superior performance characteristics, especially in selectivity and reduced spurious relationships [3].

Lack of Biological Context and Interpretability

Single-gene approaches evaluate biomarkers in isolation, disregarding their functional and statistical dependencies within biological systems [4]. This limitation has profound implications:

- Poor mechanistic insight into disease processes

- Reduced biological interpretability of biomarker signatures

- Limited ability to prioritize candidate biomarkers for functional validation

The absence of biological context means that statistically significant single molecules may be epiphenomenal rather than causally linked to disease processes, reducing their utility for understanding disease mechanisms or identifying therapeutic targets.

Quantitative Evidence: Comparing Traditional and Network-Based Approaches

Performance Metrics in Cancer Classification

Recent studies provide quantitative evidence of the superiority of network-based approaches. In a comprehensive evaluation across 19 cancer types from The Cancer Genome Atlas (TCGA), network-based biomarker discovery demonstrated remarkable classification performance:

Table 2: Performance of NetRank Biomarker Signatures Across Cancer Types

| Cancer Type | Sample Size | AUC | Accuracy | Signature Size |

|---|---|---|---|---|

| Breast Cancer (BRCA) | 862 cases, 2526 controls | 93% | 98% | 100 genes |

| Thyroid Cancer (THCA) | 502 cases | 99% | 99% | Compact signature |

| Prostate Cancer (PRAD) | 497 cases | 98% | 97% | Compact signature |

| Cholangiocarcinoma (CHOL) | 36 cases | 82% | 80% | Compact signature |

The NetRank algorithm, which integrates protein interactions, co-expressions, and functions with phenotypic associations, achieved area under the curve (AUC) values above 90% for most cancer types using compact gene signatures [4]. Notably, the algorithm favored "proteins strongly associated with the phenotype and connected to other significant proteins," leveraging network properties to enhance biomarker performance [4].

Statistical Power in Multivariate Analysis

A quantitative comparison of statistical methods across simulated and experimental metabolomics data revealed crucial advantages of multivariate approaches:

Table 3: Statistical Performance Comparison in Metabolomics Biomarker Discovery

| Statistical Method | Scenario | Positive Predictive Value | False Positive Rate | Key Strength |

|---|---|---|---|---|

| Univariate (FDR) | N=200, M=2000 | Low | High | Simplicity |

| LASSO | N=200, M=2000 | High | Low | Feature selection |

| SPLS | N=200, M=2000 | High | Low | Handling high dimensionality |

| Random Forest | N=5000, M=200 | Moderate | Moderate | Robustness |

With increasing sample sizes, univariate methods demonstrated an apparently higher false discovery rate, represented by substantial correlation between metabolites directly associated with the outcome and metabolites not associated with the outcome [3]. In scenarios where the number of metabolites was similar to or exceeded the number of study subjects, sparse multivariate models (LASSO, SPLS) exhibited the most robust statistical power with more consistent results [3].

Network-Based Biomarker Discovery: Principles and Mechanisms

Theoretical Foundation

Network-based biomarker discovery operates on the principle that disease-associated molecules do not function in isolation but within interconnected functional modules. This approach leverages two key biological insights:

- Network proximity: Molecules associated with similar phenotypes tend to reside close within molecular interaction networks [4]

- Functional coherence: Robust biomarker signatures comprise molecules participating in coordinated biological processes or pathways [2]

The random surfer model, implemented in algorithms like NetRank, integrates protein connectivity with statistical phenotypic correlation, favoring "proteins strongly associated with the phenotype and connected to other significant proteins" [4]. This integration follows the mathematical formulation:

Where r represents the ranking score, s is the statistical association with phenotype, m_ij represents connectivity between nodes, and d is a damping factor balancing statistical association and network connectivity [4].

Key Advantages of Network Approaches

- Enhanced Biological Interpretability: Network-derived biomarkers naturally map to biological pathways and processes, providing immediate mechanistic context [2]

- Improved Robustness: By leveraging network topology, these approaches are less sensitive to technical noise and individual sample variability [4]

- Compact Signature Size: Network prioritization identifies minimally redundant yet maximally informative biomarker sets [4]

- Cross-Platform Consistency: Studies have demonstrated strong correlation (Pearson's R = 0.68) between biomarker rankings derived from biologically precomputed networks (e.g., STRINGdb) and computationally computed co-expression networks [4]

Experimental Protocols for Network-Guided Biomarker Discovery

Protocol 1: NetRank Implementation for Transcriptomic Data

Purpose: To identify robust biomarker signatures for cancer classification from RNA-seq data using network-based prioritization.

Materials and Reagents:

- RNA-seq gene expression data (e.g., from TCGA)

- R statistical environment (v3.6.3 or higher)

- NetRank R package (github.com/Alfatlawi/Omics-NetRank)

- STRINGdb R package for protein-protein interaction networks

- WGCNA package for co-expression network construction

Procedure:

- Data Preprocessing: Normalize expression data using MinMaxScaler function and log2 transformation. Split data into development (70%) and test (30%) sets.

- Network Construction:

- Option A: Retrieve protein-protein interaction network from STRINGdb

- Option B: Construct co-expression network using WGCNA method

- Phenotypic Association: Calculate Pearson correlation coefficients between gene expression and phenotypic traits using WGCNA package

- Network Ranking: Execute NetRank algorithm with damping factor d=0.85 for 100 iterations to integrate network connectivity with phenotypic association

- Signature Selection: Select top 100 proteins with highest NetRank scores and P-value < 0.05 for further validation

- Performance Validation: Evaluate signature performance on test set using PCA and SVM classification

Validation Metrics: Area under ROC curve (AUC), accuracy, F1 score, and functional enrichment analysis [4].

Protocol 2: Multivariate Statistical Analysis for Metabolomic Biomarkers

Purpose: To identify multivariate metabolite signatures associated with clinical phenotypes while minimizing false discoveries.

Materials and Reagents:

- Mass spectrometry-based metabolomics data

- R or Python statistical environment

- LASSO or SPLS implementation (e.g., glmnet, mixOmics packages in R)

Procedure:

- Data Quality Control: Apply missing value imputation, outlier detection, and batch effect correction

- Data Normalization: Perform probabilistic quotient normalization or similar approach to account for sample concentration variation

- Feature Pre-screening: Remove metabolites with low coefficient of variation or excessive missing values

- Model Training: Implement sparse multivariate method (LASSO or SPLS) with repeated k-fold cross-validation for hyperparameter tuning

- Signature Extraction: Identify non-zero coefficients in the final model as the biomarker signature

- Independent Validation: Apply signature to completely independent cohort to assess generalizability

Validation Metrics: Positive predictive value, negative predictive value, false positive rate, and cross-validation error [3].

The Scientist's Toolkit: Essential Research Reagents and Solutions

Table 4: Essential Research Reagents for Network-Guided Biomarker Discovery

| Reagent/Solution | Function | Application Notes |

|---|---|---|

| STRINGdb | Protein-protein interaction database | Provides known and predicted biological interactions; use R package "STRING v10" for direct access [4] |

| WGCNA R Package | Weighted gene co-expression network analysis | Constructs biologically meaningful co-expression networks from transcriptomic data [4] |

| LASSO Implementation | Sparse multivariate regression | Performs variable selection and regularization; use glmnet package in R [3] |

| PRM Mass Spectrometry | Targeted protein quantification | Enables antibody-free validation of protein biomarkers; high sensitivity and accuracy [5] |

| MinMaxScaler | Data normalization | Preserves relationships in RNA-seq data without assuming distribution; available in scikit-learn [4] |

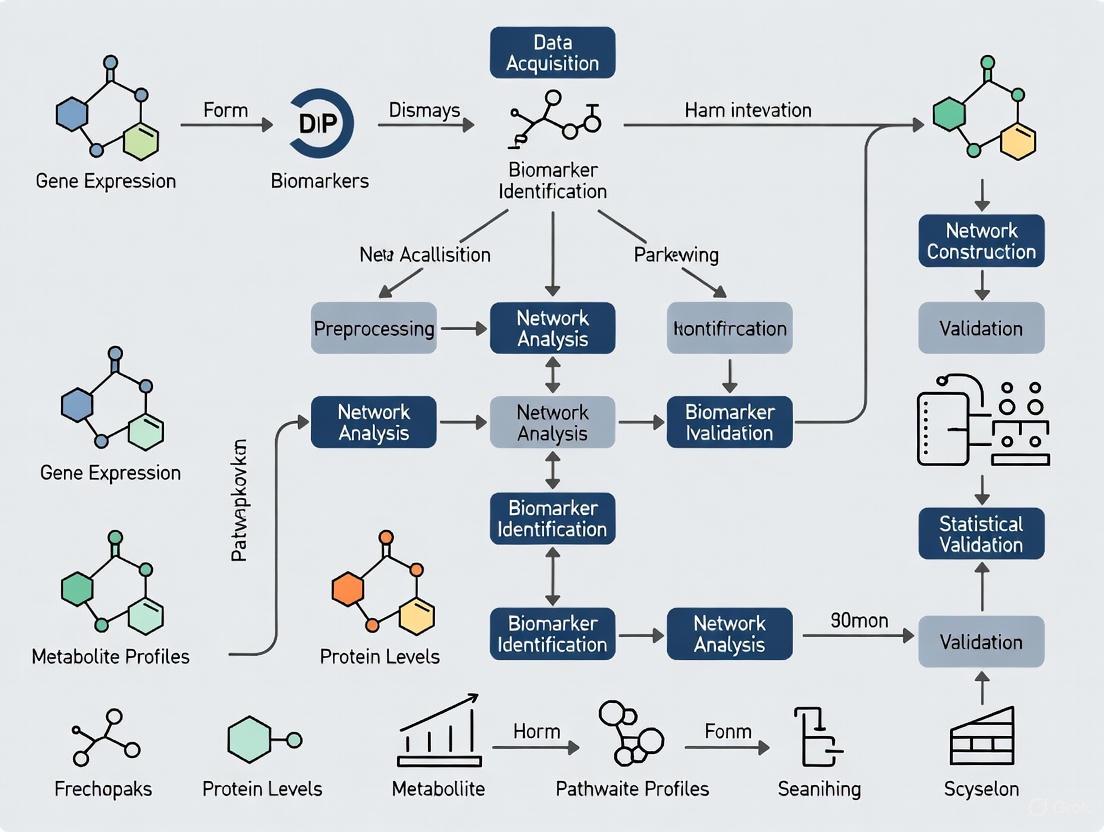

Visualizing Network-Based Biomarker Discovery

Workflow Diagram: Network-Guided Biomarker Discovery Pipeline

Conceptual Diagram: Single-Gene vs Network Approaches

The limitations of single-gene approaches in biomarker discovery for complex diseases are evident in both biological rationale and empirical performance. Network-based strategies address these limitations by embracing the complexity of disease processes through integration of multi-omics data, biological context, and sophisticated computational methods. The quantitative evidence demonstrates that network-guided biomarkers achieve superior classification accuracy, biological interpretability, and clinical potential.

Future directions in biomarker discovery will likely involve greater incorporation of artificial intelligence methods, including deep learning for multi-modal data integration and explainable AI for interpreting complex models [6]. Furthermore, federated learning approaches enable analysis across distributed datasets while protecting patient privacy, addressing a significant constraint in biomarker validation [6]. As these technologies mature, network-guided biomarker discovery will play an increasingly central role in realizing the promise of precision medicine for complex diseases.

Biological networks provide a powerful systems-level framework for understanding complex diseases and identifying robust biomarkers. By moving beyond the analysis of individual molecules, network-based approaches capture the intricate interconnected relationships within biological data, which traditional statistical and machine learning methods often fail to adequately model [7]. These networks represent biological entities—such as genes, proteins, or metabolites—as nodes and their functional relationships as edges, creating a map of cellular organization and function. In the context of biomarker discovery, this framework enables the identification of molecular signatures that are not only statistically significant but also biologically relevant within their functional context [7] [8]. The application of biological networks has been particularly transformative in precision medicine, where it helps stratify patients, predict treatment responses, and elucidate disease mechanisms across diverse clinical contexts [7] [6].

Core Network Types: Definitions, Construction, and Applications

Biological networks can be categorized based on the types of interactions they represent. The three primary categories most relevant to biomarker discovery are Protein-Protein Interaction (PPI) networks, co-expression networks, and pathway networks.

Protein-Protein Interaction (PPI) Networks

Definition and Biological Significance: PPI networks map the physical contacts between proteins within a cell. These interactions are fundamental to virtually all cellular processes, including signal transduction, gene expression regulation, metabolic pathways, and response to environmental stresses [9]. Proteins rarely operate in isolation; instead, they function in coordinated complexes and pathways. The collective behavior of proteins, studied through PPI networks, provides a system-level understanding of their regulatory behavior [10]. Higher-order interactions within these networks, such as cooperative or competitive triplets of proteins, can reveal sophisticated regulatory dynamics that are crucial for understanding complex diseases [11].

Construction and Data Sources: PPI networks are built from experimentally validated and computationally predicted interactions. Key resources include:

- Experimental Data: High-throughput techniques like yeast two-hybrid (Y2H) assays and affinity purification coupled with mass spectrometry (AP-MS) have been essential in mapping interactomes [11].

- Databases: Repositories like the Search Tool for the Retrieval of Interacting Genes (STRING) and the Biological General Repository for Interaction Datasets (BioGRID) provide crucial ground truth data [9]. The Human Protein–Protein Interaction Network (hPIN) is a high-confidence network constructed from experimentally supported data, such as that filtered from the HIPPIE database [11].

- Computational Predictions: Machine learning models, particularly those leveraging protein structural information from AlphaFold, are increasingly used to predict interactions at scale [9] [11].

Table 1: Key Data Sources for Constructing PPI Networks

| Data Source | Description | Coverage & Key Insights | Applications in Biomarker Discovery |

|---|---|---|---|

| STRING | A database of known and predicted PPIs from experimental data, computational methods, and text mining. | Limited for specific organisms like rice compared to model organisms; provides a global perspective. | Provides ground truth for known PPIs; useful for initial network building and hypothesis generation. |

| BioGRID | A comprehensive repository of biologically relevant, experimentally validated PPIs for multiple species. | Limited but high-quality, experimentally validated data. | Serves as a source of high-confidence interactions for training machine learning models and validating predictions. |

| Interactome3D | Provides 3D structural information for protein interactions. | Contains residue-level interface annotations for complexes. | Enables structural validation of interactions and identification of binding interfaces critical for drug targeting. |

| AlphaFold Predictions | Protein structure predictions for proteomes. | Nearly complete structural data for several proteomes (e.g., rice, human). | Predicts potential binding interfaces; useful for uncovering interactions in disease-responsive complexes when experimental data is scarce. |

Co-expression Networks

Definition and Biological Significance: Co-expression networks are built from gene expression data (e.g., from RNA sequencing or microarrays), where nodes represent genes and edges represent significant correlations in their expression patterns across different conditions, tissues, or perturbations [7]. The fundamental premise is that genes with highly correlated expression profiles are often involved in related biological processes, co-regulated, or part of the same protein complex or pathway.

Construction and Data Sources: A prominent method for constructing these networks is the Weighted Gene Co-expression Network Analysis (WGCNA) [7]. The process typically involves:

- Calculating Correlation: A correlation matrix (e.g., Pearson correlation) is computed for all gene pairs across the samples.

- Defining the Adjacency Matrix: The correlation matrix is transformed into an adjacency matrix, often using a power function to emphasize strong correlations.

- Identifying Modules: Genes are clustered into modules (highly interconnected subnetworks) using topological overlap and hierarchical clustering. These modules represent functional units.

- Relating Modules to Traits: Module eigengenes (the first principal component of a module's expression matrix) are correlated with clinical traits or phenotypes to identify modules associated with the disease or condition of interest.

Pathway Networks

Definition and Biological Significance: Pathway networks represent curated sequences of molecular interactions and reactions that collectively perform a specific biological function, such as a metabolic pathway (e.g., glycolysis) or a signaling pathway (e.g., MAPK signaling) [12]. They provide a holistic, multi-dimensional view of cellular processes by linking genetic information with gene expression, protein activity, and metabolic fluxes [13]. Understanding molecular pathways is critical to understanding the functioning of higher-order structures like cells, tissues, and organs [14].

Construction and Data Sources: Unlike PPI and co-expression networks, pathway networks are typically pre-defined based on accumulated biological knowledge from decades of research. Key resources include:

- KEGG (Kyoto Encyclopedia of Genes and Genomes): A comprehensive database containing pathways for metabolism, genetic information processing, environmental information processing, and human diseases [12].

- Reactome: A curated and peer-reviewed knowledgebase of biological pathways.

- GO (Gene Ontology): Provides a controlled vocabulary of terms related to biological processes, molecular functions, and cellular components, which can be used to annotate and interpret network modules.

Table 2: Comparative Overview of Core Biological Network Types

| Characteristic | PPI Networks | Co-expression Networks | Pathway Networks |

|---|---|---|---|

| Nature of Interaction | Physical or functional binding between proteins. | Statistical correlation of gene expression levels. | Curated sequence of molecular reactions/events. |

| Primary Data Source | Y2H, AP-MS, structural data, predictive models. | Transcriptomics data (RNA-Seq, microarrays). | Literature curation, expert knowledge. |

| Temporal Dynamics | Relatively stable, but can be context-dependent. | Highly dynamic, condition-specific. | Often represent canonical, conserved processes. |

| Key Strength | Identifies direct physical partners and complexes. | Infers functional relationships and co-regulated modules without prior knowledge. | Provides mechanistic context and functional annotation. |

| Application in Biomarker Discovery | Identifying druggable targets, protein complexes. | Finding gene modules associated with clinical traits. | Understanding disease mechanisms, pathway-level dysregulation. |

Experimental and Computational Protocols

This section outlines detailed methodologies for constructing and analyzing biological networks for biomarker discovery.

Protocol 1: Constructing a Context-Specific PPI Network

This protocol, inspired by tools like konnect2prot 2.0, details how to build a PPI network from a list of candidate proteins and analyze it for biomarker identification [10].

Workflow Overview:

Materials and Reagents:

- Input: A list of proteins of interest (e.g., from a differential expression analysis).

- Software/Tools:

Procedure:

- Network Generation: Submit the protein list to a network generation tool like konnect2prot 2.0 or the STRING database to retrieve a context-specific PPI network. The output will be a network file (e.g., .sif, .graphml, or .cyjs).

- Topological Analysis: Import the network into an analysis environment like Cytoscape. Calculate key topological properties for each node (protein):

- Degree: The number of connections a node has.

- Betweenness Centrality: The number of shortest paths that pass through a node, identifying potential bottlenecks.

- Closeness Centrality: How quickly a node can reach all other nodes in the network.

- Functional Enrichment: Perform gene set enrichment analysis (GSEA) or over-representation analysis (ORA) on the proteins in the network, or on specific high-degree modules, using databases like Gene Ontology (GO) and KEGG. This identifies biological processes, molecular functions, and pathways that are statistically over-represented.

- Identify Key Nodes: Integrate the results to pinpoint "influential spreaders" [10]. These are typically proteins with high degree and centrality scores that are also members of significantly enriched pathways related to the disease phenotype. These nodes represent high-priority candidate biomarkers.

Protocol 2: Building a Co-expression Network for Module Discovery

This protocol describes the process of constructing a weighted co-expression network from transcriptomic data to identify gene modules associated with a clinical trait using methods like WGCNA [7].

Workflow Overview:

Materials and Reagents:

- Input: A normalized gene expression matrix (e.g., FPKM, TPM, or counts from RNA-Seq) with samples from various conditions or with associated clinical data.

- Software/Tools:

- Primary Software: WGCNA package in R.

- Visualization: Cytoscape, ggplot2 (R).

Procedure:

- Data Preprocessing and Network Construction: Preprocess the expression data to remove lowly expressed genes and correct for batch effects. Use the WGCNA protocol to choose an appropriate soft-thresholding power (β) to achieve a scale-free topology. Construct a weighted adjacency matrix and transform it into a Topological Overlap Matrix (TOM) to minimize the effects of spurious connections.

- Module Detection: Perform hierarchical clustering on the TOM-based dissimilarity matrix. Dynamically cut the dendrogram to assign genes to modules. Assign each module a unique color label (e.g., "blue module," "turquoise module").

- Relate Modules to Traits: Calculate the module eigengene (ME) for each module. Correlate the MEs with external clinical traits (e.g., tumor stage, survival status, treatment response). Identify modules with highly significant ME-trait correlations.

- Biomarker Selection: Within the significant modules, select genes with high module membership (correlation with the module eigengene) and high gene significance (correlation with the clinical trait). These hub genes are potential biomarkers representing the core of a biologically relevant process.

Protocol 3: A Machine Learning Framework for Network-Based Classification

This protocol describes the Expression Graph Network Framework (EGNF), which integrates network generation with Graph Neural Networks (GNNs) for sample classification and biomarker discovery [7].

Workflow Overview:

Materials and Reagents:

- Input: Gene expression data and clinical attributes.

- Software/Tools:

Procedure:

- Differential Expression and Feature Selection: Perform differential expression analysis on a training set (e.g., 80% of data) using a tool like DESeq2 to identify a set of candidate genes [7].

- Build Graph Network: Construct a biologically informed network in a graph database like Neo4j. Nodes can be sample clusters generated from hierarchical clustering of expression data for each gene. Connections (edges) are established between sample clusters of different genes that share samples [7].

- Graph Neural Network (GNN): Apply GNN models like Graph Convolutional Networks (GCNs) or Graph Attention Networks (GATs) to the constructed graph. These models learn node representations by propagating and aggregating information from neighboring nodes, effectively capturing the interconnected relationships in the data [7].

- Prediction and Interpretation: Use the trained GNN for sample-specific graph-based predictions. The model can identify statistically significant and biologically relevant gene modules important for classification. The attention mechanisms in GATs can further help interpret the model by revealing which connections were most influential for the prediction [7].

Table 3: Key Research Reagents and Computational Tools for Network-Based Discovery

| Item Name | Type | Function and Application |

|---|---|---|

| STRING | Database | Provides known and predicted protein-protein interactions for network construction and preliminary analysis [9]. |

| Cytoscape | Software Platform | An open-source platform for visualizing, analyzing, and annotating molecular interaction networks. Supports plugins for enrichment analysis and network layout [15]. |

| WGCNA R Package | Software Tool | Provides a comprehensive set of functions for performing weighted gene co-expression network analysis to identify correlated gene modules [7]. |

| PyTorch Geometric | Software Library | A library for deep learning on irregularly structured input data such as graphs, used for implementing Graph Neural Networks like GCNs and GATs [7]. |

| Interactome3D | Database | Provides 3D structural information for protein interactions, enabling structural validation and analysis of binding interfaces [11]. |

| KEGG/Reactome | Database | Curated knowledge bases of biological pathways used for functional enrichment analysis of network modules [12]. |

| AlphaFold DB | Database | Repository of protein structure predictions for entire proteomes, used for structure-based feature extraction in PPI prediction [9] [11]. |

| konnect2prot 2.0 | Web Tool | Generates context-specific directional PPI networks from a protein list, identifies influential spreaders, and performs enrichment analysis [10]. |

| Neo4j GDS Library | Software Tool | A graph database and analytics platform used to store biological network data and perform graph algorithms (e.g., centrality, community detection) at scale [7]. |

The pursuit of precise biomarkers is being redefined by a paradigm shift from reductionist, single-molecule approaches to holistic, network-based strategies. Complex diseases often arise from the interplay of a group of interacting molecules rather than the malfunction of an individual gene or protein [16]. Network biomarkers leverage the mathematical principles of graph theory to model biological systems as interconnected nodes (e.g., genes, proteins, physiological metrics) and edges (their interactions or correlations). The underlying rationale is that the topology—the structural arrangement of these connections—and the position of an element within this network are profound determinants of its biological function and, consequently, its value as a biomarker. This approach provides a systems-level view, capturing the emergent properties of biological systems that are invisible when examining components in isolation [17].

The clinical need for more comprehensive and integrative biomarkers is a key driver of this field. The single-biomarker paradigm has inherent flaws; for instance, PD-L1 expression is an imperfect predictor of immunotherapy response on its own [16]. Network-based biomarkers address this by integrating multi-modal data—including molecular, clinical, and imaging-derived features—into a unified model. This allows for patient stratification based on the diagnostic and prognostic value of the entire network and its properties, moving toward the goals of predictive, preventive, personalized, and participatory (4P) medicine [18] [19].

The Conceptual Framework: From Topology to Function

Key Topological Properties as Functional Indicators

In network science, specific topological properties of a node or a network module serve as powerful proxies for biological function and resilience. The interpretation of these properties within a biological context is summarized in the table below.

Table 1: Key Network Topological Properties and Their Biological Interpretations

| Topological Property | Mathematical Definition | Biological/Functional Interpretation | Biomarker Utility |

|---|---|---|---|

| Degree Centrality | Number of connections a node has. | Indicates functional pleiotropy; high-degree nodes (hubs) often regulate core biological processes. | Hub disruption can signal system-wide failure, relevant in cancer and neurodegenerative diseases [20]. |

| Betweenness Centrality | Number of shortest paths between other nodes that pass through a given node. | Identifies bottleneck nodes that control information flow between network modules. | Bottlenecks are potential therapeutic targets; their failure can fragment the network [21]. |

| Modularity | The extent to which a network is partitioned into densely connected subgroups (modules). | Reflects functional specialization (e.g., distinct pathways). | Altered modularity can indicate disease-driven loss of functional specialization [17]. |

| Dynamic Network Index (DNI) | Quantifies a node's structural variability across different states (e.g., health vs. disease). | Captures genes or proteins undergoing significant regulatory role transitions. | Identifies state-specific "switch" genes critical in disease progression, such as in cancer [20]. |

Rationale for Position-Dependent Biomarker Discovery

The position of a molecule within a network is not random; it is a product of evolution and a direct reflection of its functional importance. The "hub-bottleneck" concept is a cornerstone of this rationale. Nodes that are both highly connected (hubs) and critical for inter-modular communication (bottlenecks) are often essential genes, and their dysregulation is disproportionately linked to disease [21]. Furthermore, analyzing a node's neighborhood—the identity and states of its direct interaction partners—can provide more robust biomarkers than the node's activity alone, as it accounts for functional context.

The concept of dynamic network biomarkers (DNBs) extends this further. Instead of a static snapshot, DNBs focus on the rewiring of interactions during a critical transition, for example, from a pre-disease state to a disease state. A group of molecules may show a sudden, coordinated increase in correlations just before this transition, serving as a powerful early-warning signal [20].

Applications and Experimental Protocols

Network topology approaches have been successfully applied across diverse disease areas, demonstrating their versatility and clinical potential. The following table summarizes key applications and the topological features they leverage.

Table 2: Applications of Network Topology in Biomarker Discovery

| Disease Area | Network Type | Key Topological Feature Used | Outcome/Biomarker Identified |

|---|---|---|---|

| Aging & Functional Disability | Physiological (clinical metrics) [17] | Global connectivity & modularity | Network topology metrics (e.g., increased connectivity) predicted incident ADL disability and mortality. |

| Cancer (Gastric Adenocarcinoma) | Gene Regulatory (scRNA-seq) [20] | Dynamic Network Index (DNI) | Genes with high DNI (major regulatory shifts) classified disease states and revealed progression biomarkers. |

| HIV Reservoir Control | Functional Genome [22] | Task-evoked topology | Topological properties of the host functional genome linked to immunologic control of the HIV reservoir. |

| Post-Stroke Motor Recovery | Functional Muscle (sEMG) [23] | Shift from redundancy to synergy | Muscle network patterns stratified patients by impairment and responsiveness to rehabilitation. |

| Alzheimer's Disease | Structural & Functional Brain (MRI) [24] | Persistent Homology | A novel topological framework was developed to detect early alterations in whole-brain connectivity. |

| Immune Checkpoint Inhibitor Response | Pathway & Protein-Protein Interaction [21] | PageRank score within pathways | PathNetGene scores quantified gene contribution to immune response, predicting therapy responders. |

Protocol 1: Identifying Dynamic Network Biomarkers in Cancer

Objective: To identify genes with significant regulatory role transitions (dynamic network biomarkers) during cancer progression using single-cell RNA sequencing data.

Methodology: The TransMarker framework [20].

Workflow Diagram:

Step-by-Step Procedure:

Multilayer Network Construction:

- For each disease state (e.g., normal, pre-cancer, tumor), create a distinct network layer.

- Build a state-specific gene network by integrating prior protein-protein interaction (PPI) data with state-specific gene expression correlations from scRNA-seq data. This creates an "attributed graph" for each state.

Contextualized Embedding Generation:

- Use a Graph Attention Network (GAT) to learn a low-dimensional representation (embedding) for each gene in each state.

- The GAT is trained to incorporate features from a node's neighbors, producing embeddings that capture both the node's own attributes and its topological context within each state-specific network.

Cross-State Structural Shift Quantification:

- Employ the Gromov-Wasserstein optimal transport method to compute a distance between the network embeddings of one state and another.

- This distance quantifies the overall structural rewiring. The alignment cost for each gene is used to measure its specific contribution to this shift.

Candidate Biomarker Ranking:

- Calculate a Dynamic Network Index (DNI) for all genes and for connected subnetworks derived from the top candidates.

- The DNI integrates the gene's alignment cost (from step 3) and its expression variance, capturing both structural and activity-based dynamics.

- Rank genes/subnetworks by their DNI value; the highest scorers are the putative dynamic network biomarkers.

Validation:

- Use the top-ranked DNBs as features to train a deep neural network (DNN) classifier to predict disease states.

- Evaluate classifier performance on a held-out test set or an independent validation cohort using metrics like accuracy and area under the ROC curve (AUC). Perform ablation studies to confirm the contribution of each step.

Protocol 2: Deriving Physiological Network Biomarkers for Aging

Objective: To construct personalized physiological networks and determine if their topology predicts functional disability and health outcomes in aging populations.

Methodology: Personalized network analysis as applied in the Rugao Longevity and Aging Study and other cohorts [17].

Workflow Diagram:

Step-by-Step Procedure:

Cohort Data Collection:

- Collect a wide range of physiological biomarkers from a large cohort. Example biomarkers include systolic and diastolic blood pressure, heart rate, cholesterol levels (HDL, LDL), C-reactive protein (CRP), and albumin.

- In parallel, collect longitudinal data on clinical outcomes, primarily Activities of Daily Living (ADL) disability and mortality.

Single-Sample Network Construction:

- For each individual participant, construct a personalized network.

- Using the vector of biomarker measurements for that single individual across multiple time points or using a resampling approach, calculate a partial correlation matrix. This matrix represents the network adjacency matrix, where nodes are biomarkers and edges are the partial correlation coefficients, controlling for the influence of other biomarkers.

Network Metric Calculation:

- From each individual's network, calculate summary metrics of topology.

- Key metrics include:

- Network Connectivity: The density of connections in the network, reflecting the overall level of co-regulation among physiological systems.

- Modularity: The extent to which the network is organized into distinct, separable communities (modules).

Statistical Association Analysis:

- Use regression models (e.g., Cox proportional hazards for mortality, logistic regression for ADL disability) to test the association between the network topology metrics (connectivity, modularity) and the clinical outcomes.

- Adjust models for potential confounders such as age, sex, and body mass index.

Validation and Sensitivity Analysis:

- Validate the findings by repeating the analysis in one or more independent, external cohorts.

- Perform sensitivity analyses to ensure the predictive performance of network topology is robust to the specific choice of biomarkers included and the parameters used for network construction.

The Scientist's Toolkit: Research Reagent Solutions

Successful implementation of network topology-based biomarker discovery requires a suite of computational and data resources.

Table 3: Essential Tools and Resources for Network Biomarker Research

| Category | Item/Resource | Specific Example | Function/Purpose |

|---|---|---|---|

| Computational Frameworks | TransMarker [20] | Custom Python scripts | Implements the full pipeline for dynamic network biomarker identification from scRNA-seq data. |

| PathNetDRP [21] | Custom R/Python scripts | Prioritizes biomarkers by integrating pathways, PPIs, and gene expression for therapy response. | |

| Brain Connectivity Toolbox | MATLAB/Python library | Provides algorithms for calculating network topology metrics (e.g., centrality, modularity). | |

| Data Resources | Protein-Protein Interaction Networks | STRING, BioGRID | Provide prior knowledge of established molecular interactions for network construction. |

| Biological Pathways | KEGG, Reactome | Curated knowledge bases for interpreting and enriching network modules and biomarker function. | |

| Multi-omics Databases | TCGA, CPTAC, DriverDBv4 [25] | Provide integrated genomic, transcriptomic, and proteomic data for analysis and validation. | |

| Analytical Techniques | Graph Neural Networks | Graph Attention Networks (GATs) [20] | Learns complex node representations that integrate features and topology. |

| Optimal Transport | Gromov-Wasserstein distance [20] | Quantifies structural dissimilarity between networks from different states. | |

| Network Propagation | PageRank Algorithm [21] | Prioritizes nodes based on their connectivity and influence within a network. |

The shift towards precision oncology represents a move away from a one-size-fits-all approach to cancer treatment, instead relying on the molecular characterization of individual tumors to guide therapeutic decisions [26]. Central to this paradigm are cancer biomarkers, which are defined as measurable indicators signaling an event or condition in a biological system, providing a measure of exposure, effect, or susceptibility [27]. In oncology, these biomarkers are most often assessed by measuring the levels of various biomolecules, including proteins, peptides, DNA, and RNA [28]. The integration of network-guided biomarker discovery approaches allows for a more comprehensive understanding of the complex molecular interactions within cancer biology, moving beyond single-marker analysis to interconnected biomarker networks. This application note details the distinct categories of biomarkers—diagnostic, prognostic, and predictive—and provides structured experimental protocols for their validation within a network biology framework, serving as an essential resource for researchers and drug development professionals.

Biomarker Classification and Clinical Utility

Biomarkers in oncology are broadly classified into three main types based on their clinical application: diagnostic, prognostic, and predictive. While some biomarkers can serve dual roles, understanding their primary function is critical for proper clinical implementation [29] [28].

Table 1: Core Types of Cancer Biomarkers and Their Clinical Applications

| Biomarker Type | Primary Function | Key Clinical Question Answered | Representative Examples |

|---|---|---|---|

| Diagnostic | Identifies the presence or type of cancer [6] [28]. | "Does the patient have cancer, and if so, what type?" | - Bence-Jones protein for multiple myeloma [28].- PSA levels for prostate cancer suspicion [29] [28].- CD20 for lymphoma diagnosis [28]. |

| Prognostic | Provides information on the likely course of the disease, such as the risk of recurrence or progression, independent of therapy [26] [29]. | "How aggressive is this cancer likely to be?" | - BRCA1/BRCA2 mutations indicating increased risk of breast and ovarian cancer [29] [28].- Oncotype DX 21-gene panel for breast cancer recurrence risk [6] [29].- Circulating Tumor Cells (CTCs) correlating with metastasis [30] [28]. |

| Predictive | Indicates the likelihood of response to a specific therapeutic intervention [26] [29]. | "Will this patient benefit from this specific drug?" | - HER2 positivity predicting response to trastuzumab in breast cancer [26] [28].- EGFR mutations predicting sensitivity to osimertinib in lung cancer [26].- KRAS mutations associated with resistance to EGFR inhibitors in colorectal cancer [28]. |

A critical conceptual distinction exists between prognostic and predictive biomarkers. Prognostic biomarkers inform about the innate aggressiveness of a disease and the overall cancer outcome in a patient, regardless of the therapy administered. In contrast, predictive biomarkers provide information on the differential benefit of a specific treatment, determining whether a patient is likely or unlikely to respond to a particular drug [6] [29]. Some biomarkers, such as estrogen receptor (ER) status in breast cancer, can be both prognostic (indicating a generally better outcome) and predictive (indicating response to hormonal therapies) [6].

Diagram 1: Clinical Decision Pathway Integrating Different Biomarker Types. This workflow illustrates how diagnostic, prognostic, and predictive biomarkers are sequentially integrated in clinical oncology to guide personalized treatment plans.

Biomarker Classes and Molecular Characteristics

Cancer biomarkers encompass a wide array of biomolecules, each providing distinct insights into tumor biology. The major classes include genetic, transcriptomic, epigenetic, proteomic, and metabolomic biomarkers, all of which can be leveraged in a network-guided discovery approach to build a comprehensive molecular signature of cancer [28].

Table 2: Molecular Classes of Cancer Biomarkers and Their Applications

| Biomarker Class | Description | Key Technologies for Detection | Examples in Precision Oncology |

|---|---|---|---|

| Genetic | Variations in the DNA sequence (somatic or germline) [28]. | - Next-Generation Sequencing (NGS)- PCR-based methods- Liquid Biopsy (ctDNA) | - BRAF V600E mutation in melanoma (predictive) [28].- ALK rearrangement in lung cancer (predictive) [26] [28].- BRCA1/2 mutations (prognostic) [29] [28]. |

| Transcriptomic | Global measurement of mRNA expression patterns [28]. | - Microarrays- RNA Sequencing (RNAseq)- qRT-PCR | - 70-gene MammaPrint panel (prognostic in breast cancer) [29].- 21-gene Oncotype DX panel (prognostic in breast cancer) [6] [29].- KAT2B, PCNA in cervical cancer (prognostic) [28]. |

| Epigenetic | Reversible modifications to DNA or histones that affect gene expression without altering the DNA sequence (e.g., DNA methylation) [28]. | - Bisulfite Sequencing- Methylation-Specific PCR | - SHOX2 promoter methylation for lung cancer diagnosis (diagnostic) [28].- SEPT9 promoter methylation for colorectal cancer detection (diagnostic) [28].- APC, GSTP1 methylation in prostate cancer (prognostic) [28]. |

| Proteomic | Analysis of protein expression, post-translational modifications, and interactions [28]. | - Mass Spectrometry (MS)- Immunohistochemistry (IHC)- ELISA | - HER2 protein overexpression by IHC (predictive) [26].- Estrogen Receptor (ER) status (prognostic/predictive) [28].- CTC detection via EpCAM, cytokeratins (prognostic) [30] [28]. |

| Metabolomic | Profiling of small-molecule metabolites that reflect the functional output of cellular processes [28]. | - Mass Spectrometry (MS)- NMR Spectroscopy | - Decreased lysophosphatidylethanolamine in breast cancer (diagnostic) [28].- Decreased choline and linoleic acid in lung cancer (diagnostic) [28]. |

Experimental Protocols for Biomarker Validation

Protocol 1: Predictive Biomarker Validation for Targeted Therapies

This protocol outlines a standardized method for validating predictive biomarkers, such as EGFR mutations, that are used to guide therapy with tyrosine kinase inhibitors (e.g., Osimertinib) in non-small cell lung cancer (NSCLC) [26].

1. Objective: To analytically and clinically validate a predictive genomic biomarker using tumor tissue or liquid biopsy samples to identify patients eligible for a targeted therapy.

2. Research Reagent Solutions & Essential Materials:

- Nucleic Acid Extraction Kit: For isolating high-quality DNA from FFPE tissue sections or plasma (for ctDNA).

- PCR Master Mix: For amplification of target genomic regions.

- Next-Generation Sequencing (NGS) Panel: A targeted panel covering relevant mutations (e.g., EGFR exons 19 and 21).

- Digital PCR System: For ultra-sensitive validation and monitoring of low-frequency variants.

- Positive and Negative Control Cell Lines: Genotyped cell lines with known mutation status for assay calibration.

- Bioinformatic Analysis Pipeline: Software for variant calling, annotation, and clinical interpretation.

3. Procedure: 1. Sample Acquisition and Processing: Obtain tumor tissue via biopsy (preferred) or blood for liquid biopsy. For tissue, process into Formalin-Fixed Paraffin-Embedded (FFPE) blocks. For blood, collect in Streck or EDTA tubes and isolate plasma within 2-4 hours, followed by ctDNA extraction. 2. Nucleic Acid Extraction: Extract genomic DNA from FFPE sections or ctDNA from plasma using a commercial kit. Quantify DNA using a fluorometric method and assess quality (e.g., DNA Integrity Number for tissue, fragment size for ctDNA). 3. Library Preparation and Sequencing: Prepare sequencing libraries from 20-50 ng of input DNA using the targeted NGS panel according to the manufacturer's protocol. Sequence on an approved NGS platform to achieve a minimum coverage of 1000x for tissue and 5000x for ctDNA. 4. Bioinformatic Analysis: Align sequencing reads to the reference genome (e.g., GRCh38). Call variants (single nucleotide variants, indels) using validated algorithms. Annotate variants using curated databases (e.g., COSMIC, ClinVar) to determine clinical significance. 5. Clinical Reporting and Actionability: Report the presence or absence of the target predictive biomarker (e.g., EGFR exon 19 del or L858R). A positive result indicates eligibility for the corresponding targeted therapy.

Protocol 2: Prognostic Transcriptomic Signature Development

This protocol describes the process for developing and validating a multi-gene prognostic RNA signature, such as the Oncotype DX Recurrence Score, to stratify patients by risk of disease recurrence [6] [29].

1. Objective: To develop a robust prognostic gene expression signature from tumor RNA that predicts the likelihood of disease recurrence (e.g., in breast cancer) independently of treatment.

2. Research Reagent Solutions & Essential Materials:

- RNA Stabilization Reagent: (e.g., RNAlater) for immediate stabilization of RNA in fresh tumor tissue.

- RNA Extraction Kit: For isolation of intact, high-quality total RNA.

- RNA Integrity Assessment Kit: (e.g., Bioanalyzer) to ensure RIN > 7.0.

- Reverse Transcription Kit: For synthesis of cDNA.

- qRT-PCR Assay: TaqMan-based assays or microarray/RNAseq platform for the target gene panel.

- Statistical Analysis Software: (e.g., R) with packages for survival analysis and risk modeling.

3. Procedure: 1. Cohort Selection and RNA Extraction: Select a well-annotated patient cohort with long-term clinical follow-up (e.g., 10 years). Extract total RNA from macro-dissected tumor tissue to ensure >70% tumor content. 2. Gene Expression Profiling: Convert RNA to cDNA. Perform gene expression analysis using a pre-defined panel of genes (e.g., 21 genes for Oncotype DX) via qRT-PCR or a designated microarray platform. Include reference genes for normalization. 3. Algorithm Development and Risk Scoring: Using the training cohort, employ multivariate Cox regression to weight the contribution of each gene to the recurrence risk. Combine the expression values and their weights into a continuous recurrence score algorithm. 4. Risk Stratification: Establish pre-defined cut-off points (e.g., low, intermediate, high risk) for the recurrence score based on clinical outcomes in the training set. 5. Clinical Validation: Validate the locked-down model and risk categories in an independent, prospectively collected validation cohort to confirm its prognostic utility.

Diagram 2: Biomarker Discovery and Validation Workflow. This flowchart outlines the three-phase pipeline for the discovery, analytical validation, and clinical translation of biomarkers, emphasizing the integration of multi-omics data and network-guided analysis.

The Scientist's Toolkit: Essential Reagents and Technologies

Successful biomarker research and development rely on a suite of specialized reagents and platforms. The following table details key solutions essential for experiments in this field.

Table 3: Research Reagent Solutions for Biomarker Discovery and Validation

| Tool Category | Specific Product Examples | Primary Function in Biomarker Workflows |

|---|---|---|

| Nucleic Acid Isolation | - QIAamp DNA FFPE Tissue Kit- Circulating Nucleic Acid Kit- RNeasy Mini Kit | - Extraction of high-quality, amplifiable DNA from challenging FFPE tissue samples.- Isolation of cell-free DNA (cfDNA) and circulating tumor DNA (ctDNA) from blood plasma.- Purification of intact total RNA for gene expression analysis. |

| Target Enrichment & Sequencing | - Illumina TruSight Oncology 500 panel- Archer FusionPlex- IDT xGen Lockdown Probes | - Comprehensive profiling of cancer-related genes for mutation, TMB, and MSI analysis from solid and liquid biopsies.- Targeted RNA sequencing for detection of gene fusions (e.g., ALK, ROS1).- Custom hybrid capture probes for focused NGS panels. |

| PCR & Digital PCR | - TaqMan SNP Genotyping Assays- Bio-Rad ddPCR Mutation Detection Assays- Roche cobas EGFR Mutation Test v2 | - Sensitive and specific allele detection and quantification for validation studies.- Absolute quantification of rare mutant alleles in liquid biopsies without a standard curve.- FDA-approved companion diagnostic test for specific predictive biomarkers. |

| Immunoassay & Proteomics | - Dako HER2 IHC Assay- R&D Systems Quantikine ELISA Kits- Olink Target 96 Proteomics Panels | - Semi-quantitative detection of protein expression (e.g., HER2) in tumor tissue.- Quantitative measurement of specific soluble protein biomarkers in serum/plasma.- High-throughput, multiplexed measurement of proteins in minimal sample volumes. |

| Bioinformatics | - GATK (Genome Analysis Toolkit)- R/Bioconductor- Commercial Clinical Interpretation Platforms (e.g., PierianDx) | - Standardized pipeline for variant discovery from NGS data.- Open-source environment for statistical analysis, visualization, and development of risk scores.- Clinical-grade software for annotating, filtering, and reporting genomic variants. |

Emerging Frontiers: AI and Novel Methodologies

The field of biomarker discovery is being transformed by artificial intelligence (AI) and machine learning (ML). These technologies can systematically explore massive, high-dimensional datasets (e.g., genomics, radiomics, clinical records) to uncover complex, non-intuitive patterns that traditional hypothesis-driven approaches might miss [6]. AI-powered biomarker discovery reduces development timelines from years to months and can integrate multiple data types simultaneously to identify "meta-biomarkers" – composite signatures that more completely capture disease complexity [6]. For instance, the AI-driven Predictive Biomarker Modeling Framework (PBMF) uses contrastive learning to specifically discover predictive, rather than merely prognostic, biomarkers. In a retrospective analysis, this framework uncovered a predictive biomarker that, if used for patient selection, would have shown a 15% improvement in survival risk in a phase 3 immuno-oncology trial [31]. Machine learning algorithms, including random forests, support vector machines, and deep neural networks, are increasingly applied to identify biomarker patterns from multi-omics data, medical images, and real-world evidence, thereby enhancing the predictive power and clinical actionability of biomarkers [6] [31].

AI and Graph-Based Methodologies: A Technical Deep Dive into Modern Frameworks

The discovery of robust biomarkers is a critical step in advancing precision medicine, enabling improved disease diagnosis, prognosis, and treatment selection. Traditional statistical and machine learning methods often struggle to capture the intricate, interconnected relationships within high-dimensional biological data. Graph Neural Networks (GNNs) have emerged as a powerful framework for biomarker discovery by explicitly modeling biological systems as networks, where nodes represent biomolecules and edges represent their functional interactions. This application note explores several cutting-edge GNN architectures—including EGNF, MOLUNGN, and MOGKAN—that are advancing the field of network-guided biomarker identification. These frameworks demonstrate how integrating multi-omics data with prior biological knowledge through graph-based deep learning can yield more accurate, interpretable, and biologically relevant biomarkers across diverse disease contexts, from cancer to neurodegenerative disorders.

Featured GNN Architectures: Core Principles and Applications

MOLUNGN: Multi-Omics Integration for Lung Cancer Staging

Core Architecture: The Multi-Omics Lung Cancer Graph Network (MOLUNGN) is designed for biomarker discovery and accurate classification of lung cancer stages, specifically focusing on non-small cell lung cancer (NSCLC) subtypes including lung adenocarcinoma (LUAD) and lung squamous cell carcinoma (LUSC). The framework incorporates omics-specific Graph Attention Network (OSGAT) modules combined with a Multi-Omics View Correlation Discovery Network (MOVCDN) to effectively capture both intra-omics and inter-omics correlations [32].

Key Application: MOLUNGN was developed to systematically integrate biomedical datasets, particularly incorporating traditional Chinese medicine (TCM)-associated multi-omics data. It investigates molecular mechanisms underlying stage-wise lung cancer progression and identifies pivotal stage-specific biomarkers to support precise cancer staging classification [32].

EGNF: Expression Graph Network Framework

Core Architecture: The Expression Graph Network Framework (EGNF) is a cutting-edge graph-based approach that integrates GNNs with network-based feature engineering. It constructs biologically informed networks by combining gene expression data and clinical attributes within a graph database, utilizing hierarchical clustering to generate dynamic, patient-specific representations of molecular interactions [33] [34].

Key Application: EGNF employs graph learning techniques, including graph convolutional networks and graph attention networks, to identify statistically significant and biologically relevant gene modules for classification. It has been validated across three independent datasets involving contrasting tumor types and clinical scenarios, demonstrating superior performance in classifying disease progression and predicting treatment outcomes [33].

MOGKAN: Interpretable Multi-Omics Integration

Core Architecture: The Multi-Omics Graph Kolmogorov–Arnold Network (MOGKAN) is a deep learning framework that utilizes messenger-RNA, micro-RNA sequences, and DNA methylation samples together with Protein-Protein Interaction (PPI) networks. The model architecture is based on the Kolmogorov–Arnold theorem principle and uses trainable univariate functions to enhance interpretability and feature analysis [35].

Key Application: MOGKAN was developed for cancer classification across 31 different cancer types, integrating heterogeneous multi-omics datasets at a systems level. The framework combines differential gene expression with DESeq2, Linear Models for Microarray (LIMMA), and LASSO regression to reduce multi-omics data dimensionality while preserving relevant biological features [35].

Table 1: Performance Comparison of Featured GNN Architectures

| Architecture | Primary Application | Key Metrics | Data Types Integrated |

|---|---|---|---|

| MOLUNGN [32] | Lung cancer staging (LUAD/LUSC) | ACC: 0.84 (LUAD), 0.86 (LUSC); F1_weighted: 0.83 (LUAD), 0.85 (LUSC) | mRNA expression, miRNA mutation profiles, DNA methylation |

| EGNF [33] | Pan-cancer biomarker discovery | Perfect normal-tumor separation; superior disease progression classification | Gene expression, clinical attributes |

| MOGKAN [35] | Multi-cancer classification (31 types) | Classification accuracy: 96.28%; Low experimental variability | mRNA, miRNA, DNA methylation, PPI networks |

| GNNRAI [36] | Alzheimer's disease classification | Improved prediction accuracy over single-omics analyses | Transcriptomics, proteomics, biological knowledge graphs |

Experimental Protocols and Workflows

MOLUNGN Implementation Protocol

Data Preprocessing Pipeline:

- Data Extraction: Extract LUAD and LUSC samples from The Cancer Genome Atlas (TCGA) database. For mRNA data, obtain FPKM_unstranded values indicating gene expression levels in non-strand-specific RNA-seq data [32].

- Data Cleaning: Perform rigorous data cleaning, noise reduction, normalization, and standardization, scaling feature values to a [0,1] interval for each sample.

- Feature Selection: Eliminate low-quality data with incomplete or zero expression, refining the dataset from an initial 60,660 gene features to 14,542 high-quality genes using dimensionality reduction algorithms [32].

Graph Construction and Model Training:

- Construct a complex network integrating gene-protein-clinical data from lung cancer patients.

- Employ OSGAT modules for feature learning from specific omics data.

- Integrate multi-omics data through MOVCDN at a higher-level label space.

- Train the model to classify clinical cases into precise cancer stages while extracting stage-specific biomarkers.

Validation Approach:

- Evaluate model performance using publicly available datasets

- Compare against existing methodologies using standard metrics: accuracy, recallweighted, F1weighted, and F1_macro

- Validate biological relevance of identified biomarkers through gene-disease association analysis [32]

EGNF Experimental Protocol

Network Construction Workflow:

- Graph Database Creation: Integrate gene expression data and clinical attributes within a graph database [33].

- Hierarchical Clustering: Apply hierarchical clustering to generate dynamic, patient-specific representations of molecular interactions.

- GNN Implementation: Leverage graph convolutional networks and graph attention networks to identify significant gene modules for classification [33].

Validation Framework:

- Test across three independent datasets involving different tumor types and clinical scenarios

- Evaluate classification accuracy and interpretability compared to traditional machine learning models

- Assess performance on nuanced tasks including normal vs. tumor separation, disease progression classification, and treatment outcome prediction [33]

MOGKAN Processing Protocol

Multi-Omics Data Preprocessing:

- Differential Expression Analysis: Apply DESeq2 to mRNA expression data to identify genes with significant expression changes (p-value threshold: 0.001) [35].

- Methylation Analysis: Use LIMMA to analyze DNA methylation data and identify differentially methylated CpG sites from the Human Methylation 450K array (485,577 features across 9,171 samples) [35].

- Dimensionality Reduction: Apply LASSO regression to mRNA and DNA methylation data to further reduce data dimensionality [35].

Graph-KAN Integration:

- Construct graph model using Protein-Protein Interaction network information to define graph structure

- Implement GKAN architecture applying Kolmogorov-Arnold representation theory to graph learning

- Utilize spline-based transformations for precise feature extraction and transparency [35]

Validation and Biomarker Analysis:

- Validate classification performance across 31 cancer types

- Conduct functional relevance analysis of identified biomarkers through Gene Ontology (GO) and Kyoto Encyclopedia of Genes and Genomes (KEGG) pathway enrichment [35]

Signaling Pathways and Workflow Visualizations

Generalized GNN Biomarker Discovery Workflow

Diagram 1: Generalized workflow for GNN-based biomarker discovery integrating multi-omics data and prior biological knowledge.

MOLUNGN Architecture Schematic

Diagram 2: MOLUNGN architecture with omics-specific GAT modules and multi-omics view correlation discovery network.

Research Reagent Solutions and Essential Materials

Table 2: Key Research Reagents and Computational Tools for GNN Biomarker Discovery

| Resource Category | Specific Tools/Databases | Application in GNN Biomarker Discovery |

|---|---|---|

| Data Sources | The Cancer Genome Atlas (TCGA) [32], Pan-Cancer Atlas [35], Autism Brain Imaging Data Exchange (ABIDE I) [37] | Provide standardized, multi-omics datasets for model training and validation across different diseases |

| Biological Networks | Protein-Protein Interaction (PPI) Networks [35], Pathway Commons [36], Prior Knowledge Networks (PKNs) [38] | Supply graph topology and biological relationships for constructing meaningful network structures |

| Analysis Tools | DESeq2 [35], LIMMA [35], LASSO Regression [35] | Perform differential expression analysis, methylation analysis, and dimensionality reduction |

| GNN Frameworks | Graph Attention Networks (GAT) [32], Graph Convolutional Networks (GCN) [36], Graph Kolmogorov-Arnold Networks (GKAN) [35] | Provide core algorithmic architectures for graph-based learning and biomarker identification |

| Validation Resources | Gene Ontology (GO) [35], KEGG Pathways [35], Permutation Testing [37] | Enable functional validation and statistical verification of identified biomarkers |

Discussion and Future Directions

The integration of GNNs with multi-omics data represents a paradigm shift in biomarker discovery, moving beyond traditional correlation-based approaches to models that capture complex biological relationships. Architectures like MOLUNGN, EGNF, and MOGKAN demonstrate several key advantages: (1) their ability to integrate heterogeneous data types through biologically meaningful graph structures; (2) improved classification performance across diverse disease contexts; and (3) enhanced interpretability through attention mechanisms and specialized architectures that highlight biologically relevant features [32] [33] [35].

Future development in this field will likely focus on several key areas. Causal inference integration approaches, as exemplified by Causal-GNN, aim to distinguish genuine causal relationships from spurious correlations by incorporating causal effect estimation and GNN-based propensity scoring [39]. Explainability enhancement through methods like integrated gradients and integrated Hessians will be crucial for clinical translation, helping researchers understand which features drive predictions and how biological domains interact [36]. Federated learning frameworks will enable analysis across distributed datasets without moving sensitive patient data, addressing privacy concerns while maintaining analytical power [6].

As these technologies mature, we anticipate increased translation of GNN-identified biomarkers into clinical applications, potentially revolutionizing precision medicine through more accurate diagnosis, prognosis, and treatment selection across diverse disease areas.

PathNetDRP represents a novel biomarker discovery framework that integrates biological pathways, protein-protein interaction (PPI) networks, and machine learning to identify functionally relevant biomarkers for predicting response to Immune Checkpoint Inhibitors (ICIs) [21]. Unlike conventional methods that rely primarily on differential gene expression analysis, PathNetDRP systematically incorporates biological context to improve biomarker selection. The framework addresses a significant challenge in cancer immunotherapy: despite the success of ICIs, only a minority of patients respond favorably, creating an urgent need for robust predictive biomarkers [21].

The core innovation of PathNetDRP lies in its application of the PageRank algorithm to prioritize ICI-associated genes within biological networks. PageRank, originally developed for ranking web pages, operates on the principle that a node's importance is determined by the quantity and quality of its connections [40]. In biological terms, this translates to the concept that genes interacting with numerous important partners in a PPI network are likely to have significant functional roles. PathNetDRP adapts this principle to identify key players in immune response mechanisms by applying PageRank to pathway-specific subnetworks, enabling a more precise, context-aware analysis of gene contributions to ICI response prediction [21].

Theoretical Foundations of Network Propagation

PageRank and Network Propagation Algorithms

Network propagation, also referred to as network smoothing, encompasses a class of algorithms that integrate information from input data across connected nodes in a given network [41]. These algorithms have found broad applications in systems biology, including protein function prediction, inferring conditionally altered sub-networks, and prioritizing disease genes [41] [42].

The PageRank algorithm operates on the principle of influence propagation through iterative updates. In the context of PathNetDRP, for a given gene (gi), the gene score at iteration (t) is computed as follows: [ PR(gi; t) = \frac{1-d}{N} + d \sum{gj \in B(gi)} \frac{PR(gj; t-1)}{L(gj)} ] where (d) is the damping factor (typically set to 0.85), (N) is the total number of genes, (B(gi)) represents genes linking to (gi), and (L(gj)) is the number of outbound links from gene (g_j) [21] [40].

Alternative network propagation algorithms include Random Walk with Restart (RWR) and Heat Diffusion (HD). RWR updates node scores according to: [ Fi = (1-\alpha)F0 + \alpha WF{i-1}, \quad (i=1,2,...) ] where (\alpha) is the spreading coefficient, (W) is the normalized network matrix, and (F0) contains the initial node scores [41]. Heat Diffusion operates as a continuous-time analogue: [ Ft = \exp(-Wt)F0 ] where (t) controls the spreading of signal over time [41].

Network Normalization and Topology Bias

A critical consideration in network propagation is network normalization, which significantly influences how network topology affects results [41]. Different normalization approaches include:

- Laplacian transformation: (W_L = D - A), where (D) is a diagonal matrix of node degrees and (A) is the adjacency matrix [41]

- Normalized Laplacian

- Degree normalized adjacency matrix

Improper normalization can lead to "topology bias," where node scores are biased exclusively due to network structure rather than biological relevance [41]. PathNetDRP mitigates this risk through careful network construction and parameter optimization.

PathNetDRP Workflow and Implementation

Algorithmic Framework

The PathNetDRP framework implements a multi-stage biomarker prioritization process [21]:

ICI-related gene selection via PageRank: The algorithm begins with ICI target genes as seeds and propagates their influence across a PPI network to identify candidate genes associated with drug response.

Identification of ICI-related biological pathways: The candidate genes are mapped to biological pathways using hypergeometric testing to identify pathways significantly enriched with ICI-response-associated genes.

Calculation of PathNetGene scores: The algorithm applies PageRank to individual pathway subnetworks to quantify each gene's contribution within its pathway context, generating PathNetGene scores that reflect functional importance in immune response.

Biomarker selection and validation: Genes with highest PathNetGene scores are selected as biomarkers and validated through machine learning models for ICI response prediction.

Table 1: Key Stages of the PathNetDRP Workflow

| Stage | Primary Input | Algorithm/Method | Output |

|---|---|---|---|

| ICI Gene Selection | ICI target genes, PPI network | PageRank algorithm | Candidate ICI-associated genes |

| Pathway Identification | Candidate genes, pathway databases | Hypergeometric test | Significantly enriched pathways |

| PathNetGene Scoring | Pathway subnetworks | Pathway-specific PageRank | Quantitative gene importance scores |

| Biomarker Validation | PathNetGene scores, expression data | Machine learning classification | Predictive biomarkers for ICI response |

Workflow Visualization

Performance Evaluation and Comparative Analysis

Quantitative Performance Metrics

PathNetDRP has demonstrated robust performance in predicting ICI response across multiple independent cancer cohorts [21]. Validation studies across eight independent ICI-treated patient cohorts showed that PathNetDRP achieved strong predictive performance, with cross-validation area under the receiver operating characteristic curves increasing from 0.780 to 0.940 compared to conventional methods [21].

Table 2: Performance Comparison of Network-Based Biomarker Discovery Methods

| Method | Key Features | Advantages | Limitations |

|---|---|---|---|

| PathNetDRP | Integrates pathways, PPIs, and PageRank; Calculates PathNetGene scores | High predictive accuracy (AUC: 0.78-0.94); Interpretable biomarkers; Biological context integration | Computational complexity; Requires high-quality pathway annotations |

| NetBio | Network propagation with pathway enrichment; Uses PPI networks | Superior to conventional biomarkers; Validated in multiple cancer types | Limited gene-level investigation capability [21] |

| ICINet | PageRank + Graph Neural Network; Integrates 14 knowledge bases | Leverages diverse biological data; Graph neural network architecture | Limited transparency in identifying specific biomarkers [21] |

| TIDE | Models T cell dysfunction and exclusion | More accurate than PD-L1 or mutation load alone; Identifies resistance mechanisms | Limited by immune system complexity [21] |

| DeepGeneX | Deep neural network with feature elimination | Identifies key genes from large feature space; Potential for target discovery | "Black box" interpretation; Limited by dataset size [21] |

In comparative analyses, PathNetDRP demonstrated superior performance to existing methods. For instance, while TIDE can identify biomarkers based on genes associated with tumor immune dysfunction and exclusion, its predictive performance is limited by the immune system's complexity [21]. DeepGeneX applies deep learning to select ICI-response-associated features but suffers from interpretability challenges due to its "black box" nature [21].

Key Parameter Optimization

Effective implementation of network propagation algorithms requires careful parameter optimization [41]:

- Spreading coefficient (α in RWR): Controls the fraction of signal spread to neighboring nodes. Small α keeps node scores close to initial values, while large α averages scores more strongly across connected nodes.

- Damping factor (d in PageRank): Typically set to 0.85, representing the probability that a "random surfer" continues following links.

- Network normalization method: Critical to avoid topology bias; different normalization methods (Laplacian, normalized Laplacian, degree-normalized) significantly impact results.

Optimal parameters can be identified by maximizing consistency between biological replicates or agreement between different omics layers (e.g., transcriptomics and proteomics) [41].

Experimental Protocols

Protocol: Implementing PathNetDRP for Biomarker Discovery

Objective: Identify and validate network-based biomarkers for ICI response prediction using the PathNetDRP framework.

Materials:

- Gene expression data from ICI-treated patients (responders vs. non-responders)

- Protein-protein interaction network (e.g., from STRING database)

- Biological pathway databases (e.g., Reactome, KEGG)

- Computational environment with Python/R and necessary libraries

Procedure:

Data Preprocessing (Day 1)

- Obtain gene expression data from ICI-treated patients with documented clinical response

- Normalize expression data using appropriate methods (e.g., TPM for RNA-seq, RMA for microarrays)

- Annotate samples as responders and non-responders based on clinical criteria (e.g., RECIST criteria)

PPI Network Construction (Day 1)

- Download comprehensive PPI network from STRING database (score >700 recommended)