From Data to Discovery: A Modern Guide to Gene Regulatory Network Inference

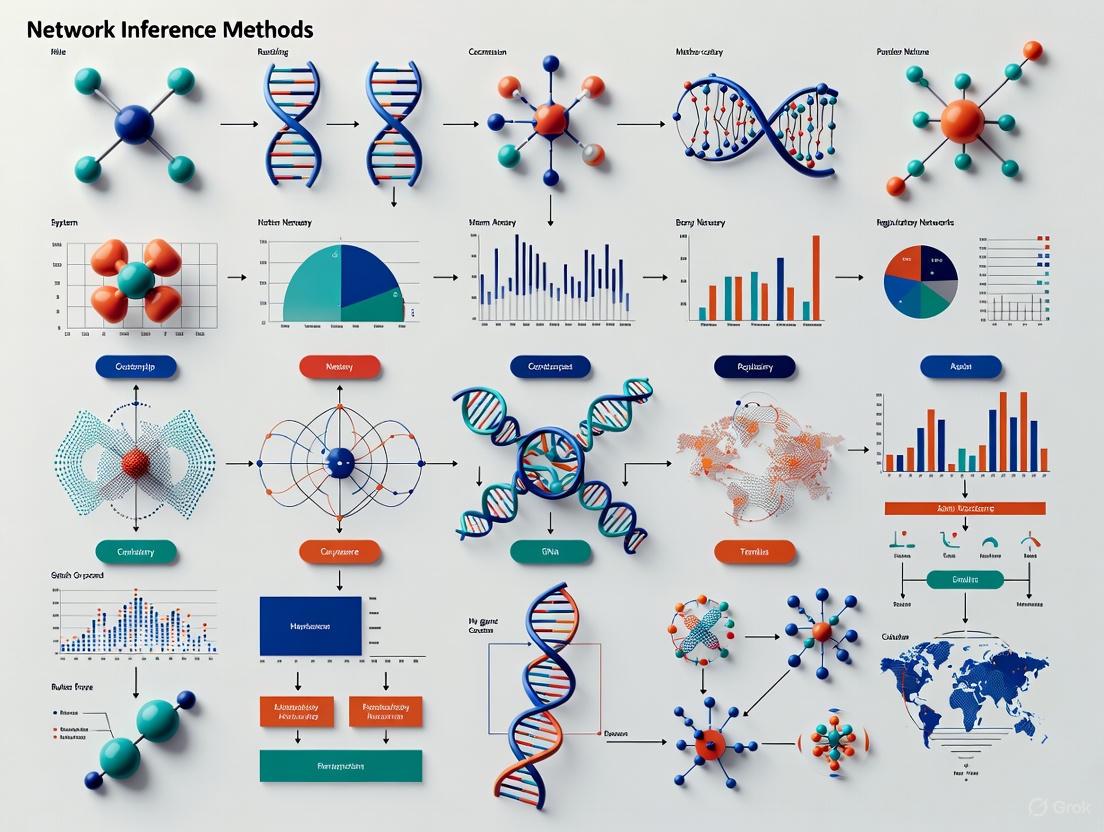

This article provides a comprehensive overview of the rapidly evolving field of Gene Regulatory Network (GRN) inference, a critical computational challenge in systems biology and drug discovery.

From Data to Discovery: A Modern Guide to Gene Regulatory Network Inference

Abstract

This article provides a comprehensive overview of the rapidly evolving field of Gene Regulatory Network (GRN) inference, a critical computational challenge in systems biology and drug discovery. Aimed at researchers and drug development professionals, it explores the foundational principles of modeling gene interactions and the central challenge of distinguishing true regulatory relationships from mere correlation. The review systematically categorizes and evaluates modern inference methodologies, from classical correlation-based techniques to cutting-edge machine learning and deep learning models like DAZZLE. It addresses key practical hurdles, including the pervasive 'dropout' noise in single-cell RNA-seq data and the critical trade-off between prediction precision and recall. Furthermore, the article synthesizes findings from recent large-scale benchmarking efforts, such as CausalBench, which reveal the performance and scalability limits of current methods on real-world perturbation data. Finally, it discusses the translation of inferred networks into biological insights and clinical applications, offering a forward-looking perspective on the integration of multi-omics data and the path toward more accurate, reliable network models for understanding disease mechanisms and identifying therapeutic targets.

Decoding the Blueprint: What Are Gene Regulatory Networks and Why Do They Matter?

This application note details modern methodologies for investigating gene regulatory networks (GRNs), moving beyond the classical central dogma to incorporate multi-omics integration and three-dimensional genomic architecture. Designed for researchers and drug development professionals, it provides actionable protocols and resources to decipher the complex interactions governing gene expression, from DNA to functional protein, within the context of network inference.

The classical central dogma of molecular biology describes the linear flow of genetic information from DNA to RNA to protein. However, contemporary research reveals that this process is governed by intricate Gene Regulatory Networks (GRNs). These networks encompass multi-gene interactions, regulatory elements, transcription factors (TFs), and co-factors that orchestrate spatiotemporal gene expression [1]. Advances in sequencing and computational biology now allow for the detailed inference and modeling of these networks, providing systems-level insights into developmental biology, cellular response, and disease mechanisms [2]. The regulatory landscape includes not only promoters but also distal elements like enhancers and silencers, whose interactions with target genes are often mediated by the three-dimensional (3D) architecture of chromatin within the nucleus [1] [3]. This note outlines practical experimental and computational workflows to study these regulatory interactions, framing them within the overarching goal of GRN inference.

Mapping the 3D Genomic Architecture

The 3D organization of chromatin is a critical, yet often overlooked, layer of transcriptional regulation. It brings distal regulatory elements into physical proximity with their target genes, forming the structural basis of the GRN.

Key Regulatory Structures and Elements

- Topologically Associating Domains (TADs): TADs are self-interacting genomic regions ranging from hundreds of kilobases to several megabases. They are highly conserved across cell types and act as functional units to constrain enhancer-promoter interactions [1]. Disruption of TAD boundaries can lead to aberrant gene expression and disease [1].

- Chromatin Loops: Facilitated by protein complexes like CTCF and cohesin, chromatin loops directly connect promoters with distal regulatory elements through a process called loop extrusion [1]. These loops are fundamental for precise gene regulation.

- Enhancers and Super-Enhancers (SEs): Enhancers are distal regulatory elements that can activate gene transcription. SEs are large clusters of enhancers that possess a stronger ability to function within GRNs and are often critical for cell identity [1].

Experimental Protocols for 3D Genome Mapping

Protocol: Mapping Chromatin Interactions with ChIA-PET

Chromatin Interaction Analysis by Paired-End Tag Sequencing (ChIA-PET) is a high-resolution method to map genome-wide, protein-mediated chromatin interactions.

- Crosslinking: Fix cells with formaldehyde to covalently link DNA and bound proteins, preserving in vivo interactions.

- Chromatin Fragmentation: Sonicate or enzymatically digest the crosslinked chromatin into smaller fragments.

- Immunoprecipitation (ChIP): Use an antibody specific to a protein of interest (e.g., RNA Polymerase II, H3K4me3, or CTCF) to pull down protein-bound DNA fragments.

- Proximity Ligation: The protein-bound DNA fragments are processed with linkers and subjected to proximity ligation, connecting DNA fragments that were in close spatial proximity.

- Library Preparation and Sequencing: The ligated products are converted into a sequencing library and analyzed using high-throughput sequencing.

- Bioinformatic Analysis: Map the sequenced paired-end tags to a reference genome to identify significant, protein-mediated chromatin interactions [3].

Table 1: Technologies for Profiling the 3D Genome and Transcriptome

| Technology | Application | Key Output | Considerations |

|---|---|---|---|

| Hi-C / in situ Hi-C [1] | Genome-wide chromatin interaction profiling | TAD maps, A/B compartments | Population-averaged, lower resolution |

| ChIA-PET [3] | Protein-specific chromatin interaction mapping | High-resolution chromatin loops | Requires a specific protein target (antibody) |

| STARR-seq [1] | High-throughput enhancer identification | Quantitative assessment of enhancer activity | Functional screening in a plasmid context |

| ChIP-seq (H3K27ac, etc.) [1] | Mapping histone modifications & TF binding | Genome-wide locations of regulatory elements | Requires high-quality antibodies |

| ATAC-seq [1] | Assaying chromatin accessibility | Identification of open, putative regulatory regions | Simple protocol, works on low cell numbers |

Diagram 1: 3D chromatin architecture enabling gene regulation.

Quantifying Regulatory Relationships

Inferring GRNs from high-throughput data requires robust statistical measures to quantify the strength and type of relationships between molecular components.

Association Measures for Network Inference

A wide array of association measures can be applied to gene expression profiling data (e.g., from RNA-seq) to infer regulatory connections. The choice of measure depends on the expected nature of the relationship (linear vs. non-linear) and computational considerations [4].

Table 2: Association Measures for Quantifying Gene Regulatory Relationships

| Measure | Abbrev. | Statistical Basis | Relationship Type | Key Feature |

|---|---|---|---|---|

| Pearson's Correlation [4] | PCC | Covariance | Linear | Widely used, fast computation |

| Spearman's Rank [4] | ρ | Rank-based | Monotonic | Non-parametric, robust to outliers |

| Mutual Information [4] | MI | Information entropy | Non-linear | Detects complex, non-linear dependencies |

| Maximal Information Coefficient [4] | MIC | Information entropy | Non-linear | Captures a wide range of associations |

| Distance Correlation [4] | dCor | Distance covariance | Non-linear | Generalizes Pearson's to non-linear |

Protocol: Inferring a Co-expression Network from RNA-seq Data

- Data Preprocessing: Obtain a gene expression matrix (genes x samples) from RNA-seq data. Perform quality control, normalization, and batch effect correction.

- Calculate Association Matrix: Select an association measure (e.g., PCC or MI) from Table 2. Compute a pairwise matrix of association scores for all gene pairs.

- Statistical Thresholding: Determine a significance threshold for the associations. This can be based on permutation testing or a fixed cutoff (e.g., top 1% of edges).

- Network Construction: Create a network where nodes represent genes and edges represent significant regulatory relationships. The weight of each edge corresponds to the association score.

- Integration with Prior Knowledge: Annotate nodes with information such as Transcription Factor (TF) status. This allows for inferring directionality (TF → target gene) in an otherwise undirected co-expression network [4].

Multi-Level Control of Gene Expression

For stringent control in synthetic biology or when working with toxic genes, regulating a single step of the central dogma is often insufficient. Multi-level controllers (MLCs) that simultaneously regulate transcription and translation offer a solution.

Principles of Multi-Level Control

MLCs implement a coherent type 1 feed-forward loop (C1-FFL). In this design, an input inducer controls both a Level 1 transcriptional regulator and a Level 2 translational regulator. The GOI is only expressed when both regulators are active, leading to significantly reduced basal (leaky) expression and a higher fold-change upon induction compared to single-level controllers (SLCs) [5]. Furthermore, MLCs can suppress transcriptional noise by requiring coincident signals from two independent promoters, filtering out transient fluctuations [5].

Protocol: Implementing a Multi-Level Controller for Stringent Protein Expression

- Genetic Template Assembly: Using a standardized toolkit (e.g., Golden Gate assembly), construct a plasmid containing:

- An inducible promoter (e.g., Ptac) controlling the expression of a Level 1 transcriptional regulator (e.g., LacI).

- The same inducible promoter controlling the expression of a Level 2 translational regulator (e.g., a toehold switch RNA).

- The GOI, whose transcript contains the recognition sequence for the Level 2 regulator [5].

- Transformation and Cell Culture: Transform the assembled construct into a suitable host (e.g., E. coli). Grow cultures with and without the inducer (e.g., IPTG).

- Characterization: Measure the output protein expression (e.g., via fluorescence or western blot) in both induced and uninduced states. The MLC should demonstrate a dramatically higher fold-change (>1000-fold) and lower basal expression than a traditional SLC [5].

Diagram 2: Multi-level vs. single-level control of gene expression.

Integration and Visualization of Omics Data

GRN inference generates complex datasets that require sophisticated integration and visualization to extract biological meaning.

Pathway Enrichment Analysis

Pathway enrichment analysis is a standard method to interpret gene lists derived from omics experiments (e.g., differentially expressed genes). It identifies biological pathways that are over-represented in a gene list more than expected by chance [6].

Protocol: Functional Enrichment Analysis with Cytoscape and STRING

- Network Retrieval: In Cytoscape, use the STRING app to query the protein-protein interaction database with a list of genes of interest (e.g., differentially expressed genes). Set the species and a confidence score cutoff (e.g., 0.4) [7].

- Data Integration: Import experimental data (e.g., log fold-change, p-values) and map it onto the network nodes using a unique identifier (e.g., Entrez Gene ID) [7].

- Visualization: Use the Style interface in Cytoscape to create data-driven visualizations. For example, map

logFCtoNode Fill Colorusing a color gradient (e.g., red for up-regulated, blue for down-regulated) [7]. - Enrichment Analysis: Use the built-in STRING enrichment function to identify significantly enriched Gene Ontology (GO) terms or KEGG pathways. Apply filters to remove redundant terms [7].

- Visualize Enrichment: Add enrichment charts (e.g., split donut charts) directly onto the network nodes to display the top associated biological processes [7].

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Reagents and Tools for Gene Regulatory Network Research

| Item | Function/Description | Example Use Case |

|---|---|---|

| pREF Universal Control [8] | Synthetic multi-omics control plasmid for NGS and MS. Encodes 21 synthetic genes for spike-in mRNA/protein controls. | Calibrating genomic, transcriptomic, and proteomic measurements in a single experiment. |

| ChIP-grade Antibodies (e.g., H3K27ac) [1] | High-specificity antibodies for immunoprecipitation of chromatin-bound proteins or histone marks. | Identifying active enhancers and promoters via ChIP-seq. |

| Gateway or Golden Gate Cloning Kits [9] | Advanced molecular cloning technologies for efficient and flexible assembly of genetic constructs. | Rapid construction of multi-level controllers and other synthetic gene circuits. |

| Competent E. coli Cells (e.g., One Shot) [9] | Chemically or electrocompetent bacterial cells for plasmid transformation and propagation. | Standard plasmid amplification and protein expression in a prokaryotic system. |

| Cytoscape Software [7] [6] | Open-source platform for complex network visualization and analysis. | Integrating, visualizing, and analyzing GRNs and associated omics data. |

| Anion Exchange Plasmid Kits [9] | High-purity plasmid purification technology with low endotoxin levels. | Preparing transfection-grade plasmid DNA for mammalian cell experiments. |

The journey from DNA to protein is governed by a sophisticated, multi-layered regulatory system. By integrating 3D genomics, quantitative association measures, multi-level control strategies, and powerful bioinformatics visualization, researchers can move from observing correlations to inferring causal relationships within GRNs. The protocols and tools outlined here provide a robust framework for advancing our understanding of cellular regulation and accelerating therapeutic development.

A Gene Regulatory Network (GRN) is a collection of molecular regulators that interact with each other and with other substances in the cell to govern the gene expression levels of mRNA and proteins, which in turn determine cellular function [10]. In computational systems biology, formalizing the process of inferring these networks from experimental data is a fundamental challenge. This formalization frames the biological system as a graph, a mathematical structure consisting of nodes (genes, proteins) and edges (their regulatory interactions) [10] [11]. The core inference problem is to accurately predict the presence and strength of these edges, represented mathematically as a prediction matrix, from high-throughput biological data such as single-cell RNA sequencing (scRNA-seq) [12] [13].

Core Concepts: Nodes, Edges, and the Matrix Representation

Nodes: The Molecular Entities

In a GRN, nodes represent the key molecular entities involved in regulation. These can include [10]:

- Genes and their messenger RNA (mRNA) transcripts.

- Transcription Factors (TFs), which are proteins that bind to DNA to activate or inhibit gene expression.

- Protein complexes formed by the combination of multiple proteins.

- Cellular processes that influence the network's state.

The value or state of a node, such as the expression level of a gene, is dynamic and depends on a function of the values of its regulatory inputs in previous time steps [10].

Edges: The Functional Interactions

Edges represent the functional interactions between nodes. These interactions are characterized by their direction and effect [10]:

- Inductive (Activating): An increase in the concentration or activity of the source node leads to an increase in the target node. Represented by arrows (

→) or a plus sign (+). - Inhibitory: An increase in the source node leads to a decrease in the target node. Represented by blunt arrows (

┴) or a minus sign (-). - Dual: Depending on context, the regulator can either activate or inhibit the target.

These edges can be direct, such as a TF binding to a DNA promoter sequence, or indirect, operating through intermediate transcribed RNA or translated proteins [10].

The Prediction Matrix: Formalizing the Inference Output

The goal of GRN inference is to reconstruct the network's connectivity. This is formalized by learning a prediction matrix, often an adjacency matrix, where each element describes the predicted regulatory relationship from one gene to another [12] [13].

Mathematical Formalization:

Let G be the set of all genes in the network, with size N. The inferred GRN can be represented by a weighted adjacency matrix A of dimensions N x N. An entry A[i, j] represents the predicted strength or probability of a regulatory interaction where the product of gene j regulates the expression of gene i. A value of zero indicates no predicted interaction. In many models, this matrix A is parameterized and forms the core component that is optimized during the learning process to best explain the observed expression data [12].

Diagram 1: The basic elements of a GRN. Nodes represent biological entities, and edges represent their regulatory interactions, which can be direct or indirect, activating or inhibitory.

Quantitative Frameworks for GRN Inference

Different mathematical and computational models are used to infer the prediction matrix. The table below summarizes the key quantitative approaches.

Table 1: Quantitative Models for GRN Inference

| Model Type | Core Principle | Key Inputs | Prediction Matrix Output |

|---|---|---|---|

| Linear Models [11] | Models gene expression as a linear function of its regulators' expression. | Time-series gene expression data. | A matrix of influence coefficients (Wij or λij), where non-zero entries indicate edges. |

| Machine Learning (ML) [2] | Uses algorithms (e.g., tree-based, neural networks) to learn complex, non-linear regulatory patterns from data. | Gene expression data, sometimes with prior network information. | A matrix of interaction scores or probabilities derived from the trained ML model. |

| Deep Learning (DL) [12] [13] | Employs deep neural networks (e.g., autoencoders, graph transformers) to learn hierarchical features and interactions. | Gene expression data, often combined with a prior GRN graph. | A parameterized adjacency matrix, often refined through graph representation learning. |

Experimental Protocols for Modern GRN Inference

Protocol: GRN Inference with DAZZLE from scRNA-seq Data

DAZZLE is a deep learning method that uses a stabilized autoencoder-based structural equation model and Dropout Augmentation (DA) to improve inference from single-cell data [12].

Workflow Overview:

Diagram 2: The DAZZLE workflow for GRN inference, highlighting the key step of Dropout Augmentation to improve model robustness.

Detailed Step-by-Step Methodology:

Input Data Preparation:

- Material: A single-cell RNA sequencing gene expression matrix (

X). Rows represent cells, and columns represent genes. - Protocol: Transform the raw count matrix to reduce variance and handle zeros. A common transformation is

log(x+1)[12].

- Material: A single-cell RNA sequencing gene expression matrix (

Dropout Augmentation (DA):

- Principle: To improve model robustness against "dropout" noise (false zeros prevalent in scRNA-seq data), augment the input data by artificially setting a small, random subset of non-zero expression values to zero. This acts as a form of model regularization [12].

- Execution: During model training, for each batch of data, randomly select a small percentage of non-zero values and set them to zero, creating an augmented training batch.

Model Architecture and Training (DAZZLE):

- Framework: The model is based on a structural equation model (SEM) framework within an autoencoder.

- Core Component: The adjacency matrix

Ais a parameterized matrix that is learned and used in both the encoder and decoder parts of the autoencoder. - Training Objective: The model is trained to reconstruct its input (the gene expression matrix) while simultaneously learning the sparse adjacency matrix

Athat best explains the regulatory relationships underlying the data [12].

Output and Interpretation:

- Output: The primary output is the trained, parameterized adjacency matrix

A. The absolute values of the entriesA[i, j]indicate the predicted strength of regulation from genejto genei. - Validation: The inferred network should be benchmarked against known ground-truth networks (e.g., from databases like STRING or ChIP-seq) using metrics like Area Under the Precision-Recall Curve (AUPRC) and Area Under the Receiver Operating Characteristic (AUROC) [13].

- Output: The primary output is the trained, parameterized adjacency matrix

Protocol: GRN Inference with GRLGRN Using Prior Knowledge

GRLGRN is a deep learning model that uses a graph transformer network to infer GRNs by integrating a prior network with single-cell gene expression profiles [13].

Workflow Overview:

Diagram 3: The GRLGRN workflow, which leverages a prior GRN and gene expression data to learn refined gene embeddings for predicting regulatory relationships.

Detailed Step-by-Step Methodology:

Input Data Preparation:

- Materials:

- A prior GRN presented as a directed graph

𝒢 = (𝒱, ℰ), where𝒱is the set of genes (nodes) andℰis the set of known regulatory relationships (edges). - A gene expression profile matrix from scRNA-seq data, corresponding to the genes in

𝒱.

- A prior GRN presented as a directed graph

- Protocol: From the prior GRN

𝒢, formulate five derived graphs to capture different relational aspects: TF-to-target (𝒢1), target-to-TF (𝒢2), TF-to-TF (𝒢3), the reverse of𝒢3(𝒢4), and a self-connected graph (𝒢5). Concatenate their adjacency matrices into a tensor𝑨ₛ[13].

- Materials:

Gene Embedding Module:

- Graph Transformer Layer: Processes the tensor

𝑨ₛto extract implicit links that are not explicitly present in the prior network but are suggested by its topology. - Graph Convolutional Network (GCN) Layer: Uses the implicit links and the gene expression matrix to generate low-dimensional vector representations (embeddings) for each gene, capturing their features in the context of the network [13].

- Graph Transformer Layer: Processes the tensor

Feature Enhancement and Output:

- Convolutional Block Attention Module (CBAM): Applies an attention mechanism to the gene embeddings to refine them, emphasizing more informative features.

- Output Module: The refined gene embeddings are fed into a neural network layer (e.g., a multi-layer perceptron) to predict the probability of a regulatory relationship for every possible pair of genes, thus generating the final prediction matrix for the GRN [13].

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials and Reagents for GRN Inference Experiments

| Item | Function in GRN Research |

|---|---|

| scRNA-seq Datasets [12] [13] | Provides the primary quantitative input of gene expression profiles across individual cells, capturing cellular heterogeneity. Key to inferring context-specific GRNs. |

| Benchmark Datasets (e.g., BEELINE) [13] | Provide standardized scRNA-seq data and validated ground-truth networks for the purpose of fairly comparing and benchmarking the performance of different GRN inference methods. |

| Prior GRN Databases (e.g., STRING, ChIP-seq data) [13] | Provide pre-existing, often experimentally derived, knowledge of gene interactions. Used as input for methods like GRLGRN to guide and improve the accuracy of inference. |

| Transcription Factor Binding Site Databases | Contain information on known or predicted DNA binding sites for TFs, providing direct physical evidence for potential regulatory edges in the network. |

| Computational Frameworks (e.g., Python, R, specialized GRN software) [12] [13] | The essential environment for implementing, training, and evaluating the complex mathematical and machine learning models used for GRN inference. |

Single-cell RNA sequencing (scRNA-seq) represents a revolutionary advancement in transcriptomic analysis, enabling researchers to investigate gene expression profiles at the individual cell level within complex biological systems. This technology has fundamentally transformed our understanding of cellular heterogeneity, a critical factor in development, disease progression, and tissue function that was previously obscured by bulk RNA sequencing methods [14]. Unlike bulk RNA sequencing, which averages gene expression across thousands of cells, scRNA-seq captures the unique transcriptional identity of each cell, revealing rare cell populations, developmental trajectories, and subtle variations in cellular states that were previously undetectable [15]. This unprecedented resolution has positioned scRNA-seq as an indispensable tool for gene regulatory network (GRN) inference, as it enables the delineation of regulatory relationships within specific cell types and states.

The evolution of scRNA-seq technology began with its first demonstration in 2009 on a 4-cell blastomere stage, followed by the development of the first multiplexed scRNA-seq method in 2014 [14]. The field has since expanded rapidly, with numerous protocol innovations and the establishment of dedicated databases such as scRNASeqDB in 2017 [14]. Current applications span diverse domains including drug discovery, tumor microenvironment characterization, biomarker identification, and microbial profiling, demonstrating the technology's versatility and transformative potential across biological research and therapeutic development [14].

Experimental Workflow and Protocol Selection

Sample Preparation and Cell Isolation

The initial critical stage in scRNA-seq involves extracting viable individual cells from the tissue of interest. The quality of this starting material profoundly impacts downstream results, emphasizing the importance of optimized dissociation protocols that maintain cell viability while minimizing stress responses [16]. For tissues difficult to dissociate or when working with frozen samples, novel methodologies such as single nuclei RNA-seq (snRNA-seq) provide a valuable alternative [14]. Another innovative approach, "split-pooling" scRNA-seq techniques, applies combinatorial indexing (cell barcodes) to single cells, offering distinct advantages including the ability to process large sample sizes (up to millions of cells) with greater efficiency while eliminating the need for expensive microfluidic devices [14].

The table below summarizes the primary cell isolation methods used in scRNA-seq experiments:

Table 1: Cell Isolation Methods for scRNA-seq

| Method | Principle | Advantages | Limitations | Applications |

|---|---|---|---|---|

| FACS | Fluorescence-activated cell sorting | High purity, precise selection based on markers | Lower throughput, potential cell stress | Targeted analysis of specific cell populations |

| Droplet-based | Microfluidic partitioning of cells | High throughput, cost-effective for many cells | Limited visual confirmation of single cells | Large-scale studies, complex tissues |

| Microwell-based | Physical confinement in wells | Controlled cell deposition, compatible with imaging | Medium throughput, potential well occupancy issues | Applications requiring cell location tracking |

| Laser capture microdissection | Direct visual selection of cells | Spatial context preservation, precise selection | Very low throughput, technical expertise required | Rare cell populations, spatially defined samples |

Following cell isolation, individual cells undergo lysis to release RNA molecules. Poly[T]-primers are frequently employed to selectively capture polyadenylated mRNA molecules while minimizing the capture of ribosomal RNAs [14]. This targeted approach enhances the efficiency of transcriptome coverage for protein-coding genes.

Protocol Selection Guidelines

Choosing an appropriate scRNA-seq protocol depends on the specific research objectives, sample type, and analytical requirements. The two primary categories of protocols are full-length transcript and 3'/5' end counting methods, each with distinct advantages and limitations:

Table 2: Comparison of scRNA-seq Protocols

| Protocol | Isolation Strategy | Transcript Coverage | UMI | Amplification Method | Unique Features |

|---|---|---|---|---|---|

| Smart-Seq2 | FACS | Full-length | No | PCR | Enhanced sensitivity for detecting low-abundance transcripts; generates full-length cDNA |

| Drop-Seq | Droplet-based | 3'-end | Yes | PCR | High-throughput and low cost per cell; scalable to thousands of cells simultaneously |

| inDrop | Droplet-based | 3'-end | Yes | IVT | Uses hydrogel beads; low cost per cell; efficient barcode capture |

| CEL-Seq2 | FACS | 3'-only | Yes | IVT | Linear amplification reduces bias compared to PCR |

| Seq-well | Droplet-based | 3'-only | Yes | PCR | Portable, low-cost, easily implemented without complex equipment |

| MATQ-Seq | Droplet-based | Full-length | Yes | PCR | Increased accuracy in quantifying transcripts; efficient detection of transcript variants |

| Fluidigm-C1 | Droplet-based | Full-length | No | PCR | Microfluidics-based single-cell capture; precise cell handling |

Full-length transcript protocols (e.g., Smart-Seq2, MATQ-Seq) excel in applications requiring isoform usage analysis, allelic expression detection, and identification of RNA editing due to their comprehensive coverage of transcripts [14]. These methods generally demonstrate superior performance in detecting more expressed genes compared to 3' end counting methods [14]. Conversely, droplet-based techniques like Drop-Seq, inDrop, and 10X Genomics Chromium enable higher throughput at a lower cost per cell, making them particularly valuable for detecting diverse cell subpopulations in complex tissues or tumor samples [14].

Commercial Platform Considerations

Commercial scRNA-seq platforms such as the 10X Genomics Chromium system have significantly standardized and democratized single-cell research. These systems utilize microfluidic partitioning to capture single cells in Gel Beads-in-emulsion (GEMs), where all cDNAs from a single cell receive the same barcode, enabling multiplexed analysis [15]. The recent GEM-X technology has improved upon earlier versions by generating twice as many GEMs at smaller volumes, reducing multiplet rates two-fold and increasing throughput capabilities [15]. The Flex Gene Expression assay further expands compatibility to include fresh, frozen, and fixed samples, including FFPE tissues and fixed whole blood, providing unprecedented flexibility for precious clinical samples [15].

scRNA-seq Experimental Workflow

Essential Research Reagent Solutions

The successful implementation of scRNA-seq protocols relies on a suite of specialized reagents and tools designed to maintain RNA integrity, ensure efficient cDNA synthesis, and enable precise barcoding. The following table outlines key research reagent solutions essential for scRNA-seq experiments:

Table 3: Essential Research Reagent Solutions for scRNA-seq

| Reagent Category | Specific Examples | Function | Technical Considerations |

|---|---|---|---|

| Cell Viability Kits | LIVE/DEAD staining, Propidium iodide exclusion | Assessment of cell integrity and selection of viable cells | Critical for reducing background from dead cells; optimal concentration varies by cell type |

| Nuclease Inhibitors | RNase inhibitors, SUPERase-In | Protection of RNA integrity during processing | Essential throughout protocol; particularly critical during cell lysis |

| Cell Lysis Buffers | Detergent-based lysis solutions | Release of RNA from individual cells | Must balance complete lysis with RNA preservation; often contain nuclease inhibitors |

| Reverse Transcriptase Enzymes | SmartScribe, Maxima H-minus | cDNA synthesis from single-cell RNA | High processivity and fidelity essential; thermostable versions preferred |

| Template Switching Oligos | TSO sequences for Smart-seq protocols | Enable full-length cDNA amplification | Critical for SMART-based protocols; sequence optimization improves efficiency |

| Unique Molecular Identifiers (UMIs) | Barcoded beads (10X), UMI oligos | Correction for amplification bias and quantitative accuracy | Enable digital counting; essential for accurate transcript quantification |

| Barcoded Beads | 10X Gel Beads, inDrop beads | Single-cell indexing and library preparation | Barcode diversity must exceed cell number; quality control critical |

| Library Amplification Kits | KAPA HiFi, NEB Next | Amplification of cDNA for sequencing | High-fidelity polymerases essential to minimize errors in amplified libraries |

| Sample Preservation Reagents | RNAlater, methanol fixation, Nuclei EZ lysis | Stabilization of RNA for delayed processing | Enable batch processing; particularly important for clinical samples |

For commercial platforms like 10X Genomics, integrated reagent kits provide standardized solutions that ensure reproducibility across experiments. The Universal 3' and 5' Gene Expression assays include all necessary reagents for GEM formation, barcoding, and library preparation in optimized formulations [15]. The newer Flex assay incorporates specialized reagents for sample fixation and permeabilization, enabling workflow flexibility while maintaining data quality [15]. These integrated solutions significantly reduce technical variability and implementation barriers for researchers new to single-cell technologies.

Data Processing and Computational Analysis

Quality Control and Preprocessing

The computational analysis of scRNA-seq data begins with rigorous quality control to identify and remove low-quality cells and technical artifacts. Key quality metrics include the number of detected genes per cell, total counts per cell, and the percentage of mitochondrial reads [16]. Cells with low gene counts, high mitochondrial percentages (indicating apoptosis or stress), or aberrantly high counts (potential multiplets) should be filtered out [16]. For droplet-based methods, tools like EmptyDrops distinguish true cells from empty droplets containing ambient RNA [16]. Doublet detection algorithms such as Scrublet or DoubletFinder identify and remove multiple cells captured in the same droplet or well [16].

Normalization approaches must account for technical variability in sequencing depth across cells. Methods like SCnorm or regularized negative binomial regression address systematic technical biases and zero-inflation characteristic of scRNA-seq data [16]. Unlike bulk RNA-seq normalization methods, which can introduce errors in scRNA-seq data, these specialized approaches model the unique statistical properties of single-cell count data [17].

Batch Effect Correction

Technical variability between experiments, known as batch effects, represents a significant challenge in scRNA-seq analysis. Multiple computational approaches have been developed to address this issue, including Seurat's CCA alignment, Harmony, and mutual nearest neighbors (MNN) correction [16]. Recent advances in deep learning-based methods such as scPhere and DV (deep visualization) provide integrated approaches for batch correction while preserving biological signal [18]. These methods learn low-dimensional representations of the biological contents of cells disentangled from technical variations, enabling effective integration of datasets across different conditions, technologies, and even species [18] [15].

Dimensionality Reduction and Visualization

The high-dimensional nature of scRNA-seq data (measuring thousands of genes across thousands of cells) necessitates dimensionality reduction for interpretation and visualization. Principal component analysis (PCA) remains a foundational linear technique that conserves global distance relationships between cells [19]. Non-linear methods such as t-SNE (t-Distributed Stochastic Neighbor Embedding) and UMAP (Uniform Manifold Approximation and Projection) have gained popularity for their ability to capture complex local structures, though they may distort global distances [18] [16].

Innovative visualization approaches continue to emerge to address specific analytical challenges. The scBubbletree method represents clusters of transcriptionally similar cells as "bubbles" at the tips of dendrograms, providing quantitative summaries of cluster properties while avoiding overplotting issues common in large datasets [19]. For dynamic processes such as development, trajectory inference methods like Poincaré maps employ hyperbolic geometry to better represent hierarchical and branched developmental trajectories [18] [20]. These advanced visualization techniques facilitate biological interpretation while maintaining quantitative accuracy.

scRNA-seq Data Analysis Pipeline

Network Inference from Single-Cell Data

Methodological Approaches for GRN Inference

Gene regulatory network inference from scRNA-seq data represents a powerful application that benefits from the technology's ability to capture cell-to-cell heterogeneity. Traditional GRN inference methods developed for bulk data, such as GENIE3 and GRNBoost2, have been adapted for single-cell analysis, though they face challenges from the unique characteristics of scRNA-seq data, particularly technical noise and dropout events [17]. More specialized approaches include LEAP, which estimates pseudotime to infer gene co-expression over lagged windows, and SCODE, which combines pseudotime with ordinary differential equations to model regulatory relationships [17]. SCENIC represents another influential framework that first identifies gene co-expression modules using GENIE3/GRNBoost2, then identifies key transcription factors that regulate these modules or regulons [17].

The prevalence of false zeros or "dropout" events in scRNA-seq data, where some transcripts' expression values are erroneously not captured, presents a particular challenge for GRN inference [17]. In typical scRNA-seq datasets, 57 to 92 percent of observed counts are zeros, with a substantial proportion representing technical artifacts rather than true biological absence [17] [18]. Conventional approaches to this issue have focused on data imputation methods that replace missing values with imputed estimates, though these methods often depend on restrictive assumptions and may introduce additional biases [17].

Advanced Computational Frameworks

Neural network-based approaches have emerged as powerful tools for GRN inference from single-cell data. DeepSEM parameterizes the adjacency matrix and uses a variational autoencoder (VAE) architecture optimized on reconstruction error, demonstrating superior performance in benchmark evaluations [17]. However, these methods can be susceptible to overfitting dropout noise in the data, leading to degradation in inferred network quality as training progresses [17].

The recently introduced DAZZLE (Dropout Augmentation for Zero-inflated Learning Enhancement) model addresses this limitation through a novel approach called Dropout Augmentation (DA) [17]. Rather than eliminating zeros through imputation, DA regularizes the model by augmenting the input data with a small amount of simulated dropout noise during training, making the model more robust against dropout artifacts [17]. This counter-intuitive approach draws theoretical foundation from the equivalence between adding noise and Tikhonov regularization established in machine learning literature [17]. DAZZLE incorporates this approach within a structure equation model (SEM) framework, utilizing a simplified model structure and closed-form prior to improve stability and reduce computational requirements compared to DeepSEM [17].

Validation and Interpretation

Validating inferred GRNs remains challenging due to the scarcity of comprehensive ground truth networks. The BEELINE benchmark provides standardized evaluation frameworks for comparing GRN inference methods on scRNA-seq data [17]. Practical applications on real-world datasets, such as the longitudinal mouse microglia dataset containing over 15,000 genes, demonstrate DAZZLE's ability to handle minimal gene filtration while maintaining biological relevance [17]. The improved robustness and stability of these advanced inference methods make them valuable additions to the computational toolkit for extracting regulatory insights from single-cell data.

Visualization and Interpretation Tools

Interactive Analysis Platforms

The complexity of scRNA-seq data has driven the development of specialized visualization tools that enable interactive exploration and interpretation. scViewer represents an R/Shiny application designed to facilitate visualization of scRNA-seq gene expression data through an intuitive graphical interface [21]. This tool takes processed Seurat objects as input and provides functionalities for exploring cell-type-specific gene expression, co-expression analysis of gene pairs, and differential expression analysis with different biological conditions [21]. A key advantage of scViewer is its implementation of negative binomial gamma mixed modeling (NBGMM) to compare gene expression in individual subjects between groups, accounting for both cell-level and subject-level variability [21].

Other available tools include SchNAPPs, which follows workflows from Seurat or Scran packages to perform quality control, normalization, clustering, and differential expression analysis [21]. ShinyCell dynamically converts scRNA-seq datasets into interactive interfaces for exploration, though it excludes differential expression analysis due to long processing times with large cell numbers [21]. Specialized visualization platforms such as singlecellVR and CellexalVR employ 3D plots or virtual reality environments to enhance pattern recognition in single-cell clusters [21].

Optimized Visual Representation

Effective color palette selection represents a subtle but critical aspect of scRNA-seq visualization, particularly as studies routinely identify tens of distinct cell clusters. Current visualization methods often assign visually similar colors to spatially neighboring clusters in dimensionality reduction plots, making it difficult to distinguish adjacent populations [20]. The Palo R package addresses this issue by optimizing color palette assignments in a spatially aware manner [20]. Palo calculates spatial overlap scores between cluster pairs using kernel density estimation and Jaccard indices, then identifies color assignments that maximize perceptual differences between neighboring clusters [20]. This approach resolves visualization issues where neighboring clusters share similar colors that are hard to differentiate, improving the identification of boundaries between spatially adjacent populations [20].

For dynamic data visualization, methods like deep visualization (DV) embed cells into Euclidean or hyperbolic spaces depending on whether the analysis focuses on static cell clustering or dynamic trajectory inference [18]. DV learns a structure graph based on local scale contraction to describe relationships between cells more accurately, transforming data into visualization space while preserving geometric structure and correcting batch effects in an end-to-end manner [18]. For dynamic scenarios involving developmental trajectories, hyperbolic embedding with negative curvature better represents the exponential growth of cell states characteristic of hierarchical differentiation processes [18].

Applications in Drug Discovery and Development

The unprecedented resolution provided by scRNA-seq has transformative implications for drug discovery and development pipelines. In oncology, scRNA-seq enables detailed characterization of the tumor microenvironment, revealing complex cellular ecosystems and cell-cell interactions that drive cancer progression and therapeutic resistance [14]. The technology can identify rare cell populations, such as drug-resistant subclones or cancer stem cells, that may represent critical therapeutic targets [14]. Similarly, in neuroscience, scRNA-seq has illuminated the diversity of neuronal and glial cell types in both healthy and diseased states, providing new insights into neurodegenerative diseases and potential intervention strategies [21].

Drug screening applications benefit from scRNA-seq's ability to resolve heterogeneous responses to compounds across different cell types within complex cultures or tissue samples. This enables the identification of cell-type-specific drug effects and mechanisms of action that would be obscured in bulk analyses [14]. In immunotherapy development, scRNA-seq provides detailed characterization of immune cell states and dynamics, facilitating the identification of predictive biomarkers and novel targets for immunomodulatory therapies [14]. The technology's capacity to uncover novel cell types and states continues to expand the universe of potential therapeutic targets across disease areas.

Biomarker discovery represents another major application area where scRNA-seq offers significant advantages over bulk approaches. By identifying cell-type-specific expression patterns associated with disease states or treatment responses, researchers can develop more precise diagnostic and prognostic biomarkers [14]. The technology's sensitivity to rare cell populations enables detection of minimal residual disease or early pathological changes that precede clinical symptoms. As single-cell technologies continue to evolve toward higher throughput and lower cost, their integration into drug development pipelines is expected to accelerate, enabling more targeted therapeutic strategies and personalized treatment approaches.

Molecular interaction networks form the foundation for understanding how complex biological functions are controlled by the intricate interplay of genes and proteins. The investigation of perturbed biological processes using these networks has been instrumental in uncovering the mechanisms that underlie complex disease phenotypes [22]. In recent years, rapid advances in omics technologies have generated high-throughput datasets that enable large-scale, network-based analyses. Consequently, various computational modeling techniques have proven invaluable for gaining new mechanistic insights into disease pathogenesis and therapeutic development [22]. These network-based approaches have created powerful applications for extracting meaningful and interpretable knowledge from molecular profiling data, particularly through the field of systems medicine which specializes in network-based modeling of biological systems.

Gene Regulatory Networks (GRNs) represent particularly intricate biological systems that control gene expression and regulation in response to environmental and developmental cues [2]. Advances in computational biology, coupled with high-throughput sequencing technologies, have significantly improved the accuracy of GRN inference and modeling. Modern approaches increasingly leverage artificial intelligence (AI), particularly machine learning techniques including supervised, unsupervised, semi-supervised, and contrastive learning to analyze large-scale omics data and uncover regulatory gene interactions [2]. The reconstruction and analysis of GRNs and other biological networks have become crucial methodologies for modeling and understanding complex biological processes, helping to decipher regulatory relationships among genes and model changes in gene expression under various conditions [23].

Table 1: Key Network Types in Biomedical Research

| Network Type | Components | Interactions Represented | Primary Applications |

|---|---|---|---|

| Protein-Protein Interaction (PPI) Networks | Proteins | Physical binding between proteins | Identifying functional complexes, drug target discovery [22] |

| Gene Regulatory Networks (GRNs) | Transcription factors, target genes | Regulatory relationships controlling gene expression | Understanding developmental processes, disease mechanisms [2] [23] |

| Co-expression Networks | Genes | Similar expression patterns across conditions | Identifying functionally related genes, biomarker discovery [22] |

| Metabolic Networks | Metabolites, enzymes | Biochemical transformations | Studying metabolic disorders, identifying therapeutic targets [22] |

| Signaling Networks | Signaling molecules, receptors | Signal transduction pathways | Understanding cell communication, cancer mechanisms [22] |

Uncovering Disease Mechanisms Through Network Analysis

Differential Network Analysis (DINA)

Differential Network Analysis (DINA) has emerged as a powerful methodology for comparing biological networks under disparate conditions, such as contrasting disease states with healthy ones [24]. DINA algorithms are specifically designed to pinpoint changes in network structures by identifying association measures that differ between two biological states. When presented with two different biological conditions, represented as two networks of molecular interactions, DINA algorithms aim to uncover the network rewiring that underpins the mechanistic differences between these states [24]. The fundamental premise is that the rewiring of molecular interactions in various conditions leads to distinct phenotypic outcomes, and understanding these rewiring events can reveal specific molecular signatures of disease.

A novel non-parametric approach to DINA incorporates differential gene expression based on sex and gender attributes, hypothesizing that gene expression can be accurately represented through non-Gaussian processes [24]. This methodology involves quantifying changes in non-parametric correlations among gene pairs and expression levels of individual genes. When applied to public expression datasets concerning diabetes mellitus and atherosclerosis in liver tissue, this method has successfully identified gender-specific differential networks, underscoring the biological relevance of this approach in uncovering meaningful molecular distinctions [24]. The relevance of DINA is exemplified in research by Ha et al., who described differential networks between two different subtypes of glioblastoma estimated from genomic data, and Basha et al., who conducted an extensive differential network analysis of multiple human tissue interactomes to evidence differences in biological processes between tissues [24].

Disease Module Identification

The concept of disease modules represents a fundamental framework in network medicine, based on the observation that disease-associated genes are not scattered randomly throughout the interactome but, due to their functional association, tend to be highly connected among themselves or reside in the same neighborhood [22]. The accurate identification of disease modules can help identify new disease genes and pathways and aid rational drug target identification [22]. De novo network enrichment methods (DNE), also referred to as active module identification methods, can be used to identify these disease modules—connected subnetwork of the human interactome that can be linked to a disease of interest [22].

In contrast to classical enrichment analysis that relies on predefined pathways or curated gene sets, DNE methods construct "active" subnetworks by projecting experimental data (mostly transcriptomic or genomic profiles) onto a molecular interaction network [22]. Candidate subnetworks are then scored by an objective function, and heuristics are implemented to identify locally optimal solutions efficiently. These approaches can be categorized into several computational frameworks:

Aggregate score methods compute a summary score for a candidate subnetwork based on assigned scores to individual genes, typically calculated using fold changes or P-values from differential expression analyses [22]. Tools in this category include SigMod, which takes gene-level P-values from genome-wide association studies (GWAS) and implements a min-cut algorithm to identify optimally enriched disease modules, and IODNE, which scores nodes and edges based on differential expression and PPI network topology using Kruskal's algorithm [22].

Module cover approaches either accept a user-provided list of relevant genes for a specific condition or implement a separate preprocessing step to determine such genes, then extract subnetworks that "cover" a large number of the pre-selected active genes [22]. KeyPathwayMiner exemplifies this approach by expecting molecular profiles encoded as binary indicator matrices as input to solve a variant of the maximal connected subnetwork problem [22].

Score propagation methods assign initial scores to nodes and propagate them through the network before extracting high-scoring subnetworks [22]. NetDecoder exemplifies this approach by extracting sparse subnetworks as directed graphs using information flow between sources and sinks that act as regulators [22].

Table 2: Computational Methods for Disease Module Identification

| Method Category | Representative Tools | Input Data Types | Key Algorithms | Example Applications |

|---|---|---|---|---|

| Aggregate Score Methods | SigMod, IODNE, PCSF, Omics Integrator | GWAS p-values, gene expression, mutation profiles | Min-cut, prize-collecting Steiner forest, minimum spanning trees | Childhood-onset asthma genes, triple-negative breast cancer targets [22] |

| Module Cover Approaches | KeyPathwayMiner, ModuleDiscoverer, NoMAS, nCOP | Gene expression, mutation profiles | Maximal connected subnetwork, maximum clique enumeration | Epigenetic targets in SARS-CoV-2, cancer survival subnetworks [22] |

| Score Propagation Methods | NetDecoder, HotNet2, Hierarchical HotNet | Mutation profiles, gene expression | Heat diffusion models, information flow | Glioblastoma resistance mechanisms, pancreatic cancer subtyping [22] |

| Machine Learning Methods | Grand Forest, N2V-HC, BiCoN, TiCoNe | Gene expression, methylation data, time-series data | Network embedding, biclustering, hierarchical clustering | Lung cancer stratification, Parkinson's disease modules [22] |

Figure 1: Differential Network Analysis Workflow for Identifying Disease Mechanisms

Advanced GRN Inference from Single-Cell Data

Single-cell RNA sequencing (scRNA-seq) has revolutionized the study of cellular diversity, but presents unique challenges for GRN inference due to the prevalence of "dropout" events where some transcripts' expression values are erroneously not captured [12]. Addressing this zero-inflation issue is crucial for accurate inference of gene regulatory networks. DAZZLE (Dropout Augmentation for Zero-inflated Learning Enhancement) represents an innovative approach that introduces "dropout augmentation" (DA)—a model regularization method to improve resilience to zero inflation in single-cell data by augmenting the data with synthetic dropout events [12]. This approach offers a novel perspective to solve the dropout problem beyond traditional imputation methods.

DAZZLE uses the same variational autoencoder (VAE)-based GRN learning framework introduced by DeepSEM and DAG-GNN but employs dropout augmentation and several other model modifications [12]. These include an optimized adjacency matrix sparsity control strategy, a simplified model structure, and a closed-form prior. Benchmark experiments have demonstrated improved performance and increased stability of the DAZZLE model over existing approaches [12]. The practical application of DAZZLE on a longitudinal mouse microglia dataset containing over 15,000 genes illustrates its ability to handle real-world single-cell data with minimal gene filtration, making it a valuable addition to the toolkit for GRN inference from single-cell data [12].

Accelerating Drug Discovery Through Network-Based Approaches

Drug Repurposing Using Gene Regulatory Networks

Network-based approaches have dramatically accelerated drug discovery, particularly through drug repurposing strategies that leverage computational techniques and expanding biomedical data. A compelling example comes from a study on bipolar disorder (BD), which utilized gene regulatory networks to identify significant regulatory changes in the disease, then used these network-based signatures for drug repurposing [25]. Using the PANDA algorithm, researchers investigated variations in transcription factor-GRNs between individuals with BD and unaffected individuals, incorporating binding motifs, protein interactions, and gene co-expression data [25]. The differences in edge weights between BD and controls were then used as differential network signatures to identify drugs potentially targeting the disease-associated gene signature using the CLUEreg tool in the GRAND database.

This analysis utilized a large RNA-seq dataset of 216 post-mortem brain samples from the CommonMind consortium, constructing GRNs based on co-expression for individuals with BD and unaffected controls, involving 15,271 genes and 405 transcription factors [25]. The analysis highlighted significant influences of these transcription factors on immune response, energy metabolism, cell signalling, and cell adhesion pathways in the disorder. Through this network-based drug repurposing approach, researchers identified 10 promising candidates potentially repurposed as BD treatments, including kaempferol and pramocaine, which have preclinical evidence supporting their efficacy [25]. Additionally, novel targets such as PARP1 and A2b offer opportunities for future research on their relevance to the disorder.

Target Identification and Prioritization

Network-based approaches have proven particularly valuable for target identification and prioritization in drug development. The accurate identification of disease modules enables researchers to identify new disease genes and pathways and aids rational drug target identification [22]. The Minimum-weight Steiner trees (MWSTs) approach, which can be viewed as generalizations of shortest paths with more than two endpoints (terminals), provides a good choice to cover a large fraction of disease-relevant molecular pathways [22]. Using known disease genes as seeds (terminals), methods like MuST identify disease modules by aggregating several approximations of Steiner trees into a single subnetwork [22].

ROBUST is another method using MWST which offers improved robustness compared to MuST by enumerating pairwise diverse rather than pairwise non-identical disease modules [22]. Levi et al. addressed limitations in most DNE methods using gene expression data through DOMINO, which aims to find disjoint connected Steiner trees in which the active genes (seeds) are over-represented, thereby more fully exploiting the information contained in expression data [22]. These approaches facilitate the identification of key genes and pathways that may be responsible for diseased conditions or could be potential therapeutic targets.

Figure 2: Network-Based Drug Discovery and Repurposing Pipeline

Experimental Protocols for Network Analysis

Protocol 1: Chromatin Conformation Analysis (4C-seq)

Chromosome conformation capture (3C) methods measure DNA contact frequencies based on nuclear proximity ligation to uncover in vivo genomic folding patterns [26]. 4C-seq is a derivative 3C method designed to search the genome for sequences contacting a selected genomic site of interest. This technique employs inverse PCR and next-generation sequencing to amplify, identify, and quantify its proximity ligated DNA fragments, generating high-resolution contact profiles for selected genomic sites based on limited amounts of sequencing reads [26].

Sample Preparation Protocol:

- Crosslinking: Fix chromatin with formaldehyde to capture in vivo interactions

- Digestion: Restrict DNA with a primary restriction enzyme

- Ligation: Perform proximity ligation under diluted conditions to favor intramolecular ligation

- Reverse Crosslinking: Purify and reverse crosslinks to release DNA

- Secondary Digestion: Use a frequent cutter restriction enzyme to generate smaller fragments

- Second Ligation: Perform a second ligation to create circular DNA templates

- Purification: Remove non-ligated fragments and purify circular templates

Library Preparation and Sequencing:

- Inverse PCR: Design primers outward-facing from the viewpoint of interest

- Amplification: Amplify the ligation products using optimized PCR conditions

- Library Construction: Prepare sequencing libraries using standard protocols

- Multiplexing: Barcode samples for multiplexed sequencing

- Sequencing: Perform high-throughput sequencing on appropriate platform

Data Analysis Pipeline:

- Demultiplexing: Separate sequenced reads by barcode

- Quality Control: Assess read quality and filter low-quality sequences

- Alignment: Map reads to reference genome

- Contact Frequency Calculation: Normalize and quantify interaction frequencies

- Peak Calling: Identify significant interactions using peakC algorithm

- Visualization: Generate interaction profiles compatible with genome browsers

Protocol 2: Gene Regulatory Network Inference from Single-Cell Data

Data Preprocessing:

- Quality Control: Filter cells based on quality metrics (mitochondrial content, number of genes detected)

- Normalization: Normalize counts to account for sequencing depth variations

- Feature Selection: Identify highly variable genes for network construction

- Zero Handling: Address dropout events using appropriate methods (imputation or dropout augmentation)

DAZZLE Implementation Protocol:

- Data Transformation: Transform raw counts using ( \log(x+1) ) to reduce variance and avoid log of zero

- Dropout Augmentation: Augment data with synthetic dropout events for model regularization

- Model Configuration:

- Parameterize adjacency matrix for use in autoencoder framework

- Implement structure equation model (SEM) framework

- Apply simplified model structure with closed-form prior

- Training:

- Train model to reconstruct input while learning regulatory relationships

- Employ optimized sparsity control strategy for adjacency matrix

- Monitor training stability and performance

- Network Inference: Extract regulatory relationships from trained model parameters

- Validation: Compare inferred networks with known regulatory interactions from benchmark datasets

Downstream Analysis:

- Network Characterization: Identify key transcription factors and regulatory hubs

- Module Detection: Discover co-regulated gene modules

- Differential Analysis: Compare networks across conditions or cell types

- Functional Enrichment: Interpret regulatory programs using pathway analysis

Table 3: Essential Research Reagents and Computational Tools for Network Analysis

| Category | Item/Software | Specification/Version | Primary Function |

|---|---|---|---|

| Wet Lab Reagents | Formaldehyde | Molecular biology grade | Chromatin crosslinking for 3C methods [26] |

| Restriction Enzymes | 4-base and 6-base cutters | Chromatin digestion for 4C-seq [26] | |

| DNA Ligase | High-concentration T4 | Proximity ligation of crosslinked DNA [26] | |

| PCR Reagents | High-fidelity polymerase | Amplification of ligation products [26] | |

| Computational Tools | DAZZLE | Python implementation | GRN inference from single-cell data with dropout augmentation [12] |

| PANDA | R/Python package | GRN inference using message passing algorithm [25] | |

| KeyPathwayMiner | Java application | De novo network enrichment analysis [22] | |

| peakC | R package | Peak calling for 4C-seq data [26] | |

| Databases | GRAND | Database | Network-based drug repurposing signatures [25] |

| Human Interactome | Public databases | Protein-protein interaction networks [22] | |

| BEELINE | Benchmarking platform | Evaluation of GRN inference methods [12] |

Network-based approaches have fundamentally transformed our ability to understand complex disease mechanisms and accelerate therapeutic development. Through methods such as differential network analysis, disease module identification, and advanced GRN inference from single-cell data, researchers can now systematically map the molecular rewiring that occurs in disease states. The integration of these network-based approaches with drug repurposing strategies has created powerful frameworks for identifying novel therapeutic applications for existing compounds, significantly shortening the traditional drug development timeline. As these methodologies continue to evolve—particularly with advances in artificial intelligence and single-cell technologies—network-based approaches will play an increasingly central role in precision medicine, enabling more targeted and effective therapeutic strategies for complex diseases.

The Methodological Toolkit: From Correlation to Causal AI Models

Inferring Gene Regulatory Networks (GRNs) is a fundamental challenge in computational biology, aimed at deciphering the complex interplay of genes, regulatory elements, and signaling molecules that orchestrate cellular behavior [27]. GRNs provide a formal representation of gene-gene interactions using graph representations, where nodes correspond to genes and edges represent regulatory interactions, often measured by transcription factors [28]. Understanding these networks is crucial for elucidating the mechanistic basis of phenotypic plasticity in cancer, identifying master regulators of cell fate decisions, and designing synthetic circuits for therapeutic applications [27].

The core challenge in GRN inference lies in the fact that experimental data, such as gene expression from single-cell RNA-sequencing (scRNA-seq), only provides indirect evidence of regulatory interactions rather than direct observations of causality [28]. Computational methods must therefore contend with these ambiguities, alongside technical noise and biological complexity. Classical computational approaches form the foundation of GRN inference, primarily leveraging statistical dependencies derived from gene expression data to predict regulatory relationships. This application note details the protocols and practical implementation of three cornerstone classical methods: correlation networks, information-theoretic approaches, and regression models, framed within the context of a comprehensive network inference methodology thesis.

The table below summarizes the key characteristics, performance metrics, and applications of the primary classical GRN inference approaches, synthesizing findings from benchmark studies [29] [17].

Table 1: Comparison of Classical GRN Inference Approaches

| Method Category | Core Principle | Typical Performance (AUROC) | Strengths | Limitations |

|---|---|---|---|---|

| Correlation Networks (e.g., Pearson) | Measures linear statistical dependence between gene expression profiles [29] [28]. | Moderate, better than random but far from perfect [29]. | Computationally efficient, simple to implement. | Cannot distinguish direct from indirect regulation; undirected predictions [29]. |

| Information Theory (e.g., PIDC) | Uses mutual information and partial information decomposition to detect non-linear dependencies [17] [28]. | Varies; can capture non-linearities. | Captures non-linear and combinatorial relationships; model cellular heterogeneity [17]. | High computational cost for large networks; can be sensitive to data sparsity. |

| Regression Models (e.g., GENIE3, GRNBoost2) | Models the expression of each target gene as a function of its potential regulators [17] [28]. | Strong performance on single-cell data; often used as a baseline in benchmarks [17]. | Provides directed predictions; handles high-dimensional data well. | Assumes pre-defined set of transcription factors; may neglect combinatorial cooperativity [27]. |

Detailed Experimental Protocols

Protocol 1: Constructing a Correlation-Based GRN

This protocol outlines the steps for inferring a GRN using Pearson correlation, a fundamental and widely used undirected method [29].

Research Reagent Solutions

- scRNA-seq Data Matrix: A cell-by-gene matrix of raw mRNA counts. Serves as the primary input for inferring statistical relationships.

- Computational Environment: Software environment (e.g., R or Python with libraries like pandas, numpy) for data manipulation and calculation.

Procedure

- Data Preprocessing: Begin with a gene expression matrix

Xof dimensionsm x n, wheremis the number of cells andnis the number of genes. Normalize the data (e.g., counts per million) and apply a variance-stabilizing transformation (e.g.,log(x+1)) [17]. - Compute Correlation Matrix: Calculate the Pearson correlation coefficient for every pair of genes

(i, j)wherei ≠ j. This produces a symmetricn x nmatrixR, where each elementR[i, j]represents the correlation strength. - Generate Prediction Matrix: The correlation matrix

Ritself serves as the network prediction matrixÂ. Since correlation is symmetric,Â[i, j] = Â[j, i], resulting in an undirected network prediction [29]. - Apply Threshold (Optional): For a binary network, apply a threshold to

Â. All edges with a correlation coefficient above the threshold are considered predicted interactions. The choice of threshold navigates the trade-off between true positive rate and false positive rate [29].

Protocol 2: Information-Theoretic GRN Inference with PIDC

This protocol details the use of Partial Information Decomposition (PIDC) to infer GRNs by measuring the mutual information between genes, capturing non-linear dependencies [17].

Research Reagent Solutions

- Normalized scRNA-seq Matrix: Processed gene expression data, ready for information-theoretic analysis.

- PIDC Software Package: Implementation of the PIDC algorithm (e.g., in Python or R).

Procedure

- Data Discretization: Information-theoretic methods often require discrete data. Discretize the preprocessed gene expression matrix into a finite number of bins (e.g., 3-5 bins representing low, medium, and high expression).

- Calculate Mutual Information: For each pair of genes

(i, j), compute the mutual informationI(X_i; X_j), which quantifies the amount of information gained about one gene's expression by knowing the other's. - Perform Partial Information Decomposition: To distinguish direct from indirect regulation, PIDC quantifies the information that genes

iandjshare about a target genekthat cannot be explained by eitheriorjalone. This helps control for the effects of common regulators [17]. - Construct Asymmetric Network: The PIDC score between each pair of genes forms the basis of the directed prediction matrix

Â. Higher PIDC scores indicate a stronger confidence in a direct regulatory interaction.

Protocol 3: Regression-Based GRN Inference with Tree Models

This protocol describes the use of tree-based regression models, as implemented in methods like GENIE3 and GRNBoost2, which are known for their strong performance on single-cell data [17].

Research Reagent Solutions

- Expression Matrix & TF List: The normalized expression matrix and a pre-defined list of transcription factors (TFs) to be considered as potential regulators.

- Software Implementation: Access to the GENIE3 (R/Python) or GRNBoost2 (Python) software.

Procedure

- Define Target Genes and Regulators: Split the full set of genes into two: a list of potential regulators (e.g., all known TFs) and a list of target genes (which can be all genes).

- Train Ensemble Model per Target: For each target gene, train a tree-based model (e.g., Random Forest or Gradient Boosting) where the expression of the target is the response variable and the expression of all potential regulators are the predictor variables.

- Extract Feature Importance: From the trained model for each target, compute a feature importance score for every potential regulator. This score quantifies how much each regulator contributes to predicting the target's expression.

- Compile Global Network: Aggregate the feature importance scores across all target genes to form the final prediction matrix

Â. The elementÂ[i, j]is the importance of regulatorifor predicting targetj, resulting in a directed network [17].

Evaluation and Benchmarking Framework

Rigorous evaluation is essential for validating the performance of any GRN inference method. The following protocol outlines a standard benchmarking workflow [29].

Procedure

- Inputs: Prepare the network prediction matrix

Âfrom your chosen method and the ground truth network matrixA(if available for benchmark data). - Threshold Sweep: Apply a series of thresholds to the prediction matrix

Âto convert confidence scores into binary predictions (edge exists: 1; does not exist: 0). - Calculate Metrics per Threshold: For each threshold, compare the binary predictions to the ground truth and compute:

- True Positive Rate and False Positive Rate for the ROC curve.

- Precision and Recall for the Precision-Recall curve.

- Compute Final Score: Calculate the Area Under the ROC Curve (AUROC) and the Area Under the Precision-Recall Curve (AUPR) to get single-number performance scores. A perfect AUROC is 1.0 [29].

Table 2: Key Evaluation Metrics for GRN Inference [29]

| Metric | Calculation | Interpretation |

|---|---|---|

| True Positive (TP) | Edge exists in both A and thresholded  |

Correctly predicted regulatory interaction. |

| False Positive (FP) | Edge exists in  but not in A |

Incorrectly predicted interaction. |

| True Negative (TN) | Edge exists in neither A nor  |

Correctly predicted absence of interaction. |

| False Negative (FN) | Edge exists in A but not in  |

Missed true regulatory interaction. |

| True Positive Rate (TPR/Recall) | TP / (TP + FN) |

Proportion of true edges correctly identified. |

| False Positive Rate (FPR) | FP / (FP + TN) |

Proportion of non-edges incorrectly identified as edges. |

| Area Under ROC Curve (AUROC) | Area under TPR vs. FPR plot | Overall measure of method's ability to rank true edges above non-edges. |

The inference of Gene Regulatory Networks (GRNs) is a central challenge in systems biology, crucial for understanding the complex mechanisms that govern cellular processes, development, and disease [30]. GRNs represent the ensemble of interactions among genes and their regulators, providing a contextual model of transcriptional control within a cell [17]. With the advent of single-cell RNA sequencing (scRNA-seq) technologies, researchers can now analyze transcriptomic profiles at an unprecedented resolution, offering a detailed view of cellular diversity and enabling the inference of context-specific GRNs [17] [12].

Among the various computational approaches developed for GRN inference, tree-based machine learning methods have emerged as particularly powerful and widely adopted tools. GENIE3 (GEne Network Inference with Ensemble of trees) and its gradient-boosted variant, GRNBoost2, represent the pinnacle of this approach [30] [31]. These methods treat the network inference problem as a set of separate feature selection problems, where the expression of each gene is modeled as a function of the expression levels of all other potential regulator genes [32]. The remarkable success of these algorithms lies in their fully non-parametric nature, excellent scalability to thousands of genes, and intrinsic explainability through the importance scores derived from the tree models [30].

The significance of these tree-based powerhouses is further amplified through their integration into comprehensive pipelines such as SCENIC (Single-Cell rEgulatory Network Inference and Clustering) and its Python implementation, pySCENIC [33] [34]. SCENIC builds upon the co-expression modules identified by GENIE3 or GRNBoost2 by incorporating cis-regulatory motif analysis to refine the networks into biologically meaningful "regulons" - transcription factors and their direct target genes [34] [35]. This combined approach has established itself as a cornerstone in computational biology, enabling researchers to not only infer regulatory interactions but also to identify key transcription factors, characterize cell types, and understand cellular dynamics from single-cell data [33] [35].

Core Algorithmic Foundations

Theoretical Framework and Problem Formulation

Tree-based GRN inference methods operate on a foundational machine learning principle, conceptualizing the complex problem of network inference as a series of predictive modeling tasks. The core assumption is that the expression level of any given gene in a particular cellular condition can be predicted from the expression levels of its potential transcriptional regulators [32]. This formulation transforms an unsupervised network inference problem into a supervised regression framework, leveraging the power of ensemble tree methods while maintaining interpretability through feature importance metrics.

Mathematically, for a gene expression matrix ( X \in \mathbb{R}^{g \times c} ) containing measurements of ( g ) genes across ( c ) cells or conditions, the fundamental relationship modeled by both GENIE3 and GRNBoost2 for each gene ( j ) can be expressed as:

[ xj = fj(\mathbf{x}_{\setminus j}) + \varepsilon ]