Dynamic Modeling of Drug Responses: From Systems Biology to Clinical Translation

This article provides a comprehensive overview of dynamic modeling approaches for predicting and understanding drug responses within the framework of systems biology.

Dynamic Modeling of Drug Responses: From Systems Biology to Clinical Translation

Abstract

This article provides a comprehensive overview of dynamic modeling approaches for predicting and understanding drug responses within the framework of systems biology. It explores the foundational principles of mechanistic and data-driven models, detailing their application across the drug development pipeline from discovery to clinical use. The content addresses critical methodological challenges, including model identifiability and parameter estimation, and presents robust workflows for model troubleshooting and optimization. Furthermore, it examines validation strategies and comparative analyses of different modeling paradigms, highlighting their impact through case studies in areas like pediatric rare diseases and cancer therapy. Designed for researchers, scientists, and drug development professionals, this review synthesizes current advancements and practical insights to guide the effective implementation of dynamic models in accelerating therapeutic innovation.

The Core Principles of Dynamic Modeling in Biological Systems

Article 1: Core Principles and Quantitative Foundations of Mechanistic Modeling

Defining Mechanistic Models in Systems Biology

Mechanistic computational models are mathematical frameworks that simulate biological systems by explicitly representing the underlying physical and chemical interactions between molecular entities. Unlike purely data-driven empirical models, mechanistic models incorporate prior knowledge of regulatory networks by solving sets of mathematical equations that represent fundamental biological processes and chemical reactions (e.g., [A]+[B]⇄[A·B]) [1]. This approach allows researchers to move beyond correlation-based inferences and capture causal relationships within complex biological systems, making them particularly valuable for predicting drug responses where understanding mechanism of action is critical for success.

The key distinguishing feature of mechanistic modeling is its foundation in established biological knowledge rather than statistical inference from data alone. These models explicitly represent molecular species (proteins, RNA, metabolites), their interactions (binding, phosphorylation, degradation), and cellular processes (expression, trafficking, signaling) [1] [2]. This mechanistic foundation enables greater predictive power when extrapolating to new conditions, such as different dosing regimens, patient populations, or related drug compounds—scenarios where purely empirical models often fail [1].

Mathematical Frameworks: From ODEs to Whole-Cell Representations

Mechanistic dynamic models span multiple mathematical formalisms, each suited to different biological questions and scales of investigation:

Ordinary Differential Equations (ODEs) form the backbone of dynamic modeling in systems biology, describing the continuous rate of change of biological variables with respect to time [3]. ODE-based models are particularly well-suited for simulating biochemical reaction networks where concentrations vary continuously, such as signaling pathways, metabolic networks, and pharmacokinetic/pharmacodynamic (PK/PD) relationships. The kinetic laws governing these reactions—from simple first-order rate laws to more complex Michaelis-Menten enzyme kinetics—are implemented as systems of coupled ODEs that can be analyzed for steady states, stability, and dynamic behavior [3].

Whole-cell models represent the most comprehensive approach to mechanistic modeling, aiming to predict cellular phenotypes from genotype by representing the function of every gene, gene product, and metabolite [2]. These integrative models combine multiple mathematical approaches—including ODEs, constraint-based methods, stochastic simulation, and rule-based modeling—to capture the full complexity of cellular processes. Recent advances have enabled the development of whole-cell models that track the sequence of each chromosome, RNA, and protein; molecular structures; subcellular organization; and all chemical reactions and physical processes that influence their rates [2].

Reaction-diffusion models incorporate spatial heterogeneity using either particle-based methods that track individual molecules in three-dimensional space or lattice-based methods that track site occupancy in a discretized cellular space [4]. Tools like Lattice Microbes provide GPU-accelerated stochastic simulators for reaction-diffusion processes in whole-cell models, accounting for how cytoplasmic crowding and spatial localization influence cellular behavior [4].

Empirical Dynamic Modeling (EDM) offers a complementary data-driven approach for reconstructing system dynamics from time series data without requiring pre-specified mechanistic equations [5] [6]. Based on Takens' theorem for state-space reconstruction, EDM uses time-lagged coordinates of observed variables to reconstruct the underlying system attractor, enabling forecasting and causal inference in complex nonlinear systems where complete mechanistic knowledge is unavailable [6].

Table 1: Comparison of Mechanistic Modeling Approaches in Drug Response Research

| Modeling Approach | Mathematical Foundation | Key Applications in Drug Response | Representative Tools |

|---|---|---|---|

| ODE-based PK/PD | Systems of differential equations | Drug distribution, target engagement, dose-response relationships | COPASI, CVODES, MATLAB ode45 [7] [3] |

| Systems Pharmacology | Hybrid ODE/PDE with mechanistic signaling | Biomarker identification, patient stratification, combination therapy prediction | [Framework from citation:3] |

| Whole-cell Modeling | Multi-algorithmic integration | Target identification, off-target effect prediction, personalized therapy | WholeCellKB, E-Cell, Lattice Microbes [2] [4] |

| Reaction-Diffusion Modeling | Spatial stochastic simulation | Intracellular drug distribution, pathway localization effects | Lattice Microbes, MesoRD [4] |

| Empirical Dynamic Modeling | State-space reconstruction | Forecasting nonlinear treatment responses, identifying causal interactions | rEDM, multiview [5] [6] |

Article 2: Experimental Protocols and Computational Methodologies

Protocol: Developing Mechanistic ODE Models of Signaling Pathways

Objective: Construct a mechanistic ODE model of cancer-associated signaling pathways capable of predicting response to single drugs and drug combinations from molecular profiling data [8].

Materials and Repertoire of Research Reagent Solutions:

Table 2: Essential Computational Tools for Mechanistic Modeling

| Tool/Resource | Type | Function in Protocol | Key Features |

|---|---|---|---|

| COPASI | Software package | ODE model simulation, parameter estimation | SBML support, parameter scanning, sensitivity analysis [7] [3] |

| CVODES | ODE solver suite | Numerical integration of stiff ODE systems | Variable-order methods, Newton-type nonlinear solver [7] |

| SBML Models | Data standard | Model representation and exchange | Community standard, compatibility with multiple tools [7] |

| Parameter Estimation Framework | Computational method | Model calibration to experimental data | Efficient gradient-based optimization, >10⁴ speedup vs. state-of-art [8] |

| BioModels Database | Model repository | Access to curated biochemical models | Quality-controlled models, simulation-ready [7] |

Experimental Workflow:

Step 1: Model Construction and Representation Begin by defining the biochemical species and reactions comprising the signaling pathways of interest. For a pan-cancer pathway model, include major cancer-associated signaling pathways (>1,200 species and >2,600 reactions) [8]. Represent the reaction network using Systems Biology Markup Language (SBML), ensuring proper annotation of all components. Assemble reaction rate equations using mass-action kinetics for elementary reactions and Michaelis-Menten or Hill equations for enzymatic processes. Compartmentalize the model to distinguish membrane, cytoplasmic, and nuclear species where appropriate.

Step 2: Numerical Integration and Solver Configuration Select appropriate numerical integration methods based on model characteristics. For stiff ODE systems common in biological modeling (where variables evolve on widely different timescales), use backward differentiation formula (BDF) methods with Newton-type nonlinear solvers [7]. Configure error tolerances (relative and absolute) based on desired precision—typical values range from 10⁻⁴ to 10⁻⁶ for relative tolerance and 10⁻⁶ to 10⁻⁸ for absolute tolerance [7]. For large models, employ sparse LU decomposition (KLU) linear solvers to improve computational efficiency [7].

Step 3: Parameter Estimation and Model Calibration Leverage efficient parameter estimation frameworks to calibrate model parameters to experimental data. Utilize gradient-based optimization methods that can achieve >10,000-fold speedup compared to state-of-the-art approaches [8]. Integrate multi-omics data (exome and transcriptome sequencing) from cancer cell lines to inform parameter values. Employ regularization techniques to handle parameter identifiability issues and avoid overfitting. Validate parameter estimates using cross-validation and uncertainty quantification.

Step 4: Simulation and Prediction Simulate drug responses by modifying model parameters to represent drug-target interactions (e.g., inhibiting kinase activity). For combination therapy prediction, simulate simultaneous modulation of multiple targets and analyze emergent network behaviors. Perform Monte Carlo simulations to account for parametric uncertainty and cell-to-cell variability. Generate dose-response curves and synergy scores for drug combinations.

Step 5: Validation and Analysis Compare model predictions to experimental measurements of drug response in cell lines. Validate combination therapy predictions using orthogonal experimental data. Perform sensitivity analysis to identify key parameters controlling drug response. Analyze network dynamics to elucidate mechanisms of drug synergy and resistance.

Protocol: Whole-Cell Model Simulation for Drug Target Identification

Objective: Develop a whole-cell mechanistic model to identify novel drug targets by simulating the complete cellular network and identifying key sensitive nodes [2] [4].

Materials and Repertoire of Research Reagent Solutions:

Table 3: Whole-Cell Modeling Resources and Databases

| Resource | Content Type | Application in Protocol | Access |

|---|---|---|---|

| WholeCellKB | Knowledge base | Organize data for whole-cell modeling | Public [2] |

| UniProt | Protein database | Protein sequences, functions, interactions | Public [2] |

| BioCyc | Pathway database | Metabolic and signaling pathways | Public [2] |

| PaxDb | Protein abundance | Quantitative proteomics data | Public [2] |

| SABIO-RK | Kinetic parameters | Reaction kinetic data | Public [2] |

| Martini Ecosystem | Coarse-grained modeling | Molecular dynamics of cellular components | Public [9] |

Experimental Workflow:

Step 1: Data Integration and Curation Collect and integrate heterogeneous data types required for whole-cell modeling. This includes genomic data (gene sequences, locations), proteomic data (protein structures, abundances, localizations), metabolic data (reaction networks, kinetic parameters), and cellular architecture data (organelle structures, spatial organization) [2]. Utilize pathway/genome database tools (Pathway Tools) and specialized knowledge bases (WholeCellKB) to organize this information into a structured format suitable for modeling [2].

Step 2: Multi-algorithmic Model Assembly Construct the whole-cell model using a multi-algorithmic approach that combines different mathematical representations appropriate for various cellular processes. Represent metabolism using constraint-based modeling (flux balance analysis), gene regulation using Boolean networks, signal transduction using ODEs, and macromolecular assembly using stochastic simulation [2] [4]. Ensure proper communication between submodels by defining shared variables and integration time steps.

Step 3: Whole-Cell Simulation Execute whole-cell simulations using platforms capable of multi-algorithmic integration (E-Cell, WholeCellSimDB) [2]. Simulate the complete cell cycle under baseline conditions to establish reference behavior. Implement numerical methods that efficiently handle the multi-scale nature of cellular processes, from rapid biochemical reactions (milliseconds) to slow cellular growth (hours). Monitor key cellular phenotypes including growth rate, energy status, and macromolecular synthesis.

Step 4: Target Identification via Sensitivity Analysis Perform systematic sensitivity analysis by perturbing each molecular component in the model (gene knockouts, protein inhibitions, expression modifications). Identify key nodes whose perturbation significantly alters phenotypes relevant to disease (e.g., cancer cell proliferation). Prioritize targets based on the magnitude of effect, essentiality in the network, and druggability. Validate predictions using orthogonal genetic and pharmacological data.

Step 5: Drug Response Prediction Simulate drug action by modifying model parameters to represent compound-target interactions at measured binding affinities. Predict cellular responses across a range of drug concentrations and treatment durations. Identify biomarkers of drug response by correlating molecular changes with phenotypic outcomes. Explore combination therapies by simulating multi-target interventions and identifying synergistic interactions.

Article 3: Applications in Drug Development and Emerging Frontiers

Quantitative Applications in Pharmaceutical Research

Mechanistic dynamic models have demonstrated significant value across multiple stages of the drug development pipeline, from target identification to clinical trial design. The table below summarizes key quantitative findings from recent applications:

Table 4: Quantitative Applications of Mechanistic Models in Drug Development

| Application Area | Model Type | Key Performance Metrics | Impact/Results |

|---|---|---|---|

| Virtual Drug Screening | Cardiac electrophysiology model | Identification of compounds with reduced arrhythmia risk | Early elimination of candidates with adverse effects [1] |

| Drug Combination Prediction | Pan-cancer pathway model (>1,200 species) | Prediction of synergistic combinations from single drug data | Accurate combination response prediction without combinatorial testing [8] |

| Species Translation | Systems pharmacology PK/PD | Prediction of human efficacious dose from animal data | Improved translatability accounting for species-specific biology [1] |

| Patient Stratification | Cancer signaling models | Identification of responsive subpopulations by genomic features | Biomarker-defined patient selection for clinical trials [8] |

| Cellular Metabolism | Population flux balance analysis | Prediction of metabolic heterogeneity in clonal populations | Understanding of diverse metabolic phenotypes in identical environments [4] |

Protocol: Systems Pharmacology Modeling for Translational Research

Objective: Develop a mechanistic systems pharmacology model that integrates pharmacokinetics with dynamic pathway models to translate preclinical findings to human patients [1].

Materials and Repertoire of Research Reagent Solutions:

Table 5: Systems Pharmacology Modeling Resources

| Tool/Resource | Application | Key Features | Reference |

|---|---|---|---|

| Mechanistic PK/PD | Drug distribution and target engagement | Physiological-based PK, tissue distribution | [1] |

| Pathway Modeling | Intracellular signaling dynamics | Molecular-detailed reaction networks | [1] [8] |

| Biomarker Linking | Connecting tissue and plasma measurements | Correlation of accessible and tissue biomarkers | [1] |

| Population Modeling | Inter-individual variability | Integration of genomic, proteomic variability | [1] [4] |

Experimental Workflow:

Step 1: Pharmacokinetic Model Development Construct a physiologically-based pharmacokinetic (PBPK) model representing drug absorption, distribution, metabolism, and excretion (ADME). Parameterize the model using in vitro ADME assays and in vivo animal pharmacokinetic studies. Include key tissues relevant to drug action and toxicity, with special attention to the disease target tissue.

Step 2: Mechanistic Pharmacodynamic Model Development Develop a detailed mechanistic model of the drug's target pathway, incorporating molecular interactions, signaling events, and downstream physiological effects. Parameterize the model using in vitro binding assays, receptor trafficking studies, phosphorylation measurements, and functional cellular responses [1]. Ensure the model captures key feedback loops, cross-talk with related pathways, and adaptive responses.

Step 3: Model Integration and Validation Integrate the PK and PD components into a unified systems pharmacology model. Validate the integrated model by comparing simulations to observed in vivo responses in animal models, including time-course data on both drug concentrations and pharmacological effects. Refine model parameters to improve agreement with experimental data while maintaining biological plausibility.

Step 4: Translation to Human Context Adapt the validated model to human physiology by incorporating human-specific parameters including tissue sizes, blood flows, protein expression levels, and genetic variants [1]. Where available, utilize human in vitro systems (e.g., human hepatocytes, primary cells) to inform human-specific parameters. Leverage clinical data from similar compounds to validate translational assumptions.

Step 5: Clinical Prediction and Biomarker Identification Simulate clinical scenarios to predict human dose-response relationships, optimal dosing regimens, and potential adverse effects. Identify measurable biomarkers in accessible compartments (e.g., blood) that correlate with target engagement and response in tissues [1]. Design clinical trial simulations to explore different patient stratification strategies and endpoint measurements.

Emerging Frontiers and Future Directions

The field of mechanistic dynamic modeling is rapidly advancing toward increasingly comprehensive and multiscale representations of biological systems. Several emerging frontiers promise to further transform drug development:

Whole-cell modeling for personalized medicine is progressing toward the creation of patient-specific models that incorporate individual genomic, proteomic, and metabolic data to predict personalized drug responses [2]. Recent efforts have demonstrated the feasibility of building whole-cell models of minimal cells (JCVI-syn3A with 493 genes) using coarse-grained molecular dynamics approaches capable of simulating over 550 million particles [9]. These developments pave the way for virtual patient models that simulate drug effects at unprecedented resolution.

Multiscale modeling of tissue and organ responses extends cellular models to higher-level physiological responses by integrating cellular models with tissue-scale physiology [4]. Emerging hybrid methodologies combine flux balance analysis of metabolism with spatially resolved kinetic simulations to study how cells compete and cooperate within dense colonies, tumors, and tissues [4]. These approaches capture emergent behaviors that arise from cell-cell interactions and microenvironmental influences.

Integrative modeling with machine learning combines the mechanistic understanding of dynamic models with the pattern recognition power of machine learning. Recent frameworks have demonstrated the value of using efficient parameter estimation methods that leverage both mechanistic priors and data-driven optimization to achieve over 10,000-fold speedup compared to conventional approaches [8]. Such advances enable the application of large-scale mechanistic models to high-throughput drug screening and personalized response prediction.

The continued development of mechanistic dynamic models promises to transform drug development from a predominantly empirical process to a more predictive and mechanistic-driven endeavor. As these models incorporate increasingly comprehensive biological knowledge and computational power grows, they offer the potential to significantly reduce attrition rates in drug development by providing deeper insights into drug mechanisms, patient variability, and therapeutic outcomes before costly clinical trials begin [1].

The Crucial Role of QSP and Systems Biology in Modern Drug Development

Quantitative Systems Pharmacology (QSP) and Systems Biology represent transformative approaches that are reshaping modern drug development by moving beyond traditional single-target strategies to embrace the inherent complexity of biological systems. Systems Biology constructs comprehensive, multi-scale models of biological processes by integrating data from molecular, cellular, organ, and organism levels [10] [11]. This holistic perspective enables researchers to gain deeper insights into disease mechanisms and predict how drugs interact with the human body. Building on this foundation, QSP leverages computational modeling to simulate drug behaviors, predict patient responses, and optimize drug development strategies [10] [11]. By incorporating QSP into the drug discovery process, pharmaceutical companies can make more informed decisions, reduce development costs, and ultimately accelerate the delivery of safer, more effective therapies to patients [12].

The adoption of Model-Informed Drug Development (MIDD) frameworks, in which QSP plays a pivotal role, has demonstrated significant potential to shorten development timelines, reduce costly late-stage failures, and improve quantitative risk assessment [13]. Evidence from drug development and regulatory approval processes indicates that well-implemented MIDD approaches can significantly shorten development cycle timelines and reduce discovery and trial costs [13]. The increasing regulatory acceptance of these approaches, with growing numbers of submissions leveraging QSP models to bodies like the FDA, underscores their expanding influence in pharmaceutical R&D [12].

QSP Methodologies and Applications Across the Drug Development Pipeline

Core Methodologies in QSP

QSP integrates diverse mathematical and computational approaches to create mechanistic frameworks that bridge biological, physiological, and pharmacological data. The discipline employs a suite of specialized modeling techniques, each with distinct applications and strengths throughout the drug development continuum.

Table 1: Key Computational Modeling Approaches in Modern Drug Development

| Modeling Approach | Description | Primary Applications |

|---|---|---|

| Quantitative Systems Pharmacology (QSP) | Integrative modeling combining systems biology and pharmacology to simulate drug effects across biological scales | Target validation, clinical trial simulation, dose optimization, biomarker strategy |

| Physiologically Based Pharmacokinetic (PBPK) | Mechanistic modeling focusing on interplay between physiology and drug product quality | Drug-drug interaction prediction, special population dosing, formulation optimization |

| Population PK/PD | Statistical approach characterizing variability in drug exposure and response across individuals | Dose selection, covariate analysis, individualization strategies |

| Quantitative Structure-Activity Relationship (QSAR) | Computational modeling predicting biological activity from chemical structure | Lead compound optimization, toxicity prediction, ADME profiling |

| Systems Biology Models | Comprehensive networks representing biological processes across multiple data levels | Target identification, disease mechanism elucidation, pathway analysis |

Applications Across the Drug Development Continuum

QSP methodologies provide value throughout the entire drug development pipeline, from early discovery through post-market optimization. During early discovery, QSP models facilitate target identification and validation by simulating the potential impact of modulating specific pathways on disease phenotypes [13]. For lead optimization, QSP integrates structural information with physiological context to predict compound behavior and refine chemical entities [13]. In preclinical development, QSP models improve prediction accuracy by translating in vitro findings to in vivo expectations and guiding first-in-human (FIH) dose selection through integrated toxi-kinetic and pharmacodynamic modeling [13].

The clinical development phase benefits substantially from QSP approaches through optimized trial designs, identification of responsive patient populations, and exposure-response characterization [13]. Particularly valuable is the ability to generate virtual patient populations and digital twins, which are especially impactful for rare diseases and pediatric populations where clinical trials are often unfeasible [12]. During regulatory review and post-market surveillance, QSP supports label updates, additional indication approvals, and lifecycle management through model-informed extrapolation and benefit-risk assessment [13].

Experimental Protocols for QSP and Systems Biology

Protocol 1: Development of a Multi-Scale QSP Model for Oncology Therapeutics

This protocol outlines the systematic development of a QSP model for predicting efficacy of oncology therapeutics, integrating cellular, tissue, and system-level dynamics.

Model Scope Definition and Conceptualization

- Define Context of Use: Clearly articulate the model's purpose, such as optimizing combination therapy dosing schedules or identifying biomarkers of response [13]

- Establish System Boundaries: Determine the biological scope, including key pathways, cell types, and physiological processes relevant to the therapeutic mechanism

- Identify Data Requirements: Specify experimental and clinical data needed for model development and validation, including prior knowledge and novel assays

Knowledge Assembly and Network Construction

- Literature Mining and Data Curation: Systematically extract mechanistic information and quantitative parameters from published literature and databases

- Pathway Mapping: Construct comprehensive network diagrams of relevant signaling pathways, drug mechanisms, and feedback loops using standardized systems biology markup

- Hypothesis Formulation: Explicitly state biological assumptions and their evidence basis to maintain model transparency

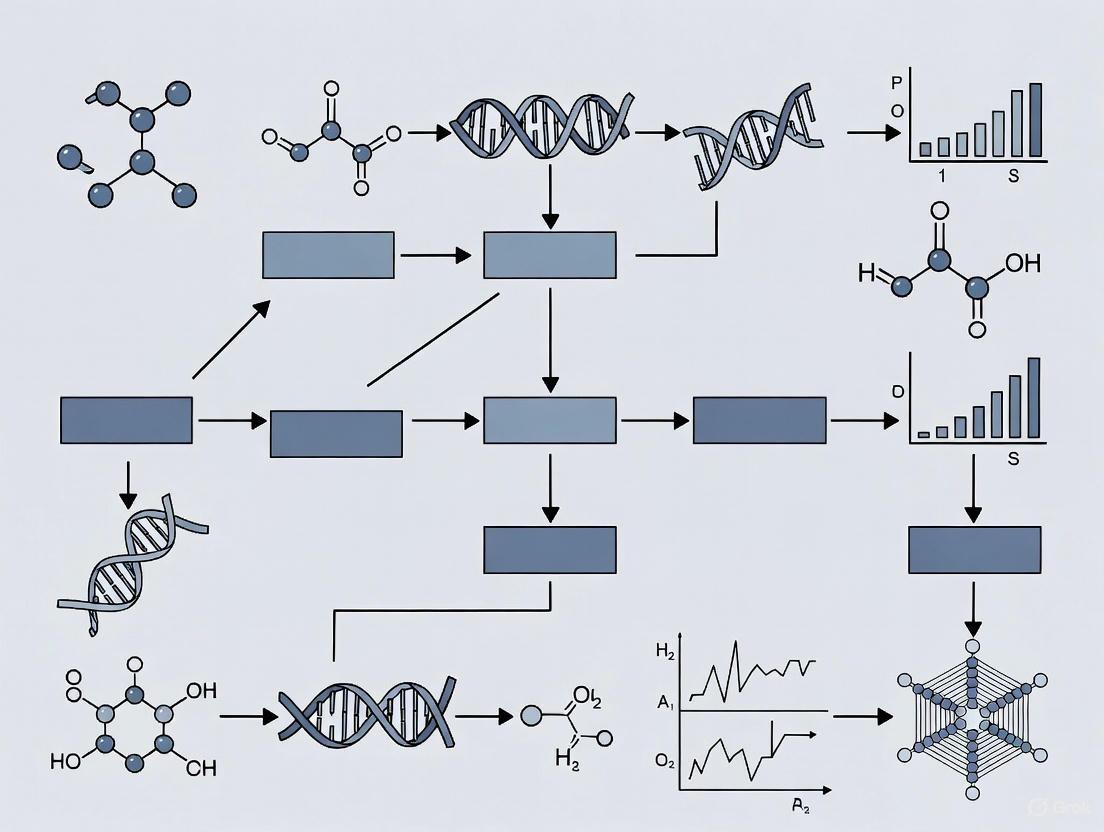

Diagram: QSP Model Development Workflow

Mathematical Formulation and Implementation

- Select Modeling Framework: Choose appropriate mathematical representations (ordinary differential equations, partial differential equations, agent-based modeling) based on system characteristics

- Implement Model Structure: Translate biological network into mathematical equations using platforms such as MATLAB, R, Python, or specialized systems biology tools

- Establish Initial Conditions: Define baseline physiological states based on healthy and disease conditions

Parameter Estimation and Model Calibration

- Leverage Prior Knowledge: Incorporate literature-derived parameter values with appropriate uncertainty distributions

- Calibrate Against Experimental Data: Use optimization algorithms to estimate unknown parameters by fitting to in vitro and in vivo data

- Perform Identifiability Analysis: Determine which parameters can be reliably estimated from available data

Model Validation and Qualification

- Internal Validation: Assess model performance against training data using goodness-of-fit metrics and residual analysis

- External Validation: Test model predictions against datasets not used in model development

- Context Qualification: Establish model credibility for the specific context of use through verification, validation, and uncertainty quantification

Model Application and Analysis

- Simulate Experimental Scenarios: Conduct virtual studies to explore drug effects under different conditions

- Perform Sensitivity Analysis: Identify key parameters and uncertainties driving model outcomes

- Generate Testable Hypotheses: Formulate predictions for subsequent experimental verification

Protocol 2: Machine Learning-Enhanced Prediction of Drug Responses in Patient-Derived Cell Cultures

This protocol combines traditional QSP with machine learning approaches to predict drug responses in patient-derived cell cultures, enabling personalized therapy prediction.

Experimental Design and Data Generation

- Cell Culture Establishment: Generate patient-derived cell lines or organoids maintaining original tumor characteristics [14]

- Drug Sensitivity Screening: Perform high-throughput screening of compound libraries across cell models to generate response profiles

- Multi-Omics Characterization: Conduct genomic, transcriptomic, and proteomic profiling of cell models to capture molecular features

Feature Selection and Data Preprocessing

- Probing Panel Identification: Select a minimal drug set (approximately 30 compounds) that captures maximum response variability across cell lines [14]

- Response Matrix Construction: Organize screening data into a structured matrix with cell lines as rows and drug responses as columns

- Data Transformation and Normalization: Apply appropriate scaling and normalization to ensure comparability across assays and platforms

Machine Learning Model Training

- Algorithm Selection: Implement random forest or other ensemble methods with approximately 50 trees as the base predictor [14]

- Model Training: Use historical screening data (approximately 100 cell lines recommended) to train the predictor [14]

- Hyperparameter Optimization: Tune model parameters using cross-validation to optimize predictive performance

Diagram: ML-Driven Drug Response Prediction

Model Validation and Performance Assessment

- Cross-Validation: Employ k-fold or leave-one-out cross-validation to estimate model generalizability

- Performance Metrics: Evaluate predictions using Pearson correlation (Rpearson), Spearman correlation (Rspearman), and root mean square error (RMSE) [14]

- Hit Rate Analysis: Assess clinical relevance by calculating the fraction of accurate predictions within top-ranked compounds (e.g., top 10, 20, or 30 drugs) [14]

Clinical Application and Translation

- Prospective Testing: Apply the trained model to new patient samples screened only against the probing panel

- Therapeutic Prioritization: Rank all drugs in the library based on predicted efficacy for the specific patient

- Experimental Confirmation: Validate top predictions (typically 10-15 candidates) through direct testing

Essential Research Tools and Reagents for QSP

The implementation of QSP and systems biology approaches requires specialized computational tools, experimental platforms, and reagent systems. The following table summarizes key components of the QSP research toolkit.

Table 2: Essential Research Reagent Solutions for QSP and Systems Biology

| Category | Specific Tools/Platforms | Function and Application |

|---|---|---|

| Computational Modeling Platforms | MATLAB, R, Python, Julia | Implementation of mathematical models, parameter estimation, and simulation |

| Systems Biology Model Repositories | BioModels Database, CellML | Access to curated, peer-reviewed models for reuse and adaptation |

| Pathway Analysis Tools | Pathway Commons, WikiPathways, KEGG | Biological network construction and annotation |

| Specialized QSP Software | Certara QSP Platform, DBSolve | Integrated development environment for QSP models |

| Patient-Derived Model Systems | 3D organoids, patient-derived cell cultures | Physiologically relevant experimental systems for model validation [14] |

| High-Content Screening Systems | Automated microscopy, image analysis | Generation of quantitative, multi-parameter data for model parameterization |

| Multi-Omics Technologies | RNA-Seq, mass spectrometry proteomics, metabolomics | Comprehensive molecular profiling for multi-scale model construction |

Emerging Trends and Future Perspectives

The field of QSP continues to evolve rapidly, driven by methodological advances and increasing integration with cutting-edge technologies. Artificial intelligence (AI) and machine learning (ML) are transforming QSP by enhancing model generation, parameter estimation, and predictive capabilities [15]. Novel approaches such as surrogate modeling, virtual patient generation, and digital twin technologies are expanding the scope and utility of QSP applications [15]. The emergence of QSP as a Service (QSPaaS) promises to democratize access to these sophisticated modeling approaches beyond large pharmaceutical companies [15].

The integration of AI with QSP is particularly promising for addressing challenges of model complexity and high-dimensional parameter spaces. AI-driven databases and cloud-based platforms are streamlining QSP model development and enabling more robust predictions [15]. However, key challenges remain, including computational complexity, model explainability, data integration, and regulatory acceptance [15]. Community-driven efforts to improve model transparency, reproducibility, and trustworthiness are critical for addressing these challenges [16].

Industry-academia partnerships are playing an increasingly important role in advancing QSP education and methodology development. Collaborative initiatives such as co-designed academic curricula, specialized training programs, and industrial internships are helping to cultivate a workforce equipped with the unique blend of biological, mathematical, and computational skills required for success in this interdisciplinary field [10] [11]. These partnerships provide invaluable opportunities for students and researchers to gain practical experience with real-world challenges while accelerating the translation of innovative modeling approaches into pharmaceutical R&D.

As QSP continues to mature, its integration across the drug development enterprise promises to enhance decision-making, reduce late-stage failures, and ultimately deliver better therapies to patients more efficiently. The ongoing refinement of QSP methodologies, coupled with advances in complementary technologies, positions this approach as an increasingly central component of modern drug development.

In systems biology, understanding complex drug responses requires moving beyond single-layer analysis to an integrated multi-omics approach. This paradigm involves the simultaneous measurement and computational integration of various molecular layers—including genomics, transcriptomics, proteomics, and epigenomics—to construct comprehensive models of biological systems [17]. The central premise is that disease states and therapeutic interventions manifest across multiple molecular layers, and by capturing these coordinated changes, researchers can pinpoint biological dysregulation more accurately than with any single data type alone [17]. This integrated approach is particularly valuable for elucidating mechanisms of adverse drug reactions and predicting patient-specific therapeutic outcomes, ultimately accelerating the development of personalized treatment strategies [18] [17].

The challenge of multi-omics integration lies not only in the technical complexity of generating diverse datasets but also in developing computational frameworks that can effectively reconcile data with varying formats, scales, and biological contexts [17]. Recent advances in artificial intelligence and machine learning have enabled the development of more powerful analytical tools that extract meaningful insights from these complex datasets [17]. When properly executed, integrated multi-omics provides unprecedented insights into the molecular mechanisms of drug action, enabling more accurate prediction of drug efficacy and toxicity before clinical deployment [18] [19].

Foundational Protocols for Multi-Omics Data Generation and Integration

Experimental Design Considerations for Dynamic Drug Response Studies

Effective multi-omics studies require careful experimental design to capture meaningful biological signals across molecular layers. For dynamic drug response profiling, researchers should implement longitudinal designs that measure molecular responses across multiple time points and physiologically relevant drug concentrations [18]. This approach captures the temporal dynamics of drug effects, revealing how molecular networks adapt and respond over time.

A proven protocol involves challenging relevant cellular models (e.g., iPSC-derived human 3D cardiac microtissues for cardiotoxicity studies) with therapeutic compounds at both therapeutic and toxic doses across an extended time period (e.g., 14 days) [18]. Molecular profiling should include at a minimum time-resolved proteomics (LC-MS), transcriptomics (RNA-seq), and epigenomics (MeDIP-seq for methylation) with multiple biological replicates at each time point (typically n=3) [18]. Control samples (e.g., DMSO-treated) must be collected at matched time points to account for natural temporal variations in the model system.

Data Generation Methodologies

Methylome Profiling using MeDIP-seq:

- Protocol: Perform methylated DNA immunoprecipitation followed by sequencing using validated antibodies against 5-methylcytosine. Fragment genomic DNA to 100-500bp, immunoprecipitate methylated fragments, and prepare sequencing libraries following manufacturer protocols [18].

- Data Analysis: Quantify enrichment signals as percentage methylation using established tools like QSEA [18]. Identify differentially methylated regions (DMRs) between treated and control samples using longitudinal statistical models, with significance threshold of q<0.01 after multiple testing correction [18].

- Quality Control: Verify that methylation patterns in the model system recapitulate known in vivo characteristics (e.g., inverse correlation between gene body methylation and expression levels) [18].

Transcriptome Profiling using RNA-seq:

- Protocol: Extract total RNA using column-based methods with DNase treatment. Assess RNA quality (RIN >8.0), prepare stranded RNA-seq libraries, and sequence on appropriate platform (e.g., Illumina) to minimum depth of 30 million reads per sample [18].

- Data Analysis: Process raw reads through standardized pipeline including adapter trimming, alignment to reference genome, and gene-level quantification. Perform differential expression analysis using appropriate longitudinal models.

Proteome Profiling using LC-MS:

- Protocol: Lyse cells/tissues in appropriate buffer, digest proteins with trypsin, and desalt peptides. Analyze by liquid chromatography-mass spectrometry with data-independent acquisition (DIA) for comprehensive quantification [18].

- Data Analysis: Process raw spectra using tools like MaxQuant or Spectronaut for identification and quantification. Normalize data and perform statistical analysis to identify differentially expressed proteins across time points.

Computational Integration Frameworks

Multiple computational approaches exist for integrating multi-omics datasets, each with distinct advantages and applications:

Table 1: Multi-Omics Data Integration Approaches

| Integration Method | Description | Use Cases | Tools/Examples |

|---|---|---|---|

| Concatenation-based (Low-level) | Direct merging of raw or processed datasets from different omics layers | Early-stage integration; Pattern discovery | Standard statistical software |

| Transformation-based (Mid-level) | Joint dimensionality reduction of multiple datasets | Data compression; Visualizing relationships | MOFA; iCluster |

| Model-based (High-level) | Integration through machine learning models on separate analyses | Prediction tasks; Network modeling | PASO; PaccMann; MOLI |

Network integration represents a particularly powerful approach, where multiple omics datasets are mapped onto shared biochemical networks to improve mechanistic understanding [17]. In this framework, analytes (genes, transcripts, proteins, metabolites) are connected based on known interactions (e.g., transcription factors mapped to their target genes, or metabolic enzymes mapped to their substrates and products) [17]. This network-based approach provides a systems-level context for interpreting multi-omics signatures of drug response.

Case Study: Network Modeling of Anthracycline Cardiotoxicity

Experimental Workflow and Multi-Omics Profiling

A landmark study demonstrating the power of multi-omics integration examined anthracycline-induced cardiotoxicity using iPSC-derived human 3D cardiac microtissues treated with four anthracycline drugs (doxorubicin, epirubicin, idarubicin, daunorubicin) at physiologically relevant doses over 14 days [18]. The researchers collected comprehensive methylome, transcriptome, and proteome measurements at seven time points (2, 8, 24, 72, 168, 240, 336 hours) with three biological replicates per time point, generating 372 different molecular profiles [18].

Analysis revealed that anthracycline treatment induced significant methylation changes, particularly affecting transcription factor binding sites for cardiac development factors including YY1, ETS1, and SRF (odds ratios 1.87-13.18) [18]. These epigenetic changes correlated with transcriptional and proteomic alterations in mitochondrial function, sarcomere assembly, and extracellular matrix organization. Through network propagation modeling on a protein-protein interaction network, the researchers identified a core network of 175 proteins representing the common signature of anthracycline cardiotoxicity [18].

Diagram 1: Multi-omics workflow for drug response profiling

Key Findings and Clinical Validation

The integrated analysis revealed that anthracyclines disrupt multiple interconnected biological modules, including:

- Mitochondrial function: Significant alterations in proteins involved in oxidative phosphorylation and energy metabolism

- Sarcomere function: Changes in structural and regulatory proteins essential for cardiac contraction

- Extracellular matrix: Remodeling of matrix composition and adhesion properties

Crucially, these in vitro-identified modules were validated using cardiac biopsies from cardiomyopathy patients with historic anthracycline treatment, demonstrating the clinical relevance and predictive power of the multi-omics approach [18]. This study established a reproducible workflow for molecular medicine and serves as a template for detecting adverse drug responses from complex omics data.

Advanced Computational Framework: PASO Deep Learning Model

Model Architecture and Implementation

The PASO (Pathway Attention with SMILES-Omics interactions) deep learning model represents a cutting-edge approach for predicting anticancer drug sensitivity by integrating multi-omics data with drug structural information [19]. This model addresses limitations of previous methods by incorporating pathway-level biological features and comprehensive drug chemical structure representation.

The PASO framework implements several innovative components:

- Pathway-level feature computation: Statistical calculation of differences in gene expression, mutation, and copy number variations within and outside biological pathways using Mann-Whitney U test and Chi-square-G test [19]

- Multi-scale drug feature extraction: Combination of embedding networks, multi-scale convolutional neural networks, and transformer encoders to represent drug features from SMILES sequences [19]

- Attention mechanisms: Learning complex interactions between omics features and drug properties, assigning interpretable weights to pathways and chemical structures [19]

Diagram 2: PASO model architecture for drug response prediction

Performance and Clinical Utility

The PASO model demonstrates superior performance in predicting anticancer drug sensitivity compared to existing methods, achieving higher accuracy across multiple evaluation metrics including mean squared error (MSE), Pearson's correlation coefficient (PCC), and coefficient of determination (R²) [19]. The model was rigorously validated using three data splitting strategies (Mixed-Set, Cell-Blind, and Drug-Blind) to assess generalization capability [19].

In analysis of lung cancer cell lines, PASO identified that PARP inhibitors and Topoisomerase I inhibitors were particularly sensitive for small cell lung cancer (SCLC) [19]. Clinical validation using TCGA data demonstrated that the model not only accurately predicted patient drug responses but also showed significant correlation with patient survival outcomes, highlighting its potential for guiding personalized cancer treatment decisions [19].

Essential Research Reagents and Computational Tools

Successful implementation of multi-omics drug response studies requires specific reagents, computational tools, and data resources. The following table summarizes key components of the research toolkit:

Table 2: Essential Research Reagents and Resources for Multi-Omics Drug Response Studies

| Category | Specific Resource | Function/Application | Source/Reference |

|---|---|---|---|

| Cellular Models | iPSC-derived 3D cardiac microtissues | Recapitulate human tissue complexity for cardiotoxicity testing | [18] |

| Omics Technologies | MeDIP-seq for methylome profiling | Genome-wide methylation analysis | [18] |

| RNA-seq for transcriptome profiling | Comprehensive transcript quantification | [18] | |

| LC-MS for proteome profiling | Quantitative protein measurement | [18] | |

| Data Resources | CCLE (Cancer Cell Line Encyclopedia) | Multi-omics data for cancer cell lines | [19] |

| GDSC (Genomics of Drug Sensitivity in Cancer) | Drug response data for cell lines | [19] | |

| PubChem | Drug SMILES structures and chemical information | [19] | |

| MSigDB | Pathway gene sets for feature computation | [19] | |

| Computational Tools | QSEA | Methylation data analysis | [18] |

| PASO framework | Drug response prediction with pathway attention | [19] | |

| Network propagation algorithms | Integration of multi-omics data onto interaction networks | [18] |

The integration of multi-omics data represents a transformative approach for modeling drug responses and understanding complex biological systems. The methodologies outlined here—from experimental design to advanced computational integration—provide a robust framework for researchers seeking to implement these approaches in their own work. As the field advances, several trends are shaping its future direction:

Single-Cell Multi-Omics: Technological advancements now enable multi-omic measurements from individual cells, allowing investigators to correlate specific genomic, transcriptomic, and epigenomic changes within the same cellular context [17]. This approach is particularly valuable for understanding tumor heterogeneity and cell-type-specific drug responses.

AI-Driven Integration: Artificial intelligence and machine learning are playing an increasingly important role in multi-omics data analysis [17]. These technologies can detect intricate patterns and interdependencies across molecular layers, providing insights that would be impossible to derive from single-analyte studies [17].

Clinical Translation: Multi-omics approaches are increasingly being applied in clinical settings, particularly in oncology [17]. By integrating molecular data with clinical information, multi-omics can help stratify patients, predict disease progression, and optimize treatment plans [18] [17]. Liquid biopsies exemplify this trend, analyzing biomarkers like cell-free DNA, RNA, proteins, and metabolites non-invasively [17].

As these methodologies continue to evolve, collaboration among academia, industry, and regulatory bodies will be essential to establish standards and create frameworks that support the clinical application of multi-omics research [17]. By addressing current challenges in data harmonization, interpretation, and validation, integrated multi-omics approaches will continue to advance personalized medicine, offering deeper insights into human health and disease and more accurate prediction of drug responses across diverse patient populations.

Dynamic modeling of drug responses is indispensable for modern systems biology and drug development, enabling the prediction of complex physiological behaviors that emerge from molecular interactions. These models serve as in silico testbeds for hypothesis validation and therapeutic intervention planning [20]. However, the path to building reliable models is fraught with challenges, primarily stemming from nonlinear system dynamics, the need to bridge multiscale complexity, and the critical task of quantifying and managing uncertainty [21] [22] [20]. These interconnected challenges can obscure the interpretability of models and compromise the reliability of their predictions. This document outlines structured application notes and experimental protocols to navigate these challenges, framed within the context of a broader thesis on dynamic modeling of drug responses. The guidance provided is designed for researchers, scientists, and drug development professionals engaged in creating robust, predictive biological models.

Application Note: Managing Nonlinear Dynamics in Drug Response

Protocol for Simulating Emergent Drug Effects in Excitable Tissues

Objective: To predict use-dependent and frequency-dependent block of cardiac ion channels by antiarrhythmic drugs, an emergent property of nonlinear dynamics, across cellular and tissue scales. Background: The nonlinear interactions between drugs and ion channels result in complex kinetics where the action potential waveform alters drug potency, which in turn changes the action potential, creating strong bidirectional feedback [22].

- Step 1: Atomic-Scale Molecular Modeling: Model drug interactions with cardiac ion channels (e.g., potassium, sodium) using simulated docking and molecular dynamics (MD) simulations based on high-resolution channel structures [22].

- Step 2: Cellular-Level Simulation: Incorporate the drug-channel kinetic model into a computational model of a cardiac myocyte (e.g., O’Hara-Rudy human ventricular model). Simulate to test for proarrhythmic cellular phenotypes like action potential duration (APD) prolongation and alternans [22].

- Step 3: Tissue-Level Simulation: Integrate the cellular model into a one-dimensional cable or two-dimensional tissue sheet to simulate action potential propagation. Assess emergent tissue-level phenomena such as conduction velocity restitution and spiral wave breakup [22].

- Step 4: 3D Organ-Scale Prediction: Incorporate the model into a high-resolution reconstruction of human ventricles to predict drug-induced vulnerability to reentrant arrhythmias like torsades de pointes [22].

Table 1: Key Metrics for Assessing Proarrhythmic Drug Risk Across Scales

| Scale | Key Simulation Outputs | Proarrhythmic Risk Indicators |

|---|---|---|

| Cellular | Action Potential Duration (APD), Restitution | APD prolongation, steep APD restitution slope, alternans |

| 1D/2D Tissue | Conduction Velocity (CV), Restitution | CV slowing, wavebreak, stable reentry |

| 3D Organ | Spiral Wave Dynamics, ECG Biomarkers | Spiral wave breakup, T-wave alternans on pseudo-ECG |

Workflow Visualization: From Ion Channel to Tissue-Level Prediction

The following diagram illustrates the multi-scale workflow for simulating nonlinear drug effects in cardiac tissue.

Diagram 1: Multi-scale workflow for simulating nonlinear drug effects.

Application Note: Navigating Multiscale Complexity in Pharmacology

Protocol for Multiscale Pharmacometric Model Development

Objective: To develop a Nonlinear Mixed Effects (NLME) model that quantifies hierarchical variability (Between-Subject Variability, BSV; Residual Unknown Variability, RUV) in drug dose-exposure-response relationships from clinical trial data [22]. Background: Physiological processes and drug effects occur over a wide range of length and time scales. Multiscale modeling bridges these scales to enable patient-specific predictions for personalized medicine [22].

- Step 1: Structural Model Definition: Establish the base pharmacokinetic (PK) and pharmacodynamic (PD) model using nonlinear differential equations (e.g., two-compartment PK, indirect response PD) [22].

- Step 2: Statistical Model Specification: Define the statistical model for BSV, assuming model parameters follow a multivariate log-normal distribution. Specify the model for RUV, which may be additive, proportional, or a combination [22].

- Step 3: Parameter Estimation (Model Calibration): Use the Maximum Likelihood Estimation (MLE) method, often implemented via the Expectation-Maximization (EM) algorithm, to estimate the fixed effects (population means) and random effects (variances) simultaneously [22] [20].

- Step 4: Model Validation: Perform predictive checks and bootstrap analysis to assess model robustness and predictive performance [20].

- Step 5: Systems Pharmacology Enhancement: Increase biological realism by incorporating prior knowledge of biological pathways and relevant disease mechanisms into the PK/PD model structure [22].

Table 2: Common Techniques for Multiscale Model Analysis and Simulation

| Technique | Primary Function | Application in Drug Development |

|---|---|---|

| Nonlinear Mixed Effects (NLME) | Quantifies BSV and RUV | Population PK/PD analysis from sparse clinical trial data |

| Markov Chain Monte Carlo (MCMC) | Bayesian parameter estimation & UQ | Inferring posterior parameter distributions from data [23] |

| Flux Balance Analysis (FBA) | Simulates steady-state metabolic fluxes | Predicting drug effects on genome-scale metabolic networks [24] |

| Optimal Experimental Design (OED) | Identifies most informative experiments | Optimizing sampling schedules for efficient parameter estimation [20] |

Workflow Visualization: Multiscale Integration in Drug Development

The following diagram outlines the integration of data and models across biological scales for drug development.

Diagram 2: Data and model integration across scales in drug development.

Application Note: Quantifying and Managing Uncertainty

Protocol for Prediction Uncertainty Analysis Using Bayesian Inference

Objective: To perform a full computational uncertainty analysis for a dynamic model, quantifying how parameter uncertainty propagates to uncertainty in a specific model prediction [23]. Background: Systems biology models are often "sloppy," with many uncertain parameters. However, this does not automatically imply all predictions are uncertain. Uncertainty must be assessed on a per-prediction basis [23].

- Step 1: Define the Posterior Parameter Distribution: Using time-series data ( y_d ), define the log-posterior distribution of parameters ( \theta ) as ( \log \pi(\theta) = c - \frac{1}{2}\chi^2(\theta) + \log p(\theta) ), where ( \chi^2 ) is the fitting error and ( p(\theta) ) is the prior density [23].

- Step 2: Generate a Parameter Sample (Ensemble): Use a Markov Chain Monte Carlo (MCMC) algorithm (e.g., Differential Evolution Markov Chain, DE-MCz) to draw a large sample (e.g., >1000) of parameter vectors from the posterior distribution ( \pi(\theta) ). Discard initial burn-in iterations [23].

- Step 3: Propagate Uncertainty to Predictions: For the prediction of interest (e.g., a future time course or dose-response curve), simulate the model for every parameter vector in the sample [23].

- Step 4: Quantify Prediction Uncertainty: Calculate the uncertainty metric ( Q{0.95} ) for a predicted time course. This metric is defined as the 95th percentile of the dimensionless error relative to the median prediction, integrated over time. A ( Q{0.95} < 1 ) indicates a tight prediction, while ( Q_{0.95} \ge 1 ) signifies high uncertainty [23].

Protocol for Node-Level Resilience Uncertainty in Networks

Objective: To quantify the probability of individual nodes in a networked system (e.g., metabolic network) losing resilience, considering parameter uncertainty following arbitrary distributions [25]. Background: Macro-scale network resilience can hide non-resilient behavior at the micro-scale (individual nodes). Uncertainty affects nodes differently based on their local and global network properties [25].

- Step 1: Formulate Node Dynamics: Define the nonlinear dynamic equations for each node, incorporating coupling terms based on the network topology [25].

- Step 2: Characterize Parameter Uncertainty: Define the arbitrary probability distributions for uncertain model parameters [25].

- Step 3: Apply Arbitrary Polynomial Chaos (aPC) Expansion: Use the aPC method to construct an orthogonal polynomial basis that is tailored to the specific arbitrary distributions of the uncertain parameters [25].

- Step 4: Compute Stochastic Node Dynamics: Solve the resulting system of equations to obtain the probability density functions for the states of each node over time [25].

- Step 5: Calculate Resilience Probability: For each node, identify the probability of being in a desirable stable state. Relate this probability to the node's in-degree and the network's average degree [25].

Workflow Visualization: Bayesian Uncertainty Quantification Pipeline

The following diagram illustrates the sequential workflow for assessing prediction uncertainty using Bayesian inference.

Diagram 3: Bayesian prediction uncertainty assessment workflow.

The Scientist's Toolkit: Key Research Reagents & Computational Solutions

Table 3: Essential Reagents and Tools for Dynamic Modeling of Drug Responses

| Category / Item | Function & Application | Specific Examples / Tools |

|---|---|---|

| Preclinical Model Systems | Provide pharmacogenomic data for drug response prediction | Cancer Cell Lines (CCLs), Patient-Derived Xenografts (PDX) [26] |

| Multi-Omic Data Platforms | Generate input features for predictive models (mRNA expression, mutations, proteomics) | RNA-Seq, Whole Exome Sequencing, Mass Spectrometry Proteomics [26] |

| Parameter Estimation Software | Solve the inverse problem of fitting model parameters to data | pypesto (Python) [20], Monolix (NLME), MATLAB Optimization Toolbox [24] |

| Uncertainty Quantification Tools | Characterize parameter & prediction uncertainty | MCMC Samplers (DE-MCz) [23], Arbitrary Polynomial Chaos (aPC) [25] |

| Hybrid Modeling Frameworks | Combine mechanistic ODE models with machine learning for improved interpretability & performance | Universal Differential Equations [20] |

Methodologies and Real-World Applications in Drug Development

Model-Informed Drug Development (MIDD) employs quantitative frameworks to integrate diverse data sources, enhancing the efficiency and effectiveness of drug discovery and development [27]. Within the broader thesis on the dynamic modeling of drug responses in systems biology research, this article details the application notes and protocols for three pivotal MIDD methodologies: Physiologically-Based Pharmacokinetic (PBPK) modeling, Quantitative Systems Pharmacology (QSP), and Machine Learning (ML). These tools form an integrated toolkit for predicting the complex interplay between drugs and biological systems, from systemic exposure to cellular-level pharmacological effects.

Application Note 1: Physiologically-Based Pharmacokinetic (PBPK) Modeling

Core Principles and Applications

PBPK modeling is a mechanistic framework that describes the absorption, distribution, metabolism, and excretion (ADME) of a drug by constructing a multi-compartment model representing key organs or tissues [28]. Its strength lies in the ability to incorporate system-specific physiological parameters (e.g., organ volumes, blood flow rates) and drug-specific physicochemical properties, enabling the prediction of drug concentration-time profiles in various tissues [28] [27]. A primary application is the extrapolation of PK across populations, such as from adults to pediatrics or from healthy volunteers to patients with organ impairment, in situations where clinical data are limited or ethically difficult to obtain [28] [27]. Furthermore, PBPK models are increasingly used to assess drug-drug interactions (DDIs) and support the development of complex biological products, such as therapeutic proteins and gene therapies [28] [27].

Quantitative Data from Regulatory Submissions

The utility of PBPK modeling is demonstrated by its growing role in regulatory submissions. A landscape analysis of the U.S. FDA's Center for Biologics Evaluation and Research (CBER) from 2018 to 2024 shows its increasing adoption.

Table 1: PBPK in CBER Regulatory Submissions (2018-2024)

| Category | Number/Type | Specific Details |

|---|---|---|

| Total Submissions/Interactions | 26 | From 17 sponsors for 18 products [27] |

| Product Types | Gene therapies (8), Plasma-derived products (3), Vaccines (1), Cell therapy (1), Others (5) [27] | 11 of 18 products were for rare diseases [27] |

| Application Types | IND (10), pre-IND (8), BLA (1), INTERACT/MIDD/DMF (7) [27] | Used for dose justification, DDI prediction, and mechanistic understanding [27] |

A specific case study involved the use of a minimal PBPK model to support the pediatric dose selection for ALTUVIIIO, a recombinant Factor VIII therapy. The model, qualified against data from a similar product (ELOCTATE), demonstrated predictive accuracy within ±25% for key exposure metrics [27].

Table 2: PBPK Model Performance for FVIII Therapies

| Population | Drug | Dose (IU/kg) | Cmax Prediction Error (%) | AUC Prediction Error (%) |

|---|---|---|---|---|

| Adult | ELOCTATE | 25 | -25 | -11 |

| Adult | ELOCTATE | 65 | -21 | -11 |

| Adult | ALTUVIIIO | 25 | +2 | -8 |

| Adult | ALTUVIIIO | 65 | +2 | -18 |

Protocol: Developing a Minimal PBPK Model for Therapeutic Proteins

Objective: To develop and qualify a minimal PBPK model for a therapeutic protein (e.g., an Fc-fusion protein) to support pediatric dose selection.

Workflow Overview:

Materials and Reagents:

- In vitro data on target binding affinity and FcRn interaction

- Preclinical PK data from animal models

- Clinical PK data from a reference compound (e.g., ELOCTATE for FVIII)

- Population-specific physiological data (e.g., pediatric organ weights, blood flows, FcRn abundance)

Procedure:

- Define Model Structure: Implement a minimal PBPK model with compartments for plasma and peripheral tissues. Incorporate key clearance pathways, including FcRn-mediated recycling and target-mediated drug disposition (TMDD) if applicable [27].

- System-Specific Parameterization: Populate the model with physiological parameters for the target population (e.g., adults). Sources include published literature and specialized software databases [28].

- Drug-Specific Parameterization: Input drug-specific parameters, such as binding constants for FcRn and the target, and non-specific clearance rates, often derived from in vitro assays [28].

- Parameter Estimation and Model Qualification: Calibrate the model using clinical PK data from a reference drug with a similar mechanism. Optimize sensitive parameters (e.g., FcRn abundance, vascular reflection coefficient in pediatrics) to achieve a prediction error for AUC and Cmax within ±25% [27].

- Simulation and Prediction: Execute the qualified model to simulate PK profiles in the target special population (e.g., pediatrics) and recommend dosing regimens that maintain target drug exposure.

Application Note 2: Quantitative Systems Pharmacology (QSP)

Core Principles and Applications

QSP is a computational approach that builds mechanistic, mathematical models to understand the interactions between a drug and the biological system, with a primary focus on pharmacodynamics (PD) and clinical efficacy outcomes [29]. It integrates knowledge of biological pathways, disease processes, and drug mechanisms to simulate patient responses [30]. QSP is particularly valuable for hypothesis generation, simulating clinical trial scenarios that are impractical to test experimentally, and for de-risking drug development by identifying efficacy and safety concerns early on [12] [29]. Its applications span from exploring combination therapies in oncology to predicting cardiovascular effects and drug-induced liver injury [29].

Key Differentiators: PBPK vs. QSP

While both are "bottom-up" mechanistic approaches, PBPK and QSP have distinct focuses, as summarized below.

Table 3: Comparison of PBPK and QSP Modeling Approaches

| Feature | PBPK Modeling | QSP Modeling |

|---|---|---|

| Primary Focus | Pharmacokinetics (PK) / "What the body does to the drug" [29] | Pharmacodynamics (PD) / "What the drug does to the body" [29] |

| Core Prediction | Drug concentrations in plasma and tissues (Exposure) [29] | Drug effects on biological pathways and clinical efficacy (Response) [29] |

| System Components | Physiological organs, blood flows, tissue partition coefficients [28] | Biological networks, signaling pathways, disease mechanisms, omics data [10] [29] |

| Typical Application | Dose selection in special populations, DDI prediction [28] [27] | Target validation, combination therapy design, biomarker identification [12] [29] |

Protocol: Building a QSP Model for a Novel Oncology Target

Objective: To develop a QSP model for a novel oncology drug candidate to simulate its effect on a key signaling pathway (e.g., MAPK) and predict optimal combination regimens.

Workflow Overview:

Materials and Reagents:

- Omics data (genomics, proteomics) characterizing the target pathway

- In vitro data on drug-target binding and inhibition constants (Ki, IC50)

- Preclinical data on pathway modulation and tumor growth inhibition in animal models

- Clinical biomarker data from early-phase trials (if available)

Procedure:

- Network Reconstruction: Define the core biological network, including key signaling nodes (e.g., Receptor, RAS, RAF, MEK, ERK) and their interactions, based on literature and pathway databases [29].

- Mathematical Representation: Formulate a system of ordinary differential equations (ODEs) to describe the dynamics of the network. Incorporate the drug's mechanism of action (e.g., competitive inhibition of MEK) [30].

- Parameterization: Populate the model with kinetic parameters (e.g., synthesis/degradation rates, activation constants) from literature and in vitro assays. Drug-specific parameters (e.g., Ki) are derived from experimental data [30].

- Model Calibration and Validation: Calibrate the model using preclinical time-course data on pathway phosphorylation and tumor volume. Validate the model by assessing its ability to predict data not used in calibration [12].

- Virtual Patient Population and Simulation: Generate a population of virtual patients by varying key system parameters (e.g., protein expression levels) to reflect inter-individual variability. Simulate different dosing regimens and combination therapies to identify optimal strategies for Phase 2 [29].

Application Note 3: Machine Learning Integration

Core Principles and Applications

Machine Learning (ML) introduces powerful data-driven capabilities to complement mechanistic PBPK and QSP models. ML techniques can address several limitations of traditional MIDD, including high-dimensional parameter estimation, covariate selection, and the analysis of complex, multimodal datasets (e.g., incorporating real-world data and novel biomarkers) [31] [32]. A key application is the development of hybrid Pharmacometric-ML (hPMxML) models, which integrate the interpretability of mechanistic models with the predictive power of ML for tasks such as precision dosing and clinical outcome prediction [32]. Furthermore, ML can enhance PBPK modeling by informing parameter estimation and reducing model uncertainty [28].

Protocol: Developing a Hybrid PMx-ML Model for Precision Dosing in Oncology

Objective: To build a hybrid model that combines a population PK (PopPK) model with an ML classifier to personalize dosing for an oncology drug and minimize the risk of severe neutropenia.

Workflow Overview:

Materials and Reagents:

- Rich, individual-level PK data from clinical trials

- Patient covariate data (e.g., demographics, genetics, laboratory values)

- Clinical outcome data (e.g., neutrophil counts over time, adverse event records)

- Software for pharmacometric analysis (e.g., NONMEM, Monolix) and ML (e.g., Python/R)

Procedure:

- Estimand Definition and Data Curation: Pre-define the clinical question and ensure rigorous data cleaning and curation. Split data into training, testing, and (if possible) external validation sets [32].

- Base PopPK Model Development: Develop a traditional PopPK model to describe the drug's exposure. From this model, extract individual empirical Bayes estimates (EBEs) of PK parameters (e.g., clearance, volume of distribution) [32].

- Feature Engineering and ML Model Training: Create a feature set that includes the individual PK parameters from step 2 and relevant patient covariates. Train an ML model (e.g., XGBoost, Random Forest) to classify patients at high risk of grade 3/4 neutropenia [32].

- Model Explainability and Diagnostics: Perform feature importance analysis to interpret the ML model's predictions and ensure biological plausibility. Conduct extensive diagnostic checks on both the PopPK and ML components [32].

- Uncertainty Quantification and Validation: Apply techniques like bootstrapping or conformal prediction to quantify the uncertainty in the ML model's outputs. Critically assess model performance on the held-out test set and external validation set [32].

The Scientist's Toolkit: Essential Research Reagents and Solutions

The following table lists key resources for implementing the described MIDD methodologies.

Table 4: Key Research Reagent Solutions for MIDD

| Item Name | Function/Application | Specific Examples/Notes |

|---|---|---|

| IVIVE-PBPK Platforms | Software for bottom-up PBPK model building and simulation, incorporating in vitro-in vivo extrapolation. | Simcyp Simulator (Certara); Used for predicting interspecies and inter-population PK [33]. |

| QSP Model Repositories | Curated, peer-reviewed QSP models that serve as starting points for new drug development projects. | Models from publications on immuno-oncology, metabolic diseases; Can be adapted and modified for specific candidates [12]. |

| ML Libraries for hPMxML | Software libraries providing algorithms for building hybrid models, feature selection, and validation. | Python's Scikit-learn, XGBoost; R's Tidymodels; Used for covariate selection and clinical outcome prediction [32]. |

| Virtual Patient Generators | Tools integrated within QSP/PBPK platforms to simulate clinically and biologically plausible virtual populations. | Used to explore inter-individual variability and design clinical trials, especially for rare diseases [12]. |

| Domain Expertise | Critical, non-computational knowledge required to guide model development and interpret results. | Collaboration between modelers, clinical pharmacologists, and biologists is essential for model credibility [10]. |

The dynamic modeling of drug responses represents a critical frontier in systems biology, aiming to bridge the gap between complex molecular profiles and clinical therapeutic outcomes. In precision oncology, the profound heterogeneity of cancer genomes means that non-targeted therapies often fail to address specific genetic events, limiting their effectiveness [34]. Deep learning architectures have emerged as powerful tools for predicting drug response by capturing the intricate, non-linear relationships between diverse molecular inputs and phenotypic outputs. These models leverage large-scale pharmacogenomic datasets from preclinical models, including cancer cell lines and patient-derived xenografts (PDXs), to forecast individual patient responses to anticancer compounds [34] [35]. This application note details two prominent architectural paradigms in this domain: DrugCell, a knowledge-guided interpretable system, and DrugS, a data-driven predictive model, providing comprehensive protocols for their implementation and evaluation within a systems biology framework.

Architectures and Mechanisms

DrugCell: A Pathway-Guided Interpretable Architecture

The DrugCell architecture exemplifies Pathway-Guided Interpretable Deep Learning Architectures (PGI-DLA), which integrate prior biological knowledge directly into the model structure to enhance interpretability and biological plausibility [36].

- Core Principle: DrugCell is a Visible Neural Network (VNN) that uses the hierarchical structure of biological systems, specifically Gene Ontology (GO) processes, as its blueprint. Unlike black-box models, its network layers and connections mirror known functional biological relationships [36].

- Architecture Design: The system processes two primary inputs:

- Somatic Mutations: A binary vector representing the presence or absence of mutations in a set of genes.

- Drug Features: A molecular fingerprint (e.g., from a Simplified Molecular-Input Line-Entry System, or SMILES string) of the compound.

- Mechanism: The genetic features are processed through a hierarchy of "gene ontology terms" (subsystems), where each subsystem's activity is computed from the activities of its constituent parts (e.g., genes or smaller subsystems). This bottom-up information flow culminates in a final output that predicts the drug response, ensuring that the model's decision-making logic is intrinsically consistent with established biological mechanisms [36].

DrugS: A Data-Driven Predictive Model

In contrast, the DrugS model employs a robust, data-driven deep learning approach to predict drug responses based primarily on genomic features [34].

- Core Principle: DrugS uses a Deep Neural Network (DNN) that integrates high-dimensional gene expression data and drug structural information to predict the natural logarithm of the half-maximal inhibitory concentration (LN IC50) as a measure of drug sensitivity [34].

- Architecture Design:

- Input Processing: The model takes as input a vector of 20,000 protein-coding genes. To handle this high dimensionality and ensure cross-dataset compatibility, gene expression values are log-transformed and scaled.

- Dimensionality Reduction: An autoencoder is used to compress the 20,000 gene features into a concise set of 30 latent features, capturing the intrinsic structure of the data.

- Drug Representation: 2,048 features are extracted from the drug's SMILES string.

- Prediction Network: The combined 2,078 features (30 genomic + 2,048 drug) serve as input to a main DNN. This network incorporates dropout layers to prevent overfitting and enhance generalizability across diverse data sources [34].

Table 1: Comparative Overview of DrugCell and DrugS Architectures

| Feature | DrugCell | DrugS |

|---|---|---|

| Core Paradigm | Knowledge-guided (PGI-DLA) | Data-driven DNN |

| Primary Inputs | Somatic mutations, drug fingerprints | Gene expression, drug SMILES strings |

| Basis | Gene Ontology (GO) hierarchy | Automated feature extraction |

| Interpretability | High (intrinsically interpretable structure) | Medium (relies on post-hoc analysis) |

| Key Innovation | Network structure mirrors biological subsystems | Autoencoder for robust feature compression and integration |

| Output | Drug response classification/score | Continuous LN IC50 value |

Quantitative Performance and Evaluation

Rigorous benchmarking against established datasets and baselines is crucial for evaluating model performance.

DrugS Performance Metrics

The DrugS model was validated on several large-scale pharmacogenomic databases, demonstrating superior predictive performance [34].

- Benchmarking Datasets: Evaluations were conducted using the Cancer Cell Line Encyclopedia (CCLE), Genomics of Drug Sensitivity in Cancer (GDSC), and NCI-60 datasets.

- Robustness Measures: The model's design, particularly the use of log-transformation, scaling, and dropout layers, ensured robust performance across these different datasets and normalization methods [34].

Table 2: Performance Evaluation of the DrugS Model

| Evaluation Metric | Dataset/Context | Performance Outcome |

|---|---|---|

| Predictive Accuracy | CTRPv2, NCI-60 datasets | Consistently outperformed baseline models and demonstrated robust performance across different normalization methods [34]. |

| Clinical Relevance | The Cancer Genome Atlas (TCGA) | Predictions correlated with patient prognosis when combined with clinical drug administration data [34]. |

| Translational Utility | Patient-Derived Xenograft (PDX) Models | Model predictions showed correlation with drug response data and viability scores from PDX models [34]. |

| Resistance Modeling | Ibrutinib-resistant cell lines | Identified CDK inhibitors, mTOR inhibitors, and apoptosis inhibitors as potential agents to reverse resistance [34]. |

Advancing Translation with TRANSPIRE-DRP

The TRANSPIRE-DRP framework addresses a key limitation of many models trained on cell lines: the translational gap to clinical patients. It specifically uses Patient-Derived Xenograft (PDX) models, which offer superior biological fidelity, as a source domain [35].